Validating Kinetic Model Frameworks in DBTL Cycles: A Roadmap for Biomedical Researchers

This article provides a comprehensive framework for validating kinetic models within Design-Build-Test-Learn (DBTL) cycles, addressing a critical need in pharmaceutical development and metabolic engineering.

Validating Kinetic Model Frameworks in DBTL Cycles: A Roadmap for Biomedical Researchers

Abstract

This article provides a comprehensive framework for validating kinetic models within Design-Build-Test-Learn (DBTL) cycles, addressing a critical need in pharmaceutical development and metabolic engineering. We explore the foundational principles of kinetic modeling, from classical tracer kinetics to modern mechanistic systems biology. The content details methodological applications across diverse domains, including combinatorial pathway optimization and biotherapeutic stability prediction. We present systematic troubleshooting approaches for overcoming common implementation challenges and establish rigorous validation protocols comparing machine learning methods and model discrimination frameworks. This resource equips researchers and drug development professionals with practical strategies for enhancing model reliability, accelerating therapeutic development, and improving prediction accuracy in complex biological systems.

The Evolution of Kinetic Modeling: From Tracer Kinetics to Modern DBTL Integration

The collaboration between Mones Berman and Robert Levy at the National Institutes of Health in the late 1950s and early 1960s represents a watershed moment in biomedical research. Their partnership aligned computational expertise with physiological insight at a time when radioisotopes were just becoming available for metabolic studies and computers filled entire rooms [1]. This convergence of technologies enabled groundbreaking investigations into plasma lipoprotein metabolism that would establish foundational principles for kinetic modeling. Berman, an engineer by training, envisioned that linear algebra, linear differential equations, and computers comprised the ideal set of tools to formulate biological models and test them against tracer kinetic data [1]. His development of the SAAM (Simulation, Analysis, and Modeling) FORTRAN code provided the practical means to implement this vision, creating one of the first comprehensive computational tools for biological system modeling [1] [2].

Meanwhile, Levy recognized that combining ultracentrifugation with radio-iodinated proteins offered unprecedented opportunities to investigate the metabolic properties of plasma lipoproteins [1]. Their collaborative work attacked pivotal questions about lipoprotein metabolism, establishing an intellectual and methodological legacy that continues to influence modern pharmacological research, particularly in the context of Design-Build-Test-Learn (DBTL) cycle validation. The Berman-Levy approach demonstrated early that quantitative modeling could answer fundamental physiological questions that were otherwise intractable, such as distinguishing between excessive production versus insufficient removal of LDL-cholesterol in disease states [1].

Comparative Analysis of Modeling Approaches

Methodological Foundations and Evolution

Table 1: Comparative Analysis of Kinetic Modeling Approaches

| Modeling Characteristic | Classical Tracer Kinetics (Berman-Levy) | Mechanistic Systems Biology (MSB) | Modern Systems Pharmacology |

|---|---|---|---|

| Fundamental Principle | Steady-state assumption | Explicit molecular mechanisms | Hybrid: mechanistic + empirical |

| Computational Framework | Linear differential equations | Nonlinear differential equations | Multi-scale, multi-mechanism |

| Data Requirements | Tracer kinetic data at steady state | Non-steady state perturbation data | Multi-modal (omics, kinetic, clinical) |

| Regulatory Insight | Identifies altered processes | Reveals molecular control mechanisms | Predicts pharmacological interventions |

| Temporal Resolution | Static (steady state) | Dynamic (transients) | Multi-temporal |

| Key Limitation | Hides molecular mechanisms | Computational complexity | Model validation across scales |

The Berman-Levy approach established the power of tracer kinetics for distinguishing between metabolic pathways. When confronting elevated LDL-cholesterol concentrations, their methods could determine whether this resulted from excessive production or insufficient removal—a distinction impossible based on concentration measurements alone [1]. The fundamental strength of tracer kinetics lies in its ability to extract rate constants that reflect the net effect of all regulatory controls (transcriptional, translational, posttranslational, and allosteric) operating in a steady state [1]. However, this power comes with the limitation that these detailed regulatory mechanisms remain hidden from view, with the full complex rate law reducing to a single rate constant under steady-state conditions [1].

Parallel to tracer kinetics, another school of biological modeling developed with equally distinguished proponents. In physiology, Arthur Guyton's group at the University of Mississippi, and in biochemistry, David Garfinkel and colleagues at the University of Pennsylvania, assembled large complex models of cardiovascular physiology and cardiac energy metabolism [1]. These early examples of Mechanistic Systems Biology (MSB) employed very large systems of nonlinear differential equations to analyze physiological non-steady states, making control and regulation explicit rather than hidden [1]. This tradition now finds expression in modern systems pharmacology, where models increasingly incorporate molecular mechanisms that dominate 21st-century biomedical research.

Quantitative Comparison of Model Performance

Table 2: Experimental Validation Data Across Modeling Paradigms

| Validation Metric | SAAM/Tracer Kinetics | Mechanistic Systems Biology | Integrated LDBT Approach |

|---|---|---|---|

| Prediction Accuracy for LDL Flux | High (established methodology) | Moderate (context-dependent) | Emerging evidence |

| HDL Metabolism Prediction | Limited to flux quantification | Gadkar-Lu model: apoA1 recycling | Not yet fully evaluated |

| CETP Inhibition Prediction | Not applicable | Correctly predicted [1] | Potential for enhanced accuracy |

| Time to Model Convergence | Days-Weeks | Weeks-Months | Hours-Days (with automation) |

| Multi-Perturbation Integration | Single perturbations | 5+ therapeutic interventions [1] | High-throughput capacity |

| Required Sample Size | Moderate (group comparisons) | Large (parameter estimation) | Reduced (active learning) |

The evolution from classical tracer kinetics to modern integrated approaches is exemplified by the work of Gadkar, Lu, and colleagues, who have built upon decades of tracer kinetic modeling while adding mechanistic and molecular detail [1]. Their model represents one of the first efforts in cholesterol metabolism to explicitly account for both steady-state tracer kinetic data and non-steady state pharmacological perturbation responses [1]. This integration challenges both modeling traditions: nonlinear mechanistic models must reproduce tracer kinetic results, while traditional tracer kinetics must expand to account for pharmacological dynamics.

Experimental Protocols and Methodologies

Foundational Tracer Kinetic Protocols

The classical Berman-Levy approach employed rigorous experimental protocols that established the gold standard for kinetic modeling validation:

Subject Selection: Recruitment of normal volunteer populations and individuals with abnormal phenotypes for comparative studies [1]

Tracer Administration: Introduction of lipoproteins with tagged lipid molecules or apolipoproteins (radio-iodinated proteins) allowing quantification independent of endogenous molecules [1]

Sample Collection: Serial blood sampling over time courses sufficient to characterize metabolic trajectories

Lipoprotein Separation: Ultracentrifugation techniques to isolate specific lipoprotein classes for analysis [1]

Data Analysis: Application of SAAM programming to model kinetic parameters and distinguish production from clearance rates [1]

The most challenging aspect of this approach was the experimental requirement: recruiting appropriate subject populations and collecting comprehensive tracer kinetic data [1]. Computational analysis, while sophisticated for its time, was secondary to the rigorous experimental design and sample processing.

Modern Cell-Free Validation Protocols

Contemporary validation methodologies have dramatically accelerated through cell-free transcription-translation (TX-TL) systems:

DNA Template Preparation: Synthesis of DNA templates without intermediate cloning steps [3]

Cell-Free Reaction Assembly: Combination of cellular biosynthesis machinery from crude lysates or purified components with DNA templates [3]

Protein Expression: Rapid in vitro transcription and translation (≥1 g/L protein in <4 hours) [3]

Functional Assays: Implementation of colorimetric or fluorescent-based assays for high-throughput sequence-to-function mapping [3]

Automated Processing: Integration with liquid handling robots and microfluidics to screen >100,000 picoliter-scale reactions [3]

These protocols enable quantitative evaluation of genetic constructs under consistent conditions, facilitating direct comparison between modeling predictions and experimental outcomes while eliminating confounding biological variables inherent in living systems.

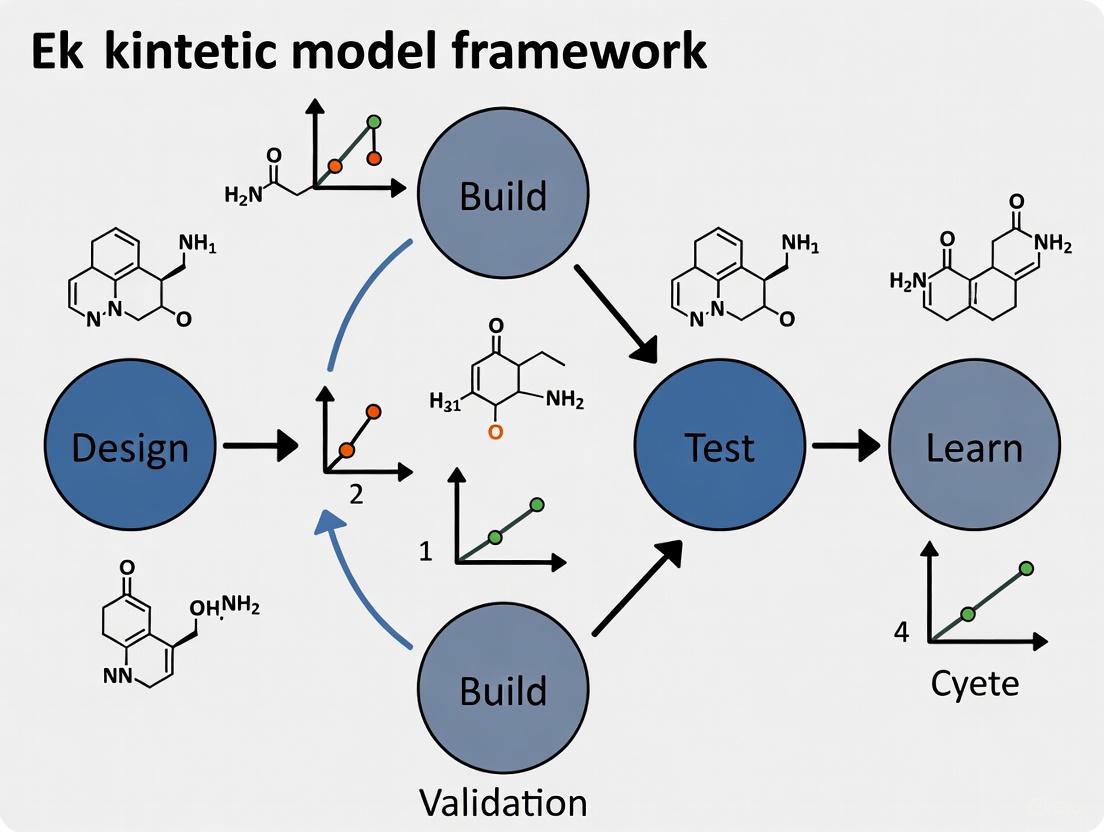

The DBTL Cycle in Kinetic Modeling: Evolution to LDBT

Traditional DBTL Workflow

The classic Design-Build-Test-Learn cycle has long structured iterative improvement in kinetic modeling and synthetic biology:

Design: Researchers define objectives for desired biological function and design parts or systems using domain knowledge and computational modeling [3]. In kinetic modeling, this corresponds to formulating mathematical representations of biological systems based on existing knowledge.

Build: DNA constructs are synthesized and assembled into plasmids or other vectors, then introduced into characterization systems [3]. For kinetic modeling, this phase involves implementing mathematical models in computational frameworks.

Test: Experimental measurement of engineered biological construct performance [3]. In modeling, this involves comparing predictions to empirical data.

Learn: Analysis of collected data compared to design objectives to inform subsequent design rounds [3]. This iterative refinement continues until desired function is achieved.

The Emerging LDBT Paradigm

Recent advances have prompted a paradigm shift from DBTL to LDBT (Learn-Design-Build-Test), where machine learning precedes design:

Learn: Machine learning models analyze existing biological data to detect patterns in high-dimensional spaces, enabling predictive design before physical construction [3]. Protein language models (ESM, ProGen) capture evolutionary relationships, while structural models (MutCompute, ProteinMPNN) predict sequences folding into specific backbones [3].

Design: Computational generation of biological designs informed by machine learning predictions rather than solely domain expertise [3]. This includes zero-shot prediction of functional sequences without additional training.

Build: Rapid construction using cell-free systems that express proteins without cloning steps, achieving high yields in hours rather than days [3].

Test: High-throughput functional characterization in cell-free systems, providing reproducible data under controlled conditions [3].

This reordering creates a feedback-efficient system that minimizes trial-and-error by frontloading computational learning, potentially achieving functional solutions in a single cycle rather than multiple iterations [3].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagents for Kinetic Modeling Validation

| Reagent/Resource | Function/Application | Specific Examples |

|---|---|---|

| Cell-Free TX-TL Systems | Rapid protein expression without living cells | E. coli lysates, purified components [3] |

| Fluorescent Reporters | Quantitative measurement of biological activity | mCherry, GFP [4] |

| Bioluminescence Reporters | Highly sensitive, quantitative signaling | LuxCDEAB operon [4] |

| Machine Learning Models | Predictive protein design and optimization | ESM, ProGen, MutCompute, ProteinMPNN [3] |

| Specialized Plasmids | Genetic construct delivery and expression | pSEVA261 (medium-low copy number) [4] |

| Selection Markers | Maintenance of genetic constructs in hosts | Kanamycin resistance cassette [4] |

| Microfluidic Platforms | Ultra-high-throughput screening | DropAI droplet microfluidics [3] |

| Inducible Promoter Systems | Controlled gene expression testing | pTet/pLac with TetR/LacI regulators [4] |

Case Study: Integration of Tracer Kinetics with Mechanistic Modeling

The Gadkar-Lu model of HDL metabolism exemplifies the integration of classical and modern approaches [1]. This model was formulated with explicit hypotheses tested quantitatively against multiple pharmacological perturbations:

- Upregulation of apoA1 synthesis

- Administration of reconstituted HDL

- Infusion of delipidated HDL

- CETP inhibition

- ABCA1 upregulation [1]

The model introduced a quantitative concept of HDL remodeling and apoA1 recycling that accounted for classic biphasic apoA1 kinetics previously reported by Ikewaki and colleagues [1]. When tested against Schwartz tracer time course data for lipoprotein cholesteryl ester kinetics, the model showed remarkable agreement in reported cholesterol fluxes despite differences in methodological approach [1]. This case study demonstrates how a single mechanistic model can account for both non-steady state perturbation data and steady-state tracer kinetic data, leveraging the unique capabilities of both modeling schools.

The legacy of the Berman-Levy collaboration and SAAM modeling extends far beyond their original applications in lipoprotein metabolism. Their work established foundational principles for combining experimental data with computational modeling that continue to evolve in modern systems pharmacology. The current convergence of linear, steady-state tracer kinetic modeling with nonlinear, mechanistic, non-steady state modeling represents a maturation of both traditions, each contributing unique strengths to the comprehensive understanding of biological systems [1].

This integration is particularly valuable in pharmaceutical development, where human and financial incentives encourage testing theories against as many different experimental protocols as possible [1]. The more validation tests a model passes, the greater confidence researchers have in its predictions. This comprehensive modeling approach ultimately benefits patients and provides competitive advantages to organizations that understand its value [1]. As kinetic modeling continues to evolve within the LDBT paradigm, the foundational principles established by Berman, Levy, and their contemporaries provide enduring guidance for relating mathematical representations to biological reality.

In the field of kinetic model framework validation within Design-Build-Test-Learn (DBTL) cycles, two dominant modeling philosophies provide complementary insights: classical Tracer Kinetics (TK) and Mechanistic Systems Biology (MSB) [1]. For decades, TK has been a powerful tool for quantifying metabolic fluxes in steady-state systems, using radioisotopes or stable isotopes to track molecular fate. In parallel, MSB has evolved to model the complex, nonlinear dynamics of biological systems by explicitly incorporating molecular mechanisms and regulatory structures [1] [5]. This guide provides an objective comparison of these approaches, detailing their performance characteristics, appropriate applications, and roles in modern pharmacological and metabolic engineering research.

Core Philosophical and Technical Differences

The fundamental distinction between these modeling approaches lies in their scope and objective. TK aims to describe systemic behavior by extracting composite rate constants from steady-state data, while MSB seeks to explain system behavior by mathematically representing underlying physical and biochemical mechanisms [1].

Tracer Kinetics operates on the principle of introducing traceable, non-perturbing amounts of labeled compounds into a system at steady state. The resulting data are analyzed, typically with linear differential equations and compartmental models, to determine kinetic parameters like production rates and clearance rate constants [1] [6]. Its power comes from the ability to distinguish between alternative physiological states—for example, determining whether elevated LDL-cholesterol stems from overproduction or impaired clearance [1]. However, this approach has a significant limitation: all molecular regulatory mechanisms remain hidden from view because, at steady state, transcriptional, translational, and allosteric controls are constant and thus invisible to the model [1].

Mechanistic Systems Biology explicitly represents these hidden controls. MSB models consist of large systems of nonlinear differential equations where every rate law includes known or hypothesized control mechanisms [1]. This allows upstream controllers to propagate changes to downstream processes, integrating multiple feedback mechanisms. These models are particularly valuable for analyzing physiological non-steady states, such as transitions from rest to exercise or metabolic responses to pharmacological perturbations [1]. In translational pharmaceutical research, TK models align with what the body does to a drug (pharmacokinetics), while MSB models align with what the drug does to the body (pharmacodynamics) [1].

The following diagram illustrates the fundamental differences in approach and information flow between these two modeling paradigms:

Comparative Performance Analysis

The table below summarizes the fundamental characteristics and performance metrics of TK and MSB approaches across key modeling dimensions.

Table 1: Fundamental Characteristics and Performance Comparison

| Modeling Dimension | Tracer Kinetics (TK) | Mechanistic Systems Biology (MSB) |

|---|---|---|

| Primary Objective | Quantify metabolic fluxes & rate constants [1] | Elucidate molecular mechanisms & regulatory structures [1] |

| Mathematical Foundation | Linear algebra & linear differential equations [1] | Nonlinear differential equations [1] |

| System State Requirement | Steady state assumption [1] | Steady state or non-steady state [1] |

| Molecular Mechanisms | Hidden from view (lumped into rate constants) [1] | Explicitly represented in rate laws [1] |

| Regulatory Control | Invisible at steady state [1] | Explicitly modeled (allosteric, transcriptional, etc.) [1] |

| Predictive Scope | Limited to similar steady states [1] | Can predict responses to novel perturbations [1] [7] |

| Data Requirements | Tracer time-course data [1] | Multi-omics, kinetic parameters, perturbation data [1] [5] |

| Computational Intensity | Lower | Higher [1] |

Experimental Applications and Validation Protocols

Representative Experimental Designs

The experimental protocols for TK and MSB differ significantly in design and objective, as shown in the comparative table below.

Table 2: Experimental Protocol Comparison

| Protocol Component | Tracer Kinetics Experiment | Mechanistic Systems Biology Experiment |

|---|---|---|

| Experimental Goal | Identify which processes differ between states [1] | Validate hypothesized molecular mechanisms [1] |

| Subject Groups | Normal vs. abnormal phenotype (e.g., healthy vs. disease) [1] | Multiple groups with different mechanistic perturbations [1] |

| Intervention Type | Introduction of traceable label at steady state [1] | Targeted perturbations (genetic, pharmacological, environmental) [1] [7] |

| Key Measurements | Time-course of labeled metabolites [1] | Multi-omics data: metabolomics, fluxomics, proteomics [7] [5] |

| Validation Approach | Statistical comparison of rate constants between groups [1] | Ability to predict non-steady state responses to new perturbations [1] |

| Data Interpretation | Identifies where to look for mechanisms [1] | Proposes specific testable molecular mechanisms [1] |

Performance in Practical Applications

In practical applications, each approach demonstrates distinct strengths and limitations, as evidenced by their implementation across various fields.

Table 3: Application Performance in Different Domains

| Application Domain | Tracer Kinetics Performance | Mechanistic Systems Biology Performance |

|---|---|---|

| Lipoprotein Metabolism | 50+ year history quantifying LDL production/clearance [1] | Emerging capability to model pharmacological perturbations [1] |

| Metabolic Engineering | Limited to steady-state flux analysis | Enables combinatorial pathway optimization in DBTL cycles [7] |

| Medical Imaging (DCE-MRI) | Standard models (e.g., Extended-Tofts) provide basic parameters [8] [9] | Advanced models (DP, TH) show superior diagnostic performance (AUC: 0.88 vs 0.73) [8] |

| Drug Development | Pharmacokinetics (what body does to drug) [1] | Systems pharmacology (what drug does to body) [1] |

| Nutritional Science | Whole-body nutrient utilization & requirements [5] | Multi-scale integration from molecular to physiological levels [5] |

Integration in Modern DBTL Cycle Research

The most powerful contemporary approaches recognize the complementary strengths of TK and MSB, integrating them within iterative DBTL cycles. The following diagram illustrates how both modeling paradigms contribute to this integrated research framework:

In metabolic engineering, this integration is particularly advanced. Kinetic models of metabolic pathways serve as "digital twins" that simulate the effects of genetic modifications before physical strain construction [7] [10]. These models use ordinary differential equations parameterized with enzyme kinetic constants (Km, Vmax) to dynamically predict metabolite concentrations and pathway fluxes, capturing nonlinear effects and regulatory feedback missed by simpler steady-state models [7] [10]. The DBTL cycle becomes increasingly efficient as model predictions guide which strains to build and test, with experimental results refining model parameters in return [7].

Essential Research Toolkit

Successful implementation of TK and MSB approaches requires specific computational and experimental resources, as detailed in the table below.

Table 4: Essential Research Tools and Reagents

| Tool/Reagent Category | Specific Examples | Research Function |

|---|---|---|

| Computational Modeling Software | SAAM, NONMEM, MONOLIX, specialized DCE analysis software [1] [8] [6] | Parameter estimation, compartmental modeling, nonlinear mixed-effects modeling [1] [6] |

| Tracer Compounds | Radioisotopes (¹⁴C, ³H, ¹²⁵I), stable isotopes (¹³C, ¹⁵N), PET tracers ([¹⁸F]FDG) [1] [11] | Metabolic pathway tracing, flux quantification, in vivo imaging [1] |

| Kinetic Parameters | Enzyme kinetic constants (Km, Vmax), inhibition constants, allosteric regulation parameters [7] [10] | Parameterizing mechanistic models, predicting pathway behavior [7] |

| Analytical Platforms | LC-MS/MS, GC-MS, NMR, MRI/PET scanners [7] [8] [5] | Quantifying metabolites, proteins, metabolic fluxes, and imaging parameters [7] [8] |

| Data Integration Tools | Multi-omics integration platforms, constraint-based modeling tools [7] [5] [12] | Integrating genomic, transcriptomic, proteomic, and metabolomic data [5] [12] |

Tracer Kinetics and Mechanistic Systems Biology represent complementary rather than competing approaches to biological system modeling. TK excels at quantifying "what" is changing in steady-state systems, providing essential numerical constraints on metabolic fluxes. MSB aims to explain "why" systems behave as they do by explicitly representing underlying molecular mechanisms. The most powerful contemporary research frameworks integrate both approaches within iterative DBTL cycles, using TK to provide quantitative flux constraints and MSB to generate testable mechanistic hypotheses and predict system responses to novel perturbations. This synergistic approach accelerates discovery in metabolic engineering, drug development, and biomedical research by combining the descriptive power of TK with the predictive capability of MSB.

The Power and Limitations of Steady-State Assumptions in Biological Systems

The steady-state assumption represents a cornerstone simplification in the modeling and analysis of biological systems, from intracellular metabolic networks to enzymatic reactions. This principle, which posits that the concentrations of intermediate species remain constant over time, enables the tractable formulation of complex kinetic models that would otherwise be mathematically intractable. The validity and utility of this assumption are perpetually tested and refined within the iterative cycles of Design-Build-Test-Learn (DBTL), a framework central to modern biological engineering and kinetic model validation research.

While the steady-state approximation has driven significant advances, its application is bounded by intrinsic limitations. As noted in epistemological analyses of biological knowledge, "fundamental limitations arise from the structure imposed on the mathematical model by the nature of the science, in particular, its formal mathematical structure and its internal tractability" [13]. This article provides a comprehensive comparison of steady-state approaches across biological applications, examining their performance against more complex non-steady-state alternatives through experimental data, computational analyses, and their critical role in the DBTL cycle.

Theoretical Foundations of Steady-State Assumptions

Conceptual Framework and Mathematical Basis

The steady-state assumption fundamentally simplifies biological system analysis by asserting that the production and consumption rates of intermediate species are balanced. In mathematical terms, for a biological species with concentration ( C ), the steady-state condition is expressed as:

[ \dot C = 0 ]

This transforms differential equations that describe system dynamics into algebraic equations, dramatically reducing computational complexity. For instance, in the classic Michaelis-Menten enzyme kinetics model, applying steady-state to the enzyme-substrate complex concentration enables derivation of the familiar hyperbolic rate equation [14].

The theoretical justification for this assumption often rests on timescale separation – the concept that metabolic processes occur much faster than other cellular processes like gene expression [15]. This permits treating metabolism as being in a quasi-steady-state relative to slower cellular dynamics.

Expanding Beyond Traditional Applications

Recent mathematical frameworks have demonstrated that the steady-state assumption can be applied to a broader range of systems than previously recognized, including oscillating and growing systems where metabolites do not remain at constant levels at every time point, but where their production and consumption balance over longer periods [15]. This expanded perspective maintains the assumption's utility while acknowledging that "the average concentrations may not be compatible with the average fluxes" in such dynamic systems [15].

Table 1: Fundamental Types of Steady-State Assumptions in Biological Systems

| Assumption Type | Mathematical Basis | Primary Application Domain | Key Requirement |

|---|---|---|---|

| Classical Quasi-Steady-State (sQSSA) | ( \dot C = 0 ) for intermediate species | Michaelis-Menten enzyme kinetics | Low enzyme concentration relative to KM |

| Total Quasi-Steady-State (tQSSA) | ( \dot{\bar{s}} = 0 ) for total substrate | Enzyme kinetics at higher enzyme concentrations | Low initial substrate concentration [14] |

| Metabolic Steady-State | ( \frac{dM}{dt} = \text{Production} - \text{Consumption} = 0 ) | Genome-scale metabolic modeling | Balance over relevant time period [15] |

| Operational Steady-State | Observable outputs constant over time | Biosensor performance characterization | Stable system parameters and inputs |

Steady-State Approaches in the DBTL Cycle

The DBTL Framework in Biological Engineering

The Design-Build-Test-Learn (DBTL) cycle represents a systematic, iterative framework for biological engineering and model validation. In this context, steady-state assumptions play dual roles: they inform the design of biological constructs and provide the theoretical basis for testable models that can be validated experimentally.

Multiple iGEM teams have documented their use of iterative DBTL cycles to refine biological systems. For instance, the WIST team applied seven distinct DBTL cycles to optimize a cell-free arsenic biosensor, adjusting parameters such as plasmid concentration ratios and incubation times based on performance data [16]. Similarly, the LYON team employed DBTL cycles to engineer biosensors for detecting PFAS compounds, with steady-state performance characterization being a key testing component [4].

The Emergence of LDBT: A Learning-First Paradigm

Recent advances in machine learning are transforming the traditional DBTL approach. The proposed LDBT (Learn-Design-Build-Test) framework repositions learning at the beginning of the cycle, leveraging pre-trained models to inform initial designs [3] [17]. This paradigm shift enhances the role of steady-state principles, as they can be embedded within machine learning models that generate more effective starting designs, potentially reducing the number of iterations required to achieve functional biological systems.

The integration of cell-free transcription-translation (TX-TL) systems has further accelerated the Build-Test phases, enabling rapid empirical validation of steady-state assumptions and model predictions [3] [17]. This combination of computational and experimental advances creates a more efficient feedback loop for validating kinetic models incorporating steady-state approximations.

Comparative Analysis of Steady-State Methodologies

Enzyme Kinetics: sQSSA vs. tQSSA

The irreversible single-substrate, single-enzyme Michaelis-Menten reaction mechanism provides a classic test case for comparing steady-state approximations. The standard quasi-steady-state assumption (sQSSA) and total quasi-steady-state assumption (tQSSA) represent different mathematical approaches to simplifying this system.

The sQSSA assumes the enzyme-substrate complex is in quasi-steady-state with respect to the substrate, deriving the well-known Michaelis-Menten equation:

[ \dot s = -\frac{k2 e0 s}{K_M + s} ]

This reduction is based on the assumption of low initial reduced enzyme concentration (( e0/KM \ll 1 )) [14]. In contrast, the tQSSA, introduced by Borghans et al. (1996) and developed by Tzafriri (2003), replaces substrate ( s ) with total substrate ( \bar{s} = s + c ), proposing a modified equation that remains valid under broader conditions [14].

Recent mathematical analysis has clarified that the tQSSA's effectiveness is particularly "reasonable" under conditions of low initial substrate concentration (( s0/KM \ll 1 )) [14]. This work has helped resolve previous ambiguities about the tQSSA's range of validity, while also demonstrating its limitations at high substrate concentrations.

Table 2: Performance Comparison of Steady-State Approximations in Enzyme Kinetics

| Parameter | Standard QSSA (sQSSA) | Total QSSA (tQSSA) | Linear tQSSA |

|---|---|---|---|

| Key Assumption | Low enzyme concentration (( e0/KM \ll 1 )) | Low initial substrate (( s0/KM \ll 1 )) [14] | Low initial substrate (( s0/KM \ll 1 )) [14] |

| Validity Range | Limited to classic Michaelis conditions | Broader parameter range | Specific to low ( s_0 ) |

| Mathematical Complexity | Moderate | Higher | Simplified linear form |

| Prediction Accuracy | High within validity range | Generally improved over sQSSA | High for targeted conditions |

| Experimental Validation | Extensive | Growing support [14] | Recent computational support [14] |

Metabolic Network Analysis

In metabolic engineering, the steady-state assumption enables flux balance analysis (FBA) by constraining metabolite concentrations to remain constant over time. This application demonstrates the power of steady-state approaches in handling genome-scale networks with hundreds or thousands of reactions.

The mathematical foundation for this application establishes that "the assumption of steady-state also applies to oscillating and growing systems without requiring quasi-steady-state at any time point" [15]. This represents a significant expansion of the concept's utility, acknowledging that steady-state can reflect a balance over longer time periods rather than instantaneous constancy.

However, this perspective also reveals limitations, as "the average concentrations may not be compatible with the average fluxes" in such systems [15]. This disconnect necessitates careful interpretation of steady-state results in dynamic biological contexts.

Biosensor Characterization and Optimization

The DBTL cycles documented by iGEM teams provide practical examples of steady-state principles in biosensor development. The WIST team's arsenic biosensor optimization involved characterizing steady-state performance metrics including sensitivity, specificity, and dynamic range across multiple iterations [16]. Their experimental protocols measured fluorescence output at equilibrium conditions to determine optimal plasmid concentration ratios (settling on a 1:10 sense-to-reporter ratio) and incubation parameters (standardizing at 37°C for 2-4 hours) [16].

The LYON team's PFAS biosensor development similarly employed steady-state fluorescence and bioluminescence measurements to characterize promoter activity and system performance [4]. Their approach highlights how steady-state measurements provide standardized metrics for comparing design iterations within the DBTL cycle.

Experimental Protocols and Methodologies

Protocol 1: Characterizing Enzyme Kinetics Under Steady-State Assumptions

Objective: Determine kinetic parameters (( KM ), ( V{max} )) using steady-state assumptions.

Methodology:

- Prepare enzyme solutions at varying concentrations, ensuring compatibility with sQSSA or tQSSA requirements

- Initiate reactions with substrate concentrations spanning expected ( K_M ) values

- Measure initial velocity rates under conditions where product accumulation is minimal (<5% substrate conversion)

- Record time-course data to verify steady-state conditions are maintained during measurements

- Fit data to appropriate steady-state model (Michaelis-Menten for sQSSA, modified equations for tQSSA)

Critical Considerations:

- Verify assumption validity through parameter consistency checks

- For tQSSA applications, ensure initial substrate concentration is sufficiently low [14]

- Account for enzyme concentration effects when applying sQSSA

Protocol 2: DBTL-Based Biosensor Performance Characterization

Objective: Optimize biosensor performance through iterative DBTL cycles with steady-state output measurements.

Methodology (adapted from iGEM WIST team [16]):

- Design: Specify desired sensitivity, dynamic range, and response time

- Build: Construct genetic circuits using standardized assembly methods

- Test:

- Prepare master mix with cell-free lysate, polymerase, plasmids, and reporter molecules

- Incubate at standardized temperature (e.g., 37°C) until steady-state response is achieved

- Measure fluorescence/luminescence output across analyte concentrations

- Determine signal-to-noise ratio and leakiness

- Learn: Analyze performance data to inform next design iteration

Technical Refinements:

- Implement simultaneous addition of all reaction components to minimize variability

- Use kinetic reading over extended periods (e.g., 90 minutes) to identify response plateaus

- Systematically vary component ratios (e.g., plasmid concentrations) to optimize dynamic range

Limitations and Boundary Conditions

Mathematical and Conceptual Constraints

The power of steady-state assumptions is counterbalanced by intrinsic limitations. As noted in analyses of biological knowledge, "fundamental limitations arise from the structure imposed on the mathematical model by the nature of the science" [13]. These include:

Mathematical Complexity: As biological models increase in size and complexity, deriving closed-form analytic solutions becomes increasingly difficult. Examples include "deriving limit cycles and mean first passage times in Markovian models of gene regulatory networks" [13]. This complexity often necessitates model reduction, which increases stochasticity and decreases predictability.

Experimental Constraints: Measurement technologies limit our ability to fully parameterize complex models, leading to systems with "latent variables" that introduce apparent stochasticity [13]. The p53 network example demonstrates how unobserved variables (like DNA damage status) can create seemingly stochastic behavior in deterministic systems [13].

Knowledge Discovery Limitations: The steady-state assumption may obscure transient dynamics that provide crucial insights into system behavior, particularly in oscillating systems or those with multi-timescale processes.

Practical Limitations in Application

Timescale Mismatch: The steady-state assumption breaks down when the timescales of interacting processes do not separate cleanly. This is particularly problematic in systems combining fast metabolic processes with slower genetic regulation.

Context Dependence: As demonstrated in DBTL cycles, optimal parameters for steady-state performance are often highly specific to experimental context. The WIST team found that plasmid concentration ratios, incubation times, and temperature all required context-specific optimization [16].

Computational Trade-offs: While steady-state approaches reduce computational complexity, they may sacrifice accuracy in dynamic systems. Recent machine learning approaches like DLRN (Deep Learning Reaction Network) have emerged to address some limitations, demonstrating "comparable performance and, in part, even better than a classical fitting analysis" for analyzing complex kinetic data [18].

Computational Advances and Emerging Alternatives

Machine Learning-Enhanced Kinetic Modeling

Recent computational advances are transforming kinetic modeling beyond traditional steady-state approaches. The DLRN framework uses deep neural networks with Inception-Resnet architecture to analyze time-resolved data and identify kinetic models, including their parameters and pathways [18]. This approach demonstrates particular utility for complex multi-step processes like ATP-driven DNA dynamics and enzymatic reaction networks [18].

Similarly, the UniKP framework leverages pretrained language models to predict enzyme kinetic parameters (( k{cat} ), ( KM ), and ( k{cat}/KM )) from protein sequences and substrate structures [19]. This unified framework shows remarkable improvement over previous prediction methods, with a 20% improvement in prediction accuracy for ( k_{cat} ) values compared to earlier approaches [19].

Hybrid Approaches for Complex Systems

For systems where pure steady-state assumptions are insufficient but complete dynamic modeling is intractable, hybrid approaches offer promising alternatives. These include:

Multi-Timescale Modeling: Segmenting systems based on characteristic timescales and applying appropriate approximations to each segment.

Piecewise Steady-State Analysis: Applying steady-state assumptions to specific subsystems or during certain operational phases.

Physics-Informed Machine Learning: Integrating physical principles with data-driven approaches to maintain biological plausibility while leveraging pattern recognition capabilities [3].

Table 3: Computational Tools for Kinetic Modeling Beyond Steady-State

| Tool/Framework | Methodology | Application Scope | Performance Advantages |

|---|---|---|---|

| DLRN [18] | Deep learning (Inception-Resnet) | Chemical reaction networks from time-resolved data | Identifies complex kinetic models with high accuracy (83.1% Top 1 accuracy) |

| UniKP [19] | Pretrained language models (ProtT5, SMILES) | Enzyme kinetic parameter prediction | 20% improvement in ( k_{cat} ) prediction accuracy over previous tools |

| EF-UniKP [19] | Two-layer ensemble model | Enzyme kinetics with environmental factors | Robust prediction considering pH and temperature effects |

| LDBT Framework [3] [17] | Machine learning-first DBTL | Biological design automation | Accelerates design process through zero-shot predictions |

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Key Research Reagents and Experimental Materials

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Cell-Free TX-TL Systems | In vitro transcription-translation for rapid testing | Protein expression without cloning steps; biosensor characterization [3] [17] |

| Plasmid Vectors (e.g., pSEVA261) | Genetic construct delivery | Controlled gene expression; biosensor assembly [4] |

| Reporter Systems (Luciferase, GFP, mCherry) | Quantitative output measurement | Promoter activity assessment; system performance quantification [4] |

| Microplate Readers with Kinetic Capabilities | Time-resolved fluorescence/luminescence measurement | Steady-state verification; dynamic response characterization [16] |

| Specialized Buffers with Cofactor Supplements | Optimized reaction environments | Maintaining enzyme activity; supporting cell-free reactions [16] |

The steady-state assumption remains a powerful tool in biological system analysis, providing essential simplification that enables the study of otherwise intractable systems. Its continued utility within DBTL frameworks demonstrates its enduring value for biological engineering and kinetic model validation. However, researchers must remain cognizant of its limitations and the boundary conditions for its application.

Emerging computational approaches, particularly machine learning frameworks like DLRN and UniKP, are extending our capabilities beyond traditional steady-state methods. These tools, combined with high-throughput experimental platforms like cell-free TX-TL systems, are creating new paradigms for biological design and analysis. The evolution from DBTL to LDBT cycles represents a fundamental shift toward learning-driven biological engineering, where steady-state principles inform rather than constrain biological design.

As biological complexity continues to challenge our modeling capabilities, the judicious application of steady-state assumptions – with clear understanding of their power and limitations – will remain essential for advancing our understanding and engineering of biological systems.

Visual Appendix: Signaling Pathways and Workflows

Simplified p53 Regulatory Network

DBTL Cycle Workflow

Michaelis-Menten Reaction Mechanism

Integrating Linear Pharmacokinetics with Nonlinear Pharmacodynamic Models

The integration of Linear Pharmacokinetics (PK) with Nonlinear Pharmacodynamic (PD) models represents a critical frontier in modern drug development. This integration allows researchers to quantitatively link a drug's concentration-time profile (PK) to its pharmacological effect (PD), even when the relationship between exposure and response is complex and saturable [20]. Within the framework of kinetic model validation research, the Design-Build-Test-Learn (DBTL) cycle has emerged as a powerful, iterative paradigm for optimizing this integration [7] [21]. This guide provides a comparative analysis of linear PK and nonlinear PD models, detailing their respective roles, the experimental data required for their development, and their application within a DBTL cycle to enhance the efficiency and predictive power of therapeutic development.

The core challenge this integration addresses is the frequent disconnect between a drug's predictable, dose-proportional exposure in the body (linear PK) and its often disproportionate, saturable biological effect (nonlinear PD). By combining these elements into a unified mathematical model, scientists can make more informed decisions on dosing, patient selection, and trial design, ultimately streamlining the path from discovery to clinic [22] [20].

Comparative Foundations: Linear PK vs. Nonlinear PD

Core Principles of Linear Pharmacokinetics

Linear pharmacokinetics are characterized by processes where the parameters governing drug absorption, distribution, and elimination are independent of the drug's concentration and time [23]. The most crucial feature is constant clearance, where the rate of drug elimination is directly proportional to its concentration in plasma [23] [24].

- Dose Proportionality: A fundamental principle of linear PK is that the Area Under the Curve (AUC), representing total drug exposure, is directly proportional to the administered dose. Doubling the dose results in a doubling of the AUC [23].

- Schedule Independence: The total drug exposure (AUC) is not affected by changes in the administration schedule. For example, the AUC from a single large bolus dose is equivalent to the combined AUC from multiple smaller doses or a continuous infusion of the same total dose [23].

- Constant Half-Life: The elimination half-life remains constant, regardless of the drug's concentration. This leads to a predictable, exponential decay in plasma concentration over time [23].

Core Principles of Nonlinear Pharmacodynamics

In contrast, nonlinear pharmacodynamics describe a scenario where the magnitude of the drug's effect does not change in direct proportion to its concentration at the effect site. This nonlinearity is often due to the saturation of biological systems [25].

- Saturable Receptors or Pathways: The drug's effect may plateau at higher concentrations once all target receptors are occupied or a downstream signaling pathway is fully activated.

- Signal Transduction Amplification: Biological systems can amplify a signal, meaning a small increase in target engagement can lead to a disproportionately large biological effect.

- Indirect Mechanisms of Action: The drug may act through complex, multi-step mechanisms (e.g., inhibition of protein synthesis or cell cycle arrest) where the observed effect is delayed and not directly proportional to the instantaneous plasma concentration.

The table below summarizes the key distinctions between these two concepts.

Table 1: Fundamental Characteristics of Linear PK and Nonlinear PD Models

| Feature | Linear Pharmacokinetics | Nonlinear Pharmacodynamics |

|---|---|---|

| Core Relationship | Parameters are independent of dose and time [23] | Effect is not directly proportional to drug concentration at the site of action |

| Dose-Exposure/Effect | AUC is proportional to dose [23] | Effect plateaus at high concentrations (Emax model) |

| Key Model Parameter | Clearance (CL) - constant [23] [24] | EC50 (potency) & Emax (efficacy) |

| Governing Equation | Rate of Elimination = CL × Cp [24] |

E = (Emax × C) / (EC50 + C) (Basic Emax model) |

| Primary Cause | Unsaturated elimination pathways (enzymes, transporters) | Saturation of target binding, signal transduction, or physiological systems |

| Clinical Implication | Predictable exposure; simple dose scaling | Complex dose optimization; risk of diminished returns or increased toxicity at high doses |

The DBTL Cycle Framework for Model Integration

The DBTL cycle provides a structured, iterative framework for developing and validating integrated PK/PD models. Its power lies in using data from one cycle to inform and improve the design of the next, creating a continuous feedback loop for model refinement [7]. The workflow of this cycle and the specific role of PK/PD integration within it are visualized below.

Diagram 1: The DBTL cycle workflow, highlighting the integration of PK and PD data into a unified model during the 'Learn' phase.

Phase 1: Design

The "Design" phase involves defining the integrated PK/PD model's mathematical structure and planning the experiments that will generate data for its parameterization [21]. For a model integrating linear PK with nonlinear PD, the structural model might be:

- PK Sub-model: A one- or two-compartment linear model with first-order elimination [23].

- PD Sub-model: A direct or indirect

Emaxmodel linking the plasma or effect-site concentration from the PK model to the observed effect [20].

A critical activity in this phase is Design of Experiments (DoE), which aims to maximize information gain while minimizing experimental effort. For a pathway with multiple factors, Resolution IV fractional factorial designs have been shown to effectively identify optimal conditions and guide subsequent optimization cycles without the prohibitive cost of a full factorial approach [26].

Phase 2: Build

In the "Build" phase, the planned experiments are executed. This involves:

- Synthesizing the drug compound or biologic.

- Preparing the in vitro (e.g., cell cultures, tissue preparations) or in vivo (e.g., animal models) systems.

- Implementing the dosing regimens and sampling schedules defined in the DoE [7].

Phase 3: Test

The "Test" phase is dedicated to data collection. High-quality, well-timed data is crucial for robust model parameterization [23] [21]. Key activities include:

- PK Sampling: Collecting serial blood/plasma samples at precise times to characterize the drug's concentration-time profile.

- PD Monitoring: Measuring the pharmacological response(s) concurrently with PK sampling. This can range from biomarker quantification (e.g., protein levels, metabolic products) to clinical endpoint assessment [20].

Phase 4: Learn

The "Learn" phase is where PK and PD data are integrated. The collected data are used to:

- Parameterize the Model: Estimate the key parameters of both the PK (e.g., Clearance, Volume of distribution) and PD (e.g.,

EC50,Emax) sub-models using nonlinear regression or population modeling techniques [20]. - Validate the Model: Assess the model's predictive performance against a validation dataset or through statistical diagnostics [21].

- Generate Hypotheses: The validated model is used to simulate new scenarios, such as different dosing regimens, which then inform the "Design" of the next DBTL cycle [7].

Experimental Protocols and Data for Model Development

Protocol for Characterizing Linear PK

Objective: To determine the fundamental PK parameters (AUC, CL, V, t½) and confirm linear kinetics over the intended therapeutic dose range.

Methodology:

- Study Design: A single-dose, multi-level escalating dose study in a relevant pre-clinical model (e.g., rodent, non-human primate) or human participants. Doses should span the anticipated therapeutic range.

- Dosing & Sampling: Administer the drug via the intended clinical route (e.g., IV bolus, oral). Collect serial blood samples at pre-defined times post-dose (e.g., 0.25, 0.5, 1, 2, 4, 8, 12, 24 hours).

- Bioanalysis: Use a validated analytical method (e.g., LC-MS/MS) to quantify drug concentrations in each plasma sample [27].

- Data Analysis:

- Calculate AUC for each dose level using non-compartmental analysis (NCA).

- Plot AUC vs. Dose. A linear relationship with a high coefficient of determination (R² > 0.95) confirms dose proportionality [23].

- Calculate clearance (CL = Dose / AUC) and other parameters. Consistent CL values across dose levels confirm linearity [23].

Protocol for Characterizing Nonlinear PD

Objective: To establish the quantitative relationship between drug concentration and pharmacological effect and parameterize a nonlinear Emax model.

Methodology:

- Study Design: An in vivo efficacy study or an in vitro cell-based assay where the biological effect can be measured across a wide concentration range.

- Intervention & Sampling: Expose the system to a range of drug concentrations. For in vivo studies, this may involve different dose groups. Measure the PD endpoint (e.g., enzyme activity, cell proliferation, pain response) at each concentration. Concurrent PK sampling is ideal to link effect to actual exposure.

- Data Analysis:

- Plot the measured effect against the corresponding drug concentration.

- Fit the data to a sigmoidal

Emaxmodel:E = E0 + (Emax × C^h) / (EC50^h + C^h), whereE0is the baseline effect,Emaxis the maximum effect,EC50is the concentration producing 50% ofEmax, andhis the Hill coefficient accounting for sigmoidicity. - Use nonlinear regression software to estimate the parameters

Emax,EC50, andh.

Table 2: Key Parameters from PK and PD Experimental Protocols

| Parameter | Description | Interpretation | Typical Units |

|---|---|---|---|

| AUC | Area Under the plasma Concentration-time curve | Total drug exposure | h*μg/mL |

| CL | Clearance | Volume of plasma cleared of drug per unit time; constant in linear PK | L/h |

| EC50 | Drug concentration producing 50% of maximal effect | Measure of drug potency; lower EC50 = higher potency | μg/mL or nM |

| Emax | Maximum achievable effect | Measure of drug efficacy | Varies (e.g., % inhibition, score) |

| Hill Coefficient (h) | Steepness of the concentration-effect curve | h > 1 suggests cooperative binding | Unitless |

Integrated PK/PD Modeling and the Scientist's Toolkit

The final step is to mathematically link the PK and PD components. For a model with linear PK and direct-effect nonlinear PD, the workflow is illustrated below.

Diagram 2: Logical flow of an integrated PK/PD model, where the output of the linear PK model serves as the input to the nonlinear PD model.

Successful implementation of this research requires a combination of computational tools, experimental reagents, and analytical services.

Table 3: Essential Research Reagent Solutions and Tools

| Item Name | Category | Primary Function in PK/PD Research |

|---|---|---|

| LC-MS/MS System | Analytical Instrument | High-sensitivity quantification of drug concentrations in biological matrices (plasma, tissue) for PK analysis [27]. |

| Validated Bioanalytical Assay | Method | Ensures accuracy, precision, and reproducibility of concentration measurements, which is critical for model parameterization [27]. |

| PBPK/PD Software (e.g., Simcyp) | Computational Tool | Mechanistic, physiologically-based modeling platform to simulate and scale PK/PD from pre-clinical to human populations [20]. |

| Population PK/PD Software (e.g., NONMEM) | Computational Tool | Used for parameterizing models using sparse, variable data from clinical populations, quantifying inter-individual variability [22] [24]. |

| Michaelis-Menten Enzyme Kinetics Assay | Reagent/Biochemical Kit | Characterizes saturable metabolic pathways in vitro, providing initial estimates for Vmax and Km that may explain nonlinear PK [25]. |

| High-Throughput Screening Systems | Platform | Enables rapid testing of PD effects (e.g., on-cell target engagement) across a wide concentration range for Emax model building [7]. |

The strategic integration of linear pharmacokinetic models with nonlinear pharmacodynamic frameworks provides a powerful, quantitative approach to understanding and predicting drug behavior. When embedded within a rigorous DBTL cycle, this integrated approach transforms drug development from an empirical, trial-and-error process into a rational, iterative learning system. By objectively comparing the principles, data requirements, and modeling outputs of linear PK and nonlinear PD, researchers can more effectively design experiments, build predictive models, and ultimately accelerate the development of safer and more effective therapeutics.

The Rise of Systems Pharmacology in Contemporary Drug Development

The landscape of drug development is undergoing a fundamental transformation, moving away from traditional reductionist approaches toward a more holistic, systems-level framework. Systems pharmacology represents this paradigm shift, integrating computational modeling, multiscale biological data, and quantitative methods to understand complex interactions between drugs, biological networks, and disease processes. This approach addresses the critical limitations of single-target drug discovery, which has faced high attrition rates in clinical trials due to inadequate efficacy and unexpected toxicity in complex diseases [28]. The emergence of systems pharmacology coincides with growing recognition that most diseases, particularly cancer, neurodegenerative disorders, and metabolic syndromes, involve dysregulated networks rather than isolated molecular defects, necessitating therapeutic strategies that target multiple pathways simultaneously [29] [28].

The foundation of modern systems pharmacology rests upon several key technological advancements: the availability of large-scale biological datasets ("omics" technologies), sophisticated computational modeling platforms, and artificial intelligence (AI) applications. According to recent analyses, the integration of these elements through Model-Informed Drug Development (MIDD) frameworks can significantly shorten development timelines and reduce costs—estimated savings of $5 million and 10 months per development program based on Pfizer data [30]. The field has matured to the point where regulatory agencies like the FDA and EMA are increasingly accepting these approaches, with a notable rise in submissions leveraging Quantitative Systems Pharmacology (QSP) models over the past decade [31] [30].

Methodological Frameworks: DBTL Cycles and Kinetic Modeling

The Design-Build-Test-Learn (DBTL) Cycle

At the core of modern systems pharmacology lies the iterative Design-Build-Test-Learn (DBTL) cycle, a systematic framework for optimizing therapeutic interventions. This engineering-inspired approach enables researchers to continuously refine hypotheses and designs based on experimental feedback [7] [32]. The DBTL cycle consists of four interconnected phases:

- Design: Researchers identify targets and plan interventions using computational tools and prior knowledge

- Build: Genetic constructs or drug candidates are created using synthetic biology and automated platforms

- Test: High-throughput experiments evaluate the performance of designs in biological systems

- Learn: Data analysis and machine learning extract insights to inform the next design cycle

Recent advancements have introduced "knowledge-driven" DBTL cycles that incorporate upstream in vitro investigations to accelerate the learning phase. For instance, researchers developing dopamine-producing Escherichia coli strains used cell-free protein synthesis systems to test enzyme expression levels before implementing changes in living organisms, significantly reducing development iterations [32]. This approach enabled a 2.6 to 6.6-fold improvement in dopamine production over existing methods by systematically optimizing pathway enzyme levels through ribosome binding site engineering [32].

Kinetic Modeling Frameworks

Kinetic models provide the mathematical foundation for systems pharmacology by describing biological systems through ordinary differential equations that capture the dynamics of metabolic and signaling pathways [7]. These mechanistic models differ from purely statistical approaches by incorporating biological constraints and prior knowledge, enabling more accurate predictions of system behavior under perturbation.

A key advantage of kinetic models is their ability to simulate counterintuitive pathway behaviors that challenge conventional wisdom. For example, in metabolic engineering, simply increasing enzyme concentrations does not always enhance flux toward desired products; in some cases, it can deplete substrates and reduce output—a phenomenon that can be predicted and avoided through kinetic modeling [7]. These models create virtual testbeds for exploring "what-if" scenarios before committing to costly experimental work.

Table 1: Comparison of Modeling Approaches in Drug Development

| Modeling Approach | Key Features | Primary Applications | Limitations |

|---|---|---|---|

| Quantitative Systems Pharmacology (QSP) | Multiscale, mechanistic, incorporates pathophysiology | Target identification, dose optimization, clinical trial simulation | High computational demand, requires extensive biological knowledge |

| Physiologically Based Pharmacokinetics (PBPK) | Organ-level resolution, species scaling | ADME prediction, drug-drug interactions, first-in-human dosing | Limited pharmacodynamic components |

| Population PK/PD | Statistical, accounts for variability | Exposure-response analysis, dosing individualization | Often empirical rather than mechanistic |

| Quantitative Structure-Activity Relationship (QSAR) | Ligand-based, uses molecular descriptors | Compound screening, toxicity prediction | Limited to similar chemical scaffolds |

Comparative Analysis: Traditional vs. Network Pharmacology

The transition from traditional to network pharmacology represents more than just technological advancement—it constitutes a fundamental shift in how we conceptualize drug action and therapeutic intervention. Classical pharmacology has operated predominantly on a "one-drug-one-target" model that emerged from receptor theory, focusing on highly specific molecular interactions between drugs and their protein targets [28]. While this approach has produced successful treatments for infectious diseases and conditions with well-defined molecular etiology, it has proven inadequate for addressing complex multifactorial diseases characterized by redundant pathways and network-level dysregulation [28].

Network pharmacology, in contrast, embraces the complexity of biological systems by examining drug actions within interconnected molecular networks. This paradigm leverages omics technologies, bioinformatics, and computational modeling to identify multi-target strategies that can produce more robust therapeutic effects with reduced side effects [28]. The distinction between these approaches extends throughout the drug development process, from target identification to clinical application.

Table 2: Traditional Pharmacology vs. Network Pharmacology

| Feature | Traditional Pharmacology | Network Pharmacology |

|---|---|---|

| Targeting Approach | Single-target | Multi-target / network-level |

| Disease Suitability | Monogenic or infectious diseases | Complex, multifactorial disorders |

| Model of Action | Linear (receptor-ligand) | Systems/network-based |

| Risk of Side Effects | Higher (off-target effects) | Lower (network-aware prediction) |

| Failure in Clinical Trials | Higher (60-70%) | Lower due to pre-network analysis |

| Technological Tools Used | Molecular biology, pharmacokinetics | Omics data, bioinformatics, graph theory |

| Personalized Therapy | Limited | High potential (precision medicine) |

The therapeutic advantages of network approaches are particularly evident in oncology, where resistance to single-target therapies remains a major clinical challenge. For example, QSP models in immuno-oncology have successfully identified combination therapies that simultaneously target tumor cells and modulate immune responses, leading to improved anti-tumor efficacy in scenarios where monotherapies fail [29]. These models capture the dynamic interactions between tumor biology, drug pharmacokinetics, and immune system components, enabling more predictive simulation of treatment outcomes across patient populations.

Key Technologies and Research Solutions

Computational and Modeling Platforms

The implementation of systems pharmacology relies on sophisticated software platforms that enable the construction, simulation, and analysis of complex biological networks. The MATLAB/SimBiology environment has emerged as a popular choice for QSP modeling, providing tools for building dynamical systems models, estimating parameters from experimental data, and running virtual patient simulations [29] [33]. Other platforms like R-based packages (nlmixr, mrgsolve, RxODE) and specialized tools such as Cell Collective offer complementary capabilities for different aspects of model development and analysis [29].

These computational environments support the QSP workflow which typically involves: (1) model building using diagrammatic or programmatic interfaces, (2) importing and visualizing experimental data, (3) parameter estimation through optimization algorithms, (4) simulation of "what-if" scenarios, (5) sensitivity analysis to identify key pathways, and (6) virtual patient generation to explore population heterogeneity [33]. This workflow enables researchers to move iteratively between experimental data and model refinement, progressively improving the predictive power of their simulations.

Experimental and Reagent Solutions

The computational aspects of systems pharmacology are grounded in experimental biology, with specific reagent systems and research tools playing critical roles in model development and validation.

Table 3: Essential Research Reagents and Platforms in Systems Pharmacology

| Reagent/Platform | Function | Application Example |

|---|---|---|

| Cell-free protein synthesis (CFPS) systems | Test enzyme expression and pathway function | In vitro optimization of dopamine pathway [32] |

| Ribosome Binding Site (RBS) libraries | Fine-tune gene expression levels | Metabolic pathway optimization in E. coli [32] |

| Promoter libraries | Vary transcription rates | Combinatorial pathway optimization [7] |

| UTR Designer tools | Modulate translation efficiency | RBS engineering for synthetic biology [32] |

| Kinetic model platforms (SKiMpy) | Simulate metabolic pathways | Predicting flux in engineered strains [7] |

| High-throughput screening automation | Rapid testing of genetic variants | DBTL cycle implementation [7] [32] |

Artificial Intelligence and Machine Learning Integration

The integration of artificial intelligence (AI) and machine learning (ML) with systems pharmacology creates a powerful synergy between mechanistic understanding and data-driven pattern recognition [34]. ML algorithms excel at identifying complex patterns in high-dimensional data, while QSP models provide biological context and mechanistic constraints. This combination is particularly valuable in areas such as target prediction, where ML can screen vast chemical spaces while QSP models assess the system-level consequences of target modulation [34].

Leading AI-driven drug discovery companies have demonstrated the practical potential of these integrated approaches. Exscientia, for example, has developed an automated platform that combines AI-based compound design with robotic synthesis and testing, achieving approximately 70% faster design cycles while requiring 10-fold fewer synthesized compounds than traditional medicinal chemistry [35]. Similarly, Insilico Medicine reported advancing an idiopathic pulmonary fibrosis drug from target discovery to Phase I trials in just 18 months—significantly faster than the typical 5-year timeline for conventional approaches [35].

Case Studies and Experimental Validation

Dopamine Production in E. coli via Knowledge-Driven DBTL

A recent study demonstrates the power of combining kinetic modeling with experimental validation in optimizing microbial production of dopamine [32]. Researchers implemented a knowledge-driven DBTL cycle that began with in vitro testing in cell lysate systems to determine optimal enzyme ratios before moving to live cells. This approach resulted in a high-yielding dopamine strain producing 69.03 ± 1.2 mg/L, representing a 2.6 to 6.6-fold improvement over previous reports [32].

The experimental protocol involved:

- Strain engineering: Creating E. coli FUS4.T2 with enhanced L-tyrosine production

- Pathway construction: Introducing heterologous genes hpaBC and ddc under inducible control

- In vitro testing: Using cell lysate systems to determine optimal enzyme expression levels

- RBS library construction: Generating 16 variants with modified Shine-Dalgarno sequences

- High-throughput screening: Evaluating dopamine production across variants

- Model refinement: Incorporating results into kinetic models for further prediction

This case highlights how upstream in vitro investigation can guide subsequent in vivo engineering, reducing the number of DBTL cycles required to achieve performance targets.

Immuno-Oncology Applications

Quantitative Systems Pharmacology has shown particular promise in immuno-oncology, where it helps unravel the complex interactions between tumors, immune cells, and therapeutic agents. Recent QSP models have incorporated tumor heterogeneity, immune cell trafficking, and checkpoint inhibitor mechanisms to simulate patient responses to immunotherapies [29]. These models have identified combination therapies that simultaneously target multiple pathways in the cancer-immunity cycle, leading to improved anti-tumor efficacy compared to monotherapies.

One published QSP model focused on triple-negative breast cancer successfully predicted the efficacy of atezolizumab and nab-paclitaxel combination therapy by capturing the dynamics of immune cell infiltration and tumor cell killing [29]. The model provided insights into optimal dosing schedules that would be difficult to determine through clinical trials alone, demonstrating how QSP can guide clinical translation of combination immunotherapies.

Signaling Pathways and Workflow Visualization

The implementation of systems pharmacology relies on clearly defined workflows and pathway representations. The following diagrams illustrate key processes in systems pharmacology approaches.

DBTL Cycle Workflow

Dopamine Biosynthesis Pathway

Future Perspectives and Challenges

As systems pharmacology continues to evolve, several emerging trends and challenges will shape its trajectory. The integration of multi-omics data (genomics, transcriptomics, proteomics, metabolomics) with QSP models promises to enhance their predictive power and biological relevance [28]. Similarly, the creation of virtual patient populations through QSP modeling addresses a critical need in drug development, particularly for rare diseases and pediatric populations where clinical trials are ethically or practically challenging [30].

Significant challenges remain, including the need for standardized model qualification methods, improved data quality and accessibility, and broader organizational acceptance of model-informed approaches [31] [29]. The field must also address technical hurdles related to model scalability and computational efficiency as systems representations become increasingly comprehensive.

Perhaps most importantly, the ultimate validation of systems pharmacology will come through clinical translation—demonstrating that model-informed therapeutic strategies actually improve patient outcomes. While AI-designed molecules are advancing through clinical trials, none have yet received regulatory approval, raising questions about whether these approaches deliver better success or merely faster failures [35]. Ongoing clinical studies will determine the real-world impact of systems pharmacology on drug development efficiency and therapeutic success rates.

Despite these challenges, the continued expansion of systems pharmacology appears inevitable given the compelling economic and scientific value proposition. As one industry analysis concluded: "QSP is no longer an emerging methodology; it is becoming the new standard in drug development" [30]. This transition represents not just a technological shift but a fundamental reimagining of how we understand and intervene in biological systems for therapeutic benefit.

Implementing Kinetic DBTL Frameworks: Practical Applications Across Biomedical Domains

Mechanistic Kinetic Models as In Silico Testbeds for DBTL Cycle Optimization

In the field of synthetic biology and metabolic engineering, the Design-Build-Test-Learn (DBTL) cycle serves as the fundamental engineering paradigm for developing efficient microbial cell factories. Mechanistic kinetic models have emerged as powerful in silico testbeds that provide a computational framework to simulate and optimize these iterative cycles before embarking on costly experimental work. These mathematical models simulate the dynamic behavior of biological systems, enabling researchers to predict pathway performance, identify metabolic bottlenecks, and evaluate optimization strategies under controlled virtual conditions. The integration of these models creates a simulated biological environment where different experimental designs, machine learning approaches, and optimization algorithms can be rigorously tested and validated, thereby accelerating the development of robust microbial strains for chemical production [36] [37] [38].

The broader thesis context of kinetic model framework validation research positions these computational tools as essential components for establishing predictive biological engineering. By providing a ground-truth simulation environment, kinetic models enable direct comparison between predicted and actual biological behavior, facilitating the validation of DBTL frameworks under conditions that mimic real-world biological complexity while offering complete parameter control and reduced experimental variance [36]. This review objectively compares the performance of various DBTL optimization strategies evaluated through kinetic modeling approaches, providing researchers with evidence-based guidance for selecting appropriate methods for their specific applications.

Kinetic Modeling Frameworks for DBTL Validation

Fundamental Framework Architecture

Mechanistic kinetic modeling frameworks for DBTL cycle validation typically employ ordinary differential equation (ODE) systems that mathematically represent the biochemical reactions within metabolic pathways. These frameworks simulate the dynamic flux of metabolites through engineered pathways, capturing complex regulatory interactions and enzyme kinetics that influence overall production yields. The foundational structure comprises mass-action kinetics and enzyme catalytic mechanisms that collectively determine pathway dynamics and emergent properties [36] [37].

The kinetic model framework introduced by van Ladereen et al. exemplifies this approach, implementing a virtual seven-gene pathway with parameters derived from experimentally validated enzyme kinetics [36] [37] [38]. This framework specifically simulates the performance of full factorial strain libraries and serves as a benchmark for comparing reduced experimental designs. The model incorporates biological noise and experimental variance parameters, enabling researchers to evaluate optimization methods under conditions that mirror real-world laboratory challenges, including measurement error and biological variability that can significantly impact algorithm performance and experimental conclusions [26] [36].

Key Framework Components and Capabilities

Table 1: Core Components of Kinetic Modeling Frameworks for DBTL Validation

| Component | Function | Implementation Example |

|---|---|---|

| Virtual Pathway | Serves as ground truth for method validation | Seven-gene pathway with known optimal expression combination [26] [36] |

| Noise Integration | Mimics experimental variance in biological data | Incorporation of Gaussian noise models for measurement error [26] [36] |

| Performance Metrics | Quantifies optimization algorithm effectiveness | Production yield, convergence speed, resource utilization [26] [36] [37] |

| Experimental Design Simulator | Tests different factor combinations and sample sizes | Comparison of full factorial, fractional factorial, and Plackett-Burman designs [26] |

| Machine Learning Interface | Enables algorithm training and prediction testing | Integration with random forest, gradient boosting, and linear models [36] [37] |

The simulated DBTL cycle framework employs a modular architecture that separately implements each phase of the engineering cycle. The design phase incorporates algorithms for selecting genetic parts and expression levels, while the build phase simulates strain construction with predictable success rates. The test phase generates synthetic analytical data with configurable noise profiles, and the learn phase applies machine learning algorithms to extract patterns and recommend improved designs for subsequent cycles [36] [37] [38]. This comprehensive approach enables researchers to systematically evaluate how different strategies at each DBTL phase contribute to overall optimization efficiency, providing insights that would be prohibitively expensive or time-consuming to obtain through purely experimental approaches.

Comparative Analysis of DoE Methods via Kinetic Models

Experimental Protocol for DoE Comparison