Validating Autonomous DBTL Platforms: How Robotic Systems are Revolutionizing Bioengineering and Drug Development

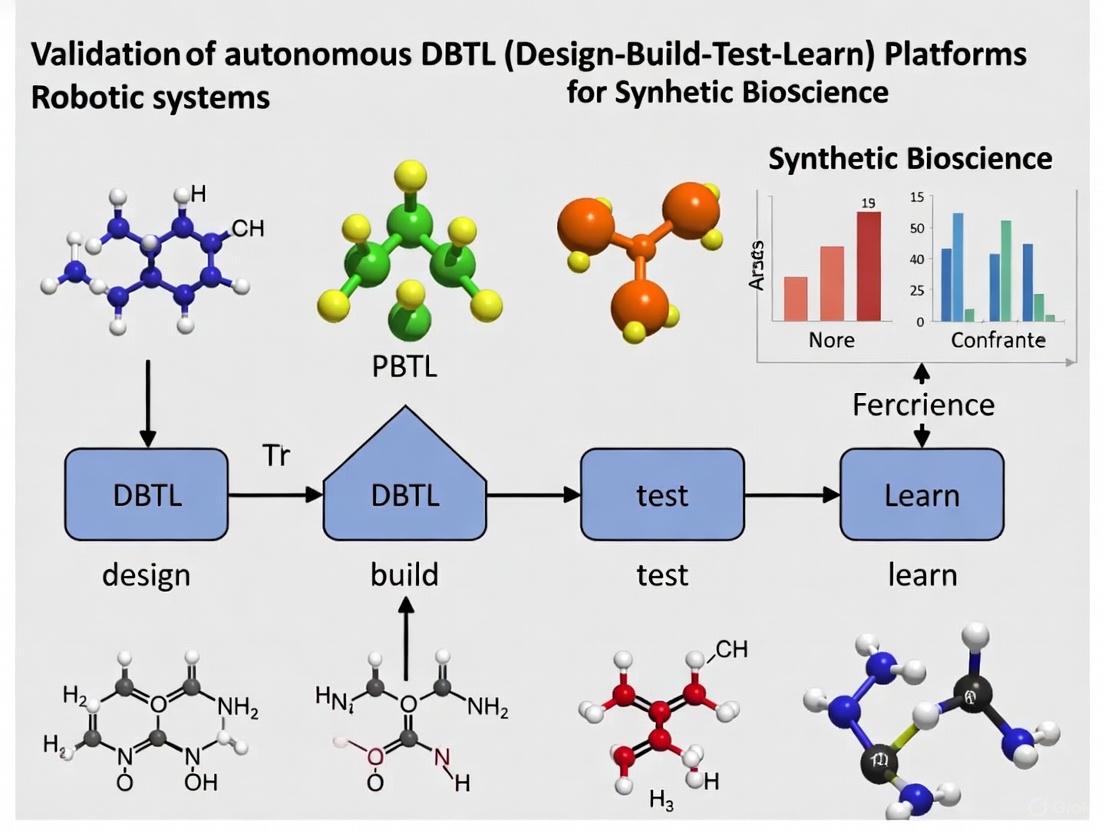

Autonomous Design-Build-Test-Learn (DBTL) platforms, integrating robotic biofoundries with artificial intelligence, are transforming the pace and precision of biological research and development.

Validating Autonomous DBTL Platforms: How Robotic Systems are Revolutionizing Bioengineering and Drug Development

Abstract

Autonomous Design-Build-Test-Learn (DBTL) platforms, integrating robotic biofoundries with artificial intelligence, are transforming the pace and precision of biological research and development. This article explores the foundational principles of these self-driving laboratories, detailing their core components from liquid handlers and incubators to AI-driven optimizers. We examine methodological implementations across diverse applications, including enzyme engineering and metabolite production, and address critical troubleshooting and optimization strategies for platform deployment. Finally, we present a comparative analysis of validation case studies that benchmark performance, demonstrating how fully autonomous DBTL cycles are achieving unprecedented efficiency and success in strain optimization, protein engineering, and therapeutic development.

The Foundations of Autonomous Experimentation: Core Components and the Drive for Automation

The Design-Build-Test-Learn (DBTL) cycle is a foundational framework in synthetic biology, enabling the systematic engineering of biological systems [1]. This iterative process involves designing biological parts, building DNA constructs, testing their function in assays, and learning from the data to inform the next design round [2] [1]. While effective, traditional DBTL cycles are often slow, labor-intensive, and limited by human throughput.

A transformative paradigm shift is now underway, moving from human-driven iterations to fully autonomous DBTL cycles powered by robotics and artificial intelligence. This evolution represents a fundamental rethinking of the bio-engineering process, enabling "self-driving laboratories" where machine learning precedes design, and automated systems handle building and testing with minimal human intervention [2]. This article examines this transition through the lens of robotic systems research, comparing performance metrics and providing experimental validation for autonomous platforms.

The Evolution from Manual to Autonomous DBTL

The Traditional DBTL Framework

In conventional synthetic biology workflows, the DBTL cycle requires significant manual effort at each stage [1]. The Design phase relies heavily on researcher expertise and limited computational modeling. The Build phase involves laborious molecular cloning, vector assembly, and transformation processes. The Test phase requires manual functional assays and characterization, creating bottlenecks in data generation [1]. The Learning phase depends on human analysis of results to inform subsequent designs, making multiple iterations time-consuming and costly.

The Emergence of the LDBT Paradigm

Recent advances are fundamentally restructuring this approach into what researchers term the "LDBT" cycle (Learn-Design-Build-Test), where machine learning precedes initial design [2]. In this model, pre-trained AI models capable of zero-shot predictions leverage vast biological datasets to generate optimized designs before any physical experimentation begins [2]. This paradigm shift is made possible by:

- Protein language models like ESM and ProGen that capture evolutionary relationships in amino acid sequences [2]

- Structure-based deep learning tools such as ProteinMPNN and MutCompute that enable robust protein design [2]

- Functional prediction models for properties like thermostability (Prethermut, Stability Oracle) and solubility (DeepSol) [2]

When combined with rapid cell-free expression systems that accelerate building and testing, this reordering enables a single, highly efficient cycle that can generate functional biological parts without multiple iterations [2].

The Role of Robotics in Autonomous Implementation

Robotic systems serve as the physical enabler of autonomous DBTL cycles, providing the integration layer between AI-driven design and experimental execution. The pharmaceutical robotics market is projected to grow from approximately $215 million in 2024 to nearly $460 million by 2033, reflecting increased adoption of automation in biological research [3]. Key robotic technologies facilitating this transition include:

- Traditional robotic systems for automating repetitive laboratory tasks like liquid handling and sample preparation [4]

- Collaborative robots (cobots) designed to work safely alongside humans in shared spaces [4]

- Autonomous robots that perform experiments and analyses without human intervention by combining AI, vision systems, and machine learning [4]

- Integrated robotic workstations that combine multiple functions like pipetting, dispensing, mixing, and thermocycling in compact units [5]

Performance Comparison: Manual vs. Automated vs. Autonomous DBTL

The transition from manual to fully autonomous DBTL implementation occurs across a spectrum of technological capability. The table below summarizes key performance differences across this evolution:

| Performance Metric | Manual DBTL | Automated DBTL | Autonomous DBTL |

|---|---|---|---|

| Cycle Duration | Weeks to months [1] | Days to weeks | Hours to days [2] |

| Throughput Scale | 10-100 constructs/cycle [1] | 100-10,000 constructs/cycle | 10,000-1,000,000+ constructs/cycle [2] |

| Human Intervention | Full involvement at all stages | Partial automation with human supervision | Minimal intervention; closed-loop operation [2] |

| Data Generation | Limited by manual assay capacity | Moderate-scale data production | Megascale data generation [2] |

| Primary Limitation | Human labor and error [1] | Integration between automated islands | Computational infrastructure and model training |

| Key Technologies | Basic lab equipment, manual cloning [1] | Liquid handlers, colony pickers | AI agents, cell-free systems, robotic integration [2] |

| Experimental Cost per Construct | High (significant labor) | Moderate (equipment amortization) | Low (massive parallelization) [3] |

Table 1: Performance comparison across DBTL implementation levels. Autonomous systems leverage cell-free expression and AI for radical acceleration.

Experimental Validation of Autonomous DBTL Platforms

Protocol 1: Ultra-High-Throughput Protein Stability Mapping

Objective: Validate autonomous DBTL performance for protein engineering applications by mapping stability landscapes of thousands of protein variants.

Methodology:

- Learn Phase: Pre-trained protein language models (ESM, ProGen) generated initial variant libraries based on evolutionary patterns [2].

- Design Phase: Stability Oracle and Prethermut algorithms predicted thermodynamic stability changes (ΔΔG) for generated sequences [2].

- Build Phase: DNA templates were synthesized without intermediate cloning steps and expressed using cell-free transcription-translation systems [2].

- Test Phase: Expressed proteins were coupled with cDNA display and stability measurements were performed in microfluidic droplets, enabling characterization of 776,000 protein variants in a single experiment [2].

Results: This autonomous workflow generated a massive dataset of protein stability measurements that was used to benchmark zero-shot predictors, demonstrating the ability to map complex sequence-function relationships at unprecedented scale [2].

Protocol 2: AI-Driven Antimicrobial Peptide Discovery

Objective: Demonstrate closed-loop DBTL for designing novel antimicrobial peptides (AMPs) with therapeutic potential.

Methodology:

- Learn Phase: Deep learning models were trained on known AMP sequences and properties [2].

- Design Phase: Models computationally surveyed over 500,000 potential AMP sequences and selected 500 optimal variants for experimental testing [2].

- Build Phase: Selected sequences were synthesized and expressed using high-throughput cell-free systems [2].

- Test Phase: Expressed peptides were automatically screened for antimicrobial activity using robotic liquid handling and fluorescence-based assays [2].

Results: The autonomous system identified 6 promising AMP designs with validated antimicrobial activity, demonstrating the efficiency of machine learning-guided discovery compared to traditional screening methods [2].

Protocol 3: Pathway Optimization via iPROBE

Objective: Implement autonomous DBTL for optimizing biosynthetic pathways.

Methodology:

- Learn Phase: Neural networks were trained on combinatorial pathway data including enzyme combinations and expression levels [2].

- Design Phase: Models predicted optimal pathway configurations for enhanced 3-hydroxybutyrate (3-HB) production [2].

- Build Phase: Pathway variants were rapidly assembled and expressed using cell-free prototyping systems (iPROBE) [2].

- Test Phase: Metabolite production was automatically measured using robotic analytical systems [2].

Results: The autonomous platform successfully increased 3-HB production in a Clostridium host by over 20-fold, showcasing the power of AI-directed pathway engineering [2].

Architectural Framework for Autonomous DBTL Implementation

The diagram below illustrates the integrated architecture of a fully autonomous DBTL system, highlighting the robotic and computational components that enable continuous operation:

Diagram 1: Autonomous DBTL system architecture showing computational and robotic layers.

The Scientist's Toolkit: Essential Research Reagents and Robotic Systems

Successful implementation of autonomous DBTL cycles requires specialized reagents and equipment designed for robotic compatibility and high-throughput operation. The table below details key components:

| Tool Category | Specific Examples | Function in Autonomous DBTL |

|---|---|---|

| Cell-Free Expression Systems | CFPS kits, PURExpress | Enable rapid protein synthesis without cloning; support non-canonical amino acids [2] |

| Automated Liquid Handlers | Tecan Veya, Eppendorf Research 3 neo | Provide precise, walk-up automation for reagent dispensing and assay setup [5] |

| Integrated Workstations | SPT Labtech firefly+, MO:BOT platform | Combine multiple functions (pipetting, mixing, thermocycling) in unified systems [5] |

| Microfluidic Devices | DropAI platform | Enable ultra-high-throughput screening via picoliter-scale reactions [2] |

| DNA Assembly Kits | Automated library prep kits | Support rapid, error-free construction of genetic variants [5] |

| Analysis Software | Stability Oracle, ProteinMPNN | Provide AI-driven prediction of protein properties for design optimization [2] |

| Robotic Integration Software | FlowPilot, Mosaic, Labguru | Schedule complex workflows and manage sample tracking across systems [5] |

Table 2: Essential research reagents and robotic systems for autonomous DBTL implementation.

Implementation Challenges and Strategic Considerations

Despite their transformative potential, autonomous DBTL platforms face significant implementation barriers that researchers must strategically address:

Technical and Operational Hurdles

- Integration Complexity: Legacy laboratory facilities often require substantial redesign to accommodate robotic systems, with significant employee retraining needs [3].

- Data Quality Requirements: Autonomous systems require massive, high-quality datasets for training AI models, yet many organizations struggle with fragmented, siloed data with inconsistent metadata [5].

- Initial Investment: While offering long-term savings, upfront costs for robotic systems are substantial, including acquisition, integration, and validation expenses [3].

Strategic Implementation Recommendations

- Phased Adoption: Begin with automating discrete DBTL components before implementing full integration, allowing for workflow optimization and staff training.

- Metadata Standardization: Establish consistent metadata protocols early to ensure AI models receive well-structured, meaningful data [5].

- Collaborative Partnerships: Leverage expertise from robotics manufacturers and AI software developers to accelerate implementation and troubleshooting.

The transition from manual DBTL cycles to fully autonomous, self-driving laboratories represents a fundamental shift in how biological engineering is approached. By integrating machine learning at the inception of the design process and leveraging robotic systems for physical implementation, autonomous DBTL platforms can achieve orders-of-magnitude improvements in speed, scale, and efficiency [2].

The experimental validations presented demonstrate that this paradigm is already delivering tangible advances in protein engineering, drug discovery, and metabolic pathway optimization. As robotic systems become more sophisticated and accessible, and as AI models continue to improve their predictive capabilities, autonomous DBTL will increasingly become the standard approach for biological engineering.

For researchers and drug development professionals, embracing this transition requires both technical adaptation and conceptual flexibility. The future of biological design lies not in replacing human creativity, but in augmenting it with autonomous systems that handle routine experimentation at massive scale, freeing scientists to focus on higher-level innovation and discovery.

The validation of autonomous Design-Build-Test-Learn (DBTL) platforms in robotic systems research represents a paradigm shift in scientific experimentation. These platforms enable continuous, self-optimizing research cycles where experimental parameters are automatically adjusted based on previous outcomes, dramatically accelerating discovery timelines in fields like drug development and synthetic biology. The core hardware components—robotic arms, liquid handlers, and incubators—form the physical infrastructure that enables this autonomy, each playing a distinct yet interconnected role in the experimental workflow. Their performance characteristics directly determine the reliability, throughput, and reproducibility of the entire system, making objective comparison essential for researchers designing these platforms.

Within the DBTL context, robotic arms provide physical manipulation and transfer capabilities between stations; liquid handlers enable precise fluidic operations for assay preparation and reagent dispensing; and incubators maintain optimal environmental conditions for biological processes to occur. The integration of these components into a seamless workflow, often guided by artificial intelligence and machine learning algorithms, transforms traditional manual experimentation into closed-loop autonomous systems. This guide provides an objective comparison of these critical hardware components, focusing on performance metrics and experimental data relevant to scientists, researchers, and drug development professionals implementing autonomous research platforms.

Performance Comparison of Core Hardware Components

Robotic Arms

Robotic arms serve as the material handling backbone of automated laboratory systems, providing the physical connectivity between discrete instruments. Their performance in DBTL platforms is measured by precision, adaptability, and integration capabilities with vision systems and other peripherals.

Table 1: Performance Comparison of Robotic Arm Technologies

| Technology/Feature | Key Performance Metrics | Optimal Application Context | Integration Considerations |

|---|---|---|---|

| Visual Servoing Systems [6] | Accuracy: >95% (F1 score); Processing: 110 FPS at 4K; Tracking Error: Minimal convergence | Dynamic target tracking; High-precision assembly | Requires integration with vision systems (e.g., BFS-Canny-IED algorithm) |

| Deep Reinforcement Learning (DRL) Control [7] | SAC (T1): Best for continuous tasks; DDQN (T2): Superior for discrete planning | Autonomous learning of design rules; Robotic assembly tasks | Compatible with policy optimization (PPO, A2C); Requires training period |

| AI-Powered Industrial Arms [8] | Market growth to $192B by 2033; Enhanced precision and force control | Manufacturing, electronics, automotive assembly | IoT-enabled for real-time data exchange; Collaborative features |

Recent research demonstrates significant advances in robotic arm precision through improved visual servoing technologies. One study established a robotic arm visual servo system (RAVS) based on a BFS-Canny image edge detection algorithm that achieved remarkable performance metrics, including accuracy, recall, and F1 score indicators exceeding 95%, 86%, and 90% respectively. This system maintained computational efficiency of 110FPS even at 4K image resolution (4096*2160), with an average running time of just 30.28ms in test datasets, enabling real-time dynamic tracking with minimal error convergence [6]. These metrics are particularly relevant for DBTL platforms requiring high-precision manipulation in biological experiments.

For autonomous learning applications, different deep reinforcement learning approaches show specialized strengths. In a block wall assembly scenario modeling architectural robotics, the problem was strategically decomposed into target reaching (T1 - continuous action space) and sequential planning (T2 - discrete action space). Performance evaluations revealed that Soft Actor-Critic (SAC) excelled in target reaching tasks, while Double Deep Q-Network (DDQN) demonstrated superior performance in sequential planning, additionally exhibiting strong learning adaptability that yielded diverse final layouts in response to varying initial conditions [7]. This capacity for autonomous adaptation is particularly valuable in DBTL platforms where environmental conditions may fluctuate.

Liquid Handlers

Liquid handling systems form the fluidic core of automated biological experimentation, enabling precise reagent dispensing, serial dilutions, and assay setup. Their performance directly impacts experimental reproducibility and minimal usable reagent volumes.

Table 2: Liquid Handler Market Overview and Performance Characteristics

| Characteristic | Market Data & Statistics | Growth Projections | Technology Trends |

|---|---|---|---|

| Overall Market Size [9] [10] | $5.1B (2025); $1.29B (2024) | $7.4B by 2030 (CAGR 8.0%); $2.57B by 2033 (CAGR 7.98%) | Integration with AI, IoT, and LIMS |

| Product Types [11] [10] | Automated systems dominate (60% share); Standalone systems lead segment | Automated systems: 9.0% CAGR; Disposable tips: 8.3% CAGR | Miniaturization, microfluidics |

| Regional Analysis [9] [10] | North America dominates; Asia-Pacific: 21.6% share (2024), fastest growth | Europe: 8.5% CAGR (2025-2033) | Rising healthcare spending in Asia-Pacific |

| Application Analysis [9] [11] | Drug discovery dominates segment; PCR setup: 11.1% CAGR | High-throughput screening driving demand | Application-specific customization |

The market for automated liquid handling is experiencing robust growth, projected to reach USD $7.4 billion by 2030 from USD $5.1 billion in 2025, at a Compound Annual Growth Rate (CAGR) of 8.0% [9]. Another report estimates the market will grow from USD $1.39 billion in 2025 to USD $2.57 billion by 2033, at a similar CAGR of 7.98% [10]. This growth is primarily driven by increasing automation in laboratories, rising throughput requirements in genomics and proteomics research, and expanding biopharmaceutical R&D investments. Automated systems account for the largest market share, with their dominance driven by increasing demand for higher precision, faster processing, and improved operational efficiency in laboratory workflows [9].

Performance across different liquid handling modalities varies significantly. Disposable tip systems currently dominate the market share, while fixed tip systems offer economic advantages for specific applications like handling purified PCR samples and DNA/RNA sequencing mixtures [10]. By procedure, PCR setup represents the highest growth segment with an expected CAGR of 11.1%, driven by integration of automated liquid handling workstations like the PerkinElmer Zephyr G3 and Tecan Freedom EVO for various PCR applications including gene sequencing, cloning, and mutation testing [10]. Regional performance differences are notable, with North America maintaining dominance due to strong pharmaceutical R&D presence, while the Asia-Pacific region is experiencing the fastest growth fueled by rapid expansion of pharmaceutical and biotechnology industries and increasing government funding for life sciences research [9].

Incubators

Incubators provide the controlled environments essential for cell culture, microbial growth, and other biological processes in DBTL platforms. Their performance is measured by stability, uniformity, and contamination control capabilities.

Table 3: Incubator Performance Parameters and Market Outlook

| Parameter | Performance Standards | Impact on Experiments | Market Context |

|---|---|---|---|

| Temperature Control [12] | High-quality units maintain ±0.2°C stability | Fluctuations alter growth rates, enzyme reactions, cell viability | Critical for reproducibility |

| CO₂ Regulation [12] | Precision control at 5% for cell culture; IR sensors | Fluctuations cause abnormal growth or cell death | Standard for mammalian cell culture |

| Humidity Control [12] | Prevents desiccation and condensation | Evaporation of media or contamination | Essential for long-term viability |

| Market Data [13] | $500M market (2025); 7% CAGR to 2033 | Exceeding $900M by 2033 | |

| Key Players [14] [13] | Thermo Fisher Scientific, Memmert, Binder | 60% market share by major players |

Temperature stability represents perhaps the most critical performance parameter for laboratory incubators. Even small fluctuations can alter microbial growth rates, enzyme reactions, or cell viability, compromising experimental reproducibility [12]. High-quality incubators use advanced sensors, insulated walls, and precise controllers to minimize these risks, with validation studies often required to confirm heat distribution meets industry standards. Modern units from leading manufacturers like Thermo Fisher Scientific and Memmert have demonstrated temperature stability of ±0.2°C over extended periods, ensuring consistent experimental conditions [14] [12].

For cell culture applications, CO₂ regulation is equally vital, with precision control at 5% CO₂ representing the standard for most mammalian cell culture work. Fluctuations in CO₂ concentration can cause cells to grow abnormally or even die due to pH shifts in the bicarbonate buffering system [12]. Modern incubators address this through infrared sensors and automatic gas injection systems that provide the stability required for long-term experiments. Additionally, proper humidity control prevents desiccation of samples while avoiding excessive condensation that could encourage contamination [12]. The global standard incubator market is projected to grow from $500 million in 2025 to exceed $900 million by 2033, at a CAGR of 7%, reflecting increasing demand from research institutions, pharmaceutical companies, and biotechnology firms [13].

Experimental Protocols and Validation Methodologies

Autonomous DBTL Platform Implementation

A groundbreaking study demonstrated a fully automated test-learn cycle to optimize induction of bacterial systems using a robotic platform, providing a validated experimental framework for autonomous DBTL implementation [15]. The platform was designed to automatically and autonomously optimize inducer concentration for a Bacillus subtilis system and the combination of inducer and feed release for an Escherichia coli system, with green fluorescent reporter protein (GFP) production as the measurable output.

Experimental Workflow:

- Cultivation: Bacteria were cultivated in 96-well flat-bottom microtiter plates (MTP) inside a custom robotic platform (Analytik Jena).

- Induction: The system tested varying inducer concentrations (lactose/IPTG) and enzyme amounts to control glucose release rates.

- Measurement: A PheraSTAR FSX plate reader (BMG) measured OD600 nm (cell density) and fluorescence (GFP production).

- Analysis: An optimizer module selected subsequent measurement points based on a balance between exploration and exploitation.

- Iteration: The platform conducted four full iterations of the test-learn cycle, using either an active-learning machine learning approach or a random search algorithm as a baseline comparison.

Hardware Configuration:

- Incubation: Cytomat two tower shake incubator (Thermo Fisher Scientific) incubating 29 MTPs simultaneously at 37°C at 1,000 rpm [15]

- Liquid Handling: Two CyBio FeliX liquid handling robots (Analytik Jena) - one 8-channel (individually addressable) and one 96-channel (full plate processing) [15]

- Transport: Linear axis mounted robotic arm with gripper (PreciseFlex; RoboDK) for MTP transfer between workstations [15]

- Storage: Multiple racks and carousels for plates and tip boxes, including refrigerated positions (4°C) for reagent storage [15]

This implementation successfully transformed a static robotic platform into a dynamic system capable of autonomous parameter adjustment, establishing a benchmark for DBTL hardware integration.

Diagram 1: Autonomous DBTL Hardware Integration. This workflow illustrates how core hardware components enable the continuous Design-Build-Test-Learn cycle in autonomous research platforms.

Performance Validation of Robotic Arm Visual Servoing

A separate study established rigorous methodology for validating robotic arm visual servo system performance using a novel image edge detection algorithm [6]. The experimental protocol evaluated both computational efficiency and tracking accuracy:

Image Processing Validation:

- Algorithm: BFS-Canny Image Edge Detection (BFS-Canny-IED) combining Breadth First Search, Canny algorithm, Harris algorithm, and parallel processing

- Performance Metrics: Accuracy, recall, F1 score (>95%, >86%, >90% respectively)

- Computational Efficiency: 110 FPS at 4K resolution (4096*2160), average running time ≤30.28ms

- Comparison Baseline: Traditional visual servoing algorithms

Dynamic Tracking Validation:

- Objective: Evaluate tracking error convergence in practical applications

- Method: Continuous position adjustment based on real-time visual feedback

- Result: Successful convergence of tracking error to minimal range, significantly improved dynamic tracking performance

This validation methodology provides a template for assessing robotic arm integration in DBTL platforms, particularly for applications requiring high-precision manipulation or dynamic target tracking.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of autonomous DBTL platforms requires careful selection of both hardware components and biological reagents. The following essential materials represent core requirements for the experimental workflows described in the research.

Table 4: Essential Research Reagent Solutions for Autonomous DBTL Platforms

| Reagent/Material | Function/Purpose | Application Context | Performance Considerations |

|---|---|---|---|

| Bacterial Expression Systems [15] | Protein production chassis; GFP reporter output | Bacillus subtilis and Escherichia coli systems | Inducible promoters enable controlled expression |

| Inducer Compounds [15] | Control expression timing and level | Lactose and IPTG for induction | Concentration optimization critical for yield |

| Culture Media [15] | Support microbial growth and production | Defined compositions for reproducible results | Affects growth rates and protein expression |

| Detection Reagents [15] | Enable output measurement | GFP fluorescence, OD600 measurements | Must be compatible with automation equipment |

| Pipette Tips [10] | Liquid handling precision | Disposable and fixed tip systems | Choice affects cross-contamination risk |

| Microtiter Plates [15] | Standardized cultivation format | 96-well flat-bottom plates | Must withstand shaking and robotic handling |

Diagram 2: DBTL System Component Relationships. This diagram illustrates the interconnected relationships between hardware, software, and reagents in an autonomous DBTL platform.

The objective comparison of robotic arms, liquid handlers, and incubators reveals distinct performance characteristics that must be carefully matched to application requirements in autonomous DBTL platforms. Robotic arms with advanced visual servoing capabilities provide the manipulation precision necessary for dynamic experiments, with DRL-enabled systems offering autonomous learning advantages. Liquid handlers demonstrate varying performance across modalities, with automated systems providing the throughput essential for large-scale screening studies. Incubators deliver critical environmental stability, with temperature, CO₂, and humidity control being non-negotiable for reproducible biological experimentation.

The integration of these components into a cohesive system, as demonstrated in the autonomous test-learn cycle for bacterial induction optimization, enables truly autonomous research platforms that can significantly accelerate discovery timelines. When selecting components for such platforms, researchers should prioritize interoperability, data integration capabilities, and reliability alongside pure performance metrics. As these technologies continue to evolve—with advancements in AI integration, IoT connectivity, and energy efficiency—autonomous DBTL platforms will become increasingly capable of managing complex experimental workflows with minimal human intervention, potentially transforming the landscape of scientific discovery in drug development and biotechnology.

In the evolving landscape of robotic systems research, the validation of autonomous Design-Build-Test-Learn (DBTL) platforms has emerged as a critical frontier. These self-driving laboratories represent a paradigm shift, integrating artificial intelligence (AI) and machine learning (ML) with robotic automation to accelerate scientific discovery. The core hypothesis is that the sophistication of the AI and data management software layer, not just the robotic hardware, determines the success and efficiency of these systems. As organizations increasingly align their data strategies with AI goals, the underlying data architecture becomes the decisive factor in research outcomes [16]. This guide objectively compares the performance of different AI-powered data management approaches within autonomous DBTL platforms, providing researchers with validated experimental data and methodologies for implementation.

Comparative Analysis of Autonomous Platform Performance

The performance of autonomous experimentation platforms varies significantly based on their integrated AI and data management capabilities. The table below summarizes quantitative performance data from recent implementations across different biological domains.

Table 1: Performance Comparison of Autonomous DBTL Platforms

| Platform / Study Focus | Experimental Duration | Throughput (Variants Tested) | Performance Improvement | Key AI/ML Methodology |

|---|---|---|---|---|

| Generalized Enzyme Engineering [17] | 4 weeks | <500 variants per enzyme | 26-fold activity improvement (YmPhytase); 16-fold activity improvement (AtHMT) | Protein LLM (ESM-2) + Epistasis Model + Low-N ML |

| Bacterial System Optimization [15] | Multiple iterations (4 cycles) | 96-well microtiter plates | Significant accuracy gains for complex QA; optimized inducer concentrations | Active Learning + Random Search (Baseline) |

| AI-Native Data Management [16] | N/A (Architectural) | 60% reduction in manual integration tasks (Projected) | Proactive quality issue prevention | AI-Enabled Data Integration + Automated Cataloging |

The data reveals that platforms leveraging specialized AI models, such as protein large language models (LLMs), achieve substantial performance improvements with relatively low experimental throughput [17]. This demonstrates the value of AI in guiding experimental design toward high-potential variants. Furthermore, the integration of automated data management is projected to drastically reduce manual engineering tasks, shifting researcher roles from routine maintenance to strategic analysis [16].

Experimental Protocols and Methodologies

A critical examination of the featured platforms reveals distinct experimental methodologies that enable their autonomous operation.

Protocol for Generalized Autonomous Enzyme Engineering

The platform described in Nature Communications employs a rigorous, modular workflow for autonomous enzyme engineering [17]:

- Design: An initial library of protein variants is designed using a combination of a protein LLM (ESM-2) and an epistasis model (EVmutation). This approach maximizes library diversity and quality by predicting amino acid likelihoods and analyzing local homologs.

- Build: A high-fidelity (HiFi) assembly-based mutagenesis method is automated on the biofoundry (iBioFAB). This method eliminates the need for intermediate sequence verification, achieving approximately 95% accuracy and enabling continuous workflow.

- Test: The platform executes seven fully automated modules for mutant characterization. This includes mutagenesis PCR, DNA assembly, transformation, colony picking, plasmid purification, protein expression, and high-throughput enzyme assays. The process is scheduled via integrated software (Thermo Momentum) and a central robotic arm.

- Learn: Assay data from each cycle trains a low-N machine learning model to predict variant fitness. These predictions inform the design of the subsequent library for iterative optimization, closing the autonomous loop.

Protocol for Bacterial System Optimization using Robotic Platforms

The research on bacterial systems outlines a protocol for closing the test-learn cycle autonomously [15]:

- Cultivation: Bacterial cultures are cultivated in 96-well flat-bottom microtiter plates (MTPs) within a custom robotic platform, maintained by a shake incubator.

- Induction and Measurement: Liquid handling robots (CyBio FeliX) administer inducers according to a parameter set retrieved from a database. A plate reader (PheraSTAR FSX) then measures optical density (OD600) and fluorescence (e.g., from GFP production).

- Data Import and Optimization: An importer software component writes measurement data to a central database. An optimizer module then selects the next set of measurement points by balancing exploration and exploitation against a defined objective function (e.g., maximizing fluorescence).

- Iteration: The platform uses these new points to reprogram the subsequent induction experiments, executing consecutive iterations of the test-learn cycle without human intervention.

Workflow Visualization of Autonomous Platforms

The efficiency of autonomous DBTL platforms is governed by the seamless logical flow between software intelligence and robotic hardware.

Logical Workflow of an Autonomous DBTL Cycle

This diagram illustrates the core closed-loop logic of an autonomous platform. The process begins with a protein sequence and a measurable fitness goal. The AI-powered Design module generates variant libraries, which the automated Build module constructs. The robotic Test module executes experiments, and the resulting data feeds the ML-driven Learn module. The model's predictions then inform the next Design cycle, iterating autonomously until the fitness goal is met [17].

Data Architecture for Autonomous Experimentation

This architecture highlights the critical data management layer. Data flows from robotic hardware to a central database via an importer. An AI optimizer accesses this data to select subsequent experimental parameters, which are executed by a scheduler. This seamless flow of information and commands is the backbone of autonomous operation, transforming a static robotic platform into a dynamic, self-optimizing system [15].

The Scientist's Toolkit: Research Reagent Solutions

Successful implementation of autonomous DBTL cycles relies on a foundation of specific reagents, materials, and software.

Table 2: Essential Research Reagents and Materials for Autonomous DBTL Platforms

| Item | Function / Application | Implementation Example |

|---|---|---|

| Microtiter Plates (96-well) | High-throughput cultivation and screening vessel. | Used for bacterial cultivation and fluorescence measurements in robotic platforms [15]. |

| Inducers (e.g., IPTG, Lactose) | Chemically trigger protein expression in genetic systems. | Automatically dispensed by liquid handlers to optimize induction conditions [15]. |

| Reporter Proteins (e.g., GFP) | Provide a quantifiable readout for gene expression and protein production. | Served as a measurable target for optimizing bacterial systems [15]. |

| Polymerases & Assembly Mixes | Enable precise and automated DNA construction via PCR and assembly. | Critical for the HiFi-assembly mutagenesis method in automated enzyme engineering [17]. |

| Protein LLMs (e.g., ESM-2) | AI models that predict protein sequence fitness to guide library design. | Used to generate high-quality, diverse initial variant libraries, minimizing experimental burden [17]. |

| Epistasis Models (e.g., EVmutation) | Computational models that identify interacting mutations in proteins. | Combined with protein LLMs to enhance the quality of designed variant libraries [17]. |

The validation of autonomous DBTL platforms confirms that their critical software layer—comprising AI, machine learning, and sophisticated data management systems—is the primary driver of research acceleration. The comparative data shows that platforms integrating specialized AI for experimental design and robust data handling can achieve order-of-magnitude improvements in protein function while requiring the construction and testing of fewer than 500 variants [17]. The emerging trend is a shift toward unified data ecosystems and AI-native databases that actively interpret data, moving beyond passive storage [16]. For researchers and drug development professionals, the strategic imperative is clear: investing in and mastering this integrated software stack is not ancillary but fundamental to leading the next wave of innovation in automated science.

A significant transformation is underway in bioengineering, shifting from manual, artisanal laboratory practices towards industrialized, automated processes. The central thesis of this guide is that the full potential of autonomous Design-Build-Test-Learn (DBTL) platforms is only realized when they are validated as integrated robotic systems, rather than as collections of independent tools. The following comparison of experimental data and methodologies demonstrates that automation is the critical catalyst overcoming the fundamental bottlenecks in biological discovery and scaling.

The Core Bottleneck: Manual Workflows in the Data Age

The central challenge in modern bioengineering is a profound imbalance: while artificial intelligence (AI) possesses immense computational power for biological design, the physical processes for building and testing these designs remain slow, labor-intensive, and prone to error. This creates a critical data-generation bottleneck.

Manual laboratory processes are characterized by high variability, limited throughput, and significant hands-on time, which restricts the collection of large, reproducible datasets required for robust AI/ML models [18]. One analysis suggests that 89% of published life science articles feature manual protocols that have existing automated alternatives [19]. This "automation gap" limits the pace of discovery, as researchers spend excessive time on repetitive tasks rather than on experimental design and interpretation.

The economic implication is a steep cost curve for data. Generating a billion-row protein-binding dataset with conventional methods has been estimated to cost nearly a billion dollars, whereas miniaturized automation could reduce this figure to the realm of ten million, transforming it from a fantasy into a feasible consortium project [18].

Experimental Validation: Case Studies in Automated DBTL Platforms

The performance advantage of integrated robotic systems becomes clear when comparing their outputs against traditional manual methods or semi-automated approaches. The table below summarizes key quantitative results from recent implementations of autonomous platforms.

Table 1: Performance Comparison of Automated vs. Manual Bioengineering Workflows

| Experimental Goal / System | Manual / Pre-Automation Performance | Automated Platform Performance | Key Improvement Metrics |

|---|---|---|---|

| Autonomous Enzyme Engineering (iBioFAB Platform) [17] | Engineering process specialist-dependent, slow, and expensive. | Integrated ML and LLMs with biofoundry automation. | 90-fold improvement in substrate preference; 16-fold & 26-fold activity improvements in 4 weeks. |

| Bacterial Protein Expression Optimization [15] | Prolonged, manual DBTL cycles with high variability. | Robotic platform with active learning for inducer optimization. | Fully autonomous "Test-Learn" cycles; optimized complex biological systems without human intervention. |

| Strain Engineering for Protein Yield [20] | Hands-on time limited throughput and introduced variability. | Automated liquid handling for cell culture and protein quantification. | Enabled large-library screening for ML; reduced hands-on time and cross-contamination risk. |

| Enzyme Discovery for Plastic Biorecycling [20] | Manual spotting and transformations were slow and labor-intensive. | Automated liquid handling and bacterial spotting in 96-well format. | Achieved much higher throughput with reduced variability; accelerated data acquisition for pipeline. |

Detailed Experimental Protocol: Autonomous Enzyme Engineering

The following methodology details the workflow from the generalized AI-powered platform for autonomous enzyme engineering, which achieved the results noted in Table 1 [17].

Objective: To engineer enzymes for enhanced function (e.g., improved substrate preference, activity at neutral pH) without human intervention, relying solely on an input protein sequence and a quantifiable fitness function.

Platform Components:

- Hardware: The Illinois Biological Foundry (iBioFAB), a fully integrated robotic system featuring liquid handlers (CyBio FeliX), a robotic arm for plate transport, incubators, and plate readers.

- Software & AI: A combination of machine learning (ML) for fitness prediction, large language models (LLMs) like ESM-2 for initial library design, and epistasis models (EVmutation) for variant prioritization.

Procedure:

- Learn/Design: An initial library of 180 protein variants is designed using a combination of a protein LLM (ESM-2) and an epistasis model (EVmutation) to maximize diversity and quality.

- Build: The platform executes an automated, high-fidelity mutagenesis method via HiFi-assembly. The workflow is divided into seven fully automated modules: mutagenesis PCR, DNA assembly, transformation, colony picking, plasmid purification, and protein expression. A key feature is the elimination of mid-campaign sequence verification, creating an uninterrupted workflow.

- Test: The expressed protein variants are characterized using automated, high-throughput functional enzyme assays (e.g., for methyltransferase or phytase activity).

- Iterate: The assay data is used to train a "low-N" machine learning model that predicts variant fitness. This model designs the subsequent library for the next DBTL round. The platform required construction and characterization of fewer than 500 variants for each enzyme to achieve significant improvements.

Critical Automation Differentiator: The seamless physical and digital integration of the platform allows for continuous operation. The system's modularity ensures robustness, enabling recovery from errors without restarting the entire process. This end-to-end integration is what eliminates the need for human judgment and domain expertise between cycles.

Detailed Experimental Protocol: Autonomous Optimization of Protein Expression

This protocol exemplifies the implementation of a fully autonomous "Test-Learn" cycle to optimize a biological process, a critical sub-function of a full DBTL platform [15].

Objective: To autonomously optimize the inducer concentration and feed release for maximizing GFP production in Bacillus subtilis and Escherichia coli expression systems.

Platform Components:

- Hardware: A custom robotic platform (Analytik Jena) with a Cytomat shake incubator, CyBio FeliX liquid handlers, a PheraSTAR FSX plate reader, and a robotic arm for plate transfer.

- Software: A dedicated framework comprising an "importer" to retrieve measurement data, a database, and an "optimizer" module that selects the next measurement points based on an objective function balancing exploration and exploitation.

Procedure:

- The robotic platform initiates bacterial cultivations in 96-well microtiter plates.

- At the induction point, the liquid handler adds pre-determined concentrations of inducer (e.g., lactose/IPTG) and/or feed-release enzyme.

- The plate reader periodically measures optical density (OD600) and fluorescence (GFP) to monitor cell growth and protein production.

- After a cultivation round, the software importer feeds all data into the database.

- The optimizer (using either an active-learning algorithm or a random search as a baseline) analyzes the data and selects the next set of conditions (inducer/enzyme concentrations) to test, aiming to maximize GFP output.

- The platform automatically begins the next cultivation round with the new parameters, closing the loop for four consecutive iterations without human intervention.

Critical Automation Differentiator: The real-time data analysis and autonomous decision-making transform the robotic platform from a static executor of protocols into a dynamic, hypothesis-generating system. This "continuous learning" approach drastically reduces the time from data acquisition to experimental redesign, which is typically a major manual bottleneck.

The Paradigm Shift: From DBTL to LDBT

The advent of sophisticated AI is prompting a fundamental re-evaluation of the classic DBTL cycle. Evidence suggests a paradigm shift towards "LDBT," where Learning precedes Design [21].

In this new model, machine learning models (especially protein language models trained on vast evolutionary data) are used for zero-shot prediction, generating high-quality initial designs without any prior experimental data from the specific system. This inverts the traditional cycle, where learning occurred only after building and testing. The "Build" and "Test" phases, supercharged by cell-free systems and automation, then serve to rapidly validate the AI's predictions and generate minimal, high-value data for final model fine-tuning [21]. This shift is a key enabler for the dramatic acceleration seen in the case studies, moving synthetic biology closer to a "Design-Build-Work" engineering discipline.

The following diagram illustrates the logical and operational differences between the traditional DBTL cycle and the emerging LDBT paradigm.

The Scientist's Toolkit: Essential Reagents and Materials for Automated Platforms

Transitioning to automated workflows requires not only robotic hardware but also a suite of specialized reagents and labware designed for reliability and scalability at high throughput.

Table 2: Key Research Reagent Solutions for Automated DBTL Platforms

| Item Name / Category | Function in Automated Workflow | Key Features for Automation |

|---|---|---|

| Cell-Free Expression System [21] | A transcription-translation system for rapid protein synthesis without living cells. | Bypasses cloning and cell cultivation; highly scalable from pL to L; enables production of toxic proteins. |

| High-Fidelity Assembly Mix [17] | Enzymatic mix for error-free DNA assembly and mutagenesis. | Critical for reliable, continuous workflows; eliminates need for mid-campaign sequence verification. |

| 96-/384-Well Microtiter Plates | Standardized labware for cell cultivation and assays. | Enables parallel processing; compatible with robotic grippers and liquid handling heads. |

| Nano-Glo HiBiT Reagent [20] | Luciferase-based assay for sensitive protein quantification. | Compatible with automated liquid handling; requires precise pipetting for standard curves (R² >0.99). |

| Automation-Friendly Cell Lysis Reagent | Chemical lysis of bacterial cultures for protein analysis. | Enables crude lysate removal via automated liquid handling, streamlining the test phase. |

The experimental data and protocols presented confirm that the bottleneck in bioengineering is not a lack of computational power or creative ideas, but a physical constraint in data generation. The validation of autonomous DBTL platforms must therefore focus on their performance as integrated robotic systems, not merely as disconnected automation tools.

The key differentiators of a successful platform are:

- Closure of the Loop: Fully autonomous cycling between Test and Learn phases, eliminating manual data handling and analysis [15].

- AI Integration at the Forefront: The use of LLMs and ML for initial design (LDBT), not just late-stage optimization [17] [21].

- Modular and Robust Workflows: Automated modules that allow for error recovery and protocol flexibility without halting the entire system [17] [18].

The evidence demonstrates that only through this holistic approach can the field achieve the necessary step-change in reproducibility, throughput, and cost-efficiency to scale biological discovery to meet future challenges in health, energy, and sustainability.

The field of synthetic biology is undergoing a fundamental transformation, moving from traditional, labor-intensive processes towards highly automated, intelligent systems. For years, the engineering of biological systems has been guided by the Design-Build-Test-Learn (DBTL) cycle, an iterative framework that, while systematic, often requires multiple slow, expensive iterations [21]. Today, two key technologies are revolutionizing this paradigm: Protein Language Models (PLMs) and Bayesian Optimization (BO). When integrated within autonomous robotic platforms, these technologies are enabling a new generation of self-driving bio-foundries that can dramatically accelerate the pace of discovery and optimization in protein engineering and drug development [22].

This shift is encapsulated in the emerging "LDBT" (Learn-Design-Build-Test) cycle, where machine learning, powered by vast biological datasets, precedes and guides the design phase [21]. This article provides a comparative guide to these enabling technologies, detailing their performance, experimental protocols, and their synergistic role in validating autonomous DBTL platforms.

Protein Language Models: Decoding the Language of Life

Protein Language Models are deep learning models, primarily based on the Transformer architecture, that are pre-trained on millions of protein sequences to learn the fundamental "grammar" and "syntax" of proteins [23] [24]. By treating amino acids as words and protein sequences as sentences, these models learn evolutionary patterns and structure-function relationships, allowing them to make powerful predictions without requiring experimentally determined structures [21] [23].

Table 1: Key Protein Language Models and Their Capabilities

| Model Name | Architecture | Key Features | Primary Applications |

|---|---|---|---|

| ESM-2 [21] [22] | Transformer Encoder | Scalable with up to 15B parameters, state-of-the-art structure prediction | Zero-shot fitness prediction, variant design, function annotation |

| ProtTrans [24] | Ensemble (BERT, Albert, ELECTRA) | Trained on massive datasets (UniRef, BFD); produces rich embeddings | Protein representation learning, feature extraction for downstream models |

| ProteinMPNN [21] | Transformer Decoder | Structure-conditioned sequence generation | De novo protein design, fixed-backbone sequence inversion |

| AntiBERTa [24] | Transformer Encoder | Specialized for antibody sequences | Paratope prediction, antibody engineering |

Experimental Protocol for PLM-Guided Protein Evolution

A study published in Nature Communications in 2025 detailed a protocol for a Protein Language Model-enabled Automatic Evolution (PLMeAE) platform [22]. The system was used to engineer a tRNA synthetase, achieving a 2.4-fold increase in enzyme activity in just four rounds of evolution over ten days.

Methodology Details:

- Zero-Shot Library Design (Design Phase): The PLM (ESM-2) was used to score single-point mutants of the wild-type enzyme. The model calculated the likelihood of each variant improving fitness, and the top 96 predicted variants were selected for the first round of testing [22].

- Automated Construction (Build Phase): An automated biofoundry synthesized the DNA sequences and expressed the protein variants without human intervention [22].

- High-Throughput Screening (Test Phase): The same biofoundry tested the enzymatic activity of all 96 variants in a high-throughput assay [22].

- Fitness Predictor Training (Learn Phase): The experimental results were fed back to train a supervised machine learning model (a multi-layer perceptron) to correlate sequence with fitness. This model then designed the subsequent round of 96 variants [22].

This closed-loop process demonstrates a complete, automated LDBT cycle where PLMs are integral to the initial design and subsequent learning phases.

Performance Comparison of PLM Approaches

Table 2: Performance Metrics of PLM-Enabled Protein Engineering

| Method | Key Metric | Reported Performance | Experimental Context |

|---|---|---|---|

| PLMeAE (Module I) [22] | Experimental Rounds & Activity Gain | 4 rounds, 2.4-fold activity increase | Engineering tRNA synthetase with no prior sites |

| PLMeAE (Module II) [22] | Efficiency vs. Random Screening | Superior to random selection | Engineering proteins with known mutation sites |

| Zero-Shot Design [21] | Success Rate in de novo Design | ~10-fold increase in design success | Combining ProteinMPNN with AlphaFold2 |

| Cell-Free PLM Screening [21] | Throughput & Scale | ~500,000 variants computationally surveyed, 500 validated | Antimicrobial peptide (AMP) design |

The following diagram illustrates the integrated workflow of the PLMeAE system, showcasing the closed-loop, automated cycle [22]:

Bayesian Optimization: Navigating Complex Fitness Landscapes

Bayesian Optimization is a machine learning strategy for efficiently optimizing expensive-to-evaluate "black-box" functions, such as experimental assays. It builds a probabilistic surrogate model (e.g., a Gaussian Process) of the underlying system and uses an acquisition function to decide which experiments to run next, optimally balancing exploration (testing uncertain regions) and exploitation (testing regions likely to be good) [25] [26].

In drug discovery, this is often applied as a Multi-Fidelity Bayesian Optimization (MF-BO), which intelligently allocates resources across experiments of differing cost and accuracy (e.g., docking, single-point inhibition, and dose-response assays) [26].

Experimental Protocol for MF-BO in Drug Discovery

A 2025 study in ACS Central Science detailed a protocol for an autonomous molecular discovery platform using MF-BO to find novel histone deacetylase inhibitors (HDACIs) [26].

Methodology Details:

- Search Space Generation: A genetic algorithm, based on executable reaction templates, generated a diverse and synthetically accessible library of candidate molecules [26].

- Multi-Fidelity Experimental Setup:

- Low-Fidelity: Docking scores computed using DiffDock (~3,500 molecules per week).

- Medium-Fidelity: Single-point percent inhibition assays run automatically on the platform (~120 molecules per week).

- High-Fidelity: Dose-response IC50 values (manually evaluated for a handful of top molecules) [26].

- The MF-BO Algorithm:

- Surrogate Model: A Gaussian Process with a Tanimoto kernel, using Morgan fingerprints (radius 2, 1024 bit) as molecular representations.

- Acquisition Function: Expected Improvement (EI), extended for multiple fidelities via the Targeted Variance Reduction (TVR) heuristic.

- Batch Selection: A Monte Carlo method selected batches of molecule-fidelity pairs to test within a predefined budget (costs: Docking=0.01, Single-point=0.2, Dose-response=1.0; weekly budget=10.0) [26].

- Autonomous Platform Execution: The platform integrated liquid handlers, HPLC-MS, a plate reader, and robotic arms to synthesize and test the molecules selected by the MF-BO algorithm automatically [26].

Performance Comparison of Bayesian Optimization Methods

Table 3: Performance Comparison of Optimization Strategies in Drug Discovery

| Optimization Method | Key Metric | Reported Performance | Experimental Context |

|---|---|---|---|

| Multi-Fidelity BO (MF-BO) [26] | Rediscovery of Top-2% Inhibitors | Outperformed all other methods | Prospective search for HDAC inhibitors |

| Classic Experimental Funnel (EF) [26] | Rediscovery of Top-2% Inhibitors | Lower rate than MF-BO | Retrospective analysis on ChEMBL data |

| Transfer Learning (TL) [26] | Rediscovery of Top-2% Inhibitors | Lower rate than MF-BO | Retrospective analysis on ChEMBL data |

| Single-Fidelity BO (BO) [26] | Rediscovery of Top-2% Inhibitors | Lower rate than MF-BO | Retrospective analysis on ChEMBL data |

| Cloud-based BO [25] | Experimental Efficiency | Found optimal conditions testing ~21 conditions vs. 294 brute-force | Cell-free assay optimization for papain |

The logical workflow of the Multi-Fidelity Bayesian Optimization platform, integrating both in silico and physical robotic components, is shown below [26]:

The Integrated Toolkit for Autonomous DBTL Platforms

The validation of autonomous DBTL systems relies on the seamless integration of computational and physical components. The following table details the essential research reagents and tools that form the backbone of these platforms.

Table 4: Research Reagent Solutions for Autonomous DBTL Platforms

| Category | Item / Technology | Function & Application |

|---|---|---|

| Computational Models | ESM-2 Protein Language Model [22] | Provides zero-shot prediction of protein fitness and variant design. |

| Gaussian Process with Tanimoto Kernel [26] | Serves as the surrogate model in BO for predicting molecular activity. | |

| Data & Libraries | UniProt/UniRef Databases [23] [24] | Source of millions of protein sequences for pre-training PLMs. |

| ChEMBL Database [26] | Provides curated bioactivity data for validating and testing BO algorithms. | |

| Experimental Subsystems | Cell-Free Gene Expression Systems [21] | Enables rapid, high-throughput protein synthesis without cloning. |

| Droplet Microfluidics [21] | Allows ultra-high-throughput screening of >100,000 picoliter-scale reactions. | |

| Robotic Hardware | Automated Liquid Handlers [22] [26] | Executes precise liquid transfers for synthesis and assays in the Build/Test phases. |

| Robotic Arm & Scheduling Software [22] | Coordinates multiple instruments (HPLC-MS, plate readers) for workflow orchestration. |

The objective comparison of Protein Language Models and Bayesian Optimization reveals that neither technology operates in isolation in a modern autonomous DBTL platform. PLMs provide a powerful, knowledge-rich starting point from evolutionary data, enabling effective "zero-shot" designs that dramatically reduce the initial search space [21] [22]. Bayesian Optimization, particularly in its multi-fidelity form, provides a rigorous mathematical framework for the efficient exploration of that space, optimally allocating precious experimental resources across assays of varying cost and accuracy [26].

The experimental data confirms that their integration leads to a synergistic effect. The PLMeAE platform demonstrated a rapid engineering campaign completed in days rather than months [22], while the MF-BO platform successfully discovered novel, potent drug candidates by navigating a complex chemical space that would be prohibitively expensive to explore with traditional methods [26]. For researchers validating autonomous robotic systems, these technologies are not merely enabling; they are foundational. They transform the DBTL cycle from a manual, sequential process into a self-driving, intelligent, and iterative discovery engine, setting a new benchmark for speed and efficiency in biological engineering and drug development.

From Theory to Practice: Implementing Autonomous DBTL Platforms in Real-World Research

The traditional Design-Build-Test-Learn (DBTL) cycle, the cornerstone of biological engineering, has long been hampered by its reliance on deep human expertise, making it slow, resource-intensive, and difficult to scale. While advancements in laboratory automation have accelerated the "Build" and "Test" phases, the "Design" and "Learn" steps have remained a persistent bottleneck, requiring expert human judgment. This article examines a paradigm shift: the creation of a fully autonomous, generalized platform that seamlessly integrates the Illinois Biological Foundry for Advanced Biomanufacturing (iBioFAB) with machine learning (ML) and large language models (LLMs). We will objectively compare its performance against traditional and semi-automated alternatives, validating its role as a transformative tool in autonomous robotic systems research.

Platform Architecture: A Deep Dive into the Integrated System

The generalized platform's architecture is designed to eliminate human intervention from the entire DBTL cycle. Its power derives from the synergistic integration of three core components: a fully automated robotic biofoundry, specialized machine learning models, and large language models trained on biological data.

The Robotic Core: Illinois Biological Foundry for Advanced Biomanufacturing (iBioFAB)

The iBioFAB serves as the physical engine of the platform, a fully automated robotic system that executes all wet-lab operations. It transforms digital designs into physical reality and generates the high-quality data required for machine learning. The platform automates the entire protein engineering workflow, which is broken down into seven robust, modular components: mutagenesis PCR, DNA assembly, transformation, colony picking, plasmid purification, protein expression, and functional enzyme assays [17]. A key innovation that ensures continuity is a high-fidelity mutagenesis method achieving approximately 95% accuracy, eliminating the need for time-consuming intermediate sequence verification and enabling truly uninterrupted workflows [17] [27].

The Intelligent Design Engine: Protein LLMs and Epistasis Models

The "Design" phase is powered by unsupervised models that require no prior experimental data for the target enzyme. The platform leverages a state-of-the-art protein language model, ESM-2, a transformer trained on millions of natural protein sequences. ESM-2 understands the deep grammatical and evolutionary rules of protein language to predict beneficial mutations by estimating the likelihood of amino acids at specific positions [17] [27]. This is complemented by an epistasis model, EVmutation, which focuses on the local homologs of the target protein. Together, they generate a diverse and high-quality initial library, maximizing the chance of identifying promising mutants early in the process [17].

The Learning Loop: Iterative Machine Learning

Once initial experimental data is generated by the iBioFAB, the platform transitions to a data-driven learning mode. The results from the first round are used to train a supervised "low-N" regression model. This model, now fine-tuned with specific knowledge about the target enzyme's fitness landscape, predicts the next generation of mutants, intelligently combining the best single mutations into higher-order variants [17] [27]. This iterative cycle of prediction and experimentation allows the platform to rapidly climb the fitness peak, continuously refining its understanding with each round of data.

The following diagram illustrates the seamless integration of these components into a closed-loop, autonomous workflow.

Performance Comparison: Benchmarking Against Alternatives

To objectively evaluate the platform's performance, we compare its achievements in engineering two distinct enzymes against the outcomes expected from traditional directed evolution and an earlier automated system, BioAutomata.

Quantitative Results and Efficiency Metrics

Table 1: Comparative Performance in Enzyme Engineering Campaigns

| Engineering Metric | Traditional Directed Evolution | BioAutomata (Lycopene Pathway, 2019) [28] | Generalized AI Platform (AtHMT, 2025) [17] [27] | Generalized AI Platform (YmPhytase, 2025) [17] [27] |

|---|---|---|---|---|

| Improvement in Activity | Variable, often 2-10 fold | Optimized pathway expression | 16-fold (ethyltransferase activity) | 26-fold (specific activity at neutral pH) |

| Other Key Improvements | N/A | N/A | 90-fold shift in substrate preference | N/A |

| Variants Screened | 10,000+ | <1% of possible variants (high efficiency) | <500 variants | <500 variants |

| Project Timeline | 6-12 months | Not specified | 4 weeks (4 rounds) | 4 weeks (4 rounds) |

| Key Differentiator | Relies on random mutagenesis & expert screening | Bayesian optimization of gene expression | Fully autonomous DBTL | Fully autonomous DBTL |

The data demonstrates a significant leap in efficiency and capability. The 2025 platform achieved dramatic functional improvements in a fraction of the time and with a remarkably small experimental footprint (<500 variants screened per enzyme) [17]. In contrast, Traditional Directed Evolution is notoriously slow and labor-intensive, often requiring the screening of tens of thousands of variants over many months. The BioAutomata platform, a precursor, showed high efficiency by evaluating less than 1% of the possible search space but was focused on a narrower problem of optimizing gene expression for a metabolic pathway [28]. The new platform generalizes this approach and adds fully autonomous decision-making, moving from a system that efficiently finds a maximum in a predefined space to one that actively designs the space itself.

Experimental Protocol and Workflow

The following methodology, derived from the featured platform, details the steps for a single, autonomous DBTL cycle.

Design.

- Input: The process begins with a single input: the wild-type protein sequence of the target enzyme (e.g., AtHMT or YmPhytase) [17].

- Library Generation: A first-generation library of ~180 variants is designed de novo using the ESM-2 protein LLM and the EVmutation epistasis model, which predict mutations likely to improve fitness based on evolutionary patterns and co-evolutionary statistics [17].

Build.

- Automated Cloning: The iBioFAB executes a high-fidelity, HiFi-assembly-based mutagenesis protocol to construct the variant library in plasmids [17].

- Transformation and Culturing: The platform performs automated microbial transformation, colonies are picked robotically, and cultures are grown in microtiter plates within automated incubators [17].

Test.

- Protein Expression: The system induces protein expression in the cultured cells [17].

- Fitness Assay: A high-throughput, automation-friendly functional assay is performed. For the case studies, this involved measuring fluorescence (for a GFP reporter) or direct enzyme activity assays (e.g., for methyltransferase or phytase activity) to quantify the fitness of each variant [17] [27]. The plate reader modules within the robotic platform automatically collect this data.

Learn.

- Data Integration: An importer software component automatically retrieves all measurement data from the platform's devices and writes it to a central database [15] [17].

- Model Training and Prediction: The experimental data is used to train a supervised machine learning model (a "low-N" regression model). This model then predicts the fitness of a new set of variants, proposing the next batch of experiments by combining beneficial mutations from the first round [17]. An optimizer algorithm selects the next measurement points based on a balance between exploration and exploitation [15].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 2: Key Research Reagents and Materials for Autonomous Enzyme Engineering

| Item Name | Function in the Workflow | Specific Examples / Specifications |

|---|---|---|

| Protein Language Model (ESM-2) | An unsupervised model used for the de novo design of high-quality initial variant libraries by predicting beneficial mutations. | ESM-2 (Evolutionary Scale Modeling) [17] [27] |

| Epistasis Model (EVmutation) | Complements the protein LLM by analyzing co-evolution in protein families to identify residue-residue interactions critical for function. | EVmutation model [17] |

| HiFi DNA Assembly Mix | Enables high-fidelity assembly of DNA fragments during mutagenesis, crucial for achieving ~95% accuracy and avoiding sequencing verification. | Not specified (Commercial high-fidelity assembly kit) [17] |

| Automated Robotic Platform | Integrated system of liquid handlers, incubators, and plate readers to perform all "Build" and "Test" steps without human intervention. | iBioFAB (Components: Cytomat incubator, CyBio FeliX liquid handlers, PheraSTAR FSX plate reader) [15] [17] |

| Fitness Assay Reagents | Chemicals and substrates required for the high-throughput functional screen that quantifies variant performance. | Substrates for target enzyme (e.g., iodide compounds for AtHMT, phytic acid for YmPhytase) and detection reagents [17] |

The integration of iBioFAB, ML, and LLMs into a single platform represents a validated architectural blueprint for autonomous biological research. The experimental data confirms that this generalized system dramatically outperforms traditional methods in speed, efficiency, and scope, achieving multi-fold enzyme improvements in weeks rather than months with minimal experimental effort. By successfully closing the DBTL loop without human intervention, it transitions biological engineering from a bespoke, specialist-dependent craft into a scalable, predictable, and democratized science. This platform sets a new benchmark for autonomous robotic systems, paving the way for accelerated advancements across drug discovery, renewable energy, and sustainable chemistry.

The engineering of enzymes for enhanced activity and substrate preference is a cornerstone of industrial biotechnology, with applications ranging from pharmaceutical manufacturing to sustainable chemistry. Traditional enzyme engineering, relying on manual iterations of the Design-Build-Test-Learn (DBTL) cycle, is often slow, resource-intensive, and limited by human bandwidth and expertise [17] [27]. This case study examines a groundbreaking, generalized platform for autonomous enzyme engineering that integrates artificial intelligence (AI) with robotic laboratory automation to create a self-driving discovery system [17]. This platform eliminates human intervention from the DBTL loop, demonstrating a transformative approach for robotic systems research. We will objectively analyze its performance against prior methodologies, detail its experimental protocols, and validate its efficacy through quantitative data from the engineering of two distinct enzymes: Arabidopsis thaliana halide methyltransferase (AtHMT) and Yersinia mollaretii phytase (YmPhytase) [17].

Experimental Protocols & System Architecture

The Autonomous Workflow Protocol

The platform's operation is a continuous, automated cycle executed on the Illinois Biological Foundry for Advanced Biomanufacturing (iBioFAB) [17]. The end-to-end workflow for each engineering cycle is modularized into seven distinct, automated protocols:

- AI-Driven Design: The cycle initiates with computational design. For the initial library, a combination of a protein Large Language Model (ESM-2) and an epistasis model (EVmutation) predicts beneficial mutations without any prior experimental data for the target enzyme [17] [27]. In subsequent cycles, a supervised "low-N" machine learning (ML) model is trained on the accumulated experimental data to propose higher-order mutants [17].

- DNA Construction: Variants are constructed using a high-fidelity, HiFi-assembly-based mutagenesis method. This method eliminates the need for intermediate sequence verification, achieving approximately 95% accuracy and enabling an uninterrupted workflow [17].

- Transformation: The constructed DNA is transformed into microbial hosts via an automated 96-well microbial transformation protocol [17].

- Protein Expression: Transformed colonies are automatically picked and cultured in 96-well deep-well plates for protein expression [17].

- Lysate Preparation: Following expression, crude cell lysates are prepared automatically to release the expressed enzyme variants for functional testing [17].

- Functional Assay: The platform performs automated, high-throughput enzyme activity assays. For AtHMT, this measured methyltransferase and ethyltransferase activity. For YmPhytase, activity was measured at neutral pH [17].

- Data Analysis and Learning: Assay data is automatically processed and fed back to the ML model, which learns from the results to design the next, improved library of variants, closing the autonomous loop [17].

System Visualization: The Autonomous DBTL Cycle

The following diagram illustrates the integrated, closed-loop workflow of the autonomous platform, showing the seamless flow from AI-driven design to robotic execution and iterative learning.

Performance Comparison & Quantitative Validation

This autonomous platform was validated through direct comparison with traditional manual methods by engineering two enzymes. The quantitative results, summarized in the table below, demonstrate a dramatic acceleration and enhancement of engineering outcomes.

Table 1: Quantitative Performance Comparison of Autonomous vs. Traditional Enzyme Engineering

| Engineering Metric | Autonomous AI Platform | Traditional Directed Evolution (Benchmark) |

|---|---|---|

| Project Duration | 4 weeks for 4 full DBTL rounds [17] | Typically several months to a year for similar outcomes [27] |

| Throughput (Variants Screened) | < 500 variants per enzyme to achieve result [17] | Often requires screening thousands to tens of thousands of variants [27] |

| AtHMT Improvement | ||

| - Ethyltransferase Activity | 16-fold improvement [17] | Not specifically reported |

| - Substrate Preference | 90-fold shift in preference [17] | Not specifically reported |

| YmPhytase Improvement | ||

| - Activity at Neutral pH | 26-fold improvement in specific activity [17] | Previous campaigns showed improvement but with lower efficiency [17] [27] |

| Library Design Efficiency | ~55-60% of initial library variants performed above wild-type baseline [17] | Initial libraries often have a very low hit rate (<5%) [27] |

| Key Enabler | Integrated AI/ML and robotics | Expert-driven design and manual screening |

The data underscores the platform's core advantage: efficiency. By leveraging AI to intelligently navigate the fitness landscape, it achieves superior results by testing orders of magnitude fewer variants in a fraction of the time required by traditional approaches [17] [27].

Comparative Visualization of Engineering Efficiency

The following diagram contrasts the fundamental workflows of the autonomous platform with the traditional manual DBTL cycle, highlighting the source of its efficiency gains.

The Scientist's Toolkit: Key Research Reagents & Solutions

The successful implementation of this autonomous platform relies on a suite of specialized reagents and computational tools. The table below details the core components of this "toolkit" and their functions within the engineered system.

Table 2: Essential Research Reagents and Solutions for Autonomous Enzyme Engineering

| Tool Category | Specific Tool / Solution | Function in the Experimental Workflow |

|---|---|---|

| Computational Models | Protein LLM (ESM-2) [17] [27] | Provides zero-shot predictions of beneficial mutations using knowledge from millions of natural protein sequences. |

| Epistasis Model (EVmutation) [17] | Identifies co-evolved residues and epistatic interactions to guide library design. | |

| Low-N Machine Learning Model [17] | A regression model trained on experimental data to predict variant fitness and propose subsequent libraries. | |

| Automation Hardware | Illinois BioFoundry (iBioFAB) [17] | A fully integrated robotic system that automates all physical steps: DNA construction, transformation, expression, and assay. |

| Molecular Biology Kits | High-Fidelity DNA Assembly Kit [17] | Enables accurate, seamless plasmid assembly for mutagenesis with ~95% accuracy, avoiding sequencing delays. |

| DpnI Restriction Enzyme [17] | Digests the methylated parent plasmid template following mutagenesis PCR to reduce background. | |