The DBTL Framework in Metabolic Engineering: A Guide to Iterative Strain Development and AI-Driven Optimization

This article provides a comprehensive overview of the Design-Build-Test-Learn (DBTL) framework, a cornerstone methodology in metabolic engineering and synthetic biology for developing efficient microbial cell factories.

The DBTL Framework in Metabolic Engineering: A Guide to Iterative Strain Development and AI-Driven Optimization

Abstract

This article provides a comprehensive overview of the Design-Build-Test-Learn (DBTL) framework, a cornerstone methodology in metabolic engineering and synthetic biology for developing efficient microbial cell factories. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of the iterative DBTL cycle, detailing its application in pathway optimization and strain engineering for the production of biofuels, pharmaceuticals, and other valuable compounds. The content delves into advanced methodologies, including the integration of machine learning and automated recommendation tools to accelerate the 'Learn' phase. It further addresses common troubleshooting challenges and optimization strategies to avoid cyclical inefficiencies, and examines emerging paradigms and validation techniques for comparing DBTL strategies, offering insights into the future of high-precision biological design.

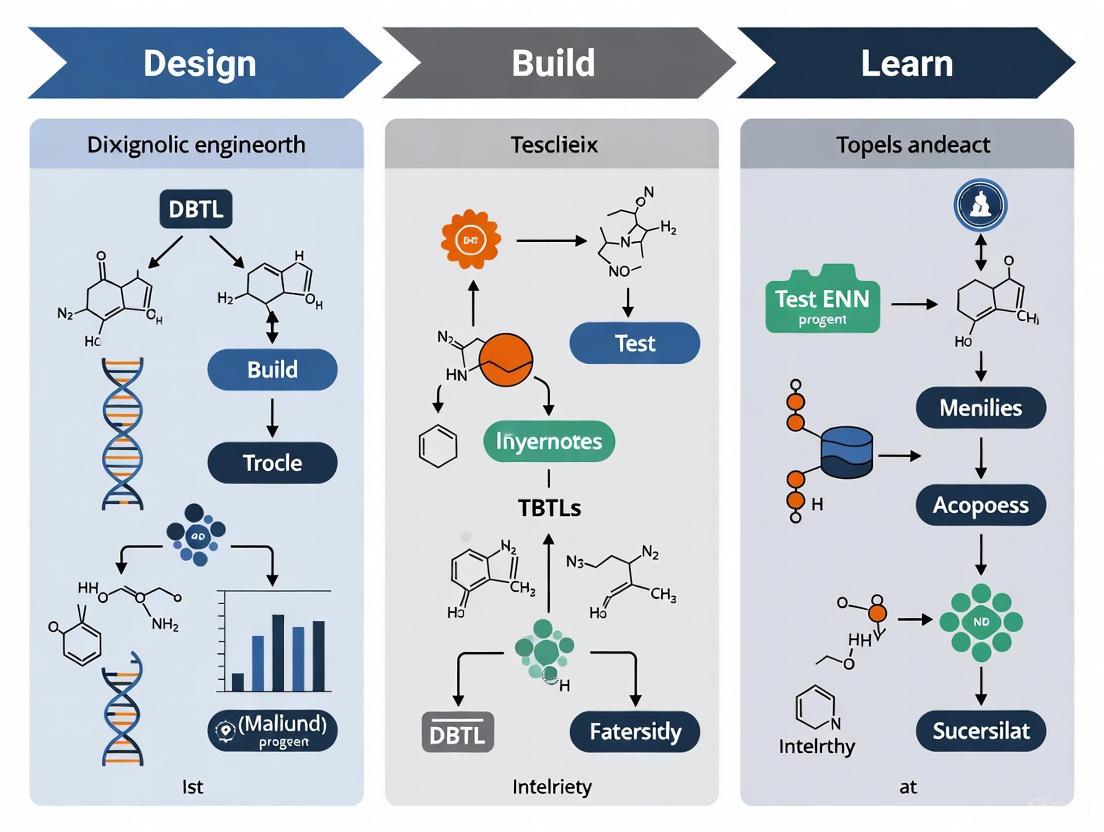

Demystifying the DBTL Cycle: The Foundational Framework of Modern Metabolic Engineering

The Design-Build-Test-Learn (DBTL) cycle represents a foundational framework in synthetic biology and metabolic engineering, enabling the systematic and iterative development of engineered biological systems. This structured approach facilitates the engineering of microbial cell factories for sustainable production of valuable compounds, serving as a robust methodology for engineering problem-solving akin to the scientific method for biologists [1] [2]. The DBTL cycle has revolutionized the biosynthesis of valuable compounds by integrating modern engineering strategies within an iterative framework, significantly enhancing the potential of microbial cell factories as sustainable alternatives to the petrochemical industry [3].

In contemporary biotechnology research and development, the DBTL framework is undergoing a transformative shift with the integration of automation and advanced software solutions. This evolution is leading to unprecedented advancements in speed, efficiency, and precision throughout the bioengineering workflow [4]. The cyclical nature of DBTL allows researchers to continuously refine their biological designs based on experimental data, progressively optimizing system performance until the desired function is achieved [1]. This review comprehensively examines the four pillars of the DBTL cycle, detailing their technical specifications, implementation methodologies, and integration within metabolic engineering research.

The Design Phase: Conceptualization and In Silico Modeling

The Design phase constitutes the initial conceptualization phase where researchers create a digital blueprint of the biological system they intend to implement. This phase encompasses a range of crucial activities, including protein design (selecting natural enzymes or designing novel proteins), genetic design (translating amino acid sequences into coding sequences, designing ribosome binding sites, and planning operon architecture), and assay design (establishing biochemical reaction conditions) [4].

A critical component of the Design phase is assembly design, which involves the strategic breakdown of plasmids into fragments for constructing DNA constructs. This process requires meticulous consideration of factors such as restriction enzyme sites, overhang sequences, and GC content to ensure efficient assembly [4]. Traditional manual design methods are often susceptible to errors in this context, leading to failed experiments. Advanced software platforms now generate detailed DNA assembly protocols tailored to specific project needs, automatically selecting appropriate cloning methods (e.g., Gibson assembly or Golden Gate cloning) and strategically arranging DNA fragments in assembly reactions [4].

Table 1: Key Design Phase Components and Functions

| Component | Function | Tools & Methods |

|---|---|---|

| Protein Design | Selection or engineering of enzymes for metabolic pathways | Structure-based design, natural enzyme selection |

| Genetic Design | Translation of protein designs into DNA sequences | Coding sequence optimization, RBS design, operon architecture |

| Assembly Design | Planning DNA construction from fragments | Restriction enzyme selection, homology arm design, GC content optimization |

| Assay Design | Establishing experimental validation protocols | Reporter system selection, measurement parameters, control design |

The Design phase increasingly incorporates in silico modeling and machine learning approaches to predict system behavior before physical implementation. For metabolic engineering projects, this involves designing metabolic pathways by selecting appropriate enzymes, determining their required expression levels, and identifying potential bottlenecks [5]. The design output serves as a detailed specification for the subsequent Build phase, with precision in this phase being crucial to avoid costly mistakes and time-consuming troubleshooting in later stages [4].

Diagram 1: Design Phase Workflow and Components

The Build Phase: Physical Construction and Assembly

The Build phase translates digital designs into physical biological entities through the construction of DNA constructs, strains, or organisms. This phase requires high precision in assembling DNA constructs, as even minor errors can lead to significant functional deviations in the final biological system [1] [4]. The Build phase encompasses several critical laboratory processes, including DNA synthesis, molecular cloning, and transformation into host organisms.

Modern Build workflows leverage automated liquid handling systems from manufacturers such as Labcyte, Tecan, Beckman Coulter, and Hamilton Robotics to enhance precision and efficiency. These systems provide high-accuracy pipetting essential for processes like PCR setup, DNA normalization, and plasmid preparation [4]. Integration with DNA synthesis providers like Twist Bioscience, IDT (Integrated DNA Technologies), and GenScript streamlines the incorporation of custom DNA sequences into automated laboratory workflows [4]. For high-throughput applications, robust inventory management capabilities are essential for tracking reagents and components throughout the construction process.

Table 2: Build Phase Implementation Methods

| Method Category | Specific Techniques | Key Applications | Throughput Capacity |

|---|---|---|---|

| DNA Assembly | Gibson Assembly, Golden Gate Cloning, Restriction Enzyme-based Cloning | Construct assembly from multiple DNA fragments | Medium to High |

| DNA Synthesis | Twist Bioscience, IDT, GenScript | Custom gene synthesis, fragment production | High |

| Transformation | Heat shock, Electroporation | Introduction of DNA into host organisms | Medium |

| Quality Control | Colony PCR, Restriction Digestion, Sequencing | Verification of constructed DNA elements | Variable |

A representative Build protocol for Gibson assembly, as implemented in recent synthetic biology projects, involves several key steps. First, the backbone vector is linearized through PCR amplification using reduced template DNA quantities (typically 1:100 dilution) to minimize carryover of the original plasmid. Following amplification, a DpnI digestion step (extended to 60 minutes) degrades methylated template DNA. DNA fragments, including the linearized backbone and insert pieces, are assembled using Gibson assembly master mix with an extended incubation time (60 minutes instead of 30 minutes) to enhance efficiency. The resulting assembly reaction is then transformed into competent host cells (e.g., E. coli MG1655) via heat shock, followed by outgrowth in SOC medium and plating on selective media containing appropriate antibiotics (e.g., kanamycin at 50 µg/mL) [6].

Successful construction is verified through colony PCR using primers spanning junction sites between fragments or through next-generation sequencing for comprehensive sequence confirmation [6]. This rigorous quality control ensures that the physical constructs accurately represent the original digital design before proceeding to the Test phase.

The Test Phase: Experimental Validation and Characterization

The Test phase involves experimental validation and characterization of the built biological systems to assess their functionality and performance. This phase employs a variety of analytical techniques to measure how closely the physical implementation matches the expected design specifications, providing crucial data for evaluating system success [1].

Advanced automation technologies have dramatically enhanced the speed and efficiency of the Test phase. High-throughput screening (HTS) systems, facilitated by automated liquid handling platforms like the Beckman Coulter Biomek Series and Tecan Freedom EVO series, enable precise and rapid assay setups [4]. These systems are complemented by automated plate readers and analyzers such as the EnVision Multilabel Plate Reader from PerkinElmer and the BioTek Synergy HTX Multi-Mode Reader, which efficiently assess diverse assay formats including fluorescence, luminescence, and absorbance measurements [4].

For metabolic engineering applications, Test phase assays typically focus on quantifying the production of target compounds and characterizing host strain performance. In the case of dopamine production in E. coli, analytical methods include:

- Metabolite quantification using HPLC or LC-MS to measure dopamine, L-DOPA, and L-tyrosine concentrations

- Biomass measurements via optical density (OD600) to track cellular growth

- Substrate consumption analysis to determine glucose utilization efficiency

- Time-course experiments to monitor production dynamics over the fermentation period [5]

Additionally, omics technologies play a significant role in comprehensive system characterization. Next-Generation Sequencing (NGS) platforms like Illumina's NovaSeq and Thermo Fisher's Ion Torrent systems provide rapid genotypic analysis, while automated mass spectrometry setups (e.g., Thermo Fisher's Orbitrap) enable proteomic analysis, and NMR-based platforms facilitate metabolomic profiling [4]. These technologies collectively generate multidimensional datasets that capture both the intended design outcomes and unexpected system behaviors.

Diagram 2: Test Phase Methodologies and Technologies

The Learn Phase: Data Analysis and Knowledge Extraction

The Learn phase represents the critical analytical component of the DBTL cycle where experimental data is transformed into actionable knowledge. This phase employs sophisticated data analysis techniques, including statistical evaluation, machine learning, and mechanistic modeling, to interpret Test results and generate insights that will inform the next Design iteration [4] [5].

The Learn phase is increasingly transformed by machine learning (ML) algorithms that analyze complex datasets to uncover patterns beyond human detection capabilities. ML models can be trained using extensive experimental data to make accurate genotype-to-phenotype predictions, guiding subsequent metabolic engineering decisions [4]. For example, in the optimization of tryptophan metabolism in yeast, ML models trained on experimental data successfully predicted metabolic outcomes and aided in designing more efficient metabolic pathways [4].

In the knowledge-driven DBTL cycle demonstrated for dopamine production in E. coli, the Learn phase incorporated both in vitro and in vivo analyses to extract mechanistic insights. The learning process included:

- Comparative analysis of enzyme expression levels in cell lysate systems

- Correlation of RBS sequence features with translation efficiency

- Identification of rate-limiting steps in the dopamine biosynthetic pathway

- Quantification of pathway bottlenecks through metabolic flux analysis [5]

This knowledge-driven approach enabled researchers to understand how GC content in the Shine-Dalgarno sequence influences RBS strength and ultimately dopamine production, leading to a 2.6 to 6.6-fold improvement over previous production methods [5]. The learning outcomes directly informed the subsequent Design phase, where RBS sequences were systematically engineered to optimize the relative expression levels of HpaBC and Ddc enzymes in the dopamine pathway.

Without effective learning mechanisms, DBTL cycles risk entering an "involution state" where iterative trial-and-error leads to endless cycling with increased complexity rather than improved productivity [7]. This involution typically occurs because increased reprogramming of cellular metabolism provokes deleterious metabolic performance, and removing one known bottleneck often reveals new rate-limiting steps. Strategic implementation of the Learn phase prevents this stagnation by ensuring each cycle generates meaningful insights that progressively advance system optimization.

Integrated DBTL Workflow: Case Study in Dopamine Production

The practical implementation of integrated DBTL cycles is exemplified by recent work developing an E. coli strain for dopamine production. This project demonstrated a knowledge-driven DBTL approach that combined upstream in vitro investigation with high-throughput in vivo engineering to optimize dopamine biosynthesis [5].

The initial Design phase focused on constructing a dopamine pathway in E. coli using native 4-hydroxyphenylacetate 3-monooxygenase (HpaBC) to convert L-tyrosine to L-DOPA, and heterologous L-DOPA decarboxylase (Ddc) from Pseudomonas putida to catalyze dopamine formation [5]. The Build phase involved plasmid construction using the pET system for heterologous gene expression and the pJNTN plasmid for crude cell lysate system experiments. RBS engineering libraries were created to fine-tune the relative expression of HpaBC and Ddc [5].

In the Test phase, researchers first employed cell-free protein synthesis (CFPS) systems to validate enzyme expression and function before moving to in vivo testing. This in vitro screening allowed rapid evaluation of multiple design variants without cellular constraints. Successful designs were then tested in engineered E. coli FUS4.T2 strains cultivated in minimal medium containing 20 g/L glucose, 10% 2xTY medium, and appropriate antibiotics [5]. Metabolite analysis quantified dopamine, L-DOPA, and L-tyrosine concentrations, revealing critical pathway bottlenecks.

The Learn phase analysis identified optimal RBS sequences that balanced the expression of HpaBC and Ddc, with specific attention to how GC content in the Shine-Dalgarno sequence influenced translation initiation rates. This knowledge informed the redesign of RBS sequences for the subsequent DBTL cycle, ultimately achieving dopamine production of 69.03 ± 1.2 mg/L (34.34 ± 0.59 mg/g biomass) - a substantial improvement over previous state-of-the-art production systems [5].

Table 3: Dopamine Production Optimization Through DBTL Iterations

| DBTL Cycle | Engineering Strategy | Key Learning | Dopamine Production |

|---|---|---|---|

| Initial Design | Pathway insertion in E. coli | HpaBC activity limits L-DOPA production | <27 mg/L |

| RBS Library V1 | Random RBS sequencing | GC content affects translation efficiency | 41.25 mg/L |

| Optimized Design | Knowledge-driven RBS design | Optimal HpaBC:Ddc expression ratio identified | 69.03 mg/L |

| Final Strain | Host engineering for L-tyrosine overproduction | Precursor availability becomes limiting | 34.34 mg/g biomass |

Research Reagent Solutions for DBTL Implementation

Successful implementation of DBTL cycles relies on specialized research reagents and platforms that streamline each phase of the workflow. The following essential materials represent key solutions for establishing robust DBTL capabilities in metabolic engineering research.

Table 4: Essential Research Reagents and Platforms for DBTL Workflows

| Category | Specific Solution | Function in DBTL Cycle |

|---|---|---|

| DNA Assembly | Gibson Assembly Master Mix | Enzymatic assembly of multiple DNA fragments with homologous ends |

| Cloning Systems | pET Vector System, pSEVA261 Backbone | Protein expression and modular genetic construction |

| Automated Liquid Handling | Tecan, Beckman Coulter, Hamilton Robotics | High-precision pipetting for PCR setup, DNA normalization, plasmid prep |

| DNA Synthesis Providers | Twist Bioscience, IDT, GenScript | Custom gene and fragment synthesis for genetic design implementation |

| Screening Platforms | Illumina NGS, PerkinElmer EnVision Reader | Genotypic verification and phenotypic characterization of constructs |

| Cell-Free Systems | Crude Cell Lysate CFPS | In vitro pathway validation before in vivo implementation |

| Software Platforms | TeselaGen, pySBOL | Workflow management, data integration, and experimental tracking |

These research reagents and platforms collectively enable the integration of individual DBTL components into a cohesive, efficient workflow. Modern biotech R&D increasingly relies on sophisticated software solutions like TeselaGen's platform, which supports the entire DBTL cycle through design algorithms, workflow orchestration, data management, and machine learning-powered analysis [4]. Similarly, computational frameworks like pySBOL provide formalized data structures for managing DBTL workflows, representing Designs, Builds, Tests, and Analyses as interconnected objects with defined relationships [2]. This integration of physical laboratory workflows with digital data management creates a foundation for continuous improvement in metabolic engineering projects.

The Design-Build-Test-Learn cycle represents a systematic framework that has transformed metabolic engineering and synthetic biology by providing a structured methodology for biological engineering. When implemented effectively, the DBTL cycle enables continuous, knowledge-driven optimization of microbial cell factories for diverse applications ranging from pharmaceutical production to sustainable biomaterials. The integration of automation, machine learning, and sophisticated data management platforms throughout the DBTL workflow continues to enhance its efficiency and predictive power, addressing challenges such as DBTL involution where cycles fail to produce meaningful improvements [7].

As the field advances, the DBTL framework is expanding beyond traditional metabolic engineering to embrace broader applications, including systems medicine and healthcare intervention design [8]. This expansion demonstrates the versatility of the engineering cycle approach for addressing complex biological challenges across multiple domains. By maintaining rigorous implementation of all four pillars - Design, Build, Test, and Learn - researchers can systematically advance biological system capabilities, progressively bridging the gap between conceptual designs and functional microbial factories that address pressing industrial and medical needs.

In metabolic engineering, the goal of optimizing microorganisms to function as efficient microbial cell factories is paramount for developing sustainable alternatives to the petrochemical industry. However, this endeavor is fundamentally challenged by the intrinsic biological complexity of cellular systems and the combinatorial explosion of possible genetic designs. Biological complexity arises from the intricate and often non-intuitive interactions within metabolic networks, where perturbations to one pathway element can have unforeseen consequences on the overall flux towards a desired product. Simultaneously, combinatorial explosion occurs when attempting to optimize multiple pathway components (e.g., promoters, ribosomal binding sites, and coding sequences) at once; the number of possible combinations far exceeds what can be feasibly built and tested in a laboratory setting.

For example, simultaneously optimizing a pathway with just 5 enzymes, each with 5 potential expression levels, generates 3,125 (5⁵) unique strain designs. Scaling this to 10 enzymes creates over 9.7 million possible designs. This combinatorial explosion makes exhaustive experimental testing impossible, necessitating a strategic, iterative framework to navigate this vast design space efficiently. The Design-Build-Test-Learn (DBTL) cycle has emerged as the foundational framework to confront these challenges, enabling the systematic and iterative development of high-performing industrial strains.

The DBTL Framework: A Systematic Response

The DBTL cycle is a structured framework used in synthetic biology and metabolic engineering to systematically and iteratively develop and optimize biological systems. The cycle consists of four interconnected phases:

- Design: In this initial phase, researchers define the objectives and computationally design the genetic constructs. This encompasses protein design, genetic design (e.g., translating amino acid sequences into coding sequences, designing ribosome binding sites, and planning operon architecture), and assay design. A critical step is Assembly Design, which involves breaking down plasmids into fragments for construction, considering factors like restriction enzyme sites and GC content to avoid failed experiments [4].

- Build: This phase involves the physical assembly of the designed DNA constructs and their introduction into a microbial chassis (e.g., E. coli or Corynebacterium glutamicum). Automation is crucial here, utilizing high-precision automated liquid handlers and integrated software platforms to manage complex inventory and high-throughput workflows, thereby ensuring precision and efficiency [4].

- Test: The built strains are experimentally characterized to measure performance metrics such as titer, yield, and rate (TYR) of the desired product. This phase relies on high-throughput screening (HTS) facilitated by automated plate readers, analyzers, and omics technologies (e.g., Next-Generation Sequencing and mass spectrometry) to generate large, multi-dimensional datasets [4].

- Learn: In the final phase, data from the Test phase are analyzed to extract insights into pathway behavior. Machine learning (ML) algorithms are increasingly used to identify complex patterns and build predictive models that link genotype to phenotype. These learnings directly inform the design of improved strains for the next cycle [4] [9].

The power of the DBTL framework lies in its iterative nature. Rather than attempting to test all possible combinations simultaneously, researchers use learning from each cycle to make informed decisions about which regions of the combinatorial design space to explore next, thereby converging on an optimal solution more rapidly [3] [9].

Table 1: Core Phases of the DBTL Cycle and Their Key Activities

| DBTL Phase | Key Activities | Technologies & Methods |

|---|---|---|

| Design | Protein & genetic part selection, DNA assembly protocol generation, in silico modeling | Advanced software algorithms, consideration of restriction enzymes & GC content [4] |

| Build | DNA synthesis & assembly, plasmid construction, transformation into chassis | Automated liquid handlers, integration with DNA synthesis providers, high-throughput workflow management [4] |

| Test | High-throughput screening, fermentation, omics data collection (transcriptomics, proteomics) | Automated plate readers, Next-Generation Sequencing (NGS), mass spectrometry, robotic integration [4] |

| Learn | Data analysis, pattern recognition, predictive model building | Machine Learning (e.g., Gradient Boosting, Random Forest), statistical analysis, genotype-to-phenotype prediction [4] [9] |

Quantitative Insights: Data and Performance of DBTL Strategies

The effectiveness of different strategies within the DBTL framework can be quantified through simulation studies. Using mechanistic kinetic models, researchers can benchmark machine learning methods and DBTL cycle strategies without the cost and time constraints of physical experiments.

Table 2: Performance of Machine Learning Models in Simulated DBTL Cycles for Combinatorial Pathway Optimization [9]

| Machine Learning Model | Performance in Low-Data Regime | Robustness to Training Set Bias | Robustness to Experimental Noise |

|---|---|---|---|

| Gradient Boosting | Outperforms other methods | Demonstrated robustness | Demonstrated robustness |

| Random Forest | Outperforms other methods | Demonstrated robustness | Demonstrated robustness |

| Other Tested Models | Lower performance | Not specified | Not specified |

A key finding from such simulations is the impact of cycle strategy on the rate of optimization. When the number of strains to be built is limited, a strategy that starts with a large initial DBTL cycle is more favorable than building the same number of strains in every cycle. This initial investment in data generation provides a richer dataset for the ML models to learn from, accelerating performance gains in subsequent cycles [9].

Experimental Protocols: Methodologies for DBTL Implementation

Protocol for In Vivo Combinatorial Pathway Optimization

This protocol is adapted from high-throughput metabolic engineering workflows for optimizing pathways in live microbial cells, such as E. coli or C. glutamicum [4] [9].

Design of DNA Library:

- Define the target pathway and identify components for optimization (e.g., promoters for each gene).

- Select a library of genetic parts (e.g., promoters with varying strengths) for each component.

- Use specialized software to design the assembly of combinatorial libraries, ensuring compatibility of DNA fragments and optimizing for factors like GC content to avoid assembly failures [4].

Build Library via Automated DNA Assembly:

- Utilize automated liquid handlers (e.g., from Tecan or Beckman Coulter) to perform high-throughput DNA assembly, such as Golden Gate or Gibson assembly.

- Integrate software to orchestrate protocols and track samples across different lab equipment.

- Transform the assembled constructs into the chosen microbial host in a 96- or 384-well format.

High-Throughput Test Phase:

- Inoculate cultures of the transformed strains in deep-well plates with a defined medium.

- Perform micro-scale fermentation using automated systems to maintain consistent environmental conditions (e.g., temperature, shaking).

- After a set time, sample the culture broth.

- Quantify the product titer, yield, and/or rate using high-throughput analytics, such as liquid chromatography-mass spectrometry (LC-MS) or colorimetric/fluorescent assays in multi-well plates read by automated plate readers [4].

Learn Phase with Machine Learning:

- Compile a dataset where the features are the genetic design (e.g., the identity or strength of each promoter used) and the target variable is the performance metric (e.g., product titer).

- Train a machine learning model (e.g., Gradient Boosting or Random Forest) on this dataset.

- Use the trained model to predict the performance of all possible, untested genetic combinations in the design space.

- Select a new set of designs predicted to have high performance (with potential for exploration) for the next DBTL cycle.

Protocol for Rapid Cell-Free Testing (LDBT Variation)

An emerging paradigm, sometimes termed LDBT, places Learning first by leveraging machine learning for initial design, and accelerates building and testing using cell-free systems [10].

Learn-Guided Design:

- Use a pre-trained protein language model (e.g., ESM, ProteinMPNN) or a model fine-tuned on relevant data to generate sequences for pathway enzymes predicted to have high activity, stability, or solubility [10].

- Design the DNA sequences encoding these proteins for optimal expression.

Rapid Build with Cell-Free DNA Template Preparation:

- Instead of time-consuming cloning in live cells, synthesize linear DNA templates via PCR that contain the necessary elements for transcription and translation.

- This step bypasses cell transformation and plasmid propagation [10].

Ultra-High-Throughput Test in Cell-Free Systems:

- Express the designed proteins directly in a cell-free gene expression system, which is a crude lysate or purified reconstitution of the cellular transcription-translation machinery.

- Reactions can be scaled down to picoliter volumes in droplet microfluidics platforms, enabling the testing of hundreds of thousands of variants in a single experiment [10].

- Measure enzyme activity or pathway productivity using coupled fluorescent or colorimetric assays.

Data Integration and Model Refinement:

- The massive dataset generated from cell-free testing is used to refine the machine learning models, closing the loop and improving predictive power for subsequent designs.

Visualization of Workflows and Pathways

The Iterative DBTL Cycle for Metabolic Engineering

DBTL Workflow

A Simulated Metabolic Pathway for DBTL Benchmarking

This diagram represents a generic, simulated metabolic pathway embedded in a core kinetic model of E. coli physiology, used for in silico testing of DBTL strategies [9].

Simulated Metabolic Pathway

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Platforms for DBTL Implementation

| Item / Solution | Function in DBTL Workflow | Specific Examples |

|---|---|---|

| Automated Liquid Handlers | Enable high-precision, high-throughput pipetting for DNA assembly, PCR setup, and assay preparation in Build and Test phases. | Labcyte, Tecan Freedom EVO, Beckman Coulter Biomek, Hamilton Robotics [4] |

| DNA Synthesis Providers | Supply custom-designed DNA sequences (e.g., gene fragments, promoters) for constructing genetic libraries in the Build phase. | Twist Bioscience, Integrated DNA Technologies (IDT), GenScript [4] |

| Cell-Free Expression Systems | Provide a rapid, flexible platform for expressing and testing protein variants or pathways without the need for live-cell cultivation, accelerating the Build-Test phases. | Crude lysate systems (E. coli, yeast), purified reconstituted systems [10] |

| High-Throughput Assay Platforms | Facilitate rapid, parallel measurement of strain performance (e.g., product titer, enzyme activity) in the Test phase. | Microplate readers (e.g., PerkinElmer EnVision, BioTek Synergy HTX), droplet microfluidics [4] [10] |

| Next-Generation Sequencing (NGS) | Verify genetic constructs (Build) and perform genotypic analysis of strains (Test). | Illumina NovaSeq, Thermo Fisher Ion Torrent [4] |

| Machine Learning Software | Analyze complex datasets to build predictive models that recommend new strain designs in the Learn phase. | Gradient Boosting, Random Forest, Protein Language Models (e.g., ESM, ProteinMPNN) [9] [10] |

The Role of DBTL in Strain Optimization for Biofuels, Drugs, and Specialty Chemicals

The Design-Build-Test-Learn (DBTL) cycle represents a systematic, iterative framework central to modern metabolic engineering and synthetic biology. This engineering-based approach enables researchers to develop and optimize biological systems, such as microbial strains, in a controlled and efficient manner for the production of valuable compounds including biofuels, pharmaceuticals, and specialty chemicals [1]. The paradigm acknowledges that even with rational design, the biological complexity of introducing foreign DNA into a cellular host makes phenotypic outcomes difficult to predict, thus necessitating the testing of multiple genetic permutations [1].

A hallmark of the DBTL framework is its closed-loop nature, where learning from each cycle directly informs the design phase of the subsequent cycle, creating a continuous improvement process. This iterative methodology has become increasingly powerful with the integration of automation, high-throughput technologies, and advanced computational tools, significantly accelerating the pace of biological engineering [4] [11]. The application of this cycle is transforming the development of biomanufacturing processes, making them more predictable and economically viable for a growing catalog of biosustainable products.

The Four Phases of the DBTL Cycle

The DBTL cycle consists of four distinct but interconnected phases. Each phase addresses a critical component of the strain optimization pipeline, and the seamless integration between them is essential for rapid progress.

Design

The Design phase involves the in silico planning and selection of genetic components for a desired metabolic pathway. This stage encompasses several crucial activities:

- Pathway and Enzyme Selection: Using computational tools like RetroPath and Selenzyme to select candidate enzymes and design biosynthetic pathways for the target compound [11].

- Genetic Design: Translating protein designs into coding sequences, designing regulatory elements such as ribosome binding sites (RBS), and planning operon architecture [4].

- Combinatorial Library Design: Creating libraries of genetic constructs by interchanging modular DNA parts (e.g., promoters, RBS, gene orders) to explore a wide design space [1] [11].

- Statistical Design of Experiments (DoE): Applying methods like orthogonal arrays to reduce vast combinatorial libraries into a tractable number of representative constructs for experimental testing, achieving compression ratios as high as 162:1 [11].

A key advancement in this phase is the automation of DNA assembly protocol generation, which minimizes human error and ensures compatibility among DNA fragments by considering factors like restriction enzyme sites and GC content [4].

Build

The Build phase focuses on the physical construction of the designed genetic constructs and their introduction into the microbial chassis. Precision and efficiency are critical in this stage, which leverages significant automation:

- Automated DNA Assembly: Robotic platforms using liquid handlers from companies like Tecan, Beckman Coulter, and Hamilton Robotics perform high-precision pipetting for PCR setup, DNA normalization, and plasmid preparation [4].

- High-Throughput Construction: Automated ligase cycling reaction (LCR) for pathway assembly enables the parallel construction of numerous genetic variants [11].

- Integration with DNA Synthesis: Partnerships with DNA synthesis providers (e.g., Twist Bioscience, IDT) streamline the incorporation of custom DNA sequences into the workflow [4].

- Quality Control: Automated clone verification through purification, restriction digest, and sequencing ensures the fidelity of the constructed strains [11].

Automation in the Build phase has dramatically reduced the time, labor, and cost associated with generating multiple constructs, thereby enabling higher throughput and improving overall reproducibility [4] [1].

Test

The Test phase involves culturing the built strains and analyzing their performance in producing the target compound. This characterization phase has been revolutionized by high-throughput technologies:

- High-Throughput Cultivation: Automated 96-deepwell plate systems handle growth and induction protocols under controlled conditions [11].

- Advanced Analytical Chemistry: Rapid quantification of target products and intermediates using techniques like ultra-performance liquid chromatography coupled to tandem mass spectrometry (UPLC-MS/MS) [11].

- Multi-Modal Data Acquisition: Integration of various analytical equipment, including next-generation sequencing (NGS) platforms (e.g., Illumina's NovaSeq) for genotypic analysis and mass spectrometry (e.g., Thermo Fisher's Orbitrap) for proteomic analysis [4].

- Centralized Data Collection: Software platforms serve as hubs for collecting and standardizing data from diverse analytical instruments, facilitating subsequent analysis [4].

The Test phase generates the critical performance data (e.g., titer, yield, productivity) that forms the basis for learning and subsequent design improvements.

Learn

The Learn phase represents the knowledge extraction component of the cycle, where data from the Test phase is analyzed to derive insights and inform the next Design phase:

- Statistical Analysis: Identifying significant relationships between design factors (e.g., promoter strength, gene order) and production outcomes to determine key bottlenecks and optimization levers [11].

- Machine Learning (ML): Applying ML algorithms like gradient boosting and random forests to analyze complex datasets, uncover non-intuitive patterns, and build predictive models that connect genotype to phenotype [4] [9].

- Predictive Modeling: Using trained models to forecast the performance of untested genetic designs and recommend promising candidates for the next DBTL cycle [9].

- Hypothesis Generation: Formulating new biological insights about pathway regulation, enzyme function, and host physiology to refine engineering strategies.

This phase closes the loop, transforming raw experimental data into actionable intelligence for continuous strain improvement.

DBTL Cycle Workflow

The following diagram illustrates the iterative DBTL cycle and the key activities within each phase:

Experimental Protocols for DBTL Implementation

Implementing an effective DBTL cycle requires standardized, robust experimental protocols that ensure reproducibility and scalability. Below are detailed methodologies for key experiments in the DBTL pipeline.

High-Throughput Molecular Cloning Workflow

This protocol enables the parallel construction of numerous genetic variants for combinatorial pathway optimization [1] [11]:

DNA Part Preparation:

- Obtain gene fragments via commercial synthesis (e.g., Twist Bioscience, IDT) or PCR amplification from existing libraries.

- Use automated systems for PCR cleanup and normalization to 50-100 ng/μL concentration.

Automated Assembly Reaction:

- Employ liquid handling robots to set up ligase cycling reactions (LCR) in 96-well or 384-well plates.

- Assembly reaction mixture: 10-20 fmol of each DNA part, 1× LCR buffer, 0.5 μL ligase, nuclease-free water to 10 μL total volume.

- Cycling conditions: 5 minutes at 98°C; 30 cycles of 10 seconds at 98°C and 2-4 minutes at 60°C; hold at 4°C.

Transformation and Clone Selection:

- Transform 2 μL of assembly reaction into competent E. coli cells (e.g., DH5α) by heat shock (42°C for 30 seconds).

- Plate on selective media and incubate overnight at 37°C.

- Pick 2-4 colonies per construct using automated colony pickers for inoculating deepwell culture plates.

Quality Control:

- Perform high-throughput plasmid purification using robotic systems.

- Verify constructs by restriction digest analysis with capillary electrophoresis.

- Confirm critical constructs by Sanger sequencing or next-generation sequencing (NGS) for large libraries.

Small-Scale Cultivation and Metabolite Screening

This protocol enables high-throughput screening of strain performance in 96-deepwell plates [11]:

Inoculum Preparation:

- Inoculate 500 μL of selective media in 2.2 mL deepwell plates with verified colonies.

- Incubate at 37°C with shaking at 800 rpm for 16-18 hours.

Production Phase:

- Transfer 10-50 μL of seed culture to 1 mL of production media in fresh deepwell plates.

- Incubate at appropriate temperature (e.g., 30°C for pathway induction) with shaking.

- Induce pathway expression at mid-exponential phase (OD600 ≈ 0.6-0.8) with optimized inducer concentrations.

Metabolite Extraction:

- Harvest cells by centrifugation at 4,000 × g for 10 minutes.

- Extract metabolites from cell pellet or supernatant using appropriate solvents (e.g., methanol:acetonitrile:water 2:2:1 for intracellular metabolites).

- Remove insoluble material by filtration or centrifugation prior to analysis.

Analytical Quantification:

- Analyze samples using UPLC-MS/MS with appropriate standards for target compounds and key intermediates.

- Use multiple reaction monitoring (MRM) for sensitive quantification of specific metabolites.

- Employ high-resolution mass spectrometry for untargeted metabolite profiling.

Data Analysis and Machine Learning Protocol

This computational protocol extracts meaningful insights from experimental data to guide subsequent DBTL cycles [9]:

Data Preprocessing:

- Normalize production titers to internal standards and cell density (e.g., OD600).

- Handle missing values using appropriate imputation methods (e.g., k-nearest neighbors).

- Perform log transformation for skewed distributions and standard scaling for multivariate analysis.

Statistical Analysis:

- Conduct analysis of variance (ANOVA) to identify significant design factors affecting production.

- Calculate effect sizes for promoters, RBS strengths, and gene order on final titer.

- Perform principal component analysis (PCA) to visualize clustering and outliers in multi-dimensional data.

Machine Learning Model Training:

- Split data into training (70-80%), validation (10-15%), and test (10-15%) sets.

- Train multiple algorithms including gradient boosting, random forest, and neural networks using cross-validation.

- Optimize hyperparameters via grid search or Bayesian optimization.

Model Interpretation and Recommendation:

- Calculate feature importance scores to identify critical genetic elements.

- Use trained models to predict performance of untested genetic combinations in the design space.

- Select top candidates for next DBTL cycle balancing exploitation (high predicted titer) and exploration (genetic diversity).

Advanced Applications and Case Studies

The DBTL framework has demonstrated remarkable success in optimizing microbial strains for various applications. The following case studies illustrate its practical implementation and effectiveness.

Flavonoid Production in E. coli

A comprehensive study applied an automated DBTL pipeline to optimize (2S)-pinocembrin production in E. coli, achieving a 500-fold improvement in titer over two DBTL cycles [11]:

First DBTL Cycle:

- Design: A combinatorial library of 2,592 theoretical constructs exploring vector copy number, promoter strengths for four genes (PAL, 4CL, CHS, CHI), and gene order.

- Build: Statistical DoE reduced the library to 16 representative constructs, which were automatically assembled.

- Test: Initial titers ranged from 0.002 to 0.14 mg L⁻¹, with accumulation of the intermediate cinnamic acid observed.

- Learn: Statistical analysis revealed vector copy number had the strongest positive effect (P = 2.00 × 10⁻⁸), followed by CHI promoter strength (P = 1.07 × 10⁻⁷).

Second DBTL Cycle:

- Design: Focused library incorporating learning from cycle 1: high-copy vector, CHI positioned at start of pathway, variable promoters for 4CL and CHS.

- Results: Achieved dramatically improved pinocembrin titers up to 88 mg L⁻¹, demonstrating the power of iterative DBTL cycling.

Combinatorial Pathway Optimization Using Kinetic Modeling

Research has demonstrated the use of mechanistic kinetic models to simulate DBTL cycles for metabolic pathway optimization [9]:

- Framework Development: A kinetic model of a synthetic pathway integrated into the E. coli core metabolism simulated strain behavior and production fluxes.

- Machine Learning Integration: Compared ML algorithms (gradient boosting, random forest, etc.) for predicting strain performance in low-data regimes.

- Key Findings: Gradient boosting and random forest models outperformed other methods, showing robustness to training set biases and experimental noise.

- Recommendation Algorithm: Developed an automated system for selecting strains for subsequent DBTL cycles, optimizing the exploration-exploitation trade-off.

DBTL Experimental Workflow

The following diagram details the integrated experimental workflow of an automated DBTL pipeline:

Essential Research Tools and Reagents

Successful implementation of the DBTL framework relies on specialized tools, reagents, and equipment. The table below details key resources for establishing an automated DBTL pipeline.

Table 1: Essential Research Reagent Solutions for DBTL Implementation

| Category | Specific Products/Platforms | Function in DBTL Pipeline |

|---|---|---|

| DNA Synthesis | Twist Bioscience, IDT, GenScript | Provides high-quality custom DNA fragments for genetic construct assembly [4]. |

| Automated Liquid Handlers | Tecan Freedom EVO, Beckman Coulter Biomek, Hamilton Robotics | Enables high-precision pipetting for PCR setup, DNA normalization, and assembly reactions [4]. |

| DNA Assembly Methods | Gibson Assembly, Golden Gate Cloning, Ligase Cycling Reaction (LCR) | Modular DNA assembly techniques for constructing combinatorial libraries [4] [11]. |

| Analytical Instruments | Illumina NovaSeq (NGS), Thermo Fisher Orbitrap (MS), UPLC-MS/MS | Provides genotypic verification and quantitative analysis of metabolites [4] [11]. |

| Cell Culture Systems | 96-deepwell plates, automated bioreactor arrays | Enables high-throughput cultivation of strain libraries under controlled conditions [11]. |

| Software Platforms | TeselaGen, CLC Genomics, Geneious | Supports end-to-end workflow management from design to data analysis [4]. |

Quantitative Analysis of DBTL Impact

The implementation of DBTL frameworks has demonstrated significant improvements in strain performance and bioprocess efficiency. The following table summarizes key quantitative findings from DBTL applications.

Table 2: Quantitative Performance Metrics in DBTL Applications

| Application | Performance Metric | Before DBTL | After DBTL Optimization | Number of Cycles |

|---|---|---|---|---|

| Pinocembrin Production in E. coli [11] | Titer (mg L⁻¹) | 0.14 (best initial construct) | 88 | 2 |

| Pinocembrin Improvement Factor [11] | Fold-Increase | 1x | 500x | 2 |

| Combinatorial Library Compression [11] | Library Size Reduction | 2,592 designs | 16 constructs (162:1 ratio) | 1 (Design) |

| Machine Learning Prediction [9] | Model Performance | N/A | Gradient boosting & random forest outperform in low-data regime | Simulation |

| Downstream Processing Market [12] | Market Value (USD) | $34.3 billion (2025) | $100.1 billion (2035) | CAGR 11.3% |

| Bioprocessing Market Overall [13] | Market Value (USD) | $90.34 billion (2025) | $248.12 billion (2034) | CAGR 11.88% |

The DBTL framework has established itself as a cornerstone methodology in modern metabolic engineering, enabling the systematic optimization of microbial strains for production of biofuels, pharmaceuticals, and specialty chemicals. By integrating advanced technologies in synthetic biology, automation, and data science, this iterative approach dramatically accelerates the development of biomanufacturing processes.

The continued evolution of the DBTL cycle—particularly through enhanced machine learning algorithms, automated experimental platforms, and integrated data management systems—promises to further reduce development timelines and costs while increasing the success rate of strain engineering projects. As the bioprocessing market continues its robust growth [12] [13], the DBTL framework will remain essential for translating laboratory discoveries into commercially viable bioproduction processes that support a more sustainable and bio-based economy.

The transition from ad-hoc tinkering to systematic rational design represents a paradigm shift in biological engineering. This transformation is embodied by the widespread adoption of the Design-Build-Test-Learn (DBTL) cycle, a framework that brings engineering discipline to biological innovation. The DBTL cycle provides a structured methodology for developing microbial cell factories as sustainable alternatives to traditional petrochemical processes through optimized metabolic pathways [3] [14] [15].

In metabolic engineering research, the DBTL framework has evolved from traditional approaches to advanced systems metabolic engineering that integrates synthetic biology, enzyme engineering, omics technology, and evolutionary engineering [3]. This iterative engineering mindset enables researchers to systematically optimize complex biological systems for producing valuable compounds, from specialty chemicals to pharmaceuticals. By applying this rigorous framework, scientists can accelerate the development of bioprocesses while gaining fundamental insights into cellular mechanisms [5].

The DBTL Cycle: Core Principles and Workflow

The DBTL cycle constitutes a systematic, iterative framework for engineering biological systems that mirrors established engineering disciplines. Each phase serves a distinct purpose in the biological engineering workflow:

- Design: Researchers define objectives and create rational plans based on hypotheses or previous learnings. This phase involves selecting genetic parts (promoters, RBS, coding sequences) and assembling them into functional circuits using standardized methods [16] [10].

- Build: Theoretical designs transition into biological reality through DNA synthesis, plasmid cloning, and transformation of engineered constructs into host organisms [16] [1].

- Test: Engineered systems undergo rigorous characterization through quantitative measurements, including gene expression analysis, cellular microscopy, and biochemical assays to measure metabolic outputs [16].

- Learn: Data from testing phases are analyzed to extract insights about system performance, informing subsequent design phases and propelling the iterative optimization process [16].

The power of the DBTL framework lies in its iterative nature, where complex synthetic biology projects rarely succeed in a single attempt but instead progress through sequential, knowledge-accumulating cycles [16].

Visualizing the DBTL Workflow

The following diagram illustrates the interconnected, cyclical nature of the DBTL framework and the key activities at each stage:

DBTL Cycle Workflow

Advanced DBTL Implementations in Metabolic Engineering

Knowledge-Driven DBTL for Dopamine Production

A notable implementation of the knowledge-driven DBTL cycle demonstrated the optimization of dopamine production in Escherichia coli. Dopamine has important applications in emergency medicine, cancer treatment, and wastewater treatment [5]. The traditional chemical synthesis methods are environmentally harmful and resource-intensive, making microbial production an attractive alternative [5].

Experimental Protocol and Implementation:

The knowledge-driven approach incorporated upstream in vitro investigation before full DBTL cycling:

Pathway Design: The dopamine biosynthetic pathway was constructed using the native E. coli gene encoding 4-hydroxyphenylacetate 3-monooxygenase (HpaBC) to convert L-tyrosine to L-DOPA, and L-DOPA decarboxylase (Ddc) from Pseudomonas putida to catalyze dopamine formation [5].

In Vitro Prototyping: Cell-free protein synthesis (CFPS) systems using crude cell lysates enabled testing of different relative enzyme expression levels without whole-cell constraints [5].

In Vivo Translation: Results from in vitro studies informed high-throughput ribosome binding site (RBS) engineering in E. coli host strain FUS4.T2 [5].

Host Engineering: The production host was engineered for enhanced L-tyrosine production through genomic modifications, including depletion of the transcriptional dual regulator L-tyrosine repressor TyrR and mutation of feedback inhibition in chorismate mutase/prephenate dehydrogenase (tyrA) [5].

Cultivation and Analysis: Dopamine production was evaluated in minimal medium containing 20 g/L glucose, with appropriate antibiotics and inducers. Analytical methods quantified dopamine concentrations and biomass [5].

This knowledge-driven DBTL approach achieved dopamine production at 69.03 ± 1.2 mg/L (equivalent to 34.34 ± 0.59 mg/g biomass), representing a 2.6 to 6.6-fold improvement over previous state-of-the-art in vivo production methods [5].

Machine Learning-Enhanced DBTL for Combinatorial Pathway Optimization

Combinatorial pathway optimization presents significant challenges due to potential combinatorial explosions when simultaneously optimizing multiple pathway genes. Recent advances have integrated machine learning with DBTL cycles to address this complexity [9].

Methodological Framework:

Mechanistic Kinetic Modeling: A kinetic model-based framework using symbolic kinetic models in Python (SKiMpy) represents metabolic pathways embedded in physiologically relevant cell models. This approach captures pathway behaviors including enzyme kinetics, topology, and rate-limiting steps [9].

Combinatorial Library Simulation: The framework simulates combinatorial libraries where enzyme levels are varied with respect to the initial strain, implemented by changing Vmax parameters in the model [9].

Machine Learning Integration: In the low-data regime typical of early DBTL cycles, gradient boosting and random forest models have demonstrated superior performance for predicting strain performance. These methods show robustness against training set biases and experimental noise [9].

Recommendation Algorithms: Specialized algorithms recommend new designs using machine learning predictions, optimizing the limited number of strains that can be built and tested experimentally [9].

This approach has revealed that when the number of strains is limited, starting with a large initial DBTL cycle is more favorable than distributing the same number of strains across multiple cycles [9].

Quantitative Performance of DBTL-Engineered Strains

C5 Chemical Production in Corynebacterium glutamicum

Advanced DBTL approaches have significantly enhanced production of C5 platform chemicals derived from L-lysine in Corynebacterium glutamicum. The table below summarizes performance metrics for various engineered strains:

Table 1: Performance of C. glutamicum Strains Engineered for C5 Chemical Production via DBTL Cycles

| Product | Host Strain | Key Engineering Strategy | Titer (g/L) | Scale | Reference |

|---|---|---|---|---|---|

| Cadaverine | C. glutamicum PKC | Chromosomal integration of H. alvei derived ldcC with strong synthetic H30 promoter | 125 | Fed-batch | [15] |

| Glutarate (GTA) | C. glutamicum BE | Identification/expression of 11 target genes for increasing L-lysine supply; Overexpression of ynfM | 105.3 | Fed-batch | [15] |

| 5-Aminovalerate (5-AVA) | C. glutamicum BE | Introduction of 5-AVA pathway using P. putida davB/davA; N-terminal His6-Tag fusion | 33.1 | Fed-batch | [15] |

| 5-Hydroxyvalerate (5-HV) | C. glutamicum PKC | Introduction of 5-HV pathway using P. putida davTBA and E. coli yahK; ΔgabD deletion | 52.1 | Fed-batch | [15] |

| 1,5-Pentanediol (1,5-PDO) | C. glutamicum PKC ΔgabD2 | Introduction of 1,5-PDO pathway using CAR and GOX1801; CAR enzyme engineering | 43.4 | Fed-batch | [15] |

| Valerolactam (VL) | C. glutamicum GA16 ΔgabT | sRNA knock-down of gdh; engineering of 5-AVA transporter genes; multi-copy chromosomal integration | 76.1 | Fed-batch | [15] |

The substantial titers achieved across multiple C5 chemical products demonstrate how iterative DBTL cycles enable systematic optimization of microbial cell factories for industrial-scale production [15].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Research Reagent Solutions for DBTL Implementation

| Reagent/Resource | Function in DBTL Workflow | Application Example |

|---|---|---|

| Ribosome Binding Site (RBS) Libraries | Fine-tuning relative gene expression in synthetic pathways | Optimizing dopamine pathway enzyme expression levels [5] |

| Cell-Free Protein Synthesis (CFPS) Systems | Rapid in vitro testing of pathway designs without host constraints | Prototyping dopamine pathway enzyme combinations [5] |

| Mechanistic Kinetic Models | In silico representation of metabolic pathways for simulation | SKiMpy models for combinatorial pathway optimization [9] |

| Promoter Libraries | Varying enzyme expression levels in combinatorial optimization | Tuning Vmax parameters in metabolic models [9] |

| CRISPR/Cas Systems | Precision genome editing for host strain engineering | Gene deletions and integrations in C. glutamicum [15] |

| Analytical Standards | Quantifying metabolic outputs during testing phases | HPLC analysis of dopamine and pathway intermediates [5] |

Emerging Paradigms: LDBT and Future Directions

The Learning-Driven Paradigm Shift

A emerging paradigm proposes reordering the cycle to LDBT (Learn-Design-Build-Test), where machine learning precedes design [10]. This approach leverages the predictive power of pre-trained protein language models (e.g., ESM, ProGen) and structural models (e.g., MutCompute, ProteinMPNN) for zero-shot predictions of protein structure and function [10].

Key Advancements Enabling LDBT:

Protein Language Models: Sequence-based models trained on evolutionary relationships can predict beneficial mutations and infer protein function without additional training [10].

Structure-Based Design Tools: Deep learning approaches like ProteinMPNN predict sequences that fold into specific backbones, achieving nearly 10-fold increases in design success rates when combined with structure assessment tools like AlphaFold [10].

Functional Prediction Models: Specialized models predict protein properties including thermostability (Prethermut, Stability Oracle) and solubility (DeepSol) to guide engineering decisions [10].

Cell-Free Expression Platforms: When combined with liquid handling robots and microfluidics, cell-free systems enable ultra-high-throughput testing of thousands of protein variants, generating massive datasets for model training [10].

This paradigm shift brings synthetic biology closer to a "Design-Build-Work" model that relies on first principles, potentially reducing or eliminating iterative cycling for many applications [10].

Visualizing Machine Learning Integration

The following diagram illustrates how machine learning transforms the traditional DBTL cycle, enabling the emerging LDBT paradigm:

ML-Driven DBTL Evolution

The adoption of the DBTL framework represents a fundamental shift from ad-hoc tinkering to rational design in biological engineering. This engineering mindset, implemented through iterative cycles of design, construction, testing, and learning, has dramatically accelerated the development of microbial cell factories for sustainable chemical production [3] [15].

The continued evolution of DBTL approaches—including knowledge-driven cycles that incorporate upstream in vitro testing [5] and machine-learning enhanced methods that leverage combinatorial optimization [9]—promises to further accelerate biological design. The emerging LDBT paradigm, which places learning first through powerful predictive models, may ultimately transform synthetic biology into a discipline where biological systems can be designed to work on the first attempt, much like established engineering fields [10].

As these frameworks mature and integrate with increasingly sophisticated computational tools and automation platforms, they will undoubtedly unlock new possibilities for sustainable manufacturing, therapeutic development, and fundamental biological discovery.

From Theory to Bioproduction: Methodologies and Real-World Applications of DBTL

The Design phase serves as the critical foundation of the Design-Build-Test-Learn (DBTL) framework in metabolic engineering, where strategic planning of genetic interventions precedes laboratory implementation. This technical guide examines computational methodologies and experimental strategies for selecting genetic parts and designing microbial strains, emphasizing integration within iterative DBTL cycles. We explore how modern biofoundries leverage computational tools, machine learning, and knowledge-driven approaches to efficiently navigate complex biological design spaces, significantly accelerating the development of microbial cell factories for therapeutic compounds and fine chemicals. Through systematic analysis of quantitative data, visualization of workflows, and presentation of experimental protocols, this whitepaper provides researchers with actionable methodologies for optimizing strain design processes, ultimately reducing resource investments while improving production titers across diverse biomanufacturing applications.

The Design-Build-Test-Learn (DBTL) cycle represents an engineering framework that has transformed metabolic engineering from artisanal tinkering to a systematic, iterative discipline. Within this paradigm, the Design phase establishes the computational and conceptual blueprint for all subsequent experimental work. Metabolic engineering has evolved from modifications targeting a handful of genes with clear metabolic network relationships to increasingly complex designs requiring coordinated modification of dozens of genes spanning diverse cellular functions [17]. This expansion in complexity necessitates sophisticated design strategies that can predict system-level consequences of genetic interventions.

The DBTL framework operates as a continuous improvement cycle where each phase informs the next. In the context of metabolic engineering, Design encompasses the selection of genetic targets, pathway construction, and parts selection; Build implements these designs through genetic engineering; Test characterizes the resulting strains; and Learn analyzes the data to inform the next design cycle [17]. The power of this framework lies in its iterative nature, where knowledge accumulates with each cycle, progressively refining microbial strains toward desired performance objectives.

Recent advances have enabled increasingly automated DBTL pipelines that integrate computational design with laboratory automation. These pipelines are designed to be compound-agnostic and can be applied to diverse metabolic engineering targets, from natural products to high-value chemicals [11]. The design phase has been particularly transformed by the development of specialized software tools, mechanistic modeling, and machine learning approaches that enhance predictive capabilities while reducing experimental burden.

Computational Tools for Strain Design

Computational strain design has evolved from manual, literature-driven approaches to sophisticated algorithms that systematically interrogate metabolic networks to identify optimal genetic interventions. These tools can be broadly categorized into constraint-based methods, kinetic modeling approaches, and machine learning techniques, each with distinct strengths and applications.

Constraint-Based Methods

Constraint-Based Reconstruction and Analysis (COBRA) methods form the foundation of many computational strain design approaches. These methods utilize genome-scale metabolic models (GEMs) that incorporate biological knowledge and experimental data to place constraints on intracellular fluxes [18]. The core technique within this framework is flux balance analysis (FBA), which assumes metabolic steady-state and uses optimization to predict flux distributions that maximize specific cellular objectives [18].

Table 1: Constraint-Based Methods for Strain Design

| Method | Key Features | Data Integration | Applications |

|---|---|---|---|

| Classic FBA | Mass balance constraints, assumption of cellular objective | Stoichiometric matrix | Prediction of flux distributions, essentiality analysis |

| ME-models | Incorporates transcription/translation reactions | Transcriptomic, proteomic data | Explicit modeling of enzyme production costs |

| GEM-PRO | Includes protein structural information | Structural proteomics | Proteome allocation constraints |

| GECKO | Incorporates enzyme kinetics | Proteomic data, kinetic parameters | Enzyme-constrained flux predictions |

Recent extensions to the COBRA framework enable integration of multi-omics data to generate more context-specific predictions. For instance, metabolism and gene-expression models (ME-models) explicitly simulate reactions involved in transcription and translation, enabling direct comparison with transcriptomic and proteomic data [18]. Similarly, the GECKO method incorporates literature-derived enzyme kinetic parameters with proteomics data to constrain metabolic fluxes more accurately [18].

Kinetic Modeling and Machine Learning

While constraint-based methods offer genome-scale coverage, kinetic models provide dynamic and more mechanistic representations of metabolic pathways. These models use ordinary differential equations (ODEs) to describe changes in metabolite concentrations over time, with reaction fluxes described by kinetic mechanisms derived from mass action principles [9]. This approach allows in silico perturbation of enzyme concentrations or catalytic properties to predict pathway behavior [9].

Machine learning has emerged as a powerful complement to mechanistic modeling, particularly when dealing with complex, non-intuitive pathway behaviors. ML algorithms can identify patterns in high-dimensional data that might escape human observation. In one demonstrated framework, gradient boosting and random forest models outperformed other methods in the low-data regime typical of early DBTL cycles and showed robustness to training set biases and experimental noise [9].

The following diagram illustrates the relationship between different computational approaches in the Design phase:

Figure 1: Computational Approaches in the Design Phase. The diagram shows the relationship between major computational methodologies used in metabolic strain design.

Genetic Parts Selection and Pathway Design

The selection of appropriate genetic parts constitutes a critical aspect of pathway design that directly influences metabolic flux and product yield. This process involves choosing regulatory elements, coding sequences, and intergenic regions that collectively determine pathway functionality.

Promoter and RBS Engineering

Promoter engineering and ribosome binding site (RBS) engineering represent two fundamental approaches for fine-tuning gene expression in synthetic pathways. Promoters control transcription initiation rates, while RBS elements modulate translation efficiency. Studies have demonstrated that systematic variation of these elements can lead to substantial improvements in product titers. For example, in a pinocembrin production pathway, statistical analysis revealed that vector copy number had the strongest effect on production levels, followed by promoter strength for specific enzymes in the pathway [11].

RBS engineering has emerged as a particularly powerful technique for precise fine-tuning of relative gene expression in synthetic pathways [5]. Tools like the UTR Designer facilitate modulation of RBS sequences, though many focus primarily on flanking regions of the Shine-Dalgarno (SD) sequence [5]. Simplified approaches that modulate the SD sequence without interfering secondary structures have also proven effective [5]. In dopamine production optimization, fine-tuning the dopamine pathway through high-throughput RBS engineering demonstrated the significant impact of GC content in the Shine-Dalgarno sequence on RBS strength and consequent dopamine production [5].

Combinatorial Library Design

Combinatorial approaches enable efficient exploration of design spaces when optimizing multi-gene pathways. Rather than testing individual variants sequentially, combinatorial libraries allow simultaneous assessment of multiple factors. However, comprehensive testing of all possible combinations often leads to combinatorial explosion, making full exploration experimentally infeasible [9].

To address this challenge, design of experiments (DoE) methodologies enable statistical reduction of library size while maintaining representative coverage of the design space. In one application for pinocembrin production, a combinatorial design representing 2592 possible configurations was reduced to just 16 representative constructs using orthogonal arrays combined with a Latin square for positional gene arrangement—achieving a compression ratio of 162:1 [11]. This approach identified the most significant factors influencing production, informing more focused libraries in subsequent DBTL cycles.

Table 2: Genetic Parts for Pathway Optimization

| Part Type | Design Parameters | Impact on Expression | Tools/Methods |

|---|---|---|---|

| Promoter | Strength, inductibility | Transcription initiation rate | Library screening, native promoter characterization |

| RBS | Shine-Dalgarno sequence, secondary structure | Translation initiation rate | UTR Designer, computational prediction |

| Coding Sequence | Codon usage, GC content | Protein folding, expression level | Codon optimization algorithms |

| Terminator | Efficiency | mRNA stability, transcriptional interference | Library characterization |

| Vector Backbone | Copy number, compatibility | Gene dosage, metabolic burden | Origin engineering, compatibility testing |

Knowledge-Driven Design Strategies

Traditional DBTL cycles often begin with limited prior knowledge, requiring multiple iterations to accumulate sufficient understanding for effective optimization. Knowledge-driven design strategies address this challenge by incorporating upstream investigations to inform initial design decisions, potentially reducing the number of cycles needed to achieve performance targets.

In Vitro Pre-screening

Cell-free protein synthesis (CFPS) systems and crude cell lysate systems enable rapid testing of enzyme combinations and relative expression levels without the constraints of whole-cell systems [5]. These approaches bypass cellular membranes and internal regulation, allowing direct assessment of pathway functionality. In one application for dopamine production, researchers conducted in vitro tests to assess enzyme expression levels before initiating DBTL cycles, creating a knowledge-driven approach that accelerated strain development in E. coli [5].

The knowledge-driven DBTL cycle incorporating in vitro investigation provides both mechanistic understanding and efficient cycling. Following in vitro cell lysate studies, results were translated to the in vivo environment through high-throughput RBS engineering, developing a dopamine production strain capable of producing 69.03 ± 1.2 mg/L, representing a 2.6 to 6.6-fold improvement over state-of-the-art production [5].

Automated Workflow Integration

Fully automated DBTL pipelines represent the cutting edge in metabolic engineering design, integrating computational design, DNA assembly, strain construction, and testing with minimal manual intervention. These biofoundries employ specialized software tools that automate various aspects of the design process:

- RetroPath: For automated pathway and enzyme selection [11]

- Selenzyme: Enzyme selection based on biochemical criteria [11]

- PartsGenie: Design of reusable DNA parts with optimization of RBS and coding regions [11]

These tools enable in silico construction of large combinatorial libraries of pathway designs, which are then statistically reduced to manageable sizes for laboratory construction and screening. Automated worklist generation facilitates seamless transition from design to build phases, with all designs deposited in centralized repositories for tracking and reproducibility [11].

The following workflow illustrates a knowledge-driven DBTL approach:

Figure 2: Knowledge-Driven DBTL Workflow. This approach incorporates upstream in vitro investigation to inform initial design decisions, accelerating strain optimization.

Experimental Protocols and Implementation

Successful implementation of design strategies requires robust experimental protocols for validation and characterization. This section outlines key methodologies for evaluating genetic parts and pathway performance.

Protocol: Combinatorial Pathway Optimization

Objective: Systematically optimize multi-gene pathway expression to maximize product titer using combinatorial library construction and screening.

Materials:

- DNA Library Components: Promoter variants, RBS sequences, coding sequences

- Host Strain: Appropriate microbial chassis (e.g., E. coli production strain)

- Assembly System: DNA assembly reagents (e.g., ligase cycling reaction components)

- Screening Platform: High-throughput cultivation and analytics (e.g., LC-MS)

Procedure:

- Library Design: Define design space encompassing regulatory elements, gene order, and copy number variations

- Statistical Reduction: Apply design of experiments (DoE) to reduce library size while maintaining representativeness

- Automated Assembly: Implement robotic platform for high-throughput DNA assembly

- Transformation: Introduce construct libraries into production host

- Quality Control: Verify constructs through automated purification, restriction digest, and sequencing

- Cultivation: Grow strains in 96-deepwell plates under standardized conditions

- Product Quantification: Analyze culture samples using UPLC-MS/MS with high mass resolution

- Data Analysis: Apply statistical methods to identify relationships between design factors and production levels

Application Note: In pinocembrin pathway optimization, this approach identified vector copy number as the strongest positive factor (P = 2.00 × 10⁻⁸), followed by CHI promoter strength (P = 1.07 × 10⁻⁷) [11].

Protocol: RBS Library Characterization

Objective: Fine-tune relative gene expression in synthetic pathways through RBS engineering.

Materials:

- RBS Variants: Library of Shine-Dalgarno sequence variants

- Reporter System: Fluorescent proteins or selection markers

- Analytical Tools: Flow cytometer, plate reader, or HPLC for product quantification

Procedure:

- Library Design: Generate RBS variants with modulated SD sequences while preserving secondary structure context

- Construct Assembly: Clone RBS variants upstream of target genes in pathway context

- Transformation: Introduce constructs into production host

- Cultivation: Grow replicates under controlled conditions

- Characterization: Measure gene expression (via reporter) or product formation

- Correlation Analysis: Relate RBS sequence features to expression/output

- Model Building: Develop predictive models for RBS strength based on sequence parameters

Application Note: In dopamine production optimization, this approach revealed the significant impact of GC content in the Shine-Dalgarno sequence on RBS strength and pathway performance [5].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for Metabolic Engineering Design

| Reagent/Category | Function | Example Applications |

|---|---|---|

| CRISPR-Cas Systems | Genome editing, multiplexed engineering | Gene knockouts, regulatory element integration |

| DNA Assembly Kits | High-throughput pathway construction | Golden Gate assembly, Gibson assembly, LCR |

| Promoter/RBS Libraries | Gene expression fine-tuning | Combinatorial pathway optimization |

| Reporter Proteins | Quantification of gene expression | RBS strength characterization, promoter activity |

| Analytical Standards | Product quantification | LC-MS/MS calibration, metabolite identification |

| Cell-Free Systems | In vitro pathway testing | Rapid prototyping without cellular constraints |

| Biofoundry Platforms | Automated strain construction | Integrated DBTL pipeline implementation |

The Design phase of the DBTL cycle represents a sophisticated integration of computational modeling, bioinformatics, and experimental design that systematically guides metabolic engineering efforts. Through strategic selection of genetic parts, application of knowledge-driven strategies, and implementation of combinatorial optimization approaches, researchers can navigate the complexity of biological systems to develop efficient microbial cell factories. The continuing evolution of computational tools, automated workflows, and machine learning applications promises to further enhance our design capabilities, reducing development timelines while increasing success rates across diverse biomanufacturing applications. As these methodologies mature and become more accessible, they will accelerate the development of sustainable bioprocesses for therapeutic compounds, fine chemicals, and other valuable products.

In the context of the Design-Build-Test-Learn (DBTL) framework for metabolic engineering, the Build phase is the critical step where designed genetic constructs are physically assembled and inserted into a host organism. This phase transforms computational models and designs into tangible biological entities that can be tested and optimized. The efficiency of the Build phase directly determines the speed and scale at which DBTL cycles can be iterated, ultimately accelerating the development of microbial cell factories for sustainable chemical production [3] [5].