The DBTL Cycle in Synthetic Biology: A Complete Guide to Engineering Biology for Drug Development

This article provides a comprehensive exploration of the Design-Build-Test-Learn (DBTL) cycle, the core engineering framework of synthetic biology.

The DBTL Cycle in Synthetic Biology: A Complete Guide to Engineering Biology for Drug Development

Abstract

This article provides a comprehensive exploration of the Design-Build-Test-Learn (DBTL) cycle, the core engineering framework of synthetic biology. Tailored for researchers and drug development professionals, it details the foundational principles of each stage, practical methodologies and applications in biomanufacturing and therapy development, strategies for overcoming bottlenecks through automation and AI, and a critical analysis of how this approach is validating new paradigms in biomedical research. The content synthesizes current advancements to offer a actionable guide for implementing and optimizing DBTL workflows in research and development.

What is the DBTL Cycle? Foundational Principles of Engineering Biology

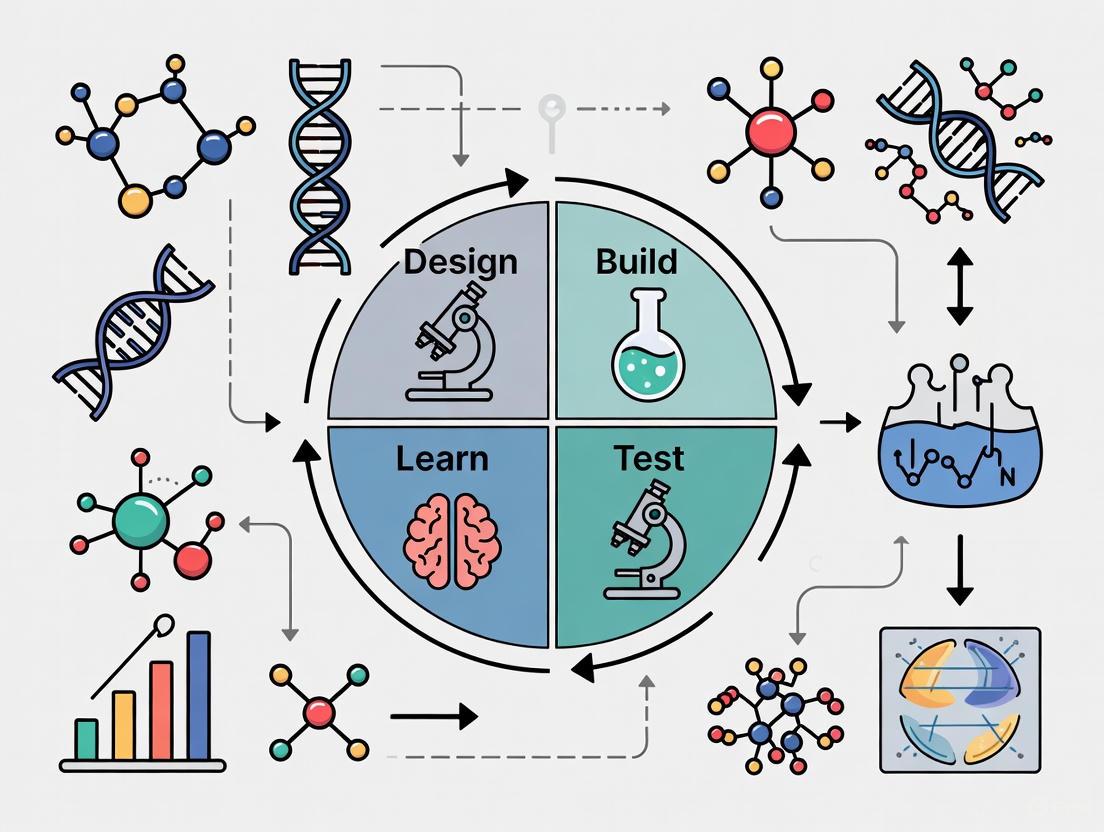

The Design-Build-Test-Learn (DBTL) cycle is a foundational framework in synthetic biology that provides a systematic, iterative methodology for engineering biological systems [1]. This engineering-based approach enables researchers to develop and optimize organisms to perform specific functions, such as producing biofuels, pharmaceuticals, or other valuable compounds [1]. The core principle of DBTL involves cycling through four distinct phases—Design, Build, Test, and Learn—where each iteration incorporates knowledge from previous cycles to refine and improve the biological system until the desired function is achieved [2].

This framework has become increasingly crucial as synthetic biology moves from demonstrating isolated successes to establishing predictable engineering principles. The iterative nature of DBTL allows researchers to navigate the complexity of biological systems, where initial designs rarely perform as expected due to the intricate and often unpredictable interactions within living cells [1]. By applying this structured cycle, synthetic biologists can methodically narrow down possibilities, optimize systems, and gain mechanistic insights into biological function [2] [3].

The Four Phases of the DBTL Cycle

Design Phase

The Design phase constitutes the initial planning stage where researchers define objectives and create a blueprint for the biological system based on a specific hypothesis or learnings from previous cycles [2]. This phase involves:

- Objective Definition: Establishing clear goals for the desired biological function, such as production of a target compound or implementation of a specific genetic circuit [4].

- Part Selection: Choosing appropriate genetic components including promoters, ribosome binding sites (RBS), coding sequences, and terminators [2].

- Circuit Assembly Planning: Determining how selected parts will be assembled into functional genetic circuits or metabolic pathways using standardized methods [2].

- Experimental Protocol Design: Defining precise protocols and success metrics that will be used to evaluate system performance [2].

The Design phase increasingly leverages computational tools and prior knowledge to create more effective initial designs. With advances in machine learning, this phase can now incorporate predictive models that have been trained on large biological datasets, enabling more informed design decisions from the outset [4].

Build Phase

In the Build phase, the theoretical design is translated into physical biological reality through molecular biology techniques [2]. This hands-on component involves:

- DNA Construction: Assembling DNA fragments into complete constructs designed for easy gene construction [1].

- Vector Cloning: Cloning assembled constructs into expression vectors appropriate for the chosen host organism [1].

- Transformation: Introducing the engineered constructs into host organisms such as bacteria, yeast, or mammalian cells [2].

- Verification: Confirming successful construction using methods like colony qPCR, sequencing, or next-generation sequencing (NGS) [1].

Automation of the assembly process significantly reduces the time, labor, and cost of generating multiple constructs, enabling higher throughput with an overall shortened development cycle [1]. The emphasis on modular design of DNA parts allows for the assembly of a greater variety of potential constructs by interchanging individual components [1].

Test Phase

The Test phase focuses on robust data collection through quantitative measurements to characterize the behavior of the engineered system [2]. This critical evaluation stage involves:

- Functional Assays: Performing various assays to measure system performance, such as measuring fluorescence to quantify gene expression, conducting microscopy to observe cellular changes, or performing biochemical assays to measure metabolic output [2].

- High-Throughput Screening: Implementing automated screening methods to efficiently test multiple variants or conditions [5].

- Data Collection: Gathering comprehensive performance data using appropriate analytical techniques and instrumentation [2].

The testing process is crucial for generating the necessary data to evaluate whether the design meets the original specifications and to inform subsequent cycles. Automation of testing significantly improves throughput, reliability, and reproducibility [5].

Learn Phase

The Learn phase represents the analytical component where data gathered during testing is analyzed and interpreted to extract meaningful insights [2]. This stage involves:

- Data Analysis: Processing and interpreting experimental results to determine if the design functioned as expected [2].

- Hypothesis Refinement: Identifying reasons for success or failure and formulating improved hypotheses [2].

- Knowledge Integration: Synthesizing new understanding to inform the next Design phase [2].

- Model Development: Creating or refining computational models to better predict system behavior in future cycles [6].

This phase has traditionally been the most weakly supported in the DBTL cycle, but advances in machine learning and data analytics are increasingly strengthening this critical component [6]. The insights gained here, whether from success or failure, are invaluable for directing subsequent engineering efforts [2].

DBTL in Action: Experimental Applications

Case Study: Engineering Dopamine Production inE. coli

A recent study demonstrated the application of a knowledge-driven DBTL cycle to develop and optimize a dopamine production strain in Escherichia coli [3]. The researchers achieved a dopamine production concentration of 69.03 ± 1.2 mg/L, representing a 2.6 to 6.6-fold improvement over state-of-the-art in vivo dopamine production [3]. Their approach combined upstream in vitro investigation with high-throughput RBS engineering to efficiently optimize the metabolic pathway.

Table 1: DBTL Cycles for Dopamine Production Optimization

| DBTL Cycle | Engineering Target | Key Approach | Outcome |

|---|---|---|---|

| Cycle 1 | Host strain development | Genomic engineering of E. coli for increased L-tyrosine production | Created precursor-optimized chassis |

| Cycle 2 | Enzyme expression balancing | In vitro cell lysate studies to test relative expression levels | Identified optimal HpaBC:Ddc expression ratio |

| Cycle 3 | Pathway fine-tuning | High-throughput RBS engineering to control translation initiation | Achieved 69.03 ± 1.2 mg/L dopamine production |

The methodology employed in this case study highlights how the DBTL framework can be adapted to incorporate mechanistic understanding while efficiently optimizing biological systems [3]. The knowledge-driven approach reduced the number of iterations needed by generating targeted insights before extensive in vivo engineering.

Case Study: Discovering a Novel Anti-Adipogenic Protein

Another research project effectively utilized the DBTL cycle to identify and validate a novel anti-adipogenic protein from Lactobacillus rhamnosus [2]. The researchers systematically narrowed down the active component from the whole bacterium to a single, purified protein through three sequential DBTL cycles:

DBTL Cycle 1: Effect of Raw Bacteria

- Design: Test hypothesis that direct contact with Lactobacillus could inhibit adipogenesis by co-culturing six different strains with 3T3-L1 preadipocytes.

- Build: Culture six bacterial strains and establish 7-day adipogenesis protocol with treatment at various multiplicities of infection (MOI).

- Test: Measure lipid accumulation using Oil Red O staining.

- Learn: Most strains, particularly L. rhamnosus, inhibited lipid accumulation by 20-30%, confirming anti-adipogenic effect [2].

DBTL Cycle 2: Effect of Bacterial Supernatant

- Design: Investigate whether secreted extracellular substances were responsible by treating cells with filtered supernatant.

- Build: Collect supernatant from all six strains and apply at concentrations of 25%, 50%, and 75%.

- Test: Quantify lipid accumulation via Oil Red O staining.

- Learn: Only L. rhamnosus supernatant showed significant, concentration-dependent inhibition (up to 45%), narrowing focus to this strain's extracellular components [2].

DBTL Cycle 3: Effect of Bacterial Exosomes

- Design: Isolate active component by testing exosomes as potential carriers of the active molecule.

- Build: Isolate exosomes from supernatant using centrifugation and Amicon tube with 100k MWCO filter.

- Test: Measure lipid accumulation and analyze expression of adipogenesis-related genes (Ppary, C/ebpa) and AMPK.

- Learn: L. rhamnosus exosomes showed 80% reduction in lipid accumulation and worked through AMPK pathway, confirming exosomes as active component [2].

This case study exemplifies the power of iterative DBTL cycles to systematically narrow down complex biological questions from a broad starting point to a specific mechanistic understanding.

Essential Research Reagents and Tools

Successful implementation of DBTL cycles requires a comprehensive suite of research reagents and tools. The table below details essential materials and their functions in synthetic biology workflows.

Table 2: Key Research Reagent Solutions for DBTL Implementation

| Reagent/Tool | Function | Application Examples |

|---|---|---|

| Expression Vectors | DNA vehicles for gene expression in host organisms | pET system for protein expression; pJNTN for library construction [3] |

| DNA Assembly Systems | Modular DNA construction methods | Golden Gate, Gibson Assembly, Ligation Chain Reaction (LCR) [7] |

| Cell-Free Expression Systems | In vitro transcription and translation | Rapid protein synthesis without cellular constraints; pathway prototyping [4] |

| RBS Libraries | Fine-tune gene expression levels | Ribosome Binding Site variants for metabolic pathway optimization [3] |

| Fluorescent Reporters | Quantify gene expression and protein production | GFP, RFP, and other fluorescent proteins for promoter characterization [5] |

| Analytical Standards | Calibrate measurement equipment | Quantification of target molecules via HPLC or mass spectrometry [7] |

Advanced Methodologies and Protocols

Automated Workflow Integration

The integration of automation into DBTL cycles has revolutionized synthetic biology by enabling higher throughput and improved reproducibility. Biofoundries—structured R&D systems where biological design, construction, testing, and modeling are performed following the DBTL cycle—have emerged as key infrastructure for advanced synthetic biology [8]. These facilities implement an abstraction hierarchy for operations:

- Level 0: Project - The overall research objective to be carried out

- Level 1: Service/Capability - Specific functions required from the biofoundry

- Level 2: Workflow - DBTL-based sequence of tasks

- Level 3: Unit Operations - Actual hardware or software performing tasks [8]

This hierarchical structure enables more modular, flexible, and automated experimental workflows while improving communication between researchers and systems [8].

Machine Learning-Enhanced DBTL

Machine learning (ML) has dramatically transformed the Learn phase of the DBTL cycle and is increasingly influencing the Design phase. Tools like the Automated Recommendation Tool (ART) leverage machine learning and probabilistic modeling to guide synthetic biology in a systematic fashion, without requiring full mechanistic understanding of the biological system [6]. ART uses sampling-based optimization to recommend strains to be built in the next engineering cycle alongside probabilistic predictions of their production levels.

More recently, a paradigm shift from DBTL to LDBT (Learn-Design-Build-Test) has been proposed, where machine learning precedes design [4]. This approach leverages the fact that data that would be "learned" by Build-Test phases may already be inherent in machine learning algorithms, potentially reducing the number of experimental cycles needed.

Diagram 1: DBTL vs. LDBT Cycle Comparison - The paradigm shift from traditional DBTL to machine learning-enhanced LDBT

AI-Powered Autonomous Enzyme Engineering

Recent advances have integrated large language models (LLMs) with biofoundry automation to create fully autonomous enzyme engineering platforms [9]. This generalized platform requires only an input protein sequence and a quantifiable way to measure fitness, enabling:

- Automated Library Design: Using protein LLMs (ESM-2) and epistasis models (EVmutation) to generate diverse, high-quality variant libraries [9]

- Integrated Construction: Implementing HiFi-assembly based mutagenesis methods that eliminate need for intermediate sequence verification [9]

- Continuous Workflow Execution: Dividing protein engineering into seven automated modules that operate without human intervention [9]

This approach has demonstrated substantial improvements in enzyme function, engineering Arabidopsis thaliana halide methyltransferase (AtHMT) for a 90-fold improvement in substrate preference and 16-fold improvement in ethyltransferase activity in just four weeks [9].

Standardized Workflow Visualization

Diagram 2: Detailed DBTL Workflow - The four phases of the DBTL cycle with their key activities

The DBTL framework continues to evolve with technological advancements. Key future directions include:

- Increased Automation: Development of fully autonomous biofoundries that can execute complete DBTL cycles with minimal human intervention [8] [9]

- Enhanced Machine Learning Integration: Deeper incorporation of AI throughout the cycle, from initial design to experimental planning and data interpretation [4] [6]

- Standardization and Interoperability: Establishment of common frameworks and data standards to enable collaboration across biofoundries and research institutions [8] [7]

- Megascale Data Generation: Utilization of cell-free systems and ultra-high-throughput methods to generate the large datasets needed to train more accurate predictive models [4]

The DBTL cycle has proven to be an essential framework for advancing synthetic biology from artisanal practices toward predictable engineering. By providing a structured approach to biological design and optimization, DBTL enables researchers to tackle increasingly complex challenges in bioengineering. As tools and technologies continue to mature, the DBTL framework will undoubtedly remain the core engine driving innovation in synthetic biology and its applications across medicine, manufacturing, and environmental sustainability.

The iterative Design-Build-Test-Learn (DBTL) cycle represents a systematic engineering framework that has become fundamental to advancing synthetic biology. Unlike classical engineering disciplines that utilize well-characterized, man-made components, synthetic biology often relies on partially characterized biological parts implemented within the complex and dynamic environment of living cells [10]. This inherent complexity necessitates an iterative approach to engineering biological systems. The DBTL cycle provides this structured framework, enabling the systematic design of biological systems at the genetic level and the elucidation of genetic design rules [10]. By continuously refining designs based on experimental data, researchers can navigate the vast biological design space to optimize microbial strains for the production of fine chemicals, therapeutics, and sustainable materials [11] [12]. This deep dive explores the core principles, technical methodologies, and transformative applications of each stage within the DBTL cycle, providing researchers and drug development professionals with a comprehensive technical guide.

The Design Stage: In Silico Blueprinting of Biological Systems

The Design stage is the foundational phase where computational tools and biological knowledge converge to create blueprints for genetic constructs. This stage encompasses both biological design—specifying desired cellular functions—and operational design—planning experimental procedures and protocols [10]. The objective is to produce one or more DNA sequences composed of multiple genetic parts that will generate the desired functions in a targeted biological context [10].

Advanced software tools are now integral to this process. For any given target compound, tools like RetroPath enable automated pathway selection, while Selenzyme facilitates enzyme selection [12]. Subsequently, reusable DNA parts are designed with simultaneous optimization of bespoke ribosome-binding sites (RBS) and enzyme coding regions using software such as PartsGenie [12]. These genes and regulatory parts are combined in silico into large combinatorial libraries of pathway designs. A critical step in managing this complexity is the application of Design of Experiments (DoE) methodologies, such as orthogonal arrays combined with Latin squares, to statistically reduce these vast libraries into smaller, representative sets that can be tractably constructed and screened in the laboratory [12]. This compression is substantial; for instance, one documented application achieved a 162:1 compression ratio, reducing 2,592 possible configurations to just 16 representative constructs [12].

Table 1: Key Software Tools for the Design Stage

| Tool Name | Primary Function | Application Context |

|---|---|---|

| RetroPath | Automated pathway selection [12] | Identifying biosynthetic pathways for target compounds |

| Selenzyme | Automated enzyme selection [12] | Selecting optimal enzymes for specified reactions |

| PartsGenie | Design of reusable DNA parts with optimized RBS and coding regions [12] | Creating standardized, optimized genetic components |

| PlasmidGenie | Generation of assembly recipes and robotics worklists [12] | Transitioning from digital design to physical construction |

The Build Stage: From Digital Design to Physical Construct

The Build stage translates digital DNA sequences into physical biological reality through molecular biology techniques, often enhanced by robotic automation [10]. This process involves two main activities: the DNA build, which iteratively assembles the DNA sequence specified in the Design process, and the host build, which involves delivering the genetic construct into the host organism and verifying its presence [10].

The DNA assembly process employs techniques like the ligase cycling reaction (LCR) to combine multiple DNA fragments [12]. Commercial DNA synthesis often provides the starting material, followed by part preparation via PCR [12]. The assembly itself is frequently guided by automated worklists and performed on robotics platforms, ensuring precision and reproducibility. Following assembly, the constructs are transformed into a microbial host, such as Escherichia coli, a workhorse of synthetic biology. The final, crucial step of the Build stage is rigorous verification. This involves quality checks through high-throughput automated plasmid purification, restriction digest analysis by capillary electrophoresis, and definitive sequence verification [12]. This meticulous validation ensures that the physical construct perfectly matches the in silico design before proceeding to costly and time-consuming testing phases.

The Test Stage: Functional Validation of Engineered Systems

In the Test stage, researchers assess whether the biological functions encoded by the designed DNA sequence have been successfully achieved by the host organism [10]. For unicellular production hosts, this typically involves growing the engineered organism under controlled conditions and assaying for the desired function, such as the production of a target chemical [10].

Advanced pipelines automate this process using 96-deepwell plate-based growth protocols [12]. The detection and quantification of the target product and key intermediates are critical. This is achieved through automated sample extraction followed by sophisticated analytical techniques, most notably fast ultra-performance liquid chromatography coupled to tandem mass spectrometry (UPLC-MS/MS) with high mass resolution [12]. The data extraction and processing from these analytical methods are often automated using custom-developed scripts, for example, in the R programming language [12]. This stage generates the quantitative performance data—such as product titer, yield, and rate—that fuels the subsequent Learn stage. For bioprocessing, a significant challenge remains in using these small-volume measurements to predict performance in large-scale fermentation, an area of active research [10].

The Learn Stage: Data-Driven Design Optimization

The Learn stage is the analytical core of the iterative cycle, where measured data is transformed into actionable insights for the next design iteration. This process utilizes statistical methods and machine learning to identify the relationships between observed production levels and the various factors incorporated into the genetic design [12].

For example, in a pathway optimization project for the flavonoid (2S)-pinocembrin, statistical analysis of initial test data identified that vector copy number had the strongest significant positive effect on production titers, followed by the promoter strength upstream of the chalcone isomerase (CHI) gene [12]. Weaker, yet still significant, effects were observed for the promoter strengths of other genes in the pathway. These insights directly informed the constraints for the second design cycle, which focused on a more productive region of the design space [12]. The Learn process can also integrate multi-omics data with metabolic models, such as Flux Balance Analysis (FBA), to identify genetic interventions that further improve titer, rate, and yield of engineered pathways [10]. The cycle is repeated, with each iteration incorporating new knowledge, until the user-defined target function is achieved.

Table 2: Example Quantitative Analysis from a DBTL Cycle for Pinocembrin Production

| Design Factor Analyzed | Impact on Pinocembrin Titer | Statistical Significance (P-value) |

|---|---|---|

| Vector Copy Number | Strongest positive effect [12] | 2.00 x 10⁻⁸ |

| CHI Promoter Strength | Strong positive effect [12] | 1.07 x 10⁻⁷ |

| CHS Promoter Strength | Weaker positive effect [12] | 1.01 x 10⁻⁴ |

| 4CL Promoter Strength | Weaker positive effect [12] | 1.01 x 10⁻⁴ |

| PAL Promoter Strength | Weaker positive effect [12] | 3.06 x 10⁻⁴ |

| Relative Gene Order | Not significant [12] | Not Significant |

Integrated Workflow and a Case Study in Flavonoid Production

The power of the DBTL cycle is fully realized when its stages are integrated into a seamless, automated pipeline. The DOT diagram below illustrates the logical flow and iterative nature of a complete DBTL cycle, highlighting key inputs, processes, and outputs at each stage.

A concrete application of this pipeline targeted the microbial production of the flavonoid (2S)-pinocembrin in E. coli [12]. The pathway involved four enzymes converting L-phenylalanine to pinocembrin. The initial Design stage created a combinatorial library of 2,592 possible configurations, which was reduced via DoE to 16 representative constructs. The Build stage assembled these 16 constructs, all of which were successfully sequence-verified. The Test stage revealed pinocembrin titers ranging from 0.002 to 0.14 mg L⁻¹, and the subsequent Learn stage identified key limiting factors, with vector copy number and CHI promoter strength having the most significant effects [12]. A second DBTL cycle, informed by these findings, focused on a refined design space. This iterative process successfully established a production pathway improved by 500-fold, achieving competitive titers of up to 88 mg L⁻¹ [12]. This case study powerfully demonstrates the rapid optimization capability of an integrated DBTL pipeline.

Table 3: Research Reagent Solutions for a Synthetic Biology DBTL Pipeline

| Reagent / Material | Function in the DBTL Cycle |

|---|---|

| Ligase Cycling Reaction (LCR) | An enzymatic method for assembling multiple DNA fragments into a single construct during the Build stage [12]. |

| DNA Oligonucleotides | Commercially synthesized single-stranded DNA fragments used as building blocks for gene and part assembly [12]. |

| Restriction Endonucleases | Enzymes used for analytical digestion to verify the size and structure of assembled DNA plasmids during quality control [12]. |

| Selected/Multiple Reaction Monitoring (SRM/MRM) | A highly specific mass spectrometry technique for targeted quantification of metabolites, proteins, or pathway intermediates during the Test stage [13]. |

| Mass Distribution Vectors (MDVs) | Data derived from isotope labeling experiments; used with Elementary Metabolite Units (EMU) models for Metabolic Flux Analysis (MFA) in the Learn stage [13]. |

| Ribosome Binding Site (RBS) Libraries | Collections of genetic parts with varying sequences to control the translation initiation rate, a key variable optimized in the Design stage [12]. |

The DBTL cycle has firmly established itself as the central paradigm for the rigorous engineering of biological systems. Its iterative, data-driven nature is essential for managing the complexity inherent in living organisms. The ongoing integration of automation, artificial intelligence (AI), and machine learning (ML) is set to dramatically accelerate this cycle, making it faster, cheaper, and more precise [11]. As these technologies mature and community standards for data and parts sharing solidify, the DBTL framework will be instrumental in tackling global challenges, from developing sustainable manufacturing processes and advanced therapeutics to addressing climate change through carbon sequestration [11]. By deconstructing and mastering each stage of the DBTL cycle, researchers and drug development professionals are poised to unlock the full transformative potential of synthetic biology.

Synthetic biology represents a fundamental shift in the life sciences, moving from a descriptive discipline to an engineering practice focused on the design and construction of novel biological systems. This field is defined by the application of rational principles and formal design processes to biological components [1]. The core premise is that biological systems can be understood as objects endowed with a relational logic between their components not fundamentally different from those designed by computational, chemical, or electronic engineers [14]. This engineering perspective allows researchers to address how and why biological systems work by focusing on the physicochemical implementation of functions in space and time, setting aside exclusive focus on evolutionary origins [14]. The adoption of this mindset is crucial for advancing biotechnology and creating next-generation bacterial cell factories and therapeutic solutions [13].

Core Principles of Rational Biological Design

Defining the Engineering Mindset

The rational engineering approach in synthetic biology is characterized by several key principles. First and foremost is the intent to harness our understanding of biology to build a library of well-understood and characterized modular biological parts, such as genes and proteins, whose functions are predictable and reliable [15]. This approach embraces both the engineering mindset and the unique properties of biological systems, accepting "Nature on its own terms and taking advantage of the parts and tools that Nature has given us, with all of their wonderful idiosyncrasies" [15].

A crucial conceptual framework in this engineering approach is the distinction between techno-logy (rational design) and techno-nomy (the appearance of rational engineering in evolved biological systems) [14]. This parallel mirrors Monod's evolutionary paradox of teleology (finality/purpose) versus teleonomy (appearance of finality/purpose) and provides a valuable interpretive lens for understanding the logic of biological objects without implying the intervention of an actual engineer [14].

The Evolutionary Design Spectrum

Engineering design processes can be understood as existing on an evolutionary spectrum, where the number of variants tested and the number of design cycles needed differentiate approaches [16]. All design methodologies combine variation and selection iteratively, differing primarily in how they leverage exploration (searching design space) and exploitation (using prior knowledge) [16].

Table 1: Engineering Design Approaches in Synthetic Biology

| Design Approach | Key Characteristics | Exploratory Power | Knowledge Leverage | Typical Applications |

|---|---|---|---|---|

| Rational Design | High knowledge exploitation, low variant numbers | Low | High prior knowledge | Systems with well-characterized parts and predictable behavior |

| Directed Evolution | High-throughput variant testing, iterative selection | High | Low to moderate | Enzyme engineering, optimizing complex phenotypes |

| Hybrid Approaches | Combines modeling with experimental testing | Moderate to high | Moderate to high | Pathway optimization, circuit design |

Rational design aims for predictable engineering of biological systems using well-characterized modular parts [15], while directed evolution harnesses the power of evolutionary processes to direct the design of synthetic organisms through high-throughput gene editing and random mutation [15]. These approaches are not mutually exclusive but rather "highly complementary" [15], with the choice depending on the specific problem, available knowledge, and constraints.

The Design-Build-Test-Learn (DBTL) Cycle

The DBTL cycle is the fundamental framework for systematic and iterative development in synthetic biology [1]. This engineering mantra provides a structured approach for engineering biological systems to perform specific functions, such as producing biofuels, pharmaceuticals, or other valuable compounds [1]. The cycle consists of four phases:

- Design: Researchers define objectives for desired biological function and design parts or systems using domain knowledge, expertise, and computational modeling [4].

- Build: DNA constructs are synthesized, assembled into plasmids or other vectors, and introduced into characterization systems including in vivo chassis or in vitro cell-free systems [4].

- Test: Experimental measurement of engineered biological constructs' performance to determine efficacy of design and build phases [4].

- Learn: Analysis of test data compared to design objectives to inform subsequent design rounds through additional DBTL iterations [4].

This framework streamlines biological engineering by providing a systematic, iterative methodology that can be repeatedly applied until desired functionality is achieved [4] [1].

The LDBT Paradigm Shift

Recent advances are transforming the classic DBTL cycle, with some researchers proposing a paradigm shift to "LDBT" - where Learning precedes Design [4]. This reordering is enabled by machine learning algorithms that can leverage large biological datasets to make zero-shot predictions (without additional training) that improve protein functionality [4]. In this model, the data that would be "learned" by Build-Test phases may already be inherent in machine learning algorithms, potentially allowing researchers to "do away with cycling altogether" in some cases and move synthetic biology closer to a Design-Build-Work model that relies on first principles [4].

Quantitative Methodologies and Experimental Protocols

Data-Driven Engineering Decisions

Rational engineering of biological systems requires rigorous quantitative analysis to compare system performance and guide design decisions. Appropriate statistical summaries and visualization methods are essential for interpreting experimental results.

Table 2: Quantitative Comparison of Gorilla Chest-Beating Rates [17]

| Group | Mean Rate (beats/10h) | Standard Deviation | Sample Size (n) |

|---|---|---|---|

| Younger Gorillas (<20 years) | 2.22 | 1.270 | 14 |

| Older Gorillas (≥20 years) | 0.91 | 1.131 | 11 |

| Difference | 1.31 | - | - |

For quantitative data comparison between groups, researchers should employ appropriate graphical representations including back-to-back stemplots (for small datasets with two groups), 2-D dot charts (for small to moderate data across multiple groups), and boxplots (for larger datasets across multiple groups) [17]. Boxplots are particularly valuable as they visualize the five-number summary (minimum, first quartile Q1, median Q2, third quartile Q3, and maximum) and identify potential outliers using the IQR rule [17].

Experimental Workflow Automation

The implementation of automated DBTL cycles is crucial for next-generation bacterial cell factories [13]. Automated biofoundries leverage liquid handling robots and microfluidics to scale the number of reactions and accelerate the DBTL cycle [4]. For example, DropAI leveraged droplet microfluidics and multi-channel fluorescent imaging to screen upwards of 100,000 picoliter-scale reactions [4]. These automated platforms are increasingly incorporating machine learning to create closed-loop design systems where AI agents cycle through experiments [4].

Automated DBTL Workflow for Strain Engineering

This workflow diagram illustrates the iterative nature of the DBTL cycle in an automated biofoundry context, highlighting the continuous refinement process enabled by machine learning and high-throughput experimentation [4] [13].

Research Reagent Solutions Toolkit

Table 3: Essential Research Reagents for Synthetic Biology Workflows

| Reagent/System | Function | Application Examples |

|---|---|---|

| Cell-Free Expression Systems | Protein biosynthesis machinery from cell lysates or purified components for in vitro transcription/translation [4] | Rapid protein production (>1 g/L in <4 h), toxic protein expression, high-throughput variant screening [4] |

| DNA Assembly Reagents | Enzymatic tools for constructing DNA vectors (e.g., USER, LCR, MAGE) [13] | Modular assembly of genetic circuits, pathway construction, genome editing [13] |

| Analytical Standards | Isotopically-labeled internal standards for mass spectrometry [13] | Metabolic flux analysis (MFA), proteomic quantification, targeted metabolomics (SRM/MRM) [13] |

| Machine Learning Models | Pre-trained algorithms for protein design and optimization (e.g., ESM, ProGen, ProteinMPNN) [4] | Zero-shot prediction of protein structures, stability optimization, enzyme engineering [4] |

Machine Learning and Cell-Free Systems Integration

Machine Learning-Enhanced Protein Engineering

Machine learning has become a driving force in synthetic biology, with protein language models demonstrating remarkable capability in zero-shot prediction of protein structures and functions [4]. Sequence-based models like ESM and ProGen are trained on evolutionary relationships between protein sequences and can predict beneficial mutations and infer protein functions [4]. Structure-based tools like ProteinMPNN take entire protein structures as input and predict sequences that fold into that backbone, leading to nearly a 10-fold increase in design success rates when combined with structure assessment tools like AlphaFold [4].

Specialized ML tools have been developed for optimizing specific protein properties. Prethermut predicts effects of single- or multi-site mutations on thermodynamic stability, while Stability Oracle predicts the ΔΔG of protein variants using a graph-transformer architecture [4]. DeepSol employs deep learning to predict protein solubility from primary sequences [4]. These tools enable researchers to eliminate destabilizing mutations or identify stabilizing ones in silico before experimental testing.

Cell-Free Platform Advantages

Cell-free expression systems provide a powerful platform for high-throughput testing of ML predictions [4]. These systems offer multiple advantages including rapid protein production (>1 g/L in <4 hours), ability to produce toxic proteins, scalability from picoliter to kiloliter scales, and compatibility with non-canonical amino acids and post-translational modifications [4]. The open nature of cell-free systems facilitates direct sampling and manipulation of the reaction environment, making them ideal for high-throughput sequence-to-function mapping of protein variants [4].

ML-Cell-Free Integration for Protein Engineering

This integration of machine learning and cell-free testing has demonstrated significant successes in protein engineering. Researchers have coupled in vitro protein synthesis with cDNA display to achieve ultra-high-throughput protein stability mapping of 776,000 protein variants [4]. This vast dataset has been extensively utilized to benchmark various zero-shot predictors for model predictability [4]. Similar approaches have been applied to engineer amide synthetases using linear supervised models trained on over 10,000 reactions from iterative rounds of site saturation mutagenesis [4].

The rational engineering mindset represents a transformative approach to biological design that leverages engineering principles, iterative design cycles, and increasingly powerful computational tools. As machine learning and automation continue to advance, the DBTL cycle is evolving toward more predictive engineering that requires fewer iterations [4]. The integration of machine learning with high-throughput experimental platforms like cell-free systems is creating new paradigms for biological engineering that leverage megascale data generation and modeling [4]. This progression moves synthetic biology closer to established engineering disciplines where reliable outcomes can be achieved through first principles design, ultimately accelerating the development of novel therapeutic solutions and sustainable biotechnologies [16] [13].

Synthetic biology represents a fundamental redefinition of humanity's interaction with biological systems, integrating core principles from biology, engineering, and computer science to design and construct novel biological entities or systematically redesign existing systems [18]. This discipline approaches biology with an engineering mindset, aiming to program biological processes with novel functions starting from fundamental genetic components [18]. The systematic development and optimization of these biological systems are guided by the Design-Build-Test-Learn (DBTL) cycle, an iterative framework that combines experimental techniques with computational modeling [18] [1]. This cycle comprises four distinct stages: the Design phase, where researchers conjecture DNA patterns or cellular alterations to achieve specific objectives; the Build phase, involving physical development of DNA fragments and their incorporation into host cells; the Test phase, where constructs are rigorously evaluated against desired outcomes; and the Learn phase, where results inform subsequent design iterations [18] [1].

The DBTL framework has proven particularly valuable in navigating the inherent complexity of biological systems, which often creates bottlenecks in efficient and predictable engineering [18]. Traditional approaches relying on first-principles biophysical models frequently struggle with non-linear, high-dimensional interactions between genetic parts and host cell machinery, often forcing the engineering process into "ad hoc tinkering" rather than predictive design [18]. The DBTL approach provides a structured methodology to address these challenges, enabling researchers to converge on biological systems with desired functions through systematic iteration [1]. This review examines key historical successes of the DBTL approach, from pioneering genetic circuits to modern AI-enhanced strain engineering, while providing detailed methodological insights and resource guidelines for researchers pursuing DBTL-based synthetic biology campaigns.

The DBTL Cycle: Core Components and Workflow

The DBTL cycle establishes a systematic framework for biological engineering that mirrors design cycles in traditional engineering disciplines. Each phase has distinct objectives, methodologies, and output deliverables that feed into subsequent phases.

Table 1: Core Components of the Traditional DBTL Cycle

| Phase | Key Objectives | Representative Methodologies | Output Deliverables |

|---|---|---|---|

| Design | Define biological objectives; Select genetic parts; Model system behavior | Computational modeling; Parts selection from libraries; Biophysical simulations [18] [4] | DNA sequence designs; System specifications; Predictive models |

| Build | Physical DNA construction; Host cell integration; Library generation | Gene synthesis; CRISPR-Cas9 genome editing; Molecular cloning; DNA assembly [18] [1] | Engineered biological constructs; Plasmid libraries; Transformed strains |

| Test | Characterize system performance; Measure against targets; Identify unintended effects | High-throughput sequencing; Functional assays; 'Omics analyses; Phenotypic screening [18] [19] | Performance metrics; Functional data; Multi-omics datasets |

| Learn | Analyze results; Identify bottlenecks; Inform redesign | Statistical analysis; Machine learning; Pattern recognition; Data integration [18] [19] | Refined hypotheses; Design rules; Optimized parameters for next cycle |

The power of the DBTL framework emerges from its iterative application, where knowledge gained from each cycle informs subsequent iterations, progressively refining the biological system toward desired specifications [18] [1]. This cyclic process continues until the engineered system robustly achieves target functions, whether for basic biological investigation or industrial application. Recent advances have introduced significant modifications to this traditional workflow, including the emerging LDBT paradigm (Learn-Design-Build-Test) that leverages machine learning and large pre-existing datasets to generate initial designs [4].

Diagram 1: The iterative Design-Build-Test-Learn (DBTL) cycle in synthetic biology. The process continues until the engineered biological system achieves the target performance specifications.

Historical Success 1: Pioneering Genetic Circuits - The Toggle Switch and Repressilator

The earliest demonstrations of synthetic biology's engineering potential emerged through the creation of synthetic genetic circuits, with the toggle switch and repressilator representing landmark achievements. These systems established fundamental engineering principles for biological circuit design and demonstrated the effective application of DBTL cycles in constructing programmable cellular behaviors.

The genetic toggle switch constituted one of the first synthetic bistable gene networks, designed to create digital-like memory in living cells [20]. The core design comprised two repressors and two promoters arranged in a mutually inhibitory network - each repressor gene was transcribed from a promoter repressed by the other repressor protein. This configuration enabled the system to stabilize in one of two stable states, with the switch toggling between states in response to specific environmental stimuli. The DBTL process was essential to achieving this functionality: initial designs based on mathematical modeling were built using standard molecular biology techniques, tested through fluorescence and enzymatic assays, and refined through multiple iterations to optimize repressor binding strengths and promoter efficiencies to achieve robust bistability [20].

Concurrently, the repressilator demonstrated the engineering of oscillatory behavior in living cells through a synthetic gene network [20]. This pioneering work implemented a three-repressor negative-feedback loop, where each repressor protein inhibited transcription of the next gene in the cycle. The DBTL cycle guided the optimization of protein degradation rates and transcriptional kinetics necessary to sustain oscillations. Testing required sophisticated single-cell time-lapse microscopy and quantitative fluorescence measurements, with learning phases focusing on matching experimental observations to mathematical models of oscillator dynamics [20]. These foundational circuits established the conceptual and methodological framework for subsequent synthetic biology applications, proving that engineered biological systems could exhibit complex, predictable behaviors.

Table 2: Key Research Reagents for Genetic Circuit Engineering

| Reagent/Category | Specific Examples | Function in DBTL Workflow |

|---|---|---|

| Repressor Proteins | TetR, LacI, CI434 | Core components for transcriptional regulation; Provide inhibition logic for circuit function [20] |

| Promoter Parts | PLtetO-1, Ptrc | Engineered regulatory regions; Control timing and magnitude of gene expression [20] |

| Reporter Genes | GFP, RFP, LacZ | Enable quantitative measurement of circuit dynamics; Facilitate high-throughput screening [20] |

| Molecular Cloning Tools | Restriction enzymes, Ligases, Plasmid vectors | Enable physical construction of genetic designs; Allow modular assembly of genetic parts [1] |

| Inducer Molecules | IPTG, aTc | Provide external control of circuit behavior; Allow experimental perturbation of system dynamics [20] |

Historical Success 2: Microbial Cell Factories for Metabolic Engineering

The application of DBTL cycles to microbial cell factories represents a transformative advancement in metabolic engineering, enabling the production of valuable compounds ranging from pharmaceuticals to biofuels. Corynebacterium glutamicum has emerged as a particularly versatile microbial platform, with systems metabolic engineering leveraging the DBTL framework to optimize production pathways for amino acids and derivative C5 platform chemicals [21].

A representative DBTL campaign for developing L-lysine-derived C5 chemical producers involves several iterative cycles [21]. The initial Design phase employs genome-scale metabolic models (GEMs) to identify gene knockout and overexpression targets that redirect metabolic flux toward desired products while maintaining cellular viability [19] [21]. The Build phase implements these designs using advanced DNA assembly techniques and multiplex automated genome engineering (MAGE) to rapidly construct strain libraries [13]. The Test phase employs analytical methods like mass spectrometry and HPLC to quantify product titers, yields, and productivity, complemented by multi-omics analyses to understand systemic cellular responses [19] [21]. The Learn phase integrates these experimental results with computational models, identifying unforeseen bottlenecks and regulatory interactions that inform the next DBTL cycle [21].

A significant challenge in this domain is the involution of the DBTL cycle, where iterative trial-and-error leads to increased complexity without proportional gains in productivity [19]. This often occurs because removing one metabolic bottleneck reveals new rate-limiting steps, or because production stresses create deleterious metabolic imbalances. Addressing this challenge requires expanding the DBTL framework to incorporate multiscale factors, including bioreactor conditions, media composition, and substrate toxicity, which collectively influence strain performance [19]. Successfully navigating these complexities has enabled the development of C. glutamicum strains producing high-value C5 chemicals at industrial scales, demonstrating the power of systematic DBTL implementation in metabolic engineering [21].

Diagram 2: The DBTL cycle for metabolic engineering of microbial cell factories, showing the potential for cycle involution and AI/ML integration to overcome this challenge.

The Modern Toolkit: AI, Automation, and Cell-Free Systems in Next-Generation DBTL

Recent technological advances are fundamentally reshaping DBTL implementation, with artificial intelligence (AI), laboratory automation, and cell-free systems collectively addressing traditional bottlenecks in biological design cycles. Machine learning (ML) approaches have emerged as particularly powerful tools for navigating biological complexity, offering robust computational frameworks to model non-linear, high-dimensional relationships that challenge traditional biophysical models [18] [19].

The integration of AI/ML is transforming each phase of the DBTL cycle. In the Design phase, protein language models (e.g., ESM, ProGen) enable zero-shot prediction of protein structure and function, while tools like ProteinMPNN and MutCompute facilitate sequence optimization based on structural constraints [4]. For the Learn phase, ML algorithms can identify complex patterns in high-dimensional experimental data, extracting design rules that would remain opaque through conventional analysis [18] [19]. This capability is particularly valuable for avoiding DBTL involution, as ML models can incorporate features from multiple biological scales - from enzymatic parameters to bioreactor conditions - to predict strain performance and identify optimal engineering strategies [19].

Concurrently, cell-free expression systems are dramatically accelerating the Build and Test phases. These platforms leverage transcription-translation machinery from cell lysates or purified components to express proteins without time-intensive cloning steps, enabling rapid testing of thousands of design variants [4]. When combined with microfluidics and automated liquid handling, cell-free systems can screen over 100,000 protein variants in picoliter-scale reactions, generating massive datasets for ML model training [4]. This integration has enabled remarkable engineering achievements, including the development of improved PET hydrolases for plastic degradation and the design of novel antimicrobial peptides [4].

These advances have prompted a fundamental paradigm shift from DBTL to LDBT (Learn-Design-Build-Test), where machine learning on large biological datasets precedes and informs the initial design phase [4]. In this model, pre-trained algorithms generate functional designs that are subsequently validated through rapid cell-free testing, potentially reducing multiple iterative cycles to a single pass. This approach moves synthetic biology closer to the Design-Build-Work model of established engineering disciplines, potentially transforming the efficiency and predictability of biological design [4].

Table 3: Automated and AI-Enhanced Workflows in Modern DBTL Implementation

| Technology Category | Specific Tools/Methods | Impact on DBTL Efficiency |

|---|---|---|

| Protein Language Models | ESM, ProGen, ProteinMPNN | Enable zero-shot prediction of protein structure and function; Accelerate design of novel enzymes [4] |

| Stability Prediction Algorithms | Prethermut, Stability Oracle, DeepSol | Predict effects of mutations on protein stability and solubility; Reduce experimental screening burden [4] |

| Cell-Free Expression Systems | In vitro transcription/translation, cDNA display | Enable rapid testing without cloning; Allow high-throughput screening of 100,000+ variants [4] |

| Automated Strain Engineering | MAGE, automated genome editing | Accelerate construction of genetic variants; Increase reproducibility of build phase [13] |

| Multi-Omics Analytics | RNA-seq, proteomics, metabolomics | Provide comprehensive system characterization; Generate datasets for ML model training [19] |

Essential Research Reagents and Experimental Protocols

Successful implementation of DBTL cycles requires carefully selected research reagents and standardized experimental protocols. This section details key components of the synthetic biology toolkit, with particular emphasis on resources suitable for both academic and industrial research settings.

Table 4: Essential Research Reagent Solutions for DBTL Workflows

| Reagent Category | Specific Examples | Function in DBTL Workflow | Implementation Considerations |

|---|---|---|---|

| DNA Assembly Systems | Golden Gate Assembly, Gibson Assembly, BioBricks | Enable modular construction of genetic designs; Allow rapid part swapping between iterations [1] | Standardization of parts facilitates reproducibility; Automation compatibility varies by method |

| Genome Editing Tools | CRISPR-Cas9, MAGE, USER cloning | Implement precise genetic modifications; Enable multiplexed editing for library generation [13] | Off-target effects require careful validation; Efficiency varies by host organism |

| Analytical Instruments | HPLC, MS, NGS, plate readers | Quantify product titers, sequence constructs, measure performance parameters [19] [21] | Throughput and sensitivity determine testing capacity; Integration with automation platforms varies |

| Cell-Free Systems | E. coli lysates, wheat germ extracts, PURExpress | Provide rapid testing platform for DNA designs; Enable high-throughput screening [4] | Cost per reaction constraints screening scale; Predictive value for in vivo performance requires validation |

| Automation Equipment | Liquid handlers, colony pickers, microfluidics | Increase throughput of build and test phases; Reduce manual labor and improve reproducibility [13] [4] | Significant initial investment; Requires specialized programming and maintenance expertise |

For researchers establishing DBTL workflows, several core experimental protocols have emerged as particularly valuable:

High-Throughput Molecular Cloning Workflow: Modern DBTL implementations employ automated cloning pipelines to increase productivity and reduce bottlenecks [1]. This typically involves in silico design of DNA constructs using standardized parts, followed by automated assembly using restriction enzyme-based or isothermal methods. After assembly, constructs are transformed into host cells, with verification increasingly performed via colony qPCR rather than sequencing to maximize throughput [1]. Automated colony picking systems further enhance throughput by enabling rapid processing of hundreds to thousands of constructs.

Cell-Free Protein Expression Testing: For rapid testing of enzyme variants or genetic circuits, cell-free expression systems provide unparalleled speed [4]. The protocol involves preparing DNA templates via PCR or direct synthesis, setting up transcription-translation reactions with commercial cell-free systems, and quantifying outputs via colorimetric, fluorescent, or mass spectrometry-based assays. This approach can test hundreds to thousands of variants in parallel, generating data within hours rather than days [4].

Multi-Omics Analysis for Learning Phase: Comprehensive system characterization employs integrated transcriptomic, proteomic, and metabolomic analyses [19] [21]. RNA sequencing profiles transcriptional changes, while LC-MS/MS enables protein quantification and metabolite profiling. The resulting datasets are integrated with genome-scale metabolic models to identify bottlenecks and predict beneficial modifications for subsequent DBTL cycles [19].

The historical trajectory of synthetic biology, from pioneering genetic circuits to sophisticated microbial cell factories, demonstrates the transformative power of the DBTL approach as a systematic framework for biological engineering. The iterative application of Design-Build-Test-Learn cycles has enabled researchers to navigate biological complexity and progressively refine synthetic biological systems toward predetermined functions. Current advances in artificial intelligence, laboratory automation, and cell-free testing are further accelerating this paradigm, potentially enabling a fundamental shift from iterative optimization to predictive design. As these technologies mature, the DBTL framework continues to provide the conceptual scaffolding for synthetic biology's progression from empirical tinkering toward true engineering discipline, with profound implications for biomanufacturing, therapeutic development, and basic biological research.

In the realm of synthetic biology and metabolic engineering, the path to optimizing biological systems is notoriously non-linear and complex. The classical Design-Build-Test-Learn (DBTL) cycle has long been the foundational framework for this engineering effort. However, the inherent unpredictability of biological systems—where minor genetic perturbations can lead to disproportionate and unexpected outcomes—demands an iterative, cyclical approach. This technical guide explores the critical role of iteration in navigating biological complexity, drawing upon recent advances in machine learning and high-throughput experimental technologies. Framed within the context of synthetic biology's DBTL cycle, this paper provides researchers, scientists, and drug development professionals with a detailed examination of the methodologies and tools that make iterative cycles a powerful strategy for achieving robust biological design.

Biological systems are characterized by a high degree of complexity and non-linearity. Unlike predictable physical systems, they involve intricate, interconnected networks where components interact in ways that are difficult to model from first principles. A change at the genetic level—such as modifying a promoter strength or enzyme sequence—can have cascading effects on metabolic fluxes, protein-protein interactions, and overall cellular physiology, often in a non-intuitive manner [22]. For instance, combinatorial optimization of a simple linear metabolic pathway can reveal that increasing the concentration of an individual enzyme might deplete its substrate and paradoxically decrease the final product flux, while simultaneously increasing the concentrations of two different enzymes could synergistically boost output [22]. This non-linearity makes one-pass design strategies ineffective.

The synthetic biology community addresses this challenge through the Design-Build-Test-Learn (DBTL) cycle, a systematic, iterative framework for engineering biological systems [4] [22]. The power of this framework lies not in a single execution, but in its repeated application. Each cycle generates data and insights that refine the model and inform the design in the subsequent cycle, progressively closing the gap between predicted and observed system behavior. Recent proposals even suggest a paradigm shift to "LDBT," where machine Learning precedes Design, leveraging pre-trained models on vast biological datasets to make more informed initial designs, thereby accelerating the entire process [4]. This guide will dissect the quantitative evidence for iteration, provide detailed experimental protocols, and visualize the key workflows that underpin this essential approach.

Quantitative Evidence: The Data-Driven Case for Iteration

The theoretical value of iteration is well-established; however, its quantitative impact is best demonstrated through simulated and real-world experimental data. Research using mechanistic kinetic models to simulate DBTL cycles shows that iterative machine learning guidance significantly outperforms single-step optimization.

Table 1: Performance of Machine Learning Models in Successive DBTL Cycles for Metabolic Flux Optimization [22]

| DBTL Cycle | Number of Strain Designs Tested | Best Product Flux (Relative to Wild-Type) | Machine Learning Model Used | Key Learning Outcome |

|---|---|---|---|---|

| Cycle 1 | 50 | ~1.5x | Gradient Boosting / Random Forest | Identified initial correlations between enzyme expression levels and product flux. |

| Cycle 2 | 20 | ~2.8x | Gradient Boosting (retrained) | Refined understanding of non-linear enzyme interactions; exploited synergistic effects. |

| Cycle 3 | 20 | ~3.5x | Gradient Boosting (further retrained) | Discovered optimal global configuration of pathway elements, avoiding local maxima. |

Simulation studies reveal that the choice of machine learning model is crucial, especially in the low-data regime typical of early cycles. Gradient boosting and random forest models have been shown to be robust to training set biases and experimental noise, making them particularly suitable for the initial, data-scarce phases of an iterative campaign [22]. Furthermore, the strategy for allocating resources across cycles is critical. Evidence suggests that when the total number of strains to be built is limited, initiating the process with a larger initial DBTL cycle is more favorable for rapid optimization than distributing the same number of strains equally across all cycles [22]. This initial larger investment generates a richer dataset, providing a stronger foundation for machine learning models to make accurate predictions in subsequent, smaller cycles.

Table 2: Comparative Performance of Machine Learning Models in Simulated DBTL Cycles [22]

| Machine Learning Model | Performance in Low-Data Regime | Robustness to Noise | Robustness to Training Set Bias | Key Application |

|---|---|---|---|---|

| Gradient Boosting | High | High | High | Recommending new strain designs based on predictive distribution. |

| Random Forest | High | High | High | Predicting strain performance from combinatorial libraries. |

| Deep Learning | Lower | Medium | Medium | Requires larger datasets; more powerful in later cycles. |

| Support Vector Machines | Medium | Medium | Lower | Less effective for complex, non-linear pathway interactions. |

Experimental Protocols: Implementing Iterative DBTL Cycles

A successful iterative DBTL workflow requires the integration of precise methodologies across the design, build, test, and learn phases. Below is a detailed protocol for a combinatorial pathway optimization campaign, a common challenge in metabolic engineering.

Protocol: Machine Learning-Guided Combinatorial Pathway Optimization

Objective: To maximize the flux through a synthetic metabolic pathway by iteratively optimizing the expression levels of multiple enzymes.

Materials and Reagents:

- DNA Library: A predefined set of genetic parts (e.g., promoters, ribosomal binding sites) of varying strengths for each pathway gene [22].

- Host Chassis: An appropriate microbial host (e.g., Escherichia coli).

- Cell-Free System (Alternative): A cell-free gene expression platform derived from crude cell lysates or purified components for rapid testing [4].

- Analytical Equipment: HPLC, GC-MS, or spectrophotometric assays for quantifying target product and growth metrics.

- Computational Tools: Software for machine learning (e.g., scikit-learn for gradient boosting) and mechanistic modeling (e.g., SKiMpy for kinetic models) [22].

Methodology:

Learn & Design (LD):

- Learn (L): In the first cycle, this phase may involve initializing a machine learning model with a pre-trained protein language model (e.g., ESM, ProGen) or a foundational kinetic model of the core metabolism [4] [22]. In subsequent cycles, the model is retrained on all accumulated experimental data.

- Design (D): Using an algorithm for recommending new designs, the machine learning model's predictive distribution is sampled. For example, an automated recommendation tool can propose a set of strain designs (e.g., 50 for Cycle 1) by selecting specific promoter-gene combinations from the DNA library that are predicted to maximize product flux, balancing exploration and exploitation [22].

Build (B):

- In Vivo: Synthesize and assemble the DNA constructs designed in the previous phase. Introduce them into the host chassis using high-throughput genome engineering techniques such as MAGE (multiplex automated genome engineering) or golden gate assembly [13].

- In Vitro (Accelerated): For ultra-high-throughput, use a cell-free expression system. Synthesized DNA templates can be directly added to the cell-free reaction for protein expression without time-consuming cloning steps, enabling the testing of thousands of variants in hours [4].

Test (T):

- Cultivate the built strains in a scaled-down format (e.g., 96-well microplates) or use the cell-free reactions directly.

- Measure the key performance indicators (KPIs), primarily the product titer/yield/rate (TYR). Also, collect data on biomass growth and substrate consumption to inform physiological impact [22].

- For cell-free systems, couple the reactions with colorimetric or fluorescent assays for high-throughput sequence-to-function mapping [4].

Learn (L):

- Integrate the new experimental TYR data with the corresponding strain designs (enzyme expression levels) into the growing dataset.

- Retrain the machine learning model (e.g., gradient boosting) on this expanded dataset. Analyze the model to identify feature importance, revealing which enzymes are most influential and uncovering potential non-linear interactions.

- Compare the model's predictions against the new experimental results to assess its accuracy and identify any systematic biases.

Iterate: The cycle (steps 1-4) is repeated, with each round of designs informed by the learnings from the previous one. The number of strains built per cycle can be optimized, often starting with a larger set to seed the model and using smaller, more targeted sets in later cycles [22].

Protocol: Quantifying Microbial Interactions with an Iterative Model

Objective: To infer interaction coefficients between species in a microbial community using relative abundance data.

Materials and Reagents:

- Time-Series Metagenomic Data: Relative abundance data of microbial species across multiple time points.

- Computational Environment: Software for numerical computing (e.g., Python with SciPy).

Methodology:

Problem Framework: The generalized Lotka-Volterra (gLV) model is a standard for modeling species interactions but requires absolute abundance data, which is rarely available. The iterative Lotka-Volterra (iLV) model is designed for widely available relative abundance data [23].

Model Implementation:

- The iLV model incorporates compositional constraints into the gLV framework.

- It uses an iterative optimization strategy that combines linear approximations with nonlinear refinements to enhance the accuracy of parameter estimation for interaction coefficients and growth rates [23].

Iterative Refinement:

- The algorithm iteratively refines its parameter estimates by minimizing the difference between the predicted and observed relative abundance trajectories.

- With each iteration, the model more accurately captures the underlying ecological dynamics, such as competition, predation, and mutualism, from the compositional data.

Validation: The model's performance is validated using simulated datasets with known parameters and applied to real-world datasets (e.g., predator-prey systems, cheese microbial communities) to demonstrate its robustness in predicting species trajectories [23].

Visualizing Iterative Workflows

The following diagrams, generated with Graphviz DOT language, illustrate the core logical relationships and workflows of the iterative cycles discussed in this guide.

Diagram 1: The classic DBTL cycle shows the sequential, iterative process. The "Accelerated Feedback" arrow highlights how modern platforms can short-cycle learning directly back into design.

Diagram 2: The LDBT paradigm positions machine learning at the outset, using pre-existing knowledge to inform the initial design. Testing then generates data that strengthens foundational models for future projects.

Diagram 3: The closed-loop ML workflow shows how data drives model updates, which in turn generate new testable hypotheses, creating a self-improving system.

The Scientist's Toolkit: Essential Research Reagents and Solutions

The effective execution of iterative DBTL cycles relies on a suite of key technologies and reagents that enable high-throughput building and testing.

Table 3: Key Research Reagent Solutions for Iterative Biology

| Tool / Reagent | Function | Application in Iterative Cycles |

|---|---|---|

| Combinatorial DNA Library | A predefined set of genetic parts (promoters, RBS, coding sequences) to systematically vary component properties. | Provides the fundamental design space for exploring genetic variations in each DBTL cycle [22]. |

| Cell-Free Expression System | Protein biosynthesis machinery from cell lysates for in vitro transcription and translation. | Accelerates the Build and Test phases by removing the need for cloning and cell cultivation; enables testing of toxic compounds [4]. |

| Automated Recommendation Tool | An algorithm that uses machine learning models to propose new strain designs for the next cycle. | Automates the Learn-to-Design transition, optimizing the choice of designs to test based on exploration/exploitation trade-offs [22]. |

| Droplet Microfluidics | A technology for creating and manipulating picoliter-scale droplets. | Allows ultra-high-throughput screening by testing >100,000 cell-free or cellular reactions in a single experiment, generating massive datasets [4]. |

| Kinetic Model (e.g., SKiMpy) | A mechanistic model using ODEs to describe metabolic reaction fluxes. | Provides a "digital twin" of the pathway for in silico testing of DBTL strategies and benchmarking machine learning methods [22]. |

| Iterative Lotka-Volterra (iLV) Model | A computational framework to infer microbial interactions from relative abundance data. | Enables iterative learning and model refinement in microbial ecology from commonly available compositional data [23]. |

Iteration is not merely a useful strategy but a fundamental necessity for engineering biological systems. The inherent non-linearity and complexity of life processes mean that success is achieved through a process of progressive refinement, not one-off design. The DBTL cycle, especially when augmented with modern machine learning and accelerated by cell-free testing and biofoundries, provides a structured framework for this iterative learning. As the field evolves towards an LDBT paradigm—where learning from vast datasets precedes design—the cycles will become faster and more efficient. However, the core principle of iteration will remain key, guiding researchers as they navigate the intricate landscape of biological design to develop the next generation of cell factories, therapeutic molecules, and diagnostic tools.

Executing the DBTL Cycle: Methodologies and Applications in Drug Development

The Design-Build-Test-Learn (DBTL) cycle is the fundamental engineering framework that underpins synthetic biology, enabling the systematic and iterative development of biological systems [1]. This cycle begins with the Design phase, where researchers define objectives for a desired biological function and create a conceptual plan for the genetic system intended to achieve it [4]. In traditional DBTL, this phase relies heavily on domain knowledge, expertise, and computational modeling, after which the designed constructs are built, tested, and the resulting data is analyzed to inform the next design round [4]. The Design phase is therefore foundational, setting the trajectory for the entire engineering effort, with its precision directly influencing the number of iterative cycles required to achieve a functional system.

However, a significant paradigm shift is emerging. With recent advances in machine learning (ML), there is a growing proposition to reorder the cycle to LDBT, where "Learning" precedes "Design" [4]. In this model, learning from vast biological datasets via machine learning algorithms directly informs the initial design, potentially enabling functional solutions in a single cycle and moving synthetic biology closer to a "Design-Build-Work" model akin to more established engineering disciplines [4]. This article will explore the tools and methodologies that constitute the modern Design stage, from its traditional computational roots to its current transformation through artificial intelligence.

Computational Foundations & Machine Learning in Biological Design

The design of biological systems has been revolutionized by computational tools. Initially, this relied on parametric models based on biophysical principles, but the field is increasingly dominated by machine learning models that can detect complex patterns in high-dimensional biological data [4]. These tools operate at different levels of biological organization, from individual proteins to entire pathways.

Machine Learning Tools for Protein and Pathway Design

Machine learning provides a powerful opportunity for directly engineering proteins and pathways with desired functions, a task that is challenging due to the complex relationship between a protein's sequence, structure, and function [4]. The following table summarizes key classes of computational tools used in the design process.

Table 1: Machine Learning Tools for Biological Design

| Tool Category | Representative Tools | Primary Function | Application Example |

|---|---|---|---|

| Protein Language Models (Sequence-based) | ESM [4], ProGen [4] | Predict beneficial mutations and infer protein function by learning from evolutionary relationships in protein sequences. | Zero-shot prediction of diverse antibody sequences [4]. |

| Structure-based Design Tools | ProteinMPNN [4], MutCompute [4] | Design new protein sequences that fold into a given backbone (ProteinMPNN) or optimize residues based on the local chemical environment (MutCompute). | Designing stabilized hydrolases for PET depolymerization [4]; designing TEV protease variants with improved activity [4]. |

| Functional Prediction Tools | Prethermut [4], Stability Oracle [4], DeepSol [4] | Predict the effects of mutations on thermodynamic stability (ΔΔG) or protein solubility. | Identifying stabilizing mutations to improve protein expression and function [4]. |

| Pathway Optimization Tools | iPROBE (In vitro Prototyping and Rapid Optimization of Biosynthetic Enzymes) [4] | Uses neural networks on pathway combination data to predict optimal pathway sets and enzyme expression levels. | Improving 3-HB production in Clostridium by over 20-fold [4]. |

The effectiveness of these models, particularly for large-language models, hinges on their scaling properties and in-context learning capabilities, allowing them to be fine-tuned for specialized biological tasks [24]. Furthermore, the rise of multimodal foundation models—trained on diverse data types like DNA, RNA, protein sequences, and structures—promises to further consolidate and enhance design capabilities by providing a more integrated view of biological information [24].

Strategic Workflow for Computational Design

The application of these tools follows a logical sequence from concept to refined design. The workflow begins with objective definition, where the desired biological function (e.g., create a novel enzyme, optimize a metabolic pathway) is clearly specified. Next is tool selection, choosing the appropriate model based on the goal, whether it's de novo protein design, optimizing an existing sequence, or balancing an entire pathway.

The core of the process is in silico design and prediction, where the selected tool is used to generate candidate DNA blueprints. For proteins, this might involve using a structure-based tool like ProteinMPNN to create sequences that fold correctly, followed by a stability predictor like Stability Oracle to filter out destabilizing variants. For pathways, a tool like iPROBE can predict the optimal combination and expression level of enzymes. This step is increasingly powerful with zero-shot predictions, where models can generate functional designs without additional training on specific experimental data, potentially collapsing the number of required DBTL cycles [4]. Finally, the design validation step involves using other computational methods (e.g., AlphaFold for structure prediction) to provide a preliminary check before moving to physical construction.

Diagram 1: Computational design workflow.

Experimental Validation of Designs

Computational designs must be experimentally validated to assess their real-world functionality. This requires transitioning from digital blueprints to physical DNA, a process greatly accelerated by modern high-throughput methods.

High-Throughput Build and Test Platforms

The Build phase involves the physical assembly of the designed DNA constructs. This is often achieved in a high-throughput manner using automated biofoundries, which are facilities that automate design-build-test cycles for synthetic biology [24]. These foundries leverage automation and robotic liquid handling to assemble combinatorial libraries of genetic constructs rapidly, overcoming the limitations of manual, labor-intensive cloning methods [25] [1].