Taming the Scale: Mastering Parameter Optimization in Biological Systems from Models to Therapeutics

Parameter scaling issues present a critical and often underestimated challenge in biological optimization, impacting fields from systems biology to drug development.

Taming the Scale: Mastering Parameter Optimization in Biological Systems from Models to Therapeutics

Abstract

Parameter scaling issues present a critical and often underestimated challenge in biological optimization, impacting fields from systems biology to drug development. This article provides a comprehensive guide for researchers and scientists on understanding, addressing, and overcoming these challenges. We explore the foundational principles of why biological parameters span multiple scales and how this affects model identifiability and optimizer performance. The content systematically reviews modern optimization methodologies—from evolution strategies and metaheuristics to machine learning approaches—that are specifically designed to handle ill-scaled parameters. Through practical troubleshooting frameworks, validation protocols, and comparative analyses of real-world case studies in kinetic modeling and bioprocess optimization, we deliver actionable strategies for achieving robust, reproducible, and computationally efficient parameter estimation in complex biological systems.

The Scaling Dilemma: Why Biological Parameters Defy Simple Optimization

Defining Parameter Scaling and Its Prevalence in Biological Systems

Definition and Core Concepts

What is Parameter Scaling? Parameter scaling is a computational and theoretical practice used to make complex biological models more tractable by adjusting system parameters—such as population sizes, reaction rates, and generation times—by a constant factor. This technique aims to preserve the model's essential dynamics and output metrics while significantly improving computational efficiency [1].

How does parameter scaling relate to the "parameter space" that living systems must navigate? Biological systems themselves must "set" a vast number of internal parameters—like molecule concentrations and interaction strengths—to function effectively. The process of how organisms navigate this high-dimensional "parameter space" through adaptation, learning, and evolution is a central question in biological physics. Computational parameter scaling is a tool scientists use to study these real biological processes [2].

What is the fundamental trade-off involved in parameter scaling? The primary trade-off is between computational efficiency and model accuracy. While scaling down population sizes and generation times reduces runtime and memory usage, aggressive scaling can distort genetic diversity and dynamics, leading to deviations from the intended model and empirical observations [1].

Prevalence and Manifestations in Biological Systems

Parameter scaling, and the need to manage numerous parameters, appears across multiple scales of biological research.

Table 1: Prevalence of Parameter Scaling Across Biological Domains

| Biological Domain | Manifestation of Parameter Scaling/Management | Key Parameters Involved |

|---|---|---|

| Population Genetics [1] | Scaling down population sizes and generation times in forward-time simulations. | Population size (N), mutation rate (μ), recombination rate (r), selection coefficients (s). |

| Intracellular Signaling & Whole-Cell Modeling [3] [4] | Estimating thousands of unknown reaction rate constants and initial concentrations from experimental data to build large-scale dynamic models. | Reaction rate constants, initial concentrations, scaling/offset factors for heterogeneous data. |

| Neural & Sensory Systems [2] | Biological adaptation adjusting internal parameters (e.g., ion channel expression) to maintain function across a range of environmental stimuli. | Ion channel densities, molecular concentrations, synaptic weights. |

| Epidemiology [5] | Identifying composite indices from multiple parameters that govern system-level outcomes like final epidemic size. | Force of infection, latent period, infectious period, individual mobility rates. |

Troubleshooting Common Parameter Scaling Issues

FAQ: Our scaled population genetics simulations show depleted genetic diversity and distorted Site Frequency Spectra (SFS). What might be going wrong?

- Potential Cause: Excessively large scaling factors can artificially intensify the effects of natural selection. Strongly scaled simulations may cause stronger negative selection against deleterious mutations, which amplifies background selection and purges linked neutral mutations [1].

- Solution:

- Validate with Unscaled Models: If computationally feasible, run a small subset of simulations without scaling to establish a baseline.

- Use Moderate Scaling Factors: The distortion of diversity metrics is often more severe with "dramatic scaling" (e.g., a factor of 1000) compared to "moderate scaling" (e.g., a factor of 10) [1].

- Check Burn-in Length: Ensure your simulation's initial "burn-in" period is long enough (e.g., 20N generations) for lineages to fully coalesce, as a 10N heuristic may be insufficient and alter expected linkage disequilibrium patterns [1].

FAQ: When estimating parameters for a large-scale signaling model, the optimization is slow and fails to converge. How can we improve this?

- Potential Cause: The high dimensionality of the optimization problem, often exacerbated by introducing numerous scaling and offset parameters to align model outputs with relative experimental data, can severely slow down optimizer performance [3] [6].

- Solution:

- Use Data-Driven Normalization (DNS): Instead of adding scaling factors as unknown parameters, normalize your model simulations in the exact same way your experimental data were normalized (e.g., to a control or maximum value). This approach reduces the number of parameters and has been shown to improve convergence speed and reduce non-identifiability [6].

- Employ Hierarchical Optimization: For problems where scaling parameters are necessary, use a hierarchical approach that analytically computes optimal scaling and offset parameters for a given set of dynamic model parameters. This method efficiently handles the problem structure and can be combined with adjoint sensitivity analysis for large-scale models [3].

FAQ: In our whole-cell model, we face the challenge of combining independently parametrized submodels. What are the key considerations?

- Potential Cause: Inaccuracies in linking different submodels can propagate uncertainty and compromise the entire compound model, even if each submodel is well-tuned on its own [4].

- Solution:

- Define Shared Variables Carefully: Establish a consistent set of cell variables (e.g., metabolite concentrations, energy levels) that are shared across submodels.

- Account for Extrinsic Noise: Recognize that cellular context matters. Model cell-to-cell heterogeneity by allowing for variations in rate parameters between simulated instances, which can capture the leading effects of unmodeled extrinsic factors [4].

- Perform Integrated Sensitivity Analysis: After coupling submodels, conduct a global sensitivity analysis to identify which parameters and inter-model connections have the largest impact on the overall output.

Experimental Protocols & Workflows

Protocol 1: Assessing Scaling Effects in Population Genetic Simulations

This protocol is adapted from studies investigating scaling in forward-time simulations of organisms like Drosophila melanogaster and humans [1].

1. Objective: To systematically quantify the effects of different scaling factors on genetic diversity metrics and computational efficiency.

2. Materials & Software:

- Software: A forward-in-time simulation platform like SLiM.

- Data: An empirically inferred demographic model for your study species.

3. Experimental Workflow:

- Step 1 - Baseline Simulation: Run an unscaled simulation with the true, empirically estimated parameters (population size

N, mutation rateμ, recombination rater). - Step 2 - Define Scaling Factors: Choose a range of scaling factors (

κ), from moderate (e.g., 10) to aggressive (e.g., 100 or 1000). - Step 3 - Parameter Scaling: For each scaling factor

κ, create a new parameter set:- Scaled Population Size:

N_scaled = N / κ - Scaled Generation Time:

t_scaled = t / κ - Scaled Mutation Rate:

μ_scaled = μ * κ - Scaled Recombination Rate:

r_scaled = r * κ

- Scaled Population Size:

- Step 4 - Run Scaled Simulations: Execute multiple simulation replicates for each scaled parameter set.

- Step 5 - Data Collection: For each run, record:

- Genetic Diversity Metrics: Expected heterozygosity, Watterson's theta, the Site Frequency Spectrum (SFS), and Linkage Disequilibrium (LD).

- Computational Metrics: Runtime and memory usage.

- Step 6 - Analysis: Compare the diversity metrics from scaled simulations against the unscaled baseline and, if available, real empirical data. Correlate computational savings with the loss of accuracy.

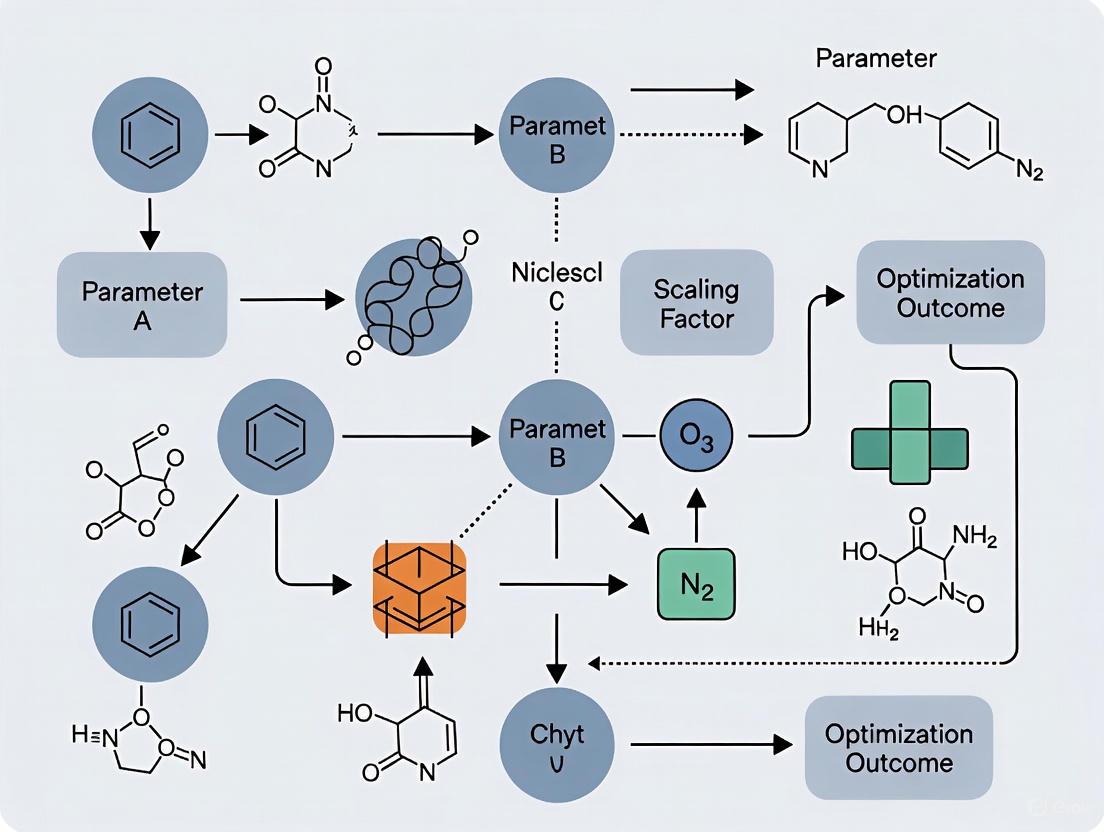

Diagram 1: Scaling assessment workflow.

Protocol 2: Parameter Estimation for Mechanistic ODE Models with Relative Data

This protocol outlines a hierarchical approach for parameterizing large-scale dynamic models, such as signaling networks, using heterogeneous relative data [3].

1. Objective: To efficiently estimate dynamic (kinetic) parameters and static (scaling, offset) parameters from relative measurements (e.g., Western blots, proteomics).

2. Materials & Software:

- Software: A modeling environment capable of adjoint sensitivity analysis (e.g., Data2Dynamics, pyPESTO).

- Data: Time-course measurements of observables (e.g., phosphoprotein levels) under different experimental conditions.

3. Experimental Workflow:

- Step 1 - Model Definition: Formulate your ODE model:

dx/dt = f(x, θ, u), whereθare dynamic parameters. - Step 2 - Define Observation Function: Link model states

xto observablesyvia an unscaled functionh̃(x, θ). - Step 3 - Hierarchical Optimization:

- Outer Loop (Dynamic Parameters): An optimizer proposes a set of dynamic parameters

θ. - Inner Loop (Static Parameters): For the given

θ, analytically compute the optimal scaling (s), offset (b), and error model (σ) parameters that minimize the discrepancy betweens·h̃(θ) + band the experimental dataȳ. - Gradient Calculation: Use adjoint sensitivity analysis to compute the gradient of the objective function with respect to

θ, which is efficient for high-dimensional parameter spaces. - Iterate: The outer loop optimizer uses this gradient to propose a new, improved

θ.

- Outer Loop (Dynamic Parameters): An optimizer proposes a set of dynamic parameters

- Step 4 - Validation: Assess the goodness-of-fit and parameter identifiability using profile likelihood or other methods.

Diagram 2: Hierarchical optimization workflow.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Parameter Scaling and Estimation

| Tool / Reagent | Function / Application | Key Feature / Consideration |

|---|---|---|

| SLiM (Simulation of Evolution) [1] | A powerful platform for forward-time population genetic simulations. | Allows for explicit scripting of complex demographic and selective scenarios. Crucial for testing scaling effects. |

| Data2Dynamics [3] [6] | A MATLAB-based modeling environment for parameter estimation in dynamic systems biology models. | Supports advanced techniques like hierarchical optimization and adjoint sensitivity analysis for large models. |

| COPASI [6] | A standalone software for simulating and analyzing biochemical networks and their dynamics. | User-friendly interface; suitable for models of small to medium complexity. |

| PEPSSBI [6] | A software tool designed to support parameter estimation with a focus on data-driven normalization of simulations (DNS). | Helps avoid the identifiability issues introduced by scaling factor parameters. |

| Hierarchical Optimization Framework [3] | A mathematical approach, not a specific software, that can be implemented in code. | Separates the estimation of dynamic and static parameters, drastically improving optimizer performance for large-scale models. |

Frequently Asked Questions

What are the most common symptoms of an ill-conditioned problem in biological optimization? The most common symptoms include extreme sensitivity of the model's output to minute changes in input parameters, wildly varying parameter estimates upon repeated optimization runs, and a significant disconnect between training loss and validation performance, indicating poor generalizability. In practice, this can manifest as a drug discovery model that performs perfectly on training data but fails to predict activity in a real biological assay [7].

My model's loss is not decreasing and fluctuates wildly. Is this a convergence issue? Yes, this pattern typically indicates a failure to converge [8]. Common causes are a learning rate that is too high, causing the optimization process to overshoot the minimum, or poorly scaled input features where variables with larger numerical ranges dominate the gradient, destabilizing the learning process [8].

How can I distinguish between slow convergence and premature stagnation? Slow convergence shows a steady but frustratingly slow decrease in the loss function over many epochs. Premature stagnation occurs when the loss plateaus at a high value and shows no further improvement, often because the optimizer is trapped in a local minimum or a saddle point. Plotting the cost function over epochs is the primary method for diagnosing this issue [8].

Why does my multi-target drug discovery model fail to generalize despite good training performance? This is a classic sign of overfitting, often driven by high-dimensional parameter spaces and data sparsity [9]. When the number of model parameters is large relative to the training data (e.g., predicting interactions for millions of compounds against thousands of targets), the model can memorize noise rather than learn the underlying biological principles, leading to poor performance on new, unseen data [7] [9].

What is the role of feature scaling in preventing slow convergence? Feature scaling is critical. Without it, features with larger numerical ranges can dominate the gradient calculations, leading to an ill-conditioned optimization landscape. This forces the optimizer to take inefficient, zig-zagging steps toward the minimum, drastically slowing convergence. Standardization (giving features a mean of zero and variance of one) or normalization (scaling to a fixed range) ensures all input features contribute equally to the learning process [8].

Troubleshooting Guides

Diagnosing and Remedying Ill-Conditioning

Ill-conditioning arises when a problem's solution is hypersensitive to its inputs, creating a highly irregular optimization landscape.

Primary Symptoms:

- Model performance or parameter values change drastically with tiny changes to the input data or initial conditions.

- The optimization process is unstable and fails to find a consistent solution across multiple runs.

- In physical simulations, vastly different material properties or poor element quality (e.g., high aspect ratios) are known to cause ill-conditioning [10].

Diagnostic Steps:

- Condition Number Estimation: Calculate the condition number of your data's covariance matrix. A very high number (e.g., >10^12) indicates ill-conditioning.

- Sensitivity Analysis: Perform a global sensitivity analysis (GSA), as used in biogeochemical modeling, to identify which parameters your model is most sensitive to. Parameters with dominant "Total effects" are often key contributors to ill-conditioning [11].

- Visualization: For high-dimensional models, use projection methods like PCA to visualize the parameter space; a long, narrow valley suggests ill-conditioning.

Solutions and Best Practices:

- Robust Regularization: Implement explicit constraints or regularization techniques like L1 (Lasso) or L2 (Ridge) to penalize extreme parameter values and improve stability [7] [8].

- Advanced Solvers: For certain well-conditioned, blocky structures (e.g., large solid models in finite element analysis), a domain decomposition-based iterative solver can be effective, though it may fail for ill-conditioned systems [10].

- Data Preprocessing: Rigorously clean your data, handle missing values, and apply feature scaling to ensure all input variables are on a comparable scale [8].

Addressing Slow Convergence

Slow convergence is characterized by a consistent but unacceptably gradual reduction in the loss function over many iterations.

Primary Symptoms:

- The loss function decreases at a very slow, often linear rate, requiring an impractical number of epochs to reach a minimum.

- The optimization process appears to be moving in the right direction but is inefficient.

Diagnostic Steps:

- Analyze Learning Curves: Plot the training and validation loss against the number of epochs/iterations to confirm a slow but steady descent.

- Check Gradient Norms: Monitor the norms of the gradients; vanishingly small gradients can signal an overly conservative optimizer.

Solutions and Best Practices:

- Adaptive Learning Rates: Replace basic Stochastic Gradient Descent (SGD) with adaptive optimizers like Adam, AdamW, or RMSprop. These algorithms automatically adjust the learning rate for each parameter, which can significantly speed up progress in ill-conditioned landscapes [7] [8].

- Learning Rate Tuning: Use techniques like the Learning Rate Range Test to find an optimal value. This involves training with exponentially increasing learning rates and identifying the "elbow" of the loss curve [8].

- Second-Order Methods: Where computationally feasible, consider methods that incorporate curvature information (e.g., natural gradient) to navigate pathological curvatures more efficiently [7].

Escaping Premature Stagnation

Premature stagnation happens when the optimization process halts at a suboptimal solution, such as a local minimum or a saddle point.

Primary Symptoms:

- The loss function plateaus at a high value and shows no further improvement for many consecutive epochs.

- The model exhibits underfitting, failing to capture key patterns in the training data itself.

Diagnostic Steps:

- Loss Plateau Analysis: Identify the point at which the learning curve flattens.

- Local Geometry Analysis: For low-dimensional problems, visualize the loss landscape around the stagnant point to check for local minima or saddle points.

Solutions and Best Practices:

- Learning Rate Schedules: Use learning rate schedules (e.g., cosine annealing) or adaptive optimizers to introduce "jolts" that can help the model escape shallow local minima [7].

- Momentum: Incorporate momentum into your optimizer. This helps the optimizer accelerate through regions of small, consistent gradients and overcome flat regions and saddle points.

- Alternative Algorithms: For complex hyperparameter tuning and feature selection tasks, population-based approaches like Covariance Matrix Adaptation Evolution Strategy (CMA-ES) can be highly effective. These stochastic search strategies are less prone to getting trapped in local optima [7].

- Model Complexity Adjustment: Increase model complexity (e.g., add more layers or neurons in a neural network) if the current model is too simple to capture the underlying relationships in the data [8].

Optimization Methods for Biological Research: A Comparative Guide

The table below summarizes key optimization algorithms, their characteristics, and their applicability to common challenges in biological data analysis.

| Method Name | Type | Key Mechanism | Pros | Cons | Best for Biological Challenges |

|---|---|---|---|---|---|

| AdamW [7] | Gradient-based | Decouples weight decay from gradient scaling. | Better generalization than Adam; resolves ineffective regularization. | Can be sensitive to the initial learning rate. | Training deep learning models on molecular data (e.g., drug-target interaction prediction). |

| AdamP [7] | Gradient-based | Projects gradients to avoid ineffective updates for scale-invariant parameters (e.g., in BatchNorm). | Improves optimization in modern deep learning architectures. | More complex implementation. | Training models with normalization layers, common in bioinformatics. |

| LION [7] | Gradient-based | Sign-based optimizer; uses momentum and weight decay. | Memory-efficient; often outperforms AdamW on some tasks. | Newer algorithm with less established track record. | Large-scale model training with memory constraints. |

| CMA-ES [7] | Population-based | Covariance Matrix Adaptation Evolution Strategy. | Excellent for non-convex, ill-conditioned problems; does not require gradients. | Computationally expensive per iteration; slower for high-dimensional problems. | Hyperparameter tuning and optimizing complex, noisy biological simulation parameters [11]. |

| Importance Sampling (iIS) [11] | Bayesian/Iterative | Iterative sampling to constrain model parameters to data. | Provides full posterior distributions, quantifying uncertainty. | Computationally intensive; requires careful setup. | Parameter optimization for complex mechanistic models (e.g., biogeochemical models) [11]. |

| Iterative (FETI) Solver [10] | Linear solver | Domain decomposition; solves sub-domains independently. | Can be faster and use less disk space than direct solvers for very large, well-conditioned models. | Fails on ill-conditioned models (e.g., with thin shells or weak springs). | Large-scale, well-conditioned physical simulations in biomedical engineering. |

Experimental Protocol: Parameter Optimization for a Biogeochemical Model

This protocol is adapted from a study that optimized 95 parameters in a PISCES biogeochemical model using iterative Importance Sampling (iIS) and BGC-Argo float data [11].

- Objective: To constrain a high-dimensional biogeochemical model using observational data to reduce prediction error and quantify parameter uncertainty.

- Key Materials/Data:

- PISCES Model: A complex marine biogeochemical model with 95 tunable parameters.

- BGC-Argo Float Data: A rich, multi-variable dataset providing 20 biogeochemical metrics (e.g., chlorophyll, oxygen, nitrate) for a site in the North Atlantic.

- Methodology:

- Global Sensitivity Analysis (GSA): Perform a GSA to identify the most sensitive parameters. This step is computationally expensive (~40x the cost of the optimization itself) but is crucial for understanding the model's behavior. The study found zooplankton dynamics parameters to be most sensitive [11].

- Strategy Selection: Choose an optimization strategy:

- Main Effects: Optimize only the subset of parameters with the strongest direct influence.

- Total Effects: Optimize a larger subset that includes parameters with strong non-linear interactions.

- All-Parameters: Optimize all parameters simultaneously (the recommended strategy for robust uncertainty quantification) [11].

- Iterative Importance Sampling (iIS):

- Initialization: Define prior distributions for all parameters.

- Sampling: Draw a large ensemble of parameter sets from the priors.

- Simulation & Evaluation: Run the model for each parameter set and calculate the Normalized Root Mean Square Error (NRMSE) against the BGC-Argo data.

- Weighting & Resampling: Assign importance weights to each parameter set based on its NRMSE. Resample the ensemble based on these weights to create a new, refined posterior distribution.

- Iteration: Repeat the sampling, evaluation, and resampling steps until the posterior distribution stabilizes and the NRMSE is minimized.

- Expected Outcome: The published study achieved a 54-56% reduction in NRMSE and a 16-41% reduction in parameter uncertainty, demonstrating the framework's power for refining complex biological models [11].

Workflow: From Problem to Solution in Biological Optimization

The diagram below outlines a logical workflow for diagnosing and addressing the computational consequences discussed in this guide.

This table details key data resources and computational tools essential for optimization tasks in biological and drug discovery research.

| Item Name | Type | Function in Optimization | Example in Context |

|---|---|---|---|

| BGC-Argo Float Data [11] | Observational Dataset | Provides the ground-truth data against which model predictions are optimized, enabling parameter constraint. | Used as the target for minimizing NRMSE in the PISCES biogeochemical model optimization [11]. |

| ChEMBL [9] | Bioactivity Database | Provides curated drug-target interaction data used to train and validate machine learning models. | Serves as a source of binding affinity labels for training a multi-target drug prediction model [9]. |

| DrugBank [9] | Drug & Target Database | Offers comprehensive information on drug mechanisms and targets, used for feature engineering and model interpretation. | Used to build a knowledge graph of drug-target-disease relationships for network pharmacology analysis [9]. |

| TensorFlow / PyTorch [7] | ML Framework | Provides the computational backbone with automatic differentiation, essential for calculating gradients in gradient-based optimization. | Used to implement and train deep learning models for tasks like molecular property prediction [7]. |

| Global Sensitivity Analysis (GSA) [11] | Computational Method | Identifies which model parameters have the greatest influence on the output, guiding which parameters to prioritize during optimization. | Prerequisite for the PISCES model optimization to identify sensitive zooplankton parameters [11]. |

| Iterative Importance Sampling (iIS) [11] | Optimization Algorithm | A Bayesian method to fit complex models to data while providing full posterior uncertainty estimates for parameters. | The core algorithm used to optimize all 95 parameters of the PISCES model [11]. |

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between structurally and practically non-identifiable parameters?

A structurally non-identifiable parameter has a correlation that is intrinsic to the model formulation and is independent of the control input parameters; it cannot be resolved with additional or more accurate measurements. In contrast, a practically non-identifiable parameter has a correlation that depends on the control input parameters; its identifiability can potentially be improved with improved experimental design or additional, higher-quality data [12].

Q2: What are the primary causes of practical non-identifiability in kinetic models?

The two main sources are:

- Lack of Influence: The parameter has no significant influence on the measured observables.

- Parameter Interdependence: The effect on the observables of a change in one parameter can be compensated by changes in other parameters [13]. Both issues are related to the sensitivities of the observables to parameter changes.

Q3: Which optimization strategies are effective for parameter estimation in large-scale models?

Robust deterministic local optimization methods (e.g., nl2sol, fmincon), when embedded within a global search strategy like multi-start (MS) or enhanced scatter search (eSS), are highly effective. Combinations such as eSS with fmincon-ADJ (using adjoint sensitivities) or eSS with nl2sol-FWD (using forward sensitivities) have been shown to clearly outperform gradient-free alternatives [12].

Q4: How can I visualize the relationships between identifiable and non-identifiable parameters in a complex model?

The MATLAB toolbox VisId uses a collinearity index and integer optimization to find the largest groups of uncorrelated parameters and identify small groups of highly correlated ones. The results can be visualized using Cytoscape, which shows the identifiable and non-identifiable parameter groups together with the model structure in the same graph [13].

Troubleshooting Guides

Issue 1: Poor Convergence or Infeasible Parameter Estimates During Model Calibration

This is a common symptom of unidentifiable parameters and ill-posed inverse problems.

- Diagnosis: The optimization algorithm fails to find a consistent minimum, or the estimated parameters have unrealistically large confidence intervals. This is often due to parameter correlation (equifinality, where different parameter combinations yield similar model outputs) or insufficient data to constrain all parameters [12].

- Solution Protocol:

- Perform a Practical Identifiability Analysis: Before parameter estimation, use tools like VisId to detect high-order relationships among parameters [13].

- Apply Regularization: Incorporate Tikhonov regularization into the objective function (e.g.,

Q_{LS}(θ) + α Γ(θ)) to penalize unrealistic parameter values and make the ill-posed problem well-posed [13] [12]. - Focus on Identifiable Subsets: Calibrate the model by first estimating the largest subset of identifiable parameters, then conditionally estimating the remaining parameters [13].

Issue 2: Handling High-Dimensional Parameter Spaces with Correlated Parameters

Large-scale models can contain dozens to hundreds of parameters, making traditional analysis methods computationally prohibitive.

- Diagnosis: Parameter estimation is computationally expensive, and global sensitivity analysis reveals that many parameters have weak direct effects but strong interaction effects [11].

- Solution Protocol:

- Global Sensitivity Analysis (GSA): Conduct a GSA to rank parameters by their influence (main and total effects) on model outputs. This helps prioritize parameters for optimization [11].

- Compare Optimization Strategies: Evaluate different approaches:

- Optimizing only parameters with strong direct effects.

- Optimizing a larger set that includes parameters with strong interaction effects.

- Optimizing all parameters simultaneously [11].

- Exploit Data Richness: Use multi-variable datasets (e.g., from BGC-Argo floats) that provide orthogonal constraints, which can help decouple correlated parameters and shift the problem from "correlated equifinality" to "uncorrelated equifinality" [11].

Issue 3: Managing Computational Cost of Identifiability Analysis and Optimization

The computational burden for large-scale models can be a significant bottleneck.

- Diagnosis: The prerequisite global sensitivity analysis or the parameter estimation process itself requires an infeasible amount of time. For example, the GSA can be ~40 times more computationally expensive than the subsequent optimization [11].

- Solution Protocol:

- Efficient Algorithms: Use computationally efficient methods like the collinearity index to quantify parameter correlation [13].

- Hybrid Optimization: Employ metaheuristics like eSS combined with efficient local search methods (e.g., NL2SOL) to accelerate convergence [13] [12].

- Strategy Selection: If a comprehensive uncertainty quantification for unobserved variables is not the primary goal, optimizing a pre-selected subset of sensitive parameters can achieve similar model skill improvement at a much lower computational cost compared to optimizing all parameters [11].

Experimental Protocols & Methodologies

Protocol 1: Workflow for Practical Identifiability Analysis

This protocol, based on the VisId toolbox, outlines the steps to assess which parameters in a model can be uniquely estimated from available data [13].

Table: Key Metrics for Identifiability Analysis

| Metric | Calculation/Description | Interpretation |

|---|---|---|

| Collinearity Index [13] | Quantifies the correlation between parameters in a group. A high index indicates near-linear dependence. | Index ≈ 1: Parameters are uncorrelated. Index >> 1: Parameters are highly correlated (non-identifiable group). |

| Sensitivity Matrix | Matrix of partial derivatives of model outputs with respect to parameters ((\frac{\partial y}{\partial \theta})) [13]. | Reveals parameters with low influence on any observable (a source of non-identifiability). |

| Largest Uncorrelated Subset | Found via integer optimization using the collinearity index [13]. | Represents the largest set of parameters that can be uniquely identified simultaneously. |

Protocol 2: Combined Global-Local Optimization for Parameter Estimation

This protocol describes a hybrid approach to efficiently find global parameter estimates for large-scale models [13] [12].

Table: Popular Optimization Algorithms for Kinetic Models

| Algorithm | Type | Key Features | Implementation |

|---|---|---|---|

| Enhanced Scatter Search (eSS) [12] | Global Metaheuristic | Population-based, effective for global exploration, often combined with local solvers. | AMIGO2, MEIGO |

| NL2SOL [13] [12] | Local (Gradient-based) | Adaptive, nonlinear least-squares; efficient for ODE models. | MATLAB, FORTRAN |

| fmincon (SQP/Interior-Point) [12] | Local (Gradient-based) | Handles constrained optimization; can use adjoint sensitivity analysis. | MATLAB Optimization Toolbox |

| qlopt [12] | Local (Sensitivity-based) | Combines quasilinearization, sensitivity analysis, and Tikhonov regularization. | GitHub |

The Scientist's Toolkit: Essential Research Reagents & Software

Table: Key Software Tools for Identifiability Analysis and Optimization

| Tool Name | Function/Brief Explanation | Reference/Source |

|---|---|---|

| VisId | A MATLAB toolbox for practical identifiability analysis and visualization of large-scale kinetic models. | GitHub [13] |

| AMIGO2 | A MATLAB toolbox for model identification and global optimization of dynamic systems, includes the eSS algorithm. | Source [12] |

| Cytoscape | An open-source platform for complex network analysis and visualization; used to visualize parameter relationships. | Website [13] [14] |

| CVODES | A solver for stiff and non-stiff ODE systems with sensitivity analysis capabilities (forward and adjoint). | SUNDIALS Suite [12] |

| qlopt | A software package implementing a quasilinearization-based method with regularization for parameter identification. | GitHub [12] |

Scaling up bioprocesses from the laboratory to industrial production presents a critical challenge in drug development: maintaining predictive accuracy. The transition from small-scale research experiments to large-scale manufacturing introduces physical and chemical constraints that can significantly alter process performance and product quality. When scaling issues are not properly addressed, they can compromise the very models and parameters used to predict outcomes, leading to failed batches, inconsistent product quality, and substantial financial losses [15] [16].

The core of this challenge lies in the fundamental differences between controlled laboratory environments and industrial-scale bioreactors. Parameters that are easily maintained at benchtop scale—such as temperature, pH, dissolved oxygen, and nutrient distribution—become heterogeneous in large vessels. This heterogeneity directly impacts cell growth, metabolism, and ultimately, the critical quality attributes (CQAs) of the biologic product [17]. Understanding and troubleshooting these scaling effects is therefore essential for researchers and scientists working to translate promising discoveries into commercially viable therapies.

FAQs: Scaling and Predictive Accuracy

Q1: Why do my laboratory-scale predictive models fail when applied to large-scale production?

Laboratory-scale models often fail during scale-up due to physical dissimilarities that are not linearly scalable. While a process parameter like power per unit volume (P/V) might be kept constant, other factors like shear stress, mixing time, and oxygen transfer rate do not scale linearly. For instance, increased agitation in large bioreactors can generate damaging shear forces not present in small-scale vessels, altering cell viability and product formation. Furthermore, the reduced surface-area-to-volume ratio in large tanks can limit oxygen transfer, creating anaerobic zones that negatively impact cell metabolism and compromise the predictive accuracy of models built on well-oxygenated lab-scale data [15] [16].

Q2: How does scaling specifically affect parameter estimation in biological models?

Scaling significantly aggravates issues of practical non-identifiability in system biology models. When moving to larger scales, the number of unknown parameters often increases. Using a common scaling method that introduces scaling factors (SF) to align simulated data with measured data has been shown to increase the number of directions in the parameter space along which parameters cannot be reliably identified. This means that multiple, very different parameter sets can appear to fit the data equally well, rendering predictions unreliable. Adopting a data-driven normalization of simulations (DNS) approach can mitigate this problem by not introducing additional unknown parameters, thus preserving model identifiability and predictive power during scale-up [6].

Q3: What are the most common scaling factors that lead to compromised product quality?

The most common scaling factors that impact product quality include:

- Oxygen Transfer Rate (OTR): Becomes a limiting factor in large bioreactors, potentially leading to anaerobic conditions and altered cell metabolism [15].

- Shear Stress: Increased from heightened agitation and aeration,它可以 damage delicate cells, reducing viability and productivity [15].

- Mixing Time: Increases with scale, leading to heterogeneity in nutrients, pH, and temperature, which can affect cell growth and product characteristics like glycan species and post-translational modifications [17] [16].

- Power per Unit Volume (P/V): A common scaling metric, but its preservation alone does not guarantee similar fluid dynamics or mass transfer coefficients (kLa) at different scales [18].

Troubleshooting Guides

Guide 1: Diagnosing Predictive Model Failure Post-Scale-Up

When your lab-scale model fails to predict large-scale performance, follow this diagnostic workflow to identify the root cause.

Title: Diagnostic Path for Model Failure

Corrective Actions:

- For Mass Transfer Issues (D1): Develop a scale-down model that mimics large-scale mixing and mass transfer limitations. Use this to re-calibrate your predictive models with data that reflects the heterogeneous conditions of the production bioreactor [16].

- For Non-Identifiable Parameters (D2): Shift from a Scaling Factor (SF) to a Data-driven Normalization of Simulations (DNS) approach in your parameter estimation routine. This reduces the number of unknown parameters and can alleviate non-identifiability, leading to more robust predictions [6].

- For Process Parameter Variability (D3): Implement advanced Process Analytical Technology (PAT) tools for real-time monitoring of Critical Process Parameters (CPPs). Use this high-resolution data to refine your models and define tighter control ranges for scale-up [19] [16].

Guide 2: Addressing Cell Culture Inconsistencies During Scale-Up

Inconsistent cell culture performance—evidenced by changes in growth, viability, or product titer—is a common scaling problem.

Title: Diagnostic Path for Culture Issues

Mitigation Strategies:

- For CO2 Accumulation (D1): Optimize aeration strategies and gas mixing ratios. Re-design spargers or adjust agitation rates to enhance CO2 stripping without causing cell-damaging turbulence [18].

- For Shear Damage (D2): Redesign the impeller or lower the agitation rate to the minimum required for adequate mixing and oxygen transfer. Consider using shear-protective additives in the culture media [15].

- For Nutrient Heterogeneity (D3): Re-evaluate feeding strategies, potentially shifting from batch to fed-batch or continuous perfusion to minimize concentration gradients. Optimize impeller design and placement for improved bulk mixing [15] [16].

Quantitative Data: Scaling Challenges & Computational Trade-Offs

Table 1: Impact of Scaling Factors on Predictive Model Accuracy

| Scaling Factor | Laboratory-Scale Value | Large-Scale Impact | Effect on Predictive Accuracy |

|---|---|---|---|

| Oxygen Transfer Rate (kLa) | Easily maintained >100 h⁻¹ | Can become limiting (<50 h⁻¹) [15] | Models fail due to metabolic shifts; inaccurate yield predictions. |

| Power per Unit Volume (P/V) | 1-2 W/L | May be kept constant, but fluid dynamics differ [18] | Altered shear profiles damage cells, violating model assumptions of constant viability. |

| Mixing Time | Seconds | Can increase to minutes [16] | Nutrient/gradient zones form, reducing model reliability for growth and titer. |

| Dissolved CO2 (pCO2) | Well-controlled | Can accumulate to inhibitory levels [18] | Cell growth models become inaccurate without accounting for CO2 inhibition. |

| Number of Model Parameters | 10 parameters | Can increase to 74+ parameters [6] | Increased practical non-identifiability; multiple parameter sets fit data equally well. |

Table 2: Computational Trade-offs in Parameter Estimation for Scaling

| Method / Strategy | Computational Efficiency | Parameter Uncertainty | Key Finding / Recommendation |

|---|---|---|---|

| Scaling Factor (SF) Approach | Lower | Increased | Aggravates non-identifiability; not recommended for complex scale-up models [6]. |

| Data-Driven Normalization (DNS) | Higher (up to 10x improvement for 74 parameters) [6] | Reduced | Greatly improves convergence speed and identifiability for large-scale models. |

| Optimizing All Parameters | High cost | Reduced by 16-41% [11] | Provides the most robust uncertainty quantification for unobserved variables [11]. |

| Importance Sampling (iIS) | Prerequisite GSA ~40x cost of optimization [11] | Significantly reduced | A comprehensive but computationally expensive framework for high-parameter models. |

Experimental Protocols

Protocol 1: Developing a Scale-Down Model for Process Validation

Objective: To create a lab-scale system that accurately mimics the stressful environment of a large-scale production bioreactor, enabling the identification and correction of scaling issues before costly manufacturing runs.

Materials:

- Benchtop bioreactor system

- Design-of-Experiments (DoE) software

- Lab-scale cell culture

- Sensors for pH, dO2, pCO2

Methodology:

- Characterize Large-Scale Environment: Profile the spatial and temporal heterogeneity of key parameters (e.g., dO2, pCO2, nutrient levels) in the production-scale bioreactor during a run.

- Translate to Lab Scale: Design an experimental setup in a small-scale bioreactor that replicates the dynamic conditions measured in Step 1. This may involve programmed variations in agitation, aeration, or feed addition to create zones of nutrient limitation or shear stress.

- Validate the Model: Confirm that the cell culture in the scale-down model exhibits similar performance metrics (e.g., growth, viability, titer, product quality attributes) as seen in the large-scale run when it encounters problems.

- Optimize and Predict: Use the validated scale-down model to test different process adjustments and control strategies. The optimized parameters from this model can then be applied to the large scale with higher confidence, restoring predictive accuracy [16].

Protocol 2: Assessing Parameter Identifiability for Scaling Models

Objective: To determine if the parameters in a dynamic scale-up model can be uniquely estimated from available experimental data, a critical step for ensuring model predictions are trustworthy.

Materials:

- Mathematical model (e.g., ODEs) of the bioprocess

- Parameter estimation software (e.g., PEPSSBI [6])

- Experimental dataset (time-course and perturbation data)

Methodology:

- Select Objective Function: Choose between Least Squares (LS) and Log-Likelihood (LL). Prefer a Data-driven Normalization of Simulations (DNS) over introducing Scaling Factors (SF) to minimize non-identifiability [6].

- Run Optimization Algorithm: Use a suitable algorithm (e.g., LevMar SE or GLSDC) to find the parameter set that minimizes the difference between model simulations and experimental data.

- Perform Practical Identifiability Analysis: After optimization, compute the profile likelihood for each parameter. This involves varying one parameter at a time around its optimal value, re-optimizing all others, and observing the change in the objective function.

- Interpret Results: A parameter is deemed "practically identifiable" if its profile likelihood shows a unique minimum. A flat profile indicates non-identifiability, meaning the parameter cannot be uniquely determined from the data. For non-identifiable parameters, consider model reduction or collecting additional, more informative data [6].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Scaling and Optimization Research

| Item | Function in Scaling Research | Example/Note |

|---|---|---|

| Single-Use Bioreactors | Minimize cross-contamination risk, simplify scale-up studies, and reduce downtime between runs [19] [16]. | Systems like ambr 250 and BIOSTAT STR offer scalability from 250 mL to 2000 L [16]. |

| Process Analytical Technology (PAT) | Enables real-time monitoring of Critical Process Parameters (CPPs) like pH, dO2, and metabolites for better process control and understanding [19]. | In-line sensors and Raman spectroscopy for real-time feedback. |

| Cloud-Based LIMS/ELN | Facilitates efficient data collection, analysis, and collaboration across teams, ensuring data integrity and traceability (ALCOA+ principles) [16]. | Centralized data management for scale-up protocols and results. |

| Parameter Estimation Software | Tools designed for calibrating complex biological models, with support for methods like DNS that improve identifiability [6]. | PEPSSBI, COPASI, Data2Dynamics. |

| High-Throughput Screening (HTS) Systems | Allows rapid screening of cell lines, culture conditions, and media formulations to identify optimal combinations for scalable production [16]. | Uses automation and miniaturization (e.g., 1536-well plates). |

Algorithmic Solutions: From Evolution Strategies to Machine Learning for Scaled Parameters

Frequently Asked Questions (FAQs)

FAQ 1: What types of optimization problems are common in biological research and drug development? Biological optimization often involves complex problems with multiple conflicting objectives, high-dimensional parameter spaces, and mixed variable types (continuous, integer, categorical). Key characteristics include [20] [21] [22]:

- Large-Scale Global Optimization (LSGO): Problems with hundreds or thousands of decision variables (e.g., optimizing cultivation media with 50+ components), leading to an exponentially growing search space and the "curse of dimensionality" [20] [22].

- Multi/Many-Objective Optimization (MOP/MaOP): Simultaneously optimizing multiple, often conflicting, objectives (e.g., maximizing protein yield while minimizing cost and production time). As objectives increase, algorithms may lose selection pressure [20].

- Mixed-Variable Problems: Parameters can be continuous (e.g., temperature), integer (e.g., number of passages), or categorical (e.g., choice of cell line). Standard continuous optimizers are not directly applicable [23] [22].

- Computationally Expensive and Noisy Evaluations: Each experiment or high-fidelity simulation can be time-consuming and costly, and biological data often has high inherent variability [23] [22].

FAQ 2: When should I use a metaheuristic algorithm instead of traditional methods like Design of Experiments (DoE)? Genetic Algorithms (GAs) and other metaheuristics are recommended in the following situations [22]:

- Limited prior knowledge about the problem or unreliable existing models.

- A large number of variables with complex, non-linear interactions.

- A need to minimize the number of expensive laboratory experiments.

- When the goal is to achieve effectiveness (e.g., high yield) rather than to build a detailed explanatory model.

- When the search space needs to be expanded beyond commonly examined areas to discover novel phenomena.

Traditional DoE and Response Surface Methodology (RSM) can struggle with high-dimensional, highly non-linear, or multi-modal problems where constructing a reliable polynomial model is difficult [22].

FAQ 3: My optimization is converging to a suboptimal solution. What could be wrong? Premature convergence is a common challenge in metaheuristics. Potential causes and solutions include [20] [24]:

- Poor Exploration-Exploitation Balance: The algorithm is exploiting local regions too quickly. Solution: Adjust algorithm parameters to favor exploration (e.g., higher mutation rates, larger population size) or choose algorithms with better inherent balance.

- Parameter Sensitivity: Default algorithm parameters (e.g., mutation rate, step size) may be poorly suited to your specific problem landscape. Solution: Perform sensitivity analysis or use adaptive parameter control.

- Loss of Population Diversity: The population becomes too homogeneous, trapping the search. Solution: Implement diversity-preservation mechanisms like niching or crowding.

FAQ 4: How can I handle constraints in my optimization problem (e.g., feasible pH ranges)? Two common strategies are used in advanced optimizers [23]:

- Probability of Feasibility: The algorithm models constraints and calculates the probability that a candidate solution is feasible. It then favors solutions with high probability and good performance. This is generally more effective than penalty functions.

- Penalty Functions: Infeasible solutions are penalized by adding a large value to their objective function, making them less likely to be selected. This requires careful tuning of the penalty weight.

FAQ 5: Are there ways to make metaheuristics more efficient for expensive biological experiments? Yes, a primary strategy is hybridization [21] [25]:

- Surrogate Modeling: Use a machine learning model (e.g., Gaussian Process, Neural Network) as a "surrogate" or approximation of the expensive real experiment. The optimizer works on the cheap surrogate most of the time, only validating promising candidates with the real assay [25].

- Hybrid Algorithms: Combine a global metaheuristic (e.g., GA, PSO) with a local search method for refined exploitation. Another approach is to hybridize a metaheuristic with other computational tools, such as combining Particle Swarm Optimization (PSO) with Sparse Grid for integral approximation in complex model fitting [21].

Troubleshooting Guides

Issue 1: Algorithm Selection for Complex Biological Problems

Symptoms: Slow convergence, inability to find a satisfactory solution, excessive computational time.

Diagnosis and Resolution: 1. Categorize your problem based on the table below.

| Problem Characteristic | Problem Type | Recommended Algorithm Class |

|---|---|---|

| Many decision variables (100+) | Large-Scale Global Optimization (LSGO) | Cooperative Co-evolution (CC), Problem Decomposition strategies [20] |

| 2-3 conflicting objectives | Multi-Objective Optimization (MOP) | MOEAs (e.g., NSGA-II, SPEA2) [20] |

| 4+ conflicting objectives | Many-Objective Optimization (MaOP) | MOEAs with enhanced selection pressure [20] |

| Many variables AND many objectives | Large-Scale Multi/Many-Objective Opt. (LSMaOP) | Custom MOEAs designed for dual challenges [20] |

| Continuous and integer/categorical variables | Mixed-Integer Problem | Mixed-Integer Evolution Strategies (MIES), Bayesian Optimizers for mixed-integer [23] |

| Computationally expensive evaluations | Expensive Black-Box Problem | Surrogate-assisted metaheuristics, Bayesian Optimization [25] [23] |

2. Select a specific algorithm. For mixed-integer problems, a Mixed Integer Evolution Strategy (MIES) is a direct fit. For other problem types, consider these commonly used metaheuristics [26] [24]:

- Genetic Algorithm (GA): Robust, good for non-linear/combinatorial spaces [24] [22].

- Particle Swarm Optimization (PSO): Simple, effective for continuous problems; often faster convergence than GA [21] [24].

- Differential Evolution (DE): Powerful for continuous global optimization [26].

- Novel Algorithms (e.g., LMO, GWO): Newer algorithms may offer improved convergence; always verify performance on benchmarks [24].

Issue 2: Handling Parameter Scaling and the "Curse of Dimensionality"

Symptoms: Performance degrades significantly as the number of parameters increases; algorithm stagnates and cannot effectively search the space.

Diagnosis: The search space grows exponentially with each added dimension, making it difficult for standard algorithms to cover effectively. This is a classic challenge in Large-Scale Global Optimization (LSGO) [20].

Resolution:

- Problem Decomposition: Use a Cooperative Co-evolution (CC) framework. This "divide and conquer" strategy breaks the high-dimensional vector into smaller, more manageable sub-components, which are optimized separately but evaluated collaboratively [20].

- Dimension Reduction Techniques: Apply unsupervised learning (e.g., Principal Component Analysis - PCA) to identify if the effective search space has lower intrinsic dimensionality, allowing you to optimize in a reduced space [20] [25].

- Algorithm Enhancement: Choose or develop algorithms specifically designed for LSGO. These often incorporate decomposition, specialized operators, or strategies to maintain diversity and focus search effort [20].

Table: Comparison of Techniques for High-Dimensional Problems

| Technique | Mechanism | Advantages | Limitations |

|---|---|---|---|

| Cooperative Co-evolution (CC) | Divides parameter vector into sub-components | Makes large problems tractable, improves scalability | Performance depends on variable grouping strategy |

| Dimension Reduction (e.g., PCA) | Projects data onto lower-dimensional space | Reduces problem complexity, can remove noise | May lose information if variance is not captured |

| Adaptive Operators | Adjusts search steps based on learning | Improves convergence, reduces parameter tuning | Adds computational overhead to algorithm itself |

Issue 3: Tuning Algorithm Parameters for Robust Performance

Symptoms: Highly variable results between runs; need to re-tune parameters for every new problem.

Diagnosis: Most metaheuristics have parameters that control their behavior (e.g., mutation rate, population size). Default values may not suit your specific problem landscape [24].

Resolution: Follow a structured tuning protocol. 1. Define Parameter Ranges. Base them on literature and problem specifics [24] [22]. 2. Choose a Tuning Method.

- Meta-Optimization: Use another optimizer to tune the first one's parameters.

- Reinforcement Learning (RL): Use RL to dynamically adjust parameters during the optimization run based on feedback [25].

- Iterated Racing: A advanced statistical method specifically for algorithm configuration. 3. Validate. Test the best-found parameter set on independent problem instances.

Experimental Protocol for Parameter Tuning

- Objective: Find the most robust parameter set for Algorithm X on your problem class.

- Method: Use a Meta-GA (a GA to tune another GA) or a simpler parameter sweep.

- Design: For each parameter set, run the algorithm 30-50 times on a representative benchmark problem to account for stochasticity.

- Metrics: Record the mean best fitness, standard deviation (for robustness), and average number of function evaluations to convergence (for speed).

- Analysis: Select the parameter set that offers the best trade-off between performance, robustness, and computational cost.

Issue 4: Validating and Interpreting Optimization Results in an Experimental Context

Symptoms: Uncertainty about whether the found solution is truly optimal or how to implement it in the lab.

Diagnosis: The stochastic nature of metaheuristics means they find near-optimal solutions, and the "best" solution might be sensitive to noise or model inaccuracies.

Resolution:

- Statistical Validation: Perform multiple independent runs (30+ is common) and perform statistical analysis (e.g., t-test, Mann-Whitney U test) on the final results to ensure the performance is significantly better than the baseline or a previous method [22].

- Sensitivity Analysis: Perturb the optimal solution slightly and re-evaluate. A robust optimum will show little change in performance with small changes in parameters. This helps assess the risk of implementing the solution in a noisy real-world environment.

- Post-Optimality Analysis (for Multi-Objective Problems): Analyze the Pareto front. Instead of a single solution, you have a set of non-dominated solutions. The shape of the front can reveal trade-offs between objectives. Decision-makers can then select a solution based on higher-level priorities [20].

The Scientist's Toolkit: Key Research Reagent Solutions

This table details computational and experimental "reagents" essential for implementing modern optimization frameworks in biological research.

| Item / Solution | Function / Explanation | Application Context |

|---|---|---|

| Benchmark Test Suites (e.g., CEC) | Standardized sets of optimization problems with known optima to fairly evaluate and compare algorithm performance before real-world application [20]. | Validating a new MIES implementation; comparing PSO vs. GA performance on LSGO. |

| Sparse Grid Integration | A numerical technique for approximating high-dimensional integrals, crucial for evaluating likelihoods in complex statistical models [21]. | Hybridized with PSO for parameter estimation in nonlinear mixed-effects models (NLMEMs) in pharmacometrics [21]. |

| Nonlinear Mixed-Effects Model (NLMEM) | A statistical framework that accounts for both fixed effects (population-level) and random effects (individual-level variability), common in longitudinal data analysis [21]. | Modeling drug concentration-time data across a population of patients to estimate PK/PD parameters [21]. |

| Global Sensitivity Analysis (GSA) | A set of methods to quantify how the uncertainty in the model output can be apportioned to different input parameters. Identifies which parameters are most influential [11]. | Prior to optimization, to reduce problem dimension by fixing non-influential parameters in a complex biogeochemical model [11]. |

| Surrogate Model (e.g., Gaussian Process) | A machine learning model that approximates the input-output relationship of an expensive function. Used as a cheap proxy during optimization [25]. | Replacing a computationally expensive cell culture simulation to allow for rapid exploration of thousands of parameter combinations. |

FAQ: Addressing Common Experimental Issues

What are the fundamental advantages of combining global stochastic and gradient-based methods?

This hybrid approach addresses core limitations of individual methods. Global stochastic methods like Evolutionary Algorithms (EAs) possess strong global exploration ability, making them excellent for navigating complex, multi-modal search spaces and avoiding local minima without requiring gradient information [27]. However, they often exhibit weak local search ability near the optimum and require large population sizes and iterations for large-scale problems, leading to low optimization efficiency [27].

Conversely, gradient-based optimization algorithms can quickly converge to the vicinity of an extreme solution with high efficiency, especially when leveraging adjoint methods for sensitivity analysis [28] [27]. Their primary weakness is dependence on the initial value and gradient information, making them essentially local search algorithms that can easily become trapped in local optima [27].

By hybridizing these methods, you retain favorable global exploration capabilities while enhancing local exploitation. The local search helps direct the global algorithm toward the globally optimal solution, improving overall convergence efficiency and often producing highly accurate solutions [27].

Why is my hybrid optimization stalling or converging slowly, particularly for biological models with poorly scaled parameters?

Poor parameter scaling is a prevalent issue in biological optimization that severely impacts algorithm performance. When parameters (e.g., reaction rates, concentrations, kinetic constants) operate on different orders of magnitude, it creates a distorted loss landscape with ill-conditioned curvature [28]. This disproportionally affects gradient-based steps, as the sensitivity of the objective function can vary wildly across parameters.

Troubleshooting Steps:

- Diagnose Sensitivity Disparities: Before full optimization, perform a global sensitivity analysis to identify parameters with dominant influence on your model output [11]. This helps understand the inherent scaling of your problem.

- Implement Parameter Scaling: Apply scaling transformations to normalize parameter ranges. Common techniques include:

- Min-Max Scaling to a predefined range (e.g., [0, 1]).

- Standardization (Z-score normalization).

- Logarithmic Transformation for parameters spanning several orders of magnitude.

- Adjust Move Limits: In the gradient-based phase, use move limits to restrict design variable changes within a narrow range during each iteration. This stabilizes convergence by protecting the accuracy of local approximations [28]. Start with conservative limits (e.g., 10-20% of the current value) and adjust based on observed convergence behavior [28].

How can I balance global exploration and local exploitation effectively in my algorithm?

Achieving the right balance is critical. Excessive global search is computationally wasteful, while premature or excessive local exploitation can cause the population to lose diversity and converge to a suboptimal point [27].

Strategies for Effective Balancing:

- Clustered Subpopulation Strategy: Divide the population using a clustering algorithm. Apply more intensive, multi-weight gradient searches only to individuals in densely populated areas (indicating promising regions) and to degraded individuals. Use simpler, single-weight searches for other individuals to conserve computational resources [27].

- Alternating Operators: Use a hybrid algorithm (HMOEA) that retains the global evolutionary operator (e.g., genetic algorithms) and alternates it with the multi-objective gradient operator. This enhances mining and convergence capabilities without overly relying on gradient information, thereby preserving population diversity [27].

- Adaptive Switching Criterion: Implement a trigger for switching from global to local search, such as when the population's improvement rate falls below a threshold or when a certain number of generations pass without significant improvement.

My model has many parameters. Which ones should I prioritize for optimization?

Simultaneously optimizing all parameters, while computationally expensive, can provide a more robust quantification of model uncertainty, especially for unassimilated variables [11]. However, a strategic approach can improve efficiency.

Optimization Strategies:

- All-Parameters Optimization: Directly optimizing all model parameters is often recommended as it explores the full parameter space comprehensively [11]. The prerequisite Global Sensitivity Analysis (GSA) might be computationally expensive (e.g., ~40 times more than the optimization itself), but the resulting optimization is more thorough [11].

- Subset Optimization based on GSA: Use GSA to identify and optimize only a subset of parameters with strong direct influence ("Main effects") or those that are influential through non-linear interactions ("Total effects") [11]. Research on biogeochemical models with 95 parameters has shown that both subset and all-parameter strategies can achieve statistically indistinguishable improvements in model skill (e.g., 54-56% NRMSE reduction) [11].

The choice depends on your computational budget and the need for uncertainty quantification. If resources allow, optimizing all parameters is preferred [11].

Troubleshooting Guides

Problem: Algorithm is Trapped in Local Minima

Symptoms: Convergence to a suboptimal solution that does not adequately explain the experimental data; small changes in initial parameters lead to the same result. Solution:

- Re-initialize Global Phase: Trigger a restart of the global stochastic search component from a new, randomized population or increase the mutation rate in your Evolutionary Algorithm.

- Review Annealing Schedule: If using a Simulated Annealing component, ensure your cooling schedule is slow enough. A rapid "quench" leads to metastable, local minima, while slow "annealing" allows access to the global ground state [29]. Monitor a metric analogous to specific heat; if it grows, slow the annealing rate to avoid false optimal points [29].

- Hybridization Check: Verify that the handover from the global to the local optimizer does not happen too early. Allow the global method (e.g., EA) to sufficiently explore the search space before activating the local gradient-based refinement [27].

Problem: Parameter Instability and Oscillations

Symptoms: Wild fluctuations in parameter values between iterations; failure to converge despite many iterations. Solution:

- Apply Gradient Clipping: For gradient-based steps, clamp the magnitude of the gradient to a maximum value to prevent explosive updates, especially common in problems with "ravines" or steep curvatures [30]. The update becomes:

gradient = clip(gradient, -max_value, max_value)[30]. - Tune Move Limits: Reduce the move limits in your gradient-based optimizer. While advanced approximations may allow limits up to 50%, typical values are around 20% of the current design variable value to protect the accuracy of the approximations [28].

- Introduce Momentum: Use momentum in your gradient descent to smooth updates. Momentum uses a weighted combination of the previous update direction and the current negative gradient (

v_{k+1} = μ * v_k - η * ∇J(θ_k)), which can help carry the search over small bumps and imperfections [30]. Be cautious, as too much momentum (μtoo high) can cause overshooting [30]. - Implement a Learning Rate Schedule: Decay the learning rate (

η) over time according to a schedule (e.g., exponential, stepwise, or linear decay) to ensure smaller, more stable steps as you approach a solution [30].

Problem: Prohibitively Long Computation Times

Symptoms: Single optimization run takes days/weeks; impossible to perform necessary replicates or sensitivity analyses. Solution:

- Leverage Adjoint Methods: For models where gradient calculation is the bottleneck (e.g., complex PDE-based biological models), implement the adjoint method for sensitivity analysis. Unlike finite differences, its computational cost is largely independent of the number of design variables, dramatically improving efficiency for large-scale problems [28] [27].

- Optimize Gradient Method Selection: Choose the correct sensitivity analysis method based on your problem.

- Use the direct method (one forward-backward substitution per design variable) for problems with many constraints but few design variables (common in shape/size optimization) [28].

- Use the adjoint variable method (one forward-backward substitution per retained constraint) for problems with many design variables but few constraints (common in topology optimization) [28].

- Utiline Constraint Screening: In the gradient-based phase, use constraint screening to ignore constraints that are not close to being violated. Calculate sensitivities only for violated or nearly violated constraints, focusing computational effort on critical areas [28].

- Switch to Mini-Batch Gradients: If using stochastic gradients, use mini-batches. This offers a middle ground between the noisy, high-variance updates of Stochastic Gradient Descent (SGD) and the computationally expensive full-batch processing, leading to more frequent and stable updates [31].

Experimental Protocols & Data Presentation

Protocol: Implementing a Hybrid Multi-Objective Evolutionary Algorithm (HMOEA)

This protocol is adapted from successful applications in aerodynamic design [27] and is applicable to complex biological models.

1. Initialization:

- Generate an initial population of candidate solutions (parameter sets) randomly within biologically plausible bounds.

- Set parameters for both global (EA) and local (gradient) components. For example: population size, crossover/mutation rates, learning rate, move limits.

2. Global Evolutionary Search Phase:

- Evaluation: Compute the multi-objective loss function for each individual in the population.

- Selection & Variation: Apply genetic operators (selection, crossover, mutation) to create a diverse offspring population.

3. Local Gradient Refinement Phase:

- Clustering: Use a clustering algorithm (e.g., k-means) to partition the population based on their location in the objective space.

- Stochastic Gradient Operator:

- For individuals in dense clusters (promising regions), use a multi-weight mode. Generate multiple random weight vectors for the objectives and perform a gradient-based update for each weight.

- For other individuals, use a single-weight mode. Generate a single random weight vector per individual for the gradient update.

- Gradient Update: For each selected individual and weight vector, calculate the weighted gradient of the objectives and update the parameters:

θ_new = θ - η * ∇(weighted_J(θ)), respecting move limits.

4. Selection and Iteration:

- Combine the populations from the evolutionary and gradient phases.

- Select the best individuals for the next generation based on Pareto dominance and diversity criteria (e.g., as in NSGA-III).

- Repeat from Step 2 until convergence criteria are met.

Quantitative Comparison of Optimization Method Characteristics

Table 1: Characteristics of different gradient-based optimization methods. Adapted from information on Altair OptiStruct [28] and deep learning optimizers [31].

| Method | Key Principle | Best Suited For | Advantages | Disadvantages |

|---|---|---|---|---|

| Method of Feasible Directions (MFD) | Seeks improved design along usable-feasible directions | Problems with many constraints but fewer design variables (e.g., size/shape optimization) [28]. | Good for handling constraints. | Can be slow for very large-scale variable problems. |

| Sequential Quadratic Programming (SQP) | Solves a quadratic subproblem in each iteration | Problems with equality constraints [28]. | Fast local convergence. | Requires accurate second-order information; high computational cost per iteration. |

| Dual Method (CONLIN, DUAL2) | Solves the problem in the dual space of Lagrange multipliers | Problems with a very large number of design variables but few constraints (e.g., topology optimization) [28]. | High efficiency for large-scale variable problems. | Less suitable for problems with many active constraints. |

| Stochastic Gradient Descent (SGD) | Parameter update after each training example | Very large datasets [31]. | Fast convergence; escapes local minima. | Noisy updates; high variance; can overshoot [31]. |

| Mini-Batch Gradient Descent | Parameter update after a subset (batch) of examples | General deep learning training [31]. | Balance between stability and speed. | Requires tuning of batch size. |

| Gradient Descent with Momentum | Update is a combination of current gradient and previous update | Navigating loss landscapes with high curvature [30]. | Reduces oscillation; accelerates convergence in relevant directions. | Introduces an additional hyperparameter (momentum term). |

Protocol: Global Sensitivity Analysis as a Precursor to Optimization

As demonstrated in biogeochemical parameter optimization, a GSA is crucial for understanding parameter influence before optimization [11].

1. Parameter Sampling:

- Use a Latin Hypercube Sampling (LHS) or Sobol sequence to generate a space-filling set of parameter samples from the defined prior distributions.

2. Model Evaluation:

- Run your biological model for each parameter set in the sample and record the objective function value(s).

3. Sensitivity Index Calculation:

- Apply a variance-based method (e.g., Sobol indices) to the input-output data.

- Calculate First-Order (Main) Indices: Measure the contribution of each parameter to the output variance alone.

- Calculate Total-Order Indices: Measure the contribution of each parameter including all interactions with other parameters.

4. Parameter Prioritization:

- Rank parameters based on their Total-Order indices. Parameters with the highest indices have the greatest influence on model output and are prime candidates for optimization.

Visualizations

Diagram 1: High-Level Workflow of a Hybrid Optimization Algorithm

Diagram 2: Parameter Scaling and its Impact on Optimization

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Key computational tools and methods for hybrid optimization, particularly in biological contexts.

| Item / Method | Function / Purpose | Key Considerations for Biological Models |

|---|---|---|

| Global Sensitivity Analysis (GSA) | Identifies parameters with the strongest influence on model output (main and total effects) [11]. | Prerequisite for informed parameter prioritization; can be computationally expensive but is highly valuable [11]. |

| Evolutionary Algorithm (EA) | Provides global exploration of parameter space without requiring gradients; good for multi-objective problems [27]. | Population-based search can find promising regions but is inefficient at fine-tuning solutions [27]. |

| Adjoint Method | Efficiently computes gradients of a cost function with respect to all parameters, independent of the number of variables [28] [27]. | Crucial for efficiency in models with many parameters (e.g., PDE-based systems); implementation can be complex. |

| Multi-Objective Gradient Operator | A local search operator that uses stochastic weighting of objectives to generate Pareto-optimal solutions [27]. | Enables gradient-based search to be applied effectively in multi-objective settings, enhancing convergence to the Pareto front. |

| Move Limits | Constraints applied during local search to limit the maximum change of a parameter in one iteration [28]. | Prevents unstable oscillations and protects the accuracy of local approximations; essential for stable convergence (typical: 10-20%) [28]. |

| Importance Sampling (e.g., iIS) | A Bayesian inference method used to constrain model parameters by leveraging rich, multi-variable datasets [11]. | Useful for generating posterior parameter distributions and quantifying uncertainty after optimization [11]. |

In biological optimization research, such as in drug development and experimental design, scientists frequently encounter complex "black-box" functions. These are processes—like predicting compound toxicity or protein binding affinity—where the relationship between input parameters and the output is not known analytically and each evaluation is computationally expensive or time-consuming to measure experimentally [32] [33]. Parameter scaling issues arise when the input variables, or parameters, of these functions operate on vastly different scales or units. This disparity can severely hinder the performance of optimization algorithms, causing them to converge slowly or miss the optimal solution altogether.

Bayesian Optimization (BO) has emerged as a powerful strategy for tackling such expensive black-box optimization problems [32] [33]. Its core strength lies in building a probabilistic surrogate model of the unknown objective function and using an acquisition function to intelligently select the next most promising parameter set to evaluate, thereby balancing exploration of uncertain regions with exploitation of known promising areas [32]. The integration of adaptive surrogate models further enhances this framework by allowing the model to update and improve its accuracy as new data from experiments becomes available, making the entire process more efficient and robust [34] [35].

This technical support center is designed to help researchers and scientists overcome specific challenges they face when implementing these advanced optimization techniques, with a particular focus on resolving parameter scaling issues and selecting appropriate modeling strategies within biological and drug discovery contexts.

Troubleshooting Guides and FAQs

FAQ: Parameter Preprocessing and Scaling

Q: My Bayesian optimization routine is converging poorly on my high-throughput screening data. I suspect it's due to my parameters having different units and scales. What is the best preprocessing method to fix this?

A: Your suspicion is likely correct. Parameter scaling is a critical preprocessing step to ensure the surrogate model, often a Gaussian Process (GP), can properly weigh the influence of all parameters. Without scaling, parameters with larger numerical ranges can dominate the distance calculations in the GP kernel, leading to a biased model.

Recommended Preprocessing Workflow: