Taming the Noise: Advanced Strategies for Robust DBTL Cycles in Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on managing experimental noise in Design-Build-Test-Learn (DBTL) cycles.

Taming the Noise: Advanced Strategies for Robust DBTL Cycles in Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on managing experimental noise in Design-Build-Test-Learn (DBTL) cycles. It explores the fundamental sources and impacts of noise in high-throughput biological data, presents advanced machine learning methodologies like Bayesian optimization and heteroscedastic modeling for robust data analysis, and offers practical troubleshooting and optimization strategies for automated platforms. Through validation case studies from metabolic engineering and protein expression, it demonstrates how these approaches lead to more reliable, reproducible, and efficient strain and therapy development, ultimately accelerating the translation of biomedical research.

Understanding Experimental Noise: The Fundamental Challenge in Biological DBTL Cycles

Troubleshooting Guide: FAQs on Experimental Noise

Q1: My TR-FRET assay has no assay window. What is the most common cause?

The most common reason for a complete lack of an assay window is that the instrument was not set up properly. Additionally, the single most common reason for TR-FRET assay failure is the use of incorrect emission filters. Unlike other fluorescence assays, TR-FRET requires specific emission filters recommended for your instrument. The excitation filter has a greater impact on the assay window itself. You should always test your microplate reader’s TR-FRET setup using reagents you have on hand before beginning experimental work [1].

Q2: How can I determine if a problem in my single-cell RNA-seq experiment is due to technical noise or genuine biological variation?

Technical noise in scRNA-seq, stemming from stochastic RNA loss during cell lysis, reverse transcription, and amplification, can be distinguished from biological noise by using a generative statistical model alongside external RNA spike-ins. These spike-in molecules are added in the same quantity to each cell's lysate and provide an empirical model of the technical noise across the dynamic range of gene expression. This approach allows you to decompose the total observed variance into its technical and biological components, helping to confirm whether observed variability, such as stochastic allele-specific expression, is genuine or a technical artefact [2].

Q3: My experimental results show high variability between replicates. How can I assess if my assay is still robust enough for screening?

Assay window size alone is not a good measure of robustness. The Z'-factor is a key metric that takes into account both the assay window (the difference between the maximum and minimum signals) and the variability (standard deviation) of the data. It is calculated as: ( Z' = 1 - \frac{3(σ{max} + σ{min})}{|μ{max} - μ{min}|} ) where ( σ ) is the standard deviation and ( μ ) is the mean of the high (max) and low (min) controls. A Z'-factor > 0.5 is generally considered suitable for screening. A large assay window with a lot of noise can have a lower Z'-factor than an assay with a small window but little noise [1].

Q4: What is a structured approach to troubleshooting general lab instrument issues?

A logical, funnel-based approach is effective:

- Start Broadly: Determine if the issue is method-related, mechanical, or operational.

- Ask Preliminary Questions: What was the last action before the issue? How frequent is the problem? Check instrument logbooks and software logs.

- Confirm Parameters: Verify that the method parameters match what is supposed to be run, as they can be accidentally changed.

- Isolate the Issue: Use "half-splitting" on modular instruments to isolate the problem to a specific component (e.g., chromatography side vs. mass spectrometer side).

- Repair Systematically: Start with easy fixes like replacing consumables. Document every step and resist applying multiple fixes at once [3].

Quantifying and Managing Noise in the DBTL Cycle

The Design-Build-Test-Learn (DBTL) cycle is a core iterative process in metabolic engineering and biosystems design. Managing noise is critical for efficient learning and design in subsequent cycles. Fully automated, algorithm-driven platforms are now being used to close the DBTL loop, using machine learning to distinguish robust signals from noisy data and directly inform the next round of experiments [4] [5].

Table 1: Key Metrics for Quantifying Experimental Noise and Data Quality

| Metric | Definition | Application | Interpretation |

|---|---|---|---|

| Z'-factor [1] | A statistical measure that assesses the robustness of an assay by considering both the dynamic range and the data variation. | High-throughput screening assays (e.g., TR-FRET, fluorescence-based assays). | > 0.5: Excellent assay for screening.0.5 to 0: A marginal to poor assay.< 0: The signals of the high and low controls overlap. |

| Biological Variance [2] [6] | The component of total observed variance in gene expression across cells that is attributable to genuine biological stochasticity (e.g., transcriptional bursting) rather than technical artifacts. | Single-cell RNA-sequencing (scRNA-seq) data analysis. | Helps distinguish true stochastic allele-specific expression or cell-to-cell heterogeneity from noise introduced by low capture efficiency or amplification bias. |

| Transcriptional Burst Frequency and Size [6] | Kinetic parameters describing stochastic gene expression; frequency is how often a gene switches to an "ON" state, and size is the number of transcripts produced per burst. | Analysis of single-cell expression data (e.g., from smFISH or scRNA-seq) to understand the source of phenotypic variability. | Genomic features (e.g., TATA-box, CpG islands) can modulate these parameters, influencing the level of transcriptional variability. |

Experimental Protocol: Decomposing Noise in Single-Cell RNA-Seq

This protocol outlines the use of external RNA spike-ins to quantify technical noise [2].

1. Principle: External RNA Control Consortium (ERCC) spike-in molecules are added in known, identical quantities to the lysis buffer of every single cell. This provides an internal standard that experiences the same technical noise (e.g., stochastic dropout, amplification bias) as the endogenous transcripts but has no biological variation. The difference between the expected and observed spike-in counts is used to model the technical noise.

2. Reagents and Equipment:

- Single-cell suspension

- ERCC spike-in mix

- scRNA-seq library preparation kit

- scRNA-seq platform (e.g., microfluidic or plate-based)

- High-throughput sequencer

3. Procedure:

- Spike-in Addition: Add a precise volume of ERCC spike-in mix to the cell lysis buffer immediately after cell lysis.

- Library Preparation and Sequencing: Proceed with standard scRNA-seq library preparation, including reverse transcription, amplification (using Unique Molecular Identifiers, UMIs), and sequencing.

- Data Processing: Align sequencing reads to a combined reference genome (endogenous genes + ERCC sequences) and quantify transcript counts.

- Generative Modeling: Use a probabilistic model (e.g., as described in [2]) to:

- Estimate cell-specific technical parameters (e.g., capture efficiency) from the spike-in data.

- Fit the mean-variance relationship for technical noise across the expression level dynamic range.

- Calculate the technical variance for each endogenous gene.

- Estimate biological variance as: Biological Variance = Total Observed Variance - Technical Variance.

4. Data Analysis:

- The model outputs an estimate of the proportion of variance for each gene that is due to biological noise versus technical noise.

- This allows for the correct identification of genuine biological phenomena, such as stochastic allele-specific expression, by ensuring that apparent monoallelic expression is not merely a consequence of technical dropout for lowly expressed genes [2].

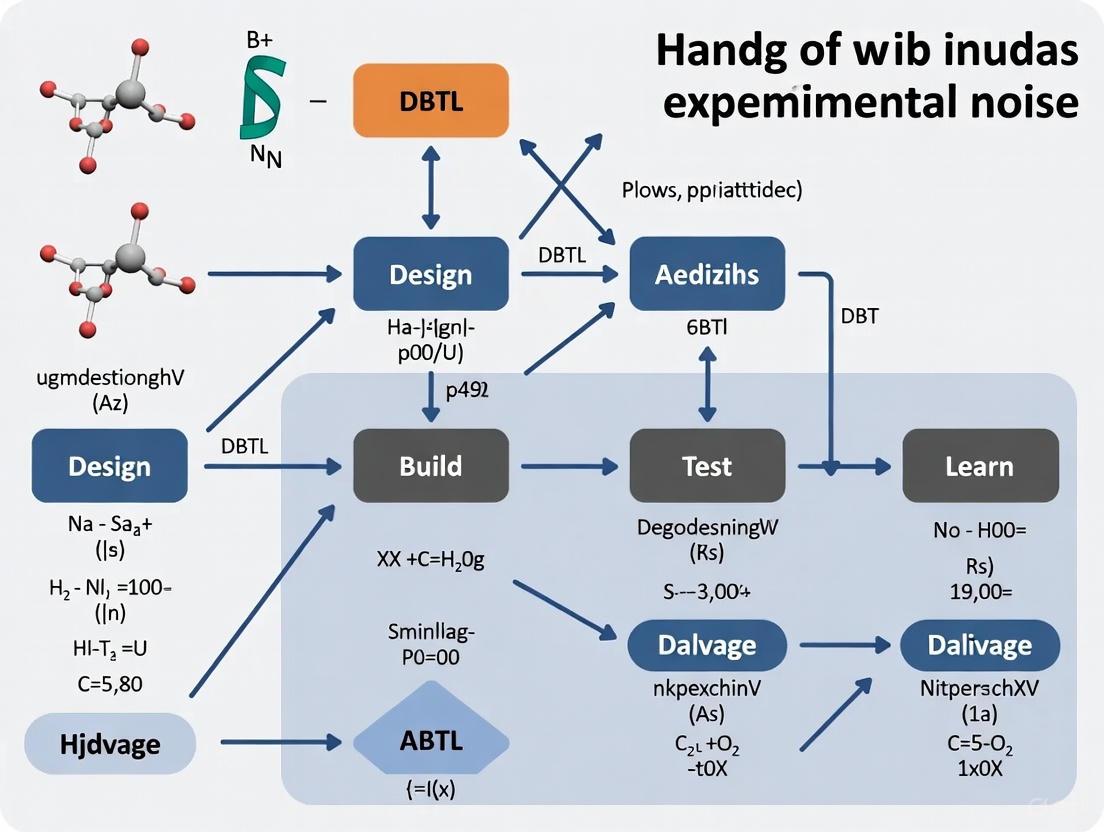

Diagram: Integrating Noise Analysis into the DBTL Cycle

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Materials for Noise Control Experiments

| Item | Function in Noise Control | Example Application |

|---|---|---|

| External RNA Spike-ins (ERCC) [2] | Provides an internal standard to model technical noise across the expression dynamic range. Added in known quantities to each sample. | Quantifying technical noise and decomposing variance in single-cell RNA-sequencing experiments. |

| LanthaScreen TR-FRET Assay Reagents [1] | Terbium (Tb) or Europium (Eu)-labeled donors and acceptors for time-resolved FRET assays. The donor signal serves as an internal reference to normalize for pipetting variance and reagent lot-to-lot variability. | High-throughput screening assays in drug discovery (e.g., kinase activity assays). |

| Unique Molecular Identifiers (UMIs) [2] | Short random nucleotide sequences added to each mRNA molecule during reverse transcription. They allow for the correction of amplification bias by collapsing PCR duplicates, reducing technical noise in sequencing counts. | Any sequencing-based protocol where amplification is involved (e.g., scRNA-seq, bulk RNA-seq). |

| Robotic Automation Platform [4] [5] | Executes repetitive "Test" steps in the DBTL cycle with high precision, minimizing human-introduced operational variability and enabling high-throughput data generation for machine learning. | Automated cultivation, induction, and measurement in metabolic engineering and biosystems design optimization. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary sources of data variability in a DBTL cycle? Data variability in DBTL cycles arises from multiple sources, which can be broadly categorized as follows:

- Biological Noise: Inherent stochasticity in biological systems, such as differences in cell physiology, metabolic burden, and colony-to-colony variability [7] [4].

- Experimental Noise: Variations introduced during manual laboratory workflows, including pipetting inaccuracies and inconsistencies in culturing conditions [4].

- Analytical Noise: Limitations in the precision of analytical instruments and methods. For example, standard bioanalytical methods can have a precision CV% of 15-20%, which directly contributes to the scatter of final results [8].

- Process and Material Variability: Fluctuations in raw materials, such as natural excipients in formulations, where factors like particle size and moisture content can vary even within pharmacopeia specifications [9].

FAQ 2: How does data variability impact the machine learning (ML) phase of the DBTL cycle? Data variability, or noise, presents a fundamental challenge to machine learning.

- Impaired Model Performance: Noisy data can lead to inaccurate predictive models that fail to identify the true optimal designs. ML requires large, reproducible datasets to function effectively, and variability can drastically slow down the learning process [10] [4].

- Exploration vs. Exploitation Dilemma: Variability makes it difficult for algorithms to distinguish between a genuinely high-performing strain and one that measured high due to random chance. This confusion disrupts the balance between exploring new designs and exploiting promising ones [5].

FAQ 3: What practical steps can I take to mitigate variability in my experiments?

- Automate Workflows: Utilizing robotic platforms for tasks like cultivation and liquid handling significantly increases throughput and data reproducibility, reducing human-introduced errors [4].

- Leverage High-Throughput Data: Generate large, multivariate datasets using plate readers and RNA-sequencing to capture system behavior more comprehensively, making ML models more robust to noise [11] [4].

- Implement Robust Design Principles: Apply Quality by Design (QbD) and Design of Experiment (DoE) principles to understand and control critical material attributes and process parameters early in development [9].

Troubleshooting Guides

Problem: High variability in protein expression yields.

- Potential Cause: Colony-to-colony variation or metabolic burden on the host organism [7].

- Solution:

- Design: Hypothesize that different colonies from the same transformation plate may have varying tolerance to expression.

- Build: Inoculate multiple flasks with different colonies, rather than relying on a single colony.

- Test: Measure growth curves (OD600) and protein yield for each flask.

- Learn: Select and scale up the healthiest colonies with the most consistent expression for subsequent cycles [7].

Problem: High variability in pharmacokinetic (PK) concentration-time data, especially during absorption and distribution phases.

- Potential Cause: The combined effect of physiological variability and analytical method precision [8].

- Solution: A data transformation method can be applied to reduce standard deviation without statistically significantly altering the mean.

- Identify the phase of the PK profile with the lowest relative standard deviation (RSD%), typically the elimination phase.

- Use this lowest RSD% value, along with the known precision of your analytical method (CV%,an), to optimize and transform the entire concentration-time dataset.

- This method has been shown to more than halve the SD of PK parameters, providing a clearer and more selective pharmacokinetic profile [8].

Problem: Machine learning recommendations are not converging on an optimal design.

- Potential Cause: The training data is too noisy or biased for the ML algorithm to learn effectively [10].

- Solution:

- Algorithm Selection: In low-data regimes, choose machine learning methods proven to be robust to noise and training set biases, such as Gradient Boosting or Random Forest [10].

- Cycle Strategy: If the number of strains you can build is limited, favor starting with a larger initial DBTL cycle to generate a more substantial baseline dataset for the model to learn from, rather than distributing the same number of builds evenly across multiple cycles [10].

- Platform Integration: Implement a fully automated DBTL platform where an algorithm, such as a Bayesian optimizer, can actively select new designs based on real-time data, effectively managing the noise through sequential, informed experimentation [4] [5].

Quantitative Data on Variability and DBTL Performance

Table 1: Impact of Data Optimization on Pharmacokinetic Variability This table summarizes the effect of a specific data transformation technique on the variability of pharmacokinetic parameters for a high-variability drug [8].

| Pharmacokinetic Parameter | Variability Before Optimization (SD) | Variability After Optimization (SD) | Reduction in Variability |

|---|---|---|---|

| Overall Concentration Data | High | More than 2x lower | > 50% |

| Absorption & Early Distribution Phase Profile | High variability, less selective | Lower variability, more selective | Significant |

Table 2: Machine Learning Algorithm Performance in Noisy, Low-Data Environments A simulation-based study evaluated different ML algorithms for combinatorial pathway optimization, a common DBTL challenge [10].

| Machine Learning Algorithm | Performance in Low-Data/Noisy Regime | Key Characteristics for DBTL |

|---|---|---|

| Gradient Boosting | Outperforms other methods | Robust to training set biases and experimental noise [10] |

| Random Forest | Outperforms other methods | Robust to training set biases and experimental noise [10] |

| Other Tested Methods | Lower performance | More susceptible to data variability |

Detailed Experimental Protocols

Protocol 1: Establishing an Autonomous Test-Learn Cycle Using a Robotic Platform

Objective: To autonomously optimize inducer concentration for protein expression in a bacterial system, closing the DBTL loop without human intervention [4].

Methodology:

- System Setup: An E. coli or Bacillus subtilis system with a GFP reporter gene under an inducible promoter is used.

- Cultivation: Bacteria are cultivated in 96-well microtiter plates (MTPs) inside a fully integrated robotic platform, which includes a shake incubator, liquid handling robots, and a plate reader.

- Initial Experiment: The platform executes a pre-defined set of inducer concentrations.

- Data Acquisition: The platform's plate reader measures optical density (OD600) and fluorescence at defined time points.

- Active Learning:

- An importer software component retrieves the measurement data and writes it to a database.

- An optimizer (e.g., a Bayesian algorithm) selects the next set of inducer concentrations to test based on a balance of exploration and exploitation.

- Iteration: The platform directly executes the new experiments suggested by the optimizer, completing multiple full Test-Learn cycles autonomously [4].

Protocol 2: Data Transformation to Reduce Pharmacokinetic Variability

Objective: To significantly reduce the standard deviation of observed drug concentrations in a pharmacokinetic study without a statistically significant influence on the mean [8].

Methodology:

- Data Collection: Collect full concentration-time (C-T) profiles from subjects after a single oral drug administration.

- Identify Stable Phase: Determine the phase of the PK profile with the lowest relative standard deviation (RSD%), which is typically the elimination phase where the process is dominated by a single mechanism.

- Assess Analytical Precision: Note the precision (CV%) of the bioanalytical method used.

- Transformation: Apply a mathematical transformation to the entire C-T dataset, using the lowest RSD% from the elimination phase and the analytical method precision as reference points to optimize and reduce variability in the more chaotic absorption and distribution phases [8].

DBTL Workflow and System Diagrams

Automated DBTL with Noise

Automated Platform Architecture

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Reagents and Platforms for Managing DBTL Variability

| Item / Solution | Function in DBTL Cycle | Role in Mitigating Variability |

|---|---|---|

| Robotic Liquid Handling Platform [4] | Automates the "Build" and "Test" phases (e.g., pipetting, cultivation). | Eliminates manual errors and provides high reproducibility for large-scale experiments. |

| Integrated Robotic Platform (e.g., iBioFAB [5], Analytik Jena [4]) | A fully automated biofoundry that integrates incubators, liquid handlers, and plate readers. | Creates a controlled, consistent environment for end-to-end experimentation, minimizing batch effects. |

| Bayesian Optimization Algorithm [5] | Drives the "Learn" phase by selecting the next experiments. | Efficiently navigates noisy experimental landscapes by balancing exploration and exploitation. |

| High-Throughput RNA-seq Platform [11] | Generates comprehensive gene expression data for thousands of molecules. | Provides deep, multi-parametric data that helps build robust ML models less sensitive to noise. |

| QbD Excipient Samples [9] | Provides excipient batches that represent the highest and lowest limits of specification ranges. | Allows formulators to test and build robustness to material variability directly into their drug development process. |

In automated biofoundries, experimental noise—the unwanted variability in data that obscures true biological signals—is a fundamental challenge that can compromise the integrity of the Design-Build-Test-Learn (DBTL) cycle. Noise originates from multiple sources: biological (stochastic fluctuations within cells), technical (instrumentation and protocols), and data-related (computational modeling). Effectively identifying, quantifying, and mitigating these sources is critical for achieving reproducible, high-throughput biological research and development. This guide provides a systematic breakdown of common noise sources and practical troubleshooting strategies for researchers and scientists.

FAQs: Identifying and Troubleshooting Noise

1. What are the most common sources of biological noise in cell-based assays?

Biological noise arises from inherent stochasticity in cellular processes. Key sources and solutions include:

- Genetic Expression Variability: Stochastic fluctuations in transcription and translation lead to cell-to-cell differences in protein and metabolite levels, even in clonal populations [12].

- Cellular Response to Drugs: Non-uniform distribution of macromolecules and varying cellular abilities to sense the environment cause divergent responses to identical drug stimuli [12].

- Circadian Rhythms: About 50% of human genes follow a circadian cycle, influencing drug metabolism and inflammatory responses, which introduces temporal variability if experiments are conducted at different times [12].

- Troubleshooting Steps:

- Implement Controlled Environments: Use incubators that tightly regulate temperature, humidity, and CO₂. For light-sensitive processes, control light-dark cycles.

- Standardize Cell Passaging: Maintain consistent cell passage numbers and confluence levels at the start of experiments to minimize drift in phenotypic behavior.

- Utilize Biosensors: Integrate genetic circuits (e.g., RNA-based riboswitches or transcription factors) to monitor intracellular metabolite levels in real-time, helping to distinguish true biological signals from noise [13].

2. How can I determine if my automated instrumentation is introducing technical noise?

Technical noise originates from the automated platforms and liquid handling systems themselves.

- Liquid Handler Inaccuracy: Improper calibration can lead to systematic errors in dispensed volumes, directly impacting cell growth and metabolite production measurements. For example, in media optimization, an error in a single component like salt (NaCl) can drastically alter production yields [14].

- Sensor Drift in Bioreactors: Probes for pH, dissolved oxygen, or temperature can drift over time, leading to inaccurate environmental control and increased heterogeneity between culture vessels [15].

- Edge Effects in Microplates: Evaporation and temperature gradients in multi-well plates can cause wells on the perimeter to behave differently from interior wells.

- Troubleshooting Steps:

- Perform Regular Calibration: Establish a strict schedule for calibrating pipettes, liquid handlers, and bioreactor probes using traceable standards.

- Run Dye Tests: Use fluorescent or colored dyes in water to visually and quantitatively check for volume dispensation accuracy and consistency across all nozzles and tips of a liquid handler.

- Conduct Blank and Control Experiments: Include negative controls and technical replicates across different plate locations (center vs. edge) to quantify and correct for positional biases.

3. My machine learning models are not converging during the 'Learn' phase. Could data noise be the cause?

Yes, noise in the training data is a primary reason for poor model performance. Machine learning models, like the Gaussian Processes used in Bayesian optimization, are highly sensitive to noisy data [15] [14].

- Insufficient Data Quality: Models trained on small or highly variable datasets will fail to learn the underlying biological function and instead model the noise [15].

- Heteroscedastic Noise: This is noise whose magnitude is not constant but depends on the input parameters (e.g., measurement error is larger at high metabolite concentrations). Standard models that assume constant noise will perform poorly [15].

- Troubleshooting Steps:

- Increase Replicates: Incorporate more technical and biological replicates to robustly estimate and account for experimental noise.

- Use Noise-Aware Models: Employ Bayesian optimization frameworks that explicitly model heteroscedastic noise. These models can separate signal from noise more effectively, leading to faster convergence on the true optimum [15].

- Feature Selection: Prior to modeling, use techniques like Principal Component Analysis (PCA) to reduce high-dimensional data and filter out non-informative, noisy features [16].

4. What strategies can reduce noise when scaling up from microplates to bioreactors?

Scale-up is a major source of noise due to changing physical and chemical environments.

- Environmental Gradients: Large bioreactor vessels develop gradients in nutrients, pH, and dissolved oxygen that are absent in well-mixed microplates [13].

- Population Heterogeneity: The larger cell population in a bioreactor can exhibit increased genotypic and phenotypic diversity.

- Troubleshooting Steps:

- Implement Dynamic Control: Use genetic circuits with biosensors to enable dynamic regulation of pathways. This allows cells to auto-adjust to changing bioreactor conditions, improving robustness and yield [13].

- Characterize Mass Transfer: Profile the kLa (volumetric oxygen transfer coefficient) and mixing times in the bioreactor to ensure homogeneous conditions.

- Employ Scale-Down Models: Create small-scale (e.g., in microplates) models that mimic the environmental fluctuations anticipated at large scale. This allows for high-throughput strain and media optimization under more relevant conditions [14].

The table below summarizes key noise types, their origins, and measurable impacts on data, providing a reference for diagnostics.

| Noise Category | Specific Source | Typical Impact on Data | Mitigation Strategy |

|---|---|---|---|

| Biological | Genetic drift in microbial populations [12] | Increasing variance in production yield (titer) over serial passages | Use cryopreserved master cell banks; limit passaging |

| Biological | Cell-to-cell variation in pathway expression [12] | High coefficient of variation (>20%) in fluorescence from reporter genes | Use flow cytometry to characterize population distribution; implement constitutive expression controls |

| Technical | Liquid handler volumetric inaccuracy [14] | >5% CV in growth (OD600) across technical replicates in a plate | Regular calibration; use of liquid class optimization for different reagents |

| Technical | Edge effects in 48-well plates [14] | 15-30% deviation in growth metrics between edge and center wells | Use plate covers to reduce evaporation; exclude edge wells or use statistical correction |

| Data | Heteroscedastic measurement error [15] | Poor predictive performance (high RMSE) of machine learning models in high-output regions | Use Bayesian optimization with heteroscedastic noise modeling [15] |

| Data | Technical variability in scRNA-seq [12] | Distortion in Highly Variable Genes (HVG) list, masking true biological variation | Use computational tools (e.g., DDG model) to distinguish technical from biological variation [12] |

Experimental Protocols for Noise Quantification

Protocol 1: Quantifying Technical Noise in a Liquid Handling System

- Objective: To measure the accuracy and precision of a liquid handler's dispensation, a key source of technical noise.

- Materials: PBS buffer, fluorescent dye (e.g., fluorescein), microplate reader, black-walled 96-well or 384-well microplates.

- Method:

- Prepare a solution of PBS with a known concentration of fluorescent dye.

- Program the liquid handler to dispense a target volume (e.g., 10 µL) of the dye solution into every well of the microplate (n≥5 replicates per column).

- Use a microplate reader to measure the fluorescence intensity in each well.

- Data Analysis: Calculate the mean fluorescence for each column and the coefficient of variation (CV) across all replicates. A CV > 5% indicates significant technical noise introduced by the liquid handler. Compare the mean measured fluorescence to a standard curve to assess accuracy (systematic error).

Protocol 2: Characterizing Biological Noise in a Production Strain

- Objective: To measure the inherent strain-to-strain and day-to-day variability of a microbial production system.

- Materials: Production strain, appropriate growth medium, deep-well plates, microplate reader, HPLC or GC-MS for product quantification.

- Method:

- Inoculate production strain from a single colony into liquid medium and grow overnight.

- The next day, sub-culture into fresh medium in a deep-well plate. Use at least 12 biological replicates (independent cultures).

- Grow cultures under standard production conditions (e.g., 48 hours, 30°C).

- Measure both the optical density (OD600) and the product titer (e.g., via absorbance or HPLC) for each culture.

- Repeat this experiment on three separate days to capture day-to-day variability.

- Data Analysis: Calculate the CV for both OD and product titer for within-day (biological noise) and between-day (total process noise) datasets. This establishes a baseline for expected noise levels and helps determine the necessary number of replicates for future DBTL cycles.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Noise Management |

|---|---|

| Cell-Free Transcription-Translation (TX-TL) Systems [17] | Bypasses cellular complexity and growth-related variability, providing a highly reproducible and rapid platform for testing genetic parts and circuit behavior. |

| RNA-based Biosensors (Riboswitches/Toehold Switches) [13] | Enable real-time, non-destructive monitoring of specific intracellular metabolites, allowing researchers to track and account for metabolic noise in living cells. |

| Automated Cultivation Platform (e.g., BioLector) [14] | Provides tight control over culture conditions (O2, humidity, shaking) in microplates, minimizing environmental noise and improving data reproducibility for fermentation optimization. |

| Gaussian Process (GP) Models with Heteroscedastic Kernels [15] | A machine learning model that does not assume constant noise; it can learn how measurement uncertainty changes across the experimental space, leading to more robust predictions. |

Workflow Diagram: Managing Noise in the DBTL Cycle

The following diagram illustrates a robust DBTL workflow that integrates noise management strategies at every stage.

Frequently Asked Questions

Q1: What are the primary sources of noise in metabolic pathway data, and how can I quantify their impact? Cellular noise originates from stochastic biochemical events and results in significant cell-to-cell heterogeneity, even in clonal populations. You can quantify this using high-throughput quantitative mass imaging techniques, such as Spatial Light Interference Microscopy (SLIM), which captures the optical-phase delay (ΔΦ) of the cell cytosol and lipid droplets to calculate dry-mass values with single-cell resolution [18]. This method provides more than 55% higher precision than conventional microscopy, allowing you to precisely measure how resources are partitioned between growth and productivity and to detect subpopulations with distinct metabolic trade-offs [18].

Q2: My DBTL cycles are yielding inconsistent results due to experimental noise. What computational approaches can help? Implement a nonparametric Bayesian framework using Gaussian Process Regression (GPR) to infer dynamic reaction rates from metabolite concentration measurements without requiring explicit time-dependent flux data [19]. This approach allows you to model metabolic dynamics and perform hierarchical regulation analysis even with noisy data. Furthermore, machine learning methods, particularly gradient boosting and random forest models, have proven robust against training set biases and experimental noise in the low-data regimes typical of initial DBTL cycles [20].

Q3: How can I distinguish between hierarchical and metabolic regulation in my noisy pathway data? Apply dynamic Hierarchical Regulation Analysis (HRA), which quantifies the contributions of enzyme concentration changes (hierarchical regulation) versus metabolic effector changes (metabolic regulation) to flux control [19]. The time-dependent hierarchical regulation coefficient can be calculated as ρhi(t) = [ln h(ei(t)) - ln h(ei(t0))] / [ln vi(t) - ln vi(t0)], where h(ei) represents enzyme capacity and vi is the reaction rate. The metabolic regulation coefficient is then derived as ρmi(t) = 1 - ρh_i(t) [19].

Q4: What experimental design maximizes signal detection when working with noisy single-cell data? Incorporate multiple independent replicate cultures (at least 3 per condition) and aim for large observation numbers (≥10,000 single-cells) to achieve statistical power [18]. Use cross-correlation imaging approaches to suppress cytosolic signal and enhance specific organelle localization, which achieved >98% agreement with fluorescence-based methods while accelerating processing to >1,000 single-cell observations per condition [18].

Experimental Protocols for Noise Quantification

Protocol 1: Single-Cell Trade-Off Analysis Using Quantitative Phase Imaging

Purpose: To quantify metabolic trade-offs between growth and product formation at single-cell resolution while accounting for cellular noise [18].

Materials:

- Yarrowia lipolytica culture (or your target microorganism)

- Spatial Light Interference Microscopy (SLIM) system

- Two coverslips per sample

- U-13C glucose for NanoSIMS validation

Methodology:

- Sample Preparation: Place 2µL of growing culture between two coverslips without further processing.

- Image Acquisition: Transfer sample to SLIM system and capture both phase-contrast intensity images and quantitative phase images by projecting onto a spatial light modulator.

- Data Conversion: Convert ΔΦcytosol and ΔΦTAG to dry-mass values using protein-specific refractive index increment for cytosol and Clausius-Mossotti equation for lipid droplets.

- Image Processing: Localize cell-contour and lipid droplets via ΔΦ levels, using additional wavefront modulation (π/2 and π) to enhance LD-to-cytosol contrast.

- Validation: Confirm cytosolic and LD elemental composition using NanoSIMS after 13C glucose exposure.

Expected Outcomes: Quantitative bivariate analysis of growth-productivity trade-offs, identification of metabolic subpopulations, and determination of cell-to-cell heterogeneity in macromolecule recycling under nutrient limitation [18].

Protocol 2: Dynamic Flux Inference Using Gaussian Process Regression

Purpose: To accurately infer time-dependent metabolic fluxes from metabolite concentration measurements for dynamic regulation analysis [19].

Materials:

- Time-series metabolite concentration data

- Gaussian Process Regression computational framework

- Network stoichiometry of the target pathway

Methodology:

- Data Preprocessing: Estimate high-resolution time-dependent metabolite profiles from discrete measurements using GPR.

- Derivative Calculation: Approximate derivatives of metabolite concentrations from the GP derivatives.

- Flux Inference: Calculate dynamic reaction rates from derivatives and network stoichiometry using the relationship: vi = ẋi+1 + ... + ẋN + gN(x_N) for linear pathways.

- Pathway Simplification: For branched pathways, combine reaction rates to match the number of metabolites (e.g., ṽ1 = v1 - v_4 for branched pathways).

- Regulation Analysis: Compute time-dependent hierarchical regulation coefficients from inferred reaction rates and enzyme profiles.

Expected Outcomes: Complete temporal hierarchical regulation profiles for each reaction with statistical confidence, without requiring direct flux measurements [19].

Quantitative Data Analysis Tables

Table 1: Correlation Analysis of Single-Cell Parameters in Y. lipolytica

| Parameter Pair | Spearman Correlation Coefficient (ρ) | P-value | Number of Observations | Conditions Tested |

|---|---|---|---|---|

| LD Volume vs. TAG Number-Density | 0.65 | <0.001 | 25,960 individual LDs | 7 different conditions, 3 replicates each [18] |

| Cell Dry-Density vs. Cell Volume | -0.6 | <0.001 | 13,770 individual cells | 7 different conditions, 3 replicates each [18] |

Table 2: Machine Learning Performance in DBTL Cycle Optimization

| ML Method | Performance in Low-Data Regime | Robustness to Training Set Bias | Robustness to Experimental Noise | Recommended Application |

|---|---|---|---|---|

| Gradient Boosting | Superior | High | High | Initial DBTL cycles with limited data [20] |

| Random Forest | Superior | High | High | Initial DBTL cycles with limited data [20] |

| Other Tested Methods | Variable | Variable | Variable | Not recommended for low-data scenarios [20] |

The Scientist's Toolkit: Research Reagent Solutions

| Reagent/Technique | Function | Application Context |

|---|---|---|

| Spatial Light Interference Microscopy (SLIM) | Captures optical-phase delay (ΔΦ) of cellular components | Quantifying dry-mass of cytosol and lipid droplets at single-cell resolution [18] |

| Gaussian Process Regression (GPR) | Nonparametric Bayesian modeling for time-series data | Inferring dynamic metabolic fluxes from metabolite measurements [19] |

| Nanoscale Secondary Ion Mass Spectrometry (NanoSIMS) | Characterizes elemental composition at subcellular level | Validating cytosolic and lipid droplet composition; confirming 13C incorporation [18] |

| U-13C Glucose | Isotope-labeled substrate for metabolic tracing | Tracking carbon allocation and validating mass imaging measurements [18] |

| Clausius-Mossotti Equation | Converts refractive index to molecular number-density | Determining TAG molecule count in lipid droplets from phase imaging data [18] |

Experimental Workflow Diagrams

Diagram 1: DBTL Cycle with Noise Integration

Diagram 2: Single-Cell Mass Imaging Workflow

Machine Learning and Algorithmic Solutions for Noisy Biological Data

Bayesian Optimization as a Robust Framework for Noisy Black-Box Functions

Frequently Asked Questions (FAQs)

Q1: My Bayesian optimization is converging slowly or to a poor solution. What are the most common causes?

Poor convergence in Bayesian optimization (BO) often stems from three common issues: an incorrect prior width, over-smoothing by the surrogate model, or inadequate maximization of the acquisition function [21] [22]. An incorrectly set prior width can misrepresent the function's variability, while over-smoothing occurs when the kernel lengthscale is too large, causing the model to miss important details. Furthermore, if the acquisition function is not maximized effectively, the algorithm may select suboptimal points for evaluation.

Q2: How can I handle experiments that fail and produce no measurable output?

A robust method is the "floor padding trick": when an experiment fails, assign it the worst objective value observed so far in the campaign [23]. This simple and adaptive method informs the surrogate model that the parameter set led to a failure, encouraging the algorithm to avoid similar regions in subsequent iterations without requiring pre-defined, problem-specific constants.

Q3: My experimental measurements are very noisy. How can I make BO more robust?

For noisy observations, consider augmenting your standard acquisition function or implementing a retest policy [24] [25]. A retest policy selectively repeats experiments to confirm the performance of promising candidates, which mitigates the risk of being misled by single, noisy measurements. Noise-augmented acquisition functions are also designed to be more robust to non-Gaussian and high-variance noise processes [25].

Q4: Can I use low-fidelity, cheap experiments to accelerate the optimization of high-fidelity, expensive ones?

Yes, this is the goal of Multifidelity Bayesian Optimization (MF-BO) [26]. MF-BO uses a unified surrogate model to learn the relationship between different experimental fidelities (e.g., computational docking, single-point assays, and dose-response curves). It then uses an acquisition function, like Targeted Variance Reduction, to optimally allocate a fixed budget by deciding whether to perform many cheap low-fidelity experiments or a few expensive high-fidelity ones at each step.

Troubleshooting Guides

Problem 1: The BO algorithm is not exploring the parameter space effectively.

Symptoms: The algorithm gets stuck in a local optimum and fails to discover better regions.

Diagnosis and Solutions:

- Check Hyperparameters: The most common fix is to properly tune the hyperparameters of your Gaussian Process surrogate model, particularly the kernel lengthscale and prior width [21]. An overly large lengthscale can cause over-smoothing, preventing the discovery of local optima.

- Adjust Acquisition Function: If using the Upper Confidence Bound (UCB), try increasing the

βparameter, which controls the exploration-exploitation trade-off. A higherβvalue gives more weight to uncertain regions, promoting exploration [21]. - Validate Model Fit: Ensure your surrogate model's predictions are consistent with your observed data. A poor model fit indicates that the kernel or its hyperparameters may be inappropriate for your specific problem.

Problem 2: Dealing with failed experiments and missing data.

Symptoms: Experimental runs occasionally fail to produce a result, creating "missing data" that disrupts the optimization loop.

Diagnosis and Solutions:

- Implement the Floor Padding Trick: This is a direct and effective solution [23].

- Methodology: When an experiment at parameter

x_nfails, simply set its objective valuey_nto the minimum value observed in all previous successful experiments:y_n = min(y_1, ..., y_{n-1}). - Rationale: This automatically labels the failed parameter set as poor without requiring manual tuning of a penalty value. It updates the surrogate model to recognize the region around

x_nas low-performing, guiding future iterations away from it.

- Methodology: When an experiment at parameter

- Combine with a Failure Predictor: For a more advanced approach, you can train a binary classifier to predict the probability of failure for a given parameter set [23]. This can be used alongside the floor padding trick to actively avoid parameters with a high predicted chance of failure.

Problem 3: Optimization performance is degraded by high experimental noise.

Symptoms: The algorithm appears to make erratic decisions, often selecting points that later prove to be poor, due to unreliable measurements.

Diagnosis and Solutions:

- Employ a Retest Policy: In batched BO, you can dedicate a portion of your batch to retesting previously evaluated candidates [24].

- Methodology: For each batch, identify a subset of the most promising candidates (e.g., those with the highest predicted mean or those that were highly ranked but had high uncertainty) and repeat the experiment. Replace their recorded value with the average of the retests.

- Rationale: This reduces the variance in the data set for critical candidates, leading to a more accurate surrogate model and preventing the algorithm from chasing noise-driven outliers.

- Use a Noise-Robust Acquisition Function: Standard acquisition functions can be deceived by noise. Consider switching to ones specifically designed for noisy settings, which can handle non-Gaussian noise distributions like those found in molecular dynamics simulations or polymer crystallization studies [25].

Problem 4: Optimizing a high-fidelity property is prohibitively expensive.

Symptoms: The target property is very expensive or time-consuming to measure, making a full BO campaign infeasible.

Diagnosis and Solutions:

- Adopt a Multifidelity (MF-BO) Approach: Leverage cheaper, correlated experimental fidelities to guide the optimization [26].

- Methodology:

- Define your experimental fidelities (e.g., Low: computational docking score; Medium: single-point percent inhibition; High: dose-response IC50 value).

- Use a surrogate model (e.g., Gaussian Process with a Tanimoto kernel for molecules) that can learn the correlation between fidelities and molecular structure.

- Use a multifidelity acquisition function like Targeted Variance Reduction (TVR). This function selects the next (molecule, fidelity) pair that maximizes the expected improvement at the high-fidelity level, weighted by the cost of the experiment.

- Rationale: MF-BO allows you to perform many low-cost experiments to explore the search space efficiently, only using the expensive high-fidelity assay to confirm the most promising candidates.

- Methodology:

Experimental Protocols & Data

This protocol integrates directly into the BO loop.

- Initialization: Start with an initial dataset of parameters and successful evaluations.

- BO Iteration:

a. Fit the Gaussian Process surrogate model to the current data.

b. Maximize the acquisition function to select the next parameter

x_n. c. Run the experiment atx_n. - Failure Handling:

a. If the experiment is successful, record the true measurement

y_nand proceed. b. If the experiment fails, sety_n = min(y_1, ..., y_{n-1})(the worst value observed so far). - Update: Augment the dataset with

(x_n, y_n)and return to Step 2.

This protocol is designed for running experiments in batches, common in drug discovery.

- Initialization: Train an initial QSAR model (e.g., Random Forest or Gaussian Process) on a randomly selected batch of molecules.

- Batch Selection:

a. Use the model to predict the activity and uncertainty of all untested molecules.

b. Rank the molecules using an acquisition function (e.g., Expected Improvement, Upper Confidence Bound).

c. Select the top

Kmolecules for the next batch. To implement the retest policy, replace the lowest-rankedRof these new molecules withRretests of previously tested, high-potential candidates. - Experimentation & Update: Perform the batch of experiments (both new and retests). Update the dataset with the new results. If a molecule is retested, update its activity value with the average of all its measurements.

- Iteration: Retrain the surrogate model on the updated dataset and repeat from Step 2.

Table 1: Comparison of Bayesian Optimization Methods for Handling Noise and Failures.

| Method | Primary Use Case | Key Mechanism | Advantages |

|---|---|---|---|

| Floor Padding Trick [23] | Handling experimental failures | Assigns the worst-seen value to failed experiments | Simple, adaptive, requires no prior knowledge of penalty values |

| Retest Policy [24] | Mitigating experimental noise | Selectively repeats experiments to average out noise | Reduces variance in key candidates, improves model accuracy |

| Multifidelity BO (MF-BO) [26] | Optimizing expensive high-fidelity properties | Uses cheap, low-fidelity data to guide high-fidelity experiments | Dramatically reduces total cost and time of optimization campaign |

| Noise-Augmented Acquisition [25] | Non-Gaussian, high-variance noise | Modifies the acquisition function to be robust to specific noise types | Can handle complex noise distributions (e.g., exponential) |

The Scientist's Toolkit: Key Reagents & Materials

Table 2: Essential Materials for a Multifidelity Drug Discovery Campaign, as described in [26].

| Item / Reagent | Function in the Experiment |

|---|---|

| Genetic Algorithm-Generated Compound Library | Provides a diverse and synthetically feasible search space of candidate drug molecules. |

| Low-Fidelity Assay (e.g., Computational Docking) | A cheap, rapid virtual screen to predict a molecule's binding affinity to the target protein. |

| Medium-Fidelity Assay (e.g., Single-Point % Inhibition) | A physical lab assay providing an initial, medium-cost readout of biological activity. |

| High-Fidelity Assay (e.g., Dose-Response IC50) | The gold-standard, expensive experiment that accurately measures the potency of a compound. |

| Gaussian Process Surrogate Model with Tanimoto Kernel | The machine learning model that learns the relationship between molecular structure and activity across all fidelities. |

Workflow Visualizations

Diagram 1: DBTL Cycle with Robust Bayesian Optimization

Diagram 2: Multifidelity Bayesian Optimization for Drug Discovery

Leveraging Gaussian Processes for Probabilistic Predictions and Uncertainty Quantification

Frequently Asked Questions

Q1: What are the main types of predictive uncertainty, and how are they modeled? A: Gaussian Process (GP) models quantify two fundamental types of uncertainty: aleatoric and epistemic. Aleatoric uncertainty is the inherent noise in the observations themselves, which cannot be reduced with more data. Epistemic uncertainty is the uncertainty in the model due to a lack of knowledge or data; this type can be reduced as more data becomes available. GP models naturally capture both by providing a full predictive distribution for a new input point, where the predictive variance represents the combined uncertainty [27].

Q2: My dataset is large; are GPs still computationally feasible? A: Standard GPs can be slow for large datasets. However, scalable approximations are available. A common method mentioned in recent research is the use of Random Fourier Features (RFF) to approximate the GP kernel. This technique allows for more efficient computation, making GPs applicable to larger datasets common in modern experimental science [27].

Q3: How can I integrate UQ from GPs into an autonomous experimental cycle? A: The uncertainty estimates from a GP are key for autonomous decision-making. The GP model can be used as a surrogate model within a Design-Build-Test-Learn (DBTL) cycle. The optimizer can query the GP to suggest the next experiment by balancing exploration (probing areas of high epistemic uncertainty) and exploitation (testing areas predicted to have high performance), thereby closing the loop autonomously [28].

Q4: What is the practical difference between the predictive mean and variance? A: The predictive mean is the model's best guess for the target value at a new input. The predictive variance quantifies the confidence in that guess. A high variance indicates low confidence, which could be due to the input being far from the training data (high epistemic uncertainty) or high inherent noise (high aleatoric uncertainty). This is crucial for assessing the reliability of a prediction [27].

Q5: How do I know if my uncertainty quantification is well-calibrated? A: A well-calibrated model means that when it predicts a 90% confidence interval, the true value falls within that interval 90% of the time. You can assess this by using held-out test data. Calculate how often the true values fall within the predicted confidence intervals across your test set; the empirical coverage should match the predicted coverage. A GP with a correctly specified kernel and likelihood should produce well-calibrated uncertainties [27].

Troubleshooting Guides

Problem: Poor Predictive Performance and Miscalibrated Uncertainty

Possible Causes and Solutions:

- Incorrect Kernel Choice: The kernel function defines the properties of the functions your GP can learn. If your data shows smooth trends, a Radial Basis Function (RBF) kernel is appropriate. For periodic data, use a periodic kernel. Experiment with different kernels and combinations.

- Hyperparameter Optimization: The kernel's hyperparameters (e.g., length-scale, variance) greatly impact performance. These should not be set arbitrarily. Use optimization techniques like maximum marginal likelihood or Markov Chain Monte Carlo (MCMC) to fit them to your data.

- Inadequate Likelihood Model: If your noise is non-Gaussian (e.g., your data is count-based or binary), a standard GP will be misspecified. Switch to a variant like a Poisson GP for count data or a Bernoulli GP for classification, which use different likelihood functions.

Problem: Model is Too Slow or Does Not Scale

Possible Causes and Solutions:

- Large Training Dataset: The computational complexity of a standard GP scales with the cube of the number of data points (O(n³)), making it slow for large datasets (n > 10,000).

- Solution: Implement scalable approximations such as Sparse GPs (using inducing points) or Random Fourier Features (RFF) [27]. These methods reduce computational complexity, making training and prediction faster.

Problem: High Uncertainty on All Predictions

Possible Causes and Solutions:

- Excessive Kernel Length-Scale: If the length-scale hyperparameter is too large, the model assumes all points are highly correlated and will be overly general, leading to high uncertainty everywhere.

- Solution: Re-optimize the kernel hyperparameters, paying close attention to the length-scale.

- Lack of Data in a Region: High epistemic uncertainty is a natural and useful output. It indicates that the model has not seen enough data in that region of the input space.

- Solution: This is a feature, not a bug. In an active learning or autonomous DBTL cycle, these high-uncertainty regions should be targeted for new experiments [28].

Experimental Protocols

Protocol 1: Uncertainty Quantification for a Synthetic Biological Circuit

This protocol outlines how to use a GP for UQ when optimizing a biological process, such as protein production in a DBTL cycle [28].

1. Objective: To build a probabilistic model that predicts protein fluorescence output based on input factors (e.g., inducer concentration) and provides a reliable measure of prediction uncertainty to guide autonomous experimentation.

2. Materials:

- Robotic Platform: An integrated system with liquid handlers, a microtiter plate incubator, and a plate reader for high-throughput, automated cultivation and measurement [28].

- Biological System: Engineered E. coli or Bacillus subtilis with a inducible promoter controlling the expression of a reporter protein (e.g., Green Fluorescent Protein, GFP).

- Software Framework: A database to store experimental data and an optimizer module that uses the GP model to select the next set of experimental conditions [28].

3. Method:

- Step 1: Initial Experimental Design. Perform a space-filling design (e.g., Latin Hypercube) or a random search across the input factor space (e.g., inducer levels from 0-1 mM) to collect an initial dataset.

- Step 2: Automated Build & Test.

- The robotic platform prepares cultures in microtiter plates, adds inducers according to the design, and incubates them.

- The platform periodically measures optical density (OD600) and fluorescence.

- Step 3: Learn with Gaussian Process.

- Train a GP model on the collected data, using inputs (e.g., inducer concentration, time) to predict outputs (e.g., fluorescence/OD).

- The GP provides a posterior predictive distribution (mean and variance) for any untested condition.

- Step 4: Autonomous Optimization.

- The optimizer uses an acquisition function (e.g., Expected Improvement, Upper Confidence Bound) that balances the GP's predictive mean (exploitation) and variance (exploration).

- The optimizer selects the condition for the next experiment that maximizes this function.

- Step 5: Close the Loop. The robotic system automatically executes the new experiment(s) suggested in Step 4. The new data is added to the training set, and the cycle (Steps 3-5) repeats autonomously for multiple iterations.

4. Key Measurements:

- Input Variables: Inducer concentration, feed rate, induction time.

- Output/Target Variables: Final fluorescence intensity (GFP), final optical density (OD600).

Protocol 2: Benchmarking GP UQ with a Synthetic Dataset

1. Objective: To validate the accuracy of a GP's uncertainty estimates on a known function before applying it to real, noisy experimental data.

2. Method:

- Step 1: Generate Data. Sample inputs, ( x ), from a uniform distribution. Compute outputs from a known function, e.g., ( y = \sin(x) + \epsilon ), where ( \epsilon ) is Gaussian noise.

- Step 2: Train-Test Split. Reserve a portion of the data as a test set.

- Step 3: Model Training. Train a GP on the training data.

- Step 4: Prediction & UQ. Make predictions on the test set to obtain the mean ( \mu* ) and variance ( \sigma^2* ) for each test point.

- Step 5: Calibration Assessment. For a range of confidence levels (e.g., 90%, 95%), compute the empirical coverage: the proportion of test points where the true value lies within ( \mu* \pm z \cdot \sigma* ) (where ( z ) is the z-score). Plot empirical vs. predicted coverage to assess calibration.

Table 1: Standard Color Contrast Ratios for Accessibility in Visualizations [29] [30] [31]

| Element Type | Size / Condition | Minimum Contrast Ratio | Enhanced Contrast Ratio (Level AAA) |

|---|---|---|---|

| Text | Smaller than 18pt or 14pt bold | 4.5:1 | 7:1 |

| Text | 18pt or 14pt bold and larger | 3:1 | 4.5:1 |

| Non-text (UI, graphics) | Any | 3:1 | 3:1 |

Table 2: Key Reagent Solutions for Automated DBTL Experiments [28]

| Reagent / Material | Function in the Experiment |

|---|---|

| Microtiter Plates (MTP) | High-throughput cultivation vessel for bacterial cultures. |

| Inducers (e.g., IPTG, Lactose) | Triggers expression of the target protein from the inducible promoter. |

| Culture Media | Nutrient source for bacterial growth and protein production. |

| Substrate for Feed Release | Polysaccharide that is enzymatically broken down to control glucose release and growth rates. |

| Reporter Protein (e.g., GFP) | Easily measurable output to quantify system performance and model success. |

The Scientist's Toolkit

Table 3: Essential Computational & Modeling Tools

| Tool / Technique | Brief Explanation and Function |

|---|---|

| Scalable GP Approximations | Methods like Random Fourier Features (RFF) or Sparse GPs that reduce computational cost, enabling UQ on large datasets [27]. |

| Monte Carlo Sampling | A method used to estimate the predictive distribution and uncertainties from complex probabilistic models, including GPs [27]. |

| Acquisition Function | A function (e.g., Expected Improvement) used to decide the next experiment by balancing exploration and exploitation in an autonomous cycle [28]. |

| Kernel Function | The core of a GP that defines the covariance between data points, determining the shape and properties of the functions the model can learn. |

Experimental Workflow Visualizations

Implementing Heteroscedastic Noise Models for Non-Constant Biological Variance

FAQs: Understanding Heteroscedastic Noise in Biological Data

1. What is heteroscedastic noise and why is it problematic in biological DBTL cycles? Heteroscedastic noise refers to measurement noise variances that are not constant across all samples in your dataset. Unlike homoscedastic noise (constant variance), heteroscedastic noise means that different measurements have different levels of reliability. This is particularly problematic in Design-Build-Test-Learn (DBTL) cycles because it can lead to incorrect parameter optimization, unreliable model fits, and false conclusions about the significance of your results. When knowledge about noise levels is available, data can be processed in a much more rigorous way, allowing distinctions between what is statistically significant and what is not [32].

2. How can I detect heteroscedastic noise in my experimental data? You can detect heteroscedastic noise by analyzing the residuals of your model fits. Create a residual plot (residuals versus fitted values) and look for patterns such as a funnel shape where the spread of residuals increases or decreases systematically with the magnitude of the measured values. Statistical tests like the Breusch-Pagan test can also formally detect heteroscedasticity. The presence of such patterns indicates that the assumption of constant variance is violated [32].

3. What practical methods can I use to estimate heteroscedastic noise variances without replicate measurements? When replicate measurements are not available (which is common due to cost constraints), you can use a grouping approach. The method involves:

- Dividing your data into subsets where the noise variance can be assumed to be constant within each group.

- Starting from the residuals obtained from an initial model of your data.

- Applying a procedure that includes a correction to suppress possible model errors, making the noise variance estimation relatively independent of the specific model used. This approach copes with heteroscedastic noise variances and heterogeneous distribution of degrees of freedom [32].

4. How does handling heteroscedastic noise improve autonomous DBTL platforms? In automated robotic platforms used for biological optimization, properly accounting for heteroscedastic noise allows for more accurate assessment of model "goodness," enables the construction of weighted least squares cost functions, and helps detect systematic errors by comparing residuals with estimated uncertainties. This leads to more reliable autonomous decision-making, as the platform can better distinguish between significant effects and noise when selecting the next measurement points [32] [28].

5. Can I model heteroscedastic noise variances parametrically? Yes, one strategy is to model noise variances as a parametric function of the response. However, this approach requires that the class of the variance function (e.g., polynomial, rational) is well known, which is often not the case in biological experiments. When the functional form is unknown, the non-parametric grouping method described above is generally more reliable [32].

Troubleshooting Guides

Issue 1: Poor Model Performance Despite Good Experimental Design

Symptoms:

- Inconsistent parameter estimates across similar experiments

- Poor predictive performance of models developed in DBTL cycles

- Statistical significance of factors changes unpredictably

Solutions:

- Implement Weighted Least Squares: Instead of standard least squares, use a cost function that weights each data point by the inverse of its estimated variance. This ensures that more reliable measurements have greater influence on the parameter estimates [32].

- Estimate Noise Variances from Residuals: Apply the following protocol:

- Fit an initial model to your data using ordinary least squares.

- Divide your dataset into meaningful subsets based on experimental conditions (e.g., different time points, inducer concentrations).

- Calculate the variance of residuals within each subset.

- Apply a correction factor to account for potential model errors [32].

- Validate with Domain Knowledge: Compare the estimated noise variances with expectations from your biological system to identify potential issues.

Issue 2: Unreliable Optimization in Autonomous DBTL Platforms

Symptoms:

- Robotic platform makes poor decisions about next experimental conditions

- Failure to converge to optimal solutions in protein expression or metabolite production

- High variability in outcomes across DBTL iterations

Solutions:

- Integrate Noise Estimation into Learning Modules: Ensure your platform's optimizer includes noise variance estimation when selecting next measurement points. The software framework should:

- Retrieve measurement data from platform devices

- Estimate noise levels for different experimental conditions

- Balance exploration and exploitation while accounting for measurement reliability [28]

- Implement Iterative Re-weighting: For continuous optimization, update noise variance estimates between DBTL cycles to refine the weighting of different data types.

- Calibrate with Control Experiments: Include reference points with known expected outcomes to monitor and correct for systematic noise variations.

Issue 3: Handling Biological Variability in High-Throughput Experiments

Symptoms:

- Batch-to-batch differences introduce unexpected noise patterns

- Difficulty distinguishing true biological effects from technical noise

- Inconsistent results when scaling up from microtiter plates to bioreactors

Solutions:

- Structured Noise Modeling: Implement the stepwise variance estimation procedure that:

- Groups observations with similar expected noise characteristics

- Estimates variances within each group separately

- Corrects for model errors to prevent bias in variance estimates [32]

- Design Experiments to Characterize Noise: Include sufficient replication at strategic points in your experimental design to directly estimate variance components.

- Adaptive Sampling: Use initial results to guide more intensive sampling in regions of higher noise or greater biological interest.

Experimental Protocols

Protocol 1: Estimation of Heteroscedastic Noise Variances from Residuals

Purpose: To estimate measurement noise variances that vary across experimental conditions when replicate measurements are not available.

Materials:

- Dataset with measured responses and experimental conditions

- Computational environment (R, Python, or MATLAB)

- Initial model describing the system

Procedure:

- Fit Initial Model: Apply an appropriate model to your dataset using standard regression techniques.

- Calculate Residuals: Compute the residuals (differences between observed and predicted values) for all data points.

- Group Data: Divide your data into subsets where noise variance can be assumed constant. Grouping can be based on:

- Experimental batches

- Response magnitude ranges

- Specific experimental conditions

- Calculate Within-Group Variances: For each subset, calculate the variance of the residuals.

- Apply Model Error Correction: Adjust the variance estimates to account for potential biases introduced by model errors using the formula: Corrected Variance = (Residual Sum of Squares) / (Number of Observations - Effective Parameters)

- Validate Estimates: Check that the estimated variances align with experimental expectations and show appropriate patterns relative to experimental conditions.

Applications: This protocol is particularly valuable in oceanographic data processing, bioprocess optimization, and any experimental context where measurement precision varies systematically [32].

Protocol 2: Implementing Weighted Cost Functions in DBTL Analytics

Purpose: To incorporate heteroscedastic noise variances into the learning phase of DBTL cycles for more robust parameter estimation.

Materials:

- Noise variance estimates from Protocol 1

- DBTL platform with customizable analytics module

- Experimental data from Build and Test phases

Procedure:

- Assign Weights: Calculate weights for each data point as the inverse of its estimated variance.

- Modify Cost Function: Implement a weighted least squares cost function where each squared residual is multiplied by its corresponding weight.

- Parameter Optimization: Optimize model parameters by minimizing the weighted cost function.

- Uncertainty Quantification: Calculate parameter uncertainties using the weighted covariance matrix.

- Iterative Refinement: Use the updated model to generate new residuals and refine variance estimates if necessary.

Applications: Essential for autonomous DBTL platforms optimizing protein expression, metabolite production, or growth conditions in synthetic biology [28] [33].

Table 1: Heteroscedastic Noise Estimation Methods Comparison

| Method | Requirements | Advantages | Limitations | Suitable For |

|---|---|---|---|---|

| Replicate Measurements | Multiple measurements at same conditions | Direct variance estimation | Expensive, not always available | All experimental types |

| Parametric Variance Function | Known functional form of variance | Efficient use of data | Requires correct model specification | Systems with well-characterized noise |

| Residual Grouping | Data subsets with constant variance | No replicates needed, handles model errors | Requires sufficient data per group | DBTL cycles, biological optimization |

Table 2: Impact of Proper Noise Handling in DBTL Applications

| Application | Benefit of Heteroscedastic Noise Modeling | Implementation Approach | Result |

|---|---|---|---|

| Flavonoid production in E. coli [33] | More reliable identification of significant factors | Statistical analysis of production data | 500-fold improvement in (2S)-pinocembrin production |

| Automated cultivation optimization [28] | Better selection of next measurement points | Machine learning with noise-aware cost functions | Improved convergence to optimal inducer concentrations |

| Oceanographic data processing [32] | Accurate parameter uncertainties | Grouped variance estimation from residuals | Detection of systematic errors in measurements |

Research Reagent Solutions

Table 3: Essential Materials for Noise-Aware Biological Experiments

| Item | Function | Application Notes |

|---|---|---|

| Microtiter Plates | High-throughput cultivation | Enable parallel testing of multiple conditions; essential for variance estimation |

| Automated Liquid Handlers | Precise reagent delivery | Reduce technical variability; improve reproducibility |

| Plate Readers with OD600 and fluorescence | Biomass and protein production measurement | Critical for collecting quantitative data in DBTL cycles |

| DNA Parts Libraries | Pathway construction | Standardized parts facilitate modular experimental design |

| Inducer Compounds (e.g., IPTG, lactose) | Regulation of gene expression | Key factors in optimization of protein production pathways |

| Statistical Software (R, Python) | Data analysis and noise modeling | Implement weighted regression and variance estimation |

Workflow Diagrams

Dot Script: Heteroscedastic Noise Estimation Workflow

Dot Script: Autonomous DBTL Cycle with Noise Handling

Frequently Asked Questions (FAQs)

Q1: What is the core function of the Expected Improvement (EI) acquisition function in a noisy experimental setting?

EI is designed to balance the trade-off between exploration (sampling from uncertain regions) and exploitation (sampling near the current best-known value) when optimizing a black-box function. It calculates the expected value of improvement over the current best observation, naturally taking into account the prediction uncertainty from the surrogate model (like a Gaussian Process). This makes it particularly suited for noisy, expensive-to-evaluate functions common in biological experiments, as it does not just consider the probability of improvement, but the magnitude of a potential improvement as well [34].

Q2: My Bayesian Optimization (BO) with EI is converging slowly. What could be wrong?

Slow convergence can often be attributed to these common issues:

- Incorrect Kernel Choice: The kernel (covariance function) of your Gaussian Process must match the smoothness and patterns of your underlying biological system. A standard Radial Basis Function (RBF) kernel assumes smooth, continuous functions. If your response is erratic, a Matern kernel might be more appropriate [15].

- Excessive Noise: If your experimental noise (e.g., from biological variability or measurement error) is high, it can overwhelm the signal. Consider using a Gaussian Process with a dedicated noise term or a heteroscedastic noise model to better capture non-constant uncertainty [15].

- Poor Initial Design: BO is sensitive to the initial set of points. Ensure you start with a space-filling design (e.g., Latin Hypercube Sampling) to build a reasonable initial surrogate model before letting EI take over.

Q3: How can I make the EI algorithm more or less exploratory?

The explorative nature of EI is intrinsic, but you can influence it:

EI is defined as: EI(x) = σ(x) [s Φ(s) + φ(s)], where s = (μ(x) - f(x⁺)) / σ(x) [34].

In this equation, σ(x) (the uncertainty) directly controls exploration. You cannot directly set an exploration parameter in standard EI. For more exploration, consider using the Upper Confidence Bound (UCB) acquisition function, which has an explicit parameter κ to control the exploration-exploitation balance: UCB(x) = μ(x) + κσ(x) [15] [35].

Troubleshooting Guide: Expected Improvement

| Symptom | Potential Cause | Recommended Solution |

|---|---|---|

| Slow convergence | Inappropriate kernel choice | Switch from RBF to Matern kernel for less smooth landscapes [15]. |

| High experimental noise | Incorporate a heteroscedastic noise model into your Gaussian Process [15]. | |

| Convergence to local optimum | Over-exploitation | Ensure initial design is space-filling; consider a meta-algorithm that restarts from random points. |

| Poor model prediction | Sparse initial data | Increase the number of points in your initial Design of Experiments (DoE) before starting the BO loop [4]. |

| Unstable recommendations | High noise corrupting the signal | Increase the number of technical replicates at each condition to obtain a more reliable mean and variance estimate [15]. |

Experimental Protocol: Implementing an EI-driven DBTL Cycle

This protocol outlines how to integrate the Expected Improvement algorithm into an automated Design-Build-Test-Learn (DBTL) cycle for optimizing a biological system, such as protein production in a bioreactor [4].

1. Objective Definition:

- Define your input variables (e.g., inducer concentration, temperature, feed rate) and their feasible ranges.

- Define your objective function to maximize or minimize (e.g., GFP fluorescence, product titer, yield).

2. Initial Experimental Design:

- Perform an initial space-filling experimental design (e.g., Latin Hypercube) across your input variables. A minimum of 10 points is recommended to build an initial model.

- Execute these experiments and record the results.

3. Model Training and Point Selection:

- Learn: Train a Gaussian Process (GP) surrogate model on all data collected so far. Use a Matern kernel and a white noise kernel to account for experimental noise.

- Design: Apply the Expected Improvement acquisition function to the GP posterior.

- Identify the next point(s) to evaluate by finding the input configuration that maximizes EI.

4. Automated Execution:

- Build & Test: A robotic platform prepares the new culture conditions and runs the experiments [4].

- The results (e.g., from a plate reader) are automatically fed back into the database.

5. Iteration:

- Repeat steps 3 and 4 until a convergence criterion is met (e.g., a pre-defined number of cycles, or minimal improvement over several cycles).

Workflow Visualization

Expected Improvement Calculation Logic

Table 1: Key Parameters for Expected Improvement Implementation

| Parameter | Symbol | Typical Value / Range | Description |

|---|---|---|---|

| Current Best Value | f(x⁺) |

- | The highest objective function value observed so far. |

| Prediction Mean | μ(x) |

- | The Gaussian Process prediction at point x. |

| Prediction Std. Dev. | σ(x) |

- | The standard deviation (uncertainty) of the prediction at x. |

| Standardized Score | s |

- | s = (μ(x) - f(x⁺)) / σ(x); number of standard deviations the prediction is above the current best [34]. |

| Cumulative Dist. Func. | Φ(s) |

0 to 1 | Probability that a standard normal variable is less than s [34]. |

| Probability Dens. Func. | φ(s) |

≥ 0 | The height of the standard normal distribution at s [34]. |

Table 2: Performance Comparison of Optimization Algorithms in Biological Case Studies

| Algorithm / Study | Problem Dimension | Experiments to Converge | Key Outcome |

|---|---|---|---|

| Bayesian Optimization (EI) [15] | 4 (Transcriptional control) | ~18 | Converged to near-optimum in 22% of the experiments required by a grid search (83 experiments). |

| Grid Search [15] | 4 (Transcriptional control) | 83 | Exhaustively screened all combinations; guaranteed but highly inefficient. |

| Active Learning [34] | 1 (Gold mining) | N/A | Focused on model accuracy, not optimization; slower at finding the maximum. |

| Autonomous DBTL [4] | 2 (Inducer & feed) | 4 cycles | Successfully optimized GFP production using a robotic platform in a fully closed loop. |

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Material | Function in Experiment |

|---|---|

| Inducers (e.g., IPTG, Naringenin) | Molecules used to trigger expression of a target gene or pathway in a synthetic biological system [15]. |

| Reporter Proteins (e.g., GFP) | Easily measurable proteins (via fluorescence) that serve as a proxy for the performance of the system being optimized [4]. |

| Marionette-wild E. coli Strain | A specialized chassis organism with genomically integrated, orthogonal inducible transcription factors, ideal for high-dimensional optimization [15]. |

| Microtiter Plates (MTPs) | Standardized plates (e.g., 96-well) for high-throughput cultivation and measurement of many experimental conditions in parallel [4]. |

| Gaussian Process Software | Core computational tool for building the surrogate model; requires selection of a kernel (e.g., Matern) and noise model [15]. |

Troubleshooting Guides

Data-Related Issues

Problem: Experimental noise is corrupting my ML model training, leading to poor performance in the next DBTL cycle.