Systems Biology vs. Synthetic Biology: A Comparative Analysis of Epistemological Approaches in Biomedical Research

This article provides a comprehensive comparative analysis of the epistemological foundations of systems and synthetic biology, two transformative disciplines reshaping modern life sciences.

Systems Biology vs. Synthetic Biology: A Comparative Analysis of Epistemological Approaches in Biomedical Research

Abstract

This article provides a comprehensive comparative analysis of the epistemological foundations of systems and synthetic biology, two transformative disciplines reshaping modern life sciences. Targeting researchers, scientists, and drug development professionals, we examine how these complementary approaches generate, validate, and apply biological knowledge. Through exploring their philosophical origins, methodological frameworks, optimization challenges, and validation criteria, we reveal how the analytical understanding-focused paradigm of systems biology and the design-oriented engineering approach of synthetic biology together create a powerful epistemological cycle for biological discovery and innovation. The analysis synthesizes insights from recent research to demonstrate how these fields address complex biological problems through distinct yet interconnected strategies with significant implications for therapeutic development and biomedical innovation.

Philosophical Roots and Epistemic Goals: Understanding vs. Designing Biological Systems

The latter part of the 20th century witnessed a profound transformation in biological science—a shift from the reductionist focus of molecular biology to the holistic perspective of systems-level thinking. While molecular biology has been extraordinarily successful in generating knowledge through decomposition and localization of component parts, this approach proved insufficient for understanding the dynamics and organization of many interconnected components [1]. Systems biology emerged as a response to these limitations, complementing reductionist strategies with theoretical frameworks better suited for studying complex biological networks [1]. This transition represents not merely a methodological change but a fundamental epistemological shift in how we study, understand, and engineer living systems.

The emergence of systems biology was catalyzed by several enabling developments: the enormous amount of genetic information derived from the Human Genome Project, the rise of interdisciplinary research efforts, the development of high-throughput technologies for generating 'omics' datasets, and advances in internetworking that facilitated data sharing [2]. This convergence of technological and conceptual advances created the foundation for a new approach to biological investigation—one that focuses on the functional analysis of the structure and dynamics of cells and organisms as integrated systems rather than concentrating solely on isolated components [2].

Historical Evolution of Systems Thinking in Biology

From Molecular Reductionism to Systems Integration

Molecular biology's success was built upon the twin strategies of decomposition and localization of component parts and molecular operations [1]. However, the detailed study of molecular pathways increasingly revealed dynamic interfaces and crosslinks between processes and components previously assigned to distinct mechanisms. This complexity demanded new approaches that could account for vast numbers of interacting components and multiple feedback loops [1].

The evolution of systems biology can be understood through three distinct phases, as observed by Ruedi Aebersold [3]. The initial phase conceptualized systems biology as high-throughput molecular biology, generating unprecedented data resources but limited by a conceptual focus on molecules and lack of scalable methods for integrative analysis. The second phase saw systems biology as network biology, with molecular networks emerging as a generic representation of molecules and their relationships. While valuable for mapping interaction spaces, these static representations struggled to explain living systems' complexities. The current phase approaches systems biology as the study of complex adaptive systems, focusing on how alterations in the state of multiple molecular agents collectively induce adaptation of the system's connectivity and behavior [3].

Key Technological Drivers

The genomic era of the 1990s, marked by the completion of the Human Genome Project in 2003, provided a critical foundation for systems approaches [2]. The advent of new technologies including microarrays, mass spectrometry, and various 'omics' platforms enabled the simultaneous examination of system components, generating the complex, multidimensional datasets that systems biology requires [2]. The development of computational tools and standards, such as the Systems Biology Markup Language (SBML) and MIRIAM guidelines for model annotation, further enabled the exchange and comparison of models across research communities [4] [5].

Table 1: Historical Timeline of Key Developments in Systems Biology

| Time Period | Major Developments | Key Technologies | Conceptual Advances |

|---|---|---|---|

| 1960s-1990s | Foundations | Systems theory, Early computing | Recognition of biological complexity |

| 1990s-2000s | Genomic Revolution | DNA sequencing, Microarrays | High-throughput data generation |

| 2000-2010 | Network Biology | Protein interaction mapping, SBML standardization | Network representations of biological systems |

| 2010-Present | Complex Adaptive Systems | Single-cell technologies, AI/ML integration | Dynamic, context-specific modeling |

Epistemological Framework: Systems vs. Synthetic Biology

Complementary Approaches to Biological Investigation

Systems and synthetic biology are often described as 'sister-disciplines' or 'cousins' focusing on complementary aims of understanding (systems biology) and designing (synthetic biology) living systems [1]. This relationship represents a fundamental epistemological distinction: systems biology is primarily concerned with analysis and understanding of existing biological systems, while synthetic biology focuses on synthesis and design of novel biological functions [1]. However, in research practice, understanding and design are often interdependent, and no simple distinction between basic and applied science can be maintained [1].

The philosophical foundations of these fields engage with one of the oldest scientific discussions: reductionism versus holism [1]. Systems biology explicitly aims to move beyond what its practitioners perceive as reductionist strategies in molecular biology [1]. Rather than focusing on isolated components, systems biology investigates the interactions and relationships between components, recognizing that emergent properties arise from these interactions that cannot be understood by studying parts in isolation [2]. Synthetic biology, while often employing reductionist approaches to standardize biological parts, ultimately seeks to understand through building—using construction as a means of gaining insight into biological principles [6].

Methodological Distinctions

The methodological approaches of systems and synthetic biology reflect their epistemological orientations. Systems biology typically employs hypothesis-driven approaches that begin with descriptive, graphical, or mathematical models, which are tested through iterations of experimental validation and model refinement [2]. This process continues until experimental data and model predictions align [2]. Research in systems biology often involves the integration of diverse data types, including both quantitative measurements (e.g., time-course data, metabolite concentrations) and qualitative observations (e.g., phenotypic classifications, functional annotations) [7].

Synthetic biology, in contrast, often follows engineering-based design cycles (design-build-test-learn) to create novel biological systems [6] [8]. This approach emphasizes the development of reusable biological "parts" that can be combined in predictable ways, reducing the need to start from scratch for each new application [6]. The field has been revolutionized by distributed biomanufacturing capabilities that offer unprecedented production flexibility in location and timing, enabling rapid responses to emerging needs [6].

Table 2: Epistemological Comparison of Systems and Synthetic Biology

| Dimension | Systems Biology | Synthetic Biology |

|---|---|---|

| Primary Aim | Understand existing systems | Design novel biological systems |

| Central Approach | Analysis of natural systems | Synthesis of artificial systems |

| Methodology | Modeling, network analysis, data integration | Parts standardization, engineering principles |

| Knowledge Focus | Emergent properties, system dynamics | Design rules, predictability, control |

| Relationship to Molecular Biology | Complementing reductionism | Extending genetic engineering |

Experimental Methodologies and Workflows

Systems Biology Workflows

Systems biology relies on sophisticated computational and experimental workflows for model construction and validation. A representative workflow for assembling quantitative parameterized metabolic networks involves multiple stages [4]. The process begins with qualitative network construction using data from MIRIAM-compliant genome-scale models, followed by parameterization of the model with experimental data from kinetic databases and quantitative experimental results [4]. The parameterized models are then calibrated and simulated using analytical tools such as COPASIWS, the web service interface to the COPASI software application for analyzing biochemical networks [4].

The integration of both qualitative and quantitative data represents a particularly important methodological advancement in systems biology parameter identification [7]. In this approach, qualitative data are converted into inequality constraints imposed on model outputs, which are used along with quantitative data points to construct a single scalar objective function that accounts for both datasets [7]. This constrained optimization framework allows researchers to formalize qualitative biological observations as inequality constraints while minimizing the sum-of-squares distance from quantitative data [7].

Systems Biology Research Workflow: An iterative process combining computational and experimental approaches.

Network Analysis Approaches

A distinctive feature of systems biology is its use of network representations to model biological relationships, enabling the application of mathematical tools from Graph Theory [2]. In these representations, nodes typically symbolize system constituents (genes, proteins, metabolites) while links represent interactions or reactions [2]. Biological networks can be constructed through multiple approaches: de novo from direct experimental interactions; by applying known interactions to experimental datasets using specialized software; or through reverse engineering approaches that gather sufficient information to build networks for predicting system dynamics [2].

Research in network biology has revealed common architectural patterns in biological networks, including scale-free network architectures and multi-level hierarchies [1]. Scale-free networks, characterized by a small number of highly connected nodes (hubs) and many poorly connected nodes, exhibit high error tolerance but fragility to attacks on central nodes [1]. The identification of network motifs—patterns of interaction that recur in many contexts—has provided insights into functional modules that perform specific information-processing operations [1].

Essential Research Tools and Reagents

The Scientist's Toolkit

The experimental and computational methodologies of systems and synthetic biology depend on specialized tools, databases, and reagents. The following table details key resources essential for research in these fields.

Table 3: Essential Research Tools and Reagents for Systems and Synthetic Biology

| Tool/Reagent Category | Specific Examples | Function/Application | Field |

|---|---|---|---|

| Network Analysis Software | Cytoscape [2], iCTNet [2] | Network visualization, integration, and analysis | Systems Biology |

| Modeling & Simulation | COPASI [4], Cell Designer [4] | Analysis of biochemical networks, model design | Systems Biology |

| Pathway Databases | KEGG [4], Reactome [4] | Curated information on metabolic pathways | Systems Biology |

| Kinetics Databases | SABIO-RK [4] | Enzyme kinetic parameters and rate laws | Systems Biology |

| Modeling Standards | SBML [4] [5], MIRIAM [4] | Model representation and annotation | Systems Biology |

| DNA Synthesis Technologies | Next-generation DNA synthesis [6] | Writing user-specified DNA sequences | Synthetic Biology |

| Workflow Management | Taverna [4] | Automated workflow design and enactment | Both |

| AI/ML Tools | BioLLMs [6], Biological Design Tools [8] | Protein design, sequence analysis | Both |

Standardization and Reproducibility

A critical challenge in both systems and synthetic biology is obtaining highly reproducible quantitative data for mathematical modeling and engineering [5]. Standardizing experimental protocols, documentation practices, and cellular systems under investigation is essential for generating comparable results across laboratories [5]. For systems biology, this includes standardized procedures for data acquisition and processing, careful documentation of experimental parameters (temperature, pH, reagent lot numbers), and use of defined genetic backgrounds [5]. The establishment of data standards such as SBML for model representation and MIRIAM for model annotation has been crucial for enabling model exchange and comparison [4] [5].

Signaling Pathways and Network Motifs

Representation of Biological Networks

Systems biology employs distinctive representational styles that highlight organizational structure rather than detailed mechanistic relationships. Whereas molecular biology typically uses mechanistic diagrams tracking specific molecular interactions, systems biology representations often display interactions as abstract networks of interconnected nodes and links [1]. This difference is epistemically significant as it facilitates focus on overall system architecture and emergent properties.

Research on network motifs provides a compelling example of how systems biology identifies functional patterns within complex networks. Network motifs are defined as "patterns of interaction that recur in a network in many contexts" [1]. Through comparison of biological networks to random networks, systems biologists have identified statistically significant circuits that perform specific information-processing functions [1].

Network Motifs in Biological Systems: Feedforward loops performing distinct regulatory functions.

Functional Significance of Network Architectures

The coherent feedforward loop (cFFL) and incoherent feedforward loop (iFFL) illustrate how specific network architectures perform distinct information-processing functions [1]. Mathematical analysis suggests that the cFFL functions as a sign-sensitive delay element that filters out noisy inputs for gene activation, while the iFFL serves as an accelerator that creates rapid pulses of gene expression in response to activation signals [1]. These predicted functions have been experimentally validated in living systems, demonstrating how systems approaches can generate testable hypotheses about biological function [1].

Beyond individual motifs, larger-scale network architectures like bow-tie structures connect many inputs and outputs through a central core consisting of a small number of elements [1]. This structure has been associated with efficient information flow but also with fragility toward perturbations of intermediate nodes in the network core [1]. Understanding these architectural principles provides insight into both the robustness and vulnerability of biological systems.

Current Frontiers and Future Directions

The Convergence with Artificial Intelligence

The integration of artificial intelligence, particularly machine learning and large language models, represents a transformative frontier for both systems and synthetic biology [8]. AI-driven tools are accelerating bioengineering workflows, enabling innovations in medicine, agriculture, and sustainability [8]. In synthetic biology, AI capabilities are progressing from predicting protein structure from amino acid sequences to more complex tasks such as predicting physical outcomes from nucleic acid sequences [8]. Biological large language models (BioLLMs) trained on natural DNA, RNA, and protein sequences can generate new biologically significant sequences that serve as starting points for designing useful proteins [6].

This AI-synthetic biology convergence promises dramatically accelerated and democratized biological engineering but also poses significant governance challenges [8]. Reduced knowledge thresholds for carrying out biological engineering tasks create potential scenarios where accidental or intentional design of harmful biological constructs could outpace our ability to anticipate consequences [8]. Responsible development of this convergence necessitates proactive governance based on principles of knowledge cultivation, accountability, transparency, and ethics [8].

Expanding Applications and Implications

Systems and synthetic biology approaches are expanding into new domains with profound implications. In medicine, systems approaches are contributing to personalized medicine through the discovery of biomarkers and therapeutic targets in complex disorders [2]. In agriculture and environmental sustainability, synthetic biology offers potential solutions for cultivating drought-resistant crops, programming cells to manufacture medicines or fuels, and bioremediation of environmental pollutants [6] [9]. The emerging recognition of biology as a general-purpose technology suggests that anything whose synthesis can be encoded in DNA could potentially be grown when and where needed [6].

Looking forward, the next decades promise unprecedented opportunities: from harnessing AI-driven insights to exploring systems-level principles of health and disease and ultimately toward realizing predictive biology and medicine across molecular and physiological scales [3]. As these fields continue to evolve and converge, they will likely further blur traditional boundaries between biological investigation and engineering, between understanding nature and creating novel biological systems, and between basic science and applied technology.

In the pursuit of biological knowledge, two distinct epistemological orientations have emerged as fundamental strategies: analysis and synthesis. These approaches represent contrasting philosophical frameworks for how we generate, validate, and apply scientific knowledge. Analysis, derived from the Greek ἀνάλυσις meaning "resolution," operates through the decomposition of complex systems into their constituent parts to understand underlying mechanisms [10]. Synthesis (σύνθεσις or "composition") follows an opposing path, constructing understanding by combining components into functional wholes to observe emergent properties [10] [11]. In contemporary biology, these epistemological strategies have crystallized into two powerful, complementary fields: systems biology, which embodies the analytical tradition through its examination of complex biological networks, and synthetic biology, which embraces synthesis as both a methodology and an epistemological stance through the construction of biological systems [11].

The distinction between these approaches reflects deeper philosophical commitments. As articulated in research paradigms, epistemology—how we know what we know—shapes methodological choices [12] [13]. Systems biology operates with the epistemological view that knowledge comes from measuring and understanding a single reality through quantitative methods, aligning with a positivist paradigm [13]. Synthetic biology often embraces a more constructivist epistemology, creating multiple realities through engineering approaches and interpreting their meaning through qualitative assessment [13]. This epistemological divide frames our examination of how these fields approach the fundamental challenges of biological research and drug development.

Philosophical and Historical Foundations

The analytical-synthetical dichotomy has deep roots in Western intellectual history, with Aristotle distinguishing between the resolution of complexes into principles (analysis) and the derivation of conclusions from those principles (synthesis) [10]. This framework evolved through mathematical and scientific thought, with Euclid and Pappus formalizing analytical methods that assume what is sought and trace consequences to established truths, while synthesis follows the reverse path from established truths to new conclusions [10]. The Renaissance solidified this distinction, though with varying interpretations—some viewing analysis and synthesis in terms of parts and wholes, others as movements between principles and conclusions [10].

The 20th century witnessed this philosophical divide playing out in scientific methodology. The rise of molecular biology in the 1950s represented a triumph of analytical approaches, decomposing organisms into macromolecules to reveal fundamental mechanisms [11]. However, this analytical success created a new challenge: understanding how these components function collectively in complex systems. This limitation of pure analysis catalyzed the emergence of systems biology as a discipline that retains analytical epistemology while addressing complexity through computational integration [11].

Simultaneously, synthetic biology emerged as the epistemological successor to the reductionist program, asserting that true understanding comes not merely from taking systems apart but from assembling them anew [11]. This echoes the tradition in chemistry, where a molecule is considered fully described only when it can be synthesized with properties matching its natural counterpart [11]. The epistemological stance here is profoundly different: where analysis seeks to discover existing truths about nature, synthesis creates new truths through construction and tests understanding through functional implementation.

Epistemological Frameworks: Core Principles and Methodologies

Systems Biology: The Analytical Epistemology

Systems biology applies computational and mathematical methods to study complex interactions within biological systems, representing an analytical epistemology that seeks to understand through decomposition and measurement [14]. Its epistemological foundation rests on the principle that biological reality can be understood through comprehensive measurement of system components and their interactions [15]. This approach operates with the ontological view that a single, measurable reality exists—aligning with positivist research paradigms—and that this reality can be progressively revealed through increasingly sophisticated analytical techniques [13].

The field employs several distinctive methodological approaches that reflect its analytical orientation. The bottom-up approach begins with large-volume datasets from omics-based experiments (genomics, transcriptomics, proteomics, metabolomics) and uses mathematical modeling to reconstruct relationships between molecular players [15]. The top-down approach starts with hypotheses about biological systems and uses mathematical modeling to study small-scale molecular interactions, translating pathway interactions into mathematical formats like ordinary differential equations for computational analysis [15]. A hybrid middle-out approach implements both methodologies, focusing on a central subsystem and expanding outward [15].

Key to systems biology epistemology is the iterative process of model development, which involves four phases: model design to identify key intermolecular activities; model construction into representative mathematical equations; model calibration to fine-tune parameters; and model validation through experimental testing of predictions [15]. This analytical epistemology enables researchers to move from observational data to mechanistic understanding, with the computer serving as a "dry lab" for testing biological hypotheses [15].

Synthetic Biology: The Synthetical Epistemology

Synthetic biology represents a fundamentally different epistemological strategy, embracing synthesis as both methodology and philosophical foundation. Where systems biology asks "How does this biological system work?", synthetic biology asks "Can we build a functioning biological system that behaves as predicted?" [11]. This orientation positions synthesis not merely as a technique but as the ultimate test of biological understanding, embodying the epistemological view that we truly know only what we can create.

The field combines biological science and engineering principles, allowing the design and manipulation of systems for specific applications [16]. Its epistemological foundations include the concept of modularity—viewing biological systems as composed of standardized, interchangeable parts that can be reassembled in novel configurations [11]. This modular approach enables a construction-based epistemology where biological knowledge advances through the design-build-test cycle rather than through observation and decomposition alone.

Synthetic biology's methodology reflects its epistemological commitments through several key approaches. DNA synthesis and assembly techniques like Gibson assembly, CPEC, and Golden Gate create novel genetic constructs, with costs decreasing to $0.28 per base pair or lower, making genetic manipulation increasingly accessible [16]. Genome engineering tools such as Multiplex Automated Genome Engineering (MAGE) and Conjugative Assembly of Genome Engineering (CAGE) enable modification of multiple chromosomal locations simultaneously, generating rich biological diversity for functional testing [16]. Refactoring gene clusters involves recoding essential sequences under synthetic control elements, simplifying native regulations to facilitate engineering in non-native hosts [16].

The epistemological power of synthetic biology lies in its ability to test biological hypotheses through construction. If a system can be built from well-characterized parts and functions as predicted, this provides validation of our understanding in a way that analytical observation alone cannot achieve [11].

Comparative Analysis: Methodology, Applications, and Validation

Methodological Comparison and Research Paradigms

The epistemological differences between systems and synthetic biology manifest in distinct methodological approaches, applications, and validation criteria. The table below summarizes these key distinctions:

Table 1: Epistemological and Methodological Comparison of Systems and Synthetic Biology

| Dimension | Systems Biology | Synthetic Biology |

|---|---|---|

| Core Question | How do biological systems function as integrated networks? | Can we design and construct biological systems with novel functions? |

| Epistemology | Analytical/Positivist: Understanding through decomposition and measurement [13] [15] | Constructivist/Pragmatist: Understanding through construction and testing [11] [13] |

| Primary Methods | High-throughput omics technologies, mathematical modeling, network analysis [14] [15] | DNA synthesis and assembly, genome engineering, modular design [16] |

| Model Role | Describe existing systems, integrate data, generate testable hypotheses [15] | Guide construction, predict system behavior, enable design [11] |

| Validation Criteria | Accurate prediction of system behavior under perturbation [15] | Successful function of constructed systems [11] |

| Knowledge Product | Descriptive models of biological complexity [14] | Engineered biological systems with novel functions [16] |

| Therapeutic Approach | Identify key network nodes for targeted intervention [17] [14] | Build novel therapeutic pathways and organisms [16] |

Applications in Drug Discovery and Development

Both epistemological approaches have demonstrated significant value in pharmaceutical research, though with different applications and contributions.

Systems biology has revolutionized drug discovery by providing analytical frameworks for understanding complex disease mechanisms. It enables network-based drug targeting by identifying hub nodes in biological networks whose perturbation produces desired therapeutic effects [17] [14]. The approach facilitates mechanism of disease elucidation through characterization of key pathways contributing to pathological states, as demonstrated in neuroblastoma where regulatory network modeling revealed novel insights into retinoid therapy responses [15]. It also enables patient stratification through analysis of multi-scale clinical and molecular data to identify patient subsets most likely to respond to treatments [14].

Synthetic biology applications reflect its constructive epistemology through direct engineering of biological solutions. These include engineered microbial therapeutics designed as cell factories for producing therapeutic proteins, enzymes, pharmaceuticals, and biofuels [16]. The field enables novel biosensing pathways created to detect disease states or metabolic conditions and trigger therapeutic responses [16]. It also contributes functional genetic tools such as synthetic promoters, ribozymes, and aptamers that enable precise regulation of transcription and translation for therapeutic purposes [16].

Experimental Protocols and Validation Frameworks

The validation of knowledge claims follows fundamentally different pathways in these two epistemological frameworks.

Systems biology employs iterative model refinement through a four-phase process: (1) model design identifying key molecular interactions; (2) model construction into mathematical equations; (3) model calibration to fine-tune kinetic parameters; and (4) model validation through experimental testing of predictions [15]. The network perturbation analysis involves systematically modifying network components (e.g., through RNAi or gene knockout) and measuring system responses to validate model predictions [17]. Multi-omics data integration combines genomic, transcriptomic, proteomic, and metabolomic datasets to build comprehensive models that can be tested against experimental observations [14] [15].

Synthetic biology validation follows engineering principles through the design-build-test cycle: (1) computational design of biological systems; (2) physical construction using DNA assembly and genome engineering tools; (3) functional testing of constructed systems; and (4) redesign based on performance gaps [16] [11]. Functional characterization assesses whether synthetic systems perform intended operations under physiological conditions, with quantitative measurements of performance metrics [16]. Orthogonality testing validates that synthetic systems operate independently from host cellular processes without unintended interactions [16].

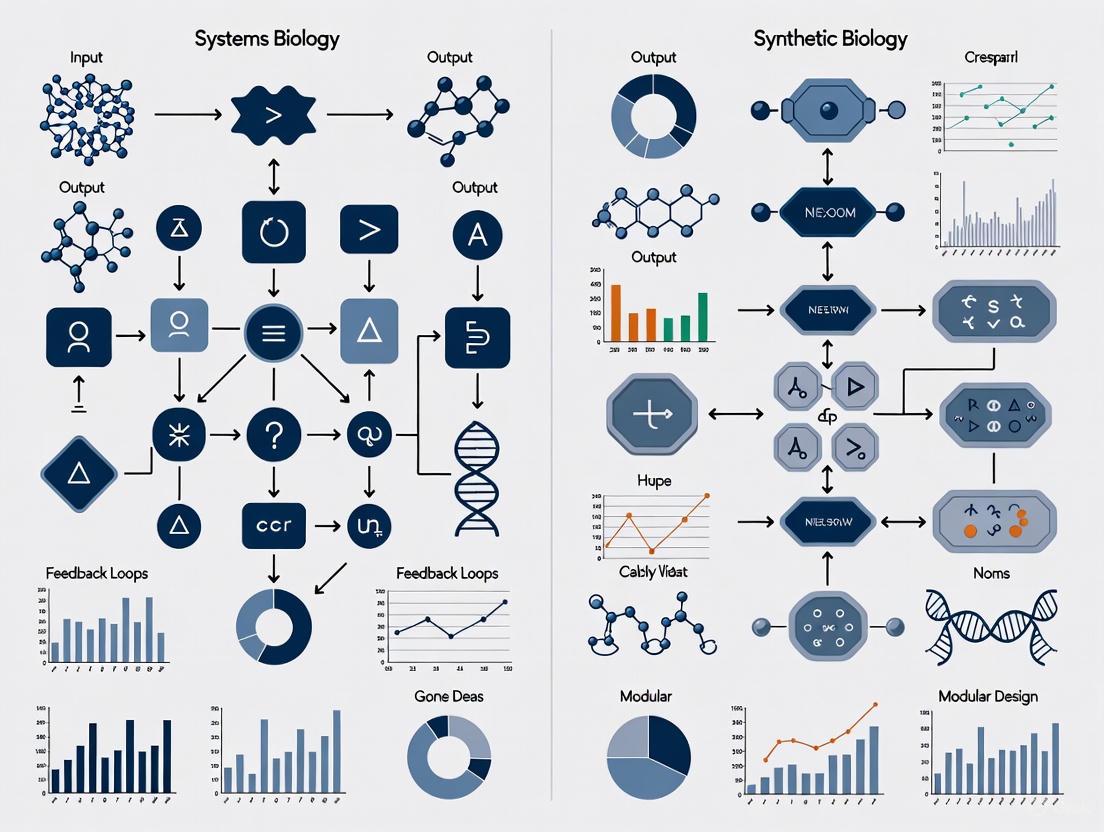

Visualization of Epistemological Approaches and Workflows

Systems Biology Analytical Workflow

The systems biology analytical approach follows a systematic process from data collection to biological insight, as shown in the following workflow:

Synthetic Biology Design-Build-Test Cycle

Synthetic biology follows a constructive epistemology centered on the design-build-test cycle:

Integrated Epistemological Framework in Drug Discovery

The most powerful applications occur when analytical and synthetic approaches integrate within drug discovery:

Essential Research Tools and Reagent Solutions

Both epistemological approaches require specialized research tools and reagents that enable their distinctive methodologies. The following table catalogs essential resources for implementing these research strategies:

Table 2: Essential Research Reagent Solutions for Analysis and Synthesis Approaches

| Category | Specific Tools/Reagents | Epistemological Function | Applications |

|---|---|---|---|

| Genomic Tools | Whole genome sequencing platforms, MAGE/CAGE genome engineering, Gibson/CPEC DNA assembly [16] | Enable both analytical characterization and synthetic construction of genetic systems | Gene identification, pathway refactoring, genome-scale modification [16] |

| Transcriptomic Tools | RNA microarray, RNA-Seq technologies, synthetic promoters, ribozymes, RBS calculators [16] | Analyze expression patterns and synthetically regulate transcript levels | Gene function interpretation, dynamic control of synthetic systems [16] |

| Proteomic Tools | Selected Reaction Monitoring (SRM), protein microarrays, modular protein design, computational protein design [16] [17] | Characterize and engineer protein networks and functions | Protein detection, artificial protein construction, activity regulation [16] [17] |

| Metabolomic Tools | GC-MS, LC-MS, NMR platforms, key enzyme engineering, synthetic transporters, FBA/MFA computational tools [16] | Analyze metabolic fluxes and engineer novel metabolic pathways | Pathway identification, bottleneck determination, metabolic optimization [16] |

| Computational Platforms | Network modeling software, dynamical modeling environments, bioinformatics suites [14] [15] | Enable in silico analysis and prediction for both analytical and constructive approaches | Model construction, simulation, design prediction, data integration [14] [15] |

The analysis-synthesis dichotomy represents not merely a methodological division but fundamentally different epistemological orientations toward biological knowledge. Systems biology exemplifies the analytical approach, seeking to understand biological complexity through decomposition, measurement, and modeling [14] [15]. Synthetic biology embodies the constructive approach, advancing knowledge through design, assembly, and functional testing of biological systems [16] [11]. Each strategy offers distinct strengths: analysis provides comprehensive understanding of existing systems, while synthesis tests understanding through creation of novel systems [11].

The most significant advances emerge from integrating these epistemological strategies, creating a virtuous cycle where analytical insights inform synthetic constructions, which in turn generate new analytical questions [11]. This integration is particularly powerful in drug discovery, where systems biology identifies key network vulnerabilities and synthetic biology creates novel therapeutic approaches to target them [14] [15]. As both fields advance, they continue to refine their respective epistemological approaches while increasingly recognizing their complementary nature in addressing the fundamental challenges of biological research and therapeutic development.

The future of biological knowledge will likely involve not choosing between these epistemological orientations but strategically deploying both analytical and synthetic approaches in an integrated framework that leverages their respective strengths. This epistemological integration promises to accelerate progress toward understanding biological complexity and developing novel therapeutic interventions for complex diseases.

Systems biology is fundamentally rooted in an analytical-descriptive epistemic stance, a position dedicated to deconstructing and comprehending the immense complexity of living systems. This approach seeks to understand biological function by analyzing the interactions and dynamics of a system's components, from genes and proteins to entire networks. The core belief is that a systems-level understanding emerges not from examining parts in isolation, but from describing their relationships and collective behaviors [18]. This stance is often summarized by the adage that "the whole is greater than the sum of its parts," focusing on the emergent properties that arise from biological complexity [18].

This stance contrasts with the synthetic biology approach, which is fundamentally constructive. Where systems biology asks "How does this work?", synthetic biology asks "Can I build it to prove my understanding?" The analytical-descriptive method is indispensable for mapping the intricate networks and signaling pathways that underpin health and disease, providing the foundational knowledge upon which constructive engineering approaches can later build [19].

Core Methodologies and Epistemic Tools

The analytical-descriptive stance employs a specific toolkit of methodologies designed to manage biological complexity while extracting meaningful patterns and principles.

The Multi-Model Framework

A cornerstone of this approach is the recognition that multiple, non-identical models can be productively applied to the same biological system. Rather than seeking a single "true" model, systems biologists often combine epistemic tools, each with different constraints and simplifying assumptions. This diversity is not a weakness but rather a strength for knowledge generation, as different models can illuminate different aspects of a complex system [20]. For instance, a simplified model might reveal core design principles, while a more detailed model might generate testable predictions about specific molecular interactions.

This multi-model approach acknowledges that biological systems are too complex to be fully captured by any single representation. The epistemic power comes from the integration of multiple perspectives, each offering partial but valuable insights into the system's behavior [20]. This methodology stands in contrast to approaches that seek a single unified model, instead embracing a productive pluralism in scientific representation.

Handling Uncertainty through Bayesian Multimodel Inference

A sophisticated methodological development exemplifying the analytical-descriptive stance is Bayesian Multimodel Inference (MMI). This approach directly addresses the challenge of model uncertainty in systems biology, where multiple mathematical models can describe the same signaling pathway [21].

MMI increases certainty in predictions by combining models through a weighted average of their predictive capabilities. The consensus estimator is constructed as:

[ {{{\rm{p}}}}(q| {{{d}}}{{{{\rm{train}}}}},{{\mathfrak{M}}}{K}): ={\sum }{k=1}^{K}{w}{k}{{{\rm{p}}}}({q}{k}| {{{{\mathcal{M}}}}}{k},{{d}}_{{{{\rm{train}}}}}) ]

where weights wk ≥ 0 are assigned based on each model's probability or predictive performance [21]. This methodology produces predictors that are robust to changes in model sets and data uncertainties, embracing rather than eliminating the inherent uncertainty in biological modeling.

Table: Methods for Bayesian Multimodel Inference in Systems Biology

| Method | Basis for Weighting | Key Advantages | Limitations |

|---|---|---|---|

| Bayesian Model Averaging (BMA) | Probability of each model given the training data [21] | Natural Bayesian approach; theoretically rigorous | Strong dependence on prior information; relies on data-fit alone |

| Pseudo-BMA | Expected predictive performance on unseen data [21] | Focuses on predictive accuracy rather than fit | Computationally intensive; requires cross-validation |

| Stacking | Maximizes predictive performance by combining models [21] | Often superior predictive performance; practical focus | Complex implementation; may not reflect underlying model probabilities |

Exemplary Application: ERK Signaling Pathway Analysis

The analytical-descriptive approach is powerfully illustrated by recent work on the extracellular-regulated kinase (ERK) signaling pathway, a crucial regulator of cell growth and differentiation. Researchers applied Bayesian MMI to ten different ERK pathway models, each with distinct structural assumptions and parameterizations [21].

The experimental protocol followed a rigorous workflow:

- Model Selection: Ten established ODE-based models of the core ERK pathway were selected from the BioModels database [21].

- Bayesian Calibration: Each model was calibrated against experimental training data using Bayesian parameter estimation to quantify parametric uncertainty [21].

- Weight Calculation: Models were weighted using BMA, pseudo-BMA, and stacking methods based on their predictive performance and probability [21].

- Multimodel Prediction: A consensus predictor was constructed by combining individual model predictions according to their weights [21].

- Validation: The MMI approach was validated using both synthetic and experimental data, demonstrating increased predictive certainty and robustness [21].

This approach successfully identified mechanisms driving subcellular location-specific ERK activity, suggesting that location-specific differences in both Rap1 activation and negative feedback strength are necessary to capture observed dynamics [21]. The study demonstrated that MMI-based predictions remained robust even when the composition of the model set changed or data uncertainty increased.

The following diagram illustrates the Bayesian Multimodel Inference workflow for the ERK signaling pathway analysis:

Research Reagent Solutions for Systems Biology

The experimental implementation of the analytical-descriptive stance requires specific research tools and reagents. The following table details essential materials used in modern systems biology research, particularly in signaling pathway analysis:

Table: Essential Research Reagents and Tools for Analytical-Descriptive Systems Biology

| Research Tool | Function in Analytical-Descriptive Research | Specific Application Examples |

|---|---|---|

| High-Throughput Sequencing Technologies | Enables comprehensive measurement of transcriptomes, epigenomes, and genomic variations [18] | Characterizing system-wide responses to perturbations; mapping regulatory networks |

| Bayesian Inference Software | Quantifies parametric and model uncertainty; implements multimodel inference [21] | Calibrating ODE models of signaling pathways; calculating model weights for MMI |

| BioModels Database | Repository of curated mathematical models of biological processes [21] | Accessing established ERK pathway models for multimodel inference studies |

| Advanced Microscopy Platforms | Enables spatial and temporal monitoring of signaling activity in live cells [21] | Measuring subcellular location-specific ERK activity dynamics |

| CRISPR Screening Tools | Facilitates functional genomics at system scale [22] | Identifying key components and interactions in biological networks |

| Single-Cell Sequencing Technologies | Resolves cellular diversity and functional states within populations [22] | Deconstructing tissue-level systems into constituent cellular components |

Comparative Analysis with Synthetic Biology Approaches

The analytical-descriptive stance of systems biology differs fundamentally from the constructive epistemology of synthetic biology. Where systems biology analyzes existing natural systems, synthetic biology builds novel biological entities to test understanding [23] [19].

Synthetic biology employs two primary constructive pathways: the top-down approach, which modifies existing natural organisms through genomic reduction (e.g., creating minimal cells from Mycoplasma), and the bottom-up approach, which assembles molecular modules into functional systems [23]. Both approaches share an engineering-oriented epistemology that prioritizes design and construction as a means of validation.

The relationship between these approaches is complementary rather than oppositional. As one analysis notes: "The precision resulting from this synergy eliminates much of the uncertainty and failure associated with biological design and allows for more meaningful conclusions to be drawn from experimental studies" [19]. The analytical-descriptive stance provides the fundamental understanding that enables successful synthetic construction, while synthetic approaches test and validate analytical insights through implementation.

The following diagram contrasts the epistemological approaches of systems and synthetic biology:

The analytical-descriptive stance in systems biology continues to evolve toward more comprehensive and realistic models. Future directions include developing whole-cell models that integrate all cellular functions, creating digital twins of biological systems for personalized medicine, and establishing automated pipelines from raw data to mechanistic models [18]. These ambitious goals will require continued methodological innovation in managing biological complexity.

The fundamental epistemic stance of systems biology—that understanding emerges from analyzing and describing complexity—remains crucial for addressing the most challenging problems in biology and medicine. As biological research generates increasingly large and complex datasets, the analytical-descriptive approach provides the conceptual and methodological framework for extracting meaningful understanding from this wealth of information.

The synergy between systems biology's analytical approach and synthetic biology's constructive approach promises to drive future biological discovery, with each epistemology strengthening and validating the other [19]. This integrated approach will be essential for tackling emerging challenges in health, agriculture, and environmental sustainability, demonstrating the enduring value of the analytical-descriptive stance for biological understanding.

Synthetic biology represents a fundamental paradigm shift in the biological sciences, moving from analytical observation to constructive engineering. This emerging discipline adopts a modular and systemic conception of living organisms, applying engineering principles such as standardization, decoupling, and abstraction to biological systems [23]. Unlike traditional biological approaches that seek to understand existing natural systems, synthetic biology aims to design and construct new biological entities with desired functionalities, treating biology as a substrate for engineering. This design-oriented framework stands in stark contrast to the analytical approach of systems biology, positioning synthetic biology as a true engineering discipline that builds upon physics, computation, and biology [23] [24].

The epistemological foundation of synthetic biology establishes it as a distinct form of knowledge generation, not merely applied biology. Similar to how mechanical engineering predated and drove the development of thermodynamics, biological engineering is emerging as a discipline that will likely deepen our fundamental understanding of biological systems through the process of designing and building them [24]. This constructive approach embodies what can be considered meta-engineering, where engineers design the engineering processes themselves, operating at a higher level of abstraction to create systems capable of their own design processes [24].

epistemological Frameworks: Synthetic Versus Systems Biology

The fundamental distinction between synthetic and systems biology lies in their core methodologies and objectives. The table below summarizes the key epistemological differences between these two approaches to studying biological systems.

Table 1: Epistemological Comparison of Systems Biology and Synthetic Biology

| Aspect | Systems Biology | Synthetic Biology |

|---|---|---|

| Primary Objective | Understand, model, and analyze complex natural systems | Design, construct, and test novel biological systems |

| Core Methodology | Analytical decomposition and computational modeling | Design-Build-Test-Learn (DBTL) cycles |

| System View | Reverse-engineering of existing networks | Forward-engineering of new networks |

| Knowledge Production | Discovery through observation and analysis | Creation through implementation and testing |

| Biological Perspective | Studies biology as found in nature | Approaches biology as a modular, engineerable substrate |

| Conceptual Foundation | Holism, emergence, complexity | Standardization, abstraction, decoupling |

Systems biology operates primarily through analytical methods, seeking to understand existing biological systems by decomposing them into their constituent parts and analyzing their interactions. This approach embraces the inherent complexity of natural systems and aims to develop predictive models of system behavior. In contrast, synthetic biology employs constructive methods to build new biological systems from standardized components, applying engineering principles to create functional entities that may not exist in nature [23]. This constructive process generates knowledge through implementation and testing, creating an epistemological framework where understanding emerges from the act of creation rather than observation alone [24].

The relationship between these approaches is not purely oppositional; rather, they exist in a complementary dialectic. Synthetic biology's construction-oriented approach often relies on insights from systems biology to inform designs, while the testing of synthetic constructs can generate valuable data for refining systems biology models. This iterative exchange between construction and analysis drives progress in both fields and contributes to a more comprehensive understanding of biological systems [23].

The Synthetic Biology Toolbox: Core Methodologies and Experimental Approaches

The Design-Build-Test-Learn Cycle

The foundational framework for synthetic biology's engineering approach is the Design-Build-Test-Learn (DBTL) cycle, which provides a systematic process for developing biological systems [25]. This iterative engineering cycle mirrors the evolutionary process of variation and selection, creating a structured methodology for exploring biological design spaces [24]. The power of the DBTL cycle lies in its recursive nature, where each iteration builds upon knowledge gained from previous cycles to refine designs and improve system performance.

Table 2: Key Stages of the Design-Build-Test-Learn Cycle

| Stage | Primary Activities | Outputs |

|---|---|---|

| Design | Computational modeling, part selection, circuit design | Detailed biological blueprint |

| Build | DNA assembly, transformation, strain engineering | Physical biological construct |

| Test | Characterization, measurement, functional assays | Quantitative performance data |

| Learn | Data analysis, model refinement, insight generation | Improved design rules and understanding |

Biofoundries operationalize the DBTL cycle through automated workflows that translate biological designs into physical constructs [25]. These facilities implement an abstraction hierarchy that separates project goals from specific operational details, enabling researchers to work at appropriate levels of abstraction while ensuring technical execution follows standardized protocols. This hierarchical organization includes: Project (Level 0), Service/Capability (Level 1), Workflow (Level 2), and Unit Operation (Level 3) [25].

Key Engineering Strategies: Top-Down and Bottom-Up Approaches

Synthetic biology employs two primary engineering strategies for constructing biological systems:

The top-down approach begins with existing biological systems and simplifies them through reduction. A prominent example is the creation of minimal genomes by systematically removing non-essential genes from natural organisms [23]. For instance, researchers have progressively reduced the genome of Mycoplasma mycoides to identify the minimal set of genes required for life [23]. This approach benefits from working within functional biological systems while attempting to distill them to their essential components.

The bottom-up approach assembles biological systems from molecular components, creating protocells and synthetic genetic circuits from basic biological parts [23]. This method often utilizes standardized DNA parts known as BioBricks that can be combined in modular fashion [23]. While more challenging than top-down approaches, bottom-up construction offers greater control over system composition and the potential to create fundamentally novel biological entities not found in nature.

Table 3: Comparison of Top-Down and Bottom-Up Engineering Approaches

| Characteristic | Top-Down Approach | Bottom-Up Approach |

|---|---|---|

| Starting Point | Existing living organisms | Molecular components (DNA, proteins, membranes) |

| Methodology | Genome reduction, simplification | Modular assembly, de novo construction |

| Key Examples | Minimal cell projects (Mycoplasma, Mesoplasma) | Protocells, synthetic genetic circuits |

| Advantages | Works within proven biological framework | Maximum design flexibility, novel functions |

| Challenges | Cellular complexity, unknown essential functions | Integration of components into functional wholes |

Experimental Protocols in Synthetic Biology Construction

Protocol 1: Minimal Genome Construction via Top-Down Engineering

This protocol outlines the representative methodology for creating minimal cells through genome reduction [23].

Selection of Host Organism: Choose a simple, fast-growing bacterium with a small genome (e.g., Mycoplasma mycoides, Mesoplasma florum, or Escherichia coli).

Identification of Essential Genes:

- Apply transposon mutagenesis to randomly disrupt genes

- Use high-throughput sequencing to identify disrupted sites

- Determine essential genes as those without transposon insertions

Genome Design and Synthesis:

- Design a reduced genome retaining only essential genes

- Include necessary non-coding regions for replication and transcription

- Synthesize the designed genome in yeast using hierarchical assembly

Genome Transplantation:

- Isolate the synthetic genome from yeast

- Transplant into recipient cells lacking their native genome

- Select for viable transplants using antibiotic resistance markers

Validation and Characterization:

- Verify genome sequence through whole-genome sequencing

- Assess growth characteristics and morphology

- Confirm metabolic capabilities through biochemical assays

This protocol exemplifies the constructive approach of synthetic biology, where biological understanding emerges from the process of building simplified systems rather than merely analyzing complex natural ones [23].

Protocol 2: Modular Genetic Circuit Assembly via Bottom-Up Engineering

This protocol describes the standard methodology for constructing genetic circuits using standardized biological parts [23].

Circuit Design:

- Select appropriate biological parts (promoters, coding sequences, terminators) from repositories

- Arrange parts according to desired logical functions

- Use computational tools to predict circuit behavior

DNA Assembly:

- Amplify individual parts using polymerase chain reaction (PCR)

- Digest parts and vector with restriction enzymes (or use Gibson assembly)

- Ligate parts into destination vector

- Transform assembled construct into E. coli for propagation

Circuit Characterization:

- Measure transfer function of individual components

- Quantify input-output relationships for the complete circuit

- Determine dynamic response to input signals

Model Refinement:

- Compare experimental data with computational predictions

- Adjust model parameters to better fit observed behavior

- Identify sources of context-dependence and unexpected interactions

This bottom-up construction approach demonstrates the modular design principle central to synthetic biology's engineering paradigm, though practitioners must account for contextual effects that can alter part function in different arrangements [26].

Visualization of Synthetic Biology Design Processes

The following diagrams illustrate key conceptual frameworks and workflows in synthetic biology's design-oriented paradigm.

Diagram 1: DBTL Cycle in Synthetic Biology

Diagram 2: Biofoundry Abstraction Hierarchy

Diagram 3: Engineering Approaches in Synthetic Biology

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Reagents and Materials for Synthetic Biology Construction

| Reagent/Material | Function | Examples & Applications |

|---|---|---|

| Standard Biological Parts (BioBricks) | Modular DNA components for genetic circuit construction | Promoters, RBS, coding sequences, terminators used in transcriptional logic gates [23] |

| DNA Assembly Reagents | Enzymatic assembly of DNA fragments | Restriction enzymes, ligases, Gibson assembly mixes for combinatorial part assembly [23] |

| Synthetic Genetic Codes | Expanded genetic alphabets for novel functions | Unnatural base pairs, synthetic amino acids for orthogonal biological systems [23] |

| Minimal Genome Templates | Simplified cellular chassis for engineering | Reduced-genome E. coli, Mycoplasma strains for predictable circuit operation [23] |

| Standardized Visual Notation (SBOL Visual) | Graphical language for genetic design communication | Promoter, CDS, terminator glyphs for diagramming genetic constructs [27] [28] |

| Aspect-Oriented Design Tools | Computational framework for managing contextual effects | SynBioWeaver for separating core and cross-cutting concerns in genetic circuit design [26] |

Case Studies: Exemplary Achievements of the Construction Paradigm

Minimal Genome Construction

The creation of Mycoplasma laboratorium represents a landmark achievement in synthetic biology's construction paradigm. Researchers at the J. Craig Venter Institute synthesized a 1.08 megabase pair genome based on the naturally occurring Mycoplasma mycoides genome, but extensively redesigned and simplified through top-down engineering approaches [23]. This work demonstrated that living systems could be driven by chemically synthesized genomes and established methodologies for genome design, synthesis, and transplantation. The project employed iterative design cycles to identify essential genes and remove redundancies, resulting in JCVI-syn3.0, a minimal cell with only 473 genes [23]. This constructive approach generated fundamental insights into the core requirements for cellular life that would have been difficult to obtain through analytical methods alone.

Synthetic Biology Open Language (SBOL) Visual

The development of SBOL Visual provides a standardized graphical language for genetic designs, enabling effective communication of biological constructions [27] [28]. This visual notation system includes symbols for DNA subsequences, regulatory elements, and assembly features, creating a coherent framework for describing synthetic biological systems. SBOL Visual functions as a key enabling technology for the engineering paradigm by allowing researchers to unambiguously communicate designs across laboratories and publications. The standard continues to evolve, with SBOL Visual 2 expanding to include molecular species glyphs and interaction glyphs, further enhancing its ability to represent both structural and functional aspects of biological designs [27].

Synthetic biology represents a fundamental shift in how we approach biological systems, establishing a design-oriented engineering paradigm that complements traditional analytical approaches. Through its constructive methodology, synthetic biology generates knowledge by building and testing biological systems, creating an epistemological framework where understanding emerges from implementation. The discipline's core principles—abstraction, standardization, modularity, and the DBTL cycle—provide a systematic approach to biological engineering that enables the creation of novel biological functions not found in nature [23] [24] [25].

The comparative analysis with systems biology reveals how these two approaches offer complementary perspectives on biological systems: one seeking to understand existing complexity through analysis, the other seeking to create simplified functionality through construction. As synthetic biology continues to mature, its construction-oriented paradigm promises not only practical applications in biotechnology and medicine but also fundamental insights into the principles governing biological systems. By building biological systems from the ground up, synthetic biology tests our understanding of life's fundamental principles and expands the scope of what biological systems can do [23] [24].

Modularity is a foundational design principle that transcends disciplines, yet its application and interpretation vary significantly. In biology, it informs how we understand natural systems; in synthetic biology, it guides how we build new ones; and in technology, it dictates how we construct complex, maintainable software. This guide provides a comparative analysis of how the concept of modularity is applied, the performance it enables, and the practical tools used in systems biology, synthetic biology, and software engineering.

Defining Modularity Across Disciplines

At its core, modularity is a systems design principle that breaks down a complex system into smaller, self-contained units (modules) that communicate through well-defined interfaces [29]. This conceptual foundation, however, takes on distinct meanings and objectives depending on the field.

In Systems Biology, modularity is an observed property of natural biological systems. Researchers analyze networks to identify modules—groups of densely interconnected biomolecules that work together to perform a specific function [29]. The goal is to understand the inherent organization of life, often associating modular structures with properties like robustness and evolvability [29].

In Synthetic Biology, modularity is a design principle for engineering biological systems. It aims to create standardized, interchangeable genetic parts (BioBricks) that can be assembled like Lego bricks to create novel biological functions [16] [30]. The goal is to build predictable and reliable systems, drawing a direct analogy from electrical engineering to biology [30].

In Software Engineering, modularity is an architectural methodology for building complex applications. It involves decomposing software into independent, loosely-coupled modules that can be developed, scaled, and updated separately [31] [32]. The goal is to achieve maintainability, scalability, and agility in development.

The table below summarizes these comparative epistemological approaches.

| Discipline | Primary Perspective on Modularity | Core Objective | Key Analogy or Inspiration |

|---|---|---|---|

| Systems Biology | An observed, emergent property of biological networks [29] | To understand and decipher the natural organization of life | Functional clusters in interaction networks |

| Synthetic Biology | A guiding engineering principle for design and construction [16] [30] | To build novel, predictable biological systems | Standardized parts in electrical engineering (e.g., resistors, capacitors) [30] |

| Software Engineering | A deliberate architectural methodology for managing complexity [31] [33] | To create maintainable, scalable, and agile systems | Composable building blocks (e.g., microservices, playlists) [31] |

Comparative Performance and Experimental Data

The performance and outcomes of applying modularity differ starkly between the constructive approaches of synthetic biology and software engineering versus the analytical approach of systems biology.

Synthetic Biology: Performance vs. Traditional Methods

Synthetic biology's modular approach aims to outperform traditional genetic engineering and chemical production methods. The following table summarizes key performance comparisons.

| Application Area | Synthetic Biology (Modular Approach) | Traditional Methods | Comparative Performance Data |

|---|---|---|---|

| Product Development | mRNA vaccine platform [34] | Traditional vaccine development | Development time reduced from 5-10 years to under 12 months [34] |

| Industrial Production | Bio-based chemicals (e.g., by Genomatica, Amyris) [34] | Petrochemical processes | Cost reduction of 15-30% with a carbon footprint reduction of up to 85% [34] |

| Agricultural Products | Plant-based meat (e.g., Impossible Foods) [34] | Conventional animal agriculture | Requires 96% less land, 87% less water, and produces 89% fewer GHG emissions [34] |

| Ingredient Production | Fermentation-derived vanillin/saffron [34] | Resource-intensive cultivation | Yield improvements of 50-200% with production costs reduced by 40-60% [34] |

| Genetic Circuit Design | MAGE (Multiplex Automated Genome Engineering) [16] | Sequential genetic modifications | Achieved over fivefold increase in lycopene production in E. coli within 3 days [16] |

A core experimental challenge in synthetic biology is the "chassis effect", where the same genetic module behaves differently depending on the host organism it is placed in, due to variations in resource allocation and regulatory crosstalk [35]. This highlights that true modularity in biology is complex and context-dependent.

Experimental Protocol: Multiplex Automated Genome Engineering (MAGE)

Objective: To simultaneously optimize multiple genetic targets within a biological pathway [16].

- Design: Synthesize single-stranded DNA (ssDNA) oligonucleotides encoding the desired mutations (e.g., promoter modifications, RBS substitutions) for multiple genes in a pathway (e.g., DXP biosynthesis pathway for lycopene production).

- Delivery: Introduce these ssDNA oligos cyclically into a population of living E. coli cells equipped with the λ-Red β-protein, which promotes allelic replacement.

- Selection: After each cycle, screen or select for cells that have incorporated the modifications. This is often facilitated by co-expressing a fluorescent reporter (e.g., for lycopene).

- Iteration: Repeat the process for many cycles (e.g., 30+ cycles over 3 days) to generate combinatorial genomic diversity.

- Analysis: Isolate high-performing clones and sequence their genomes to identify the combination of mutations that lead to the optimized phenotype (e.g., high lycopene yield) [16].

Systems Biology: Identifying Natural Modules

In systems biology, the "performance" of modularity is measured by its success in explaining biological phenomena. Key methodologies include:

Experimental Protocol: Multi-Omic Integration for Network Modeling

Objective: To define functional modules and their role in complex diseases like Alzheimer's [36].

- Data Generation: Collect multiple high-throughput datasets from relevant models and human tissues. This includes:

- Genomics: Unbiased genetic screens (e.g., RNAi screen in Drosophila for neurodegeneration genes) [36].

- Proteomics & Metabolomics: Mass spectrometry-based profiling of proteins, phosphoproteins, and metabolites in disease models [36].

- Transcriptomics: RNA-sequencing of vulnerable cell populations from human post-mortem brains (e.g., laser-capture microdissected neurons) [36].

- Network Construction: Integrate these disparate datasets using computational network models. Genes/proteins are nodes, and their interactions (functional, physical, or correlative) are edges [36] [29].

- Module Detection: Apply community detection algorithms (e.g., Girvan-Newman, Louvain method) to the network to identify densely interconnected groups of nodes (modules) [29].

- Validation: Computationally predict and experimentally confirm the role of key modules and hub genes. For example, the roles of HNRNPA2B1 and MEPCE in tau-mediated neurotoxicity were confirmed in flies and human stem cell-derived neural progenitor cells [36].

The Scientist's Toolkit: Essential Research Reagents and Materials

The practical application of modularity concepts relies on a specific set of tools and reagents.

| Item Name | Field of Use | Function Description |

|---|---|---|

| BioBricks / Standardized Genetic Parts | Synthetic Biology | Standardized DNA sequences (promoters, RBS, coding sequences, terminators) with uniform interfaces that enable modular assembly of genetic circuits [30]. |

| Modular Cloning Systems (e.g., Golden Gate, Gibson Assembly) | Synthetic Biology | Enzyme-based methods (using ligases or exonuclease/polymerase/ligase mixes) that allow for the scarless, one-pot assembly of multiple DNA fragments into a functional plasmid or pathway [16]. |

| Broad-Host-Range (BHR) Vectors (e.g., SEVA) | Synthetic Biology | Plasmid vectors designed with modular parts (origins of replication, antibiotic markers) to function across a wide range of microbial hosts, facilitating chassis exploration [35]. |

| MAGE/CAGE Platform | Synthetic Biology | A platform using ssDNA oligonucleotides and automated cycling (MAGE) or bacterial conjugation (CAGE) to introduce genome-wide modifications simultaneously, enabling rapid pathway optimization [16]. |

| Cross-Species Chassis Panel | Synthetic Biology | A curated collection of genetically tractable non-model organisms (e.g., Rhodopseudomonas palustris, Halomonas bluephagenesis) used to test and exploit host-specific traits as functional modules [35]. |

| Community Detection Algorithms | Systems Biology | Computational methods (e.g., Girvan-Newman, Louvain) applied to biological networks to identify tightly interconnected groups of genes or proteins, revealing functional modules [29]. |

| Multi-Omic Datasets | Systems Biology | Integrated datasets from genomics, transcriptomics, proteomics, and metabolomics used as input for network models to discover modules in a holistic, unbiased manner [36]. |

Visualizing Workflows and Relationships

The following diagrams illustrate the core workflows and logical relationships in the application of modularity.

Systems Biology Multi-Omic Workflow

Synthetic Biology DBTL Cycle

Modularity Concepts Relationship

The emergence of systems and synthetic biology represents a significant paradigm shift in the life sciences, marking a departure from traditional reductionist approaches toward more integrated, interdisciplinary frameworks. These fields are fundamentally reshaping biological research through the systematic incorporation of principles from engineering, physics, computer science, and mathematics. While often described as "sister disciplines," they pursue complementary yet distinct epistemological approaches: systems biology aims to understand and model existing biological systems, whereas synthetic biology focuses on designing and constructing new biological functions and systems [1]. This comparative analysis examines their interdisciplinary origins, methodological frameworks, and applications in therapeutic development, highlighting how their distinct approaches to biological complexity serve the broader scientific community.

The interdisciplinary nature of both fields is not merely incidental but foundational to their identity and practice. Systems biology has been characterized as operating in both systems-theoretical and pragmatic streams, with the former reviving theoretical questions about living systems and the latter functioning as a powerful extension of molecular biology fueled by genomic data [1]. Similarly, synthetic biology has developed pluralistic research programs, including top-down approaches that re-engineer existing organisms and bottom-up approaches that construct new biological systems from molecular components [23]. This integration of diverse disciplines has created a rich epistemological landscape for tackling complex biological problems, particularly in drug discovery and development.

Epistemological Foundations: Analysis Versus Synthesis

The epistemological distinction between systems and synthetic biology can be understood through their fundamental orientations toward biological systems. Systems biology employs a largely analytical approach that seeks to understand the dynamic networks and organizational principles of natural biological systems. In contrast, synthetic biology embraces a design-based synthesis approach that constructs new biological systems to test hypotheses and create useful functions [1]. This complementary relationship mirrors the cycle of analysis and synthesis common in engineering disciplines.

Philosophical Underpinnings and Research Paradigms

The philosophical foundations of these fields reflect their different engagements with biological complexity:

Systems Biology emerged as a response to limitations in molecular biology's reductionist strategies, emphasizing that understanding the dynamic behavior of complex networks requires computational modeling and quantitative analysis beyond what traditional decomposition approaches can offer [1]. It treats living systems as dynamic networks of interacting components whose collective behavior cannot be fully understood by studying individual parts in isolation [37].

Synthetic Biology adopts what has been termed an "engineering mindset" toward biology, viewing biological components as modules that can be standardized, assembled, and reprogrammed to perform novel functions [23] [1]. This approach is grounded in the conviction that the best way to understand biological systems is to attempt to build them, following Richard Feynman's famous dictum: "What I cannot create, I do not understand."

Table 1: Core Epistemological Distinctions Between Systems and Synthetic Biology

| Aspect | Systems Biology | Synthetic Biology |

|---|---|---|

| Primary Goal | Understand and model natural systems | Design and construct novel biological systems |

| Core Approach | Analysis of existing networks | Synthesis of new functions |

| Relationship to Complexity | Seeks to map and analyze emergent properties | Aims to simplify and standardize complexity through modularity |

| Knowledge Production | Descriptive and predictive models | Prescriptive design rules and functional prototypes |

| Primary Methodologies | High-throughput data collection, network modeling, computational simulation | DNA synthesis and assembly, genetic circuit design, genome engineering |

Interdisciplinary Methodologies: A Comparative Analysis

The interdisciplinary character of systems and synthetic biology is most evident in their methodological toolkits, which integrate techniques and concepts across traditional disciplinary boundaries. Both fields leverage advances in genomics, computational analysis, and engineering principles, but deploy them toward different ends.

Systems Biology's Quantitative Framework

Systems biology employs a multi-scale quantitative approach to biological analysis, integrating data across molecular, cellular, and organismal levels. Key methodological elements include:

Network Analysis and Modeling: Representation of biological components as nodes in large-scale interaction networks, enabling identification of organizational principles like scale-free architectures and network motifs [1]. These approaches reveal common patterns in biological networks and their functional implications for robustness and fragility.

Omics Technologies: High-throughput methods (genomics, transcriptomics, proteomics, metabolomics) that generate large-scale quantitative datasets on biological system components [16] [1]. These technologies enable comprehensive profiling of system states under different conditions.

Computational Simulation and Modeling: Development of dynamic mathematical models that simulate system behavior, predict responses to perturbations, and identify emergent properties not evident from component analysis alone [1] [11]. These models increasingly serve to guide experimental work rather than merely summarize results.

Synthetic Biology's Design-Build-Test Cycle

Synthetic biology applies engineering principles of modularity, standardization, and abstraction to biological system design:

DNA Synthesis and Assembly: Techniques such as Gibson assembly, CPEC, and Golden Gate enable construction of genetic circuits and pathways from standardized biological parts [16]. These methods allow for scar-less assembly of multiple DNA fragments with high efficiency.

Genetic Circuit Engineering: Design and implementation of predictable genetic control systems using standardized components such as promoters, ribosome binding sites, coding sequences, and terminators [16] [1]. This approach treats genetic regulation as a problem of circuit design analogous to electrical engineering.

Genome-Scale Engineering: Methods like Multip Automated Genome Engineering (MAGE) and Conjugative Assembly of Genome Engineering (CAGE) enable large-scale, targeted modifications across microbial chromosomes [16]. These techniques facilitate rapid optimization of metabolic pathways and cellular functions.

Table 2: Core Methodologies and Their Applications in Drug Development

| Methodology | Systems Biology Applications | Synthetic Biology Applications |

|---|---|---|

| Genome Sequencing | Gene identification, annotation, understanding genetic basis of disease [16] | Template for synthetic genome design, identification of therapeutic targets |

| DNA Synthesis/Assembly | Validation of genetic components | Construction of synthetic pathways, gene circuits, and engineered genomes [16] |

| Network Analysis | Identification of disease mechanisms, drug targets, and side effect predictions | Design of synthetic circuits that interface with host networks |

| Metabolic Modeling | Understanding disease metabolism, predicting drug effects | Engineering optimized metabolic pathways for therapeutic compound production [37] [16] |

| High-Throughput Screening | Drug target identification, biomarker discovery | Characterization of biological parts, optimization of synthetic systems |

Experimental Design and Workflows