Systems Biology in Biomedicine: From Network Principles to Clinical Innovation

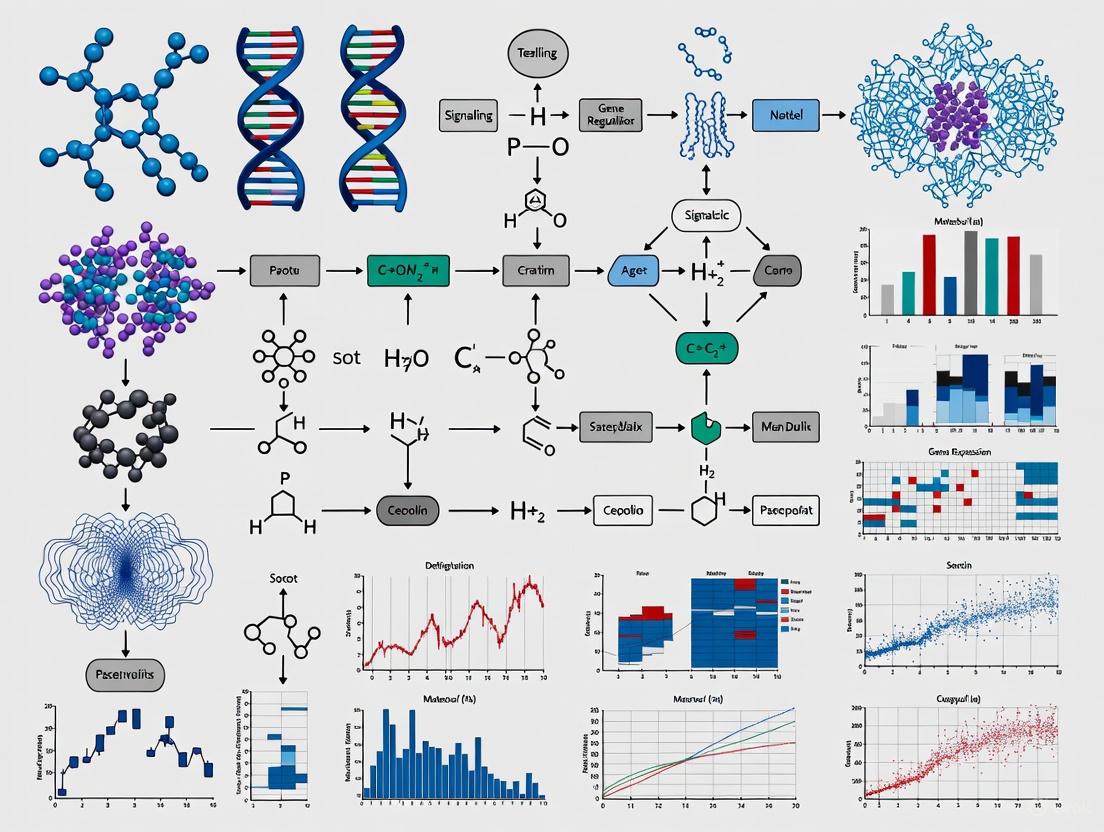

This article provides a comprehensive overview of how systems biology principles are revolutionizing biomedical research and drug development.

Systems Biology in Biomedicine: From Network Principles to Clinical Innovation

Abstract

This article provides a comprehensive overview of how systems biology principles are revolutionizing biomedical research and drug development. It explores the foundational concepts of analyzing biological complexity as interconnected networks, details cutting-edge methodological approaches like Quantitative Systems Pharmacology (QSP) and Model-Informed Drug Development (MIDD), and addresses key challenges in model training and data integration. Through concrete case studies in oncology, cardiovascular disease, and COVID-19, it validates the power of systems biology to identify novel drug targets, optimize therapeutic strategies, and accelerate the path to precision medicine. Designed for researchers, scientists, and drug development professionals, this review synthesizes current innovations and future trajectories for harnessing biological complexity to create more effective diagnostics and therapies.

Decoding Complexity: Core Systems Principles for Biomedical Research

The historical discourse in biology has long been characterized by a fundamental tension between two competing philosophical frameworks: reductionism and holism. Reductionism, which dominated molecular biology throughout the latter half of the 20th century, strives to understand biological phenomena by deconstructing them into their constituent parts, mapping complex functions onto fundamental chemical and physical principles [1]. This approach operates on the premise that complex systems can be understood by analyzing their individual components in isolation, essentially reducing biological explananda to assemblages of more elementary phenomena. In direct counterpart, holism (also termed anti-reductionism) asserts that genuinely novel phenomena emerge from organized levels of biological complexity that possess intrinsic causal power—properties that cannot be fully explained or predicted solely by studying isolated components [2] [1].

This philosophical debate is not merely academic; it fundamentally shapes methodological approaches, experimental design, and interpretive frameworks throughout biological research. The reductionist approach has yielded extraordinary successes, including the characterization of individual genes and proteins, the elucidation of metabolic pathways, and the mapping of the human genome. However, its limitations become apparent when confronting the emergent properties of biological systems—characteristics such as cellular decision-making, organismal development, and ecosystem dynamics that arise from complex, non-linear interactions between components rather than from the properties of individual parts [2]. The contemporary emergence of systems biology represents a deliberate effort to transcend these limitations by embracing holistic principles while leveraging the analytical power of reductionist methods, thereby catalyzing a genuine paradigm shift in how we study, understand, and manipulate biological systems for biomedical innovation.

The Historical Trajectory: From Vitalism to Modern Systems Thinking

The reductionist-holist debate in biology emerged in the 1920s from earlier disputes between mechanists and vitalists, as well as between neo-Darwinians and neo-Lamarckians [2]. Vitalism, championed by figures like embryologist Hans Driesch, posited that a special life-force (élan vital or entelechy) differentiated living from inanimate matter [2]. This position was effectively abandoned by the 1920s, not only due to theoretical limitations but because of its inability to suggest a productive experimental research program. In contrast, mechanism, defended by biochemists like Jacques Loeb, asserted that all living processes could be "unequivocally explained in physicochemical terms" and provided numerous avenues for experimental analysis [2].

The concept of classical holism was formally introduced by Jan Smuts in 1926, describing an innate tendency for stable wholes to form from parts across all levels of organization, from atomic to psychological [2]. Unlike vitalism, which applied only to living matter, Smuts' holism proposed a universal principle driving evolution toward increasingly complex and integrated levels of organization. However, this classical holism was relatively short-lived as a unified theory, though the term persisted as an umbrella designation for various anti-reductionist approaches [2].

Throughout the mid-20th century, reductionism became the dominant paradigm in molecular biology, facilitated by technological advances that enabled the study of biological components in isolation. The discovery of the DNA double helix, the characterization of enzymes, and the elucidation of metabolic pathways all represented triumphs of the reductionist approach. However, by the late 20th century, it became increasingly apparent that this focus on individual components provided an incomplete understanding of biological complexity, leading to the emergence of systems biology as a deliberate synthesis of reductionist and holistic perspectives [3].

The Rise of Systems Biology: A Synthesis of Approaches

Systems biology represents a formalized framework for implementing holistic principles in biological research. It emphasizes "the intricate interconnectedness and interactions of biological components within living organisms" and plays a "crucial role in advancing diagnostic and therapeutic capabilities in biomedical research and precision healthcare" [4]. Rather than rejecting reductionism entirely, systems biology incorporates its analytical power while contextualizing component-level knowledge within an integrative, network-based understanding of biological systems [2].

This synthesis has been facilitated by several technological and conceptual developments:

- High-throughput '-omics' technologies that enable comprehensive measurement of biological components (genes, transcripts, proteins, metabolites) at a systems level

- Advanced computational tools for modeling and simulating complex biological networks and their dynamics

- CRISPR genome engineering which provides unprecedented capability to experimentally probe system-level responses to targeted perturbations [3]

- Mathematical frameworks for analyzing emergent properties and non-linear dynamics in biological systems

The holistic approach in modern systems biology is characterized by its focus on interactions and networks rather than isolated components, on dynamics rather than static snapshots, and on emergent properties that arise from system organization rather than solely from component properties [2]. This paradigm recognizes that biological function often resides in the patterns of connectivity between elements rather than in the elements themselves, necessitating a shift from purely component-centered to interaction-centered research strategies.

Methodological Framework: Implementing Holistic Research Programs

Core Principles and Experimental Design

Implementing a holistic research program in systems biology requires distinctive methodological approaches that differ from traditional reductionist strategies. The following table summarizes the key methodological shifts involved in this paradigm transition:

Table 1: Methodological Shifts from Reductionism to Holism in Biological Research

| Aspect | Reductionist Approach | Holistic/Systems Approach |

|---|---|---|

| Experimental Design | Isolate individual components for detailed study | Measure multiple system components simultaneously under perturbation |

| Variable Analysis | Control all but one variable to establish causality | Purposefully perturb multiple variables to study network interactions |

| Data Collection | Focus on single data types (e.g., only genomic) | Integrate multiple '-omics' data types (multi-omics) |

| Model Validation | Predict behavior of parts in isolation | Predict emergent system-level properties and dynamics |

| Measurement Tools | Optimized for depth on single components | Balanced depth and breadth across system components |

| Time Resolution | Often static measurements | Frequent dynamic measurements to capture system trajectories |

A fundamental principle of holistic experimental design is the multi-scale integration of data across different levels of biological organization—from molecular to cellular, tissue, organismal, and sometimes even population levels. This requires sophisticated experimental frameworks that can capture data at multiple scales while subjecting the system to controlled perturbations that reveal network properties and dynamics [3] [4].

Quantitative Data Analysis in Holistic Research

The shift to holistic approaches necessitates advanced quantitative methods for analyzing complex datasets. Systems biology generates multidimensional data that require specialized statistical approaches and visualization strategies. The following table outlines core quantitative measures essential for characterizing holistic datasets:

Table 2: Essential Quantitative Measures for Holistic Data Analysis

| Measure Category | Specific Metrics | Application in Systems Biology |

|---|---|---|

| Central Tendency | Mean, Median, Mode [5] | Characterize distributions of molecular abundance across cell populations |

| Data Spread | Standard Deviation, Range, Interquartile Range [5] | Quantify heterogeneity in cellular responses and biological noise |

| Network Properties | Degree Distribution, Clustering Coefficient, Betweenness Centrality | Identify hub genes/proteins and critical pathways in biological networks |

| Dynamic Measures | Correlation Over Time, Cross-Covariance, Time-Delayed Mutual Information | Quantify temporal relationships and feedback loops in signaling pathways |

| Multivariate Measures | Principal Components, Partial Correlations, Canonical Correlations | Reduce dimensionality and identify latent variables in multi-omics data |

Proper handling of missing data is particularly critical in holistic research, as the absence of measurements for even a few components can compromise network inference. Techniques such as multiple imputation, k-nearest neighbors imputation, or matrix completion methods are often employed to address this challenge while preserving the integrity of the dataset [5].

Technical Implementation: Genome Engineering as a Gateway to Holistic Understanding

CRISPR-Based Genome Perturbation Strategies

The emergence of CRISPR genome engineering represents a pivotal technological development that enables true holistic experimentation by allowing researchers to systematically perturb biological systems and observe the resulting network-level effects [3]. The following diagram illustrates a generalized workflow for employing CRISPR in holistic research programs:

This workflow highlights how CRISPR technology enables researchers to move beyond correlational observations to causal inference through systematic perturbation, capturing the system's response through multi-omics profiling, and reconstructing network relationships from the resulting data.

Essential Research Reagents and Tools

Implementing holistic research programs requires specialized reagents and computational tools. The following table catalogues essential resources for systems biology research with genome engineering:

Table 3: Essential Research Reagent Solutions for Holistic Biology

| Reagent/Tool Category | Specific Examples | Function in Holistic Research |

|---|---|---|

| Genome Engineering Tools | CRISPR-Cas9 systems, Base editors, Prime editors [3] | Targeted perturbation of network components to establish causality |

| Multi-omics Profiling Kits | Single-cell RNA-seq, Spatial transcriptomics, Proteomics kits | Simultaneous measurement of multiple molecular species across system |

| Bioinformatics Platforms | Network analysis tools (Cytoscape), Pathway databases (KEGG, Reactome) [4] | Reconstruction and visualization of biological networks from omics data |

| Biological Standards | Synthetic gene circuits, Reference cell lines, Standard biological parts [4] [6] | Benchmarking and normalization across experiments and platforms |

| Visualization Resources | Scientific icon repositories (Bioicons, Noun Project), Graph visualization tools [7] | Creation of graphical abstracts and system diagrams for communication |

These resources collectively enable researchers to perturb biological systems in a targeted manner, comprehensively measure the multidimensional response, computationally reconstruct the underlying networks, and effectively communicate the resulting insights.

Visualization Strategies for Complex Biological Systems

Communicating holistic research findings requires specialized visualization strategies that can convey complex relationships and system-level principles effectively. Graphical abstracts have emerged as a standard tool for summarizing key findings in an immediately accessible format [7]. Effective design principles for graphical abstracts in systems biology include:

- Establish a clear visual hierarchy that guides the viewer through the narrative

- Use consistent visual styles for icons and diagrammatic elements throughout

- Employ standardized biological icons from repositories such as Bioicons, Phylopic, or Reactome [7]

- Implement appropriate layout strategies that follow natural reading directions (left-to-right for linear processes, circular layouts for cycles)

- Ensure sufficient color contrast between foreground and background elements

The following diagram illustrates a standardized workflow for creating effective graphical abstracts that communicate holistic biological concepts:

Color and Accessibility Guidelines

For effective scientific communication, all visualizations must adhere to accessibility standards, particularly regarding color contrast. The Web Content Accessibility Guidelines (WCAG) specify minimum contrast ratios: at least 4.5:1 for normal text and 3:1 for large text or graphical objects [8] [9]. These guidelines ensure that visualizations are accessible to individuals with low vision or color vision deficiencies, representing approximately 8% of men and 0.4% of women [9].

Tools such as the WebAIM Contrast Checker can verify that color combinations meet these standards [8]. When creating systems biology diagrams, it is critical to explicitly set text colors to ensure high contrast against node background colors, rather than relying on default settings that may provide insufficient contrast for readability.

Applications in Biomedical Innovation and Therapeutic Development

The paradigm shift toward holism in biology has profound implications for biomedical research and therapeutic development. Systems biology approaches enable more predictive models of disease pathogenesis, more comprehensive assessment of therapeutic responses, and more strategic identification of intervention points in complex pathological networks [4] [6].

Exemplary applications include:

- Network-based drug discovery that targets emergent vulnerabilities in disease networks rather than single pathogenic factors

- Personalized therapeutic strategies based on multi-omics profiling of individual patients and their disease states

- Toxicology and safety assessment that evaluates system-wide responses to candidate therapeutics rather than limited adverse effect profiles

- Biomarker identification through integrated analysis of molecular networks dysregulated in disease states

Notable successes include the development of programmable oncogene targeting systems [6], novel diagnostic devices for colorectal cancer screening [6], and robust biosensor platforms using synthetic biology approaches [6]. These innovations demonstrate how holistic approaches can yield clinically impactful solutions that might remain inaccessible through purely reductionist strategies.

The integration of holistic principles with biomedical innovation represents a promising frontier for addressing complex diseases such as cancer, neurodegenerative disorders, and metabolic syndromes, which involve dysregulation across multiple biological scales and network systems rather than isolated molecular defects.

The paradigm shift from reductionism to holism in biology represents more than a theoretical debate; it constitutes a fundamental transformation in how we conceptualize, investigate, and manipulate biological systems. This shift has been catalyzed by both technological advances that enable comprehensive measurement and perturbation of biological systems, and by conceptual frameworks that emphasize emergent properties and network dynamics as fundamental principles of biological organization.

The most productive path forward lies not in rejecting reductionism entirely, but in synthesizing its analytical power with holistic perspectives that contextualize component-level knowledge within integrated systems. This integrative approach promises to accelerate biomedical innovation by providing more accurate models of biological complexity, more predictive frameworks for therapeutic intervention, and more comprehensive strategies for diagnostic and therapeutic development.

As systems biology continues to mature, its principles and methodologies will increasingly form the foundation for biological research and its translation into clinical applications. Embracing this holistic paradigm while acknowledging the enduring value of rigorous reductionist analysis offers the most promising approach to addressing the complex biological challenges that confront biomedical science in the 21st century.

Biological networks provide a fundamental framework for understanding the intricate organization and functional dynamics of living organisms. Within the paradigm of systems biology, these networks are not merely collections of individual components but represent the complex, interconnected web of interactions that give rise to biological function. This systems-level perspective is crucial for advancing biomedical innovation, as it enables researchers to move beyond studying isolated elements to understanding how these elements work together in health and disease. The interactome—the comprehensive network of molecular interactions within a cell—allows proteins to communicate and coordinate their activities across cellular compartments, enabling the complex functions essential for life [10].

Among the various types of biological networks, protein-protein interaction (PPI) networks and signaling pathways hold particular significance for biomedical research. PPIs constitute the physical contacts between two or more proteins that occur at specific domain interfaces and can be either transient or stable in nature [10]. These interactions are fundamental to virtually all cellular processes, including signal transduction, metabolic regulation, and structural organization. Signaling networks, which often incorporate PPIs as key components, govern how cells respond to external and internal stimuli through sophisticated phosphorylation cascades, protein translocations, and gene expression changes [11]. Together, these networks form the operational infrastructure of cellular systems, and their disruption is implicated in numerous disease pathologies, making them prime targets for therapeutic intervention.

Protein-Protein Interaction Networks: Architecture and Analysis

Fundamental Principles of PPIs

Protein-protein interactions form the backbone of most cellular signaling machineries. These interactions occur at specific sites on protein surfaces known as domain interfaces and are primarily influenced by the hydrophobic effect [10]. Unlike enzyme active sites, which typically feature deep clefts for substrate binding, PPI interfaces often encompass specific residue combinations, distinct regions, and unique architectural layouts that result in cooperative formations referred to as "hot spots" [10]. These hot spots are defined as residues whose substitution leads to a substantial decrease in the binding free energy (ΔΔG ≥ 2 kcal/mol) of a PPI [10]. The energetic contributions of hot spots stem from their localized networked arrangement within tightly packed "hot" regions, enabling flexibility and the capacity to bind to multiple different partners—a mechanism that explains how a single molecular surface can interact with multiple structurally distinctive partners.

The analysis of PPIs has evolved significantly from early observations of protein complexes to a deep understanding of their underlying mechanisms. This evolution has been marked by several technological milestones, including the first protein structure determination in 1958, the launch of the Human Protein Atlas project in 2003, the cryo-electron microscopy (cryo-EM) revolution in 2013, and the simultaneous release of AlphaFold and RosettaFold in 2021 [10]. These advancements have dramatically accelerated PPI research and therapeutic development, leading to FDA approvals of PPI modulators such as venetoclax, sotorasib, and adagrasib for various diseases [10].

Types of Biological Networks

Biological systems can be represented through several network types, each capturing different aspects of molecular relationships and functions. The table below summarizes the primary classes of biological networks relevant to PPI and signaling pathway analysis.

Table 1: Types of Biological Networks in Systems Biology Research

| Network Type | Nodes Represent | Edges Represent | Primary Research Applications |

|---|---|---|---|

| Protein-Protein Interaction (PPI) Networks | Proteins | Physical interactions between proteins | Mapping interactomes, identifying drug targets, understanding complex formation |

| Genetic Interaction Networks | Genes | Functional relationships (e.g., synthetic lethality) | Identifying gene functions, pathway relationships, combination therapies |

| Metabolic Networks | Metabolites | Biochemical reactions | Modeling flux balance, understanding metabolic diseases, metabolic engineering |

| Gene/Transcriptional Regulatory Networks | Genes, transcription factors | Regulatory relationships | Understanding gene expression control, cellular differentiation, disease mechanisms |

| Cell Signaling Networks | Signaling molecules | Signal transduction events | Modeling cellular decision-making, understanding drug mechanisms, cancer biology |

Each network type provides distinct insights into cellular organization. PPI networks emphasize physical complex formation, while signaling pathways focus on information flow. Genetic interaction networks reveal functional relationships between genes, and metabolic networks map biochemical transformations. The integration of these complementary network types enables a more comprehensive understanding of biological systems [12].

Experimental Methodologies for PPI and Signaling Network Analysis

Mass Spectrometry-Based Approaches

Mass spectrometry (MS) has emerged as a powerful, quantitative, and unbiased approach for studying PPIs and signaling networks under near-physiological conditions [11]. MS applications in network analysis include monitoring protein abundance changes, identifying post-translational modifications (PTMs), and characterizing PPIs through affinity purification followed by mass spectrometry (AP/MS). The decision points when using MS to study signaling events include sample preparation, choice of MS applications, pre-MS strategies, the MS itself, and post-MS data analysis [11].

For quantitative protein abundance analysis, several MS-based technologies have been developed to measure absolute or relative protein levels among different samples. These include traditional semi-quantitative MALDI-TOF and liquid chromatography (LC)-MS/MS approaches, as well as more advanced methods such as isotope-coded affinity tags (ICAT), stable isotope labeling by amino acids in cell culture (SILAC), isobaric tags for relative and absolute quantification (iTRAQ), tandem mass tags (TMTs), and triple-stage mass spectrometry (MS3) [11]. The TMT technology is particularly powerful for network studies as it allows as many as 54 samples to be tagged with different combinations of isobaric tags and analyzed in a single MS run, thereby providing relative protein abundance across multiple conditions or time points [11].

Table 2: Quantitative Mass Spectrometry Methods for Network Analysis

| Method | Principle | Advantages | Limitations | Applications in Network Biology |

|---|---|---|---|---|

| SILAC | Metabolic labeling with stable isotopes | High accuracy; minimal technical variation | Requires cell culture; limited to comparable cell types | Time-course studies of signaling dynamics |

| iTRAQ/TMT | Isobaric chemical tagging of peptides | Multiplexing (up to 54 samples); applicable to tissues | Ratio compression due to contaminating ions | Comparative network analysis across multiple conditions |

| Label-Free Quantification | Comparison of spectral counts or intensities | No chemical labeling; unlimited sample comparisons | Lower accuracy; requires strict standardization | Large-scale interactome mapping |

PTM analysis, particularly phosphoproteomics, represents another crucial application of MS in signaling network research. With proper enrichment strategies and quantitative MS approaches, global phosphoproteomic profiling has characterized numerous signaling pathways, including TGF-β signaling, Wnt signaling, insulin signaling, and proto-oncogene tyrosine-protein kinase Src signaling [11]. These studies provide insights into the regulation of signaling pathways and represent valuable resources for basic and clinical research.

Affinity Purification Mass Spectrometry (AP/MS) Workflow

AP/MS has become a cornerstone technique for identifying PPIs under near-physiological conditions and for characterizing protein complexes rather than just binary interactions [11]. The standard AP/MS protocol involves multiple critical steps that must be optimized for reliable results.

Detailed AP/MS Protocol:

Bait Selection and Tagging: The protein of interest (the "bait") is selected based on its relevance to the signaling pathway or biological process under investigation. The bait gene is cloned with an appropriate affinity tag (e.g., FLAG, HA, GFP, TAP tag) considering tag size, position (N- or C-terminal), and potential impact on protein function and localization.

Cell Culture and Transfection: Appropriate cell lines are selected that endogenously express relevant interaction partners. Cells are transfected or transduced with the tagged bait construct, with empty vector transfections serving as critical controls. Stable cell lines are often generated to ensure consistent expression levels.

Cell Lysis and Affinity Purification: Cells are lysed using conditions that preserve native interactions while minimizing non-specific binding. Lysis buffers typically contain:

- Mild detergents (e.g., 0.1-1% NP-40 or Triton X-100)

- Salt concentrations (e.g., 150 mM NaCl) to control stringency

- Protease and phosphatase inhibitors to preserve protein integrity and PTMs

- Benzonase to digest nucleic acids and reduce non-specific interactions Affinity purification is performed using tag-specific resins with extensive washing to remove non-specifically bound proteins.

Protein Elution and Digestion: Proteins can be eluted using tag-specific competing peptides (e.g., 3xFLAG peptide) or by low-pH conditions. Alternatively, proteins can be digested directly on-bead using trypsin to release peptides for MS analysis.

Mass Spectrometric Analysis: Eluted peptides are separated by reverse-phase liquid chromatography and analyzed by tandem mass spectrometry (LC-MS/MS). Data-dependent acquisition is commonly used to select the most abundant peptides for fragmentation.

Data Processing and Validation: MS/MS spectra are searched against protein databases using software such as MaxQuant or OpenMS. Statistical frameworks (e.g., SAINT, CompPASS) are applied to distinguish specific interactors from background contaminants using control purifications. Identified interactions should be validated through orthogonal methods such as co-immunoprecipitation or proximity ligation assays.

The AP/MS approach has been successfully applied to study numerous signaling pathways. For example, interactomes of core components of the Wnt signaling pathway, including Dishevelled-1/2/3, β-catenin, AXIN1, APC, and β-TRCP1/2, have been obtained using a streptavidin-based tandem AP/MS approach, uncovering several novel Wnt regulators [11]. Similarly, Smad2-interacting proteins have been profiled under different TGF-β stimulation conditions using multiple MS strategies [11].

Computational and Visualization Approaches for Network Analysis

Computational Prediction of PPIs and Network Modeling

Computational methods for predicting PPIs have advanced significantly, broadly falling into two categories: homology-based methods and template-free machine learning approaches [10]. Homology-based methods leverage the principle of "guilt by association," where proteins with significant sequence similarity to known interactors are predicted to interact similarly [10]. While accurate for well-characterized proteins, these methods are limited when experimentally determined homologs are unavailable.

Template-free machine learning methods identify patterns in vast datasets of known interacting and non-interacting protein pairs. These patterns are represented as features like amino acid sequences, protein structures, or interaction affinities that "train" the ML model [10]. Common algorithms include Support Vector Machines (SVMs) and Random Forests (RFs) [10]. More recently, large language models (LLMs) and advanced deep learning architectures have shown remarkable performance in predicting PPIs from sequence data alone.

Virtual screening represents another valuable computational approach for identifying PPI modulators. Structure-based virtual screening utilizes structural information of the target protein, while ligand-based virtual screening screens compounds fitting a pre-built pharmacophore model derived from known potent inhibitors [10]. Each approach has limitations—structure-based methods require well-defined binding pockets (often challenging in PPIs), while ligand-based methods depend on existing chemical matter for the target interface.

Network Visualization Principles

Effective visualization is crucial for interpreting biological networks and communicating findings. Several key principles should guide biological network figure creation:

Rule 1: Determine Figure Purpose and Assess Network - Before creating an illustration, establish its purpose and note whether the explanation relates to the whole network, a node subset, temporal aspects, topology, or other features [13]. This analysis should happen before drawing the network because the data included, the figure's focus, and the visual encoding sequence should support the intended explanation.

Rule 2: Consider Alternative Layouts - While node-link diagrams are most common, adjacency matrices offer advantages for dense networks [13]. Matrices list all nodes horizontally and vertically, with edges represented by filled cells at intersections. They excel at showing neighborhoods and clusters when node order is optimized and can encode edge attributes through color or saturation [13].

Rule 3: Beware of Unintended Spatial Interpretations - Node-link diagrams map nodes to spatial locations, and Gestalt principles of grouping influence reader perception [13]. Proximity, centrality, and direction are key principles: nodes drawn in proximity are interpreted as conceptually related; central placement suggests importance; and vertical/horizontal dimensions can represent power, information flow, or development [13].

Rule 4: Provide Readable Labels and Captions - Labels must be legible, using the same or larger font size as the caption font [13]. When direct labeling causes clutter, alternative approaches include reference numbers linked to a key or providing high-resolution versions for zooming.

Research Reagent Solutions for PPI and Signaling Network Studies

Table 3: Essential Research Reagents for PPI and Signaling Network Analysis

| Reagent Category | Specific Examples | Function and Application | Technical Considerations |

|---|---|---|---|

| Affinity Tags | FLAG, HA, GFP, TAP, Strep | Enable purification of protein complexes under near-physical conditions | Tag position and size may affect protein function and localization |

| Mass Spectrometry Reagents | iTRAQ, TMT, SILAC amino acids | Enable multiplexed quantitative proteomics | Choice depends on sample type, number of conditions, and required precision |

| Crosslinkers | DSS, BS3, formaldehyde | Stabilize transient interactions for MS detection | Optimization of concentration and reaction time required to preserve complex integrity |

| Phospho-Specific Antibodies | Anti-pSer/pThr/pTyr antibodies | Enrichment of phosphoproteins for signaling studies | Specificity validation crucial; combination with pan antibodies improves coverage |

| Protease Inhibitors | PMSF, protease inhibitor cocktails | Preserve protein integrity during purification | Broad-spectrum cocktails recommended for complex purification |

| Lysis Buffers | RIPA, NP-40, Triton X-114 | Extract proteins while maintaining interactions | Stringency affects complex preservation; mild detergents preferred for native PPIs |

| Bioinformatics Tools | MaxQuant, Cytoscape, StringDB | Data analysis, visualization, and network integration | Tool selection depends on data type and biological question |

Therapeutic Targeting of PPI Networks in Biomedical Innovation

Strategies for PPI Modulator Discovery

The therapeutic targeting of PPIs has historically been challenging due to the large, relatively flat nature of many interaction interfaces. However, several strategic approaches have emerged to address these challenges:

High-Throughput Screening (HTS) utilizes chemically diverse libraries that are often enriched with compounds likely to target PPIs to identify lead modulators [10]. However, HTS effectiveness can be hindered by lack of specific hot spots on some interfaces, motivating alternative approaches [10].

Fragment-Based Drug Discovery (FBDD) has proven particularly useful for PPI modulator design [10]. The presence of discontinuous hot spots on many PPI interfaces poses challenges for HTS but is amenable to binding smaller, low molecular weight fragments used in FBDD [10]. Interfaces rich in aromatic residues like tyrosine or phenylalanine have shown particular susceptibility to fragment hit identification [10].

Rational Drug Design has demonstrated success in identifying PPI modulators by utilizing structural information from hot spot analysis [10]. Computer modeling techniques coupled with phage display technology have enabled the rational design of peptidomimetics that recapitulate the secondary structure of key peptide helices, sheets, and loops within PPIs [10]. Among secondary structures used to design peptidomimetics, the α-helix has been most widely employed owing to its frequent occurrence and successful targeting [10].

PPI Modulators in Clinical Development

PPI modulators have transitioned from being considered "undruggable" targets to representing promising therapeutic opportunities. The FDA has approved several PPI modulators for various diseases, including maraviroc, tocilizumab, siltuximab, venetoclax, sarilumab, satralizumab, sotorasib, and adagrasib [10]. These successes demonstrate the feasibility of targeting PPIs and have paved the way for extensive drug development efforts in this area.

PPI modulators can be categorized as either inhibitors or stabilizers. While inhibitors disrupt interaction interfaces, stabilizers enhance existing complexes by binding to specific sites on one or both proteins [10]. Stabilizers present more challenging prospects than inhibitors because they often act allosterically, with binding sites that may not be readily apparent in protein structures [10]. Additionally, the cellular milieu further complicates stabilizer development, as post-translational modifications and other molecules can significantly influence PPI stability [10].

The lessons learned from successful PPI modulator development include the importance of hot spot characterization, the value of combining multiple approaches (HTS, FBDD, rational design), and the need to consider protein dynamics and allosteric mechanisms. These insights continue to inform the design of next-generation PPI modulators for challenging targets in oncology, inflammation, immunomodulation, and antiviral applications [10].

Biological networks, particularly PPI and signaling networks, represent foundational elements in systems biology approaches to biomedical innovation. The comprehensive analysis of these networks requires integrated experimental and computational strategies, ranging from AP/MS and quantitative proteomics to advanced computational prediction and visualization methods. As technologies continue to advance—including cryo-EM, AlphaFold, and machine learning—our ability to map, model, and therapeutically target these complex networks will dramatically improve. The successful development of FDA-approved PPI modulators demonstrates the translational potential of network-based approaches, offering promising avenues for addressing complex diseases through systems-level interventions. Future directions will likely focus on multi-omics integration, dynamic network modeling, and the development of increasingly sophisticated computational tools to decipher the intricate wiring of cellular systems.

The advent of "network medicine" has fundamentally transformed our understanding of human disease by revealing that most diseases are not consequences of abnormalities in single genes, but rather result from complex interactions and perturbations within vast biological networks [14]. In this context, hub and driver genes have emerged as critical players in disease pathogenesis and progression. These highly connected and influential genes act as central coordinators in biological processes crucial to the host's response to various disease states, making them essential for understanding disease mechanisms and developing targeted therapeutic strategies [15]. The identification of these genes represents a cornerstone of systems biology approaches to biomedical innovation, enabling researchers to move beyond reductionist models toward a more holistic understanding of disease complexity.

Hub genes are typically defined as genes with a high number of connections in biological networks, making them potentially powerful regulators of cellular processes. Driver genes, while sometimes overlapping with hub genes, are specifically defined as genes whose mutations provide a selective growth advantage to cells, thereby driving disease progression. The systematic identification of these key genes through network-based analysis provides a powerful framework for elucidating pathogenic mechanisms, classifying patients into distinct prognostic groups, and identifying potential therapeutic targets [16]. This technical guide provides an in-depth examination of the methodologies, applications, and experimental protocols for identifying and validating hub and driver genes within the framework of systems biology principles for biomedical research.

Core Concepts and Biological Significance

Defining Hub and Driver Genes in Biological Networks

In network-based analyses, hub genes are identified through their topological importance within protein-protein interaction (PPI) networks or co-expression networks. These genes typically exhibit high connectivity degrees, acting as critical intermediaries in cellular communication processes. The biological significance of hub genes stems from their potential to coordinately regulate multiple downstream pathways and biological processes. For instance, in a comprehensive study of Ebola virus disease (EVD) outcomes, researchers identified specific hub genes that differentiated fatal from survival outcomes, including upregulated hub genes (FGB, C1QA, SERPINF2, PLAT, C9, SERPINE1, F3, VWF) enriched in complement and coagulation cascades, and downregulated hub genes (IL1B, IL17RE, XCL1, CXCL6, CCL4, CD8A, CD8B, CD3D) associated with immune cell processes [15].

Driver genes, while conceptually related, are distinct in that they are defined by their causal role in disease progression rather than solely by their network position. In cancer research, driver genes are identified through controllability analysis of complex networks, pinpointing proteins with the highest control power over disease-associated modules [16]. These genes play crucial roles in biological systems by governing the dynamics of disease networks and potentially serving as leverage points for therapeutic intervention. The integration of these concepts provides researchers with complementary approaches for identifying genes of high biological importance through both structural network analysis and functional impact assessment.

Methodological Framework for Gene Identification

The identification of hub and driver genes follows a systematic workflow that integrates multiple data types and analytical approaches. Table 1 summarizes the primary data sources and analytical tools used in this process.

Table 1: Essential Resources for Hub and Driver Gene Identification

| Resource Type | Specific Tools/Databases | Primary Function | Key Applications |

|---|---|---|---|

| Gene Networks | STRING, HIPPIE | Protein-protein interaction data | Network construction [16] [17] |

| Expression Data | GEO, TCGA | Gene expression profiles | Differential expression analysis [18] |

| Analytical Tools | Cytoscape with cytoHubba | Network visualization and analysis | Hub gene identification via MCC algorithm [15] |

| Functional Analysis | DAVID, Enrichr | Pathway and GO term enrichment | Biological interpretation [15] |

| Prioritization Methods | Random Walk, Kernelized Score Functions | Gene-disease association scoring | Candidate gene ranking [14] |

A key advancement in the field has been the development of multiplex network approaches that integrate different network layers representing various scales of biological organization. As demonstrated in a landmark study analyzing 3,771 rare diseases, constructing a multiplex network consisting of over 20 million gene relationships organized into 46 network layers across six biological scales (genome, transcriptome, proteome, pathway, biological processes, and phenotype) enables a comprehensive evaluation of the impact of gene defects across biological scales [17]. This cross-scale integration is particularly valuable for contextualizing individual genetic lesions and investigating disease heterogeneity.

Experimental and Computational Protocols

Protocol 1: Identification of Hub Genes from Transcriptomic Data

This protocol outlines the systematic approach for identifying hub genes from gene expression data, as applied in studies of soft tissue sarcoma [18] and Ebola virus disease [15].

Materials and Reagents

- RNA-seq or microarray data from disease and control samples (sources: GEO, TCGA)

- Computational infrastructure: High-performance computing environment with sufficient RAM for large-scale network analysis

- Software tools: R programming environment with WGCNA package, Cytoscape with cytoHubba plugin, DESeq2 for differential expression analysis

- Reference databases: STRING database for PPI information, KEGG and GO for functional annotation

Step-by-Step Methodology

Data Preprocessing and Quality Control

- Obtain normalized RNAseq data and associated clinical data from public repositories (e.g., GSE21122 for sarcoma studies)

- Perform background correction using Robust Multi-array Average (RMA) algorithm and log base 2 normalization

- Check for batch effects through analysis of expression clusters, box plots, and principal components analysis (PCA)

- Identify sample outliers using sample network analysis based on squared Euclidean distance (threshold: z.k < 0.6)

- Select the top 3,000 genes ranked by median absolute deviation (MAD) to reduce background noise [18]

Network Construction and Module Detection

- Construct co-expression network using WGCNA package with appropriate soft power selection to achieve scale-free topology

- Identify modules using dynamic tree-cutting function (deepSplit = 2, minimum size cutoff = 30)

- Calculate module eigengenes (MEs) representing the most representative expression profile for each module

- Correlate MEs with clinical traits to identify disease-relevant modules

Hub Gene Identification

- Define intramodular connectivity for all genes within significant modules

- Select the top 20 genes with highest connectivity as candidate hub genes

- Validate hub genes through PPI network construction using STRING database

- Import PPI network into Cytoscape and identify hub nodes using Maximal Clique Centrality (MCC) algorithm via cytoHubba plugin

- Select genes appearing as hub genes in both co-expression and PPI networks as high-confidence hub genes [18]

Validation and Functional Analysis

Survival Analysis

- Utilize Gene Expression Profiling Interactive Analysis (GEPIA) for overall survival and disease-free survival analyses

- Divide patients into high and low expression groups based on mean expression level of each hub gene

- Generate Kaplan-Meier survival plots using "survival" package in R

Functional Characterization

- Perform Gene Set Enrichment Analysis (GSEA) to identify pathways enriched in samples with high module eigengene expression

- Conduct functional enrichment analyses using KEGG and GO databases via "clusterProfile" package in R

Figure 1: Workflow for Hub Gene Identification from Transcriptomic Data

Protocol 2: Network Controllability Analysis for Driver Gene Identification

This protocol describes the methodology for identifying driver genes through network controllability analysis, as demonstrated in brain cancer research [16].

Materials and Specialized Tools

- Disease-associated gene sets from literature mining (e.g., CORMINE medical database)

- PPI network data from STRING database

- Network analysis tools: Cytoscape with network clustering algorithms

- Controllability analysis framework: Custom implementation for identifying minimum driver sets

Step-by-Step Methodology

Gene Set Compilation

- Collect disease-associated genes from curated databases (e.g., CORMINE) using significance threshold (p-value < 0.05)

- Compile list of proteins corresponding to selected genes for PPI network construction

PPI Network Construction and Analysis

- Build PPI network using STRING database with confidence score threshold (default: 0.4)

- Perform degree centrality analysis to identify hub proteins (top 25 by connectivity)

- Execute network modularization using k-means clustering to identify functional modules

- Select module with highest ratio of hub proteins to total proteins as most relevant to disease

Controllability Analysis for Driver Gene Identification

- Orient edges within selected module based on causal relationships (from literature or regulatory interactions)

- Apply structural controllability analysis to identify minimum set of driver nodes

- Rank driver nodes by control power (ability to control largest portion of network)

- Select top candidate driver genes (e.g., 5 proteins with highest control power) for further validation [16]

Therapeutic Targeting Strategy

- Drug-Gene Interaction Mapping

- Query drug-gene interaction databases for compounds targeting identified driver genes

- Identify set of drugs effective against each driver gene as potential combination therapy

- Validate biological relevance through essentiality analysis in biological processes

Figure 2: Driver Gene Identification and Therapeutic Application

Case Studies and Applications

Infectious Disease: Ebola Virus Disease Outcomes

A 2024 study demonstrated the power of hub gene analysis to differentiate between fatal and survival outcomes in Ebola virus disease [15]. Researchers analyzed differentially expressed genes between fatal cases, survivors, and healthy controls, identifying:

- 13,198 DEGs in fatal groups and 12,039 DEGs in survival groups compared to healthy controls

- 1,873 DEGs specifically differentiating acute fatal and survivor groups

- Upregulated hub genes in fatal outcomes were enriched in complement and coagulation cascades

- Downregulated hub genes were associated with immune cell processes

This study identified CCL2 and F2 as unique hub genes in fatal outcomes, while CXCL1, HIST1H4F, and IL1A were upregulated hub genes unique to survival outcomes. These findings provide potential targets for developing targeted interventions for distinct EVD outcomes.

Oncology Applications

Brain Cancer Driver Genes

In a comprehensive analysis of brain cancer, researchers identified five proteins with the highest control power as driver genes through network controllability analysis [16]. The methodology included:

- Collection of 1,385 brain cancer-related genes from CORMINE database (p-value < 0.05)

- Construction of PPI network with 39,688 protein-protein interactions from STRING database

- Identification of 25 hub proteins through degree centrality analysis

- Network modularization revealing key functional modules

- Controllability analysis pinpointing driver genes with highest network control power

The resulting driver genes were considered potential targets for combination therapy, with drugs identified through drug-gene interaction analysis.

Soft Tissue Sarcoma Prognostic Markers

A co-expression network analysis of soft tissue sarcoma identified four hub genes (RRM2, BUB1B, CENPF, and KIF20A) associated with poor prognosis [18]. The study:

- Analyzed 156 samples using WGCNA

- Identified 20 network hub genes in the significant blue module

- Found 12 of these were also hub nodes in the PPI network

- Validated 4 genes showing poorer overall survival and disease-free survival

- Demonstrated enrichment in cell cycle and metabolism pathways through GSEA

Table 2 summarizes key findings from these case studies, highlighting the diverse applications of hub and driver gene analysis.

Table 2: Hub and Driver Gene Applications in Disease Research

| Disease Context | Identified Genes | Biological Pathways | Clinical Applications |

|---|---|---|---|

| Ebola Virus Disease | FGB, C1QA, SERPINF2 (up); IL1B, CD8A, CD3D (down) | Complement/coagulation cascades; Immune cell processes | Differentiating fatal vs. survival outcomes; Targeted interventions [15] |

| Brain Cancer | 5 driver proteins (not specified) | Network controllability structures | Combination therapy development [16] |

| Soft Tissue Sarcoma | RRM2, BUB1B, CENPF, KIF20A | Cell cycle and metabolism pathways | Prognostic biomarkers; Therapeutic targets [18] |

| Rare Diseases | Cross-scale network signatures | Multiple biological scales | Disease gene prediction; Mechanistic dissection [17] |

Successful identification of hub and driver genes requires specialized computational tools and biological resources. Table 3 provides a comprehensive list of essential materials and their applications in hub and driver gene research.

Table 3: Essential Research Reagent Solutions for Hub and Driver Gene Studies

| Resource Category | Specific Resource | Key Features/Functions | Application Context |

|---|---|---|---|

| Gene Networks | STRING Database | Known and predicted PPIs; Confidence scoring | Network construction [16] |

| Analytical Platforms | Cytoscape with cytoHubba | Network visualization; Hub gene identification (MCC algorithm) | Topological analysis [15] [18] |

| Expression Data Repositories | GEO (Gene Expression Omnibus) | Public repository of expression data | Data source for analysis [18] |

| Functional Analysis Tools | DAVID (Database for Annotation) | Functional enrichment analysis; Pathway mapping | Biological interpretation [15] |

| Prioritization Algorithms | Random Walk with Restart | Network propagation; Gene prioritization | Candidate gene ranking [14] |

| Validation Resources | GEPIA (Gene Expression Profiling) | Survival analysis; Expression profiling | Clinical validation [18] |

The identification of hub and driver genes represents a powerful approach within systems biology for unraveling the complexity of human disease. By integrating network-based analyses with functional validation, researchers can pinpoint critical regulatory nodes that govern disease pathogenesis and progression. The experimental protocols outlined in this guide provide a robust framework for conducting such analyses across diverse disease contexts.

Future directions in the field include the development of more sophisticated multiplex network approaches that integrate across biological scales, improved methods for incorporating single-cell data into network analyses, and the creation of more comprehensive databases linking network properties to therapeutic responses. Furthermore, the integration of artificial intelligence and machine learning techniques with network-based analyses promises to enhance our ability to identify clinically relevant hub and driver genes and translate these findings into personalized treatment strategies.

As network medicine continues to evolve, the systematic identification of hub and driver genes will play an increasingly important role in biomedical innovation, ultimately contributing to more effective targeted therapies and personalized treatment approaches for complex diseases.

Controllability theory provides a powerful framework for understanding how internal and external factors influence a system's dynamics, offering a principled approach to identifying intervention points. In the context of systems biology and biomedical innovation, this theory moves beyond traditional single-target approaches to consider the complex, multidimensional nature of biological systems [19]. The foundational principle of controllability theory is that a system's behavior can be directed toward a desired state through strategic manipulation of specific components, whether those components are neural circuits, molecular pathways, or emotional states [20] [19].

The clinical relevance of controllability is profound, with decades of research demonstrating that uncontrollable stress produces significantly more debilitating behavioral and physiological outcomes than equivalent amounts of controllable stress [20] [21]. This distinction explains individual differences in stress resilience and susceptibility to disorders such as depression and anxiety. More recently, computational psychiatry has formalized these concepts using control theory frameworks to quantify how interventions alter a system's intrinsic stability and sensitivity to external inputs [19]. This whitepaper explores how controllability theory, grounded in systems biology principles, provides a mechanistic foundation for developing targeted therapeutic interventions.

Foundational Concepts and Neural Mechanisms

Historical Foundations and Key Experiments

The concept of behavioral controllability emerged from seminal learned helplessness experiments where subjects exposed to uncontrollable adverse events developed profound passivity and learning deficits compared to those who could control the events [20]. The critical insight came from the triadic design experiment, which isolated controllability as the active ingredient in producing these divergent outcomes [20]. In this design, one group (Escapable) could terminate shocks via instrumental response, a second group (Inescapable) received yoked identical shocks but had no control, and a third group received no shocks. Only the Inescapable group later failed to learn escape behaviors in a new environment, demonstrating that psychological impact depends not merely on adverse event exposure but on whether responses can control outcomes [20].

Subsequent research revealed that uncontrollable stress produces a broad range of sequelae beyond poor escape learning, including reduced aggression, altered feeding patterns, disrupted sleep, and exaggerated fear responses [20]. This early work proposed a cognitive explanation: during uncontrollable stress, organisms learn that outcomes are independent of their behavior, forming expectations that undermine future attempts to exert control [20]. However, this original theory struggled to explain why these effects persist for only 2-3 days, prompting further neuroscientific investigation [20].

Neural Circuits of Controllability

Recent neuroscience research has fundamentally reversed the original learned helplessness explanation. Rather than uncontrollability actively producing debilitation, it is prolonged exposure to aversive stimulation itself that drives debilitating outcomes through potent activation of serotonergic neurons in the dorsal raphe nucleus (DRN) [20]. Controllable stressors prevent this outcome by engaging specific prefrontal circuitry that detects control and subsequently inhibits the DRN response [20].

Table 1: Key Neural Structures in Stress Controllability

| Neural Structure | Function in Controllability | Therapeutic Significance |

|---|---|---|

| Dorsal Raphe Nucleus (DRN) | Serotonergic activity drives stress debilitation; primary output for helplessness effects | Potential target for inhibiting stress pathology |

| Medial Prefrontal Cortex (mPFC) | Detects behavioral control; inhibits DRN response to controllable stress | Critical for resilience; can be strengthened through control experiences |

| Amygdala | Processes emotional salience; shows reduced activity during distancing interventions | Regulation via prefrontal connections enhances emotional control |

The critical distinction between controllable and uncontrollable stress is not what the organism learns, but whether the mPFC is activated to inhibit the DRN [20]. This circuit-based explanation resolves puzzling issues in the original theory: the time course of helplessness effects corresponds with DRN sensitization periods, and "immunization" through prior control experience occurs because control alters the prefrontal response to future adverse events, creating long-term resiliency [20]. This neural model suggests that therapeutic interventions should focus on activating or strengthening these control-detection circuits rather than merely correcting maladaptive cognitions.

Computational Frameworks for Quantifying Controllability

Dynamical Systems Approach to Emotional States

Control theory provides a formal framework for quantifying how interventions modify a system's controllability properties [19]. Emotions can be conceptualized as a dynamical system where different states interact and influence each other over time. Within this framework, distancing interventions function by altering both the intrinsic stability of emotional patterns and the extrinsic sensitivity to emotional stimuli [19].

In a landmark study applying this approach, researchers used a Kalman Filter to quantify how multidimensional emotional states changed with standardized emotional inputs (video clips) [19]. Participants reported emotional states across five dimensions repeatedly before and after a distancing intervention. Bayesian model comparison revealed that distancing altered the underlying emotional dynamics through two distinct mechanisms: stabilizing specific emotional patterns and reducing the impact of external emotional stimuli [19]. The controllability Gramian formally quantified how these changes affected the overall controllability of the emotional system [19].

Table 2: Computational Approaches to Quantifying Controllability

| Method | Application | Outcome Measures |

|---|---|---|

| Kalman Filter | Tracking multidimensional emotional state trajectories over time | State transitions, persistence, and interactions |

| Bayesian Model Comparison | Identifying intervention effects on system parameters | Changes in intrinsic stability vs. input sensitivity |

| Controllability Gramian | Quantifying overall system controllability | How easily states can be driven to desired values |

| Network Models | Mapping emotional state interactions | Identification of attractor states and transition probabilities |

Experimental Protocols for Assessing Controllability

For researchers investigating controllability in biological systems, the following methodologies provide robust approaches:

Emotional Dynamics Protocol: Participants report multidimensional emotional states repeatedly while viewing standardized emotional video clips. Emotional states are rated along key dimensions (e.g., valence, arousal) at frequent intervals. A Kalman Filter tracks state trajectories, quantifying how states change with inputs, persist over time, and interact with each other [19]. The protocol should include pre- and post-intervention assessments to measure changes in dynamical properties.

Stressor Controllability Assessment: Adapted from animal models, this paradigm exposes subjects to controllable versus uncontrollable stressors while measuring neural, physiological, and behavioral outcomes [20]. The essential design includes: (1) Escapable group with instrumental response to terminate stressor; (2) Yoked Inescapable group receiving identical stressor timing but no response efficacy; (3) No-stress control group. Outcome measures include subsequent learning performance, neural activation patterns (particularly mPFC-DRN circuitry), and physiological stress markers.

Intervention Timeline: Baseline assessment → Randomization to intervention or control condition → Intervention period (e.g., distancing training) → Post-intervention assessment using the same protocols as baseline → Computational modeling to quantify changes in system dynamics and controllability properties [19].

Therapeutic Applications and Intervention Strategies

Emotion Regulation as Control Enhancement

Psychotherapeutic interventions increasingly target emotion regulation strategies that enhance perceived control over emotional states [19]. Distancing, a core technique in cognitive behavioral therapies, involves simulating a new perspective to increase psychological distance from emotional stimuli [19]. Computational studies demonstrate that distancing works not by eliminating emotions but by altering the control properties of the emotional system - specifically, by making emotional states less externally controllable through increased intrinsic stability and reduced sensitivity to external inputs [19].

This framework explains why distancing is associated with decreased amygdala activity beyond the period of active regulation [19]. From a control theory perspective, the intervention modifies the system's dynamics such that external emotional stimuli have diminished impact, reducing the need for ongoing regulatory effort. This mechanism aligns with the neural evidence that control experiences produce lasting changes in prefrontal function that blunt stress responses [20].

Targeted Intervention Points in Biological Systems

Controllability theory suggests several strategic intervention points for biomedical innovation:

Prefrontal Control Circuitry: Interventions that strengthen mPFC function or its inhibitory connections to the DRN can enhance resilience to uncontrollable stress [20]. This might include neuromodulation approaches, pharmacological enhancement of prefrontal function, or behavioral therapies designed to activate these circuits through control experiences.

System Dynamics Modification: Rather than targeting specific symptoms, interventions can aim to modify the overall dynamics of pathological systems. For example, in mood disorders, this might involve destabilizing maladaptive attractor states (e.g., depressive states) while stabilizing healthy states [19].

Input Sensitivity Regulation: Treatments can focus on reducing a system's sensitivity to pathological inputs, analogous to how distancing reduces emotional sensitivity to external stimuli [19]. This approach is particularly relevant for disorders involving heightened sensitivity to stress or emotional triggers.

Research Reagent Solutions

Table 3: Essential Research Materials for Controllability Investigations

| Reagent/Resource | Function | Application Context |

|---|---|---|

| Standardized Emotional Video Clips | Provide controlled emotional inputs with known properties | Assessing emotional dynamics and intervention effects [19] |

| Kalman Filter Modeling Framework | Quantify state trajectories and system parameters | Computational analysis of emotional dynamics [19] |

| fMRI with DRN-specific Protocols | Measure neural activity in deep brainstem structures | Assessing mPFC-DRN circuit engagement during control [20] |

| Triadic Design Experimental Paradigm | Isolate controllability from stressor exposure | Fundamental stressor controllability research [20] |

| Bayesian Model Comparison Pipeline | Identify intervention effects on system parameters | Determining whether interventions affect intrinsic vs. extrinsic dynamics [19] |

Visualizing Controllability Pathways and Workflows

Neural Circuitry of Behavioral Control

Computational Assessment Workflow

Therapeutic Intervention Mechanisms

The contemporary landscape of biomedical innovation is defined by complexity, demanding a workforce capable of moving beyond traditional disciplinary silos. Systems biology represents a fundamental paradigm shift from reductionist biology to an integrative approach that seeks to understand the larger picture—be it at the level of the organism, tissue, or cell—by putting its pieces together [22]. This field leverages interdisciplinary approaches from biology, mathematics, computer science, and engineering to transform our understanding of complex biological processes [23]. The urgent need for professionals skilled in computational and biological integration is underscored by its central role in areas such as drug discovery, multi-omics integration, systems immunology, and clinical decision support tools [23]. Building this workforce requires a clear definition of the core competencies, experimental protocols, and computational tools that enable researchers to tackle the intricate challenges of modern biomedical research.

Core Competencies for an Integrated Workforce

Foundational Knowledge Domains

A professional in this field requires a synthesis of knowledge from traditionally separate domains. The foundational pillars include:

- Molecular and Cellular Biology: Deep knowledge of biological components, including signaling pathways, metabolic networks, and regulatory mechanisms.

- Computational Modeling: Skills in building quantitative models to simulate and predict the behavior of complex biological systems [22].

- Data Sciences: Proficiency in bioinformatics and statistical analysis to manage and interpret large, diverse datasets from technologies like genomics, proteomics, and mass spectrometry [22].

- Systems Theory: Understanding of network analysis, emergent properties, and feedback mechanisms that govern system-level behaviors.

Technical and Analytical Skills

The technical skill set must bridge experimental and computational workflows:

- High-Throughput Experimentation: Experience with genome-wide RNAi screens, mass spectrometry for proteomic analysis, and other technologies to generate key data sets [22].

- Quantitative Data Analysis: Ability to perform quantitative, system-wide analysis of components like the proteome to understand biochemical states and reaction rates [22].

- Software Proficiency: Competence with computational tools for model building and simulation, such as Simmune, which facilitates the construction of realistic multiscale biological processes [22].

Experimental Frameworks for Integrative Biology

The Perturbation-Analysis Cycle

A cornerstone of systems biology is the use of systematic perturbations to decipher the wiring and function of biological systems. As practiced at the NIH's Laboratory of Systems Biology, this involves using various stimuli—from Toll-like receptor (TLR) stimulations to vaccinations and natural genetic variations in humans—as valuable perturbations to deduce the structure of the underlying networks [22]. The process involves:

- Perturbation Application: Introducing controlled, systematic changes to the biological system.

- High-Dimensional Measurement: Using -omics technologies (e.g., genomics, proteomics) to capture genome-wide responses.

- Network Inference: Applying statistical and computational tools to build network models that connect genes, proteins, and epigenetic states from the perturbation data [22].

Single-Case (N-of-1) Experimental Designs

While randomized clinical trials (RCTs) determine average treatment effects, single-case experimental designs are crucial for personalized medicine, identifying optimal treatments for individuals [24]. This methodology is particularly useful for patients with rare diseases or comorbidities that exclude them from RCTs and for individualizing preventive measures [24]. The protocol involves:

- Repeated Measurements: Collecting many measurements on a single individual over time to establish a baseline and monitor responses.

- Experimental Manipulation: Introducing and withdrawing treatments according to a pre-determined plan within the same individual.

- Data Analysis: Using visual inspection of graphs and statistical analysis to determine if changes in symptoms were due to the treatment [24]. Results from several personalized trials can be incorporated into a meta-analysis to strengthen confidence in intervention effects across patients.

Computational and Data Integration Methodologies

Multi-Scale Computational Modeling

Computational models are integral for understanding complex biochemical networks that regulate interactions within the immune system and between hosts and pathogens [22]. A robust modeling workflow includes:

- Model Construction: Using tools like Simmune to build multi-scale models of biological processes, from intracellular signaling to intercellular communication [22].

- Simulation and Validation: Running simulations to generate predictions and iteratively refining models with fresh experimental data to ensure reality checks.

- Standardization: Employing systems biology markup languages (e.g., SBML3) to encode advanced models of cellular signaling pathways, ensuring reproducibility and interoperability [22].

Integrative Genomics and Data Analysis

The enormous amount of data from diverse sources requires sophisticated processing and integration. A top-down approach uses inferences from perturbation analyses to probe the large-scale structure of interactions at the cellular, tissue, and organism levels [22]. Key steps include:

- Data Aggregation: Collecting and analyzing data on gene expression, miRNAs, epigenetic modifications, and commensal microbes [22].

- Statistical Integration: Developing and applying statistical tools for large and diverse datasets, such as from microarrays and high-throughput screenings.

- Network Model Building: Constructing models that integrate different data types (genes, proteins, miRNAs, epigenetic states) to form a cohesive understanding of system behavior [22].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 1: Key Research Reagents and Materials in Systems Biology

| Reagent/Material | Function in Research |

|---|---|

| Short Interfering RNA (siRNA) Libraries | Enables genome-wide RNAi screens to characterize signaling network relationships and identify key components in cellular networks, such as innate immune pathogen-sensing pathways [22]. |

| Mass Spectrometry Reagents | Facilitates system-wide quantitative analysis of the proteome, including investigations of post-translational modifications like protein phosphorylation to reveal the biochemical state of cells [22]. |

| Phospho-Specific Antibodies | Used in western blotting and immunofluorescence to detect and quantify specific protein phosphorylation events, a common mode of protein-function regulation [22]. |

| Toll-Like Receptor (TLR) Agonists/Antagonists | Well-defined perturbation tools for stimulating innate immune pathways (e.g., TLR4) to study the resulting complex cellular responses and signaling network dynamics [22]. |

| Continuous Glucose Monitors | Provides high-frequency, longitudinal physiological data (e.g., for blood glucose regulation), which is ideal for single-case experimental designs and monitoring individual patient responses [24]. |

Visualizing Systems Biology Workflows

The following diagrams, generated with Graphviz, illustrate core workflows and logical relationships in integrative biological research. The color palette adheres to specified guidelines for clarity and accessibility.

Systems Biology Data Integration

N-of-1 Trial Design

Multi-Scale Biological Modeling

Data Presentation and Visualization Standards

Effective communication of complex data is a critical skill for an integrated workforce. The presentation of quantitative information must follow established principles to ensure clarity and accuracy.

Variable Classification and Presentation

Understanding data types is fundamental to their correct presentation. Variables are specifically divided into categorical (qualitative) and numerical (quantitative) groups, each with specific presentation requirements [25].

Table 2: Variable Types and Their Presentation in Data Visualization

| Variable Type | Subtypes | Description | Recommended Charts |

|---|---|---|---|

| Categorical | Dichotomous (Binary) | Two categories only (e.g., Yes/No) [25] | Bar chart, Pie chart [25] |

| Nominal | Three or more categories with no ordering (e.g., blood types) [25] | Bar chart, Pie chart [25] | |

| Ordinal | Three or more categories with obvious ordering (e.g., Fitzpatrick skin types) [25] | Bar chart (ordered) | |

| Numerical | Discrete | Observations that can only take certain numerical values (e.g., age in years) [25] | Histogram, Frequency polygon, Table with cumulative frequencies [25] |

| Continuous | Measured on a continuous scale with many possible decimal places (e.g., height, blood pressure) [25] | Histogram (after categorization) [25] |

Guidelines for Effective Data Displays

- Self-Explanatory Graphics: Every table or graph should be understandable without needing to read the referring text. Titles, legends, and other explanatory information must be included on the same page [25] [26].

- Bar Chart Optimization: To make bar charts easily interpretable, augment the visual cue with the actual number placed on or next to the bar, provide a clear scale, order bars by performance, and use easily readable colors [26].

- Color and Accessibility: Color palettes for data visualization must be accessible, considering contrast ratios and color vision deficiencies. The Carbon Design System, for example, requires a 3:1 contrast ratio against the background for its categorical palette and incorporates features like divider lines and tooltips to assist comprehension [27]. A conventional starting point for categorical palettes is 5-7 distinct colors [28].

- Table Size Limitation: Large tables with dozens of data points can be intimidating and difficult to process. It is advisable to show no more than seven providers or seven measures in a single table to avoid overwhelming readers [26].

Building a workforce proficient in computational and biological integration requires a foundational shift in biomedical education and training. This entails moving from siloed curricula to integrated learning experiences that mirror the collaborative, team-based nature of modern systems biology labs [22]. The next generation of researchers must be fluent in both the languages of biology and computation, capable of designing perturbation experiments, constructing multi-scale models, and interpreting complex datasets. They must also be adept at communicating their findings through clear visualizations and adhering to rigorous experimental protocols, from large-scale trials to N-of-1 designs. By embracing these educational frontiers, the biomedical research community can cultivate the innovators needed to drive the next wave of discovery and translate systems biology principles into tangible health solutions.

The Modeler's Toolkit: QSP, MIDD, and AI-Driven Workflows

Quantitative Systems Pharmacology (QSP) has emerged as a transformative computational discipline that integrates systems biology and pharmacology to advance biomedical innovation. QSP employs mathematical models to characterize biological systems, disease processes, and drug mechanisms, creating a crucial bridge between traditional PK/PD modeling and the complex network biology that underpins physiological and pathological states [29]. This approach represents an evolution beyond conventional pharmacometric methods by incorporating mechanistic, multi-scale representations of biological systems to simulate drug effects from molecular targets to clinical outcomes [30].