Synthetic Biology Toolkits and Registries: A Comprehensive Guide for Researchers and Drug Developers

This article provides a comprehensive overview of the rapidly evolving landscape of synthetic biology toolkits and registries, tailored for researchers, scientists, and drug development professionals.

Synthetic Biology Toolkits and Registries: A Comprehensive Guide for Researchers and Drug Developers

Abstract

This article provides a comprehensive overview of the rapidly evolving landscape of synthetic biology toolkits and registries, tailored for researchers, scientists, and drug development professionals. It explores the foundational principles of tool registries and their role in the biological design cycle, details methodological applications in bioproduction, biosensing, and therapeutic development, addresses key challenges in troubleshooting and optimization for real-world deployment, and offers a framework for the validation and comparative selection of tools. By synthesizing current resources and emerging trends, this guide aims to empower professionals in efficiently selecting and utilizing computational and experimental tools to accelerate innovation in biomedicine.

Navigating the Synthetic Biology Toolkit: Core Principles and Key Resources

Synthetic biology is an interdisciplinary field that aims to transform our ability to probe, manipulate, and interface with living systems by applying engineering principles to biological design [1]. This represents a fundamental shift from traditional genetic engineering, distinguished by its emphasis on engineering principles including standardization, modularization, and abstraction [2] [1]. A core desirable consequence of this perspective is that these principles enable the separation of labor, expertise, and complexity at each level of a biological design hierarchy [2]. This framework allows researchers to manage biological complexity by dividing systems into manageable levels—DNA, parts, devices, and systems—enabling more efficient and predictable engineering of biological functions [1].

The field's theoretical foundation is realized through the biological design cycle, a forward-design approach where a biological system is specified, modeled, analyzed, assembled, and its functionality tested [2]. This iterative process of design, build, and test is central to all synthetic biology workflows [2]. The expansion of the synthetic biology toolkit can be attributed to a dynamic community including academic researchers, iGEM undergraduate students, and DIY BIO enthusiasts, all contributing to the development of standardized, characterized, and reusable biological components [2]. This guide provides a comprehensive technical overview of the core elements of the synthetic biology toolkit, framed within the context of modern engineering paradigms.

The Core Toolkit: A Hierarchical Architecture

The synthetic biology toolkit is structured hierarchically, allowing for complexity management through defined abstraction levels. This structure enables the predictable composition of simple biological components into increasingly complex systems.

Foundational Components: Bioparts and Standards

At the molecular level, bioparts are the basic building blocks of synthetic biology. These discrete DNA sequences encode specific biological functions [2]. Examples include promoters, ribosomal binding sites (RBS), coding sequences (CDS), and terminators [2]. The concept of the biopart is fundamental; it allows a particular DNA sequence to be defined by its function, enabling complex biological functions to be conceptually separated from their native sequence contexts [2].

Standardization is critical for ensuring interoperability and predictability. Physical assembly standards, such as the BioBrick standard, provide standardized sequences flanking biological parts, enabling their interchangeable combination via constant restriction-enzyme/ligation-mediated cloning [2] [3] [1]. However, the field is increasingly shifting toward assembly methods that do not require restriction-enzyme-mediated cloning to avoid impairing functional assembly [1]. Functional assembly standards focus on identifying sequence interfaces that allow predictable functional coupling between parts, independent of their specific sequences [1]. Additionally, measurement and reporting standards ensure reliable characterization data, supporting the sharing and reuse of parts across the research community [1].

Table 1: Major Registries of Standard Biological Parts

| Registry Name | Key Features | Scale | Primary Maintainer |

|---|---|---|---|

| iGEM Registry of Standard Biological Parts | Open registry with parts of variable quality; mostly uncharacterized | Over 12,000 parts across 20 categories [2] | iGEM Community |

| BIOFAB | Professional registry with expansive libraries of characterized DNA-based regulatory elements [2] | Not Specified | BIOFAB |

| SynBITS (Synthetic Biology Index of Tools and Software) | Online community structured according to the design cycle [2] | Not Specified | Research Community |

From Parts to Devices and Systems

Bioparts are combined to form devices, which are integrated biological units that perform defined functions. Examples include genetic toggle switches and oscillators (repressilators) that encode dynamic, computational operations [2] [1]. These devices can be further integrated into systems that execute complex tasks, such as biosynthetic pathways for chemical production or engineered cellular therapies [1].

A significant challenge in this hierarchical integration is context dependency, where the behavior of a part or device changes depending on its surrounding genetic environment [2]. Developing synthetic passive and active insulator sequences is one strategy to increase predictability by reducing this context dependency [2]. Furthermore, chassis selection—the choice of host organism—is a critical design decision, as the chassis provides the metabolic environment, energy sources, and molecular machinery that directly influence the behavior and function of the synthetic system [2].

The Design Phase: Engineering Predictable Biology

The design phase focuses on specifying biological systems with predictable behaviors, leveraging computational tools and modeling to plan genetic constructs before physical assembly.

Computational Tools and Modeling

Computational biology and modeling are essential for predicting the behavior of synthetic biological systems. In silico modeling allows researchers to simulate system dynamics, optimize designs, and identify potential failures prior to construction [2]. Early successes like the toggle switch and repressilator demonstrated this approach, though they also revealed limitations, as their in vivo behavior displayed stochastic fluctuations not fully captured by initial models [2]. The field is now adopting high-throughput characterization platforms that use automated liquid-handling robots and plate readers to test entire biopart libraries in parallel, generating data to refine models and improve predictability [2].

Recently, Artificial Intelligence (AI) has begun to revolutionize biological design. AI-driven tools, such as AlphaFold, enhance protein structure prediction, while generative AI models are being used for de novo protein design, enabling the creation of novel protein structures with atom-level precision beyond evolutionary constraints [4]. AI-powered platforms are also accelerating gene synthesis and optimizing biomanufacturing processes [5] [6].

Software Platforms for Biological Design

Integrated software platforms streamline the entire design process. For example, TeselaGen's platform accelerates synthetic biology research by providing a comprehensive, automated toolkit for DNA design, sequence alignment, and genetic schematic visualization [7]. Such platforms often include features for:

- Intelligent Sequence Partitioning: Analyzing lengthy DNA sequences and dividing them into optimal fragments for assembly methods like Gibson or Golden Gate [7].

- Combinatorial Design and Validation: Allowing researchers to define validation logic to constrain designs, such as ensuring all coding sequences begin with "ATG," thereby reducing human error [7].

- Inventory Integration: Cross-referencing existing DNA part inventories to identify reusable components, reducing synthesis costs and accelerating project timelines [7].

The Build Phase: DNA Assembly Methodologies

The build phase translates designed genetic systems into physical DNA molecules. An expanding repertoire of DNA assembly methodologies, grouped into four broad strategies, enables the construction of genetic circuits, pathways, and even entire genomes.

Table 2: DNA Assembly Methodologies and Techniques

| Assembly Strategy | Key Technique(s) | Principle | Typical Application Scale |

|---|---|---|---|

| Restriction Enzyme-Based | BioBrick Assembly | Uses standardized restriction sites and ligation to combine parts [2]. | Parts to Devices |

| Overlap-Directed | Gibson Assembly, Golden Gate Assembly | Uses homologous overlaps (Gibson) or type IIS restriction enzymes (Golden Gate) for scarless, multi-part assembly [2] [7]. | Devices to Systems |

| Recombination-Based | Transformation-Associated Recombination (TAR), MAGE | Uses homologous recombination in vivo (e.g., in yeast) or in vitro to assemble large constructs or perform genome editing [1]. | Systems to Genomes |

| DNA Synthesis | de novo Gene Synthesis | Chemically synthesizes DNA oligonucleotides and assembles them into gene-length fragments or longer [1]. | Parts to Systems |

Experimental Protocol: Gibson Assembly

Gibson Assembly is a powerful one-step, isothermal in vitro method for assembling multiple DNA fragments. The following provides a detailed methodology [2] [7]:

- Fragment Preparation: Generate DNA fragments with 20-40 bp overlapping ends that are homologous to the adjacent fragments. These can be generated via PCR with primers designed to include the overlaps or by restriction enzyme digestion.

- Master Mix Preparation: Prepare a master mix containing:

- T5 Exonuclease: Chews back the 5' ends of the DNA fragments to create single-stranded 3' overhangs.

- Phusion DNA Polymerase: Fills in the gaps within the annealed fragments.

- Taq DNA Ligase: Seals the nicks in the assembled DNA backbone.

- Assembly Reaction: Combine the DNA fragments and the master mix in an equimolar ratio. Incubate at 50°C for 15-60 minutes. The isothermal reaction allows all three enzymes to work simultaneously.

- Transformation: Transform the assembled product directly into competent E. coli for propagation.

Experimental Protocol: Multiplex Automated Genome Engineering (MAGE)

MAGE is used for large-scale, targeted genome editing and is highly effective for pathway optimization [1].

- Oligonucleotide Design: Design a pool of single-stranded DNA (ssDNA) oligonucleotides containing the desired allelic replacements (e.g., point mutations, RBS modifications). The oligos must be flanked by homology arms complementary to the target genomic loci.

- Preparation of Cells: Use a strain of E. coli that expresses the bacteriophage λ-Red single-stranded-DNA-binding protein β, which promotes homologous recombination.

- Cycling Process:

- The cells are made electrocompetent.

- The pool of ssDNA oligos is introduced into the cells via electroporation.

- Cells are allowed to recover and grow, incorporating the mutations.

- This cycle is repeated multiple times (automation enables high throughput) to accumulate diverse genomic modifications across a population of cells.

- Screening: A high-throughput screening method is essential to identify cell variants with the desired combination of phenotypic traits from the diverse library generated.

Diagram: MAGE Workflow for Genome Engineering. This diagram outlines the iterative cycle of introducing genetic modifications using the MAGE platform.

The Test Phase: Rapid Prototyping and Characterization

The test phase involves measuring the performance of the constructed biological system against design specifications, closing the loop in the design cycle.

Measurement and Characterization Tools

Rapid prototyping platforms are crucial for accelerating the test phase. These often integrate automation, such as liquid-handling robots coupled with plate readers, to enable high-throughput characterization of genetic constructs [2]. Microfluidics approaches are also gaining traction for their ability to perform assays at small scales and with high precision [2]. These platforms, when combined with automated data analysis, provide the basis for the rapid feedback required for iterative design improvement.

The evaluation of synthetic biological systems extends beyond performance to include biosafety and bioethics. For novel, structurally unprecedented proteins created through de novo design, robust risk assessments are required to address potential risks such as immune reactions, disruptions to native cellular pathways, and environmental persistence [4]. Future methodologies are expected to integrate closed-loop validation with multi-omics profiling for comprehensive risk assessments [4].

The Role of Synthetic Data

Synthetic data—artificially generated datasets that replicate the statistical characteristics of real experimental data—are emerging as a valuable tool in the test phase [8]. They can mitigate concerns about data privacy and accessibility when sharing results. However, a challenge is the lack of standardized evaluation metrics. Tools like SynthRO (Synthetic data Rank and Order) provide user-friendly dashboards for benchmarking synthetic health data across three key metric categories [8]:

- Resemblance Metrics: Evaluate if the correlation structure among features of the original dataset is preserved.

- Utility Metrics: Assess the usability of outcomes from machine learning models trained on the synthetic data.

- Privacy Metrics: Evaluate the risk of disclosing private information from the original dataset.

Applications and Research Reagent Solutions

Synthetic biology toolkits are being applied across diverse sectors, including medicine, industry, and agriculture. In healthcare, they enable the development of precision medicine through tailored therapies, novel drug discovery initiatives, and the creation of engineered tissues [5] [6] [9]. Industrially, they are used for the biofuels and sustainable biomanufacturing of chemicals and materials [5].

Table 3: Essential Research Reagent Solutions in Synthetic Biology

| Reagent/Tool Category | Specific Examples | Function in Research |

|---|---|---|

| Oligonucleotides & Synthetic DNA | Custom DNA/RNA Oligos, Gene Fragments | Essential for gene synthesis, CRISPR-based genome editing, and molecular diagnostics [5] [9]. |

| Cloning & Assembly Kits | Gibson Assembly Kit, Golden Gate Kit | Provide optimized enzymes and buffers for efficient, standardized assembly of DNA parts [9]. |

| Chassis Organisms | E. coli, S. cerevisiae, B. subtilis | Engineered host cells that provide the structural and metabolic framework for synthetic systems [2] [9]. |

| Enzymes | Restriction Enzymes, Polymerases, Ligases | Molecular scissors, copiers, and glue for manipulating DNA in vitro [9]. |

| Software Platforms | TeselaGen, AI-driven Protein Design Tools | Enable digital biological design, project management, and data analysis, reducing human error [4] [7]. |

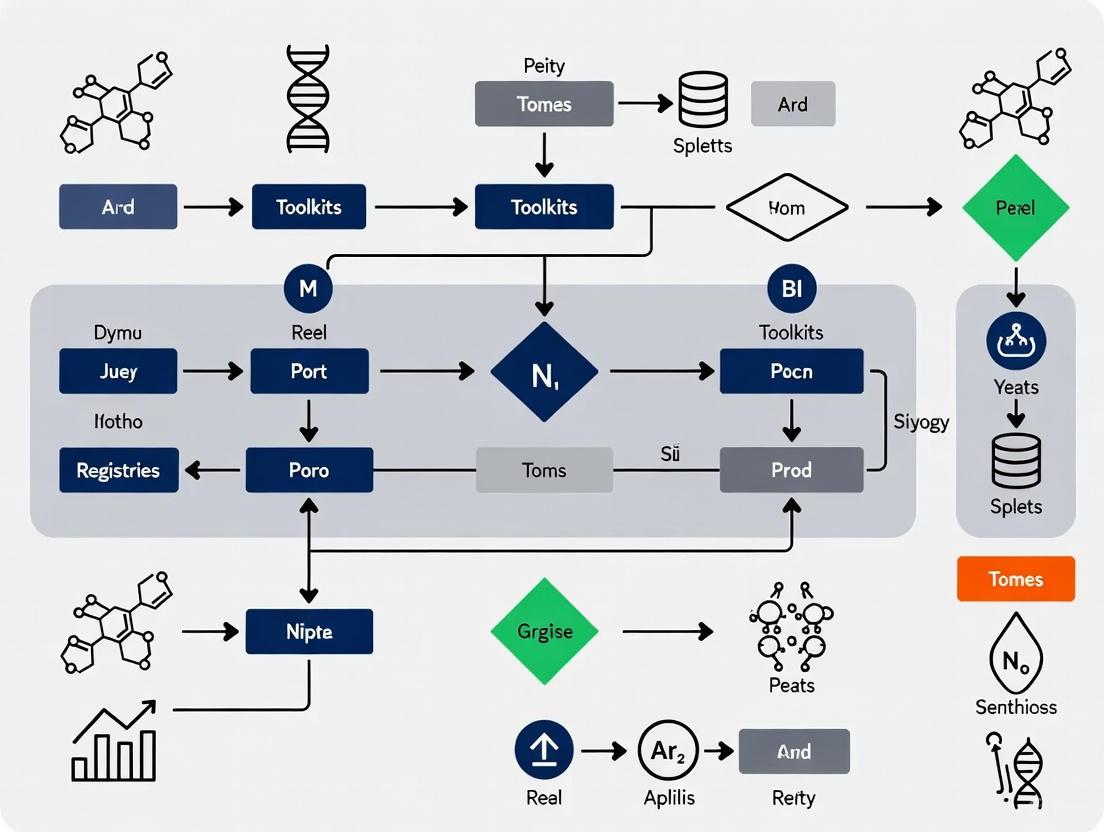

Diagram: DBTL Cycle in Synthetic Biology. The core engineering cycle in synthetic biology is an iterative process of Design, Build, Test, and Learn.

The synthetic biology toolkit has evolved from a collection of ad hoc genetic engineering techniques into a principled engineering discipline founded on standardized bioparts, hierarchical design, and iterative cycles of design, build, and test. The ongoing integration of AI-driven design, automated fabrication, and high-throughput characterization is set to further advance the field's capacity to address complexity. While challenges in predictability, context dependency, and regulatory frameworks remain, the continued expansion and maturation of the toolkit are paving the way for transformative applications across medicine, manufacturing, and environmental sustainability. The future points toward the integration of these tools into a hierarchical design framework for advancing from the creation of tailored de novo functional protein modules to the development of full-synthetic cellular systems [4].

The Role of Tool Registries and the Biological Design Cycle

The engineering of biological systems remains a complex challenge, requiring iterative refinement to achieve desired specifications. The systematic application of the Design-Build-Test-Learn (DBTL) cycle, supported by comprehensive tool registries, is fundamental to advancing synthetic biology from an ad-hoc practice to a predictable engineering discipline. This whitepaper examines the critical role tool registries play in supporting each phase of the biological design cycle. It further explores how the integration of machine learning and high-throughput experimental data is beginning to bridge the predictive gap that has traditionally hampered biological design efficiency. By providing researchers with structured methodologies and curated resources, these frameworks significantly accelerate the development of novel biologics, sustainable biomaterials, and precision therapies.

The Biological Design Cycle: A Framework for Engineering Living Systems

Synthetic biology research and development predominantly follows an iterative Design-Build-Test-Learn (DBTL) loop [10]. This recursive engineering process allows researchers to progressively refine biological systems until they meet desired specifications for a particular application, such as a target titer, rate, or yield [11].

- Design: In this initial phase, researchers define the biological system to be created and plan the necessary genetic modifications. This involves designing new genetic circuits, selecting standardized biological parts from libraries, or using computational models to simulate system behavior before physical implementation [10].

- Build: The designed genetic sequences are physically realized in this phase. Researchers synthesize or assemble the required DNA using techniques such as gene synthesis, cloning, and CRISPR-Cas9 genome editing, inserting the constructs into microbial chassis or other host organisms [10].

- Test: The constructed biological system is experimentally evaluated to measure its performance against the design goals. This may involve assaying gene expression, measuring the production of a desired molecule, or profiling system behavior using multi-omics technologies (transcriptomics, proteomics, metabolomics) [11] [10].

- Learn: Data collected from the Test phase is analyzed to extract insights into the system's behavior. This critical step informs the next Design phase, enabling more intelligent and effective designs in each subsequent cycle [11] [10]. The Learn phase has traditionally been the most weakly supported, but machine learning (ML) is increasingly used to leverage experimental data for improved prediction and design [11].

Quantifying the Engineering Bottleneck

The DBTL cycle can be analyzed as a search process through the vast space of possible biological designs. The efficiency of this process is governed by the amount of information gained per test cycle [12]. Long development times for pioneering products like artemisinin and propanediol, which required hundreds of person-years, underscore the historical inefficiency of this search [11]. The primary bottleneck stems from a critical gap: while high-level design tools and low-level build/test tools have advanced rapidly, predictive models accurate enough to reliably select the best designs for testing are often lacking [12]. This "biological design barrier" results in multiple, costly iterations. The application of Amdahl's law to the DBTL cycle shows that the overall engineering time is a product of the time per cycle and the number of cycles required. Thus, even significant improvements in the speed of "Build" and "Test" phases yield diminishing returns if the "Learn" phase is ineffective and the number of cycles remains high [12].

Diagram: The iterative DBTL cycle in synthetic biology. Tool registries and predictive models directly support the Design phase, which is informed by data from the Learn phase.

Tool registries are curated collections of databases, computational tools, and experimental methods that improve the accessibility, sharing, and reuse of resources critical for synthetic biology [13]. They serve as a foundational infrastructure for the field, helping researchers navigate the rapidly expanding ecosystem of bioinformatics resources.

SynBioTools: A Specialized Registry for Synthetic Biology

SynBioTools is an example of a comprehensive, one-stop facility specifically dedicated to synthetic biology tools [13]. It addresses a key market need, as no previous registry comprehensively addressed all aspects of synthetic biology. Its construction involved:

- Data Extraction: Tools were systematically extracted from 37 review articles using a custom scientific table-extraction tool named SCITE (SCIentific Table Extraction) [13].

- Categorization: Resources are grouped into nine application-specific modules: compounds, biocomponents, protein, pathway, gene-editing, metabolic modeling, omics, strains, and others. This functional grouping aids users in selecting the right tool for their specific biosynthetic task [13].

- Enhanced Metadata: Each tool is accompanied by its URL, description, source references, and citation counts, which helps users gauge a tool's popularity and reliability [13].

A critical finding is that approximately 57% of the resources in SynBioTools are not listed in bio.tools, the dominant general-purpose bioinformatics tool registry [13]. This highlights the unique value of specialized registries in uncovering resources that might otherwise be overlooked.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key categories of tools and reagents essential for executing the synthetic biology DBTL cycle, along with their primary functions.

Table: Key Research Reagent Solutions and Tools in Synthetic Biology

| Tool Category | Specific Examples | Primary Function in DBTL Cycle |

|---|---|---|

| Oligonucleotide/Synthetic DNA [5] [6] | Custom gene fragments | Building blocks for gene construction; essential for the Build phase. |

| Cloning Technology Kits [5] [6] | Assembly kits (e.g., Gibson Assembly) | Standardized methods for assembling DNA parts; used in the Build phase. |

| Genome Editing Technology [5] [10] | CRISPR-Cas9 | Precise modification of an organism's genome; central to the Build phase. |

| Enzymes [5] [6] | Polymerases, restriction enzymes | Catalyze DNA synthesis, digestion, and modification; critical for Build. |

| Chassis Organisms [5] [6] | E. coli, S. cerevisiae | Optimized host organisms for engineering; the platform for Test. |

| DNA Sequencing [10] | High-throughput sequencing | Verification of constructed DNA sequences; used in Test and Learn. |

| Computational Modeling Tools [13] [11] | Pathway prediction, ML models | In-silico design and prediction; supports Design and Learn. |

Quantitative Landscape of Synthetic Biology Tools

The development of databases and computational tools has accelerated rapidly in recent decades. An analysis of the tools cataloged in SynBioTools reveals clear temporal and geographical trends that reflect the growth of the field.

Table: Temporal Distribution of Tool Development by Application Module (Based on SynBioTools Data [13])

| DBTL Module | Pre-2000 | 2000-2009 | 2010-2019 | 2020-Present |

|---|---|---|---|---|

| Pathway Design | Foundational | Steady Growth | High Growth | Continued Innovation |

| Protein Design | Limited | Emergence | Rapid Growth | AI-Driven Advances |

| Gene Editing | Basic Tools | Key Discoveries | CRISPR Revolution | Precision Editing |

| Metabolic Modeling | Foundational | Constraint-Based | Integration with Omics | ML Enhancement |

| Omics Analysis | Low Throughput | Technologies Emerge | High-Throughput Standard | Single-Cell & Multi-Omics |

The data indicates that while tools in areas like pathway design have been developed over a longer timeframe, more specialized modules like protein design and gene editing have seen the majority of their growth within the last 10-15 years, coinciding with technological breakthroughs [13]. Furthermore, the United States, China, and Germany are the top three countries developing the tools and databases listed in SynBioTools, indicating their leading roles in the field's computational infrastructure [13].

Experimental Protocols: Integrating Machine Learning into the DBTL Cycle

Bridging the predictive gap in the Learn phase is a primary focus of modern synthetic biology. The following protocol details the use of machine learning to enhance this phase, as exemplified by the Automated Recommendation Tool (ART).

Protocol: Machine Learning-Guided Strain Recommendation with ART

Objective: To use machine learning and probabilistic modeling in the Learn phase of the DBTL cycle to recommend genetic modifications that optimize the production of a target molecule [11].

Materials and Reagents:

- Experimental data from previous DBTL cycles (e.g., proteomics data, promoter combination data, production titers).

- Computational environment with installed ART software (https://github.com/JBEI/art).

- Standard reagents for Build and Test phases as relevant to the project (e.g., listed in Section 2.2).

Methodology:

- Data Import and Preprocessing:

- Import training data into ART. Data can be loaded directly from the Experimental Data Depo (EDD) online tool or from EDD-style CSV files [11].

- The input data (features, X) can be various types, such as targeted proteomics measurements of pathway enzymes or combinatorial promoter activities. The response variable (Y) is typically the production titer, rate, or yield of the desired molecule [11].

- Format the data into a table where each row represents a tested strain and columns represent the input features and the output production level.

Model Training and Uncertainty Quantification:

- ART employs a Bayesian ensemble approach, combining models from the scikit-learn library, to train a predictive model that links the input features to the output production [11].

- Unlike models that provide only point estimates, ART outputs a full probability distribution for the predicted production of any new input, rigorously quantifying predictive uncertainty. This is crucial for guiding experiments effectively when data is sparse [11].

Generating Recommendations:

- Define the engineering objective within ART (e.g., maximize, minimize, or achieve a specified production level).

- ART uses sampling-based optimization to search the input feature space and provide a set of recommended strains (e.g., proteomic profiles or genetic designs) predicted to achieve the goal [11].

- The tool also estimates the probability that at least one of the recommendations will be successful, helping researchers prioritize which strains to physically build and test in the next DBTL cycle [11].

Validation Case Study: In a project to improve tryptophan production in yeast, the integration of ART with genome-scale models led to a 106% increase in productivity from the base strain. This demonstrates the practical efficacy of machine learning in guiding bioengineering even without a full mechanistic model of the underlying biological system [11].

Tool registries and the biological design cycle are deeply intertwined elements of a mature synthetic biology ecosystem. Specialized registries like SynBioTools provide the curated, accessible resources necessary to inform the Design phase effectively. Meanwhile, the formalization of the DBTL cycle, particularly through the enhancement of the Learn phase with machine learning tools like ART, is systematically addressing the critical predictive gap in biological design. As the field continues to grow, driven by advancements in AI, genome editing, and high-throughput technologies [5] [6], the continued development and integration of comprehensive tool registries and sophisticated learning frameworks will be paramount. This synergy is essential for breaking the biological design barrier and unlocking the full potential of synthetic biology across medicine, manufacturing, and environmental sustainability.

The field of synthetic biology relies heavily on computational resources, databases, and standardized biological parts to accelerate research and development. These resources are scattered across various platforms, making discovery and selection challenging for researchers, scientists, and drug development professionals. This whitepaper provides an in-depth technical analysis of three major public registries—SynBioTools, bio.tools, and the iGEM Registry—that address this fragmentation through specialized approaches. SynBioTools serves as a comprehensive, manually curated collection specifically for synthetic biology tools, bio.tools operates as a broad, community-driven registry for life sciences tools, and the iGEM Registry functions as the definitive repository for standardized biological parts. Together, these platforms form a critical infrastructure supporting the synthetic biology workflow from part selection to computational analysis. Understanding their complementary scopes, technical architectures, and access methodologies enables researchers to strategically leverage these resources throughout the drug development pipeline and biological engineering lifecycle.

Registry Comparative Analysis

Table 1: Core Characteristics and Scope Comparison

| Feature | SynBioTools | bio.tools | iGEM Registry |

|---|---|---|---|

| Primary Focus | Synthetic biology databases, tools, and experimental methods [14] | Broad bioinformatics resources (databases, tools, services) for all life sciences [15] | Standardized Biological Parts for synthetic biology [16] |

| Resource Types | Computational tools, databases, experimental methods (e.g., DNA assembly) [14] | Databases, tools, services, workflows, workbenches [15] | Biological parts (DNA sequences), collections, documentation [16] |

| Classification System | Nine modules based on biosynthetic applications (e.g., compounds, proteins, pathways) [14] | EDAM ontology (topics, operations, data types, formats) [15] | Categories, types, compatibilities [16] |

| Unique Identifier | Not specified | Unique, URL-safe Tool ID [15] | UUID and Part Name [16] |

| Update Mechanism | Extraction from review articles via SCITE tool & manual curation [14] | Community & developer contributions, ELIXIR nodes curation [15] | Community submissions via web interface or API [16] |

Table 2: Technical Architecture and Access

| Feature | SynBioTools | bio.tools | iGEM Registry |

|---|---|---|---|

| Technology Stack | FastAPI, Bootstrap, MongoDB, Elasticsearch [14] | Information not fully specified in search results | REST API [16] |

| Access Method | Web interface [14] | Web interface, API [15] | Web interface, REST API (with Python wrapper) [16] |

| Data Model | Common and unique fields for tools [14] | Formalized biotoolsSchema with controlled vocabularies [15] | Pydantic models (Part, Annotation, License, etc.) [16] |

| Programmatic Access | Information not specified in search results | HTTP-based API for query and updates [17] | Full REST API; igem_registry_api Python package [16] |

| License Information | Included where available [14] | Mandatory open licensing information [15] | License information for parts [16] |

SynBioTools: A One-Stop Facility

Methodology and Data Acquisition

SynBioTools employs a systematic methodology for aggregating synthetic biology resources, focusing on extraction from scientific review articles. The data acquisition pipeline begins with retrieving tool references from established sources like bio.tools and literature datasets including the Semantic Scholar Open Research Corpus (S2ORC) and PubMed [14]. The platform specifically targets review articles published between 2010 and 2022 that cite more than 100 tools, from which 37 synthetic biology-related reviews were manually selected for tool extraction [14]. A key innovation in their methodology is SCIentific Table Extraction (SCITE), a custom-built tool that combines PaddleOCR for optical character recognition from PDFs and the tidypmc R package for parsing PubMed Central full-text XML files [14]. This hybrid approach enables efficient extraction of tabular data from diverse article formats. Following automated extraction, all data undergoes manual curation to correct inaccuracies and standardize formatting, ensuring each table row corresponds to one tool. The final integration phase supplements extracted data with direct references and common fields (names, modules, citations), resulting in a comprehensively annotated resource collection [14].

Classification and Functional Modules

SynBioTools organizes resources into nine specialized modules based on tool characteristics and potential biosynthetic applications [14]:

- Compounds: Tools for compound selection and analysis

- Biocomponents: Resources for biological element selection

- Protein: Tools for protein selection and design

- Pathway: Resources for pathway mining and design

- Gene-editing: Tools for genetic modification

- Metabolic Modeling: Resources for metabolic network modeling

- Omics: Tools for omics analysis

- Strains: Resources for strain modification and analysis

- Others: Miscellaneous synthetic biology tools

This modular approach allows researchers to quickly navigate to tools relevant to their specific workflow stage, with detailed comparisons of similar tools within each classification to facilitate selection [14].

SynBioTools Data Acquisition Workflow

bio.tools: The ELIXIR Tools Registry

Community-Driven Curation Framework

bio.tools employs a distributed, community-driven curation model supported by the ELIXIR infrastructure. The platform mandates only basic information (name, short description, and homepage) for resource registration but supports rich annotation of approximately 50 scientific, technical, and administrative attributes [15]. All resource descriptions must conform to biotoolsSchema, a formalized schema that implements rigorous semantics and syntax, extensively using controlled vocabularies from the EDAM ontology to ensure consistency and comparability [15]. This ontological framework provides concise, standardized terminology for describing tool topics, operations, input and output data types, and supported formats. The curation process is facilitated through multiple channels: direct contributions from developers and providers, coordinated curation assistance from the core bio.tools team and ELIXIR partners, and community-led workshops [15]. This multi-tiered approach distributes the curation burden while maintaining quality standards. The platform also integrates utilities to pull tool information from workbench environments like Galaxy and code repositories like GitHub, further streamlining the curation process [15].

Technical Implementation and Interoperability

bio.tools is designed as an interoperable registry with a focus on integration with computational workflows. The system assigns unique, persistent tool identifiers that provide a pragmatic means for software citation and traceability, particularly valuable for resources without traditional publications [15]. These identifiers form stable URLs that resolve to Tool Cards containing essential resource information. The registry's API supports both query operations and automated creation/update of accessions, enabling programmatic integration [15]. A key interoperability feature is bio.tools' alignment with FAIR data principles, making resources more findable, accessible, and reusable [15]. The platform actively develops services to combine and export bio.tools data in workflow configuration formats used by platforms like Galaxy and the Common Workflow Language [15]. This technical architecture positions bio.tools as a central indexing service rather than merely a static catalog, bridging resource discovery with practical implementation in analytical pipelines.

iGEM Registry: Standard Biological Parts

API Architecture and Programmatic Access

The iGEM Registry provides a comprehensive REST API that offers programmatic access to all main features of the registry, including parts retrieval, modification, and publishing [16]. To address documentation gaps and high entry barriers, the community has developed a Python wrapper package (igem_registry_api) containing over 7,500 lines of code with extensive inline comments and complete docstrings [16]. This package implements more than 15 Pydantic models—including Part, Annotation, Author, Organisation, License, and Type—that validate API responses and provide a structured, Pythonic interface that mirrors the Registry's architecture [16]. The implementation includes robust session management and automatic handling of the Registry's rate limits, which is particularly crucial for bulk operations like downloading the entire parts catalog [16]. The Python package extends native Registry functionality with additional capabilities such as local BLAST searches against downloaded sequences and integration with bioinformatics pipelines and electronic lab notebooks [16].

Table 3: iGEM Registry API Python Models and Functions

| Component Type | Name | Function |

|---|---|---|

| Pydantic Models | Part |

Represents part data with sequence and metadata |

Annotation |

Handles biological annotations | |

Author |

Manages author information | |

Organisation |

Handles institutional affiliations | |

License |

Manages usage rights | |

Type |

Categorizes part types | |

| Client Methods | connect() |

Establishes anonymous connection |

sign_in() |

Authenticates with credentials | |

fetch() |

Retrieves parts with pagination | |

| Extended Features | Local BLAST | Sequence similarity searching |

| Rate limit handling | Manages API request throttling | |

| Bulk operations | Enables large-scale data retrieval |

Experimental Protocol: Programmatic Parts Retrieval and Analysis

This protocol details methodology for leveraging the iGEM Registry API for systematic retrieval and analysis of standardized biological parts, enabling reproducible research workflows.

Materials and Reagents

- Computational Environment: Python 3.8+ environment with

igem_registry_apipackage installed [16] - Authentication Credentials: iGEM account (optional for public parts, required for private data) [16]

- Analysis Tools: BLAST+ executable for local sequence analysis (if using BLAST functionality) [16]

Procedure

Installation and Setup

Connection and Authentication

Parts Retrieval

Advanced Analysis

iGEM Registry API Interaction Flow

Research Reagent Solutions

Table 4: Essential Computational Tools and Resources

| Resource Name | Type | Function | Registry |

|---|---|---|---|

| BLAST | Computational Tool | Sequence similarity searching [14] | SynBioTools, bio.tools |

| KEGG | Database | Pathway and functional information [14] | SynBioTools, bio.tools |

| GO (Gene Ontology) | Database | Gene function standardization [14] | SynBioTools, bio.tools |

| STRING | Database | Protein-protein interaction networks [14] | SynBioTools, bio.tools |

| NCBI | Database | Comprehensive biological data [14] | SynBioTools, bio.tools |

| MAFFT | Computational Tool | Multiple sequence alignment [14] | SynBioTools, bio.tools |

| Graphviz | Library | Diagram visualization [16] | External dependency |

| PaddleOCR | Toolkit | Optical character recognition [14] | External dependency |

| iGEM Part | Biological Part | Standardized DNA sequence [16] | iGEM Registry |

SynBioTools, bio.tools, and the iGEM Registry represent complementary pillars of the synthetic biology infrastructure ecosystem. Each registry addresses distinct needs within the research workflow: SynBioTools provides specialized discovery of computational tools through its curated, application-focused modules; bio.tools offers a comprehensive, interoperable registry spanning the entire life sciences domain; and the iGEM Registry delivers standardized biological parts with sophisticated programmatic access. For researchers and drug development professionals, strategic utilization of these platforms can significantly accelerate project timelines—from initial bioinformatics analysis and tool selection to biological part identification and experimental implementation. The ongoing development of these registries, particularly in API functionalities, cross-platform integration, and community-driven curation, continues to enhance their utility as essential resources powering innovation in synthetic biology and therapeutic development.

Specialized Databases for Targeted Applications (e.g., Plant Synthetic BioDatabase)

Synthetic biology applies engineering principles to redesign biological systems, offering innovative solutions across medicine, agriculture, and industrial biotechnology [18]. The field relies on standardized, well-characterized biological parts ("bioparts") such as promoters, coding sequences, and regulatory elements [19]. However, a significant challenge hindering progress, particularly in plant synthetic biology, has been the scarcity of specialized databases that provide these characterized components compared to the resources available for microbial systems [20].

Specialized databases address this gap by offering curated, application-focused biological data. They mobilize and integrate research data from diverse sources, providing standardized information and tools that are essential for rational design [21]. For plant synthetic biology, which lags behind microbial counterparts due to fewer well-characterized bioparts, these resources are particularly critical for advancing the redesign and construction of novel biological devices [20]. This guide provides a technical overview of leading specialized databases, their quantitative content, and methodologies for their application in research and development.

The landscape of specialized biological databases is diverse, spanning general genomic repositories, organism-specific resources, and application-focused platforms for synthetic biology. The following table summarizes key databases and their quantitative data holdings.

Table 1: Specialized Biological Databases and Their Data Holdings

| Database Name | Primary Focus | Key Data Holdings | Quantitative Scope |

|---|---|---|---|

| Plant Synthetic BioDatabase (PSBD) [20] | Plant Synthetic Biology | Catalytic bioparts, regulatory elements, species, chemicals | 1,677 catalytic bioparts, 384 regulatory elements, 309 species, 850 chemicals |

| DSCI [22] | Innate Immunity Synthetic Biology | Innate immune signaling components, regulatory relationships | 1,240 independent components, >4,000 specific entries from literature |

| RDBSB [18] [23] | General Synthetic Biology (Catalytic Bioparts) | Bioparts for synthetic biology | Focus on catalytically active parts with experimental evidence |

| Ensembl Plants [24] | Plant Genomics | Genome assembly, annotation, variation, regulation | Multiple plant genomes of scientific interest |

| Plant DNA C-values [24] | Plant Genomics | Genome size (C-value) data | C-values for 8,510 plant species |

| Phytozome [24] | Plant Comparative Genomics | Sequenced and annotated plant genomes | Access to 58 sequenced and annotated green plant genomes |

Beyond the application-specific databases, core biodata resources provide foundational data that supports synthetic biology research. These include The Alliance of Genome Resources for model organisms, BRENDA for enzyme functional data, and UniProt for protein sequence and functional information [21]. The Registry of Standard Biological Parts (parts.igem.org) also serves as a foundational community repository for bioparts, particularly from the iGEM competition [22].

Database Construction and Curation Methodologies

The utility of a specialized database hinges on its data quality, curation methodology, and standardization. The construction of high-quality resources like DSCI and PSBD involves rigorous, multi-layered literature mining and data integration workflows.

Table 2: Comparative Experimental Protocols for Database Curation

| Protocol Step | DSCI Methodology [22] | PSBD Methodology [20] |

|---|---|---|

| 1. Literature Mining | Three-layer process: 1. Broad retrieval via keywords (e.g., "innate immunity"). 2. Detailed retrieval for regulatory relationships (e.g., "ubiquitination"). 3. Protein-centric search to ensure data integrity (e.g., "RIG-I"). | Data collected from published literature and other biological databases to catalog bioparts and regulatory elements. |

| 2. Data Extraction & Annotation | Manual curation of 12 data items from figures and text: signaling proteins, interactions, modifications, sites, enzymes, references, expression, function, stability, stimuli, and biological process. | Curation of parts with functional information, including catalytic activity and regulatory function. |

| 3. Experimental Validation | Data sourced from experimentally validated literature. Evidence extracted from Western Blot (protein stability), RT-qPCR (expression), and Mass Spectrometry (modification sites). | Incorporated bioparts are demonstrated to be functional, as shown by experimental characterization (e.g., taxadiene synthase). |

| 4. Data Integration & Standardization | Protein annotations (sequence, localization) integrated from UniProt/NCBI. Data managed in MySQL. | Integration of part information with species and chemical data. Online tools (BLAST, phylogenetics) provided. |

| 5. Visualization & Access | Regulatory networks and signaling motifs visualized using Echarts. Web interface built with HTML, CSS, JavaScript. | Web-based platform with tools for rational design of genetic circuits. |

The following workflow diagram generalizes the core process for building a specialized database, as implemented in these resources.

Application in Research: Experimental Workflows

Specialized databases enable specific, advanced research workflows. The following demonstrates a functional characterization and circuit design process using PSBD.

Case Study: Utilizing PSBD for Enhanced Protein Expression

Researchers demonstrated PSBD's utility by functionally characterizing a taxadiene synthase 2 gene and implementing its quantitative regulation in tobacco leaves [20]. The workflow involved:

- Part Identification: The target gene, taxadiene synthase 2, was identified and its sequence was used as a query against PSBD.

- Circuit Design: Using the regulatory elements (e.g., promoters, terminators) cataloged in PSBD, more powerful synthetic transcriptional devices were designed and assembled. These devices were engineered to amplify transcriptional signals.

- Implementation and Testing: The genetic circuits were introduced into tobacco plants. Quantitative measurements confirmed that these PSBD-informed designs successfully enabled enhanced expression of target proteins, such as flavivirus non-structure 1 proteins [20].

This case highlights how database-driven design leads to more predictable and successful outcomes in complex genetic engineering projects.

The Scientist's Toolkit: Key Research Reagent Solutions

The experimental workflows and database curation efforts rely on a core set of research reagents and materials. The following table details these essential tools and their functions.

Table 3: Essential Research Reagents and Materials for Synthetic Biology

| Research Reagent / Material | Function in R&D |

|---|---|

| Plasmid Vectors [25] | Backbone for cloning and maintaining genetic circuits; different vectors offer varied replication origins and selection markers. |

| Inducible & Constitutive Promoters [25] | Regulate the timing and level of gene expression; a library of characterized promoters is crucial for tunable control. |

| CRISPR Interference (CRISPRi) System [25] | Provides targeted gene repression (knockdown) for functional studies and metabolic engineering without permanent knockout. |

| BioBrick Parts [25] | Standardized DNA sequences that facilitate the modular assembly of complex genetic circuits from functional units. |

| Antibiotics for Selection [22] | Maintain selective pressure to ensure plasmid retention in bacterial and eukaryotic cultures during construction and testing. |

| Polymerases (for PCR, RT-qPCR) [22] | Amplify DNA fragments and quantify gene expression levels, essential for both construction and validation phases. |

| Antibodies for Western Blot [22] | Detect and quantify specific protein expression and post-translational modifications (e.g., phosphorylation). |

Specialized databases represent a critical infrastructure for the advancement of targeted applications in synthetic biology. Resources like the Plant Synthetic BioDatabase (PSBD) and DSCI move beyond simple data repositories by offering curated, experimentally validated components within their functional contexts, alongside integrated bioinformatics tools. The rigorous, multi-layered curation methodologies these databases employ ensure high data quality and reliability for research. As the field progresses, the continued development and enrichment of such specialized, application-focused databases will be paramount in translating the promise of synthetic biology into real-world solutions across medicine, agriculture, and industrial biotechnology.

In data-intensive and technically complex fields, workflow efficiency is a critical determinant of pace, scalability, and reproducibility. Standardization—the establishment of common protocols, data formats, and definitions—and abstraction—the organization of complex systems into simplified, hierarchical layers—are two interdependent engineering principles that powerfully address this need. This whitepaper examines their transformative impact, with a specific focus on their application within synthetic biology biofoundries and clinical data registries. In synthetic biology, the lack of standardized workflows has been identified as a major limitation to the scalability and efficiency of research [26]. Similarly, in healthcare, fragmented approaches to clinical data abstraction create inconsistencies that hinder quality improvement initiatives [27]. By exploring frameworks and quantitative evidence from these domains, this guide provides researchers and drug development professionals with actionable methodologies for implementing standardization and abstraction to accelerate discovery and development.

An abstraction hierarchy organizes a system's activities into discrete, interoperable levels, effectively separating the "what" from the "how." This separation streamlines communication, enhances modularity, and facilitates automation.

Research published in Nature Communications proposes a four-level abstraction hierarchy to address interoperability challenges in synthetic biology biofoundries [26]. This model effectively structures the entire Design-Build-Test-Learn (DBTL) cycle, a core engineering paradigm in the field.

Table 1: Four-Level Abstraction Hierarchy for Biofoundry Operations

| Level | Name | Description | Example |

|---|---|---|---|

| Level 0 | Project | The overarching goal to be fulfilled for an external user. | Engineering a microbial strain to produce a novel therapeutic. |

| Level 1 | Service/Capability | The specific functions the biofoundry provides to fulfill the project. | Modular long-DNA assembly; AI-driven protein engineering. |

| Level 2 | Workflow | A modular, DBTL-stage-specific sequence of tasks to deliver a service. | "DNA Oligomer Assembly" (Build); "Microplate Reading" (Test). |

| Level 3 | Unit Operation | The smallest unit of experimental or computational task, performed by a specific hardware or software. | "Liquid Transfer" (by a liquid handler); "Protein Structure Generation" (by RFdiffusion software). |

This hierarchical model allows engineers and biologists working at the project level (Level 0) to operate without needing deep expertise in the unit operations (Level 3) that will execute their vision [26]. The workflows (Level 2) are designed to be highly abstracted and modular, allowing for their reconfiguration and reuse to achieve different functional outcomes. For instance, the same "Liquid Media Cell Culture" workflow could be used for simple DNA amplification or a more complex cell-based enzyme assay, depending on the project's needs [26].

Figure 1: Abstraction Hierarchy for Biofoundries. This model separates high-level project goals from low-level operational details, streamlining the DBTL cycle [26].

A parallel concept is evident in healthcare, where the Health Outcomes Management Evaluation (HOME) model provides a structured framework for using clinical registry data for quality improvement [28]. This model also follows a cyclical, hierarchical process:

- Monitoring of Outcomes: Data is systematically collected from patient records.

- Identification of Improvement Potential: Data is analyzed, often with risk adjustment, to identify areas for improvement.

- Selection of Improvement Initiatives: Specific initiatives are chosen based on data insights.

- Implementation of Improvement Initiatives: Changes are applied to clinical practice.

This "improvement cycle" is supported by an organizational context that includes strategy, governance, and infrastructure [28]. The process begins with clinical data abstraction, which involves capturing key administrative and clinical data elements from medical records for purposes including quality improvement and patient registries [27]. The qualifications of the abstractor are critical; this function is often performed by coders, nurses, and Health Information Management (HIM) professionals who possess the necessary clinical knowledge and attention to detail [27].

Quantitative Evidence: Market Growth and Performance Metrics

The adoption of standardized and automated platforms is driving significant market growth and operational improvements, providing quantitative evidence of enhanced workflow efficiency.

Synthetic Biology Platforms Market Forecast

The global synthetic biology platforms market is experiencing rapid expansion, reflecting the growing reliance on standardized, automated workflows. This market encompasses enabling technologies, software platforms, and services that form the foundation of efficient biofoundries.

Table 2: Synthetic Biology Platforms Market Data and Segmentation (2025-2035)

| Metric | Value | Source/Notes |

|---|---|---|

| Market Size (2025) | USD 26.7 Billion | [29] |

| Projected Market Size (2035) | USD 54.27 Billion | [29] |

| Compound Annual Growth Rate (CAGR) | 19.4% | (2025-2035) [29] |

| Key Growth Drivers | Automated genome engineering, AI-controlled pathway optimization, high-throughput strain development. | [30] |

| Key Product Segments | Oligonucleotides, Enzymes, Cloning & Assembly Kits, Chassis Organisms, Software Platforms. | [29] |

This growth is propelled by technologies that directly contribute to standardization and abstraction. Modular biofoundries, cloud-based laboratory management systems, and automated DNA assembly platforms are transforming industrial biotechnology and pharmaceuticals by speeding up manufacturing and increasing precision [30]. Furthermore, strategic partnerships and scalable production technologies are key factors in the market's expansion.

In healthcare data management, the impact of standardized processes and expert human abstraction is measured through accuracy metrics. For example, specialized abstractors can achieve inter-rater reliability (IRR) scores exceeding 95% after targeted training and quality oversight, a significant improvement from baseline scores around 80-81% [31]. High accuracy is paramount, as a single misclassified procedure or overlooked complication can skew clinical metrics and impact patient care decisions [31].

The methods used for abstraction also reveal efficiency trade-offs. A 2021 survey found that manual abstraction (58%) remains the primary method in healthcare organizations, followed by natural language processing (NLP) (18%) and simple query (12%) [27]. This is because human abstractors can interpret complex documentation and contextual nuances that automated systems may miss, ensuring data integrity despite being more resource-intensive [32] [31].

Experimental Protocols: Implementing Standardized Workflows

The theoretical benefits of abstraction and standardization are realized through concrete, well-defined experimental protocols. The following section details a specific example from synthetic biology.

Protocol: CRISPRi Repression System Characterization

This protocol outlines the characterization of a modular CRISPR interference (CRISPRi) platform for tunable gene repression in a bacterial host, such as Acinetobacter baumannii, as part of a synthetic biology toolkit development [25]. The goal is to standardize the process for evaluating genetic parts and their performance in a genetic circuit.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for CRISPRi Characterization

| Item | Function/Description |

|---|---|

| Plasmid Vectors | Backbone for hosting the CRISPRi system (dCas9 gene) and sgRNA expression. |

| Inducible Promoters | Regulate the expression of the dCas9 protein (e.g., with anhydrotetracycline). |

| Constitutive Promoters | Drive consistent expression of the sgRNA. |

| sgRNA Expression Cassettes | Target the dCas9 protein to specific genomic loci for repression. |

| Reporter Gene | A gene (e.g., GFP) under the control of a target promoter; its knockdown indicates CRISPRi efficacy. |

| qPCR Assay | Quantitatively measure the repression of the target gene at the mRNA level. |

Methodology:

Component Cloning:

- Assemble the CRISPRi system by cloning a library of constitutive and inducible promoters upstream of the dCas9 gene into a plasmid vector.

- Clone a set of sgRNAs, targeting both non-essential and essential genes (e.g., biofilm-related genes), into a compatible plasmid vector [25].

Transformation and Culture:

- Co-transform the dCas9 and sgRNA plasmids into the target bacterial strain.

- Inoculate transformed colonies into liquid media containing appropriate antibiotics and the inducer molecule (if using an inducible system). Use a gradient of inducer concentrations to characterize tunability.

Functional Assessment (Testing):

- Flow Cytometry: For reporters like GFP, measure the fluorescence intensity of cells over time to quantify the level of repression relative to a control (non-targeting sgRNA).

- qPCR Analysis: Harvest cells at mid-logarithmic phase. Extract total RNA, synthesize cDNA, and perform qPCR using primers for the target gene. Calculate repression efficiency by comparing expression levels to controls using the 2^–ΔΔCt method.

- Phenotypic Assay: For targets like biofilm-forming genes, perform a crystal violet assay to correlate gene repression with reduced biofilm formation.

Data Integration (Learning):

- Aggregate the flow cytometry, qPCR, and phenotypic data.

- Model the input (inducer concentration/sgRNA identity) to output (repression level) relationship for the CRISPRi system.

- This characterized data becomes part of a standardized repository (registry) to inform future genetic circuit designs.

Figure 2: DBTL Workflow for Genetic Toolkit Characterization. A standardized experimental protocol follows the DBTL cycle to generate reproducible, reusable data for genetic circuit design [25].

The implementation of standardization and abstraction frameworks directly translates into measurable gains in workflow efficiency, characterized by increased throughput, improved reproducibility, and accelerated innovation.

In synthetic biology, the abstraction hierarchy decouples high-level design from low-level execution, enabling automation and reusability. A defined unit operation like "Liquid Transfer" can be consistently executed by a liquid-handling robot across countless different workflows, from PCR setup to cell culture feeding [26]. This modularity prevents "reinventing the wheel" for each new project. Furthermore, standardized data formats, such as the Synthetic Biology Open Language (SBOL), are crucial for ensuring that information flows seamlessly between different levels of the hierarchy and between different biofoundries, facilitating collaboration and data reuse [26].

In healthcare, the move towards structured abstraction models—whether centralized under HIM or Quality departments—helps eliminate the inefficiencies and errors of fragmented, decentralized data collection [27]. While manual abstraction leverages critical human expertise, the integration of NLP and other automated methods points to a future of hybrid workflows that balance accuracy with speed [27] [31]. The ultimate impact is on the quality of care: accurate data abstraction enables clinicians to identify high-risk patients and prioritize interventions, directly improving patient outcomes [32] [31].

In conclusion, the strategic application of standardization and abstraction is not merely a technical exercise but a fundamental driver of efficiency and quality. For researchers and drug development professionals, adopting these principles through structured frameworks, standardized protocols, and specialized toolkits is essential for navigating the complexity of modern biology and healthcare, ultimately accelerating the translation of discovery into application.

From Code to Cell: Applying Toolkits in Bioproduction, Biosensing, and Therapeutics

Implementing the Design-Build-Test-Learn (DBTL) Cycle

The Design-Build-Test-Learn (DBTL) cycle is a systematic, iterative framework that serves as the cornerstone of modern synthetic biology and metabolic engineering. This engineering-based approach provides a structured methodology for developing and optimizing biological systems, enabling researchers to engineer organisms for specific functions such as producing biofuels, pharmaceuticals, or other valuable compounds [33]. The cycle's power lies in its iterative nature, where complex biological projects rarely succeed on the first attempt but instead make progressive refinements through multiple, sequential cycles [34].

As a discipline, synthetic biology applies rational engineering principles to the design and assembly of biological components. However, the impact of introducing foreign DNA into a cell can be difficult to predict, creating the need to test multiple permutations to obtain desired outcomes [33]. The DBTL framework addresses this challenge directly by emphasizing modular design of DNA parts, automation of assembly processes, and systematic learning from experimental data [33]. This methodology has become increasingly vital as the field advances, with recent innovations incorporating machine learning and cell-free systems to accelerate the engineering process [35].

The Core DBTL Framework

Phase 1: Design

The Design phase initiates the DBTL cycle by defining clear objectives for the desired biological function and creating a rational plan based on specific hypotheses or learnings from previous cycles [34]. This phase relies on domain knowledge, expertise, and computational modeling approaches to select and arrange genetic parts such as promoters, ribosomal binding sites (RBS), and coding sequences into functional circuits or devices [35] [34]. During this stage, researchers also define precise experimental protocols and metrics that will be used to assess success [34].

The design process occurs at multiple levels. On the abstract level, researchers define the objects of construction and modification, while on the practical level, they develop detailed plans for experimental implementation [36]. Computational tools have become increasingly important for managing the complexity of biosystems, despite the persistent challenge of limited predictive models [36]. Modern design strategies often incorporate machine learning algorithms and protein language models that can capture evolutionary relationships and predict structure-function relationships, enabling more efficient and scalable biological design [35].

Phase 2: Build

In the Build phase, theoretical designs are translated into physical, biological reality through hands-on molecular biology techniques [34]. This involves DNA synthesis, plasmid cloning, and transformation of engineered constructs into host organisms [34]. The building process can follow either a bottom-up approach, constructing new systems from standardized parts, or a top-down approach, modifying existing biological systems through genome engineering [36].

Automation has dramatically enhanced the Build phase, with biofoundries now enabling high-throughput construction of biological systems [36]. These facilities leverage robust DNA assembly methods and versatile genome engineering tools to generate large libraries of biological strains [33]. Traditional cloning methods often involved manual colony screening using sterile pipette tips, toothpicks, or inoculation loops—processes prone to human error, labor-intensive, and time-consuming [33]. Automated workflows have overcome these limitations, significantly increasing throughput while reducing costs and development timelines [33] [36].

Phase 3: Test

The Test phase focuses on robust data collection through quantitative measurements of the engineered system's performance [34]. Various assays characterize system behavior, including measuring fluorescence to quantify gene expression, performing microscopy to observe cellular changes, or conducting biochemical assays to measure metabolic pathway outputs [34]. This experimental validation is crucial for determining the efficacy of the Design and Build phases [35].

Despite advancements in other phases, testing often remains the throughput bottleneck in DBTL cycles [36]. However, recent technological innovations have enabled multi-omics analysis with improved efficiency and speed [36]. Advanced analytical technologies now allow characterization at multiple systems scales, including genetic constructs, genome, transcriptome, proteome, and metabolome [36]. For genotyping, methods have evolved from gel electrophoresis and Sanger sequencing to more sophisticated approaches like colony qPCR and Next-Generation Sequencing (NGS) [33].

Phase 4: Learn

In the Learn phase, data gathered during testing is analyzed and interpreted to extract meaningful insights [34]. Researchers determine whether the design functioned as expected, what principles were confirmed, and in cases of failure, identify the underlying reasons [34]. This analytical process transforms raw experimental data into knowledge that directly informs the next Design phase [35].

The learning process has been revolutionized by computational tools and machine learning approaches that can detect patterns in high-dimensional biological data [35]. With the increasing complexity of biological systems and experiments, human analysis alone is often insufficient [36]. Computational learning methods now enable researchers to build predictive models, identify statistical patterns, and generate hypotheses for the next DBTL cycle [36]. This knowledge creation forms the critical bridge that closes the DBTL loop, enabling continuous improvement across iterations.

DBTL Workflow Visualization

The following diagram illustrates the cyclical nature and key activities of the DBTL framework:

DBTL Cycle Workflow

Experimental Protocols and Methodologies

Case Study: DBTL in Action for Anti-adipogenic Protein Discovery

A comprehensive research project successfully demonstrates the practical application of iterative DBTL cycles to identify and validate a novel anti-adipogenic protein from Lactobacillus rhamnosus [34]. The systematic approach narrowed the active component from the whole bacterium to a single, purified protein through three consecutive DBTL cycles.

DBTL Cycle 1: Effect of Raw Lactobacillus Bacteria

- Design: Test the hypothesis that direct contact with Lactobacillus could inhibit adipogenesis by co-culturing six different Lactobacillus strains with 3T3-L1 preadipocytes during differentiation at various Multiplicities of Infection (MOI: 1, 10, 100) [34].

- Build: Culture six bacterial strains and establish a 7-day protocol for inducing adipogenesis in 3T3-L1 cells, involving bacterial treatment during media changes followed by gentamycin treatment after 24 hours [34].

- Test: Measure lipid accumulation using Oil Red O staining and statistical analysis comparing negative controls to bacteria-treated adipocytes [34].

- Learn: Most tested strains, particularly L. delbrueckii, L. casei, L. crispatus, L. rhamnosus, and L. gasseri, inhibited lipid accumulation by 20-30%, confirming anti-adipogenic effects and prompting investigation into the mechanism [34].

DBTL Cycle 2: Effect of Bacterial Supernatant

- Design: Determine if secreted extracellular substances mediated the observed effect by treating 3T3-L1 cells with filtered supernatant from bacterial cultures at concentrations of 25%, 50%, and 75% [34].

- Build: Collect and filter supernatant from all six bacterial cultures using established 7-day adipogenesis protocol with supernatant added at specified concentrations [34].

- Test: Quantify lipid accumulation via Oil Red O staining and compare effects across different supernatants and concentrations [34].

- Learn: Only L. rhamnosus supernatant showed significant, concentration-dependent inhibition of lipid accumulation (up to 45%), narrowing focus to extracellular components of this specific strain [34].

DBTL Cycle 3: Effect of Bacterial Exosomes

- Design: Isolate the active component within the L. rhamnosus supernatant by testing the hypothesis that exosomes (extracellular vesicles) were the active agent, treating 3T3-L1 cells with exosomes at 2, 5, and 10 × 10⁷ nanoparticles/mL [34].

- Build: Isolate exosomes from supernatant of each Lactobacillus strain using centrifugation and Amicon tube with 100k MWCO filter, then treat 3T3-L1 cells with these exosomes [34].

- Test: Quantify effects on lipid accumulation and analyze expression of adipogenesis-related genes (PPARγ, C/EBPα) and AMPK regulator [34].

- Learn: L. rhamnosus exosomes showed remarkable 80% reduction in lipid accumulation, down-regulating PPARγ and C/EBPα while up-regulating AMPK, confirming the active substance works through the AMPK pathway [34].

Experimental Workflow Visualization

The experimental progression through three DBTL cycles can be visualized as follows:

Experimental Progression Through DBTL Cycles

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of DBTL cycles requires specific research reagents and tools. The following table summarizes key components essential for synthetic biology workflows, particularly those applied in genetic circuit engineering and metabolic pathway optimization.

Table 1: Essential Research Reagent Solutions for DBTL Implementation

| Tool Category | Specific Examples | Function in DBTL Workflow |

|---|---|---|

| Genetic Parts | Promoters, RBS, coding sequences, terminators | Modular components for genetic circuit design and assembly [25] [34] |

| Cloning Systems | BioBrick vectors, plasmid systems, DNA assembly kits | Standardized platforms for constructing and replicating genetic designs [25] |

| Host Organisms | E. coli, Corynebacterium glutamicum, Acinetobacter baumannii | Chassis organisms for hosting engineered genetic circuits and pathways [25] [37] |

| Genome Editing Tools | CRISPR-Cas9, CRISPRi, homologous recombination systems | Precision engineering of host genomes and regulatory control [25] |

| Analytical Tools | Colony qPCR, NGS, RNA-seq, mass spectrometry | Verification and functional characterization of engineered systems [33] [36] |

| Cell-Free Systems | In vitro transcription/translation systems | Rapid prototyping of genetic designs without cellular constraints [35] |

Advanced Applications and Current Innovations

Machine Learning-Enhanced DBTL Cycles

The integration of artificial intelligence and machine learning is revolutionizing traditional DBTL approaches. Sequence-based protein language models—such as ESM and ProGen—trained on evolutionary relationships between protein sequences can predict beneficial mutations and infer protein functions [35]. These models have proven adept at zero-shot prediction of diverse antibody sequences and predicting solvent-exposed and charged amino acids [35].

Structural models like MutCompute and ProteinMPNN learn from expanding databases of experimentally determined structures to enable powerful zero-shot design strategies [35]. For example, MutCompute uses a deep neural network trained on protein structures to associate amino acids with their surrounding chemical environment, predicting stabilizing and functionally beneficial substitutions [35]. This method successfully engineered a hydrolase for polyethylene terephthalate (PET) depolymerization with increased stability and activity compared to wild-type [35].

The combination of machine learning with high-throughput experimental data has enabled new engineering paradigms. As researchers note, "Machine learning provides a new opportunity for directly engineering proteins and pathways with desired functions" [35]. This integration has led to proposals for reordering the traditional cycle to LDBT (Learn-Design-Build-Test), where learning from large datasets precedes and informs the design phase [35].

Cell-Free Systems for Accelerated DBTL

Cell-free synthetic biology has emerged as a powerful platform for accelerating DBTL cycles by enabling biological reactions outside living cells [35]. These systems offer faster prototyping, improved biosynthetic control, and reduced biomanufacturing variability compared to traditional cellular approaches [35]. Cell-free gene expression leverages protein biosynthesis machinery from crude cell lysates or purified components to activate in vitro transcription and translation [35].

The advantages of cell-free systems include:

- Rapid production: Achieving >1 g/L protein in <4 hours [35]

- Toxic product tolerance: Enabling production of compounds toxic to living cells [35]

- Scalability: Operating from picoliter to kiloliter scales [35]

- Modularity: Facilitating customization of reaction environments [35]

When combined with liquid handling robots and microfluidics, cell-free systems can dramatically increase throughput. For example, the DropAI platform leveraged droplet microfluidics and multi-channel fluorescent imaging to screen upwards of 100,000 picoliter-scale reactions [35]. These capabilities make cell-free systems particularly valuable for building large datasets to train machine learning models and test in silico predictions [35].

Automated Biofoundries

Biofoundries represent the industrialization of synthetic biology, integrating automation throughout the DBTL cycle to enable high-throughput biological engineering [36]. These facilities address critical limitations in conventional biological research by substituting human labor with machines, improving consistency and speed while reducing costs [36]. As noted in metabolic engineering literature, "Automation has been proposed as a solution to improve consistency and speed, as well as to reduce labor costs and help researchers to focus more on intellectual tasks" [36].

Biofoundries face unique challenges in biological automation, including high variability in experimental protocols and high failure rates requiring constant handling of exceptions [36]. However, recent advances in metabolic engineering, synthetic biology, and bioinformatics—such as robust DNA assembly methods, versatile genome engineering tools, and powerful retrobiosynthesis algorithms—have enabled these facilities to overcome many limitations [36].

Quantitative Data and Performance Metrics

The effectiveness of DBTL cycles can be measured through various quantitative metrics. The following table summarizes key performance indicators and representative data from synthetic biology applications.

Table 2: Quantitative Performance Metrics in DBTL Implementation

| Metric Category | Specific Measurement | Representative Data/Values |

|---|---|---|

| Market Growth | Global synthetic biology market size | $23.60 billion (2025) to $53.13 billion (2033 projected) at 10.7% CAGR [5] |

| Cycle Acceleration | Cell-free protein production | >1 g/L protein in <4 hours [35] |

| Screening Throughput | Microfluidic screening capacity | >100,000 picoliter-scale reactions [35] |

| Engineering Efficiency | Lipid accumulation reduction | 80% reduction achieved through 3 DBTL cycles [34] |

| Data Reduction | AI-driven protein design | 99% reduction in protein design data points [5] |

| Investment Scale | Biofoundry funding | $200 million raised for synthetic biology tools expansion [5] |