Synthetic Biology for Chronic Disease Modeling: Engineered Systems for Personalized Diagnosis and Therapeutic Innovation

This article explores the transformative role of synthetic biology in modeling and treating complex chronic diseases.

Synthetic Biology for Chronic Disease Modeling: Engineered Systems for Personalized Diagnosis and Therapeutic Innovation

Abstract

This article explores the transformative role of synthetic biology in modeling and treating complex chronic diseases. It provides researchers, scientists, and drug development professionals with a comprehensive analysis of how engineered biological systems—from genetic circuits in microbial therapeutics to AI-integrated diagnostic platforms—are revolutionizing our approach to conditions like metabolic disorders, cancers, and gastrointestinal diseases. The scope spans foundational principles, cutting-edge methodological applications, critical troubleshooting for real-world deployment, and rigorous validation frameworks. By synthesizing recent advances and addressing persistent challenges, this resource aims to bridge the gap between laboratory innovation and clinically viable, personalized chronic disease management solutions.

The Foundation of Synthetic Biology in Chronic Disease Research

Synthetic biology represents a paradigm shift in biological engineering, moving from the analysis of existing biological systems to the design and construction of novel biological systems with enhanced or entirely new functions. For researchers modeling chronic diseases, this discipline provides an unprecedented capacity to program biological systems that can mimic, interrogate, and ultimately correct the complex pathophysiology underlying conditions such as cancer, neurodegenerative disorders, and autoimmune diseases. At its core, synthetic biology employs a toolkit of standardized, interoperable parts that can be assembled into sophisticated genetic circuits capable of processing information and executing logical functions within living cells. This toolkit has evolved dramatically from its origins with simple, standardized DNA parts like BioBricks to the current era of precision genome editing powered by CRISPR-based technologies. This technical guide provides an in-depth examination of the core components of the synthetic biology toolkit, with specific emphasis on their application in constructing more accurate models of chronic human diseases for therapeutic discovery and development.

The Foundational Toolkit: Components and Standards

BioBricks and Standardized Biological Parts

The BioBrick framework established the foundational principle of synthetic biology: treating biological components as standardized, interchangeable parts that can be assembled into increasingly complex systems. BioBricks are DNA sequences stored in a standardized format within plasmid vectors, featuring uniform prefix and suffix sequences that enable reliable physical assembly using restriction enzymes and ligases. This standardization allows researchers to share and combine genetic parts across laboratories with predictable behavior, creating a true engineering discipline for biology.

Table: Core Components of a Synthetic Biology Toolkit

| Component Category | Key Examples | Primary Function | Application in Disease Modeling |

|---|---|---|---|

| Standardized Parts | BioBrick promoters, RBS, coding sequences, terminators | Modular assembly of genetic circuits | Construction of reporter systems for disease-associated pathways |

| Vector Systems | Plasmid backbones with origin of replication, selection markers | Maintenance and delivery of genetic constructs | Stable introduction of synthetic circuits into host cells |

| Promoter Library | Constitutive (e.g., J23100), inducible (e.g., pTet, pLac) | Control of gene expression timing and level | Tunable expression of disease-related genes or therapeutic proteins |

| CRISPR Tools | Cas9 nucleases, dCas9 effectors, gRNA expression cassettes | Targeted genome editing & transcriptional control | Knock-in of disease mutations; gene activation/repression studies |

Vector Systems and Delivery Mechanisms

Effective deployment of synthetic genetic circuits requires specialized plasmid vectors and delivery methods. For bacterial systems like Acinetobacter baumannii, toolkit characterization often involves high-copy and low-copy number plasmids to modulate gene dosage effects [1]. In mammalian systems relevant to human disease modeling, viral vectors (lentivirus, AAV) or physical methods (electroporation, microinjection) are typically employed. Recent advances in non-viral delivery, including lipid nanoparticles and polymer-based complexes, are addressing critical bottlenecks in therapeutic application, particularly for primary human cells that are often recalcitrant to genetic modification.

The CRISPR Revolution: Beyond Cutting

The advent of CRISPR-Cas systems has transformed synthetic biology from a discipline focused on adding new genetic material to one capable of precisely rewriting existing genomic information. While early CRISPR applications focused primarily on gene knockout via targeted double-strand breaks, the field has rapidly evolved to encompass a far more sophisticated toolkit that operates beyond simple DNA cleavage.

From Scissors to Swiss Army Knife: The Expanded CRISPR Toolkit

CRISPR technology has evolved into a versatile "Swiss Army Knife" for cellular engineering [2]. This expansion includes:

- CRISPR Interference (CRISPRi): Utilizing catalytically dead Cas9 (dCas9) fused to repressor domains for targeted gene silencing without altering DNA sequence. This system has been successfully deployed in bacterial pathogens like A. baumannii to downregulate biofilm-related genes implicated in antibiotic resistance [1].

- CRISPR Activation (CRISPRa): Employing dCas9 fused to transcriptional activation domains for targeted gene upregulation, enabling gain-of-function studies in disease models.

- Base Editing: Using Cas9 nickase fused to deaminase enzymes to directly convert one DNA base to another (C→T or A→G) without double-strand breaks, enabling precise modeling of single-nucleotide polymorphisms associated with chronic disease.

- Prime Editing: Employing Cas9-reverse transcriptase fusions programmed with prime editing guide RNAs (pegRNAs) to mediate targeted insertions, deletions, and all base-to-base conversions with minimal indel formation.

- Epigenetic Editing: Utilizing dCas9 fused to chromatin-modifying enzymes (methyltransferases, acetyltransferases) to rewrite epigenetic marks, enabling study of epigenetic contributions to chronic diseases like cancer and neurodegeneration.

Table: Advanced CRISPR Tool Variants and Applications

| Tool Variant | Core Components | Key Feature | Disease Modeling Application |

|---|---|---|---|

| CRISPRi/a | dCas9 + KRAB/SunTag activation domains | Reversible transcriptional control | Studying gene dosage effects in cancer |

| Base Editors | Cas9 nickase + cytidine/adenine deaminase | Single-nucleotide changes without DSBs | Introducing precise point mutations |

| Prime Editors | Cas9-RT fusion + pegRNA | Precise small edits without donor template | Modeling small indels found in genetic disorders |

| Epigenetic Editors | dCas9 + DNA methyltransferase/histone modifier | Heritable epigenetic modifications | Modeling epigenetic dysregulation in disease |

Experimental Protocol: CRISPRi-Mediated Gene Repression for Bacterial Pathogenesis Studies

The following detailed methodology outlines implementation of a CRISPRi system for studying antibiotic resistance mechanisms in bacterial chronic infections, based on toolkit development for A. baumannii [1]:

gRNA Design and Cloning:

- Design 20-nucleotide guide sequences complementary to the target gene's promoter or early coding region using computational tools (e.g., CHOPCHOP).

- Clone annealed oligonucleotides into a CRISPRi plasmid vector containing the gRNA scaffold under a constitutive promoter.

- Verify sequence integrity through Sanger sequencing.

CRISPRi Plasmid Assembly:

- Transform the verified gRNA plasmid into expression strains containing a compatible plasmid with dCas9 under control of an inducible promoter (e.g., anhydrotetracycline-inducible).

- Select for double-transformants using appropriate antibiotics.

Repression Efficiency Validation:

- Induce dCas9 expression with optimal inducer concentration (determined empirically).

- Measure target gene expression 24-hours post-induction via RT-qPCR.

- Normalize expression to appropriate housekeeping genes.

- Confirm phenotypic effects (e.g., reduced biofilm formation for biofilm-related genes).

Off-Target Assessment:

- Perform RNA-seq on induced vs. uninduced cells to identify potential off-target transcriptional effects.

- Focus analysis on genes with partial complementarity to the gRNA sequence.

This protocol enables tunable, reversible gene repression essential for studying essential genes in chronic infections where complete knockout would be lethal, providing a powerful approach for identifying novel antibiotic targets.

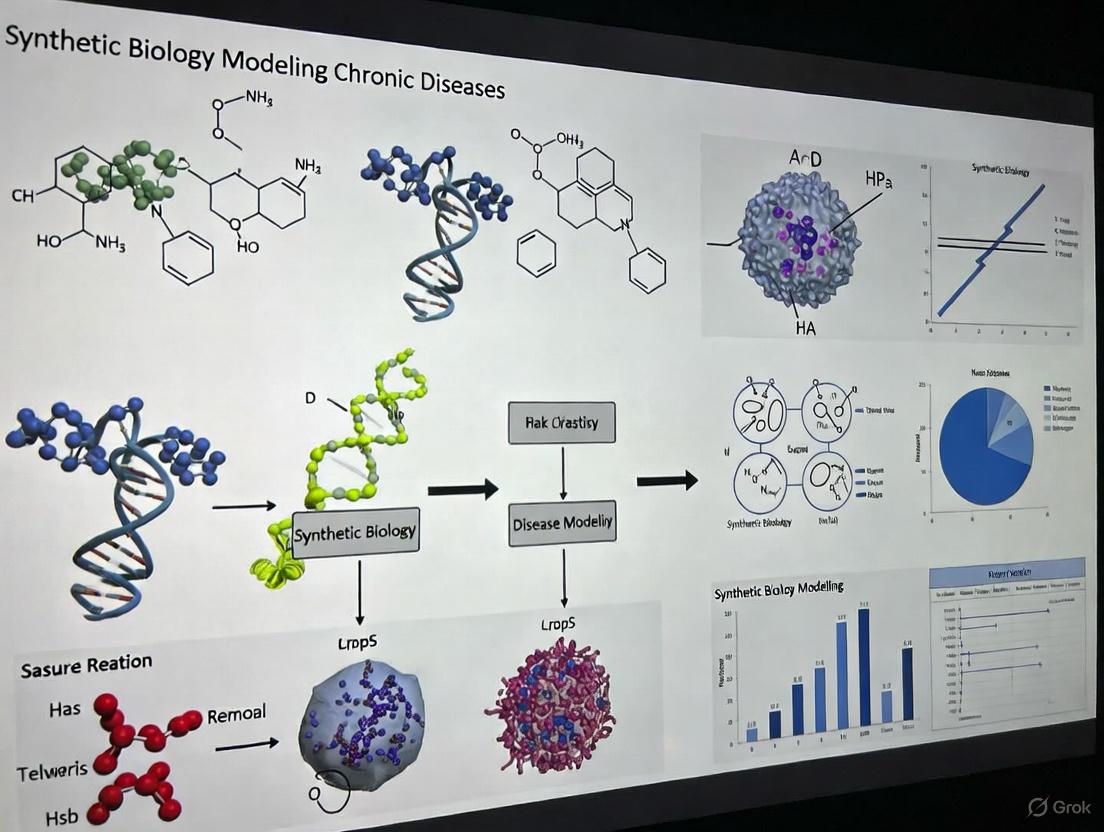

Visualization of Synthetic Circuit Design and Implementation

The following diagrams illustrate key workflows and logical relationships in synthetic biology toolkit implementation for chronic disease research.

Workflow for Constructing a Synthetic Genetic Circuit

CRISPR Toolkit Components and Applications

The Scientist's Toolkit: Essential Research Reagents

Implementation of synthetic biology approaches requires specific, high-quality research reagents. The following table details essential materials for constructing and testing genetic circuits in chronic disease research.

Table: Essential Research Reagents for Synthetic Biology in Disease Modeling

| Reagent Category | Specific Examples | Function | Application Notes |

|---|---|---|---|

| Standardized DNA Parts | BioBrick promoter collection (J23100 series), RBS library, fluorescent protein coding sequences | Modular circuit construction | Enable predictable, composable circuit design; characterize in target cell type |

| Cloning Systems | Restriction enzymes (EcoRI, XbaI, SpeI, PstI), T4 DNA ligase, Gibson Assembly master mix | Physical assembly of DNA constructs | BioBrick standard uses prefix/prefix assembly; newer systems (Golden Gate) offer higher throughput |

| Vector Systems | Plasmid backbones with appropriate origins, antibiotic resistance markers, inducible dCas9 expression systems | Maintenance and control of genetic circuits | Select origin compatible with host; inducible systems enable temporal control |

| Delivery Reagents | Electroporation kits, lipid nanoparticles, viral packaging systems (lentiviral, AAV) | Introduction of constructs into target cells | Choice depends on host cell type (bacterial, mammalian, primary cells); optimize efficiency vs. toxicity |

| Reporter Systems | Fluorescent proteins (GFP, RFP, YFP), luciferase enzymes, secreted embryonic alkaline phosphatase (SEAP) | Circuit functionality readout | Enable real-time monitoring in live cells; SEAP allows non-destructive temporal sampling |

| Selection Agents | Antibiotics (ampicillin, kanamycin), metabolic selection (puromycin, G418) | Enrichment for successfully modified cells | Dose-response determination critical for primary cells; consider inducible kill switches for biocontainment |

Applications in Chronic Disease Modeling and Therapeutic Development

Synthetic biology toolkits are revolutionizing chronic disease research by enabling the creation of more physiologically relevant models and novel therapeutic modalities. Key applications include:

Advanced Disease Modeling

The programmable nature of synthetic genetic circuits allows researchers to engineer cellular systems that recapitulate key aspects of chronic disease pathophysiology. For neurodegenerative diseases, CRISPR-based genome editing enables precise introduction of disease-associated mutations (e.g., APP, MAPT, LRRK2) into human iPSCs, which can then be differentiated into relevant neuronal subtypes for mechanistic studies and drug screening. In cancer biology, synthetic circuits can be designed to mimic oncogenic signaling pathways, allowing controlled investigation of tumor initiation and progression in a genetically defined background.

Engineered Cellular Therapeutics

Synthetic biology provides the foundation for next-generation cell-based therapies, particularly for cancer and autoimmune diseases. The integration of synthetic receptors (e.g., CAR-T), programmable gene circuits, and safety switches enables creation of smart therapeutic cells that can detect disease-specific signals, execute sophisticated response logics, and self-regulate to minimize off-target effects [3]. Recent advances include circuits that allow T cells to recognize multiple tumor antigens simultaneously, reducing the likelihood of tumor escape through antigen loss.

Smart Drug Delivery Systems

The convergence of synthetic biology with biomaterial engineering has enabled development of stimulus-responsive drug delivery platforms for chronic disease management. For example, cellulose-based drug delivery systems can be engineered with synthetic genetic circuits to release therapeutic agents in response to disease-specific biomarkers such as pH changes in tumor microenvironments or inflammatory signals in autoimmune conditions [4]. These systems offer the potential for automated, closed-loop drug delivery that maintains therapeutic drug levels while minimizing side effects.

The synthetic biology toolkit continues to evolve at an accelerated pace, with several emerging trends poised to further enhance its utility for chronic disease research. The integration of artificial intelligence with design automation is streamlining the process of circuit design and optimization, while multi-omics technologies are providing unprecedented insights into circuit performance and host-circuit interactions. The ongoing development of novel delivery modalities, including advanced viral vectors and non-viral nanoparticles, will address critical bottlenecks in therapeutic application. Furthermore, the increasing emphasis on biocontainment strategies and ethical frameworks will ensure the safe and responsible deployment of these powerful technologies.

In conclusion, the synthetic biology toolkit has matured from a collection of simple standardized parts to a sophisticated platform encompassing precision genome editing, programmable epigenetic modification, and complex cellular computing. For researchers focused on chronic diseases, these tools provide unprecedented capabilities to create predictive models, elucidate disease mechanisms, and develop next-generation therapeutics with enhanced precision and efficacy. As the toolkit continues to expand and integrate with complementary technologies from engineering and computational sciences, it promises to fundamentally transform our approach to understanding and treating complex chronic diseases.

Chronic non-communicable diseases (NCDs) represent a critical global health burden, responsible for approximately 74% of all deaths worldwide according to World Health Organization statistics [5]. Traditional approaches to chronic disease modeling and management face fundamental limitations in addressing disease complexity, particularly their inability to capture multimorbidity dynamics, temporal disease progression, and individualized risk trajectories. These models typically operate in siloed frameworks, analyzing diseases in isolation despite overwhelming clinical evidence of strong pathophysiological interconnections. For instance, diabetes increases the risk of heart disease and stroke, while hypertension and stroke are closely interrelated [6]. The conventional single-disease paradigm fails to account for these critical interactions, leading to incomplete risk assessment and suboptimal intervention strategies.

The integration of synthetic biology with advanced computational approaches presents a transformative opportunity to overcome these limitations. Where traditional models provide static snapshots, synthetic biology enables dynamic, multi-scale analysis of disease mechanisms through engineered biological systems. This paradigm shift from observation to manipulation of biological components allows researchers to construct sophisticated disease models that mirror the complexity of human pathophysiology [7]. This technical guide examines the specific shortcomings of traditional methodologies while detailing the experimental frameworks and synthetic biology platforms advancing chronic disease research.

Critical Limitations of Traditional Modeling Approaches

Inability to Capture Multimorbidity and Disease Interrelationships

Traditional chronic disease prediction models have predominantly focused on single-disease outcomes, requiring separate models for different conditions—an approach that demands significant computational resources and time while ignoring clinically significant comorbidities [6]. This limitation is particularly problematic given that nearly 25% of individuals aged 14 or older suffer from multiple chronic diseases, with multimorbidity prevalence reaching up to 30% in some populations [6]. The failure to model these interactions results in several critical shortcomings:

- Fragmented Risk Prediction: Single-disease models cannot identify shared risk factors or compensatory mechanisms across conditions

- Therapeutic Blind Spots: Interventions targeting one condition may exacerbate or improve comorbidities, effects undetectable in isolated models

- Resource Inefficiency: Developing and maintaining separate models for each disease duplicates efforts and increases computational overhead

The fundamental limitation stems from modeling diseases as independent entities rather than components of an interconnected physiological network with shared pathways and feedback mechanisms.

Lack of Temporal Dynamics in Disease Progression

Chronic diseases evolve through complex temporal patterns that traditional cross-sectional approaches cannot capture. Existing models often fail to incorporate how past medical conditions and temporal interactions influence disease development trajectories [6]. This represents a significant methodological gap, as the sequence, duration, and intensity of health events provide critical information for predicting disease onset and progression. Without capturing these temporal dimensions, models provide incomplete pathological pictures that lack predictive precision for individual patients.

Table 1: Limitations of Traditional Chronic Disease Models

| Limitation Category | Specific Technical Shortcomings | Impact on Research & Clinical Translation |

|---|---|---|

| Model Architecture | Single-disease focus; Isolated prediction frameworks | Inability to capture multimorbidity (30% prevalence in populations); Fragmented therapeutic insights |

| Temporal Modeling | Static, cross-sectional analyses; Ignoring historical medical patterns | Limited prognostic capability; Missed early intervention opportunities |

| Data Integration | Siloed medical data; Incompatible clinical and public health datasets | Systemic biases; Underrepresentation of marginalized populations in digital health records |

| Technical Implementation | High computational resource demands for multiple models; No shared representation learning | Resource-intensive deployment; Limited scalability in real-world settings |

Data Fragmentation and Systemic Biases

Integrated clinical-public health data systems face inherent constraints from heterogeneous data quality across multi-source inputs, including inconsistencies in completeness, accuracy, and temporal resolution [5]. These challenges are compounded by technical interoperability barriers stemming from incompatible data standards (HL7 FHIR vs. OpenEHR), organizational fragmentation in data governance, and semantic discrepancies between preventive health terminologies and clinical ontologies [5]. Furthermore, inherent selection biases skew analyses, particularly through underrepresentation of marginalized populations in digital health records and confounding effects of differential healthcare-seeking behaviors.

Synthetic Biology Solutions for Enhanced Disease Modeling

Advanced Multi-Task Learning Frameworks

Multitask learning (MTL) multimodal networks represent a paradigm shift in chronic disease prediction by simultaneously modeling multiple conditions to capture their inherent correlations. This approach utilizes shared representations to enhance generalization across tasks while maintaining strong predictive performance with reduced features [6]. The MTL architecture employs both hard parameter sharing (sharing hidden layers among tasks) and soft parameter sharing (independent models with constrained parameters), effectively addressing the seesaw phenomenon where improvements in one task cause decline in another [6].

For chronic disease prediction, the strong interrelationships between conditions like diabetes, heart disease, stroke, and hypertension make MTL particularly effective. The model captures shared risk factors and pathophysiological pathways while learning disease-specific features through task-specific layers. This architecture demonstrates how synthetic biological approaches can mirror the interconnected nature of human physiology rather than treating each disease as an independent entity.

Diagram 1: Multi-Task Learning Architecture for Chronic Disease Prediction

Whole-Cell and Cell-Free Synthetic Biology Platforms

Synthetic biology offers both whole-cell and cell-free platforms for chronic disease modeling, each with distinct advantages and application scenarios. Whole-cell platforms utilize engineered microorganisms (e.g., Pichia pastoris) as biological factories for producing therapeutic proteins and diagnostic components [7]. These systems benefit from self-replication and complex metabolic capabilities but face challenges with long-term viability/stability and toxicity of analytes or reaction components [7].

Cell-free platforms bypass the need for viable cells through open reaction environments that facilitate manipulation of metabolism, transcription, and translation [7]. These systems can detect or produce compounds typically toxic to cells and focus resource utilization solely on reactions of interest. However, they face limitations including short reaction durations (typically hours), high reagent costs, and difficulties in folding complex protein products [7].

Table 2: Synthetic Biology Platforms for Disease Modeling

| Platform Type | Technical Advantages | Implementation Challenges | Chronic Disease Applications |

|---|---|---|---|

| Whole-Cell Systems | Self-replication; Complex metabolic capacity; Consolidated complex assays | Long-term stability; Analyte toxicity; Time delays for cell growth | Continuous therapeutic production; Living diagnostics; Closed-loop delivery systems |

| Cell-Free Systems | Bypass viability requirements; Toxic compound compatibility; Open reaction environment | Short reaction duration (hours); High reagent costs; Complex protein folding difficulties | Point-of-care diagnostics; On-demand bioproduction; Metabolic pathway modeling |

| Biotic/Abiotic Interfaces | Enhanced portability/stability; 3D-printed hydrogel encapsulation; Resilience to extreme stresses | Integration complexity; Material compatibility; Functional stability across climates | On-demand antibiotic production; Remote therapeutic synthesis; Environmental-responsive systems |

Multimodal Data Integration and Processing Frameworks

Modern chronic disease modeling requires sophisticated data processing pipelines that integrate heterogeneous healthcare data from electronic medical records, wearable sensors, genomic repositories, and population health databases [5]. The methodological framework for constructing predictive models involves five critical phases:

- Multi-source Data Acquisition: Aggregating multimodal inputs providing comprehensive digital representation of patient health trajectories

- Preprocessing Pipeline: Data cleansing (outlier removal, missing value imputation), dimensionality reduction (PCA, t-SNE), and normalization

- Feature Engineering and Selection: Statistical filtering (chi-square tests, mutual information) and algorithmic selection (recursive feature elimination with tree-based classifiers)

- Predictive Model Development: Ensemble learning architectures with deep neural networks incorporating attention mechanisms for temporal dependencies

- Clinical Implementation: Deployed models serving risk stratification, precision prevention, and population analytics functions [5]

Diagram 2: Multimodal Data Processing Pipeline for Chronic Disease Modeling

Experimental Protocols for Advanced Chronic Disease Modeling

Multitask Learning Multimodal Network Implementation

The MTL multimodal network for chronic disease prediction utilizes a nationwide dataset from Taiwan's Health and Welfare Data Science Center, incorporating medical records and personal information from two million individuals [6]. The experimental protocol involves:

Data Preparation Phase:

- Collect medical records from "Ambulatory Care Expenditures" and personal information from "Registry for Beneficiaries"

- Select patients without target disease diagnoses during the first 10-year observation period

- Construct disease features by selecting the three most frequently occurring diseases every 2 months over 10 years (180 features total)

- Replace missing values with

token and convert International Classification of Diseases (ICD) codes into embeddings using Word2Vec-based embedding layer

Model Architecture:

- Implement multi-head self-attention (MHSA) mechanisms to capture temporal interactions among diseases

- Adapt multimodal attention network for dementia (MAND) framework for multiple chronic disease prediction

- Employ both hard and soft parameter sharing approaches to balance shared representation and task-specific learning

- Analyze attention scores to identify key risk factors and disease comorbidities aligned with clinical literature

Validation Framework:

- Utilize stratified k-fold cross-validation (k=5/10) to prevent overfitting while maintaining class distribution

- Implement Bayesian optimization for hyperparameter tuning in high-dimensional parameter spaces

- Quantify model efficacy through discrimination (AUC-ROC with 95% confidence intervals), calibration (Brier scores), and clinical utility (decision curve analysis)

Outside-the-Lab Deployment Platforms

Deploying synthetic biology technologies for chronic disease management in resource-limited settings requires specialized platforms that maintain functionality outside controlled laboratory environments [7]. Experimental protocols for these deployments include:

Preservation and Stability Testing:

- Encapsulate Bacillus subtilis spores within 3D-printed agarose hydrogels for enhanced stress resilience

- Conduct long-term stability testing under variable storage conditions (temperature, humidity, resource limitations)

- Validate genetic and functional stability through accelerated aging studies and challenge tests

Integrated Production Systems:

- Develop table-top microfluidic reactors (e.g., Integrated Scalable Cyto-Technology platform) for point-of-care therapeutic production

- Implement continuous perfusion fermentation to reduce bioreactor footprint from industrial 1000+ liter to sub-liter scales

- Engineer Pichia pastoris with inducible, switchable production of multiple biologics (e.g., rHGH and IFNα2b) within 24 hours

- Automate downstream separation and purification modules for end-to-end production of clinical-grade therapeutics

Performance Validation:

- Verify production yields against laboratory benchmarks under simulated field conditions

- Test system functionality with limited electrical power and pure oxygen inputs

- Validate analytical performance across diverse environmental scenarios (temperature fluctuations, vibration, intermittent usage)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for Synthetic Biology Chronic Disease Models

| Research Reagent | Technical Function | Application Context |

|---|---|---|

| Word2Vec-based ICD Embedding Layer | Converts medical diagnosis codes into numerical representations capturing clinical relationships | Feature engineering from electronic health records for multimodal disease prediction networks |

| 3D-Printed Agarose Hydrogels | Biocompatible encapsulation matrix for preserving synthetic biology systems in harsh environments | Outside-the-lab deployment platforms for diagnostic and therapeutic applications |

| Multi-Head Self-Attention (MHSA) Modules | Neural network components that weight the importance of different medical events in patient histories | Temporal pattern recognition in disease progression trajectories |

| Methylotrophic Yeast (P. pastoris) Platforms | Recombinant protein production host with mammalian-like glycosylation patterns | On-demand bioproduction of therapeutic proteins in resource-limited settings |

| Continuous Perfusion Fermentation Systems | Miniaturized bioreactors maintaining continuous nutrient flow and waste removal | Small-footprint manufacturing of biologics at point-of-care |

| Explainable AI (XAI) Visualization Tools | Model interpretation techniques (SHAP values) for clinical validation of predictions | Translating model outputs into clinically actionable insights for chronic disease risk stratification |

The integration of synthetic biology platforms with advanced computational approaches represents a fundamental shift in chronic disease modeling, addressing critical limitations of traditional methodologies. By capturing disease interrelationships through multi-task learning frameworks, incorporating temporal dynamics via attention mechanisms, and enabling deployment outside controlled laboratory settings, these approaches provide more accurate, scalable, and clinically relevant models. The experimental protocols and research reagents detailed in this technical guide provide scientists and drug development professionals with the foundational elements for implementing these advanced methodologies. As synthetic biology continues to evolve, its convergence with artificial intelligence and materials science will further transform our approach to understanding and addressing chronic disease complexity, ultimately enabling more personalized, predictive, and accessible healthcare solutions.

Synthetic biology applies engineering principles to biological systems, creating genetically programmed devices that sense, compute, and respond to disease signals. This approach is revolutionizing the modeling and treatment of chronic diseases by introducing unprecedented precision and controllability. For metabolic diseases, cancers, and gastrointestinal (GI) disorders—conditions characterized by complex, dynamic pathophysiology—synthetic biology provides tools to construct living diagnostic systems and programmable therapeutic platforms. These engineered systems function as both investigative tools to decode disease mechanisms and therapeutic agents that operate with cellular precision, moving beyond traditional static models and one-size-fits-all treatments [8].

The foundational principle involves designing genetic circuits that perform logical operations within living cells. These circuits, composed of promoters, regulators, and effector genes, can be programmed to detect specific disease biomarkers and execute predetermined responses, such as the production of therapeutic molecules. This capability enables the development of dynamic disease models that respond to physiological changes in real-time, offering more clinically relevant insights than conventional cell culture systems. For researchers and drug development professionals, these tools provide sophisticated experimental platforms for mechanistic discovery, target validation, and therapeutic screening, accelerating the translation of basic research into clinical applications [3] [8].

Technical Foundations: Core Synthetic Biology Toolkits

Genetic Circuit Design Principles

The engineering of biological systems relies on standardized, modular genetic parts that can be assembled into circuits with predictable functions. These components include:

- Sensors: Specialized promoters or two-component systems that detect intracellular and extracellular signals (e.g., metabolites, cytokines, hypoxia, reactive oxygen species).

- Processors: Logic gates (AND, OR, NOT) that integrate multiple sensor inputs using recombinases, CRISPR/dCas9 systems, or transcriptional regulators.

- Actuators: Effector genes that produce therapeutic outputs (e.g., enzymes, cytokines, antibodies, apoptotic agents) in response to processed signals.

- Reporters: Visualizable outputs (fluorescent proteins, luciferases, pigments) that enable tracking of circuit activity and disease status.

Advanced circuit designs now incorporate memory functions that record transient biological events, and closed-loop control systems that automatically adjust therapeutic output based on disease biomarker fluctuations. These sophisticated systems achieve autonomous regulation of disease processes, moving beyond simple on/off switching to proportional, dynamic control that mirrors physiological feedback loops [3] [8].

Delivery Chassis and Engineering Platforms

The functional implementation of genetic circuits requires selection of appropriate cellular chassis optimized for specific disease applications:

Table: Synthetic Biology Chassis and Their Applications

| Chassis Organism | Primary Disease Application | Key Engineering Tools | Clinical Examples |

|---|---|---|---|

| Escherichia coli Nissle 1917 | Metabolic disorders, GI inflammation | CRISPR-Cas9, plasmid systems, genome integration | SYNB1618 (PKU), SYNB8802 (enteric hyperoxaluria) |

| Lactococcus lactis | Oral mucositis, inflammatory bowel disease | Conjugative plasmids, chromosomal integration | AG013 (oral mucositis), AG019 (type 1 diabetes) |

| Bacteroides thetaiotaomicron | GI disorders, metabolic diseases | CRISPR-Cas12a, conjugative transfer | NOV-001 (enteric hyperoxaluria) |

| Engineered T-cells | Cancer immunotherapy | Lentiviral/retroviral vectors, transposons | Logic-gated CAR-T, armored CAR-T |

| Lactobacillus reuteri | Infectious disease, GI disorders | Quorum sensing circuits, plasmid systems | Pathogen detection biosensors |

The engineering workflow employs advanced genome editing technologies, with CRISPR-Cas systems particularly revolutionizing the field due to their precision and versatility across diverse bacterial species and human cells. For clinical translation, considerations of genetic stability and biocontainment are critical, often addressed through chromosomal integration of circuits and implementation of auxotrophies that limit microbial proliferation outside target environments [9] [10] [11].

Application Area 1: Metabolic Diseases

Disease Modeling and Therapeutic Intervention Strategies

Synthetic biology approaches to metabolic disorders focus on creating systems that detect metabolite imbalances and execute corrective responses in real-time. These platforms typically employ engineered microorganisms or human cells programmed with specialized genetic circuits that sense pathological metabolite levels and respond with therapeutic outputs.

Table: Engineered Systems for Metabolic Disorders

| Disease Target | Engineering Approach | Sensing Mechanism | Therapeutic Output | Response Time |

|---|---|---|---|---|

| Phenylketonuria (PKU) | Engineered E. coli Nissle 1917 | Passive phenylalanine diffusion | Phenylalanine ammonia-lyase (PAL), L-amino acid deaminase (LAAD) | Hours [9] [10] |

| Type 1 Diabetes | Engineered Lactococcus lactis | Microenvironment sensing | Human proinsulin, immunomodulators | Hours [10] |

| Type 1/2 Diabetes | Engineered human cells (StimExo system) | FDA-approved drug (grazoprevir) | Rapid insulin exocytosis | Minutes [12] |

| Enteric Hyperoxaluria | Engineered E. coli Nissle 1917 | Oxalate sensing | Oxalate degradation enzymes | Hours [10] |

| General Metabolite Disorders | Artificial microbial consortia | Multi-input sensing | Short-chain fatty acid production | Hours [13] |

Experimental Protocols and Workflows

Protocol 1: Engineering Microbial Systems for Phenylketonuria (PKU) Treatment

This protocol details the creation of SYNB1618, an engineered E. coli Nissle 1917 strain for phenylalanine degradation [9] [10]:

Circuit Design:

- Identify phenylalanine-degrading enzymes: phenylalanine ammonia-lyase (PAL) from photosynthetic bacteria and L-amino acid deaminase (LAAD) from Proteus mirabilis.

- Clone genes under constitutive promoters with optimized ribosome binding sites.

- Incorporate anaerobic promoters to ensure function in the gut environment.

Strain Engineering:

- Transform E. coli Nissle 1917 with engineered plasmids or integrate genes into the chromosome using CRISPR-Cas9.

- Implement auxotrophic mutations (e.g., diaminopimelic acid DAP- dependence) for biocontainment.

- Validate genetic stability through serial passage without antibiotic selection.

In Vitro Validation:

- Culture engineered strain in anaerobic conditions with phenylalanine supplementation.

- Quantify phenylalanine degradation using HPLC and measure enzymatic activity.

- Assess strain viability under simulated gastrointestinal conditions.

In Vivo Evaluation:

- Administer to murine PKU models (Pahenu2) via oral gavage.

- Monitor blood phenylalanine levels longitudinally.

- Analyze bacterial colonization dynamics and host inflammatory responses.

Protocol 2: StimExo System for On-Demand Insulin Secretion

The StimExo platform enables minute-scale therapeutic protein secretion in response to small molecule triggers [12]:

System Design:

- Create bipartite STIM1 constructs:

- EF-hand domain replaced with synthetic oligomeric proteins (e.g., Azami-Green tetramer).

- Split system with conditional protein-protein interaction pairs (e.g., grazoprevir-dependent dimerization).

- Clone proinsulin gene with appropriate secretion signals.

- Create bipartite STIM1 constructs:

Cell Engineering:

- Transduce human endocrine cells (e.g., HEK293, EndoC-βH1) with lentiviral vectors encoding StimExo components.

- Select stable clones using antibiotic resistance.

- Validate calcium flux using fluorescent indicators (e.g., Fura-2) upon trigger addition.

Functional Characterization:

- Measure insulin secretion via ELISA following grazoprevir administration.

- Assess kinetics using perfused cell systems to simulate physiological delivery.

- Evaluate glucose responsiveness in diabetic mouse models with implanted microencapsulated cells.

Application Area 2: Cancers

Engineering Precision Anti-Tumor Responses

Synthetic biology approaches to cancer focus on creating systems that distinguish malignant from healthy tissue through complex biomarker sensing, then execute spatially-precise therapeutic responses. These platforms include engineered immune cells, bacteria with natural tumor tropism, and sophisticated gene circuits that perform Boolean logic operations to maximize tumor specificity [14] [8].

Table: Synthetic Biology Platforms for Cancer Immunotherapy

| Platform Type | Engineering Strategy | Targeting Mechanism | Therapeutic Payload | Development Status |

|---|---|---|---|---|

| Engineered Bacteria | Attenuated E. coli Nissle 1917, Salmonella | Hypoxia tropism, tumor microenvironment sensing | Cytokines, nanobodies, checkpoint inhibitors | Preclinical, Phase 1 (SYNB1891) [10] [14] |

| Logic-Gated CAR-T | T-cells with synthetic receptors | Multi-antigen sensing (AND, NOT gates) | Cytotoxic machinery, cytokine production | Preclinical development [14] |

| Armored CAR-T | T-cells with enhanced persistence | Tumor antigen recognition | IL-12, IL-15, dominant-negative cytokine receptors | Clinical trials [14] |

| Synthetic Gene Circuits | Mammalian cells with programmable regulators | Intracellular cancer biomarkers | Apoptotic inducers, surface markers | Preclinical development [8] |

Experimental Protocols and Workflows

Protocol 1: Engineering Bacteria for Tumor Microenvironment Targeting

This protocol details the development of bacteria that selectively colonize tumors and deliver immunomodulatory payloads [14]:

Bacterial Attenuation and Safety Engineering:

- Delete virulence genes (e.g., LPS biosynthesis genes) from E. coli Nissle 1917 or Salmonella strains.

- Implement auxotrophic mutations (e.g., purine, amino acid dependence) to limit replication outside tumors.

- Add "kill switches" based on temperature or nutrient sensing for additional biocontainment.

Tumor-Sensing Circuit Design:

- Clone promoters activated by tumor microenvironment signals (hypoxia, necrosis, high lactate) upstream of therapeutic genes.

- Incorporate quorum sensing systems to activate payload delivery only at sufficient bacterial density.

- Use inducible systems for temporal control (e.g., tetracycline-regulated expression).

Therapeutic Payload Integration:

- Engineer secretion systems for cytokines (IL-2, IL-12), checkpoint inhibitors (anti-PD-L1 nanobodies), or prodrug-converting enzymes.

- Validate payload functionality and specificity in vitro using tumor cell co-cultures.

In Vivo Validation:

- Administer engineered bacteria intravenously or intratumorally to tumor-bearing mice.

- Quantify bacterial localization using bioluminescence imaging.

- Monitor tumor regression and immune cell infiltration (flow cytometry, IHC).

- Assess systemic toxicity and bacterial clearance from non-target organs.

Protocol 2: Constructing Logic-Gated CAR-T Cells

This protocol describes the creation of T-cells with enhanced tumor specificity through Boolean logic operations [14]:

Circuit Architecture Design:

- Select tumor-associated antigens (TAAs) and healthy tissue exclusion markers.

- Design synthetic receptors with AND-gate logic:

- Primary CAR with CD3ζ signaling domain.

- Secondary costimulatory receptor with complementary signaling domain (e.g., CD28, 4-1BB).

- Implement NOT-gate logic using inhibitory CARs (iCARs) that recognize healthy tissue antigens.

Vector Construction and T-Cell Engineering:

- Clone CAR constructs into lentiviral or retroviral vectors with appropriate promoters.

- Transduce human primary T-cells using spinoculation with polybrene.

- Expand transduced cells with IL-2/IL-15 and validate surface expression by flow cytometry.

Functional Characterization:

- Co-culture with antigen-positive and antigen-negative tumor cell lines.

- Measure cytokine production (IFN-γ, IL-2) and cytotoxic activity (real-time cell killing assays).

- Verify specificity using cells expressing single antigens versus antigen combinations.

- Assess in vivo efficacy in xenograft models with tumor mixtures.

Application Area 3: Gastrointestinal Disorders

Reengineering Gut Microbiome for Therapeutic Applications

Synthetic biology approaches to GI disorders focus on engineering microbial communities that can sense inflammation, correct metabolic imbalances, and restore gut barrier function. These systems leverage the natural colonization capabilities of gut commensals while adding sophisticated therapeutic functions through genetic programming [9] [11] [13].

Table: Engineered Microbiome Solutions for GI Disorders

| Disease Target | Engineering Approach | Therapeutic Mechanism | Key Components | Efficacy Evidence |

|---|---|---|---|---|

| Inflammatory Bowel Disease | Engineered E. coli Nissle 1917 | Inflammation sensing, anti-inflammatory cytokine delivery | Tetrathionate-responsive promoters, IL-10 production | Preclinical models [9] [8] |

| Dysbiosis Restoration | Artificial microbial consortia | Multi-species complementation | Short-chain fatty acid production, bile acid metabolism | In vitro validation [11] [13] |

| GI Pathogen Infections | Engineered Lactobacillus spp. | Pathogen detection, antimicrobial production | Quorum sensing circuits, bacteriocin secretion | Preclinical models [8] |

| Gut-Brain Axis Disorders | Engineered neurotransmitter producers | Neuroactive metabolite synthesis | GABA, serotonin biosynthesis pathways | Preclinical models [13] |

Experimental Protocols and Workflows

Protocol 1: Engineering Inflammation-Responsive Biosensors

This protocol details the creation of bacterial systems that detect and report on gastrointestinal inflammation [9] [8]:

Inflammation Sensor Design:

- Identify promoters responsive to gut inflammation biomarkers:

- Tetrathionate-responsive promoters (e.g., ttrRS from Salmonella).

- Nitrate-responsive promoters (e.g., narG from E. coli).

- Thiosulfate-sensitive promoters.

- Clone these promoters upstream of reporter genes (GFP, luxABCDE) or therapeutic genes.

- Identify promoters responsive to gut inflammation biomarkers:

Circuit Integration and Optimization:

- Incorporate memory functions using recombinase-based switches (e.g., FimE, Bxb1) that record inflammation exposure.

- Implement amplification circuits using positive feedback loops to enhance sensitivity.

- Optimize promoter strength using ribosomal binding site libraries.

In Vitro Characterization:

- Expose engineered strains to inflammation biomarkers at physiological concentrations.

- Quantify reporter output using flow cytometry or plate readers.

- Determine dynamic range, sensitivity, and specificity against related compounds.

In Vivo Validation:

- Administer engineered strains to mouse colitis models (DSS, TNBS).

- Monitor reporter signals non-invasively (bioluminescence imaging) or in feces.

- Correlate bacterial signals with gold standard inflammation markers (histology, calprotectin).

- Assess therapeutic efficacy if strains deliver anti-inflammatory molecules.

Protocol 2: Constructing Artificial Microbial Consortia for Dysbiosis

This protocol describes the rational design and assembly of synthetic microbial communities for gut microbiome restoration [11] [13]:

Consortium Design Principles:

- Select complementary species with cross-feeding capabilities (e.g., mucin degraders and butyrate producers).

- Include species with diverse metabolic capabilities to enhance community resilience.

- Engineer strains to fill specific functional niches (vitamin production, pathogen inhibition).

Strain Engineering and Optimization:

- Modify individual strains to produce therapeutic metabolites:

- Butyrate production in Bacteroides thetaiotaomicron.

- GABA production in Lactobacillus spp.

- Conjugated linoleic acid production in Bifidobacterium.

- Implement quorum sensing systems for population control.

- Enhance gut persistence through adhesion factor expression.

- Modify individual strains to produce therapeutic metabolites:

Community Assembly and Testing:

- Co-culture engineered strains in gut-on-a-chip systems or anaerobic bioreactors.

- Monitor community stability using species-specific qPCR or flow cytometry.

- Measure metabolic outputs (SCFAs, neurotransmitters) via LC-MS/MS.

- Test in gnotobiotic mouse models with humanized microbiomes.

Therapeutic Efficacy Assessment:

- Administer consortium to disease models (IBD, metabolic syndrome).

- Evaluate host parameters: gut barrier integrity, inflammation markers, metabolic profiles.

- Analyze microbiome composition post-treatment to assess engraftment.

- Perform multi-omics integration (metagenomics, metabolomics, transcriptomics).

The development and implementation of synthetic biology solutions for chronic diseases requires specialized reagents and tools. The following table catalogs essential research materials referenced across the applications discussed.

Table: Essential Research Reagents for Synthetic Biology in Chronic Disease Modeling

| Reagent Category | Specific Examples | Research Function | Key Applications |

|---|---|---|---|

| Chassis Organisms | Escherichia coli Nissle 1917, Lactococcus lactis, Bacteroides thetaiotaomicron | Platform for circuit implementation | Metabolic engineering, gut microbiome modulation [9] [10] |

| Genetic Editing Tools | CRISPR-Cas9, CRISPR-Cas12a, Lambda Red recombineering | Precise genome modification | Circuit integration, gene knockout, pathway engineering [9] [11] [13] |

| Inducible Promoters | Tetrathionate-responsive (ttr), nitrate-sensitive (narG), hypoxia-inducible | Environmental signal sensing | Inflammation detection, tumor microenvironment targeting [8] [13] |

| Signaling Modules | STIM1/Orai1 calcium channels, quorum sensing systems (LuxI/LuxR) | Signal transduction and amplification | Controlled exocytosis, population-level coordination [12] |

| Therapeutic Effectors | Phenylalanine ammonia-lyase (PAL), interleukin-10 (IL-10), anti-PD-L1 nanobodies | Disease-modifying activities | Metabolic correction, immunomodulation, checkpoint inhibition [9] [10] [14] |

| Reporters and Biosensors | GFP, luciferase, pigment-producing enzymes | Circuit activity monitoring | Diagnostic readouts, in vivo tracking [8] |

| Containment Systems | Auxotrophic mutations (DAP-), kill switches (coupled to temperature) | Biological safety | Environmental containment, controlled persistence [10] [11] |

| Computational Design Tools | Genome-scale metabolic models (GEMs), circuit simulation software | In silico prediction and optimization | Consortium design, metabolic flux analysis [13] |

Future Directions and Translational Considerations

The field of synthetic biology for chronic disease modeling is advancing toward increasingly sophisticated systems with enhanced clinical applicability. Key emerging trends include the development of multi-input sensing circuits that can integrate multiple disease biomarkers for improved specificity, and personalized microbial consortia designed based on individual patient microbiome profiles. The integration of artificial intelligence with synthetic biology is accelerating the design-build-test-learn cycle, enabling more predictive modeling of circuit behavior in complex biological environments [13].

Significant challenges remain in the clinical translation of these technologies, particularly regarding safety and biocontainment of engineered organisms, immune system evasion for persistent function, and manufacturing scalability of complex living therapeutics. Regulatory frameworks are evolving to address these unique therapeutic modalities, with early clinical trials (e.g., SYNB1618 for PKU) providing important precedent [10]. The convergence of synthetic biology with tissue engineering, particularly through organs-on-chips and 3D tissue models, promises more physiologically relevant testing platforms that may bridge the gap between traditional cell culture and animal models [15].

As the field matures, the focus is shifting from proof-of-concept demonstrations to the development of robust, reliable therapeutic platforms that can deliver on the promise of precision medicine. This will require close collaboration between synthetic biologists, clinicians, regulatory specialists, and patients to ensure these innovative technologies can safely and effectively address the complex challenges of chronic diseases.

The field of synthetic biology has undergone a profound transformation, evolving from the construction of simple genetic circuits in single cells to the sophisticated modeling of complex human chronic diseases. This evolution represents a paradigm shift in how researchers approach biological design and medical research. Initially focused on foundational elements like toggle switches and oscillators, the discipline now builds multi-cellular systems that can simulate disease pathophysiology and predict therapeutic outcomes. This journey mirrors the broader trajectory in biomedical research, where traditional compartmental models of disease, which often used fixed compartments to represent different states of individuals, have shown limitations in accurately reflecting real-world conditions [16]. The integration of synthetic biology principles with advanced computational frameworks has enabled the development of more nuanced models that capture the dynamic, heterogeneous, and continuous nature of chronic disease progression, offering new hope for managing conditions such as cardiovascular diseases, diabetes, and cancer, which collectively account for a significant majority of global mortality [17].

The Era of Simple Genetic Circuits

The foundational period of synthetic biology was characterized by the design and implementation of simple, modular genetic circuits in model microorganisms. These circuits provided the basic logic gates that underpinned more complex constructions and established the core engineering principles of the field.

Fundamental Components and Design Principles

Early genetic circuits were built from a limited toolkit of biological parts: promoters, ribosomal binding sites, coding sequences, and terminators. These components were assembled to create predictable functions, such as transcriptional activation and repression. The design process was heavily influenced by electrical engineering concepts, treating genes and proteins as components in a circuit diagram. A key breakthrough was the development of the toggle switch, a bistable circuit that could flip between two stable states, and the repressilator, a synthetic oscillator that generated periodic pulses of gene expression [16]. These systems demonstrated that engineered cellular behavior could be predictable and programmable, laying the conceptual groundwork for more ambitious biological programming.

Table: Fundamental Genetic Circuit Components

| Component Type | Core Function | Example from Early Circuits |

|---|---|---|

| Promoter | Initiates transcription of a gene | Constitutive (e.g., PLtetO-1) or inducible (e.g., lac) promoters |

| Repressor Protein | Binds to operator sites to block transcription | LacI, TetR, CI lambda repressor |

| Activator Protein | Enhances transcription initiation | Engineered variants of natural transcription factors |

| Reporter Gene | Produces a detectable output (e.g., fluorescence) | Green Fluorescent Protein (GFP) |

Methodologies for Circuit Construction and Testing

The construction of these early circuits relied on standard molecular biology techniques: restriction enzyme-based cloning, PCR, and plasmid transformation. Characterization was typically performed in batch cultures, with measurements of fluorescent reporter proteins over time providing the primary data on circuit performance. The workflow involved an iterative cycle of design, build, test, and model refinement. A significant challenge was dealing with cellular context and noise, where factors such as resource competition, gene copy number variation, and stochastic biochemical reactions could lead to divergence between predicted and actual circuit behavior.

The Shift Towards Complex Biological Systems

As the field matured, the focus expanded from single cells and simple circuits to multi-cellular systems and more complex biological functions. This transition was driven by advances in DNA synthesis, genome editing, and computational modeling, enabling engineers to tackle more ambitious problems, including the emulation of disease processes.

Incorporating Real-World Data and Machine Learning

A critical enabler of this shift was the integration of large-scale, real-world data. The adoption of the Common Data Model (CDM) allowed for the standardization of electronic medical record data, facilitating its use in model training and validation [18]. Machine learning algorithms demonstrated remarkable performance in predicting chronic disease onset. For instance, one study using the CDM reported that the Extreme Gradient Boosting algorithm achieved area under the curve (AUC) standards ranging from 0.84 to 0.93 for predicting diabetes, hypertension, hyperlipidemia, and cardiovascular disease within a 10-year horizon [18]. This data-driven approach provided a more nuanced understanding of disease risk factors that could be incorporated into biological models.

Table: Machine Learning Performance in Chronic Disease Prediction (CDM-Based Study)

| Chronic Disease | Best-Performing Algorithm | Accuracy | Area Under the Curve (AUC) |

|---|---|---|---|

| Diabetes | Extreme Gradient Boosting | >80% | 0.84 - 0.93 |

| Hypertension | Extreme Gradient Boosting | >80% | 0.84 - 0.93 |

| Hyperlipidemia | Extreme Gradient Boosting | >80% | 0.84 - 0.93 |

| Cardiovascular Disease | Extreme Gradient Boosting | >80% | 0.84 - 0.93 |

The Rise of Hybrid and Quantum-Inspired Models

The increasing complexity of systems also spurred the development of novel computational frameworks. Quantum-inspired models have been proposed to overcome limitations of traditional compartmental models. The Quantum Healthy-Infected Model (QHIM), for example, uses the principle of quantum superposition, where an individual's state is represented as a linear combination of healthy and infected ground states (( |ψ⟩ = a|0⟩ + b|1⟩ )) [16]. This allows the system to exist in multiple states simultaneously, collapsing into a definite state only upon observation, thereby offering a more flexible framework for capturing the uncertainty and heterogeneity inherent in biological systems and disease progression [16].

Synthetic Biology for Chronic Disease Modeling

The current frontier of synthetic biology directly addresses the complexity of chronic diseases by engineering cellular systems that can sense, record, and respond to disease-related signals within a physiological context.

Engineering Cellular Systems as Disease Sensors

Modern synthetic biology approaches are designing cells to function as sophisticated biosensors. These engineered systems can detect specific biomarkers, such as metabolites, cytokines, or abnormal physiological states, and produce a quantifiable readout or therapeutic response. A prominent application is in diabetes management, where the integration of Internet of Things (IoT) mobile sensing devices with machine learning has led to the development of wearable blood glucose monitoring systems that enable continuous monitoring and improve patients' quality of life [17]. The workflow for developing these systems is highly interdisciplinary, closing the loop between computational prediction and biological implementation.

Experimental Protocols for Engineered Disease Models

The development of a synthetic biology model for a chronic disease involves a rigorous, multi-stage protocol.

Protocol 1: Biosensor Development for Metabolic Disease Monitoring

- Identify and Isolate Promoters: Select natural or engineer synthetic promoters that are responsive to the target metabolite (e.g., glucose, ketones, specific lipids). This can be achieved through screening genomic libraries or computational design.

- Construct Genetic Circuit: Assemble the biosensor circuit using high-fidelity DNA assembly methods (e.g., Gibson Assembly, Golden Gate). The core circuit typically consists of:

- Sensor: The metabolite-responsive promoter.

- Amplifier: A transcriptional cascade to enhance signal-to-noise ratio.

- Actuator/Reporter: A gene encoding a therapeutic protein (e.g., insulin) or a reporter protein (e.g., luciferase, GFP).

- Delivery and Genomic Integration: Transfer the constructed circuit into the target cell line (e.g., mammalian stem cells, probiotics) using transfection or viral transduction. Aim for site-specific genomic integration to ensure stable, long-term expression and avoid issues related to episomal plasmid replication.

- Functional Validation in Vitro: Culture the engineered cells and expose them to a gradient of the target metabolite. Quantify the output (e.g., reporter fluorescence, secreted protein) using plate readers, flow cytometry, or ELISA. Compare the dynamic range and sensitivity against clinical gold-standard assays.

Protocol 2: In Vivo Validation in a Disease Model

- Model System Preparation: Introduce the engineered cells into an appropriate animal model (e.g., murine models for diabetes, cardiovascular disease). This can be done via implantation of encapsulated cells or colonization with engineered probiotics.

- Disease State Induction: Depending on the model, induce the disease state through diet (e.g., high-fat diet), chemical means (e.g., streptozotocin for diabetes), or genetic manipulation.

- Real-Time Monitoring and Sampling: Monitor disease progression and system function in real-time. For IoT-enabled systems, this involves collecting data from wearable sensors (e.g., continuous glucose monitors, heart rate sensors) [17]. Periodically collect biological samples (blood, tissue) for deeper analysis.

- Endpoint Analysis and Model Refinement: At the end of the study, perform histological analysis of tissues and quantify engraftment and function of the engineered system. Compare the results with the predictions from the initial computational model (e.g., the QHIM or ML model) and use this data to refine the model parameters for the next design-build-test cycle [16].

The Scientist's Toolkit: Research Reagent Solutions

The advancement of complex disease modeling relies on a suite of specialized reagents and tools that enable the precise construction and analysis of biological systems.

Table: Essential Reagents for Synthetic Biology Disease Models

| Research Reagent / Tool | Core Function | Application in Disease Modeling |

|---|---|---|

| Common Data Model (CDM) | Standardizes electronic health record data from diverse sources [18]. | Provides structured, real-world data for training and validating machine learning models that identify disease risk factors. |

| IoT Mobile Sensing Devices | Collects real-time physiological data (e.g., glucose, heart rate, blood oxygen) [17]. | Enables continuous patient monitoring and supplies dynamic input data for biosensor systems and model refinement. |

| Machine Learning Algorithms (e.g., XGBoost) | Identifies complex, non-linear patterns in large datasets [18]. | Generates high-performance predictive models for chronic disease onset, informing which pathways to target synthetically. |

| Quantum Circuit Simulators | Simulates the behavior of quantum systems on classical computers. | Allows for testing and development of quantum-inspired models (e.g., QHIM) for disease progression before implementation on quantum hardware [16]. |

| Genome Editing Tools (e.g., CRISPR-Cas9) | Enables precise, targeted modifications to genomic DNA. | Used to stably integrate synthetic genetic circuits (biosensors, actuators) into the genome of host cells for long-term function. |

The historical evolution from simple circuits to complex disease modeling marks the maturation of synthetic biology into a discipline capable of directly confronting major challenges in human health. The integration of data-driven insights from clinical databases and IoT devices with the programmability of biological systems has created a powerful, iterative feedback loop for understanding and intervening in chronic diseases [18] [17]. Emerging paradigms, including quantum-inspired modeling and organs-on-chips, promise to further increase the fidelity and predictive power of these systems [16] [15]. As these tools converge, the vision of creating personalized, predictive models of a patient's disease—and deploying engineered cellular sentinels to manage it—moves from the realm of science fiction into a tangible, albeit complex, engineering frontier. This progression firmly establishes synthetic biology as a cornerstone of next-generation biomedical research and therapeutic development.

The Design-Build-Test-Learn (DBTL) cycle is a core engineering framework in synthetic biology and biomedical research, enabling the systematic and iterative development of biological systems. This approach allows researchers to reprogram organisms with desired functionalities through rational engineering principles, drawing inspiration from the assembly of electronic circuits [19]. The cycle begins with the in silico design of biological parts, proceeds to their physical assembly, tests their function, and concludes with data analysis to inform the next design iteration. This structured methodology has become fundamental for advancing applications from therapeutic development to the creation of microbial cell factories for fine chemical production [20].

When applied to chronic disease research, the DBTL framework provides a powerful tool for deciphering complex disease mechanisms and developing targeted interventions. Chronic diseases, characterized by multifactorial etiology and progressive pathophysiology, present challenges that align well with the iterative, systems-oriented nature of the DBTL approach. By applying DBTL cycles, researchers can construct and analyze synthetic biological models that capture key aspects of chronic disease processes, enabling more precise investigation of disease pathways and accelerating the development of novel therapeutics [21].

The Four Phases of the DBTL Cycle

Design Phase

The Design phase involves the computational planning of biological systems using standardized biological parts. This stage encompasses several crucial activities: Protein Design (selecting natural enzymes or designing novel proteins), Genetic Design (translating amino acid sequences into coding sequences, designing regulatory elements, and planning genetic architecture), and Assembly Design (strategically breaking down genetic constructs into fragments for assembly) [22]. Central to this phase is the use of bioinformatics tools to select candidate enzymes and design DNA parts with optimized expression levels [20].

Advanced software tools have been developed to automate and enhance this phase. For pathway design, RetroPath enables automated enzyme selection, while Selenzyme facilitates specific enzyme choice. For DNA part design, PartsGenie optimizes ribosome-binding sites and coding sequences, and PlasmidGenie generates assembly recipes and robotics worklists [20]. These tools allow researchers to create combinatorial libraries of pathway designs that can be statistically reduced using Design of Experiments (DoE) methodologies, making laboratory testing tractable [20]. The Design phase outputs a set of blueprint genetic constructs ready for physical assembly.

Build Phase

The Build phase translates in silico designs into physical biological constructs. This stage has been revolutionized by advances in DNA synthesis and assembly technologies, with automated platforms enabling high-throughput construction of genetic variants [19]. Key technologies include automated liquid handlers from manufacturers such as Tecan, Beckman Coulter, and Hamilton Robotics, which provide high-precision pipetting for processes like PCR setup, DNA normalization, and plasmid preparation [22].

DNA assembly is typically accomplished using modern methodologies such as Gibson assembly or Golden Gate cloning, which overcome limitations of traditional cloning methods and enable seamless assembly of combinatorial genetic parts [19] [22]. Integration with commercial DNA synthesis providers like Twist Bioscience and IDT streamlines the incorporation of custom DNA sequences into automated workflows [22]. Software platforms such as TeselaGen orchestrate the entire build process, managing protocols and tracking samples across different laboratory equipment while maintaining robust inventory management systems [22]. The output of this phase is a collection of engineered biological constructs ready for functional testing.

Test Phase

The Test phase characterizes the functionality and performance of built constructs through high-throughput analytical methods. This phase employs high-throughput screening (HTS) systems such as automated liquid handling platforms (e.g., Beckman Coulter Biomek series) and plate readers (e.g., PerkinElmer EnVision) to assess diverse assay formats [22]. Multi-omics technologies play a crucial role, with Next-Generation Sequencing (NGS) platforms like Illumina's NovaSeq providing genotypic analysis, and mass spectrometry setups such as Thermo Fisher's Orbitrap enabling proteomic and metabolomic profiling [22].

In the context of chronic disease research, testing may involve specialized models such as organs-on-chips that replicate key aspects of human physiology. For example, researchers are developing artificial blood vessel models to study clot formation in cardiovascular disease, which accounts for almost a third of global mortality [15]. These advanced test systems generate quantitative data on biological performance, which is collected and standardized through software platforms that transform raw data into formats ready for analysis and machine learning [22].

Learn Phase

The Learn phase represents the critical knowledge extraction step where experimental data is analyzed to generate insights for subsequent DBTL cycles. This phase has been transformed by machine learning (ML) algorithms that identify complex patterns in large datasets beyond human analytical capability [19] [21]. For instance, ML models trained on experimental data can make accurate genotype-to-phenotype predictions, guiding metabolic engineering by learning from experimental datasets and predicting outcomes [22].

The learning process involves identifying relationships between observed production levels and design factors through statistical methods and machine learning [20]. Explainable ML approaches are particularly valuable as they provide both predictions and reasons for proposed designs, deepening understanding of biological relationships and accelerating the learning process [19]. This phase closes the DBTL loop by generating refined hypotheses and design rules that initiate subsequent cycles, progressively optimizing system performance toward desired specifications.

DBTL Cycle Operationalization and Automation

Implementing an Automated DBTL Pipeline

Fully automated DBTL pipelines represent the state-of-the-art in synthetic biology infrastructure. An exemplary pipeline demonstrated for microbial production of fine chemicals integrates a unique combination of technologies to be compound-agnostic and automated throughout [20]. The pipeline incorporates specialized software tools for each stage: RetroPath and Selenzyme for pathway and enzyme selection in the Design phase, automated DNA assembly via ligase cycling reaction on robotics platforms in the Build phase, quantitative screening using UPLC-MS/MS in the Test phase, and statistical analysis coupled with machine learning in the Learn phase [20].

This integrated approach enables rapid iterative cycling, as demonstrated by the optimization of a (2S)-pinocembrin pathway in E. coli, where application of two DBTL cycles established a production pathway improved by 500-fold, achieving competitive titers up to 88 mg L⁻¹ [20]. The pipeline's modular design allows replacement of individual components or protocols while preserving overall principles, ensuring future-proof flexibility as technologies advance.

Biofoundries and Standardization Frameworks

Biofoundries represent specialized facilities that operationalize DBTL cycles through high-throughput, automated workflows. The 2019 formation of the Global Biofoundry Alliance established a collaborative network for sharing resources and addressing common challenges [19] [23]. These facilities provide tiered services ranging from equipment access to full DBTL cycle support, categorized as follows [23]:

| Tier | Service Description | Example Applications |

|---|---|---|

| Tier 1 | Access to individual automated equipment | Liquid handling robots for user training |

| Tier 2 | Focus on single DBTL stage | Protein sequence library design using Protein MPNN |

| Tier 3 | Combination of multiple DBTL stages | AI model training followed by protein design |

| Tier 4 | Full DBTL cycle support | Enzyme discovery/engineering for greenhouse gas bioconversion |

Recent advances propose abstraction hierarchies to standardize biofoundry operations across four levels: Project (Level 0), Service/Capability (Level 1), Workflow (Level 2), and Unit Operation (Level 3) [23]. This framework enables modular, flexible, and automated experimental workflows, improving communication between researchers and systems while supporting reproducibility and software tool integration.

Computational and Machine Learning Approaches

Overcoming the "Learning Bottleneck" with Machine Learning

The "Learn" phase has traditionally presented a bottleneck in DBTL cycles due to biological complexity and heterogeneity [19]. Machine learning has emerged as a powerful solution, processing large datasets to provide predictive models by choosing appropriate features and uncovering unseen patterns [19]. ML methods particularly excel in the low-data regime, with gradient boosting and random forest models demonstrating robustness against training set biases and experimental noise [24].

ML applications span multiple DBTL stages, from improving biological components like promoters and enzymes to facilitating system-level prediction of biological designs [19]. For metabolic engineering, ML can recommend new strain designs by learning from experimentally probed input designs, enabling (semi)-automated iterative optimization [24]. The Automated Recommendation Tool exemplifies this approach, using an ensemble of ML models to create predictive distributions from which it samples new designs based on exploration/exploitation parameters [24].

Kinetic Modeling Frameworks

Kinetic modeling provides a mechanistic foundation for simulating DBTL cycles and benchmarking ML methods. By describing changes in intracellular metabolite concentrations over time through ordinary differential equations, kinetic models can simulate the effects of genetic perturbations on metabolic flux [24]. These models capture non-intuitive pathway behaviors, such as instances where increasing enzyme concentrations decreases flux due to substrate depletion, highlighting the importance of combinatorial optimization [24].

Kinetic model-based frameworks allow researchers to test and optimize ML approaches over multiple DBTL cycles without the cost and time constraints of real-world experiments [24]. This approach enables systematic comparison of DBTL cycle strategies, such as determining whether building a large initial library is more effective than distributing the same number of constructs across multiple cycles [24].

DBTL Applications in Chronic Disease Research

Disease Modeling and Therapeutic Development

DBTL cycles are advancing chronic disease research through improved disease models and therapeutic approaches. For cardiovascular disease, researchers are applying DBTL principles to develop artificial blood vessels that replicate key aspects of human circulation, including blood flow, vessel structure, and clot formation [15]. The ARTEMIS project (ARTificial blood vessels for Thrombosis, Endothelial Modeling and artificial Intelligence Simulation) aims to create reliable, non-animal methods for drug discovery that can reduce and potentially eliminate animal models [15].

In broader chronic disease contexts, DBTL approaches facilitate P4 medicine (predictive, preventive, personalized, participatory) through systems biology approaches [25]. This framework integrates comprehensive molecular information (genomic, proteomic, metabolomic) with computational modeling to shift healthcare from reactive treatment toward proactive wellness management [25].

Metabolic Engineering for Therapeutic Compound Production

DBTL cycles enable optimized microbial production of complex therapeutic compounds, as demonstrated by the successful optimization of flavonoid and alkaloid pathways [20]. The iterative DBTL approach allows researchers to systematically identify and overcome metabolic bottlenecks, balance pathway expression, and optimize host physiology for compound production [20]. This capability is particularly valuable for chronic disease treatments requiring complex natural products or sustained therapeutic delivery.

Essential Research Tools and reagents

The following table details key research reagent solutions and essential materials used in automated DBTL pipelines for synthetic biology:

| Category | Specific Tools/Reagents | Function in DBTL Cycle |

|---|---|---|

| DNA Synthesis Providers | Twist Bioscience, IDT, GenScript | Provide custom DNA sequences for assembly in the Build phase [22] |

| Software Platforms | TeselaGen, CLC Genomics Workbench, Geneious | Orchestrate workflows, manage protocols, and analyze data across DBTL stages [22] |

| DNA Assembly Methods | Gibson Assembly, Golden Gate Cloning, Ligase Cycling Reaction | Enable seamless assembly of combinatorial genetic parts in the Build phase [19] [20] |

| Analytical Instruments | Illumina NovaSeq (NGS), Thermo Fisher Orbitrap (Mass Spectrometry), UPLC-MS/MS | Provide genotypic and phenotypic characterization in the Test phase [22] [20] |

| Automation Equipment | Tecan Freedom EVO, Beckman Coulter Biomek, Hamilton Robotics | Enable high-throughput liquid handling for Build and Test phases [22] [20] |

Workflow Visualization

Automated DBTL Pipeline