Streamlining Biomanufacturing: A Guide to DoE-Driven Library Reduction in DBTL Cycles

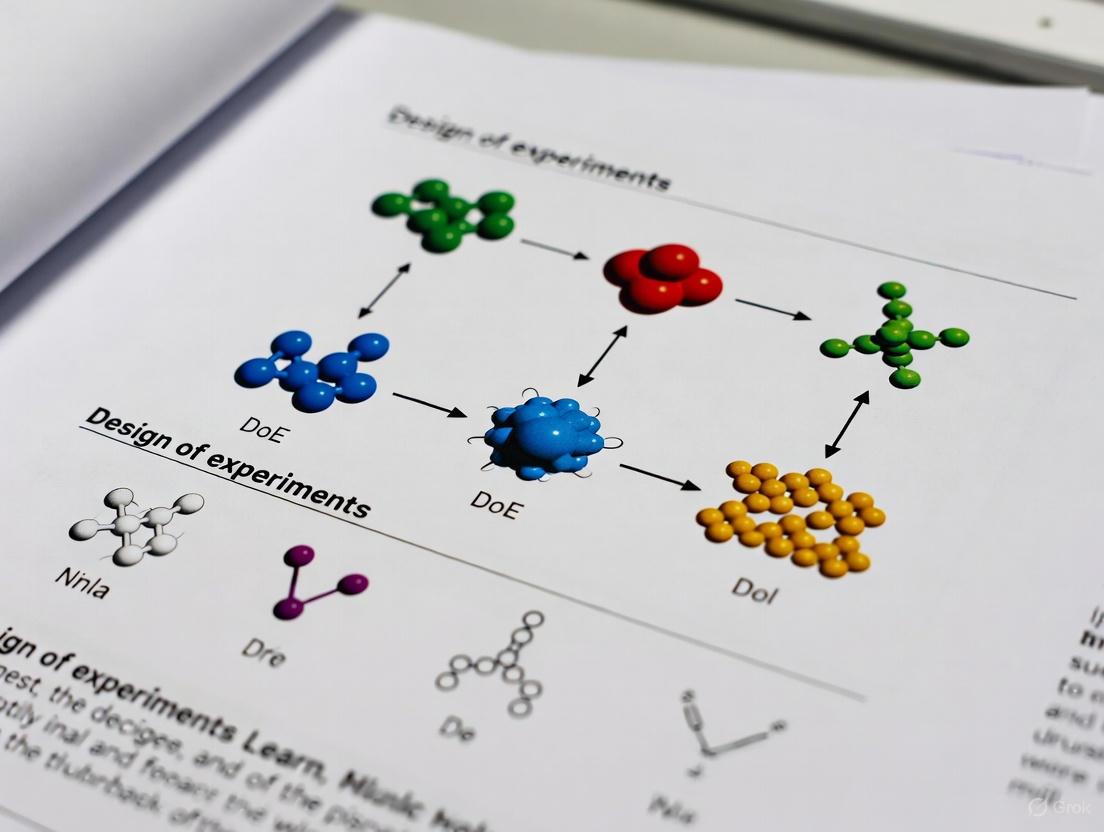

This article explores the strategic integration of Design of Experiments (DoE) to efficiently reduce the combinatorial library size in Design-Build-Test-Learn (DBTL) cycles for biomedical research and drug development.

Streamlining Biomanufacturing: A Guide to DoE-Driven Library Reduction in DBTL Cycles

Abstract

This article explores the strategic integration of Design of Experiments (DoE) to efficiently reduce the combinatorial library size in Design-Build-Test-Learn (DBTL) cycles for biomedical research and drug development. It provides a foundational understanding of the challenges in optimizing complex biological systems, such as metabolic pathways for drug production. The piece details methodological approaches, including fractional factorial and definitive screening designs, and offers troubleshooting strategies for common pitfalls. Through validation and comparative analysis of different DoE techniques, it demonstrates how researchers can achieve significant resource savings and accelerate the development of microbial cell factories and therapeutic compounds, ultimately enhancing the efficiency of biomanufacturing pipelines.

The Combinatorial Challenge: Why DoE is Essential for Modern DBTL Pipelines

The Intractability of Full Factorial Designs in Biological Optimization

In the context of a broader thesis on statistical design of experiments (DoE) for Design-Build-Test-Learn (DBTL) cycles, managing combinatorial complexity is a fundamental challenge. Full factorial designs, wherein every possible combination of factor levels is experimentally tested, represent a comprehensive approach for understanding main effects and interaction effects [1]. However, the application of such designs in biological optimization—from metabolic engineering to stem cell bioprocessing—is often rendered intractable due to the sheer number of experiments required [2] [3]. As the number of factors (k) increases, the experimental runs grow exponentially (l^k), creating a prohibitive bottleneck for the "Build" and "Test" phases of the DBTL cycle [1]. This application note details the inherent challenges of full factorial designs in biological systems and provides structured protocols and alternative strategies to navigate this complexity efficiently, thereby accelerating research and development.

The Combinatorial Challenge in Biology

Biological systems are inherently multivariate. Optimizing a metabolic pathway or a bioprocess involves navigating a vast design space that includes genetic elements (e.g., promoters, RBSs, gene sources), environmental conditions (e.g., pH, temperature, media components), and process parameters [2] [3].

The core of the intractability problem is combinatorial explosion. For a system with k factors, each at only 2 levels, a full factorial design requires 2^k experimental runs [4] [1]. The table below illustrates how this number becomes unmanageable as factor count increases, a common scenario in biology.

Table 1: Exponential Growth of Experimental Runs in a Full Factorial Design (2^k)

| Number of Factors (k) | Number of Experimental Runs (2^k) |

|---|---|

| 2 | 4 |

| 3 | 8 |

| 4 | 16 |

| 5 | 32 |

| 8 | 256 |

| 10 | 1024 |

| 15 | 32,768 |

| 28 | 268,435,456 |

This problem is starkly evident in metabolic engineering. For instance, designing an 8-gene pathway with just 3 expression levels per gene would require 3^8 = 6,561 genetic designs [2]. For a more complex pathway, such as the 28-gene pathway for vitamin B12 synthesis in E. coli, the number of possible sequences balloons to an astronomical 3^28 (approximately 2.3 x 10^13) [2]. Executing a full factorial exploration of such a space is practically impossible with standard laboratory resources and timeframes.

Furthermore, the traditional one-factor-at-a-time (OFAT) approach, while simpler, is inefficient and likely to yield suboptimal results because it fails to account for interaction effects between factors [2] [3]. This often leads to researchers becoming trapped in local optima rather than finding the global optimum for the system.

Application Note & Experimental Protocols

Screening for Influential Factors

The primary strategy to overcome intractability is to first perform a screening design to identify the few critical factors from a large set of potential candidates.

Table 2: Key Screening Design Methodologies

| Methodology | Description | Ideal Use Case | Key Advantage |

|---|---|---|---|

| Plackett-Burman Design | A highly fractional factorial design that allows screening of a large number of factors (N-1 factors with N runs) with very few experimental runs [2]. | Early-stage screening when many factors (e.g., 10-20) are being considered and interactions are assumed negligible. | Extreme efficiency in run reduction. |

| Definitive Screening Design (DSD) | A modern, efficient design that enables screening of many factors and can also model some quadratic effects, unlike Plackett-Burman [2]. | Screening when curvature in the response is suspected, providing more robust analysis without a large run increase. | Combines screening and optimization capabilities. |

| 2^k Fractional Factorial Design | A design that studies k factors at 2 levels but only uses a fraction (e.g., 1/2, 1/4) of the full factorial runs. It confounds (aliases) some interactions [4]. | Screening a moderate number of factors (e.g., 5-8) where some interaction effects are of interest but must be carefully considered. | Balances run efficiency with the ability to estimate some interactions. |

Protocol 1: Screening Media Components for Microbial Metabolite Production

- Objective: Identify which of 8 media components significantly influence the yield of a target metabolite.

- Materials:

- Strain: Engineered microbial strain.

- Basal Media: Minimal salts medium.

- Components: 8 different carbon, nitrogen, and vitamin sources to be screened.

- Analytical Equipment: HPLC or GC-MS for metabolite quantification.

- Procedure:

- Design Setup: Select a Plackett-Burman design for 8 factors. This may require only 12 experimental runs, plus center points for error estimation.

- Factor Levels: Define a "high" (+1) and "low" (-1) level for the concentration of each media component.

- Experiment Execution: Inoculate shake flasks according to the design matrix. Cultivate under controlled conditions (temperature, pH, agitation).

- Response Measurement: Harvest samples at a fixed time point and measure the final metabolite titer.

- Statistical Analysis:

- Fit a linear model to the experimental data.

- Calculate the main effect of each factor (the average change in response when moving from the low to high level) [4].

- Use ANOVA or t-tests to identify factors with statistically significant effects (p-value < 0.05).

- Output: A Pareto chart or a ranked list of factors by the magnitude of their effect. The 2-3 most influential factors are selected for further optimization.

Optimization via Response Surface Methodology (RSM)

Once critical factors are identified, Response Surface Methodology (RSM) is used to find their optimal levels. RSM is a collection of statistical and mathematical techniques for building models, designing experiments, and optimizing processes [2] [3].

Table 3: Common RSM Designs for Optimization

| Design | Description | Runs for 3 Factors | Key Feature |

|---|---|---|---|

| Central Composite Design (CCD) | The most popular RSM design. It consists of a factorial or fractional factorial core (2^k), axial (star) points, and center points [2] [1]. | 15-20 runs | Excellent for fitting a second-order (quadratic) model and locating a stationary point (optimum). |

| Box-Behnken Design (BBD) | A spherical, rotatable design based on incomplete factorial blocks. It does not contain corner points [2] [1]. | 15 runs | More efficient than CCD; useful when performing experiments at the extreme factor levels (corners) is impractical or expensive. |

Protocol 2: Optimizing Inducer Concentration and Temperature for Recombinant Protein Expression

- Objective: Determine the levels of inducer concentration and temperature that maximize protein expression yield in a yeast system.

- Materials:

- Strain: Yeast strain with integrated expression construct.

- Inducer: e.g., Galactose or Methanol.

- Bioreactor/Shake Flask System: For precise environmental control.

- Assay Kits: SDS-PAGE, Bradford assay, or activity assay for protein quantification.

- Procedure:

- Design Setup: For 2 factors, a Central Composite Design (CCD) with 5 levels per factor (requiring ~13 runs) is appropriate.

- Experiment Execution: Run cultures according to the CCD matrix, ensuring precise control of temperature and inducer addition.

- Response Measurement: Quantify the final protein concentration and/or specific activity.

- Model Building & Analysis:

- Fit a second-order polynomial model to the data (e.g., Yield = β₀ + β₁A + β₂B + β₁₁A² + β₂₂B² + β₁₂AB).

- Use ANOVA to check the model's significance and lack-of-fit.

- Generate 2D contour plots or 3D response surface plots to visualize the relationship between factors and the response.

- Optimization: Use the model to predict the factor settings (inducer concentration, temperature) that yield the maximum protein expression. Validate the prediction with confirmatory experiments.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Research Reagents and Materials for DoE in Biological Optimization

| Item | Function in DoE | Application Example |

|---|---|---|

| Library of Genetic Parts | Provides the variation in genetic factors (promoters, RBSs, terminators) to be tested in combinatorial designs [2]. | Varying promoter strength to optimize flux through a metabolic pathway. |

| Chemically Defined Media | Allows for precise, independent manipulation of individual media components (carbon, nitrogen, salts, vitamins) as factors in a screening design [5]. | Identifying which trace element limits growth or product yield. |

| Inducers & Inhibitors | Used as factors to control the timing and level of gene expression or to modulate specific enzymatic activities within a pathway [5]. | Optimizing IPTG concentration and induction time for recombinant protein production. |

| High-Throughput Analytics | Enables rapid quantification of responses (e.g., product titer, cell density, enzyme activity) for the many samples generated by a DoE campaign [6]. | Using HPLC-MS or plate-based spectrophotometry to analyze 100s of samples from a screening design. |

| Statistical Software | Essential for generating design matrices, randomizing run orders, analyzing data, building models, and creating visualizations (contour plots) [2] [6]. | Tools like JMP, R, Python (with pyDOE2, scikit-learn), or specialized platforms for experimental design. |

The intractability of full factorial designs in complex biological optimization is a significant hurdle. However, by integrating a structured DoE approach within the DBTL cycle, researchers can efficiently navigate this complexity. The strategic use of initial screening designs (e.g., Plackett-Burman, DSD) to identify critical factors, followed by optimization designs (e.g., CCD, BBD) to model and locate optimal conditions, provides a powerful and resource-efficient framework. This methodology moves biological optimization beyond the limitations of OFAT and unfeasible full factorial explorations, enabling more rapid and reliable development of robust bioprocesses and engineered biological systems.

Contrasting DoE with the One-Factor-at-a-Time (OFAT) Approach

In scientific and industrial research, the pursuit of optimal experimental strategies is paramount for efficient resource utilization and robust knowledge generation. Two predominant methodologies in this realm are the One-Factor-at-a-Time (OFAT) approach and the Design of Experiments (DoE) framework. OFAT, a traditional method, involves varying a single factor while holding all others constant, and is widely taught due to its straightforward nature [7]. In contrast, DoE represents a systematic, statistically-driven approach that simultaneously varies multiple factors according to a structured plan, enabling a comprehensive exploration of the experimental space and interactions between factors [8]. Within the Design-Build-Test-Learn (DBTL) cycle for library reduction research, the choice of experimental methodology directly impacts the efficiency of knowledge acquisition and process optimization. This application note provides a detailed comparison of these methodologies, supported by quantitative data, experimental protocols, and implementation resources tailored for researchers, scientists, and drug development professionals.

Conceptual Foundations and Comparative Analysis

One-Factor-at-a-Time (OFAT) Approach

The OFAT approach, also known as the classical or hold-one-factor-at-a-time method, involves sequentially changing one input variable while maintaining all others at fixed, constant levels [9]. After completing tests for one factor, the experimenter resets the factor to its baseline before proceeding to investigate the next variable of interest. This process continues until all factors have been tested individually [9]. Historically, OFAT gained popularity due to its simplicity and ease of implementation without requiring complex experimental designs or advanced statistical analysis techniques [9].

Design of Experiments (DoE) Framework

DoE is a systematic, structured approach to investigating the relationship between input factors and output responses through carefully designed test sequences [8] [10]. Rooted in statistical principles first introduced by Sir Ronald Fisher in the early twentieth century, DoE builds quality into product development by enabling system thinking, variation understanding, theory of knowledge, and psychology [10]. The methodology employs various experimental designs—including factorial designs, response surface methodologies, and screening designs—to efficiently capture main effects, interaction effects, and curvature in the response surface [8] [9]. The pharmaceutical industry has increasingly adopted DoE as a cornerstone of Quality by Design (QbD) initiatives, where it facilitates the establishment of design space linking Critical Process Parameters (CPPs) and Material Attributes (CMAs) to Critical Quality Attributes (CQAs) [10].

Fundamental Differences Structured in DBTL Context

The table below summarizes the core differences between OFAT and DoE within the Design-Build-Test-Learn cycle for library reduction:

Table 1: Fundamental Differences Between OFAT and DoE Approaches

| Aspect | OFAT Approach | DoE Approach |

|---|---|---|

| Factor Variation | Sequential, one factor at a time | Simultaneous, multiple factors varied together |

| Experimental Space Coverage | Limited, along a single path | Comprehensive, systematic coverage of multi-dimensional space |

| Interaction Detection | Cannot detect or quantify interactions between factors | Explicitly models and quantifies interaction effects |

| Statistical Foundation | Minimal, relies on direct comparison | Strong, based on principles of randomization, replication, and blocking |

| Resource Efficiency | Inefficient, requires many runs for limited information | Highly efficient, maximizes information per experimental run |

| Optimization Capability | Limited to suboptimal, local improvements | Enables global optimization through predictive modeling |

| DBTL Integration | Slow learning cycles, limited knowledge generation | Accelerated learning, comprehensive process understanding |

Quantitative Comparison: Experimental Efficiency and Outcomes

Case Study: Chemical Process Optimization

A direct comparison from a chemical process optimization study demonstrates the practical differences between these approaches. Researchers aimed to maximize yield by optimizing temperature and pH, with the true optimum existing at 80°C and pH 9.0, yielding 83% [11].

Table 2: Experimental Outcomes for Yield Optimization Study

| Approach | Number of Experiments | Identified "Optimum" | Actual Yield at Identified Conditions | Missed Optimization Opportunity |

|---|---|---|---|---|

| OFAT | 15 (9 temperature + 6 pH) | 40°C, pH 6.0 | 71% | 12% (83% true maximum) |

| DoE | 5 (factorial with center point) | 80°C, pH 9.0 | 83% | 0% |

The OFAT approach required three times more experimental resources but failed to identify the true process optimum, instead converging on a suboptimal local maximum [11]. This case exemplifies how OFAT can provide misleading conclusions about a system's true behavior while inefficiently utilizing resources.

Statistical Efficiency in Multi-Factor Scenarios

The efficiency gap between OFAT and DoE widens exponentially as the number of experimental factors increases. For a comprehensive assessment of k factors at two levels each:

Table 3: Experimental Requirements Scale with Factor Number

| Number of Factors | OFAT Experiments | Full Factorial DoE | Fractional Factorial DoE |

|---|---|---|---|

| 2 | ~13 tests [8] | 4 tests | 4 tests |

| 3 | ~25 tests | 8 tests | 4 tests |

| 4 | ~49 tests | 16 tests | 8 tests |

| 5 | ~81 tests | 32 tests | 16 tests |

| 7 | ~225 tests | 128 tests | 16-32 tests |

While OFAT appears to test each factor in detail, it provides no information about interactions between factors and becomes progressively more resource-intensive compared to DoE as complexity increases [8] [9]. DoE designs maintain statistical power while dramatically reducing experimental burden through structured fractionation when full factorial designs become prohibitive.

Disadvantages and Limitations

Critical Limitations of OFAT

The OFAT approach suffers from several fundamental limitations that impact its effectiveness in complex experimental settings:

Interaction Blindness: OFAT cannot detect or quantify interactions between factors, which are often critical in complex biological and chemical systems [8] [9]. For example, in the temperature-pH case study, OFAT failed to detect the interaction effect that caused the response surface to twist and rise toward the true optimum [8].

Inefficient Resource Utilization: OFAT requires a large number of experimental runs to investigate factors individually, making poor use of limited resources [7] [9]. This inefficiency becomes particularly problematic when experimental runs are time-consuming or expensive.

Suboptimal Solutions: OFAT frequently identifies local rather than global optima, as it only explores a limited trajectory through the experimental space [12] [11]. The sequential nature of investigation means that early factor settings may constrain later optimization directions.

No Comprehensive Understanding: Without capturing interactions or exploring the full experimental region, OFAT provides only a fragmented understanding of system behavior [9]. This limits its utility for establishing robust design spaces required in regulated industries like pharmaceuticals.

Implementation Challenges of DoE

While DoE offers significant advantages, practitioners should acknowledge its implementation considerations:

Initial Learning Curve: DoE requires specific knowledge for proper experimental planning and results analysis [12]. Researchers need training in statistical principles and design selection strategies.

Software Dependency: Effective implementation typically requires specialized software for design generation and analysis, though free tools like ValChrom provide accessible options [12].

Upfront Planning: DoE experiments demand careful consideration of factors, levels, and responses before execution, which can represent a cultural shift for organizations accustomed to sequential experimentation [12] [11].

Minimum Experiment Number: While more efficient than OFAT, DoE does require a minimum entry of approximately 10 experiments to establish meaningful models, which may represent a psychological barrier despite the superior information return [7].

Experimental Protocols and Workflows

OFAT Protocol for Process Optimization

Protocol Title: One-Factor-at-a-Time Approach for Bioprocess Optimization

Objective: To determine the optimal settings of critical process parameters (e.g., temperature, pH, media concentration) for maximizing product yield.

Materials and Reagents:

- Reaction components (substrates, buffers, catalysts)

- Equipment with parameter control (bioreactor, spectrophotometer)

- Analytical tools for response measurement (HPLC, ELISA)

Procedure:

- Baseline Establishment: Run the process at baseline conditions and measure the response.

- Factor Selection: Choose one factor to vary while holding others constant.

- Sequential Testing: Systematically test different levels of the selected factor:

- For continuous factors (temperature, pH): Test at least 5-7 levels across the operating range

- For categorical factors (vendor, catalyst type): Test all relevant options

- Response Measurement: Record the response at each tested condition.

- Factor Reset: Return the tested factor to its baseline level.

- Repeat: Move to the next factor and repeat steps 3-5 until all factors have been tested individually.

- Data Analysis: Identify the "optimal" level for each factor based on individual response patterns.

Workflow Visualization:

DoE Protocol for Systematic Process Characterization

Protocol Title: Design of Experiments Approach for Comprehensive Process Understanding

Objective: To efficiently characterize the relationship between multiple process parameters and critical quality attributes, enabling identification of interactions and global optimization.

Materials and Reagents:

- Experimental units (reaction vessels, cell culture flasks)

- Parameter control systems (pH stat, temperature controller)

- Response measurement tools (HPLC, MS, activity assays)

- DoE software (JMP, ValChrom, Modde)

Procedure:

- Define Objectives: Clearly state experimental goals (screening, optimization, robustness testing).

- Select Factors and Ranges: Identify input factors to study and establish practical operating ranges based on prior knowledge.

- Choose Experimental Design: Select appropriate design based on objectives:

- Screening: Fractional factorial or Plackett-Burman designs

- Optimization: Central composite or Box-Behnken designs

- Robustness testing: Full factorial designs

- Randomize Run Order: Generate randomized execution sequence to minimize bias.

- Execute Experiments: Conduct runs according to randomized schedule, carefully controlling and recording all factor levels.

- Measure Responses: Collect comprehensive response data for all quality attributes.

- Statistical Analysis:

- Fit empirical model relating factors to responses

- Identify significant main effects and interactions

- Evaluate model adequacy (R², Q², residual analysis)

- Knowledge Extraction:

- Interpret factor effects through Pareto charts

- Visualize relationships with contour and response surface plots

- Establish design space for robust operation

- Confirmation Experiments: Run additional tests at predicted optimum to verify model predictions.

Workflow Visualization:

Essential Research Reagents and Software Solutions

Successful implementation of DoE requires both laboratory materials and specialized software tools. The following table outlines key resources for pharmaceutical and biotech applications:

Table 4: Essential Research Reagents and Software Solutions for DoE Implementation

| Category | Specific Items | Function in DoE Studies |

|---|---|---|

| Chromatography Reagents | Mobile phase buffers, pH modifiers, salt solutions | Systematically vary chromatographic conditions to optimize separation |

| Cell Culture Materials | Media components, growth factors, induction agents | Study multifactorial effects on cell growth and protein production |

| Protein Analysis Tools | ELISA kits, activity assays, stability buffers | Measure multiple quality attributes as responses to factor changes |

| DoE Software | JMP, ValChrom, MODDE, Design-Expert | Generate optimal designs, analyze results, build predictive models |

| Statistical Packages | R, Python with specialized libraries | Advanced analysis and custom design generation |

| Data Management | Electronic lab notebooks, data warehouses | Maintain experimental integrity and data traceability |

For researchers new to DoE, the free ValChrom software provides an accessible entry point without registration requirements [12]. JMP offers comprehensive functionality with extensive learning resources, including a free online course "Statistical Thinking for Industrial Problem Solving" [11].

Implementation in Pharmaceutical Development and DBTL Cycles

The pharmaceutical industry has increasingly embraced DoE as a cornerstone of Quality by Design (QbD) initiatives, moving away from traditional OFAT approaches [10]. In the context of Design-Build-Test-Learn cycles for library reduction, DoE provides a structured framework for efficient knowledge generation and process optimization.

Application in Biopharmaceutical Development:

- Protein Expression Optimization: Systematic variation of factors including temperature, induction conditions, media composition, and gas exchange to maximize recombinant protein yield [11].

- Purification Process Development: Concurrent optimization of multiple chromatography parameters (pH, salt concentration, gradient slope, resin type) to achieve target purity with minimal steps [12].

- Formulation Development: Efficient identification of robust formulation conditions by studying interactions between buffer composition, excipients, and storage conditions [10].

DBTL Integration Benefits:

- Reduced Cycle Time: Simultaneous factor evaluation accelerates the learn phase, informing subsequent design iterations more efficiently than sequential OFAT approaches.

- Library Reduction: Statistical models derived from DoE data enable virtual screening of parameter combinations, reducing the experimental burden for library validation.

- Knowledge Retention: Empirical models capture system understanding in a transferable, scalable format that persists beyond individual experiments.

The comparative analysis presented in this application note demonstrates the clear superiority of Design of Experiments over the One-Factor-at-a-Time approach for most research and development applications, particularly within DBTL frameworks for library reduction. While OFAT offers simplicity and minimal upfront knowledge requirements, its inability to detect factor interactions, inefficiency in resource utilization, and tendency to identify suboptimal conditions limit its value in complex experimental settings. DoE provides a systematic, statistically-sound framework that maximizes information gain per experimental run, enables detection of critical interaction effects, and supports the establishment of robust design spaces. For researchers in drug development and related fields, investment in DoE training and implementation yields substantial returns in accelerated development timelines, enhanced process understanding, and improved product quality. As the pharmaceutical industry continues its transition toward Quality by Design paradigms, DoE stands as an essential methodology for efficient, knowledge-driven development.

In the context of the Design-Build-Test-Learn (DBTL) cycle for biological research, the strategic implementation of Design of Experiments (DoE) is paramount for efficient library reduction and systematic inquiry. DoE provides a structured framework for investigating complex biological systems by simultaneously exploring multiple variables, a capability that is particularly valuable when navigating intractably large genetic design spaces [2]. At its core, DoE involves the identification and manipulation of factors (input variables), their corresponding levels (specific settings), and the measurement of response variables (output measurements) to build statistical models that explain system behavior [13] [14]. This approach stands in stark contrast to traditional One-Factor-at-a-Time (OFAT) methods, which often miss significant interactions between variables and can lead to suboptimal results [2]. In biological research, where systems are characterized by inherent complexity and variability, DoE enables researchers to efficiently screen numerous factors and optimize processes while minimizing experimental runs [14].

Defining Core Concepts in a Biological Context

Factors

In biological experiments, factors are variables that are hypothesized to influence the outcome or response of the system under investigation [13]. These can be broadly classified into different categories with distinct characteristics:

Continuous factors represent quantitative parameters that can assume any value within a defined range. In biological systems, examples include temperature (°C), pH, concentration of media components (g/L), incubation time (hours), and oxygenation rate (%). Recent advances also allow the treatment of genetic elements as continuous factors; for instance, promoter and ribosome-binding site (RBS) strengths can be quantitatively characterized using reporter assays and fluorescence measurements, enabling their treatment as continuous rather than ordinal variables [2].

Categorical factors represent qualitative attributes that divide experimental conditions into distinct groups. These can be further subdivided into:

- Nominal variables: Categories without inherent order or ranking, such as strain types (e.g., E. coli BL21 vs. DH10B), plasmid types (e.g., high-copy vs. low-copy), media types (e.g., LB vs. minimal media), and carbon sources (e.g., glucose vs. glycerol) [2].

- Ordinal variables: Categories with a specific sequence or ranking but lacking consistent intervals between them, such as the order of genes in an operon or the sequence of processing steps [2].

Levels

Levels represent the specific values or settings at which factors are tested during an experiment [2]. The strategic selection of appropriate levels is critical for generating meaningful data. For continuous factors, levels are discretized into specific set points within the biologically plausible range. For example, in a bacterial protein expression optimization study, temperature might be tested at levels of 30°C, 37°C, and 42°C, representing low, standard, and high conditions [15]. For categorical factors, levels represent the distinct categories or types being compared, such as comparing the performance of constitutive versus inducible promoters (nominal) or testing different gene orders in a metabolic pathway (ordinal) [2].

Response Variables

Response variables, also referred to as output variables or dependent variables, are the measured outcomes that reflect the system's performance or behavior [13] [14]. In biological contexts, these must be carefully selected to provide meaningful insights into the process under investigation and must be measurable with sufficient precision and accuracy. Examples include:

- Product titer (e.g., g/L of a target metabolite)

- Protein expression level (e.g., mg/L of a recombinant protein)

- Cell density (OD600)

- Enzyme activity (U/mg)

- Product yield (g product/g substrate)

The measurement system for response variables must be properly calibrated and maintained throughout the experiment, with particular attention to noise (reproducibility) and sensitivity (detection range) considerations [15].

Table 1: Classification of Experimental Factors in Biological Systems

| Factor Type | Definition | Biological Examples | Considerations for Level Selection |

|---|---|---|---|

| Continuous | Quantitative measurements with infinite values within a range | Temperature, pH, concentration, time, promoter strength | Select biologically plausible ranges; avoid combinations that create implausible conditions |

| Categorical (Nominal) | Qualitative categories without inherent order | Strain type, plasmid backbone, media composition, carbon source | Ensure categories are mutually exclusive; include biologically relevant alternatives |

| Categorical (Ordinal) | Qualitative categories with specific sequence | Gene order in operon, sequence of processing steps | Recognize that intervals between categories may not be equal or quantifiable |

Experimental Protocols for DoE Implementation in Biological Systems

Preliminary Factor Screening Protocol

Objective: To identify the most significant factors affecting system performance from a large set of potential variables.

Methodology:

- Define Experimental Scope: List all potentially influential factors based on prior knowledge and literature review. For a metabolic engineering project, this might include genetic elements (promoters, RBSs, gene order) and environmental conditions (temperature, pH, media components) [2].

- Select Appropriate Screening Design: Choose a fractional factorial design such as Plackett-Burman, which allows efficient examination of many factors with minimal experimental runs [2].

- Establish Factor Levels: Set two levels for each factor (high and low) that represent biologically plausible extremes. For example, when testing media components, set levels that bracket concentrations typically used in similar systems [15].

- Randomize Run Order: Generate a randomized execution order for all experimental runs to minimize confounding effects of external variables [14].

- Include Controls: Incorporate appropriate positive and negative controls that are separate from the DoE design points [15].

- Execute Experiments: Conduct all runs according to the predetermined design, maintaining consistent measurement protocols across all conditions [14].

- Statistical Analysis: Analyze results using ANOVA to identify factors with statistically significant effects on response variables [14].

Table 2: Example Factor Screening Setup for Recombinant Protein Expression

| Factor | Type | Low Level | High Level | Justification |

|---|---|---|---|---|

| Temperature | Continuous | 30°C | 42°C | Brackets standard E. coli growth range |

| Inducer Concentration | Continuous | 0.1 mM IPTG | 1.0 mM IPTG | Represents typical induction range |

| Media Type | Categorical (Nominal) | LB | Minimal | Tests nutrient richness impact |

| Promoter Strength | Continuous | Weak | Strong | Uses quantitatively characterized parts |

| Oxygenation | Continuous | 20% DO | 80% DO | Tests aerobic vs. microaerobic conditions |

Response Surface Methodology Protocol

Objective: To model the relationship between significant factors and response variables for system optimization.

Methodology:

- Factor Selection: Based on screening results, select 2-4 most significant factors for detailed optimization [2].

- Design Selection: Choose an appropriate response surface design such as Central Composite Design (CCD) or Box-Behnken Design (BBD) [2].

- Level Refinement: Establish 3-5 levels for each factor, focusing on the region of interest identified during screening.

- Experimental Execution: Conduct experiments in randomized order, with replication at center points to estimate experimental error [14].

- Model Building: Use regression analysis to build a mathematical model describing the relationship between factors and responses.

- Optimization: Utilize the fitted model to identify factor level combinations that optimize the response variables [14].

- Validation: Conduct confirmation experiments at predicted optimal conditions to verify model accuracy [14].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Biological DoE

| Reagent/Material | Function | Application Example |

|---|---|---|

| Quantified Genetic Parts | Provides characterized biological components with known performance metrics | Promoters and RBSs with quantitatively measured strengths enable treatment as continuous factors [2] |

| Automated Liquid Handling Systems | Enables precise, high-throughput dispensing of reagents and cultures | Essential for executing complex DoE layouts with multiple factor combinations and small volume variations [15] |

| DOE Software Platforms | Facilitates experimental design, randomization, and statistical analysis | Reduces mathematical errors and makes DoE accessible to non-statisticians; allows design assessment and iteration planning [15] |

| Reporter Assay Systems | Provides quantitative measurement of biological activity | Fluorescence-based reporters enable precise quantification of promoter activity and gene expression levels [2] |

| Defined Growth Media Components | Allows systematic manipulation of nutritional environment | Testing individual media components as factors to identify optimal concentrations and interactions [15] |

Application in DBTL Library Reduction

The application of DoE within the DBTL cycle is particularly valuable for managing the combinatorial explosion inherent in biological design spaces. For example, an eight-gene pathway with just three different regulatory elements per gene generates 6,561 possible designs [2]. Through strategic DoE implementation, researchers can efficiently navigate this vast space by:

- Screening Designs: Identifying the most influential factors from a large set of potential variables using fractional factorial designs, significantly reducing the number of designs requiring full testing [2].

- Iterative Optimization: Applying response surface methodology to refine the critical factors identified during screening, converging on optimal combinations with minimal experimental effort [2].

- Model-Guided Design: Using statistical models derived from DoE results to predict the performance of untested genetic combinations, further reducing the experimental burden [2].

DoE in the DBTL Cycle

Case Study: Protein Expression Optimization

A practical application of these concepts can be illustrated through optimization of recombinant protein expression in bacteria:

Factors and Levels:

- Genetic factors: Promoter type (constitutive, inducible), plasmid copy number (high, low)

- Environmental factors: Induction temperature (25°C, 30°C, 37°C), induction OD600 (0.5, 1.0, 1.5), post-induction time (2h, 4h, 6h)

Response Variables:

- Protein titer (mg/L)

- Protein solubility (soluble fraction %)

- Cell density (OD600)

Experimental Approach: Initial screening using a fractional factorial design identified induction temperature and post-induction time as the most significant factors. Subsequent optimization using a Central Composite Design modeled the relationship between these factors and protein titer, identifying an optimal combination that increased yield 3.2-fold compared to baseline conditions [15].

Factor-Response Relationships

The strategic application of core DoE concepts—factors, levels, and response variables—within biological research provides a powerful framework for navigating complex design spaces efficiently. By implementing systematic experimental designs that investigate multiple factors simultaneously, researchers can accelerate the DBTL cycle, significantly reduce library sizes, and develop predictive models of biological system behavior. The structured approach outlined in this protocol enables comprehensive exploration of biological design spaces while minimizing experimental resources, ultimately facilitating more efficient optimization of biological systems for research and industrial applications.

Defining the Design Space for Genetic Pathways and Fermentation Processes

In the development of biologics and recombinant proteins, systematically defining the design space for genetic pathways and fermentation processes is a critical component of successful process characterization and scale-up. The design space, as defined by ICH Q8 (R2) guidelines, is the multidimensional combination and interaction of input variables and process parameters that have been demonstrated to provide assurance of quality [16]. This systematic approach moves beyond traditional one-factor-at-a-time (OFAT) experimentation, which often fails to capture complex interactions between critical process parameters (CPPs) and critical quality attributes (CQAs) [16]. For researchers and drug development professionals, establishing this space is not merely an academic exercise but a practical necessity for ensuring process robustness, regulatory compliance, and economic viability in biological manufacturing.

The integration of statistical Design of Experiments (DoE) within the Design-Build-Test-Learn (DBTL) cycle provides a structured framework for exploring the complex relationship between genetic modifications and their phenotypic expression in fermentation systems. This approach is particularly valuable for library reduction strategies, where the experimental burden of screening vast genetic variant libraries must be minimized without sacrificing the identification of high-performing constructs or process conditions. By applying DoE principles, researchers can efficiently navigate the multidimensional design space, model process responses, and identify optimal operating conditions that ensure consistent product quality and yield.

Statistical Framework for Design Space Exploration

Fundamentals of Design of Experiments (DoE) in Bioprocessing

The application of DoE in fermentation process development enables researchers to systematically investigate the effects of multiple factors and their interactions on key process outputs simultaneously. This methodology is fundamentally superior to OFAT approaches, which are not only time-consuming but also likely to miss critical interaction effects between process parameters [16]. For a typical fermentation process with multiple CPPs, a full factorial DoE can characterize the entire design space, but this often requires a prohibitive number of experimental runs. In practice, fractional factorial designs and response surface methodologies (RSM) are employed to reduce the experimental burden while still capturing essential main effects and interactions.

The model-building process typically involves several key steps: creating the experimental design, selecting appropriate models (e.g., factorial or polynomial), and validating the chosen models using statistical criteria such as the corrected Akaike information criterion (AICc) and Bayesian information criterion (BIC) [16]. Additional validation metrics include high R² and adjusted R² values, low predicted residual error sum of squares (PRESS), and non-significant lack-of-fit p-values. This rigorous statistical approach ensures that the empirical models derived from experimental data reliably predict process behavior within the defined design space.

DoE within the DBTL Cycle for Library Reduction

In the context of genetic pathway engineering and strain development, the DBTL cycle provides an iterative framework for continuous improvement. DoE plays a crucial role in the "Learn" phase, where data from the "Test" phase are analyzed to build predictive models that inform subsequent "Design" and "Build" phases. For library reduction strategies, DoE helps identify the most influential genetic elements or process parameters, enabling researchers to focus experimental efforts on the most promising regions of the design space.

This approach is particularly valuable when dealing with large genetic libraries, where testing all possible variants is practically impossible. By applying DoE, researchers can screen a representative subset of variants and build models that predict the performance of untested combinations. This strategy significantly reduces experimental timelines and resource requirements while still identifying optimal genetic constructs and process conditions. The table below summarizes key DoE applications in fermentation process characterization.

Table 1: Design of Experiments Applications in Fermentation Process Characterization

| DoE Application | Objective | Typical Model | Key Outputs |

|---|---|---|---|

| Screening Experiments | Identify critical process parameters from a large set | Fractional Factorial or Plackett-Burman | Significant main effects on yield and quality |

| Response Surface Methodology | Characterize nonlinear relationships and identify optima | Central Composite or Box-Behnken | Quadratic models for predicting process behavior |

| Mixture Designs | Optimize culture medium composition | Scheffé Polynomials | Optimal nutrient concentrations and ratios |

| Optimal Designs | Address constrained experimental spaces | D- or I-optimal | Predictive models with limited experimental runs |

Defining the Genetic Pathway Design Space

Key Genetic Elements and Their Modulation

The design space for genetic pathways encompasses the various molecular components that control gene expression and protein production in host organisms. For microbial systems such as E. coli and Pichia pastoris, these elements include promoter strength, ribosome binding sites, gene copy number, plasmid stability systems, and selection markers [17]. Each of these elements represents a dimension in the genetic design space that can be modulated to optimize protein expression.

Defining the genetic design space requires understanding how these elements interact to influence metabolic burden, protein folding, and post-translational modifications. For example, strong promoters may drive high expression but can lead to metabolic stress or the formation of inclusion bodies [17]. Similarly, high-copy plasmids may increase gene dosage but can negatively impact cell growth and plasmid stability. The use of inducible expression systems, such as IPTG-inducible promoters, adds another layer of control by separating the growth and production phases [16].

High-Throughput Screening and Library Reduction Strategies

Advanced techniques such as CRISPR screens and multi-omics integration enable systematic exploration of genetic design spaces [18]. CRISPR-based approaches allow for precise perturbation of genetic elements, while multi-omics data (genomics, transcriptomics, proteomics, metabolomics) provides a comprehensive view of cellular responses to genetic modifications [18].

The integration of machine learning (ML) with DoE has revolutionized library reduction strategies. ML algorithms can analyze high-dimensional data from initial screening experiments to build models that predict the performance of genetic variants, prioritizing the most promising candidates for further testing [19] [18]. This approach significantly reduces the experimental burden while increasing the probability of identifying optimal genetic configurations.

Table 2: Key Research Reagent Solutions for Genetic Pathway Engineering

| Reagent/Category | Function | Example Application |

|---|---|---|

| Expression Vectors | Carry the target gene and regulatory elements | pET series for E. coli; pPIC series for P. pastoris [16] |

| Inducers | Control timing and level of gene expression | IPTG for lac-based systems [16] |

| Selection Antibiotics | Maintain selective pressure for plasmid retention | Ampicillin, Kanamycin in bacterial systems [17] |

| Host Strains | Provide genetic background for protein production | E. coli BL21(DE3) for T7-based expression [16] |

| CRISPR Systems | Enable precise genome editing | Gene knock-outs, promoter swaps, pathway engineering [18] |

Characterizing the Fermentation Process Design Space

Critical Process Parameters and Their Interactions

The fermentation process design space encompasses the bioprocess parameters that directly influence cell growth, metabolic activity, and product formation. Key parameters include temperature, pH, dissolved oxygen (DO), agitation rate, nutrient concentrations, and induction conditions [16] [17]. These parameters often interact in complex, nonlinear ways, making DoE essential for understanding their combined effects on process outcomes.

At large scales, parameters such as oxygen transfer rate and heat management become increasingly critical. As noted by industry experts, "For fast-growing bacterial cultures, it is necessary to ensure that sufficient oxygen transfer occurs throughout the entire culture volume to maximize growth, which in turn requires sufficient mixing and airflow" [17]. Similarly, temperature control within +/- 1-2°C is essential for maintaining process consistency and product quality. These challenges highlight the importance of characterizing scale-dependent effects when defining the design space.

Advanced Monitoring and Control Strategies

The implementation of Process Analytical Technologies (PAT) enables real-time monitoring of critical process parameters and quality attributes [17]. PAT tools, including in-line sensors and spectroscopic methods, provide rich data streams that support design space characterization and process control. As noted by industry experts, "Use of PAT during a fermentation run enables detection of potentially problematic variations and allows for manual or automatic corrections to bring the process back to the center of the validated operating space" [17].

The emergence of digital twin technology further enhances design space characterization by creating virtual representations of the fermentation process [20]. These models integrate first-principles knowledge with empirical data to simulate process behavior under different conditions, enabling in-silico exploration of the design space and optimization of process parameters.

Diagram 1: Fermentation process design space characterization workflow

Integrated Framework: Connecting Genetic and Process Design Spaces

Modeling Interactions Between Genetic and Process Parameters

The integration of genetic and process design spaces represents a significant opportunity for optimizing bioprocess performance. Genetic modifications often alter cellular metabolism and physiology, which in turn affects how cells respond to process conditions. For example, engineered strains with high metabolic fluxes may have different oxygen demands or nutrient requirements compared to wild-type strains [17]. Similarly, the optimal induction strategy for recombinant protein production depends on both the genetic construct and process conditions [16].

DoE provides a powerful framework for investigating these interactions through factorial designs that include both genetic and process factors. These integrated experiments can reveal how the effects of genetic modifications depend on process conditions, and vice versa. The resulting models enable the identification of robust operating regions where process performance is maintained despite minor variations in genetic background or process parameters.

Knowledge Management and Decision Support

As the biopharmaceutical industry transitions toward Quality by Design (QbD) principles, effective knowledge management becomes essential for design space definition and utilization [16]. The models and data generated during design space characterization should be documented in a structured manner to support regulatory submissions and technology transfer.

The application of hybrid modeling approaches, which combine mechanistic models with machine learning, enhances the predictive capability and interpretability of design space models [19]. Mechanistic models capture fundamental biological and engineering principles, while machine learning components adapt to strain-specific or process-specific peculiarities. This combination is particularly valuable for scaling up fermentation processes from laboratory to commercial scale.

Table 3: Representative Experimental Results from Fermentation Process Optimization

| Factor Combination | Volumetric Yield (mg/L) | Total Yield (mg) | Purity (%) | Significance |

|---|---|---|---|---|

| Base Case | 150 | 750 | 95.2 | Reference point |

| High Induction, Low Temp | 320 | 1600 | 94.8 | 113% yield increase |

| Low Induction, High Temp | 190 | 950 | 90.1 | Purity below spec |

| Medium Induction, Medium Temp | 280 | 1400 | 96.5 | Balanced optimization |

| High Agitation, Low DO | 165 | 825 | 95.8 | Minimal impact |

Application Notes and Protocols

Protocol 1: DoE for Fermentation Media Optimization

Objective: To optimize culture medium composition for recombinant protein production in E. coli using response surface methodology.

Materials and Equipment:

- E. coli BL21(DE3) harboring pET19b-MI001-S vector [16]

- Chemically defined media components (carbon source, nitrogen source, salts, trace elements)

- Firstek fermenter (FB-10B or equivalent) with temperature, pH, and DO control [16]

- IPTG (isopropyl β-D-thiogactoside) for induction [16]

Procedure:

- Factor Selection: Identify 3-5 critical media components (e.g., glucose, yeast extract, phosphates) based on prior knowledge or screening experiments.

- Experimental Design: Generate a Central Composite Design (CCD) or Box-Behnken Design using statistical software (e.g., Design Expert, JMP).

- Inoculum Preparation: Prepare seed cultures in shake flasks overnight at 37°C.

- Fermentation Runs: Execute experimental runs according to the design matrix. Control temperature at 37°C, pH at 7.0, and maintain DO above 30% air saturation.

- Induction: Add IPTG to a final concentration of 0.1-1.0 mM during mid-exponential phase (OD600 ≈ 4-6).

- Harvest: Collect samples periodically for analysis of OD600, substrate concentration, and product titer.

- Product Quantification: Determine recombinant protein concentration using A280 nm measurement with extinction coefficient predicted by ProtParam [16].

- Data Analysis: Fit response surface models to the experimental data. Identify optimal medium composition that maximizes volumetric yield while maintaining product quality.

Statistical Analysis:

- Use ANOVA to assess model significance and lack-of-fit.

- Generate response surface plots to visualize factor effects and interactions.

- Apply desirability functions for multi-objective optimization (e.g., maximizing yield while minimizing cost).

Protocol 2: High-Throughput Screening for Genetic Construct Evaluation

Objective: To screen a library of genetic variants using DoE to identify optimal expression constructs.

Materials and Equipment:

- Library of genetic variants (e.g., promoter variants, RBS libraries, fusion tags)

- Microtiter plates or deep-well plates

- Microplate reader with absorbance and fluorescence capabilities

- Automated liquid handling system

Procedure:

- Library Design: Apply fractional factorial design to select a representative subset of variants for screening (library reduction).

- Strain Construction: Build selected variants using molecular biology techniques (e.g., Gibson assembly, Golden Gate cloning).

- Cultivation: Inoculate variants into deep-well plates containing appropriate medium. Incubate with shaking at appropriate temperature.

- Monitoring: Measure OD600 periodically to monitor growth.

- Induction: Add inducer at appropriate cell density.

- Analysis: Measure product titer using plate-based assays (e.g., fluorescence, activity assays, immunoassays).

- Data Collection: Record growth parameters (max OD, growth rate) and product-related metrics (titer, productivity).

- Model Building: Use machine learning algorithms (e.g., random forest, gradient boosting) to build predictive models linking genetic elements to performance metrics.

- Validation: Select top-performing predicted variants for validation in bench-scale bioreactors.

Diagram 2: High-throughput screening with DoE-based library reduction

Protocol 3: Scale-Up Verification of Design Space

Objective: To verify the design space identified at laboratory scale during scale-up to pilot and production scales.

Materials and Equipment:

- Optimized strain from laboratory-scale studies

- Pilot-scale fermenter (e.g., 100 L to 1000 L scale) with comparable control capabilities

- PAT tools for real-time monitoring (e.g., in-line pH, DO, biomass sensors)

Procedure:

- Scale-Down Model Validation: Establish that laboratory-scale systems accurately reproduce performance observed at pilot scale.

- Design Space Verification: Execute batches at different operating conditions within the proposed design space.

- Edge of Failure Studies: Intentionally operate at or beyond the design space boundaries to establish proven acceptable ranges.

- Data Collection: Monitor CPPs and measure CQAs using validated analytical methods.

- Comparative Analysis: Compare performance metrics (titer, yield, productivity, quality attributes) across scales.

- Model Refinement: Adjust scale-up models based on experimental results.

- Control Strategy Definition: Establish process control strategies to maintain operation within the design space.

Considerations:

- Address scale-dependent parameters such as oxygen transfer rate (kLa), mixing time, and heat transfer.

- Implement single-use technologies where appropriate to reduce downtime between runs [17].

- For large-scale stainless steel vessels, consider modifications such as internal cooling baffles to improve heat transfer [17].

The systematic definition of design spaces for genetic pathways and fermentation processes represents a paradigm shift in bioprocess development, moving from empirical optimization to science-based understanding and control. The integration of statistical DoE within the DBTL framework enables efficient exploration of complex biological systems while managing experimental resources through strategic library reduction. This approach is particularly valuable in the biopharmaceutical industry, where understanding the relationship between process parameters and product quality is essential for regulatory compliance and manufacturing success.

As the field advances, the incorporation of machine learning, multi-omics data, and digital twin technologies will further enhance our ability to define and utilize design spaces across scales. These innovations, combined with a rigorous statistical foundation, will accelerate the development of robust, efficient bioprocesses for the production of next-generation biologics.

Practical DoE Strategies for Efficient Library Reduction and Screening

In the context of Design-Build-Test-Learn (DBTL) cycles for research, efficient library reduction is paramount. Plackett-Burman (PB) designs serve as a powerful statistical screening methodology to identify the "vital few" significant factors from a large set of potential variables with minimal experimental effort. Originally developed by statisticians Robin Plackett and J.P. Burman in 1946, these designs belong to the family of fractional factorial designs and are specifically intended for early experimentation stages when knowledge about the system is limited [21] [22]. The primary strength of PB designs is their ability to study up to N-1 factors in just N experimental runs, where N is a multiple of 4 (e.g., 4, 8, 12, 16, 20, etc.) [21] [23]. This makes them exceptionally economical for screening a large number of factors to determine which ones have significant main effects on a response variable, thereby effectively reducing the design space for subsequent, more detailed DBTL cycles.

PB designs operate under the fundamental assumption that main effects dominate over interaction effects during initial screening [21]. They are classified as Resolution III designs, meaning that while main effects are not confounded with each other, they are partially aliased (confounded) with two-factor interactions [23] [24]. This confounding pattern is a calculated trade-off that enables significant resource savings. The designs are ideally suited for situations where researchers need to quickly prioritize a subset of factors for further optimization, a common requirement in fields like pharmaceutical development, metabolic engineering, and materials science where the initial variable space can be overwhelmingly large [25] [2].

Key Characteristics and Statistical Basis

Fundamental Properties

Plackett-Burman designs possess several defining characteristics that make them uniquely suited for screening applications. First, they are two-level designs, meaning each factor is tested at a high (+1) and a low (-1) setting [21]. This allows for the efficient estimation of linear main effects. The design matrix itself is orthogonal, ensuring that all main effects can be estimated independently of one another [21] [24]. The construction of these designs often involves "foldover pairs," where the initial N runs are created and then "folded over" by reversing the signs to generate the remaining N runs, thus contributing to the balance and orthogonality of the design [21].

A critical differentiator from standard fractional factorial designs is the run size flexibility. While standard fractional factorials have run sizes that are powers of two (e.g., 8, 16, 32), PB designs have run sizes that are multiples of four (e.g., 8, 12, 16, 20, 24) [23]. This provides researchers with more granular control over experimental size, allowing for more efficient resource allocation when screening 9, 10, or 11 factors, for example, where a 12-run design can be used instead of a 16-run fractional factorial [23].

Confounding and Assumptions

The statistical efficiency of PB designs comes with a specific limitation: the confounding of main effects with two-factor interactions. In a PB design, every main effect is partially confounded with many two-factor interactions not involving itself [23] [24]. For instance, in a 12-run design for 10 factors, the main effect of one factor might be confounded with 36 different two-factor interactions [23]. This complex aliasing structure means that if a significant effect is detected, it could be due to the main effect, one of its confounded interactions, or a combination thereof.

Therefore, the validity of conclusions drawn from a PB screening experiment hinges on the sparsity of effects principle and the effect heredity principle [24]. The sparsity principle assumes that only a few factors are actively influencing the response. The effect heredity principle suggests that interactions are most likely to be significant when at least one of their parent factors also has a significant main effect. Consequently, PB designs are most reliably interpreted when interaction effects are assumed to be weak or negligible compared to main effects [26] [23]. If this assumption is violated, there is a risk of misidentifying the active factors or misinterpreting the direction of their effects [24].

Experimental Protocol for Implementing Plackett-Burman Designs

Step-by-Step Workflow

The successful application of a Plackett-Burman design follows a structured workflow that integrates planning, execution, and analysis. The following diagram illustrates the key stages in this process.

Protocol Details

Step 1: Define Experimental Objective and Response Metrics Clearly articulate the goal of the screening study. Define the primary response variable(s) (Y) to be measured. In a DBTL context, this is the "Test" phase. Responses should be quantifiable, reproducible, and relevant to the overall research goal. Examples include product yield, purity, particle size, dissolution rate, or enzymatic activity [25] [27].

Step 2: Select Factors and Define Levels Identify all potential factors (k) to be screened. Based on prior knowledge or preliminary experiments, set the high (+1) and low (-1) levels for each continuous factor. For categorical factors (e.g., catalyst type), assign appropriate level labels. The difference between levels should be large enough to potentially produce a detectable effect but not so large as to be impractical or unsafe [21] [23].

Step 3: Determine Appropriate Run Size (N) The number of experimental runs (N) must be a multiple of 4 and greater than the number of factors (k). Standard sizes include N=8 (for up to 7 factors), N=12 (for up to 11 factors), and N=16 (for up to 15 factors) [21] [23]. It is considered good practice to include center points (e.g., 3-5 replicates) to check for curvature and estimate pure error, though this is not part of the original PB structure [26].

Step 4: Generate the Design Matrix Use statistical software (e.g., JMP, Minitab, Design-Expert, R) to generate the design matrix. The software will create an N x k matrix of +1 and -1 values specifying the factor level for each run [21] [23]. The matrix will also include a dummy column of +1s [21].

Step 5: Randomize and Execute Experimental Runs Randomize the order of the N runs to protect against systematic biases and ensure independence of observations [21]. Execute the experiments according to the randomized list, carefully controlling all factors at their designated levels.

Step 6: Measure the Response For each completed experimental run, measure the value of the pre-defined response variable(s). Ensure measurement consistency and accuracy across all runs.

Step 7: Analyze Main Effects

Calculate the main effect for each factor. The main effect is the difference between the average response when the factor is at its high level and the average response when it is at its low level [21] [26].

Main Effect (Factor A) = Ȳ(A+) - Ȳ(A-)

Step 8: Identify Significant Factors Judge the significance of the calculated effects. This can be done using:

- Statistical Significance Testing: Perform ANOVA or use t-tests with a pre-defined significance level (α). A higher α (e.g., 0.10) is often used in screening to avoid missing active factors (Type II error) [23].

- Half-Normal Probability Plot: Plot the absolute values of the effects against their cumulative normal probabilities. Significant effects will deviate from the straight line formed by the null effects [26].

- Pareto Chart: Plot the absolute values of the standardized effects in descending order. A reference line helps identify which effects are statistically significant [27].

Step 9: Plan Subsequent DBTL Cycles The significant factors identified become the focus for the next "Learn" and "Design" phases. Subsequent cycles often employ full factorial or Response Surface Methodology (RSM) designs like Central Composite Design (CCD) to model interactions and locate optima [23] [2].

Case Studies and Data Presentation

Case Study 1: Pharmaceutical Formulation Development

A study focused on optimizing an extended-release formulation for hot melt extrusion used a Plackett-Burman design to screen nine critical factors [25]. The objective was to identify which factors significantly impacted the drug release profile (T90: time to release 90% of the drug) and the release mechanism (n value).

Table 1: Factors and Levels for Pharmaceutical Formulation Screening

| Factor Code | Factor Name | Low Level (-1) | High Level (+1) |

|---|---|---|---|

| X1 | Poly (ethylene oxide) Molecular Weight | 6 × 10⁵ | 7 × 10⁶ |

| X2 | Poly (ethylene oxide) Amount | 100.00 mg | 300.00 mg |

| X4 | Ethylcellulose Amount | 0.00 mg | 50.00 mg |

| X5 | Drug Solubility | 9.91 mg/mL | 136.00 mg/mL |

| X6 | Drug Amount | 100.00 mg | 200.00 mg |

| X7 | Sodium Chloride Amount | 0.00 mg | 20.00 mg |

| X8 | Citric Acid Amount | 0.00 mg | 5.00 mg |

| X9 | Polyethylene Glycol Amount | 0.00 mg | 5.00 mg |

| X11 | Glycerin Amount | 0.00 mg | 5.00 mg |

A 12-run PB design was employed. Analysis of Variance (ANOVA) of the results identified that only three of the nine factors had a statistically significant effect on T90: Poly (ethylene oxide) amount (X2), Ethylcellulose amount (X4), and Drug solubility (X5) [25]. This screening successfully reduced the number of critical factors from nine to three, allowing for a focused optimization study in the next DBTL cycle.

Case Study 2: Green Synthesis of Silver Nanoparticles

A 2024 study employed a PB design to screen seven physico-chemical variables affecting the green synthesis of silver nanoparticles (SNPs) using orange peel extract [27]. The goal was to engineer SNPs with enhanced properties for antimicrobial applications.

Table 2: Factors and Responses for Nanoparticle Synthesis Screening

| Category | Details |

|---|---|

| Screened Factors | Temperature, pH, Shaking Speed, Incubation Time, Peel Extract Concentration, AgNO₃ Concentration, Extract/AgNO₃ Volume Ratio |

| Design | 7 factors in a 12-run + 1 center point Plackett-Burman design |

| Responses Measured | Maximum Absorption Wavelength, Zeta Size, Zeta Potential, Nanoparticle Concentration |

| Key Finding | pH was the only variable with a statistically significant effect on the synthesis process. |

| Outcome | Optimized SNPs had a Zeta size of 11.44 nm and demonstrated potent antimicrobial activity against E. coli and others. |

This case demonstrates the power of PB design to efficiently identify a single dominant factor from several candidates, preventing wasted resources on non-influential variables and accelerating the path to an optimized process [27].

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential materials and software commonly used in experiments designed with Plackett-Burman methodology.

Table 3: Essential Research Reagents and Tools for Screening Experiments

| Item | Function/Application | Example Use |

|---|---|---|

| Statistical Software | Generates design matrix, randomizes run order, and analyzes results. | JMP, Minitab, Design-Expert, R (package: FrF2) [25] [23] [27] |

| Chemical Reagents | Act as factors or components in the process being studied. | Polymers (e.g., Polyethylene Oxide), Metal Salts (e.g., AgNO₃), Acids/Bases for pH control [25] [27] |

| Characterization Instruments | Measure the response variables of the system. | UV-Vis Spectrophotometer, Zeta Potential/Sizer, HPLC, Atomic Absorption Spectrometer [27] |

| Biological Materials | Used in biotechnological or pharmaceutical applications. | Microbial Strains (e.g., E. coli), Plant Extracts (e.g., Citrus peel), Enzymes [27] [2] |

| Process Equipment | The physical setup where the experimental process is executed. | Bioreactors, Hot Melt Extruders, Chemical Reactors [25] |

Plackett-Burman designs are an indispensable tool for the initial "screening" phase within the DBTL framework, enabling researchers to navigate large and complex experimental spaces with remarkable efficiency. By strategically confining interactions, these designs allow for the identification of the critical few factors that drive a system's behavior from a vast pool of potential variables using a minimal number of experimental runs. The structured protocol—from defining objectives and generating the design matrix to analyzing effects via statistical and graphical methods—ensures rigorous and interpretable results. The presented case studies from pharmaceutical development and nanotechnology underscore the practical utility of PB designs in real-world research scenarios, leading to significant library reduction. The identified significant factors provide a solid, data-driven foundation for subsequent DBTL cycles, where more detailed models, including interactions and quadratic effects, can be explored to fully optimize the system.

In the context of Design-Build-Test-Learn (DBTL) cycles for research, particularly in fields like metabolic engineering and drug development, the number of factors to investigate can become intractably large. A full factorial design—testing every possible combination of all factors—quickly becomes prohibitively expensive and time-consuming as the number of factors increases [2]. Fractional factorial designs (FFDs) are a class of classic screening experiments that address this challenge by testing only a carefully chosen subset, or fraction, of the full factorial design [28]. This approach enables researchers to screen a large set of potentially important treatment components economically and efficiently, a crucial first step in the multiphase optimization strategy for developing new interventions [29]. The core value of FFDs lies in their ability to balance the acquisition of meaningful information with the practical constraints of experimental effort, making them indispensable for initial DBTL cycles aimed at library reduction and identifying the most influential factors [30].

Theoretical Foundations: Resolution and Aliasing

The utility of a fractional factorial design is governed by its resolution, which measures the degree of confounding (aliasing) between different effects and determines which effects can be estimated independently [28]. The resolution is denoted by a Roman numeral, with common levels being III, IV, and V.

- Resolution III Designs: In these designs, main effects are not confounded with other main effects but are confounded with two-factor interactions [28] [31]. They are useful for screening a large number of factors when interactions are assumed to be negligible. However, if significant interactions exist, the estimates for main effects can be misleading.

- Resolution IV Designs: Main effects are clear of other main effects and two-factor interactions. However, two-factor interactions are confounded with other two-factor interactions [28] [31]. This makes Resolution IV designs stronger than Resolution III for identifying active main effects without being biased by interactions.

- Resolution V Designs: Main effects and all two-factor interactions are clear of each other. Two-factor interactions are confounded with three-factor interactions, which are often assumed to be negligible [28]. These designs provide more detailed information but require more experimental runs.

Aliasing occurs when the design does not allow two effects to be estimated independently. For example, in a Resolution III design, a main effect might be aliased with a two-way interaction (e.g., X1 = X2*X3), meaning the estimated effect is actually a combination of both [28]. The choice of design resolution is a direct trade-off between information gained and experimental resources required [30].

Table 1: Comparison of Common Two-Level Fractional Factorial Design Resolutions

| Design Resolution | Confounding (Aliasing) Structure | Typical Use Case | Key Advantage | Key Limitation |

|---|---|---|---|---|

| Resolution III | Main effects are confounded with 2-factor interactions [31]. | Screening many factors with minimal runs [30]. | High efficiency; minimal number of experiments. | Cannot distinguish main effects from 2FI [28]. |

| Resolution IV | Main effects are clear of 2FI; 2FI are confounded with other 2FI [28] [31]. | Screening when main effects are of primary interest [30]. | Main effects are reliably estimated. | Cannot separate confounded 2FI [29]. |

| Resolution V | Main effects and 2FI are clear of each other; 2FI are confounded with 3FI [28]. | Characterizing a smaller number of factors in more detail. | Provides reliable estimates of main effects and 2FI. | Requires a larger number of experimental runs [30]. |

Abbreviation: 2FI, two-factor interactions; 3FI, three-factor interactions.

Application Protocols for Screening Experiments

Protocol 1: Screening a Large Number of Factors Using a Resolution III Design

This protocol is designed for the initial screening phase of a DBTL cycle, where the goal is to identify the few critical factors from a large set of candidates.

- Define Factors and Levels: List all factors to be screened (e.g., different promoters, RBSs, nutrients, or culture conditions). Define two levels for each factor (e.g., high/low, present/absent, type A/type B) [2].

- Select the Appropriate Fraction: Choose a Resolution III design, such as a Plackett-Burman design, which requires a number of runs that is a multiple of 4, making it highly efficient for screening [29] [2]. For k factors, the number of runs, N, will be less than 2k.

- Generate the Design Matrix: Use statistical software (e.g., JMP, Minitab, R) to generate the design matrix. This matrix specifies the exact settings for each factor in every experimental run.

- Randomize and Execute Experiments: Randomize the run order to minimize the impact of confounding variables. Execute the experiments according to the design matrix and measure the response(s) of interest.

- Analyze Data and Identify Important Effects:

- For Saturated Models: In designs with no degrees of freedom for error (a saturated model), use graphical methods like a half-normal plot and Lenth's Pseudo Standard Error (PSE) to identify significant effects. Effects that deviate substantially from the straight line in the plot are considered important [28].

- Follow the Heredity Principle: An important interaction effect is more likely to be present if its constituent main effects are also important. Use this principle, along with subject matter expertise, to interpret which effects are active [28].

Protocol 2: Pathway Optimization Using a Resolution IV Design

For optimizing a defined pathway with a moderate number of genes (e.g., a 7-gene pathway), a Resolution IV design offers a robust balance, as it provides unbiased estimates of main effects, which are often the primary drivers of system performance [30].

- Define Pathway Factors: Identify the factors to optimize, typically the expression levels of individual genes in a pathway. Each gene is a factor.