Strategic Balance: Mastering Exploration and Exploitation in Machine Learning for Efficient DBTL Cycles in Biomedicine

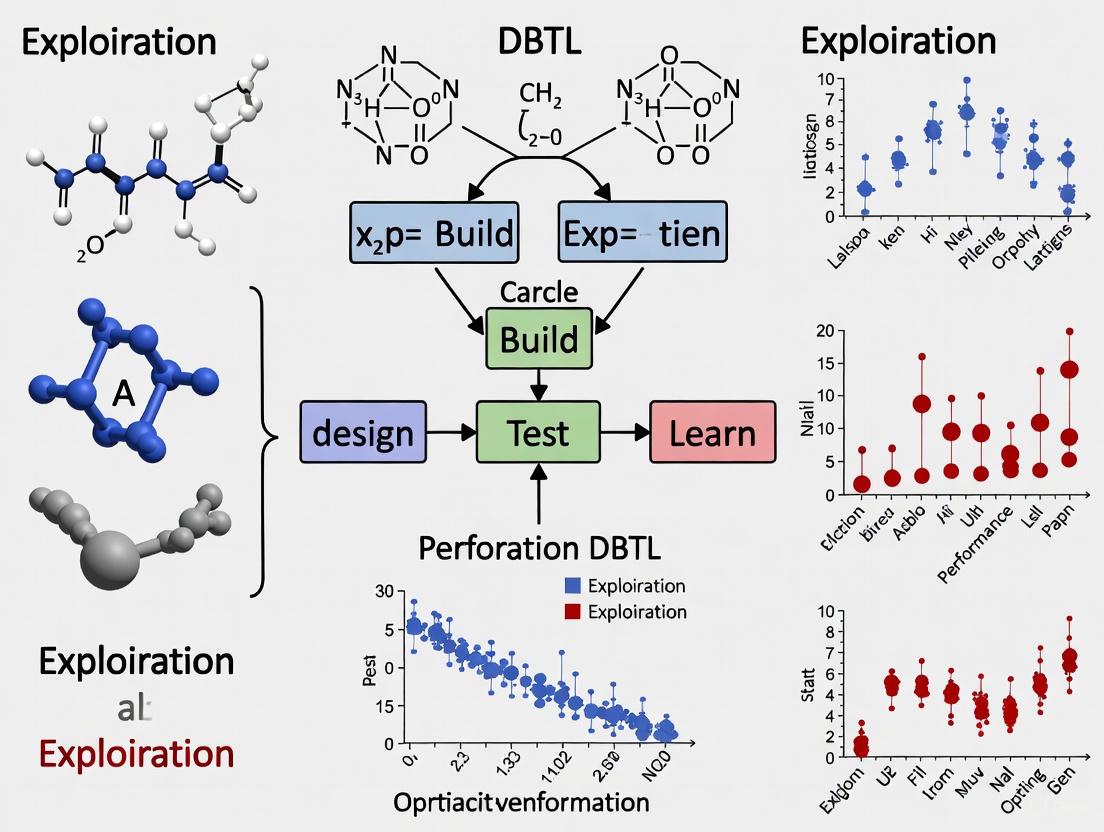

This article provides a comprehensive guide for researchers and drug development professionals on integrating the exploration-exploitation dilemma from machine learning into Design-Build-Test-Learn (DBTL) cycles.

Strategic Balance: Mastering Exploration and Exploitation in Machine Learning for Efficient DBTL Cycles in Biomedicine

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on integrating the exploration-exploitation dilemma from machine learning into Design-Build-Test-Learn (DBTL) cycles. It covers the foundational principles of directed and random exploration, details methodological implementations like Bayesian optimization and multi-armed bandit strategies within metabolic engineering workflows, and addresses common troubleshooting challenges such as data scarcity and algorithmic stagnation. Furthermore, it presents validation frameworks and comparative analyses of machine learning models, offering actionable insights for optimizing bioprocess development and accelerating therapeutic discovery.

The Core Dilemma: Understanding Exploration and Exploitation in Biological Systems

Defining the Exploration-Exploitation Trade-Off in Machine Learning and Biology

Frequently Asked Questions

What is the exploration-exploitation trade-off? The exploration-exploitation dilemma describes the fundamental conflict between choosing the best-known option based on current knowledge (exploitation) and trying new, uncertain options that might lead to better outcomes in the future (exploration). Finding the optimal balance is crucial for maximizing long-term benefits in decision-making processes [1].

Why is this trade-off important in machine learning and biology? In machine learning, particularly in Reinforcement Learning (RL), an agent must balance exploring the environment to learn more about it with exploiting its current knowledge to maximize rewards [1] [2]. In biology, this trade-off is fundamental to survival, governing behaviors from animal foraging for food to human memory search and social innovation [3]. Both fields face the same core problem: the need to make decisions with incomplete information.

What are common challenges when balancing exploration and exploitation? Several problems can make effective exploration difficult [1]:

- Sparse Rewards: When rewards occur infrequently, an agent may not persist in exploring.

- Deceptive Rewards: Immediate small rewards can lure an agent away from actions that yield larger, delayed rewards.

- Noisy TV Problem: An agent can become trapped exploring parts of the environment that generate unpredictable or random feedback.

My agent is not performing optimally. Is it over-exploring or over-exploiting? Diagnosing this issue requires examining your agent's behavior and the environment.

- Signs of over-exploitation: The agent converges quickly to a sub-optimal policy, seems to "get stuck" using the same actions, and fails to discover higher-reward strategies. This is like a gambler only ever playing the same slot machine without testing others [4] [5].

- Signs of over-exploration: The agent behaves erratically, fails to consistently choose known high-reward actions, and shows slow or no improvement in average reward over time. This is like a gambler constantly switching machines without ever settling on the best one [4] [6].

Troubleshooting Guides

Guide 1: Implementing Core Balancing Strategies

This guide outlines standard methodologies for managing the exploration-exploitation trade-off.

Protocol: Epsilon-Greedy Strategy This is a simple and widely used method where the agent primarily exploits but randomly explores with a small probability [1] [4] [2].

- Initialize: For each action, initialize the estimated value (e.g., to zero) and a counter for how many times it has been chosen.

- Loop for each decision step

t:- With probability

1 - ε, choose the action with the highest estimated value (Exploitation). - With probability

ε, choose a random action (Exploration).

- With probability

- Update: After taking action

aand receiving rewardR, update the action's estimated valueQ(a):Q(a) = Q(a) + (1/N(a)) * (R - Q(a))whereN(a)is the number of times actionahas been chosen [4].

Protocol: Upper Confidence Bound (UCB) Strategy This method uses uncertainty to balance exploration and exploitation mathematically [1] [4].

- Initialize: For each action

a, initializeN(a) = 0andQ(a) = 0. - Loop for each decision step

t = 1, 2, ...:- For each action

a, calculate its UCB score:a_t = argmax_a [ Q(a) + sqrt( (2 * ln t) / N(a) ) ] - Select the action

a_twith the highest score. TheQ(a)term promotes exploitation, while the square root term promotes exploration of less-tried actions [4].

- For each action

- Update: Update

Q(a_t)andN(a_t)after receiving the reward.

Comparison of Core Strategies

| Strategy | Core Mechanism | Pros | Cons |

|---|---|---|---|

| Epsilon-Greedy [4] [2] | Fixed probability (ε) of taking a random action. |

Simple to implement and understand. | Does not prioritize promising explorations; requires tuning of ε. |

| Upper Confidence Bound (UCB) [1] [4] | Optimism in the face of uncertainty; selects actions with high upper confidence bounds. | Efficient, theoretically grounded, and automatically reduces exploration over time. | Can be more complex to implement than epsilon-greedy. |

| Thompson Sampling [1] [4] | Bayesian approach; samples a model from a posterior distribution and acts optimally according to the sample. | Strong empirical and theoretical performance. | Requires maintaining a posterior distribution, which can be computationally heavy. |

Guide 2: Addressing Advanced Scenarios

Scenario: Poor performance in environments with sparse or deceptive rewards. Standard methods like epsilon-greedy can fail in complex environments. The solution is to use intrinsic motivation, where the agent gives itself an internal reward for exploring novel or uncertain states [1].

Protocol: Intrinsic Curiosity Module (ICM) This method trains a model to predict the consequence of the agent's actions and uses the prediction error as an intrinsic reward signal [1].

- Featurize State: Use a neural network

φto encode the current states_tand next states_{t+1}into features. - Train Inverse Dynamics Model: Train a model

gthat predicts the actiona_ttaken given the feature representationsφ(s_t)andφ(s_{t+1}). - Train Forward Dynamics Model: Train a model

fthat predicts the next state's featuresφ(s_{t+1})givenφ(s_t)anda_t. - Calculate Intrinsic Reward: The intrinsic reward

r_t^iis the error between the predicted and actual next-state features:r_t^i = || f(φ(s_t), a_t) - φ(s_{t+1}) ||^2. - Combine Rewards: The total reward for the agent is

r_total = r_t^e + β * r_t^i, wherer_t^eis the external reward from the environment andβis a scaling factor [1].

Diagram: Intrinsic Curiosity Module Workflow

Guide 3: Adopting Novel Research Perspectives

Emerging research suggests the traditional trade-off can be re-examined. One novel approach involves analyzing the agent's behavior in its hidden state space, proposing that exploration and exploitation can be decoupled and enhanced simultaneously [7].

Protocol: Cognitive Consistency (CoCo) Framework This framework rethinks the trade-off by conducting "pessimistic exploration" and "optimistic exploitation" [8].

- Optimistic Exploitation (Self-Imitating Distribution Correction): Prioritize learning from high-performing trajectories. The policy is updated to reinforce actions that have led to high rewards, focusing computational effort on promising strategies rather than confirming the inadequacy of poor ones [8].

- Pessimistic Exploration (Inconsistency Minimization): Guide exploration conservatively around the currently learned effective policy. This is achieved by introducing a loss function that minimizes the discrepancy between the agent's current policy and a target, encouraging stable and consistent learning without wildly diverging into unknown states [8].

- Integration: These two components are integrated into a single objective function for training, often using a reweighted, uniformly sampled loss [8].

Diagram: CoCo Framework Principles

The Scientist's Toolkit: Research Reagents & Solutions

This table details key algorithmic components and their functions for studying the exploration-exploitation trade-off.

| Item | Function / Definition | Application Context |

|---|---|---|

| Effective Rank (ER) [7] | A quantity measuring the exploration capacity in the semantically rich hidden-state space of a model. | Used in advanced analyses (e.g., VERL method) to move beyond token-level metrics and understand exploration in latent representations. |

| Effective Rank Acceleration (ERA) [7] | The second-order derivative of the Effective Rank, capturing the dynamics of exploitation. | Used as a predictive meta-controller to prospectively shape the RL advantage function, reinforcing gains. |

| NoisyNet [1] | A method where parameters of a neural network are perturbed with noise, making the exploration state-dependent and adaptive. | Provides a more structured exploration strategy compared to simple epsilon-greedy, integrated directly into the policy network. |

| Intrinsic Reward Signal [1] | An internally generated reward (e.g., based on prediction error or state novelty) that encourages the agent to explore. | Critical for environments with sparse or no external rewards, such as hard-exploration video games or robotics in uncharted terrain. |

| Forward Dynamics Model [1] | A function that predicts the next state of the environment given the current state and action. | Core to many intrinsic motivation algorithms like ICM; the prediction error drives curiosity. |

Quantitative Results from Recent Research

| Method / Framework | Key Metric Improvement | Test Environment / Benchmark |

|---|---|---|

| Velocity-Exploiting Rank-Learning (VERL) [7] | Up to 21.4% absolute accuracy improvement | Gaokao 2024 dataset [7] |

| Cognitive Consistency (CoCo) [8] | Substantial improvement in sample efficiency and performance | Mujoco tasks, Atari games [8] |

Technical Support Center: Troubleshooting the ML-DBTL Cycle

This support center provides targeted guidance for researchers navigating the Design-Build-Test-Learn (DBTL) cycle, particularly when integrating machine learning to balance exploration and exploitation. Below are common challenges and their solutions, framed within this core research thesis.

Troubleshooting Guides

1. Guide: Poor Strain Performance Despite High In-Silico Predictions

- Issue or Problem Statement: A microbial strain, designed for metabolite production (e.g., dopamine), shows low yield in vivo even though ML models predicted high performance [9] [10].

- Symptoms or Error Indicators: Final product titer is significantly lower than expected; model prediction accuracy is poor for new genetic designs.

- Environment Details: E. coli production host; high-throughput screening data; ML model trained on historical RBS library data [10].

- Possible Causes:

- Over-exploitation: The ML model is overfitting to a narrow region of the genetic design space it is confident about, missing potentially better, unexplored designs [10].

- Context Dependence: The model was trained on data from a different genetic background or environmental condition, reducing its predictive power for new contexts [9].

- Inadequate Training Data: The initial dataset used to train the ML model is too small or lacks diversity, leading to poor generalization [10].

- Step-by-Step Resolution Process:

- Diagnose the ML Policy: Check the acquisition function (e.g., Upper Confidence Bound) used in the Design phase. A low emphasis on "exploration" can cause this [10].

- Increase Exploration: In the next DBTL cycle, deliberately design and build a batch of variants from less-explored regions of the sequence space, as determined by the model's uncertainty estimates [10].

- Validate In Vitro: For metabolic engineering, use a cell-free protein synthesis (CFPS) system to test pathway enzyme levels and interactions rapidly before committing to full in-vivo strain construction [9].

- Retrain the Model: Integrate the new experimental data from both high-performing and poor-performing strains into the training set to improve the model's accuracy and coverage in the next Learn phase [10].

- Escalation Path: If iterative cycling does not improve performance, consult a machine learning specialist to re-evaluate the model's features, kernel, or acquisition function.

- Validation or Confirmation Step: The next batch of designed strains should include candidates with both high predicted performance and high uncertainty, leading to the discovery of improved variants.

2. Guide: Inefficient Foraging Behavior in Animal Models

- Issue or Problem Statement: In a study on foraging behavior, food-deprived animals do not show the expected increase in rhythmic foraging activity, confounding the analysis of the exploration-exploitation dynamic [11].

- Symptoms or Error Indicators: No significant change in general activity or foraging; disrupted daily rhythmic pattern of behavior.

- Environment Details: Home-Cage monitoring system; 12h/12h light/dark cycle; food deprivation protocol.

- Possible Causes:

- Insufficient Energy Deficit: The duration of food deprivation was not long enough to trigger the energy-seeking motivational state [11].

- Confounding Food Cues: The presence of non-nutritive food cues (odor, sight) without actual energy availability may be distorting the natural behavioral rhythm [11].

- Neurological Inactivity: Neuronal activity in the paraventricular hypothalamic nucleus (PVH), a key regulator of this behavior, may not be adequately modulated [11].

- Step-by-Step Resolution Process:

- Confirm Energy Status: Verify and potentially extend the food deprivation period to ensure a significant energy deficit.

- Control for Cues: Systematically introduce or remove food-related sensory cues (e.g., odor, mouthfeel) to isolate their effect from the energy deficit itself [11].

- Monitor Neuronal Activity: Use immunohistochemical staining (e.g., for c-fos) to confirm that PVH neuronal activity is increased during the expected foraging periods [11].

- Modulate Activity: Employ chemogenetic actuators (e.g., DREADDs) to selectively activate or inhibit PVH neurons to confirm their causal role in the behavior and rescue the phenotype [11].

- Escalation Path: If the issue persists, conduct metabolic profiling to rule out underlying health issues in the animal model and ensure the Home-Cage system is calibrated correctly.

- Validation or Confirmation Step: Successful activation of PVH neurons should restore or enhance the rhythmic foraging pattern in the animals.

Frequently Asked Questions (FAQs)

Q1: In the context of an ML-DBTL cycle, when should my team prioritize exploration over exploitation? A1: Prioritize exploration when: 1) Starting a new project with limited initial data. 2) Performance has plateaued, suggesting a local optimum. 3) Moving to a new host organism or genetic context. Exploitation is favored when you have a high-quality, large dataset and need to fine-tune a nearly-optimal design for maximum yield [10].

Q2: What is a "knowledge-driven DBTL" cycle and how does it differ from a standard one? A2: A knowledge-driven DBTL incorporates upstream, mechanistic investigations—such as testing pathways in cell-free systems—before the first full in-vivo cycle. This generates critical data to inform the initial Design phase, making the subsequent cycles more efficient than a standard DBTL that might start with random or statistically designed variants [9].

Q3: Our research bridges animal behavior and synthetic biology. What is a core analogy between foraging and DBTL? A3: The core analogy is the exploration-exploitation dilemma. A foraging animal must balance exploring new areas for food (high energy cost, high uncertainty) with exploiting a known food source (low cost, predictable reward) [11]. Similarly, in a DBTL cycle, you must balance exploring new regions of genetic design space (which might fail) with exploiting known, high-performing designs to refine them [10]. Both are governed by the need to optimize a resource (energy or research funding/time) under uncertainty.

Experimental Data & Protocols

Table 1: Quantitative Data on Foraging Behavior Modulation [11]

| Intervention | Foraging Behavior Amplitude (Change vs. Control) | PVH Neuronal Activity (c-fos+ cells) | Key Finding |

|---|---|---|---|

| Food Deprivation (Energy Deficit) | Significantly Increased | Significantly Increased | Potentiates rhythmic foraging |

| Food Cues Only (No Energy) | Modulated | Not Specified | Insufficient without energy deficit |

| Chemogenetic PVH Activation | Enhanced | Artificially Increased | Directly enhances foraging |

| Chemogenetic PVH Inactivation | Decreased & Rhythm Impaired | Artificially Decreased | Impairs rhythmic foraging |

Table 2: ML-Guided RBS Engineering Performance Data [10]

| DBTL Cycle | Number of RBS Variants Tested | Best Performance (TIR) vs. Benchmark | Key ML Action |

|---|---|---|---|

| Initial | ~100-150 | Baseline | Initial data collection for model training |

| 1 | ~150 | +10-15% | Model-guided design begins |

| 2 | ~150 | +20-25% | Exploitation of high-confidence predictions |

| 3 & 4 | ~150 | +34% | Balanced exploration-exploitation finds optimum |

Detailed Protocol: Immunohistochemical Staining for Neuronal Activity (c-fos) [11]

- Purpose: To visualize and quantify trends in neuronal activity in brain regions like the PVH.

- Methodology:

- Perfusion and Fixation: Deeply anesthetize mice and perfuse transcardially with 4% paraformaldehyde (PFA). Remove brains and post-fix in 4% PFA for 0-14 hours.

- Sectioning: Using a vibrating microtome (e.g., Leica VT1200S), collect 30-μm-thick coronal brain sections containing the region of interest.

- Immunostaining: Incubate free-floating sections with a primary antibody against c-fos (e.g., 1:500 dilution). Then, incubate with a biotinylated secondary antibody.

- Visualization: Treat sections with the 3,3'-diaminobenzidine (DAB) chromogen to produce a visible precipitate at the site of c-fos expression.

- Imaging & Analysis: Acquire images using a light microscope. Count c-fos positive cells in the target regions (e.g., PVH, ARC, LH) for quantitative comparison between experimental groups.

Detailed Protocol: High-Throughput RBS Library Construction & Screening [10]

- Purpose: To build and test a large library of RBS variants to optimize translation initiation rate (TIR) for a pathway enzyme.

- Methodology:

- Design: Using an ML algorithm (e.g., Gaussian Process Regression with a Bandit algorithm), design a batch of RBS sequence variants focusing on the core Shine-Dalgarno sequence.

- Build (Automated Cloning):

- Use automated DNA assembly (e.g., Golden Gate or Gibson Assembly) to clone each RBS variant upstream of a reporter gene (e.g., GFP) in an expression plasmid.

- Transform the plasmid library into the production E. coli strain. This step is often automated using liquid handling robots.

- Test (High-Throughput Assay):

- Grow deep-well plates of the transformed library with induction.

- Use high-throughput flow cytometry to measure fluorescence (GFP) of each variant, which serves as a proxy for TIR and protein expression level.

- Learn: Feed the RBS sequence and corresponding TIR data back into the ML model. The model learns the sequence-function relationship and recommends a new, improved batch of variants for the next cycle.

Workflow and Pathway Visualizations

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Featured Experiments

| Item | Function / Application | Specific Example / Note |

|---|---|---|

| Home-Cage Monitoring System | Automated, long-term behavioral tracking of animals (e.g., foraging, general activity) in their home environment without human disruption [11]. | Systems like Shanghai Vanbi's Home-Cage with Tracking Master software. |

| Chemogenetic Actuators (DREADDs) | To selectively modulate (activate/inhibit) neuronal activity in specific brain regions in vivo to establish causality in behavior [11]. | Used with Clozapine-N-Oxide (CNO) injection; targets PVH neurons. |

| c-fos Antibody | Immunohistochemical marker for detecting and quantifying recent neuronal activity in tissue sections following specific stimuli or behaviors [11]. | e.g., Abcam ab214672. |

| Cell-Free Protein Synthesis (CFPS) System | A crude cell lysate system for rapid in-vitro testing of metabolic pathways and enzyme expression levels before full in-vivo strain engineering [9]. | Used for knowledge-driven DBTL entry point. |

| Ribosome Binding Site (RBS) Library | A set of genetic variants to fine-tune the translation initiation rate (TIR) of genes in a metabolic pathway, optimizing enzyme expression and product yield [10]. | Designed via ML; built via automated cloning. |

| Gaussian Process Regression (GPR) Model | A machine learning algorithm used in the "Learn" phase that predicts performance and, crucially, provides uncertainty estimates for genetic designs [10]. | Enables balancing exploration vs. exploitation. |

In both machine learning and scientific research domains like Design-Build-Test-Learn (DBTL) cycles, a fundamental challenge is the exploration-exploitation dilemma. This refers to the trade-off between gathering new information (exploration) and using existing knowledge to maximize rewards (exploitation) [1]. Researchers have identified two primary strategies that humans and algorithms use to explore: directed exploration (purposeful information-seeking) and random exploration (strategic randomization of choice) [12] [13]. Understanding and implementing these strategies is crucial for optimizing research processes, from drug discovery to reinforcement learning agent training. This guide provides troubleshooting and methodological support for researchers applying these concepts.

The table below summarizes the key characteristics of directed and random exploration.

| Feature | Directed Exploration | Random Exploration |

|---|---|---|

| Core Principle | Purposeful information-seeking; biased towards informative options [12]. | Strategic introduction of decision noise to try new options by chance [12]. |

| Driving Force | Information bonus (e.g., uncertainty-driven) [12]. | Random noise added to value calculations [12]. |

| Computational Analogy | Upper Confidence Bound (UCB) algorithms [12]. | Epsilon-Greedy or Thompson Sampling algorithms [12] [4]. |

| Key Neural Correlate | Right Frontopolar Cortex (FPC) [14]. | Neural variability; potentially modulated by norepinephrine [12] [15]. |

| Response to Time Horizon | Increases with a longer future time horizon (more choices remain) [12] [13]. | Increases with a longer future time horizon [12] [13]. |

| Primary Use Case | When the value of information is high and can be quantified. | In complex environments where optimal information-seeking is computationally intractable [15]. |

Experimental Protocols & Methodologies

The Horizon Task: Quantifying Both Strategies

The Horizon Task is a behavioral paradigm designed to independently measure directed and random exploration in human participants [13] [14].

Workflow: The diagram below illustrates the core structure and decision logic of the Horizon Task.

Detailed Protocol:

- Task Setup: Participants play a series of games where they choose between two "slot machines" (bandits) with different, unknown reward distributions [14].

- Key Manipulation 1 - Time Horizon:

- In a Horizon 1 (H1) game, the participant makes only one choice. This favors exploitation, as there is no future to use gathered information.

- In a Horizon 6 (H6) game, the participant makes six sequential choices. This favors exploration, because information gained early can be used for better choices later [13] [14].

- Key Manipulation 2 - Information Condition:

- Before the free-choice phase, participants undergo four forced-choice trials to control their initial information.

- Equal Information [2-2]: Participants see two outcomes from each bandit. This condition helps measure random exploration as the probability of choosing the option with the lower estimated reward.

- Unequal Information [1-3]: Participants see one outcome from one bandit and three from the other. This condition helps measure directed exploration as the probability of choosing the less-known, higher-information option [14].

- Data Analysis:

- A cognitive model is fit to the choice data to extract two key parameters:

Pharmacological Intervention: Targeting the Norepinephrine System

This protocol tests the causal role of the norepinephrine (NE) system in random exploration [15].

Detailed Protocol:

- Design: Double-blind, placebo-controlled, crossover study.

- Intervention: Administration of a single dose of atomoxetine (40-60 mg), a selective norepinephrine transporter blocker, versus a placebo.

- Participants: Healthy human volunteers.

- Task: Participants perform the Horizon Task (or a similar explore-exploit task) after drug administration.

- Measurement: The effects of atomoxetine on the model-based parameters for random exploration (decision noise) and directed exploration (information bonus) are analyzed [15].

- Expected Outcome: Atomoxetine is hypothesized to selectively reduce random exploration without significantly affecting directed exploration, supporting the role of NE in modulating decision noise [15].

Neuromodulation: Inhibiting the Frontopolar Cortex

This protocol uses brain stimulation to test the causal role of the frontopolar cortex in directed exploration [14].

Detailed Protocol:

- Technique: Continuous Theta-Burst Transcranial Magnetic Stimulation (cTBS).

- Target: Right Frontopolar Cortex (RFPC). A control site (e.g., vertex) is also stimulated in a separate session.

- Participants: Healthy human volunteers.

- Task: Participants perform the Horizon Task after undergoing cTBS.

- Measurement: Compare the information bonus (directed exploration) and decision noise (random exploration) between the RFPC stimulation and control sessions.

- Expected Outcome: Inhibition of the RFPC via cTBS is expected to selectively reduce directed exploration while leaving random exploration intact [14].

Troubleshooting Guides & FAQs

FAQ 1: In my reinforcement learning model for molecular discovery, the agent converges on a suboptimal candidate too quickly. How can I improve the search?

- Problem: The algorithm is over-exploiting and lacks a mechanism to discover novel, potentially superior candidates.

- Solution:

- Implement Directed Exploration: Incorporate an information bonus or Upper Confidence Bound (UCB) strategy. This will encourage the agent to select options where uncertainty about the reward (e.g., bioactivity) is high [12] [1].

- Adjust Exploration Schedule: If using a simple epsilon-greedy strategy, switch to a decaying epsilon schedule or an adaptive method like Thompson Sampling. This maintains a baseline level of exploration for longer, preventing premature convergence [4] [16].

FAQ 2: My research process (e.g., high-throughput screening) is inefficient, exploring too many options with low success. How can I make it more targeted?

- Problem: The process is over-exploring without effectively leveraging accumulated knowledge.

- Solution:

- Shift Towards Exploitation: Use early results to build a predictive model. Prioritize candidates or experiments that the model predicts will be high-performing, effectively adding an exploitation bias [4].

- Adopt a Hybrid Strategy: Implement a strategy like Activity-Directed Synthesis (ADS), which is inherently function-driven. It uses initial broad exploration (promiscuous reactions) but quickly channels resources into exploiting and optimizing only the reactions that show promising bioactivity [17].

FAQ 3: My behavioral experiment failed to find a horizon effect on exploration. What could have gone wrong?

- Problem: The manipulation of the time horizon was not effective, or exploration strategies were not properly isolated.

- Solution:

- Control for Confounds: Ensure that the expected reward value and the information value of options are decorrelated in your task design, as in the Horizon Task's forced-choice phase. A common confound is that participants naturally gain more information about higher-value options because they choose them more often [14].

- Verify Task Instructions: Confirm that participants understand how many choices they have in each game (the horizon). The utility of exploration is only high if they know they have future choices to use the information in [13].

FAQ 4: A pharmacological agent (e.g., atomoxetine) affected behavior, but I cannot tell if it impacted directed or random exploration. How can I dissociate these strategies?

- Problem: The behavioral task used does not provide independent measures of the two exploration strategies.

- Solution:

- Use a Deconfounded Task: Employ a task like the Horizon Task that independently manipulates information and reward [15] [14].

- Fit a Computational Model: Extract parameters for information bonus (βinfo) and decision noise (η). A selective effect on

ηwould point to a change in random exploration, while a change inβinfowould indicate an effect on directed exploration [12] [15].

The Scientist's Toolkit: Key Reagents & Materials

The table below lists essential "research reagents," both computational and biological, for studying exploration strategies.

| Reagent / Material | Function / Description | Relevance to Exploration Research |

|---|---|---|

| Horizon Task | A behavioral paradigm to deconfound reward and information. | The primary tool for independently quantifying directed and random exploration in humans [13] [14]. |

| Computational Model (e.g., from Wilson et al.) | A cognitive model with information bonus and decision noise parameters. | Used to analyze task data and extract quantitative measures of directed (βinfo) and random (η) exploration [13]. |

| Atomoxetine | A selective norepinephrine transporter (NET) blocker. | A pharmacological tool for manipulating the norepinephrine system to test its causal role in random exploration [15]. |

| Transcranial Magnetic Stimulation (TMS) | A non-invasive brain stimulation technique. | Used to temporarily inhibit (e.g., via cTBS) brain regions like the right frontopolar cortex to test their causal role in directed exploration [14]. |

| Multi-Armed Bandit (MAB) Framework | A formal mathematical framework for the explore-exploit dilemma. | Provides the theoretical foundation and algorithms (e.g., UCB, Thompson Sampling) that mirror human exploration strategies [12] [4] [1]. |

Computational Intractability and the Need for Approximate Solutions

Frequently Asked Questions (FAQs)

FAQ 1: What does "computational intractability" mean in the context of drug design? Computational intractability describes problems that cannot be solved within a reasonable timeframe, even with the most powerful classical computers. These problems require exponential computational resources relative to the input size, rendering them practically unsolvable for large instances. In drug design, this often manifests in tasks like de novo molecular generation, where the number of possible molecular structures is vast, making exhaustive search for an optimal candidate impossible [18] [19].

FAQ 2: How does the exploration-exploitation dilemma relate to intractable problems? Optimal solutions to the explore-exploit dilemma are intractable in all but the simplest cases. The reason is that optimal solutions require massive simulations of the future—considering how choices impact future outcomes and how those outcomes will impact future choices. Because of this computational complexity, researchers turn to approximate strategies like directed and random exploration [12].

FAQ 3: What is the practical consequence of intractability for my simulation-based research? When simulations (e.g., involving partial-differential-equation models with fine spatiotemporal discretization) are computationally expensive, "many-query" problems like uncertainty quantification or design optimization become intractable. This limits the scope of complex optimizations in areas like global climate modeling, advanced materials design, and ecological system predictions [18] [20].

FAQ 4: What can I do if my problem is proven to be intractable? Intractability does not mean a problem is unsolvable, but that an exact, efficient solution for all cases is unlikely. The standard approach is to shift focus towards finding a "good enough" approximate solution. This can be achieved through approximation algorithms, heuristic methods, surrogate models, or new computational paradigms like quantum computing [18] [19].

Troubleshooting Guides

Problem: My molecular generation algorithm gets stuck in local minima, producing low-diversity candidates. This is a classic symptom of an imbalance between exploration and exploitation.

- Potential Cause 1: Over-exploitation of known, high-scoring regions of the chemical space.

- Solution: Integrate a mean-variance framework that explicitly optimizes for both the scoring function (exploitation) and the diversity of the proposed solutions (exploration) [21].

- Solution: Implement a hybrid exploration strategy like Max-Boltzmann, which has been shown to provide more stable and effective outcomes in high-risk, complex domains compared to pure epsilon-greedy or Boltzmann methods [22].

Problem: The error in my surrogate or reduced-order model grows uncontrollably over time. Dynamical systems pose a unique challenge as errors exhibit dependence on non-local quantities, meaning the error at a given time depends on the past history of the system.

- Potential Cause 1: Using an error quantification method (like a simple residual norm) that is only sensitive to local, instantaneous errors.

- Solution: Adopt a Time-Series Machine-Learning Error Modeling (T-MLEM) method. This uses recursive, time-series-prediction models (e.g., autoregressive models, recurrent neural networks) with time-local error indicators as features to capture the non-local error dynamics [20].

Problem: My reinforcement learning agent fails to discover successful states in a sparse-reward environment. This is known as the "hard-exploration" problem, where random exploration rarely discovers states that provide meaningful feedback.

- Potential Cause 1: Lack of intrinsic motivation to guide the agent towards novel or informative states.

- Solution: Augment the environment's extrinsic reward with an intrinsic exploration bonus. This bonus can be based on the novelty of a state, estimated using pseudo-counts from a density model or locality-sensitive hashing (LSH) to track state visits in high-dimensional spaces [23].

Experimental Protocols & Methodologies

Protocol: Constructing a Time-Series Machine-Learning Error Model (T-MLEM)

Purpose: To accurately model the error of approximate solutions (e.g., from reduced-order models) for parameterized dynamical systems, where errors have non-local, time-dependent dynamics [20].

Methodology:

- Data Generation: Run the high-fidelity and approximate models for a training set of parameters. Collect sequences of time-local error indicators (e.g., residual norms) as features and the corresponding true normed state errors as the response variable.

- Feature Engineering: Use error indicators like residual samples that are cheaply computable during the online use of the approximate solution.

- Regression Model Training: Train a recursive time-series-prediction model (e.g., an Autoregressive model or Recurrent Neural Network) to map the sequence of features to the error response. For comparison, a non-recursive model (e.g., a feed-forward neural network) can also be trained.

- Noise Model Construction: Model the residual uncertainty not captured by the regression model. This is often done by fitting a mean-zero Gaussian distribution whose variance is the sample variance of the prediction error on a test set.

- Validation: The trained T-MLEM model provides a statistical error prediction (a random variable) that can be used to quantify uncertainty in the approximate solution.

Protocol: Implementing Directed and Random Exploration in a Bandit Task

Purpose: To empirically study and dissect the exploration strategies used by human or artificial agents in a controlled setting [12].

Methodology:

- Task Design: Use a multi-armed bandit task where the reward probabilities of options are initially unknown. Key manipulations include:

- Time Horizon: Vary the number of trials left in the game. A longer horizon should increase exploration.

- Novel Options: Introduce completely new options at specific points to measure directed exploration towards novelty.

- Uncertainty: Control the initial uncertainty or the volatility of the reward distributions.

- Modeling Behavior: Fit computational models to the choice data to quantify the contribution of each strategy.

- Directed Exploration Model:

Q(a) = r(a) + IB(a), whereIB(a)is an information bonus, often proportional to the uncertainty about optiona. - Random Exploration Model:

Q(a) = r(a) + η(a), whereη(a)is zero-mean random noise added to the value estimate.

- Directed Exploration Model:

- Analysis: Identify directed exploration by increased information-seeking (e.g., choosing novel or uncertain options) when the time horizon is long. Identify random exploration by an increase in the randomness of choices (e.g., a higher softmax temperature parameter) under the same conditions.

Workflow Visualization

Approximation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 1: Key computational components and their functions for tackling intractability.

| Research Reagent | Function & Purpose |

|---|---|

| Surrogate Model (e.g., Reduced-Order Model) | Replaces a computationally expensive high-fidelity model (e.g., a PDE) to generate low-cost approximate solutions, making many-query problems tractable [20]. |

| Error Model (e.g., T-MLEM) | A statistical model that maps cheaply computable error indicators (e.g., residual norms) to a prediction of the error incurred by an approximate solution, quantifying its uncertainty [20] [24]. |

| Upper Confidence Bound (UCB) | A directed exploration strategy that adds an "information bonus" to the value of an option, proportional to its uncertainty, thereby systematically guiding exploration towards informative choices [12]. |

| Boltzmann (Softmax) Policy | A random exploration strategy that selects actions probabilistically based on their estimated Q-values, regulated by a temperature parameter. Higher temperature increases exploration [25] [23]. |

| Density Model (e.g., PixelCNN) | A model that estimates the probability density of states, allowing for the calculation of pseudo-counts. This is used to generate intrinsic rewards for count-based exploration in large state spaces [23]. |

| Locality-Sensitive Hashing (LSH) | A hashing technique that maps similar states to similar hash codes, enabling efficient counting of state visits in high-dimensional continuous spaces for count-based exploration bonuses [23]. |

The Design-Build-Test-Learn (DBTL) cycle is a cornerstone framework in synthetic biology and biotechnology research and development, enabling the systematic and iterative engineering of biological systems [26]. This cyclical process allows researchers to rationally design biological components, assemble them into functional systems, rigorously test their performance, and learn from the data to inform the next, improved design round [27].

Automation and machine learning (ML) are now transforming the DBTL cycle, helping to overcome traditional bottlenecks and enhancing its efficiency and predictive power [28] [27]. A critical challenge within this iterative process is the exploration-exploitation dilemma—the strategic decision between exploring new, uncertain designs to gather more information and exploiting known, high-performing designs to maximize immediate results [29] [12]. This article provides troubleshooting guidance and FAQs to help researchers navigate the practical challenges of implementing the DBTL cycle, with a special focus on integrating ML to balance exploration and exploitation.

FAQs: Navigating the DBTL Cycle

What is the fundamental purpose of the DBTL cycle in synthetic biology?

The DBTL cycle provides a structured framework for engineering organisms to perform specific functions, such as producing biofuels, pharmaceuticals, or other valuable compounds [26]. Its iterative nature allows researchers to systematically approach the complexity of biological systems, where the impact of introducing foreign DNA is often difficult to predict, making multiple testing permutations necessary to achieve a desired outcome [26].

How can machine learning (ML) improve the DBTL cycle?

ML has gained significant traction for overcoming bottlenecks, particularly in the "Learn" phase [27]. By processing large, complex datasets generated from high-throughput experiments, ML models can:

- Uncover unseen patterns and provide predictive models by choosing appropriate features to represent a phenomenon of interest [27].

- Facilitate system-level prediction of biological designs with desired characteristics by elucidating the associations between phenotypes and various combinations of genetic parts and genotypes [27].

- Guide metabolic engineering by learning from experimental datasets to make accurate genotype-to-phenotype predictions, thereby accelerating the design of more efficient biological pathways [28] [30].

What is the exploration-exploitation dilemma in this context?

The exploration-exploitation dilemma is a fundamental trade-off faced when making sequential decisions under uncertainty [29] [12].

- Exploitation involves choosing the best-known option based on current information to maximize immediate reward.

- Exploration involves trying less-known or novel options to gather more information, which may lead to better rewards in the long run [31].

In a DBTL cycle, this translates to the decision between exploiting a known, well-performing genetic design and exploring new, potentially superior but uncertain designs. Optimal solutions to this dilemma are computationally complex, leading to the use of approximate strategies [29].

What are the main strategies for balancing exploration and exploitation?

Research shows that humans, animals, and effective artificial intelligence algorithms often combine two major strategies to solve this dilemma [29] [12]:

- Directed Exploration (Information-Seeking): This strategy deterministically biases choice towards more informative options. A common computational method is to add an "information bonus" to the value of uncertain options, making them more attractive [12].

- Random Exploration (Behavioral Variability): This strategy introduces randomness or noise into the decision-making process. This can be implemented by adding random noise to the computed value of each option before selecting the one with the highest value [12].

These strategies are not mutually exclusive and can be integrated into a holistic approach for more robust performance [29].

Troubleshooting Common DBTL Workflow Challenges

Problem: Low Throughput and Efficiency in the "Build" and "Test" Phases

Symptoms: The rate of constructing and testing biological designs is slow, creating a bottleneck that limits the number of DBTL iterations you can perform.

Solutions:

- Implement Automation: Integrate automated liquid handlers (e.g., from Tecan, Beckman Coulter, or Hamilton Robotics) for high-precision, high-throughput pipetting, PCR setup, and plasmid preparation [28].

- Use Orchestration Software: Adopt platforms like TeselaGen to manage complex protocols, track samples across different lab equipment, and maintain inventory efficiently [28].

- Partner with DNA Synthesis Providers: Streamline the process by integrating with providers like Twist Bioscience or IDT for seamless incorporation of custom DNA sequences into your workflows [28].

Problem: Inability to Effectively "Learn" from Large, Complex Datasets

Symptoms: Despite generating large amounts of multi-omics data (from NGS, mass spectrometry, etc.), extracting meaningful, actionable insights to guide the next design cycle is challenging.

Solutions:

- Adopt a Centralized Data Hub: Use a unified software platform to collect, standardize, and manage data from all analytical equipment and design phases [28].

- Apply Machine Learning: Employ ML algorithms to analyze experimental data, uncover complex patterns, and build predictive models that can forecast the performance of future biological designs, such as predicting genotype-to-phenotype relationships [28] [27].

- Establish ML-Friendly Data Standards: To lay the groundwork for effective ML, implement common standards for data generation and formatting across experiments [27].

Problem: Strategic Uncertainty in the "Design" Phase

Symptoms: Difficulty deciding whether to optimize a known, promising genetic construct (exploit) or to test a radically new design with uncertain potential (explore).

Solutions:

- Formalize the Trade-off: Explicitly frame your design choices within the exploration-exploitation dilemma.

- Quantify Uncertainty: Use computational models that estimate the uncertainty or potential information gain of each design option.

- Implement Adaptive Exploration Strategies: Instead of fixed rules, use strategies that dynamically adjust the level of exploration based on the stage of your project. For example:

- Use Value-Difference Based Exploration (VDBE), which adapts the exploration probability based on the difference in estimated values between options, reflecting the agent's uncertainty [31].

- Use Max-Boltzmann strategies, which combine the directed nature of Softmax with the adaptive nature of value-difference methods [31].

The following table summarizes these adaptive strategies and their applications within a DBTL context.

| Strategy Name | Core Mechanism | Application in DBTL Cycle |

|---|---|---|

| ε-Greedy [31] | With probability ε, explore a random action; otherwise, exploit the best-known action. | A simple baseline for introducing randomness in design selection. |

| Decreasing ε-Greedy [31] | The exploration probability ε decreases linearly over time. | Useful for initial DBTL rounds; exploration is high early on and reduces as knowledge accumulates. |

| Value-Difference Based Exploration (VDBE) [31] | The exploration probability ε is dynamically adjusted based on the difference in Q-values (value estimates), increasing when the agent is uncertain. | Adapts exploration based on the confidence in the performance of different genetic designs. |

| Max-Boltzmann [31] | Combines a value-based rule (like ε-greedy) for high-value options with a Softmax rule for the rest, blending directed and random exploration. | Balances the choice between top-performing designs (exploit) and informed sampling of other options (explore). |

Problem: Low Protein Expression in a Microbial Chassis

Symptoms: Transformed bacterial colonies grow slowly, and protein yields are very low, hindering downstream purification and functional assays [32].

Solutions:

- Hypothesis: Different colonies from the same transformation might vary in their ability to tolerate the expression of the foreign protein [32].

- Iterative DBTL Protocol:

- Design: Test the hypothesis by designing an experiment that screens multiple colonies, not just one.

- Build: Inoculate separate culture flasks with a range of different colonies from your transformation plate.

- Test: Measure the growth curves (OD₆₀₀) and protein expression levels (e.g., via SDS-PAGE) for each flask.

- Learn: Identify and select the healthiest, highest-expressing colonies for scaling up. This turns colony variability from a problem into a selection tool [32].

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential materials and their functions for executing automated, data-driven DBTL cycles, particularly for metabolic pathway engineering as demonstrated in the dopamine production case study [30].

| Item / Reagent | Function / Explanation |

|---|---|

| Ribosome Binding Site (RBS) Libraries | A key tool for rational fine-tuning of gene expression levels within a synthetic pathway without altering the coding sequence itself [30]. |

| pET Plasmid System | A common and robust vector system for high-level, inducible expression of heterologous genes in E. coli [30]. |

| E. coli FUS4.T2 | An example of a specialized production host strain, often genetically engineered for high precursor (e.g., l-tyrosine) production [30]. |

| Cell-Free Protein Synthesis (CFPS) System | A crude cell lysate system used for upstream in vitro testing of enzyme expression and pathway functionality, bypassing cellular constraints and accelerating initial design [30]. |

| HpaBC (4-hydroxyphenylacetate 3-monooxygenase) | A native E. coli enzyme that converts l-tyrosine to l-DOPA, a key precursor in the dopamine production pathway [30]. |

| Ddc (l-DOPA decarboxylase) | A heterologous enzyme from Pseudomonas putida that catalyzes the formation of dopamine from l-DOPA [30]. |

Workflow Visualization: The ML-Enhanced DBTL Cycle

The following diagram illustrates the integrated, machine-learning-enhanced DBTL cycle, highlighting the critical decision point of exploration versus exploitation.

Experimental Protocol: Knowledge-Driven DBTL for Metabolite Production

This protocol is adapted from a study that successfully optimized dopamine production in E. coli using a knowledge-driven DBTL cycle with high-throughput RBS engineering [30].

Objective

To develop and optimize a microbial strain for the high-yield production of a target metabolite (dopamine) by fine-tuning the expression of pathway enzymes.

Materials

- Bacterial Strains: E. coli DH5α for cloning; a specialized production strain like E. coli FUS4.T2 [30].

- Plasmids: pET system for gene storage; a compatible plasmid (e.g., pJNTN) for library construction and in vivo expression [30].

- Genes: Heterologous genes for the metabolic pathway (e.g.,

hpaBCandddcfor dopamine production) [30]. - Media: 2xTY medium for cloning; a defined minimal medium for production experiments [30].

- Equipment: Automated liquid handling system, plate reader, HPLC or MS for metabolite quantification.

Methodology

Step 1: In Vitro Knowledge Gathering (Pre-DBTL)

- Design: Create plasmids for individual expression of pathway enzymes (e.g., pJNTNhpaBC, pJNTNddc).

- Build: Transform plasmids into a suitable strain and cultivate for cell lysate production.

- Test: Use a cell-free protein synthesis (CFPS) system to express the enzymes in a reaction buffer containing the substrate (l-tyrosine). Measure the formation of the intermediate (l-DOPA) and final product (dopamine) to determine baseline enzyme activities and identify potential bottlenecks [30].

- Learn: Analyze the in vitro data to hypothesize the optimal relative expression levels for the two enzymes in the full pathway.

Step 2: First In Vivo DBTL Cycle

- Design: Based on the in vitro learnings, design a library of genetic constructs where the expression of the two pathway genes is fine-tuned using a Ribosome Binding Site (RBS) library. This library is created by modulating the Shine-Dalgarno sequence [30].

- Build: Use high-throughput molecular cloning (e.g., Golden Gate or Gibson Assembly) and automated liquid handlers to assemble the RBS library constructs into the production vector and transform into the production host [30].

- Test: Cultivate the library variants in a high-throughput format (e.g., deep-well plates). Monitor growth and quantitatively analyze final metabolite production using HPLC or MS [30].

- Learn: Statistically analyze the production data to identify top-performing RBS combinations. Use this data to train an initial machine learning model to predict production yields based on RBS sequence features.

Step 3: Iterative DBTL Cycling with ML-Guided Exploration/Exploitation

- Design: The trained ML model is used to propose a new set of designs for the next cycle.

- Exploitation: The model can propose designs that are similar to the known high-performers but with slight optimizations.

- Exploration: The model can also propose designs in unexplored regions of the genetic design space that it predicts could be high-performing (directed exploration), or you can incorporate random sampling of the space (random exploration) [29] [12].

- Build, Test, Learn: Repeat the build and test phases with the new designs. Use the resulting data to retrain and improve the ML model, enhancing its predictive power for subsequent cycles [27] [30].

This knowledge-driven, ML-enhanced approach efficiently navigates the vast design space, balancing the exploration of novel designs with the exploitation of known successful strategies to rapidly converge on an optimally performing production strain.

Information Gain vs. Immediate Reward in Experimental Design

FAQs: Balancing Exploration and Exploitation

FAQ 1: What is the exploration-exploitation dilemma in experimental research? The exploration-exploitation dilemma describes the conflict between gathering new information (exploration) and using known information for immediate reward (exploitation). In research, this translates to choosing between testing a new, uncertain hypothesis that could yield valuable insights (information gain) versus repeating a proven protocol to obtain a reliable result (immediate reward). Computational studies show humans use two distinct strategies to solve this: a bias for information ('directed exploration') and the randomization of choice ('random exploration') [33] [34].

FAQ 2: How does this dilemma relate to the Design-Build-Test-Learn (DBTL) cycle? The DBTL cycle is inherently driven by this balance. Each "Test" phase can be exploitative (validating a known high-performing design) or exploratory (gathering data on new designs to inform future learning). A paradigm shift towards "LDBT" (Learn-Design-Build-Test) proposes using machine learning first to leverage large existing datasets, making the initial design more informed and reducing the need for extensive exploratory testing cycles. This places a higher value on initial information gain to streamline the entire process [35].

FAQ 3: My experiment failed. How do I troubleshoot whether the issue was with my exploratory or exploitative approach? Effective troubleshooting requires a structured method to identify the root cause [36] [37]:

- Identify the Problem: Clearly define what went wrong without assuming the cause (e.g., "No protein expression" not "The new polymerase is bad").

- List Possible Explanations: Consider causes related to both exploration (e.g., an unvalidated new reagent, an uncertain protocol step) and exploitation (e.g., a miscalculation in a standard buffer recipe, contaminated common stock).

- Collect Data: Review your experimental controls. Did both positive and negative controls perform as expected? Check reagent storage conditions and your procedure against established protocols [37].

- Eliminate Explanations: Use the collected data to rule out incorrect explanations.

- Check with Experimentation: Design a targeted experiment to test the remaining likely causes.

- Identify the Cause: Conclude the most probable root cause and implement a fix.

FAQ 4: When should I prioritize information gain over immediate reward? Prioritize information gain (exploration) when [34]:

- Entering a new research area with high uncertainty.

- Standard protocols are consistently failing for your specific application.

- You are building large datasets for machine learning models.

- The potential long-term benefit of discovering a new, more efficient method outweighs the short-term need for a result. Prioritize immediate reward (exploitation) when [34]:

- You are in the final validation stages of a project.

- You need to generate reproducible data for a publication.

- Resource constraints (time, funding) are a primary concern.

FAQ 5: What computational models describe how researchers balance this trade-off? Computational strategies can be summarized as follows [34]:

| Strategy | Core Principle | Best Applied When... |

|---|---|---|

| Standard Reinforcement Learning (sRL) | Learns to maximize only immediate, expected reward based on past outcomes. The decision process can include random noise ("random exploration"). | The research environment is stable, and the goal is to reliably reproduce a known high-yield result. |

| Knowledge Reinforcement Learning (kRL) | Augments reward learning by assigning a value to information itself. Actively seeks to reduce uncertainty about options ("directed exploration"). | Working with poorly characterized systems, designing new protocols, or when preparing data for predictive computational models. |

Studies comparing these models show that humans engage in significant directed exploration, more frequently choosing options they have less information about, even when it is associated with lower short-term gains [34].

Troubleshooting Guides

Guide 1: Troubleshooting a Failed Exploratory Experiment

Scenario: You tested a novel protein expression system based on a machine learning prediction (LDBT cycle), but yield is unexpectedly low.

- Step 1: Verify the "Learn" Phase. Are the machine learning model's predictions reliable for this type of protein? Check the model's training data and known limitations [35].

- Step 2: Analyze the "Build" Phase.

- Reagent Solutions: Confirm the DNA template sequence and concentration. For cell-free systems, check the lysate activity and storage conditions [35].

- Controls: Include a positive control (a DNA template known to express well in any system) and a negative control (no template) to isolate the problem to the new design.

- Step 3: Analyze the "Test" Phase.

- Methodology: Ensure your assay (e.g., SDS-PAGE, activity assay) is functioning correctly. Run a known standard.

- Data Collection: Check for subtle signs of activity you might have missed. The experiment may have provided valuable information gain despite low yield (e.g., clues about protein instability) [35].

Guide 2: Troubleshooting a Failed Exploitative Experiment

Scenario: A standard PCR protocol that has worked for months suddenly produces no product.

- Step 1: Check All Controls [37]. This is the most critical step for exploitative protocols.

- Positive Control: Did it work? If not, the problem is systemic (e.g., the thermal cycler, master mix).

- Negative Control: Is it clean? If not, there is contamination.

- Step 2: List Possible Causes. Focus on components and equipment [37]:

- Taq DNA Polymerase: Activity loss? Incorrect storage?

- Primers: Degraded? Concentration correct?

- Template DNA: Quality and concentration?

- Thermal Cycler: Calibration off? Block temperature uniform?

- Step 3: Collect Data. Check expiration dates. Re-measure DNA concentrations. Verify the cycler's calibration [37].

- Step 4: Experiment. Set up a new reaction with fresh aliquots of all reagents, carefully following the proven protocol. If it works, the issue was a degraded reagent. If not, the thermal cycler may be at fault.

Experimental Protocols for Balancing Strategies

Protocol: A Directed Exploration Experiment to Characterize a New Enzyme

Objective: Gain maximum information on the activity of a novel hydrolase under different conditions.

- Design: Use a machine learning model (e.g., MutCompute, ProteinMPNN) to predict stabilizing mutations and informative point mutations [35]. The design should include a wide range of conditions (pH, temperature, substrates) rather than just optimizing for one high-yield condition.

- Build: Synthesize and clone the wild-type and key variant genes. For rapid testing, use a cell-free expression system to bypass time-consuming cell culture [35].

- Test: Use a high-throughput assay (e.g., in microtiter plates) to measure activity across all conditions and variants in parallel. The dependent variable is specific activity, and the independent variables are pH, temperature, and substrate.

- Learn: Analyze the dataset to build a predictive model of the enzyme's function. The goal is not a single high-yield point, but a comprehensive understanding (high information gain) to inform future projects [35].

Protocol: An Exploitative Experiment for High-Yield Protein Production

Objective: Relably produce a high quantity of a well-characterized protein (immediate reward).

- Design: Use the known, optimal expression construct (e.g., plasmid with strong promoter) and growth conditions (media, temperature) from previous cycles.

- Build: Transform the plasmid into a proven expression chassis (e.g., E. coli BL21). Inoculate a starter culture and then a large production culture.

- Test: Induce protein expression at the optimal cell density. Harvest cells, lyse, and purify the protein using a standard method (e.g., affinity chromatography). The key metric is final pure yield (mg/L).

- Learn: The learning is minimal and confirmatory. Note any minor deviations from the expected yield. The process is repeated with high fidelity to achieve the reward.

The Scientist's Toolkit

| Research Reagent Solution | Function in Exploration/Exploitation |

|---|---|

| Cell-Free Expression System | A tool for rapid exploration. Allows expression of proteins without cloning, enabling ultra-high-throughput testing of thousands of variants for informational gain [35]. |

| Machine Learning Models (e.g., ESM, ProteinMPNN) | Used in the "Learn" phase to generate informed hypotheses (LDBT), reducing uncertainty before any physical experiment is conducted [35]. |

| Positive & Negative Controls | Fundamental for exploitation and troubleshooting. They validate that a known protocol is working correctly and help isolate the cause when it fails [37] [38]. |

| High-Throughput Screening Platforms (e.g., Microfluidics) | Essential for directed exploration. Enables the collection of large, information-rich datasets on many conditions or variants simultaneously [35]. |

| Stable Cell Line/Proven Plasmid | A key resource for exploitation. Provides a reliable and reproducible system to achieve consistent, high-yield results [38]. |

From Theory to Bioreactors: Implementing ML Strategies in DBTL Workflows

Frequently Asked Questions (FAQs)

Q1: What is the exploration-exploitation dilemma, and why is it critical in biological research? The exploration-exploitation dilemma describes the challenge of choosing between testing new options to gather more information (exploration) and using known options that currently yield the best results (exploitation). In biological research, such as drug development or media optimization, this is critical because experiments are costly and time-consuming. A poor balance can lead to wasted resources, slow discovery, or even ethical concerns in clinical settings if patients receive suboptimal treatments for too long [39] [40].

Q2: When should I choose Thompson Sampling over UCB for my experiment? You should choose Thompson Sampling when you are working with complex, non-stationary environments (where reward distributions change over time) or when you prefer an algorithm that requires minimal parameter tuning [41] [42]. UCB is often preferable when you need strict, deterministic confidence bounds and can afford a more exploratory initial phase. Thompson Sampling has been shown to be particularly effective in clinical trial simulations and biological optimization tasks [41] [43].

Q3: How do I handle non-stationary reward distributions in biological data, like in adaptive clinical trials? Non-stationary rewards are common in biology, for example, when a pathogen evolves or patient responses shift. To handle this, you can employ algorithms specifically designed for non-stationary environments. Bio-inspired neural models and some variants of bandit algorithms can adapt to drifting reward probabilities over time [42]. Furthermore, using a sliding window of recent data or incorporating discount factors that weight recent rewards more heavily can help the algorithm adapt to changing conditions [39].

Q4: What are Contextual Bandits, and how can they improve personalized medicine research? Contextual Bandits are an extension of multi-armed bandits that incorporate "context"—additional information about each specific situation—into the decision-making process. In personalized medicine, the context can be a patient's genetic profile, biomarker levels, or clinical history. This allows the algorithm to learn which treatments work best for specific patient subtypes simultaneously, dramatically accelerating the identification of personalized therapeutic strategies and improving patient outcomes compared to context-free approaches [39].

Troubleshooting Guides

Issue 1: Algorithm Fails to Identify the Optimal Treatment or Condition

Symptoms:

- The algorithm's performance (e.g., final yield or success rate) plateaus at a suboptimal level.

- High cumulative regret, meaning the algorithm consistently selects poor options [42].

Possible Causes and Solutions:

- Cause: Insufficient Exploration. The algorithm is exploiting known, mediocre options too greedily and never discovers the true best arm.

- Cause: Over-exploration. The algorithm spends too much time testing suboptimal options, reducing overall efficiency.

- Solution: For Epsilon-Greedy, decrease the value of

εover time. For Thompson Sampling, verify that the prior distributions are correctly specified. Using a decoupled approach like Top-Two Thompson Sampling can more directly balance this trade-off [43].

- Solution: For Epsilon-Greedy, decrease the value of

- Cause: Non-stationary Environment. The best option has changed over the course of the experiment, but the algorithm is stuck with its old beliefs.

- Solution: Implement a bandit algorithm designed for non-stationary environments, which can forget old information and adapt to new data more quickly [42].

Issue 2: Poor Performance with a Large Number of Options (Arms)

Symptoms:

- The algorithm takes an impractically long time to converge.

- Performance is significantly worse than with a smaller number of arms.

Possible Causes and Solutions:

- Cause: Priming Rounds Overhead. Algorithms like UCB and Optimistic Greedy require trying each arm once before making informed decisions. With 1000 arms, this means 1000 initial experiments with no optimization [41].

- Cause: Lack of Context. With many arms, simple bandits lack the information to generalize.

- Solution: Implement a Contextual Bandit. By using features of the arms or the experimental conditions (e.g., chemical properties of drugs, strain genotypes), the algorithm can learn a policy that generalizes across arms, drastically improving sample efficiency [39].

Issue 3: Algorithm is Overly Sensitive to Parameter Settings

Symptoms:

- Small changes in hyperparameters (like

εor the UCB confidence parameter) lead to large swings in performance. - Difficulty in finding a single parameter set that works across different experimental batches.

Possible Causes and Solutions:

- Cause: Fixed Hyperparameters. Using a static, non-adaptive value for parameters like

εin Epsilon-Greedy.- Solution: Use adaptive methods. For example, the VDBE strategy dynamically adjusts

εbased on the value function's variance. Alternatively, Thompson Sampling is often more robust because it inherently adapts its exploration based on the uncertainty (variance) of its posterior distributions and typically requires fewer parameters to tune [41] [42].

- Solution: Use adaptive methods. For example, the VDBE strategy dynamically adjusts

Quantitative Algorithm Comparison

The following table summarizes the key characteristics of the three core algorithms to guide your selection.

| Algorithm | Key Mechanism | Best For | Strengths | Weaknesses |

|---|---|---|---|---|

| Epsilon-Greedy | With probability ε, explore a random arm; otherwise, exploit the best-known arm. |

Simple, quick-to-implement prototypes; stationary environments with a small number of arms [41] [40]. | Simple to understand and implement. | Performance is highly sensitive to the choice of ε; can waste pulls on clearly suboptimal arms [41]. |

| Upper Confidence Bound (UCB) | Selects the arm with the highest upper confidence bound, balancing estimated reward and uncertainty. | Scenarios where deterministic confidence bounds are needed; problems with a well-defined horizon [44]. | Provides a deterministic, principled bound for exploration. | Requires an initial play of all arms; can be slow to start with a very large number of arms [41]. |

| Thompson Sampling | Uses Bayesian inference; selects an arm by sampling from the posterior distribution of each arm's reward. | Complex, non-stationary environments; high-dimensional problems; when parameter tuning is difficult [41] [43] [42]. | Highly performant and robust; naturally incorporates uncertainty. | Computationally more intensive than Epsilon-Greedy; requires specifying a prior distribution [41]. |

Experimental Protocol: Media Optimization via Active Learning

This protocol is adapted from a study that used the Automated Recommendation Tool (ART) to optimize flaviolin production in Pseudomonas putida [45].

1. Objective: To identify the optimal concentrations of media components to maximize the titer of a target metabolite.

2. Experimental Setup:

- Host Organism: Engineered Pseudomonas putida KT2440.

- Target Metabolite: Flaviolin.

- Culture Platform: Automated cultivation in a BioLector system (48-well plates).

- Analysis: Absorbance at 340 nm as a high-throughput proxy for flaviolin concentration.

3. Algorithm Integration (Active Learning Loop):

- Step 1 (Design): The ML algorithm (e.g., a bandit model or ART) suggests a batch of ~15 new media designs (i.e., specific concentration combinations of components like salts, carbon, and nitrogen sources).

- Step 2 (Build): An automated liquid handler physically prepares the suggested media in replicates according to the design.

- Step 3 (Test): The media are inoculated and cultivated in the BioLector for 48 hours. The production titer is measured.

- Step 4 (Learn): The production data and media designs are stored in a database (e.g., Experiment Data Depot). This data is used to retrain the ML model, which then generates improved recommendations for the next cycle.

- This DBTL cycle is repeated until performance plateaus or the experimental budget is exhausted [45].

4. Key Findings:

- The active learning process led to a 60-70% increase in titer and a 350% increase in process yield.

- Explainable AI techniques identified that common salt (NaCl) was the most important component, with an optimal concentration near the tolerance limit of the bacteria [45].

Workflow Visualization

The following diagram illustrates the integration of a bandit algorithm into an automated Design-Build-Test-Learn (DBTL) cycle for biological optimization.

Research Reagent Solutions

The table below lists key computational and experimental "reagents" essential for implementing bandit algorithms in biological DBTL research.

| Item | Function/Description | Example Use Case |

|---|---|---|

| Automated Cultivation System (e.g., BioLector) | Provides highly reproducible culture conditions and online monitoring of growth and production metrics [45]. | Essential for the "Test" phase, generating the high-quality, consistent data needed for ML models. |

| Automated Liquid Handler | Precisely dispenses media components and inoculants according to digital designs generated by the algorithm [45]. | Critical for the "Build" phase, enabling rapid and error-free physical implementation of suggested experiments. |

| Data Repository (e.g., Experiment Data Depot - EDD) | A centralized database to store all experimental metadata, conditions, and outcome data [45]. | Serves as the memory for the DBTL cycle, ensuring data is structured and accessible for the "Learn" phase. |

| Thompson Sampling Library (e.g., in Python) | A pre-built implementation of the Thompson Sampling algorithm for Bernoulli or other relevant reward distributions. | Allows researchers to integrate a powerful bandit algorithm into their active learning loop without building it from scratch. |

| Contextual Feature Set | A curated list of measurable features (e.g., genetic markers, protein expressions, chemical properties) that describe each experimental unit [39]. | Enables the use of Contextual Bandits for personalized medicine or stratified optimization. |

Bayesian Optimization as a Superior Framework for DBTL Cycles

Frequently Asked Questions

Q1: What is the primary advantage of using Bayesian Optimization over simpler methods like Grid or Random Search in a DBTL cycle? Bayesian Optimization (BO) is superior in scenarios where each function evaluation is expensive, such as building and testing a new microbial strain. Unlike Grid or Random Search, which evaluate parameters in isolation, BO uses a probabilistic surrogate model to approximate the objective function and an acquisition function to intelligently select the next most promising parameters to evaluate. This informed approach allows it to focus on high-performance regions of the parameter space, typically requiring far fewer experimental cycles to find the optimal solution [46] [47].

Q2: How does BO balance the exploration of new regions with the exploitation of known promising areas? BO manages the exploration-exploitation trade-off through its acquisition function. Exploration involves sampling areas of high uncertainty in the surrogate model, while exploitation focuses on areas likely to give a better result than the current best. Functions like Expected Improvement (EI) and Upper Confidence Bound (UCB) naturally balance this trade-off by mathematically combining the predicted mean (exploitation) and uncertainty (exploration) of the surrogate model [48] [49] [47].

Q3: Our initial data is limited. Can BO still be effective in such a low-data regime? Yes. Evidence from simulated DBTL cycles shows that machine learning methods like Random Forest and Gradient Boosting, which can be used within a BO-like framework, are robust and perform well even when starting with limited data. These methods are particularly effective for combinatorial pathway optimization before large amounts of experimental data have been collected [50].

Q4: Why might my BO process fail to find the global optimum, and how can I fix it? Common pitfalls in BO include an incorrect prior width, over-smoothing, and inadequate maximization of the acquisition function [51].

- Incorrect Prior Width: If the prior assumptions about the function are too narrow or too wide, the model may converge to a local optimum or learn too slowly. Fix: Adjust the length-scale and amplitude parameters of the kernel function to better match the characteristics of your system.

- Over-smoothing: This occurs when the model fails to capture important, sharp variations in the response landscape. Fix: Consider using a combination of kernels or adjusting kernel parameters to allow for more flexibility.

- Inadequate Acquisition Maximization: If the search for the maximum of the acquisition function is not thorough, a sub-optimal point may be selected. Fix: Ensure you are using a robust optimizer for this inner loop and consider using multiple restarts to find the global maximum of the acquisition function [51].

Q5: How can we accelerate the traditionally slow Build-Test phases of the DBTL cycle to generate data faster for BO? Integrating cell-free expression systems can dramatically accelerate the Build-Test phases. These systems allow for rapid, high-throughput synthesis and testing of proteins or pathways without the need for live cells, enabling megascale data generation. This provides the large, high-quality datasets needed to efficiently train and validate machine learning models, including those used in BO [35].

Comparison of Acquisition Functions in Bayesian Optimization

The choice of acquisition function is critical as it directly governs the trade-off between exploration and exploitation. The table below summarizes key functions.

| Acquisition Function | Mechanism | Best For | Key Parameter(s) |

|---|---|---|---|