Strain Engineering DBTL Cycles: Performance Comparison, Optimization Strategies, and Impact on Biopharmaceutical Development

This article provides a comprehensive analysis of Design-Build-Test-Learn (DBTL) cycle performance in microbial strain engineering for biomedical and biopharmaceutical applications.

Strain Engineering DBTL Cycles: Performance Comparison, Optimization Strategies, and Impact on Biopharmaceutical Development

Abstract

This article provides a comprehensive analysis of Design-Build-Test-Learn (DBTL) cycle performance in microbial strain engineering for biomedical and biopharmaceutical applications. It explores foundational principles, compares traditional and next-generation methodologies like LDBT and bio-intelligent cycles, and details practical applications from pathway optimization to enzyme production. Through case studies and troubleshooting guidance, it demonstrates how optimized DBTL workflows enable rapid strain development, significantly improve product titers—such as achieving 10-fold yield increases in vaccine enzyme production—and accelerate the translation of research into scalable manufacturing processes for drug development professionals.

The DBTL Framework: Core Principles and Evolutionary Shifts in Strain Engineering

Defining the Design-Build-Test-Learn Cycle in Synthetic Biology

The Design-Build-Test-Learn (DBTL) cycle is a systematic, iterative framework central to synthetic biology for developing and optimizing biological systems [1]. This engineering-based approach enables researchers to engineer organisms to perform specific functions, such as producing biofuels, pharmaceuticals, or other valuable compounds [1]. The cycle's power lies in its structured process: researchers design biological components, build DNA constructs, test their functionality, and learn from the data to inform the next design iteration, progressively refining the system until the desired performance is achieved [1]. The application of this cycle has been greatly enhanced by automation and modular design of DNA parts, which increase throughput and shorten development timelines [1]. This guide objectively compares the performance of different DBTL implementations within strain engineering, providing experimental data and methodologies to inform research practices.

Core Components and Workflow

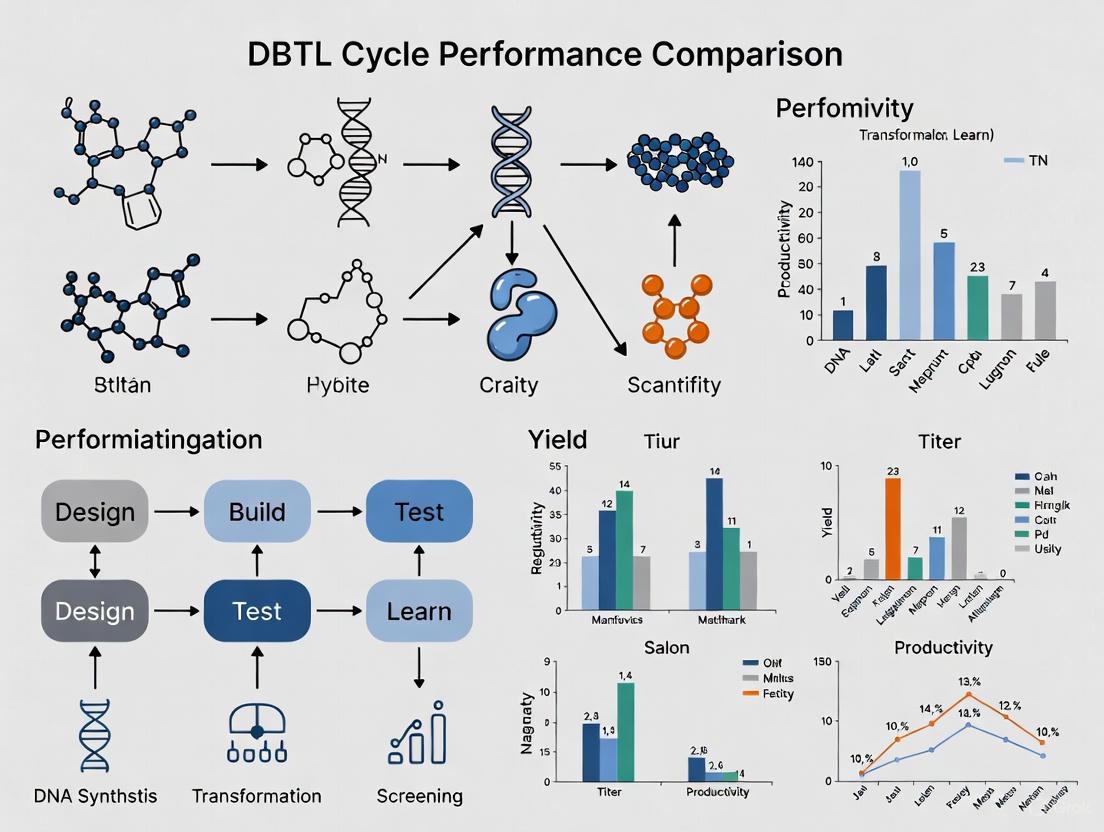

A typical DBTL cycle consists of four distinct phases. Figure 1 illustrates the logical flow and key activities for each stage.

Figure 1: The Iterative DBTL Cycle in Synthetic Biology. This workflow shows the four core phases and the decision point that determines whether to finalize a strain or begin another iteration.

- Design: In this initial phase, researchers define objectives for the desired biological function and design the system using biological parts, often relying on computational modeling and domain expertise [2]. For metabolic engineering, this involves selecting enzymes and designing metabolic pathways.

- Build: This phase involves the physical construction of the biological system. DNA constructs are synthesized and assembled into plasmids or other vectors, which are then introduced into a characterization system (e.g., bacterial chassis, cell-free systems) [2].

- Test: The built constructs are experimentally analyzed to measure performance against the design objectives. This involves a variety of functional assays, such as measuring product titers, growth rates, or fluorescence signals [1] [3].

- Learn: Data from the testing phase is analyzed and compared to the initial objectives. The insights gained inform the next round of design, creating a feedback loop for continuous improvement [2].

Quantitative Performance Comparison

The effectiveness of a DBTL approach is best demonstrated through its application in real strain engineering projects. Performance is typically measured by key metrics such as product titer (concentration), yield, and productivity. Table 1 summarizes the outcomes of two distinct DBTL applications, highlighting the achieved metrics.

Table 1: Performance Outcomes of DBTL Cycles in Strain Engineering

| Engineering Project | Key Metric | Initial/State-of-the-Art Performance | Performance After DBTL Optimization | Fold Improvement | Reference / Source |

|---|---|---|---|---|---|

| Dopamine Production in E. coli | Final Titer | 27 mg/L | 69.03 ± 1.2 mg/L | 2.6-fold | [4] |

| Biomass-specific Yield | 5.17 mg/g˅biomass | 34.34 ± 0.59 mg/g˅biomass | 6.6-fold | [4] | |

| PFOA Biosensor in E. coli | Functional Output | N/A (Initial design failed assembly) | Successful detection signal with inducible promoters | N/A | [3] |

Case Study: High-Yield Dopamine Production

- Experimental Objective: To develop an optimized E. coli strain for the environmentally friendly production of dopamine, a compound with applications in medicine and material science [4].

- DBTL Workflow & Methodology:

- Design: A knowledge-driven DBTL approach was adopted. The study focused on optimizing a two-enzyme pathway (HpaBC and Ddc) converting L-tyrosine to dopamine. The design phase involved in silico planning and in vitro tests using crude cell lysate systems to assess enzyme expression and function before moving to in vivo engineering [4].

- Build: The build phase involved high-throughput ribosome binding site (RBS) engineering to fine-tune the expression levels of the genes hpaBC and ddc in the production host, E. coli FUS4.T2. This was achieved via automated molecular cloning [4].

- Test: Dopamine production was quantified from cultured strains. Analytical methods, likely involving chromatography, measured the final titer (mg/L) and biomass-specific yield (mg/g˅biomass) [4].

- Learn: Data from the RBS library screening identified optimal RBS sequences that balanced enzyme expression, leading to the 2.6-fold and 6.6-fold improvements in titer and yield, respectively [4].

Case Study: PFOA Biosensor Development

- Experimental Objective: To construct a specific and sensitive biosensor in E. coli for detecting the environmental pollutant PFOA [3].

- DBTL Workflow & Methodology:

- Design: The initial design (Design 1.1) involved a complex system with a split-lux operon for bioluminescence output, controlled by PFOA-responsive promoters (Pb0002 and Pb3021), and included fluorescent proteins (mCherry, GFP) as secondary reporters for troubleshooting [3].

- Build: The team attempted to build the plasmid using Gibson assembly, a method for joining multiple DNA fragments, and transformed it into E. coli MG1655 [3].

- Test: Transformants were tested for fluorescence and luminescence. Colony PCR and plasmid sequencing were used to verify successful assembly [3].

- Learn & Iterate (Cycle 1.1): The initial test revealed that the Gibson assembly failed, yielding only empty plasmids. The team learned that the complexity of assembling four long fragments was a major hurdle. They applied a "mini" DBTL cycle to optimize the protocol (e.g., longer DpnI digestion, longer Gibson assembly incubation), but these failed. The key learning was to simplify the strategy [3].

- Re-design & Re-build (Cycle 1.2): The plasmid was ordered from a commercial synthesis provider (Azenta-Genewiz). This bypassed the technical build problem. The new construct was successfully transformed [3].

- Re-test & Final Learn: The commercially built plasmid was validated by sequencing and functional assays with inducers (IPTG, ATC). The biosensor design was confirmed to be functional, producing a luminescent signal primarily under double induction [3].

The Scientist's Toolkit: Essential Research Reagents

Successful execution of DBTL cycles relies on a suite of essential reagents and tools. Table 2 details key solutions used in the featured experiments.

Table 2: Key Research Reagent Solutions for DBTL Workflows

| Item | Function in DBTL Cycle | Specific Example / Note |

|---|---|---|

| Cell-Free Protein Synthesis (CFPS) System | Rapidly tests enzyme expression and pathway function in vitro before in vivo strain engineering; used for high-throughput testing [2] [4]. | Crude cell lysate systems supply metabolites and energy, allowing for functional pathway analysis [4]. |

| Gibson Assembly | An automated molecular cloning method for seamlessly assembling multiple DNA fragments into a vector in a single reaction [3]. | Prone to failure with complex, multi-fragment assemblies, as seen in the biosensor case study [3]. |

| RBS Library Kit | Enables high-throughput fine-tuning of gene expression levels within an operon or pathway without altering the coding sequence [4]. | Crucial for optimizing metabolic flux in the dopamine production case [4]. |

| Commercial Gene Synthesis | Outsources the "Build" phase for complex DNA constructs, ensuring accuracy and bypassing difficult in-house assembly steps [3]. | Used to overcome assembly failures and save time, as demonstrated in the biosensor project [3]. |

| Reporter Genes (e.g., Lux, GFP, mCherry) | Provides a measurable output (e.g., luminescence, fluorescence) during the "Test" phase to quantify system performance and functionality [3]. | The split-Lux operon was designed for a biosensor, while GFP/mCherry served as diagnostic reporters [3]. |

| Analytical Instruments (e.g., Plate Reader) | Precisely quantifies the output of functional assays, such as fluorescence and luminescence intensity, for robust data collection [3]. | A Tecan plate reader was used to measure reporter signals in the biosensor project [3]. |

Advanced Workflows and Emerging Trends

The classic DBTL cycle is being transformed by two major trends: the integration of machine learning (ML) and the adoption of cell-free systems. Figure 2 contrasts the traditional DBTL cycle with the emerging LDBT paradigm.

Figure 2: Comparison of Traditional DBTL and Emerging LDBT Paradigms. The LDBT model leverages machine learning at the outset to generate designs, potentially reducing the need for multiple iterative cycles.

- Machine Learning and the LDBT Shift: Machine learning models, particularly protein language models (e.g., ESM, ProGen) and structure-based tools (e.g., ProteinMPNN), can now make reasonably accurate "zero-shot" predictions for protein design [2]. This allows the cycle to start with Learning, where a model pre-trained on vast biological datasets informs the initial Design, leading to a proposed Learn-Design-Build-Test (LDBT) sequence. This approach can generate functional parts in fewer cycles, or even a single cycle, moving synthetic biology closer to a "Design-Build-Work" ideal [2].

- Cell-Free Systems for Rapid Building and Testing: Cell-free gene expression (CFPS) uses the transcription-translation machinery from cell lysates in vitro. It accelerates the Build and Test phases by eliminating the need for time-consuming cell transformation and cultivation [2]. DNA templates can be directly added to express proteins within hours, enabling ultra-high-throughput testing that is ideal for gathering large datasets to train ML models [2].

The Design-Build-Test-Learn (DBTL) cycle represents a foundational, iterative framework in synthetic biology and strain engineering, enabling the systematic development of microbial cell factories for chemical and therapeutic production [5]. This linear, iterative engineering mantra provides a structured approach to biological engineering, treating each setback not as a failure, but as feedback for the next iteration [6]. As a cornerstone of rational strain engineering, the traditional DBTL cycle allows researchers to gradually refine genetic constructs and cultivation processes through repeated cycles of hypothesis-driven experimentation, moving from initial designs to optimized production strains capable of synthesizing valuable compounds ranging from emergency medicines to bio-based chemicals [5].

The cyclic nature of this process is deliberate—early attempts rarely work as planned, but each iteration generates valuable data to improve subsequent designs [6]. This review examines the performance of the traditional DBTL workflow through comparative analysis with emerging alternatives, providing experimental data and methodological details to illustrate its application in contemporary strain engineering research for drug development professionals and synthetic biologists.

Core Principles and Workflow Implementation

The Linear Iterative Framework

The traditional DBTL cycle follows a sequential, linear progression through four distinct phases, with each completion of the cycle informing the next iteration [2]. In the Design phase, researchers define objectives for desired biological function and design genetic parts or systems using domain knowledge, expertise, and computational approaches [2]. The Build phase involves DNA synthesis, assembly into plasmids or other vectors, and introduction into characterization systems such as bacterial, yeast, or mammalian chassis [2] [7]. During the Test phase, engineered biological constructs are experimentally measured to determine their performance against design objectives [2]. Finally, the Learn phase focuses on analyzing collected data to inform the next design round, creating a continuous feedback loop for system optimization [6] [2].

This framework closely mirrors established approaches in traditional engineering disciplines such as mechanical engineering, where iteration involves gathering information, processing it, identifying design revisions, and implementing those changes [2]. In synthetic biology, this workflow streamlines and simplifies efforts to build biological systems by providing a systematic, iterative framework for engineering, though the field continues to rely heavily on empirical iteration rather than predictive engineering [2].

Experimental Visualization of Traditional DBTL Workflow

The following diagram illustrates the sequential, linear progression of the traditional DBTL cycle and its key activities at each stage:

Performance Analysis: Quantitative Comparison of DBTL Applications

Comparative Performance Metrics in Strain Engineering

Table 1: Quantitative performance outcomes from traditional DBTL implementation in strain engineering projects

| Application Area | DBTL Cycles | Key Optimization Parameters | Performance Improvement | Experimental Validation |

|---|---|---|---|---|

| Dopamine Production in E. coli [5] | Multiple knowledge-driven cycles | RBS engineering, enzyme expression balancing | 69.03 ± 1.2 mg/L (2.6 to 6.6-fold increase over prior art) | HPLC analysis, in vitro-in vivo translation |

| Verazine Biosynthesis in Yeast [7] | Automated DBTL screening | 32 gene library, high-throughput transformation | 2.0 to 5-fold increase in normalized titer | LC-MS quantification, 96-well format validation |

| Arsenic Biosensor Development [8] | 7 iterative cycles | Plasmid concentration ratios (1:10), incubation conditions | 5-100 ppb dynamic range for detection | Fluorescence assays, household scenario simulation |

| PFAS Biosensor Engineering [3] | 2+ detection cycles | Promoter selection (b0002, b3021), split-lux operon design | Specificity and sensitivity optimization | Bioluminescence and fluorescence measurements |

Experimental Duration and Resource Requirements

Table 2: Time investment and experimental scale requirements for traditional DBTL cycles

| DBTL Phase | Typical Duration | Key Activities | Resource Requirements | Automation Potential |

|---|---|---|---|---|

| Design [5] | Days to weeks | Pathway design, computational modeling, part selection | Bioinformatics tools, DNA design software | Medium (AI-assisted design) |

| Build [7] | 1-3 weeks | DNA assembly, transformation, strain construction | Molecular biology reagents, cloning strains | High (Robotic integration) |

| Test [5] | 1-2 weeks | Cultivation, sampling, analytical measurements | Bioreactors, LC-MS, plate readers | High (Automated screening) |

| Learn [9] | Days to weeks | Data analysis, statistical modeling, hypothesis generation | Statistical software, bioinformatics | Medium (Machine learning) |

Experimental Protocols and Methodologies

Standardized Workflow for Metabolic Pathway Optimization

Protocol 1: Knowledge-Driven DBTL Cycle for Dopamine Production in E. coli [5]

Initial Design Phase:

- Select heterologous genes (hpaBC from E. coli for tyrosine to l-DOPA conversion; ddc from Pseudomonas putida for l-DOPA to dopamine conversion)

- Design constructs with modular RBS sequences for expression tuning

- Apply UTR Designer for RBS sequence modulation focusing on Shine-Dalgarno sequence

Build Phase:

- Clone genes into pET plasmid system for individual expression testing

- Assemble bi-cistronic operon in pJNTN vector for coordinated expression

- Transform into E. coli FUS4.T2 production strain with high l-tyrosine production capability

Test Phase:

- Cultivate strains in minimal medium (20 g/L glucose, 10% 2xTY, MOPS buffer)

- Conduct fed-batch fermentation with controlled feeding strategy

- Sample at regular intervals for HPLC analysis of dopamine titers

- Measure biomass concentration for yield calculations (mg product/g biomass)

Learn Phase:

- Analyze correlation between RBS strength and dopamine production

- Identify pathway bottlenecks through enzyme activity assays

- Design next-generation constructs with optimized RBS combinations

Protocol 2: Automated High-Throughput DBTL for Yeast Pathway Engineering [7]

Design Phase:

- Select gene candidates from native and heterologous pathways

- Design pESC-URA plasmids with GAL1 promoter for inducible expression

- Plan combinatorial library screening approach

Build Phase (Automated):

- Program Hamilton Microlab VANTAGE for high-throughput transformation

- Set up 96-well format lithium acetate/ssDNA/PEG transformation protocol

- Integrate off-deck hardware (plate sealer, thermal cycler, plate peeler)

- Execute ~400 transformations per day capacity

Test Phase:

- Automated colony picking using QPix 460 system

- High-throughput culturing in 96-deep-well plates with selective media

- Zymolyase-mediated cell lysis and organic solvent extraction

- Rapid LC-MS analysis (19-minute runtime) for verazine quantification

Learn Phase:

- Statistical analysis of gene library performance

- Identification of top-performing constructs (erg26, dga1, cyp94n2)

- Design of follow-up cycles for combinatorial optimization

Research Reagent Solutions for DBTL Implementation

Table 3: Essential research reagents and materials for traditional DBTL workflows

| Reagent/Material | Specific Function | Application Examples | Experimental Considerations |

|---|---|---|---|

| pET Plasmid System [5] | High-copy expression vector for heterologous gene expression | Dopamine pathway enzyme expression | Compatible with E. coli expression systems; IPTG-inducible |

| pESC-URA Yeast Vector [7] | Galactose-inducible expression in S. cerevisiae | Verazine biosynthetic pathway expression | Enables high-throughput screening with auxotrophic selection |

| Hamilton Microlab VANTAGE [7] | Robotic liquid handling and automation platform | High-throughput yeast transformation | Enables 2,000 transformations/week with integrated off-deck hardware |

| Cell-Free Protein Synthesis Systems [2] | In vitro transcription/translation without cellular constraints | Rapid prototyping of enzyme combinations | Bypasses cell membrane limitations; enables toxic product synthesis |

| RBS Library Variants [5] | Fine-tuning translation initiation rates | Optimizing relative enzyme expression levels in pathways | SD sequence modulation without altering secondary structure |

| Liquid Chromatography-Mass Spectrometry [7] | Quantitative analysis of metabolic products | Verazine and dopamine quantification | Method runtime optimization critical for high-throughput (19-50 minutes) |

Comparative Analysis with Emerging Alternatives

Traditional DBTL vs. LDBT Paradigm

The emergence of machine learning has prompted a proposed paradigm shift from the traditional DBTL cycle to an LDBT (Learn-Design-Build-Test) approach, where "Learning" precedes "Design" [2]. This reordering leverages large biological datasets and machine learning algorithms to make zero-shot predictions that improve the initial design phase, potentially reducing the number of iterative cycles required.

Key Advantages of Traditional DBTL:

- Established framework with proven success across multiple applications [6] [5] [7]

- Accessible to laboratories without extensive computational resources

- Hypothesis-driven approach provides mechanistic insights [5]

- Compatible with both manual and automated implementation

Limitations of Traditional DBTL:

- Multiple cycles required to gain sufficient knowledge for optimization [2]

- Build-Test phases represent significant time and resource investments [7]

- Reliance on empirical iteration rather than predictive engineering [2]

- Limited exploration of design space due to practical constraints [9]

Integration of Automation in Traditional DBTL

Automation has significantly accelerated the traditional DBTL cycle, particularly in the Build and Test phases. Automated biofoundries demonstrate the potential to increase throughput by an order of magnitude - from approximately 200 manual yeast transformations per week to 2,000 automated transformations [7]. This automation maintains the linear, iterative structure of traditional DBTL while dramatically improving its efficiency and scalability.

The integration of robotic systems like the Hamilton Microlab VANTAGE with customized user interfaces enables modular, high-throughput execution of strain construction protocols while preserving the fundamental DBTL sequence [7]. This approach combines the systematic framework of traditional DBTL with the practical benefits of automation, making it particularly valuable for screening large gene libraries and optimizing multi-gene pathways.

The traditional DBTL workflow remains a cornerstone of synthetic biology and strain engineering, providing a systematic, iterative framework for developing microbial production strains. While emerging approaches like LDBT propose paradigm shifts by leveraging machine learning, the linear, iterative structure of Design-Build-Test-Learn continues to deliver substantial performance improvements in diverse applications, from pharmaceutical precursor synthesis to environmental biosensor development. The integration of automation and high-throughput methodologies has enhanced the efficiency of traditional DBTL cycles, maintaining their relevance in contemporary bioengineering research. As the field advances, the traditional DBTL mantra continues to serve as both a practical engineering framework and a foundational concept upon which next-generation approaches are being built.

The iterative process of Design-Build-Test-Learn (DBTL) has long been a cornerstone of synthetic biology and metabolic engineering. However, a paradigm shift is emerging, recasting this cycle as Learn-Design-Build-Test (LDBT), where machine learning (ML) and advanced computational models precede physical design. This comparison guide objectively evaluates the performance of the traditional DBTL framework against the nascent LDBT approach, with a specific focus on applications in strain engineering research. We present quantitative experimental data, detailed methodologies, and essential resource information to equip researchers and drug development professionals with a clear understanding of this transformative transition.

The conventional DBTL cycle begins with the Design of genetic constructs based on existing knowledge, proceeds to Build these constructs in biological systems, Tests their performance empirically, and concludes with Learning from the results to inform the next design iteration [10] [2]. This process, while systematic, often involves multiple costly and time-consuming cycles to achieve optimal strains for metabolic engineering.

The proposed LDBT framework fundamentally reorders this sequence by placing Learn first [10] [2]. This initial learning phase leverages sophisticated machine learning models trained on vast biological datasets—including protein sequences, structural information, and historical experimental results—to make predictive designs before any laboratory work begins. The subsequent Design, Build, and Test phases then serve to execute and validate these computationally informed designs, potentially achieving desired functionality in fewer iterations or even a single cycle [2].

Table 1: Core Conceptual Comparison Between DBTL and LDBT Frameworks

| Feature | Traditional DBTL Cycle | LDBT Cycle |

|---|---|---|

| Initial Phase | Design based on existing knowledge & hypotheses | Learn from comprehensive datasets using ML |

| Primary Driver | Empirical experimentation & iteration | Predictive computational modeling |

| Data Utilization | Data generated from previous Test phases informs next Design | Pre-existing megascale datasets train initial models |

| Cycle Goal | Converge toward solution through multiple iterations | Achieve functional design in minimal cycles |

| Resource Emphasis | Laboratory throughput & experimental efficiency | Computational power & data quality |

Performance Comparison: Experimental Data

Recent studies directly compare the effectiveness of machine learning-enhanced cycles against traditional approaches in metabolic engineering and protein design. The data demonstrates significant advantages in prediction accuracy, experimental efficiency, and success rates when adopting an LDBT-inspired methodology.

Machine Learning Method Performance in Metabolic Engineering

A 2023 framework for simulating DBTL cycles in metabolic engineering provides direct evidence of ML performance. Researchers used a mechanistic kinetic model to test various ML methods over multiple cycles, with a particular focus on combinatorial pathway optimization—a common challenge in strain engineering [11].

Table 2: Machine Learning Method Performance in Simulated DBTL Cycles for Pathway Optimization

| Machine Learning Method | Performance in Low-Data Regime | Robustness to Training Set Bias | Robustness to Experimental Noise |

|---|---|---|---|

| Gradient Boosting | Top performer | High | High |

| Random Forest | Top performer | High | High |

| Other Tested Methods | Lower performance | Variable | Variable |

The study demonstrated that these superior methods were effective even with limited initial data, a crucial advantage for applications where experimental data is scarce or expensive to generate [11]. Furthermore, the research introduced a algorithm for recommending new designs based on ML predictions, revealing that when the number of strains to be built is limited, starting with a large initial cycle is more favorable than distributing the same number of strains across multiple cycles [11].

Case Study: Protein Engineering with LDBT Principles

While not explicitly labeled LDBT, several recent protein engineering campaigns exemplify the learn-first approach with striking results:

Table 3: Performance Metrics in Protein Engineering Case Studies

| Engineering Project | Traditional Approach | ML-Enhanced (LDBT-like) Approach | Result |

|---|---|---|---|

| PET Hydrolase Engineering | Multiple rounds of site-directed mutagenesis | MutCompute structure-based deep learning predictions [2] | Increased stability and activity compared to wild-type [2] |

| TEV Protease Engineering | Directed evolution with extensive screening | ProteinMPNN sequence design with AlphaFold structure assessment [2] | Nearly 10-fold increase in design success rates [2] |

| Antimicrobial Peptide Design | Library screening & characterization | Deep learning sequence generation followed by screening of 500 selected from 500,000 [2] | 6 promising designs identified for experimental validation [2] |

Experimental Protocols & Methodologies

Protocol: ML-Guided Metabolic Pathway Optimization

This protocol is adapted from the simulated DBTL study that compared machine learning methods for metabolic engineering [11].

- Initial Data Collection (Learn): Compile historical data on pathway variants, including gene combinations, expression levels, and corresponding metabolite production measurements. If no prior data exists, generate an initial diverse set of 50-100 pathway variants.

- Model Training (Learn): Train gradient boosting or random forest models using the collected data. Use pathway configurations (e.g., promoter strengths, RBS sequences, gene variants) as features and metabolic flux or product yield as the target variable.

- Design Proposal (Design): Use the trained model to predict the performance of 10,000+ virtual pathway combinations in silico. Select 20-50 top-performing candidates for experimental construction, including some with high predicted uncertainty to explore novel design space.

- Strain Construction (Build): Build selected pathway variants using high-throughput DNA assembly and transformation protocols in the desired microbial host.

- Performance Assay (Test): Cultivate constructed strains in microtiter plates and measure target metabolite production using HPLC, GC-MS, or enzymatic assays.

- Model Refinement: Add the new experimental data to the training dataset and retrain the ML model for the next cycle.

Protocol: Zero-Shot Protein Design with Cell-Free Testing

This protocol exemplifies the LDBT paradigm by leveraging pre-trained models before any building or testing occurs [2].

- Model Selection (Learn): Select a pre-trained protein language model (e.g., ESM, ProGen) or structure-based design tool (e.g., ProteinMPNN, MutCompute) based on the engineering goal [2].

- Zero-Shot Design (Design): Input wild-type sequence or structural information into the model to generate candidate variants with predicted improved properties (e.g., stability, activity, expression).

- DNA Template Preparation (Build): Order gene fragments or synthesize DNA templates encoding the designed protein variants without cloning into expression vectors.

- Cell-Free Expression (Test): Express protein variants directly using cell-free transcription-translation systems [2].

- High-Throughput Screening: Assay protein function directly in the cell-free reaction or after minimal purification using fluorescent, colorimetric, or activity-based assays. Microfluidics can be employed to screen thousands of picoliter-scale reactions [2].

- Validation: Confirm the performance of top hits using traditional purified protein assays and, if applicable, in vivo testing.

Workflow Visualization: DBTL vs. LDBT

Traditional DBTL Cycle

LDBT Cycle with Optional Refinement

The Scientist's Toolkit: Essential Research Reagents & Platforms

Implementation of efficient LDBT cycles requires specific reagents and platforms that enable high-throughput building and testing, particularly when guided by computational predictions.

Table 4: Key Research Reagent Solutions for LDBT Implementation

| Resource | Type | Primary Function in LDBT |

|---|---|---|

| Cell-Free Transcription-Translation Systems | Biochemical Reagent | Rapid protein expression without cloning; enables direct testing of DNA templates [10] [2] |

| Protein Language Models (ESM, ProGen) | Computational Tool | Zero-shot prediction of protein function and design based on evolutionary sequences [2] |

| Structure-Based Design Tools (ProteinMPNN, MutCompute) | Computational Tool | Generate sequences that fold into desired structures or optimize local residue environments [2] |

| Gradient Boosting / Random Forest Libraries | Computational Tool | Build predictive models for metabolic pathway performance from experimental data [11] |

| Droplet Microfluidics Systems | Equipment Platform | Ultra-high-throughput screening of >100,000 reactions in picoliter volumes [2] |

| Automated DNA Synthesis & Assembly | Service/Platform | Rapid construction of designed genetic constructs without traditional cloning [10] |

Discussion & Future Outlook

The experimental evidence and case studies presented indicate a clear trend: the integration of machine learning at the beginning of the engineering cycle—the LDBT paradigm—demonstrates measurable improvements in both the efficiency and success rates of strain engineering projects. The ability of models like gradient boosting and random forests to perform well even with limited data, their robustness to experimental noise, and the dramatic success of zero-shot protein design all point toward a future where learning from existing data fundamentally precedes and guides experimental design.

The adoption of cell-free systems for rapid testing addresses a critical bottleneck in traditional DBTL, enabling the validation of computationally generated designs at unprecedented scales [10] [2]. As these technologies mature and integrate with automation, the vision of a single, efficient LDBT cycle producing functional strains moves closer to reality, potentially transforming the bioeconomy by bringing synthetic biology closer to a "Design-Build-Work" model [2]. For researchers in metabolic engineering, the evidence suggests that exploring an LDBT approach, particularly with the identified best-performing ML methods and experimental platforms, offers a compelling path to accelerating strain development.

The Design-Build-Test-Learn (DBTL) cycle is a fundamental engineering framework in synthetic biology used to systematically develop microbial strains for producing valuable biochemicals. This iterative process involves designing genetic modifications, building the engineered strains, testing their performance, and learning from the data to inform the next design cycle. As strain engineering faces increasingly complex challenges, novel approaches are essential to improve success rates and reduce development times, helping promising innovations escape the "valley-of-death" between laboratory research and industrial application [12] [13].

The recent emergence of bio-intelligent DBTL (biDBTL) represents a transformative evolution of this framework. This advanced approach integrates artificial intelligence (AI), digital twins, and automation to create a self-optimizing system that significantly accelerates strain and bioprocess engineering [12] [14]. By incorporating bio-intelligent elements such as biosensors, bioactuators, and bidirectional communication at biological-technical interfaces, biDBTL enables more predictable and efficient development of sustainable biomanufacturing processes [13].

This guide provides a comprehensive comparison of conventional and intelligent DBTL methodologies, supported by experimental data and detailed protocols to assist researchers in selecting appropriate strategies for their metabolic engineering projects.

Comparative Analysis of DBTL Framework Performance

The evolution from conventional to intelligent DBTL frameworks has marked a significant advancement in strain engineering capabilities. The table below provides a systematic comparison of four prominent approaches, highlighting their core methodologies, implementation requirements, and demonstrated performance.

Table 1: Performance Comparison of DBTL Frameworks in Strain Engineering

| DBTL Framework | Core Methodology | Key Technologies | Implementation Requirements | Reported Performance |

|---|---|---|---|---|

| Conventional DBTL | Sequential iteration with statistical analysis [4] | Molecular cloning, HPLC, basic data analysis [4] | Standard lab equipment, foundational bioinformatics skills [4] | Multiple cycles needed; Limited by design complexity [4] |

| Knowledge-Driven DBTL | Mechanistic understanding through upstream in vitro investigation [4] | Cell-free transcription-translation systems, high-throughput RBS engineering [4] | Automated liquid handling, UTR Designer, advanced analytics [4] | 2.6 to 6.6-fold improvement in dopamine production [4] |

| Bio-Intelligent DBTL (biDBTL) | AI-driven hybrid learning with digital twins [12] [14] | Biosensors, bioactuators, AI/ML, robotic automation, digital twins [12] [13] | Biofoundry infrastructure, AI/ML expertise, IoT connectivity [12] [15] | Targets 50% cycle time reduction; Enables autonomous bioprocesses [12] [15] |

| LDBT Paradigm | Machine learning precedes design (Learn-Design-Build-Test) [16] | Protein language models (ESM, ProGen), cell-free systems, zero-shot prediction [16] | Pre-trained ML models, microfluidics, high-throughput screening [16] | 10-fold increase in protein design success rates; 20-fold pathway improvement [16] |

Performance Insights and Applications

The comparative data reveals a clear trajectory toward intelligent automation and data-driven prediction across DBTL frameworks. The knowledge-driven approach demonstrates substantial efficiency gains, as evidenced by its application in dopamine production where strategic in vitro prototyping enabled precise optimization of enzyme expression levels, yielding 69.03 ± 1.2 mg/L dopamine (34.34 ± 0.59 mg/g biomass) – a 2.6 to 6.6-fold improvement over previous methods [4].

The emerging LDBT paradigm represents a fundamental reordering of the traditional cycle, placing learning first through zero-shot predictions from protein language models. This approach has demonstrated remarkable success in protein engineering campaigns, with one application showing a nearly 10-fold increase in design success rates and another achieving 20-fold improvement in 3-HB production through cell-free pathway prototyping [16].

Bio-intelligent DBTL frameworks aim for even more substantial efficiency gains through comprehensive digitization. The EU BIOS project exemplifies this approach by creating digital twins mimicking cellular and process levels, enabling hybrid learning that combines AI predictions with experimental data to accelerate the development of P. putida producer strains for terpenes, polyolefins, and methylacrylates [12] [13].

Experimental Protocols for DBTL Implementation

Knowledge-Driven DBTL for Dopamine Production

Table 2: Key Research Reagents for Knowledge-Driven DBTL

| Reagent/Cell Line | Function/Application | Key Features/Benefits |

|---|---|---|

| E. coli FUS4.T2 | Dopamine production host [4] | High L-tyrosine producer; Engineered with TyrR depletion and feedback-resistant tyrA [4] |

| HpaBC enzyme | Converts L-tyrosine to L-DOPA [4] | Native E. coli gene; 4-hydroxyphenylacetate 3-monooxygenase activity [4] |

| Ddc from P. putida | Converts L-DOPA to dopamine [4] | Heterologous L-DOPA decarboxylase; Catalyzes final dopamine synthesis step [4] |

| RBS Library | Fine-tuning gene expression [4] | Modulates translation initiation rate; Varies Shine-Dalgarno sequence GC content [4] |

| Crude Cell Lysate System | In vitro pathway prototyping [4] | Bypasses cellular membranes and regulation; Enables rapid enzyme testing [4] |

Phase 1:In VitroPathway Prototyping

- Prepare crude cell lysates from E. coli FUS4.T2 strains expressing HpaBC and Ddc at varying ratios [4]

- Set up reaction mixtures containing phosphate buffer (50 mM, pH 7), 0.2 mM FeCl₂, 50 μM vitamin B6, and 1 mM L-tyrosine or 5 mM L-DOPA [4]

- Incubate reactions at 37°C with continuous shaking at 250 rpm for 4-6 hours [4]

- Quantify dopamine production using HPLC with UV detection at 280 nm [4]

- Identify optimal enzyme expression ratios that maximize dopamine yield while minimizing intermediate accumulation [4]

Phase 2:In VivoStrain Engineering

- Design RBS variants with modulated Shine-Dalgarno sequences using the UTR Designer tool to achieve expression levels identified in in vitro studies [4]

- Assemble bicistronic constructs with hpaBC and ddc genes controlled by the optimized RBS variants via Golden Gate assembly [4]

- Transform constructs into E. coli FUS4.T2 high-tyrosine production chassis using electroporation [4]

- Culture engineered strains in minimal medium containing 20 g/L glucose, 10% 2xTY, MOPS buffer, and appropriate antibiotics [4]

- Induce expression with 1 mM IPTG during mid-log phase and monitor dopamine production over 24-48 hours [4]

Diagram 1: Knowledge-Driven DBTL Workflow

Bio-Intelligent DBTL Implementation

Digital Twin Development

- Create cellular-level digital twins by integrating multi-omics data (genomics, transcriptomics, proteomics, metabolomics) with kinetic models of central metabolism [12] [13]

- Develop process-level digital twins by incorporating bioreactor hydrodynamics, mass transfer limitations, and nutrient gradient effects [12]

- Implement real-time data integration from online biosensors monitoring key parameters (substrate consumption, product formation, dissolved oxygen) [13]

- Establish bidirectional communication between physical and digital systems using bioactuators for automated process control [12]

AI-Driven Hybrid Learning

- Train ensemble machine learning models on historical experimental data to predict strain performance from genetic designs [17]

- Implement Bayesian optimization to recommend genetic modifications with high probability of success [17]

- Combine physics-based models with data-driven approaches to create hybrid models that improve prediction accuracy with limited data [12]

- Utilize Automated Recommendation Tools (ART) to bridge Learn and Design phases by providing probabilistic predictions of production levels [17]

Diagram 2: Bio-Intelligent DBTL Architecture

The integration of AI and digital twins into DBTL cycles represents a paradigm shift in strain engineering and bioprocess development. The comparative analysis demonstrates that bio-intelligent approaches offer substantial advantages in prediction accuracy, development speed, and success rates compared to conventional methods.

While knowledge-driven DBTL provides a strategic intermediate option with proven efficacy for pathway optimization, the full biDBTL framework enables autonomous bioprocess development through hybrid learning and digital twins. The LDBT paradigm further accelerates this evolution by leveraging pre-trained machine learning models for zero-shot design, potentially reducing the number of experimental cycles required.

For research teams with access to biofoundry infrastructure and computational resources, implementing bio-intelligent DBTL cycles can significantly enhance productivity and success in developing sustainable biomanufacturing processes. The experimental protocols and reagent specifications provided in this guide offer practical starting points for adopting these advanced methodologies in strain engineering projects.

Design-Build-Test-Learn (DBTL) cycles are the cornerstone of modern synthetic biology and strain engineering, providing an iterative framework for developing microbial cell factories. This guide objectively compares the performance of different DBTL cycle implementations, supported by experimental data, to inform researchers and drug development professionals in selecting and optimizing their engineering strategies.

In synthetic biology, DBTL cycles enable the systematic engineering of biological systems. The Design phase involves planning genetic constructs; Build implements these designs in biological chassis; Test characterizes the resulting strains; and Learn analyzes data to inform the next cycle [18]. Recent advancements have introduced variations like knowledge-driven DBTL and LDBT (Learn-Design-Build-Test) cycles that leverage machine learning and cell-free systems to accelerate development [5] [2]. Evaluating DBTL cycle effectiveness requires standardized metrics across critical performance dimensions, including engineering efficiency, product yield, and resource utilization. This analysis compares these metrics across documented implementations to establish benchmarks for strain engineering research.

Performance Metrics Comparison

The table below summarizes key performance metrics from published DBTL cycle implementations, providing a comparative baseline for strain engineering projects.

Table 1: Comparative Performance Metrics of DBTL Cycle Implementations

| Application / Study | Final Production Titer / Output | Performance Improvement | Cycle Duration / Efficiency | Key Success Factors |

|---|---|---|---|---|

| Dopamine Production [5] | 69.03 ± 1.2 mg/L (34.34 ± 0.59 mg/gbiomass) | 2.6 to 6.6-fold improvement over state-of-the-art | Knowledge-driven approach with upstream in vitro testing | RBS engineering, GC content optimization in Shine-Dalgarno sequence |

| Biosensor Development [3] | Functional inducible biosensor validated | Success after switching from complex Gibson assembly to commercial synthesis | Multiple failed assembly attempts before successful build | Simplified design, commercial gene synthesis, low-copy number backbone |

| Combinatorial Pathway Optimization [19] | In silico framework for metabolic flux optimization | Machine learning recommendations improved design selection | Simulated cycles for benchmarking | Gradient boosting/random forest models effective in low-data regime |

| Cell-Free ML Integration [2] | Various protein engineering successes | Near 10-fold increase in design success rates with structure-based AI | Rapid testing (protein production in <4 hours) | Cell-free expression, zero-shot machine learning predictions |

Detailed Experimental Protocols

Knowledge-Driven DBTL Cycle for Dopamine Production

This protocol details the methodology for implementing a knowledge-driven DBTL cycle with upstream in vitro investigation, as used to develop an efficient dopamine production strain in E. coli [5].

Table 2: Key Research Reagent Solutions for Dopamine Production

| Reagent / Material | Function in Experiment | Specifications / Composition |

|---|---|---|

| E. coli FUS4.T2 strain | Dopamine production host | Engineered for high L-tyrosine production |

| pJNTN plasmid system | Library construction for pathway engineering | Bi-cistronic expression of hpaBC and ddc genes |

| Minimal medium | Cultivation for production experiments | 20 g/L glucose, 10% 2xTY, MOPS buffer, trace elements |

| Phosphate reaction buffer | Cell-free lysate system | 50 mM pH 7, with FeCl₂, vitamin B₆, L-tyrosine/L-DOPA |

| HpaBC enzyme | Converts L-tyrosine to L-DOPA | 4-hydroxyphenylacetate 3-monooxygenase from native E. coli |

| Ddc enzyme | Converts L-DOPA to dopamine | L-DOPA decarboxylase from Pseudomonas putida |

Experimental Workflow:

In Vitro Pathway Investigation: Prepare crude cell lysate systems from production strains to test different relative enzyme expression levels before full DBTL cycling. Use phosphate reaction buffer supplemented with 0.2 mM FeCl₂, 50 μM vitamin B₆, and 1 mM L-tyrosine or 5 mM L-DOPA.

Strain Design: Based on in vitro results, design RBS libraries for fine-tuning expression of hpaBC and ddc genes. Consider GC content in Shine-Dalgarno sequence as key parameter affecting RBS strength.

Strain Construction: Use high-throughput RBS engineering to build variant libraries. Employ appropriate antibiotics for selection (ampicillin 100 μg/mL, kanamycin 50 μg/mL).

Testing and Analysis: Cultivate strains in minimal medium with 20 g/L glucose. Measure dopamine production titers using appropriate analytical methods (e.g., HPLC). Perform triplicate experiments to ensure statistical significance (n=3).

Learning and Re-design: Analyze the relationship between RBS sequence variations, enzyme expression levels, and dopamine production. Identify optimal expression balance for maximal flux through the pathway.

Automated DBTL for Biosensor Refactoring

This protocol outlines an automated DBTL approach for biosensor engineering, which improves throughput, reliability, and reproducibility compared to manual methods [20].

Experimental Workflow:

Design: Using computational tools, design refactored biosensor components with standardized biological parts. For PFAS biosensors [3], select responsive promoters (e.g., b0002 and b3021 for PFOA) and split-lux operon reporter system.

Build: Implement automated DNA assembly using liquid handling robots. For complex assemblies [3], consider commercial gene synthesis when Gibson assembly fails. Use low-copy number backbone (e.g., pSEVA261) to minimize background signal.

Test: Characterize biosensor performance through high-throughput screening. Measure specificity (response to target vs. non-target molecules), sensitivity (detection limit), and dynamic range using plate readers for fluorescence and luminescence.

Learn: Apply data analysis algorithms to identify performance bottlenecks. For PFAS biosensors [3], this revealed promoter leakiness issues requiring redesign.

DBTL Workflow Visualization

Diagram 1: Traditional DBTL Cycle

Diagram 2: LDBT Paradigm with Learning First

Analysis of DBTL Implementation Strategies

Knowledge-Driven vs. Conventional DBTL Approaches

The knowledge-driven DBTL cycle demonstrated significantly improved efficiency in dopamine production strain development [5]. By incorporating upstream in vitro investigation, researchers achieved a 2.6 to 6.6-fold improvement over state-of-the-art methods. This approach reduced iterative cycling by front-loading mechanistic understanding, contrasting with conventional DBTL that often relies on design of experiment or randomized selection of engineering targets. The key advantage emerged from using cell-free lysate systems to test enzyme expression levels before full pathway implementation in vivo, de-risking the Build and Test phases.

Impact of Automation and Machine Learning

Biofoundries implementing automated DBTL cycles demonstrate substantially increased throughput capabilities. One notable example constructed 1.2 Mb DNA, built 215 strains across five species, established two cell-free systems, and performed 690 assays within 90 days for 10 target molecules [18]. Machine learning integration further enhances cycle efficiency; gradient boosting and random forest models outperform other methods in low-data regimes common in early DBTL cycles [19] [21]. When the number of strains is limited, starting with a large initial DBTL cycle proves more favorable than distributing the same number of strains across multiple cycles [19].

Cell-Free Systems for Accelerated Testing

The integration of cell-free expression systems dramatically compresses DBTL cycle timelines. These platforms enable protein production exceeding 1 g/L in under 4 hours, bypassing time-intensive cloning and transformation steps [2]. When combined with microfluidics, researchers can screen up to 100,000 picoliter-scale reactions, generating massive datasets for machine learning training [2]. This approach proves particularly valuable for testing protein variants and pathway prototypes before committing to full cellular implementation.

This comparative analysis identifies key performance differentiators among DBTL cycle implementations. Knowledge-driven approaches with upstream in vitro testing [5], automation-enabled biofoundries [18], and machine learning-guided design [19] [2] demonstrate superior efficiency and success rates compared to conventional artisanal methods. The most significant performance improvements emerge from strategies that reduce Build and Test phase bottlenecks through automation, cell-free systems, and computational prediction. Researchers can leverage these comparative metrics to select appropriate DBTL implementations for specific strain engineering objectives, resource constraints, and timeline requirements. As synthetic biology advances, the continued integration of machine learning and accelerated testing platforms promises to further compress development timelines, potentially evolving toward single-cycle LDBT paradigms that approach first-principles engineering.

From Rational Design to Automated Biofoundries: Implementing High-Performance DBTL Workflows

The Design–Build–Test–Learn (DBTL) framework has established itself as a cornerstone of modern strain engineering, providing an iterative, systematic process for developing high-performing industrial microbial strains. Within this framework, rational strain engineering represents a hypothesis-driven approach that leverages prior knowledge and computational models to design specific genetic interventions, contrasting with purely random methods such as classical mutagenesis. The growing bioeconomy, projected to contribute up to $30 trillion to the global economy by 2030, necessitates efficient strain development to produce biofuels, pharmaceuticals, and specialty chemicals competitively [22]. Rational engineering strategies are particularly valuable for minimizing development time and resources by focusing experimental efforts on the most promising genetic targets.

This guide objectively compares the performance of different rational strain engineering methodologies implemented within DBTL cycles, supported by quantitative data from recent experimental studies. We examine specific applications in producing valuable compounds such as anthranilate, dopamine, and pinene, providing detailed protocols and analytical frameworks for researchers engaged in metabolic engineering and drug development.

Comparative Performance Analysis of Rational Engineering Approaches

The table below summarizes the performance outcomes of three distinct rational engineering approaches applied to different production targets in E. coli, highlighting the specific strategies and quantitative improvements achieved.

Table 1: Performance Comparison of Rational Strain Engineering Approaches

| Production Target | Host Organism | Rational Engineering Strategy | Key Genetic Interventions | Resulting Performance | Reference |

|---|---|---|---|---|---|

| Anthranilate | E. coli W3110 trpD9923 | NOMAD framework for minimal phenotype perturbation | Multi-target interventions identified via kinetic modeling | Superior in-silico performance vs. experimental strategies; maintained robust physiology | [23] |

| Dopamine | E. coli FUS4.T2 | Knowledge-driven DBTL with upstream in vitro testing | RBS engineering of hpaBC and ddc; l-tyrosine pathway deregulation | 69.03 ± 1.2 mg/L (2.6 to 6.6-fold improvement over state-of-the-art) | [4] |

| α-Pinene | E. coli HSY012 | Rational design model for chromosomal integration site & copy number | CRISPR/Cas9 integration of MVA pathway & pinene synthase (PG1) at optimized genomic loci | 436.68 mg/L in bioreactor (14.55 mg/L/h mean productivity) | [24] |

Analysis of Comparative Performance Data

The data demonstrates that hypothesis-driven strategies consistently yield substantial improvements in product titer and productivity. The NOMAD framework [23] highlights the importance of maintaining host robustness by keeping engineered strains phenotypically close to the reference strain, ensuring vitality alongside productivity. The knowledge-driven DBTL cycle for dopamine production [4] shows the efficacy of using upstream in vitro experiments (e.g., cell-free lysate systems) to inform in vivo engineering, reducing the number of iterative cycles needed. Finally, the rational chromosomal integration strategy for pinene [24] underscores that the location and copy number of pathway genes are critical parameters for maximizing metabolic flux toward the desired product.

Experimental Protocols for Key Rational Engineering Methodologies

Protocol 1: NOMAD Framework for Minimal Phenotype Perturbation

The NOMAD (NOnlinear dynamic Model Assisted rational metabolic engineering Design) framework employs kinetic models to devise reliable genetic interventions while maintaining cellular physiology [23].

- Kinetic Model Generation: Generate a population of thousands of putative kinetic models consistent with experimental omics data, network topology, and physicochemical laws using tools like ORACLE.

- Model Screening: Screen models for quality:

- Steady-state consistency with observed fluxes and metabolite concentrations.

- Local stability around the steady state.

- Accurate reproduction of dynamic behavior in batch fermentation simulations.

- Robustness to random perturbations in enzyme activities.

- Strain Design via Optimization: Cast strain design as a mixed-integer linear programming (MILP) problem using Network Response Analysis (NRA). The objective is to identify a set of genetic interventions (e.g., enzyme over-expression or down-regulation) that maximize product synthesis while constraining the model to keep metabolite concentrations and fluxes close to the reference strain's phenotype.

- In-silico Validation: Test and rank the proposed designs using nonlinear dynamic bioreactor simulations that mimic real fermentation conditions.

Protocol 2: Knowledge-Driven DBTL with In Vitro Pathway Validation

This approach accelerates the learning phase by incorporating mechanistic insights from cell-free systems before in vivo implementation [4].

- In Vitro Pathway Assembly: Clone genes of the target pathway (e.g., hpaBC and ddc for dopamine) into an expression plasmid. Transform into a suitable production host (e.g., E. coli).

- Crude Cell Lysate Preparation: Cultivate the production strain, harvest cells, and prepare a crude cell lysate system containing endogenous metabolites, cofactors, and the expressed enzymes.

- In Vitro Reaction Monitoring: Incubate the lysate with the pathway substrate (e.g., l-tyrosine) in a defined reaction buffer. Monitor intermediate consumption and product formation over time to assess pathway flux and identify potential bottlenecks.

- In Vivo Translation and RBS Engineering: Translate the findings into the in vivo environment by designing a library of constructs with varying Ribosome Binding Site (RBS) strengths to fine-tune the expression levels of pathway enzymes.

- Strain Cultivation and Analysis: Cultivate the engineered strains in a defined minimal medium. Sample periodically to measure cell density (OD600) and product titer using analytical methods like HPLC or LC-MS.

Protocol 3: Rational Chromosomal Integration for Pathway Optimization

This protocol details a model-driven approach to optimize the copy number and genomic location of heterologous pathways [24].

- Pathway Element Design: Simplify heterologous pathways into standardized cassettes (e.g., upper MVA pathway, lower MVA pathway, and product synthase).

- Initial Pathway Screening: Transform production hosts with plasmid-based versions of different pathway variants (e.g., PG1, PG2, PG3) to identify the most efficient one. Selection can be based on competitive fitness, such as reduced native pathway product (e.g., lycopene) indicating higher precursor drain towards the target product.

- Rational Design Modeling: Use a rational design model to predict optimal copy numbers and genomic integration sites that maximize gene expression and stability.

- CRISPR/Cas9-Mediated Integration: Use CRISPR/Cas9 and λ-Red recombineering to sequentially integrate the selected expression cassette (e.g., PG1) into pre-determined non-essential genomic loci (e.g., regions 8, 44, 58, 23).

- Bioreactor Scale-Up: Cultivate the final integrated strain in a bioreactor under optimized conditions (controlled pH, dissolved oxygen, feeding strategy) to assess performance at a higher scale.

Pathway Diagrams and Workflows

The following diagrams illustrate the core logical workflows and metabolic pathways involved in the rational engineering strategies discussed.

Diagram 1: The integrated DBTL cycle for rational strain engineering. The cycle iterates through computational design, genetic construction, phenotypic testing, and data-driven learning to progressively improve strain performance [22] [23] [4].

Diagram 2: Engineered dopamine biosynthesis pathway in E. coli. The heterologous enzymes HpaBC and Ddc are introduced to convert the endogenous precursor L-tyrosine to dopamine [4].

The Scientist's Toolkit: Essential Research Reagents and Solutions

The successful application of rational strain engineering relies on a suite of specialized reagents, computational tools, and experimental systems.

Table 2: Key Research Reagent Solutions for Rational Strain Engineering

| Tool/Reagent | Category | Specific Function | Example Application |

|---|---|---|---|

| CRISPR/Cas9 System | Genome Editing | Enables precise gene knock-in, knock-out, and replacement. | Integrating pinene synthase pathway into specific genomic loci [24]. |

| λ-Red Recombinase | Genome Editing | Facilitates homologous recombination for genetic modifications. | Used in conjunction with CRISPR/Cas9 for marker-free integration [24]. |

| NOMAD Framework | Computational Tool | Scopes design space using kinetic models for robust strain design. | Identifying multi-target strategies for anthranilate overproduction [23]. |

| UTR Designer | Computational Tool | Designs RBS sequences to fine-tune translation initiation rate. | Modulating the expression levels of pathway enzymes like HpaBC and Ddc [4]. |

| Crude Cell Lysate System | In Vitro Tool | Mimics intracellular environment for rapid pathway prototyping. | Testing relative enzyme expression levels for dopamine synthesis before in vivo work [4]. |

| ORACLE | Computational Tool | Generates populations of kinetic models consistent with omics data. | Building a reference model of E. coli W3110 trpD9923 physiology [23]. |

| pSEVA261 Backbone | Molecular Biology | A medium-low copy number plasmid to reduce background expression. | Used as a backbone for biosensor construction to minimize leaky promoter activity [3]. |

| LuxCDEAB Operon | Reporter System | Provides a bioluminescent output for biosensor applications. | Served as a reporter in a split-operon biosensor design for PFOA detection [3]. |

This guide compares the performance of the knowledge-driven Design-Build-Test-Learn (DBTL) cycle, which incorporates upstream in vitro investigations, against other established DBTL approaches in strain engineering. The comparison is framed within a broader thesis on optimizing DBTL cycle performance for microbial strain development, focusing on objective performance data and methodological details.

The Design-Build-Test-Learn (DBTL) cycle is a foundational framework in synthetic biology for the systematic engineering of biological systems. Traditional DBTL cycles begin with an in silico design phase, followed by physical construction (Build) of genetic designs, experimental validation (Test), and data analysis to inform the next cycle (Learn) [16] [18]. However, reliance on initial designs created without prior experimental data for the specific system can lead to multiple, time-consuming iterations.

Innovative variations have emerged to enhance the efficiency of this iterative process. The knowledge-driven DBTL cycle introduces a critical preliminary step: upstream in vitro investigations using tools like cell-free lysate systems to gather mechanistic insights and inform the initial design phase [5]. This approach contrasts with the bio-intelligent DBTL (biDBTL), which heavily integrates artificial intelligence and digital twins at all stages [12], and the LDBT paradigm, which proposes reordering the cycle to start with "Learning" from existing machine learning models to enable zero-shot designs, potentially reducing the need for cycling altogether [16]. This guide objectively compares the performance of the knowledge-driven approach against these and other alternatives.

Performance Comparison of DBTL Methodologies

The table below summarizes the key characteristics and performance outcomes of different DBTL methodologies as applied in recent strain engineering research.

Table 1: Comparative Performance of DBTL Cycle Methodologies in Strain Engineering

| DBTL Methodology | Key Differentiating Feature | Reported Application / Product | Performance Outcome / Improvement | Cycle Efficiency / Key Advantage |

|---|---|---|---|---|

| Knowledge-Driven DBTL | Upstream in vitro investigation using cell lysates [5] | Dopamine production in E. coli [5] | 69.03 ± 1.2 mg/L (34.34 ± 0.59 mg/gbiomass); 2.6-fold and 6.6-fold improvement over previous state-of-the-art in vivo production [5] | Provides mechanistic understanding before first in vivo cycle; efficient translation from in vitro to in vivo [5] |

| Traditional DBTL (Biofoundry) | Fully automated, high-throughput in vivo cycling [18] | 10 target molecules for DARPA challenge [18] | Successful production for 6/10 target molecules within 90 days [18] | High-throughput capability for rapid, large-scale prototyping [18] |

| LDBT (AI-First) | Machine Learning precedes Design ("Learning-Design-Build-Test") [16] | Protein engineering (e.g., hydrolases, antimicrobial peptides) [16] | Enables zero-shot prediction; nearly 10-fold increase in protein design success rates in some cases [16] | Potential for single-cycle success; leverages large biological datasets for prediction [16] |

| Bio-Intelligent DBTL (biDBTL) | Integration of AI, biosensors, and digital twins [12] | Terpenes, polyolefines, and methylacrylate production in P. putida [12] | Aims to increase speed and success rate via hybrid learning (project active) [12] | Enables hybrid learning for autonomous, self-controlled bioprocesses [12] |

| Iterative DBTL (iGEM) | Sequential, problem-solving cycles with protocol adjustments [8] | Cell-free arsenic biosensor [8] | Achieved a dynamic range of 5–100 ppb arsenic after 7 major cycle iterations [8] | Adaptable to constraints; enables pivots based on new insights and technical hurdles [8] |

Experimental Protocols for Key Methodologies

Core Protocol: Knowledge-Driven DBTL for Metabolite Production

The following protocol details the key experimental steps for implementing a knowledge-driven DBTL cycle, as used for optimizing dopamine production in E. coli [5].

Table 2: Key Research Reagent Solutions for Knowledge-Driven DBTL

| Reagent / Material | Function in the Protocol |

|---|---|

| E. coli FUS4.T2 | Engineered production host strain with high L-tyrosine production [5]. |

| pJNTN Plasmid System | Vector used for constructing plasmids for the crude cell lysate system and library construction [5]. |

| hpaBC and ddc Genes | Genes encoding the key pathway enzymes: HpaBC (from E. coli) converts L-tyrosine to L-DOPA, and Ddc (from Pseudomonas putida) converts L-DOPA to dopamine [5]. |

| Crude Cell Lysate System | In vitro system derived from cell lysates, supplying metabolites and energy equivalents to test enzyme expression and pathway functionality bypassing whole-cell constraints [5]. |

| Phosphate Reaction Buffer | Buffer (50 mM, pH 7) supplemented with FeCl₂, vitamin B6, and pathway precursors (L-tyrosine or L-DOPA) to support the enzymatic reactions in the lysate system [5]. |

| Minimal Medium | Defined medium for cultivation experiments, containing glucose, salts, MOPS, trace elements, and appropriate antibiotics and inducers [5]. |

1. Upstream In Vitro Investigation (Knowledge Generation):

- Prepare Crude Cell Lysate: Generate a cell-free protein synthesis (CFPS) system from crude cell lysates of the production host (E. coli FUS4.T2) to supply essential metabolites and energy [5].

- In Vitro Pathway Assembly: Express the key enzymes (HpaBC and Ddc) individually or together in the CFPS system using appropriate plasmids (e.g., pJNTNhpaBC, pJNTNddc) [5].

- Reaction and Analysis: Incubate the expressed enzymes in a reaction buffer containing L-tyrosine. Quantify the production of L-DOPA and dopamine using analytical methods like HPLC to determine the optimal relative expression levels and enzyme kinetics in vitro [5].

2. Design & Build (Translation to In Vivo):

- RBS Library Design: Based on the in vitro results, design a library of ribosome binding site (RBS) sequences to fine-tune the translation initiation rates of hpaBC and ddc in the bicistronic operon in vivo. Modulation can focus on the Shine-Dalgarno sequence to alter strength without complex secondary structures [5].

- Strain Construction: Use high-throughput molecular biology techniques to assemble the RBS library into the production strain. Automated platforms can be employed for this build phase [5].

3. Test & Learn (In Vivo Validation and Iteration):

- High-Throughput Screening: Cultivate the library of engineered strains in minimal medium and screen for dopamine production [5].

- Data Analysis: Identify top-performing strains. Analyze the sequence-function relationship of the RBS variants, for instance, correlating GC content of the Shine-Dalgarno sequence with dopamine yield [5].

- Cycle Iteration: The learning from this first in vivo cycle can be used to design a more refined RBS library for further optimization if necessary [5].

Contrasting Protocol: LDBT for Protein Engineering

For comparison, the LDBT (Learn-Design-Build-Test) cycle employs a different starting point, as seen in AI-driven protein engineering [16].

1. Learn (Model-Based Knowledge Generation):

- Utilize pre-trained protein language models (e.g., ESM, ProGen) or structure-based models (e.g., ProteinMPNN, MutCompute) to "learn" from vast datasets of protein sequences and structures. These models predict sequences that will fold into desired structures or possess enhanced properties like stability or activity, often in a "zero-shot" manner without project-specific training data [16].

2. Design:

- The output from the machine learning models serves as the design, generating a list of candidate protein sequences expected to perform the desired function [16].

3. Build & Test:

- Build: Synthesize the DNA sequences encoding the top AI-designed protein variants. Cell-free expression systems are particularly advantageous here for rapid, high-throughput synthesis without cloning [16].

- Test: Express the proteins and screen for the target function (e.g., enzyme activity, binding affinity). Microfluidics and liquid handling robots can screen thousands of variants, generating large datasets [16].

Diagram 1: Workflow comparison of DBTL methodologies.

Comparative Analysis and Strategic Application

The performance data and protocols reveal a clear trade-off between the depth of preliminary mechanistic knowledge and the sheer speed of testing hypotheses. The knowledge-driven approach, with its upstream in vitro phase, provides a strong foundational understanding of pathway kinetics and enzyme interactions, which can de-risk subsequent in vivo engineering and lead to highly efficient strains, as demonstrated by the significant yield improvements in dopamine production [5]. In contrast, the LDBT and high-throughput biofoundry models prioritize scale and speed, testing thousands of designs to converge on a solution through massive parallel experimentation [16] [18].

The choice of DBTL strategy should be guided by project goals:

- Use Knowledge-Driven DBTL when working with novel pathways or chassis hosts where mechanistic understanding is poor, when project goals require high product titers and efficient resource use, and when access to cell-free and automated screening capabilities is available.

- Employ LDBT or Automated DBTL when extensive, high-quality datasets or robust predictive models already exist for the system (e.g., for well-characterized proteins or hosts), when the primary goal is to explore a vast design space rapidly, and for applications like protein engineering where zero-shot AI models have shown strong performance [16].

- Adopt Iterative DBTL for projects with frequently changing requirements, significant technical unknowns, or when working under specific constraints (e.g., safety regulations requiring a pivot from GMO to cell-free systems) [8].

Diagram 2: Knowledge-driven DBTL workflow for strain engineering.

The knowledge-driven DBTL cycle, characterized by its strategic upstream use of in vitro investigations, has proven to be a highly effective strategy for strain engineering, achieving multi-fold improvements in product yield as demonstrated in dopamine production. Its performance is competitive when compared to other modern approaches like LDBT and automated biofoundry cycles, with each methodology offering distinct advantages depending on the specific research context, availability of pre-existing data, and project objectives. The future of strain engineering likely lies in the flexible integration of these approaches, such as combining the mechanistic insights from knowledge-driven methods with the predictive power of AI from the LDBT paradigm, to further accelerate the development of robust microbial cell factories.

Robotic platforms have become indispensable in synthetic biology and strain engineering, fundamentally accelerating the Design-Build-Test-Learn (DBTL) cycle. In the Build and Test phases, high-throughput automation enables the rapid construction and evaluation of thousands of genetic variants, transforming the efficiency and scale of biological research. This guide compares the performance of different automation approaches and provides a detailed look at the methodologies empowering modern drug discovery and microbial engineering.

Robotic Platforms and Their Core Components

At the heart of high-throughput automation are integrated systems that handle repetitive laboratory tasks with precision and minimal human intervention. These platforms are particularly crucial for high-throughput screening (HTS), which allows for the simultaneous testing of hundreds of thousands of compounds or genetic constructs against biological targets [25].

Key Robotic Modules and Their Functions

The functionality of a robotic platform depends on the integration of several core modules [25] [26]:

| Module Type | Primary Function in HTS | Key Requirement |

|---|---|---|

| Liquid Handler | Precise fluid dispensing and aspiration | Sub-microliter accuracy; low dead volume |

| Plate Incubator | Temperature and atmospheric control | Uniform heating across microplates |

| Microplate Reader | Signal detection (e.g., fluorescence, luminescence) | High sensitivity and rapid data acquisition |

| Plate Washer | Automated washing cycles | Minimal residual volume and cross-contamination control |

| Robotic Arm | Moves microplates between modules | High precision and reliability for continuous operation |

These modules are orchestrated by sophisticated scheduling software, which acts as the central nervous system of the operation, managing the timing and sequencing of all actions to enable continuous, 24/7 operation [25].

Performance Comparison of Automation Approaches

The implementation of automation in the DBTL cycle can be categorized into traditional large-scale systems and more flexible, collaborative robots ("cobots"). The table below summarizes their key performance characteristics based on current market and research trends [27]:

| Feature | Traditional Robotic Systems | Collaborative Robots (Cobots) |

|---|---|---|

| Throughput | Very high, ideal for large, fixed workflows | High, but more suited for batch processing and dynamic workflows |

| Precision & Stability | Excellent; known for consistent performance in repetitive tasks | High precision, with advanced sensors for interactive tasks |

| Flexibility & Deployment | Lower; often require fixed, isolated workcells | High; user-friendly, quick to deploy, and can work alongside humans |

| Typical Workflow Integration | Deeply integrated, end-to-end automation systems | Easily integrated into existing lab infrastructure without major overhaul |

| Ideal Use Case | Large-scale, unchanging HTS protocols for lead compound identification | Agile labs, specialized multi-step assays, and R&D with frequently changing protocols |

Supporting Experimental Data: A fully integrated robotic system at the National Institutes of Health's Chemical Genomics Center (NCGC) exemplifies the power of traditional systems. This platform, which includes online compound library storage carousels and multifunctional reagent dispensers, is designed for quantitative HTS (qHTS) [26]. In this paradigm, each compound in a library is tested at multiple concentrations, generating full concentration-response curves. This system has the capacity to store over 2.2 million compound samples and has generated over 6 million concentration-response curves from more than 120 assays in a three-year period, demonstrating immense productivity and reliability [26].

Experimental Protocol: A DBTL Case Study in Strain Engineering

The following workflow and detailed methodology are based on a published study that used a knowledge-driven DBTL cycle to optimize dopamine production in E. coli [5] [28]. This case provides a concrete example of how automation is applied in the Build and Test phases.

DBTL Cycle with In Vitro Kinetics

Detailed Experimental Methodology

1. Upstream In Vitro Investigation (Informing the Design Phase)

- Objective: To inform the initial Design by determining the optimal relative expression levels of enzymes (HpaBC and Ddc) in the dopamine pathway without the complexities of a living cell [5].

- Protocol:

- Cell-Free Protein Synthesis (CFPS): The genes encoding the key enzymes, HpaBC and Ddc, were individually cloned into plasmids and expressed in a crude E. coli cell lysate system. This system provides the necessary machinery for transcription and translation [5].

- Reaction Buffer: The CFPS reactions were run in a phosphate buffer (pH 7) supplemented with 0.2 mM FeCl₂, 50 µM vitamin B6, and the precursor molecule, L-tyrosine (1 mM) or L-DOPA (5 mM) [5].