Predictive Power: How Computational Models Are Decoding Cell Self-Organization and Morphogenesis

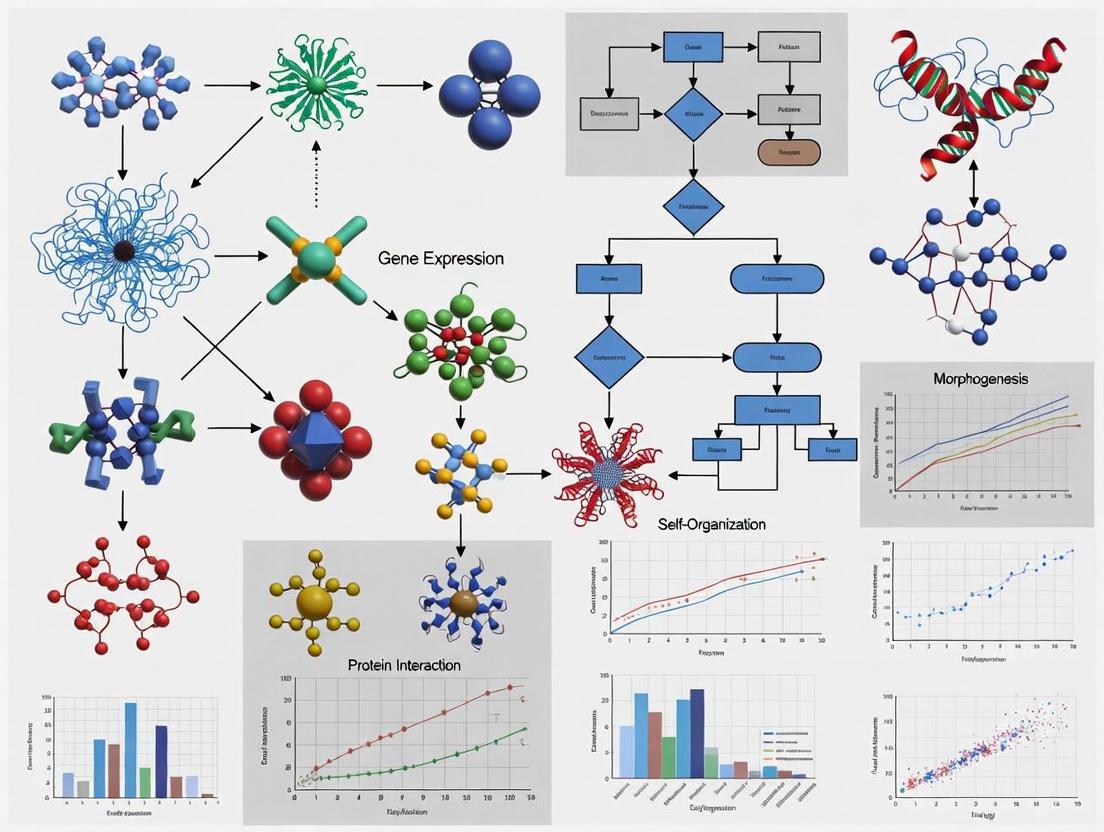

This article explores the transformative role of computational models in predicting and understanding cell self-organization and morphogenesis—the process by which cells form complex tissues and organs.

Predictive Power: How Computational Models Are Decoding Cell Self-Organization and Morphogenesis

Abstract

This article explores the transformative role of computational models in predicting and understanding cell self-organization and morphogenesis—the process by which cells form complex tissues and organs. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive overview from foundational theories to cutting-edge applications. We examine the core physical and biochemical principles that models encapsulate, detail a spectrum of methodological approaches from continuum mechanics to deep learning, and address key challenges in model optimization and validation. By synthesizing insights from recent advances, this article serves as a guide for leveraging computational predictions to enhance tissue engineering, drug development, and the fundamental understanding of developmental biology.

The Blueprint of Life: Core Principles Governing Morphogenesis

In the developing embryo, tissues differentiate, deform, and move in an orchestrated manner to generate various biological shapes. This process, known as morphogenesis, is driven by the complex interplay between genetic, epigenetic, and environmental factors. A resurgence of interest in recent decades has solidified the understanding that mechanical forces are not merely a passive outcome but a primary driver and regulator of embryonic development [1]. Biomechanical forces form the critical bridge that connects genetic and molecular-level events to tissue-level deformations, ultimately sculpting the embryo [1]. Furthermore, feedback from the cellular mechanical environment actively influences gene expression and cell differentiation, creating a dynamic bidirectional relationship between mechanics and biology [1].

The emergence of sophisticated computational models has revolutionized our ability to study and understand these mechanical processes. These models provide a quantitative, unbiased framework for testing physical mechanisms and generating experimentally verifiable predictions [1]. This knowledge is invaluable for biomedical researchers aiming to prevent and treat congenital malformations, as well as for tissue engineers working to create functional replacement tissues. This review explores the fundamental mechanical theories of morphogenesis, examines specific developmental processes, details experimental methodologies, and discusses emerging computational frameworks that are pushing the boundaries of predictive developmental biology.

Fundamental Mechanical Theories of Morphogenesis

Continuum Mechanics and Tissue Material Properties

The mechanical behavior of embryonic tissues is predominantly analyzed using continuum mechanics principles, where tissue is treated as a continuous material rather than discrete cells. This framework centers on the concepts of stress (force per unit area) and strain (relative change in length or angle), which must obey equilibrium, geometric compatibility, mass conservation, and constitutive (stress-strain) equations [1]. Early mechanical theories, largely based on biochemistry, included Turing's reaction-diffusion model, which proposed that spatial patterns emerge from interactions between a short-range activator and long-range inhibitor morphogen [1].

Oster, Murray, and colleagues presented a continuum mechanics formulation that integrated both mechanical and chemical phenomena, providing a comprehensive framework for analyzing tissue deformation [1]. This approach recognizes that developmental processes must simultaneously obey the laws of mechanics, thermodynamics, and biochemistry.

The Differential Adhesion Hypothesis

The Differential Adhesion Hypothesis (DAH), proposed by Steinberg, explains cell sorting phenomena through physical principles. When embryonic cells are disaggregated and allowed to recoalesce, they behave similarly to immiscible fluids, sorting into distinct homogeneous clusters with one cell type often engulfing another [1]. This behavior is governed by differences in cell-cell adhesion, with cell mixtures undergoing phase separation to achieve minimum interfacial and surface free energies [1]. The DAH has been experimentally validated across numerous systems and has been supported by multiple computer simulations.

Computational Modeling Approaches

Computational models have largely replaced physical models for testing hypotheses about mechanical forces in development. These approaches range from simple networks of elastic elements (springs), viscous elements (dashpots), and contractile elements to sophisticated continuum models [1]. The choice of model depends on the specific research question and the level of complexity required to capture essential behaviors while remaining computationally tractable.

Table: Fundamental Theories in Developmental Mechanics

| Theory/Model | Key Principles | Biological Applications |

|---|---|---|

| Continuum Mechanics | Treats tissues as continuous materials; analyzes stress, strain, and material properties | Tissue deformation, bending, folding during neurulation and organogenesis |

| Differential Adhesion Hypothesis (DAH) | Cell sorting driven by interfacial tension and adhesion energy minimization | Cell sorting, tissue boundary formation, germ layer organization |

| Reaction-Diffusion (Turing Patterns) | Pattern formation via interacting morphogens (short-range activator, long-range inhibitor) | Periodic patterning, digit formation, hair follicle spacing |

| Spring-Dashpot Models | Discrete element modeling of cell networks using mechanical analogs | Epithelial sheet deformation, cell packing, collective cell migration |

Mechanical Forces in Specific Developmental Processes

Gastrulation

Gastrulation represents a pivotal event in embryonic development where extensive cell rearrangements establish the three germ layers. Recent research on chick gastrulation has revealed a novel form of collective migration where mesenchymal cells self-organize into a dynamic meshwork structure while moving away from the primitive streak [2]. Through live imaging and topological data analysis, researchers observed that these highly motile mesenchymal cells maintain connections and coordinate their movements despite their dispersed nature.

The formation of this meshwork structure depends on several key parameters, as identified by agent-based theoretical models: cell elongation, cell-cell adhesion, and cell density [2]. Experimental perturbation of N-cadherin, a cell adhesion molecule, demonstrated its critical role in collective migration. Overexpression of a mutant form of N-cadherin reduced the speed of tissue progression and directionality of collective cell movement, while individual cell speed remained unchanged [2]. This highlights how mechanical interactions between cells, mediated by adhesion molecules, coordinate large-scale tissue movements during gastrulation.

Neurulation

Neurulation involves the folding of the neural plate to form the neural tube, which gives rise to the brain and spinal cord. This process exemplifies how coordinated mechanical forces transform a flat epithelial sheet into a three-dimensional structure. The primary mechanical driver of neurulation is apical constriction, where coordinated contraction of actomyosin networks at the apical surface of neuroepithelial cells creates wedge-shaped cells that promote tissue bending [1].

Mechanical models of neurulation incorporate multiple force-generating mechanisms, including apical constriction, basal tension, and external forces from surrounding tissues. These models treat the neuroepithelium as a continuum material with specific mechanical properties, successfully predicting the formation of neural folds and their eventual fusion into a tube.

Organogenesis

During organogenesis, mechanical forces continue to shape emerging organs through complex interactions between epithelia and mesenchyme. Branching morphogenesis in organs like the lung, kidney, and mammary gland involves repetitive branching and budding driven by a combination of localized proliferation, mechanical tension, and fluid pressure [1].

Computational models have been particularly valuable for understanding how global tissue architecture emerges from local cellular mechanics. These models incorporate feedback between mechanical strain and cell proliferation, where stretched cells proliferate more rapidly, creating a self-reinforcing pattern of branching growth.

Experimental Methodologies for Measuring Developmental Mechanics

Quantifying Cell and Tissue Mechanics

Understanding developmental mechanics requires precise measurement of mechanical properties and forces at cellular and tissue scales. Several advanced techniques have been developed for this purpose:

- Traction Force Microscopy: Measures forces exerted by cells on their substrate using deformable hydrogels with embedded fluorescent beads. As cells exert forces, they deform the substrate, and the displacement of beads allows calculation of traction forces [1].

- Laser Ablation: Uses focused lasers to sever specific cytoskeletal elements or cell-cell junctions, with subsequent recoil velocity providing information about pre-existing tension [1].

- Atomic Force Microscopy (AFM): Employs a microscopic cantilever to probe tissue stiffness at high spatial resolution, providing direct measurements of local mechanical properties [1].

- Fluorescent Tension Sensors: Genetically encoded molecular sensors that change fluorescence properties in response to mechanical tension, allowing visualization of forces across specific proteins in living cells [1].

Live Imaging and Quantitative Analysis

Modern live imaging techniques, particularly light-sheet microscopy and confocal microscopy, enable four-dimensional tracking of cell behaviors during development [2]. When combined with computational approaches like topological data analysis (TDA), these methods can reveal emergent patterns in collective cell migration that might not be apparent through qualitative observation alone [2].

Table: Essential Research Reagents and Tools for Developmental Mechanics

| Reagent/Tool | Category | Primary Function | Example Application |

|---|---|---|---|

| N-cadherin Mutants | Molecular Tool | Perturb cell-cell adhesion | Test adhesion role in collective migration [2] |

| FRET-based Tension Sensors | Biosensor | Visualize mechanical tension across proteins | Measure forces across cell junctions in live embryos |

| Deformable Hydrogels | Substrate | Quantify cellular traction forces | Traction force microscopy for cell-ECM forces [1] |

| Photoactivatable Proteins | Optogenetic Tool | Spatiotemporally control protein activity | Precisely manipulate contractility in specific cells |

| Topological Data Analysis (TDA) | Computational Method | Identify patterns in complex cell movements | Analyze meshwork formation in migrating mesoderm [2] |

Emerging Computational Frameworks

Automatic Differentiation for Morphogenesis

A groundbreaking computational framework recently developed by Harvard applied physicists uses automatic differentiation—a technique originally developed for training neural networks—to decipher the rules of cellular self-organization [3] [4]. This approach treats the control of cellular organization and morphogenesis as an optimization problem that can be solved with powerful machine learning tools [3].

The framework learns genetic networks that guide cell behavior, including chemical signaling and physical forces like adhesion and repulsion [3]. Automatic differentiation enables the computer to efficiently compute the precise effect that small changes in any part of the gene network would have on the behavior of the entire cell collective [4]. This represents a significant advancement over traditional trial-and-error approaches in tissue engineering.

Predictive Model Inversion for Tissue Engineering

A particularly powerful aspect of this new computational approach is its potential for inversion. As explained by researchers, "Once you have a model that can predict what happens when you have a certain combination of cells, genes or molecules that interact, can we then invert that model and say, 'We want these cells to come together and do this particular thing. How do we program them to do that?'" [3]. This capability could ultimately enable researchers to design living tissues with specific functions or shapes by working backward from a desired outcome to determine the necessary cellular programming [4].

The long-term goal of this research is to achieve predictive control sufficient to engineer the growth of organs—considered the holy grail of computational bioengineering [4]. While currently a proof of concept, these methods could eventually be combined with experimental approaches to understand and control how organisms develop from the cellular level.

Mechanical forces play a fundamental and indispensable role in shaping the developing embryo, from large-scale tissue deformations during gastrulation and neurulation to the intricate patterning of organs. The integration of mechanical theories with advanced computational models provides a powerful framework for deciphering the complex physical principles governing morphogenesis. Emerging approaches, particularly those leveraging automatic differentiation and machine learning, offer promising paths toward predictive control of tissue development and regeneration. As these computational methods become increasingly sophisticated and integrated with experimental data, they hold the potential to transform our ability to engineer tissues and organs, advancing both regenerative medicine and our fundamental understanding of life's physical blueprint.

The emergence of complex biological patterns from homogeneous beginnings represents one of the most fundamental problems in developmental biology. At the heart of this process lies a sophisticated biochemical landscape where morphogens—signaling molecules that dictate cell fate based on concentration—interact through reaction-diffusion systems to create the intricate structures observed in living organisms. Alan Turing's seminal 1952 paper, "The Chemical Basis of Morphogenesis," first proposed that simple physical laws could explain biological pattern formation through the interaction of diffusing morphogens [5]. His revolutionary insight was that diffusion, typically considered a stabilizing process, could actually destabilize a homogeneous equilibrium and drive pattern formation when coupled with appropriate chemical reactions [5] [6].

Seventy years later, Turing's theoretical framework has evolved into a robust field of computational biology that seeks to predict and control cellular self-organization. Modern approaches integrate mathematical modeling with experimental data to reverse-engineer the rules governing morphogenesis [3] [7]. This whitepaper examines the core principles of Turing patterns, morphogen dynamics, and reaction-diffusion systems within the context of contemporary computational models for predicting cell self-organization, with particular emphasis on applications in drug development and regenerative medicine [8] [9].

Theoretical Foundations: From Turing's Insight to Modern Pattern Formation

Turing's Reaction-Diffusion Theory

Alan Turing's groundbreaking work demonstrated that pattern formation could arise spontaneously from the interaction of two morphogens with different diffusion rates. He showed that a stable homogeneous steady state could become unstable when diffusion is introduced, leading to spontaneous pattern formation—a process now known as diffusion-driven instability [5] [6]. Turing's model proposed that morphogen gradients emerge from local sources and move through tissues, creating concentration gradients that establish positional information for developing cells [5].

The mathematical foundation of Turing's model consists of a system of partial differential equations that describe the spatial and temporal evolution of morphogen concentrations:

∂U/∂t = F(U,V) + Du ∇²U ∂V/∂t = G(U,V) + Dv ∇²V

Where U and V represent morphogen concentrations, F and G define their reaction kinetics, Du and Dv are diffusion coefficients, and ∇² is the Laplacian operator describing diffusion [10]. Turing's key insight was that when one morphogen acts as an activator and the other as an inhibitor, with the inhibitor diffusing faster than the activator, small random fluctuations can amplify into stable, spatially periodic patterns [6].

The Activator-Inhibitor Principle

While Turing's original equations were mathematically elegant, they had biological limitations, including the potential for negative concentrations that lacked physical meaning [6]. In 1972, Gierer and Meinhardt refined Turing's concept by explicitly formulating the conditions for pattern formation: local self-enhancement coupled with long-range inhibition [6]. This activator-inhibitor principle states that pattern formation occurs if, and only if:

- An activator morphogen undergoes an autocatalytic feedback loop that amplifies its own production

- The activator stimulates production of an inhibitor morphogen

- The inhibitor diffuses more rapidly than the activator and suppresses activator production

This mechanism generates stable patterns from random fluctuations because any small local increase in activator concentration self-amplifies while simultaneously producing inhibitor that spreads to prevent similar activation in neighboring regions [6]. The resulting patterns can take the form of spots, stripes, or gradients depending on system parameters, domain size, and boundary conditions.

Table 1: Core Principles of Biological Pattern Formation

| Principle | Mathematical Basis | Biological Requirement | Example System |

|---|---|---|---|

| Local Self-Enhancement | Autocatalytic feedback (e.g., a² term) | Nonlinear production kinetics | Nodal dimer formation [6] |

| Long-Range Inhibition | Higher diffusion coefficient for inhibitor | Rapidly diffusing inhibitor | Lefty2 diffusion [6] |

| Stable Patterning | Non-linear saturation terms | Limited resources or decay mechanisms | Saturated activator production [6] |

| Threshold Response | Switch-like activation | Cooperative binding | Gene regulatory networks [7] |

Computational Frameworks for Predictive Morphogenesis

Modern Optimization Approaches

Recent advances in computational power have enabled new approaches to inverse design in developmental systems. Harvard researchers have developed methods that frame cellular organization as an optimization problem solvable with machine learning tools [3]. Their technique uses automatic differentiation—algorithms originally developed for training deep neural networks—to efficiently compute how small changes in gene networks affect collective cell behavior [3]. This approach allows researchers to discover local interaction rules that yield desired emergent characteristics in growing tissues.

The underlying computational framework models tissues as collections of cells capable of division, growth, mechanical stress sensing, and morphogen secretion/detection [7]. Each cell contains an internal genetic network that processes local environmental information to guide cellular decisions. The entire simulation is designed to be automatically differentiable, enabling gradient-based optimization in high-dimensional parameter spaces that would be intractable with traditional parameter sweep methods [7].

Differentiable Programming for Morphogenesis

Differentiable programming represents a paradigm shift in computational morphogenesis, allowing efficient navigation of complex parameter spaces to discover biological rules. As demonstrated in recent work, this approach can learn gene circuits that control complex developmental processes such as directed axial elongation, cell type homeostasis, and mechanical stress response [7].

The optimization process employs score-based methods like REINFORCE to handle the intrinsic stochasticity of proliferation dynamics [7]. The system gradually learns which division events are most favorable and increases their probability in subsequent simulations. Through this iterative process, the model discovers interpretable genetic networks that reproduce target morphogenetic outcomes, which can then be simplified by removing small-weight connections to highlight the functional backbone [7].

Experimental Protocols and Methodologies

In Vitro Reconstitution of Turing Patterns

The experimental validation of Turing patterns has advanced significantly since Turing's theoretical proposal. The following protocol outlines key methodology for establishing and analyzing reaction-diffusion systems in biological contexts:

Protocol 1: Establishing 3D Stem Cell Cultures for Morphogenesis Studies

Cell Aggregate Formation:

- Utilize forced aggregation methods (hanging drop, microwell centrifugation) or self-assembly via random association in bulk suspension cultures to form 3D cellular aggregates [11].

- For pluripotent stem cells (PSCs), form embryoid bodies (EBs) through E-cadherin-mediated self-assembly to create high-density cellular environments that mimic embryonic development [11].

Pattern Induction:

- Induce specific differentiation programs through precise biochemical induction. For example, induction of Rx+ neuroepithelium in 3D PSC spheroids generates spatially distinct patterns resembling the native optic cup [11].

- Modulate Wnt/β-catenin signaling through controlled aggregate assembly kinetics to direct mesoderm differentiation [11].

Pattern Analysis:

- Fix samples at specific timepoints and perform whole-mount immunofluorescence for key morphogens and differentiation markers.

- Quantify pattern periodicity and amplitude using spatial autocorrelation analysis or Fourier transforms.

- Perturb systems through inhibitor dilution or genetic manipulation to test Turing mechanism requirements [6].

Protocol 2: Computational Identification of Turing Parameters

System Calibration:

- Measure diffusion coefficients of candidate morphogens using fluorescence recovery after photobleaching (FRAP) or similar techniques.

- Quantify expression kinetics through live imaging of reporter constructs.

Model Fitting:

- Implement automatic differentiation to efficiently compute parameter sensitivities [3] [7].

- Optimize genetic network parameters using gradient-based methods (e.g., Adam optimizer) to minimize discrepancy between simulated and experimental patterns [7].

- Employ REINFORCE or similar score-based methods to handle stochasticity in proliferation dynamics [7].

Validation:

- Test model predictions through targeted genetic perturbations.

- Assess robustness to initial conditions and parameter variations.

Table 2: Key Research Reagents and Computational Tools

| Category | Specific Reagents/Tools | Function/Application | Example Use |

|---|---|---|---|

| Biological Systems | Pluripotent Stem Cells (PSCs) | 3D aggregate formation for morphogenesis studies | Embryoid body formation to study early patterning [11] |

| Signaling Modulators | Nodal/Lefty2 system | Activator-inhibitor pair for mesoderm patterning | Sea urchin oral field formation [6] |

| Computational Frameworks | JAX library | Automatic differentiation for parameter optimization | Learning genetic networks for axial elongation [7] |

| Cell Culture Methods | Hanging drop technique | Controlled 3D spheroid formation | Modulating cardiomyocyte differentiation efficiency [11] |

| Mechanical Sensors | Morse potential models | Simulating cell-cell adhesion and repulsion | Modeling tissue mechanics in proliferating clusters [7] |

| Extracellular Matrix | Hyaluronan and versican | Biochemical signal presentation in 3D microenvironments | Supporting mesenchymal differentiation in EBs [11] |

Biological Instantiations of Turing Patterns

Verified Turing Systems in Development

While Turing's mechanism was initially met with skepticism, several biological systems have been experimentally verified to operate through genuine reaction-diffusion mechanisms:

Vertebrate Mesoderm Patterning: The Nodal/Lefty2 system represents a canonical example of a Turing network [6]. Nodal, an activator, forms dimers that positively feedback on its own production—satisfying the nonlinear autocatalysis requirement. Lefty2, the inhibitor, is under the same regulatory control but diffuses more rapidly and interrupts the self-enhancement by blocking the receptor required for activation [6]. This system patterns the mesoderm and establishes left-right asymmetry in vertebrates.

Periodic patterning in Hydra: Turing's original paper specifically addressed the periodic arrangement of structures in hydra [6]. Recent work has confirmed that activator-inhibitor mechanisms govern tentacle spacing in these organisms, with the foot of the hydra acting as an organizing region that establishes the body axis [6].

Mammalian Palate Development: The spaced transverse ridges of the palate in mammals form through Turing mechanisms, with disruptions leading to patterning defects [5]. This system demonstrates how reaction-diffusion can create complex, species-specific patterns in mammalian development.

Synthetic Biology Approaches

Engineering synthetic Turing systems provides the most direct validation of the theory. Recent advances include:

- Programmed formation of multicellular structures using synthetic gene circuits that implement activator-inhibitor logic [7].

- Rationally designed cell communication systems using contact-based or chemical signaling to achieve target patterns [7].

- Engineered cellular assemblies that form precisely controlled shapes through optimized local interactions [7].

Applications in Drug Development and Regenerative Medicine

Predictive Toxicology and Efficacy Modeling

The pharmaceutical industry faces significant challenges in predicting drug efficacy and toxicity, with late-stage failures representing enormous costs [9]. Multiscale computational models based on morphogenetic principles offer promising approaches to this problem:

Multiscale Modeling of Drug Effects: Drug toxicity and efficacy are emergent properties arising from interactions across multiple biological scales [9]. Molecules interact with specific targets, but these targets are embedded within complex signaling networks that process these interactions into cellular outcomes, which subsequently influence tissue and organ function [9]. Computational frameworks that capture these emergent behaviors can predict clinical outcomes from molecular interventions.

Quantitative Systems Pharmacology (QSP): This approach integrates mechanistic modeling with machine learning to predict drug behavior [9]. QSP models are built on physiological and pathophysiological knowledge, then calibrated using experimental data. The integration of ML helps address data gaps and improves individual-level predictions, enhancing model robustness and generalizability [9].

Tissue Engineering and Regenerative Medicine

Reaction-diffusion principles guide emerging approaches in tissue engineering:

Organoid Development: Three-dimensional stem cell cultures spontaneously undergo morphogenesis when provided with appropriate biochemical and biophysical cues [11]. For example, induction of Rx+ neuroepithelium in 3D pluripotent stem cell spheroids generates spatially distinct patterns resembling the native optic cup, with dynamic structural changes including evagination and invagination creating distinct retinal layers [11].

Engineered Morphogenesis: Computational models enable the inverse design of cellular systems to achieve target structures [7]. By optimizing parameters governing genetic networks, researchers can program cell clusters to undergo specific morphogenetic events such as axial elongation, mimicking natural developmental processes like limb bud outgrowth [7].

Table 3: Computational Approaches in Pharmaceutical Development

| Approach | Key Features | Strengths | Limitations |

|---|---|---|---|

| Quantitative Systems Pharmacology (QSP) | Mechanistic, multiscale models | Biologically grounded predictions | High complexity; parameter identifiability |

| Machine Learning Integration | Pattern recognition in large datasets | Handling high-dimensional data | Limited mechanistic insight |

| Automatic Differentiation | Efficient parameter sensitivity analysis | Scalable to complex models | Requires differentiable models |

| Physiologically Based Pharmacokinetic (PBPK) Modeling | Whole-body drug distribution | Clinical translation | Limited cellular resolution |

| Reaction-Diffusion Models | Spatial patterning prediction | Captures emergent tissue-level effects | Computational intensity for large systems |

Future Directions and Community Efforts

Emerging Computational Paradigms

The field of computational morphogenesis is rapidly evolving, with several promising directions:

Generative Models for Morphogenesis: Deep learning frameworks, physics-informed neural networks, and agent-based simulations provide powerful tools to capture the dynamic, multiscale nature of morphogenesis [8]. These models can replicate tissue patterning, growth, and differentiation in silico, generating novel hypotheses about self-organization mechanisms [8].

Community-Driven Model Improvement: Enhancing predictive modeling requires coordinated community efforts [9]. Initiatives such as the ASME V&V 40 standard, FDA guidance documents, the NIH-supported Center for Reproducible Biomedical Modeling (CRBM), and FAIR (Findable, Accessible, Interoperable, and Reusable) principles promote model transparency, reproducibility, and trustworthiness [9].

Technical Challenges and Solutions

Key challenges remain in computational prediction of morphogenesis:

Bridging Scales: Models must connect molecular regulations to tissue-level architecture [8]. Multiscale frameworks that efficiently couple processes across spatial and temporal scales are essential for capturing emergent behaviors [9].

Integrating Mechanics and Biochemistry: Morphogenesis involves both biochemical signaling and physical forces [7] [11]. Successful models must integrate biomechanics with reaction-diffusion systems to fully capture developmental processes.

Validation and Credibility: As noted in recent reviews, "Developing credible and actionable predictive models remains a deeply challenging endeavor" [9]. Setting proper expectations is crucial—models should be viewed as tools that support scientific dialogue rather than perfect replicas of biological systems [9].

The continued integration of computational and experimental approaches, supported by community standards and shared resources, promises to advance our understanding of biological pattern formation and our ability to harness it for therapeutic applications.

Biological morphogenesis, the process by which cells and tissues develop their shape and structure, represents one of the most fundamental mysteries in developmental biology. At its core lies self-organization—a process by which interacting cells organize and arrange themselves into higher-order structures and patterns without external direction [12]. This process is governed by reciprocal causality, a form of causal relationship distinct from linear chains, where causes and effects continuously influence one another across spatial and temporal scales [13]. In practical terms, this means that developing organisms are not solely products, but also active causes, of their own evolutionary and developmental trajectories [14].

The significance of these processes extends beyond basic developmental biology to profound clinical applications. Congenital disorders and cancers often arise from malfunctions in these precisely coordinated behaviors [12]. Understanding how cells collectively build and maintain complex structures could revolutionize regenerative medicine, enabling the engineering of tissues and organs through controlled self-organization principles [3]. This whitepaper examines the mechanistic basis of self-organization and reciprocal causality through the lens of computational modeling, providing researchers with both theoretical frameworks and practical methodologies for advancing this transformative field.

Theoretical Foundations: From Single Cells to Emergent Patterns

Core Principles of Self-Organization

Self-organization in biological systems operates through several interconnected principles that transform disordered cellular states into structured tissues:

Local Interactions Generate Global Order: Complex functional patterns such as tissues and organisms emerge not from a central controller but from local interactions between individual cells. No single cell comprehends the overall structure, yet collective behavior produces sophisticated organization [12].

Symmetry Breaking and Pattern Formation: A defining step in self-organization occurs when initially identical cells differentiate and establish lineage segregation. This symmetry-breaking transition moves the system from a symmetric but disordered state into defined, asymmetric states with specialized functions [12]. This process correlates with functional specialization across multiple scales—from molecular assemblies to whole body axis formation [12].

Cell-to-Cell Variability as a Functional Feature: Populations of cells maximize collective performance rather than individual cell optimization. This inherent variability provides tissues the flexibility to develop and maintain homeostasis in diverse environments [12].

Reciprocal Causality in Developmental Systems

Reciprocal causation represents a fundamental shift from linear causal models to systems where causality operates bidirectionally:

Beyond Unidirectional Causation: Traditional evolutionary theory often emphasized unidirectional causation (genes → traits → selection). Reciprocal causation acknowledges that organisms actively modify their environments, which in turn alters selective pressures, creating feedback cycles where "process A is a cause of process B and, subsequently, process B is a cause of process A" [14].

Multi-Scale Interactions: Reciprocal causality operates across scales—from gene-environment interactions to population-level dynamics [14]. This cross-scale influence means that understanding morphogenesis requires simultaneous analysis of multiple organizational levels [13].

Constructive Development: Through reciprocal causation, developing organisms actively construct their own developmental and evolutionary niches, blurring traditional distinctions between internal and external factors [14].

Computational Frameworks for Predicting Self-Organization

Modeling Approaches and Their Applications

Computational models provide indispensable tools for understanding and predicting self-organizing systems whose complexity defies intuitive analysis. The table below summarizes major modeling approaches and their specific applications to self-organization research:

Table 1: Computational Modeling Approaches in Self-Organization Research

| Model Type | Key Features | Application Examples | Limitations |

|---|---|---|---|

| Physics-Based Models | Incorporates biophysical forces; cell packing effects; mechanical tension | Tissue morphogenesis; cell sorting; lumen formation [15] | Requires precise parameterization; computationally intensive |

| Gene Regulatory Networks | Models genetic controls; signaling pathways; molecular interactions | Pattern formation; stem cell differentiation; symmetry breaking [12] | Often oversimplifies cellular context; limited spatial representation |

| Optimization Frameworks | Uses automatic differentiation; inverse design; predictive control | Organ engineering; predicting cellular programming [3] | Currently proof-of-concept; requires experimental validation |

| Multi-Scale Models | Integrates molecular, cellular, and tissue levels; cross-scale causality | Supracellular organization; traveling wave propagation [13] | Extreme complexity; challenging to validate empirically |

Emerging Computational Methodologies

Recent advances in computational power and algorithms have enabled novel approaches to modeling self-organization:

Automatic Differentiation for Inverse Design: Harvard researchers have developed methods using automatic differentiation—algorithms originally designed for training neural networks—to extract the rules cells follow during self-organization. This approach treats morphological control as an optimization problem that can be solved with machine learning tools [3]. The computer learns these rules in the form of genetic networks that guide cellular behavior, including chemical signaling and physical forces governing cell adhesion [3].

Predictive Model Integration: These computational frameworks enable researchers to invert the modeling process, asking: "We want these cells to come together and do this particular thing. How do we program them to do that?" [3]. This represents a fundamental shift from descriptive to prescriptive modeling in developmental biology.

Handling Cellular Complexity: Computational models must account for the crowded, heterogeneous cellular environment where molecular components navigate a complex landscape to function at appropriate times and places. This is particularly challenging for self-assembling systems with high-order kinetics that are sensitive to concentration gradients and stochastic noise [16].

The following diagram illustrates the computational workflow for applying automatic differentiation to predict and program cellular self-organization:

Key Experimental Methodologies for Quantifying Self-Organization

Quantitative Morphological Characterization

Robust quantitative metrics are essential for characterizing cell phenotypic characteristics unambiguously. These methodologies enable comparison of data across laboratories and experimental conditions:

Morphological Metrics: Quantitative assessment of cell shape characteristics, including aspect ratio, perimeter length, and surface area, provides objective descriptors that replace ambiguous qualitative terms [17].

Cell-Cell Interaction Analysis: Methods for quantifying the nature and strength of interactions between adjacent cells, including contact inhibition dynamics and adhesion properties [17] [12].

Population Growth Dynamics: Analysis of growth rates within cell populations under varying conditions reveals how local interactions influence global tissue properties [17].

Mechanosensing Pathways Evaluation: Experimental assessment of membrane tension sensing pathways (Yap1, Piezo, Misshapen-Yorkie) that transduce physical cues into cellular responses [12].

Symmetry-Breaking and Pattern Formation Assays

To experimentally investigate the initial stages of self-organization, researchers employ several specialized protocols:

Morphogen Gradient Reconstruction: Establishment of controlled concentration gradients of signaling molecules (e.g., Wnt3a in intestinal stem cell niches) to observe how cells interpret positional information [12].

Cell Polarity Determination: Tracing differential inheritance of cellular components (e.g., apical domains in mouse trophectoderm formation) to understand initial symmetry breaking [12].

Lumen Formation Protocols: Using lumen formation as a mechanism to study how cells locally restrict and coordinate communication between selected groups, as demonstrated in zebrafish lateral line development [12].

The following experimental workflow outlines key methodologies for analyzing self-organization processes from cellular to tissue scales:

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful investigation of self-organization and reciprocal causality requires specialized reagents and tools. The following table details essential materials and their applications in this research domain:

Table 2: Essential Research Reagents for Self-Organization Studies

| Reagent Category | Specific Examples | Research Applications | Technical Considerations |

|---|---|---|---|

| Morphogen Signaling Modulators | Recombinant Wnt3a, BMP4, FGF inhibitors | Manipulating positional information; gradient establishment [12] | Concentration-dependent effects; temporal specificity critical |

| Cell Polarity Markers | Phosphorylated PLCζ, Par complex antibodies | Tracing asymmetric division; symmetry breaking events [12] | Fixed tissue limitations; live imaging alternatives preferred |

| Mechanosensing Pathway Reagents | Yap1 inhibitors, Piezo activators, MSR antibodies | Probing physical force transduction; cell packing effects [12] | Multiple parallel pathways require combinatorial approaches |

| Cell-Cell Contact Probes | E-cadherin GFP fusions, Clusterin inhibitors | Studying contact inhibition; adhesion dynamics [12] | Real-time monitoring essential for dynamic processes |

| Live Imaging Compatible Reporters | FUCCI cell cycle indicators, membrane-targeted GFP | Quantifying division patterns; population dynamics [17] | Phototoxicity concerns with prolonged imaging |

| Extracellular Matrix Components | Synthetic laminin gradients, collagen concentration arrays | Testing microenvironment effects; scaffold engineering [16] | Matrix stiffness co-varies with biochemical properties |

Signaling Pathways Governing Self-Organization

Core Pathway Interactions

Self-organization emerges from the integration of multiple interconnected signaling pathways that enable cells to sense and respond to their environment:

Morphogen Sensing and Interpretation: Cells detect their position within tissue through morphogen gradients (e.g., Dpp in fly wing development, Wnt3a in mouse intestinal stem cell niches) [12]. The precision and robustness of these systems require spatio-temporally coordinated self-organized processes where cells both respond to and modify these gradients [12].

Mechanotransduction Pathways: Physical cues from the microenvironment, including cell packing effects and membrane tension, are transduced through pathways such as Yap1, Piezo, and Misshapen-Yorkie [12]. These pathways connect external physical forces to internal genetic programs.

Contact-Dependent Signaling: Local environment sensing through mechanisms like contact inhibition regulates proliferation based on cell density and motility [12]. This involves pathways including increased Clusterin secretion and E-cadherin-mediated control of cell proliferation [12].

The following diagram illustrates the integrated signaling network that enables cellular self-organization through reciprocal causation:

Cross-Scale Integration of Signaling Information

The true sophistication of self-organizing systems lies in their ability to integrate information across scales:

Temporal Integration: Cells combine immediate signaling inputs with longer-term historical information, such as counting proliferation rounds in mammalian hematopoietic stem cells [12].

Spatial Integration: Individual cells compute local information on cell density, motility, and division rates to trigger population-level responses like contact inhibition [12].

Functional Specialization: The combination of intrinsic and extrinsic cues establishes feedback loops that move entire populations to new states, generating complex architectures seen in neuronal development where extensive progenitor proliferation switches to asymmetric division when progenitors reach the correct size [12].

Future Directions and Clinical Applications

Emerging Research Paradigms

The field of self-organization and reciprocal causality is rapidly evolving with several promising research directions:

Predictive Organ Engineering: The holy grail of computational bioengineering—using predictive models to specify desired tissue characteristics and deriving the cellular programming required to achieve them [3]. This inverse design approach could eventually enable engineering of complex organs through controlled self-organization.

Multi-Scale Model Integration: Developing frameworks that simultaneously analyze multiple organizational levels, acknowledging that reciprocal causality operates across length-scales from molecular interactions to tissue-level patterns [13].

Dynamic Microenvironment Control: Creating experimental systems that allow real-time manipulation of both biochemical and biophysical cues to dissect their relative contributions to self-organization.

Therapeutic Implications

Understanding self-organization and reciprocal causality has profound clinical implications:

Regenerative Medicine Applications: Harnessing self-organization principles for tissue engineering and organ regeneration, potentially using computational models to optimize scaffold design and cellular composition [3].

Cancer Biology Insights: Since malfunction in coordinated cellular behaviors underlies many cancers, understanding how these processes normally maintain tissue homeostasis could reveal new therapeutic targets [12].

Congenital Disorder Prevention: Elucidating how self-organization fails during embryogenesis could lead to interventions for preventing congenital disorders caused by errors in pattern formation [12].

As research continues to unravel the complex interplay between self-organization and reciprocal causality, computational models will play an increasingly vital role in bridging our understanding across spatial, temporal, and functional scales—ultimately enabling the prediction and programming of biological form for both basic science and clinical applications.

In the developing embryo, cellular self-organization is governed by the fundamental interplay between two principal tissue types: epithelia and mesenchyme [1]. These distinct cellular arrangements exhibit unique mechanical properties and behavioral programs that drive the complex process of morphogenesis. Epithelia consist of tightly adherent, polarized sheets that serve as barriers and organized templates for development, while mesenchyme comprises loosely organized, migratory cells embedded in extracellular matrix that provide the cellular material for building complex three-dimensional structures [18] [1]. The transitions between these states—through epithelial-mesenchymal transition (EMT) and mesenchymal-epithelial transition (MET)—create a dynamic cellular repertoire that enables the emergence of anatomical complexity from simple cellular sheets [19]. Understanding the distinct self-organizing principles of these tissue types is essential for computational modeling of morphogenesis and has significant implications for regenerative medicine and therapeutic development.

Defining Characteristics and Behavioral Programs

Epithelial Organization and Morphogenetic Capabilities

Epithelial cells are characterized by their stationary nature and organization into two-dimensional sheets with strong intercellular adhesion [18]. They exhibit apical-basal polarity with specialized junctional complexes including adherens junctions, tight junctions, and gap junctions [18]. A defining feature is their association with an underlying basal lamina composed of extracellular matrix proteins such as laminin and fibronectin [18]. The strong adhesiveness between epithelial cells provides integrity and mechanical rigidity to tissues while allowing limited remodeling through junctional rearrangement [18].

Epithelia undergo morphogenesis through several conserved mechanisms:

- Apical Constriction: Coordinated contraction of apical actomyosin bands bends epithelial sheets by constricting the apex and giving cells a wedge-like profile [1].

- Convergent Extension: Cell intercalation in the epithelial plane produces tissue shortening along one axis and extension along the perpendicular axis [1].

- Collective Migration: Due to strong cell-cell contacts, epithelia move as continuous sheets during morphogenetic events [1].

Mesenchymal Organization and Migratory Behaviors

Mesenchymal cells display a fundamentally different organization, lacking apical-basal polarity and organized junctional complexes [18]. They exhibit a loosely packed configuration with significant extracellular matrix between cells and form focal contacts rather than continuous adhesions [1]. This structural organization enables two primary migratory modes: individual cell migration or chain migration, both characterized by front end-back end polarity [18].

Mesenchymal cells navigate through their environment using several guidance mechanisms:

- Chemotaxis: Migration along chemical gradients [1]

- Haptotaxis: Movement along adhesion gradients in the extracellular matrix [1]

- Durotaxis: Migration guided by stiffness variations in the substrate [1]

The migratory capacity of mesenchymal cells provides a vehicle for cell rearrangement, dispersal, and novel cell-cell interactions essential for building complex tissues [18].

Quantitative Comparison of Tissue Properties

Table 1: Comparative Properties of Epithelial and Mesenchymal Tissues

| Property | Epithelial | Mesenchymal |

|---|---|---|

| Cellular Organization | Stationary sheets with strong cell-cell adhesion | Loosely packed with significant ECM between cells |

| Polarity | Apical-basal polarity | Front end-back end polarity (when migratory) |

| Junctional Complexes | Adherens junctions, tight junctions, gap junctions | Focal contacts |

| Basal Lamina | Present underlying the tissue | Absent |

| Migratory Behavior | Collective sheet migration | Individual or chain migration |

| Primary Morphogenetic Mechanisms | Apical constriction, convergent extension, collective migration | Chemotaxis, haptotaxis, durotaxis |

| Characteristic Markers | E-cadherin, cytokeratins | N-cadherin, vimentin, fibronectin |

Table 2: EMT and MET Characteristics in Early Mouse Embryo

| Process | Key Events | Molecular Regulation |

|---|---|---|

| EMT (Ingression) | Loss of apical-basal polarity; Dismantling of cell-cell junctions; Basal membrane disruption; Downregulation of E-cadherin; Upregulation of N-cadherin and vimentin; Cell shape change and protrusion extension [18] | Wnt/β-catenin pathway; TGF-β signaling; Snail genes activating metalloproteases; RhoA regulation via Net1; FERM proteins (e.g., Epb4.1.5) for cytoskeletal organization [18] |

| MET (Egression) | Downregulation of mesenchymal markers; Upregulation of epithelial factors; Acquisition of epithelial morphology; Formation of cell-cell junctions; Establishment of apical-basal polarity [18] | WNT6 for somite formation; Repression of EMT-inducing signals; Cadherin switching [18] |

Experimental Models and Methodologies

Mouse Gastrulation as a Model for EMT

The mouse embryo provides an exemplary model for studying epithelial-mesenchymal transitions during gastrulation, which occurs between embryonic day (E) 6.25 and E8.5 [18]. The primitive streak serves as the site of epiblast cell ingression, where carefully orchestrated cellular and molecular events transform epithelial cells into migratory mesenchyme.

Detailed Experimental Protocol for Analyzing EMT in Mouse Gastrulation:

- Embryo Collection and Staging: Collect mouse embryos at precisely timed intervals from E6.25 to E8.5, staging morphologically from early streak to 8-10 somite stages [18].

- Histological Processing: Fix embryos in 4% paraformaldehyde, embed in paraffin or optimal cutting temperature compound, and section at 5-8μm thickness.

- Immunofluorescence Analysis: Perform antigen retrieval and stain with the following antibody panel:

- Anti-E-cadherin to visualize epithelial integrity loss

- Anti-N-cadherin to detect mesenchymal acquisition

- Anti-vimentin as a mesenchymal marker

- Anti-laminin or anti-fibronectin to assess basal lamina integrity

- Phalloidin staining to visualize actin cytoskeleton rearrangements

- Imaging and Quantification: Capture high-resolution confocal images and quantify the following parameters:

- Cell shape index (apical:basal ratio)

- Junction disassembly scoring

- Basement membrane fragmentation percentage

- Migration distance from primitive streak

Computational Modeling Approaches

Computational models provide powerful tools for understanding the mechanical principles governing epithelial and mesenchymal behaviors [1]. These models employ various theoretical frameworks to simulate tissue self-organization.

Methodology for Constructing Computational Models of Tissue Self-Organization:

- Continuum Mechanics Approach:

- Treat tissue as a continuous material rather than discrete particles

- Define stress (force per unit area) and strain (relative deformation) relationships

- Implement constitutive equations specific to epithelial or mesenchymal material properties

- Solve equilibrium, geometric compatibility, and mass conservation equations [1]

Discrete Cell Modeling:

- Represent individual cells as interacting elements

- Model cell-cell adhesion using differential adhesion hypothesis principles

- Simulate cell sorting based on surface tension minimization

- Incorporate cytoskeletal dynamics and contractility [1]

Hybrid Continuum-Discrete Frameworks:

- Combine continuum descriptions of extracellular matrix with discrete cellular elements

- Model traction forces exerted by mesenchymal cells on their substrate

- Simulate collective epithelial migration with maintained cell-cell contacts

Diagram 1: Signaling Pathways Regulating EMT During Gastrulation. The process involves coordinated molecular signaling that drives the transition from epithelial to mesenchymal state.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents for Epithelial-Mesenchymal Studies

| Reagent/Category | Specific Examples | Function/Application |

|---|---|---|

| Epithelial Markers | E-cadherin antibodies, Cytokeratin antibodies, ZO-1 antibodies | Identify and validate epithelial phenotype; Assess junctional integrity |

| Mesenchymal Markers | N-cadherin antibodies, Vimentin antibodies, Fibronectin antibodies | Confirm mesenchymal transition; Track cell fate changes |

| EMT-Inducing Factors | Recombinant TGF-β, BMP proteins, Wnt ligands | Induce epithelial-mesenchymal transition in experimental models |

| Signaling Inhibitors | SB431542 (TGF-β inhibitor), IWP-2 (Wnt inhibitor), Y-27632 (ROCK inhibitor) | Block specific pathways to test functional requirements |

| Extracellular Matrix | Matrigel, Collagen I, Fibronectin, Laminin | Provide substrates for cell migration and differentiation assays |

| Live Imaging Reagents | CellTracker dyes, GFP-tagged cytoskeletal proteins, E-cadherin-GFP constructs | Visualize dynamic cell behaviors in real-time |

Computational Modeling of Self-Organization Principles

Theoretical Frameworks for Tissue Mechanics

Computational models of morphogenesis integrate mechanical theories with biological data to simulate how epithelial and mesenchymal tissues self-organize [1]. The mechanical behavior of soft tissues is typically analyzed using continuum mechanics principles, where tissue is treated as a continuous material characterized by stress-strain relationships [1]. For epithelial tissues, models often incorporate cell-based discrete elements that account for junctional tensions, apical constriction, and collective behaviors [1]. Mesenchymal tissues are frequently modeled as viscous or viscoelastic materials that respond to traction forces, matrix stiffness, and chemical gradients [1].

Key modeling frameworks include:

- Differential Adhesion Hypothesis: Simulates cell sorting based on surface tension minimization and differential adhesion [1]

- Reaction-Diffusion Systems: Models pattern formation through interacting morphogens [1]

- Mechanical Feedback Loops: Incorporates how mechanical stress influences gene expression and cell behavior [1]

Diagram 2: Computational Modeling Framework for Tissue Self-Organization. Integrated models simulate epithelial and mesenchymal behaviors using distinct mechanical principles.

Implications for Predictive Morphogenesis

The distinct self-organizing behaviors of epithelia and mesenchyme provide the fundamental building blocks for predictive computational models of morphogenesis [1]. By quantifying the mechanical properties, adhesion characteristics, and migratory behaviors of these tissue types, researchers can develop increasingly accurate simulations of embryonic development [1]. These models have significant applications in understanding congenital malformations, developing regenerative medicine approaches, and creating engineered tissues in the laboratory [1]. The integration of computational modeling with experimental validation creates a powerful framework for deciphering the complex interplay between genetic regulation and mechanical forces that shapes the developing embryo.

The Modeler's Toolkit: From Continuum Mechanics to AI-Driven Prediction

Continuum mechanics models provide a powerful framework for simulating biological tissues as materials, enabling the prediction of their behavior across multiple spatial and temporal scales. This approach treats tissues not as discrete collections of cells, but as continuous materials with specific mechanical properties, thereby bridging the gap between cellular-level phenomena and tissue-level outcomes. Within the broader context of computational models for predicting cell self-organization and morphogenesis, these models are indispensable for unraveling how genetic, chemical, and physical factors integrate to shape developing organisms [20]. The fundamental premise lies in applying principles of solid and fluid mechanics to biological systems, treating tissues as materials with properties like elasticity, viscosity, and poroelasticity that emerge from their cellular components and extracellular matrix (ECM).

The significance of this approach is profoundly evident in tissue engineering and regenerative medicine, where achieving reliable and durable outcomes for structural cardiovascular implants like vascular grafts and heart valves requires a deeper understanding of the fundamental mechanisms driving tissue evolution during in vitro maturation [21]. Similarly, in developmental biology, continuum models help decipher morphogenesis—the process by which organisms develop their shape—by integrating growth, elasticity, chemical factors, and hydraulic effects into a unified theoretical framework [20]. For researchers and drug development professionals, these models offer predictive capabilities that can reduce costly experimental optimization and provide insights into pathological processes where mechanobiology plays a pivotal role, such as in cancer, fibrosis, and cardiovascular disorders [22].

Theoretical Foundations of Tissue Mechanics

Key Continuum Modeling Frameworks

Continuum models of tissues employ several specialized theoretical frameworks, each suited to capturing different aspects of tissue behavior. These frameworks share a common foundation in the kinematics of continuous bodies but diverge in how they conceptualize and mathematically describe tissue-specific processes.

Morphoelasticity serves as a cornerstone theory for modeling biological growth. It is based on nonlinear solid mechanics and describes growth through a multiplicative split of the deformation gradient into elastic and inelastic (growth) parts. This approach allows researchers to simulate volumetric changes in tissues, such as the expansion of a developing limb or the thickening of a heart valve leaflet [21]. The theory effectively captures how biological tissues achieve tensional homeostasis—equilibrating at a specific level of internal stress—which drives changes in tissue shape and the reorientation of structural components like collagen fibers [21].

Poroelasticity theory characterizes the mechanical behavior of fluid-saturated solids, making it particularly suitable for modeling plant and animal tissues where hydraulic effects play a crucial role. This framework treats tissues as porous materials through which fluid can flow, generating pressure gradients that influence overall mechanical behavior. A particularly comprehensive approach combines poroelasticity with morphoelasticity into a hydromechanical field theory that captures the complex interplay between fluid flow, solid mechanics, and growth in developing plant tissues [20].

For modeling tissue fusion and aggregation processes—highly relevant in biofabrication and developmental biology—continuum models often borrow from the hydrodynamics of highly viscous liquids. These models treat clusters of cohesive cells as an incompressible viscous fluid on the time scale of hours, successfully predicting the post-printing evolution of 3D bioprinted constructs built from tissue spheroids or organoids [23].

Table 1: Fundamental Continuum Modeling Frameworks for Tissues

| Modeling Framework | Fundamental Principle | Primary Applications | Key Advantages |

|---|---|---|---|

| Morphoelasticity | Multiplicative split of deformation gradient into elastic and growth components | Volumetric growth, tissue development, residual stress evolution | Naturally incorporates finite deformations and residual stresses |

| Poroelasticity | Treats tissue as fluid-saturated porous solid | Hydraulic effects, fluid transport, swelling processes | Captures time-dependent response to loading and fluid flow |

| Viscous Hydrodynamics | Models tissue as incompressible highly viscous fluid | Tissue spheroid fusion, cell sorting, aggregate coalescence | Predicts large-scale morphological changes during development |

| Constrained Mixture Models | Tracks individual tissue constituents with different deposition times | Arterial wall mechanics, tissue remodeling | Accounts for history-dependent material behavior |

Governing Equations and Mathematical Formulation

The mathematical foundation of continuum tissue models typically begins with the definition of kinematic relationships. In morphoelasticity, the central kinematic assumption is the multiplicative decomposition of the deformation gradient: F = Fₑ · Fg where F is the total deformation gradient, Fg represents the growth part, and Fₑ is the elastic part that ensures compatibility and generates stresses [21]. The evolution of the growth tensor F_g is governed by constitutive relationships often based on the concept of a homeostatic stress surface, which defines the stress state at which tissues neither grow nor resorb.

The balance laws for mass, momentum, and energy complete the theoretical framework. For poroelastic materials, these include additional equations governing fluid transport through the solid matrix. The resulting system of partial differential equations is typically solved numerically using techniques like the Finite Element Method (FEM), which can handle complex geometries, material heterogeneity, and anisotropy [24].

Recent advances have focused on ensuring thermodynamic consistency of these models—a crucial requirement for physical realism. Contemporary frameworks couple evolution equations for volumetric growth with equations describing collagen density evolution and fiber reorientation, creating comprehensive models that capture the interdependent phenomena shaping tissue development and adaptation [21].

Computational Implementation and Methodologies

Finite Element Method for Soft Tissues

The Finite Element Method (FEM) represents the predominant numerical technique for implementing continuum mechanics models of tissues, particularly due to its ability to handle complex geometries, material heterogeneity, and anisotropy [25] [24]. In structural analysis, FEM has established itself as the most powerful tool for modeling and simulation of structures characterized by complex geometry and exposed to arbitrary boundary and initial conditions. For biological tissues, which often demonstrate nonlinear material behavior, large deformations, and complex boundary conditions, FEM provides the necessary flexibility to achieve clinically and biologically relevant simulations.

Implementing FEM for soft tissues presents unique computational challenges, especially when simulating high-frequency harmonic excitations as used in diagnostic techniques like Vibroacoustography (VA) and Magnetic Resonance Elastography (MRE). The Helmholtz-type equations used to model such systems suffer from additional numerical error known as "pollution" when excitation frequency becomes high relative to tissue stiffness [24]. This pollution effect can dominate the FEM error unless addressed through specialized approaches. The error bound estimate for the weak-form Galerkin FEM applied to the Helmholtz equation reveals that the polynomial order (p) of the element basis functions has a significant effect on accuracy, making high-order elements particularly advantageous for such problems [24].

Spectral Finite Element Methods (SEM) have emerged as a powerful approach to bridge the gap between single-domain spectral methods and classical low-order FEM. These methods utilize tensor product elements (quad or brick elements) of high-order Lagrangian polynomials with non-uniformly distributed Gauss-Lobatto-Legendre (GLL) nodal points [24]. For a prescribed level of accuracy, SEM require far fewer degrees of freedom than lower-order methods to represent solution structures associated with wave propagation in soft tissues. This computational efficiency, combined with reduced artificial dispersion and dissipation, makes SEM particularly appealing for problems characterized by large separation of scales, such as the propagation of finer-scale waves through very large tissue domains.

Alternative Computational Approaches

While FEM dominates the landscape of continuum tissue modeling, several alternative approaches offer unique advantages for specific applications. Mass-spring systems represent a relatively simple heuristic approach where concentrated masses are interconnected by a set of springs, effectively replacing a 3D continuum body with a truss structure [25]. The advantages of this approach reside in the simplicity of formulation and computational efficiency, making it particularly attractive in the 1990s and early 2000s when hardware capabilities were more limited. These systems have found extensive application in medical simulations, including orthodontics, robotic surgery, real-time muscle simulation, and various surgery simulators [25]. However, a significant challenge with mass-spring models is the ambiguity in determining the distribution of point masses and connection topology to achieve acceptable description of actual physical behavior.

Agent-based models (ABMs) offer a different paradigm by focusing on cellular-level behaviors and interactions, with tissue-level properties emerging from these discrete interactions. While not strictly continuum approaches, ABMs can be integrated with continuum models to create multiscale simulations that capture both individual cell behaviors and tissue-level mechanical responses. In the context of cell cycle research and tumor development, ABMs have been utilized to assess the role of the tumor immune microenvironment in influencing immunotherapy outcomes [26].

Table 2: Computational Methods for Tissue Mechanics Simulation

| Computational Method | Theoretical Basis | Computational Efficiency | Ideal Application Context |

|---|---|---|---|

| Standard Finite Element Method (FEM) | Continuum mechanics, variational methods | Moderate to high depending on mesh density and element order | General purpose tissue mechanics; static and slow dynamic processes |

| Spectral Finite Element Method (SEM) | High-order polynomial basis functions | High for problems with smooth solutions | Wave propagation in soft tissues; problems with minimal dissipation |

| Mass-Spring Systems | Discrete lumped parameters | Very high | Real-time simulation for surgery; plausible physical behavior |

| Agent-Based Models (ABMs) | Discrete cellular automata | Low to moderate depending on cell count | Multicellular systems; emergence of tissue patterns from cell rules |

Emerging Computational Paradigms

The field of computational tissue mechanics is being transformed by the integration of machine learning techniques with traditional continuum models. Automatic differentiation, a computational technique that forms the backbone of training deep learning models in artificial intelligence, is now being applied to problems in cellular self-organization [3]. This method allows computers to efficiently compute highly complex functions and detect the precise effect that small changes in any part of a gene network would have on the behavior of the whole cell collective. Harvard applied physicists have successfully used automatic differentiation to translate the complex process of cell growth into an optimization problem that computers can solve, effectively extracting the rules that cells follow as they grow to achieve a desired collective function [3].

Another promising development is the Physics-based Inelastic Constitutive Artificial Neural Networks framework, which has demonstrated promising results in modeling volumetric growth [21]. These approaches combine the physical consistency of traditional continuum models with the adaptive learning capabilities of neural networks, potentially offering new pathways for simulating complex tissue behaviors that have proven difficult to capture with conventional constitutive models.

Experimental Validation and Parameter Identification

Measuring Mechanical Properties in Biological Tissues

Validating continuum mechanics models requires precise quantification of the mechanical properties of biological tissues through carefully designed experimental protocols. A critical advancement in this domain is the development of Bayesian Inversion Stress Microscopy (BISM), which enables direct measurement of intercellular stresses in living tissues [27]. This methodology involves culturing cells on soft elastic substrates embedded with fluorescent markers, imaging the displacement of these markers due to cellular forces, and computationally reconstructing the underlying stress field using Bayesian statistical methods. The protocol requires high-resolution microscopy, sophisticated image analysis to track substrate deformations, and computational inversion algorithms that can resolve the force balances within the tissue.

For characterizing wave propagation properties in tissues, as relevant to diagnostic techniques like MRE and VA, researchers employ harmonic excitation tests combined with phase-contrast imaging [24]. The experimental protocol involves applying controlled harmonic mechanical excitation to tissue samples across a range of frequencies (typically tens of Hz to kHz), while simultaneously measuring the resulting displacement fields using techniques such as laser Doppler vibrometry or MRI. The complex shear modulus (storage and loss moduli) can then be extracted by fitting the measured wave propagation data to appropriate viscoelastic models, providing essential parameters for continuum models simulating dynamic tissue responses.

The mechanical characterization of developing tissues requires specialized approaches to capture evolving properties. For tissue-engineered cardiovascular implants, biaxial mechanical testing coupled with digital image correlation provides the necessary data to calibrate growth and remodeling models [21]. The protocol involves mounting tissue samples in a biaxial testing apparatus, applying controlled multiaxial loading paths while tracking surface deformations with high-resolution cameras, and extracting anisotropic material parameters through inverse finite element analysis. This approach has been instrumental in identifying homeostatic stress targets that drive tissue adaptation in computational models.

Integrating Imaging and Mechanical Testing

A powerful validation strategy combines advanced imaging techniques with mechanical testing to correlate structural features with mechanical function. Fluorescence microscopy tensor imaging represents a significant innovation in this domain, enabling whole-organ tensor imaging representations of local regional descriptors based on fluorescence data acquisition [28]. This method processes binarized imaging datasets to extract morphological descriptors that are used to build a local voxel-wise variance-covariance matrix, ultimately generating a volumetric tensor-valued representation of the imaging dataset. The approach is analogous to diffusion tensor imaging (DTI) in MRI but extends the concept to fluorescence microscopy data, allowing reconstruction of organizational tracts in biological structures like the cardiac microvasculature with unprecedented detail [28].

The experimental workflow for this technique involves several critical steps: (1) sample preparation and optical clearing to enable deep imaging, (2) image acquisition by fluorescence confocal microscopy at sub-cellular resolution, (3) computational pre-processing to maximize signal-to-noise ratio and contrast, (4) 3D segmentation using custom-designed supervised neural networks, (5) skeletonization to extract centerline information, and (6) tensor computation from the spatial distribution of morphological features [28]. The resulting tensor fields quantitatively characterize tissue organization across multiple scales, providing rich data for validating computational models of tissue mechanics and growth.

Applications in Predictive Morphogenesis and Tissue Engineering

Predicting Cell Self-Organization and Tissue Patterning

Continuum mechanics models have demonstrated remarkable utility in predicting cell self-organization and tissue patterning, key processes in morphogenesis and tissue engineering. A groundbreaking application comes from Harvard's research using automatic differentiation to uncover the rules that cells use to self-organize [3]. Their computational framework translates the complex process of cell growth into an optimization problem that can be solved with machine learning tools, effectively extracting the genetic networks that guide cell behavior and influence how cells chemically signal to each other or the physical forces that make them stick together or pull apart [3]. This approach represents a paradigm shift from descriptive to predictive modeling in developmental biology.

The predictive capability of continuum models extends to explaining mechanical cell competition, a tissue surveillance mechanism for eliminating unwanted cells that is indispensable in development, infection, and tumorigenesis. Recent research has revealed that force transmission capability serves as a master regulator of mechanical cell competition, selecting for cell types with stronger intercellular adhesion [27]. Direct force measurements in ex vivo tissues and different cell lines show increased mechanical activity at the interface between competing cell types, leading to large stress fluctuations that result in upward forces and cell elimination. Continuum models incorporating these findings can predict competition outcomes based on differences in mechanical properties, providing insights into tissue boundary maintenance and cell invasion pathology [27].

In tissue engineering, continuum models have proven valuable for predicting the post-printing evolution of 3D bioprinted constructs. Models based on the continuum hydrodynamics of highly viscous liquids can accurately simulate the fusion process of tissue spheroids, helping achieve desirable outcomes without expensive optimization experiments [23]. These models treat clusters of cohesive cells as incompressible viscous fluids on the time scale of hours, successfully predicting the morphological changes that occur as individual spheroids coalesce into integrated tissue constructs. The differential adhesion hypothesis provides the main morphogenetic mechanism underlying these predictive capabilities, with continuum models effectively capturing how surface tension-driven flows minimize interfacial energy between cell populations with different adhesive properties.

Optimizing Tissue-Engineered Implants

Continuum mechanics models are playing an increasingly important role in the design and optimization of tissue-engineered implants, particularly for cardiovascular applications. These models provide a virtual testing environment for exploring how in vitro culture conditions influence the development of mechanical properties in engineered tissues. A recent thermodynamically consistent model predicts tissue evolution and mechanical response throughout the in vitro maturation of passive, load-bearing soft collagenous constructs, using a stress-driven homeostatic surface to capture volumetric growth coupled with an energy-based approach to describe collagen densification via the strain energy of the fibers [21].