Predictive Biology Simulation Software: The Complete Guide for Researchers and Drug Developers

This guide provides researchers, scientists, and drug development professionals with a comprehensive overview of predictive biology simulation software.

Predictive Biology Simulation Software: The Complete Guide for Researchers and Drug Developers

Abstract

This guide provides researchers, scientists, and drug development professionals with a comprehensive overview of predictive biology simulation software. It covers foundational concepts, from protein structure prediction with tools like AlphaFold2 to whole-cell modeling platforms like KBase and RunBioSimulations. The article details practical methodologies for applications in drug discovery and personalized medicine, offers solutions for common troubleshooting and performance optimization, and establishes a framework for model validation and comparative analysis of techniques. By synthesizing current tools and best practices, this guide aims to empower scientists to leverage computational modeling for more efficient and predictive biomedical research.

What is Predictive Biology Software? Core Concepts and Capabilities

Predictive modeling in biology represents a fundamental paradigm shift from traditional descriptive approaches to a quantitative, model-driven science. It involves the use of mathematical formulations and computational algorithms to simulate biological systems, forecast their behavior under various conditions, and generate testable hypotheses. This field integrates biology, mathematics, statistics, and computer science to explore collective behaviors in biological systems that elude traditional molecular approaches [1]. The core premise is that by constructing accurate computational representations of biological processes—from molecular interactions to entire ecosystems—researchers can simulate experiments in silico, predict outcomes of biological processes, and accelerate the pace of discovery across biotechnology, drug development, and personalized medicine [1].

The scope of predictive modeling extends comprehensively across biological scales. At the molecular scale, models illuminate biochemical processes, cell signaling, protein interactions, and gene regulation. Cellular-scale models explore cell interactions, communication, and population dynamics, while organ and tissue-level models capture emergent physiological behaviors. At the broadest scales, models address population dynamics, ecological interactions, and evolutionary trajectories [1]. This multi-scale integration enables researchers to connect genetic variations to physiological outcomes, model disease progression, and design targeted therapeutic interventions with unprecedented precision.

Mathematical Foundations of Predictive Modeling

Core Modeling Approaches

Predictive modeling employs diverse mathematical frameworks, each suited to specific biological questions and data types. These approaches can be broadly categorized by their treatment of time, space, and stochasticity.

Table 1: Core Mathematical Modeling Approaches in Biology

| Model Type | Mathematical Basis | Primary Applications | Key Advantages |

|---|---|---|---|

| Ordinary Differential Equations (ODEs) | Systems of differential equations dx/dt = f(x) | Biochemical kinetics, metabolic pathways, population dynamics | Captures continuous, deterministic dynamics; well-established analytical tools |

| Partial Differential Equations (PDEs) | Differential equations with multiple independent variables | Spatial gradient modeling, morphogen diffusion, tissue development | Incorporates spatial information; models transport and diffusion phenomena |

| Boolean Networks | Logical operators (AND, OR, NOT) | Gene regulatory networks, signaling pathways | Handles qualitative data; computationally efficient for large networks |

| Stochastic Models | Probability distributions, master equations | Gene expression, cellular decision-making, rare events | Captures intrinsic noise and variability in biological systems |

| Agent-Based Models | Rule-based interactions between discrete entities | Tumor growth, immune system responses, ecological systems | Models emergent behavior; flexible representation of heterogeneity |

| Constraint-Based Models | Linear optimization within physiological constraints | Metabolic network analysis, flux balance analysis | Predicts steady-state metabolic behaviors; genome-scale capabilities |

Multi-Scale and Hybrid Modeling

A significant breakthrough in computational biology is the creation of multi-scale models that integrate multiple biological levels within unified frameworks [1]. These hybrid approaches combine different mathematical techniques to capture both discrete and continuous aspects of biological systems. For example, a model might use agent-based modeling to represent individual cells, ODE systems to model intracellular signaling, and PDEs to capture spatial concentration gradients [1]. This multi-scale approach was exemplified in a model of Helicobacter pylori colonization of gastric mucosa that employed agent-based modeling, ODE, and PDE approaches to effectively capture immune response dynamics [1]. Similarly, a multi-scale model of CD4 T cell response to influenza infection integrated molecular, cellular, and systemic scales [1].

Current Methodologies and Experimental Protocols

Model Development Workflow

The development of predictive models follows a systematic methodology to ensure robustness and biological relevance. The standard workflow encompasses several critical phases:

Problem Formulation and Scope Definition: Clearly define the biological question, system boundaries, and modeling objectives. Determine the appropriate scale (molecular, cellular, tissue, organism) and mathematical framework based on available data and research goals.

Data Collection and Curation: Gather relevant quantitative data from experimental measurements, omics technologies, or literature sources. This may include kinetic parameters, concentration measurements, gene expression profiles, or physiological readouts. Implement rigorous data quality control and normalization procedures to ensure consistency [2].

Model Construction: Implement the mathematical structure using appropriate software tools. This involves defining state variables, parameters, and interaction rules. For data-driven models, this step includes feature selection and dimensionality reduction to minimize overfitting and improve generalizability [3].

Parameter Estimation and Model Calibration: Use optimization algorithms to estimate unknown parameters by fitting model outputs to experimental data. Techniques include maximum likelihood estimation, Bayesian inference, and least squares optimization. Implement sensitivity analysis to identify parameters with greatest influence on model behavior.

Model Validation and Testing: Evaluate model performance using independent datasets not used during parameter estimation. Assess predictive accuracy, discriminatory power, and calibration using appropriate statistical measures [3]. Employ cross-validation techniques to assess generalizability beyond the training data.

Model Analysis and Simulation: Execute simulations to generate predictions, test hypotheses, and explore system behavior under various perturbations. Perform bifurcation analysis to identify critical transition points and stability analysis to characterize steady-state behaviors.

Iterative Refinement: Continuously update and refine the model as new experimental data becomes available, following an iterative cycle of prediction, experimental testing, and model improvement.

Validation and Reproducibility Protocols

Robust validation is essential for establishing model credibility and ensuring reproducible predictions. The following protocols represent best practices in predictive modeling:

Internal Validation Techniques:

- Random Split Validation: Divide available data into training (typically 70-80%) and validation (20-30%) sets [3].

- k-Fold Cross-Validation: Partition data into k subsets, using k-1 for training and one for validation, rotating through all subsets [3].

- Monte Carlo Cross-Validation: Perform multiple random splits to generate distribution of model performance metrics [3].

External Validation:

- Validate models using completely independent datasets from different sources or experimental conditions.

- Assess model transportability across different populations or environmental contexts.

Reproducibility Safeguards:

- Document all data preprocessing steps, parameter values, and computational procedures.

- Implement version control for model code and documentation.

- Adhere to TRIPOD guidelines for prediction model study design and reporting [4].

- Practice open science by making analysis code available to enable verification of results [2].

Advanced Applications in Biological Research

Multi-Scale Integration in Biological Systems

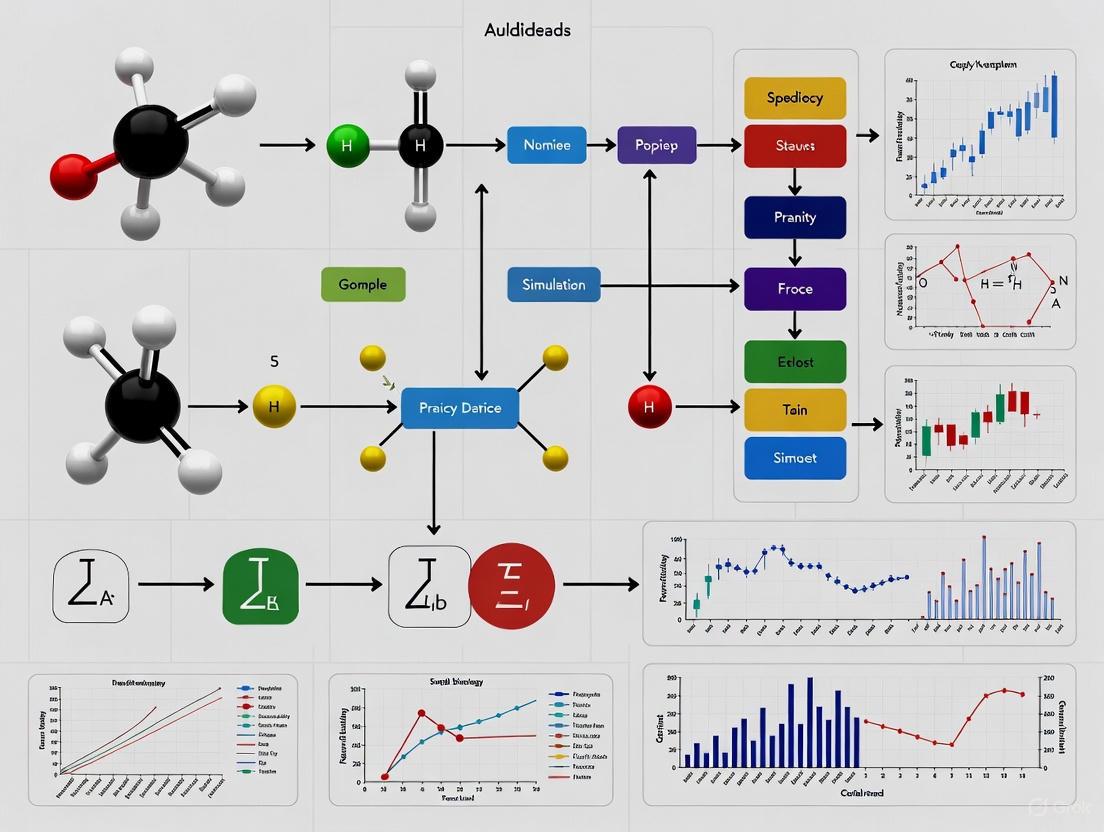

Predictive modeling excels at integrating biological processes across multiple scales, from molecular interactions to physiological outcomes. The diagram below illustrates how different modeling approaches connect across biological hierarchies:

Drug Discovery and Development Applications

Predictive modeling has transformed pharmaceutical research by enabling in silico prediction of drug-target interactions, reducing reliance on traditional trial-and-error methods [1]. Systems pharmacology models aid in determining dosing regimens, patient stratification, understanding drug mechanism of action, and disease modeling [1]. Specific applications include:

- Virtual Clinical Trials: Simulation of drug effects across heterogeneous patient populations to optimize trial design and identify responsive subpopulations.

- Mechanism of Action Analysis: Deconvolution of complex drug effects on biological networks using network pharmacology approaches.

- Toxicology Prediction: Forecasting adverse drug reactions through modeling of off-target effects and metabolic activation pathways.

- Therapeutic Optimization: Personalizing treatment schedules and combination therapies based on individual patient characteristics and disease dynamics.

Single-Cell Technologies and Digital Twins

The advent of single-cell technologies has revolutionized predictive modeling by revealing previously unappreciated cellular heterogeneity [1]. Integrating single-cell RNA sequencing (scRNA-seq) data with computational models enables granular views of biological processes at cellular resolution, facilitating understanding of cellular heterogeneity, differentiation pathways, and cell lineage relationships [1]. RNA velocity analysis, based on genome-wide inference of kinetic models on cell populations, allows prediction of gene expression evolution and reconstruction of developmental trajectories [1].

More complex models of complete biological systems, referred to as 'digital twins', are being designed with sufficient fidelity for computational experiments that predict real-life outcomes, such as disease treatment scenarios [1]. These virtual representations of individual patients can simulate disease progression and treatment effects at a personal level, enabling more effective and targeted therapies [1]. The Chan Zuckerberg Initiative has identified building AI-based virtual cell models as a grand challenge, aiming to create powerful models for predicting and designing cellular behavior to speed drug development and therapeutic discovery [5].

Computational Tools and Platforms

Table 2: Essential Software Tools for Predictive Biological Modeling

| Tool Name | Primary Function | Modeling Strengths | Access |

|---|---|---|---|

| COPASI | Biochemical network simulation | ODE-based kinetics, metabolic control analysis | Open source |

| Virtual Cell (VCell) | Multi-scale spatial modeling | Reaction-diffusion systems, electrophysiology | Free web-based |

| BioNetGen | Rule-based network modeling | Large-scale signaling networks, combinatorial complexity | Open source |

| NEURON | Neural electrophysiology | Neuronal dynamics, synaptic integration | Open source |

| SBML Toolbox | Model interoperability | SBML format support, tool integration | Open source |

| Scikit-learn | Machine learning | Predictive algorithms, feature selection | Open source (Python) |

| Caret | Predictive modeling | Unified framework for R machine learning | Open source (R) |

| Anaconda Distribution | Platform management | Integrated data science environment | Open source |

Data Standards and Exchange Formats

Standardized data formats are critical for model reproducibility and sharing. The COmputational Modeling in BIology NEtwork (COMBINE) initiative coordinates community standards for all aspects of modeling in biology [6]. Key formats include:

- SBML (Systems Biology Markup Language): XML-based format for representing biochemical network models, supported by over 100 modeling tools [6].

- BioPAX (Biological Pathway Exchange): Format for pathway data sharing and integration across databases [6].

- SBGN (Systems Biology Graphical Notation): Standard for visual representation of biological processes [6].

- CellML: Open standard for representing mathematical models, particularly suited for electrophysiology and mechanical models [6].

- NeuroML: Format for defining and exchanging models of neuronal systems [6].

Future Perspectives and Grand Challenges

The future of predictive modeling in biology is intrinsically linked to advancements in artificial intelligence, multi-scale integration, and data generation technologies. Major initiatives like the Chan Zuckerberg Institute's grand challenges aim to build AI-based virtual cell models, develop novel imaging technologies to map complex biological systems, create tools for real-time measurement of inflammation within tissues, and harness the immune system for early disease detection and prevention [5]. These efforts highlight the growing convergence of biology with computational sciences and engineering.

Key frontier areas include:

- Whole-Cell Modeling: Expanding beyond the first whole-cell model of Mycoplasma genitalium to more complex organisms and human systems [1].

- AI-Augmented Model Discovery: Using machine learning to automatically infer model structures from high-dimensional biological data [6].

- Personalized Predictive Medicine: Developing patient-specific models that incorporate individual genomic, proteomic, and clinical data for treatment personalization.

- Real-Time Predictive Monitoring: Creating systems that can integrate continuous data streams from wearable sensors and medical devices for dynamic health forecasting.

- Ethical Data Sharing Frameworks: Establishing protocols for secure, privacy-preserving data sharing to accelerate model development while protecting patient confidentiality [2].

As predictive modeling continues to mature, it will increasingly serve as the foundation for precision medicine, enabling healthcare interventions tailored to individual molecular profiles, lifestyles, and environmental contexts. The integration of predictive models into clinical decision support systems represents the next frontier in evidence-based medicine, potentially transforming how diseases are prevented, diagnosed, and treated across global populations.

Predictive biology uses computational models to simulate complex biological systems and forecast outcomes, which is crucial for advancing biomedical research and therapeutic development. The field relies on distinct yet complementary mathematical frameworks—statistical, kinetic, machine learning (ML), and logical models—each with unique strengths for specific applications. Statistical models infer relationships from data patterns, kinetic models describe dynamic system behaviors through differential equations, ML algorithms learn complex mappings from high-dimensional datasets, and logical models provide qualitative insights into network topology and regulation. Framing these approaches within clinical bioinformatics reveals their shared role in translating 'omics' data into clinically relevant predictions for diagnostics, prognostics, and therapy decisions [7]. This guide provides an in-depth technical examination of these core frameworks, their experimental protocols, and their integration in predictive biology simulation software.

Core Modeling Frameworks: A Comparative Analysis

Table 1: Comparative Overview of Key Modeling Frameworks in Computational Biology

| Modeling Framework | Core Description | Primary Applications | Data Requirements | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Statistical | Scoring and probability functions assuming specific data distributions [7]. | Continuous quantification, hypothesis testing [7]. | Data for parameter estimation; depends on sample size [7]. | Provides probability estimates and confidence intervals; well-established theoretical foundation. | Relies on strict assumptions about data distribution; limited capacity for complex pattern recognition. |

| Kinetic | Systems of nonlinear differential equations based on biochemical rate laws [7] [8]. | Dynamic simulation of metabolic pathways, drug metabolism [8]. | Reported or estimated kinetic parameters; does not depend on sample size [7]. | Mechanistically represents system dynamics and time-dependent responses [8]. | Parameter estimation is often challenging and computationally intensive [8]. |

| Logical | Logical equations based on predefined rules for component interactions [7] [9]. | Binary classification, signaling network analysis [7] [9]. | Relational knowledge of system components; does not depend on sample size [7]. | Operates without precise kinetic parameters; intuitive representation of network topology [9]. | Lacks quantitative precision for concentration dynamics. |

| Machine Learning | Algorithms that learn patterns from data to make predictions [10]. | Expression forecasting, classification, biomarker discovery [10]. | Large datasets for training and validation [7] [10]. | Handles high-dimensional data and identifies complex nonlinear relationships. | Requires substantial training data; risk of overfitting; "black box" interpretation challenges. |

| Regression | Fitting of mathematical equations (linear, polynomial, etc.) to data [7]. | Binary classification, continuous outcome prediction [7]. | Data for model fitting; depends on sample size [7]. | Simple implementation and interpretation; clear relationship between inputs and outputs. | Limited flexibility for capturing complex biological relationships. |

| Random Forests | Supervised ML algorithm averaging multiple decision trees [7]. | Binary classification [7]. | Data for training and validation; requires large datasets [7]. | Handles high-dimensional data well; robust to outliers and noise. | Limited interpretability of individual predictions. |

| Support Vector Machines | Supervised ML algorithm based on clustering and principal component analysis [7]. | Binary classification [7]. | Data for training and validation; requires large datasets [7]. | Effective in high-dimensional spaces; memory efficient through support vectors. | Less effective with noisy data; performance depends on kernel choice. |

| Neural Networks | Supervised ML with layered neuron-like architectures [7]. | Binary classification [7]. | Data for training and validation; requires large datasets [7]. | Exceptional capacity for learning complex patterns and relationships. | High computational requirements; prone to overfitting; minimal interpretability. |

Framework-Specific Methodologies and Applications

Kinetic Modeling with Generative Machine Learning

Kinetic models characterize metabolic states by explicitly linking metabolite concentrations, metabolic fluxes, and enzyme levels through mechanistic relationships [8]. The RENAISSANCE (REconstruction of dyNAmIc models through Stratified Sampling using Artificial Neural networks and Concepts of Evolution strategies) framework addresses the key challenge of parameterizing large-scale kinetic models by efficiently determining kinetic parameters that match experimental observations [8].

Experimental Protocol: RENAISSANCE Framework for Kinetic Model Parameterization

- Input Preparation: The process begins with a steady-state profile of metabolite concentrations and metabolic fluxes. These are computed by integrating structural properties of the metabolic network (stoichiometry, regulatory structure, and rate laws) with available multi-omics data (metabolomics, fluxomics, proteomics, and transcriptomics) [8].

- Generator Initialization: A population of feed-forward neural networks (generators) is initialized with random weights. The complexity of these generator networks is dictated by the size and complexity of the target kinetic model [8].

- Parameter Generation and Model Evaluation: Each generator takes multivariate Gaussian noise as input and produces a batch of kinetic parameters. These parameter sets are used to parameterize the kinetic model. The dynamics of each parameterized model are evaluated by computing the eigenvalues of its Jacobian matrix and corresponding dominant time constants, assessing whether they match experimentally observed timescales [8].

- Reward Assignment and Iterative Optimization: Based on the dynamic evaluation, models are classified as biologically relevant (valid) or not (invalid), and each generator receives a reward proportional to its incidence of valid models. Using Natural Evolution Strategies (NES), the weights of all generators are updated based on their normalized rewards, with higher-performing generators having greater influence. This process iterates until the generator meets user-defined objectives, such as maximizing the incidence of valid models [8].

Application: This approach has successfully characterized intracellular metabolic states in Escherichia coli, accurately estimating missing kinetic parameters and reconciling them with sparse experimental data, substantially reducing parameter uncertainty [8].

Workflow of the RENAISSANCE kinetic modeling framework

Machine Learning for Expression Forecasting

Machine learning approaches for expression forecasting aim to predict effects of genetic perturbations (e.g., gene knockouts or knockins) on the transcriptome. The Grammar of Gene Regulatory Networks (GGRN) and its benchmarking platform PEREGGRN provide a modular framework for this purpose [10].

Experimental Protocol: GGRN for Expression Forecasting

- Data Splitting: A non-standard data split ensures no perturbation condition occurs in both training and test sets. Randomly chosen perturbation conditions and all controls are allocated to training data, while a distinct set of perturbation conditions is allocated to test data [10].

- Baseline Establishment: The average expression of all control samples is computed as the baseline [10].

- Perturbation Application: For the test perturbations, the targeted gene's expression is set to 0 (for knockout) or its observed value after intervention (for knockdown or overexpression) [10].

- Model Prediction: Supervised machine learning models predict expression of all genes except the directly targeted gene, based on the expression of candidate regulators [10].

- Performance Evaluation: Multiple metrics assess performance, including mean absolute error (MAE), mean squared error (MSE), Spearman correlation, proportion of genes with correctly predicted direction of change, and accuracy in classifying cell type [10].

Application: Expression forecasting provides a cheaper, less labor-intensive alternative to Perturb-seq and similar assays for nominating, ranking, or screening genetic perturbations with interesting effects on cell state [10].

Logical Modeling of Biological Networks

Logical models, particularly logic-based differential equations, provide a valuable middle ground between qualitative Boolean approaches and quantitative kinetic modeling. These approaches do not require precisely measured kinetic parameters but can predict graded crosstalk between pathways, unlike traditional Boolean methods [9].

Experimental Protocol: Network Simulation with Netflux

- Network Construction: Species (genes or proteins) and reactions (activating or inhibiting interactions) are defined based on literature mining and experimental data. Netflux provides a graphical user interface for this construction without requiring programming [9].

- Parameter Setting: For each species, key parameters include initial value (yinit), maximum value (ymax), and time constant (tau) describing its rate of change. For each reaction, parameters include relationship strength (weight), cooperativity (n), and half-maximal effective concentration (EC50) [9].

- Simulation Execution: Simulations are run through the Netflux interface, which uses logic-based differential equations with normalized Hill functions to calculate species activities over time [9].

- Perturbation Analysis: Network responses to perturbations (e.g., gene knockouts, drug treatments) are simulated by modifying input reactions or species parameters, showing how perturbations propagate through signaling networks [9].

Application: Netflux has been used to construct predictive network models for various biological processes, including a mechano-signaling network for heart cells that identified mechanisms by which increased stretch increases cell area, a maladaptive change in heart cell physiology [9].

Logical modeling workflow using Netflux

Table 2: Key Software Tools and Resources for Predictive Biology Modeling

| Tool/Resource Name | Primary Function | Supported Frameworks | Key Features |

|---|---|---|---|

| RENAISSANCE | Kinetic model parameterization [8]. | Kinetic, Machine Learning | Uses generative ML and natural evolution strategies to efficiently parameterize large-scale kinetic models without requiring training data [8]. |

| GGRN/PEREGGRN | Expression forecasting and benchmarking [10]. | Machine Learning, Statistical | Modular software for forecasting gene expression changes after perturbations; includes benchmarking platform with multiple datasets and metrics [10]. |

| Netflux | Logic-based network modeling and simulation [9]. | Logical | User-friendly, programming-free tool for constructing and simulating biological networks using logic-based differential equations [9]. |

| CompuCell3D | Multicellular virtual-tissue modeling [11]. | Kinetic, Logical | Open-source environment for building and simulating multicellular models using Cellular Potts Model and related frameworks [11]. |

| COPASI | Biochemical network simulation and analysis [6]. | Kinetic, Statistical | Software for simulation and analysis of biochemical networks and their dynamics. |

| Virtual Cell (VCell) | Multiscale spatial modeling of cellular physiology [6]. | Kinetic, Statistical | Web-based and standalone platform for constructing and simulating cell biological models. |

| Tellurium | Modeling and simulation of biochemical networks [11]. | Kinetic, Statistical | Python environment for reproducible dynamical modeling of biological networks with support for COMBINE archives [11]. |

| BioNetGen | Rule-based modeling of complex biological systems [6]. | Logical, Kinetic | Software for constructing and simulating computational models using the BioNetGen Language (BNGL) [6]. |

Integrated Framework for Predictive Modeling in Drug Development

Effective predictive modeling in drug development requires integrating multiple frameworks to capture emergent properties across biological scales, from molecular interactions to clinical outcomes [12]. Success depends on strong foundations in physiology, pharmacology, and molecular biology, combined with strategic application of computational tools [12].

Key Integration Strategies:

- Combining QSP and Machine Learning: Quantitative Systems Pharmacology (QSP) provides biologically grounded mechanistic frameworks, while ML excels at pattern recognition in large datasets. Together, they can address data gaps, improve individual-level predictions, and enhance model robustness [12].

- Balancing Quantitative and Qualitative Features: Effective models must capture both quantitative kinetics and qualitative system behaviors, such as bistability in signal-response systems with positive feedback, which requires specific model structures beyond standard Hill equations [12].

- Strategic Model Adaptation: Proactively adapting existing literature models after critical assessment of their biological assumptions, represented pathways, parameter estimation methods, and implementation can be more efficient than developing entirely new models [12].

The scientific community is increasingly coordinating efforts to improve model credibility and reproducibility through initiatives such as the ASME V&V 40 standard, FDA guidance documents, the Center for Reproducible Biomedical Modeling (CRBM), and FAIR (Findable, Accessible, Interoperable, and Reusable) principles [12].

The field of predictive biology is being transformed by sophisticated software platforms that enable researchers to model and simulate complex biological systems. KBase, RunBioSimulations, and AlphaFoldDB represent three leading platforms, each with distinct architectures and capabilities tailored to different research needs. KBase provides a comprehensive, narrative-driven environment for systems biology, RunBioSimulations specializes in standardized simulation of biological models, and AlphaFoldDB offers unprecedented access to AI-predicted protein structures. Together, these platforms are accelerating drug discovery, basic biological research, and the development of personalized medicine by providing scientists with powerful computational tools that complement traditional experimental approaches.

Predictive biology represents a paradigm shift in life sciences research, leveraging computational power to model, simulate, and predict biological system behavior. The global computational biology market, valued at $9.13 billion in 2025 and projected to reach $28.4 billion by 2032, reflects the growing dominance of these approaches [13]. This growth is fueled by increasing demand for data-driven drug discovery, personalized medicine, and the integration of artificial intelligence and machine learning with biological research [14] [15] [13].

These platforms share a common goal of making complex biological analyses more accessible, reproducible, and scalable. However, they differ significantly in their technical implementations, specialized capabilities, and target research communities. Understanding these distinctions enables researchers to select the most appropriate tools for their specific investigative needs, whether studying individual protein structures, metabolic pathways, or whole-cell models.

The table below provides a structured comparison of the three featured platforms across key technical and operational dimensions:

Table 1: Platform Comparison Overview

| Feature | KBase | RunBioSimulations | AlphaFold DB |

|---|---|---|---|

| Primary Focus | Systems biology & microbiome analysis [16] [17] | Biological model simulation & reproducibility [18] | Protein structure prediction & access [19] [20] |

| Core Technology | Integrated bioinformatics apps & Narratives [16] | BioSimulators standardized containers [18] | Deep learning AI (AlphaFold models) [19] [20] |

| Key Capabilities | Shareable, reproducible workflows; Data integration [16] | Runs simulations from diverse modeling formats [18] | Provides over 200 million protein structures [19] [20] |

| Access Model | Freely available open-source platform [16] [17] | Open-source (MIT license) [18] | Free database (CC-BY-4.0); Restricted server access [19] [21] |

| Computing Resources | DOE high-performance computing [16] | Cloud-based application [18] | Cloud-based predictions via AlphaFold Server [20] [21] |

Table 2: Research Application Suitability

| Research Need | Recommended Platform | Rationale |

|---|---|---|

| Metabolic Pathway Modeling | KBase [16] | Integrated 'omics analysis tools and genome-scale modeling apps |

| Running Standardized Simulations | RunBioSimulations [18] | Central registry of containerized tools supporting COMBINE/OMEX standards |

| Protein Structure Analysis | AlphaFold DB [19] [20] | Comprehensive database of predicted structures with high experimental accuracy |

| Collaborative Workflow Sharing | KBase [16] [17] | Narrative interface enables sharing of data, code, and commentary |

| Predicting Protein-Ligand Interactions | AlphaFold Server [20] [21] | AlphaFold 3 extends modeling to interactions with other molecules |

Deep Dive: KBase (The Department of Energy Systems Biology Knowledgebase)

Platform Architecture and Workflow

KBase is a comprehensive knowledge creation environment designed for biologists and bioinformaticians, integrating diverse data and analysis tools into a unified platform [16] [17]. Its architecture leverages scalable Department of Energy computing infrastructure to perform sophisticated systems biology analyses that would be challenging for individual researchers to implement [16]. The platform's core organizing principle is the "Narrative" interface – digital notebooks that allow users to combine data, analytical tools, visualizations, and commentary into shareable, reproducible research stories [16] [17]. This approach addresses the critical need for reproducibility in computational biology while facilitating collaboration across research teams and institutions.

The data model within KBase is designed around FAIR principles (Findable, Accessible, Interoperable, and Reusable), enabling researchers to analyze their own data in the context of public data from DOE resources and other public repositories [17]. The platform is developer-extensible, allowing bioinformatics developers to add open-source analysis tools that become available to all users, creating a growing ecosystem of analytical capabilities [17]. This community-driven approach has positioned KBase as a leading platform for systems biology research, particularly in areas relevant to DOE missions such as bioenergy, environmental science, and microbiome research.

Key Experimental Protocols

KBase Metabolic Modeling Protocol:

- Data Input and Assembly: Begin by importing your genome sequence data into KBase. Utilize the platform's assembly and annotation apps to process raw sequencing reads into annotated genomes.

- Model Reconstruction: Employ the Model Reconstruction interface to automatically generate a genome-scale metabolic model from your annotated genome. KBase integrates tools like ModelSEED to facilitate this process.

- Gap Filling and Curation: Use KBase's gap-filling algorithms to identify and address metabolic gaps in the draft model. Manually curate the model based on experimental data or literature evidence using the built-in curation tools.

- Simulation Design: Define the simulation conditions by specifying environmental constraints, nutrient availability, and growth objectives using the Flux Balance Analysis (FBA) app.

- Analysis and Visualization: Run the simulation and utilize KBase's visualization tools to interpret the results, including flux distributions, nutrient uptake rates, and growth predictions.

- Narrative Publication: Document the entire workflow, parameters, and results in a KBase Narrative. Share this Narrative with collaborators or publish it with a persistent DOI to ensure reproducibility [16] [17].

Diagram: KBase Metabolic Modeling Workflow. This flowchart illustrates the step-by-step process for building and analyzing metabolic models in KBase.

Deep Dive: RunBioSimulations

Platform Architecture and Workflow

RunBioSimulations addresses a fundamental challenge in computational biology: the difficulty of sharing and reusing biological models and simulations due to incompatible formats and tools [18]. The platform is part of a larger ecosystem that includes BioSimulators (a registry of containerized simulation tools) and BioSimulations (a platform for sharing modeling studies) [18]. This integrated approach provides researchers with a consistent interface for running simulations across a broad range of modeling frameworks and formats, including those standardized by COMBINE initiatives such as SBML (Systems Biology Markup Language) and SED-ML (Simulation Experiment Description Markup Language) [18].

The technical architecture of RunBioSimulations is implemented in TypeScript using Angular, NestJS, MongoDB, and Mongoose [18]. This modern web stack enables the platform to provide a user-friendly web interface that eliminates the need for researchers to install and configure specialized simulation software. By leveraging containerization technologies, RunBioSimulations ensures that simulations are reproducible and consistent across different computing environments. This focus on standardization and reproducibility makes the platform particularly valuable for research validation, educational purposes, and collaborative projects where different teams need to verify and build upon each other's work.

Key Experimental Protocols

RunBioSimulations Standardized Simulation Protocol:

- Model Preparation: Prepare your computational model in a supported format (e.g., SBML, CellML) or select a pre-existing model from a public repository.

- Simulation Experiment Description: Create a Simulation Experiment Description (SED-ML) file that specifies the simulation setup, including model references, simulation parameters, and output definitions.

- Platform Upload: Access the RunBioSimulations web interface and upload your model file and SED-ML document. Alternatively, use the platform's interface to select from available public models.

- Tool Selection: Choose from the registry of BioSimulators containerized simulation tools that are compatible with your model type and the desired simulation algorithm.

- Execution and Monitoring: Run the simulation through the web interface. The platform will execute the simulation using the selected tool and provide status updates.

- Results Retrieval: Download the simulation results in standardized formats. Results can be visualized within the platform or exported for further analysis using external tools.

- Study Sharing: Optionally, share your complete modeling study (including model, simulation experiment, and results) through the BioSimulations platform to enable other researchers to reproduce and build upon your work [18].

Diagram: RunBioSimulations Standardized Workflow. This chart outlines the process for running reproducible biological simulations using standardized formats and containerized tools.

Deep Dive: AlphaFold DB

Platform Architecture and Workflow

AlphaFold DB represents one of the most significant advances in computational biology, providing open access to over 200 million protein structure predictions generated by DeepMind's AlphaFold AI system [19] [20]. The database is the product of a partnership between Google DeepMind and EMBL's European Bioinformatics Institute (EMBL-EBI), making these predictions freely available to the global scientific community [19]. The underlying AlphaFold system regularly achieves accuracy competitive with experimental methods such as X-ray crystallography and cryo-electron microscopy, dramatically reducing the time and cost required to determine protein structures from years to minutes [19] [20].

The technological breakthrough of AlphaFold lies in its ability to predict a protein's 3D structure from its amino acid sequence with remarkable accuracy [20]. The database is continuously updated with structures for newly discovered protein sequences and improved functionality based on user feedback [19]. Recent enhancements include AlphaFold 3, which extends modeling capabilities to a broader spectrum of molecular structures including ligands, ions, and post-translational modifications [21]. The database also now includes custom annotation features that enable researchers to integrate and visualize sequence annotations alongside structure predictions [19]. While the database is freely available, access to the most advanced capabilities like AlphaFold Server (which predicts protein interactions with other molecules) is currently limited to non-commercial research purposes [20] [21].

Key Experimental Protocols

AlphaFold DB Structure Retrieval and Analysis Protocol:

- Sequence Identification: Identify the protein sequence of interest through databases like UniProt. Note the specific isoform and any known sequence variants.

- Database Query: Navigate to the AlphaFold Protein Structure Database and search using the protein identifier (e.g., UniProt ID) or by uploading the amino acid sequence.

- Structure Retrieval: Access the predicted structure, which includes the 3D coordinates and per-residue confidence scores (pLDDT). Download the structure in PDB or mmCIF format.

- Quality Assessment: Examine the pLDDT scores to assess prediction confidence across different regions of the protein. Low scores may indicate flexible or disordered regions.

- Structure Visualization and Analysis: Use molecular visualization software (e.g., PyMOL, ChimeraX) to analyze the predicted structure. Examine active sites, binding pockets, and structural domains.

- Custom Annotation Integration: For advanced analysis, use the integrated annotation feature to visualize custom sequence annotations (e.g., mutation sites, functional domains) alongside the 3D structure and pLDDT track [19].

- Experimental Validation: Design wet-lab experiments (e.g., crystallography, mutagenesis) to validate computational insights, particularly for regions with lower confidence scores or novel structural features.

Diagram: AlphaFold DB Analysis Workflow. This flowchart shows the process for retrieving, assessing, and analyzing AI-predicted protein structures from the AlphaFold database.

Integrated Research Applications

Case Study: Investigating a Disease-Causing Mutation

The power of these platforms is magnified when used in combination. Consider a research project investigating a novel mutation in the SERPINC1 gene (encoding antithrombin) associated with thrombophilia:

Initial Analysis with AlphaFold DB: Retrieve and analyze the wild-type and mutated antithrombin structures. While a 2024 study noted that AlphaFold may not always predict conformational changes from mutations, it provides crucial initial structural context and highlights regions of interest [22].

Functional Modeling with RunBioSimulations: Create a model of the mutated antithrombin's interaction with its target proteases and simulate the kinetic differences compared to wild-type using standardized simulation tools.

Systems Biology Context with KBase: Place the findings within a broader systems biology context by modeling how the mutation affects relevant coagulation pathways and metabolic processes using KBase's integrated tools.

This integrated approach demonstrates how these complementary platforms can accelerate the journey from genetic variant identification to functional characterization and systems-level understanding.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Computational Tools

| Item | Function/Application | Relevance to Platforms |

|---|---|---|

| Protein Data Bank (PDB) Files | Standard format for 3D structural data; used for visualization and comparative analysis | Primary download format for AlphaFold DB structures [19] |

| SED-ML (Simulation Experiment Description Markup Language) | XML format that describes the simulation setup, including model and simulation parameters | Standardized input for RunBioSimulations to ensure reproducible simulations [18] |

| SBML (Systems Biology Markup Language) | Standard representation for computational models of biological processes | Supported model format in RunBioSimulations; used in KBase for constraint-based modeling [18] |

| FASTA Sequence Files | Standard text-based format for representing nucleotide or peptide sequences | Input format for AlphaFold structure prediction; used in KBase for genomic analyses [16] [19] |

| COMBINE/OMEX Archives | Containers bundling all files related to a modeling study (models, data, scripts) | Supported format in RunBioSimulations for comprehensive model sharing and reproducibility [18] |

Future Directions in Predictive Biology

The future trajectory of predictive biology platforms points toward increased integration, accessibility, and expanded capabilities. We anticipate several key developments:

Enhanced AI Integration: Platforms will increasingly incorporate AI and machine learning not just for structure prediction but for guiding simulation parameters, interpreting results, and generating hypotheses [14] [15] [13]. The success of AlphaFold has demonstrated AI's transformative potential, with newer versions already expanding to model protein interactions with other molecules [21].

Convergence Toward Unified Platforms: The distinction between specialized platforms may blur as they incorporate each other's capabilities. We may see KBase integrating AlphaFold predictions into its narrative workflows or RunBioSimulations incorporating more AI-guided simulation approaches.

Democratization Through Cloud Computing: The shift toward cloud-based platforms will continue, making sophisticated biological simulations accessible to researchers without specialized computing infrastructure [14] [15]. This trend particularly benefits researchers in low and middle-income countries, as evidenced by AlphaFold's significant user base in these regions [20].

Addressing Current Limitations: Future versions will need to address current limitations, such as AlphaFold's challenges in predicting conformational changes in certain proteins like serpins [22] and the need for improved standardization across biological data formats [13].

As these platforms evolve, they will further transform biological research from a predominantly experimental discipline to one that seamlessly integrates computation and experimentation, accelerating discoveries across basic research, drug development, and personalized medicine.

The integration of multi-omics data represents a paradigm shift in biomedical research, moving from isolated data analysis to a holistic understanding of biological systems. This approach combines diverse datasets—genomics, transcriptomics, proteomics, metabolomics, and clinical records—to create comprehensive computational models that can simulate biological behavior with unprecedented accuracy [23]. For researchers and drug development professionals, this integration is foundational to predictive biology, enabling the simulation of disease progression, treatment response, and complex cellular interactions before moving to wet-lab validation.

The core value proposition lies in overcoming the limitations of single-omics approaches. Where genomics alone might reveal disease predisposition and transcriptomics might show active processes, multi-omics integration reveals how these layers interact dynamically [23] [24]. This is particularly crucial for understanding complex diseases like cancer, which are driven by intricate interactions between various cellular regulatory layers that cannot be captured by any single data type [25]. By building predictive models on this integrated data foundation, researchers can accelerate therapeutic development from target identification through clinical trial optimization, ultimately creating more effective personalized treatment strategies [23] [26].

Core Challenges in Multi-Omics Data Integration

Technical and Analytical Hurdles

Successfully integrating multi-omics data for simulation requires overcoming significant technical challenges stemming from the inherent complexity and scale of the data. These obstacles represent critical points where integration pipelines can fail without proper design and execution.

Data Heterogeneity and Scale: Each biological layer generates data with distinct formats, scales, and statistical characteristics. Genomics (DNA) provides static structural information, transcriptomics (RNA) reveals dynamic gene expression, proteomics (proteins) reflects functional states, and metabolomics captures real-time physiological activity [23]. This creates a high-dimensionality problem with far more features than samples, increasing the risk of spurious correlations and overwhelming conventional analysis methods [23].

Normalization and Batch Effects: Data from different laboratories, platforms, and processing batches contain systematic technical variations that can obscure true biological signals. Sophisticated normalization techniques (e.g., TPM for RNA-seq, intensity normalization for proteomics) and statistical correction methods like ComBat are essential prerequisites for reliable integration [23]. Without these steps, batch effects can render integrated datasets useless for downstream simulation tasks.

Missing Data and Sparsity: It's common for samples to have incomplete data across omics layers, with certain measurements missing entirely. Simple deletion of incomplete cases can seriously bias analysis, while imputation methods like k-nearest neighbors (k-NN) or matrix factorization must be carefully selected based on the missingness mechanism and data structure [23].

Computational and Interpretation Challenges

Beyond technical processing, researchers face substantial obstacles in computational infrastructure and biological interpretation when working with multi-omics data.

Computational Scalability: Multi-omics datasets routinely reach petabyte scales, with single whole genomes generating hundreds of gigabytes of raw data [23]. Scaling to thousands of patients across multiple omics layers demands cloud-based solutions and distributed computing architectures that many research institutions lack [23] [26]. The shift to single-cell multi-omics further exacerbates these demands by increasing resolution to millions of individual cells per experiment [26].

Interpretation Complexity: With thousands of correlated variables across omics layers, distinguishing true biological signals from noise becomes statistically challenging [24]. The integration of multiple data types can obscure real biological relationships, while conducting thousands of statistical tests without predefined hypotheses creates a high false-positive rate [24]. Furthermore, spatial and temporal variations in omics measurements add additional dimensions of complexity that many current methods struggle to analyze effectively [24].

Reproducibility and Standardization: Many multi-omics results fail replication due to inconsistent methodologies and insufficient documentation of software versions and parameter settings [24]. Establishing robust protocols for data integration is crucial to ensuring reliability, yet methods often must be tailored to each specific dataset and research question [24].

Table 1: Key Challenges in Multi-Omics Data Integration for Simulations

| Challenge Category | Specific Obstacles | Impact on Simulations |

|---|---|---|

| Data Heterogeneity | Different formats, scales, and biases across omics layers [23] | Compromises model accuracy and biological relevance |

| Computational Demand | Petabyte-scale data storage and processing requirements [23] | Limits accessibility and increases infrastructure costs |

| Statistical Complexity | High dimensionality, missing data, batch effects [23] [24] | Increases false discovery rates and reduces predictive power |

| Interpretation Difficulties | Correlated variables, unclear causal relationships [24] | Hinders extraction of biologically meaningful insights |

| Reproducibility Issues | Inconsistent methodologies, inadequate documentation [24] | Undermines validation and clinical translation |

Methodologies for Multi-Omics Integration

AI and Machine Learning Strategies

Artificial intelligence and machine learning provide the essential computational foundation for multi-omics integration, acting as sophisticated pattern recognition systems that detect subtle connections across millions of data points [23]. The selection of integration strategy significantly influences what types of biological relationships can be captured in subsequent simulations.

Early Integration (Feature-Level): This approach merges all raw features from different omics layers into a single massive dataset before analysis [23]. While computationally intensive and susceptible to the curse of dimensionality, early integration preserves all raw information and can capture complex, unforeseen interactions between modalities that might be lost in other approaches [23].

Intermediate Integration: Methods in this category first transform each omics dataset into a more manageable representation, then combine these representations [23]. Network-based methods are particularly powerful, constructing biological networks (e.g., gene co-expression, protein-protein interactions) for each omics layer and then integrating these networks to reveal functional relationships and modules driving disease [23]. This approach reduces complexity while incorporating valuable biological context.

Late Integration (Model-Level): This strategy builds separate predictive models for each omics type and combines their predictions at the end [23]. Using methods like weighted averaging or stacking, this ensemble approach is robust, computationally efficient, and handles missing data well [23]. However, it may miss subtle cross-omics interactions that aren't strong enough to be captured by any single model.

Table 2: Multi-Omics Integration Strategies Comparison

| Integration Strategy | Timing of Integration | Advantages | Limitations |

|---|---|---|---|

| Early Integration | Before analysis | Captures all cross-omics interactions; preserves raw information [23] | Extremely high dimensionality; computationally intensive [23] |

| Intermediate Integration | During analysis | Reduces complexity; incorporates biological context through networks [23] | Requires domain knowledge; may lose some raw information [23] |

| Late Integration | After individual analysis | Handles missing data well; computationally efficient [23] | May miss subtle cross-omics interactions [23] |

State-of-the-Art Machine Learning Techniques

Several advanced machine learning architectures have proven particularly effective for multi-omics data integration, each offering distinct advantages for specific research contexts.

Autoencoders (AEs) and Variational Autoencoders (VAEs): These unsupervised neural networks compress high-dimensional omics data into dense, lower-dimensional latent spaces [23]. This dimensionality reduction makes integration computationally feasible while preserving key biological patterns, creating a unified representation where data from different omics layers can be effectively combined [23].

Graph Convolutional Networks (GCNs): Specifically designed for network-structured data, GCNs represent genes and proteins as nodes and their interactions as edges [23]. These networks learn from biological structure by aggregating information from a node's neighbors to make predictions, proving particularly effective for clinical outcome prediction in complex conditions like cancer [23].

Similarity Network Fusion (SNF): This approach creates patient-similarity networks from each omics layer separately, then iteratively fuses them into a single comprehensive network [23]. The process strengthens robust similarities while removing weak ones, enabling more accurate disease subtyping and prognosis prediction [23].

Transformers: Originally developed for natural language processing, transformer architectures adapt effectively to biological data through their self-attention mechanisms [23]. These systems learn to weigh the importance of different features and data types, identifying which modalities matter most for specific predictions and extracting critical biomarkers from noisy datasets [23].

Knowledge Graphs and Semantic Technologies

Knowledge graphs provide a powerful framework for structuring multi-omics data by representing biological entities as nodes (genes, proteins, metabolites, diseases) and their relationships as edges (interactions, regulations, associations) [24] [27]. This explicit representation of relationships enables more sophisticated querying and reasoning about biological systems.

When enhanced with Graph Retrieval-Augmented Generation (GraphRAG), knowledge graphs enable AI systems to make sense of large, heterogeneous datasets by combining retrieval with structured graph representations [24]. This approach converts unstructured and multi-modal data into knowledge graphs where relationships are explicit and easier to retrieve, significantly improving contextual depth and reducing hallucinations in AI-generated content [24]. For multi-omics research, GraphRAG allows datasets and scientific literature to be jointly embedded in the same retrieval space, enabling seamless cross-validation of findings across data types [24].

Practical Implementation and Workflows

Integrated Multi-Omics Analysis Pipeline

Implementing a robust multi-omics integration pipeline requires careful attention to each processing stage, from raw data to biological insights. The following workflow represents current best practices for preparing simulation-ready data.

Experimental Protocols and Methodologies

Protocol 1: Bulk Multi-Omics Integration Using Flexynesis

Flexynesis represents a state-of-the-art deep learning toolkit specifically designed for bulk multi-omics data integration in precision oncology and beyond. This protocol outlines its implementation for predictive modeling tasks [25].

Objective: Integrate multiple omics datasets to predict clinical outcomes such as drug response, disease subtypes, or patient survival.

Input Data Requirements:

- Multiple omics data matrices (genomics, transcriptomics, epigenomics, proteomics) from the same sample set

- Clinical annotation data including outcome variables (e.g., treatment response, survival time, disease status)

- Training set: 70% of samples, Test set: 30% of samples

Methodology:

- Data Preprocessing: Normalize each omics dataset separately using platform-specific methods (e.g., TPM for RNA-seq, beta values for methylation arrays)

- Feature Selection: Apply variance-based filtering and remove low-variance features across all omics layers

- Model Architecture Selection: Choose from fully connected or graph-convolutional encoders based on data structure and sample size

- Supervisor Attachment: Connect multi-layer perceptron (MLP) heads for specific tasks:

- Regression: Linear output with mean squared error loss for continuous variables (e.g., drug sensitivity)

- Classification: Softmax output with cross-entropy loss for categorical variables (e.g., disease subtype)

- Survival: Cox Proportional Hazards loss function for time-to-event data

- Multi-task Configuration: For complex predictive tasks, attach multiple supervisor MLPs that jointly shape the embedding space

- Training Protocol: Implement training/validation splits with early stopping and hyperparameter optimization

Validation: Benchmark against classical machine learning methods (Random Forest, Support Vector Machines, XGBoost, Random Survival Forest) to ensure performance superiority [25].

Protocol 2: Knowledge Graph Construction for Multi-Omics Data

This protocol details the construction of biological knowledge graphs for enhanced data integration and retrieval, particularly when combined with GraphRAG methodologies [24] [27].

Objective: Create a structured knowledge representation that connects entities across omics layers and enables sophisticated querying for biological discovery.

Data Sources:

- Multi-omics experimental data (genomic variants, gene expression, protein abundance)

- Public biological databases (protein-protein interactions, pathway databases, gene-disease associations)

- Scientific literature (text mining outputs, established relationships)

Construction Methodology:

- Entity Extraction: Identify and normalize biological entities from each data source:

- Genes: HGNC nomenclature

- Proteins: UniProt identifiers

- Metabolites: HMDB or ChEBI identifiers

- Diseases: OMIM or MONDO identifiers

- Relationship Definition: Establish typed relationships between entities:

- Molecular: "interactswith", "regulates", "expressedas"

- Functional: "participatesinpathway", "has_function"

- Clinical: "associatedwith", "biomarkerfor"

- Graph Population: Create nodes for each entity and edges for each relationship, storing quantitative attributes (z-scores, p-values, effect sizes) as node properties

- Community Detection: Partition the graph into biologically meaningful communities by tissue, cancer type, or gene family to improve retrieval efficiency

- GraphRAG Integration: Implement retrieval mechanisms that traverse the graph structure to answer complex biological queries

Application: Use the constructed knowledge graph for hypothesis generation, biomarker discovery, and drug repurposing by identifying previously unrecognized connections across omics layers [24].

Research Reagent Solutions for Multi-Omics Studies

Table 3: Essential Research Reagents and Platforms for Multi-Omics Experiments

| Reagent/Platform | Function | Application in Multi-Omics |

|---|---|---|

| Next-Generation Sequencing (NGS) | High-throughput DNA/RNA sequencing | Genomics (WGS, WES), transcriptomics (RNA-seq), epigenomics (ChIP-seq, ATAC-seq) [23] |

| Mass Spectrometry | Protein and metabolite identification and quantification | Proteomics (LC-MS/MS), metabolomics (LC-MS, GC-MS) [23] |

| Single-Cell Multi-Omics Platforms | Simultaneous measurement of multiple omics layers from single cells | Single-cell RNA-seq + ATAC-seq, CITE-seq (RNA + protein) [26] |

| Spatial Transcriptomics | Gene expression analysis within tissue context | Integrating molecular profiles with histological structure [26] |

| Liquid Biopsy Assays | Non-invasive sampling of circulating biomarkers | Analysis of cfDNA, RNA, proteins, metabolites from blood [26] |

| Cell Line Encyclopedias (e.g., CCLE) | Reference databases of characterized cell lines | Pre-clinical models for drug response prediction [25] |

Applications in Predictive Biology and Drug Development

Clinical Translation and Therapeutic Applications

The integration of multi-omics data has generated significant impact across multiple therapeutic areas, particularly in oncology, where it enables more precise patient stratification and treatment selection.

Precision Oncology: Multi-omics profiling allows researchers to move beyond histopathological classification to molecular subtyping of cancers. For example, integrating genomic, transcriptomic, and proteomic data has enabled identification of novel cancer subtypes with distinct clinical outcomes and therapeutic vulnerabilities [23] [25]. In glioblastoma and lower-grade glioma, multi-omics integration has improved survival prediction accuracy by capturing the complex interplay between genetic drivers and transcriptional programs [25].

Drug Response Prediction: By modeling how genomic alterations propagate through transcriptional and proteomic networks to influence therapeutic sensitivity, multi-omics integration significantly improves drug response prediction. Studies have demonstrated high correlation between predicted and actual drug sensitivity in cancer cell lines when models incorporate both genomic (copy number variations) and transcriptomic data [25]. This approach is particularly valuable for targeted therapies where patient selection based on single biomarkers has shown limited success.

Biomarker Discovery: Multi-omics approaches have uncovered novel biomarkers that would remain invisible in single-omics analyses. For instance, integrating gene expression and promoter methylation profiles enables accurate classification of microsatellite instability (MSI) status in gastrointestinal and gynecological cancers, a crucial predictor of response to immunotherapy [25]. Similarly, combining proteomic and metabolomic data has identified composite biomarkers with superior diagnostic and prognostic performance compared to single-analyte markers.

Emerging Trends and Future Directions

The field of multi-omics integration continues to evolve rapidly, with several emerging trends shaping its future applications in predictive biology and drug development.

Single-Cell Multi-Omics: The transition from bulk to single-cell analyses represents a fundamental shift in resolution, enabling researchers to deconvolve cellular heterogeneity and identify rare cell populations driving disease processes [26]. Technological advances now allow simultaneous measurement of genomic, transcriptomic, and epigenomic information from the same cells, correlating specific molecular changes within individual cells rather than across population averages [26].

Temporal and Spatial Integration: Incorporating time-series data and spatial context adds critical dimensions to multi-omics analyses. Longitudinal sampling can capture disease progression dynamics, while spatial technologies preserve architectural relationships between cells in tissues [26]. These approaches are particularly valuable for understanding tumor microenvironment interactions and therapy resistance evolution.

Federated Learning and Privacy-Preserving Analysis: As data privacy concerns grow, federated computing approaches enable collaborative model training without sharing sensitive patient data [23] [26]. This is especially important for multi-omics studies requiring diverse patient populations to ensure biomarker discoveries are broadly applicable across ethnic and geographic groups [26].

Clinical Decision Support Systems: The integration of multi-omics data with electronic health records (EHRs) is creating comprehensive clinical decision support tools that incorporate molecular profiles alongside traditional clinical parameters [23]. These systems enable personalized treatment planning based on both static genetic risk factors and dynamic molecular states, potentially transforming routine clinical practice.

Table 4: Multi-Omics Applications in Drug Development Pipeline

| Drug Development Stage | Multi-Omics Application | Impact |

|---|---|---|

| Target Identification | Integration of genomic, transcriptomic, and proteomic data to identify dysregulated pathways [24] | More therapeutic targets with stronger biological validation |

| Pre-clinical Validation | Multi-omics profiling of disease models (cell lines, animal models) [25] | Better prediction of efficacy and toxicity before human trials |

| Clinical Trial Design | Patient stratification based on multi-omics signatures [23] | Increased trial success rates through enrichment strategies |

| Biomarker Development | Discovery of composite biomarkers across omics layers [23] [24] | Companion diagnostics with higher sensitivity and specificity |

| Post-market Surveillance | Longitudinal multi-omics monitoring of treatment response [26] | Earlier detection of resistance mechanisms and adverse events |

The integration of multi-omics data represents a fundamental enabling technology for predictive biology, transforming how researchers simulate complex biological systems and develop therapeutic interventions. By combining diverse molecular measurements into unified computational models, this approach captures the essential complexity of biological systems that single-omics methods cannot address. The technical challenges—from data heterogeneity to computational scalability—remain substantial, but advances in AI, knowledge graphs, and specialized tools like Flexynesis are rapidly overcoming these limitations [25].

For drug development professionals and researchers, mastering multi-omics integration is becoming increasingly essential for harnessing the full potential of biomedical data. As the field evolves toward single-cell resolution, temporal dynamics, and clinical integration, multi-omics approaches will continue to enhance the predictive power of biological simulations, ultimately accelerating the development of personalized therapies and improving patient outcomes across diverse disease areas.

Proteins are fundamental components of all living organisms, responsible for critical functions including material transport, energy conversion, and catalytic reactions [28]. A protein's function is intrinsically determined by its three-dimensional structure, which emerges through a process known as protein folding—where a linear chain of amino acids spontaneously folds into a complex, functional conformation [28] [29]. For decades, predicting the 3D structure of a protein from its amino acid sequence alone has stood as a grand challenge in computational biology, often referred to as the "protein folding problem" [30].

The significance of this problem is underscored by the staggering disparity between known protein sequences and experimentally determined structures. While databases contain over 200 million known protein sequences, only approximately 200,000 structures have been determined through experimental methods such as X-ray crystallography, nuclear magnetic resonance (NMR), and cryo-electron microscopy (cryo-EM) [28] [29]. These experimental approaches, while considered the gold standard, are often costly, time-consuming, and technically demanding, creating a critical bottleneck in structural biology [30] [28].

The Levinthal paradox highlights the computational complexity of this challenge, noting that if a protein were to sample all possible conformations randomly to find its native structure, it would take an astronomically long time. Yet, proteins in nature fold reliably in microseconds to seconds, suggesting specific folding pathways rather than random conformational searches [28]. This paradox has motivated scientists for over 50 years to develop computational approaches that can predict protein structures accurately and efficiently, bridging the sequence-structure gap [30].

The Pre-AI Landscape of Computational Prediction

Before the advent of modern AI systems, computational protein structure prediction methods primarily fell into three categories: template-based modeling (TBM), template-free modeling (TFM), and ab initio approaches [28].

Template-based modeling relied on identifying known protein structures as templates, typically through sequence or structural homology. Key steps in TBM included identifying homologous template structures, creating sequence alignments, mapping target sequences to template structures, and iterative quality assessment [28]. Tools like MODELLER and SwissPDBViewer represented this approach, which worked effectively when homologous structures were available but struggled with novel folds lacking structural templates [28].

Template-free modeling predicted protein structures directly from sequence without using global template information, instead leveraging multiple sequence alignments to extract co-evolutionary signals and correlation patterns [28].

Ab initio methods represented the true "free modeling" approach, based purely on physicochemical principles without reliance on existing structural information. These methods faced significant challenges due to the computational complexity of simulating protein folding physics [28].

The Critical Assessment of protein Structure Prediction (CASP) experiments, launched in 1994, provided a rigorous blind testing framework to evaluate the accuracy of computational methods [29]. For years, progress was incremental, with the best methods achieving Global Distance Test (GDT) scores of only about 40 out of 100 for the most difficult proteins as recently as 2016 [29]. This landscape changed dramatically with the introduction of artificial intelligence approaches.

The AI Revolution: Deep Learning Enters the Field

AlphaFold1: The Initial Breakthrough

DeepMind's initial version of AlphaFold, introduced in 2018, represented a significant advancement in protein structure prediction. AlphaFold1 placed first in the overall rankings of the 13th Critical Assessment of Structure Prediction (CASP13) in December 2018 [29]. The system was particularly successful at predicting accurate structures for the most difficult targets where no existing template structures were available [29].

AlphaFold1 built upon work from the early 2010s that analyzed large databanks of related DNA sequences to find correlated changes between residues that weren't consecutive in the main chain, suggesting physical proximity [29]. AlphaFold1 extended this approach by estimating probability distributions for distances between residues, effectively transforming contact maps into distance maps, and employed more advanced learning methods to develop inferences [29]. Despite its success, this initial version had limitations in overall accuracy and practical applicability.

AlphaFold2: Achieving Atomic Accuracy

The 2020 version, AlphaFold2, represented a complete architectural redesign that dramatically improved prediction accuracy. In the CASP14 assessment in November 2020, AlphaFold2 achieved a level of accuracy far exceeding any other method, scoring above 90 on CASP's global distance test for approximately two-thirds of proteins [29]. The system demonstrated atomic accuracy competitive with experimental methods in a majority of cases, with a median backbone accuracy of 0.96 Å (compared to 2.8 Å for the next best method) [30].

AlphaFold2 introduced several key technical innovations. The system employs an end-to-end deep learning architecture that jointly embeds multiple sequence alignments and pairwise features [30]. At the core of its design is the Evoformer module—a novel neural network block that enables information exchange between MSA and pair representations, allowing direct reasoning about spatial and evolutionary relationships [30]. The structure module then introduces an explicit 3D structure through rotations and translations for each residue, rapidly refining these from an initial trivial state to a highly accurate protein structure with precise atomic details [30].

Unlike the initial version, AlphaFold2 operates as a single, differentiable, end-to-end model based on pattern recognition, trained in an integrated manner [29]. The system uses a form of attention network that allows the AI to identify parts of a larger problem, then piece them together to obtain an overall solution [29]. The training process leveraged over 170,000 proteins from the Protein Data Bank, utilizing processing power between 100-200 GPUs [29].

Expanding Capabilities: AlphaFold3 and Beyond

In May 2024, DeepMind announced AlphaFold3, which extends capabilities beyond single-chain proteins to predict the structures of protein complexes with DNA, RNA, various ligands, and ions [29]. AlphaFold3 introduces the "Pairformer" architecture, considered similar to but simpler than the Evoformer used in AlphaFold2, and employs a diffusion model that begins with a cloud of atoms and iteratively refines their positions to generate 3D molecular structures [29]. The new method shows at least 50% improvement in accuracy for protein interactions with other molecules, with prediction accuracy effectively doubling for certain key categories of interactions [29].

The revolutionary impact of AlphaFold was recognized with the 2024 Nobel Prize in Chemistry, awarded to Demis Hassabis and John Jumper of Google DeepMind for protein structure prediction [31] [29]. This achievement represented the realization of Hassabis's long-stated goal to win Nobel prizes with the company's AI tools [31].

Alternative Approaches and the Open-Source Ecosystem

While AlphaFold has dominated attention in the field, several alternative approaches and open-source initiatives have emerged, fostering diversity and accessibility in AI-driven protein structure prediction.

RoseTTAFold represents another significant deep learning model for protein structure prediction. The Rosetta Commons community continues to drive innovation in biomolecular modeling, recently releasing RoseTTAFold2-PPI for predicting protein-protein interactions [32]. This ecosystem emphasizes open, reproducible science and collaborative development.

OpenFold3 has emerged as a crucial open-source alternative to AlphaFold3. Created by a large consortium of researchers led by Mohammed AlQuraishi at Columbia University, OpenFold3 provides a facsimile of the AlphaFold3 platform that can be used for commercial purposes, including drug development [33]. This addresses a significant limitation of AlphaFold3, which can only be used by individuals, non-commercial organizations, or journalists [33]. The Federated OpenFold3 Initiative has brought together pharmaceutical companies to train the AI model on proprietary data while maintaining company confidentiality [33].

D-I-TASSER represents a hybrid approach that integrates multisource deep learning potentials with iterative threading fragment assembly simulations [34]. This method introduces a domain splitting and assembly protocol for automated modeling of large multidomain protein structures. Recent benchmark tests demonstrate that D-I-TASSER outperforms both AlphaFold2 and AlphaFold3 on single-domain and multidomain proteins, folding 81% of protein domains and 73% of full-chain sequences in the human proteome [34]. The results are highly complementary to AlphaFold2 models, highlighting the value of integrating deep learning with physics-based folding simulations [34].

Table 1: Performance Comparison of Protein Structure Prediction Methods

| Method | Approach Type | Key Features | Reported TM-Score | Key Limitations |