Performance Benchmarking of Engineered Strains: A Framework for Industrial Standardization in Biomanufacturing and Drug Development

This article provides a comprehensive guide for researchers and drug development professionals on establishing robust performance benchmarking for engineered microbial strains.

Performance Benchmarking of Engineered Strains: A Framework for Industrial Standardization in Biomanufacturing and Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on establishing robust performance benchmarking for engineered microbial strains. It covers the foundational principles of benchmarking, explores advanced methodological frameworks like the Design-Build-Test-Learn (DBTL) cycle, and details strategies for troubleshooting common pitfalls in strain validation. By presenting best practices for comparative analysis against industrial standards, this resource aims to accelerate the development of high-performing, commercially viable strains for biomanufacturing and therapeutic production, ultimately de-risking scale-up and regulatory approval.

Establishing the Foundation: Core Principles and Industrial Imperatives of Strain Benchmarking

Defining Performance Benchmarking in Industrial Strain Engineering

Performance benchmarking in industrial strain engineering is a systematic process for evaluating and comparing the effectiveness of microbial strains against standardized metrics and established industrial baselines. It transforms strain development from an art into a quantitative science, enabling researchers to make data-driven decisions for optimizing biomanufacturing processes for drugs, biofuels, and sustainable chemicals.

The DBTL: The Core Benchmarking Framework

The Design–Build–Test–Learn (DBTL) cycle is the dominant framework for performance benchmarking in modern strain engineering [1]. This iterative process structures the journey from a genetic design to a high-performing industrial strain.

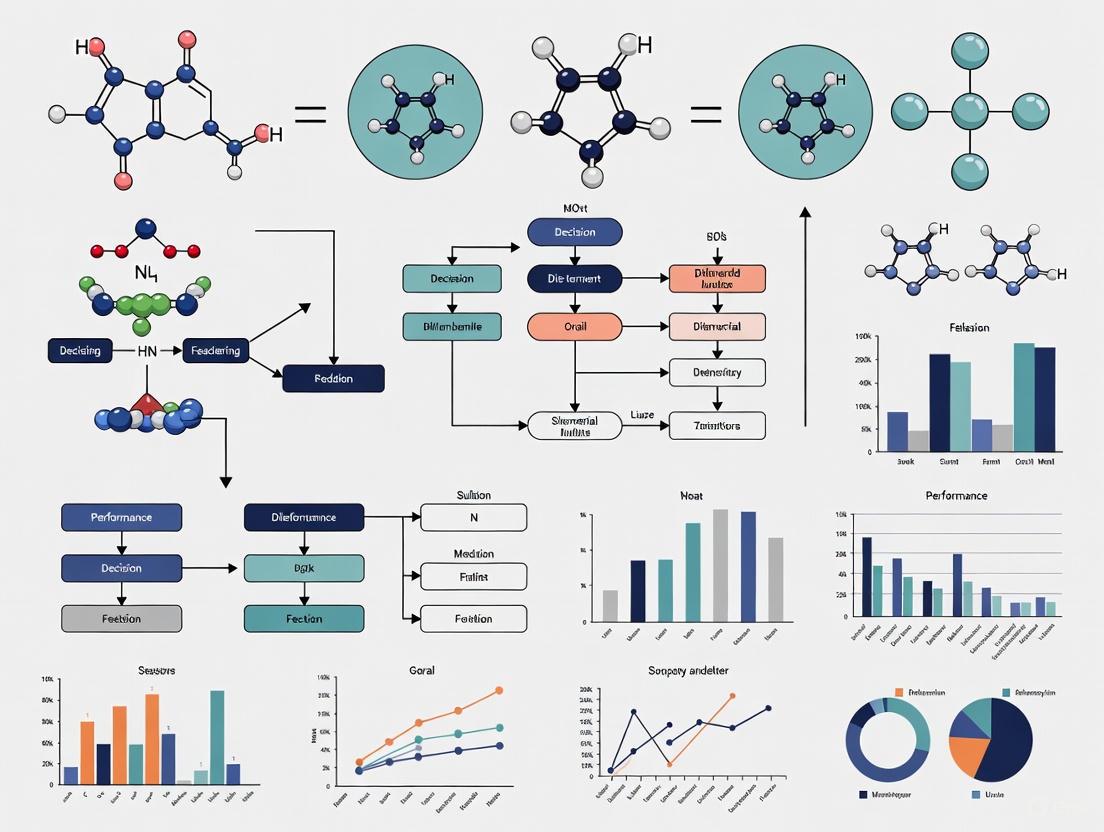

The following diagram illustrates the continuous, iterative workflow of the DBTL framework and the key activities at each stage.

Stage 1: Design

The "Design" stage formulates the genetic engineering strategy, which exists on a spectrum from fully rational to random approaches [1]:

- Rational Design: Involves introducing specific, predefined genetic edits based on prior knowledge. This is often used for well-understood pathways.

- Semi-Rational Design: Targets hundreds to thousands of hypothesis-driven genetic targets, such as enzyme variants.

- Random Approaches: Utilize methods like chemical mutagenesis or Adaptive Laboratory Evolution (ALE) to generate genetic diversity without a pre-conceived hypothesis, useful for complex traits like tolerance.

Stage 2: Build

The "Build" stage encompasses the physical implementation of the genetic designs. Key methods include [1]:

- Classical Methods: Techniques like chemical or UV mutagenesis are easy to implement and access the whole genome but generate random changes that are difficult to deconvolute.

- Precise Editing: Methods such as CRISPR-based editing, recombineering, and saturation mutagenesis allow for specific, defined genetic modifications, though they often require more time and expertise.

Stage 3: Test

The "Test" stage involves phenotyping the engineered strains to measure performance against key benchmarks. This includes [1]:

- Titer: The final concentration of the target product (e.g., in g/L).

- Yield: The conversion efficiency of the feedstock into the product.

- Productivity: The rate of product formation (e.g., in g/L/h).

- Robustness: Strain performance under scale-up conditions, including tolerance to inhibitors and other stressors.

Stage 4: Learn

The "Learn" stage uses computational tools to analyze the "Test" data. The goal is to connect the genotype (the genetic change) to the phenotype (the observed performance) and build predictive models to inform the "Design" of the next, improved strain cycle [1].

Quantitative Benchmarking Metrics and Industrial Standards

For a benchmarking exercise to be meaningful, qualitative observations must be translated into quantitative data. The table below summarizes the key performance indicators (KPIs) used to benchmark industrial strains.

Table 1: Key Quantitative Metrics for Benchmarking Engineered Strains

| Metric Category | Specific Metric | Industrial Significance & Benchmarking Context |

|---|---|---|

| Production Performance | Titer (g/L) | Indicates the maximum achievable product concentration in a fermentation broth; directly impacts downstream purification costs and volumetric efficiency [1]. |

| Yield (g product / g substrate) | Measures metabolic efficiency and conservation of mass; critical for cost-effectiveness, especially with expensive feedstocks [1]. | |

| Productivity (g/L/h) | Reflects the synthesis rate of the target molecule; determines the output of a production bioreactor over time [1]. | |

| Strain Robustness | Tolerance to Inhibitors / Stressors | Quantifies growth and production under real-world conditions (e.g., in hydrolysates or high product concentrations); often measured as IC50 or relative growth rate [1]. |

| Genetic Stability | Assesses the ability to maintain productivity over multiple generations in prolonged fermentation; essential for consistent industrial performance. | |

| Scale-Up Potential | Performance in Bioreactors | Benchmarks the translation of lab-scale performance (e.g., in shake flasks) to controlled, large-scale bioreactors; a key de-risking step [1]. |

| Oxygen Uptake & Heat Generation | Critical parameters for designing and scaling fermentation processes, impacting reactor design and operational costs. |

Experimental Protocols for Benchmarking

A standardized experimental workflow is essential for generating comparable and reliable benchmarking data.

- Strain Cultivation: Inoculate strains in a defined medium and grow under controlled, standardized conditions (temperature, pH, agitation) to ensure reproducibility.

- Fermentation in Bioreactors: Conduct experiments in bench-top (1-10 L) bioreactors with tight control over dissolved oxygen, pH, temperature, and feed rates. This provides data relevant to industrial scale-up, unlike shake flasks [1].

- Analytical Sampling: Take periodic samples throughout the fermentation process to track metrics over time.

- Product Quantification: Analyze samples using techniques like High-Performance Liquid Chromatography (HPLC) or Gas Chromatography (GC) to accurately measure the concentration of the target product and metabolic byproducts [1].

- Data Analysis: Calculate the key metrics (Titer, Yield, Productivity) and compare them against a baseline control strain and other engineered variants.

Research Reagent Solutions for Strain Benchmarking

Successful benchmarking relies on a suite of specialized reagents and tools.

Table 2: Essential Research Reagents and Tools for Strain Benchmarking

| Reagent / Tool | Function in Benchmarking |

|---|---|

| CRISPR-Cas Systems | Enables precise genome editing for the "Build" phase, allowing for knockout, knock-in, and fine-tuning of gene expression in a high-throughput manner [1]. |

| Defined Growth Media | Provides a consistent and reproducible chemical environment for the "Test" phase, eliminating variability introduced by complex, undefined media components. |

| HPLC/GC Systems | The gold standard for the accurate separation, identification, and quantification of target molecules and metabolites in culture samples during the "Test" phase [1]. |

| DNA Sequencing (NGS) | Used in the "Learn" phase for genotyping engineered strains to confirm intended edits and identify potential unintended mutations that could affect performance [1]. |

| Pathway Modeling Software | Computational tools that use genomic and metabolic data to predict flux distributions in the "Learn" phase, helping to identify new targets for the next "Design" cycle [1]. |

| Fiber Optic Strain Sensors | Advanced sensors that can be used in bioreactors to monitor physical parameters like strain on impellers or internal structures in real-time during scale-up studies [2]. |

The Critical Role of Benchmarking in De-risking Drug Development and Bioprocess Scale-Up

In the high-stakes landscape of biopharmaceutical development, benchmarking serves as a critical navigational tool, enabling organizations to mitigate profound financial and technical risks. The average capitalized research and development (R&D) cost to bring a new biopharmaceutical to market has escalated to approximately $2.8 billion, with an overall clinical success rate from Phase I to approval languishing at around 12% [3]. Within this daunting financial framework, Chemistry, Manufacturing, and Controls (CMC) activities—encompassing process development and manufacturing—represent a significant and non-negotiable cost component, constituting 13–17% of the total R&D budget from pre-clinical trials to approval [3]. This translates to a required allocation of approximately $60 million for early-phase (pre-clinical to Phase II) and $70 million for late-phase (Phase III to regulatory review) material preparation to ensure one market success annually [3]. For complex therapeutics like monoclonal antibodies (mAbs), these figures underscore a simple reality: without robust benchmarking against industrial standards to guide decision-making, organizations risk catastrophic resource misallocation and project failure.

Benchmarking extends beyond mere cost accounting; it is a multidimensional practice that de-risks development by providing objective, data-driven frames of reference for evaluating engineered biological systems. In the context of bioprocess scale-up, it involves the systematic comparison of a novel process or producer strain against a well-defined Industrial Standard Platform. This platform is not a single entity but a composite benchmark, typically defined by a combination of key performance indicators (KPIs) including volumetric productivity (titer), yield (g product/g substrate), productivity (g/L/h), and final product quality [4] [5]. For mammalian cell culture processes, the industrial standard for commercial mAbs has seen titers rise from 0.2 g/L in the 1990s to over 3.5 g/L in 2023, with more than 12% of commercial biologics now exceeding 6 g/L [4]. Adopting advanced platform technologies like the WuXiUI ultra-intensified fed-batch platform can increase productivity by 3–6 fold and drug substance output by up to 500% at a similar production scale [4]. This rigorous comparative analysis allows researchers and development scientists to answer a pivotal question: "Does our new, innovative process or strain offer a meaningful advantage over the established, de-risked state-of-the-art?"

Benchmarking in Practice: A Structured Workflow for Strain and Process Evaluation

Implementing an effective benchmarking strategy requires a disciplined, staged approach that moves from controlled laboratory conditions to a comprehensive evaluation mirroring commercial production realities. The following workflow outlines the critical stages for de-risking an engineered strain or process through systematic comparison.

Figure 1: A structured workflow for de-risking bioprocess development through iterative benchmarking. KPIs: Key Performance Indicators; LCA: Life Cycle Assessment; CDMO: Contract Development and Manufacturing Organization.

Laboratory-Scale Benchmarking

The initial stage involves a head-to-head comparison under controlled, small-scale conditions (e.g., bench-scale bioreactors). The objective is to collect robust preliminary data on critical growth and production metrics. The engineered strain is evaluated alongside the industrial benchmark strain in a standardized medium and under defined process conditions (e.g., fed-batch). Key performance indicators (KPIs) such as maximum cell density, specific growth rate, product titer, and yield are measured and statistically compared [5]. This stage acts as a primary filter, identifying candidates that merit the significant investment of further scale-up studies.

Controlled Scale-Up Evaluation

Candidates that pass laboratory screening advance to a scaled-down model of the manufacturing environment. This stage assesses how the strain performance translates to larger, more industrially relevant scales (e.g., pilot-scale bioreactors of 50-1000L). The focus expands to include power input (kW/m³), oxygen mass transfer (kLa), shear sensitivity, and process consistency across multiple batches [4] [6]. A key deliverable is demonstrating a 98% success rate in manufacturing batches, a benchmark achieved by top-tier manufacturers using single-use bioreactors (SUBs) at scales up to 16,000L [4]. This phase is critical for identifying scale-up liabilities that are not apparent at the benchtop.

Techno-Economic and Environmental Assessment

The final pre-transfer stage is a holistic evaluation of economic viability and sustainability. A techno-economic analysis (TEA) models the full cost of goods (COG) at commercial scale, while a life cycle assessment (LCA) evaluates environmental impact [7] [5]. The process is benchmarked against industry COG standards, which for mAbs have decreased from over $10,000 per gram in the 1980s-90s to $10s-100s per gram today [3]. For bio-based chemicals like succinic acid, the benchmark is production cost, with a current target of $1.6–1.9/kg to compete with petrochemical routes [5]. Successful passage of this stage provides the financial and strategic justification for technology transfer and commercial development.

Quantitative Benchmarking: Comparing Engineered Yeasts for Succinic Acid Production

The following table provides a concrete example of benchmarking in action, comparing the performance of various engineered Yarrowia lipolytica yeast strains against each other and industrial benchmarks for the production of succinic acid (SA), a key platform chemical for bioplastics.

Table 1: Benchmarking performance of engineered Yarrowia lipolytica strains for succinic acid production against industrial targets [5].

| Strain / Benchmark | Genotype Modifications | Carbon Source | Titer (g/L) | Yield (g/g) | Productivity (g/L/h) |

|---|---|---|---|---|---|

| Y. lipolytica Y-4215 | sdh2Δ, Chemical Mutagenesis & ALE |

Glucose | 50.2 | 0.43 | 0.93 |

| Y. lipolytica PGC01003 | sdh5Δ |

Crude Glycerol | 160.2 | 0.40 | 0.40 |

| Y. lipolytica RIY420 | GUT1 in PGC01003 |

Glycerol | 178.0 | 0.46 | 0.44 |

| Y. lipolytica PGC202 | YlSCS2 in PGC62 (sdh5Δ, ach1Δ, ScPCK) |

Glycerol | 110.7 | 0.53 | 0.80 |

| Y. lipolytica PSA02004 | sdh5Δ, ALE in Glucose |

Food Waste Hydrolysate | 140.6 | 0.47 | 0.44 |

| Industrial Bacterial Strains | Various (e.g., E. coli) | Glucose & CO₂ | >100 | Varies | High |

| Industrial Economic Target | N/A | Lignocellulosic Sugars | N/A | N/A | (Cost: <$2.0/kg) |

The data reveals a clear trajectory of performance enhancement through successive rounds of metabolic engineering. The best-performing yeast strains, such as RIY420 and PGC202, now achieve titers (178 g/L and 110.7 g/L, respectively) and yields (0.46 g/g and 0.53 g/g) that are competitive with industrial bacterial systems [5]. Furthermore, the successful utilization of non-food feedstocks like crude glycerol and food waste hydrolysates by several top strains demonstrates progress toward the critical economic benchmark of reducing production costs below $2.0/kg, a key threshold for competing with petrochemical-derived succinic acid [5].

Essential Methodologies for Robust Benchmarking Experiments

To generate reliable and comparable benchmarking data, standardized experimental protocols are non-negotiable. The following section details core methodologies for evaluating key performance parameters.

Fed-Batch Fermentation for Titer and Productivity Assessment

This protocol is the industry standard for determining maximum volumetric productivity (titer) and overall process rate (productivity) [5].

- Principle: A initial batch volume of medium is provided, followed by the controlled addition of a concentrated nutrient feed solution during the production phase. This prevents catabolite repression, controls growth rate, and extends the production phase to achieve high cell densities and product titers.

- Procedure:

- Inoculum Preparation: Grow a seed culture of the benchmark and engineered strain in a shake flask for 24-48 hours.

- Bioreactor Inoculation: Transfer the seed culture to a bench-scale (e.g., 1-5 L) bioreactor with a defined initial working volume.

- Environmental Control: Maintain constant temperature, dissolved oxygen (DO > 30%), and pH (e.g., 3.0 or 6.0, depending on the organism and pathway) throughout the run [5].

- Initiate Feeding: Begin the nutrient feed once the initial carbon source is nearly depleted, typically indicated by a spike in DO.

- Sampling and Analysis: Take periodic samples to measure cell density (OD600), substrate concentration (e.g., via HPLC), and product titer (e.g., via HPLC or GC).

- Harvest: Terminate the fermentation when productivity ceases or the maximum run time is reached.

- Data Analysis: Calculate the final titer (g/L), yield (g product/g substrate), and volumetric productivity (g/L/h = final titer / total process time).

Metabolic Pathway Flux Analysis

Understanding the internal distribution of metabolic resources is key to identifying bottlenecks and guiding further strain engineering.

- Principle: Uses isotopic tracers (e.g., ¹³C-labeled glucose) and analytical techniques like Mass Spectrometry (MS) to quantify the flow of carbon through central metabolism, thereby inferring the fluxes in various pathways.

- Procedure:

- Pulse Labeling: Grow cells on a mixture of natural and ¹³C-labeled substrate during the production phase.

- Rapid Sampling and Quenching: Take a culture sample and immediately quench metabolism (e.g., in cold methanol) to "freeze" the metabolic state.

- Metabolite Extraction: Extract intracellular metabolites.

- MS Analysis: Analyze the extracts using Gas Chromatography-MS (GC-MS) or Liquid Chromatography-MS (LC-MS) to determine the isotopic labeling patterns in key metabolic intermediates.

- Data Analysis: Use computational models to interpret the mass isotopomer distributions and calculate the flux (mmol/gDCW/h) through major pathways like glycolysis, TCA cycle, and the target product pathway.

Table 2: Key research reagent solutions for bioprocess benchmarking [4] [7] [5].

| Reagent / Solution | Function in Benchmarking | Example / Industrial Standard |

|---|---|---|

| Proprietary Media Formulations | Provides optimized nutrients for high cell density and productivity; a key differentiator for CDMOs. | Custom in-house medium formulations used with platforms like WuXiUI to intensify processes [4]. |

| Single-Use Bioreactors (SUBs) | Flexible, scalable manufacturing platform that cuts setup and turnaround times by over 40% vs. stainless steel [4]. | 4,000L - 6,000L SUBs used in a scale-out strategy for flexible manufacturing [4]. |

| Process Analytical Technology (PAT) | Tools for real-time monitoring and control of critical process parameters (e.g., pH, DO, metabolites). | In-line sensors and automated control loops for precise, high-productivity process control [4]. |

| Crude Glycerol / Hydrolysates | Low-cost, non-food renewable feedstocks used to benchmark production cost and sustainability. | Crude glycerol (by-product of biodiesel) and food waste hydrolysates can reduce SA production costs to $1.6–1.9/kg [5]. |

| High-Productivity Cell Line Platforms | Engineered host systems providing a baseline for evaluating new strains or processes. | WuXia TrueSite platform achieves average mAb titers >8g/L with superior stability [4]. |

Visualizing the Engineered Succinic Acid Pathway for Benchmarking

A critical aspect of benchmarking engineered strains is mapping their modified metabolic pathways against those of wild-type or benchmark organisms. This visual comparison helps identify the specific genetic alterations and their intended functional impacts.

Figure 2: Metabolic pathway engineering in yeast for enhanced succinic acid production. Key modifications include overexpression of reductive TCA cycle enzymes (PYC, MAE) and disruption of the succinic acid consumption pathway (SDH knockout) [5].

Strategic Implementation: Integrating Benchmarking into Biopharma Development

Ultimately, the value of benchmarking is realized through its integration into strategic decision-making across the drug development lifecycle. This integration occurs at multiple levels:

- Portfolio Management: Quantitative benchmarks, such as the $60M/$70M budget allocation required for early/late-phase material per market success, enable data-driven portfolio prioritization [3]. Companies can use these figures to balance high-risk, high-reward projects with those having a higher probability of technical success.

- CDMO and Technology Selection: Benchmarking manufacturing strategies is crucial. The industry is transitioning from large-volume stainless-steel reactors to flexible, single-use bioreactors (SUBs), which can reduce setup times by over 40% [4]. When evaluating a Contract Development and Manufacturing Organization (CDMO), benchmarks such as a technology-transfer-to-PPQ timeline of 3.5–6 months (vs. an industry standard of 9–12 months) are critical differentiators for accelerating speed-to-market [4].

- Regional and Global Strategy: On a macro scale, benchmarking helps regions position their life-science ecosystems. Analysis reveals that regions like Ireland and Singapore compete via high biomanufacturing-capacity-to-GDP ratios, while others like the UK and Canada compete through strong pipeline-asset-to-capacity ratios, indicating a focus on R&D and invention [6]. Understanding these strategic benchmarks guides national investment in infrastructure versus research.

In the pursuit of biologics and bio-based chemical development, benchmarking is the indispensable discipline that transforms subjective technical optimism into objective, de-risked business and scientific reality. It provides the critical data needed to navigate the immense financial pressures—where CMC contributions can exceed $130 million per successful drug—and technical complexities of scale-up [3]. By systematically comparing engineered strains and processes against industrial standards for titer, yield, productivity, and cost, organizations can make informed Go/No-Go decisions, allocate resources efficiently, and ultimately increase the probability of commercial success. As the industry advances with more complex modalities like multi-specific antibodies and ADCs, and as pressures for sustainable manufacturing intensify, the role of rigorous, multi-dimensional benchmarking will only become more critical in bridging the gap between laboratory innovation and robust, life-saving commercial products.

Key Industrial Standards and Metrics for Strain Performance (Titer, Yield, Productivity, Robustness)

In industrial biotechnology, the performance of microbial strains is quantitatively assessed using four key metrics: titer, yield, productivity, and robustness. These parameters form the foundation for evaluating the economic viability and scalability of bioprocesses, from laboratory discovery to commercial manufacturing. Titer represents the final concentration of a target compound, typically measured in grams per liter (g/L), indicating the process efficiency and directly influencing downstream purification costs. Yield quantifies the conversion efficiency of substrates into products, expressed as grams of product per gram of substrate (g/g), reflecting the metabolic efficiency of the engineered strain. Productivity measures the production rate, calculated as grams per liter per hour (g/(L·h)), determining the manufacturing throughput and facility utilization. Robustness has emerged as a critical fourth metric, representing a strain's ability to maintain stable performance despite environmental perturbations, genetic instability, or scale-up challenges.

The growing emphasis on robustness stems from the observed performance gaps between laboratory-optimized strains and their behavior in industrial settings. As noted in recent studies, "Microbial robustness refers to the ability of the microbe to maintain constant production performance (defined as titers, yields, and productivity) regardless of the various stochastic and predictable perturbations that occur in a scale-up bioprocess" [8]. This comprehensive review provides researchers with standardized frameworks for strain evaluation, comparative performance data across production systems, experimental protocols for metric quantification, and emerging tools for robustness engineering, enabling more effective benchmarking of engineered strains against industrial standards.

Quantitative Performance Benchmarking Across Production Systems

Performance Metrics for Succinic Acid Production in Yeasts

Table 1: Comparative performance of engineered yeast strains for succinic acid production

| Strain | Genotype | Carbon Source | Titer (g/L) | Yield (g/g) | Productivity (g/(L·h)) | Reference |

|---|---|---|---|---|---|---|

| Y. lipolytica Y-4215 | Chemical mutagenesis & ALE of Y-3314 | Glucose | 50.2 | 0.43 | 0.93 | [5] |

| Y. lipolytica PGC01003 | sdh5Δ | Crude Glycerol | 160.2 | 0.40 | 0.40 | [5] |

| Y. lipolytica PGC202 | YlSCS2 in PGC62 | Glycerol | 110.7 | 0.53 | 0.80 | [5] |

| Y. lipolytica RIY420 | GUT1 in PGC01003 | Glycerol | 178.0 | 0.46 | 0.44 | [5] |

| S. cerevisiae | Engineered for CO₂ fixation | Glucose + CO₂ | 16.8 | 0.17 | 0.21 | [5] |

The data reveals that engineered Yarrowia lipolytica strains generally achieve superior titers and productivity compared to Saccharomyces cerevisiae platforms. The highest titer of 178 g/L was achieved using a glycerol carbon source, highlighting the potential of using low-cost feedstocks. The PGC202 strain demonstrates an exceptional yield of 0.53 g/g, approaching the theoretical maximum for succinic acid production, coupled with high productivity of 0.80 g/(L·h). These metrics are critical for economic feasibility, as the cost of bio-based succinic acid remains higher than its chemical-based counterpart (€2.61/kg vs. €2.25/kg) [5].

High-Performance Strain Case Study: Ergothioneine Production

Table 2: Performance metrics for ergothioneine production in engineered E. coli

| Strain Engineering Strategy | Methyl Donor | Sulfur Source | Titer (g/L) | Scale | TRL |

|---|---|---|---|---|---|

| Betaine-based methyl supply + inorganic sulfur module | Betaine | Sodium thiosulfate | 7.2 | 5-L fermenter | 5-6 |

| Conventional amino acid supplementation | Methionine | Cysteine | 0.456 | Shake flasks | 3-4 |

Recent innovations in E. coli engineering for ergothioneine production demonstrate how metabolic reconstruction dramatically improves performance metrics. By implementing a betaine-driven methyl supply system and an inorganic sulfur source module, researchers eliminated the need for costly methionine and cysteine supplementation, substantially reducing production costs [9]. The engineered strain achieved a record ergothioneine titer of 7.2 g/L in a 5-L fermenter, representing a nearly 16-fold improvement over shake-flask performance with conventional amino acid supplementation. This advancement highlights the critical importance of precursor supply optimization in enhancing strain performance, with the technology now reaching TRL 5-6, indicating validation in a relevant industrial environment.

Experimental Protocols for Metric Quantification

Cultivation Conditions and Performance Assessment

Standardized cultivation protocols are essential for generating comparable performance metrics across different strains and laboratories. For aerobic high-throughput screening, researchers typically employ systems such as the BioLector I (M2p-labs GmbH) using CELLSTAR black clear-bottom 96-well microtiter plates with a working volume of 200 μL, sealed with AeraSeal films to prevent evaporation [10]. Cultures are incubated at 30°C with 85% humidity and shaking at 900 rpm for 24-36 hours, depending on the growth characteristics of the strain. For oxygen-limited conditions, non-baffled flasks sealed with trap loops containing glycerol create oxygen-limited environments, with shakers set to 150 rpm (rotation radius of 12.5 mm) [10]. Growth is continuously monitored using systems like the Cell Growth Quantifier, which records scattered light measurements every 10 minutes, enabling precise determination of growth parameters.

Specific growth rate (μ) is calculated from the exponential phase of growth curves using the formula: μ = (lnOD₂ - lnOD₁)/(t₂ - t₁), where OD₁ and OD₂ represent optical density measurements at times t₁ and t₂ during exponential growth. For anaerobic screenings, samples are collected at the beginning (t₀) and end (t₄₈ₕ) of cultivation for determining cell dry weight, glycerol, and ethanol yields [10]. Yield calculations are performed as the amount of product (e.g., glycerol or ethanol) divided by the consumed substrate amount, with pentoses excluded from yield computation for strains incapable of metabolizing them.

Robustness Quantification Methodology

Robustness quantification employs a Fano factor-based method that is dimensionless, free from arbitrary control conditions, and frequency-independent [10]. This methodology, known as Trivellin's formula, measures the variation of performance with respect to its average across multiple perturbations, resulting in a dimensionless negative number where zero represents a completely robust, non-changing phenotype [11]. The formula is:

R = -log₁₀(σ²/μ)

Where R represents robustness, σ² is the variance of the performance metric across perturbations, and μ is the mean performance. This equation allows identification of robust functions (e.g., specific growth rate or product yields) among tested strains, as well as performance-robustness trade-offs in a perturbation space composed of single stress conditions from industrial environments [10].

The perturbation space should simulate industrial conditions, including inhibitors present in lignocellulosic hydrolysates (furfural, acetic acid, phenolic compounds), osmotic stress (NaCl), product inhibition (ethanol), and temperature fluctuations [11]. For each strain, multiple phenotypes (specific growth rate, lag phase, final cell dry weight, biomass yield, and ethanol yield) are calculated across all perturbations. This approach enables systematic evaluation of how measurement artefacts affect strain data, separating sensor-induced effects from true material behavior of the strain [12].

Figure 1: Strain Robustness Quantification Workflow. This diagram illustrates the systematic process for assessing strain robustness across defined industrial perturbation spaces, culminating in computational analysis using Trivellin's formula.

Robustness as a Critical Industrial Metric

Performance-Robustness Trade-offs in Industrial Strains

Robustness quantification has revealed fundamental trade-offs between maximum performance and stability across varying conditions. Experimental studies with Saccharomyces cerevisiae strains have demonstrated negative correlations between performance and robustness for ethanol yield, biomass yield, and cell dry weight [11]. Conversely, specific growth rate performance positively correlated with robustness, presumably because of evolutionary selection for robust, fast-growing cells [11]. These trade-offs explain why strains optimized for maximum performance in laboratory conditions are often less capable of coping with environmental stresses and fluctuations in industrial settings.

The industrial strain Ethanol Red exemplifies the ideal balance, exhibiting both high performance and robustness across multiple phenotypes, making it a superior candidate for bioproduction in tested perturbation spaces [11]. In contrast, the PE2 strain achieved the highest mean ethanol yield (more than double the average across all strains) but displayed significantly lower robustness and higher population heterogeneity [10]. This dichotomy highlights the importance of evaluating both performance and robustness during strain selection, as strongly performing cells under one condition may be less robust in others, potentially leading to process failures during scale-up.

Engineering Strategies for Enhanced Robustness

Table 3: Strategies for improving microbial robustness in industrial strains

| Engineering Approach | Mechanism | Example Application | Outcome | Citation |

|---|---|---|---|---|

| Global Transcription Machinery Engineering (gTME) | Reprogramming gene networks via mutations in transcription machinery | E. coli δ70 factor engineering | Improved tolerance to 60 g/L ethanol, high SDS | [8] |

| Transcription Factor Engineering | Overexpression of global or specific stress response regulators | S. cerevisiae Haa1 overexpression | Enhanced acetic acid tolerance | [8] |

| Adaptive Laboratory Evolution (ALE) | Natural selection under stress conditions | Y. lipolytica for low pH SA production | Improved performance at pH 3.0 | [5] |

| Membrane/Transporter Engineering | Modifying membrane composition and transport capabilities | Engineered transporters in C. glutamicum | Enhanced nutrient uptake and stress resistance | [8] |

| Betaine-based Methyl Supply | Reconstruction of methylation metabolism | E. coli ergothioneine production | Elimination of costly methionine supplementation | [9] |

Advanced engineering strategies focus on enhancing robustness without compromising performance. Global Transcription Machinery Engineering (gTME) introduces mutations in generic transcription-related proteins to reprogram gene networks and cellular metabolism, successfully improving tolerance to various stressors in multiple organisms [8]. For example, engineering the housekeeping sigma factor δ70 improved E. coli tolerance to 60 g/L ethanol and high concentrations of SDS, while maintaining high yields of target products [8]. Similarly, engineering the cAMP receptor protein (CRP), which regulates more than 400 genes in E. coli, has improved alcohol tolerance, acid tolerance, and biosynthetic capacities for compounds like vanillin, naringenin, and caffeic acid [8].

Adaptive Laboratory Evolution (ALE) represents a complementary approach that leverages natural selection under stress conditions to enhance robustness. For succinic acid production in Yarrowia lipolytica, ALE under low pH conditions generated strain PSA3 capable of maintaining productivity at pH 3.0, significantly simplifying downstream processing [5]. Such robustness engineering strategies are essential for bridging the gap between laboratory performance and industrial reliability, ensuring consistent production despite the predictable and stochastic disturbances encountered in scale-up bioprocesses.

The Scientist's Toolkit: Essential Research Reagents and Methods

Table 4: Key research reagents and methods for strain performance characterization

| Reagent/Method | Function | Application Example | Performance Metric | |

|---|---|---|---|---|

| ScEnSor Kit | Fluorescent biosensors for 8 intracellular parameters | Monitoring oxidative stress, UPR in S. cerevisiae | Robustness quantification | [10] |

| Bonded Foil Strain Gauges | Strain measurement in composite materials | Assessing creep in CFRP tendons | Mechanical stability | [12] |

| Distributed Fibre Optic Sensing | High-resolution strain distribution mapping | Detecting localised strain peaks in composites | Material reliability | [12] |

| Digital Image Correlation | Non-contact deformation measurement | Surface strain analysis during testing | Precision metrics | [12] |

| Diluted Lignocellulosic Hydrolysates | Complex perturbation space simulation | Stress testing industrial yeast strains | Robustness assessment | [10] |

| 3D Hashin Failure Criterion | Composite material damage initiation | Predicting failure in fiber-reinforced composites | Structural reliability | [13] |

The experimental characterization of strain performance requires specialized reagents and methodologies. The ScEnSor Kit enables monitoring of eight intracellular parameters using fluorescent biosensors, providing real-time data on pH, ATP, glycolytic flux, oxidative stress, unfolded protein response, ribosome abundance, pyruvate metabolism, and ethanol consumption [10]. This comprehensive profiling allows researchers to investigate population heterogeneity and intracellular environment stability under different hydrolysate conditions. For physical strain measurements in composite materials, bonded foil strain gauges, distributed fibre optic sensing, and digital image correlation offer complementary approaches with varying precision and application ranges [12].

Complex perturbation spaces can be simulated using diluted lignocellulosic hydrolysates (typically 50-60% vol/vol for screening), which contain inhibitory compounds, osmotic stressors, and product inhibition agents representative of industrial conditions [10]. These are supplemented with standard nutrients ((NH₄)₂SO₄, KH₂PO₄, MgSO₄·7H₂O, trace metals, and vitamins) and adjusted to pH 5.0 before filter sterilization. For structural analysis, the 3D Hashin failure criterion provides a validated method for predicting damage initiation in fiber-reinforced composites, incorporating fiber and matrix behaviors under tensile and compressive stresses [13].

Figure 2: Microbial Robustness Engineering Pathways. This diagram illustrates the cellular response mechanisms to industrial perturbations and targeted engineering interventions (blue) that enhance robustness.

The systematic evaluation of titer, yield, productivity, and robustness provides a multidimensional framework for benchmarking engineered strains against industrial standards. While high titer remains essential for economic viability, and yield reflects metabolic efficiency, robustness has emerged as the critical determinant of successful scale-up from laboratory to industrial implementation. The quantitative robustness assessment methodology using Trivellin's formula enables researchers to precisely evaluate strain stability across perturbation spaces representative of industrial conditions.

Future strain development will increasingly focus on balancing these four metrics rather than maximizing individual parameters, recognizing the inherent trade-offs between peak performance and stability. Advanced engineering strategies including gTME, transcription factor engineering, ALE, and metabolic pathway reconstruction provide powerful tools for enhancing robustness without compromising productivity. As the field progresses, standardized implementation of these assessment protocols across research institutions and industrial facilities will enable more accurate prediction of scale-up performance, accelerating the development of robust microbial cell factories for sustainable bioproduction.

The Design-Build-Test-Learn (DBTL) cycle is a systematic, iterative framework central to synthetic biology and metabolic engineering for developing and optimizing biological systems [14]. This engineering-based approach enables researchers to efficiently create and refine engineered strains for specific applications, such as producing biofuels, pharmaceuticals, or other valuable compounds [14]. By applying rational design principles and learning from each iteration, the DBTL cycle provides a structured path for benchmarking strain performance against industrial targets, ultimately reducing the time and cost associated with traditional trial-and-error methods [14] [15].

The Core Principles of the DBTL Cycle

The DBTL cycle is built on four interconnected phases that form an iterative loop for continuous improvement.

Design: Researchers define the objectives for the desired biological function and create a blueprint for the biological system. This phase involves selecting genetic parts (promoters, coding sequences, RBS), planning assembly strategies, and designing experimental assays [16] [17]. The design can be based on prior knowledge, hypotheses, or computational models.

Build: The digital design is translated into physical biological reality. This involves DNA synthesis, assembly of genetic constructs into vectors, and their introduction into a host organism (chassis) such as bacteria or yeast [14] [18]. Automation through liquid handling robots is often used to increase throughput and reproducibility [16].

Test: The constructed strains undergo rigorous experimental characterization to measure performance against the objectives set in the Design phase. This can include functional assays, -omics analyses, and high-throughput screening to quantify metrics like product titer, yield, and productivity [14] [18].

Learn: Data from the Test phase is analyzed to extract insights into the system's behavior. Researchers identify successes, failures, and bottlenecks. This new knowledge informs the design of the next cycle, helping to refine hypotheses and strategies [16] [15].

The power of the DBTL framework lies in its iterative nature; complex biological systems are rarely perfected in a single attempt. Each cycle builds upon knowledge from the previous one, progressively steering the engineering process toward an optimal solution [19] [18].

DBTL Cycle Workflow

Experimental Implementation and Protocols

Successful application of the DBTL cycle relies on robust, well-documented experimental methodologies. The table below summarizes key protocols from published studies that utilized the DBTL framework for strain engineering.

Table 1: Key Experimental Protocols in DBTL Cycle Applications

| Study Objective | Host Organism | Key Protocol Steps | Performance Metrics | Reference |

|---|---|---|---|---|

| Dopamine Production | Escherichia coli | 1. In vitro pathway testing with cell lysates.2. RBS library construction for tuning gene expression.3. Cultivation in minimal medium with targeted metabolites.4. HPLC analysis for dopamine quantification. | 69.03 ± 1.2 mg/L dopamine (2.6-fold improvement over state-of-the-art) [20]. | [20] |

| Arsenic Biosensor Optimization | Cell-free system | 1. Plasmid assembly for sense & reporter constructs.2. Cell-free reaction assembly with lysate, plasmids, and buffer.3. Kinetic fluorescence measurement in microplate reader.4. Data analysis for sensitivity and dynamic range. | 5–100 ppb dynamic range for arsenic detection [21]. | [21] |

| Combinatorial Pathway Optimization | In silico Model | 1. Library design of enzyme expression levels.2. Kinetic model simulation of pathway flux.3. Machine learning analysis (e.g., Gradient Boosting, Random Forest).4. Strain recommendation for next DBTL cycle. | Robust predictions in low-data regime; identification of optimal non-intuitive enzyme combinations [19]. | [19] |

Detailed Experimental Protocol: In Vivo Dopamine Production

The development of an E. coli strain for dopamine production exemplifies a knowledge-driven DBTL cycle [20]. The methodology can be summarized as follows:

Strain and Plasmid Design: A high L-tyrosine producing E. coli host (FUS4.T2) was used. Heterologous genes for the dopamine pathway—hpaBC (from E. coli) for conversion of L-tyrosine to L-DOPA and ddc (from Pseudomonas putida) for conversion of L-DOPA to dopamine—were cloned into a pET plasmid system under an inducible promoter.

RBS Library Construction for Fine-Tuning: A critical step was modulating the translation initiation rate of the genes. This was achieved by constructing a library of RBS sequences with varying Shine-Dalgarno sequences to alter the strength of ribosome binding without disrupting the surrounding secondary structure.

Cultivation and Production: Engineered strains were cultivated in a defined minimal medium containing glucose, MOPS buffer, and essential trace elements. Gene expression was induced with IPTG. Cells were harvested during the exponential growth phase.

Analytical Methods - Quantification: Dopamine production was quantified using High-Performance Liquid Chromatography (HPLC). This allowed for precise measurement of the final product titer (mg/L) and yield (mg/g biomass), which are key performance indicators for benchmarking against industrial standards.

Quantitative Benchmarking of Engineered Strains

A core objective of the DBTL framework is the systematic improvement of strain performance. Quantitative data from multiple cycles allows for direct benchmarking of progress. The following table compares performance metrics from different DBTL-driven engineering projects.

Table 2: Performance Benchmarking of DBTL-Engineered Strains

| Engineering Project / Host | Key Industrial Metric | Initial / State-of-the-Art Performance | Performance After DBTL Optimization | Fold Improvement & Key Enabler |

|---|---|---|---|---|

| Dopamine / E. coli | Titer (mg/L) | 27 mg/L [20] | 69.0 mg/L [20] | 2.6-foldKnowledge-driven RBS fine-tuning [20] |

| Dopamine / E. coli | Yield (mg/g biomass) | 5.17 mg/g [20] | 34.3 mg/g [20] | 6.6-foldHigh-throughput RBS engineering [20] |

| Anti-adipogenic Effect / L. rhamnosus Exosomes | Lipid Accumulation Inhibition | ~30% (Raw Bacteria) [18] | ~80% (Purified Exosomes) [18] | ~2.7-foldComponent isolation & mechanism analysis [18] |

| Tryptophan Metabolism / Yeast | Pathway Flux | Not specified in source | Significant increase reported | ML-guided genotype-to-phenotype predictions [16] |

The Scientist's Toolkit: Essential Research Reagents and Solutions

The efficiency of DBTL cycles is heavily dependent on the tools and reagents used at each stage. The following table catalogs key solutions mentioned in the search results.

Table 3: Essential Research Reagent Solutions for the DBTL Cycle

| Reagent / Solution / Tool | Function in DBTL Workflow | Specific Application Examples |

|---|---|---|

| Automated Liquid Handlers (Tecan, Beckman Coulter) | Build, Test | High-precision pipetting for PCR setup, DNA normalization, and assay setup in plate formats [16]. |

| DNA Synthesis Providers (Twist Bioscience, IDT) | Build | Provision of high-quality, custom-designed DNA fragments and gene sequences for construct assembly [16]. |

| Cell-Free Expression Systems | Build, Test | Rapid in vitro protein synthesis and pathway prototyping without cloning into a live host [17]. |

| Next-Generation Sequencing (NGS) (Illumina platforms) | Test | Genotypic verification of constructed strains and deep analysis of genetic modifications [14]. |

| Machine Learning Platforms (TeselaGen) | Learn, Design | Data analysis, predictive modeling, and design recommendation for subsequent DBTL cycles [16]. |

| Specialized Databases & ML Models (ProteinMPNN, ESM) | Learn, Design | Zero-shot prediction of protein structures and functions to inform initial designs [17]. |

Emerging Trends and the Future of DBTL

The DBTL cycle is continuously evolving, with two trends poised to significantly accelerate biological engineering.

The Rise of AI and Machine Learning: Machine learning (ML) is transforming the Learn and Design phases. By analyzing large datasets from previous cycles, ML models can predict genotype-phenotype relationships and recommend optimal designs for the next iteration, potentially breaking the cycle of "involution" where iterative improvements become increasingly complex without major gains [15]. The use of pre-trained protein language models (e.g., ESM, ProGen) enables "zero-shot" design, where functional proteins can be proposed without additional model training [17].

Paradigm Shift: LDBT and Closed-Loop Automation: The traditional order of the cycle is being challenged. With the success of zero-shot predictors, a new paradigm dubbed "LDBT" (Learn-Design-Build-Test) has been proposed [17]. In this model, the cycle starts with Learn, leveraging vast datasets inherent in machine learning algorithms to inform the initial Design. This approach, combined with fully automated biofoundries, aims to create a "Design-Build-Work" model, reducing the need for multiple, time-consuming DBTL iterations [17] [22].

The Evolving DBTL Workflow

Aligning Benchmarking Goals with Commercial Viability and Regulatory Pathways

In the development of advanced biotherapeutics, particularly those utilizing engineered microbial strains, performance benchmarking is frequently treated as a standalone research activity. However, to deliver commercially successful and clinically viable products, benchmarking must be strategically aligned with both regulatory requirements and commercial objectives from the earliest development stages. This alignment ensures that the data generated not only demonstrates scientific superiority but also satisfies the evolving evidence requirements of global regulatory bodies while supporting a compelling value proposition for payers and patients.

The modern pharmaceutical landscape demands this integrated approach. With development costs soaring and therapeutic areas becoming increasingly crowded, simply having a genetically modified strain with enhanced characteristics is insufficient [23]. Organizations must demonstrate that their engineered strains offer measurable advantages over existing standards through rigorously designed benchmarking studies that satisfy multiple stakeholders simultaneously. This guide provides a structured framework for designing and executing benchmarking studies that meet these dual objectives, enabling researchers to build robust evidence packages that accelerate development and facilitate market access.

Foundational Principles of Strategic Benchmarking

Defining Performance Benchmarking for Engineered Strains

Performance benchmarking for engineered strains represents a systematic process of comparing a novel strain's critical quality attributes (CQAs) and performance metrics against established industrial standards or competitor strains [24]. This goes beyond simple side-by-side comparison, instead employing statistically powered experimental designs to generate data that informs development decisions, regulatory strategies, and commercial positioning.

Effective benchmarking serves multiple functions throughout the product lifecycle. During early research stages, it identifies potential competitive advantages and informs platform selection. Through development, it provides data to optimize processes and define control strategies. At the commercialization stage, it generates evidence to support regulatory submissions and market differentiation [23]. This multi-purpose function necessitates careful planning to ensure the resulting data meets diverse stakeholder needs, from regulatory agencies requiring rigorous validation to commercial teams needing compelling competitive messaging.

The Commercial-Regulatory Nexus in Benchmarking Strategy

The most successful benchmarking programs recognize the intrinsic connection between regulatory requirements and commercial success. Regulatory pathways increasingly demand demonstration of comparative effectiveness, while market access depends on proving superior value against established alternatives [25]. This creates a strategic imperative to design benchmarking studies that simultaneously satisfy both requirements.

Regulatory agencies have heightened their scrutiny of early-phase clinical endpoints, with differing expectations across regions [25]. The U.S. FDA may accept surrogate biomarkers for accelerated approval, while European regulators typically require demonstrable clinical benefit. These divergent requirements necessitate benchmarking strategies that balance biomarker data with clinically meaningful measures. Similarly, commercial viability depends on demonstrating durability of response, which has become a key consideration for payers assessing long-term value [25]. Forward-looking organizations therefore design benchmarking studies with these dual requirements in mind, ensuring the resulting data supports both regulatory approval and favorable reimbursement decisions.

Designing Comprehensive Benchmarking Frameworks

Key Performance Metrics for Engineered Strains

Strategic benchmarking requires measuring parameters that matter to both regulators and commercial stakeholders. The table below outlines essential metric categories for engineered strain evaluation, aligned with critical development and commercialization objectives.

Table 1: Key Performance Metrics for Engineered Strain Benchmarking

| Metric Category | Specific Parameters | Commercial Significance | Regulatory Relevance |

|---|---|---|---|

| Productivity & Yield | Titer (g/L), Productivity (g/L/h), Yield (g product/g substrate) | Determines COGS and manufacturing footprint; impacts profitability and scalability [25] | Critical for Chemistry, Manufacturing, and Controls (CMC) documentation; demonstrates process consistency |

| Product Quality | Purity (% primary product), Impurity profiles, Post-translational modifications | Impacts efficacy, safety profile, and positioning against competitor products [26] | Key quality attribute for marketing authorization; requires rigorous control strategy |

| Process Performance | Fermentation duration, Nutrient utilization, Downstream processing recovery | Influences facility throughput, capacity planning, and operational costs [25] | Evidence of manufacturing process robustness and control; required for process validation |

| Genetic Stability | Plasmid retention rate, Genetic drift over generations, Phenotypic consistency | Affects production consistency, regulatory compliance, and commercial lifespan [23] | Expectation for all genetically modified production systems; documented in regulatory submissions |

Establishing Experimental Protocols for Comparative Analysis

Robust benchmarking requires standardized, reproducible experimental designs that generate high-quality, comparable data. The following protocols provide frameworks for key comparative assessments.

Protocol 1: Fermentation Performance Benchmarking

Objective: Compare productivity and growth characteristics between engineered and reference strains under industrially-relevant conditions.

Methodology:

- Strain Preparation: Revive cryopreserved master cell bank vials of both test and reference strains following standardized resuscitation protocols.

- Seed Train Expansion: Execute identical seed train expansion in defined media, with sampling for viability and purity testing at each transfer point.

- Bioreactor Operation: Inoculate parallel, controlled bioreactors (minimum n=3 per strain) at standardized initial biomass concentrations. Maintain identical process parameters (pH, temperature, dissolved oxygen, feeding strategy) throughout the fermentation.

- Monitoring & Sampling: Collect samples at predetermined intervals for analysis of cell density, substrate concentration, metabolite accumulation, and product titer.

- Endpoint Analysis: Harvest cultures at consistent criteria (e.g., time, carbon exhaustion) for comprehensive product quantification and quality attribute assessment.

Data Analysis: Calculate key parameters including maximum specific growth rate (μmax), product titer, volumetric productivity, yield coefficients, and final product quality attributes. Perform statistical comparison using appropriate methods (e.g., t-tests for normally distributed data) with pre-defined significance thresholds.

Protocol 2: Genetic Stability Assessment

Objective: Evaluate strain stability over extended cultivation, simulating manufacturing-scale propagation.

Methodology:

- Extended Passage Study: Initiate serial passage cultures from master cell banks, employing representative production media and culture conditions.

- Sampling Strategy: Archive samples at predetermined generation points (e.g., every 10 generations) for comprehensive analysis.

- Phenotypic Monitoring: Assess productivity and growth characteristics at each sampling point using standardized micro-scale fermentation assays.

- Genotypic Analysis: Employ whole-genome sequencing on samples from key timepoints to identify potential genetic mutations or rearrangements.

- Plasmid Retention: For plasmid-based systems, quantify retention rates using selective vs. non-selective plating and PCR-based methods.

Data Analysis: Determine rates of productivity loss, genetic drift, and relationship between genotypic changes and phenotypic outcomes. Establish stability thresholds based on commercial manufacturing requirements.

Visualization of Strategic Benchmarking Alignment

The following diagram illustrates the integrated relationship between benchmarking activities, regulatory strategy, and commercial objectives throughout the therapeutic development lifecycle.

Strategic Benchmarking Alignment

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 2: Key Research Reagent Solutions for Strain Benchmarking

| Reagent/Category | Function in Benchmarking | Strategic Considerations |

|---|---|---|

| Reference Standards | Provide benchmark comparators for product quality and potency assays | Source from recognized standards organizations when available; critical for demonstrating competitive advantages [27] |

| Defined Media Components | Enable consistent fermentation performance evaluation | Formulations should reflect commercial manufacturing intent; document all components for regulatory filing |

| Analytical Standards | Support quantification and characterization of products and impurities | Quality and traceability are essential for regulatory acceptance; invest in well-characterized references |

| Cell Culture Reagents | Maintain consistent growth conditions across comparative studies | Standardize sources and qualifications to minimize variability; document all reagents for method transfer |

| DNA Sequencing Kits | Enable genetic stability assessment and construct verification | Utilize validated methods acceptable to regulatory agencies; whole-genome approaches provide comprehensive data |

Implementing a Data-Driven Benchmarking Program

Establishing a Continuous Improvement Cycle

Effective benchmarking is not a one-time activity but rather an ongoing process of measurement, analysis, and refinement [24]. Organizations should implement a structured approach to continuously collect benchmarking data, analyze results against predetermined targets, and identify opportunities for strain or process improvement. This cyclical process aligns with quality-by-design principles and supports both process optimization and regulatory documentation requirements.

Product benchmarking principles emphasize the importance of creating user journey maps that represent the typical customer journey through your funnel [24]. In the context of engineered strains, this translates to mapping the strain's performance throughout its entire lifecycle – from initial construction through manufacturing implementation. By overlaying critical metrics throughout this lifecycle, organizations can identify friction points or performance limitations and target improvement efforts accordingly.

Technology and Automation Enablers

Strategic benchmarking programs benefit significantly from technological enablers that enhance data quality, reproducibility, and analysis efficiency. As identified in pharmaceutical development contexts, targeted technology solutions can include "packages for autogenerating data sets for tables, listings, and figures with standardized metadata" and "quality-control software that can validate terminology, ensure consistent formatting, and check graphics against source data" [26].

Manufacturing industries are increasingly investing in smart manufacturing technologies, including "automation hardware, data analytics, sensors, and cloud computing" to improve competitiveness [28]. These technologies similarly benefit benchmarking programs through enhanced data capture, reduced manual intervention, and improved analytical capabilities. When implementing benchmarking platforms, prioritize solutions that offer integration capabilities, data standardization, and reporting functionalities that directly support regulatory submission requirements.

Strategic benchmarking of engineered strains represents a critical competency for organizations navigating the complex intersection of science, regulation, and commerce. By aligning benchmarking goals with both regulatory pathways and commercial objectives from the earliest development stages, organizations can generate evidence that accelerates development, facilitates regulatory approval, and supports compelling market positioning. The frameworks, metrics, and methodologies outlined in this guide provide a foundation for implementing benchmarking programs that deliver strategic value throughout the therapeutic development lifecycle. As the competitive landscape intensifies and regulatory expectations evolve, organizations that excel at generating and leveraging comparative performance data will maintain a distinct advantage in delivering innovative biotherapeutics to patients.

From Theory to Practice: Methodologies and Application of Dynamic Benchmarking Frameworks

Implementing the DBTL Cycle for Iterative Strain Improvement

The Design-Build-Test-Learn (DBTL) cycle is a systematic framework central to modern synthetic biology and metabolic engineering for developing and optimizing biological systems [14]. This iterative process enables researchers to engineer microorganisms to perform specific functions, such as producing valuable compounds including biofuels, pharmaceuticals, and specialty chemicals [14] [29]. The DBTL approach begins with the rational design of genetic constructs, proceeds to the high-throughput assembly of these designs, then to the rigorous testing of the resulting strains, and concludes with data analysis to inform the next design cycle [14]. This methodology is particularly valuable for combinatorial pathway optimization, where simultaneously optimizing multiple pathway genes often leads to combinatorial explosions that make exhaustive experimental testing infeasible [19].

The growing bioeconomy, which could contribute up to $30 trillion to the global economy by 2030, depends heavily on our ability to manufacture high-performing microbial strains in a time- and cost-effective manner [29]. Strain optimization through DBTL cycles aims to develop production strains iteratively, with each cycle incorporating learning from the previous one [19]. Machine learning methods have emerged as powerful tools to learn from experimental data and propose optimized designs for subsequent DBTL cycles, potentially accelerating the strain development process significantly [19]. However, radical reduction in strain development time and cost requires optimizing the entire DBTL cycle rather than simply increasing throughput at individual stages [29].

Performance Benchmarking of DBTL Methodologies

Different implementations of the DBTL cycle have demonstrated varying levels of efficiency and effectiveness in strain improvement projects. The table below compares three distinct approaches documented in recent scientific literature.

Table 1: Performance Comparison of DBTL Implementation Strategies

| DBTL Approach | Target Product | Host Organism | Key Engineering Strategy | Reported Performance | Industrial Relevance |

|---|---|---|---|---|---|

| Knowledge-Driven DBTL [20] | Dopamine | Escherichia coli | In vitro pathway analysis + RBS library | 69.03 ± 1.2 mg/L (2.6-fold improvement) | Medium - demonstrates rational design principles |

| Mechanistic Model-Guided DBTL [19] | Hypothetical Metabolite G | Escherichia coli (in silico) | Kinetic modeling + machine learning recommendations | N/A (simulation study) | High - framework for optimizing ML in DBTL cycles |

| Industrial Strain Engineering [29] | Various bio-based molecules | Multiple industrial hosts | Integrated DBTL with scale-up prediction | Case-dependent | High - focuses on scale-up challenges |

The knowledge-driven DBTL approach exemplifies how incorporating upstream in vitro investigation can enhance pathway understanding before committing to full DBTL cycling [20]. This methodology produced a 2.6-fold improvement in dopamine production over previous state-of-the-art in vivo production methods [20]. Meanwhile, the mechanistic model-guided framework provides a simulated environment for testing machine learning methods over multiple DBTL cycles, addressing the scarcity of public multi-cycle datasets [19]. Industrial implementations emphasize the importance of integrating all four DBTL stages and incorporating scale-up performance predictions early in the strain development process [29].

Table 2: Analysis of Machine Learning Performance in DBTL Cycles

| Machine Learning Method | Performance in Low-Data Regime | Robustness to Training Set Bias | Noise Tolerance | Implementation Complexity |

|---|---|---|---|---|

| Gradient Boosting [19] | High | High | High | Medium |

| Random Forest [19] | High | High | High | Medium |

| Automated Recommendation Tool [19] | Variable | Medium | Medium | Low |

| Other Tested Methods [19] | Lower | Lower | Lower | Variable |

Experimental Protocols for DBTL Implementation

Knowledge-Driven DBTL with Upstream In Vitro Investigation

The knowledge-driven DBTL cycle begins with comprehensive in vitro testing to inform the initial design phase, reducing the number of DBTL iterations required [20]. For dopamine production, researchers first established a crude cell lysate system to express pathway enzymes under various conditions, allowing rapid assessment of different relative expression levels without cellular constraints [20]. The protocol involves:

- Preparation of cell lysate: Harvest E. coli cells by centrifugation, resuspend in phosphate buffer, and lyse using sonication or French press [20].

- In vitro reaction assembly: Combine cell lysate with reaction buffer containing 0.2 mM FeCl₂, 50 μM vitamin B₆, and 1 mM l-tyrosine or 5 mM l-DOPA in 50 mM phosphate buffer (pH 7) [20].

- Pathway analysis: Monitor conversion rates from l-tyrosine to l-DOPA and subsequently to dopamine using analytical methods such as HPLC or LC-MS [20].

- Translation to in vivo system: Based on optimal expression ratios identified in vitro, design RBS libraries for in vivo implementation using high-throughput techniques [20].

This approach enables mechanistic understanding of pathway limitations before committing to resource-intensive in vivo engineering, potentially saving significant time and resources [20].

High-Throughput RBS Engineering for Pathway Optimization

RBS engineering serves as a powerful tool for fine-tuning relative gene expression in synthetic pathways [20]. The implementation protocol includes:

- Library design: Modulate the Shine-Dalgarno sequence without interfering with secondary structures, using simplified RBS engineering approaches [20].

- Library construction: Employ automated molecular cloning workflows, potentially utilizing biofoundries for high-throughput assembly [20] [29].

- Strain cultivation: Grow engineered strains in minimal medium containing 20 g/L glucose, 10% 2xTY, phosphate salts, 15 g/L MOPS, and appropriate antibiotics [20].

- Phenotypic screening: Assess dopamine production using high-throughput analytics, often leveraging automation for consistent data collection [20].

This methodology enabled the development of a dopamine production strain achieving 34.34 ± 0.59 mg/g biomass, representing a 6.6-fold improvement over previous reports [20].

Model-Guided Machine Learning for Design Recommendation

The mechanistic kinetic model-based framework provides a simulated environment for optimizing machine learning approaches in DBTL cycles [19]. The experimental approach involves:

- Kinetic model development: Implement a mechanistic kinetic model of the metabolic pathway embedded in a physiologically relevant cell model using platforms like SKiMpy [19].

- In silico library generation: Simulate combinatorial libraries by varying enzyme levels through adjustments to Vmax parameters [19].

- Machine learning training: Use simulated data from initial cycles to train models like gradient boosting or random forests [19].

- Recommendation algorithm application: Implement algorithms that balance exploration and exploitation to propose optimized strains for subsequent cycles [19].

This approach has demonstrated that gradient boosting and random forest models outperform other methods in the low-data regime typical of early DBTL cycles and show robustness to training set biases and experimental noise [19].

Essential Research Reagent Solutions

Successful implementation of DBTL cycles requires specific reagents, tools, and platforms that enable high-throughput and reproducible experimentation.

Table 3: Essential Research Reagents and Tools for DBTL Implementation

| Reagent/Tool Category | Specific Examples | Function in DBTL Cycle |

|---|---|---|

| Molecular Cloning Tools [20] | pET plasmid system, pJNTN vector | Storage and expression of heterologous genes in production hosts |

| Host Strains [20] | E. coli DH5α (cloning), E. coli FUS4.T2 (production) | Providing optimized genetic backgrounds for cloning and production |

| Selection Agents [20] | Ampicillin (100 µg/mL), Kanamycin (50 µg/mL) | Maintaining plasmid stability and selecting for successful transformations |

| Induction Systems [20] | IPTG (1 mM) | Controlling expression timing and levels of pathway enzymes |

| Analytical Tools [30] | HPLC, LC-MS, Spectrometry | Quantifying metabolite concentrations and pathway performance |

| Cell-Free Systems [20] | Crude cell lysate systems | Rapid in vitro pathway prototyping before in vivo implementation |

| Statistical Analysis Tools [30] [31] | t-tests, F-tests, Empirical Likelihood methods | Determining significance of observed differences between strains |

Workflow Visualization of DBTL Implementation

The following diagram illustrates the comprehensive knowledge-driven DBTL workflow that incorporates upstream in vitro investigation:

Diagram 1: Knowledge-driven DBTL workflow with upstream in vitro investigation

The mechanistic model-guided DBTL cycle with integrated machine learning follows a slightly different workflow, particularly in the Learn and Design phases:

Diagram 2: Model-guided DBTL cycle with machine learning integration

The implementation of the DBTL cycle for iterative strain improvement represents a paradigm shift in metabolic engineering and synthetic biology. Through systematic comparison of different approaches, it is evident that knowledge-driven strategies that incorporate upstream in vitro investigation can significantly reduce development time and resources [20]. Similarly, model-guided approaches that leverage machine learning provide powerful frameworks for optimizing the recommendation of designs for subsequent DBTL cycles, especially in the low-data regimes typical of early-stage projects [19].

The performance benchmarking data indicates that no single DBTL implementation strategy is universally superior; rather, the optimal approach depends on the specific application, available resources, and stage of development. Industrial-scale implementation requires particular attention to scale-up considerations early in the DBTL process to de-risk technology transfer to manufacturing environments [29]. As the bioeconomy continues to expand, further refinement of DBTL methodologies—particularly through enhanced automation, improved machine learning algorithms, and more sophisticated kinetic models—will be essential for achieving the radical reductions in strain development time and cost necessary to meet global sustainability goals.

Untargeted metabolomics has emerged as a transformative approach in systems biology, enabling researchers to comprehensively profile the small molecule metabolites within a biological system. This method provides a direct functional readout of cellular activity and physiological status. For researchers and scientists engaged in performance benchmarking of engineered strains against industrial standards, untargeted metabolomics offers a powerful tool to decipher the metabolic consequences of genetic modifications. However, the immense complexity of the data generated, which can encompass tens to hundreds of thousands of observations, necessitates sophisticated data analysis workflows for functional interpretation [32].

A critical step in this process is Metabolic Pathway Enrichment Analysis (MPEA), which helps researchers move beyond lists of significant metabolites to understand the biological pathways and processes that are perturbed. By identifying metabolite sets or pathways that are overrepresented in the data, MPEA provides functional context, aiding in the elucidation of mechanisms of action [32]. Several computational methods for MPEA are available, but their performance can vary significantly. For the critical task of strain benchmarking, selecting the most appropriate and reliable method is paramount. This guide objectively compares three popular enrichment analysis approaches—Over Representation Analysis (ORA), Metabolite Set Enrichment Analysis (MSEA), and Mummichog—based on a recent experimental study, providing the data and methodologies needed to inform your analytical choices [32] [33].

Comparative Analysis of Popular MPEA Methods

The three methods compared here employ distinct statistical frameworks for identifying altered metabolic pathways.

- Over Representation Analysis (ORA): This is a straightforward method that tests whether a pre-defined set of metabolites (e.g., those belonging to a specific pathway) appears more frequently within a list of statistically significant metabolites than would be expected by chance alone. It typically uses a hypergeometric test or Fisher's exact test [32].

- Metabolite Set Enrichment Analysis (MSEA): Adapted from Gene Set Enrichment Analysis (GSEA), MSEA considers the entire ranked list of metabolites (e.g., based on p-values or fold changes) rather than just a significant subset. It evaluates whether the members of a predefined metabolite set are randomly distributed throughout the ranked list or concentrated at the top or bottom, thereby identifying pathways with coordinated but subtle changes [32].

- Mummichog: This method bypasses the need for rigorous metabolite identification prior to enrichment analysis. It operates directly on the m/z features and their p-values from the untargeted mass spectrometry data. Mummichog uses the mass defects and patterns of these features to predict putative metabolites and their associated pathways, then tests for pathway enrichment through a combinatorial algorithm [32].

Experimental Protocol for Method Benchmarking

The comparative data presented in this guide are derived from a dedicated benchmarking study [32]. The experimental protocol is summarized below and illustrated in Figure 1.

1. Cell Culture and Compound Treatment:

- Biological System: Hep-G2 cells (a human hepatoblastoma cell line widely used in toxicology and pharmacology) were cultivated in RPMI 1640 medium supplemented with 10% fetal bovine serum [32].

- Test Compounds: Cells were treated with 11 compounds with five distinct, well-characterized mechanisms of action (MoAs). This design allowed for the assessment of method correctness and consistency across similar MoAs. Key compounds included:

- Glycolysis Inhibitors: 2-Deoxyglucose, 3-Bromopyruvic acid, Metrizamide [32].

- Electron Transport Chain Disruptors: Antimycin A, FCCP [32].

- ROS Generators: Menadione, Phenanthrene-9,10-dione [32].

- Cholesterol Biosynthesis Inhibitors: Mevastatin, Simvastatin [32].

- Pyrimidine Metabolism/DNA Damage Agents: 5-Fluorouracil, Trifluorothymidine [32].

- Dosing: Cells were exposed to subtoxic concentrations (IC~10~) of each compound to ensure metabolic changes were not secondary to overt cell death [32].

2. Sample Preparation and Metabolite Profiling:

- Sample Harvesting: Following a 2-hour treatment, cells were harvested and metabolites were extracted using a methanol-based solvent system, optimized for capturing a broad range of polar and semi-polar metabolites [32].

- Instrumental Analysis: The metabolome was profiled using an Elute UHPLC system coupled to a timsTOF Pro mass spectrometer (Bruker) [32].

- Data Processing: Raw spectral data were processed using MetaboScape (Bruker) software for feature detection, alignment, and annotation. Metabolite annotation was performed using spectral library matching [32].

3. Enrichment Analysis:

- The processed datasets were subjected to enrichment analysis using the three methods (ORA, MSEA, and Mummichog) within the widely used MetaboAnalyst platform [32].

Performance Comparison: Consistency and Correctness

The performance of ORA, MSEA, and Mummichog was evaluated based on two key metrics: (i) the consistency of results among methods and among compounds with a similar MoA, and (ii) the correctness of the identified pathways in relation to the known MoA of the test compounds [32] [33].

Table 1: Summary of Method Performance Characteristics

| Method | Underlying Approach | Requires Full Metabolite ID? | Consistency Across Similar MoAs | Overall Correctness |

|---|---|---|---|---|

| Mummichog | Predicts pathways from m/z features | No | High | Best |

| MSEA | Ranks entire list of metabolites | Yes | Moderate | Moderate |

| ORA | Analyzes significant metabolite subset | Yes | Low | Lower |