Pathway Engineering and Refactoring: A Comprehensive Guide for Biomedical Researchers

This article provides a comprehensive overview of the foundational concepts, methodologies, and applications of pathway engineering and refactoring, tailored for researchers, scientists, and drug development professionals.

Pathway Engineering and Refactoring: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a comprehensive overview of the foundational concepts, methodologies, and applications of pathway engineering and refactoring, tailored for researchers, scientists, and drug development professionals. It explores the evolutionary design principles underpinning the field, details high-throughput construction and optimization techniques like Golden Gate assembly and combinatorial optimization, and addresses common challenges through advanced troubleshooting strategies. Further, it covers the critical validation and comparative analysis of refactored pathways, illustrating their impact through case studies in natural product discovery and therapeutic development. By synthesizing current trends, including the integration of synthetic biology, machine learning, and laboratory automation, this guide serves as a vital resource for leveraging these powerful technologies to accelerate biomedical innovation and drug discovery.

The Foundations of Pathway Engineering: From Rational Design to Evolutionary Principles

In both software, and biological engineering, the challenge of evolving complex systems without compromising their core function is paramount. Two disciplines, pathway engineering and refactoring, provide the foundational principles for managing this evolution. Though originating in different fields—pathway engineering in synthetic biology and refactoring in software development—they share a common goal: the systematic improvement of a system's internal architecture to enhance its performance, maintainability, and utility. Pathway engineering focuses on the design and construction of novel biochemical pathways or the redesign of existing ones in living organisms to achieve targeted production of compounds [1]. Refactoring, conversely, is the disciplined process of restructuring existing code without altering its external behavior to improve non-functional attributes like readability and maintainability [2] [3]. Within a research context, a deep understanding of these concepts is not merely academic; it is a prerequisite for innovation, reproducibility, and scaling laboratory discoveries into tangible applications, such as the efficient production of a novel therapeutic.

Core Concept: Pathway Engineering

Definition and Objectives

Pathway engineering is a cornerstone of synthetic biology and metabolic engineering. It involves the deliberate modification and optimization of metabolic pathways within a host organism to enable the synthesis of target molecules. This process entails introducing, deleting, or modulating genes that code for specific enzymes to redirect metabolic flux towards a desired product [1]. The core objectives are multifaceted, aiming to achieve:

- High Titer, Yield, and Productivity: Maximizing the concentration (titer), conversion efficiency (yield), and production rate of the target compound [4].

- Expanded Product Range: Enabling the synthesis of "new-to-nature" compounds or substances not naturally produced by the host organism.

- Sustainable Production: Creating biological routes for chemical synthesis, reducing reliance on petrochemicals and harsh industrial processes [4].

- Utilization of Alternative Feedstocks: Engineering pathways to utilize inexpensive and renewable carbon sources, such as lignocellulosic biomass or waste products.

Key Methodologies and Experimental Protocols

The pathway engineering workflow is an iterative cycle of design, build, test, and learn. The following protocol outlines the standard approach for establishing and optimizing a heterologous pathway in a microbial host like E. coli.

Protocol 1: Establishing and Optimizing a Heterologous Biosynthetic Pathway

Host Selection and Preparation:

- Objective: Select an appropriate microbial chassis (E. coli, S. cerevisiae, C. glutamicum) with favorable growth characteristics, genetic tractability, and precursor availability [4] [1].

- Method: Select a standard laboratory strain (e.g., E. coli BL21(DE3) for protein expression). Prepare competent cells for transformation.

Pathway Design and Gene Sourcing:

- Objective: Identify the sequence of enzymatic reactions required to produce the target metabolite from endogenous host precursors.

- Method: Mine genomic and transcriptomic data from natural producers to identify candidate genes [1]. Codon-optimize genes for the chosen host and synthesize them de novo [4].

Vector Construction and Transformation:

- Objective: Assemble the genetic constructs that will express the pathway enzymes in the host.

- Method: Clone the synthesized genes into expression plasmids under the control of inducible promoters (e.g., pTac, T7). Use techniques like Gibson Assembly or Golden Gate cloning for multi-gene constructs. Co-transform all required plasmids into the prepared competent host cells [4].

Screening and Initial Validation:

- Objective: Identify successfully engineered clones and confirm the production of the target metabolite.

- Method: Screen colonies on selective media. Inoculate positive clones in liquid culture and induce gene expression. Analyze culture extracts using Liquid Chromatography-Mass Spectrometry (LC-MS) or similar methods to detect the target compound [4] [1].

Pathway Optimization:

- Objective: Alleviate metabolic bottlenecks and maximize flux toward the product.

- Method: Employ strategies such as:

- Combinatorial Screening: Test different homologs of key enzymes (e.g., indigoidine synthases) and their cognate activating enzymes (e.g., phosphopantetheinyl transferases) to identify the most efficient combination [4].

- Promoter and RBS Engineering: Fine-tune the expression level of each pathway gene to balance metabolic flux and avoid intermediate accumulation [1].

- Membrane Engineering: For products that accumulate in the cell membrane or periplasm, overexpress genes related to lipid membrane supply (e.g., plsX, plsC) to enhance storage capacity and reduce toxicity [4].

Fermentation Scale-Up:

- Objective: Translate shake-flask production to controlled bioreactors for higher yields.

- Method: Transfer the engineered strain to a bioreactor for fed-batch fermentation. Optimize parameters including dissolved oxygen, pH, temperature, and feeding strategy to maximize biomass and product titer [4].

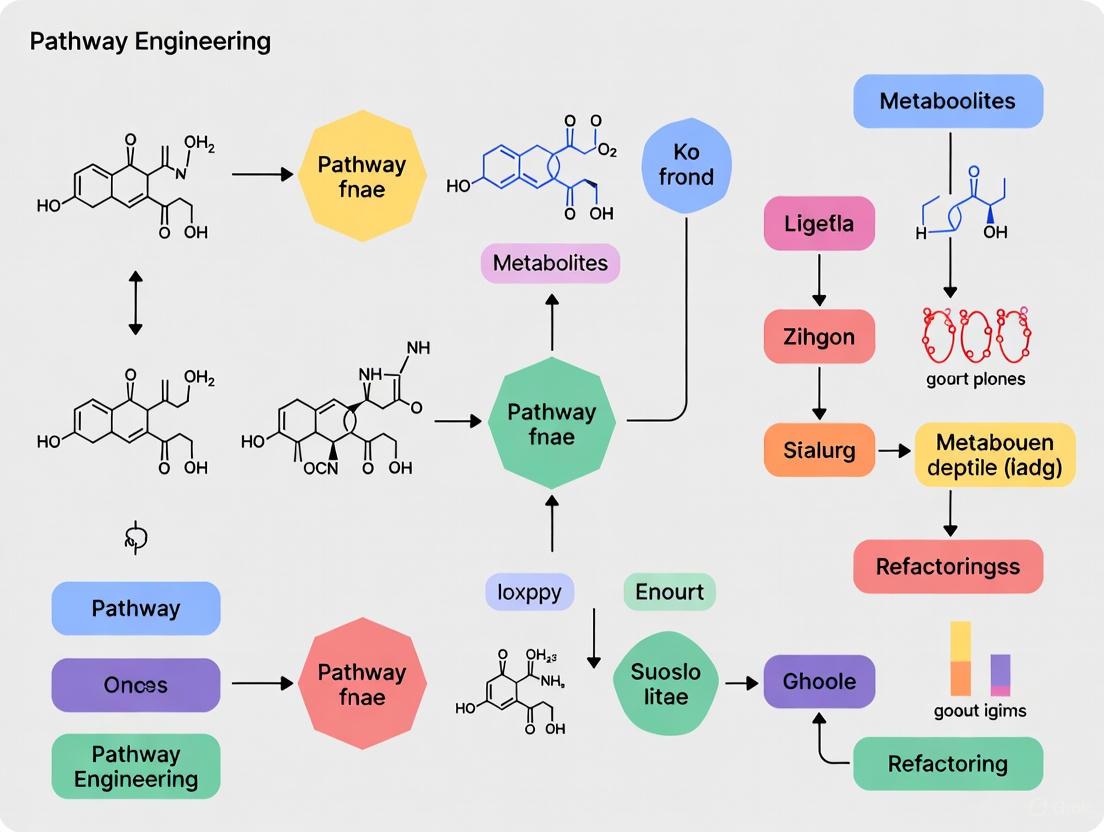

The following diagram visualizes the core experimental workflow for this protocol.

Research Reagent Solutions for Pathway Engineering

The following table details key reagents and materials essential for executing pathway engineering experiments.

Table 1: Essential Research Reagents for Pathway Engineering

| Reagent/Material | Function/Explanation | Example Use Case |

|---|---|---|

| Codon-Optimized Genes | Synthesized DNA sequences altered to match the codon usage bias of the host organism, maximizing translation efficiency and protein yield. | Critical for high-level expression of heterologous enzymes in a non-native host like E. coli [4]. |

| Expression Plasmids | Circular DNA vectors containing regulatory elements (promoters, terminators, selectable markers) for controlled gene expression in the host. | pET or pTac-based vectors for T7 or Tac-promoter driven expression in bacterial systems [4]. |

| Non-ribosomal Peptide Synthetase (NRPS) | A large multi-domain enzyme that catalyzes the assembly of complex peptides, such as the blue pigment indigoidine, without ribosomes. | Key enzyme for producing peptide-derived natural products; requires activation by a PPTase [4]. |

| Phosphopantetheinyl Transferase (PPTase) | An activator enzyme that converts inactive NRPS (apo-form) into its active (holo-) form by transferring a phosphopantetheinyl group from Coenzyme A. | Co-expression is essential for the functionality of heterologous NRPS pathways in engineered hosts [4]. |

| Inducible Promoters | Genetic switches that allow precise temporal control of gene expression in response to a chemical (e.g., IPTG) or environmental cue. | Used to decouple cell growth from product synthesis, which is vital for expressing proteins that may be toxic to the host. |

Core Concept: Refactoring

Definition and Objectives

In software engineering, refactoring is the process of restructuring existing source code to improve its internal structure while rigorously preserving its external behavior [2] [3]. It is not about adding new features or fixing bugs, but about reducing technical debt and making the codebase more resilient to future changes. The primary objectives include:

- Enhanced Maintainability: Making the code easier to understand, modify, and debug, which reduces long-term costs [2] [5].

- Improved Readability: Transforming code that is only comprehensible to machines into code that is easily read and understood by developers [3].

- Reduced Complexity: Breaking down large, monolithic methods or classes into smaller, single-purpose units [2].

- Increased Architectural Flexibility: Preparing the code for future enhancements by improving its modularity and adherence to design principles, which can indirectly improve scalability and reliability [2].

Key Methodologies and Refactoring Techniques

Refactoring is typically an incremental process involving small, verified changes. The following protocol outlines a standard, safe approach to refactoring a legacy codebase.

Protocol 2: Refactoring a Legacy Code Module

Establish a Test Suite:

- Objective: Create a safety net that verifies the software's external behavior remains unchanged after each refactoring step.

- Method: Develop a comprehensive set of unit and integration tests that cover the key functionalities of the module to be refactored. Ensure the test suite passes before beginning refactoring [3].

Identify "Code Smells":

Apply Targeted Refactoring Techniques:

- Objective: Address identified code smells with specific, proven refactoring transformations.

- Method: Apply techniques one at a time. Common techniques include [3]:

- Extract Method: Break a long method into smaller, well-named methods.

- Rename Method/Variable: Use clear and descriptive names.

- Replace Conditional with Polymorphism: Remove complex switch statements or conditionals.

- Decompose Conditional: Break down complex conditional expressions into simpler, well-named methods.

Run the Test Suite:

- Objective: Verify that the refactoring did not introduce any functional regressions.

- Method: After each small refactoring step, run the entire test suite. If any test fails, immediately address the issue before proceeding.

Iterate:

- Objective: Continuously improve the code structure.

- Method: Repeat steps 2-4, addressing the next most critical code smell. This aligns with the "Boy Scout Rule" of leaving the code cleaner than you found it [2].

The logical decision process for choosing between refactoring and more drastic measures is summarized below.

Comparative Analysis: Refactoring, Reengineering, and Rewriting

It is crucial to distinguish refactoring from more extensive approaches. The following table provides a comparative overview of these strategies, adapting the software-centric concepts for a broader engineering research context [2] [6] [5].

Table 2: Comparative Analysis of System Improvement Strategies

| Feature | Refactoring | Reengineering | Rewriting (Rebuilding) |

|---|---|---|---|

| Primary Goal | Improve internal structure without changing external behavior; manage technical debt. | Enhance structure to support significant new capabilities without a full rebuild. | Replace the system entirely to overcome fundamental limitations and create a future-proof foundation [2]. |

| Scope of Change | Incremental, localized modifications. Architecture is preserved. | Major structural changes to specific components. Core framework is retained but significantly altered. | Extensive; a complete overhaul of the codebase, architecture, and often the technology stack [2] [5]. |

| Analogy | Tuning an engine and cleaning the interior of a car [2]. | Remodeling and expanding a kitchen by moving walls [2]. | Demolishing an old building and constructing a new one on the same site [5]. |

| Risk Level | Low risk of major failure when backed by tests. | Moderate risk, as changes are deeper but contained. | High risk of project failure, delays, and budget overruns [6] [5]. |

| Ideal Use Case | Code is functional but messy, hard to maintain, or contains "code smells". | The architecture is unscalable for new requirements, or bug fixes cause ripple effects [2]. | Existing architecture is obsolete, technical debt is overwhelming, or new requirements are incompatible with the old design [2] [6]. |

| API/Pathway Stability | External API (or metabolic output) must remain strictly stable. | Efforts are made to maintain API stability, e.g., through facades or versioning. | API stability is a low priority; a new API is often designed, requiring a transition strategy [2]. |

Interrelationship and Application in Research

The paradigms of pathway engineering and refactoring are deeply interconnected in advanced research. Pathway engineering often relies on a refactoring-like approach once an initial pathway is established. For example, a first-generation strain engineered to produce indigoidine may be "refactored" by optimizing the expression levels of the Sc-indC and Sc-indB genes, switching to more efficient enzyme homologs, or engineering the cell's membrane to enhance product accumulation—all without changing the fundamental biochemical role of the pathway [4]. This iterative optimization is analogous to code refactoring.

Furthermore, the concept of "reengineering" serves as a bridge between the two. In software, reengineering involves significant structural changes to accommodate new features without starting from scratch [2]. In biology, this is equivalent to introducing novel abstractions or modularity. For instance, a researcher might reengineer a pathway by introducing a regulatory circuit to dynamically control flux, thereby changing its internal "architecture" for greater stability and yield without rebuilding the entire host's metabolism. This holistic view, where refactoring, reengineering, and rebuilding are points on a spectrum of intervention, provides a powerful framework for planning and executing complex research and development projects in drug development and beyond.

Metabolic engineering emerged in the early 1990s as a formalized discipline focused on directed modification of cellular metabolism to achieve specific production goals. The term was coined by Bailey and Stephanopoulos in 1991, establishing a new framework for employing biological entities for chemical production beyond traditional fermentation [7]. This field represented a paradigm shift from simply exploiting naturally occurring microbial processes to actively redesigning metabolic networks through genetic manipulation. The evolution of metabolic engineering has since progressed through three distinct waves characterized by increasingly sophisticated approaches to understanding and manipulating cellular metabolism. Initially focusing on rational modification of individual pathways, the field has expanded to encompass systems-level understanding and ultimately synthetic biology approaches that enable comprehensive redesign of metabolic networks [8] [7]. This progression has transformed metabolic engineering from a collection of elegant demonstrations to a systematic engineering discipline with well-defined principles and tools, enabling the development of microbial cell factories for sustainable production of fuels, chemicals, and pharmaceuticals.

The First Wave: Rational Design and Early Genetic Manipulation

The first wave of metabolic engineering (approximately 1991-early 2000s) was characterized by rationally designed strategies focused on modifying specific metabolic pathways through genetic manipulation. During this period, metabolic engineers primarily worked on over-producing natively synthesized metabolites in established industrial hosts like E. coli and S. cerevisiae [8]. The foundational approach involved identifying metabolic bottlenecks through techniques like metabolic flux analysis and then applying targeted genetic modifications to alleviate these constraints [8] [7].

Core Principles and Methodologies

Early metabolic engineering followed a systematic methodology:

- Pathway Identification: Researchers identified and analyzed the metabolic pathways leading to desired products, focusing on central metabolism including glycolysis, TCA cycle, and major biosynthetic routes [7].

- Bottleneck Detection: Using metabolic control analysis, scientists determined which enzymatic steps limited flux through the pathway [8].

- Genetic Modification: Engineers applied targeted genetic interventions including gene deletion to eliminate competing pathways, gene overexpression to amplify flux through desired pathways, and heterologous gene introduction to add new capabilities [8] [7].

- Analytical Validation: Modified strains were analyzed using chromatography and mass spectrometry to measure metabolic fluxes and product yields [7].

Key Experimental Protocols

A representative experimental protocol from this era for engineering a production host included:

- Pathway Analysis: Map the complete metabolic pathway from substrate to product, identifying all enzymes, cofactors, and potential branching points.

- Promoter Engineering: Replace native promoters with constitutive or inducible systems to control gene expression levels.

- Gene Knockout: Use homologous recombination to delete genes encoding enzymes in competing pathways.

- Vector Design: Construct plasmid vectors containing heterologous genes or multiple copies of native genes under control of strong promoters.

- Transformation and Screening: Introduce constructs into host organism and screen for high-producing clones.

- Fed-Batch Fermentation: Cultivate engineered strains in bioreactors with controlled nutrient feeding to maximize product formation.

Table 1: Key Research Reagents in First-Wave Metabolic Engineering

| Reagent/Tool | Function | Application Examples |

|---|---|---|

| pET Expression Vectors | Strong T7 promoter system for high-level gene expression | Overproduction of pathway enzymes in E. coli |

| Homologous Recombination | Targeted gene deletion or insertion | Knockout of competing metabolic pathways |

| Constitutive Promoters | Continuous gene expression without induction | Maintenance of metabolic flux in production hosts |

| Gel Electrophoresis | Analysis of DNA and protein samples | Verification of genetic constructs and expression |

| GC-MS/LC-MS | Separation and identification of metabolites | Analysis of metabolic fluxes and pathway intermediates |

The Second Wave: Systems Metabolic Engineering

The second wave of metabolic engineering (approximately early 2000s-2010s) emerged as a response to the limitations of single-pathway approaches. Dubbed "systems metabolic engineering," this paradigm recognized that metabolism functions as an interconnected network rather than isolated pathways [8] [7]. The shift was enabled by the genomics revolution, which provided complete genome sequences for production hosts and advanced analytical techniques for measuring system-wide metabolic changes.

Multivariate Modular Metabolic Engineering (MMME)

A seminal framework from this period, Multivariate Modular Metabolic Engineering (MMME), addressed the complex regulation of secondary metabolism by redefining metabolic networks as collections of distinct modules [8]. This approach was brilliantly demonstrated in a landmark study on taxane production in E. coli, which systematically engineered the terpenoid biosynthetic pathway by dividing it into two modules: the upstream precursor formation module and the downstream terpenoid formation module [8]. By independently optimizing each module and then systematically testing different expression levels, researchers achieved unprecedented production titers of taxadiene, a key taxane precursor, debunking the notion that E. coli was suboptimal for terpenoid production [8].

Genome-Scale Metabolic Modeling

The development of genome-scale metabolic models (GEMs) represented another cornerstone of the second wave. The first GEMs for E. coli and S. cerevisiae enabled researchers to simulate metabolic fluxes across the entire cellular network [7]. These computational models integrated genomic, transcriptomic, proteomic, and metabolomic data to predict how genetic modifications would affect system-wide metabolic fluxes, moving beyond the single-pathway focus of the first wave.

Table 2: Quantitative Advances Enabled by Systems Metabolic Engineering

| Organism | Engineering Approach | Product | Yield Improvement | Reference |

|---|---|---|---|---|

| E. coli | MMME of terpenoid pathway | Taxadiene | ~1,000-fold increase over baseline | [8] |

| S. cerevisiae | Genome-scale model-guided engineering | Sesquiterpene | 14.4-fold increase over control | [7] |

| E. coli | Modular co-culture engineering | Flavonoids | 4.3-fold increase in naringenin | [8] |

| S. cerevisiae | Systems biology of xylose utilization | Ethanol | ~85% xylose-to-ethanol conversion | [9] |

Figure 1: Multivariate Modular Metabolic Engineering (MMME) Workflow. This approach divides complex pathways into discrete modules that are optimized independently before systematic combination and flux balance analysis.

The Third Wave: Synthetic Biology Integration

The third wave of metabolic engineering (approximately 2010s-present) is characterized by the deep integration of synthetic biology, enabling unprecedented precision in cellular engineering. This era has been defined by the development of powerful tools like CRISPR-Cas systems for precise genome editing, de novo pathway design, and the application of artificial intelligence for predictive bioengineering [9] [10] [7]. Rather than merely modifying existing pathways, third-wave metabolic engineering focuses on designing and implementing entirely new metabolic routes that may not exist in nature.

CRISPR-Cas Enabled Genome Engineering

The adaptation of CRISPR-Cas systems for genome editing revolutionized metabolic engineering by enabling precise, multiplexed genetic modifications. CRISPR-Cas9 technology uses a 20-nucleotide RNA guide to direct the Cas9 nuclease to specific genomic locations, dramatically reducing off-target effects and simplifying genetic engineering [10]. This technology has been applied to create complex microbial cell factories with numerous targeted modifications that would have been impractical with previous technologies. For example, researchers have used CRISPR to simultaneously regulate eight pathway genes in S. cerevisiae, optimizing squalene and heme production through fine-tuned expression control [11].

AI-Driven Strain Optimization

Artificial intelligence and machine learning have emerged as powerful tools for predicting optimal genetic configurations. Machine learning strategies can now predict the impact of metabolic gene deletions with high accuracy, enabling in silico design of optimized production strains [11]. AI-powered high-throughput screening platforms, such as digital colony pickers, can rapidly identify productive microbial strains based on multi-modal phenotypic data, dramatically accelerating the design-build-test-learn cycle [11].

Experimental Protocol: CRISPR-Mediated Pathway Optimization

A modern protocol for metabolic pathway optimization using CRISPR-dCas12a systems includes:

- gRNA Library Design: Computational design of guide RNA libraries targeting multiple points in the metabolic network.

- Multiplexed CRISPR Interference: Simultaneous repression of competing pathways while activating target pathways using catalytically dead Cas12a (dCas12a).

- Fluorescence-Activated Cell Sorting: High-throughput screening based on fluorescent reporters linked to production metrics.

- Single-Cell RNA Sequencing: Validation of expression changes in selected clones.

- Fermentation Profiling: Assessment of production kinetics in controlled bioreactors.

Table 3: Synthetic Biology Toolkit for Third-Wave Metabolic Engineering

| Tool/Technology | Mechanism | Applications in Metabolic Engineering |

|---|---|---|

| CRISPR-Cas9/dCas9 | RNA-guided DNA targeting | Gene knockouts, transcriptional activation/repression |

| Multiplex Automated Genome Engineering (MAGE) | Oligonucleotide-based recombination | Multiplex genome editing across chromosomal locations |

| Genome-Scale Metabolic Models (GEMs) | Constraint-based modeling | Prediction of metabolic fluxes, identification of engineering targets |

| AI-Powered Digital Colony Picker | Machine learning image analysis | High-throughput screening of microbial strains |

| Orthogonal Riboswitches | Synthetic RNA regulators | Dynamic control of gene expression without cellular interference |

Figure 2: Third-Wave Metabolic Engineering Cycle. The integrated design-build-test-learn cycle leverages synthetic biology tools, AI-powered screening, and machine learning to rapidly optimize metabolic pathways.

Applications and Case Studies

Biofuel Production

The evolution of metabolic engineering is particularly evident in biofuel production, where each wave has addressed limitations of previous approaches. First-generation biofuels relied on food crops, raising sustainability concerns [9]. Second-generation biofuels utilized non-food lignocellulosic biomass but faced challenges with biomass recalcitrance and inhibitor tolerance [9] [10]. Third-wave metabolic engineering has enabled next-generation biofuels through engineered microorganisms capable of producing advanced biofuels like butanol, isoprenoids, and jet fuel analogs with superior energy density and compatibility with existing infrastructure [9].

Notable achievements include engineered Clostridium species with 3-fold increased butanol yields, S. cerevisiae strains achieving ∼85% xylose-to-ethanol conversion, and 91% biodiesel conversion efficiency from microbial lipids [9]. These advances were made possible by third-wave technologies such as CRISPR-Cas systems for rapid strain optimization and de novo pathway engineering to create synthetic metabolic routes [9] [10].

Pharmaceutical and Natural Product Synthesis

Metabolic engineering has revolutionized production of plant-derived pharmaceuticals by transferring complex biosynthetic pathways into microbial hosts. Engineering the biosynthesis of the anticancer drug precursor baccatin III required expression of 17 genes in a heterologous host, demonstrating the sophisticated multi-gene engineering capabilities of third-wave metabolic engineering [1]. Similarly, reconstruction of the n-formyldemecolcine pathway from Gloriosa superba involved 16 genes and achieved production titers of 6.3 ± 1.3 μg/g dry weight in the heterologous host [1].

Future Perspectives

The future of metabolic engineering lies in increasingly integrated and automated approaches. Key emerging trends include:

- AI-Driven Design: Machine learning algorithms will increasingly predict optimal pathway configurations, enzyme variants, and cultivation conditions, reducing the need for extensive experimental screening [7] [11].

- Consortium Engineering: Designed microbial communities will divide metabolic labor for more efficient conversion of complex substrates, as demonstrated in lignocellulosic biomass processing [11].

- C1 Metabolism: Engineering formatotrophic microorganisms to utilize one-carbon compounds (CO2, CO, formate) as feedstocks represents a frontier in sustainable bioproduction [11].

- Cell-Free Systems: In vitro metabolic engineering using purified enzyme systems offers advantages for toxic compounds and simplified purification [12].

As the field continues to evolve, the integration of metabolic engineering with synthetic biology, systems biology, and artificial intelligence promises to accelerate the development of sustainable bioprocesses for producing the next generation of fuels, materials, and therapeutics.

The complex and adaptive nature of biological systems presents a fundamental challenge to traditional engineering paradigms. This technical guide explores the framework of evolutionary design, which recognizes evolution not as a obstacle but as a powerful engineering methodology. We detail how biological evolution and engineering design follow analogous cyclic processes of variation, selection, and iteration. By situating various bioengineering methodologies within a unified Evolutionary Design Spectrum, this whitepaper provides researchers and drug development professionals with a conceptual foundation and practical toolkit for pathway engineering and refactoring. The core thesis is that accounting for—and actively engineering—evolutionary properties is not optional but essential for creating robust, predictable, and successful biological designs.

Synthetic biology aims to apply engineering principles to create biological systems with novel functionalities [13]. However, success in engineering complex biological systems remains limited, partly due to technical challenges but more fundamentally because engineered biological systems are living, adaptive, and evolving [13]. Unlike static engineering substrates like steel or electronics, designed biosystems continue to change after manufacture; the bioengineer is inherently designing future lineages [14]. This reality demands a shift from classical engineering principles toward a new kind of meta-engineering, where the engineering process itself is designed to accommodate and exploit evolution [13].

The conventional application of principles like standardization, decoupling, and abstraction has proven insufficient for taming biological complexity [13]. Engineering failures, such as bacterial antibiotic resistance or the unintended spread of hyper-aggressive engineered organisms, underscore the risks of designing immediate traits without considering evolutionary futures [14]. This guide formalizes the alternative: a design philosophy that aligns engineering goals with evolutionary processes, enabling more predictable and resilient bioengineering outcomes.

Theoretical Foundation: Design as an Evolutionary Process

The Unified Cyclic Process

At its core, the engineering design process is intrinsically evolutionary. Multiple formal descriptions of design, including the design-build-test cycle and CK theory, share a common structure with biological evolution: they are cyclic, iterative processes where concepts are generated, prototyped, tested, and the best candidates are selected for further iteration [13].

- Conceptual Analogy: In directed evolution, genetic diversity (variation) is introduced into a population, which is then screened or selected for desired traits (selection). The best performers are used as templates for the next cycle (inheritance). This process directly mirrors Darwinian evolution [13].

- The Technosphere: Evolutionary trends are evident at a macro scale, where technologies advance through the modification and recombination of existing technologies, which are then selected by market forces, forming clear lineages [13].

This fundamental similarity allows for a unified framework, the Evolutionary Design Spectrum, which encompasses all design methods from random trial-and-error to rational design [13].

The Evotype: Engineering Evolutionary Dispositions

To systematically engineer the evolutionary properties of a biosystem, the concept of the "evotype" has been developed. Analogous to genotype and phenotype, the evotype is defined as the set of evolutionary properties of a designed biosystem [14]. It is determined by three interdependent processes:

- Genetic Variation: The nature of genetic change (mutation, recombination) and how it is distributed across the genome.

- Genotype-Phenotype Map: How genetic changes translate into functional changes in the organism.

- Fitneity: A function that combines the organism's natural fitness (reproductive success) and its utility (success relative to the engineer's design goals) [14].

The evotype can be visualized as an adaptive landscape. Bioengineering, therefore, becomes the process of "sculpting" this landscape to make desired evolutionary outcomes more accessible and to ensure the stability of designed functions over time [14].

The Evolutionary Design Spectrum: A Quantitative Framework

We propose that all bioengineering design methodologies can be characterized within a two-dimensional spectrum defined by throughput (the number of design variants that can be created and tested in a single cycle) and generation count (the number of iterative cycles performed). The product of these two dimensions defines the exploratory power of a design approach [13].

Table 1: Positioning of Bioengineering Methodologies on the Evolutionary Design Spectrum

| Design Methodology | Throughput | Generation Count | Exploratory Power | Primary Knowledge Leverage |

|---|---|---|---|---|

| Rational Design | Low | Low | Low | Exploitation (Prior Knowledge) |

| Random Trial and Error | Medium | Low | Low | Exploration |

| Directed Evolution | High | High | High | Exploration |

| Model-Guided Design | Medium | Medium | Medium | Exploitation & Exploration |

Two forms of "learning" reduce the required exploratory power:

- Exploration: The search process performed by the design method as it roams the fitness landscape (e.g., testing random mutants).

- Exploitation: The leverage of prior knowledge to constrain and guide the search (e.g., using a protein structure model to guide site-directed mutagenesis) [13].

Natural evolution exploits eons of past adaptation; bioengineers can exploit prior scientific knowledge and computational models to achieve design goals more efficiently.

Experimental Protocols for Evolutionary Design

Protocol for a Basic Directed Evolution Experiment

This protocol is foundational for optimizing or creating novel biomolecular functions, such as improving enzyme catalytic efficiency or altering substrate specificity.

1. Gene Diversity Generation:

- Mutagenesis: Create a diverse library of the target gene sequence. Common methods include:

- Error-Prone PCR: Using PCR conditions that reduce fidelity (e.g., unbalanced dNTP concentrations, Mn²⁺) to introduce random point mutations across the gene.

- DNA Shuffling: Digesting a family of related homologous genes with DNase I, then reassembling them using a PCR-like process without primers to create chimeric genes.

- Library Size: Aim for a library size of 10⁴ to 10⁶ variants to ensure adequate coverage of sequence space.

2. Selection or Screening:

- Selection: Link the desired function directly to survival or replication. For example, expressing an antibiotic resistance gene only upon successful cleavage of a target substrate by an engineered enzyme.

- High-Throughput Screening: When selection is not feasible, use robotic automation to assay individual clones in microtiter plates. Employ fluorescence-activated cell sorting (FACS) if the function can be linked to a fluorescent output.

3. Amplification and Reiteration:

- Isolate the genetic material from the top-performing variants (e.g., from selected cells or sorted populations).

- Use this material as the template for the next round of diversity generation (back to Step 1).

- Typically, 3-10 rounds of evolution are performed until a satisfactory performance level is reached.

Protocol for Sculpting the Evotype in a Microbial Chassis

This advanced protocol focuses on engineering the evolutionary properties of a host organism to stabilize a designed pathway.

1. Modulating Genetic Variation:

- Genome Reduction: Delete non-essential genes, mobile genetic elements, and prophages from the host genome to minimize sources of unstable genetic variation.

- Orthogonal DNA Polymerase: Introduce an engineered DNA polymerase with higher fidelity to act on the engineered pathway, reducing its mutation rate.

2. Engineering the Genotype-Phenotype Map:

- Refactoring the Pathway: Recode the metabolic pathway to eliminate native regulatory elements (e.g., replace native promoters and RBSs with synthetic, orthogonal versions). This decouples pathway expression from the host's natural regulatory network, reducing unintended functional changes from host mutations.

- Additive Functions: Introduce negative feedback loops or toxin-antitoxin systems linked to pathway function to penalize mutants that lose the designed function.

3. Aligning Fitness and Utility via Fitneity:

- Essential Gene Coupling: Make the expression of an essential host gene dependent on the function of the engineered pathway. This directly aligns survival (fitness) with the design goal (utility).

- Auxotrophic Complementation: Engineer the pathway to complement a host auxotrophy (e.g., a required amino acid), so only cells maintaining functional pathway can grow in minimal media.

Visualization of Core Concepts

The Evolutionary Design Cycle

This diagram illustrates the fundamental iterative cycle unifying biological evolution and engineering design.

The Evolutionary Design Spectrum

This diagram maps different bioengineering methodologies based on their throughput and generational capacity.

The Evotype Concept

This diagram deconstructs the components of the evotype, showing how genetic variation, the genotype-phenotype map, and selection interact to form the evolutionary landscape.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents and Materials for Evolutionary Design Experiments

| Reagent / Material | Function in Evolutionary Design | Specific Example / Kit |

|---|---|---|

| Diversity Generation Kits | Facilitate the creation of mutant libraries for directed evolution. | Error-Prone PCR Kit (e.g., from Agilent or NEB), DNA Shuffling Kit |

| High-Throughput Screening System | Enables rapid testing of thousands to millions of variants for a desired function. | Fluorescence-Activated Cell Sorter (FACS), Microfluidic Droplet Sorter, Robotic liquid handling systems |

| Orthogonal DNA Polymerases | Engineered polymerases with altered fidelity (high or low) to control mutation rates in specific genetic constructs. | Mutazyme II (for epPCR), High-Fidelity Polymerases (e.g., Phusion) for stable cloning |

| Synthetic Gene Fragments | Completely synthesized genes with customized sequences for refactoring pathways (e.g., codon optimization, regulatory element removal). | gBlocks Gene Fragments (IDT), Full-length gene synthesis services |

| Model Organism Chassis | Genetically tractable host organisms with reduced genomes or engineered for greater genetic stability. | E. coli MG1655 ΔrecA, B. subtilis MGB874, P. putida EM42 |

| CRISPR-based Editors | Enable precise, targeted genomic modifications for pathway refactoring and host genome engineering (e.g., deleting unstable elements). | CRISPR-Cas9 systems (e.g., from Addgene), Base Editors, Prime Editors |

The evolutionary design spectrum provides a unifying framework that reframes bioengineering challenges. By recognizing that all design is evolutionary, researchers can more consciously select and combine methodologies based on their exploratory power and their leverage of prior knowledge. Pathway engineering and refactoring, when viewed through this lens, become exercises in sculpting the evotype—not just designing for immediate function, but for evolutionary stability and adaptability. As the field progresses, the integration of sophisticated computational models and machine learning with high-throughput experimental evolution will further expand our ability to navigate the evolutionary design spectrum, ultimately leading to more predictable and powerful bioengineering outcomes for therapeutics and beyond.

Microbial cell factories (MCFs) represent a paradigm shift in industrial biotechnology, serving as eco-friendly platforms for producing chemicals, fuels, and therapeutics using renewable resources [15]. These biological "workhorses" are regarded as the "chips" of biomanufacturing that will fuel the emerging bioeconomy era [16]. As climate change and fossil fuel depletion accelerate the global need for sustainable production systems, MCFs offer a viable alternative by harnessing engineered microorganisms to convert biomass into valuable products while reducing environmental impact [15] [16]. The development of efficient MCFs relies on sophisticated pathway engineering and refactoring strategies that systematically redesign microbial metabolism to optimize production metrics: titer (product concentration), productivity (production rate), and yield (substrate conversion efficiency) [17].

Within this framework, systems metabolic engineering has emerged as a multidisciplinary approach that integrates synthetic biology, systems biology, and evolutionary engineering with traditional metabolic engineering [17]. This integration enables researchers to overcome the natural limitations of microbial hosts by reprogramming their metabolic networks through targeted genetic modifications. The core challenge lies in selecting optimal host strains, reconstructing efficient metabolic pathways, and optimizing metabolic fluxes—processes that traditionally required significant time, effort, and costs [15] [17]. Recent advances in computational tools, particularly genome-scale metabolic models (GEMs), have revolutionized this field by enabling in silico prediction of metabolic behaviors before undertaking laborious experimental work [15] [17].

Foundational Concepts in Pathway Engineering

Pathway Modeling and Standards

Effective pathway engineering begins with robust modeling frameworks that capture biological knowledge in computationally accessible formats. Pathway models are defined as sets of interactions among biological entities (e.g., proteins and metabolites) curated and organized to illustrate specific processes [18]. These models serve dual purposes: providing intuitive visualizations for human comprehension and supplying annotated, metadata-rich resources for computational analysis according to FAIR (Findable, Accessible, Interoperable, Reusable) principles [18].

Standardized naming conventions and identifiers are critical for pathway model interoperability. Biological entities often have numerous synonyms—for example, the official gene name NET1 also refers to a sodium-dependent noradrenaline transporter, while common chemicals like paracetamol/acetaminophen have over 500 vendor-specific names globally [18]. Implementation of consistent vocabularies through resources like the HUGO Gene Nomenclature Committee (HGNC) for gene symbols, ChEBI for chemical compounds, and UniProt for specific proteins enables unambiguous computational processing [18]. Proper annotation requires using the most precise identifiers available, with proteins identified by UniProt accessions, genes by Ensembl or NCBI identifiers, and metabolites by ChEBI or LIPID MAPS identifiers, all registered through identifiers.org for resolvability [18].

Pathway Scope and Multi-Pathway Integration

Determining appropriate scope and detail level represents a fundamental consideration in pathway modeling. The scope should reflect the biological process being illustrated, with decisions about which reactions and entities to include based on their relevance to the research question [18]. For metabolic conversions, this may involve including only main reaction participants while omitting proton/electron donors/acceptors to reduce visual clutter. In signaling pathways, central cascades with mutated genes might be illustrated in detail while condensing downstream events [18].

Many biological processes span multiple pathways, necessitating integrated visualization approaches. Pathway collages address this need by enabling construction of personalized multi-pathway diagrams that depict customized collections of interacting pathways [19]. These collages fill a gap between individual pathway diagrams and full metabolic network maps, allowing researchers to highlight specific fragments of cellular metabolism relevant to their investigations [19]. Unlike automated super-pathway layouts, pathway collages provide user control over pathway selection, layout, and styling, supporting medium-sized metabolic network fragments typically comprising 5-10 pathways [19].

Computational Framework for Strain Selection

Genome-Scale Metabolic Modeling

Genome-scale metabolic models (GEMs) have emerged as indispensable tools for evaluating microbial production capabilities in silico. These mathematical representations reconstruct an organism's complete metabolic network based on its genomic information, enabling systematic analysis of metabolic fluxes through computer simulations [15]. GEMs encapsulate gene-protein-reaction associations, creating predictive models that can identify gene knockout targets, characterize strain variations, construct biosynthetic pathways, and analyze metabolic resource allocations without extensive experimental effort [17].

The application of GEMs has transformed strain selection from a trial-and-error process to a rational design endeavor. For example, in silico knockout simulations can systematically identify gene deletion targets for improved production, as demonstrated with l-valine production in E. coli [17]. GEMs also enable analysis of strain performance across different environmental conditions (aerobic, microaerobic, anaerobic) and carbon sources (glucose, glycerol, xylose, etc.), providing comprehensive metabolic capacity assessments before laboratory implementation [17].

Comparative Metabolic Capacity Analysis

Selecting optimal production hosts requires comparative analysis of microbial metabolic capabilities. A comprehensive 2025 study evaluated five representative industrial microorganisms—Escherichia coli, Saccharomyces cerevisiae, Bacillus subtilis, Corynebacterium glutamicum, and Pseudomonas putida—for producing 235 bio-based chemicals [15] [17]. This systematic assessment established criteria for identifying suitable strains based on calculated yield metrics:

- Maximum Theoretical Yield (YT): The maximum production of target chemical per given carbon source when all resources are allocated to production, ignoring cell growth and maintenance [17].

- Maximum Achievable Yield (YA): The maximum production considering cell growth requirements and non-growth-associated maintenance energy (NGAM), representing a more realistic production capacity [17].

Table 1: Metabolic Capacities of Industrial Microorganisms for Selected Chemicals

| Target Chemical | Application | E. coli YA (mol/mol) | S. cerevisiae YA (mol/mol) | C. glutamicum YA (mol/mol) | B. subtilis YA (mol/mol) | P. putida YA (mol/mol) |

|---|---|---|---|---|---|---|

| l-Lysine | Animal feed, nutritional supplements | 0.7985 | 0.8571 | 0.8098 | 0.8214 | 0.7680 |

| l-Glutamate | Food additive, neurotransmitter | 0.7501 | 0.8182 | 0.8426 | 0.7933 | 0.7214 |

| Sebacic Acid | Biopolymer precursor | 0.6543 | 0.5987 | 0.6124 | 0.6892 | 0.6013 |

| Propan-1-ol | Bulk chemical, solvent | 0.7215 | 0.6542 | 0.5987 | 0.6321 | 0.5894 |

| Mevalonic Acid | Natural product precursor | 0.5124 | 0.6895 | 0.4563 | 0.4987 | 0.4326 |

Hierarchical clustering of host performance reveals that while most chemicals achieve highest yields in S. cerevisiae, certain compounds display clear host-specific superiority [17]. For instance, pimelic acid production is optimal in B. subtilis, while l-glutamate achieves maximal yields in C. glutamicum despite S. cerevisiae's overall superiority [17]. These findings underscore the importance of chemical-specific evaluation rather than applying universal host selection rules.

Diagram: Computational Framework for Rational Strain Selection

Metabolic Engineering Strategies

Pathway Reconstruction and Cofactor Engineering

Reconstructing efficient biosynthetic pathways often requires introducing heterologous reactions from other organisms. Research demonstrates that for over 80% of 235 target chemicals, fewer than five heterologous reactions were needed to establish functional biosynthetic pathways in host strains [17]. Specifically, 88.24%, 84.56%, 88.97%, 85.29%, and 90.81% of chemicals required fewer than five heterologous reactions for B. subtilis, C. glutamicum, E. coli, P. putida, and S. cerevisiae, respectively [17]. This indicates most bio-based chemicals can be synthesized with minimal metabolic network expansion.

Cofactor engineering represents another powerful strategy for enhancing pathway efficiency. Systematic analysis of cofactor exchanges in native metabolic reactions demonstrates that swapping cofactors (e.g., NADH/NADPH) can increase yields beyond innate metabolic capacities [15]. This approach has proven particularly effective for production of industrially important chemicals including mevalonic acid, propanol, fatty acids, and isoprenoids [15]. By redesigning cofactor specificity of key enzymes, engineers can rebalance redox metabolism and overcome thermodynamic constraints that limit pathway efficiency.

Flux Control and Regulatory Rewiring

Metabolic flux optimization requires identifying key regulatory nodes that control carbon distribution. Computational approaches enable quantitative analysis of relationships between enzyme reactions and chemical production, determining which reactions should be up- or down-regulated to maximize yields [15]. These strategies consider both theoretical maximum yields and actual production capacities under industrial conditions.

The hexosamine biosynthesis pathway exemplifies the complex regulatory challenges in pathway engineering. This pathway produces valuable compounds like glucosamine, N-acetylglucosamine, and UDP-N-acetylglucosamine—key precursors for human milk oligosaccharides (HMOs) with applications in infant nutrition and therapeutics [20]. Natural regulation occurs at multiple levels:

- Transcriptional control through transcription factors (e.g., NagR in Bacillus subtilis) and σ-factors [20]

- Translational control via riboswitches (e.g., glms ribozyme that cleaves its mRNA in response to GlcN6P) [20]

- Post-translational control through allosteric regulation (e.g., feedback inhibition of human glutamine-fructose-6-phosphate amidotransferase by glucosamine-6-phosphate) [20]

Refactoring these control mechanisms involves replacing native regulatory parts with orthogonal systems, removing feedback inhibition through enzyme engineering, and decoupling pathway expression from host regulation [20].

Table 2: Metabolic Flux Optimization Strategies

| Strategy | Mechanism | Application Example |

|---|---|---|

| Heterologous Pathway Introduction | Incorporation of non-native reactions from other organisms | Introduction of mevalonate pathway in E. coli for isoprenoid production [15] |

| Cofactor Exchange | Swapping cofactor specificity to balance redox metabolism | Engineering NADPH-dependent enzymes to use NADH for improved flux [15] |

| Transcriptional Deregulation | Replacement of native promoters with constitutive/inducible variants | Substitution of NagR-regulated promoters for hexosamine pathway expression [20] |

| Allosteric Regulation Removal | Site-directed mutagenesis to eliminate feedback inhibition | Engineering feedback-resistant glutamine-fructose-6-phosphate amidotransferase [20] |

| Riboswitch Engineering | Modification or replacement of natural riboswitches | Bypassing glms ribozyme control for glucosamine production [20] |

Experimental Protocols and Workflows

Host Strain Evaluation Protocol

Objective: Systematically evaluate microbial strains for production of target chemicals using genome-scale metabolic models.

Materials:

- Genome-scale metabolic models for E. coli, S. cerevisiae, C. glutamicum, B. subtilis, P. putida

- Constraint-based reconstruction and analysis (COBRA) toolbox

- Rhea database for mass- and charge-balanced reaction equations

Methodology:

- Pathway Construction: Identify or construct biosynthetic pathways for target chemicals using known biochemical reactions. For reactions not in Rhea, manually construct mass- and charge-balanced equations [17].

- GEM Development: Build separate GEMs for each chemical biosynthesis pathway in each host, incorporating heterologous reactions when necessary. A comprehensive study constructed 1360 GEMs (272 pathways × 5 hosts), with 1092 requiring heterologous reactions [17].

- Yield Calculation: Compute both YT and YA for each chemical under different conditions (aerobic, microaerobic, anaerobic) and carbon sources (glucose, glycerol, xylose, etc.) [17].

- Strain Ranking: Rank strains based on metabolic capacity (YA), considering additional factors like chemical tolerance, genetic stability, and scale-up potential [17].

Pathway Refactoring Protocol

Objective: Refactor native pathways to eliminate regulatory bottlenecks and enhance flux.

Materials:

- CRISPR-Cas9 system for genome editing

- Serine recombinase-assisted genome engineering (SAGE) tools [17]

- Synthetic DNA fragments with redesigned regulatory elements

- Plasmid vectors for expression optimization

Methodology:

- Regulatory Mapping: Identify transcriptional, translational, and post-translational control mechanisms in the target pathway through literature review and experimental analysis [20].

- Promoter Replacement: Substitute native promoters with orthogonal regulatory elements unaffected by host regulation. For hexosamine pathway, replace NagR-regulated promoters with constitutive/inducible alternatives [20].

- Riboswitch Bypassing: Engineer 5' UTRs to remove natural riboswitches while maintaining translation efficiency [20].

- Feedback Resistance Engineering: Use site-directed mutagenesis to eliminate allosteric inhibition sites in key enzymes [20].

- Expression Balancing: Fine-tune gene expression levels using ribosomal binding site (RBS) libraries and promoter variants to optimize flux distribution [20].

Diagram: Integrated Workflow for Developing Microbial Cell Factories

Visualization and Data Integration Tools

Pathway Visualization Platforms

Effective pathway visualization requires specialized tools that balance informational content with interpretability. Escher represents a web application for building, viewing, and sharing metabolic pathway maps with three key features: (1) rapid pathway design with suggestions based on user data and genome-scale models, (2) data visualization for omics datasets (transcriptomics, proteomics, metabolomics, fluxomics), and (3) leveraging modern web technologies for adaptability and sharing [21].

The application supports multiple visualization modes:

- Viewer mode for panning, zooming, and data visualization

- Builder mode for adding reactions, moving pathway components, adding annotations, and adjusting canvas layout [21]

- Data integration for coloring pathway elements based on experimental data (e.g., reaction fluxes, metabolite concentrations, gene expression)

Escher employs gene reaction rules to connect gene data to metabolic reactions, using AND logic for protein complexes and OR logic for isoenzymes [21]. Recent enhancements include reaction data animation using GSAP (GreenSock Animation Platform) to visualize metabolic flux intensity and direction, with adjustable animation speed and line styles [21].

Multi-Pathway Integration with Pathway Collages

Pathway collages address the limitation of single-pathway views by enabling construction of personalized multi-pathway diagrams [19]. The implementation combines server-side pathway layout generation using Pathway Tools algorithms with client-side manipulation through a Cytoscape.js-based web application [19]. This architecture enables:

- Interactive pathway repositioning and styling customization

- Definition of connections between pathways

- Overlay of metabolomics, transcriptomics, and fluxomics data

- Export to publication-quality formats (SVG, PNG)

Performance analysis indicates optimal handling of 5-10 pathways (50-100 metabolites and enzymes), with generation and rendering requiring approximately 10 seconds on standard hardware [19]. Larger assemblies (40+ pathways) experience performance degradation, with rendering times extending to several minutes [19].

Research Reagent Solutions

Table 3: Essential Research Reagents and Tools for Microbial Cell Factory Development

| Category | Specific Tools/Resources | Function/Application |

|---|---|---|

| Genome-Scale Models | GEMs for E. coli iJO1366, S. cerevisiae iMM904, B. subtilis iYO844, C. glutamicum iMT1026, P. putida iJN746 | In silico prediction of metabolic capabilities and engineering targets [17] |

| Pathway Databases | Reactome, WikiPathways, BioCyc, KEGG, Pathway Commons, Rhea | Access to curated metabolic pathways and reaction information [18] |

| Genetic Engineering Tools | CRISPR-Cas9 systems, SAGE (serine recombinase-assisted genome engineering), Golden Gate assembly | Precise genome editing and pathway integration [17] |

| Visualization Software | Escher, Pathway Tools, Cytoscape.js, PathVisio, CellDesigner | Pathway construction, visualization, and data overlay [18] [19] [21] |

| Identifier Resources | UniProt, Ensembl, NCBI Gene, ChEBI, LIPID MAPS, miRBase | Standardized biological identifiers for data integration [18] |

| Modeling Standards | SBGN (Systems Biology Graphical Notation), SBML (Systems Biology Markup Language), BioPAX | Standard formats for model exchange and reproducibility [18] |

The development of microbial cell factories for chemicals, fuels, and therapeutics represents a cornerstone of the emerging bioeconomy. The integration of computational and experimental approaches—from genome-scale modeling to pathway refactoring—has dramatically accelerated the design-build-test-learn cycle for strain development [15] [17]. Future advances will likely focus on several key areas: (1) integration of automation and artificial intelligence with biotechnology to facilitate development of customized artificial synthetic MCFs [16], (2) expansion to non-model organisms with native capabilities for target molecule production [17], and (3) dynamic regulation systems that automatically adjust metabolic flux in response to changing cultivation conditions [20].

The resources and methodologies outlined in this technical guide provide a comprehensive framework for researchers engaged in pathway engineering and refactoring. By applying systematic approaches to host selection, pathway design, and flux optimization, scientists can develop efficient microbial cell factories that translate laboratory success to industrial-scale production, ultimately contributing to more sustainable manufacturing paradigms across chemical, fuel, and therapeutic sectors.

Metabolic engineering is the science of improving product formation or cellular properties through the modification of specific biochemical reactions or the introduction of new genes using recombinant DNA technology [22]. The field has evolved through three distinct waves of technological innovation, transforming from a rational discipline to a systematic, data-driven science. The first wave of metabolic engineering, beginning in the 1990s, relied on rational approaches to pathway analysis and flux optimization to redirect cellular metabolism toward desired products. A classic example from this era includes the overproduction of lysine in Corynebobacterium glutamicum, where simultaneous expression of pyruvate carboxylase and aspartokinase increased flux into and out of the Tricarboxylic Acid (TCA) cycle, resulting in a 150% increase in lysine productivity [22].

The second wave emerged in the 2000s, incorporating systems biology technologies such as genome-scale metabolic models. This holistic approach enabled researchers to bridge mechanistic genotype-phenotype relationships and explore the full metabolic potential of cell factories [22]. The third wave, which continues today, began with pioneering work on complete pathway design and optimization using synthetic biology tools. This approach enables the production of both natural and non-natural chemicals that may not be inherent to the host organism, exemplified by the production of artemisinin, a potent antimalarial compound [22]. Within this modern framework, the Design-Build-Test-Learn (DBTL) cycle and hierarchical metabolic engineering have emerged as central dogmas for systematic pathway engineering and refactoring research.

The Design-Build-Test-Learn (DBTL) Cycle: A Framework for Systematic Engineering

Core Principles and Workflow

The DBTL cycle represents an iterative framework for strain optimization that incorporates learning from each successive cycle to progressively develop improved production strains [23]. This approach is particularly valuable for combinatorial pathway optimization, where simultaneous optimization of multiple pathway genes often leads to combinatorial explosions that make exhaustive experimental testing infeasible [23]. The power of the DBTL cycle lies in its recursive nature, allowing researchers to continuously refine their designs based on experimental data.

The cycle consists of four interconnected phases:

- Design: Selection of genetic elements and pathway configurations using computational tools and prior knowledge

- Build: Construction of strain designs using genetic engineering tools

- Test: Characterization of strain performance through fermentation and analytical methods

- Learn: Analysis of data to extract insights that inform the next design phase

Table 1: Key Components of the DBTL Cycle in Metabolic Engineering

| Phase | Key Activities | Tools & Technologies | Outputs |

|---|---|---|---|

| Design | Pathway design, computational modeling, target identification | Genome-scale models, UTR Designer, promoter libraries | DNA library designs, engineering targets |

| Build | DNA assembly, molecular cloning, genome editing | Golden Gate assembly, CRISPR-Cas9, automated strain construction | Engineered microbial strains |

| Test | Fermentation, analytics, omics data collection | HPLC, MS, NMR, RNA-seq, proteomics | Titer, yield, productivity (TYR) data |

| Learn | Data analysis, pattern recognition, hypothesis generation | Machine learning, statistical modeling, kinetic analysis | New design rules, optimized targets |

The Knowledge-Driven DBTL Cycle: A Case Study in Dopamine Production

Recent advances have introduced the knowledge-driven DBTL cycle, which incorporates upstream in vitro investigation to provide mechanistic understanding before embarking on full DBTL cycling [24]. This approach was successfully applied to optimize dopamine production in Escherichia coli, resulting in a strain capable of producing dopamine at concentrations of 69.03 ± 1.2 mg/L (34.34 ± 0.59 mg/g biomass) – a 2.6 to 6.6-fold improvement over previous state-of-the-art production systems [24].

The dopamine production pathway was engineered using a bicistronic system where the native E. coli gene encoding 4-hydroxyphenylacetate 3-monooxygenase (HpaBC) converts L-tyrosine to L-DOPA, followed by conversion to dopamine by L-DOPA decarboxylase (Ddc) from Pseudomonas putida [24]. The knowledge-driven approach began with in vitro testing in crude cell lysate systems to assess enzyme expression levels before moving to in vivo optimization, enabling more informed design decisions.

Diagram 1: Knowledge-driven DBTL cycle for dopamine production

Experimental Protocol: Dopamine Production Strain Development

Materials and Methods [24]:

Bacterial Strains and Plasmids:

- Production host: E. coli FUS4.T2 with genomic modifications for enhanced L-tyrosine production (TyrR depletion and feedback inhibition mutation in tyrA)

- Cloning strain: E. coli DH5α

- Plasmid system: pET system for gene storage, pJNTN for crude cell lysate system and library construction

Media and Cultivation:

- Minimal medium containing: 20 g/L glucose, 10% 2xTY medium, phosphate buffer, MOPS, vitamin B6, phenylalanine, FeCl₂, and trace elements

- Antibiotics: ampicillin (100 µg/mL), kanamycin (50 µg/mL)

- Inducer: IPTG (1 mM)

In Vitro Testing:

- Crude cell lysate system prepared in 50 mM phosphate buffer (pH 7)

- Reaction buffer supplemented with 0.2 mM FeCl₂, 50 µM vitamin B6, and 1 mM L-tyrosine or 5 mM L-DOPA

- Enzyme expression levels tested before in vivo implementation

RBS Library Construction:

- RBS engineering focused on modulating the Shine-Dalgarno sequence without interfering with secondary structures

- High-throughput construction of bicistronic designs for simultaneous optimization of HpaBC and Ddc expression levels

Analytical Methods:

- Dopamine quantification via HPLC

- Biomass measurement for yield calculations

Hierarchical Metabolic Engineering: Rewiring Cellular Metabolism at Multiple Scales

The Five Hierarchies of Metabolic Engineering

Hierarchical metabolic engineering operates across multiple biological scales to efficiently reprogram cellular metabolism. This approach recognizes that successful pathway engineering requires optimization at different levels of biological organization [22]. The mainstream strategies of hierarchical metabolic engineering can be categorized into five distinct levels:

Part Level: Engineering individual biological components such as enzymes, ribosome binding sites, and promoters. Key strategies include:

- Enzyme engineering: Improving catalytic efficiency, substrate specificity, and stability

- Cofactor engineering: Modifying cofactor requirements and regeneration systems

- Promoter engineering: Tuning expression levels with precision

Pathway Level: Optimizing complete metabolic pathways through modular design and balancing. Implementation strategies include:

- Modular pathway engineering: Dividing complex pathways into functional modules

- Precursor engineering: Enhancing supply of starting metabolites

- Transport engineering: Managing influx of substrates and efflux of products

Network Level: Engineering at the scale of metabolic networks to manage systemic interactions:

- Cofactor balancing: Optimizing ATP, NADH, NADPH regeneration and utilization

- Regulatory network engineering: Modifying transcription factors and regulatory circuits

- Signaling transplant engineering: Introducing novel regulatory mechanisms

Genome Level: Implementing chromosomal modifications for stable and efficient production:

- Genome editing: Using CRISPR-Cas systems for precise modifications

- High-throughput genome engineering: Automated methods for multiplexed edits

- Codon optimization: Enhancing translation efficiency across the genome

Cell Level: Engineering at the whole-cell level to improve overall cellular fitness:

- Chassis engineering: Optimizing host physiology for production

- Tolerance engineering: Enhancing resistance to toxic compounds and products

- Substrate engineering: Expanding the range of utilizable carbon sources

Table 2: Representative Achievements in Hierarchical Metabolic Engineering

| Product | Host Organism | Titer/Yield/Productivity | Key Hierarchical Strategies | Application Area |

|---|---|---|---|---|

| 3-Hydroxypropionic acid | C. glutamicum | 62.6 g/L, 0.51 g/g glucose | Substrate engineering, Genome editing | Bulk chemical |

| L-Lactic acid | C. glutamicum | 212 g/L, 97.9 g/g glucose | Modular pathway engineering | Bulk chemical |

| Succinic acid | E. coli | 153.36 g/L, 2.13 g/L/h | Modular pathway engineering, High-throughput genome engineering | Bulk chemical |

| Lysine | C. glutamicum | 223.4 g/L, 0.68 g/g glucose | Cofactor engineering, Transporter engineering | Amino acid |

| Valine | E. coli | 59 g/L, 0.39 g/g glucose | Transcription factor engineering, Cofactor engineering | Amino acid |

| Artemisinin | S. cerevisiae | N/A | Synthetic pathway construction, Enzyme engineering | Pharmaceutical |

| Opioids | Engineered yeast | N/A | Complete pathway refactoring, Heterologous expression | Pharmaceutical |

Integrated Workflow for Hierarchical Metabolic Engineering

The hierarchical approach to metabolic engineering follows a systematic workflow that integrates across the five levels, from part selection to cell-level optimization. This integrated methodology enables comprehensive rewiring of cellular metabolism for enhanced production of target compounds.

Diagram 2: Hierarchical metabolic engineering workflow

Advanced Tools and Methodologies for Pathway Engineering

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of DBTL cycles and hierarchical metabolic engineering requires a comprehensive toolkit of research reagents and methodologies. The table below details essential materials and their applications in pathway engineering research.

Table 3: Research Reagent Solutions for Metabolic Engineering

| Category | Specific Items | Function & Application | Examples from Literature |

|---|---|---|---|

| Genetic Tools | RBS libraries, Promoter collections, Plasmid systems (pET, pJNTN) | Fine-tuning gene expression, Pathway balancing, Gene expression control | RBS engineering for dopamine pathway [24], Modular pathway engineering [22] |

| Host Strains | E. coli FUS4.T2 (tyrosine overproducer), C. glutamicum production strains | Providing metabolic background, Precursor supply, Tolerance to products | E. coli FUS4.T2 for dopamine [24], C. glutamicum for lysine [22] |

| Enzyme Systems | HpaBC, Ddc, Feedback-resistant enzymes (TyrA) | Catalyzing specific reactions, Overcoming regulatory constraints | HpaBC (L-tyrosine to L-DOPA), Ddc (L-DOPA to dopamine) [24] |

| Analytical Tools | HPLC, MS, NMR, GC-MS | Quantifying products, Metabolic profiling, Pathway analysis | Metabolomics for pathway elucidation [25] [1] |

| Culture Media | Minimal medium with defined components, SOC medium, Phosphate buffers | Supporting cell growth, Maintaining pH, Providing essential nutrients | Minimal medium for dopamine production [24] |

| Inducers & Antibiotics | IPTG, Ampicillin, Kanamycin | Controlling gene expression, Selective pressure | IPTG (1 mM) for induction [24] |

Machine Learning in DBTL Cycles

Machine learning has emerged as a powerful tool for guiding metabolic engineering, particularly in the "Learn" phase of DBTL cycles. In combinatorial pathway optimization, ML methods help navigate large design spaces where testing all possible combinations is experimentally infeasible [23]. Studies comparing ML algorithms have shown that gradient boosting and random forest models outperform other methods in the low-data regime typical of early DBTL cycles [23]. These methods have demonstrated robustness to training set biases and experimental noise, making them particularly valuable for real-world applications.

The application of machine learning in DBTL cycles follows a structured process:

- Data Collection: Experimental data from initial strain characterization

- Model Training: Using ML algorithms to identify patterns and relationships

- Prediction: Forecasting performance of untested genetic designs

- Recommendation: Prioritizing designs for the next DBTL cycle

A key advancement in this area is the development of mechanistic kinetic model-based frameworks that combine first-principles understanding with data-driven approaches. These frameworks enable in silico testing and optimization of machine learning methods over multiple DBTL cycles, addressing the challenge of limited publicly available multi-cycle datasets [23].

Applications and Future Perspectives

Industrial Applications of DBTL and Hierarchical Engineering

The integration of DBTL cycles with hierarchical metabolic engineering has enabled production of diverse valuable compounds across multiple industries:

Pharmaceuticals and Therapeutics:

- Artemisinin: Antimalarial compound produced in engineered yeast

- Opioids: Pain management drugs synthesized in engineered microorganisms

- Vinblastine: Anticancer compound produced through complex pathway engineering

- QS-21: Vaccine adjuvant produced in engineered plant systems [22]

Bulk Chemicals and Materials:

- 1,4-Butanediol: Chemical intermediate for polymer production

- Succinic acid: Platform chemical with applications in food, pharma, and materials

- Poly(lactate-coglycolate): Biodegradable polymer for medical applications

Biofuels and Energy:

- Bioethanol: Traditional biofuel with improved production efficiency

- Advanced biofuels: Isobutanol, fatty acid-derived biofuels from engineered microbes

Emerging Trends and Future Directions

The field of metabolic engineering continues to evolve with several emerging trends shaping its future:

Integration of Multi-Omics Data: The combination of genomics, transcriptomics, proteomics, and metabolomics data provides comprehensive views of cellular physiology, enabling more informed engineering decisions [25].

Automation and High-Throughput Technologies: Automated biofoundries are accelerating the DBTL cycle by enabling rapid construction and testing of thousands of genetic designs [24].

Expansion of Chemical Space: Advances in enzyme engineering and pathway design are enabling production of increasingly complex molecules, including "new-to-nature" compounds with novel properties [22].

Model-Guided Engineering: The development of more sophisticated computational models, including kinetic models and genome-scale models, is improving our ability to predict cellular behavior and identify optimal engineering strategies [23].

As these trends continue, the central dogmas of DBTL cycles and hierarchical metabolic engineering will remain fundamental to the systematic rewiring of cellular metabolism for sustainable production of valuable chemicals, materials, and therapeutics.

Methodologies and Workflows: Practical Tools for Pathway Construction and Refactoring

Pathway refactoring serves as an indispensable synthetic biology tool for natural product discovery, characterization, and engineering, particularly valuable for activating silent biosynthetic gene clusters (BGCs) that are tightly controlled by complex native regulations [26] [27]. The fundamental principle involves decoupling pathway expression from sophisticated native regulatory networks and replacing them with standardized, well-characterized genetic parts that function predictably in heterologous hosts [27]. This engineering approach enables researchers to bypass the traditional laborious processes required to elicit pathway expression, which often demands extensive manipulation of culture parameters or case-by-case regulatory engineering [27].

The emergence of high-throughput DNA assembly methods, particularly Golden Gate assembly, has dramatically accelerated pathway refactoring capabilities. Golden Gate reaction is a DNA assembly technique based on Type IIs restriction enzymes, which cut outside their recognition sites to generate single-strand DNA overhangs that guide corresponding DNA fragments to ligate in a designated order [26]. This "one-pot" nature makes Golden Gate reactions exceptionally amenable to automation, facilitating the generation of numerous constructs in a massively parallel manner [28]. The integration of these molecular techniques with modular design principles has established plug-and-play refactoring as a powerful platform for combinatorial biosynthesis and natural product research.

Core Methodology: A Two-Tier Assembly Workflow

Workflow Architecture and Component Design

The plug-and-play pathway refactoring workflow employs a two-tier Golden Gate reaction system, catalyzed by BbsI (1st tier) and BsaI (2nd tier) respectively [26]. This hierarchical approach enables systematic assembly of complex pathways from basic genetic components:

Biosynthetic Gene Preparation: Target genes are synthesized or PCR-amplified with BbsI cleavage sites at both ends, generating general overhangs AATG (start codon side) and CGGT (stop codon side). Internal BbsI and BsaI sites must be removed via silent mutations to prevent interference [26].

Helper Plasmid Construction: Preassembled helper plasmids contain promoters and terminators flanking a counter-selection marker (ccdB) with BbsI cleavage sites. These plasmids provide the transcriptional control elements for pathway expression [26].

Spacer Plasmid Implementation: A critical innovation includes spacer plasmids sharing identical 4bp overhangs with corresponding helper plasmids but containing only a 20bp random DNA sequence. These spacers enable the system to adapt to pathways with varying gene numbers by "filling gaps" when helper plasmids are unused [26].

The first tier involves a BbsI-catalyzed Golden Gate reaction where the ccdB marker on the helper plasmid is replaced by the biosynthetic gene, creating a complete expression cassette [26]. The AATG overhang between promoter and biosynthetic gene is strategically designed with the "A" originating from the promoter's last nucleotide followed by the "ATG" start codon, enabling seamless connection [26].

Modular Assembly and Pathway Reconstruction