Overcoming 2025's Biggest Hurdles in Automated DBTL Cycles for Accelerated Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals tackling the core challenges in automating the Design-Build-Test-Learn (DBTL) cycle.

Overcoming 2025's Biggest Hurdles in Automated DBTL Cycles for Accelerated Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals tackling the core challenges in automating the Design-Build-Test-Learn (DBTL) cycle. It explores the foundational barriers of legacy systems and data silos, details emerging methodologies like AI-driven data automation and agentic AI, offers strategies for optimizing collaboration and ROI, and establishes a framework for robust clinical validation and regulatory compliance. The goal is to equip scientific teams with the knowledge to create faster, more reliable, and scalable automated discovery pipelines.

Navigating the Foundational Hurdles: Legacy Systems, Data Silos, and Workforce Resistance

The Legacy System Integration Problem in Biomedical Research

Technical Support Center

Troubleshooting Guides

Issue 1: System Integration Failures in Automated DBTL Cycles

Problem: Legacy systems fail to communicate with modern biofoundry equipment during Design-Build-Test-Learn (DBTL) automation, causing pipeline interruptions.

Symptoms:

- Inability to transfer DNA sequence designs from legacy systems to automated DNA assembly platforms

- Data format mismatches between older Laboratory Information Management Systems (LIMS) and modern analytical tools

- Failure to execute automated protocols due to communication timeouts

Resolution Steps:

- Implement API Bridges: Deploy healthcare APIs as middleware to enable communication between legacy systems and modern biofoundry equipment. These act as "code-based messengers" to facilitate safe, secure data transfer between applications [1].

- Data Standardization: Convert all data inputs and outputs to CDISC standards (SDTM, ADaM, ODM) before processing through the DBTL cycle to ensure interoperability [2].

- Protocol Validation: Test integration points with pilot projects before full implementation. Start with non-critical workflows to identify compatibility issues [3].

Prevention:

- Conduct regular system audits to identify integration vulnerabilities

- Implement real-time data monitoring tools to detect communication failures early

- Establish data governance frameworks to maintain format consistency [2]

Issue 2: Data Quality Degradation in Research Data Management

Problem: Manual data entry and legacy system limitations introduce errors that compromise research data integrity.

Symptoms:

- Inconsistent data formatting across different research sites

- Missing critical data points in experimental results

- Typographical errors in dosage recordings and measurement units

Resolution Steps:

- Electronic Data Capture (EDC) Implementation: Deploy EDC systems with built-in validation checks to reduce manual entry errors, improving data accuracy by over 30% [2].

- Standardized Procedures: Create uniform SOPs and data dictionaries ensuring all research sites follow identical data entry protocols [2].

- Automated Validation: Implement real-time data monitoring tools that immediately flag inconsistencies for correction [2].

Prevention:

- Regular training programs for staff to ensure proper protocol understanding

- Establish data quality metrics and monitoring dashboards

- Implement automated data cleaning scripts for common error patterns

Issue 3: Legacy System Security Vulnerabilities

Problem: Outdated operating systems and software in research environments create cybersecurity risks that can compromise sensitive research data.

Symptoms:

- System vulnerabilities to ransomware and cyberattacks

- Inability to implement modern encryption standards

- Compliance violations with data protection regulations

Resolution Steps:

- Security Assessment: Conduct regular security audits to identify vulnerabilities in legacy systems [4].

- Access Controls: Implement stringent access limitations to ensure only authorized personnel can access critical systems [4].

- Encryption Implementation: Apply advanced encryption to sensitive research data both at rest and in transit [5].

- System Patching: Keep all system software updated with the latest security patches, despite compatibility challenges [4].

Prevention:

- Develop comprehensive incident response plans

- Provide ongoing security awareness training

- Consider phased modernization of most vulnerable systems [4]

Frequently Asked Questions (FAQs)

Q: Why are legacy systems still prevalent in biomedical research environments? A: According to a HIMSS survey, 73% of healthcare providers still use legacy operating systems, primarily due to cost constraints, complexity of migrating research data, and resistance to change from staff accustomed to existing workflows. The financial investment required for system replacement can be prohibitive for research institutions with limited budgets [5].

Q: What are the specific impacts of legacy systems on automated DBTL cycle iteration? A: Legacy systems disrupt DBTL cycles by creating data silos that prevent seamless information flow between design, building, testing, and learning phases. They cause slower processes through excessive manual data entry, increase error rates due to documentation inefficiencies, and create poor interoperability that prevents effective data sharing between departments or research facilities. This significantly slows iteration speed in synthetic biology research [5] [6].

Q: How can we integrate AI and machine learning capabilities with our existing legacy research systems? A: Successful AI integration requires a strategic approach: (1) Begin with assessment and planning to evaluate existing systems and identify capability gaps; (2) Partner with AI technology providers who specialize in your research domain; (3) Start with pilot projects to test integration in a controlled environment before full-scale implementation. Companies like Pfizer and Roche have successfully leveraged this approach to enhance manufacturing and quality control processes [3].

Q: What are the compliance risks associated with maintaining legacy systems in regulated research environments? A: Legacy systems struggle to keep pace with evolving standards like HIPAA, GDPR, and FDA requirements, potentially resulting in substantial fines and penalties. According to BDO's 2024 Healthcare CFO Outlook Survey, 51% of healthcare CFOs say data breaches are becoming one of the biggest risks compared to previous years, highlighting increasing regulatory concerns [4].

Q: What quantitative improvements can we expect from modernizing our legacy research systems? A: Successful modernization projects have demonstrated significant gains: up to 50% improvement in operational agility, 30% performance enhancement, over 40% increase in bug resolution efficiency, and elimination of security incidents post-update. These metrics translate to faster research cycles and more reliable experimental results [7].

Quantitative Data Analysis

Legacy System Prevalence in Biomedical Research

Table 1: Legacy Operating System Usage in Healthcare and Research Environments

| Operating System | Usage Percentage | Primary Research Impact |

|---|---|---|

| Windows Server 2008 | 35% | Security vulnerabilities, integration limitations |

| Windows 7 | 34% | Compliance risks, unsupported features |

| Legacy Medical Device OS | 25% | Data siloing, interoperability issues |

| Windows XP | 20% | Critical security risks, inability to modernize |

| Windows Server 2003 | 19% | Performance bottlenecks, maintenance costs |

Source: HIMSS Survey Data [5]

Data Management Challenges in Research Settings

Table 2: Data Management Challenges and Prevalence in Biomedical Research

| Challenge Category | Prevalence | Impact on Research Quality |

|---|---|---|

| Data handling problems | 84% of researchers | Delayed research timelines, inaccurate results |

| Manual data entry errors | Up to 40% reduction with EDC | Compromised data integrity, statistical bias |

| Lack of Laboratory Information Management Systems | 86% of labs | Inefficient data tracking, provenance issues |

| Protocol complexity issues | >50% of data issues | Reduced reproducibility, implementation variability |

Source: Academic Biomedical Research Needs Assessment [8]

Experimental Protocols for System Integration

Protocol 1: API Integration Methodology for Legacy Systems

Purpose: To establish secure communication between legacy research systems and modern biofoundry equipment.

Materials:

- Legacy system with database access

- API middleware solution

- Modern biofoundry equipment (liquid handlers, sequencers)

- Authentication and encryption tools

Procedure:

- Collaborative Discovery Phase: Conduct comprehensive analysis of existing legacy system architecture and data flows [1].

- Secure API Design: Develop APIs following healthcare security best practices, including HIPAA-compliant data transmission protocols [1].

- Testing & Iterative Deployment: Implement pilot project to validate integration, starting with non-critical data transfers [1].

- Ongoing Management: Establish monitoring and support protocols for continuous integration performance [1].

Validation: Successful data transfer measured by complete record migration with zero data loss and transaction speeds meeting biofoundry throughput requirements.

Protocol 2: Legacy System Modernization for Research Environments

Purpose: To update aging research systems while preserving critical historical data and maintaining research continuity.

Materials:

- Legacy system inventory

- Modern technology stack (Angular 2+, Node.js)

- Data migration tools

- Testing frameworks

Procedure:

- Problem Mapping: Identify specific instability issues, functionality gaps, and security vulnerabilities [7].

- Technology Migration: Transition from obsolete frameworks (AngularJS) to modern equivalents (Angular 2+) [7].

- Functionality Enhancement: Incorporate new features addressing contemporary research challenges while maintaining existing workflows [7].

- Comprehensive Testing: Conduct unit tests, end-to-end validation, and user acceptance testing before full deployment [7].

Validation: System performance metrics showing at least 30% improvement in processing speed and 40% reduction in critical errors.

Workflow Visualization

Current Problem State: Legacy System Disruption in DBTL Cycles

Solution State: Integrated Research Environment

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Materials and Functions for Legacy System Integration

| Tool/Technology | Function | Application Context |

|---|---|---|

| Healthcare APIs | Enable communication between disparate systems | Legacy-to-modern system integration [1] |

| Electronic Data Capture (EDC) Systems | Digitize data collection with validation checks | Research data management modernization [2] |

| Angular 2+ Framework | Modern web application development | Legacy user interface modernization [7] |

| Node.js | Server-side JavaScript runtime | Backend system enhancement [7] |

| CDISC Standards (SDTM, ADaM) | Standardized data models for clinical research | Data format interoperability [2] |

| Cloud Data Repository | Scalable, secure data storage | Centralized research data management [2] |

| Machine Learning Algorithms | Predictive analysis and pattern recognition | Enhanced Learning phase in DBTL cycles [9] |

| Cell-Free Expression Systems | Rapid protein synthesis without cloning | Accelerated Build-Test phases [9] |

Confronting Data Fragmentation and Siloed Workflows

Troubleshooting Guide

This guide addresses common technical issues that disrupt the automated Design-Build-Test-Learn (DBTL) cycle, helping researchers maintain efficient and iterative bioengineering workflows.

Q: Our automated DBTL pipeline is failing due to inconsistent data formats between the "Build" and "Test" phases. How can we resolve this?

- A: Data format mismatches often arise from siloed instrumentation software. Implement a unified data schema and use integration platforms to create seamless data flow.

- Protocol: Establish a standardized data schema (e.g., using JSON or XML) for all experimental data. Utilize integration platforms or custom scripts to create real-time data pipelines that automatically convert instrument outputs into this common schema before they enter the central database. This ensures that the "Test" phase can reliably consume data from the "Build" phase.

Q: Our machine learning models for the "Learn" phase are underperforming due to poor-quality, fragmented training data. What can we do?

- A: This indicates a need for robust data governance and consolidation. Incomplete or siloed data severely limits the predictive power of ML models [10].

- Protocol: Create a centralized data repository, such as a data lake, to aggregate structured and unstructured data from all DBTL cycles [11]. Enforce data governance policies that define roles, responsibilities, and quality checks (e.g., required metadata fields, allowable value ranges) for all data entries. This provides the clean, comprehensive datasets needed for effective model training.

Q: How can we prevent workflow silos when different teams use specialized tools for "Design" (CAD software) and "Build" (automation scripts)?

- A: Strategic integration is key to breaking down barriers between departments and disconnected software systems [12].

- Protocol: Adopt tools with native integration capabilities or use APIs to connect them. For example, tools like AssemblyTron integrate DNA assembly design outputs directly with liquid handling robots for automated execution [6]. Foster a culture of cross-functional collaboration by aligning team goals and KPIs to encourage data and resource sharing.

Q: Our automated workflows are brittle and break whenever a software tool updates its API. How can we create more resilient systems?

- A: This fragility stems from over-reliance on point-to-point integrations. Moving to a more modular architecture can mitigate this risk.

- Protocol: Instead of hardcoding connections, use a middleware layer or an orchestration framework (e.g., Apache Airflow, Nextflow) to manage tasks. Containerize software components (e.g., using Docker) to ensure consistent execution environments. This abstraction makes the workflow more robust to changes in individual tools.

Frequently Asked Questions (FAQs)

Q: What are the most critical initial steps to reduce data fragmentation in a new biofoundry?

Q: We are considering a "Learn-Design-Build-Test" (LDBT) approach. What is its main advantage?

- A: The LDBT cycle begins with a machine learning-driven Learn phase that analyzes existing data to predict optimal designs before moving to the lab [13]. This "learn-first" ethos can dramatically accelerate development by reducing costly trial-and-error in the Build-Test phases, converging on high-performance constructs with fewer iterations [13].

Q: How can we evaluate if a new software tool will exacerbate our siloed workflows?

- A: During vendor evaluation, ask:

- Does it offer native integrations with our core systems (e.g., LIMS, data warehouses)?

- Does it support open standards and APIs for easy data exchange?

- Is it designed to scale with our organization and regularly update its integration capabilities? [12] A "no" to these questions suggests the tool may create a new silo.

- A: During vendor evaluation, ask:

Q: What are the quantifiable business impacts of data silos we can use to justify consolidation projects?

- A: Data silos have measurable negative impacts, including poor data quality that can cost organizations an average of $12.9 million annually [12]. Furthermore, data fragmentation can cost industries like healthcare tens to hundreds of billions of dollars per year through inefficiencies and errors [11].

Experimental Protocols for Key Cited Studies

1. Protocol for a Machine Learning-Driven LDBT Cycle [13]

- Objective: To accelerate genetic construct optimization by reordering the cycle to begin with a machine learning-based "Learn" phase.

- Methodology:

- Learn: Train machine learning models (e.g., neural networks) on historical DBTL data. Features include promoter sequences, RBS strengths, and codon usage. The model predicts expression levels for new designs.

- Design: Use the model's predictions to in silico generate a focused set of high-potential genetic construct sequences.

- Build: Synthesize the top-predicted sequences using high-throughput methods, such as automated DNA assembly.

- Test: Rapidly characterize the constructs using a cell-free transcription-translation (TX-TL) system, obtaining protein expression data in hours.

- Iterate: Feed the new experimental data back into the ML model to refine its predictions and initiate the next LDBT cycle.

2. Protocol for a High-Throughput DBTL Pressure Test [6]

- Objective: To rapidly research, design, and develop microbial strains for the production of 10 target molecules within 90 days.

- Methodology:

- Design: Utilize a suite of bioinformatics tools (e.g., RetroPath 2.0 for retrosynthesis) to design pathways for diverse molecules, from simple chemicals to complex natural products with unknown pathways.

- Build: Employ automated strain construction across multiple host species (e.g., E. coli, S. cerevisiae). The referenced team built 215 strains and synthesized 1.2 Mb of DNA.

- Test: Develop and run high-throughput, molecule-specific assays (e.g., in-house developed assays for the target molecules) to screen for production. The team performed 690 assays.

- Learn: Analyze production data to identify successful strains and pathways, iterating on designs for molecules not initially successful.

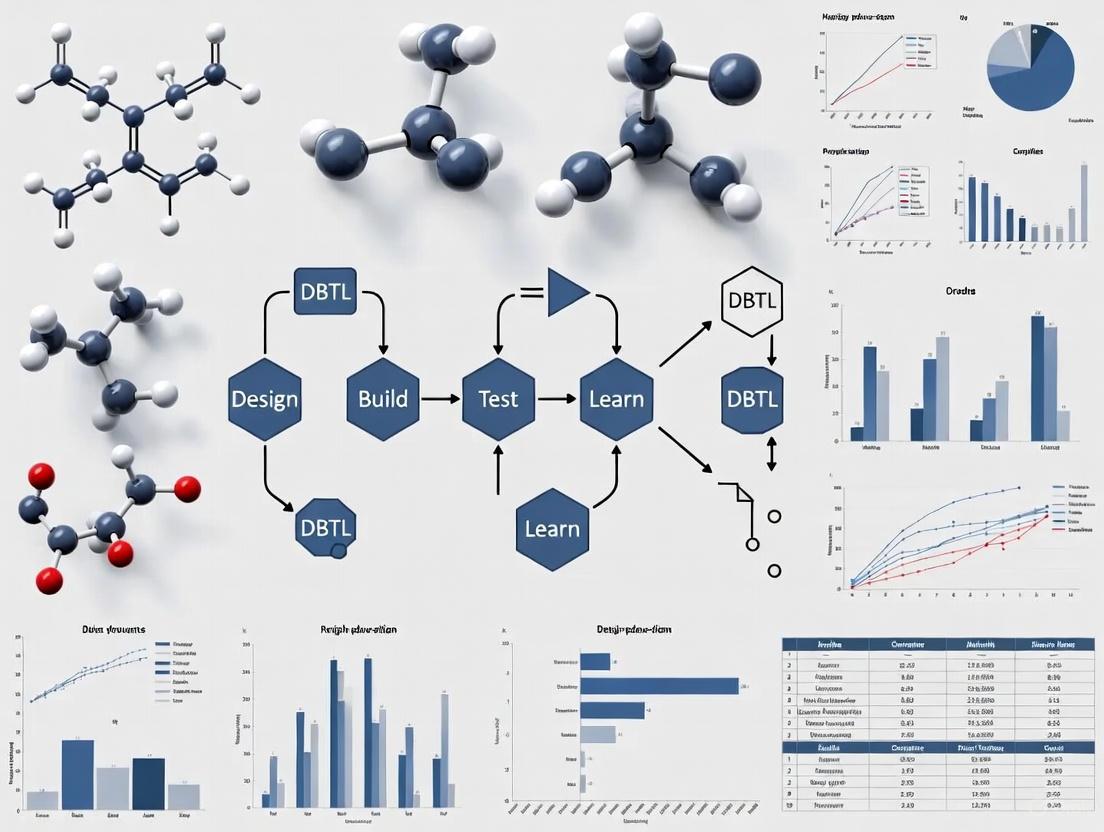

Workflow Visualization

The following diagrams illustrate the core workflows discussed in this guide.

Traditional DBTL Cycle Workflow

Machine Learning-Driven LDBT Cycle

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential materials and tools for implementing advanced, automated DBTL cycles.

| Item | Function in DBTL Cycle |

|---|---|

| Cell-Free Transcription-Translation (TX-TL) Systems | Enables rapid, high-throughput testing of genetic circuits without the complexities of living cells, drastically speeding up the "Test" phase [13]. |

| j5 DNA Assembly Design Software | An open-source tool for automating the design of DNA assembly strategies, standardizing and accelerating the "Design" phase [6]. |

| AssemblyTron | An open-source Python package that integrates j5 design outputs with Opentrons liquid handling robots, bridging the "Design" and automated "Build" phases [6]. |

| SynBiopython | An open-source software library developed by the Global Biofoundry Alliance to standardize DNA design and assembly efforts across different platforms and labs [6]. |

| Cameo & RetroPath 2.0 | Computational tools used for in silico design of metabolic engineering strategies and retrosynthesis, supporting the data-driven "Learn" and "Design" phases [6]. |

Workforce Resistance and the Skills Gap in Automated Environments

Troubleshooting Guide: Common Issues in Automated DBTL Environments

FAQ 1: Our team is experiencing a high rate of failed strain constructions in the automated "Build" phase. How can we troubleshoot this?

Answer: A high failure rate in automated strain construction often indicates a process optimization issue rather than a fundamental scientific problem. Follow this systematic approach:

- Confirm Process Calibration: First, verify that all liquid handling robots and synthesis instruments are correctly calibrated. Run standard control sequences to confirm pipetting accuracy and volume delivery.

- Review DNA Library Quality: Check the quality and concentration of your input DNA parts library. Degraded or impure DNA is a common cause of assembly failure in high-throughput workflows [14]. Implement stringent quality control checks before assembly.

- Simplify and Test a Subset: Reduce complexity by testing a smaller subset of constructs with known performance. If these fail, the issue is likely with your current assembly reaction conditions or instrumentation.

- Investigate Cross-Contamination: In highly automated, parallel workflows, cross-contamination between samples can occur. Use negative controls to identify if contamination is skewing your results.

FAQ 2: Our researchers are resistant to adopting new AI and machine learning tools for the "Learn" phase. How can we address this?

Answer: Resistance to AI/ML tools often stems from a skills gap and lack of trust in algorithmic outputs. Address this with a phased approach:

- Start with Assisted, Not Autonomous, Tools: Begin by implementing AI tools that assist with data analysis and hypothesis generation rather than replacing human decision-making. This allows researchers to build confidence gradually [15].

- Provide Context-Specific Training: Offer hands-on workshops and seminars focused on applying AI/ML to specific biological problems, such as predicting metabolic flux or optimizing pathway designs, rather than abstract programming concepts [16].

- Demonstrate Quick Wins: Use a pilot project to demonstrate how ML can successfully recommend new strain designs for the next DBTL cycle, leading to a measurable improvement in product titer or yield [15].

- Establish Cross-Functional Partnerships: Pair biologists with data scientists. This fosters mutual understanding—biologists gain AI literacy, while data scientists deepen their domain knowledge, leading to more effective tool development [17].

FAQ 3: The data generated from our automated "Test" phase is inconsistent and difficult to interpret. What should we check?

Answer: Inconsistent high-throughput screening data can derail the entire DBTL cycle. Focus on these areas:

- Standardize Cultivation Conditions: In microbial strain engineering, even minor fluctuations in microtiter plate cultivation conditions (temperature, humidity, oxygen transfer) can cause significant variance. Ensure your automated incubators and bioreactors are properly maintained and monitored [15].

- Validate Analytical Assays: Re-calibrate all in-line sensors and analytical instruments (e.g., plate readers, HPLC). Use a standardized control strain in every experimental run to normalize and validate the data.

- Review Data Pipeline Integrity: Automating the data flow from instruments to analysis platforms is critical. Check for errors in data formatting, transfer, or parsing that could introduce noise or corrupt the dataset.

- Correlate with Low-Throughput Validation: Periodically validate your high-throughput results with low-throughput, gold-standard methods (e.g., flask studies followed by GC-MS) to ensure your automated platform is generating physiologically relevant data.

FAQ 4: We are struggling to find and retain staff with the right mix of biology and engineering skills. What long-term strategies can we employ?

Answer: Bridging the interdisciplinary talent gap requires a strategic combination of hiring, development, and new organizational models.

- Prioritize Upskilling Existing Staff: Invest in reskilling your current biology workforce. Hands-on training in data science, automation programming, and the use of AI-powered tools is essential to close the internal skills gap [16] [17].

- Adopt a Hybrid Hiring Model: Recognize that finding "unicorn" candidates with deep expertise in both biology and engineering is difficult. Instead, build interdisciplinary teams composed of biologists who are tech-literate and engineers who understand biological principles [16].

- Leverage Strategic Outsourcing: For highly specialized tasks, consider a hybrid outsourcing model. Partner with functional service providers (FSPs) or CROs to access specific AI and data science expertise without the long lead time and cost of a full-time hire [16] [17].

- Create Clear Career Paths: Define and communicate career trajectories for hybrid roles. 29% of frontline workers state that clearly defined career paths are crucial for them to thrive and remain in their roles [16].

Skills Gap and Workforce Data Analysis

The following table summarizes key quantitative findings on the current skills gap and workforce challenges in automated life sciences environments.

| Metric | Finding/Situation | Implication for DBTL Cycles |

|---|---|---|

| AI Skills Priority [17] | AI expertise is a top-3 hiring priority for 85% of large pharma leaders. | DBTL "Learn" phases are increasingly dependent on AI, creating a competitive talent market. |

| Workforce Preparedness [16] | 51% of biopharmaceutical leaders identify a critical need to hire AI experts in the next 3-5 years. | A significant shortage of in-house skills is hindering the optimization of iterative DBTL cycles. |

| New Roles Emergence [17] | 57.8% of required roles in life sciences are new, driven by AI and automation. | Traditional biologist roles are insufficient; teams need data scientists and automation specialists. |

| Hiring Timelines [17] | Filling an AI/ML Specialist role takes 4-6 months on average. | Project delays are likely if the talent strategy relies solely on external hiring. |

| Training Preference [16] | Most industry leaders believe in-person, hands-on training is more effective than online courses for upskilling. | Effective reskilling for automated DBTL requires practical, experiential learning. |

Experimental Protocol: Benchmarking a Machine Learning-Powered DBTL Cycle

This protocol provides a methodology for evaluating a new machine learning tool's performance in guiding iterative strain engineering, a common challenge where skills gaps often emerge.

Objective: To systematically compare the effectiveness of a new ML-based recommendation engine against a traditional, researcher-driven approach for combinatorial pathway optimization over three DBTL cycles.

Materials:

- Strain Library: A defined library of DNA parts (promoters, RBSs, CDSs) for a pathway of interest.

- Automated Strain Construction System: e.g., a benchtop DNA printer or automated molecular biology platform [18].

- High-Throughput Screening System: Microtiter plates, automated incubators, and plate readers for measuring strain performance (e.g., product titer).

- ML Recommendation Software: The ML tool to be evaluated (e.g., based on gradient boosting or random forest models as used in simulated studies [15]).

Procedure:

- Initial Design (Cycle 0): Create an initial training set by constructing and screening a diverse set of 50 strains from the library. Measure the product titer for each strain.

- Cycle 1 - Control Arm: Provide the dataset to an experienced research team. Ask them to use their expertise to select the next 50 strains to build and test.

- Cycle 1 - Experimental Arm: Provide the same dataset to the ML recommendation engine. Use its top 50 predictions to select strains for construction and testing.

- Subsequent Cycles (2 & 3): Repeat the "Build" and "Test" phases for both arms. For each new cycle, provide all accumulated data to both the research team and the ML tool to inform their next set of design choices.

- Analysis: After three complete cycles, compare the performance of the best-producing strain from each arm and the overall performance trajectory (e.g., the average titer per cycle). Evaluate the resource efficiency of each approach.

The Scientist's Toolkit: Key Reagents & Materials for Automated DBTL

| Item | Function in Automated DBTL |

|---|---|

| Benchtop DNA Printer/Synthesizer | Enables in-lab, on-demand production of DNA constructs, accelerating the "Build" phase and maintaining confidentiality of proprietary sequences [18]. |

| Standardized DNA Part Library | A collection of characterized biological components (promoters, genes, terminators) that are functionally modular and can be reliably assembled in different combinations [14]. |

| Automated Liquid Handling Robot | Performs repetitive pipetting tasks (e.g., PCR setup, transformation) with high precision and speed, enabling high-throughput "Build" and "Test" phases [14]. |

| Machine Learning Recommendation Tool | Software that analyzes experimental data from the "Test" phase to predict and recommend the most promising strain designs for the next "Design" cycle, optimizing the "Learn" phase [15]. |

| Microtiter Plate Bioreactors | Small-scale cultivation vessels that allow for parallel fermentation of hundreds of microbial strains under controlled conditions, facilitating high-throughput phenotyping during the "Test" phase [15]. |

Workflow Diagram: Integrating Human Expertise & Automation

The diagram below visualizes the optimized DBTL cycle, highlighting points of human-machine collaboration to overcome workforce resistance and skills gaps.

Identifying and Prioritizing DBTL Processes for Automation

Core DBTL Concept and Automation Challenges

The Design-Build-Test-Learn (DBTL) cycle is an iterative framework central to modern scientific fields like synthetic biology and metabolic engineering [19]. It involves four key phases: Design (planning genetic constructs or pathways), Build (physical assembly), Test (experimental validation), and Learn (data analysis to inform the next cycle) [20] [21].

Automating this cycle is crucial for overcoming the "involution" state, where numerous iterative cycles generate vast amounts of information without leading to performance breakthroughs [22]. However, researchers face significant challenges during automation, including data integration from disparate sources, selecting appropriate machine learning models, and managing the high computational and equipment costs associated with high-throughput platforms [22] [19] [21].

Troubleshooting Common DBTL Automation Issues

Design Phase Roadblocks

Problem: How can I efficiently explore a vast combinatorial design space without exhaustive testing? The number of possible genetic designs (e.g., promoter-gene combinations) can be enormous. Manually selecting designs to build and test is inefficient.

Solution: Employ statistical design of experiments (DoE) to create a reduced, representative library of constructs [21]. For instance, in a pinocembrin production pathway, a combinatorial design of 2592 possible configurations was reduced to 16 representative constructs using DoE, achieving a 500-fold improvement in production titer after two DBTL cycles [21].

Problem: My predictive models for biological systems are inaccurate. Traditional mechanistic models often struggle with the complexity and non-linearity of biological systems.

Solution: Integrate Machine Learning (ML) with mechanistic models. ML can capture complex, non-linear relationships from experimental data that are difficult to model explicitly [22] [19]. Use tools like RetroPath for automated pathway selection and Selenzyme for enzyme selection to enhance the design phase [21].

Build & Test Phase Hurdles

Problem: Manual genetic assembly is slow, error-prone, and doesn't scale. Traditional cloning methods are a major bottleneck for high-throughput DBTL cycles.

Solution: Automate DNA assembly using liquid-handling robots and standardized protocols. The Opentrons OT-2 robot is a cost-effective platform for automated synthesis of genetic constructs [20]. Utilize standardized assembly methods like the Ligase Cycling Reaction (LCR), for which automated worklists can be generated [21].

Problem: Low-throughput testing creates a data bottleneck. Testing a few constructs at a time severely limits the learning rate of each DBTL cycle.

Solution: Implement high-throughput testing platforms. For colorimetric gas sensors, a platform capable of testing 384 sensing units simultaneously was developed [20]. For metabolic engineering, use 96-deepwell plates and automated, quantitative screening methods like fast UPLC-MS/MS for analyzing target products and key intermediates [21].

Learn Phase Obstacles

Problem: I cannot identify the key factors affecting system performance from my data. With multiple variables (e.g., promoter strength, gene order), it's difficult to determine which factors are most influential.

Solution: Apply statistical analysis and machine learning to test results. In the pinocembrin case, statistical analysis of the first DBTL cycle identified that vector copy number and the promoter strength of the CHI gene had the strongest significant effects on production, directly guiding the redesign for the second cycle [21].

Problem: Data from different cycles and experiments is siloed and unusable. Without a structured database, historical data cannot be leveraged for future ML.

Solution: Build a centralized, structured database for all DBTL data. Use repositories like JBEI-ICE to track all DNA parts and plasmid assemblies with unique IDs [21]. Develop a structured biomanufacturing database from scientific literature to facilitate knowledge mining and feature engineering for ML [22].

Frequently Asked Questions (FAQs)

Q1: What is the single most important factor for a successful automated DBTL pipeline? A seamless data flow is critical. The pipeline must be designed so that data and learnings from one phase automatically inform the next. This requires integrated software tools, automated data tracking, and a centralized data repository [19] [21].

Q2: My automated platform is producing more data than we can analyze. What should we do? Focus on automating the "Learn" phase. Implement custom data processing scripts (e.g., in R or Python) for automated data extraction and analysis. Use ML not just for design but also to automatically identify patterns and relationships in the test data [21].

Q3: How do we prioritize which process in our lab to automate first? Adopt a value-driven or bottleneck prioritization method [23].

- Value-Driven: Automate the process that, if improved, would deliver the highest financial or scientific return (e.g., the rate-limiting step in your research).

- Bottleneck: Target the process that is clearly impeding overall workflow efficiency, such as a manual cloning step that slows down all projects. Use process flow models to identify where the biggest delays occur [23].

Q4: Are there cost-effective options for automating the "Build" phase? Yes. The "Opentrons OT-2" is a cited example of a relatively low-cost, open-source liquid-handling robot that can be used for automated synthesis of materials like colorimetric sensor formulations, making automation more accessible [20].

Q5: How can we manage the intellectual property (IP) of AI-generated designs or data from automated systems? This is a recognized challenge. It's crucial to establish robust data-sharing mechanisms and comprehensive IP protections for algorithms and generated designs early in the process. The field is still adapting to these new challenges [24].

Experimental Protocol: An Automated DBTL Pipeline for Metabolic Engineering

This protocol is adapted from an automated DBTL pipeline for optimizing microbial production of fine chemicals [21].

Objective

To iteratively optimize a biosynthetic pathway in E. coli for enhanced production of a target compound (e.g., the flavonoid pinocembrin) using a fully integrated and automated DBTL cycle.

Methodology

Design Stage:

- Pathway & Enzyme Selection: Use computational tools

RetroPath[24] andSelenzymeto select candidate enzymes for the target pathway. - Combinatorial Library Design: Design a library of genetic constructs varying key parameters such as:

- Vector backbone (copy number: High/ColE1, Medium/p15a, Low/pSC101).

- Promoter strength (Strong/Ptrc, Weak/PlacUV5, None) for each gene.

- Gene order (permutations of the pathway genes).

- Library Reduction: Apply Design of Experiments (DoE) based on orthogonal arrays to reduce the combinatorial library to a tractable number of representative constructs (e.g., from 2592 to 16).

Build Stage:

- DNA Synthesis: Source genes via commercial DNA synthesis.

- Automated Assembly:

- Use

PartsGeniesoftware to design reusable DNA parts with optimized ribosome-binding sites. - Generate robotic worklists for the Ligase Cycling Reaction (LCR) assembly.

- Execute the assembly on a liquid-handling robotics platform (e.g., Opentrons OT-2).

- Use

- Quality Control (QC): Perform automated plasmid purification, restriction digest analysis via capillary electrophoresis, and sequence verification.

Test Stage:

- Culture & Induction: Transform constructs into the production chassis (E. coli). Use a robotic platform to inoculate cultures in 96-deepwell plates and execute growth/induction protocols.

- Product Analysis:

- Perform automated metabolite extraction.

- Quantify target product and key intermediates using fast UPLC-MS/MS.

Learn Stage:

- Data Processing: Use custom R scripts for automated data extraction and processing.

- Statistical Analysis & Machine Learning: Apply statistical methods (e.g., Analysis of Variance) to identify the main design factors (e.g., promoter strength, gene order) that significantly influence production titers.

- Redesign: Use the statistical insights to define new, more constrained design rules for the next DBTL cycle.

Key Research Reagent Solutions

Table: Essential materials for automated metabolic engineering pipeline

| Item | Function in the Protocol | Example/Specification |

|---|---|---|

| Liquid Handling Robot | Automates liquid transfers for DNA assembly and culture setup. | Opentrons OT-2 [20] |

| DNA Assembly Method | High-efficiency, robot-friendly method for constructing plasmids. | Ligase Cycling Reaction (LCR) [21] |

| Production Chassis | The host organism for expressing the biosynthetic pathway. | Escherichia coli (e.g., strain DH5α) [21] |

| High-Throughput Screening Platform | Enables parallel testing of many constructs. | 96- or 384-deepwell plate systems [20] [21] |

| Analytical Instrumentation | Precisely quantifies target compounds and intermediates. | UPLC-MS/MS (Ultra-Performance Liquid Chromatography-Tandem Mass Spectrometry) [21] |

| Statistical Software | Analyzes experimental data to identify key performance factors. | R or Python with statistical/ML libraries [21] |

DBTL Workflow and Optimization Cycle Visualization

DBTL Cyclic Workflow: This diagram illustrates the core iterative process of the Design-Build-Test-Learn cycle, where insights from each iteration feed directly into the next design phase.

Manual vs Automated DBTL Impact: This diagram contrasts the traditional manual DBTL process, which risks stagnation ("involution"), with the automated, data-driven pipeline that enables rapid optimization and discovery.

Establishing Clear Automation Goals and Success Metrics

For researchers, scientists, and drug development professionals, the automated Design-Build-Test-Learn (DBTL) cycle represents a paradigm shift in metabolic engineering and synthetic biology. While automation accelerates the construction of microbial cell factories, its success hinges on establishing clear, quantifiable goals and metrics from the outset [25]. Without a rigorous framework for defining objectives and measuring outcomes, laboratories risk investing in expensive automation platforms that fail to deliver reproducible, high-quality results. This technical support guide provides a structured approach to goal-setting and performance measurement for automated DBTL iteration, enabling research teams to maximize their return on investment and advance next-generation drug development pipelines.

Key Performance Indicators for Automated DBTL Cycles

Effective monitoring of an automated DBTL platform requires tracking metrics across its entire workflow. The following table summarizes essential quantitative indicators for evaluating performance at each stage.

Table 1: Key Performance Indicators for Automated DBTL Cycle Monitoring

| DBTL Stage | Key Metric | Definition | Target Benchmark |

|---|---|---|---|

| Design | In silico Design Success Rate | Percentage of designed constructs flagged as viable by genome-scale metabolic models (GSMM) and AI-based tools [25]. | >95% |

| Build | Assembly Throughput | Number of genetic constructs successfully assembled per unit time (e.g., per week) by automated genome engineering systems (e.g., MAGE) [25]. | Varies by system; aim for consistent week-over-week increase. |

| Build | Cloning Efficiency | Percentage of assembled constructs that yield correct sequences upon verification (e.g., via sequencing) [25]. | >90% |

| Test | Analytical Throughput | Number of samples processed per day via automated analytical techniques (e.g., FIA, SWATH-MS) [25]. | Varies by assay; target maximum platform capacity. |

| Test | Data Quality Score | A composite score based on signal-to-noise ratio, replicate consistency, and adherence to quality control standards in omics data [25]. | >95% pass rate against predefined QC thresholds. |

| Learn | Model Prediction Accuracy | The accuracy of machine learning (ML) models (e.g., GNNs, PINNs) in predicting experimental outcomes, measured against holdout test data [25]. | >85% for iterative model improvement. |

| Overall Cycle | Cycle Time | Total time required to complete one full DBTL iteration, from initial design to data-driven hypothesis for the next cycle [25]. | Progressive reduction with each optimized iteration. |

Frequently Asked Questions (FAQs)

1. Our automated strain construction has high throughput, but our learning phase is the bottleneck. What metrics can help diagnose this?

The issue likely lies in data integration and model training. Focus on these metrics:

- Data Processing Lag Time: Measure the time between raw data generation from the "Test" phase and its availability in a structured format for ML models. The goal should be minimal lag [25].

- Model Training/Retraining Cycle Time: Track how often your ML models (e.g., SVMs, GANs) are updated with new experimental data. Long cycles prevent rapid learning [25].

- Hypothesis Generation Rate: Monitor the number of testable, data-driven hypotheses the "Learn" phase produces per week. A low rate indicates a weak learning feedback loop.

2. How can we set realistic but ambitious goals for improving our DBTL cycle time?

Use a phased approach:

- Phase 1 - Baseline Establishment: For the first 1-2 cycles, simply measure the current total cycle time and the time spent in each segment without making changes.

- Phase 2 - Target Setting: Analyze the baseline data to identify the slowest segment. Set a Specific, Measurable, Achievable, Relevant, and Time-bound (SMART) goal to reduce the time in that segment by a specific percentage (e.g., 15-20%) over the next two cycles [26].

- Phase 3 - Systematic Reduction: Once the largest bottleneck is improved, identify and target the next slowest segment. This iterative method ensures continuous, measurable progress toward a fully optimized cycle [25] [26].

3. What are the most critical success metrics for securing further funding for our automated biofoundry?

Beyond technical metrics, funders need evidence of efficiency and return on investment. Emphasize:

- Cost Per Construct: The fully burdened cost to design, build, and validate a single genetic construct. A downward trend demonstrates improving efficiency and cost-control.

- Experiment Success Rate: The percentage of initiated DBTL cycles that successfully generate actionable learning or a validated hypothesis. This shows overall platform reliability [25].

- Researcher Throughput: Measure the number of distinct research projects or hypotheses a single scientist can test per quarter using the automated platform. This highlights the multiplicative effect of the infrastructure [26].

Troubleshooting Guides

Issue: Stagnating Model Prediction Accuracy in the Learn Phase

Symptoms: Machine learning models used to design new constructs are no longer improving in accuracy, leading to diminishing returns from DBTL iterations.

Diagnosis and Resolution:

- Audit Data Quality and Quantity:

- Action: Check the

Data Quality Score(from Table 1) for recent cycles. Ensure data from the "Test" phase meets quality thresholds. - Solution: If data quality is high but volume is low, you may need to run more "Test" experiments to generate a larger, more diverse training dataset for the ML models [25].

- Action: Check the

- Check for Data Drift:

- Action: Compare the statistical distributions of input features (e.g., promoter strengths, metabolite levels) between the data used to train the model and newly generated data.

- Solution: If drift is detected, retrain your models (e.g., VAEs, GNNs) on a combined dataset that includes both historical and new data to keep the models current [25].

- Re-evaluate Model Features:

- Action: Perform feature importance analysis on your models. The relevant biological features may have changed as the research advances.

- Solution: Work with domain experts to incorporate new biological knowledge or omics measurements (e.g., from flux balance analysis) into the model's feature set [25].

Diagram 1: Troubleshooting stagnating model accuracy in the Learn phase.

Issue: Low Cloning Efficiency in the Automated Build Phase

Symptoms: A high percentage of assembled genetic constructs fail verification sequencing, leading to wasted reagents, time, and inadequate sample progression to the Test phase.

Diagnosis and Resolution:

- Verify Reagent Integrity:

- Action: Check the expiration dates and storage conditions of all enzymes (e.g., ligases, polymerases), buffers, and oligos used in the automated assembly process (e.g., LCR, USER) [25].

- Solution: Replace any reagents that are outdated or have not been stored correctly. Implement a first-in-first-out (FIFO) inventory system.

- Calibrate Liquid Handling Robots:

- Action: Run calibration and precision tests on the automated liquid handlers. Check for volume inaccuracies, especially with small volumes and viscous liquids.

- Solution: Recalibrate instruments according to manufacturer specifications. Consider using a dye-based assay to visually confirm dispensing accuracy across the entire platform.

- Validate Assembly Protocol Parameters:

- Action: Review the thermal cycler protocols (time, temperature) and reaction mixture compositions in the automated workflow. Compare them to manually verified protocols.

- Solution: Optimize protocol parameters specifically for the automated environment, which may differ from manual bench protocols. Introduce a positive control construct in every run to isolate protocol failures from random errors.

Diagram 2: Resolving low cloning efficiency in the automated Build phase.

The Scientist's Toolkit: Key Research Reagent Solutions

The following reagents and materials are fundamental for executing automated DBTL cycles. Their consistent quality is critical for reproducible results.

Table 2: Essential Reagents for Automated DBTL Workflows

| Reagent/Material | Function in DBTL Cycle | Critical Quality Metrics for Automation |

|---|---|---|

| High-Fidelity DNA Polymerases | "Build": Accurate amplification of genetic parts and assemblies [25]. | Low error rate, compatibility with automated liquid handling buffers, stability at 4°C. |

| Automation-Grade Restriction Enzymes & Ligases | "Build": Modular DNA assembly using standardized methods (e.g., Golden Gate) [25]. | Fast reaction kinetics, high specificity, uniform buffer system to enable complex mixtures. |

| Synthetic Oligonucleotides | "Build": Primers for assembly and sequencing; probes for screening [25]. | High purity (HPLC-grade), accurate concentration, low well-to-well variation in 96- or 384-well plates. |

| Liquid Handling Calibration Dyes | "Build"/"Test": Verification of dispensing accuracy across all nozzles of an automated liquid handler. | High contrast, chemical inertness, viscosity matching aqueous buffers. |

| Cell Lysis Reagents | "Test": Preparing samples for metabolomic or proteomic analysis [25]. | Rapid, consistent lysis; compatibility with downstream analytical techniques (e.g., Mass Spectrometry). |

| Internal Standards (Isotope-Labeled) | "Test": Quantifying metabolites in Mass Spectrometry (e.g., SRM/MRM, DIA) for precise Metabolic Flux Analysis (MFA) [25]. | Chemical purity, precise concentration, minimal isotopic impurity. |

| LC-MS Grade Solvents | "Test": Mobile phase for Liquid Chromatography coupled to Mass Spectrometry to minimize background noise [25]. | Ultra-high purity, low particulate content, consistent lot-to-lot composition. |

Implementing Next-Gen Solutions: AI, Data Automation, and Process Intelligence

Leveraging AI and Machine Learning for Smarter Data Ingestion and Analysis

Troubleshooting Guides and FAQs

This technical support center is designed for researchers and scientists working to optimize the Design-Build-Test-Learn (DBTL) cycle in bioprocess development, particularly in fields like metabolic engineering and drug development. The following guides address common challenges encountered when integrating AI and ML into these automated, data-intensive workflows.

Data Ingestion and Quality

Q: Our automated data ingestion pipelines are bringing in inconsistent or corrupt data, which is causing our downstream ML models to fail. What are the first things we should check?

Inconsistent data is a frequent culprit behind poor model performance. A structured approach to troubleshooting is key [27].

A1: Audit Your Data Sources and Preprocessing: Begin by verifying the integrity of your raw data. Check for common issues like missing values, inconsistent formatting due to mismanagement, or data corruption when combining streams from incompatible sources [27]. Implement a preprocessing checklist:

- Handle Missing Data: For features with a few missing values, impute using the mean, median, or mode. Remove data entries if multiple features are missing [27].

- Detect and Handle Outliers: Use box plots to identify values that stand out from the dataset, as these can skew model results [27].

- Normalize or Standardize Features: Ensure all features are on the same scale to prevent models from giving undue weight to those with larger magnitudes [27].

A2: Check for Data Imbalance: Imbalanced data, where one target class is over-represented, can lead to models with high accuracy but poor predictive power for the minority class. This is a critical edge-case consideration [27]. Techniques to address this include resampling the dataset (oversampling the minority class or undersampling the majority class) or data augmentation [27].

A3: Implement a Version Control System for Data: As your DBTL cycles iterate, your data will change. Using a data version control system (e.g., lakeFS) allows you to track changes to large datasets, revert to previous states, and maintain reproducibility across experiments [28].

Q: We are incorporating unstructured data (e.g., research notes, historical documents) into our analysis. How can we monitor its quality for AI use?

Unlike structured data, unstructured data operates in a traditional blind spot [29].

- A1: Implement Unstructured Data Monitoring: Adopt solutions that bring automated quality checks to text and image fields. This involves monitoring for issues like empty vector arrays, incomplete metadata, or corrupted text, which can severely degrade the context provided to AI models [29].

- A2: Monitor Embedding Quality: When using text data for AI, it is converted into numerical representations called embeddings. Monitor these embeddings for critical failures such as empty arrays, wrong dimensionality, or corrupted vector values. Poor embedding quality is often misdiagnosed as model "hallucination" [29].

Model Performance and Training

Q: Our ML model performed well on training data but generalizes poorly to new experimental data from the DBTL cycle. What could be the cause?

This is a classic problem often stemming from the model's relationship with the training data [27].

- A1: Diagnose Overfitting or Underfitting:

- Overfitting occurs when a model is too complex and learns the training data, including its noise, too closely. The result is a low-bias, high-variance model that fails on new data [27].

- Underfitting occurs when a model is too simple to capture the underlying trends, resulting in a high-bias, low-variance model that performs poorly even on training data [27].

- A2: Utilize Cross-Validation: Use k-fold cross-validation to evaluate your model's performance more reliably. This technique involves dividing the data into k subsets, training the model k times (each time using a different subset as the validation set and the rest as training data), and averaging the results. This helps ensure the final model can generalize to new data [27].

- A3: Perform Feature Selection: Not all input features contribute to a meaningful output. Input data can contain hundreds of features, many of which may be irrelevant. Selecting the most important features improves model performance and reduces training time. Techniques include [27]:

- Statistical Tests: Use univariate or bivariate selection (e.g., correlation, ANOVA) to find features with a strong relationship to the output variable.

- Principal Component Analysis (PCA): A dimensionality reduction algorithm that selects features with high variance, which typically contain more information.

- Feature Importance: Leverage algorithms like Random Forest to rank features based on their contribution to predictions.

Q: For our specific DBTL cycle, should we use a single powerful model or a system of smaller, specialized models?

The choice depends on the specific tasks and operational constraints. In 2025, the trend is shifting towards more efficient, specialized models [30].

- A1: Consider Small Language Models (SLMs) and Agentic Workflows: SLMs (1M-10B parameters) offer advantages in cost efficiency, edge deployment, data privacy, and easier customization for specific domains [30]. They are particularly well-suited for agentic AI systems, where multiple specialized SLMs can be orchestrated to tackle complex, multi-step processes within a DBTL cycle [29] [30].

- A2: Evaluate the Trade-offs: The following table compares the two approaches for DBTL applications:

| Characteristic | Large Foundation Models | Small Language Models (SLMs) & Agentic Workflows |

|---|---|---|

| Cost & Infrastructure | Higher computational cost and infrastructure demands [30]. | Lower operational costs, can run on local devices or edge infrastructure [30]. |

| Customization | Less flexible for domain-specific fine-tuning. | Easier to fine-tune and specialize for specific tasks (e.g., predicting enzyme kinetics) [30]. |

| Functionality | Single, powerful model for broad tasks. | System of multiple agents, each handling a discrete task (e.g., one for design recommendation, another for anomaly detection in tests) [29]. |

| Data Privacy | Often requires cloud processing. | Local processing eliminates data transmission, addressing privacy concerns [30]. |

AI and Workflow Integration

Q: The AI/ML recommendations from our DBTL cycle are inconsistent and difficult to trust for critical decisions like strain engineering. How can we improve reliability?

Trust is built on transparency, data quality, and a robust iterative process.

- A1: Adopt a Knowledge-Driven DBTL Cycle: Move beyond purely data-driven cycles. One effective method is to integrate upstream in vitro investigation (e.g., using cell-free protein synthesis systems) to test enzyme expression levels and pathway behavior before moving to costly in vivo experiments. This provides mechanistic insights and creates prior knowledge to guide the initial design phase, leading to more efficient cycling [31].

- A2: Ensure Proper "Context Engineering": The quality of the data provided to the model (its context) is paramount. Context engineering is the systematic process of preparing, optimizing, and maintaining this context data [29]. This involves ensuring complete metadata, proper data chunking, and clean vector arrays. Poor context is a major source of unreliable AI outputs [29].

- A3: Implement a Robust MLOPs Framework: A mature MLOps practice is critical for production-ready AI. This includes [28] [30]:

- Version Control: For data, models, and code.

- Real-Time Monitoring: For model performance, data drift, and concept drift.

- Automated Pipelines: For training, evaluation, and deployment.

- Security-First Design: Incorporating controls and audit trails to protect AI systems [30].

Q: The integration between our data sources, AI models, and laboratory execution systems is complex and fragile. Are there standards to simplify this?

Yes, the ecosystem is moving towards standardization to reduce integration complexity.

- A1: Leverage the Model Context Protocol (MCP): MCP is emerging as a universal standard, acting as a "USB-C for AI." It allows AI applications to connect to any data source or tool (e.g., databases, CRMs, laboratory instruments) without requiring custom integrations for each one. This simplifies architecture and maintenance while also standardizing governance and logging [29].

- A2: Use a Unified Data Catalog: To prevent vendor lock-in and ensure platform interoperability, consider using a neutral data catalog like AWS Glue. It can serve as a flexible data access layer when integrated with platforms like Databricks and Snowflake, allowing you to maintain control over your data strategy [28].

Experimental Protocols & Methodologies

Protocol: Simulating DBTL Cycles for ML Model Benchmarking

This protocol, adapted from scientific literature, allows for the systematic testing and optimization of machine learning methods over multiple DBTL cycles without the cost of real-world experiments [15].

1. Objective: To create a framework for comparing the performance of different machine learning models and recommendation algorithms in guiding iterative combinatorial pathway optimization.

2. Background: Public data from multiple, real DBTL cycles is scarce. Simulated data using mechanistic models overcomes this limitation and allows for the controlled benchmarking of strategies [15].

3. Methodology:

- Step 1: Construct a Mechanistic Kinetic Model.

- Develop or use an existing kinetic model (e.g., in the SKiMpy package) that represents your metabolic pathway of interest embedded in a physiologically relevant cell model [15].

- The model should use ordinary differential equations (ODEs) to describe changes in metabolite concentrations. Kinetic parameters should represent biologically relevant quantities (e.g., enzyme concentrations, catalytic rates) [15].

- Integrate the pathway model into a basic bioprocess model (e.g., a batch reactor) to simulate realistic conditions [15].

- Step 2: Define the Design Space and Perturbations.

- Identify the pathway enzymes to be optimized.

- Define a library of possible perturbations (e.g., 5 different expression levels for each enzyme) achieved by modulating parameters like

V_maxin the model, simulating the effect of using different promoters or RBS sequences [15].

- Step 3: Generate Initial Training Data.

- Randomly sample a set of initial strain designs (e.g., 50 different combinations of enzyme levels) from the defined library.

- Use the kinetic model to simulate the product flux (titer/yield/rate) for each design, generating the target variable for ML training [15].

- Step 4: Train, Validate, and Recommend.

- Train a suite of ML models (e.g., Gradient Boosting, Random Forest) on the initial dataset to predict product flux from enzyme levels [15].

- Use a recommendation algorithm on the trained models to propose a new set of promising strain designs for the next "build" phase, balancing exploration and exploitation [15].

- Step 5: Iterate and Benchmark.

- Simulate the next DBTL cycle by generating data for the recommended designs using the kinetic model.

- Update the ML models with the new data and repeat. Compare the performance of different ML and recommendation strategies over multiple simulated cycles based on the time taken to find the optimal production strain [15].

Key Workflow Diagram

This diagram illustrates the automated, knowledge-driven DBTL cycle for metabolic engineering, integrating both in vitro and in vivo experimentation [31].

Protocol: Troubleshooting an AI-Powered Chatbot for Experimental Data Querying

Many research teams deploy AI assistants to help query internal data. This protocol provides a triage flow for when these systems provide wrong or inconsistent answers [32].

1. Objective: To systematically diagnose and resolve issues of inaccuracy in an AI-powered research data assistant.

2. Methodology:

- Step 1: Reproduce and Isolate the Issue.

- Step 2: Inspect the Retrieval System (Most Common Cause). The problem is often weak "grounding," where the AI lacks access to the correct information [32].

- Check Retrieval Hit Rate: Verify that the system is actually retrieving relevant documents for the query.

- Inspect Top-K Docs: Examine the top documents (e.g., the top 5) that were fed into the model's context. Are they the correct and most up-to-date research documents or data summaries?

- Check Index Freshness: Is the document index stale? Has new experimental data been added that hasn't been indexed? [32].

- Step 3: Inspect the Model Call.

- Check Token Counts: Excessively long prompts can lead to high latency and may cause the model to ignore later context.

- Review Parameters: Check if the

temperatureparameter is set too high, introducing excessive randomness. Lowering it makes outputs more deterministic [32]. - Analyze the System Prompt: Ensure the instructions to the model are clear and unambiguous.

- Step 4: Implement Fixes.

- Fast Fixes: Narrow the assistant's scope, require citations for answers, lower the

temperature, and add a safe fallback message for low-confidence answers [32]. - Long-Term Fixes: Implement Retrieval Augmented Generation (RAG). Improve document chunking strategies and add better metadata. Establish a schedule to regularly rebuild the search index [32].

- Fast Fixes: Narrow the assistant's scope, require citations for answers, lower the

Data Validation Workflow Diagram

This flowchart outlines the logical steps for validating data quality and model inputs within an AI-driven analytical pipeline, a critical step before model training or inference.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key resources and computational tools essential for implementing AI-driven data analysis within automated DBTL cycles.

| Item | Function / Application |

|---|---|

| Cell-Free Protein Synthesis (CFPS) System | A crude cell lysate system used for upstream in vitro testing of metabolic pathways. It bypasses cellular membranes and internal regulation, allowing for rapid prototyping and optimization of enzyme expression levels before moving to more time-consuming in vivo strain engineering [31]. |

| Mechanistic Kinetic Model (e.g., SKiMpy) | A computational model using ordinary differential equations to represent a metabolic pathway embedded in cell physiology. It is used to simulate DBTL cycles, generate training data for ML models, and benchmark optimization strategies without the cost of wet-lab experiments [15]. |

| Ribosome Binding Site (RBS) Library | A key tool for in vivo fine-tuning of synthetic biological pathways. A library of RBS sequences with varying strengths (e.g., modulated by changing the Shine-Dalgarno sequence) allows for precise control of the translation initiation rate of multiple enzymes simultaneously, enabling combinatorial optimization of pathway flux [31]. |

| Data Version Control (e.g., lakeFS) | A system that applies git-like version control to large datasets stored in data lakes. It is critical for maintaining reproducibility across DBTL iterations, enabling branching, merging, and rolling back of data, and implementing engineering best practices for data products [28]. |

| Model Context Protocol (MCP) | A universal standard protocol that acts as "USB-C for AI," allowing AI applications to connect seamlessly to various data sources, databases, and laboratory instrument APIs without requiring custom integrations for each one. This drastically reduces integration complexity and maintenance [29]. |

| Vector Database | A specialized database designed to store and query high-dimensional vector embeddings of data (e.g., text from research papers, protein sequences). It is the de facto infrastructure for Retrieval-Augmented Generation (RAG) applications, which ground AI responses in relevant source material [29]. |

Performance Metrics Table

Tracking the right metrics is essential for evaluating the success of your AI-enhanced DBTL pipeline. The following table summarizes key quantitative indicators.

| Metric | Description | Target / Application |

|---|---|---|

| Product Titer/Yield/Rate (TYR) | The concentration, yield, and production rate of the target molecule (e.g., dopamine, a therapeutic protein) [15]. | The primary optimization objective in metabolic engineering and bioprocess development. Example: A dopamine production strain achieving 69.03 ± 1.2 mg/L [31]. |

| Grounded Accuracy | The percentage of AI-generated answers that correctly match and are supported by the provided source data [32]. | Critical for AI research assistants. Should be measured using human-labeled data with a target as close to 100% as possible [32]. |

| Containment Rate | The percentage of conversations or queries solved entirely by an AI assistant without requiring human intervention [32]. | A key efficiency metric for AI support tools. The target should be set by use case and complexity [32]. |

| ROI from MLOps | The return on investment from implementing a mature MLOps framework. | Organizations report 189% to 335% ROI over three years from improved deployment efficiency and reduced operational costs [30]. |

| Data Scientist Productivity | The improvement in the output efficiency of data science teams. | Comprehensive MLOps strategies can lead to a 25% improvement in data scientist productivity by automating workflows and standardizing processes [30]. |

The Role of Real-Time Data Streaming and Processing in the 'Test' Phase

The Design-Build-Test-Learn (DBTL) cycle is a foundational framework in metabolic engineering and strain development for optimizing the production of compounds, such as pharmaceuticals or biofuels [31] [15]. The 'Test' phase is critical, where built microbial strains are evaluated to generate performance data. Integrating real-time data streaming and processing into this phase transforms it from a static, endpoint assessment into a dynamic, continuous source of actionable insights. This enables researchers to monitor bioprocesses as they happen, detect anomalies instantly, and make data-driven decisions to guide the subsequent 'Learn' and 'Design' phases more effectively [33] [34]. This technical support article addresses common challenges and provides protocols for implementing these powerful tools within automated DBTL research.

Key Concepts and Definitions

- Real-Time Data Streaming: The continuous ingestion, processing, and analysis of data as it is generated, with minimal latency between data creation and availability for insights [33] [35].

- Stream Processing: The technology that performs real-time analysis on data streams, allowing for transformations, aggregations, and anomaly detection as data flows [33].

- DBTL Cycle: An iterative workflow for microbial strain development comprising Design (planning genetic modifications), Build (genetic construction), Test (strain characterization and bioprocessing), and Learn (data analysis to inform the next cycle) [31] [15].

Frequently Asked Questions (FAQs)

1. How can real-time data streaming specifically accelerate our 'Test' phase experiments? Real-time data streaming directly shortens the feedback loop within the DBTL cycle. Instead of waiting for a batch process to conclude and then conducting offline, time-consuming analyses, you can monitor key process indicators—like biomass, substrate consumption, or product formation—live. This allows for:

- Immediate Intervention: Detect and correct process anomalies (e.g., sudden pH changes, oxygen depletion) before they ruin an experiment [33] [35].

- Dynamic Control: Adjust process parameters in real-time to maintain optimal production conditions, maximizing yield for every experimental run [33].

- Faster Cycle Times: As data is processed and available instantly, the subsequent 'Learn' phase can begin sooner, accelerating the overall iteration of DBTL cycles [15].

2. Our data comes from multiple bioreactors and analyzers. How do we ensure consistency and quality in the streaming data? This is a common challenge. A robust streaming architecture is key to managing data from diverse sources [33] [36].

- Standardize Data Ingestion: Use a scalable ingestion tool like Apache Kafka or Amazon Kinesis to collect data from all sources into a single platform [33] [35].

- Implement Pre-Processing: Perform data validation, cleansing, and standardization (e.g., unit conversion, timestamp synchronization) as the first step in your stream processing pipeline. This ensures that downstream analyses are based on consistent, high-quality data [33] [36].

- Handle Out-of-Order Data: Choose a stream processing framework (e.g., Apache Flink) that can buffer and re-order data packets based on the actual event time to ensure analytical accuracy [35] [36].

3. What are the best practices for storing and managing the high-volume data generated from continuous bioprocess monitoring?

- Adopt a Multi-Tiered Strategy [35]:

- Hot Storage: Use in-memory databases or the internal storage of your streaming platform for real-time access to the most recent data for live dashboards and alerting.

- Warm Storage: Use cloud object stores (e.g., AWS S3) for recent data that requires quick retrieval for further analysis.

- Cold Storage: Archive historical data in cost-effective storage solutions for long-term trend analysis and regulatory purposes.

- Use Efficient Compression: Apply compression algorithms to reduce data size and minimize storage costs and bandwidth usage [35].

Troubleshooting Guides

Issue 1: High Latency in Data Processing Pipeline

Symptoms: Alerts for process anomalies are delayed; real-time dashboards are not updating promptly. Possible Causes and Solutions:

| Cause | Solution |

|---|---|

| I/O Bottlenecks | Minimize disk I/O by processing data in-memory wherever possible. Avoid excessive use of intermediate topics in message brokers like Kafka [34]. |

| Insufficient Resources | Monitor system metrics and use autoscaling (e.g., via Kubernetes) to dynamically add more processing nodes as the data load increases [35] [36]. |

| Inefficient Processing Logic | Optimize stream processing algorithms and leverage in-memory computations. Use tools with native support for time-series data to reduce processing overhead [34] [36]. |

| Network Protocol Overhead | Optimize data serialization/deserialization and use efficient data transport protocols [35]. |

Symptoms: Gaps in data streams; nonsensical readings disrupting analytical models. Possible Causes and Solutions:

| Cause | Solution |

|---|---|

| Unreliable Network Connectivity | Implement edge processing. Deploy lightweight algorithms to edge devices or local gateways to perform initial data filtering, reduction, and caching when connection is lost [36]. |

| Faulty Sensors or Calibration Drift | Establish a regular sensor calibration and maintenance schedule. Implement data validation rules at the ingestion point to flag and route anomalous readings for review [33]. |

| Packet Loss or Out-of-Order Delivery | Use a streaming platform that can buffer, shuffle, and re-order data packets based on the original event timestamp before analysis [36]. |

Issue 3: Difficulty Scaling the Platform with Experimental Throughput

Symptoms: System performance degrades as more bioreactors or analytical instruments are brought online. Possible Causes and Solutions:

| Cause | Solution |

|---|---|

| Non-Scalable Architecture | Design your system with horizontal scaling in mind. Use a platform that supports easy partitioning of data streams, allowing multiple processes to handle different segments in parallel [33] [35]. |

| Improper Resource Allocation | Use container orchestration tools (e.g., Kubernetes) to deploy streaming components. Implement continuous monitoring and autoscaling policies to adjust CPU and memory resources based on real-time demand [35]. |

| Monolithic Processing Pipelines | Break down processing into smaller, independent microservices. This allows each service to be scaled independently based on its specific load [35]. |

Experimental Protocols for the 'Test' Phase

Protocol 1: Real-Time Monitoring of a Fed-Batch Fermentation for Metabolite Production

Objective: To continuously track key process variables and product formation to maintain optimal production conditions and gather high-quality data for the 'Learn' phase.

Methodology:

- Instrument Setup: Calibrate in-line sensors for pH, dissolved oxygen (DO), and temperature. Connect an automated sampling system to an HPLC or mass spectrometer for near-real-time quantification of metabolites and substrates.

- Data Ingestion: Configure a stream ingestion tool (e.g., Apache Kafka) to collect data from all sensors and analyzers. Each data point should be timestamped at the source.

- Stream Processing:

- Use a processing framework (e.g., Apache Flink) to clean and synchronize the incoming data streams.

- Calculate derived metrics in real-time, such as specific growth rate or product yield.

- Implement a machine learning model for anomaly detection to flag deviations from expected process trajectories [33] [34].

- Output and Action:

- Feed processed data to a real-time dashboard for visualization.

- Set up automated alerts to notify researchers if key parameters (e.g., DO, product titer) fall outside predefined thresholds.

- Implement simple feedback control loops (e.g., to add base to control pH).

Protocol 2: High-Throughput Screening with Real-Time Data Integration

Objective: To rapidly process and analyze data from microtiter plates or similar high-throughput formats, enabling immediate prioritization of strains for further study.

Methodology:

- Automated Assays: Use robotic systems to perform assays (e.g., fluorescence, absorbance) on many strains in parallel.

- Continuous Data Collection: Configure plate readers and other instruments to stream raw data output continuously to a central ingestion point as measurements are taken.

- Real-Time Analysis:

- Apply Streaming SQL or similar technology to calculate performance metrics (e.g., fluorescence intensity per OD600) for each strain as the data arrives [34].

- Use a stateful stream processing application to aggregate results per strain across multiple time points.

- Instantaneous Ranking: Implement a logic module that ranks strains based on pre-defined performance criteria (e.g., highest product yield, fastest growth) in real-time. The top-performing strains can be automatically flagged for the 'Build' phase of the next DBTL cycle.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key technologies and materials essential for implementing real-time data streaming in the 'Test' phase.

| Item | Function in the 'Test' Phase |

|---|---|

| Apache Kafka | A distributed event streaming platform used for high-throughput, reliable ingestion of data from multiple sources into a unified data pipeline [33] [35]. |

| Apache Flink | A powerful stream processing framework that supports complex event processing, stateful computations, and low-latency analytics, crucial for real-time bioprocess monitoring [33] [35]. |

| In-line Sensors (pH, DO) | Generate continuous, real-time data on critical process parameters, providing the fundamental input for the streaming pipeline [35]. |

| Cloud Object Storage (e.g., AWS S3) | Provides scalable and cost-effective storage for the vast amounts of time-series data generated during experiments, supporting long-term data retention for the 'Learn' phase [35]. |

| Streaming SQL | A query language that allows researchers and data engineers to define data transformations and analyses on continuous data streams using a familiar SQL-like syntax, speeding up development [34]. |

The following table summarizes quantitative data on the growth and performance of the real-time analytics and streaming tools market, underscoring the strategic importance of this technology.