Optimizing the DBTL Cycle: Accelerating Therapeutic Development Through AI and Automation

This article explores the strategic optimization of the Design-Build-Test-Learn (DBTL) cycle to accelerate and enhance therapeutic development.

Optimizing the DBTL Cycle: Accelerating Therapeutic Development Through AI and Automation

Abstract

This article explores the strategic optimization of the Design-Build-Test-Learn (DBTL) cycle to accelerate and enhance therapeutic development. Tailored for researchers, scientists, and drug development professionals, it provides a comprehensive framework spanning from foundational principles to advanced applications. We examine the core components of the DBTL framework, detail cutting-edge methodologies including automated biofoundries and machine learning, address critical troubleshooting and optimization strategies for high-throughput workflows, and present validation case studies from recent research. The synthesis of these insights aims to equip practitioners with the knowledge to implement more efficient, predictive, and successful biotherapeutic development pipelines.

The DBTL Framework: Core Principles for Therapeutic Innovation

Defining the Design-Build-Test-Learn (DBTL) Cycle in Synthetic Biology

The Design-Build-Test-Learn (DBTL) cycle is a systematic, iterative framework central to synthetic biology, enabling the engineering of biological systems for specific functions such as producing therapeutic compounds [1]. This engineering approach applies rational principles to design and assemble biological components, acknowledging that introducing foreign DNA into a cell often produces unpredictable outcomes, thus necessitating testing multiple permutations [1]. The cycle begins with Design, where researchers define objectives and create plans using domain knowledge and computational models [2]. This is followed by the Build phase, where DNA constructs are synthesized and assembled into vectors for introduction into characterization systems like bacteria, yeast, or cell-free platforms [2]. The Test phase involves experimentally measuring the performance of the engineered constructs against the initial objectives [2]. Finally, the Learn phase involves analyzing the collected data to inform the next design round, creating a continuous loop of refinement until the desired biological function is achieved [1] [2]. This iterative process is fundamental to streamlining biological engineering, making it more predictable and efficient.

The DBTL framework is particularly powerful in therapeutic development, where it accelerates the optimization of microbial hosts for drug production, engineering of therapeutic proteins like antibodies, and development of novel antimicrobial peptides [3] [4]. Emphasizing modular design of DNA parts allows researchers to assemble a greater variety of potential constructs by interchanging individual components, while automation reduces the time, labor, and cost of generating these constructs [1]. This structured approach to biological engineering has transformed the field's capacity to address complex challenges in biomanufacturing and therapeutic development.

Core Components of the DBTL Framework

Design Phase

The Design phase establishes the computational and biological framework for the entire DBTL cycle. In this initial stage, researchers define precise objectives for the desired biological function and design the biological parts or system required to achieve it [2]. This may involve introducing novel genetic components or redesigning existing biological parts for new therapeutic applications. The phase heavily relies on domain expertise, biological knowledge, and increasingly sophisticated computational approaches for modeling and prediction [2]. For metabolic engineering, this involves planning genetic modifications to host organisms; for protein engineering, it entails designing sequences with improved or novel functions.

Modern Design phases increasingly incorporate machine learning (ML) and artificial intelligence (AI) tools to enhance predictive capabilities. Protein language models such as ESM (Evolutionary Scale Modeling) and Ankh are trained on evolutionary relationships between protein sequences and can predict beneficial mutations and infer protein function [3] [2]. Structural models like ProteinMPNN use deep learning to design protein sequences that fold into specific backbone structures, while tools like MutCompute optimize residues based on local chemical environments [2]. These computational approaches enable more informed design decisions, potentially reducing the number of DBTL iterations needed to achieve therapeutic goals.

Build Phase

The Build phase translates designed genetic constructs into physical biological entities. This phase involves synthesizing DNA fragments, assembling them into plasmids or other vectors, and introducing them into characterization systems [2]. Traditional Build methods employ in vivo chassis such as bacteria (E. coli, Pseudomonas putida), eukaryotic cells, mammalian cells, or plants [2]. However, cell-free expression systems are increasingly adopted for their speed and flexibility, leveraging protein biosynthesis machinery from cell lysates or purified components to activate in vitro transcription and translation without time-intensive cloning steps [2].

Automation is revolutionizing the Build phase, enabling high-throughput construction of biological systems. Automated liquid handlers and biofoundries facilitate the combinatorial assembly of modular gene fragments from prepared repositories into diverse linear and plasmid constructs [4]. This automation significantly increases throughput while reducing human error in molecular cloning workflows [1]. For therapeutic development, the Build phase must produce constructs reliably and at scales appropriate for subsequent testing, whether for pathway prototyping, enzyme engineering, or therapeutic protein production.

Test Phase

The Test phase quantitatively evaluates the performance of built biological systems against design objectives. This involves experimentally measuring key performance indicators through functional assays specific to the application [2]. For metabolic engineering, this might include measuring titers, rates, and yields (TRY) of target therapeutic compounds using analytical methods like HPLC or GC-MS [5]. For protein engineering, tests might assess activity, stability, solubility, or specificity through colorimetric, fluorescent, or functional assays [2].

High-throughput methodologies are transforming the Test phase. Automated cultivation platforms like the BioLector provide reproducible data through tight control of culture conditions (O₂ transfer, shake speed, humidity) while generating results that scale to higher production volumes [5]. Cell-free systems paired with liquid handling robots and microfluidics can screen thousands of reactions, as demonstrated by the DropAI platform which screened over 100,000 picoliter-scale reactions [2]. For antimicrobial peptide development, the iGEM Jiangnan-China team implemented rigorous testing of their CytoGuard prediction model on independent test sets, achieving a Spearman correlation of 0.8543 and Pearson correlation of 0.9105 [3]. These advanced testing methodologies generate the high-quality data essential for informative Learn phases.

Learn Phase

The Learn phase transforms experimental results into actionable insights for subsequent DBTL cycles. Researchers analyze data collected during testing, compare outcomes to design objectives, and extract knowledge about biological system behavior [2]. This analysis ranges from identifying optimal media components to understanding sequence-function relationships in engineered proteins. The Learn phase increasingly employs explainable artificial intelligence techniques to pinpoint critical factors influencing system performance [5].

In one media optimization case study, learning revealed that sodium chloride (NaCl) was the most important component influencing flaviolin production in Pseudomonas putida, with optimal concentrations near seawater salinity [5]. For the CytoFlow platform, learning identified that multi-model fusion outperformed single embeddings (Spearman: 0.8543 vs 0.71-0.79) and that dynamic k-mer selection (k=3,4) effectively captured structural dependencies [3]. These insights directly inform subsequent DBTL iterations, enabling progressive refinement of biological designs. The Learn phase completes the DBTL cycle while initiating the next, creating a continuous improvement loop essential for optimizing complex biological systems for therapeutic applications.

Quantitative Applications in Therapeutic Development

The DBTL cycle delivers measurable improvements in therapeutic development campaigns. The table below summarizes performance metrics from recent applications.

Table 1: Quantitative Outcomes of DBTL Implementation in Therapeutic Development

| Application Area | DBTL Implementation | Key Outcomes | Reference |

|---|---|---|---|

| Media Optimization for flaviolin production in P. putida | Machine learning-guided active learning with semi-automated pipeline | 60-70% increase in titer; 350% increase in process yield | [5] |

| Antimicrobial Peptide (AMP) Prediction | Hypergraph neural network (CytoGuard) integrating multi-model embeddings | Spearman correlation: 0.8543; Pearson correlation: 0.9105; RMSE: 0.1806 | [3] |

| Protein Stability Engineering | Stability Oracle trained on stability data and protein structures | Accurate prediction of ΔΔG for protein stability | [2] |

| Enzyme Engineering | ProteinMPNN for sequence design with AlphaFold for structure assessment | Nearly 10-fold increase in design success rates | [2] |

These quantitative improvements demonstrate how structured DBTL cycles significantly accelerate and enhance therapeutic development. The 350% increase in process yield for flaviolin production highlights the potential impact on manufacturing efficiency for therapeutic compounds [5]. Similarly, the 10-fold improvement in protein design success rates showcases how machine learning integration transforms the efficiency of engineering biological therapeutics [2].

Table 2: Machine Learning Models in the DBTL Cycle for Therapeutic Development

| ML Model | DBTL Phase | Function in Therapeutic Development | Performance Metrics |

|---|---|---|---|

| ESM-2/Ankh/ProtT5 | Design | Protein language models for predicting beneficial mutations and inferring function | Zero-shot prediction of diverse antibody sequences [2] |

| Stability Oracle | Design/Learn | Predicts ΔΔG of proteins using graph-transformer architecture | Trained on collection of stability data and protein structures [2] |

| Hypergraph Neural Networks (CytoGuard) | Test/Learn | Predicts antimicrobial activity by integrating multi-model embeddings | Spearman correlation 0.8543; RMSE 0.1806 [3] |

| Reinforcement Learning (CytoEvolve) | Learn | Policy networks with diffusion architecture guide sequence mutations | Generates LL-37 variants with improved antimicrobial activity [3] |

Machine learning models enhance every DBTL phase, from initial design to final learning. These tools enable researchers to navigate complex biological design spaces more efficiently, extracting meaningful patterns from high-dimensional data to inform therapeutic development decisions.

Detailed Experimental Protocols

Protocol: Machine Learning-Led Media Optimization for Secondary Metabolite Production

This protocol describes a semi-automated, active learning process for optimizing culture media to enhance production of therapeutic metabolites, adapted from a study that increased flaviolin production by 60-70% [5].

Materials and Equipment

Table 3: Research Reagent Solutions for Media Optimization

| Reagent/Equipment | Function/Application | Specifications |

|---|---|---|

| Automated Liquid Handler | Prepares media with precise component concentrations | Enables testing of 15+ media designs in parallel |

| BioLector or Similar Automated Cultivation System | Provides controlled, reproducible cultivation conditions | Controls O₂ transfer, shake speed, humidity |

| Microplate Reader | Measures product formation via absorbance/fluorescence | High-throughput alternative to HPLC/GC-MS |

| ART (Automated Recommendation Tool) | ML algorithm that recommends improved media designs | Implements active learning to minimize experiments |

| EDD (Experiment Data Depot) | Stores experimental designs and results | Central repository for DBTL data management |

| 12-15 Media Components (e.g., salts, carbon sources, nitrogen sources) | Variables for optimization | 2-3 components fixed, 12-13 variables |

Procedure

Initial Design (1-2 days):

- Select 12-13 media components as variables for optimization, keeping 2-3 components fixed at standard concentrations.

- Use the Automated Recommendation Tool (ART) or similar active learning algorithm to generate an initial set of 15 media designs spanning the experimental space.

- Program the liquid handler with stock solution concentrations to automate media preparation.

Build Phase (4-6 hours hands-on):

- Use the automated liquid handler to combine stock solutions according to the generated media designs.

- Dispense each media design in triplicate or quadruplicate wells of a 48-well plate.

- Inoculate each well with the engineered production strain (e.g., P. putida KT2440 for flaviolin).

Test Phase (3 days cultivation + 4 hours analysis):

- Cultivate plates in the BioLector or similar system for 48 hours under controlled conditions.

- Measure product formation using a microplate reader (e.g., Abs₃₄₀ for flaviolin as a high-throughput proxy).

- Validate key results with authoritative assays like HPLC for definitive quantification.

- Upload media designs and production data to the Experiment Data Depot (EDD).

Learn Phase (1-2 days computational analysis):

- ART collects data from EDD and uses explainable AI techniques to identify the most important components influencing production.

- The algorithm recommends the next set of media designs likely to improve performance.

- Initiate the next DBTL cycle with the improved designs.

Critical Notes

- The semi-automated pipeline enables completion of approximately 15 media conditions in triplicate within one week.

- Use absorbance or fluorescence as high-throughput proxies when possible, with periodic HPLC validation.

- The active learning process typically requires 3-5 DBTL cycles to identify significantly improved media formulations.

- Explainable AI components help identify biologically relevant factors, such as the unexpected importance of NaCl concentration in flaviolin production [5].

Protocol: Cell-Free DBTL for Antimicrobial Peptide Development

This protocol implements a rapid DBTL cycle for developing antimicrobial peptides (AMPs) using cell-free expression systems and machine learning, based on the CytoFlow platform developed by iGEM Jiangnan-China [3].

Materials and Equipment

Table 4: Research Reagent Solutions for AMP Development

| Reagent/Equipment | Function/Application | Specifications |

|---|---|---|

| Cell-Free Protein Synthesis System | Rapid expression of AMP variants without cloning | >1 g/L protein in <4 hours [2] |

| Hypergraph Neural Network (CytoGuard) | Predicts antimicrobial activity from sequence | Integrates ESM-2, Ankh, ProtT5 embeddings |

| Reinforcement Learning Model (CytoEvolve) | Optimizes AMP sequences through iterative mutation | Uses policy network with diffusion architecture |

| Liquid Handling Robot + Microfluidics | Enables ultra-high-throughput screening | Screen >100,000 reactions (e.g., DropAI) [2] |

| Activity Assay Components | Measures antimicrobial efficacy | Minimum Inhibitory Concentration (MIC) determination |

Procedure

Design Phase (1-2 days computational):

- Use pre-trained protein language models (ESM-2, Ankh, ProtT5) to generate initial AMP sequence designs or analyze existing templates like LL-37.

- Apply CytoGuard (hypergraph neural network) to predict antimicrobial activity of designed sequences, selecting the most promising variants for testing.

- For sequence optimization, implement CytoEvolve reinforcement learning to guide mutations toward higher predicted activity.

Build Phase (1 day):

- Synthesize DNA templates encoding selected AMP variants without cloning steps.

- Express AMPs using cell-free protein synthesis systems, leveraging their rapid production capabilities (protein in hours without cloning).

- Scale reactions appropriately for subsequent testing (pL to mL scale depending on throughput needs).

Test Phase (1-2 days):

- Assess AMP activity against target pathogens using minimum inhibitory concentration (MIC) assays or high-throughput viability assays.

- For stability assessment, evaluate protein solubility using tools like DeepSol or thermal stability assays.

- Test cytotoxicity against human cell lines for therapeutic safety assessment.

- Quantify results and compile datasets for model training.

Learn Phase (2-3 days computational):

- Feed experimental results back into CytoGuard to refine activity prediction models.

- Use reinforcement learning (CytoEvolve) to analyze sequence-activity relationships and generate improved designs.

- Key Learning Metrics: Multi-model fusion outperforms single embeddings; dynamic k-mer selection (k=3,4) effectively captures structural dependencies [3].

Critical Notes

- Cell-free expression bypasses cloning and transformation steps, dramatically accelerating the Build phase.

- Combining droplet microfluidics with multi-channel fluorescent imaging enables screening of >100,000 AMP variants [2].

- Experience replay in reinforcement learning stabilizes convergence during sequence optimization.

- This approach successfully generated improved LL-37 variants with enhanced predicted antimicrobial activity [3].

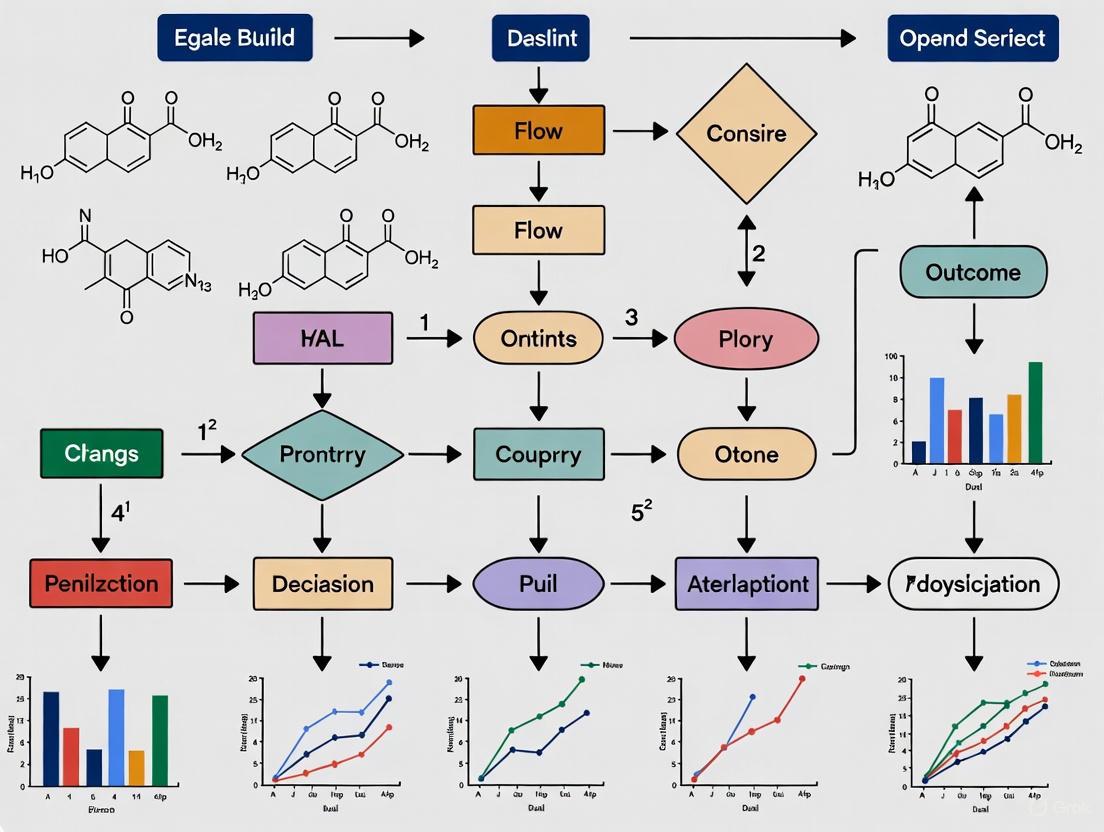

Visualizing DBTL Workflows

Core DBTL Cycle for Therapeutic Development

Diagram Title: Core DBTL Cycle

The fundamental DBTL cycle illustrates the iterative process where learning from previous experiments directly informs new design phases. This continuous refinement loop is essential for optimizing complex biological systems for therapeutic production, allowing researchers to systematically approach desired functions through successive approximation [1] [2].

Machine Learning-Enhanced DBTL with Cell-Free Systems

Diagram Title: ML-Enhanced LDBT Workflow

The machine learning-enhanced workflow demonstrates the emerging LDBT paradigm (Learn-Design-Build-Test), where machine learning models pre-trained on large biological datasets precede and inform the design phase [2]. This approach leverages zero-shot predictions to generate functional designs without additional training, potentially reducing the number of cycles needed to achieve therapeutic goals. Integration with cell-free systems accelerates building and testing phases, enabling megascale data generation for further model refinement [2].

The Design-Build-Test-Learn cycle represents a foundational framework that brings engineering discipline to biological innovation, particularly in therapeutic development. Through its iterative, systematic approach, DBTL enables researchers to navigate the complexity of biological systems with increasing precision and efficiency. The integration of machine learning technologies and automation platforms is transforming traditional DBTL into more predictive and scalable workflows, potentially evolving toward LDBT paradigms where learning precedes design [2]. For therapeutic development researchers, mastering DBTL methodologies provides a powerful strategy for accelerating the development of novel antimicrobial peptides, optimizing biomanufacturing processes for therapeutic compounds, and engineering proteins with enhanced therapeutic properties. The structured experimental protocols and quantitative assessment frameworks presented in this application note offer practical guidance for implementing these approaches in research programs aimed at addressing pressing challenges in therapeutic development.

The Role of DBTL in Overcoming Biological Complexity for Drug Development

The Design-Build-Test-Learn (DBTL) cycle is a systematic framework central to synthetic biology and modern drug discovery, enabling researchers to navigate and overcome the inherent complexity of biological systems. This iterative engineering approach applies rational principles to the design and assembly of biological components to reprogram organisms with desired therapeutic functionalities [6] [1]. In pharmaceutical applications, the DBTL framework impacts all stages of drug discovery and development, from initial target validation and assay development to hit finding, lead optimization, chemical synthesis, and the development of cellular therapeutics [7]. The cycle begins with the rational design of biological systems, followed by the construction of these systems using genetic engineering tools, functional testing through various assays, and finally analysis of data to inform the next design iteration [1]. This structured approach is particularly valuable in addressing the traditionally slow and costly nature of drug discovery, where development timelines typically span 10-15 years with high attrition rates [8]. By implementing iterative DBTL cycles, researchers can progressively refine therapeutic designs, optimize metabolic pathways for drug production, and develop more effective treatments with greater efficiency and predictability.

DBTL Framework and Its Pharmaceutical Applications

The Core Components of the DBTL Cycle

The DBTL cycle consists of four interconnected stages that form an iterative engineering process for biological systems. The Design phase involves the rational planning of biological components using computational tools and prior knowledge to achieve desired functions [6] [9]. This includes selecting genetic parts, designing metabolic pathways, and modeling expected behaviors. The Build phase translates these designs into physical biological constructs using genetic engineering techniques such as DNA synthesis, assembly, and genome editing [6] [10]. This stage has been significantly accelerated by advances in DNA synthesis technologies and automated assembly methodologies. The Test phase involves experimental validation of the constructed biological systems through high-throughput screening and functional assays to characterize performance and output [1] [9]. Finally, the Learn phase utilizes data analysis and machine learning to extract insights from experimental results, identify patterns, and generate improved designs for the next cycle [6] [11]. This iterative process enables continuous refinement of biological systems, progressively enhancing their therapeutic potential while deepening understanding of underlying biological mechanisms.

Applications in Therapeutic Development

The DBTL framework has demonstrated significant value across multiple therapeutic domains, enabling more efficient development of various treatment modalities. In small molecule drug discovery, DBTL cycles facilitate the optimization of microbial production strains for complex drug compounds and the design of novel drug candidates with improved properties [6] [8]. For therapeutic peptides, the framework guides the generation of functional sequences and de novo structures with enhanced stability and reduced immunogenicity [8]. In cellular therapeutics, DBTL enables the programming of microbes with sensing and response capabilities, such as microorganisms engineered to sense and kill cancer cells or produce drugs in vivo based on diagnostic signals [6] [7]. The framework has also proven valuable in developing enzymatic therapeutics and biologics, where iterative optimization of expression systems and protein engineering can significantly enhance production yields and therapeutic efficacy [12] [9]. The application of DBTL cycles in these diverse areas highlights their versatility in addressing various challenges in pharmaceutical development, from initial drug candidate identification to optimization of production strains for manufacturing.

Table 1: DBTL Cycle Applications in Different Therapeutic Modalities

| Therapeutic Modality | DBTL Application Examples | Key Benefits |

|---|---|---|

| Small Molecules | Metabolic pathway optimization for drug production; Structure-based drug design [6] [8] | Improved production titers; Enhanced drug binding affinity |

| Therapeutic Peptides | Sequence optimization for stability; De novo peptide design [8] | Reduced proteolysis; Minimized immunogenicity |

| Cellular Therapeutics | Engineering sensing circuits; Optimizing drug production in vivo [6] [7] | Targeted delivery; Autonomous function |

| Enzymes & Biologics | Expression optimization; Protein engineering [12] [9] | Increased yield; Enhanced catalytic efficiency |

Case Study: Optimizing Dopamine Production inE. coli

Experimental Background and Objectives

Dopamine is a crucial organic compound with applications in emergency medicine, cancer diagnosis and treatment, lithium anode production, and wastewater treatment [12]. Traditional chemical synthesis methods for dopamine are environmentally harmful and resource-intensive, creating a need for more sustainable production approaches. This case study demonstrates the development and optimization of a dopamine production strain in Escherichia coli using a knowledge-driven DBTL cycle that combines upstream in vitro investigation with high-throughput ribosomal binding site (RBS) engineering [12]. The experimental objective was to create an efficient dopamine production strain by constructing a synthetic pathway that converts the precursor L-tyrosine to L-DOPA via the native E. coli enzyme 4-hydroxyphenylacetate 3-monooxygenase (HpaBC), then to dopamine using L-DOPA decarboxylase (Ddc) from Pseudomonas putida [12]. This approach achieved a remarkable 2.6 to 6.6-fold improvement over state-of-the-art in vivo dopamine production methods, ultimately developing a strain capable of producing dopamine at concentrations of 69.03 ± 1.2 mg/L (equivalent to 34.34 ± 0.59 mg/g biomass) [12].

DBTL Protocol for Dopamine Strain Optimization

Design Phase Methodology:

- Pathway Design: Select the dopamine biosynthetic pathway genes (hpaBC and ddc) and appropriate expression vectors (pET and pJNTN plasmid systems) [12].

- Host Strain Engineering: Genomically engineer E. coli FUS4.T2 for increased L-tyrosine production by depleting the transcriptional dual regulator L-tyrosine repressor TyrR and introducing feedback inhibition mutations in chorismate mutase/prephenate dehydrogenase (tyrA) [12].

- RBS Library Design: Design a library of RBS sequences with modulated Shine-Dalgarno sequences to fine-tune translation initiation rates without interfering with secondary structures [12].

Build Phase Methodology:

- DNA Assembly: Clone hpaBC and ddc genes into expression vectors using standard molecular cloning techniques with E. coli DH5α as the cloning strain [12].

- Strain Transformation: Transform the engineered dopamine production constructs into the optimized E. coli FUS4.T2 production strain [12].

- Library Construction: Generate the RBS variant library for bi-cistronic expression optimization using high-throughput DNA assembly methods [12].

Test Phase Methodology:

- Cultivation Conditions: Grow production strains in minimal medium containing 20 g/L glucose, 10% 2xTY medium, and appropriate supplements at specified conditions [12].

- Dopamine Quantification: Analyze dopamine production using appropriate analytical methods (e.g., HPLC) after cultivation [12].

- Data Collection: Measure final dopamine titers, biomass yields, and process parameters for each strain variant [12].

Learn Phase Methodology:

- Data Analysis: Evaluate the performance of each RBS variant in terms of dopamine production and biomass yield [11].

- Mechanistic Insight Analysis: Correlate RBS sequence features (particularly GC content in the Shine-Dalgarno sequence) with translation efficiency and dopamine production [12].

- Design Refinement: Identify optimal RBS combinations and propose further genetic modifications for subsequent DBTL cycles [12].

Table 2: Dopamine Production Optimization Through DBTL Iterations

| DBTL Cycle | Key Genetic Modifications | Dopamine Production (mg/L) | Fold Improvement |

|---|---|---|---|

| Initial State | Reference strain from literature [12] | 27.0 | 1.0x |

| Cycle 1 | Introduction of basic hpaBC-ddc pathway [12] | 35.2 | 1.3x |

| Cycle 2 | RBS engineering of hpaBC gene [12] | 48.5 | 1.8x |

| Cycle 3 | RBS engineering of ddc gene [12] | 58.7 | 2.2x |

| Cycle 4 | Combinatorial RBS optimization [12] | 69.0 | 2.6x |

Dopamine Biosynthetic Pathway

Machine Learning and Automation in DBTL Cycles

Machine Learning for DBTL Acceleration

Machine learning (ML) has emerged as a powerful tool for overcoming the bottleneck in the "Learn" phase of the DBTL cycle, particularly when dealing with the complexity and heterogeneity of biological systems [6]. ML processes large biological datasets and provides predictive models by selecting appropriate features and uncovering unseen patterns [6]. In metabolic engineering for drug development, ML algorithms such as gradient boosting and random forest have demonstrated superior performance in the low-data regime common in early DBTL cycles [11]. These methods have proven robust against training set biases and experimental noise, making them particularly valuable for pharmaceutical applications where data may be limited or variable [11]. ML approaches can facilitate system-level prediction of biological designs with desired characteristics by elucidating associations between phenotypes and various combinations of genetic parts and genotypes [6]. As explainable ML advances, these systems provide both predictions and reasons for proposed designs, deepening understanding of biological relationships and significantly accelerating the "Learn" stage of the DBTL cycle [6]. This capability is especially valuable in drug discovery, where understanding structure-activity relationships is crucial for developing effective therapeutics.

Biofoundries and Automated Workflows

Biofoundries represent the physical implementation of automated DBTL cycles, providing integrated facilities where biological design, construction, functional assessment, and mathematical modeling are performed using automated equipment [9]. These facilities address the challenges of scaling DBTL processes by implementing standardized workflows and unit operations that enable high-throughput experimentation [9]. The Global Biofoundry Alliance, established in 2019, has brought together key facilities worldwide to share experiences and resources while addressing common challenges in synthetic biology [6] [9]. Biofoundries employ an abstraction hierarchy that organizes activities into four interoperable levels: Project, Service/Capability, Workflow, and Unit Operation, effectively streamlining the DBTL cycle [9]. This framework enables more modular, flexible, and automated experimental workflows, improves communication between researchers and systems, supports reproducibility, and facilitates better integration of software tools and artificial intelligence [9]. For drug development, this automation is particularly valuable in enabling rapid iteration through DBTL cycles, with studies demonstrating that when the number of strains to be built is limited, starting with a large initial DBTL cycle is favorable over building the same number of strains for every cycle [11].

ML-Enhanced DBTL Cycle

Implementation Tools and Reagent Solutions

Essential Research Reagents and Materials

Successful implementation of DBTL cycles in drug development relies on a suite of specialized research reagents and molecular tools. The table below details key resources essential for executing DBTL-based therapeutic development projects.

Table 3: Essential Research Reagents for DBTL-Based Drug Development

| Reagent/Material | Function in DBTL Cycle | Specific Examples |

|---|---|---|

| DNA Synthesis & Assembly Tools | Build phase: Construction of genetic designs | Gibson assembly [6]; Biofoundry-automated DNA assembly [9] |

| Expression Vectors | Build phase: Host delivery of genetic constructs | pET plasmid system; pJNTN plasmid [12] |

| Engineering Host Strains | Build/Test phases: Chassis for pathway expression | E. coli FUS4.T2 (dopamine production) [12] |

| Enzyme Libraries | Design phase: Source of biological parts | HpaBC (native E. coli); Ddc (Pseudomonas putida) [12] |

| Analytical Standards | Test phase: Compound quantification | Dopamine hydrochloride; L-tyrosine; L-DOPA [12] |

| Cell-Free Protein Synthesis Systems | Learn phase: Rapid pathway testing | Crude cell lysate systems [12] |

Computational and Automation Infrastructure

Effective DBTL implementation requires sophisticated computational tools and automation infrastructure to manage the iterative design and testing processes. Machine learning platforms incorporating gradient boosting, random forest, and other algorithms are essential for analyzing complex datasets and generating predictive models for subsequent design cycles [11]. Biofoundry automation systems including liquid handling robots, plate readers, and high-throughput screening equipment enable the rapid construction and testing of multiple design variants [9]. DNA design software and computational modeling tools facilitate the initial design phase by predicting the behavior of biological systems before physical construction [6] [9]. Data management systems are crucial for tracking iterations across multiple DBTL cycles, maintaining experimental metadata, and ensuring reproducibility [9]. Specialized cultivation equipment such as automated bioreactors and high-throughput culture systems enable precise control of environmental conditions during the test phase [12]. These computational and automation tools collectively reduce the time and cost associated with therapeutic development by enabling parallel processing of multiple design variants and enhancing the quality of insights gained from each DBTL cycle.

The DBTL cycle represents a powerful framework for addressing biological complexity in drug development, enabling systematic iteration toward optimized therapeutic solutions. By implementing knowledge-driven DBTL approaches that combine upstream in vitro investigation with high-throughput genetic engineering, researchers can significantly accelerate strain development for drug production, as demonstrated by the 2.6-fold improvement in dopamine production [12]. The integration of machine learning, particularly gradient boosting and random forest algorithms that perform well in low-data regimes, further enhances the efficiency of DBTL cycles by improving predictive modeling and design recommendation [11]. Looking forward, the full potential of DBTL in pharmaceutical applications will be realized through increased automation in biofoundries, development of more sophisticated abstraction hierarchies for workflow standardization, and enhanced AI integration that bridges modality-specific gaps between small molecule and therapeutic peptide development [9] [8]. These advances will ultimately shift the drug discovery paradigm from exploratory screening to targeted creation of novel therapeutics, potentially reducing development timelines and costs while increasing success rates in bringing effective treatments to market. As DBTL methodologies continue to evolve and become more accessible through benchtop DNA synthesis technologies and standardized protocols, they are poised to significantly transform pharmaceutical development across multiple therapeutic modalities.

The Design-Build-Test-Learn (DBTL) cycle is a cornerstone of modern synthetic biology, enabling the iterative development of genetically programmed cells for therapeutic applications. This framework involves designing genetic constructs, building them in biological systems, testing their performance, and learning from the data to inform the next design cycle. In therapeutic development, this process is crucial for programming cells to correct genetic diseases, serve as living therapeutics in the human microbiome, and produce therapeutic molecules with high precision [13]. However, quantitative genetic circuit design has been hampered by the limited modularity of biological parts and the significant metabolic burden imposed on chassis cells as complexity increases [14]. Recent advancements have introduced a paradigm-shifting approach: the Learn-Design-Build-Test (LDBT) cycle, which begins with a machine learning-driven learning phase to predict meaningful design parameters before construction commences [15]. This application note details the key components, methodologies, and analytical tools for implementing both DBTL and LDBT frameworks to optimize genetic circuit development for therapeutic applications.

Genetic Part Design: Components and Quantitative Characterization

The foundation of any genetic circuit lies in its individual parts—DNA sequences that control gene expression. For therapeutic applications, precise control over both the timing and level of gene expression is essential [13].

Core Genetic Components

- Promoters: Synthetic promoters, especially those engineered with tandem operator sites for transcription factor binding, provide the regulatory basis for complex circuits. Their strength determines the maximum possible expression level and must be matched to the application [14].

- Transcriptional Regulators: These DNA-binding proteins, including repressors, anti-repressors, and activators, control the flow of RNA polymerase. A key advancement is the development of Transcriptional Programming (T-Pro), which utilizes synthetic anti-repressors to achieve Boolean logic operations with fewer genetic components, a process known as circuit compression [14].

- Ribosome Binding Sites (RBS): These sequences control translational efficiency and significantly impact protein expression levels. Machine learning models in the LDBT cycle use RBS sequences as key features for predicting circuit performance [15].

- Terminators: These sequences signal the end of transcription and prevent read-through, ensuring genetic insulation between adjacent genetic parts.

Table 1: Characterization of Major Transcriptional Regulator Classes Used in Genetic Circuit Design

| Regulator Class | Control Mechanism | Example Systems | Therapeutic Application Examples |

|---|---|---|---|

| DNA-Binding Proteins | Recruit or block RNA polymerase [13] | TetR, LacI, CI homologues [13]; synthetic TFs (e.g., for IPTG, D-ribose, cellobiose) [14] | Biosensors for disease markers; pulse generators for drug delivery [13] |

| CRISPR/dCas Systems | dCas9 binding blocks transcription or recruits activators [13] | CRISPRi, CRISPRa [13] | Multiplexed gene regulation; fine-tuning metabolic pathways [13] |

| Invertases/Recombinases | Flip DNA segments between specific sites, changing genetic output permanently [13] | Cre, Flp, serine integrases (e.g., Bxb1) [13] | Biological memory for recording cell history; irreversible activation of therapeutic genes [13] [14] |

Protocol 1: Quantitative Characterization of Genetic Parts

Objective: To measure the transfer function of an inducible promoter, determining its dynamic range, leakiness, and response threshold.

Materials:

- Plasmid DNA: Construct with the promoter of interest driving a fluorescent reporter gene (e.g., GFP).

- Chassis: Appropriate microbial or mammalian cells.

- Inducer: Ligand or molecule that regulates the promoter.

- Equipment: Flow cytometer or plate reader, culture incubator, microcentrifuge.

Method:

- Transformation/Transfection: Introduce the plasmid construct into the chassis cells.

- Induction Curve: Inoculate cultures and grow them to mid-log phase. Aliquot cultures into different flasks and induce with a concentration gradient of the inducer (e.g., 0, 0.1, 1, 10 mM IPTG).

- Measurement: Grow cultures for a fixed, standardized period (e.g., 6-8 hours or until steady state is reached). Measure the fluorescence intensity and optical density (OD600) of each culture using a flow cytometer or plate reader.

- Data Analysis:

- Calculate the mean fluorescence intensity (MFI) for each sample.

- Normalize the MFI by the OD600 to account for cell density.

- Plot normalized fluorescence versus inducer concentration on a logarithmic scale.

- Fit a dose-response curve (e.g., Hill function) to determine key parameters: OFF state (leakiness), ON state (saturation), Hill coefficient (cooperativity), and EC50 (effective concentration for 50% response).

Circuit Build and Test: Assembly and Rapid Prototyping

Circuit Build Methodologies

Advanced DNA assembly techniques, such as Golden Gate assembly or Gibson assembly, are used to compose multiple genetic parts into a single, functional circuit. For complex circuits, computational tools are now available that algorithmically enumerate designs to guarantee the smallest possible circuit (maximum compression) for a given Boolean logic operation, minimizing metabolic burden [14].

Protocol 2: Rapid Testing Using Cell-Free Transcription-Translation (TX-TL) Systems

Objective: To rapidly prototype and test genetic circuit performance without the constraints of living cells, accelerating the Test phase.

Materials:

- Cell-Free Extract: Commercially available or homemade E. coli or HEK293 cell lysate.

- Template DNA: PCR product or plasmid containing the genetic circuit.

- Energy Solution: Contains amino acids, nucleotides, and energy sources (e.g., phosphoenolpyruvate).

- Equipment: 96-well plate, real-time PCR machine with fluorescence detection, or plate reader.

Method:

- Reaction Setup: On ice, mix the cell-free extract with the energy solution and your template DNA (5-20 nM) in a 96-well plate. Include a negative control (no DNA) and a positive control (a well-characterized construct).

- Kinetic Measurement: Place the plate in a real-time PCR machine or plate reader preheated to 30°C (for E. coli TX-TL) or 37°C (for mammalian TX-TL). Measure fluorescence (e.g., GFP) and absorbance (to monitor resource consumption) every 5-10 minutes for 6-16 hours.

- Data Analysis:

- Plot fluorescence over time to observe the circuit's dynamics (e.g., onset time, amplitude, steady-state).

- Compare the output of different circuit designs or inducer concentrations to characterize performance.

This cell-free approach is a key enabler of the LDBT cycle, providing high-throughput, reproducible data that is decoupled from cellular complexities, thereby enriching the training datasets for machine learning models [15].

Phenotype Analysis: From HPO Terms to Diagnostic Prioritization

For therapeutic development, particularly in Mendelian diseases, analyzing the phenotypic outcome of genetic perturbations—whether natural or treatment-induced—is critical. Computational tools that link patient phenotypes to genetic causes are essential for diagnosis and evaluating therapeutic efficacy.

Phenotype-Driven Analysis Tools

Deep learning-based toolkits like PhenoDP represent the state of the art in phenotype-driven diagnosis. PhenoDP integrates three modules to streamline analysis [16]:

- Summarizer: A fine-tuned large language model (LLM) that generates patient-centered clinical summaries from lists of Human Phenotype Ontology (HPO) terms.

- Ranker: Prioritizes potential Mendelian diseases by combining information content-based, phi-based, and semantic similarity measures between the patient's HPO terms and disease-associated terms.

- Recommender: Uses contrastive learning to suggest additional HPO terms for clinicians to check, thereby improving differential diagnosis accuracy.

Table 2: Key Research Reagent Solutions for Genetic Circuit Design and Phenotype Analysis

| Item / Reagent | Function / Application | Example Use Case |

|---|---|---|

| Synthetic Transcription Factors (TFs) | Engineered repressors/anti-repressors that respond to orthogonal signals (e.g., IPTG, cellobiose) [14] | Implementing Boolean logic in compressed genetic circuits for cellular computation [14] |

| Cell-Free TX-TL Systems | Lysate-based systems for rapid, high-throughput testing of genetic circuits outside of living cells [15] | Accelerating the Test phase; generating data for machine learning model training in LDBT cycles [15] |

| CRISPR-dCas9 Modules | Catalytically dead Cas9 fused to effector domains for programmable transcriptional regulation [13] | Building complex, multi-input genetic circuits without altering the underlying DNA sequence [13] |

| Serine Integrases | Unidirectional recombinases that flip DNA segments to create permanent genetic memory [13] | Recording exposure to a therapeutic agent or disease-specific stimulus within a cell [13] [14] |

| Human Phenotype Ontology (HPO) | Standardized vocabulary of phenotypic abnormalities encountered in human disease [16] | Mapping patient symptoms for computational analysis and diagnosis using tools like PhenoDP [16] |

Protocol 3: Phenotype-Based Disease Prioritization with PhenoDP

Objective: To rank potential Mendelian diseases based on a patient's clinical features (HPO terms) and receive suggestions for further diagnostic clarification.

Materials:

- Input Data: A set of HPO terms describing the patient's phenotype.

- Software: PhenoDP toolkit, installed locally or accessed via web interface.

- Computing Environment: Standard workstation capable of running Python.

Method:

- Input Preparation: Compile the patient's clinical symptoms into a list of official HPO IDs (e.g., HP:0001250 for Seizure).

- Running PhenoDP:

- Summarizer: Input the HPO list to generate a coherent, patient-focused clinical summary.

- Ranker: Execute the Ranker module with the HPO list. The tool will compute similarity scores against known diseases in databases like OMIM and Orphanet, outputting a ranked list.

- Recommender: For the top-ranked candidate diseases, run the Recommender to get a list of suggested additional HPO terms that would help distinguish between these candidates.

- Clinical Correlation: The generated summary, ranked disease list, and suggested terms are reviewed by a clinician. The suggested terms can guide further physical examination or questioning of the patient.

- Iterative Refinement: As new phenotypic information is gathered, the process is repeated to refine the diagnosis.

Integrating LDBT and Phenotype Analysis for Therapeutic Development

The integration of a machine-learning-first LDBT cycle with advanced phenotype analysis tools creates a powerful, closed-loop framework for accelerating therapeutic development. The LDBT cycle enables the rapid, predictive design of genetic circuits intended to correct pathological phenotypes. These circuits can be optimized for biosensing, drug production, or direct cellular reprogramming. Subsequently, the phenotypic outcomes of these interventions—whether in preclinical models or clinical settings—can be rigorously analyzed using tools like PhenoDP. The rich phenotypic data generated then feeds back into the initial "Learn" phase of the next LDBT cycle, creating a virtuous cycle of continuous improvement and refinement for therapeutic strategies. This integrated approach promises to dramatically shorten development timelines and improve the predictability and efficacy of genetic therapies [15] [16].

The Impact of DNA Synthesis and Sequencing Cost Reductions on DBTL Accessibility

The Design-Build-Test-Learn (DBTL) cycle is a foundational framework in modern therapeutic development, enabling the iterative engineering of biological systems. In the context of drug development, this cycle involves designing novel genetic constructs or cellular therapies, building these designs using DNA synthesis and assembly techniques, testing their efficacy and safety through sequencing and functional assays, and learning from the data to inform the next design iteration. The pace and success of these cycles are critically dependent on the cost, speed, and accessibility of core technologies, particularly DNA synthesis and DNA sequencing.

Recent technological advancements have driven unprecedented reductions in the cost and time required for both DNA synthesis and sequencing. The global DNA synthesis market, valued at between USD 4.56 billion and USD 5.32 billion in 2024-2025, is projected to grow at a compound annual growth rate (CAGR) of 14% to 17.9% through 2035, potentially reaching USD 16.08 billion to USD 27.61 billion [17] [18] [19]. Concurrently, next-generation sequencing (NGS) costs have plummeted from billions of dollars per human genome to under $1,000, compressing sequencing timelines from years to mere hours [20]. This application note examines how these cost reductions are democratizing and accelerating DBTL cycles, with a specific focus on optimizing therapeutic development research.

Market and Cost Trends for DNA Synthesis and Sequencing

Quantitative Analysis of DNA Synthesis and Sequencing Costs

Table 1: DNA Synthesis Market Size and Growth Projections

| Base Year | Base Year Market Size (USD Billion) | Forecast Year | Projected Market Size (USD Billion) | CAGR (%) | Source |

|---|---|---|---|---|---|

| 2024 | 4.56 | 2032 | 16.08 | 17.5 | [18] |

| 2025 | 3.7 | 2035 | 13.7 | 14.0 | [17] |

| 2025 | 5.32 | 2035 | 27.61 | 17.9 | [19] |

Table 2: DNA Sequencing Cost and Performance Evolution

| Parameter | Human Genome Project (c. 2000) | Circa 2025 | Improvement Factor |

|---|---|---|---|

| Cost per Genome | ~$3 billion | <$1,000 | ~3,000,000x |

| Time per Genome | 13 years | Hours | ~10,000x |

| Technology | Sanger Sequencing | NGS | Massively parallel |

| Applications | Single reference genome | Widespread clinical & research use | Revolutionary |

The staggering cost reductions in DNA sequencing have transformed it from a monumental scientific undertaking to a routine tool. Next-generation sequencing (NGS) can now process millions of genetic fragments simultaneously, making it thousands of times faster than traditional methods [20]. The global clinical NGS market, valued at USD 6.2 billion in 2024, is projected to reach USD 15.2 billion by 2032, registering a CAGR of 13.6% [21]. This growth is fueled by increasing demand for personalized medicine and significant investments in research and development.

For DNA synthesis, the most dramatic cost innovations are emerging from decentralized workflows. Research demonstrates that labs can now perform large-scale, high-fidelity DNA construction in-house, delivering sequence-confirmed constructs in as little as four days at a fraction of outsourcing costs [22]. This approach reduces raw DNA costs by three- to five-fold compared to ordering double-stranded DNA fragments from commercial vendors, fundamentally altering the economics of the "Build" phase in DBTL cycles [22].

Regional and Segment Analysis

North America currently dominates the DNA synthesis market with a 55.04% share in 2024 [18], propelled by robust research infrastructure, substantial genomic research funding, and the strong presence of key market players. The services segment leads the product and service landscape due to the demand for cost-efficient and customized synthesis solutions [18]. By application, the research and development segment holds the largest share (54.6%), underscoring the critical role of DNA synthesis as a backbone for R&D in molecular biology, genetics, and biopharmaceutical development [17].

Accelerated Workflows and Experimental Protocols

Protocol 1: Decentralized Gene Synthesis via Golden Gate Assembly

This protocol enables rapid, cost-effective gene construction in research laboratories, compressing the "Build" phase of the DBTL cycle from weeks to days [22].

Principle: The workflow utilizes a combination of pooled oligonucleotides, computational fragment design optimization, and one-pot Golden Gate Assembly to construct complex DNA sequences with high fidelity.

Table 3: Research Reagent Solutions for Decentralized Gene Synthesis

| Item Name | Function/Description | Key Features/Benefits |

|---|---|---|

| NEBridge SplitSet Lite High-Throughput Web Tool | Divides input gene sequences into codon-optimized fragments | Determines optimal break points; assigns unique barcode primers for retrieval |

| Data-Optimized Assembly Design (DAD) | Computational framework for optimal overhang selection | Data-driven ligation fidelity prediction; enables complex multi-fragment assemblies |

| Type IIS Restriction Enzymes (e.g., BsaI-HFv2, BsmBI-v2) | Cleaves DNA at positions offset from recognition sites | Generates custom 4-base overhangs; recognition sites removed after assembly |

| NEBridge Golden Gate Assembly System | One-pot assembly of DNA fragments | Simultaneous, directional ligation of multiple fragments; seamless constructs |

| Pooled Oligonucleotides | Starting material for gene construction | Cost-effective; enables parallel retrieval of hundreds of gene designs via multiplexed PCR |

Step-by-Step Procedure:

- Design and Fragment Retrieval: Input the target gene sequence into the NEBridge SplitSet Lite High-Throughput webtool. The tool divides the sequence into codon-optimized fragments, appends Type IIS restriction sites, and assigns unique barcodes, with fragment design guided by DAD for optimal ligation fidelity. Order the designed oligonucleotides as a pool from a vendor. Retrieve specific fragments from the pool via a single round of multiplex PCR using a single primer pair, followed by purification.

- DAD-Guided Golden Gate Assembly: Assemble the retrieved fragments in a one-pot reaction using a Type IIS restriction enzyme (e.g., BsaI-HFv2) and T4 DNA Ligase. The DAD-optimized overhangs ensure correct fragment ordering and high assembly efficiency. Incubate the reaction using a thermocycler program (e.g., 37°C for 5 minutes, 16°C for 5 minutes, repeated for 25-30 cycles, followed by a final digestion at 37°C for 15 minutes and enzyme inactivation at 80°C for 20 minutes).

- Transformation and Verification: Transform the assembled constructs into competent E. coli cells. Screen colonies for correct assembly, typically via colony PCR or restriction digest. Verify the sequence of positive clones through Sanger sequencing or next-generation sequencing.

Validation and Scaling: In a validation study, this workflow successfully constructed 343 out of 458 target genes, assembling 389 kilobases of functional DNA. It proved particularly effective for sequences rejected by commercial vendors due to extreme GC content (>70% or <30%), high repeat content, or predicted structural complexity [22].

Diagram 1: Decentralized gene synthesis workflow showing the integrated "Build" and "Test" phases of a DBTL cycle, enabling sequence-verified constructs in four days.

Protocol 2: NGS-Based High-Throughput Functional Characterization

This protocol leverages reduced sequencing costs for the high-throughput "Test" phase of DBTL cycles, enabling comprehensive functional characterization of synthetic genetic constructs.

Principle: NGS technologies enable the parallel analysis of thousands to millions of DNA sequences, providing deep insights into the outcomes of genetic engineering efforts in a single experiment.

Key NGS Platforms and Selection Criteria:

- Short-Read Sequencing (e.g., Illumina): Ideal for variant calling, transcriptome analysis (RNA-Seq), and targeted sequencing due to high accuracy (>99%) and low cost per base. Best suited for applications where a reference genome is available.

- Long-Read Sequencing (e.g., PacBio, Oxford Nanopore): Essential for resolving complex genomic regions, detecting large structural variations, and de novo genome assembly. Reads can span thousands to millions of base pairs, providing context that short reads cannot.

Step-by-Step Procedure:

- Library Preparation: The specific protocol varies by application. For variant validation in a pooled library, shearing or amplify the synthesized DNA constructs. Attach platform-specific adapter sequences to the fragments. For single-cell RNA-Seq to "Test" therapeutic cell function (e.g., CAR-T cells), use specialized kits to barcode cDNA from individual cells.

- Cluster Generation and Sequencing (Illumina Example): Load the DNA library onto a flow cell where fragments bind to the surface and are amplified into clusters. Perform sequencing-by-synthesis using fluorescently tagged nucleotides. A camera captures the color of each cluster after each nucleotide addition, determining the sequence of millions of fragments in parallel.

- Data Analysis: Convert raw image data into sequence reads (base calling). Align reads to a reference genome or assemble them de novo. For a pooled library screen, quantify the abundance of each barcode to determine variant fitness. For single-cell RNA-Seq, use bioinformatics tools to cluster cells by gene expression and identify differentially expressed genes.

Integration with DBTL: The massive data output from NGS directly feeds the "Learn" phase. Computational analysis can reveal structure-function relationships, identify optimal genetic designs, and predict the behavior of novel designs in silico, thus accelerating the iterative design process.

Case Studies in Therapeutic Development

Engineering of Synthetic Receptors for Cell Therapies

The development of Chimeric Antigen Receptor (CAR) T-cell therapies exemplifies the power of accelerated DBTL cycles. CARs are synthetic receptors that reprogram T cells to target and kill cancer cells [23]. The evolution from first to fifth-generation CARs illustrates an iterative DBTL process:

- Design: Successive CAR designs incorporated additional intracellular signaling domains (e.g., from CD28, 4-1BB) to enhance T-cell persistence and cytotoxicity [23].

- Build: DNA synthesis technologies enabled the rapid construction of these complex genetic circuits.

- Test: NGS-based tracking of CAR-T cells in vivo and single-cell RNA-Seq of tumor microenvironments provided critical functional data.

- Learn: Data revealed mechanisms of tumor resistance and cytokine release syndrome, informing the next generation of safer, more effective designs.

Advanced synthetic receptors like synNotch further demonstrate this principle. These receptors can be programmed to activate only in the presence of multiple tumor antigens (AND logic gates), thereby improving specificity and reducing "on-target, off-tumor" toxicity [23]. The testing of these sophisticated designs relies heavily on NGS to monitor T-cell differentiation and function at the transcriptional level.

AI-Driven Gene Synthesis for Optimized Biologics

Artificial intelligence is now being integrated into the "Design" and "Learn" phases to further optimize DBTL cycles. Companies are leveraging AI to predict and resolve potential synthesis issues in silico before the "Build" phase begins. For instance:

- AI-Powered Sequence Optimization: AI algorithms analyze and optimize gene sequences for synthesis success, codon usage for high protein expression, and avoidance of problematic secondary structures [24].

- Impact: This intelligent design significantly improves the success rate for synthesizing complex sequences (e.g., those with high GC content or repetitive sequences), which are common in therapeutic targets. It reduces the number of costly and time-consuming DBTL iterations required to arrive at a functional product.

Diagram 2: The optimized DBTL cycle, showing the integration of cost-reduced technologies and AI, leading to accelerated therapeutic development.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Key Research Reagent Solutions for Modern DBTL Cycles

| Category/Item | Function in DBTL Cycle | Key Application in Therapeutic Development |

|---|---|---|

| Synthesis & Cloning | ||

| NEBridge Golden Gate Assembly System | "Build": Seamless, one-pot assembly of multiple DNA fragments. | Assembly of complex genetic circuits (e.g., CAR constructs, gene editing vectors). |

| Type IIS Restriction Enzymes (BsaI, BsmBI) | "Build": Generate unique, sequence-independent overhangs for modular assembly. | Essential for standardized assembly of therapeutic DNA modules. |

| Pooled Oligonucleotides | "Build": Cost-effective starting material for synthesizing numerous gene variants in parallel. | Construction of variant libraries for antibody optimization or protein engineering. |

| Sequencing & Analysis | ||

| Illumina NGS Platforms | "Test": High-accuracy, short-read sequencing for variant calling and expression profiling. | Tumor DNA sequencing, CAR-T cell persistence tracking, single-cell transcriptomics. |

| Long-Read Sequencers (Nanopore, PacBio) | "Test": Resolve complex genomic regions and detect structural variations. | Full-length antibody sequencing, characterization of complex transgene integration sites. |

| AI-Based Design Tools (e.g., CI, NG Codon) | "Design/Learn": In silico optimization of sequences for synthesis and expression. | Optimizing biotherapeutic protein expression and stability before synthesis. |

| Therapeutic Cell Engineering | ||

| synNotch Receptor System | "Design/Build": Programmable receptor for sensing multiple antigens and controlling therapeutic payload release. | Engineering safer T-cell therapies with AND-gate logic for precise tumor targeting [23]. |

| CAR Signaling Domains | "Design/Build": Intracellular components that enhance T-cell persistence and function. | Engineering 4th/5th generation CARs with improved antitumor activity and reduced exhaustion [23]. |

The convergence of dramatically reduced costs for DNA synthesis and sequencing is fundamentally transforming the accessibility and efficiency of DBTL cycles in therapeutic research. The emergence of decentralized synthesis workflows places the power of rapid gene construction directly in the hands of researchers, while the ubiquity of affordable NGS enables deep, data-rich characterization. This technological synergy accelerates the iterative process of biological design, compressing development timelines from years to months.

For researchers and drug development professionals, this means that ambitious projects—such as engineering multi-specific synthetic receptors or optimizing entire genetic pathways—are no longer constrained by prohibitive costs or slow turnaround times. The integration of AI and machine learning into this streamlined pipeline promises further gains, creating a future where DBTL cycles are not only faster and cheaper but also inherently smarter. By adopting these advanced protocols and tools, therapeutic development teams can maximize their experimental throughput and more rapidly deliver novel treatments to patients.

Establishing the Basis for Iterative, Knowledge-Driven Strain Engineering

This application note provides a detailed protocol for implementing a knowledge-driven Design-Build-Test-Learn (DBTL) cycle, with a specific focus on optimizing microbial strains for the production of therapeutic compounds. The framework accelerates strain development by integrating upstream in vitro investigations to generate mechanistic understanding before embarking on full in vivo DBTL cycling. A case study for the production of dopamine, a compound with applications in emergency medicine and cancer treatment, is used to illustrate the protocol [25].

The core innovation lies in preceding the traditional DBTL cycle with a preliminary learning phase that uses cell-free protein synthesis (CFPS) systems to rapidly inform the initial design. This "LDBT" approach (Learn-Design-Build-Test) leverages machine learning and rapid in vitro prototyping to de-risk and accelerate the subsequent engineering of living production chassis [2]. This method has demonstrated a 2.6 to 6.6-fold improvement in dopamine production titers compared to previous state-of-the-art in vivo methods [25].

Table 1: Key Performance Indicators for Dopamine Production Strain Optimization

| Performance Metric | State-of-the-Art (Prior to Study) | This Study's Results | Fold Improvement |

|---|---|---|---|

| Dopamine Titer (mg/L) | 27 mg/L [25] | 69.03 ± 1.2 mg/L [25] | 2.6-fold [25] |

| Specific Yield (mg/gbiomass) | 5.17 mg/g [25] | 34.34 ± 0.59 mg/g [25] | 6.6-fold [25] |

| Host Strain Modifications | — | TyrR depletion; Feedback inhibition mutation in tyrA [25] | — |

| Key Tuning Strategy | — | High-throughput RBS engineering of GC content in Shine-Dalgarno sequence [25] | — |

Table 2: Core Reagents and Research Solutions for Knowledge-Driven DBTL

| Reagent / Solution | Function / Purpose | Example / Composition |

|---|---|---|

| Production Chassis | Host organism for in vivo dopamine synthesis. | E. coli FUS4.T2 [25] |

| Pathway Enzymes | Conversion of L-tyrosine to dopamine. | HpaBC (from E. coli), Ddc (from Pseudomonas putida) [25] |

| Cell-Free Protein Synthesis (CFPS) System | In vitro prototyping of enzyme expression and pathway balance without cellular constraints [2]. | Crude cell lysate providing metabolites and energy equivalents [25] |

| RBS Library Kit | High-throughput fine-tuning of gene expression levels in the synthetic pathway. | Tools for modulating Shine-Dalgarno sequence [25] |

| Specialized Growth Medium | Supports high-density growth and precursor availability for dopamine production. | Minimal medium with 20 g/L glucose, 10% 2xTY, MOPS, vitamins, and trace elements [25] |

| Inducer | Controls expression of heterologous genes in the production strain. | Isopropyl β-D-1-thiogalactopyranoside (IPTG) at 1 mM [25] |

Detailed Experimental Protocols

Protocol 1: Upstream Knowledge Generation Using Cell-Free Lysate Systems

Objective: To rapidly test the expression and functionality of pathway enzymes and determine their optimal relative expression levels in vitro before strain construction [25] [2].

Materials:

- Crude cell lysate from the production host (e.g., E. coli FUS4.T2) [25]

- DNA templates for target genes (hpaBC, ddc)

- Prepared reaction buffer (50 mM phosphate buffer pH 7, 0.2 mM FeCl₂, 50 µM vitamin B₆, 1 mM L-tyrosine) [25]

- Incubator or thermal cycler

Procedure:

- Prepare Reaction Mixture: Combine crude cell lysate, DNA templates, and reaction buffer in a microcentrifuge tube. A typical reaction volume is 50 µL.

- Incubate for Protein Synthesis: Incubate the reaction mixture at 30°C for 4-6 hours to allow for transcription and translation [2].

- Analyze Pathway Output: Quantify the conversion of L-tyrosine to L-DOPA and subsequently to dopamine using High-Performance Liquid Chromatography (HPLC) or a similar analytical method.

- Vary Expression Ratios: Repeat steps 1-3 with varying amounts or ratios of DNA templates for different enzymes to identify the expression balance that maximizes dopamine yield. This data directly informs the design of RBS variants for the in vivo strain.

Protocol 2: In Vivo Strain Construction & High-Throughput RBS Engineering

Objective: To translate the optimal expression levels identified in vitro into an in vivo production strain via ribosome binding site (RBS) engineering [25].

Materials:

- Cloning strain: E. coli DH5α [25]

- Production strain with enhanced L-tyrosine production (e.g., E. coli FUS4.T2 with TyrR depletion and tyrA mutation) [25]

- Plasmid vectors for gene expression

- PCR reagents and equipment for DNA assembly

- SOC medium and antibiotic selection plates

Procedure:

- Design RBS Library: Based on the in vitro results, design a library of RBS sequences for the hpaBC and ddc genes. Focus on modulating the GC content of the Shine-Dalgarno sequence to fine-tune translation initiation rates without altering the coding sequence [25].

- Build DNA Constructs: Use automated DNA assembly techniques (e.g., Golden Gate assembly, Gibson assembly) to clone the RBS library variants into your expression plasmid(s) containing the dopamine pathway genes.

- Transform Production Strain: Transform the assembled plasmid library into the high L-tyrosine production strain.

- Cultivation and Test:

- Inoculate single colonies into deep-well plates containing 1 mL of minimal medium with appropriate antibiotics and 20 g/L glucose [25].

- Induce protein expression with 1 mM IPTG during the mid-exponential phase.

- Grow cultures for 24-48 hours at 30-37°C with shaking.

- Quantify Production: Measure final dopamine titers and biomass from each culture using HPLC and optical density (OD600) measurements, respectively.

Workflow and Pathway Visualization

From Code to Cell: Implementing Automated and AI-Enhanced DBTL Workflows

Leveraging Automated Biofoundries for High-Throughput Strain Construction

Automated biofoundries represent a transformative advancement in synthetic biology, integrating robotic automation, computational design, and data analytics to accelerate the engineering of biological systems. This protocol details the application of automated biofoundries for high-throughput strain construction, specifically within the context of optimizing the Design-Build-Test-Learn (DBTL) cycle for therapeutic development. We present a detailed methodology for the automated construction of Saccharomyces cerevisiae strains, a key chassis for biopharmaceutical production, achieving a throughput of up to 2,000 transformations per week [26]. The document provides a comprehensive framework comprising application notes, a step-by-step experimental protocol, and essential resource guides to enable researchers to implement and leverage these advanced capabilities for accelerating therapeutic strain development.

Application Notes

Integration within the DBTL Cycle for Therapeutic Development

The engineering of microbial strains for therapeutic compound production, such as steroidal alkaloids or anticancer agents, is a central pursuit in biotechnology. Automated strain construction directly enhances the Build phase of the DBTL cycle, which has traditionally been a major bottleneck. By drastically increasing the speed and reproducibility of strain generation, it enables more rapid iteration through the entire DBTL cycle, compressing development timelines from years to months [26] [27].

A prominent success story involved a biofoundry tasked by the U.S. Defense Advanced Research Projects Agency (DARPA) to produce 10 target molecules, including complex therapeutics like the anticancer agent rebeccamycin, within 90 days. The foundry successfully constructed 215 strains across five species and assembled 1.2 Mb of DNA, demonstrating the power of automated workflows to tackle diverse and challenging therapeutic targets [27].

Quantitative Workflow Advantages

The implementation of an automated workflow for strain construction provides significant quantitative advantages over manual methods, directly impacting the efficiency of therapeutic development research.

Table 1: Comparative Analysis of Manual vs. Automated Strain Construction Workflows

| Performance Metric | Manual Workflow | Automated Workflow | Key Implication for DBTL Cycle |

|---|---|---|---|

| Throughput | ~100-200 transformations/week | ~2,000 transformations/week [26] | Drastically expands design space exploration per cycle. |

| Process Integration | Disconnected steps requiring manual intervention | Modular, integrated protocol with a central robotic arm [26] | Reduces human error and increases reproducibility. |

| Data Generation | Limited, slower data acquisition | Rapid, large-scale data generation for machine learning [28] [29] | Enables more powerful learning phases and predictive models. |

| Parameter Customization | Prone to inconsistency | On-demand customization via user-friendly software interface [26] | Allows for flexible and complex experimental designs. |

Key Reagents and Research Solutions

The following reagents and hardware are critical for establishing a robust automated strain construction pipeline.

Table 2: Research Reagent Solutions for Automated Strain Construction

| Item Name | Function/Description | Application in Protocol |

|---|---|---|

| Hamilton Microlab VANTAGE | Central liquid handling robot with a robotic arm for integrating off-deck hardware. | Core platform for executing the automated transformation protocol [26]. |

| VENUS Software | User interface software for the Hamilton system. | Allows on-demand customization of experimental parameters (e.g., DNA amounts, incubation times) [26]. |

| S. cerevisiae Strain | A well-characterized eukaryotic host (e.g., engineered for verazine production). | Production chassis for therapeutic intermediates; easily genetically manipulated [26]. |

| Linear DNA Cassettes/Plasmids | DNA templates containing the genes for the biosynthetic pathway. | Introduced into the host via transformation to construct the production strain. |

| j5 & AssemblyTron | DNA assembly design software (j5) and an open-source python package (AssemblyTron). | Streamlines the design of DNA assembly strategies and translates them into commands for liquid handlers [27]. |

Experimental Protocol

This protocol describes an automated method for constructing Saccharomyces cerevisiae strains, optimized for high-throughput screening of biosynthetic pathways.

The automated workflow integrates discrete hardware and biochemical steps into a seamless, programmable operation. The following diagram illustrates the logical flow and system integration.

Detailed Step-by-Step Methodology

Phase 1: Pre-Automation Setup

- Strain and DNA Preparation:

- Inoculate a fresh culture of the recipient S. cerevisiae strain (e.g., engineered for verazine production) and grow overnight in appropriate medium.

- Prepare the linear DNA cassettes or plasmids containing the gene library to be screened. Ensure DNA is purified and quantified.

- Workflow Programming:

- Using the VENUS software on the Hamilton system, load the automated protocol.

- Customize parameters in the user interface as needed for the experiment, including DNA concentrations (e.g., 100-500 ng per transformation), culture volumes, and incubation times.

Phase 2: Automated Transformation Protocol

The following steps are executed by the Hamilton Microlab VANTAGE system.

- Cell Harvesting and Washing:

- Transfer a defined volume of overnight yeast culture to a deep-well plate.

- Centrifuge the plate (using an integrated off-deck centrifuge) and aspirate the supernatant.

- Resuspend the cell pellet in 200 µL of sterile lithium acetate (LiOAc) solution (0.1 M) by automated pipetting. Repeat the wash step once.

- Competent Cell Preparation:

- After the final wash, resuspend the cell pellet in 50 µL of LiOAc solution (0.1 M).

- Transformation Mix Assembly:

- To the cells, add the following in sequence:

- Prepared DNA (variable volume to achieve desired mass).

- 50 µL of 50% (w/v) PEG-3350 solution.

- 5 µL of carrier DNA (e.g., sheared salmon sperm DNA, 10 mg/mL).

- The robot mixes the components thoroughly by repeated pipetting.

- To the cells, add the following in sequence:

- Heat Shock:

- Incubate the transformation mix on a heated deck integrated into the system at 42°C for 40 minutes.

- Cell Recovery:

- Centrifuge the plate to pellet the cells and carefully remove the transformation mix via aspiration.

- Resuspend the cells in 200 µL of recovery medium (e.g., YPD or synthetic complete medium).

- Incubate the plate on a temperature-controlled shaker at 30°C for 90 minutes to allow for cell recovery and expression of the selection marker.

Phase 3: Post-Automation Procedures

- Plating and Selection:

- Using the liquid handler, transfer the entire recovery culture onto solid selection agar plates.

- Incubate the plates at 30°C for 2-3 days until colonies appear.

- Screening and Analysis (Test Phase):

- Pick individual colonies for high-throughput screening. For verazine pathway optimization, this involves culturing in deep-well plates and measuring product yield using LC-MS or other analytical methods [26].

- Data Integration (Learn Phase):

Troubleshooting Guide

| Problem | Potential Cause | Suggested Solution |

|---|---|---|

| Low Transformation Efficiency | Inadequate heat shock temperature or duration. | Verify and calibrate the temperature of the heated deck. Ensure consistent incubation timing in the protocol script. |

| High Contamination Rate | Non-sterile reagents or plate handling. | Ensure all reagents are filter-sterilized. Use sealed plates where possible and validate the sterilization cycle of the robotic deck. |

| Inconsistent Cell Pellet During Washes | Improper centrifugation settings. | Calibrate the integrated centrifuge for speed and time to ensure a firm pellet is formed without compromising cell viability. |

Integrating Machine Learning for Predictive Pathway and Protein Design