Optimizing Microbial Strains: A Comprehensive Guide to the Design-Build-Test-Learn (DBTL) Cycle

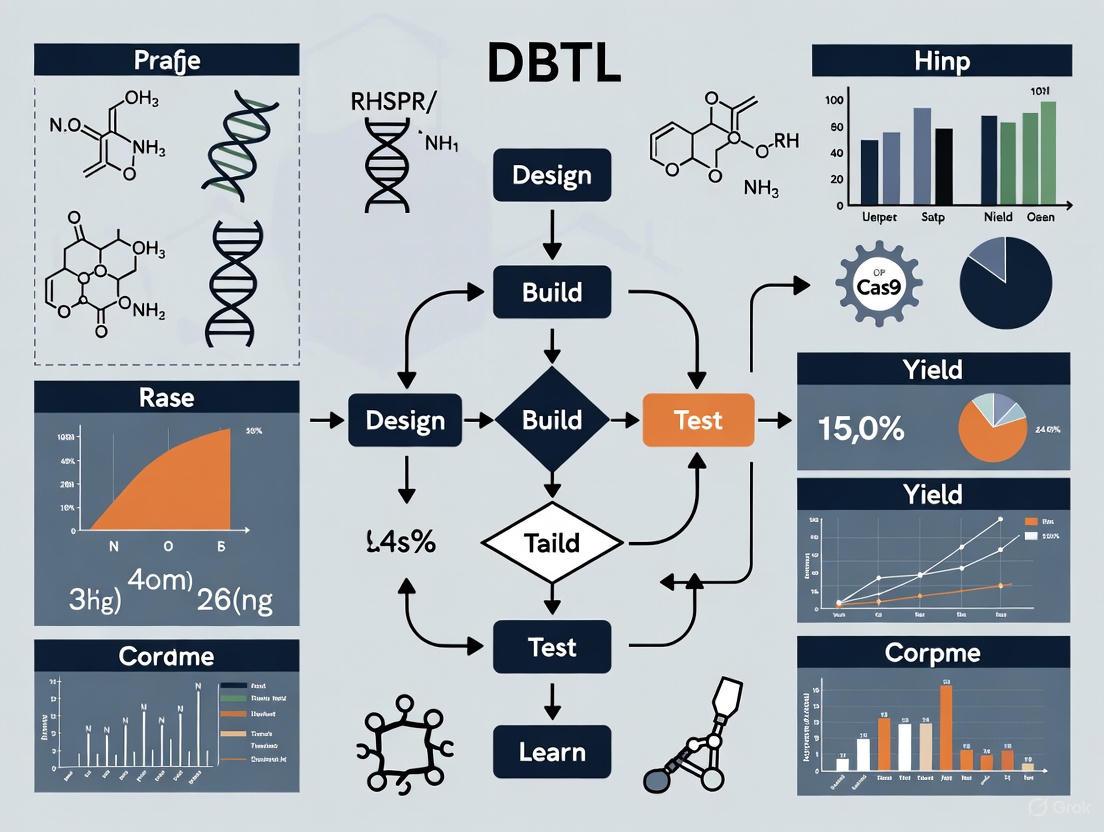

This article provides a comprehensive overview of the Design-Build-Test-Learn (DBTL) cycle, a foundational and iterative framework in synthetic biology for microbial strain development.

Optimizing Microbial Strains: A Comprehensive Guide to the Design-Build-Test-Learn (DBTL) Cycle

Abstract

This article provides a comprehensive overview of the Design-Build-Test-Learn (DBTL) cycle, a foundational and iterative framework in synthetic biology for microbial strain development. Tailored for researchers, scientists, and drug development professionals, it explores the core principles of the DBTL cycle, details its methodological application in creating strains for therapeutics and fine chemicals, addresses common troubleshooting and optimization challenges, and validates the approach through comparative case studies. The scope extends from foundational concepts to advanced, automated workflows, highlighting how iterative DBTL cycling accelerates the engineering of high-performing production strains for biomedical applications.

The DBTL Cycle Demystified: Foundational Principles for Rational Strain Engineering

In the field of synthetic biology and strain development, the Design-Build-Test-Learn (DBTL) cycle serves as a foundational framework for systematically engineering biological systems. This iterative process enables researchers and drug development professionals to efficiently develop microbial strains for producing valuable compounds, from pharmaceuticals to biofuels. By providing a structured approach to biological engineering, the DBTL cycle accounts for the inherent variability of biological systems and allows for continuous refinement until a strain meets desired performance specifications [1]. The true power of this framework lies in its iterative nature—complex projects rarely succeed on the first attempt but instead make progress through multiple, sequential cycles of refinement [2]. This article explores the four core phases of the DBTL framework, examining their application in modern strain development research through specific experimental protocols, data analysis techniques, and emerging technological innovations.

The Four Phases of the DBTL Cycle

Design: The Conceptual Blueprint

The Design phase initiates the DBTL cycle by establishing a clear objective and developing a rational plan based on specific hypotheses or learnings from previous cycles. This stage involves the strategic selection of genetic parts—promoters, ribosome binding sites (RBS), and coding sequences—and their assembly into functional circuits or devices using standardized methods [2]. Researchers define precise experimental protocols and success metrics during this phase.

In strain development, Design often encompasses:

- Pathway Design: Selecting and arranging genes to create biosynthetic pathways for target compounds

- Genetic Optimization: Engineering regulatory elements like RBS sequences to fine-tune gene expression levels [3]

- Host Selection: Choosing appropriate microbial chassis (e.g., E. coli, S. cerevisiae) based on compatibility with target pathways

Advanced biofoundries now integrate artificial intelligence and machine learning to enhance design precision. Large Language Models (LLMs) and foundation models can generate thousands of potential molecule candidates in days—a task that would traditionally take researchers years [1]. These tools help researchers quickly grasp key concepts across vast amounts of scientific literature and assist in generating scientific hypotheses.

Table 1: Key Design Tools and Applications in Strain Development

| Tool Category | Specific Tools | Application in Strain Design |

|---|---|---|

| DNA Assembly Design | j5 DNA assembly software, AssemblyTron, SynBiopython | Automated design of DNA assembly protocols for complex constructs [4] |

| Pathway Design | Cameo, RetroPath 2.0 | In silico design of metabolic engineering strategies for cell factories [4] |

| Circuit Design | Cello | Genetic circuit design for predictable behavior [4] |

| AI-Assisted Design | CRISPR-GPT, BioGPT, IBM's Biomedical Foundation Models | Automated experiment design and biological component selection [1] |

Build: From Digital Design to Biological Reality

The Build phase translates theoretical designs into physical biological entities through hands-on molecular biology techniques. This involves DNA synthesis, plasmid cloning, and transformation of engineered constructs into host organisms [2]. In strain development, this phase focuses on physically assembling the genetic constructs designed in the previous phase.

Modern automated biofoundries have dramatically accelerated the Build phase. For example, in a recent automated strain construction workflow for Saccharomyces cerevisiae, researchers programmed a Hamilton Microlab VANTAGE system to integrate off-deck hardware via a central robotic arm, achieving a throughput of 2,000 transformations per week—a 10-fold increase over manual operations [5].

Key Build processes in strain development include:

- DNA Assembly: Constructing plasmids and pathways using methods like Gibson assembly or Golden Gate cloning

- Transformation: Introducing DNA constructs into host cells

- Strain Library Construction: Creating diverse variant libraries for screening

Table 2: Automated Build Phase Components in a High-Throughput Yeast Engineering Pipeline

| System Component | Function | Implementation Example |

|---|---|---|

| Robotic Platform | Central liquid handling and coordination | Hamilton Microlab VANTAGE with iSWAP robotic arm [5] |

| External Devices | Specialized processing steps | Integration with plate sealer, plate peeler, and thermal cycler [5] |

| Software Interface | Workflow control and customization | Hamilton VENUS with modular dialog boxes for parameter adjustment [5] |

| Process Steps | Transformation workflow | 1. Transformation set up and heat shock2. Washing3. Plating [5] |

Test: Rigorous Evaluation of Engineered Strains

The Test phase centers on robust data collection through quantitative measurements of the engineered system's performance [2]. In strain development, this involves characterizing the behavior of engineered strains through various assays to evaluate productivity, growth characteristics, and metabolic activity.

Advanced testing methodologies include:

- Analytical Chemistry: LC-MS for quantifying metabolite production

- Growth Assays: Measuring biomass accumulation and substrate consumption

- Omics Technologies: Genomics, proteomics, and metabolomics for comprehensive characterization

A recent dopamine production study exemplifies rigorous testing protocols. Researchers developed a rapid LC-MS method that reduced analyte detection runtime from 50 minutes to 19 minutes, enabling high-throughput quantification across strain libraries [5]. Similarly, in the RiceGuard arsenic biosensor project, researchers implemented real-time kinetic analysis over 90 minutes to observe transcription dynamics and response plateaus [6].

Table 3: Test Phase Analytical Methods in Strain Development

| Analysis Type | Specific Methods | Measured Parameters |

|---|---|---|

| Genotypic Analysis | Next-Generation Sequencing (NGS), colony qPCR | DNA sequence verification, construct validation [7] [8] |

| Product Analysis | LC-MS, HPLC, automated mass spectrometry | Metabolite titers, pathway intermediates [5] |

| Growth Phenotyping | Plate readers, high-throughput culturing | OD measurements, growth rates, substrate consumption [2] [9] |

| Pathway-Specific Assays | Fluorescence-based reporters, enzymatic assays | Pathway activity, gene expression levels [6] |

Learn: Extracting Insights for the Next Cycle

The Learn phase represents the critical analytical component where data gathered during testing is interpreted to inform subsequent design iterations [2]. This phase determines whether the design performed as expected and extracts fundamental principles from both successes and failures.

In strain development, Learning involves:

- Data Integration: Combining multi-omics datasets to form comprehensive system understanding

- Pattern Recognition: Identifying correlations between genetic modifications and phenotypic outcomes

- Model Refinement: Updating computational models to improve predictive accuracy

The integration of machine learning has transformed the Learn phase. For example, TeselaGen's Discover Module employs predictive models to forecast biological product phenotypes using quantitative and qualitative data, with advanced embeddings representing DNA, proteins, and chemical compounds [8]. In one application, ML models trained on experimental data made accurate genotype-to-phenotype predictions that guided metabolic engineering strategies [8].

A notable evolution in this phase is the emerging LDBT paradigm (Learn-Design-Build-Test), where machine learning algorithms trained on large biological datasets can make zero-shot predictions, potentially enabling functional parts and circuits to be generated in a single cycle [10].

Case Study: Knowledge-Driven DBTL for Dopamine Production

A recent study demonstrates the effective application of the DBTL cycle in developing an E. coli strain for dopamine production [3]. The approach employed a knowledge-driven DBTL cycle involving upstream in vitro investigation to inform strain design.

Experimental Overview and Results:

- Objective: Develop an efficient dopamine production strain using a rational DBTL approach

- Strategy: Combined in vitro pathway design with high-throughput RBS engineering

- Outcome: Achieved dopamine production of 69.03 ± 1.2 mg/L (34.34 ± 0.59 mg/g biomass)—a 2.6 to 6.6-fold improvement over previous in vivo production methods [3]

DBTL Implementation:

- Design: Engineered a dopamine pathway using heterologous genes (hpaBC and ddc) with RBS variations for expression optimization

- Build: Constructed plasmid libraries and transformed into an E. coli FUS4.T2 production strain with enhanced L-tyrosine production

- Test: Evaluated dopamine production using LC-MS analysis and measured pathway enzyme expression levels

- Learn: Identified optimal RBS sequences and discovered the impact of GC content in the Shine-Dalgarno sequence on RBS strength, informing subsequent design iterations [3]

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 4: Key Research Reagents and Their Applications in DBTL Workflows

| Reagent Category | Specific Examples | Function in DBTL Workflows |

|---|---|---|

| Cloning Systems | pESC-URA plasmid, pESC-LEU plasmid, 2μ vectors with auxotrophic markers | Selection and maintenance of genetic constructs in microbial hosts [5] |

| Cell-Free Systems | Crude cell lysates, transcription/translation machinery | Rapid testing of genetic circuits and pathway variants without cellular constraints [10] |

| Analytical Standards | Dopamine hydrochloride, verazine, DFHBI-1T fluorescent dye | Quantification of target compounds and reporter gene activity [6] [3] |

| Induction Systems | GAL1 promoter, IPTG-inducible systems | Controlled gene expression for metabolic pathway regulation [5] |

| Specialized Media | Minimal media with MOPS buffer, SOC medium, selective media with antibiotics | Optimized growth conditions for engineered strains and selection of successful transformants [3] |

The DBTL cycle remains the cornerstone of modern synthetic biology and strain engineering, providing a systematic framework for developing biological systems with predictable functions. As the field advances, the integration of automation, artificial intelligence, and high-throughput technologies continues to accelerate each phase of the cycle. Biofoundries with fully automated DBTL capabilities are pushing the boundaries of what's possible in strain development, as demonstrated by success stories like the DARPA challenge where researchers produced 6 out of 10 target molecules within a 90-day timeframe [4].

The emergence of new paradigms like LDBT (Learn-Design-Build-Test), which places machine learning at the forefront of biological design, suggests an exciting future where predictive engineering may reduce the need for multiple iterative cycles [10]. However, the fundamental principles of the DBTL framework—rational design, rigorous testing, and knowledge-driven refinement—will continue to guide researchers in developing novel microbial strains for pharmaceutical applications, sustainable biomanufacturing, and addressing global challenges through biological innovation.

The Design-Build-Test-Learn (DBTL) cycle represents a foundational framework in synthetic biology and metabolic engineering, enabling the systematic development of microbial cell factories for producing valuable compounds. This iterative engineering paradigm allows researchers to progressively refine genetic designs by incorporating data-driven insights from each cycle, accelerating strain optimization while deepening fundamental understanding of biological systems. This whitepaper examines the core principles of the DBTL framework, its implementation in diverse biological systems, and emerging enhancements through artificial intelligence and automation, providing researchers with comprehensive methodological guidance for effective strain development.

The DBTL cycle is a systematic, iterative framework that has become synonymous with rational biological engineering. It provides a structured approach for developing and optimizing biological systems, such as engineered microbial strains for producing biofuels, pharmaceuticals, and other valuable compounds [7]. The cycle begins with Design, where researchers define objectives and create genetic blueprints based on domain knowledge and computational modeling. In the Build phase, DNA constructs are synthesized and assembled into vectors before being introduced into host chassis. The Test phase functionally characterizes these constructs to measure performance against objectives, and the Learn phase analyzes collected data to inform subsequent design iterations [10]. This continuous refinement process allows engineering of biological systems with predictable functions, significantly reducing development timelines compared to traditional ad hoc approaches.

The power of the DBTL framework lies in its iterative nature—with each cycle, knowledge accumulates, enabling progressively more sophisticated designs. In metabolic engineering specifically, DBTL cycles have proven invaluable for optimizing complex traits such as product titers, yields, and productivity (TYR values) that typically involve multiple genetic modifications [11]. The framework's structure is particularly suited for addressing combinatorial explosions in design space that occur when optimizing multiple pathway components simultaneously, as it allows focused exploration of the most promising regions based on empirical data [11].

The Four Phases of the DBTL Cycle

Design: Computational Planning of Biological Systems

The Design phase establishes the foundational blueprint for genetic engineering campaigns. This stage leverages both domain expertise and computational tools to specify genetic elements, their configurations, and regulatory components. For metabolic pathways, this typically involves identifying target genes, selecting regulatory parts (promoters, ribosomal binding sites), and planning assembly strategies. The design phase has been revolutionized by standardized design tools that enable seamless interoperability across biofoundries, facilitating protocol sharing and reproducibility [12].

Modern design strategies increasingly incorporate machine learning to enhance predictive capabilities. Protein language models such as ESM and ProGen, trained on evolutionary relationships between millions of protein sequences, enable zero-shot prediction of beneficial mutations and protein functions [10]. Structure-based deep learning tools like ProteinMPNN can design protein variants by predicting sequences that fold into desired backbone structures, significantly increasing design success rates [10]. For metabolic engineering, the design phase must also consider pathway topology and thermodynamic properties, as these factors critically influence flux distribution and potential rate-limiting steps [11].

Build: High-Throughput DNA Assembly and Strain Construction

The Build phase translates computational designs into physical biological entities through DNA assembly and host transformation. Automation has dramatically accelerated this phase, with biofoundries implementing robotic platforms that increase throughput and reproducibility. For example, an automated pipeline for Saccharomyces cerevisiae transformation achieved a capacity of ~400 transformations per day and up to 2,000 per week—a 10-fold increase over manual operations [5].

Key advances in the Build phase include:

- Automated DNA assembly: Integrated robotic systems execute complex protocols with minimal human intervention, improving success rates [12].

- Standardized genetic toolkits: Universal, reproducible pipelines for biofoundries enable reliable part interchangeability [12].

- CRISPR/Cas9 systems: Implemented across diverse hosts including bacteria, yeast, and filamentous fungi to enable precise genome editing [13].

- Modular cloning systems: Facilitate rapid assembly of multi-gene constructs through standardized parts and rules [7].

For challenging hosts like filamentous fungi, Build phase optimization has included developing strains with disrupted non-homologous end joining (NHEJ) pathways by knocking out ku70, ku80, or ligD genes, dramatically increasing homologous recombination efficiency to over 90% in some species [13].

Test: Functional Characterization of Engineered Strains

The Test phase quantitatively assesses performance of engineered strains through functional assays and analytical methods. This phase generates the critical data that fuels the learning process. Advanced test platforms range from high-throughput cell-free systems to automated bioreactor platforms.

Cell-free expression systems have emerged as particularly powerful tools for rapid testing, as they bypass cellular constraints and enable direct measurement of enzyme activities and pathway function [10]. These systems can produce >1 g/L of protein in under 4 hours and can be scaled from picoliter to kiloliter volumes, enabling massive parallelization [10]. When combined with liquid handling robots and microfluidics, cell-free platforms can screen hundreds of thousands of variants, as demonstrated by DropAI, which screened over 100,000 picoliter-scale reactions using droplet microfluidics [10].

For in vivo testing, analytical methods such as liquid chromatography-mass spectrometry (LC-MS) provide precise quantification of metabolic products. In one verazine production study, researchers developed a rapid LC-MS method that reduced analysis time from 50 to 19 minutes while maintaining accurate quantification, enabling high-throughput screening of strain libraries [5].

Learn: Data Analysis and Knowledge Extraction

The Learn phase transforms experimental data into actionable insights for subsequent DBTL cycles. This phase employs statistical analysis, machine learning, and mechanistic modeling to identify patterns, predict improved designs, and generate biological understanding.

Machine learning algorithms have proven particularly valuable for analyzing complex biological data. In metabolic engineering, gradient boosting and random forest models have demonstrated strong performance in the low-data regime typical of early DBTL cycles, showing robustness to training set biases and experimental noise [11]. These models can identify non-intuitive relationships between genetic modifications and metabolic flux that might escape human observation.

The learning process also generates mechanistic insights into pathway regulation and limitations. For example, in a dopamine production study, researchers discovered that GC content in the Shine-Dalgarno sequence significantly influenced ribosome binding site strength—knowledge that directly informed subsequent design iterations [14]. Similarly, kinetic modeling of metabolic pathways can reveal how perturbations to individual enzyme concentrations affect overall flux, explaining why sequential debottlenecking often fails to identify global optima [11].

DBTL in Action: Case Studies in Metabolic Engineering

Dopamine Production in Escherichia coli

A knowledge-driven DBTL cycle was implemented to develop an efficient dopamine production strain in E. coli, resulting in a 2.6 to 6.6-fold improvement over state-of-the-art production [14]. The approach integrated upstream in vitro investigation with high-throughput ribosome binding site (RBS) engineering to optimize expression levels of the heterologous pathway enzymes HpaBC and Ddc.

Table 1: DBTL Cycle for Dopamine Production in E. coli

| DBTL Phase | Key Activities | Outcomes |

|---|---|---|

| Design | Selected heterologous genes hpaBC and ddc; Designed RBS variants for pathway balancing | Identified optimal expression level combinations in cell-free system |

| Build | High-throughput RBS engineering; Constructed variant library in high-tyrosine production host | Created diversified strain library with varying enzyme expression ratios |

| Test | Cultivation in minimal medium; Dopamine quantification via HPLC | Identified optimal strain producing 69.03 ± 1.2 mg/L dopamine |

| Learn | Analyzed relationship between GC content in Shine-Dalgarno sequence and RBS strength | Discovered key mechanistic insight for future design iterations |

The workflow incorporated a cell-free protein synthesis system to prototype pathway behavior before in vivo implementation, accelerating the learning process. This "knowledge-driven" approach provided mechanistic understanding that enabled more intelligent design in subsequent cycles, contrasting with traditional statistical approaches that may require more iterations [14].

Verazine Production in Saccharomyces cerevisiae

An automated DBTL platform was applied to optimize verazine biosynthesis in yeast, identifying several gene overexpression targets that enhanced production by 2- to 5-fold [5]. The study screened a library of 32 genes involved in sterol metabolism and transport, demonstrating the power of high-throughput approaches for identifying non-obvious bottlenecks.

Table 2: Automated Strain Engineering for Verazine Production

| Parameter | Manual Workflow | Automated Workflow |

|---|---|---|

| Throughput | ~200 transformations/week | ~2,000 transformations/week |

| Transformation method | Lithium acetate/ssDNA/PEG in tubes | Adapted to 96-well format with robotic liquid handling |

| Key integration points | Manual intervention at each step | Full integration of plate sealer, peeler, and thermal cycler |

| Colony picking | Manual selection | Automated with QPix 460 system |

The top-performing strains overexpressed erg26, dga1, cyp94n2, ldb16, gabat1v2, or dhcr24, genes spanning diverse functional categories including sterol biosynthesis, lipid droplet formation, and cytochrome P450 reactions [5]. This demonstrated the value of exploring multiple engineering targets simultaneously rather than focusing only on obvious pathway enzymes.

Emerging Paradigms and Future Directions

The LDBT Shift: Learning Before Design

A emerging paradigm proposes reordering the cycle to LDBT (Learn-Design-Build-Test), where machine learning and prior knowledge guide the initial design phase [10]. This approach leverages protein language models and zero-shot predictors to generate functional designs without requiring experimental data from previous cycles. The availability of megascale biological datasets now enables these models to make accurate predictions about sequence-structure-function relationships, potentially reducing the number of experimental iterations needed.

In this revised framework, learning occurs before physical construction through computational analysis of existing biological knowledge [10]. This shifts synthetic biology closer to a "Design-Build-Work" model used in established engineering disciplines, where first principles reliably predict system behavior.

AI-Enabled Automation and Integrated Workflows

The integration of artificial intelligence with automated biofoundries is creating increasingly sophisticated DBTL implementations. AI-guided systems can now dynamically optimize assembly protocols, diagnose failures, and close the DBTL loop through real-time learning [12]. These systems continuously improve through iteration, establishing a new paradigm for biological engineering.

Future developments will likely focus on workflow integration across multiple platforms and data systems. As noted in recent advances, "experiments continuously improve through iteration, promising to accelerate both fundamental research and industrial applications" [12]. This requires seamless data flow between design software, robotic execution platforms, and analytical instruments—a challenge being addressed through standardized data models and communication protocols.

Essential Research Reagent Solutions

Successful implementation of DBTL cycles relies on specialized reagents and tools that enable precise genetic manipulation and characterization. The following table catalogizes key solutions used in advanced metabolic engineering studies.

Table 3: Essential Research Reagent Solutions for DBTL Implementation

| Reagent/Tool | Function | Application Examples |

|---|---|---|

| CRISPR/Cas9 systems | Precision genome editing through targeted double-strand breaks | Gene knockouts, promoter replacements in fungi and yeast [13] |

| RBS variant libraries | Fine-tuning translation initiation rates | Metabolic pathway balancing in E. coli dopamine production [14] |

| Cell-free expression systems | Rapid in vitro prototyping of pathway enzymes | Testing enzyme combinations without cellular constraints [10] |

| Selectable markers (ptrA, hph, ble) | Selection of successfully transformed strains | Multiple rounds of fungal engineering through marker recycling [13] |

| Standardized DNA assembly toolkits | Modular, reproducible construction of genetic circuits | High-throughput biofoundry operations [12] |

| Promoter systems (PGAL1, PTEF1) | Controlled gene expression | Inducible and constitutive expression in yeast and fungi [5] [13] |

Visualizing DBTL Workflows

DBTL Cycle Workflow - The iterative engineering process showing how knowledge from each cycle informs subsequent designs, with multiple iterations converging toward an optimized strain.

Enhanced DBTL with AI and Automation - Modern DBTL implementations where machine learning informs the design phase and cell-free systems accelerate the build-test phases, creating faster, more predictive cycles.

The DBTL cycle has established itself as an indispensable framework for systematic strain development in synthetic biology and metabolic engineering. Its power derives from the structured iteration of design, construction, characterization, and analysis phases, each generating knowledge that progressively refines biological designs. Current advances in machine learning, automation, and experimental platforms are further accelerating DBTL implementations, enabling more complex engineering challenges to be addressed efficiently. As these technologies mature, the paradigm is shifting toward more predictive engineering approaches that require fewer iterations, promising to significantly reduce the time and cost required to develop production strains for pharmaceutical, chemical, and biotechnology applications.

This technical guide explores the strategic pivot from traditional whole-cell biosensors to cell-free systems within the framework of the Design-Build-Test-Learn (DBTL) cycle. While genetically modified organisms (GMOs) have long served as the foundation for biological sensing, constraints including cellular membrane barriers, stringent viability requirements, and extended development timelines often hinder their efficiency and application scope [15] [16]. Cell-free biosensors, which utilize transcription and translation machinery in vitro, present a paradigm shift by overcoming these limitations and accelerating the DBTL cycle [15] [17]. This whitepaper provides an in-depth analysis of this transition, supported by quantitative data, detailed experimental protocols, and visual workflows, specifically tailored for researchers and drug development professionals engaged in strain development and biosensor engineering.

The Design-Build-Test-Learn (DBTL) cycle is a systematic, iterative framework central to synthetic biology and metabolic engineering for developing and optimizing biological systems [7] [18]. In the context of biosensor development, this cycle involves: (1) Design: Planning genetic constructs using modular DNA parts; (2) Build: Assembling constructs and engineering microbial strains; (3) Test: Functionally characterizing the constructs in a relevant biological system; and (4) Learn: Analyzing data to inform the next design iteration [11] [7] [14].

Traditional DBTL cycles relying on GMOs often face significant bottlenecks. Cellular membranes restrict the transport of solid substrates or toxic compounds, while the need to maintain cell viability imposes constraints on experimental conditions and screening throughput [15] [16]. Furthermore, the iterative process of in vivo strain development can be slow, sometimes leading to an "involution state" where cycles increase in complexity without corresponding gains in productivity [18].

Cell-free gene expression (CFE) systems have emerged as a transformative technology that mitigates these challenges. By using purified cellular components like ribosomes, transcription factors, and energy sources, CFE systems enable protein synthesis and biosensor operation without the constraints of living cells [15] [17]. This pivot allows for more rapid prototyping, direct detection of analytes inaccessible to whole cells, and integration with high-throughput automation, thereby streamlining the entire DBTL pipeline [16] [17].

Comparative Analysis: GMO vs. Cell-Free Biosensor Performance

The following table summarizes key performance characteristics of GMO-based and cell-free biosensors, highlighting the advantages of the cell-free approach for specific applications.

Table 1: Performance Comparison of GMO-Based and Cell-Free Biosensors

| Feature | GMO-Based Biosensors | Cell-Free Biosensors |

|---|---|---|

| Setup Complexity | Requires cloning, transformation, and cell culture [7] | Rapid activation; uses pre-prepared extracts [17] |

| Viability Requirements | Strict viability and growth conditions necessary [15] | No viability constraints; functions in toxic environments [15] |

| Response Time | Slower (hours), depends on cell growth and regulation [15] | Faster (minutes to hours), direct activation [16] [17] |

| Substrate Accessibility | Limited by cell membrane permeability [16] | Open system; ideal for solid substrates like microcrystalline cellulose [16] |

| High-Throughput Potential | Lower, due to culturing and viability steps [7] | High, easily integrated with automated liquid handlers [16] |

| Real-World Deployment | Challenging due to containment and stability issues [19] | Portable; suitable for lyophilized, paper-based field tests [15] |

Experimental Protocol: Developing a Cell-Free Biosensor

This section outlines a generalizable protocol for creating and testing a transcription factor (TF)-based cell-free biosensor, exemplified by a system designed to detect cellobiose—a product of cellulose degradation [16].

Design and Build Phase

Objective: To construct a genetic circuit that produces a detectable signal (e.g., fluorescence) in the presence of a target analyte.

Plasmid Design: The core sensor element is a plasmid containing two key components:

- Reporter Gene: A gene encoding a fluorescent protein (e.g., superfolder GFP, sfGFP) under the control of a constitutive or inducible promoter (e.g., T7 promoter).

- Operator Site: The specific DNA sequence (operator) recognized by the transcription factor (TF) must be placed strategically within the promoter region to regulate reporter gene expression [16].

- Example: For a cellobiose sensor, the operator site for the TF CelR (CelO) is inserted into the plasmid pIVEX-PT7-CelO-sfGFP [16].

Protein Preparation (Transcription Factor): The TF (e.g., CelR) must be expressed and purified separately.

- The TF gene is cloned into an expression vector (e.g., pET28-CelR) and transformed into an E. coli expression strain like BL21(DE3).

- Protein expression is induced with IPTG. Cells are lysed via sonication, and the TF is purified using affinity chromatography (e.g., Ni-column for His-tagged proteins) [16].

Test Phase

Objective: To characterize the biosensor's response to the target analyte in a cell-free environment.

Reaction Assembly: The cell-free biosensor reaction is set up by combining the following components in a microplate well:

- Cell-Free Extract: A commercial E. coli-based CFPS kit (e.g., PURExpress) or a homemade S30 lysate [16] [17].

- Sensory Plasmid: The constructed plasmid (e.g., pIVEX-PT7-CelO-sfGFP).

- Purified Transcription Factor: The purified TF (e.g., CelR).

- Substrate/Analyte: The target analyte (e.g., cellobiose) or, for enzyme-screening applications, the solid substrate (e.g., microcrystalline cellulose) along with the enzyme to be tested (e.g., cellobiohydrolase) [16].

- Nuclease-Free Water: To reach the desired final volume.

Incubation and Measurement:

- The reaction mixture is incubated at a constant temperature (e.g., 30-37°C) for several hours.

- Fluorescence (Ex/Em: 485/535 nm for sfGFP) is measured at regular intervals using a plate reader [16].

Learn Phase and DBTL Iteration

Objective: To analyze sensor performance and refine the design.

- Data Analysis: Calculate fold-change in fluorescence (signal-to-noise ratio) and determine the limit of detection (LOD) for the analyte. For enzyme screening, fluorescence intensity correlates directly with enzyme activity [16].

- Machine Learning Integration: Sensor output data can be fed into machine learning models (e.g., gradient boosting, random forest) to predict the performance of new genetic designs, thereby guiding the selection of components for the next DBTL cycle [11] [18]. This data-driven learning is crucial for escaping involution and efficiently navigating the vast combinatorial design space.

The following diagram visualizes this integrated, iterative DBTL workflow for a cell-free biosensor project.

The Scientist's Toolkit: Essential Reagents and Materials

Successful implementation of a cell-free biosensor project relies on a suite of specialized reagents and tools. The table below details key solutions and their functions.

Table 2: Key Research Reagent Solutions for Cell-Free Biosensor Development

| Research Reagent | Function/Benefit |

|---|---|

| Cell-Free Protein Synthesis (CFPS) System | Provides the core transcriptional/translational machinery. Commercial kits (e.g., PURExpress) offer reliability, while homemade S30 lysate allows for customization and cost reduction [16] [17]. |

| Acoustic Liquid Handler (e.g., Echo 525) | Enables non-contact, nanoliter-scale dispensing for high-throughput assembly of cell-free reactions in microplates, minimizing reagent consumption and improving reproducibility [16]. |

| Allosteric Transcription Factors (aTFs) | The core sensing element. aTFs undergo conformational change upon binding an analyte, regulating transcription. They can be engineered for sensitivity and specificity [15]. |

| Supported Lipid Bilayers & Hydrogels | Artificial matrices used for spatial organization and microcompartmentalization of cell-free reactions, enhancing stability and enabling complex signal processing [15]. |

| Lyophilization (Freeze-Drying) Reagents | Trehalose and other stabilizers allow for long-term, room-temperature storage of cell-free biosensors on paper or other substrates, which is critical for field deployment [15]. |

The pivot from GMO-based to cell-free systems represents a significant evolution in biosensor development, directly addressing critical bottlenecks in the traditional DBTL cycle. By removing the constraints of the cell membrane and viability, cell-free biosensors unlock new possibilities for detecting a wider range of analytes, including solid substrates, and enable unprecedented speeds of prototyping and testing. The integration of these systems with automation, machine learning, and structured data management creates a powerful, iterative engineering platform. This approach not only accelerates the development of biosensors for applications in diagnostics, environmental monitoring, and biomanufacturing but also provides a more efficient and fundamentally more flexible framework for biological design.

The iterative Design-Build-Test-Learn (DBTL) cycle provides a fundamental framework for strain development in synthetic biology and metabolic engineering [11] [7]. In traditional DBTL approaches, each cycle begins with genetic designs based on previous experimental results, often relying on statistical models or randomized selection when prior knowledge is limited [3]. However, this conventional approach can lead to multiple iterative cycles, consuming substantial time, money, and resources [3]. A transformative evolution of this paradigm—the knowledge-driven DBTL cycle—incorporates upstream in vitro investigations to create a more mechanistic and rational foundation for strain engineering decisions.

This knowledge-driven approach employs cell-free systems and in vitro testing to de-risk and inform the initial design phase of the DBTL cycle, enabling more predictive strain optimization before moving to live organisms [3]. By bridging the gap between theoretical design and practical implementation through empirical in vitro data, researchers can accelerate the development of microbial cell factories for producing valuable compounds, including pharmaceuticals, biofuels, and specialty chemicals [3].

The Foundation: DBTL Cycles in Strain Development

The Core DBTL Framework

The DBTL cycle represents a systematic framework for engineering biological systems [7]. In strain development, this involves:

- Design: Planning genetic modifications using modular biological parts to achieve desired metabolic functions [7]

- Build: Implementing designs through DNA assembly and strain construction, increasingly automated with advanced genetic engineering tools [3]

- Test: Analyzing constructed strains through functional assays and performance metrics [7]

- Learn: Extracting insights from experimental data to inform subsequent design phases [11]

This cyclical process continues until a strain meets target performance specifications [7]. The integration of automation throughout these phases, known as biofoundries, is becoming central to synthetic biology [3].

Limitations of Conventional DBTL Approaches

Traditional DBTL cycles often face significant challenges in the initial rounds where prior mechanistic understanding is limited [3]. Without sufficient knowledge of pathway kinetics, enzyme interactions, and cellular context, engineering targets may be selected via design of experiment or randomized approaches [3]. This knowledge gap can result in suboptimal design choices, necessitating more iterations and extensive resource consumption before identifying optimal strain configurations [11] [3].

The Knowledge-Driven DBTL Framework

Conceptual Foundation

The knowledge-driven DBTL cycle addresses fundamental limitations of conventional approaches by incorporating upstream in vitro investigation as a critical component of the learning phase [3]. This methodology employs mechanistic understanding rather than relying solely on statistical correlations, creating a more rational foundation for engineering decisions.

This approach is particularly valuable for optimizing complex metabolic pathways where combinatorial explosions of possible design variations make exhaustive experimental testing infeasible [11]. By first testing pathway elements in cell-free systems, researchers can gather crucial data on enzyme kinetics, expression level effects, and potential inhibitory interactions before committing to full strain construction [3].

Integrated Workflow

The knowledge-driven approach creates a bridge between in vitro and in vivo environments through a structured workflow:

- Upstream In Vitro Investigation: Testing pathway components in cell-free systems

- Mechanistic Learning: Extracting quantitative relationships from in vitro data

- Informed In Vivo Design: Translating findings to strain engineering strategies

- Validation and Refinement: Testing engineered strains and iterating based on performance [3]

This workflow effectively narrows the design space for in vivo engineering, increasing the probability of success in early DBTL cycles [3].

Diagram 1: The Knowledge-Driven DBTL workflow integrates upstream in vitro investigation with traditional DBTL cycles to create a more mechanistic learning foundation.

Technical Implementation: A Dopamine Production Case Study

A recent application demonstrating the effectiveness of the knowledge-driven approach involved developing an Escherichia coli strain for dopamine production [3]. This case study illustrates the practical implementation and quantitative benefits of this methodology.

Background and Challenge

Dopamine has important applications in emergency medicine, cancer diagnosis/treatment, and materials science [3]. While microbial production offers an environmentally friendly alternative to chemical synthesis, previous in vivo dopamine production in E. coli achieved only 27 mg/L, leaving significant room for improvement [3].

The dopamine biosynthesis pathway from the precursor l-tyrosine involves two key enzymes: 4-hydroxyphenylacetate 3-monooxygenase (HpaBC, native to E. coli) converts l-tyrosine to l-DOPA, and l-DOPA decarboxylase (Ddc, from Pseudomonas putida) then catalyzes dopamine formation [3].

Diagram 2: Dopamine biosynthesis pathway in engineered E. coli, showing the two-enzyme conversion from l-tyrosine to dopamine.

Experimental Protocols

1In VitroInvestigation Phase

Cell-Free Protein Synthesis System Preparation [3]:

- Crude Cell Lysate Preparation: Grow E. coli FUS4.T2 in 2xTY medium, harvest cells, and lysate via sonication

- Reaction Buffer Composition: 50 mM phosphate buffer (pH 7) supplemented with 0.2 mM FeCl~2~, 50 μM vitamin B~6~, and 1 mM l-tyrosine or 5 mM l-DOPA

- Pathway Testing: Combine lysate with reaction buffer and pathway enzymes to test different relative expression levels

Key Measurements:

- Enzyme expression levels under different regulatory sequences

- Substrate conversion rates

- Metabolite profiling to identify potential bottlenecks

Translation toIn VivoEngineering

Ribosome Binding Site (RBS) Engineering [3]:

- RBS Library Design: Modulate Shine-Dalgarno sequences without interfering with secondary structures

- High-Throughput Assembly: Automated construction of RBS variants

- Strain Transformation: Introduce variant libraries into dopamine production host (E. coli FUS4.T2)

- Screening: Evaluate dopamine production across RBS variants

Analytical Methods:

- HPLC for dopamine quantification

- Biomass measurements for yield calculations

- Statistical evaluation of production variance

Key Reagents and Research Solutions

Table 1: Essential Research Reagents for Knowledge-Driven DBTL Implementation

| Reagent/Resource | Function in Workflow | Specific Application in Dopamine Case Study |

|---|---|---|

| Crude Cell Lysate System | In vitro pathway testing bypassing cellular constraints | E. coli FUS4.T2 lysate for testing dopamine pathway enzymes [3] |

| RBS Engineering Tools | Fine-tuning relative gene expression in synthetic pathways | Modulating Shine-Dalgarno sequences for HpaBC and Ddc [3] |

| Analytical Platforms | Quantifying pathway intermediates and products | HPLC for dopamine quantification [3] |

| Specialized Media | Supporting specific metabolic functions | Minimal medium with MOPS buffer, vitamin B~6~, phenylalanine [3] |

| Automated Strain Construction | High-throughput assembly of genetic variants | Automated RBS library construction [3] |

Performance Results and Comparative Analysis

The knowledge-driven approach yielded significant improvements over previous dopamine production efforts:

Table 2: Quantitative Comparison of Dopamine Production Strains

| Production Strain / Approach | Dopamine Titer (mg/L) | Dopamine Yield (mg/g biomass) | Fold Improvement |

|---|---|---|---|

| Previous State-of-the-Art | 27.0 | 5.17 | 1.0x |

| Knowledge-Driven DBTL | 69.03 ± 1.2 | 34.34 ± 0.59 | 2.6-6.6x |

The knowledge-driven approach achieved a 2.6-fold improvement in titer and a 6.6-fold improvement in yield compared to previous state-of-the-art in vivo dopamine production [3]. This demonstrates the efficacy of using upstream in vitro data to inform in vivo engineering decisions.

Implementation Guidelines

When to Apply the Knowledge-Driven Approach

The knowledge-driven DBTL strategy is particularly advantageous in these scenarios:

- Novel Pathway Engineering: When introducing heterologous pathways with limited prior functional data

- Complex Metabolic Optimization: When pathway kinetics are non-intuitive and sequential optimization is suboptimal [11]

- Toxic Intermediate Production: When pathway intermediates may impact cell viability

- High-Resource Constraint Situations: When minimizing iterative cycles is economically critical [3]

Practical Considerations for Implementation

Cell-Free System Design:

- Use crude cell lysates to maintain metabolite pools and energy equivalents [3]

- Match in vitro conditions to anticipated in vivo environment as closely as possible

- Include relevant cofactors and precursors to support pathway function

Data Translation:

- Focus on relative expression levels rather than absolute values when moving from in vitro to in vivo

- Account for cellular context differences, including membrane transport and regulatory networks

- Use appropriate statistical models to predict in vivo performance from in vitro data [11]

Integration with Automation:

- Implement high-throughput in vitro screening to maximize data generation

- Utilize automated DNA assembly for rapid variant construction [3]

- Employ robotic systems for consistent assay execution

Future Perspectives

The knowledge-driven DBTL approach continues to evolve with several promising directions:

Machine Learning Integration: Combining in vitro data with machine learning models can further enhance predictive capabilities. Recent studies show that gradient boosting and random forest models outperform other methods in low-data regimes common in early DBTL cycles [11].

Expanded In Vitro Systems: Advanced human-based in vitro methods are being developed for more physiologically relevant testing, particularly for pharmaceutical applications [20]. Similar innovations could enhance microbial strain development.

Multi-Omics Data Integration: Incorporating proteomic, metabolomic, and transcriptomic data with in vitro results can provide a more comprehensive systems biology perspective for strain design.

The knowledge-driven DBTL cycle represents a significant advancement over conventional iterative approaches in strain development. By incorporating upstream in vitro investigation to build mechanistic understanding before committing to full strain construction, this methodology reduces resource consumption and accelerates the development timeline.

The dopamine production case study demonstrates that this approach can achieve substantial improvements in both titer and yield—2.6-fold and 6.6-fold improvements respectively—highlighting its practical efficacy [3]. As synthetic biology continues to tackle more complex engineering challenges, the knowledge-driven integration of in vitro data to inform in vivo engineering will play an increasingly vital role in developing efficient microbial cell factories for sustainable chemical production.

From Code to Cell: Methodological Applications of DBTL in Strain Development

The Design-Build-Test-Learn (DBTL) cycle serves as a foundational framework in synthetic biology and metabolic engineering for systematically developing and optimizing microbial strains. This iterative process enables researchers to engineer organisms for specific functions, such as producing biofuels, pharmaceuticals, and other valuable compounds [7]. In modern biotechnology, automating the DBTL cycle has become crucial for accelerating strain development, enhancing reproducibility, and managing the complexity of biological engineering [21] [8]. The integration of software, robotics, and advanced analytics has transformed this cycle from a largely manual, time-consuming process into a high-throughput, data-driven pipeline capable of rapidly exploring vast genetic design spaces that would be impossible to address through traditional methods.

This technical guide examines the core components and implementation of automated DBTL pipelines, focusing on their application in strain development research. We explore the specific technologies enabling each phase, present quantitative performance data from real-world applications, and provide detailed experimental methodologies that demonstrate the power of this integrated approach for advancing microbial metabolic engineering.

Core Components of the Automated DBTL Pipeline

The Four Phases of the DBTL Cycle

Table 1: The Four Phases of the Automated DBTL Cycle

| Phase | Key Activities | Enabling Technologies |

|---|---|---|

| Design | Pathway design, enzyme selection, DNA part specification, combinatorial library design | Bioinformatics software (RetroPath, Selenzyme), DNA assembly design tools (PartsGenie), Design of Experiments (DoE) |

| Build | DNA synthesis, pathway assembly, transformation, strain construction | Automated liquid handlers, robotic integration, high-throughput cloning, DNA synthesizers |

| Test | Cultivation, product extraction, analytical screening, data collection | High-throughput fermentation, automated mass spectrometry, LC-MS/MS, next-generation sequencing |

| Learn | Data analysis, pattern recognition, predictive modeling, design recommendation | Machine learning algorithms, statistical analysis, deep neural networks, AI-driven recommendation systems |

Enabling Technologies and Integration Framework

Automated DBTL pipelines rely on sophisticated integration of computational and physical systems. Biofoundries represent the pinnacle of this integration, featuring computer-aided design, synthetic biology tools, and robotic automation working in concert [5]. The modular nature of these pipelines allows laboratories to adapt specific components while maintaining the overall workflow integrity. Key integration points include standardized data transfer protocols (such as RESTful APIs), instrument-specific software drivers, and centralized sample tracking systems that maintain chain of custody from digital design to physical strain [8].

Software platforms like TeselaGen provide end-to-end management of the DBTL cycle, offering flexible deployment options (cloud or on-premises) to address varied security, regulatory, and compliance needs within the biotech industry [8]. These systems generate detailed DNA assembly protocols, manage laboratory inventory, orchestrate robotic workflows, and provide advanced analytics capabilities essential for interpreting complex experimental results.

Automated DBTL Workflow: This diagram illustrates the integrated, cyclical nature of the automated Design-Build-Test-Learn pipeline, showing how data flows between phases to inform subsequent iterations.

Quantitative Performance of Automated DBTL Implementation

Throughput and Efficiency Metrics

Automation dramatically accelerates strain construction and evaluation. In a representative example, an automated yeast strain engineering pipeline achieved a capacity of approximately 400 transformations per day and up to 2,000 transformations per week [5]. This represents a 10-fold increase compared to manual throughput, where a human operator typically completes about 40 transformations per day (200 reactions per week) [5]. This enhanced throughput enables researchers to explore significantly larger design spaces in shorter timeframes.

Table 2: Performance Metrics from Automated DBTL Implementations

| Application | Strain/Product | Throughput/Cycle Efficiency | Key Improvement |

|---|---|---|---|

| Flavonoid Production [22] | (2S)-pinocembrin in E. coli | 16 constructs per cycle | 500-fold production increase over 2 DBTL cycles |

| Alkaloid Pathway Screening [5] | Verazine in S. cerevisiae | 400 transformations/day | Identified genes increasing production 2-5 fold |

| Dopamine Production [14] | Dopamine in E. coli | N/A | 2.6-6.6 fold improvement over state-of-the-art |

| Combinatorial Pathway Optimization [11] | Metabolic flux optimization | Simulated 50 designs/cycle | Gradient boosting outperformed other ML methods |

Case Study: Automated Flavonoid Production

The application of an automated DBTL pipeline for flavonoid production demonstrates the cycle's effectiveness. In this implementation, researchers applied design of experiments (DoE) based on orthogonal arrays combined with a Latin square for gene positional arrangement to reduce 2,592 possible combinatorial configurations down to 16 representative constructs - achieving a compression ratio of 162:1 [22]. This strategic reduction made comprehensive exploration of the design space experimentally tractable.

Through two iterative DBTL cycles, the pipeline established a production pathway improved by 500-fold, with competitive titers reaching 88 mg L⁻¹ of (2S)-pinocembrin [22]. Statistical analysis of the first cycle identified that vector copy number had the strongest significant effect on pinocembrin levels (P value = 2.00 × 10⁻⁸), followed by a positive effect of the chalcone isomerase (CHI) promoter strength (P value = 1.07 × 10⁻⁷) [22]. This learning informed the second cycle design, which focused on the most impactful parameters to further optimize production.

Detailed Experimental Protocols for Automated DBTL Implementation

Protocol 1: Automated High-Throughput Yeast Transformation

The automated yeast strain construction protocol exemplifies the Build phase in DBTL cycles [5]. This modular, integrated method enables high-throughput transformation in Saccharomyces cerevisiae using the Hamilton Microlab VANTAGE platform:

Transformation Setup and Heat Shock: Program the robotic system to prepare transformation mixtures in 96-well format using the lithium acetate/ssDNA/PEG method. The system automatically:

- Transfers competent yeast cells to reaction plates

- Adds plasmid DNA (customizable volume and concentration)

- Adds transformation mix components in optimized ratios

- Seals plates using an integrated plate sealer

- Incubates plates in a thermal cycler for heat shock (temperature and duration customizable)

Washing: After heat shock, the system:

- Removes plate seals using an integrated plate peeler

- Centrifuges plates to pellet cells

- Aspirates supernatant

- Resuspends cells in recovery media

- Incubates plates to allow cell recovery

Plating: The automated system:

- Transfers transformed cells to selective agar plates

- Spreads cells evenly across plate surfaces

- Incubates plates for colony development

This protocol achieves approximately 96 transformations per run with ~2 hours of robotic execution time, including 1.5 hours of automated setup and hands-off heat shock [5]. Critical to this process is the optimization of liquid classes for viscous reagents like PEG, which required adjustment of aspiration and dispensing speeds, air gaps, and pre- and post-dispensing parameters to ensure accurate pipetting [5].

Protocol 2: Knowledge-Driven DBTL for Dopamine Production

A knowledge-driven DBTL approach incorporating upstream in vitro investigation accelerated dopamine production strain development in E. coli [14]:

In Vitro Pathway Validation:

- Prepare crude cell lysate systems from production hosts

- Express pathway enzymes (HpaBC and Ddc) using cell-free protein synthesis

- Test different relative expression levels in reaction buffer containing FeCl₂, vitamin B₆, and l-tyrosine precursor

- Quantify dopamine production to identify optimal enzyme ratios

In Vivo Strain Construction:

- Translate optimal expression ratios to in vivo environment through RBS engineering

- Design RBS variants focusing on Shine-Dalgarno sequence modulation

- Construct plasmid libraries using high-throughput cloning techniques

- Transform engineered E. coli FUS4.T2 production strain

High-Throughput Screening:

- Cultivate strains in 96-deepwell plates containing minimal medium with appropriate antibiotics and inducers

- Incubate with shaking for standardized growth period

- Perform chemical extraction using Zymolyase-mediated cell lysis followed by organic solvent extraction

- Analyze dopamine production using rapid LC-MS methods (19-minute runtime)

This knowledge-driven approach developed a dopamine production strain capable of producing 69.03 ± 1.2 mg/L (34.34 ± 0.59 mg/gbiomass), representing a 2.6 to 6.6-fold improvement over previous state-of-the-art in vivo production methods [14].

Knowledge-Driven DBTL Approach: This workflow illustrates the integration of upstream in vitro investigation to inform and accelerate the subsequent in vivo DBTL cycles for strain development.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for Automated DBTL Workflows

| Reagent/Solution | Function | Application Example |

|---|---|---|

| Lithium Acetate/ssDNA/PEG Transformation Mix | Enables DNA uptake in yeast | High-throughput yeast transformation [5] |

| Zymolyase-based Lysis Buffer | Enzymatic cell wall degradation | Chemical extraction from yeast for metabolite analysis [5] |

| MOPS-based Minimal Medium | Defined growth conditions | Cultivation experiments for metabolite production [14] |

| Cell-Free Protein Synthesis System | In vitro protein expression | Testing enzyme expression levels before in vivo implementation [14] |

| Restriction Enzyme Cloning Systems | DNA assembly | Golden Gate, Gibson assembly, ligase cycling reaction [22] [8] |

| LC-MS Mobile Phase Solvents | Chromatographic separation | Metabolite quantification (e.g., verazine, dopamine) [5] [14] |

Advanced Analytics and Machine Learning in the Learn Phase

Machine Learning for Predictive Modeling

The Learn phase has evolved from basic statistical analysis to sophisticated machine learning applications that drive predictive modeling. In combinatorial pathway optimization, gradient boosting and random forest models have demonstrated superior performance in the low-data regime typical of early DBTL cycles [11]. These methods show robustness against training set biases and experimental noise, making them particularly valuable for biological applications where clean, extensive datasets are often unavailable.

Mechanistic kinetic model-based frameworks provide a powerful approach for testing and optimizing machine learning methods in iterative metabolic engineering [11]. These models use ordinary differential equations to describe changes in intracellular metabolite concentrations over time, allowing in silico simulation of pathway behavior under different engineering scenarios. This enables researchers to compare machine learning methods and DBTL strategies without the cost and time requirements of physical experiments.

Heterogeneity-Powered Learning

Advanced analytical approaches are leveraging biological heterogeneity to enhance learning. The RespectM method enables microbial single-cell level metabolomics, detecting metabolites at a rate of 500 cells per hour with high efficiency [23]. By analyzing 4,321 single-cell metabolomics data points representing metabolic heterogeneity, researchers trained deep neural networks to establish heterogeneity-powered learning (HPL) models [23].

This approach addresses a fundamental challenge in the Learn phase: the extreme asymmetry between sparse testing data and chaotic metabolic networks [23]. By leveraging naturally occurring heterogeneity, researchers generate sufficient data to power deep learning algorithms, enabling more accurate predictions of biological system behavior. In one application, an HPL-based model achieved high accuracy (Training MSE: 0.0009546, Test MSE: 0.0009198) in predicting optimal metabolic engineering strategies for triglyceride production [23].

The integration of software, robotics, and analytics in automated DBTL pipelines has fundamentally transformed strain development research. By systematically addressing each phase of the cycle with specialized technologies and maintaining data continuity throughout the process, these pipelines enable unprecedented exploration of biological design space. Quantitative results demonstrate order-of-magnitude improvements in throughput, efficiency, and production outcomes across diverse applications.

As the field advances, several emerging trends promise to further enhance DBTL capabilities: increased integration of single-cell analytics to leverage biological heterogeneity, development of more sophisticated recommendation algorithms for the Design phase, and enhanced data infrastructure to support machine learning across multiple DBTL cycles. These advancements will continue to accelerate the engineering of microbial cell factories for sustainable production of pharmaceuticals, chemicals, and materials, solidifying the automated DBTL pipeline's role as a cornerstone of modern biotechnology research and development.

The quest for sustainable and efficient production methods for high-value fine chemicals has positioned microbial metabolic engineering at the forefront of industrial biotechnology. Within this field, the Design-Build-Test-Learn (DBTL) cycle has emerged as a powerful, iterative framework for strain development, enabling the systematic optimization of complex biological systems. This whitepaper elucidates the application of the DBTL cycle in the context of a landmark achievement: the dramatic enhancement of flavonoid production in engineered Escherichia coli. Flavonoids, such as (2S)-pinocembrin, are plant-derived specialized metabolites with recognized therapeutic potential, including anti-oxidative and anti-apoptotic effects that are valuable for drug development [24]. We detail how a modular metabolic strategy, implemented through rigorous DBTL cycling, facilitated the direct production of these compounds from glucose, establishing a robust platform for microbial manufacturing.

The DBTL Cycle in Strain Development

The DBTL cycle is an iterative engineering paradigm that structures the process of biological optimization. Its application to microbial strain development is foundational to modern synthetic biology [14].

- Design: In this initial phase, informed by prior knowledge and data, researchers design genetic modifications. Strategies can range from rational design (based on known pathway information) to the use of machine learning models and protein language models to predict high-fitness enzyme variants or optimal pathway configurations [25]. For metabolic pathways, a common approach is modular design, where large pathways are partitioned into smaller, manageable segments [24].

- Build: This phase involves the physical construction of the genetically engineered strains. Automated biofoundries are increasingly used to execute this step with high throughput and reproducibility, employing techniques such as molecular cloning, plasmid assembly, and genome editing [14] [25].

- Test: The constructed strains are cultivated and their performance is rigorously evaluated. Automated facilities conduct high-throughput screening to measure key performance indicators like product titer, yield, and productivity. Analytical techniques such as mass spectrometry are central to this label-free, untargeted discovery of new enzyme products [26] [27].

- Learn: Data collected from the testing phase are analyzed to extract mechanistic insights and identify bottlenecks. This learning phase can be guided by traditional statistical analysis or sophisticated machine learning models that correlate genetic changes with phenotypic outcomes. The insights gained directly inform the next Design phase, closing the loop and initiating a new, more informed cycle of engineering [14] [25].

A key enhancement to this cycle is the "knowledge-driven DBTL" approach, which incorporates upstream in vitro investigations, such as testing enzyme expression levels in cell-free lysate systems, to generate mechanistic understanding before committing to resource-intensive in vivo strain construction [14].

Case Study: Engineering E. coli for High-Yield (2S)-Pinocembrin Production

Background and Objective

(2S)-Pinocembrin is a flavonoid of significant pharmaceutical interest, studied for its potential to alleviate cerebral ischemic injury [24]. Previous microbial production methods relied on supplementation with expensive precursors like L-phenylalanine or cinnamic acid, which is commercially unfavorable. The objective of this engineering effort was to develop an E. coli strain capable of efficiently producing (2S)-pinocembrin directly from glucose, thereby eliminating the need for costly additives and creating a more sustainable production process [24].

Modular Pathway Design and Implementation

A modular metabolic strategy was employed to balance the extensive pathway required for de novo (2S)-pinocembrin synthesis. This approach partitions the overall pathway into discrete, co-regulated modules to alleviate metabolic burden and avoid the accumulation of toxic intermediates [24].

The overall pathway was divided into four modules as shown in the diagram below:

The following table summarizes the functional role of each module in the engineered pathway.

Table 1: Modular Pathway Strategy for (2S)-Pinocembrin Production in E. coli

| Module | Function | Key Enzymes/Gene(s) | Engineering Strategy |

|---|---|---|---|

| Upstream Pathway | Conversion of glucose to L-phenylalanine | Feedback inhibition-resistant AroG (AroGfbr) | Enhancement of native L-phenylalanine biosynthesis capacity [24] |

| Module 1 | Conversion of L-phenylalanine to cinnamic acid | Phenylalanine ammonia lyase (PAL) | Introduction of heterologous plant-derived enzyme [24] |

| Module 2 | Activation of cinnamic acid to its CoA ester | 4-coumarate:CoA ligase (4CL) | Introduction of heterologous enzyme for activation [24] |

| Module 3 | Supply of malonyl-CoA | Acetyl-CoA carboxylase (ACC) | Enhancement of malonyl-CoA precursor supply [24] |

| Module 4 | Assembly of (2S)-pinocembrin | Chalcone synthase (CHS), Chalcone isomerase (CHI) | Introduction of heterologous flavonoid assembly enzymes [24] |

DBTL Cycle in Action

The development of the high-yield strain was a product of iterative DBTL cycling.

- Design (Round 1): The initial design involved selecting appropriate heterologous enzymes (PAL, 4CL, CHS, CHI) with proven activity in E. coli and partitioning them into the four modules. To balance expression, the modules were placed on plasmids with different copy numbers [24].

- Build (Round 1): The genetic constructs were assembled using a system of compatible vectors (e.g., pETDuet-1, pCDFDuet-1, pRSFDuet-1, pACYCDuet-1) and transformed into an E. coli host strain [24].

- Test (Round 1): The initial strain was cultivated, and (2S)-pinocembrin production was quantified, establishing a baseline titer [24].

- Learn (Round 1): Analysis revealed that pathway imbalance and metabolic burden from maintaining four plasmids were primary limitations.

- Design (Round 2): Informed by the initial results, the pathway was re-balanced. This involved fine-tuning the expression of the modules by varying plasmid copy numbers and optimizing the codon usage of the heterologous genes to maximize flux towards the final product [24].

- Build & Test (Round 2): The optimized constructs were built and tested. This iterative process of balancing the modules led to a final strain capable of producing (2S)-pinocembrin at 40.02 mg/L directly from glucose [24].

This case demonstrates a pre-biofoundry, rational implementation of the DBTL cycle. Modern applications would leverage full automation for the Build and Test phases, dramatically accelerating the iterative process [25].

Advanced Tools and Methodologies

The field has evolved significantly since the foundational (2S)-pinocembrin study. The following workflow illustrates a modern, automated biofoundry approach to the DBTL cycle for protein and pathway engineering.

Key Technologies Driving Modern DBTL Cycles

- Automated Biofoundries: Integrated robotic workcells automate the Build and Test phases, handling tasks from colony picking and microculture cultivation to sample preparation and analysis. This ensures high reproducibility and throughput, enabling the processing of thousands of variants [26] [25].

- High-Throughput Screening (HTS): Label-free mass spectrometry techniques, such as MALDI-TOF MS, can analyze samples at a rate of seconds per sample, enabling the ultra-high-throughput screening necessary for evaluating large variant libraries [26]. Whole-cell biosensors have also been developed through directed evolution of transcription factors to detect intracellular products like alcohols, facilitating real-time, in situ product detection for screening [28].

- Machine Learning and Protein Language Models (PLMs): PLMs like ESM-2 can perform "zero-shot" prediction of beneficial protein variants, providing a highly intelligent starting point for the Design phase without requiring prior structural knowledge [25]. As experimental data is collected, supervised ML models (e.g., multi-layer perceptrons) are trained to become accurate fitness predictors, guiding the selection of variants in subsequent DBTL cycles and progressively navigating the fitness landscape towards global optima [25].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Reagents and Tools for Metabolic Engineering

| Reagent / Tool | Function / Application | Example Use Case |

|---|---|---|

| Compatible Plasmid Systems (e.g., Duet vectors) | Allows simultaneous, balanced expression of multiple genes from a single strain. | Engineering the four-module (2S)-pinocembrin pathway in E. coli [24]. |

| Error-Prone PCR & Site-Saturation Mutagenesis Kits | Introduces random or targeted diversity into a gene for directed evolution. | Creating mutant libraries of a cyclodipeptide synthase (CDPS) to produce new diketopiperazine compounds [26]. |

| Cell-Free Protein Synthesis (CFPS) Systems | Rapid in vitro testing of enzyme expression and pathway function without host constraints. | Used in knowledge-driven DBTL to test enzyme levels before in vivo strain construction [14]. |

| Ribosome Binding Site (RBS) Libraries | Fine-tunes the translation initiation rate and thereby the expression level of a target gene. | Optimizing the relative expression of bicistronic genes in a dopamine production pathway [14]. |

| Protein Language Models (e.g., ESM-2) | Zero-shot prediction of high-fitness protein variants to seed initial libraries. | Designing 96 variants of a tRNA synthetase to initiate an automated DBTL cycle, leading to a 2.4-fold activity improvement [25]. |

| Transcription Factor Biosensors | Real-time, in situ detection of a target metabolite within a living cell for HTS. | Evolved AlkS transcription factor variants used to screen for high-isopentanol-producing strains [28]. |

The journey to optimize microbial cell factories for fine chemical production is a complex endeavor masterfully guided by the DBTL cycle. The case of flavonoid production in E. coli demonstrates the power of a systematic, modular approach to metabolic engineering. The continued integration of this framework with cutting-edge technologies—automated biofoundries, protein language models, and machine learning—is fundamentally accelerating the pace of biological design. These advancements are transforming the DBTL cycle from a sequential process into a tightly integrated, self-improving system capable of achieving engineering goals, such as orders-of-magnitude improvements in product titers, with unprecedented speed and efficiency. For researchers and drug development professionals, mastering this evolving toolkit is essential for pushing the boundaries of what is possible in sustainable chemical production.

The Design-Build-Test-Learn (DBTL) cycle is a systematic framework in synthetic biology and metabolic engineering for developing and optimizing microbial strains. This iterative process enables researchers to efficiently navigate the vast design space of genetic modifications to achieve desired metabolic functions, such as the high-yield production of valuable compounds [7]. Strain optimization is therefore often performed using iterative DBTL cycles, with the goal of progressively developing a production strain by incorporating learning from each previous cycle [11]. This approach is particularly powerful, and often necessary, for combinatorial pathway optimization, where multiple pathway components are adjusted simultaneously. Due to the large set of library components, a combinatorial explosion of the design space often occurs, making it experimentally infeasible to test every design [11]. The DBTL framework provides a structure to manage this complexity.

The Design Phase: Strategic Planning of Experiments

The Design phase involves planning which genetic modifications to create and which experimental conditions to test. For combinatorial pathway optimization, this means deciding which genes to tune and what expression levels to explore. Simultaneously, the Design of Experiments (DoE) is used to structure the exploration of factors, such as media composition and culture temperature, that interact with the genetic background [29].

Combinatorial Library Design

Combinatorial libraries for pathway optimization are constructed from a large DNA library consisting of promoters, ribosomal binding sites (RBSs), and coding sequences that affect enzyme properties or concentrations [11]. A key challenge is designing libraries that are small enough to be experimentally practical yet smart enough to effectively sample the expression level space. The RedLibs (Reduced Libraries) algorithm addresses this by designing partially degenerate RBS sequences that produce a uniform distribution of Translation Initiation Rates (TIRs) across a user-specified library size [30]. This rational design minimizes experimental effort while maximizing the coverage of possible expression levels, ensuring that a high density of functional clones is present in the library [30].

Design of Experiments (DoE) for Process and Media

Statistical Design of Experiments (DoE) is a core methodology for simultaneously optimizing genetic and environmental factors. It allows for a structured exploration of the relationships between experimental variables (factors) and the measured response (e.g., product titer) [29].

- Full Factorial Designs: Test all possible combinations of factor levels, allowing for full characterization of factor effects and interactions. The number of experiments scales geometrically (

L1 * L2 * ... * Lfforffactors) [29]. - Fractional Factorial Designs: Reduce the number of experiments by testing a carefully selected subset of all possible combinations. This preserves the ability to estimate main effects but may confound (alias) interactions with each other. The resolution of the design (e.g., Resolution III, IV) indicates which effects are confounded [29].

Table 1: Types of Experimental Designs

| Design Type | Key Characteristic | Advantage | Disadvantage |

|---|---|---|---|

| Full Factorial | Tests all factor level combinations | Characterizes all main effects and interactions | Number of experiments can be prohibitively large |

| Fractional Factorial | Tests a subset of all combinations | Reduces experimental workload; efficient for screening | Interactions may be confounded with each other or main effects |

The Build and Test Phases: Experimental Execution

Building Strain Libraries

In the Build phase, the designed DNA constructs are assembled and introduced into a host microorganism [11]. High-throughput molecular cloning workflows are essential for generating the diverse libraries of biological strains required for effective DBTL cycling [7]. For example, in a study optimizing the violacein biosynthesis pathway, RBS libraries were constructed via simple PCR and/or assembly strategies using the degenerate sequences identified by the RedLibs algorithm, enabling easy one-pot library generation [30].

Testing Strain Performance