Optimizing Cell Self-Organization: A Guide to Predictive Computational Frameworks

This article explores the latest computational frameworks that are revolutionizing the study and control of cellular self-organization.

Optimizing Cell Self-Organization: A Guide to Predictive Computational Frameworks

Abstract

This article explores the latest computational frameworks that are revolutionizing the study and control of cellular self-organization. Aimed at researchers, scientists, and drug development professionals, it details how machine learning techniques, particularly automatic differentiation, are being used to decode the genetic and biophysical rules guiding morphogenesis. We cover foundational concepts, practical methodologies for model implementation, strategies for troubleshooting and optimization, and a comparative analysis of different modeling approaches. The synthesis of these areas provides a comprehensive roadmap for leveraging computational models to predictively design tissues and understand disease, with profound implications for regenerative medicine and therapeutic development.

The Principles of Self-Organization: From Biological Complexity to Computational Optimization

Troubleshooting Guides

Guide 1: Addressing Variability in Organoid Cultures

Problem: High batch-to-batch variability in organoid morphology and differentiation.

- Potential Cause 1: Inconsistent Matrigel Quality

- Solution: Use lot-qualified, growth factor-reduced (GFR) Matrigel and avoid repeated thawing/refreezing. Ensure the Matrigel concentration is at least 8 mg/mL to create stable domes [1].

- Potential Cause 2: Stem Cell Population Instability

- Solution: Regularly monitor the expression of stem cell markers (e.g., LGR5+). Perform marker expression and karyotype analysis every 5-10 passages to ensure organoid quality and identity. Passage organoids before they become too large or necrotic, typically between 7-12 days [1].

- Potential Cause 3: Suboptimal Passaging

- Solution: For more uniform cultures, use single-cell passaging with reagents like TrypLE Express, supplemented with 10 µM ROCK inhibitor (Y-27632) to promote cell viability. This can produce more consistent results than mechanical dissociation [1].

Guide 2: Managing Computational Reproducibility

Problem: Inability to reproduce computational analyses of self-organization data.

- Potential Cause 1: Manual Data Handling and Non-Scripted Workflows

- Solution: Implement end-to-end automated computational workflows using tools like Snakemake, Nextflow, or Jupyter Notebooks. This avoids error-prone manual steps like spreadsheet manipulation [2].

- Potential Cause 2: Lack of Compute Environment Control

- Solution: Use containerization technologies (e.g., Docker, Singularity) to capture the complete computational environment, including all software dependencies and versions [2].

- Potential Cause 3: Inadequate Documentation

- Solution: Practice literate programming by combining code with human-readable narratives in R Markdown or Jupyter Notebooks. This ensures the analysis process is transparent and understandable [2].

Guide 3: Handling Contamination and Cell Health Issues

Problem: Microbial contamination or poor viability in organoid cultures.

- Potential Cause 1: Compromised Aseptic Technique

- Solution: Use biosafety cabinets and enclosed containers. Implement standardized protocols for tissue sampling and media preparation. Include regular checks for bacteria, fungi, yeast, and mycoplasma [3].

- Potential Cause 2: Improper Cryopreservation or Thawing

- Solution: Pre-treat organoids with ROCK inhibitor (Y-27632) before freezing. Use controlled freezing containers and optimized freezing media. When thawing, remove cryoprotectants promptly through centrifugation [1].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between a spheroid and an organoid? A1: Organoids are derived from stem cells or primary tissue, contain multiple cell types, exhibit complex structures, and have a theoretically unlimited lifespan when cultured in a hydrogel like Matrigel. Spheroids are derived from immortalized cell lines, typically consist of a single cell type, form simple aggregates, and are cultured as freely floating clusters in low-adhesion plates with a limited lifespan [1].

Q2: What are the key principles that make organoids a "complex system"? A2: Organoids exhibit several key principles of complex biological systems [4]:

- Self-organization: Stem cells can spontaneously form ordered structures without external guidance.

- Emergence: Complex patterns and functionalities (e.g., neural electrical activity, crypt-villus structures) arise from interactions between constituent cells.

- Nonlinearity: Small changes in culture conditions or genetic makeup can lead to disproportionately large effects on development.

- Adaptation: They can adjust and evolve in response to changing environmental conditions.

Q3: How can computational models help optimize cellular self-organization? A3: Computational frameworks can treat the control of cellular organization as an optimization problem. Techniques like automatic differentiation—originally developed for training neural networks—can be used to pinpoint how small changes in genetic networks or cellular signals affect the collective behavior of cells. This allows researchers to invert the problem and ask: "What cellular programming is needed to achieve a specific tissue function or shape?" [5]. Hybrid models that combine physics-based principles with data-driven approaches are also emerging [6].

Q4: What are the best practices for ensuring data quality in bioinformatics analyses of self-organization? A4: Adhere to the "Five Pillars of Reproducible Computational Research" [2]:

- Literate Programming: Combine code and narrative in documents (e.g., Jupyter Notebooks, R Markdown).

- Code Version Control and Sharing: Use Git and share code via public repositories.

- Compute Environment Control: Use containerization to manage software environments.

- Persistent Data Sharing: Archive data in stable, public repositories.

- Documentation: Thoroughly document all steps from data collection to analysis.

Q5: My organoids show high variability in size and shape after passaging. How can I reduce this? A5: To reduce variation:

- Use single-cell passaging with enzymatic dissociation (e.g., TrypLE) instead of mechanical "chunking" methods, ensuring to add a ROCK inhibitor to the media to support viability.

- Manually select and maintain organoids of similar sizes and morphologies during culture.

- Seed equivalent numbers of cells or organoid fragments per well to standardize starting conditions [1].

Q6: What are the critical components for a standard organoid culture medium? A6: While the exact formulation varies by organoid type, serum-free media is standard and often includes a base like Advanced DMEM/F12, supplemented with critical factors such as [1]:

- N-2 and B-27 supplements for essential nutrients.

- Growth factors like EGF, FGF, Noggin, and R-Spondin-1. These can be added as recombinant proteins or via conditioned media (e.g., from L-WRN cells).

- Small molecule inhibitors such as A-83-01, SB202190, and CHIR99021 to modulate key signaling pathways.

Table 1: Common Challenges in Organoid Culture and Recommended Quality Control (QC) Metrics

| Challenge | Recommended QC Metric | Frequency of Testing | Ideal Outcome / Acceptable Range |

|---|---|---|---|

| Genetic Drift & Misidentification [3] | STR Profiling / Cell Authentication | At initiation and every 10-15 passages [3] | Match to original cell line or tissue source |

| Loss of Stem Cell Population [1] | Marker Expression (e.g., LGR5+) | Every 5-10 passages [1] | Consistent expression of key stem cell markers |

| Microbial Contamination [3] | Mycoplasma Testing | Regularly (e.g., monthly) and for new incoming lines [3] | Negative for bacteria, fungi, yeast, and mycoplasma |

| Assay Variability [1] | Uniform Seeding (Single Cells) | With every experimental passage [1] | High viability post-seeding; consistent organoid size and number per well |

Table 2: Essential Research Reagent Solutions for Organoid Self-Organization Studies

| Reagent / Material | Function in Self-Organization Experiments | Key Considerations |

|---|---|---|

| GFR Matrigel [1] | Provides a 3D extracellular matrix (ECM) scaffold rich in signaling cytokines and structural proteins, essential for proper growth and patterning. | Use high concentration (≥8 mg/mL); qualify lots for consistency. |

| ROCK Inhibitor (Y-27632) [1] | Promotes cell survival during passaging, freezing, and thawing by inhibiting apoptosis, crucial for maintaining cell numbers for self-organization. | Add at 10 µM concentration during stressful manipulations. |

| N-2 & B-27 Supplements [1] | Provide essential nutrients and hormones for cell survival, growth, and neural differentiation, supporting the metabolic needs of complex structures. | Standard component of serum-free organoid media. |

| WNT Agonists (e.g., R-Spondin-1) [1] | Activates the WNT signaling pathway, a critical cue for stem cell maintenance and axial patterning during self-organization. | Can be used as recombinant protein or via conditioned media. |

| L-WRN Conditioned Media [1] | Cost-effective source of WNT-3A, R-Spondin-3, and Noggin, three key signaling molecules that direct intestinal and other organoid fate. | Must be titrated and quality-controlled for different organoid types. |

Experimental Protocols

Protocol 1: Establishing a Reproducible Organoid Passaging Workflow

This protocol aims to minimize variability for downstream analysis or expansion.

- Pre-conditioning: 1-2 hours before passaging, add ROCK inhibitor (Y-27632) to the culture media to a final concentration of 10 µM [1].

- Matrigel Dissolution: Aspirate the culture media from the well. Add an appropriate volume of Cell Recovery Solution or cold PBS to the Matrigel dome. Incubate at 4°C for 30-60 minutes to dissolve the Matrigel [1].

- Cell Collection and Washing: Gently pipette the dissolved Matrigel-cell mixture and transfer to a conical tube. Wash the well with cold basal media to collect any remaining organoids. Centrifuge the pooled suspension at 1100 rpm for 5 minutes. Carefully aspirate the supernatant, including the thin layer of dissolved Matrigel [1].

- Dissociation:

- For clump passaging: Resuspend the pellet in cold basal media and mechanically break up the organoids into small fragments by pipetting vigorously with a P1000 pipette tip.

- For single-cell passaging: Resuspend the pellet in a gentle enzymatic dissociation reagent like TrypLE Express and incubate at 37°C for 5-15 minutes. Neutralize with complete media [1].

- Centrifugation and Reseeding: Centrifuge again at 1100 rpm for 5 minutes. Aspirate the supernatant. Resuspend the cell pellet in a small volume of cold GFR Matrigel. Seed as domes in a pre-warmed plate and allow to polymerize for 20-30 minutes at 37°C. Finally, gently add complete organoid media containing ROCK inhibitor (if single-cells were made) on top of the dome [1].

Protocol 2: Implementing a Computational Reproducibility Pipeline

This protocol outlines steps for creating a reproducible analysis of self-organization data.

- Literate Programming Setup: Begin your analysis in a Jupyter Notebook or R Markdown document. Write the narrative and methodology alongside the code from the start [2].

- Version Control Initialization: Initialize a Git repository for your project. Make frequent, descriptive commits as the analysis progresses. Use a remote repository (e.g., GitHub, GitLab) for backup and sharing [2].

- Environment Capture: Create a container (e.g., Dockerfile) that specifies all operating system dependencies, software, and package versions required for the analysis. Alternatively, use a package manager like Conda to export the environment specification [2].

- Workflow Automation: Script the entire analysis from raw data ingestion to final figure and table generation. Use a workflow management system (e.g., Snakemake, Nextflow) or a master script to coordinate all steps. Set a fixed random seed for any stochastic algorithms [2].

- Data and Code Archiving: Upon completion, archive the final version of the code and the raw data in a persistent, public repository (e.g., Zenodo, Figshare) and link the two with a permanent DOI [2].

Signaling Pathways and Experimental Workflows

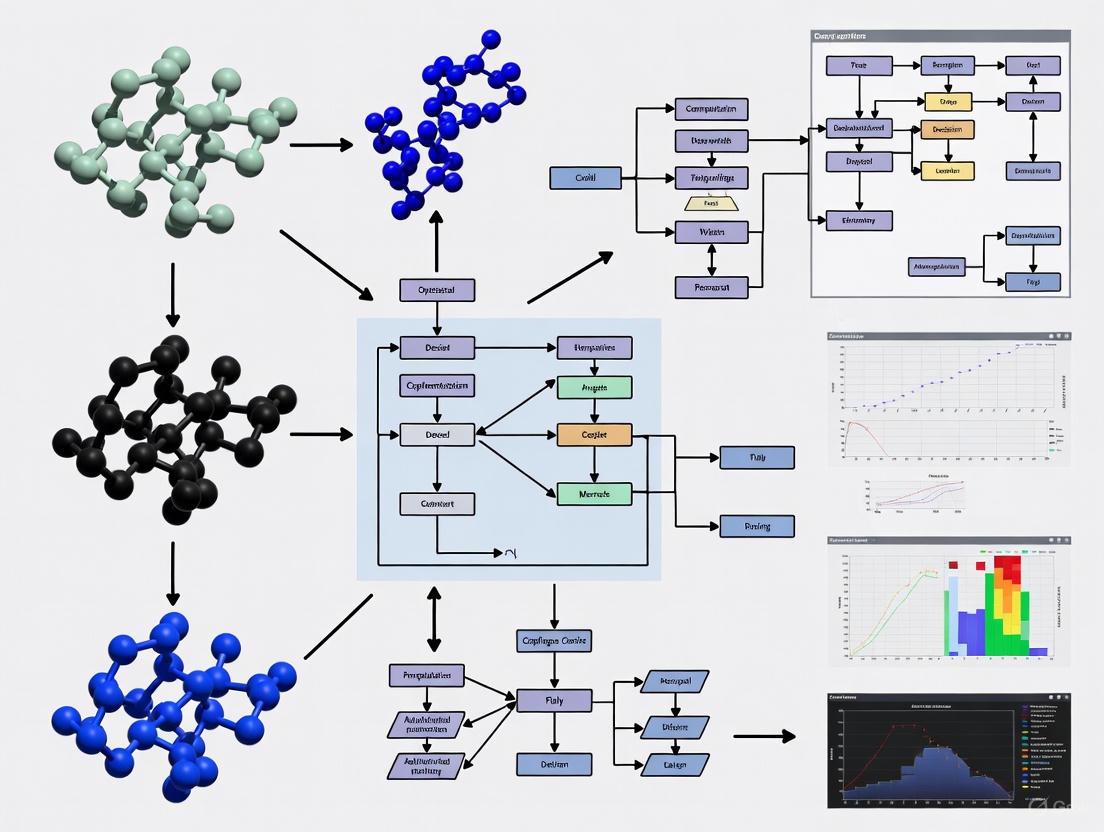

Diagram 1: Core self-organization process in organoids.

Diagram 2: Computational framework for predicting self-organization.

Troubleshooting Guides

Guide 1: Addressing Poor Predictive Performance in Morphogenesis Models

Problem: Your computational model of cellular self-organization fails to accurately predict the final tissue shape or structure.

Solutions:

- Check Gradient Calculations: If using automatic differentiation, verify that the gradients of your objective function with respect to genetic network parameters are computed correctly. Incorrect gradients will lead the optimization astray [5].

- Review Model Constraints: Ensure that physical constraints, such as mass conservation, limits on cell density, and realistic reaction kinetics, are properly implemented in your model. Overly simplified constraints can reduce predictive power [7].

- Calibrate with Experimental Data: Refine your model by calibrating it against experimental data. A model that is not calibrated on real biological data will struggle to make accurate predictions [5] [8].

Guide 2: Managing Computational Complexity in Large-Scale Models

Problem: The optimization process becomes computationally intractable when scaling to large networks or cell populations.

Solutions:

- Leverage Automatic Differentiation: Utilize automatic differentiation, a technique from machine learning, to efficiently compute gradients even in highly complex models. This is more efficient than traditional numerical methods for large problems [5] [8].

- Implement Parallel Computing: Harness parallel computing architectures to distribute the computational load, especially when simulating large cell colonies or running multiple parameter sets [9].

- Start with a Reduced Model: Begin with a simplified model that captures core network interactions before progressively adding complexity. This helps identify key drivers without immediate computational overload [7].

Guide 3: Controlling Charge Heterogeneity in Recombinant Protein Production

Problem: The charge variant profile of your monoclonal antibody (mAb) is inconsistent between batches, affecting therapeutic quality.

Solutions:

- Optimize Culture Conditions: Use Machine Learning (ML) models to identify the optimal combination of culture parameters (pH, temperature, duration) to minimize undesirable charge variants [10].

- Analyze Medium Components: Employ ML-driven analysis to understand the complex, non-linear effects of medium components (e.g., glucose, metal ions) on post-translational modifications that cause charge heterogeneity [10].

- Adopt a Quality-by-Design (QbD) Framework: Implement an adaptive, ML-driven optimization strategy aligned with QbD principles to proactively control critical quality attributes [10].

Frequently Asked Questions (FAQs)

FAQ 1: What is the core computational technique used to translate cell growth into an optimization problem? The core technique is automatic differentiation. Originally developed for training neural networks, it allows researchers to efficiently compute how small changes in a cell's genetic network or signaling pathways impact the emergent behavior of the entire cell collective. This transforms the process of understanding cell organization into a tractable optimization problem that a computer can solve [5] [8].

FAQ 2: How can we ensure that a computational model of the cell cycle is biologically relevant? Choosing the right modeling framework is crucial. The table below compares different computational paradigms to help you select the most appropriate one for your research goal.

| Modeling Paradigm | Key Strengths | Primary Applications | Key Considerations |

|---|---|---|---|

| Ordinary Differential Equations (ODEs) [9] | Captures deterministic dynamics of biochemical networks; well-established analytical methods. | Studying cyclin/CDK network dynamics, DNA replication and repair mechanisms [9]. | Requires accurate kinetic parameters; can become computationally heavy for large systems. |

| Agent-Based Models (ABMs) [9] [11] | Models individual cell behavior, heterogeneity, and spatial interactions within a tissue or tumor microenvironment. | Studying tumor-immune interactions, cell population dynamics, and spatial organization [9]. | High computational cost for large cell numbers; analysis can be complex. |

| Machine Learning (ML) Models [10] | Discovers complex, non-linear relationships in large datasets without requiring a pre-defined mechanistic model. | Optimizing cell culture conditions to control charge variants in mAbs; predicting cell behavior from data [10]. | Dependent on high-quality, large-scale data; model interpretability can be a challenge. |

FAQ 3: What are common pitfalls when applying machine learning to bioprocess optimization? Common pitfalls include:

- Insufficient Data Quality: ML models require large, high-quality datasets. Noisy or biased data will lead to unreliable predictions [10].

- Poor Model Interpretability: A "black box" model that predicts well but offers no biological insight is of limited use for understanding underlying mechanisms [10].

- Ignoring Nonlinear Interactions: Traditional methods like one-factor-at-a-time (OFAT) fail to capture complex interactions between process parameters, which ML is designed to handle [10].

FAQ 4: Can principles of cellular self-organization be applied beyond tissue engineering? Yes. The principles of self-organization, where local interactions give rise to global order, are observed across biological scales. For example, the "peak selection" model shows how modular structures in the brain (e.g., grid cell modules) and distinct species clusters in ecosystems can self-organize from a smooth gradient combined with local competitive interactions [12]. This suggests a universal principle that can inform computational models across neuroscience and ecology.

Experimental Protocols

Protocol 1: Differentiable Programming for Engineering Cellular Morphogenesis

This protocol outlines a computational method to discover genetic rules that guide cells into target shapes [5] [8].

1. Problem Formulation:

- Define Target Outcome: Specify the desired macroscopic outcome, such as a spheroid of a specific size or a horizontally elongated cell cluster.

- Formulate as Optimization: Frame the search for the genetic network that achieves this outcome as an optimization problem, where the goal is to minimize the difference between the simulated and target structures.

2. Model Setup:

- Define Cell Types: In the simulation, establish at least two cell archetypes (e.g., stationary "source cells" that emit growth factors and "proliferating cells" that divide in response) [8].

- Parameterize Gene Network: Create a model of a gene regulatory network where parameters (e.g., gene expression thresholds, interaction strengths) are the variables to be optimized.

3. Optimization via Automatic Differentiation:

- Simulate and Compare: Run the simulation and compare the resulting cell cluster shape to the target.

- Compute Gradients: Use automatic differentiation to efficiently calculate how each parameter in the gene network influences the final shape.

- Iterate and Converge: Update the network parameters based on the gradients to reduce the error. Repeat until the model reliably produces the target structure.

4. Validation and Analysis:

- Extract Rules: Analyze the optimized model to uncover the learned genetic rules. For example, it might reveal a motif where a receptor gene suppresses division in high-growth-factor regions [8].

- Experimental Testing: Synthesize cells with the prescribed genetic circuits and conduct real-world experiments to validate the computational predictions [8].

Protocol 2: Machine Learning-Driven Optimization of mAb Charge Heterogeneity

This protocol describes using ML to control a critical quality attribute (charge heterogeneity) in biopharmaceutical manufacturing [10].

1. Data Collection and Preprocessing:

- Gather Historical Data: Collect high-quality data from past bioreactor runs, including process parameters (pH, temperature, nutrient levels) and the resulting charge variant profiles (acidic, main, and basic species).

- Clean and Normalize Data: Preprocess the data to handle missing values and normalize features to a common scale.

2. Model Training and Validation:

- Select ML Algorithm: Choose a supervised learning regression algorithm (e.g., Random Forest, Gradient Boosting) capable of modeling non-linear relationships.

- Train Model: Use the historical data to train the model to predict the charge variant profile from the process parameters.

- Validate Model: Test the model's predictive accuracy on a held-out dataset not used during training.

3. Model Inversion for Optimization:

- Define Target Profile: Specify the ideal charge variant profile for your monoclonal antibody.

- Invert the Model: Use the trained ML model to identify the set of culture conditions (pH, temperature, duration, etc.) that are predicted to yield the target profile.

4. Implementation and Monitoring:

- Run Bioreactor: Execute a bioreactor run using the ML-prescribed conditions.

- Analyze Output: Measure the actual charge variant profile of the produced mAb.

- Refine Model: Use the results from this run to further refine and recalibrate the ML model, creating an adaptive optimization loop [10].

Visualizations

Diagram 1: Differentiable Programming Workflow for Cell Engineering

Diagram 2: Key Signaling in Growth Factor-Driven Morphogenesis

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Research |

|---|---|

| Automatic Differentiation Libraries (e.g., PyTorch, JAX) | Enables efficient gradient computation for optimizing complex models of genetic networks and cellular interactions [5] [8]. |

| Cell Colony Simulators (e.g., gro simulator) | Provides an agent-based modeling environment to simulate the behavior and communication of individual cells in a growing colony, useful for testing genetic circuits [11]. |

| CHO (Chinese Hamster Ovary) Cell Lines | The industry standard host cell line for the production of recombinant therapeutic proteins, including monoclonal antibodies [10]. |

| Cation Exchange Chromatography (CEX) | An essential analytical technique for separating and quantifying the different charge variants (acidic, main, basic) of a monoclonal antibody [10]. |

| Fluorescent Ubiquitination-Based Cell Cycle Indicator (FUCCI) | A live-cell imaging tool that allows real-time visualization of cell cycle progression in individual cells, useful for validating cell cycle models [9]. |

Frequently Asked Questions & Troubleshooting

Q1: Our computational model fails to reproduce key experimental results on tissue morphogenesis. How can we diagnose the issue? This is often a problem of reproducibility (re-running the same analysis) versus replicability (obtaining consistent results with a new, independent setup) [13]. To diagnose:

- Action: First, check for computational reproducibility. Use the same raw data and code to rebuild the analysis files and implement the same statistical analysis [14]. A discrepancy at this stage points to issues in data processing, statistical tools, or accidental errors.

- Action: If computationally reproducible, the issue may be with replicability. Ensure your model and the experimental setup share a close fit between the hypothesis and the experimental design/data [14]. Inconsistencies here often prolong validation or terminate projects [14].

Q2: How can we effectively quantify the structural complexity of a self-organized cell cluster? Traditional single-scale entropy measures often overlook hierarchical patterns. A multiscale entropy framework is better suited for this task.

- Action: Apply spectral graph coarsening to your network representation of the cell cluster [15]. This method aggregates groups of connected nodes into supernodes, creating a hierarchy of reduced graphs that preserve key structural properties [15].

- Action: Compute a compression-based entropy measure at each scale of the reduced graph [15]. The evolution of entropy across scales reveals structural regimes and provides a more complete characterization of the cluster's complexity [15].

Q3: Our model successfully predicts cell behavior in isolation, but fails when cells interact in a cluster. What could be wrong? The problem likely lies in not fully capturing the rules that govern collective cellular behavior.

- Action: Reframe the control of cellular organization as an optimization problem [5]. Use automatic differentiation to efficiently compute how infinitesimal changes in a gene regulatory network influence the emergent behavior of the entire tissue [5] [8]. This helps the computer learn the genetic and biochemical rules cells follow to achieve a collective function [5].

Q4: How can we reduce the computational cost of calculating entropy for very large networks of cells? Calculating compression-based entropy is computationally expensive. A multiscale approach can significantly reduce this cost.

- Action: Perform entropy analysis on spectrally coarsened versions of your original network [15]. By working with significantly smaller graphs, you can obtain a useful entropy-based metric at a fraction of the computational cost, enabling the analysis of much larger networks [15].

Experimental Protocols & Methodologies

1. Protocol for Multiscale Entropy Analysis of a Cellular Network This protocol quantifies the structural complexity of a cell cluster across different hierarchical levels [15].

- Input: A graph

G=(V,E)representing the cell cluster, where nodes are cells and edges represent interactions. - Procedure:

- Graph Coarsening: Apply the spectral graph coarsening framework [5] [16] to

Gto produce a series of reduced graphsG_c. The Laplacian matrix of the coarsened graph is defined asL_c = C∓ L C+, whereCis the coarsening matrix andLis the original Laplacian [15]. - Graph Encoding: At each scale, encode the graph's adjacency matrix into a binary sequence.

- Entropy Calculation: Use a universal compression algorithm (e.g., arithmetic coding) on the binary sequence to estimate the compression-based entropy.

- Normalization: Normalize the entropy value at each scale using a randomized Erdös-Rényi graph baseline of the same size and density [15].

- Graph Coarsening: Apply the spectral graph coarsening framework [5] [16] to

- Output: A multiscale entropy profile showing how structural complexity evolves as the network is coarsened.

2. Protocol for Differentiable Programming of Cell Clusters This protocol uses automatic differentiation to discover the genetic rules that guide cells to self-organize into a target shape [5] [8].

- Input: A target tissue shape or collective cell behavior.

- Procedure:

- Model Setup: Construct a simulation with clusters of cells, categorizing them into types (e.g., stationary source cells and proliferating cells) [8].

- Gradient Computation: Use automatic differentiation to compute the gradient of the final tissue shape with respect to the parameters of the intracellular gene network. This reveals how small changes in genes affect the collective outcome [5] [8].

- Parameter Optimization: Iteratively adjust the gene network parameters to minimize the difference between the simulated and target morphogenesis.

- Rule Extraction: Analyze the optimized gene network to identify the regulatory motif (e.g., a receptor gene that activates upon sensing an external growth factor and suppresses cell division) [8].

- Output: A learned gene regulatory network that instructs cells to form the target structure.

The table below compares key entropy measures used to quantify complexity in biological systems.

Table 1: Entropy Measures for Quantifying Biological Complexity

| Entropy Measure | Scale of Application | Key Principle | Primary Use Case |

|---|---|---|---|

| Compression-based Graph Entropy [15] [17] | Network | Quantifies the information content needed to encode a graph's structure after compression. | Characterizing the structural complexity and predictability of networks, such as cell interaction networks. |

| Multiscale Graph Entropy [15] | Multiscale Network | Extends compression entropy by applying graph reduction, showing how complexity evolves across hierarchical scales. | Uncovering consistent entropy profiles and structural regimes (stable, increasing, hybrid) in hierarchical biological networks. |

| Local Entropy-Weighted Binary Pattern [16] | Image/Texture | Uses two-dimensional entropy to weight local binary patterns, enhancing feature discriminability. | Classifying textures in biological images, such as microscopic or medical images. |

| Local Entropy (for Financial Patterns) [18] | Time Series / Data Cluster | Measures the uncertainty or purity of outcomes within a local cluster of data points. | Identifying high-quality, non-overlapping patterns with consistent behavior; adaptable for analyzing dynamic cell behavior. |

The table below outlines common challenges in computational research on cellular self-organization and potential solutions.

Table 2: Troubleshooting Guide for Computational Bioengineering

| Problem Area | Specific Challenge | Proposed Solution | Underlying Principle |

|---|---|---|---|

| Reproducibility [14] [19] | Inability to duplicate prior results with the same materials and data. | Ensure full computational reproducibility by sharing and re-running the exact analytical dataset, computer code, and metadata [14]. | Reproducibility is a minimum necessary condition for a finding to be believable and informative [14]. |

| Model Generalization | Model works in simulation but not with new experimental data or conditions. | Test for replicability by developing an independent implementation or applying the model to a new, independently collected dataset [13]. | Replicability assesses whether the finding can be duplicated under different experimental conditions [13]. |

| Structural Complexity | Single-scale complexity measures fail to capture hierarchical tissue organization. | Implement a multiscale entropy framework using spectral graph coarsening to analyze complexity across scales [15]. | Real-world networks display patterns that are only apparent at certain scales; a multiscale tool better captures structural complexity [15]. |

| Predictive Control | Inability to inversely program cells to achieve a specific target shape. | Use automatic differentiation to translate morphogenesis into an optimization problem and discover required gene network rules [5] [8]. | Automatic differentiation efficiently computes gradients of complex functions, enabling the inversion of a predictive model to dictate cellular programming [5]. |

Workflow and Pathway Visualizations

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Reagents for Optimizing Cell Self-Organization

| Tool / Reagent | Function / Explanation | Application Context |

|---|---|---|

| Automatic Differentiation [5] [8] | An algorithm that efficiently computes the gradient (sensitivity) of a complex function's output with respect to its inputs. | Core engine for inverting cell behavior models; determines how to change genetic inputs to achieve a target tissue output. |

| Spectral Graph Coarsening [15] | A graph reduction method that aggregates nodes, preserving the spectral properties of the original graph's Laplacian matrix. | Creates multiscale representations of cell networks for efficient entropy analysis and complexity profiling. |

| Compression-Based Entropy [15] [17] | An information-theoretic measure that estimates the structural complexity of a system by the length of its compressed binary encoding. | Quantifies the structural complexity and inherent predictability of cell interaction networks. |

| Differentiable Programming | A paradigm where entire simulation programs are made differentiable, allowing end-to-end gradient-based optimization. | Provides the framework for combining physical models of cell adhesion/mechanics with learnable gene networks. |

FAQs and Troubleshooting Guides

General Concepts

What are the key biological processes controlling cell self-organization? Cell self-organization is guided by the precise interplay of three core processes:

- Genetic Networks: Internal genetic programs that define a cell's potential behaviors and responses.

- Chemical Signaling: Molecules such as ligands and morphogens that transmit information between cells.

- Physical Forces: Mechanical cues like tension and compression that instruct cell fate and tissue shape.

A pivotal study revealed that chemical signals like BMP4 alone are insufficient to guide gastrulation; the transformation began only when cells were also under the correct mechanical conditions, demonstrating a fundamental interdependence [20].

Why should I use a computational model for my self-organization experiments? Computational models help translate the complex process of cell growth into an optimization problem a computer can solve. They can predict how small changes in genes or cellular signals affect the final tissue design, moving beyond trial-and-error approaches. A new framework using automatic differentiation can extract the rules cells follow to achieve a collective function, which could someday be used to design living tissues with specific shapes [5].

Troubleshooting Common Experimental Issues

My synthetic embryo model fails to generate the correct cell layers (e.g., mesoderm and endoderm). What could be wrong? This is a common issue where mechanical conditions are overlooked. As demonstrated in optogenetic studies, the failure to form mesoderm and endoderm can result from using unconfined, low-tension cell cultures even with proper BMP4 activation [20].

Troubleshooting Guide:

| Possible Cause | Diagnostic Check | Recommended Solution |

|---|---|---|

| Insufficient Mechanical Confinement | Check if cell colonies are allowed to spread freely without physical constraint. | Culture cells in confined colonies or embed them in tension-inducing hydrogels [20]. |

| Absence of Mechanosensory Protein Activity | Measure nuclear localization of YAP1. | Verify that nuclear YAP1, which acts as a molecular brake on gastrulation, is appropriately regulated by mechanical tension [20]. |

| Purely Biochemical Induction | Review protocol: are you relying solely on morphogens? | Ensure your experimental system integrates both biochemical (BMP4, WNT, Nodal) and physical priming steps [20]. |

I am analyzing a genetic network, but my tokenization of genomic "words" does not reveal meaningful patterns. What alternative methods exist? The common presuppositions that the genomic alphabet has only four letters and that words are always triplets can limit analysis. Consider an alternative "contextual tokenization" that uses a seven-symbol alphabet accounting for nucleotide degeneracy [21].

Comparison of Genomic Tokenization Methods:

| Method | Core Principle | Alphabet Size | "Word" Unit | Best Use Case |

|---|---|---|---|---|

| Frame Tokenization (TF) | Sliding window of fixed size n [21]. |

4 (A, T, C, G) | N-nucleotide sequences | Baseline analysis of texts with unknown punctuation. |

| Triplet Tokenization (TT) | Partitioning sequence into consecutive non-overlapping triplets [21]. | 4 (A, T, C, G) | Codons | Standard genetic code analysis. |

| Contextual Tokenization | Accounts for codon degeneracy based on nucleotide position [21]. | 7 (A, T, C, G, Y, X, ) | Variable length | Detecting deeper semiotic information and power-law distributions. |

Y (any purine), X (any pyrimidine), * (any nucleotide) [21].

How can I computationally extract the rules of self-organization from my experimental data? A modern approach uses automatic differentiation, a technique from machine learning, not to train neural networks but to analyze physics-based models of your system. This method allows you to efficiently compute how a small change in any part of a gene network would affect the collective behavior of the cell population, thereby "learning" the underlying rules [5].

Experimental Protocols

Detailed Protocol: Optogenetic Induction of Gastrulation with Mechanical Priming

This protocol allows remote control of embryonic development using light to activate key developmental proteins, enabling the study of mechanical forces [20].

Workflow Diagram: Optogenetic Gastrulation Induction

Key Materials:

- Human Embryonic Stem Cells (hESCs): The foundational cell type capable of forming all embryonic layers.

- Optogenetic BMP4 Construct: Genetically engineered system where BMP4 expression is controlled by light.

- Confinement Micropatterns/ Hydrogels: Substrates designed to impart specific mechanical tension to the cells.

- Immunofluorescence Assays: For detecting and quantifying the mechanosensory protein YAP1.

Methodology:

- Cell Engineering: Engineer human embryonic stem cells (hESCs) to express an optogenetic actuator for BMP4, allowing precise, light-controlled activation [20].

- Mechanical Priming: Plate the engineered cells into different mechanical environments:

- Control: Unconfined, low-tension culture dishes.

- Experimental: Confined colonies or hydrogel substrates that induce cellular tension [20].

- Optogenetic Induction: Apply a specific wavelength of light to the cultures to activate the BMP4 signaling pathway [20].

- Outcome Assessment: After 48-72 hours, analyze the cells for the presence of specific germ layers. Only samples under correct mechanical tension will robustly form mesoderm and endoderm. Quantify nuclear vs. cytoplasmic YAP1 as a readout of mechanical competence [20].

Detailed Protocol: Contextual Tokenization of Genomic Sequences

This method tests the hypothesis that the genomic alphabet contains seven semiotic symbols, leading to a more natural tokenization of genetic "words" that may follow a power-law distribution [21].

Workflow Diagram: Genomic Sequence Tokenization Analysis

Key Materials:

- Genomic Datasets: Coding sequences (CDS) from databases like NCBI. For robust analysis, use sequences of at least 3 Mbp in length [21].

- Computational Resources: Software or custom scripts (e.g., in Python/R) for sequence processing, tokenization, and statistical analysis.

- Reference Data: Artificially generated random nucleotide sequences of the same length as a negative control [21].

Methodology:

- Data Preparation: Download and compile coding sequences (CDS) from your organism of interest into a continuous text of 3 Mbp. Generate a control random sequence of the same length [21].

- Tokenization: Process the sequences using three different methods:

- Frame Tokenization (TF): Use a sliding window of a fixed size (e.g., 3-6 nucleotides) to extract "words" [21].

- Triplet Tokenization (TT): Divide the sequence into consecutive, non-overlapping three-nucleotide codons [21].

- Contextual Tokenization: Rewrite the sequence using a seven-symbol alphabet (A, T, C, G, Y, X, ), where the symbol meaning depends on its position in a codon (e.g., the third position in several codons is represented by '' meaning "any nucleotide"). Then, tokenize the sequence based on the occurrence of these asserted symbols [21].

- Frequency-Range Analysis: For each tokenization method, calculate the frequency of every unique word. Rank the words from most frequent (rank 1) to least frequent [21].

- Power-Law Assessment: Plot the frequency against the rank on a double logarithmic scale. A linear decay indicates conformity to Zipf's law, suggesting a meaningful, language-like structure. The method whose plot best fits a power law is likely the most semantically valid [21].

The Scientist's Toolkit: Research Reagent Solutions

| Research Area | Essential Reagent / Tool | Function |

|---|---|---|

| Genetic Networks | Contextual Tokenization Scripts | Enables analysis of genomic sequences with a seven-symbol alphabet to uncover deeper linguistic structures and power-law distributions [21]. |

| Chemical Signaling | Optogenetic Actuators (e.g., light-controlled BMP4) | Provides unprecedented spatiotemporal precision in activating specific signaling pathways during development, moving beyond static chemical addition [20]. |

| Physical Forces | Tension-Inducing Hydrogels / Micropatterned Substrates | Supplies the critical mechanical priming required for proper morphogenesis. Converts biochemical signals into successful, structured outcomes [20]. |

| Computational Integration | Automatic Differentiation Frameworks | A computational technique that efficiently inverts models to predict how to program cells (e.g., which genes to alter) to achieve a desired collective tissue shape [5]. |

| Mechanosensing Readouts | YAP/TAZ Localization Assays | A key biomarker (via immunofluorescence) to verify that cells are in a mechanically competent state (nuclear YAP) permissive for differentiation [20]. |

Methodologies in Action: Machine Learning and Hybrid Models for Predictive Control

Core Concepts: Automatic Differentiation in Computational Biology

What is Automatic Differentiation and why is it pivotal for modern computational biology?

Automatic Differentiation (AD) is a computational technique that uses chain rule to accurately and efficiently compute derivatives of functions expressed in a computer program. While it forms the backbone for training deep learning models by calculating gradients for optimization algorithms, its application has expanded significantly. In computational biology, AD translates the complex process of cell growth and self-organization into an optimization problem that computers can solve. It allows researchers to predict how small changes in genes or cellular signals affect the final tissue design or organizational outcome, enabling the inverse engineering of biological systems [5].

How is AD applied beyond neural networks in biological research?

AD is being repurposed for fundamental biological challenges. Harvard physicists have utilized AD to uncover the rules cells use to self-organize. Their computational framework can extract the genetic networks guiding cell behavior, influencing how cells chemically signal each other or the physical forces that make them stick together or pull apart. This approach provides a promising path toward achieving the predictive control needed to, in the future, engineer the growth of organs [5]. Similarly, AD has been used to model dynamics of chromosome organization in minimal bacterial cells, creating computational frameworks for systems of replicating bacterial chromosomes [22].

Technical Implementation Guide

What are the primary computational frameworks supporting AD?

Table: Key Deep Learning Frameworks Supporting Automatic Differentiation

| Framework | Primary Developer | Key Features | Best Suited For |

|---|---|---|---|

| TensorFlow | Production-scale deployment, extensive ecosystem | Industry applications, large-scale models | |

| PyTorch | Meta (Facebook) | Dynamic computation graphs, intuitive syntax | Research prototyping, academic use |

| JAX | Composable transformations, high-performance | Scientific computing, numerical research | |

| MXNet | Apache Foundation | Multi-language support, scalable distributed computing | Cross-platform applications |

These frameworks provide the essential infrastructure for implementing AD in both neural network training and biological system modeling [23].

What are the essential research reagents and computational tools?

Table: Essential Research Reagent Solutions for Cell Behavior Experiments

| Reagent/Tool | Function/Purpose | Application Example |

|---|---|---|

| Human Induced Pluripotent Stem Cells (hiPSCs) | Starting biological material for differentiation studies | Generating cortical neural networks |

| Crosslinked Gelatin Nanofiber Membranes | Substrate providing stiffness and permeability modulation | Promoting self-organization of neural clusters |

| CEN-SELECT System | Combines centromere inactivation with selection marker | Studying chromosome segregation errors |

| Associative GRN Model (AGRN) | Neural network-based framework storing gene expression profiles | Modeling cell-fate decisions and development |

| Variational Autoencoder (VAE) | Unsupervised learning for feature extraction and clustering | Analyzing cellular response to mechanical stimuli |

These tools enable both wet-lab experimentation and computational modeling of cell behavior [24] [25] [26].

Troubleshooting Common Experimental Challenges

Why does my model fail to predict accurate cell behavior patterns?

Issue: Models producing biologically implausible cell organization patterns or failing to converge.

Solutions:

- Verify Data Quality: Ensure single-cell expression data is properly normalized and batch effects are corrected. In calcium signaling studies, implement rigorous background subtraction and smoothing of fluorescence traces [26].

- Check Model Capacity: Increase network complexity gradually. For gene regulatory networks, ensure the associative memory model has sufficient capacity to store all developmental stage vectors [25].

- Adjust Optimization Parameters: Modify learning rates and regularization. Biological systems often require different optimization strategies than standard computer vision tasks.

- Validate with Known Pathways: Test models on well-established biological pathways before applying to novel systems.

How can I improve synchronization in neural network formation?

Issue: Differentiated neural cells fail to form synchronous clusters with coordinated activities.

Solutions:

- Optimize Substrate Properties: Use arrayed monolayers of crosslinked gelatin nanofiber membranes rather than glass substrates, as they provide better modulation of stiffness and permeability [24].

- Implement Automated Differentiation: Utilize automated systems to avoid undesired shaking that critically affects formation of synchronous neural clusters [24].

- Extend Differentiation Period: Allow sufficient time for neural precursor cells to develop inter-connected cortical neural clusters, typically requiring long-term culture maintenance.

- Verify Precursor Cell Quality: Ensure neural precursor cells are properly derived from hiPSCs with appropriate marker expression before plating on nanofiber membranes.

What are common data visualization pitfalls in cell behavior analysis?

Issue: Visualization fails to effectively communicate patterns in single-cell data or developmental trajectories.

Solutions:

- Apply Appropriate Color Palettes:

- Use qualitative palettes with varying hues for categorical data (e.g., different cell types)

- Use sequential palettes with varying luminance for continuous numeric data (e.g., expression levels)

- Use diverging palettes for data with a categorical boundary (e.g., upregulated/downregulated genes) [27]

- Limit Color Usage: Stick to seven or fewer colors in a single visualization to avoid overwhelming viewers [28].

- Ensure Accessibility: Check color choices for compatibility with color vision deficiencies by using tools that simulate different forms of colorblindness [27].

- Maintain Consistency: Use the same color associations throughout related visualizations (e.g., orange for safety performance, green for profit) [28].

Experimental Protocols

Protocol 1: Automated Differentiation of hiPSCs toward Synchronous Neural Networks

Purpose: Generate regular, inter-connected cortical neural clusters with synchronized activities from hiPSCs [24].

Materials:

- Human induced pluripotent stem cells (hiPSCs)

- Honeycomb microframe array with monolayer of crosslinked gelatin nanofiber membranes

- Neural induction medium

- Cell culture equipment with automated environmental control

Methodology:

- Neural Precursor Cell Derivation: Differentiate hiPSCs into neural precursor cells (NPCs) using standard neural induction protocols.

- Array Seeding: Place NPCs on the nanofiber membranes within honeycomb compartments.

- Automated Differentiation: Maintain cultures in automated system for extended period (typically 4-6 weeks) with minimal disturbance.

- Activity Monitoring: Assess neural activities through calcium imaging or electrode arrays to confirm synchronization.

Key Considerations:

- Most cells should localize to center areas of honeycomb compartments due to substrate modulation

- Regular, inter-connected clusters indicate successful self-organization

- Neural activities should show synchronization across clusters

- Compare against control substrates (glass, nanofiber-covered glass) to verify efficiency

Protocol 2: Chromosome Segregation Error Analysis Using CEN-SELECT

Purpose: Systematically interrogate structural landscape of missegregated chromosomes and their genomic consequences [29].

Materials:

- DLD-1 colorectal cancer cells (or other p53-inactivated line)

- CENP-A mutant cell lines with auxin-inducible degron system

- CRISPR/Cas9 components for NeoR gene insertion

- G418 selection antibiotic

- Fluorescence in situ hybridization (FISH) probes

Methodology:

- Cell Line Engineering:

- Insert neomycin-resistance gene (NeoR) at Y-chromosome AZFa locus using CRISPR/Cas9

- Integrate single-copy, doxycycline-inducible CENP-A constructs

Centromere Inactivation:

- Add indole-3-acetic acid (IAA) and doxycycline (DOX) to induce CENP-A degradation and replacement with mutant

Selection and Recovery:

- Apply G418 selection to identify cells retaining Y chromosome

- Wash out drugs to allow centromere reactivation

Structural Analysis:

- Perform metaphase spread preparations with chromosome painting

- Conduct whole-genome sequencing to identify rearrangement types

Key Considerations:

- Expect ~59% of cells to undergo Y chromosome loss within three days of centromere inactivation

- ~56% of retained Y chromosomes should localize to micronuclei

- Transient inactivation with selection reduces clonogenic survival by ~89%

- Majority of recovered cells (>90%) should show Y chromosome retention in main nucleus

Signaling Pathways and Experimental Workflows

Developmental Gene Regulatory Network Framework

Cell Behavior Analysis Pipeline Using Variational Autoencoders

Advanced Applications and Future Directions

How can I adapt these methods for drug development applications?

Target Identification: Use AD-optimized models to identify critical regulatory nodes in disease-associated gene networks. The AGRN framework can predict which transcription factors drive pathological cell states, suggesting intervention points [25].

Toxicity Screening: Implement chromosome segregation analysis to assess genomic instability potential of candidate compounds. The CEN-SELECT system provides a sensitive measure of structural chromosomal abnormalities [29].

Mechanism of Action Studies: Apply VAE-based analysis to classify cellular response patterns to different drug treatments, connecting short-term signaling events with long-term outcomes [26].

What are the computational requirements for implementing these approaches?

Hardware Considerations:

- GPUs: Essential for training large models, particularly for VAEs processing thousands of time series

- Memory: 16GB+ RAM recommended for whole-cell modeling and chromosome dynamics

- Storage: High-speed SSDs for large imaging datasets and sequencing files

Software Stack:

- Deep Learning Frameworks: TensorFlow or PyTorch for AD implementation

- Specialized Libraries: Cell tracking algorithms (modified Crocker and Grier), bioinformatics tools

- Visualization: Seaborn/Matplotlib for data visualization with perceptually uniform colormaps

As AD continues to bridge machine learning and biological discovery, these troubleshooting guidelines and experimental frameworks provide researchers with practical tools to advance the computational understanding of cell self-organization and behavior.

The integration of Agent-Based Modeling (ABM) with deep learning represents a paradigm shift in computational biology, moving from traditional, rule-based simulations to intelligent, predictive systems that can learn directly from experimental data. These hybrid frameworks are designed to optimize the understanding and control of cellular self-organization, a critical process in tissue development and regenerative medicine [5] [30] [8].

The following table summarizes the key characteristics of the primary computational frameworks used in this domain.

| Framework Name/Type | Primary Methodology | Key Application in Cell Self-Organization | Core Advantage |

|---|---|---|---|

| Differentiable Programming [5] [8] | Automatic Differentiation | Discovers genetic network rules for target morphogenesis (e.g., cluster elongation). | Translates cellular organization into an optimization problem; enables reverse-engineering of desired tissue shapes. |

| ABM + Deep Reinforcement Learning (DDQN) [30] | Double Deep Q-Network (DDQN) | Predicts dynamic cell migration (e.g., barotaxis) in response to environmental pressure gradients. | Learns cell behavior directly from experimental data without pre-defined rules; generalizes to new geometries. |

| NVIDIA PhysicsNeMo [31] | Physics-Informed Neural Networks (PINNs), Graph Neural Networks (GNNs) | Building scalable AI surrogate models and digital twins for biological systems. | Provides an open-source, enterprise-scale platform for combining physics-driven causality with simulation/observed data. |

| Generative AI + Active Learning [32] | Variational Autoencoder (VAE) with nested Active Learning cycles | Context: Drug Design - Optimizes molecular structures for target engagement (e.g., for CDK2, KRAS). | Generates novel, synthesizable, drug-like molecules by iteratively refining predictions with physics-based oracles. |

Frequently Asked Questions (FAQs)

Framework Selection and Design

Q1: When should I choose an ABM with Reinforcement Learning (RL) framework over a Differentiable Programming approach for my cell organization project?

The choice hinges on the specific biological question and the nature of the available data.

- ABM with RL is particularly powerful for modeling dynamic decision-making processes of individual cells in complex, heterogeneous environments. For example, it has been successfully used to model how a single cell decides its migration path based on continuously sensed pressure gradients (barotaxis) in a microfluidic device [30]. Use this framework when you have high-resolution, time-lapse data tracking individual cell behaviors and want the model to learn the optimal policy for cell actions.

- Differentiable Programming excels at reverse-engineering the underlying rules—often genetic or biochemical networks— that lead to an emergent tissue-level shape. The Harvard SEAS framework, for instance, optimized parameters to make a cell cluster elongate horizontally by learning a rule where a receptor gene suppresses division in response to a source cell's signal [5] [8]. Choose this framework when you have a target macroscopic outcome (e.g., a specific tissue shape) and need to discover the microscopic rules that can achieve it.

Q2: What is the role of a physics-based model in these hybrid frameworks?

Physics-based models provide a crucial causal backbone that enhances the robustness and generalizability of data-driven AI models. They ground the learning process in established physical principles, which is especially important when experimental data is sparse or expensive to obtain.

- As an Oracle: In the generative AI drug design framework, physics-based molecular docking simulations act as an "affinity oracle" to evaluate and filter generated molecules, ensuring they are physically plausible and have high predicted binding affinity [32].

- As the Environment: In the ABM-RL model for cell migration, a Computational Fluid Dynamics (CFD) simulation calculates the pressure field within the microchannel. This physically accurate environment serves as the input signal that the cell agent senses and responds to [30].

- As a Constraint: In NVIDIA PhysicsNeMo, physics-informed neural networks (PINNs) directly incorporate physical laws (in the form of partial differential equations) into the loss function of the neural network, guiding it to produce solutions that are not only data-driven but also physically consistent [31].

Implementation and Troubleshooting

Q3: My ABM-RL model is failing to converge during training. What are the common pitfalls?

Failure to converge in an ABM-RL setup like the DDQN used for cell migration can stem from several issues [30]:

- Poorly Designed Reward Function: The reward function is the primary guidance for the RL agent. If it does not accurately and incrementally reflect the desired biological behavior, the agent will not learn effectively. For example, the barotaxis model used a reward function that encouraged movement towards a goal location (outlet), which was aligned with the higher pressure gradient.

- Inadequate State Representation: The information you provide to the agent about its environment (the "state") must be sufficient for decision-making. In the successful model, the state was represented by pressure readings at multiple equidistant points around the cell membrane, effectively mimicking cellular mechanosensing. An overly simplistic state representation would lack the necessary information.

- Hyperparameter Tuning: RL algorithms are sensitive to hyperparameters such as the learning rate, discount factor, and exploration-exploitation schedule (e.g., epsilon decay). These must be carefully tuned for the specific problem. Refer to the published parameters from the barotaxis study as a starting point [30].

Q4: How can I ensure the genetic rules discovered by my differentiable model are biologically plausible and experimentally testable?

The output of a differentiable model is a computational proof-of-concept. Translating it into biology requires careful design and validation.

- Incorporate Prior Knowledge: Constrain the search space of possible genetic networks based on known biology. The model should explore plausible interactions (e.g., activation, suppression) rather than arbitrary functions.

- Analyze the Learned Motif: Examine the discovered rule for interpretable patterns. The Harvard model, for example, found an elegant motif where a receptor gene activated by an external signal suppressed cell division [8]. This is a biologically plausible negative feedback loop.

- Design Validation Experiments: The model's output should directly suggest a wet-lab experiment. For instance, if the model identifies a key receptor gene, the follow-up experiment would involve knocking down that gene and observing if the predicted disruption in self-organization (e.g., failed elongation) occurs [5] [8].

Troubleshooting Guides

Problem: Model Fails to Generalize to New Experimental Conditions

Symptoms: The model performs well on its training data but produces inaccurate predictions when applied to a new geometry, a different protein target, or slightly altered biochemical conditions.

Possible Causes and Solutions:

| Cause | Solution |

|---|---|

| Overfitting to Training Data | Implement stronger regularization techniques (e.g., L1/L2 regularization, dropout) within your neural networks. For generative models, actively promote diversity by using filters that penalize molecules too similar to those in the training set [32]. |

| Insufficient Physics Constraints | Move towards a more strongly physics-informed framework. Use NVIDIA PhysicsNeMo to build models that hard-code physical laws, or use physics-based simulations (CFD, molecular docking) as oracles to ground the predictions [31] [30] [32]. |

| Narrow Training Data Distribution | Ensure your training data encompasses a wide range of variability. Use data augmentation techniques for images or simulation data. In an AL cycle, explicitly sample from diverse regions of the parameter space to build a more robust model [32]. |

Problem: Computationally Prohibitive Training Times

Symptoms: Training a single model takes days or weeks, severely slowing down the research iteration cycle.

Possible Causes and Solutions:

| Cause | Solution |

|---|---|

| Inefficient Data Loading and Preprocessing | Utilize GPU-accelerated data pipelines (e.g., NVIDIA DALI) to ensure the GPU is never idle waiting for data. Precompute and cache expensive simulation results where possible. |

| Suboptimal Hardware Utilization | Leverage multi-GPU and distributed training frameworks. NVIDIA PhysicsNeMo is explicitly designed for scalable, multi-node training, enabling you to handle problems like a "50 million node mesh" [31]. |

| Overly Complex Model for the Task | Start with a simpler model architecture (e.g., a simpler NN in your ABM) and increase complexity only if needed. Consider using a pre-trained model from the NVIDIA NGC catalog and fine-tuning it for your specific problem, which can drastically reduce training time [31]. |

Experimental Protocols & Workflows

Workflow 1: Differentiable Programming for Optimizing Cell Cluster Morphogenesis

This protocol is based on the research from Harvard SEAS that reframes cellular self-organization as an optimization problem [5] [8].

Title: Differentiable Morphogenesis Workflow

Detailed Steps:

- Define Target Morphology: Quantitatively specify the desired outcome of the cell cluster, such as "achieve a horizontal elongation with a length-to-width ratio of 3:1" [5] [8].

- Initialize Computational Model:

- Build a simulation with two cell types: source cells (stationary, emit a growth factor) and proliferating cells (respond to the signal) [8].

- Parameterize the gene regulatory network that controls how proliferating cells respond to the growth factor. These parameters are the "knobs" the optimization will adjust.

- Run Simulation: Execute the model to simulate the growth and organization of the cell cluster over time.

- Calculate Loss: Compare the final simulated shape of the cluster to the target morphology defined in Step 1 using a loss function (e.g., Mean Squared Error on the shape metrics).

- Apply Automatic Differentiation: Use the automatic differentiation engine to compute the gradient of the loss function with respect to every parameter in the gene network. This identifies how each parameter influenced the final shape [5].

- Update Parameters: Adjust the gene network parameters using gradient-based optimization (e.g., Adam optimizer) to minimize the loss.

- Iterate: Repeat steps 3-6 until the simulation consistently produces the target morphology. The final set of parameters represents the learned genetic rules for achieving that shape.

Workflow 2: ABM with Deep RL for Predicting Cell Migration

This protocol outlines the process for training an intelligent agent to replicate cell migration behavior, as demonstrated in barotaxis research [30].

Title: ABM Reinforcement Learning Workflow

Detailed Steps:

- Define Microenvironment: Create a 2D or 3D geometry representing the experimental setup (e.g., a microfluidic channel with a bifurcation) [30].

- Compute Physics Field: Perform Computational Fluid Dynamics (CFD) simulations on the geometry to calculate the spatial pressure field, P(x), which will act as the environmental cue [30].

- Initialize ABM and RL Agent:

- ABM: Set up a simulation where a single cell agent can move through the environment.

- RL Agent: Implement a Double Deep Q-Network (DDQN). The agent's state is a vector of pressure values sensed at multiple points around the cell. Its actions are discrete movement directions [30].

- Training Loop:

- Observe: The cell agent senses the local pressure field.

- Act: The DDQN's neural network processes the state and outputs a probability for each action. The agent selects a migration direction (e.g., using an epsilon-greedy policy).

- Execute: The chosen action is executed in the ABM, moving the cell to a new location.

- Reward: The agent receives a reward. In the barotaxis model, this was based on moving closer to the goal (outlet). A large positive reward is given for success [30].

- Learn: The experience (state, action, reward, next state) is stored in a replay memory. The DDQN is periodically trained by sampling batches from this memory to update the network weights, improving its decision-making policy.

- Validation: After training, the model is validated by deploying the trained agent in new, unseen microdevice geometries and comparing its migration paths to experimental data [30].

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key computational and experimental "reagents" essential for working with these hybrid frameworks.

| Item Name | Type | Function / Application | Example / Note |

|---|---|---|---|

| Automatic Differentiation Engine [5] | Software Library | The core computational tool that calculates gradients for optimization; enables the "differentiable" in differentiable programming. | Built into deep learning frameworks like PyTorch and JAX. |

| NVIDIA PhysicsNeMo [31] | Open-Source AI Framework | Provides a specialized toolkit for building, training, and deploying physics-ML models at scale across various domains (CFD, structural mechanics). | Includes model architectures like PINNs, GNNs, and Fourier Neural Operators. |

| Computational Fluid Dynamics (CFD) Solver [30] | Physics Simulation Software | Calculates pressure and fluid flow fields in a microenvironment; provides physical input signals for ABM-RL agents. | Used to simulate pressure gradients in microfluidic devices for barotaxis studies. |

| Double Deep Q-Network (DDQN) [30] | Reinforcement Learning Algorithm | Stabilizes training and prevents overestimation of Q-values in deep RL; used to train intelligent cell agents. | An enhancement of the classic Deep Q-Network. |

| Variational Autoencoder (VAE) [32] | Generative AI Model | Learns a compressed, continuous latent representation of molecular structures; enables generation of novel molecules. | Integrated with active learning for drug design. |

| Active Learning (AL) Cycles [32] | Machine Learning Strategy | Iteratively refines a model by selecting the most informative data points for evaluation, maximizing efficiency. | Uses "oracles" (e.g., docking scores, synthesizability filters) to guide molecular generation. |

| Microfluidic Device [30] | Experimental Platform | Provides a controlled in vitro environment with defined geometries and pressure gradients to study cell migration (e.g., barotaxis). | Enables collection of high-quality, quantitative data for model training and validation. |

This case study details the application of a novel computational framework to achieve a fundamental morphogenetic process: the guided horizontal elongation of a cell cluster. The research is situated within the broader thesis of optimizing cell self-organization through computational frameworks, which aims to reverse-engineer the local rules that enable cells to collectively form complex, pre-specified structures. The core innovation lies in reframing biological development as an optimization problem solvable with machine learning tools, specifically automatic differentiation. This approach allows researchers to efficiently discover the parameters of genetic networks that cells need to execute so that the entire system develops into a target shape, moving beyond traditional trial-and-error methods in bioengineering [5] [8]. The successful design of an elongating tissue demonstrates the potential of this methodology to inform experimental work in regenerative medicine and drug development, ultimately aiming for the in vitro growth of functional tissues.

The challenge of morphogenesis—how cells self-organize into functional tissues and organs—is a major unsolved problem in biology. Traditional experimental approaches often rely on manually crafted, qualitative rules, which can be slow and lack robustness [33]. The research presented here addresses this by leveraging a powerful computational technique: automatic differentiation (AD).

Originally developed for training deep neural networks, AD consists of algorithms that can efficiently compute the derivatives (sensitivities) of a complex system's output with respect to its inputs [5] [34]. In this biological context, the entire process of tissue growth—including cell division, mechanical interaction, and chemical signaling—is modeled as a computer simulation that is made to be "differentiable." This means the computer can determine precisely how a tiny change in a single parameter (e.g., the strength of a connection in a genetic network) will influence the final, emergent shape of the tissue [5] [33].

The ultimate goal is inverse design: specifying a desired tissue shape (like a horizontally elongated cluster) and allowing the computer to work backwards to discover the local cellular rules that will achieve it. This is formulated as an optimization problem where a loss function, quantifying the difference between the simulated and target structures, is minimized using gradient-based methods [34] [33].

Experimental Protocol & Methodology

Core Computational Model

The following workflow outlines the key components and steps of the differentiable simulation used to engineer morphogenesis.

Diagram 1: Differentiable Simulation Workflow

Forward Model of Tissue Growth

The system models a tissue as a collection of cells interacting in a 3D space [33].

- Cell Representation & Mechanics: Cells are modeled as soft spheres that interact via a Morse potential, which includes both repulsive (for volume exclusion) and attractive (for cell-cell adhesion) components [34] [33].

- Cell Lifecycle: Cells grow at a fixed rate until they reach a maximum size, after which they can stochastically divide, producing two daughter cells with half the volume of the mother [34] [33].

- Chemical Signaling: Cells can secrete and sense diffusible chemical factors (morphogens), creating concentration gradients across the tissue [34].

The Internal Genetic Network

Each cell contains a simplified internal genetic network that processes local information to make decisions.

- Inputs: The network receives inputs including local concentrations of morphogens, estimates of chemical gradients, and mechanical stress signals [34].

- Processing: The network consists of a set of genes with trainable excitatory or inhibitory couplings.

- Outputs: The network's output determines the cell's behavioral policies, primarily its division propensity and rates of chemical secretion [33].

Inverse Design via Optimization

The key to the framework is its ability to perform inverse design.

- Loss Function: For horizontal elongation, the objective was to minimize the squared sum of the x-coordinates of all cells, effectively penalizing cells for being close to the center and encouraging spread along the x-axis [33].

- Automatic Differentiation: The entire simulation is written using the JAX library, making it automatically differentiable. This allows for the efficient calculation of the gradient of the loss function with respect to every parameter in the genetic network [34] [33].

- Handling Stochasticity: Score-based methods like REINFORCE are used to manage the stochasticity of cell division events. Rewards and penalties are assigned to division actions to guide the optimization process [33].

Key Research Reagents and Computational Tools

Table 1: Essential Components for the Computational Experiment

| Item Name | Type | Function in the Experiment |

|---|---|---|

| Source Cells | Biological Model Component | Non-proliferating cells that secrete a diffusible morphogen to establish a chemical gradient [33]. |

| Proliferating Cells | Biological Model Component | Cells that sense the morphogen and use their internal genetic network to modulate their division propensity based on its concentration [33]. |

| Diffusible Morphogen | Modeled Chemical Factor | A signaling molecule that creates a concentration gradient, providing positional information to cells within the cluster [33]. |

| Genetic Network | Computational Model | A trainable, internal program for each cell that maps sensory inputs (morphogen level) to behavioral outputs (division propensity) [34] [33]. |

| JAX Library | Computational Tool | A high-performance numerical computing library used to implement the differentiable simulation and calculate gradients via automatic differentiation [33]. |

| Morse Potential | Physical Model | Defines the mechanical interactions between cells, including adhesion and repulsion, within the molecular dynamics simulation [34] [33]. |

The Learned Mechanism for Horizontal Elongation

The optimization process discovered an elegant and interpretable genetic network that drives horizontal elongation. The system was composed of two cell types: stationary source cells (marked red) that secrete a morphogen, and proliferating cells (marked gray) that sense the morphogen and decide when to divide [33].

The following diagram illustrates the core signaling pathway and cellular response that emerged from the optimization.

Diagram 2: Morphogen Gradient Signaling Pathway

The mechanism functions as a form of chemical-based positional control:

- Gradient Establishment: Source cells secrete a diffusible factor, creating a steady-state chemical gradient that is highest near the source cells and decreases with distance [33].

- Inhibitory Signaling: The learned genetic network in proliferating cells contains a strong inhibitory link from the morphogen sensor to the division output. This means high morphogen concentration strongly suppresses cell division [33].

- Spatial Patterning of Growth: Due to this inhibition, proliferating cells located in regions of high chemical concentration (proximal to the source) exhibit low division rates. Conversely, cells in regions of low chemical concentration (distal to the source) experience less inhibition and thus divide at a higher rate [33].

- Emergent Elongation: This spatial bias in division propensity concentrates growth at the distal end of the cluster, pushing the tissue outward and away from the source cells, resulting in a self-reinforcing process of horizontal elongation [33].

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: The simulation fails to converge on an elongating shape. The cell cluster remains spherical. What could be wrong?

- A: This is often a problem with the initial conditions or the loss function. First, verify that your source cells and proliferating cells are correctly initialized in their distinct spatial compartments. Second, check the implementation of your loss function; for horizontal elongation, it should penalize the squared x-coordinates. Finally, review the learning rate of your optimizer—a rate that is too high can cause instability, while one that is too low can result in no learning.

Q2: The learned genetic network is overly complex and not interpretable. How can I simplify it?

- A: The research team employed network pruning as a standard post-processing step [34] [33]. After optimization, you can systematically remove all edges (connections) in the genetic network that have weights below a specific threshold. This simplifies the network to its functional "backbone" while preserving the optimized tissue-level behavior, making the core regulatory motif (like the strong inhibitory link) much clearer.

Q3: The growth is elongated but not robust; small perturbations cause malformed structures. How can I improve robustness?

- A: Robustness is a key challenge. Introduce stochasticity during the training process itself. Instead of training on a single, fixed initial condition, run the optimization across multiple simulations with minor variations in the starting configuration (e.g., slight random displacements of initial cell positions). This forces the model to learn a general policy that works across a family of similar scenarios, not just one specific instance.

Q4: How can I validate this computational model with real biological experiments?