Network Robustness to Perturbation: Evaluation Frameworks and Biomedical Applications for Resilient System Design

This article provides a comprehensive framework for evaluating the robustness of diverse network architectures against perturbations, tailored for researchers and drug development professionals.

Network Robustness to Perturbation: Evaluation Frameworks and Biomedical Applications for Resilient System Design

Abstract

This article provides a comprehensive framework for evaluating the robustness of diverse network architectures against perturbations, tailored for researchers and drug development professionals. It explores foundational concepts like effective graph resistance and attack tolerance, details cutting-edge methodological advances including genetic algorithms and convolutional neural networks for robustness optimization, and addresses critical troubleshooting aspects such as certifying Graph Convolutional Network (GCN) reliability and mitigating scalability limits. The content further synthesizes validation strategies and comparative analyses of real-world biomedical AI platforms, offering actionable insights for building more robust, reliable computational and biological networks in clinical research and development.

Foundations of Network Robustness: Core Concepts, Metrics, and Attack Tolerance

Network robustness is a pivotal property determining a system's ability to maintain core functions amidst perturbations, with evaluation approaches spanning from structural integrity to functional sustainability assessments. In biological and pharmaceutical contexts, precisely defining and measuring robustness enables researchers to predict cellular response to genetic perturbations, drug treatments, and disease states. Structural integrity focuses predominantly on topological metrics—how network connectivity persists as components fail. In contrast, functional sustainability emphasizes the maintenance of biological processes, signaling flows, and phenotypic outcomes despite perturbations [1] [2]. This distinction is particularly crucial in drug development, where a therapeutic agent might disrupt a protein-protein interaction network's structure without immediately compromising its essential biological functions, or vice versa. The integration of these perspectives provides a more comprehensive framework for evaluating how biological networks respond to genetic, environmental, and pharmacological perturbations, ultimately enabling more predictive models of drug efficacy and toxicity.

The evaluation landscape encompasses diverse methodologies, from percolation-theoretic approaches that model cascade failures in network connectivity to machine learning frameworks that predict functional degradation from topological features [1] [3]. For research scientists, selecting appropriate robustness metrics is foundational to experimental design, influencing whether studies capture mere structural vulnerability or genuine functional collapse in targets ranging from intracellular signaling networks to epidemiological models. This guide systematically compares these approaches, their experimental implementations, and their applications in pharmaceutical research.

Quantitative Comparison of Robustness Evaluation Frameworks

Table 1: Core Methodologies for Network Robustness Evaluation

| Evaluation Approach | Key Metrics | Applicable Network Types | Data Requirements | Strengths | Limitations |

|---|---|---|---|---|---|

| Topological Analysis [1] | Largest Connected Component (LCC) size, Average path length, Connectivity measures | Large-scale topological networks (protein-protein, genetic) | Network adjacency matrix | Computational efficiency, Clear structural interpretation | May not reflect functional outcomes, High computational cost for dynamic networks |

| Percolation Theory [3] | Critical node fraction, Phase transition point | Random graphs, Erdős-Rényi models | Network structure and edge probability | Theoretical foundation, Statistical properties | Primarily for large networks, Assumes network collapse state |

| Matrix Spectra Methods [1] | Spectral radius, Spectral gap, Natural connectivity | Connected networks with defined adjacency | Matrix representations | Straightforward computation | Relationship with robustness not well-established for all biological networks |

| Functional Resilience Assessment [2] | Ecosystem service maintenance, Functional connectivity | Ecological networks, Supply chain networks | Functional capacity data, Flow measurements | Captures system performance | Complex to quantify biological functions |

| Dynamic Least-Squares MRA (DL-MRA) [4] | Edge sign and directionality, Feedback loop integrity, Dynamic behavior | Small signaling networks (2-3 nodes), Gene regulatory networks | Perturbation time-course data | Captures dynamic, signed directed edges with cycles | Scales linearly but challenging for large networks |

| Convolutional Neural Networks [1] | Predicted LCC sequence (attack curves) | Various synthetic and empirical networks | Training datasets of network structures | Instantaneous evaluation after training, Generalization capability | Performance depends on training data and specific scenarios |

Table 2: Performance Comparison Across Network Types

| Network Architecture | Optimal Evaluation Method | Robustness to Random Failure | Robustness to Targeted Attack | Experimental Validation Status |

|---|---|---|---|---|

| Erdős-Rényi Random Graphs [3] | Finite-size percolation theory | High for dense graphs | Low (vulnerable to high-degree removal) | Theoretically and empirically validated |

| Scale-Free Networks [1] | Topological analysis with targeted attacks | High | Very low (vulnerable to hub targeting) | Empirical studies ongoing |

| Small Biological Networks [4] | DL-MRA | Varies with connectivity patterns | Varies with feedback loops | Validated with simulated data |

| Ecological Networks [2] | Functional-structural integration | Moderate | High for central corridor removal | Case study in Yangtze River Delta |

| Intracellular Signaling Networks [4] | DL-MRA with partial perturbations | High for redundant pathways | Moderate to low | Limited to specific pathways |

| Gene Regulatory Networks [4] [5] | DL-MRA with knockdown data | Low for minimal connectivity | High for master regulators | Validated in inference contexts |

Experimental Protocols for Robustness Assessment

Topological Robustness Evaluation via Largest Connected Component

Objective: Quantify structural robustness by measuring the largest connected component size during progressive node removal.

Methodology:

- Network Preparation: Represent the biological network as a graph G(N, E) with N nodes and E edges. For protein-protein interaction networks, nodes represent proteins and edges represent interactions.

- Removal Simulation: Implement two primary removal strategies:

- Random Failure: Remove nodes uniformly at random

- Targeted Attack: Remove nodes in descending order of degree (highest degree first)

- LCC Calculation: After each removal iteration (from p=0 to p=(T-1)/T, where T is total removal steps), compute the relative size of the LCC using:

( Gn(p) = np/N )

where ( n_p ) is the LCC size after removing proportion p of nodes [1].

- Robustness Quantification: Calculate the robustness value R as the area under the curve of LCC sizes across all removal steps:

( R = \frac{1}{T} \sum{p=0}^{(T-1)/T} Gn(p) ) [1].

Applications: This method is particularly valuable for assessing structural vulnerability in protein interaction networks and metabolic networks, identifying critical nodes whose removal fragments the network.

Dynamic Least-Squares Modular Response Analysis

Objective: Infer signed, directed network structures with cycles from perturbation time-course data.

Methodology:

- Experimental Design: For an n-node network, perform n perturbation time-course experiments, with one perturbation per node [4].

- Perturbation Implementation: Apply reasonably specific perturbations (e.g., siRNA knockdown, small molecule inhibition) that predominantly affect the targeted node.

- Time-Course Measurement: Measure node activities (e.g., phosphorylation states, transcription levels) at 7-11 evenly distributed time points [4].

- Jacobian Matrix Estimation: Formulate ordinary differential equations describing node dynamics:

( \frac{dxi}{dt} ≡ fi(x1(k),x2(k),...,xn(k),S{i,ex},S_{i,b}) )

where ( xi(k) ) is node i activity at time point ( tk ), and estimate the Jacobian matrix J containing the network edge weights [4]:

( J ≡ \begin{pmatrix} F{11} & F{12} \ F{21} & F{22} \end{pmatrix} ≡ \begin{pmatrix} \frac{\partial f1}{\partial x1} & \frac{\partial f1}{\partial x2} \ \frac{\partial f2}{\partial x1} & \frac{\partial f2}{\partial x2} \end{pmatrix} ).

- Network Inference: Use least-squares fitting to estimate Jacobian elements from perturbation responses, capturing edge directionality, sign, and strength.

Applications: DL-MRA is particularly effective for reconstructing small signaling networks and gene regulatory circuits from phosphoproteomic or transcriptional data, capturing feedback and feedforward loops critical to biological function.

CNN-Based Robustness Prediction

Objective: Leverage convolutional neural networks to predict network robustness from structural features.

Methodology:

- Training Data Generation: Create diverse network topologies and compute their attack curves (LCC sequences) through simulation.

- Network Representation: Convert each network's adjacency matrix into an image-like representation suitable for CNN processing [1].

- Model Architecture: Implement a CNN with Spatial Pyramid Pooling (SPP-net) to handle networks of different sizes, using the attack curves and robustness values as training targets [1].

- Model Training: Optimize CNN parameters to minimize the difference between predicted and simulated attack curves.

- Robustness Prediction: Apply the trained model to new network structures for instantaneous robustness evaluation.

Applications: This approach enables rapid screening of network robustness across large datasets, such as comparing vulnerability across multiple disease-associated networks or synthetic biological circuits.

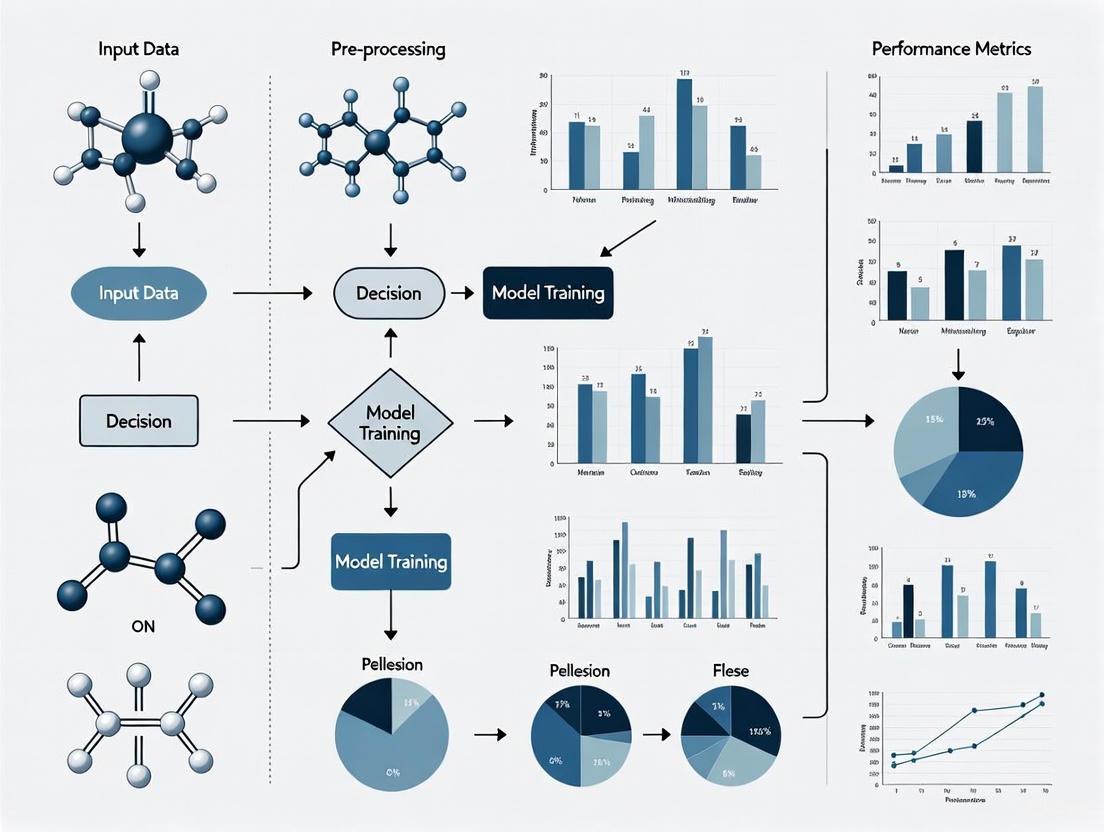

Visualization of Robustness Assessment Workflows

Diagram 1: Structural robustness assessment methodology for biological networks.

Diagram 2: Functional robustness evaluation using perturbation time-course data.

Table 3: Key Research Reagents and Computational Tools for Network Perturbation Studies

| Reagent/Tool | Function | Application Context | Considerations |

|---|---|---|---|

| siRNA/shRNA Libraries [5] | Gene-specific knockdown | Low and high-dimensional phenotyping screens | Specificity controls essential; multiple siRNAs per gene recommended |

| CRISPR-Cas9 Knockout Collections [5] | Complete gene knockout | Essentiality screens, synthetic lethality | Off-target effects monitoring required |

| Small Molecule Inhibitors [4] | Targeted protein inhibition | Signaling network perturbation | Dose optimization critical for specificity |

| Phospho-Specific Antibodies [4] | Monitoring signaling node activity | Dynamic network inference | Validation for specific modifications needed |

| Linkage Mapper Toolbox [2] | Ecological network construction | Structural connectivity analysis | Adapted for biological network mapping |

| NetworkX Python Library [2] | Network analysis and metrics | Structural stability calculation | Flexible for custom metric implementation |

| DL-MRA Computational Framework [4] | Network inference from perturbations | Signaling and regulatory network reconstruction | Handles small networks (2-3 nodes) effectively |

| CNN with SPP-net Architecture [1] | Robustness prediction from structure | Large network vulnerability screening | Requires training dataset generation |

The comprehensive evaluation of network robustness requires integration of both structural and functional approaches, as these complementary perspectives reveal different aspects of biological system vulnerability. Structural metrics efficiently identify topological fragility points, while functional assessments capture the dynamic, context-dependent nature of biological resilience. For drug development professionals, this integrated approach enables more predictive models of how therapeutic interventions might propagate through biological systems, potentially identifying both efficacious targets and vulnerable rescue pathways that could lead to resistance.

Future methodologies will likely combine high-dimensional phenotyping with advanced network inference to map the complex relationship between structural perturbation and functional collapse. As network biology continues to inform therapeutic discovery, robust evaluation frameworks will be essential for predicting intervention outcomes across diverse biological contexts, from cancer signaling networks to metabolic disease states. The experimental protocols and comparative analyses presented here provide a foundation for selecting appropriate robustness assessment strategies based on network type, available data, and research objectives.

The evaluation of network robustness is a critical task in network science, with direct applications in protecting infrastructures such as power grids, transportation systems, and communication networks from random failures and targeted attacks. Robustness fundamentally measures a network's ability to maintain structural integrity and functional performance when components fail or are deliberately compromised. While numerous metrics exist to quantify this property, three have emerged as particularly fundamental: Effective Graph Resistance, the Size of the Largest Connected Component (LCC), and Algebraic Connectivity. Each metric captures distinct yet complementary aspects of network robustness, from structural cohesion to spectral properties and electrical analogies.

Each metric operates on different theoretical foundations and captures unique aspects of network robustness. Effective Graph Resistance draws from electrical network theory, modeling the network as a system of resistors and quantifying overall connectivity. Algebraic Connectivity, derived from spectral graph theory, measures how difficult it is to disconnect a graph into components. The Largest Connected Component represents a more direct, empirical measure of functional network size after damage. Understanding the strengths, limitations, and appropriate application contexts for each metric is essential for researchers evaluating network architectures across scientific domains, from biological networks to critical infrastructures.

Metric Fundamentals and Theoretical Foundations

Effective Graph Resistance

Effective Graph Resistance, also known as total effective resistance or Kirchhoff index, originates from electrical circuit theory applied to graph structures. The metric imagines a graph as an electrical network where each edge represents a 1 Ohm resistor. The effective resistance between any two nodes is then computed as the potential difference needed to pass 1 Ampere of current between them. The total effective graph resistance is the sum of these pairwise resistances across all node pairs in the network [6] [7].

Mathematically, Effective Graph Resistance is computed using the pseudoinverse of the Laplacian matrix. For a graph (G) with Laplacian matrix (L), the resistance between nodes (a) and (b) is given by (R{ab} = (ea - eb)^T L^+ (ea - eb)), where (L^+) denotes the Moore-Penrose pseudoinverse of (L), and (ei) is the standard basis vector with 1 in the (i)-th position and 0 elsewhere [6]. The total effective resistance is then (R{total} = \sum{a

This metric exhibits several important properties: it decreases when edges are added (improving robustness), is monotonic with edge additions, and incorporates information about both the number of paths between nodes and their lengths. Intuitively, the effective resistance becomes small when there are many short paths between two vertices, meaning removing an edge hardly disrupts connectivity as alternative paths exist [7].

Algebraic Connectivity

Algebraic Connectivity, denoted as (a1(G)) or (\lambda2), is defined as the second-smallest eigenvalue of the Laplacian matrix of a graph. This metric was introduced by Fiedler and serves as a fundamental spectral measure of connectivity [8]. For a connected graph, the smallest eigenvalue is always zero, making the second-smallest eigenvalue crucially important as it quantifies how well-connected the graph is overall [9].

A key property of Algebraic Connectivity is that it is positive if and only if the graph is connected. Higher values indicate greater robustness, as they correspond to graphs that are more difficult to disconnect. The metric also relates directly to numerous graph properties; for instance, it provides bounds on the graph's diameter, vertex connectivity, and expansion properties [9] [8].

For d-dimensional generic frameworks, researchers have generalized the concept to generalized algebraic connectivity ((ad(G))), which extends the applicability to problems in structural rigidity, sensor network localization, and formation control in multi-robot systems [9]. In one dimension, (a1(G)) coincides with the standard algebraic connectivity, where generic rigidity and connectivity are equivalent [9].

Largest Connected Component (LCC)

The Largest Connected Component metric measures the relative size of the biggest connected subgraph remaining after node or edge removals. Unlike the previous spectral measures, LCC is a direct, intuitive structural measure that quantifies the proportion of nodes that remain mutually accessible after network damage [1] [10].

The LCC size, often denoted as (Gn(p)), where (p) is the proportion of removed nodes, forms the basis for the robustness index (Rn = \frac{1}{T} \sum{p=0}^{(T-1)/T} Gn(p)), which averages the LCC size across all failure stages [1]. This metric is particularly valuable because it directly reflects the functional scale of the network's main body that maintains normal functionality after failures [1].

The LCC is computationally intensive to compute for large networks undergoing sequential attack, but provides the most straightforward visualization of network disintegration. It serves as the reference benchmark for many other robustness metrics and has become the most widely employed metric in empirical robustness evaluation [1].

Table 1: Fundamental Properties of Network Robustness Metrics

| Property | Effective Graph Resistance | Algebraic Connectivity | Largest Connected Component |

|---|---|---|---|

| Theoretical Basis | Electrical circuit theory | Spectral graph theory | Graph connectivity |

| Mathematical Formulation | (R{total} = \sum{a |

Second smallest eigenvalue of Laplacian matrix | Relative size of largest connected subgraph |

| Computational Complexity | (O(n^3)) due to pseudoinversion | (O(n^3)) for full eigen-decomposition | (O(n+m)) per failure scenario |

| Range of Values | ((0, \infty)) - lower is better | ([0, \infty)) - higher is better | ([0, 1]) - higher is better |

| Handles Disconnected Graphs | Yes | No (zero for disconnected) | Yes |

Experimental Methodologies and Protocols

Standard Attack Scenarios and Evaluation Frameworks

Researchers typically evaluate network robustness metrics under standardized attack scenarios to enable meaningful comparisons. These scenarios systematically remove network components while tracking metric responses:

- Random Node Failure (RNF): Nodes are removed in uniformly random order, simulating random equipment failures or errors [1].

- Malicious Node Attack with Highest Degree Adaptive Attack (HDAA): Nodes are removed in descending order of degree, recalculating degrees after each removal to simulate intelligent attacks targeting critical nodes [1].

- Random Edge Failure (REF): Edges are removed randomly, simulating random connection failures [1].

- Malicious Edge Attack with Highest Edge Degree Adaptive Attack (HEDAA): Edges are removed targeting those with highest centrality or connectivity measures [1].

The robustness evaluation process typically involves incremental and irreversible attacks, where network components are removed sequentially while measuring the metrics at each step. For statistical reliability, multiple runs (typically 100-500) are performed for random failure scenarios to account for process variability [10].

Robustness Surface Framework

To address the challenge of combining multiple robustness metrics, researchers have developed the robustness surface framework, which employs Principal Component Analysis (PCA) to extract the most informative robustness metric for a given failure scenario [10]. The process involves:

- Defining a set of percentage failures (P = {1\%, 2\%, ..., |P|\%}) and failure configurations (m)

- Computing an (m \times n) matrix (A_p) for each percentage failure (p), where (n) is the number of robustness metrics

- Calculating the covariance matrix (Cp) for each (Ap) and averaging them to obtain a unified covariance matrix (\overline{C})

- Computing eigenvectors (V) and eigenvalues (D) of (\overline{C}) and selecting the most relevant principal component (v)

- Normalizing (v) to obtain (\overline{v} = \frac{v}{t0^T v}), where (t0) is the set of metrics with no failures

- Computing the R-value as (R^ = t_p^T \overline{v}) for each failure scenario [10]

This framework allows comparison of network robustness across different failure scenarios and addresses the challenges of metric dimensionality unification and weight assignment [10].

Diagram 1: Experimental workflow for network robustness evaluation showing the sequential process from network input through attack simulation to result analysis.

Comparative Performance Analysis

Metric Behavior Under Different Attack Scenarios

Extensive experimental studies have revealed distinctive behaviors for each robustness metric under various attack scenarios:

Effective Graph Resistance demonstrates superior sensitivity in early failure stages, making it particularly valuable for detecting initial network degradation. Studies show it correlates strongly with node and link connectivity, often converging to a distribution identical to the minimal nodal degree in random graphs [8] [7]. Its electrical analogy provides intuitive explanations for network robustness - networks with lower total effective resistance maintain connectivity through multiple alternative paths, making them more resilient to both random and targeted attacks [7].

Algebraic Connectivity exhibits a sharp phase transition behavior, suddenly dropping to zero when the network becomes disconnected. This binary characteristic makes it particularly useful for identifying the critical threshold of network disintegration [8]. However, its inability to distinguish between different disconnected states (all having zero algebraic connectivity) limits its utility in advanced failure stages. Research has shown that algebraic connectivity increases with improving node and link connectivity, justifying its role as a robustness measure [8].

Largest Connected Component provides the most intuitive visualization of network disintegration, typically following a characteristic S-curve with gradual decline under random attacks and abrupt collapse under targeted attacks [1]. The LCC-based robustness index (Rn = \frac{1}{T} \sum{p=0}^{(T-1)/T} G_n(p)) provides a single scalar value that effectively captures the average performance across all failure stages, making it highly practical for network comparisons [1].

Computational Considerations and Scalability

Computational requirements vary significantly across the three metrics, impacting their practical application to large-scale networks:

Effective Graph Resistance faces the most severe computational challenges, requiring (O(n^3)) time for the pseudoinversion of the Laplacian matrix [7]. This cubic complexity limits direct application to networks with more than a few thousand nodes. Researchers have developed approximation techniques including combinatorial and algebraic connections to speed up gain computations, randomized sampling methods, and greedy heuristics to make the metric applicable to larger networks [7].

Algebraic Connectivity also requires (O(n^3)) operations for full eigen-decomposition, though specialized algorithms can compute only the second smallest eigenvalue more efficiently. For very large networks, approximation methods become necessary [8].

Largest Connected Component computation is relatively efficient, requiring (O(n+m)) time per failure scenario using breadth-first or depth-first search. However, when simulating multiple attack sequences and configurations, the computational burden can still become significant [1]. Recent approaches using convolutional neural networks with spatial pyramid pooling (SPP-net) have demonstrated promising results in predicting LCC sizes, potentially bypassing the need for extensive simulations [1].

Table 2: Experimental Performance Comparison Across Network Types

| Network Type | Effective Graph Resistance | Algebraic Connectivity | Largest Connected Component |

|---|---|---|---|

| Erdős-Rényi Random Graphs | Closely tracks minimal nodal degree; predicts connectivity accurately | Mean and variance can be estimated via minimum nodal degree approximation | Shows smooth decay under random attacks; abrupt collapse under targeted attacks |

| Scale-Free Networks | Highly sensitive to targeted attacks on hubs; reveals vulnerability | Rapid decrease when high-degree nodes are targeted | Exhibits robustness to random attacks but fragility to targeted attacks |

| Multilayer Networks | Captures inter-layer dependency robustness | Generalizes to inter-layer spectral properties | Effectively tracks cascading failures across layers |

| Regular Lattices | High resistance values indicating lower robustness | Low values reflecting structural rigidity | Gradual, predictable reduction under attacks |

| Real-World Infrastructure Networks | Identifies critical bottlenecks effectively | Provides early warning of connectivity loss | Most interpretable for practical assessment |

Research Reagents and Computational Tools

Essential Research Components

Network robustness research requires both conceptual components and computational implementations:

Graph Laplacian Matrix: Fundamental mathematical construct defined as (L = D - A), where (D) is the degree diagonal matrix and (A) is the adjacency matrix [6]. Serves as the foundation for both effective resistance and algebraic connectivity.

Moore-Penrose Pseudoinverse ((L^+)): Critical for computing effective graph resistance when the Laplacian is non-invertible [6]. Implemented via singular value decomposition or specialized combinatorial methods.

Eigenvalue Solvers: Computational algorithms for extracting specific eigenvalues (particularly the second smallest) of large sparse matrices without full decomposition [8].

Connected Component Algorithms: Efficient graph traversal methods (BFS/DFS) for tracking LCC size during attack simulations [1].

Attack Simulation Frameworks: Software environments for implementing RNF, HDAA, REF, and HEDAA scenarios with statistical analysis of results [1] [10].

Emerging Methodologies

Recent advances have introduced several innovative approaches to robustness evaluation:

Convolutional Neural Networks with SPP-net: Machine learning approach that treats adjacency matrices as images to predict attack curves, offering significant speed advantages once trained [1].

Robustness Surface (Ω) Framework: PCA-based methodology that unifies multiple metrics addressing dimensionality and weighting challenges [10].

Greedy Optimization Algorithms: Heuristic approaches for robustness improvement through optimal edge addition, using stochastic techniques to reduce computational complexity [7].

Higher-Order Network Models: Extended network representations incorporating simplex structures beyond pairwise interactions, requiring specialized robustness assessment techniques [11].

Diagram 2: Research toolkit for network robustness evaluation showing the relationship between mathematical foundations, computational tools, and theoretical frameworks.

The three robustness metrics—Effective Graph Resistance, Algebraic Connectivity, and Largest Connected Component—each provide valuable but distinct perspectives on network robustness. Effective Graph Resistance offers the most comprehensive theoretical foundation through its electrical analogy, capturing both the number and quality of alternative paths. Algebraic Connectivity serves as an excellent early warning indicator with its sharp transition at the critical disconnection point. The Largest Connected Component provides the most intuitive and practically relevant measure of functional network scale after damage.

Selection of appropriate metrics depends on research goals, network characteristics, and computational resources. For theoretical analysis of small to medium networks, Effective Graph Resistance provides unparalleled insights. For identifying critical thresholds, Algebraic Connectivity is optimal. For practical assessment of large-scale networks, particularly when computational efficiency is crucial, LCC-based measures remain the most viable option.

Table 3: Metric Selection Guidelines for Different Research Scenarios

| Research Scenario | Recommended Primary Metric | Supplementary Metrics | Rationale |

|---|---|---|---|

| Theoretical Analysis of Network Structure | Effective Graph Resistance | Algebraic Connectivity | Captures most comprehensive connectivity information |

| Large-Scale Network Practical Assessment | Largest Connected Component | Robustness index (R_n) | Computational efficiency and interpretability |

| Critical Threshold Identification | Algebraic Connectivity | LCC size | Sharp phase transition clearly identifies disintegration point |

| Robustness Optimization Algorithms | Effective Graph Resistance | Node/edge connectivity | Differentiable nature supports optimization approaches |

| Multilayer Network Analysis | All three metrics with adaptations | Multilayer extensions | Each captures different aspects of cross-layer robustness |

Emerging approaches that combine these metrics through unified frameworks like the robustness surface, or that leverage machine learning to predict metric behavior, represent promising directions for future research. As network complexity grows with higher-order interactions and multilayer structures, the development of more sophisticated robustness metrics that can efficiently handle these complexities while providing actionable insights will remain an active and critical research area.

In the field of network science, evaluating the robustness of various network architectures to perturbations is a fundamental task, particularly in domains like computational biology and drug discovery where networks model complex cellular interactions. Perturbations—changes to a network's structure—can be broadly categorized as either random failures or targeted malicious attacks. Random failures involve the stochastic removal of nodes or edges, simulating natural breakdowns or unbiased experimental noise. In contrast, targeted attacks deliberately remove the most critical nodes or edges (e.g., those with highest connectivity), mimicking coordinated adversarial action or the specific knockout of a key biological entity. Understanding how different network inference and reconstruction methods respond to these two perturbation types is critical for developing reliable models in biological research. This guide provides a structured comparison of these perturbation models, detailing their impact on network robustness and offering protocols for their experimental simulation.

Theoretical Foundations of Network Perturbations

Network robustness analysis typically models a system as a graph ( G = (V, E) ), where ( V ) represents nodes (e.g., genes, proteins) and ( E ) represents edges (e.g., interactions, regulatory relationships). Perturbations alter this structure by removing nodes or edges.

- Random Failures: This model removes nodes or edges uniformly at random. It simulates natural noise, experimental error, or unpredictable hardware failures. In biological contexts, it can represent random gene knockouts or unsystematic measurement inaccuracies in high-throughput experiments.

- Targeted Malicious Attacks: This model removes nodes or edges based on a specific strategy designed to maximize network disruption. Common strategies include targeting nodes with the highest degree (number of connections) or betweenness centrality (frequency of lying on shortest paths). In adversarial machine learning, this correlates with data poisoning attacks where malicious samples are injected into training data to corrupt the learned model [12].

The core difference lies in intent and execution: random failures are stochastic and unbiased, while targeted attacks are strategic and exploit the network's topological vulnerabilities. The cascading failure model, often studied in physical networks like power grids or underwater unmanned swarms, demonstrates how the removal of a critical node can overload and subsequently fail neighboring nodes, leading to a catastrophic collapse of network connectivity [13].

Quantitative Comparison of Robustness Under Perturbation

The performance of network inference methods varies significantly under different perturbation types and datasets. The following tables summarize quantitative findings from large-scale benchmarking efforts, which evaluated methods on both statistical and biologically-motivated metrics.

Table 1: Performance of Network Inference Methods on the K562 Cell Line Dataset Under Perturbation

| Method Category | Method Name | Precision (Biological) | Recall (Biological) | F1 Score (Biological) | Mean Wasserstein Distance | False Omission Rate (FOR) |

|---|---|---|---|---|---|---|

| Challenge (Interventional) | Mean Difference (Top 1k) | 0.241 | 0.219 | 0.229 | 0.081 | 0.781 |

| Challenge (Interventional) | Guanlab (Top 1k) | 0.238 | 0.233 | 0.235 | 0.074 | 0.792 |

| Observational | GRNBoost | 0.094 | 0.451 | 0.155 | 0.062 | 0.549 |

| Observational | NOTEARS (MLP) | 0.184 | 0.142 | 0.160 | 0.073 | 0.827 |

| Interventional | GIES | 0.166 | 0.147 | 0.156 | 0.070 | 0.831 |

| Interventional | DCDI-G | 0.175 | 0.152 | 0.163 | 0.071 | 0.823 |

Table 2: Performance of Network Inference Methods on the RPE1 Cell Line Dataset Under Perturbation

| Method Category | Method Name | Precision (Biological) | Recall (Biological) | F1 Score (Biological) | Mean Wasserstein Distance | False Omission Rate (FOR) |

|---|---|---|---|---|---|---|

| Challenge (Interventional) | Mean Difference (Top 1k) | 0.237 | 0.221 | 0.229 | 0.082 | 0.779 |

| Challenge (Interventional) | Guanlab (Top 1k) | 0.235 | 0.230 | 0.232 | 0.075 | 0.785 |

| Observational | GRNBoost | 0.091 | 0.448 | 0.151 | 0.061 | 0.551 |

| Observational | NOTEARS (MLP) | 0.180 | 0.139 | 0.157 | 0.072 | 0.833 |

| Interventional | GIES | 0.162 | 0.144 | 0.152 | 0.069 | 0.836 |

| Interventional | DCDI-G | 0.172 | 0.149 | 0.159 | 0.070 | 0.829 |

Key for Metrics:

- Precision/Recall/F1: Biology-driven evaluation approximating ground truth.

- Mean Wasserstein Distance: A statistical metric; higher values indicate predicted interactions correspond to stronger causal effects.

- False Omission Rate (FOR): A statistical metric; lower values are better, indicating fewer real causal interactions are missed by the model.

The data reveals several critical insights. First, methods like GRNBoost achieve high recall but low precision, indicating they discover many true interactions but also include many false positives; this structure may be more resilient to random failures but vulnerable to targeted attacks on its numerous false edges. Second, the top-performing methods from the CausalBench challenge (e.g., Mean Difference, Guanlab) show a better balance, typically yielding higher F1 scores and Mean Wasserstein distances. This suggests that methods leveraging interventional data and designed for scalability are generally more robust to the inherent perturbations and noise in real-world biological data [14]. Finally, a key finding is that traditional interventional methods (e.g., GIES, DCDI-G) often fail to outperform their observational counterparts on real-world data, contrary to theoretical expectations, highlighting a significant performance gap under realistic perturbation scenarios [14].

Experimental Protocols for Perturbation Analysis

To systematically evaluate network robustness, researchers can employ the following detailed experimental protocols, drawing from established benchmarking suites and empirical studies.

Protocol 1: Benchmarking with Real-World Perturbation Data

This protocol leverages large-scale, real-world perturbation datasets to move beyond synthetic experiments, which often do not reflect real-world performance.

- Dataset Curation: Utilize openly available large-scale perturbation datasets. The CausalBench suite, for instance, uses single-cell RNA sequencing data from two cell lines (K562 and RPE1), comprising over 200,000 interventional data points from genetic perturbations (e.g., CRISPRi gene knockdowns) [14].

- Perturbation Integration: The dataset inherently contains both observational (control) and interventional (perturbed) data. The interventional data serves as a proxy for targeted perturbations on specific network nodes (genes).

- Method Evaluation:

- Input: Train network inference methods on a mix of observational and interventional data.

- Evaluation Metrics: Use a dual approach:

- Biology-Driven Metrics: Compare inferred networks to biologically approximated ground truth to calculate precision, recall, and F1 score.

- Statistical Causal Metrics: Compute the Mean Wasserstein Distance and False Omission Rate (FOR) to assess the strength and completeness of predicted causal interactions without relying on a fixed ground truth graph [14].

- Robustness Analysis: Compare the performance degradation of different methods (e.g., NOTEARS, GIES, GRNBoost, challenge methods) across these metrics. Methods that maintain higher performance are deemed more robust to the perturbations present in the real-world data.

Protocol 2: Simulating Random and Targeted Attacks

This protocol involves actively perturbing an inferred or known network structure to test its resilience.

- Network Inference: Begin by inferring a baseline network ( G_{\text{base}} ) from a dataset using a chosen method.

- Perturbation Simulation:

- Random Failure: For a chosen fraction ( p ) of nodes/edges, remove them uniformly at random from ( G{\text{base}} ) to create ( G{\text{random}} ).

- Targeted Attack: Calculate a node importance metric (e.g., degree centrality, betweenness centrality). Remove the top ( p ) fraction of nodes based on this metric from ( G{\text{base}} ) to create ( G{\text{targeted}} ) [13].

- Impact Assessment: Quantify the impact of perturbations using metrics like:

- Global Efficiency: Measures the efficiency of information exchange across the network.

- Largest Connected Component (LCC) Size: The proportion of nodes in the largest connected subgraph. A rapid shrink in LCC size indicates low robustness.

- Network Survivability: Under a multi-level connectivity scheme, analyze how dynamic topology, load, and recovery delays affect the network's ability to maintain core functions [13].

- Robustness Scoring: A method or architecture is considered robust if the performance drop (in terms of the chosen metrics) from ( G{\text{base}} ) to ( G{\text{random}} ) and ( G_{\text{targeted}} ) is minimal. Networks are typically much more resilient to random failures than to targeted attacks.

Protocol 3: Adversarial Training for Enhanced Robustness

This protocol focuses on improving model robustness by incorporating perturbations during the training phase, a technique proven effective in deep learning.

- Perturbation Generation: During model training, generate adversarial examples. In image classification, this can be done via algorithms like the Fast Gradient Sign Method (FGSM) or Projected Gradient Descent (PGD) [15]. For network inference, this could involve corrupting training data with noise or biased samples.

- Robust Training: Integrate these adversarial examples or noisy data into the training loop. This is the core of adversarial training, which minimizes the worst-case loss within a perturbation region, forcing the model to learn more robust features [12] [15].

- Validation: Evaluate the adversarially trained model on a held-out test set that contains both clean data and data subjected to various perturbation types (e.g., random noise, targeted adversarial attacks).

- Analysis: Empirical studies show that adversarial training generally improves robustness against the attacks it was trained on. However, it can lead to overfitting to a specific attack algorithm and may not generalize perfectly to novel attack strategies [15]. The trade-off between standard accuracy and adversarial robustness must be carefully managed.

Experimental Workflow for Network Robustness Evaluation

Building a robust network analysis pipeline requires a suite of computational tools and datasets. The following table details key resources for conducting perturbation research.

Table 3: Essential Research Reagents and Resources for Perturbation Analysis

| Resource Name | Type | Primary Function in Research | Relevance to Perturbation Models |

|---|---|---|---|

| CausalBench Suite | Software & Dataset | Provides a benchmark suite with real-world single-cell perturbation data and biologically-motivated metrics for evaluating network inference methods. | Serves as a standard testbed for assessing method robustness to real biological perturbations [14]. |

| PC Algorithm | Software Algorithm | A constraint-based causal discovery method used for inferring network structures from observational data. | A baseline observational method to compare against interventional methods under perturbation [14]. |

| GIES Algorithm | Software Algorithm | An extension of GES for causal discovery from a mix of observational and interventional data. | Tests the hypothesis that interventional data should improve robustness to targeted perturbations [14]. |

| NOTEARS | Software Algorithm | A continuous optimization-based method for learning the structure of directed acyclic graphs (DAGs) from data. | Represents a modern differentiable approach whose robustness can be benchmarked [14]. |

| Adversarial Training Library (e.g., ART, TorchAttacks) | Software Library | Provides implementations of common adversarial attack algorithms (FGSM, PGD) and adversarial training loops. | Used to enhance model robustness by training on perturbed data, defending against targeted attacks [12] [15]. |

| Single-Cell Perturbation Data (e.g., from CRISPRi screens) | Dataset | Large-scale datasets measuring gene expression under genetic perturbations, such as those from the CausalBench suite. | Provides the foundational "wet-lab" data containing real node (gene) perturbations for realistic benchmarking [14]. |

| Critical ε Value Analysis | Analysis Method | A technique from formal verification to find the maximum perturbation magnitude an input can withstand before misclassification. | Quantifies the intrinsic robustness of a network model to input perturbations [16]. |

Toolkit for Network Robustness Research

The comparative analysis between random failures and targeted malicious attacks reveals a fundamental truth: network architectures and inference methods are disproportionately vulnerable to strategic attacks on their critical components. The experimental data consistently shows that while methods like GRNBoost offer high recall, their low precision indicates a structure potentially riddled with false edges vulnerable to exploitation. In contrast, modern, scalable methods developed for interventional data, such as those emerging from the CausalBench challenge, demonstrate a more balanced and robust performance profile. For researchers in drug development, selecting a network inference method must involve a rigorous evaluation of its robustness to both random noise, which is inevitable in biological experiments, and targeted perturbations, which model the specific knockout of a disease-relevant gene or pathway. Incorporating adversarial training and robustness verification techniques from broader AI security research presents a promising path toward building more reliable and trustworthy biological network models, ultimately accelerating the hypothesis generation process in early-stage drug discovery.

The robustness of complex networks, defined as their ability to maintain connectivity and functionality when nodes or edges fail, represents a cornerstone of network science research. Within this field, scale-free networks have garnered significant attention due to their unique structural properties and implications for real-world systems. Characterized by power-law degree distributions where a few highly connected hubs coexist with many poorly connected nodes, scale-free architectures are frequently observed across biological, technological, and social domains [17] [18]. The foundational work by Albert, Jeong, and Barabási in 2000 first established the "robust yet fragile" nature of these networks: while remarkably resilient to random failures, they exhibit pronounced vulnerability to targeted attacks on hub nodes [17] [19]. This paradoxical behavior has profound implications for designing and protecting critical infrastructure, from biological networks in drug development to technological systems supporting pharmaceutical research. Understanding both the strengths and limitations of scale-free robustness provides essential insights for evaluating network architectures against perturbations, ultimately informing more resilient designs for complex systems in scientific and industrial applications.

Foundational Principles of Scale-Free Network Robustness

The degree distribution serves as the primary differentiator of scale-free networks from other architectural types. Unlike random or exponential networks where node degrees cluster around a characteristic value, scale-free networks follow a power-law distribution ( P(k) \sim k^{-\lambda} ), where the probability of a node having degree ( k ) decreases polynomially as ( k ) increases [17] [18]. This fundamental structural property gives rise to two complementary robustness phenomena that define scale-free network behavior.

The robustness to random failures emerges statistically from the predominance of low-degree nodes. Since the vast majority of nodes possess few connections, the random removal of nodes (or edges) most likely eliminates these structurally unimportant components, leaving the overall network connectivity largely unaffected [19]. The connected hubs continue to maintain the network's giant component, preserving global connectivity even under substantial random node removal. This property makes scale-free networks naturally resilient to undirected perturbations or random component failures.

Conversely, the fragility to targeted attacks stems directly from the disproportionate importance of highly connected hubs. These rare but critical nodes act as central connectors maintaining network integrity. When attackers possess perfect information about network topology and deliberately remove nodes in decreasing degree order, the elimination of just a small fraction of hubs can catastrophically fragment the network [19]. This "Achilles' heel" effect demonstrates how strategic attacks exploiting the very heterogeneity that provides random failure robustness can induce catastrophic failures, creating a fundamental trade-off in network security design.

Table 1: Key Properties of Scale-Free Networks and Their Impact on Robustness

| Network Property | Structural Manifestation | Impact on Random Failure Robustness | Impact on Targeted Attack Robustness |

|---|---|---|---|

| Power-law degree distribution | Few hubs, many low-degree nodes | High | Low |

| Degree heterogeneity | High variance in node connections | Preferential failure of unimportant nodes | Critical vulnerability of key hubs |

| Small-world structure | Short average path lengths | Maintained despite random removal | Rapid collapse when hubs are targeted |

| Hierarchical organization | Modular structure with connector hubs | Localized damage containment | Critical dependency on inter-module connectors |

Quantitative Framework: Measuring and Modeling Network Robustness

The mathematical foundation for analyzing network robustness relies heavily on generating functions and percolation theory. Generating functions provide a powerful mathematical framework for representing probability distributions of node degrees and analyzing their combinatorial properties. For a degree distribution ( pk ), the generating function is defined as ( G0(x) = \sum{k=0}^{\infty} pk x^k ), which enables the calculation of key network metrics through differentiation and functional composition [17]. This approach allows researchers to compute the mean component size, giant component existence, and other critical robustness indicators without exhaustive simulation.

The robustness metric ( R ) quantitatively captures a network's resilience to targeted attacks by measuring the preserved connectivity during sequential hub removal. Formally, ( R = \frac{1}{N} \sum_{q=1}^{N} s(q) ), where ( N ) is the total number of nodes and ( s(q) ) represents the fraction of nodes in the largest connected component after removing ( q ) nodes in decreasing degree order [20]. This metric ranges from ( 1/N ) (extremely fragile) to 0.5 (highly robust), with scale-free networks typically exhibiting significantly higher ( R ) values under random failure compared to targeted attacks.

Percolation theory provides the theoretical foundation for understanding network fragmentation thresholds. The critical removal fraction ( fc ) marks the phase transition point where the giant component disintegrates, with scale-free networks exhibiting notably different ( fc ) values for random versus targeted removal scenarios [19]. Analytical approaches building on the generating function formalism allow researchers to predict these critical thresholds for arbitrary degree distributions, creating a mathematical toolkit for robustness assessment without resource-intensive computational simulations.

Experimental Methodologies for Robustness Assessment

Standardized Attack Protocols

Researchers employ standardized experimental protocols to quantitatively evaluate network robustness, with distinct methodologies for different attack scenarios:

Targeted Node Attack Protocol:

- Calculate and rank all nodes by a centrality measure (typically degree or betweenness)

- Remove the highest-ranked node and all its incident edges

- Recalculate the size of the largest connected component (LCC)

- Recompute node centralities in the resulting network (for recalculated strategies)

- Repeat steps 2-4 until no nodes remain

- Record the LCC size after each removal to generate robustness curves [21] [20]

Random Failure Protocol:

- Randomly select a node with uniform probability

- Remove the selected node and all its incident edges

- Record the LCC size after removal

- Repeat until no nodes remain, averaging results over multiple trials to account for stochasticity

Information Disturbance Attack Model: This sophisticated approach introduces imperfect attack information by adding noise to node degree data. The displayed degree ( \tilde{di} ) follows a uniform distribution ( U(a, b) ) where ( a = di \alphai + m(1-\alphai) ) and ( b = di \alphai + M(1-\alphai) ), with ( \alphai \in [0,1] ) representing the attack information perfection parameter [19]. This model creates a continuum between perfect information (( \alpha = 1 ), pure targeted attack) and no information (( \alpha = 0 ), effectively random failure).

Experimental Workflow for Network Robustness Assessment

The following diagram illustrates the complete experimental workflow for assessing network robustness under different attack scenarios:

Comparative Experimental Data and Analysis

Robustness Under Different Attack Scenarios

Empirical studies consistently demonstrate the "robust yet fragile" dichotomy in scale-free networks. Under random failure conditions, scale-free networks maintain connectivity until exceptionally high node removal fractions, while targeted attacks trigger rapid disintegration with minimal hub removal.

Table 2: Comparative Robustness Metrics Across Network Topologies

| Network Type | Power-law Exponent (λ) | Critical Removal Fraction (f_c) Random | Critical Removal Fraction (f_c) Targeted | Robustness Metric (R) |

|---|---|---|---|---|

| Scale-free (biological) | 2.1 - 2.3 | 0.80 - 0.92 | 0.18 - 0.26 | 0.24 - 0.31 |

| Scale-free (technological) | 2.2 - 2.5 | 0.75 - 0.88 | 0.15 - 0.24 | 0.21 - 0.28 |

| Random network | Exponential | 0.65 - 0.75 | 0.60 - 0.72 | 0.28 - 0.35 |

| Small-world | Exponential | 0.70 - 0.82 | 0.55 - 0.68 | 0.30 - 0.38 |

The critical removal fraction (( fc )) represents the proportion of nodes that must be removed to dismantle the giant component [19]. The dramatic disparity between random and targeted ( fc ) values in scale-free networks highlights their unique vulnerability profile. For example, when the minimum degree ( m = 2 ), reducing the attack information perfection parameter from ( α = 1 ) (perfect information) to ( α = 0.8 ) (moderately disturbed information) increases ( f_c ) from 23% to 63%, demonstrating how information quality fundamentally alters robustness [19].

The Impact of Modularity on Robustness

Modular structure significantly influences scale-free network robustness, particularly under targeted attacks. Networks with high modularity (distinct community structure) exhibit different failure dynamics compared to non-modular scale-free networks:

Table 3: Modularity Effects on Scale-Free Network Robustness

| Modularity Level | Percolation Transition Type | Efficacy of Degree-Based Attack | Efficacy of Betweenness Attack | Critical Modules |

|---|---|---|---|---|

| Non-modular/Low | 2nd order (continuous) | High | Moderate | N/A |

| Medium Modularity | Mixed | High | High | Emerging |

| High Modularity | 1st order (abrupt) | Moderate | Very High | Critical |

Research indicates that in highly modular scale-free networks, betweenness-based attacks become more effective than degree-based attacks at fragmenting the network [21]. This occurs because betweenness centrality identifies connector nodes that link different modules, whose removal causes abrupt network fragmentation. Additionally, highly modular networks exhibit first-order percolation transitions with sudden collapse, unlike the continuous degradation observed in non-modular networks [21]. These findings demonstrate how organizational principles beyond degree distribution significantly impact robustness characteristics.

Enhancement Strategies for Scale-Free Robustness

Information Disturbance Approach

The information disturbance strategy enhances robustness by deliberately reducing the quality of topological information available to attackers. By introducing uncertainty in node degree information through the parameter ( α ), this approach effectively converts targeted attacks toward random failures, dramatically improving robustness [19]. Counterintuitively, optimal disturbance strategies preferentially target "poor nodes" (low-degree) rather than "rich nodes" (hubs), as disturbing the attack information for low-degree nodes provides greater overall protection [19]. This approach enhances robustness without altering the actual network topology, making it particularly valuable for protecting existing infrastructure.

Intelligent Rewiring Mechanisms

Intelligent rewiring algorithms proactively modify network topology to enhance robustness while preserving the scale-free degree distribution. The INTR (Intelligent Rewiring) mechanism specifically optimizes connections between high and low-degree nodes to reduce hub vulnerability [20]. This approach demonstrates performance superior to simulated annealing and ROSE algorithms, improving robustness metrics by 17.8% and 10.7% respectively while maintaining the original degree distribution [20]. The mechanism employs closeness centrality for efficient node importance identification, balancing optimization effectiveness with computational feasibility for large-scale networks.

Comparison of Enhancement Strategies

Table 4: Performance Comparison of Robustness Enhancement Strategies

| Enhancement Strategy | Robustness Improvement | Topology Preservation | Computational Complexity | Key Mechanism |

|---|---|---|---|---|

| Information Disturbance | High (fc: 23%→63%) | Complete | Low | Attack information degradation |

| Intelligent Rewiring (INTR) | High (R: +10.7-17.8%) | Degree distribution only | Medium | Strategic edge rewiring |

| Simulated Annealing | Moderate | Degree distribution only | High | Probabilistic optimization |

| Multiple Population GA | High | Degree distribution only | Very High | Evolutionary optimization |

Caveats and Limitations of Scale-Free Robustness

The Rarity of True Scale-Free Networks

Recent rigorous statistical analyses challenge the presumed ubiquity of scale-free networks across real-world systems. Comprehensive examination of nearly 1000 networks across social, biological, technological, transportation, and information domains revealed that strongly scale-free structure is empirically rare, with most networks better described by log-normal distributions [18]. Social networks appear at best weakly scale-free, while only a handful of technological and biological networks display strong scale-free properties [18]. This distributional diversity highlights limitations in generalizing the "robust-yet-fragile" principle across all complex networks without empirical verification.

Finite-Size Effects and Alternative Metrics

Finite-size effects may obscure underlying scale-free topology in empirical networks, as real systems necessarily contain limited nodes [22]. Finite-size scaling analysis suggests that many networks rejected as non-scale-free under strict statistical tests may actually exhibit underlying scale invariance clouded by sampling limitations [22]. Additionally, the degree-degree distance ( η ) has been proposed as an alternative scale-freeness indicator that may better capture underlying scale-free properties than traditional degree distributions [23]. These methodological considerations highlight ongoing refinements in how scale-free properties are identified and characterized.

Context-Dependent Robustness

Network robustness depends on structural features beyond degree distribution, including clustering coefficients, degree correlations, and spatial constraints. For spatial scale-free networks where connection probability decreases with distance according to a rate ( δd ), robustness requires ( τ < 2 + 1/δ ) for the power-law exponent ( τ ) [24]. This demonstrates how robustness criteria become more complex in spatially-embedded networks, with topological and geometric properties jointly determining resilience. Similarly, higher clustering coefficients generally reduce robustness to targeted attacks, suggesting structural trade-offs in network design [21].

The Scientist's Toolkit: Research Reagent Solutions

Table 5: Essential Methodological Tools for Network Robustness Research

| Research Tool | Function | Application Context | Key Considerations |

|---|---|---|---|

| Generating Functions | Mathematical representation of degree distributions | Analytical calculation of robustness metrics | Enables exact solutions for configuration model networks |

| Percolation Theory Framework | Models network fragmentation processes | Determining critical removal thresholds | Provides theoretical foundation for phase transitions |

| Finite-Size Scaling Analysis | Accounts for limited network size effects | Testing scale-free hypothesis in empirical data | Distinguishes true scaling from finite-sample artifacts |

| INTR Algorithm | Intelligent rewiring for robustness enhancement | Optimizing existing network topologies | Preserves degree distribution while enhancing robustness |

| Information Disturbance Model | Introduces attack information imperfection | Simulating realistic attack scenarios | Converts targeted attacks toward random failures |

| Modularity Detection Algorithms | Identify community structure | Analyzing robustness of modular networks | Reveals organizational principles beyond degree distribution |

The "robust-yet-fragile" paradigm of scale-free networks continues to provide fundamental insights into network resilience, though with important caveats and limitations. Foundational studies have established the mathematical principles underlying this paradoxical behavior, while contemporary research has refined our understanding of its boundary conditions and practical implications. For researchers and drug development professionals, these lessons highlight both the potential benefits and risks of scale-free architectures in biological and technological systems. The experimental methodologies and enhancement strategies reviewed here offer practical approaches for assessing and improving network robustness in real-world applications. As statistical analyses reveal the empirical rarity of strongly scale-free networks and methodological refinements address finite-size effects, the field continues to evolve toward more nuanced understanding of network robustness across diverse architectural types. This progression enables more informed design and protection of critical networks in pharmaceutical research, healthcare systems, and biological discovery pipelines.

In computational biology, the concept of robustness—a system's ability to maintain function despite perturbations—is a fundamental property of both biological and algorithmic systems. Protein-protein interaction (PPI) networks and drug-target interaction (DTI) graphs form the backbone of modern drug discovery, yet their predictive utility depends critically on their resilience to various forms of disturbance. Biological networks inherently exhibit distributed robustness, where functionality is preserved through alternative pathways when individual components fail [25]. Similarly, computational models must demonstrate stability against distribution shifts, including noisy data, adversarial attacks, and natural biological variation [26] [27]. This review systematically evaluates the robustness of different network architectures to perturbation, providing researchers with comparative performance data and methodological insights to guide tool selection for drug development pipelines.

The rise of biomedical foundation models creates new hurdles in testing and authorization, given their broad capabilities and susceptibility to complex distribution shifts [26]. Current evaluations reveal significant gaps in robustness assessment, with approximately 31.4% of biomedical foundation models containing no robustness evaluations at all [26] [27]. This underscores the critical need for standardized robustness testing frameworks tailored to biomedical applications where model failures can have serious consequences.

Comparative Analysis of Network Architectures and Their Robustness

Performance Metrics Across Network Types

Table 1: Quantitative performance comparison of network architectures under perturbation

| Network Architecture | Primary Application | Performance Metric | Performance (Unperturbed) | Performance (Perturbed) | Robustness Retention |

|---|---|---|---|---|---|

| GraphDTI [28] | Drug-target prediction | AUC | 0.996 (validation) | 0.939 (unseen data) | 94.3% |

| Multi-Objective EA with FS-PTO [29] | Protein complex detection | F1-Score | 0.82 (original PPI) | 0.76 (20% noise) | 92.7% |

| GO-Informed Mutation [29] | Protein complex detection | F1-Score | 0.85 (original PPI) | 0.81 (20% noise) | 95.3% |

| Retrieval-Augmented LLMs [30] | Biomedical NLP tasks | Accuracy | Varies by task | Significant degradation under counterfactuals | Limited |

| Standard LLMs [30] | Biomedical NLP tasks | Accuracy | Varies by task | Severe degradation | Poor |

Robustness Characteristics by Network Type

Table 2: Qualitative robustness characteristics across network architectures

| Network Type | Strengths | Vulnerabilities | Optimal Application Context |

|---|---|---|---|

| PPI Networks (Biological) [25] | Distributed robustness via redundant pathways; Hub-based architecture provides stability | Central-lethality: Hub deletion causes systemic failure; Missing/spurious interactions [29] | Cellular signaling analysis; Target identification |

| Graph Neural Networks (e.g., GraphDTI) [28] | Integrates heterogeneous data; Generalizes to unseen data with high AUC | Limited testing against adversarial attacks; Dependency on data quality | Drug-target interaction prediction; Polypharmacology studies |

| Evolutionary Algorithms (e.g., MOEA with FS-PTO) [29] | Resilient to noisy edges in PPI networks; Identifies sparse functional modules | Computational intensity; Limited scalability to very large networks | Protein complex detection; Functional module identification |

| Retrieval-Augmented LLMs [30] | Reduces hallucinations in biomedical NLP; Access to external knowledge | Struggles with counterfactual scenarios; Limited self-awareness | Biomedical literature analysis; Question-answering systems |

Experimental Protocols for Robustness Assessment

Robustness Testing Framework for Biomedical Foundation Models

Effective robustness evaluation requires a pragmatic framework addressing two central aspects: (1) the degradation mechanism behind a distribution shift, and (2) the task performance metric requiring protection against the shift [26] [27]. The specification should break down robustness evaluation into operationalizable units convertible into quantitative tests with guarantees. Below is the experimental workflow for implementing this framework:

Knowledge Integrity Testing

For knowledge-based models like biomedical LLMs, testing should focus on knowledge integrity checks using realistic transforms rather than random perturbations [26] [27]. For text inputs, prioritize typos and distracting domain-specific information involving biomedical entities. For image inputs, prioritize common imaging and scanner artifacts, and alterations in organ morphology and orientation [26]. Experimental protocols should include:

- Biomedical entity substitution: Replace specific drug, protein, or disease names with semantically similar alternatives to test contextual understanding [26] [27]

- Scientific finding negation: Modify factual statements in prompts to determine if models detect inconsistencies [26]

- Patient history manipulation: Deliberately misinform models about patient data to assess reasoning robustness [26] [27]

Population Structure Evaluation

Biomedical data often contain explicit or implicit group structures organized by age, ethnicity, socioeconomic strata, or medical study cohorts [26]. Evaluation protocols should include:

- Group robustness assessment: Measure performance gaps between best- and worst-performing subpopulations

- Instance robustness testing: Identify corner cases where models fail consistently

- Longitudinal robustness: Evaluate performance consistency across temporal distribution shifts

Noise Resilience Testing for PPI Network Analysis

The multi-objective evolutionary algorithm for protein complex detection employs a rigorous protocol for assessing robustness to network perturbations [29]:

Artificial Network Generation

To assess PPI network robustness, researchers create artificial networks by introducing different noise levels into original Saccharomyces cerevisiae (yeast) PPI networks [29]. This evaluates how perturbations in protein interactions affect algorithmic performance compared to other approaches. The protocol includes:

- Controlled edge manipulation: Remove existing interactions (10-30%) and introduce spurious connections at comparable rates

- Topological preservation: Maintain overall network properties while altering specific connections

- Functional similarity integration: Apply Gene Ontology-based metrics to guide mutation operators

FS-PTO Mutation Operator

The Functional Similarity-Based Protein Translocation Operator (FS-PTO) enhances collaboration between canonical models and Gene Ontology-informed mutation strategies [29]. This operator:

- Leverages GO semantic similarity metrics to guide protein translocation during mutation

- Improves detection of biologically meaningful complexes despite noisy data

- Increases quality of detected complexes over other evolutionary algorithm-based methods

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key research reagents and computational tools for robustness evaluation

| Tool/Resource | Type | Primary Function | Application in Robustness Research |

|---|---|---|---|

| Gene Ontology (GO) Annotations [29] | Biological Database | Standardized representation of gene functions | Provides biological constraints for mutation operators; enhances complex detection accuracy |

| FS-PTO Operator [29] | Algorithmic Component | Gene ontology-based mutation in evolutionary algorithms | Improves robustness to noisy PPI data; increases biological relevance of predictions |

| RoMA Framework [31] | Assessment Framework | Quantifies model robustness without parameter access | Measures LLM resilience to adversarial inputs; enables model comparison for specific applications |

| WCAG Contrast Guidelines [32] | Visualization Standard | Defines minimum contrast ratios for visual elements | Ensures accessibility and interpretability of network visualizations and tool interfaces |

| Biomedical RAG Benchmark [30] | Evaluation Framework | Comprehensive assessment of retrieval-augmented models | Tests robustness across biomedical NLP tasks; evaluates performance under counterfactual scenarios |

| PPI Network Databases (MIPS, etc.) [29] | Data Resource | Curated protein-protein interaction data | Provides ground truth for robustness testing; enables controlled noise introduction studies |

| Robustness Specification Template [26] [27] | Methodological Framework | Tailors robustness tests to task-dependent priorities | Connects abstract AI regulatory frameworks with concrete testing procedures |

The comparative analysis presented herein demonstrates significant variability in robustness characteristics across different network architectures for biomedical applications. Graph-based approaches like GraphDTI show impressive resilience to distribution shifts in drug-target prediction, while evolutionary algorithms with biological constraints excel in noisy PPI environments. The emerging generation of biomedical foundation models shows promise but requires more rigorous robustness testing, particularly for knowledge integrity and counterfactual scenarios [26] [30].

Future directions should prioritize the development of standardized robustness specifications that integrate both domain-specific and general robustness considerations [26] [27]. Additionally, methods that explicitly incorporate biological knowledge—such as Gene Ontology annotations in mutation operators or functional constraints in neural network training—consistently demonstrate enhanced robustness to perturbations [29]. As biomedical networks continue to grow in scale and complexity, ensuring their robustness will be paramount for translating computational predictions into clinically actionable insights.

Advanced Methods for Robustness Analysis and Optimization in Complex Networks

The evaluation of robustness across different network architectures—from biological and social systems to artificial intelligence models—is a cornerstone of reliable computational research. In the context of network science, robustness is defined as the ability of a system to maintain its structural integrity and core functionality when subjected to perturbations, whether random failures or targeted attacks [33] [34]. The development of strategies to enhance this robustness is critical, as cascading failures can lead to the severe impairment or complete collapse of entire networks [33]. This guide provides an objective comparison of three principal computational strategies for robustness enhancement: link addition, protection, and rewiring. By synthesizing current research and experimental data, we aim to offer researchers, scientists, and development professionals a clear framework for selecting and implementing these strategies based on specific network architectures and perturbation threats.

The table below synthesizes the core objectives, key mechanisms, and supported evidence for the three primary robustness enhancement strategies discussed in this guide.

Table 1: Comparative Overview of Robustness Enhancement Strategies

| Strategy | Core Principle | Key Mechanism | Reported Efficacy/Impact |

|---|---|---|---|

| Link Addition [33] | Augment network connectivity to provide alternative pathways and mitigate cascade effects. | Strategically adding higher-order structures (hyperedges) within or between communities. | Transforms collapse from first-order to second-order phase transitions; effectiveness depends on community structure clarity. |

| Protection [33] | Shield critical network components from failure to prevent initial disruption. | Employing cooperative protection models that safeguard a portion of edges within higher-order structures (e.g., 2-simplices). | Preserves functionality of key components, maintaining network connectivity and delaying the onset of cascading failures. |

| Rewiring [34] | Dynamically reconfigure connections post-disruption to restore or maintain connectivity. | "Bypass rewiring": Reconnecting neighbors of a removed node with a stochastic probability ( \alpha ). | Creates a trade-off between cost (number of new links) and robustness; preferentially reconnecting high-degree nodes is most effective. |

Detailed Analysis of Strategies and Experimental Protocols

Link Addition for Higher-Order Networks

Strategic edge addition focuses on enhancing robustness by proactively introducing new connections, particularly in networks with higher-order interactions (e.g., simplicial complexes) and community structures [33].

Experimental Protocol: The efficacy of link addition is typically evaluated using a load redistribution model that simulates cascading failures. This model differentiates between load redistribution within communities and among them. Researchers then test various edge addition strategies:

- Within-Community Addition: Adding higher-order structures inside existing communities.

- Among-Community Addition: Adding higher-order structures that connect different communities.

- Mixed Addition: A combination of the two. The network's robustness is measured by tracking the relative size of the largest connected component throughout the cascading failure process against the fraction of removed nodes [33].

Supporting Data: The strategy's success is highly contingent on the underlying network structure. For networks with prominent community structures, adding edges among communities is more effective, as it enhances inter-community connectivity and can change the nature of network collapse. Conversely, for networks with indistinct community structures, adding edges within communities yields superior robustness enhancement [33].

Component Protection Strategies

Protection strategies aim to harden the network by making key components less vulnerable to failure from the outset.

Experimental Protocol: A prominent method is the higher-order structure cooperative protection model. In this approach, a certain fraction of edges within protected higher-order structures (like 2-simplices) are reinforced and thus made immune to failure. The robustness is then tested under various attack scenarios (e.g., random node removal or targeted attacks on high-degree nodes) by measuring the percolation threshold or the robustness index ( R_{\text{TA}} ), which quantifies the retained network functionality as nodes are removed [33] [34].

Supporting Data: Studies show that selectively protecting critical nodes or higher-order structures significantly helps in maintaining the connectivity of the network. For instance, Zhang et al. demonstrated that designating and reinforcing key nodes can preserve global connectivity by ensuring these nodes remain functional during a cascade [33].

Link-Limited Bypass Rewiring

Bypass rewiring is a reactive strategy that dynamically reconfigures the network immediately after a node fails.