Modular by Design: Engineering Principles for Next-Generation Synthetic Biology Tools

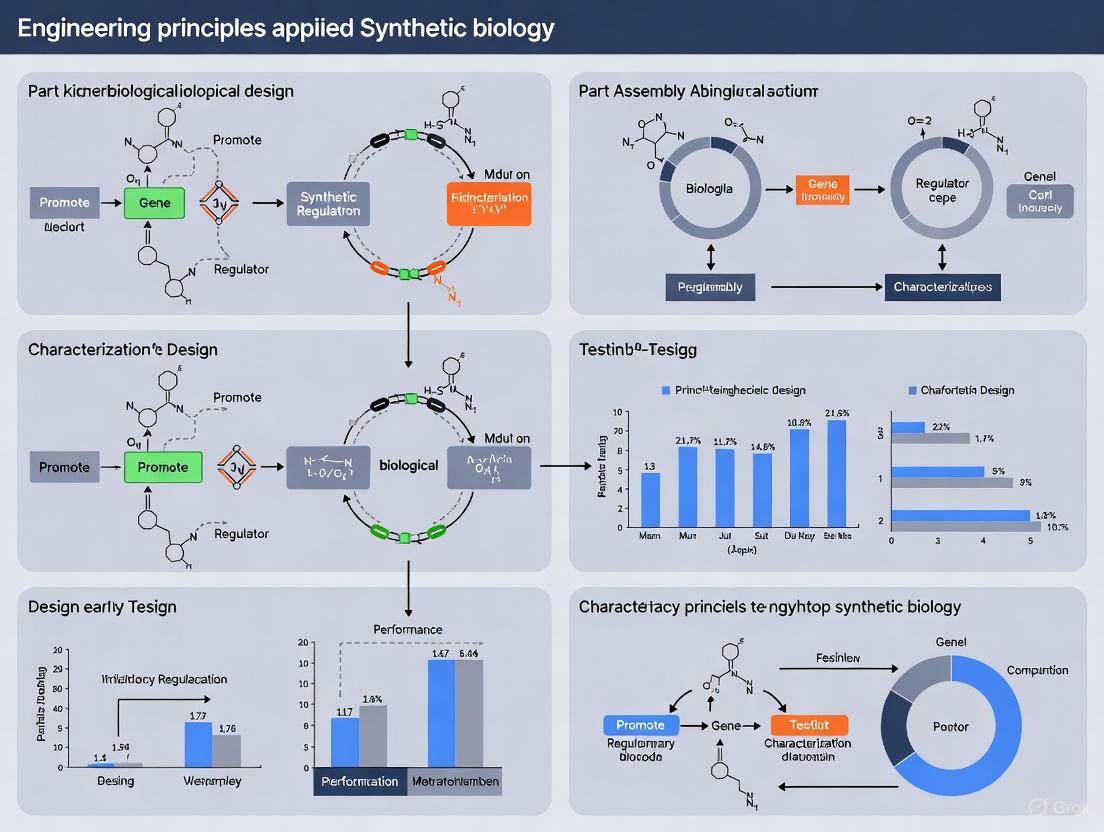

This article explores the foundational engineering principles driving the design of modular biological tools in synthetic biology.

Modular by Design: Engineering Principles for Next-Generation Synthetic Biology Tools

Abstract

This article explores the foundational engineering principles driving the design of modular biological tools in synthetic biology. It examines the core concepts of standardization and abstraction that enable a parts-based approach, from genetic devices to functional synthetic cells. The scope extends to methodological advances in creating compressed genetic circuits, de novo proteins, and synthetic enzyme assemblies, alongside critical troubleshooting strategies for system integration and interoperability. Further, it covers the frameworks for validating tool performance and comparing design paradigms. Tailored for researchers, scientists, and drug development professionals, this review synthesizes current state-of-the-art research to guide the rational construction of predictable biological systems for therapeutic and biotechnological applications.

Core Concepts: Standardization, Abstraction, and the SynCell Vision

Defining Modularity and Engineering Principles in Biological Systems

Modularity is a fundamental organizational principle observed across all scales of biological organization, from molecular interactions to entire organisms [1]. In biological terms, modularity refers to the ability of a system to organize discrete, individual units, which increases the overall efficiency of network activity and facilitates selective forces upon the network [1]. This compartmentalized architecture allows complex biological systems to function in a robust, evolvable, and reconfigurable manner. The concept draws parallels to engineering design principles, where complex systems are built from standardized, interchangeable components that can be mixed and matched to create different functionalities. In evolutionary biology, modularity provides a crucial advantage: it allows a system to 'save its work' while permitting further adaptation and evolution [2]. This review explores the theoretical foundations of biological modularity, its engineering applications in synthetic biology, and the practical methodologies for designing and analyzing modular biological systems.

Theoretical Foundations of Biological Modularity

Evolutionary Origins and Maintenance

The evolutionary origins of biological modularity have been extensively debated since the 1990s. Several competing and complementary theories explain how modularity arises and is maintained in biological systems through various evolutionary modes of action [1]. One prominent framework suggests that modularity emerges through the interaction of four primary evolutionary forces: (1) Selection for the rate of adaptation, where complexes evolving at different rates reach fixation in a population at different times; (2) Constructional selection, where genes existing in many duplicated copies are maintained due to their numerous connections (pleiotropy); (3) Stabilizing selection, which acts as a counter-force against the evolution of modularity by maintaining previously established interactions; and (4) The compounded effect of stabilizing and directional selection, which creates evolutionary "corridors" that allow systems to move toward optimum states along defined paths [1].

Beyond purely selective forces, research by Clune and colleagues (2013) introduced the concept of "connectivity costs" as a factor driving modular organization [1]. Their models demonstrated that systems tending to resist maximizing connections—thereby creating more efficient, compartmentalized network topologies—consistently outperformed non-modular counterparts. This suggests that modularity may form spontaneously due to inherent constraints on network connectivity, not just through direct selective advantages. Neutral theories of modularity emergence propose alternative mechanisms, including duplication-differentiation processes, where gene or network duplication followed by functional specialization leads to modular structures without immediate selective pressure [2]. Additionally, neutral modular restructuring allows for the reduction of pleiotropic constraints through neutral changes in gene architecture, creating genotypic modularity that may provide selective advantages when environments change [2].

Quantitative Definitions and Metrics

Modularity can be quantified using various graph-theoretical approaches that measure the extent to which a network can be partitioned into densely connected subsystems with sparse between-system connections. The table below summarizes key quantitative theories and models explaining the emergence of modularity in biological systems:

Table 1: Quantitative Theories on the Emergence of Biological Modularity

| Theory/Model | Key Mechanism | Mathematical Basis | Biological Evidence |

|---|---|---|---|

| Selection-Based Models [1] | Direct selection for traits that enhance adaptability and evolvability | Population genetics models; Corridor model of phenotype space | Evolutionary trajectories in protein networks; Compartmentalization in metabolic pathways |

| Connectivity Cost Models [1] | Minimization of connection costs between network nodes | Network topology optimization; Cost-performance tradeoffs | Neural connectivity patterns; Protein-protein interaction networks |

| Neutral Duplication-Differentiation [2] | Gene duplication followed by functional divergence | Graph growth models with duplication operators | Hierarchical structure in yeast protein-protein interaction networks |

| Rugged Landscape Theory [2] | Adaptation on rugged fitness landscapes promotes modular solutions | NK fitness landscape models | Modularity in gene regulatory networks under varying environmental conditions |

| Horizontal Gene Transfer [2] | Exchange of genetic material between organisms | Network analysis of gene flow | Increased modularity in bacterial metabolic networks |

The fundamental advantage of modular organization lies in its ability to transform the NP-hard problem of searching all of biological configuration space into a polynomial-hard problem through compartmentalization [2]. By breaking down complex systems into nearly independent components, evolution can optimize modules separately and recombine them in novel configurations, dramatically accelerating the discovery of functional solutions.

Engineering Principles for Synthetic Biology

Core Engineering Concepts

Synthetic biology formally applies engineering principles to biological system design, with standardization, modularity, and abstraction forming its foundational pillars [3]. These principles enable predictable design and reliable prototyping of biological systems:

Standardization: Biological parts are characterized according to consistent specifications, enabling reliable composition and performance prediction. Standardization encompasses physical composition (DNA sequences), measurement units, and functional characterization.

Modularity: Biological systems are decomposed into discrete, functional units (bio-parts, devices, and systems) that can be combined in various configurations [3]. Like toy building blocks, compatible modular designs enable bioparts to be combined and optimized easily.

Abstraction: Complex biological systems are designed using hierarchical abstraction layers that separate concerns between DNA parts, devices, circuits, and systems. This allows researchers to work at appropriate complexity levels without needing to manage all underlying biological details simultaneously.

Synthetic biology implements these principles through an iterative Design-Build-Test-Learn (DBTL) cycle [3]. Computers are used at all stages, from mathematical modeling through robotic automation of assembly and experimentation. This engineering framework has enabled the construction of increasingly complex biological systems, from genetic circuits to metabolic pathways and synthetic cells.

Case Study: Engineering Artificial Platelets

The engineering of artificial platelets exemplifies the modular design approach in synthetic biology [4]. This ambitious project aims to create lipid bilayer vesicles that recapitulate essential platelet functions, particularly in catalyzing secondary hemostasis. The design incorporates four distinct functional modules:

- Targeting Module: Enables binding to collagen exposed at vascular injury sites

- Activation Module: Couples collagen binding to membrane changes through shear-stress dependent calcium-induced membrane fusion

- Catalytic Surface Module: Exposes phosphatidylserine (PS) to recruit activated coagulation factors V and X

- Coagulation Module: Promotes the conversion of prothrombin to thrombin, leading to fibrin formation

This modular architecture allows for independent optimization of each functional component and creates a system that can be reprogrammed for related applications. The artificial platelet concept demonstrates how complex biological functionality can be reverse-engineered through rational modular design rather than direct replication of natural systems.

Experimental Protocols and Methodologies

Quantitative Analysis of Biological Dynamics

The systematic analysis of modular biological systems requires specialized methodologies for quantifying spatiotemporal dynamics. The Systems Science of Biological Dynamics database (SSBD) provides a centralized resource for storing and sharing quantitative data on biological dynamics across multiple scales [5]. The experimental workflow typically involves:

Live-Cell Imaging and Data Acquisition

- Sample Preparation: Biological systems (cells, tissues, organisms) are prepared with appropriate fluorescent tags or labels for the structures or molecules of interest

- Time-Lapse Microscopy: Images are acquired at specified time intervals using appropriate microscopy techniques (e.g., confocal, light-sheet, DIC)

- Image Processing: Computational image analysis techniques quantitatively extract numerical data from microscopy images, tracking the dynamics of biological objects (single molecules, nuclei, cells)

Data Formatting and Sharing

- Biological Dynamics Markup Language (BDML): Quantitative data is formatted using this standardized format to enable data sharing and reuse [5]

- Data Repository: Processed data is deposited in specialized databases like SSBD, which currently provides 311 sets of quantitative data for single molecules, nuclei, and whole organisms

- Data Access: Data is accessible through BDML files or a REST API, enabling further computational analysis and modeling

This methodology has been successfully applied to diverse biological systems, including nuclear division dynamics in C. elegans embryos, behavioral dynamics of adult C. elegans, and spatiotemporal dynamics of single molecules in E. coli cells [5].

Research Reagents and Computational Tools

The design and analysis of modular biological systems requires specialized research reagents and computational tools. The table below details essential resources for synthetic biology and modular design research:

Table 2: Essential Research Reagents and Computational Tools for Modular Biological Design

| Category | Specific Tools/Reagents | Function/Application | Key Features |

|---|---|---|---|

| DNA Assembly & Engineering | Golden Gate Assembly; Gibson Assembly; CRISPR-Cas9 | Construction of genetic circuits from standardized parts | High efficiency; Modular part compatibility; Scarless assembly |

| Cell-Free Systems | PURExpress; Reconstituted transcription-translation systems | Rapid prototyping of genetic circuits without cellular constraints | Bypass cell viability constraints; Direct observation of dynamics |

| Microfluidic Platforms | Droplet generators; Vesicle formation chips | Encapsulation of cell-free systems in lipid membranes | High-throughput; Monodisperse vesicle formation; Controlled environments |

| Visualization Software | Cytoscape; yEd [6] | Biological network layout and visualization | Multiple layout algorithms; Data integration; Plugin architecture |

| Data Repositories | SSBD [5]; BioStudies; Cell Image Library | Storage and sharing of quantitative biological data | Standardized formats; REST API access; Image-data linkage |

| Modeling Tools | Virtual Cell; COPASI; BioNetGen | Mathematical modeling of modular biological systems | Multi-scale modeling; Parameter estimation; Stochastic simulation |

These resources collectively enable the design, construction, testing, and analysis of modular biological systems across multiple scales of complexity.

Visualization Principles for Biological Networks

Effective visualization is crucial for understanding and communicating the structure and dynamics of modular biological systems. Biological network figures are ubiquitous in the literature but present significant design challenges [6]. The following principles guide the creation of effective biological network visualizations:

Determine Figure Purpose and Assess Network Characteristics: Before creating an illustration, establish its purpose and the network characteristics [6]. The visual representation should align with the explanatory goal—whether it emphasizes network functionality, structure, or specific attributes.

Consider Alternative Layouts: While node-link diagrams are most common, alternative representations like adjacency matrices may be superior for dense networks [6]. Matrices excel at showing neighborhoods, clusters, and edge attributes without the clutter typical of complex node-link diagrams.

Beware of Unintended Spatial Interpretations: Spatial arrangement strongly influences perception of network information [6]. Principles of proximity, centrality, and direction should align with the intended message, using layout algorithms that optimize according to relevant similarity measures.

Provide Readable Labels and Captions: Labels must be legible at publication size, using the same or larger font size than the caption font [6]. When direct labeling isn't feasible, high-resolution online versions should be provided.

Utilize Color and Channel Effectiveness: Color should be used purposefully to represent data attributes, choosing schemes appropriate to the data type (sequential, divergent, or qualitative) [6]. Ensure sufficient contrast between text and background colors, with a minimum contrast ratio of 4.5:1 for large text and 7:1 for standard text to meet accessibility standards [7].

Specialized tools like Cytoscape and yEd provide rich selections of layout algorithms tailored to biological network visualization [6]. These tools enable researchers to apply these principles effectively, creating visualizations that accurately communicate the modular organization of biological systems.

Modularity represents a fundamental organizational principle that bridges biological evolution and engineering design. The theoretical frameworks explaining its emergence—through selective advantage, connectivity minimization, or neutral processes—provide a foundation for understanding biological complexity. Synthetic biology has successfully harnessed this principle through standardization, modularity, and abstraction, enabling the engineering of biological systems with predictable functions.

Future research directions will likely focus on several key challenges: (i) developing more sophisticated computational models that better predict the behavior of modular biological systems across scales; (ii) creating new standards and abstraction layers that enable more complex system engineering; (iii) addressing the "overabundance of visualization tools using schematic or straight-line node-link diagrams" by developing more powerful alternatives [8]; and (iv) integrating advanced network analysis techniques beyond basic graph descriptive statistics into visualization tools [8]. As these capabilities advance, the engineering principles of modular design will continue to transform our ability to program biological systems for applications in therapeutics, biosensing, and sustainable bioproduction.

Synthetic cells (SynCells) are artificial constructs meticulously engineered from molecular components to mimic the functions of biological cells. This bottom-up approach, which involves assembling non-living building blocks into life-like systems, offers profound insights into fundamental biology and promises significant impacts in medicine, biotechnology, and bioengineering [9]. The field is driven by diverse motivations, from understanding the intricate processes of life in a simplified context and probing origins-of-life theories, to creating minimal, controllable biomimetic systems for applications in therapeutics, energy production, and biomanufacturing [9]. A primary, inspiring goal for the community is the creation of a living system from non-living parts, characterized by the ability to self-reproduce and evolve, thereby testing our fundamental understanding of life itself [9].

Core Engineering Principles in Synthetic Biology

The design and construction of SynCells are deeply rooted in core engineering principles, which enable the systematic and efficient creation of complex biological systems.

- Standardization: The use of well-characterized, interchangeable biological parts allows for predictable system behavior and reproducibility across different laboratories and experiments [10].

- Modularity: Complex systems are broken down into simpler, self-contained functional units or modules [11]. A module is defined as "an essential and self-contained functional unit relative to the product of which it is part," with standardized interfaces that allow for product composition through combination [11]. This modular approach inspires the current strategy for building SynCells, leading to a vast catalog of key functional modules and structural chassis [9].

- Abstraction: This principle allows designers to work at one level of a system without needing to understand all the underlying complexities of lower levels, significantly streamlining the design process [10].

These principles are implemented through an iterative Design–Build–Test–Learn (DBTL) cycle, often assisted by computers and robotics, to accelerate the development of functional synthetic systems [10].

Key Modules for a Functional Synthetic Cell

Achieving a functional SynCell requires the integration of multiple, distinct functional modules that recapitulate essential life-like properties. The table below summarizes the core modules, their functions, and the current state of the art.

Table 1: Essential Functional Modules for a Bottom-Up Synthetic Cell

| Module | Primary Function | Key Components | Current State-of-the-Art |

|---|---|---|---|

| Compartmentalization | Defines physical boundary & separates interior from environment [9] | Phospholipid vesicles, emulsion droplets, polymersomes, proteinosomes [9] | Widely explored; various chassis developed [9] |

| Information Processing | Couples genotype to phenotype; executes genetic programs [9] | TX-TL systems (cell extracts or purified components like PURE), DNA/RNA [9] | TX-TL systems assembled & integrated with compartments [9] |

| Growth & Self-Replication | Enables self-sustenance and replication [9] | Systems for ribosome biogenesis, lipid synthesis, genomic DNA replication [9] | Major challenge; far from achieving doubling of all cellular components [9] |

| Autonomous Division | Splits a grown SynCell into daughter cells [9] | Synthetic divisome (e.g., contractile rings, abscission machinery) [9] | Individual elements realized; controlled synthetic divisome not yet achieved [9] |

| Metabolism & Transportation | Provides energy, building blocks, and waste removal [9] | Metabolic networks, transport systems for molecular fuels/wastes [9] | Metabolic networks reconstituted & integrated with genetic modules; improvements in flux & efficiency needed [9] |

The Integration Challenge

A defining characteristic of a living SynCell is a functionally integrated cell cycle, where processes like DNA replication, segregation, growth, and division are seamlessly coordinated [9]. The primary scientific challenge in the field is no longer just creating individual modules, but overcoming the incompatibilities between these diverse chemical and synthetic sub-systems to integrate them into a single, interoperable whole [9]. The complexity of this integration scales exponentially with the number of modules, and the parameter space of possible combinations is too vast to explore without robust theoretical frameworks to predict system behavior and robustness [9].

Experimental Protocols for Key SynCell Modules

Protocol 1: Assembling a Transcription-Translation (TX-TL) System in a Lipid Vesicle

This protocol details the encapsulation of a cell-free gene expression system within a lipid bilayer, a foundational step for endowing SynCells with information processing capabilities.

Workflow Diagram: TX-TL in Vesicles

Materials and Reagents:

- Lipids: 1-palmitoyl-2-oleoyl-sn-glycero-3-phosphocholine (POPC) and other desired lipids (e.g., POPG, cholesterol) [9].

- TX-TL System: Commercially available PURE system* or homemade E. coli extract [9].

- DNA Template: Plasmid containing gene of interest under a T7 or other suitable promoter.

- Hydration Buffer: HEPES or Tris-based buffer, often with sugars like trehalose for osmolarity control and stabilization.

- Equipment: Rotary evaporator, vacuum desiccator, extruder apparatus with polycarbonate membranes (e.g., 100 nm, 400 nm, or 1 μm pore size), thermomixer.

Detailed Procedure:

- Lipid Film Preparation: Dissolve lipid mixtures in an organic solvent (e.g., chloroform) in a glass vial. Use a rotary evaporator to remove the solvent, forming a thin lipid film on the vial walls. Place the vial under vacuum in a desiccator for several hours (or overnight) to remove any residual solvent.

- Hydration: Hydrate the dried lipid film with an aqueous solution containing the complete TX-TL reaction mix and your DNA template. Gently agitate the mixture (e.g., vortex, rotating) above the lipid phase transition temperature for ~1 hour to form multilamellar vesicles (MLVs). The solution will appear cloudy.

- Size Reduction and Unilamellar Vesicle Formation: Subject the MLV suspension to several cycles of freezing in liquid nitrogen and thawing in a warm water bath (e.g., 5-10 cycles). This helps to break the lipid layers. Then, pass the suspension through a polycarbonate membrane of the desired pore size (e.g., 400 nm for large unilamellar vesicles, LUVs) using an extruder apparatus. Perform multiple passes (e.g., 11-21) to achieve a homogeneous size distribution.

- Expression and Analysis: Incubate the vesicles at a constant temperature (e.g., 30-37°C) for the required time to allow for gene expression. Monitor protein synthesis by fluorescence (if using a GFP reporter) or analyze the contents post-incubation by techniques like SDS-PAGE, Western blot, or fluorescence-activated cell sorting (FACS) after purifying the vesicles from the external solution.

The PURE (Protein Synthesis Using Recombinant Elements) system is a reconstituted TX-TL system composed of purified components, including ribosomes, tRNAs, aminoacyl-tRNA synthetases, translation factors, and energy sources, offering greater controllability and reduced biological noise compared to crude extracts [9].

Protocol 2: Implementing Dynamic DNA Barcoding for Lineage Tracing

This protocol, adapted from research in C. elegans, outlines a method for using CRISPR/Cas9 to create heritable, dynamic DNA barcodes to track cell lineage relationships within a population or tissue [12].

Workflow Diagram: Lineage Tracing

Materials and Reagents:

- CRISPR/Cas9 System: Purified Cas9 nuclease, and single-guide RNAs (sgRNAs) targeting multiple specific, non-overlapping sites within a neutral or reporter genomic locus [12].

- Delivery System: Microinjection apparatus for precise delivery into cells or embryos; alternatively, electroporation or lipid-based transfection reagents for cell lines.

- Lysis Buffer: To isolate genomic DNA from sampled cells.

- PCR and Sequencing Primers: Designed to amplify the ~500 bp region encompassing all targeted sites for high-throughput sequencing [12].

Detailed Procedure:

- Target Selection and RNP Complex Formation: Design and synthesize sgRNAs targeting 10 or more specific sites within a compact genomic region (e.g., within a 500 bp segment of a reporter gene like EGFP). Form ribonucleoprotein (RNP) complexes by pre-incubating Cas9 protein with each sgRNA.

- Delivery to Progenitor Cell: Introduce the pooled RNP complexes into a single progenitor cell (e.g., a fertilized egg or a stem cell) at time T=0. Microinjection is a common and effective method for precise delivery.

- Cell Division and Barcode Generation: Allow the injected cell to divide and proliferate. As divisions proceed, CRISPR/Cas9 will stochastically create insertion-deletion mutations (indels) via non-homologous end joining (NHEJ) at the targeted sites in the genomes of the daughter cells. Once a site is mutated, it becomes unavailable for further cutting, and the unique combination of indels is inherited by all subsequent progeny, forming a dynamic barcode [12].

- Sampling and Sequencing: After several generations, isolate individual progeny cells or pools of cells from different tissues or locations. Lyse the cells, amplify the target genomic region by PCR, and subject the amplicons to next-generation sequencing (e.g., paired-end sequencing).

- Lineage Analysis: Analyze the sequencing data to identify the unique barcode (set of indels) for each sampled cell. Construct a lineage tree based on the shared mutations between barcodes; cells that share a common indel are considered to have descended from a common progenitor cell in which that mutation occurred [12].

The Scientist's Toolkit: Essential Research Reagents

The following table catalogs key reagents and materials fundamental to bottom-up synthetic cell research.

Table 2: Key Research Reagent Solutions for SynCell Construction

| Reagent/Material | Function/Description | Example Use Case |

|---|---|---|

| PURE System | A reconstituted cell-free protein synthesis system composed of purified components [9]. | Providing a controllable and minimal platform for gene expression inside vesicles [9]. |

| POPC & Other Phospholipids | Synthetic or natural lipids used to form the bilayer membrane of vesicle-based SynCells [9]. | Creating the primary structural chassis (liposomes) for compartmentalization [9]. |

| Polymersomes | Synthetic vesicles made from block copolymers, often offering greater stability than lipid membranes [9]. | Constructing robust SynCell chassis that can withstand harsh conditions [9]. |

| CRISPR/Cas9 System | A programmable genome editing tool consisting of the Cas9 nuclease and a guide RNA (sgRNA) [12]. | Implementing dynamic DNA barcoding for synthetic lineage tracing [12]. |

| Metabolic Pathway Kits | Pre-assembled sets of enzymes and cofactors for specific biochemical reactions (e.g., ATP generation). | Reconstituting core metabolic modules for energy production and anabolism inside SynCells [9]. |

Advanced Applications and Data-Driven Design

The construction of SynCells is increasingly leveraging data-driven approaches. Artificial intelligence (AI) and machine learning (ML) are being applied to address key challenges, such as predicting protein function, optimizing metabolic pathways, estimating missing kinetic parameters, and designing non-natural biosynthesis pathways [13]. The integration of these data-driven methods with mechanistic models is poised to accelerate the development of sophisticated synthetic strains and SynCells for industrial biomanufacturing [13]. In therapeutic applications, SynCells are being engineered as minimal and well-controllable systems for targeted drug delivery and as biosensors [9]. The landmark development of CAR-T cell therapy, where a patient's own T cells are synthetically engineered to fight cancer, exemplifies the power of synthetic biology in medicine [10], a principle that bottom-up SynCells aim to emulate and extend.

The foundational principle of synthetic biology is the application of rigorous engineering concepts to biological systems. Central to this approach is modular design, a paradigm that enables the rapid, efficient, and reproducible construction of complex biological systems [14]. This methodology involves breaking down complex systems into standardized, interchangeable parts that can be combined in various configurations to achieve predictable functions. The convergent knowledge from natural biological systems and engineered modular systems provides a powerful toolset for addressing emergent challenges in health, food, energy, and the environment [14].

This technical guide examines the trajectory of biological standardization, from the basic coding sequences of DNA parts to the sophisticated three-dimensional architectures of protein modules. We explore the core principles, quantitative data, experimental protocols, and computational frameworks that are establishing a new era of predictable biological engineering, framing this progress within the broader thesis of implementing proven engineering principles in biotechnology.

DNA Parts: The Foundation of Modularity

The Synthesis Technology Landscape

The ability to "write" DNA is as crucial as sequencing it ("reading" DNA) for advancing synthetic biology. The field has progressed from labor-intensive, low-yield DNA synthesis methods to automated, high-throughput technologies capable of industrial-scale production [15]. This evolution is critical for supporting applications ranging from gene therapy to sustainable biomanufacturing.

Table 1: DNA Synthesis Market Landscape and Growth Projections

| Market Segment | 2014 Market Value | 2025 Market Value | 2035 Projected Value | Key Players / Technologies |

|---|---|---|---|---|

| Gene Synthesis | $137 million [15] | >$2 billion [15] | - | GenScript, GenTitan platform, IDT, Twist Bioscience |

| Oligonucleotide Synthesis | $241 million (single-stranded) [15] | ~$4 billion [15] | - | DNA Script, Molecular Assemblies, Column-phase synthesis |

| Total DNA Synthesis | - | ~$6 billion [15] | ~$30 billion [15] | Enzymatic synthesis, Chip-based semiconductor synthesis |

Two primary technological advancements are driving this growth:

- Automated and High-Throughput Platforms: Technologies like GenScript's GenTitan, which leverages a miniature semiconductor platform, enable high-throughput, cost-effective production of diverse DNA products [15].

- Enzymatic Synthesis: This method is emerging as a challenger to traditional phosphoramidite chemistry. It offers faster production rates, reactions under milder conditions, and improved accessibility and economy, particularly for long sequences [15]. Recent advances have doubled the size of custom genetic sequences that can be generated [16].

Standardized Assembly Methodologies

Standardized assembly systems are crucial for combining DNA parts into functional constructs. Golden Gate cloning is a highly robust and efficient method based on Type IIS restriction enzymes that allows for the seamless, directional assembly of multiple DNA fragments in a single one-pot reaction [17]. This principle underpins several standardized systems, including Modular Cloning (MoClo) and the Modular Protein Expression Toolbox (MoPET).

The MoPET platform exemplifies the application of modular design. It uses pre-defined, standardized functional DNA modules categorized into eight classes (e.g., promoters, signal peptides, tags, linkers, plasmid backbones) [17]. These modules can be flexibly combined to rationally design hundreds of thousands of different expression constructs. A key feature is the design of fusion sites that connect modules without adding undesired amino acids to the final protein product, a critical consideration for function [17].

Protocol 1: Standardized Golden Gate Assembly for Modular Constructs

- Principle: Simultaneous digestion and ligation using Type IIS restriction enzymes (e.g., BsaI, BpiI), which cut outside their recognition sites, enabling seamless fusion of DNA parts.

- Reaction Setup:

- ~30 fmol (approx. 100 ng for a 5 kb plasmid) of each plasmid module.

- 1X T4 DNA Ligase Buffer.

- 10 U of Type IIS restriction enzyme (e.g., BsaI or BpiI).

- 10 U of high-concentration T4 DNA Ligase.

- Deionized water to a final volume of 20 µL.

- Thermocycling Conditions:

- Incubate for 2 hours at 37°C.

- Follow with 5 minutes at 50°C.

- Finally, heat-inactivate at 80°C for 5 minutes.

- Downstream Processing: Transform the reaction product into competent E. coli, plate on selective media, and screen colonies for correct assemblies [17].

DNA-Based Functional Modules for Sensing and Computation

Beyond storing genetic information, DNA can be engineered into molecular devices that sense and process information within biological systems. The predictable thermodynamics of Watson-Crick base pairing and the strand-displacement reaction form the basis for these dynamic systems [18].

In a strand-displacement reaction, an input single-stranded DNA (invader) binds to a complementary strand in a double-stranded complex, displacing and releasing an output strand through a process called branch migration. This output can then trigger downstream reactions, creating a cascade of logic operations [18].

Table 2: DNA-Based Functional Modules for Molecular Information Sensing

| Target Information | DNA Module Type | Operating Principle | Example Application |

|---|---|---|---|

| Molecular Identity | Aptamer-based Sensors/Switches | Target binding induces conformational change, exposing or releasing a reporter sequence [18]. | Detection of antibodies, small molecules (e.g., ATP) [18]. |

| Molecular Concentration | Thresholds & Selectors | Kinetics of strand displacement are tuned by toehold length/sequence to respond at specific concentration thresholds [18]. | Pattern recognition networks, concentration-dependent signal processing [18]. |

| Temporal Order | Sequencers & Selectors | Modules are designed to be activated only when molecular inputs arrive in a specific sequence [18]. | Monitoring the order of transcription factor appearance in developmental pathways [18]. |

Protein Modules: Architectural Control in Synthetic Biology

De Novo Protein Design with Atomic-Level Precision

The field has moved beyond repurposing natural proteins to designing entirely novel protein structures from first principles, unbound by evolutionary constraints [19]. This revolution is powered by artificial intelligence (AI)-driven computational frameworks, such as deep learning-based generative tools (e.g., RFdiffusion), which enable the creation of protein structures with atom-level precision for customized functions [19] [20].

A major advance is the bond-centric modular design of protein assemblies. This approach, inspired by the predictable valencies and geometries of atomic bonds, involves designing rigid protein building blocks with pre-specified interaction "bonds" [20]. These building blocks can then self-assemble into complex, multi-component architectures guided by simple geometric principles.

Protocol 2: Computational Pipeline for De Novo Protein Assembly Design

- Step 1: Architecture Definition: Select the target architecture (e.g., cage, lattice). Define the structural modules (homo-oligomeric cores) and the complementary bonding modules (e.g., LHD heterodimers). Specify the desired spatial arrangement and degrees of freedom [20].

- Step 2: Junction Generation: Generate rigid junction modules that connect the structural and bonding modules in the desired orientation. This is achieved using:

- WORMS Protocol: Fuses predesigned helical structural modules to generate required geometries [20].

- RFdiffusion: A deep generative neural network that creates novel protein backbones to rigidly link the core and bonding modules, often resulting in more compact structures suitable for 2D/3D lattices [20].

- Step 3: Sequence Design & Validation: Design amino acid sequences for the newly generated backbone segments. The designed sequences are then filtered and validated using structure prediction tools like AlphaFold2 to ensure they fold and assemble as intended before experimental testing [20].

Experimental Characterization of Designed Protein Assemblies

Computationally designed proteins require rigorous experimental validation. The success of the bond-centric design approach is demonstrated by the high experimental success rate (10%–50%) in forming target architectures like polyhedral cages, 2D arrays, and 3D lattices [20].

Protocol 3: Experimental Workflow for Validating Protein Assemblies

- Bicistronic Expression: Express the two (or more) designed protein building blocks in E. coli using a bicistronic vector, with one component fused to a polyhistidine tag [20].

- Affinity Purification & Screening: Use Nickel-NTA affinity chromatography to pull down potential complexes. Initial assembly is confirmed by SDS-PAGE, visualizing bands for all partner building blocks [20].

- In Vitro Assembly & Purification: Purify individual building blocks via Size Exclusion Chromatography (SEC). Assemble complexes in vitro by mixing partners at equimolar ratios and re-analyze by SEC to confirm stable complex formation [20].

- Structural Validation:

- Negative-Stain EM (nsEM): For initial, rapid characterization of particle formation and homogeneity [20].

- Cryo-Electron Microscopy (cryo-EM): For high-resolution structural validation. Successful designs, such as the T33-549 tetrahedral cage and O42-24 octahedral cage, have yielded sub-nanometer resolution reconstructions that closely match the computational design models [20].

The Scientist's Toolkit: Key Reagents and Technologies

Table 3: Research Reagent Solutions for Modular Biology

| Item / Technology | Function / Application | Key Features |

|---|---|---|

| MoPET Toolbox [17] | Standardized assembly of protein expression constructs. | 53 predefined DNA modules; enables generation of >790,000 construct variants; Golden Gate cloning. |

| LHD Heterodimers [20] | Programmable, high-affinity "bonds" for protein assemblies. | Polar interfaces; specificity from shape complementarity and hydrophobic burial; used in bond-centric design. |

| GenTitan Gene Synthesis [15] | High-throughput production of custom DNA fragments. | Semiconductor-based platform; commercial gene synthesis service. |

| Gibco OncoPro Medium [21] | 3D tumoroid culture for biologically relevant cancer models. | Improves accessibility and standardization of 3D cancer models for drug testing. |

| DynaGreen Magnetic Beads [21] | Sustainable protein purification. | Reduces environmental impact without sacrificing performance (e.g., Protein A beads). |

| RFdiffusion & AlphaFold2 [20] | Computational protein design and validation. | AI-driven tools for generating novel protein backbones (RFdiffusion) and validating designed structures (AlphaFold2). |

The systematic standardization of biological parts, from DNA to protein modules, marks a paradigm shift in biotechnology. The principles of modular design, abstraction, and standardization—long established in traditional engineering—are now yielding tangible results in biology, as evidenced by the robust construction of genetic circuits, functional DNA devices, and complex protein nanomaterials [14] [18] [20]. The integration of AI-powered design and automated experimental workflows is accelerating the DBTL cycle, reducing development time and increasing the complexity of systems that can be engineered [15].

The future of this field lies in the deeper integration of these standardized parts into increasingly complex systems. This includes the creation of reconfigurable protein interaction networks [20], the application of de novo designed proteins as modular toolkits for building synthetic cellular systems [19], and the use of advanced DNA-based modules for sophisticated sensing and computation inside living cells [18]. As these technologies mature, robust biosafety and bioethics evaluations will be paramount to address potential risks associated with novel, structurally unprecedented proteins and engineered biological systems [19]. The ongoing industrialization of biology, fueled by standardization, promises to unlock transformative applications across medicine, materials science, and environmental sustainability.

The construction of a synthetic cell (SynCell) from non-living molecular components represents a staggering and ambitious goal at the forefront of synthetic biology. This bottom-up approach aims to assemble life-like systems that mimic cellular functions, offering profound insights into fundamental biology and promising transformative applications in medicine, biotechnology, and bioengineering [9]. A foundational paradigm in this endeavor is modular design—a proven engineering principle that involves constructing complex systems from smaller, self-contained functional units with standardized interfaces [14] [11]. Applying this principle to synthetic biology allows researchers to deconstruct the immense complexity of a cell into manageable, engineerable modules that can be developed, tested, and optimized independently before integration into a cohesive whole [11]. This whitepaper provides an in-depth technical guide to three core functional modules essential for a living SynCell: growth, division, and metabolism. We frame this discussion within the broader thesis of engineering biology, emphasizing how modular design accelerates the systematic development of robust biological systems and tools for research and therapeutic applications.

The Growth Module: Achieving Self-Sustenance

The growth module is responsible for the de novo production and self-replication of all essential cellular components, a fundamental characteristic of living systems. The current state-of-the-art is still far from achieving the doubling of all cellular components, making this one of the most significant challenges in the SynCell effort [9].

Core Components and Machinery

At the heart of the growth module lies the reconstitution of the central dogma. The primary workhorse for this is cell-free protein synthesis, which can be implemented using cellular extracts or systems composed of purified elements, such as the PURE (Protein Synthesis Using Recombinant Elements) system [9]. A critical milestone for a self-sustaining growth module is the creation of a self-replicating PURE system—where the system itself can produce all its protein and RNA components. Workshop attendees anticipated that, with sufficient funding, this could be achieved within the next 5-10 years [22]. Beyond the core transcription-translation machinery, growth requires the synthesis of other essential macromolecules, including:

- Lipid synthesis for membrane generation [9].

- Ribosome biogenesis to create the translation machinery itself [9].

- Replication of genomic DNA to propagate genetic information [9].

Experimental Protocols and Key Challenges

A standard protocol for establishing a basic growth module involves encapsulating the PURE system, along with a DNA template and necessary substrates, within a lipid vesicle or other chassis [9]. The functionality is typically assessed by measuring the expression of a reporter protein, such as green fluorescent protein (GFP), over time.

The major scientific hurdles for the growth module include:

- Limited Efficiency and Controllability: Maximizing the protein synthesis capacity and achieving controllability comparable to living systems remains a substantial challenge [9].

- Ribosome Complexity: The E. coli ribosome consists of 54 protein subunits and 3 rRNAs, and its complex assembly process is not fully understood, making artificial ribosome construction a critical unsolved step [22].

- System Integration: Reconstituting all needed components for a full, self-sustaining central dogma is immensely complex. Our grasp of the architecture of a fully functional minimal genome, estimated to require 200-500 genes, is still minimal [9].

Table 1: Key Research Reagents for the Growth Module

| Research Reagent | Function in Experimentation |

|---|---|

| PURE System | A reconstituted cell-free protein synthesis system used as the core engine for protein production. |

| Giant Unilamellar Vesicles (GUVs) | A common chassis for compartmentalizing SynCell reactions and modules. |

| Lipid Precursors (e.g., fatty acids, glycerol) | Molecular building blocks for the synthesis of new membrane material. |

| NTPs (Nucleoside Triphosphates) | Energy-rich substrates for RNA synthesis and as an energy currency. |

| Amino Acids | The fundamental building blocks for protein synthesis. |

| DNA Template | Encodes genetic instructions for proteins to be expressed. |

The Division Module: Orchestrating Reproduction

Autonomous division is the process that enables a SynCell to propagate. It is a biophysical process requiring the coordination of multiple proteins to achieve large-scale mechanical deformation and rearrangement of the membrane [9].

Pathways to Synthetic Cell Division

Two primary strategies are being explored to achieve SynCell division:

- Biological Division: This involves the reconstitution of a minimal divisome—the set of proteins that orchestrate cell division in natural cells. This includes proteins that form a contractile ring, such as FtsZ, which constricts the cell, and proteins that mediate the final abscission event that separates the daughter cells [9]. While certain elements like ring formation have been realized, a fully controlled synthetic divisome has not yet been achieved [9].

- Physically-Induced Division: Given the difficulty of reconstituting biological division, physical stimuli offer a more feasible near-term solution. Techniques such as applying osmotic shock or using microfluidic devices to deform and pinch vesicles can lead to division [22]. Continued efforts are needed in this field, with the belief that division could be achieved in the next 5 years using such methods [22].

Experimental Protocols and Key Challenges

A typical experiment for studying biological division might involve encapsulating FtsZ proteins and their associated regulators inside GUVs. The assembly of the contractile ring and any subsequent membrane deformation can be visualized using fluorescence microscopy.

The major challenges for the division module are:

- Integration with Growth: Division must be tightly coupled with the growth module to ensure it occurs at the appropriate time and that resources are partitioned correctly between daughter cells.

- Spatial Organization: Proper division requires spatial control to position the division machinery correctly, which is a non-trivial engineering problem in a synthetic environment [9].

- Membrane Mechanics: The synthetic chassis must be both stable enough to maintain integrity and flexible enough to allow for the dramatic shape changes required for division [22].

The Metabolism Module: Powering the Cell

Metabolism is the engine of the SynCell, providing the building blocks, energy, and redox balance to support the self-regeneration of all macromolecules [22]. It keeps the system out of thermodynamic equilibrium, which is essential for life [9].

Energy Supply and Metabolic Strategies

A key challenge is supplying energy to operate genetic circuits and protein expression for extended periods. Multiple strategies have been developed, which can be used in combination [23].

Table 2: Strategies for Powering Synthetic Cell Metabolism

| Strategy | Mechanism | Key Components | Experimental Considerations |

|---|---|---|---|

| Continuous External Feeding | Microfluidic devices continuously supply fresh substrates and remove waste. | Microfluidic chemostat, energy solution (e.g., creatine phosphate, NTPs). | Partially mimics nutrient uptake; not fully autonomous. |

| Reconstituted ATP Regeneration | Enzyme cascades recycle phosphate to regenerate ATP from ADP. | Phosphoenolpyruvate (PEP)/3-PGA, polyphosphate, corresponding kinases. | Can extend operation but faces catalyst poisoning and instability. |

| Light-Driven Systems | Light-sensitive proton pumps create a gradient to drive ATP synthesis. | Bacteriorhodopsin, ATP synthase, lipids/polymersomes. | Renewable, externally controllable input; requires membrane co-reconstitution. |

| Substrate-Level Phosphorylation | Minimal metabolic pathways directly generate ATP from energy-rich substrates. | Arginine breakdown pathway (arginine, ornithine, carbamate kinase). | Simpler than full respiratory chains; requires membrane transporters. |

| Cofactor Recycling | Enzymatic systems regenerate essential cofactors like NADH/NADPH. | Dehydrogenases, electron donors. | Maintains redox balance for sustained metabolic reactions. |

Experimental Protocols and Key Challenges

A common experiment for a light-driven energy module involves co-reconstituting bacteriorhodopsin and ATP synthase into the membrane of a liposome or polymersome. Upon illumination, the establishment of a proton gradient and subsequent ATP production can be measured using luciferase-based assays.

The major challenges for the metabolism module are:

- Metabolic Regulation: It is difficult to control the relative expression levels of enzymes and to dynamically regulate multi-step metabolic cascades in synthetic cells [22].

- Waste Management: The accumulation of inhibitory byproducts (e.g., inorganic phosphate) can halt metabolism. Programmable degradation and recycling systems are needed [9].

- Membrane Permeability: The membrane must retain large biomolecules while allowing the selective import of nutrients and export of waste, often achieved by incorporating pore-forming proteins like α-hemolysin [23].

- Balanced Design: Unlike traditional metabolic engineering that focuses on maximizing a single product, SynCell metabolism requires generating a coordinated and balanced system that supports the entire cell [22].

The Integration Challenge and an Evolutionary Design Framework

A defining characteristic of a living SynCell is the seamless coordination and integration of all its modules to create a functional cell cycle [9]. The complexity of combining components scales exponentially with the number of modules, and the parameter space is too large to explore exhaustively [9]. This underscores the need for robust theoretical frameworks and sophisticated design processes.

A powerful perspective for addressing this is to view engineering as evolution [24]. In this framework, design and evolution both follow a cyclic process of variation, selection, and iteration. All design methods, from traditional rational design to directed evolution and random trial-and-error, exist on an evolutionary design spectrum characterized by their throughput (how many variants can be tested) and the number of design cycles [24]. This unified view allows bioengineers to act as "meta-engineers," strategically choosing and combining design methods to efficiently navigate the vast design space of a SynCell. For instance, rational design can be used to create initial module blueprints based on biological knowledge (exploiting prior information), while high-throughput directed evolution can be deployed to optimize poorly understood subsystem interactions (exploring the solution space) [24].

Building a functional SynCell from the bottom up by engineering the core modules of growth, division, and metabolism is a monumental task that requires global, multidisciplinary collaboration. The modular design approach provides a structured pathway toward this goal, breaking down the problem into tractable units. However, the ultimate challenge lies in the integration of these modules into a system that is more than the sum of its parts—one capable of self-sustenance, reproduction, and open-ended evolution. The convergence of advanced experimental techniques, quantitative theoretical frameworks, and an evolutionary perspective on design offers a promising path forward. As these technologies mature, they will not only deepen our understanding of the fundamental principles of life but also unlock novel applications in biomedicine, such as intelligent drug delivery systems and programmable therapeutic cells, ultimately revolutionizing the landscape of drug development and biotechnology.

The pursuit of constructing synthetic cells (SynCells) from molecular components represents a staggering multidisciplinary aim at the forefront of synthetic biology [9]. This field leverages engineering principles of standardisation, modularity, and abstraction to dismantle and reassemble biological cells and processes into novel systems that perform useful functions [10]. A synthetic chassis—the foundational compartment that mimics the cellular boundary—serves as the essential physical platform for hosting these life-like functions. The design, construction, and implementation of these chassis are guided by the iterative Design–Build–Test–Learn (DBTL) cycle, a framework that enables the systematic development and optimization of biological systems [10] [25]. This technical guide provides an in-depth examination of the three primary synthetic chassis platforms—lipid vesicles, polymersomes, and coacervates—framed within the context of engineering principles for modular biological tool design.

Diagram: The DBTL (Design-Build-Test-Learn) cycle, a core engineering framework in synthetic biology for the systematic development of synthetic chassis [10] [25].

Core Chassis Platforms: Materials, Properties, and Formation

Lipid Vesicles: The Biomimetic Standard

Lipid vesicles, or liposomes, are spherical assemblies comprising one or more phospholipid bilayers, closely mimicking the structure of natural cell membranes [26] [27]. Their formation is driven by the amphiphilic nature of phospholipids, which feature a hydrophilic head group and hydrophobic hydrocarbon tails [27]. This structure enables the spontaneous assembly in aqueous solutions to form compartments that separate an internal volume from the external environment.

Material Composition and Properties: The physicochemical properties of lipid vesicles—including membrane fluidity, surface charge, and permeability—are dictated by the specific lipids used. Zwitterionic lipids like DOPC (1,2-dioleoyl-sn-glycero-3-phosphocholine) are commonly employed, while the incorporation of charged lipids allows for modulation of surface properties [27]. A critical parameter is the gel-to-liquid phase transition temperature (Tm), which must be considered during formation and experimentation to ensure the bilayer is in the desired fluid state [27].

Vesicle Classification by Size:

Polymersomes: The Engineered Workhorse

Polymersomes are vesicles formed from amphiphilic block copolymers [28] [27]. Their structure features an aqueous core enclosed by a thicker, more robust polymeric membrane, offering superior stability and tunability compared to lipid-based systems [28].

- Material Composition and Properties: The characteristics of polymersomes—including size, membrane thickness, permeability, and degradation kinetics—are controlled by the molecular weight and chemistry of the constituent hydrophilic and hydrophobic polymer blocks [28]. This tunability makes them particularly attractive for applications requiring prolonged stability and controlled release, such as drug delivery to challenging environments like the eye [28] [29]. Their chemical and physical adaptability allows for precise control over parameters critical for traversing biological barriers [29].

Coacervates: The Dynamic Alternative

Coacervates are membraneless droplets that form via liquid-liquid phase separation (LLPS), typically driven by the associative interaction of oppositely charged polyelectrolytes, such as polymers, peptides, or proteins [30] [31]. They serve as models for biomolecular condensates, which are membraneless organelles found in natural cells [31].

- Material Composition and Properties: Coacervates are characterized by a condensed internal phase that can spontaneously concentrate a wide range of biomolecules, including nucleic acids and proteins, through partitioning [30]. This creates a unique molecularly crowded microenvironment that can enhance biochemical reactivity [31]. A significant advancement is the development of coacervate vesicles, which encapsulate coacervate droplets within a membrane, combining the dynamic molecular uptake of coacervates with the structural definition of vesicles [30].

Table 1: Comparative Analysis of Synthetic Chassis Platforms

| Parameter | Lipid Vesicles (Liposomes) | Polymersomes | Coacervates |

|---|---|---|---|

| Primary Material | Phospholipids (e.g., DOPC) [27] | Amphiphilic block copolymers [28] [27] | Polyelectrolytes, peptides (e.g., FF-OMe) [30] [31] |

| Structure | Lipid bilayer (~3-5 nm thick) [27] | Polymeric bilayer (thicker than lipids) [28] | Membraneless droplet or membrane-bound vesicle [30] |

| Key Formation Driver | Hydrophobic effect & self-assembly [27] | Hydrophobic effect & self-assembly [27] | Liquid-Liquid Phase Separation (LLPS) [30] [31] |

| Stability | Moderate; can be fragile | High; chemical & physical robustness [28] | Variable; can be low without stabilization [30] |

| Permeability | Tunable with lipid composition [26] | Tunable with polymer design [28] | Innately high; selective partitioning [31] |

| Key Advantage | High biomimicry & biocompatibility [27] | High stability & tunability [28] [29] | Biomolecular crowding & dynamic function [30] [31] |

| Primary Challenge | Limited chemical robustness | Potential complexity in synthesis & biodegradation | Controlling stability & coalescence [30] |

Experimental Protocols for Chassis Construction and Analysis

Formation of Giant Unilamellar Vesicles (GUVs) via Electroformation

GUVs are a cornerstone model for artificial cells due to their cell-like size, enabling observation under standard microscopy [27].

Materials:

- Phospholipids: DOPC is a common choice for a neutral, fluid bilayer.

- Solvent: Chloroform or a chloroform/methanol mixture.

- Apparatus: Electroformation chamber with indium tin oxide (ITO)-coated glass slides.

- Buffer: An aqueous solution with low ionic strength (e.g., sucrose solution).

Step-by-Step Protocol:

- Lipid Film Preparation: Dissolve lipids in chloroform (~1-2 mg/mL). Spread a small volume (~20-50 µL) evenly onto the conductive surface of an ITO slide. Evaporate the solvent under an inert gas stream (e.g., nitrogen or argon) to form a thin, dry lipid film. Further desiccate under vacuum for at least 1 hour to remove residual solvent.

- Chamber Assembly: Assemble the electroformation chamber by sealing a second ITO slide over the first, with a spacer (e.g., silicone gasket) in between, creating a cavity.

- Hydration and Swelling: Fill the chamber cavity with the desired aqueous buffer (e.g., 200 mOsm sucrose solution). Apply an alternating low-frequency AC electric field (e.g., 1-2 V, 10 Hz) for 1-2 hours at a temperature above the Tm of the lipids. The electric field facilitates gentle hydration and swelling of the lipid film, promoting the formation of giant, unilamellar vesicles.

- Harvesting: Carefully drain the GUV suspension from the chamber. GUVs can be collected for immediate use or stored at 4°C for short periods.

Microfluidic Formation of Polymersomes

Microfluidic techniques offer superior control over the size and monodispersity of synthesized vesicles [27].

Materials:

- Amphiphilic Copolymer: Dissolved in a water-immiscible organic solvent (e.g., mineral oil, octanol).

- Aqueous Buffer: For the inner and outer continuous phases.

- Apparatus: Microfluidic device with a flow-focusing or T-junction geometry and precision syringe pumps.

Step-by-Step Protocol:

- Phase Preparation: Prepare the polymer solution in the organic solvent and the aqueous buffer.

- Device Priming and Flow Setup: Load the solutions into syringes and connect them to the inlets of the microfluidic device. Use syringe pumps to precisely control the flow rates.

- Droplet Jetting and Self-Assembly: The organic polymer solution (dispersed phase) is injected into a stream of the aqueous solution (continuous phase). At the junction, shear forces break the polymer stream into monodisperse droplets. As the solvent diffuses out of the droplet interface into the aqueous phase, the polymers concentrate and self-assemble into a bilayer, forming a polymersome.

- Collection and Purification: Collect the polymersome suspension from the outlet. Remove residual solvent and oil, potentially using techniques like dialysis or centrifugation, depending on the system [27].

Preparation of Dipeptide Coacervates

Short peptide-based coacervates represent a simplified and biocompatible model system [31].

Materials:

- Dipeptide: FF-OMe (diphenylalanine capped with a methoxy group) [31].

- Buffer: HEPES buffer, pH ~6.

- pH Modulator: NaOH solution (e.g., 0.1 M).

Step-by-Step Protocol:

- Stock Solution Preparation: Dissolve FF-OMe in HEPES buffer at a concentration of 10 mg/mL. At a pH of ~6, the dipeptide will be fully soluble [31].

- Phase Separation Trigger: Induce LLPS by increasing the pH to ~7 or higher by adding small volumes of 0.1 M NaOH solution with gentle mixing. The deprotonation of the amino group reduces electrostatic repulsion and solvation, triggering the formation of liquid coacervate droplets.

- Characterization: Observe droplet formation via optical microscopy. Droplets typically range from 1-10 μm and exhibit classic liquid behaviors like fusion and coalescence [31].

- Reversibility Check: Confirm the dynamic nature of the system by re-acidifying the solution. The coacervates should dissolve back into a homogeneous solution, and the process can be cycled [31].

Functionalization and Applications in Modular Design

The true power of a synthetic chassis is unlocked through functionalization, creating modules that can be combined to mimic life-like behaviors. This aligns with the core engineering principle of modularity, where complex systems are built from exchangeable units of self-contained functionality [11].

Diagram: The modular design principle in synthetic biology, where self-contained functional units are integrated into a core synthetic chassis [9] [11].

Information Processing and Gene Expression: A foundational module is the integration of transcription-translation (TX-TL) systems within the chassis. These systems, based on cellular extracts or reconstituted from purified components (e.g., the PURE system), enable the expression of proteins from encapsulated DNA, coupling genotype to phenotype [9]. This allows synthetic cells to be programmed for specific functions, such as sensing environmental signals and responding dynamically [9].

Metabolism and Energy Supply: Sustaining functionality requires energy. Metabolic pathways that generate adenosine triphosphate (ATP), such as glycolysis, have been reconstituted in vitro and integrated with genetic modules [9]. This creates a metabolic module that keeps the system out of thermodynamic equilibrium, powering other processes. Improvements in metabolic flux and the coupling of complementary pathways are active areas of research [9].

Communication and Signaling: Synthetic cells can be designed to communicate with each other and with natural living cells. This is achieved by incorporating modules for the production, secretion, and detection of signaling molecules, mimicking quorum sensing or other biological signaling pathways [9] [27]. This functionality is key to building complex multi-vesicle networks and for therapeutic applications where synthetic cells interact with host tissues.

Bioorthogonal Catalysis: A cutting-edge application involves using synthetic chassis as microreactors to perform non-biological chemistry inside cells. For example, dipeptide coacervates with a hydrophobic microenvironment can encapsulate transition metal catalysts [31]. When internalized by living cells, these artificial organelles can catalyze bioorthogonal reactions, such as the intracellular production of an active fluorophore, thereby introducing new-to-nature functions [31].

Table 2: The Scientist's Toolkit: Essential Reagents and Materials

| Item Name | Function/Application | Technical Notes |

|---|---|---|

| DOPC Lipid | Primary building block for biomimetic lipid bilayers [27] | Zwitterionic; low phase transition temperature (Tm ~ -17°C) for fluid membranes [27]. |

| PURE System | Reconstituted cell-free transcription-translation [9] | Purified components for protein expression; offers high controllability [9]. |

| FF-OMe Dipeptide | Building block for simple, tunable peptide coacervates [31] | Forms pH-responsive coacervates (forms at pH >7); creates a hydrophobic microenvironment [31]. |

| Amphiphilic Block Copolymer | Building block for polymersomes (e.g., PEG-PLA) [28] | Provides high stability and tunable membrane properties for demanding applications [28]. |

| Microfluidic Device | High-throughput, monodisperse vesicle production [27] | Enables formation of GUVs and polymersomes with precise size control [27]. |

| Electroformation Chamber | Standard method for GUV production [27] | Uses AC field to swell lipid films; ideal for basic research with GUVs [27]. |

| Morphogenic Agent (e.g., POM) | Induces coacervate-to-vesicle transition [30] | Densely charged species that reorganizes coacervate droplets into stable coacervate vesicles [30]. |

The exploration of lipid vesicles, polymersomes, and coacervates provides a versatile toolkit for constructing synthetic cells based on modular design principles. Lipid vesicles offer unparalleled biomimicry, polymersomes deliver engineered robustness, and coacervates open doors to dynamic, lifelike condensates. The convergence of these platforms, such as in the development of membrane-bound coacervate vesicles, points toward a future of increasingly complex and functional hybrid systems [30].

The major scientific challenge ahead lies in integration—seamlessly combining functional modules for growth, division, metabolism, and information processing into a single, interoperable system capable of self-reproduction and evolution [9]. Overcoming the inherent incompatibilities between disparate chemical subsystems is paramount. Success in this endeavor will rely on the continued application of rigorous engineering principles, including the DBTL cycle and standardization, fostering global collaboration to guide the responsible development of synthetic biology from the ground up [9] [10].

Building the Toolbox: From Genetic Circuits to De Novo Proteins

Transcriptional Programming (T-Pro) for Compact Genetic Circuits

The field of synthetic biology is guided by core engineering principles such as modularity, predictability, and resource efficiency. However, the biological parts used to construct synthetic genetic circuits have historically suffered from limited modularity and impose significant metabolic burdens on host cells as complexity increases. This creates a fundamental engineering challenge: how to build sophisticated biological computing systems without overloading the host chassis [32].

Transcriptional Programming (T-Pro) represents a paradigm shift in synthetic biology that addresses these challenges through circuit compression—a design strategy that enables higher-state decision-making using significantly fewer genetic parts. By leveraging engineered systems of synthetic transcription factors and promoters, T-Pro moves beyond intuitive, labor-intensive design approaches toward predictive engineering of cellular functions [32] [33]. This technical guide examines the core principles, methodologies, and applications of T-Pro as a framework for engineering modular biological tools in synthetic biology research and therapeutic development.

Core Principles of T-Pro and Circuit Compression

Fundamental Concepts and Definitions

Transcriptional Programming utilizes synthetic transcription factors (TFs) and synthetic promoters to implement logical control over gene expression. Unlike traditional inversion-based genetic circuits that require multiple components to implement basic Boolean operations, T-Pro employs engineered repressors and anti-repressors that coordinate binding to cognate synthetic promoters, fundamentally reducing part count [32].

Circuit compression refers to the process of designing genetic circuits that achieve equivalent or enhanced functionality with fewer genetic components. Research demonstrates that T-Pro compression circuits are, on average, approximately 4-times smaller than canonical inverter-type genetic circuits while maintaining precise quantitative performance [32].

The Engineering Advantage: T-Pro Versus Traditional Architectures

Traditional genetic circuit design relies heavily on inversion to achieve NOT/NOR Boolean operations, requiring multiple promoters and regulators for complex functions. In contrast, T-Pro utilizes synthetic anti-repressors to facilitate objective NOT/NOR operations with reduced component count [32]. This architectural difference translates to significant advantages in predictive design and metabolic efficiency.

The compression achieved through T-Pro is not merely a quantitative reduction in parts but represents a qualitative improvement in design capability. By minimizing cross-talk and context dependencies, T-Pro circuits exhibit more predictable behaviors, enabling researchers to move beyond design-by-eye approaches toward quantitative prediction of genetic circuit performance [32].

Expanding T-Pro Wetware for 3-Input Boolean Logic

Engineering Cellobiose-Responsive Transcription Factors

Scaling T-Pro from 2-input to 3-input Boolean logic required developing additional orthogonal repressor/anti-repressor sets. Researchers expanded the T-Pro wetware toolbox by engineering a complete set of cellobiose-responsive synthetic transcription factors based on the CelR scaffold, which operates orthogonally to existing IPTG and D-ribose responsive systems [32].

The engineering workflow involved:

- Verifying synthetic TF compatibility with tandem operator promoter designs

- Selecting the E+TAN repressor based on dynamic range and ON-state performance

- Engineering anti-CelR variants through a structured protein engineering pipeline [32]

Anti-Repressor Engineering Pipeline

The development of anti-repressors followed a established engineering workflow [32]:

- Super-repressor generation: Creating variants that retain DNA binding but become ligand-insensitive through site saturation mutagenesis (producing ESTAN variant L75H)

- Error-prone PCR: Introducing low mutation rates into the super-repressor template

- FACS screening: Identifying functional anti-repressors (EA1TAN, EA2TAN, EA3TAN) from a library of ~10^8 variants

- ADR expansion: Equipping anti-CelRs with additional alternate DNA recognition functions (YQR, NAR, HQN, KSL)

This systematic approach yielded a high-performing set of EA1ADR anti-repressors, where ADR represents TAN, YQR, NAR, HQN, or KSL DNA-binding domains [32]. The expansion to 3-input Boolean logic enables 256 distinct truth tables, dramatically increasing the computational capacity of genetic circuits while maintaining compression principles [32].

T-Pro Software: Algorithmic Design of Compression Circuits

The Computational Challenge of 3-Input Circuit Design

The expansion from 2-input (16 Boolean operations) to 3-input (256 Boolean operations) biocomputing creates a combinatorial design space on the order of 10^14 putative circuits [32]. This complexity eliminates the possibility of intuitive circuit design and requires sophisticated computational approaches.

To address this challenge, researchers developed a generalizable algorithmic enumeration method that models circuits as directed acyclic graphs and systematically enumerates circuits in sequential order of increasing complexity [32]. This approach guarantees identification of the most compressed circuit implementation for any given truth table.

Key Features of the T-Pro Design Algorithm

The T-Pro design software incorporates several innovative features [32]:

- Generalized component description: Allows for >5 orthogonal protein-DNA interactions

- Scalable promoter architecture: Wetware can be expanded to ~10^3 unique protein-DNA interactions if needed

- Compression-first enumeration: Prioritizes minimal part count solutions

- A priori orthogonal specification: Ensures component compatibility

Table 1: Key Features of T-Pro Design Algorithm

| Feature | Description | Impact on Design Capacity |

|---|---|---|

| Sequential Enumeration | Circuits enumerated by increasing complexity | Guarantees identification of most compressed design |

| Directed Acyclic Graph Model | Represents circuits as computational graphs | Enables systematic exploration of design space |

| Scalable ADR Specification | Supports expansion of DNA recognition functions | Allows circuit complexity to scale beyond current wetware |

| Orthogonality Verification | Checks for cross-talk between components | Ensures predictable circuit performance |

Quantitative Performance and Predictive Design

Performance Metrics and Prediction Accuracy

The T-Pro framework enables quantitative prediction of genetic circuit performance with high accuracy. Experimental validation across >50 test cases demonstrated an average error below 1.4-fold between predictions and measurements, establishing T-Pro as a predictive design tool rather than an iterative optimization platform [32].

Key performance metrics include:

- Digital performance: Faithful implementation of Boolean truth tables

- Analog performance: Precise control over expression levels

- Setpoint achievement: Accurate matching of desired expression thresholds

Context-Aware Design Workflows

A critical innovation in T-Pro is the development of workflows that account for genetic context in quantifying expression levels. These workflows enable predictive design of T-Pro circuits with prescriptive quantitative performance, moving beyond qualitative operation to precise control over expression setpoints [32].

Table 2: T-Pro Performance Validation Across Applications

| Application Domain | Circuit Type | Performance Metric | Result |

|---|---|---|---|

| Biocomputing | 3-Input Boolean Logic | Truth Table Accuracy | Faithful implementation of 256 Boolean operations |

| Synthetic Memory | Recombinase Circuit | Activity Setpoint Achievement | Precise control of memory state switching thresholds |

| Metabolic Engineering | Enzyme Pathway | Flux Control | Predictive tuning of metabolic flux through toxic pathway |

Experimental Protocols and Methodologies

Transcription Factor Engineering Protocol

The following detailed methodology outlines the engineering of anti-repressor transcription factors [32]:

Initial Repressor Characterization:

- Clone synthetic transcription factors into expression vectors with standard promoters and RBS sequences

- Measure dose-response curves using flow cytometry for fluorescence quantification

- Calculate dynamic range (ON/OFF ratio) and absolute ON-state expression level

- Select lead repressor based on dynamic range >10-fold and high ON-state expression

Super-Repressor Generation:

- Perform site-saturation mutagenesis at critical allosteric control residues (e.g., position 75 in CelR scaffold)

- Screen library for variants that maintain repression but lose inducer response

- Identify and sequence confirmed super-repressor variants (e.g., ESTAN L75H)

Anti-Repressor Development:

- Conduct error-prone PCR on super-repressor template with low mutation rate (1-3 amino acid substitutions per variant)

- Clone mutated library into expression vectors and transform into reporter strains

- Use FACS to sort for anti-repressor phenotype (expression in presence of repressor)

- Isolate and sequence individual anti-repressor clones (e.g., EA1TAN, EA2TAN, EA3TAN)

- Characterize dose-response profiles of confirmed anti-repressors

Circuit Assembly and Testing Protocol

Qualitative Circuit Assembly:

- Identify required Boolean operation and input states

- Use T-Pro algorithmic software to generate compressed circuit design

- Assemble synthetic promoters and TF genes in standard vector backbone

- Include appropriate reporter genes (GFP, RFP) for functional characterization

Quantitative Performance Validation:

- Transform assembled circuits into appropriate chassis cells (e.g., E. coli)

- Measure input-output responses using flow cytometry or plate readers

- Quantify expression levels across multiple input combinations

- Compare experimental results with computational predictions

- Iterate through design-build-test cycles if performance deviates from predictions

Research Reagent Solutions

Table 3: Essential Research Reagents for T-Pro Implementation

| Reagent Category | Specific Examples | Function/Application |

|---|---|---|

| Synthetic Transcription Factors | E+TAN, EA1TAN, EA2TAN, EA3TAN | Implement logical operations through DNA binding and regulation |