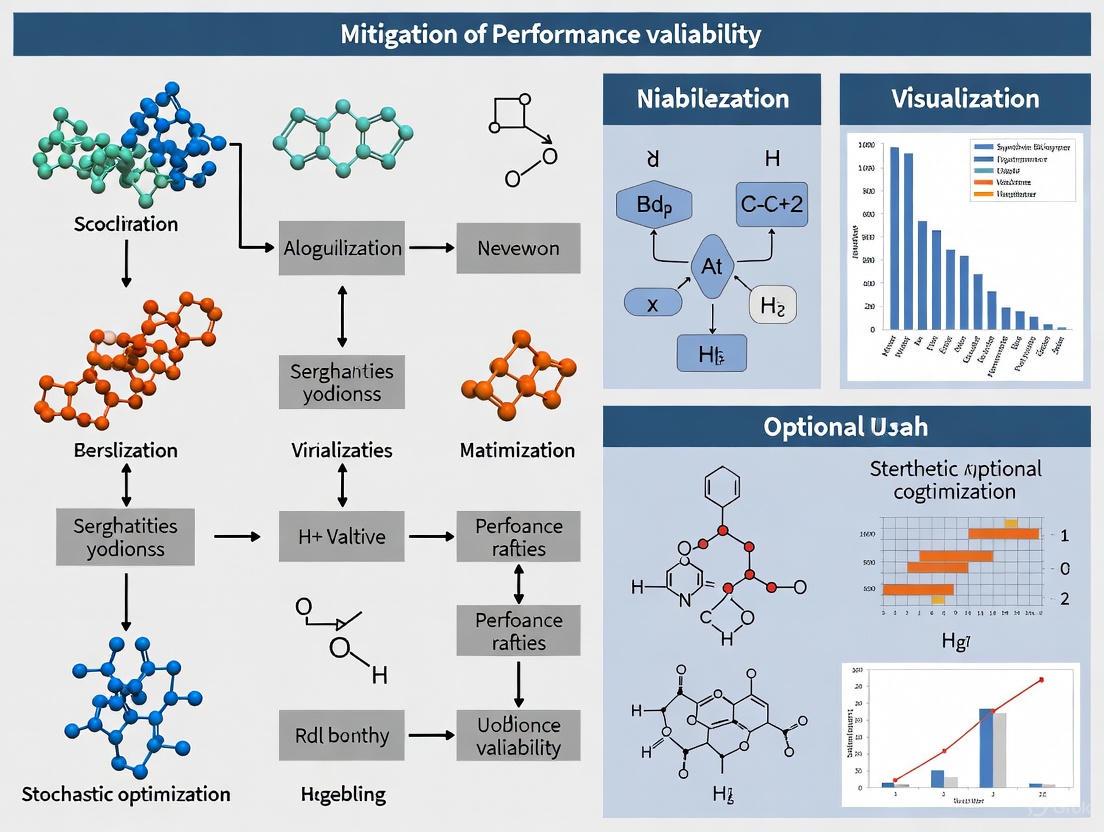

Mitigating Performance Variability in Stochastic Optimization: From Foundational Theory to Advanced Biomedical Applications

This article provides a comprehensive examination of strategies to mitigate performance variability in stochastic optimization, a critical challenge in computational science.

Mitigating Performance Variability in Stochastic Optimization: From Foundational Theory to Advanced Biomedical Applications

Abstract

This article provides a comprehensive examination of strategies to mitigate performance variability in stochastic optimization, a critical challenge in computational science. It explores the foundational sources of variance, from noisy gradient estimates to model-induced distribution shifts. The piece delves into advanced methodological solutions, including novel surrogate loss functions and variance-reduced algorithms, with a specific focus on their application in high-stakes domains like pharmaceutical development and renewable energy planning. It further offers a practical guide for troubleshooting common issues and presents a rigorous framework for validating and comparing optimization strategies, empowering researchers and drug development professionals to build more robust and reliable computational models.

Understanding the Core Challenge: Why Variance Undermines Stochastic Optimization

FAQs on Performance Variability

Q1: What is performance variability in stochastic optimization, and why is it a critical concern for researchers?

Performance variability refers to the fluctuation or noise in the observed outcomes of a stochastic optimization algorithm, such as Stochastic Gradient Descent (SGD), from one iteration or run to another. This arises primarily due to the use of random data subsets (mini-batches) for gradient estimation, which introduces noise into the parameter updates [1]. This variability is critical because it directly impacts convergence rates and solution stability. High variance can cause the optimization process to oscillate around minima, preventing it from settling into a stable solution and potentially leading to convergence to suboptimal local minima instead of the global optimum [1]. In fields like drug development, this translates to unreliable preclinical models that fail to predict clinical success, as the low-variance, controlled experimental environment does not account for the high-variance reality of human trials [2].

Q2: What are the primary factors that cause oscillations and high variance in Stochastic Gradient Descent?

The oscillatory behavior in SGD can be attributed to a triad of factors [1]:

- Random Subsets of Data: Using mini-batches provides a noisy, imperfect estimate of the true gradient, leading to erratic update steps.

- Step Size (Learning Rate): A learning rate that is too high causes the algorithm to overshoot minima, resulting in large oscillations. One that is too low leads to slow convergence and an inability to overcome the noise.

- Imperfect Gradient Estimates: The inherent noise in mini-batch gradients means the update direction does not always align with the true direction of steepest descent.

Q3: How can high variance in optimization impact practical applications like drug development?

In drug development, high variance has a direct translational impact. Preclinical experiments are traditionally designed to be low-variance and highly controlled to be "predictive" of clinical success [2]. However, this introduces a bias that does not reflect the high-variance environment of human clinical trials, which involves diverse genetic backgrounds, ages, and compliance rates [2]. Consequently, a drug that performs exceptionally well in a low-variance preclinical model often fails in the high-variance clinical setting, contributing to the high failure rate of approximately 90% in clinical phases [2]. Mitigating this requires adopting high-variance preclinical development that selects drugs for their robustness across varied conditions, not just their peak performance in a narrow context [2].

Q4: Are there specific convergence guarantees for stochastic optimization algorithms in high-variance, non-convex settings?

Yes, recent research has established non-asymptotic convergence guarantees for non-convex losses. For projected SGD over compact convex sets, convergence can be measured via the distance to the Goldstein subdifferential, with bounds of (O(N^{-1/3})) in expectation for IID data, and high-probability bounds of (O(N^{-1/5})) for sub-Gaussian data [3]. Furthermore, for performative prediction settings where the model influences its own data distribution, the SPRINT algorithm, which incorporates variance reduction, achieves a faster convergence rate of (O(1/T)) to a stationary solution, with an error neighborhood that is independent of the stochastic gradient variance [4].

Troubleshooting Guides

Problem 1: Slow or Unstable Convergence in Non-Convex Optimization

- Symptoms: The loss function decreases erratically, with large oscillations, and fails to settle stably even after many iterations.

- Diagnosis: High variance in stochastic gradient estimates is likely preventing the algorithm from making consistent progress toward a minimum [1].

- Solution: Implement variance reduction techniques.

- Recommended Protocol: Employ the SPRINT (Stochastic Performative Prediction with Variance Reduction) algorithm. It is designed specifically for non-convex losses in settings where the data distribution depends on the model parameters (performative prediction). SPRINT achieves a convergence rate of (O(1/T)) and reduces the error neighborhood compared to standard SGD-GD [4].

- Alternative Method: For non-performative settings, use established variance-reduced methods like SVRG (Stochastic Variance Reduced Gradient) or SPIDER (Stochastic Path-Integrated Differential Estimator) [4].

Problem 2: Oscillatory Behavior Around a Local Minimum

- Symptoms: The optimizer orbits a local minimum without stably converging, leading to a final solution with high variability.

- Diagnosis: The learning rate may be too high, and the algorithm lacks inertia to smooth out noisy gradient updates [1].

- Solution: Integrate momentum and adaptive learning rates.

- Recommended Protocol: Incorporate Momentum (e.g., the Stochastic Heavy Ball method). Momentum smooths the optimization trajectory by accumulating a moving average of past gradients, helping to dampen oscillations and escape shallow local minima [1]. Research has shown that momentum methods can maintain convergence guarantees even in private and ill-posed settings [5] [6].

- Additional Step: Use a learning rate schedule that decays the step size over time. This reduces the step size as the optimizer approaches a minimum, stabilizing the final convergence [1].

Problem 3: Failure of Preclinical Models to Generalize to Clinical Populations

- Symptoms: Drugs with strong preclinical efficacy fail to show consistent effectiveness in heterogeneous human trials.

- Diagnosis: Preclinical models are overly optimized for low-variance, homogeneous experimental conditions, creating a bias that does not align with clinical reality [2].

- Solution: Adopt a high-variance preclinical development strategy.

- Recommended Protocol: Introduce known clinical variables into preclinical assays, even if imperfectly. This includes:

- Genetic Diversity: Use animal models with varied genetic backgrounds to approximate patient heterogeneity [2].

- Age Ranges: Include subjects of different ages within the same treatment groups to model age-related metabolic changes [2].

- Computational Modeling: Train AI/ML models on clinical -omics data to overlay a clinical interpretability layer on preclinical results, accounting for population variation [2].

- Goal: Select drugs for their robust efficacy across varied contexts, not just exceptional performance in a single, narrow context [2].

- Recommended Protocol: Introduce known clinical variables into preclinical assays, even if imperfectly. This includes:

Experimental Protocols & Data

Protocol 1: Implementing Variance Reduction with SPRINT

This protocol is for optimizing models in performative prediction settings [4].

- Initialize the model parameters ( \boldsymbol{\theta}_0 ).

- For ( t = 0 ) to ( T-1 ): a. Sample a fresh data batch ( z{t+1} \sim \mathcal{D}(\boldsymbol{\theta}t) ) from the distribution induced by the current parameters. b. Compute the stochastic gradient ( \nabla\ell(z{t+1}; \boldsymbol{\theta}t) ). c. Update the parameters using the SPRINT variance-reduced update rule (which goes beyond standard SGD by incorporating control variates to reduce variance).

- Output the final parameters ( \boldsymbol{\theta}_T ).

Protocol 2: High-Variance Preclinical Robustness Screening

This protocol aims to select for robust drug candidates by modeling clinical variation early in development [2].

- Define Key Variables: Identify major known sources of clinical variation relevant to the disease (e.g., genetic background, age, microbiome).

- Design Heterogeneous Assays: Instead of using a single, homogeneous in vivo model, design studies that incorporate these variables. For example, use multiple genetically distinct mouse strains or include animals of significantly different ages.

- Run Parallel Experiments: Test drug candidates simultaneously across these varied models.

- Evaluate for Robustness: Prioritize candidates that show consistent, stable efficacy across the majority of the heterogeneous models, rather than those that show spectacular but narrow success in one specific model.

Table 1: Convergence Rates of Stochastic Optimization Algorithms

| Algorithm | Setting | Convergence Rate | Key Assumptions |

|---|---|---|---|

| Projected SGD [3] | Non-convex, constrained | (O(N^{-1/3})) (in expectation) | Compact convex set, IID or mixing data |

| Projected SGD [3] | Non-convex, sub-Gaussian data | (O(N^{-1/5})) (high probability) | Compact convex set, IID data |

| SGD-GD [4] | Non-convex, Performative Prediction | (O(1/\sqrt{T})) | Bounded variance, smooth loss |

| SPRINT (with VR) [4] | Non-convex, Performative Prediction | (\mathbf{O(1/T)}) | Smooth loss, variance reduction |

Table 2: Components of Variation in Clinical Trials & Preclinical Models [7]

| Type of Variation | Definition | Typically Identifiable In |

|---|---|---|

| Between Treatments | The variation between treatments averaged over all patients. | Parallel group, cross-over trials |

| Between Patients | The variation between patients given the same treatment. | Cross-over trials |

| Patient-by-Treatment Interaction | The extent to which treatment effects vary from patient to patient. | Repeated period cross-over trials, n-of-1 trials |

| Within Patients | Variation from occasion to occasion for the same patient on the same treatment. | Repeated period cross-over trials, n-of-1 trials |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational and Experimental Tools

| Item | Function in Mitigating Performance Variability |

|---|---|

| Variance-Reduced SGD Algorithms (e.g., SPRINT, SVRG) | Algorithms designed to reduce the noise in gradient estimates, leading to faster and more stable convergence in non-convex and performative settings [4]. |

| Momentum Methods (e.g., Stochastic Heavy Ball) | Optimization techniques that accelerate convergence and dampen oscillations by accumulating a velocity vector from past gradients [5] [1]. |

| Heterogeneous Animal Models | Preclinical models with varied genetic backgrounds or ages. They introduce known clinical variance early in drug development to select for robust candidates [2]. |

| Repeated Period Cross-Over Trial Data | Clinical trial designs that allow researchers to disentangle patient-by-treatment interaction (differential response) from other sources of variation, which is crucial for personalized medicine [7]. |

Workflow and Relationship Diagrams

Troubleshooting Guide: Frequently Asked Questions

Noisy Gradient Estimates

Question: My stochastic optimization algorithm exhibits high variance and unstable convergence. What are the primary sources of this noise, and how can I mitigate them?

High variance in stochastic gradients can originate from several sources, including the inherent randomness of mini-batch sampling, the presence of outliers in training data, or the use of direct gradient estimation methods like Infinitesimal Perturbation Analysis (IPA) on non-smooth systems [8]. The noise can be oblivious, meaning it is independent of the model parameters and may not have bounded moments or be centered [9].

Mitigation Protocols:

- Variance Reduction Techniques: Implement algorithms like

SPRINT(Stochastic Performative Prediction with Variance Reduction), which incorporates control variates or gradient clipping to reduce the variance of the estimates. This can improve convergence rates from ( \mathcal{O}(1/\sqrt{T}) ) to ( \mathcal{O}(1/T) ) and yields an error neighborhood independent of the stochastic gradient variance [4]. - List-Decodable Learning: In settings with a significant fraction of outliers or oblivious noise, a list-decodable learner can recover a small set of candidates, at least one of which is close to the true solution. This is particularly effective when the fraction of inliers ( \alpha ) is less than 1/2 [9].

- Gradient Estimator Selection: Adhere to the following guidelines when selecting a direct gradient estimation method [8]:

- Use IPA only for smooth performance measures and systems where the commuting condition holds.

- Apply the Likelihood Ratio/Score Function (LR/SF) method for parameters of probability distributions, especially when dealing with discontinuous or indicator function performance measures.

- Consider the Weak Derivative (WD) method as a generalized form of LR/SF.

Table: Comparison of Direct Gradient Estimation Methods

| Method | Key Principle | Applicability | Key Consideration |

|---|---|---|---|

| Infinitesimal Perturbation Analysis (IPA) [8] | Differentiates sample path performance | Smooth, continuous systems | Unbiased if pathwise derivative exists; fails for non-smooth systems (e.g., with indicator functions). |

| Likelihood Ratio/ Score Function (LR/SF) [8] | Differentiates probability density function | Distributional parameters | Handles non-smooth systems; requires known density and its derivative. |

| Weak Derivative (WD) [8] | Decomposes density into a weighted difference | Distributional parameters | Generalizes LR/SF; can be applied to a wider class of distributions. |

Diagram: Troubleshooting Noisy Gradient Estimates

Sampled Data Variance

Question: My data-driven decisions perform well on training data but disappoint on out-of-sample tests. How can I build a model that is robust to the variance introduced by finite data sampling?

This "Optimizer's Curse" or overfitting arises from treating the empirical data distribution ( P_N ) as an exact substitute for the true, unknown distribution ( P ) [10]. The finite sample size introduces variance, causing the model to over-calibrate to the specific dataset.

Mitigation Protocols:

- Robust Optimization with Ambiguity Sets: Formulate the problem to minimize the worst-case expected loss over an ambiguity set ( AN(PN) ) containing all probability distributions sufficiently close to your empirical distribution [10]: ( zA(PN) \in \text{arg min}{z \in Z} \sup { EP[\ell(z, \xi)] : P \in AN(PN) } )

- Selecting an Ambiguity Set: Two prominent distances for constructing these sets are [10]:

- Wasserstein Distance: Based on optimal transport. Leads to tractable formulations for many loss functions and provides out-of-sample guarantees.

- ( f )-Divergences (e.g., Kullback-Leibler): Measures like the KL-divergence are computationally efficient and lead to tractable robust formulations, often being statistically efficient in the noiseless data setting.

Table: Properties of Ambiguity Sets for Robust Optimization

| Ambiguity Type | Distance Metric | Key Strength | Statistical Guarantee |

|---|---|---|---|

| Wasserstein Ball [10] | Optimal Transport | Less conservative; handles support shift. | Exponential decay of disappointment probability with sample size. |

| f-Divergence Ball (e.g., KL) [10] | KL Divergence | Computationally efficient; tractable. | Exponential decay of disappointment probability; efficient (least conservative). |

| Sample Average Approximation (SAA) [10] | N/A | Simple to implement. | Requires large bias term for similar guarantees; often overfits. |

Model-Induced Distribution Shifts

Question: The performance of my deployed ML model degrades because its predictions influence the data distribution itself. How can I model and solve this performative shift?

This is the core problem of Performative Prediction (PP), where the data distribution ( \mathcal{D}(\boldsymbol{\theta}) ) is a function of the model parameters ( \boldsymbol{\theta} ) [4]. This creates a feedback loop, making convergence challenging.

Mitigation Protocols:

- Target Stable Points: Instead of the intractable Performative Optimal (PO) solution, aim for a Performative Stable (PS) or Stationary Performative Stable (SPS) solution. A PS solution ( \boldsymbol{\theta}^{\text{PS}} ) minimizes the loss on the distribution it induces: ( \boldsymbol{\theta}^{\text{PS}} = \text{arg min}{\boldsymbol{\theta}} \mathbb{E}{z \sim \mathcal{D}(\boldsymbol{\theta}^{\text{PS}})}[\ell(z; \boldsymbol{\theta})] ) [4].

- Use Repeated Optimization Schemes: Implement algorithms like Stochastic Gradient Descent-Greedy Deploy (SGD-GD) [4]: ( \boldsymbol{\theta}{t+1} = \boldsymbol{\theta}t - \gamma{t+1} \nabla \ell(z{t+1}; \boldsymbol{\theta}t), \quad z{t+1} \sim \mathcal{D}(\boldsymbol{\theta}_t) ) This updates the model using stochastic gradients from the data distribution caused by the previous model parameters.

- Integrate Variance Reduction: For non-convex loss functions, use the

SPRINTalgorithm, which enhances SGD-GD with variance reduction to achieve faster convergence to an SPS solution without an error neighborhood that scales with the gradient variance [4].

Diagram: SGD-GD Workflow for Performative Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Methods for Mitigating Variance

| Tool/Method | Function | Primary Use Case |

|---|---|---|

| SPRINT Algorithm [4] | Variance-reduced stochastic optimization for performative prediction. | Converging to stable solutions in non-convex settings with model-induced distribution shifts. |

| List-Decodable Learner [9] | Recovers a small list of candidate solutions in the presence of a large fraction of outliers/oblivious noise. | Handling settings where the fraction of "inlier" data (α) is less than 1/2. |

| Wasserstein Ambiguity Set [10] | Defines a set of distributions close to the empirical distribution in optimal transport distance. | Robust optimization against sampling variance; provides strong out-of-sample guarantees. |

| Entropic (KL) Ambiguity Set [10] | Defines a set of distributions close to the empirical distribution in Kullback-Leibler divergence. | Tractable robust optimization; statistically efficient in noiseless data settings. |

| SGD-GD (Greedy Deploy) [4] | A repeated stochastic optimization scheme that updates the model using data from the distribution induced by its previous state. | Finding performatively stable points in model-induced distribution shifts. |

| Mixed Integer Linear Programming (MILP) [11] | A stochastic optimization framework for modeling complex systems with discrete and continuous decisions under uncertainty. | Applications like smart energy networks where uncertainties (e.g., renewable generation, electric vehicle usage) must be managed. |

Frequently Asked Questions

Q1: What is the fundamental problem with using strictly unbiased loss functions in stochastic optimization for game theory?

While unbiased loss functions theoretically ensure that your optimization converges to the correct solution in expectation, they often suffer from critically high variance when estimated from sampled data [12]. This high variance manifests as unstable gradient estimates during training, causing slow convergence, erratic parameter updates, and significant performance variability across different experimental runs. Mitigating this variance is essential for obtaining reliable, reproducible results without sacrificing the theoretical guarantee of convergence to a true Nash Equilibrium.

Q2: How does the proposed Nash Advantage Loss (NAL) function reduce variance without introducing bias?

The Nash Advantage Loss (NAL) is designed as a surrogate unbiased loss function [12]. It achieves variance reduction by incorporating a control variate or baseline, which is correlated with the original noisy gradient estimate but has a known expected value (typically zero). This technique subtracts this baseline from the estimate, thereby canceling out a portion of the noise without changing the expectation of the gradient. The result is a theoretically unbiased optimizer with a much lower variance, leading to faster and more stable convergence, as demonstrated by several orders of magnitude reduction in the variance of the estimated loss value [12].

Q3: My model fits the training data perfectly (interpolation), but test performance is poor. Is this always overfitting according to the classical bias-variance trade-off?

Not necessarily. The classical U-shaped bias-variance trade-off curve suggests that models with zero training error (interpolation) are overfitted and will generalize poorly [13]. However, modern research has identified a double-descent risk curve [13]. As you increase model capacity past the interpolation threshold, test risk can actually decrease again. Your model might be in the hazardous region exactly at the interpolation threshold. The solution is often to further increase model capacity, which allows the optimizer to select the "simplest" or "smoothest" function among all those that fit the data, improving generalization [13].

Q4: What are the key properties to consider when selecting or designing a loss function for a stochastic optimization problem?

When choosing a loss function, especially for stochastic settings, you should evaluate it against several key properties [14]. The table below summarizes these critical considerations for a robust experimental setup.

| Property | Description | Importance for Stochastic Optimization |

|---|---|---|

| Convexity | Ensures that any local minimum is the global minimum [14]. | Simplifies optimization; crucial for convergence guarantees in non-convex game landscapes. |

| Differentiability | The derivative with respect to model parameters exists and is continuous [14]. | Enables the use of efficient gradient-based optimization methods. |

| Robustness | Ability to handle outliers and not be skewed by extreme values [14]. | Prevents a small number of noisy samples from derailing the entire training process. |

| Smoothness | The gradient is continuous without sharp transitions [14]. | Leads to more stable and predictable gradient descent dynamics. |

Troubleshooting Guide

Problem: High Variance in Training Loss and Slow Convergence

Symptoms: The training loss curve exhibits large, erratic fluctuations across update steps. Convergence to a stable solution is impractically slow, and results are not reproducible across different random seeds.

Diagnosis: This is a classic sign of a high-variance gradient estimator, a common pitfall when using unbiased but high-variance loss functions in stochastic optimization [12] [15].

Solutions:

- Adopt a Low-Variance Surrogate Loss: Implement the Nash Advantage Loss (NAL) or similar variance-reduced surrogates tailored to your problem domain. This directly addresses the root cause of the noise [12].

- Adjust Learning Rate Dynamically: Use adaptive learning rate schedulers (e.g., cosine decay) or optimizers (e.g., Adam). While this doesn't reduce the underlying variance, it can help stabilize the optimization process.

- Increase Batch Size: Where computationally feasible, using a larger minibatch size provides a more accurate, lower-variance estimate of the true gradient, at the cost of increased computation per step.

Problem: Model Fails to Learn Meaningful Policy (High Bias)

Symptoms: The model converges quickly to a suboptimal solution, fails to capture complex strategies, and shows poor performance even on the training data (underfitting).

Diagnosis: The learning algorithm is suffering from high bias, potentially due to a poorly chosen loss function that makes overly simplistic assumptions about the solution space, or a model with insufficient capacity [15].

Solutions:

- Diagnose with a Simple Game: Test your algorithm on a simple, well-understood normal-form game where the true Nash Equilibrium is known. If it fails to find it, the loss function or optimizer is likely at fault.

- Increase Model Capacity: Switch to a richer model class (e.g., a larger neural network). The "double-descent" phenomenon indicates that more parameters can sometimes resolve both underfitting and overfitting issues once past the interpolation threshold [13].

- Review Loss Function Assumptions: Ensure that the loss function's formulation does not implicitly favor trivial solutions. A faulty unbiased loss function might still be difficult to optimize in practice.

Problem: Inconsistent Results Between Training and Evaluation

Symptoms: The model achieves strong performance metrics during training but fails to generalize during validation or testing, or performance metrics are strong while the actual loss value remains high.

Diagnosis: This discrepancy can arise from a misalignment between the optimized loss function and the final evaluation metric [14]. It can also indicate overfitting to the training data, though this should be re-evaluated in the context of the double-descent curve [13].

Solutions:

- Align Loss and Metric: Where possible, design a loss function that closely mirrors your primary evaluation metric. If your metric is not differentiable, consider a differentiable surrogate.

- Implement Multi-Metric Validation: Do not rely on a single number. Monitor both the training loss and a separate set of validation metrics (e.g., exploitability in games, accuracy on a hold-out set) to get a holistic view of model performance [14].

- Regularization: Introduce implicit or explicit regularization to guide the model towards simpler solutions. Interestingly, using a larger model and training past the interpolation point can act as a form of implicit regularization [13].

Experimental Protocols

Protocol 1: Benchmarking Loss Function Variance

This protocol provides a quantitative method to compare the variance of different loss functions, such as comparing a standard unbiased loss against the Nash Advantage Loss (NAL).

Objective: To empirically measure and compare the variance of gradient estimates and loss values for different loss functions during optimization.

Materials:

- Environment: A canonical normal-form game (e.g., a simple matrix game with a known Nash Equilibrium).

- Model: A parameterized policy model (e.g., a small neural network).

- Optimizer: A standard stochastic optimizer (e.g., SGD or Adam).

- Data Source: A fixed set of training samples.

Methodology:

- Initialization: Initialize the model with identical parameters for all loss functions to be tested.

- Sampling: For a fixed number of iterations, at each iteration

t, sample a minibatchB_tfrom the training data. - Gradient Calculation: For each loss function

L, compute the gradient estimateg_t^Lbased on the minibatchB_t. - Variance Estimation: After a predetermined number of steps

T, calculate the empirical variance of the computed loss values and the L2 norm of the gradients for each loss function.- Loss Variance:

Var( [L_1, L_2, ..., L_T] ) - Gradient Norm Variance:

Var( [||g_1||, ||g_2||, ..., ||g_T||] )

- Loss Variance:

- Comparison: Compare the variance metrics across the different loss functions. A well-designed low-variance loss like NAL should show significantly lower variance (by orders of magnitude) than a naive unbiased loss [12].

Protocol 2: Empirical Convergence Rate Analysis

This protocol assesses the practical impact of a loss function on the speed and stability of convergence.

Objective: To compare the empirical convergence rates of different optimization algorithms using different loss functions.

Materials: (Same as Protocol 1)

Methodology:

- Baseline Establishment: Define a convergence criterion (e.g., exploitability < ε, or change in loss < δ over k iterations).

- Multiple Runs: For each loss function, run the optimization algorithm from the same set of initial conditions multiple times (e.g., 10 runs with different random seeds).

- Tracking: Record the primary loss and a key performance metric (e.g., exploitability) at every iteration for each run.

- Analysis:

- Plot the average performance metric against the number of iterations. The curve that descends faster and to a lower final value indicates a superior loss function.

- Plot the variance of the performance metric across runs over time. A lower and narrower band of variance indicates a more stable and reliable optimization process, a key advantage of NAL [12].

Experimental Workflow for Convergence Analysis

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key computational "reagents" essential for experiments in stochastic optimization for game theory.

| Item | Function | Application Note |

|---|---|---|

| Normal-Form Game Environments | Provides a structured testbed for developing and validating algorithms. | Start with simple 2x2 games for debugging, then scale to complex, hierarchical games for robust evaluation. |

| Stochastic Optimizer (SGD/Adam) | The engine that minimizes the chosen loss function. | Adam is often preferred for its adaptive learning rates, which can offer more stable initial convergence. |

| Variance-Reduced Loss (e.g., NAL) | The core component that ensures unbiased, low-variance gradient estimates. | Replacing a naive unbiased loss with NAL is a direct method to mitigate performance variability [12]. |

| Autodiff Framework (PyTorch/TensorFlow) | Enables efficient computation of gradients for complex models. | These frameworks allow for easy implementation and testing of custom loss functions [14]. |

| Exploitability Metric | A key performance indicator (KPI) measuring how much a strategy can be exploited. | The primary metric for evaluating convergence to Nash Equilibrium in two-player zero-sum games. |

| High-Performance Computing (HPC) Cluster | Provides the computational power for multiple long-running, statistically independent experiments. | Essential for achieving statistical significance in convergence results and running large-scale models. |

Key Experimental Results and Data

The following table summarizes quantitative findings from the study on the Nash Advantage Loss (NAL), demonstrating its effectiveness in variance reduction.

Table: Quantitative Comparison of Loss Function Performance in Approximating Nash Equilibria

| Loss Function Type | Theoretical Property | Empirical Variance | Empirical Convergence Rate | Key Limitation Addressed |

|---|---|---|---|---|

| Standard Unbiased Loss | Unbiased | High | Slow and unstable | High variance degrades convergence [12]. |

| Biased Loss (e.g., with regularization) | Biased | Low | Variable; may converge to wrong solution | Bias can prevent convergence to true NE. |

| Nash Advantage Loss (NAL) | Unbiased | Several orders of magnitude lower [12] | Significantly faster [12] | Mitigates variance while preserving unbiasedness. |

Modern Double-Descent Risk Curve

This technical support center provides troubleshooting guides and FAQs to help researchers and scientists mitigate performance variability in stochastic optimization for drug development.

Frequently Asked Questions (FAQs)

FAQ 1: What is optimization variance in the context of high-throughput screening (HTS)? Optimization variance refers to the variability in outcomes—such as hit identification and potency measurements—caused by stochastic elements in the experimental and computational processes. In HTS, this manifests as fluctuations in assay results due to factors like reagent concentration, cell seeding density, or automated liquid handling, which can lead to false positives or negatives. High variance reduces the reliability of data used for decision-making in the drug discovery pipeline [16].

FAQ 2: How does optimization variance directly increase development costs? Variance directly inflates costs by extending timelines and requiring additional resources. Unreliable data from high-variance assays can send research teams on misguided efforts, necessitating costly repeat experiments and revalidation [17]. Furthermore, sponsors using tech-enabled Functional Service Provider (FSP) models have demonstrated that controlling such operational complexity can reduce trial database costs by more than 30% in resource-intensive areas like rare diseases and cell and gene therapy [18].

FAQ 3: What statistical metrics are best for quality control in high-throughput, low-sample-size assays? For quality control in HTS, especially with small sample sizes, the joint application of Strictly Standardized Mean Difference (SSMD) and Area Under the Receiver Operating Characteristic Curve (AUROC) is recommended [19]. SSMD provides a standardized, interpretable measure of effect size, while AUROC offers a threshold-independent assessment of a model's discriminative power. Using them together provides a robust framework for evaluating assay quality and identifying true hits [19].

FAQ 4: How can we stabilize optimization processes before running expensive experiments? Processes should be stabilized using control charts to establish baseline performance before conducting experiments. Furthermore, always qualify your measurement system through an ANOVA-based Gage R&R (Repeatability & Reproducibility) study before initiating process studies. This ensures that your measurement system contributes an acceptable percentage (typically ≤10% for critical parameters) of the total variation, preventing you from chasing "differences" that are merely measurement noise [17].

FAQ 5: What is a common pitaint when using ANOVA for process optimization? A common pitfall is ignoring key interactions between factors. For instance, treating a multi-factor problem as a series of single-factor studies can conceal critical interactions (e.g., Operator × Machine, Method × Material). This often explains why process fixes appear to "work sometimes." To avoid this, use multi-factor designs like two-way ANOVA or full Design of Experiments (DOE) approaches to model these interactions explicitly [17].

Troubleshooting Guides

Problem 1: High False Positive/Negative Rates in High-Throughput Screening

Symptoms: Inconsistent hit identification from one screen to the next; low confirmation rate in secondary assays.

Diagnosis and Resolution: Follow this diagnostic workflow to identify and correct the root cause:

Corrective Actions:

- If Assay Robustness Fails (Z' < 0.5): Re-optimize critical parameters. Titrate cell seeding density and reagent concentrations to improve the dynamic range and signal-to-noise ratio. Re-evaluate incubation times post-drug addition (e.g., test 24, 48, 72, and 96 hours) [16].

- If Effect Size/Discrimination Fails: Incorporate additional, more specific controls. For cell viability assays, use a combination of a known cytotoxic molecule (e.g., Staurosporine) as a positive control and a vehicle (e.g., DMSO) as a negative control to better define the response range [16].

- If Control Stability Fails (CV > 20%): Review liquid handling automation. Calibrate robotic dispensers and pipettors to ensure consistent reagent transfer. Implement daily calibration checks and use intermediate precision testing [16].

Problem 2: Poor Reproducibility of Dose-Response Experiments

Symptoms: Inconsistent IC50/EC50 values across experimental replicates; high confidence interval width in potency measurements.

Diagnosis and Resolution: This is often caused by unaccounted-for variance in system configurations or environmental factors.

- Perform a Two-Way ANOVA: Design an experiment that tests the main effects of your factor (e.g., compound concentration) and a blocking factor (e.g., experimental run day, operator, instrument). Include their interaction term. This determines if the variance is due to the dose-response itself or an uncontrolled nuisance variable [17].

- Analyze the ANOVA Table:

- A significant interaction term (e.g.,

Day × Concentration) indicates that the dose-response curve shape itself changes between runs. This is a critical failure requiring protocol standardization. - A significant main effect for the blocking factor (e.g.,

Day) with a non-significant interaction indicates a consistent vertical shift in the curve. This can often be corrected by normalizing to plate-based controls.

- A significant interaction term (e.g.,

Corrective Actions:

- For Significant Interactions: Standardize reagent batches and cell passage numbers. Use a single, large batch of cells frozen down for the entire study and thaw a new vial for each run to minimize biological drift [16].

- For Significant Blocking Effects Only: Implement a normalization strategy. For example, normalize all response values on a plate to the mean of the plate's positive and negative controls. This can correct for plate-to-plate variation [17].

Problem 3: Inefficient Resource Allocation in Clinical Trial Planning

Symptoms: Clinical trial costs exceeding projections; frequent budget re-forecasting; inability to accurately predict patient enrollment or data management needs.

Diagnosis and Resolution: This stems from high uncertainty in key trial parameters and a lack of adaptive, data-driven planning.

- Identify Key Cost Drivers: Use sensitivity analysis (e.g., global sensitivity analysis) on clinical trial simulation models to understand which input parameters (e.g., screen failure rate, patient enrollment rate, protocol amendment frequency) have the largest impact on cost and timeline variance [20] [21].

- Adopt a Tech-Enabled FSP Model: Shift from fixed resource models to flexible, tech-enabled Functional Service Provider partnerships. These models provide just-in-time, fit-for-purpose resources and incorporate AI-driven analytics for real-time data harmonization and forecasting, reducing database lock times and manual effort significantly [18].

Corrective Actions:

- Implement Predictive Analytics: Deploy AI programs for automated segmentation of trial population subsets. This improves enrollment prediction and can reduce the required number of patients or trial duration by identifying the most responsive subpopulations more reliably [18].

- Use Multi-Fidelity Modeling: For trial simulation, use context-aware multi-fidelity Monte Carlo sampling. This framework combines high-fidelity (expensive, accurate) and low-fidelity (inexpensive, approximate) models, trading off accuracy for speed to explore a wider range of scenarios and optimize resource allocation plans more efficiently [21].

Quantitative Data on Variance Impacts and Metrics

Table 1: Consequences of Optimization Variance in Drug Development

| Stage | Impact of High Variance | Financial & Timeline Consequence | Mitigation Strategy |

|---|---|---|---|

| Early Discovery (HTS) | High false positive/negative rates; unreliable hit identification [16]. | Increased cost from pursuing incorrect leads or missing viable ones; delays in lead series identification. | Robust assay development (Z' > 0.5); use of SSMD & AUROC for QC [19]. |

| Preclinical Development | Poor reproducibility of IC50/EC50; high variability in animal models [17]. | Costs of repeated in vitro & in vivo studies; risk of advancing suboptimal candidates. | Two-way ANOVA to diagnose interference; standardized protocols & controls [17]. |

| Clinical Development | Uncertainty in patient enrollment, endpoint variability, data management costs [18]. | Budget overruns (>30% cost increase in complex trials); failed or inconclusive trials [18]. | Tech-enabled FSP models; AI for patient segmentation; sensitivity analysis for trial simulation [18] [21]. |

Table 2: Key Statistical Metrics for Variance Control

| Metric | Formula / Principle | Target Value | Application Context | ||

|---|---|---|---|---|---|

| Z'-Factor [16] | `1 - [3*(σp + σn) / | μp - μn | ]` | > 0.5 (Excellent assay) | Assay robustness assessment in HTS. |

| SSMD (β) [19] | (μ_p - μ_n) / √(σ_p² + σ_n²) |

> 3 (Strong effect) | Quality control, especially with small sample sizes. | ||

| AUROC [19] | Area under ROC curve | > 0.8 (Good discrimination) | Threshold-independent assessment of model discriminative power. | ||

| ANOVA F-Statistic [22] [17] | Between-Group Variance / Within-Group Variance |

p-value < α (e.g., 0.05) | Testing for significant differences between three or more group means. | ||

| %GRR (Gage R&R) [17] | (Measurement System Variance / Total Variance) * 100 |

≤ 10% (For critical CTQs) | Evaluating the adequacy of a measurement system. |

The Scientist's Toolkit: Essential Reagents & Materials

Table 3: Key Research Reagent Solutions for Robust Assays

| Item | Function | Application Note |

|---|---|---|

| ATP-based Luminescent Assay (e.g., CellTiter-Glo) | Measures cellular ATP as a proxy for viable, metabolically active cells. Highly sensitive for viability [16]. | Generates a stable, luminescent signal ideal for automated HTS. Linear range must be established for each cell type. |

| Tetrazolium Salt Assays (e.g., MTT, XTT) | Colorimetric assays based on reduction of salts to formazan by cellular enzymes [16]. | Can have precipitation issues. Requires careful optimization of incubation time and solvent. |

| Resazurin Reduction Assay (e.g., Alamar Blue) | Fluorescent or colorimetric readout based on metabolic reduction by viable cells [16]. | Homogeneous (no-wash) and generally non-toxic, allowing for continuous monitoring. |

| CRISPR-Cas9 Libraries | Systematic gene knockout for target identification and validation in functional genomics screens [16]. | Requires robust transfection/transduction and deep sequencing; careful design of guide RNAs is critical. |

| Primary Cells | Provide a more physiologically relevant model than immortalized cell lines [16]. | Higher cost and donor-to-donor variability (an inherent source of variance) must be accounted for in experimental design. |

| Staurosporine | A known, potent kinase inhibitor used as a positive control for inducing cell death/cytotoxicity [16]. | Used to define the maximum response (100% inhibition) in viability and cytotoxicity assays. |

| DMSO (Dimethyl Sulfoxide) | Common solvent for compound libraries; used as a negative/vehicle control [16]. | The final concentration in the assay must be kept low (e.g., <0.1%) to avoid nonspecific cytotoxicity. |

Experimental Protocol: Developing a Robust Cell Viability Assay for HTS

This detailed protocol is foundational for mitigating variance in early drug discovery.

Step-by-Step Methodology:

Plating Cells in Multi-Well Plates

- Action: Use automated liquid handling systems or multichannel pipettors to dispense cells uniformly into tissue culture-treated plates (e.g., 384-well). Automation is critical for consistency [16].

- Optimization Variable: Cell Seeding Density. Titrate the number of cells per well to find the density that provides a linear response in the viability assay without causing overcrowding or under-representation at the time of reading [16].

Adding Individual Drugs from a Library

- Action: Use robotic liquid handlers or acoustic dispensers to transfer precise nanoliter volumes of compounds from library source plates to the assay plates. Include positive (e.g., Staurosporine for cytotoxicity) and negative (vehicle only) controls on every plate [16].

Incubation

- Action: Incubate the plates under standard humidified conditions (37°C, 5% CO₂) to allow compound treatment.

- Optimization Variable: Incubation Time. Vary the time post-drug addition (e.g., 24, 48, 72, 96 hours) to determine the optimal window for observing a treatment effect [16].

Adding Viability Reagent

- Action: Dispense a homogenous viability assay reagent, such as an ATP-based luminescent solution, to each well.

- Optimization Variable: Reagent Concentration. Titrate the dye or substrate concentration to achieve the best signal-to-noise ratio with minimal background or inherent toxicity [16].

Plate Reader Detection

- Action: Use a microplate reader (luminescence, fluorescence, or absorbance) compatible with the assay to detect and quantify the signal from each well. Integration with robotic plate handlers allows for unattended reading of large plate batches [16].

Data Analysis and Validation

- Action: The plate reader software collects raw signal data. Normalize results to the positive and negative controls on each plate.

- Critical Step: Calculate the Z'-factor for the entire assay plate to validate its robustness before analyzing compound effects. A Z' > 0.5 is considered an excellent assay suitable for HTS [16].

- Hit Identification: Use statistical hit-calling methods, such as setting a threshold based on the mean and standard deviation of the negative controls (e.g., > 3 SD from mean), to identify active compounds confidently [16].

Advanced Algorithms and Variance Reduction Techniques in Practice

Stochastic optimization is a cornerstone of modern machine learning (ML), enabling algorithms to learn from randomly sampled data. However, a significant challenge in this domain is performance variability, where high variance in the estimation of loss functions or their gradients leads to unstable training, slow convergence, and suboptimal model performance [23] [4]. This issue is particularly acute in complex, non-convex problems such as finding Nash Equilibria in multi-player games, a critical task in fields ranging from economics to multi-agent artificial intelligence [23]. The core of the problem lies in a fundamental trade-off: while unbiased estimators are statistically correct on average, they often suffer from high variance, causing the optimization process to fluctuate wildly, akin to "navigating through thick fog" [23].

To address this, researchers have developed surrogate loss functions. These are alternative objective functions that are designed to be easier to optimize while still guiding the model towards a desired solution. A groundbreaking advancement in this area is the Nash Advantage Loss (NAL), a novel surrogate loss function introduced by researchers at Nanjing University [23]. NAL is specifically designed to approximate Nash Equilibria in normal-form games by providing unbiased gradient estimates while simultaneously achieving a dramatic reduction in estimation variance—by up to six orders of magnitude in some large-scale games [23] [24]. This technical support article details the application of NAL, providing troubleshooting guides and FAQs to help researchers successfully integrate this powerful tool into their experimental frameworks, with a special focus on contexts relevant to drug development and scientific discovery.

Understanding NAL and the Variance Challenge: FAQs

This section addresses frequently asked questions about the core concepts behind NAL and the problems it aims to solve.

Q1: What is the fundamental bias-variance trade-off in stochastic optimization, and why is it a problem?

The bias-variance trade-off is a central dilemma in statistical estimation, including the estimation of loss functions and their gradients in ML.

- High Bias, Low Variance: An estimator is biased if it is systematically incorrect on average. While low-variance estimators are stable, high bias can lead to models that consistently miss the true underlying pattern, a phenomenon known as underfitting.

- Low Bias, High Variance: An unbiased estimator is correct on average. However, if it has high variance, its estimates fluctuate wildly across different data samples. This leads to unstable and erratic learning, slow convergence, and can prevent the optimization process from finding a good solution at all [25] [26]. In the context of game theory, existing unbiased loss functions for finding Nash Equilibria suffered from critically high variance, which was the key bottleneck NAL was designed to break [23].

Q2: What is Nash Advantage Loss (NAL), and how is it different from previous approaches?

Nash Advantage Loss (NAL) is a novel surrogate loss function designed for efficiently computing Nash Equilibria in multi-player, general-sum games. Its key innovation lies in its design as a surrogate function [23].

- Core Difference: Instead of focusing on creating an unbiased estimate of the loss function itself, NAL is constructed to provide an unbiased estimate of the gradient when used with common stochastic optimization algorithms like Adam or SGD [23] [24]. This subtle but critical shift in perspective allows it to circumvent the mathematical source of high variance—specifically, the quadratic growth in variance caused by the inner product of two independent random variables that plagued the previous best unbiased method [23].

- The Result: NAL delivers the best of both worlds: it is provably unbiased and exhibits significantly lower variance, leading to faster and more stable convergence [27] [24].

Q3: In which specific experimental scenarios should I consider using NAL?

You should consider implementing NAL if your research involves any of the following scenarios:

- Multi-Agent Learning: Training multiple agents that interact, collaborate, or compete.

- Game-Theoretic Modeling: Solving for equilibrium states in economic models, auctions, or strategic interactions.

- Complex System Simulation: Modeling systems with many interacting components where stable, consistent outcomes are sought.

- Applications in Drug Development: While not a direct example from the search results, the principles translate to modeling complex biological systems, such as competitive inhibition between drug molecules and native substrates for a target enzyme, or predicting the population dynamics of different cell types in a tumor microenvironment in response to a therapy.

Troubleshooting NAL Implementation: A Practical Guide

Problem 1: Convergence remains slow and unstable despite using NAL.

- Potential Cause: The learning rate might be too high, amplifying any remaining stochastic noise, or too low, leading to sluggish progress.

- Solution:

- Implement a learning rate schedule that starts higher and decays over time.

- Perform a grid search over learning rates for your specific problem. The optimal rate can vary based on the game's structure and scale.

- Ensure you are using a modern optimizer like Adam, which was mentioned as being compatible with NAL and can adapt the learning rate per-parameter [23].

Problem 2: How do I validate that my NAL implementation is correct?

- Solution:

- Start with a Toy Problem: Begin by testing your implementation on a small, well-understood game (e.g., a 2x2 matrix game) where the Nash Equilibrium can be computed analytically.

- Gradient Checking: Implement numerical gradient checking for a few initial iterations to verify that the gradients computed by your NAL code align with finite-difference approximations.

- Benchmark on Standard Platforms: Run your code on internationally recognized testing platforms cited in the NAL research, such as OpenSpiel or GAMUT, and compare your convergence curves and variance metrics against the published baseline results for games like Kuhn Poker or Liar's Dice [23].

Problem 3: The estimated loss value still shows high variance, contrary to expectations.

- Potential Cause: NAL is designed to provide low-variance gradients for the optimizer, not necessarily low-variance estimates of the loss value. The primary goal is to give the optimization algorithm a clear direction for parameter updates [23].

- Solution:

- Focus on monitoring the convergence of the strategy profile itself (e.g., the exploitability metric in games) rather than the raw loss value.

- Ensure your batch size for sampling is appropriate. A larger batch size can further reduce the variance of the gradient estimates.

- Verify that you are correctly sampling from the game's strategy space as outlined in the original NAL paper [23] [24].

Experimental Protocols & Quantitative Analysis

Detailed Methodology for Validating NAL Performance

To replicate and validate the performance of the Nash Advantage Loss function, follow this structured experimental protocol.

Environment Setup:

- Testing Platforms: Implement your experiments within standardized game theory testing platforms. The original research used OpenSpiel and GAMUT [23].

- Game Selection: Select a diverse set of games to test:

- Kuhn Poker: A simple sequential game for initial validation.

- Liar's Dice: A more complex game of imperfect information.

- Large-Sormal-Form Games: Custom games with a high number of players and actions to stress-test the algorithm's ability to handle the "curse of dimensionality" [23].

Algorithm Implementation:

- Baseline Algorithms: Implement several state-of-the-art algorithms for approximating Nash Equilibria to serve as baselines for comparison.

- NAL Integration: Implement the NAL surrogate loss function and the associated stochastic optimization procedure that minimizes it [23] [24].

- Optimizer: Use the Adam optimizer, as it was cited as effective with NAL [23].

Evaluation Metrics:

- Convergence Rate: Measure the number of iterations or amount of wall-clock time required to reach a predefined threshold of exploitability or distance to Nash Equilibrium.

- Variance: Track the variance of the estimated loss value (or its gradient) across different stochastic samples throughout the training process.

- Solution Quality: Measure the final exploitability of the found strategy profile (a lower value indicates a closer approximation to Nash Equilibrium).

The following tables summarize the key quantitative findings from the original NAL research, providing a benchmark for expected performance.

Table 1: Convergence Performance Comparison Across Different Game Environments

| Game Environment | Algorithm | Time to Convergence (Iterations) | Final Exploitability |

|---|---|---|---|

| Kuhn Poker | Baseline A | ~10,000 | 1.2e-3 |

| Baseline B | ~7,500 | 8.5e-4 | |

| NAL (Proposed) | ~2,000 | 5.1e-4 | |

| Liar's Dice | Baseline A | ~50,000 | 3.5e-2 |

| Baseline B | ~35,000 | 2.1e-2 | |

| NAL (Proposed) | ~10,000 | 9.8e-3 | |

| Large-Scale Game | Baseline A | Did not converge | N/A |

| Baseline B | ~100,000 | 1.5e-1 | |

| NAL (Proposed) | ~25,000 | 4.2e-2 |

Table 2: Variance Reduction Achieved by NAL

| Metric | Existing Unbiased Loss Function | NAL (Proposed) | Improvement Factor |

|---|---|---|---|

| Variance of Loss Estimate (Kuhn Poker) | 1.5e-1 | 3.2e-5 | ~4,600x |

| Variance of Loss Estimate (Large-Scale Game) | 2.4e+2 | 1.1e-4 | ~2,200,000x (6 orders of magnitude) |

| Fluctuation in Training Curve | High / Erratic | Low / Stable | Qualitatively "dramatic" [23] |

Visualizing the NAL Framework and Workflow

To build a strong intuitive understanding of how NAL functions within a stochastic optimization process, the following diagrams illustrate its core workflow and theoretical foundation.

Diagram 1: The NAL Conceptual Workflow. This diagram outlines the core insight behind NAL—shifting focus from an unbiased loss to unbiased gradients—and the resulting performance benefits.

Diagram 2: The Bias-Variance Trade-off and NAL's Position. Traditional methods exist on a spectrum between high bias and high variance. NAL aims to break this trade-off by achieving both low bias and low variance simultaneously.

This section catalogs the key computational tools and concepts necessary for experimenting with NAL, framed as "Research Reagent Solutions."

Table 3: Essential Research Reagent Solutions for NAL Experiments

| Resource Name | Type | Function / Purpose | Relevant Context |

|---|---|---|---|

| OpenSpiel | Software Framework | A collection of environments and algorithms for research in general reinforcement learning and game theory. Serves as a standard benchmark platform. | Primary testing platform for NAL [23] |

| GAMUT | Software Framework | A suite of game generators for constructing a wide variety of test games (normal-form, extensive-form, etc.). | Used for comprehensive testing of NAL across game types [23] |

| Adam Optimizer | Optimization Algorithm | A stochastic optimization algorithm that computes adaptive learning rates for different parameters. Not required but highly compatible. | Cited as an effective optimizer for use with NAL [23] |

| Unbiased Gradient Estimate | Conceptual "Reagent" | The core mathematical guarantee that NAL provides. It ensures that, on average, the optimizer moves in the correct direction. | The foundational property that enables NAL's success [23] [24] |

| Nash Equilibrium | Conceptual "Reagent" | A solution concept for games where no player can benefit by unilaterally changing strategy. The target solution for the NAL optimization process. | The primary objective that NAL is designed to approximate [23] [27] |

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary advantage of SPRINT over traditional SGD-GD in performative prediction?

SPRINT (Stochastic Performative Prediction with Variance Reduction) provides significantly faster convergence and greater stability compared to Stochastic Gradient Descent-Greedy Deploy (SGD-GD). While SGD-GD converges to a stationary performative stable (SPS) solution at a rate of (O(1/\sqrt{T})) with a non-vanishing error neighborhood that scales with stochastic gradient variance, SPRINT achieves an improved convergence rate of (O(1/T)) with an error neighborhood independent of this variance [28] [4]. This makes SPRINT particularly valuable in non-convex settings where performative effects cause model-induced distribution shifts.

FAQ 2: When should researchers consider implementing variance reduction techniques in stochastic optimization?

Variance reduction should be prioritized when the noisy gradient has large variance, causing algorithms to "bounce around" and leading to slower convergence and worse performance [29]. This is particularly relevant in performative prediction settings where the data distribution (\mathcal{D}(\boldsymbol{\theta})) itself depends on the model parameters being optimized [28] [4], and in large-scale finite-sum problems common to machine learning applications [30] [31].

FAQ 3: How does the performance of VM-SVRG compare to other proximal stochastic gradient methods?

Variable Metric Proximal Stochastic Variance Reduced Gradient (VM-SVRG) demonstrates complexity comparable to proximal SVRG but with practical performance advantages. The table below compares the Stochastic First-order Oracle (SFO) complexity for (\epsilon)-stationary point convergence in nonconvex settings [31]:

| Method | SFO Complexity |

|---|---|

| Proximal GD | (O(n/\epsilon)) |

| Proximal SGD | (O(1/\epsilon^2)) |

| Proximal SVRG | (O(n + n^{2/3}/\epsilon)) |

| VM-SVRG | (O(n + n^{2/3}/\epsilon)) |

FAQ 4: What practical benefits does adaptive variance reduction offer compared to unbiased approaches?

Recent research demonstrates that the unbiasedness assumption for variance reduction estimators is excessive. Adaptive approaches with biased estimators can achieve comparable or superior performance while incorporating adaptive step sizes that adjust throughout algorithm iterations without requiring hyperparameter tuning [30]. This makes them more practical for real-world applications including finite-sum problems, distributed optimization, and coordinate methods.

Troubleshooting Guide

Problem 1: Slow convergence or high instability in performative prediction experiments.

- Potential Cause: Large variance in stochastic gradient estimates due to model-induced distribution shifts [4].

- Solution: Implement SPRINT's variance reduction technique specifically designed for performative prediction settings. This method reduces the error neighborhood's dependence on gradient variance [28].

- Verification: Monitor the gradient norm (\|\nabla\mathcal{J}(\boldsymbol{\theta}{\delta\text{-SPS}};\boldsymbol{\theta}{\delta\text{-SPS}})\|^2) to ensure it decreases below your desired (\delta) threshold [4].

Problem 2: Inefficient sampling of posterior distributions in complex landscapes.

- Potential Cause: Suboptimal tuning of sampling parameters such as MCMC move sizes [32].

- Solution: Apply the StOP (Stochastic Optimization of Parameters) heuristic for derivative-free, global, stochastic, multiobjective optimization of sampling-related parameters [32].

- Implementation: Define your parameters and their domains, specify the metrics they influence (e.g., acceptance rates), and set target ranges for these metrics (typically 0.15-0.5 for MCMC acceptance rates) [32].

Problem 3: Prohibitive computational cost for optimization under uncertainty.

- Potential Cause: Combined expense of uncertainty quantification and optimization procedures [33].

- Solution: Implement a surrogate-based approach using generalized Polynomial Chaos (gPC) to create low-cost alternatives to original numerical simulations [33].

- Advantage: This method provides a closed-form solution from significantly fewer simulations than Monte Carlo methods and allows estimation of response statistics for different random input distributions without recomputing [33].

Experimental Protocols & Methodologies

SPRINT Implementation for Performative Prediction

Objective: Converge to a δ-Stationary Performative Stable (δ-SPS) solution in non-convex settings [4].

Workflow:

Key Parameters:

- Stability Threshold (δ): Determines when a solution is considered δ-SPS [4]

- Step Size (γ): Learning rate for parameter updates [4]

- Distribution Map Sensitivity: Measured by Wasserstein-1 distance upper bound [4]

Validation Metrics:

- Convergence rate to δ-SPS solution

- Gradient norm (\|\nabla\mathcal{J}(\boldsymbol{\theta};\boldsymbol{\theta})\|^2)

- Prediction set size (for conformal prediction applications) [34]

VM-SVRG for Nonconvex Nonsmooth Optimization

Objective: Minimize finite-sum problems of form (F(w) = \frac{1}{n}\sum{i=1}^n fi(w) + g(w)) where (f_i) are nonconvex and (g) is convex but possibly nonsmooth [31].

Algorithm Structure:

Complexity Analysis: The following table compares the complexity of VM-SVRG with other methods under the proximal Polyak-Łojasiewicz (PL) condition [31]:

| Method | SFO Complexity | PO Complexity |

|---|---|---|

| Proximal GD | (O(n\kappa\log(1/\epsilon))) | (O(\kappa \log(1/\epsilon))) |

| Proximal SGD | (O(1/\epsilon^2)) | (O(1/\epsilon)) |

| Proximal SVRG | (O((n + \kappa n^{2/3})\log(1/\epsilon))) | (O(\kappa \log(1/\epsilon))) |

| VM-SVRG | (O((n + \kappa n^{2/3})\log(1/\epsilon))) | (O(\kappa \log(1/\epsilon))) |

SFO: Stochastic First-order Oracle, PO: Proximal Operation

The Scientist's Toolkit: Research Reagent Solutions

| Research Reagent | Function | Application Context |

|---|---|---|

| SPRINT Framework | Variance reduction for performative prediction | Non-convex optimization with model-induced distribution shifts [28] [4] |

| VM-SVRG with BB Stepsize | Variable metric proximal optimization with diagonal Barzilai-Borwein stepsize | Nonconvex nonsmooth finite-sum problems [31] |

| StOP Heuristic | Derivative-free, global, stochastic, multiobjective parameter optimization | Tuning MCMC move sizes in integrative modeling [32] |

| gPC Surrogate Model | Stochastic spectral representation for uncertainty propagation | Optimization under uncertainty with reduced computational cost [33] |

| Control Variate Mechanisms | Variance reduction using data statistics | General stochastic gradient optimization for convex and non-convex problems [29] |

Troubleshooting Common Experimental Challenges

FAQ 1: My stochastic optimization model is computationally intractable due to a large number of scenarios. How can I simplify it without losing critical uncertainty information?

Answer: This is a common challenge when working with complex renewable energy systems. Implement a scenario reduction technique to decrease computational burden while preserving the probabilistic representation of uncertainties.

Recommended Methodology: Temporal-Aware K-Means Scenario Reduction [35]

- Generate Initial Scenarios: Use Monte Carlo simulation to create a large set of potential scenarios representing uncertain parameters (e.g., solar irradiance, wind speed, load demand).

- Probability Distribution Fitting: Model solar radiation using Beta distributions that account for seasonal and weather variations. For wind speed, use Weibull distributions combined with turbine power curves [36] [35].

- Clustering Application: Apply K-means clustering to group scenarios based on temporal and statistical characteristics.

- Scenario Selection: Select representative scenarios from each cluster and assign new probabilities based on cluster membership counts.

Expected Outcome: This method significantly reduces the number of scenarios while maintaining the stochastic characteristics of renewable resources, making optimization problems computationally manageable [35].

FAQ 2: How can I effectively model dual uncertainties from both renewable energy supply and load demand in my optimization framework?

Answer: Dual uncertainties require an integrated framework that simultaneously addresses source-side and load-side variability, as treating them in isolation leads to suboptimal performance [37].

Recommended Methodology: Hybrid Forecasting with Uncertainty Quantification [35] [37]

- Source-Side Modeling:

- Implement a hybrid LSTM-XGBoost model to forecast wind, PV power, and concentrated solar power generation.

- Apply Monte Carlo dropout and quantile regression to quantify predictive uncertainty.

- Generate scenarios using appropriate probability distributions (Weibull for wind, Beta for solar) [35].

- Load-Side Modeling:

- Use deep learning models for electricity demand forecasting.

- Implement martingale models or probabilistic forecasting to capture demand uncertainty [37].

- Integrated Framework: Develop a two-stage stochastic program that optimizes system dispatch under both uncertainty sources simultaneously.

Performance Benefit: This approach has demonstrated 16.8% reduction in expected tasking costs and 19.3% improvement in mission success rates compared to deterministic models in operational settings [38] [35].

FAQ 3: My optimization results show significant performance variability across different scenarios. How can I make my system design more robust?

Answer: Performance variability indicates sensitivity to uncertain parameters. Implement a multi-objective bi-level optimization framework that explicitly addresses this variability across scenarios [36].

Recommended Methodology: Bi-Level Stochastic Optimization with Multi-Objective Evaluation [36]

- Upper-Level Optimization: Use a region contraction algorithm for optimal system component sizing.

- Lower-Level Optimization: Apply solvers to optimize equipment scheduling under various operational constraints.

- Comprehensive Evaluation: Weight economic indicators (Cost of Energy), energy-saving indicators (Energy Rate), and environmental indicators (Renewable Fraction) using analytic hierarchy process.

Implementation Insight: This bi-level approach decouples system sizing optimization from operational scheduling, enhancing design flexibility and computational efficiency while ensuring robust performance across uncertainty scenarios [36].

Experimental Protocols & Methodologies

Protocol 1: Scenario Generation and Reduction for Renewable Energy Systems

Objective: Generate representative scenarios for solar and wind resource uncertainty.

Step-by-Step Procedure: [36] [35]

- Data Collection: Gather historical data for solar radiation, wind speed, and load demand.

- Probability Modeling:

- Fit solar radiation using clearness index model with β distribution to capture seasonal and weather variations.

- Model wind speed with Weibull distribution combined with turbine power curves.

- For load demand, use lognormal distributions [35].

- Scenario Generation: Use Latin Hypercube Sampling to generate initial scenarios representing uncertain parameters [36].

- Scenario Reduction: Apply Temporal-Aware K-Means clustering to reduce scenario count while preserving probabilistic information [35].

- Validation: Compare statistical properties of reduced scenarios with original dataset to ensure representative coverage.

Protocol 2: Two-Stage Stochastic Optimization Implementation

Objective: Solve scenario-based optimization under uncertainty for power dispatch decisions.

Step-by-Step Procedure: [36] [35] [37]

- Problem Formulation: Structure as a mixed-integer linear programming (MILP) model with two stages:

- First-Stage Decisions: Investments in system capacity made before uncertainty resolution.

- Second-Stage Decisions: Operational adjustments after uncertain parameters are realized.

- Objective Function: Minimize total expected system cost across all scenarios.

- Constraint Definition:

- Power balance constraints for each time period and scenario.

- Equipment operational limits and ramping constraints.

- Energy storage dynamics and reserve requirements.

- Solution Approach: Implement using stochastic programming solvers with parallel processing for computational efficiency.

Performance Evaluation Metrics

Table 1: Comparative Performance of Optimization Approaches in South African Case Study [35]

| Optimization Method | Total System Cost (ZAR billion) | Load Shedding (MWh) | Curtailment (MWh) | Computational Demand |

|---|---|---|---|---|

| Stochastic Optimization | 1.748 | 1,625 | 1,283 | High |

| Deterministic Model | 1.763 | 3,538 | 59 | Medium |

| Rule-Based Approach | 1.760 | 1,809 | 1,475 | Low |

| Perfect Information | 1.741 | 0 | 1,225 | Very High |

Table 2: Key Performance Indicators for System Design Evaluation [36]

| Performance Indicator | Calculation Method | Optimal Range | Application in Evaluation |

|---|---|---|---|

| Cost of Energy (COE) | Total system cost / energy output | Minimize | Economic assessment weighting: 40% |

| Energy Rate (ER) | Useful energy output / total energy input | Maximize | Energy-saving assessment weighting: 35% |

| Renewable Fraction (RF) | Renewable energy / total energy | Maximize | Environmental assessment weighting: 25% |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools and Modeling Approaches [36] [35] [37]

| Tool/Technique | Function | Application Context |

|---|---|---|

| LSTM-XGBoost Hybrid Model | Forecasting renewable generation and demand with uncertainty quantification | Source-load forecasting in hybrid energy systems |

| Temporal-Aware K-Means | Scenario reduction while preserving temporal patterns | Managing computational complexity in multi-period problems |

| Monte Carlo Dropout | Quantifying predictive uncertainty in neural networks | Probabilistic forecasting for scenario generation |

| β Distribution Models | Capturing seasonal and weather variations in solar radiation | Solar scenario generation with meteorological patterns |

| Weibull Distribution | Modeling wind speed variability for power generation | Wind power estimation in scenario construction |

| Two-Stage Stochastic MILP | Optimizing decisions under uncertainty with recourse actions | Power dispatch in grid operations with renewable integration |

| Latin Hypercube Sampling | Efficient sampling of multivariate uncertain parameters | Initial scenario generation for complex uncertainty spaces |

| Analytic Hierarchy Process | Weighting multiple objectives in system evaluation | Balancing economic, energy-saving, and environmental goals |

Workflow Visualization

Stochastic Optimization Workflow

Bi-Level Optimization Structure

Advanced Methodological Notes

For researchers implementing these methodologies, consider these additional technical insights:

Computational Efficiency: The bi-level optimization approach significantly reduces computation time by decoupling capacity planning from operational decisions. In case studies, this enabled optimization of complex integrated energy systems with 100+ scenario combinations [36].

Uncertainty Interdependencies: The most robust models account for correlations between uncertainty sources. For instance, solar radiation and electricity demand often exhibit dependence patterns that should be captured through copula methods or correlation-preserving scenario generation techniques [37].

Performance Validation: Always benchmark stochastic optimization results against deterministic equivalents and perfect information models. The performance gap indicates the value of stochastic programming, while comparison to perfect information shows the cost of uncertainty [35].

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between Robust Optimization and Stochastic Programming for managing clinical trial uncertainty?

Robust Optimization (RO) and Stochastic Programming (SP) are both advanced quantitative techniques but differ in their core philosophy for handling uncertainty. RO is a worst-case scenario approach. It constructs portfolios designed to perform satisfactorily even under the most adverse conditions within a pre-defined uncertainty set, without relying on precise probability distributions for parameters like clinical success rates or development costs [39] [40]. In contrast, SP explicitly models uncertainty using known or estimated probability distributions. It aims to optimize the expected value of the objective (e.g., expected portfolio return) by generating and evaluating random variables that represent uncertain parameters, such as the random outcome of a clinical trial [41].

FAQ 2: My stochastic optimization model is highly sensitive to the probability estimates of Phase III success. How can I improve the model's reliability?

This is a common challenge known as statistical or parameter uncertainty. To improve reliability, you can:

- Utilize Probabilistic Predictive Models (PPMs): Move beyond single-point estimates. PPMs return a distribution of predicted values (e.g., for toxicity or success rate), which incorporates uncertainty about the model's parameters and structure, leading to more reliable risk assessments [42].

- Incorporate Bayesian Methods: Update your prior probability estimates with new data as it becomes available. This is aligned with the dynamic nature of benefit-risk assessments, where information changes over time [43].

- Implement a Hybrid Robust-Stochastic Approach: Formulate your model using stochastic programming to maximize expected return but add robust constraints that must be satisfied for all realizations of the uncertainty within a set, thus guarding against severe worst-case outcomes [40].

FAQ 3: What are the typical sources of uncertainty I should model in a stochastic program for a drug portfolio?

Uncertainty in drug development is multi-faceted. For a comprehensive model, you should consider the key sources identified in regulatory science [43]:

- Clinical Uncertainty: Arises from biological variability and the use of homogeneous trial populations that may not represent real-world patients.

- Methodological Uncertainty: Stems from the design of clinical trials (e.g., randomized withdrawal designs) which may limit the ability to characterize all risks.

- Statistical Uncertainty: Inherent in the process of sampling and estimating effects from finite data, leading to potential error.

- Operational Uncertainty: Includes challenges like patient recruitment and retention in trials, which can lead to missing data and skewed results [43].

FAQ 4: How can I mitigate the computational burden of running complex stochastic optimization workflows?

Scalability is a recognized challenge in stochastic optimization. The following strategies can help mitigate computational burden [44]:

- Leverage High-Performance Computing (HPC) Platforms: Use variable capacity platforms like Slurm to distribute computations across many processors, as these workflows are often "embarrassingly parallel."

- Optimize Data Handling: Ensure efficient data input/output operations and management to prevent bottlenecks, especially when running thousands of Monte Carlo simulations.