Machine Learning vs. Random Search: A Strategic Guide to Optimizing DBTL Cycles in Drug Discovery

This article provides a comprehensive analysis for researchers and drug development professionals on integrating Machine Learning (ML) and Random Search into Design-Build-Test-Learn (DBTL) cycles.

Machine Learning vs. Random Search: A Strategic Guide to Optimizing DBTL Cycles in Drug Discovery

Abstract

This article provides a comprehensive analysis for researchers and drug development professionals on integrating Machine Learning (ML) and Random Search into Design-Build-Test-Learn (DBTL) cycles. It explores the foundational principles of DBTL and the role of hyperparameter optimization, detailing how ML models and Random Search are applied in real-world drug discovery scenarios, from target identification to lead optimization. The content offers practical strategies for troubleshooting and optimizing these approaches, alongside a comparative validation of their performance, efficiency, and suitability for different project stages. The goal is to equip scientists with the knowledge to strategically select and implement these methods, thereby accelerating the development of microbial cell factories and novel therapeutic candidates.

Understanding DBTL Cycles and Hyperparameter Optimization in Modern Drug Discovery

The Design-Build-Test-Learn (DBTL) cycle represents a foundational framework in synthetic biology and metabolic engineering, providing a systematic, iterative workflow for engineering biological systems [1]. This engineering-inspired approach has become crucial for developing microbial cell factories as sustainable alternatives to the petrochemical industry by optimizing metabolic pathways for valuable compound production [2]. The DBTL cycle enables researchers to rationally design genetic modifications, build DNA constructs, test their functionality in biological systems, and learn from the results to inform subsequent design iterations [1].

Recent advances have transformed the traditional DBTL cycle through integration of machine learning (ML), automation, and novel computational approaches. While classical DBTL follows a sequential process, some researchers now propose a reordering to "LDBT" (Learn-Design-Build-Test), where machine learning and prior knowledge precede the design phase, potentially accelerating the path to functional solutions [3]. This evolution positions the DBTL framework at the center of a methodological shift toward data-driven biological design, enabling more precise engineering of organisms for pharmaceuticals, biofuels, and specialty chemicals [4].

Core DBTL Cycle Components and Workflow

The DBTL framework consists of four interconnected phases that form an iterative engineering cycle. Each phase addresses distinct aspects of the strain development process while contributing to continuous improvement through multiple iterations.

Design Phase

The Design phase involves defining objectives for desired biological function and creating detailed blueprints for genetic modifications [3]. Researchers select appropriate biological parts, such as promoters, ribosome binding sites (RBS), coding sequences, and terminators, then design their assembly into functional genetic circuits [5]. This phase increasingly relies on computational tools, models, and prior knowledge to predict which genetic configurations might achieve the desired metabolic outcomes. In modern metabolic engineering, the Design phase may incorporate machine learning predictions or leverage large biological datasets to inform initial designs [3] [4].

Build Phase

In the Build phase, designed DNA constructs are physically assembled and introduced into host organisms [1]. This process involves DNA synthesis, assembly into plasmids or other vectors, and transformation into microbial chassis such as Escherichia coli or Corynebacterium glutamicum [2] [3]. Automation and standardized assembly techniques have dramatically accelerated this phase, enabling construction of extensive variant libraries for testing. Advanced genetic toolkits and genome editing techniques now allow manipulation of a wide range of organisms, including non-model species that were previously difficult to engineer [4].

Test Phase

The Test phase characterizes the performance of engineered strains through experimental measurements [1]. This involves cultivating modified organisms and analyzing product formation, growth characteristics, and other relevant phenotypes using analytical chemistry methods like HPLC, mass spectrometry, or biosensors [5]. Automation and high-throughput screening methods have significantly increased testing capacity, with biofoundries enabling rapid evaluation of thousands of variants [6] [4]. Testing generates the crucial performance data that drives the learning process in subsequent phases.

Learn Phase

The Learn phase focuses on analyzing experimental data to extract insights about system behavior and identify improvements for the next cycle [3]. Researchers compare measured performance against design objectives, identify bottlenecks in metabolic pathways, and formulate hypotheses for overcoming limitations [5]. Traditionally relying on statistical analysis and researcher expertise, this phase increasingly incorporates machine learning to identify complex patterns in large datasets and generate predictive models for future designs [4] [7]. The quality of learning directly determines the efficiency of subsequent DBTL iterations.

Table 1: Core Components of the DBTL Cycle

| Phase | Key Activities | Technologies & Methods | Outputs |

|---|---|---|---|

| Design | Defining objectives; Selecting genetic parts; Pathway modeling | Computational modeling; Machine learning; Bioinformatics | DNA sequence designs; Assembly plans |

| Build | DNA synthesis; Vector assembly; Host transformation | DNA synthesis; Gibson assembly; CRISPR; Automation | Engineered microbial strains; Variant libraries |

| Test | Cultivation; Performance assays; Analytics | HPLC; MS; NGS; Biosensors; High-throughput screening | Performance metrics; Omics data |

| Learn | Data analysis; Pattern recognition; Hypothesis generation | Statistical analysis; Machine learning; Modeling | Design rules; New hypotheses; Next targets |

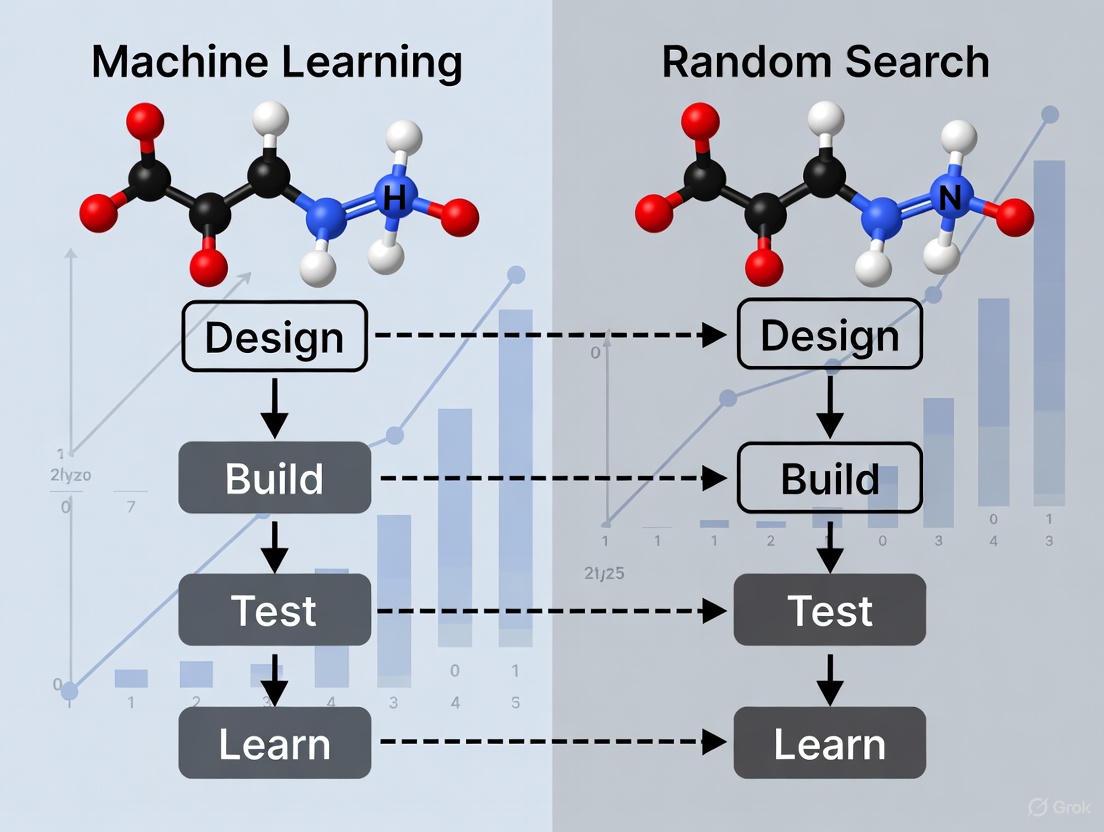

Machine Learning vs. Random Search in DBTL Optimization

The integration of machine learning into DBTL cycles represents a paradigm shift in metabolic engineering strategy. Traditionally, strain optimization often relied on random search or design of experiment approaches, which could lead to more iterations and extensive consumption of time, money, and resources [5]. Machine learning offers a more efficient path by leveraging biological datasets to build predictive models and identify non-obvious design patterns.

Machine Learning-Enhanced DBTL

Machine learning transforms the DBTL cycle by enabling data-driven predictions and designs. ML algorithms can process large biological datasets to identify patterns and relationships that are difficult for humans to discern [8]. In protein engineering, sequence-based language models (ESM, ProGen) and structure-based tools (ProteinMPNN, MutCompute) enable zero-shot prediction of beneficial mutations without additional experimental training [3]. For metabolic pathway optimization, ML methods like gradient boosting and random forest have demonstrated strong performance in the low-data regime, showing robustness to training set biases and experimental noise [7].

The emerging "LDBT" paradigm places learning at the beginning of the cycle, leveraging pre-trained models and existing biological knowledge to generate initial designs [3]. This approach can potentially reduce the number of experimental iterations needed to achieve desired performance. ML also enhances the Learn phase by providing more sophisticated analysis of complex datasets, enabling researchers to extract deeper insights from each DBTL cycle [4].

Random Search Approach

Random search methods involve testing variants selected without prior knowledge or predictive modeling. While sometimes effective, this approach often requires screening large libraries to identify improved strains, making it resource-intensive and time-consuming [5]. In combinatorial pathway optimization, where the number of possible genetic configurations grows exponentially, random search becomes increasingly inefficient as design complexity increases [7]. However, random search remains useful when biological understanding is limited or when exploring completely new design spaces without existing data for training ML models.

Table 2: Machine Learning vs. Random Search in DBTL Optimization

| Aspect | Machine Learning Approach | Random Search Approach |

|---|---|---|

| Design Strategy | Predictive modeling using biological data and patterns | Random selection or design of experiment |

| Data Efficiency | Improves with more data; can leverage existing datasets | Does not benefit from prior knowledge |

| Iteration Speed | Faster convergence to optimal designs | Requires more DBTL cycles |

| Best Application | Complex optimization with available training data | Initial exploration of unknown design spaces |

| Limitations | Requires sufficient data; model training complexity | Resource-intensive; limited by screening capacity |

| Performance | Gradient boosting/random forest outperform in low-data regimes [7] | Less efficient as design complexity increases [7] |

Case Study: DBTL-Driven Dopamine Production inE. coli

A recent study demonstrates the application of a knowledge-driven DBTL cycle to optimize dopamine production in Escherichia coli, resulting in significant improvements over previous efforts [5]. This case study illustrates the practical implementation of DBTL principles and provides a comparative framework for evaluating metabolic engineering strategies.

Experimental Protocol and Workflow

The researchers employed a systematic approach to engineer an efficient dopamine production strain:

Host Strain Engineering: The base E. coli strain FUS4.T2 was engineered for enhanced L-tyrosine production by depleting the transcriptional dual regulator TyrR and introducing feedback-resistant mutations in chorismate mutase/prephenate dehydrogenase (TyrA) [5].

Pathway Design: The dopamine biosynthetic pathway was constructed using the native E. coli gene encoding 4-hydroxyphenylacetate 3-monooxygenase (HpaBC) to convert L-tyrosine to L-DOPA, and Pseudomonas putida-derived L-DOPA decarboxylase (Ddc) to catalyze dopamine formation [5].

In Vitro Prototyping: Before in vivo implementation, the researchers conducted cell-free protein synthesis tests using crude cell lysate systems to evaluate different relative enzyme expression levels, identifying potential bottlenecks [5].

RBS Library Construction: Based on in vitro results, the team created a ribosome binding site (RBS) library to fine-tune the expression of hpaBC and ddc genes. The library was generated by modulating the Shine-Dalgarno sequence while maintaining constant flanking regions to minimize secondary structure effects [5].

High-Throughput Screening: Automated cultivation in minimal medium with appropriate antibiotics and inducers was followed by dopamine quantification. The production strain was cultivated in minimal medium containing 20 g/L glucose, 10% 2xTY, phosphate buffer, MOPS, vitamin B6, phenylalanine, and trace elements [5].

Analytical Methods: Dopamine concentrations were measured using HPLC, with biomass determined by optical density measurements at 600 nm (OD600) [5].

Figure 1: Knowledge-Driven DBTL Workflow for Dopamine Production Optimization

Performance Results and Comparison

The implementation of the knowledge-driven DBTL cycle yielded significant improvements in dopamine production:

Table 3: Dopamine Production Performance Comparison

| Strain / Approach | Dopamine Titer (mg/L) | Specific Productivity (mg/g biomass) | Fold Improvement |

|---|---|---|---|

| Previous State-of-the-Art | 27.0 | 5.17 | 1.0x (baseline) |

| DBTL-Optimized Strain | 69.03 ± 1.2 | 34.34 ± 0.59 | 2.6-6.6x |

The DBTL-optimized strain achieved dopamine concentrations of 69.03 ± 1.2 mg/L, equivalent to 34.34 ± 0.59 mg/g biomass [5]. This represents a 2.6-fold improvement in titer and a 6.6-fold improvement in specific productivity compared to previous state-of-the-art production strains [5]. The researchers attributed this success to their knowledge-driven approach, which combined upstream in vitro investigation with high-throughput RBS engineering to efficiently optimize the metabolic pathway.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of DBTL cycles in metabolic engineering requires specific reagents, tools, and platforms. The following table summarizes key solutions used in advanced metabolic engineering studies:

Table 4: Essential Research Reagents and Solutions for DBTL Implementation

| Reagent / Solution | Function | Application Example |

|---|---|---|

| Cell-Free Protein Synthesis Systems | Rapid in vitro prototyping of pathway enzymes without cellular constraints | Testing enzyme expression levels before in vivo implementation [5] |

| Ribosome Binding Site (RBS) Libraries | Fine-tuning translation initiation rates for metabolic pathway balancing | Optimizing relative expression of HpaBC and Ddc in dopamine pathway [5] |

| Automated DNA Assembly Platforms | High-throughput construction of genetic variants | Building large libraries of pathway variants for screening [6] |

| Analytical Chromatography (HPLC) | Precise quantification of target compounds and metabolites | Measuring dopamine production titers in culture supernatants [5] |

| CRISPR-Cas Genome Editing Tools | Precise genomic modifications in host strains | Engineering host metabolism for enhanced precursor supply [5] |

| Machine Learning Algorithms | Predictive modeling of sequence-function relationships | Zero-shot prediction of beneficial protein mutations [3] |

Emerging Trends: ML-Driven DBTL and Future Directions

The integration of machine learning is transforming traditional DBTL cycles into more efficient, predictive workflows. Several emerging trends are shaping the future of DBTL in metabolic engineering:

From DBTL to LDBT: A Paradigm Shift

The conventional DBTL cycle is evolving toward an "LDBT" (Learn-Design-Build-Test) paradigm, where learning precedes design through machine learning and prior knowledge [3]. This approach leverages pre-trained models on large biological datasets to generate initial designs, potentially reducing the number of experimental iterations needed. Protein language models trained on evolutionary relationships (ESM, ProGen) and structure-based design tools (ProteinMPNN) enable zero-shot prediction of beneficial mutations without additional training [3]. As these models improve, they may enable first-pass success in biological design, moving synthetic biology closer to the "Design-Build-Work" model seen in established engineering disciplines [3].

Cell-Free Systems for Accelerated Building and Testing

Cell-free expression platforms are emerging as powerful tools for accelerating the Build and Test phases of DBTL cycles [3]. These systems enable rapid protein synthesis without time-intensive cloning steps, with capabilities to produce >1 g/L protein in <4 hours [3]. When combined with liquid handling robots and microfluidics, cell-free systems allow ultra-high-throughput testing of thousands of variants. For example, DropAI leveraged droplet microfluidics to screen over 100,000 picoliter-scale reactions [3]. These platforms are particularly valuable for generating large training datasets for machine learning models, creating a virtuous cycle of improvement.

Automated Biofoundries and Closed-Loop DBTL

The automation of DBTL cycles through biofoundries represents another significant trend [6] [4]. These facilities integrate robotic systems for DNA assembly, strain cultivation, and performance characterization, dramatically increasing throughput and reproducibility. Automated biofoundries enable systematic exploration of large design spaces that would be impossible with manual methods [6]. Furthermore, closed-loop systems that integrate ML agents with automated experimentation can continuously propose and test new designs without human intervention [3]. As these platforms mature, they will enable more complex metabolic engineering projects with reduced development timelines.

Figure 2: Evolution from Traditional to ML-Enhanced DBTL Cycles

The DBTL framework continues to be a pillar of systematic metabolic engineering, providing a structured approach to biological design that balances rational planning with empirical optimization. The integration of machine learning, automation, and computational modeling is transforming DBTL from a sequential process into an integrated, data-driven workflow with the potential to dramatically accelerate strain development. As these technologies mature, the DBTL cycle will become increasingly predictive and efficient, enabling more sophisticated metabolic engineering projects and expanding the range of products accessible through biotechnology. The continued evolution of the DBTL framework promises to enhance our ability to program biology for sustainable manufacturing, therapeutic applications, and fundamental biological discovery.

Table of Contents

- The Critical Role of Hyperparameters in Machine Learning

- Quantitative Comparison of Tuning Strategies

- Experimental Protocols in Hyperparameter Optimization

- Visualizing Hyperparameter Optimization Workflows

- The Scientist's Toolkit: Research Reagent Solutions

The Critical Role of Hyperparameters in Machine Learning

In the context of Design-Build-Test-Learn (DBTL) cycles for drug development, the "Learn" phase involves refining models to guide the next "Design." Hyperparameter tuning is the cornerstone of this phase, transforming a model from a conceptual framework into a predictive tool with real-world impact. Hyperparameters are the configuration settings that govern the machine learning training process itself, set before learning begins [9] [10]. They are distinct from model parameters, which are learned from the data during training [10].

The necessity of tuning stems from the direct influence hyperparameters have on a model's ability to generalize from training data to unseen validation and test sets, a property paramount for predicting compound efficacy or toxicity. A poorly tuned model is often overfit—it performs well on its training data but fails spectacularly in the real world, a phenomenon described as the "silent killer of ROI" in industrial applications [9]. Conversely, an undertuned model may be underfit, failing to capture essential relationships in the data [10]. Systematic hyperparameter optimization minimizes the loss function, bridging the gap between a "meh" model and one that creates tangible value [9]. This is not merely about squeezing out a few extra points of accuracy; a performance jump from 85% to 94% in a fraud detection model, for instance, represented a 60% reduction in error and saved millions [9]. In drug discovery, such improvements can translate to significantly higher success rates in virtual screening or more accurate toxicity predictions, directly accelerating the DBTL cycle.

Quantitative Comparison of Tuning Strategies

The choice of hyperparameter optimization strategy involves a critical trade-off between computational resources and the performance of the final model. The table below summarizes the key characteristics of three primary tuning methods.

Table 1: Comparison of Hyperparameter Tuning Methods

| Method | Core Principle | Computational Efficiency | Best-Suited Scenario | Key Advantage | Key Disadvantage |

|---|---|---|---|---|---|

| Grid Search [11] [12] [10] | Exhaustively evaluates all combinations in a predefined grid. | Low; becomes infeasible with many parameters ("curse of dimensionality"). | Small, well-understood search spaces with few hyperparameters. | Guaranteed to find the best combination within the defined grid. | Computationally expensive and slow, especially with a large number of hyperparameters. |

| Random Search [11] [12] [10] | Randomly samples a fixed number of combinations from defined distributions. | Medium; does not suffer from the curse of dimensionality. | Larger search spaces where some parameters are more important than others. | Often finds good configurations faster than Grid Search; more efficient for a large number of hyperparameters. | Not guaranteed to find the optimal combination; can miss the best hyperparameters. |

| Bayesian Optimization [9] [13] [10] | Builds a probabilistic model to predict promising hyperparameters based on past results. | High; aims to find excellent configurations with far fewer iterations. | Expensive-to-train models (e.g., deep learning) with large, complex search spaces. | Highly sample-efficient; intelligently balances exploration and exploitation. | Sequential nature can lead to longer wall-clock time; more complex to implement. |

Table 2: Illustrative Performance Data on a Wine Quality Dataset [12]

| Tuning Method | Sampled Hyperparameters | Best Accuracy | Computational Note |

|---|---|---|---|

| Grid Search | Exhaustive search over 216 combinations (max_depth: [3,5,10,None], n_estimators: [10,100,200], etc.) |

0.74 | Evaluates all combinations, which is computationally intensive. |

| Random Search | 500 iterations sampled from broader distributions (e.g., n_estimators: 10 to 500) |

0.74 | Achieved comparable accuracy to Grid Search with a more efficient search. |

The data in Table 2 highlights a key insight: Random Search can achieve performance on par with Grid Search but with significantly greater efficiency, as it does not waste resources on unpromising regions of the search space [11]. For the most computationally intensive models in deep learning, Bayesian Optimization is the state-of-the-art method, capable of slashing search time by 10x or more by using a model to guide the search [9].

Experimental Protocols in Hyperparameter Optimization

A rigorous and reproducible experimental protocol is non-negotiable for reliable hyperparameter tuning. The following methodology is standard practice and should be integrated into the DBTL workflow.

1. Data Partitioning: The dataset is first split into training, validation, and hold-out test sets. The training set is used to learn model parameters for each hyperparameter configuration. The validation set is used to evaluate and compare the performance of these different hyperparameter configurations. The test set is used only once, at the very end, to provide an unbiased estimate of the final model's generalization error [14].

2. Defining the Search Space: The researcher must define the hyperparameters to tune and their range of values. This can be a discrete list for Grid Search (e.g., learning_rate: [0.001, 0.01, 0.1]) or a statistical distribution for Random and Bayesian search (e.g., learning_rate: loguniform(1e-5, 1e-2)) [11] [13].

3. Cross-Validation: To mitigate overfitting and provide a more robust performance estimate, k-fold cross-validation is typically employed on the training set. The training data is split into k folds (e.g., 5); the model is trained on k-1 folds and validated on the remaining fold. This process is repeated k times, and the average validation performance is used to score the hyperparameter set [11] [14].

4. Execution and Evaluation: The chosen search algorithm (Grid, Random, or Bayesian) is executed. This involves training and validating a model for each candidate hyperparameter set. The set achieving the best average validation score is selected as the winner.

5. Final Assessment: The final model is retrained on the entire training and validation dataset using the optimal hyperparameters. Its performance is then evaluated on the held-out test set to report the final, unbiased performance metric [14].

Visualizing Hyperparameter Optimization Workflows

The following diagrams illustrate the logical relationships and workflows for the general tuning process and the specific strategies discussed.

General Hyperparameter Tuning Workflow

Comparison of Tuning Strategy Principles

The Scientist's Toolkit: Research Reagent Solutions

Successfully implementing hyperparameter tuning requires a suite of software tools and libraries. The table below details essential "research reagents" for your computational experiments.

Table 3: Essential Software Tools for Hyperparameter Tuning

| Tool / Library | Primary Function | Application in Tuning |

|---|---|---|

| Scikit-learn [11] [14] | A core machine learning library for Python. | Provides the GridSearchCV and RandomizedSearchCV classes for easy implementation of these methods with built-in cross-validation. |

| Optuna [9] | A dedicated hyperparameter optimization framework. | Enables efficient Bayesian optimization with advanced features like pruning (early stopping of poorly performing trials) for complex models like neural networks. |

| Hyperopt [15] | A Python library for serial and parallel optimization. | Similar to Optuna, it allows for Bayesian optimization over complex search spaces for deep learning and other demanding tasks. |

| Cross-Validation [11] [14] | A statistical resampling technique. | Not a software tool per se, but a critical methodological component integrated into tuning workflows to prevent overfitting and ensure robust parameter selection. |

The pharmaceutical industry is undergoing a profound transformation, driven by the integration of artificial intelligence (AI) and machine learning (ML). This shift addresses a critical challenge known as "Eroom's Law"—the paradoxical decline in R&D efficiency despite technological advancements, where the cost of developing a new drug now exceeds $2.23 billion and the process can take 10-15 years with a failure rate of approximately 90% [16]. The traditional drug discovery pipeline, a linear and sequential process of design, build, test, and learn (DBTL), is being superseded by a new, data-driven paradigm. Machine learning algorithms can now parse vast biological and chemical datasets to identify patterns, predict outcomes, and generate novel hypotheses at a scale and complexity beyond human cognition, effectively shifting the center of gravity from the wet lab to the computer—from in vitro to in silico [16].

This overview explores the role of machine learning in drug discovery, framed within a broader thesis on its performance compared to traditional optimization methods like random search within DBTL cycles. By examining experimental data, protocols, and specific case studies, we will demonstrate how ML is not merely accelerating existing processes but is fundamentally recoding the future of pharmaceutical research [16].

Machine Learning vs. Random Search in DBTL Optimization

The classic Design-Build-Test-Learn (DBTL) cycle is the fundamental framework for systematic engineering in synthetic biology and drug discovery. Recently, a paradigm shift has been proposed: LDBT, where "Learning" precedes "Design" [17]. This is made possible by machine learning models that have been pre-trained on massive biological datasets, enabling them to make informed, "zero-shot" predictions to guide the initial design phase [17].

A core research question is how ML-guided learning compares to simpler optimization methods like random search. The key differentiator is that random search explores a design space blindly, whereas ML models learn from iteratively acquired data to predict promising candidates, balancing exploration of unknown regions with exploitation of known high-performing areas [18].

Table: Core Comparison of ML-Guided vs. Random Search Optimization

| Feature | Machine Learning-Guided | Random Search |

|---|---|---|

| Approach | Active learning; predictive models guide next experiments | Passive; random selection from predefined space |

| Data Usage | Learns from cumulative data to refine hypotheses | Ignores data from previous iterations |

| Efficiency | Higher; focuses resources on high-probability candidates | Lower; success is proportional to library size |

| Best For | Complex, high-dimensional optimization problems | Simple problems or establishing a baseline performance |

The implementation of these cycles has been accelerated by technologies like cell-free expression systems for rapid testing and robotic platforms for automation. These platforms can execute fully autonomous "test-learn" cycles, where software analyzes data and directly schedules the next round of experiments without human intervention [17] [18].

Experimental Comparison: A Case Study in Metabolic Engineering

A 2024 study on optimizing p-Coumaric Acid (pCA) production in Saccharomyces cerevisiae provides a clear experimental comparison of ML-guided and random search strategies within a DBTL cycle [19].

Experimental Protocol and Workflow

- Objective: To improve the titer and yield of pCA, a valuable aromatic compound.

- Strain Engineering: Combinatorial libraries were built in yeast, focusing on a 7-gene cluster. Libraries were created by varying both the coding sequences (e.g., feedback-resistant enzyme variants) and regulatory elements (promoters) for key genes in the prephenate pathway [19].

- Library Generation: A one-pot library generation method was used to create diverse strain variants.

- Screening & Data Collection: A subset of the library was screened for pCA production. The resulting genotype-phenotype data was used to train machine learning models.

- ML Model Training: The trained models used feature importance and SHAP (Shapley Additive exPlanations) values to identify which genetic factors most influenced production [19].

- Next-Cycle Design: The ML predictions were used to design a second, optimized library for a subsequent DBTL cycle.

The workflow for this approach is outlined below.

Key Findings and Performance Data

The study demonstrated the superior efficiency of the ML-guided approach. In the first DBTL cycle, which established a baseline, the best-producing strain from the PAL pathway achieved a pCA titer of 0.31 g/L. The ML model, trained on this initial data, was then used to predict a new set of genetic combinations likely to yield higher production [19].

The results from the second DBTL cycle confirmed the model's accuracy. The best strain from this ML-informed library achieved a final pCA titer of 0.52 g/L, representing a 68% increase in production over the first cycle [19]. This direct comparison within a single study provides compelling evidence for the power of ML in pathway optimization.

Table: Performance Outcomes from pCA Optimization DBTL Cycles [19]

| DBTL Cycle | Guidance Strategy | Best pCA Titer (g/L) | Yield on Glucose (g/g) | Key Takeaway |

|---|---|---|---|---|

| Cycle 1 | Initial library screening | 0.31 | Not Specified | Establishes baseline performance and training data. |

| Cycle 2 | Machine Learning prediction | 0.52 | 0.03 | 68% improvement in titer, demonstrating ML efficacy. |

Broader Applications of ML in the Drug Discovery Pipeline

The success of ML in metabolic engineering is mirrored across the entire drug discovery value chain. The following applications highlight its transformative impact.

Target Identification and Validation

ML algorithms analyze complex biological data from genomics, proteomics, and disease pathways to identify and prioritize novel drug targets [20]. For example, Insilico Medicine leveraged AI to identify a novel target for fibrosis in a matter of months, a process that traditionally takes years [20]. Tools like CLAPE-SMB predict protein-DNA binding sites using only sequence data, accelerating this critical first step [21].

Compound Screening and Optimization

AI enables virtual screening of millions of compounds in silico, dramatically outperforming the physical throughput of traditional high-throughput screening (HTS) [22] [20]. Once a "hit" is identified, ML guides the "hit-to-lead" optimization. In a 2025 study, deep graph networks were used to generate over 26,000 virtual analogs, leading to sub-nanomolar inhibitors with a >4,500-fold potency improvement over the initial hit [22]. Predictive models for ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) properties, such as AttenhERG for cardiotoxicity, are also crucial for early risk assessment [21].

Clinical Trial Acceleration

ML streamlines clinical development by optimizing patient recruitment through the analysis of electronic health records, predicting patient response, and reducing dropout rates [23] [20]. It also enables smarter trial design by running virtual scenarios and facilitates real-time monitoring of trial data for early detection of safety signals [20].

The Frontier: Generative AI and Virtual Humans

The field is advancing beyond predictive models to generative AI. Platforms like GALILEO can generate entirely novel drug candidates from scratch. In a 2025 study, GALILEO designed 12 antiviral compounds, achieving a 100% hit rate in subsequent in vitro validation [24]. Looking further ahead, researchers are proposing the development of a "programmable virtual human," an AI-driven systemic model that predicts how a new drug affects the entire human body, not just an isolated target, potentially revolutionizing preclinical safety and efficacy testing [25].

The Scientist's Toolkit: Essential Research Reagents and Platforms

The implementation of ML-driven drug discovery relies on a suite of specialized reagents, software, and hardware.

Table: Key Research Reagent Solutions for ML-Driven Drug Discovery

| Tool Name / Category | Type | Primary Function | Example Use Case |

|---|---|---|---|

| Cell-Free Expression Systems | Wet-lab Reagent Platform | Rapid in vitro protein synthesis and testing without cloning. | Megascale data generation for training ML models; testing protein variants [17]. |

| CETSA (Cellular Thermal Shift Assay) | Wet-lab Assay | Validates direct drug-target engagement in intact cells/tissues. | Providing quantitative, system-level validation of mechanism of action [22]. |

| Protein Language Models (e.g., ESM, ProGen) | Software / Algorithm | Predicts protein structure and function from sequence; designs novel proteins. | Zero-shot prediction of beneficial mutations and antibody sequences [17]. |

| Structure-Based Design Tools (e.g., ProteinMPNN) | Software / Algorithm | Designs new protein sequences that fold into a given backbone structure. | Engineering TEV protease variants with improved catalytic activity [17]. |

| Automated Robotic Platforms | Hardware / Platform | Executes liquid handling, cultivation, and measurement fully autonomously. | Running closed-loop, autonomous DBTL cycles without human intervention [18]. |

| ADMET Prediction Tools (e.g., AttenhERG, Stability Oracle) | Software / Algorithm | Predicts pharmacokinetics, toxicity, and stability of molecules in silico. | Early identification of compounds with hERG liability or poor solubility [17] [21]. |

Machine learning is fundamentally recoding the drug discovery process, moving it from a realm reliant on serendipity and brute-force screening to one driven by predictive, data-driven intelligence. The experimental evidence, particularly from direct comparisons within DBTL cycles, clearly demonstrates that ML-guided optimization outperforms traditional methods like random search in efficiency and outcomes, as shown by the significant increase in product titers in metabolic engineering case studies [19]. The proliferation of ML applications—from target identification and virtual screening to generative AI and autonomous robotic platforms—underscores its role as the central nervous system of modern pharmaceutical R&D [23]. While challenges in data quality, model interpretability, and regulatory acceptance remain, the paradigm has irrevocably shifted. The future of drug discovery lies in the continued integration and refinement of these intelligent systems, promising to deliver life-saving therapies to patients faster and more efficiently than ever before.

In the high-stakes field of drug development, where attrition rates are staggering and development cycles regularly span 10-15 years, efficiency in every process is paramount [26]. The emerging paradigm of Model-Informed Drug Development (MIDD) leverages quantitative approaches to streamline discovery and reduce costly late-stage failures [27]. Within this framework, machine learning (ML) models have become indispensable tools, from predicting drug-target interactions to optimizing clinical trial designs. However, the performance of these ML models hinges critically on their hyperparameter configurations—the settings that govern the learning process itself. The process of identifying these optimal configurations, known as hyperparameter optimization, represents a significant computational challenge. This article examines two fundamental approaches to this challenge—Grid Search and Random Search—within the context of drug development research. We demonstrate how Random Search provides a computationally efficient alternative to Grid Search, enabling researchers to extract maximum predictive power from their models while conserving valuable computational resources that can be redirected toward other critical aspects of the drug development pipeline.

Hyperparameter Fundamentals: Parameters vs. Hyperparameters

In machine learning, a critical distinction exists between model parameters and hyperparameters. Parameters are the internal variables of a model that are learned directly from the training data, such as weight coefficients in linear regression or connection weights in neural networks [28]. These values are optimized during the training process and are not set manually by the researcher. In contrast, hyperparameters are external configuration variables that govern the overall learning process. They are set before the training begins and control aspects such as model capacity, learning speed, and regularization strength [28].

Key Hyperparameters in Machine Learning Algorithms

- Random Forest: nestimators (number of trees), maxdepth (maximum tree depth), minsamplessplit (minimum samples required to split a node) [28]

- Support Vector Machines: C (regularization parameter), kernel (kernel function type), gamma (kernel coefficient) [28] [29]

- Neural Networks: learningrate (step size in gradient descent), batchsize (number of samples per gradient update), hiddenlayercount (number of hidden layers) [28]

- XGBoost: learningrate, nestimators, maxdepth, minchild_weight, subsample [29]

The primary goal of hyperparameter optimization is to identify configurations that provide the optimal balance between overfitting and underfitting, thereby maximizing a model's generalization performance on unseen data [28]. Empirical studies show that hyperparameter selection can have as much impact on model performance as parameter optimization itself, making systematic hyperparameter optimization methodologies essential for developing robust machine learning models [28].

Grid Search: The Systematic Approach

Principles and Methodology

Grid Search represents the most exhaustive and systematic approach to hyperparameter optimization. This method operates on a simple but computationally intensive principle: it performs an exhaustive search across a predefined set of hyperparameter values, evaluating every possible combination [28] [30]. The algorithm follows four key steps: (1) parameter space definition, where discrete value sets are specified for each hyperparameter; (2) Cartesian product calculation, which systematically generates all parameter combinations; (3) model evaluation, where each combination is assessed using cross-validation methodology; and (4) optimal configuration selection, where the parameter set with the highest validation score is chosen [28].

Table 1: Grid Search Workflow Example for SVM Tuning

| Step | Action | Example for SVM |

|---|---|---|

| 1. Define Grid | Specify discrete values for each hyperparameter | C: [0.1, 1, 10, 100], gamma: [1, 0.1, 0.01, 0.001], kernel: ['rbf', 'poly'] |

| 2. Generate Combinations | Create Cartesian product of all values | 4×4×2 = 32 unique combinations |

| 3. Evaluate Models | Train and validate each combination | 5-fold cross-validation = 32×5 = 160 model fits |

| 4. Select Best | Choose configuration with highest performance | Best parameters and score returned |

Advantages and Limitations

Grid Search's primary strength lies in its comprehensive nature—it guarantees finding the global optimum within the defined discrete parameter space, provided the optimal configuration exists within the grid [28] [30]. This deterministic nature produces reproducible results, which is valuable in regulated environments like pharmaceutical research. Additionally, the method is embarrassingly parallelizable, as each parameter combination can be evaluated independently [28].

However, Grid Search contains significant disadvantages in terms of computational complexity. The total number of evaluations grows exponentially with the number of parameters (d) and the number of values per parameter (n), following O(n^d) [28]. This "curse of dimensionality" makes the method impractical for high-dimensional parameter spaces. Furthermore, Grid Search requires sub-optimization in continuous parameter spaces due to its discrete value limitation and can waste computational resources on insignificant parameters [28].

Random Search: The Efficient Alternative

Principles and Methodology

Random Search, proposed by Bergstra and Bengio (2012) as an empirical solution to Grid Search's computational costs, introduces a probabilistic approach to hyperparameter optimization [28]. Instead of exhaustively exploring a predefined grid, Random Search performs the search by randomly sampling parameter combinations from predefined probability distributions [28] [30]. The algorithm follows these steps: (1) parameter distribution definition, where probability distributions are specified for each hyperparameter; (2) sampling strategy, where random combinations are generated according to the n_iter parameter; (3) performance evaluation, where model performance is measured for each sample combination using cross-validation; and (4) optimum selection, where the parameter set with the highest validation score is chosen [28].

Table 2: Random Search Workflow Example for SVM Tuning

| Step | Action | Example for SVM |

|---|---|---|

| 1. Define Distributions | Specify probability distributions for each hyperparameter | C: loguniform(0.1, 100), gamma: loguniform(0.001, 1), kernel: ['rbf', 'poly'] |

| 2. Sample Combinations | Randomly select n_iter parameter sets | n_iter = 20 → 20 unique combinations |

| 3. Evaluate Models | Train and validate each combination | 5-fold cross-validation = 20×5 = 100 model fits |

| 4. Select Best | Choose configuration with highest performance | Best parameters and score returned |

Theoretical Advantages

Random Search's effectiveness stems from the heterogeneous distribution of parameter effects in most machine learning models [28]. In practice, performance is typically determined by a few critical parameters, while others show marginal effects. Random Search benefits from the fact that it does not waste evaluations on exhaustively exploring unimportant parameters, unlike Grid Search which expends equal resources on all dimensions [28]. Mathematically, if the parameter space is d-dimensional and the optimal region lies in a d-subspace, Random Search's probability of discovering the optimal region is generally higher than Grid Search for a fixed computational budget [28].

Direct Comparison: Grid Search vs. Random Search

Computational Efficiency

Random Search demonstrates superior computational efficiency compared to Grid Search, particularly in high-dimensional spaces. In a practical experiment tuning a Random Forest classifier, Grid Search evaluated 648 parameter combinations with 3-fold cross-validation, resulting in 1,944 total model fits [30]. The best score achieved was 0.9648, with a test accuracy of 0.9649 [30]. In contrast, Random Search can achieve competitive performance with significantly fewer evaluations. For instance, in another experiment, Random Search found effective hyperparameters with just 30 samples, requiring only 150 model fits with 5-fold cross-validation—dramatically reducing computation time while maintaining performance [31].

Table 3: Performance Comparison of Grid Search vs. Random Search

| Metric | Grid Search | Random Search |

|---|---|---|

| Search Pattern | Exhaustive, systematic | Random sampling from distributions |

| Parameter Space | Discrete, predefined values | Continuous and discrete distributions |

| Computational Cost | Exponential O(n^d) | Linear O(n) |

| Best for | Small parameter spaces (1-3 dimensions) | High-dimensional spaces (4+ dimensions) |

| Optimal Solution | Guaranteed within grid | Probabilistic, not guaranteed |

| Parallelizability | Excellent (embarrassingly parallel) | Excellent (embarrassingly parallel) |

| Key Advantage | Comprehensive coverage | Broad exploration with fixed budget |

Practical Performance

Empirical evidence consistently shows that Random Search can find equal or better hyperparameter configurations with significantly fewer computational resources. In a case study using the Wine dataset and SVM model tuning, Random Search outperformed Grid Search in accuracy, achieving a score of 0.7569 compared to Grid Search's 0.7459 [32]. This performance advantage arises because Random Search can explore a broader range of values for each hyperparameter, rather than being restricted to a fixed grid [32]. This flexibility is particularly valuable when the relationships between hyperparameters and objective functions are unknown or complex, which is often the case in drug discovery applications involving high-dimensional biological data [32].

Experimental Protocols and Implementation

Standard Implementation Code

Implementing Random Search is straightforward using popular machine learning libraries. The following code example demonstrates Random Search implementation using scikit-learn's RandomizedSearchCV:

This code example showcases random search using scikit-learn's RandomizedSearchCV [31]. Instead of fixed lists, probability distributions enable continuous and flexible exploration of the parameter space. Rather than evaluating thousands of combinations as in a traditional grid search, sampling just 30 dramatically reduces computation while still finding competitive solutions [31].

Experimental Workflow Visualization

The following diagram illustrates the logical workflow of a Random Search optimization process for hyperparameter tuning:

Research Reagent Solutions: Essential Tools for Optimization Experiments

Table 4: Essential Research Tools for Hyperparameter Optimization

| Tool/Category | Specific Examples | Function in Research |

|---|---|---|

| Programming Languages | Python, R | Core implementation languages for optimization algorithms |

| Machine Learning Libraries | scikit-learn, XGBoost, TensorFlow, PyTorch | Provide implemented ML models and tuning capabilities |

| Hyperparameter Optimization Frameworks | Scikit-learn's RandomizedSearchCV, GridSearchCV | Implement standard tuning methods with cross-validation |

| Probability Distributions | scipy.stats (randint, uniform, loguniform) | Define parameter search spaces for random sampling |

| Visualization Tools | Matplotlib, Seaborn, Plotly | Analyze and present optimization results |

| Computational Resources | Multi-core CPUs, Cloud computing platforms | Enable parallel evaluation of parameter configurations |

Advanced Optimization Techniques

Beyond Random Search: Bayesian Optimization

While Random Search represents a significant improvement over Grid Search, more advanced techniques like Bayesian Optimization can provide further efficiencies. Bayesian optimization uses a probabilistic model to approximate the relationship between hyperparameters and model performance, focusing sampling on regions more likely to contain optimal values [31] [29]. This approach is particularly valuable when computational resources are limited and each evaluation is expensive. Studies have shown Bayesian optimization can find optimal hyperparameters in as few as 67 iterations, outperforming both Grid and Random Search methods in terms of efficiency [29].

Quasi-Random Search

Another advanced approach is Quasi-random Search, based on low-discrepancy sequences. This method can be thought of as "jittered, shuffled grid search" that uniformly but randomly explores a given search space, spreading out search points more effectively than pure random search [33]. The advantages include consistent and statistically reproducible behavior, non-adaptive sampling that enables flexible post hoc analysis, and better performance in high-parallelism environments where many trials run simultaneously [33].

Applications in Drug Development and Research

In pharmaceutical research, efficient hyperparameter optimization directly translates to reduced computational costs and accelerated model development. For example, in drug classification and target identification tasks—critical yet challenging steps in drug discovery—properly tuned models can achieve accuracy exceeding 95% [34]. The proposed optSAE + HSAPSO framework integrates a stacked autoencoder for robust feature extraction with a hierarchically self-adaptive particle swarm optimization algorithm for parameter optimization, demonstrating how advanced optimization techniques can enhance pharmaceutical research outcomes [34].

The efficiency of Random Search becomes particularly valuable in drug development contexts where computational resources are often allocated across multiple parallel research efforts. By reducing the time and resources required for model optimization, researchers can iterate more quickly, test more hypotheses, and ultimately accelerate the drug discovery process. This aligns with the broader industry trend toward Model-Informed Drug Development (MIDD), which uses quantitative approaches to improve decision-making across all stages of drug development [27].

Random Search represents a fundamentally more efficient approach to hyperparameter optimization compared to Grid Search, particularly in the high-dimensional parameter spaces commonly encountered in drug discovery research. Its ability to explore broader parameter ranges with fixed computational budget, coupled with its simplicity and excellent parallelization capabilities, makes it particularly suitable for resource-constrained research environments. While advanced techniques like Bayesian optimization offer further refinements, Random Search remains an essential tool in the machine learning practitioner's toolkit—striking an effective balance between implementation complexity and computational efficiency. As drug development increasingly relies on complex machine learning models for tasks ranging from target identification to clinical trial optimization, efficient hyperparameter tuning methods like Random Search will play an increasingly important role in accelerating pharmaceutical research and development timelines.

For years, synthetic biology and bioengineering have been governed by the Design-Build-Test-Learn (DBTL) cycle, an iterative framework that, while systematic, often relies on time-consuming and costly empirical experimentation. The emergence of sophisticated machine learning (ML) and artificial intelligence (AI) is fundamentally challenging this paradigm. A transformative shift is underway, recasting the traditional cycle into a Learn-Design-Build-Test (LDBT) framework. This new paradigm leverages powerful computational models to learn from vast biological datasets before any physical design begins, promising to dramatically accelerate the development of new biological parts, pathways, and therapeutics.

This guide objectively compares the performance of the established DBTL cycle against the emerging LDBT framework, situating the analysis within the broader research context of machine learning versus traditional search and optimization methods, such as random search, in biological engineering.

Understanding the Paradigms: DBTL vs. LDBT

The core difference between the two frameworks lies in the starting point and the role of computational prediction.

The Traditional DBTL Cycle

The conventional Design-Build-Test-Learn (DBTL) cycle is an iterative process [17]:

- Design: Researchers define objectives and design biological constructs (e.g., DNA sequences, proteins) based on domain knowledge and existing models.

- Build: The designed constructs are synthesized and assembled in a biological system (e.g., bacteria, yeast) or in a cell-free environment.

- Test: The performance of the built constructs is experimentally measured (e.g., protein expression, enzyme activity).

- Learn: Data from the test phase are analyzed to inform the next round of design, and the cycle repeats until the desired function is achieved.

This process is inherently empirical and can require multiple lengthy and resource-intensive cycles to converge on a successful solution [17].

The Emerging LDBT Paradigm

The Learn-Design-Build-Test (LDBT) framework represents a fundamental reordering, placing learning at the forefront [17] [35]:

- Learn: The process begins with machine learning models that have been pre-trained on massive biological datasets (e.g., millions of protein sequences, structures, and functional data). These models learn the complex relationships between sequence, structure, and function.

- Design: The trained ML models are used to generate and design new biological constructs with a high probability of success, often through "zero-shot" predictions that do not require additional experimental training data.

- Build & Test: The top-predicted designs are then physically built and tested, often using rapid, high-throughput platforms like cell-free systems.

This approach leverages prior knowledge embedded in ML models to make highly informed initial designs, potentially reducing or eliminating the need for multiple iterative cycles [17].

The following diagram illustrates the fundamental structural differences between these two engineering workflows.

Performance Comparison: LDBT vs. DBTL

The transition from DBTL to LDBT is driven by demonstrated improvements in key performance metrics. The table below summarizes a quantitative comparison based on recent research.

Table 1: Quantitative Performance Comparison of DBTL vs. LDBT Frameworks

| Performance Metric | Traditional DBTL | LDBT Framework | Context & Experimental Evidence |

|---|---|---|---|

| Design Cycles to Candidate | Often requires multiple cycles [17] | Single-cycle success demonstrated [17] | LDBT aims for "single cycle" functional parts via zero-shot prediction [17]. |

| Compounds Synthesized | Thousands to tens of thousands [36] | Hundreds or fewer [36] | Exscientia's AI-designed CDK7 inhibitor required only 136 synthesized compounds [36]. |

| Hit Rate | Low (typical of HTS) | Very High (up to 100% reported) | Model Medicines' GALILEO AI platform achieved a 100% hit rate (12/12 compounds) in antiviral assays [24]. |

| Timeline (Discovery to Candidate) | ~5 years (traditional pharma) [36] | <2 years demonstrated [36] | Insilico Medicine's IPF drug progressed from target to Phase I trials in 18 months [36]. |

| Primary Search Method | Empirical iteration, random/mutagenic sampling [17] | Intelligent navigation of design space [35] | ML uses active learning to select the most informative variants, maximizing information gain per experiment [35]. |

Experimental Protocols & Key Methodologies

The superior performance of the LDBT framework is enabled by specific, advanced methodologies at each stage.

Core LDBT Workflow Protocol

A typical LDBT workflow for a protein engineering campaign involves the following detailed steps:

Learn Phase (Model Training & Foundation):

- Objective: Create a model that maps genetic or protein sequences to functional outputs.

- Procedure: a. Data Curation: Gather a large-scale dataset for training. This can include public databases (e.g., UniProt for sequences, PDB for structures) or proprietary experimental data. b. Model Selection: Choose an appropriate ML architecture. Common choices include: * Protein Language Models (pLMs) like ESM-2 or ProGen, trained on evolutionary sequence data to predict structure and function [17]. * Structure-based Models like ProteinMPNN (for sequence design given a backbone) or AlphaFold2 (for structure prediction) [17]. * Hybrid Physics-Informed ML that incorporates biophysical principles into the model [17]. c. Training: Train the model on the curated dataset to learn the underlying sequence-function relationships.

Design Phase (AI-Driven Generation):

- Objective: Generate novel sequences predicted to have the desired function.

- Procedure: a. In Silico Generation: Use the trained model for zero-shot generation of thousands to billions of candidate sequences. For example, ProGen can generate novel protein sequences conditioned on specific desired properties [17]. b. In Silico Screening: Filter the generated library using predictive models for specific properties like solubility (e.g., with DeepSol), stability (e.g., with Stability Oracle), or target binding (e.g., with molecular docking) [17] [22]. c. Selection: A small set of top-ranking candidates (often tens to hundreds) is selected for experimental validation.

Build Phase (Rapid Synthesis):

Test Phase (High-Throughput Validation):

- Objective: Experimentally measure the function of the built candidates.

- Procedure: Conduct high-throughput assays in line with the target function (e.g., enzyme activity assays, binding assays). Cell-free systems are often coupled directly with robotic liquid handlers and microfluidics (e.g., DropAI platform) to screen >100,000 reactions simultaneously [17].

This integrated workflow is depicted in the following diagram, showing the flow of information and materials between the computational and physical experimental stages.

Case Study: Antimicrobial Peptide Design

A 2025 study exemplifies the power of the LDBT paradigm [17]:

- Learn: Researchers trained deep learning models on datasets of known antimicrobial peptides (AMPs).

- Design: The model computationally surveyed over 500,000 potential AMPs and selected 500 optimal variants for experimental testing.

- Build & Test: The 500 candidates were synthesized and tested.

- Result: The LDBT process identified 6 promising AMP designs with high accuracy, demonstrating efficient navigation of a vast design space that would be prohibitively expensive to explore empirically.

The Scientist's Toolkit: Essential Research Reagents & Platforms

Implementing a robust LDBT pipeline requires a suite of specialized computational and experimental tools.

Table 2: Essential Research Reagents and Platforms for LDBT

| Category | Item / Platform | Function in LDBT Workflow |

|---|---|---|

| Computational Models | Protein Language Models (e.g., ESM, ProGen) [17] | Learn from evolutionary data to predict protein structure and function; enable zero-shot design. |

| Structure-based Design Tools (e.g., ProteinMPNN, RosettaFold) [17] | Design protein sequences that fold into a specific backbone structure. | |

| Property Predictors (e.g., DeepSol, Stability Oracle) [17] | Predict key biophysical properties like solubility and thermodynamic stability from sequence. | |

| Rapid Build/Test Systems | Cell-Free Transcription-Translation (TX-TL) Systems [17] [35] | Rapidly express proteins without live cells, enabling high-throughput building and testing. |

| Microfluidic/Droplet Platforms (e.g., DropAI) [17] | Allow ultra-high-throughput screening of reactions (e.g., >100,000 picoliter-scale reactions). | |

| Automated Biofoundries [17] | Integrated robotic facilities that automate the Build and Test phases for massive parallelism. |

Context: Machine Learning vs. Random Search in DBTL Optimization

The shift from DBTL to LDBT is, at its core, a shift from relying on empirical search methods to leveraging intelligent, model-guided optimization.

- Random Search in Traditional DBTL: The classic DBTL cycle, especially when exploring uncharted biological space, often functions similarly to a random search. While more structured than pure trial-and-error, its efficiency is limited because each cycle does not necessarily inform the next in a globally optimal way. The learning is often local and heuristic, leading to slow convergence and a high risk of becoming trapped in local optima [17].

- Machine Learning in LDBT: Machine learning, particularly active learning, transforms this process. ML models are trained to understand the complex, high-dimensional landscape of biological sequence space. They can predict which regions of this vast space are most likely to be successful, effectively pruning away unproductive avenues of research before any lab work begins [35]. This represents a move from exploring a landscape in the dark to exploring it with a predictive map.

- Quantitative Advantage: The performance metrics in Table 1, such as the drastic reduction in compounds synthesized and the dramatically increased hit rates, provide concrete evidence of ML's superiority over traditional, more stochastic search methods for navigating biological complexity.

The evidence from cutting-edge research paints a clear picture: the LDBT framework is outperforming the traditional DBTL cycle on critical metrics of efficiency, speed, and success rate. By placing machine learning at the beginning of the workflow, researchers can leverage vast biological knowledge to make smarter initial designs, dramatically reducing the need for costly and time-consuming iterative cycles. While the DBTL cycle remains a valuable and foundational engineering concept, the integration of AI and rapid experimentation platforms in the LDBT paradigm represents the future of bioengineering. This shift enables a more predictive, first-principles approach to biological design, poised to accelerate breakthroughs in drug discovery, enzyme engineering, and synthetic biology.

Implementing ML and Random Search in Drug Discovery DBTL Pipelines

Random Search for Efficient Model Tuning in QSAR and Virtual Screening

In modern drug discovery, Quantitative Structure-Activity Relationship (QSAR) modeling and virtual screening have become indispensable tools for identifying promising therapeutic candidates. The performance of machine learning models in these applications heavily depends on selecting appropriate hyperparameters, which control the learning process itself. While sophisticated optimization algorithms continue to emerge, Random Search remains a surprisingly effective and widely adopted approach for hyperparameter tuning in QSAR workflows, particularly given the computational constraints and structured data characteristics common to cheminformatics problems.

This guide provides an objective comparison of Random Search against competing hyperparameter optimization methods within the context of machine learning-driven QSAR and virtual screening. We present experimental data, detailed protocols, and practical recommendations to help researchers select appropriate tuning strategies for their specific drug discovery pipelines.

Theoretical Foundations of Hyperparameter Optimization Methods

Random Search Fundamentals

Random Search operates by evaluating random combinations of hyperparameters sampled from predefined distributions over the parameter space. Unlike systematic approaches, it doesn't attempt to model the performance landscape but relies on statistical probability to find good configurations. The algorithm's effectiveness stems from the empirical observation that for most machine learning models, a small subset of parameters truly drives performance variance. By testing more distinct values for each parameter across the entire search space, Random Search often discovers high-performing regions more efficiently than methods that exhaustively search limited dimensions [37].

Alternative Optimization Strategies

Several competing approaches offer different trade-offs between computational efficiency and solution quality:

- Grid Search: Systematically explores all combinations of a predefined set of values for each hyperparameter. While thorough, it suffers from the "curse of dimensionality" – computational requirements grow exponentially as parameters increase [38].

- Bayesian Optimization: Builds a probabilistic model of the objective function to direct future sampling toward promising regions. It typically requires fewer evaluations but has higher computational overhead per iteration [38].

- Tree-structured Parzen Estimator (TPE): Models the performance landscape using Gaussian distributions to focus sampling on hyperparameter values that previously yielded good results [38].

- Hyperband: Utilizes an early-stopping strategy to quickly discard underperforming configurations, efficiently allocating computational resources [38].

Comparative Performance Analysis

Experimental Framework and Dataset Characteristics

To objectively evaluate optimization methods, we synthesized data from multiple published studies comparing hyperparameter tuning approaches across various machine learning tasks and dataset types. The table below summarizes key dataset characteristics used in these benchmark studies:

Table 1: Dataset Characteristics in Hyperparameter Optimization Studies

| Dataset Name | Samples | Features | Task Type | ML Algorithms Evaluated |

|---|---|---|---|---|

| Iris Flower | 150 | 4 | Multiclass Classification | K-NN, K-means, Neural Networks, SVM |

| Pima Indians Diabetes | 768 | 8 | Binary Classification | K-NN, K-means, Neural Networks, SVM |

| MNIST Handwritten Digits | 70,000 | 784 | Multiclass Classification | K-NN, K-means, Neural Networks, SVM |

| ChEMBL T. cruzi Inhibitors | 1,183 | 1,804 | Regression | SVM, ANN, Random Forest |

Quantitative Performance Comparison

The following table synthesizes performance metrics across multiple studies comparing Random Search to other optimization methods:

Table 2: Performance Comparison of Hyperparameter Optimization Methods

| Optimization Method | Average Accuracy Gain | Computational Efficiency | Implementation Complexity | Best Suited Scenarios |

|---|---|---|---|---|

| Random Search | Baseline | High | Low | Limited computational resources, High-dimensional parameter spaces |

| Random Search Plus | 5-30% improvement over Random Search [37] | High (10% of RS time for equivalent results) [37] | Medium | All problem types, especially when accuracy prioritizes |

| Grid Search | Comparable to Random Search | Low (exponential complexity) | Low | Very low-dimensional spaces, Exhaustive search required |

| Bayesian Optimization | 0-15% improvement over Random Search | Medium (high per-iteration cost) | High | Expensive function evaluations, Low-dimensional spaces |

| Hyperband | Comparable to Random Search | Very High (early stopping) | Medium | Large-scale neural architectures, Distributed computing |

Case Study: Random Search Plus Enhancement

A 2020 study introduced Random Search Plus, which incorporates hyperparameter space separation to improve upon traditional Random Search. The method works by dividing the search space into regions and allocating trials more strategically. Empirical evaluation demonstrated that this enhancement could:

- Find better hyperparameters than traditional Random Search, improving accuracy by 5-30% on supervised learning tasks [37]

- Achieve equivalent optimization as Random Search in only 10% of the time with appropriate space separation strategies [37]

- Provide more globally optimal solutions compared to the sometimes locally-constrained results of traditional Random Search [37]

Experimental Protocols for Hyperparameter Optimization in QSAR

Standard Random Search Implementation Protocol

For researchers implementing Random Search in QSAR workflows, the following protocol provides a robust starting point:

Define Hyperparameter Space: Specify distributions for each hyperparameter (e.g., uniform, log-uniform) based on theoretical constraints and empirical experience.

Set Iteration Budget: Determine the number of random combinations to evaluate based on computational resources (typically 50-200 iterations).

Configure Cross-Validation: Implement k-fold cross-validation (typically k=5 or 10) to evaluate each hyperparameter combination's performance.

Execute Parallel Trials: Evaluate different hyperparameter combinations concurrently to maximize resource utilization.

Select Optimal Configuration: Identify the hyperparameter set yielding the best cross-validated performance metric (e.g., RMSE, MAE, Pearson R²).

Final Model Training: Train the final model using the optimal hyperparameters on the entire training set.

QSAR-Specific Evaluation Metrics

When applying these methods to QSAR and virtual screening, researchers should select evaluation metrics aligned with their specific objectives:

- For regression models predicting continuous activity values (e.g., pIC50, pKi), use RMSE, MAE, and Pearson Correlation Coefficient [39] [40].

- For classification models in virtual screening, prioritize Positive Predictive Value (PPV) over balanced accuracy when screening ultra-large chemical libraries, as it better reflects hit identification performance in early discovery [41].

Integrated Workflow for QSAR Model Development

The following diagram illustrates the complete QSAR model development workflow, highlighting the role of hyperparameter optimization within the broader context:

Table 3: Essential Tools for QSAR Modeling and Hyperparameter Optimization

| Tool/Resource | Type | Function in QSAR/Hyperparameter Optimization | Example Applications |

|---|---|---|---|

| Scikit-learn | Python Library | Provides ML algorithms and hyperparameter optimization implementations | Implementation of Random Search, Grid Search, and cross-validation [39] |

| PaDEL-Descriptor | Software | Calculates molecular descriptors and fingerprints from chemical structures | Generation of 1,804 molecular descriptors for QSAR modeling [39] |

| ChEMBL Database | Chemical Database | Provides curated bioactivity data for model training | Source of 1,183 T. cruzi inhibitors for anti-Chagas disease modeling [39] |

| Random Forest | ML Algorithm | Ensemble method effective for structured data; robust to hyperparameter choices | Classification of active/inactive compounds in virtual screening [42] |

| Support Vector Machine (SVM) | ML Algorithm | Powerful for nonlinear classification; requires careful hyperparameter tuning | Modeling complex structure-activity relationships with RBF kernel [39] |

| Artificial Neural Networks | ML Algorithm | Flexible function approximators; highly sensitive to architecture hyperparameters | Development of high-performance QSAR models with CDK fingerprints [39] |

Based on our comprehensive analysis of hyperparameter optimization methods in the context of QSAR and virtual screening, we provide the following evidence-based recommendations:

Prioritize Random Search Plus over traditional Random Search for most QSAR applications, as it provides significant accuracy improvements (5-30%) or equivalent performance in substantially less time (up to 90% reduction) [37].

Reserve Grid Search for scenarios with very few hyperparameters (typically ≤3), where exhaustive search remains computationally feasible without compromising project timelines.

Consider Bayesian Optimization when function evaluations are computationally expensive and the hyperparameter space is low-dimensional, despite its higher implementation complexity.

Select evaluation metrics aligned with project goals – particularly for virtual screening, where Positive Predictive Value (PPV) better reflects success in identifying true hits from ultra-large chemical libraries than traditional balanced accuracy [41].

Random Search and its enhanced variants continue to offer compelling performance for hyperparameter optimization in QSAR modeling, balancing computational efficiency with competitive results. As drug discovery increasingly relies on large-scale virtual screening of expansive chemical libraries, efficient model tuning remains essential for accelerating the identification of novel therapeutic candidates.

De Novo Drug Design with Deep Learning and Reinforcement Learning

The integration of artificial intelligence (AI) into drug discovery represents a paradigm shift, offering the potential to significantly reduce the time and cost associated with traditional drug development. De novo drug design, the computational generation of novel drug-like molecules from scratch, has emerged as a particularly promising application of AI. This field leverages deep learning architectures and reinforcement learning (RL) to explore the vast chemical space and design molecules with specific pharmacological properties. Within the broader context of machine learning versus random search for Design-Build-Test-Learn (DBTL) cycle optimization, AI-driven methods demonstrate a fundamental advantage: they use learned chemical knowledge to make informed decisions, moving beyond the stochastic sampling of random search to efficiently navigate complex structure-activity relationships. This guide provides an objective comparison of the performance, methodologies, and applications of state-of-the-art deep learning and reinforcement learning frameworks in de novo drug design.

Comparative Analysis of Methodologies and Performance

The performance of AI-driven de novo design models is evaluated against a multitude of criteria, including the bioactivity, synthesizability, and novelty of the generated molecules, as well as their ability to model complex pharmacological phenomena.

Key Model Architectures and Their Experimental Performance

Table 1: Comparison of Key Deep Learning and RL Frameworks for De Novo Drug Design

| Model/Framework | Core Approach | Key Innovation | Reported Experimental Outcome | Primary Advantage |

|---|---|---|---|---|

| DRAGONFLY [43] | Interactome-based Deep Learning (GTNN + LSTM) | "Zero-shot" learning from a drug-target interactome; no application-specific fine-tuning needed. | Generated potent PPARγ partial agonists; crystal structure confirmed anticipated binding mode. | Integrates both ligand- and structure-based design; outperformed fine-tuned RNNs on synthesizability, novelty, and bioactivity [43]. |

| ACARL [44] | Activity Cliff-Aware Reinforcement Learning | Formulates an Activity Cliff Index (ACI) and uses a contrastive RL loss to prioritize activity cliff compounds. | Surpassed state-of-the-art algorithms in generating high-affinity molecules for multiple protein targets [44]. | Explicitly models critical structure-activity relationship (SAR) discontinuities, which are often overlooked. |

| Diversity-Aware RL [45] | Reinforcement Learning with Intrinsic Motivation | Combines structure- and prediction-based methods to penalize rewards and enhance diversity. | Effectively increased the diversity of the set of generated molecules without sacrificing high rewards [45]. | Prevents the optimization process from becoming stuck in local optima. |

| REINVENT/Reg. MLE [46] | Regularized Maximum Likelihood Estimation (RL) | Keeps the agent's policy close to a pre-trained prior policy while focusing on high-scoring sequences. | Demonstrated good sample efficiency and performance in generating predicted active molecules against DRD2 [46]. | Balances the exploration of novel chemical space with the exploitation of known chemical rules. |

Addressing Critical Challenges in Molecular Generation

A significant challenge in the field is the evaluation of generative models. A large-scale analysis of approximately one billion generated molecules revealed that the size of the generated molecular library is a critical confounder. Common evaluation metrics like the Fréchet ChemNet Distance (FCD) can be misleading if the number of designs is too small, with convergence often requiring over 10,000 designs—a larger number than is typically used in many studies [47]. This underscores the importance of standardized, large-scale evaluation for fair model comparison.

Furthermore, traditional methods struggle with activity cliffs, a phenomenon where small structural changes lead to significant shifts in biological activity. The ACARL framework directly addresses this by identifying activity cliff compounds using a quantitative Activity Cliff Index and dynamically enhancing their impact during RL training through a tailored contrastive loss function [44]. This approach allows the model to focus optimization on high-impact regions of the SAR landscape.

Detailed Experimental Protocols

To ensure reproducibility and provide a clear basis for comparison, this section outlines the standard protocols and workflows used in the cited studies.

General DBTL Workflow for AI-Driven Drug Design

The following diagram illustrates the standard Design-Build-Test-Learn (DBTL) cycle, which is foundational to both ML-guided and random search approaches in enzyme engineering and drug design [48].

The ACARL Framework Workflow