LDBT vs DBTL: How Machine Learning is Redefining the Synthetic Biology Cycle

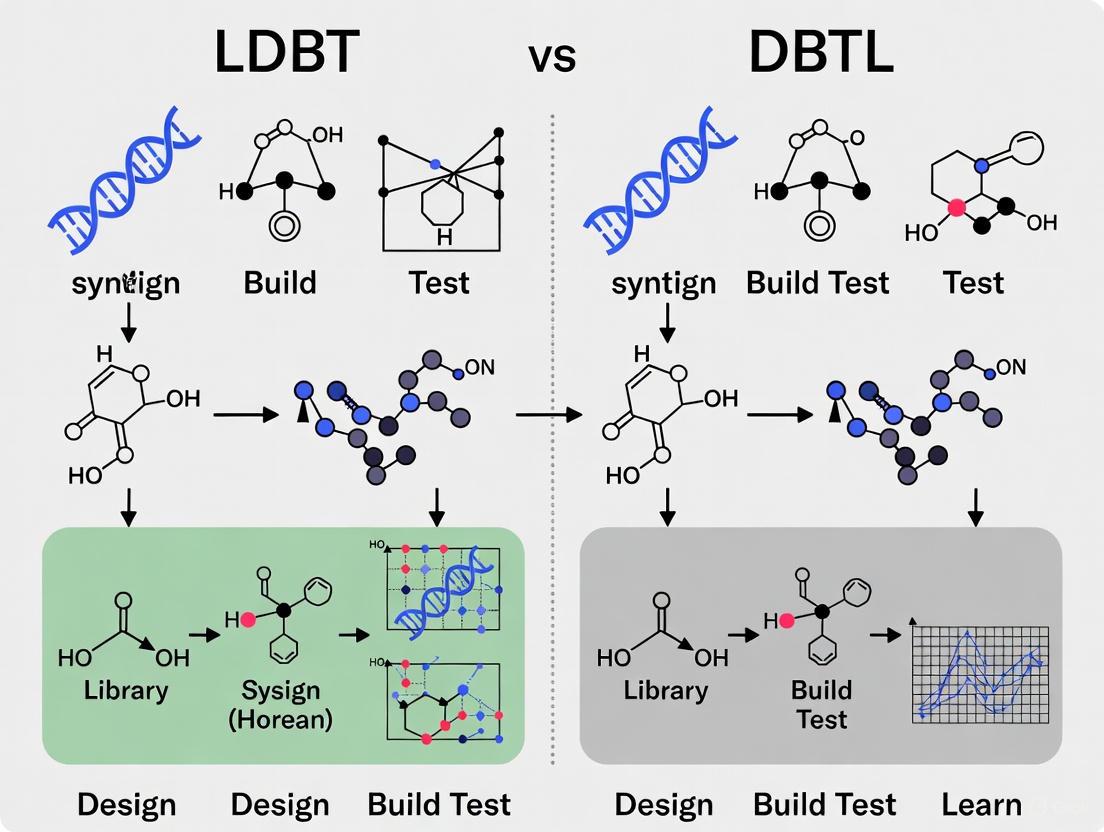

This article explores the paradigm shift from the traditional Design-Build-Test-Learn (DBTL) cycle to a new Learn-Design-Build-Test (LDBT) framework in synthetic biology.

LDBT vs DBTL: How Machine Learning is Redefining the Synthetic Biology Cycle

Abstract

This article explores the paradigm shift from the traditional Design-Build-Test-Learn (DBTL) cycle to a new Learn-Design-Build-Test (LDBT) framework in synthetic biology. Driven by advances in machine learning (ML) and artificial intelligence (AI), this reordering places data-driven learning at the forefront, enabling more predictive and precise biological design. We will examine the foundational principles of both cycles, detail the key ML technologies and high-throughput 'Build' and 'Test' methods that make LDBT possible, address critical troubleshooting and optimization challenges, and validate the approach through comparative analysis of its impact on efficiency and success rates. Aimed at researchers, scientists, and drug development professionals, this review synthesizes how LDBT accelerates therapeutic discovery, optimizes protein engineering, and paves the way for a more predictive engineering biology.

From DBTL to LDBT: Understanding the Foundational Shift in Engineering Biology

The Design-Build-Test-Learn (DBTL) cycle has long served as the foundational framework for systematic biological engineering, providing a structured approach to designing and optimizing biological systems. This iterative process begins with designing genetic constructs, building them in biological systems, testing their performance, and learning from the results to inform subsequent design iterations [1]. However, recent advances in machine learning (ML) and high-throughput testing platforms are fundamentally reshaping this paradigm. A new framework, dubbed "LDBT" (Learn-Design-Build-Test), proposes reordering the cycle to begin with machine learning, potentially accelerating biological design by leveraging predictive algorithms before physical construction [2]. This paradigm shift promises to transform synthetic biology from an empirical, trial-and-error discipline toward a more predictive engineering science.

The tension between traditional DBTL and the emerging LDBT framework represents a critical juncture for synthetic biology research and drug development. Where DBTL relies on empirical iteration to gain knowledge, LDBT leverages pre-trained machine learning models on vast biological datasets to generate initial designs, potentially reducing the number of physical cycles needed to achieve desired biological functions [2] [3]. This comparative analysis examines both frameworks through experimental data, methodological protocols, and practical implementations to guide researchers in selecting appropriate strategies for their biological engineering challenges.

Framework Fundamentals: DBTL vs. LDBT

The Traditional DBTL Cycle

The conventional DBTL cycle follows a sequential, iterative process. The Design phase involves defining objectives and designing genetic parts or systems using domain knowledge and computational modeling. The Build phase focuses on physical construction through DNA synthesis, assembly, and introduction into characterization systems (e.g., bacterial, mammalian, or cell-free systems). The Test phase experimentally measures the performance of engineered biological constructs. Finally, the Learn phase analyzes collected data to inform the next design iteration, repeating until desired functionality is achieved [2] [1].

This approach has proven effective but often requires multiple cycles to gain sufficient knowledge for optimal designs, with the Build-Test phases creating significant bottlenecks in timeline and resources [2]. The process is further constrained by the vast combinatorial space of biological sequences; for an average 300-residue protein, just three substitutions can yield approximately 3.1 × 10¹⁰ possible combinations, making exhaustive exploration impractical [4].

The Emerging LDBT Paradigm

The LDBT framework repositions "Learn" as the initial phase, leveraging pre-trained machine learning models on large biological datasets to generate initial designs. These models can capture complex patterns in high-dimensional spaces, enabling more efficient navigation of the biological design space before physical construction [2] [3]. Specifically, protein language models like ESM and ProGen, trained on millions of protein sequences, can perform zero-shot predictions of beneficial mutations and infer protein functions without additional training [2].

This learn-first approach is further enhanced by integrating cell-free transcription-translation (TX-TL) systems for rapid testing. These systems circumvent complexities of living cells, enabling swift assessment of genetic circuit performance within hours rather than days or weeks [3]. When coupled with machine learning predictions, they create a synergistic framework that accelerates validation while enriching training datasets for improved algorithmic learning [2] [3].

Table 1: Core Conceptual Differences Between DBTL and LDBT Frameworks

| Aspect | Traditional DBTL | LDBT Paradigm |

|---|---|---|

| Starting Point | Design based on existing knowledge | Learning from pre-trained ML models on large datasets |

| Primary Driver | Empirical iteration | Predictive algorithms |

| Knowledge Acquisition | Gradual, through multiple cycles | Leveraged from foundational models at outset |

| Testing Approach | Often in vivo systems | Heavy utilization of rapid cell-free platforms |

| Cycle Goal | Converge through iteration | Achieve functionality in fewer cycles |

Experimental Comparisons and Performance Metrics

Case Study: Protein Engineering with DeepDE

A rigorous comparison of the frameworks emerges from protein engineering applications. Traditional directed evolution follows the DBTL approach, requiring labor-intensive screening of thousands of mutants over multiple rounds [4]. In contrast, the DeepDE algorithm exemplifies the LDBT approach, leveraging deep learning on a compact library of ~1,000 mutants as a training set [4].

When applied to GFP from Aequorea victoria, DeepDE achieved a 74.3-fold increase in activity over four rounds of evolution, far surpassing the benchmark superfolder GFP (40.2-fold increase) that required multi-year engineering efforts [4]. This demonstrates how machine-learning guided approaches can significantly accelerate optimization cycles while achieving superior results.

The algorithm employed a mutation radius of three (triple mutants), enabling exploration of a much greater sequence space compared to single or double mutants in each iteration. This approach explored a combinatorial library of approximately 1.5 × 10¹⁰ variants, a space impractical for traditional methods [4].

Case Study: Dopamine Production in E. coli

A knowledge-driven DBTL approach was used to optimize dopamine production in E. coli, demonstrating the traditional framework's capabilities when enhanced with mechanistic insights [5]. Researchers developed a high-throughput RBS engineering strategy to fine-tune expression levels of dopamine pathway enzymes.

The optimized strain achieved dopamine production of 69.03 ± 1.2 mg/L (equivalent to 34.34 ± 0.59 mg/g biomass), representing a 2.6 to 6.6-fold improvement over previous state-of-the-art in vivo production methods [5]. This success highlights how traditional DBTL cycles, when informed by upstream in vitro investigations and high-throughput engineering, can efficiently optimize complex metabolic pathways.

Table 2: Quantitative Performance Comparison of Engineering Approaches

| Engineering Approach | Target System | Performance Improvement | Screening Scale | Iterations/Timeframe |

|---|---|---|---|---|

| Traditional Directed Evolution (DBTL) | Various proteins | Variable, often requires extensive optimization | Thousands to millions of variants | Multiple rounds over months/years |

| DeepDE (LDBT) | avGFP | 74.3-fold increase in activity | ~1,000 mutants per round | 4 rounds [4] |

| Knowledge-Driven DBTL | Dopamine production in E. coli | 69.03 mg/L (2.6-6.6-fold improvement) | High-throughput RBS library | Not specified [5] |

| AI-Guided + Cell-Free (LDBT) | Antimicrobial peptides | 6 promising designs from 500,000 survey | 500 variants validated | Single round with computational pre-screening [2] |

Methodological Protocols

Protocol: DeepDE Implementation for Protein Engineering

The DeepDE algorithm exemplifies the LDBT approach through iterative deep learning-guided directed evolution [4]:

Training Data Curation: Compile a supervised training dataset of approximately 1,000 single or double mutants with associated fitness measurements. For avGFP, this dataset covered 219 of 238 sites.

Model Training: Implement three deep learning methods—unsupervised, weak-positive only, and supervised learning—using the curated dataset. Performance correlates with training dataset size, with Spearman's correlation coefficients increasing from 0.30 to 0.74 as dataset size grows from 24 to 2,000 mutants.

Mutant Prediction: Set a mutation radius of three for each evolution round. For the "mutagenesis by direct prediction" approach, compute all possible double mutants, identify top performers, calculate mutation frequency per site, and generate triple mutant combinations for prediction.

Experimental Validation: Synthesize and assay top-ranked triple mutants (e.g., top 10 predictions). For the "mutagenesis coupled with screening" approach, experimentally construct libraries of triple mutants for screening.

Iterative Cycling: Use best-performing mutants as templates for subsequent rounds, repeating the process for 4-5 rounds with the same training dataset before potentially transitioning to a different dataset.

Protocol: Knowledge-Driven DBTL for Metabolic Engineering

The dopamine production case study demonstrates an enhanced DBTL approach [5]:

Upstream In Vitro Investigation: Conduct cell lysate studies to assess enzyme expression levels and pathway interactions before in vivo implementation. This mechanistic understanding informs initial design decisions.

Host Strain Engineering: Develop a high-production host (e.g., E. coli FUS4.T2 for dopamine) with precursor enhancement (e.g., l-tyrosine overproduction through genomic modifications like TyrR depletion and feedback inhibition mutation).

Pathway Optimization: Implement high-throughput RBS engineering to fine-tune relative expression of pathway enzymes. Modulate Shine-Dalgarno sequences without interfering secondary structures to predictably control translation initiation rates.

Automated Strain Construction: Utilize automated molecular cloning and cultivation processes to accelerate the Build and Test phases.

Multi-Omics Analysis: Integrate transcriptomic, proteomic, and metabolomic data during the Learn phase to identify bottlenecks and inform subsequent design iterations.

Visualization Frameworks

DBTL Cycle Workflow

LDBT Paradigm Workflow

Research Reagent Solutions

Table 3: Essential Research Tools for DBTL and LDBT Implementation

| Reagent/Platform | Function | Framework Application |

|---|---|---|

| Cell-Free TX-TL Systems | Rapid protein expression without living cells | LDBT: Enables high-throughput testing of ML predictions in hours [2] [3] |

| Protein Language Models | Predict protein structure-function relationships | LDBT: Pre-trained models (ESM, ProGen) enable zero-shot design [2] |

| UTR Designer Tools | Modulate RBS sequences for expression tuning | DBTL: Fine-tune metabolic pathway enzymes in iterative optimization [5] |

| Droplet Microfluidics | Ultra-high-throughput screening platform | Both: Enables screening of >100,000 picoliter-scale reactions [2] |

| Automated Biofoundries | Integrated robotic assembly and testing | Both: Full automation of DBTL/LDBT cycles for scaling [2] |

| Deep Learning Algorithms | Navigate vast protein sequence spaces | LDBT: Tools like DeepDE, ProteinMPNN predict optimized variants [2] [4] |

The choice between DBTL and LDBT frameworks depends on specific research contexts and available resources. The traditional DBTL cycle remains valuable when limited training data exists for machine learning models, when engineering well-characterized biological systems, and when working with constrained computational resources. The knowledge-driven DBTL approach, incorporating upstream in vitro investigations, demonstrates how traditional cycles can be enhanced for efficient optimization [5].

The emerging LDBT paradigm offers distinct advantages for exploring vast design spaces, engineering poorly characterized systems, and accelerating development timelines. Its strength lies in leveraging pre-existing biological knowledge embedded in foundational models, potentially achieving functionality in fewer physical cycles [2] [4]. For drug development professionals, LDBT approaches show particular promise for antibody engineering, enzyme optimization, and metabolic pathway design where large sequence datasets enable robust model training.

The future of biological engineering likely involves hybrid approaches that leverage the strengths of both frameworks. As the field advances, the distinction may blur into adaptive cycles that dynamically reorder phases based on available knowledge and resources. What remains clear is that the integration of machine learning and rapid experimental platforms is fundamentally transforming biological engineering from an empirical art toward a predictive science.

Synthetic biology operates on a core engineering mantra known as the Design-Build-Test-Learn (DBTL) cycle, a systematic framework intended to streamline the engineering of biological systems [6]. In this paradigm, researchers Design biological parts with desired functions, Build DNA constructs and introduce them into living systems, Test the resulting constructs to measure performance, and Learn from the data to inform the next design iteration [2]. This approach has enabled remarkable achievements over the past two decades, from basic genetic oscillators to microbial production of therapeutic compounds [6]. However, as the field advances toward more complex challenges, the traditional DBTL framework is revealing significant limitations in its ability to efficiently navigate biological complexity.

The fundamental weakness lies in what might be termed "the learning bottleneck" – the cycle's inability to effectively extract predictive knowledge from the growing volumes of biological data [6]. While synthetic biologists can now generate draft blueprints of desired biological systems, many still resort to top-down approaches based on likelihoods and trial-and-error to determine optimal designs [6]. This deviation from synthetic biology's aspiration of rational design stems from the fact that biological processes in cells are often highly dynamic and inscrutable "black boxes" [6]. As a result, even with massive improvements in DNA synthesis and testing capabilities, the learning phase has failed to keep pace, creating a critical bottleneck that limits the entire engineering process.

The Learning Bottleneck: Where DBTL Stumbles

Fundamental Limitations in Handling Biological Complexity

The DBTL cycle struggles particularly with the multidimensional complexity inherent to biological systems. Three key factors contribute to this learning bottleneck:

System Heterogeneity and Component Interactions: Biological systems exhibit extraordinary complexity and heterogeneity, with numerous interacting components that create emergent properties not easily predicted from individual parts [6]. The traditional DBTL approach often oversimplifies these interactions, leading to designs that fail when scaled from individual parts to systems.

Data Interpretation Challenges: The "Learn" phase faces difficulties due to "variations in experimental setups" and the challenge of integrating multi-omics data [6]. Without standardized approaches to data generation and analysis, knowledge gained from one cycle often fails to transfer effectively to the next.

Trial-and-Error Inefficiency: The current paradigm frequently deviates into "top-down approaches based on likelihoods and trial-and-error" [6]. This empirical approach contrasts with the foundational vision of synthetic biology as a discipline built on rational design principles.

The Throughput Mismatch: Building and Testing Outpacing Learning

Technical advancements have dramatically accelerated the Build and Test phases while leaving Learning behind. DNA sequencing costs have plummeted from approximately $10 million per human genome in 2007 to around $600 today [6]. This cost reduction has enabled the accumulation of vast genomic databases, while innovations in DNA synthesis and assembly methodologies allow researchers to rapidly construct complex genetic systems [6].

The establishment of biofoundries worldwide has further accelerated this process through high-throughput automated assembly and screening methods [6]. These facilities can generate enormous amounts of multi-omics data at single-cell resolution, creating a deluge of information that outpaces traditional analytical approaches [6]. The result is a fundamental mismatch between data generation capacity and knowledge extraction capabilities – the core of the learning bottleneck.

Table: Throughput Comparison Across DBTL Stages

| DBTL Stage | Traditional Approach | Modern Capabilities | Limitations |

|---|---|---|---|

| Design | Manual, experience-based | Computational modeling | Limited by biological understanding |

| Build | Manual cloning | Automated DNA synthesis & assembly | Cost-effective but limited by design quality |

| Test | Low-throughput assays | High-throughput multi-omics | Data volume exceeds analysis capacity |

| Learn | Manual data interpretation | Basic statistical analysis | Inability to extract complex patterns |

LDBT: A Paradigm Shift to Overcome the Bottleneck

Reordering the Cycle: Learning Before Design

A paradigm shift is emerging in synthetic biology that directly addresses the learning bottleneck: the LDBT framework, which repositions "Learning" at the beginning of the cycle [2]. This approach leverages machine learning (ML) models trained on vast biological datasets to make predictive designs before any building or testing occurs. Rather than relying on iterative experimental cycles to accumulate knowledge, LDBT starts with knowledge embedded in pre-trained models capable of "zero-shot" predictions – generating functional designs without additional training [2].

This reorientation represents more than a simple procedural change; it fundamentally alters the relationship between data generation and knowledge application. As researchers note, "the data that would be 'learned' by Build-Test phases may already be inherent in machine learning algorithms" [2]. This approach brings synthetic biology closer to established engineering disciplines like civil engineering, which rely on first principles to create functional designs without extensive iterative testing [2].

Machine Learning and Foundational Models in LDBT

The LDBT paradigm is enabled by specialized machine learning approaches trained on biological data:

Protein Language Models: Sequence-based models like ESM and ProGen are trained on evolutionary relationships between protein sequences across phylogeny [2]. These models can predict beneficial mutations and infer protein function, enabling zero-shot prediction of diverse antibody sequences and other protein engineering tasks [2].

Structure-Based Design Tools: Approaches like ProteinMPNN use deep learning to design protein sequences that fold into specific backbone structures [2]. When combined with structure-assessment tools like AlphaFold, these methods have demonstrated "nearly 10-fold increase in design success rates" compared to traditional methods [2].

Functional Prediction Models: Specialized models focus on predicting key protein properties like thermostability (Prethermut, Stability Oracle) and solubility (DeepSol) [2]. These tools help eliminate potentially problematic designs before the Build phase.

Table: Machine Learning Approaches in LDBT

| ML Approach | Training Data | Key Capabilities | Demonstrated Applications |

|---|---|---|---|

| Protein Language Models (ESM, ProGen) | Millions of protein sequences | Predict beneficial mutations, infer function | Antibody sequence prediction, enzyme engineering |

| Structure-Based Design (ProteinMPNN) | Experimentally determined structures | Design sequences for specific folds | TEV protease optimization (increased activity) |

| Stability Prediction (Stability Oracle) | Protein stability data with structures | Predict ΔΔG of mutations | Enzyme stabilization for industrial applications |

| Hybrid Approaches | Multiple data types combined | Enhanced predictive power | PET hydrolase engineering with improved performance |

Comparative Analysis: DBTL vs. LDBT in Practice

Experimental Workflows and Methodologies

The fundamental difference between DBTL and LDBT approaches becomes evident in their experimental workflows:

The traditional DBTL cycle (top) follows a sequential, iterative process where learning occurs only after building and testing. In contrast, the LDBT paradigm (bottom) begins with learning through machine learning analysis of existing biological data, enabling more informed design before any building occurs [2].

Performance Metrics and Experimental Outcomes

Direct comparisons between DBTL and LDBT approaches demonstrate significant advantages for the machine learning-driven paradigm:

Table: Quantitative Comparison of DBTL vs. LDBT Performance

| Performance Metric | Traditional DBTL | LDBT Approach | Improvement Factor |

|---|---|---|---|

| Design Cycles Required | 4-6 iterations | 1-2 iterations | 2-3x faster |

| Compounds Synthesized | Thousands for lead optimization | 10x fewer compounds [7] | 10x efficiency gain |

| Success Rate | Industry baseline | ~10x increase for some protein designs [2] | Significant improvement |

| Data Utilization | Limited to project-specific data | Leverages evolutionary knowledge across species | Vastly expanded context |

Case studies highlight these advantages in practical applications. In protein engineering, combining ProteinMPNN with structure-assessment tools like AlphaFold has demonstrated "nearly 10-fold increase in design success rates" compared to traditional methods [2]. In pharmaceutical development, companies like Exscientia report "in silico design cycles ~70% faster and requiring 10× fewer synthesized compounds than industry norms" [7]. One specific program achieved a clinical candidate "after synthesizing only 136 compounds, whereas traditional programs often require thousands" [7].

Enabling Technologies: The Scientist's Toolkit

The implementation of LDBT relies on specialized research reagents and platforms that enable rapid building and testing of computationally generated designs:

Table: Essential Research Reagent Solutions for LDBT Implementation

| Tool/Category | Function | Key Applications |

|---|---|---|

| Cell-Free Expression Systems | Rapid protein synthesis without living cells | High-throughput testing of protein variants [2] |

| DNA Synthesis Platforms | Automated production of designed DNA sequences | Rapid construction of genetic circuits [6] |

| Automated Liquid Handlers | High-throughput reagent distribution and sample processing | Scaling testing to thousands of variants [2] |

| Microfluidics/Droplet Systems | Ultra-high-throughput screening in picoliter volumes | Screening >100,000 protein variants [2] |

| Multi-omics Analysis Kits | Comprehensive molecular profiling | Generating training data for ML models [6] |

Cell-free expression systems deserve particular emphasis as they enable "rapid (>1 g/L protein in <4 h)" production and can be "readily scaled from the pL to kL scale" [2]. These systems allow direct testing of protein variants without time-consuming cloning steps, making them ideal for validating LDBT-generated designs [2]. When combined with automated platforms like biofoundries, these tools create an integrated infrastructure for implementing the LDBT paradigm.

Implementation Pathway: Adopting LDBT in Research Programs

Practical Framework for Transition

Shifting from DBTL to LDBT requires strategic changes in research operations and infrastructure. Research organizations should consider these implementation phases:

Data Foundation Development: The LDBT paradigm depends on "ML-friendly data" with "common standards for designing and generating" datasets suitable for machine learning [6]. This requires establishing consistent experimental protocols and data formats across projects to create training datasets.

Computational Infrastructure Investment: Successful LDBT implementation requires "deep learning models trained on vast biological datasets" [2]. This necessitates investment in computational resources and expertise, including partnerships between "dry- and wet-laboratory researchers" [6].

Integrated Workflow Design: The most successful implementations combine computational design with rapid experimental validation, such as "closed-loop design platforms that leverage AI agents to cycle through experiments" [2]. These systems connect computational design directly with automated building and testing.

Validation and Quality Control in LDBT

As with any methodological shift, maintaining rigorous validation is essential when adopting LDBT approaches:

The validation framework for LDBT employs a multi-stage approach that begins with computational predictions, moves through increasingly rigorous experimental testing, and feeds results back to improve models [2]. This tiered validation strategy balances speed with reliability, enabling rapid iteration while maintaining scientific rigor.

The transition from DBTL to LDBT represents more than a procedural adjustment – it marks a fundamental shift in how we approach biological engineering. By positioning learning at the forefront of the design process, synthetic biologists can leverage the vast accumulated knowledge of biological systems to create more predictive and reliable designs. The evidence suggests that this paradigm shift can deliver substantial improvements in efficiency, success rates, and cost-effectiveness across multiple applications, from therapeutic development to sustainable biomaterials [2].

While challenges remain in standardized data generation, model transparency, and interdisciplinary collaboration [6], the LDBT framework offers a promising path forward for overcoming the learning bottleneck that has long constrained synthetic biology. As machine learning capabilities continue to advance and biological datasets expand, this approach may ultimately realize the field's original aspiration: a true engineering discipline for biology, capable of reliably designing complex biological systems to address humanity's most pressing challenges.

The engineering of biological systems has long been guided by the Design-Build-Test-Learn (DBTL) cycle, a systematic, iterative framework that streamlines efforts to build functional biological systems [8]. In this established paradigm, researchers first define objectives and design biological parts, then build DNA constructs, test their performance experimentally, and finally learn from the data to inform the next design round [8]. However, recent advances in machine learning (ML) and high-throughput testing platforms are fundamentally transforming this workflow, prompting a significant paradigm shift from DBTL to LDBT (Learn-Design-Build-Test) [8] [3]. This reordering places machine learning at the forefront of the biological engineering process, creating a learn-first ethos that leverages large biological datasets to make predictive designs before committing to experimental work [8] [3].

The LDBT paradigm represents more than a simple reordering of steps—it constitutes a fundamental change in approach that leverages the predictive power of machine learning models trained on vast biological datasets. Instead of relying on empirical iteration, LDBT uses computational intelligence to directly inform and optimize designs, potentially generating functional biological parts and circuits in a single cycle [8]. This approach is made possible by the growing success of zero-shot predictions from protein language models and the availability of rapid cell-free testing platforms that can validate computational predictions at unprecedented scales [8] [3]. The resulting paradigm brings synthetic biology closer to a "Design-Build-Work" model that relies more heavily on first principles, similar to established engineering disciplines [8].

Comparative Analysis: DBTL vs. LDBT Workflows and Performance

The fundamental difference between the traditional DBTL cycle and the emerging LDBT paradigm lies in their starting points and underlying methodologies. The table below outlines the core distinctions in workflow, timing, data utilization, and overall approach between these two frameworks.

Table 1: Core Workflow Comparison Between DBTL and LDBT Paradigms

| Feature | Traditional DBTL Cycle | LDBT Paradigm |

|---|---|---|

| Starting Point | Design phase based on domain knowledge and expertise [8] | Learning phase powered by machine learning analysis of existing data [8] [3] |

| Primary Driver | Empirical iteration and experimental validation [8] | Predictive modeling and computational intelligence [8] [3] |

| Cycle Duration | Multiple iterations requiring weeks to months [8] [3] | Potential for single-cycle success with days to weeks [8] [3] |

| Data Utilization | Learning occurs after testing in each cycle [8] | Learning precedes design using accumulated datasets [8] [3] |

| Experimental Approach | Relies on cellular systems with associated biological constraints [8] | Leverages cell-free systems for rapid, parallel testing [8] [3] |

| Resource Allocation | Build-Test phases can be slow and resource-intensive [8] | Computational screening minimizes experimental burden [8] |

The performance advantages of the LDBT approach become particularly evident when examining specific engineering metrics. Research has demonstrated substantial improvements in the efficiency and success rates of biological design projects implementing the learn-first methodology.

Table 2: Performance Metrics Comparison Between DBTL and LDBT Approaches

| Performance Metric | Traditional DBTL | LDBT Approach | Improvement Factor |

|---|---|---|---|

| Design Success Rates | Baseline | Nearly 10-fold increase with structure-based deep learning [8] | ~10x |

| Screening Throughput | ~102-103 variants per cycle [8] | >105 variants using cell-free droplet microfluidics [8] | 100-1000x |

| Protein Expression Time | Days to weeks (in vivo) [8] | <4 hours (cell-free) with >1 g/L yields [8] | >10x faster |

| Data Generation Scale | Limited by cellular transformation and culturing [8] | 776,000 protein variants mapped in one study [8] | Orders of magnitude higher |

| Time to Functional Solution | Multiple cycles required [8] | Single-cycle convergence possible [8] [3] | Significant reduction |

The following workflow diagram illustrates the fundamental structural differences between these two approaches, highlighting how machine learning is repositioned from a downstream analytical tool to an upstream predictive engine in the LDBT paradigm.

Experimental Protocols and Methodologies in LDBT Implementation

Machine Learning Framework for Protein Design

The learning phase of LDBT employs sophisticated machine learning models trained on evolutionary and structural biological data. The experimental protocol for implementing these models typically follows a structured approach:

Data Curation and Preprocessing: Researchers assemble large-scale datasets of protein sequences (e.g., from UniRef, NCBI) and structures (e.g., from Protein Data Bank) encompassing millions of biological examples [8]. Sequence-based protein language models such as ESM (Evolutionary Scale Modeling) and ProGen are trained on evolutionary relationships between protein sequences to capture long-range dependencies and phylogenetic patterns [8]. Structure-based models like ProteinMPNN and MutCompute utilize deep neural networks trained on experimentally determined protein structures to associate amino acids with their local chemical environments [8].

Model Architecture and Training: For sequence-based prediction, transformer architectures with attention mechanisms are implemented to process amino acid sequences and predict beneficial mutations or infer protein function [8]. Structure-based approaches employ graph neural networks or 3D convolutional networks that take entire protein structures as input and output sequences likely to fold into target backbones [8]. Hybrid models combine evolutionary information with biophysical principles, such as incorporating force-field algorithms with large language models trained on enzyme homologs [8].

Validation and Benchmarking: Rigorous evaluation protocols test model generalizability by withholding entire protein superfamilies from training and assessing performance on these novel targets [9]. Models are validated against experimental data from cell-free expression systems, creating benchmark datasets of thousands of protein variants with measured stability and activity metrics [8]. This validation approach simulates real-world scenarios where models must make accurate predictions for novel protein families not encountered during training [9].

Cell-Free Expression and High-Throughput Testing

The Build-Test phases in LDBT utilize cell-free transcription-translation (TX-TL) systems to rapidly validate computational predictions:

Cell-Free System Preparation: Protein biosynthesis machinery is obtained from crude cell lysates (e.g., from E. coli, wheat germ, or insect cells) or purified components [8]. Reaction mixtures are assembled containing necessary transcription/translation components: RNA polymerase, ribosomes, tRNAs, amino acids, nucleotides, energy regeneration systems, and cofactors [8]. DNA templates designed in the computational phase are added directly without intermediate cloning steps, enabling rapid expression without cellular transformation [8].

High-Throughput Screening Implementation: Liquid handling robots and automated workstations (e.g., from Tecan, Beckman Coulter, Hamilton Robotics) dispense nanoliter to microliter reactions into multi-well plates or microfluidic devices [8] [10]. Droplet microfluidics platforms, such as DropAI, compartmentalize individual reactions in picoliter-scale droplets, enabling screening of >100,000 variants in parallel [8]. Functional assays are integrated with expression systems, employing colorimetric, fluorescent, or bioluminescent reporters to quantify protein expression, stability, or activity in real-time [8].

Data Collection and Analysis: Automated plate readers (e.g., PerkinElmer EnVision, BioTek Synergy HTX) measure assay signals across thousands of samples simultaneously [10]. Next-generation sequencing (NGS) platforms (e.g., Illumina NovaSeq, Thermo Fisher Ion Torrent) genotype variant libraries, linking sequence to function [8] [10]. Custom software platforms (e.g., TeselaGen) manage experimental workflows, track samples, and integrate data from multiple instruments for centralized analysis [10].

The following diagram illustrates the integrated workflow of the LDBT cycle, highlighting the seamless connection between computational prediction and experimental validation that characterizes this approach.

Essential Research Reagents and Solutions for LDBT Implementation

The successful implementation of the LDBT paradigm requires specialized reagents, computational tools, and instrumentation. The following table details key research solutions that enable the learn-design-build-test workflow in synthetic biology.

Table 3: Essential Research Reagent Solutions for LDBT Implementation

| Category | Specific Solution | Function in LDBT Workflow |

|---|---|---|

| Machine Learning Models | ESM (Evolutionary Scale Modeling) [8] | Protein language model trained on evolutionary sequences for zero-shot prediction of structure-function relationships |

| Machine Learning Models | ProGen [8] | Protein language model capable of generating functional protein sequences and predicting beneficial mutations |

| Machine Learning Models | ProteinMPNN [8] | Structure-based deep learning tool that designs protein sequences for specific backbone structures |

| Machine Learning Models | MutCompute [8] | Deep neural network that identifies stabilizing mutations based on local chemical environments |

| Cell-Free Systems | TX-TL Transcription-Translation Systems [8] | Cell-free protein synthesis machinery enabling rapid expression without cellular constraints |

| Automation Equipment | Automated Liquid Handlers (Tecan, Beckman Coulter) [10] | High-precision pipetting systems for assembling DNA constructs and setting up screening reactions |

| Automation Equipment | Droplet Microfluidics (DropAI) [8] | Picoliter-scale reaction compartmentalization enabling ultra-high-throughput screening of >100,000 variants |

| DNA Synthesis Providers | Twist Bioscience, IDT, GenScript [10] | Custom DNA sequence providers integrated with automated workflows for seamless construct building |

| Analytical Instruments | High-Throughput Plate Readers (PerkinElmer EnVision) [10] | Multi-mode detectors for measuring fluorescent, colorimetric, or luminescent signals from thousands of samples |

| Analytical Instruments | Next-Generation Sequencers (Illumina NovaSeq) [10] | Rapid genotypic analysis of variant libraries, linking DNA sequence to functional output |

| Software Platforms | TeselaGen [10] | End-to-end DBTL/LDBT management software orchestrating design, inventory, workflow automation, and data analysis |

Case Studies: Experimental Validation of LDBT Efficacy

Protein Engineering with Zero-Shot Prediction

A compelling demonstration of LDBT's power comes from protein engineering campaigns that utilize zero-shot machine learning predictions:

PET Hydrolase Engineering: Researchers employed MutCompute, a structure-based deep learning tool, to identify stabilizing mutations in a polyethylene terephthalate (PET) depolymerization enzyme [8]. The model was trained on protein structures to associate amino acids with their local chemical environments, enabling prediction of beneficial substitutions without additional experimental data [8]. The resulting engineered hydrolase variants demonstrated significantly increased stability and activity compared to the wild-type enzyme, validating the computational predictions [8]. This approach was further refined using large language models trained on PET hydrolase homologs combined with force-field algorithms, effectively exploring the evolutionary landscape to improve enzyme performance [8].

TEV Protease Optimization: ProteinMPNN was used to design variants of TEV protease with improved catalytic activity [8]. The model took the entire protein structure as input and predicted new sequences likely to fold into the target backbone [8]. When combined with deep learning-based structure assessment tools like AlphaFold and RoseTTAFold, this approach achieved a nearly 10-fold increase in design success rates compared to traditional methods [8]. This case exemplifies how the integration of multiple machine learning tools within the LDBT framework can dramatically accelerate the engineering of functional proteins.

Ultra-High-Throughput Stability Mapping

The scalability of LDBT has been demonstrated through massive protein stability mapping efforts:

Comprehensive ΔG Determination: Researchers coupled cell-free protein synthesis with cDNA display to calculate folding free energy (ΔG) for 776,000 protein variants in a single experimental campaign [8]. This unprecedented dataset provided experimental validation for thousands of computational predictions simultaneously, creating a robust benchmark for evaluating zero-shot predictors [8]. The scale of this dataset—orders of magnitude larger than traditional approaches—highlights how LDBT's integration of high-throughput experimentation enables comprehensive exploration of sequence-function relationships.

Antimicrobial Peptide Design: Deep learning sequence generation was paired with cell-free expression to computationally survey over 500,000 antimicrobial peptide (AMP) variants [8]. From this vast sequence space, researchers selected 500 optimal candidates for experimental validation, resulting in six promising AMP designs with confirmed activity [8]. This approach demonstrates LDBT's ability to efficiently navigate massive design spaces that would be intractable using traditional DBTL methods, focusing experimental resources on the most promising candidates identified through computational learning.

The LDBT paradigm represents a fundamental shift in how we approach biological engineering, moving from empirical iteration to predictive design. By placing machine learning at the forefront of the biological design process, LDBT leverages the vast and growing repositories of biological data to make informed predictions before experimental work begins [8] [3]. This learn-first approach, combined with rapid cell-free testing platforms, enables researchers to navigate the enormous complexity of biological sequence space with unprecedented efficiency [8] [3].

While significant challenges remain—including model generalizability to novel protein families and the cost of large-scale experimentation—the LDBT framework provides a clear path forward for synthetic biology [9]. As machine learning models continue to improve and experimental platforms become increasingly automated, the integration of computational intelligence with biological design will likely become the standard approach for engineering biological systems [8] [3] [10]. This convergence of data science and biotechnology promises to accelerate the development of novel therapeutics, sustainable biomaterials, and bio-based manufacturing processes, ultimately transforming how we design and interact with biological systems.

The Role of Foundational Models and Zero-Shot Predictions in Enabling LDBT

The synthetic biology field has traditionally operated on the Design-Build-Test-Learn (DBTL) framework, an iterative cycle that systematically engineers biological systems. However, recent advances in artificial intelligence and machine learning are driving a paradigm shift toward Learn-Design-Build-Test (LDBT), where computational learning precedes physical implementation. This transformation is primarily enabled by foundation models capable of zero-shot predictions—generating accurate biological designs without prior task-specific training. This article compares the capabilities of various AI architectures and their zero-shot performance in synthetic biology applications, providing experimental data and methodologies that demonstrate how LDBT accelerates biological engineering.

Traditional DBTL cycles begin with designing biological parts based on existing knowledge, then building DNA constructs, testing them in biological systems, and finally learning from the results to inform the next design iteration [8] [1]. This empirical approach, while systematic, often requires multiple time-consuming and resource-intensive cycles to achieve desired functions.

The emerging LDBT paradigm fundamentally reorders this process by placing Learning first, leveraging foundation models trained on vast biological datasets to generate initial designs [8] [3]. These models utilize zero-shot prediction capabilities to propose functional biological constructs without requiring additional training on specific tasks. The subsequent Design, Build, and Test phases then serve to validate and refine these computational predictions in a single, efficient cycle [8].

This paradigm shift brings synthetic biology closer to established engineering disciplines where designs are based on first principles and reliably work on the first implementation, moving toward a "Design-Build-Work" model [8].

Comparative Analysis of Foundation Models for Biological Design

Foundation models trained on diverse biological datasets have demonstrated remarkable capabilities in understanding and designing biological sequences and structures. The table below compares major model architectures relevant to synthetic biology applications.

Table 1: Comparison of Foundation Model Architectures for Biological Design

| Model | Architecture Type | Key Innovation | Training Data | Relevant Biological Applications |

|---|---|---|---|---|

| ESM [8] | Protein Language Model | Evolutionary scale modeling | Millions of protein sequences | Predicting beneficial mutations, inferring protein function |

| ProGen [8] | Protein Language Model | Conditional protein generation | Diverse protein families | Zero-shot prediction of antibody sequences |

| ProteinMPNN [8] | Structure-based Deep Learning | Inverse folding from structure | Protein structures | Designing sequences that fold into specific backbone structures |

| AlphaFold [8] | Structural Prediction | Geometric deep learning | Protein Data Bank structures | Assessing designed protein structures |

| MutCompute [8] | Environment-aware Neural Network | Residue-level optimization | Protein structures with local environments | Predicting stabilizing mutations |

These models vary in their approaches—some learn from evolutionary patterns in sequence data, while others focus on structural relationships—but collectively enable zero-shot prediction of biological designs.

Zero-Shot Prediction Capabilities Across Modalities

The performance of foundation models in zero-shot settings varies significantly across different biological tasks. The following table summarizes quantitative performance metrics from recent evaluations.

Table 2: Zero-Shot Performance Across Biological Tasks

| Task Domain | Model/Approach | Performance Metric | Result | Reference |

|---|---|---|---|---|

| Face Verification | Vision-Language Models | TMR @ FMR=1% (LFW dataset) | 96.77% | [11] |

| Iris Recognition | Vision-Language Models | TMR @ FMR=1% (IITD-R-Full dataset) | 97.55% | [11] |

| PET Hydrolase Engineering | MutCompute | Stability & Activity | Increased vs. wild-type | [8] |

| TEV Protease Design | ProteinMPNN + AlphaFold | Catalytic Activity | Improved vs. parent sequence | [8] |

| Antimicrobial Peptides | Deep Learning + Cell-free Testing | Success Rate | 6/500 promising designs | [8] |

Performance variability is a recognized characteristic of zero-shot prediction. Research indicates that when foundation models perform well on base prediction tasks, their predicted probabilities become stronger signals for individual-level accuracy [12]. This underscores the importance of task-specific evaluation before full implementation.

Experimental Protocols for LDBT Implementation

Protein Engineering via Structure-Based Models

Objective: Engineer enhanced PET hydrolase enzymes for improved plastic degradation [8].

Methodology:

- Learning: Utilize MutCompute, a deep neural network trained on protein structures, to identify probable stabilizing mutations based on local chemical environments [8].

- Design: Select mutations predicted to improve stability and activity while maintaining catalytic function.

- Build: Synthesize DNA sequences encoding the designed variants and express them in cell-free systems [8] [3].

- Test: Measure depolymerization activity against PET substrates and compare thermostability to wild-type enzyme [8].

Key Tools: MutCompute for mutation prediction; cell-free expression system for rapid protein production; activity assays for functional validation [8].

Antimicrobial Peptide Design via Sequence-Based Models

Objective: Design novel antimicrobial peptides (AMPs) with predicted activity [8].

Methodology:

- Learning: Employ deep learning sequence generation models to computationally survey over 500,000 potential AMP sequences [8].

- Design: Select 500 optimal variants based on model predictions for experimental validation.

- Build: Synthesize peptide sequences using high-throughput methods.

- Test: Evaluate antimicrobial activity against target pathogens using cell-free assays [8] [3].

Key Tools: Deep learning sequence generation; high-throughput peptide synthesis; cell-free antimicrobial activity assays [8].

Metabolic Pathway Optimization via iPROBE

Objective: Improve 3-HB production in Clostridium hosts [8].

Methodology:

- Learning: Apply iPROBE (in vitro prototyping and rapid optimization of biosynthetic enzymes) framework using neural networks trained on pathway combinations and enzyme expression levels [8].

- Design: Predict optimal pathway sets and expression levels for enhanced production.

- Build: Construct pathway variants in appropriate vectors.

- Test: Measure 3-HB production yields in host systems [8].

Key Tools: iPROBE neural network for pathway prediction; cell-free pathway prototyping; metabolic flux analysis [8].

Visualizing the LDBT Workflow

Diagram 1: LDBT Workflow Integration. This diagram illustrates how foundation models enable the Learn-first approach in synthetic biology, generating zero-shot predictions that inform the design phase, with cell-free systems accelerating build and test phases.

Diagram 2: DBTL vs LDBT Paradigm Comparison. The traditional iterative cycle (left) contrasts with the learning-first approach (right) where foundation models enable single-pass implementation.

Essential Research Reagent Solutions for LDBT Implementation

Table 3: Key Research Reagents and Platforms for LDBT Workflows

| Reagent/Platform | Function in LDBT | Application Examples |

|---|---|---|

| Cell-Free Transcription-Translation Systems [8] [3] | Rapid protein synthesis without living cells | High-throughput testing of protein variants |

| DropAI Microfluidics [8] | Ultra-high-throughput screening in picoliter droplets | Screening >100,000 protein variants |

| cDNA Display Platforms [8] | In vitro protein stability mapping | ΔG calculations for 776,000 protein variants |

| Automated Liquid Handling Robots [8] | Accelerated build and test phases | Automated assembly of DNA constructs |

| Foundation Model APIs (ESM, ProGen, ProteinMPNN) [8] | Zero-shot biological design | Generating novel protein sequences |

The integration of foundation models with zero-shot prediction capabilities represents a transformative advancement in synthetic biology methodology. The LDBT paradigm, enabled by these technologies, shifts the innovation bottleneck from empirical iteration to computational prediction, potentially reducing development timelines from months to days. As foundation models continue to improve in accuracy and biological relevance, and as cell-free testing platforms increase in throughput and accessibility, the LDBT framework promises to democratize and accelerate synthetic biology research across academic, industrial, and therapeutic domains.

Synthetic biology, a field dedicated to reprogramming organisms with novel functionalities, has long been guided by the Design-Build-Test-Learn (DBTL) cycle [1] [6]. This iterative framework, while systematic, often relies on empirical trial-and-error due to the profound complexity of biological systems, making the engineering process slow and costly [13] [14]. However, a paradigm shift is underway, mirroring the evolution of older engineering disciplines from craftsmanship to predictive, computer-aided design [14]. Fueled by the convergence of artificial intelligence (AI) and high-throughput biology, the emerging Learn-Design-Build-Test (LDBT) framework is reordering the cycle to place data-driven learning first, promising to transform synthetic biology into a predictive engineering discipline [2] [3].

The Established DBTL Cycle and Its Bottlenecks

The traditional DBTL cycle has been the backbone of synthetic biology development. Its four stages form a continuous loop for engineering biological systems:

- Design: Researchers define objectives and design genetic constructs using domain knowledge and computational modeling [2] [13].

- Build: DNA constructs are physically assembled and introduced into a host chassis (e.g., bacteria, yeast) or cell-free systems [2] [14].

- Test: The performance of the engineered system is experimentally measured and characterized [1] [13].

- Learn: Data from testing is analyzed to inform the next design iteration, refining the approach until the desired function is achieved [2] [6].

A significant limitation of this cycle is the "learn" phase often comes last. Learning is reactive, dependent on the data generated from the specific "build" and "test" phases of that cycle [6]. Furthermore, the "build" and "test" phases, particularly when using living cells, can be time-consuming and low-throughput, creating a bottleneck and limiting the number of design iterations possible [2] [14].

The Emergence of the LDBT Paradigm

The LDBT paradigm proposes a fundamental reordering of the cycle, placing "Learn" at the forefront [2] [3]. This shift is powered by machine learning (ML) models trained on vast biological datasets, which can make powerful predictions before any new physical construction begins.

- Learn (First): In LDBT, the cycle starts with machine learning models that have been pre-trained on large-scale biological data, including protein sequences, structures, and functional assays [2] [13]. These models can learn the complex, non-linear relationships between sequence, structure, and function.

- Design (Informed by Learning): The design phase is directly guided by the ML models. Researchers use tools like ProteinMPNN (for sequence design) and AlphaFold (for structure prediction) to generate optimal genetic designs that are likely to succeed, often in a "zero-shot" manner without additional model training [2].

- Build & Test (Rapid Validation): The designed constructs are then rapidly built and tested using high-throughput platforms. Cell-free transcription-translation (TX-TL) systems are particularly valuable here, as they allow for rapid protein expression without the delays of cellular culture, enabling the testing of thousands of variants in hours [2] [3].

This approach creates a more efficient funnel, where a vast digital design space is navigated computationally, and only the most promising candidates are physically validated [3].

Comparative Analysis: DBTL vs. LDBT

The table below summarizes the core differences between the two paradigms across key aspects of the engineering workflow.

| Feature | Traditional DBTL Cycle | LDBT Paradigm |

|---|---|---|

| Cycle Order | Design → Build → Test → Learn | Learn → Design → Build → Test |

| Primary Driver | Empirical, iterative experimentation | Data-driven, predictive computation |

| Knowledge Base | Project-specific data from previous cycles | Foundational models trained on megascale biological data (e.g., protein sequences, structures) [2] [13] |

| Role of ML/AI | Analyzes data at the end of the cycle to inform next design | Precedes and directly informs the initial design; enables zero-shot predictions [2] |

| Build/Test Platform | Often relies on in vivo (cellular) systems | Heavily leverages rapid, high-throughput cell-free systems [2] [3] |

| Throughput | Lower, limited by cellular growth and cloning steps | Very high, enabled by cell-free and automation |

| Predictivity | Lower, relies on trial-and-error | Higher, aims for "first-principles" design [2] |

Experimental Protocols & Supporting Data

The practical superiority of the LDBT paradigm is demonstrated in recent research that integrates specific machine learning models with rapid cell-free testing.

Experimental Workflow for LDBT

The following diagram illustrates the integrated workflow of the LDBT cycle, showcasing the seamless flow from computational learning to physical testing and model refinement.

Diagram 1: The LDBT (Learn-Design-Build-Test) experimental workflow. The cycle begins with foundational learning from large datasets, which directly informs the computational design of biological parts. These designs are rapidly built and tested in cell-free systems, with the resulting experimental data used to update and refine the machine learning models, creating a continuous improvement loop [2] [3].

Detailed Methodology for a Key Experiment

A seminal application of LDBT involves using a protein language model like ESM (Evolutionary Scale Modeling) to design and test novel enzyme variants [2].

- Objective: Engineer an enzyme (e.g., a hydrolase for PET plastic depolymerization) with enhanced stability and activity [2].

- Learn Phase: A pre-trained protein language model (e.g., ESM) or a structure-based tool (e.g., MutCompute) is used to analyze evolutionary and biophysical constraints. The model predicts amino acid substitutions that are likely to be stabilizing and functionally beneficial without being explicitly trained on this specific enzyme [2].

- Design Phase: The top in silico predictions are selected to generate a library of variant DNA sequences. This step uses computational tools to codon-optimize the sequences for expression [2].

- Build Phase: The DNA sequences for the wild-type and predicted variant enzymes are synthesized via high-throughput gene synthesis. These DNA templates are then used directly in a cell-free transcription-translation (TX-TL) system, bypassing time-consuming steps of cloning into plasmids and transforming living cells [2] [3].

- Test Phase: The cell-free reactions express the enzyme variants. Function is assessed using a colorimetric or fluorescent assay that measures the degradation of a substrate (e.g., PET). Fluorescence-activated droplet sorting or microplate readers can be used for high-throughput quantification. Stability is measured by incubating enzymes at different temperatures and assessing residual activity [2].

Performance Data Comparison

The table below quantifies the performance gains achieved by the LDBT paradigm in specific experimental use cases, compared to traditional DBTL approaches.

| Application / Metric | DBTL Performance | LDBT Performance | Key Enabling Technologies |

|---|---|---|---|

| Enzyme Engineering (PET Hydrolase) [2] | Multiple iterative rounds required; improved stability/activity | Single-round success with zero-shot models; increased stability & activity vs. wild-type | MutCompute, Protein Language Models (ESM), Cell-free Testing |

| Antimicrobial Peptide (AMP) Design [2] | Limited library size; low hit rate | 500 variants tested from >500,000 surveyed; 6 promising designs identified | Deep Learning Sequence Generation, Cell-free Expression |

| Pathway Optimization (3-HB in Clostridium) [2] | Iterative host engineering; slower yield improvement | >20-fold production increase predicted via neural network | iPROBE, Cell-free Pathway Prototyping |

| General Cycle Turnover Time | Weeks to months per cycle [14] | Hours for test phase using cell-free systems [2] [3] | Cell-free TX-TL, Automation, Microfluidics |

The Scientist's Toolkit: Essential Research Reagents & Materials

The implementation of the LDBT paradigm relies on a specific set of computational and experimental tools.

| Tool Category | Item / Solution | Function in LDBT Workflow |

|---|---|---|

| Computational (Learn/Design) | Protein Language Models (e.g., ESM, ProGen) [2] | Pre-trained on evolutionary data for zero-shot prediction of protein function and beneficial mutations. |

| Structure-Based Design Tools (e.g., ProteinMPNN, AlphaFold) [2] | Generate sequences that fold into a desired backbone (ProteinMPNN) and predict 3D protein structures (AlphaFold). | |

| Stability Prediction Tools (e.g., Prethermut, Stability Oracle) [2] | Predict the thermodynamic stability change (ΔΔG) of protein variants to screen for stabilizing mutations. | |

| Experimental (Build/Test) | Cell-Free TX-TL System [2] [3] | A reconstituted biochemical machinery for rapid, high-yield protein synthesis without living cells. |

| DNA Template | Synthesized linear DNA or plasmids encoding the variant to be expressed; the direct input for the cell-free system. | |

| Metabolic Assay Kits (e.g., NADPH/NADP⁺) | Quantify cofactor turnover or metabolic flux in cell-free prototyped pathways. | |

| Droplet Microfluidics Setup [2] | Encapsulate single cell-free reactions in picoliter droplets for ultra-high-throughput screening. |

Future Outlook and Implications

The transition from DBTL to LDBT signifies a broader movement toward the industrialization of biology. As foundational models grow more sophisticated and high-throughput testing becomes even more accessible, the LDBT cycle is expected to accelerate, potentially converging on a "Design-Build-Work" model reminiscent of mature engineering disciplines like civil engineering [2]. This progression will be crucial for tackling complex challenges in drug development, sustainable manufacturing, and climate change, enabling the creation of biological solutions with a speed and precision previously unimaginable.

Key Technologies and Workflows Powering the LDBT Framework

The foundational framework of synthetic biology has long been the Design-Build-Test-Learn (DBTL) cycle, an iterative process where biological systems are designed, constructed, experimentally validated, and insights from data are used to inform the next design round [8] [1]. However, recent advances in machine learning (ML) are instigating a paradigm shift. The proliferation of large-scale biological data and sophisticated computational models now enables a reordering of this cycle into LDBT (Learn-Design-Build-Test), where machine learning precedes design [8].

In the LDBT framework, "Learning" is moved to the forefront. Vast, pre-existing biological knowledge is captured by machine learning models trained on millions of protein sequences and structures. This allows researchers to make zero-shot predictions—designing proteins with desired functions without any initial target-specific experimental data [8]. The subsequent Build and Test phases then serve to validate these computational predictions, potentially reducing the number of costly experimental cycles required. This paradigm brings synthetic biology closer to a "Design-Build-Work" model, akin to more mature engineering disciplines [8].

This guide objectively compares the performance of key machine learning tools driving this shift: protein language models (ESM, ProGen) and structure-based design tools (ProteinMPNN, MutCompute).

Protein Language Models (PLMs) for Zero-Shot Design

Protein language models are deep learning systems pre-trained on massive datasets of protein sequences. By learning evolutionary patterns and statistical relationships between amino acids, they can predict the effects of mutations and generate novel, functional protein sequences from scratch without target-specific training data [8] [15].

ESM (Evolutionary Scale Modeling)

The ESM family of models, including ESM-1b and ESM-2, are transformer-based protein language models trained on millions of diverse protein sequences. They learn to represent the evolutionary constraints and biophysical properties that shape proteins [8] [15].

- Core Methodology: ESM models use a self-supervised training objective, often a masked language model task, where random amino acids in a sequence are hidden, and the model must predict them based on the surrounding context. This forces the model to internalize complex dependencies within protein sequences [15].

- Zero-Shot Function: The model's output log-likelihood for a given sequence or mutation can be used as a fitness score, estimating how "natural" and likely-to-fold the variant appears based on evolutionary data [8] [16].

- Key Applications: ESM has been applied to predict beneficial mutations, infer protein function, and predict solvent-exposed and charged amino acids. It has proven adept at zero-shot prediction of diverse antibody sequences [8].

Table 1: Performance Summary of ESM Models

| Model | Training Data | Key Applications | Reported Performance |

|---|---|---|---|

| ESM-1b/ESM-2 | Millions of protein sequences from UniRef [15] | Protein function prediction, mutation effect prediction, zero-shot fitness inference [8] [15] | Outperformed traditional methods in CAFA challenge; widely used as a state-of-the-art feature encoder [15] |

| ProGen | Millions of protein sequences, including control tags for function [8] | Generation of functional protein sequences with controlled properties [8] | Successfully generated functional lysozymes; capable of zero-shot prediction of diverse antibody sequences [8] |

ProGen

ProGen is another protein language model trained on a large corpus of protein sequences. Its distinctive feature is the inclusion of control tags (e.g., for protein family or function) during training, enabling conditional generation of novel protein sequences tailored for specific purposes [8].

- Core Methodology: Similar to ESM, ProGen uses a transformer architecture. It was trained on a dataset of ~280 million protein sequences, learning the underlying "grammar and style" of proteins across different families [8].

- Zero-Shot Function: ProGen can generate entirely new protein sequences that do not exist in nature by sampling from the learned distribution, guided by user-specified control tags to steer the function of the generated protein [8].

Structure-Based Deep Learning Design Tools

Unlike PLMs that primarily use sequence information, structure-based tools leverage 3D structural data to inform the design process, focusing on how a sequence will fold and function in a structural context.

ProteinMPNN

ProteinMPNN is a deep neural network for protein sequence design. Given a protein backbone structure as input, it predicts amino acid sequences that are likely to fold into that structure [8] [17].

- Core Methodology: ProteinMPNN uses a message-passing neural network architecture that operates on a graph representation of the protein structure. It considers the spatial relationships between residues to design sequences that are energetically favorable and compatible with the desired fold [8].

- Zero-Shot Function: Researchers can input a novel backbone structure or a modified existing structure, and ProteinMPNN will output one or more sequences predicted to fold into it, without requiring any prior experimental data for that specific scaffold [8].

- Key Applications: It has been used to design variants of TEV protease with improved catalytic activity and to redesign enzymes like the Fe(II)/αKG enzyme tP4H for greater stability and solubility while retaining native activity [8] [17]. When combined with structure prediction tools like AlphaFold, it has led to a nearly 10-fold increase in design success rates [8].

Table 2: Performance Summary of Structure-Based Tools

| Tool | Input | Key Applications | Reported Performance |

|---|---|---|---|

| ProteinMPNN | Protein backbone structure [8] | De novo sequence design, enzyme stabilization, protein binder design [8] [17] | ~10-fold increase in design success rates when combined with AlphaFold for structure assessment [8] |

| MutCompute | Protein structure and local chemical environment [8] | Residue-level optimization for stability and activity [8] | Engineered a hydrolase for PET depolymerization with increased stability and activity vs. wild-type [8] |

MutCompute

MutCompute is a structure-based deep learning tool that focuses on residue-level optimization. It identifies probable mutations given the local chemical environment of a residue within a protein structure [8].

- Core Methodology: MutCompute uses a deep neural network trained on protein structures from the PDB. It learns to associate an amino acid with its surrounding chemical environment (e.g., neighboring atoms, secondary structure, solvent exposure), allowing it to predict mutations that are likely to be stabilizing or functionally beneficial [8].

- Zero-Shot Function: By analyzing a protein's structure, MutCompute can predict single or multiple point mutations that are predicted to enhance stability or other properties without requiring functional assays on the target protein first [8].

Comparative Performance Analysis

The following table provides a direct, data-driven comparison of these tools based on their performance in published experimental validations.

Table 3: Experimental Performance and Validation Data

| Tool | Type | Experimental Validation Example | Experimental Outcome |

|---|---|---|---|

| ESM | Protein Language Model | Zero-shot prediction of beneficial antibody mutations [8] | Successful prediction of functional antibody sequences without target-specific training [8] |

| ProGen | Protein Language Model | Generation of novel antimicrobial peptides (AMPs) [8] | From 500 computationally surveyed AMPs, 6 promising designs were validated experimentally [8] |

| ProteinMPNN | Structure-Based Design | Redesign of TEV protease [8] | Designed variants showed improved catalytic activity compared to the parent sequence [8] |

| MutCompute | Structure-Based Design | Engineering a hydrolase for PET depolymerization [8] | MutCompute-designed proteins had increased stability and activity compared to wild-type [8] |

| FSFP (Fine-tuning) | Hybrid Approach | Engineering Phi29 DNA polymerase [16] | Fine-tuned ESM-1v model led to a 25% increase in the positive rate of functional polymerase variants [16] |

Experimental Protocols for Zero-Shot Validation

To validate zero-shot predictions, a typical DBTL cycle is employed, where the "Design" phase is heavily influenced by the computational model.

Protocol for Validating a PLM-Generated Protein

This protocol outlines the steps for experimentally testing a novel protein sequence generated by a model like ProGen or a stabilized variant designed by ProteinMPNN.

- In Silico Design: Generate a set of candidate sequences using the zero-shot model (e.g., ProGen for a novel AMP, ProteinMPNN for a stabilized enzyme scaffold). The model's internal scoring (e.g., log-likelihood, confidence score) is used for initial ranking [8] [17].

- DNA Synthesis and Cloning: The selected nucleotide sequences are synthesized de novo and cloned into an appropriate expression vector. Automated biofoundries can streamline this process for high-throughput workflows [8] [14].

- Cell-Free Expression or In Vivo Expression: The built DNA constructs are expressed. Cell-free expression systems are particularly valuable here for their speed (>1 g/L protein in <4 h), scalability (pL to kL), and ability to express proteins that might be toxic in living cells [8].

- Functional Assay: The expressed proteins are tested for the desired function. This could be:

- Data Analysis and Learning: Experimental results are analyzed. Successful variants confirm the zero-shot prediction, while failures provide data that can be used to fine-tune the model for future cycles, closing the LDBT loop [8] [16].

Zero-Shot LDBT Cycle for Protein Design

Protocol for a Stability Engineering Campaign Using ProteinMPNN

This specific protocol is adapted from a study that used ProteinMPNN to stabilize the Fe(II)/αKG enzyme tP4H [17].

- Structure Preparation: Obtain a 3D structure of the target protein. If an experimental structure is unavailable, use a predicted model from AlphaFold2 [17].

- Define Fixed Residues: To preserve catalytic function, identify and "fix" (prevent mutation of) residues critical for activity (e.g., active site residues, cofactor-binding residues). This can be done using the AlphaFold2 model and comparisons to homologs with known structures [17].

- Sequence Design with ProteinMPNN: Run ProteinMPNN on the structure, specifying the fixed residues. Generate a large number (e.g., 48) of designed sequences [17].

- In Silico Ranking: Rank the designed sequences based on ProteinMPNN's confidence scores and/or other criteria (e.g., computational stability metrics) [17].

- Build, Test, and Learn: Synthesize and clone top-ranked designs. Express and purify the proteins. Test for:

- Retained Native Function: Ensure the stabilized design has not lost its original catalytic activity.

- Improved Stability: Measure metrics like melting temperature (

Tm) or soluble expression yield. - Non-Native Function: If applicable, screen for improved performance in a promiscuous, industrially-relevant reaction (e.g., C-H hydroxylation) [17].

Workflow for Protein Stabilization with ProteinMPNN

Essential Research Reagent Solutions

The experimental validation of zero-shot designs relies on a suite of core reagents and platforms.

Table 4: Key Research Reagents and Platforms

| Item / Solution | Function in Workflow | Key Characteristics |

|---|---|---|

| Cell-Free Expression System [8] | Rapid protein synthesis for high-throughput testing of designed variants. | Fast (>1 g/L in <4 h), scalable, bypasses cell viability, allows toxic protein production. |

| AlphaFold2 Model [18] [17] | Provides a reliable 3D protein structure for structure-based tools when experimental structures are unavailable. | High accuracy (average error ~1 Å), covers entire protein sequences. |

| Automated Biofoundry [8] [14] | Automates the Build and Test phases (DNA assembly, transformation, culturing, assays). | Increases throughput, reduces human error and labor, enables closed-loop DBTL/LDBT cycles. |

| Droplet Microfluidics [8] | Ultra-high-throughput screening of protein variants. | Enables screening of >100,000 picoliter-scale reactions in parallel. |

| Ultra-Large Virtual Compound Libraries (e.g., REAL Database) [18] | Provides a vast chemical space for in silico screening of small molecule binders for designed proteins. | Billions of make-on-demand compounds, expands hit discovery and chemical diversity. |

Synthetic biology has traditionally operated on the Design-Build-Test-Learn (DBTL) cycle, a systematic framework for engineering biological systems [1]. In this paradigm, researchers design genetic constructs, build them in the laboratory, test their functionality, and learn from the results to inform the next design iteration. However, this process can be time-consuming, with the Build and Test phases often creating significant bottlenecks. A new paradigm, the "LDBT" cycle, is emerging, where Learning precedes Design [8]. This shift is powered by machine learning (ML) models that can make zero-shot predictions, generating viable initial designs based on vast biological datasets. This places even greater importance on the subsequent Build and Test phases, which must be rapid and high-throughput to validate these computational predictions efficiently [8]. Within this context, cell-free gene expression (CFE) systems and automated DNA synthesis have become critical technologies for accelerating the Build phase, enabling the rapid physical realization of designed genetic constructs and paving the way for a more agile engineering biology.

The DBTL Cycle and its Bottlenecks

The classic DBTL cycle is a cornerstone of synthetic biology [5]. The process begins with Design, where researchers define objectives and design the necessary biological parts using computational tools [8]. This is followed by the Build phase, which involves the physical construction of DNA constructs, their assembly into vectors, and introduction into a living chassis (e.g., bacteria, yeast) or a cell-free system for characterization [8]. The Test phase involves experimental measurement of the construct's performance, and the Learn phase analyzes this data to refine the design for the next cycle [8]. A major bottleneck in this workflow has been the Build phase, particularly when relying on in vivo chassis. Cloning, transforming, and cultivating living cells is a slow process, often taking days and creating a disconnect with the rapidly generated in silico designs from the new LDBT paradigm [8].

Cell-Free Expression Systems: Bypassing the Cell to Accelerate Building and Testing

What is Cell-Free Gene Expression?

Cell-free gene expression (CFE) is a methodology for performing transcription and translation in vitro using the protein synthesis machinery extracted from cells [19]. Unlike traditional in vivo methods, CFE bypasses the need for living cells, using lysates or purified components from organisms like E. coli to directly convert synthesized DNA templates into proteins [8] [19]. This offers a direct and rapid path from a designed DNA sequence to a functional protein product.

Key Advantages for the Build-Test Phases

The unique features of CFE make it exceptionally well-suited for accelerating synthetic biology workflows, particularly within an LDBT framework:

- Speed and Directness: CFE reactions can produce proteins in less than four hours, dramatically faster than in vivo methods which require cloning and cell cultivation [8]. Synthesized DNA can be added directly to the reaction without time-consuming cloning steps [8].

- High-Throughput Capability: The open nature of CFE reactions makes them highly compatible with automation. They can be miniaturized to picoliter volumes and scaled to hundreds of thousands of reactions using liquid handling robots and microfluidics, enabling massive parallel testing [8]. Platforms like DropAI can screen over 100,000 reactions in a single run [8].