LDBT vs. DBTL: How Machine Learning and Cell-Free Systems Are Reshaping Synthetic Biology

This article provides a comparative analysis for researchers and drug development professionals on the evolving engineering cycles in synthetic biology.

LDBT vs. DBTL: How Machine Learning and Cell-Free Systems Are Reshaping Synthetic Biology

Abstract

This article provides a comparative analysis for researchers and drug development professionals on the evolving engineering cycles in synthetic biology. It explores the foundational principles of the traditional Design-Build-Test-Learn (DBTL) cycle and the emerging, data-driven Learn-Design-Build-Test (LDBT) paradigm. The scope covers the methodological shift driven by machine learning and rapid cell-free testing, addresses practical challenges and optimization strategies, and offers a critical validation of both approaches through performance metrics and application case studies, ultimately outlining the future implications for biomedical research and therapeutic development.

Core Concepts: Deconstructing the DBTL Cycle and the New LDBT Paradigm

The Design-Build-Test-Learn (DBTL) cycle represents a foundational framework in synthetic biology and engineering biology, applying rigorous engineering principles to the design and assembly of biological components [1] [2]. This iterative process provides a systematic methodology for developing biological systems with predicted functions, enabling researchers to engineer organisms for specific applications such as producing biofuels, pharmaceuticals, and other valuable compounds [1]. A fundamental challenge in biological engineering lies in the inherent complexity of living systems, where even rationally designed biological components can behave unpredictably when introduced into cellular environments [1] [2]. Unlike classical engineering disciplines that utilize well-characterized, man-made building blocks, synthetic biology often relies on partially characterized biological parts implemented within dynamic living systems that remain poorly understood [2].

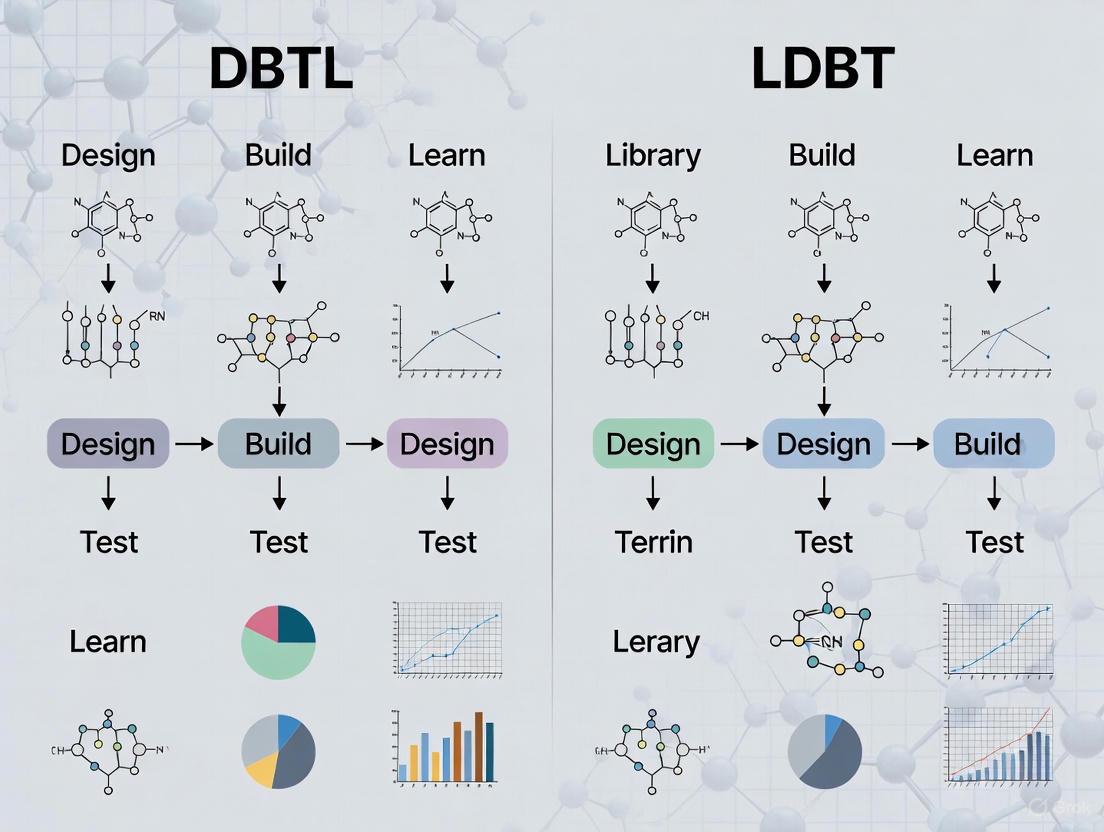

The DBTL framework addresses this challenge through continuous iteration, where each cycle generates new data and insights to inform subsequent designs [3]. This approach has become synonymous with advanced synthetic biology workflows, particularly with the rise of automated biofoundries that streamline each phase of the cycle [4]. The structured nature of DBTL allows researchers to navigate the vast design space of biological systems methodically, gradually converging on optimal solutions through empirical testing and data-driven learning [3]. As the field evolves, new variations such as the LDBT (Learn-Design-Build-Test) cycle are emerging, proposing a reordering of the traditional sequence to leverage machine learning predictions before experimental building and testing [5] [6]. Nevertheless, the established DBTL cycle remains the predominant framework for engineering biological systems across academic and industrial contexts.

The Four Phases of the DBTL Cycle

Design Phase

The Design phase initiates the DBTL cycle by defining objectives for the desired biological function and specifying the genetic components needed to achieve it [5]. This stage encompasses both biological design and operational design [2]. Biological design involves specifying desired cellular functions, such as producing a target compound or generating detectable signals in response to analytes [2]. Operational design focuses on creating experimental procedures and protocols that will efficiently generate the required data [2].

During Design, researchers identify appropriate biological parts—including enzymes, reporters, and regulatory sequences—and determine how to assemble them to implement the desired function [2]. This process draws upon domain knowledge, expertise, and computational modeling approaches [5]. With the growing universe of characterized biological parts, standardized registries that catalog these components under various biological contexts become increasingly valuable [2]. The end product of the Design phase is one or more DNA sequences comprising multiple genetic parts that are predicted to generate the target functions in a specific biological context [2].

Table 1: Key Activities and Outputs in the Design Phase

| Activity Category | Specific Activities | Primary Outputs |

|---|---|---|

| Biological Design | Define target functions; Identify biological parts; Computational modeling | Functional specifications; DNA sequence designs |

| Operational Design | Design experimental protocols; Define performance specifications; Plan data capture | Experimental plans; Measurement protocols |

| Computational Support | Design-of-experiment (DoE) approaches; Optimization algorithms; DNA assembly planning | Optimized design libraries; Assembly strategies |

Build Phase

The Build phase translates designed DNA sequences into physical biological constructs [2]. This process primarily consists of DNA assembly, incorporation of the assembled DNA into a host organism, and verification of the correctly assembled sequence [2]. DNA assembly uses molecular biology techniques—often enhanced by robotic automation—to combine multiple DNA fragments according to the specifications from the Design phase [1] [2]. Complex constructs frequently require multiple hierarchical assembly rounds, where initial rounds assemble individual transcriptional units or large genes, and subsequent rounds combine these into complete pathways or circuits [2].

A key innovation in modern Build processes is the emphasis on modular design of DNA parts, which enables assembly of greater variety by interchanging individual components [1]. Automation has dramatically reduced the time, labor, and cost of generating multiple constructs, allowing for increased throughput with shortened development cycles [1]. After assembly, constructs are typically cloned into expression vectors and verified using colony qPCR, Next-Generation Sequencing (NGS), or other analytical methods [1]. The final output is a physical DNA molecule or library of DNA molecules comprising the specified sequence(s), ready for functional testing [2].

Test Phase

In the Test phase, researchers experimentally assess whether the built biological constructs perform their intended functions [5]. This involves introducing the constructs into characterization systems—which may include in vivo chassis like bacteria, yeast, or mammalian cells, or in vitro cell-free systems—and measuring their performance against objectives defined during the Design phase [5]. For metabolic engineering applications, testing typically involves growing engineered organisms and assaying for desired functions, such as quantifying product formation or measuring metabolic activity [2].

Comprehensive testing may require sophisticated analytical techniques including proteomics, liquid chromatography-mass spectrometry, gas chromatography-mass spectrometry, and next-generation DNA/RNA sequencing [2]. The Test phase generates critical performance data—such as product titer, yield, rate, enzyme activities, and dynamic response ranges—that enable assessment of the current design's efficacy [2]. Advances in high-throughput screening methodologies, including liquid handling robots and microfluidics, have significantly accelerated this phase by enabling parallel testing of thousands of variants [1] [5]. Cell-free transcription-translation systems have emerged as particularly valuable testing platforms because they circumvent complexities of living cells, allowing rapid assessment of genetic circuit performance within hours rather than days [5] [6].

Learn Phase

The Learn phase closes the DBTL cycle by analyzing data collected during testing to extract actionable insights for the next iteration [5]. This stage involves comparing experimental results with design objectives to identify successful elements and limitations of the current design [1] [5]. Learning mechanisms range from traditional statistical evaluations to advanced machine learning techniques that identify complex patterns within high-dimensional data [7] [3].

In the Learn phase, researchers develop or refine mathematical models—both statistical and mechanistic—of the engineered biological system [2]. The integration of multi-omics data with metabolic models, for instance, has proven valuable for identifying genetic interventions that improve titer, rate, and yield of engineered pathways [2]. These insights directly inform the Design phase of the subsequent DBTL cycle, creating a continuous improvement loop [1]. The learning generated also contributes to broader biological knowledge, helping to address fundamental challenges in predicting how foreign DNA will affect cellular function [1].

DBTL in Action: Experimental Protocol for Metabolic Engineering

To illustrate the practical implementation of a DBTL cycle, consider the optimization of dopamine production in Escherichia coli as documented in a 2025 study [7]. This example demonstrates a knowledge-driven DBTL approach that incorporates upstream in vitro investigation before full cycling.

Design and Build Protocol

The dopamine pathway was designed using two key enzymes: 4-hydroxyphenylacetate 3-monooxygenase (HpaBC) from native E. coli metabolism to convert L-tyrosine to L-DOPA, and L-DOPA decarboxylase (Ddc) from Pseudomonas putida to catalyze dopamine formation [7]. The host strain (E. coli FUS4.T2) was engineered for high L-tyrosine production through genomic modifications, including depletion of the transcriptional dual regulator TyrR and mutation of the feedback inhibition in chorismate mutase/prephenate dehydrogenase (TyrA) [7].

DNA Assembly and Verification:

- Genes were cloned into pET and pJNTN plasmid systems using standard molecular biology techniques [7].

- Constructs were verified through colony qPCR and sequencing before transformation into the production strain [7].

- Ribosome Binding Site (RBS) engineering was employed to fine-tune the relative expression levels of HpaBC and Ddc, using the UTR Designer tool to modulate RBS sequences [7].

Test and Learn Protocol

Cultivation Conditions:

- Minimal medium containing 20 g/L glucose, 10% 2xTY medium, MOPS buffer, vitamin B6, phenylalanine, and trace elements was used [7].

- Cultures were supplemented with appropriate antibiotics and induced with 1 mM IPTG [7].

Dopamine Quantification:

- Dopamine production was measured using high-performance liquid chromatography (HPLC) with electrochemical detection [7].

- Results were normalized to biomass (mg product/g biomass) and volume (mg/L) [7].

Learning and Iteration:

- Initial testing revealed the impact of GC content in the Shine-Dalgarno sequence on RBS strength and translation efficiency [7].

- This learning informed subsequent RBS redesign, culminating in a dopamine production strain achieving 69.03 ± 1.2 mg/L (34.34 ± 0.59 mg/g biomass)—a 2.6 to 6.6-fold improvement over previous state-of-the-art production [7].

Workflow Visualization: DBTL Cycle

The Scientist's Toolkit: Essential Research Reagents and Platforms

Successful implementation of DBTL cycles relies on specialized reagents, tools, and platforms that streamline each phase of the workflow.

Table 2: Essential Research Reagents and Platforms for DBTL Implementation

| Category | Specific Tools/Reagents | Function in DBTL Cycle |

|---|---|---|

| DNA Assembly & Parts | JBEI-ICE Registry; SynBioHub; Type IIS restriction enzymes (Golden Gate) | Catalog biological parts; Standardize assembly; Manage design metadata [8] |

| Host Engineering | E. coli production strains (e.g., FUS4.T2); Chromosomal integration systems; CRISPR-Cas9 tools | Provide metabolic background; Enable stable genetic modifications [7] |

| Analytical Methods | HPLC with electrochemical detection; LC-MS/MS; NGS; Colony qPCR | Quantify metabolites; Verify sequences; Validate constructs [7] [1] |

| Cell-Free Systems | TX-TL transcription-translation kits; Crude cell lysates; Non-canonical amino acids | Rapid testing of protein function; Bypass cellular complexity [5] [6] |

| Automation & Software | Liquid handling robots; Microfluidics; SBOLDesigner; UTR Designer | Increase throughput; Enable high-throughput screening; Facilitate design [1] [5] [8] |

Emerging Evolution: From DBTL to LDBT

The established DBTL cycle faces ongoing refinement, most notably through the proposed LDBT (Learn-Design-Build-Test) paradigm that reorders the cycle to prioritize learning [5] [6]. This approach leverages machine learning models trained on large biological datasets to make zero-shot predictions about protein structure and function before experimental design and building [5]. Advances in protein language models (ESM, ProGen), structure-based design tools (ProteinMPNN, MutCompute), and functional predictors (Prethermut, DeepSol) enable increasingly accurate computational predictions that can guide biological design [5].

When combined with rapid cell-free testing platforms, LDBT promises to accelerate biological engineering by reducing dependency on costly and time-consuming trial-and-error experimentation [5] [6]. This evolution toward learning-first approaches represents a potential paradigm shift from iterative empirical optimization toward predictive engineering, potentially moving synthetic biology closer to the Design-Build-Work model used in established engineering disciplines like civil engineering [5]. Nevertheless, the core principles and workflow of the traditional DBTL cycle remain fundamental to synthetic biology, providing the foundational framework upon which these next-generation methodologies are being built.

The DBTL cycle's established framework continues to enable systematic engineering of biological systems, with ongoing enhancements through automation, data management platforms, and machine learning integration further increasing its power and efficiency for biotechnology applications.

Synthetic biology has long been governed by the Design-Build-Test-Learn (DBTL) cycle, a systematic framework for engineering biological systems. This iterative process involves designing genetic constructs, building them in the laboratory, testing their performance, and learning from the results to inform the next design iteration [1]. While this approach has enabled significant advances, it is inherently constrained by its reactive nature; learning occurs only after resource-intensive build and test phases, leading to multiple time-consuming and costly cycles [5]. However, a transformative paradigm shift is now underway, fueled by advances in machine learning (ML) and high-throughput experimental platforms. The emerging Learn-Design-Build-Test (LDBT) framework reorders this sequence, placing Learning at the forefront through data-driven prediction and zero-shot design [5]. This repositioning transforms synthetic biology from an empirical, trial-and-error discipline toward a more predictive engineering science, potentially collapsing multiple DBTL cycles into a single, efficient LDBT cycle that brings the field closer to a "Design-Build-Work" model [5] [6]. For researchers and drug development professionals, this shift promises to dramatically accelerate the development of therapeutic proteins, optimized biosynthetic pathways, and other bio-based products.

The Limitation of the Traditional DBTL Cycle

The traditional DBTL cycle, while systematic, faces significant challenges in predictability and efficiency. The core limitation lies in biological complexity: the impact of introducing foreign DNA into a cell is often difficult to predict due to non-linear, high-dimensional interactions between genetic parts and host cell machinery [9]. This complexity forces the engineering process away from rational design and into a regime of ad hoc tinkering [9].

- The Build-Test Bottleneck: The physical construction of genetic designs and their experimental testing are often the slowest and most resource-intensive phases. In vivo testing, in particular, is hampered by cellular processes like transformation and culturing, which are time-consuming and can be complicated by factors such as metabolic burden and toxicity to the host cell [5] [1].

- Iterative Learning Delay: In the DBTL cycle, learning is retrospective. Insights that could guide design are only generated after builds are tested, creating a lag in knowledge acquisition. This often necessitates several rounds of iteration to converge on a functional system, making the overall process costly and slow [5] [3].

Table 1: Core Challenges in the Traditional DBTL Cycle

| Challenge | Impact on Engineering Workflow |

|---|---|

| Unpredictable Biological Interactions | Limits the power of purely rational design, requiring extensive experimental iteration [9]. |

| Slow In Vivo Build/Test Phases | Creates a bottleneck, extending project timelines from weeks to months or years [5]. |

| Retrospective Learning | Delays the incorporation of critical insights into the design process, increasing the number of required cycles [3]. |

| Combinatorial Explosion | Vast design spaces make it experimentally infeasible to test all promising variants [3]. |

The LDBT Paradigm: A Machine-Learning First Approach

The LDBT paradigm addresses the core limitations of DBTL by leveraging modern machine learning to pre-emptively generate knowledge. In this new framework, the cycle begins with the Learn phase, where ML models trained on vast biological datasets are used to make zero-shot predictions about sequence-structure-function relationships before any physical design is initiated [5] [6].

The "Learn-First" Rationale

The rationale for this reordering is the growing success of ML models in making accurate functional predictions from sequence or structural data alone. These models have been trained on the entirety of available protein sequences and structures, effectively internalizing evolutionary and biophysical constraints [5]. This allows researchers to "interrogate" the model to generate designs with a high probability of success, effectively compressing the learning from many potential DBTL cycles into a single, upfront computational step [5] [9]. This approach is particularly powerful for navigating the vast combinatorial space of biological sequences, where testing all variants is impossible [3].

Key Machine Learning Technologies

The LDBT approach is enabled by several classes of machine learning models:

- Protein Language Models (e.g., ESM, ProGen): Trained on evolutionary relationships across millions of protein sequences, these models can predict beneficial mutations, infer function, and design novel, functional protein sequences in a zero-shot manner [5].

- Structure-Based Models (e.g., ProteinMPNN, MutCompute): These tools take protein structures as input and design sequences that fold into that backbone or optimize residues for stability and function. When combined with structure-prediction tools like AlphaFold, they significantly increase design success rates [5].

- Functional Prediction Models (e.g., Prethermut, Stability Oracle, DeepSol): These supervised learning models are trained on specific functional properties like thermodynamic stability or solubility, allowing for the in silico screening of designs for desired characteristics [5].

The LDBT Workflow in Practice

The practical implementation of the LDBT cycle integrates computational learning with rapid experimental validation. The workflow is illustrated in the following diagram, which highlights the streamlined, single-pass nature of the process driven by initial learning.

Phase 1: Learn

The cycle begins by harnessing machine learning models that have been pre-trained on massive biological datasets. These models encapsulate complex patterns of sequence evolution, structural stability, and functional fitness [5] [9]. Researchers can query these models to predict the properties of hypothetical sequences or to generate entirely new sequences with desired functions, a capability known as zero-shot design [5]. For example, a protein language model can be prompted to generate novel antimicrobial peptide sequences, which are then filtered for predicted activity and low toxicity before any DNA is synthesized [5].

Phase 2: Design

In this phase, the insights and pre-validated designs from the Learn phase are translated into specific, buildable DNA sequences. The design process is now guided and constrained by the ML predictions, ensuring a higher probability of success. This may involve selecting optimal codons for expression, assembling genetic circuits from parts with predicted compatibility, or designing libraries focused on the most promising regions of sequence space as identified by the models [6].

Phase 3: Build

The designed DNA constructs are synthesized and prepared for testing. To maintain the speed of the LDBT cycle, this phase often leverages automated DNA synthesis and assembly workflows [1]. The use of cell-free systems is particularly advantageous here, as they eliminate the need for time-consuming steps like cloning and transformation into living cells. Synthesized DNA can be directly added to cell-free reactions for expression, bypassing a major bottleneck of in vivo methods [5].

Phase 4: Test

The final phase involves the high-throughput experimental validation of the built designs. Cell-free transcription-translation (TX-TL) systems are a cornerstone of the LDBT approach for this purpose [5] [6]. These systems:

- Are highly rapid, producing proteins in less than 4 hours [5].

- Are scalable, enabling parallel testing of thousands of reactions from picoliter to kilo-liter scales [5].

- Offer fine control over the reaction environment, improving reproducibility [6].

- Can be coupled with robotics and microfluidics for ultra-high-throughput screening, as demonstrated by platforms like DropAI that screen over 100,000 reactions [5].

The data generated from this testing phase can be fed back to further refine and retrain the ML models, creating a virtuous cycle of improving predictive power for future LDBT campaigns.

Quantitative Comparison: DBTL vs. LDBT

The theoretical advantages of the LDBT cycle are borne out in practical metrics and experimental benchmarks. The following table summarizes key performance differentiators.

Table 2: Performance Comparison of DBTL vs. LDBT Approaches

| Metric | Traditional DBTL | LDBT Approach |

|---|---|---|

| Cycle Time | Weeks to months per cycle [1] | Days to weeks, with cell-free testing in hours [5] |

| Primary Learning Mode | Retrospective (post-testing) analysis [3] | Prospective, zero-shot prediction from pre-trained models [5] |

| Typical Cycles to Success | Multiple iterative cycles required [3] | Potential for single-cycle success [5] |

| Throughput of Test Phase | Limited by in vivo transformation and growth [1] | Ultra-high-throughput; >100,000 variants using microfluidics [5] |

| Handling of Design Space | Limited exploration due to low throughput [3] | Capable of navigating vast combinatorial spaces computationally [5] [3] |

| Resource Intensity | High (repeated cycles, labor, materials) [1] | Lower per project (faster convergence), but requires computational investment [6] |

Experimental Evidence and Case Studies

Simulation-based studies and real-world experiments demonstrate the efficacy of the LDBT approach:

- Enzyme Engineering: A pre-trained protein language model was used to design libraries for engineering a biocatalyst, resulting in successful enantioselective bond formation. This showcases the zero-shot design capability without additional model training [5].

- Antimicrobial Peptide (AMP) Discovery: Researchers combined deep learning-based sequence generation with cell-free expression. They computationally surveyed over 500,000 AMP sequences, selected 500 optimal variants for experimental testing, and identified six promising designs, demonstrating efficient navigation of a massive sequence space [5].

- Pathway Optimization: The iPROBE (in vitro prototyping and rapid optimization of biosynthetic enzymes) method uses a neural network trained on pathway combinations to predict optimal enzyme sets and expression levels, leading to a 20-fold improvement in product yield in a host organism [5].

- In Silico DBTL Benchmarking: A kinetic model-based framework demonstrated that ML methods like gradient boosting and random forest are highly effective in low-data regimes for guiding combinatorial pathway optimization, underscoring the power of learning in iterative strain improvement [3].

Essential Tools and Protocols for Implementing LDBT

Adopting the LDBT framework requires a suite of computational and experimental tools. The following table details key resources that constitute a modern LDBT toolkit.

Table 3: Research Reagent Solutions for the LDBT Workflow

| Tool Category | Example Solutions | Function in LDBT Workflow |

|---|---|---|

| Machine Learning Models | Protein Language Models (ESM, ProGen), Structure-Based Design Tools (ProteinMPNN, MutCompute), Stability Predictors (Stability Oracle) [5] | Enables the "Learn" phase by generating and pre-validating designs with desired properties. |

| Cell-Free Expression Systems | TX-TL systems from E. coli, wheat germ, or mammalian cell lysates; purified component systems [5] | Accelerates the "Build" and "Test" phases by enabling rapid, high-throughput protein expression without living cells. |

| High-Throughput Screening Platforms | Droplet microfluidics (e.g., DropAI), automated liquid handlers, microplate readers [5] | Allows parallel testing of thousands of designs, generating large datasets for model validation or retraining. |

| DNA Synthesis & Assembly | Automated gene synthesis, high-throughput molecular cloning workflows [1] | Facilitates the rapid physical construction of computationally designed DNA sequences. |

Detailed Experimental Protocol: Coupling ML Design with Cell-Free Testing

This protocol outlines a standard workflow for validating machine-learning-generated protein variants.

A. Learn & Design Phase:

- Objective Definition: Clearly define the target protein property (e.g., improved thermostability, higher enzymatic activity, novel binding).

- Model Selection & Interrogation:

- For zero-shot design, use a protein language model (e.g., ESM) or a structure-based tool (e.g., ProteinMPNN) to generate a library of candidate sequences.

- For optimization, use a predictive model (e.g., Stability Oracle) to score and filter a large mutational library, selecting the top-ranked variants for testing.

- DNA Sequence Design: Convert the selected protein sequences into DNA sequences with optimal codons for the chosen cell-free expression system.

B. Build Phase:

- DNA Synthesis: Order the designed sequences as linear DNA fragments or as cloned genes from a commercial synthesis provider.

- Template Preparation: If using linear fragments, amplify them via PCR to ensure sufficient quantity and purity. If using plasmids, purify them using standard miniprep or maxiprep kits.

C. Test Phase:

- Cell-Free Reaction Setup:

- Use a commercial cell-free kit or a homemade E. coli lysate system [5].

- In a 96-well or 384-well microplate, mix the cell-free reaction master mix with each DNA template (~10-20 ng/µL final concentration).

- Include positive and negative controls (e.g., a known well-expressing protein and a no-DNA control).

- Incubation and Measurement:

- Incubate the reaction plate at 30°C for 4-16 hours with shaking.

- Monitor expression if using a fluorescent protein. For enzymes, stop the reaction and assay for activity using a colorimetric or fluorescent substrate in a high-throughput plate reader.

- Data Analysis: Compare the activity or yield of the ML-designed variants to the wild-type or parent control. The top performers identified in this single round of testing are strong candidates for further development.

The transition from DBTL to LDBT represents a fundamental maturation of synthetic biology. By placing machine learning at the forefront of the design process, the LDBT framework leverages the vast and growing body of biological data to make predictive, zero-shot engineering a reality. When combined with the experimental acceleration provided by cell-free systems and high-throughput screening, this paradigm significantly shortens the path from concept to functional biological system. For the field of drug development, this shift is particularly impactful, promising to streamline the discovery and optimization of therapeutic proteins, vaccines, and biosynthetic pathways for small-molecule drugs. As machine learning models become more sophisticated and cell-free platforms more robust, the LDBT approach is poised to become the standard for a new era of predictable, efficient, and scalable biological design.

Synthetic biology has established itself as a premier engineering discipline by adopting and adapting core principles from traditional engineering fields. The foundational framework for this biological engineering endeavor has been the Design-Build-Test-Learn (DBTL) cycle, a systematic, iterative process that has streamlined efforts to build biological systems [10]. This cyclic methodology closely resembles approaches used in established engineering disciplines such as mechanical engineering, where iteration involves gathering information, processing it, identifying design revisions, and implementing those changes [10]. However, the field is now undergoing a paradigm shift driven by computational advances. The emergence of machine learning (ML) is prompting a fundamental rethinking of this established workflow, potentially reorganizing it into a Learn-Design-Build-Test (LDBT) cycle where learning precedes design [10] [6]. This transition represents a significant evolution in how engineers approach biological design, moving from a build-then-learn to a learn-then-build philosophy.

The DBTL cycle begins with the Design phase, where researchers define objectives and design biological parts or systems using domain knowledge and computational modeling [10]. In the Build phase, DNA constructs are synthesized and introduced into characterization systems, which can include in vivo chassis or in vitro cell-free systems [10]. The Test phase experimentally measures the performance of the engineered constructs, while the Learning phase analyzes this data to inform the next design iteration [10]. This cyclic process has formed the backbone of synthetic biology's progress, but its reliance on empirical iteration has limitations in efficiency and predictability.

The Established Paradigm: Design-Build-Test-Learn (DBTL)

Theoretical and Practical Foundations

The DBTL framework finds its theoretical roots in broader engineering design theory. The process is not unique to synthetic biology but closely mirrors approaches in mechanical engineering, where physical laws model parameters like damping and stiffness [10]. More fundamentally, all design processes, including DBTL, can be viewed as evolutionary in nature [11]. They follow a cyclic iterative process where concepts are modified or recombined, prototyped, tested for utility, and the best candidates are selected for further iteration—directly analogous to biological evolution through natural selection [11].

This evolutionary perspective reveals that all design methods exist on a spectrum characterized by population size (throughput) and generation count (number of iterations) [11]. The exploratory power of any design approach is the product of these two factors, yet this power always pales compared to the vastness of biological design space [11]. Successful navigation of this space relies on two forms of learning: exploration (searching the fitness landscape) and exploitation (using prior knowledge to constrain and guide the search) [11].

Implementation and Challenges

In practical implementation, the DBTL cycle has been propelled by massive improvements in DNA sequencing and synthesis technologies. The cost of sequencing a human genome dropped from approximately $10 million in 2007 to around $600, enabling the accumulation of vast genomic databases that form the basis for redesigning biological systems [12]. Similarly, advances in DNA assembly methodologies like Gibson assembly have overcome limitations of conventional cloning, enabling seamless assembly of combinatorial genetic parts and even entire synthetic chromosomes [12].

Despite these technical advances, significant challenges have persisted in the DBTL cycle. The "learning" stage has proven particularly difficult due to the complexity and heterogeneity of biological systems, interactions between components, and variations in experimental setups [12]. While synthetic biologists can decipher data to create draft blueprints, many still resort to top-down approaches based on likelihoods and trial-and-error rather than genuine rational design [12]. This limitation has motivated the integration of more sophisticated computational approaches, particularly machine learning, to overcome these bottlenecks.

Table 1: Key Stages of the Traditional DBTL Cycle

| Stage | Core Activities | Primary Tools & Technologies | Major Challenges |

|---|---|---|---|

| Design | Define objectives; Design parts/system using domain knowledge | Computational modeling; Domain expertise; Biophysical principles | Limited predictive power of models; Complexity of biological systems |

| Build | Synthesize DNA; Assemble constructs; Introduce into chassis | DNA synthesis; Cloning; Genome editing; Cell-free systems | Time-consuming cloning; Cellular toxicity; Genetic instability |

| Test | Measure performance experimentally | Omics technologies; Fluorescence assays; Analytics | Low throughput; Cellular context effects; Difficulty of measurement |

| Learn | Analyze data; Compare to objectives; Inform next design | Statistical analysis; Data interpretation | Complexity & heterogeneity; Black box nature of biology; Incomplete knowledge |

The Emerging Paradigm: Learn-Design-Build-Test (LDBT)

The Machine Learning Revolution

The proposed LDBT cycle represents a fundamental reordering of the synthetic biology workflow, placing "Learning" at the forefront through machine learning [10]. This shift is made possible by the development of sophisticated protein language models and structural prediction tools that can leverage vast biological datasets to detect patterns in high-dimensional spaces, enabling more efficient and scalable design [10]. These models are trained on millions of protein sequences or hundreds of thousands of structures, allowing researchers to make increasingly accurate zero-shot predictions that improve the functionality of protein parts without additional training [10].

Several classes of machine learning models are driving this transition. Sequence-based protein language models such as ESM and ProGen are trained on evolutionary relationships between protein sequences embedded across phylogeny [10]. These models excel at predicting beneficial mutations and inferring protein functions, having proven adept at zero-shot prediction of diverse antibody sequences [10]. Structure-based models like MutCompute and ProteinMPNN use deep neural networks trained on protein structures to associate amino acids with their chemical environments, enabling prediction of stabilizing and functionally beneficial substitutions [10]. When combined with structure assessment tools like AlphaFold, these approaches have demonstrated nearly 10-fold increases in design success rates [10].

Enabling Technologies: Cell-Free Systems and Automation

The practical implementation of the LDBT paradigm is facilitated by the parallel development of high-throughput cell-free transcription-translation (TX-TL) systems [10] [6]. These systems circumvent complexities associated with living host cells—such as metabolic burden and genetic instability—enabling rapid assessment of genetic circuit performance within hours rather than days or weeks [6]. Cell-free expression leverages protein biosynthesis machinery from crude cell lysates or purified components to activate in vitro transcription and translation, producing more than 1 g/L of protein in under 4 hours [10].

The integration of cell-free systems with liquid handling robots and microfluidics has dramatically scaled testing capabilities. For example, DropAI leveraged droplet microfluidics and multi-channel fluorescent imaging to screen over 100,000 picoliter-scale reactions [10]. Biofoundries worldwide have institutionalized these high-throughput automated workflows, with facilities collaborating through the Global Biofoundry Alliance established in 2019 [12]. This infrastructure provides the massive, high-quality datasets required to train effective machine learning models for biological design.

Table 2: Machine Learning Approaches in the LDBT Paradigm

| ML Approach | Representative Tools | Primary Application | Key Strengths |

|---|---|---|---|

| Sequence-Based Language Models | ESM [10], ProGen [10] | Predicting beneficial mutations; Inferring protein function | Captures long-range evolutionary dependencies; Zero-shot prediction capability |

| Structure-Based Models | MutCompute [10], ProteinMPNN [10] | Residue-level optimization; Sequence design for target structures | Associates amino acids with local chemical environment; High success rates when combined with structure assessment |

| Stability Prediction | Prethermut [10], Stability Oracle [10] | Predicting thermodynamic stability changes of mutants | Predicts ΔΔG of proteins; Identifies stabilizing/destabilizing mutations |

| Solubility Prediction | DeepSol [10] | Predicting protein solubility from primary sequence | Maps sequence features (k-mers) to solubility; Helps screen expressible variants |

| Hybrid Approaches | Physics-informed ML [10] | Combining statistical power with physical principles | Leverages both data patterns and biophysical principles; Enhanced explanatory capability |

Comparative Analysis: DBTL vs. LDBT

Workflow and Philosophical Differences

The fundamental distinction between DBTL and LDBT lies in their starting points and underlying philosophies. The traditional DBTL cycle begins with design based on existing domain knowledge and hypotheses, representing a hypothesis-driven approach [10]. In contrast, the LDBT cycle starts with learning from vast datasets, employing a data-driven approach that uses machine learning to uncover hidden patterns and relationships before any design occurs [6]. This learn-first approach enables researchers to refine design hypotheses before constructing biological parts, potentially circumventing costly trial-and-error [6].

This philosophical shift also changes the role of iteration in the engineering process. While DBTL requires multiple cycles to gain knowledge, with Build-Test phases being particularly slow, LDBT aims to leverage pre-existing knowledge embedded in machine learning models to reduce iteration needs [10]. Given the increasing success of zero-shot predictions, it may be possible to reorganize the cycle such that Learn-Design allows an initial set of answers to be quickly built and tested, potentially generating functional parts and circuits in a single cycle [10]. This brings synthetic biology closer to a Design-Build-Work model that relies on first principles, similar to disciplines like civil engineering [10].

Practical Implementation and Efficiency Gains

The efficiency advantages of LDBT manifest most clearly in its handling of vast biological design spaces. The combinatorial nature of potential DNA sequence variations generates a landscape of possibilities too extensive for exhaustive exploration [6]. LDBT's machine learning component navigates this space intelligently through active learning techniques, strategically selecting the most informative sequence variants to test experimentally [6]. This approach maximizes information gain per experiment, reducing redundancy and focusing efforts on promising design regions [6].

Case studies demonstrate LDBT's practical efficacy. Researchers have paired deep-learning sequence generation with cell-free expression to computationally survey over 500,000 antimicrobial peptides, selecting 500 optimal variants for experimental validation, resulting in 6 promising designs [10]. In pathway engineering, in vitro prototyping and rapid optimization of biosynthetic enzymes (iPROBE) uses neural networks with training sets of pathway combinations to predict optimal pathway sets, improving 3-HB production in Clostridium by over 20-fold [10]. These examples showcase LDBT's ability to achieve rapid convergence on high-performance constructs with fewer iterations than conventional methods.

The Scientist's Toolkit: Essential Research Reagents and Methodologies

Core Experimental Platforms

The implementation of both DBTL and LDBT cycles relies on a sophisticated toolkit of experimental platforms and reagents. Cell-free gene expression systems form a cornerstone of the emerging LDBT paradigm, enabling rapid testing without the constraints of living cells [10]. These systems leverage protein biosynthesis machinery from various organisms and can be customized through modular reagent exchanges [10]. Their flexibility allows incorporation of non-canonical amino acids and post-translational modifications, positioning them as versatile platforms for high-throughput synthesis and testing [10].

Automation and microfluidics constitute another critical component. Robotic liquid handling systems enable the scale-up of assembly and testing protocols, while droplet microfluidics allows massive parallelization of reactions [10] [6]. These technologies interface closely with advanced analytical methods, including next-generation sequencing and mass spectrometry, to collect multi-omics data at single-cell resolution [12]. The integration of these platforms in biofoundries provides the industrial-scale infrastructure needed for modern biological engineering.

Mathematical modeling remains an essential tool for studying gene regulatory circuits, whether in traditional DBTL or ML-enhanced LDBT approaches [13]. Models serve as logical machines to derive the implications of biological hypotheses, with mathematical language providing a powerful reasoning system for building arguments too intricate to hold in our heads [13]. The definition of a circuit—representing interactions between entities and the computing logic of such interactions—provides a map for building mathematical models where nodes represent molecular species and edges denote interactions or biochemical reactions [13].

For machine learning implementation, specific architectures have proven particularly valuable. Neural networks alongside classic ensemble methods capture nonlinear relationships between sequence features and functional outputs [6]. These models are trained on biological features encompassing promoter strengths, ribosome binding site sequences, codon usage biases, and secondary structure propensities [6]. The continuous improvement of these models through iterative experimental validation creates a virtuous cycle of enhanced predictive capability.

Table 3: Essential Research Reagent Solutions for Synthetic Biology Workflows

| Reagent/Platform | Primary Function | Application in DBTL/LDBT | Key Advantages |

|---|---|---|---|

| Cell-Free TX-TL Systems | In vitro transcription-translation | Rapid testing in Build-Test phases; Dataset generation for ML | Bypasses living cells; High throughput; Tunable environment |

| CRISPR-Based Editing | Targeted genome modification | Building constructs in host chassis; Creating mutator strains | Precision; Versatility across organisms; Multiplexing capability |

| DNA Synthesis & Assembly | De novo DNA construction | Building genetic designs; Library construction | Scalability; Speed; Independence from template availability |

| Droplet Microfluidics | Miniaturized reaction compartments | Ultra-high-throughput screening; Single-cell analysis | Massive parallelization; Reduced reagent costs |

| Protein Language Models | Protein sequence-function prediction | Learning phase; Zero-shot design | Evolutionary insight; No required structural data |

| Structure Prediction Tools | Protein structure/function prediction | Learning and Design phases | Environmental context; Stabilizing mutation identification |

| Multi-Omics Analytics | Comprehensive molecular profiling | Testing and Learning phases | Systems-level insight; Data richness for ML training |

Future Perspectives and Implications

Technological Convergence and Democratization

The convergence of machine learning with synthetic biology promises to fundamentally reshape biotechnology development timelines. The ability to quickly iterate designs based on predictive learning could dramatically shorten development windows for bio-based products, from pharmaceuticals to sustainable chemicals [6]. Furthermore, the reduced dependency on labor-intensive cloning and cellular culturing steps may democratize synthetic biology research, opening avenues for smaller labs and startups to participate in cutting-edge bioengineering without extensive infrastructure [6].

This technological convergence also enables more nuanced understanding of genotype-to-phenotype relationships. Traditional methods often struggle with the stochasticity and context-dependence inherent to biological systems, but the iterative learning and validation offered by the LDBT cycle helps disentangle these complexities through continual refinement of predictive models [6]. Each loop through the cycle yields improved biological insight and enhanced design rationales, fostering a virtuous circle of discovery and engineering [6].

Meta-Synthetic Biology and Evolutionary Engineering

Looking forward, the field appears to be moving toward what might be termed "meta-synthetic biology"—controlling not just biological function but the evolutionary processes themselves [14]. Research has demonstrated that mutation rates and spectra can be manipulated both globally across genomes and locally at specific genes or genomic regions [14]. Under specific conditions, mutational space can be considerably reduced—for instance, predominantly to G->T mutations on transcribed strands—constraining evolutionary paths and making outcomes more predictable [14].

This control over evolutionary processes enables new engineering paradigms. Rather than solely designing static biological systems, engineers can now design systems with specified evolutionary trajectories [14]. This might include creating microbes that are genetically hyper-stable for robust performance in bioreactors, or microbiome therapies that evolve in the gut to become personalized to host genetics [14]. Such approaches represent a ultimate synthesis of engineering and evolution, potentially resolving the apparent paradox between rational design and evolutionary tinkering [15].

The evolution of engineering paradigms in synthetic biology from DBTL to LDBT represents more than just a reordering of workflow stages—it signifies a fundamental shift in how biological engineers approach design. The traditional DBTL cycle, with its roots in classical engineering disciplines, has provided a systematic framework for biological innovation [10]. However, the integration of machine learning and high-throughput testing platforms is now enabling a more data-driven, predictive approach that places learning at the forefront of biological design [10] [6].

This paradigm shift promises to accelerate synthetic biology toward its ultimate goal: high-precision biological design with predictable outcomes [12]. By leveraging the growing power of machine learning models trained on expanding biological datasets, and combining these with rapid experimental validation through cell-free systems and automation, the field appears poised to overcome the limitations of iterative trial-and-error that have constrained its progress [10] [6] [12]. The continued convergence of biological engineering with computational intelligence and experimental ingenuity sets the stage for transforming how biological systems are understood, designed, and deployed for human benefit [6].

The Design-Build-Test-Learn (DBTL) cycle has long been the foundational framework of synthetic biology, representing an iterative process where researchers design biological systems, build them, test their functionality, and learn from the outcomes to inform the next design round [5]. However, recent advancements in machine learning (ML) and data generation technologies are catalyzing a fundamental restructuring of this paradigm. The emerging Learn-Design-Build-Test (LDBT) cycle represents a transformative approach where machine learning precedes design, leveraging vast biological datasets to make predictive, zero-shot designs that dramatically accelerate biological engineering [5] [6]. This shift from a build-test-learn cycle to a learn-first methodology is poised to reshape synthetic biology, moving the field closer to a "Design-Build-Work" model reminiscent of more established engineering disciplines [5]. This technical guide examines the core drivers enabling this transition, with particular focus on the integration of machine learning and megascale data generation through cell-free testing platforms.

The LDBT Framework: Core Components and Workflow

The LDBT framework fundamentally reorders the synthetic biology workflow, placing learning at the forefront of the engineering process. This reorientation leverages pre-existing knowledge encoded in machine learning models to generate more intelligent initial designs, potentially reducing or eliminating the need for multiple iterative cycles.

The LDBT Workflow Diagram

The following diagram illustrates the core structure and information flow of the LDBT paradigm:

Comparative Analysis: DBTL vs. LDBT Cycles

Table 1: Fundamental differences between traditional DBTL and the emerging LDBT paradigm

| Aspect | Traditional DBTL Cycle | LDBT Cycle |

|---|---|---|

| Starting Point | Design phase based on limited knowledge and hypotheses | Learning phase leveraging pre-trained ML models on vast datasets [5] |

| Primary Driver | Empirical experimentation and iterative testing | Predictive computational modeling and zero-shot design [5] |

| Data Utilization | Data generated from previous cycles informs subsequent designs | Pre-existing megascale datasets and foundational models enable intelligent first-pass designs [5] [6] |

| Cycle Duration | Multiple lengthy iterations often required | Potential for single-cycle success through accurate prediction [6] |

| Resource Intensity | High resource consumption across multiple build-test phases | Resource concentration on validated, high-probability designs [6] |

| Knowledge Foundation | Domain expertise and incremental learning from own experiments | Collective biological knowledge encoded in ML models [5] |

| Experimental Approach | Heavy reliance on in vivo systems and cellular constraints | Cell-free testing platforms for rapid, parallel validation [5] [6] |

Machine Learning as the Foundation of the Learn Phase

The initial "Learn" phase in LDBT is powered by sophisticated machine learning models trained on massive biological datasets. These models capture complex relationships between biological sequences, structures, and functions that would be impossible to discern through traditional analysis.

Key Machine Learning Approaches in LDBT

Table 2: Machine learning model types and their applications in the LDBT Learn phase

| Model Type | Examples | Training Data | Key Applications | Capabilities |

|---|---|---|---|---|

| Protein Language Models | ESM [5], ProGen [5] | Evolutionary relationships in protein sequences [5] | Predicting beneficial mutations [5], inferring protein function [5], designing antibody sequences [5] | Zero-shot prediction of diverse sequences [5], capturing long-range evolutionary dependencies [5] |

| Structure-Based Models | MutCompute [5], ProteinMPNN [5] | Experimentally determined protein structures [5] | Residue-level optimization [5], designing sequences for specific backbones [5], enzyme engineering [5] | Predicting stabilizing mutations [5], associating amino acids with local chemical environment [5] |

| Functional Prediction Models | Prethermut [5], Stability Oracle [5], DeepSol [5] | Experimental measurements of protein properties [5] | Predicting thermodynamic stability changes [5], predicting protein solubility [5], multi-property optimization [5] | Predicting ΔΔG of mutations [5], mapping sequence-fitness landscapes [5] |

| Hybrid & Augmented Models | Physics-informed ML [5], evolutionary-augmented models [5] | Combined datasets (sequences, structures, biophysics) [5] | Exploring evolutionary landscapes [5], engineering specialized enzymes [5], simultaneous multi-parameter optimization [5] | Combining predictive power of statistical models with explanatory strength of physical principles [5] |

Technical Implementation of ML Models in LDBT

The machine learning infrastructure supporting LDBT requires specialized implementation approaches:

Data Preparation and Feature Engineering

- Biological Feature Extraction: ML models leverage a broad spectrum of biological features including promoter strengths, ribosome binding site sequences, codon usage biases, and secondary structure propensities [6]. These features are encoded in high-dimensional vectors that capture both local and global sequence properties.

- Training Data Curation: Models are trained on diverse datasets ranging from millions of protein sequences [5] to hundreds of thousands of structures [5]. Data quality assurance involves removing redundant sequences, balancing representation across protein families, and integrating experimental measurements from standardized assays.

Model Architectures and Training

- Neural Network Architectures: State-of-the-art neural network architectures alongside classic ensemble methods capture nonlinear relationships between sequence features and functional outputs [6]. Graph-transformer architectures learn pairwise representations of residues for predicting protein stability [5].

- Active Learning Integration: ML components employ active learning techniques to intelligently navigate vast genetic design spaces by strategically selecting the most informative sequence variants to test experimentally [6]. This approach maximizes information gain per experiment, reducing redundancy and focusing efforts on promising design regions.

Zero-Shot Prediction Capabilities The most significant advancement enabling LDBT is the development of models capable of zero-shot prediction—generating functional designs without additional training on specific targets [5]. For example:

- Protein language models trained on evolutionary relationships can predict beneficial mutations and infer protein function without target-specific fine-tuning [5].

- Structure-based models like ProteinMPNN can design sequences that fold into specified backbones with nearly 10-fold increased success rates when combined with structure assessment tools like AlphaFold [5].

Megascale Data Generation Through Cell-Free Testing Platforms

The "Build" and "Test" phases in LDBT are accelerated through cell-free transcription-translation (TX-TL) systems, which enable rapid, high-throughput experimental validation of computationally designed biological parts.

Cell-Free Testing Workflow

The experimental pipeline for cell-free testing in LDBT involves the following automated workflow:

Technical Specifications of Cell-Free Testing Platforms

Cell-free systems provide a biochemical environment containing the essential components for transcription and translation without the constraints of living cells.

System Components and Configuration

- Cellular Machinery Source: Protein biosynthesis machinery obtained from crude cell lysates or purified components [5]. Systems can be derived from organisms across the tree of life, including E. coli, yeast, and mammalian cells [5].

- Reaction Customization: Modular reaction environments allow facile customization through addition of specific energy sources, nucleotide triphosphates, amino acids, and cofactors [5]. This enables optimization for different protein types and experimental goals.

- Non-Canonical Incorporation: Capable of incorporating non-canonical amino acids and performing post-translational modifications like glycosylation and phosphorylation [5], expanding the chemical diversity of testable designs.

Performance Characteristics and Capabilities

- Speed and Yield: Rapid protein production achieving >1 g/L protein in <4 hours [5], dramatically faster than in vivo expression systems.

- Throughput Capacity: Systems like DropAI leverage droplet microfluidics to screen upwards of 100,000 picoliter-scale reactions [5], enabling truly megascale data generation.

- Tolerance and Flexibility: Capable of expressing products toxic to living cells and testing under diverse conditions that would be lethal to whole organisms.

Quantitative Advantages of Cell-Free Testing

Table 3: Performance comparison between traditional in vivo testing and cell-free testing platforms

| Parameter | Traditional In Vivo Testing | Cell-Free Testing Platforms | Improvement Factor |

|---|---|---|---|

| Testing Cycle Time | Days to weeks (including cloning, transformation, and cellular growth) [6] | Hours (direct template addition to reactions) [5] [6] | 10-100x faster [6] |

| Throughput Capacity | ~10^3-10^4 variants per campaign | ~10^5-10^6 variants using microfluidics [5] | 100-1000x higher throughput [5] |

| Resource Consumption | High (media, antibiotics, cellular growth requirements) | Minimal (nanoliter-scale reactions) [5] | 1000x reduction in reagent use [5] |

| Environmental Control | Limited by cellular homeostasis and metabolic constraints | Precise control over redox potential, energy charge, and molecular composition [6] | Unprecedented parameter control |

| Data Generation Rate | Limited by cellular growth rates and assay scalability | Ultra-high-throughput mapping (e.g., 776,000 variants for stability mapping) [5] | Megascale data acquisition |

| Assay Flexibility | Constrained by cellular viability and genetic stability | Compatible with diverse conditions, including toxic compounds [5] | Expanded experimental design space |

Integrated LDBT Implementation: Case Studies and Performance Metrics

The integration of machine learning and cell-free testing creates a synergistic framework where each component enhances the other's capabilities in a virtuous cycle of improvement.

Experimental Protocol for Integrated LDBT Implementation

Phase 1: Learn-First Design Initiation

- Objective Definition: Clearly specify functional requirements for the biological system, including performance metrics, environmental constraints, and optimization priorities.

- Model Selection: Choose appropriate pre-trained ML models based on the design challenge (e.g., protein language models for sequence optimization, structure-based models for stability engineering).

- Zero-Shot Design Generation: Utilize selected models to generate initial design candidates without additional training, leveraging the evolutionary and structural knowledge embedded in the models [5].

Phase 2: High-Throughput Experimental Validation

- DNA Template Preparation: Synthesize DNA templates for top candidate designs using high-throughput oligo synthesis and assembly methods.

- Cell-Free Reaction Assembly: Utilize liquid handling robots to assemble cell-free reactions in 96, 384, or 1536-well plates, or employ microfluidic devices for picoliter-scale reactions [5].

- Expression and Assaying: Incubate reactions to enable protein expression, followed by functional assaying using colorimetric, fluorescent, or other high-throughput detection methods [5].

- Data Collection: Implement automated data acquisition systems to quantitatively measure performance metrics for each variant.

Phase 3: Model Refinement and Iteration

- Data Processing and Quality Control: Apply automated processing pipelines to normalize data, remove outliers, and compile results into structured datasets.

- Model Retraining (Optional): For particularly challenging design problems, use the newly generated data to fine-tune models for improved prediction on similar systems.

- Design Prioritization: Apply updated models to identify the most promising candidates for further development or scale-up.

Case Study: Enzyme Engineering via LDBT

A demonstrated application of LDBT involves engineering a hydrolase for polyethylene terephthalate (PET) depolymerization [5]:

- Learning Phase: MutCompute, a deep neural network trained on protein structures, identified probable mutations given the local chemical environment [5].

- Design Phase: The model predicted specific amino acid substitutions predicted to increase stability and activity [5].

- Build Phase: DNA templates for selected variants were synthesized and expressed in cell-free systems [5].

- Test Phase: Expressed variants were rapidly assayed for PET depolymerization activity, confirming that proteins with MutCompute-designed mutations showed increased stability and activity compared to wild-type [5].

Further optimization was achieved by augmenting the approach with large language models trained on PET hydrolase homologs and force-field-based algorithms, enabling more comprehensive exploration of the evolutionary landscape [5].

Performance Metrics and Validation

Table 4: Quantitative outcomes from LDBT implementation in protein engineering campaigns

| Engineering Campaign | Traditional DBTL Results | LDBT Approach Results | Improvement Factor |

|---|---|---|---|

| PET Hydrolase Engineering | Not specified in literature | MutCompute-designed variants showed increased stability and activity vs wild-type [5] | Significant improvement in key parameters [5] |

| TEV Protease Engineering | Not specified in literature | ProteinMPNN-designed variants improved catalytic activity vs parent sequence [5] | Design success rates increased nearly 10-fold with structure assessment [5] |

| Antimicrobial Peptide Design | Traditional screening of limited libraries | 500 optimal variants selected from computational survey of >500,000; 6 promising designs validated [5] | Highly efficient design-to-validation pipeline [5] |

| Biosynthetic Pathway Optimization | Multi-round iterative strain engineering | iPROBE used neural network on pathway combinations to improve 3-HB titer in Clostridium by >20-fold [5] | Dramatic reduction in optimization time [5] |

| General Protein Engineering | Multiple rounds of site-saturation mutagenesis | Linear supervised models trained on >10,000 reactions accelerated identification of favorable variants [5] | Data-driven acceleration of engineering campaigns [5] |

Essential Research Reagents and Tools for LDBT Implementation

Successful implementation of the LDBT framework requires specific research reagents and computational tools that enable the seamless integration of machine learning and rapid experimental validation.

Research Reagent Solutions for LDBT

Table 5: Essential reagents, tools, and platforms for implementing LDBT workflows

| Category | Specific Tools/Reagents | Function in LDBT Pipeline | Key Features |

|---|---|---|---|

| Machine Learning Models | ESM [5], ProGen [5], ProteinMPNN [5], MutCompute [5] | Learn-phase: Generating intelligent designs based on patterns in biological data | Zero-shot prediction capabilities [5], attention mechanisms for long-range dependencies [5], structure-based design [5] |

| Cell-Free Systems | TX-TL systems [5], PURE system [5], species-specific lysates [5] | Build/Test-phase: Rapid expression and testing of designed variants | Bypass cellular growth constraints [5], high-throughput compatibility [5], customizable reaction conditions [5] |

| Automation Platforms | Liquid handling robots [5], microfluidic devices [5] | Build/Test-phase: Enabling megascale parallel experimentation | Nanoliter-to-microliter reaction assembly [5], integration with screening assays [5], walk-away operation [5] |

| Assay Technologies | cDNA display [5], fluorescence-based assays [5], colorimetric readouts [5] | Test-phase: Quantitative measurement of function and properties | Ultra-high-throughput compatibility [5], quantitative output for ML training [5], minimal cross-reactivity [5] |

| Data Integration Tools | Automated data processing pipelines [6], active learning algorithms [6] | Learn-phase: Continuous model improvement from experimental data | Strategic selection of informative variants [6], maximized information gain per experiment [6], closed-loop optimization [6] |

The LDBT framework represents a fundamental shift in synthetic biology methodology, moving the field from empirical iteration toward predictive engineering. By placing learning at the forefront through machine learning models trained on megascale biological data, and accelerating validation through cell-free testing platforms, LDBT dramatically accelerates the design process for biological systems. The integration of these technologies creates a virtuous cycle where each experiment enhances predictive models, which in turn generate more intelligent designs for subsequent testing. As this paradigm gains broader adoption, it promises to transform synthetic biology from a trial-and-error discipline to a truly predictive engineering science, enabling more rapid development of novel therapeutics, sustainable materials, and bio-based solutions to global challenges. Future advancements will likely focus on fully automated closed-loop systems combining AI-driven design with robotic experimentation, further reducing development timelines and expanding the complexity of addressable biological engineering challenges.

Execution and Impact: Machine Learning and Cell-Free Systems in Action

The engineering of biological systems has traditionally been guided by the Design-Build-Test-Learn (DBTL) cycle, an iterative framework where insights from testing one design inform the next round of design hypotheses [10]. While systematic, this process can be time-consuming and resource-intensive, often requiring multiple rounds of iteration to converge on a functional protein or genetic circuit. The recent and rapid integration of advanced machine learning (ML) is fundamentally reshaping this paradigm. A new framework, termed LDBT (Learn-Design-Build-Test), places learning at the forefront [10] [6]. In this model, ML algorithms are used to learn directly from vast biological datasets—including evolutionary sequences, structural information, and experimental measurements—to generate informed designs before any physical building or testing occurs [10]. This "learn-first" approach enables zero-shot design, where models can generate novel, functional protein sequences without additional training or iterative experimental feedback for a specific task [10] [16]. This whitepaper provides an in-depth technical guide to the key machine learning tools—protein language models and structure-based design tools—that are making this paradigm shift possible, empowering researchers to accelerate the development of novel therapeutics and enzymes.

Protein Language Models for Zero-Shot Design

Protein language models (pLMs) treat amino acid sequences as texts written in a 20-letter alphabet. By training on millions of natural protein sequences, they learn the underlying "grammar" and "syntax" of proteins, capturing evolutionary constraints and functional patterns. This allows them to generate novel, functional sequences from scratch or predict the effects of mutations without explicit structural or functional data.

ESM (Evolutionary Scale Modeling) Models

The ESM family of models, based on the Transformer architecture, is trained on a masked language modeling objective, learning to predict randomly omitted amino acids in a sequence based on their context [16]. This process forces the model to internalize complex biophysical and evolutionary relationships.

- Core Architecture and Training: ESM models are bidirectional encoders. During pre-training, they process sequences with a portion of residues masked, learning to generate information-rich, context-aware embeddings for every position in a sequence. The largest ESM-2 model has 15 billion parameters, while the newer ESM-3 has scaled to 98 billion parameters [16].

- Zero-Shot Applications: A key application is the zero-shot prediction of mutational effects. Models like ESM-1v are designed to score the likelihood of sequence variants, effectively predicting fitness landscapes without experimental data [16] [17]. Furthermore, the embeddings generated by ESM models can be used directly as features for downstream prediction tasks (e.g., predicting stability or function) without task-specific fine-tuning, a process known as transfer learning [16].

- Performance and Scaling: Studies show that model performance in transfer learning scales with size but is also influenced by data availability. While larger models like ESM-2 15B perform best with large datasets, medium-sized models like ESM-2 650M and ESM C 600M offer a practical balance of performance and computational cost, especially when data is limited [16]. For embedding-based tasks, mean pooling (averaging embeddings across all sequence positions) has been found to be a surprisingly effective and superior compression strategy compared to more complex methods [16].

ProGen Models

In contrast to ESM's masked modeling, the ProGen family employs an autoregressive, generative approach, similar to GPT models in natural language processing. It is trained to predict the next amino acid in a sequence, making it inherently powerful for generating entire novel protein sequences from scratch.

- Core Architecture and Training: ProGen3 is a suite of models using a sparse mixture-of-experts architecture. It was trained on the Profluent Protein Atlas v1 (PPA-1), a curated dataset of 3.4 billion protein sequences totaling 1.1 trillion amino acid tokens [18] [19]. Model sizes range from 112 million to 46 billion parameters [18].

- Zero-Shot Applications: ProGen3 can perform de novo protein generation and critical tasks like infilling (designing sequences for a given protein backbone or functional motif) [18] [19]. Its training enables it to generate diverse, functional proteins across numerous families, including complex proteins like antibodies and gene editors, in a zero-shot manner.

- Performance and Scaling: Profluent's research demonstrates clear scaling laws for biological design. Larger ProGen3 models produce more diverse, valid, and functional sequences. For instance, the ProGen3-46B model generated 59% more diverse sequences (measured by unique sequences at 30% identity) than a 3B parameter model [18]. Furthermore, these large models benefit most from alignment with limited laboratory data, allowing their outputs to be fine-tuned for specific properties like stability or binding affinity, with one study showing a correlation with protein fitness improving from 33.1% to 67.3% after alignment [18].

Table 1: Comparison of Major Protein Language Model Families

| Feature | ESM (e.g., ESM-2, ESM-3) | ProGen (e.g., ProGen3) |

|---|---|---|

| Primary Architecture | Bidirectional Encoder (BERT-like) | Autoregressive Decoder (GPT-like) |

| Training Objective | Masked Language Modeling | Next Token Prediction |

| Core Strength | Context-aware embeddings, variant effect prediction | De novo sequence generation, infilling |

| Typical Zero-Shot Use | Predicting fitness of mutants, transfer learning via embeddings | Generating novel, full-length functional proteins |

| Model Scale | Up to 98B parameters (ESM3) | Up to 46B parameters |

| Key Differentiator | Excels at understanding and scoring existing sequences | Excels at creating entirely new sequences |

Structure-Based Tools for Zero-Shot Design

While pLMs operate primarily on sequence information, another class of tools uses protein structural data to design sequences that fold into specific three-dimensional shapes or interact with target molecules.

ProteinMPNN

ProteinMPNN is a deep learning-based protein sequence design tool that takes a protein backbone structure as input and outputs sequences that are predicted to fold into that structure.

- Core Methodology: It uses a graph neural network architecture where each residue is a node, and edges are defined by spatial proximity [20]. The network performs message passing to incorporate information from the local structural environment, then decodes the optimal amino acid identity for each position. A key innovation is its use of random autoregressive decoding, which allows for the efficient design of symmetric proteins and the generation of diverse sequence solutions for a single backbone [20].

- Zero-Shot Application: Given a backbone structure (from de novo design or a natural protein), ProteinMPNN can generate a high-fidelity sequence in a single pass, without requiring iterative optimization. It has been successfully used to design novel proteins, including variants of TEV protease with improved activity [10].

LigandMPNN

LigandMPNN is a generalization of ProteinMPNN that explicitly incorporates the atomic context of non-protein molecules, making it indispensable for designing enzymes, binders, and sensors.

- Core Methodology: The architecture extends ProteinMPNN by adding two additional graphs: a protein-ligand graph and a fully connected ligand graph [20]. This allows the model to perform message passing between ligand atoms and from ligand atoms to protein residues, explicitly modeling interactions like hydrogen bonding and metal coordination. It was trained on protein structures with associated small molecules, nucleotides, and metals from the PDB [20].

- Zero-Shot Application and Performance: LigandMPNN enables the zero-shot design of protein sequences that bind specific small molecules, DNA, or metals. It significantly outperforms both Rosetta and the original ProteinMPNN on native sequence recovery for residues interacting with these molecules [20]. For example, its sequence recovery for residues near metals is 77.5%, compared to 40.6% for ProteinMPNN, and it has been used to design over 100 experimentally validated binders [20].

MutCompute

MutCompute is a deep learning tool focused on residue-level optimization within a structural context. It identifies probable mutations that stabilize a protein or enhance its function based on the local chemical environment.

- Core Methodology: MutCompute uses a deep neural network trained on protein structures to associate an amino acid with its surrounding chemical environment, learning the statistical preferences for specific residues in specific structural contexts [10].

- Zero-Shot Application: It predicts stabilizing or functionally beneficial substitutions in a zero-shot manner. Its success is demonstrated in projects like engineering a hydrolase for PET depolymerization, where designs from MutCompute showed increased stability and activity compared to the wild-type enzyme [10].

Table 2: Comparison of Structure-Based Protein Design Tools

| Tool | Primary Input | Core Methodology | Key Strength | Demonstrated Zero-Shot Application |

|---|---|---|---|---|

| ProteinMPNN | Protein Backbone Structure | Graph Neural Network with message passing | Fast, robust sequence design for a given backbone | Designing stable de novo proteins, enzyme variants with improved activity [10] [20] |

| LigandMPNN | Backbone + Ligand Atoms | Extension of ProteinMPNN with ligand-to-protein graphs | Designing proteins that interact with small molecules, DNA, metals | Creating high-affinity small-molecule binders and sensors [20] |

| MutCompute | Protein Structure | Deep neural network trained on local structural environments | Identifying stabilizing mutations and local functional enhancements | Engineering a PET-depolymerizing hydrolase with improved stability and activity [10] |

Integrated Experimental Protocols for Validation

The transition from in silico zero-shot designs to physically validated proteins requires robust experimental workflows. The following protocols are commonly used to test and validate the outputs of ML models.

Protocol for High-Throughput Protein Characterization using Cell-Free Systems

This protocol leverages cell-free transcription-translation (TX-TL) systems to rapidly express and test designed protein sequences, perfectly aligning with the accelerated LDBT cycle [10] [6].

- DNA Template Preparation: Designed protein sequences are codon-optimized for the expression system. DNA templates are generated via high-throughput gene synthesis or PCR assembly.

- Cell-Free Reaction Setup: Reactions are assembled in microtiter plates using commercial or homemade cell-free extracts (e.g., from E. coli). Each reaction contains the DNA template, nucleotides, amino acids, energy sources, and an appropriate buffer.

- Protein Expression: Reactions are incubated for several hours (typically 4-6 hours) at a constant temperature (e.g., 30-37°C) to allow for protein synthesis.

- Functional Assay:

- For enzymes: Reactions are supplemented with a fluorogenic or chromogenic substrate. Activity is measured by monitoring fluorescence or absorbance over time using a plate reader.

- For binding proteins (e.g., antibodies): An immunoassay (e.g., ELISA) can be configured directly in the plate. For example, following expression, the plate is transferred to a refrigerator to stop the reaction, then a target antigen is added to bind to the synthesized antibody, which is detected via a labeled secondary reagent.