Knowledge-Driven DBTL Cycles: Unlocking Mechanistic Insights for Accelerated Biomanufacturing and Drug Discovery

This article explores the transformative impact of knowledge-driven Design-Build-Test-Learn (DBTL) cycles in synthetic biology and biopharmaceutical development.

Knowledge-Driven DBTL Cycles: Unlocking Mechanistic Insights for Accelerated Biomanufacturing and Drug Discovery

Abstract

This article explores the transformative impact of knowledge-driven Design-Build-Test-Learn (DBTL) cycles in synthetic biology and biopharmaceutical development. Tailored for researchers, scientists, and drug development professionals, it provides a comprehensive examination of how integrating upstream mechanistic investigations, artificial intelligence, and automation is reshaping traditional bio-engineering workflows. We cover the foundational principles distinguishing knowledge-driven from statistical approaches, detail methodologies like in vitro prototyping and high-throughput RBS engineering, address troubleshooting and optimization challenges, and present validation case studies with comparative performance metrics. The synthesis offers a forward-looking perspective on how these integrated cycles accelerate strain optimization, enhance predictive power, and drive innovation in biomedical research.

Beyond Trial and Error: Establishing the Principles of Knowledge-Driven DBTL

Defining the Knowledge-Driven DBTL Cycle and Its Core Components

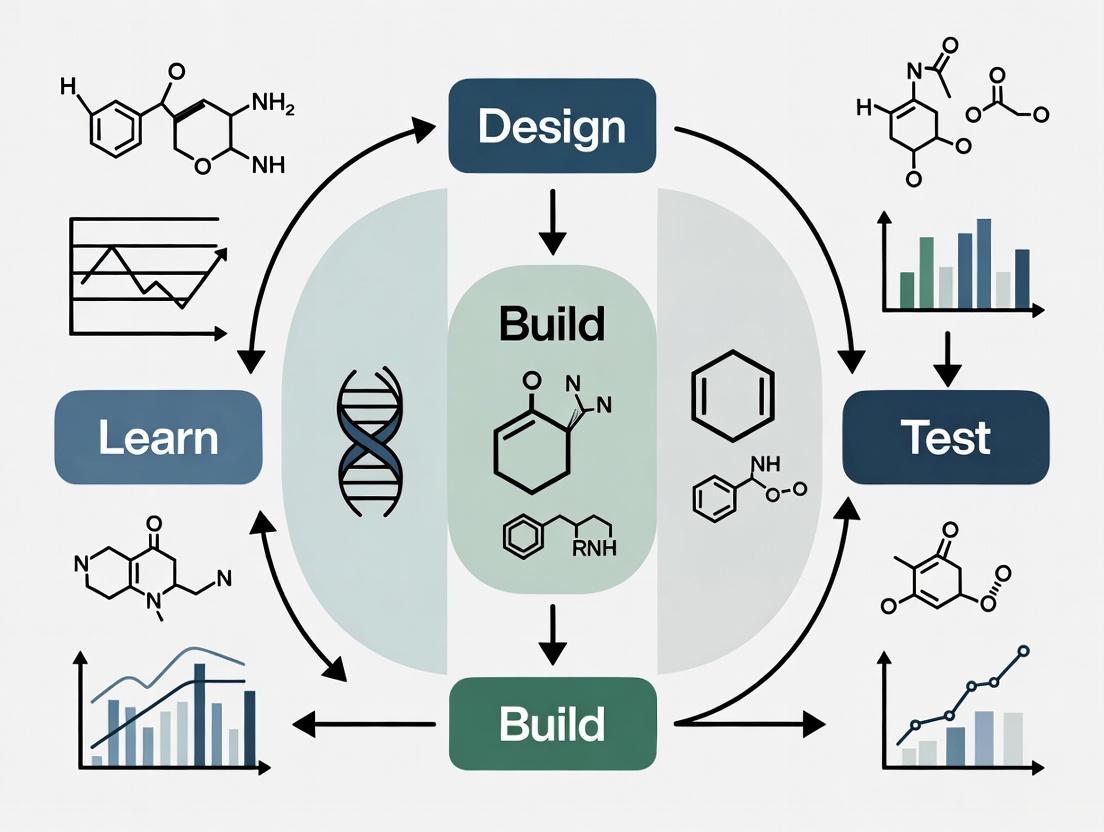

The Design-Build-Test-Learn (DBTL) cycle is a cornerstone methodology in synthetic biology, providing a systematic framework for engineering biological systems [1]. Traditionally, this iterative process begins with a design phase based on existing knowledge or hypotheses, followed by physical construction of genetic designs, testing of the constructed systems, and learning from the results to inform the next design cycle [2].

The knowledge-driven DBTL cycle represents an advanced evolution of this framework, characterized by the integration of upstream in vitro investigations and mechanistic insights before embarking on full DBTL cycles in vivo [3]. This approach addresses a fundamental challenge in traditional DBTL implementation: the initial cycle typically starts without substantial prior knowledge, potentially leading to multiple iterative cycles that consume significant time and resources [3]. By incorporating targeted preliminary experiments and leveraging computational tools, the knowledge-driven approach enables more rational strain engineering with reduced experimental overhead.

This application note delineates the core components, experimental protocols, and practical implementation strategies for the knowledge-driven DBTL cycle, with specific examples from metabolic engineering applications.

Core Components of the Knowledge-Driven DBTL Cycle

The knowledge-driven DBTL framework modifies the traditional cycle through strategic additions that enhance its efficiency and mechanistic depth.

Modified Workflow Architecture

The knowledge-driven approach incorporates two crucial elements that precede the standard DBTL cycle:

- Upstream In Vitro Investigation: Preliminary testing of enzyme expression and pathway functionality in cell-free systems or crude cell lysates

- Mechanistic Pathway Analysis: Detailed examination of enzyme kinetics, expression levels, and pathway flux before in vivo implementation

These elements feed critical data into the initial Design phase, creating a more informed starting point for DBTL cycling [3].

Table 1: Core Components of Knowledge-Driven DBTL Cycle

| Component | Description | Function in Workflow |

|---|---|---|

| Upstream In Vitro Investigation | Testing pathway enzymes in cell lysate systems | Bypasses cellular constraints to assess enzyme functionality and interactions |

| Mechanistic Analysis | Detailed study of enzyme expression, kinetics, and pathway flux | Provides quantitative understanding of pathway limitations and optimization targets |

| Enhanced Design Phase | Computational and RBS tools for pathway optimization | Translates in vitro findings into informed genetic designs for in vivo testing |

| Automated Build-Test | High-throughput genetic engineering and screening | Accelerates construction and evaluation of engineered strains |

| Data Integration & Learning | Statistical and model-guided assessment of performance | Generates actionable insights for subsequent engineering cycles |

Knowledge-Driven DBTL Workflow

The following diagram illustrates the integrated workflow of the knowledge-driven DBTL cycle, highlighting how upstream investigations feed into the core engineering cycle:

Experimental Protocol: Implementing Knowledge-Driven DBTL for Metabolic Pathway Optimization

This section provides a detailed protocol for implementing the knowledge-driven DBTL cycle, using dopamine production in Escherichia coli as a case study [3].

Upstream In Vitro Investigation Phase

Preparation of Crude Cell Lysate System

Purpose: To create a cell-free environment for testing enzyme combinations and pathway functionality without cellular constraints [3].

Materials:

- E. coli production strain (e.g., FUS4.T2)

- Phosphate buffer (50 mM, pH 7.0)

- Lysozyme

- DNase I

- Protease inhibitor cocktail

- Reaction buffer components: 0.2 mM FeCl₂, 50 μM vitamin B₆, 1 mM L-tyrosine or 5 mM L-DOPA

Procedure:

- Culture E. coli production strain in 2xTY medium with appropriate antibiotics at 37°C with shaking (220 rpm) until OD₆₀₀ reaches 0.6-0.8

- Harvest cells by centrifugation at 4,000 × g for 15 minutes at 4°C

- Resuspend cell pellet in ice-cold phosphate buffer (50 mM, pH 7.0)

- Add lysozyme to final concentration of 1 mg/mL and incubate on ice for 30 minutes

- Disrupt cells by sonication on ice (5 cycles of 30 seconds pulse, 30 seconds rest)

- Add DNase I (10 μg/mL) and protease inhibitor cocktail according to manufacturer's instructions

- Remove cell debris by centrifugation at 12,000 × g for 30 minutes at 4°C

- Collect supernatant (crude cell lysate) and aliquot for immediate use or storage at -80°C

In Vitro Pathway Assembly and Testing

Purpose: To assess relative enzyme expression levels and pathway functionality before in vivo implementation [3].

Materials:

- Crude cell lysate system (from Protocol 3.1.1)

- Plasmid constructs containing pathway genes (e.g., pJNTNhpaBC, pJNTNddc for dopamine pathway)

- Reaction buffer: Phosphate buffer supplemented with 0.2 mM FeCl₂, 50 μM vitamin B₆, and 1 mM L-tyrosine

- HPLC system for dopamine quantification

Procedure:

- Express individual pathway enzymes in separate crude cell lysate reactions

- Combine lysates containing different pathway enzymes in systematic ratios

- Initiate reactions by adding L-tyrosine substrate (1 mM final concentration)

- Incubate at 30°C with shaking (200 rpm) for 4-24 hours

- Collect samples at predetermined time points (e.g., 0, 2, 4, 8, 24 hours)

- Quench reactions by adding equal volume of ice-cold methanol

- Remove precipitated proteins by centrifugation at 15,000 × g for 10 minutes

- Analyze supernatant for dopamine production using HPLC with UV detection (280 nm)

Knowledge-Driven Design Phase

Ribosome Binding Site (RBS) Engineering Based on In Vitro Data

Purpose: To translate optimal enzyme expression ratios identified in vitro to in vivo systems through RBS modulation [3].

Materials:

- UTR Designer software or similar computational tools

- Plasmid backbone (e.g., pET system for single gene expression)

- Synthetic DNA fragments with modified RBS sequences

- Restriction enzymes and ligase for molecular cloning

Procedure:

- Analyze in vitro data to identify optimal expression ratios for pathway enzymes

- Use computational tools (e.g., UTR Designer) to design RBS sequences with varying translation initiation rates (TIR)

- Design RBS libraries focusing on Shine-Dalgarno sequence modulation while maintaining secondary structure stability

- Synthesize DNA constructs with designed RBS variants

- Clone RBS variants into appropriate expression vectors

- Verify sequences by Sanger sequencing before proceeding to Build phase

Build-Test-Learn Cycle Implementation

High-Throughput Strain Construction and Screening

Purpose: To efficiently build and test multiple engineered strains in parallel [3].

Materials:

- E. coli cloning strain (e.g., DH5α) and production strain (e.g., FUS4.T2)

- Plasmid libraries with RBS variants

- Minimal medium for cultivation: 20 g/L glucose, 10% 2xTY, salts, MOPS buffer, trace elements

- Antibiotics: ampicillin (100 μg/mL), kanamycin (50 μg/mL)

- Inducer: IPTG (1 mM)

- Microtiter plates and automated liquid handling systems

Procedure:

- Transform production strain with RBS variant libraries

- Plate transformed cells on selective media and incubate at 37°C overnight

- Pick individual colonies into deep-well plates containing minimal medium

- Grow cultures at 30°C with shaking (800 rpm) until OD₆₀₀ reaches 0.6-0.8

- Induce pathway expression with IPTG (1 mM final concentration)

- Continue incubation for 24-48 hours for metabolite production

- Measure biomass (OD₆₀₀) and dopamine production via HPLC

- Analyze data to identify top-performing RBS combinations

- Sequence validated hits to confirm RBS sequences

Research Reagent Solutions and Essential Materials

Successful implementation of the knowledge-driven DBTL cycle requires specific reagents and tools optimized for high-throughput metabolic engineering.

Table 2: Essential Research Reagents for Knowledge-Driven DBTL Implementation

| Reagent/Tool | Specifications | Application in Workflow |

|---|---|---|

| Crude Cell Lysate System | Derived from production strain; contains essential metabolites and cofactors | Upstream in vitro pathway testing and optimization |

| Plasmid Systems | pET for gene storage; pJNTN for lysate studies and library construction | Genetic parts storage and pathway expression |

| RBS Engineering Tools | UTR Designer; synthetic DNA with modulated Shine-Dalgarno sequences | Fine-tuning relative gene expression in synthetic pathways |

| Production Strain | Engineered E. coli FUS4.T2 with high L-tyrosine production | Dopamine production chassis with enhanced precursor supply |

| Analytical Tools | HPLC with UV detection; automated sampling systems | Quantitative measurement of pathway performance and metabolites |

| Automation Platforms | Liquid handling robots; high-throughput screening systems | Accelerated Build and Test phases for rapid DBTL cycling |

Results and Performance Metrics

Implementation of the knowledge-driven DBTL cycle for dopamine production has demonstrated significant improvements over traditional approaches.

Quantitative Performance Assessment

Table 3: Performance Comparison of DBTL Approaches for Dopamine Production

| Engineering Approach | Dopamine Titer (mg/L) | Specific Productivity (mg/g biomass) | Fold Improvement Over State-of-the-Art | Key Innovation |

|---|---|---|---|---|

| Traditional DBTL | 27.0 | 5.17 | 1.0 (baseline) | Standard iterative engineering |

| Knowledge-Driven DBTL | 69.03 ± 1.2 | 34.34 ± 0.59 | 2.6 (titer) / 6.6 (specific productivity) | Upstream in vitro investigation guiding RBS engineering |

| Critical Success Factor | In vitro testing in crude cell lysates | High-throughput RBS engineering | GC content optimization in Shine-Dalgarno sequence | Integrated knowledge-driven workflow |

Case Study: Dopamine Production Optimization

The application of knowledge-driven DBTL to dopamine production in E. coli exemplifies the power of this approach. The pathway utilizes native E. coli 4-hydroxyphenylacetate 3-monooxygenase (HpaBC) to convert L-tyrosine to L-DOPA, followed by heterologous expression of L-DOPA decarboxylase (Ddc) from Pseudomonas putida to form dopamine [3].

The knowledge-driven approach enabled:

- Identification of optimal HpaBC:Ddc expression ratios through in vitro lysate studies

- Strategic RBS engineering to achieve identified optimal ratios in vivo

- Development of a production strain achieving 69.03 ± 1.2 mg/L dopamine

- 2.6-fold improvement in titer and 6.6-fold improvement in specific productivity compared to previous state-of-the-art

Advanced Applications and Integration with Emerging Technologies

The knowledge-driven DBTL framework is increasingly enhanced through integration with automation and artificial intelligence.

Integration with Biofoundry Platforms

Biofoundries provide automated, integrated facilities that significantly accelerate the DBTL cycle through robotic automation and computational analytics [2]. These facilities enable:

- High-throughput DNA construction using automated assembly protocols

- Rapid strain characterization through integrated analytical platforms

- Data management systems that facilitate the Learn phase

- Implementation of multiple parallel DBTL cycles

Machine Learning and AI Enhancement

Machine learning tools are transforming the Learn and Design phases of the DBTL cycle:

- Automated Recommendation Tools (ART): Leverage machine learning and probabilistic modeling to recommend optimal strain designs based on previous cycle data [4]

- Protein Language Models: Enable zero-shot prediction of protein function and stability for improved part selection [5]

- Bayesian Optimization: Efficiently navigates complex biological design spaces with limited experimental data [6]

Paradigm Shift: LDBT Cycle

Emerging approaches propose reordering the cycle to "LDBT" (Learn-Design-Build-Test), where machine learning models trained on large biological datasets precede and inform the initial design phase, potentially enabling functional solutions in a single cycle [7].

The knowledge-driven DBTL cycle represents a significant advancement in synthetic biology methodology, addressing key limitations of traditional approaches through strategic incorporation of upstream in vitro investigations and mechanistic analyses. By front-loading the workflow with critical pathway knowledge, this approach enables more rational design decisions, reduces the number of iterative cycles required, and accelerates development of high-performance production strains.

The detailed protocols and reagent specifications provided in this application note offer researchers a practical framework for implementing knowledge-driven DBTL in diverse metabolic engineering applications, from therapeutic compound production to sustainable biomanufacturing.

Contrasting Knowledge-Driven and Traditional Statistical DBTL Approaches

The Design-Build-Test-Learn (DBTL) cycle is a foundational framework in synthetic biology and metabolic engineering for systematically developing microbial strains for bioproduction [8] [9]. While traditional implementations have often relied on statistical analysis of large datasets, an emerging knowledge-driven approach incorporates upstream mechanistic investigations to guide the design process more efficiently [8] [10]. This paradigm shift aims to replace randomized trial-and-error with rational, insight-driven engineering, potentially reducing the number of DBTL cycles required to achieve performance targets. Within the context of mechanistic insights research, the knowledge-driven approach specifically seeks to understand the underlying biological principles governing strain performance, thereby generating transferable knowledge that can inform future engineering efforts across different host organisms and metabolic pathways.

Conceptual Framework Comparison

Table 1: Fundamental Contrasts Between DBTL Approaches

| Aspect | Knowledge-Driven DBTL | Traditional Statistical DBTL |

|---|---|---|

| Primary Basis for Design | Mechanistic understanding from upstream investigations [8] | Statistical models, design of experiment (DoE), or randomized selection [8] |

| Learning Focus | Understanding biological mechanisms and causal relationships [8] | Identifying correlations and statistical patterns in data [9] |

| Data Requirements | Prioritizes targeted, informative data for mechanistic insights [8] | Often requires large, comprehensive datasets for statistical power [11] |

| Typical Entry Point | Prior knowledge from in vitro studies or mechanistic hypotheses [8] | Often begins without prior knowledge [8] |

| Interpretability | High - focuses on understanding biological causality [8] | Variable - statistical models can be "black boxes" [11] [12] |

| Handling of Nonlinearity | Can incorporate nonlinear relationships through mechanistic understanding [13] | Traditional statistical methods often assume linearity [11] [12] |

Case Study: Knowledge-Driven Dopamine Production inE. coli

A compelling implementation of knowledge-driven DBTL demonstrated significantly enhanced dopamine production in Escherichia coli [8]. Researchers integrated upstream in vitro investigation using crude cell lysate systems to inform subsequent in vivo strain engineering, achieving dopamine titers of 69.03 ± 1.2 mg/L (equivalent to 34.34 ± 0.59 mg/g biomass) [8] [14]. This represented a 2.6 to 6.6-fold improvement over previous state-of-the-art production methods [8].

Table 2: Dopamine Production Performance Comparison

| Strain/Method | Dopamine Titer (mg/L) | Specific Productivity (mg/g biomass) | Fold Improvement |

|---|---|---|---|

| Previous State-of-the-Art | 27 | 5.17 | 1x (baseline) |

| Knowledge-Driven DBTL Strain | 69.03 ± 1.2 | 34.34 ± 0.59 | 2.6-6.6x |

Detailed Experimental Protocol

Protocol 1: In Vitro Pathway Prototyping Using Crude Cell Lysates

Purpose: To test dopamine pathway enzyme expression levels and interactions before in vivo implementation [8].

Materials:

- Production Host Strain: E. coli FUS4.T2 with high L-tyrosine production [8]

- Reaction Buffer: 50 mM phosphate buffer (pH 7) with 0.2 mM FeCl₂, 50 μM vitamin B₆, and 1 mM L-tyrosine or 5 mM L-DOPA [8]

- Enzyme Components: HpaBC (4-hydroxyphenylacetate 3-monooxygenase) and Ddc (L-DOPA decarboxylase) [8]

Procedure:

- Lysate Preparation: Cultivate production host strain to mid-log phase, harvest cells, and prepare crude cell lysate using standard lysis protocols [8].

- Pathway Assembly: Combine lysate with reaction buffer supplemented with pathway enzyme expressions.

- Reaction Incubation: Maintain reactions at optimal temperature (typically 30-37°C) with shaking for 4-24 hours.

- Metabolite Analysis: Quantify dopamine production using HPLC or LC-MS methods with appropriate standards.

- Mechanistic Analysis: Assess enzyme expression compatibility, cofactor utilization, and potential inhibitory interactions.

Protocol 2: In Vivo Translation via RBS Engineering

Purpose: To translate optimal expression ratios identified in vitro to stable production strains [8].

Materials:

- Cloning Strain: E. coli DH5α for genetic construction [8]

- Expression Vectors: Plasmid systems with inducible promoters (e.g., IPTG-inducible) [8]

- RBS Library: Variant Shine-Dalgarno sequences with modulated GC content [8]

Procedure:

- RBS Design: Design ribosome binding site variants focusing on Shine-Dalgarno sequence modifications while maintaining surrounding secondary structure [8].

- Genetic Construction: Assemble bicistronic operons encoding hpaBC and ddc genes with variant RBS sequences using high-throughput DNA assembly methods.

- Strain Transformation: Introduce construct libraries into production host E. coli FUS4.T2.

- High-Throughput Screening: Cultivate strains in 96-well format with minimal medium (20 g/L glucose, 10% 2xTY, MOPS buffer) [8].

- Performance Validation: Analyze dopamine production in shake flask scale with the same minimal medium composition.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagent Solutions for Knowledge-Driven DBTL Implementation

| Reagent/Category | Specific Examples | Function/Application |

|---|---|---|

| Production Host Strains | E. coli FUS4.T2 (high L-tyrosine producer) [8] | Engineered host with enhanced precursor supply for target compound synthesis |

| Enzyme Components | HpaBC (from E. coli), Ddc (from Pseudomonas putida) [8] | Pathway enzymes catalyzing conversion of L-tyrosine to L-DOPA and subsequently to dopamine |

| Cell-Free Systems | Crude cell lysates [8] | In vitro prototyping platform for testing enzyme expression and pathway functionality without cellular constraints |

| Genetic Toolboxes | RBS libraries with modulated Shine-Dalgarno sequences [8] | Fine-tuning gene expression levels in synthetic pathways |

| Analytical Standards | Dopamine hydrochloride, L-tyrosine, L-DOPA [8] | Quantification references for target compounds and precursors via HPLC or LC-MS |

| Culture Media | Minimal medium with MOPS buffer, trace elements [8] | Defined cultivation conditions for reproducible strain performance evaluation |

Emerging Paradigm: Learning-Driven Design (LDBT)

A revolutionary extension of knowledge-driven DBTL is the LDBT framework, where "Learning" precedes "Design" [10]. This approach leverages machine learning models trained on large biological datasets to make zero-shot predictions for protein and pathway design before physical construction [10]. Protein language models (ESM, ProGen) and structure-based tools (ProteinMPNN, MutCompute) can predict beneficial mutations and generate functional sequences, potentially enabling a Design-Build-Work paradigm that reduces iterative cycling [10]. When combined with cell-free expression systems for rapid testing, LDBT represents the cutting edge of knowledge-driven biological design, potentially transforming how researchers approach strain engineering and optimization [10].

Comparative Performance Analysis

Multiple systematic studies across biological domains have quantitatively compared traditional and advanced learning-based approaches. In building performance prediction, machine learning algorithms demonstrated superior performance to traditional statistical methods in both classification and regression metrics across 56 comparative studies [12]. However, a meta-analysis of cancer survival prediction revealed equivalent performance between machine learning models and traditional Cox regression [15], highlighting that advanced methods do not automatically guarantee superior results and must be selected based on specific application requirements.

The knowledge-driven DBTL approach represents a significant evolution beyond traditional statistical methods in metabolic engineering. By prioritizing mechanistic understanding through upstream investigations and targeted experimentation, researchers can generate fundamental insights that accelerate strain development while deepening biological understanding. The dopamine production case study demonstrates how this approach achieves substantial performance improvements while elucidating fundamental principles like the impact of GC content on RBS strength [8]. As synthetic biology continues to mature, integrating knowledge-driven strategies with emerging machine learning capabilities promises to further transform biological engineering into a more predictive, knowledge-intensive discipline.

The Critical Role of Mechanistic Understanding in Rational Strain Engineering

Rational strain engineering is a cornerstone of modern industrial biotechnology, essential for developing robust microbial cell factories. While high-throughput technologies have accelerated the construction and testing of engineered strains, achieving desired performance often requires more than iterative, random approaches. The knowledge-driven Design-Build-Test-Learn (DBTL) cycle has emerged as a powerful framework that leverages upstream mechanistic insights to guide engineering strategies, significantly reducing development time and resource expenditure [3]. This paradigm shift from random mutagenesis to informed design relies on a deep understanding of the complex biological networks and constraints within the host organism [16]. By integrating computational modeling, advanced analytics, and targeted experimentation, researchers can now probe the underlying physiological mechanisms that govern strain performance, enabling more predictable and successful engineering outcomes for applications ranging from small molecule production to therapeutic protein expression [17] [18].

The Knowledge-Driven DBTL Framework

The knowledge-driven DBTL cycle represents a significant evolution from traditional DBTL approaches by incorporating upstream mechanistic investigation to inform the initial design phase. This framework creates a virtuous cycle where each iteration yields deeper biological insights that subsequently guide more effective engineering strategies [3] [14].

Core Components and Workflow

Knowledge-Driven Design: This initial phase utilizes in vitro studies and computational modeling to generate testable hypotheses about pathway optimization and potential bottlenecks before any genetic modifications are made [3]. For example, in vitro cell lysate systems can be used to rapidly assess enzyme expression levels and interactions without cellular regulatory constraints [3].

Build: The construction phase implements the designed strategies using high-throughput genetic engineering tools. Ribosome Binding Site (RBS) engineering has proven particularly effective for fine-tuning gene expression in synthetic pathways [3]. This approach allows for precise modulation of translation initiation rates without altering secondary structures that might impact functionality [3].

Test: Advanced analytical methods, including operando X-ray absorption spectroscopy and ambient pressure X-ray photoelectron spectroscopy, provide real-time insights into catalytic processes and electronic structure modifications during operation [19]. These techniques enable researchers to move beyond correlative observations to establish causal relationships.

Learn: Data analysis in this phase focuses on extracting mechanistic understanding rather than merely identifying statistical correlations. Machine learning approaches can then leverage these insights to generate more accurate predictions for subsequent DBTL cycles [3] [17].

Computational Integration

The NOMAD (NOnlinear dynamic Model Assisted rational metabolic engineering Design) framework exemplifies the integration of computational modeling into rational strain engineering [16]. This approach employs kinetic models to predict metabolic responses to genetic perturbations while ensuring the engineered strain maintains robustness by keeping its phenotype close to the reference strain [16]. By imposing constraints on fluxes, metabolite concentrations, and enzyme level changes, NOMAD enables more accurate representation and design of microbial hosts, capturing both steady-state and dynamic metabolic behaviors with greater fidelity [16].

Application Notes: Successful Implementation Case Studies

Enhanced Hydrogen Evolution Catalysis

The application of rational strain engineering principles has demonstrated remarkable success in optimizing catalytic systems for hydrogen evolution reaction (HER). Researchers constructed a strain-tunable nanoporous MoS2-based Ru single-atom catalyst system where tensile strain was precisely controlled by adjusting ligament sizes [19].

Table 1: Performance Metrics of Strained Ru/np-MoS2 Catalyst for Hydrogen Evolution

| Catalyst System | Overpotential at 10 mA cm⁻² (mV) | Tafel Slope (mV dec⁻¹) | Key Engineering Strategy |

|---|---|---|---|

| Ru/np-MoS2 (strained) | 30 | 31 | Strain-amplified synergy between S vacancies and Ru sites |

| Conventional SACs | Typically >50 | Typically >40 | Single-atom sites without strain optimization |

Through systematic strain engineering, researchers amplified the synergistic effect between sulfur vacancies and single-atom Ru sites, resulting in exceptional catalytic performance [19]. Theoretical calculations revealed that applied strain enhanced reactant density in sulfur vacancies and accelerated both water dissociation and H-H coupling on Ru sites [19]. This mechanistic understanding was crucial for optimizing the catalyst design, demonstrating how physical principles can be harnessed to improve electrochemical performance.

Dopamine Production in Escherichia coli

The knowledge-driven DBTL cycle was successfully implemented to develop an efficient dopamine production strain in E. coli, achieving a 2.6 to 6.6-fold improvement over previous state-of-the-art in vivo production methods [3] [14].

Table 2: Dopamine Production Performance in Engineered E. coli Strains

| Strain/Approach | Dopamine Concentration (mg/L) | Yield (mg/gbiomass) | Key Innovation |

|---|---|---|---|

| Knowledge-driven DBTL | 69.03 ± 1.2 | 34.34 ± 0.59 | In vitro pathway optimization + RBS engineering |

| Previous in vivo production | 27 | 5.17 | Conventional metabolic engineering |

The experimental protocol began with in vitro cell lysate studies to assess enzyme interactions and identify optimal expression levels without cellular constraints [3]. Key steps included:

Pathway Design: Construction of a dopamine biosynthetic pathway from L-tyrosine using 4-hydroxyphenylacetate 3-monooxygenase (HpaBC) for L-DOPA production and L-DOPA decarboxylase (Ddc) for dopamine synthesis [3].

Host Engineering: Implementation of a high L-tyrosine production host through deletion of the transcriptional dual regulator TyrR and mutation of the feedback inhibition in chorismate mutase/prephenate dehydrogenase (TyrA) [3].

RBS Library Construction: Creation of a targeted RBS library with modulation of GC content in the Shine-Dalgarno sequence to fine-tune translation initiation rates [3].

High-Throughput Screening: Screening of library members using targeted enzyme titer and activity assays to identify top-performing strains [3].

This approach demonstrated that GC content in the Shine-Dalgarno sequence significantly impacts RBS strength, providing a generalizable principle for pathway optimization [3].

Enzyme Expression Systems for Vaccine Production

In an industrial application, Ginkgo Bioworks implemented a targeted DBTL approach to overcome critical enzyme supply constraints for vaccine manufacturing [18]. The methodology focused on developing an E. coli expression system with enhanced protein yield through rational strain engineering combined with fermentation process development [18].

Table 3: Enzyme Expression Strain Engineering Parameters and Outcomes

| Engineering Parameter | Initial Approach | Optimized Approach | Impact |

|---|---|---|---|

| Library Size | ~300 constructs | Targeted design | Reduced screening burden |

| Engineering Elements | DNA recoding, promoters, plasmid backbones, RBSs | Combined optimization | 5-fold yield improvement |

| Process Integration | Sequential | Concurrent strain and process engineering | 10-fold overall improvement |

The protocol employed a highly targeted library of approximately 300 DNA expression constructs testing different DNA recodings, promoters, plasmid backbones, and RBS variants [18]. This focused approach enabled the identification of top-performing strains within a single DBTL cycle, achieving a 5-fold yield improvement in the first six months [18]. Concurrent fermentation process development ensured that laboratory successes translated to scalable manufacturing processes, ultimately delivering a 10-fold increase in protein yield within one year [18].

Essential Protocols for Mechanistic Investigation

Protocol: In Vitro Pathway Optimization Using Cell Lysate Systems

Purpose: To rapidly assess enzyme expression levels and pathway interactions without cellular constraints prior to implementation in vivo [3].

Materials:

- Reaction Buffer: 50 mM phosphate buffer (pH 7.0) supplemented with 0.2 mM FeCl₂, 50 μM vitamin B₆, and 1 mM L-tyrosine or 5 mM L-DOPA [3]

- Crude Cell Lysate: Prepared from production host strain (e.g., E. coli FUS4.T2)

- Expression Plasmids: pJNTN system for single gene or bicistronic expression [3]

Procedure:

- Prepare concentrated reaction buffer with 5× supplements

- Combine cell lysate with expression constructs in reaction buffer

- Incubate at optimal growth temperature (e.g., 37°C for E. coli) with shaking

- Monitor substrate consumption and product formation over time

- Analyze enzyme activities and interactions to determine optimal expression ratios

Application Notes: This upstream investigation provides critical mechanistic insights into pathway bottlenecks and enzyme compatibility, informing the design of RBS libraries for in vivo implementation [3].

Protocol: High-Throughput RBS Engineering for Pathway Tuning

Purpose: To precisely modulate translation initiation rates for optimal pathway flux without altering enzyme coding sequences [3].

Materials:

- UTR Designer or similar computational tools for RBS sequence design [3]

- High-Throughput Cloning System: Golden Gate assembly or similar modular DNA assembly method

- Production Host: Engineered host with optimized precursor supply (e.g., high L-tyrosine E. coli for dopamine production) [3]

Procedure:

- Design RBS library with variations in Shine-Dalgarno sequence GC content

- Implement modular DNA assembly for high-throughput construct generation

- Transform library into production host

- Screen for product formation using targeted assays (e.g., HPLC for dopamine quantification)

- Isolate top-performing strains for characterization

- Sequence validated strains to correlate RBS sequences with performance

Application Notes: Focus on modulating the SD sequence without interfering with secondary structures to achieve predictable translation initiation rates [3].

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Research Reagent Solutions for Rational Strain Engineering

| Reagent/Resource | Function/Application | Example Implementation |

|---|---|---|

| Crude Cell Lysate Systems | Rapid in vitro pathway testing bypassing cellular constraints | Pre-DBTL cycle pathway validation [3] |

| RBS Library Variants | Fine-tuning translation initiation rates without altering coding sequences | Bicistronic pathway optimization [3] |

| Kinetic Modeling Platforms (ORACLE, NOMAD) | Predicting metabolic responses to genetic perturbations | Robust strain design with minimal phenotype perturbation [16] |

| Operando Spectroscopy Techniques (XAS, AP-XPS) | Real-time monitoring of catalytic processes and electronic structures | Mechanistic studies of single-atom catalysts [19] |

| Targeted DNA Library Constructs | Hypothesis-driven exploration of design space | Enzyme expression optimization [18] |

Workflow Visualization

Knowledge-Driven DBTL Workflow

NOMAD Framework for Robust Design

Leveraging Upstream In Vitro Investigations to Inform In Vivo Design

The transition from in vitro findings to in vivo efficacy remains a significant challenge in biomedical research and drug development. The knowledge-driven Design-Build-Test-Learn (DBTL) cycle provides a structured framework to address this challenge by incorporating upstream in vitro investigations that yield mechanistic insights before embarking on costly in vivo studies. This approach enables researchers to make data-driven decisions when designing in vivo experiments, enhancing predictive accuracy while optimizing resource allocation.

This application note details practical methodologies for implementing upstream in vitro investigations within a knowledge-driven DBTL framework, complete with experimental protocols and analytical techniques for informing in vivo design.

The Knowledge-Driven DBTL Framework for In Vitro to In Vivo Translation

The conventional DBTL cycle in synthetic biology and strain engineering begins with initial designs often based on limited prior knowledge, potentially leading to multiple iterative cycles. The knowledge-driven DBTL framework enhances this process by incorporating targeted upstream in vitro investigations that generate critical mechanistic understanding before proceeding to in vivo experimentation [3].

This approach is particularly valuable for metabolic pathway optimization, enzyme characterization, and biomarker identification, where understanding component interactions and kinetics at the in vitro level provides essential insights for effective in vivo implementation. Studies demonstrate that this methodology can significantly accelerate development timelines and improve outcomes, as evidenced by a 2.6 to 6.6-fold improvement in dopamine production titers in Escherichia coli compared to conventional approaches [3] [14].

Application Case Study: Optimizing Dopamine Production in E. coli

Background and Objective

Dopamine has important applications in emergency medicine, cancer diagnosis and treatment, lithium anode production, and wastewater treatment [3]. Developing an efficient microbial production strain for dopamine presents challenges in balancing metabolic pathway expression while maintaining host viability. Traditional DBTL approaches might require multiple in vivo cycles to identify optimal expression levels for the enzymes in the dopamine biosynthetic pathway.

Experimental Workflow and Results

The knowledge-driven approach utilized upstream in vitro investigations in crude cell lysate systems to determine rate-limiting steps and optimal enzyme ratios before moving to in vivo strain construction [3]. This methodology significantly accelerated the optimization process and enhanced understanding of pathway kinetics.

Table 1: Dopamine Production Optimization Through Knowledge-Driven DBTL

| Engineering Step | Approach | Key Parameters Tested | Outcome |

|---|---|---|---|

| Upstream In Vitro Investigation | Crude cell lysate system | Relative enzyme expression levels; Cofactor requirements; Substrate concentrations | Identification of rate-limiting steps; Optimal enzyme ratio determination |

| In Vivo Translation | RBS library engineering | GC content in Shine-Dalgarno sequence; RBS strength variants; Biomass yield | Development of production strain with enhanced dopamine titers |

| Performance Metrics | Fed-batch cultivation | Production titer (mg/L); Yield (mg/g biomass); Productivity | 69.03 ± 1.2 mg/L dopamine; 34.34 ± 0.59 mg/g biomass; 2.6 to 6.6-fold improvement over previous methods [3] |

Detailed Experimental Protocols

Protocol 1: In Vitro Pathway Prototyping Using Crude Cell Lysates

Purpose: To characterize enzyme kinetics and identify potential bottlenecks in metabolic pathways before in vivo implementation [3].

Materials:

- Production Strain: E. coli FUS4.T2 with high L-tyrosine production capacity [3]

- Plasmids: pJNTN system for single gene expression (pJNTNhpaBC, pJNTNddc) [3]

- Reaction Buffer: 50 mM phosphate buffer (pH 7.0) supplemented with 0.2 mM FeCl₂, 50 μM vitamin B₆, and 1 mM L-tyrosine or 5 mM L-DOPA [3]

Procedure:

- Lysate Preparation:

- Cultivate production strain in appropriate medium with necessary antibiotics and inducers

- Harvest cells at mid-log phase (OD₆₀₀ ≈ 0.6-0.8) by centrifugation (4,000 × g, 10 min, 4°C)

- Resuspend cell pellet in lysis buffer and disrupt using sonication or French press

- Clarify lysate by centrifugation (12,000 × g, 20 min, 4°C) and retain supernatant

In Vitro Reaction Setup:

- Combine clarified lysate with reaction buffer in 1:1 ratio

- Incubate at 30°C with shaking at 200 rpm

- Collect samples at predetermined timepoints (0, 15, 30, 60, 120 min)

Analytical Methods:

- Quantify dopamine production using HPLC with electrochemical detection

- Monitor substrate depletion and intermediate accumulation

- Calculate conversion rates and enzyme kinetics parameters

Protocol 2: RBS Library Design and High-Throughput Screening

Purpose: To translate optimal enzyme ratios identified in vitro to in vivo implementation through ribosomal binding site engineering [3].

Materials:

- RBS Library Design Tool: UTR Designer or similar computational tool [3]

- Cloning System: pJNTN plasmid with multiple cloning site for pathway genes [3]

- Screening Medium: Minimal medium with 20 g/L glucose, 10% 2xTY, and appropriate supplements [3]

Procedure:

- RBS Library Construction:

- Design RBS variants with modulated Shine-Dalgarno sequences using computational tools

- Generate variant libraries for hpaBC and ddc genes using degenerate primers

- Clone RBS variants into expression vectors using Golden Gate assembly or Gibson assembly

- Transform library into production host (E. coli FUS4.T2)

High-Throughput Screening:

- Array individual clones in 96-well or 384-well plates

- Cultivate clones in screening medium with appropriate inducers

- Monitor growth kinetics and dopamine production spectrophotometrically or via HPLC

- Select top-performing variants for further characterization

Validation and Scale-Up:

- Validate performance of selected variants in shake flask cultures

- Analyze biomass yield and dopamine production titers

- Scale up promising strains to bioreactor for fed-batch cultivation

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 2: Key Research Reagents for Knowledge-Driven DBTL Implementation

| Reagent/Category | Specific Examples | Function/Application |

|---|---|---|

| Cell-Free Protein Synthesis Systems | Crude cell lysates; Purified enzyme systems | In vitro pathway prototyping; Enzyme kinetics characterization; Cofactor requirement determination [3] |

| Genetic Engineering Tools | RBS library variants; Promoter libraries; Plasmid systems (pET, pJNTN) | Fine-tuning gene expression; Pathway optimization; Modular cloning [3] |

| Analytical Platforms | HPLC with electrochemical detection; Spectrophotometry; Mass spectrometry | Metabolite quantification; Pathway flux analysis; Product characterization [3] [20] |

| Bioinformatics Resources | UTR Designer; Machine learning algorithms; Pathway modeling software | Predictive design; Data analysis; Pattern recognition in high-throughput datasets [3] |

| Specialized Production Strains | E. coli FUS4.T2 (high L-tyrosine producer); Engineered host strains | Providing metabolic precursors; Optimizing carbon flux toward target compounds [3] |

Integration with Broader Research Applications

The principles of leveraging upstream in vitro investigations extend beyond metabolic engineering to various domains in biomedical research:

Drug Discovery and Development

In pharmaceutical research, live cell imaging applications and high-content screening platforms enable dynamic monitoring of cellular responses to pharmacological interventions, providing temporal profiling of phenotypic responses that inform subsequent in vivo study design [21]. These approaches reveal transient responses and adaptive mechanisms that might be missed in traditional fixed endpoint assays.

Biomarker Discovery and Validation

Integrating in vitro and in vivo approaches enhances biomarker development strategies. Systems biology approaches that combine molecular profiling across in silico, in vitro, and in vivo models maximize opportunities for discovering clinically relevant biomarkers [22]. This integrated framework allows for correlation of pharmacological responses with genomic patterns, enabling patient stratification strategies before clinical trials.

Diagnostic Development

In vitro diagnostic (IVD) instrument development leverages similar principles, where methodology optimization precedes clinical implementation. Technologies including electrochemical analysis, spectral analysis, and chromatography are refined through systematic in vitro testing before translation to clinical diagnostic applications [20].

The knowledge-driven DBTL cycle with upstream in vitro investigation represents a powerful paradigm for enhancing in vivo design across multiple domains of biological research. By systematically generating mechanistic insights before proceeding to complex in vivo systems, researchers can make informed decisions that accelerate development timelines, improve success rates, and deepen understanding of biological mechanisms.

The protocols and methodologies detailed in this application note provide actionable frameworks for implementation across various research contexts, from metabolic engineering to pharmaceutical development. As automated platforms and analytical technologies continue to advance, the integration of upstream in vitro investigations will become increasingly central to efficient research translation.

Synergizing AI and Foundational Biological Knowledge for Predictive Modeling

The convergence of artificial intelligence (AI) and foundational biological knowledge is revolutionizing predictive modeling in biomedical research, creating a new paradigm for the knowledge driven Design-Build-Test-Learn (DBTL) cycle. This synergy enhances the mechanistic understanding of biological systems while accelerating the development of therapeutic compounds and bioproduction strains [23] [3]. Traditional DBTL cycles often face challenges with entry points due to limited prior knowledge, leading to multiple iterative cycles that consume significant time and resources [3]. The integration of AI with established biological principles addresses this limitation by incorporating upstream investigations that generate critical mechanistic insights before full-cycle implementation [3] [14]. This approach is particularly valuable in drug discovery and development, where AI technologies can analyze vast datasets to identify novel drug targets, predict molecular interactions, and optimize lead compounds with unprecedented speed and accuracy [23] [24]. By leveraging machine learning (ML), deep learning (DL), and other AI methodologies alongside fundamental biological knowledge, researchers can construct more predictive models that not only identify correlations but also elucidate causal mechanisms, thereby bridging the gap between empirical observation and theoretical understanding in complex biological systems.

Application Notes: AI-Biology Integration in the Knowledge-Driven DBTL Cycle

Fundamental Principles of Integration

The effective integration of AI with foundational biological knowledge within the DBTL cycle operates on several core principles. First, AI serves as an augmenting tool that enhances rather than replaces domain expertise, with the most successful implementations featuring tight iteration between wet and dry lab teams where "it's hard to even tell where the line is between these groups" [25]. Second, data quality supersedes algorithmic complexity in importance, as evidenced by Amgen's AMPLIFY model, which achieves impressive performance with fewer parameters through high-quality training data [25]. Third, mechanistic interpretability is prioritized over black-box prediction, ensuring that AI-derived insights contribute to fundamental biological understanding rather than merely generating outputs [3]. This principles-based approach ensures that AI applications remain grounded in biological reality while leveraging computational power to explore complex relationships beyond human analytical capacity.

Quantitative Impact of AI-Biology Synergy

Table 1: Performance Metrics of AI-Driven Drug Discovery Platforms

| Platform/Company | Discovery Timeline | Compounds Synthesized | Therapeutic Area | Development Stage |

|---|---|---|---|---|

| Insilico Medicine | 18 months from target to Phase I [26] | Not specified | Idiopathic pulmonary fibrosis [26] | Phase I trials [26] |

| Exscientia | ~70% faster design cycles [26] | 10× fewer compounds than industry norms [26] | Oncology, immunology [26] | Phase I/II trials [26] |

| Exscientia (CDK7 inhibitor) | Substantially faster than industry standards [26] | 136 compounds [26] | Solid tumors [26] | Phase I/II trials [26] |

| BenevolentAI | Not specified | Not specified | COVID-19 (repurposing) [23] | Emergency use authorization [23] |

Table 2: Knowledge-Driven DBTL Impact on Dopamine Production in E. coli

| Strain Engineering Approach | Dopamine Concentration (mg/L) | Dopamine Yield (mg/g biomass) | Fold Improvement |

|---|---|---|---|

| State-of-the-art in vivo production (prior art) | Not specified | 5.17 [3] | Baseline |

| Knowledge-driven DBTL with RBS engineering | 69.03 ± 1.2 [3] | 34.34 ± 0.59 [3] | 2.6-6.6 fold [3] |

Implementation Framework

The knowledge-driven DBTL cycle implementation follows a structured framework that begins with upstream in vitro investigation to inform the initial design phase [3]. This preliminary knowledge generation step distinguishes it from conventional DBTL approaches and provides critical mechanistic insights before committing to full strain construction or compound development. The framework subsequently proceeds through iterative optimization cycles where AI models are continuously refined with experimental data, enabling increasingly accurate predictions of biological behavior [3] [25]. This methodology has demonstrated particular success in bioproduction strain development, where the knowledge-driven DBTL cycle enabled 2.6-6.6 fold improvement in dopamine production performance compared to state-of-the-art alternatives [3]. The integration of AI tools throughout this framework enhances each phase, from designing genetic constructs to predicting metabolic flux and optimizing pathway regulation.

Protocols

Protocol 1: AI-Augmented Target Identification and Validation

Purpose: To identify novel therapeutic targets by integrating AI-driven analysis with established biological knowledge.

Materials:

- Multi-omics datasets (genomics, transcriptomics, proteomics)

- AI platforms (e.g., Insilico Medicine, BenevolentAI)

- Validation assay systems (cell-based, biochemical)

- High-performance computing infrastructure

Procedure:

- Data Curation and Integration

- Collect and pre-process heterogeneous biological data from public repositories and proprietary sources

- Annotate datasets using established biological ontologies and pathway databases

- Apply natural language processing (NLP) to extract structured information from unstructured scientific literature [23]

Knowledge Graph Construction

- Build biological knowledge graphs integrating protein-protein interactions, signaling pathways, and disease associations

- Implement graph neural networks to identify novel target-disease relationships [26]

- Prioritize targets based on druggability, safety profile, and biological plausibility

Mechanistic Modeling

Experimental Validation

- Design validation experiments based on AI-generated hypotheses

- Implement cell-based assays to confirm target-disease relationship

- Evaluate target tractability using established screening approaches

Troubleshooting Tips:

- If AI predictions show poor biological coherence, review training data for biases and incorporate additional domain knowledge

- When validation results contradict predictions, analyze discrepancy to refine AI models

- For targets with limited structural information, leverage homology modeling and molecular dynamics simulations

Protocol 2: Knowledge-Driven Strain Optimization for Bioproduction

Purpose: To engineer microbial strains for enhanced compound production using knowledge-driven DBTL cycles.

Table 3: Research Reagent Solutions for Microbial Strain Engineering

| Reagent/Resource | Function | Application Example |

|---|---|---|

| E. coli FUS4.T2 strain [3] | Dopamine production host | Engineered for high L-tyrosine production as dopamine precursor [3] |

| pET plasmid system [3] | Storage vector for heterologous genes | Single gene insertion (hpaBC, ddc) for dopamine pathway [3] |

| pJNTN plasmid [3] | Library construction and crude cell lysate systems | Bi-cistronic expression of dopamine pathway genes [3] |

| Ribosome Binding Site (RBS) libraries [3] | Fine-tuning gene expression | Optimization of relative enzyme expression levels in dopamine pathway [3] |

| Crude cell lysate systems [3] | In vitro pathway testing | Bypass cellular constraints to assess enzyme expression and function [3] |

Materials:

- Microbial chassis (e.g., E. coli FUS4.T2)

- Pathway engineering plasmids

- RBS library variants

- Cell-free transcription-translation systems

- Analytical equipment (HPLC, mass spectrometry)

Procedure:

- In Vitro Pathway Analysis

- Establish crude cell lysate system expressing pathway enzymes

- Measure reaction kinetics and metabolic intermediates

- Identify rate-limiting steps and regulatory bottlenecks [3]

Computational Design

- Model metabolic flux using constraint-based reconstruction and analysis

- Apply machine learning to predict optimal enzyme expression levels

- Design RBS variants for pathway balancing using UTR Designer tools [3]

High-Throughput Construction

- Implement automated DNA assembly for pathway variants

- Transform optimized constructs into production host

- Validate genetic modifications through sequencing

Performance Evaluation

- Cultivate engineered strains in controlled bioreactors

- Measure product titers, yields, and productivity

- Analyze metabolomic profiles to confirm pathway operation

Learning and Model Refinement

- Integrate experimental results with computational models

- Identify discrepancies between predicted and actual performance

- Refine AI models for improved prediction in subsequent cycles

Troubleshooting Tips:

- If pathway intermediate accumulation occurs, rebalance enzyme expression using RBS engineering

- When host viability is compromised, implement dynamic regulation or subpopulation control

- For poor protein expression, optimize codon usage and mRNA stability

Visualizations

Knowledge-Driven DBTL Cycle Workflow

AI-Biology Integration in Predictive Modeling

Dopamine Production Pathway Engineering

From Theory to Bench: Implementing Knowledge-Driven DBTL in Research and Development

Strategic Integration of Cell-Free Systems for Rapid Pathway Prototyping

The knowledge-driven Design-Build-Test-Learn (DBTL) cycle represents a paradigm shift in synthetic biology and metabolic engineering. By integrating upstream in vitro investigations, this approach accelerates strain development and provides deep mechanistic insights into pathway performance [3]. Cell-free systems (CFS) have emerged as a pivotal platform within this framework, enabling researchers to bypass the constraints of whole cells. These systems utilize purified cellular components or crude cell extracts to execute complex metabolic and genetic programs in a controlled, open environment [28]. The fundamental advantage lies in their ability to rapidly probe biochemical reactions without the confounding influences of cellular growth, regulation, or viability, thus offering an unparalleled context for predictive pathway prototyping [28] [3].

The versatility of cell-free systems spans two primary configurations: purified systems with well-defined reaction networks, and crude cell extracts that capture a snapshot of native metabolic networks at the moment of cell lysis [28]. This flexibility allows for precise manipulation of reaction conditions, enzyme combinations, and co-factor concentrations, facilitating the high-throughput exploration of biological and chemical diversity. As a result, cell-free prototyping has demonstrated remarkable success in predicting in vivo performance, with studies reporting correlation coefficients (R²) as high as 0.75 for resource competition and growth burden when translated to living systems [28].

Core Principles and Advantages of Cell-Free Pathway Prototyping

Key Characteristics of Cell-Free Systems

Cell-free systems offer several distinct advantages that make them ideally suited for pathway prototyping within knowledge-driven DBTL cycles. The open reaction environment allows direct access to the reaction milieu, enabling real-time monitoring, facile substrate addition, and product removal that would be impossible in intact cells [28] [29]. This openness also permits precise control over the redox environment, pH, and energy regeneration systems, which is crucial for optimizing pathways involving oxygen-sensitive enzymes or complex co-factor dependencies [28].

Another significant advantage is the decoupling of protein production from cell viability. This enables the expression of toxic proteins or pathways that would otherwise inhibit cell growth in vivo [29]. Furthermore, the absence of cell walls and membranes eliminates the barrier to substrate uptake and product secretion, particularly beneficial for non-native substrates or pathways with intracellular transport limitations [28]. The substantial reduction in design-build-test cycle times – from weeks to mere days – allows for iterative optimization of enzyme variants and ratios under different conditions, dramatically accelerating the prototyping phase [28] [30].

System Configuration Options

Table 1: Comparison of Cell-Free System Configurations for Pathway Prototyping

| System Type | Key Components | Advantages | Ideal Applications |

|---|---|---|---|

| Crude Cell Extracts | Lysate containing native metabolic networks, enzymes, cofactors [28] | Cost-effective; contains native chaperones and metabolites; suitable for complex pathway assembly [3] | Primary metabolic pathways; rapid screening of enzyme combinations; mimicking native host context [28] [3] |

| Purified Systems (PURE) | Recombinantly expressed, purified components of transcription and translation [28] [31] | Defined composition; minimized proteolytic degradation; precise control over components [28] | Functional studies of individual enzymes; toxic protein production; standardized reactions [28] |

| Hybrid Systems | Mixed extracts from multiple organisms or supplemented with purified enzymes [28] | Access to diverse metabolic capabilities; complementation of missing functions [28] [30] | Non-model organism pathways; complex natural product biosynthesis; C1 metabolism [28] [30] |

Experimental Protocol: Implementing Cell-Free Pathway Prototyping

Preparation of Crude Cell Extracts from E. coli

This protocol details the preparation of crude cell extracts from E. coli, the most commonly used and well-characterized cell-free platform [28] [29]. The entire procedure requires approximately 8-10 hours.

Materials and Equipment:

- E. coli strain (e.g., BL21, MG1655, or production-specific strains like FUS4.T2 for dopamine prototyping [3])

- Lysogeny Broth (LB) medium

- French press or high-pressure homogenizer

- Centrifuge and ultracentrifuge capable of 30,000 × g

- Dialysis membrane or desalting columns

- Buffer A: 10 mM Tris-acetate (pH 8.2), 14 mM magnesium acetate, 60 mM potassium acetate

- Buffer B: 10 mM Tris-acetate (pH 8.2), 14 mM magnesium acetate, 60 mM potassium glutamate

Procedure:

- Cell Culture: Inoculate 1 L of LB medium with the selected E. coli strain and incubate at 37°C with vigorous shaking (250 rpm). Monitor growth until mid-log phase (OD600 ≈ 0.6-0.8).

- Harvesting: Chill culture on ice for 15 minutes, then centrifuge at 5,000 × g for 15 minutes at 4°C. Discard supernatant and wash cell pellet with cold Buffer A.

- Cell Lysis: Resuspend cell pellet in a minimal volume of Buffer A (approximately 1 mL per gram of wet cells). Lyse cells using a French press at 20,000 psi with two passes. Maintain samples on ice throughout the process.

- Clarification: Centrifuge the lysate at 12,000 × g for 10 minutes at 4°C to remove cell debris. Transfer supernatant to a fresh tube.

- Run-Off Reaction: Incubate the supernatant at 37°C for 80 minutes with gentle shaking (120 rpm) to deplete endogenous mRNA and run off ribosomes.

- Dialysis: Transfer the extract to dialysis tubing with a 10-14 kDa molecular weight cutoff. Dialyze against 50 volumes of Buffer B for 3 hours at 4°C with one buffer change.

- Aliquoting and Storage: Divide the extract into small aliquots, flash-freeze in liquid nitrogen, and store at -80°C until use.

Quality Control Assessment:

- Determine protein concentration using Bradford assay (typical range: 30-50 mg/mL)

- Test protein synthesis capability using a reporter gene (e.g., GFP)

- Confirm minimal ATP depletion during storage

Cell-Free Reaction Setup for Metabolic Pathway Prototyping

This protocol describes the assembly of cell-free reactions for prototyping metabolic pathways, using the dopamine biosynthesis pathway as an exemplary application [3].

Reaction Components:

- Cell Extract: 30% of final reaction volume [3]

- Energy Regeneration System: 10-20 mM magnesium glutamate, 50-100 mM potassium glutamate, 1.5 mM ATP, 0.9 mM each of GTP, UTP, CTP, 35 mM phosphoenolpyruvate (PEP) [28]

- Amino Acids: 2 mM each of 20 standard amino acids

- Cofactors: 0.1 mM NADP+, 0.1 mM NAD+, 0.05 mM coenzyme A, 0.1 mM thiamine pyrophosphate

- DNA Template: 10-20 ng/µL plasmid DNA or PCR product encoding target pathway

- Substrates: Pathway-specific substrates (e.g., 1 mM L-tyrosine for dopamine prototyping [3])

- Supplemental Buffer: 50 mM HEPES or phosphate buffer (pH 7.0-8.0) [3]

Assembly Procedure:

- Prepare a master mix containing all components except cell extract and DNA template.

- Pre-warm the master mix at the desired reaction temperature (typically 30-37°C).

- Add cell extract and DNA template to initiate the reaction.

- Incubate with gentle shaking (120-150 rpm) for 4-8 hours.

- Monitor reaction progress through periodic sampling for substrate consumption and product formation.

Analytical Methods:

- HPLC Analysis: For dopamine detection, use C18 reverse-phase column with mobile phase of 50 mM phosphate buffer (pH 3.0) and methanol (95:5), flow rate 1 mL/min, detection at 280 nm [3]

- Mass Spectrometry: LC-MS for identification and quantification of pathway intermediates

- Colorimetric Assays: Specific detection of cofactor turnover or product formation

Case Study: Knowledge-Driven DBTL for Dopamine Production

Application of Cell-Free Prototyping

A recent study demonstrated the power of knowledge-driven DBTL cycling with cell-free prototyping for optimizing dopamine production in E. coli [3] [14]. The pathway consisted of two key enzymes: 4-hydroxyphenylacetate 3-monooxygenase (HpaBC) from native E. coli metabolism for converting L-tyrosine to L-DOPA, and L-DOPA decarboxylase (Ddc) from Pseudomonas putida for the final conversion to dopamine [3].

The initial in vitro prototyping phase utilized crude cell lysate systems to test different relative expression levels of HpaBC and Ddc, bypassing the time-consuming in vivo cloning and cultivation steps. The cell-free reactions were conducted in phosphate buffer (50 mM, pH 7.0) supplemented with 0.2 mM FeCl₂, 50 µM vitamin B₆, and 1 mM L-tyrosine or 5 mM L-DOPA as substrates [3]. This approach allowed rapid assessment of enzyme kinetics, compatibility, and potential bottlenecks before moving to in vivo implementation.

Quantitative Results and Translation to In Vivo Systems

Table 2: Performance Metrics for Dopamine Production in Cell-Free vs. In Vivo Systems

| System Configuration | Dopamine Concentration | Product Yield | Key Optimization Parameters |

|---|---|---|---|

| Cell-Free Prototyping | Not specified in results | Not specified in results | Enzyme ratios; cofactor concentrations; Fe²⁺ supplementation [3] |

| Initial In Vivo Strain | Baseline (reference) | Baseline (reference) | None (starting point) [3] |

| Optimized In Vivo Strain | 69.03 ± 1.2 mg/L [3] [14] | 34.34 ± 0.59 mg/g biomass [3] [14] | RBS engineering; SD sequence GC content [3] |

| Fold Improvement | 2.6-fold increase [3] [14] | 6.6-fold increase [3] [14] | Knowledge-driven DBTL with upstream in vitro investigation [3] |

The cell-free prototyping results informed the subsequent in vivo implementation through high-throughput ribosome binding site (RBS) engineering [3]. The critical learning from the in vitro studies was translated to fine-tune the expression levels of HpaBC and Ddc in the production strain. Notably, the research demonstrated the significant impact of GC content in the Shine-Dalgarno (SD) sequence on translation efficiency, enabling precise metabolic flux control toward dopamine synthesis [3].

Visualization of Workflows and Pathways

Knowledge-Driven DBTL Cycle with Cell-Free Prototyping

Diagram Title: Knowledge-Driven DBTL Cycle with Integrated Cell-Free Prototyping

Dopamine Biosynthesis Pathway for Prototyping

Diagram Title: Dopamine Biosynthesis Pathway for Cell-Free Prototyping

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for Cell-Free Pathway Prototyping

| Reagent Category | Specific Examples | Function in Pathway Prototyping |

|---|---|---|

| Cell-Free Extracts | E. coli extract, B. subtilis extract, hybrid/extract mixtures [28] [30] | Provide foundational enzymatic machinery, cofactors, and energy systems for in vitro reactions [28] |

| Energy Regeneration Systems | Phosphoenolpyruvate (PEP), creatine phosphate, 3-phosphoglyceric acid [28] | Sustain ATP-dependent processes; drive transcription, translation, and energy-requiring enzymatic reactions [28] |

| Specialized Cofactors | NAD(P)+, NAD(P)H, Coenzyme A, Thiamine pyrophosphate, Pyridoxal phosphate [3] | Enable specific enzyme activities; essential for oxidase, dehydrogenase, and decarboxylase functions [3] |

| Pathway-Specific Substrates | L-tyrosine, L-DOPA, C1 substrates (formate, methanol, CO₂) [28] [3] | Serve as starting materials or intermediates for target pathways; enable testing of substrate utilization [28] [3] |

| DNA Template Systems | Plasmid vectors, linear expression templates, gBlocks Gene Fragments [32] | Encode pathway enzymes; enable rapid testing of genetic designs without cloning [32] |

Advanced Applications and Future Perspectives

The integration of cell-free systems with the knowledge-driven DBTL cycle extends beyond conventional metabolic engineering. Recent advances demonstrate their application in natural product biosynthesis [30], where cell-free platforms enable the characterization of biosynthetic pathways for compounds including ribosomal peptides, non-ribosomal peptides, polyketides, and terpenoids [30]. This approach is particularly valuable for accessing "silent" or "cryptic" biosynthetic gene clusters that are not expressed under standard laboratory conditions [30].

Future developments will likely focus on expanding the scope of cell-free metabolism to include non-model organisms and engineered extracts with augmented capabilities [28]. The incorporation of non-natural chemistries and the utilization of sustainable substrates such as C1 compounds (CO₂, formate, methanol), plastic waste, and lignin derivatives represent promising directions for environmentally conscious bioproduction [28]. Additionally, the integration of machine learning algorithms with high-throughput cell-free experimentation will further accelerate the optimization of pathway performance and predictive modeling [33].

As the field progresses, standardization of cell-free systems and development of modular workflows will enhance reproducibility and accessibility. The synergy between cell-free prototyping and automated biofoundries will establish a new paradigm for rapid biological design, fundamentally transforming how we approach metabolic engineering and synthetic biology challenges [3] [31].

High-Throughput Ribosome Binding Site (RBS) Engineering for Precise Metabolic Control

Metabolic engineering is increasingly adopting a knowledge-driven Design-Build-Test-Learn (DBTL) cycle to efficiently develop microbial cell factories. This approach uses upstream, mechanistic investigations to inform rational strain engineering, moving beyond purely statistical or random methods [3]. Within this framework, high-throughput Ribosome Binding Site (RBS) engineering serves as a powerful tool for implementing the "Build" phase with precision, enabling fine-tuning of metabolic pathway fluxes without relying on random mutagenesis [3] [14]. RBS sequences control translation initiation rates (TIR) by modulating ribosome accessibility to mRNA, directly influencing protein expression levels [34]. By systematically engineering RBS libraries, researchers can optimize the expression levels of multiple enzymes in a biosynthetic pathway, thereby balancing metabolic flux to maximize product titers, yields, and productivity [35] [36]. This protocol details the application of high-throughput RBS engineering within a knowledge-driven DBTL framework, demonstrating its utility for achieving precise metabolic control in both Escherichia coli and Corynebacterium glutamicum for the production of valuable compounds including dopamine, 4-hydroxyisoleucine (4-HIL), and lycopene [36] [3] [37].

Application Notes: RBS Engineering for Metabolic Pathway Optimization

Key Principles and Strategic Implementation

The effectiveness of RBS engineering stems from its direct impact on translation initiation, a key rate-limiting step in protein synthesis. Even minor modifications of 6-8 base pairs within the RBS core region can dramatically alter protein expression levels by changing the secondary structure accessibility and the complementarity to the 16S rRNA [34]. In a knowledge-driven DBTL cycle, preliminary in vitro investigations using cell-free transcription-translation systems can provide crucial mechanistic insights into enzyme expression and function before committing to extensive in vivo engineering [3]. These insights directly inform the design of smarter, more focused RBS libraries for chromosomal integration, significantly accelerating the strain optimization process [3] [14].

Combinatorial RBS engineering of multiple genes within a pathway has proven particularly powerful for overcoming metabolic bottlenecks. Recent advances enable the generation of highly diverse RBS variant libraries across numerous genomic loci without donor templates. For instance, the bsBETTER system for Bacillus subtilis uses base editing to create up to 255 of 256 theoretical RBS combinations per target gene directly on the chromosome, enabling massive parallel optimization of pathway flux [37].

Quantitative Outcomes of RBS Engineering Applications

Table 1: Performance Metrics of RBS Engineering in Various Microbial Hosts

| Host Organism | Target Product | Engineering Strategy | Key Performance Outcome | Reference |

|---|---|---|---|---|

| Escherichia coli | Dopamine | Knowledge-driven DBTL with RBS fine-tuning of hpaBC and ddc | 69.03 ± 1.2 mg/L (34.34 ± 0.59 mg/gbiomass); 2.6 to 6.6-fold improvement over previous state-of-the-art | [3] [14] |

| Corynebacterium glutamicum | 4-Hydroxyisoleucine (4-HIL) | RBS engineering of ido combined with odhI and vgb expression | 139.82 ± 1.56 mM 4-HIL; demonstrates critical synchronicity of cosubstrate supply | [36] |

| Bacillus subtilis | Lycopene | Multiplex base editing of RBSs across 12 MEP pathway genes (bsBETTER system) | 6.2-fold increase in lycopene production compared to genomic overexpression | [37] |

| Escherichia coli | Riboflavin (Vitamin B2) | GLOS-based RBS library integration in MMR-proficient strains | Efficient sampling of functional expression space without off-target mutations | [34] |

Experimental Workflow for Knowledge-Driven RBS Engineering

The following diagram illustrates the integrated experimental workflow combining knowledge-driven DBTL with high-throughput RBS engineering:

Detailed Experimental Protocols

Protocol 1: GLOS-Based RBS Library Design and Chromosomal Integration

Principle: This protocol enables unbiased RBS library integration in mismatch repair (MMR)-proficient strains using the Genome-Library-Optimized-Sequences (GLOS) rule, which avoids MMR recognition by designing oligonucleotides with at least 6 bp mismatches [34].

Materials:

- Bacterial strain (e.g., E. coli MMR-proficient such as EcNR1)

- RedLibs algorithm for library design [34]

- CRMAGE system (CRISPR-optimized MAGE) [34]

- Oligonucleotides with 6+ bp mismatches targeting RBS region

Procedure:

- Target Identification: Select the RBS region -15 to -10 bp upstream of the target gene start codon.

- GLOS Library Design:

- Apply the GLOS rule using RedLibs algorithm to generate a library with 6 bp mismatches.

- Ensure all oligonucleotides have the same mismatch length (≥6 bp) to maintain similar allelic replacement efficiency.

- Pre-screen for oligonucleotides with optimal folding energies (ΔG > -5 kcal/mol) to maximize integration efficiency [34].

- Library Integration:

- Prepare electrocompetent cells expressing λ Red recombinase and Cas9.

- Co-transform with GLOS oligonucleotide library and target-specific sgRNA plasmid.

- Select for transformants using appropriate antibiotics.

- Verify integration via colony PCR and Sanger sequencing of 96+ randomly selected clones.

- Quality Control:

- Measure allelic replacement efficiency (target >95% in MMR+ strains with GLOS).

- Quantify library diversity by sequencing to ensure >90% of designed variants are represented.

- Check for off-target indels (expected <8% in MMR+ strains) [34].

Protocol 2: Combinatorial RBS Engineering for Pathway Optimization

Principle: This protocol enables simultaneous tuning of multiple pathway genes using base editor-guided systems like bsBETTER, which generates diverse RBS combinations without donor templates [37].

Materials:

- Base editing system (e.g., bsBETTER for B. subtilis)

- sgRNA library targeting RBS regions of multiple pathway genes

- High-throughput screening capability (FACS or robotic screening)

Procedure:

- Multi-Gene Target Selection: Identify 4-12 genes in the target metabolic pathway for combinatorial RBS engineering [37].

- sgRNA Library Design: Design sgRNAs targeting the RBS regions of all selected genes.

- Base Editor Transformation: Introduce the base editor system with sgRNA library into the host strain.

- Library Generation:

- Induce base editor activity to generate RBS variants.

- Allow sufficient generations for stable variant formation.

- The bsBETTER system can generate up to 255 of 256 theoretical RBS combinations per gene [37].

- High-Throughput Screening:

- For pigmented products (e.g., lycopene), use colorimetric screening.

- For non-pigmented products, employ FACS with biosensors or label-free techniques.

- Isolate top 0.1-1% of producers for further analysis.

- Validation and Scale-Up:

- Sequence RBS regions of top performers to correlate RBS strength with productivity.

- Validate hits in shake-flask fermentations.

- Conduct multi-omics analysis to understand flux rewiring [37].

Protocol 3: RBS Engineering with Cofactor Balancing

Principle: This protocol specifically addresses the synchronization of main pathway enzymes with cofactor-supplying enzymes, as demonstrated for 4-HIL production where α-ketoglutarate and O₂ supply were critical [36].

Materials:

- Plasmid system with multiple RBS variants

- Genes for cofactor regeneration/balancing (e.g., odhI for α-ketoglutarate, vgb for oxygen)

- Microaerobic cultivation equipment

Procedure:

- Pathway Analysis: Identify main pathway enzymes and their cofactor requirements.

- Dual RBS Library Construction:

- Create RBS libraries for both the key pathway enzyme (e.g., ido for 4-HIL) and cofactor-supplying enzyme (e.g., odhI).

- Use RBS sequences spanning high, medium, and low strength variations [36].

- Strain Construction: Transform production host with combinatorial RBS libraries.

- Cultivation Optimization:

- Employ stratified oxygen conditions to test cofactor synchronization.

- For oxygen-dependent enzymes, consider introducing bacterial hemoglobin (vgb) to enhance O₂ supply [36].

- Byproduct Reduction:

- Identify and delete genes encoding competing pathways (e.g., avtA, ldhA-pyk2 in C. glutamicum).

- Measure reduction in byproducts and improvement in target product yield [36].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Reagents for High-Throughput RBS Engineering

| Reagent/System | Function | Application Example | Key Features |

|---|---|---|---|

| RedLibs Algorithm | Designs smart RBS libraries with uniform TIR distribution | E. coli lacZ and riboflavin pathway optimization [34] | GLOS rule compliance; Reduced library size with high functional diversity |

| CRMAGE System | CRISPR-optimized MAGE for efficient allelic replacement | Chromosomal RBS library integration in E. coli [34] | >95% allelic replacement efficiency; Counterselection against wild-type |

| bsBETTER System | Base editor-guided multiplex RBS editing | B. subtilis lycopene pathway optimization [37] | Template-free; 255+ RBS combinations per gene; Scalable multiplexing |