How to Choose a Synthetic Biology Simulation Platform: A 2025 Guide for Researchers

This guide provides researchers, scientists, and drug development professionals with a structured framework for selecting a synthetic biology simulation platform.

How to Choose a Synthetic Biology Simulation Platform: A 2025 Guide for Researchers

Abstract

This guide provides researchers, scientists, and drug development professionals with a structured framework for selecting a synthetic biology simulation platform. It covers foundational principles, from understanding the core DBTL cycle and key technologies like AI and automation, to methodological application for specific research goals. The article further details strategies for troubleshooting and optimizing experimental workflows and offers a comparative analysis for validating platform performance, empowering scientists to make informed decisions that accelerate R&D.

Understanding the Core of Synthetic Biology Simulation

Defining the Synthetic Biology Simulation Platform

Synthetic biology simulation platforms are integrated computational tools and technologies designed to enable the engineering of biological systems for specific purposes. These platforms combine DNA synthesis, computational biology, and advanced automation to create, modify, or enhance genetic constructs, supporting applications in biotechnology, medicine, agriculture, and environmental sustainability [1]. They serve as a critical bridge between digital design and biological implementation, allowing researchers to model, test, and optimize genetic designs in silico before physical implementation. This digital approach significantly accelerates the design-build-test-learn (DBTL) cycle, reducing development costs and timeframes while increasing the predictability of biological engineering outcomes.

The core function of these platforms is to provide a virtual environment where biological components, such as DNA sequences, genetic circuits, enzymes, and metabolic pathways, can be assembled and their behavior simulated. This capability is particularly valuable given the complexity and inherent variability of biological systems. By leveraging computational models, researchers can explore a much wider design space than would be feasible through experimental methods alone, identifying promising candidates for further laboratory validation.

Core Components and Market Landscape

A synthetic biology simulation platform is typically composed of several interconnected technological layers. Key components include genome editing tools (e.g., CRISPR-Cas9), DNA assembly technologies, and bioinformatics software for design and analysis [1]. The platform integrates capabilities for genetic design, mathematical modeling of biological systems, and often connects with laboratory automation systems for physical implementation.

The global synthetic biology platforms market, which includes these simulation environments, is experiencing rapid growth. The market was valued at USD 5.23 billion in 2024 and is projected to reach USD 19.77 billion by 2032, growing at a compound annual growth rate (CAGR) of 18.07% during the forecast period of 2025 to 2032 [1]. This growth is fueled by increasing demand across pharmaceuticals, food and agriculture, and environmental sectors, alongside government initiatives supporting bio-based economies.

Table: Global Synthetic Biology Platforms Market Segmentation

| Segmentation Basis | Categories and Key Elements |

|---|---|

| By Tool and Technology | Tools: Oligonucleotides, Enzymes, Cloning Technology Kits, Chassis Organisms, Xeno-Nucleic Acids (XNA) [1].Technologies: Gene Synthesis, Genome Engineering, Cloning and Sequencing, Next-Generation Sequencing, Microfluidics, Computational Modelling [1]. |

| By Application | Medical Applications (Pharmaceuticals, Drug Discovery, Artificial Tissue), Industrial Applications (Biofuel, Biomaterials, Industrial Enzymes), Food and Agriculture, Environmental Applications (Bioremediation) [1]. |

| By Product | Core Products (Synthetic DNA, Synthetic Genes), Enabling Products [1]. |

| Key Market Players | Thermo Fisher Scientific Inc., Merck KGaA, Ginkgo Bioworks, Twist Bioscience, GenScript, Agilent Technologies, Inc. [1]. |

Quantitative Data and Technology Trends

The expansion of the synthetic biology platforms market is driven by several key factors. There is an increasing demand for personalized medicine, where synthetic biology enables tailored drug development based on individual genetic profiles, such as in CAR-T cell therapies for cancer [1]. Furthermore, the expansion of industrial biotechnology is promoting the production of sustainable, bio-based chemicals as alternatives to petrochemicals, reducing environmental impact [1].

A significant trend is the increasing integration of Artificial Intelligence (AI) with synthetic biology platforms. AI enhances computational modeling, automates workflows, and optimizes genetic designs. For instance, companies like Ginkgo Bioworks use AI-powered platforms to design custom organisms for applications in biofuels and pharmaceuticals, reducing the time and costs associated with complex genetic engineering processes [1]. Other transformative advancements include the integration of CRISPR-based gene-editing tools with AI algorithms and the adoption of droplet-based microfluidics for high-throughput screening [1].

Table: Key Technologies in Synthetic Biology Simulation Platforms

| Technology | Function in the Platform | Specific Example |

|---|---|---|

| Computational Modelling & AI | Uses algorithms to predict the behavior of biological systems, optimizing designs before construction. | Predicting metabolic flux in an engineered pathway for biofuel production [1]. |

| Gene Synthesis | The digital design and subsequent chemical creation of DNA sequences from scratch. | Creating a novel gene sequence for a therapeutic protein [1]. |

| Genome Engineering | Tools for making targeted modifications to an organism's native DNA. | Using CRISPR-Cas9 to knock out a gene in a chassis organism [1]. |

| Microfluidics | Technology for miniaturizing and automating experiments, enabling high-throughput testing. | Screening thousands of engineered enzyme variants in parallel [1]. |

| Measurement & Modelling | Tools for gathering quantitative data from biological systems to inform and refine models. | Using RNA-seq data to update a model of a genetic circuit's dynamics [1]. |

A Framework for Platform Selection: The Scientist's Toolkit

Selecting an appropriate synthetic biology simulation platform requires a strategic evaluation of project needs against platform capabilities. This decision is critical as it influences the efficiency, success, and scalability of the research. The following framework outlines the core considerations, including the essential "research reagent solutions" that the platform must effectively model and manage.

Chassis Organism Selection

The choice of chassis organism—the host cell that will carry the engineered genetic construct—is a foundational decision that the simulation platform must support. Key selection criteria include [2]:

- Genetic Tractability: How easily the organism can be genetically manipulated, including the availability of genetic tools, transformation protocols, vectors, and genome-editing technologies.

- Growth Characteristics: The organism's growth rate, nutrient requirements, and tolerance to stress conditions, which impact the feasibility of large-scale production.

- Safety: The organism should typically be non-pathogenic and Generally Recognized As Safe (GRAS), especially for applications with potential for environmental release or in food and therapeutics.

- Pathway Compatibility: The organism's native metabolic pathways must support—or at least not interfere with—the intended synthetic function.

Table: Common Chassis Organisms and Their Applications

| Chassis Organism | Best-Suited Project Types | Key Advantages | Notable Limitations |

|---|---|---|---|

| Escherichia coli | Rapid prototyping, protein production, metabolic engineering of small molecules [2]. | Fast growth, well-characterized genetics, extensive toolkit available [2]. | Limited ability to perform eukaryotic post-translational modifications. |

| Saccharomyces cerevisiae | Eukaryotic protein production, complex metabolic pathways, synthetic biology requiring eukaryotic processes [2]. | GRAS status, performs complex post-translational modifications, well-understood [2]. | Slower growth than E. coli, more complex genetics. |

| Bacillus subtilis | Secretion of proteins, industrial enzyme production [2]. | Efficient protein secretion, GRAS status, naturally competent. | Less genetic toolbox than E. coli or yeast. |

| Pseudomonas putida | Bioremediation, metabolism of complex aromatic compounds [2]. | Metabolic versatility, robust, tolerant to solvents and stresses. | Can be more difficult to engineer genetically. |

| Cyanobacteria | Photosynthetic applications, CO2 capture, solar-driven chemical production [2]. | Converts sunlight into chemical energy, fixes CO2. | Slow growth, challenges in genetic manipulation. |

The Research Reagent Solutions Toolkit

The simulation platform must accurately model the behavior and interactions of core biological reagents. The following table details essential materials and their functions that are central to synthetic biology experiments [3] [1] [2].

Table: Essential Research Reagent Solutions for Synthetic Biology

| Reagent / Material | Core Function | Technical Specification & Use-Case |

|---|---|---|

| Oligonucleotides | Short, single-stranded DNA/RNA fragments used as primers, probes, or for gene synthesis. | Used in PCR, sequencing, and as building blocks for gene assembly. The platform must model specificity and melting temperature. |

| Cloning Kits | Pre-assembled reagents for molecular cloning techniques (e.g., restriction digestion, ligation, Gibson assembly). | Simplify and standardize the process of inserting DNA fragments into vectors. The platform should simulate assembly fidelity. |

| Enzymes | Protein catalysts for specific biochemical reactions (e.g., polymerases, ligases, restriction endonucleases). | Essential for PCR, DNA assembly, and DNA modification. Platform models must account for enzyme kinetics and fidelity. |

| Chassis Organisms | The host cell (microbial, yeast, mammalian) that harbors the engineered genetic system [2]. | Serves as the foundational platform for synthetic functions. The platform simulates cellular context and system behavior [2]. |

| Non-Canonical Amino Acids | Unnatural amino acids incorporated into proteins to confer new properties. | Used for expanding the genetic code and creating novel enzymes. Platform must handle altered codon tables and chemical properties [3]. |

| Xeno-Nucleic Acids | Synthetic genetic polymers with alternative sugar-phosphate backbones [3] [1]. | Used for creating aptamers and catalysts with enhanced stability. Platform must model base-pairing and polymer properties [3]. |

Automation and Workflow Integration

Modern simulation platforms are increasingly integrated with physical laboratory automation, creating a seamless digital-to-physical pipeline. This integration is crucial for translating in silico designs into tangible results efficiently and reproducibly. Automation addresses the "programming-barrier-to-entry" that often prevents biologists from leveraging advanced robotic systems [4].

Emerging solutions include the use of Large Language Models (LLMs) to interpret natural language instructions and convert them into executable robotic commands. This allows scientists to design complex experiments through a chat-based interface, which is then translated into unambiguous code for liquid handlers and other automated systems [4]. The CRISPR.BOT is an example of an autonomous, low-cost robotic system built from LEGO Mindstorms that can perform genetic engineering protocols such as bacterial transformation and lentiviral transduction, demonstrating the potential for accessible automation [5].

The simulation platform's role is to act as the central planner, taking high-level experimental intent and generating detailed, error-checked workflows that specify calculations, well plate layouts, liquid handling decisions, and device-specific operations [4].

Computational and Modeling Capabilities

The computational core of a simulation platform encompasses the algorithms and models that predict system behavior. A critical function is supporting directed evolution experiments, a powerful protein engineering method. The platform must manage the core cycle of creating genetic diversity and applying selective pressure, while maintaining a strong phenotype-genotype linkage to ensure variants with desired functions can be identified and recovered [3].

Platforms must also be capable of multi-scale modeling, from the molecular level (e.g., protein structure prediction) to the cellular and population levels (e.g., metabolic network modeling and population dynamics). The rise of Generative AI is creating new opportunities in this space, such as using Variational Autoencoders (VAEs) and Generative Adversarial Networks (GANs) for de novo design of biological parts, predictive analytics for disease progression, and generating synthetic biomedical data for training other models [6]. Furthermore, Graph Neural Networks (GNNs) are proving powerful for analyzing biological networks, such as protein-protein interactions and metabolic pathways, to drive discoveries in drug repurposing and patient stratification [6].

Selecting a synthetic biology simulation platform is a strategic decision that hinges on aligning the platform's capabilities with the specific goals and constraints of the research project. A structured evaluation should focus on four pillars: the platform's ability to model and select appropriate chassis organisms; its integration with a comprehensive experimental toolbox; its connectivity to automation systems for robust execution; and the sophistication of its underlying computational and modeling capabilities. As the field evolves, platforms that effectively leverage AI, machine learning, and seamless digital-physical integration will be instrumental in overcoming current challenges in predictability and scalability, ultimately empowering researchers to navigate the complexity of biological design with greater confidence and efficiency.

The Central Role of the Design-Build-Test-Learn (DBTL) Cycle

The Design-Build-Test-Learn (DBTL) cycle is a foundational framework in synthetic biology that provides a systematic, iterative approach for engineering biological systems [7]. This engineering-inspired methodology enables researchers to develop organisms with novel functions, such as producing biofuels, pharmaceuticals, or other valuable compounds, through repeated cycles of refinement [7]. The cycle begins with rational design, proceeds to physical assembly, moves to rigorous experimental validation, and concludes with data analysis that informs the next design iteration. This structured process is crucial because introducing foreign DNA into a cellular environment creates complex, often unpredictable interactions that require multiple permutations to achieve desired outcomes [7].

The DBTL framework has become increasingly vital as synthetic biology ambitions have grown more complex. While rational principles guide initial designs, biological systems contain immense complexity that often necessitates several iterations to optimize system performance [7]. The manual execution of these cycles, however, presents significant limitations in terms of time and labor resources [8]. Recent advances in automation, artificial intelligence, and machine learning are transforming how DBTL cycles are implemented, dramatically accelerating the pace of biological engineering and opening new possibilities for rapid prototyping of genetic systems [8] [9].

The Four Phases of DBTL

Design Phase

The Design phase establishes the computational blueprint for the biological system to be engineered. This stage involves defining objectives for desired biological function and creating detailed plans for genetic parts or systems [9]. Key activities include protein design (selecting natural enzymes or designing novel proteins), genetic design (translating amino acid sequences into coding sequences, designing ribosome binding sites, and planning operon architecture), and assay design (establishing biochemical reaction conditions for subsequent testing) [10]. A critical component is assembly design, which involves deconstructing plasmids into fragments and planning their assembly with consideration of factors like restriction enzyme sites, overhang sequences, and GC content [10].

Automation has revolutionized the Design phase through advanced software that generates detailed DNA assembly protocols tailored to specific project needs [10]. These tools automatically select appropriate cloning methods (e.g., Gibson assembly or Golden Gate cloning) and strategically arrange DNA fragments in assembly reactions, significantly enhancing precision while reducing human error [10]. The integration of machine learning has further transformed this phase, with protein language models (e.g., ESM, ProGen) and structure-based tools (e.g., ProteinMPNN, MutCompute) now enabling zero-shot prediction of protein structures and functions [9]. These AI-driven approaches can capture evolutionary relationships and predict beneficial mutations, allowing researchers to explore design spaces that would be impractical through manual methods.

Build Phase

The Build phase translates computational designs into physical biological constructs. This stage involves synthesizing DNA sequences, assembling them into plasmids or other vectors, and introducing them into characterization systems [9]. These systems can include in vivo chassis (bacteria, eukaryotic, mammalian cells, or plants) or in vitro platforms (cell-free systems and synthetic cells) [9]. The Build phase requires high precision, as even minor errors in DNA assembly can lead to significant functional deviations in the final constructs [10].

Automation plays a crucial role in enhancing precision and throughput during the Build phase. Automated liquid handlers from companies like Tecan, Beckman Coulter, and Hamilton Robotics provide high-precision pipetting essential for processes including PCR setup, DNA normalization, and plasmid preparation [10]. Integration with DNA synthesis providers (e.g., Twist Bioscience, IDT, GenScript) streamlines the incorporation of custom DNA sequences into automated workflows [10]. Sophisticated software platforms orchestrate these processes by managing protocols, tracking samples across lab equipment, and maintaining inventory systems [10]. These automated solutions are particularly valuable for managing high-throughput, plate-based workflows where manual execution would be prohibitively time-consuming and prone to error.

Test Phase

The Test phase experimentally measures the performance of engineered biological constructs to determine the efficacy of the Design and Build phases [9]. This stage employs various functional assays to characterize the constructs against predefined objectives and performance metrics. High-throughput screening (HTS) represents a cornerstone of the modern Test phase, facilitated by automated liquid handling systems (e.g., Beckman Coulter Biomek series, Tecan Freedom EVO series) and automated plate readers (e.g., PerkinElmer EnVision, BioTek Synergy HTX) [10]. These systems enable rapid, parallel assessment of thousands of variants, generating comprehensive datasets on construct performance.

The integration of omics technologies has significantly expanded Testing capabilities. Next-Generation Sequencing (NGS) platforms (e.g., Illumina NovaSeq, Thermo Fisher Ion Torrent) provide rapid genotypic analysis, while automated mass spectrometry setups (e.g., Thermo Fisher Orbitrap) enable detailed proteomic profiling [10]. NMR-based platforms similarly facilitate metabolomic analyses [10]. The emergence of cell-free transcription-translation (TX-TL) systems has introduced a particularly powerful testing platform that circumvents the complexities of living host cells, such as metabolic burden and genetic instability [9] [11]. These systems allow for swift assessment of genetic circuit performance within hours rather than days or weeks, while providing finer control over environmental parameters and leading to more reproducible, interpretable data [11].

Learn Phase

The Learn phase involves analyzing data collected during testing and comparing it against objectives established in the Design stage [9]. This critical stage transforms raw experimental results into actionable insights that inform subsequent DBTL cycles. Researchers identify patterns, correlations, and causal relationships between design features and functional outcomes, enabling them to refine their hypotheses and design rules. In traditional DBTL cycles, this learning process is primarily driven by human interpretation of experimental data, which can become limiting with the complexity and scale of modern synthetic biology projects.

The integration of machine learning (ML) has revolutionized the Learn phase by enabling sophisticated analysis of vast, high-dimensional datasets that exceed human analytical capabilities [10]. ML algorithms can uncover complex patterns and relationships within experimental data, generating predictive models that connect genotypic designs to phenotypic outcomes [10]. For example, in optimizing tryptophan metabolism in yeast, ML models trained on extensive experimental data made accurate genotype-to-phenotype predictions that guided metabolic engineering strategies [10]. These computational models become increasingly accurate with each DBTL iteration, progressively reducing the need for extensive experimental screening and accelerating the path to optimized biological systems.

Table 1: Key Automation Technologies Enhancing the DBTL Cycle

| DBTL Phase | Technology Category | Specific Tools/Platforms | Key Function |

|---|---|---|---|

| Design | DNA Design Software | j5, Cello, AssemblyTron, Cameo | Automated genetic construct design [12] [10] |

| Design | Machine Learning Models | ESM, ProGen, ProteinMPNN, MutCompute | Protein design and function prediction [9] |

| Build | Automated Liquid Handlers | Tecan, Beckman Coulter, Hamilton Robotics | High-precision liquid handling for DNA assembly [10] |

| Build | DNA Synthesis Providers | Twist Bioscience, IDT, GenScript | Custom DNA sequence production [10] |

| Test | High-Throughput Screening | Biomek series, Freedom EVO series | Automated assay setup and execution [10] |

| Test | Cell-Free TX-TL Systems | PURE system, various cell extracts | Rapid protein expression without living cells [9] [11] |

| Learn | Data Analysis Platforms | TeselaGen, CLC Genomics, Geneious | Experimental data management and analysis [10] |

| Learn | Machine Learning Algorithms | Neural networks, ensemble methods | Pattern recognition and predictive modeling [11] [10] |

The Evolving DBTL Paradigm: LDBT and Advanced Automation

The Shift to LDBT

A significant paradigm shift is emerging in synthetic biology with the proposal to reorder the traditional cycle to LDBT (Learn-Design-Build-Test), placing learning at the forefront [9] [11]. This approach leverages machine learning models that have been pre-trained on vast biological datasets to make predictive designs before any physical construction occurs [9]. The LDBT cycle begins with a comprehensive learning phase where ML algorithms interpret existing biological data to predict meaningful design parameters, enabling researchers to refine design hypotheses before committing resources to building biological parts [11]. This learning-first approach potentially circumvents much of the costly trial-and-error that has traditionally characterized biological engineering.

The LDBT framework leverages the growing capabilities of zero-shot prediction methods, where AI models can design functional biological parts without additional training on specific experimental data [9]. Protein language models trained on evolutionary relationships between millions of protein sequences can now predict beneficial mutations and infer protein functions directly from sequence data [9]. Structural models like MutCompute and ProteinMPNN use deep neural networks trained on protein structures to associate amino acids with their local chemical environments, predicting stabilizing and functionally beneficial substitutions [9]. The success of these methods is demonstrated in various applications, including engineering hydrolases for PET depolymerization and designing TEV protease variants with improved catalytic activity [9].

Automation and Biofoundries

Biofoundries represent the physical implementation of automated DBTL cycles, integrating robotic automation, computational analytics, and high-throughput instrumentation to streamline synthetic biology workflows [12]. These facilities strategically combine automation technologies with bioinformatics to accelerate the engineering of biological systems [12]. The core concept involves creating integrated pipelines where the DBTL cycle can be executed with minimal human intervention, dramatically increasing throughput and reproducibility while reducing costs and development timelines [12].

The transformative potential of biofoundries was demonstrated in a timed pressure test administered by DARPA, where a biofoundry was tasked with researching, designing, and developing strains to produce 10 small molecules in 90 days [12]. Despite not being told the bioproduct identity in advance and having no prior experience with these specific molecules, the team succeeded in producing target molecules or close analogs for six of the ten targets [12]. This achievement highlighted the power of automated DBTL cycles to rapidly tackle complex biological engineering challenges that would be impossible through traditional manual approaches. The Global Biofoundry Alliance (GBA), established in 2019 with over 30 member institutions worldwide, continues to drive standards and resource sharing to advance biofoundry capabilities [12].

Table 2: Quantitative Impact of DBTL Automation Technologies

| Technology | Performance Metric | Traditional Method | Automated Method |

|---|---|---|---|

| Cell-Free Testing | Testing Timeframe | Days or weeks [9] | Hours [9] [11] |

| Robotic Liquid Handling | Pipetting Precision | Variable (manual skill-dependent) [7] | Sub-microliter precision [10] |

| Protein Language Models | Design Variants Surveyed | 10s-100s [9] | 100,000+ [9] |

| Drop-based Microfluidics | Reactions Screened | 100s-1,000s [9] | >100,000 [9] |

| Automated Strain Engineering | Strains Built (90 days) | 10s [12] | 215+ across 5 species [12] |

| DNA Assembly Design | Design Time (Complex Library) | Days [10] | Hours [10] |

Implementation Guide: Experimental Protocols and Workflows

Automated Genetic Construct Assembly Protocol

This protocol details the automated assembly of genetic constructs using high-throughput DNA assembly methods, suitable for building combinatorial libraries of genetic variants.

Materials Required:

- Automated Liquid Handling System: Tecan Freedom EVO, Beckman Coulter Biomek, or equivalent [10]

- DNA Parts: Synthesized oligonucleotides or DNA fragments from providers (Twist Bioscience, IDT, GenScript) [10]

- Assembly Master Mix: Typically includes DNA ligase, exonuclease, polymerase, and buffer components specific to assembly method [10]

- Destination Vectors: Linearized plasmid backbones with appropriate antibiotic resistance markers

- Microplates: 96-well or 384-well PCR-compatible plates

- Software: DNA assembly design software (j5, AssemblyTron) with liquid handler integration [12] [10]

Procedure:

- Design Integration: Export assembly designs from DNA design software in format compatible with liquid handler scheduling system [10].

- Reagent Setup: Program liquid handler to distribute appropriate assembly master mix to each well of microplate based on assembly complexity.

- DNA Transfer: Using automated liquid handler, transfer DNA parts (50-100 ng each) and destination vectors (25-50 ng) to designated wells following assembly design specifications.

- Incubation: Transfer plates to thermal cycler for appropriate assembly reaction conditions (e.g., 50°C for 60 minutes for Gibson assembly).

- Transformation: Program liquid handler to transfer assembly reactions to competent cells prepared in 96-well format.

- Selection: Plate transformation reactions on selective media using automated plating system.

- Verification: Pick colonies using automated colony picker for sequence verification via colony PCR or next-generation sequencing [10].

Troubleshooting Notes:

- Failed assemblies may require optimization of DNA concentration ratios, which can be systematically tested using design-of-experiment approaches in subsequent DBTL cycles.

- For complex assemblies, consider dividing construction into hierarchical steps with intermediate verification checkpoints.

Cell-Free Transcription-Translation Testing Protocol

This protocol describes the use of cell-free systems for rapid testing of genetic constructs, enabling high-throughput characterization without cell culture steps.

Materials Required:

- Cell-Free System: Commercially available cell-free transcription-translation mix (PURE system or cell extracts) [9] [11]

- DNA Templates: Purified plasmids or PCR products encoding genetic circuits

- Reaction Plates: 96-well or 384-well microplates with optical clarity for absorbance/fluorescence measurements

- Plate Reader: Multi-mode microplate reader capable of kinetic measurements (e.g., PerkinElmer EnVision, BioTek Synergy HTX) [10]

- Liquid Handler: Automated system for precise small-volume dispensing

Procedure:

- Reaction Setup: Program liquid handler to dispense cell-free reaction mix (10-20 μL per well) into microplate wells.

- DNA Template Addition: Add DNA templates (5-50 nM final concentration) to appropriate wells using automated liquid handler.

- Incubation: Transfer plate to temperature-controlled plate reader pre-set to optimal reaction temperature (typically 30-37°C).

- Kinetic Measurement: Program plate reader to take periodic measurements (every 5-15 minutes) of fluorescence/absorbance for relevant reporters over 4-24 hours.

- Data Export: Automatically export time-course data to analysis software for processing.

- Quality Control: Include appropriate controls (no DNA, positive controls, negative controls) in each plate.

Data Analysis:

- Calculate expression kinetics (lag time, rate, maximum yield) from time-course data.

- Normalize signals using internal standards or control reactions.

- Apply statistical models to determine significant differences between constructs.

- Feed results back to Learn phase for model refinement and next design iteration.

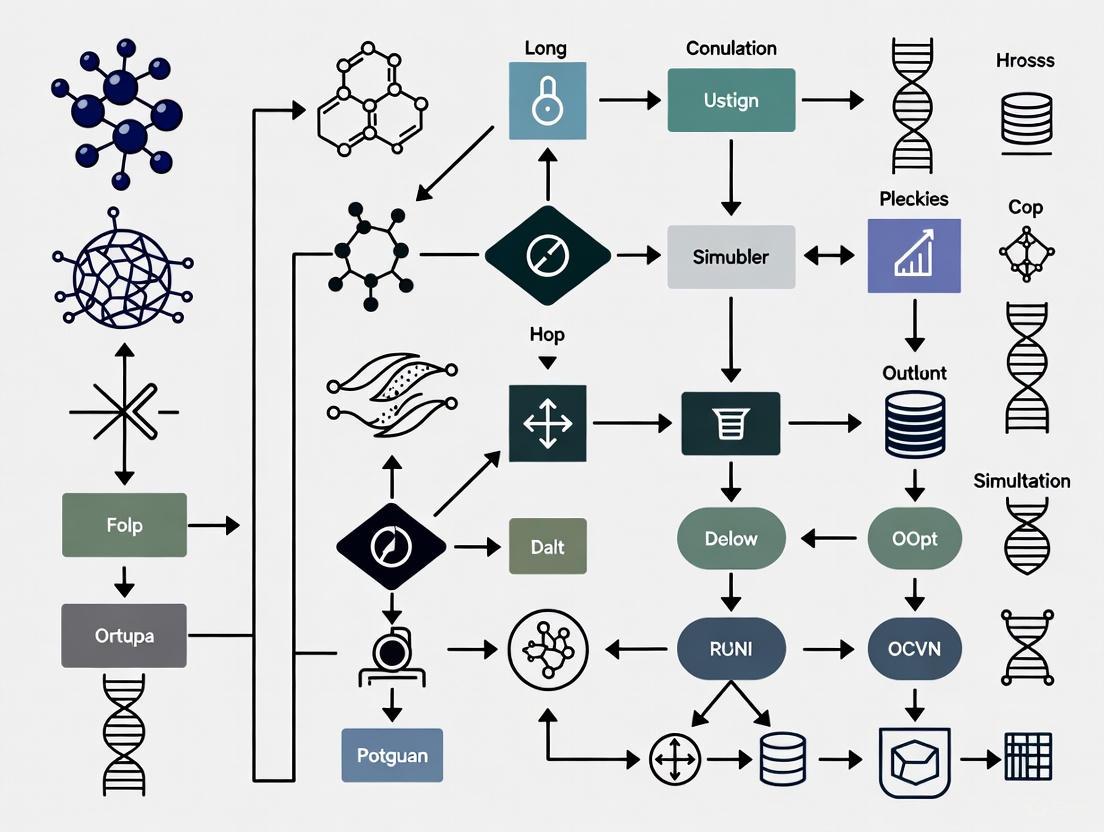

Diagram 1: DBTL Cycle Workflow. This diagram illustrates the iterative four-phase Design-Build-Test-Learn cycle in synthetic biology, showing how knowledge gained in each cycle informs subsequent iterations until desired biological functions are achieved.

Essential Research Reagent Solutions

Table 3: Key Research Reagent Solutions for DBTL Implementation

| Reagent Category | Specific Examples | Function in DBTL Cycle | Considerations for Platform Selection |

|---|---|---|---|

| DNA Assembly Master Mixes | Gibson Assembly Mix, Golden Gate Assembly Mix | Enzymatic assembly of DNA fragments into functional genetic constructs [10] | Compatibility with automation; storage stability; success rate with complex assemblies |

| Cell-Free TX-TL Systems | PURE System, E. coli extracts, wheat germ extracts | Rapid protein expression without living cells for high-throughput testing [9] [11] | Cost per reaction; protein yield; support for post-translational modifications |

| Competent Cells | High-efficiency E. coli strains, yeast competent cells | Transformation of assembled DNA constructs for amplification and in vivo testing [10] | Transformation efficiency; compatibility with automation; genotype requirements |

| Fluorescent Reporters | GFP, RFP, luciferase variants | Quantitative measurement of gene expression and circuit performance [9] | Brightness; stability; compatibility with detection equipment; spectral overlap |

| Selection Markers | Antibiotic resistance genes, auxotrophic markers | Selection of successful transformants and maintenance of genetic constructs [10] | Compatibility with host chassis; selection stringency; cost of selective agents |

| NGS Library Prep Kits | Illumina DNA Prep, Swift Accel Amplicon | Verification of constructed sequences and analysis of population diversity [10] | Automation compatibility; hands-on time; sequence bias; cost per sample |

Implications for Synthetic Biology Simulation Platform Selection

Selecting an appropriate synthetic biology simulation platform requires careful consideration of how the platform supports each phase of the DBTL cycle. The ideal platform should provide integrated capabilities that span the entire engineering lifecycle rather than focusing on isolated phases. Based on the evolving DBTL paradigm, several critical factors emerge as essential for platform selection.

Integration with Experimental Automation: The platform must seamlessly connect computational design with physical implementation through compatibility with automated laboratory instrumentation [10]. This includes support for standard file formats used by DNA design software (e.g., j5 outputs), liquid handling systems, and DNA synthesis providers [12] [10]. Platforms that offer application programming interfaces (APIs) for connecting with laboratory information management systems (LIMS) and robotic equipment enable more streamlined workflows between digital designs and physical execution [10].

Machine Learning Capabilities: As the field shifts toward LDBT cycles with learning at the forefront, simulation platforms must incorporate robust machine learning functionalities [9] [11]. This includes both pre-trained models for zero-shot prediction and infrastructure for training custom models on experimental data [9]. Support for embedding biological sequences (DNA, proteins) and representing chemical compounds is particularly valuable for predicting structure-function relationships [10]. The platform should facilitate iterative model improvement by automatically incorporating experimental results from Test phases into updated predictive models [10].

Data Management and Analysis: Given the massive datasets generated by high-throughput testing methodologies, effective data management is crucial [10]. Simulation platforms should offer comprehensive solutions for storing, organizing, and analyzing diverse data types, from sequence information to kinetic measurements and omics data [10]. Features should include automated data validation, customizable assay descriptors, and integrated visualization tools that help researchers identify patterns and extract meaningful insights from complex datasets [10].

Deployment Flexibility: The choice between cloud-based and on-premises deployment depends on specific research requirements [10]. Cloud solutions offer superior scalability, collaboration features for distributed teams, and easier access to computational resources for data-intensive ML tasks [10]. On-premises deployment provides greater control over sensitive intellectual property and may be preferred for projects with strict data governance requirements [10]. Some platforms offer hybrid approaches that combine advantages of both deployment models.

Support for Emerging Technologies: As synthetic biology advances, simulation platforms must adapt to support emerging methodologies like cell-free systems [9] [11] and complex multi-module integration [13]. Platforms should incorporate predictive models for cell-free expression yields and support design of synthetic cells with multiple integrated functional modules [13]. The ability to simulate both in vivo and cell-free environments within the same platform provides greater flexibility for experimental planning.

The convergence of artificial intelligence (AI), machine learning (ML), and automation is fundamentally reshaping synthetic biology, creating a new generation of powerful simulation and engineering platforms. For researchers and drug development professionals, understanding these core technologies is no longer optional but a prerequisite for selecting a platform that can accelerate the design-build-test-learn (DBTL) cycle, enhance predictive accuracy, and scale biological engineering to industrial levels. This technical guide provides an in-depth analysis of these pivotal technologies, detailing how they function individually and synergistically within modern biofoundries and software platforms. By framing this analysis within the critical context of platform selection, it equips scientists with the necessary framework to evaluate and choose a synthetic biology simulation platform that aligns with their research complexity, data requirements, and desired throughput, ultimately bridging the gap between in silico design and tangible biological outcomes.

Synthetic biology is undergoing a paradigm shift, moving from a craft-based discipline reliant on manual trial-and-error to a data-driven engineering science powered by sophisticated software and automation. This transformation is orchestrated by the integration of three core technological pillars: AI/ML for predictive design and learning, and automation for high-throughput execution. These technologies coalesce into integrated platforms, often manifested as biofoundries—automated facilities that execute the DBTL cycle with minimal human intervention [14]. The strategic importance of this convergence lies in its ability to manage the profound complexity and context-dependency of biological systems, which has traditionally hindered predictable engineering. For the researcher, the choice of a platform dictates the very scope of what is possible, influencing the scale of experiments, the sophistication of designs, and the speed from concept to validated construct. This guide delves into the specifics of each technological pillar to provide a foundational understanding for making an informed platform selection.

Core Technology Pillars

Artificial Intelligence and Machine Learning

AI and ML serve as the intellectual core of modern synthetic biology platforms, transforming vast and complex datasets into predictive models and actionable designs.

Predictive Modeling of Biological Systems: AI techniques, particularly deep learning, are used to build models that predict the behavior of synthetic genetic circuits before physical assembly. These models can forecast protein expression levels, identify potential off-target effects or metabolic burden, and pinpoint failure points in silico [15]. This capability shifts the engineering process from being reactive to proactive, saving considerable resources.

Generative AI for De Novo Design: Moving beyond prediction, generative AI models like Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs) are employed to create novel biological parts and systems. In drug discovery, GANs can generate novel molecular structures that target specific biological activities while adhering to desired pharmacological and safety profiles [16]. Similarly, Large Language Models (LLMs), trained on vast biological sequence data, are being repurposed to design novel DNA, RNA, and protein sequences, exploring the biological design space far beyond human intuition [17].

Sequence and Pathway Optimization: ML-based optimization engines are critical for refining genetic designs. These tools analyze factors such as codon usage, mRNA folding, regulatory sequence configurations, and host-specific genomic traits [15]. By learning from experimental datasets, these models recommend high-performing genetic designs with a greater likelihood of success in the lab. Furthermore, AI can map target molecules to biosynthetic pathways, rank candidate enzymes for efficiency, and recommend optimal host chassis organisms [15].

Automation and Robotic Systems

Automation provides the physical infrastructure to execute the designs generated by AI at a scale and precision unattainable through manual methods. This pillar is embodied in the architecture of biofoundries.

Biofoundry Architectures and Workflows: A biofoundry is an integrated, automated platform that facilitates high-throughput DBTL cycles [14]. Its core function is to execute synthetic biology workflows—such as DNA assembly, strain transformation, and cellular analysis—in a highly parallelized format (e.g., using 96- or 384-well plates) [14]. The degree of automation can be categorized as follows:

- Single-Robot, Single-Workflow (SR-SW): One robot handles a specific, sequential workflow.

- Multi-Robot, Single-Workflow (MR-SW): Multiple robots, each specializing in a different task (e.g., liquid handling, incubation, analysis), are integrated into a single, continuous workflow line.

- Multi-Robot, Multi-Workflow (MR-MW) and Modular Continuous Workflows (MCW): These represent the most advanced architectures, enabling flexible, parallel execution of multiple different experimental workflows, maximizing throughput and resource utilization [14].

The Self-Driving Lab: The ultimate expression of automation is the "self-driving lab," where the DBTL cycle is fully closed and operated with minimal human intervention. Platforms like

BioAutomatuse AI algorithms, such as Gaussian processes, to automatically design experiments, interpret results, and select the next best set of parameters to test, creating an autonomous optimization loop for challenges like culture medium improvement or enzyme engineering [14].

Table 1: Categories of Laboratory Automation in Biofoundries

| Automation Category | Key Characteristics | Typical Applications |

|---|---|---|

| Single-Robot, Single-Workflow (SR-SW) | One robot dedicated to a specific, sequential protocol. | Automated plasmid construction, routine sample preparation. |

| Multi-Robot, Single-Workflow (MR-SW) | Multiple specialized robots integrated into a single, continuous line. | A fully automated pipeline for DNA assembly, transformation, and cell culturing. |

| Multi-Robot, Multi-Workflow (MR-MW) | Flexible system capable of managing and executing multiple different workflows in parallel. | Simultaneously running protein expression screening and metabolic pathway optimization. |

Data Integration and Software Platforms

The third pillar is the software layer that unifies AI and automation, managing the immense data flow and enabling intelligent control.

The Design-Build-Test-Learn (DBTL) Cycle Software: Central to any modern platform is software that supports the entire DBTL cycle. This includes tools for computer-assisted design (CAD) of biological constructs, software for planning and executing experiments on automated hardware (e.g.,

Aquarium,Galaxy-SynBioCAD), and data management systems that aggregate results from the "Test" phase [14]. This integrated data is then fed into ML models for the "Learn" phase, creating a virtuous cycle of continuous improvement.Semantic Search and Data Accessibility: Generative AI-powered semantic search, driven by LLMs, is revolutionizing how researchers access information. Unlike traditional keyword search, it interprets the user's intent and context, pulling relevant documents, protocols, and internal data even without exact phrasing matches. This capability dramatically reduces the time scientists spend finding and synthesizing information, allowing them to focus on analysis and design [18].

A Framework for Selecting a Simulation Platform

Choosing the right synthetic biology platform requires a strategic assessment of how its technological components align with your research goals. The following framework provides a structured approach for researchers and drug development professionals.

Evaluating Predictive Modeling Capabilities

The core of a modern platform is its predictive power. Key evaluation criteria include:

- Supported Biological Scales: Determine if the platform specializes in a specific scale (e.g., molecular, genetic circuit, metabolic network, cellular, or multicellular) or offers integrated multi-scale modeling. For instance, advanced platforms are now incorporating 3D multicellular simulators that account for spatiotemporal behavior and cellular interactions, which is crucial for tissue engineering and therapeutic development [19].

- AI Model Transparency and Validation: Scrutinize the underlying AI models. Are they "black boxes," or do they provide insights into the rationale behind their predictions? Platforms that offer model confidence scores, feature importance analysis, and are validated on independent external datasets are generally more reliable [16].

- Generative Design Features: For pioneering research in novel biologic or therapeutic design, a platform with robust generative AI capabilities is essential. Evaluate the flexibility and control you have over the design constraints (e.g., potency, selectivity, expressibility) and the platform's track record of generating viable, lab-validated constructs.

Assessing Integration with Automation and Physical Workflows

A platform's digital capabilities are most valuable when they are tightly coupled with physical execution.

- Biofoundry Compatibility and Connectivity: If your research aims for high-throughput validation, the software platform must seamlessly integrate with biofoundry automation. Investigate its compatibility with standard laboratory automation systems (e.g., Opentrons OT-2, Hamilton) and its ability to translate digital designs into machine-readable instructions for liquid handlers, sequencers, and analyzers [14].

- Support for Autonomous Workflows: For the highest efficiency, consider platforms that support active learning and autonomous operation. Assess whether the platform includes or integrates with tools like the

Automated Recommendation Tool (ART)that can automatically analyze test results and propose the next round of experiments, effectively closing the DBTL loop [14].

Analyzing Data Management and Interoperability

The platform's ability to handle and leverage data is a critical differentiator.

- Data Standardization and Curation: The platform should enforce data standards (e.g., SBML for models) and provide robust curation tools. High-quality, well-annotated data is the fuel for accurate AI/ML models. Platforms that automate data capture from instruments and use curated datasets for their AI tools are preferable to avoid the "garbage in, garbage out" problem [16] [18].

- Extensibility and Customization: No platform is perfect for every unique research need. Evaluate the availability of APIs, software development kits (SDKs), and modular architectures that allow you to integrate custom ML models, proprietary algorithms, or new data sources, ensuring the platform can evolve with your research.

Table 2: Quantitative Market and Performance Metrics for Platform Assessment

| Metric Category | Current Benchmark Data | Strategic Implication for Platform Choice |

|---|---|---|

| Market Growth & Investment | The global synthetic biology market is valued at $16-18 billion (2024), with a projected CAGR of 20.6-28.63% [20]. | Indicates a rapidly maturing sector; choose platforms from vendors with strong financial footing and a clear R&D roadmap. |

| Sequencing/Synthesis Cost | Consistent reduction in DNA sequencing and synthesis costs [20]. | Enables more ambitious, high-throughput projects; platform should facilitate easy design and ordering of genetic constructs. |

| Computational Performance | Stochastic algorithms (e.g., Gillespie SSAs) are more principled for modeling biological noise but are computationally expensive, often requiring HPC [19]. | For complex stochastic models, ensure the platform has access to sufficient cloud or on-premise high-performance computing (HPC) resources. |

| Automation Throughput | Biofoundries operate workflows in 96- or 384-well plates, with advanced systems (MCW) enabling parallel, multi-workform execution [14]. | Match the platform's supported throughput with your project's scale. High-throughput demands full MR-MW/MCW architecture compatibility. |

Essential Research Reagent Solutions and Materials

The following toolkit details critical reagents and materials whose properties and performance are often predicted and optimized by the AI/ML and automation platforms described above.

Table 3: Key Research Reagent Solutions for AI-Driven Synthetic Biology

| Reagent/Material | Core Function in Experimental Workflow |

|---|---|

| Oligonucleotides & Synthetic Genes | The foundational building blocks for genetic construct assembly; AI platforms design optimal sequences for synthesis [15] [21]. |

| Enzyme Libraries | Diverse collections of enzymes screened by AI for specific catalytic activities in novel biosynthetic pathways [15]. |

| Engineered Host Chassis | Optimized microbial (e.g., E. coli, P. putida, C. glutamicum) or yeast cells, selected and engineered by platforms to efficiently host and express synthetic pathways [14]. |

| CRISPR Guide RNA Libraries | Designed in silico using platform tools to enable precise, multiplexed genome editing for strain engineering [14]. |

| Cell-Free Synthesis Systems | Extracts containing the transcriptional and translational machinery for rapid prototyping of genetic circuits without the complexity of living cells, often used in automated testing [14] [21]. |

| Specialized Growth Media | Formulations, often optimized by AI-active learning, to support the production of specific target compounds or enhance the growth of engineered strains [14]. |

Experimental Protocol: An Automated DBTL Cycle for Metabolic Pathway Optimization

This protocol details a standard methodology for optimizing a biosynthetic pathway in a microbial host, representative of workflows executed in an AI-powered biofoundry.

Objective: To engineer a microbial strain for the high-yield production of a target molecule (e.g., a therapeutic precursor or biofuel) through iterative, automated DBTL cycles.

1. Design Phase:

- In Silico Pathway Design: Use the platform's generative and predictive tools to design a library of variant pathways. This includes:

- Enzyme Selection: Using an ML model to rank homologous enzymes from databases (e.g., KEGG, UniProt) based on predicted activity, stability, and compatibility in the chosen host.

- DNA Sequence Optimization: Using an ML-based codon optimization engine to tailor the coding sequences for each selected enzyme to the host organism, maximizing expression and folding [15].

- Regulatory Element Design: Designing a library of promoters and ribosome binding sites with varying predicted strengths to balance the expression levels of multiple pathway enzymes [15].

- Output: A list of DNA sequences for synthesis.

2. Build Phase:

- Automated DNA Assembly: The platform's software generates instructions for an automated liquid handling robot (e.g., using

Opentrons OT-2orRoboMoCloprotocols) to assemble the designed DNA constructs from synthesized oligonucleotides or gene fragments [14]. - High-Throughput Strain Engineering: The assembled constructs are transformed into the host chassis (e.g., via electroporation) in a 96-well format. Robots manage the entire process, from cell culture preparation to plating on selective media.

3. Test Phase:

- Cultivation and Metabolite Analysis: Automated systems inoculate and grow engineered strains in deep-well plates in a controlled incubator. After a specified period, a liquid handler samples the culture broth.

- Analysis: The samples are analyzed using integrated, high-throughput analytical instruments, typically liquid chromatography-mass spectrometry (LC-MS) or spectrophotometric assays, to quantify the titer of the target molecule and key intermediates [14].

4. Learn Phase:

- Data Aggregation and Modeling: The results from the "Test" phase (e.g., product titers, growth rates) are automatically uploaded to the platform's data management system and linked to the corresponding genetic designs.

- AI-Driven Analysis: An active learning algorithm (e.g., a Gaussian process or random forest model) analyzes the dataset to identify the relationships between genetic design variables (enzyme variants, promoter strengths) and performance outcomes [14].

- Recommendation: The AI model recommends a new set of designs for the next DBTL cycle, strategically proposing combinations that are predicted to outperform the current best or to explore under-sampled areas of the design space.

Visual Workflow:

The integration of AI, ML, and automation has given rise to a new class of synthetic biology platforms that are fundamentally more powerful, predictive, and productive than their predecessors. For the modern researcher, the critical task is to move beyond viewing these technologies in isolation and to instead evaluate the integrated platform's ability to execute a robust, data-productive DBTL cycle. The choice of platform will dictate the pace and ambition of your research program. By applying the framework outlined in this guide—assessing predictive capabilities, integration with automation, and data management strengths—scientists and drug developers can make a strategic decision that aligns technological capability with research objectives, positioning themselves to not only navigate but also lead in the rapidly evolving landscape of synthetic biology.

The field of synthetic biology has undergone a profound transformation, evolving from a discipline reliant on manual experimentation to one powered by integrated computational and automated systems. This shift is embodied in the Design-Build-Test-Learn (DBTL) cycle, an engineering framework that has become the cornerstone of modern biological engineering [22]. The convergence of artificial intelligence (AI) and synthetic biology is revolutionizing each stage of this cycle, enabling researchers to move from in silico design to AI-powered biofoundries with unprecedented speed and precision [17] [23].

The core challenge facing researchers today is no longer just the biological engineering itself, but selecting the right computational platforms to power these workflows. This decision critically influences the scalability, success, and translational potential of synthetic biology projects. This guide provides a technical framework for evaluating and selecting synthetic biology simulation platforms, focusing on their capabilities to bridge in silico design with high-throughput automated execution. We examine the core technologies, data requirements, and validation methodologies essential for leveraging these powerful systems in therapeutic development.

Core Components of a Synthetic Biology Simulation Platform

Computational and Data Foundations

At its core, molecular biology simulation software relies on a combination of specialized hardware and software. High-performance computing (HPC) infrastructure, including multi-core CPUs and GPUs, provides the necessary processing power for complex calculations involving protein structures or genetic sequences. These simulations often require significant RAM and storage to process and store massive datasets [24].

On the software side, these platforms incorporate sophisticated algorithms based on principles from physics, chemistry, and biology. Key computational techniques include:

- Molecular dynamics (MD) for simulating physical movements of atoms and molecules

- Quantum mechanics/molecular mechanics (QM/MM) for modeling enzymatic reactions

- Monte Carlo methods for exploring molecular conformations

- Machine learning (ML) and deep learning for predictive modeling and pattern recognition [24]

Modern platforms emphasize interoperability through adherence to standards like SBML (Systems Biology Markup Language) and BioPAX, which facilitate data exchange between different tools and platforms. Application Programming Interfaces (APIs) enable integration with laboratory information management systems (LIMS), data repositories, and visualization tools, creating seamless workflows from design to validation [24].

AI and Machine Learning Integration

AI has become a central element of synthetic biology's technology platform, creating a powerful three-component loop with engineering and biology [23]. The integration occurs across multiple dimensions:

Large Language Models (LLMs) have been adapted to the lexicon of biology by replacing words with nucleotide bases (adenine, cytosine, thymine, and guanine). This enables LLMs to optimize experiments and generate new DNA sequences precisely, quickly, and cheaply in response to human prompts [23]. For instance, CRISPR-GPT represents an LLM capable of automating and enhancing gene editing experiments [23].

Generative AI models are being used to create novel biological designs rather than just predicting outcomes. These systems can generate new protein sequences, genetic circuits, and metabolic pathways optimized for specific functions. Companies like Profluent use the same large language models employed by chatbots to design and optimize proteins, while Dreamfold uses generative algorithms to design drugs that precisely match the shape of their molecular targets [22].

Table 1: AI Applications Across the Synthetic Biology Workflow

| Workflow Stage | AI Capability | Representative Tools/Companies |

|---|---|---|

| Design | Protein structure prediction, DNA sequence generation | AlphaFold, Profluent, CRISPR-GPT |

| Build | Automated benchtop work and QC | Asimov, LabGenius |

| Test | High-throughput data analysis, pattern recognition | Carterra LSA, CellVoyant |

| Learn | Predictive modeling, multi-omics integration | Absci, Generate Biomedicines |

From In Silico Models to Biofoundries

The transition from digital designs to physical biological systems occurs through biofoundries - automated laboratories that integrate robotic platforms with advanced analytics to execute high-throughput genetic engineering experiments. These facilities represent the physical manifestation of integrated simulation platforms, where in silico designs are translated into tangible biological constructs with minimal human intervention [25].

Companies like Ginkgo Bioworks and Zymergen have pioneered the biofoundry approach, leveraging AI-driven platforms to design microorganisms for specific industrial applications. The Carterra LSA platform exemplifies this integration, offering high-throughput screening that can analyze up to 150,000 interactions per assay, generating massive datasets to train AI models for improved antibody design [25].

The emergence of digital twin technology represents the next frontier in this space, creating virtual replicas of biological systems that can be manipulated and studied in silico before physical implementation. Crown Bioscience is exploring this approach for hyper-personalized therapy simulations, creating digital models of patient-specific biology to predict treatment outcomes [26].

Technical Guide: Platform Evaluation and Selection

Quantitative Assessment Framework

Selecting an appropriate synthetic biology platform requires careful evaluation of both technical specifications and alignment with research objectives. The market for synthetic biology platforms is growing rapidly, with an estimated value of $5.04 billion in 2025 and projected to reach $14.10 billion by 2030, representing a compound annual growth rate (CAGR) of 22.81% [27]. This growth is fueled by increasing adoption across pharmaceutical, agricultural, and industrial biotechnology sectors.

Table 2: Synthetic Biology Platforms Market Segmentation (2025-2030)

| Segment | Key Technologies | Projected Growth | Representative Companies |

|---|---|---|---|

| By Offering | DNA Sequencing, DNA Synthesis, mRNA Synthesis | CAGR of 22.81% | Twist Bioscience, DNA Script |

| By Application | Antibody Discovery & NGS, Cell & Gene Therapy, Vaccine Development | Market value reaching $14.10B by 2030 | Ginkgo Bioworks, LanzaTech |

| By End User | Pharmaceutical & Life Science, Agriculture, Food & Beverage | Driven by personalized medicine | Illumina, Codexis |

When evaluating platforms, consider these critical technical parameters:

- Data Integration Capabilities: Assess the platform's ability to incorporate multi-omics data (genomics, transcriptomics, proteomics, metabolomics). Crown Bioscience's approach demonstrates how integrating these datasets captures tumor biology complexity for more accurate predictions [26].

- Throughput and Scalability: Evaluate processing capabilities for large-scale simulations. The Carterra LSA platform exemplifies high-throughput capacity, analyzing up to 150,000 interactions per assay [25].

- AI/ML Functionality: Determine the sophistication of built-in machine learning algorithms for predictive modeling and design optimization.

- Interoperability: Verify support for standard data formats (SBML, BioPAX) and API connectivity for integration with existing laboratory infrastructure [24].

Experimental Validation Protocols

Validating computational predictions with experimental data remains a critical step in platform selection. The following methodology outlines a robust framework for assessing platform accuracy:

Protocol: Cross-Validation of In Silico Predictions with Experimental Models

In Silico Prediction Phase:

- Design 200-500 biological constructs (e.g., protein variants, genetic circuits) using the platform's AI-driven design tools

- Run simulations to predict behavior and performance metrics

- Rank constructs based on predicted efficacy

Experimental Validation Phase:

- Synthesize top 50 predicted constructs and 10 negative controls

- Test using appropriate biological assays (e.g., binding affinity, enzymatic activity, gene expression)

- For oncology applications, utilize patient-derived xenografts (PDXs), organoids, and tumoroids as validated by Crown Bioscience [26]

Data Correlation Analysis:

- Calculate correlation coefficients between predicted and observed results

- Establish performance thresholds for platform accuracy (e.g., >80% correlation for high-value targets)

- Iterate DBTL cycles to refine AI models based on validation results

This validation approach ensures that in silico predictions translate to real-world biological activity, highlighting platforms that effectively bridge the digital-physical divide.

Implementation Workflow

The integration of a synthetic biology platform follows a structured workflow that connects computational design with physical implementation. The diagram below illustrates this integrated process:

Integrated DBTL Workflow with AI

This workflow demonstrates how modern platforms create a continuous cycle of improvement, where data from each experiment enhances AI models, leading to progressively more accurate predictions and efficient designs.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of synthetic biology platforms requires careful selection of supporting reagents and materials. The following table details essential components for establishing robust experimental workflows:

Table 3: Essential Research Reagents for Synthetic Biology Workflows

| Reagent/Material | Function | Application Examples |

|---|---|---|

| DNA Synthesis/Sequencing Kits | DNA reading and writing | Library construction, variant validation (Twist Bioscience, DNA Script) [27] |

| Patient-Derived Xenografts (PDXs) | Human tumor models in mice | Validation of oncology targets and therapeutic efficacy [26] |

| Organoids/Tumoroids | 3D in vitro tissue models | High-throughput screening of drug candidates [26] |

| Non-Standard Amino Acids | Expand genetic code for novel functions | Engineering proteins with enhanced properties (GRO Biosciences) [22] |

| CRISPR-Cas Systems | Precision gene editing | Genetic circuit implementation, knock-in/knock-out studies [17] |

| Cell-Free Transcription-Translation Systems | Rapid protein expression without cells | Prototype testing of genetic designs [28] |

Biofoundry Operations and Automation

AI-powered biofoundries represent the physical implementation of optimized synthetic biology workflows. These automated facilities translate digital designs into biological reality through coordinated robotic systems. The operational framework of a modern biofoundry can be visualized as follows:

AI-Powered Biofoundry Architecture

This automated pipeline enables rapid iteration through DBTL cycles. Companies like LabGenius have implemented robotics platforms capable of autonomous experimentation through the entire DBTL cycle in cell-based assays to discover high-performing antibodies [22]. Similarly, Asimov has created a platform that integrates engineered cells, computer-aided design and simulation, multiomics analysis, and QC to advance the design of RNA, gene, and cell therapies [22].

Challenges and Future Directions

Despite significant advances, several challenges remain in the full realization of AI-powered synthetic biology platforms:

Data Quality and Quantity: High-quality, curated datasets are essential for training accurate AI models. Incomplete or biased datasets can lead to inaccurate predictions. Companies like Crown Bioscience address this by curating datasets from diverse sources, including global biobanks and proprietary experimental results [26].

Model Interpretability: AI models often function as "black boxes," making it difficult to understand their decision-making processes. Explainable AI techniques, such as feature importance analyses, are being implemented to ensure transparency in predictive frameworks [26].

Dual-Use Risks and Ethical Considerations: The democratization of synthetic biology tools through AI lowers barriers for potential misuse. Robust governance frameworks, including international safety protocols and synthesis screening, are essential to mitigate risks while promoting beneficial innovation [17] [23].

Scalability and Computational Requirements: Simulating biological systems across large datasets demands significant computational resources. Cloud-based solutions and high-performance computing clusters are addressing these challenges, making advanced simulations accessible to smaller laboratories [24] [26].

Looking ahead, key developments will shape the next generation of synthetic biology platforms:

- Digital Twin Technology: Creating virtual replicas of biological systems for hyper-personalized therapy simulations [26]

- CRISPR-Based Simulations: Incorporating CRISPR editing data to predict effects of genetic modifications [26]

- Multi-Scale Modeling: Integrating data from molecular, cellular, and tissue levels for comprehensive views of biological dynamics [26]

- Generative AI Algorithms: Advancing beyond predictive capabilities to create novel biological designs optimized for specific functions

The integration of in silico design tools with AI-powered biofoundries represents a paradigm shift in synthetic biology research and therapeutic development. Selecting an appropriate platform requires careful consideration of computational capabilities, experimental validation frameworks, and scalability for specific research applications. As the field continues to evolve at a rapid pace, platforms that effectively bridge the digital-physical divide while maintaining rigorous validation standards will offer the greatest value for advancing precision medicine and biotechnological innovation. The convergence of AI and synthetic biology promises to accelerate the development of novel therapeutics, but success hinges on choosing platforms that align with both immediate research needs and long-term strategic goals in an increasingly automated and data-driven landscape.

Matching Platform Capabilities to Your Research Goals

Selecting a synthetic biology simulation platform is a strategic decision that directly impacts research efficiency, scalability, and translational success. This technical guide provides researchers, scientists, and drug development professionals with a structured framework for aligning platform capabilities with three primary application areas: Therapeutics, Biomanufacturing, and Discovery. By comparing quantitative performance metrics, detailing experimental protocols, and visualizing key workflows, this document supports data-driven platform selection within a comprehensive research strategy. The integration of artificial intelligence (AI) and automated workflows is transforming all three domains, enabling more predictive simulations and accelerating the design-build-test-learn (DBTL) cycle [14] [29].

Application-Specific Platform Requirements

Synthetic biology applications impose distinct requirements on simulation platforms. The table below summarizes core capabilities, key performance metrics, and representative tools for each domain.

Table 1: Platform Requirements by Application Area

| Application | Core Simulation Capabilities | Key Performance Metrics | Representative Tools/Platforms |

|---|---|---|---|

| Therapeutics | Patient-specific biosimulation, Pharmacokinetic/Pharmacodynamic (PK/PD) modeling, Clinical trial simulation, Toxicity prediction | Clinical trial success rate, Reduction in development timeline, Preclinical prediction accuracy | MIDD tools [30], Turbine's Simulated Cell [31], Digital twin platforms [29] |

| Biomanufacturing | Metabolic flux analysis, Strain optimization, Fermentation process modeling, Scale-up simulation | Product yield (titer, rate), Reduction in production costs, Strain engineering cycle time | Galaxy-SynBioCAD [32], Biofoundry platforms [14], Ginkgo Bioworks platforms [29] |

| Discovery | De novo molecular design, Target identification, Pathway enumeration, Binding affinity prediction | Novel candidate identification speed, Compound library size screened, Target validation accuracy | Generative AI platforms [33] [29], Retrosynthesis software [32], Multimodal AI [29] |

Therapeutics-focused platforms prioritize clinical translatability, incorporating models for human physiology and disease mechanisms. The focus is on reducing late-stage failures, with AI-powered platforms reportedly reducing preclinical development costs by up to 30% and timelines by 40-50% [29]. Biomanufacturing platforms emphasize predictive metabolic engineering and process optimization, operating within automated biofoundries that support high-throughput DBTL cycles [14]. The synthetic biology market in healthcare, a key enabler for this sector, is projected to grow from USD 5.15 billion in 2025 to USD 10.43 billion by 2032 [34]. Discovery platforms leverage generative AI and expansive biological databases to explore novel chemical and genetic space, with some platforms compressing target identification from years to days [29].

Quantitative Data and Market Landscape

Understanding the economic and performance landscape provides critical context for platform investment decisions. The biosimulation market, which underpins these applications, is experiencing robust growth driven by the escalating costs of traditional drug development and regulatory acceptance of model-informed approaches [35].

Table 2: Performance Metrics and Market Outlook

| Metric Category | Therapeutics | Biomanufacturing | Discovery |

|---|---|---|---|

| Timeline Impact | Reduces 12-year average drug development timeline by 40-50% [29] [36] | Accelerates strain engineering DBTL cycles via automation [14] | Compresses target identification from years to days [29] |

| Economic Impact | AI can reduce preclinical costs by ~30%; total drug development cost ~$2.6B [29] [36] | Synthetic biology market projected to grow from $11.4B (2023) to >$40B by 2028 [29] | AI-driven discovery can reduce R&D costs by 25-40% [29] |

| Market Data | Global synthetic biology in healthcare market to reach $10.43B by 2032 (12.7% CAGR) [34] | AI in drug discovery market valued at $1.5B in 2023, 29.7% CAGR expected [36] | |

| Success Metrics | Increases success rates via improved target selection and patient stratification [30] [36] | Achieves high (e.g., 83%) success rates in retrieving validated pathways for engineering [32] | Generates novel molecular structures for previously "undruggable" targets [29] |

Experimental Protocols and Workflows

Protocol for Therapeutics: Model-Informed Drug Development (MIDD)

Model-Informed Drug Development (MIDD) is an essential framework that uses quantitative modeling to guide drug development and regulatory decisions [30]. The following workflow integrates modeling and simulation throughout the development lifecycle.

Diagram Title: MIDD Workflow for Therapeutics

Key Methodologies:

- Quantitative Systems Pharmacology (QSP): Integrates disease pathophysiology with drug mechanisms to simulate clinical outcomes across virtual patient populations [30].

- Physiologically Based Pharmacokinetic (PBPK) Modeling: Mechanistically models drug absorption, distribution, metabolism, and excretion based on human physiology and drug properties [30].

- Exposure-Response (ER) Analysis: Quantitatively characterizes the relationship between drug exposure levels and both efficacy and safety endpoints to inform dosing regimens [30].

Research Reagent Solutions:

- Virtual Patient Populations: Computational cohorts with defined physiological and genetic variability used to simulate clinical trial outcomes and predict subgroup responses [30].

- QSAR Models: Computational models that predict biological activity based on chemical structure, used for early toxicity and efficacy screening [30].

- Pathway Databases: Curated biological pathway information (e.g., metabolic, signaling) used to contextualize drug targets and mechanism of action [32].

Protocol for Biomanufacturing: Automated Strain Engineering

This protocol outlines the biofoundry-based workflow for engineering microbial strains to produce target compounds, implementing a fully automated Design-Build-Test-Learn (DBTL) cycle [14] [32].

Diagram Title: Automated DBTL Cycle for Biomanufacturing

Detailed Methodologies:

- Pathway Design (Design): Utilize retrosynthesis tools (e.g., RetroPath2.0) to enumerate biosynthetic pathways from target compound to host chassis metabolites. Rank pathways using multiple criteria (thermodynamics, predicted yield, enzyme availability) [32].

- DNA Assembly (Build): Convert selected pathways into DNA assembly designs using standardized formats (SBOL). Generate robotic scripts (e.g., via Aquarium or DNA-BOT) to automate DNA part assembly and host strain transformation [14] [32].

- High-Throughput Screening (Test): Cultivate engineered strains in automated microtiter plate fermenters. Monitor growth and product formation using integrated analytics (e.g., HPLC, mass spectrometry) [14] [37].

- Data Integration & Learning (Learn): Apply machine learning (e.g., Gaussian process models) to analyze strain performance data. Identify genetic modifications (promoter/RBS combinations, gene deletions) for improved yield in the next DBTL cycle [14] [32].

Research Reagent Solutions:

- Standardized Biological Parts: Characterized DNA sequences (promoters, RBS, coding sequences) stored in repositories for reproducible genetic design [32].

- Liquid Handling Robots: Automated systems for accurate transfer of liquids, enabling high-throughput molecular biology techniques (PCR, DNA assembly, transformation) [14] [37].

- Microtiter Plate Fermenters: Miniaturized bioreactors that enable parallel cultivation of hundreds of microbial strains under controlled conditions [37].

Protocol for Discovery: AI-Driven Target and Molecule Identification

This protocol leverages generative AI and multimodal learning for novel target identification and molecular design, significantly accelerating early discovery [33] [29].

Diagram Title: AI-Driven Discovery Workflow

Detailed Methodologies:

- Multimodal Data Integration: Aggregate and harmonize diverse datasets including genomic sequences, protein structures, disease associations, and clinical data. Knowledge graphs often integrate these data to represent complex biological relationships [29].

- Target Hypothesis Generation: Apply machine learning to identify novel disease targets from integrated data. For example, Insilico Medicine used AI to identify a novel target for idiopathic pulmonary fibrosis [33].

- Generative Molecular Design: Train deep learning models on chemical databases and structure-activity relationships to generate novel molecular structures satisfying target product profiles (potency, selectivity, ADME properties) [33] [29].

- In silico Validation: Use molecular docking, free energy calculations, and predictive toxicity models to prioritize synthesized compounds. Companies like Exscientia report achieving clinical candidates with 10x fewer synthesized compounds than industry norms [33].

Research Reagent Solutions:

- Knowledge Graphs: Structured databases connecting genes, proteins, diseases, and compounds to infer novel relationships and identify druggable targets [33] [29].

- Generative AI Models: Neural network architectures (e.g., GANs, VAEs) trained on molecular structures to generate novel drug-like compounds with optimized properties [29].