Gradient Boosting vs. Random Forest: A Guide to Machine Learning in DBTL Cycles for Low-Data Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on leveraging machine learning, specifically Gradient Boosting and Random Forest, within Design-Build-Test-Learn (DBTL) cycles under data-scarce conditions.

Gradient Boosting vs. Random Forest: A Guide to Machine Learning in DBTL Cycles for Low-Data Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on leveraging machine learning, specifically Gradient Boosting and Random Forest, within Design-Build-Test-Learn (DBTL) cycles under data-scarce conditions. We explore the foundational principles of these ensemble methods, detail their methodological application in metabolic engineering and QSAR modeling, and offer practical troubleshooting and optimization strategies. Through a comparative analysis of their performance, robustness, and computational efficiency, we deliver validated insights to inform model selection and implementation, enabling more efficient and predictive bioengineering and drug discovery pipelines.

Machine Learning in DBTL Cycles: Tackling the Low-Data Challenge in Biomedicine

What is the DBTL cycle and why is it fundamental to synthetic biology?

The Design-Build-Test-Learn (DBTL) cycle is a systematic, iterative framework used in synthetic biology and metabolic engineering to develop and optimize biological systems. This engineering-based approach allows researchers to create organisms with specific functions, such as producing biofuels, pharmaceuticals, or other valuable compounds. The cycle consists of four key phases: in the Design phase, researchers create a conceptual plan and select biological parts; in the Build phase, DNA constructs are assembled and introduced into host cells; in the Test phase, the constructed biological systems are experimentally evaluated; and in the Learn phase, data from testing is analyzed to inform the next design iteration. This iterative process accounts for the inherent variability of biological systems and helps researchers progressively refine their designs until they achieve the desired performance [1] [2].

How is the traditional DBTL cycle being transformed by computational advances?

Recent computational advances, particularly in machine learning (ML), are transforming the traditional DBTL cycle in two significant ways. First, machine learning models are increasingly being used to enhance the Learn phase by identifying patterns in complex biological data that would be difficult for humans to discern. Second, a paradigm shift termed "LDBT" (Learn-Design-Build-Test) has been proposed, where the cycle begins with machine learning algorithms that leverage vast biological datasets to generate initial designs, potentially reducing the number of experimental iterations needed. The integration of cell-free systems further accelerates the Build and Test phases by enabling rapid, high-throughput experimentation without the constraints of living cells [3].

Troubleshooting Common DBTL Workflow Challenges

What should I do when my experimental results don't match expectations?

When experimental results don't match expectations, a systematic troubleshooting approach is essential:

- Repeat the experiment: Unless cost or time prohibitive, always repeat the experiment first to rule out simple human error in protocol execution [4].

- Verify the experimental validity: Consider whether there might be scientifically valid reasons for the unexpected results, such as low protein expression in specific tissue types, rather than assuming protocol failure [4].

- Check your controls: Ensure you have included appropriate positive and negative controls. If a positive control fails, it likely indicates a protocol issue rather than a meaningful biological result [4].

- Inspect equipment and reagents: Check that all reagents have been stored properly and haven't degraded. Verify equipment calibration and function [5] [4].

- Change one variable at a time: When modifying your protocol, isolate variables systematically. Test one potential factor at a time to clearly identify what resolves the issue [4].

- Document everything: Maintain detailed records of all changes and outcomes in your lab notebook to track troubleshooting efforts and solutions [4].

How can I improve the efficiency of my DBTL cycles, especially with limited data?

In low-data regimes commonly encountered in early DBTL cycles, specific strategies can significantly improve efficiency:

- Select appropriate machine learning methods: Research indicates that gradient boosting and random forest models outperform other methods when training data is limited, and they demonstrate robustness to experimental noise and training set biases [6].

- Implement automated recommendation tools: Use algorithms that can propose new strain designs based on machine learning model predictions, particularly when the number of strains you can physically build and test is limited [6].

- Consider cycle strategy: Evidence suggests that when resources are constrained, starting with a larger initial DBTL cycle is more favorable than distributing the same number of builds evenly across multiple cycles [6].

- Leverage cell-free systems: For rapid prototyping, implement cell-free expression platforms that allow high-throughput testing without time-intensive cloning steps, enabling megascale data generation for model training [3].

What are common issues in molecular cloning within the Build phase and how can I resolve them?

Molecular cloning bottlenecks frequently occur in the Build phase, particularly in high-throughput workflows:

- Problem: Traditional colony screening methods (using sterile pipette tips, toothpicks, or inoculation loops) are causing bottlenecks.

Solution: Implement automated assembly processes to reduce time, labor, and cost while increasing throughput and overall shortening the development cycle [1].

Problem: High variance or unexpected results in biological assays.

- Solution: Focus on technique consistency. For example, in cell viability assays, inconsistent aspiration during wash steps can cause high variance. Standardize techniques across experiments and personnel [5].

Machine Learning in DBTL: Frequently Asked Questions

Which machine learning methods perform best in low-data regimes for DBTL applications?

In the context of DBTL cycles for combinatorial pathway optimization, specific machine learning methods have shown superior performance when data is limited:

Table 1: Machine Learning Method Performance in Low-Data Regimes

| Method | Key Strengths | Considerations | Best Applications |

|---|---|---|---|

| Gradient Boosting | High predictive accuracy, handles imbalanced data, effective with complex relationships [7] [6] | Prone to overfitting without careful tuning, longer training times, sensitive to hyperparameters [7] | Crucial accuracy needs, imbalanced datasets, complex problem spaces [7] |

| Random Forest | Robust to overfitting, handles missing data well, easier to implement and tune [7] [6] | Can become complex and less interpretable, potentially slower predictions with large forests [7] | Fast baseline models, large datasets, when interpretability is important [7] |

Research using simulated DBTL cycles has demonstrated that both gradient boosting and random forest models outperform other tested methods in low-data conditions and remain robust to training set biases and experimental noise [6].

How can I implement machine learning for predictive modeling in my DBTL workflow?

Implementing machine learning in DBTL workflows involves both methodological and practical considerations:

- Data Generation: Leverage high-throughput automated systems and cell-free platforms to generate large, high-quality datasets necessary for training effective models [2] [3].

- Model Selection: Choose algorithms based on your data characteristics. For high-dimensional longitudinal data (common in time-series omics studies), consider specialized methods like Mixed-Effect Gradient Boosting (MEGB), which accounts for within-subject correlations while handling numerous predictors [8].

- Feature Engineering: Represent biological entities in computationally friendly formats, such as using sequential representations of proteins or Simplified Molecular Input Line Entry System (SMILES) for chemical structures, which are compatible with various machine learning models [2].

- Validation: Always couple AI-driven predictions with experimental validation to account for biological variability not captured in models [2].

Research Reagent Solutions for DBTL Experiments

Table 2: Essential Research Reagents and Their Applications in DBTL Workflows

| Reagent/Resource | Function in DBTL Workflow | Example Applications |

|---|---|---|

| Ribosome Binding Site (RBS) Libraries | Fine-tune relative gene expression in synthetic pathways [9] | Optimizing enzyme expression levels in metabolic pathways for dopamine production [9] |

| Cell-Free Expression Systems | Rapid protein synthesis without cloning; high-throughput testing [3] | Prototyping pathway combinations, expressing toxic proteins, incorporating non-canonical amino acids [3] |

| Promoter Libraries | Modulate transcription initiation rates for pathway balancing [6] | Combinatorial optimization of multiple pathway genes simultaneously [6] |

| CRISPR-GPT | LLM-assisted automated design of gene-editing experiments [2] | Designing precise genetic modifications for strain engineering [2] |

| Specialized Model Organisms | Engineered chassis strains with optimized precursor supply | E. coli FUS4.T2 with high l-tyrosine production for dopamine synthesis [9] |

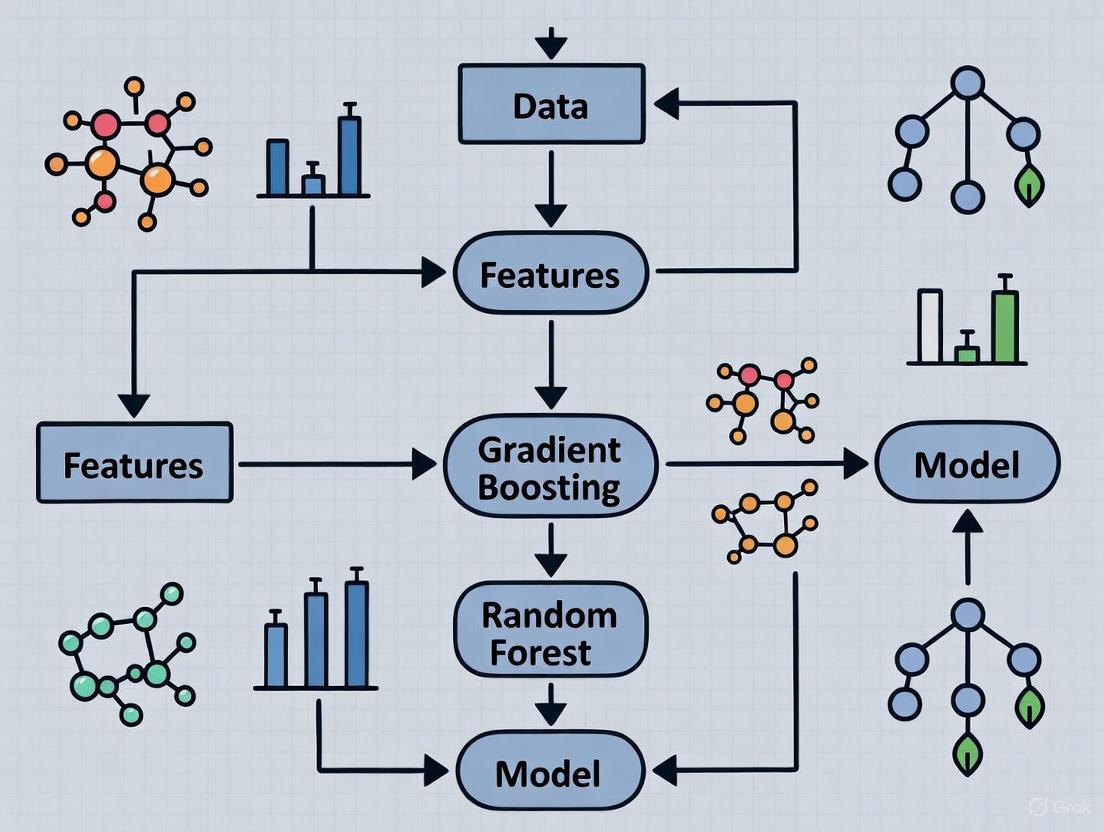

Workflow Visualization

Standard DBTL Cycle

Machine Learning-Enhanced LDBT Cycle

FAQs: Core Concepts and Problem Definition

Q1: What are "combinatorial explosions" in metabolic engineering, and why are they a critical bottleneck?

In metabolic engineering, combinatorial explosions refer to the exponential increase in the number of genetic variant combinations that need to be tested when simultaneously optimizing multiple pathway components. As the number of components (e.g., genes, promoters, RBS) to be optimized increases, the number of permutations grows exponentially, rendering full factorial searches experimentally infeasible. This creates a major bottleneck in the development of microbial cell factories for producing chemicals, fuels, and pharmaceuticals [10].

Q2: How does a "low-data regime" affect machine learning applications in metabolic engineering?

A low-data regime describes a scenario where the number of available experimental data points (e.g., strain performance measurements) is very small relative to the complexity of the system being modeled. This is a common challenge in metabolic engineering where building and testing strains is time-consuming and expensive. In these regimes, complex models like deep neural networks often overfit and fail to generalize, whereas certain ensemble methods like gradient boosting and random forests have been shown to be more robust and perform better [11].

Q3: What is the advantage of using ensemble ML models like Gradient Boosting over traditional methods for this problem?

Ensemble ML models combine multiple weaker models to create a single, more robust, and accurate predictor. This is particularly advantageous in low-data regimes with complex, non-linear relationships often found in biological systems. Gradient boosting iteratively builds models to correct the errors of previous ones, making it highly effective at capturing complex patterns from limited data. Random forests reduce overfitting by averaging predictions from multiple decorrelated decision trees. A recent study demonstrated that both gradient boosting and random forest models outperform other methods in the low-data regime, showing robustness to training set biases and experimental noise [11].

Q4: How does the DBTL cycle integrate with machine learning for combinatorial pathway optimization?

The Design-Build-Test-Learn (DBTL) cycle is an iterative framework for metabolic engineering. Machine learning powerfully integrates into the "Learn" phase. In this phase, data from the "Test" phase is used to train an ML model. This model then informs the next "Design" phase, predicting which genetic combinations might yield improved performance. Using ML to guide these cycles helps to strategically explore the vast combinatorial space, focusing experimental effort on the most promising candidates [11].

Troubleshooting Guides

Problem 1: Poor Model Performance and Overfitting in Initial DBTL Cycles

Symptoms: Your machine learning model performs well on training data but poorly when predicting new strain designs. Predictions are inaccurate and do not lead to improved strains in the next cycle.

Solutions:

- Action: Prioritize simpler models and strong regularization.

- Details: In initial cycles with very little data, start with simpler models like Random Forests or Gradient Boosting with strong regularization parameters. These models are less prone to overfitting than deep neural networks. For Gradient Boosting, reduce the model complexity by using a smaller

max_depthfor trees and a higherl2_regularizationparameter [12] [11].

- Details: In initial cycles with very little data, start with simpler models like Random Forests or Gradient Boosting with strong regularization parameters. These models are less prone to overfitting than deep neural networks. For Gradient Boosting, reduce the model complexity by using a smaller

- Action: Implement early stopping.

- Details: Use early stopping during model training to halt the process as soon as performance on a hold-out validation set stops improving. This prevents the model from over-optimizing to the noise in the small training dataset [12].

- Action: Leverage cross-validation.

- Details: Use techniques like k-fold cross-validation to get a more reliable estimate of your model's performance and for more robust hyperparameter tuning, even with limited data [13].

Problem 2: Navigating Combinatorial Explosion with Limited Experimental Budget

Symptoms: The number of potential genetic variant combinations is impossibly large, and you can only build and test a small number of strains per DBTL cycle.

Solutions:

- Action: Apply smart diversification strategies.

- Details: Do not diversify all pathway components at once. Use prior knowledge to identify the most rate-limiting steps (e.g., promoters for key genes, homologs for a specific enzyme) and focus combinatorial libraries on these. Strategies include varying coding sequences (gene homologs), expression levels (promoters, RBS), and gene dosage [10].

- Action: Use an algorithmic recommendation system.

- Details: Employ an algorithm that uses the trained ML model's predictions to recommend a shortlist of the most promising strains for the next DBTL cycle. Research indicates that when the total number of strains you can build is limited, it can be more effective to start with a larger initial DBTL cycle to provide the ML model with a better foundational dataset [11].

Problem 3: Model Failure in Subsequent DBTL Cycles

Symptoms: The model was effective in the first few cycles but is no longer generating improved designs, or performance has plateaued.

Solutions:

- Action: Retrain the model with accumulated data.

- Details: Avoid using a static model. The model should be retrained at the beginning of each DBTL cycle using all available data from all previous cycles. This allows the model to continuously learn and refine its understanding of the genotype-phenotype landscape [11].

- Action: Check for data distribution shifts.

- Details: As DBTL cycles progress, the new strains being tested may occupy a different region of the combinatorial space than the initial strains. Ensure your training data is representative of the space you are trying to explore. If not, actively design experiments to fill knowledge gaps.

The following table summarizes key quantitative findings from a foundational study that simulated DBTL cycles to evaluate machine learning methods for combinatorial pathway optimization [11].

Table 1: Comparative Performance of ML Methods in Simulated Metabolic Engineering DBTL Cycles

| Machine Learning Method | Performance in Low-Data Regime | Robustness to Training Set Bias | Robustness to Experimental Noise | Key Strengths |

|---|---|---|---|---|

| Gradient Boosting | Outperforms other tested methods | Robust | Robust | High accuracy, handles complex non-linear relationships |

| Random Forest | Outperforms other tested methods | Robust | Robust | Reduces overfitting, stable performance |

| Deep Neural Networks | Lower performance | Less Robust | Less Robust | Data-hungry; prone to overfitting with small data |

| Linear Models | Lower performance | N/A | N/A | Interpretable but often too simple for biological complexity |

Detailed Experimental Protocol: ML-Guided DBTL Cycle

This protocol outlines the steps for implementing a single iteration of a machine learning-guided DBTL cycle for combinatorial pathway optimization.

Objective: To use machine learning (Gradient Boosting/Random Forest) to select the best set of strain variants to build and test in the next cycle, with the goal of maximizing product titer/yield while minimizing experimental effort.

Materials and Reagents:

- Strain Library: A library of characterized genetic parts (e.g., promoter libraries, RBS libraries, gene homologs).

- Microbial Chassis: The host organism (e.g., E. coli, S. cerevisiae).

- DNA Assembly Reagents: Enzymes and kits for molecular cloning (e.g., Gibson assembly, Golden Gate assembly).

- Analytical Equipment: HPLC, GC-MS, or spectrophotometer for quantifying target product and growth metrics.

Procedure:

Learn: Model Training and Validation

- Input Data Preparation: Compile a dataset from all previous cycles. The dataset should consist of feature vectors (e.g., genetic part combinations, promoter strengths) and corresponding target variables (e.g., product titer, yield, growth rate).

- Model Training: Train a Gradient Boosting or Random Forest model on the compiled dataset. Use a train/validation split (e.g., 80/20) or k-fold cross-validation.

- Hyperparameter Tuning: Optimize key parameters using the validation set or cross-validation.

- Performance Assessment: Evaluate the final model on the hold-out test set or via cross-validation to ensure it has not overfit.

Design: In Silico Prediction and Recommendation

- In Silico Library Generation: Use the trained model to predict the performance of a large, in silico library of all possible genetic combinations within the defined design space.

- Strain Selection: Run a recommendation algorithm to select the top N (e.g., 50-100) most promising strain designs from the in silico library for experimental construction. The selection can be based on the highest predicted performance, or can also incorporate exploration of uncertain regions to improve the model.

Build: Library Construction

- Strain Engineering: Use high-throughput DNA assembly and genome engineering techniques (e.g., CRISPR-based methods, multiplex automated genome engineering) to construct the selected N strain variants in the microbial host [10].

Test: Phenotypic Characterization

- Cultivation and Assay: Grow the constructed strains in a controlled, high-throughput format (e.g., microtiter plates).

- Data Collection: Measure key performance indicators (KPIs) such as product titer, yield, and cellular growth for each strain variant.

- Data Curation: Organize the new experimental data (genotype and phenotype) for the next "Learn" phase.

The cycle then repeats from step 1, incorporating the new data.

Workflow and Pathway Diagrams

DBTL Cycle with ML Integration

Combinatorial Diversification Strategies

Research Reagent Solutions

Table 2: Key Research Reagents and Tools for Combinatorial Pathway Engineering

| Reagent / Tool | Function / Description | Application in Workflow |

|---|---|---|

| Promoter & RBS Libraries | Pre-characterized sets of genetic parts with varying strengths to fine-tune gene expression levels. | Design: Used to create diversity in expression levels for pathway genes to balance flux [10]. |

| Gene Homolog Libraries | A collection of alternative coding sequences from different species for the same enzymatic function. | Design: Provides diversity in enzyme kinetics and stability to overcome rate-limiting steps [10]. |

| CRISPR-Cas Systems | Tools for precise and multiplexed genome editing. | Build: Enables simultaneous modification of multiple genomic loci to construct complex variant strains [10]. |

| DNA Assembly Kits (e.g., Gibson, Golden Gate) | Enzyme mixes for seamlessly assembling multiple DNA fragments. | Build: Essential for high-throughput construction of pathway variants and genetic constructs [10]. |

| Genome-Scale Metabolic Models (GEMs) | Computational models of entire cellular metabolism. | Learn/Design: Provides a structured knowledge base and can be used to generate initial hypotheses and constrain ML models [15]. |

Frequently Asked Questions

Q1: My single decision tree model is overfitting, especially with my limited dataset. What is the simplest ensemble method to fix this?

A1: Bagging (Bootstrap Aggregating) is an excellent starting point. It reduces model variance and overfitting by training multiple decision trees on different random subsets of your training data (drawn with replacement) and then averaging their predictions [16] [17]. The Random Forest algorithm is an extension of bagging that further improves performance by also randomly selecting a subset of features at each split, creating more diverse and robust trees [16] [18].

Q2: I have a model where even small errors are costly. I want to sequentially improve my model's performance by focusing on hard-to-predict samples. Which method should I use?

A2: Boosting is designed for this exact scenario. Unlike bagging which runs models in parallel, boosting builds models sequentially, with each new model focusing on the errors made by the previous ones [17] [19]. Gradient Boosting, in particular, is a powerful technique that fits new models to the residual errors of the current ensemble, effectively minimizing the overall loss function in a gradient descent fashion [20] [21].

Q3: In a low-data regime, is it better to use Bagging or Boosting?

A3: Both can be adapted, but their approaches differ. Bagging uses bootstrap samples (random subsets with replacement) to create multiple training sets from a single limited dataset, allowing you to simulate a larger data environment [16] [22]. Boosting works sequentially to get the most out of every data point by concentrating on misclassified instances in each iteration [19]. In practice, the choice depends on your specific data and problem; empirical testing with cross-validation is often necessary to determine which performs better for your use case.

Q4: My ensemble model is becoming too complex and slow to train. How can I prevent overfitting and manage training time?

A4:

- For Gradient Boosting: Use early stopping. Monitor the model's performance on a validation set and halt training when performance stops improving [20]. Also, tune the learning rate; a smaller learning rate often requires more trees but can lead to better generalization [20].

- For Random Forest: While generally more resistant to overfitting, you can control complexity by tuning hyperparameters like

max_depth(maximum tree depth) andmin_samples_leaf(minimum samples required at a leaf node) [18]. Leverage Out-of-Bag (OOB) samples as an internal validation set to estimate performance without needing a separate dataset [16] [18].

Q5: How can I combine fundamentally different models (e.g., a decision tree and a logistic regression) for better performance?

A5: Use Stacking (Stacked Generalization). This advanced technique involves training multiple different (heterogeneous) base models in parallel. Then, their predictions are used as input features to train a final meta-model (e.g., a linear regression) that learns how to best combine the base models' predictions [17] [19]. A related technique called Blending uses a small holdout set instead of cross-validation for this last step [17].

The Scientist's Toolkit: Essential Algorithms & Libraries

The table below details key algorithms and libraries for implementing ensemble methods in a research environment.

| Name | Type | Primary Function | Key Consideration for Low-Data Regimes |

|---|---|---|---|

| Random Forest [16] [18] | Bagging | Creates an ensemble of decorrelated decision trees via bagging and feature randomness. | Bootstrap sampling efficiently utilizes limited data. OOB error provides a reliable validation estimate [16]. |

| Gradient Boosting (GBM) [20] [21] | Boosting | Sequentially builds an ensemble by fitting new models to the residual errors of the current ensemble. | Highly effective but requires careful tuning (learning rate, tree depth) and techniques like early stopping to prevent overfitting [20]. |

| AdaBoost [17] [19] | Boosting | An early boosting algorithm that re-weights misclassified data points for subsequent models. | Simpler than GBM, can be a good baseline. Focuses on hard examples, which can be beneficial with limited data. |

| Scikit-learn [17] | Library | Provides easy-to-use implementations for Random Forest, AdaBoost, and a basic Gradient Boosting classifier/regressor. | Ideal for prototyping and comparing different ensemble methods with a consistent API. |

| XGBoost [20] | Library | Optimized implementation of Gradient Boosting designed for speed and performance. | Often achieves state-of-the-art results. Excellent for fine-tuning and computational efficiency. |

| LightGBM [20] | Library | Another high-performance Gradient Boosting framework using novel techniques for faster training on large datasets. | Can be more efficient than XGBoost in some scenarios, useful when computational resources are a constraint. |

Experimental Protocols & Comparisons

Comparative Analysis of Bagging vs. Boosting

This table summarizes the core methodological differences between the two main ensemble paradigms, which is critical for selecting the right approach for an experiment.

| Aspect | Bagging (e.g., Random Forest) | Boosting (e.g., Gradient Boosting) |

|---|---|---|

| Core Objective | Reduce variance and overfitting [16] [22] | Reduce bias and improve accuracy [17] [19] |

| Data Sampling | Bootstrap samples (random with replacement); each model sees a different data subset [16] [17] | Whole dataset, but instances are re-weighted or errors are focused on sequentially [19] [22] |

| Model Training | Parallel and independent [19] | Sequential and dependent [19] |

| Base Model Type | Typically high-variance, complex models (e.g., deep decision trees) [16] | Typically high-bias, simple models (e.g., shallow decision trees/stumps) [22] |

| Aggregation | Averaging (regression) or Majority Voting (classification) [17] | Weighted averaging based on model performance [17] |

Visual Workflow: Bagging vs. Boosting

The diagram below illustrates the fundamental structural differences in the workflows for Bagging and Boosting algorithms.

Workflow comparison of parallel Bagging versus sequential Boosting.

Protocol: Implementing a Basic Gradient Boosting Regressor

This protocol outlines the key steps for implementing a Gradient Boosting model, which is particularly relevant for research in predictive modeling.

- Initialization: Start with a weak base learner (e.g., a decision tree with a single node). Make an initial prediction, which is often the average of the target values for regression [21].

- Loop for M iterations (one per new tree):

- Step A: Compute Residuals. For each instance in the training set, calculate the difference between the observed value and the current model's prediction. These are the negative gradients [20] [21].

- Step B: Fit a Weak Learner. Train a new weak learner (e.g., a decision tree) to predict these residuals. The tree is typically constrained by parameters like

max_depth(e.g., 3-8) [20]. - Step C: Update the Model. Add the new weak learner to the current ensemble. Its contribution is scaled by a

learning_rateparameter to prevent overfitting [20]. The update rule is:F_new(x) = F_old(x) + ν * h_m(x), whereνis the learning rate andh_m(x)is the new tree [21].

- Termination: The loop stops when a pre-set number of trees (

n_estimators) is reached, or when performance on a validation set stops improving (early stopping) [20].

Visual Workflow: Gradient Boosting Steps

The following diagram details the sequential, iterative process of the Gradient Boosting algorithm.

The iterative model correction process of Gradient Boosting.

Frequently Asked Questions (FAQs)

Q1: What exactly is meant by a "low-data regime" in machine learning for research? A: A "low-data regime" refers to situations where obtaining a large number of reliable, high-quality labeled data samples is challenging due to constraints such as time, cost, ethics, privacy, security, or technical limitations in data acquisition [23]. In such regimes, the number of training samples is so small that the ability of standard machine learning (ML) models to learn effectively sharply decreases, often resulting in poor predictive performance and a high risk of overfitting [23].

Q2: Between Gradient Boosting and Random Forest, which is more suitable for low-data scenarios? A: Random Forest is often recommended for initial low-data models because it is robust, fast to train, and less prone to overfitting due to its bagging approach, which builds multiple independent trees and averages their results [7]. Gradient Boosting, while often achieving higher accuracy, is more prone to overfitting with noisy or limited data and requires careful hyperparameter tuning, which can be difficult without sufficient data for validation [7]. For a very small number of labeled samples (e.g., few dozen), specialized multi-task learning approaches may be necessary [24].

Q3: What are the common pitfalls when applying Gradient Boosting to imbalanced datasets with low event rates? A: The primary pitfall is not the algorithm itself but using inappropriate evaluation metrics. With low event rates (e.g., 1%), metrics like Accuracy can be misleading [25]. It is crucial to use metrics like Area Under the Precision-Recall Curve (AUCPR) or Brier score, which provide a more accurate picture of model performance [25]. Furthermore, the predicted probabilities from Gradient Boosting models may need calibration to reliably capture tendencies in the data [25].

Q4: My dataset has multiple related properties, but each has very few measurements. How can I build a reliable model? A: Multi-task Learning (MTL) is designed for this scenario. It leverages correlations among related properties (tasks) to improve predictive performance for each individual task [24]. However, with imbalanced data, classical MTL can suffer from "negative transfer," where updates from one task harm another. Advanced training schemes like Adaptive Checkpointing with Specialization (ACS) can mitigate this by saving task-specific model checkpoints to protect against detrimental interference [24].

Q5: What practical steps can I take to improve model performance when my labeled data is severely limited? A: Several advanced ML strategies have been developed specifically for low-data challenges [23]:

- Transfer Learning: Initialize a model with knowledge from a related, data-rich domain and fine-tune it on your small dataset.

- Data Augmentation: Create new, synthetic training samples based on physical models or knowledge of the domain [23].

- Self-Supervised Learning (SSL): The model generates its own labels from the structure of unlabeled data, learning useful representations before fine-tuning on the limited labeled data [23].

- Semi-Supervised Learning: Leverage any available unlabeled data in addition to the small set of labeled data to improve learning [23].

Troubleshooting Guides

Issue 1: Model Performance is Poor on a Small, Imbalanced Dataset

Problem: Your Gradient Boosting or Random Forest model fails to produce meaningful outputs or shows poor predictive power on a dataset with a low event rate.

| Step | Action | Diagnostic Question | Solution / Next Step |

|---|---|---|---|

| 1 | Evaluate Metrics | Are you using accuracy? | Switch to metrics robust to imbalance: AUCPR, Brier Score, or F1-Score [25]. |

| 2 | Check Data Balance | What is the ratio of minority to majority class? | Employ stratified sampling or assign inverse prior weights during training [25]. |

| 3 | Validate Model Calibration | Are the predicted probabilities reliable? | Apply probability calibration techniques (e.g., Platt scaling, isotonic regression) to the model's output [25]. |

| 4 | Simplify the Model | Is the model overfitting? | For Random Forest, reduce tree depth. For Gradient Boosting, increase regularization, use a lower learning rate, or perform hyperparameter tuning [7]. |

Issue 2: Multi-Task Learning is Underperforming or Harming Individual Tasks

Problem: You are using MTL to jointly predict several molecular properties, but the overall performance is worse than training separate models.

| Step | Action | Diagnostic Question | Solution / Next Step |

|---|---|---|---|

| 1 | Identify Negative Transfer | Is performance on a specific task dropping during training? | Implement a training scheme like Adaptive Checkpointing (ACS) that saves task-specific model parameters to avoid detrimental updates [24]. |

| 2 | Analyze Task Relatedness | Are the tasks truly related? | Quantify task similarity. If tasks are unrelated, consider using separate models or a architecture with higher capacity to learn divergent tasks [24]. |

| 3 | Address Data Imbalance | Do the tasks have vastly different amounts of data? | Techniques like loss masking for missing labels can help, but advanced methods like ACS are specifically designed to handle severe task imbalance [24]. |

Quantitative Data on Low-Data Performance

The table below summarizes quantitative benchmarks for low-data regime performance from published research, providing a practical reference for expectations.

Table 1: Performance Benchmarks in Low-Data Regimes

| Dataset / Context | Model / Method | Data Scale | Key Performance Result | Reference |

|---|---|---|---|---|

| Molecular Property Prediction (e.g., ClinTox, SIDER) | Adaptive Checkpointing with Specialization (ACS) | As few as 29 labeled samples | Enabled accurate prediction of sustainable aviation fuel properties; outperformed single-task learning by 8.3% on average [24]. | [24] |

| General Small Data Challenges | Traditional ML (RF, SVM) vs. Deep Learning (CNN, ANN) | Very few training samples | DL models face severe overfitting; strategies like Transfer Learning, Data Augmentation, and Combining DL with traditional ML are essential [23]. | [23] |

| Imbalanced Dataset Classification | Gradient Boosting Machines | ~1.2% event rate in >4M samples | Model failed with default settings; required stratified sampling and inverse prior weights, highlighting sensitivity to imbalance [25]. | [25] |

Experimental Protocol: Implementing ACS for Multi-Task Learning

Objective: To train a robust multi-task graph neural network (GNN) on a dataset with severely imbalanced labels across tasks, mitigating negative transfer.

Materials:

- Dataset: A multi-task molecular property dataset (e.g., ClinTox, SIDER, or Tox21 from MoleculeNet).

- Software Framework: Python with deep learning libraries (e.g., PyTorch, Deep Graph Library).

- Computing Resources: GPU-enabled computing environment.

Methodology:

- Architecture Setup:

Training Loop:

- Train the entire model (shared backbone + all heads) on all tasks simultaneously.

- Monitor the validation loss for each individual task separately.

Adaptive Checkpointing:

- Throughout training, whenever the validation loss for a specific task reaches a new minimum, checkpoint (save) the current shared backbone parameters along with that task's specific head parameters [24].

- This ensures each task retains the best model state that worked for it, even if subsequent training updates are harmful.

Specialization:

- After training is complete, for each task, load its corresponding best-performing checkpoint to create a specialized model for deployment [24].

Workflow Visualization

Low-Data Modeling Strategy Selection

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Low-Data Regime Research

| Tool / Technique | Function in Low-Data Research | Key Consideration |

|---|---|---|

| Random Forest | Provides a robust, fast baseline model. Less prone to overfitting, making it a safe first choice for exploration [7]. | Performance may plateau; less effective at capturing complex, sequential dependencies compared to boosting methods [7]. |

| Gradient Boosting | Offers high predictive power and flexibility. Can handle complex, non-linear relationships if tuned correctly [7]. | Highly sensitive to hyperparameters and noisy data. Requires more computational resources and expertise to avoid overfitting [7] [25]. |

| Multi-Task Learning (MTL) | Alleviates data bottlenecks by leveraging correlations among related tasks, improving data efficiency [24]. | Risk of "Negative Transfer" if tasks are not sufficiently related or data is severely imbalanced [24]. |

| Adaptive Checkpointing (ACS) | A training scheme that mitigates negative transfer in MTL, allowing reliable modeling with ultra-low data (e.g., <30 samples per task) [24]. | Increases training complexity and requires careful monitoring of validation loss for each task [24]. |

| Transfer Learning | Uses knowledge from a data-rich source task to improve learning on a low-data target task, effectively expanding the useful training set [23]. | Success depends on the relatedness between the source and target domains. |

| Self-Supervised Learning (SSL) | Learns useful data representations from unlabeled data, which can then be fine-tuned with limited labels, maximizing utility from available data [23]. | Requires defining a "pretext task" for the model to solve on unlabeled data. |

Frequently Asked Questions

FAQ: Machine Learning in Metabolic Engineering

Q1: Which machine learning methods are most effective for metabolic pathway optimization with limited experimental data?

A1: In low-data regimes commonly encountered in early-stage metabolic engineering, gradient boosting and random forest models have been demonstrated to outperform other machine learning methods. These approaches are particularly valuable because they show robustness to training set biases and experimental noise, which are common challenges with small datasets. Furthermore, when the number of strains you can build is limited, starting with a larger initial Design-Build-Test-Learn (DBTL) cycle is more favorable than distributing the same number of strains equally across all cycles [11].

Q2: What is a DBTL cycle and how does machine learning integrate with it?

A2: The Design-Build-Test-Learn (DBTL) cycle is an iterative framework for strain optimization in metabolic engineering. Its purpose is to progressively develop a production strain by incorporating learning from each previous cycle, thereby avoiding the "combinatorial explosion" that occurs when simultaneously optimizing many pathway genes. Machine learning integrates into this cycle by using data from the "Test" phase to "Learn" and propose improved genetic "Designs" for the next iteration, creating a data-driven feedback loop [11] [26].

Q3: How can I predict the activity of a novel compound for a specific biological target?

A3: This is achieved through Quantitative Structure-Activity Relationship (QSAR) modeling. QSAR models mathematically link a compound's molecular structure, represented by numerical descriptors, to its biological activity. You can train a model on a dataset of known compounds and then use it to predict the activity of new, unseen compounds. For critical tasks like predicting activity against a specific target (e.g., DHODH or TYMS in cancer research), support vector machines (SVM) with radial basis functions have shown high predictive performance (R² > 0.8 on unseen data) [27].

Q4: What are the common pitfalls when building a QSAR model and how can I avoid them?

A4: Common pitfalls include:

- Overfitting: Building a model that memorizes the training data but fails on new compounds. Solution: Use feature selection to reduce the number of descriptors and rigorously validate the model with a separate test set and cross-validation [28] [29].

- Poor Data Quality: Models are only as good as the data they are trained on. Solution: Carefully curate your dataset by removing duplicates, standardizing chemical structures, and handling missing values appropriately [28] [30].

- Ignoring the Applicability Domain: Using the model to predict compounds that are structurally very different from its training set. Solution: Define the chemical space of your training data and only apply the model to new compounds within that domain [28].

FAQ: QSAR Modeling

Q5: My QSAR model performs well on the training data but poorly on the test set. What is wrong?

A5: This is a classic sign of overfitting. Your model has likely learned the noise in the training data rather than the generalizable relationship between structure and activity. To address this:

- Simplify the model: Reduce the number of molecular descriptors using feature selection techniques.

- Use cross-validation: Tune your model's hyperparameters based on cross-validation performance within the training set, not on the test set.

- Gather more data: If possible, increase the size and diversity of your training dataset [28] [29].

Q6: What software tools are available for calculating molecular descriptors for QSAR?

A6: Several software packages can calculate a wide array of molecular descriptors. Common tools include:

- RDKit and Mordred (Open-source)

- PaDEL-Descriptor (Open-source)

- Dragon (Commercial)

- ChemAxon (Commercial) [28]

Troubleshooting Guides

Problem: Low Predictive Accuracy in a QSAR Model Built with a Small Dataset

This guide addresses the challenge of building a reliable QSAR model when you have a limited amount of bioactivity or property data.

| Step | Action | Rationale & Technical Details |

|---|---|---|

| 1 | Apply Data Augmentation | For deep learning models, represent each compound with multiple valid SMILES strings. The Maxsmi approach demonstrates that this augmentation improves model accuracy and allows the prediction's standard deviation across different SMILES to serve as an uncertainty measure [31]. |

| 2 | Select Robust Algorithms | Prioritize Gradient Boosting or Random Forest algorithms. These ensemble methods are known to perform well in low-data regimes and are less prone to overfitting compared to more complex models like deep neural networks on small datasets [11]. |

| 3 | Use Simple Descriptors | Calculate a manageable set of 200+ molecular descriptors (e.g., constitutional, topological) using tools like RDKit. Avoid generating thousands of complex descriptors that can easily lead to overfitting when data is scarce [29]. |

| 4 | Implement Rigorous Validation | Use k-fold cross-validation on your training data for model selection and keep a strict hold-out test set for final evaluation. This provides a more reliable estimate of how the model will perform on new, unseen compounds [28]. |

Problem: Inefficient DBTL Cycles for Combinatorial Pathway Optimization

This guide helps optimize the DBTL process when faced with a vast combinatorial space of possible genetic modifications.

| Symptom | Possible Cause | Solution |

|---|---|---|

| Slow progress between cycles; learning does not effectively inform next designs. | The learning phase is not systematically leveraging data to propose high-potential designs. | Integrate a machine learning recommendation algorithm. Use model predictions to guide the selection of which strains to build in the next cycle, focusing resources on the most promising parts of the design space [11]. |

| High experimental noise obscuring the signal from genetic changes. | The "Test" data is too variable, making it difficult for ML models to discern meaningful patterns. | Ensure experimental replicates and use ML methods like random forest which are demonstrated to be robust to a certain level of experimental noise [11]. |

| Limited number of strains can be built per cycle. | Resources are spread too thinly across many cycles. | Allocate a larger proportion of your total resources to the initial DBTL cycle. A larger initial dataset provides a stronger foundation for the ML model to learn from, which is more efficient than many small cycles [11] [32]. |

Experimental Protocols & Data

Protocol 1: Building a Basic QSAR Model using Random Forest

This protocol provides a step-by-step methodology for constructing a predictive QSAR model [29].

- Define Goal & Curate Data: Compile a dataset of chemical structures (as SMILES strings) and their associated biological activities (e.g., IC50, pIC50). Clean the data by standardizing structures and removing duplicates.

- Calculate Molecular Descriptors: Use a software tool like RDKit to compute numerical descriptors (e.g., molecular weight, logP, topological indices) for every compound in the dataset.

- Split Data: Divide the dataset into a training set (e.g., 80%) for model building and a test set (e.g., 20%) for final validation. The test set must be held out and not used in any model training.

- Train Model: Train a Random Forest model on the training set, using the molecular descriptors as features and the biological activity as the target variable.

- Validate Model: Use the trained model to predict the activity of the compounds in the test set. Calculate performance metrics (e.g., R², RMSE) by comparing predictions to the true values.

Workflow: QSAR Model Building

Protocol 2: Implementing an ML-Driven DBTL Cycle for Pathway Optimization

This protocol outlines how to integrate machine learning into iterative metabolic engineering cycles [11] [26].

- Design: Based on prior knowledge or ML recommendations, design a library of genetic variants (e.g., with different promoter/gene combinations).

- Build: Use molecular biology techniques to construct the designed strains.

- Test: Ferment the constructed strains and measure the output metrics (e.g., metabolite titer, yield, productivity).

- Learn: Use the collected "Build" and "Test" data to train a machine learning model (e.g., Gradient Boosting) to predict strain performance from genetic design.

- Recommend: Use the trained ML model to predict the performance of new, untested genetic designs and select the most promising ones for the next "Design" phase.

Workflow: ML-Driven DBTL Cycle

Table 1: Machine Learning Algorithm Performance in Low-Data Regime Metabolic Engineering [11]

| Algorithm | Performance in Low-Data Regime | Key Strengths |

|---|---|---|

| Gradient Boosting | Outperforms other methods | Robust to noise and training set bias. |

| Random Forest | Outperforms other methods | Robust to noise and training set bias. |

| Other Tested ML Methods | Lower performance | Less effective with limited data. |

Table 2: Key Research Reagent Solutions for Featured Experiments

| Reagent / Tool | Function / Application |

|---|---|

| gmctool (R application) | Identifies metabolic vulnerabilities in cancer cells by calculating genetic Minimal Cut Sets (gMCSs) using RNA-seq data [27]. |

| TCGAbiolinks (R library) | Retrieves and preprocesses transcriptomic data (e.g., RNA-seq) from The Cancer Genome Atlas (TCGA) for analysis [27]. |

| PaDEL-Descriptor, RDKit | Software tools to calculate molecular descriptors from chemical structures for QSAR modeling [28]. |

| OECD QSAR Toolbox | Software that supports chemical hazard assessment through read-across and categorization, incorporating metabolic simulators [33]. |

| Uni-QSAR (Auto-ML Tool) | An automated machine learning tool that combines multiple molecular representations (1D, 2D, 3D) for improved molecular property prediction [34]. |

Implementing Gradient Boosting and Random Forest in Your Research Pipeline

Gradient Boosting Machines (GBMs) represent a powerful machine learning ensemble technique that builds models sequentially, with each new model correcting the errors of its predecessors. In the context of drug development and research operating in low-data regimes (DBTL cycles - Design, Build, Test, Learn), GBMs offer particular advantages due to their ability to capture complex patterns from limited datasets. This technical support guide addresses the specific challenges researchers and scientists face when implementing GBMs in resource-constrained environments.

Core Concepts and Terminology

What is Gradient Boosting?

Gradient boosting is an ensemble learning method that builds models sequentially, where each new model in the sequence focuses on correcting the errors made by the previous models [35]. The algorithm combines multiple weak learners (typically decision trees) to create a strong predictive model [36]. This approach differs fundamentally from parallel ensemble methods like Random Forests, where trees are built independently [37].

Key Mechanism: Sequential Residual Modeling

The fundamental principle behind gradient boosting involves iteratively fitting new models to the residuals (errors) of the previous ensemble [38]. In regression tasks with squared error loss, this translates to directly modeling the differences between observed values and current predictions [39]. For other loss functions, the algorithm fits to pseudo-residuals, which represent the negative gradient of the loss function [21].

Frequently Asked Questions (FAQs)

How does gradient boosting differ from random forests?

The table below summarizes the key differences between these two popular ensemble methods:

| Feature | Gradient Boosting | Random Forest |

|---|---|---|

| Model Building | Sequential, trees built one after another [37] | Parallel, trees built independently [37] |

| Bias-Variance | Lower bias, higher variance thus more prone to overfitting [37] | Lower variance, less prone to overfitting [37] |

| Training Approach | Each new tree corrects errors of previous ones [38] | Each tree built on random data and feature subsets [37] |

| Training Time | Slower due to sequential nature [37] | Faster due to parallel training [37] |

| Robustness to Noise | More sensitive to outliers and noise [37] | Less sensitive to outliers and noise [37] |

| Hyperparameter Sensitivity | High sensitivity requires careful tuning [37] | Less sensitive, more robust to suboptimal settings [37] |

When should I choose gradient boosting over random forests in low-data regimes?

For DBTL research with limited data, consider gradient boosting when:

- High predictive accuracy is crucial and you have relatively clean data [37]

- You need to capture complex nonlinear relationships in small to medium datasets [14]

- You have computational resources for extensive hyperparameter tuning [37]

- Interpretability is secondary to pure predictive performance [37]

What are the most critical hyperparameters to tune in gradient boosting?

The most impactful hyperparameters include:

- Learning rate: Controls contribution of each tree (typical range: 0.001-0.3) [39]

- Number of trees: Too few underfit, too many overfit [35]

- Tree depth: Controls model complexity (1-6 splits common for weak learners) [39]

- Minimum samples split: Prevents overfitting by limiting node splits [35]

Troubleshooting Guides

Problem: Model Overfitting in Small Datasets

Symptoms:

- Excellent training performance but poor validation results

- Large gap between training and validation metrics

- Unrealistic predictions on new data

Solutions:

- Increase regularization: Reduce tree depth, increase minimum samples per leaf

- Lower learning rate: Use values between 0.01-0.1 with more trees [40]

- Apply shrinkage: Scale the contribution of each tree [40]

- Use early stopping: Halt training when validation performance plateaus [35]

- Implement stochastic boosting: Use random subsamples of data for each tree [39]

Experimental Protocol:

Symptoms:

- Experiment iteration cycles become impractically long

- Hyperparameter tuning consumes excessive resources

- Model development delays DBTL cycles

Solutions:

- Use shallower trees: Limit depth to 2-4 for faster computation [39]

- Reduce feature space: Apply feature selection prior to modeling

- Implement early stopping: Avoid unnecessary iterations [35]

- Leverage GPU acceleration: Use implementations like XGBoost with GPU support

- Start with smaller subsets: Prototype with data samples before full training

Problem: Handling Noisy Data in Experimental Measurements

Symptoms:

- Model performance fluctuates significantly with small data changes

- High sensitivity to measurement outliers

- Inconsistent feature importance rankings

Solutions:

- Use robust loss functions: For regression, consider Huber loss instead of MSE [41]

- Increase bagging fraction: Use smaller subsamples to reduce outlier impact

- Apply feature scaling: Normalize or standardize input features

- Implement cross-validation: Use robust performance estimation strategies

- Remove extreme outliers: Preprocess data to remove measurement errors

Workflow Visualization

Gradient Boosting Sequential Training Process

Bias-Variance Tradeoff in Sequential Learning

The Researcher's Toolkit: Essential Components

Key Hyperparameters and Their Functions

| Component | Function | Impact on Low-Data Regimes |

|---|---|---|

| Learning Rate | Controls contribution of each tree to the ensemble [40] | Critical for preventing overfitting; lower values (0.01-0.1) preferred with limited data |

| Tree Depth | Determines complexity of individual weak learners [39] | Shallower trees (2-4 levels) reduce variance in small datasets |

| Number of Trees | Total iterations in the sequential process [35] | Requires careful tuning; too many trees overfit small datasets |

| Subsample Ratio | Fraction of data used for each tree (stochastic boosting) [39] | Introduces diversity and reduces overfitting in limited data |

| Minimum Samples Split | Smallest number of observations required to split a node [35] | Higher values prevent modeling noise in small datasets |

Experimental Design Considerations for DBTL Research

When implementing gradient boosting in low-data drug development contexts:

- Prioritize Cross-Validation: Use leave-one-out or repeated k-fold CV for reliable performance estimation [23]

- Focus on Regularization: Emphasize hyperparameters that control model complexity

- Implement Early Stopping: Automate stopping criteria to prevent overfitting [35]

- Leverage Domain Knowledge: Incorporate biological constraints into feature engineering

- Plan Iterative Refinement: Design experiments to sequentially improve data quality

Advanced Techniques for Low-Data Environments

Transfer Learning Applications

In DBTL frameworks where initial data is scarce, transfer learning can help leverage related domains or previous experiments to bootstrap models [23]. Pre-training on larger public datasets followed by fine-tuning on specific experimental data can improve performance in data-limited scenarios.

Hybrid Modeling Approaches

Combining gradient boosting with traditional machine learning models or physical models can enhance performance when data is limited [23]. These hybrid approaches leverage both data-driven patterns and domain knowledge to compensate for small sample sizes.

Performance Optimization Checklist

- Implement early stopping to prevent overfitting

- Use learning rate values between 0.01-0.1 for small datasets

- Limit tree depth to 2-4 for weak learners

- Apply stochastic boosting with subsampling

- Use robust cross-validation strategies

- Regularize using minimum samples per split/leaf

- Monitor training vs validation performance gaps

- Consider robust loss functions for noisy experimental data

By understanding these core principles, troubleshooting common issues, and implementing the recommended strategies, researchers can effectively leverage gradient boosting in DBTL cycles and low-data regime research to advance drug development initiatives.

Frequently Asked Questions (FAQs)

Q1: My Random Forest model performs nearly perfectly on training data but poorly on test data. Am I overfitting, and how can I prevent this?

Yes, this indicates overfitting. To prevent it:

- Tune Hyperparameters: Optimize parameters that control tree growth, such as:

mtry: The number of features to consider at each split.nodesizeormin_samples_leaf: The minimum number of samples required to be at a leaf node.max_depth: The maximum depth of the tree [42].

- Use Correct Training Performance Metric: For the training data, always use the Out-of-Bag (OOB) error, an unbiased estimate calculated from samples not used to build a given tree. Do not use

predict(model, newdata=train), which creates artificially high scores [42]. - Employ Cross-Validation: Use k-fold cross-validation to robustly evaluate model performance and guide hyperparameter tuning [42].

Q2: Why are my Random Forest regression predictions never outside the range of the target values seen in the training data?

This is a fundamental characteristic of the algorithm. A Random Forest for regression averages the predictions of its individual decision trees [43] [44]. Each tree's prediction is the mean of the samples in a leaf node [45]. Therefore, the final averaged prediction cannot exceed the maximum or minimum values present in the training set. Random Forests are poor at extrapolating outside the training data range [45]. For tasks requiring trend extrapolation, consider linear models, Support Vector Regression (SVR), or neural networks [45].

Q3: In a low-data regime for drug-target interaction (DTI) prediction, how can I make the most of Random Forest?

- Leverage Bootstrap Aggregation: The inherent bagging in Random Forest is beneficial for low-data scenarios. Each tree is trained on a different bootstrap sample of the limited data, effectively creating diversity and making the ensemble more robust [43] [46].

- Prioritize Feature Selection: Use feature selection techniques (e.g., based on XGBoost importance or domain knowledge) to reduce the feature space before training the Random Forest. This prevents overfitting and improves model focus on the most relevant molecular descriptors [47].

- Innovative Feature Engineering: Create powerful, informative features. For example, one study used 3D molecular fingerprints and transformed molecular similarity matrices into probability distributions using Kullback-Leibler divergence (KLD) as features for the Random Forest, achieving high accuracy in DTI prediction even with a limited number of targets [48].

Troubleshooting Guide

| Problem | Symptom | Likely Cause | Solution |

|---|---|---|---|

| Overfitting | High accuracy/AUC on training set (>99%), but significantly lower performance on test/hold-out set [42]. | Trees grown too deep without sufficient regularization; model learns noise in training data. | Increase min_samples_leaf or nodesize. Increase max_depth restriction. Tune mtry via cross-validation [42]. |

| Poor Extrapolation | Regression predictions for new data are consistently constrained within the min/max range of the training target values [45]. | Algorithmic limitation; predictions are averages of training data outcomes. | Use an alternative model (Linear Regression, SVR) or a hybrid/stacked model that combines Random Forest with a linear algorithm [45]. |

| Low Predictive Accuracy | Model performance is poor on both OOB/test samples and new validation data. | Uninformative features, noisy data, or suboptimal hyperparameters. | Perform feature selection and engineering. Optimize all key hyperparameters (mtry, nodesize, n_estimators) using grid/random search with cross-validation. |

| Long Training Times | Model takes excessively long to train, especially on larger datasets. | Large number of trees (n_estimators), large dataset, or too many features considered at splits. |

Start with a smaller subset for prototyping. Use a computing environment with parallel processing, as Random Forest training can be parallelized [44]. |

Experimental Protocol: Predicting Drug-Target Interactions (DTI) in a Low-Data Regime

This protocol outlines a methodology for building a Random Forest model to predict interactions between drug compounds and biological targets, which is particularly relevant for drug repurposing and understanding polypharmacology in a low-data context [48].

Workflow Diagram

Key Steps

Data Curation:

Molecular Featurization:

Advanced Feature Engineering (KLD Features):

- Q-Q Matrix: For each target protein, compute the pairwise 3D similarity of all its known ligands. This matrix characterizes the target's "uniqueness" [48].

- Q-L Vector: For a query compound and a target, compute the pairwise 3D similarities between the query and all the target's known ligands [48].

- Use Kernel Density Estimation (KDE) to transform the similarity scores of both the Q-Q matrix and Q-L vectors into probability density functions.

- Calculate the Kullback-Leibler divergence (KLD) between the Q-L vector's distribution and the Q-Q matrix's distribution. The KLD serves as a "quasi-distance" and becomes the novel, powerful feature vector for the Random Forest classifier [48].

Model Training and Validation:

Performance Data

Comparative Algorithm Performance in Drug Discovery

| Algorithm | Application Context | Key Performance Metric | Result | Reference |

|---|---|---|---|---|

| Random Forest | Anti-breast cancer drug candidate bioactivity prediction | Prediction Accuracy | 0.745 | [47] |

| XGBoost | Anti-breast cancer drug candidate bioactivity prediction | Prediction Accuracy | Comparable to RF | [47] |

| Gradient Boosting | Anti-breast cancer drug candidate bioactivity prediction | Prediction Accuracy | Comparable to RF | [47] |

| Support Vector Machine (SVM) | Anti-breast cancer drug candidate bioactivity prediction | Prediction Accuracy | Worst among tested | [47] |

| Random Forest (with KLD) | Drug-Target Interaction prediction for 17 targets | Mean Accuracy / OOB Score / ROC AUC | 0.882 / 0.876 / 0.990 | [48] |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in the Experimental Protocol |

|---|---|

| ChEMBL Database | A public repository of bioactive molecules with drug-like properties, providing curated bioactivity data (e.g., IC50) for training the model [48]. |

| E3FP Fingerprint | A 3D molecular fingerprint that captures the radial distribution of atomic features around each atom, providing a comprehensive representation of a molecule's 3D structure for similarity calculations [48]. |

| Kullback-Leibler Divergence (KLD) | A statistical measure of how one probability distribution diverges from a second. It is used as a feature engineering tool to quantify the relationship between a query molecule and a target's ligand set [48]. |

| Out-of-Bag (OOB) Score | An unbiased internal validation metric for Random Forests. It estimates the model's prediction error using data points not included in the bootstrap sample for a given tree, reducing the need for a separate validation set [43]. |

Frequently Asked Questions (FAQs)

Algorithm Selection & Performance

Q: When should I choose Gradient Boosting over Random Forest in a low-data metabolic engineering context?

A: The choice depends on your data characteristics and priority. For small datasets composed mainly of categorical variables, Random Forest (bagging) often provides more stable and accurate predictions [49]. However, Gradient Boosting (boosting) can achieve excellent predictive performance for certain specific prediction tasks, despite being generally less stable on small data [49]. Empirical studies across 165 datasets confirm that both are top-performing ensemble methods, but neither is a universal winner [50].

Q: Why does my ML model perform poorly even after tuning, and how can I improve it?

A: Poor performance can stem from the fundamental "no free lunch" theorem in ML. Key improvement strategies include:

- Systematic Algorithm Spot-Checking: Test a suite of algorithms, as performance is problem-dependent [50].

- Comprehensive Hyperparameter Tuning: This can improve accuracy by 3-50%, depending on the algorithm and dataset [50].

- Feature Engineering: Model performance is significantly affected by the characteristics of the features used for development [49].

Implementation & Technical Issues

Q: What are the best practices for validating ML models on limited experimental data?

A: For small datasets, use Leave-One-Out Cross-Validation (LOOCV) [49]. LOOCV uses all samples for both training and testing, providing a robust performance estimate when data is scarce. Avoid simple train-test splits, which can be unreliable with limited samples.

Q: How can I integrate biosensor data effectively into my DBTL cycle for dynamic control?

A: Effectively integrate biosensors by characterizing their key performance parameters [51]:

- Dynamic Range and Operating Range: Ensure the biosensor covers the relevant metabolite concentrations.

- Response Time: Slow responses hinder real-time controllability.

- Signal-to-Noise Ratio: High noise obscures subtle metabolic differences. For dynamic regulation, consider hybrid approaches combining slower, stable systems with faster-acting components like riboswitches to improve overall performance [51].

Data Handling & Visualization

Q: How should I visualize high-dimensional ML results for metabolic engineering applications?

A: Follow these core principles for effective visualization [52]:

- Prioritize Clarity and Accuracy: Ensure visuals are easy to interpret and build trust.

- Provide Context: Use titles and annotations to explain the "so what".

- Know Your Audience: Tailor depth and presentation to researchers or decision-makers. Choose the chart type based on the story you need to tell. For model performance comparison, use bar charts. For tracking optimization across DBTL cycles, use line charts [52].

Q: My data is highly skewed. Will this negatively impact Random Forest or Gradient Boosting models?

A: Both Random Forest and Gradient Boosting can handle skewed data reasonably well compared to models like linear regression [53]. Their ensemble nature and built-in feature importance analysis provide robustness and interpretability with non-normally distributed data.

Troubleshooting Guides

Poor ML Model Accuracy

| Step | Action | Diagnostic Check |

|---|---|---|

| 1 | Verify Data Quality & Preprocessing | Check for outliers, perform normalization, and ensure proper handling of categorical variables [49]. |

| 2 | Spot-Check Multiple Algorithms | Test at least 5 different algorithms: Gradient Boosting, Random Forest, Support Vector Machines, Extra Trees, and Logistic Regression [50]. |

| 3 | Perform Hyperparameter Tuning | Use grid search or similar methods; tuning can yield 3-50% accuracy gains [50]. |

| 4 | Evaluate with Proper Validation | Use LOOCV for small datasets to ensure reliable performance estimates [49]. |

| 5 | Analyze Feature Importance | Use built-in functions from tree-based models to identify and focus on the most impactful features [49] [53]. |

Inconsistent Results Across DBTL Cycles

| Symptom | Possible Cause | Solution |

|---|---|---|

| High variance in model performance between cycles | Lack of standardized experimental protocols and data collection. | Implement Standard Operating Procedures (SOPs) for all experimental steps and data recording. |

| Model fails to generalize to new cycles | Overfitting to noise or specific conditions of initial cycles. | Increase dataset size via data augmentation, apply stronger regularization in ML models, and use ensemble methods. |

| Inability to compare results across cycles | Missing metadata and inconsistent context for data points. | Create a standardized metadata template capturing all relevant experimental conditions for each data point. |

Biosensor Integration Challenges

| Problem | Root Cause | Resolution |

|---|---|---|

| Slow or delayed response in dynamic control | Inherently slow biosensor response kinetics. | Implement hybrid approaches (e.g., combine with fast-acting riboswitches) or engineer biosensors for improved response time [51]. |

| High signal noise obscures metabolic differences | Non-optimal biosensor design or external interference. | Characterize signal-to-noise ratio, use filtering techniques, and employ biosensors with higher specificity [51]. |

| Limited detection window for metabolite concentrations | Biosensor dynamic/operating range mismatched with metabolite levels. | Re-select or engineer biosensors with an operating range that covers the required metabolite concentration window [51]. |

Experimental Protocols & Data

Standardized Protocol for Consistent ML Comparison

This protocol ensures fair and reproducible comparison of ML algorithms across simulated DBTL cycles in low-data regimes.

1. Data Preprocessing and Standardization

- Collect and preprocess data, eliminating outliers [49].

- Normalize or standardize features to ensure consistent scaling across variables [49].

- For categorical variables, use appropriate encoding techniques.

2. Model Training with Hyperparameter Tuning

- Select a diverse set of ML algorithms for initial spot-checking [50].

- For each algorithm, perform hyperparameter tuning using a defined grid of values [50].

- Use a consistent resampling method (e.g., 5-fold CV) during tuning for comparability [53].

3. Model Validation and Evaluation

- Evaluate final model performance using Leave-One-Out Cross-Validation (LOOCV) to maximize data usage in small-sample settings [49].

- Calculate a standard set of performance metrics: R² (coefficient of determination), RMSE (Root Mean Square Error), MAE (Mean Absolute Error), and Pearson's R [49].

- Rank algorithm performance on each dataset to identify the most suitable one [50].

ML Algorithm Performance in Low-Data Regimes

Table: Comparative performance of ensemble algorithms on small datasets, relevant to metabolic engineering applications.

| Algorithm | Ensemble Type | Key Strengths | Performance on Small Categorical Data | Considerations |

|---|---|---|---|---|

| Random Forest (RF) | Bagging | High stability, robust to outliers, handles imbalanced classes [49]. | Predictions are more stable and accurate [49]. | Less prone to overfitting; lower computational time [53]. |

| Gradient Boosting (GBM) | Boosting | Effectively reduces bias and variance, high predictive power when tuned [49]. | Can demonstrate excellent performance for specific tasks [49]. | Can be more sensitive to hyperparameters and noisy data [49]. |

Table: Results from a large-scale study comparing 13 algorithms across 165 datasets [50].

| Algorithm | Average Rank (Lower is Better) | Significance Group | Recommended for Initial Spot-Checking? |

|---|---|---|---|

| Gradient Boosting | 1 (Best) | Top Performer | Yes [50] |

| Random Forest | 2 | Top Performer | Yes [50] |

| Support Vector Machines | 3 | High Performer | Yes [50] |

| Extra Trees | 4 | High Performer | Yes [50] |

| Logistic Regression | 5 | High Performer | Yes [50] |

� Workflow & Pathway Visualizations

DBTL Cycle with Integrated ML Analysis

Bagging vs. Boosting Workflow

Research Reagent Solutions

Table: Key biosensor types for dynamic monitoring and control in metabolic engineering DBTL cycles [51].

| Reagent / Tool | Type | Primary Function in Metabolic Engineering |

|---|---|---|

| Transcription Factor (TF)-based Biosensors | Protein-based Sensor | Links metabolite concentration to measurable outputs (e.g., fluorescence) for high-throughput screening of strain libraries [51]. |

| Riboswitches | RNA-based Sensor | Provides dynamic, modular control of gene expression via ligand-induced conformational changes, enabling real-time regulation of metabolic fluxes [51]. |

| Two-Component Systems (TCSs) | Protein-based Sensor | Enables detection of extracellular/intracellular signals (ions, pH, small molecules) and signal transduction for environmental monitoring [51]. |

| Toehold Switches | RNA-based Sensor | Provides programmable, logic-gated control of metabolic pathways via RNA-RNA interaction, increasing production efficiency [51]. |

Troubleshooting Guides and FAQs

FAQ 1: Which gradient boosting implementation should I choose for my QSAR project?

The choice depends on your specific priorities regarding predictive performance, training speed, and dataset size [54].

- For the best predictive performance:

XGBoostis generally recommended, as it consistently achieved the best predictive performance in a large-scale benchmark study [54] [55]. - For the fastest training on large datasets:

LightGBMis the optimal choice, as it requires the least training time, making it ideal for high-throughput screening (HTS) data [54]. - For small datasets or to reduce overfitting risk:

CatBoostand its ordered boosting approach can be more robust, especially in low-data regimes [54].

FAQ 2: How can I prevent my model from overfitting, especially with a small dataset?

Overfitting is a common challenge, but several strategies can mitigate it [54] [56] [57].

- Apply Regularization: Utilize the built-in L1 (Lasso) and L2 (Ridge) regularization in algorithms like

XGBoostto penalize complex models [54] [56]. - Constrain the Trees: Limit the depth of the decision trees (

max_depth) and the number of leaves. Simpler, "weaker" trees are less prone to overfitting [56]. - Use Shrinkage: Incorporate a learning rate (shrinkage) to slow down the learning process, making the model more robust [56].

- Employ Stochastic Gradient Boosting: Train each tree on a random subset of the data (rows) and/or features (columns) to reduce the correlation between trees and improve generalization [56].

- Tune Hyperparameters Extensively: The relevance of each hyperparameter varies across datasets. Optimizing as many hyperparameters as possible is crucial for maximizing performance and avoiding overfitting [54].

FAQ 3: My dataset has many highly correlated molecular descriptors. Is this a problem?

Gradient Boosting models are inherently robust to descriptor intercorrelation (multi-collinearity) due to their decision-tree-based architecture, which naturally prioritizes informative splits and down-weights redundant descriptors [57]. This makes them well-suited for high-dimensional descriptor sets. However, if overfitting is still evident, you can:

- Use Recursive Feature Elimination (RFE): A supervised method that iteratively removes the least important descriptors based on model performance [57].

- Remove low-variance and highly correlated descriptors as a pre-processing step, though this may sometimes discard meaningful features [57].

FAQ 4: Can I trust the feature importance rankings from my model?

While gradient boosting models provide feature importance scores, they should be interpreted with caution. Different implementations (XGBoost, LightGBM, CatBoost) can surprisingly rank molecular features differently due to differences in their regularization techniques and decision tree structures [54]. Therefore, expert knowledge must always be employed when evaluating these data-driven explanations of bioactivity. The rankings are a useful guide, but not an absolute truth [54].

FAQ 5: How well does gradient boosting perform in a low-data regime?

Evidence suggests that gradient boosting can be effective even with limited data. One study on demolition waste prediction, which used a small dataset of 690 samples, found that while Random Forest (a bagging algorithm) provided more stable predictions, Gradient Boosting Machine (GBM) models demonstrated excellent predictive performance for some specific predictive tasks [58]. Furthermore, in the context of metabolic engineering, gradient boosting and random forest were shown to outperform other methods in the low-data regime for combinatorial pathway optimization [59].

Experimental Protocols & Data Presentation

Large-Scale Benchmarking Protocol for GBM Implementations