Genome-Scale Metabolic Modeling for Strain Design: A Computational Guide for Researchers and Scientists

Genome-scale metabolic models (GEMs) provide a powerful, computational framework for predicting the metabolic capabilities of organisms, revolutionizing rational strain design for biotechnology and biomedicine.

Genome-Scale Metabolic Modeling for Strain Design: A Computational Guide for Researchers and Scientists

Abstract

Genome-scale metabolic models (GEMs) provide a powerful, computational framework for predicting the metabolic capabilities of organisms, revolutionizing rational strain design for biotechnology and biomedicine. This article explores the foundational principles of GEMs, from reconstruction using tools like Model SEED and RAVEN to simulation via Flux Balance Analysis (FBA). It details practical methodologies for applying GEMs to engineer high-yield microbial cell factories, discusses strategies for troubleshooting and optimizing model predictions, and reviews critical practices for model validation and selection. Aimed at researchers, scientists, and drug development professionals, this guide synthesizes current tools and best practices to bridge the gap between in silico predictions and successful experimental outcomes in metabolic engineering.

The Foundations of Genome-Scale Metabolic Modeling

Genome-scale metabolic models (GEMs) represent comprehensive computational reconstructions of metabolic networks within living organisms, integrating genomic annotation with biochemical knowledge to enable predictive simulations of cellular behavior. These models have become indispensable tools in systems biology, providing a mathematical framework for analyzing genotype-phenotype relationships through gene-protein-reaction (GPR) associations. By encompassing the entire metabolic repertoire of target organisms—from bacteria and archaea to complex eukaryotes—GEMs facilitate the prediction of metabolic fluxes under various genetic and environmental conditions. Their application spans multiple fields including strain engineering for industrial biotechnology, drug target identification in pathogens, and understanding human diseases. This technical guide examines the core components, reconstruction methodologies, and applications of GEMs, with particular emphasis on their transformative role in strain design research.

Core Components of Genome-Scale Metabolic Models

GEMs are structured knowledgebases that mathematically represent an organism's metabolism through several interconnected components. Each element plays a critical role in ensuring the model's biological accuracy and computational functionality.

Fundamental Elements

Metabolites: These are the chemical substances participating in metabolic reactions. Each metabolite is uniquely identified and associated with information about its chemical formula and charge, which enables mass and charge balance analysis. The complete set of metabolites defines the chemical space of the model [1] [2].

Reactions: Biochemical transformations that convert substrates to products are represented as reactions, complete with stoichiometric coefficients that quantify reactant and product relationships. Reactions are characterized by their directionality (reversible or irreversible) and are organized into metabolic pathways that reflect the organism's biochemical capabilities [2].

Genes: The model includes all metabolic genes identified through genome annotation. These genetic elements provide the genomic basis for the metabolic network and enable the prediction of phenotypic consequences resulting from genetic perturbations [3] [2].

Gene-Protein-Reaction (GPR) Associations: GPR rules formally connect genes to their corresponding metabolic reactions through Boolean logic statements (e.g., "gene1 AND gene2" or "gene3 OR gene4"). These associations capture essential genetic and regulatory information, including enzyme complexes (AND relationships) and isoenzymes (OR relationships) [1] [2].

Biomass Objective Function: The biomass reaction represents the metabolic requirements for cellular growth by quantifying the necessary precursors and energy in appropriate proportions. This function serves as the primary objective in most metabolic simulations, particularly when predicting growth phenotypes [3].

Constraints: GEMs incorporate multiple constraint types that define the operating boundaries of the metabolic network. These include reaction capacity constraints based on enzyme kinetics, environmental constraints that define nutrient availability, and thermodynamic constraints that ensure biochemical feasibility [3] [2].

Table 1: Core Components of a Genome-Scale Metabolic Model

| Component | Description | Functional Role |

|---|---|---|

| Metabolites | Chemical substances participating in metabolic reactions | Define the chemical space and enable mass balance |

| Reactions | Biochemical transformations with stoichiometric coefficients | Represent metabolic pathways and fluxes |

| Genes | Metabolic genes from genome annotation | Provide genomic basis for network capabilities |

| GPR Associations | Boolean relationships connecting genes to reactions | Link genotype to metabolic phenotype |

| Biomass Objective | Synthetic reaction representing growth requirements | Primary objective function for growth simulations |

| Constraints | Physicochemical and environmental boundaries | Define feasible operating space for metabolic fluxes |

Network Properties and Stoichiometric Matrix

The core mathematical structure of a GEM is the stoichiometric matrix (S), where rows represent metabolites and columns represent reactions. Each element Sij corresponds to the stoichiometric coefficient of metabolite i in reaction j (with negative values for substrates and positive values for products). This matrix formulation enables steady-state analysis of metabolic networks through the equation S · v = 0, where v is the flux vector representing reaction rates [3] [2].

The stoichiometric matrix encapsulates the topology of the metabolic network and enables the application of constraint-based reconstruction and analysis (COBRA) methods. Under the steady-state assumption, the internal metabolite concentrations remain constant over time, meaning that metabolite production and consumption rates are balanced [2].

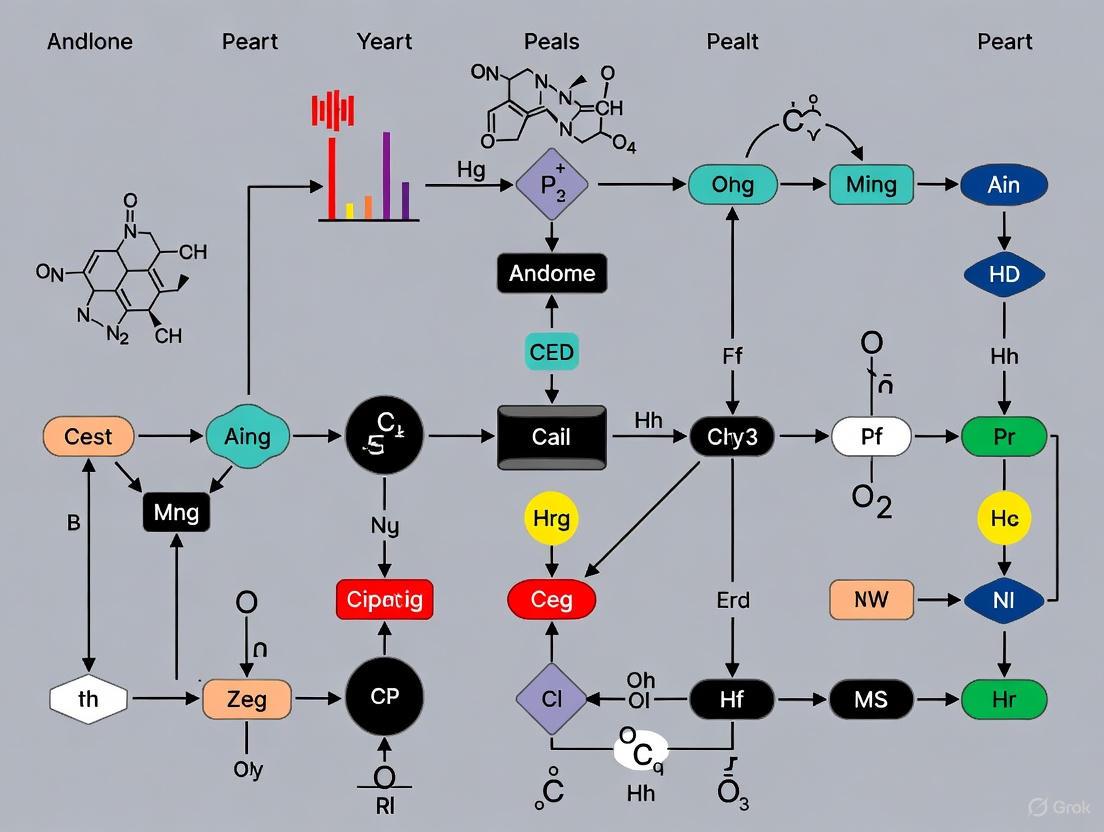

Figure 1: Logical relationships between core components of a GEM, showing the flow from genetic information to metabolic function.

GEM Reconstruction Methodologies

The construction of high-quality GEMs follows a systematic workflow that integrates automated computational approaches with manual curation based on experimental evidence.

Reconstruction Workflow

Table 2: Key Stages in GEM Reconstruction and Validation

| Stage | Key Procedures | Outputs |

|---|---|---|

| 1. Genome Annotation | Functional assignment of genes using RAST, ModelSEED, KEGG | Draft list of metabolic genes, proteins, and functions |

| 2. Draft Reconstruction | Automatic generation of reactions and GPRs from annotation; homology mapping with template models | Initial network with metabolites, reactions, and GPR associations |

| 3. Network Refinement | Manual curation to fill metabolic gaps; mass and charge balancing; addition of transport reactions | Functional network capable of producing all biomass components |

| 4. Model Validation | Comparison of simulated growth phenotypes and gene essentiality with experimental data | Quantitative assessment of model predictive accuracy |

Detailed Experimental Protocols

Protocol 1: Draft Model Construction via ModelSEED

- Genome Annotation: Submit the target genome to the RAST (Rapid Annotation using Subsystem Technology) server for automated annotation of metabolic genes [3].

- Automated Reconstruction: Input the RAST annotation into the ModelSEED pipeline to automatically generate a draft metabolic model containing initial reactions, metabolites, and GPR associations [3].

- Homology-Based Enhancement: Identify homologous genes in related reference organisms with existing high-quality GEMs (e.g., Bacillus subtilis, Staphylococcus aureus) using BLAST with thresholds of ≥40% identity and ≥70% query coverage [3].

- Data Integration: Manually integrate GPR associations from automated and homology-based methods into a unified draft model using spreadsheet software or specialized computational tools [3].

Protocol 2: Manual Curation and Gap Analysis

- Gap Identification: Use computational tools like the

gapAnalysisfunction in the COBRA Toolbox to detect metabolic gaps that prevent the synthesis of essential biomass components [3]. - Gap Filling: Systematically add missing biochemical reactions based on literature evidence, transporter annotations from the Transporter Classification Database (TCDB), and new gene functions assigned via BLASTp against UniProtKB/Swiss-Prot [3].

- Mass and Charge Balancing: Verify and correct all reactions to ensure mass and charge conservation using the

checkMassChargeBalanceprogram, adding H2O or H+ as necessary to balance equations [3]. - Biomass Composition Definition: Compile organism-specific biomass composition data including percentages of proteins, DNA, RNA, lipids, and other cellular components based on experimental measurements or phylogenetic inference from closely related organisms [3].

Protocol 3: Model Simulation and Validation

- Implementation: Employ mathematical optimization solvers like GUROBI through the MATLAB interface to perform flux balance analysis (FBA) simulations [3].

- Growth Condition Testing: Simulate growth phenotypes under different nutrient conditions by constraining the model with appropriate uptake rates for carbon, nitrogen, phosphorus, and sulfur sources [3].

- Gene Essentiality Analysis: Predict essential genes by sequentially setting the flux of reactions corresponding to each gene to zero and calculating the growth ratio (grRatio). Genes with grRatio <0.01 are classified as essential [3].

- Experimental Validation: Compare model predictions with experimental growth assays in chemically defined media with controlled nutrient availability, measuring optical density at 600nm over time to determine growth rates [3].

Figure 2: GEM reconstruction workflow from genome annotation to validated model.

Successful development and application of GEMs requires specialized computational tools, databases, and analytical frameworks.

Table 3: Essential Research Reagents and Computational Tools for GEM Development

| Resource Category | Specific Tools/Databases | Primary Function |

|---|---|---|

| Annotation Platforms | RAST, KEGG, UniProtKB/Swiss-Prot | Automated genome annotation and functional prediction |

| Reconstruction Software | ModelSEED, RAVEN Toolbox, CarveMe | Automated draft model generation from genomic data |

| Simulation Environments | COBRA Toolbox, COBRApy, GUROBI solver | Constraint-based modeling and flux analysis |

| Biochemical Databases | TCDB, BRENDA, MetaCyc | Reaction kinetics, transporter classification, pathway information |

| Reference Models | E. coli iML1515, S. cerevisiae Yeast 8, Human1 | High-quality templates for homology-based reconstruction |

| Analysis Methods | iMAT, FBA, dFBA, MTSA | Context-specific model extraction and simulation |

Applications in Strain Design and Biotechnology

GEMs have revolutionized strain design by enabling systematic prediction of genetic modifications that enhance production of target compounds while maintaining cellular viability.

Industrial Biotechnology Applications

- Bio-based Chemical Production: GEMs successfully predict gene knockout targets that redirect metabolic flux toward industrially valuable compounds. For E. coli, model-driven interventions have enhanced yields of biofuels, bioplastics, and pharmaceutical precursors [2].

- Amino Acid Production: In Corynebacterium glutamicum, GEMs identified amplification targets in the L-lysine biosynthetic pathway, resulting in industrial strains with significantly improved production efficiency [2].

- Enzyme and Protein Synthesis: Bacillus subtilis GEMs (iBsu1144) simulated the effects of oxygen transfer rates on serine alkaline protease and recombinant protein production, guiding bioprocess optimization [2].

Metabolic Engineering Methodologies

- OptKnock Algorithm: Identifies gene deletion strategies that couple growth with chemical production by solving a bi-level optimization problem [2].

- Flux Scanning: Systematically scans reaction fluxes to identify potential overexpression or knockout targets that enhance product yield [2].

- Thermodynamic Analysis: Incorporates Gibbs free energy calculations to eliminate thermodynamically infeasible flux distributions and improve prediction accuracy [2].

Advanced Applications and Future Directions

The continued evolution of GEMs has enabled increasingly sophisticated applications across biological research and biotechnology.

Multi-Strain and Community Modeling

- Pan-genome Analysis: Multi-strain GEMs capture metabolic diversity across different isolates of the same species, revealing strain-specific metabolic capabilities and niche adaptations [1].

- Host-Pathogen Interactions: Integrated models of Mycobacterium tuberculosis and human alveolar macrophages simulate metabolic interactions during infection, identifying potential therapeutic targets [2].

- Microbiome Modeling: Community GEMs reconstruct metabolic interactions in complex microbial ecosystems, enabling prediction of community stability and metabolic cross-feeding [1].

Integration with Machine Learning and Multi-omics Data

- Context-Specific Model Extraction: Methods like iMAT (Integrative Metabolic Analysis Tool) construct tissue- or condition-specific models by integrating transcriptomic data with global reconstructions [4].

- Metabolic Biomarker Discovery: Machine learning classifiers (e.g., random forest) combined with GEMs identify metabolic signatures that distinguish between physiological states, such as healthy versus cancerous tissues [4].

- Thermodynamic Vulnerability Analysis: Novel approaches like Metabolic Thermodynamic Sensitivity Analysis (MTSA) assess temperature-dependent metabolic vulnerabilities in pathological states [4].

Genome-scale metabolic models represent a mature computational framework for decoding the complex relationships between genotype and metabolic phenotype. Their structured composition—integrating genes, proteins, reactions, and metabolites within a stoichiometric matrix—enables predictive simulation of metabolic behavior under various genetic and environmental conditions. As reconstruction methodologies continue to advance through improved automation and curation, and as applications expand through integration with machine learning and multi-omics data, GEMs will play an increasingly central role in strain engineering, drug discovery, and fundamental biological research. The ongoing development of more sophisticated modeling frameworks, including those accounting for metabolic regulation, protein allocation constraints, and multi-cellular interactions, promises to further enhance their predictive power and biomedical relevance.

Genome-scale metabolic reconstructions (GENREs) are structured knowledge-bases that consolidate existing biochemical, genetic, and genomic information about an organism's metabolism into a mathematical model [5]. These reconstructions represent the biochemical reactions occurring within a cell, their association with gene products, and the relationships between these reactions and metabolic pathways. The process of reconstructing a metabolic network begins with annotated genomic data and progresses through iterative stages of refinement and validation to produce a computational model capable of predicting metabolic behavior under various conditions.

In the context of strain design for industrial biotechnology, metabolic reconstructions have become indispensable tools. They enable systematic analysis of cellular metabolism and guide rational strain design, thereby reducing experimental trial-and-error [6]. For instance, metabolic models have successfully identified genetic interventions to enhance production of compounds like succinic acid in Yarrowia lipolytica and Escherichia coli [6] [7]. The development of resources like the APOLLO database, which contains 247,092 microbial genome-scale metabolic reconstructions spanning 19 phyla, demonstrates the increasing scope and application of these models in studying diet-host-microbiome-disease interactions [8].

The Reconstruction Pipeline: A Step-by-Step Methodology

The reconstruction of a metabolic network follows a systematic, iterative process that transforms genomic information into a predictive computational model.

Automated Draft Generation

The initial phase involves creating a draft reconstruction from an annotated genome:

- Genome Annotation: The process begins with identifying all metabolic genes within the organism's genome and determining their functional roles, including enzyme commission numbers and association with specific biochemical reactions.

- Reaction Database Mining: Annotated genes are linked to biochemical reactions through databases such as KEGG, MetaCyc, and BiGG. This step establishes the biochemical transformation network that the organism can potentially catalyze.

- Compartmentalization Assignment: Reactions are assigned to specific subcellular locations (e.g., cytosol, mitochondria, peroxisome) based on localization signals and experimental evidence, creating an intracellular topological map of metabolism.

Table 1: Key Databases for Metabolic Network Reconstruction

| Database Name | Primary Content | Application in Reconstruction |

|---|---|---|

| KEGG | Pathway maps, reaction modules | Draft network generation, pathway completeness verification |

| MetaCyc | Curated metabolic pathways and enzymes | Reaction stoichiometry, thermodynamic data |

| BiGG Models | Curated genome-scale metabolic models | Reaction identifiers, compartmentalization |

| UniProt | Protein functional information | Gene-protein-reaction associations |

| ModelSeed | Biochemical reaction database | Automated draft reconstruction |

For well-studied organisms, a scaffold-based approach can be employed, leveraging existing curated models of phylogenetically close organisms as templates. This method uses orthology-based model transfer, wherein a well-curated GENRE serves as a scaffold for generating a draft model of the target organism based on gene homology and functional conservation [6].

Manual Curation and Network Refinement

The automated draft reconstruction requires extensive manual curation to ensure biological fidelity:

- Gap Analysis: Identification of metabolic gaps where reactants are produced without consumption or vice versa, indicating missing reactions or transport processes.

- Literature Mining: Comprehensive review of organism-specific biochemical literature to validate reaction presence, determine directionality, and establish tissue-specific or condition-specific metabolic capabilities.

- Gene-Protein-Reaction (GPR) Association Refinement: Establishment of logical relationships between genes, their protein products, and the reactions they catalyze, including complex isozyme and subunit relationships.

- Biomass Composition Definition: Quantification of the molecular components required for cell growth, including amino acids, nucleotides, lipids, cofactors, and their stoichiometric proportions.

Mathematical Formulation and Model Validation

The curated metabolic network is converted into a mathematical format for computational analysis:

- Stoichiometric Matrix Construction: The network is represented as an m×n stoichiometric matrix S, where m represents metabolites and n represents reactions. Each element Sij corresponds to the stoichiometric coefficient of metabolite i in reaction j.

- Constraint Implementation: Physical and biochemical constraints are applied, including mass balance (S·v = 0), capacity constraints (vmin ≤ v ≤ vmax), and thermodynamic constraints.

- Model Validation: The reconstruction is validated by testing its ability to predict known physiological functions, such as growth capability on different nutrient sources, essential gene requirements, and metabolic secretion profiles under various conditions.

The following diagram illustrates the comprehensive workflow for metabolic network reconstruction:

Network Analysis and Simulation Techniques

Once reconstructed, metabolic networks can be simulated using various computational techniques to predict physiological behavior and metabolic capabilities.

Constraint-Based Reconstruction and Analysis (COBRA)

The COBRA methodology provides a framework for simulating metabolic networks under physiological constraints:

- Flux Balance Analysis (FBA): This fundamental COBRA technique calculates flux distributions in metabolic networks by optimizing an objective function (e.g., biomass production) subject to mass balance and capacity constraints. FBA assumes metabolic steady-state and utilizes linear programming to identify optimal reaction fluxes [5].

- Gene Deletion Analysis: Simulation of knockout mutants to identify essential genes and reactions, providing insights into network robustness and potential drug targets.

- Pathway Analysis: Identification of elementary flux modes or extreme pathways representing minimal functional metabolic units.

Integration of Omics Data

Modern reconstruction efforts increasingly incorporate multi-omics data to create condition-specific models:

- Transcriptomic Integration: Methods like E-Flux and GIMME use gene expression data to constrain reaction fluxes, elevating or lowering flux bounds based on expression levels [5].

- Proteomic Constraints: Approaches such as GECKO incorporate enzyme abundance data and catalytic rates to impose enzyme capacity constraints on metabolic fluxes [5].

- Metabolomic Data Integration: Thermodynamic metabolic flux analysis utilizes metabolite concentration data to determine reaction directionality and drive forces.

- Fluxomic Validation: 13C metabolic flux analysis data provides direct measurements of intracellular fluxes for model validation and refinement [5].

Table 2: Computational Tools for Metabolic Network Analysis

| Tool Name | Methodology | Primary Application |

|---|---|---|

| OptFlux | Flux Balance Analysis | Strain design optimization |

| COBRA Toolbox | Constraint-Based Modeling | Multi-purpose metabolic analysis |

| OptKnock | Bilevel Optimization | Growth-coupled production strain design |

| GIMME | Expression Data Integration | Condition-specific model creation |

| iBioSim | SBML Model Analysis | Petri net conversion and simulation [9] |

Applications in Strain Design and Engineering

Metabolic reconstructions provide powerful platforms for systematic strain design in metabolic engineering.

Model-Guided Strain Optimization

GENREs enable identification of genetic modifications that enhance production of target compounds:

- Growth-Coupled Production: Algorithms like OptKnock identify reaction knockouts that genetically force production of desired compounds to be associated with cellular growth [7].

- Metabolic Engineering Strategies: The OptDesign framework selects regulation candidates based on flux differences between wild-type and production strains, then computes optimal design strategies combining regulation and knockout manipulations [7].

- Redox and Energy Balancing: Models identify cofactor imbalances and suggest interventions to optimize energy metabolism and redox state.

A notable application includes the reconstruction of a GENRE for Yarrowia lipolytica strain W29 (iWT634), which contains 634 metabolic genes, 1130 metabolites, and 1364 reactions distributed across eight cellular compartments. This model successfully identified succinate dehydrogenase (SDH) and acetate production (ACH) as key knockout targets to improve succinic acid production, predicting yields of 4.36 mmol/gDW/h without compromising growth [6].

Multi-Omics Integration in the DBTL Cycle

Metabolic reconstructions form the computational core of the Design-Build-Test-Learn (DBTL) cycle in modern metabolic engineering:

- Learn Phase: Computational techniques interpret multi-omics datasets from engineered strains to understand mechanisms driving phenotypic changes [5].

- Design Phase: Model-aided design integrates experimental data to generate effective and non-intuitive genetic intervention strategies.

- Predictive Modeling: Advanced methods augment flux balance analysis with constraints from fluxomic, genomic, and metabolomic datasets to improve prediction accuracy [5].

The following diagram illustrates how metabolic network reconstruction integrates with the strain engineering DBTL cycle:

Table 3: Research Reagent Solutions for Metabolic Reconstruction

| Resource Category | Specific Tools | Function in Reconstruction Process |

|---|---|---|

| Reconstruction Software | ModelSEED, RAVEN, CarveMe | Automated draft reconstruction from annotated genomes |

| Simulation Environments | COBRA Toolbox, OptFlux, Cobrapy | Constraint-based analysis and flux simulation |

| Data Integration Tools | GECKO, iMAT, INIT | Incorporation of omics data into metabolic models |

| Strain Design Algorithms | OptKnock, OptForce, OptDesign | Identification of genetic interventions for strain improvement [7] |

| Model Exchange Formats | SBML, SBOL, Petri Net markup | Standardized representation and sharing of models [9] |

| Quality Assessment | MEMOTE, SEMPRE | Systematic evaluation of model quality and completeness |

The field of metabolic reconstruction continues to evolve with several emerging trends:

- Machine Learning Integration: Predictive modeling of metabolic behavior using machine learning approaches trained on multi-omics data and existing reconstruction databases [5].

- Multi-Tissue and Community Modeling: Development of metabolic models for complex systems, including human tissues and microbial communities, enabled by resources like the APOLLO database of 247,092 microbial reconstructions [8].

- Kinetic Model Incorporation: Integration of enzymatic kinetic parameters to create more predictive, dynamic models that can simulate metabolic responses to perturbations.

- Automated Curation Tools: Development of artificial intelligence systems to accelerate the manual curation process through natural language processing of scientific literature.

In conclusion, genome-scale metabolic reconstruction provides a critical bridge between genomic information and predictive models of metabolic function. Through systematic reconstruction pipelines, sophisticated analysis techniques, and integration with experimental data, these models have become indispensable tools for strain design in biotechnology and pharmaceutical applications. As reconstruction methods continue to advance, they will enable increasingly sophisticated engineering of biological systems for chemical production, therapeutic development, and fundamental understanding of cellular physiology.

Genome-scale metabolic modeling has emerged as a cornerstone of modern metabolic engineering and strain design research. These computational models enable researchers to predict the metabolic behavior of microorganisms under various genetic and environmental conditions, significantly accelerating the development of industrial biotechnology strains. The construction and refinement of these models rely heavily on curated metabolic databases that provide essential information about biochemical reactions, metabolic pathways, gene-protein-reaction relationships, and metabolite properties. Among the numerous available resources, KEGG, MetaCyc, BiGG, and Model SEED have established themselves as fundamental tools for systems biologists and metabolic engineers. This whitepaper provides an in-depth technical analysis of these four core databases, focusing on their distinctive features, data structures, and applications in genome-scale metabolic modeling for strain design research.

Core Characteristics and Applications

Table 1: Fundamental Characteristics of Metabolic Databases

| Database | Primary Focus | Data Curation Approach | Organism Coverage | Key Applications in Strain Design |

|---|---|---|---|---|

| KEGG | Molecular interaction networks, pathways, and genomes [10] | Manual and computational curation [10] | Extensive (~1,200 species in MicrobesFlux implementation) [11] | Draft network generation, pathway analysis, enzyme function annotation |

| MetaCyc | Experimentally elucidated metabolic pathways [12] [13] | Literature-based manual curation [13] [14] | 3,443 different organisms [13] | Reference for pathway prediction, metabolic engineering, enzyme database |

| BiGG | Genome-scale metabolic network reconstructions [15] [16] | Manual curation of organism-specific models [16] | 7+ curated models (in 2010) [16] | Constraint-based modeling, flux analysis, model standardization |

| Model SEED | Automated generation of metabolic models [11] | Computational prediction based on RAST annotations [11] | ~5,000 genomes [11] | High-throughput model generation, gap-filling, initial model drafts |

Quantitative Database Content

Table 2: Comparative Quantitative Content of Metabolic Databases

| Database | Pathways | Reactions | Metabolites | Organism-Specific Models |

|---|---|---|---|---|

| KEGG | Comprehensive collection of pathway maps [10] | Not explicitly quantified | Not explicitly quantified | Organism-specific pathways generated via KO conversion [10] |

| MetaCyc | 3,128 metabolic pathways (manually curated) [13] | 18,819 enzymatic reactions [13] | Not explicitly quantified | Used to generate >5,700 organism-specific PGDBs in BioCyc [14] |

| BiGG | Integrated into published genome-scale metabolic networks [16] | 10,000+ reactions across models [17] | 5,000+ metabolites across models [17] | 7+ integrated genome-scale metabolic reconstructions [16] |

| Model SEED | Automatically predicted based on annotations | Automatically generated | Automatically generated | ~5,000 organisms [11] |

Technical Specifications and Data Structure

Table 3: Technical Specifications and Data Access

| Database | Identifier System | Export Formats | Programming Interface | Update Frequency |

|---|---|---|---|---|

| KEGG | KEGG Orthology (KO) numbers, EC numbers, Reaction IDs [10] | KGML, custom text formats | KEGG API, Web services [10] | Regular updates (last noted: November 2025) [10] |

| MetaCyc | MetaCyc IDs, links to UniProt, CAS, etc. [13] | SBML, Pathway Tools data files [13] | Pathway Tools APIs (Python, Java, Perl, Lisp) [13] | Continuous curation [13] |

| BiGG | Standardized BiGG IDs for reactions, metabolites, genes [15] [16] | SBML, MAT, JSON [15] | Web API [15] | As new reconstructions are added [15] |

| Model SEED | Model SEED identifiers | SBML [11] | Web interface [11] | With RAST annotation updates [11] |

Detailed Database Architectures and Applications

KEGG (Kyoto Encyclopedia of Genes and Genomes)

KEGG serves as a comprehensive resource integrating sixteen main databases categorized into systems information, genomic information, and chemical information [10]. Its pathway database consists of manually drawn pathway maps representing molecular interaction, reaction, and relation networks. A critical feature for strain design is KEGG's pathway classification system, which includes metabolism, genetic information processing, environmental information processing, cellular processes, organismal systems, human diseases, and drug development [10].

KEGG employs a sophisticated identifier system where each pathway map is identified by a combination of 2-4 letter prefix code and 5-digit number. The prefix indicates the pathway type: "map" for reference pathway, "ko" for pathway highlighting KOs, "ec" for metabolic pathway highlighting EC numbers, "rn" for reference metabolic pathway highlighting reactions, and organism codes for organism-specific pathways [10]. This systematic approach enables researchers to precisely track metabolic capabilities across organisms.

For strain design applications, KEGG facilitates the identification of conserved metabolic modules and orthologous enzyme functions. The database enables researchers to compare metabolic pathways across different microorganisms, identify potential heterologous pathways for engineering, and pinpoint gene knock-in/knock-out targets. Tools like MicrobesFlux leverage KEGG to automatically generate metabolic models for approximately 1,200 microorganisms by downloading metabolic networks from KEGG and converting them to metabolic model drafts [11].

MetaCyc

MetaCyc distinguishes itself through its rigorous literature-based curation process, focusing exclusively on experimentally elucidated metabolic pathways [13]. This evidence-based approach makes it particularly valuable for strain design projects requiring high-confidence biochemical data. The curation process captures extensive information including pathway summaries, taxonomic range, key reactions, enzyme kinetics, substrate specificity, optimal pH and temperature, and literature citations [13].

The database architecture inter-relates information about pathways, reactions, compounds, proteins, and genes, with each object name serving as a hyperlink to detailed description pages [13]. This interconnected structure enables efficient navigation through complex metabolic networks, which is crucial when designing novel metabolic routes in engineered strains.

For strain design, MetaCyc serves four primary functions: (1) as a reference database for computationally predicting metabolic networks from sequenced genomes using tools like PathoLogic; (2) as an encyclopedic reference on pathways and enzymes for educational and basic research purposes; (3) as a resource for metabolic engineers seeking well-characterized enzymes and pathways for genetic engineering; and (4) as a metabolite database that aids metabolomics research through its collection of metabolites with full structure and monoisotopic mass data [13] [18].

MetaCyc is particularly valuable for identifying non-native pathways that can be introduced into production hosts. For example, when engineering a microorganism to produce a compound not naturally synthesized by the host, researchers can query MetaCyc to identify all known biosynthetic routes for the target compound across different organisms, along with the specific enzymes required for each route [18].

BiGG Models

BiGG Models represents a knowledgebase of genome-scale metabolic network reconstructions that are biochemically, genetically, and genomically structured [15] [16]. Unlike KEGG and MetaCyc, which focus on biochemical pathways, BiGG specializes in constraint-based metabolic models that are ready for computational analysis. The database integrates multiple published genome-scale metabolic networks into a single resource with standardized nomenclature, enabling direct comparison of metabolic components across different organisms [16].

A fundamental feature of BiGG is its representation of Gene-Protein-Reaction (GPR) associations using Boolean logic. These associations define how genes encode proteins that catalyze metabolic reactions, describing relationships such as enzyme complexes (AND relationships) and isozymes (OR relationships) [16]. This structured representation is essential for predicting the metabolic consequences of gene knockouts and other genetic modifications in strain design projects.

BiGG supports flux balance analysis (FBA) and related constraint-based modeling techniques by providing stoichiometrically balanced models with well-defined compartmentalization, reaction bounds, and biomass objectives [16]. The models in BiGG undergo extensive manual curation and testing to ensure biological functionality, including gap analysis to identify dead-end metabolites and validation through growth prediction under different conditions [16].

For strain design, BiGG enables researchers to: (1) simulate the metabolic impact of gene knockouts before experimental implementation; (2) predict maximum theoretical yields of target metabolites; (3) identify essential genes and reactions; and (4) design optimal metabolic engineering strategies through in silico prototyping [16].

Model SEED

Model SEED represents an automated approach to genome-scale metabolic reconstruction, addressing the challenge that manual reconstruction is "slow, tedious and labor-intensive" involving "over 90 steps from assembling genome annotations to validating the metabolic model" [11]. The platform can automatically generate metabolic models for thousands of genomes based on annotations from the RAST (Rapid Annotation using Subsystem Technology) system [11].

The Model SEED pipeline begins with genome annotation, identifies metabolic reactions based on annotated genes, assembles these reactions into metabolic networks, and performs gap-filling to ensure network functionality [11]. This high-throughput approach enables researchers to quickly obtain initial metabolic models for poorly characterized organisms, which is particularly valuable when working with non-model microorganisms with industrial potential.

While automatically generated models require manual refinement for accurate predictions, they provide a valuable starting point for strain design projects targeting novel production hosts. Model SEED includes tools for comparing metabolic capabilities across multiple organisms, identifying unique metabolic features, and predicting essential metabolic functions [11].

Integrated Workflow for Strain Design

Metabolic Modeling Workflow for Strain Design

The following diagram illustrates how the four databases integrate into a comprehensive metabolic modeling workflow for strain design:

Experimental Protocol: Metabolic Pathway Enrichment Analysis for Target Identification

Metabolic Pathway Enrichment Analysis (MPEA) has emerged as a powerful methodology for identifying strain engineering targets using metabolomics data [19]. The following protocol details the application of MPEA for succinate production improvement in E. coli, as demonstrated in recent research:

Objective: Identify significantly modulated metabolic pathways during succinate fermentation to prioritize genetic targets for strain improvement.

Materials and Equipment:

- E. coli production strains

- Fermentation bioreactor with monitoring capabilities

- LC-MS system (high-resolution accurate mass preferred)

- Metabolomics processing software (e.g., XCMS, MS-DIAL)

- Statistical analysis environment (R, Python)

- Metabolic databases (KEGG, MetaCyc)

Procedure:

Bioprocess Operation and Sampling:

- Conduct triplicate fermentations of E. coli succinate production process under controlled conditions

- Collect samples throughout fermentation timecourse, with emphasis on transition between growth and production phases

- Monitor extracellular metabolites (glucose, organic acids) via HPLC-UV/Vis-RI analysis

- Quench intracellular metabolism rapidly and extract metabolites for LC-MS analysis

Metabolomic Data Acquisition:

- Perform combined targeted and untargeted metabolomics using HRAM mass spectrometry

- Acquire data in both positive and negative ionization modes

- Include quality control samples (pooled quality controls) to monitor instrument performance

- Identify metabolites by matching accurate mass and retention times to databases

Data Preprocessing and Statistical Analysis:

- Process raw LC-MS data using peak detection, alignment, and normalization

- Perform univariate statistical analysis (ANOVA, t-tests) to identify significantly changing metabolites across fermentation phases

- Apply false discovery rate correction for multiple testing

- Utilize multivariate analysis (PCA, PLS-DA) to visualize metabolic trajectory

Pathway Enrichment Analysis:

- Map significantly altered metabolites to metabolic pathways using KEGG and MetaCyc databases

- Perform metabolic pathway enrichment analysis using Fisher's exact test or over-representation analysis

- Rank pathways by statistical significance (p-value with multiple test correction)

- Calculate impact factors based on pathway topology

Target Identification and Validation:

- Select significantly modulated pathways (p < 0.05) with high impact factors

- Cross-reference with literature knowledge of succinate production biochemistry

- Prioritize engineering targets based on pathway modulation and mechanistic plausibility

- Validate targets through genetic modification and subsequent fermentation analysis

Expected Results: Application of this protocol to E. coli succinate production identified three significantly modulated pathways: pentose phosphate pathway, pantothenate and CoA biosynthesis, and ascorbate and aldarate metabolism [19]. The first two pathways were consistent with previous engineering efforts, while ascorbate and aldarate metabolism represented a novel target for succinate production optimization.

Research Reagent Solutions for Metabolic Engineering

Table 4: Essential Research Reagents and Resources for Metabolic Engineering Studies

| Reagent/Resource | Function/Application | Example Implementation |

|---|---|---|

| LC-MS Systems | Metabolite identification and quantification in untargeted/targeted metabolomics | High-resolution accurate mass spectrometry for succinate production monitoring [19] |

| SBML Files | Standardized model exchange between databases and simulation tools | Exporting models from BiGG for constraint-based analysis [15] [16] |

| Pathway Tools Software | PGDB creation, curation, and visualization | Editing organism-specific databases based on MetaCyc reference [13] |

| COBRA Toolbox | Constraint-based reconstruction and analysis | FBA simulation of gene knockout effects using BiGG models [16] |

| RAST Annotation Server | Automated genome annotation for draft reconstruction | Providing input annotations for Model SEED pipeline [11] |

| IPOPT Optimizer | Nonlinear optimization for constraint-based modeling | Solving flux balance problems in MicrobesFlux platform [11] |

KEGG, MetaCyc, BiGG, and Model SEED provide complementary functionalities that collectively support the entire metabolic modeling pipeline for strain design. KEGG offers extensive pathway maps and orthology information for draft reconstruction; MetaCyc provides high-confidence experimentally validated pathways for manual curation; BiGG delivers standardized, ready-to-use metabolic models for simulation; and Model SEED enables high-throughput generation of initial models for non-characterized organisms. The integration of these resources, particularly through emerging methodologies such as metabolic pathway enrichment analysis, empowers researchers to systematically identify engineering targets and optimize microbial strains for industrial biotechnology applications. As these databases continue to evolve with expanded content and improved interoperability, they will play an increasingly vital role in accelerating the design-build-test-learn cycle for next-generation bioprocess development.

Genome-scale metabolic models (GEMs) are powerful computational frameworks that represent the complete set of metabolic reactions within an organism, based on its genomic annotation. These models encapsulate the totality of metabolic functions for a given organism, connecting genetic information to phenotypic capabilities [20] [21]. GEMs are composed of a list of reactions associated with the enzymes and transporters found in a given organism's genome, connected into a comprehensive metabolic network [20]. The metabolic network in a GEM is converted into a mathematical format—a stoichiometric matrix (S matrix)—where columns represent reactions, rows represent metabolites, and each entry is the corresponding coefficient of a particular metabolite in a reaction [21].

The primary computational method for analyzing GEMs is Flux Balance Analysis (FBA), a constraint-based optimization technique that computes metabolic flux distributions through the network by solving a linear optimization problem [22] [21]. FBA identifies optimal flux distributions that maximize or minimize an objective function (typically biomass production for growth simulations) while respecting constraints such as reaction reversibility, nutrient availability, and enzyme capacities [22]. GEMs have evolved significantly from their initial formulations, with iterative updates continually improving their predictive performance for critical model organisms [21]. Recent advancements have expanded GEM capabilities to include expression constraints and reaction thermodynamics, with models such as yETFL for S. cerevisiae incorporating enzymatic constraints, proteome allocation, and compartmentalization [22].

Comparative Analysis of Model Organisms

Key Characteristics and Historical Development

Table 1: Evolution of Genome-Scale Metabolic Models for Key Model Organisms

| Organism | Representative Model | Reactions | Genes | Metabolites | Key Features | References |

|---|---|---|---|---|---|---|

| Bacillus subtilis | iBsu1103 | 1,437 | 1,103 | - | SEED annotations, improved reaction directionality | [23] |

| iBSU1209 | 1,948 | - | - | Expansion from iBsu1103 | [20] | |

| Pan-genome model (2024) | 2,239 | 2,315 | 1,874 | Represents 481 strains, pan-genome scale | [20] | |

| Saccharomyces cerevisiae | Initial reconstruction (2003) | 1,175 | 708 | 584 | First eukaryotic GEM, compartmentalization | [24] |

| Yeast8 | 3,991 | 1,149 | 1,326 | Comprehensive model with 14 compartments | [22] | |

| yETFL | - | 1,393+ | 2,691 | Incorporates expression constraints and thermodynamics | [22] | |

| Escherichia coli | Multiple iterations | - | - | - | Gold standard for GEM development | [21] |

Metabolic Capabilities and Experimental Validation

Model organisms are selected for their well-characterized biology, genetic tractability, and representative metabolic features. Bacillus subtilis, a Gram-positive bacterium, is known for its industrial applications and safety profile, with several strains labeled as "generally recognized as safe" (GRAS) by the FDA [20]. Its metabolism has been extensively modeled, with recent pan-genome scale models capturing the diversity across 481 strains, identifying 2,315 orthologous gene clusters, 1,874 metabolites, and 2,239 reactions [20]. The average B. subtilis strain model contains 2,175 reactions, with 92% of reactions being core features present in over 99% of strains [20].

Saccharomyces cerevisiae, as a eukaryotic model, presents unique challenges with its compartmentalized cellular structure. The yETFL model accounts for this complexity by including multiple RNA polymerases and ribosomes—specifically, RNA polymerase II for nuclear genes, mitochondrial RNA polymerase, and three distinct ribosomes (one for mitochondrial genes and two with different compositions for nuclear genes) [22]. This compartmentalization requires explicit modeling of transport steps between cellular compartments, a significant advancement beyond bacterial models [22] [24].

Escherichia coli remains the gold standard for metabolic modeling, with continuous iterative improvements to its GEMs. The methodologies developed for E. coli have served as templates for other organisms, including the ETFL formulation that efficiently integrates RNA and protein synthesis with traditional GEMs [22].

Experimental Protocols and Methodologies

Genome-Scale Metabolic Model Reconstruction

The reconstruction process for GEMs is a non-automated, iterative process that requires significant curation effort. For S. cerevisiae, the initial reconstruction involved several key steps: (1) downloading gene catalogs from KEGG metabolic pathways; (2) identifying ORF names, gene names, enzyme names, and EC numbers; (3) determining reaction stoichiometry from the Enzyme nomenclature database; (4) verifying missing genes using MIPS Comprehensive Yeast Genome Database and Saccharomyces Genome Database; (5) identifying organism-specific substrates and products; (6) determining cofactor specificity; (7) localizing reactions to appropriate cellular compartments; and (8) establishing reaction directionality [24].

For the B. subtilis pan-genome model, researchers followed the protocol of Norsigian et al., starting with gathering and re-annotating publicly available genomes, then grouping protein sequences into clusters of orthologous genes to reduce redundancy [20]. The pan-genome construction identified 20,315 orthologous gene families, which were partitioned into core features (present in >99% of strains) and accessory genes (less frequent) [20]. The resulting pan-model was gap-filled to ensure individual strains could grow in known conditions, using defined media, prior Biolog experiments, and new Biolog experiments for eight additional strains [20].

Model Validation and Refinement Protocols

Table 2: Key Experimental Methods for Model Validation

| Method | Application | Key Outcomes | Examples |

|---|---|---|---|

| High-throughput phenotyping | Validate predicted growth capabilities | Comparison of computational predictions with experimental results | Biolog PM1 experiments for B. subtilis [20] |

| Gene essentiality studies | Test model predictions against knockout mutants | Identification of essential genes for growth | Comparison with experimental essentiality data [25] |

| Fluxomics | Measure intracellular metabolic fluxes | Validation of predicted flux distributions | Comparison with experimental flux data [22] |

| Carbon utilization experiments | Refine and validate metabolic predictions | Strain-specific nutrient utilization profiles | Experimental data for 8 B. subtilis strains [20] |

| Thermodynamic curation | Ensure thermodynamic feasibility | Gibbs free energy values for reactions | yETFL model with thermodynamic constraints [22] |

For the B. subtilis pan-genome model, experimental refinement utilized Biolog PM1 experiments to validate carbon source utilization predictions across eight strains [20]. When model predictions failed to match experimental results, gap-filling procedures were implemented: "To fill a strain-specific gap, the most common reactions from the pan-reactome were iteratively added until the model could grow, then trimmed away until a minimal set of new reactions was found" [20].

The yETFL model for S. cerevisiae incorporated thermodynamic constraints using the group-contribution method to estimate Gibbs free energies of formation for metabolites and reactions [22]. This enabled thermodynamic-based flux analysis (TFA) that enforces coupling between reaction directionality and corresponding Gibbs free energy to eliminate thermodynamically infeasible predictions [22].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents and Resources for Metabolic Modeling

| Reagent/Resource | Function | Application Examples |

|---|---|---|

| SEED annotations | Genome annotation platform | Basis for iBsu1103 B. subtilis model [23] |

| AGORA2 | Repository of curated GEMs for gut microbes | Source for 7,302 strain-level GEMs [26] |

| COBRA Toolbox | MATLAB package for constraint-based modeling | FBA and other GEM analysis methods [21] |

| COBRApy | Python package for constraint-based modeling | Alternative to MATLAB COBRA Toolbox [21] |

| Pathway Tools | Software for pathway analysis and model construction | Used in BioCyc database collection [27] |

| Non-standard amino acids | Genetic code expansion | 20 distinct nsAAs incorporated in B. subtilis [28] |

| Orthologous gene clusters | Pan-genome analysis | 2,315 clusters across 481 B. subtilis strains [20] |

Applications in Strain Design and Metabolic Engineering

Industrial and Therapeutic Applications

GEMs have enabled sophisticated metabolic engineering strategies across model organisms. For B. subtilis, metabolic models have informed engineering strategies for producing menaquinone-7, asparaginase enzyme, and riboflavin [20]. The pan-genome model specifically allows for assessing strain-to-strain differences related to nutrient utilization, fermentation outputs, and robustness, dividing B. subtilis strains into five groups with distinct metabolic behavior patterns [20].

For S. cerevisiae, GEMs have been instrumental in optimizing this eukaryote for industrial production of fuels, specialty chemicals, and therapeutic proteins [22]. The yETFL model with expression constraints enables more realistic predictions of metabolic capabilities by accounting for enzymatic and proteomic limitations [22].

A promising application is in the development of live biotherapeutic products (LBPs), where GEMs guide the selection and design of microbial strains for therapeutic use [26]. GEMs can predict strain functionality, host interactions, and microbiome compatibility, helping identify strains that produce beneficial metabolites or inhibit pathogens [26].

Genome Transfer and Synthetic Biology

B. subtilis serves as an important platform for genome transfer technologies, enabling manipulation of large DNA fragments and whole genomes [29]. The BGM (Bacillus Genome Mediated) vector system allows cloning and transfer of large genomic fragments, with methods like domino cloning enabling assembly of DNA sequences through homologous recombination [29]. These technologies are crucial for synthetic biology applications, including the transfer of entire synthetic genomes.

Figure 1: Microbial Genome Transfer Platforms. Diagram illustrating the three primary model organisms used as platforms for genome transfer technologies and their associated methods.

The field of genome-scale metabolic modeling continues to evolve with several emerging trends. Pan-genome scale modeling, as demonstrated with B. subtilis, represents a shift from single-strain to population-level metabolic representations [20] [21]. Integration of multi-omics data—including transcriptomics, proteomics, and metabolomics—into constrained models is enhancing predictive accuracy [22] [27]. For eukaryotic models, incorporation of compartmentalization and multiple expression systems (nuclear and mitochondrial) presents both challenges and opportunities for more realistic simulations [22].

Machine learning approaches are being combined with GEMs, as seen in the unsupervised clustering of B. subtilis strains into distinct functional groups based on metabolic capabilities [20]. The development of improved computational frameworks that efficiently integrate expression constraints, reaction thermodynamics, and proteome allocation will further enhance model predictive capabilities [22].

Figure 2: GEM Development Workflow. The iterative process of genome-scale metabolic model reconstruction, validation, and application.

In conclusion, E. coli, B. subtilis, and S. cerevisiae each provide unique advantages as model organisms for metabolic modeling and strain design. E. coli offers the most mature modeling infrastructure, B. subtilis provides Gram-positive representation and industrial utility, and S. cerevisiae enables eukaryotic compartmentalization studies. The continuous refinement of GEMs for these organisms, incorporating pan-genome diversity, multi-omics data, and sophisticated computational frameworks, will further enhance their utility in basic research and applied biotechnology. As these tools evolve, they will accelerate the development of novel microbial chassis for sustainable biomanufacturing, therapeutic applications, and fundamental biological discovery.

From Reconstruction to Application: Tools and Workflows for Strain Design

Genome-scale metabolic models (GEMs) represent genome-wide representations of an organism's metabolism, computationally describing gene-protein-reaction associations for entire metabolic genes [2]. Since the first GEM for Haemophilus influenzae was reported in 1999, advances have been made to develop and simulate GEMs for an increasing number of organisms across all domains of life [2]. These models have become indispensable tools in systems biology and metabolic engineering, serving as platforms for integrating and analyzing various types of omics data to predict metabolic fluxes using optimization techniques like flux balance analysis (FBA) [2].

For strain design research, GEMs provide a powerful framework for predicting metabolic engineering targets that maximize the production of valuable compounds. They enable researchers to simulate the effects of genetic modifications (e.g., gene knockouts, overexpression) on metabolic capabilities and growth performance before conducting laborious laboratory experiments [30]. The reconstruction of high-quality GEMs has therefore become a critical step in rational strain design, allowing for in silico experimentation and hypothesis generation that dramatically accelerates the engineering of microbial cell factories.

The manual reconstruction of GEMs is a complex and time-consuming process that can take from several months to years [31]. To address this challenge, several automated reconstruction tools have been developed, each with unique approaches, strengths, and limitations. This guide focuses on four prominent tools—CarveMe, RAVEN, Model SEED, and merlin—that represent the state-of-the-art in genome-scale metabolic reconstruction.

Table 1: Core Characteristics of Automated Reconstruction Tools

| Tool | Primary Approach | Core Databases | Interface | License |

|---|---|---|---|---|

| CarveMe | Top-down reconstruction from universal template | BIGG | Command-line (Python) | Open-source |

| RAVEN | Semi-automated reconstruction from multiple sources | KEGG, MetaCyc, Published models | MATLAB toolbox | Open-source |

| Model SEED | Automated pipeline with integrated annotation | RAST, Model SEED database | Web interface | Open-source |

| merlin | Comprehensive manual and automatic reconstruction | KEGG, TCDB, MetaCyc | Graphical User Interface (Java) | Open-source |

These tools employ different strategies for reconstructing metabolic networks. CarveMe uses a unique top-down approach that involves creating models from a manually curated universal template, prioritizing reactions with stronger genetic evidence [32]. In contrast, RAVEN allows for semi-automated reconstruction based on protein homology using published models and/or KEGG and MetaCyc databases [33]. Model SEED provides a fully automated web-based pipeline that integrates genome annotation with model reconstruction [32], while merlin offers a balance between automated procedures and manual curation with its curation-oriented graphical interface [31].

In-Depth Tool Analysis

CarveMe

CarveMe is a command-line Python-based tool designed to create genome-scale metabolic models ready for flux balance analysis in just a few minutes [32]. Its distinctive top-down approach begins with a BIGG-based manually curated universal template, which is subsequently "carved" out based on organism-specific genetic evidence [32]. This methodology prioritizes the incorporation of reactions with higher genetic evidence through its proprietary gap-filling algorithm.

The tool is particularly valued for its speed and efficiency in generating models that demonstrate performance similar to manually curated models [32]. CarveMe's command-line interface makes it suitable for automated workflows and high-throughput reconstruction projects, though it may present a steeper learning curve for users unfamiliar with command-line operations.

RAVEN Toolbox

The RAVEN (Reconstruction, Analysis and Visualization of Metabolic Networks) Toolbox is a software suite for MATLAB that enables semi-automated reconstruction of genome-scale models [33]. RAVEN can utilize published models and/or KEGG and MetaCyc databases, coupled with extensive gap-filling and quality control features [33]. The toolbox also contains methods for visualizing simulation results and omics data, along with a range of methods for performing simulations and analyzing results [33] [34].

A key strength of RAVEN is its versatility in reconstruction sources. Unlike the initial version that only supported KEGG, RAVEN 2.0 and later versions allow de novo reconstruction using MetaCyc and template models, with algorithms to merge networks from multiple databases [32]. This flexibility enables researchers to leverage the strengths of different database systems. Additionally, RAVEN includes the ftINIT algorithm for generating context-specific models from single-cell RNA-Seq data, expanding its utility for specialized applications [33].

Model SEED

Model SEED is a web resource that provides automated reconstruction of genome-scale metabolic models for both microorganisms and plants [32]. The platform integrates genome annotation performed by RAST (Rapid Annotation using Subsystem Technology) with model reconstruction, enabling users to create models in less than 10 minutes, including annotation time [32]. Users can select or create custom media conditions to be used during the gap-filling process.

The web-based interface of Model SEED makes it accessible to users without programming expertise, while the platform provides aliases and synonyms for reactions and metabolites across multiple databases, enhancing interoperability [32]. This comprehensive approach from annotation to functional model makes Model SEED particularly valuable for researchers seeking a streamlined, all-in-one solution for metabolic reconstruction.

merlin

merlin is an open-source, Java-based application that provides comprehensive features for genome-scale metabolic reconstruction, emphasizing manual curation support [31]. Since its initial release, merlin has undergone significant updates, with version 4.0 featuring deep architectural changes, improved database management, and a redesigned graphical interface that enhances user-friendliness and manual evaluation capabilities [31].

A distinctive feature of merlin is its extensive toolkit for genome functional annotation, which includes algorithms for selecting gene products and Enzyme Commission numbers from BLAST or Diamond alignment results [31]. merlin incorporates TranSyT (Transporter Systems Tracker), which uses the Transporter Classification Database (TCDB) to annotate transport systems, including information on substrates, mechanisms, and transport direction [31]. The platform also supports compartmentalization through integration with subcellular localization tools like WolfPSORT, PSORTb3, and LocTree3 [31].

Table 2: Specialized Features and Applications

| Tool | Unique Features | Best Applications | Limitations |

|---|---|---|---|

| CarveMe | Top-down approach, Rapid reconstruction, Priority on genetic evidence | High-throughput projects, Quick draft generation | Less suitable for extensive manual curation during reconstruction |

| RAVEN | MATLAB integration, Multi-database support, ftINIT for context-specific models | Integration with omics data, Systems-wide analysis | Requires MATLAB license |

| Model SEED | Integrated RAST annotation, Web-based interface, Plant and microbial models | Users without programming background, Rapid annotation to model pipeline | Less flexible for custom curation during automated process |

| merlin | Curated-oriented GUI, Transporters annotation (TranSyT), Compartmentalization tools | High-quality curated models, Eukaryotic organisms | Steeper learning curve due to extensive features |

Comparative Performance and Selection Criteria

Performance Benchmarks

Systematic assessments of genome-scale metabolic reconstruction tools have revealed that no single tool outperforms others across all evaluation criteria [32] [35]. The performance of each tool varies depending on the target organism, the completeness of genome annotation, and the intended application of the resulting model.

A comparative analysis of seven automated reconstruction tools applied to multicellular eukaryotes demonstrated that the similarity of obtained metabolic networks is highly influenced by the databases each method uses for predictions, sometimes more so than phylogenetic considerations [35]. This finding underscores the importance of selecting tools that leverage databases most relevant to the target organism.

Tool Selection Guidelines

Choosing the appropriate reconstruction tool depends on multiple factors, including the target organism, available data, and research objectives. Based on comparative evaluations, the following guidelines emerge:

- For well-studied model organisms: Tools with template-based approaches like CarveMe may produce more reliable models by leveraging existing knowledge from related organisms [32].

- For non-model or less-studied organisms: De novo reconstruction tools like RAVEN and merlin that rely on genome annotation may be more appropriate, as they are less dependent on existing metabolic models of related organisms [31].

- For high-throughput projects: CarveMe and Model SEED offer advantages in speed and automation, generating models within minutes to hours [32].

- For high-quality curated models: merlin provides extensive curation-oriented features that support manual refinement, which is still recognized as essential for developing high-quality GEMs [31].

- For integration with omics data: RAVEN offers specialized algorithms for constructing context-specific models from transcriptomic data [33].

Integrated Workflow for Strain Design

The process of metabolic reconstruction and strain design follows a systematic workflow that integrates automated tools with manual curation and experimental validation. The diagram below illustrates this integrated approach:

Implementation Protocol

Genome Annotation and Draft Reconstruction

- Input genome sequence in FASTA format

- Annotate genome using RAST (for Model SEED) or external tools (for other platforms)

- Run automated reconstruction using selected tool(s)

- Generate draft metabolic network in SBML format

Model Curation and Refinement

- Perform gap-filling to ensure network connectivity

- Add organism-specific biomass composition

- Define transport reactions and exchange boundaries

- Validate core metabolic functionality (e.g., ATP production, growth on known substrates)

Model Simulation and Strain Design

- Define objective function (e.g., biomass production, target metabolite synthesis)

- Perform flux balance analysis under relevant conditions

- Identify gene knockout targets using optimization algorithms (e.g., OptKnock)

- Predict overexpression targets to redirect metabolic flux

Experimental Validation and Iterative Refinement

- Implement suggested genetic modifications in laboratory strains

- Measure growth phenotypes and metabolite production

- Compare experimental results with model predictions

- Refine model to improve predictive accuracy

Research Reagent Solutions

Table 3: Essential Research Reagents and Resources

| Reagent/Resource | Function in Metabolic Reconstruction | Example Sources |

|---|---|---|

| Genome Sequence (FASTA) | Primary input for all reconstruction tools | NCBI GenBank, Ensembl Bacteria [30] |

| KEGG Database | Reference for pathway information and reaction stoichiometry | KEGG PATHWAY [30] |

| MetaCyc Database | Curated metabolic pathway database | BioCyc [30] |

| BIGG Models | Curated genome-scale metabolic models | BIGG Database [32] |

| UniProt | Protein functional annotation | UniProt [30] |

| TCDB | Transporter classification and annotation | Transporter Classification Database [31] |

| SBML | Standard format for model representation | Systems Biology Markup Language [32] |

The field of automated metabolic reconstruction continues to evolve with several emerging trends. The development of pan-genome scale modeling approaches, such as pan-Draft, addresses challenges in reconstructing models for unculturable species by leveraging genetic evidence from multiple genomes within species-level clusters [36]. These approaches mitigate issues arising from incompleteness and contamination in individual metagenome-assembled genomes (MAGs) [36].

Integration of machine learning methods with traditional constraint-based approaches shows promise for improving gap-filling algorithms and predicting reaction probabilities based on genomic context [36]. Additionally, the expansion of reconstruction tools to support multicellular eukaryotes and host-pathogen systems opens new applications in biomedical research and biotechnology [35] [2].

In conclusion, CarveMe, RAVEN, Model SEED, and merlin each offer distinct advantages for genome-scale metabolic reconstruction in strain design research. The selection of an appropriate tool should be guided by the specific research context, target organism, and desired model quality. As these tools continue to mature, they will play an increasingly vital role in accelerating the design of microbial cell factories for sustainable bioproduction, drug development, and fundamental biological discovery. Researchers are encouraged to combine multiple tools and leverage their complementary strengths to generate high-quality metabolic models tailored to their specific applications.

Flux Balance Analysis (FBA) is a mathematical approach for analyzing the flow of metabolites through a metabolic network to find an optimal net flow of mass that follows a set of instructions defined by the user [37]. This computational technique has become a cornerstone in systems biology for studying genome-scale metabolic models (GEMs), which contain all known metabolic reactions in an organism and the genes that encode each enzyme [38]. FBA calculates the flow of metabolites through these metabolic networks, enabling researchers to predict organism growth rates or the production rates of biotechnologically important metabolites without requiring difficult-to-measure kinetic parameters [38]. The method is built upon a well-established mathematical technique called linear programming (LP), which is designed to solve optimization problems applicable to any discipline [37].

The power of FBA lies in its ability to analyze metabolic networks using constraints rather than kinetic parameters. This constraint-based approach differentiates FBA from theory-based models that rely on biophysical equations requiring extensive parameterization [38]. By focusing on stoichiometric balances and flux constraints, FBA can rapidly predict metabolic behaviors in large-scale networks, making it particularly valuable for metabolic engineering and strain design. The versatility of FBA is evidenced by its diverse applications, from understanding metabolic gene essentiality and stress tolerance to designing microbial cell factories [39]. As metabolic models continue to expand for numerous organisms, FBA remains an essential tool for harnessing the knowledge encoded in these comprehensive network reconstructions.

Mathematical Foundations of Flux Balance Analysis

Core Mathematical Principles

The mathematical foundation of FBA begins with the representation of metabolic reactions as a stoichiometric matrix (S) of size m × n, where m represents the number of unique metabolites and n represents the number of reactions in the network [38]. Each column in this matrix corresponds to a reaction and contains the stoichiometric coefficients of the metabolites involved, with negative coefficients indicating consumed metabolites and positive coefficients indicating produced metabolites. Reactions that do not involve a particular metabolite receive a coefficient of zero, resulting in a sparse matrix structure [38].

The fundamental equation governing FBA is the mass balance equation at steady state:

Sv = 0

In this equation, v represents the flux vector through all reactions in the network, and the steady-state assumption requires that the total production and consumption of each metabolite must balance, preventing unrealistic accumulation or depletion [37] [38]. This steady-state constraint defines a solution space containing all possible flux distributions that satisfy mass conservation. For realistic large-scale metabolic models where reactions outnumber metabolites (n > m), the system is underdetermined, meaning there is no unique solution to this system of equations [38].

Linear Programming Formulation

Flux Balance Analysis uses linear programming to identify specific optimal points within the constrained solution space. The LP formulation for FBA consists of three key components:

Objective function: A linear combination of fluxes represented as Z = c^T v, where c is a vector of weights indicating how much each reaction contributes to the objective [38]. Common objectives include maximizing biomass production (simulating growth) or maximizing the production of a target metabolite.

Constraints: These include the steady-state mass balance constraints (Sv = 0) and inequality constraints that impose upper and lower bounds on reaction fluxes (αi ≤ vi ≤ β_i) [38]. These bounds define maximum and minimum allowable fluxes based on physiological considerations.

Optimization: Linear programming algorithms identify the flux distribution that maximizes or minimizes the objective function while satisfying all constraints [37].

The general linear programming problem for FBA can be summarized as:

Maximize: c^T v Subject to: Sv = 0 and: αi ≤ vi ≤ β_i for all reactions i

Table 1: Key Components of the Linear Programming Problem in FBA

| Component | Mathematical Representation | Biological Interpretation |

|---|---|---|

| Decision Variables | Vector v | Flux through each metabolic reaction |

| Stoichiometric Constraints | Matrix S | Metabolic network structure |

| Mass Balance | Sv = 0 | Steady-state assumption |

| Flux Bounds | αi ≤ vi ≤ β_i | Physiological capacity limits |

| Objective Function | c^T v | Biological goal (e.g., growth) |

FBA Workflow and Computational Implementation

Core FBA Methodology

The implementation of Flux Balance Analysis follows a systematic workflow that transforms a metabolic network reconstruction into quantitative predictions of metabolic flux. The process begins with a genome-scale metabolic model (GEM) that includes all known metabolic reactions for an organism. The well-curated iML1515 model of E. coli K-12 MG1655, for instance, includes 1,515 open reading frames, 2,719 metabolic reactions, and 1,192 metabolites [40].

The key steps in performing FBA include:

Network Reconstruction: Compiling all known metabolic reactions into a stoichiometric matrix that defines the network structure [38].

Constraint Definition: Applying mass balance constraints and setting physiologically relevant flux bounds based on environmental conditions or genetic modifications [38].

Objective Specification: Defining appropriate biological objectives relevant to the research question, such as maximizing biomass production or metabolite synthesis [38].

Linear Programming Solution: Using computational algorithms to identify the optimal flux distribution that satisfies all constraints while optimizing the objective function [37].

Result Interpretation: Analyzing the flux distribution to draw biological insights and make experimental predictions.

The following diagram illustrates the core workflow of Flux Balance Analysis:

Advanced FBA Techniques

Several advanced FBA techniques have been developed to address specific research questions in strain design and metabolic engineering:

Enzyme-Constrained Modeling: Incorporating enzyme constraints ensures that fluxes through pathways are capped by enzyme availability and catalytic efficiency, avoiding arbitrarily high flux predictions. Tools like ECMpy add total enzyme constraints without altering the GEM structure, improving prediction accuracy compared to traditional FBA [40].

Lexicographic Optimization: This approach addresses situations where optimizing for a single objective (e.g., product export) leads to unrealistic biological predictions (e.g., zero growth). The model is first optimized for biomass growth and then constrained to require a percentage of that growth while optimizing for the production objective [40].

Integration with Kinetic Models: Recent advances enable the integration of kinetic pathway models with genome-scale metabolic models, allowing simulation of local nonlinear dynamics of pathway enzymes and metabolites informed by the global metabolic state predicted by FBA [41].

FBA Applications in Strain Design and Metabolic Engineering

Industrial and Therapeutic Strain Design

Flux Balance Analysis has become an indispensable tool for metabolic engineers seeking to design microbial strains for industrial and therapeutic applications. A representative case study involves the engineering of E. coli for L-cysteine overproduction [40]. In this application, researchers used FBA to model how mutated enzymes in L-cysteine biosynthesis pathways affect overall production and to determine optimal medium conditions. The implementation required specific modifications to the base iML1515 model, including updates to kinetic parameters and the addition of missing reactions through gap-filling methods.

Table 2: Key Modifications for L-Cysteine Overproduction Strain Design [40]

| Parameter | Gene/Reaction | Original Value | Modified Value | Rationale |

|---|---|---|---|---|

| Kcat_forward | PGCD | 20 1/s | 2000 1/s | Remove feedback inhibition |

| Kcat_forward | SERAT | 38 1/s | 101.46 1/s | Increased enzyme activity |