From Lab to Market: A Techno-Economic Framework for Engineering Industrial Microbial Strains

This article provides a comprehensive economic and technical analysis for developing engineered microbial strains for industrial-scale production.

From Lab to Market: A Techno-Economic Framework for Engineering Industrial Microbial Strains

Abstract

This article provides a comprehensive economic and technical analysis for developing engineered microbial strains for industrial-scale production. Tailored for researchers, scientists, and drug development professionals, it synthesizes current methodologies to bridge the gap between laboratory innovation and commercial viability. The scope covers foundational economic drivers, advanced strain engineering workflows like the Design-Build-Test-Learn (DBTL) cycle, strategies for troubleshooting scale-up challenges, and robust validation through Techno-Economic Analysis (TEA) and Life Cycle Assessment (LCA). The objective is to offer a actionable framework for de-risking bioprocess development and accelerating the transition of bio-based products to market.

The Bioeconomy Imperative: Market Drivers and Economic Potential of Engineered Strains

The application of engineered microbial strains in industrial production represents a cornerstone of the modern bioeconomy. However, the strategic approach to strain design, process development, and economic optimization differs profoundly between the production of high-value therapeutics and bulk chemicals. High-value therapeutics, such as pharmaceuticals and biologics, are characterized by low-volume, high-price products where development cost and speed to market are often secondary to precision and efficacy. In contrast, bulk chemical production is a high-volume, low-margin business where economic viability is exquisitely sensitive to factors like feedstock cost, carbon conversion efficiency, and volumetric productivity [1]. This guide provides an objective comparison of the performance requirements and engineering paradigms for engineered strains across these two distinct sectors, framed within an economic analysis for researchers and drug development professionals.

The global chemical industry is currently navigating a complex transition. While the overall bulk chemical market is substantial—projected to grow from USD 715 billion in 2025 to over USD 1,022 billion by 2035—profit margins are under pressure from overcapacity and soft demand [2] [3]. Conversely, specialty sectors, particularly biomanufacturing for therapeutics, are experiencing robust growth. The biomanufacturing specialty chemicals market for applications like pharmaceuticals is expected to grow at a CAGR of 9.04%, nearly triple the rate of the overall bulk chemical market [4]. This divergence is driving a strategic rebalancing of portfolios, with many companies shifting focus from commoditized base chemicals toward high-margin specialties and sustainable alternatives [2] [5].

Economic & Performance Landscape

The fundamental economic drivers for biological production vary dramatically between therapeutics and bulk chemicals. The following table summarizes the key performance metrics and their relative economic impact for each sector.

Table 1: Key Performance and Economic Metric Comparison

| Metric | High-Value Therapeutics | Bulk Chemicals |

|---|---|---|

| Typical Product Value | Very High (e.g., APIs, Biologics) | Low (e.g., Organic Acids, Solvents) |

| Primary Economic Driver | Speed to Market, Product Efficacy, Purity | Feedstock Cost, Carbon Yield, Titer & Productivity [1] |

| Acceptable Production Cost | High (Cost is a small fraction of product price) | Must be cost-competitive with petrochemical routes [1] |

| Key Market Trend | Growth in biologics, cell & gene therapies [6] | Shift towards bio-based and sustainable chemicals [3] |

| Market Size & Growth | Biomanufacturing Specialty Chem. Market: USD 26.99 Bn by 2034 (CAGR 9.04%) [4] | Bulk Chemical Market: USD 1,022.63 Bn by 2035 (CAGR 3.64%) [3] |

A more detailed analysis of the technical performance requirements highlights the stark contrast in strain engineering priorities.

Table 2: Technical Performance Targets for Engineered Strains

| Performance Parameter | High-Value Therapeutics | Bulk Chemicals | Criticality for Economic Viability |

|---|---|---|---|

| Titer (g/L) | Moderate (1-10) often sufficient | High (>50) is essential [7] | High for Bulk Chemicals |

| Yield (g product/g substrate) | Moderate | Maximum theoretical yield critical [1] | High for Bulk Chemicals |

| Productivity (g/L/h) | Focus on reproducibility | High is essential for low CAPEX [1] | High for Bulk Chemicals |

| Downstream Processing | Highly complex, cost-tolerant (e.g., chromatography) | Must be simple and low-cost (e.g., distillation) [1] | High for Both |

| Feedstock Flexibility | Low (typically defined, high-purity media) | High (must utilize low-cost sugars, C1 gases, or biomass) [8] [1] | High for Bulk Chemicals |

For bulk chemicals, the feedstock cost is the dominant factor, often comprising over 50% of the operating expenditure (OPEX) [1]. Therefore, engineering strains to utilize non-food competing, low-cost feedstocks like lignocellulosic biomass or one-carbon (C1) molecules (e.g., CO₂, methanol) is a major research focus. However, a significant barrier for C1 biomanufacturing is its low carbon conversion efficiency, often below 10%, which necessitates larger, more capital-intensive bioreactor systems to compensate for poor productivity [1]. In the therapeutic sector, the cost of goods sold is less prohibitive, allowing the use of pure, first-generation sugar feedstocks and placing a greater emphasis on precision and complex pathway engineering.

Comparative Experimental Analysis

Case Study 1: Oleic Acid Production (Bulk Chemical)

This case study is based on a techno-economic analysis of oleic acid production from lignocellulosic biomass, representing a bulk chemical production model [7].

- Objective: To engineer a yeast strain for high-yield oleic acid production from glucose and xylose in lignocellulosic hydrolysate and evaluate its industrial-scale economic viability.

- Experimental Strain: Engineered Yarrowia lipolytica strain YSXID for co-utilization of glucose and xylose.

- Control: Wild-type or previous generation strains with lower yield.

- Culture Conditions: Flask fermentation and scalable bioreactor processes using hydrolysate from 2,000 metric tons per day of rice straw biomass.

- Key Performance Metrics:

- Titer: Total lipid production of 10.5 g/L, with an oleic acid titer of 5.98 g/L [7].

- Yield: A yield of 0.18 g oleic acid per gram of sugar, representing a 50% improvement over previous reports [7].

- Content: Oleic acid composition in total lipids maintained at 69-71% across different sugar conditions [7].

- Techno-Economic Conclusion: At scale, the process requires ~34 tons of biomass per ton of oleic acid, with a minimum selling price of US$6.4-7.89 per kg, highlighting the critical link between yield and economic viability [7].

Case Study 2: 3-HP Production via C1 Assimilation (Chemical Platform)

This study examines the production of 3-hydroxypropionic acid (3-HP), a platform chemical, from C1 feedstocks, illustrating the challenges of a nascent bulk production pathway [1].

- Objective: To assess the techno-economic and environmental feasibility of producing 3-HP from waste C1 feedstocks (steel mill off-gas or electrochemically derived methanol).

- Experimental Setup:

- Route 1: Two-stage biological system using steel mill off-gas.

- Route 2: Integrated hybrid system combining electrochemical conversion of CO₂ to methanol followed by microbial conversion.

- Key Findings:

- The C1 feedstock-to-chemical conversion efficiency for both routes was found to be below 10% [1].

- This low efficiency is a major economic barrier, increasing both capital expenditures (CAPEX) and operating expenditures (OPEX) by requiring larger infrastructure and more raw material [1].

- Fermentation-related equipment accounted for the largest share (>92%) of equipment costs in related processes, with costs being inversely proportional to overall carbon yield and productivity [1].

Detailed Methodologies

Strain Engineering for C1 Assimilation in Bulk Chemicals

Engineering non-model microbial hosts to utilize C1 feedstocks is a advanced methodology for reducing the feedstock cost burden in bulk chemical production [8].

- Principle: Enable sustainable bioproduction by engineering versatile "polytrophic" microbes to assimilate C1 molecules like CO₂, methanol, or formate, which can be derived from waste gases or renewable energy.

- Procedure:

- Host Selection: Select a non-model host (e.g., Pseudomonas putida, Cupriavidus necator) based on desired native traits like stress resistance, substrate tolerance, or genetic stability [8].

- Omics-Driven Profiling: Use metabolomics, fluxomics, and transcriptomics to map the host's native metabolic network and central carbon fluxes [8].

- Computational Modeling: Employ Flux Balance Analysis (FBA) and Minimum-Maximum Driving Force (MDF) models to identify suitable synthetic C1 assimilation pathways (e.g., reductive glycine pathway) and predict flux distributions [8].

- Pathway Implementation: Introduce the selected C1 assimilation pathway into the host chassis using genetic tools.

- Fermentation Optimization: Conduct lab-scale fermentation under defined conditions (aerobic/anaerobic, bioreactor type) to validate strain performance [8].

- Supporting Experimental Data: This approach relies on integrating metabolic modeling with experimental validation. For example, computational models can predict the theoretical yield and thermodynamic feasibility of a synthetic C1 pathway before any genetic engineering is performed [8].

Process Design & Scale-Up Workflow

A structured scale-up workflow is critical for translating laboratory success to industrial production, particularly for cost-sensitive bulk chemicals [8] [1].

- Principle: Adopt a goal-oriented design mindset ("beginning with the end in mind") to de-risk scale-up and ensure economic viability is considered from the earliest R&D stages.

- Procedure:

- Bioprocess Context Definition: Define the carbon and energy inputs, target molecule, and candidate pathways. Establish initial economic and sustainability benchmarks [8].

- Strain Selection & Engineering: Based on the bioprocess context, select and engineer the host strain, as detailed in section 4.1 [8].

- Preliminary TEA/LCA: Perform initial Techno-Economic Analysis and Life Cycle Assessment to identify major cost drivers and environmental hotspots (e.g., fermentation CAPEX, feedstock cost). This guides subsequent engineering priorities [8] [1].

- Fermentation Optimization & Scale-Up: Optimize fermentation parameters (e.g., fed-batch strategies, O₂ transfer) and progressively scale from lab to pilot to demonstration scale [4].

- Iterative Design: Use data from each scale-up step to refine the TEA/LCA and, if necessary, inform further strain or process engineering in an iterative loop [1].

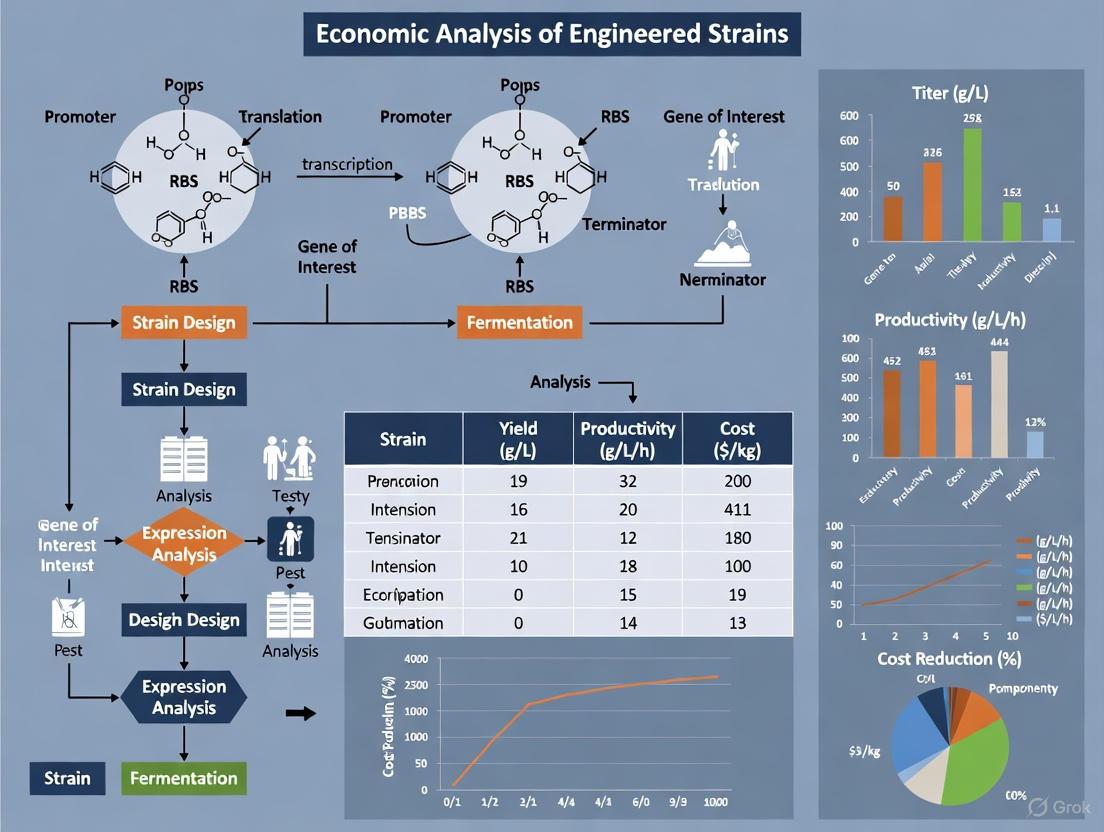

Diagram 1: Bioprocess scale-up workflow with iterative economic feedback.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key reagents, materials, and software tools essential for research in engineering industrial production strains.

Table 3: Essential Research Tools for Strain and Process Development

| Tool / Reagent | Function / Application | Relevance in Research |

|---|---|---|

| Lignocellulosic Biomass (e.g., Rice Straw) | A second-generation, non-food feedstock for sustainable bulk chemical production. | Used in hydrolysis processes to generate fermentable sugars (glucose, xylose) [7]. |

| C1 Feedstocks (Methanol, Formate, CO₂/CO/Syngas) | Next-generation carbon substrates for decarbonized biomanufacturing. | Critical for cultivating and engineering methylotrophic or synthetic C1-assimilating strains [8] [1]. |

| Oleaginous Yeast (e.g., Yarrowia lipolytica) | A GRAS (Generally Recognized As Safe) microbial host for high-lipid production. | A common chassis for engineering production of oleochemicals like oleic acid and biofuels [7]. |

| Metabolic Modeling Software (e.g., for FBA, MDF) | Computational tools for predicting metabolic flux and pathway thermodynamics. | Used in silico to design and optimize metabolic pathways for yield and efficiency before strain engineering [8]. |

| Fed-Batch Bioreactor Systems | Scalable fermentation equipment for process intensification. | Essential for achieving high cell densities and product titers in both lab-scale optimization and industrial production [7] [1]. |

The landscape of engineered strains for industrial production is defined by a fundamental economic dichotomy. The development of high-value therapeutics prioritizes precision, complexity, and speed, with production costs being a secondary concern. In stark contrast, the production of bulk chemicals is an exercise in economic optimization, where success is dictated by achieving maximum yield, titer, and productivity from the lowest-cost feedstocks.

The future of bulk biomanufacturing hinges on overcoming critical techno-economic barriers, particularly the low carbon conversion efficiency of promising next-generation feedstocks like C1 gases [1]. For researchers, this implies that early and iterative use of Techno-Economic Analysis is not merely an academic exercise but a crucial tool to guide metabolic engineering efforts towards economically viable outcomes. The convergence of advanced metabolic engineering, innovative process design, and rigorous economic modeling will be essential to enable the widespread adoption of biological production routes for both the therapeutics that save lives and the bulk chemicals that underpin our material world.

The global industrial landscape is undergoing a significant transformation, shaped by three powerful forces: evolving policy frameworks, accelerating sustainability mandates, and expanding market opportunities. For researchers, scientists, and drug development professionals working with engineered strains for industrial production, understanding these macroeconomic drivers is crucial for guiding research investment and technology development. Current economic analysis reveals a complex environment where strategic policy incentives are aligning with robust market growth in sustainable technologies, creating unprecedented opportunities for biotechnological innovation.

In 2025, the manufacturing sector faces a challenging yet opportunistic environment. The Institute for Supply Management’s manufacturing index remained below 50 for much of the year, signaling sector contraction, while trade policy uncertainty and tariffs emerged as top concerns for manufacturers [9]. Despite these headwinds, targeted technology investments and new policy incentives are creating fertile ground for innovation, particularly in sustainable biomanufacturing [9]. This guide provides a structured economic analysis of these converging drivers, offering researchers a framework for evaluating the commercial potential of engineered production strains within this evolving context.

Quantitative Market Analysis: Growth Projections and Economic Impact

The sustainable manufacturing and materials markets are demonstrating remarkable growth trajectories, underpinned by technological advancement and increasing regulatory pressure. The global sustainable manufacturing market, valued between $215.43 billion and $231.86 billion in 2025, is projected to reach $367.18 billion to $601.17 billion by 2029-2034, representing a compound annual growth rate (CAGR) of 11.1% to 11.3% [10] [11]. This robust expansion is mirrored in the sustainable materials market, which was estimated at $333.31 billion in 2024 and is expected to grow at a CAGR of 12.41% to reach approximately $1,073.73 billion by 2034 [12].

Table 1: Comparative Sustainable Market Size Projections

| Market Segment | 2024-2025 Base Value (USD Billion) | 2034 Projection (USD Billion) | CAGR (%) | Key Growth Drivers |

|---|---|---|---|---|

| Sustainable Manufacturing | $215.43 - $231.86 [10] [11] | $367.18 - $601.17 [10] [11] | 11.1 - 11.3 [10] [11] | Regulatory pressure, circular economy transition, consumer demand for eco-friendly products [10] [11] |

| Sustainable Materials | $333.31 [12] | ~$1,073.73 [12] | 12.41 [12] | Green building certifications, EV infrastructure expansion, corporate sustainability commitments [12] |

| Recycled Plastics | N/A | N/A | N/A | Versatility, reduced environmental impact, cost savings vs. virgin plastics [11] |

| Bioplastics & Biopolymers | N/A | N/A | N/A | Strict measures on single-use plastics, corporate environmental objectives [12] |

Regional analysis reveals distinct growth patterns, with North America capturing the largest market share (34.87%) in sustainable manufacturing in 2025, while the Asia-Pacific region is anticipated to grow at the fastest CAGR of 12.46% during the forecast period [10]. This geographic variation reflects differing policy environments, industrial capabilities, and consumer preferences that researchers must consider when developing market-entry strategies for products derived from engineered strains.

Policy and Regulatory Drivers: Incentives and Compliance Mandates

Policy frameworks have emerged as powerful economic drivers, creating both opportunities and constraints for industrial biotechnology. Recent legislative developments, including the passage of a major tax and spending bill commonly called the One Big Beautiful Bill Act, include several tax provisions that could lower costs and encourage manufacturing investment [9]. These policies are further reinforced by the Trump administration's America's AI Action Plan, which aims to accelerate the data center surge and demand for related manufactured components while promoting deregulation and streamlined permitting for new semiconductor manufacturing facilities [9].

The international policy landscape is equally impactful, with over 130 countries setting goals to reach net-zero emissions by 2050, creating regulatory pressure on industries to adopt sustainable raw materials [12]. The European Union's Circular Economy Action Plan and "Fit for 55" package are pouring billions into renewable-powered manufacturing facilities and clean technology innovation, making compliance economically favorable for manufacturers who adopt sustainable practices [10]. For researchers developing engineered strains, these policy signals indicate strong future demand for production systems that can demonstrably reduce carbon emissions and resource consumption.

Table 2: Key Policy Drivers and Their Economic Impacts

| Policy Initiative | Region | Key Provisions | Research & Development Implications |

|---|---|---|---|

| One Big Beautiful Bill Act [9] | United States | Retention of 21% corporate tax rate, full expensing for new equipment, immediate expensing of domestic R&D [9] | Enhances return on investment for capital-intensive biomanufacturing facilities; incentivizes domestic research spending |

| America's AI Action Plan [9] | United States | Streamlined permitting for advanced manufacturing facilities; promotion of AI integration [9] | Accelerates adoption of AI-powered bioprocess optimization and scale-up |

| Inflation Reduction Act [10] [13] | United States | $369 billion in clean energy incentives [14] | Supports development of bio-based energy production and waste-to-value bioprocesses |

| EU Green Deal [10] | European Union | €1 trillion sustainable investment; Circular Economy Action Plan [10] [14] | Creates demand for circular bioeconomy solutions using engineered strains |

| Corporate Sustainability Reporting Directive (CSRD) [13] | European Union | Mandatory sustainability reporting using "double materiality" concept [13] | Increases need for quantifiable sustainability metrics in biomanufacturing |

Sustainability as an Economic Driver: From Cost Center to Value Creation

Sustainability has transitioned from a peripheral concern to a central economic driver in industrial production. This shift is reflected in corporate investment patterns, with a 2025 Deloitte survey of 600 manufacturing executives finding that the majority (80%) plan to invest 20% or more of their improvement budgets in smart manufacturing initiatives, with a focus on foundational tools and technologies including automation hardware, data analytics, sensors, and cloud computing [9]. Beyond compliance, manufacturers are recognizing the economic value of sustainable practices, including reduced resource consumption, operational resilience, and enhanced brand reputation.

The transition from linear production to circular value chains represents a particularly significant opportunity for engineered biological systems. Manufacturing is rapidly moving away from conventional linear models toward circular value ecosystems that emphasize material reuse and recycling [10]. Designs now prioritize longevity, while modular component architectures facilitate easier recovery and reassembly. This structural transition in global industrial strategies is creating demand for biological production systems that can utilize waste streams as feedstocks and produce biodegradable materials, positioning engineered strains as critical enabling technologies for the circular economy.

Technological Enablers: AI and Smart Manufacturing

Advanced technologies are serving as powerful accelerators for sustainable biomanufacturing. Artificial Intelligence, machine learning, and digital twin technologies are fundamentally changing sustainability approaches by enabling real-time monitoring and predictive optimization of energy consumption, emissions, and waste output [10]. Digital twins virtually replicate factory operations, allowing manufacturers to simulate scenarios, identify inefficiencies, and deploy energy-efficient configurations without disrupting physical production [10].

Agentic Artificial Intelligence, characterized by its ability to reason, plan, and take autonomous action, is poised to elevate smart manufacturing and operations [9]. Industry adoption is likely to grow considerably in the next few years, with agentic AI applications including identifying alternative suppliers in response to supply chain disruptions, capturing institutional knowledge from retiring employees, maximizing production uptime with autonomously generated shift handover reports, and improving customer experience by simplifying equipment repair [9]. For researchers, these capabilities suggest future production environments where AI-powered systems can optimize the performance of engineered strains in real-time, adapting process parameters to maximize yield and minimize resource intensity.

Diagram 1: Economic drivers for engineered strains research

Experimental Framework for Economic Evaluation of Engineered Strains

Methodology for Techno-Economic Analysis

Evaluating the economic viability of engineered production strains requires a structured experimental approach that integrates both technical performance and economic metrics. The following protocol provides a standardized methodology for comparative analysis of strain performance under industrial-relevant conditions:

Strain Cultivation and Baseline Characterization: Inoculate engineered strains and reference controls in 1L bioreactors using standardized media formulated to mimic industrial feedstock costs. Monitor growth kinetics, substrate consumption rates, and maximum biomass density over 72 hours [9] [11].

Productivity Assessment Under Process Conditions: Evaluate product titer, yield, and productivity under controlled conditions that reflect manufacturing environments, including pH gradients, dissolved oxygen limitations, and temperature shifts. Perform triplicate runs for statistical significance [11].

Resource Efficiency Quantification: Measure key sustainability metrics including water consumption per unit product, energy input requirements, carbon dioxide equivalent emissions, and waste generation. Compare against industry benchmarks for conventional production methods [10] [13].

Scale-Up Projection Modeling: Utilize digital twin technology to simulate performance at commercial scale (10,000L+), identifying potential bottlenecks in mass transfer, heat dissipation, and nutrient distribution that might impact economic viability [10].

Economic Modeling: Integrate performance data into discounted cash flow models that account for capital expenditure, operating costs, tax incentives, and potential carbon pricing scenarios. Calculate minimum selling price and compare to incumbent production methods [9] [12].

Essential Research Reagents and Platforms

Table 3: Key Research Reagent Solutions for Economic Strain Evaluation

| Reagent/Platform | Function | Economic Relevance |

|---|---|---|

| Industrial Simulation Media | Mimics cost structure of commercial feedstocks | Enables accurate production cost forecasting early in R&D cycle |

| High-Throughput Microbioreactor Systems | Parallel strain screening under controlled conditions | Reduces development timeline; lowers preliminary research costs |

| Process Analytical Technology (PAT) | Real-time monitoring of critical process parameters | Provides data for process intensification and operational expense reduction |

| LC-MS/MS Analytical Systems | Quantification of target molecules and byproducts | Enables yield calculations and purification cost projections |

| Digital Twin Software | Virtual simulation of commercial-scale production | Identifies scale-up challenges before capital investment; de-risks technology transfer |

| Life Cycle Assessment (LCA) Databases | Quantifies environmental impacts across value chain | Supports sustainability claims essential for regulatory compliance and market access |

Investment Flows and Funding Landscape

Strategic investment patterns provide crucial indicators of economic momentum in sustainable manufacturing technologies. Global clean energy investment is forecasted to exceed $1.77 trillion in 2025, representing 41% growth over 2024 figures [14]. Similarly, artificial intelligence is projected to reach a $407 billion market in 2025, up from $142 billion in 2023, with significant implications for bioprocess optimization and strain engineering [14].

Corporate venture capital is increasingly targeting technologies that align with both sustainability and efficiency objectives. Research indicates that companies combining AI diagnostics with telemedicine platforms are achieving customer acquisition costs 76% below industry averages, demonstrating the economic advantage of integrated technological solutions [14]. For researchers, these investment trends highlight the importance of developing engineered strains that not only demonstrate technical superiority but also align with broader digitalization and sustainability initiatives that attract capital deployment.

Diagram 2: Value creation pathway from R&D to economic impact

The convergence of policy support, sustainability imperatives, and robust market growth creates a favorable economic environment for advanced biomanufacturing technologies. Researchers and drug development professionals can leverage these economic drivers to prioritize development efforts toward engineered strains with the greatest potential for commercial success and industrial impact. The quantitative projections and experimental frameworks presented in this analysis provide a structured approach for evaluating research priorities within this evolving economic context.

Future research should focus on integrating advanced computational methods, including AI and digital twins, with biological design to accelerate strain development and scale-up while minimizing resource consumption. Additionally, researchers should increasingly consider circular economy principles in strain design, developing production systems that can utilize waste carbon streams and generate biodegradable products. By aligning technical development with these powerful economic drivers, the research community can maximize the commercial impact and sustainability benefits of engineered production strains for industrial applications.

In the industrial production of bio-based chemicals, pharmaceuticals, and fuels, feedstock selection represents a pivotal cost determinant that can ultimately dictate commercial viability. Despite significant advances in strain engineering and bioprocess optimization, the economic burden of feedstocks continues to dominate production economics, often accounting for the majority of operational expenditures. This economic reality persists across diverse biomanufacturing sectors, from sustainable aviation fuel production to pharmaceutical precursor synthesis. The complex interplay between feedstock composition, strain metabolism, and process scaling creates a multidimensional optimization problem that extends beyond mere biological performance. Within the broader context of economic analysis of engineered strains for industrial production, understanding this feedstock-cost dynamic is not merely advantageous—it is fundamental to strategic research planning and commercial deployment. This analysis systematically compares conventional and next-generation feedstocks through both economic and performance lenses, providing researchers with a structured framework for feedstock evaluation and selection.

Feedstock Economics: Quantitative Comparative Analysis

The economic assessment of feedstocks extends beyond simple per-kilogram costs to encompass availability, processing requirements, and compatibility with industrial-scale operations. The table below synthesizes key economic and performance characteristics across major feedstock categories relevant to industrial bioprocessing.

Table 1: Economic and Technical Comparison of Feedstocks for Industrial Bioproduction

| Feedstock Category | Example Feedstocks | Production Cost Range | Key Advantages | Primary Limitations | Technology Readiness |

|---|---|---|---|---|---|

| Conventional Sugar-Based | Molasses, Sucrose, Glucose | Low to Moderate [15] | Established supply chains, High fermentability [15] | Food-fuel competition, Price volatility [16] | Commercial [15] |

| Lignocellulosic | Agricultural residues, Wood waste, Bagasse [17] | Moderate [16] | Non-food resources, High availability [16] [17] | Recalcitrance to hydrolysis, Requires pretreatment [18] | Demonstration [18] |

| Next-Generation (NGFs) | CO₂, Methanol, Formic acid [15] | Highly Variable [15] | Potential carbon circularity, Avoid land use [15] | Low energy density, Emerging conversion pathways [15] | R&D to Pilot [15] |

| Waste-Based | Glycerol (from biodiesel), Municipal solid waste [15] [17] | Low (especially waste streams) [15] | Low-cost, Circular economy benefits [15] | Composition variability, Contamination risks [15] | Commercial to Demonstration [15] |

| Lipid-Rich | Vegetable oils, Animal fats, Algae [17] | Moderate to High | Direct conversion to fuels, High energy density | Seasonal availability, High pretreatment costs | Commercial [17] |

The economic landscape reveals clear trade-offs between feedstock cost, processing complexity, and technology maturity. Molasses and waste glycerol consistently demonstrate favorable economic and environmental performance [15], while the promise of CO₂ and other next-generation feedstocks remains constrained by immaturity of conversion technologies [15]. Particularly telling is the finding that without subsidies, production costs for biogas from non-food feedstocks like grass, crop residues, and manure typically exceed those from food crops, highlighting the critical role of policy in advancing advanced biofuels [16].

Experimental Protocols for Feedstock Performance Evaluation

Standardized Feedstock Screening Methodology

Robust evaluation of feedstock performance requires systematic experimental protocols that generate comparable data across diverse substrate categories. The following methodology provides a standardized approach for initial feedstock screening:

Feedstock Preparation and Characterization

- Physical Processing: Reduce particle size to <2mm where applicable (e.g., biomass residues) using laboratory milling equipment to ensure homogeneity.

- Compositional Analysis: Quantify key components: carbohydrates (glucose, xylose, arabinose), lignin, protein, lipid content, and ash using standardized NREL laboratory analytical procedures (LAP).

- Sterilization: Employ autoclavable feedstocks (121°C, 15-20 min) or filter-sterilize (0.2μm) soluble components as appropriate for microbial culture.

Inoculum Preparation

- Prepare seed cultures of the production strain in defined medium with laboratory-grade glucose.

- Harvest cells at mid-exponential phase (OD600 ≈ 2-4), wash twice with sterile saline, and resuspend to standardized cell density for consistent inoculation.

Fermentation Conditions

- Utilize controlled bioreactors with working volume of 1-2L maintained at optimal process conditions (temperature, pH, dissolved oxygen).

- Employ a defined basal medium with the test feedstock as the primary carbon source.

- Maintain consistent feedstock loading on a total carbon equivalent basis where possible (e.g., 20g/L total fermentable sugars).

- Implement fed-batch protocols for feedstocks with potential substrate inhibition.

Analytical Monitoring

- Sample at 3-6 hour intervals for offline analysis of substrate consumption, product formation, and potential inhibitors.

- Quantify metabolites via HPLC (organic acids, alcohols, sugars) and GC (volatiles, gases) with appropriate standards.

- Monitor cell growth spectrophotometrically (OD600) and via dry cell weight determination.

Scale-Up Simulation Protocol

To assess strain performance under conditions mimicking industrial scale, researchers should implement scale-down simulations that expose strains to anticipated heterogeneities:

- Dissolved Oxygen Gradient Simulation: Implement cyclic (30-300s period) dissolved oxygen variations between 0% and 100% air saturation to mimic gradients in large-scale fermenters [19].

- Substrate Pulse Challenge: Introduce concentrated substrate pulses to create temporary high-osmolarity zones, monitoring recovery kinetics and stress response markers.

- pH Gradient Exposure: Apply controlled pH fluctuations (±0.5-1.0 pH units) at frequencies relevant to production-scale mixing times.

- Metabolic Response Profiling: Quantify transcriptomic (RNA-seq) and metabolomic changes in response to these simulated industrial conditions to identify potential scale-up limitations [19].

Table 2: Key Analytical Methods for Feedstock and Strain Performance Characterization

| Analysis Type | Specific Methods | Key Parameters Measured | Application in Feedstock Evaluation |

|---|---|---|---|

| Feedstock Composition | NREL LAP, HPLC-RI, GC-MS | Structural carbohydrates, lignin, extractives, ash | Quantify fermentable components and potential inhibitors |

| Process Metabolites | HPLC-UV/RID, GC-FID/TCD | Sugars, organic acids, alcohols, gases | Determine substrate consumption rates and product yields |

| Strain Physiology | Flow cytometry, qPCR, ELISA | Viability, stress markers, recombinant protein expression | Assess strain fitness and productivity under different feedstocks |

| Omics Analysis | RNA-seq, LC-MS metabolomics | Global gene expression, metabolic fluxes, stress responses | Identify metabolic bottlenecks and adaptation mechanisms |

The Strain Engineering Workflow: Integrating Feedstock Considerations

The Design-Build-Test-Learn (DBTL) framework has proven highly effective for industrial strain engineering, with feedstock performance representing a critical selection criterion throughout this iterative process [18]. The following diagram illustrates how feedstock considerations integrate with each stage of strain development.

Diagram: Feedstock-Integrated Strain Engineering Cycle

This integrated approach emphasizes that feedstock selection cannot be decoupled from strain development. During the Design phase, engineering strategies must account for the specific composition of target feedstocks, including potential inhibitors in lignocellulosic hydrolysates or C1 substrate assimilation challenges [18]. The Build phase employs appropriate genetic tools to implement these designs, with CRISPR-based editing particularly valuable for exploring non-traditional feedstock utilization pathways [18]. In the Test phase, strains are evaluated not only on pure substrates but also on actual industrial feedstock samples under conditions that simulate production-scale heterogeneity [19]. Finally, the Learn phase leverages machine learning and multi-omics data to identify genetic determinants of feedstock performance and predict scale-up behavior [18].

Research Reagent Solutions for Feedstock Evaluation

Table 3: Essential Research Tools for Feedstock and Strain Performance Analysis

| Reagent/Kit | Primary Function | Application Context |

|---|---|---|

| NREL Standard Methods | Standardized protocols for biomass composition analysis | Feedstock characterization for lignocellulosic and waste materials |

| HPLC/GC Standards | Quantification of substrates, products, and inhibitors | Process monitoring across diverse feedstock types |

| CRISPR-Cas9 Systems | Precision genome editing for pathway engineering | Optimizing feedstock utilization pathways in production hosts |

| RNA-seq Kits | Transcriptomic profiling of strain responses | Identifying metabolic adaptations to different feedstocks |

| Viability Stains | Flow cytometry-based cell health assessment | Monitoring stress responses in scale-down simulations |

| Metabolomics Kits | Comprehensive metabolite profiling | Mapping metabolic fluxes with different carbon sources |

| Microplate Assays | High-throughput substrate utilization screening | Rapid comparison of multiple feedstock conditions |

The dominance of feedstock costs in production economics necessitates a strategic approach to feedstock selection that aligns with both strain capabilities and process objectives. This analysis demonstrates that while sugar-based feedstocks like molasses currently offer the most favorable economic profile for conventional bioprocesses [15], the long-term sustainability of biomanufacturing depends on advancing next-generation feedstocks including waste streams and C1 substrates. Critically, the successful implementation of any feedstock requires early integration with strain engineering efforts, using the DBTL framework to develop robust production strains capable of maintaining performance at industrial scale. For researchers, this implies parallel development of feedstocks and strains rather than sequential optimization. Future advancements in systems and synthetic biology, particularly AI-powered design and machine learning prediction of scale-up performance, promise to accelerate this integrated development approach, potentially reducing both the time and cost barriers that currently challenge the bioeconomy's expansion [18].

In the economic analysis of engineered strains for industrial production, two methodological frameworks are indispensable for assessing viability and sustainability: Techno-Economic Analysis (TEA) and Life Cycle Assessment (LCA). TEA is a systematic methodology for evaluating the technical performance and economic feasibility of a process, product, or technology [20]. It integrates process design engineering with economic analysis to estimate production costs and investment requirements. Conversely, LCA is a standardized methodology (ISO 14040) for "compilation and evaluation of the inputs, outputs and the potential environmental impacts of a product system throughout its life cycle" [20]. It provides a comprehensive assessment of environmental burdens across all stages from raw material extraction to disposal.

For researchers developing industrial bioprocesses, the integration of TEA and LCA is crucial for sustainable process design, enabling systematic analysis of relationships between technical, economic, and environmental performance [20]. This integrated approach is particularly valuable for assessing emerging biotechnologies at early development stages, where design decisions have significant long-term implications for both economic competitiveness and environmental footprint.

Core Principles and Methodological Frameworks

Techno-Economic Analysis (TEA) Fundamentals

TEA employs a rigorous, model-based approach grounded in chemical engineering fundamentals. The methodology typically follows these key phases:

- Process Design and Modeling: Developing detailed process flow diagrams and using simulation software (e.g., ASPEN Plus) to generate complete mass and energy balances [21].

- Capital Cost Estimation: Calculating total investment costs (TIC), including equipment, installation, and indirect costs.

- Operating Cost Estimation: Assessing costs of raw materials, utilities, labor, maintenance, and overhead.

- Economic Performance Indicators: Calculating key metrics such as minimum selling price (MSP), return on investment, and payback period [21].

TEA results provide critical benchmarks for technology comparison. For example, in biorefining, enzymatic hydrolysis pathways show TIC of $100–200 million and MSP of $799–$1,013 per ton, while acid hydrolysis pathways demonstrate lower TIC ($40–80 million) and MSP ($530–$545 per ton) but with higher technical risks [21].

Life Cycle Assessment (LCA) Fundamentals

LCA follows a structured four-phase framework per ISO 14044 standards:

- Goal and Scope Definition: Defining system boundaries, functional unit, and assessment objectives.

- Life Cycle Inventory (LCI): Compiling quantitative input-output data for all processes within the system boundaries.

- Life Cycle Impact Assessment (LCIA): Evaluating potential environmental impacts using categorized indicators (e.g., global warming potential).

- Interpretation: Analyzing results, checking sensitivity, and providing conclusions and recommendations.

In application, LCA reveals critical environmental trade-offs. For biorefining pathways, the global warming potential (GWP) can range from 200 to 900 kg CO₂ equivalent per ton of sugars, with energy integration and biogenic fuel sources identified as key mitigation strategies [21].

Integrated TEA-LCA Approach

The integration of TEA and LCA enables simultaneous economic and environmental evaluation, addressing the critical need for understanding trade-offs during technology development [20]. This integrated framework aligns goal, scope, data, and system elements to reduce inconsistencies that can arise from separate analyses. The synergy between these methodologies is particularly powerful for prospective assessment of emerging technologies at low technology readiness levels (TRLs), where design parameters remain flexible and optimization opportunities are greatest [20].

Figure 1: Integrated TEA-LCA Framework for Sustainable Technology Assessment

Comparative Analysis: TEA vs. LCA

Table 1: Fundamental Comparison Between TEA and LCA Methodologies

| Aspect | Techno-Economic Analysis (TEA) | Life Cycle Assessment (LCA) |

|---|---|---|

| Primary Focus | Technical feasibility and economic viability [20] | Environmental impacts throughout product life cycle [20] |

| Core Methodology | Process modeling, cost estimation, profitability analysis [21] | Inventory analysis, impact assessment, interpretation [20] |

| Typical System Boundaries | Gate-to-gate or cradle-to-gate [22] | Cradle-to-grave or cradle-to-cradle [20] |

| Key Metrics | Minimum selling price (MSP), return on investment, capital and operating costs [21] | Global warming potential (GWP), resource depletion, eutrophication, acidification [21] |

| Standardization | No universal ISO standards; follows established engineering practices [23] | ISO 14040 and 14044 standards [20] |

| Typical Applications | Technology benchmarking, process optimization, investment decisions [24] | Environmental product declarations, eco-design, policy development [20] |

Table 2: TEA and LCA Applications in Different Technology Readiness Levels (TRLs)

| TRL Range | TEA Approach & Challenges | LCA Approach & Challenges | Integrated Assessment Value |

|---|---|---|---|

| TRL 1-4 (Early Research) | Screening-level cost analysis; High uncertainty due to limited data [20] | Conceptual LCA using proxy data; Focus on hotspot identification [23] | Identifies critical R&D directions; Prevents regrettable investments [20] |

| TRL 5-6 (Technology Development) | Detailed process modeling; Cost sensitivity analysis [20] | Prospective LCA with scenario analysis; Allocation methods critical [23] | Enables simultaneous optimization of economic and environmental parameters [20] |

| TRL 7-9 (Commercial Scale) | Accurate capital and operating cost estimation; Business case development [24] | Comprehensive inventory data; Validation with operational data [20] | Supports investment decisions and environmental marketing claims [25] |

Experimental Protocols and Assessment Workflows

Standard TEA Methodology for Bioprocess Evaluation

The following protocol outlines a comprehensive TEA methodology appropriate for evaluating industrial bioprocesses involving engineered strains:

Process Design and Base Case Establishment

- Develop detailed process flow diagrams encompassing all major unit operations

- Define design basis and capacity (e.g., 150 t/d of dry feedstock) [21]

- Establish operating conditions (temperature, pressure, conversion rates) based on experimental data

Process Simulation and Mass/Energy Balancing

- Utilize process simulation software (e.g., ASPEN Plus) to model the entire system [21]

- Generate comprehensive mass and energy balances for all streams

- Identify utility requirements (steam, electricity, cooling water)

Equipment Sizing and Capital Cost Estimation

- Size major equipment based on simulation results

- Calculate total installed capital (TIC) using established factoring methods [21]

- Estimate working capital and other startup costs

Operating Cost Estimation

- Calculate raw material costs based on consumption rates and market prices

- Estimate utility costs from energy balances

- Determine labor, maintenance, and overhead costs

Economic Analysis

- Calculate minimum selling price (MSP) or minimum fuel selling price (MFSP) [21]

- Perform sensitivity analysis on key parameters (yield, capacity, resource costs)

- Identify major cost drivers and potential optimization targets

Standard LCA Methodology for Bioprocess Evaluation

The LCA protocol follows ISO 14044 standards with specific considerations for bioprocesses:

Goal and Scope Definition

- Define functional unit (e.g., 1 ton of product, 1 MJ of fuel) appropriate for comparison [22]

- Establish system boundaries (cradle-to-gate or cradle-to-grave)

- Specify cut-off criteria and allocation procedures for co-products

Life Cycle Inventory (LCI) Compilation

- Collect primary data from process simulations and experimental measurements

- Supplement with secondary data from commercial LCA databases

- Document all material/energy inputs and emissions for each process unit

Life Cycle Impact Assessment

- Select appropriate impact categories (global warming, eutrophication, etc.)

- Apply characterization factors to convert emissions to impact equivalents

- Conduct hotspot analysis to identify significant environmental contributors

Interpretation and Sensitivity Analysis

- Evaluate result significance and data quality

- Perform sensitivity analysis on key parameters (allocation methods, energy sources)

- Compare results with benchmark systems or alternative technologies

Essential Research Reagent Solutions and Tools

Table 3: Essential Tools and Resources for TEA and LCA Studies

| Tool/Resource Category | Specific Examples | Application & Function |

|---|---|---|

| Process Simulation Software | ASPEN Plus [21] | Models complete mass and energy balances for technical design |

| TEA Guidelines & Frameworks | Global CO2 Initiative TEA/LCA Toolkit [26], NREL/NETL methodologies [23] | Provide standardized approaches for conducting assessments |

| LCA Database & Software | GREET Model [23], Commercial LCA databases | Supply secondary data for life cycle inventory compilation |

| Integrated Assessment Platforms | AssessCCUS platform [23] | Aggregate resources for techno-economic and life cycle assessment |

| Harmonization Guidelines | TEA and LCA Guidelines for CO2 Utilization [25] | Ensure consistent methodological choices for comparative studies |

Advanced Applications in Industrial Bioprocessing

Multi-Criteria Decision Analysis Framework

For complex decisions in strain engineering and bioprocess development, integrated TEA-LCA can be incorporated into structured multi-criteria analysis frameworks. These approaches combine technical, economic, and environmental performance metrics with weightings based on stakeholder priorities [21] [22]. The analytical hierarchy process (AHP) is one method that enables systematic comparison of alternatives across multiple dimensions, facilitating transparent decision-making that balances cost, environmental impact, and technical feasibility [22].

Prospective Assessment of Emerging Biotechnologies

The integration of TEA and LCA is particularly valuable for prospective assessment of emerging technologies at low technology readiness levels (TRLs). While traditional assessments focus on mature technologies, prospective application at early development stages allows technology developers to:

- Understand implications of different design choices on future economic and environmental performance [20]

- Optimize process parameters to maximize economic benefits while minimizing environmental burdens [20]

- Identify potential showstoppers before significant resources are invested

- Guide R&D priorities toward performance improvements with the greatest sustainability impact

Technology Learning Curve Integration

For emerging biotechnologies, integrating technology learning curves (TLCs) into TEA and LCA enables forecasting of future environmental and economic performance as technologies mature and benefit from cumulative experience and scale [23]. This approach provides more realistic projections compared to static assessments and helps identify pathways to competitiveness with incumbent technologies.

Techno-Economic Analysis and Life Cycle Assessment are complementary methodologies that together provide a comprehensive framework for evaluating the sustainability of industrial bioprocesses. While TEA focuses on technical feasibility and economic viability, LCA assesses environmental impacts across the entire value chain. Their integration enables informed decision-making that balances economic and environmental considerations, particularly valuable during the development of engineered strains for industrial production. As standardization efforts through initiatives like the Global CO2 Initiative continue to mature [26] [25], these methodologies will play an increasingly critical role in guiding the transition toward sustainable bioprocess technologies.

The Strain Engineering Toolkit: DBTL Cycles, Omics, and AI for Predictive Design

Implementing the Design-Build-Test-Learn (DBTL) Framework for Rapid Iteration

In the competitive landscape of industrial biotechnology, the Design-Build-Test-Learn (DBTL) framework serves as the foundational methodology for developing efficient microbial cell factories. This iterative cycle is crucial for optimizing biological systems to produce valuable compounds, from renewable biofuels to pharmaceutical precursors [18] [27]. The economic implications of streamlined DBTL cycles are profound; traditional metabolic engineering projects have required enormous investments, such as the 150 person-years needed to produce the antimalarial precursor artemisinin and 575 person-years for DuPont's propanediol [27]. With the global bioeconomy projected to reach $30 trillion by 2030, radical reductions in strain development time and cost through optimized DBTL implementation have become imperative for capturing market opportunities across all sectors [18].

Recent advances have introduced transformative variations to the traditional DBTL approach, most notably the LDBT (Learn-Design-Build-Test) paradigm that leverages machine learning at the forefront of the cycle [28] [29]. This reordering, combined with high-throughput technologies and automation, accelerates the entire biomanufacturing development pipeline. For researchers and drug development professionals, understanding these methodologies and their comparative performance is essential for making strategic decisions in engineered strain development for industrial production. This guide provides an objective comparison of these frameworks, supported by experimental data and implementation protocols.

Comparative Analysis of DBTL Cycle Implementations

Traditional DBTL versus LDBT Paradigms

The table below compares the fundamental characteristics of the traditional DBTL cycle against the emerging machine learning-driven LDBT paradigm.

Table 1: Comparison of Traditional DBTL and Modern LDBT Frameworks

| Aspect | Traditional DBTL Cycle | LDBT Cycle (ML-First) |

|---|---|---|

| Sequence | Design → Build → Test → Learn [28] | Learn → Design → Build → Test [28] [29] |

| Primary Driver | Domain knowledge & hypothesis [28] | Machine learning predictions & existing data [28] [29] |

| Build Phase Approach | In vivo chassis (bacteria, yeast) [28] | Cell-free systems & in vivo [28] [29] |

| Testing Throughput | Moderate (days to weeks) [18] | High (hours) with cell-free systems [28] [29] |

| Learning Mechanism | Manual data analysis [27] | Automated ML algorithms [28] [27] |

| Data Requirements | Cycle-specific | Large datasets for pre-training [28] [30] |

| Initial Investment | Lower | Higher (computational resources) |

| Iteration Speed | Weeks to months [18] | Days to weeks [28] [29] |

Workflow Visualization

The following diagrams illustrate the fundamental differences in workflow between the traditional DBTL cycle and the modern LDBT approach.

Diagram 1: Traditional DBTL Cycle. The classic four-stage iterative process begins with Design and progresses sequentially to Build, Test, and Learn, with Learn informing the next Design phase [28] [31].

Diagram 2: LDBT Cycle. The machine learning-enhanced paradigm begins with Learning from existing data, followed by Design, Build, and Test, potentially requiring fewer iterations [28] [29].

Performance Comparison and Experimental Data

Quantitative Performance Metrics

Experimental implementations of both traditional and enhanced DBTL cycles demonstrate significant differences in performance and efficiency. The following table summarizes key quantitative findings from published studies.

Table 2: Experimental Performance Metrics of DBTL Implementations

| Application | DBTL Approach | Cycle Time | Strains Tested | Performance Improvement | Reference |

|---|---|---|---|---|---|

| Tryptophan production | ML-guided (ART) | 2 cycles | Not specified | 106% increase from base strain | [27] |

| PET hydrolase engineering | Structure-based ML (MutCompute) | Not specified | Not specified | Increased stability and activity vs. wild-type | [28] |

| TEV protease engineering | ProteinMPNN + AlphaFold | Not specified | Not specified | 10-fold increase in design success rates | [28] |

| Antimicrobial peptides | DL + cell-free testing | Single design round | 500 variants tested | 6 promising AMP designs from 500,000 surveyed | [28] |

| 3-HB production | iPROBE (neural network) | Not specified | Pathway combinations | 20-fold improvement in Clostridium host | [28] |

| Fatty acids production | ML-guided (ART) | Multiple cycles | Library | Successful guidance of engineering | [27] |

Machine Learning Algorithm Performance

A critical component of modern DBTL implementations is the machine learning approach used in the Learn phase. Research has compared various algorithms for their effectiveness in predicting strain performance.

Table 3: Machine Learning Algorithm Performance in DBTL Cycles

| Machine Learning Method | Best For | Performance Characteristics | Experimental Validation |

|---|---|---|---|

| Gradient Boosting | Low-data regimes [32] | Robust to training set biases and experimental noise [32] | Outperformed other methods in simulated DBTL cycles [32] |

| Random Forest | Low-data regimes [32] | Robust to training set biases and experimental noise [32] | Outperformed other methods in simulated DBTL cycles [32] |

| Automated Recommendation Tool (ART) | Recommending new strain designs [27] | Provides probabilistic predictions of production levels [27] | Successfully applied to biofuels, fatty acids, and tryptophan [27] |

| Protein Language Models (ESM, ProGen) | Zero-shot protein design [28] | Captures evolutionary relationships in sequences [28] | Designed enantioselective biocatalysts [28] |

| Structure-based Models (ProteinMPNN) | Protein sequence design [28] | Input: protein structure; Output: folded sequences [28] | Improved TEV protease catalytic activity [28] |

Detailed Experimental Protocols

Machine Learning-Guided DBTL for Metabolic Engineering

Objective: To optimize microbial strain performance for target metabolite production using machine learning-guided DBTL cycles.

Materials and Reagents:

- Microbial chassis (e.g., E. coli, Corynebacterium glutamicum)

- DNA library components (promoters, RBS, coding sequences)

- CRISPR-Cas9 genome editing system

- Analytical equipment (HPLC, GC-MS) for metabolite quantification

Protocol:

- Initial Design: Select target pathway and identify 5-10 enzyme coding sequences with associated regulatory elements from a standardized library [32].

- Build Phase: Use high-throughput genome engineering (e.g., CRISPR-based editing) to assemble 50-100 variant strains with combinatorial perturbations of enzyme expression levels [32] [18].

- Test Phase: Cultivate strains in parallel micro-bioreactors for 24-72 hours. Quantify metabolite concentrations using HPLC/GC-MS and measure enzyme expression levels via targeted proteomics [32] [27].

- Learn Phase: Train gradient boosting or random forest models on the collected dataset, using enzyme expression levels as inputs and metabolite production as output [32]. Use Automated Recommendation Tool (ART) to predict optimal enzyme expression levels for increased production [27].

- Iteration: Implement top 5-10 recommended strain designs in the next DBTL cycle. Repeat for 2-3 cycles or until performance targets are met [32] [27].

Cell-Free LDBT for Protein Engineering

Objective: To rapidly engineer proteins with enhanced properties using cell-free transcription-translation systems.

Materials and Reagents:

- Cell-free TX-TL system (commercial or lab-made)

- DNA templates for target protein variants

- Microfluidic droplet generation equipment

- High-throughput assay reagents (fluorescence, absorbance)

Protocol:

- Learn Phase: Utilize pre-trained protein language models (ESM, ProGen) or structure-based tools (ProteinMPNN) to generate 500-1000 protein sequence variants predicted to have improved function [28].

- Design Phase: Select 200-500 top candidates based on computational predictions and synthesize DNA templates using high-throughput oligo synthesis [28].

- Build Phase: Express protein variants directly in cell-free TX-TL reactions arrayed in 96- or 384-well plates, or using picoliter-scale droplet microfluidics for >100,000 reactions [28] [29].

- Test Phase: Measure protein functionality in vitro within 4-24 hours using coupled enzymatic assays, fluorescence-based stability probes, or cDNA display for binding affinity [28].

- Optional Iteration: Use results to refine machine learning models for subsequent design rounds if necessary [28] [29].

Essential Research Reagent Solutions

The successful implementation of DBTL cycles requires specialized reagents and tools. The following table catalogues key solutions for establishing robust DBTL workflows.

Table 4: Essential Research Reagent Solutions for DBTL Implementation

| Reagent/Tool | Function | Application Context |

|---|---|---|

| Cell-free TX-TL systems | In vitro transcription-translation | Rapid protein expression without living cells [28] [29] |

| CRISPR-Cas9 editing systems | Precise genome engineering | Introducing targeted genetic modifications in vivo [18] |

| DNA Library Parts | Standardized genetic elements | Modular assembly of genetic constructs [32] [31] |

| Automated Recommendation Tool (ART) | Machine learning for strain design | Predicting optimal strain configurations from data [27] |

| Protein Language Models (ESM, ProGen) | Zero-shot protein design | Predicting functional protein sequences [28] |

| Droplet Microfluidics | Ultra-high-throughput screening | Screening >100,000 cell-free reactions [28] |

| Multi-omics Analytics | Systems-level characterization | Understanding strain physiology and pathway dynamics [33] |

Economic Analysis and Industrial Scaling Considerations

The economic viability of engineered strains for industrial production depends heavily on the efficiency of the DBTL process. Research indicates that strain development costs can be reduced by 30-50% through the implementation of automated, ML-guided DBTL cycles compared to traditional approaches [18]. This acceleration is particularly critical for achieving competitive production costs in commodity chemicals markets, where profit margins are slim and extreme strain performance is required [18].

A key consideration in DBTL implementation is the strategic allocation of resources across cycles. Simulation studies demonstrate that when the number of strains to be built is limited, starting with a large initial DBTL cycle is favorable over building the same number of strains for every cycle [32]. This approach maximizes the initial data generation for machine learning models, enabling more informed recommendations in subsequent cycles.

For industrial-scale cultivation, DBTL cycles must incorporate scale-up considerations early in the process. Laboratory-scale cultures often differ significantly from large-scale bioreactors in terms of nutrient gradients, gas transfer, and stress responses [19]. Integrating systems biology tools that model these large-scale conditions during the Learn and Design phases can dramatically improve the success rate of scale-up operations, reducing both time and cost in technology transfer to manufacturing [18] [19].

The integration of automated biofoundries represents the most advanced implementation of the DBTL framework, combining computational design, robotic automation, and machine learning to achieve radical reductions in development timelines [30] [33]. These facilities enable continuous DBTL cycling with minimal human intervention, potentially reducing strain development time from years to months and providing a significant competitive advantage in the rapidly evolving bioeconomy [33].

In the economic landscape of industrial biotechnology, the development of high-yielding microbial production strains is a critical determinant of commercial viability. The journey from a wild-type microorganism to a robust industrial workhorse relies on strategic genetic improvement. For decades, random mutagenesis served as the cornerstone of strain development, relying on non-targeted genetic changes and high-throughput screening to identify improved variants. The emergence of CRISPR-based genome editing has revolutionized this field, introducing unprecedented precision and efficiency in strain engineering programs. This guide provides an objective comparison of these foundational strategies, evaluating their performance, applications, and economic implications for researchers and scientists engaged in industrial production research. We present experimental data and standardized protocols to inform strategic decisions in strain development pipelines.

Fundamental Mechanisms

Random Mutagenesis encompasses classical techniques that introduce untargeted genetic changes across the microbial genome. Methods include chemical mutagens (e.g., ethyl methanesulfonate), ultraviolet (UV) radiation, and ionizing radiation, which induce stochastic mutations throughout the genome without specificity. This approach generates vast genetic diversity from which improved phenotypes are selected through iterative screening cycles. The primary strength of this method lies in its ability to generate beneficial mutations without requiring prior knowledge of the genome or metabolic pathways, a principle successfully applied for decades to enhance enzyme yields in industrial strains [34].

CRISPR Genome Editing represents a paradigm shift toward precision genetics. Derived from a bacterial adaptive immune system, the technology utilizes a guide RNA (gRNA) to direct a Cas nuclease (e.g., Cas9) to a specific DNA sequence, inducing a double-strand break (DSB). The cell repairs this break via either the error-prone non-homologous end joining (NHEJ) pathway, which often results in small insertions or deletions (indels), or the precise homology-directed repair (HDR) pathway when a donor DNA template is provided [35] [36]. This system allows for targeted gene knock-outs, knock-ins, and precise nucleotide substitutions. Recent advances include CRISPR-based base editing (BE-TRM), which fuses a catalytically impaired Cas nuclease to a DNA deaminase enzyme, enabling direct conversion of one base pair to another (e.g., C•G to T•A) without requiring DSBs or donor templates, thus expanding the toolset for directed evolution [37].

Quantitative Performance Comparison

The table below summarizes key performance metrics for random mutagenesis and CRISPR-based editing, based on published experimental data.

Table 1: Performance Comparison of Random Mutagenesis and CRISPR Editing

| Performance Metric | Random Mutagenesis | CRISPR Genome Editing | References |

|---|---|---|---|

| Typical Mutation Frequency | Variable; global mutations | High at target locus (e.g., ~73% in tomato ALC gene) | [36] |

| Precision & Control | Non-targeted, genome-wide | Single-nucleotide precision possible | [37] [38] |

| Editing Efficiency (HDR) | Not applicable | Relatively low (e.g., 7.69% in tomato) | [36] |

| Multiplexing Capacity | Not applicable | High (multiple gRNAs for pathway engineering) | [38] |

| Off-Target Effects | High, genome-wide burden of deleterious mutations | Moderate; predictable and can be minimized with optimized gRNA design | [39] [40] |

| Library Size Requirement | Very large (10^4 - 10^6 variants) | Smaller, more focused libraries | [37] [34] |

| Development Timeline | Lengthy (iterative cycles of mutation/screening) | Accelerated (directed changes) | [34] [38] |

Table 2: Comparison of CRISPR-Derived Editing Systems

| Editing System | Key Components | Primary Editing Outcome | Typical Application in Strain Development |

|---|---|---|---|

| CRISPR-NHEJ/HDR | Cas9 nuclease, gRNA, optional donor DNA | Gene knock-outs, insertions, or precise edits via HDR | Gene inactivation, pathway insertion, gene replacement |

| Base Editing (BE) | Nickase Cas9 (nCas9) fused to deaminase, gRNA | Targeted point mutations (C-to-T, A-to-G) within a defined window | Functional optimization of enzyme active sites, evolving promoter strength |

| Prime Editing (PE) | nCas9 fused to reverse transcriptase, Prime Editing gRNA (pegRNA) | All 12 possible base-to-base conversions, small insertions/deletions | High-fidelity correction of specific deleterious mutations |

| CRISPR-Directed Evolution (e.g., BE-TRM) | Deaminase-nCas9 fusion, gRNA library | Targeted random mutagenesis at a specific genomic locus | Continuous in vivo evolution of a gene of interest under selection |

Detailed Experimental Protocols

Protocol for Random Mutagenesis and Screening in Microbes

This classic protocol is adapted from established strain improvement programs [34].

- Strain Preparation: Inoculate a single colony of the production microbe (e.g., Bacillus subtilis or Aspergillus niger) into a rich liquid medium. Grow overnight to mid-exponential phase.

- Mutagen Treatment:

- Chemical Mutagenesis: Pellet cells and resuspend in a buffered solution containing a mutagen like ethyl methanesulfonate (EMS; e.g., 0.1-0.3 M). Incubate for a duration that results in ~90-99% kill rate (determined empirically). Terminate the reaction by thorough washing.

- UV Mutagenesis: Spread cells on a plate and expose to UV light (e.g., 254 nm wavelength) at a distance and duration that yields 90-99% kill rate. Perform all steps under low light conditions to prevent photoreactivation.

- Outgrowth and Screening: Allow the treated population to recover in non-selective medium for several generations to allow phenotypic expression. Plate the cells to obtain single colonies.

- High-Throughput Screening: Replica-plate colonies onto assay plates or into deep-well plates for liquid culture. Screen for the desired phenotype (e.g., increased enzyme production using a chromogenic substrate or higher product titer). This step is often the bottleneck and requires a robust, scalable assay.

- Validation and Scale-Up: Isolate the top-performing mutants from the primary screen. Re-test their performance in small-scale fermenters. Genetically validate stable elite strains for further development.

Protocol for Targeted Gene Inactivation Using CRISPR-Cas9

This protocol, based on methods used in plants and human cells [36] [41], can be adapted for microbial systems with appropriate vector modifications.

- gRNA Design and Vector Construction:

- Target Selection: Identify a 20-nucleotide target sequence within the gene of interest that is immediately followed by a Protospacer Adjacent Motif (PAM, e.g., 5'-NGG-3' for SpCas9).

- gRNA Cloning: Synthesize and clone the gRNA sequence into a CRISPR plasmid under a suitable promoter (e.g., a U6 promoter).

- Cas9 Expression: The plasmid must also express the Cas9 nuclease, often under a strong, constitutive promoter.

- Transformation: Introduce the constructed plasmid into the microbial host using a standard transformation method (e.g., electroporation for bacteria, protoplast transformation for fungi).

- Mutant Identification: After transformation, isolate individual clones.

- Genotypic Screening: Perform colony PCR amplifying the target genomic region and sequence the products to identify indels caused by NHEJ repair.

- Phenotypic Screening: If a predictable phenotype is expected (e.g., auxotrophy), screen on selective media.

- Plasmid Curing: To ensure genetic stability, eliminate the CRISPR plasmid from the confirmed mutant by serial passage in non-selective medium and verify its loss.

Protocol for Base Editor-Mediated Targeted Random Mutagenesis (BE-TRM)

This advanced protocol leverages base editors for continuous in vivo evolution [37].

- System Selection: Choose an appropriate base editor (e.g., a cytidine base editor for C-to-T changes, or an adenosine base editor for A-to-G changes). For broader diversification, a dual base editor like Target-ACEmax can be used.

- gRNA Library Design: Instead of a single gRNA, design a pool of gRNAs that tiled across the gene or genomic region you wish to diversify. This creates a library of constructs.

- Library Delivery: Co-transform the base editor expression plasmid and the gRNA library pool into the production host at a scale that ensures high library coverage.

- Continuous Evolution Under Selection: Culture the transformed population over serial passages in a bioreactor or shake flasks. Apply a consistent selective pressure (e.g., a challenging substrate, inhibitor, or condition that favors improved mutants).

- Isolation and Sequencing of Elite Variants: Periodically sample the population. Isolate genomic DNA from the entire population or from individual high-performing clones. Sequence the target locus to identify beneficial mutations that have been enriched under selection.

Visualization of Strategic Workflows

Random Mutagenesis and Screening Workflow

The following diagram illustrates the iterative, non-targeted nature of classical strain development.

CRISPR-Based Gene Editing Workflow

This diagram outlines the targeted and rational design process of CRISPR-mediated strain engineering.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagents for Strain Engineering Research

| Research Reagent / Solution | Function in Experiments | Example Use Case |

|---|---|---|

| CRISPR-Cas9 Plasmid | Expresses the Cas9 nuclease and gRNA scaffold within the host cell. | Targeted gene knock-out to eliminate a competing metabolic pathway. |

| Base Editor Plasmid | Expresses a fusion protein (e.g., nCas9-deaminase) for point mutations. | Saturation mutagenesis of a key enzyme's active site for improved activity. |

| Single-Guide RNA (sgRNA) | Directs the Cas protein to the specific genomic target sequence via Watson-Crick base pairing. | Defining the exact site for a double-strand break or deamination window. |

| Homology-Directed Repair (HDR) Template | A DNA donor template (single or double-stranded) for precise editing. | Inserting a strong promoter upstream of a biosynthetic gene cluster. |

| Chemical Mutagens (e.g., EMS) | Induces random point mutations across the genome. | Generating a diverse starting population for screening for antibiotic resistance. |

| Agrobacterium tumefaciens Strain | A biological vector for delivering DNA into plant cells. | CRISPR transformation of tomato or other crop plants for trait development [36]. |

| Graph-CRISPR Prediction Model | A computational tool that integrates sgRNA sequence and secondary structure to predict editing efficiency [40]. | In silico selection of highly efficient gRNAs to minimize costly experimental trial and error. |

The strategic choice between random mutagenesis and CRISPR-based editing is not a simple binary decision but a nuanced consideration of project goals, timeline, and resource constraints. Random mutagenesis remains a powerful, knowledge-agnostic tool for trait improvement when the genetic basis of a desired phenotype is unknown, though it carries a high screening burden and genetic baggage. In contrast, CRISPR genome editing offers a rapid, precise, and rational approach for strain engineering, enabling targeted modifications with known functions, such as gene knock-outs, promoter swaps, and pathway refactoring. The emergence of base editing and BE-TRM effectively bridges these two worlds, offering a semi-targeted strategy that focuses continuous diversification on specific genomic loci, accelerating the directed evolution process within a native genomic context.

From an economic analysis perspective, the higher initial investment in CRISPR technology—encompassing reagent design, computational tools, and skilled personnel—is often justified by a significantly accelerated development timeline and the creation of more genetically stable and well-defined production strains. This precision reduces regulatory hurdles and ensures more consistent performance in industrial-scale fermentation [34] [42]. Ultimately, the most effective strain development pipelines for industrial production will likely employ a synergistic approach, leveraging the brute-force power of random mutagenesis for initial, broad improvements and the surgical precision of CRISPR tools for final, targeted optimization of elite strains.

Leveraging Multi-Omics Data (Metabolomics, Proteomics) for System-Level Insight

In the competitive landscape of industrial biomanufacturing, the economic analysis of engineered production strains has evolved from simple yield measurements to a system-level understanding of microbial physiology. The integration of multi-omics data, particularly metabolomics and proteomics, provides unprecedented insights into the complex metabolic networks that determine the economic viability of bioprocesses. Where traditional analytics offered fragmented views, multi-omics reveals the intricate interplay between genetic modifications, protein expression, and metabolic flux, enabling more predictive strain engineering and process optimization [43] [44].

The global multi-omics market, valued at $2.47 billion in 2025 and projected to reach $6.73 billion by 2032, reflects the growing recognition that integrated biological data drives innovation in industrial biotechnology [45]. For researchers and scientists focused on engineered strains for industrial production, multi-omics represents not merely a technological advancement but a fundamental tool for de-risking scale-up and enhancing cost-competitiveness against traditional petroleum-based production routes [46]. This guide provides a comprehensive comparison of multi-omics approaches specifically contextualized for economic analysis of production strains, complete with experimental protocols, data interpretation frameworks, and pathway visualizations essential for informed decision-making in industrial bioprocessing.

Multi-Omics Technologies: Comparative Analytical Capabilities

Technology Platforms and Their Economic Applications