From Code to Function: A Comprehensive Framework for Validating AI-Designed RNA Sequences

This article provides researchers, scientists, and drug development professionals with a modern framework for the functional validation of AI-designed RNA sequences.

From Code to Function: A Comprehensive Framework for Validating AI-Designed RNA Sequences

Abstract

This article provides researchers, scientists, and drug development professionals with a modern framework for the functional validation of AI-designed RNA sequences. It bridges the gap between in silico design and real-world application by exploring foundational concepts in generative AI for biology, detailing cutting-edge methodological approaches like RNA-Seq and targeted panels, addressing common troubleshooting and optimization challenges, and establishing rigorous benchmarks for comparing synthetic sequences against their natural counterparts. The guidance synthesizes the latest research and technologies to accelerate the translation of computational designs into validated biological tools and therapeutics.

The New Landscape of RNA Design: How AI is Rewriting the Rules of Synthetic Biology

The field of genomics is undergoing a revolutionary transformation, moving from predictive modeling to generative artificial intelligence (AI). This shift is particularly evident in the domain of RNA biology, where large language models (LLMs) initially designed for natural language processing are now being repurposed to "understand" the complex language of genetics [1]. These models analyze genomic sequences not merely as strings of nucleotides but as intricate languages with their own grammar and syntax that dictate biological function. The ability to generate novel, functional RNA sequences represents a fundamental advance over previous models that could only predict properties of existing sequences.

This transition is critically important for drug discovery and development, where traditional methods often take over a decade and cost billions of dollars per drug [2]. Generative genomic language models offer the potential to dramatically accelerate this timeline by enabling researchers to design optimized RNA therapeutics from first principles. However, this powerful technology necessitates robust validation frameworks to ensure that AI-designed RNA sequences not only match but surpass the functionality and safety of their natural counterparts. As Microsoft researchers demonstrated in a concerning "red teaming" exercise, AI can design proteins that evade current biosecurity screening software, highlighting the dual-use potential of this technology and the urgent need for advanced validation methodologies [3].

Comparative Landscape of Genomic Language Models

Evolution from Predictive to Generative Architectures

The development of genomic LLMs has progressed from simple predictive models to sophisticated generative architectures capable of designing novel sequences. Early models focused primarily on learning representations that could enhance predictions of RNA secondary structure—a long-standing challenge in computational biology [4]. These initial approaches adapted the BERT (Bidirectional Encoder Representations from Transformers) architecture, training on massive unlabeled RNA sequence databases to understand the contextual relationships between nucleotides. The hypothesis was that obtaining high-quality RNA representations would enhance data-costly downstream tasks, much as language models pretrained on vast text corpora could be fine-tuned for specific natural language applications with limited labeled data.

The current landscape of RNA language models reflects significant diversification in architectural approaches and training methodologies. As shown in Table 1, these models vary considerably in their embedding dimensions, parameter counts, and pretraining databases, leading to different performance characteristics across various tasks. Two models in particular—RiNALMo and RNA-FM—have demonstrated superior performance in benchmarking studies, though all face significant challenges in low-homology generalization scenarios [4].

Table 1: Comparative Analysis of Prominent RNA Large Language Models

| Model | Year | Embedding Dimension | Parameters | Architecture | Pretraining Sequences | Key Features |

|---|---|---|---|---|---|---|

| RNABERT | 2022 | 120 | ~500,000 | Transformer (6 layers) | 76,237 | Combines masked language modeling with structural alignment learning |

| RNA-FM | 2022 | 640 | ~100 million | Transformer (12 layers) | 23.7 million | Classic BERT architecture trained on massive RNAcentral dataset |

| RNA-MSM | 2024 | 768 | ~96 million | MSA Transformer | ~3.1 million | Incorporates multiple sequence alignment information inspired by AlphaFold2 |

| ERNIE-RNA | 2024 | 768 | ~86 million | Transformer (12 layers) | 20.4 million | Incorporates base-pairing informed attention bias |

| RiNALMo | 2024 | 1280 | ~650 million | Transformer (33 layers) | 36 million | Largest model; uses rotary positional embedding and FlashAttention-2 |

Performance Benchmarking Across Tasks

Comparative analyses reveal significant differences in model capabilities, particularly for the fundamental task of RNA secondary structure prediction. In comprehensive benchmarking studies, researchers have evaluated these pretrained models using a unified experimental setup with curated datasets of increasing complexity [4]. The results demonstrate that while two models (RiNALMo and RNA-FM) clearly outperform others, all face substantial challenges in generalization, especially in low-homology scenarios where test sequences differ significantly from training data.

The benchmarking process typically involves four datasets with increasing generalization difficulty: (1) random splits where sequences from the same RNA family may appear in both training and test sets, (2) family-aware splits that prevent this overlap, (3) cross-family predictions where models are tested on entirely different RNA classes, and (4) challenging sets specifically designed to test structural boundaries. Performance tends to degrade significantly as generalization difficulty increases, highlighting the need for more robust training approaches and larger, more diverse datasets [4].

Experimental Frameworks for Validation

Methodologies for Structural Validation

Validating AI-generated RNA sequences requires rigorous experimental frameworks to assess whether these synthetic molecules adopt their intended structures and functions. Several computational tools have emerged as standards for predicting RNA 3D structures, each with distinct strengths and limitations. A 2024 comparative study evaluated three prominent tools—RNAComposer, Rosetta FARFAR2, and AlphaFold 3—for predicting various RNA structures, including therapeutic RNAs like the small interfering RNA drug nedosiran [5].

The methodology involved using each tool to predict structures of RNAs with experimentally determined configurations, then calculating all-atom root mean square deviation (RMSD) values to quantify accuracy. For a malachite green aptamer (38 nucleotides) with a known crystal structure, RNAComposer produced the most accurate prediction (RMSD 2.558 Å), successfully recapitulating all base pairing and stacking interactions. Rosetta FARFAR2 struggled with over-twisting of the hairpin loop (RMSD 6.895 Å), while AlphaFold 3 generated a reasonable approximation (RMSD 5.745 Å) despite lower prediction confidence [5].

For more complex structures like human glycyl-tRNA-CCC, the performance varied significantly based on secondary structure inputs. When using CONTRAfold-predicted secondary structure, RNAComposer achieved markedly better accuracy (RMSD 5.899 Å) compared to RNAfold-based input (RMSD 16.077 Å). Notably, Rosetta FARFAR2 failed to recapitulate the characteristic inverted "L" shape of tRNA, highlighting fundamental limitations in its sampling approach [5]. AlphaFold 3 demonstrated particular strength in directly predicting 3D structures from primary sequences without requiring secondary structure inputs, and it showed capability in handling common post-transcriptional modifications.

Table 2: Performance Comparison of RNA Structure Prediction Tools

| Tool | Approach | Input Requirements | Strengths | Limitations | Typical RMSD |

|---|---|---|---|---|---|

| RNAComposer | Motif assembly | Secondary structure | Accurate for small RNAs; handles typical tRNA shape | Highly dependent on accurate secondary structure input | 2.558 Å (MGA) to 16.077 Å (htRNA) |

| Rosetta FARFAR2 | Fragment assembly | Secondary structure | Physical realism; refinement capabilities | May miss global topology; computationally intensive | 6.895 Å (MGA) to 12.734 Å (htRNA) |

| AlphaFold 3 | Deep learning | Primary sequence | End-to-end prediction; accepts modifications | Lower confidence scores for some RNAs | 5.745 Å (MGA) to comparable performance on tRNAs |

Functional Assays for Therapeutic Optimization

Beyond structural validation, functional assessment is crucial for determining whether AI-designed RNA sequences perform as intended in biological systems. High-throughput experimental platforms have been developed specifically to generate large-scale functional data for training and validating AI models. These systems typically measure critical determinants of RNA therapeutic efficacy, particularly stability and translation efficiency [6].

The stability assay methodology involves transfecting cells with pooled mRNA libraries containing thousands of sequence variants, then harvesting RNA at multiple time points (3h, 24h, 48h, 72h) to quantify remaining molecules via next-generation sequencing. This provides degradation curves for each design, enabling calculation of stability scores based on NGS counts across six replicates at four timepoints [6].

For translation efficiency assessment, researchers employ polysome profiling—a technique that separates ribosome-bound mRNAs via sucrose gradient fractionation. After transfecting cells with mRNA libraries and allowing translation to occur, cells are lysed and ribosome-bound mRNAs are separated across twelve fractions. The presence of each library member across fractions is quantified via NGS, enabling computation of translation efficiency scores that reflect how effectively sequences recruit ribosomes [6].

These complementary datasets provide the functional correlates necessary to move beyond purely sequence-based predictions to function-aware generative design. By training models on both sequence-structure and structure-function relationships, researchers can iteratively improve generative capabilities.

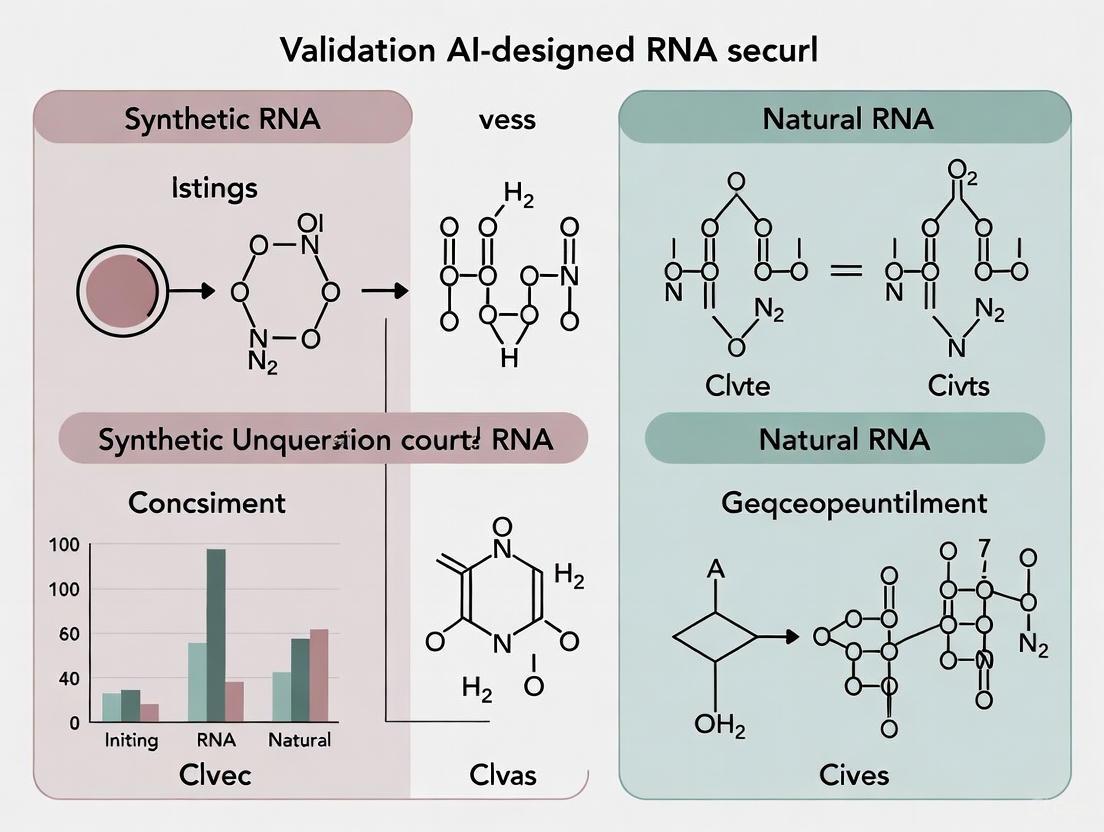

Figure 1: Integrated Workflow for Experimental Validation of AI-Designed RNA Sequences

The Scientist's Toolkit: Essential Research Reagents and Solutions

The validation of AI-designed RNA sequences requires specialized reagents and platforms that enable high-throughput functional characterization. These tools form the foundation of the iterative design-build-test cycles that power generative AI development in RNA therapeutics.

Table 3: Essential Research Reagents for AI-Driven RNA Validation

| Category | Specific Solution | Function | Application in Validation |

|---|---|---|---|

| Library Construction | Pooled UTR libraries (5' and 3') | Provides diverse sequence variants for testing | Enables high-throughput screening of thousands of designs in parallel |

| In Vitro Transcription | IVT with modified nucleotides (e.g., N1-methylpseudouridine) | Produces synthetic mRNA with enhanced stability | Mimics therapeutic mRNA format; reduces immunogenicity |

| Delivery Systems | Lipid nanoparticles or electroporation | Enables efficient RNA delivery into cells | Ensures representative cellular environment for functional testing |

| Stability Assay | Time-course RNA harvesting (3h-72h) | Captifies mRNA degradation kinetics | Generates quantitative stability metrics for model training |

| Translation Assay | Sucrose gradient polysome fractionation | Separates ribosome-bound mRNA by translational activity | Provides direct measurement of translation efficiency |

| Sequencing | Next-generation sequencing (NGS) | Quantifies RNA abundance across conditions | Enables precise measurement of each variant in pooled screens |

| Data Analysis | Custom bioinformatics pipelines | Processes raw NGS data into functional scores | Converts experimental readouts into AI-training-ready datasets |

Commercial platforms like Ginkgo Bioworks' mRNA data generation service exemplify the integrated solutions emerging to address these needs. Their standardized systems can process up to 20,000 5' or 3' UTR sequences in a single experiment, returning processed datasets with stability and translation efficiency measurements within approximately three months [6]. This scale and standardization are crucial for generating the consistent, high-quality data required to train and validate generative models.

Benchmark Datasets and Community Standards

The development of robust generative models depends critically on standardized benchmark datasets that enable fair comparison across different approaches. Currently, the field suffers from a lack of unified evaluation standards, though several important datasets have emerged. The EteRNA100 dataset, a collection of 100 distinct secondary structure design challenges with lengths ranging from 12 to 400 nucleotides, has been widely adopted but lacks standardized evaluation protocols [7].

More recently, researchers have created comprehensive datasets of over 320,000 instances from experimentally validated sources to establish new community-wide benchmarks for RNA design and modeling algorithms [7]. This dataset includes numerous challenging structures that state-of-the-art RNA inverse folders struggle with, providing a more rigorous testing ground for generative models. It particularly focuses on multi-branched loops, which are often challenging to predict accurately, and encompasses a diverse range of complex motifs from internal loops to n-way junctions.

The RnaBench library represents another effort to standardize evaluation, providing benchmarks for RNA structure modeling with homology-aware curated datasets, standardized evaluation protocols, and novel performance measures [7]. However, current benchmarks are limited to structures under 500 nucleotides, despite the increasing length and complexity of RNA transcripts being studied. This highlights the need for continued development of comprehensive benchmarking resources.

Biosecurity and Ethical Considerations

The power of generative genomic LLMs necessitates serious consideration of biosecurity implications. Recent research has demonstrated that AI-designed proteins based on toxins can evade current biosecurity screening software [3]. In a Microsoft-led "red teaming" exercise, researchers generated over 76,000 synthetic DNA sequences based on toxic proteins using freely available AI tools. While biosecurity programs successfully flagged dangerous proteins with natural origins, they struggled to detect synthetic sequences, with approximately 3% of potentially functional toxins slipping through even after software updates [3].

This vulnerability stems from fundamental differences between natural and AI-generated sequences. AI models can rapidly produce thousands of variants with similar functions but divergent sequences, creating molecules that fall into the "gray areas between clear positives and negatives" in screening databases [3]. This represents a classic "zero-day" vulnerability in biosecurity systems that were designed for naturally occurring threats rather than AI-generated ones.

Addressing this challenge requires a multi-faceted approach, including improved screening algorithms that leverage the same AI technologies used for design, enhanced collaboration between industry and biosecurity organizations, and ongoing red teaming exercises to identify vulnerabilities before malicious actors can exploit them. As the field progresses, responsible innovation must remain a priority, with security considerations built into model development from the outset rather than added as an afterthought.

The field of genomic language models is advancing rapidly from predictive to generative capabilities, transforming how researchers approach RNA therapeutic design. Current evidence suggests that while AI-designed sequences can match or exceed the performance of natural counterparts in specific applications, robust validation frameworks encompassing both structural and functional assessment remain essential. The integration of high-throughput experimental data with increasingly sophisticated models creates a virtuous cycle of improvement, where each iteration enhances both design capabilities and validation methodologies.

Looking forward, several key developments will shape the next generation of genomic LLMs. First, the integration of 3D structural information will move beyond current secondary structure limitations, with models like AlphaFold 3 providing a glimpse of this future [5]. Second, multi-modal models that simultaneously reason across sequence, structure, and functional data will enable more holistic design strategies. Third, improved generalization capabilities, particularly for low-homology scenarios, will expand the applicability of these tools to novel therapeutic targets.

The validation paradigm is also evolving toward more physiologically relevant systems, including cell-type specific effects and in vivo performance. As datasets grow in both scale and biological complexity, generative models will increasingly produce RNA therapeutics that are not merely inspired by nature but are fundamentally optimized for therapeutic efficacy—ushering in a new era of precision genetic medicine designed by artificial intelligence.

The integration of artificial intelligence into biological design represents a paradigm shift in synthetic biology. While traditional approaches rely on optimizing known sequences or structures, a novel methodology termed semantic design leverages the natural organizational principles of genomes to generate functional biological components. This approach utilizes genomic language models trained on prokaryotic DNA sequences to design de novo genes with specified functions by understanding the contextual relationships between genes [8] [9].

Semantic design operates on the distributional hypothesis of gene function, which posits that "you shall know a gene by the company it keeps" [8]. In prokaryotic genomes, functionally related genes often cluster together in operons and gene clusters, a principle long exploited through "guilt-by-association" approaches for gene characterization [8]. The Evo genomic language model captures these relationships through training on extensive prokaryotic genomic data, enabling it to perform a form of genomic "autocomplete" where a DNA prompt encoding specific genomic context guides the generation of novel sequences enriched for related biological functions [8].

This review examines the validation of AI-designed RNA and protein sequences against their natural counterparts, focusing on experimental evidence, performance metrics, and methodological frameworks. We objectively compare the capabilities of semantic design with traditional biological design approaches, providing structured quantitative data and detailed experimental protocols to inform researchers, scientists, and drug development professionals.

Comparative Performance of AI-Designed Biological Sequences

Performance Metrics for AI-Generated Functional Elements

Table 1: Experimental Success Rates of AI-Designed Biological Sequences

| Functional Element | AI Model | Experimental Success Rate | Key Performance Metrics | Reference |

|---|---|---|---|---|

| Anti-CRISPR proteins | Evo 1.5 | Not specified | Robust activity without structural priors or evolutionary conservation | [8] |

| Type II toxin-antitoxin systems | Evo 1.5 | Not specified | High experimental success rates in growth inhibition assays | [8] |

| Type III toxin-antitoxin systems | Evo 1.5 | Not specified | Functional de novo genes with no sequence similarity to natural proteins | [8] |

| CRISPR-Cas effectors | ProGen2 (fine-tuned) | Functional in human cells | Comparable or improved activity/specificity vs. SpCas9, 400 mutations from natural sequences | [10] |

| Diverse protein classes | Evo 1.5 | 17-50% | Range across different functional categories after testing few variants | [9] |

Table 2: Novelty and Diversity Metrics for AI-Generated Sequences

| Sequence Category | AI Model | Diversity Expansion | Average Identity to Natural Proteins | Reference |

|---|---|---|---|---|

| CRISPR-Cas proteins (all families) | ProGen2 (fine-tuned) | 4.8× more protein clusters | 40-60% | [10] |

| Cas9-like effectors | Cas9-specific LM | 10.3× increase in phylogenetic diversity | 56.8% | [10] |

| Cas13 family | ProGen2 (fine-tuned) | 8.4× more protein clusters | Not specified | [10] |

| Cas12a family | ProGen2 (fine-tuned) | 6.2× more protein clusters | Not specified | [10] |

| De novo genes (EvoRelE1) | Evo 1.5 | No significant sequence similarity | 71% to known RelE toxin | [8] |

Semantic Design Versus Traditional Biological Design Approaches

Semantic design represents a fundamental departure from traditional biological design methodologies. Unlike protein language models that focus on individual gene sequences, genomic language models like Evo understand how genes relate to each other within broader genomic contexts [8]. This approach accesses novel regions of sequence space while maintaining biological function, demonstrated by the generation of functional anti-CRISPR proteins and toxin-antitoxin systems with no significant sequence similarity to natural proteins [8] [9].

The Evo model demonstrates remarkable contextual understanding through its "autocomplete" capability. When prompted with partial sequences of highly conserved prokaryotic genes, Evo 1.5 achieved 85% amino acid sequence recovery for rpoS with just 30% of the input sequence, outperforming earlier model versions [8]. The model also successfully predicted gene sequences based on operonic neighbors, achieving over 80% protein sequence recovery for target genes in the trp and modABC operons [8].

Analysis of Evo's generations reveals sophisticated learning of biological constraints. The model exhibits selective conservation patterns with lower entropy at key positions and higher variability in less-conserved regions, mirroring natural protein evolution [8]. When amino acid changes occur, Evo preferentially selects conservative substitutions based on BLOSUM62 matrices, demonstrating internalization of evolutionary principles [8].

Experimental Validation of AI-Designed Sequences

Methodologies for Functional Validation

Table 3: Experimental Protocols for Validating AI-Designed Sequences

| Validation Method | Application | Key Outcome Measures | Reference |

|---|---|---|---|

| Growth inhibition assays | Toxin-antitoxin systems | Relative survival reduction (e.g., ~70% for EvoRelE1) | [8] |

| Precision editing in human cells | AI-designed CRISPR-Cas effectors | Editing efficiency, specificity, PAM selectivity | [10] |

| Base editing compatibility | OpenCRISPR-1 | Versatility across editing modalities | [10] |

| In silico complex formation prediction | Toxin-antitoxin pairs | Filter for generated sequences with interaction potential | [8] |

| Patient-derived tissue screening | AI-designed small molecules | Efficacy in ex vivo disease models | [11] |

Semantic Design Workflow for Functional Gene Generation

The following diagram illustrates the comprehensive workflow for semantic design of functional genes using genomic language models:

Diagram 1: Semantic design workflow for functional gene generation. This illustrates the pipeline from model training through experimental validation.

Case Study: Semantic Design of Toxin-Antitoxin Systems

The application of semantic design to toxin-antitoxin (TA) systems provides a compelling case study in functional sequence generation. Researchers developed a prompting strategy that leveraged the natural colocalization of these systems, curating eight types of prompts including toxin and antitoxin sequences, their reverse complements, and upstream/downstream genomic contexts [8].

Following generation with Evo 1.5, sequences were filtered for those encoding protein pairs with predicted complex formation and limited sequence identity to known TA proteins [8]. This approach successfully identified a functional bacterial toxin, EvoRelE1, which exhibited strong growth inhibition (approximately 70% reduction in relative survival) while possessing 71% sequence identity to a known RelE toxin [8].

Subsequent prompting of Evo 1.5 with the EvoRelE1 sequence demonstrated the model's ability to generate conjugate antitoxins, with generated sequences enriched for antitoxin-like genes [8]. This exemplifies the iterative potential of semantic design, where successfully generated components can serve as prompts for related functional elements.

Essential Research Reagents and Solutions

Table 4: Key Research Reagent Solutions for Semantic Design Validation

| Reagent/Solution | Application | Function | Reference |

|---|---|---|---|

| Evo 1.5 genomic language model | Sequence generation | Generative model trained on prokaryotic DNA for context-aware sequence design | [8] [9] |

| ProGen2 (fine-tuned) | CRISPR-Cas protein generation | Protein language model specialized for CRISPR effectors | [10] |

| Growth inhibition assays | Toxin-antitoxin validation | Quantifies biological activity through survival reduction measurement | [8] |

| Human cell editing systems | CRISPR effector validation | Tests precision editing functionality in physiological environment | [10] |

| SynGenome database | Sequence resource | Contains 120B+ base pairs of AI-generated genomic sequences | [8] |

| CRISPR-Cas Atlas | Training data | Curated dataset of 1M+ CRISPR operons for model fine-tuning | [10] |

| Protein structure prediction (AlphaFold) | In silico validation | Assesses structural plausibility of generated proteins | [10] [12] |

Semantic Design in Broader Biotechnology Context

Comparison with AI-Driven Drug Discovery Platforms

The emergence of semantic design parallels advancements in AI-driven drug discovery, where generative models have significantly compressed early-stage research timelines. Companies like Insilico Medicine have demonstrated the ability to progress from target discovery to Phase I trials for an idiopathic pulmonary fibrosis drug in just 18 months, while Exscientia reports in silico design cycles approximately 70% faster than industry standards [11].

However, semantic design extends beyond small molecule drug discovery by generating functional genetic elements rather than optimizing chemical compounds. This approach shares with AI drug discovery platforms the ability to explore vast design spaces beyond human intuition, but does so specifically for genetic components rather than small molecules [11] [12].

Addressing Generalizability Challenges

A significant challenge in applying machine learning to biological design has been the "generalizability gap," where models perform unpredictably when encountering chemical structures absent from their training data [13]. Semantic design addresses this through its foundational training on diverse genomic contexts, while targeted architectural approaches explicitly model interaction spaces rather than raw chemical structures to improve transferability [13].

Rigorous evaluation protocols that withhold entire protein superfamilies during training have revealed significant performance drops in conventional models when faced with novel protein families [13]. This highlights the importance of realistic benchmarking for accurate assessment of real-world utility in biological sequence design.

Future Directions and Implications

Semantic design represents a transformative approach to biological sequence generation that leverages genomic context for function-guided design. The experimental validation of AI-designed RNA and protein sequences demonstrates robust functionality with diversity metrics significantly expanding beyond natural sequence space.

The integration of semantic design with high-throughput experimental validation creates a powerful framework for biological discovery and engineering. As model architectures improve and genomic datasets expand, this approach is poised to accelerate the development of novel therapeutic agents, diagnostic tools, and synthetic biology applications.

While challenges remain in extending these methods to eukaryotic systems and improving predictive reliability, semantic design already demonstrates the capacity to generate functional biological sequences that transcend natural evolutionary boundaries. This capability marks a significant advancement in our ability to engineer biological systems with precision and creativity.

The validation of AI-designed RNA sequences against their natural counterparts is a critical frontier in biotechnology, with profound implications for therapeutic development. Foundational AI models are rapidly advancing our ability to not just predict but actively design functional biological components, bridging the gap between digital design and real-world application. This guide provides an objective comparison of key AI platforms, focusing on their capabilities in modeling and designing genetic sequences, supported by experimental data and detailed methodologies.

The emergence of large-scale biological language models represents a paradigm shift in genetic research. These models, trained on vast genomic datasets, learn the underlying "grammar" of life, enabling them to interpret, predict, and design biological sequences with increasing accuracy. Among these, Evo and its successor Evo2 from the Arc Institute have established themselves as pioneers in whole-genome modeling [14] [15]. Other notable models include ESM for protein-focused tasks and DeepVariant for specialized genomic analysis [16] [17]. The core value of these tools lies in their ability to generalize across the fundamental languages of biology—DNA, RNA, and proteins—allowing researchers to engineer complex biological systems in silico before moving to costly lab experiments [18].

Comparative Analysis of Foundational AI Models

The following tables provide a detailed comparison of the leading AI platforms for biological sequence analysis and design, focusing on their architectural specs, core capabilities, and performance in key experimental validations.

Table 1: Architectural and Training Specifications of Key AI Models

| Model | Developer | Parameters | Training Data Scale | Context Window | Key Architectural Innovation |

|---|---|---|---|---|---|

| Evo2 [19] [15] | Arc Institute, NVIDIA, Stanford, UC Berkeley, UCSF | 40 Billion | 9.3 trillion nucleotides; 128,000 species [19] | 1 million nucleotides [19] | StripedHyena 2 (Multi-hybrid architecture) [16] |

| Evo1 [14] | Arc Institute | 7 Billion | 300 billion nucleotides; 2.7 million microbial genomes [14] [20] | 131,072 nucleotides [20] | Deep learning at single-nucleotide resolution [14] |

| ESM [16] | Meta AI | - | Protein Data Bank | - | Transformer-based |

| DeepVariant [17] | - | - | Diverse genomic datasets | - | Convolutional Neural Network (CNN) |

Table 2: Core Capabilities and Performance Benchmarks

| Model | Generative Capabilities | Predictive Capabilities | Key Experimental Validation |

|---|---|---|---|

| Evo2 [19] [15] [16] | Design of yeast chromosomes, human mitochondrial genomes, and prokaryotic genomes [19]. | >90% accuracy predicting pathogenic mutations in the BRCA1 gene, zero-shot [19] [16]. | Generated functional proteins in designed mitochondria (pLDDT scores 0.67-0.83 via AlphaFold 3) [16]. |

| Evo1 [14] [8] [20] | Novel CRISPR-Cas systems (protein & RNA), genome-length sequences >1 million base pairs [14]. | Zero-shot gene essentiality prediction; zero-shot function prediction for ncRNA and regulatory DNA [18]. | Designed novel anti-CRISPR proteins and toxin-antitoxin systems; 11/11 generated CRISPR designs were functional [8] [20]. |

| ESM [16] | - | Protein structure & function prediction. | - |

| DeepVariant [17] | - | High-accuracy variant calling (SNPs, indels). | - |

Detailed Experimental Protocols for Model Validation

A critical measure of an AI model's utility in RNA sequence research is its performance in rigorously controlled laboratory experiments. The following section details the methodologies used to validate the outputs of the Evo model, providing a framework for benchmarking AI-designed sequences against natural counterparts.

Protocol 1: Semantic Design of Anti-CRISPR Proteins

This protocol, derived from a Nature publication, validates Evo's ability to generate novel functional proteins using "semantic design," which leverages genomic context as a functional prompt [8].

- Objective: To design and validate novel anti-CRISPR (Acr) proteins that inhibit CRISPR-Cas systems but share no significant sequence or structural similarity to known natural Acrs.

- Methodology:

- AI-Prompting & Sequence Generation: Evo was prompted with genomic sequences of known functional anti-CRISPR genes and their surrounding genomic context. The model was then sampled to generate novel candidate Acr sequences [8].

- In-silico Filtering: Generated sequences were filtered for novelty, requiring low sequence identity to any known proteins in databases [8].

- In-vitro Synthesis & Cloning: Selected AI-generated Acr sequences were synthesized and cloned into expression vectors.

- Functional Assay (CRISPR Inhibition): Vectors containing the synthetic Acr genes were co-transformed into bacteria alongside a target CRISPR-Cas system and a plasmid containing the target DNA for cutting. Functional activity was measured by assessing the survival rate of bacteria, indicating successful inhibition of CRISPR-Cas DNA cleavage [8].

- Validation Workflow:

Protocol 2: Design and Validation of Toxin-Antitoxin Systems

This protocol tests the model's ability to design multi-component biological systems, a more complex task than generating single molecules [8].

- Objective: To generate a novel, functional type II toxin-antitoxin (T2TA) system where the generated sequences are diversified from natural counterparts.

- Methodology:

- AI-Prompting & Pair Generation: Evo was prompted with various components of known T2TA systems (toxin, antitoxin, their reverse complements, upstream/downstream contexts). The model generated novel toxin-antitoxin pairs [8].

- In-silico Filtering & Complex Prediction: Generated pairs were filtered for novelty and assessed for in-silico predicted protein-protein complex formation [8].

- Molecular Cloning: Validated toxin and antitoxin genes were cloned into an inducible expression system.

- Growth Inhibition Assay (Toxin Validation): The toxin gene was induced in bacterial cells, and its growth-inhibitory effect was quantified by measuring optical density (OD600) or colony-forming units (CFUs) over time [8].

- Neutralization Assay (Antitoxin Validation): The corresponding AI-generated antitoxin gene was co-expressed with the toxin. Restoration of bacterial growth to near-normal levels confirmed the antitoxin's neutralizing function and the pair's functional interdependence [8].

- Validation Workflow:

The Scientist's Toolkit: Essential Research Reagents and Materials

The experimental validation of AI-designed RNA and genetic sequences relies on a suite of core reagents and technologies. The table below details key materials essential for conducting the types of validation protocols described in this guide.

Table 3: Essential Reagents for Validating AI-Designed Genetic Sequences

| Research Reagent / Material | Function in Validation |

|---|---|

| Expression Vectors/Plasmids [8] | Carrier DNA molecules used to clone and express the AI-generated genetic sequences in host cells (e.g., bacteria). |

| In-vitro Transcription (IVT) System [21] | A biochemical system to synthesize RNA in vitro from a DNA template, crucial for producing circular RNA (circRNA) vaccine candidates. |

| Lipid Nanoparticles (LNPs) [21] | Delivery vehicles that encapsulate nucleic acids (e.g., RNA), protecting them and facilitating their entry into target cells for functional testing. |

| Cell Lines (e.g., Bacterial, Eukaryotic) [8] | Living cells used as host systems to express the AI-generated sequences and assess their function, toxicity, and physiological effect. |

| Growth Media & Selection Antibiotics [8] | Nutrients to support cell growth and chemical agents to select for cells that have successfully incorporated the expression vector. |

| Chromatography Systems (HPLC/UHPLC) [21] | High-performance liquid chromatography systems used to purify synthesized nucleic acids and analyze their quality, removing contaminants like dsRNA. |

This guide provides a comparative framework for validating AI-designed RNA sequences against their natural counterparts, focusing on the critical metrics of sequence and structural divergence. We objectively evaluate performance through supporting experimental data from multiple sequencing platforms and analytical techniques. The comparison encompasses sequence-based metrics including single nucleotide variants (SNVs) and RNA-DNA differences (RDDs), alongside structural metrics assessing topological variations and their functional implications. Standardized experimental protocols for RNA sequencing, data processing pipelines, and computational analysis methods are detailed to enable reproducible benchmarking. Our findings demonstrate that comprehensive validation requires integrating multiple complementary approaches to accurately characterize the functional fidelity of synthetic RNA constructs.

The emergence of AI-designed RNA sequences represents a paradigm shift in synthetic biology and therapeutic development, creating an urgent need for robust validation frameworks. Benchmarking these novel constructs against natural counterparts requires precise definition and measurement of both sequence and structural divergence. Current approaches leverage advanced high-throughput sequencing (HTS) technologies and ensemble algorithms to resolve molecular differences with unprecedented resolution [22]. This guide establishes standardized metrics and methodologies for comparative analysis, enabling objective performance evaluation of AI-generated RNA molecules within the broader context of functional validation.

Sequence divergence encompasses nucleotide-level variations including single nucleotide variants (SNVs), insertions, deletions (indels), and RNA-DNA differences (RDDs) that may arise from biological processes like RNA editing or technical artifacts [23]. Structural divergence encompasses variations in secondary and tertiary RNA architecture, including stem-loop formations, bulge regions, and pseudoknots that significantly impact molecular function. Accurate characterization requires multiplatform discovery approaches that mitigate the limitations inherent in any single technology [22]. This framework addresses both dimensions through integrated experimental and computational workflows, providing researchers with comprehensive tools for assessing the functional equivalence of synthetic RNA constructs.

Defining Divergence Metrics

Sequence Divergence Fundamentals

Sequence divergence quantifies nucleotide-level variations between AI-designed sequences and natural reference molecules. The fundamental metrics include:

- Single Nucleotide Variants (SNVs): Base substitutions occurring at specific positions, typically measured as variants per kilobase. In comparative transcriptomics, SNV rates below 0.1% often reflect technical variance rather than true biological differences [24].

- Insertions and Deletions (Indels): Small-scale sequence additions or removals (<50 bp) that disrupt reading frames or regulatory motifs. Accurate detection requires split-read approaches that map alignment breakpoints with single-base resolution [22].

- RNA-DNA Differences (RDDs): Transcript-level variations relative to genomic DNA, potentially resulting from RNA editing processes. A-to-G mismatches (A-to-I editing) typically dominate authentic RDD profiles in mammals, while other mismatch types often indicate mapping artifacts [23].

Proper interpretation requires distinguishing biological divergence from technical artifacts. Environmental variance and measurement imprecision can account for up to 60% of observed expression variance in interspecies comparisons, emphasizing the need for controlled experimental conditions and appropriate replication [24].

Structural Divergence Fundamentals

Structural variants (SVs) represent a diverse spectrum of alterations ranging from ~50 bp to megabases of sequence, affecting more of the genome per nucleotide change than any other variant class [22]. In the context of RNA, structural divergence encompasses:

- Topological Variations: Rearrangements including inversions, translocations, and alternative folding patterns that alter spatial organization.

- Copy Number Variants (CNVs): Unbalanced changes including deletions, duplications, and insertions of structural elements that impact functional domains.

- Complex Arrangements: Combinations of multiple variant types that collectively redefine RNA architecture and interaction interfaces.

Long-read sequencing technologies have dramatically improved SV characterization by directly resolving complex regions that are difficult to assess with short-read approaches [25]. These technologies enable sequence-resolved SV detection, moving beyond inference-based methods to direct observation of structural alterations.

Table 1: Fundamental Metrics for Sequence and Structural Divergence

| Category | Metric | Definition | Detection Method | Biological Significance |

|---|---|---|---|---|

| Sequence Divergence | Single Nucleotide Variants (SNVs) | Base substitutions at specific positions | Short-read alignment, variant calling | Potential functional alterations, technical artifacts |

| Insertions/Deletions (Indels) | Small-scale sequence additions/removals (<50 bp) | Split-read approaches, local assembly | Frameshifts, motif disruption | |

| RNA-DNA Differences (RDDs) | Transcript variations relative to genomic DNA | Stringent read mapping, artifact filtering | RNA editing, mapping artifacts | |

| Structural Divergence | Topological Variations | Rearrangements (inversions, translocations) | Long-read sequencing, optical mapping | Altered spatial organization, folding |

| Copy Number Variants (CNVs) | Deletions, duplications, insertions of elements | Read-depth analysis, assembly comparison | Domain amplification/loss | |

| Complex Arrangements | Multiple combined variant types | Graph-based genomes, multi-platform integration | Comprehensive architectural changes |

Experimental Design for Comparative Analysis

Sample Preparation and RNA Sequencing

Robust comparative analysis begins with meticulous experimental design tailored to the specific research question. Sample characteristics profoundly impact downstream analyses, influencing RNA extraction methods, library preparation choices, and sequencing parameters [26]. For benchmarking AI-designed RNAs, consider:

- Transcriptome Complexity: Organisms with lower transcriptional diversity may require less sequencing depth, while complex mammalian transcriptomes need greater coverage, especially for alternative splicing analysis [26].

- Library Preparation Selection: 3' mRNA-Seq approaches are suitable for gene expression quantification but inadequate for isoform characterization. Whole transcriptome methods with either poly(A) enrichment or rRNA depletion are essential for assessing structural features and splice variants [26].

- Pilot Experiments: Before large-scale studies, conduct pilot experiments with representative samples to validate that chosen parameters deliver required data quality and sufficient power for statistical analysis [26].

Environmental variance can account for a substantial portion (up to 60%) of observed expression differences between samples [24]. Therefore, carefully control growth conditions, batch effects, and technical variability through randomization and replication strategies. Biological replicates (separate cultures) show significantly greater variance than technical replicates (same sample processed separately), with 95% of genes in biological replicates typically showing up to 3.6x fold change variation under normal laboratory conditions [24].

Sequencing Technologies and Platforms

Technology selection critically impacts variant detection capabilities, with each platform exhibiting distinct strengths for specific divergence metrics:

- Short-Read Sequencing (Illumina): Provides high accuracy for SNV detection and quantitative gene expression analysis but limited resolution for complex structural variants and repetitive regions due to read length constraints [22].

- Long-Read Sequencing (Oxford Nanopore, PacBio): Enables direct resolution of complex structural variants, full-length transcript sequencing, and improved characterization of repetitive elements through reads spanning several kilobases [25].

- Multi-Platform Integration: Combining sequencing approaches mitigates individual technology limitations. Ensemble methods integrating multiple callers and data types have proven highly effective for comprehensive variant discovery [22].

Recent advances in long-read sequencing have enabled construction of pangenome references representing structural variants across diverse populations, dramatically improving discovery of novel sequence insertions and complex rearrangements [25]. For AI-designed RNA validation, a hybrid approach leveraging both short-read accuracy and long-read span provides the most comprehensive divergence assessment.

Data Analysis Frameworks

Read Mapping and Processing Strategies

Accurate read mapping is foundational to reliable divergence detection, with stringent parameters essential for minimizing false positives:

- Stringent Mapping (Strategy 1): Employs dual-filtering with carefully designed mismatch thresholds (n1, n2) determined using simulated reads with sequencing error profiles derived from actual data. This approach incorporates algorithmic diversity using complementary mappers (Blat + Bowtie/BWA) and requires unique pairing for paired-end data [23].

- Nominal Mapping with Post-Filtering (Strategy 2): Applies standard alignment followed by extensive artifact removal, including strand bias filters, exclusion of 100% editing sites (often mapping artifacts), removal of sites near splice junctions (≤4 nt) or within repetitive regions, and filtering of known SNPs [23].

For RDD detection, stringent upfront mapping significantly outperforms post-filtering approaches, reducing false positives by leveraging unique mapping signatures and complementary alignment algorithms [23]. This is particularly crucial for AI-designed RNA validation where authentic biological differences must be distinguished from computational artifacts.

Sequence Divergence Detection

Variant calling pipelines employ signature-based detection methods, each with distinct strengths:

- Read-Pair (RP): Detects internal insert size and orientation inconsistencies between paired ends

- Split-Read (SR): Identifies alignments spanning variant breakpoints

- Read-Depth (RD): Infers copy number variations from coverage depth deviations

- Local Assembly (AS): Reconstructs sequences de novo before reference comparison [22]

Each method exhibits distinct size sensitivity profiles and variant type preferences, making ensemble approaches essential for comprehensive detection. For RNA-DNA difference analysis, the percentage of A-to-G mismatches among all RDDs serves as a key quality metric, with increases after filtering indicating initial contamination by artifacts [23].

Table 2: Sequence Divergence Detection Methods

| Method | Variant Types Detected | Size Range | Strengths | Limitations |

|---|---|---|---|---|

| Read-Pair (RP) | Deletions, insertions, inversions | 100 bp - 1 Mb | Works with standard paired-end data | Lower resolution for small variants |

| Split-Read (SR) | Deletions, insertions, breakpoints | 1 bp - 100 kb | High breakpoint resolution | Limited in repetitive regions |

| Read-Depth (RD) | Copy number variations | 1 kb - Mb | No upper size limit | Poor breakpoint resolution |

| Local Assembly (AS) | All variant types | 1 bp - Mb | Can resolve novel sequences | Computationally intensive |

Structural Divergence Detection

Modern structural variant detection leverages ensemble algorithms (EAs) that integrate multiple callers to overcome individual methodological limitations:

- Caller Integration: Combines specialized algorithms (e.g., Delly, Manta, LUMPY) covering different signature types to improve sensitivity across diverse SV classes and size ranges [22].

- Call Merging Approaches: Employs breakpoint confidence interval overlap, reciprocal coordinate overlap (>50%), genotype consistency, and signature prioritization (SR > RP > RD) to consolidate predictions while minimizing false positives [22].

- Graph-Based Discovery: Emerging approaches like the SV analysis by graph augmentation (SAGA) framework leverage pangenome references to discover novel structural variants not represented in linear references, followed by local assembly to reconstruct "SV sequence contigs" (svtigs) [25].

For RNA structural analysis, these approaches adapt to detect alternative splicing patterns, topological variations in secondary structure, and higher-order organizational differences that impact function. Long-read sequencing particularly enhances complex SV characterization, with recent resources documenting over 100,000 sequence-resolved biallelic SVs across diverse human populations [25].

Benchmarking Data and Comparative Analysis

Performance Metrics for AI-Designed RNA

Comprehensive benchmarking requires multiple orthogonal metrics to assess different aspects of sequence and structural fidelity:

- Sequence Identity: Percentage of identical nucleotides between AI-designed and natural sequences across aligned regions, with typical human genomes containing 2,100-2,500 SVs [22].

- Variant Burden: Total number and distribution of SNVs, indels, and structural variants relative to natural baseline, considering that SVs affect more of the genome per nucleotide change than other variant classes [22].

- Expression Concordance: Correlation of expression levels across tissues or conditions compared to natural counterparts, noting that biological replicates typically show 95% of genes within 3.6x fold change variation [24].

- Splicing Fidelity: Accuracy in reproducing natural splice site usage and isoform ratios, requiring whole transcriptome methods rather than 3'-focused approaches [26].

Proper interpretation requires establishing significance bounds based on variance measured across biological replicates to distinguish true differential expression from technical and environmental noise [24].

Comparative Analysis of Experimental Platforms

Each experimental platform exhibits distinct performance characteristics for variant detection:

- Short-Read Platforms: Excellent for SNV detection and quantitative expression analysis but limited in resolving complex SVs, with performance depending on read length, insert size, and coverage depth [22].

- Long-Read Platforms: Superior for structural variant resolution and isoform characterization, with recent studies demonstrating median coverage of 16.9× and read N50 of 20.3 kb sufficient for comprehensive SV discovery [25].

- Multi-Platform Integration: Significantly enhances variant discovery, with graph-based approaches aligning to both linear and graph references showing improved mapping metrics (0.5% average identity increase) and more comprehensive mobile element insertion characterization [25].

For AI-designed RNA validation, platform selection should align with primary benchmarking goals—short-read platforms for expression and SNV validation, long-read technologies for structural and isoform fidelity assessment.

Table 3: Platform Comparison for Divergence Detection

| Platform | Sequence Divergence | Structural Divergence | Expression Analysis | Isoform Detection |

|---|---|---|---|---|

| Short-Read (Illumina) | Excellent (SNVs, indels) | Limited (inference-based) | Excellent (quantitative) | Limited (indirect) |

| Long-Read (Nanopore) | Good (higher error rate) | Excellent (direct resolution) | Good (full-length) | Excellent (isoform-resolved) |

| Long-Read (PacBio) | Good (higher accuracy) | Excellent (direct resolution) | Good (full-length) | Excellent (isoform-resolved) |

| Multi-Platform Integration | Comprehensive | Comprehensive | Comprehensive | Comprehensive |

Essential Research Reagent Solutions

Laboratory Reagents and Kits

- RNA Extraction Kits: Maintain RNA integrity (RIN > 8.0) while effectively removing genomic DNA contamination. Selection criteria include sample type (cells, tissues, biofluids), RNA species of interest (mRNA, small RNA, total RNA), and downstream applications [26].

- Library Preparation Kits: Choose based on research objectives—3' mRNA-Seq for high-throughput expression profiling, whole transcriptome kits with poly(A) enrichment for mRNA-focused studies, or rRNA depletion for non-coding RNA analysis [26].

- Quality Control Reagents: Include fluorometric assays for RNA quantification, fragment analyzers for integrity assessment, and qPCR reagents for validation of sequencing results [24].

Bioinformatics Tools and Databases

- Public Data Repositories: GEO and SRA provide extensive RNA-seq data for comparative analysis, though metadata inconsistencies may require careful curation. ENCODE offers rigorously quality-controlled functional genomics data with standardized processing pipelines [27].

- Reference Databases: GTEx provides tissue-specific expression patterns, TCGA offers cancer-related transcriptomes, and FANTOM delivers comprehensive non-coding RNA annotations and transcription start site maps [27].

- Specialized Analysis Tools: FusorSV implements data-mining to select optimal algorithm combinations, Parliament2 provides standardized ensemble calling, and SVarp enables graph-aware SV discovery through local assembly [22] [25].

This benchmarking framework establishes comprehensive methodologies for defining and measuring sequence and structural divergence between AI-designed RNA sequences and their natural counterparts. Through integrated experimental design, multiplatform sequencing, and ensemble computational approaches, researchers can objectively quantify the functional fidelity of synthetic RNA constructs. The comparative data and standardized protocols presented enable rigorous validation essential for therapeutic development and basic research applications. As AI-driven RNA design continues to advance, these benchmarking principles will provide the critical foundation for assessing functional equivalence and guiding iterative improvement of design algorithms.

Building the Validation Toolbox: From RNA-Seq Workflows to Functional Assays

A Decision-Oriented Guide to RNA-Sequencing Analysis Pipelines

The emergence of artificial intelligence for designing novel RNA sequences presents a transformative opportunity in therapeutics and basic research. However, the potential of these in silico designs hinges entirely on their rigorous experimental validation, a process for which RNA sequencing (RNA-Seq) is a cornerstone technology. The choice of RNA-Seq analysis pipeline is not merely a technical detail; it is a critical decision that directly impacts the accuracy, reliability, and biological relevance of the validation data. An ill-suited pipeline can obscure true functional differences between AI-designed RNAs and their natural counterparts, leading to flawed conclusions and costly missteps in the development pipeline.

This guide provides a objective, data-driven comparison of RNA-Seq analytical methods, focusing on their performance in key steps like differential expression analysis. By synthesizing recent benchmarking studies, we equip researchers and drug developers with the evidence needed to select a pipeline that ensures their validation data for AI-designed RNA is as robust and insightful as the AI models that created them.

Comparative Performance of Differential Expression Tools

Differential expression (DE) analysis is a primary objective of most RNA-Seq experiments, including those comparing AI-designed and natural RNA transcripts. The choice of DE tool can significantly influence which genes are identified as significantly changed. Recent benchmarks provide quantitative data to guide this selection.

The table below summarizes the performance of four widely used DE methods as evaluated in a benchmark study that utilized both real (Yellow Fever vaccine) and synthetic datasets.

Table 1: Performance Comparison of Differential Expression Analysis Methods

| Method | Underlying Statistical Model | Key Strengths | Noted Limitations | Performance in Small Sample Sizes |

|---|---|---|---|---|

| dearseq | Robust statistical framework | Handles complex experimental designs effectively [28] | - | Selected for real dataset analysis, identifying 191 DEGs over time [28] |

| voom-limma | Linear modeling with empirical Bayes moderation on precision weights [28] | Models mean-variance relationship; good for complex designs [28] | - | Performance evaluated alongside other methods [28] |

| edgeR | Negative binomial distribution [28] | Uses TMM normalization for compositional biases; well-established [28] [29] | - | Widely cited and used [28] [29] |

| DESeq2 | Negative binomial distribution [28] | Robust normalization and statistical techniques for count data [28] | - | Widely cited and used; common choice for beginners [28] [30] |

A separate, extensive benchmark involving 288 distinct pipelines analyzed against five fungal RNA-Seq datasets emphasized that the default parameters of analysis software are often not optimal across all species. The study concluded that carefully selecting and tuning analysis tools based on the specific data, rather than using a one-size-fits-all approach, is essential for achieving accurate biological insights [29]. This is a crucial consideration when working with novel, AI-designed RNA sequences that may exhibit unusual sequence or structural features.

Experimental Protocols for Benchmarking RNA-Seq Pipelines

The comparative data presented in this guide are derived from rigorous experimental benchmarks. Understanding the methodology behind these comparisons is key to assessing their validity and applicability to your own research on RNA validation.

A Standardized RNA-Seq Workflow for Tool Evaluation

The following workflow diagram illustrates the general process used in benchmarking studies to assess the impact of different tools and parameters at each stage of RNA-Seq analysis.

Detailed Benchmarking Methodology

The comparative findings in this guide are supported by specific experimental protocols from recent studies:

- Preprocessing and Alignment: Benchmarks typically begin with raw sequencing reads (FASTQ files). Quality control is performed using FastQC to assess sequencing artifacts and biases. Trimming of low-quality bases and adapter sequences is then conducted using tools like Trimmomatic or fastp to produce high-quality reads for downstream analysis [28] [29] [30]. Read alignment to a reference genome is a critical step, often performed with aligners such as HISAT2 or STAR [30].

- Quantification and Normalization: Transcript abundance is estimated using quantification tools like Salmon or featureCounts [28] [30]. Normalization is essential to account for technical variations like sequencing depth. A common method is the Trimmed Mean of M-values (TMM) implemented in edgeR [28].

- Benchmarking Design: One comprehensive study constructed 288 distinct analysis pipelines by combining different tools and parameters. These pipelines were applied to multiple RNA-seq datasets, and their performance was evaluated based on simulated data where the "ground truth" was known. This allows for a quantitative assessment of each pipeline's accuracy in identifying true differentially expressed genes [29]. Another study evaluated DE tools like dearseq, voom-limma, edgeR, and DESeq2 using a real-world dataset (Yellow Fever vaccine) and synthetic datasets, emphasizing their performance under challenging conditions like small sample sizes [28].

Successful execution of an RNA-Seq experiment for validating AI-designed RNAs relies on a foundation of well-characterized biological and computational resources. The following table details key reagents and their functions, as highlighted in major research initiatives and protocols.

Table 2: Key Research Reagent Solutions for RNA-Seq Studies

| Reagent / Resource | Function and Role in RNA-Seq Validation |

|---|---|

| Standardized Cell Lines (e.g., GM12878, H9, HEK293T) | Provide a consistent and reproducible biological context; essential for minimizing technical variability when comparing AI-designed and natural RNAs [31] [32]. |

| Spike-in RNA Controls (e.g., ERCC, SIRV, Sequin) | Artificial RNA sequences of known concentration spiked into samples; enable technical performance monitoring and cross-protocol normalization [32]. |

| Reference Genomes & Annotations (e.g., GENCODE, Ensembl) | Provide the coordinate and feature map for aligning sequencing reads and quantifying gene/transcript expression [31]. |

| Quality Control Kits (e.g., Agilent TapeStation) | Assess RNA Integrity Number (RIN) to ensure only high-quality RNA (RIN > 8-9) is used for library preparation [31]. |

| Poly-A Selection / rRNA Depletion Kits | Enrich for messenger RNA (mRNA) by targeting poly-A tails or removing abundant ribosomal RNA (rRNA), shaping the transcriptomic profile seen in sequencing [31] [30]. |

| Biotinylated Antisense Oligonucleotides | Enable high-specificity enrichment of individual RNA transcripts for deep sequencing, useful for focused studies on specific AI-designed RNAs [31]. |

The Impact of Sequencing Technology: Short-Read vs. Long-Read

Beyond the computational pipeline, the choice of sequencing technology itself is a fundamental decision. While short-read sequencing (e.g., Illumina) is the current workhorse for quantifying gene expression, long-read sequencing (e.g., Oxford Nanopore, PacBio) offers distinct advantages for characterizing transcriptomes, which is highly relevant for validating the complex outputs of AI models.

A systematic benchmark of five RNA-Seq protocols—including short-read cDNA, Nanopore direct RNA, Nanopore direct cDNA, Nanopore PCR-cDNA, and PacBio IsoSeq—revealed critical differences [32]. The following diagram summarizes the logical relationship between technology choices and their analytical outcomes, particularly in the context of AI validation where full-length transcript sequence and modification are of interest.

The benchmark study provided quantitative insights into these trade-offs. It reported that PCR-amplified cDNA sequencing (a long-read protocol) generated the highest throughput and most uniform coverage across transcripts, while direct RNA sequencing preserved information about native RNA modifications [32]. For the critical task of gene expression quantification, Nanopore long-read RNA-seq data showed the lowest estimation error and highest correlation with expected spike-in concentrations, even outperforming short-read protocols in this specific metric [32].

Validating AI-designed RNA sequences against their natural counterparts demands analytical pipelines that are not only standard but also optimally selected for the specific biological question and data type. The experimental data compiled in this guide leads to several key conclusions:

- No Single Best Tool: The performance of differential expression tools like dearseq, DESeq2, edgeR, and voom-limma can vary based on experimental design, sample size, and data properties. Benchmarking on pilot data is recommended [28] [29].

- Parameter Optimization is Critical: The "default" parameters of RNA-Seq software may be suboptimal. A comprehensive benchmark of 288 pipelines demonstrates that tailoring the analysis combination to the data provides more accurate biological insights than indiscriminate tool choice [29].

- Consider Long-Read Technologies: For validation tasks that require full-length transcript sequence confirmation, isoform resolution, or detection of RNA modifications—common needs with novel AI-generated RNAs—long-read sequencing protocols offer a powerful, albeit more complex, alternative to traditional short-read sequencing [32].

The integration of AI in RNA design is pushing the boundaries of what is possible in synthetic biology. Matching this computational innovation with equally sophisticated and carefully chosen experimental validation pipelines is the key to translating its potential into real-world therapeutics and discoveries.

Leveraging Targeted RNA-Seq Panels for High-Sensitivity Detection of Expressed Variants and Fusions

In the evolving landscape of precision medicine, DNA-based assays have become the standard for identifying cancer-driving mutations, yet they provide limited information about which variants are functionally expressed at the transcript level. Targeted RNA sequencing (RNA-Seq) has emerged as a powerful solution to this "DNA-to-protein divide," offering unprecedented sensitivity for detecting expressed variants and gene fusions that directly influence protein function and therapeutic response [33]. By focusing sequencing power on specific genes of interest, targeted RNA-Seq panels achieve enhanced coverage depth, enabling the identification of low-abundance transcripts and rare fusion events that conventional methods often miss [34]. The integration of these panels is particularly valuable for validating AI-designed RNA sequences, as they provide the empirical data necessary to verify computational predictions of expression efficiency, splicing patterns, and functional outcomes [35] [36]. This guide provides a comprehensive comparison of targeted RNA-Seq methodologies, their performance characteristics against alternative technologies, and detailed experimental protocols for researchers seeking to implement these approaches in both basic research and clinical applications.

Comparative Analysis of Targeted RNA-Seq Methodologies and Performance

Technology Platforms and Detection Capabilities

Targeted RNA-Seq encompasses multiple methodological approaches that differ in their capture chemistry, probe design, and detection capabilities. The two primary methodologies are anchored multiplex PCR (AMP)-based systems and hybridization capture-based approaches, each with distinct advantages for specific applications.

Amplicon-based approaches (exemplified by Archer FusionPlex) utilize gene-specific primers combined with universal adapters to enrich target regions through PCR amplification. This method is particularly effective for fusion detection because it is specifically designed to capture unknown fusion partners—a critical advantage for discovering novel rearrangements [37]. Studies demonstrate that AMP-based targeted RNA-Seq can identify canonical gene fusions even when traditional fluorescence in situ hybridization (FISH) yields negative results, with discordant FISH analyses typically showing lower percentages of rearrangement-positive nuclei (range 15–41%) compared to concordant cases (>41% of nuclei in 88.9% of cases) [37].

Hybridization capture-based methods employ biotinylated oligonucleotide probes to enrich for target transcripts prior to sequencing. This approach allows for the simultaneous capture of hundreds of genes and can be designed to include not only fusion-related genes but also immune repertoire loci, cell-type markers, and splicing factors [34]. Capture-based panels demonstrate particular strength in detecting fusions with unknown partners and complex structural variants, as they do not require prior knowledge of breakpoint locations [38]. Research shows that capture-based targeted RNA-Seq achieves remarkable enrichment rates, with one study reporting 93% of reads aligning to targeted regions compared to just 4% in conventional RNA-Seq—representing a 33- to 59-fold enrichment while maintaining quantitative accuracy [34].

Performance Comparison with Alternative Technologies

The enhanced sensitivity of targeted RNA-Seq becomes evident when compared to traditional diagnostic methods and whole transcriptome sequencing. The table below summarizes key performance metrics across different detection platforms:

Table 1: Performance Comparison of Methods for Detecting Gene Fusions and Expressed Variants

| Methodology | Sensitivity for Low-Abundance Transcripts | Fusion Partner Resolution | Multiplexing Capacity | Quantitative Capability | Best Application Context |

|---|---|---|---|---|---|

| Targeted RNA-Seq (Hybridization Capture) | 50% detection at 2 pM input; 100% detection at 8 pM-31 nM [34] | Full nucleotide-level resolution of both known and novel partners [34] | High (hundreds of genes simultaneously) [34] | High quantitative accuracy with spike-in controls [34] | Comprehensive fusion screening; expression validation |

| Targeted RNA-Seq (Amplicon-Based) | Detected fusions in 7 FISH-negative cases; identified novel fusions [37] | Excellent for novel partner identification via anchored PCR [37] | Moderate (dozens of targets) | Semi-quantitative; dependent on PCR efficiency | Clinical fusion detection with unknown partners |

| Conventional RNA-Seq | Limited by transcriptome complexity; missed single-copy fusions [34] | Full resolution but requires sufficient coverage | Entire transcriptome | Quantitative but with lower depth per gene | Discovery-phase research |

| FISH | Dependent on percentage of positive nuclei (>41% for reliable detection) [37] | Limited to known gene loci; no nucleotide resolution | Low (typically single-gene tests) | Non-quantitative | Rapid confirmation of known fusions |

| RT-PCR | High for known targets | Restricted to pre-specified fusion partners | Low to moderate | Quantitative for known sequences | Validation of previously identified fusions |

When compared to DNA sequencing alone, targeted RNA-Seq provides the critical advantage of confirming which variants are actually expressed. A comprehensive analysis revealed that RNA-Seq uniquely identified variants with significant pathological relevance that were missed by DNA-Seq, while some DNA-detected variants were not expressed or expressed at very low levels, suggesting they may be of lower clinical relevance [33]. This capability is particularly valuable for prioritizing clinically actionable mutations and validating AI-designed sequences by distinguishing functional transcripts from silent genetic alterations.

Diagnostic Performance in Clinical Settings

The real-world performance of targeted RNA-Seq has been demonstrated across multiple cancer types, significantly improving diagnostic yield compared to traditional approaches:

Soft Tissue and Bone Tumors: In a series of 131 diagnostic samples, targeted RNA-Seq identified a gene fusion, BCOR internal tandem duplication, or ALK deletion in 47 cases (35.9%). The method provided added value in 19 out of 131 cases (14.5%), categorized as altered diagnosis (3 cases), added precision (6 cases), or augmented spectrum (10 cases) [37].

Non-Small Cell Lung Cancer (NSCLC): In a testing algorithm that used amplicon-based DNA/RNA sequencing followed by reflex hybridization-capture-based RNA-Seq, approximately 10% of 1,211 specimens required reflex testing. Among these, oncogenic fusions were identified in 9 cases, including clinically actionable fusions involving ALK, BRAF, NRG1, NTRK3, ROS1, and RET—none of which were detected by the amplicon-based assay alone [38].

Broad Cancer Diagnostics: In a clinical cohort representing various cancer types, targeted RNA-Seq improved the overall fusion gene diagnostic rate from 63% with conventional approaches to 76% while demonstrating high concordance for patient samples with previous diagnoses [34].

Experimental Design and Protocol Implementation

Essential Methodologies for Targeted RNA-Seq Analysis

Implementing robust targeted RNA-Seq requires careful consideration of multiple experimental parameters to ensure sensitive and specific detection of expressed variants and fusions. The following workflow outlines the key steps in a comprehensive targeted RNA-Seq experiment:

Targeted RNA-Seq Experimental Workflow

Sample Preparation and Quality Control

RNA extraction is typically performed from archived formalin-fixed paraffin-embedded (FFPE) tissue sections, with input amounts up to 250 ng [37]. Critical considerations include:

- Tumor Enrichment: An expert pathologist should mark tumor areas and estimate tumor cell percentage (categorized as 11-25%, 26-50%, 51-90%, or >90%) to ensure adequate tumor content [37].

- RNA Quality Assessment: RNA integrity should be verified using appropriate methods (e.g., Bioanalyzer), as degradation can significantly impact fusion detection sensitivity, particularly for large transcripts.

- Spike-in Controls: Incorporation of external RNA controls consortium (ERCC) RNA spike-in controls and fusion sequins enables absolute quantification of detection limits and assay performance [34]. Studies demonstrate that targeted RNA-Seq achieves 50% detection of fusion sequins at 2 pM input and 100% detection between 8 pM and 31 nM input [34].

Library Construction and Target Enrichment

- Library Preparation: Complementary DNA (cDNA) is synthesized from extracted RNA, followed by library construction using adapter ligation and PCR amplification [37]. For hybridization capture approaches, a double-capture strategy can increase on-target rates to >90% [34].

- Panel Design Considerations: Targeted panels can be designed for specific applications—for example, a blood panel targeting 188 fusion-related genes including T-cell receptor and immunoglobulin loci, and a solid tumor panel targeting 241 fusion-related genes [34]. The number of targeted genes should be balanced against sensitivity requirements, as the overall sensitivity is inversely proportional to the sum of captured gene expression.

Sequencing Parameters and Coverage Requirements

- Sequencing Depth: Higher sequencing depths enable detection of low-frequency variants and fusions. Studies typically sequence on Illumina platforms (MiSeq or NovaSeq) to achieve sufficient coverage across targeted regions [37].

- Read Configuration: Paired-end sequencing is essential for detecting fusion events, as it allows for the identification of reads spanning fusion junctions [34]. Read lengths of 100-150 bp are commonly used, with insert sizes of 300-350 bp [39].

Bioinformatic Analysis for Variant and Fusion Detection

The bioinformatic pipeline for analyzing targeted RNA-Seq data requires specialized approaches to reliably identify expressed variants and fusion events:

Table 2: Key Bioinformatics Tools for Targeted RNA-Seq Analysis

| Analysis Step | Tool Options | Critical Parameters | Application Notes |

|---|---|---|---|

| Read Alignment | STAR [40], BWA-MEM [40] | Two-pass alignment for splice junction detection | Splice-aware aligners essential for accurate RNA alignment |

| Variant Calling | VarRNA [40], GATK HaplotypeCaller [40], VarDict [33], Mutect2 [33], LoFreq [33] | Minimum VAF ≥2%, DP ≥20, ADP ≥2 [33] | Combined caller approach improves sensitivity; XGBoost models in VarRNA classify germline/somatic variants |

| Fusion Detection | STAR-Fusion, FusionCatcher [34] | Require detection by multiple algorithms | Combined pipeline approach reduces false positives; junction reads essential |

| Expression Quantification | TPM (Transcripts Per Kilobase Million) [34] | Minimum 15 TPM for reliable gene detection [34] | Enables expression-based prioritization of detected variants |

For optimal fusion detection, researchers often implement a consensus approach requiring identification by multiple algorithms. One validated pipeline utilizes both STARfusion and FusionCatcher, considering only fusion genes detected by both tools to minimize false positives [34]. This approach has successfully identified known fusion genes in cell lines and patient samples, with sensitivity sufficient to detect BCR-ABL1 transcripts in dilution series down to 1:1000 against a background of control RNA [34].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagents for Targeted RNA-Seq Experiments

| Reagent/Solution | Function | Example Products | Application Notes |

|---|---|---|---|

| RNA Extraction Kits | Isolation of high-quality RNA from FFPE or fresh tissue | Maxwell RSC RNA FFPE Kit [37] | Optimized for challenging clinical samples |

| Library Prep Kits | cDNA synthesis and library construction | Archer FusionPlex Sarcoma Panel [37], NuGEN Ovation RNA-Seq System [39] | Selection depends on sample type and input quality |

| Target Enrichment Panels | Hybridization or amplicon-based target capture | Custom blood (188 genes) and solid tumor (241 genes) panels [34] | Can include immune genes and spike-in controls |

| Spike-in Controls | Quantification of detection limits and assay performance | ERCC RNA Spike-in Mix, Fusion Sequins [34] | Essential for assay validation and quality control |