From Cells to Cognition: Harnessing Network Principles and Emergent Properties for Biomedical Innovation

This article provides a comprehensive exploration of the theoretical foundations of biological networks and emergent properties, tailored for researchers and drug development professionals.

From Cells to Cognition: Harnessing Network Principles and Emergent Properties for Biomedical Innovation

Abstract

This article provides a comprehensive exploration of the theoretical foundations of biological networks and emergent properties, tailored for researchers and drug development professionals. It covers the foundational concepts of biological emergence, from historical philosophy to modern scientific models, including neurobiological emergentism and the role of bioelectricity. The piece delves into cutting-edge methodological approaches, such as spatial biology and AI-driven network analysis, and addresses key challenges in the field, including workforce gaps and technical limitations. Finally, it examines validation frameworks and comparative analyses of network models, synthesizing how a deeper understanding of multi-scale network organization is poised to revolutionize target identification, therapeutic development, and personalized medicine.

What Are Emergent Properties? Unraveling the Core Principles from Neurons to Intelligence

Emergence describes a fundamental phenomenon where complex systems exhibit properties, behaviors, or capabilities that their individual components do not possess. These emergent properties arise only when the parts interact within a wider whole, creating novel features that are distinct from, and not reducible to, the sum of the parts [1]. The term itself, coined by philosopher G. H. Lewes in 1875, originates from the Latin emergo, meaning to arise or come forth [2]. Lewes distinguished between "resultant" effects, which are predictable, additive sums of component forces (like the weight of an object), and "emergent" effects, which are qualitatively novel and cannot be calculated from the properties of the constituent parts alone [2]. This concept has since become a cornerstone for understanding complex systems across disciplines, from physics and ecology to the social sciences and biology, offering a middle path between reductionist mechanism and vitalist dualism [2] [3].

In the specific context of modern biology, the study of emergent properties is indispensable for grappling with the profound complexity of living systems. Biological entities—from individual cells to entire ecosystems—are quintessential examples of complex systems where interactions between components (e.g., genes, proteins, cells, organisms) give rise to functions and behaviors that cannot be deduced by studying these components in isolation [4] [5] [6]. The field of network biology has emerged as a primary framework for this research, representing biological components as nodes and their interactions as edges in a network. This approach allows researchers to move beyond classical reductionism and map how intricate interactions within these networks underlie emergent phenomena such as cellular signaling, organismal development, disease resilience, and ecosystem stability [4] [6]. Understanding emergence is thus not merely an academic exercise; it is critical for elucidating the pathogenesis of complex diseases, identifying novel drug targets, and rationally modulating microbial ecosystems for human and planetary health [4] [5].

Philosophical and Historical Foundations

The intellectual roots of emergence trace back to Aristotle, whose concept of form and matter acknowledged that a compound substance can exhibit features not present in its elemental constituents [3]. However, the most systematic early development of emergentist thought came from a group known as the British Emergentists in the 19th and early 20th centuries [2]. These thinkers, including John Stuart Mill, Samuel Alexander, C. Lloyd Morgan, and C. D. Broad, sought a naturalistic explanation for phenomena like life and mind that would neither reduce them to mere mechanism nor explain them by invoking mysterious, non-physical forces (vitalism) [2].

- John Stuart Mill: In his System of Logic (1843), Mill distinguished between "homopathic" and "heteropathic" effects. Homopathic effects follow the principle of composition of causes (i.e., the whole is the sum of its parts), as seen in vector sums of forces. In contrast, heteropathic effects, exemplified by chemical reactions, represent a failure of this principle, where the joint effect of causes is different from the sum of their separate effects. Mill's heteropathic effects are the direct precursor to Lewes's emergent effects [2].

- Samuel Alexander: In Space, Time and Deity (1920), Alexander proposed that evolution proceeds through a series of levels—matter, life, mind, and deity—each emerging from and dependent on the level below it, yet possessing its own novel qualities. He argued that emergent qualities, while identical to a specific configuration of physico-chemical processes, are causally efficacious. He famously suggested that emergence must be accepted with "natural piety," as it represents a brute empirical fact that cannot be deduced from below [2].

- C. Lloyd Morgan: A biologist, Morgan in Emergent Evolution (1923) applied the concept directly to the evolutionary process. He emphasized that emergent properties are not merely epiphenomenal but bring about new kinds of lawful relationships ("a new kind of relatedness") that can downwardly influence the behavior of lower-level components [2].

- C. D. Broad: In The Mind and Its Place in Nature (1925), Broad provided the most sophisticated formulation. He argued that the properties of a whole cannot be deduced, even in principle, from the most complete knowledge of its parts and their arrangements. He classified laws into "intra-ordinal" laws (within a level) and "trans-ordinal" laws (connecting levels), with the latter being fundamental, irreducible laws of emergence [2].

A central distinction in contemporary discussions, crucial for a scientific worldview, is that between weak and strong emergence [3] [1].

- Weak Emergence: This describes novel properties that arise from the interactions of a system's components and are recognized only by observing or simulating the system as a whole. While the emergent property is unexpected, it is still reducible in principle to the interactions of the parts, even if that reduction is computationally impractical. Examples include the formation of a traffic jam or the intricate patterns of a flock of birds [1].

- Strong Emergence: This posits that some emergent properties are irreducible and exert fundamental, downward causal power on the system's constituents. This form of emergence, if it exists, would contravene a purely reductionist physicalism. Philosopher Mark Bedau notes that strong emergence "is uncomfortably like magic" from a scientific perspective, as it seems to require causal powers that are not derived from the micro-level components [1]. In biology, consciousness is often debated as a potential candidate for strong emergence, though most biological phenomena are considered weakly emergent.

Table 1: Key Thinkers in the Emergence Tradition

| Thinker | Key Work | Core Contribution to Emergence |

|---|---|---|

| G. H. Lewes | Problems of Life and Mind (1875) | Coined the term "emergent"; distinguished emergents from resultants. |

| John Stuart Mill | A System of Logic (1843) | Distinguished "heteropathic" from "homopathic" effects. |

| Samuel Alexander | Space, Time and Deity (1920) | Proposed a hierarchical view of reality with emergent levels accepted with "natural piety." |

| C. Lloyd Morgan | Emergent Evolution (1923) | Applied emergence to evolutionary theory; emphasized downward causation. |

| C. D. Broad | The Mind and Its Place in Nature (1925) | Provided a rigorous definition based on the non-deducibility of whole from parts. |

Emergence in Modern Biological Networks

The theoretical framework of emergence finds its practical, empirical grounding in modern biology through the paradigm of network biology. This field uses graph theory to represent and analyze biological systems, where biomolecules (like genes or proteins) are nodes and their physical or functional interactions are edges [4] [6]. This approach is uniquely suited to studying emergence because it explicitly maps the interactions that give rise to system-level properties.

Types of Biological Networks and Emergent Phenomena

Biological networks can be broadly categorized into evidence-based networks (built from curated experimental data) and statistically inferred networks (constructed from high-throughput data like gene expression) [4]. Key network types include:

- Protein-Protein Interaction (PPI) Networks: These map physical interactions between proteins. Emergent properties from PPIs can include the robustness of a cell to mutation, the specificity of signaling pathways, and the identification of functional protein complexes [6].

- Gene Regulatory Networks (GRNs): These represent how transcription factors regulate target genes. Emergent properties include cellular differentiation, developmental patterning, and the switch-like behaviors critical for cell fate decisions [6].

- Metabolic Networks: These model biochemical reactions. Emergence here manifests in the overall metabolic flux and the ability of a cell or microbial community to utilize novel nutrient sources [5].

A central emergent property in many of these networks is resilience. For example, microbial communities often exhibit a remarkable ability to maintain stability and function in the face of biotic (e.g., invasion by pathogens) or abiotic (e.g., antibiotic exposure) perturbations. This resilience is not a property of any single microbial species but emerges from the complex web of competitive, cooperative, and cross-feeding interactions within the consortium [5]. Another key emergent property is niche expansion, where a microbial community can metabolize substrates that no single member can degrade alone, a phenomenon often enabled by cross-feeding of metabolic byproducts [5].

Table 2: Emergent Properties in Biological Systems

| Biological System | Component Parts | Emergent Property | Biological Function |

|---|---|---|---|

| Microbial Community | Individual microbial species | Resilience & Niche Expansion | Ecosystem stability, broad metabolic capability [5] |

| Protein-Protein Interaction Network | Individual proteins & their interactions | Robustness to Mutation | Cellular viability despite genetic variation [6] |

| Gene Regulatory Network | Genes & their regulatory interactions | Cellular Differentiation | Development of distinct cell types from a single genome [6] |

| Spatial Game Theory Model | Individual players & their strategies | Cooperative Behavior | Survival of cooperators even with high temptation to defect [7] |

Methodologies for Studying Emergence in Networks

Research into emergent properties relies on a combination of experimental data generation and sophisticated mathematical modeling. The process typically begins with the generation of large-scale, multi-omics datasets (genomics, transcriptomics, proteomics, metabolomics) that provide a parts list for the system [4] [6]. These components are then assembled into networks using data from public databases (e.g., BioGRID, STRING for PPIs; RegulonDB for GRNs) or through statistical inference from high-throughput data [6].

Mathematical modeling is indispensable for linking network structure to emergent function, as the non-linear nature of these interactions makes intuitive prediction impossible [5]. Several classes of models are prominently used:

- Lotka-Volterra Models: These are phenomenological models based on differential equations that describe population dynamics through pairwise interaction coefficients between species. They are relatively simple to parameterize and can predict community stability and composition. However, they often assume static interactions and can miss higher-order effects or molecule-mediated interactions [5].

- Consumer-Resource Models: These models explicitly represent the consumption and production of metabolites, making them highly suitable for modeling cross-feeding in microbial ecosystems. They can capture environment-dependent shifts in interactions [5].

- Genome-Scale Metabolic Models (GEMs): GEMs are bottom-up, mechanistic models that incorporate the entire set of known metabolic reactions for an organism or community. They can predict emergent community-level metabolic capabilities, such as growth rates or byproduct secretion, from genomic information [5].

- Spatial Game Theory Models: Used to study the evolution of cooperation, these models simulate interactions between agents (e.g., cells) in a spatial context. Recent work shows that when agents can dynamically rewire their interaction network based on payoff, the system self-organizes into an approximate scale-free network—an emergent structural property that influences the population's evolutionary dynamics [7].

- Statistical Inference for GRNs: Methods like Gaussian Graphical Models (GGM), Bayesian Networks, and information-theoretic approaches (e.g., based on Mutual Information) are used to reconstruct GRNs from gene expression data. These networks can reveal emergent regulatory modules that control complex phenotypic outcomes [6].

Table 3: Modeling Approaches for Emergent Properties in Biology

| Model Type | Key Principle | Advantages | Limitations |

|---|---|---|---|

| Lotka-Volterra | Population growth depends on linear pairwise interactions. | Simple, interpretable parameters; analytical solutions possible [5]. | Static interactions; misses higher-order and metabolite-mediated effects [5]. |

| Consumer-Resource | Explicitly models dynamics of extrinsic resources. | Captures environment-dependent interactions; good for microbial ecology [5]. | Can be complex to parameterize for many resources and species. |

| Genome-Scale Metabolic (GEM) | Stoichiometric matrix of all known metabolic reactions. | Mechanistic; predicts emergent metabolic fluxes and growth [5]. | Requires curated genome annotation; does not include regulatory information. |

| Bayesian Network | Probabilistic directed acyclic graph representing causal relationships. | Can model causal structure; handles uncertainty well [6]. | Computationally intensive; difficult to search all possible structures. |

Experimental Protocols and Research Toolkit

To make the study of emergence concrete for researchers, this section outlines a representative experimental workflow and the essential tools required to investigate an emergent property in a biological network.

A Representative Workflow: Mapping Emergent Resilience in a Microbial Community

Objective: To characterize the emergent resilience of a synthetic microbial consortium to antibiotic perturbation using multi-omics data and network modeling.

- Community Assembly & Perturbation: A defined consortium of culturable microbes is constructed in vitro. The community is subjected to a controlled perturbation, such as a sub-lethal dose of a broad-spectrum antibiotic [5].

- Longitudinal Multi-Omics Data Collection: Over a time course, samples are collected for:

- Metagenomic Sequencing: To track absolute abundances of all member species and identify potential horizontal gene transfer events [4].

- RNA-Sequencing (Transcriptomics): To profile the gene expression response of each member to the stress and to each other [4] [6].

- Metabolomics: To measure the extracellular and intracellular metabolites, revealing the metabolic interactions and byproducts that mediate community-level functions [4].

- Network Reconstruction & Integration:

- A co-occurrence network is inferred from the metagenomic abundance data to identify species whose presence/absence is correlated [5].

- A Gene Regulatory Network (GRN) for key members is reconstructed from the transcriptomic data using a statistical method like a Gaussian Graphical Model (GGM) or an information-theoretic approach. The GGM estimates a precision matrix (inverse covariance) from gene expression data; non-zero entries in this matrix indicate conditional dependencies between genes, which are represented as edges in the GRN [6].

- Data is integrated into a Genome-Scale Metabolic Model (GEM) to predict community-level metabolic fluxes and identify critical cross-feeding interactions that may confer resilience [5].

- Modeling Emergent Dynamics: A Consumer-Resource model or a generalized Lotka-Volterra model is parameterized with the experimental data. The model is simulated with and without the perturbation to test hypotheses about which specific interactions (e.g., the production of a detoxifying metabolite by one species that protects others) are essential for the observed emergent resilience [5].

- Validation: Predictions from the model (e.g., "if species X is removed, resilience collapses") are tested experimentally by reconstructing the community without the hypothesized keystone species and re-measuring its response to perturbation [5].

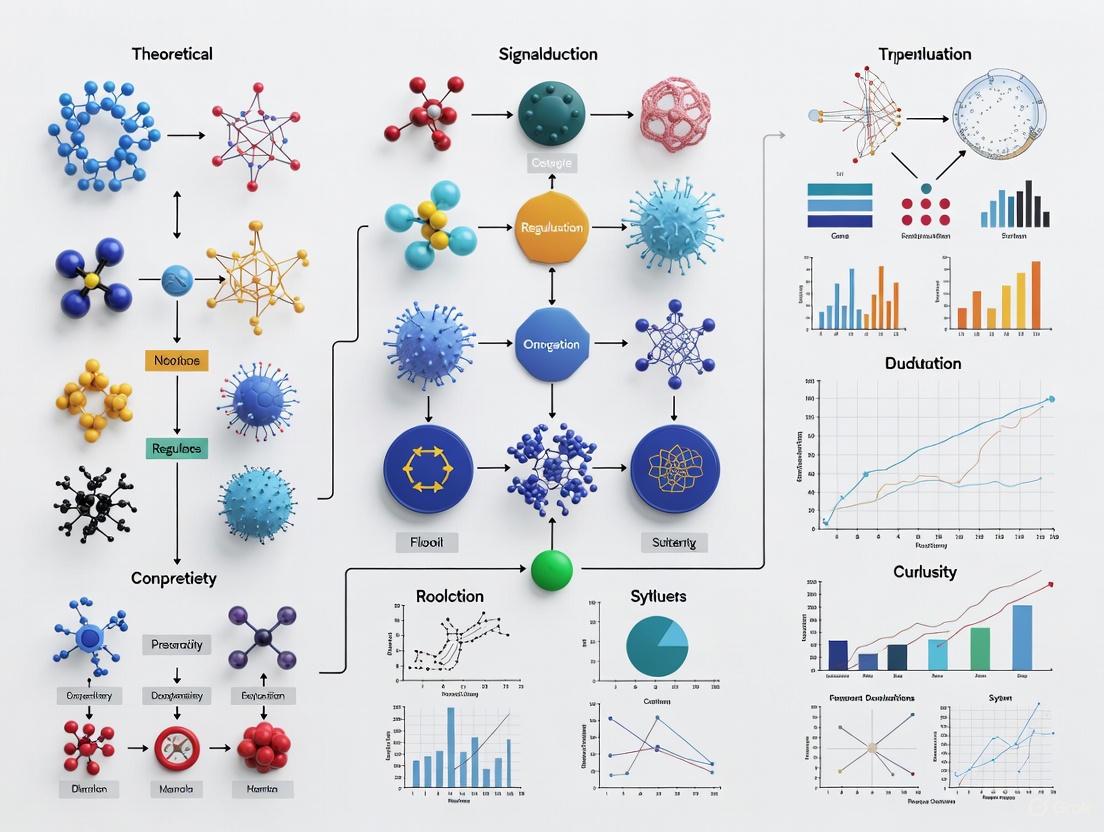

The following diagram illustrates this integrated multi-omics and modeling workflow.

Diagram 1: Workflow for studying emergent properties.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents and Resources for Emergence Research

| Reagent / Resource | Type | Function in Research |

|---|---|---|

| BioGRID Database | Public Database | Provides curated physical and genetic protein-protein interactions for network reconstruction [6]. |

| STRING Database | Public Database | Provides both known and predicted functional protein associations, often with confidence scores, for building weighted networks [6]. |

| RNA-sequencing Kit | Laboratory Reagent | Enables transcriptomic profiling to infer gene regulatory networks and cellular states [4] [6]. |

| Synthetic Microbial Community | Biological Model | A defined, culturable consortium that allows for controlled perturbation and mapping of emergent interactions [5]. |

| Gaussian Graphical Model (GGM) Software | Computational Tool | Statistical package for reconstructing gene regulatory networks from gene expression data by estimating the conditional dependence structure [6]. |

The journey to define and understand emergence, from its philosophical origins to its critical role in modern biology, underscores a fundamental shift in scientific thinking. It is the recognition that life's complexity cannot be fully understood by cataloging its parts alone. The essential character of biological systems—their resilience, their adaptability, their very functionality—is an emergent property of the intricate networks of interactions between those parts [2] [4] [5]. The theoretical foundations laid by the British Emergentists have found a powerful and practical instantiation in the field of network biology, which provides the tools, models, and conceptual framework to move from observation to prediction.

The future of emergence research in biology is exceptionally promising and points toward several key directions. First, the integration of multi-omics data will become even more sophisticated, moving beyond correlation to establish causal relationships within networks, thereby clarifying the mechanistic basis of emergent phenomena [4]. Second, there is a pressing need to develop multi-scale models that can seamlessly connect dynamics across different levels of organization, from molecular interactions within a cell to species interactions within an ecosystem [5]. Finally, the ultimate test of our understanding will be the rational modulation of complex ecosystems. Whether it is manipulating the human gut microbiome to treat disease, engineering consortia for bioremediation, or predicting the emergence of antibiotic resistance, the ability to reliably steer a system's emergent properties toward a desired outcome will be the hallmark of success. The study of emergence, therefore, is not just about explaining the world as it is, but about gaining the wisdom to shape it for the better.

In the study of complex biological systems, a fundamental phenomenon observed is emergence, where novel properties, patterns, or behaviors arise that are not present in or predictable from the individual components of the system alone [8]. These emergent properties are not the product of a single directive but result from the interplay of simpler elements organized in specific ways. Understanding the mechanisms that drive emergence is critical for fields ranging from developmental biology to drug discovery, as it allows researchers to comprehend how complex functions and pathologies develop from molecular and cellular interactions [9]. This guide focuses on three primary drivers of emergence: the interactions between components, the process of self-organization, and the formation of hierarchical organizations. These drivers are not mutually exclusive but are often intertwined, working in concert to generate the complex behaviors characteristic of living systems. By examining their roles and interrelationships, this document provides a theoretical foundation for research into biological networks and their emergent properties.

Theoretical Foundations of Emergence

Emergence is a fundamental property of complex systems, defined as the appearance of new properties or behaviors due to non-linear interactions within the system [10]. In biological contexts, this means that the whole is indeed more than the sum of its parts. A single neuron possesses none of the capabilities of a conscious mind, but vast networks of neurons interacting produce cognition, learning, and memory [8]. This non-predictability is a hallmark of emergent phenomena.

The concept of emergence challenges purely reductionist approaches in biology. While molecular biology has successfully driven us to the innermost mechanisms of the cell, work in mathematics, physics, and complexity science reveals that the inherent order within a cell may be largely self-organized and spontaneous, rather than solely a consequence of natural selection or a linear genetic program [10] [8]. Emergent properties are the "product" or "by-product" of the system, arising dynamically from the interconnectedness of its parts [10]. The study of these phenomena is, therefore, inherently a study of interactions, organization, and the dynamics of complex systems.

Key Driver 1: Interactions

Interactions form the most basic level of foundation for emergence. They are the channels through which components of a system communicate and influence one another.

The Role of Interactions in Generating Novelty

Interactions are the primary source of non-linearity in complex systems. The behavior of an individual component, such as a protein or a cell, is modified by its interactions with numerous other components. This relational dynamic means that the system's future state is co-determined by these multiple, interdependent interactions [11]. It is through these interactions that novel information is generated—information that is not present in the initial or boundary conditions of the system and which inherently limits predictability [11]. As such, there is no shortcut to knowing the future state of a complex system; one must account for the trajectory through all intermediate steps shaped by interactions.

Biological Exemplar: Gene Regulatory Networks (GRNs)

Gene Regulatory Networks (GRNs) provide a quintessential example of how interactions drive emergence. GRNs are webs of protein-DNA interactions (PDIs) that govern the transcription of genes [12]. The topological analysis of GRNs across model eukaryotes reveals they are scale-free networks, meaning a majority of transcription factors (TFs) bind to few target genes, while a small number of hub TFs bind to a large proportion of targets [13] [12]. This specific pattern of interactions, characterized by a power-law distribution, is an emergent property of the network. The connectivity of these networks is not random but follows organism-specific patterns that drive phenotypic plasticity and species-specific phenotypes [13] [12]. The properties of the entire network, such as robustness and the flow of regulatory information, emerge from the specific pattern of these molecular interactions.

Table 1: Topological Properties of Gene Regulatory Networks (GRNs) in Model Organisms

| Organism | Network Type | Key Topological Feature | Power-Law Exponent (Out-degree) | Biological Implication |

|---|---|---|---|---|

| S. cerevisiae (Yeast) | Gene Regulatory | Scale-free | Organism-specific | Underlies phenotypic plasticity and regulatory capacity |

| D. melanogaster (Fruit fly) | Gene Regulatory | Scale-free | Organism-specific | Constrained by organism-specific regulatory landscape |

| C. elegans (Worm) | Gene Regulatory | Scale-free | Organism-specific | Drives species-specific phenotype |

| A. thaliana (Plant) | Gene Regulatory | Scale-free | Organism-specific | Predicts total interactions in complete GRN |

Key Driver 2: Self-Organization

Self-organization is the process whereby some form of overall order arises from local interactions between parts of an initially disordered system, without being controlled by an external agent [14].

Principles of Self-Organization

Self-organization is a process characteristic of systems far from thermodynamic equilibrium and relies on several key ingredients [14]:

- Strong dynamical non-linearity, often involving positive and negative feedback loops.

- A balance of exploitation and exploration.

- Multiple interactions among the system's components.

- A continuous availability of energy to overcome the natural tendency toward entropy.

The process is often triggered by random fluctuations, which are then amplified by positive feedback, leading to the spontaneous formation of a robust, decentralized order [14]. As articulated by the cybernetician W. Ross Ashby, a system self-organizes by evolving toward a state of equilibrium (an attractor), and in doing so, its components become mutually dependent and coordinated [14] [11].

Biological Exemplars of Self-Organization

- Morphogenesis and Pattern Formation: The development of an organism's shape is a classic example of self-organization. Reaction-diffusion systems, where chemicals react and diffuse across space, can generate complex patterns like spots and stripes, which are thought to underlie animal coat patterns and shell pigmentation [10]. This process is highly sensitive to initial conditions and system parameters, demonstrating how global order can arise from local, non-linear interactions [10].

- Xenobots: Programmable living organisms constructed from frog cells, xenobots exhibit remarkable behaviors such as movement, collective action, and self-repair, despite having no nervous system [8]. Their coordinated activities are not encoded in their individual cells but emerge from the self-organization of how those cells are assembled and interact [8].

- Bioelectrical Signaling: Beyond biochemical gradients, cells use bioelectrical signaling—voltage gradients and ion flows—to coordinate decision-making and pattern formation across tissues. This form of communication is a key mediator of self-organization, guiding large-scale morphogenesis and regeneration [8].

Key Driver 3: Hierarchical Organization

Hierarchical organization refers to the nesting of systems within systems, where each level of organization exhibits its own emergent properties, which in turn influence and constrain both higher and lower levels.

Hierarchies and Biological Complexity

Biological life is structured in nested hierarchies, from molecules to cells, tissues, organs, and organisms [8]. In networks, this often manifests as modularity, where densely connected clusters of nodes (modules) serve distinct functions but are also part of a larger, integrated network [9]. This hierarchical organization is not merely descriptive; it is a fundamental constraint that shapes the degrees of freedom of a complex system [10]. It allows for robustness, as failure in one module may not cascade to destroy the entire system, and it enables the evolution of complexity by allowing modules to be modified or co-opted for new functions.

Biological Exemplar: The Brain Connectome

The brain is a prototypical hierarchical system. Neural networks are organized across multiple spatial and temporal scales, from individual synapses to local microcircuits, to large-scale brain regions, and ultimately to the entire connectome [9]. This hierarchical structure is crucial for brain function. Different levels of the hierarchy process information at different scales and with different functions, and the interactions between these levels are essential for complex cognitive processes. The emergence of consciousness and cognition is not located in any single level but arises from the coordinated activity across this multiscale, hierarchical architecture [8] [9].

Table 2: Analysis of Emergent Properties Across Biological Hierarchies

| Level of Organization | Key Components | Primary Interactions | Exemplar Emergent Property |

|---|---|---|---|

| Molecular | Proteins, DNA, Metabolites | Biochemical reactions, PDIs | Scale-free topology of GRNs [12] |

| Cellular | Organelles, Cytoskeleton | Bioelectrical signaling, Mechanotransduction | Cellular polarity; Xenobot movement [8] |

| Tissue/Organ | Multiple cell types | Paracrine signaling, Gap junctions, Extracellular matrix | Pulsatile contraction of the heart; Organ shape [8] |

| Organismal | Organ systems | Neural and endocrine signaling | Consciousness; Learning and memory [8] |

| Social/Ecological | Individual organisms | Visual, auditory, chemical cues | Swarm intelligence in ant colonies [8] [14] |

Interplay of Drivers in Biological Systems

The three drivers of emergence—interactions, self-organization, and hierarchy—do not operate in isolation. They are deeply interconnected in a recursive feedback loop (Figure 2). Local interactions between components give rise to self-organization, which produces a global pattern or order. This emergent order often manifests as a new hierarchical level of organization. This hierarchical structure, in turn, creates new contexts and constraints that shape and guide the future local interactions of the components, leading to further rounds of self-organization and the emergence of even more complex properties [8] [11].

This interplay is central to Michael Levin's theory of "multiscale competency architecture," which posits that intelligent behaviors in biological systems result from the cooperation of self-organizing, goal-directed processes operating across different biological scales—from molecular networks to cellular collectives to entire tissues [8]. In this view, each level of the hierarchy exhibits a degree of agency and problem-solving capability.

Experimental and Analytical Protocols

Studying emergence requires a shift from purely reductionist methods to systems-level approaches that can capture the dynamics of interactions, self-organization, and hierarchy.

Protocol 1: Topological Analysis of Biological Networks

This methodology is used to uncover emergent architectural properties, such as scale-freeness, in networks like GRNs or neural connectomes [13] [12].

- Network Reconstruction: Compile a graph of the biological system. Nodes represent entities (e.g., genes, neurons), and edges represent interactions (e.g., PDIs, synapses) from experimental data (ChIP-Seq, DAP-Seq, neural tracing) [12].

- Degree Distribution Analysis: For each node, calculate its degree (number of connections). Plot the probability distribution P(k) of the degrees.

- Power-Law Fitting: If the distribution is heavy-tailed, fit it to a power-law function, P(k) ~ k^(-α), using maximum likelihood estimation to determine the scaling exponent (α) [12].

- Goodness-of-Fit and Model Selection: Use statistical tests (e.g., Kolmogorov-Smirnov) to evaluate the fit. Compare the power-law model to alternatives (e.g., exponential, Poisson) to confirm the network is scale-free [12].

- Inequality Analysis: Employ Lorenz curves to interpret the exponent α, which describes the inequality of connections (e.g., "capitalistic" vs. "socialistic" network topologies) [12].

Protocol 2: Investigating Self-Organization in Morphogenesis

This protocol outlines an approach to study how global patterns self-organize from local cellular interactions [10] [8].

- Perturbation Experiments: Systematically perturb the proposed local interaction rules. This could involve inhibiting specific bioelectric signals (e.g., with ion channel blockers), disrupting chemical gradients, or altering cell-cell adhesion.

- Multi-Scale Imaging: Use live imaging to track the resulting changes at both the local (single-cell behavior) and global (tissue-wide pattern) levels over time.

- Agent-Based Modeling (ABM): Create a computational model where simulated "agent" cells follow the hypothesized local rules (e.g., reaction-diffusion, bioelectrical communication). The model should be informed by the experimental data.

- Model Validation and Prediction: Compare the patterns generated by the ABM to the empirical results from perturbation experiments. A validated model can then be used to predict the outcomes of novel perturbations, testing the self-organization hypothesis.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Studying Emergent Properties

| Reagent / Tool Category | Specific Examples | Primary Function in Research |

|---|---|---|

| Genomic Interaction Mapping | ChIP-Seq, DAP-Seq, Yeast One-Hybrid (Y1H) | High-throughput identification of Protein-DNA Interactions (PDIs) for GRN reconstruction [12] |

| Bioelectric Perturbation | Ion channel blockers (e.g., Gabazine), Optogenetics | To manipulate bioelectrical signaling networks that guide pattern formation and self-organization [8] |

| Computational Modeling | Agent-Based Modeling (ABM) platforms, Network analysis software (e.g., Cytoscape) | To simulate system dynamics from local rules and analyze topological properties of reconstructed networks [10] [11] |

| Multi-Scale Imaging | Live-cell confocal microscopy, Calcium/voltage-sensitive dyes | To visualize and quantify the emergence of global patterns from local cellular behaviors over time [8] |

The study of emergence, driven by interactions, self-organization, and hierarchical organization, provides a powerful framework for understanding biological complexity. Moving beyond a purely genetic or reductionist view, this perspective reveals how the intricate behaviors and forms of life arise from the dynamic and relational nature of biological components. For researchers and drug development professionals, this implies that therapeutic interventions must consider the system-level consequences of targeting any single component, as the network dynamics can produce unexpected, emergent outcomes. Embracing this complexity, through the experimental and analytical protocols outlined, will be essential for unlocking the next generation of insights in regenerative medicine, synthetic biology, and the treatment of complex diseases.

The study of consciousness and intelligent behavior has traditionally been confined to the realm of complex nervous systems. However, emerging research within the framework of biological network science reveals that the fundamental principles of information processing, decision-making, and even primitive cognition operate across vastly different scales and material substrates. Network-based approaches have become ubiquitous in diverse biological fields, offering unifying concepts for understanding complex systems from gene regulation to brain circuits [9]. This whitepaper examines three distinct but interconnected domains where biological networks exhibit emergent properties relevant to consciousness and intelligence: canonical neural networks in the brain, the critical role of neural integration in organ function, and the surprising cognitive capabilities of aneural biological systems such as Xenobots.

The essential concepts of biological network science—including hierarchical organization, modularity, and the balance between integration and segregation—provide a common theoretical foundation for exploring how conscious states arise from neural tissue, how neural networks govern organ development and homeostasis, and how intelligent behaviors can emerge in systems completely lacking neurons [9]. By applying consistent analytical frameworks from multivariate information theory across these diverse systems, researchers are beginning to identify universal fundamentals of biological information processing that operate independently of specific material implementations [15].

Consciousness from Neural Networks in the Brain

Defining the Neural Correlates of Consciousness

The neural correlates of consciousness (NCC) represent the minimal set of neuronal events and mechanisms sufficient for specific conscious experiences [16]. Consciousness research typically distinguishes between two key dimensions: the level of consciousness (wakefulness or arousal) and the content of consciousness (subjective experience) [17] [16]. The Glasgow Coma Scale serves as a clinical tool for assessing the level of consciousness in patients, focusing on objective criteria like eye-opening and verbal response [17].

From a neurobiological perspective, consciousness requires both enabling factors that maintain adequate brain arousal and specific neural populations that generate particular conscious content. The enabling structures include various nuclei in the thalamus, midbrain, and pons that regulate overall brain arousal, while the content-specific NCC appear to involve particular neurons in the cortex and associated structures including the amygdala, thalamus, claustrum, and basal ganglia [16].

Key Neural Structures and Mechanisms

Paraventricular Nucleus (PVT) and Arousal Regulation: The paraventricular nucleus of the thalamus has been identified as a key regulator of arousal states. Research using in vivo fiber photometry and multi-channel electrophysiological recordings in mice demonstrates that glutamatergic neurons in the PVT show high activity during waking states. Inhibition of PVT neuronal activity decreases arousal, while activation induces transitions from sleep to wakefulness and accelerates recovery from general anesthesia. The projection from the PVT to the nucleus accumbens and the input from orexin-secreting neurons in the lateral hypothalamus to PVT glutamatergic neurons represent critical pathways controlling arousal [17].

The Claustrum as a Potential Consciousness Coordinator: The claustrum, a thin, irregular sheet of neurons attached to the underside of the neocortex, has extensive reciprocal connections with almost the entire neocortex. This unique connectivity pattern has led researchers to propose its role as a potential consciousness coordinator [17]. Groundbreaking experimental evidence comes from studies where electrical stimulation of the claustrum in an epileptic patient resulted in immediate loss of consciousness, while cessation of stimulation led to immediate recovery [17]. Additionally, examination of 171 veterans with traumatic brain injuries revealed that claustrum damage was associated with the duration of loss of consciousness, suggesting its importance in consciousness restoration [17].

Functional Connectivity and Higher-Order Interactions: Advanced neuroimaging techniques have revealed that conscious states are associated with specific patterns of functional connectivity in the brain. These include a complex distribution of positively and negatively signed dependencies between brain regions, indicating correlated and anti-correlated patterns of activity [15]. Truly higher-order interactions, assessed using techniques from information theory, are widespread throughout the human brain, with alterations observed in conditions affecting consciousness such as aging, neurodegeneration, and following anesthesia [15].

Table 1: Key Neural Structures in Consciousness

| Neural Structure | Primary Function in Consciousness | Experimental Evidence |

|---|---|---|

| Paraventricular Nucleus (PVT) | Regulates arousal states and wakefulness | Optogenetic activation induces wakefulness; inhibition reduces arousal [17] |

| Claustrum | Potential consciousness coordinator; integrates information across cortical regions | Stimulation induces immediate loss of consciousness; damage prolongs unconsciousness [17] |

| Frontal Cortex | Supports higher-level consciousness and cognitive functions | Activity correlates with conscious perception in binocular rivalry tasks [16] |

| Inferior Temporal Cortex | Processes specific conscious content (e.g., faces) | Neurons fire only when percept is consciously experienced [16] |

Experimental Approaches for Studying Neural Correlates of Consciousness

Perceptual Illusion Paradigms: Researchers have employed various perceptual illusions to dissociate physical stimuli from subjective experience. Techniques such as binocular rivalry, continuous flash suppression, and motion-induced blindness allow scientists to present constant physical stimuli while the subject's conscious perception fluctuates [16]. In binocular rivalry tasks, different images are presented to each eye, and subjects report alternating perceptions despite constant retinal input. Single-neuron recordings in macaque monkeys performing such tasks reveal that while primary visual cortex (V1) neurons respond largely to the retinal stimulus regardless of perception, neurons in higher cortical areas like the inferior temporal cortex fire only when their preferred stimulus is perceived [16].

Integrated Information Theory (IIT) and Consciousness Metrics: The Integrated Information Theory provides a theoretical framework for quantifying consciousness by measuring how effectively a system integrates information [15]. This approach assesses the extent to which a system's future state can be predicted more accurately based on its true joint statistics compared to a disintegrated model. Changes in integrated information have been found to correlate with alterations in consciousness following anesthesia or brain injury [15].

Neural Networks in Organ Function and Bioengineering

The Critical Role of Innervation in Organ Development and Homeostasis

The autonomic nervous system (ANS), consisting of sympathetic ("fight-or-flight") and parasympathetic ("rest") fibers, plays a crucial role in the development, functional regulation, and homeostasis of virtually all internal organs [18]. The ANS employs acetylcholine as the principal neurotransmitter between preganglionic and postganglionic fibers, with postganglionic sympathetic nerves mainly using norepinephrine and parasympathetic nerves employing acetylcholine to communicate with organs [18].

Pancreatic Innervation: The pancreas receives extensive autonomic innervation that critically shapes its development and function. During pancreatic organogenesis in mice, sympathetic neurons expressing vesicular monoamine transporter 2 (VMAT2) are detectable within the developing pancreatic bud by embryonic day E12.5 [18]. These sympathetic nerves play a crucial role in organizing the architecture of pancreatic islets, with experimental denervation in neonatal mice disrupting typical α-cell localization around β-cell cores. The autonomic signaling subsequently orchestrates insulin release during the cephalic phase, sustains glucose tolerance, synchronizes islet activity, and modulates responses to hypoglycemia and diabetes [18].

Liver, Salivary Gland, and Spleen Innervation: Similar critical roles for innervation have been established in other organs. In the liver, neural inputs regulate metabolic functions, while in salivary glands, they control secretion processes. The spleen's immune functions are similarly modulated by autonomic inputs, demonstrating the far-reaching influence of neural networks beyond traditional conscious processing [18].

Engineering Innervated Organs: Methodologies and Challenges

The growing field of organ bioengineering faces the significant challenge of incorporating functional neural networks into engineered tissues and organs. Two primary approaches have emerged:

Top-Down Organ Manufacturing: This approach utilizes decellularized organs from cadavers as scaffolds to culture autologous cells. While this method preserves the native extracellular matrix architecture, including potential pathways for neural ingrowth, it faces limitations due to the scarce availability of donor organs [18].

Bottom-Up Organ Engineering: This strategy involves fabricating the smallest structural/functional unit of an organ and using it as a building block to recreate complex architecture, typically employing additive manufacturing techniques like 3D bioprinting [18]. The creation of organoids—miniaturized functional replicas of organs—represents a promising bottom-up approach. Recent research demonstrates that human brain organoids can replicate fundamental building blocks of learning and memory, showing synaptic plasticity and increased expression of immediate early genes upon stimulation [19].

Table 2: Research Reagent Solutions for Neural Network and Consciousness Research

| Research Reagent/Tool | Application/Function | Experimental Examples |

|---|---|---|

| In vivo fiber photometry | Records neural activity in awake, behaving animals | Used to track PVT neuron activity during sleep-wake cycles [17] |

| Optogenetics | Precise control of specific neuronal populations | Activation/inhibition of PVT glutamatergic neurons to modulate arousal [17] |

| Deep brain electrodes | Stimulation and recording from deep brain structures | Claustrum stimulation in epileptic patients [17] |

| Calcium imaging | Visualizing activity in neural networks and non-neural tissues | Tracking calcium signaling in Xenobots and brain organoids [15] [19] |

| fMRI/DTI | Mapping functional and structural connectivity | Identifying networks altered in disorders of consciousness [15] [16] |

| Evolutionary algorithms | Designing biological forms and behaviors | Creating Xenobot morphologies [20] |

| Immediate early gene markers | Identifying recently activated neurons/cells | Assessing memory formation in brain organoids [19] |

Xenobots: Aneural Biological Networks Exhibiting Cognitive Behaviors

Xenobots as a Model for Primitive Cognition

Xenobots represent a revolutionary biological platform for studying the emergence of intelligent behaviors in systems completely lacking neurons. These computer-designed organisms are constructed from embryonic skin and cardiac cells of the frog Xenopus laevis, assembled into forms designed by evolutionary algorithms [20]. Despite having no neurons or traditional nervous systems, Xenobots exhibit remarkably complex behaviors including collective motion, object manipulation, and even self-healing capabilities [20].

The design process begins with an evolutionary algorithm that generates random solutions to a specified problem (such as locomotion), culls underperforming shapes, and iteratively modifies survivors until viable organisms emerge in simulation. Researchers then physically realize these designs by harvesting embryonic frog cells, pipetting them into molds, and using microsurgery to carve the resulting cell spheres into algorithm-specified shapes [20].

Information Processing in Aneural Systems

Groundbreaking research has demonstrated that Xenobots, despite their simplicity as collections of non-neural epithelial cells, possess sophisticated internal information structures comparable to those found in neural systems [15]. Using techniques from complex systems and multivariate information theory originally developed for analyzing brain activity, researchers have identified higher-order interactions in the calcium signaling networks of Xenobots that mirror the information-processing patterns observed in human brains [15].

These findings challenge traditional boundaries between neural and non-neural information processing. The coordinated calcium dynamics observed in Xenobots represent a more ancient and fundamental form of biological cognition that predates the evolution of nervous systems by billions of years [15]. Similar patterns of complex calcium signaling have been identified across diverse biological systems including animal epithelial tissue, plant tissue, and fungal mycelial networks, where they coordinate crucial processes such as development, regeneration, wound healing, and cell-type differentiation [15].

Implications for Understanding Cognition and Consciousness

The demonstrated competencies of Xenobots support a perspective of cognition as a continuum rather than a binary phenomenon. As articulated by researcher Michael Levin, cognitive capacities likely extend "all the way from naked chemical networks to cells, to bacteria, organs, and then whole organisms and humans" [20]. This framework suggests that the cognitive abilities we associate with brains may represent specialized instantiations of more general principles of cellular intelligence and collective problem-solving.

This perspective is further supported by research showing that many capacities traditionally attributed to neurons—including decision-making, problem-solving, and memory—are in fact shared by more humble cells, albeit at different scales and temporal resolutions [20]. The implications for artificial intelligence are significant, suggesting that future intelligent systems might emulate these more ancient, pre-neural principles of biological intelligence rather than simply mimicking the synaptic connections of the human brain [20].

Integrated Experimental Protocols

Protocol 1: Investigating Consciousness Using Binocular Rivalry

Objective: To identify neural correlates of conscious perception by dissociating sensory stimulation from subjective experience.

Methodology:

- Stimulus Presentation: Different images are presented to each eye of a human subject or non-human primate using a mirror stereoscope or similar apparatus.

- Behavioral Reporting: Subjects are trained to report their perceptual state (which image they are consciously seeing) using continuous button presses or verbal reporting.

- Neural Recording: Simultaneously record neural activity using one or more of the following techniques:

- Single-neuron electrophysiology (in non-human primates)

- Functional MRI (fMRI) to measure hemodynamic activity

- Electroencephalography (EEG) to measure electrical activity

- Data Analysis: Compare neural activity patterns during periods when the physical stimulus is identical but the subjective percept differs.

Key Measurements:

- Contrast neural responses in primary visual cortex (V1) versus higher visual areas (e.g., inferior temporal cortex)

- Calculate the percentage of neurons whose firing rates track the physical stimulus versus the subjective percept

- Identify time delays between neural activity changes and perceptual switches

Applications: This protocol has revealed that while V1 activity largely follows the physical stimulus, neurons in higher visual areas like the inferior temporal cortex fire predominantly when their preferred stimulus is consciously perceived [16].

Protocol 2: Creating and Testing Xenobots

Objective: To design, fabricate, and assess the capabilities of programmable biological machines from frog embryonic cells.

Methodology:

- In Silico Design:

- Use an evolutionary algorithm (e.g., a 12-line algorithm as described in original research) to generate candidate morphologies

- Simulate behavior of these morphologies in a virtual environment

- Select highest-performing designs for biological implementation

Biological Fabrication:

- Harvest embryonic skin and cardiac cells from Xenopus laevis embryos

- Pipette cells carefully into molds to form tight spheres of living tissue

- Use microsurgical techniques (tiny scalpel and cauterizing iron) to carve spheres into algorithm-specified shapes

Behavioral Assessment:

- Observe and record Xenobot behavior in petri dishes

- Track movement patterns, collective behaviors, and object manipulation capabilities

- Test self-healing capacities by performing minor injuries

Information Processing Analysis:

- Use calcium imaging to record signaling dynamics between cells

- Apply multivariate information theory measures to quantify higher-order interactions

- Compare these patterns to those observed in neural systems using the same analytical framework

Key Measurements:

- Locomotion efficiency and speed

- Collective behavior patterns and coordination

- Self-healing capacity and time

- Multivariate information metrics (integration, synergy, etc.)

Applications: This protocol has demonstrated that Xenobots exhibit sophisticated behaviors and information processing despite lacking neurons, challenging traditional concepts of cognition [15] [20].

Visualization of Concepts and Pathways

Diagram 1: Information Processing Hierarchy in Biological Systems

Diagram 2: Experimental Workflow for Consciousness Research

Diagram 3: Xenobot Creation and Analysis Pipeline

The study of biological networks across scales—from neuronal populations in the brain to cellular collectives in Xenobots—reveals fundamental principles of information processing, decision-making, and the emergence of complex behaviors. The theoretical framework of biological network science provides unifying concepts for understanding how conscious states arise from neural tissue, how neural networks govern organ development and homeostasis, and how intelligent behaviors can emerge in systems completely lacking neurons.

The implications of this research extend across multiple domains. For basic science, it challenges traditional boundaries between neural and non-neural cognition, suggesting a continuum of cognitive capacities throughout biological systems. For medicine, it offers new approaches to understanding disorders of consciousness and developing innovative treatments through bioengineered tissues and organs. For artificial intelligence, it suggests alternative pathways to creating intelligent systems that emulate the more ancient, pre-neural principles of biological intelligence.

As research progresses across these interconnected domains, a more comprehensive understanding of the theoretical foundations of biological networks and their emergent properties will continue to emerge, potentially transforming our fundamental concepts of mind, intelligence, and life itself.

Neurobiological Emergentism (NBE) presents a rigorous biological-neurobiological-evolutionary framework to explain one of science's most perplexing phenomena: the emergence of sentience from physical nervous systems. Sentience, defined as the capacity for subjective, felt experience—what philosopher Thomas Nagel characterized as "something it is like to be"—represents the hard problem of consciousness [21] [22]. This theory specifically addresses the subjective, feeling aspects of consciousness, encompassing both interoceptive-affective states (pain, pleasure, emotions) with inherent valence and exteroceptive sensory experiences (vision, audition) that may lack immediate emotional charge but nonetheless constitute felt experience [21].

The central challenge NBE addresses is the so-called "explanatory gap" between objectively describable neurobiological processes and the subjective personal nature of feeling [21] [22]. This gap manifests in two primary forms: (1) the personal nature problem, concerning how objective brain functions give rise to irreducibly first-person experiences, and (2) the subjective character problem, addressing how the specific qualities of experiences (e.g., the redness of red) emerge from neural activity [21]. NBE proposes that these apparent gaps result from the natural emergence of sentience in complex systems and can be scientifically explained without completely objectifying subjective experience [22].

Theoretical Foundations: Emergence in Biological Systems

Core Principles of Biological Emergence

The concept of emergence, first scientifically articulated by G.H. Lewes in 1875, provides the theoretical bedrock for NBE [21] [22]. In biological systems, emergence describes how novel properties and behaviors arise through the interactions of simpler components, creating a whole that exceeds the mere sum of its parts. Biological emergence is characterized by several fundamental principles, as detailed in Table 1.

Table 1: Fundamental Features of Biological Emergence [21] [22]

| Feature | Description | Biological Example |

|---|---|---|

| Novelty | Emergent properties are system-level features not present in or reducible to individual components. | Consciousness emerges from neural networks, though absent from individual neurons. |

| Interaction-Dependence | Requires physical integration and dynamic interaction between system components. | Bioelectrical signaling between cells enables coordinated tissue development and repair. |

| Process Nature | Emergent features are dynamic processes created by ongoing part interactions. | Cognitive functions like learning and memory arise from changing synaptic strengths. |

| Hierarchical Amplification | Complex hierarchies with multiple levels greatly enhance emergent potential. | Neural hierarchies (molecular → cellular → circuit → system) enable complex cognition. |

Neurobiological Emergence

In nervous systems, emergence operates with special intensity due to several amplifying factors outlined in Table 2. The extensive reciprocal connectivity within and between neurobiological hierarchy levels creates unprecedented opportunities for novel system properties to emerge [21] [22]. This is particularly evident in brains with complex central nervous systems, where the magnitude of interactions enables the emergence of sentience.

Table 2: Key Features of Emergence in Neurobiological Hierarchical Systems [21] [22]

| Feature | Neurobiological Significance |

|---|---|

| Hierarchical Arrangements | Critical for creating emergent features across all biology, especially pronounced in neurohierarchical systems. |

| Reciprocal Connectivity | Extensive feedback and feedforward connections within and between levels dramatically enhance emergent properties. |

| Multi-Scale Operation | Emergent properties occur simultaneously across multiple spatial scales and temporal frequencies. |

| Level Addition | Novel properties emerge system-wide as additional (typically "higher") hierarchical levels are added. |

The following diagram illustrates the hierarchical organization of biological systems that enables the emergence of complex properties like sentience:

The Evolutionary Emergence of Sentience

A Three-Stage Evolutionary Model

NBE proposes that sentience emerged through a three-stage evolutionary sequence, with each stage marked by increasing neural complexity and novel emergent properties. This model provides a biological timeline for the emergence of subjective experience, spanning billions of years of evolutionary history [21] [22].

Table 3: Evolutionary Stages of Sentience Emergence [21] [22]

| Stage | Time Period | Characteristics | Example Organisms |

|---|---|---|---|

| ES1: Non-Sentient Sensing | 3.5-3.4 billion years ago | Single-celled organisms capable of sensing environmental stimuli but lacking neurons and nervous systems; non-sentient. | Early prokaryotes, bacteria. |

| ES2: Presentient Transition | ~570 million years ago | Organisms with neurons and simple nervous systems; intermediate between non-sentient and fully sentient states. | Early metazoans with simple neural nets. |

| ES3: Full Sentience | 560-520 mya (Cambrian) | Organisms with neurobiologically complex central nervous systems capable of generating subjective experience. | Vertebrates, arthropods, cephalopods. |

The Cambrian explosion period (560-520 mya) represents a critical threshold where multiple evolutionary lineages independently crossed into the sentience domain, suggesting that sufficiently complex nervous systems inevitably give rise to subjective experience [22]. This parallel emergence across vertebrates, arthropods, and cephalopods indicates sentience is a predictable emergent property of specific neural architectures rather than a unique evolutionary fluke.

Bridging the Explanatory Gaps

NBE provides a scientific resolution to the two primary explanatory gaps through the principles of biological emergence:

The Personal Nature Gap: The irreducibly first-person character of sentience results from the novel system properties that emerge from complex neural hierarchies. Just as wetness emerges from H₂O molecular interactions but isn't reducible to individual molecules, subjective experience emerges from neural networks but isn't reducible to individual neurons [21]. This explains why C.D. Broad's "mathematical archangel"—with complete objective knowledge of neurobiology—could not predict the subjective smell of ammonia without direct experience [21] [22].

The Subjective Character Gap: The specific qualities of experiences (qualia) emerge from the unique organizational patterns and interaction dynamics within neural systems. The theory replaces the notion of an unbridgeable "explanatory gap" with a natural, scientifically tractable "experiential gap" that can be studied through the principles of biological emergence [22].

Experimental Approaches and Research Methodologies

Modern Computational Approaches to Emergent Properties

Contemporary research has developed sophisticated methodologies for detecting and analyzing emergent properties in complex biological systems. Graph Neural Networks (GNNs) represent a particularly powerful approach for studying how tissue-level properties emerge from cellular interactions [23].

Table 4: Experimental Approaches for Studying Emergent Properties

| Methodology | Application | Key Findings |

|---|---|---|

| Graph Neural Networks (GNNs) | Modeling spatial omics data as cell graphs to predict tissue phenotypes. | Captures emergent tumor properties and cell-type interactions not detectable in single-cell analyses [23]. |

| Multi-Instance Learning | Analyzing dissociated single-cell data without spatial context. | Provides baseline comparison for evaluating spatial emergence effects [23]. |

| Pseudobulk Analysis | Averaging molecular profiles across cell populations. | Often performs comparably to complex spatial models for simple classification tasks [23]. |

| Attention Mechanism Analysis | Interpreting which cellular interactions drive GNN predictions. | Reveals grade-specific cell-type interactions in tumor microenvironments [23]. |

The following diagram illustrates the experimental workflow for applying graph neural networks to detect emergent properties in biological tissues:

Key Experimental Evidence

Research across multiple domains provides empirical support for the emergentist perspective:

Spatial Omics and GNNs: Studies applying GNNs to spatial omics data have demonstrated that tissue-level properties like tumor grade and immune response emerge from cellular interaction patterns. Notably, GNN embeddings capture clinically meaningful gradients of tumor progression that reflect underlying biology beyond simple classification labels [23].

Bioelectrical Emergence: Work on xenobots—reconfigurable biological systems created from frog cells—demonstrates how complex behaviors like movement, problem-solving, and self-repair can emerge from cellular collectives without central nervous systems [8]. This research shows that cognitive-like functions are not exclusive to neural tissue but represent a more general biological emergent property.

Consciousness Gradients: The finding that GNN embeddings naturally organize along continuous gradients of tumor severity—despite being trained only for categorical classification—suggests these models capture emergent biological realities that reflect underlying continuous processes rather than discrete categories [23].

Research Reagents and Computational Tools

The study of emergent properties requires specialized reagents and computational resources. The following table details essential research solutions for investigating neurobiological emergence.

Table 5: Essential Research Reagents and Computational Tools

| Resource Category | Specific Examples | Research Application |

|---|---|---|

| Spatial Profiling Technologies | Imaging Mass Cytometry (IMC), CODEX, MERFISH | Highly multiplexed protein or RNA imaging in intact tissues to capture spatial organization [23]. |

| Graph Neural Network Frameworks | PyTorch Geometric, Deep Graph Library | Specialized libraries for implementing GNNs on biological graph data [23]. |

| Neurogenic Tagging Systems | NeuroGT (CreER-loxP) | Birthdate-based neuronal classification and manipulation for studying development [24]. |

| Bioelectric Measurement Tools | Voltage-sensitive dyes, patch clamp systems | Measuring bioelectrical signaling in non-neural tissues for morphogenetic studies [8]. |

| Synthetic Biology Tools | Optogenetics, synthetic gene circuits | Testing emergence hypotheses through controlled perturbation of cellular networks [8]. |

Neurobiological Emergentism provides a scientifically rigorous framework for understanding how sentience arises from complex nervous systems. By situating sentience within the broader context of biological emergence and evolutionary development, NBE bridges the explanatory gaps that have long perplexed consciousness researchers. The theory gains substantial support from contemporary research in spatial transcriptomics, graph neural networks, and bioelectrical communication, all of which demonstrate how complex system-level properties emerge from simpler components through specific patterns of interaction.

Future research directions should focus on identifying the precise threshold conditions for sentience emergence across different neural architectures, developing more sophisticated computational models of emergent phenomena, and establishing biomarkers for detecting conscious states across species. As Michael Levin's work on xenobots suggests, the principles of biological emergence may extend beyond nervous systems to reveal fundamental aspects of how intelligence and cognitive-like functions manifest across multiple scales of biological organization [8].

The Role of Bioelectric Signaling and Cellular Communication in Pattern Formation

Bioelectric signaling represents a fundamental layer of control in biological pattern formation, operating alongside well-established genetic and biochemical pathways. This form of cellular communication utilizes spatial patterns of transmembrane potential (Vmem) differences, ion flows, and electric fields to coordinate large-scale morphogenesis during embryonic development, regeneration, and tissue repair [25]. Unlike fast-action potentials in neural tissue, these slow-changing bioelectrical signals guide complex processes including cell differentiation, proliferation, migration, and ultimate anatomical structure formation [25]. The study of bioelectricity provides a crucial bridge between molecular genetics and the emergent properties that enable cells to collectively make decisions about anatomical structure, offering profound implications for regenerative medicine and bioengineering [26] [25].

The theoretical foundation of bioelectrical patterning rests upon the concept that groups of cells form functional networks capable of processing information through ion channels, pumps, and gap junctions [25]. These networks establish dynamic pre-patterns that guide morphological outcomes through a combination of reaction-diffusion principles, field-mediated effects, and cellular coordination mechanisms [26] [27]. Recent advances in monitoring and manipulating these signals have revealed that bioelectrical patterns serve as instructive cues that transcend cellular housekeeping functions, representing a powerful information processing system that enables complex morphological outcomes from relatively simple physiological interactions [28].

Core Mechanisms of Bioelectrical Patterning

Molecular Basis of Bioelectric Signals

The generation of bioelectrical patterns originates from the coordinated activity of ion channels, pumps, and transporters embedded in cellular membranes. These proteins establish and maintain transmembrane voltage potentials (Vmem) by controlling the flow of specific ions (K+, Na+, Cl-, Ca2+) across plasma membranes [25]. The resulting patterns of Vmem are not merely epiphenomena but play instructive roles in development, with specific voltage ranges correlating with distinct cell behaviors: depolarized states typically associate with proliferation and migration, while hyperpolarization often precedes differentiation [25].

Gap junctions form a crucial component of bioelectrical networks, allowing direct cell-to-cell communication through the exchange of ions and small molecules [25]. These electrical synapses enable the formation of iso-electric cell compartments known as syncytia, which can synchronize bioelectrical activity across tissue regions [25]. The dynamic control of gap junction permeability allows tissues to establish functional domains with distinct bioelectrical properties, creating a pattern formation system that is both robust and plastic in response to injury or changing environmental conditions.

Field-Mediated Pattern Formation

Beyond localized cell-cell communication, electrostatic fields contribute to morphogenesis through a synergetics-based mechanism that enhances the complexity of Vmem patterns [26]. These fields facilitate collective patterning by projecting coarse-grained information across tissues, enabling the optimization of transient signals from symmetry-breaking organizer regions that subsequently mold Vmem patterns in tissue bulk [26]. Research has identified two contrasting pattern-coding strategies that emerge depending on field sensitivity strengths: "mosaic" patterning, which relies more on cell-autonomous mechanisms, and "stigmergic" patterning, where cells modify their environment in ways that influence subsequent cellular activity [26].

The stigmergic model particularly recapitulates the qualitative developmental sequence observed in vertebrate embryogenesis, such as the bioelectric craniofacial prepattern in frog embryos [26]. This field-based mechanism provides a pathway for long-range coordination that complements shorter-range bioelectrical signaling, enabling the establishment of complex anatomical patterns without requiring explicit genetic blueprints for every spatial detail.

Integration with Genetic Programs

Bioelectrical signaling does not operate in isolation but is tightly integrated with conventional genetic programs. Changes in Vmem patterns trigger downstream second-messenger cascades that ultimately influence gene expression, transcription factor localization, and epigenetic modifications [25]. This integration creates feedback loops where genetic elements establish the ion channel and pump proteins that generate bioelectrical patterns, which in turn regulate genetic networks to stabilize cell states and positional information.

This reciprocal relationship enables a dynamic control system where bioelectrical signals provide real-time information about tissue-level anatomy while genetic programs provide molecular specificity. The interplay between these systems is particularly evident in regeneration, where bioelectrical patterns can initiate and guide the restoration of complex structures even in organisms not traditionally considered model systems for regeneration [25].

Quantitative Data in Bioelectrical Patterning

Table 1: Bioelectrical Signal Characteristics Across Biological Processes

| Biological Process | Signal Type | Frequency/Time Characteristics | Key Ion Channels/Transporters | Primary Functions |

|---|---|---|---|---|

| Monolayer Formation (Fibroblasts) | Quasi-periodic bursts | Dominant period: 4.2 min; Occasional bursts: 1.6-2 min [28] | Voltage-gated channels, Gap junctions | Cell adhesion, population coordination, tissue assembly [28] |

| Wound Repair (Fibroblasts) | Quasi-periodic bursts | Average period: 60-110 min (0.27-0.15 mHz); Duration: ~35 hours [28] | Calcium channels, Potassium channels | Matrix synthesis, immune cell recruitment, wound closure [28] |

| Developmental Prepatterning | Slow Vmem changes | Sustained patterns (hours-days) [25] | K+ channels (Kir7.1), Na+/K+ ATPase, Gap junctions | Axial polarity, organ identity, cell fate specification [26] [25] |

| Regeneration Initiation | Endogenous ion flows | Persistent gradients (injury potentials) [25] | V-ATPase, Sodium channels | Cell proliferation, migration, repatterning [25] |

Table 2: Functional Outcomes of Bioelectrical Signaling in Pattern Formation

| Bioelectrical Manipulation | Experimental System | Morphological Outcome | Proposed Mechanism |

|---|---|---|---|

| Applied electric fields | Planarian regeneration | Alteration of anterior-posterior polarity [25] | Redirection of cell migration, polarity establishment |

| Potassium channel inhibition | Zebrafish fin development | Increased fin/barbel size due to hyperpolarization-induced proliferation [25] | Cell cycle progression via membrane potential changes |

| Ion channel perturbation | Xenopus melanocyte migration | Improper colonization of tissues by neural crest derivatives [25] | Disrupted galvanotaxis and positional information |

| Endogenous field modulation | Craniofacial patterning | Stigmergic patterning recapitulating native development [26] | Field-mediated optimization of Vmem patterns in tissue bulk |

Experimental Methodologies and Protocols

Multielectrode Array (MEA) Recording for Bioelectrical Pattern Detection

Objective: To measure extracellular bioelectrical signals from non-electrogenic cell populations during pattern formation and wound response.

Materials:

- Custom-made large-area Multielectrode Arrays (MEAs) [28]

- Low-noise voltage amplifier system with stable ground connection [28]

- Fibroblast cell line (e.g., dermal fibroblasts)

- Cell culture facilities and standard media

- Microscope for visual monitoring

- Software for signal analysis (e.g., MATLAB, Python with scientific libraries)

Procedure:

- MEA Preparation: Sterilize MEA devices and coat with appropriate extracellular matrix proteins to promote cell adhesion [28].

- Cell Seeding: Plate fibroblasts at density sufficient to form confluent monolayers (typically 3,000-4,000 cells per sensing electrode) [28].

- Baseline Recording: Establish electrophysiological baseline with culture medium alone, verifying average noise level of approximately 4 µV peak-to-peak [28].

- Continuous Monitoring: Record bioelectrical activity throughout monolayer formation (typically 2.5 days), confluent stability, and post-wound repair phases [28].

- Wound Induction: At 3.5 days post-seeding, inflict controlled mechanical wound using soft plastic blade to create fissure in monolayer [28].

- Post-Wound Monitoring: Continue recording for additional 35+ hours to capture repair-associated bioelectrical patterns [28].

- Signal Analysis: Process time traces to identify signal patterns, intervals, and frequency domain characteristics using appropriate algorithms.

Key Considerations: Amplifier should be configured in alternating current mode to filter out direct current drifts. Ground connection stability is critical for reliable measurements. Experimental timeline may vary based on cell density and wound size [28].

Pharmacological Manipulation of Bioelectrical Patterns

Objective: To establish causal relationship between specific ion flows and morphological outcomes through targeted pharmacological interventions.

Materials:

- Ion channel agonists/antagonists (specific to channels of interest)

- Gap junction blockers (e.g., carbenoxolone)

- Vmem-reporting fluorescent dyes (e.g., DiBAC or CC2-DMPE)

- Ion-specific fluorescent indicators (e.g., Calcium Green for Ca2+)

- Appropriate vehicle controls

Procedure:

- Baseline Pattern Assessment: Establish normal bioelectrical patterns and morphological progression in untreated systems.

- Compound Administration: Apply pharmacological agents at specific developmental timepoints or pre-patterning stages.

- Bioelectrical Monitoring: Track changes in Vmem patterns using fluorescence imaging or MEA recording.

- Morphological Tracking: Document subsequent effects on anatomical outcomes over time.

- Rescue Experiments: Attempt to reverse phenotypes through complementary manipulations.

- Mechanistic Analysis: Investigate downstream effects on second messengers, gene expression, and cell behaviors.

Interpretation Guidelines: Compound specificity, dose-dependency, and temporal requirements should be established. Complementary genetic manipulations provide stronger evidence for specific mechanisms [25].

Signaling Pathways and Experimental Workflows

Bioelectrical Patterning Signaling Pathway

Bioelectrical Pattern Research Workflow

Research Reagent Solutions

Table 3: Essential Research Reagents for Bioelectrical Pattern Studies

| Reagent Category | Specific Examples | Primary Function | Application Notes |

|---|---|---|---|

| Multielectrode Arrays (MEAs) | Custom large-area MEAs [28] | Extracellular recording of bioelectrical patterns from cell populations | Enables detection of ultra-low frequency signals (10⁻⁴ Hz); suitable for long-term (week+) monitoring [28] |