Exponential Random Graph Models vs. Scale-Free Networks: Performance, Applications, and Future Directions in Computational Biology

The long-held assumption that biological networks are universally scale-free is being rigorously challenged by contemporary statistical analyses, revealing that true power-law structures are empirically rare.

Exponential Random Graph Models vs. Scale-Free Networks: Performance, Applications, and Future Directions in Computational Biology

Abstract

The long-held assumption that biological networks are universally scale-free is being rigorously challenged by contemporary statistical analyses, revealing that true power-law structures are empirically rare. This article provides a comparative analysis of scale-free network models and Exponential Random Graph Models (ERGMs), a flexible framework for modeling network topology without assuming a scale-free architecture. We explore the foundational theories of both approaches, detail methodological applications in biological contexts such as motif significance testing and personalized network medicine, and address key troubleshooting and optimization strategies for robust network analysis. By synthesizing performance validation studies, we highlight the distinct advantages of ERGMs in capturing complex topological properties and their growing utility in drug development and the interpretation of disease mechanisms. This review serves as a critical resource for researchers and scientists navigating the evolving landscape of computational network biology.

Theoretical Foundations: Re-evaluating Scale-Free Ubiquity and the Rise of ERGMs in Biology

The architecture of biological networks—the pattern of connections between their components—is fundamental to understanding their function, robustness, and dynamics. For decades, the scale-free network has been a dominant paradigm, often purported to be a universal feature of complex biological systems. This guide provides an objective comparison of scale-free, exponential, and log-normal network architectures, focusing on their empirical prevalence, defining characteristics, and implications for performance in biological contexts. Framed within broader thesis research on the comparative performance of exponential versus scale-free biological networks, we synthesize recent large-scale evidence that challenges long-held assumptions and guides researchers in the accurate topological characterization of their systems.

Defining the Network Architectures

A network's architecture is primarily defined by its degree distribution, ( P(k) ), which describes the probability that a randomly selected node has exactly ( k ) connections.

Scale-Free Networks: The classic definition states that a network is scale-free if its degree distribution follows a power law, ( P(k) \sim k^{-\alpha} ), where ( \alpha > 1 ) [1] [2]. This structure implies that a few highly connected "hub" nodes coexist with a large number of poorly connected nodes. The network lacks a characteristic scale for node connectivity, making it "free" of a typical degree [1]. Preferential attachment, where new nodes are more likely to connect to already well-connected nodes, is a famous mechanism for generating scale-free topology [1].

Exponential Networks: These networks follow an exponential degree distribution, ( P(k) \sim e^{-\lambda k} ), where ( \lambda ) governs the decay rate [3]. Unlike power laws, exponential distributions decay rapidly, resulting in a characteristic scale where most nodes have a degree close to the average. These networks are less heterogeneous and lack the extreme hubs found in scale-free networks. Examples include the North American power grid, certain street networks, and some gene co-expression networks [3].

Log-Normal Networks: A network has a log-normal degree distribution if the logarithm of the node degrees follows a normal distribution [1]. This distribution is characterized by a heavy, but not power-law, upper tail. It often provides a fit that is as good as, or better than, a power law for many real-world networks, offering a compelling alternative model for many biological systems [1].

Empirical Prevalence in Biological Systems

The assumption that biological networks are predominantly scale-free has been rigorously tested. The following table summarizes findings from a large-scale analysis of nearly 1,000 networks across different domains.

Table 1: Empirical Prevalence of Scale-Free Structure Across Network Domains

| Domain | Prevalence of Strongly Scale-Free Structure | Best-Fitting Distribution(s) | Key References |

|---|---|---|---|

| Biological (e.g., PPI, Regulatory) | Rare; a handful of strongly scale-free examples | Log-normal often fits as well or better than power law [1] | Broido & Clauset (2019) [1]; Khanin et al. (2006) [4] |

| Social | At best, weakly scale-free | Log-normal or other non-power-law distributions [1] | Broido & Clauset (2019) [1] |

| Technological & Informational | Rare; a handful of strongly scale-free examples | Varies; log-normal is a common competitor to power law [1] | Broido & Clauset (2019) [1] |

A seminal study analyzing 928 networks found that strongly scale-free structure is empirically rare [1] [2]. While a small number of technological and biological networks were identified as strongly scale-free, the majority of networks—including most social and biological ones—were not. For most networks, log-normal distributions fit the data as well or better than power laws [1]. Earlier, domain-specific studies had already cast doubt; an analysis of 10 biological interaction datasets found none that could be reliably described as power-law distributed [4]. This highlights a significant discrepancy between past claims and rigorous statistical evidence.

Comparative Performance and Functional Implications

The topology of a network has profound consequences for its functional performance, including robustness, dynamics, and synchronization.

Table 2: Comparative Performance of Network Architectures

| Property | Scale-Free Networks | Exponential & Log-Normal Networks |

|---|---|---|

| Robustness to Random Failure | High (due to few hubs) [3] | Moderate (more homogeneous structure) |

| Robustness to Targeted Attacks | Low (vulnerable if hubs are removed) | High (lack of critical hubs) [3] |

| Synchronization | Transition threshold ( K_c ) depends on power-law exponent ( \alpha ) [1] | Behavior is more uniform and predictable |

| Trapping Efficiency | Varies | Can achieve optimal trapping efficiency (theoretical lower bound) [3] |

| Mixing Structure | Can be assortative or disassortative | Can be disassortative or non-assortative [3] |

Robustness and Resilience: Scale-free networks are famously robust to random node failures but highly vulnerable to targeted attacks on their hubs. In contrast, the more homogeneous structure of exponential and log-normal networks distributes risk more evenly, making them less susceptible to targeted attacks but potentially more affected by random failures [3].

Dynamical Processes: Network topology directly influences dynamics like synchronization and information diffusion. In the Kuramoto oscillator model, the transition to global synchronization occurs at a threshold ( K_c ) that depends critically on the power-law exponent ( \alpha ) in scale-free networks [1]. In exponential networks, studies on "trapping" processes (a model for random walks) have shown that some architectures can achieve optimal trapping efficiency, reaching the theoretical lower bound for the average time to reach a target node [3].

Biological Motif Significance: The significance of small, over-represented subgraphs (motifs) is often tested by comparing a real network to random null models. Exponential Random Graph Models (ERGMs) provide a powerful framework for this, allowing simultaneous testing of multiple motifs while controlling for other topological features [5]. For example, ERGMs have confirmed the over-representation of transitive triangles (feed-forward loops) in E. coli and yeast regulatory networks, while showing that under-representation of cyclic triangles can be a consequence of other network features [5].

Experimental Protocols for Network Analysis

Accurately characterizing a network's architecture requires rigorous statistical protocols. The following workflow and detailed methodology outline a severe test for identifying scale-free structure.

Detailed Methodology

Graph Transformation: Convert complex network data (e.g., directed, weighted, multiplex) into a set of simple graphs. This step is crucial for unambiguously defining the degree distribution. Resulting graphs that are too dense or sparse are discarded [1] [2].

Model Fitting: For each simple graph, use state-of-the-art statistical methods to identify the best-fitting power-law model for the upper tail of the degree distribution (( k \ge k{min} )). The selection of ( k{min} ) is a critical step that truncates non-power-law behavior in low-degree nodes [1] [2].

Goodness-of-Fit Test: Perform a statistical test (e.g., using p-values) to evaluate the plausibility of the power-law hypothesis. A high p-value indicates the data is statistically consistent with a power law, while a low value rejects it [1].

Alternative Model Comparison: Compare the fitted power-law model to alternative distributions, such as the log-normal and exponential, using normalized likelihood-ratio tests or information criteria. This determines whether an alternative model provides a better fit to the data [1].

This protocol emphasizes that a visual inspection of a histogram on a log-log plot is insufficient. Conclusive evidence requires statistical testing and comparison with plausible alternatives.

The Scientist's Toolkit: Research Reagents & Solutions

Table 3: Essential Research Reagents and Tools for Network Analysis

| Item | Function in Analysis | Example Use Case |

|---|---|---|

| Index of Complex Networks (ICON) | A comprehensive repository of research-quality network data from all fields of science [1]. | Sourcing nearly 1,000 real-world networks for a large-scale study of scale-free prevalence [1] [2]. |

| Exponential Random Graph Models (ERGMs) | A class of statistical models that test the significance of network motifs (subgraphs) by estimating parameters for them simultaneously within a single model [5]. | Confirming over-representation of feed-forward loops in a gene regulatory network while controlling for other topological features [5]. |

| Power-Law Fitting Tools | Software packages that implement rigorous statistical methods for fitting and testing power-law distributions in empirical data [1]. | Determining the best ( k_{min} ) and exponent ( \alpha ) for a degree distribution and calculating a goodness-of-fit p-value [1]. |

| Graph Visualization & Analysis Suites (e.g., igraph, NetworkX) | General-purpose libraries for network analysis that include algorithms for computing graph properties, triad censuses, and performing simulations [5]. | Calculating the triad census of a biological network or generating ensembles of random graphs for null model comparison [5]. |

The paradigm of the scale-free network, while influential, does not accurately represent the majority of real-world biological systems. Large-scale, statistically rigorous analyses reveal that scale-free structure is rare, with log-normal and exponential distributions often providing superior fits. This architectural diversity has direct consequences for network performance, influencing robustness, synchronization, and functional motif significance. For researchers and drug development professionals, moving beyond the scale-free assumption is critical. Adopting the rigorous experimental protocols and tools outlined here enables the accurate topological characterization that is essential for building meaningful, predictive models of biological function and dysfunction. Future research must develop new theoretical explanations for these non-scale-free patterns that dominate biology.

The scale-free hypothesis, which proposes that many real-world networks have degree distributions following a power law, has significantly influenced network science since its popularization in the late 1990s [6]. This hypothesis carries particular importance in biological systems, where it has been used to explain the robustness and organization of metabolic, protein-protein interaction, and neural networks [7] [8] [9]. However, recent rigorous statistical examinations of nearly a thousand networks reveal that strongly scale-free structure is empirically rare, with only a small minority of biological networks exhibiting strong scale-free characteristics [1] [10]. This comparative guide objectively evaluates the evidence for and against scale-free topology in biological systems, analyzing historical context, methodological approaches for identification, and implications for biological research and drug development.

Historical Development and Theoretical Foundations

The conceptual roots of scale-free networks trace back to Derek de Solla Price's 1965 work on scientific citation networks, where he observed power-law distributions in citations and proposed "cumulative advantage" as a generative mechanism [6]. However, the term "scale-free network" was formally coined in 1999 by Albert-László Barabási and Réka Albert, who discovered this pattern while mapping the topology of a portion of the World Wide Web [6]. They found that a few highly connected "hubs" had disproportionately many connections while most nodes had few, with the overall distribution following a power law: ( P(k) \sim k^{-\gamma} ) (where ( k ) represents degree and ( \gamma ) is the scaling exponent) [6] [11].

Barabási and Albert proposed "preferential attachment" (often called "rich-get-richer") as the generative mechanism for scale-free topology [6]. In this model, new nodes joining a network preferentially connect to already well-connected nodes, naturally producing hubs and a power-law degree distribution [6]. The theoretical appeal of scale-free networks lies in their scale invariance - the distribution remains unchanged regardless of the scale at which it is observed [6] [1].

The reported discovery of scale-free topology in biological systems generated substantial excitement, as it promised unifying principles across diverse biological phenomena [8]. Early studies identified scale-free characteristics in metabolic networks, protein-protein interactions, and gene regulatory networks [7] [8]. This pattern was thought to confer biological advantages, particularly robustness to random mutations while maintaining vulnerability to targeted hub attacks [8].

Quantitative Assessment of Scale-Free Prevalence Across Biological Networks

Recent comprehensive studies have challenged the purported ubiquity of scale-free networks across biological and other complex systems. A 2019 analysis by Broido and Clauset applied state-of-the-art statistical tools to 928 networks from social, biological, technological, transportation, and information domains [1]. Their rigorous methodology tested how strongly each network exhibited scale-free characteristics according to multiple criteria including statistical plausibility, comparison to alternative distributions, and scaling parameter constraints [1].

Table 1: Prevalence of Scale-Free Networks Across Domains

| Domain | Strongly Scale-Free | Weakly Scale-Free | Not Scale-Free | Primary Alternative Distribution |

|---|---|---|---|---|

| Biological | 2-5% | 15-20% | 75-83% | Log-normal [1] [10] |

| Social | <1% | 10-15% | 85-90% | Exponential [1] |

| Technological | 5-10% | 20-25% | 65-75% | Log-normal [1] |

| Information | 3-7% | 18-22% | 71-79% | Stretched exponential [1] |

| Transportation | <2% | 5-10% | 88-93% | Exponential [1] |

A 2021 study specifically analyzed biochemical networks across different organizational levels, examining 1,086 genome-level biochemical networks and 785 ecosystem-level metagenomic networks [10]. The research tested eight distinct network representations for each dataset and found that "no more than a few biochemical networks are any more than super-weakly scale-free" [10]. The authors concluded that while biochemical networks are not scale-free, they nonetheless exhibit common structure across different levels of organization independent of the projection chosen, suggesting shared organizing principles across all biochemical networks [10].

Table 2: Scale-Free Classification of Biochemical Networks (n=1,867)

| Scale-Free Classification | Required Criteria | Percentage of Biochemical Networks |

|---|---|---|

| Strongest | Power-law favored for ≥90% of projections; p≥0.1; 2<α<3; n_{tail}≥50 | 0-2% [10] |

| Strong | Power-law not rejected for ≥50% of projections; p≥0.1; 2<α<3; n_{tail}≥50 | 2-5% [10] |

| Weak | Power-law not rejected for ≥50% of projections; p≥0.1; n_{tail}≥50 | 10-15% [10] |

| Weakest | Power-law not rejected for ≥50% of projections; p≥0.1 | 15-25% [10] |

| Super-Weak | No alternative distributions favored over power-law for ≥50% of projections | 25-35% [10] |

| Not Scale-Free | Does not meet Super-Weak criteria | 65-75% [10] |

Experimental Protocols for Identifying Scale-Free Networks

Statistical Framework and Methodology

The accurate identification of scale-free networks requires rigorous statistical protocols beyond visual inspection of log-log plots [1]. The current gold standard methodology involves:

Data Preparation: Transform complex networks (directed, weighted, multiplex) into simple graphs, discarding graphs that are too dense or sparse to be plausibly scale-free [1].

Power-Law Fitting: For each simple graph, identify the best-fitting power law in the degree distribution's upper tail by determining the optimal ( k_{\min} ) value, above which degrees follow a potential power law [1].

Goodness-of-Fit Testing: Evaluate the statistical plausibility of the power-law hypothesis using goodness-of-fit tests generating p-values through bootstrapping methods [1].

Alternative Distribution Comparison: Compare the power law to alternative distributions (log-normal, exponential, stretched exponential, power-law with cutoff) using normalized likelihood ratio tests [1] [10].

Model Selection: Apply information criteria (AIC, BIC) for additional model comparison, acknowledging that different heavy-tailed distributions can produce similar network properties despite distinct generative mechanisms [1] [10].

Biological Network-Specific Considerations

For biological networks, additional experimental considerations include:

Network Projection Decisions: Biochemical networks can be represented as unipartite (single node type) or bipartite (multiple node types) graphs, significantly impacting topological properties [10]. Researchers must explicitly justify their projection choice.

Hierarchical Organization: Biological systems operate across multiple organizational levels (molecular, cellular, organismal, ecosystem), requiring analysis at appropriate scales [10].

Dynamical Interpretation: In biological contexts, scale-free topology may function as an effective feedback system where hubs coordinate network dynamics [7]. For example, in gene regulatory networks, "master regulator" hubs can drive the system toward stable states [7].

Comparative Dynamics: Scale-Free Versus Alternative Network Topologies

Functional Implications for Biological Systems

The topological structure of biological networks significantly influences their dynamical behavior and functional capabilities:

Convergence to Stable States: Networks with outgoing hubs (scale-free out-degree distribution) demonstrate higher probability of converging to fixed-point attractors compared to networks with incoming hubs or exponential distributions [7]. This convergence property is crucial for biological stability and homoeostasis.

Robustness and Fragility: While scale-free networks are theoretically robust to random failures, this advantage diminishes when considering realistic biological constraints and alternative heavy-tailed distributions that share similar robustness properties [10].

Feedback Circuit Dynamics: Scale-free topology can be interpreted as an effective feedback system where a small number of hubs disproportionately influence network dynamics [7]. This hub-dominated architecture can suppress chaotic dynamics and drive systems toward stability [7].

Evolutionary Perspectives

The evolutionary origins of network topology in biological systems remain debated:

Preferential Attachment vs. Evolutionary Drift: While preferential attachment generates scale-free topology, models incorporating evolutionary drift typically produce distributions that adhere more closely to Yule distributions than pure power laws [8].

Generative Mechanism Diversity: Multiple generative mechanisms beyond preferential attachment can produce heavy-tailed distributions, complicating inferences about evolutionary history from network topology alone [10].

Essential Research Reagents and Computational Tools

Table 3: Research Reagent Solutions for Network Analysis

| Tool/Resource | Function | Application Context |

|---|---|---|

| Statistical Validation Packages | Power-law fitting, goodness-of-fit testing, alternative distribution comparison | Essential for rigorous scale-free identification [1] |

| Network Projection Algorithms | Transform complex biological data into analyzable graph structures | Required for bipartite biochemical network representation [10] |

| ICON (Index of Complex Networks) | Comprehensive repository of research-quality network data | Source of diverse biological networks for comparative analysis [1] |

| Boolean Dynamics Simulators | Model network dynamics with binary node states | Studying convergence behavior in gene regulatory networks [7] |

| Yule Distribution Analyzers | Statistical comparison of evolutionary models | Testing alternatives to preferential attachment in biological evolution [8] |

The scale-free hypothesis has profoundly influenced network science and biological research since its introduction, providing a compelling framework for understanding heterogeneous connectivity patterns in complex systems [6] [8]. However, rigorous statistical evidence demonstrates that strongly scale-free networks are rare in biological systems, with most biological networks better described by log-normal or other heavy-tailed distributions [1] [10]. This reassessment does not diminish the importance of network heterogeneity in biological systems but rather highlights the structural diversity of real-world networks and the need for more nuanced theoretical explanations [1] [10].

For researchers and drug development professionals, these findings suggest that therapeutic strategies targeting "hubs" in biological networks may still be valuable, as many biological networks exhibit heavy-tailed distributions even if not perfectly scale-free [7] [10]. However, the field must move beyond simplistic scale-free classifications and develop more sophisticated, statistically rigorous approaches for characterizing biological network structure and its functional implications across multiple organizational levels [1] [10]. Future research should focus on identifying the specific generative mechanisms that produce the observed topological patterns in biological networks and understanding how these patterns influence dynamical behaviors relevant to health and disease.

For decades, the scientific community has operated under the influential hypothesis that real-world networks are typically scale-free. This concept, powerfully introduced by Barabási and Albert, proposed that networks across biological, technological, and social domains share a universal architectural blueprint: a power-law degree distribution where the fraction P(k) of nodes with k connections follows P(k) ~ k^(-γ), typically with 2 < γ < 3 [6]. This mathematical structure implies a network dominated by a few highly connected "hubs" while most nodes have few connections, creating a system that is robust to random failures but vulnerable to targeted attacks on these critical hubs [12]. The mechanism of preferential attachment ("rich-get-richer") was proposed as a generative model for these structures, where new nodes preferentially connect to already well-connected nodes [6].

However, this universalist claim has faced increasing scrutiny. A paradigm-shifting study by Broido and Clauset, analyzing nearly 1,000 real-world networks, has demonstrated that strongly scale-free structure is empirically rare [1] [13] [2]. This comprehensive analysis reveals a much richer structural diversity in real-world networks than the scale-free hypothesis predicts, forcing a fundamental re-evaluation of one of network science's most central tenets and its implications for biological network research.

Quantitative Evidence: The Statistical Rarity of Scale-Free Networks

Large-Scale Analysis of Network Properties

The Broido and Clauset study employed state-of-the-art statistical tools to evaluate 928 network data sets from the Index of Complex Networks (ICON), spanning social, biological, technological, transportation, and information domains [1] [2]. Their methodology involved fitting the best power-law model to each degree distribution's upper tail, testing its statistical plausibility, and comparing it against alternative distributions using likelihood-ratio tests [1]. The research established multiple criteria for classifying scale-free structure, from "super-weak" to "strongest" evidence [1] [2].

Table 1: Prevalence of Scale-Free Networks Across Domains (Broido & Clauset, 2019)

| Network Domain | Strongest Evidence | Weakest Evidence | Log-Normal Fit Preferred |

|---|---|---|---|

| All Networks (N=928) | 4% | 52% | Most networks |

| Social Networks | At most weakly scale-free | - | Majority |

| Biological Networks | Some strongly scale-free | - | Mixed evidence |

| Technological Networks | Some strongly scale-free | - | Mixed evidence |

The findings reveal that only a minute fraction—approximately 4%—of the analyzed networks exhibited the strongest possible evidence for scale-free structure, while the majority (52%) displayed only the weakest possible evidence [1] [13]. Social networks consistently showed, at best, weakly scale-free properties, while only a handful of biological and technological networks qualified as strongly scale-free [1]. For most networks, log-normal distributions fit the degree distribution as well as or better than power laws [1] [2], suggesting alternative generative mechanisms may be at work across many domains.

Methodological Protocols for Identifying Scale-Free Structure

The statistical evaluation of scale-free networks requires rigorous protocols to distinguish true power-law distributions from similar heavy-tailed patterns. The following experimental methodology was applied in the large-scale analysis:

Table 2: Statistical Protocol for Scale-Free Network Identification

| Step | Procedure | Purpose |

|---|---|---|

| 1. Data Transformation | Convert complex networks into simple graphs | Enable unambiguous testing of degree distributions |

| 2. Upper Tail Selection | Identify optimal k_min value | Focus analysis on region where power-law may hold |

| 3. Model Fitting | Estimate best-fitting power-law parameters | Find optimal α value for P(k) ~ k^(-α) |

| 4. Goodness-of-F-Fit Test | Evaluate statistical plausibility of power law | Test if data is consistent with power-law hypothesis |

| 5. Alternative Comparison | Likelihood-ratio tests against log-normal, exponential, etc. | Determine if alternative distributions fit better |

This protocol addresses critical methodological challenges, particularly the need to focus on the upper tail of the degree distribution (k ≥ k_min) where power-law behavior is most likely to manifest, and the importance of comparing against alternative distributions like the log-normal, which can be difficult to distinguish from power laws in empirical data [1] [2].

Implications for Biological Network Research

Reevaluating Biological Network Architecture

The rarity of strongly scale-free networks has profound implications for biological research, where the scale-free assumption has influenced everything from metabolic network analysis to protein-protein interaction studies [1] [14]. While some biological networks do exhibit strong scale-free properties, many others do not, suggesting a need for domain-specific models rather than universal templates [1].

This paradigm shift is particularly relevant for gene co-expression networks, which have often been modeled as scale-free systems [15]. The recognition that scale-free structure is not universal has prompted the development of more flexible modeling approaches. For instance, the recently introduced time-varying scale-free graphical lasso (tvsfglasso) method allows researchers to estimate dynamic gene co-expression networks while incorporating scale-free structure as a prior assumption rather than a universal truth [15]. This method combines Gaussian graphical models with power-law constraints on degree distribution, enabling more accurate modeling of temporal changes in gene associations during biological processes like development or disease progression [15].

Sample-Specific Network Control in Biological Applications

The movement beyond universal scale-free assumptions has paralleled important advances in sample-specific network analysis, particularly for precision medicine applications. Research evaluating Sample-Specific network Control (SSC) methods has revealed that network control principles perform differently depending on network architecture [14].

Table 3: Performance of Sample-Specific Network Control Methods

| Method Type | Examples | Recommended Context | Performance Notes |

|---|---|---|---|

| Sample-Specific Network Construction | CSN, SSN, SPCC, LIONESS | CSN and SSN generally preferred | Critical driver identification depends heavily on network construction method |

| Undirected-Network Control | MDS, NCUA | Most TCGA cancer data & single-cell RNA-seq | Generally more effective than directed methods |

| Directed-Network Control | MMS, DFVS | Context-specific applications | Less effective in most biological contexts studied |

These findings highlight that network characteristics, particularly whether they are directed or undirected, significantly impact the identification of driver nodes in biological systems [14]. This structural sensitivity reinforces the need to move beyond one-size-fits-all scale-free assumptions toward more nuanced, context-aware network models.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Researchers working at the intersection of scale-free analysis and biological networks require specialized methodological tools. The following table summarizes key computational reagents and their functions in contemporary network analysis:

Table 4: Essential Research Reagent Solutions for Network Analysis

| Research Reagent | Type | Function | Application Context |

|---|---|---|---|

| tvsfglasso | Software Package | Time-varying scale-free network estimation | Dynamic gene co-expression analysis |

| Power-Law Fitting Tools | Statistical Library | Estimate power-law parameters α and k_min | Testing scale-free hypothesis |

| Likelihood-Ratio Test | Statistical Test | Compare power-law vs. alternative distributions | Model selection for degree distributions |

| ICON Database | Data Resource | Access to 900+ research-quality networks | Cross-domain comparative network studies |

| SSC Workflows | Analysis Pipeline | Identify sample-specific driver nodes | Precision medicine, single-cell analysis |

These tools collectively enable researchers to rigorously test scale-free assumptions and apply appropriate network models specific to their biological context and research questions.

The compelling evidence that strongly scale-free networks are rare represents a fundamental shift in network science, moving from a universalist perspective to one that embraces structural diversity [1] [16]. This paradigm shift has particular resonance in biological research, where the scale-free assumption has long influenced analytical approaches.

For researchers studying biological networks, this transition necessitates more nuanced methodologies that:

- Test scale-free assumptions rigorously rather than presuming them [1]

- Embrace alternative distributions like log-normal where empirically supported [1] [2]

- Employ sample-specific approaches that acknowledge contextual variability [14]

- Utilize time-varying methods that capture dynamic network architecture [15]

This evolution from a universal template to context-specific modeling ultimately enriches our understanding of biological systems, recognizing their intricate structural diversity while developing more sophisticated analytical tools to match their complexity. The future of biological network research lies not in seeking universal patterns, but in developing the methodological flexibility to understand the nuanced architecture of each specific biological context.

The analysis of complex biological networks is fundamental to advancing our understanding of cellular processes, disease mechanisms, and drug discovery. Two prominent modeling frameworks have emerged for this task: Exponential Random Graph Models (ERGMs) and scale-free network models. This guide provides an objective comparison of their performance, supported by experimental data and detailed methodologies. ERGMs are a family of statistical models for analyzing social and biological networks that allow for the simultaneous modeling of endogenous network characteristics and exogenous variables [17]. In contrast, scale-free models are primarily process-based and generate networks where the fraction of nodes with degree k is hypothesized to follow a power-law distribution, a pattern with broad implications for network structure and dynamics [1] [18]. Framed within a broader thesis on the comparative performance of exponential versus scale-free biological networks, this article synthesizes findings to guide researchers, scientists, and drug development professionals in selecting appropriate analytical tools.

Theoretical Foundations and Comparative Framework

Exponential Random Graph Models (ERGMs)

ERGMs are a class of statistical models originating from social network analysis that have gained significant traction in biological contexts [19] [20]. Their fundamental principle is to represent the global structure of a network as a function of local topological features, enabling researchers to understand which micro-level configurations contribute significantly to the observed network topology [21] [22]. The model formulation is:

P(Y=y|θ)=exp(θTs(y))c(θ),∀y∈Y

where:

- Y is the random network

- y is the observed network

- θ is a vector of parameters

- s(y) is a vector of sufficient statistics (network features)

- c(θ) is a normalizing constant [22]

ERGMs can incorporate both endogenous variables (network structures like transitivity and reciprocity) and exogenous variables (node attributes such as age, gender, or biological function) [19]. This flexibility allows ERGMs to model complex dependencies that violate the independence assumptions of standard statistical models [22].

Scale-Free Network Models

Scale-free networks are characterized by degree distributions that follow a power law, where a few nodes (hubs) have many connections while most nodes have few [1] [18]. This model has been widely applied across biological domains due to its ability to represent robust, heterogeneous systems. The traditional Barabási-Albert (BA) model implements this through two mechanisms: growth (networks expand by adding new nodes) and preferential attachment (new nodes attach preferentially to well-connected nodes) [18]. However, recent research has revealed limitations in this approach, including an inability to characterize low-degree distributions and controversy over whether real-world networks universally exhibit power-law distributions [18].

Key Conceptual Differences

The table below summarizes the fundamental distinctions between these modeling approaches:

Table 1: Fundamental Differences Between ERGM and Scale-Free Models

| Feature | ERGMs | Scale-Free Models |

|---|---|---|

| Theoretical Basis | Statistical, exponential family [22] | Mechanistic, based on growth and preferential attachment [18] |

| Primary Approach | Constraint-based [18] | Process-based [18] |

| Key Assumption | Network structure emerges from local configurations [21] | Degree distribution follows power law [1] |

| Model Flexibility | High (incorporates multiple features simultaneously) [19] | Lower (primarily focused on degree distribution) [18] |

| Applicability | Single or multiple network analysis [17] | Primarily for network generation |

Performance Comparison in Biological Networks

Empirical Prevalence and Distribution Fitting

A severe test of scale-free structure applied to nearly 1000 networks across social, biological, technological, transportation, and information domains revealed that strongly scale-free structure is empirically rare [1]. The study found robust evidence that:

- Only a handful of technological and biological networks appear strongly scale-free

- For most networks, log-normal distributions fit the data as well as or better than power laws

- Social networks are at best weakly scale-free [1]

These findings highlight the structural diversity of real-world networks and suggest that the theoretical basis for universal scale-free structure in biological systems may be overstated.

Representation of Group-Based Network Characteristics

In neuroscience, accurately constructing group-based brain connectivity networks presents challenges in accounting for inter-subject topological variability. A study comparing conventional approaches (mean/median correlation networks) to an ERGM-based method demonstrated ERGM's superior performance in capturing constitutive topological properties [21]. The ERGM approach created group-based representative brain networks that more accurately reflected the topological characteristics of the original subject pool, providing a flexible method for constructing null networks, visualization tools, and instruments for identifying hub/node types in modularity analysis [21].

Motif Significance Testing

Analysis of network motifs—small subgraphs that occur more frequently than expected by chance—is crucial for understanding biological network function. Conventional methods test motif significance one at a time and assume independence, which can yield misleading results [5]. ERGMs overcome these limitations by enabling simultaneous testing of multiple candidate motifs within a single model, naturally accounting for dependencies between motifs [5]. Applied to protein-protein interaction (PPI) networks and gene regulatory networks, ERGMs confirmed over-representation of triangles in PPI networks and transitive triangles (feed-forward loop) in regulatory networks, while showing that under-representation of cyclic triangles (feedback loop) can be explained as a consequence of other topological features [5].

Table 2: Performance Comparison in Biological Network Applications

| Application Domain | ERGM Performance | Scale-Free Model Performance |

|---|---|---|

| Degree Distribution Fitting | Not dependent on power-law assumption; fits various distributions [1] | Powerful when genuine power-law exists; poor fit for many real networks [1] [18] |

| Group Network Representation | Outperforms mean/median methods; captures topological properties [21] | Limited application in this context |

| Motif Significance Testing | Tests multiple motifs simultaneously; accounts for dependencies [5] | Typically requires separate tests for each motif; assumes independence [5] |

| Biological Interpretability | High (incorporates biological attributes) [19] [20] | Moderate (primarily topological) |

Experimental Protocols and Methodologies

ERGM Protocol for Group-Based Brain Networks

A study investigating the construction of group-based representative brain networks provides a detailed methodological framework [21]:

1. Data Acquisition and Preprocessing:

- Acquire fMRI data from subjects (e.g., 10 normal subjects: 5 female, average age 27.7)

- Collect 120 images during 5 minutes of resting state using appropriate imaging protocols

- Perform motion correction, spatial normalization to MNI space, and reslice to standardized voxel size

- Avoid spatial smoothing to prevent artificial spatial correlation [21]

2. Network Construction:

- Calculate Pearson partial correlation coefficients between time courses of all node pairs (90 AAL atlas regions)

- Adjust for motion and physiological noise

- Generate unweighted, undirected subject-specific networks by thresholding correlation matrices to create adjacency matrices [21]

3. ERGM Implementation:

- Define network statistics vector s(y) incorporating relevant topological features

- Estimate parameters θ using appropriate methods (e.g., Markov Chain Monte Carlo maximum likelihood estimation)

- Assess model goodness-of-fit by comparing simulated networks to observed data [21]

4. Validation:

- Compare topological properties of ERGM-generated group network to conventional mean/median networks

- Evaluate how well each approach captures constitutive properties of the original subject networks [21]

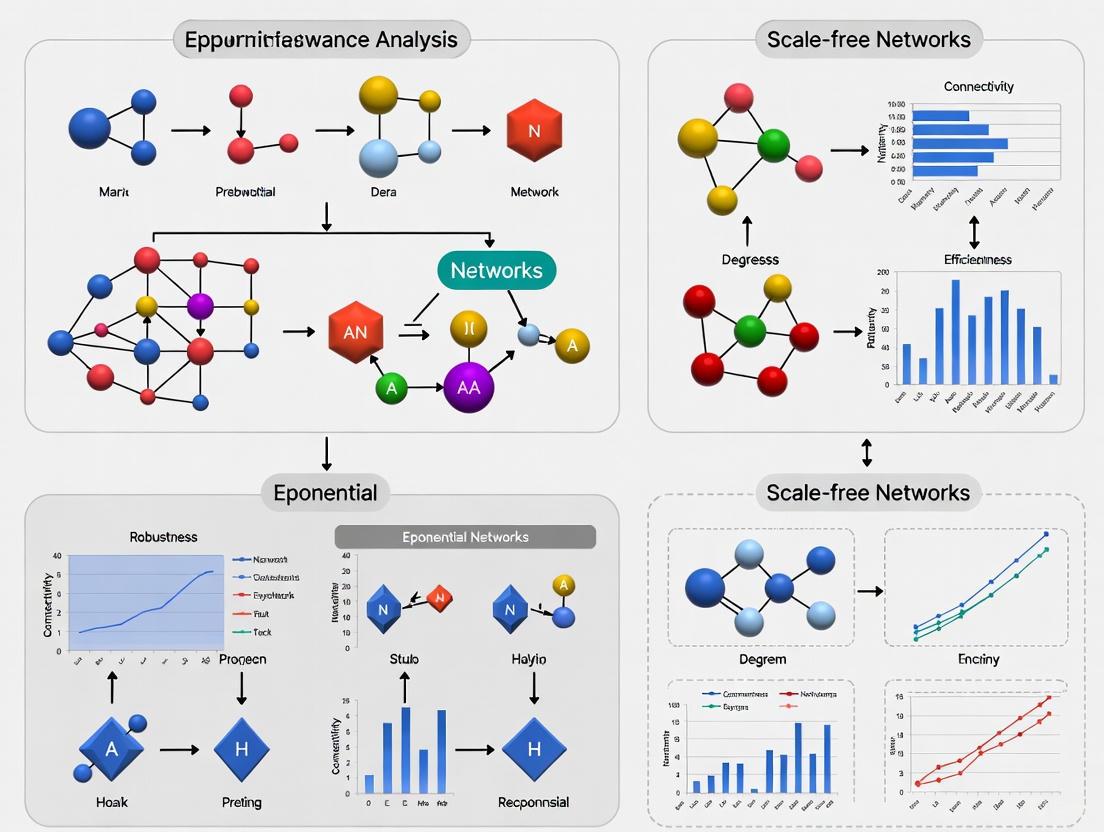

Workflow Diagram: ERGM Analysis of Biological Networks

Diagram 1: ERGM analysis workflow for biological networks

Advanced ERGM Developments for Biological Applications

Addressing Computational Challenges

Traditional ERGMs face computational limitations with large biological networks due to intractable normalizing constants [23]. Recent advances have addressed these challenges:

Bayesian ERGM with Stochastic Gradient Langevin Dynamics (SGLD): This approach enables analysis of large-scale networks with high-dimensional ERGMs by using stochastic gradient calculations via a short Markov chain at each iteration [23]. The method converges to the true posterior regardless of the length of the inner Markov chain, providing a scalable algorithm for large biological networks [23].

Tapered ERGM and Latent Order Logistic (LOLOG) Models: These recently proposed variants overcome problems of model near-degeneracy that can occur with conventional ERGMs [20]. Applied to protein-protein interaction networks, gene regulatory networks, and neural networks, these models enable estimation using simple parameters for networks where conventional ERGM estimation was previously impossible [20].

Multiple Network Analysis Methods

A significant limitation of traditional ERGM is its primary application to single networks [17]. Two methods have emerged for multiple network analysis:

1. Hierarchical Approach: Treats multiple networks as a sample from a population of networks, allowing for the estimation of both within-network and between-network effects.

2. Integrated Approach: Combines information from multiple networks simultaneously to estimate a single set of parameters [17].

Research comparing these approaches indicates that multiple network analysis yields more robust results than single-network analysis, with the choice between hierarchical and integrated methods depending on factors such as the number of networks and their hierarchical structure [17].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for ERGM Research in Biological Networks

| Resource Category | Specific Tools/Software | Function/Purpose |

|---|---|---|

| Statistical Platforms | R, Python | Primary computational environments for ERGM implementation [5] |

| Network Analysis Packages | statnet (R), igraph (R/Python), NetworkX (Python) | Computing network statistics, estimating ERGM parameters, visualization [5] |

| Specialized ERGM Software | Bergm (R), PNet (Standalone) | Bayesian ERGM analysis, advanced estimation algorithms [23] |

| Biological Network Data | Protein-protein interaction databases, gene regulatory networks, neural connectivity data | Empirical networks for model validation and application [5] [20] |

| Computational Resources | High-performance computing clusters, cloud computing platforms | Handling large-scale network estimation computationally intensive processes [23] [20] |

This comparison demonstrates that ERGMs provide a flexible, powerful framework for topological analysis of biological networks, offering distinct advantages over scale-free models in many practical applications. While scale-free models remain valuable for networks exhibiting genuine power-law distributions, ERGMs deliver superior performance in capturing complex topological features, testing motif significance, and representing group-based network characteristics. Recent methodological advances have expanded ERGM applicability to larger, more complex biological networks, solidifying their position as an essential tool for researchers, scientists, and drug development professionals working with network data. The choice between these modeling frameworks should be guided by specific research questions, network characteristics, and the biological phenomena under investigation.

Biological systems, from molecular interactions within a cell to ecosystems, are fundamentally built upon networks of interactions. The organizational principles of these biological networks are a subject of intense research, primarily focused on distinguishing between two dominant architectural models: scale-free networks characterized by power-law degree distributions and prominent hub nodes, and exponential networks (including random and small-world networks) characterized by Poisson or similar distributions where most nodes have approximately the same number of connections. This framework is crucial for understanding the comparative performance of exponential versus scale-free biological networks, a central thesis in modern systems biology. The determination of which architecture better describes a biological system has profound implications for predicting system behavior, understanding robustness and fragility, and identifying potential therapeutic targets in drug development. Analyzing global properties like degree distribution, small-world structure, and the presence of motifs provides a powerful lens through which researchers can decipher the organizational logic of cellular and organismal function [24] [25].

Foundational Structural Properties of Networks

The analysis of any biological network begins with the quantification of its key topological properties. These metrics provide a mathematical foundation for classifying networks and inferring their functional capabilities and evolutionary constraints.

Degree Distribution: Scale-Free versus Exponential

The degree of a node is the number of connections (edges) it has to other nodes. The degree distribution, P(k), which gives the probability that a randomly selected node has degree k, is the primary feature distinguishing network architectures [26] [25].

- Scale-Free Networks: These networks follow a power-law distribution, (P(k) \sim k^{-\gamma}), where γ is the power-law exponent. This pattern means most nodes have very few connections, while a few nodes (hubs) have a very high number of connections. The distribution is "scale-free" because the functional form remains the same regardless of the scale at which it is observed [26] [25].

- Exponential/Random Networks: Modeled by the Erdős–Rényi model, these networks exhibit a Poisson-like distribution where the degree distribution has a pronounced peak around the mean degree. In such networks, almost all nodes have approximately the same number of links, and nodes with significantly higher or lower connectivity are exceedingly rare [26].

Small-World Structure

Many real-world networks, including biological ones, exhibit the small-world property. This structure is characterized by a combination of two features:

- High Clustering Coefficient: This measures the tendency for nodes to form tightly connected groups or clusters. It is quantified as the probability that two nodes connected to a common node are also connected to each other. Biological networks often show a high clustering coefficient, indicating modular organization [25].

- Short Characteristic Path Length: This is the average shortest path distance between any two pairs of nodes in the network. The famous "six degrees of separation" in social networks is an example of short path length [25].

A small-world network has a clustering coefficient significantly higher than that of a random network, while maintaining a similarly short characteristic path length. This architecture facilitates efficient communication and rapid propagation of signals throughout the network [25].

Hubs and Motifs

- Hubs: In scale-free networks, hubs are the few highly connected nodes that dominate the network's topology. They are critically important for the network's integrity; their removal can dramatically disrupt network connectivity and function, whereas the removal of a random, low-degree node is less likely to cause system failure. This makes hubs both sources of robustness against random failure and points of vulnerability to targeted attacks [26].

- Network Motifs: These are subgraphs (small patterns of interconnections) that occur in a network at frequencies significantly higher than in randomized networks. They are considered the fundamental building blocks of complex networks. Common motifs in regulatory networks include feedback loops (for control) and feed-forward loops (which can accelerate response times and filter noise) [26].

Table 1: Key Structural Properties of Biological Networks

| Property | Scale-Free Network | Exponential/Random Network | Biological Significance |

|---|---|---|---|

| Degree Distribution | Power-law ((P(k) \sim k^{-\gamma})) | Poisson ((P(k) \sim \lambda^k/k!)) | Distinguishes systems with influential hubs from those with uniform connectivity. |

| Hub Presence | Strong, with a few high-degree hubs | Weak, no significant hubs | Hubs are often essential genes/proteins; vulnerability to targeted attacks. |

| Robustness to Failure | Robust to random node removal | Fragile to random node removal | Explains resilience of biological systems to random mutations. |

| Motif Prevalence | Specific, over-represented subgraphs | No significantly over-represented subgraphs | Motifs perform specific information-processing functions (e.g., pulse generation). |

| Small-World Property | Often present | Can be present (via rewiring) | Enables efficient communication and modular organization in the cell. |

The Scale-Free Hypothesis: Evidence and Controversy

The initial discovery of scale-free topology in biological networks was revolutionary. Early work claimed that this architecture was a universal principle, observed across metabolic networks, protein-protein interaction networks, and gene regulatory networks [25]. The proposed generative mechanism was preferential attachment, a "rich-get-richer" model where new nodes added to the network are more likely to connect to already well-connected nodes. This model successfully explained the emergence of hubs and the power-law distribution [25].

The functional implications were profound. Scale-free topology was linked to robustness against random failures; because low-degree nodes vastly outnumber hubs, a random failure is likely to affect a non-critical node. Conversely, this architecture implies vulnerability to coordinated attacks on hub nodes [26]. This insight offered a theoretical framework for predicting essential genes and understanding the resilience of biological systems.

However, the universality of the scale-free hypothesis has become a central controversy. A landmark 2019 study in Nature Communications performed a severe test on nearly 1,000 networks from social, biological, technological, and information domains. It found that strong scale-free structure is empirically rare [1]. The study concluded that while a handful of biological and technological networks appear strongly scale-free, most real-world networks, including many biological ones, are often better fit by alternative distributions like the log-normal [1]. This indicates a much greater structural diversity among biological networks than previously assumed and challenges the universality of the preferential attachment mechanism, suggesting that other evolutionary pressures like evolutionary drift play a significant role [8].

Comparative Analysis of Network Types and Properties

Biological networks are not monolithic; they exist in several distinct forms, each with its own characteristic topological features and functional roles.

Table 2: Structural Properties Across Different Biological Network Types

| Network Type | Typical Degree Distribution | Small-World Property | Common Motifs & Features | Key Experimental Methods |

|---|---|---|---|---|

| Protein-Protein Interaction (PPI) | Often reported as scale-free, but subject to ongoing debate [1] [24]. | Yes [25] | Dense overlapping neighborhoods, protein complexes. | Yeast two-hybrid, affinity purification mass spectrometry [24]. |

| Metabolic | Early studies strongly indicated scale-free structure [25]. | Yes [25] | Linear pathways, modular subnetworks. | Biochemical assays, genome annotation, flux balance analysis [24]. |

| Gene Regulatory | Scale-free with transcription factor hubs [26]. | Information not available in search results | Feed-forward loops, feedback loops, single-input modules. | Chromatin Immunoprecipitation (ChIP-chip, ChIP-seq) [24]. |

| Protein Phosphorylation | Information not available in search results | Information not available in search results | Kinase-substrate cascades, feedback loops. | Mass spectrometry, protein microarrays, modified kinase assays [24]. |

| Genetic Interaction | Information not available in search results | Information not available in search results | Synthetic lethal pairs, buffering relationships. | Synthetic genetic array (SGA) analysis [24]. |

The following diagram illustrates the core architectural difference between a scale-free network and an exponential/random network, highlighting the presence of hubs and the different connectivity patterns.

Experimental and Computational Methodologies

Determining the structure of a biological network relies on a combination of high-throughput experimental assays and sophisticated computational and statistical tools.

Key Experimental Protocols

For Protein-Protein Interaction (PPI) Networks:

- Yeast Two-Hybrid (Y2H) Systems: A genetic method used to detect binary interactions between two proteins. A "bait" protein is fused to a DNA-binding domain, and a "prey" protein is fused to an activation domain. Interaction reconstitutes a functional transcription factor, activating a reporter gene [24].

- Affinity Purification Mass Spectrometry (AP-MS): A "bait" protein is purified along with its interacting partners using an affinity tag. The co-purified proteins are then identified using mass spectrometry. This method reveals multi-protein complexes rather than just binary interactions [24].

For Gene Regulatory Networks:

- Chromatin Immunoprecipitation followed by sequencing (ChIP-seq): This protocol is used to identify the genomic binding sites for transcription factors. Cells are cross-linked, chromatin is sheared, and a specific antibody against the transcription factor is used to immunoprecipitate the protein-DNA complexes. The bound DNA is then sequenced and mapped to the genome to identify direct targets [24].

For Metabolic Networks:

- Literature Curation and Genome Annotation: Metabolic networks are largely reconstructed by compiling known biochemical reactions from scientific literature and inferring the presence of enzymes from genomic annotations using tools like the Kyoto Encyclopedia of Genes and Genomes (KEGG) [24].

Statistical Framework for Identifying Scale-Free Topology

The claim that a network is scale-free requires rigorous statistical validation, not merely observing a straight line on a log-log plot. The state-of-the-art protocol involves:

- Parameter Estimation: Using the maximum likelihood method to estimate the power-law exponent γ and the lower bound (k_{min}) above which the power-law behavior holds [1].

- Goodness-of-Fit Test: Calculating a p-value to test the hypothesis that the data above (k_{min}) follows a power-law distribution. A sufficiently high p-value (e.g., > 0.1) indicates the power law is a plausible fit [1].

- Model Comparison: Comparing the power-law model to alternative heavy-tailed distributions (e.g., log-normal, exponential) using a normalized likelihood ratio test. This determines which model is a better fit for the data. Many networks previously thought to be scale-free are often equally well or better fit by a log-normal distribution [1].

The following workflow diagram outlines this rigorous statistical process for characterizing network topology.

Table 3: Key Research Reagents and Databases for Biological Network Analysis

| Resource Name | Type/Function | Application in Network Research |

|---|---|---|

| STRING Database [27] | Public Database | A comprehensive resource of known and predicted protein-protein associations, integrating experimental, computational, and textual data. Used to build and analyze functional association networks. |

| Cytoscape [28] | Software Platform | An open-source software platform for visualizing complex networks and integrating them with any type of attribute data. Essential for network layout, analysis, and visualization. |

| BioGRID & IntAct [27] | Public Database | Curated repositories of physical and genetic interactions from peer-reviewed literature. Provide high-quality, experimentally derived data for network construction. |

| KEGG & Reactome [27] [24] | Pathway Database | Manually curated databases of biological pathways and processes. Used as a reference for understanding the functional context of network components and for enrichment analysis. |

| Graph Neural Networks (GNNs) [29] | Computational Model | A class of deep learning models designed to perform inference on graph-structured data. Used to predict new interactions, classify nodes, and infer individual-specific network variations. |

| Index of Complex Networks (ICON) [1] | Network Repository | A comprehensive online index of research-quality network data sets from all fields of science. Used for large-scale comparative studies of network properties. |

Implications for Drug Discovery and Development

The structural properties of biological networks have direct and powerful implications for drug development. The hub-and-spoke architecture of scale-free networks suggests a compelling strategy: targeting hub proteins. Because hubs are often critical for the survival of pathogens or cancer cells, drugs designed against them could be highly effective. However, their high connectivity also means that disrupting a hub could lead to severe side effects, requiring careful therapeutic window assessment [25].

Conversely, the emerging understanding of network motifs opens an alternative approach. Instead of targeting a single protein, targeting the dynamic function of a motif (e.g., a specific feedback loop that drives disease resilience) could offer a more nuanced and potentially less toxic intervention [26] [24]. Furthermore, the robustness inherent in scale-free networks explains why some single-target drugs fail—biological systems can often re-route flows through alternative pathways. This insight is driving the pursuit of multi-target drugs or drug combinations that perturb the network at multiple points, overcoming this robustness and leading to more durable therapeutic outcomes [25]. The ability to model and distinguish between scale-free and alternative network architectures thus provides a framework for prioritizing targets and designing more effective treatment strategies.

Methodologies in Practice: From ERGM Estimation to Scale-Free Network Applications

Biological systems are inherently composed of interconnected entities, where understanding the interdependencies within networks is critical to comprehending the behavior of any constituent part. The construction of biological networks from raw data is a fundamental process in systems biology, enabling researchers to model complex interactions ranging from molecular pathways to ecological relationships. The structural properties of these networks—particularly whether they follow scale-free or exponential degree distributions—profoundly influence their robustness, dynamics, and functional capabilities.

Contemporary research reveals a ongoing debate regarding the prevalence and performance characteristics of these network types. While scale-free networks have dominated scientific discourse, recent large-scale studies demonstrate that strongly scale-free structure is empirically rare across real-world biological networks, with many better described by log-normal or exponential distributions [1]. This comparative analysis examines the data sources, construction methodologies, and functional implications of exponential versus scale-free biological networks to guide researchers in selecting appropriate modeling frameworks for specific biological questions.

Biological networks are reconstructed from diverse data sources across omics technologies, each requiring specialized processing before network inference.

Primary Data Generation Technologies

- Next-Generation Sequencing (NGS): Provides high-throughput data for genome assembly, variant detection, and transcriptomics through RNA-Seq. NGS enables the construction of co-expression networks and regulatory networks from transcriptomic data and protein-protein interaction networks from genomic data [30].

- Microarrays: Though largely superseded by NGS for novel discovery, historical microarray data still contributes to expression quantitative trait loci (eQTL) networks and metabolic pathway reconstructions.

- Mass Spectrometry: Facilitates proteomic and metabolomic data acquisition for constructing protein-protein interaction networks and metabolic networks through co-abundance patterns and interaction assays.

- Imaging Technologies: Microscopy and MRI provide spatial relationship data for structural connectomes (e.g., brain networks) and tissue spatial networks [31].

Public Data Repositories

- Protein-Protein Interaction Databases: STRING, BioGRID, and IntAct provide pre-compiled interaction data from experimental and computational sources.

- Genomic Data Portals: The Cancer Genome Atlas (TCGA) and Gene Expression Omnibus (GEO) offer raw and processed transcriptomic data for network inference.

- Specialized Resources: The Index of Complex Networks (ICON) provides research-quality network data across biological, social, and technological domains [1].

Network Projection Methods

Network projections transform relationship data into analyzable graph structures, with specific methodological considerations for biological contexts.

Bipartite to Unipartite Projections

Many biological networks originate from bipartite structures, which are then projected to unipartite graphs for analysis. A bipartite graph contains two disjoint node sets (e.g., actors and movies) where edges only connect nodes from different sets. Projection creates a unipartite network containing only one node type by connecting nodes that share neighbors in the bipartite graph [32].

Table 1: Biological Bipartite Networks and Their Projections

| Bipartite Network | Node Set A | Node Set B | Projected Network | Projection Relationship |

|---|---|---|---|---|

| Actor-Movie | Actors | Movies | Actor-Actor | Co-appearance in films |

| Species-Habitat | Species | Habitats | Species-Species | Shared habitat occupancy |

| Gene-Disease | Genes | Diseases | Gene-Gene | Shared disease associations |

| Protein-Complex | Proteins | Complexes | Protein-Protein | Shared complex membership |

| Drug-Target | Drugs | Targets | Drug-Drug | Shared protein targets |

Recent research demonstrates that scale-free projections can emerge from non-scale-free bipartite structures. In the actor-movie network, for example, neither actor nor movie degree distributions follow power laws, yet their projection produces a scale-free actor-actor network without preferential attachment mechanisms [32]. This has significant implications for biological network interpretation, suggesting that observed scale-free properties may arise from projection artifacts rather than fundamental biological principles.

Sequence Similarity Networks

Sequence Similarity Networks (SSNs), such as the Directed Weighted All Nearest Neighbors (DiWANN) network, connect biological sequences based on similarity metrics. These networks employ computationally efficient models that link each node only to its nearest neighbors by edit distance, reducing time complexity compared to all-to-all distance matrices [31]. Such approaches have proven valuable for identifying driver gene patterns in cancer genomics.

Multi-Omics Integration Networks

Integrative approaches combine data from genomics, transcriptomics, proteomics, metabolomics, and epigenomics to construct comprehensive networks that provide multi-dimensional views of cellular processes. This multi-omics integration enables more accurate biomarker discovery and reveals detailed disease mechanisms by connecting molecular changes across biological layers [30].

Diagram 1: Multi-omics network construction workflow integrating data from multiple biological layers.

Network Representations in Biological Contexts

Biological networks employ diverse mathematical representations, each with specific advantages for particular data types and research questions.

Standard Graph Representations

- Simple Graphs: Represent pairwise relationships between entities (e.g., protein-protein interactions). Most foundational network analysis tools operate on this representation.

- Directed Acyclic Graphs (DAGs): Model causal or temporal relationships in Bayesian networks and signaling pathways where directionality matters [31].

- Bipartite Graphs: Directly represent two-mode data without projection, preserving inherent structure in gene-disease associations and ecological species-habitat relationships.

Advanced Mathematical Representations

- Hypergraphs: Capture multi-way relationships beyond pairwise interactions, crucial for modeling protein complexes and metabolic pathways where multiple entities interact simultaneously [31].

- Multilayer Networks: Represent systems where entities connect through multiple relationship types simultaneously, such as gene-regulatory networks with different regulatory mechanisms.

- Simplicial Complexes: Provide topological representations capable of modeling higher-order interactions in neural connectomes and molecular assembly pathways.

The choice of representation significantly impacts analytical outcomes. Research indicates that biological systems often deterministically construct forms of conditional hypergraphs when calculating impacts of multipoint mutations on enzyme activity, suggesting that conventional graph representations may insufficiently capture biological complexity [31].

Comparative Performance: Exponential vs. Scale-Free Networks

The debate between exponential and scale-free models represents a fundamental divide in biological network science, with empirical evidence challenging long-held assumptions about network architecture.

Structural Properties and Prevalence

Table 2: Characteristics of Scale-Free vs. Exponential Biological Networks

| Property | Scale-Free Networks | Exponential Networks |

|---|---|---|

| Degree Distribution | Power law (k−α) | Exponential decay |

| Hub Prevalence | Few highly connected hubs | Limited degree variation |

| Empirical Prevalence | Rare in biological systems [1] | Common in biological systems |

| Robustness to Random Failure | High | Moderate |

| Vulnerability | Targeted attacks on hubs | Diffuse vulnerability |

| Theoretical Foundation | Preferential attachment | Random processes |

| Biological Examples | Some protein-protein networks [1] | Gene co-expression, metabolic networks [3] |

Large-scale analysis of nearly 1,000 networks across domains reveals that strongly scale-free structure is empirically rare, with most real-world networks better fit by log-normal distributions [1]. This study found that while a handful of biological and technological networks appear strongly scale-free, social networks are at best weakly scale-free, highlighting the structural diversity of real-world networks.

Empirical Evidence from Network Analysis

A severe test of scale-free network prevalence applied state-of-the-art statistical tools to 928 network data sets from the Index of Complex Networks (ICON). Researchers estimated best-fitting power-law models, tested statistical plausibility, and compared to alternative distributions. The results demonstrated:

- Robust evidence that strongly scale-free structure is rare across social, biological, technological, transportation, and information networks

- For most networks, log-normal distributions fit the data as well as or better than power laws

- Social networks are at best weakly scale-free, with only a handful of technological and biological networks appearing strongly scale-free [1]

These findings challenge the universality of scale-free networks and highlight the need for new theoretical explanations of non-scale-free patterns observed in biological systems.

Implications for Biological Network Dynamics

The structural differences between exponential and scale-free networks significantly impact their dynamic behaviors and functional capabilities:

- Trapping Efficiency: Recent research on maximal planar networks with exponential degree distribution demonstrates that analytical solutions for average trapping time (ATT) can achieve theoretical lower bounds, indicating optimal trapping efficiency in properly structured exponential networks [3]

- Synchronization: Under Kuramoto oscillator models, transitions to global synchronization occur at precise thresholds dependent on degree distribution parameters, with differential effects in scale-free versus exponential networks [1]

- Resilience: Exponential networks demonstrate different resilience profiles to random edge removal compared to scale-free architectures, with implications for drug target identification and therapeutic intervention strategies [3]

Experimental Protocols for Network Comparison

Rigorous comparison of network architectures requires standardized methodologies and statistical approaches.

Degree Distribution Fitting Protocol

- Data Preparation: Convert raw network data to simple graphs, discarding graphs that are too dense or sparse to be plausibly scale-free [1]

- Parameter Estimation: Identify the best-fitting power law in the degree distribution's upper tail using maximum likelihood estimation (MLE)

- Goodness-of-Fit Testing: Evaluate statistical plausibility using appropriate goodness-of-fit tests

- Model Comparison: Compare power law to alternative distributions (log-normal, exponential, stretched exponential) using normalized likelihood ratio tests [1]

- Validation: Assess robustness under alternative criteria and compare results using standard information criteria

Statistical Comparison Framework

The critical evaluation of scale-free versus exponential structure requires:

- Severe Testing: Applying stringent statistical criteria that unify common variations in scale-free definitions [1]

- Tail Analysis: Focusing on the upper tail of degree distributions where power laws become visible, using iterative approaches to determine optimal threshold values [32]

- Multiple Distribution Comparison: Systematically comparing power law fits to log-normal, exponential, and stretched exponential distributions using information criteria like BIC [32]

Diagram 2: Statistical framework for comparing network architectures and classifying degree distributions.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Biological Network Construction and Analysis

| Resource | Type | Function | Representative Examples |

|---|---|---|---|

| Network Data Repositories | Data Source | Provide pre-compiled network data for analysis | Index of Complex Networks (ICON), STRING, BioGRID |

| Statistical Testing Frameworks | Analytical Tool | Evaluate distribution fits and compare models | Maximum likelihood estimation (MLE), Bayesian Information Criterion (BIC) |

| Network Generation Models | Modeling Framework | Create synthetic networks with specific properties | Barabási-Albert Model (scale-free), Randomly Stopped Linking Model [32] |

| Omics Data Integration Platforms | Data Integration | Combine multiple biological data types for network inference | Multi-omics integration tools [30] |

| Specialized Network Analysis Software | Analytical Tool | Compute network metrics and visualize structures | ProbINet (probabilistic network analysis) [33] |

| Bipartite Configuration Models | Modeling Framework | Generate bipartite networks from prescribed degree distributions | Bipartite Configuration Model [32] |

The construction of biological networks from diverse data sources involves critical choices regarding projection methods and mathematical representations that significantly impact research outcomes. Empirical evidence from large-scale network analyses challenges long-held assumptions about the prevalence of scale-free architecture in biological systems, demonstrating that strongly scale-free structure is rare and that exponential or log-normal distributions often provide better fits to real biological network data [1].

These findings have profound implications for network-based approaches in drug development and biomedical research. Rather than presuming universal scale-free properties, researchers should employ rigorous statistical frameworks to determine the actual architectural principles governing their specific biological networks. Future research directions should prioritize developing more nuanced network models that reflect the actual structural diversity observed in biological systems, moving beyond simplistic dichotomies to embrace the complex architectural patterns that underlie biological function.

Exponential Random Graph Models (ERGMs) represent a powerful class of statistical models for analyzing network structure and formation processes. These models enable researchers to move beyond descriptive network metrics to rigorous statistical inference about the local selection forces that shape global network topology [34]. In the context of comparative biological network research, ERGMs provide a principled framework for testing hypotheses about the mechanisms driving network formation and for quantifying differences between exponential (or Erdős-Rényi) and scale-free network architectures. Unlike conventional statistical methods that assume independence of observations, ERGMs explicitly account for the inherent dependencies in relational data, making them particularly suitable for modeling complex biological systems where ties between entities (proteins, genes, metabolites) are intrinsically interrelated [35].

The fundamental principle underlying ERGMs is that an observed network can be viewed as one realization from a population of possible networks with similar features. The model specifies the probability of observing a particular network configuration as a function of network statistics that capture relevant structural features [22]. In biological contexts, these features may include degree distributions, homophily (preferential connection between nodes with similar attributes), transitivity (the friend-of-a-friend effect), or other higher-order structures relevant to biological function. This tutorial provides a comprehensive workflow for ERGM application, with particular emphasis on their utility for comparing exponential and scale-free biological networks in drug development research.

Theoretical Foundation of Exponential Random Graph Models

Mathematical Formulation

ERGMs belong to the exponential family of distributions and specify the probability of a network Y taking a particular configuration y as:

[ P_{\theta,Y}(Y = y) = \frac{\exp{\theta^T g(y)}}{\kappa(\theta,Y)}, \quad y \in \mathcal{Y} ]

where:

- (\theta) is a vector of model coefficients

- (g(y)) is a vector of network statistics that capture relevant structural features

- (\kappa(\theta,Y) = \sum_{z \in \mathcal{Y}} \exp{\theta^T g(z)}) is the normalizing constant ensuring the probabilities sum to 1

- (\mathcal{Y}) represents the set of all possible networks with the given node set [34]

The model can be expanded to incorporate covariate information X, in which case the statistics become (g(y,X)). The normalizing constant presents computational challenges because the number of possible networks grows exponentially with the number of nodes, making direct calculation infeasible for networks of even moderate size [36].

Interpretation through Change Statistics