Evaluating Therapeutic Protein Engineering Platforms: A Guide to AI, Automation, and Validation for Researchers

This article provides a comprehensive evaluation of modern therapeutic protein engineering platforms for researchers, scientists, and drug development professionals.

Evaluating Therapeutic Protein Engineering Platforms: A Guide to AI, Automation, and Validation for Researchers

Abstract

This article provides a comprehensive evaluation of modern therapeutic protein engineering platforms for researchers, scientists, and drug development professionals. It explores the foundational technologies driving this rapidly evolving field, from AI-driven design to automated laboratory systems. The scope includes a detailed analysis of methodological approaches, strategies for troubleshooting and optimization, and rigorous frameworks for platform validation and comparison. By synthesizing the latest advancements and persistent challenges, this review serves as a strategic guide for selecting and implementing cutting-edge protein engineering solutions to accelerate the development of next-generation biologics.

The New Landscape of Therapeutic Protein Engineering: Core Technologies and Market Dynamics

Defining Modern Protein Engineering Platforms

Modern protein engineering platforms represent a technological convergence of artificial intelligence, biofoundry automation, and high-throughput experimentation that is revolutionizing therapeutic development. These integrated systems have shifted from traditional methods like rational design and directed evolution used in isolation to AI-driven, autonomous workflows that rapidly optimize protein therapeutics through iterative design-build-test-learn (DBTL) cycles [1] [2]. This evolution is driving remarkable market growth, with the protein/antibody engineering sector projected to expand from USD 3.5 billion in 2025 to USD 15.8 billion by 2035 [3].

Comparative Analysis of Platform Technologies

Table 1: Quantitative Performance Metrics of Modern Protein Engineering Platforms

| Platform / Approach | Engineering Efficiency | Key Performance Metrics | Therapeutic Application Examples | Experimental Validation |

|---|---|---|---|---|

| AI-Powered Autonomous Platforms | 90-fold improvement in substrate preference; 26-fold activity enhancement in 4 weeks [2] | Requires <500 variants; 4 rounds over 4 weeks [2] | Halide methyltransferase, phytase engineering [2] | High-throughput screening; automated characterization [2] |

| Rational Protein Design | 59.7% market share as preferred approach [3] | nM binding affinity achieved [1] | Antibodies to insulin and acyl-carrier protein [1] | Yeast display; flow cytometry [1] |

| Directed Evolution | Historically successful (Nobel Prize 2018) [4] | Varies by target and library size | Enzyme optimization [1] | Display technologies; survival assays [1] |

| Computational Library Design | 8-fold increased discovery efficiency [1] | 55-59.6% variants above wild type baseline [2] | Fibronectin domains, affibody scaffolds [1] | Phage display; yeast two-hybrid screening [1] |

Table 2: Technology Readiness Levels Across Platform Types

| Platform Characteristic | AI-Powered Autonomous | Rational Design | Directed Evolution | Library-Based Approaches |

|---|---|---|---|---|

| Therapeutic Validation | Early-stage proof of concept [2] | Clinically validated [5] | Multiple approved therapies [5] | Numerous clinical candidates [1] |

| Automation Integration | Fully integrated [2] | Partial integration [1] | Limited integration [1] | Moderate integration [1] |

| AI/ML Implementation | Core component [2] | Increasingly AI-informed [1] | Limited ML guidance [1] | ML for library design [1] |

| Throughput Capacity | 500+ variants per campaign [2] | Medium throughput [1] | High throughput possible [1] | Very high throughput [1] |

| Data Generation Quality | Quantitative performance mapping [1] | Structure-function insights [1] | Functional optimization data [1] | Sequence-performance relationships [1] |

Experimental Protocols and Methodologies

Autonomous Engineering Workflow

The most advanced platforms implement fully automated DBTL cycles incorporating several core methodologies:

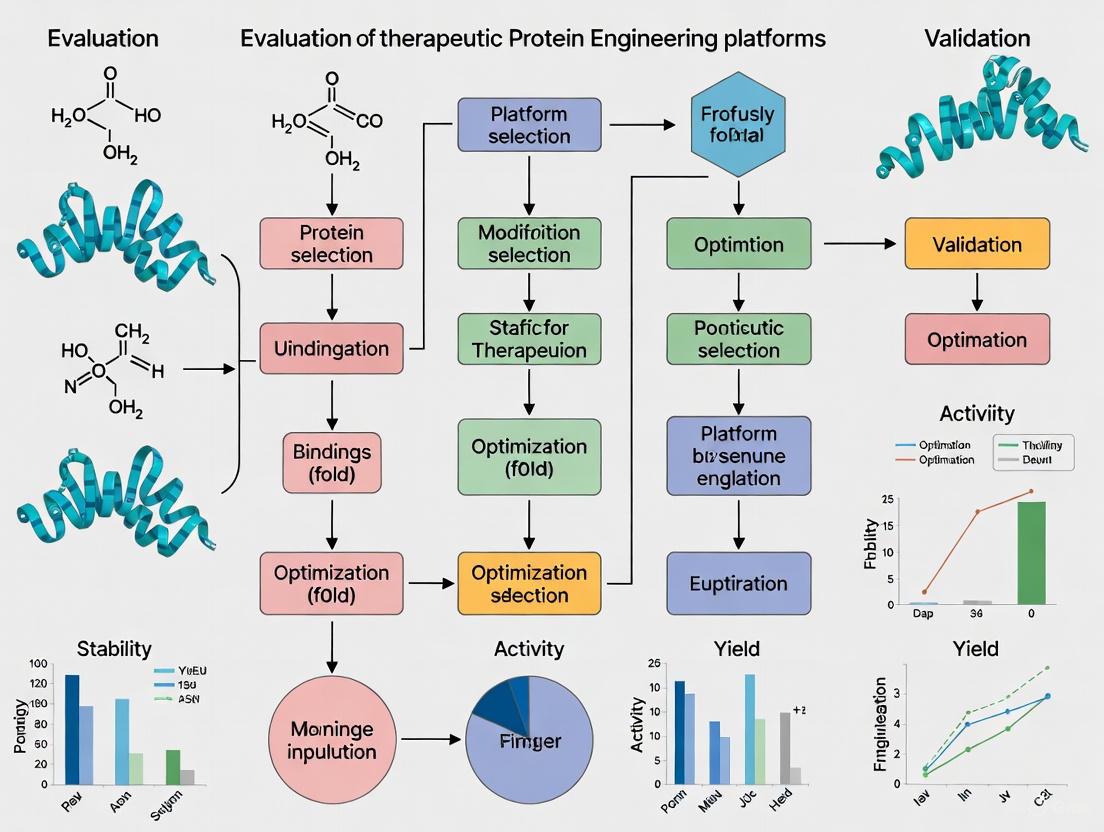

Diagram 1: Autonomous Protein Engineering Workflow

Key Methodological Components

Machine Learning-Guided Library Design: Initial variant libraries are designed using protein large language models (LLMs) like ESM-2 combined with epistasis models (EVmutation) to maximize diversity and quality. This approach generated 180 initial variants each for Arabidopsis thaliana halide methyltransferase (AtHMT) and Yersinia mollaretii phytase (YmPhytase), with 55-59.6% performing above wild-type baseline [2].

High-Fidelity Assembly Mutagenesis: Automated platforms employ HiFi-assembly based mutagenesis that eliminates intermediate sequence verification steps, achieving approximately 95% accuracy while enabling continuous workflow operation. This method allows generation of higher-order mutants through combinations of single mutants from initial libraries [2].

Integrated Biofoundry Operations: Advanced platforms like the Illinois Biological Foundry for Advanced Biomanufacturing (iBioFAB) implement seven automated modules covering mutagenesis PCR, DNA assembly, transformation, colony picking, plasmid purification, protein expression, and enzyme assays. This modular approach enables robust operation with minimal human intervention [2].

Quantitative Sequence-Performance Mapping: Unlike traditional selection-based methods, modern platforms emphasize quantitative characterization to build comprehensive sequence-performance landscapes. This approach elucidates complex relationships between protein sequence and performance metrics including binding affinity, catalytic efficiency, and stability [1].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for Protein Engineering Platforms

| Reagent / Material | Function in Workflow | Specific Application Examples | Performance Considerations |

|---|---|---|---|

| CRISPR Protein Libraries | Gene editing and variant generation | Therapeutic antibody optimization [6] | Editing efficiency, specificity [6] |

| Display Technologies | In vitro selection of binders | Yeast display, phage display [1] | Throughput, diversity representation [1] |

| Cell-Free Expression Systems | Rapid protein production | High-throughput screening [2] | Yield, folding efficiency [2] |

| Stable Cell Lines | Recombinant protein production | Therapeutic protein manufacturing [7] | Titers, product quality [7] |

| Specialized Enzymes | DNA assembly and modification | HiFi assembly mutagenesis [2] | Fidelity, efficiency [2] |

| Biosensors & Reporters | Functional characterization | Enzyme activity assays [2] | Sensitivity, dynamic range [2] |

| AI Training Datasets | Machine learning model development | Protein language models [2] | Size, quality, diversity [2] |

Performance Benchmarking and Data Integrity

Modern platforms demonstrate remarkable efficiency gains compared to traditional methods. The autonomous engineering platform described in Nature Communications achieved a 90-fold improvement in substrate preference and 16-fold improvement in ethyltransferase activity for AtHMT, along with a 26-fold improvement in neutral pH activity for YmPhytase. Critically, this was accomplished in just four rounds over four weeks while requiring construction and characterization of fewer than 500 variants for each enzyme [2].

Quantitative mapping approaches have shown that designed libraries can yield 55-59.6% of variants performing above wild-type baselines, with 23-50% showing significant improvements [2]. Rational library design methods have demonstrated 8-fold increased discovery efficiency compared to naive designs when informed by large datasets of binder sequences [1].

The integration of computational and experimental approaches creates powerful feedback loops. As noted in recent research, "computational design of combinatorial libraries aids the experimental search of sequence space, and high-throughput, high-integrity experimental data inform computational design" [1]. This synergy addresses the fundamental challenges of protein sequence space - its immensity, sparsity, and ruggedness - by creating increasingly accurate predictive models.

Future Directions and Platform Evolution

The trajectory of protein engineering platforms points toward increased autonomy, broader generalizability, and deeper integration of multimodal data. Platforms are evolving from target-specific solutions to generalizable systems that require only an input protein sequence and quantifiable fitness measurement [2]. The growing adoption of benchmarking tournaments, like those organized by Align, creates community standards for evaluating platform performance across diverse protein engineering challenges [8].

The therapeutic protein engineering landscape continues to expand with emerging opportunities in multispecific antibodies, antibody-drug conjugates, extracellular protein degraders, and non-oncology applications [9]. As platforms mature, their impact is extending beyond optimized single proteins to encompass entire therapeutic development workflows, potentially reducing development timelines and costs while increasing success rates for novel biologic therapeutics [5].

The Rise of AI and Machine Learning in Protein Design

The field of therapeutic protein engineering is undergoing a profound transformation, driven by the integration of artificial intelligence (AI) and machine learning (ML). Conventional protein engineering methods, while successful, are often constrained by their reliance on natural templates and labor-intensive experimental cycles [10]. AI-driven approaches are now overcoming these limitations by enabling the computational design of novel proteins with customized functions, significantly accelerating the exploration of the vast, untapped protein functional universe [10]. This guide provides an objective comparison of leading AI-powered protein design platforms, evaluates their performance against classical methods, and details the experimental protocols and resources essential for their evaluation in therapeutic development.

The AI-Driven Paradigm Shift in Protein Engineering

Traditional protein engineering strategies, such as directed evolution, have proven powerful for optimizing existing proteins but perform a local search within the protein functional universe. They require a natural protein as a starting point and involve the construction and experimental screening of immense variant libraries, a process that is costly, time-consuming, and confined to incremental improvements near the parent scaffold [10]. De novo protein design, which aims to create proteins from first principles, has historically relied on physics-based modeling tools like Rosetta. These tools use force fields and conformational sampling to design novel proteins, such as the Top7 fold, but can be hampered by approximate energy calculations and high computational expense [10].

Modern AI-augmented strategies complement and extend these methods. By training on vast biological datasets, machine learning models learn high-dimensional mappings between protein sequence, structure, and function [10]. This allows for the rapid generation of novel protein sequences and the inverse design of sequences that fold into predetermined, stable structures with desired functions [11] [12]. Frameworks like ProteinMPNN use deep learning to generate sequences based on structural inputs, resulting in proteins with improved solubility, stability, and binding energy compared to those created by conventional protein engineering [11].

Comparative Analysis of Protein Design Platforms

The performance of AI-driven platforms is best evaluated through rigorous benchmarking against traditional methods and each other. Key evaluation metrics include predicted binding energy for affinity, solubility and stability scores, and the ability to generalize to unseen protein targets without overfitting.

Table 1: Comparative Performance of AI and Traditional Protein Design Methods

| Design Method | Key Features | Reported Advantages | Key Limitations |

|---|---|---|---|

| AI/Deep Learning (e.g., ProteinMPNN) [11] | Uses structural data and neural networks to generate novel sequences. | - Creates proteins with increased solubility and stability.- Expands accessible sequence space.- Faster design cycle. | - Performance can be dependent on the quality and diversity of training data. |

| Directed Evolution [10] | Iterative cycles of mutation and high-throughput screening of variants based on a natural parent protein. | - Proven experimental track record.- Does not require prior structural knowledge. | - Explores a narrow sequence space.- Labor-intensive and time-consuming.- Tied to evolutionary history. |

| Physics-Based Design (e.g., Rosetta) [10] | Uses force fields and fragment assembly to find low-energy protein conformations. | - Can create novel folds (e.g., Top7).- Versatile for various design goals. | - Computationally expensive.- Approximate force fields can lead to misfolding.- Limited throughput. |

Table 2: Performance of ProteinMPNN-Designed Synthetic Binding Protein Scaffolds [11]

| Protein Scaffold | Key Therapeutic Application | Reported Improvement Over Conventional Counterparts |

|---|---|---|

| Fab | Antibody therapies | Improved binding energy or stability |

| scFv | Antibody therapies | Improved binding energy or stability |

| Diabody | Antibody therapies | Improved binding energy or stability |

| Affilin | Smaller synthetic alternative to antibodies | Improved binding energy or stability |

| Repebody | Smaller synthetic alternative to antibodies | Improved binding energy or stability |

| Neocarzinostatin-based binder | Anticancer drug delivery | Improved binding energy or stability |

| CI2-based binder | Synthetic design for stability and binding | Improved binding energy or stability |

| Evibody | Synthetic design for stability and binding | Improved binding energy or stability |

A critical consideration for AI models is their generalizability. A study on antibody-specific AI model Graphinity revealed that when tested with standard methods, the model appeared highly accurate. However, under stricter evaluations that prevented similar antibodies from appearing in both training and test sets, its performance dropped by over 60% [13]. This indicates that models can overfit to limited data rather than learning underlying principles. Research suggests that robust AI performance likely requires datasets on the order of at least 90,000 experimentally measured mutations, far exceeding the few hundred found in many current datasets [13].

Essential Research Reagent Solutions

Advancing AI-driven protein design from computation to clinic requires a suite of specialized research reagents and platforms for experimental validation.

Table 3: Key Research Reagent Solutions for Experimental Validation

| Reagent / Platform | Function in Protein Engineering |

|---|---|

| SUREtechnology Platform [14] | A platform for precise modification of therapeutic proteins to enhance stability, efficacy, and manufacturability. |

| Protein Engineering Foundry [14] | An integrated laboratory that enables rapid, high-throughput testing of new protein designs, integrating AI across all stages of protein creation. |

| High-Throughput Screening Systems | Automated systems for rapidly testing thousands of protein variants for binding, stability, and function. |

Experimental Protocols for Validating AI-Designed Proteins

To ensure AI-designed proteins meet therapeutic goals, a multi-stage validation protocol is essential. The workflow below outlines the key stages from computational design to functional validation.

Stage 1: Computational Design and In Silico Analysis

- Objective: Generate and select lead protein sequences computationally.

- Protocol:

- Input Design Goal: Define the target structure or function, such as a binding pocket for a specific antigen [11] [10].

- Sequence Generation: Use an AI model (e.g., ProteinMPNN) to generate thousands of candidate sequences that fulfill the input structural constraints [11].

- In Silico Filtering: Analyze candidates using bioinformatics tools to predict and select for high solubility, stability, and low aggregation propensity [11] [15]. Molecular dynamics simulations can further assess folding stability.

Stage 2: Experimental Characterization and Validation

- Objective: Experimentally confirm the structure, stability, and function of expressed proteins.

- Protocol:

- Gene Synthesis and Expression: Synthesize genes for selected sequences and express them in a suitable host system (e.g., E. coli, mammalian cells) [16].

- Biophysical Characterization:

- Size-Exclusion Chromatography (SEC): Assess protein monodispersity and identify soluble aggregates [15].

- Dynamic Light Scattering (DLS): Determine hydrodynamic radius and detect larger aggregates not visible in SEC.

- Surface Plasmon Resonance (SPR): Quantify binding affinity (KD) and kinetics (kon, koff) to the target antigen [13] [17].

- Functional Assays: Perform cell-based assays relevant to the therapeutic mechanism, such as neutralizing a virus or inhibiting a receptor signaling pathway [16].

The challenge of data diversity is a key bottleneck in developing generalizable AI models, as illustrated in the following diagram.

Market Landscape and Strategic Outlook

The AI protein design market is experiencing explosive growth, projected to grow from $1.5 billion in 2025 to $7 billion by 2033, a compound annual growth rate of 25% [18]. This growth is concentrated in therapeutic protein development, particularly for novel antibodies in oncology, driven by reduced design costs and faster discovery cycles [18]. Key players include Generate:Biomedicines, Ginkgo Bioworks, and Ginkgo Bioworks, who are leveraging proprietary algorithms and high-throughput foundries [14] [18]. The market is characterized by a high level of mergers and acquisitions as large biopharma companies seek to acquire AI capabilities [18].

Critical Challenges and Future Directions

Despite the promise, several challenges remain:

- Data Scarcity and Bias: As highlighted by the Oxford study, current experimental datasets are too small and lack diversity. For instance, over half the mutations in one major database involve changes to a single amino acid, alanine [13]. This biases models and limits generalizability.

- Validation Bottleneck: Computational predictions must be confirmed experimentally. The process of expressing, purifying, and characterizing proteins remains a throughput bottleneck, though automated foundries aim to address this [14].

- Immunogenicity Prediction: Accurately predicting whether a novel protein will trigger an unwanted immune response in humans is complex and remains a significant hurdle for clinical translation [15] [16].

Future progress hinges on generating larger and more diverse experimental datasets, developing more robust and generalizable AI models through community blind challenges, and further integrating AI with automated experimental workflows to create closed-loop design-build-test cycles [13] [10].

Therapeutic protein engineering represents a paradigm shift in modern medicine, transforming the treatment landscape for a diverse spectrum of diseases. By deliberately modifying protein structures—enhancing their affinity, stability, pharmacokinetics, and targetability—researchers can create highly specific, potent, and adaptable biotherapeutics [15]. The global protein engineering market, valued at USD 3.08 billion in 2024, is experiencing explosive growth, projected to reach USD 13.84 billion by 2034 at a CAGR of 16.27% [19]. This expansion is fundamentally driven by the urgent, unmet clinical needs in two major therapeutic areas: oncology and rare diseases. This guide objectively compares how distinct market drivers, scientific challenges, and engineering strategies are shaping the development of protein-based therapeutics for these divergent fields, providing a framework for platform evaluation.

Quantitative Market Landscape

The financial and strategic investment in cell and protein therapies underscores their clinical and commercial significance. The table below summarizes key quantitative metrics that define the current and projected market landscape for these therapeutic areas.

Table 1: Key Market Drivers and Investment Intelligence (2025-2033)

| Metric | Oncology | Rare Diseases |

|---|---|---|

| 2025 Market Share (Cell Therapy) | $8.1 Billion (54% of total market) [20] | $3.7 Billion (25% of total market) [20] |

| Primary Growth Driver | High unmet need, transformative potential of cell therapies (e.g., CAR-T), and high prevalence [20] | Significant investment ($3.7B 2024-2025) and regulatory incentives like orphan drug designations [20] |

| Investment Allocation (2025) | 54% of all cell therapy investments [20] | Attracted $3.7 billion between 2024-2025 [20] |

| Clinical Pipeline | 34 pivotal trials ongoing in 2025 [20] | 19 new orphan cell therapy designations (2024-2025) [20] |

| Representative Companies | Novartis, Gilead (Kite), BMS [20] | Bluebird Bio, Orchard Therapeutics, Vertex [20] |

| Market Valuation (Protein Drugs) | Largest application segment (28.2% share in 2025) [21] | Key area for biologics development and personalized medicine [22] |

Comparative Analysis of Engineering Platforms and Methodologies

The distinct pathological mechanisms and patient populations in oncology versus rare diseases demand specialized engineering approaches. The following section compares core platforms and their applications, supported by experimental data and workflows.

Established Protein Engineering Platforms

Table 2: Comparison of Established Protein Engineering Platforms

| Platform/Strategy | Core Principle | Key Advantages | Oncology Application | Rare Disease Application |

|---|---|---|---|---|

| Monoclonal Antibodies (mAbs) | High-affinity binding to specific antigens using IgG or fragments [23] | High specificity, established manufacturing, long half-life (FcRn) [23] | Trastuzumab (HER2+ breast cancer); Bevacizumab (anti-VEGF) [24] [23] | Pembrolizumab (immune dysregulation); engineered mAbs for rare autoimmune conditions [21] |

| Rational Protein Design | Structure-based, computational design of mutations for desired traits [24] | Precision engineering, improved stability, reduced immunogenicity [24] [15] | Insulin glargine (long-acting); Fc mutations (e.g., YTE, LS) to tune antibody half-life [15] | Engineered enzyme replacement therapies (ERTs) with enhanced stability for improved dosing regimens [15] |

| Directed Evolution | Mimics natural selection in lab; iterative random mutagenesis & screening [24] | No prior structural knowledge needed; can discover novel solutions [24] | Optimization of antibody affinity for tumor-associated antigens [24] | Engineering of novel enzymes with enhanced activity for substrate reduction therapy [24] |

Emerging and Specialized Platforms

Table 3: Emerging Platforms and Targeting Strategies

| Platform/Strategy | Core Principle | Key Advantages | Oncology Application | Rare Disease Application |

|---|---|---|---|---|

| Alternative Protein Scaffolds | Use of small, stable, non-antibody proteins (e.g., DARPins, Affibodies) as binding domains [23] | Small size for better tissue penetration; bacterial production; access unique epitopes [23] | MP0250 (bispecific DARPin anti-VEGF/HGF, Phase II); Angiocept (Adnectin, Phase II) [23] | Ecallantide (Kunitz domain, approved for hereditary angioedema) [23] |

| Ligand/Receptor Traps | Fusing extracellular domains of receptors to Fc to sequester pathogenic ligands [23] | High affinity and specificity; leverages native biology [23] | Aflibercept (Zaltrap, VEGF trap for colorectal cancer) [23] | FP-1039 (FGF trap, in clinical development) [23] |

| Bispecific Formats | Engineering proteins that bind two different antigens simultaneously [24] | Redirects immune cells to tumors; engages multiple signaling pathways [24] | Blinatumomab (BiTE, engages T-cells to CD19+ leukemia cells) [24] | Potential for engaging multiple disease-modifying targets in complex rare diseases |

Experimental Protocol: Engineering and Characterizing a Therapeutic Protein

The following workflow, common in both fields, outlines the key stages from design to functional validation.

Diagram 1: Protein Therapeutic Development Workflow

Detailed Experimental Methodologies:

Target Identification and Validation:

- Protocol: Target relevance is established using diseased vs. healthy human tissue samples via techniques like immunohistochemistry (IHC) and RNA sequencing. Functional validation employs gene knockdown (siRNA/shRNA) or knockout (CRISPR-Cas12) in disease-relevant in vitro and in vivo models to confirm the target's role in pathology [24] [25].

- Data Interpretation: A successful validation shows a strong, specific target expression in diseased cells and a significant phenotypic modification (e.g., reduced tumor growth, restored cellular function) upon target modulation.

Protein Design and Engineering:

- Rational Design: Requires a high-resolution protein structure (from X-ray crystallography or Cryo-EM). Computational tools like molecular dynamics simulations and docking algorithms (e.g., Rosetta, Schrödinger) are used to model and select point mutations for stability (e.g., SAP analysis), reduced immunogenicity, or altered half-life (e.g., Fc-FcRn engineering) [24] [15].

- Directed Evolution: A gene library is created via error-prone PCR or DNA shuffling. This library is expressed in a host system (e.g., E. coli, yeast), and variants with desired properties are isolated through multiple rounds of high-throughput screening (e.g., FACS for binding, growth selection for stability) [24].

In Vitro Characterization:

- Binding Affinity (SPR/BLI): The engineered protein is immobilized on a biosensor chip. A concentration series of the target analyte is flowed over the surface, and the binding kinetics (kon, koff, KD) are measured in real-time using Surface Plasmon Resonance (SPR) or Bio-Layer Interferometry (BLI).

- Thermal Stability (DSF/NanoDSF): A fluorescent dye that binds to hydrophobic regions exposed upon protein unfolding is added to the sample. The sample is heated gradually, and the fluorescence is monitored. The midpoint of the unfolding transition (Tm) is reported, with a higher Tm indicating greater stability.

In Vivo Efficacy and PK/PD:

- Protocol: The lead candidate is administered to disease-relevant animal models (e.g., patient-derived xenografts for oncology, genetic knockout models for rare diseases). Pharmacokinetics (PK) involves serial blood collection to measure drug concentration over time and calculate half-life (T½), Cmax, and AUC. Pharmacodynamics (PD) involves assessing biomarker changes and disease endpoints (e.g., tumor volume, biochemical correction) [23].

- Data Interpretation: Successful candidates show a favorable PK profile (e.g., long half-life) and a clear, dose-dependent efficacy signal on the disease phenotype with statistical significance (p < 0.05) compared to the control group.

The Scientist's Toolkit: Essential Research Reagents

The following reagents and platforms are critical for executing the experimental protocols described above.

Table 4: Key Research Reagent Solutions for Protein Engineering

| Reagent/Platform | Function in R&D | Example Use-Case |

|---|---|---|

| Cas12 Protein | CRISPR-associated protein for precise gene editing [25] | Functional validation of novel oncology targets or creating isogenic cell lines for rare disease modeling [25]. |

| AI-Driven Design Platforms | Accelerates in silico prediction of optimal protein structures and mutations [22] [26]. | Generating novel protein scaffolds with enhanced stability or reduced immunogenicity prior to synthesis. |

| High-Throughput Screening Instruments | Automated systems for rapidly analyzing thousands of protein variants from directed evolution libraries [19]. | Identifying clones with the highest binding affinity or thermal stability from a large combinatorial library. |

| SPR/BLI Instruments | Label-free analysis of biomolecular interactions in real-time to determine binding kinetics [19]. | Characterizing the binding affinity (KD) of an engineered monoclonal antibody to its cancer antigen. |

| Stable Cell Line Systems | Genetically engineered host cells (e.g., CHO, HEK293) for consistent, high-yield production of therapeutic proteins [21]. | Manufacturing complex, post-translationally modified proteins like monoclonal antibodies or fusion proteins. |

Signaling Pathways and Therapeutic Intervention

The therapeutic modality is determined by the underlying pathogenic mechanism, which differs significantly between oncology and rare diseases. The diagram below illustrates key pathways and intervention points.

Diagram 2: Therapeutic Intervention by Disease Mechanism

- Oncology Interventions: Primarily aim to inhibit hyperactive or aberrant pathways driving cell proliferation and survival. For example, ligand traps like Aflibercept block VEGF signaling to inhibit tumor angiogenesis [23]. Bispecific T-cell engagers physically link cancer cells to immune cells, inducing apoptosis [24].

- Rare Disease Interventions: Often aim to replace or supplement a missing or non-functional protein. Enzyme Replacement Therapy (ERT) is a direct approach, supplying a functional, engineered version of the deficient enzyme to restore metabolic function [15] [21]. Emerging strategies also include using ligand traps to block pathological signaling, as seen in FP-1039, an FGF trap designed for cancers with FGFR1 amplification but illustrating a principle applicable to rare diseases [23].

The evaluation of therapeutic protein engineering platforms reveals a dynamic and diverging landscape driven by oncology and rare diseases. Oncology is characterized by a high-volume market, intense investment, and engineering strategies centered on potent target cell killing and immune system engagement. In contrast, the rare disease sector, while smaller, is rapidly growing and propelled by significant R&D funding and regulatory incentives, with engineering efforts focused on enzyme replacement, metabolic correction, and highly personalized approaches. For researchers and drug developers, this comparative analysis underscores that the optimal choice of a protein engineering platform—from mAbs and ligand traps to novel scaffolds and AI-driven design—is not one-size-fits-all but is fundamentally dictated by the specific biological mechanism, patient population, and commercial landscape of the intended therapeutic area.

Major Players and Competitive Landscape in 2025

The global protein engineering market is experiencing a period of unprecedented growth and transformation, fueled by the convergence of artificial intelligence (AI), advanced computational design, and high-throughput experimental technologies. In 2025, the market is characterized by a vibrant and collaborative ecosystem where established life science titans, specialized biotechnology innovators, and cutting-edge contract research organizations (CROs) compete and partner to drive the next wave of biologic therapeutics. The market size, estimated at approximately $3.1 billion in 2024, is projected to grow at a remarkable compound annual growth rate (CAGR) of 16.3%, aiming to reach around $14 billion by 2034 [27]. This expansion is primarily driven by the escalating demand for novel therapeutics, particularly in oncology, the rise of biosimilars, and a strategic industry shift from small-molecule drugs to complex biologics [28] [5].

The competitive dynamics are shaped by several key trends: the central role of AI and machine learning in accelerating protein design and optimization; the strategic dominance of monoclonal antibodies as the leading protein type; and the critical importance of platform technologies that enable the rapid development and scaling of new protein-based drugs. Pharmaceutical and biotechnology companies constitute the largest end-user segment, accounting for over 55% of the market revenue, underscoring the field's therapeutic focus [27]. North America continues to lead the global market, but the Asia-Pacific region is emerging as the fastest-growing region, signaling a gradual globalization of innovation and capability [28].

Table: Key Global Market Metrics for Protein Engineering (2024-2034)

| Metric | 2024 Value | 2034 Projection | CAGR |

|---|---|---|---|

| Global Market Size | $3.1 Billion [27] | $14.0 Billion [27] | 16.3% [27] |

| Protein Engineering Segment | $3.08 Billion [28] | $13.84 Billion [28] | 16.27% [28] |

| Protein Drugs Market | $441.7 Billion [22] | $655.7 Billion [22] | 8.2% [22] |

Market Segmentation and Key Players

The protein engineering market can be dissected along several axes, including product type, technology, and protein type. Understanding these segments is crucial for evaluating the strategic positioning of the key players.

Market Dominance by Segment

In 2025, specific segments have established clear dominance, reflecting the current priorities and technological demands of the industry.

Table: Dominant Market Segments in Protein Engineering (2025)

| Segment Category | Dominant Segment | Market Share (2024-2025) | Key Drivers for Dominance |

|---|---|---|---|

| Product Type | Instruments | 45.3% [27] | Demand for high-throughput screening, precision analysis, and automated protein characterization [28] [27]. |

| Technology | Rational Protein Design | 38.2% [27] | Precision enabled by AI and computational modeling; ability to create proteins with specific, desired properties [27]. |

| Protein Type | Monoclonal Antibodies (mAbs) | 41.9% [27] | High success in oncology, immunology, and autoimmune diseases; target-specific action with minimal off-target effects [28] [27]. |

| End User | Pharmaceutical & Biotechnology Companies | 55.6% [27] | High R&D investment in biologics and a strategic shift toward precision medicine and protein-based therapies [27]. |

Analysis of Leading Companies

The competitive landscape is populated by a diverse set of players, which can be categorized into technology and tool providers, biopharmaceutical innovators, and specialized service providers. The following table offers a structured comparison of the major entities shaping the market in 2025.

Table: Major Players in the Protein Engineering Competitive Landscape (2025)

| Company | Primary Role & Core Focus | Key Strengths & Strategic Advantages | Notable Recent Activities (2024-2025) |

|---|---|---|---|

| Thermo Fisher Scientific [29] | Technology & Tool Provider | Comprehensive portfolio of instruments, reagents, and software; global scale and distribution. | Partnership with AESKU.GROUP (Dec 2023) to distribute automated lab instruments in the U.S. [27]. |

| Danaher Corp. (Cytiva) [29] | Technology & Tool Provider | State-of-the-art analytical instruments and a strong emphasis on digital automation and workflow integration. | Continuous R&D investment in molecular biology platforms and bioprocessing tools [29]. |

| Agilent Technologies [29] | Technology & Tool Provider | Robust tools for chromatography, sequencing, and analytical validation; strong industry partnerships. | Launched the Agilent Seahorse XF Pro Analyzer for cellular metabolism analysis (June 2024) [30]. |

| Merck KGaA [29] | Technology & Tool Provider | Innovation in gene synthesis, site-directed mutagenesis, and high-quality reagents. | Focus on proprietary technologies for biopharmaceutical manufacturing [29]. |

| Lonza Group AG [29] | Service Provider (CRO/CMO) | End-to-end services from early discovery to commercial manufacturing; expertise in regulatory compliance. | A preferred partner for scaling therapeutic protein production [29]. |

| Charles River Laboratories [29] | Service Provider (CRO) | Specialized expertise in protein characterization, safety assessment, and regulatory guidance for therapeutics. | Provides end-to-end solutions to accelerate clinical timelines [29]. |

| Evotec SE [29] | Service Provider (CRO) | Integrated discovery platforms; expertise in directed evolution and gene editing. | Collaborative model and investment in next-generation tools for pharmaceutical partners [29]. |

| GenScript Biotech Corp. [29] | Biotechnology Innovator | Advanced gene synthesis, protein engineering, and CRISPR-based technologies; custom services. | Blends innovation with speed-to-market for pharmaceuticals and industrial applications [29]. |

| Bio-Techne Corporation [29] | Technology & Tool Provider | Innovative tools, consumables, and analytical software; robust catalog of reagents and kits. | Launched a new portfolio of "designer proteins" developed through AI and evolutionary engineering (Jan 2025) [30]. |

| Amgen, Inc. [28] | Biopharmaceutical Innovator | Decades of experience in developing and commercializing blockbuster protein therapeutics. | Research collaboration with Generate Biomedicines to develop protein therapies for five targets (Jan 2022) [27]. |

| Codexis, Inc. [30] | Biotechnology Innovator | Specializes in engineering novel enzymes for pharmaceutical and industrial applications. | Donated a proprietary imine reductase dataset for the Protein Engineering Tournament [31]. |

Diagram 1: The protein engineering market is structured around three primary types of competing and collaborating entities.

Experimental Protocols for Platform Evaluation

Evaluating the performance of therapeutic protein engineering platforms requires rigorous, data-driven methodologies. The following section outlines established experimental protocols that provide standardized frameworks for benchmarking computational and experimental approaches.

The Predictive Modeling Benchmarking Protocol

Objective: To assess the accuracy of computational models in predicting biophysical properties (e.g., activity, expression, thermostability) from protein sequences [31].

Workflow: This protocol is typically structured into two parallel tracks to test different model capabilities:

- Supervised Track: Participants are provided with a pre-split dataset containing sequences and their experimentally measured properties. The training set is used to build a model, which is then used to predict the properties of a withheld test set. Performance is evaluated by comparing predictions to the ground-truth experimental data for the test set [31].

- Zero-Shot Track: This more challenging track tests the inherent generalizability of models. Participants are given only protein sequences from a test set and must predict their properties without any prior training data for that specific protein, relying on the model's pre-existing knowledge or physicochemical principles [31].

Key Metrics for Evaluation: The choice of metric depends on the nature of the predicted property. Common metrics include:

- Rank Correlation Coefficients: Spearman's rank correlation is used to evaluate how well the model ranks variants by a given property.

- Mean Squared Error (MSE): Measures the average squared difference between predicted and actual values for continuous data.

- Accuracy: Used for classification tasks (e.g., predicting expression levels as "Low" or "Good") [31].

The Generative Design and Validation Protocol

Objective: To benchmark the ability of generative models to design novel protein sequences that maximize or satisfy a set of target biophysical properties [31].

Workflow:

- Design Challenge Definition: A specific challenge problem is posed, such as "Design a list of up to 200 amino acid sequences that maximize enzyme activity while maintaining at least 90% of the parent sequence's stability and expression" [31].

- Computational Sequence Generation: Participating teams use their proprietary platforms (e.g., AI-driven generative models, hybrid approaches) to generate and rank a list of candidate sequences.

- Experimental Characterization: The submitted sequences are synthesized and characterized experimentally by a partner organization. This step is critical for moving beyond in silico predictions and providing ground-truth validation. Partners like International Flavors and Fragrances (IFF) have provided high-throughput characterization for thousands of variants in past tournaments [31].

- Multi-objective Performance Assessment: The success of generated sequences is evaluated based on how well they meet the combined objectives of the challenge (e.g., high activity with maintained stability and expression). The final ranking of teams is based on the experimentally validated performance of their designs.

Diagram 2: Experimental workflows for platform evaluation involve parallel tracks for predictive modeling and generative design, culminating in quantitative benchmarking.

The Scientist's Toolkit: Key Research Reagent Solutions

The experimental protocols and daily research in protein engineering rely on a suite of essential reagents, instruments, and software. The following table details key solutions that form the backbone of innovation in this field.

Table: Essential Research Reagent Solutions for Protein Engineering

| Tool / Solution | Primary Function | Role in Protein Engineering Workflow | Example Providers |

|---|---|---|---|

| High-Throughput Screening Instruments | Enable rapid analysis of thousands of protein variants for expression, activity, and stability. | Critical for evaluating large libraries generated by directed evolution or computational design. | Thermo Fisher, Agilent, Danaher [28] [27] |

| Gene Synthesis & Mutagenesis Kits | Facilitate the construction of gene libraries with random or site-specific mutations. | Foundation for creating genetic diversity required for both rational design and directed evolution. | Merck KGaA, GenScript, New England Biolabs [29] [30] |

| AI/ML-Driven Protein Design Software | Use machine learning to predict protein structure/function and generate novel sequences. | Powers rational design and de novo protein creation, dramatically accelerating the design cycle. | Capgemini (pLLM), Bio-Techne (AI platforms) [22] [30] |

| Chromatography & Analytical Instruments | Purify and characterize engineered proteins with high precision (e.g., size, charge, affinity). | Essential for ensuring the quality, purity, and correct folding of engineered protein candidates. | Agilent, Waters Corp., Bruker [29] [27] |

| Cell-Free Protein Expression Systems | Produce proteins without the use of living cells, enabling rapid and flexible synthesis. | Allows for quick expression of designed proteins for initial screening and functional testing. | Various specialized providers |

| Stable Cell Line Development Reagents | Create robust mammalian cell lines for consistent, large-scale production of therapeutic proteins. | Key to transitioning from a research candidate to a manufacturable therapeutic biologic. | Lonza, Cytiva (Danaher) [29] |

The competitive landscape for protein engineering in 2025 is dynamic and poised for continued disruption. The dominance of AI-driven rational design and monoclonal antibodies is clear, but the future will be shaped by emerging trends. The push for oral protein formulations and personalized protein therapeutics represents the next frontier in drug delivery and precision medicine [22]. Furthermore, the integration of synthetic biology is expected to enable the creation of entirely new protein modalities with enhanced therapeutic profiles [22]. As the field progresses, challenges such as high production costs, immunogenicity, and complex regulatory pathways will persist, fostering innovation in manufacturing and computational predictive models [5] [24]. Success in this evolving market will belong to those who can effectively integrate multidisciplinary capabilities—spanning computational design, high-throughput experimentation, and scalable manufacturing—within a collaborative ecosystem.

The Impact of AlphaFold and Generative AI on Protein Structure Prediction

The field of protein structure prediction has undergone a revolutionary transformation, moving from a long-standing challenge to a routinely solvable problem due to the advent of advanced artificial intelligence (AI). For over five decades, the "protein folding problem"—predicting a protein's three-dimensional structure from its amino acid sequence—remained a critical open challenge in molecular biology [32]. Traditional experimental methods for determining protein structures, including X-ray crystallography, nuclear magnetic resonance (NMR), and cryo-electron microscopy (cryo-EM), while invaluable, are often labor-intensive, costly, and technically demanding, creating a significant bottleneck in structural coverage [33] [34]. The release of AlphaFold2 (AF2) by Google DeepMind in 2020 marked a pivotal breakthrough, achieving atomic-level accuracy competitive with experimental methods and effectively resolving the single-chain protein structure prediction problem for most cases [32]. This breakthrough was recognized with the 2024 Nobel Prize in Chemistry, underscoring its profound impact on the field [34].

The evolution has continued rapidly, with the subsequent development of AlphaFold3 (AF3) and a new generation of generative AI tools expanding capabilities beyond monomer prediction to model protein complexes, interactions with ligands and nucleic acids, and even to design novel proteins de novo [12] [34] [35]. These advancements are particularly transformative for therapeutic protein engineering, enabling researchers to understand disease mechanisms at a molecular level, identify novel drug targets, and design optimized protein-based therapeutics with unprecedented speed and precision [33]. This guide provides a comparative analysis of the current AI-driven protein structure prediction landscape, focusing on performance metrics, underlying methodologies, and practical applications in drug discovery and development.

Performance Comparison of Leading AI Prediction Tools

The performance of protein structure prediction tools is typically benchmarked using metrics such as Global Distance Test (GDT), Template Modeling Score (TM-score), Root-Mean-Square Deviation (RMSD), and predicted Local Distance Difference Test (pLDDT), which estimates model confidence [34] [35]. The following tables summarize the key capabilities and quantitative performance of major contemporary tools.

Table 1: Overview of Key Protein Structure Prediction Tools and Their Primary Applications

| Tool | Developer | Key Capabilities | Therapeutic Application Strengths | Access Model |

|---|---|---|---|---|

| AlphaFold3 [36] | Google DeepMind / Isomorphic Labs | Predicts structures of proteins, protein complexes, protein-ligand, and protein-nucleic acid interactions. | Holistic view of drug targets in their molecular context. | Restricted (academic non-commercial) [36] |

| AlphaFold2 [37] | Google DeepMind | High-accuracy monomeric protein structure prediction. | Reliable target identification and characterization. | Fully open source & database of 200M+ predictions [37] |

| RoseTTAFold All-Atom [36] | David Baker Lab, UW | Similar broad molecular complex prediction as AF3. | De novo protein and therapeutic antibody design. | Non-commercial license [36] |

| DeepSCFold [38] | Academic Research | High-accuracy protein complex modeling using sequence-derived structural complementarity. | Modeling challenging complexes (e.g., antibody-antigen). | Academic research tool |

| Boltz 2 [35] | Academic Research | Specializes in biomolecular interaction modeling and binding affinity prediction. | Small-molecule drug binding affinity and hit discovery. | Fully open access (weights & code) [35] |

| OpenFold [36] | Academic Consortium | An open-source effort to replicate and build upon AlphaFold's performance. | Commercial R&D where AF3 is restricted. | Aims for full open-source commercial use [36] |

| SimpleFold [39] | Academic Research | Transformer-based generative model for structure and ensemble prediction. | Modeling protein dynamics and multiple conformations. | Research tool |

Table 2: Comparative Performance Metrics on Standard Benchmarks

| Tool | CASP14 GDT / Accuracy | Protein Complex Prediction (vs. AF3) | Key Experimental Performance Highlights |

|---|---|---|---|

| AlphaFold3 [35] | - | Baseline | 50% more precise than traditional docking; outperforms AF2 & specialized tools [35]. |

| AlphaFold2 [32] | ~90 GDT [35] | Not Applicable (Monomer focus) | Median backbone accuracy of 0.96 Å RMSD in CASP14 [32]. |

| DeepSCFold [38] | - | +10.3% TM-score on CASP15 targets | Improves antibody-antigen interface success rate by 24.7% over AF-Multimer [38]. |

| Boltz 2 [35] | - | - | Pearson of 0.62 in binding affinity prediction, ~1000x faster than FEP methods [35]. |

| SimpleFold [39] | Surpasses ESMFold on CASP14 [39] | - | Achieves >95% of AF2's performance on CAMEO22 without MSA or specialized architecture [39]. |

As the data indicates, while AlphaFold3 sets a new benchmark for the scope and accuracy of biomolecular complex prediction, several specialized and open-source alternatives are emerging with competitive or superior performance in specific tasks, such as DeepSCFold for complex interfaces and Boltz2 for binding affinity.

Experimental Protocols and Methodologies

Understanding the experimental protocols and underlying architectures of these tools is crucial for researchers to select the appropriate method and interpret results correctly.

Core Architectural Paradigms

The AI models discussed here are built on distinct architectural backbones:

- AlphaFold2's Evoformer and Structural Module: AF2 introduced a novel neural network block called the Evoformer, which processes multiple sequence alignments (MSAs) and pairwise features to reason about evolutionary and spatial relationships [32]. This is followed by a structure module that explicitly represents the 3D atomic coordinates, using iterative refinement to achieve high accuracy [32].

- AlphaFold3's Diffusion-Based Architecture: AF3 replaced the structure module with a diffusion-based generative architecture. This model starts with a cloud of noisy atoms and iteratively denoises it to generate the final, precise 3D structure. This approach is particularly adept at modeling complex molecular assemblies and interactions [34] [35].

- SimpleFold's Transformer-Only Approach: Challenging the need for specialized modules, SimpleFold uses a standard Transformer architecture and a flow-matching generative objective. It directly maps protein sequences to 3D atomic structures without relying on MSAs, triangular updates, or pairwise representations, demonstrating the power of a scalable, general-purpose architecture [39].

Workflow for Protein Complex Prediction with DeepSCFold

DeepSCFold exemplifies a state-of-the-art protocol for modeling challenging protein complexes, such as antibody-antigen pairs, where traditional co-evolutionary signals may be weak [38]. The workflow is designed to leverage structural complementarity inferred directly from sequence.

The key methodological innovation in DeepSCFold is its use of two deep learning models to construct more informative paired MSAs (pMSAs). Instead of relying solely on sequence co-evolution, it predicts a protein-protein structural similarity (pSS) score and a protein-protein interaction probability (pIA) score from sequence data alone. These scores allow the algorithm to prioritize homologous sequences that are structurally relevant and likely to interact, leading to more accurate predictions of complex interfaces, especially for systems like antibody-antigen pairs [38].

Protocol for Predicting Alternative Conformations with CF-random

A significant limitation of many AI predictors is their tendency to output a single, static structure, whereas proteins are dynamic and can adopt multiple conformations critical for function (e.g., fold-switching, allostery) [40]. The CF-random protocol, built on ColabFold, was developed to generate ensembles of alternative protein conformations.

Table 3: The Scientist's Toolkit: Key Reagents and Resources for AI-Driven Structure Prediction

| Resource / Tool | Type | Primary Function in Research | Example / Source |

|---|---|---|---|

| AlphaFold Protein Structure Database | Database | Provides instant, open access to over 200 million pre-computed protein structure predictions for target identification and validation. | EMBL-EBI [37] |

| Protein Data Bank (PDB) | Database | Repository of experimentally determined 3D structures of proteins, nucleic acids, and complexes; used for validation and templating. | RCSB PDB [33] |

| UniProt | Database | Comprehensive resource for protein sequence and functional information; essential for MSA construction. | UniProt Consortium [37] |

| ColabFold | Software | Efficient, cloud-based implementation of AlphaFold2 and other tools that simplifies and accelerates prediction jobs. | Public Server [40] |

| Multiple Sequence Alignment (MSA) | Data Input | A core input for many models (e.g., AF2), providing evolutionary constraints that guide accurate folding. | Generated by HHblits, Jackhammer [38] |

| pLDDT | Metric | Per-residue estimate of prediction confidence (0-100); helps researchers identify reliable regions of a model. | Output of AlphaFold [33] |

| CF-random | Algorithm | A protocol for predicting multiple alternative protein conformations from a single sequence. | [40] |

The CF-random method works by systematically randomly subsampling the input MSA at very shallow depths (as few as 3 sequences). Deep MSAs typically lead to one dominant conformation, but shallow MSAs, which provide insufficient information for robust co-evolutionary inference, force the network to explore the conformational landscape it has learned, often resulting in viable alternative structures [40]. This protocol has successfully predicted both conformations for about 35% of tested fold-switching proteins, a significant improvement over previous methods [40].

Applications in Therapeutic Protein Engineering and Drug Discovery

The integration of these AI tools is accelerating nearly every stage of the drug discovery pipeline, from target identification to lead optimization.

Target Identification and Validation: The AlphaFold Database has democratized access to structural information, providing models for proteins previously without any structural data. This allows for the functional annotation of unknown proteins and the identification of new, tractable drug targets [37] [33]. For example, AF2 predictions have been used to pinpoint pathogenic missense variations in hereditary cancer genes by analyzing the impact of mutations on protein stability [33].

Allosteric Drug Discovery: AI-predicted structures enable the identification of potential allosteric binding sites—regions distinct from the active site that can regulate protein function. This opens avenues for designing allosteric drugs that can work synergistically with traditional orthosteric drugs to overcome drug resistance [33].

De Novo Protein Design: Generative AI is catalyzing a paradigm shift from prediction to creation. Tools like RFdiffusion and ProteinMPNN allow researchers to design novel protein sequences and scaffolds that fold into desired structures and functions [12] [33]. This has direct applications in designing miniprotein therapeutics, enzymes with novel catalytic activities, and stable vaccine antigens [12] [35]. For instance, researchers have designed de novo enzymes like Kemp eliminase with dramatically improved activity and miniproteins that effectively neutralize snake venom toxins [35].

Antibody and Complex Engineering: Tools like DeepSCFold are particularly valuable for modeling the interfaces of protein complexes, such as antibody-antigen interactions. By achieving higher accuracy in predicting binding interfaces, these tools help in silico engineering of antibodies with optimized affinity and specificity, reducing the need for costly and time-consuming experimental screening [38].

The field of AI-powered protein structure prediction is evolving at a breathtaking pace. The initial revolution in monomer prediction, led by AlphaFold2, has swiftly given way to a new era of generative AI for biomolecular complexes and de novo design. As evidenced by the performance of tools like AlphaFold3, DeepSCFold, and Boltz2, the focus is now on predicting and designing the intricate interactions that form the basis of cellular function [38] [35].

Future developments will likely involve a closer synthesis of generative AI with high-throughput experimental automation and physics-based simulation. A key challenge that remains is the accurate prediction of protein dynamics, multiple conformational states, and the effects of post-translational modifications [34] [40]. Methods like CF-random and generative architectures like SimpleFold's, which can natively model structural distributions, represent important steps toward this goal [40] [39]. Furthermore, the tension between the restricted access to some of the most powerful models (e.g., AF3) and the scientific community's need for open tools is driving vigorous open-source development, as seen with OpenFold and Boltz2, which will be crucial for broad commercial application in biotechnology and pharma [36] [35].

In conclusion, the impact of AlphaFold and subsequent generative AI models on therapeutic protein engineering is profound and enduring. They have not only provided a massive repository of structural hypotheses but have also created a new, powerful toolkit for rational drug design. By enabling researchers to move from sequence to structure to function with increasing reliability, these technologies are streamlining the drug discovery process, enhancing our understanding of disease mechanisms, and opening new frontiers in the design of advanced protein-based therapeutics.

Economic Potential and Market Growth Projections

The global protein engineering market is experiencing rapid expansion, driven by increasing demand for protein-based therapeutics and advancements in biotechnology. The market is poised to grow from $3.08 billion in 2024 to approximately $13.84 billion by 2034, representing a robust compound annual growth rate (CAGR) of 16.27% during the forecast period [41] [28]. Alternative market assessments project growth from $4.11 billion in 2024 to $8.33 billion by 2029 at a CAGR of 15.5%, further confirming the sector's strong growth trajectory [42]. This significant expansion underscores protein engineering's transformative role in the global bioeconomy, which is being reshaped by technological innovations across healthcare, agriculture, and industrial biotechnology [5].

Table 1: Global Protein Engineering Market Size Projections

| Year | Market Size (USD Billion) | Data Source |

|---|---|---|

| 2024 | $3.08 | Towards Healthcare [41] [28] |

| 2024 | $4.11 | Research and Markets [42] |

| 2025 | $3.58 (projected) | Towards Healthcare [41] [28] |

| 2025 | $4.69 (projected) | Research and Markets [42] |

| 2029 | $8.33 (projected) | Research and Markets [42] |

| 2034 | $13.84 (projected) | Towards Healthcare [41] [28] |

The escalating demand for protein-based drugs represents a primary market driver, with protein engineering techniques enabling the modification of protein sequences to tailor therapeutics for specific applications [42]. The broader market for protein-based therapeutics exceeds $300 billion annually with projections of nearly 10% CAGR over the next decade, while the industrial enzymes segment is expected to surpass $10 billion by 2030 [5]. Growth is further accelerated by the rising prevalence of chronic diseases, which increases the need for targeted therapies developed through protein engineering [42].

Market Segmentation Analysis

By Product Type

The instruments segment dominated the market in 2024 and is expected to maintain the fastest growth rate during the forecast period. This segment's prominence stems from providing faster, more efficient protein synthesis, screening, and analysis capabilities that enable large-scale production of protein-based therapeutics [28]. Automated instruments are particularly valued for high-throughput screening applications, driving continued investment and innovation in this category [41].

By Application Type

Rational protein design held the dominating market share in 2024, largely due to its increased use in developing targeted treatment approaches [41] [28]. This approach enables the development of stable, effective therapeutic proteins and enzymes while potentially reducing production timelines [28]. The hybrid approach segment is anticipated to witness the fastest growth, as it combines multiple methodologies to enhance the accuracy of developed proteins and improve success rates for therapeutic applications [41] [28].

By Protein Type

Monoclonal antibodies led the market in 2024 and are expected to remain the fastest-growing segment [41] [28]. Their dominance is attributed to wide-ranging applications, high specificity that reduces side effects, and their extensive use in biologics development [41]. Protein engineering enhances both the stability and specificity of monoclonal antibodies, leading to increased FDA approvals for disease treatments, particularly in oncology [28].

By End User

Pharmaceutical and biotechnology companies captured the largest revenue share in 2024, driven by substantial R&D activities focused on developing biologics and biosimilars [41] [28]. The contract research organizations (CROs) segment is predicted to be the fastest-growing end-user category, benefiting from increasing collaborations, outsourcing trends, and demand for advanced technologies in biologics and biosimilar production [41] [28].

Table 2: Protein Engineering Market Segmentation Analysis

| Segmentation | Dominant Segment (2024) | Fastest-Growing Segment | Key Growth Drivers |

|---|---|---|---|

| Product Type | Instruments | Instruments | High-throughput screening, automated analysis, large-scale production needs |

| Application Type | Rational Protein Design | Hybrid Approach | Targeted therapy development, improved accuracy, higher success rates |

| Protein Type | Monoclonal Antibodies | Monoclonal Antibodies | Target-specific action, reduced side effects, FDA approvals for cancer treatments |

| End User | Pharmaceutical & Biotechnology Companies | Contract Research Organizations (CROs) | R&D investments, outsourcing trends, biologics manufacturing demand |

Regional Market Analysis

North America held the major revenue share of the global protein engineering market in 2024, attributable to robust biotechnology industries, significant investments in protein engineering, and advanced research and development activities across industrial and institutional settings [41] [28]. The region's well-developed biotechnology sector utilizes protein engineering extensively for developing therapeutic proteins and protein-based products, with technological advancements continuously optimizing various applications [28].

The Asia Pacific region is expected to witness the fastest growth during the forecast period, driven by expanding industries that utilize protein engineering for enzymes, drugs, and biologics development [41] [28]. Government funding, investments from diverse sources, increasing outsourcing trends, and growing awareness of targeted therapies are enhancing innovations and prompting market growth across the region [41]. Specifically, countries like China are seeing biotechnological industries utilize protein engineering for developing new treatment options, including biosimilars and biopharmaceuticals [28].

Key Growth Drivers and Restraints

Market Drivers

Rising Demand for Novel Therapeutics: Increasing incidences of chronic diseases have amplified demand for protein-based therapeutics, including monoclonal antibodies, recombinant proteins, and enzymes for treating cancer, autoimmune diseases, and cardiovascular disorders [28]. The growing demand for biosimilars as affordable alternatives further propels market expansion [28].

Technological Advancements: Innovations in artificial intelligence and machine learning are revolutionizing protein engineering through enhanced protein structure prediction, data analysis, and design capabilities [28] [12]. The integration of AI across all stages of protein creation aims to democratize protein design research and accelerate the field [42].

Precision Medicine Expansion: Protein engineering enables the development of personalized medications tailored to individual patient profiles and disease markers, representing a significant growth opportunity [42] [28].

Government and Private Funding: Substantial investments from government entities and private sectors are accelerating research and commercialization. Recent examples include a $35 million Series A funding for Portal Biotech, NSF TIP grants for AI-driven protein engineering, and 210 million JPY for RevolKa Ltd's AI-driven platform [41].

Market Challenges

High Production Costs: The development of protein-based therapeutics is expensive and complex, requiring specialized reagents, facilities, quality control measures, and skilled personnel [28]. The high costs associated with biologics production and protein therapeutics for rare diseases create affordability challenges that can limit market growth [28].

Technological Complexities: The sophisticated nature of protein engineering technologies increases error potential and product development failures, creating demand for highly skilled personnel and potentially limiting accessibility for some organizations [41].

Regulatory Hurdles: The regulatory landscape for biologics includes varying exclusivity frameworks across regions—approximately 12 years in the U.S. and 8+2(+1) years in the EU—creating complexity for global market strategies [5].

Investment Trends and Innovation Focus

Leading companies are prioritizing the establishment of advanced protein engineering foundries to enhance research and industrial applications. For instance, Adaptyv Bio launched an integrated protein engineering foundry in April 2023 that enables rapid testing of new proteins across various technologies, significantly reducing reagent usage, experiment duration, and cost per data point [42]. Major players are also developing innovative products using advanced technological platforms; KBI Biopharma's SUREtechnology platform, launched in September 2023, focuses on optimized, safe, and cost-effective development and manufacturing of monoclonal antibodies [42].

The convergence of protein engineering with synthetic biology, machine learning, and automation is shaping the field's trajectory, with AI-powered platforms like AlphaFold and RFdiffusion transforming protein structure prediction and design capabilities [5]. These advancements enable researchers to create novel proteins with unusual precision, opening doors to de novo protein design for specific functions from drug delivery vehicles to environmentally friendly biocatalysts [5].

Key Research Reagent Solutions and Experimental Platforms

Table 3: Essential Research Reagents and Platforms for Protein Engineering

| Research Tool Category | Specific Examples | Primary Function | Experimental Application |

|---|---|---|---|

| Analytical Instruments | Mass spectrometers, Spectroscopy systems, Protein purification systems | Protein characterization, purity assessment, functional analysis | Identity confirmation, concentration measurement, purity validation [43] |

| Display Technologies | Yeast surface display, Phage display | High-throughput screening of protein variants | Binder discovery, affinity maturation, stability engineering [1] |

| Screening Platforms | Flow cytometric sorting, Binder panning, Miniaturized well plates | Efficient evaluation of large variant libraries | Directed evolution, de novo discovery, sequence-performance mapping [1] |

| Computational Tools | Rosetta, AlphaFold2, RFdiffusion, AI/ML models | Protein structure prediction, sequence design, functional optimization | Rational design, mutational impact prediction, library design [1] [12] [5] |

| Characterization Reagents | Enzymes, Antibodies, Labeling and detection reagents | Functional assessment, binding quantification | Binding affinity measurement, specificity evaluation, stability profiling [42] |

The protein engineering market represents a high-growth sector within the global bioeconomy, with significant opportunities driven by therapeutic innovation, technological advancements, and increasing demand for biologics. While challenges related to production costs and technological complexity persist, continued investment in platform technologies and AI-driven design tools is expected to accelerate growth and expand applications across healthcare and industrial biotechnology. The market's trajectory positions protein engineering as a critical enabler of future innovations in precision medicine, sustainable manufacturing, and targeted therapeutics.

Inside the Platform Toolbox: From Rational Design to Automated Evolution

In the pursuit of advanced therapeutic proteins, researchers primarily employ two distinct methodological paradigms: rational design and directed evolution. These approaches represent fundamentally different philosophies in protein engineering. Rational design operates as a top-down, knowledge-driven process that leverages detailed structural insights to make precise alterations to protein sequences [44] [45]. In contrast, directed evolution follows a bottom-up, empirical strategy that mimics natural selection through iterative rounds of mutagenesis and screening to discover improved variants [46]. The choice between these methodologies carries significant implications for research timelines, resource allocation, and therapeutic outcomes within drug development pipelines. This comparative analysis examines the technical foundations, experimental workflows, and performance metrics of both approaches to guide platform selection for therapeutic protein engineering.

Fundamental Principles and Technical Foundations

Rational Design: A Structure-Informed Approach

Rational protein design functions on the principle that protein function is determined by its three-dimensional structure, which in turn is dictated by its amino acid sequence. This approach requires comprehensive knowledge of protein structure-function relationships, typically obtained through X-ray crystallography, cryo-electron microscopy, or nuclear magnetic resonance (NMR) spectroscopy [45]. With the advent of artificial intelligence (AI), computational prediction of protein structures has become increasingly sophisticated, with tools like AlphaFold2 capable of predicting single-chain protein structures with atomic precision from amino acid sequences [47]. The rational design workflow involves identifying key residues or structural domains responsible for specific functions—such as catalytic activity, binding affinity, or stability—and implementing targeted mutations to enhance these properties.

Recent advancements in AI have dramatically expanded the capabilities of rational design. Deep learning tools such as RFdiffusion enable de novo backbone and topology design, while ProteinMPNN generates amino acid sequences optimized for a given 3D backbone [47]. This AI-driven rational design has demonstrated remarkable success in creating novel proteins with functions not found in nature, from enzymes with novel catalytic activities to therapeutic proteins with enhanced binding properties [47] [48]. These computational methods have effectively created a "third way" in protein engineering that combines the predictability of rational design with the exploratory power of evolutionary approaches.

Directed Evolution: Harnessing Evolutionary Principles

Directed evolution applies the principles of natural selection—variation, selection, and amplification—in a laboratory setting to engineer improved proteins without requiring prior structural knowledge [46]. This method involves creating genetic diversity through random mutagenesis or recombination, followed by high-throughput screening or selection to identify variants with desired properties [46]. The most successful variants then serve as templates for subsequent rounds of evolution, allowing progressive improvement through cumulative mutations.

Traditional directed evolution methods have been constrained by their reliance on labor-intensive screening processes and limited exploration of sequence space. However, recent technological innovations have substantially accelerated these workflows. Continuous evolution platforms, such as the T7-ORACLE system, represent a significant advancement by enabling proteins to evolve inside living cells without manual intervention [49]. This system engineers E. coli to host a second, artificial DNA replication system derived from bacteriophage T7, which targets only plasmid DNA while leaving the host genome untouched [49]. By engineering T7 DNA polymerase to be error-prone, researchers can introduce mutations into target genes at a rate 100,000 times higher than normal, achieving a round of evolution with each cell division instead of weekly cycles [49].

Table 1: Core Principles of Protein Engineering Methodologies

| Aspect | Rational Design | Directed Evolution |

|---|---|---|

| Philosophical Basis | Knowledge-driven, deterministic | Empirical, exploratory |

| Structural Knowledge Required | High (atomic-level) | Minimal to none |

| Primary Advantage | Precision and control | Access to novel solutions |

| Key Limitation | Limited by current structural understanding | Screening throughput constraints |

| Automation Potential | High (computational design) | Moderate (physical screening) |

| Therapeutic Success Examples | Engineered binders for venom toxins [47] | Optimized antibodies, enzymes [46] |

Experimental Protocols and Workflows

Rational Design Methodology

The rational design pipeline follows a structured, iterative process that integrates computational and experimental components:

Stage 1: Target Identification and Structural Analysis

- Obtain high-resolution structure of target protein via experimental methods or AI-based prediction (AlphaFold2, RoseTTAFold)

- Identify key functional residues through structural analysis and molecular dynamics simulations

- Map binding interfaces, catalytic sites, or stability-determining regions

Stage 2: Computational Design and In Silico Screening

- Implement targeted mutations using tools like RFdiffusion for backbone design or ProteinMPNN for sequence design

- Perform virtual screening of designed variants using molecular docking and free energy calculations

- Predict stability changes and folding efficiency through tools like DOPE and Rosetta

Stage 3: Experimental Validation

- Synthesize and express top-ranking designed variants

- Characterize biophysical properties (thermal stability, solubility, aggregation propensity)

- Assess functional performance through activity assays and binding studies

This workflow is exemplified by the development of potent, stable binders that neutralize elapid venom toxins. Researchers used RFdiffusion to engineer binders, resulting in variants with nanomolar affinity and crystal structures that closely matched the computational designs (RMSD = 1.04 Å) [47].

Directed Evolution Protocol

Directed evolution employs an iterative experimental workflow that emphasizes high-throughput screening:

Stage 1: Library Construction

- Generate genetic diversity through error-prone PCR (epPCR) or DNA shuffling

- For continuous evolution: Clone target gene into orthogonal replication system (e.g., T7-ORACLE)

- Set mutation rate through polymerase error rate or chemical mutagenesis concentration

Stage 2: Selection or Screening

- Implement growth-coupled selection for properties conferring survival advantage

- Employ fluorescence-activated cell sorting (FACS) for surface display libraries

- Use microfluidic platforms or robotic screening for high-throughput functional assays

Stage 3: Hit Identification and Iteration

- Sequence top-performing variants to identify beneficial mutations

- Use best hits as templates for subsequent rounds of diversification

- Continue until performance plateau or desired activity level achieved

A representative example is the evolution of amide synthetases using machine-learning guided cell-free expression [50]. Researchers evaluated 1,217 enzyme variants across 10,953 unique reactions, using the resulting data to build machine learning models that predicted variants with 1.6- to 42-fold improved activity [50].

Diagram 1: Comparative Workflows for Rational Design (Blue) vs. Directed Evolution (Red)

Performance Metrics and Comparative Analysis

Efficiency and Success Rates