Evaluating DNA Assembly Fidelity by Sequencing: A Comprehensive Guide for Biomedical Researchers

This article provides a comprehensive framework for researchers and drug development professionals to evaluate DNA assembly fidelity through modern sequencing technologies.

Evaluating DNA Assembly Fidelity by Sequencing: A Comprehensive Guide for Biomedical Researchers

Abstract

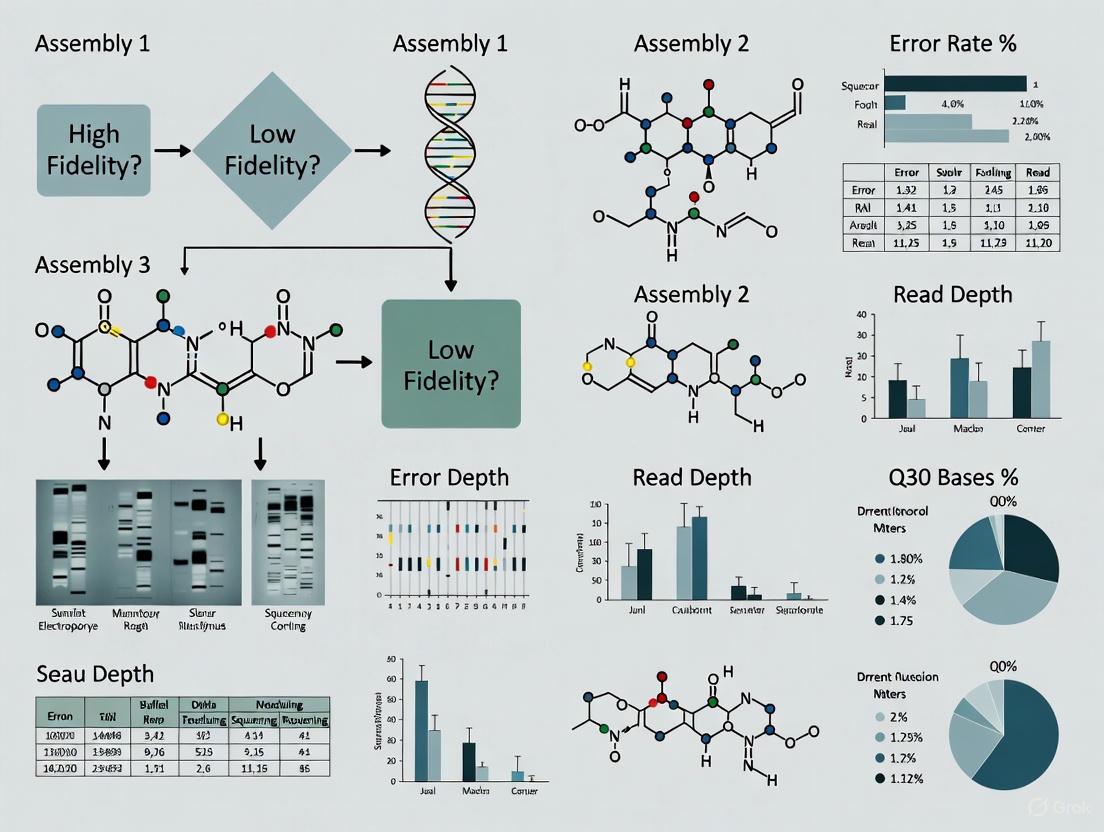

This article provides a comprehensive framework for researchers and drug development professionals to evaluate DNA assembly fidelity through modern sequencing technologies. It covers foundational principles of sequencing accuracy, explores methodological applications of tools like Golden Gate Assembly and Data-optimized Assembly Design, addresses troubleshooting and optimization strategies for error reduction, and presents validation approaches for comparative analysis. By synthesizing current advancements in HiFi, nanopore, and NGS platforms, this guide enables scientists to ensure high-fidelity DNA constructs critical for synthetic biology, therapeutic development, and precision medicine applications.

Understanding DNA Assembly Fidelity: Core Concepts and Sequencing Accuracy Fundamentals

In metabolic engineering and synthetic biology, the methods for assembling genetic parts into functional DNA molecules are foundational to prototyping metabolic pathways and genetic circuits [1]. DNA assembly fidelity refers to the accuracy and precision with which these DNA fragments are joined, ensuring the final constructed sequence matches the intended design without errors. The first developed DNA assembly method, restriction digestion and ligation, sparked a biotechnology revolution but imposed significant limitations on our ability to synthesize complex DNA molecules [1]. As synthetic biology advances, increasingly complicated DNA construct designs involving multiple genes and intergenic components demand higher efficiency and fidelity than traditional cloning methods can provide [1].

The critical importance of DNA assembly fidelity extends across research and clinical applications. In therapeutic development, including the production of monoclonal antibodies, vaccines, and CRISPR-based gene therapies, assembly errors can compromise functionality or safety [2]. For instance, constructing vectors for CAR-T cell engineering or correcting mutations like CFTR F508del in cystic fibrosis requires absolute precision [2]. High-fidelity assembly is equally crucial in basic research, whether for functional studies of proteins resolved by X-ray crystallography or for building complex genetic circuits [2]. This guide objectively compares modern DNA assembly technologies through the lens of fidelity, providing researchers with performance data, experimental protocols, and analytical frameworks for evaluating assembly accuracy in their specific contexts.

DNA Assembly Technologies: Mechanisms and Fidelity Profiles

Restriction Enzyme-Based Methods

Restriction enzyme-based methods represent one of the earliest approaches to DNA assembly. The Golden Gate Assembly method, which relies on type IIs restriction enzymes, cleaves DNA outside recognition sites to produce four-nucleotide overhangs that facilitate precise fragment joining [1]. When properly designed, digested fragments ligate to generate products lacking original restriction sites, enabling efficient one-pot assembly through temperature cycling [1].

The BioBrick standard was the first strategy enabling sequential assembly of standard biological parts through iterative restriction digestion and ligation cycles [1]. Each DNA part is flanked by specific restriction sites (EcoRI and XbaI upstream; SpeI and PstI downstream), with XbaI and SpeI being isocaudamers that generate compatible sticky ends [1]. A key limitation was the original design's extra nucleotides beyond the natural 6-nucleotide scar, creating frameshifts and premature stop codons problematic for protein fusion applications [1]. Subsequent revisions like the BglBrick system addressed this by using more efficient, methylation-insensitive enzymes (BglII and BamHI) and producing a scar sequence (GGATCT) encoding glycine-serine, making it suitable for protein fusions [1].

A significant advancement in restriction enzyme-based assembly comes from comprehensive ligase fidelity profiling. Research demonstrates that measuring ligation fidelity enables prediction of high-fidelity junction sets, allowing dramatically more complex assemblies of 12, 24, or even 36+ fragments in a single reaction with high accuracy and efficiency [3]. Online tools now apply these comprehensive datasets to analyze existing junction sets, select new high-fidelity overhang sequences, modify and expand existing sets, and divide known sequences at multiple high-fidelity breakpoints [3].

Sequence Homology-Based Methods

Sequence homology-based methods utilize longer arbitrary overlapping regions between parts, avoiding restrictions of enzyme-based approaches. These include both in vitro and in vivo methods with distinct fidelity characteristics.

NEBuilder HiFi DNA Assembly enables virtually error-free joining of DNA fragments, even those with 5´- and 3´-end mismatches, using a proprietary high-fidelity polymerase [4]. This method offers simple and fast seamless cloning in as little as 15 minutes, accommodating both routine cloning and complex assemblies of 2-12 fragments [4]. A key advantage is its ability to remove 3' and 5'-end mismatch sequences prior to fragment assembly, significantly enhancing fidelity [4].

The Gibson assembly method employs T5 exonuclease to chew back 5' ends, generating single-stranded overhangs that facilitate fragment annealing, followed by Phusion polymerase and Taq ligase to fill gaps and seal nicks in an isothermal reaction [1]. Sequence and Ligation-Independent Cloning (SLIC) uses T4 DNA polymerase in the absence of dNTPs to generate single-stranded overhangs in vitro, with recombinant DNA molecules completed by endogenous repair machinery in E. coli [1]. A related method, Seamless Ligation Cloning Extract (SLiCE), utilizes inexpensive E. coli cell extracts to drive homology-mediated assembly, substantially reducing costs [1].

Table 1: Comparison of DNA Assembly Methods and Their Fidelity Characteristics

| Assembly Method | Mechanism | Key Fidelity Feature | Optimal Fragment Number | Scar Formation |

|---|---|---|---|---|

| Golden Gate | Type IIs restriction enzymes and ligation | Data-optimized assembly design predicts high-fidelity junction sets | 6-8 (up to 36+ with optimized overhangs) | Scarless if properly designed |

| NEBuilder HiFi | Exonuclease removal of mismatches + polymerase/ligase | Removes 3' and 5'-end mismatch sequences prior to assembly | 2-12 | Scarless |

| Gibson Assembly | Exonuclease + polymerase + ligase | Homology-directed recombination in vitro | 2-12 | Scarless |

| SLIC/SLiCE | T4/T5 polymerase chew-back + in vivo repair | Endogenous repair machinery fixes nicks in vivo | 2-10 | Scarless |

| BioBrick | Restriction digestion and ligation | Standardized parts with specific scar sequences | Sequential assembly | 6-8 nucleotide scar |

Quantitative Fidelity Assessment: Experimental Data and Comparison

Ligase Fidelity Profiling

Comprehensive profiling of DNA ligase fidelity has revolutionized our understanding of sequence-dependent ligation bias and its impact on assembly accuracy. Research profiling the ligation of all three-base 5'-overhangs by T4 DNA ligase under typical conditions revealed significant variations in ligation efficiency depending on overhang sequence [5]. These ligation profiles accurately predict junction fidelity and have enabled accurate and efficient assembly of 24-fragments in a single reaction [5].

This fundamental work has been extended through Data-optimized Assembly Design (DAD), which applies comprehensive ligase fidelity data to predict high-accuracy junction sets for Golden Gate assembly [5]. The practical applications are substantial - researchers have successfully assembled the 40 kb T7 bacteriophage genome from up to 52 parts using these principles and recovered infectious phage particles after cellular transformation [5]. Similarly, highly parallelized construction of genes from low-cost oligonucleotide mixtures has been achieved in three simple steps in as little as 4 days by applying data-optimized assembly design to Golden Gate Assembly with optimized ligase fidelity tools [5].

Table 2: Experimental Performance Metrics for DNA Assembly Methods

| Method | Assembly Complexity Demonstrated | Reported Efficiency | Key Fidelity Validation Method | Notable Applications |

|---|---|---|---|---|

| Golden Gate with DAD | 52 fragments (40 kb genome) | High efficiency with correct clones | Sequencing of final construct | T7 bacteriophage genome assembly [5] |

| NEBuilder HiFi | 2-12 fragments | Virtually error-free, high-efficiency cloning | End-point analysis with validation | sgRNA-Cas9 vector construction [4] |

| Gibson Assembly | 2-12 fragments | High efficiency with screening | Sequencing validation | Pathway construction [1] |

| SLIC/SLiCE | 2-10 fragments | Moderate to high efficiency | In vivo repair validation | Library construction [1] |

Sequencing-Based Fidelity Validation

Long-read sequencing technologies have emerged as powerful tools for validating DNA assembly fidelity. The Edinburgh Genome Foundry established a single-molecule sequencing quality control step using Oxford Nanopore sequencing, coupled with a companion Nextflow pipeline and Python package for in-depth analysis [6]. This approach provides detailed reports that enable researchers working with plasmids to rapidly analyze and interpret sequencing data, validating assembled, cloned, or edited plasmids with long-read sequencing [6].

Comparative studies between high-fidelity (HiFi) and continuous long-read (CLR) sequencing platforms demonstrate their distinct capabilities for variant detection. HiFi sequencing identified 1.65-fold more true-positive variants on average with 60% fewer false-positive variants compared to CLR [7]. Furthermore, variant calling after genome assembly proved particularly effective for detecting large insertions, even with only 10× sequencing depth of accurate long-read sequencing data [7]. This establishes 10× assembly-based variant calling as a cost-effective methodology for high-quality variant detection in assembled constructs [7].

DNA Assembly Fidelity Assessment Workflow

Experimental Protocols for Fidelity Evaluation

High-Fidelity Golden Gate Assembly Protocol

Principle: Golden Gate assembly uses type IIs restriction enzymes that cleave outside recognition sites, creating unique overhangs for precise fragment ligation. High-fidelity implementation employs data-optimized assembly design to select optimal overhang sets [3].

Step-by-Step Protocol:

- Fragment Preparation: Amplify DNA parts with primers adding appropriate overhang sequences (4bp). Use high-fidelity polymerase to minimize amplification errors.

- Golden Gate Reaction Setup:

- Combine DNA parts (equimolar ratio, 50-100 fmol each)

- Add T4 DNA ligase (5 Weiss units)

- Add Type IIs restriction enzyme (e.g., BsaI-HFv2, 10 units)

- Include reaction buffer and ATP

- Thermocycling Conditions:

- 30 cycles: 37°C (2 min) + 16°C (5 min)

- Final step: 60°C (10 min) then 80°C (10 min)

- Transformation: Introduce 2μL reaction into competent E. coli cells

- Screening: Pick colonies for sequencing validation

Critical Fidelity Considerations:

- Use NEBridge Ligase Fidelity Tools to select high-fidelity overhang sets [5]

- Avoid repetitive sequences in overhangs that promote misassembly

- Include negative controls without ligase to detect template carryover

- For complex assemblies (>12 fragments), use hierarchical assembly strategy

Long-Read Sequencing Validation Protocol

Principle: Single-molecule long-read sequencing detects assembly errors, structural variants, and contaminations that short-read technologies miss [6] [8].

Step-by-Step Protocol:

- DNA Preparation: Extract high-molecular-weight DNA using magnetic bead-based cleanups

- Library Preparation:

- Use native barcoding kits for multiplexing

- Repair DNA ends and ligate adapters

- Purify with magnetic beads

- Sequencing:

- Load library on MinION, GridION, or PromethION flow cells

- Run sequencing for 24-72 hours

- Basecall in real-time using Dorado with superaccurate model (sup@v5.0)

- Data Analysis:

- Demultiplex reads with guppy_barcoder or Dorado

- Assemble with Flye assembler using nanopore raw reads

- Polish assembly with Medaka (medakag360HAC model)

- Compare assembled sequence to reference design

- Identify discrepancies (SNPs, indels, structural variants)

Quality Control Metrics:

- Minimum 10× sequencing coverage for reliable variant calling [7]

- Read N50 > 20kb for adequate assembly continuity

- Base quality Q20+ (99% accuracy) with latest basecalling models

- Maximum 2 allele variations per isolate across datasets when using optimized protocols [8]

Sequencing-Based Fidelity Validation

Research Reagent Solutions for DNA Assembly

Table 3: Essential Research Reagents for High-Fidelity DNA Assembly

| Reagent/Tool | Manufacturer/Provider | Function in Fidelity Assessment | Key Applications |

|---|---|---|---|

| NEBuilder HiFi DNA Assembly Master Mix | New England Biolabs | Enables virtually error-free joining of DNA fragments | Seamless cloning, complex assemblies [4] |

| Golden Gate Assembly System | Various | Type IIs restriction enzymes for precise fragment assembly | Modular cloning, combinatorial libraries [5] |

| NEBridge Ligase Fidelity Tools | New England Biolabs | Online tools for predicting high-fidelity junction sets | Golden Gate assembly design optimization [5] [3] |

| Oxford Nanopore Sequencing Kits | Oxford Nanopore Technologies | Long-read sequencing for assembly validation | Plasmid verification, structural variant detection [6] [8] |

| PacBio HiFi Sequencing Reagents | Pacific Biosciences | High-accuracy long-read sequencing | Variant calling, genome assembly [7] |

| NEBuilder Assembly Tool | New England Biolabs | Online primer design for assembly reactions | Primer design with optimal overlaps [4] |

The evaluation of DNA assembly technologies reveals a landscape where fidelity optimization requires strategic method selection based on project requirements. For high-complexity assemblies involving numerous fragments (12+), Golden Gate assembly with data-optimized design principles offers unprecedented capability, enabling one-pot construction of 35+ DNA fragments with high accuracy [5]. For seamless cloning applications requiring minimal screening, NEBuilder HiFi DNA Assembly provides virtually error-free joining of 2-12 fragments with proprietary enzymes that remove end mismatches prior to assembly [4].

Critical to all assembly workflows is validation through long-read sequencing, with research demonstrating that 10× coverage with assembly-based variant calling provides cost-effective, high-quality fidelity assessment [7]. The integration of these technologies - optimized assembly methods coupled with rigorous sequencing validation - establishes a robust framework for DNA construction across synthetic biology and clinical applications. As therapeutic DNA constructs grow more complex, from CRISPR-based editors to entire synthetic pathways, these fidelity assurance methods will become increasingly essential for research reproducibility and clinical safety.

In the pursuit of genomic truth, scientists rely on sequencing technologies to generate accurate representations of genetic material. The fidelity of DNA assembly in sequencing research hinges on understanding and quantifying accuracy, which is not a singular concept but a multi-faceted metric that directly impacts biological interpretation. For researchers and drug development professionals, selecting the appropriate sequencing platform and methodology requires a clear grasp of two fundamental accuracy types: read accuracy and consensus accuracy. These metrics govern our ability to distinguish true biological variation from technical artifacts, ultimately influencing diagnostic conclusions and therapeutic insights.

The distinction between these accuracy types becomes particularly crucial when investigating complex genomic regions associated with disease. Repetitive elements, structural variants, and medically relevant genes with pseudogenes (e.g., GBA) present formidable challenges that are conquered only by technologies offering superior accuracy profiles. As large-scale population genomics initiatives like the All of Us program generate data for personalized medicine, the choice between accuracy metrics and sequencing technologies carries profound implications for identifying pathogenic variants and uncovering missing heritability [9]. This guide provides an objective comparison of sequencing accuracy metrics and technologies, empowering scientists to optimize their experimental designs for maximum genomic fidelity.

Defining the Fundamental Accuracy Metrics

Read Accuracy: The Single-Measurement Benchmark

Read accuracy (also referred to as raw read accuracy) represents the inherent error rate of individual sequencing reads from a DNA sequencing technology. It is a measure of the single-pass fidelity of the sequencing instrument before any computational correction or consensus building is applied [10]. This metric is typically expressed as a percentage, with higher values indicating greater precision at the level of individual DNA molecules.

The quality of individual bases within a read is commonly expressed as a Q-score (Quality Score), a Phred-scaled value that estimates the probability of an incorrect base call. The formula Q = -10log₁₀(P) defines the relationship, where P is the probability of an erroneous call [11] [12]. For example, a Q-score of 30 (Q30) indicates a 1 in 1,000 chance of an error, corresponding to 99.9% base call accuracy [12]. This metric provides a probabilistic assessment of sequencing precision that informs downstream analytical confidence.

Consensus Accuracy: The Power of Redundancy

Consensus accuracy is determined by combining information from multiple overlapping reads covering the same genomic region, effectively eliminating random errors present in individual reads [10]. This approach leverages deep sequencing coverage—where more reads contribute to the consensus—to produce a highly accurate consolidated sequence [10] [11]. The fundamental principle is that while random errors may occur in individual reads, they will be outvoted by correct base calls at the same position across the read ensemble.

However, consensus building faces inherent limitations. The process is computationally intensive and cannot correct for systematic errors—consistent mistakes introduced by a sequencing platform due to biochemical or technical biases [10]. If a technology consistently misinterprets a particular sequence context or motif, this error will be propagated through all reads and reinforced in the consensus. Consequently, the starting quality of individual reads, particularly their freedom from systematic bias, profoundly influences the ultimate quality of the consensus sequence [10].

Visualizing the Relationship Between Accuracy Types

The following diagram illustrates how read accuracy and consensus accuracy interrelate within the sequencing workflow:

Technology-Specific Performance Comparison

Long-Read Sequencing Platforms: PacBio and Oxford Nanopore

The landscape of long-read sequencing is dominated by two principal technologies: Pacific Biosciences (PacBio) and Oxford Nanopore Technologies (ONT). Each employs distinct biochemical approaches that yield characteristic accuracy profiles, with significant implications for genomic research.

PacBio sequencing utilizes Single Molecule, Real-Time (SMRT) technology. The platform offers two primary modes: Continuous Long Read (CLR) sequencing and High-Fidelity (HiFi) sequencing. CLR mode generates long reads (tens to hundreds of kilobases) but with a relatively high single-pass error rate of approximately 15% [13] [9]. HiFi sequencing, by contrast, employs circular consensus sequencing (CCS) where a DNA molecule is sequenced multiple times in a loop, producing highly accurate (>99.9%) reads of 15-20 kb [9]. This unique approach provides both length and accuracy, making HiFi reads particularly suited for applications requiring high precision without compromising contiguity.

Oxford Nanopore Technologies sequences DNA and RNA molecules by measuring changes in electrical current as nucleic acids pass through protein nanopores. Early ONT chemistries (R9.4.1) exhibited relatively high error rates (>10%) [13], but recent advancements have substantially improved performance. The latest R10.4.1 flow cells with Q20+ chemistry achieve single-read accuracy exceeding 99% (Q20) [14], with some reports indicating raw read accuracy up to 99.75% (Q26) using sophisticated basecalling models like Dorado v5 SUP [14]. ONT's distinctive capability for ultra-long reads (exceeding 100 kb) and direct detection of epigenetic modifications provides unique advantages for comprehensive genome characterization [14] [15].

Comparative Accuracy Metrics Across Platforms

Table 1: Comparative Accuracy Metrics of Major Sequencing Platforms

| Technology | Read Type | Raw Read Accuracy (Range) | Consensus Accuracy Potential | Typical Read Length | Systematic Biases |

|---|---|---|---|---|---|

| PacBio HiFi | Circular Consensus | >99.9% (Q30+) [10] [9] | Very High (>Q40) [16] | 15-20 kb [9] | Low, uniform coverage [10] |

| PacBio CLR | Continuous Long Read | ~85% (Q8) [13] | High with polishing | Tens to hundreds of kb | Low [10] |

| ONT (R10.4.1) | Nanopore | >99% (Q20) [14] | High (>Q40 with sufficient coverage) [14] | Up to hundreds of kb [14] | Context-dependent [17] |

| Illumina | Short Read | >99.9% (Q30+) [12] | Very High | 50-300 bp | PCR, amplification biases |

Impact on Genomic Applications

The choice between accuracy types and technologies carries practical consequences for genomic investigations. Variant calling reliability depends on both read and consensus accuracy. Single nucleotide variant (SNV) detection requires high base-level precision, while structural variant (SV) identification benefits from long reads that span repetitive regions [9]. A recent study evaluating the All of Us program found that HiFi reads produced the most accurate results for both small and large variants [9].

For de novo genome assembly, consensus accuracy determines the overall quality of the reconstructed sequence. Highly accurate long reads dramatically improve assembly contiguity and completeness compared to error-prone long reads [16]. Research comparing assembly outcomes across 6,750 plant and animal genomes revealed that HiFi-based assemblies were 501% more contiguous for plants and 226% more contiguous for animals compared to those generated with other long-read technologies [16].

The phasing of haplotypes in diploid or polyploid genomes represents another application where accuracy is paramount. Distinguishing maternal from paternal chromosomes requires sufficient read accuracy to confidently identify heterozygous variants against the background error rate [10]. HiFi reads, with their high accuracy and length, enable phasing of variants over large genomic distances, which is crucial for studying compound heterozygotes in Mendelian disorders [10].

Experimental Protocols for Accuracy Assessment

Standardized Workflows for Accuracy Benchmarking

Rigorous assessment of sequencing accuracy requires controlled experimental designs and standardized bioinformatic pipelines. The following protocol outlines a comprehensive approach for technology comparison:

Sample Selection and Preparation:

- Utilize well-characterized reference samples with known truth sets, such as the Genome in a Bottle (GIAB) consortium samples (e.g., HG002) [14] [9]

- Extract high-molecular-weight DNA using validated protocols (e.g., phenol:chloroform extraction) to minimize shearing [13]

- Quantify DNA using fluorometric methods (e.g., Qubit) and assess fragment size distribution via pulsed-field capillary electrophoresis (e.g., FEMTO Pulse) [13]

Library Preparation and Sequencing:

- For PacBio HiFi: Prepare SMRTbell libraries using the SMRTbell Express Template Prep Kit, optimize size selection for desired read length (typically 15-20 kb) [9]

- For ONT ligation sequencing: Use the Ligation Sequencing Kit (SQK-LSK109) with optional size selection (e.g., BluePippin) for fragments >10 kb [13]

- For ONT rapid sequencing: Employ the Rapid Sequencing Kit (SQK-RAD004) for simplified, amplification-free workflows [13]

- Sequence on appropriate platforms (PacBio Sequel II/Revio or ONT PromethION/MinION) to achieve target coverage (typically 30-50x for human genomes) [9]

Computational Analysis Pipeline:

- Basecalling: Use platform-specific tools (Dorado for ONT, CCS for PacBio HiFi) with appropriate models (e.g., SUP for highest accuracy) [14]

- Read alignment: Map reads to reference genome using optimized aligners (minimap2, pbmm2) [9]

- Variant calling: Implement specialized callers for different variant types (SNVs, indels, SVs) [9]

- Accuracy assessment: Compare calls to established truth sets, calculating precision, recall, and F1 scores [14] [9]

Cloud-Based Pipelines for Large-Scale Accuracy Evaluation

For large-cohort studies like the All of Us program, scalable computational approaches are essential. The following workflow has been successfully implemented for population-scale accuracy assessment:

Table 2: Key Research Reagent Solutions for Accuracy Benchmarking

| Reagent/Resource | Function | Example Applications |

|---|---|---|

| SMRTbell Express Template Prep Kit 2.0 | PacBio library construction | HiFi sequencing for variant detection [13] |

| ONT Ligation Sequencing Kit (SQK-LSK109) | ONT library preparation with ligation | Structural variant detection, assembly [13] |

| ONT Rapid Sequencing Kit (SQK-RAD004) | Rapid ONT library preparation | Rapid diagnostics, plasmid sequencing [13] |

| GIAB Reference Materials | Benchmark samples with characterized variants | Technology validation, pipeline development [9] |

| Dorado Basecaller | ONT basecalling with optimized models | High-accuracy basecalling (SUP mode) [14] |

| Verkko Assembly Pipeline | Hybrid assembly tool | Telomere-to-telomere assembly [14] |

Implications for DNA Assembly Fidelity and Research Applications

Technology Selection for Specific Research Goals

The choice between sequencing technologies and accuracy metrics should be guided by specific research objectives rather than a one-size-fits-all approach. Each application domain presents distinct requirements for read length, accuracy, and throughput:

Complex Genome Assembly: For de novo assembly of eukaryotic genomes, especially those with high repetitive content, highly accurate long reads (HiFi) produce superior results. Studies demonstrate that HiFi assemblies achieve contig N50 values approximately 5x greater than those generated with error-prone long reads [16]. The combination of length and accuracy enables resolution of complex regions like centromeres, telomeres, and segmental duplications that remain fragmented with other technologies.

Medical Genomics and Variant Detection: In clinical research settings where variant accuracy is paramount, consensus accuracy becomes the critical metric. For sequencing medically relevant genes—particularly those with pseudogenes (e.g., SMN1, GBA) or complex polymorphisms (e.g., LPA)—technologies offering high single-read accuracy are essential [9]. Hybrid approaches that combine long reads for structural context with short reads for base-level accuracy may offer optimal solutions for comprehensive variant characterization.

Epigenetic Modification Detection: Both PacBio and ONT platforms enable detection of base modifications without special treatment, but through different mechanisms. PacBio identifies modifications via kinetic signatures in polymerase synthesis, while ONT detects them through current alterations as bases pass through nanopores [13] [15]. ONT currently supports a broader range of detectable DNA and RNA modifications [14], making it preferable for comprehensive epigenomic profiling.

Future Directions in Sequencing Accuracy

The trajectory of sequencing technology points toward continuous improvement in both read and consensus accuracy. Emerging approaches like ONT duplex sequencing (reading both strands of a DNA molecule) promise to elevate raw read accuracy to nearly HiFi levels [9]. Simultaneously, novel methodologies that enable six-letter sequencing of genetic and epigenetic bases in a single workflow represent the next frontier in comprehensive sequence characterization [15].

For the research community, these advances will gradually eliminate the traditional trade-offs between read length, accuracy, and cost. As technologies mature, the distinction between read accuracy and consensus accuracy may blur, with single-molecule approaches achieving the precision previously attainable only through consensus. Until that convergence occurs, a sophisticated understanding of these metrics remains essential for designing robust genomic studies and interpreting their findings with appropriate confidence.

Sequencing accuracy is not a monolithic concept but a hierarchical framework encompassing both individual measurement fidelity (read accuracy) and integrated sequence determination (consensus accuracy). The distinction between these metrics informs technology selection, experimental design, and analytical interpretation across genomic research applications. While PacBio HiFi currently offers the highest single-read accuracy, ONT provides unparalleled read lengths and direct epigenetic detection. Both platforms, when properly leveraged, can generate consensus sequences exceeding Q40 quality—adequate for even the most demanding clinical applications.

For researchers focused on DNA assembly fidelity, the evidence strongly suggests that highly accurate long reads provide the optimal balance of contiguity and precision for resolving complex genomic regions. As sequencing technologies continue their rapid evolution, the principles of accuracy quantification remain foundational to extracting biological truth from sequence data. By aligning technological capabilities with research questions through the lens of these accuracy metrics, scientists can maximize the validity and impact of their genomic investigations.

The accurate evaluation of DNA assembly fidelity is a cornerstone of modern molecular biology and synthetic biology research. The reliability of constructed genetic constructs directly impacts downstream applications, from basic research to therapeutic development. This critical evaluation is performed using DNA sequencing technologies, which have undergone a remarkable evolution. Each generation of sequencing technology has brought new capabilities and trade-offs in read length, accuracy, throughput, and cost, shaping how researchers verify their work. This guide provides an objective comparison of sequencing platforms from first-generation Sanger methods to third-generation technologies, framing their performance within the context of DNA assembly fidelity assessment.

The Sequencing Technology Landscape

DNA sequencing technologies are broadly categorized into three generations based on their underlying biochemistry and operational principles.

First-generation sequencing, pioneered by Frederick Sanger in 1977, relies on the chain-termination method using dideoxynucleotides (ddNTPs) to generate DNA fragments of varying lengths that are separated by capillary electrophoresis [18]. This method produces highly accurate reads of up to 1000 base pairs, establishing it as the gold standard for validation [19].

Second-generation sequencing, commonly called Next-Generation Sequencing (NGS), introduced massively parallel sequencing in the mid-2000s [20] [21]. Platforms like Illumina utilize sequencing-by-synthesis to simultaneously read millions of short DNA fragments (typically 50-600 base pairs) [20]. This high-throughput approach dramatically reduced costs while generating enormous data volumes, making large-scale projects feasible [22].

Third-generation sequencing encompasses single-molecule, real-time (SMRT) technologies from Pacific Biosciences (PacBio) and nanopore-based sequencing from Oxford Nanopore Technologies (ONT) [23] [21]. These technologies sequence individual DNA molecules without amplification, producing exceptionally long reads (thousands to millions of base pairs) that can span complex genomic regions and structural variations [20].

Technical Comparison of Sequencing Platforms

The following tables provide a detailed technical comparison of representative platforms across the three sequencing generations, focusing on parameters critical for DNA assembly fidelity evaluation.

Table 1: Core Technology Specifications Across Sequencing Generations

| Parameter | Sanger | Illumina (NGS) | PacBio SMRT | Oxford Nanopore |

|---|---|---|---|---|

| Read Length | 500-1000 bp [18] | 50-600 bp [20] | 10-25 kb HiFi reads [21] | 10 kb - >1 Mb [21] |

| Accuracy | ~99.999% [18] | >99% per base (SBS) [20] | >99.9% (HiFi consensus) [21] | ~99% (simplex), >99.9% (duplex) [21] |

| Throughput per Run | 1-96 samples | 100-200 Gbp (Illumina) [22] | 75-100 Mbp (early), ~360 Gbp (Revio) | Variable, up to terabytes |

| Run Time | Hours | Several days [22] | Hours to days | Minutes to days |

| Template Preparation | PCR amplification | Array-based enzymatic amplification [22] | SMRTbell adapter ligation | Adapter ligation or transposase-based |

| Detection Method | Capillary electrophoresis with fluorescence [18] | Fluorescent nucleotide incorporation [22] | Real-time fluorescence in ZMW [21] | Ionic current disruption [23] |

Table 2: Performance Characteristics for DNA Assembly Fidelity Applications

| Characteristic | Sanger | Illumina (NGS) | PacBio SMRT | Oxford Nanopore |

|---|---|---|---|---|

| Error Types | Low error rate, random | Substitution errors dominant [20] | Random indels, minimal bias | Mostly indels in homopolymers |

| Variant Detection | Excellent for SNPs, small indels | Excellent for SNPs, small indels | Good for all variant types | Good for all variant types |

| Epigenetic Detection | No | Requires bisulfite conversion | Direct detection of modifications [23] | Direct detection of modifications [23] |

| Haplotype Phasing | Limited | Limited to short range | Excellent long-range phasing | Excellent long-range phasing |

| Best For Fidelity | Gold standard validation [19] | High-throughput variant screening | Complete assembly verification | Complex region analysis |

Experimental Protocols for Fidelity Assessment

Sanger Sequencing for NGS Validation

Sanger sequencing remains the gold standard for validating genetic variants identified by NGS, particularly in clinical settings [19].

Workflow:

- Variant Identification by NGS: Process raw NGS data through bioinformatics pipelines to identify genetic variants including single nucleotide variants (SNVs), insertions, deletions, and structural variations [19].

- Selection of Variants for Confirmation: Prioritize variants based on quality metrics (depth of coverage, variant allele frequency) and clinical relevance for orthogonal validation [19].

- PCR Amplification: Design primers flanking the target region and amplify using DNA polymerase. Proper primer design is critical for specificity [18].

- Sanger Sequencing: Perform chain-termination sequencing with fluorescently labeled dideoxynucleotides followed by capillary electrophoresis [18] [19].

- Data Analysis and Interpretation: Compare Sanger sequencing results with original NGS data to evaluate concordance. Discordant results require further investigation [19].

Polymerase Fidelity Measurement Using SMRT Sequencing

Single-Molecule Real-Time (SMRT) sequencing enables direct measurement of DNA polymerase error rates, providing a powerful method for assessing fidelity in DNA assembly workflows [24].

Protocol:

- Template Preparation: Use plasmid DNA virtually devoid of nucleotide errors as template for PCR amplification with the polymerase of interest [24].

- PCR Amplification: Amplify the target sequence (e.g., LacZ amplicon) using standardized conditions appropriate for the polymerase being tested [24].

- SMRTbell Library Preparation: Ligate SMRTbell adapters to create circular templates for sequencing [24] [21].

- SMRT Sequencing: Load library onto PacBio sequencer. DNA polymerase undergoes multiple passes of the circular template, generating multiple subreads for each molecule [24] [21].

- Error Analysis: Generate highly accurate consensus sequences from subreads. Identify true replication errors by comparing to known template sequence, calculating errors per base per doubling event [24].

Key Advantage: SMRT sequencing achieves a background error rate of 9.6 × 10⁻⁸ errors/base, making it suitable for quantifying the fidelity of high-fidelity proofreading polymerases [24].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagents for Sequencing-Based Fidelity Assessment

| Reagent/Category | Function | Examples & Notes |

|---|---|---|

| High-Fidelity DNA Polymerases | PCR amplification with minimal errors for template preparation | Q5 High-Fidelity DNA Polymerase (error rate: ~5.3×10⁻⁷), Phusion Polymerase [24] |

| Type IIS Restriction Enzymes | DNA assembly; generate defined overhangs for Golden Gate Assembly | BsaI-HFv2, BsmBI-v2 [25] |

| DNA Ligases | Join DNA fragments in assembly workflows; varying fidelity affects outcome | T4 DNA Ligase [26] |

| Library Preparation Kits | Prepare sequencing libraries platform-specific | Illumina Nextera, PacBio SMRTbell, ONT Ligation Sequencing Kits |

| Quantitation Assays | Accurately measure DNA concentration and quality before sequencing | Fluorometric methods (Qubit), spectrophotometry (NanoDrop) |

| Cloning & Transformation | Propagate assembled constructs for analysis | Competent E. coli strains, transformation reagents |

Application in DNA Assembly Fidelity Evaluation

Different sequencing technologies offer complementary strengths for assessing DNA assembly fidelity:

Sanger Sequencing provides the highest per-base accuracy for targeted validation of specific assembly junctions or critical regions [18] [19]. Its limitations include low throughput and inability to detect low-frequency variants in heterogeneous samples.

Illumina NGS enables comprehensive verification of large assemblies and library-level quality control through deep sampling [20]. The high coverage depth allows detection of low-frequency errors but struggles with repetitive regions and large structural variations.

PacBio HiFi Sequencing combines long reads with high accuracy through circular consensus sequencing, making it ideal for complete assembly verification and phasing [21]. This technology excels at resolving complex regions and detecting structural variants that challenge short-read technologies.

Oxford Nanopore Sequencing provides the longest reads, enabling complete phasing and detection of large-scale structural variations [21]. While historically limited by higher error rates, duplex sequencing now achieves >99.9% accuracy, making it suitable for comprehensive assembly validation [21].

The choice of sequencing technology for DNA assembly fidelity assessment depends on the specific requirements of the project, considering factors such as assembly size, complexity, required accuracy, and available resources. Many researchers employ a hierarchical approach, using NGS for initial screening followed by Sanger or long-read sequencing for resolution of problematic regions.

In genomic research, the fidelity of DNA sequencing data is paramount. Error profiles—characteristic patterns of substitutions, insertions, and deletions (indels)—are not random but are influenced by the specific sequencing technology, experimental workflow, and the biological sample itself. For researchers and drug development professionals, a precise understanding of these error profiles is essential for accurate variant calling, reliable genome assembly, and valid biological interpretation, particularly when detecting low-frequency variants in cancer or tracing outbreaks of pathogenic bacteria [27] [28]. A failure to account for platform-specific errors can lead to false positives in single-nucleotide polymorphism (SNP) calls, hinder de novo assembly, and create unfavorable biases in quantitative methods like RNA-seq and ChIP-seq [29].

This guide provides a comparative analysis of error profiles across major sequencing platforms, primarily Illumina and Oxford Nanopore Technologies (ONT). We objectively compare their performance using supporting experimental data, summarize key quantitative findings in structured tables, and detail the methodologies that generate this critical evidence. The goal is to equip scientists with the knowledge to select the appropriate technology, implement effective error mitigation strategies, and accurately evaluate the reliability of their genomic data within the broader context of DNA assembly fidelity.

Platform Comparison: Error Profiles and Experimental Data

The fundamental principles of different sequencing technologies give rise to distinct error profiles. Illumina's sequencing-by-synthesis is generally associated with high accuracy but susceptible to substitution errors, particularly in specific sequence contexts. In contrast, ONT's long-read sequencing, while powerful for assembly, has historically higher error rates, though its continuous evolution has led to significant improvements [28] [29].

Illumina Sequencing Error Profiles

A comprehensive 2019 analysis of Illumina platforms revealed that the substitution error rate can be computationally suppressed to an impressive 10⁻⁵ to 10⁻⁴, which is 10 to 100 times lower than the commonly cited rate of 10⁻³ [27] [30]. This study provided a detailed breakdown of errors attributable to various steps in a conventional NGS workflow.

Table 1: Quantified Illumina Substitution Error Rates from Deep Sequencing Studies

| Error Type | Average Error Rate | Key Influencing Factors | Experimental Context |

|---|---|---|---|

| A>G / T>C | ~10⁻⁴ | Sequence context; base elongation inhibition | HiSeq/NovaSeq, post computational suppression [27] |

| A>C / T>G | ~10⁻⁵ | Sample-specific DNA damage | HiSeq/NovaSeq, post computational suppression [27] |

| C>A / G>T | ~10⁻⁵ | Sample handling (oxidative damage) | Hybridization-capture dataset [27] |

| C>G / G>C | ~10⁻⁵ | Polymerase fidelity during enrichment PCR | Comparison of Q5 vs. Kapa polymerases [27] |

| C>T / G>A | ~10⁻⁴ | Spontaneous cytosine deamination; strong sequence-context dependency | Identified as a major error pattern [27] [29] |

| Overall Substitution Rate | ~10⁻³ (raw); 10⁻⁵ - 10⁻⁴ (computationally suppressed) | Wet-lab protocols and computational correction | Dilution experiment using COLO829/COLO829BL cell lines [27] |

The study identified that certain errors are systematic. For instance, C>T/G>A errors exhibit a strong sequence context dependency, while elevated C>A/G>T errors are often dominated by sample-specific effects, such as oxidative damage during handling [27]. Furthermore, the target-enrichment PCR step alone was found to cause an approximately six-fold increase in the overall error rate [27]. Earlier research also identified Sequence-Specific Errors (SSEs) linked to specific motifs, such as inverted repeats and GGC sequences, which can trigger lagging-strand dephasing by inhibiting the base elongation process during sequencing-by-synthesis [29].

Oxford Nanopore Technologies (ONT) Sequencing Error Profiles

A 2025 study evaluating ONT for genotyping pathogenic bacteria with low mutation rates provides a clear view of the accuracy achievable with the latest R10.4.1 chemistry. The results were species-dependent, but the nature of errors in the final assemblies was characterized [28].

Table 2: ONT Assembly Accuracy and Error Impact (2025 Study)

| Metric / Finding | Result / Value | Experimental Context |

|---|---|---|

| Assembly Variation | 5 to 46 nucleotide differences vs. reference | Brucella species assemblies [28] |

| Perfect Genomes Achieved | For K. variicola, Listeria spp., M. tuberculosis, S. aureus, S. pyogenes | ONT R10.4.1 sequencing [28] |

| Error Location | 81% within Coding Sequences (CDS) | Analysis of errors in ONT assemblies [28] |

| Methylation-Linked Errors | 6.5% of total errors | Use of methylation-aware polishing model [28] |

| cgMLST Allele Differences | <5 for B. anthracis, B. abortus, F. tularensis; 5 for B. melitensis | Impact on genotyping reliability [28] |

| Polishing Effect | Mainly improves quality (one round sufficient), but can sometimes degrade assembly | Evaluation of long-read polishing strategies [28] |

This research highlights that while highly accurate assemblies are possible, errors persist and can affect biologically relevant regions. The finding that 81% of errors were located within coding sequences (CDS) is particularly critical for functional genomics studies [28]. Furthermore, basecalling can be confounded by bacterial DNA methylation, though the use of a methylation-aware polishing model was shown to reduce these specific errors [28].

Experimental Protocols for Error Profiling

The quantitative data presented above are derived from rigorous experimental designs. Below, we detail the key methodologies used to generate this evidence.

Protocol 1: Dilution Series for Illumina Error Suppression Benchmarking

This protocol was designed to establish a "truth set" for distinguishing low-frequency true somatic mutations from sequencing errors [27].

- Cell Lines and DNA Extraction: Use matched cancer/normal cell lines derived from the same patient (e.g., COLO829 melanoma and COLO829BL lymphoblastoid lines). Extract high-quality genomic DNA using a standardized kit.

- Spike-in Dilutions: Spike the cancer DNA into the matched normal DNA at precise dilution ratios (e.g., 1:1000 and 1:5000) to create samples with known mutant allele fractions (e.g., 0.1% and 0.02%). Include biological or technical replicates.

- Library Preparation and Sequencing: Perform target amplicon sequencing (e.g., 130-170 bp amplicons) using different polymerases (e.g., Q5 vs. Kapa). Sequence on Illumina platforms (e.g., HiSeq 2500, NovaSeq 6000) to a very high depth (e.g., 300,000X to 1,000,000X coverage).

- Bioinformatic Processing and Analysis:

- Read Trimming: Trim 5 bp from both ends of reads to remove low-quality bases and potential adapter contamination.

- Quality Filtering: Remove reads with low mapping quality and evaluate the association between overall read quality and error rates.

- Variant Calling: Call variants in the diluted and undiluted samples.

- Error Rate Calculation: Calculate the substitution error rate at known wild-type sites in the flanking sequences using the formula:

# reads with mismatch / Total # reads at position. - False-Positive Assessment: Use the undiluted cancer sample data to characterize false-positive calls, as true low-frequency variants will show a proportional (1000- to 5000-fold) increase in allele fraction, while errors will not.

Protocol 2: Evaluating ONT Assembly Fidelity for Bacterial Genomes

This protocol assesses the accuracy of assemblies from ONT data for bacterial species, which is critical for outbreak analysis [28].

- Strain Selection and Sequencing: Select reference strains of target bacteria (e.g., B. anthracis, Brucella spp., F. tularensis). Sequence the same DNA sample using both ONT (e.g., R10.4.1 flow cells) and Illumina (for validation). Incorporate publicly available data for a broader comparison.

- Assembly Strategies: Execute multiple, distinct assembly strategies, which may include:

- Different basecalling models (e.g., standard and enhanced models).

- Various assemblers (e.g., Flye, Canu).

- Polishing steps, both with long reads alone and with short reads (hybrid polishing).

- Quality Assessment:

- Reference Comparison: Compare the final assemblies to a high-quality Sanger-sequenced reference genome from a repository like NCBI RefSeq. Count the number of nucleotide differences.

- Error Profiling: Analyze the location of errors (e.g., within CDS) and the impact of factors like methylation.

- Functional Genotyping: Perform core-genome MLST (cgMLST) analysis on the assemblies to determine if errors lead to incorrect allele calls, which could mislead outbreak tracing.

The following workflow diagram illustrates the parallel paths taken in these two key experimental protocols:

Diagram 1: Experimental protocols for sequencing error profiling.

The Scientist's Toolkit: Essential Reagents and Materials

Successful execution of the described experiments requires careful selection of reagents and computational tools. The following table details key solutions used in the featured studies.

Table 3: Key Research Reagent Solutions for Sequencing Error Analysis

| Item / Solution | Function / Purpose | Example Use-Case |

|---|---|---|

| Matched Cell Lines | Provides a ground-truth set of somatic variants for benchmarking. | COLO829/COLO829BL for dilution experiments [27]. |

| High-Fidelity Polymerases | Minimizes introduction of errors during PCR amplification in library prep. | Comparison of Q5 vs. Kapa polymerases in amplicon sequencing [27]. |

| Illumina Sequencing Kits | Executes the sequencing-by-synthesis chemistry on Illumina platforms. | HiSeq 2500 and NovaSeq 6000 SBS kits for generating deep sequencing data [27]. |

| ONT R10.4.1 Flow Cells | Provides updated pore chemistry for improved raw read accuracy. | Sequencing bacterial reference strains for assembly evaluation [28]. |

| Methylation-Aware Polishing Models | Corrects errors in ONT data caused by basecalling confusion at methylated sites. | Using the Medaka model to reduce methylation-linked errors in bacterial assemblies [28]. |

| Bioinformatic Pipelines (BWA, Flye, Medaka) | Maps reads, performs de novo assembly, and polishes sequences to reduce errors. | Essential for all data analysis, from read processing to final assembly generation [28]. |

The landscape of sequencing errors is complex and platform-dependent. Illumina technologies offer very low baseline substitution errors, which can be further suppressed computationally, but are susceptible to sequence-specific and sample-handling artifacts. ONT sequencing provides long reads that are powerful for assembly, yet the final accuracy varies by species and bioinformatic pipeline, with errors frequently affecting coding regions. For research demanding the highest accuracy, such as detecting low-frequency cancer variants or distinguishing closely related bacterial strains, a hybrid approach using both technologies remains a powerful, albeit more costly, solution. As both technologies continue to evolve, ongoing rigorous and comparative error profiling will remain a cornerstone of reliable genomic science.

Deoxyribonucleic acid (DNA) assembly fidelity, defined as the accuracy and precision with which synthetic DNA fragments are constructed into larger, functional genetic units, serves as a foundational parameter in biotechnology with profound implications for therapeutic outcomes. In the context of gene therapy and drug development, even minor errors in assembly—such as single-base substitutions, insertions, deletions, or misassemblies—can compromise therapeutic efficacy, alter safety profiles, and derail development timelines [31]. The growing reliance on synthetic biology and gene editing technologies has elevated the importance of assembly fidelity from a technical consideration to a critical determinant of product success. This guide provides a comparative analysis of how different DNA assembly methodologies perform in terms of fidelity and evaluates their subsequent impact on key downstream applications, supported by experimental data and detailed protocols.

DNA Assembly Methodologies and Fidelity Comparison

DNA assembly techniques vary significantly in their underlying mechanisms, resulting in distinct fidelity profiles. The following table summarizes the core characteristics of prominent methods.

Table 1: Comparison of DNA Assembly Methodologies and Their Fidelity

| Assembly Method | Key Feature | Typical Error Profile | Optimal Application Context | Reported Success Rate |

|---|---|---|---|---|

| NEBridge Golden Gate Assembly with DAD [32] | Type IIS restriction enzymes; Data-Optimized Assembly Design (DAD) for overhang selection. | Minimized misligation; errors primarily from source oligonucleotide synthesis. | High-throughput, in-house construction of complex gene libraries, including sequences with high GC content or repeats. | 343 out of 458 genes successfully assembled (75%) in a single large-scale test [32]. |

| Gibson Assembly [33] | Isothermal assembly using 5' exonuclease, DNA polymerase, and DNA ligase. | Potential for misassembly in repetitive regions; fidelity dependent on homology arm design. | Assembly of large DNA fragments for data storage (e.g., 32 KB files) and synthetic biology constructs [33]. | Data recovery from 32 KB file at 36x nanopore sequencing coverage [33]. |

| Enzymatic Synthesis [31] | Use of terminal deoxynucleotidyl transferase (TdT) or mirror-image polymerases. | Lower error rates reported compared to traditional phosphoramidite chemistry; enables incorporation of unnatural bases. | Synthesis of unnatural nucleic acids (L-DNA) and long, single-stranded DNA for therapeutics and data storage. | Kilobase-length L-DNA assembly demonstrated with mirror-image Pfu polymerase [31]. |

| PCR-Based Assembly [33] | Polymerase Chain Reaction to assemble multiple oligonucleotides into larger fragments. | Susceptible to polymerase-induced errors; requires high-fidelity enzymes. | Rapid construction of DNA pools for data storage; often a preliminary step for other assembly methods. | Used in readout pipelines for DNA data storage schemes [33]. |

The data reveal a trade-off between throughput, scalability, and absolute accuracy. Methods like Golden Gate Assembly with DAD are engineered for high fidelity in complex, multi-fragment constructs, making them suitable for demanding gene therapy applications where sequence perfection is paramount [32]. In contrast, while highly scalable, methods like PCR-based assembly may require more rigorous downstream sequencing validation due to inherent polymerase error rates.

Quantitative Impact of Assembly Fidelity on Key Applications

The consequences of assembly fidelity are quantifiable across development pipelines, directly affecting critical performance and safety metrics.

Table 2: Impact of Assembly Fidelity on Downstream Application Outcomes

| Application Area | Impact of High Fidelity | Impact of Low Fidelity | Supporting Data |

|---|---|---|---|

| Gene Therapy (AAV-based) | Ensures correct transgene expression; maintains safety profile. | Risk of truncated or non-functional therapeutic proteins; potential immunogenic responses. | As of 2025, 343 AAV clinical trials are active, with dose-dependent hepatotoxicity a key safety concern. Correct transgene sequence is critical for mitigating this [34]. |

| CRISPR-Based Therapeutics | Enables precise gene editing with minimal unintended consequences. | Exacerbates risks of large structural variations (SV), megabase-scale deletions, and chromosomal translocations. | Use of DNA-PKcs inhibitors to enhance HDR can increase SV frequency a thousand-fold, highlighting the need for precisely engineered templates [35]. |

| Cell & Gene Therapy Pipeline | Accelerates progression from preclinical to clinical stages. | Causes delays and failures in process development and manufacturing. | The global CGT pipeline includes 2,210 gene therapy assets. Upstream DNA supply bottlenecks can cascade, delaying manufacturing [36]. |

| DNA Data Storage | Enables error-free data recovery at very low sequencing coverage. | Necessitates high coverage and complex computational error correction, increasing cost and time. | PNC-LDPC coding scheme allowed error-free data recovery from medium-length DNA at a coverage of just 1.24-3.15x, a direct benefit of high-fidelity construction [33]. |

The correlation is clear: high assembly fidelity directly underpins therapeutic efficacy and safety. In gene therapy, it is a prerequisite for predictable dosing and minimized adverse events. For CRISPR applications, it is a key factor in mitigating the risk of genomic instability, a significant safety concern [35].

Experimental Protocols for Fidelity Assessment

To ensure the data generated from the methodologies in Table 1 is reliable, standardized experimental protocols for assessing assembly fidelity are essential.

Protocol: High-Throughput Gene Construction and Validation

This protocol is adapted from the decentralized workflow demonstrated by Lund et al. [32].

Design and Fragment Retrieval:

- Input the target gene sequence into the NEBridge SplitSet Lite High-Throughput web tool. The tool automatically divides the sequence into codon-optimized fragments with optimal break points.

- The Data-Optimized Assembly Design (DAD) algorithm assigns optimized, unique overhangs to each fragment to maximize ligation fidelity.

- Order the designed oligonucleotides as a pooled library.

- Retrieve the individual double-stranded DNA fragments from the oligo pool via a single round of multiplex PCR using barcoded primers, followed by purification.

Golden Gate Assembly:

- Set up a one-pot reaction mixture containing the retrieved DNA fragments, a Type IIS restriction enzyme (e.g., BsaI-HFv2 or BsmBI-v2), and T4 DNA Ligase.

- Run the reaction in a thermocycler with a program that cycles between the restriction enzyme's digestion temperature and the ligase's optimal temperature (e.g., 37°C and 16°C) for 25-50 cycles, followed by a final digestion step and enzyme inactivation.

Transformation and Screening:

- Transform the assembled product into competent E. coli cells.

- Plate the cells on selective media and pick individual colonies for screening.

- The primary screen can be performed by colony PCR. The final validation must be done by Sanger sequencing or next-generation sequencing (NGS) of the plasmid DNA from candidate clones to confirm perfect assembly.

Protocol: Assessing CRISPR-Editing Outcomes with Structural Variation Analysis

This protocol is designed to detect large, unintended structural variations resulting from CRISPR/Cas9 editing, which can be influenced by the fidelity of the donor template [35].

Cell Culture and Transfection:

- Culture the target cells (e.g., hematopoietic stem cells) under standard conditions.

- Transfect the cells with a ribonucleoprotein (RNP) complex of Cas9 nuclease and guide RNA (gRNA). For HDR experiments, include a donor DNA template.

- Optional for HDR enhancement: Treat cells with a DNA-PKcs inhibitor (e.g., AZD7648). Note that this treatment has been shown to significantly increase the frequency of structural variations [35].

Genomic DNA Extraction and Long-Range PCR:

- After 72 hours, extract high-molecular-weight genomic DNA from the edited population.

- Perform long-range PCR using primers flanking the on-target edit site. The amplicon should be several kilobases in length.

Sequencing and SV Detection:

- Prepare a sequencing library from the long-range PCR amplicons. For comprehensive detection of SVs, use long-read sequencing platforms like Pacific Biosciences (PacBio) or Oxford Nanopore Technologies (ONT).

- Alternatively, use specialized assays like CAST-Seq or LAM-HTGTS [35].

- Analyze the sequencing data for large deletions (>1 kb), chromosomal translocations, and other structural variations by aligning reads to the reference genome and identifying misassemblies and discordant read pairs.

Signaling Pathways and Workflow Diagrams

The following diagrams illustrate the core workflows and risk pathways discussed in this guide.

High-Fidelity DNA Assembly Workflow

Diagram 1: High-fidelity DNA assembly workflow integrating computational design (DAD) with optimized Golden Gate Assembly to maximize construct accuracy [32].

CRISPR Editing Risks from Low-Fidelity Inputs

Diagram 2: CRISPR editing risks showing how low-fidelity DNA templates and certain HDR-enhancing strategies can lead to dangerous structural variations [35].

The Scientist's Toolkit: Essential Research Reagents

Successful implementation of high-fidelity DNA assembly requires specific, quality-controlled reagents and tools.

Table 3: Key Research Reagent Solutions for High-Fidelity DNA Assembly

| Reagent / Tool | Function | Application Note |

|---|---|---|

| Type IIS Restriction Enzymes (e.g., BsaI-HFv2) | Cleave DNA at sites outside their recognition sequence, generating unique, user-defined 4-base overhangs. | The core enzyme for Golden Gate Assembly, enabling seamless and directional assembly of multiple fragments [32]. |

| T4 DNA Ligase | Catalyzes the formation of phosphodiester bonds between adjacent fragments with compatible overhangs. | Used concurrently with the restriction enzyme in the one-pot Golden Gate reaction for efficient ligation [32]. |

| NEBridge SplitSet Lite HT Web Tool | A computational tool that automatically designs optimal fragments and primers for gene synthesis from oligo pools. | Integrates with DAD to ensure fragment boundaries and overhangs are optimized for both synthesis and assembly fidelity [32]. |

| Data-Optimized Assembly Design (DAD) | A computational framework that uses a large fidelity dataset to predict the most reliable overhang combinations for assembly. | Critical for minimizing misligation in complex, multi-fragment assemblies, thereby dramatically increasing success rates [32]. |

| DNA-PKcs Inhibitors (e.g., AZD7648) | Small molecule inhibitors that suppress the NHEJ DNA repair pathway to favor HDR in CRISPR editing. | Caution Required: Their use, particularly with low-fidelity templates, is linked to a drastic increase in harmful structural variations [35]. |

| Long-Range PCR Kit | Amplifies long segments of genomic DNA (several kilobases) for downstream analysis. | Essential for generating amplicons that encompass large potential structural variations for sequencing-based safety assays [35]. |

Methodological Approaches: Tools and Techniques for Fidelity Assessment

Golden Gate Assembly and Data-optimized Assembly Design (DAD) Principles

DNA assembly is a foundational technique in modern synthetic biology, enabling the construction of complex recombinant DNA constructs from smaller fragments for applications ranging from biosynthetic pathway engineering to therapeutic development [37]. Among the various methodologies, Golden Gate Assembly has emerged as a particularly powerful approach due to its ability to assemble multiple DNA fragments in a single "one-pot" reaction using Type IIS restriction enzymes and DNA ligase [38]. This technique's efficiency and fidelity critically depend on the selective ligation of complementary overhangs flanking each DNA fragment. Historically, assembly design followed theoretical guidelines to minimize misligation, but these rules often limited complexity and were not based on comprehensive experimental data [39] [40].

The emergence of Data-optimized Assembly Design (DAD) represents a paradigm shift from rule-based to empirical, data-driven assembly design. This approach leverages high-throughput sequencing data to profile the sequence-specific fidelity and bias of ligation under actual assembly conditions [37] [41]. By applying DAD principles, researchers can now design assembly reactions with dramatically increased fragment capacity while maintaining high fidelity, enabling the construction of highly complex genetic systems that were previously impractical or required cumbersome hierarchical approaches [42] [40]. This guide compares the performance of traditional Golden Gate Assembly with DAD-optimized systems, providing the experimental data and protocols essential for researchers evaluating DNA assembly fidelity by sequencing.

Traditional Golden Gate Assembly vs. DAD Principles

Fundamental Concepts and Limitations of Traditional Design

Traditional Golden Gate Assembly relies on a Type IIS restriction enzyme to generate DNA fragments with compatible overhangs and a DNA ligase to join them seamlessly [38]. The selection of overhang sequences—typically 3 or 4 bases in length—is crucial for directing the ordered assembly of multiple fragments. Conventional design followed five established rules of thumb: (1) avoid using the same overhang twice; (2) avoid palindromic sequences; (3) avoid overhangs with the same three nucleotides in a row; (4) avoid overhangs with two identical nucleotides in the same position across different pairs; and (5) avoid overhangs with either 0% or 100% GC content (the "Goldilocks rule") [39] [40].

While effective for simple assemblies, these theoretical guidelines imposed significant limitations. The rules drastically reduced the number of available overhang sequences, consequently restricting the complexity of achievable assemblies. Most traditional Golden Gate reactions were practically limited to joining 5-10 fragments in a single reaction, with more complex assemblies requiring multi-step hierarchical approaches using different Type IIS enzymes at each stage [37] [43]. Furthermore, these rules were not derived from comprehensive experimental data on ligase behavior, potentially excluding many functional overhang sequences that violated the guidelines but would otherwise support high-fidelity assembly.

The DAD Paradigm: Data-Driven Assembly Design

Data-optimized Assembly Design fundamentally rewrites the rules for Golden Gate Assembly by replacing theoretical guidelines with empirical data. Researchers at New England Biolabs developed a high-throughput single-molecule sequencing assay using Pacific Biosciences SMRT sequencing to examine reaction outcomes for every possible overhang sequence combination under standard Golden Gate conditions [37] [41]. This comprehensive profiling quantified both the efficiency of correct Watson-Crick pairings and the frequency of mispairing for T4 DNA ligase with commonly used Type IIS restriction enzymes, including those generating both 3-base (SapI) and 4-base overhangs (BsaI-HFv2, BsmBI-v2, BbsI-HF) [37].

The key innovation of DAD is its application of this massive dataset to predict assembly outcomes before experimental execution. The data revealed that traditional rules could be relaxed, as high-fidelity reactions could be achieved with overhang sets that violated rules 3-5 [39]. More importantly, the research established that assembly fidelity and bias are determined primarily by the DNA ligase rather than the Type IIS restriction enzyme used [41]. This foundational insight enabled the development of predictive tools that calculate expected fidelity for any given overhang set, allowing researchers to select optimal sequences for their specific assembly needs rather than being constrained by generic guidelines.

Table: Comparison of Traditional vs. DAD Golden Gate Assembly Principles

| Aspect | Traditional Golden Gate | DAD-Optimized Golden Gate |

|---|---|---|

| Basis of Design | Theoretical rules of thumb | Comprehensive experimental fidelity data |

| Key Constraints | Avoid palindromes, duplicates, extreme GC content | Minimize predicted misligation based on empirical data |

| Typical Fragment Limit | 5-10 fragments per reaction | 20-35+ fragments per reaction |

| Fidelity Prediction | Limited to rule compliance | Quantitative fidelity score based on ligase behavior |

| Design Flexibility | Limited by rigid rules | Flexible, customized to specific sequence needs |

| Primary Innovation | Standardized overhang sets | Customized, context-aware overhang selection |

Performance Comparison and Experimental Data

Quantitative Fidelity and Capacity Analysis

Direct experimental comparisons demonstrate the superior performance of DAD-optimized assemblies over traditional designs. In foundational studies, assemblies designed using DAD principles achieved dramatically higher complexities while maintaining impressive fidelity rates. Traditional rules-based design typically supported high-fidelity assembly of only 5-10 fragments, with fidelity rapidly declining beyond this point [39]. In contrast, DAD-enabled assemblies achieved:

- 35-fragment assemblies with 71% predicted fidelity and successful experimental validation [39]

- 52-fragment assembly of the entire 40 kb T7 bacteriophage genome, with recovery of infectious phage particles after cellular transformation [44] [40]

- 24-fragment assemblies with >90% fidelity, compared to significantly lower success rates with traditional design [43]

The relationship between assembly complexity and fidelity reveals the dramatic advantage of DAD. While traditional rules-based selection provides approximately 5-10 overhang pairs with 100% fidelity, DAD-based selection maintains near-perfect fidelity for up to 20 overhang pairs before gradually declining [40]. This expansion of the fidelity frontier enables researchers to undertake significantly more complex genetic engineering projects in single-pot reactions, reducing time, resources, and potential errors associated with multi-step hierarchical assembly.

Experimental Validation and Case Studies

The performance advantages of DAD have been validated across multiple experimental systems. A key validation utilized a reverse lac operon blue/white screen, where successful assembly of fragments reconstituted a functional β-galactosidase gene, producing blue colonies when plated with X-gal/IPTG [40]. This system provided direct quantitative assessment of assembly fidelity through simple colony color screening. Results demonstrated that predictions based on DAD calculations closely matched experimental outcomes, confirming the accuracy of the fidelity predictions [40].

In a more ambitious application, researchers used DAD to assemble the 40 kilobase T7 bacteriophage genome from 52 fragments [44] [40]. This achievement not demonstrated technical capability but also biological functionality, as the assembled genome produced infectious phage particles. The assembly was designed using the NEBridge SplitSet tool, which optimally divided the genome sequence into fragments while avoiding internal Type IIS sites through domestication [40]. This case study highlights how DAD enables construction of entire functional genomes in a single reaction, opening new possibilities for genome engineering and synthetic biology.

Table: Experimental Performance of DAD-Optimized Assemblies

| Assembly Complexity | Target | Fidelity (Predicted/Experimental) | Key Findings |

|---|---|---|---|

| 24 fragments | lac operon cassette | >90% experimental fidelity [43] | 5- to 12-fold increase in transformants compared to traditional methods |

| 35 fragments | Custom assembly | 71% predicted fidelity [39] | Demonstrated high efficiency for unprecedented complexity |

| 52 fragments | T7 bacteriophage genome (40 kb) | Successful functional assembly [40] | Recovered infectious phage after transformation; circular assemblies yielded 500x more plaques than linear |

| 12 fragments | lac operon cassette | 99.5% experimental fidelity [43] | Near-perfect assembly with minimal screening required |

| Up to 22 fragments | Oligonucleotide-derived constructs | Variable based on sequence difficulty [45] | Successfully assembled sequences with extreme GC content (<30% or >70%) |

DAD Methodologies and Experimental Protocols

High-Throughput Fidelity Profiling Assay

The foundation of DAD rests on a sophisticated high-throughput sequencing assay that comprehensively profiles ligation fidelity under Golden Gate assembly conditions. The experimental workflow involves several key stages. First, hairpin DNA substrates are engineered to contain Type IIS restriction enzyme recognition sites flanking randomized base segments at the cleavage sites, ensuring equal representation of all possible overhang sequences [37] [41]. These substrates are then subjected to Golden Gate assembly reactions using T4 DNA ligase and specific Type IIS restriction enzymes under standard thermocycling conditions [37].

The resulting assembly products are sequenced using the Pacific Biosciences Single-Molecule Real-Time (SMRT) sequencing platform, which provides the deep sequencing coverage needed to detect even rare ligation events [37] [41]. Bioinformatics analysis then processes the massive dataset to quantify the relative frequency of each possible overhang pairing—both correct Watson-Crick pairs and mismatch pairs—generating a complete fidelity profile that captures both sequence-dependent efficiency and misligation tendencies [41]. This comprehensive dataset enables the prediction of assembly outcomes for any combination of overhangs before experimental execution.

Practical Assembly Protocols for Complex Constructions

Implementation of DAD principles extends beyond design to include optimized reaction protocols that maximize assembly efficiency, particularly for high-complexity reactions. For assemblies of medium complexity (12-36 fragments), a standard thermocycling protocol is recommended: repeated cycles of 5 minutes at 37°C (optimal for Type IIS restriction enzyme activity) followed by 5 minutes at 16°C (optimal for T4 DNA ligase activity), typically for 30-90 cycles depending on complexity, followed by a final 5-minute incubation at 60°C to inactivate enzymes [46] [43].

For high-complexity assemblies (>35 fragments), research has demonstrated that a static incubation at 37°C for extended periods (15-48 hours) significantly improves fidelity, despite being suboptimal for ligase activity [39] [40]. This counterintuitive finding revealed that the higher temperature reduces misligation events, and the extended incubation compensates for reduced ligation efficiency. This protocol modification was crucial for achieving successful 52-fragment assemblies that failed under standard cycling conditions [39].

Recent applications have also demonstrated DAD's utility in highly parallelized gene construction from oligonucleotide pools. This approach enables synthesis of hundreds of genes in three simple steps: (1) parallel amplification of parts from a single oligonucleotide pool, (2) Golden Gate Assembly of parts for each construct, and (3) transformation [45]. This method significantly reduces costs and time compared to commercial gene synthesis, constructing genes from receiving DNA to sequence-confirmed isolates in as little as 4 days [45].

Research Toolkit: Essential Reagents and Tools

Key Experimental Reagents

Successful implementation of DAD-enhanced Golden Gate Assembly requires specific reagents optimized for performance and compatibility. The following essential components represent the core toolkit for researchers.

Table: Essential Reagents for DAD-Optimized Golden Gate Assembly

| Reagent Category | Specific Examples | Function and Importance |

|---|---|---|

| Type IIS Restriction Enzymes | BsaI-HFv2, BsmBI-v2, BbsI-HF, Esp3I, SapI [37] [38] | Generate defined overhangs outside recognition sites; engineered versions offer enhanced efficiency and stability |

| DNA Ligase | T4 DNA Ligase [37] [43] | Joins complementary overhangs; preferred over T7 DNA ligase due to higher efficiency and less bias against A/T-rich sequences |

| Assembly Kits | NEBridge Golden Gate Assembly Kits (BsmBI-v2 or BsaI-HFv2) [38] [46] | Provide optimized enzyme mixes and buffers for specific Type IIS enzymes |

| DNA Polymerases | Phusion High-Fidelity DNA Polymerase [46] [45] | Amplify assembly fragments with high fidelity; crucial for generating high-quality parts |

| Competent Cells | High-efficiency E. coli strains [46] | Transform assembled constructs; higher efficiency helps recover complex assemblies with lower yields |

DAD Informatics Tools

The computational aspect of DAD is implemented through a suite of web-based tools that translate the experimental fidelity data into practical design solutions for researchers.

NEBridge Ligase Fidelity Viewer: This tool allows researchers to evaluate the predicted fidelity of existing overhang sets by uploading their sequences and selecting their specific Type IIS enzyme and thermocycling protocol. It identifies overhangs with high potential for mismatches, enabling targeted redesign of problematic junctions [42] [39].

NEBridge GetSet Tool: For projects requiring new overhang sets, GetSet generates customized high-fidelity overhang sets based on user-specified parameters including number of fragments, overhang length (3- or 4-base), and any sequences to exclude. The tool uses a stochastic search algorithm to identify optimal sets with the highest predicted fidelity [42] [39].

NEBridge SplitSet Tool: This powerful tool automates the division of a target DNA sequence into optimal assembly fragments. Users input their sequence and desired parameters (number of fragments, search windows for breakpoints), and SplitSet identifies the highest-fidelity overhang set while avoiding internal Type IIS sites. It also outputs fragment sequences and PCR primers for part generation [42] [40].