Engineering Life: Foundational Design Principles and AI-Driven Applications of Synthetic Biology

This article provides a comprehensive guide to the design principles underpinning synthetic biology, tailored for researchers, scientists, and drug development professionals.

Engineering Life: Foundational Design Principles and AI-Driven Applications of Synthetic Biology

Abstract

This article provides a comprehensive guide to the design principles underpinning synthetic biology, tailored for researchers, scientists, and drug development professionals. It explores the foundational concepts of biological system engineering, from genetic circuits to chassis engineering. The scope covers methodological applications in therapeutics and biosensing, delves into advanced combinatorial and AI-driven optimization strategies, and concludes with rigorous validation frameworks and comparative analysis of tools. By integrating the latest advances, including the convergence of AI and synthetic biology, this resource aims to equip professionals with the knowledge to design, build, and troubleshoot robust artificial biological systems for biomedical innovation.

The Engineer's Toolkit: Core Concepts for Designing Biological Systems from the Ground Up

Synthetic biology represents a fundamental paradigm shift in biological engineering, moving beyond the gene-centric manipulations of traditional genetic engineering to embrace a holistic, systems-level design approach. This discipline is characterized by the application of engineering principles—including standardization, abstraction, modularity, and decoupling—to design and construct novel biological systems with predictable functions [1]. While classical genetic engineering often focuses on transferring individual genes between organisms, synthetic biology aims to create entirely new biological architectures by assembling standardized biological parts into complex, functional circuits and networks. This transition from editing existing genetic code to writing new genetic programs enables unprecedented applications in bioproduction, therapeutics, and biomedicine, fundamentally expanding our ability to program biological function for human benefit [2] [3].

The field has evolved significantly from its early milestones, such as the genetic toggle switch and synthetic oscillators engineered in E. coli [1], to increasingly sophisticated systems capable of complex behaviors like pattern formation, multistability, and self-organization [1] [4]. This progression has been facilitated by the integration of principles from quantitative biology, systems biology, and engineering, creating a new research paradigm that combines mathematical modeling with experimental validation to advance both fundamental understanding and practical application [1] [5]. As the field matures, synthetic biologists are now tackling challenges in predictability, context-dependence, and scaling, driving the development of increasingly powerful tools for designing, modeling, and implementing artificial biological systems across multiple scales of complexity [6] [3].

Core Principles: The Synthetic Biology Design Framework

Foundational Engineering Principles

Synthetic biology is guided by a core set of engineering principles that enable the systematic design and construction of biological systems. Standardization establishes uniform specifications for biological parts, allowing them to be characterized and reused across different systems without redesign. This principle is exemplified by biological repositories such as the iGEM Registry, which collects standardized DNA parts called BioBricks that can be reliably assembled using compatible techniques [7]. Abstraction organizes biological complexity into hierarchical layers, enabling engineers to work at one level without requiring exhaustive knowledge of underlying details. A synthetic biologist designing a genetic circuit, for instance, can utilize well-characterized promoter parts without modeling their precise molecular interactions with RNA polymerase. Modularity creates functional units with defined interfaces and behaviors, allowing complex systems to be built from simpler, interchangeable components. This facilitates the creation of reusable genetic devices such as sensors, oscillators, and logic gates that can be combined in various configurations. Decoupling separates design into manageable subproblems that can be addressed independently by different specialists, significantly streamlining the engineering process for complex biological systems [7] [6].

Systems-Level Design Considerations

At the systems level, synthetic biologists must account for emergent properties that arise from interactions between components rather than from their individual behaviors. These properties include robustness (system performance despite external perturbations or internal variations), performance (how effectively the system executes its designed function), and reliability (consistent operation over time and across different cellular contexts) [4]. A critical insight from systems biology is that biological circuits do not operate in isolation but are embedded within a complex cellular environment that significantly influences their behavior. This context-dependence arises from myriad factors including resource competition, cross-talk with host networks, and varying cellular conditions [3]. The context matrix framework systematically categorizes these influencing factors along three dimensions: construct (genetic elements and their organization), host (chassis physiology and genetic background), and environment (growth conditions and external stimuli) [3]. Understanding a system's position within this multidimensional space is essential for predicting failure modes and designing biological systems with robust performance across different implementation scenarios.

Table 1: Core Principles of Synthetic Biology Design

| Principle | Key Concept | Practical Implementation | Benefit |

|---|---|---|---|

| Standardization | Uniform technical specifications for biological parts | BioBrick assembly standards; SBOL Visual diagram conventions [7] [6] | Enables interoperability and reliable reuse of components |

| Abstraction | Hierarchical organization of complexity | Separation of device design from molecular implementation details | Simplifies design process; allows specialization |

| Modularity | Self-contained functional units with defined interfaces | Genetic devices with standardized input/output interfaces (e.g., inducible promoters) | Facilitates system construction from validated components |

| Decoupling | Separation of complex problems into independent tasks | Distinct teams for part characterization, device design, and system integration | Parallelizes development; improves engineering efficiency |

| Systems-Level Thinking | Consideration of emergent properties and context | Context-aware design using the construct-host-environment matrix [3] | Enhances predictability and real-world performance |

Quantitative Foundations: Modeling for Prediction and Design

The Role of Mathematical Modeling

Mathematical modeling serves as the cornerstone of quantitative synthetic biology, providing a formal framework to articulate hypotheses, explore design possibilities, and predict system behavior before experimental implementation [4]. A well-constructed model functions as a "logical machine" that derives the implications of our biological assumptions and existing knowledge, enabling researchers to effectively evaluate the consequences of their design choices and identify potential failure modes [4]. This approach is particularly valuable for understanding gene regulatory circuits, which often exhibit non-linear dynamics, feedback loops, and emergent properties that challenge intuitive reasoning [4]. Models span multiple levels of granularity, from simple ordinary differential equations capturing bulk biochemical kinetics to complex spatial models that account for cellular architecture and heterogeneity. The appropriate modeling approach depends strongly on the specific research question, with simpler models often sufficient for elucidating general design principles and more detailed models required for accurate quantitative predictions of complex system behaviors [4].

Modeling Workflow and Best Practices

The process of developing effective mathematical models follows a structured workflow that begins with thoroughly knowing your system—gathering essential information about the relevant molecular components, their interaction mechanisms, and the available experimental data that will inform and validate the model [4]. This foundation enables researchers to explicitly set down all assumptions, both simplifying approximations and core hypotheses, which must be clearly documented to properly interpret modeling results and their limitations [4]. The next critical step involves defining the circuit through a visual representation that delineates the system boundary, identifies key molecular species as nodes, and maps their interactions as edges; this abstraction serves as a direct blueprint for constructing the mathematical equations [4]. Subsequently, researchers must write down the biochemical events by translating each circuit interaction into appropriate mathematical expressions, typically using mass action kinetics, Michaelis-Menten equations, or more complex formalisms that capture the specific biochemical mechanisms [4]. The process culminates in iterative refinement through comparison with experimental data, using discrepancies to identify gaps in understanding and drive model improvement in a cyclic fashion that progressively enhances both the model and biological insight [4].

Advanced and Automated Modeling Approaches

Recent advances in synthetic biology modeling include the development of automated modeling frameworks that augment or replace manual model construction, particularly for large-scale systems where manual approaches become prohibitively labor-intensive [8]. These automated approaches can be categorized into three levels of increasing machine autonomy: level 1 (human-led modeling with machine assistance for specific subtasks), level 2 (human-machine collaborative modeling where both contribute significantly to the process), and level 3 (machine-led modeling with human supervision or fully autonomous modeling) [8]. The implementation of these automated approaches typically relies on structured biological knowledge bases, community standards like SBOL Visual for diagrammatic representation [6], and natural language processing tools that can extract biological information from literature to inform model construction [8]. As these technologies mature, they promise to dramatically accelerate the modeling process while improving model quality and reproducibility, ultimately enabling the creation of more complex and predictive biological designs.

Table 2: Mathematical Modeling Approaches in Synthetic Biology

| Model Type | Key Features | Appropriate Applications | Limitations |

|---|---|---|---|

| Ordinary Differential Equations (ODEs) | Continuous deterministic dynamics of concentration variables | Well-mixed systems with large molecule counts; metabolic pathways; genetic circuits | Cannot capture stochasticity or spatial effects |

| Stochastic Models | Explicit representation of random fluctuations | Systems with small molecule counts; noise propagation analysis; cell fate decisions | Computationally intensive; parameter estimation challenging |

| Rule-Based Modeling | Compact representation of combinatorial interactions | Multi-protein complexes; signaling networks with post-translational modifications | Specialized software required; visualization challenging |

| Agent-Based Models | Autonomous entities with individual behaviors | Multicellular systems; developmental biology; microbial communities | Computationally intensive; parameters often poorly constrained |

| Constraint-Based Models | Steady-state flux balance analysis | Metabolic networks; resource allocation; growth prediction | Limited dynamic information; steady-state assumption |

The Design-Build-Test-Learn Cycle: Core Methodology

Integrated Workflow for Biological Engineering

The Design-Build-Test-Learn (DBTL) cycle represents the core operational framework of synthetic biology, providing a systematic methodology for engineering biological systems through iterative refinement [7] [9]. In the Design phase, researchers specify biological parts, devices, and systems using computational tools and biological knowledge, frequently employing standardized diagrammatic representations such as SBOL Visual to communicate both structural and functional aspects of their designs [6]. The Build phase involves physical construction of the designed systems using molecular biology techniques, often employing standardized assembly methods like BioBrick cloning to combine genetic elements in specified configurations [7]. During the Test phase, the constructed systems are experimentally characterized to measure their performance and identify deviations from expected behavior, employing techniques ranging from fluorescence measurements and RT-qPCR to advanced microfluidic cultivation and single-cell imaging [9] [3]. Finally, in the Learn phase, researchers analyze the experimental data to refine their understanding of the system, update models, and inform the next design iteration, progressively improving system performance and designer knowledge with each cycle [4] [8].

Standardized Visual Communication: SBOL Visual

Effective communication of biological designs is essential for collaborative engineering, and the synthetic biology community has developed SBOL Visual as a standardized visual language for this purpose [6]. SBOL Visual defines a coherent set of glyphs (symbols) for representing genetic features, molecular species, and their functional interactions, organized into three complementary classes: sequence feature glyphs for representing nucleic acid components (e.g., promoters, coding sequences, terminators), molecular species glyphs for representing other biochemical entities (e.g., proteins, small molecules, functional RNAs), and interaction glyphs for indicating functional relationships between elements (e.g., activation, repression, degradation) [6]. This standardized notation enables clear communication of both structural arrangements and functional relationships within biological systems, facilitating collaboration, reducing misinterpretation, and supporting the development of software tools that can automatically translate between visual diagrams and machine-readable design representations [6]. The standard intentionally allows for stylistic variation and the use of glyph variants where justified, while providing precise specifications to ensure consistent interpretation across different implementations and applications.

Experimental Implementation: From Model Organisms to Novel Chassis

Protocol Development for Non-Model Organisms

While early synthetic biology work predominantly utilized model organisms like E. coli and S. cerevisiae, there is growing interest in expanding to non-model bacteria with unique metabolic capabilities, requiring the development of specialized protocols and toolkits for these novel chassis [9]. The process for developing a synthetic biology toolkit for a non-model organism begins with establishing efficient genetic transformation methods, optimizing delivery mechanisms (electroporation, conjugation, or transduction) and selection strategies appropriate for the target species [9]. Next, researchers must characterize a set of biological parts—including promoters, ribosomal binding sites, and terminators—to determine their function and performance in the new host context, identifying elements that provide a range of expression levels and regulatory control [9]. The toolkit development process also includes adapting standardized assembly techniques for the target organism and validating their efficiency, enabling reproducible construction of genetic circuits from standardized parts [9]. Finally, comprehensive characterization using fluorescence markers, RT-qPCR, and phenotypic assays establishes the performance and reliability of the toolkit, creating a foundation for more complex engineering projects in the non-model chassis [9].

Advanced Measurement and Characterization Techniques

Modern synthetic biology employs increasingly sophisticated measurement technologies that provide unprecedented insights into system behavior across multiple scales. Single-molecule imaging techniques, including various super-resolution microscopy modalities such as structured illumination microscopy (SIM), enable researchers to observe individual molecular events and localization with nanometer-scale resolution, revealing mechanistic details inaccessible through traditional bulk measurements [3]. Microfluidic cultivation platforms, including "mother machine" devices and organ-on-a-chip systems, maintain cells in precisely controlled environments over extended periods while enabling high-resolution time-lapse imaging, allowing researchers to track dynamic processes and heterogeneity in population behaviors [3]. Cybergenetic control systems implement real-time feedback between measurements and external inputs, using automated microscopy to monitor cellular states and applying inducers or other modulators to drive systems toward desired behaviors or population compositions [3]. These advanced measurement approaches are particularly valuable for observing the effects of context—including construct, host, and environmental factors—on system performance, providing critical data for improving design predictability and robustness [3].

Table 3: Essential Research Reagent Solutions for Synthetic Biology

| Reagent Category | Specific Examples | Function/Application | Implementation Considerations |

|---|---|---|---|

| Standardized DNA Parts | BioBricks from iGEM Registry; Anderson promoter collection | Modular genetic elements for circuit construction | Compatibility with assembly standard; characterization in target host [7] |

| Fluorescence Reporters | GFP, RFP, YFP variants; transcriptional/translational fusions | Quantitative measurement of gene expression and regulation | Spectral compatibility; maturation time; background autofluorescence [9] |

| Selection Markers | Antibiotic resistance genes; auxotrophic complementation markers | Selective maintenance of genetic constructs; enrichment of desired variants | Host susceptibility; concentration optimization; cross-resistance issues [7] |

| Assembly Systems | Restriction enzyme-based (BioBrick); Gibson assembly; Golden Gate | Physical construction of genetic circuits from DNA parts | Efficiency; scalability; part compatibility; fidelity [7] |

| Induction Systems | Chemical inducers (aTc, IPTG); light-sensitive promoters; biosensors | External control of gene expression; feedback implementation | Kinetics; dynamic range; toxicity; cost [2] |

Applications and Case Studies: From Bioproduction to Biomedical Innovation

Precision Metabolic Engineering for Bioproduction

Synthetic biology enables unprecedented precision in metabolic engineering for bioproduction of valuable compounds, as demonstrated by recent work on heparosan biosynthesis [2]. Heparosan, a natural polymer with significant biomedical applications, requires precise control over molecular weight (Mw) and polydispersion index (PDI) for optimal performance—characteristics that are challenging to regulate using traditional metabolic engineering approaches [2]. Researchers addressed this challenge by designing a synthetic genetic controller that dynamically regulates the expression of heparosan biosynthesis genes in response to precursor availability, creating a feedback system that maintains optimal metabolic fluxes for producing heparosan with consistently low Mw and low PDI [2]. This controller was implemented in the probiotic E. coli Nissle 1917 chassis, creating a biosafe production platform that demonstrates how synthetic biology principles can be applied to achieve precise control over polymer properties that were previously difficult to standardize [2]. This case study illustrates the power of synthetic biology to go beyond simple pathway expression and implement sophisticated control strategies that optimize complex product characteristics, opening new possibilities for manufacturing biomaterials with tailored properties for specific biomedical applications.

Engineering Microbial Communities and Host-Microbe Interactions

Advanced synthetic biology applications increasingly target systems beyond single cells, engineering microbial communities and host-microbe interactions for therapeutic and diagnostic purposes [3]. Engineering synthetic microbial communities involves programming interactions between different strains to achieve division of labor, where specialized subpopulations perform distinct metabolic functions that collectively accomplish complex tasks beyond the capabilities of individual strains [3]. These communities reduce metabolic burden on individual members, enable utilization of complex substrates, and provide enhanced robustness to environmental fluctuations compared to monoculture systems [3]. In biomedical applications, engineered bacterial strains are being developed for spatially-precise diagnostics and therapy within the mammalian gut, creating living therapeutics that can detect pathogens, monitor disease states, and locally release therapeutic compounds in response to specific physiological conditions [3]. These systems represent the cutting edge of synthetic biology, requiring sophisticated design strategies that account for multi-species interactions, spatial organization, and dynamic environments while maintaining safety and efficacy in complex biological contexts.

Future Directions: Emerging Technologies and Challenges

The future development of synthetic biology will be shaped by several converging technological advances and persistent challenges. Automated modeling approaches will progressively expand from level 1 (human-led with machine assistance) toward level 3 (machine-led with human supervision), leveraging text mining, knowledge bases, and community standards to increase modeling efficiency and enable handling of greater biological complexity [8]. Cross-scale integration will connect molecular-level events with cellular, population, and ecosystem behaviors, requiring new theoretical frameworks and computational tools to predict emergent properties across these scales [3]. Context-aware design methodologies will systematically address the effects of construct, host, and environmental factors on system performance, using frameworks like the context matrix to improve predictability and robustness while reducing trial-and-error experimentation [3]. The field will also need to develop enhanced characterization methods with improved resolution (spatial, temporal, and single-cell) to capture the full complexity of engineered biological systems and provide sufficient data for refining models and design rules [4] [3]. As these advances mature, synthetic biology will increasingly deliver on its promise to provide reliable, predictable engineering of biological systems for applications spanning healthcare, bioproduction, environmental remediation, and fundamental scientific research.

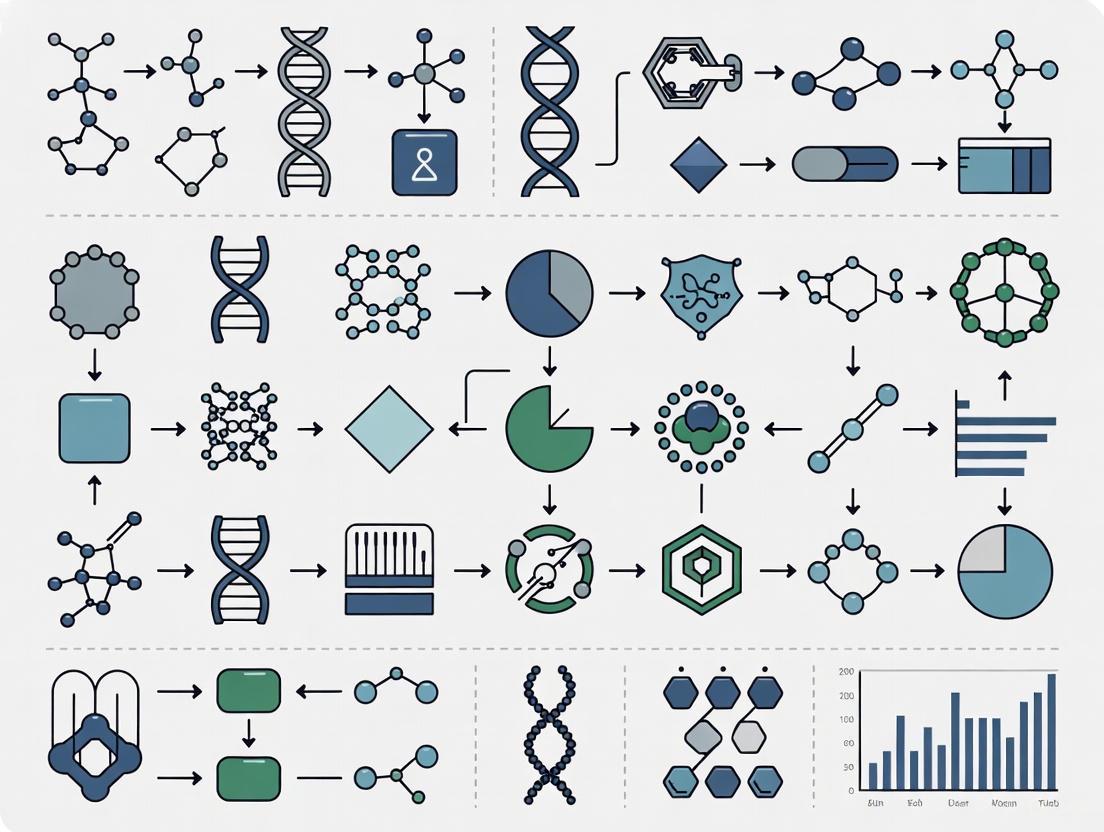

Synthetic biology represents a fundamental shift in the interaction with biological systems, moving from observation and analysis to design and construction. This field applies engineering principles of standardization, modularity, and abstraction to biological components, enabling the predictable programming of cellular behavior [10]. At the core of this discipline lies what we term the "Central Dogma of Biodesign"—a structured framework for designing and assembling standardized biological parts into functional devices and systems that execute defined operations within living cells.

This technical guide examines the foundational principles and methodologies underlying the engineering of artificial biological systems. Unlike traditional genetic engineering that often operates on single genes, synthetic biology adopts a systems-level outlook that targets entire pathways, networks, and whole organisms with quantitative control and modulation [10]. The conceptual framework mirrors electronic engineering, where basic parts (e.g., promoters, coding sequences) combine to form devices (e.g., oscillators, switches), which subsequently integrate into complex systems (e.g., metabolic pathways, biosensors) [11]. This hierarchical approach enables researchers to create biological circuits that can sense, compute, and respond to environmental signals with precision approaching that of their electronic counterparts.

Foundational Principles: Orthogonalization and the Central Dogma

The Challenge of Host Interference

A significant obstacle in synthetic biology is the inadvertent interaction between engineered components and host cellular machinery. Engineered circuits predominantly utilize components derived from natural sources, making them vulnerable to undesirable crosstalk with host processes, particularly those within the host central dogma [12] [11]. This interference frequently manifests as reduced host fitness due to resource depletion or imposition of non-native functions on endogenous machinery [12]. Furthermore, complex circuits often fail in novel contexts due to off-target effects between components and unanticipated resource competition [11].

Biological Orthogonalization as a Solution

Biological orthogonalization addresses these challenges through the purposeful insulation of researcher-dictated bioactivities from native cellular processes. In synthetic biology, "orthogonal" describes biomolecules that, despite similarities in composition or function, cannot interact with one another or affect each other's substrates [12]. The implementation of mutually orthogonal systems creates isolated biological hubs where engineered components interact strongly with each other but minimally with host machinery [13].

The ultimate expression of this principle is the creation of an orthogonal central dogma—a parallel system for genetic information flow that operates independently of host replication, transcription, and translation machinery [12] [13]. This approach shares conceptual similarities with virtual machines in computing, where separation from complex host operating systems provides both portability and specialization capabilities [13].

Implementing an Orthogonal Central Dogma

Orthogonal Genetic Information Storage and Replication

The foundation of an orthogonal central dogma begins with insulating genetic information from host interference through specialized replication systems and nucleotide chemistries.

Table 1: Approaches to Orthogonal Genetic Information Storage

| Approach | Mechanism | Examples | Applications |

|---|---|---|---|

| Non-canonical Nucleobases | Incorporation of modified DNA bases unrecognized by host machinery | N6-methyldeoxyadenosine (m6dA); Synthetic 6-8 letter genetic codes [12] [11] | Epigenetic signaling; Genetic code expansion; Protection from nucleases |

| Orthogonal Replication Systems | Dedicated DNA polymerases that replicate only specific templates | φ29 bacteriophage system; Yeast OrthoRep system [12] [13] | Rapid continuous evolution; In vitro replication; Mutation rate control |

| Backbone Modification | Alteration of phosphate-sugar DNA backbone | Phosphorothioate; Alkyl phosphonate nucleic acids [12] | Novel aptamer interactions; Stabilization |

The OrthoRep system in yeast exemplifies this approach, utilizing native cytoplasmic plasmids from Kluveromyces lactis with an orthogonal DNA polymerase that exclusively replicates the cognate cytoplasmic plasmid [12] [13]. This system enables mutation rates 100,000-fold higher than the host genome without affecting host fitness, facilitating continuous evolution of biomolecules entirely in vivo [13].

Orthogonal Transcription Systems

Transcriptional orthogonalization creates independent regulatory channels using transcription factors and RNA polymerases that operate exclusively on their cognate promoters without interacting with host transcriptional machinery.

Table 2: Orthogonal Transcription Systems

| System Type | Key Features | Orthogonality Mechanism | Applications |

|---|---|---|---|

| Bacteriophage RNAPs | Single gene encoding RNAP; High transcription levels [13] | Cognate promoters with distinct sequences from host promoters [11] [13] | Heterologous gene expression; Genetic circuits |

| Engineered σ Factors | Respond to diverse stimuli; High dynamic range [11] | Reprogrammed promoter recognition [11] | Custom regulatory networks |

| CRISPR-Based Regulation | Sequence-programmable DNA binding [14] | Synthetic guide RNAs and activators/repressors [13] | Multiplexed gene regulation |

Bacteriophage RNA polymerase-promoter pairs (e.g., T7 RNAP) represent well-established orthogonal transcription systems that have been engineered to reduce host growth defects through reduced abortive cycling and regulated expression levels [13]. Systematic expansion of these systems has created multiple mutually orthogonal RNAP-promoter pairs enabling independent control of numerous genes within complex circuits [13].

Orthogonal Translation Systems

Translation orthogonalization creates dedicated machinery for protein synthesis, enabling expanded chemical capabilities and insulation from host translational regulation.

Diagram 1: Orthogonal translation system components. The system enables incorporation of non-canonical amino acids (ncAAs) through specialized machinery.

Key advances in orthogonal translation include:

- Orthogonal ribosomes ("ORibosomes") engineered with altered ribosomal RNA sequences that selectively translate orthogonal mRNAs without recognizing host messages [11] [13]

- Orthogonal aminoacyl-tRNA synthetase/tRNA pairs that incorporate hundreds of unnatural amino acids site-specifically into proteins [11] [13]

- Recoded genetic codes that reassign codons for non-canonical monomer incorporation, including quadruplet codons [11] [13]

- Covalently linked ribosomal subunits enabling exploration of novel rRNA sequences and functions [11]

These systems collectively enable the incorporation of multiple non-canonical amino acids into single polypeptides, dramatically expanding the chemical space accessible to biological systems [13].

Stringent Multi-Level Control of Gene Expression

The Multi-Level Controller (MLC) Framework

Even with orthogonal components, synthetic circuits face challenges with leaky expression and stochastic noise. Multi-level controllers (MLCs) address these limitations by simultaneously regulating both transcription and translation, implementing a coherent type 1 feed-forward loop (C1-FFL) regulatory motif [14] [15].

Diagram 2: Multi-level controller (MLC) architecture. Both transcriptional (L1) and translational (L2) regulators must be present for gene of interest (GOI) expression.

The MLC design delivers significantly reduced basal expression—up to 50-fold lower than single-level controllers—while maintaining nearly identical maximum expression rates [14] [15]. This results in dramatically improved dynamic range exceeding 1000-fold change between induced and uninduced states [14] [15].

Noise Suppression in MLCs

A critical advantage of MLC architectures is their ability to suppress intrinsic transcriptional noise. While single-level controllers amplify stochastic promoter bursts into protein expression fluctuations, MLCs require near-simultaneous activation of two identical promoters—a statistically rare event [14] [15]. This creates a digital-like switch between 'on' and 'off' states, effectively filtering transient noise and ensuring more uniform population-level responses [14] [15].

Experimental Protocols for Biodesign Implementation

Protocol: Assembly of Multi-Level Controllers

This protocol describes the construction of MLCs using a modular DNA assembly system, adapted from demonstrated methodologies [14] [15]:

- Toolkit Preparation: Utilize the 8-part genetic template system (pA–pH plasmids plus pMLC-BB1 backbone) designed for combinatorial MLC assembly [14].

- Golden Gate Assembly: Perform one-pot Golden Gate reaction using 4-bp overhangs with minimal cross-reactivity for efficient ligation [14].

- Screening: Identify successful constructs through drop-out of an orange fluorescent protein (ofp) expression cassette [14].

- Characterization: Measure steady-state response curves across inducer concentrations and dynamic responses to pulse inputs [14] [15].

Protocol: Establishing Orthogonal Replication in Yeast

For implementing orthogonal DNA replication systems in yeast [12] [13]:

- Plasmid Introduction: Transform Saccharomyces cerevisiae with engineered pGKL1/pGKL2-derived plasmids containing orthogonal DNA polymerase.

- Selection Maintenance: Apply continuous selection pressure to maintain orthogonal plasmids in population.

- Error Rate Validation: Sequence orthogonal plasmid and host genome to confirm mutation rate differential.

- Evolution Application: For continuous evolution, target genes are encoded on orthogonal plasmid and subjected to selective pressure.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents for Orthogonal Biodesign

| Reagent/System | Function | Key Features | Example Applications |

|---|---|---|---|

| OrthoRep System | Orthogonal DNA replication in yeast [12] [13] | Error rate >100,000× host; Stable cytoplasmic propagation | Continuous evolution; Mutagenesis |

| Bacteriophage RNAPs | Orthogonal transcription [11] [13] | High specificity; Strong expression | Genetic circuits; Metabolic engineering |

| Orthogonal aaRS/tRNA | Non-canonical amino acid incorporation [11] [13] | Site-specific incorporation; >200 ncAAs | Protein engineering; Bioconjugation |

| Toehold Switches | RNA-based translational regulation [14] [15] | Programmable; High dynamic range | Biosensors; Multi-level control |

| CRISPR-Act/Rep | Programmable transcription control [14] | Multiplexable; Highly specific | Gene regulation; Synthetic circuits |

| Golden Gate Assembly | Modular DNA construction [14] | Standardized overhangs; Multi-part assembly | Genetic device construction |

Emerging Frontiers: AI-Driven Biodesign

The convergence of artificial intelligence with synthetic biology is accelerating biological design capabilities. Key developments include:

- Protein Language Models enabling de novo protein design with atom-level precision, moving beyond evolutionary constraints [16] [17]

- AI-driven biodesign automation that integrates design, build, test, and learn cycles with limited human supervision [16]

- Large Language Models (LLMs) applied to biological sequences for predicting physical outcomes from nucleic acid sequences [16]

- Generative AI systems like CodonTransformer for species-specific codon optimization and sequence design [18]

These AI technologies are transitioning synthetic biology from trial-and-error approaches to predictable engineering disciplines. For instance, systems like CRISPR-GPT leverage LLMs to guide researchers through experimental planning and execution, lowering expertise barriers for complex genetic engineering [18].

The implementation of standardized biological parts within orthogonal central dogma frameworks represents a paradigm shift in biological engineering. By creating insulated biological hubs that minimize host interference while maximizing predictability, synthetic biologists are overcoming fundamental constraints that have limited genetic engineering for decades.

Future developments will focus on the complete integration of orthogonal replication, transcription, and translation into unified systems [12] [13], expanded genetic codes with enhanced chemical capabilities [11], and increasingly sophisticated AI-driven design tools that account for the polyfactorial context of biological systems [16]. As these technologies mature, the Central Dogma of Biodesign will enable increasingly complex biological programming, transforming therapeutic development, biomanufacturing, and fundamental biological research.

The trajectory points toward a future where biological systems can be designed with reliability approaching other engineering disciplines, ultimately enabling the programming of cellular behaviors for addressing pressing challenges in health, sustainability, and technology.

Synthetic biology is an interdisciplinary field that combines engineering principles with biology to design and construct new biological parts, devices, and systems, and to re-design existing biological systems for useful purposes [10]. A core application within this field is the engineering of synthetic genetic circuits—interacting molecular pathways engineered to direct cells to perform specific, predefined tasks [10]. Inspired by electronic circuits, these genetic circuits harness a cell's native machinery to control gene expression, enabling the programming of cellular behaviors such as responding to specific stimuli, altering traits, or performing complex logical operations [10]. The primary types of foundational genetic circuits include oscillators, toggle switches, and logic gates, each enabling distinct dynamic behaviors and computational capabilities within living cells.

The design of these circuits is fundamentally hampered by the limited modularity of biological parts and the significant metabolic burden imposed on chassis cells as circuit complexity increases [19]. This burden occurs because engineered gene networks utilize the host's finite gene expression resources (e.g., ribosomes, amino acids), diverting them away from essential host processes and often reducing cell growth rate—a phenomenon known as "burden" [20]. This creates a selective disadvantage, whereby cells with mutations that disrupt circuit function but improve growth rates will inevitably outcompete their engineered counterparts, leading to the eventual failure of the circuit over time [20]. Consequently, a major technical challenge is to engineer circuits that are not only functional but also evolutionarily stable and efficient in their resource utilization.

Core Circuit Types: Function and Design

Oscillators

Function and Principle: Oscillators are engineered to produce rhythmic, periodic gene expression patterns, mimicking natural biological processes like circadian rhythms [21]. They function as dynamic time-keeping devices within cells, enabling the study of dynamic cellular behaviors and facilitating time-controlled therapeutic interventions [21]. In biomanufacturing, their integration into microbial production systems can optimize the timing of metabolic pathway activation, thereby improving process yields [21].

Design Considerations: The evolutionary longevity of an oscillator circuit, or any synthetic gene circuit, can be quantified by metrics such as its functional half-life (τ50), defined as the time taken for the population-level output to fall below 50% of its initial value [20]. A key design strategy to enhance longevity involves implementing genetic feedback controllers. Research using multi-scale host-aware computational models has shown that post-transcriptional controllers, which exploit small RNAs (sRNAs) to silence circuit RNA, generally outperform transcriptional controllers because this mechanism provides an amplification step enabling strong control with reduced burden on the cellular machinery [20]. Furthermore, the choice of control input is critical; growth-based feedback significantly extends the circuit's functional half-life compared to intra-circuit feedback [20].

Toggle Switches

Function and Principle: Toggle switches represent a foundational component in synthetic gene circuits, functioning as bistable systems that can switch between two distinct, stable states in response to specific external stimuli or signals [21]. This binary memory function is crucial for applications requiring a permanent, inheritable change in cell state, such as in gene therapies, biosensing, and metabolic engineering [21]. Their reliability and programmability make them a preferred building block for constructing more complex genetic systems.

Design and Evolutionary Stability: The bistable behavior of a toggle switch, like other circuits, is susceptible to evolutionary degradation. Studies on a positive feedback-based bistable circuit in yeast revealed that its evolutionary trajectory is heavily influenced by the selective environment [22]. For instance, in environments where high gene expression is both beneficial and costly, mutations tend to alter gene expression heterogeneity, while in environments where expression is purely costly, mutations that completely abrogate the function of the auto-activator gene accumulate, leading to a loss of bistability [22]. This underscores the necessity of designing circuits with the selective landscape in mind.

Logic Gates

Function and Principle: Logic gates in synthetic gene circuits are designed to perform Boolean operations (e.g., AND, OR, NOT), enabling cells to process multiple biological or chemical inputs and generate specific, programmed outputs [21]. This capability is at the forefront of engineering programmable cell-based therapies, sophisticated biosensors, and intelligent diagnostic tools [23] [21]. By constructing complex logic within living cells, researchers can create systems for precise disease detection and targeted treatment strategies [21].

Advanced Design and Compression: A significant advancement in logic gate design is Transcriptional Programming (T-Pro), a methodology that leverages synthetic transcription factors (TFs) and synthetic promoters to achieve circuit compression [19]. Unlike traditional designs that often rely on inversion (NOT gates) and require multiple parts, T-Pro utilizes engineered repressor and anti-repressor TFs that coordinate binding to cognate synthetic promoters, facilitating objective NOT/NOR Boolean operations with fewer components [19]. This compression is critical for reducing the metabolic burden on the host cell and for scaling circuit complexity. Recent work has expanded T-Pro from 2-input (16 Boolean operations) to 3-input Boolean logic (256 Boolean operations), requiring an algorithmic enumeration-optimization software to identify the most compressed (smallest) circuit design from a combinatorial space of over 100 trillion putative circuits [19]. On average, these compression circuits are approximately four times smaller than canonical inverter-type genetic circuits [19].

Table 1: Key Metrics for Foundational Synthetic Genetic Circuits

| Circuit Type | Core Function | Primary Applications | Key Performance Metrics |

|---|---|---|---|

| Oscillators | Generate rhythmic, periodic gene expression | Time-controlled therapeutics, Bioprocess optimization, Studying cellular dynamics | Period, Amplitude, Evolutionary half-life (τ50) [20] [21] |

| Toggle Switches | Maintain stable, bistable states; cellular memory | Cell fate programming, Biosensing, Metabolic engineering | Switching threshold, Stability, Evolutionary half-life (τ±10) [20] [21] |

| Logic Gates | Perform Boolean computations on inputs | Programmable therapeutics, Diagnostics, Environmental monitoring | Truth table accuracy, Dynamic range, Signal-to-noise ratio [19] [23] |

Quantitative Performance and Stability Data

The performance and evolutionary stability of synthetic gene circuits are quantifiable, allowing for direct comparison and optimization. The following table summarizes key metrics from recent research, highlighting the trade-offs between initial output, short-term stability, and long-term functional persistence.

Table 2: Quantitative Metrics for Evolutionary Longevity of Gene Circuits [20]

| Circuit / Controller Design | Initial Output (P₀) | Short-Term Stability (τ±10) | Long-Term Half-Life (τ50) | Notes |

|---|---|---|---|---|

| Open-Loop System (No Controller) | High (e.g., 100%) | Shortest duration | Shortest duration | High burden leads to rapid takeover by mutants. |

| Negative Autoregulation | Reduced relative to open-loop | Significantly improved | Moderate improvement | Prolongs short-term performance by reducing burden. |

| Growth-Based Feedback | Varies with design | Moderate improvement | Greatest improvement (>3x increase possible) | Extends functional half-life most effectively. |

| Post-Transcriptional Control (sRNA) | Maintainable at high levels | High | High | Outperforms transcriptional control due to amplification and lower burden. |

Experimental Protocols for Key Studies

Protocol: Evaluating Evolutionary Longevity in Bacteria

This protocol is adapted from studies that quantify the evolutionary longevity of synthetic gene circuits in microbial populations, such as E. coli [20].

- Strain and Circuit Engineering: Clone the synthetic gene circuit (e.g., a constitutively expressed reporter protein like GFP) into the chosen bacterial host strain. The circuit should be integrated into the genome or placed on a stable plasmid to ensure inheritance.

- Culture and Passaging:

- Inoculate the ancestral, engineered population in a defined growth medium with appropriate selective pressure (e.g., an antibiotic).

- Grow the culture in repeated batch conditions. A typical cycle involves 24 hours of growth, after which a small sample of the culture is used to inoculate fresh medium, diluting the population (e.g., 1:100 or 1:1000) to maintain continuous growth. This serial passaging is repeated for dozens of generations.

- Monitoring and Sampling:

- At regular intervals (e.g., every 2-4 generations), collect samples from the population.

- Analyze these samples using flow cytometry to measure the population-level output (e.g., mean fluorescence intensity) and to track the emergence of sub-populations with different expression levels.

- Data Analysis:

- Total Output (P): Calculate the total output of the system over time using the formula: ( P = \sum{i} (Ni \cdot p{Ai}) ), where ( Ni ) is the number of cells in strain i and ( p{A_i} ) is the output per cell for that strain [20].

- Metrics Calculation:

- P₀: The initial output at time zero.

- τ±10: The time (in hours or generations) for the total output P to fall outside the range of P₀ ± 10%.

- τ50: The time for the total output P to fall below P₀/2 [20].

Protocol: Predictive Design of a Compressed 3-Input Logic Gate

This protocol outlines the workflow for designing a compressed genetic circuit using the T-Pro methodology [19].

- Wetware Expansion:

- Engineer orthogonal sets of synthetic repressors and anti-repressors. For 3-input logic, three orthogonal systems are required. For example, develop a new set of TFs responsive to cellobiose (CelR scaffold) alongside existing systems for IPTG and D-ribose.

- Anti-Repressor Engineering:

- Generate a "super-repressor" variant via site-saturation mutagenesis (e.g., at a key amino acid position) that retains DNA binding but is insensitive to the input ligand.

- Use this super-repressor as a template for error-prone PCR (EP-PCR) at a low mutational rate to create a library of variants.

- Screen the library (~10⁸ variants) using Fluorescence-Activated Cell Sorting (FACS) to identify anti-repressors (variants that activate transcription in the presence of the ligand).

- Software-Driven Circuit Enumeration:

- Define the desired 3-input Boolean truth table (one of 256 possible).

- Employ an algorithmic enumeration method that models circuits as directed acyclic graphs. The algorithm systematically explores circuits in order of increasing complexity to guarantee the identification of the most compressed (smallest part count) design for the given truth table [19].

- Circuit Assembly and Validation:

- Assemble the computationally selected circuit design using standard molecular biology techniques (e.g., Golden Gate assembly, Gibson assembly).

- Transform the constructed DNA into the chassis organism.

- Quantitatively validate the circuit's performance by measuring the output (e.g., fluorescence) in response to all 8 possible combinations of the three inputs. Compare the experimental results to the predicted truth table and quantitative performance setpoints.

Visualization of Pathways and Workflows

Diagram 1: T-Pro compressed logic gate architecture showing coordinated TF binding.

Diagram 2: Evolutionary degradation of an uncompressed gene circuit.

Diagram 3: Workflow for predictive design of compressed genetic circuits.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for Genetic Circuit Engineering

| Reagent / Material | Function in Research | Specific Examples / Notes |

|---|---|---|

| Synthetic Transcription Factors (TFs) | Engineered proteins that bind to specific DNA sequences to regulate transcription. Form the core of advanced circuit platforms like T-Pro. | Repressors (e.g., E+TAN), Anti-repressors (e.g., EA1TAN). Can be made responsive to ligands like IPTG, D-ribose, cellobiose [19]. |

| Synthetic Promoters | Engineered DNA sequences where TFs bind. Designed for orthogonality to prevent crosstalk between different circuit components. | T-Pro synthetic promoters with tandem operator designs [19]. |

| Chassis Organisms | The host cell in which the genetic circuit is implemented. | Commonly E. coli or yeast [20] [22]. Mammalian cells are used for therapeutic applications [10]. |

| Gene Synthesis Services | Provides custom-designed DNA fragments for circuit construction, bypassing the need to source natural sequences. | Essential for creating novel genetic parts not found in nature [24]. |

| Fluorescent Reporter Proteins | Serve as a quantifiable output for circuit function, allowing for high-throughput screening and characterization. | Green Fluorescent Protein (GFP) is a classic example [20]. |

| Flow Cytometer / FACS | Instrument for measuring fluorescence of individual cells (flow cytometry) and for sorting populations based on fluorescence (FACS). | Critical for screening mutant libraries (e.g., for anti-repressors) and for monitoring population heterogeneity and evolution [20] [19]. |

| CRISPR-Cas Systems | Genome editing technology used for precise integration of circuits into the host genome. | Enhances the stability of circuit inheritance compared to plasmid-based systems [21]. |

In synthetic biology, a chassis organism is the living host that houses engineered genetic circuits, functioning as a foundational platform for building artificial biological systems. The selection and engineering of an appropriate chassis are as critical as the design of the genetic circuits themselves, directly determining the system's functionality, stability, and application potential [25]. Synthetic biology aims to dismantle and reassemble biological components to create novel systems that perform useful tasks, a process heavily reliant on the chassis that hosts these constructs [26]. This guide provides a technical framework for selecting and engineering chassis across the three primary categories: microbial, mammalian, and cell-free platforms. The principles outlined here are fundamental to the broader thesis of applying rigorous engineering design—characterized by standardization, modularity, and abstraction—to the creation of reliable artificial biological systems [26].

A Framework for Systematic Chassis Selection

Selecting an optimal chassis requires a multi-factorial analysis beyond mere genetic tractability. The following constraints provide a conceptual framework for systematic selection, particularly for environmentally deployed systems [25].

Core Selection Constraints

- Constraint 1: Safety and "Do No Harm": The chassis must be safe for its intended application. This precludes known pathogens and necessitates robust biocontainment strategies to prevent uncontrolled proliferation or horizontal gene transfer. Engineered safeguards, such as toxin-antitoxin systems, auxotrophies, and inducible kill switches, are essential, with a recommended biological containment escape frequency of less than 1 in 10^8 cells [25].

- Constraint 2: Ecological and Metabolic Persistence: The chassis must survive and function in the target environment. This requires evaluating its ability to withstand biotic (e.g., microbial competition) and abiotic (e.g., nutrient availability, oxygen gradients) stresses. For environmental biosensing, organisms that persist poorly in lab conditions may be ideal, and their primary metabolism must be compatible with environmental conditions [25].

- Constraint 3: Genetic Tractability: A well-annotated genome and reliable DNA delivery methods are prerequisites. Tools for genetic manipulation have expanded beyond model organisms and now include broad-host-range plasmids, recombinase-based systems, and CRISPR-based integration tools, enabling the engineering of non-model chassis [25].

Table: Key Constraints for Selecting a Chassis Organism

| Constraint | Key Considerations | Examples of Supporting Technologies |

|---|---|---|

| Safety & Biocontainment | Non-pathogenicity, containment strategy, escape frequency | Auxotrophy, toxin-antitoxin systems, kill switches [25] |

| Ecological Persistence | Survival in target niche, resistance to stressors, community interactions | Incubation studies with environmental samples, genome-scale metabolic modeling (GEMs) [25] |

| Genetic Tractability | Fully sequenced genome, DNA delivery methods, tool availability | Broad-host-range plasmids, recombinases, CRISPR-Cas systems [25] |

Microbial Chassis Platforms

Microbial chassis, primarily bacteria and yeast, are the most established platforms in synthetic biology due to their rapid growth and advanced toolkits.

Model Bacterial Chassis

- Escherichia coli: As a quintessential model organism, E. coli boasts unparalleled genetic tools, high transformation efficiency, and rapid growth. It is frequently used as a testbed for new genetic designs. The Nissle 1917 strain, for example, has been engineered as a probiotic to treat metabolic disorders like phenylketonuria [26].

- Pseudomonas putida & Bacillus subtilis: These are robust soil bacteria with considerable metabolic versatility and are often chosen for environmental applications where model organisms like E. coli may not persist [25].

Engineering Advanced Microbial Functions

A prime example of sophisticated microbial chassis engineering is the creation of E. coli strains with integrated Molecularly Encoded Memory via an Orthogonal Recombinase arraY (MEMORY). This system unifies decision-making, communication, and memory—three key tenets of intelligent systems [27].

Experimental Protocol: Engineering a MEMORY Chassis [27]

- Identify Orthogonal Parts: Select six orthogonal serine recombinases (e.g., A118, Bxb1, Int3) and six orthogonal transcription factors (e.g., PhlF, TetR, AraC) from the Marionette biosensing array.

- Optimize Recombinase Expression: For each recombinase, create a genetic library with a TF-regulated promoter, a degenerate RBS, and degradation tags. Clone this library into a single-copy Bacterial Artificial Chromosome (BAC).

- Screen for Digital Switching: Co-transform the BAC library with a low-copy reporter plasmid containing an inverted promoter flanked by att sites upstream of a GFP gene. Use a memory assay to screen for clones showing low GFP leakiness without inducer and high, stable GFP expression after transient induction.

- Genomic Integration and Insulation: Integrate the optimized, insulated set of six inducible recombinase genes into a specific genomic locus (e.g., attB φ80). Use strong terminators and alternate transcription directions to prevent readthrough and ensure orthogonality.

- Functional Validation: Validate the final MEMORY chassis by testing all 24 fundamental gain-of-function and loss-of-function memory circuits for both inversion and excision configurations, ensuring minimal cross-induction.

Diagram Title: Recombinase-Based Memory Circuit Logic

Mammalian Cell Chassis Platforms

Mammalian cells offer unique capabilities for therapeutic applications, including complex protein processing and targeted cell-to-cell interactions.

Primary Applications and Engineering Approaches

The primary application of mammalian chassis is in advanced cell-based therapies. A landmark example is the engineering of CAR-T cells for cancer treatment. This involves isolating a patient's T-cells, genetically modifying them ex vivo to express a Chimeric Antigen Receptor (CAR) that recognizes a tumor-specific antigen (e.g., CD19), and reinfusing them into the patient. The modified cells then selectively target and destroy cancerous B-cells [26].

Experimental Protocol: Generating CAR-T Cells [26]

- Leukapheresis: Isolate T lymphocytes from the patient's blood.

- T-Cell Activation: Stimulate the T-cells using anti-CD3/CD28 antibodies to promote proliferation.

- Genetic Modification: Introduce the CAR transgene into the activated T-cells using a viral vector (e.g., lentivirus or gamma-retrovirus).

- Expansion and Formulation: Culture the successfully transduced T-cells ex vivo to expand their numbers.

- Lymphodepletion and Infusion: Administer a lymphodepleting chemotherapy regimen to the patient, followed by infusion of the engineered CAR-T cells.

Table: Essential Reagent Solutions for a CAR-T Cell Workflow

| Research Reagent | Function in the Experimental Protocol |

|---|---|

| Anti-CD3/CD28 Antibodies | Immobilized on beads or plates to activate and stimulate the proliferation of isolated T-cells. |

| Lentiviral Vector | A viral delivery system used to stably integrate the CAR transgene into the genome of the host T-cells. |

| Recombinant Human IL-2 | A cytokine added to the culture medium to support the growth and survival of T-cells during ex vivo expansion. |

Cell-Free Synthetic Biology Platforms

Cell-free protein synthesis (CFPS) systems bypass the use of living cells, instead utilizing the transcriptional and translational machinery extracted from them in an open test tube environment.

Advantages and System Types

CFPS offers unique advantages: freedom from cell viability constraints, direct control over reaction conditions, and the ability to produce proteins that are toxic to cells [28]. There are two main categories of CFPS platforms [28]:

- Crude Extract Systems: A top-down approach using clarified lysate from cells (e.g., E. coli, wheat germ), containing all necessary components for translation and energy regeneration.

- PURE System: A bottom-up approach using a purified ensemble of individually defined components required for protein synthesis.

Applications of Cell-Free Platforms

CFPS is a powerful enabling technology for multiple synthetic biology domains [29]:

- Protein Engineering: Particularly useful for synthesizing toxic proteins, membrane proteins, and proteins incorporating non-canonical amino acids.

- Metabolic Engineering: Allows for the design and reconstitution of complex metabolic pathways without cellular regulatory constraints.

- Rapid Prototyping: Enables quick testing and characterization of genetic parts (promoters, RBSs) and circuits before implementation in living cells.

- Diagnostics and Biomanufacturing: Used in low-cost, paper-based diagnostics and for the industrial-scale production of therapeutics [28].

Table: Comparison of Major Cell-Free Protein Synthesis Platforms

| CFPS Platform | Key Advantages | Key Disadvantages | Representative Protein Yield (μg/mL) |

|---|---|---|---|

| E. coli Extract (ECE) | High yield, low-cost, commercially available, scales linearly to >10^6 L [28] | Limited post-translational modifications | GFP: ~2,300 [28] |

| Wheat Germ Extract (WGE) | Excellent for complex eukaryotic and membrane proteins | Labor-intensive extract preparation | GFP: ~9,700 [28] |

| PURE System | Highly defined and flexible composition, low nuclease/protease activity | Expensive, cannot leverage endogenous metabolism | GFP: ~380 [28] |

Diagram Title: Cell-Free Protein Synthesis Workflow

The strategic selection and engineering of chassis organisms are foundational to the successful implementation of synthetic biology's design principles. The choice is application-dependent, involving a careful balance between safety, persistence, and engineerability. Microbial chassis offer speed and a growing capacity for complex logic; mammalian chassis provide the sophistication required for next-generation therapeutics; and cell-free systems deliver unparalleled flexibility and control. As toolkits for non-model organisms and cell-free systems continue to mature, the scope of addressable challenges will expand significantly. Future progress hinges on the development of standardized, well-characterized chassis that reliably host synthetic genetic programs, ultimately enabling the creation of intelligent biological systems for healthcare, manufacturing, and environmental management.

The Design-Build-Test-Learn (DBTL) cycle represents a foundational framework in synthetic biology for the systematic and iterative development of biological systems [30]. This engineering-based approach enables researchers to rationally program organisms with desired functionalities, applying engineering principles to overcome the inherent unpredictability of biological systems [31]. The cycle formalizes the process of engineering biological components, where each iteration incorporates learning from previous experiments to progressively refine genetic designs until desired functions are achieved [30].

This framework has become increasingly crucial for advancing synthetic biology from conceptual demonstrations to real-world applications in therapeutics, biomanufacturing, and sustainable chemistry [31] [32]. The power of the DBTL cycle lies in its iterative nature—each completed cycle generates knowledge that informs subsequent designs, creating a continuous improvement loop that gradually converges on optimal biological systems [33]. By implementing this structured approach, researchers can navigate the complexity of biological systems more effectively, transforming biological engineering from an artisanal process into a predictable engineering discipline [32].

The Four Phases of the DBTL Cycle

Design Phase

The Design phase constitutes the strategic planning stage where researchers specify the genetic components and systems to be constructed. This phase leverages modular design principles, enabling the assembly of diverse genetic constructs by interchanging standardized biological parts [30]. Modern design approaches incorporate computational tools and models to predict system behavior before physical assembly, significantly enhancing initial design quality [34] [35].

Advanced design strategies now include knowledge-driven approaches that incorporate upstream in vitro investigations to inform initial designs [34]. For metabolic engineering applications, this may involve selecting enzyme homologs, promoter strengths, and ribosomal binding sites (RBS) based on prior mechanistic understanding [34] [36]. The emergence of literate programming platforms like teemi further supports this phase by enabling the integration of bioinformatic tools for automated homolog selection, promoter design, and combinatorial library generation [36]. These platforms facilitate the simulation of experimental flows for in vivo design and assembly, reducing human error and improving reproducibility [36].

Build Phase

The Build phase translates genetic designs into physical biological constructs through DNA assembly and host transformation. This stage has been revolutionized by automation and advanced genetic engineering tools that enable high-throughput construction of genetic variants [34] [32]. Automated assembly processes reduce the time, labor, and cost of generating multiple constructs, enabling researchers to explore a broader design space [30].

Key building technologies include modular assembly techniques such as Golden Gate assembly, Gibson assembly, and ligase chain reaction (LCR), which facilitate the seamless combination of genetic parts [32]. For pathway optimization, RBS engineering provides a powerful method for fine-tuning relative gene expression in synthetic pathways [34]. The build phase also leverages advanced genome editing tools like CRISPR-Cas9 and multiplex automated genome engineering (MAGE) for precise genetic modifications [32] [35]. Automation through biofoundries has dramatically accelerated this phase, with robotic platforms enabling the construction of hundreds to thousands of microbial strains in record time [37] [32].

Test Phase

The Test phase involves functional characterization of the constructed biological systems through a variety of analytical techniques. This critical evaluation stage assesses whether the built constructs perform as intended and provides the essential data for subsequent learning [30]. High-throughput screening methods have become indispensable for efficiently testing large libraries of genetic variants [38].

Advanced testing methodologies include multi-omic analyses (genomics, transcriptomics, proteomics, metabolomics) that provide systems-level insights into microbial metabolism and function [37] [32]. For metabolic engineering applications, mass spectrometry-based analytics enable precise quantification of metabolic outputs [32]. Emerging technologies like RespectM allow microbial single-cell level metabolomics, detecting metabolites at rates of 500 cells per hour with high efficiency [39]. Automation plays a crucial role in this phase, with liquid-handling robots and microfluidics enabling rapid, reproducible testing of thousands of samples [38] [35]. Functional assays may include measurements of metabolite production, enzyme activity, growth characteristics, and other phenotype-relevant parameters [30] [34].

Learn Phase

The Learn phase represents the knowledge extraction stage where experimental data are analyzed to generate insights that will inform the next design iteration. This phase has traditionally been a bottleneck in the DBTL cycle due to the complexity and heterogeneity of biological systems [31]. However, advances in machine learning (ML) and data analytics are revolutionizing this critical phase [33] [31].

Machine learning approaches—including gradient boosting, random forest models, and deep neural networks—excel at identifying complex patterns in multidimensional biological data, even with limited sample sizes [33] [39]. These methods can recommend new strain designs by learning from a small set of experimentally probed designs, enabling semi-automated iterative metabolic engineering [33]. Heterogeneity-powered learning (HPL) represents an emerging approach that leverages single-cell metabolomics data to train predictive models [39]. The learning phase also incorporates traditional statistical evaluations and model-guided assessments to refine understanding of biological system behavior [34]. The insights generated during this phase close the DBTL loop, initiating a new cycle with improved designs.

DBTL in Action: Experimental Case Study

Dopamine Production in E. coli

A recent implementation of the knowledge-driven DBTL cycle demonstrates its power for optimizing microbial production of valuable compounds. Researchers applied this framework to develop an E. coli strain for dopamine production, achieving concentrations of 69.03 ± 1.2 mg/L (34.34 ± 0.59 mg/g biomass)—a 2.6 to 6.6-fold improvement over previous state-of-the-art production systems [34].

Table 1: Key Experimental Parameters for Dopamine Production Optimization

| Parameter | Description | Application in DBTL Cycle |

|---|---|---|

| Host Strain | E. coli FUS4.T2 | Engineered for high l-tyrosine production as dopamine precursor |

| Key Enzymes | HpaBC (from E. coli) and Ddc (from Pseudomonas putida) | Convert l-tyrosine to l-DOPA, then to dopamine |

| Engineering Strategy | Ribosome Binding Site (RBS) tuning | Fine-tuned expression of heterologous genes |

| Cultivation Medium | Minimal medium with 20 g/L glucose | Controlled fermentation conditions |

| Analytical Method | Metabolite quantification | Measured dopamine production titers |

Experimental Methodology

The dopamine production case study exemplifies a comprehensive DBTL implementation [34]:

Strain Design: The initial design phase involved selecting heterologous genes (hpaBC and ddc) and planning RBS variations to optimize expression levels. In vitro cell lysate studies informed the initial design choices before moving to in vivo testing.

Library Construction: The build phase employed automated cloning techniques to generate a library of E. coli strains with varying RBS sequences controlling hpaBC and ddc expression. This included modulating the Shine-Dalgarno sequence to fine-tune translation initiation rates without disrupting secondary structures.

High-Throughput Screening: The test phase involved cultivating strain variants in 96-well formats and quantifying dopamine production using analytical methods such as mass spectrometry. This enabled rapid evaluation of dozens to hundreds of variants.

Data Analysis and Learning: The learn phase analyzed the relationship between RBS sequences, enzyme expression levels, and dopamine production. Researchers discovered that GC content in the Shine-Dalgarno sequence significantly impacted RBS strength and dopamine yield, informing the next design iteration.

Table 2: Quantitative Results from DBTL Optimization of Dopamine Production

| DBTL Cycle | Dopamine Production (mg/L) | Improvement Factor | Key Learning |

|---|---|---|---|

| Initial State | 27.0 | 1.0x | Baseline production level |

| First Iteration | 45.2 | 1.7x | Optimal HpaBC expression critical |

| Second Iteration | 69.0 | 2.6x | Fine-tuned Ddc expression further enhanced yield |

| Final Optimization | 69.0 ± 1.2 | 2.6x | GC content in SD sequence affects RBS strength |

Workflow Visualization: DBTL Cycle

Enabling Technologies and Research Reagents

The effective implementation of DBTL cycles relies on a sophisticated ecosystem of technologies and research reagents. These tools enable the high-throughput, precision engineering required for advanced synthetic biology applications.

Table 3: Essential Research Reagent Solutions for DBTL Implementation

| Reagent/Technology | Function | Application Example |

|---|---|---|

| Ribosome Binding Site (RBS) Libraries | Fine-tune translation initiation rates | Optimizing heterologous enzyme expression in metabolic pathways [34] |

| Promoter Variants | Regulate transcription levels | Controlling flux through biosynthetic pathways [32] |

| CRISPR-Cas9 Systems | Enable precise genome editing | Knocking out regulatory genes or integrating pathways [35] |

| Biofoundry Automation | Robotic liquid handling and strain construction | High-throughput assembly and screening of genetic variants [37] [32] |

| Cell-Free Protein Synthesis Systems | Rapid in vitro testing of enzyme combinations | Screening enzyme variants before in vivo implementation [34] |

| Multi-Omics Analysis Platforms | Comprehensive characterization of strains | Identifying metabolic bottlenecks and regulatory effects [32] |

Advanced Computational Approaches

Machine learning has emerged as a transformative technology for enhancing DBTL cycles, particularly in the Learn phase. Gradient boosting and random forest models have demonstrated exceptional performance in the low-data regimes typical of early DBTL cycles [33]. These methods can effectively learn from limited experimental data to recommend improved designs for subsequent iterations.

The integration of kinetic modeling with machine learning creates powerful frameworks for simulating DBTL cycles in silico before costly wet-lab experimentation [33]. These models capture non-intuitive pathway behaviors, such as instances where increasing enzyme concentrations decreases product flux due to substrate depletion [33]. By simulating thousands of virtual DBTL cycles, researchers can optimize their experimental strategies and machine learning approaches, significantly accelerating the overall engineering process.

Literate programming platforms like teemi represent another computational advance, providing end-to-end workflow management for DBTL cycles [36]. These platforms support FAIR (Findable, Accessible, Interoperable, Reusable) data principles, ensuring that knowledge gained from each cycle is properly captured and utilized in subsequent iterations [36]. The integration of computational design tools with laboratory automation systems is gradually moving the field toward fully autonomous DBTL cycles that can operate with minimal human intervention [32] [36].

Future Perspectives

The future of DBTL cycles in biological engineering points toward increasingly automated, computationally driven workflows. Biofoundries will play an expanding role as central facilities for high-throughput DBTL implementation, combining advanced robotics, microfluidics, and analytics [37] [32]. The emergence of explainable machine learning will enhance the Learn phase by providing both predictions and the biological rationale behind them, deepening fundamental understanding of biological systems [31].

We anticipate that multi-omic data integration will become increasingly sophisticated, enabling systems-level understanding and engineering of microbial metabolism [32]. The application of DBTL cycles will also expand beyond traditional model organisms to nontraditional microbes with unique metabolic capabilities, greatly broadening the scope of synthetic biology applications [37]. As these technologies mature, the DBTL cycle will evolve from a primarily manual process to a fully automated pipeline, dramatically accelerating the engineering of biological systems for healthcare, manufacturing, and environmental sustainability [32].

From Blueprint to Biologics: Methodologies and Breakthrough Applications in Medicine

The field of synthetic biology, which applies engineering principles to design and construct novel biological systems, has found a powerful application in the development of living therapeutics [26] [40]. Chimeric Antigen Receptor (CAR)-T cell therapy exemplifies this approach, where a patient's own T lymphocytes are genetically reprogrammed to recognize and eliminate cancerous cells [41]. This technology represents a paradigm shift in cancer treatment, moving from traditional small-molecule drugs to living, engineered cells that operate as targeted, self-amplifying therapeutics. The core synthetic biology principle of the Design–Build–Test–Learn (DBTL) cycle is central to the iterative development and optimization of these sophisticated cellular machines [42]. By breaking down biological complexity into standardized, modular components, synthetic biology provides the framework to engineer immune cells with predictable and controllable behaviors, offering new hope for treating refractory cancers and other complex diseases [26] [43].

The Architectural Evolution of CAR-T Cells

The molecular architecture of the Chimeric Antigen Receptor is the cornerstone of CAR-T cell functionality, and its design has evolved significantly through multiple generations, each enhancing the therapeutic potential of the engineered cells [41] [44].

Core Structural Modules

All CARs consist of four fundamental domains, each serving a distinct function [44]:

- Extracellular Antigen-Binding Domain: Typically a single-chain variable fragment (scFv) derived from monoclonal antibodies, this domain acts as the sensor that recognizes and binds to specific tumor-associated antigens with high selectivity [41] [44].

- Hinge/Spacer Domain: This region provides structural flexibility, allowing the scFv to access the target antigenic epitope. Its length and composition can influence CAR function and stability [44].

- Transmembrane Domain: This alpha-helical anchor integrates the CAR into the T cell membrane and can influence receptor stability and signaling. It is often derived from proteins like CD28 or CD8 [41].

- Intracellular Signaling Domain: This is the engine of the CAR, transmitting activation signals into the T cell upon antigen binding. Its complexity has increased with each CAR generation [41] [44].

Generational Advancements in CAR Design

The progression from first- to fifth-generation CARs illustrates the iterative application of synthetic biology to enhance T cell function [41] [44].

Table: Evolution of CAR-T Cell Generations

| Generation | Key Intracellular Components | Primary Functional Enhancements | Clinical Status |

|---|---|---|---|

| First | CD3ζ | Basic T-cell activation; limited persistence and efficacy [41]. | Superseded |

| Second | CD3ζ + one co-stimulatory domain (e.g., CD28 or 4-1BB) | Enhanced proliferation, cytotoxicity, and persistence [41] [44]. | Clinically approved (e.g., Axicabtagene ciloleucel, Brexucabtagene autoleucel) [41] |

| Third | CD3ζ + multiple co-stimulatory domains (e.g., CD28 + 4-1BB) | Further improved potency, cytokine production, and persistence [41] [44]. | In clinical studies |

| Fourth (TRUCK) | Second-gen base + inducible transgenes (e.g., IL-12) | Local secretion of cytokines to modulate the tumor microenvironment; recruitment of innate immune system [41] [44]. | In clinical studies |

| Fifth | Second-gen base + truncated cytokine receptor domain (e.g., IL-2Rβ) | JAK-STAT signaling integration; enhanced memory formation, cytokine secretion, and resistance to immunosuppression [41] [44]. | Pre-clinical / Early clinical |

The following diagram visualizes the structural composition and key signaling pathways of these five CAR generations.