DeepCRISPR: How Machine Learning is Revolutionizing CRISPR Guide RNA Design and Prediction

This article explores the transformative role of deep learning in overcoming the central challenges of CRISPR-based genome editing: accurately predicting on-target knockout efficacy and minimizing off-target effects.

DeepCRISPR: How Machine Learning is Revolutionizing CRISPR Guide RNA Design and Prediction

Abstract

This article explores the transformative role of deep learning in overcoming the central challenges of CRISPR-based genome editing: accurately predicting on-target knockout efficacy and minimizing off-target effects. Tailored for researchers, scientists, and drug development professionals, it provides a comprehensive analysis of DeepCRISPR and other AI-driven platforms. We cover the foundational principles of applying convolutional and hybrid neural networks to sgRNA design, detail methodological advances for improved specificity, discuss troubleshooting data limitations, and present a comparative validation of current tools. The synthesis of these areas offers a critical resource for leveraging artificial intelligence to design safer and more effective gene-editing therapies.

The CRISPR Challenge: Why Accurate sgRNA Design is Critical for Genome Editing

Frequently Asked Questions (FAQs)

Q1: What is the primary function of sgRNA in the CRISPR-Cas9 system? The single guide RNA (sgRNA) is a synthetic RNA molecule that combines two natural RNA components—crispr RNA (crRNA) and trans-activating crRNA (tracrRNA). Its primary function is to guide the Cas9 nuclease to a specific DNA target sequence complementary to the crRNA segment. This guidance system allows for precise double-strand breaks in the DNA at predetermined genomic locations [1].

Q2: How does machine learning improve sgRNA design? Machine learning, particularly deep learning models, analyzes large-scale experimental data to identify sequence and epigenetic features that correlate with high on-target knockout efficacy and low off-target effects. These models learn from thousands of sgRNAs tested in various contexts to predict the performance of new sgRNA sequences, surpassing traditional hypothesis-driven design rules. For instance, the DeepCRISPR platform uses a hybrid deep neural network pre-trained on billions of unlabeled sgRNA sequences to boost prediction accuracy [2] [3].

Q3: Why is the PAM sequence critical for sgRNA design? The Protospacer Adjacent Motif (PAM) is a short, specific DNA sequence adjacent to the target DNA site that is essential for Cas9 recognition and binding. Different Cas proteins from various bacterial species recognize different PAM sequences. For the most commonly used Streptococcus pyogenes Cas9 (SpCas9), the PAM sequence is 5'-NGG-3'. The PAM requirement defines the possible target sites within a genome, as Cas9 will only cleave DNA if the target sequence is followed by the correct PAM [1] [4].

Q4: What are the key sequence features of an effective sgRNA? Machine learning studies have identified several key features that influence sgRNA efficacy. These include the specific nucleotide composition at particular positions along the 20-nucleotide guide sequence, the GC content of the sgRNA, and the secondary structure of the sgRNA itself. Models like DeepCRISPR automatically identify these features in a data-driven manner, with convolutional neural networks emerging as particularly effective for this analysis [2] [3].

Troubleshooting Common sgRNA Experiment Issues

Table 1: Common Experimental Challenges and Solutions

| Problem | Possible Cause | Recommended Solution |

|---|---|---|

| Low editing efficiencyInsufficient indels at target locus | Suboptimal sgRNA sequencePoor sgRNA stabilityLow transfection efficiency | Test 2-3 different guide RNAs per target [5]. Use chemically modified synthetic sgRNAs for improved stability and activity [5]. Verify component concentrations and delivery method [5] [6]. |

| High off-target effectsEditing at unintended genomic sites | sgRNA sequence similarity to off-target sitesProlonged sgRNA expression | Use machine learning tools (e.g., DeepCRISPR) to predict and minimize off-target profiles [2]. Deliver CRISPR components as Ribonucleoproteins (RNPs) to reduce off-target effects [5]. |

| Irregular protein expressionUnexpected protein levels post-editing | Guide RNA targeting variable exonsIsoform-specific editing | Design sgRNAs to target exons common to all major protein isoforms [7]. Target early exons to increase probability of frameshift mutations [4]. |

| No cleavage activityLack of indels at target site | Incorrect PAM specificationInefficient delivery | Confirm the correct PAM sequence for your specific Cas nuclease [1] [6]. Optimize transfection protocol and consider antibiotic selection to enrich transfected cells [6]. |

| Unpredictable editing outcomesVariable efficiency between guides | Chromatin accessibilityEpigenetic factors | Utilize tools like DeepCRISPR that integrate epigenetic information from relevant cell types to improve prediction [2]. |

Table 2: Machine Learning Tools for sgRNA Design

| Tool Name | Key Features | Design Approach |

|---|---|---|

| DeepCRISPR [2] | Unifies on-target and off-target prediction; Uses hybrid deep neural network; Integrates epigenetic data. | Deep Learning (Unsupervised pre-training + supervised fine-tuning) |

| sgDesigner [8] | Uses stacked generalization framework; Trained on plasmid library data for generalizability. | Machine Learning (Stacked generalization) |

| CHOPCHOP [1] | Supports multiple Cas nucleases and PAM sequences; Provides off-target prediction. | Hypothesis-driven / Empirical scoring |

| Synthego Design Tool [1] | Validates guides for editing efficiency and off-target effects; Extensive genome library. | Proprietary Algorithm |

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Reagents for CRISPR Experiments

| Item | Function | Application Notes |

|---|---|---|

| Chemically Modified Synthetic sgRNA | Guides Cas9 to target DNA; Modified for enhanced stability and reduced immune response. | Superior editing efficiency and lower cellular toxicity compared to IVT or plasmid-based guides [5]. |

| Cas9 Nuclease | Effector protein that creates double-strand breaks in target DNA. | Choose based on PAM requirement and target genome (e.g., SpCas9 for GC-rich regions) [5]. |

| Ribonucleoprotein (RNP) Complex | Pre-complexed Cas9 protein and sgRNA. | Enables DNA-free editing; increases efficiency; reduces off-target effects [5]. |

| Delivery Vehicle (e.g., Lentivirus) | Introduces CRISPR components into cells. | Critical for hard-to-transfect cells; requires careful titration [8] [2]. |

| Validation Primers & Sequencing Kits | Amplify and sequence target locus to confirm edits and assess efficiency. | Essential for verifying on-target cleavage and analyzing indel patterns [6]. |

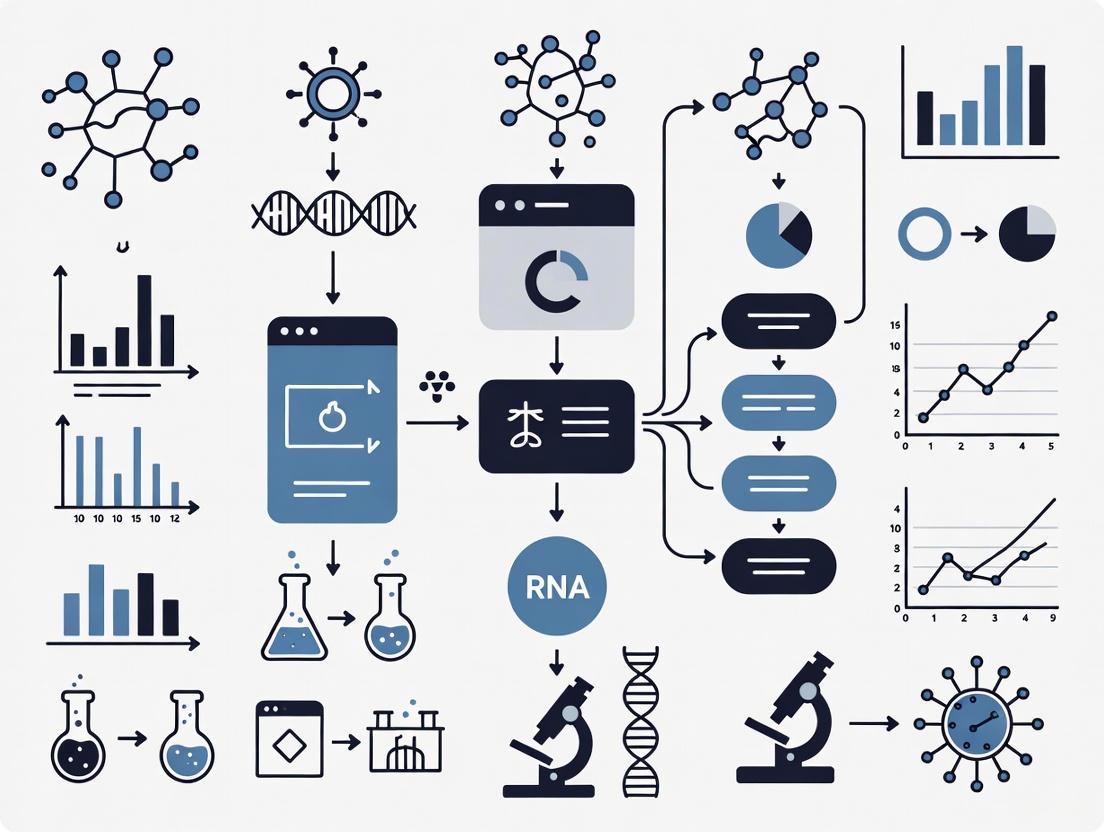

Experimental Workflows & Conceptual Diagrams

sgRNA Design and Validation Workflow

Deep Learning Framework for sgRNA Design

Troubleshooting Guide: Navigating CRISPR Experimental Design

This guide addresses common challenges in CRISPR genome editing experiments, providing targeted solutions informed by state-of-the-art DeepCRISPR machine learning research. The integration of artificial intelligence (AI) and deep learning is now revolutionizing the field by accelerating the optimization of gene editors, guiding the engineering of existing tools, and supporting the discovery of novel genome-editing enzymes [9].

Frequently Asked Questions

Q1: Why do different sgRNAs targeting the same gene show such variable editing efficiency?

In the CRISPR/Cas9 system, gene editing efficiency is highly influenced by the intrinsic properties of each sgRNA sequence [10]. This variability stems from multiple sequence and epigenetic features that affect how effectively the Cas9 complex binds to and cleaves the target DNA.

DeepCRISPR Solution: The DeepCRISPR platform applies a deep learning framework that uses unsupervised pre-training on billions of genome-wide unlabeled sgRNA sequences to automatically learn meaningful representations and identify features affecting sgRNA performance [2]. This approach considers both sequence composition and epigenetic information from different cell types, enabling more accurate predictions of which sgRNAs will perform effectively.

Recommended Protocol:

- Always design 3-4 sgRNAs per gene to mitigate performance variability [10]

- Utilize DeepCRISPR or CRISPRon tools to predict on-target efficacy before experimental validation

- Consider epigenetic context from your specific cell type, as chromatin accessibility significantly impacts editing efficiency

Q2: How can I accurately predict and minimize off-target effects in my experiments?

Off-target effects occur when Cas9 cleaves unintended genomic sites with sequences similar to your target. These effects represent a major safety concern, particularly for clinical applications [11]. Traditional prediction methods based solely on sequence alignment have limited performance because they don't fully capture the molecular mechanisms of CRISPR systems.

DeepCRISPR Solution: Advanced deep learning models now incorporate molecular dynamics simulations to understand RNA-DNA interactions at the atomic level. The CRISOT tool suite, for example, derives RNA-DNA molecular interaction fingerprints that significantly improve off-target prediction accuracy across diverse CRISPR systems [12]. These models analyze hydrogen bonding, binding free energies, and base pair geometric features to predict cleavage likelihood.

Table 1: Comparison of Deep Learning Models for Off-Target Prediction

| Model Name | Key Features | Advantages | Performance Metrics |

|---|---|---|---|

| CRISOT | RNA-DNA molecular interaction fingerprints from MD simulations | Generalizable across Cas9, base editors, and prime editors | Outperforms existing tools in comprehensive validations [12] |

| CRISPR-Net | Integrated multiple sequence and structural features | Strong overall performance in independent benchmarks | High AUC, Precision, and F1 scores [13] |

| R-CRISPR | Advanced neural network architecture | Robust performance with imbalanced datasets | Strong Recall and MCC metrics [13] |

| Crispr-SGRU | Gated recurrent units for sequence analysis | Effective at capturing positional dependencies | Competitive overall performance [13] |

| DeepCRISPR | Hybrid deep neural network with epigenetic features | Unifies on-target and off-target prediction | Superior to state-of-the-art tools [2] |

Recommended Protocol:

- Use CRISOT-Spec to calculate specificity scores for your sgRNA designs

- For sgRNAs with poor specificity, apply CRISOT-Opti to introduce single nucleotide mutations that reduce off-target effects while maintaining on-target activity [12]

- Validate predictions with GUIDE-seq or CIRCLE-seq for critical applications

- Consider using high-fidelity Cas9 variants (eSpCas9, SpCas9-HF1) with models specifically trained for these systems

Q3: What sequencing depth and analysis methods ensure reliable CRISPR screen results?

Genome-wide CRISPR screens require careful experimental design and appropriate bioinformatic analysis to generate meaningful results. Inadequate sequencing depth or improper statistical analysis can lead to false positives and negatives.

DeepCRISPR Context: Machine learning models like CRISPR-GPT can now assist researchers in planning and executing proper experimental designs by drawing on vast knowledge from published literature and experimental data [14].

Table 2: CRISPR Screen Sequencing and Analysis Specifications

| Parameter | Recommended Specification | Technical Rationale |

|---|---|---|

| Sequencing Depth | ≥200x per sample [10] | Ensures sufficient coverage for statistical power in sgRNA detection |

| Mapping Rate | Monitor but not primary concern [10] | Analysis uses only mapped reads; focus on absolute mapped read count |

| sgRNAs per Gene | 3-4 [10] | Mitigates impact of individual sgRNA performance variability |

| Primary Analysis Tool | MAGeCK [10] | Incorporates RRA (single-condition) and MLE (multi-condition) algorithms |

| Candidate Gene Selection | Prioritize by RRA score ranking [10] | Integrates multiple metrics; more comprehensive than LFC/p-value alone |

| Quality Control | Include positive-control sgRNAs [10] | Validates screening conditions and experimental effectiveness |

Recommended Protocol:

- Calculate required sequencing volume using: Required Data Volume = Sequencing Depth × Library Coverage × Number of sgRNAs / Mapping Rate [10]

- For a typical human whole-genome knockout screen, plan for approximately 10 Gb per sample [10]

- Use MAGeCK's RRA algorithm for single-condition comparisons and MLE for multi-condition experiments [10]

- Include well-validated positive control genes to assess screen success [10]

- For FACS-based screens, increase initial cell numbers and perform multiple sorting rounds where feasible to reduce technical noise [10]

Q4: How can I predict outcomes for advanced editing systems like base editors?

Base editors (ABE and CBE) enable precise single-nucleotide changes without double-strand breaks but present unique challenges due to bystander editing within the activity window [15].

DeepCRISPR Solution: The CRISPRon framework uses a novel dataset-aware training approach that simultaneously trains on multiple experimental datasets while tracking their origins. This allows the model to learn systematic differences between base editor variants and experimental conditions [15].

Recommended Protocol:

- Use CRISPRon-ABE for adenine base editors and CRISPRon-CBE for cytosine base editors

- When designing base editing experiments, consider both the primary target base and potential bystander edits within the approximately 8-nucleotide activity window [15]

- Select sgRNAs that maximize editing efficiency while minimizing unintended bystander edits based on model predictions

- Be aware that different deaminase variants exhibit distinct sequence preferences—verify your specific editor is represented in the training data

Table 3: Key Research Reagents and Computational Tools for CRISPR Experiments

| Resource Category | Specific Tools/Reagents | Function & Application |

|---|---|---|

| In Silico Prediction | DeepCRISPR [2], CRISOT [12], CRISPRon [15] | Unified prediction of on-target efficacy and off-target profiles |

| Base Editing Design | CRISPRon-ABE, CRISPRon-CBE [15] | Predicts efficiency and outcomes for adenine and cytosine base editors |

| Off-Target Detection | GUIDE-seq, CIRCLE-seq, DISCOVER-seq [11] [12] | Experimental validation of predicted off-target sites |

| Cas9 Variants | eSpCas9(1.1), SpCas9-HF1 [14] | High-fidelity enzymes with reduced off-target effects |

| Screening Analysis | MAGeCK [10] | Statistical analysis of CRISPR screen data using RRA and MLE algorithms |

| AI Assistants | CRISPR-GPT [14] | Large language model trained on CRISPR literature for experimental guidance |

Visualizing DeepCRISPR Workflows

The following diagrams illustrate key computational and experimental workflows in DeepCRISPR-informed research.

DeepCRISPR Core Architecture

Off-Target Prediction Workflow

DeepCRISPR Technical Support Center

Core Concept FAQs

What is the core innovation of the DeepCRISPR platform? DeepCRISPR is a comprehensive deep learning framework that unifies sgRNA on-target efficacy prediction and genome-wide off-target cleavage profile prediction into a single model. Its key innovation is a two-stage training process that first uses unsupervised pre-training on billions of unlabeled sgRNA sequences across the human genome, followed by supervised fine-tuning on labeled sgRNA datasets. This approach enables the model to automatically learn meaningful representations of sgRNAs while integrating epigenetic information from multiple cell types [2].

How does DeepCRISPR address the critical challenge of class imbalance in off-target datasets? Class imbalance, where true off-target sites are vastly outnumbered by potential mismatch sites, causes models to become biased toward dominant categories. DeepCRISPR employs a specialized bootstrapping sampling algorithm integrated directly into the training procedure to dramatically alleviate this data imbalance issue in off-target site prediction [2]. Recent research has also introduced more advanced strategies like the Efficiency and Specificity-Based (ESB) class rebalancing method, which utilizes biological properties inherent in sequence pairs rather than conventional random sampling [16].

What types of neural network architectures does DeepCRISPR utilize? DeepCRISPR employs a hybrid deep neural network architecture consisting of:

- A Deep Convolutional Denoising Neural Network (DCDNN) autoencoder for unsupervised pre-training on unlabeled sgRNA sequences

- A Convolutional Neural Network (CNN) for supervised fine-tuning on labeled sgRNA efficacy data This hybrid design enables the model to leverage both massive unlabeled datasets and specialized labeled data for optimal performance [2].

Troubleshooting Guides

Issue 1: Poor Prediction Accuracy in New Cell Types

Problem: DeepCRISPR models trained on specific cell types show decreased performance when applied to new cellular contexts.

Solution:

- Epigenetic Integration: Ensure epigenetic features (histone modifications, chromatin accessibility) for the target cell type are properly integrated into the feature space [2].

- Transfer Learning: Utilize DeepCRISPR's parent network capability - the pre-trained model on billion-scale sgRNAs can be fine-tuned with limited cell-type specific data [2].

- Feature Alignment: Verify that chromatin accessibility data and DNA methylation patterns from the new cell type are properly encoded in the input features.

Table: Key Epigenetic Features for Cross-Cell Type Generalization

| Feature Type | Data Source | Impact on Prediction |

|---|---|---|

| Chromatin accessibility | ATAC-seq, DNase-seq | High impact - affects Cas9 binding accessibility |

| Histone modifications | ChIP-seq data (H3K4me3, H3K27ac) | Moderate to high impact on editing efficiency |

| DNA methylation | WGBS, RRBS | Moderate impact, particularly in promoter regions |

| Chromatin states | ChromHMM, Segway | Provides integrated epigenetic context |

Issue 2: Handling Extreme Class Imbalance in Off-Target Datasets

Problem: Models show biased learning and poor minority class prediction due to significantly fewer verified off-target sites compared to potential mismatch sites.

Solution:

- ESB Rebalancing Strategy: Implement Efficiency and Specificity-Based class rebalancing that screens sequences based on biological properties rather than random sampling [16].

- Multi-Feature Extraction: Use the CRISPR-MCA hybrid model which employs multi-scale convolutional networks and multi-head self-attention mechanisms to capture features across different scales [16].

- Encoding Optimization: Select moderate-complexity encoding schemes (23×4 to 20×20 One-hot encodings) that balance performance and computational efficiency [16].

Issue 3: Suboptimal sgRNA Design for Novel Targets

Problem: Inefficient sgRNA selection for genes or genomic regions with limited prior experimental data.

Solution:

- Data Augmentation: Leverage DeepCRISPR's data augmentation technique that generates novel sgRNAs with biologically meaningful labels, expanding the effective training dataset [2].

- Multi-Model Ensemble: Combine predictions from specialized models including CRISPRon for integrated thermodynamic features and DeepHF for high-fidelity Cas9 variants [14].

- Unsupervised Pre-training: Utilize the DCDNN-based autoencoder trained on ~0.68 billion sgRNA sequences from both coding and non-coding regions to capture fundamental sequence patterns [2].

Table: Encoding Schemes for Optimal Feature Extraction

| Encoding Scheme | Dimensions | Best Use Cases | Performance Trade-offs |

|---|---|---|---|

| Basic One-hot | 23×4 | Standard SpCas9 targets | Fast computation, moderate accuracy |

| Expanded One-hot | 20×20 | Complex indel prediction | Higher accuracy, increased computational load |

| 7×24 (CRISPR-Net) | 7×24 | Datasets with insertions/deletions | Balanced performance for diverse variants |

| 14×23 (Advanced) | 14×23 | Noisy or complex datasets | Highest accuracy, significant preprocessing |

Experimental Protocols & Methodologies

Protocol 1: DeepCRISPR Model Training Workflow

Purpose: Establish a standardized procedure for training and validating DeepCRISPR models on custom datasets.

Materials:

- sgRNA sequence data with known efficacy measurements

- Epigenetic feature data for target cell types (chromatin accessibility, histone modifications)

- Computational resources with GPU acceleration

- DeepCRISPR software platform (available at http://www.deepcrispr.net/)

Methodology:

- Data Collection & Preprocessing

- Extract all 20-bp sgRNA sequences with NGG PAM from reference genome

- Curate epigenetic information from minimum of 13 human cell types

- Encode each sgRNA with sequence and epigenetic features

Unsupervised Pre-training Phase

- Train DCDNN-based autoencoder on ~0.68 billion unlabeled sgRNA sequences

- Apply de-noising strategy to enhance model robustness

- Generate parent network for feature representation

Supervised Fine-tuning Phase

- Load labeled sgRNA dataset (~0.2 million sgRNAs with known efficacies)

- Build hybrid neural network combining pre-trained DCDNN and CNN

- Fine-tune entire network on labeled data

- Validate prediction accuracy on held-out test set

Performance Validation

- Compare predictions with experimental validation data

- Assess cross-cell type generalization capability

- Benchmark against state-of-the-art tools (CFD score, MIT score)

Protocol 2: CRISPR-MCA Hybrid Model Implementation

Purpose: Implement advanced hybrid neural network for off-target prediction with enhanced imbalance handling.

Materials:

- Off-target datasets with verified cleavage sites

- Computational framework supporting TensorFlow/PyTorch

- GPU resources for deep learning model training

Methodology:

- Data Preparation & ESB Rebalancing

- Apply Efficiency and Specificity-Based rebalancing to address class imbalance

- Encode gRNA-target DNA sequences using optimized One-hot encoding (23×4 to 20×20)

- Partition data into training/validation/test sets (70/15/15 ratio)

CRISPR-MCA Model Architecture

- Implement multi-scale convolutional neural network for local feature extraction

- Integrate multi-head self-attention mechanism for global dependencies

- Combine features through concatenation and fully connected layers

Training & Optimization

- Train model with class-weighted loss function

- Implement early stopping based on validation performance

- Apply hyperparameter optimization for learning rate and network dimensions

Performance Benchmarking

- Compare against state-of-the-art models (CRISPR-Net, AttnToMismatch_CNN)

- Evaluate on multiple benchmark datasets

- Assess performance on datasets containing both mismatches and indels

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for DeepCRISPR Experimental Validation

| Reagent/Resource | Function/Application | Specifications |

|---|---|---|

| SpCas9 Nuclease | Standard CRISPR nuclease for validation experiments | Wild-type Streptococcus pyogenes Cas9 with NGG PAM |

| Guide RNA Library | Target-specific sgRNA sequences | Minimum 3-4 sgRNAs per gene to account for performance variability |

| Positive Control Genes | Validation of screening success | Well-characterized genes with known phenotypic outcomes |

| MAGeCK Software | Statistical analysis of CRISPR screens | Implements RRA (single-condition) and MLE (multi-condition) algorithms |

| CELL-FREE Validation Systems (CIRCLE-seq, SITE-seq) | In vitro off-target detection | Cell-free methods for comprehensive off-target identification |

| CELL-BASED Validation Systems (GUIDE-seq, Digenome-seq) | Cellular context off-target detection | Methods considering nuclear environment and cellular factors |

| Epigenetic Data Resources | Chromatin accessibility, histone modifications | ENCODE consortium data, ATAC-seq, ChIP-seq datasets |

| DeepCRISPR Software Platform | Unified prediction framework | http://www.deepcrispr.net/ [2] [17] |

Advanced Applications & Integration

Multi-Model Framework for Enhanced Prediction

CRISPR-GPT Integration: For experimental design assistance, integrate with CRISPR-GPT - a large language model trained on 11 years of scientific literature and over 4,000 discussion threads. This provides natural language guidance for both beginners and experts [14].

Specialized Model Selection:

- Use DeepHF for high-fidelity Cas9 variants (eSpCas9(1.1), SpCas9-HF1)

- Implement CRISPRlnc for long non-coding RNA targets

- Apply EasyDesign for Cas12a-based diagnostic applications

Performance Metrics: Current deep learning models achieve >95% prediction accuracy in some applications, significantly outperforming traditional hypothesis-driven scoring methods (MIT Score, CCTop Score) [16] [14].

Frequently Asked Questions (FAQs)

Q1: What is the core innovation of the DeepCRISPR platform? DeepCRISPR is a comprehensive computational platform that unifies the prediction of sgRNA on-target knockout efficacy and off-target profile into a single deep learning framework. This integrated approach surpasses the capabilities of previous tools that treated these predictions separately [2] [18].

Q2: What specific deep learning techniques does DeepCRISPR employ? The platform uses a hybrid deep neural network architecture. Its key innovation is a two-stage training process:

- Unsupervised Pre-training: A Deep Convolutional Denoising Neural Network (DCDNN)-based autoencoder learns meaningful feature representations from ~0.68 billion unlabeled sgRNA sequences across the human genome [2].

- Supervised Fine-tuning: The pre-trained "parent network" is then fine-tuned using labeled sgRNA data (with known knockout efficacies) within a Convolutional Neural Network (CNN) to predict on-target and off-target activities [2].

Q3: How does DeepCRISPR address the challenge of limited labeled sgRNA data? DeepCRISPR tackles data sparsity through two primary strategies. First, it uses unsupervised pre-training on a massive set of unlabeled sgRNAs to learn fundamental sequence representations. Second, it applies data augmentation to generate novel sgRNAs with biologically meaningful labels, effectively increasing the size of the training set and making the model more robust [2].

Q4: What kind of data does DeepCRISPR integrate beyond the sgRNA sequence? In addition to the sgRNA sequence itself, DeepCRISPR encodes epigenetic information curated from 13 different human cell types. This allows the model to account for cell-type-specific factors that can influence sgRNA activity [2].

Q5: For which CRISPR system is the current version of DeepCRISPR designed? The publicly available version of DeepCRISPR is focused on conventional NGG PAM-based sgRNA design for the SpCas9 nuclease in human cells. The architecture can be extended to other Cas9 species or variants [2].

Troubleshooting Guide: Common Deep Learning Prediction Challenges

Problem 1: Handling Imbalanced Datasets in Off-Target Prediction A common hurdle in predicting off-target effects is the extreme class imbalance in datasets, where true off-target sites are vastly outnumbered by potential non-functional mismatch sites [16].

- Challenge: This imbalance can cause models to become biased toward the majority class (non-functional sites), reducing their accuracy in predicting the rare off-target events [16].

- Solution:

- Advanced Rebalancing Strategies: Recent research has introduced strategies like the Efficiency and Specificity-Based (ESB) class rebalancing method. This approach is specifically designed for datasets with mismatch-only off-target instances and has been shown to surpass conventional undersampling or resampling techniques [16].

- Model Architecture: Employ hybrid models, such as CRISPR-MCA, which integrates multi-scale convolutional networks with multi-head self-attention mechanisms. These models are better equipped to extract salient features from imbalanced data [16].

- Recommended Protocol:

- Dataset Analysis: Begin by calculating the ratio of positive (off-target) to negative (non-off-target) samples in your dataset.

- Strategy Application: Implement the ESB strategy or similar advanced rebalancing techniques during data pre-processing.

- Model Selection: Choose a model architecture proven to handle imbalanced data, such as a hybrid CNN-RNN or the CRISPR-MCA model [16].

Problem 2: Achieving High Generalization Across Cell Types Predictions from models trained on data from one cell type may not scale well to others due to differences in epigenetic landscapes and cellular environments [2] [19].

- Challenge: A model trained solely on data from K562 cells might not perform accurately when applied to primary T-cells.

- Solution:

- Incorporate Epigenetic Features: As done in DeepCRISPR, integrate unified epigenetic features from multiple cell types during training to create a more generalizable model [2].

- Cell-Type-Specific Profiling: Large-scale studies have identified key factors, such as the expression of nucleotidylexotransferase (DNTT), that drive cell-type-specific mutational profiles. Informing model selection with this knowledge can improve predictions [19].

- Recommended Protocol:

- Feature Identification: For your target cell type, identify key epigenetic markers (e.g., chromatin accessibility data) if available.

- Model Transfer: Use a pre-trained model like DeepCRISPR that was trained on multiple cell types.

- Fine-Tuning: If possible, fine-tune the model on a small set of experimental data from your specific cell line to adapt it.

Problem 3: Selecting Optimal Input Encoding and Model Architecture The method for encoding sgRNA and target DNA sequences into a format understandable by a deep learning model significantly impacts predictive performance [16].

- Challenge: With numerous encoding schemes (e.g., One-hot, word embeddings) and model architectures (CNN, RNN, hybrid) available, selecting the best combination is non-trivial [16] [19].

- Solution:

- Encoding Complexity: Studies suggest that encoding schemes of moderate complexity often provide the best balance between performance and computational efficiency. Overly complex encodings do not necessarily yield better results [16].

- Include Flanking Sequences: Research shows that including flanking sequences (±10-20 bp) around the target site, especially downstream, significantly increases prediction accuracy [19].

- Architecture Performance: Recurrent Neural Networks (RNNs) have been shown to outperform CNNs and conventional models (like XGBoost) in predicting on-target activity when trained on large, uniformly processed datasets [19].

- Recommended Protocol:

- Sequence Encoding: Encode your sgRNA-target pairs using a well-established One-hot encoding scheme (e.g., 4x23 matrix) and include flanking sequences of at least ±10 bp.

- Model Benchmarking: For on-target prediction, benchmark an RNN-based model (like AIdit_ON [19]) against other architectures using your specific dataset.

- Hybrid Models for Off-Target: For off-target prediction, consider hybrid models like CRISPR-Net or CRISPR-MCA that can handle both mismatches and indels [16] [13].

Performance Data and Benchmarks

Table 1: Deep Learning Model Performance on On-Target Prediction

| Model Name | Architecture | Training Data Size | Performance (Spearman Correlation) | Key Feature |

|---|---|---|---|---|

| DeepCRISPR [2] | Hybrid DCDNN + CNN | ~0.2 million sgRNAs (augmented) | Surpassed state-of-the-art tools (exact metrics not specified in results) | Unsupervised pre-training on 0.68B sgRNAs |

| AIdit_ON [19] | RNN | 926,476 gRNAs | 0.898 (median) | Trained on deep-sampled, uniformly processed data from K562 cells |

| DeepHF [20] | RNN with biological features | ~58,000 gRNAs per nuclease | Outperformed other popular design tools | Predicts for WT-SpCas9 and high-fidelity variants |

Table 2: Addressing Common Experimental and Computational Challenges

| Problem Area | Traditional Approach | Deep Learning/Advanced Solution | Benefit |

|---|---|---|---|

| Low Knockout Efficiency [21] | Testing 3-5 sgRNAs manually | Using AI-predicted, high-efficacy sgRNAs from tools like DeepCRISPR | Increases probability of selecting highly active guides, saving time and resources |

| Off-Target Effect Prediction [16] [13] | Rule-based scores (e.g., MIT, CCTop) | Deep learning models (e.g., CRISPR-Net, R-CRISPR) | Automatically learns complex sequence features; better accuracy with high-quality training data |

| Transfection Optimization [22] | Testing ~7 conditions manually | High-throughput automated optimization (e.g., 200-parameter screening) | Systematically identifies optimal conditions for hard-to-transfect cell lines, maximizing editing efficiency |

Architectural Diagrams

DeepCRISPR Two-Stage Architecture

Data Rebalancing Strategy Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for gRNA Activity Profiling and Model Training

| Resource / Reagent | Function in Research | Example from Literature |

|---|---|---|

| Stably Expressing Cas9 Cell Lines | Provides consistent Cas9 expression, improving reproducibility and knockout efficiency in validation experiments [21]. | Used in DeepHF study to profile gRNA activity for WT-SpCas9, eSpCas9(1.1), and SpCas9-HF1 [20]. |

| Lentiviral gRNA-Target Pair Library | Enables high-throughput, direct measurement of indel rates for thousands of gRNAs in a single experiment, generating data for model training [19] [20]. | A library of 740,000 gRNA-target pairs was used to train the AIdit_ON model in K562 cells [19]. |

| Validated Off-Target Site (OTS) Datasets | High-quality experimental data (e.g., from GUIDE-seq) used to train and benchmark off-target prediction models, improving their robustness [13]. | Integration of validated OTS data from databases like CRISPRoffT is recommended to enhance model performance [13]. |

| Mouse U6 (mU6) Promoter | Expands genomic targeting sites by allowing transcription of gRNAs starting with 'A' in addition to 'G', which is crucial for high-fidelity Cas9 variants sensitive to 5' mismatches [20]. | Employed in the DeepHF study to increase the number of targetable sites for eSpCas9(1.1) and SpCas9-HF1 [20]. |

Inside DeepCRISPR: Architectural Breakdown and Workflow for Practical sgRNA Design

Frequently Asked Questions (FAQs)

Q1: What types of epigenetic features does DeepCRISPR integrate, and why are they important for sgRNA design? DeepCRISPR integrates cell type-specific epigenetic information, such as chromatin accessibility and histone modifications (e.g., H3K4me3, H3K27me3, H3K36me3) [2] [23]. These features are crucial because the local chromatin environment can significantly influence the accessibility of the Cas9 complex to its target DNA site. For instance, a "closed" chromatin state (heterochromatin) can hinder binding and reduce knockout efficacy, even for a perfectly sequenced sgRNA [24]. By learning from these features across multiple cell types, DeepCRISPR provides more accurate, context-aware predictions [2].

Q2: My model's performance drops when applying it to a new cell type. What could be the cause? This is a common challenge known as data heterogeneity. DeepCRISPR's framework is specifically designed to address this by using a unified feature space that incorporates epigenetic data from various cell types [2]. A performance drop likely indicates that the epigenetic landscape of your new cell type is substantially different from those in the training data. We recommend:

- Verify Feature Availability: Ensure you have generated or can source the required epigenetic markers (e.g., via ATAC-seq or ChIP-seq data) for the new cell type.

- Leverage Unsupervised Pre-training: The DeepCRISPR model is pre-trained on billions of unlabeled sgRNAs across the human genome, which helps it generalize better to new genomic contexts, including those in different cell types [2].

Q3: What is the minimum epigenetic data required to use DeepCRISPR effectively for a custom cell line? At a minimum, data on DNA accessibility (e.g., from ATAC-seq or DNase-seq) is highly recommended, as it directly measures whether a genomic region is open and accessible for Cas9 binding [24]. While integrating more histone modification marks (e.g., H3K27ac for active enhancers, H3K9me3 for repressed regions) can further refine predictions, chromatin accessibility data is the most critical for capturing the primary structural barrier to editing efficiency [23].

Q4: How does DeepCRISPR handle the technical variation between epigenetic datasets from different laboratories? DeepCRISPR employs a deep learning framework that is trained to learn a unified feature representation [2]. This process inherently works to normalize variations across different datasets. The model's initial pre-training on a massive corpus of genome-wide sgRNAs helps it distinguish between biologically meaningful epigenetic signals and technical noise [2]. For best practices, we recommend using standardized assay protocols (e.g., as outlined in methods for ChIP-seq or CUT&Tag) where possible to minimize batch effects [23].

Troubleshooting Guides

Issue 1: Poor sgRNA On-Target Efficacy Prediction

Problem: The predicted on-target knockout scores from DeepCRISPR do not correlate well with your experimental validation results.

Investigation and Resolution:

| Step | Action | Expected Outcome & Further Step |

|---|---|---|

| 1. Isolate | Check the sequence features of your sgRNA. Ensure the target site is unique and does not have highly similar off-target sites in the genome. | Confirms the issue is not purely sequence-based. Proceed to step 2. |

| 2. Gather | Verify the epigenetic data quality for your cell type. Check the read depth, coverage, and signal-to-noise ratio of your ChIP-seq or ATAC-seq datasets [23]. | Identifies potential issues in input data. If quality is poor, re-sequence or use a public high-quality dataset. |

| 3. Reproduce | Compare your epigenetic signals at the target locus with gene expression data (e.g., from RNA-seq). Ensure the chromatin state (e.g., "open" at promoters) is consistent with the gene's expression level [24]. | Validates the biological plausibility of your epigenetic input. Inconsistencies may suggest incorrect cell type or assay conditions. |

| 4. Fix | If the epigenetic data is correct but performance is poor, consider fine-tuning the DeepCRISPR model with a small set of experimentally validated sgRNAs from your specific cell type, if available [2]. | This adapts the pre-trained model to the specific nuances of your cellular context, improving prediction accuracy. |

Issue 2: Challenges in Integrating Heterogeneous Epigenetic Data

Problem: You are unable to effectively combine epigenetic features from multiple cell types or sources into a unified input for the model.

Investigation and Resolution:

| Step | Action | Expected Outcome & Further Step |

|---|---|---|

| 1. Isolate | Simplify the problem. Start by integrating only one type of epigenetic mark (e.g., H3K4me3) across two cell types before scaling up. | Reduces complexity and helps identify where in the processing pipeline the issue occurs. |

| 2. Gather | Ensure all your epigenetic datasets are processed through the same bioinformatics pipeline (e.g., same aligner, peak-caller, and normalization method). | Eliminates technical variation arising from different data processing methods [23]. |

| 3. Reproduce | Use a control region (e.g., a known active promoter like GAPDH) to check that the epigenetic signals from your different datasets show a consistent pattern at this locus. | Confirms that each dataset is biologically valid and comparable. |

| 4. Fix | Employ the data encoding strategy used by DeepCRISPR, which uses a DCDNN-based autoencoder to learn a unified, lower-dimensional representation of the heterogeneous input data, effectively integrating sequence and epigenetic features [2]. | This deep learning approach is designed to handle the data heterogeneity issue directly. |

Quantitative Data and Performance

Table 1: Key Performance Metrics of DeepCRISPR Compared to Other Tools This table summarizes the predictive performance of DeepCRISPR against other state-of-the-art in silico tools as reported in its initial publication [2].

| Model / Tool | Underlying Approach | Mean AUC (On-Target) | Mean AUC (Off-Target) | Key Advantage |

|---|---|---|---|---|

| DeepCRISPR | Hybrid Deep Neural Network | 0.977 | 0.989 | Unifies on/off-target prediction; integrates epigenetic features [2] |

| CRISTA | Hypothesis-driven / Learning-based | 0.883 | 0.908 | Focus on sequence features only [2] |

| CRISPRon | Learning-based | 0.921 | Not Reported | - |

| Trained on Sequence Only | Deep Learning (Ablation Study) | 0.950 | Not Reported | Highlights value of adding epigenetic data [2] |

Note: AUC (Area Under the Curve) is a metric for model performance where 1.0 is a perfect predictor and 0.5 is no better than random. Data adapted from [2].

Table 2: Essential Research Reagent Solutions for Epigenetic Feature Mapping This table details key reagents and methods required to generate the epigenetic data inputs for DeepCRISPR.

| Research Reagent / Method | Function in Context of DeepCRISPR | Key Consideration |

|---|---|---|

| ATAC-seq (Assay for Transposase-Accessible Chromatin using sequencing) | Maps genome-wide regions of open chromatin (DNA accessibility), a critical feature for sgRNA efficacy prediction [24] [23]. | Works best on fresh cells; indicates regions where Cas9 can physically access DNA. |

| ChIP-seq (Chromatin Immunoprecipitation followed by sequencing) | Maps the genomic binding sites of specific histone modifications (e.g., H3K4me3 for active promoters, H3K27me3 for repressed regions) [23]. | Antibody specificity is paramount for data quality. |

| CUT&Tag (Cleavage Under Targets and Tagmentation) | A newer, more sensitive alternative to ChIP-seq for mapping histone modifications and transcription factor binding with lower background noise [23]. | Requires fewer cells than ChIP-seq and can be adapted for single-cell analysis. |

| Whole-Genome Bisulfite Sequencing (WGBS) | Provides a base-resolution map of DNA methylation (5mC), which can also influence gene expression and chromatin structure [23]. | The traditional gold standard; however, new methods like EM-Seq are emerging to reduce DNA damage [23]. |

| DNMT Inhibitors (e.g., 5-azacytidine) | Chemical reagents that inhibit DNA methyltransferases, used to experimentally alter the epigenetic state and validate its functional impact on sgRNA efficacy [23]. | Useful for experimental validation of feature importance. |

Detailed Experimental Protocols

Protocol 1: Generating Input Epigenetic Data via CUT&Tag for Histone Modifications

Background: CUT&Tag is a key method for mapping histone modifications with high signal-to-noise ratio, providing clean data for DeepCRISPR's models [23].

Methodology:

- Cell Permeabilization: Isolate and permeabilize nuclei from your target cell type.

- Antibody Binding: Incubate with a primary antibody specific to your histone mark of interest (e.g., anti-H3K27ac).

- pA-Tn5 Binding: Add a protein A-Tn5 transposase fusion protein, which binds to the antibody.

- Tagmentation: Activate the Tn5 with Mg2+. The transposase will simultaneously cleave the DNA and insert sequencing adapters into the regions bound by the histone mark.

- DNA Extraction and Sequencing: Extract the cleaved DNA fragments and prepare for high-throughput sequencing.

- Data Analysis: Map sequencing reads, call peaks, and generate a bedGraph or bigWig file of signal intensity across the genome. This file is used as input for DeepCRISPR.

Visual Workflow: CUT&Tag for Histone Marks

Protocol 2: Experimental Validation of sgRNA Efficacy

Background: To validate DeepCRISPR's predictions and generate fine-tuning data, you need to measure the actual knockout efficiency of designed sgRNAs.

Methodology:

- sgRNA Cloning: Clone your candidate sgRNA sequences into your chosen CRISPR plasmid backbone (e.g., lentiCRISPRv2).

- Cell Transduction: Deliver the sgRNA plasmid along with Cas9 into your target cell line using an appropriate method (lentivirus, nucleofection, etc.).

- Selection: Apply antibiotics (e.g., Puromycin) to select for successfully transduced cells.

- Efficiency Measurement:

- T7 Endonuclease I Assay: Extract genomic DNA from the selected cell pool. PCR-amplify the target region, denature and reanneal the PCR products. Digest with T7E1 enzyme, which cleaves heteroduplex DNA formed by indels. Analyze by gel electrophoresis.

- Next-Generation Sequencing (NGS): For a more quantitative measure, perform targeted amplicon sequencing of the genomic region. Use computational tools (e.g., CRISPResso2) to analyze the sequencing reads and calculate the percentage of indels at the target site.

Visual Workflow: sgRNA Validation

The following diagram illustrates the core architecture of DeepCRISPR, showing how sequence and epigenetic data from multiple cell types are integrated for unified sgRNA design.

Visual Workflow: DeepCRISPR Architecture

Frequently Asked Questions: Core Concepts

Q1: What is the fundamental purpose of using unsupervised pre-training for sgRNA design?

Unsupervised pre-training addresses a major challenge in developing accurate machine learning models for CRISPR: the scarcity of expensive, experimentally labeled sgRNA efficacy data. By first learning the fundamental "language" and underlying patterns from billions of unlabeled sgRNA sequences available across the genome, the model builds a robust foundational understanding. This pre-trained "parent network" is then fine-tuned with the limited labeled data, significantly boosting prediction performance for both on-target knockout efficacy and off-target effects [2].

Q2: What specific type of deep learning architecture is used for this pre-training?

The process typically uses a DCDNN-based autoencoder (Deep Convolutional Denoising Neural Network) [2]. This is a specific architecture designed to reconstruct its input, even when that input is corrupted with noise. By learning to denoise and accurately reconstruct sgRNA sequences, the model automatically learns a compressed, meaningful representation of the features that define an sgRNA, which is invaluable for subsequent prediction tasks.

Q3: My research involves a non-conventional organism. Can this method be applied?

Yes, the principle of unsupervised pre-training on organism-specific sgRNA sequences is a powerful strategy for non-model organisms. The DeepCRISPR framework, initially developed for human sgRNAs, has inspired similar approaches. For instance, the DeepGuide algorithm was successfully developed for the yeast Yarrowia lipolytica by using a convolutional autoencoder (CAE) for unsupervised pre-training on its genome, followed by supervised fine-tuning. This produced a highly accurate, species-specific guide activity predictor [25].

Q4: What are the primary data sources for the billions of unlabeled sgRNAs?

The initial DeepCRISPR study extracted all possible ~0.68 billion (680 million) 20-nucleotide sgRNA sequences that are adjacent to an NGG PAM (required by the SpCas9 enzyme) from the entire human genome, encompassing both coding and non-coding regions [2]. For other projects, the source would be the complete genome sequence of the target organism, from which all potential sgRNA sequences conforming to the required PAM are computationally generated.

Troubleshooting Guides: Common Experimental Challenges

Q1: The model's performance is poor after fine-tuning with my experimental data. What could be wrong?

This is often a data integration issue. Ensure that the epigenetic context (e.g., chromatin accessibility, nucleosome occupancy) of your experimental cell type is incorporated into the model. DeepCRISPR unifies data from different cell types by representing them in a shared feature space that includes these epigenetic features. If the pre-training was on a general genome but your fine-tuning data comes from a specific cell type with unique epigenetics, this mismatch can hamper performance. Retraining the feature representation with your cell type's epigenetic data can dramatically improve adaptability [2] [25].

Q2: How can I handle the class imbalance problem when predicting rare off-target sites?

This is a recognized challenge, as true off-target cleavage sites are vastly outnumbered by potential non-functional mismatch sites. The DeepCRISPR framework integrates an efficient bootstrapping sampling algorithm directly into the training procedure. This technique involves repeatedly drawing random samples from the training data, with a focus on the minority class (true off-targets), to create multiple balanced training sets. This process helps the model learn the characteristics of rare off-target events without being overwhelmed by the majority class [2].

Q3: I have a limited set of labeled sgRNAs for fine-tuning. How can I improve the model's robustness?

To combat data sparsity, you can employ data augmentation techniques specifically designed for biological sequences. Similar to methods used in image processing, DeepCRISPR generates novel, biologically meaningful sgRNA variants from your existing labeled data. This artificially expands the size and diversity of your training set, making the final fine-tuned model more robust and improving its generalization to new, unseen sgRNAs [2].

Experimental Protocol & Workflow

The following workflow outlines the key steps for implementing an unsupervised pre-training framework for sgRNA efficacy prediction, based on the DeepCRISPR methodology [2].

Table 1: Key Phases of the Deep Learning Framework

| Phase | Objective | Key Input | Output | Notes |

|---|---|---|---|---|

| 1. Data Collection & Encoding | Generate and featurize all potential sgRNAs. | Reference Human Genome | ~0.68 billion sgRNAs with sequence and epigenetic features [2] | Epigenetic data (e.g., chromatin accessibility) from target cell types is crucial. |

| 2. Unsupervised Pre-training | Learn a general-purpose representation of sgRNA sequences. | Billions of unlabeled sgRNAs | A pre-trained "Parent Network" | Uses a DCDNN-based autoencoder. No experimental efficacy labels required. |

| 3. Supervised Fine-tuning | Adapt the general model to predict specific efficacy. | Parent Network + Labeled sgRNAs (e.g., from CRISPR screens) | A fine-tuned model for on/off-target prediction | Employs data augmentation to expand the limited labeled dataset. |

Performance & Quantitative Benchmarks

Table 2: Comparative Performance of Deep Learning Models

This table summarizes the performance of DeepCRISPR and other tools as reported in foundational studies. Performance is typically measured by the correlation (Pearson coefficient) between predicted and experimentally measured sgRNA activities.

| Model / Tool | Key Methodology | Reported Performance (Pearson 'r') | Notes |

|---|---|---|---|

| DeepCRISPR [2] | Unsupervised pre-training + supervised fine-tuning | Surpassed state-of-the-art in its benchmark | Performance gain attributed to pre-training on billions of sequences. |

| DeepGuide (for Y. lipolytica) [25] | Convolutional Autoencoder (CAE) pre-training + CNN | Cas9: r = 0.5Cas12a: r = 0.66 | Outperformed other tools (e.g., SSC, sgRNA Scorer) not trained on the specific organism. |

| SSC [25] | Learning-based model (sequence only) | Cas9: r = 0.11 | Example of a model without organism-specific pre-training. |

| Seq-deepCpf1 [25] | Neural network for Cas12a | Cas12a: r = 0.25 | Outperformed by DeepGuide's specialized approach. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for sgRNA Design and Validation

| Item | Function / Description | Example / Source |

|---|---|---|

| sgRNA Design Platform | Computational tool to search, design, and score optimal CRISPR gRNAs. | Invitrogen GeneArt CRISPR Design Tool [26] |

| Custom CRISPR Services | Provider for designing and constructing custom CRISPR constructs or cell lines. | GeneArt CRISPR Custom Services [26] |

| CRISPR Libraries | Pre-designed pooled libraries of sgRNAs for genome-wide screens. | Available as individual clones or lentiviral arrays/pools [26] |

| Data Analysis Tool | Standard software for statistical analysis of CRISPR screen sequencing data. | MAGeCK (Model-based Analysis of Genome-wide CRISPR-Cas9 Knockout) [10] |

| DeepCRISPR Code | Open-source implementation of the DeepCRISPR algorithm. | Available on GitHub and Zenodo [2] |

In DeepCRISPR machine learning prediction research, the accurate forecasting of on-target and off-target effects is paramount. Hybrid Deep Neural Networks (HDNNs), which synergistically combine different neural architectures, are at the forefront of this endeavor [27]. By integrating Convolutional Neural Networks (CNNs) renowned for their feature extraction capabilities with the powerful data compression and feature learning of Autoencoders (AEs), researchers can build models that are both more robust and more interpretable [28] [27]. Such hybrid systems, for instance, have demonstrated superior performance in predicting Critical Heat Flux (achieving an R² of 0.9908) by leveraging augmented features from autoencoders [28]. This technical support guide addresses the specific implementation and troubleshooting challenges faced by scientists and drug development professionals when deploying these advanced architectures in a genomic research context.

Frequently Asked Questions (FAQs)

Q1: What is the primary advantage of combining an Autoencoder with a CNN in a DeepCRISPR context?

The primary advantage is enhanced feature learning and model robustness. CNNs excel at automatically learning and extracting hierarchical spatial features from raw input data, such as genomic sequences [29]. Autoencoders, through their bottleneck structure, are excellent at unsupervised learning of efficient data codings, which can be used for dimensionality reduction, feature augmentation, and denoising [28]. In a hybrid model, the autoencoder can pre-process data, create augmented feature sets, or learn compressed representations that the CNN then uses for specific prediction tasks, leading to significantly improved accuracy as demonstrated in various scientific applications [28] [30].

Q2: I am encountering overfitting despite using a hybrid model. What are the primary strategies to address this?

Overfitting is a common challenge, especially with complex models on limited biological datasets. Key strategies include:

- Data Augmentation with Autoencoders: Utilize the autoencoder component of your network to generate synthetic but realistic data variations. This expands your training set and teaches the model to learn more generalized features [28].

- Incorporating a Denoising Autoencoder (DAE): Use a Stacked Denoising Autoencoder (SDAE) which is trained to reconstruct clean inputs from corrupted or noisy versions. This forces the model to learn robust features and prevents it from overfitting to noise in the training data [31].

- Hybrid Classification with SVM: Replace the final common SoftMax classifier of the CNN with a Support Vector Machine (SVM). SVMs are known for their strong generalization capabilities, especially with limited data, and can create more effective decision boundaries from the features extracted by the convolutional layers [30].

Q3: How can I effectively manage the computational cost of training large hybrid models?

Training hybrid models is resource-intensive. To manage computational costs:

- Phased Training: Instead of training the entire hybrid network end-to-end from scratch, consider a phased approach. Pre-train the autoencoder and CNN components independently on your dataset before fine-tuning the entire integrated model [31].

- Leverage Pre-trained Models: Where possible, initialize your CNN with weights from models pre-trained on large, general datasets (e.g., ImageNet for image-like genomic data representations) to reduce convergence time.

- Optimize Input Resolution: As done in resource-constrained medical imaging applications, fix your input data to a standardized, computationally tractable size (e.g., 256x256 pixels for image data) to control the computational load of the initial layers [31].

Troubleshooting Guides

Data Preparation and Preprocessing

Problem: Model performance is poor due to low-quality or insufficiently prepared genomic data.

Genomic data for CRISPR research often requires conversion into a numerical format that CNNs can process, such as binary matrices or image-like representations of sequences.

Issue: Vanishing/Exploding Gradients during training.

- Solution: Implement Batch Normalization layers within both the CNN and Autoencoder parts of the network. This stabilizes and accelerates training by normalizing the inputs to each layer.

Issue: The model fails to learn meaningful features from the sequence data.

- Solution: Review your data encoding scheme. Ensure the method of converting DNA/RNA sequences into numerical tensors preserves the spatial and sequential relationships critical for CRISPR activity. Validate your encoding by attempting to reconstruct the original sequence from the input tensor.

Issue: High error rates in off-target prediction.

- Solution: Integrate a Denoising Autoencoder (DAE). Train the DAE to reconstruct clean genomic data representations from artificially noised versions. This pre-processing step can significantly improve the model's robustness to variations and errors in the input data [31].

Model Architecture and Integration

Problem: The CNN and Autoencoder components are not integrating effectively, leading to suboptimal performance.

The integration point between the Autoencoder and CNN is critical. The architecture must facilitate efficient information flow.

Issue: The Autoencoder's latent space is too compressed, losing information critical for the CNN's task.

- Solution: Increase the dimensionality of the latent space and monitor the reconstruction loss. The latent representation should be a meaningful compression, not a destructive one. Use a custom loss function that balances reconstruction quality with the final task's performance.

Issue: The model is complex and slow to train.

- Solution: Design a modular and phased training protocol. First, train the autoencoder to achieve good reconstruction. Then, freeze the autoencoder weights and train the CNN on the frozen latent representations. Finally, unfreeze the entire network for a short period of fine-tuning. This can be more stable and efficient than end-to-end training.

Issue: The model's predictions lack interpretability.

- Solution: Utilize the latent space of the autoencoder for visualization. The compressed features can be visualized using techniques like t-SNE or UMAP to cluster different types of on-target and off-target sites, providing insights into the model's decision-making process.

Training and Optimization

Problem: The hybrid model does not converge, or convergence is unstable.

Issue: Training loss fluctuates wildly or does not decrease.

- Solution: Use Gradient Clipping. This is especially important in deep, hybrid networks. Capping the gradients during backpropagation prevents parameter updates from becoming excessively large and destabilizing the training process.

- Solution: Adjust the learning rate. Implement a learning rate scheduler that reduces the rate as training progresses, allowing the model to fine-tune its parameters as it approaches a minimum.

Issue: The model performs well on training data but poorly on validation data (overfitting).

- Solution: Employ strong regularization techniques. As mentioned in the FAQs, using an SVM as the final classifier has proven effective in hybrid models for improving generalization on limited data [30]. Additionally, incorporate L2 regularization (weight decay) and Dropout layers within the CNN to prevent complex co-adaptations of neurons.

Experimental Protocols & Data

The following table summarizes quantitative results from recent studies employing hybrid deep learning models, providing a benchmark for expected performance in various tasks.

Table 1: Performance Metrics of Hybrid Deep Learning Models in Scientific Applications

| Application Domain | Hybrid Model Architecture | Key Performance Metrics | Citation |

|---|---|---|---|

| Critical Heat Flux Prediction | DCNN combined with 3 Autoencoder configurations (Feature Augmentation) | R²: 0.9826 (Testing), NRMSE: Significantly improved | [28] |

| Composite Structure Damage Diagnosis | CNN-SVM & CAE-SVM (Replacing SoftMax with SVM classifier) | Accuracy: ~99.9%, outperforming standalone CNN or SVM | [30] |

| Medical Image Compression | Hybrid SWT + Stacked Denoising Autoencoder (SDAE) | PSNR: 50.36 dB, MS-SSIM: 0.9999, Time: 0.065s | [31] |

| CRISPR Off-Target Prediction | Deep learning models (e.g., CRISPR-Net, R-CRISPR) | Evaluation based on AUROC, PRAUC, F1 Score | [13] |

Key Research Reagent Solutions

This table outlines essential computational "reagents" and their functions for building and training HDNNs for DeepCRISPR.

Table 2: Essential Research Reagents for Hybrid Deep Learning Experiments

| Research Reagent / Tool | Function / Purpose in the Experiment |

|---|---|

| Stacked Denoising Autoencoder (SDAE) | Learns robust, hierarchical data representations by reconstructing clean data from corrupted input, improving feature quality [31]. |

| Convolutional Neural Network (CNN) | Automatically extracts spatial and hierarchical features from input data (e.g., encoded genomic sequences) [29]. |

| Support Vector Machine (SVM) Classifier | A powerful alternative to SoftMax for classification, known for creating strong decision boundaries from features, improving generalization [30]. |

| Stationary Wavelet Transform (SWT) | Provides multiresolution analysis of input data, helping the model capture information at different scales and frequencies [31]. |

| Custom Hybrid Loss Function | Combines multiple objectives (e.g., MSE for pixel-level accuracy, SSIM for perceptual quality) to guide the model more effectively [31]. |

Workflow and Architecture Diagrams

Hybrid DCNN-Autoencoder Architecture for DeepCRISPR

This diagram illustrates the data flow and integration points in a generic hybrid DCNN-Autoencoder model designed for a prediction task in DeepCRISPR.

Data Processing and Feature Augmentation Logic

This flowchart details the data preprocessing and feature augmentation pathway, a critical step for enhancing model performance.

Frequently Asked Questions (FAQs)

Q1: My deep learning model for predicting sgRNA on-target activity is overfitting due to a small dataset. What data augmentation strategies can I use? A1: In DeepCRISPR research, two effective data augmentation strategies are:

- Automix with CNLC: This method uses an autoencoder for data augmentation, generating novel, realistic sgRNA sequences. It is complemented by Confidence-based Nearest Label Correction (CNLC), a technique that refines the labels of the augmented data, improving both the quantity and quality of your training set [32].

- Sequence Augmentation: Inspired by image processing, this involves generating new sgRNA sequences through biologically meaningful transformations or perturbations of your original labeled data. This expands your training dataset and helps the model become more robust [2].

Q2: I am working on a low-data drug discovery project. Are there alternatives to standard fine-tuning? A2: Yes, meta-learning is a powerful alternative for few-shot scenarios. The Meta-Mol framework is specifically designed for molecular property prediction with limited data. It uses a Bayesian Model-Agnostic Meta-Learning (MAML) approach, which learns a general model from a variety of related tasks. This model can then be rapidly adapted to a new, low-data task with only a few gradient updates, significantly reducing overfitting risks [33].

Q3: What is a major pitfall when fine-tuning large language models (LLMs) on specialized biomedical data? A3: A key finding is that biomedical fine-tuning does not always guarantee better performance. Studies show that general-purpose LLMs can often outperform their biomedically fine-tuned counterparts on clinical tasks, especially those not purely focused on medical knowledge. Biomedically fine-tuned models have also demonstrated a higher tendency to hallucinate. A more effective strategy than pure fine-tuning can be Retrieval-Augmented Generation (RAG), which dynamically pulls information from external, trusted sources [34].

Q4: How can I address severe class imbalance in my dataset for fine-tuning? A4: For imbalanced data, a two-stage fine-tuning approach with data augmentation can be highly effective. This involves an initial fine-tuning stage on a balanced, augmented dataset, followed by a second fine-tuning stage on the original, imbalanced data. This method helps the model learn general features first before adapting to the real data distribution [35].

Troubleshooting Guides

Issue: Poor Generalization to New Cell Types or Organisms

Problem: Your model, trained on sgRNA efficacy data from one cell type, performs poorly when applied to a new cell type or organism.

Solution: Integrate multi-modal data and leverage unsupervised pre-training.

- Methodology: The DeepCRISPR framework addresses this by incorporating epigenetic information (e.g., chromatin accessibility) from different cell types into the model. This provides a more unified feature space. Furthermore, it employs deep unsupervised representation learning on billions of unlabeled, genome-wide sgRNA sequences. This pre-trained "parent network" learns a robust foundational representation of sgRNA sequences, which is then fine-tuned on your limited labeled dataset, leading to better generalization [2].

- Experimental Protocol:

- Data Collection: Gather epigenetic data (e.g., from ENCODE) for relevant cell types.

- Unsupervised Pre-training: Train a denoising autoencoder on all possible sgRNA sequences across the genome to learn a general sequence representation.

- Supervised Fine-tuning: Create a hybrid neural network. Use the pre-trained encoder from step 2 and add a task-specific classifier. Fine-tune this entire network on your smaller, labeled dataset of known sgRNA efficacies.

Issue: Model Overfitting on Small Labeled Datasets

Problem: Your model achieves high accuracy on the training data but fails on the validation set or new predictions.

Solution: Implement a Bayesian meta-learning framework.

- Methodology: The Meta-Mol framework combats overfitting through several mechanisms. It uses a Bayesian MAML variant to learn a probabilistic distribution of model parameters rather than fixed point estimates, which better captures uncertainty in low-data regimes. It also replaces gradient-based inner-loop updates with a hypernetwork that generates task-specific parameter adjustments, allowing for more complex and adaptive fine-tuning without over-optimizing on a few samples [33].

- Experimental Protocol:

- Meta-Training: Sample a large number of tasks (e.g., predicting different molecular properties) from a related, larger dataset.

- Hypernetwork Training: Train a hypernetwork to output the parameters (mean and variance) for a task-specific predictor based on a small support set from a new task.

- Adaptation: For a new, low-data task, use the hypernetwork to generate a specialized model, which is then evaluated on a query set.

Experimental Protocols for Key Cited Works

This protocol enhances sgRNA on-target activity prediction using a dedicated data augmentation pipeline.

- Data Augmentation (Automix): Feed your original sgRNA sequences into a trained autoencoder. The decoder component generates new, synthetic sgRNA sequences that resemble real data.

- Pseudo-Label Correction (CNLC): Assign initial labels to the augmented data. Then, use a confidence-based metric to identify and correct potentially mislabeled examples by comparing them to their nearest neighbors in the feature space. This improves the label quality.

- Model Training (CrisprDA): Train a parallel architecture that integrates Convolutional Neural Networks (CNNs) with attention mechanisms on the combined set of original and augmented, label-corrected data. The CNN extracts local sequence patterns, while the attention mechanism identifies long-range dependencies and important sequence motifs.

This protocol enables the prediction of molecular properties with very few labeled examples.

- Molecular Encoding: Represent each molecule as a graph. Use a Graph Isomorphism Network (GIN) as the encoder to learn features from both atomic (node) and bond (edge) information.

- Bayesian Meta-Learning Setup:

- Sampling: In each training episode, dynamically sample multiple tasks from a pool of known molecular property prediction tasks.

- Hypernetwork Adaptation: For each task, the hypernetwork takes the support set (a few labeled molecules) and outputs the parameters for a task-specific classifier.

- Bayesian Inference: The final prediction on the query set is made by a classifier whose weights are sampled from the Gaussian distribution parameterized by the hypernetwork's output.

- Evaluation: The model's performance is assessed on its ability to make accurate predictions on novel tasks after seeing only a few examples.

Workflow Diagram: DeepCRISPR with Augmentation & Fine-Tuning

The following diagram illustrates the integrated workflow of the DeepCRISPR platform, showcasing how data augmentation and fine-tuning are applied to enhance sgRNA design.

Research Reagent Solutions

The following table details key computational tools and resources essential for implementing data augmentation and fine-tuning in DeepCRISPR and related biomedical ML research.

| Research Reagent / Tool | Function in Research |

|---|---|

| CrisprDA [32] | A parallel CNN-Attention architecture designed for sgRNA on-target activity prediction, intended to be used with the Automix and CNLC data augmentation methods. |

| Autoencoder (in Automix) [32] | A type of neural network used for unsupervised learning; core to the Automix method for generating novel, synthetic sgRNA sequences to expand training datasets. |

| Meta-Mol Framework [33] | A few-shot learning framework based on Bayesian MAML and a graph isomorphism network, used for rapid adaptation of molecular property prediction models to new tasks with limited data. |

| Hypernetwork [33] | A network that generates the weights for another network. In Meta-Mol, it produces task-specific parameters for the predictor, enabling flexible adaptation without overfitting. |

| Graph Isomorphism Network (GIN) [33] | A type of graph neural network used in Meta-Mol to encode molecular structural information from atoms and bonds into a meaningful numerical representation. |

| Convolutional Neural Network (CNN) [32] [3] [2] | A deep learning architecture widely used in sgRNA design tools (like DeepCRISPR and CrisprDA) to detect local sequence motifs and patterns critical for activity. |

| DeepCRISPR Platform [2] | A comprehensive computational platform that unifies sgRNA on-target and off-target prediction using a hybrid deep neural network, incorporating unsupervised pre-training and data augmentation. |

What is DeepCRISPR and what are its main advantages? DeepCRISPR is a comprehensive computational platform that unifies sgRNA on-target knockout efficacy and off-target profile prediction into a single deep learning framework. Its key advantages include the use of unsupervised pre-training on billions of unlabeled sgRNA sequences, automatic integration of epigenetic features from different cell types, and the ability to simultaneously optimize for both high sensitivity and specificity in sgRNA design [2].

What specific CRISPR applications is DeepCRISPR best suited for? DeepCRISPR is particularly valuable for genome-wide knockout screens, coding and non-coding region targeting, and experiments requiring high precision across different cell types. The platform has demonstrated good generalization to new cell types not included in its training data, making it suitable for novel research applications [2] [14].

What are the computational requirements for running DeepCRISPR? The platform utilizes a hybrid deep neural network architecture with GPU acceleration for processing. While specific hardware requirements aren't detailed in the search results, the implementation uses SPARK-based large-scale data processing and is available through both web interface (http://www.deepcrispr.net) and command-line implementation [2].

Troubleshooting Common DeepCRISP Implementation Issues

Problem: Low predicted on-target efficiency for all designed sgRNAs

- Potential Cause: Epigenetic barriers in your target cell type

- Solution: DeepCRISPR automatically integrates epigenetic features like histone modifications and chromatin accessibility. Verify that your input data includes cell-type specific epigenetic information, as this significantly affects prediction accuracy in different genomic contexts [2] [14].

Problem: Discrepancy between predicted and experimental editing efficiency

- Potential Cause: Cell-type specific factors not fully captured in training data

- Solution:

- Validate predictions using 3-4 sgRNAs per gene as recommended by experimental optimization benchmarks [22] [5]

- Perform pilot experiments in your specific cell line, as editing efficiency can vary significantly across cell types

- Consider using chemically synthesized, modified guide RNAs which have demonstrated improved editing efficiency across multiple cell types [5]

Problem: Installation and dependency issues with local implementation

- Potential Cause: Complex deep learning library dependencies

- Solution:

- Use the provided Docker containers or conda environments

- Ensure compatible GPU drivers are installed for CUDA acceleration

- Consider starting with the web interface at http://www.deepcrispr.net before attempting local installation [2]

DeepCRISPR Workflow and Experimental Design

DeepCRISPR sgRNA Design Pipeline

Quantitative Performance Metrics

Table 1: DeepCRISPR Performance Comparison with Traditional Methods

| Prediction Task | Traditional Tools | DeepCRISPR | Improvement |

|---|---|---|---|

| On-target Efficacy Prediction | Moderate accuracy (varies by tool) | Superior performance | Surpasses state-of-the-art tools [2] |

| Off-target Profile Prediction | Hypothesis-based scoring | Whole-genome prediction | More comprehensive coverage [2] |

| Cross-cell-type Generalization | Limited | Good generalization | Validated on multiple cell types [2] |

| Feature Engineering | Manual curation | Automatic learning | Data-driven feature identification [2] |

Table 2: Essential Research Reagents for DeepCRISPR Experimental Validation

| Reagent/Solution | Function | Optimization Tips |

|---|---|---|

| Chemically Modified sgRNAs | Enhanced stability and editing efficiency | Include 2'-O-methyl modifications at terminal residues [5] |

| Ribonucleoproteins (RNPs) | Complex of Cas9 protein and guide RNA | Reduces off-target effects vs. plasmid transfection [5] |

| Positive Control Guides | Benchmark editing efficiency | Use species-specific controls; Synthego offers human controls kit [22] |

| Multiple sgRNAs per Gene (3-5) | Control for variable guide efficiency | Test different guides as performance varies significantly [21] [5] |

| Stable Cas9 Cell Lines | Consistent nuclease expression | Improves reproducibility over transient transfection [21] |

Step-by-Step Experimental Protocol for DeepCRISPR Validation

Phase 1: sgRNA Design and Selection

- Input your target genomic sequence into the DeepCRISPR web interface or local installation

- Specify cell type to enable epigenetic context integration

- Generate predictions for both on-target efficacy and off-target profiles

- Select top 3-5 sgRNAs per gene based on DeepCRISPR scores for experimental testing [5]

Phase 2: Experimental Optimization and Validation

- Delivery Optimization: Test multiple transfection methods (lipofection, electroporation) as efficiency varies by cell type [21] [36]

- Dosage Titration: Optimize guide RNA concentration to balance editing efficiency and cellular toxicity [5]

- Efficiency Validation:

- Extract genomic DNA 48-72 hours post-transfection

- Amplify target region and sequence using NGS or Sanger sequencing