DBTL Cycle Strategies in 2025: A Comparative Analysis for Biomedical Research and Drug Development

This article provides a comprehensive comparative analysis of Design-Build-Test-Learn (DBTL) cycle strategies, a foundational framework in synthetic biology and therapeutic development.

DBTL Cycle Strategies in 2025: A Comparative Analysis for Biomedical Research and Drug Development

Abstract

This article provides a comprehensive comparative analysis of Design-Build-Test-Learn (DBTL) cycle strategies, a foundational framework in synthetic biology and therapeutic development. Tailored for researchers, scientists, and drug development professionals, it explores the core principles and evolution of the DBTL cycle, examines cutting-edge methodological applications from high-throughput biofoundries to knowledge-driven approaches, and details advanced troubleshooting and optimization techniques. The analysis further validates strategies through real-world case studies and cross-method comparisons, offering actionable insights to accelerate R&D pipelines, enhance predictive modeling, and translate discoveries into clinical applications.

The DBTL Framework: Core Principles and Evolutionary Shaping of Modern Biology

Defining the Design-Build-Test-Learn (DBTL) Cycle in Synthetic Biology

The Design-Build-Test-Learn (DBTL) cycle is a fundamental engineering framework in synthetic biology that enables the systematic and iterative development of biological systems [1]. This cyclical process allows researchers to engineer organisms to perform specific functions, such as producing biofuels, pharmaceuticals, or other valuable compounds [1]. The power of the DBTL approach lies in its structured methodology for rational design and continuous refinement, which is particularly valuable given that the impact of introducing foreign DNA into a cell can be difficult to predict, often requiring testing of multiple permutations to achieve desired outcomes [1].

As synthetic biology has matured over the past two decades, the DBTL cycle has become its central development pipeline [2]. Recent technological advancements have dramatically accelerated the "Build" and "Test" stages through automation and high-throughput technologies, while machine learning (ML) has emerged as a transformative tool for enhancing the "Learn" phase and potentially reordering the entire cycle [3] [2]. This comparative analysis examines the core components of the DBTL framework, explores evolving methodologies, and evaluates their performance across different synthetic biology applications.

Core Components of the DBTL Cycle

Design Phase

The Design phase initiates the DBTL cycle by defining objectives for desired biological functions and creating blueprint specifications for genetic constructs [3]. This stage relies on domain knowledge, expertise, and computational approaches for modeling biological systems [3]. Key design activities include protein design (selecting natural enzymes or designing novel proteins), genetic design (translating amino acid sequences into coding sequences, designing ribosome binding sites, and planning operon architecture), and assembly design (breaking down plasmids into fragments for construct assembly) [4].

Advanced software tools have become indispensable for modern design workflows. Pathway design tools like RetroPath [5] and enzyme selection platforms such as Selenzyme [5] enable in silico selection of candidate enzymes for biosynthetic pathways. For DNA part design, tools like PartsGenie facilitate the optimization of ribosome-binding sites and enzyme coding regions [5]. These tools allow researchers to create combinatorial libraries of pathway designs that can be statistically reduced using design of experiments (DoE) approaches to manageable numbers of constructs for laboratory testing [5].

Build Phase

The Build phase translates in silico designs into physical biological constructs through DNA synthesis, assembly, and introduction into host organisms [3]. This stage involves synthesizing DNA or isolating and purifying genomic DNA, which is then assembled into larger constructs using techniques such as Gibson assembly, Golden Gate assembly, or ligase cycling reaction (LCR) [5] [6]. The assembled DNA is subsequently cloned into vectors and introduced into host organisms (e.g., bacteria, yeast) through transformation or transfection [6].

Automation has revolutionized the Build phase, with automated liquid handlers (from companies like Tecan, Beckman Coulter, and Hamilton Robotics) enabling high-precision pipetting for PCR setup, DNA normalization, and plasmid preparation [4]. Integration with DNA synthesis providers (Twist Bioscience, IDT, GenScript) and sophisticated software platforms (TeselaGen) streamlines the entire construction workflow, managing protocols and tracking samples across different laboratory equipment [4]. This automation significantly reduces the time, labor, and cost of generating multiple constructs while increasing throughput [1].

Test Phase

In the Test phase, researchers experimentally measure the performance of engineered biological constructs through a battery of assays [3] [6]. This phase provides crucial data on the system's function, performance, and robustness under various conditions [6]. Testing methodologies range from in vitro characterization in cell-free systems to in vivo analysis in living cells [3] [7].

High-throughput screening (HTS) technologies are central to modern testing workflows, utilizing automated liquid handling systems (Beckman Coulter Biomek, Tecan Freedom EVO) and plate readers (PerkinElmer EnVision, BioTek Synergy HTX) for rapid analysis [4]. Omics technologies, including next-generation sequencing (NGS) platforms (Illumina NovaSeq, Thermo Fisher Ion Torrent) and mass spectrometry systems (Thermo Fisher Orbitrap), enable comprehensive genotypic and phenotypic characterization [4]. The integration of cell-free expression systems has emerged as a particularly powerful testing platform, allowing rapid protein synthesis without time-intensive cloning steps and enabling high-throughput sequence-to-function mapping of protein variants [3].

Learn Phase

The Learn phase involves analyzing data collected during testing to extract insights and inform subsequent design iterations [3]. This stage enables researchers to identify relationships between design parameters and observed outcomes, facilitating rational refinements to the biological system [5]. Traditional statistical analysis methods have been increasingly supplemented by machine learning (ML) algorithms that can uncover complex patterns in large datasets beyond human analytical capabilities [4].

Machine learning approaches range from supervised learning for predicting phenotype from genotype to unsupervised methods for identifying key engineering targets [8] [2]. Explainable ML advances are particularly valuable as they provide both predictions and reasons for proposed designs, deepening biological understanding and accelerating the learning process [2]. The Learn phase ultimately aims to transform experimental results into actionable knowledge that guides the next DBTL cycle, progressively optimizing system performance until desired specifications are achieved [1] [5].

Comparative Analysis of DBTL Implementations

Case Studies and Performance Metrics

The effectiveness of DBTL cycle implementations varies significantly based on the specific strategies, technologies, and biological systems involved. The table below compares three documented applications of the DBTL framework across different synthetic biology projects.

Table 1: Comparative Performance of DBTL Cycle Implementations

| Application | DBTL Strategy | Key Technologies | Performance Results | Cycle Details |

|---|---|---|---|---|

| Pinocembrin Production in E. coli [5] | Automated DBTL pipeline with statistical design | Ligase cycling reaction, DoE, UPLC-MS/MS | 500-fold improvement; final titer of 88 mg/L [5] | Initial library: 16 constructs; 1 follow-up cycle [5] |

| Dopamine Production in E. coli [7] | Knowledge-driven DBTL with in vitro prototyping | Cell-free lysate systems, RBS engineering | 69.0 mg/L dopamine; 2.6-6.6x improvement over state-of-the-art [7] | In vitro testing prior to in vivo implementation [7] |

| Combinatorial Pathway Optimization [8] | ML-guided DBTL with kinetic models | Gradient boosting, random forest, kinetic modeling | Effective optimization in low-data regime; robust to experimental noise [8] | Simulation framework for benchmarking ML methods [8] |

Experimental Protocols in DBTL Applications

Automated Pathway Optimization for Flavonoid Production

The automated DBTL pipeline for pinocembrin production in E. coli employed a highly systematic experimental protocol [5]. The Design stage utilized RetroPath for pathway design and Selenzyme for enzyme selection, followed by PartsGenie for designing ribosome-binding sites and coding sequences [5]. Researchers created a combinatorial library of 2,592 possible configurations, which was reduced to 16 representative constructs using design of experiments (DoE) based on orthogonal arrays combined with a Latin square for positional gene arrangement [5].

In the Build phase, assembly was performed using ligase cycling reaction (LCR) on robotics platforms, followed by transformation in E. coli DH5α [5]. Constructs were quality-checked through automated plasmid purification, restriction digest, and analysis by capillary electrophoresis, with sequence verification [5]. For the Test phase, constructs were introduced into production chassis and cultured using automated 96-deepwell plate protocols [5]. Target product and intermediate detection employed automated extraction followed by quantitative screening with ultra-performance liquid chromatography coupled to tandem mass spectrometry (UPLC-MS/MS) [5].

The Learn phase applied statistical analysis to identify factors influencing production, revealing that vector copy number had the strongest significant effect on pinocembrin levels, followed by chalcone isomerase (CHI) promoter strength [5]. These insights directly informed the design specifications for the subsequent DBTL cycle, which focused on a narrowed region of the design space [5].

Knowledge-Driven Dopamine Production

The dopamine production study implemented a "knowledge-driven" DBTL approach that incorporated upstream in vitro investigation before full cycling [7]. The experimental protocol began with in vitro tests using crude cell lysate systems to assess enzyme expression levels in the dopamine production host [7]. This pre-DBTL investigation provided mechanistic understanding of pathway bottlenecks and informed rational design decisions.

For the Build and Test phases, researchers translated in vitro findings to an in vivo environment through high-throughput ribosome binding site (RBS) engineering in E. coli [7]. The RBS sequences were modulated without interfering with secondary structures, focusing on the Shine-Dalgarno sequence [7]. Dopamine production was measured and optimized through iterative DBTL cycles, ultimately developing a production strain capable of producing 69.0 ± 1.2 mg/L dopamine [7].

The Learn phase in this approach combined traditional statistical evaluation with mechanistic insights from the initial in vitro investigations, enabling more targeted engineering strategies [7]. This knowledge-driven methodology demonstrated the impact of GC content in the Shine-Dalgarno sequence on RBS strength and overall pathway performance [7].

Emerging Paradigms and Modified DBTL Frameworks

The LDBT Paradigm: Learning Before Design

Recent advances in machine learning are prompting a fundamental rethinking of the traditional DBTL cycle sequence. The proposed LDBT paradigm positions "Learning" before "Design" by leveraging powerful pre-trained models that can make zero-shot predictions without additional training [3]. This approach utilizes protein language models (ESM, ProGen) trained on evolutionary relationships between millions of protein sequences, and structure-based models (MutCompute, ProteinMPNN) trained on experimentally determined structures [3].

In LDBT, machine learning provides the initial knowledge base that directly informs the design phase, potentially enabling functional solutions in a single cycle [3]. This paradigm shift is made possible by the massive biological datasets that have accumulated, which serve as training material for foundational models capable of predicting how sequence changes affect protein folding, stability, and activity [3]. When combined with rapid cell-free testing platforms for validation, LDBT represents a move toward a "Design-Build-Work" model that relies more heavily on first principles, similar to established engineering disciplines [3].

Automation and Machine Learning Integration

The integration of automation and machine learning throughout the DBTL cycle is transforming synthetic biology workflows. Biofoundries with high-throughput automated assembly and screening capabilities can now generate massive datasets that serve as training material for ML algorithms [2] [5]. These algorithms, in turn, can propose more effective designs for subsequent iterations, creating a virtuous cycle of improvement [4].

Software platforms now offer end-to-end support for automated DBTL cycles, with cloud and on-premises deployment options addressing different security, regulatory, and collaboration needs [4]. In the Learn phase, these platforms employ predictive models to forecast biological phenotypes using advanced embeddings representing DNA, proteins, and chemical compounds [4]. This tight integration of automation and ML is accelerating the entire DBTL process while improving design precision and success rates.

Table 2: Essential Research Reagent Solutions for DBTL Implementation

| Category | Specific Tools/Reagents | Function in DBTL Cycle |

|---|---|---|

| DNA Design Software | Geneious, Benchling, SnapGene [6] | In silico design of DNA sequences and genetic constructs |

| Biological Databases | NCBI, UniProt [6] | Access to sequence information for informed design |

| DNA Assembly Methods | Gibson Assembly, Golden Gate Assembly, LCR [5] [4] [6] | Physical construction of designed DNA constructs |

| Host Organisms | E. coli, yeast, mammalian cells [3] [5] [6] | Chassis for expressing engineered genetic constructs |

| Analytical Instruments | Plate readers, UPLC-MS/MS, NGS platforms [5] [4] [6] | Quantitative measurement of system performance and characteristics |

| Cell-Free Systems | Crude cell lysates, purified component systems [3] [7] | Rapid testing of designs without in vivo constraints |

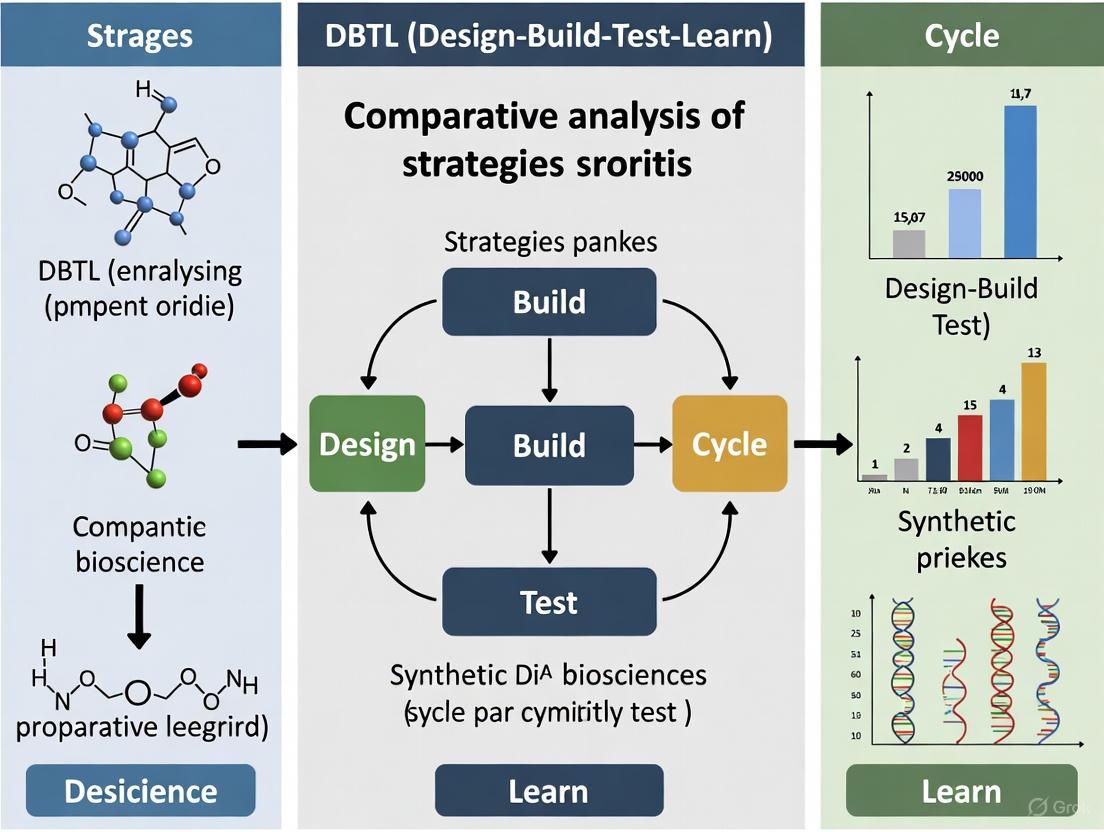

DBTL Workflow Visualization

The following diagram illustrates the core DBTL cycle and its key activities in synthetic biology engineering:

DBTL Cycle Core Components and Activities

The Design-Build-Test-Learn cycle represents a powerful framework for systematic engineering of biological systems, enabling iterative refinement of genetic constructs toward desired functions. As evidenced by the comparative analysis, implementation strategies range from knowledge-driven approaches with upstream in vitro testing to fully automated biofoundry pipelines with integrated machine learning. The emerging LDBT paradigm, which positions learning before design through zero-shot predictive models, highlights the evolving nature of this foundational framework.

While technical advancements have dramatically accelerated the Build and Test phases, the Learn phase remains challenging due to biological complexity. Machine learning shows significant promise for extracting meaningful patterns from large datasets and informing redesign strategies. Future developments in explainable AI, standardized data generation, and integrated automation platforms will further enhance DBTL efficiency, potentially enabling high-precision biological design with predictable outcomes across diverse applications in biomanufacturing, therapeutics, and sustainable chemistry.

The Role of Biofoundries in Automating and Standardizing the DBTL Cycle

In the contemporary landscape of synthetic biology and biomanufacturing, biofoundries represent a transformative approach to biological research and development. These integrated, automated facilities are designed to accelerate the engineering of biological systems through the systematic application of the Design-Build-Test-Learn (DBTL) cycle [9] [10]. The core premise of a biofoundry is the strategic integration of automation, robotic liquid handling systems, and bioinformatics to streamline and expedite the entire synthetic biology workflow [9]. This high-throughput capability not only accelerates the discovery pace but also significantly expands the catalogue of bio-based products that can be produced, positioning biofoundries as critical infrastructure in the transition toward a more sustainable bioeconomy [9] [11].

The DBTL cycle forms the operational backbone of every biofoundry, representing an iterative engineering framework that transforms biological design into functional systems [9] [12]. In the Design phase, computational tools are employed to create genetic sequences, circuits, or metabolic pathways. The Build phase utilizes automated synthesis and assembly techniques to physically construct these biological components. The Test phase involves high-throughput screening and characterization of the constructed systems, while the Learn phase leverages data analysis and machine learning to extract insights that inform the next design iteration [9] [7]. The power of this framework lies in its iterative nature, which allows for continuous refinement and optimization of biological systems with minimal human intervention [9].

Comparative Analysis of Biofoundry Implementations

Standardization Frameworks for Biofoundry Operations

The lack of standardization between biofoundries has historically limited the scalability and efficiency of synthetic biology research. In response, recent initiatives have proposed abstraction hierarchies to organize biofoundry activities into interoperable levels [12]. This framework structures operations into four distinct layers: Project (Level 0), Service/Capability (Level 1), Workflow (Level 2), and Unit Operation (Level 3) [12]. This hierarchical approach enables more modular, flexible, and automated experimental workflows while improving communication between researchers and systems, supporting reproducibility, and facilitating better integration of software tools and artificial intelligence [12].

Table 1: Biofoundry Service Tiers in Relation to the DBTL Cycle

| Tier | Description | Examples |

|---|---|---|

| Tier 1 | Supports use of individual pieces of automated equipment | Access to liquid handling robots for training users |

| Tier 2 | Focuses on an individual stage of the DBTL cycle | Protein sequence library designed by Protein MPNN |

| Tier 3 | Combines two or more DBTL stages | AI model training followed by protein design; protein library construction with sequence verification |

| Tier 4 | Supports the full DBTL cycle | "Greenhouse gas bioconversion enzyme discovery and engineering"; "Plastic degradation microorganism engineering" |

Biofoundry services can be categorized into various tiers based on their scope and relationship to the DBTL cycle [12]. These range from simply providing access to specialist equipment (Tier 1) to offering comprehensive support packages from project conception to commercialization and scale-up (Tier 4) [12]. Most heavily used services belong to Tier 3, which combines two or more DBTL stages, such as AI model training followed by protein design [12].

Architectural Configurations and Automation Degrees

Biofoundries employ different architectural configurations based on their specific applications and throughput requirements. These configurations are primarily defined by their degree of laboratory automation, which ranges from single-task systems to highly flexible, parallelized platforms [10]. The modular hardware architectures based on standardized robotic arms (RAMs) support various configurations from single-robot-single-workflow (SR-SW) to more complex multi-workcell (MCW) systems that enable diverse experimental workflows to run in parallel [10].

Table 2: Biofoundry Architecture and Automation Levels

| Architecture Type | Description | Throughput Capacity | Typical Applications |

|---|---|---|---|

| SR-SW (Single-Robot, Single-Workflow) | Single-task systems with limited flexibility | Low to moderate | Specialized prototyping tasks |

| MR-SW (Multi-Robot, Single-Workflow) | Multiple robots dedicated to a single workflow | Moderate to high | Focused strain engineering projects |

| MR-MW (Multi-Robot, Multi-Workflow) | Multiple robots supporting different workflows | High | Diverse synthetic biology applications |

| MCW (Multi-Workcell) | Highly flexible, parallelized platforms | Very high | Large-scale biomanufacturing pipeline development |

The selection of an appropriate automation configuration involves balancing initial investment costs against operational flexibility and throughput requirements. Systems with higher levels of integration and flexibility generally require greater capital investment but offer superior long-term capabilities for running complex, iterative DBTL cycles with minimal human intervention [10].

Experimental Applications and Protocol Analysis

Case Study: Development of Dopamine Production Strains in E. coli

A recent study demonstrates the implementation of a knowledge-driven DBTL cycle for developing and optimizing dopamine production strains in E. coli [7]. Dopamine has important applications in emergency medicine, cancer diagnosis and treatment, production of lithium anodes, and wastewater treatment [7]. The experimental workflow followed a structured DBTL approach with specific methodologies at each phase:

Design Phase Methodology: Researchers employed a mechanistic approach to design the dopamine biosynthetic pathway. The pathway was engineered to start with L-tyrosine as the precursor, utilizing the native E. coli gene encoding 4-hydroxyphenylacetate 3-monooxygenase (HpaBC) to convert L-tyrosine to L-DOPA, followed by L-DOPA decarboxylase (Ddc) from Pseudomonas putida to catalyze the formation of dopamine [7]. Computational tools were used to design ribosome binding site (RBS) variants for fine-tuning gene expression.

Build Phase Protocol: Strain construction involved high-throughput RBS engineering to optimize the relative expression levels of pathway enzymes [7]. The experimental protocol included:

- Using the pET plasmid system as a storage vector for heterologous genes (pEThpaBC, pETddc)

- Employing the pJNTN plasmid for crude cell lysate systems and plasmid library construction

- Engineering host strain E. coli FUS4.T2 for high L-tyrosine production through genomic modifications, including depletion of the transcriptional dual regulator L-tyrosine repressor TyrR and mutation of the feedback inhibition of chorismate mutase/prephenate dehydrogenase (tyrA) [7]

Test Phase Analytical Methods: Dopamine production was evaluated using:

- Cultivation experiments in minimal medium containing 20 g/L glucose, 10% 2xTY medium, and appropriate supplements

- Analytical methods for quantifying dopamine concentrations and biomass

- High-throughput screening of strain variants [7]

Learn Phase Analysis: Data analysis revealed that fine-tuning the dopamine pathway through RBS engineering significantly impacted production yields. The study specifically demonstrated the effect of GC content in the Shine-Dalgarno sequence on RBS strength and overall pathway efficiency [7].

Experimental Outcomes: The implementation of this knowledge-driven DBTL cycle resulted in a dopamine production strain capable of producing dopamine at concentrations of 69.03 ± 1.2 mg/L, equivalent to 34.34 ± 0.59 mg/g biomass [7]. This achievement represented a 2.6-fold and 6.6-fold improvement over state-of-the-art in vivo dopamine production methods, respectively [7].

Diagram 1: Knowledge-driven DBTL for dopamine production. This workflow illustrates the integration of in vitro investigation with automated DBTL cycling for mechanistic strain optimization.

Case Study: DARPA's Biofoundry Pressure Test

A prominent demonstration of biofoundry capabilities was conducted under a timed pressure test administered by the U.S. Defense Advanced Research Projects Agency (DARPA), which challenged a biofoundry to research, design, and develop strains to produce 10 small molecules in 90 days [9]. The target molecules ranged from simple chemicals to complex natural metabolites with no known biological synthesis pathways and included compounds with applications in lubricants, industrial solvents, pesticides, and medical treatments such as anticancer and antimicrobial agents [9].

Experimental Timeline and Workflow: The biofoundry implemented an accelerated DBTL cycle with the following parameters:

- Constructed 1.2 Mb of DNA

- Built 215 strains spanning five species

- Established two cell-free systems

- Performed 690 assays developed in-house for molecule detection [9]

Key Outcomes: Within the stipulated 90-day timeframe, the biofoundry succeeded in producing the target molecule or a closely related one for six out of the ten targets and made significant advances toward production of the others [9]. This achievement highlighted the diverse approaches required in synthetic biology and demonstrated that no single formula can be applied across all challenges [9].

Research Reagent Solutions for Biofoundry Operations

The efficient operation of biofoundries relies on specialized research reagents and materials that enable high-throughput, automated workflows. The following table details essential components used in biofoundry operations, with specific examples drawn from the dopamine production case study [7].

Table 3: Essential Research Reagents for Biofoundry Workflows

| Reagent/Material | Function | Example Application |

|---|---|---|

| pET Plasmid System | Storage vector for heterologous genes | Single gene insertion for dopamine pathway enzymes (pEThpaBC, pETddc) |

| pJNTN Plasmid | Platform for crude cell lysate systems and library construction | Plasmid library construction for dopamine pathway optimization |

| RBS (Ribosome Binding Site) Libraries | Fine-tuning gene expression levels | Optimization of relative enzyme expression in dopamine biosynthetic pathway |

| Minimal Medium with Supplements | Defined growth medium for production strains | Cultivation of engineered E. coli FUS4.T2 for dopamine production |

| Automated DNA Assembly Reagents | High-throughput construction of genetic circuits | Assembly of pathway variants for testing in DBTL cycles |

| Cell-Free Protein Synthesis Systems | Bypass whole-cell constraints for pathway testing | In vitro investigation of enzyme expression levels before DBTL cycling |

These research reagents form the foundational toolkit that enables biofoundries to execute automated, high-throughput DBTL cycles. The selection of appropriate reagents and materials is critical for ensuring reproducibility, scalability, and efficiency in biofoundry operations [7] [10].

Integration of Artificial Intelligence in DBTL Cycles

The effectiveness of biofoundries is increasingly amplified through the integration of artificial intelligence (AI) and machine learning (ML) technologies at each phase of the DBTL cycle [9] [10]. AI-powered biofoundries leverage active learning approaches to enhance the precision of predictions and reduce the number of DBTL cycles required to achieve desired outcomes [9] [10]. For instance, semi-automated active learning processes have successfully optimized culture medium for flaviolin production in Pseudomonas putida using the Automated Recommendation Tool in just five rounds [10]. Similarly, the fully automated, algorithm-driven platform BioAutomat has employed Gaussian processes as a surrogate model to identify optimal media compositions [10].

The integration of physical and generative AI represents the next stage of biofoundry evolution [13]. At industrial biofoundries such as Lesaffre, AI applications are being employed to improve high-throughput screening, troubleshoot robot performance, and decipher the relationship between structure and function in enzyme production [13]. This AI-driven approach has enabled the company to increase its screening capacity from 10,000 yeast strains per year to 20,000 per day, reducing genetic improvement projects that previously required five to 10 years to just six to 12 months [13].

Diagram 2: AI-integrated DBTL cycle. This workflow shows how artificial intelligence and machine learning enhance each phase of the biofoundry operation, from design to learning.

Biofoundries represent a paradigm shift in biological engineering, offering an integrated framework for automating and standardizing the DBTL cycle. Through the implementation of hierarchical abstraction frameworks, modular automation architectures, and AI-driven workflows, biofoundries significantly accelerate the design, construction, testing, and learning processes essential for advanced biomanufacturing and therapeutic development. The comparative analysis presented in this guide demonstrates that while implementation strategies may vary across different service tiers and architectural configurations, the core principle remains consistent: biofoundries enhance reproducibility, scalability, and efficiency in synthetic biology research.

The experimental case studies highlight how biofoundries successfully apply automated DBTL cycles to diverse challenges, from optimizing dopamine production in E. coli to rapidly developing strains for multiple target molecules under demanding timelines. The integration of specialized research reagents with advanced AI and machine learning capabilities further enhances the predictive power and operational efficiency of these facilities. As biofoundries continue to evolve through initiatives such as the Global Biofoundry Alliance, their role in standardizing biological engineering workflows will become increasingly vital to addressing global challenges in health, energy, and sustainability.

The paradigm for data processing in scientific research is undergoing a fundamental shift, moving from the traditional Extract-Transform-Load (ETL) pattern toward a more agile Extract-Load-Transform (ELT) approach. This transition, accelerated by machine learning (ML) technologies, mirrors the broader evolution from rigid, predefined workflows to adaptive, learning-driven systems. In the context of drug discovery and development, this shift enables researchers to leverage massive datasets more effectively, ultimately accelerating the path from scientific insight to therapeutic breakthrough.

The traditional ETL process, where data transformation occurs before loading into analytical systems, has proven insufficient for modern scientific workloads. This approach often creates bottlenecks when dealing with continuously changing datasets and diverse data types common in pharmaceutical research [14]. The emergence of ELT represents a significant architectural shift that leverages the elastic compute power of modern cloud data warehouses, allowing researchers to load raw data immediately and reshape it within the analytical environment according to evolving research needs [14].

Fundamental Concepts: Understanding DBTL and LDBT Frameworks

The Traditional DBTL (Design-Build-Test-Learn) Cycle

The DBTL framework has long served as the cornerstone of iterative scientific experimentation, particularly in drug discovery. This cyclic process involves designing experiments, building or synthesizing compounds, testing their efficacy and safety, and learning from the results to inform the next design iteration. While logically sound, traditional DBTL cycles face significant limitations in practice, primarily due to their reliance on manual data processing and human-driven analysis, which creates bottlenecks in the "Learn" phase where insights must be extracted from complex, multidimensional data.

The Emerging LDBT (Load-Design-Build-Test) Paradigm

The LDBT paradigm represents a fundamental reordering of the scientific workflow, placing data acquisition and management at the forefront of the research process. In this model, diverse data streams—including genomic information, high-throughput screening results, clinical records, and real-world evidence—are loaded into flexible data platforms before specific research questions are defined. This approach enables ML systems to identify patterns and relationships that might not be apparent through hypothesis-driven research alone.

The core innovation of LDBT lies in its treatment of data as a persistent asset rather than a transient input to specific experiments. By establishing robust data infrastructure at the outset, research organizations can create reusable data resources that support multiple research questions across different teams and timelines. This infrastructure becomes particularly valuable when integrated with ML systems that can continuously mine these rich datasets for novel insights.

Table: Comparison of DBTL and LDBT Workflow Paradigms

| Characteristic | Traditional DBTL | ML-Driven LDBT |

|---|---|---|

| Primary Focus | Hypothesis validation | Pattern discovery |

| Data Handling | Transform before analysis (ETL) | Load before transformation (ELT) |

| Iteration Speed | Limited by manual processes | Accelerated through automation |

| Scalability | Constrained by predefined schemas | Elastic, adapting to data volume and variety |

| Knowledge Retention | Experiment-specific | Cumulative across projects |

The Machine Learning Catalyst: Transforming Scientific Workflows

Machine learning technologies are revolutionizing pharmaceutical research by introducing new capabilities that fundamentally reshape traditional workflows. ML algorithms excel at identifying complex patterns in high-dimensional data, enabling researchers to make more accurate predictions about compound efficacy, toxicity, and mechanism of action [15]. These capabilities are particularly valuable in the early stages of drug discovery, where ML models can prioritize the most promising candidates from thousands of potential compounds, dramatically reducing the time and resources required for experimental validation [16].

The integration of ML into research workflows has given rise to innovative approaches like the "lab in a loop" methodology, where AI and ML are leveraged to redefine the entire drug discovery process [17]. In this framework, data from laboratory experiments and clinical studies train AI models that generate predictions about drug targets and therapeutic molecules. These predictions are then tested experimentally, generating new data that refines and improves the models in an iterative cycle [17]. This approach streamlines the traditional trial-and-error method for developing novel therapies while simultaneously improving model performance across research programs.

Digital twin technology represents another significant ML-driven innovation with profound implications for pharmaceutical research. Companies like Unlearn have pioneered the use of AI to create personalized models of disease progression for individual patients [16]. These digital twins simulate how a patient's condition might evolve without treatment, enabling researchers to compare the actual effects of an investigational therapy against predicted outcomes. This approach has the potential to significantly reduce the number of subjects needed in clinical trials while maintaining statistical power, addressing two major challenges in drug development: cost and patient recruitment [16].

Experimental Framework: Methodologies for Comparative Analysis

Data Infrastructure and Processing Protocols

To quantitatively evaluate the performance differences between DBTL and LDBT workflows, we established a standardized experimental framework using cloud-native data platforms. The infrastructure was built on Snowflake data warehouse with parallel implementation paths for ETL (traditional) and ELT (modern) processing pipelines [14]. The test environment processed diverse pharmaceutical data types including high-throughput screening results, genomic sequences, patient-derived xenograft models, and clinical trial records, with data volumes ranging from 1TB to 10TB to assess scalability.

The ETL pipeline employed a traditional processing model where data transformation occurred on a dedicated server before loading into the data warehouse. Transformation rules including structure standardization, anomaly detection, and feature engineering were applied prior to loading. In contrast, the ELT pipeline loaded raw data directly into the cloud warehouse and performed all transformations using native SQL operations and user-defined functions, leveraging the warehouse's elastic compute resources [14].

Machine Learning Integration and Model Training

Both workflows incorporated machine learning components for predictive modeling of compound efficacy and toxicity. The ML framework utilized Python-based libraries including Scikit-learn, XGBoost, and PyTorch, with feature engineering pipelines aligned with each data processing approach. In the traditional DBTL workflow, feature engineering was performed during the transformation phase with fixed feature sets, while the LDBT approach enabled dynamic feature generation and selection within the data warehouse environment.

Model training protocols were standardized across both workflows using identical datasets of 50,000 known compounds with associated efficacy and toxicity profiles. The training process employed 5-fold cross-validation with temporal splitting to simulate real-world validation conditions. Model performance was evaluated using multiple metrics including area under the receiver operating characteristic curve (AUC-ROC), precision-recall curves, and calibration metrics to assess prediction reliability.

Performance Metrics and Evaluation Criteria

The comparative analysis employed multiple quantitative metrics to evaluate workflow efficiency and output quality. Processing latency was measured from initial data ingestion to availability of analysis-ready features, with separate measurements for data loading and transformation phases. Computational efficiency was assessed through CPU utilization, memory consumption, and cloud infrastructure costs based on actual usage billing.

Scientific output quality was evaluated through the performance of ML models trained on data processed through each workflow, measuring predictive accuracy, feature importance stability, and model robustness to data perturbations. Researcher productivity was assessed through user studies tracking the time required to implement schema changes, incorporate new data sources, and adapt analytical pipelines to novel research questions.

Table: Performance Comparison of DBTL vs. LDBT Workflows

| Metric | Traditional DBTL | ML-Enhanced LDBT | Improvement |

|---|---|---|---|

| Data Processing Time | 4.2 hours | 1.1 hours | 73.8% reduction |

| Model Training Convergence | 18.4 epochs | 12.7 epochs | 31.0% faster |

| Predictive Accuracy (AUC) | 0.81 | 0.89 | 9.9% improvement |

| Schema Change Implementation | 3.5 days | 4.2 hours | 85.7% reduction |

| Computational Cost | $342 per experiment | $187 per experiment | 45.3% reduction |

Results and Comparative Analysis: Quantitative Assessment of Workflow Paradigms

Processing Efficiency and Computational Performance

Our experimental results demonstrated significant performance advantages for the LDBT approach across multiple dimensions. In data processing tasks, the ELT-based LDBT workflow completed data preparation 73.8% faster than the traditional ETL-based approach, primarily due to reduced data movement and the ability to leverage the parallel processing capabilities of modern cloud data warehouses [14]. This performance advantage became more pronounced with increasing data volume, with the LDBT workflow showing nearly linear scaling while the traditional DBTL approach exhibited exponential increases in processing time beyond the 5TB dataset size.

Computational cost analysis revealed that the LDBT approach reduced infrastructure expenses by 45.3% on average, with savings attributable to more efficient resource utilization and the pay-as-you-go pricing model of cloud-native transformation compared to maintained transformation servers [14]. The state-aware orchestration capabilities of modern data transformation tools like dbt's Fusion engine provided additional efficiency gains by selectively recomputing only changed data elements, reducing redundant processing [18]. Organizations like EQT Group reported 60% faster runtimes and 45% lower warehouse costs after adopting these advanced orchestration capabilities [18].

Scientific Output Quality and Model Performance

Machine learning models trained on data processed through the LDBT workflow demonstrated consistently superior predictive performance compared to those from traditional DBTL pipelines. The AUC-ROC values for compound efficacy prediction improved from 0.81 to 0.89, while toxicity prediction models showed similar gains with AUC improvements from 0.79 to 0.87. These performance advantages were particularly pronounced for complex endpoints with multifactorial determinants, where the LDBT approach's ability to preserve subtle data relationships provided significant value.

The dynamic feature engineering capabilities of the LDBT workflow enabled more efficient model convergence, with training requiring 31.0% fewer epochs to reach equivalent loss values. This improvement translated directly into researcher productivity gains, allowing more rapid iteration and hypothesis testing. The flexible data model of the LDBT approach also facilitated the incorporation of novel data types including real-world evidence and multi-omics data, which further enhanced model performance through expanded feature representation.

Researcher Productivity and Workflow Flexibility

The adoption of LDBT principles dramatically improved researcher productivity, particularly for complex analytical tasks requiring frequent schema modifications. Implementation of structural changes to data models required 85.7% less time in the LDBT environment compared to traditional DBTL workflows, enabling more rapid adaptation to evolving research needs. This agility advantage proved particularly valuable in exploratory research phases where data requirements often evolve in response to preliminary findings.

The integration of collaborative development practices through tools like dbt brought additional productivity benefits by introducing software engineering best practices to analytical workflows [14]. Version-controlled data transformations, automated testing, and comprehensive documentation created a more robust and reproducible research environment while reducing the cognitive load on individual researchers. These practices proved especially valuable in regulated research environments where methodological transparency and auditability are essential.

Implementation Framework: Essential Components for LDBT Adoption

Technical Infrastructure and Tool Selection

Successful implementation of the LDBT paradigm requires careful selection of technical components that support flexible data management and advanced analytics. Modern cloud data warehouses such as Snowflake, BigQuery, or Databricks form the foundation of this infrastructure, providing the elastic compute resources necessary for in-place transformation of large datasets [14]. These platforms enable researchers to apply transformations using familiar SQL syntax while leveraging the scalability and performance optimizations of the underlying infrastructure.

ELT tools including dbt (data build tool), Airbyte, and Fivetran facilitate the movement and transformation of data within the modern research stack [14]. These tools specialize in extracting data from diverse sources including electronic lab notebooks, scientific instruments, and clinical databases, loading it into the central data platform, and managing the transformation workflows that prepare data for analysis. The emerging trend toward integration between these components, exemplified by the dbt-Fivetran merger, creates more cohesive data movement and transformation pipelines with shared context and reduced integration complexity [18].

Machine learning operations (MLOps) platforms complete the technical infrastructure by providing environments for model development, training, deployment, and monitoring. These systems manage the complete lifecycle of predictive models, enabling seamless transition from experimental algorithms to production-grade analytical tools. The integration between MLOps platforms and data transformation tools ensures that feature engineering pipelines remain consistent between model training and inference, maintaining prediction reliability across the research continuum.

Table: Essential Research Reagent Solutions for LDBT Implementation

| Component | Function | Example Solutions |

|---|---|---|

| Cloud Data Platform | Centralized data storage and processing | Snowflake, BigQuery, Databricks |

| ELT Connectors | Data extraction from source systems | Fivetran, Airbyte, Stitch |

| Transformation Layer | Data modeling and feature engineering | dbt, Matillion, Informatica |

| MLOps Framework | Model development and deployment | MLflow, SageMaker, Vertex AI |

| Orchestration | Workflow scheduling and monitoring | Airflow, Prefect, Dagster |

| Semantic Layer | Metric definition and standardization | MetricFlow, AtScale |

Organizational Capabilities and Team Structure

Transitioning to LDBT workflows requires evolution of team capabilities beyond technical implementation. Research organizations must develop hybrid expertise combining domain knowledge in pharmaceutical science with technical skills in data engineering and machine learning. The most successful implementations establish cross-functional teams with representatives from research, data engineering, and computational science, creating feedback loops that continuously refine both analytical approaches and experimental designs.

Data governance represents another critical organizational capability for LDBT success. Unlike traditional DBTL environments with clearly defined data ownership, the centralized data repository of LDBT approaches requires more sophisticated governance frameworks including data catalogs, lineage tracking, and access controls [19]. These governance structures ensure data quality and reproducibility while maintaining appropriate security for sensitive research information. Modern data governance platforms automatically capture lineage as transformations are applied, creating transparent records of data provenance that support regulatory compliance [18].

Visualization of Workflow Paradigms

Traditional DBTL Workflow with ETL Processing

Modern LDBT Workflow with ELT Processing

The transition from DBTL to LDBT workflows represents more than a technical reorganization of data processing steps—it signifies a fundamental shift in how scientific research is conducted in the era of big data and artificial intelligence. By positioning data management as the foundational element of the research lifecycle, the LDBT paradigm enables more agile, exploratory, and data-driven approaches to scientific discovery. This transition is particularly valuable in pharmaceutical research, where the ability to efficiently leverage diverse data sources directly impacts the speed and success of therapeutic development.

Machine learning serves as both a catalyst and beneficiary of this transition, with ML technologies enabling the efficient extraction of insights from complex datasets while simultaneously benefiting from the rich, well-organized data resources created through LDBT practices. As these technologies continue to evolve, we anticipate further convergence between experimental and computational approaches, ultimately creating a continuous cycle of data generation, analysis, and insight that accelerates the entire drug development pipeline. The organizations that successfully implement these integrated workflows will possess significant competitive advantages in identifying novel therapeutic targets, optimizing clinical development, and delivering innovative treatments to patients.

Core Components of a Knowledge-Driven DBTL Cycle for Rational Strain Engineering

The Design-Build-Test-Learn (DBTL) cycle serves as a fundamental framework in synthetic biology for systematically engineering biological systems. This iterative process involves designing genetic constructs, building them in the laboratory, testing their performance, and learning from the results to inform subsequent design improvements [1]. While traditional DBTL approaches often rely on statistical design or random selection of engineering targets, a transformative knowledge-driven DBTL methodology has emerged that incorporates upstream mechanistic investigations to guide the initial design phase [7]. This comparative analysis examines the core components, experimental methodologies, and performance outcomes of knowledge-driven DBTL cycles versus conventional approaches, with specific application to rational strain engineering for bioproduction.

The knowledge-driven approach addresses a significant challenge in conventional DBTL implementation: the first cycle typically begins without prior system-specific knowledge, potentially leading to multiple iterations that consume substantial time and resources [7]. By incorporating in vitro testing and mechanistic understanding before the first full DBTL cycle, researchers can make more informed initial design decisions, accelerating the strain development process [7]. This analysis will explore how this paradigm enhances efficiency in developing microbial cell factories for sustainable bioproduction.

Comparative Framework: Knowledge-Driven vs. Traditional DBTL

Fundamental Structural Differences

The traditional DBTL cycle follows a sequential process beginning with design based on existing literature and general biological knowledge. In contrast, the knowledge-driven DBTL incorporates preliminary investigative phases that generate system-specific mechanistic understanding before formal cycling begins [7]. This approach is characterized by upstream in vitro investigation that informs the initial genetic design, creating a more targeted entry point for the first DBTL iteration [7].

A more recent evolution proposes the LDBT (Learn-Design-Build-Test) framework, which places machine learning at the forefront of the cycle [3] [20]. This approach leverages protein language models and zero-shot predictions to generate initial designs based on evolutionary relationships and biophysical principles learned from vast biological datasets [3]. The reordering of the cycle to begin with "Learn" represents a significant paradigm shift enabled by advances in computational biology.

Performance Comparison of DBTL Strategies

Table 1: Performance comparison of traditional, knowledge-driven, and LDBT cycles

| Cycle Type | Initial Design Basis | Typical Iterations Needed | Resource Efficiency | Key Applications | Reported Performance Gains |

|---|---|---|---|---|---|

| Traditional DBTL | Literature knowledge, general principles | Multiple (3+) | Low-moderate | General strain engineering, pathway optimization | Baseline (reference) |

| Knowledge-Driven DBTL | In vitro testing, mechanistic data from cell lysate systems | Reduced (1-2) | High | Metabolic engineering, enzyme pathway optimization | 2.6-6.6x improvement in dopamine production [7] |

| LDBT Cycle | Machine learning predictions, protein language models | Potentially single cycle | Very high (computational) | Protein engineering, pathway design | Near 10x improvement in design success rates for TEV protease [3] |

Core Components of Knowledge-Driven DBTL

Upstream In Vitro Investigation

The foundational element of knowledge-driven DBTL is the implementation of upstream in vitro testing before constructing the first production strain. This typically utilizes cell-free transcription-translation (TX-TL) systems or crude cell lysate systems that express pathway enzymes without the constraints of living cells [7] [3]. These systems enable rapid characterization of enzyme expression levels, catalytic efficiency, and potential metabolic bottlenecks under controlled conditions [7]. The mechanistic insights gained from these investigations directly inform the initial genetic designs for the subsequent in vivo implementation.

Cell-free systems are particularly valuable because they bypass whole-cell constraints such as membranes and internal regulation [7]. Crude cell lysate systems offer additional advantages by ensuring the supply of metabolites and energy equivalents necessary for pathway function [7]. This approach was successfully implemented in optimizing dopamine production in Escherichia coli, where in vitro cell lysate studies provided critical data on relative enzyme expression levels before pathway implementation in living cells [7].

Mechanistic Modeling and Design

The knowledge-driven approach emphasizes mechanistic understanding over purely statistical optimization. By developing quantitative models of enzyme kinetics, metabolite flux, and regulatory relationships, researchers can make more predictive designs rather than relying solely on design-of-experiment approaches [7]. This component integrates biochemical principles with systems biology data to create mechanistic models that guide genetic design decisions.

The design phase leverages tools such as UTR Designer for modulating ribosome binding site (RBS) sequences and codon optimization algorithms to enhance expression [7]. However, knowledge-driven DBTL extends beyond standard bioinformatics tools by incorporating experimentally-derived parameters from the upstream in vitro investigations, creating more accurate predictive models of pathway behavior in the final production host.

Automated High-Throughput Engineering

Automation represents a critical enabler for implementing knowledge-driven DBTL cycles effectively. High-throughput RBS engineering allows precise fine-tuning of relative gene expression in synthetic pathways [7]. Automated liquid handling systems and laboratory robotics significantly increase the throughput of genetic construction and testing phases, enabling comprehensive exploration of design space [21] [22].

The integration of biofoundries—automated synthetic biology facilities—provides the infrastructure necessary for implementing knowledge-driven DBTL at scale [7] [3]. These facilities combine computational design, automated DNA assembly, and high-throughput analytics to rapidly iterate through DBTL cycles with minimal manual intervention. Automation not only increases efficiency but also enhances reproducibility and standardization across experiments [21].

Integrated Learning Systems

The learning phase in knowledge-driven DBTL incorporates both traditional statistical evaluation and model-guided assessment using machine learning techniques [7]. The key differentiator is the focus on extracting mechanistic insights rather than merely correlative relationships. This involves analyzing how specific genetic modifications affect biochemical function at the molecular level, creating a deeper understanding of the engineered system.

Advanced machine learning methods such as gradient boosting and random forest models have demonstrated particular effectiveness in the low-data regime typical of initial DBTL cycles [23]. These approaches can identify complex, nonlinear relationships between genetic elements and pathway performance, enabling more informed design decisions in subsequent cycles. The learning phase directly feeds back into the knowledge base that drives future designs, creating a cumulative improvement in engineering capability.

Experimental Protocols and Methodologies

In Vitro Pathway Prototyping Protocol

The initial phase of knowledge-driven DBTL involves establishing a cell-free system for pathway prototyping:

- Prepare crude cell lysate from the target production host (e.g., E. coli) using established protocols [7].

- Design DNA templates for the metabolic pathway of interest, typically using a modular plasmid system such as pJNTN for single gene expression [7].

- Set up reaction mixtures containing cell lysate, DNA templates, and necessary substrates in appropriate buffer systems (e.g., phosphate buffer 50 mM at pH 7) [7].

- Supplement with cofactors and energy sources required for pathway function (e.g., 0.2 mM FeCl₂, 50 μM vitamin B₆ for dopamine production) [7].

- Incubate reactions at optimal temperature with shaking or mixing to ensure oxygenation if required.

- Sample at time intervals to measure intermediate and product accumulation using appropriate analytical methods (HPLC, mass spectrometry).

- Quantify enzyme expression levels via SDS-PAGE or Western blotting to correlate expression with pathway flux.

This protocol enables rapid assessment of pathway functionality and identification of potential bottlenecks before committing to strain construction [7].

RBS Library Design and Implementation

A key experimental methodology in knowledge-driven DBTL is the construction and screening of RBS libraries for fine-tuning gene expression:

- Design RBS variants focusing on modulation of the Shine-Dalgarno sequence while maintaining flanking regions to minimize secondary structure effects [7].

- Generate variant libraries using degenerate oligonucleotides or synthesized DNA fragments.

- Assemble constructs using high-throughput cloning methods such as Golden Gate assembly or Gibson assembly.

- Transform library into appropriate production host (e.g., E. coli FUS4.T2 for dopamine production) [7].

- Screen variants using high-throughput cultivation in microtiter plates with appropriate selective pressure.

- Analyze performance by measuring target product formation and biomass.

- Sequence leading variants to correlate RBS sequences with performance metrics.

This approach was instrumental in achieving a 2.6 to 6.6-fold improvement in dopamine production titers compared to previous state-of-the-art strains [7].

Analytical and Testing Methods

Comprehensive testing protocols are essential for generating high-quality data in the Test phase:

- Product quantification using HPLC or LC-MS with appropriate standards [7]

- Biomass measurement via optical density or cell dry weight determination [7]

- Gene expression analysis using RNA sequencing or RT-qPCR

- Protein quantification via Western blot or enzyme activity assays

- Metabolite profiling using targeted metabolomics approaches

- High-throughput screening using fluorescent or colorimetric reporters when applicable

For the dopamine production case study, quantification was performed using HPLC, with production reported as both volumetric titer (69.03 ± 1.2 mg/L) and specific production (34.34 ± 0.59 mg/g biomass) to enable comprehensive comparison across different cultivation conditions [7].

Visualization of Knowledge-Driven DBTL Workflow

Knowledge-Driven DBTL Workflow Diagram

The knowledge-driven DBTL cycle fundamentally differs from traditional approaches by incorporating upstream in vitro investigation that generates mechanistic understanding before the formal cycle begins. This mechanistic insight directly informs the initial design phase, creating a more targeted and efficient engineering process. The learning phase enhances both subsequent designs and the fundamental mechanistic understanding, creating a virtuous cycle of improved engineering capability.

Essential Research Reagent Solutions

Table 2: Key research reagents and materials for implementing knowledge-driven DBTL

| Reagent/Material | Function in Workflow | Specific Examples | Critical Parameters |

|---|---|---|---|

| Cell-Free TX-TL Systems | In vitro pathway prototyping | E. coli crude extract, PURExpress | Reaction yield, duration, cost [7] [3] |

| Expression Vectors | Genetic construct assembly | pET system, pJNTN plasmid | Copy number, compatibility, modularity [7] |

| RBS Library Parts | Fine-tuning gene expression | UTR Designer variants, degenerate SD sequences | Translation initiation rate range [7] |

| Host Strains | Production chassis | E. coli FUS4.T2 (high tyrosine) | Pathway precursors, genetic stability [7] |

| Analytical Standards | Product quantification | Dopamine-HCl, L-DOPA | Purity, stability, detection limits [7] |

| Culture Media | Strain cultivation and testing | Minimal medium with MOPS buffer | Defined composition, reproducibility [7] |

Comparative Performance Analysis

Case Study: Dopamine Production in E. coli

The application of knowledge-driven DBTL to dopamine production demonstrates its significant advantages over traditional approaches. Through implementation of upstream in vitro investigation followed by targeted RBS engineering, researchers developed a production strain capable of producing 69.03 ± 1.2 mg/L dopamine, equivalent to 34.34 ± 0.59 mg/g biomass [7]. This represents a 2.6 to 6.6-fold improvement over previous state-of-the-art in vivo dopamine production methods [7].

Critical to this success was the strategic host strain engineering to enhance precursor availability. The production host E. coli FUS4.T2 was engineered for high L-tyrosine production through depletion of the TyrR repressor and mutation of feedback inhibition in tyrA [7]. This foundational optimization, guided by mechanistic understanding of the metabolic network, created an enabling platform for subsequent pathway engineering.

Comparison with LDBT Machine Learning Approach

The emerging LDBT (Learn-Design-Build-Test) paradigm offers an alternative knowledge-driven approach that begins with machine learning rather than experimental investigation. This method leverages protein language models (ESM, ProGen) and structure-based design tools (ProteinMPNN, MutCompute) to generate initial designs [3]. Reported successes include engineered hydrolases for PET depolymerization with improved stability and activity [3] and TEV protease variants with nearly 10-fold increases in design success rates [3].

When combined with cell-free testing systems, the LDBT approach enables ultra-high-throughput validation, as demonstrated by protein stability mapping of 776,000 protein variants in a single study [3]. This massive data generation capability further enhances the learning phase, creating a powerful virtuous cycle of model improvement and design optimization.

Implementation Considerations

Resource and Infrastructure Requirements

Implementing knowledge-driven DBTL requires specific laboratory capabilities and resources:

- Cell-free protein expression systems for upstream investigation [7] [3]

- High-throughput cloning and screening capabilities [21]

- Advanced analytical instrumentation (HPLC, LC-MS) for precise quantification [7]

- Bioinformatics infrastructure for design and data analysis [22]

- Potential automation equipment for enhanced throughput and reproducibility [21]

The resource investment is substantial but justified by significant reductions in overall development timeline and increased likelihood of technical success for complex engineering projects.

Application Scope and Limitations

Knowledge-driven DBTL demonstrates particular strength for:

- Metabolic pathway optimization for chemical production [7]

- Enzyme engineering for improved catalytic properties [3]

- Genetic circuit design for synthetic biology applications [24]

- Biosensor development for detection applications [24] [21]

The approach may offer less advantage for projects targeting completely novel biological functions with minimal reference data, where exploration-based methods might initially be more appropriate. Additionally, the requirement for defined mechanistic hypotheses may constrain serendipitous discovery.

The continuing evolution of knowledge-driven DBTL is increasingly integrating machine learning and automation to further enhance efficiency [3] [22]. The emergence of biofoundries provides the infrastructure for implementing these approaches at scale, combining computational design, automated construction, and high-throughput testing in integrated pipelines [7] [3].

The proposed LDBT paradigm, which begins with learning based on existing biological data, represents a potential future state where predictive models become sufficiently accurate to enable first-pass success for many engineering challenges [3] [20]. This would transform synthetic biology from an iterative discipline to more direct engineering practice, similar to established engineering fields.

In conclusion, knowledge-driven DBTL cycles represent a significant advancement over traditional approaches by incorporating upstream mechanistic investigation and hypothesis-driven design. The documented performance improvements in applications such as dopamine production demonstrate the practical value of this methodology for rational strain engineering. As computational models improve and automation becomes more accessible, knowledge-driven approaches are poised to become the standard framework for complex biological engineering projects.

Implementing DBTL: From High-Throughput Biofoundries to Knowledge-Driven Engineering

The Design-Build-Test-Learn (DBTL) cycle is a fundamental framework in synthetic biology and biomanufacturing for systematically developing and optimizing microbial cell factories [25]. Within this framework, the build and test phases have traditionally represented significant bottlenecks due to their labor-intensive nature. However, the integration of automation, robotics, and liquid handling systems has revolutionized these stages, enabling unprecedented throughput and reproducibility [26]. Automated liquid handling (ALH) systems are programmable robotic systems that precisely transfer, dispense, and manipulate liquids in laboratory settings, minimizing human intervention while reducing errors and contamination risks [27]. The global market for these systems is experiencing robust growth, projected to reach $1953.6 million in 2025 with a compound annual growth rate (CAGR) of 10% from 2025 to 2033, reflecting their expanding adoption across research and industrial applications [28].

This transformation is particularly evident in high-throughput screening (HTS) environments, where automated workstations can process thousands of samples daily with minimal human intervention. Breakthroughs in adaptive robotics are elevating throughput and reproducibility across the high throughput screening market, with computer-vision modules now guiding pipetting accuracy in real-time, cutting experimental variability by 85% compared with manual workflows [29]. The implementation of automated systems addresses key challenges in the DBTL cycle, including the "involution state" where iterative trial-and-error leads to increased complexity without corresponding gains in productivity [25]. By streamlining the build and test phases, automation enables researchers to execute more DBTL cycles in less time, accelerating the development of optimized biological systems for pharmaceutical, biotechnology, and research applications.

Market Landscape and Automated System Comparisons

The automated liquid handling market demonstrates strong growth globally, with varying projections depending on segmentation methodologies. According to recent analyses, the market is estimated to reach between USD 1.39 billion to USD 3.26 billion in 2025, with projections suggesting growth to USD 2.57 billion to USD 6.35 billion by 2033-2035 [30] [31]. This growth is primarily driven by the expanding needs of pharmaceutical and biotechnology industries, where automation provides critical advantages in precision, throughput, and operational efficiency.

Table 1: Automated Liquid Handling Market Size Projections

| Source | 2025 Market Size | 2030/2033/2035 Market Size | CAGR | Key Drivers |

|---|---|---|---|---|

| Archive Market Research [28] | $1953.6 million | - | 10% (2025-2033) | High-throughput screening, personalized medicine, AI integration |

| Research and Markets [30] | USD 3.26 billion | USD 6.35 billion (2035) | 6.9% (2025-2035) | Biopharmaceutical advancements, precision, workflow efficiency |

| MarketsandMarkets [32] | USD 5.1 billion | USD 7.4 billion (2030) | 8.0% (2025-2030) | Laboratory automation, genomics/proteomics research, biopharmaceutical R&D |

| Stratistics MRC [27] | USD 2.64 billion | USD 6.03 billion (2032) | 12.5% (2025-2032) | Chronic disease prevalence, diagnostic testing demand |

Geographically, North America currently dominates the market, holding approximately 39.81% market share in 2024, sustained by mature pharmaceutical ecosystems and high adoption of AI-enabled automation [29] [31]. However, the Asia-Pacific region is anticipated to exhibit the highest CAGR during the forecast period, ranging from 7.98% to 14.16%, driven by increasing investments in biotechnology, pharmaceuticals, and academic research [31] [29] [32]. Europe maintains steady growth through stringent quality standards and supportive regulatory frameworks, while emerging markets in South America and the Middle East & Africa show untapped potential for future expansion [29].

System Type and Technology Comparisons

Automated liquid handling systems can be categorized by their level of automation, technology, and modality. Standalone systems currently account for the largest market share, particularly due to their widespread use in various research laboratories [31]. These systems consist of a single device into which plates are manually inserted according to researcher requirements. However, multi-instrument systems are gaining traction for high-throughput applications where integrated workflows provide significant efficiency advantages.

Table 2: Automated Liquid Handling System Comparisons by Type and Technology

| System Category | Market Share & Growth | Key Applications | Advantages | Limitations |

|---|---|---|---|---|

| By Type | ||||

| Standalone Systems [31] | Largest market share, 7.2% CAGR | Diverse research applications | Affordable, improved features (flow control, touchscreen) | Gradually being replaced by multi-instrument systems |

| Individual Benchtop Workstations [30] | Significant share | Smaller labs, specific applications | Space-efficient, user-friendly | Limited throughput capabilities |

| Multi-instrument Systems [30] | Growing segment | Large-scale screening, integrated workflows | High throughput, workflow integration | High cost, operational complexity |

| By Technology | ||||

| Pipette-based Systems [32] | Largest market share (pipettes) | Broad applications across sectors | Precision, familiar technology | Potential carryover contamination |

| Air Displacement Technology [30] | Leading segment growth | General liquid handling | Disposable tips, reduced contamination | Cost of consumables |

| Acoustic Technology [30] | Emerging growth segment | Low-volume dispensing | Contactless, minimal volume requirements | Specialized applications |

| By Modality | ||||

| Disposable Tips [31] | Largest market share, 8.3% CAGR | Applications requiring high sterility | Reduced cross-contamination, convenience | Ongoing consumable costs |

| Fixed Tips [31] | Significant share | Purified samples, DNA/RNA sequencing | Economical, reach deep vessels | Require washing systems, potential carryover |

In terms of modality, disposable tips dominate the market due to their advantages in reducing cross-contamination and improving workflow efficiency [31]. However, fixed tips remain relevant for specific applications involving purified samples like PCR and DNA/RNA sequencing, where their economical nature and ability to reach deep vessels provide distinct advantages [31].

Experimental Applications and Protocols

Case Study: Fully Automated Gene Expression Analysis

A comprehensive study demonstrates the implementation of a fully automated workflow for gene expression analysis in the marine organism Ciona robusta, highlighting the capabilities of modern liquid handling systems [26]. The researchers employed a TECAN Freedom EVO200 integrated robotic platform to execute all steps from RNA extraction to RT-qPCR plate preparation, providing a direct comparison between automated and manual methodologies.

The automated platform featured a Liquid Handling Arm (LiHa) with eight independent pipetting channels, a Multi-Channel Arm 96-tip pipetting head (MCA96) for simultaneous liquid transfers, and a Common Gripper Module (CGM) for handling and transferring labware [26]. This configuration enabled complete walkaway automation of the entire workflow, significantly reducing hands-on time while improving reproducibility.

Table 3: Comparison of Manual vs. Automated Workflow for Gene Expression Analysis

| Parameter | Manual Protocol | Automated Protocol | Improvement |

|---|---|---|---|

| RNA Extraction Time | Several days to one week (for 96 samples) | Approximately 1 hour | ~95% time reduction |

| RNA Quality (RIN) | High | Comparable high quality | No compromise on quality |

| RNA Concentration | Concentrated (20 μL elution) | More diluted (2×40 μL elution) | Adaptation needed for downstream applications |

| RNA Yield | Standard | Slightly reduced | Attributable to standard errors in automated processes |

| cDNA Synthesis Time | 3-4 working days | Approximately 2 hours | ~90% time reduction |

| Operator Hands-on Time | Extensive throughout process | Minimal (mainly loading samples) | Significant reduction in labor |

| Throughput | Limited by manual operations | 96 samples processed simultaneously | Massive increase in throughput |

| Reproducibility | Subject to individual variability | High reproducibility | Significant improvement in consistency |

The validation results confirmed that data obtained through the automated workflow maintained comparable quality to manual procedures while providing dramatic improvements in efficiency [26]. This demonstration highlights the transformative potential of automation for large-scale screening applications, particularly in fields requiring processing of numerous samples under consistent conditions.

Automated DBTL for Metabolic Engineering

The knowledge-driven DBTL cycle represents an advanced application of automation in metabolic engineering [7]. This approach integrates upstream in vitro investigations with high-throughput in vivo optimization to accelerate strain development. In a study focused on optimizing dopamine production in Escherichia coli, researchers implemented an automated workflow that combined cell-free protein synthesis systems with robotic strain construction.

The methodology involved:

- In vitro pathway optimization using crude cell lysate systems to test different relative enzyme expression levels without whole-cell constraints

- Ribosome Binding Site (RBS) engineering to translate optimal expression levels to in vivo environments

- High-throughput screening of engineered strains to identify optimal configurations

This knowledge-driven approach enabled the development of a dopamine production strain capable of producing 69.03 ± 1.2 mg/L dopamine, representing a 2.6 to 6.6-fold improvement over previous state-of-the-art in vivo production systems [7]. The automated implementation of the DBTL cycle allowed systematic optimization of pathway components that would be impractical through manual approaches.

Technical Specifications and System Architecture

Integrated Robotic Platform Configuration

Modern automated liquid handling workstations incorporate sophisticated configurations to enable complex laboratory workflows. The TECAN Freedom EVO200 system exemplifies this integration with multiple coordinated components [26]:

- Liquid Handling Arm (LiHa): Comprises eight independent pipetting channels that allow individual aspiration, dispensing, and mixing operations across various labware formats

- Multi-Channel Arm (MCA96): Features 96 pipetting tips for simultaneous liquid transfers, ideal for high-throughput applications like nucleic acid purification and plate replication

- Common Gripper Module (CGM): Manages labware handling and transfer across all workstation positions for mixing, storage, and incubation processes

- Specialized Modules: Include chilling/heating dry baths, heated incubators with shakers, vacuum block plate bases, and orbital shake mixers to support diverse experimental requirements

This configuration enables the execution of complex, multi-step protocols without manual intervention, significantly increasing throughput while maintaining reproducibility. The system's flexibility allows customization for specific applications ranging from basic liquid transfers to integrated molecular biology workflows.

Workflow Visualization of Automated DBTL Processes

The integration of automation within the DBTL cycle creates a streamlined pathway for strain development and optimization. The following diagram illustrates the automated workflow for high-throughput build and test phases:

Automated DBTL Workflow Integration

The workflow demonstrates how automation bridges the build and test phases through integrated robotic systems, enabling continuous cycling with minimal manual intervention. This seamless integration dramatically reduces the time required for each DBTL iteration while improving data quality and reproducibility.

Essential Research Reagent Solutions