Computational vs Experimental Off-Target Validation: Strategies for Drug Discovery and Gene Editing

This article provides a comprehensive analysis of computational and experimental methods for off-target validation in biomedical research.

Computational vs Experimental Off-Target Validation: Strategies for Drug Discovery and Gene Editing

Abstract

This article provides a comprehensive analysis of computational and experimental methods for off-target validation in biomedical research. Targeting researchers and drug development professionals, it explores the foundational principles of off-target effects, compares the expanding toolkit of in silico prediction models with established experimental assays, and addresses critical troubleshooting and optimization strategies. By presenting rigorous validation frameworks and comparative performance metrics, this resource aims to guide researchers in developing integrated workflows that enhance safety assessment in therapeutic development, from small-molecule drugs to CRISPR-based gene therapies.

Understanding Off-Target Effects: From Small Molecules to Gene Editing

In the precise domain of drug discovery and therapeutic development, off-target effects refer to unintended interactions between a therapeutic compound or tool and biological components beyond its primary intended target. These unintended interactions represent a significant challenge across multiple modalities, from traditional small-molecule drugs to advanced gene-editing technologies like CRISPR-Cas9. The consequences of off-target activity can range from reduced therapeutic efficacy and confounding research data to serious adverse patient outcomes, including toxicity and carcinogenesis [1] [2]. As therapeutic technologies grow more potent, the accurate identification and characterization of off-target effects have become critical for both drug safety and understanding complex biological mechanisms.

The fundamental mechanisms driving off-target effects vary considerably across therapeutic platforms. In small-molecule drugs, these effects typically arise from structural similarities between binding sites on unrelated proteins or unexpected interactions with structurally unrelated but accessible binding pockets [3]. For CRISPR-based gene editing systems, off-target effects occur when the Cas nuclease cleaves DNA at genomic locations with significant sequence similarity to the intended guide RNA target but without perfect complementarity [1] [4]. Similarly, in RNA interference (RNAi) therapies, off-target silencing can affect genes with partial sequence complementarity to the designed siRNA, particularly in regions of continuous sequence identity [5]. Understanding these diverse mechanisms is essential for developing effective strategies to predict, detect, and mitigate off-target consequences across the drug discovery pipeline.

Computational vs. Experimental Validation Approaches

The assessment of off-target effects employs two complementary paradigms: computational prediction and experimental verification. Computational methods leverage bioinformatics, artificial intelligence, and structural modeling to forecast potential off-target interactions before laboratory investigation. In contrast, experimental approaches utilize biochemical, cellular, and genomic technologies to empirically detect and quantify off-target activity in controlled settings. The evolving consensus recognizes that neither approach alone is sufficient; rather, a integrated strategy combining predictive computational power with empirical experimental validation offers the most robust framework for comprehensive off-target profiling [4].

Computational Prediction Methods

Computational approaches for off-target prediction have advanced significantly with improvements in AI and the availability of large-scale biological datasets. For small-molecule therapeutics, target prediction methods like MolTarPred, RF-QSAR, and TargetNet use machine learning algorithms trained on chemical databases such as ChEMBL and BindingDB to identify potential off-target interactions based on structural similarity and quantitative structure-activity relationships (QSAR) [6]. These ligand-centric and target-centric approaches can rapidly screen compounds against thousands of potential targets, revealing hidden polypharmacology that might contribute to both side effects and potential drug repurposing opportunities.

For biologics and gene-editing platforms, computational tools employ sequence-based algorithms to identify potential off-target sites. In CRISPR applications, tools like Cas-OFFinder, CRISPOR, and CCTop scan genomes for sequences with homology to the guide RNA, considering factors including mismatch tolerance, bulge sequences, and genomic accessibility [4]. Similarly, for RNAi therapeutics, tools like siRNA Scan identify potential off-target genes by searching for contiguous regions of sequence identity (≥21 nucleotides) between the siRNA trigger and unintended transcripts [5]. Computational studies suggest that approximately 50-70% of gene transcripts in plants have potential off-targets during post-transcriptional gene silencing, with experimental verification confirming that up to 50% of predicted off-target genes can actually be silenced [5].

Table 1: Comparison of Computational Off-Target Prediction Methods

| Method Category | Representative Tools | Data Sources | Key Algorithms | Primary Applications |

|---|---|---|---|---|

| Small-Molecule Target Prediction | MolTarPred, RF-QSAR, TargetNet, PPB2 | ChEMBL, BindingDB, DrugBank | Random Forest, Naïve Bayes, 2D Similarity, Neural Networks | Polypharmacology prediction, drug repurposing, toxicity screening |

| CRISPR Off-Target Prediction | Cas-OFFinder, CRISPOR, CCTop, MIT CRISPR tool | Genome sequences, PAM rules, chromatin accessibility | Sequence alignment, homology modeling, machine learning | Guide RNA design, risk assessment of therapeutic candidates |

| RNAi Off-Target Prediction | siRNA Scan | Genomic/transcriptome sequences | Sequence identity search, reverse complement matching | siRNA design, interpretation of gene silencing results |

| Cryptic Pocket Identification | PocketMiner, FAST, Markov State Models | Protein structures, molecular dynamics trajectories | Graph Neural Networks, Adaptive Sampling, MSMs | Allosteric drug discovery, overcoming drug resistance |

Experimental Verification Methods

Experimental approaches for off-target detection provide empirical validation of computational predictions and can identify unexpected off-target activities through unbiased screening. These methods broadly fall into biochemical approaches using purified components and cellular approaches that capture biological context. Biochemical methods like CIRCLE-seq and CHANGE-seq offer exceptional sensitivity for CRISPR off-target detection by sequencing Cas9-cleaved genomic DNA in vitro, with CHANGE-seq demonstrating particularly high sensitivity for rare off-targets through its tagmentation-based library preparation [4]. These approaches can identify potential cleavage sites genome-wide but may overestimate biologically relevant off-target editing due to the absence of cellular context like chromatin structure and DNA repair mechanisms.

Cellular methods such as GUIDE-seq and DISCOVER-seq profile off-target activity within living cells, capturing the influence of nuclear environment, chromatin accessibility, and DNA repair pathways. GUIDE-seq incorporates a double-stranded oligonucleotide tag into double-strand breaks followed by sequencing, providing high-sensitivity detection of off-target DSBs [4]. DISCOVER-seq uniquely exploits the recruitment of DNA repair protein MRE11 to cleavage sites, using ChIP-seq to map nuclease activity genome-wide while capturing real cellular context [4]. Each method presents distinct trade-offs between sensitivity, throughput, workflow complexity, and biological relevance.

Table 2: Comparison of Experimental Off-Target Detection Methods

| Method | Approach Category | Input Material | Detection Context | Key Strengths | Key Limitations |

|---|---|---|---|---|---|

| CHANGE-seq | Biochemical (NGS-based) | Purified genomic DNA | Naked DNA (no chromatin) | Very high sensitivity; detects rare off-targets with reduced false negatives | May overestimate biologically relevant editing |

| CIRCLE-seq | Biochemical (NGS-based) | Nanogram amounts of genomic DNA | Naked DNA (no chromatin) | High sensitivity; lower sequencing depth needed compared to DIGENOME-seq | Lacks cellular repair and chromatin context |

| GUIDE-seq | Cellular (NGS-based) | Living cells (edited) | Native chromatin + repair | High sensitivity for DSB detection; reflects true cellular activity | Requires efficient delivery of double-stranded oligo tag |

| DISCOVER-seq | Cellular (NGS-based) | Cellular DNA; ChIP-seq of MRE11 | Native chromatin + repair | Captures real nuclease activity genome-wide; uses endogenous repair machinery | Lower throughput than biochemical methods |

| UDiTaS | Cellular (NGS-based) | Genomic DNA from edited cells | Native chromatin + repair | High sensitivity for indels and rearrangements at targeted loci | Amplicon-based; requires prior knowledge of potential sites |

| DIGENOME-seq | Biochemical (NGS-based) | Micrograms of genomic DNA | Naked DNA (no chromatin) | Direct detection without enrichment; comprehensive | Requires deep sequencing; moderate sensitivity |

Comparative Analysis of Methodologies

The integration of both computational and experimental approaches provides a more complete off-target assessment than either method alone. Computational prediction excels at early-stage risk assessment and guide selection, enabling researchers to avoid therapeutic candidates with high inherent off-target potential before committing to extensive experimental validation. For example, in CRISPR guide RNA design, computational tools can immediately flag guides with numerous high-similarity genomic matches, allowing researchers to select more specific alternatives [4]. However, computational methods remain limited by their dependence on existing databases and algorithms that may not fully capture biological complexity, such as the influence of three-dimensional chromatin structure or cell-type-specific variations in gene expression.

Experimental methods provide the essential empirical validation needed to confirm actual off-target activity in biologically relevant contexts. Cellular methods particularly excel at identifying which computationally predicted off-target sites actually manifest as edits in the target cell type or tissue. However, these approaches have their own limitations, including varying sensitivity thresholds, technical artifacts, and the practical challenge of surveying the entire genome with sufficient depth [4]. The emerging consensus, reinforced by FDA guidance, recommends using multiple complementary methods for comprehensive off-target assessment, particularly for therapeutic applications [4].

Recent advances in AI and machine learning are gradually bridging the gap between computational prediction and experimental verification. For instance, PocketMiner, a graph neural network model, predicts locations of cryptic pockets in proteins with impressive accuracy, substantially accelerating the identification of potentially druggable off-target sites [3]. Similarly, platforms like Folding@home with the Goal-Oriented Adaptive Sampling Algorithm (FAST) have discovered over 50 cryptic pockets in proteins, revealing novel targets for antiviral drug development by simulating protein dynamics at exascale [3]. These computational approaches are increasingly being validated by experimental methods, creating a virtuous cycle of improved prediction accuracy.

Detailed Experimental Protocols

CHANGE-seq Protocol for Biochemical Off-Target Detection

CHANGE-seq (Circularization for High-throughput Analysis of Nuclease Genome-wide Effects by Sequencing) is an ultrasensitive, bias-reduced method for profiling CRISPR-Cas nuclease off-target activity in vitro. The protocol begins with genomic DNA extraction from appropriate cell lines or tissues, requiring only nanogram amounts of input DNA. The DNA undergoes end-repair and A-tailing using standard molecular biology reagents followed by adapter ligation with T-tailed duplexed adapters. Critical to the method, the adapter-ligated DNA is circularized using circligase, then treated with exonuclease to remove linear DNA molecules, thus enriching for successfully circularized fragments.

The nuclease cleavage reaction is performed by incubating the purified, circularized DNA with precomplexed Cas9 ribonucleoprotein (RNP) under optimal reaction conditions. After cleavage, the DNA is purified and tagmented using a hyperactive Tn5 transposase, which simultaneously fragments the DNA and adds sequencing adapters. This tagmentation step replaces the sonication or enzymatic fragmentation used in earlier methods, reducing bias and improving sensitivity. Finally, the tagmented DNA is amplified with indexed primers and sequenced on Illumina platforms. Bioinformatic analysis involves identifying sequencing reads with integrated adapter sequences, mapping them to the reference genome, and statistically identifying significant off-target sites [4].

GUIDE-seq Protocol for Cellular Off-Target Detection

GUIDE-seq (Genome-wide, Unbiased Identification of DSBs Enabled by Sequencing) detects off-target CRISPR-Cas9 cleavage in living cells by capturing double-strand breaks through integration of a double-stranded oligodeoxynucleotide (dsODN) tag. The protocol begins with cell preparation and transfection, typically co-transfecting cells with plasmids expressing Cas9 and guide RNA along with the 34-bp dsODN tag using appropriate transfection methods. Critical to success is maintaining a optimal ratio of dsODN to RNP, typically around 100:1, to ensure efficient tag integration without excessive toxicity. After 48-72 hours, genomic DNA is extracted using standard methods.

The extracted DNA undergoes library preparation through tag-specific amplification. First, a primary PCR is performed using a dsODN-specific primer and a primer binding to a randomly fragmented portion of the genome (via tagmentation or sonication). This is followed by a nested PCR with internal primers to enhance specificity. The final libraries are sequenced on an Illumina platform, and bioinformatic analysis identifies genomic locations with integrated dsODN tags, quantifying off-target cleavage sites. GUIDE-seq can detect off-target sites with frequencies as low as 0.1%, making it one of the most sensitive cellular methods available [4].

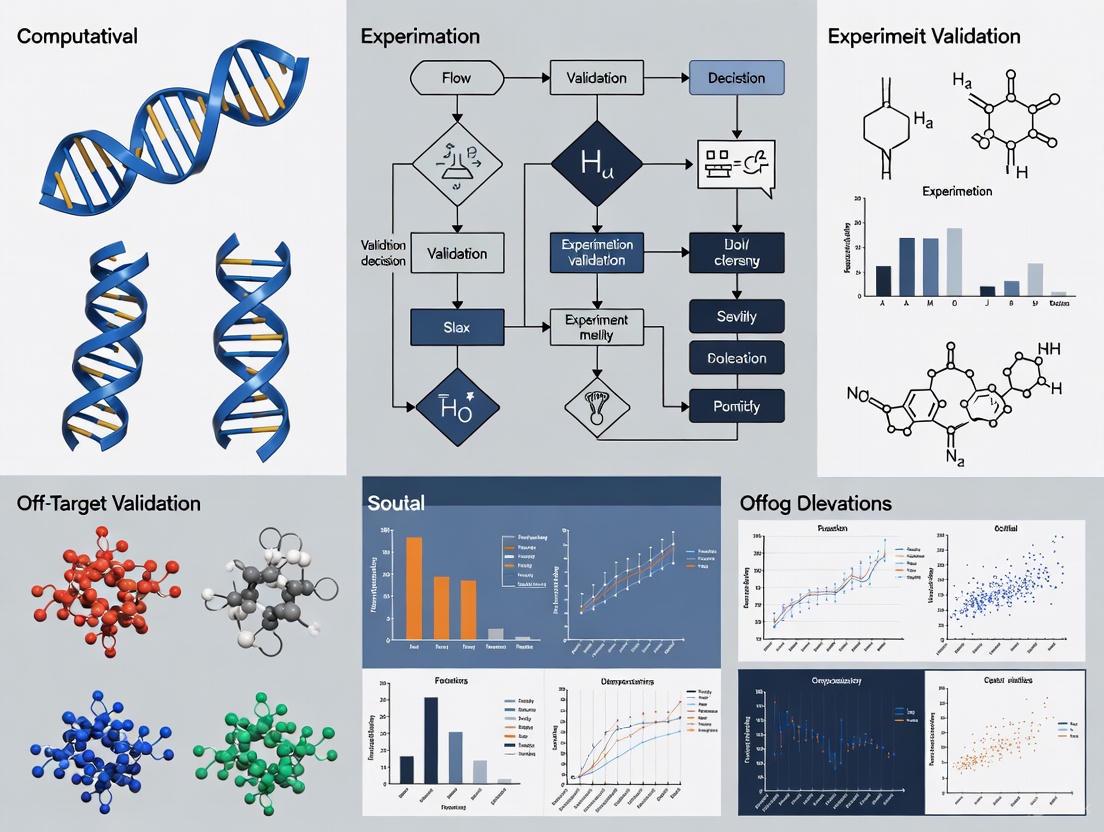

Visualization of Workflows

Integrated Off-Target Assessment Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents for Off-Target Studies

| Category | Specific Reagents/Materials | Function/Application | Example Uses |

|---|---|---|---|

| Computational Tools | MolTarPred, Cas-OFFinder, siRNA Scan, PocketMiner | Prediction of potential off-target interactions | In silico screening of small molecules, guide RNAs, or siRNAs before experimental testing |

| Genomic DNA Sources | Cell line genomic DNA, primary cell DNA, tissue-derived DNA | Substrate for biochemical off-target assays | INPUT for CIRCLE-seq, CHANGE-seq, DIGENOME-seq |

| Nuclease Reagents | Purified Cas nucleases, recombinant RNP complexes | Enzyme source for in vitro cleavage assays | Cas9, Cas12a proteins for biochemical off-target screening |

| Library Prep Kits | Illumina sequencing kits, tagmentation reagents | Next-generation sequencing library construction | CHANGE-seq (Tn5 transposase), GUIDE-seq (specialized adapters) |

| Oligonucleotides | dsODN tags (for GUIDE-seq), sequencing adapters, PCR primers | Tagging and amplification of cleavage sites | 34-bp double-stranded oligodeoxynucleotide tag for DSB capture |

| Cell Culture Reagents | Cell lines, transfection reagents, culture media | Cellular context off-target assessment | Delivery of CRISPR components for GUIDE-seq, DISCOVER-seq |

| Antibodies | Anti-MRE11 antibodies (for DISCOVER-seq) | Immunoprecipitation of repair complexes | ChIP-seq to capture MRE11-bound DSB sites |

| Analysis Software | Custom bioinformatics pipelines, genome alignment tools | Data processing and off-target site identification | Bowtie2, BWA for read alignment; custom scripts for site calling |

The comprehensive assessment of off-target effects requires a multidisciplinary approach integrating computational prediction with experimental validation. While computational methods provide rapid, cost-effective screening capabilities, experimental approaches deliver essential empirical verification in biologically relevant contexts. The continuing evolution of both paradigms—driven by advances in AI, sequencing technologies, and our understanding of biological systems—promises increasingly accurate off-target profiling across all therapeutic modalities. For researchers and drug developers, selecting the appropriate combination of methods based on specific therapeutic platforms, developmental stages, and regulatory requirements remains crucial for advancing safe and effective treatments through the drug development pipeline. As the field progresses, the integration of standardized off-target assessment protocols will be essential for comparing results across studies and establishing validated safety profiles for novel therapeutics.

The specificity paradigm ('one drug-one target') has been the golden standard in drug discovery for decades, leading to the perception that drugs with multiple targets are 'unselective' or 'promiscuous' and therefore high-risk [7]. However, retrospective analyses have revealed that most approved drugs actually interact with a multitude of targets rather than a single one, bearing rich polypharmacological profiles that often contribute to their therapeutic efficacy [8] [7]. This recognition has catalyzed a paradigm shift, transforming polypharmacology from a perceived liability into a strategic opportunity. Polypharmacology, the study of single drugs that act on multiple targets, is now an established branch of pharmaceutical science that provides a systematic framework for understanding these off-target activities and leveraging them for therapeutic benefit [8] [7] [9].

The clinical success of many multitarget drugs underscores this shift. Tyrosine kinase inhibitors (TKIs) in oncology, such as sunitinib, and central nervous system (CNS) drugs, such as the tricyclic antidepressant amitriptyline, exemplify how engaging multiple targets can yield superior clinical efficacy [7]. This paradigm reframes off-target effects not merely as sources of potential adverse reactions but as valuable assets for drug repurposing—the process of finding new therapeutic uses for existing drugs outside their original medical indication [8] [10]. Computational methods have become indispensable in this area due to the vast amount of data that needs to be processed to identify and validate these repurposing opportunities [8].

Computational Methods for Off-Target Prediction

Computational approaches enable the high-throughput prediction of drug off-target effects, providing a cost-effective strategy for assessing compound safety and discovering repurposing opportunities before embarking on expensive experimental work [11] [10]. These methods leverage artificial intelligence (AI), machine learning (ML), and chemogenomic data to systematically profile drug-target interactions.

Machine Learning and AI-Based Models

Advanced modeling techniques use known compound-target interaction data to predict novel off-target interactions.

- Multi-Task Graph Neural Networks: This approach frames off-target prediction as a multi-task learning problem, where a single model simultaneously learns to predict interactions for a panel of hundreds of potential safety targets. The model uses the molecular graph structure of a compound as input, and the outcomes of these off-target predictions can themselves serve as informative molecular representations for downstream tasks like Anatomical Therapeutic Chemical (ATC) classification and toxicity prediction [11].

- Ensemble Artificial Neural Networks for Transcriptional Response: Some models predict the transcriptional response of cells to drugs, simultaneously inferring drug-target interactions and their downstream effects on intracellular signaling. These models can decouple on-target and off-target effects on transcription and extract causal signaling networks, providing a deeper understanding of a drug's mechanism of action [12].

- Benchmarked Machine Learning Models: Industrial and academic research has extensively benchmarked various models, including Random Forests (RF), Gradient Boosting (GB), Deep Neural Networks (DNN), and Automated Machine Learning (AutoML) platforms, against large-scale proprietary datasets. For example, a Roche study evaluated these models on a panel of 50 safety targets, providing a practical comparison of their performance on real-world drug discovery data [11].

Table 1: Key Computational Methods for Off-Target Profiling

| Method | Core Principle | Primary Application | Reported Advantages |

|---|---|---|---|

| Multi-Task Graph Neural Network [11] | Learns to predict interactions for multiple targets simultaneously using molecular graph structures. | Precise prediction of compound off-target profiles; generates molecular representations. | High predictive accuracy; representations useful for toxicity and ATC classification. |

| Ensemble Neural Networks [12] | Models transcriptional drug response to infer drug-target interactions and downstream signaling effects. | Decoupling on/off-target effects; understanding mechanism of action. | Provides insight into biological pathways and causal signaling networks. |

| Random Forest / Gradient Boosting [11] | Tree-based ensemble methods that build multiple decision trees for classification/regression. | Building predictive models for specific off-target panels (e.g., 46-50 targets). | Handles diverse data types; robust performance on imbalanced datasets. |

| Deep Neural Networks (DNN) [11] [10] | Uses multiple processing layers to learn hierarchical representations of data. | Large-scale virtual screening and binding affinity prediction. | High capacity for learning complex patterns from raw data. |

| Chemical Similarity Search [11] | Assumes chemically similar compounds have similar biological activities. | Initial virtual screening and target prediction. | Computationally efficient; easy to implement and interpret. |

The predictive power of these models relies on robust, large-scale datasets. Key data sources include ChEMBL and PubChem, which provide compound-target interaction data (e.g., Ki, Kd, IC50) [11]. The typical workflow involves data collection and processing, model training and validation, and subsequent application to new compounds for safety assessment or repurposing hypothesis generation. The following diagram illustrates a generalized computational workflow for off-target profiling and repurposing.

Diagram 1: Computational off-target profiling workflow.

Experimental Validation of Off-Target Effects

Computational predictions are only the starting point; they require rigorous experimental validation to confirm biological relevance and therapeutic potential [13] [14]. This validation follows a hierarchical approach, progressing from simple in vitro systems to complex in vivo models and clinical analysis.

In Vitro Validation Protocols

In vitro assays provide the first empirical evidence for predicted off-target interactions.

- In Vitro Pharmacological Assays: Companies commonly profile compounds against a panel of safety-related off-targets. For example, a consensus panel of 44 early drug safety targets was proposed based on the internal panels of AstraZeneca, GlaxoSmithKline, Novartis, and Pfizer, covering toxicity to the central nervous system, immune system, gastrointestinal tract, and heart [11]. These assays quantitatively measure binding affinity or functional activity (e.g., Ki, IC50).

- Cell-Based Functional Assays: These assays evaluate the functional consequences of off-target binding in a cellular context. Examples include:

- Enzyme inhibition assays to confirm direct target modulation [10].

- Cell viability assays to assess anti-proliferative effects in oncology repurposing [15].

- Reporter gene assays to measure the impact on specific signaling pathways [15].

- High-content screening and phenotypic assays in more physiologically relevant models like organoids or 3D cultures to enhance translational relevance [15].

In Vivo and Clinical Validation

- Animal Models: In vivo studies are crucial for assessing the efficacy and safety of a repurposed drug in a whole-organism context, accounting for complex pharmacology, metabolism, and potential toxicity [14] [10].

- Retrospective Clinical Analysis: This powerful validation method uses real-world patient data to find supporting evidence.

- Electronic Health Records (EHR) and Insurance Claims: Analyzing these datasets can reveal off-label drug usage that correlates with improved outcomes for a new disease, providing strong evidence of efficacy in humans [14].

- Existing Clinical Trials: Searching databases like ClinicalTrials.gov for trials testing the predicted drug-disease connection indicates that the hypothesis has already reached an advanced validation stage [14].

Table 2: Experimental Validation Methods for Off-Target Effects

| Method Type | Protocol Description | Key Measured Outcomes | Role in Validation Pipeline |

|---|---|---|---|

| In Vitro Binding Assays [11] [10] | Profiling compounds against panels of purified safety targets. | Binding affinity (Ki, Kd), inhibitory concentration (IC50). | Confirms direct physical interaction with the predicted off-target. |

| Cell-Based Functional Assays [10] [15] | Measuring drug effects in cellular models (e.g., reporter gene, viability). | Pathway modulation, cell proliferation, transcriptional changes. | Confirms functional biological activity in a cellular context. |

| In Vivo Studies [14] [10] | Administering drug to animal models of the new disease indication. | Efficacy, pharmacokinetics, preliminary safety and toxicity. | Assesses complex therapeutic effects and safety in a whole organism. |

| Retrospective Clinical Analysis [14] | Mining EHRs or clinical trial databases for drug-disease connections. | Real-world evidence of drug efficacy and safety in human populations. | Provides strong supporting evidence from human data before new trials. |

Comparative Analysis: Computational vs. Experimental Approaches

A synergistic integration of computational and experimental methods forms the most robust framework for off-target profiling and drug repurposing. The table below provides a direct comparison of these approaches, highlighting their distinct strengths, limitations, and ideal applications.

Table 3: Computational vs. Experimental Off-Target Validation

| Aspect | Computational Methods | Experimental Methods |

|---|---|---|

| Throughput & Scale | Very High - Can screen thousands of compounds against hundreds of targets in silico [11] [10]. | Low to Medium - Limited by cost, time, and reagent availability for large-scale screening [11]. |

| Cost & Resources | Low - Relatively low cost after initial model development [11] [10]. | High - Substantial costs for reagents, equipment, and specialized labor [13] [10]. |

| Primary Strengths | - Hypothesis generation at scale.- Identifies non-obvious connections.- Cost-effective early safety assessment [11] [10]. | - Empirical confirmation of biological activity.- Provides insight into mechanism of action.- Gold standard for establishing causality [13] [14]. |

| Key Limitations | - Predictions are model-dependent and may contain false positives/negatives.- Limited by the quality and breadth of training data [13] [11]. | - Results in model systems (e.g., cell lines, animals) may not fully translate to humans.- Low throughput restricts the scope of investigation [13] [11]. |

| Typical Application | Early-stage drug safety assessment, prioritization of compounds for experimental testing, and generation of repurposing hypotheses [11] [14]. | Validation of computational predictions, detailed investigation of mechanism of action, and definitive proof of efficacy and safety [13] [14]. |

The following diagram illustrates how these methods are integrated into a cohesive drug repurposing pipeline, from initial prediction to clinical application.

Diagram 2: Integrated repurposing R&D pipeline.

Case Studies in Repurposing via Polypharmacology

Baricitinib: AI-Driven Repurposing for COVID-19

Baricitinib, a Janus-associated kinase (JAK) inhibitor approved for rheumatoid arthritis, was identified by BenevolentAI's machine learning algorithm as a potential treatment for COVID-19. The computational prediction was based on its purported ability to inhibit host proteins involved in viral endocytosis (AP2-associated protein kinase 1) while also mitigating the inflammatory response [10] [15]. This hypothesis was subsequently validated in clinical trials, leading to the drug's emergency use authorization for COVID-19. This case exemplifies a successful drug-centric repurposing strategy where a drug's known polypharmacological profile was leveraged for a new indication [15].

SARS-CoV-2 Multi-Target Drug Discovery

A large-scale virtual screening of 4,193 FDA-approved drugs against 24 proteins of SARS-CoV-2 identified several drugs with polypharmacological profiles against the virus. Drugs such as dihydroergotamine, ergotamine, and midostaurin were found to interact with multiple viral targets, suggesting potential as multi-targeting antiviral agents. This study highlights a disease-centric repurposing approach, starting from a specific pathogen and systematically screening for drugs that could counteract multiple proteins essential for its lifecycle [16].

Pergolide: Off-Target Safety Profiling

The withdrawn drug Pergolide was used as a case study to demonstrate how computational off-target profiling can elucidate the mechanisms of adverse drug reactions (ADRs). An AI model predicted its off-target profile, which was then used in an ADR enrichment analysis. This approach inferred potential ADRs at the target level and provided a plausible explanation for the clinical observations that led to its withdrawal, showcasing the application of polypharmacology in enhanced drug safety assessment [11].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful off-target profiling and validation require a suite of specialized reagents and tools. The following table details key solutions used in the featured experiments and the broader field.

Table 4: Research Reagent Solutions for Off-Target Profiling

| Reagent / Material | Function in Research | Example Use Case |

|---|---|---|

| Curated Compound-Target Interaction Databases (ChEMBL, PubChem) [11] | Provides structured bioactivity data (Ki, Kd, IC50) for model training and validation. | Building and benchmarking machine learning models for off-target prediction [11]. |

| Defined Off-Target Safety Panels [11] | A focused set of proteins associated with major safety liabilities (e.g., CNS, cardiac toxicity). | In vitro pharmacological profiling of candidate drugs to assess early safety risks [11]. |

| High-Throughput Screening Assay Kits | Enable efficient testing of compound activity against specific target classes (e.g., kinases, GPCRs). | Experimental validation of computationally predicted drug-off-target interactions [11] [15]. |

| Pathway-Specific Reporter Assay Systems [15] | Cell-based tools that measure the activation or inhibition of specific signaling pathways. | Functional validation of the downstream consequences of off-target binding in a cellular context [15]. |

| Biological Functional Assays [15] | Includes enzyme inhibition, cell viability, and other phenotypic assays to measure biological activity. | Bridging computational predictions and therapeutic reality by providing empirical data on compound behavior [15]. |

The repurposing of the CRISPR-Cas9 system from a bacterial immune mechanism into a programmable gene-editing tool has revolutionized biological research and therapeutic development [17]. At its core, the system consists of a Cas9 nuclease and a single-guide RNA (sgRNA) that directs the nuclease to a specific DNA sequence for cleavage [18]. While its potential is immense, a significant challenge limiting its broader application, particularly in clinical settings, is the phenomenon of off-target effects [18] [19] [20]. This refers to unintended edits at genomic locations that bear similarity to the intended target site, which can lead to unintended mutations and genomic instability [19] [17].

Understanding the mechanisms behind off-target activity is crucial for developing safer gene therapies. This guide objectively compares the performance of various technologies for predicting and validating these effects, framing the discussion within the broader thesis of computational versus experimental off-target validation research. For drug development professionals, navigating this landscape is critical, as regulatory agencies like the FDA now recommend using multiple methods, including genome-wide analysis, to characterize off-target editing in preclinical studies [4].

Mechanisms of CRISPR Off-Target Activity

The precision of CRISPR-Cas9 is governed by the complementary base pairing between the sgRNA and the target DNA sequence. However, this process is not perfectly stringent, and several interrelated factors contribute to off-target editing.

Mismatch Tolerance and the Role of the Seed Region

A primary mechanism for off-target effects is the system's tolerance for mismatches—base-pairing errors between the sgRNA and genomic DNA. The widely used Streptococcus pyogenes Cas9 (SpCas9) can tolerate up to three to five base pair mismatches, depending on their position and context [19]. The "seed region," a sequence of 8-12 nucleotides closest to the Protospacer Adjacent Motif (PAM), is particularly critical [17]. Mismatches within this region are less tolerated and more likely to prevent cleavage, whereas mismatches in the distal region are more easily accommodated [20] [21].

Structural and Energetic Influences

The structure and binding dynamics of the Cas9-sgRNA complex itself play a significant role. The GC content of the sgRNA sequence is a key factor; while sufficient GC content (40-60%) stabilizes the DNA:RNA duplex, excessively high GC content can promote misfolding and increase off-target potential [19] [17]. Furthermore, the secondary structure of the sgRNA can influence its availability and efficiency, thereby impacting specificity [20]. The energetics of the RNA-DNA hybrid formation and allosteric regulation within the Cas9 protein upon DNA binding also contribute to the complex's ability to tolerate mismatches [20].

Genomic and Cellular Context

Off-target activity is not determined by sequence alone. The genomic context, including the presence of repetitive or highly homologous sequences, increases the risk of erroneous cleavage [17]. Cellular factors such as chromatin accessibility and epigenetic modifications (e.g., histone modifications and DNA methylation) also influence off-target editing by determining the physical accessibility of a DNA region to the Cas9 complex [18] [17]. Tightly packed heterochromatin is less accessible than open euchromatin, affecting both on-target and off-target efficiency.

Methodologies for Off-Target Detection and Validation

A suite of technologies has been developed to identify off-target sites, each with distinct methodologies, strengths, and limitations. They can be broadly categorized into computational prediction, biochemical methods, and cellular methods.

Computational Prediction Tools

In silico tools are typically the first step in sgRNA design and off-target risk assessment. They use algorithms to scan reference genomes for sequences homologous to the sgRNA.

- Methodology: These tools use alignment-based or scoring-based models. Alignment-based tools like Cas-OFFinder and CasOT perform exhaustive searches for genomic sites with user-defined numbers of mismatches or bulges [18] [22]. Scoring-based models, such as the MIT score and Cutting Frequency Determination (CFD), assign weights to mismatches based on their position relative to the PAM, often derived from experimental data [18]. Newer deep learning models like DeepCRISPR and CCLMoff leverage artificial intelligence and pre-trained RNA language models to automatically extract sequence features and predict off-target activity with improved accuracy and generalization [21] [22].

- Experimental Protocol:

- Input: The 20-nucleotide sgRNA sequence and a reference genome.

- Genome Scanning: The algorithm scans the genome for sequences with a matching PAM (e.g., 5'-NGG-3' for SpCas9).

- Comparison & Scoring: Candidate sites are compared to the sgRNA, and a score is calculated based on the number, type, and position of mismatches.

- Output: A ranked list of potential off-target sites for further experimental validation [18] [22].

Biochemical Detection Methods

These are highly sensitive in vitro methods that use purified genomic DNA and Cas9 nuclease to map cleavage sites without cellular influences.

- Methodology:

- CIRCLE-seq: Genomic DNA is circularized, digested with Cas9-sgRNA, and then treated with an exonuclease to degrade linear DNA. The remaining cleaved, circularized fragments are amplified and sequenced, providing a highly sensitive profile of potential cleavage sites [18] [4] [23].

- Digenome-seq: Purified genomic DNA is digested in vitro with Cas9-sgRNA ribonucleoprotein (RNP) and subjected to whole-genome sequencing. Cleavage sites are identified by looking for linear DNA ends mapped to the reference genome [18] [4].

- SITE-seq: Biotinylated Cas9 RNP is used to cleave genomic DNA. The cleaved ends are then captured using streptavidin beads, enriched, and sequenced [18] [4].

- Experimental Protocol (CIRCLE-seq):

- DNA Extraction & Circularization: High-molecular-weight genomic DNA is purified and circularized.

- In Vitro Digestion: Circularized DNA is incubated with pre-complexed Cas9-sgRNA RNP.

- Exonuclease Treatment: An exonuclease digests the linear DNA fragments, enriching for cleaved circles.

- Library Prep & Sequencing: The enriched circles are linearized, converted into a sequencing library, and analyzed by NGS [18] [4].

Cellular Detection Methods

These methods detect off-target editing within living cells, capturing the effects of chromatin structure, DNA repair pathways, and other cellular contexts.

- Methodology:

- GUIDE-seq: A short, double-stranded oligonucleotide (dsODN) tag is transfected into cells alongside the CRISPR components. This tag is incorporated into double-strand breaks (DSBs) during repair. The tagged sites are then enriched via PCR and sequenced [18] [4].

- DISCOVER-seq: This method exploits the natural DNA repair machinery. It uses ChIP-seq to target MRE11, a repair protein recruited to DSBs, to identify CRISPR-induced cleavage sites within native chromatin [18] [4].

- Whole Genome Sequencing (WGS): The most comprehensive approach, WGS involves sequencing the entire genome of edited cells (often clonally derived) and comparing it to an unedited control to identify all mutations, including large structural variations [19] [4].

- Experimental Protocol (GUIDE-seq):

- Co-delivery: Cells are co-transfected with plasmids encoding Cas9 and sgRNA, and the GUIDE-seq dsODN tag.

- DNA Extraction & Tag Enrichment: Genomic DNA is extracted after editing. Tag-integrated fragments are enriched using PCR with primers specific to the dsODN.

- Library Prep & Sequencing: An NGS library is prepared from the amplified products and sequenced.

- Data Analysis: Sequences are aligned to the reference genome to identify the genomic locations where the tag was inserted, revealing DSB sites [18] [4].

The workflow below illustrates the logical decision process for selecting an off-target validation strategy based on research goals.

Comparative Analysis of Off-Target Validation Methods

Performance Comparison of Detection Methods

The following table summarizes the key characteristics, advantages, and limitations of the major methodological approaches.

Table 1: Comparison of Major Off-Target Detection Approaches

| Approach | Example Methods | Input Material | Key Strengths | Key Limitations |

|---|---|---|---|---|

| In Silico | Cas-OFFinder, CCTop, CCLMoff [18] [22] | Genome sequence & computational models | Fast, inexpensive; essential for guide design [4] | Purely predictive; lacks biological context (chromatin, repair) [18] |

| Biochemical | CIRCLE-seq, Digenome-seq, SITE-seq [18] [4] [23] | Purified genomic DNA | Ultra-sensitive; comprehensive; standardized workflow; detects rare sites [4] [23] | Lacks cellular context (may overestimate); does not reflect chromatin effects [4] |

| Cellular | GUIDE-seq, DISCOVER-seq, UDiTaS [18] [4] | Living cells (edited) | Captures native chromatin & repair; identifies biologically relevant edits [18] [4] | Limited by delivery efficiency; less sensitive than biochemical methods [4] |

| In Situ | BLISS, BLESS [18] [4] | Fixed cells or nuclei | Preserves genome architecture; captures breaks in their native location [18] [4] | Technically complex; lower throughput; variable sensitivity [4] |

A critical performance metric is the sensitivity of these methods, particularly their ability to detect low-frequency off-target events. The table below compares quantitative data on detection sensitivity and other key parameters from selected studies.

Table 2: Quantitative Comparison of Selected Off-Target Assays

| Method | Reported Sensitivity | Detection Principle | Input DNA | Key Experimental Findings |

|---|---|---|---|---|

| GUIDE-seq [18] [4] | High (in cellular context) | DSB tag integration | Cellular DNA | Highly sensitive with low false-positive rate; limited by transfection efficiency [18] |

| CIRCLE-seq [18] [4] [23] | Very High (in vitro) | Circularization & exonuclease enrichment | Nanograms of purified DNA | High sensitivity; lower sequencing depth needed vs. Digenome-seq [18] [4] |

| CRISPR Amplification [23] | Extremely High (≤0.00001%) | Mutant DNA enrichment via repeated cleavage & PCR | Genomic DNA from edited cells | Detected off-target mutations at a 1.6~984 fold higher rate than targeted amplicon sequencing [23] |

| Digenome-seq [18] [4] | Moderate | Direct WGS of digested DNA | Micrograms of purified DNA | Requires deep sequencing; moderate sensitivity [18] [4] |

The Computational vs. Experimental Validation Paradigm

The choice between computational and experimental methods is not a matter of selecting one over the other, but rather of understanding their complementary roles in a robust validation pipeline.

Computational Prediction serves as the foundational, cost-effective first step. It is indispensable for sgRNA selection, allowing researchers to rank guides and filter out those with high predicted off-target risk before any wet-lab experiment begins [24] [19]. However, its major limitation is the reliance on sequence data alone, which fails to account for the complex biology of the cell [18]. Even advanced deep-learning models like CCLMoff, which show superior generalization by leveraging RNA language models, are ultimately predictive and require empirical confirmation [22].

Experimental Validation provides the necessary empirical ground truth. Biochemical methods like CIRCLE-seq offer unparalleled sensitivity for creating an initial "risk list" of potential off-target sites under ideal conditions [4]. However, their lack of cellular context means they may identify sites that are not actually cut in a therapeutic context. This is where cellular methods like GUIDE-seq and DISCOVER-seq are critical, as they identify which of the potential sites are actually edited in the relevant cell type, providing a more physiologically relevant assessment [18] [4]. For final therapeutic validation, especially for in vivo therapies, the FDA often expects the most comprehensive data available, which may include WGS to detect unexpected chromosomal rearrangements in addition to targeted methods [19] [4].

The following diagram maps the standard workflow for off-target assessment, integrating both computational and experimental approaches.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Off-Target Analysis

| Item / Reagent | Function in Experiment | Key Considerations |

|---|---|---|

| High-Fidelity Cas9 Variants (e.g., eSpCas9, SpCas9-HF1) [19] [25] | Engineered nucleases with reduced mismatch tolerance; used to minimize off-target cleavage during editing. | Balance between high specificity and maintained on-target efficiency is crucial [19]. |

| Chemically Modified sgRNA [19] | sgRNAs with 2'-O-methyl analogs (2'-O-Me) and 3' phosphorothioate bonds (PS) to increase stability and reduce off-target effects. | Modifications can enhance editing efficiency and specificity by altering sgRNA structure and kinetics [19]. |

| dsODN Tag (for GUIDE-seq) [18] [4] | A short, double-stranded oligonucleotide that is incorporated into DSBs during cellular repair to mark the location for sequencing. | Transfection efficiency is a limiting factor; tag concentration must be optimized to avoid toxicity [18] [4]. |

| MRE11-Specific Antibody (for DISCOVER-seq) [18] [4] | Used for chromatin immunoprecipitation (ChIP) to pull down DNA fragments bound by the MRE11 DNA repair protein. | Antibody specificity is critical for low background and high-resolution results [18]. |

| Biotinylated Cas9 RNP (for SITE-seq) [18] [4] | Cas9 pre-complexed with sgRNA and labelled with biotin; allows for streptavidin-based enrichment of cleaved DNA fragments. | Enables direct capture of cleavage events without cellular repair, reducing background [18] [4]. |

The journey toward perfectly precise CRISPR-based therapeutics hinges on a comprehensive understanding and rigorous management of off-target effects. The mechanisms of mismatch tolerance are multifaceted, involving sgRNA-DNA interactions, protein structure, and genomic context. No single validation method provides a perfect solution; each has distinct performance trade-offs between sensitivity, throughput, and biological relevance.

The most robust strategy for researchers and drug developers is a hierarchical one that leverages the strengths of both computational and experimental paradigms. This process begins with sophisticated in silico design using modern AI-powered tools, progresses through ultra-sensitive in vitro biochemical screens to cast a wide net, and culminates in cellular validation to confirm biologically relevant off-target events in the target cell type. The evolving regulatory landscape underscores the necessity of this multi-faceted approach. By systematically employing this integrated toolkit, the field can advance safer, more effective CRISPR therapies from the bench to the clinic.

Off-target effects refer to unintended interactions between a therapeutic compound and biological targets other than its primary intended target. These interactions can lead to unexpected side effects, toxicity, or altered efficacy, presenting significant challenges in drug development. Comprehensive off-target assessment has become a critical component of the regulatory submission process for new therapies, particularly as novel modalities like gene therapies and small molecules with complex mechanisms of action advance through clinical development.

The U.S. Food and Drug Administration (FDA) emphasizes thorough off-target characterization to ensure patient safety, though specific formal guidances dedicated exclusively to off-target assessment remain limited. Instead, the FDA's expectations are embedded within broader guidelines for drug development and approval. The agency's approach is evolving to balance rigorous safety assessment with the need to accelerate development of promising therapies for serious conditions. For cell and gene therapies, the FDA recommends long-term safety monitoring to detect delayed off-target effects, reflecting the unique risk profiles of these innovative treatments [26].

This article examines the current regulatory landscape for off-target assessment, focusing specifically on FDA guidelines and how they interface with emerging computational and experimental approaches for comprehensive off-target profiling.

FDA Regulatory Framework for Off-Target Assessment

Foundational Principles and Evolving Pathways

The FDA's approach to off-target assessment is guided by several foundational principles centered on patient safety. While the agency has not issued a standalone guidance specifically dedicated to off-target assessment, its expectations are articulated through various documents addressing product-specific safety considerations. The Center for Biologics Evaluation and Research (CBER) and Center for Drug Evaluation and Research (CDER) both emphasize characterization of off-target effects as part of comprehensive safety profiling.

A significant recent development is the FDA's proposal of a "plausible mechanism pathway" for bespoke therapies when traditional clinical trials are not feasible. This pathway, outlined by FDA leaders, includes as a core element the confirmation that the intended target was successfully edited without significant off-target effects when clinically feasible [27]. This reflects a flexible yet evidence-based approach to safety assessment for highly individualized therapies.

For regenerative medicine therapies, including cell and gene products, the FDA recommends that monitoring plans for clinical trials include both short-term and long-term safety assessments [26]. The agency also encourages exploration of digital health technologies to collect safety information, potentially including data relevant to detecting off-target effects in real-world settings.

Comparison with EMA Approaches to Off-Target Assessment

Understanding how FDA guidelines compare with those of other major regulatory agencies like the European Medicines Agency (EMA) provides valuable context for global drug development strategies. The table below summarizes key comparative aspects:

Table: Comparison of FDA and EMA Approaches to Off-Target Assessment

| Aspect | FDA Approach | EMA Approach |

|---|---|---|

| Overall Philosophy | Flexible, case-by-case; accepts RWE and surrogate endpoints [28] | More comprehensive data requirements; emphasizes larger patient populations [28] |

| Experimental Evidence | Encourages novel methodologies; emphasis on functional assays [29] | Systematic profiling; requires thorough mechanistic studies |

| Computational Evidence | Increasing acceptance with strong validation; DeepTarget recognition [29] | Conservative stance; requires extensive experimental correlation |

| Post-Marketing Surveillance | REMS requirements; 15+ years LTFU for gene therapies [28] | Risk Management Plans; periodic safety update reports [30] |

| Expedited Pathways | RMAT designation available with ongoing safety monitoring [26] | Conditional approval with stricter post-authorization measures |

The differences in regulatory approach mean that strategic planning for off-target assessment must consider region-specific requirements. The FDA generally demonstrates greater flexibility in accepting novel approaches to off-target assessment, including computational methods and real-world evidence, particularly through its expedited programs [28].

Computational vs. Experimental Approaches for Off-Target Validation

Computational Methods for Off-Target Prediction

Computational approaches for off-target assessment leverage bioinformatics algorithms and artificial intelligence to predict unintended therapeutic interactions based on structural and sequence similarities. These methods offer the advantage of comprehensive screening across multiple potential targets before resource-intensive experimental work begins.

A prominent example is DeepTarget, an open-source computational tool that integrates large-scale drug and genetic knockdown viability screens plus omics data to determine cancer drugs' mechanisms of action [29]. Benchmark testing revealed that DeepTarget outperformed currently used tools such as RoseTTAFold All-Atom and Chai-1 in seven out of eight drug-target test pairs for predicting drug targets and their mutation specificity [29].

The methodological workflow for computational off-target assessment typically involves several key steps:

- Target Identification: Using structural bioinformatics to identify potential off-targets based on sequence or structural homology

- Binding Affinity Prediction: Applying molecular docking simulations or machine learning algorithms to estimate interaction strengths

- Functional Impact Assessment: Predicting potential physiological consequences of identified off-target interactions

- Clinical Correlation: Integrating pharmacological and clinical data to prioritize findings based on potential relevance

These methods are particularly valuable for their ability to screen thousands of potential interactions rapidly and at low cost, providing hypotheses for experimental validation [29] [31].

Experimental Methods for Off-Target Validation

Experimental approaches provide direct empirical evidence of off-target effects and remain the cornerstone of regulatory safety assessments. These methods measure actual binding interactions or functional effects in biologically relevant systems.

Key experimental methodologies include:

- In vitro binding assays (e.g., radioligand binding, fluorescence-based techniques)

- Cellular functional assays measuring downstream effects of off-target engagement

- High-throughput screening platforms against target panels

- Proteomic approaches (e.g., mass spectrometry-based chemoproteomics)

- Pharmacological profiling in engineered cell systems

The experimental workflow typically progresses from broad screening to mechanistic characterization:

- Primary Screening: Broad profiling against target families or known safety targets

- Dose-Response Characterization: Establishing potency at primary and off-targets

- Functional Consequence Assessment: Determining agonism, antagonism, or other functional effects

- Mechanistic Studies: Elucidating molecular mechanisms of off-target engagement

- Translational Correlation: Relating in vitro findings to potential clinical manifestations

Experimental methods provide direct evidence of off-target effects but are generally more resource-intensive and lower throughput than computational approaches [32].

Integrated Workflow for Comprehensive Off-Target Assessment

The most robust approach to off-target assessment combines computational and experimental methods in a complementary workflow. The following diagram illustrates this integrated strategy:

Integrated Computational-Experimental Workflow for Off-Target Assessment

This integrated approach leverages the comprehensiveness of computational methods with the empirical validation of experimental techniques, creating a rigorous framework for off-target identification and characterization that meets regulatory expectations.

Comparative Analysis: Performance Metrics and Case Studies

Quantitative Comparison of Methodologies

Direct comparison of computational and experimental approaches reveals distinct performance characteristics across multiple metrics. The table below summarizes quantitative comparisons based on published studies and regulatory submissions:

Table: Performance Comparison of Off-Target Assessment Methods

| Performance Metric | Computational Methods | Experimental Methods |

|---|---|---|

| Throughput | High (1000s of targets simultaneously) [29] | Low to medium (10s-100s of targets) |

| Cost per Target | Low (<$1-10/target) [32] | High ($100-1000/target) |

| Time Requirements | Days to weeks [32] | Weeks to months |

| False Positive Rate | Variable (10-40%) [29] | Low (5-15%) |

| False Negative Rate | Variable (15-30%) | Low (5-20%) |

| Regulatory Acceptance | Increasing with validation [29] | Established standard |

| Biological Context | Limited without additional modeling | High in complex systems |

| Clinical Predictivity | Moderate (requires validation) | High with relevant models |

DeepTarget demonstrated particularly strong performance in benchmark testing, showing superior predictive ability across diverse datasets for determining both primary and secondary targets compared to other computational tools [29].

Case Studies in Off-Target Assessment

Case Study 1: DeepTarget for Ibrutinib in Solid Tumors

In a validation case study, DeepTarget demonstrated that EGFR T790 mutations influence response to ibrutinib in BTK-negative solid tumors [29]. The computational predictions were subsequently confirmed experimentally, demonstrating how computational methods can identify novel therapeutic applications through off-target characterization.

Experimental Protocol:

- Computational Prediction: DeepTarget analysis of drug-target interactions

- Cell Viability Assays: Treatment of EGFR T790M mutant cell lines with ibrutinib

- Western Blot Analysis: Confirmation of EGFR pathway modulation

- Binding Assays: Direct measurement of ibrutinib-EGFR interaction

This case exemplifies the complementary value of computational and experimental approaches for comprehensive off-target characterization.

Case Study 2: Pyrimethamine Repurposing

DeepTarget analysis revealed that the antiparasitic agent pyrimethamine affects cellular viability by modulating mitochondrial function in the oxidative phosphorylation pathway [29]. This off-target mechanism suggested potential repurposing opportunities for mitochondrial disorders.

Experimental Protocol:

- Computational Prediction: DeepTarget identification of mitochondrial associations

- Oxygen Consumption Rate: Measurement using Seahorse Analyzer

- ATP Production Assays: Luminescence-based quantification

- Complex I Activity: Specific enzymatic activity measurements

Essential Research Reagents and Methodologies

Successful off-target assessment requires carefully selected research tools and methodologies. The table below details key reagents and their applications in off-target studies:

Table: Essential Research Reagents for Off-Target Assessment

| Reagent Category | Specific Examples | Research Application |

|---|---|---|

| Target Panels | Eurofins Safety Screen 44, DiscoverX KINOMEscan | Broad pharmacological profiling against established safety targets |

| Cell-Based Assays | Reporter gene assays, PathHunter β-arrestin recruitment | Functional assessment of off-target engagement |

| Proteomic Tools | Activity-based protein profiling, photoaffinity labeling | Identification of unknown off-targets in complex proteomes |

| Computational Tools | DeepTarget, molecular docking software | Prediction of potential off-target interactions |

| Gene Editing Tools | CRISPR-Cas9, base editors | Validation of target specificity for gene therapies |

| Animal Models | Transgenic models, humanized target animals | In vivo assessment of off-target effects |

The selection of appropriate reagents and methodologies should be guided by the specific therapeutic modality, stage of development, and regulatory requirements. For gene therapies, the FDA looks for confirmation that the target was successfully edited without significant off-target effects when clinically feasible [27].

The regulatory landscape for off-target assessment is evolving toward greater acceptance of computational methods complemented by targeted experimental validation. The FDA's proposed "plausible mechanism pathway" for bespoke therapies represents a significant shift toward more flexible evidence requirements, where off-target assessment may be tailored to specific product characteristics and clinical contexts [27].

Future developments in off-target assessment will likely include:

- Increased integration of AI and machine learning approaches with experimental validation

- Greater use of real-world evidence for post-market off-target monitoring

- Advanced computational models that better recapitulate biological complexity

- Standardized validation frameworks for computational prediction tools

The complementary strengths of computational and experimental approaches provide a robust framework for comprehensive off-target assessment that meets regulatory requirements while supporting efficient therapeutic development. As both technologies advance, their integration will become increasingly seamless, enabling more predictive safety assessment throughout the drug development process.

The high failure rate of clinical drug development represents a significant economic burden and a challenge for pharmaceutical innovation. Analyses indicate that approximately 90% of drug candidates that enter clinical trials fail to achieve approval, with about 30% of these failures attributed to unmanageable toxicity, a significant portion of which is caused by off-target effects [33]. Off-target effects occur when a small molecule drug interacts with proteins or biological pathways other than its intended primary target, potentially leading to adverse drug reactions (ADRs) [11]. About 75% of ADRs are Type A reactions, which are dose-dependent and predictable based on a drug's secondary pharmacological profile, making off-target profiling a critical component of early safety assessment [11]. This guide compares the performance of computational and experimental methods for off-target validation, providing a framework for researchers to integrate these approaches into the drug discovery pipeline to mitigate clinical attrition risks.

The Clinical Attrition Crisis and the Off-Target Problem

The drug development process is long, costly, and fraught with risk, requiring over 10-15 years and an average cost exceeding $1-2 billion for each new approved drug [33]. The transition from preclinical research to clinical success remains a major bottleneck. For drug candidates that advance to Phase I clinical trials, the failure rate is strikingly high, with lack of clinical efficacy (40-50%) and unmanageable toxicity (30%) being the predominant causes of failure [33].

Off-target toxicity presents a dual challenge in drug development. It can arise from either poorly selective compounds interacting with unrelated protein targets or from on-target effects in tissues where target inhibition leads to toxicity. Pharmaceutical companies commonly employ in vitro pharmacological assays to profile compounds against comprehensive panels of safety targets to mitigate this risk. For instance, cross-screening against panels of 44-70 safety-related targets has been implemented across major pharmaceutical companies to identify potential liability early in the discovery process [11].

Computational Off-Target Prediction Methods

Computational approaches for off-target prediction have gained significant traction due to their cost-effectiveness and scalability compared to experimental methods. These can be broadly categorized into target-centric and ligand-centric approaches, each with distinct methodologies and applications.

Methodologies and Performance Comparison

Target-centric methods build predictive models for specific protein targets to estimate the interaction likelihood of query molecules. These often utilize Quantitative Structure-Activity Relationship (QSAR) models with various machine learning algorithms such as random forest and Naïve Bayes classifiers [6]. Structure-based methods like molecular docking simulations leverage 3D protein structures to predict binding, though their application can be limited by the availability of high-quality structural data [6].

Ligand-centric methods focus on chemical similarity between query molecules and known ligands annotated with their targets. These methods depend on the comprehensiveness of knowledge about known ligands and their targets, with effectiveness directly correlated to the quality and coverage of chemical databases [6].

Table 1: Performance Comparison of Computational Off-Target Prediction Methods

| Method | Type | Algorithm | Data Source | Key Performance Findings |

|---|---|---|---|---|

| MolTarPred | Ligand-centric | 2D similarity, MACCS/Morgan fingerprints | ChEMBL 20 | Most effective method in comparative study; Morgan fingerprints with Tanimoto scores outperformed MACCS [6] |

| AI/Graph Neural Network | Target-centric | Multi-task Graph Neural Network | ChEMBL, PubChem | Predicts off-target profiles for safety assessment; enables ADR inference and toxicity classification [11] |

| RF-QSAR | Target-centric | Random Forest | ChEMBL 20&21 | ECFP4 fingerprints; performance varies by target [6] |

| TargetNet | Target-centric | Naïve Bayes | BindingDB | Uses multiple fingerprint types (FP2, MACCS, E-state, ECFP) [6] |

| CMTNN | Target-centric | Neural Network (ONNX runtime) | ChEMBL 34 | Stand-alone code for local execution [6] |

| Elevation (CRISPR) | Target-centric | Two-layer machine learning | GUIDE-seq data | State-of-the-art for CRISPR off-target prediction; outperforms CFD and MIT scoring methods [34] |

Recent advances in artificial intelligence have enhanced computational prediction capabilities. Multi-task graph neural network models can predict compound off-target interactions with high precision, and these predictions can serve as molecular representations for differentiating drugs under various Anatomical Therapeutic Chemical (ATC) codes and classifying compound toxicity [11]. The predicted off-target profiles are further employed in ADR enrichment analysis, facilitating inference of potential adverse drug reactions [11].

Experimental Protocols for Computational Methods

Database Preparation and Curation

- Source Data: Extract bioactivity records from curated databases such as ChEMBL (version 34), containing over 15,000 targets, 2.4 million compounds, and 20.7 million interactions [6].

- Filtering Criteria: Select records with standard values for IC50, Ki, or EC50 below 10,000 nM. Exclude entries associated with non-specific or multi-protein targets by filtering out targets with names containing "multiple" or "complex" [6].

- Confidence Scoring: Implement minimum confidence score thresholds (e.g., score ≥7 in ChEMBL, indicating direct protein complex subunits assigned) to ensure only well-validated interactions are included [6].

Model Training and Validation

- Fingerprint Generation: Generate molecular fingerprints such as Morgan hashed bit vector fingerprints with radius two and 2048 bits for similarity calculations [6].

- Benchmark Dataset: Prepare a benchmark dataset of FDA-approved drugs excluded from the main database to prevent overlap and bias during prediction validation [6].

- Performance Validation: Use hold-out test sets with novel perturbation conditions not present in training data to ensure real-world predictive capability [35].

Computational Prediction Workflow

Experimental Off-Target Validation Methods

While computational methods provide valuable initial insights, experimental validation remains essential for confirming off-target interactions and understanding their biological implications.

Methodologies and Integrated Approaches

Metabolomics-Guided Target Discovery

- A multiscale drug target-finding workflow integrates machine-learning analysis of metabolomics data with metabolic modelling and protein structural analysis [36].

- This approach was successfully deployed to identify HPPK (folK) as an off-target of the antibiotic compound CD15-3, which was originally designed to target dihydrofolate reductase (DHFR) [36].

- The methodology combines untargeted global metabolomics to identify perturbation patterns, growth rescue experiments to confirm functional relevance, and protein structural similarity analysis to prioritize candidate targets [36].

Multi-scale Integrative Framework

- Metabolomic Perturbation Analysis: Measure metabolic changes upon drug treatment across multiple growth phases to identify significantly altered pathways [36].

- Machine Learning Classification: Train multi-class models (e.g., logistic regression) to identify mechanism-specific metabolic signatures and contextualize drug effects [36].

- Metabolic Modeling: Use constraint-based modeling to identify pathways whose inhibition aligns with observed growth rescue patterns [36].

- Structural Analysis: Compare protein active sites and structural features to identify potential off-targets with similarity to primary targets [36].

Table 2: Experimental Methods for Off-Target Validation

| Method Type | Specific Techniques | Key Applications | Advantages | Limitations |

|---|---|---|---|---|

| Biophysical Assays | Binding affinity assays, gene expression analyses, proteomics | Direct measurement of drug-target interactions | High reliability, direct evidence | Labour-intensive, lower throughput [6] |

| Metabolomics | LC/MS, GC/MS, multivariate analysis | Unbiased profiling of metabolic perturbations | Systems-level view, functional context | Complex data interpretation, requires validation [36] |

| Growth Rescue | Metabolic supplementation, gene overexpression | Functional validation of target engagement | Direct functional evidence, physiological context | Limited to essential targets, may have compensatory mechanisms [36] |

| High-Throughput Screening | In vitro safety panels (44-70 targets) | Systematic off-target profiling | Comprehensive liability assessment | Costly, requires substantial compound [11] |

| Structural Analysis | X-ray crystallography, cryo-EM, homology modeling | Understanding binding modes and selectivity | Mechanistic insights, rational design | Requires high-quality structures, may not reflect cellular environment [6] |

Experimental Protocols for Integrated Validation

Metabolomics Analysis Protocol

- Sample Collection: Harvest cells at multiple growth phases (early lag, mid-exponential, late log) under treated and untreated conditions [36].

- Metabolite Extraction: Use appropriate extraction solvents (e.g., methanol:acetonitrile:water) to quench metabolism and extract polar and non-polar metabolites.

- LC-MS Analysis: Perform untargeted global metabolomics using reversed-phase chromatography coupled to high-resolution mass spectrometry.

- Data Processing: Use software platforms (e.g., XCMS, Progenesis QI) for peak picking, alignment, and annotation against metabolite databases.

- Statistical Analysis: Identify significantly altered metabolites (fold-change >2, p-value <0.05) and perform pathway enrichment analysis.

Growth Rescue Experiments

- Metabolite Supplementation: Supplement growth media with potential metabolites that might bypass the inhibited pathway and rescue growth [36].

- Gene Overexpression: Clone candidate off-target genes into expression vectors and transform into host cells to test for reduced drug sensitivity [36].

- Dose-Response Analysis: Measure IC50 shifts with and without rescue conditions to quantify the contribution of suspected off-targets to overall efficacy/toxicity.

Integrated Experimental Validation

Comparative Analysis: Computational vs. Experimental Approaches

The selection between computational and experimental approaches for off-target validation involves trade-offs between throughput, cost, biological relevance, and mechanistic insight.

Performance and Practical Considerations

Throughput and Scalability

- Computational Methods: Offer high throughput, capable of screening thousands of compounds against hundreds of targets in silico within days to weeks [6] [11].

- Experimental Methods: Lower throughput, with typical in vitro panels testing tens to hundreds of compounds against 44-70 safety targets over weeks to months [11].

Cost Considerations

- Computational Prediction: Relatively low cost after initial model development, primarily involving computational resources [11].

- Experimental Screening: Substantially higher costs for reagents, equipment, and personnel time, making extensive screening cost-prohibitive for large compound libraries [11].

Biological Relevance

- Computational Methods: Often limited to predicting binding events without functional context, though newer AI methods incorporate downstream effect prediction [11].

- Experimental Methods: Provide direct functional readouts in relevant cellular contexts, particularly for phenotypic assays and omics approaches [36].

Table 3: Strategic Application of Off-Target Validation Methods

| Development Stage | Recommended Computational Methods | Recommended Experimental Methods | Key Objectives |

|---|---|---|---|

| Early Discovery/Hit Identification | Ligand-based similarity searching (MolTarPred), QSAR | Minimal; select binding assays for primary target | Eliminate compounds with obvious liability risks, prioritize scaffolds |

| Lead Optimization | Multi-target machine learning (AI/graph neural networks), docking | Targeted in vitro safety panels (44-70 targets), metabolic profiling | Systematic liability profiling, SAR for selectivity, identify major off-targets |

| Preclinical Candidate Selection | Comprehensive off-target profiling, ADR prediction | Metabolomics, specialized assays (hERG, genotoxicity), proteomics | Complete safety assessment, inform clinical monitoring plans, risk mitigation |

| Post-Market | Retrospective analysis for new safety signals | Pharmacovigilance, focused mechanistic studies | Understand clinical ADRs, support label updates, drug repurposing |

Table 4: Key Research Reagents and Resources for Off-Target Studies

| Resource Category | Specific Examples | Function and Application | Key Features |

|---|---|---|---|

| Bioactivity Databases | ChEMBL, PubChem, BindingDB | Source of annotated compound-target interactions for model training and validation | ChEMBL contains 2.4M+ compounds and 20.7M+ interactions; manually curated data [6] |

| Safety Target Panels | Eurofins Safety Panel, Bioprint Database | Standardized target sets for systematic off-target screening | 44-70 safety targets covering CNS, cardiovascular, gastrointestinal liabilities [11] |

| Molecular Fingerprints | Morgan, ECFP4, MACCS | Numerical representation of chemical structure for similarity assessment | Morgan fingerprints with Tanimoto scores show superior performance in similarity-based prediction [6] |

| Metabolomics Platforms | LC-MS, GC-MS, NMR | Global profiling of metabolic perturbations to identify off-target effects | Identifies pathway-level effects; provides functional context for target engagement [36] |

| Structural Biology Tools | AlphaFold, molecular docking software | Prediction of protein-ligand interactions and binding modes | AlphaFold generates high-quality structural models for targets without experimental structures [6] |

| Machine Learning Frameworks | Graph Neural Networks, Random Forest, Logistic Regression | Prediction of off-target interactions and aggregation of off-target scores | Multi-task graph neural networks enable prediction of full off-target profiles from chemical structure [11] |

The integration of computational and experimental approaches for off-target validation represents a powerful strategy to address the persistent challenge of clinical attrition. Computational methods provide cost-effective, scalable early assessment, while experimental approaches deliver essential biological validation and mechanistic understanding. The emerging paradigm of Structure–Tissue Exposure/Selectivity–Activity Relationship (STAR) offers a comprehensive framework for balancing efficacy and safety considerations during drug optimization [33].

Companies and academic institutions that systematically implement robust off-target validation strategies can significantly de-risk their development pipelines, potentially reducing the 30% failure rate attributable to toxicity. As computational methods continue to improve through advances in artificial intelligence and structural biology, and experimental techniques become more sensitive and higher throughput, the drug development community is positioned to make significant strides in overcoming the economic and safety challenges that have long plagued pharmaceutical innovation.

Methodologies in Practice: Computational Prediction and Experimental Detection