Comparative Analysis of Biopart Characterization Methods: From Foundational Principles to Advanced Applications

This comprehensive review explores the rapidly evolving landscape of biopart characterization methodologies essential for synthetic biology and biopharmaceutical development.

Comparative Analysis of Biopart Characterization Methods: From Foundational Principles to Advanced Applications

Abstract

This comprehensive review explores the rapidly evolving landscape of biopart characterization methodologies essential for synthetic biology and biopharmaceutical development. Targeting researchers, scientists, and drug development professionals, the article systematically examines foundational principles, current technological applications, troubleshooting strategies, and validation frameworks. By comparing established and emerging characterization techniques across diverse biological contexts—from standardized DNA components in genetic circuits to complex therapeutic proteins—this analysis provides critical insights for selecting appropriate methodologies, optimizing characterization workflows, and ensuring reliability in biological engineering applications. The synthesis of current best practices and future directions serves as both a practical guide and strategic roadmap for advancing biopart characterization in research and industrial settings.

Foundations of Biopart Characterization: Core Principles and Engineering Paradigms

In the engineering-focused discipline of synthetic biology, bioparts represent the fundamental building blocks—standardized, interchangeable DNA sequences—that enable the programmable construction of biological systems. These components form the foundation of genetic circuits and pathways, allowing researchers to apply engineering principles such as modularity and standardization to biological design. Bioparts include a diverse range of functional genetic elements, with promoters and terminators playing particularly crucial roles in controlling gene expression levels and patterns [1]. The systematic identification and characterization of these components has become a central focus in synthetic biology, driving the field toward more predictable and reliable biological engineering.

The strategic importance of bioparts extends across multiple application domains, from metabolic engineering for producing high-value compounds to the development of diagnostic and therapeutic tools. For instance, catalytic bioparts enable the design and optimization of specific metabolic pathways in engineered chassis organisms [2]. Similarly, advanced genetic circuits constructed from well-characterized bioparts underpin emerging applications in gene therapy and cell engineering, including CAR-T cells for cancer treatment and engineered hematopoietic stem cells for addressing blood disorders [3]. This broad applicability highlights why comparative analysis of biopart characterization methods represents a critical research frontier with significant implications for both basic science and translational applications.

Comparative Analysis of Major Biopart Categories

Regulatory Bioparts: Promoters and Terminators

Promoters and terminators constitute essential regulatory bioparts that precisely control gene expression in synthetic biology systems. Promoters, located upstream of gene coding sequences, are classified based on their expression patterns as constitutive, tissue-specific, or inducible [1]. In plants, promoters contain core, proximal, and distal elements with direction-sensitive motifs like CCAAT-box, initiator elements, and plant-specific Y patches [1]. Terminators, situated downstream of coding sequences, ensure proper 3' end processing, polyadenylation, and transcript stability through far upstream elements, near upstream elements, and cleavage sites [1].

Table 1: Comparative Analysis of Major Promoter Categories

| Promoter Type | Expression Pattern | Key Components | Applications | Performance Considerations |

|---|---|---|---|---|

| Constitutive | Constant across tissues and conditions | Core promoter elements (e.g., TSS, Inr) | High-level protein production | May cause cellular burden; potential silencing |

| Tissue-Specific | Restricted to specific cell types | Tissue-specific cis-regulatory elements | Metabolic engineering; avoiding pleiotropic effects | Enables spatial control; reduced burden |

| Inducible | Activated by specific stimuli | Response elements to inducers | Conditional expression; toxic pathway elements | Temporal control; may have leaky expression |

| Bidirectional | Drives two genes in opposite directions | Shared intergenic region | Gene pyramiding; compact circuit design | Prevents transcriptional silencing; coordinate regulation |

The combinatorial selection of promoter-terminator pairs significantly influences transgene expression outcomes. Recent studies demonstrate that native plant-derived terminators such as tHSP18 and tMIR frequently outperform exogenous elements like tNos, resulting in higher and more stable transgene expression [1]. Strategic combinations, including dual-terminator configurations and incorporation of matrix attachment regions, further enhance expression levels and reduce variability, underscoring the importance of co-optimizing these regulatory elements when engineering robust synthetic circuits [1].

Catalytic Bioparts and Modular Enzyme Systems

Catalytic bioparts encompass enzyme-coding sequences that perform specific biochemical transformations, serving as fundamental components for metabolic pathway engineering. The Registry and Database of Bioparts for Synthetic Biology (RDBSB) represents a comprehensive resource containing 83,193 curated catalytic bioparts with experimental evidence, including critical parameters such as activities, substrates, optimal pH and temperature, and chassis specificity [2]. These characterized components substantially accelerate the design and optimization of biological systems for specific metabolic applications.

For complex natural product biosynthesis, modular enzyme systems such as type I polyketide synthases (PKSs) and non-ribosomal peptide synthetases (NRPSs) represent higher-order biopart assemblies. These mega-enzymes operate through an assembly-line logic where individual catalytic domains function as coordinated bioparts [4]. A prime example is 6-deoxyerythronolide B synthase (DEBS) from Streptomyces erythraeus, which comprises eight modules distributed across three polypeptides that maintain functional continuity through docking domains at their N- and C-termini [4]. The modular repetition combined with functional variability in these systems underlies the remarkable chemical diversity observed in polyketides and non-ribosomal peptides.

Table 2: Comparative Analysis of Synthetic Interface Strategies for Modular Enzymes

| Interface Type | Mechanism | Key Features | Applications | Performance Metrics |

|---|---|---|---|---|

| Cognate Docking Domains | Natural protein-protein interactions | Specificity; co-evolved compatibility | PKS/NRPS module assembly | Varies with domain combination |

| Synthetic Coiled-Coils | Engineered helical interactions | Programmable affinity; orthogonal pairs | Enzyme scaffolding; spatial organization | Tunable binding strength |

| SpyTag/SpyCatcher | Covalent peptide-protein bonding | Irreversible complex formation | Enzyme complex stabilization | Rapid reaction kinetics |

| Split Inteins | Protein trans-splicing | Post-translational coupling | Domain fusion; segmental labeling | High splicing efficiency |

Advanced Characterization Methods for Bioparts

High-Throughput Experimental Approaches

The functional characterization of bioparts has been revolutionized by high-throughput automation workflows that enable parallel analysis of thousands of variants. Recent research established Chlamydomonas reinhardtii as a prototyping chassis for chloroplast synthetic biology through development of an automated platform capable of generating, handling, and analyzing over 3,000 transplastomic strains in parallel [5]. This automated workflow employs solid-medium cultivation in standardized 384 formats with contactless liquid-handling robots, significantly enhancing throughput compared to traditional liquid-medium screening methods while reducing time requirements approximately eightfold and cutting yearly maintenance spending by half [5].

Large-scale identification of regulatory elements has been accelerated by innovative genomic methods. Assay for Transposase-Accessible Chromatin using sequencing (ATAC-seq) and Self-Transcribing Active Regulatory Region sequencing (STARR-seq) enable comprehensive mapping of functional promoter regions [1]. When complemented with deep-learning-based models for predicting promoter activity from sequence data, these approaches provide powerful computational-experimental frameworks for expanding the repertoire of well-characterized regulatory bioparts. The integration of these methods with standardized assembly systems such as the Phytobrick/Modular Cloning (MoClo) framework, which utilizes Golden Gate cloning with Type IIS restriction enzymes, enables systematic characterization of genetic part combinations across multiple orders of magnitude in expression strength [5].

Computational Modeling and Evolutionary Stability Analysis

Host-aware computational frameworks represent advanced methods for predicting biopart performance and evolutionary stability within engineered biological systems. These multi-scale models capture interactions between host and circuit expression, mutation dynamics, and mutant competition, enabling quantitative evaluation of biopart performance against metrics such as total protein output, duration of stable output, and functional half-life [6]. Simulations performed in repeated batch conditions, where nutrients are replenished and population size is reset periodically, mirror experimental serial passaging and allow researchers to model the evolutionary trajectories of engineered strains.

Computational analyses have revealed fundamental design principles for enhancing biopart longevity. Studies comparing controller architectures found that post-transcriptional control using small RNAs generally outperforms transcriptional regulation via transcription factors due to an amplification step that enables strong control with reduced cellular burden [6]. Furthermore, growth-based feedback mechanisms significantly extend functional half-life compared to intra-circuit feedback, though the latter provides better short-term performance maintenance. These modeling approaches demonstrate that systems with separate circuit and controller genes can exhibit enhanced performance due to evolutionary trajectories where controller function loss paradoxically increases short-term production, highlighting the complex dynamics influencing biopart evolutionary stability [6].

Experimental Protocols for Biopart Characterization

High-Throughput Characterization of Chloroplast Bioparts

Protocol: Automated Workflow for Transplastomic Strain Analysis

This protocol enables systematic characterization of regulatory bioparts in chloroplast genomes through automated handling of thousands of transplastomic strains [5].

Strain Generation and Picking: Transform chloroplast genomes and pick individual transformants using a Rotor screening robot into standardized 384-format plates containing solid medium.

Achieving Homoplasy: Restreak colonies through three successive rounds on selective media, screening 16 replicate colonies per construct to drive strains toward homoplasmy (approximately 80% success rate with minimal losses).

Biomass Array Preparation: Organize homoplasmic colonies into 96-array format for high-throughput biomass growth and subsequent analysis.

Liquid Medium Transfer: Use contact-free liquid handler for cell number normalization based on OD750 measurements, medium transfer, and supplementation of assay-specific compounds (e.g., luciferase substrates).

Reporter Gene Analysis: Quantify biopart activity using fluorescence or luminescence-based readouts, enabling rapid assessment of regulatory element performance across multiple orders of magnitude.

This automated platform reduces the time required for picking and restreaking from approximately 16 hours to 2 hours weekly for 384 strains, while cutting annual maintenance costs by half compared to manual methods [5].

Assessing Evolutionary Longevity in Bacterial Systems

Protocol: Quantifying Evolutionary Stability of Genetic Circuits

This protocol employs serial passaging under repeated batch conditions to evaluate the evolutionary longevity of bioparts in engineered bacterial systems [6].

Strain Preparation: Engineer ancestral strains containing the biopart or genetic circuit of interest alongside appropriate control constructs.

Batch Cultivation: Grow parallel populations in nutrient-limited media, with periodic dilution into fresh medium (typically every 24 hours) to maintain exponential growth phase.

Population Sampling: At each transfer timepoint, collect samples for:

- Flow cytometry to quantify population-level output (e.g., fluorescence)

- Plating and colony screening to assess mutation frequency

- DNA sequencing to identify specific mutations affecting biopart function

Data Analysis: Calculate three key metrics for evolutionary stability:

- P0: Initial output from ancestral population prior to mutation

- τ±10: Time until population output falls outside P0 ± 10%

- τ50: Time until population output declines to P0/2 (functional half-life)

This experimental approach enables direct comparison of different biopart designs and controller architectures for their ability to maintain function over evolutionary timescales, providing critical data for engineering more robust biological systems [6].

Research Reagent Solutions for Biopart Engineering

Table 3: Essential Research Reagents for Biopart Characterization and Assembly

| Reagent/Category | Specific Examples | Function | Application Context |

|---|---|---|---|

| Cloning Systems | Golden Gate Assembly, Gateway, TOPO-TA | Modular DNA assembly | Construct fabrication; multi-gene pathway assembly |

| Selection Markers | aadA (spectinomycin), antibiotic resistance genes | Selective maintenance of engineered constructs | Stable strain engineering; chloroplast transformation |

| Reporter Genes | Fluorescent proteins, luciferases | Quantitative measurement of biopart activity | Promoter/terminator characterization; circuit performance |

| Restriction Enzymes | Type IIS enzymes (BsaI, BsmBI) | DNA cleavage outside recognition sites | Golden Gate assembly; scarless construct fabrication |

| Computational Tools | Host-aware models, deep-learning predictors | In silico performance prediction | Design prioritization; evolutionary stability assessment |

| Characterization Kits | ATAC-seq, STARR-seq reagents | Genome-wide regulatory element mapping | Novel biopart discovery; context-specific activity profiling |

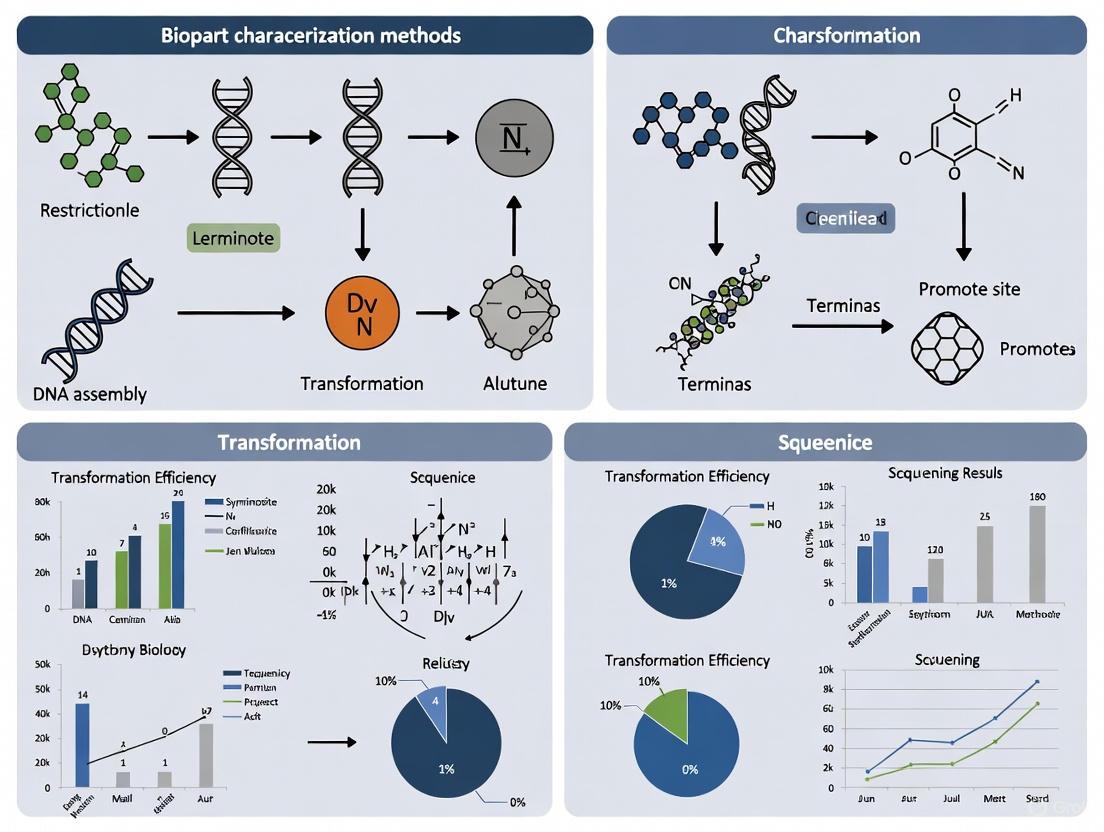

Visualization of Experimental Workflows and System Architectures

DBTL Cycle for Modular Enzyme Engineering

Diagram 1: DBTL Cycle for Modular Enzyme Engineering. This iterative framework integrates computational design, automated construction, functional testing, and machine learning to optimize modular enzyme assemblies for natural product biosynthesis [4].

High-Throughput Chloroplast Characterization Pipeline

Diagram 2: High-Throughput Chloroplast Characterization Pipeline. This automated workflow enables parallel analysis of thousands of transplastomic strains, significantly accelerating the characterization of chloroplast bioparts [5].

The comparative analysis of biopart characterization methods reveals a dynamic field transitioning from individual component analysis to integrated system-level evaluation. The emerging paradigm combines high-throughput experimental automation with sophisticated computational modeling to address both immediate function and evolutionary stability. As characterization methodologies continue advancing, they enable increasingly precise engineering of biological systems with enhanced predictability and robustness. The development of standardized, well-characterized biopart collections, combined with sophisticated assembly frameworks and predictive modeling tools, is establishing a foundation for synthetic biology to realize its full potential across therapeutic, agricultural, and industrial applications.

The application of core engineering principles—standardization, modularity, and abstraction—represents a foundational shift in how researchers approach biological design. Unlike traditional genetic engineering, which often focuses on single-gene modifications, synthetic biology aims to apply rigorous engineering logic to create complex biological systems with predictable behaviors [7]. This engineering-centric approach transforms biological components into well-defined, characterizable elements that can be reliably assembled into larger systems [8]. The adoption of these principles has created a new paradigm where biological systems can be designed, assembled, and tested within a structured framework similar to that used in computer engineering, with distinct hierarchical levels ranging from basic biological parts to integrated multicellular systems [8].

This framework is particularly crucial for biopart characterization, where consistent evaluation methods enable researchers to compare the performance of biological components across different contexts and chassis organisms. The comparative analysis of characterization methods reveals how standardization enables part reuse, modularity facilitates part combination, and abstraction allows researchers to work with biological functions without needing to understand every underlying molecular detail [7]. As the field progresses, these engineering principles are being refined to account for biological complexity, including context dependence, evolutionary pressures, and the dynamic nature of living systems [9] [8].

The DBTL Cycle: An Iterative Framework for Biopart Characterization

The Design-Build-Test-Learn (DBTL) cycle provides a structured, iterative framework for developing and characterizing biological parts [10]. This cyclic process enables systematic refinement of bioparts through successive rounds of design modification and performance evaluation. In the Design phase, researchers specify biological parts using standardized formats and conceptual models, drawing from repositories like the iGEM Registry of Standard Biological Parts or professional collections such as those from BIOFAB [7]. The Build phase involves physical construction using DNA assembly methods such as Golden Gate cloning [11] or gene synthesis [11], while the Test phase characterizes part performance through quantitative measurements of expression levels, specificity, and other functional parameters [11]. Finally, the Learn phase uses collected data to refine models and inform subsequent design iterations, potentially incorporating adaptive laboratory evolution to optimize performance [9].

Automation has dramatically enhanced the DBTL cycle's efficiency, particularly for high-throughput biopart characterization. Automated liquid-handling robots, coupled with plate readers or microfluidics systems, enable rapid prototyping of thousands of biological variants [7]. This automated approach significantly increases throughput, reliability, and reproducibility while enabling exploration of larger design spaces [10]. The integration of data analysis algorithms further streamlines the characterization process, creating a more seamless pipeline from experimental data to computational models [10].

DBTL Cycle for Biopart Engineering

Comparative Analysis of Biopart Characterization Methods

Biopart characterization employs diverse methodologies with varying throughput, quantitative resolution, and experimental requirements. The table below compares five principal approaches used in contemporary synthetic biology research.

Table 1: Comparison of Biopart Characterization Methods

| Method | Throughput | Quantitative Resolution | Key Applications | Experimental Requirements | Data Output |

|---|---|---|---|---|---|

| Fluorescence Microscopy with Visual Scoring [11] | Low | Semi-quantitative (1-6 scale) | Neuron-specific promoter characterization | Stereo and compound microscopes with fluorescence capabilities | Categorical intensity scores |

| Flow Cytometry | High | Quantitative (molecules/cell) | Library screening of regulatory elements | Flow cytometer with appropriate lasers and detectors | Population-level distribution statistics |

| Plate Reader Assays [7] | Medium | Quantitative (bulk fluorescence) | Promoter strength measurement | Plate reader with temperature control | Time-course or endpoint measurements |

| RNA Sequencing [12] | Medium | Quantitative (transcript counts) | Transcriptome-wide expression profiling | RNA extraction kits, sequencing platform | Transcript abundance values |

| Mass Spectrometry | Low | Quantitative (molecules/cell) | Protein expression and modification analysis | LC-MS/MS instrumentation | Peptide abundance and modifications |

Each characterization method offers distinct advantages depending on the experimental context. Fluorescence-based methods with visual scoring provide rapid, cost-effective assessment suitable for initial characterization, particularly in specialized systems like C. elegans neurons [11]. Flow cytometry enables high-throughput single-cell resolution, making it ideal for characterizing heterogeneous expression patterns in microbial systems. Plate reader assays offer a balanced approach for medium-throughput screening with quantitative precision, while RNA sequencing provides comprehensive transcript-level data but with higher resource requirements [12]. Mass spectrometry delivers direct protein-level quantification but typically with lower throughput and higher technical complexity.

Experimental Protocols for Key Characterization Methods

Protocol 1: Visual Fluorescence Scoring for Specialized Expression Patterns

This protocol details the semi-quantitative visual scoring method used to characterize cell-specific promoters, such as the BAG neuron-specific promoter Pflp-17 in C. elegans [11].

Materials:

- Transgenic organisms containing fluorescent reporter constructs

- Stereo dissection microscope with fluorescence capabilities (e.g., ZEISS Olympus SZX2-FOF)

- Upright compound microscope (e.g., LEICA DM2500 LED)

- Appropriate filter sets (e.g., mTomato filter for mScarlet)

- Light sources (e.g., X-Cite XYLIS XT720L LED light, LEICA EL6000 mercury metal halide bulb)

- 2% agarose pads for immobilization

- 50 mM sodium-azide M9 solution for anesthesia

Procedure:

- Generate transgenic organisms through appropriate methods (e.g., extrachromosomal array injection or single-copy transgene insertion).

- For extrachromosomal arrays, inject a mixture containing: 10 ng/μL reporter DNA, 10 ng/μL selection marker (e.g., pCFJ108 for unc-119 rescue), 10 ng/μL antibiotic resistance marker (e.g., pCFJ782 HygroR), and 10 ng/μL piRNA interference fragment (e.g., pMNK54 targeting him-5 for male induction) [11].

- Maintain injected organisms at appropriate temperatures (e.g., 25°C for C. elegans) and apply selection agents (e.g., hygromycin) after 3 days.

- Identify transgenic organisms based on selection markers and rescue phenotypes.

- Image approximately 15 embryos at various developmental stages (gastrula, comma, 1.5-fold, 2-fold, and 3-fold) to determine expression onset.

- For fluorescence quantification, select ten L4 stage organisms and score using both dissection and compound microscopes according to the standardized 1-6 scale [11].

- For imaging, anesthetize transgenic organisms on 2% agarose pads with 50 mM sodium-azide M9 solution and capture images using appropriate objectives and filter sets.

Scoring Criteria:

- Score 6: Fluorescence visible with 1x objective at lowest zoom

- Score 5: Fluorescence visible with 1x objective at highest zoom

- Score 4: Fluorescence visible with 10x objective at lowest zoom

- Score 3: Fluorescence visible with 10x objective at highest zoom

- Score 2: Fluorescence visible with 20x air objective

- Score 1: Fluorescence visible with 40x oil immersion objective

- Score 0: No fluorescence detectable at 40x oil immersion [11]

Protocol 2: High-Throughput Characterization of Regulatory Elements

This protocol outlines automated methods for high-throughput biopart characterization using liquid handling robotics and plate readers [7] [10].

Materials:

- Library of biological parts in standardized vectors

- Automated liquid handling system (e.g., Hamilton STAR, Tecan Freedom EVO)

- Multi-mode microplate reader with temperature control and shaking

- Sterile 96-well or 384-well microplates

- Appropriate growth media and inducers

- Data analysis software (custom scripts or commercial packages)

Procedure:

- Clone bioparts (promoters, RBS variants, terminators) into standardized vectors using assembly methods such as Golden Gate or BioBrick assembly.

- Transform constructs into appropriate host chassis (e.g., E. coli, yeast) and inoculate into deep-well blocks containing selective media.

- Using liquid handling robots, transfer cultures into microplates and dilute to standard optical density.

- Incubate plates with controlled temperature and shaking in plate reader.

- Measure optical density and fluorescence at regular intervals (e.g., every 10-30 minutes) over the growth cycle.

- For inducible systems, add inducer compounds at specified cell densities using automated dispensing.

- Export time-course data for processing and model fitting.

- Calculate characteristic parameters: promoter strength (fluorescence/OD/unit time), expression leakage (uninduced fluorescence), dynamic range (induced/uninduced ratio), and growth effects.

- Apply statistical analysis to determine significance between variants and construct performance distributions.

Research Reagent Solutions for Biopart Characterization

Table 2: Essential Research Reagents for Biopart Characterization

| Reagent / Material | Function | Example Applications | Key Features |

|---|---|---|---|

| Standardized Vector Systems [11] | Modular cloning of bioparts | Golden Gate assembly systems; Fire lab vectors (C. elegans) | Standardized restriction sites; modular part exchange |

| Fluorescent Reporter Proteins [11] | Quantitative measurement of gene expression | mScarlet, GFP for promoter characterization | Brightness; photostability; maturation time |

| DNA Synthesis Services [11] | De novo generation of optimized bioparts | Custom promoter design; codon optimization | Error correction; sequence verification |

| Restriction/Assembly Enzymes [11] | DNA assembly for construct building | Golden Gate cloning (Esp3I); BioBrick assembly | Temperature stability; star activity reduction |

| Library Screening Platforms [7] | High-throughput characterization | Robotic plating; colony picking | Automation compatibility; scalability |

| Microscopy Systems [11] | Spatial and temporal expression analysis | Cell-specific promoter characterization | Fluorescence detection; image processing |

Case Study: Characterization of a Neuron-Specific Promoter

A recent study characterizing the Pflp-17 promoter for BAG neuron-specific expression in C. elegans illustrates the practical application of biopart characterization principles [11]. Researchers synthesized a 300 bp shortened version of the natural promoter, removing problematic restriction sites (ApaI, SmaI, BsaI) and homopolymer runs to ensure compatibility with gene synthesis and standardized assembly methods. The team added a standardized start sequence ("aaaaATG") and incorporated the promoter into vectors with fluorescent reporters (mScarlet and GFP) for comparative analysis.

The characterization workflow assessed multiple performance parameters:

- Specificity: Confirmed expression restricted to two bilaterally symmetric BAG neurons

- Strength: Evaluated using semi-quantitative visual scoring (5/6 for both multicopy arrays and single-copy insertions)

- Onset timing: Determined expression beginning at gastrula stage

- Context performance: Tested in both extrachromosomal arrays and single-copy MosTI insertions

This systematic approach demonstrated that even a short, synthetic promoter could maintain strong cell-specific expression, highlighting how standardized characterization enables direct comparison of biopart performance across different genetic contexts [11].

Biopart Characterization Workflow

Data Integration and Analysis in Biopart Characterization

Effective biopart characterization requires robust data integration and analysis methodologies. The field increasingly employs data-driven approaches, including artificial intelligence tools like Artificial Neural Networks (ANN) and Adaptive Neuro-Fuzzy Inference Systems (ANFIS), to model and predict biopart behavior [13]. These computational methods can identify complex patterns in characterization data that might not be apparent through traditional analysis, potentially reducing the number of experimental cycles needed to optimize biological systems.

Statistical approaches such as Response Surface Methodology (RSM) enable researchers to optimize multiple parameters simultaneously, as demonstrated in the development of biomass-based plastics where factors like material composition and processing conditions were systematically varied [13]. For regulatory element characterization, data analysis typically involves normalizing fluorescence measurements to cell density, fitting kinetic models to time-course data, and calculating statistical confidence intervals for performance parameters. The resulting data facilitates the creation of predictive models that describe how characterized bioparts will behave when assembled into larger systems, gradually building the comprehensive design rules needed for predictable biological engineering [7].

The comparative analysis of biopart characterization methods reveals a trade-off between throughput and resolution that guides method selection for specific research applications. Automated high-throughput approaches excel during initial screening phases where thousands of variants require rapid assessment, while specialized lower-throughput methods provide detailed functional insights for prioritized candidates. The integration of standardized data collection across laboratories and experimental platforms enables the creation of comprehensive biopart performance databases that accelerate biological design.

As synthetic biology continues to mature, emerging technologies like single-cell RNA sequencing and microfluidics platforms are further expanding the characterization toolbox [12] [7]. However, challenges remain in predicting contextual effects and part-part interactions that influence biopart behavior in complex systems. Addressing these limitations will require continued development of characterization methodologies that capture not only individual part performance but also interaction profiles in diverse biological contexts. The systematic application of standardization, modularity, and abstraction principles to biopart characterization provides a pathway toward more predictable biological engineering, ultimately enabling the construction of sophisticated genetic systems for therapeutic development, bioproduction, and fundamental biological research.

In the fields of synthetic biology and drug development, characterization refers to the comprehensive process of documenting the inherent properties and functional capabilities of fundamental biological components, from genetic bioparts to protein-based reagents [14]. Establishing reliability and reproducibility in characterization data is not merely an academic exercise—it is the fundamental prerequisite for building predictable biological systems and ensuring the validity of scientific findings. The antibody characterization crisis, where an estimated 50% of commercial antibodies fail to meet basic characterization standards resulting in billions of dollars in annual losses, starkly illustrates the consequences of inadequate characterization practices [14].

This guide provides a comparative analysis of characterization methodologies across different biological domains, with a focus on establishing standardized approaches that ensure data reliability across laboratories. We examine emerging solutions including automated characterization pipelines, standardized databases, and rigorous validation frameworks that collectively address current challenges in reproducibility.

Comparative Analysis of Characterization Methods

Characterization Frameworks Across Biological Domains

Table 1: Comparison of Characterization Approaches Across Biological Domains

| Domain | Primary Characterization Focus | Key Parameters | Standardization Challenges |

|---|---|---|---|

| Genetic Bioparts | Functional performance in circuits [10] | Activity, substrate specificity, optimal pH/temperature, chassis compatibility [15] | Context-dependent behavior, measurement variability [10] |

| Antibodies | Specificity and selectivity in different applications [14] | Target specificity, cross-reactivity, performance across protocols (Western blot, IHC, IF) [14] | Inconsistent validation protocols, commercial pressures [14] |

| Analytical Methods | Suitability for intended purpose [16] | Sensitivity, specificity, precision, accuracy, quantification range [16] | Resource-intensive validation, varying regulatory requirements [16] |

| Process Characterization | Manufacturing consistency and control [17] | Yield, impurity clearance, parameter ranges and interactions [17] | Scale-down model qualification, multivariate complexity [17] |

Table 2: Catalytic Bioparts Database Coverage Comparison

| Database | Total Bioparts | Experimentally Validated | With Curated Conditions | Key Strengths |

|---|---|---|---|---|

| RDBSB | 390,708 [15] | 83,193 [15] | 3,200 (pH/temperature/chassis) [15] | Integrated pathway design tools, community submission system [15] |

| BRENDA | Not specified | Not specified | Not specified | Enzyme function data, kinetic parameters [15] |

| Swiss-Prot | Not specified | Evidence codes | Limited chassis data | Protein sequence and functional annotation [15] |

| Registry of Standard Biological Parts | >300 catalytic bioparts [15] | Limited | Limited | iGEM-focused, standardized parts [15] |

Experimental Protocols for Robust Characterization

Automated Design-Build-Test-Learn (DBTL) Cycle for Biopart Characterization

The DBTL cycle represents a systematic framework for characterizing biological parts, with automation addressing key reproducibility challenges [10].

Protocol Details:

- Design: Select bioparts based on sequence data and preliminary characterization. Define characterization parameters and success metrics.

- Build: Assemble genetic constructs using standardized assembly methods. Automation through laboratory robotics improves consistency [10].

- Test: Conduct high-throughput functional assays. Automated testing significantly improves throughput, reliability, and reproducibility [10]. Measure fluorescence, enzyme activity, or growth parameters under standardized conditions.

- Learn: Analyze data to refine biopart models and inform next design cycle. Implement statistical analysis to identify significant performance differences.

Application Example: In a case study refactoring a biosensor, the automated DBTL cycle enabled rapid iteration and performance enhancement, producing a biosensor that could be readily integrated into more complex genetic circuits [10].

Risk-Based Approach to Process Characterization

For biopharmaceutical manufacturing, process characterization follows a rational, step-wise approach to ensure consistent product quality [17].

Protocol Details:

Precharacterization Phase:

- Data Mining and Risk Assessment: Retrospectively review historical development data. Conduct Failure Mode and Effects Analysis (FMEA) to assign Risk Priority Numbers (RPN) to parameters [17].

- Scale-Down Model Qualification: Develop and qualify representative small-scale models that mimic performance at manufacturing scale. Critical parameters must remain consistent across scales [17].

- Protocol Development: Create detailed experimental protocols defining operating parameters, ranges, and analytical methods.

Characterization Studies:

- Process Fingerprinting: Establish baseline performance for each unit operation.

- Screening Experiments: Use fractional factorial designs (e.g., Resolution III) to examine multiple parameters efficiently.

- Interaction Studies: Characterize interactions between key parameters using full factorial designs.

- Process Redundancy: Demonstrate consistent performance within established ranges.

Timeline Considerations: Process characterization should begin 12-15 months before validation runs, typically starting after Phase 2 clinical trials [17].

Method Validation for Analytical Procedures

For analytical methods, validation demonstrates suitability for intended purpose through defined performance characteristics [16].

Protocol Details:

- Define Validation Parameters: Establish testing protocols for critical parameters:

- Specificity: Ability to measure analyte accurately in the presence of potential interferents.

- Accuracy: Degree of closeness to true value.

- Precision: Repeatability, intermediate precision, and reproducibility.

- Detection/Quantitation Limits: Sensitivity boundaries.

- Linearity and Range: Concentration interval over which response is proportional.

- Robustness: Capacity to remain unaffected by small parameter variations.

- Implement Tiered Approach:

- Method Qualification: Limited verification for early development phases.

- Full Validation: Comprehensive characterization for commercial methods.

- Method Verification: Demonstration of proficiency with previously validated methods.

Regulatory Alignment: Follow ICH Q2A, Q2B, and FDA guidance requirements based on application phase [16].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Resources for Characterization Studies

| Resource Type | Specific Examples | Function in Characterization | Key Features |

|---|---|---|---|

| Biopart Databases | RDBSB, Registry of Standard Biological Parts [15] | Provide curated bioparts with functional data | Experimental validation, chassis information, pathway tools [15] |

| Antibody Validation Tools | Knockout cell lines, isotype controls, application-specific standards [14] | Demonstrate antibody specificity and selectivity | Identify lot-to-lot variability, confirm target recognition [14] |

| Analytical Standards | USP standards, WHO reference materials, NIST certified materials [16] | Calibrate instruments and validate method performance | Traceable certification, established purity values [16] |

| Automation Platforms | Laboratory robotics, high-throughput screening systems [10] | Increase throughput and reduce variability in testing | Standardized protocols, reduced manual intervention [10] |

| Statistical Packages | R, Python (Pandas, NumPy, SciPy), SPSS [18] | Analyze characterization data for significance and reliability | Descriptive and inferential statistics, data visualization [19] [18] |

Emerging Challenges and Standardization Initiatives

Machine Learning in Biological Characterization

The application of machine learning classifiers to biological characterization introduces new reproducibility challenges. Studies demonstrate that classifier performance varies significantly based on:

- Data type: Transcript vs. protein signatures produce different accuracy patterns [20]

- Classifier choice: Random Forest, GLM, SVM, and Neural Networks show variable performance across biological datasets [20]

- Training data proportion: Accuracy improves with increased training data, but optimal proportions vary by data type [20]

- Hyperparameter tuning: Dramatically affects accuracy for some classifiers (GLM, SVM, NB) more than others [20]

These findings highlight the need for standardized benchmarking and validation of ML approaches in biological characterization to ensure reproducible outcomes [20].

Addressing the Antibody Characterization Crisis

Initiatives to improve antibody characterization include:

- Clearer Terminology: Distinguishing between characterization (inherent properties) and validation (context-specific suitability) [14]

- Application-Specific Controls: Implementing appropriate controls for different protocols (Western blot, IHC, IF) [14]

- Data Sharing: Encouraging publication of characterization data and negative results [14]

- Multistakeholder Engagement: Researchers, vendors, journals, and funders collaborating on standards [14]

Establishing reliability and reproducibility in biological characterization requires systematic approaches tailored to specific biological domains. Cross-cutting themes include the value of automation for reducing variability, the importance of standardized databases and protocols, and the critical need for application-appropriate controls and validation.

The comparative analysis presented in this guide demonstrates that while characterization challenges differ across domains, the fundamental principles of rigorous experimental design, appropriate controls, comprehensive documentation, and data sharing remain universally applicable. As biological research increasingly focuses on building predictable systems from well-characterized components, these characterization fundamentals will grow ever more critical to scientific advancement and therapeutic development.

The Design-Build-Test-Learn (DBTL) cycle represents a cornerstone methodology in synthetic biology, providing an iterative engineering framework for the systematic development and optimization of biological systems. This approach enables researchers to engineer organisms with novel functionalities, accelerating the creation of microbial cell factories for producing biofuels, pharmaceuticals, and other valuable compounds [21] [22]. As synthetic biology has matured over the past two decades, the DBTL cycle has evolved to incorporate increasingly sophisticated technologies, including laboratory automation, advanced analytics, and machine learning [22] [23].

This guide provides a comparative analysis of DBTL implementation strategies, focusing specifically on their application in biopart characterization. We examine contrasting approaches through detailed case studies, presenting quantitative performance data and experimental protocols to empower researchers in selecting appropriate methodologies for their specific characterization challenges.

Understanding the DBTL Cycle Framework

The DBTL cycle operates as an iterative feedback system where each phase informs the next, creating a continuous improvement loop for biological engineering projects.

Core Components of the DBTL Cycle

Design: Researchers define the biological problem and design DNA sequences encoding desired functions using computational tools and biological databases [24]. This phase leverages modular design principles to create interchangeable genetic components [21].

Build: Designed DNA constructs are synthesized and assembled using techniques such as Gibson Assembly or Golden Gate Assembly, then cloned into host organisms through transformation or transfection [24]. Automation enables high-throughput construction of biological variants [21].

Test: Engineered systems undergo rigorous functional characterization using analytical techniques including fluorescence assays, chromatography, and sequencing to evaluate performance [21] [24].

Learn: Data analysis provides insights into system behavior, informing design refinements for subsequent cycles [24]. This phase increasingly employs machine learning to extract patterns from complex datasets [22].

The following diagram illustrates the cyclical relationship between these phases and their key activities:

Comparative Analysis of DBTL Implementation Strategies

DBTL cycles can be implemented through different methodologies, each with distinct advantages for biopart characterization. The table below compares knowledge-driven and automated approaches:

Table 1: Comparison of DBTL Implementation Strategies for Biopart Characterization

| Aspect | Knowledge-Driven DBTL | Automated DBTL (Biofoundry) |

|---|---|---|

| Initial Approach | In vitro testing to inform initial design [25] | Design of experiments or randomized selection [25] |

| Throughput | Moderate | High (e.g., ~400 transformations/day) [26] |

| Key Technologies | Cell-free protein synthesis, RBS engineering [25] | Laboratory robotics, integrated workstations [10] [26] |

| Learning Mechanism | Mechanistic understanding from upstream testing [25] | Machine learning on large datasets [22] |

| Experimental Focus | Pathway optimization through precise tuning [25] | Large-scale library screening [26] |

| Resource Requirements | Moderate | High initial investment |

Performance Comparison in Biopart Characterization

The following table summarizes quantitative performance data from representative studies implementing each approach:

Table 2: Performance Metrics of DBTL Approaches in Case Studies

| Metric | Knowledge-Driven (Dopamine Production) | Automated (Verazine Pathway Screening) |

|---|---|---|

| Productivity Improvement | 2.6 to 6.6-fold increase over state-of-the-art [25] | 2 to 5-fold enhancement in verazine production [26] |

| Throughput Capacity | Not specified | 2,000 yeast transformations per week [26] |

| Final Product Titer | 69.03 ± 1.2 mg/L dopamine [25] | Multiple gene candidates identified with enhanced production [26] |

| Characterization Depth | Mechanistic insights into RBS strength impact [25] | Multiplexed screening of 32 genes with 6 biological replicates [26] |

Experimental Protocols for DBTL Implementation

Knowledge-Driven DBTL for Metabolic Pathway Optimization

The dopamine production case study exemplifies a knowledge-driven DBTL approach incorporating upstream in vitro investigation [25].

Experimental Workflow

The methodology for optimizing dopamine production in E. coli followed a structured workflow:

Detailed Protocol: Dopamine Biosynthetic Pathway Optimization

Bacterial Strains and Genetic Components

- Production host: E. coli FUS4.T2 engineered for L-tyrosine overproduction [25]

- Key enzymes: 4-hydroxyphenylacetate 3-monooxygenase (HpaBC) from E. coli and L-DOPA decarboxylase (Ddc) from Pseudomonas putida [25]

- Plasmid system: pET system for gene storage, pJNTN for crude cell lysate system [25]

In Vitro Testing Phase

- Prepare crude cell lysate system from production strain

- Set up reaction buffer containing 0.2 mM FeCl₂, 50 μM vitamin B₆, and 1 mM L-tyrosine or 5 mM L-DOPA in 50 mM phosphate buffer (pH 7) [25]

- Test different relative enzyme expression levels to determine optimal ratios before in vivo implementation

In Vivo RBS Engineering

- Design RBS variants focusing on Shine-Dalgarno sequence modulation

- Assemble bi-cistronic constructs containing hpaBC and ddc genes

- Transform engineered E. coli FUS4.T2 with variant libraries

- Screen for dopamine production in minimal medium containing 20 g/L glucose and key supplements [25]

Analytical Methods

- Quantify dopamine production using appropriate chromatographic methods

- Normalize measurements to biomass (mg/g)

- Compare performance to baseline strains

Automated DBTL for High-Throughput Biopart Characterization

The verazine pathway screening case study demonstrates a fully automated DBTL approach for characterizing gene variants in yeast [26].

Experimental Workflow

The automated workflow for high-throughput characterization in yeast follows this process:

Detailed Protocol: Automated Yeast Transformation and Screening

Automation Platform and Integration

- Robotic system: Hamilton Microlab VANTAGE with Venus software [26]

- Integrated off-deck hardware: plate sealer, plate peeler, and thermal cycler [26]

- Custom user interface for parameter customization [26]

Transformation Protocol

- Program robotic platform with modular steps: transformation setup, washing, and plating

- Optimize liquid classes for viscous reagents (e.g., PEG) by adjusting aspiration and dispensing speeds [26]

- Implement lithium acetate/ssDNA/PEG method in 96-well format [26]

- Execute automated heat shock using integrated thermal cycler

- Plate transformations automatically for colony picking

Library Screening and Analysis

- Clone target genes into pESC-URA plasmid under GAL1 promoter regulation [26]

- Transform verazine-producing S. cerevisiae strain PW-42 with plasmid library [26]

- Pick six biological replicates of each strain using automated colony picker (e.g., QPix 460) [26]

- Culture in 96-deep-well plates with selective media

- Implement high-throughput chemical extraction using Zymolyase-mediated lysis and organic solvent extraction [26]

- Quantify verazine production using rapid LC-MS method (19-minute runtime) [26]

The Scientist's Toolkit: Essential Research Reagents and Equipment

Successful implementation of DBTL cycles requires specific laboratory resources. The following table catalogues essential solutions for biopart characterization:

Table 3: Essential Research Reagent Solutions for DBTL Implementation

| Category | Specific Solutions | Function in DBTL Cycle |

|---|---|---|

| DNA Design & Analysis | Geneious, Benchling, SnapGene software; NCBI, UniProt databases [24] | DNA sequence design and analysis (Design phase) |

| DNA Construction | Oligonucleotide synthesizer; PCR thermocycler; Gel electrophoresis; DNA assembly enzymes [24] | DNA synthesis and assembly (Build phase) |

| Host Transformation | Competent cells; Electroporation equipment; Transfection reagents [24] | Introduction of DNA into host organisms (Build phase) |

| Characterization & Analytics | Spectrophotometer; Plate reader; Chromatography systems; Microscopes [24] | Functional testing of engineered systems (Test phase) |

| Automation & Robotics | Hamilton Microlab VANTAGE; Automated colony pickers; Liquid handling systems [26] | High-throughput implementation of Build and Test phases |

The comparative analysis of DBTL implementation strategies reveals a complementary relationship between knowledge-driven and automated approaches for biopart characterization. Knowledge-driven DBTL offers deeper mechanistic insights through targeted experimentation, while automated DBTL enables rapid exploration of vast design spaces. The selection between these methodologies depends on project-specific factors including characterization depth requirements, throughput needs, and resource availability.

As synthetic biology advances, integration of machine learning promises to bridge these approaches by extracting meaningful patterns from high-throughput datasets to inform mechanistic understanding [22]. This convergence will ultimately accelerate the DBTL cycle, enabling higher-precision biological design and more efficient characterization of biological parts for therapeutic and industrial applications.

Biopart registries and standardized collections form the foundational infrastructure of modern synthetic biology, enabling researchers to design, construct, and optimize biological systems with predictable functions. These resources provide characterized genetic components—ranging from promoters and terminators to coding sequences and regulatory elements—that can be assembled into complex genetic circuits. The evolution from community-driven repositories like the iGEM Registry to professionally curated libraries such as the Plant Synthetic BioDatabase (PSBD) and Registry and Database of Bioparts for Synthetic Biology (RDBSB) reflects the field's maturation toward standardized, data-rich resources essential for reproducible research and biotechnological innovation [27] [1] [15]. For researchers and drug development professionals, selecting appropriate biopart collections significantly impacts project success, as these resources vary considerably in scope, characterization depth, and application-specific utility.

This comparative analysis examines the landscape of biopart registries through the lens of characterization methodologies, data completeness, and practical applicability. We evaluate experimental protocols for biopart validation, quantify performance metrics across platforms, and provide a structured framework for selecting repositories based on research requirements—whether for microbial engineering, plant synthetic biology, or therapeutic development.

Comparative Analysis of Major Biopart Registries

The biopart registry ecosystem encompasses community repositories, professionally curated databases, and specialized collections optimized for particular chassis or applications. The iGEM Registry represents a pioneering community-driven effort with over 20,000 documented biological parts, though fewer than 100 are specifically categorized for plant systems [27]. While valuable for educational purposes and standardizing basic parts, its transition to archive mode underscores the need for more rigorously characterized alternatives [28].

In contrast, professional libraries have emerged with enhanced curation, experimental validation, and application-specific tools. The Plant Synthetic BioDatabase (PSBD) addresses the critical gap in plant-compatible bioparts by cataloging 1,677 catalytic bioparts and 384 regulatory elements from 309 species, alongside 850 associated chemicals [27]. Its integrated bioinformatics tools—including local BLAST, chem similarity analysis, phylogenetic analysis, and visual strength assessment—support rational design of genetic circuits for plant systems [27].

The Registry and Database of Bioparts for Synthetic Biology (RDBSB) exemplifies scale and validation rigor, encompassing 83,193 curated catalytic bioparts with experimental evidence—far exceeding the coverage of traditional enzyme databases [15]. Its four-tier data classification system (from basic sequence information to comprehensive characterization with optimal pH, temperature, and chassis specificity) provides researchers with critical parameters for biosystem design [15].

Table 1: Comparative Overview of Major Biopart Registries

| Registry | Biopart Count | Specialization | Data Validation | Key Features |

|---|---|---|---|---|

| iGEM Registry | >20,000 parts (legacy) | General, educational focus | Variable; community-submitted | Standardized parts, educational resources, assembly standards |

| PSBD | 1,677 catalytic bioparts, 384 regulatory elements | Plant synthetic biology | Experimentally validated | Species-specific tools, visual strength assessment, pathway design |

| RDBSB | 83,193 catalytic bioparts | Broad synthetic biology | Experimental validation with parameters | Optimum pH/temperature data, chassis specificity, pathway tools |

| Professional Libraries | Varies by database | Domain-specific (e.g., therapeutics) | Rigorous empirical characterization | Analytical comparability, biophysical characterization |

Characterization Metrics and Data Completeness

Biopart utility in research and development depends heavily on characterization depth and data accessibility. The PSBD provides detailed functional annotations, quantitative activity measurements for regulatory elements, and standardized parts flanked with BsaI or BsmBI sites for GoldenBraid assembly [27]. Its visual strength tool enables researchers to select promoter-terminator pairs based on quantitative expression data in Nicotiana benthamiana leaves and BY2 suspension cells, presented as interactive heatmaps [27].

The RDBSB offers unparalleled biochemical parameterization, with 27,789 bioparts associated with optimal pH, temperature, or chassis information [15]. This database uniquely categorizes bioparts into four integrity levels: Level 1 (sequences only), Level 2 (with reaction data), Level 3 (experimentally validated reactions), and Level 4 (with full biochemical parameters) [15]. Such granularity enables researchers to filter parts based on characterization completeness—a critical feature for high-stakes applications like therapeutic development.

Table 2: Biopart Characterization Depth Across Registries

| Characterization Type | iGEM Registry | PSBD | RDBSB | Professional Libraries |

|---|---|---|---|---|

| Sequence Validation | Basic documentation | Curated sequences with annotations | Comprehensive sequence data | Certified sequences |

| Functional Data | Variable, often limited | Quantitative expression levels | Kinetic parameters | Dose-response curves |

| Performance Conditions | Rarely provided | Species-specific activity | Optimal pH/temperature ranges | Validated operational ranges |

| Standardized Assembly | BioBrick standards | GoldenBraid compatibility | Multiple standards | Platform-specific formats |

| Experimental Evidence | Community reports | Peer-reviewed literature | Structured validation | GLP/GMP compliance |

For regulatory-focused applications such as biosimilar development, professional libraries maintained by pharmaceutical organizations employ advanced characterization techniques including circular dichroism spectroscopy, hydrogen-deuterium exchange mass spectrometry, nuclear magnetic resonance, and surface plasmon resonance to establish product comparability [29] [30]. These methods provide the "fingerprint-like similarity" analysis required by regulatory agencies for demonstrating biosimilarity [30].

Experimental Characterization Methods for Biopart Validation

Structural and Functional Characterization Techniques

Robust biopart characterization employs orthogonal analytical techniques to comprehensively assess structure-function relationships. For protein-based bioparts, higher-order structure analysis utilizes far and near UV circular dichroism (CD) spectroscopy to probe secondary and tertiary structures [29]. Quantitative comparison of CD spectra through root mean square deviation (RMSD) calculations provides objective assessment of structural similarity, with industry applications demonstrating sensitivity to reversible formulation-dependent structural changes [29].

Size distribution profiling employs multiple orthogonal techniques to evaluate aggregation states—a critical quality attribute for therapeutic proteins. Size exclusion chromatography (SEC), asymmetric flow field-flow fractionation (AF4), and analytical ultracentrifugation sedimentation velocity (AUC-SV) separate species by hydrodynamic volume, while dynamic light scattering (DLS) mathematically resolves size distributions from diffusion coefficients [29]. Gravitational sweep AUC expands dynamic range to characterize particles up to 1.2 μm diameter, enabling comprehensive aggregate profiling [29].

For functional characterization, biosensor-based techniques including surface plasmon resonance (SPR) and biolayer interferometry (BLI) quantify binding kinetics and affinities [29]. When applied to regulatory elements such as promoters and terminators, quantitative reporter systems (e.g., fluorescent proteins) coupled with flow cytometry or microplate spectroscopy enable precise measurement of expression strength and context-dependent performance [27] [1].

Protocol: Comparative Analysis of Promoter-Terminator Combinations

Objective: Quantify relative expression strengths of promoter-terminator pairs in plant systems.

Methodology:

- Construct Design: Assemble transcriptional fusions combining target promoters and terminators with a standardized reporter gene (e.g., GFP) in GoldenBraid-compatible vectors [27].

- Plant Transformation: Deliver constructs to Nicotiana benthamiana leaves via agroinfiltration, including internal controls for normalization.

- Expression Quantification: Harvest tissue 3-5 days post-infiltration and measure reporter expression using:

- Fluorescence microplate spectroscopy (quantitative)

- Western blotting (protein accumulation)

- qRT-PCR (transcript level) [27]

- Data Analysis: Normalize measurements to internal controls, compute relative expression levels, and generate heatmap visualizations of combination performance [27].

Applications: This protocol, implemented in the PSBD platform, enables systematic quantification of 114 promoter and 15 terminator combinations, revealing significant interactions between promoter and terminator elements that collectively determine expression output [27].

Experimental workflow for promoter-terminator characterization

Advanced Characterization Frameworks

Empirical Models and Machine Learning Approaches

Advanced characterization increasingly incorporates empirical modeling and artificial intelligence to predict biopart performance. In biochar research—a related domain dealing with complex biological-derived materials—Artificial Neural Network (ANN) models successfully predict adsorption efficiency based on biochar properties, demonstrating the potential for similar approaches in biopart characterization [31]. For catalytic bioparts, the RDBSB integrates pathway prediction tools like PathFinder, which identifies optimal biosynthetic routes from substrate to product using graph theory and shortest-path algorithms [15].

The PSBD employs phylogenetic analysis tools to infer functional relationships within enzyme families, enabling informed selection of catalytic bioparts with predicted substrate specificities [27]. Its chem similarity tool identifies structurally related molecules and associated enzymes, facilitating pathway design based on structural analogies [27]. These computational approaches complement empirical measurement, accelerating the design-build-test-learn cycle in synthetic biology.

Regulatory-Compliant Characterization for Therapeutic Applications

Biosimilar development requires exceptionally rigorous characterization protocols to demonstrate structural and functional equivalence to reference products. Regulatory guidelines mandate "state-of-art analytical, orthogonal methods" for comparative characterization [30]. The stepwise approach includes:

- Primary Structure Analysis: LC-MS/MS peptide mapping for amino acid sequence verification, post-translational modification identification (deamidation, oxidation, glycosylation), and disulfide bond characterization [30].

- Higher-Order Structure Assessment: CD spectroscopy, Fourier-transform infrared spectroscopy, NMR, and hydrogen-deuterium exchange MS to confirm secondary, tertiary, and quaternary structures [30].

- Functional Characterization: Cell-based bioassays, binding affinity measurements (SPR, BLI), and potency testing to verify mechanism-of-action retention [30].

This comprehensive analytical approach generates the "fingerprint-like similarity" data required for regulatory submissions, potentially reducing clinical trial requirements through demonstrated analytical comparability [30].

Biosimilar characterization workflow

Essential Research Reagents and Tools

Table 3: Essential Research Reagents for Biopart Characterization

| Reagent/Tool | Function | Application Examples |

|---|---|---|

| GoldenBraid Vectors | Standardized assembly system | Modular construction of genetic circuits [27] |

| Reporter Genes (GFP, LUC) | Quantitative expression measurement | Promoter/terminator strength quantification [27] |

| CD Spectroscopy | Secondary/tertiary structure analysis | Higher-order structure comparability [29] |

| Surface Plasmon Resonance | Binding kinetics measurement | Affinity and kinetic parameter determination [29] |

| Analytical Ultracentrifugation | Size distribution analysis | Aggregate quantification and characterization [29] |

| LC-MS/MS Systems | Primary structure verification | Peptide mapping, PTM identification [30] |

| Bioinformatics Tools | In silico analysis and prediction | Phylogenetic analysis, chem similarity [27] |

Biopart registries have evolved from basic parts collections (iGEM) to sophisticated, data-rich platforms (PSBD, RDBSB) with advanced characterization data and design tools. Selection criteria should prioritize characterization completeness, experimental validation, application-specific optimization, and data accessibility.

For plant synthetic biology applications, PSBD provides species-optimized bioparts and expression data. For broad metabolic engineering projects, RDBSB offers unparalleled catalytic biopart coverage with biochemical parameters. For therapeutic development, specialized professional libraries with regulatory-compliant characterization data are essential.

The future of biopart characterization lies in integrating high-throughput experimental data with machine learning prediction, expanding into non-model organisms, and developing standardized qualification frameworks for specific applications. As characterization methodologies advance, biopart registries will increasingly serve as predictive platforms rather than mere repositories, fundamentally accelerating biological design and engineering.

Advanced Characterization Techniques: Analytical Tools and Workflow Applications

This guide provides a comparative analysis of Liquid Chromatography-Mass Spectrometry (LC-MS) platforms for the characterization of biopharmaceuticals at the intact, subunit, and peptide levels. The evaluation focuses on performance metrics, experimental workflows, and practical applications to aid in the selection of appropriate methodologies for drug development.

Level-Based Analysis Comparison

The characterization of biotherapeutics, such as monoclonal antibodies (mAbs) and antibody-drug conjugates (ADCs), is typically performed at three levels, each providing distinct information crucial for ensuring product quality, safety, and efficacy [32] [33].

Table 1: Comparison of LC-MS Analysis Levels for Biopharmaceutical Characterization

| Analysis Level | Key Applications | Typical Resolution & Mass Range | Critical Quality Attributes (CQAs) Assessed | Sample Preparation Complexity |

|---|---|---|---|---|

| Intact Protein | Mass confirmation, glycosylation profiling, aggregation analysis, DAR assessment for ADCs [34] [32] | 17,500+ [34]; High Mass Range Orbitrap [33] | Product identity, drug-to-antibody ratio (DAR), aggregation, clipping [32] [33] | Low (minimal manipulation; buffer exchange) [32] |

| Subunit (Middle-Up/Down) | Heavy/light chain analysis, post-translational modification (PTM) localization, detailed DAR species characterization [35] [36] | ~35,000 for DAR species [34]; ZenoTOF 7600 [35] | Glycoform variants, oxidation, deamidation, terminal lysine [35] [33] | Medium (reduction and/or enzymatic digestion with IdeS) [35] [33] |

| Peptide (Bottom-Up) | Amino acid sequence confirmation, precise PTM and conjugation site mapping, disulfide bond characterization [34] [35] | High-Resolution MS (Orbitrap, Q-TOF) [34] | Site-specific modifications (e.g., deamidation, oxidation), glycosylation site occupancy, sequence variants [34] | High (denaturation, reduction, alkylation, enzymatic digestion) [34] |

Experimental Protocols and Workflows

Multi-Dimensional LC-MS (mD-LC-MS) for Comprehensive Characterization

A robust protocol for comprehensive peak characterization in ion exchange chromatography (IEC) involves a multi-dimensional system, which allows for the isolation and online processing of chromatographic variants [37].

- Instrument Configuration: The system is based on a commercial 2D-LC platform (e.g., Agilent 1290 Infinity 2D-LC) extended with additional modules. This includes three additional pumps, two external 2-position 10-port valves, and multiple column heaters. The system is controlled by two software instances (e.g., OpenLab CDS ChemStation) to manage the complex method sequences [37].

- Key Technologies:

- Multiple Heart Cutting (MHC): A duo-valve directs the flow from the first dimension (e.g., the analytical IEC method) into two parking decks, each holding six loops (10-180 μL volume), enabling precise, closely spaced cuts of peak regions of interest [37].

- Active Solvent Modulation (ASM): A valve-based dilution feature is used to mitigate solvent incompatibility between the first-dimension buffers and the subsequent separation dimensions [37].

- Online Processing: The isolated fractions are automatically transferred and processed online, which may include reduction and enzymatic digestion (e.g., using an Immobilized Enzyme Reactor - IMER), before being analyzed by mass spectrometry [37].

- Data Acquisition and Analysis: A custom documentation application can be used to generate comprehensive reports that consolidate pressure curves from all pumps, method timetables, and link the generated MS files to their corresponding chromatographic cuts, which is vital for routine use and troubleshooting [37].

Single-Column LC-MS for Multilevel Analysis

A streamlined workflow using a single-column setup (e.g., a C4 column) has been demonstrated for the characterization of mAbs, bispecific antibodies, and Fc-fusion proteins across all three levels [35].

- Chromatography: A reversed-phase C4 column is used for all analyses (intact, subunit, and peptide-level). For middle-down analysis of subunits, a ZenoTOF 7600 mass spectrometer is employed [35].

- Sample Preparation:

- Intact Analysis: The sample is diluted or buffer-exchanged into a volatile solvent like water or ammonium acetate and injected directly [32].

- Subunit Analysis (Middle-Down): The intact protein is treated with a reducing agent (e.g., dithiothreitol - DTT) or an immunoglobulin-degrading enzyme (IdeS) to generate specific fragments like Fc/2 and Fab subunits [35] [36] [33].

- Peptide Mapping (Bottom-Up): The protein is denatured, reduced, alkylated, and digested with an enzyme like trypsin. The resulting peptides are then separated on the C4 column [35].

- MS Analysis: High-resolution accurate-mass (HRAM) instrumentation such as Orbitrap-based mass spectrometers is used. Data is processed with deconvolution algorithms for intact and subunit levels, and database search engines for peptide identification [34] [33].

The following diagram illustrates the logical relationship and workflow between these analysis levels:

Logical Workflow of Multi-Level LC-MS Analysis

Performance Metrics and Data Comparison

The reliability of LC-MS platforms is evaluated using specific performance metrics that monitor various components of the system, from chromatography to mass spectrometry detection [38].

Table 2: Key LC-MS/MS Performance Metrics for System Evaluation

| Metric Category | Specific Metric Examples | Optimal Direction | Purpose and Application |

|---|---|---|---|

| Chromatography | Median Peak Width at Half-Height (s) [38] | ↓ (Sharper peaks) | Measures chromatographic resolution and peak broadening. |

| Interquartile Retention Time Period (min) [38] | ↑ (Longer period) | Indicates the quality of chromatographic separation over the gradient. | |

| Electrospray Ion Source Stability | MS1 Signal Jumps/Falls >10x [38] | ↓ (Fewer instances) | Flags instability in the electrospray ionization source. |

| Precursor m/z for Identifications (Th) [38] | ↓ | Higher median m/z can indicate inefficient or partial ionization. | |

| Dynamic Sampling | Ratio of Peptides Identified by 1 vs 2 Spectra [38] | ↑ | Estimates oversampling; higher ratios indicate more efficient sampling of unique peptides. |

| Number of MS2 Scans [38] | ↑ | More MS2 scans indicate more extensive sampling for identification. |

Essential Research Reagent Solutions

Successful implementation of these LC-MS workflows relies on a suite of specialized reagents and materials.

Table 3: Essential Research Reagents and Materials for LC-MS Biopharmaceutical Characterization

| Item | Function and Application |

|---|---|

| Immobilized Enzyme Reactor (IMER) | Enables fast, online enzymatic digestion (e.g., with trypsin) of protein cuts in mD-LC-MS workflows, minimizing sample handling and processing time [37]. |

| IdeS Enzyme (Immunoglobulin-degrading enzyme) | A specific protease used in middle-level analysis to generate consistent Fc/2 and F(ab')2 fragments from antibodies for detailed subunit analysis [34] [33]. |

| Reducing Agents (DTT, TCEP) | Used to break disulfide bonds for subunit (middle-down) and peptide mapping (bottom-up) analyses. TCEP is often preferred for its stability [36]. |

| Macroporous and Supermacroporous Reversed-Phase Cartridges/Columns | Provide fast online desalting and high-resolution separation of intact proteins, subunits, and large peptides, improving MS compatibility and signal [33]. |

| High-Resolution HIC (Hydrophobic Interaction Chromatography) Columns | Used for separating and characterizing intact mAb charge variants, oxidation variants, and bispecific antibodies under native-like conditions [33]. |

| C4 Reversed-Phase LC Columns | The stationary phase of choice for intact and subunit-level separations due to its ability to handle large biomolecules, enabling single-column multilevel characterization [35]. |

High-Throughput Screening (HTS) is a foundational pillar of modern drug discovery, enabling the rapid testing of hundreds of thousands of compounds to identify potential therapeutic candidates. [39] This guide provides a comparative analysis of the two dominant technological approaches in this field: automated robotic systems and advanced microfluidics. The evolution from traditional, static well-plate assays to dynamic, miniaturized systems represents a paradigm shift aimed at increasing physiological relevance while reducing costs and timelines.

Core Platform Technologies and Comparative Performance

The selection of an HTS platform involves critical trade-offs between throughput, physiological relevance, and operational cost. The table below summarizes the core characteristics of the two main technology streams.

Table 1: Comparative Analysis of Automated Robotics and Microfluidic HTS Platforms

| Feature | Traditional Automation & Robotics | Advanced Microfluidic Platforms |

|---|---|---|

| Core Principle | Automated handling of microtiter plates (96, 384, 1536 wells) using robotics. [40] | Manipulation of minute fluid volumes in micro-scale channels for cell culture and assays. [41] |

| Throughput | High; can investigate hundreds of thousands of compounds per day. [39] | Rapidly improving; considered compatible with high-throughput systems. [41] |

| Liquid Handling | Difficulty accurately dispensing volumes <1 µL; quick evaporation in 1536+ well plates. [41] | Precise handling of nanoliter to picoliter volumes, minimizing reagent consumption. [41] |

| Cell Culture Model | Primarily 2D cell monolayers; limited 3D spheroid culture in plates. [39] | Superior support for 3D models (spheroids, organoids) and dynamic, perfusion-based cultures. [41] |

| Physiological Relevance | Low; static conditions fail to recapitulate tissue-specific architecture and biomechanical cues. [41] | High; enables shear stresses, continuous perfusion, and precise drug gradients. [41] |

| Key Advantage | Proven, simple technology with established protocols and infrastructure. | Higher predictive power and better correlation with in vivo data due to more complex models. [41] |

| Primary Limitation | High consumption of costly reagents and biological samples. [41] | Ongoing standardization and integration into existing HTS workflows. [41] |

Experimental Protocols for Platform Validation

To ensure reliable and reproducible results, rigorous experimental protocols must be followed. The methodologies below are critical for benchmarking HTS platform performance.

Protocol for HTS Data Processing and Hit Identification

The goal of this protocol is to distinguish true biologically active compounds from assay variability. [42]

- Assay Validation (Pre-Screening):

- Perform a 3-day assay signal window test using controls to establish baseline performance.

- Conduct DMSO validation tests to ensure the solvent does not interfere with the assay.

- Primary Screening & Data Collection:

- Screen the compound library against the target using the automated or microfluidic system.

- Capture raw assay signals (e.g., absorbance, fluorescence, luminescence). [39]

- Multi-Level Statistical Review (Quality Control):

- Apply robust statistical methods to identify and correct for systematic row/column effects.

- Exclude data that fall outside pre-defined quality control criteria.

- Hit Identification:

Protocol for 3D Spheroid Formation in Microfluidic HTS

This protocol leverages microfluidics to create more physiologically relevant 3D models for screening. [41]

- Device Preparation:

- Use a microfluidic platform designed for 3D culture, such as a hanging-drop array or a phase-guide chip (e.g., OrganoPlate).

- Cell Seeding:

- Load a cell suspension at a constant concentration into the device's inlet.

- Allow cells to sediment into the culture chambers (e.g., hanging drops or gel lanes) via microfluidic networks.

- Spheroid Formation:

- Culture the cells for 24-72 hours to allow for self-assembly into uniform spheroids.

- Compound Administration: