Combinatorial Pathway Optimization: Mastering DBTL Cycles for Advanced Therapeutics and Bioproduction

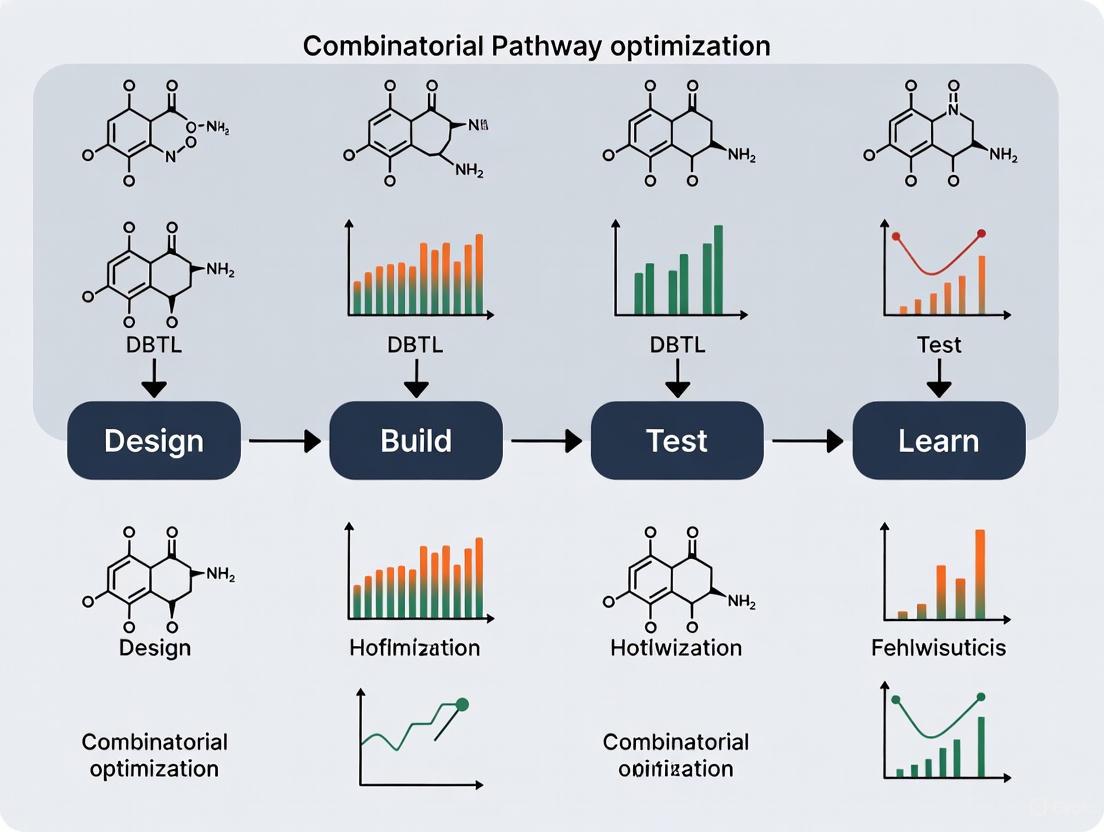

This article provides a comprehensive exploration of combinatorial pathway optimization through the lens of the Design-Build-Test-Learn (DBTL) cycle, a foundational framework in synthetic biology and precision medicine.

Combinatorial Pathway Optimization: Mastering DBTL Cycles for Advanced Therapeutics and Bioproduction

Abstract

This article provides a comprehensive exploration of combinatorial pathway optimization through the lens of the Design-Build-Test-Learn (DBTL) cycle, a foundational framework in synthetic biology and precision medicine. Tailored for researchers, scientists, and drug development professionals, it details the integration of multi-omics data, machine learning, and high-throughput automation to efficiently navigate vast combinatorial spaces. The scope ranges from foundational concepts of synergy and antagonism in drug combinations to advanced methodological applications in metabolic engineering and AI-driven strain design. It further addresses critical troubleshooting and optimization strategies for robust experimental workflows and concludes with rigorous validation frameworks and comparative analyses of emerging technologies, offering a roadmap for accelerating the development of novel therapeutic regimens and microbial cell factories.

The DBTL Cycle and Combinatorial Optimization: Core Principles and Biological Imperatives

Defining the Design-Build-Test-Learn (DBTL) Framework in Synthetic Biology

The Design-Build-Test-Learn (DBTL) cycle is a systematic, iterative framework central to synthetic biology, enabling the engineering of biological systems for specific functions such as producing biofuels, pharmaceuticals, and other valuable compounds [1]. This engineering approach involves designing biological parts, assembling them into constructs, testing their functionality, and learning from the data to inform the next design iteration [1] [2]. The cycle's power lies in its structured methodology for overcoming the unpredictability of biological systems, where even rational designs require multiple permutations to achieve a desired outcome [1]. Automation and modular design are key pillars of modern DBTL workflows, drastically reducing the time, cost, and labor associated with traditional methods while increasing throughput and improving overall outcomes [1] [3]. The framework is foundational to metabolic engineering and combinatorial pathway optimization, providing a structured path for developing high-performing microbial cell factories [4] [5].

The Core Components of the DBTL Cycle

Design

The Design phase involves creating a conceptual blueprint for the biological system to be implemented. This digital representation specifies the structural composition and intended function of the genetic circuit or pathway [6]. Key activities include selecting candidate enzymes, designing DNA parts (e.g., optimizing ribosome binding sites and codon usage), and assembling virtual combinatorial libraries of pathway designs [3]. The design phase heavily relies on domain knowledge, expertise, and computational modeling, and is increasingly supported by tools like RetroPath for automated pathway selection and Selenzyme for enzyme selection [3]. The shift towards data-driven design is critical, utilizing large biological datasets and machine learning to create more predictive and effective initial designs [2] [7].

Build

The Build phase translates the digital design into physical biological constructs. This involves DNA synthesis, assembly of constructs into plasmids or other vectors, and their introduction into a characterization chassis such as bacteria, yeast, or cell-free systems [1] [7]. Automation is crucial in this phase, employing robotic platforms for DNA assembly (e.g., via ligase cycling reaction) and transformation to generate the desired biological strains [3]. The build process results in a Build object—a physical sample in the laboratory, such as a DNA construct or a transformed microbial strain, which can be tracked and managed within a laboratory information management system [6].

Test

The Test phase involves experimental measurement of the built construct's performance against the objectives set during the Design stage [7]. This requires cultivating the engineered organisms, inducing expression, and quantitatively measuring the target products and key intermediates [3]. High-throughput methods, such as automated cultivation in microtiter plates coupled with analytical techniques like ultra-performance liquid chromatography-tandem mass spectrometry (UPLC-MS/MS), are essential for generating robust, comparable data [3]. The output is a Test object, which wrappers the raw experimental data files produced from these measurements [6].

Learn

In the Learn phase, data collected during testing is analyzed to extract insights about the system's behavior. This involves identifying relationships between design parameters and observed production levels using statistical methods and machine learning [3]. The learning process determines whether the design objectives have been met or whether another iteration of the cycle is required. The learning phase generates an Analysis object, which contains processed or transformed data (e.g., background-subtracted signals, log transformations, model-fitting results) [6]. The knowledge gained here is fundamental to informing and improving the design in the subsequent DBTL cycle [2] [8].

Workflow Visualization: DBTL Cycle

The following diagram illustrates the iterative, interconnected nature of the DBTL cycle and the key activities within each phase.

Advanced DBTL Paradigm: The LDBT Cycle and Machine Learning Integration

Recent advances are reshaping the traditional DBTL cycle. The integration of Machine Learning (ML) is so transformative that a new paradigm, the LDBT cycle (Learn-Design-Build-Test), has been proposed, where Learning precedes Design [7]. In this model, pre-trained ML models on vast biological datasets enable zero-shot predictions—generating functional designs without additional experimental training data [7]. This approach leverages powerful computational tools like protein language models (e.g., ESM, ProGen) and structure-based design tools (e.g., ProteinMPNN, MutCompute) to create highly optimized initial designs, potentially reducing the need for multiple iterative cycles [7].

Application Notes and Protocols for Combinatorial Pathway Optimization

Case Study: Automated DBTL for Flavonoid Production inE. coli

An automated DBTL pipeline was successfully applied to optimize a (2S)-pinocembrin biosynthetic pathway in E. coli, resulting in a 500-fold improvement in production titer, reaching 88 mg L⁻¹ [3]. The pathway consisted of four enzymes (PAL, CHS, CHI, 4CL) converting L-phenylalanine to pinocembrin.

Table 1: Key Experimental Results from Iterative DBTL Cycles for Pinocembrin Production

| DBTL Cycle | Number of Constructs | Design of Experiments Compression | Pinocembrin Titer Range (mg L⁻¹) | Key Learning |

|---|---|---|---|---|

| Cycle 1 | 16 | 162:1 (from 2592) | 0.002 – 0.14 | Vector copy number had the strongest positive effect (P = 2.00 × 10⁻⁸); CHI promoter strength also highly significant (P = 1.07 × 10⁻⁷). |

| Cycle 2 | 24 | Focused library based on Cycle 1 learning | Up to 88 mg L⁻¹ | Application of learned constraints (high copy number, strategic gene order) led to a 500-fold increase over the best initial construct. |

Detailed Protocol: Automated DBTL for Pathway Optimization

I. Design Phase Protocol

- Pathway Selection: Use RetroPath [3] to design the heterologous pathway from a defined starting metabolite (e.g., L-phenylalanine) to the target compound (e.g., pinocembrin).

- Enzyme Selection: Employ Selenzyme [3] to select candidate enzyme sequences for each reaction step.

- Combinatorial Library Design: Use software like PartsGenie [3] to design a library of genetic constructs, varying parameters such as:

- Vector backbone and copy number (e.g., ColE1 [high], p15a [medium], pSC101 [low]).

- Promoter strength (e.g., strong [Ptrc], weak [PlacUV5]).

- Gene order permutations within an operon.

- Library Compression: Apply Design of Experiments (DoE) based on orthogonal arrays to reduce the combinatorial library to a tractable number of representative constructs for building and testing [3].

II. Build Phase Protocol

- DNA Synthesis: Order designed gene sequences from commercial vendors [3].

- Automated DNA Assembly:

- Prepare DNA parts via PCR.

- Use a liquid-handling robot to set up Ligase Cycling Reaction (LCR) assembly reactions according to automated worklists generated by design software [3].

- Transformation and Quality Control:

- Transform LCR reactions into E. coli cloning strain (e.g., DH5α).

- Perform high-throughput plasmid purification from candidate clones.

- Verify constructs by restriction digest and capillary electrophoresis, followed by sequence verification [3].

III. Test Phase Protocol

- Strain Cultivation:

- Introduce verified constructs into the production chassis (e.g., E. coli DH5α).

- Perform automated cultivation in 96-deepwell plates using defined media and induction protocols [3].

- Metabolite Extraction: Use an automated liquid handler to extract metabolites from culture samples.

- Quantitative Analysis:

- Analyze samples using fast UPLC-MS/MS with high mass resolution.

- Quantify target product (pinocembrin) and key intermediates (e.g., cinnamic acid) by comparison to authentic standards [3].

- Use custom scripts (e.g., in R) for automated data extraction and processing.

IV. Learn Phase Protocol

- Statistical Analysis: Perform analysis of variance (ANOVA) to identify the impact of each design factor (e.g., copy number, promoter strength, gene order) on production titers [3].

- Modeling: Apply machine learning (e.g., linear models, random forest) to the dataset to build a predictive model of pathway performance [4].

- Design Refinement: Use the model and statistical insights to define constraints and priorities for the next design cycle, focusing on the most impactful parameters [3].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Research Reagents and Tools for DBTL Cycles

| Item Name | Function/Application | Example Use Case |

|---|---|---|

| RetroPath [3] | Computational tool for automated metabolic pathway design. | Designing a novel pathway from a substrate to a target fine chemical. |

| Selenzyme [3] | Enzyme selection platform for choosing candidate sequences for pathway steps. | Selecting the most suitable 4-coumarate:CoA ligase (4CL) for a flavonoid pathway. |

| PartsGenie [3] | Software for designing reusable DNA parts with optimized RBS and coding sequences. | Designing a library of bicistronic constructs for RBS engineering in a dopamine pathway [8]. |

| Ligase Cycling Reaction (LCR) [3] | High-efficiency, automated DNA assembly method. | Assembling a combinatorial library of pathway variants into a plasmid backbone. |

| Cell-Free Protein Synthesis (CFPS) Systems [7] | Crude lysate or purified system for rapid in vitro transcription and translation. | Ultra-high-throughput prototyping of enzyme variants without cellular transformation [7]. |

| UTR Designer [8] | Tool for modulating Ribosome Binding Site (RBS) sequences to fine-tune translation. | Systematically varying the expression level of a pathway enzyme to balance flux. |

| JBEI-ICE Repository [3] | Open-source biological data management platform (jbe-ice.org). | Cataloging and tracking all designed DNA parts, plasmids, and associated metadata. |

The Challenge of Combinatorial Explosions in Metabolic Engineering and Drug Discovery

A considerable number of areas of bioscience, including gene and drug discovery and metabolic engineering, are best viewed as combinatorial optimization problems representing large search spaces of possible solutions populated by a much smaller number of optimal solutions [9]. The term "combinatorial explosion" describes the core challenge: the number of possible permutations in a system increases exponentially with the number of variables involved [10] [9]. In metabolic engineering, this manifests when optimizing multi-enzyme pathways, where testing all combinations of gene homologs, expression levels, and regulatory elements becomes experimentally intractable [10] [11]. Similarly, in drug discovery, screening all possible multi-drug combinations at varying doses from a library of candidate compounds is functionally impossible due to the astronomical number of possibilities [12].

This combinatorial explosion renders full factorial searches infeasible, forcing researchers to rely on sophisticated heuristic methods to identify "good enough" solutions without exhaustively testing every possibility [10] [9]. The fundamental problem is NP-hard, meaning the computational effort required for a definitive solution grows at a non-polynomial rate, quickly surpassing practical limits [9]. Addressing this challenge requires integrated strategies that combine smart experimental design with computational guidance to navigate the vast search spaces efficiently.

Table 1: Examples of Combinatorial Problem Scaling in Biology

| System Variables | Number of Components | Possible Combinations | Reference |

|---|---|---|---|

| Protein Engineering (300 aa) | 3 amino acid changes | ~30 billion | [9] |

| Metabolic Engineering | 6 enzymes with 5 variants each | 15,625 (5⁶) | [10] |

| Drug Screening | 4 drugs from 100 candidates | 3.9 million | [12] |

| DNA Aptamer (30mer) | 4 bases at 30 positions | 1.2 x 10¹⁸ (4³⁰) | [9] |

Application Notes: Metabolic Engineering

The Core Problem in Pathway Optimization

In metabolic engineering, combinatorial explosion arises when attempting to balance flux through heterologous pathways comprising multiple enzymatic steps [10]. Installing efficient pathways based purely on forward design rules remains infeasible due to insufficient a priori knowledge of pathway kinetics and intricate orchestration within cellular metabolism [10]. Traditional sequential optimization methods, which identify and remove major bottlenecks one at a time, often fail to identify globally optimal solutions because they neglect holistic interactions within the pathway and with host metabolism [10]. This limitation has driven the development of combinatorial pathway optimization approaches that create variant libraries where several pathway elements are diversified simultaneously [10] [11].

Key Diversification Strategies

Combinatorial optimization in metabolic engineering employs three primary diversification strategies, which can be used independently or in combination [10]:

Variation of Coding Sequences: This strategy employs different structural or functional gene homologs known (or suspected) to catalyze the respective reaction steps. In the absence of suitable candidates, metagenomic libraries can be exploited to identify appropriate biocatalysts [10]. For example, this approach was successfully used to graft xylose utilization into Saccharomyces cerevisiae [10].

Engineering of Expression Levels: Fine-tuning relative and absolute expression levels of involved genes is crucial for setting up a balanced pathway with high flux toward the desired product. This can be achieved by varying gene dosage through plasmid copy number or genomic integration sites, engineering transcription using promoter and terminator libraries, and modulating translation through ribosomal binding site (RBS) engineering [10] [11].

Combined and Integrated Approaches: The most powerful implementations simultaneously integrate different methods for diversity creation. For example, combinatorial refactoring of a 16-gene nitrogen fixation pathway from Klebsiella oxytoca into a E. coli host involved varying copy number, ribosome binding sites, and operon configurations, leading to a remarkable 50-fold improvement in ammonia production [10].

Protocol: Combinatorial Pathway Library Construction

Title: Modular Cloning for Combinatorial Pathway Assembly Application: Rapid generation of genetic diversity for metabolic pathway optimization Principle: Golden Gate and related DNA assembly methods utilize Type IIS restriction enzymes that cut outside their recognition sites, enabling seamless, directional, and simultaneous assembly of multiple DNA parts in a single reaction [13].

Table 2: Key Research Reagent Solutions for Combinatorial Pathway Engineering

| Reagent/Method | Function | Key Features |

|---|---|---|

| Type IIS Restriction Enzymes (e.g., BsaI) | Foundation of modular cloning systems; cut outside recognition sites | Creates unique, sequence-independent overhangs for seamless assembly |

| Golden Gate Assembly | Standardized framework for combinatorial part assembly | Modular, hierarchical; enables rapid variant generation |

| MoClo Toolkit | Standardized genetic part collections | Enables one-pot assembly of transcriptional units from promoters, CDS, terminators |

| Ribosome Binding Site (RBS) Libraries | Translation modulation | Varies protein expression levels without transcriptional changes |

| CRISPR/Cas9 Systems | Multiplex genome editing | Enables precise, simultaneous integration of pathway variants at genomic loci |

Materials:

- DNA parts library (promoters, RBS, coding sequences, terminators)

- Type IIS restriction enzyme (e.g., BsaI-HFv2)

- T4 DNA Ligase and buffer

- Thermocycler

- Competent E. coli cells

- Selection plates with appropriate antibiotics

Procedure:

- Library Design: Select DNA parts from standardized toolkits (e.g., MoClo). Design assembly using 4-bp overhangs that define part position and orientation [13].

- One-Pot Assembly Reaction:

- Combine approximately 50-100 ng of each DNA part

- Add 1.5 µL of BsaI-HFv2 (10 U/µL)

- Add 1 µL of T4 DNA Ligase (400 U/µL)

- Add 5 µL of 10X T4 DNA Ligase Buffer

- Adjust total volume to 50 µL with nuclease-free water

- Thermocycling: Program thermocycler: 25 cycles of (37°C for 3 minutes + 16°C for 4 minutes), then 50°C for 5 minutes, and 80°C for 10 minutes [13].

- Transformation: Transform 5 µL of assembly reaction into competent E. coli cells, plate on selective media, and incubate overnight.

- Screening/Selection: Pick colonies for screening or apply appropriate selection pressure to identify functional constructs.

Visualization: Combinatorial Pathway Optimization Workflow

Application Notes: Drug Discovery

The Combinatorial Therapy Challenge

In drug discovery, combinatorial explosion becomes problematic when seeking optimal multi-drug therapies [12]. While multidrug combination therapies often show better results than monotherapies against complex diseases, the number of possible combinations is staggering [12]. For example, a chemotherapy regimen comprising four drugs chosen from a library of 100 clinically used compounds, with three different doses possible for each drug, creates at least 3.2 × 10⁹ possibilities [12]. Conventional experimental platforms can typically test no more than 1000 combinations, covering only 0.00005% of this search space [12]. This massive discrepancy necessitates highly efficient and systematic optimization strategies.

Phenotype-Driven Optimization Approaches

The phenotype-driven medicine concept associates combinatorial drug therapy with systems engineering and optimization theories [12]. This approach considers the biological system as an open system and optimizes drug combinations as system inputs based on phenotypic outputs according to the formula:

Xopt = argmaxX E = argmax_X f(X)

where X is the drug combination input, E is the efficacy output (any measurable parameter), f is the function relation between drug doses and efficacy, and X_opt is the optimal combination [12]. This framework has introduced powerful approaches and tools to drug combination optimization, moving beyond the limitations of target-driven discovery.

Advanced Screening Platforms

Innovative laboratory platforms have been developed to enhance screening throughput and efficiency:

- Droplet Microfluidics: These systems enable high-throughput screening by encapsulating single cells or populations in nanoliter droplets along with specific drug combinations, allowing massive parallelization of experiments [12].

- Mass-Activated Droplet Sorting (MADS): This technology enables high-throughput screening of enzymatic reactions at nanoliter scale, combining the advantages of droplet microfluidics with sensitive mass spectrometric detection [12].

- 3D Tissue Models: Advanced in vitro systems including organoids, spheroids, and organ-on-a-chip platforms provide more physiologically relevant screening environments that better predict clinical efficacy [12].

Protocol: High-Throughput Combinatorial Drug Screening

Title: Droplet Microfluidic Screening for Drug Combination Discovery Application: Identification of synergistic drug combinations against cancer cell lines Principle: Nanoliter droplet encapsulation of cells with precise drug combinations enables massive parallel screening while conserving reagents and cells [12].

Materials:

- Drug compound library (prepared as concentrated stocks)

- Target cell line (e.g., cancer cells)

- Droplet microfluidics device (commercial or custom-fabricated)

- Fluorescent viability dyes (e.g., Calcein AM for live cells, Propidium Iodide for dead cells)

- Droplet generation oil and surfactants

- Flow cytometer or droplet sorter

Procedure:

- Drug Preparation: Prepare drug combinations in source plates using automated liquid handling systems. Create serial dilutions for dose-response studies.

- Cell Preparation: Harvest and resuspend cells in appropriate medium at optimized density (typically 10⁶ cells/mL).

- Droplet Generation:

- Load drug combinations and cell suspension into separate syringes

- Co-flow through microfluidic device to generate monodisperse droplets containing single cells with specific drug combinations

- Collect droplets in incubation chambers

- Incubation: Incude droplets at 37°C, 5% CO₂ for 48-72 hours to allow treatment effects to manifest.

- Viability Staining: Inject viability staining solutions into droplet stream using additional microfluidic inlets.

- Analysis and Sorting:

- Flow droplets through detection system measuring fluorescence signals

- Identify droplets containing viable cells based on fluorescence patterns

- Sort droplets containing promising drug combinations for further analysis

- Hit Validation: Break sorted droplets, recover cells, and validate hits in conventional well-plate assays.

Visualization: Combinatorial Drug Screening & Optimization

Computational Strategies to Counter Combinatorial Explosion

Heuristic Optimization Algorithms

Heuristic methods are essential for navigating combinatorial landscapes efficiently [9]. Several computational approaches have shown particular promise:

Evolutionary Computation: These algorithms maintain a population of candidate solutions that undergo selection, recombination, and mutation in cycles that mimic natural evolution [9]. The field that has been explicit in viewing scientific problem-solving as combinatorial optimization is evolutionary computing, where candidate solutions exhibit a level of "fitness" determined by the experimenter [9].

Active Learning: These algorithms use existing knowledge to determine the "best" experiment to do next, effectively modeling combinatorial landscapes in silico to guide experimental design [9]. By focusing experimental effort on the most promising regions of the search space, active learning dramatically reduces the number of experiments needed to find optimal solutions.

Machine Learning Integration: As the fields of combinatorial chemistry and computational chemistry have matured, combining them has led to higher hit rates [14]. It is more cost-effective to design and screen virtual chemical libraries in silico to define subsets of the chemical space likely to contain hits before actual synthesis and screening [14].

Protocol: Active Learning for Combinatorial Optimization

Title: Bayesian Optimization for Efficient Experimental Design Application: Guiding combinatorial library screening to maximize information gain Principle: Active learning algorithms select the most informative experiments to perform next based on previous results and uncertainty estimates, dramatically reducing the experimental burden [9].

Materials:

- Initial combinatorial library (pathway variants or drug combinations)

- High-throughput screening data from initial library

- Bayesian optimization software (e.g., GPyOpt, Dragonfly)

- Computational resources (workstation or cluster)

Procedure:

- Initial Screening: Screen a diverse but manageable subset (e.g., 5-10%) of the combinatorial library to generate initial training data.

- Model Training:

- Define objective function (e.g., product titer, cell viability)

- Train Gaussian process model on initial data to build surrogate model of the search space

- Quantize uncertainty estimates across unsampled regions

- Acquisition Function Optimization:

- Calculate acquisition function values (e.g., Expected Improvement, Upper Confidence Bound) for all unsampled combinations

- Select top candidates that balance exploration (high uncertainty regions) and exploitation (high predicted performance)

- Iterative Experimental Cycles:

- Experimentally test selected candidates from Step 3

- Update model with new experimental results

- Repeat selection process until performance plateaus or resources are exhausted

- Validation: Confirm optimal candidates identified through the process in biological replicates.

Table 3: Computational Approaches to Combat Combinatorial Explosion

| Method | Principle | Applications | Advantages |

|---|---|---|---|

| Active Learning | Selects most informative experiments based on previous results and model uncertainty | Pathway balancing, drug combination optimization | Reduces experimental burden by 10-100x |

| Evolutionary Algorithms | Mimics natural selection with populations of candidate solutions | Protein engineering, host optimization | Effective on rugged, non-linear landscapes |

| Bayesian Optimization | Builds probabilistic surrogate models of search space | Dose optimization, metabolic engineering | Handles noise and uncertainty effectively |

| PDGrapher (Graph Neural Networks) | Solves inverse problem of finding perturbations for desired state | Target identification, drug discovery | Direct prediction without exhaustive search [15] |

Integration in the Design-Build-Test-Learn Cycle

The most effective strategy for managing combinatorial explosion involves tight integration of combinatorial approaches within the Design-Build-Test-Learn (DBTL) framework [10] [11]. Each phase of the cycle contributes to progressively refining the search space:

Design Phase: Computational tools help design focused libraries that maximize diversity while minimizing redundancy. For metabolic engineering, this includes tools for enzyme selection, codon optimization, and expression balancing [13]. For drug discovery, in silico screening and virtual library design prioritize the most promising regions of chemical space [14].

Build Phase: Advanced DNA assembly methods [13] and combinatorial chemistry techniques [14] enable rapid construction of variant libraries. Modular cloning systems and DNA-encoded libraries have been particularly transformative in increasing construction efficiency.

Test Phase: High-throughput screening technologies including biosensors [11], droplet microfluidics [12], and advanced cytometry enable rapid evaluation of combinatorial libraries at unprecedented scale.

Learn Phase: Data from screening feeds back into computational models, refining understanding of the system and guiding the next Design phase. Machine learning methods excel at extracting complex patterns from high-dimensional combinatorial screening data [11] [15].

This iterative DBTL framework, enhanced by combinatorial methods and computational guidance, represents the most powerful approach to navigating the challenge of combinatorial explosions in metabolic engineering and drug discovery.

In the context of combinatorial pathway optimization using Design-Build-Test-Learn (DBTL) cycles, the accurate quantification of drug interaction effects is paramount for making informed decisions in subsequent design iterations. The primary goal of combining drugs is to achieve a synergistic effect, where the combined therapeutic impact is greater than the expected additive effect of the individual drugs. This allows for dosage reduction, decreased toxicity, and potentially overcoming drug resistance [16]. Two established reference models, Bliss Independence and the Combination Index (Chou-Talalay method), provide distinct mathematical frameworks for defining and quantifying these interactions [17]. While Bliss Independence operates on a probabilistic framework assuming drugs act independently through different pathways, the Combination Index is derived from the mass-action law principle and can be applied regardless of the drugs' mechanisms of action [18] [19]. This application note details the protocols for both methods, enabling researchers to robustly quantify drug synergy and antagonism, thereby providing critical, data-driven learning for optimizing therapeutic combinations in DBTL cycles.

Theoretical Foundations and Quantitative Comparison

Table 1: Core Principles of Bliss Independence and Combination Index Models

| Feature | Bliss Independence Model | Combination Index (Chou-Talalay) Model |

|---|---|---|

| Fundamental Principle | Probabilistic independence; drugs act on different pathways without interacting [17] [20]. | Mass-action law; dose-effect equivalence derived from enzyme kinetics [18] [19]. |

| Definition of Additivity (No Interaction) | Observed combination response equals the predicted response: (Yc = YA + YB - YA Y_B) [21] [17]. | Combination Index equals 1: (CI = \frac{dA}{D{A}} + \frac{dB}{D{B}} + \frac{dA dB}{D{A}D{B}} = 1) [21] [18]. |

| Definition of Synergism | Observed combination response greater than the Bliss-predicted response ((Yc > Yp)) [22]. | Combination Index less than 1 (CI < 1) [18] [19]. |

| Definition of Antagonism | Observed combination response less than the Bliss-predicted response ((Yc < Yp)) [22]. | Combination Index greater than 1 (CI > 1) [18] [19]. |

| Typical Application | Drugs with different mechanisms of action (mutually non-exclusive) [21]. | Applicable regardless of mechanism; can be used for both similar and different modes of action [18]. |

| Key Equation | (Yp = YA + YB - YA Y_B) (for inhibition/response) [17]. | (CI = \frac{dA}{D{A}} + \frac{dB}{D{B}}) (for mutually exclusive drugs) or (CI = \frac{dA}{D{A}} + \frac{dB}{D{B}} + \frac{dA dB}{D{A}D{B}}) (for mutually non-exclusive drugs) [21] [18]. |

The selection between Bliss Independence and the Combination Index often depends on the scientific question and the assumed mechanism of action. Bliss Independence is most appropriate when two drugs are believed to target different biological pathways and are mutually non-exclusive [21]. In contrast, the Chou-Talalay Combination Index method is a more general framework based on the median-effect principle, which is derived from the mass-action law [18] [19]. It does not require a priori knowledge of the drugs' mechanisms, as it can model both mutually exclusive and non-exclusive interactions [18]. A critical advancement in the application of Bliss Independence is the development of a two-stage response surface model, which allows for statistical testing of synergism across all tested dose combinations, reducing the risk of false-positive claims that can arise from simply comparing observed and predicted values without accounting for variability [21] [22].

Experimental Protocols

Protocol 1: Experimental Design and Viability Assay for Combination Screening

This protocol outlines the steps for designing and executing a drug combination experiment, from plate layout to data acquisition, which serves as the "Test" phase in a DBTL cycle.

Research Reagent Solutions:

- Cell Line: Select a relevant cancer cell line (e.g., LN229 glioblastoma [21] or MX-1 breast cancer [18]).

- Drugs: Gamitrinib (Hsp90 inhibitor) and a PI3K pathway kinase inhibitor [21], or Fludelone and Panaxytriol [18].

- Controls: Positive control (e.g., 10 μM Doxorubicin for 100% inhibition) and negative control (e.g., 0.2% DMSO for 0% inhibition) [21].

- Viability Assay Reagent: Fluorescent dye (e.g., for cell viability) or XTT assay kit [18].

Procedure:

- Plate Map Design: Utilize a matrix design (e.g., 7x7). The first block should include single-agent doses for Drug A (e.g., Gamitrinib) along one axis and single-agent doses for Drug B (e.g., a PI3K inhibitor) along the other. The inner cells of the matrix represent combination doses [21].

- Drug Dilution: Prepare a 1:3 serial dilution series for each drug, with the highest concentration typically in the micromolar range (e.g., 2.00 μM) and the lowest in the nanomolar range (e.g., 0.008 μM) [21].

- Cell Plating and Treatment: Plate cancer cells in a 384-well format. Treat cells with the single agents and combinations according to the plate map for a specified duration (e.g., 18 h at 37°C) [21].

- Viability Measurement: Add fluorescence or XTT viability reagent to the wells and incubate according to manufacturer specifications. Measure the fluorescence or absorbance intensity using a plate reader [21] [18].

- Data Normalization: Calculate the fractional viability for each well by normalizing its readout to the aggregate averages of the positive control (0% viability) and negative control (100% viability) wells. The fractional growth inhibition rate (the response, (Y)) is then calculated as (1 - \text{viability}) [21].

Protocol 2: Data Analysis using the Bliss Independence Model

This protocol details the statistical analysis of combination data under the Bliss independence assumption, transforming raw data into a quantitative assessment of synergy.

Procedure:

- Calculate Bliss Prediction: For each combination dose ((dA, dB)), calculate the predicted inhibition rate ((Yp)) under the assumption of independence using the formula: (Yp = YA + YB - YA \cdot YB), where (YA) and (YB) are the observed inhibition rates for drug A and drug B alone at doses (dA) and (dB), respectively [21] [17].

- Compute Excess Over Bliss: For each combination, subtract the predicted response from the observed combination response ((Yc)): (\text{Excess} = Yc - Y_p). A positive excess indicates synergism, while a negative value indicates antagonism [21].

- Statistical Modeling (Two-Stage Model): To avoid false positives and account for data variability, fit a two-stage response surface model [21] [22].

- Stage 1: Fit non-linear dose-response curves (e.g., sigmoidal Emax model) to the single-agent data for each drug to estimate their parameters.

- Stage 2: Using the parameters from Stage 1, fit a global model to the combination data to estimate an overall interaction index (τ) with a 95% confidence interval. An index significantly less than 0 indicates overall synergism [21].

- Visualization: Generate a surface plot or heat map showing the "excess over Bliss" across the entire dose matrix to visualize regions of synergy and antagonism [21].

Protocol 3: Data Analysis using the Chou-Talalay Combination Index Method

This protocol describes the use of the median-effect equation and Combination Index to quantify drug interactions, a method widely adopted in the field.

Procedure:

- Median-Effect Plot: For each single agent and the combination at a fixed ratio, calculate the dose and effect. Plot (\log \left( \frac{fa}{fu} \right)) vs. (\log(\text{dose})), where (fa) is the fraction affected and (fu) is the fraction unaffected ( (fu = 1 - fa) ). This is the median-effect plot [18] [17].

- Determine Median-Effect Parameters: Fit a linear regression to the median-effect plot. The slope of the line is (m) (the shape parameter of the dose-effect curve), and the x-intercept is (\log(Dm)), where (Dm) is the median-effect dose (e.g., IC50 or GI50) [18].

- Calculate Combination Index (CI): For a given combination effect level (fa), use the equation for mutually non-exclusive drugs: ( CI = \frac{(dA)}{(D{m,A}) \left( \frac{fa}{1-fa} \right)^{1/mA}} + \frac{(dB)}{(D{m,B}) \left( \frac{fa}{1-fa} \right)^{1/mB}} + \frac{(dA)(dB)}{(D{m,A}) \left( \frac{fa}{1-fa} \right)^{1/mA} (D{m,B}) \left( \frac{fa}{1-fa} \right)^{1/mB}} ) where (dA) and (dB) are the doses of drug A and B in combination that produce effect (fa), and (D{m,A}) and (D{m,B}) are the doses of each drug alone that produce the same effect [18].

- Interpretation: A CI < 1 indicates synergism, CI = 1 indicates an additive effect, and CI > 1 indicates antagonism [18] [19].

- Software-Assisted Analysis: Input the dose-response data for single agents and the combination into software like CompuSyn to automatically generate the median-effect plots, CI values, Fa-CI plots, and isobolograms [18].

Integration with DBTL Cycles & Visualization

The quantitative outputs from these synergy models are the cornerstone of the "Learn" phase in a DBTL cycle for combinatorial pathway optimization. The results directly inform the next "Design" phase, guiding whether to pursue a specific drug pair, optimize the ratio of compounds, or investigate the underlying biological pathways further.

Diagram 1: The DBTL cycle for combinatorial drug optimization, highlighting the integration of Bliss and Combination Index (CI) models within the "Learn" phase to inform subsequent design iterations.

Table 2: Interpreting Model Outputs for DBTL Cycle Decisions

| Model Output | Observation | DBTL Cycle Decision & Action |

|---|---|---|

| Bliss: Significant negative τ [21]CI: Consistent CI < 0.9 [18] | Strong, overall synergism | Proceed & Optimize: Advance the drug combination to the next DBTL cycle. Actions may include refining the dose ratio, testing in more complex models (e.g., 3D cultures, in vivo), or scaling up synthesis. |

| Bliss: τ not significantCI: CI ≈ 1 | Additive effect | Re-design or Terminate: The combination offers no superior effect. Consider re-designing the combination with different drugs or mechanisms of action, or terminate the project to save resources. |

| Bliss: Significant positive τ [21]CI: CI > 1.1 [18] | Antagonism | Terminate or Investigate: The combination is counterproductive. The combination should be abandoned unless the antagonistic effect is desired for a specific context (e.g., reducing toxicity of one drug [18]). |

| Bliss: Synergy only at high dosesCI: Synergy only at high Fa | Dose-dependent synergism | Re-design & Refine: The therapeutic window may be narrow. Re-design the experiment to focus on the synergistic dose range and ratio in the next cycle. |

The rigorous application of both Bliss Independence and Combination Index models provides a powerful, quantitative framework for evaluating drug combinations within combinatorial pathway optimization research. By implementing the detailed experimental and analytical protocols outlined in this note, researchers can consistently transition from raw viability data to statistically robust conclusions regarding synergism and antagonism. This quantitative learning is essential for guiding intelligent decisions in iterative DBTL cycles, ultimately accelerating the development of effective, multi-target therapeutic strategies with the potential to reduce toxicity and overcome drug resistance.

The Design-Build-Test-Learn (DBTL) cycle is a foundational framework in synthetic biology and metabolic engineering for developing efficient microbial cell factories [5]. The integration of multi-omics data—genomics, transcriptomics, and proteomics—has revolutionized this cycle by providing deep, system-wide molecular insights that inform each stage. This integration moves pathway design beyond static genomic information to incorporate dynamic functional data, enabling more predictive and efficient engineering of biological systems [23] [24]. The application of multi-omics within DBTL cycles has been particularly transformative for combinatorial pathway optimization, where understanding interactions between multiple genetic modifications is crucial for maximizing product yields and cellular fitness [5].

Table: Multi-Omics Data Types and Their Roles in Pathway Design

| Omics Layer | Data Content | Role in Pathway Design |

|---|---|---|

| Genomics | DNA sequence, mutations, copy number variations | Identifies target genes for modification, reveals structural variants, provides context for host engineering |

| Transcriptomics | RNA expression levels, transcript sequences | Reveals gene expression dynamics, identifies regulatory elements, monitors cellular response to pathway engineering |

| Proteomics | Protein abundance, post-translational modifications, protein-protein interactions | Confirms functional enzyme levels, identifies bottlenecks in metabolic flux, reveals regulatory mechanisms |

Computational Frameworks for Multi-Omics Integration

Effective integration of multi-omics data requires sophisticated computational frameworks that can handle the heterogeneity, scale, and complexity of diverse molecular datasets. Several approaches have emerged to address these challenges.

The SynOmics framework represents a significant advancement through its use of graph convolutional networks to model both within- and cross-omics dependencies [25]. Unlike traditional early or late integration strategies, SynOmics adopts a parallel learning strategy that processes feature-level interactions at each model layer, enabling more nuanced capture of cross-omics relationships. This approach constructs omics networks in feature space, incorporating both omics-specific networks and cross-omics bipartite networks to simultaneously learn intra-omics and inter-omics relationships [25]. Experimental results demonstrate that SynOmics consistently outperforms state-of-the-art multi-omics integration methods across various biomedical classification tasks, highlighting its potential for enhancing pathway design predictions [25].

For visualization and exploration of integrated multi-omics data, tools like the expanded Cellular Overview in Pathway Tools (PTools) enable researchers to simultaneously visualize up to four types of omics data on organism-scale metabolic network diagrams [26]. This tool maps different omics datasets to distinct visual channels—reaction arrow color and thickness, plus metabolite node color and thickness—allowing intuitive interpretation of complex relationships across molecular layers. The system supports semantic zooming for detailed exploration and can animate datasets with multiple time points to reveal dynamic patterns [26].

Table: Benchmarking Multi-Omics Study Design Factors for Robust Analysis

| Factor Category | Specific Factor | Recommended Guideline | Impact on Analysis |

|---|---|---|---|

| Computational | Sample Size | ≥26 samples per class | Ensures statistical power and reliability |

| Computational | Feature Selection | <10% of omics features selected | Improves clustering performance by 34% |

| Computational | Class Balance | Under 3:1 ratio between classes | Prevents algorithmic bias toward majority class |

| Computational | Noise Characterization | Below 30% noise level | Maintains signal integrity and pattern recognition |

| Biological | Omics Combinations | GE + MI + CNV + ME | Optimal for cancer subtyping based on TCGA analysis |

Experimental Protocols for Multi-Omics Data Generation

Genomics and Transcriptomics Profiling

Protocol 1: Whole Genome Sequencing for Pathway Engineering

- DNA Extraction: Use high-quality extraction kits to obtain high-molecular-weight DNA from bacterial cultures (OD₆₀₀ ≈ 0.6-0.8)

- Library Preparation: Utilize Illumina-compatible library prep kits with fragmentation to 350-500bp following manufacturer protocols

- Sequencing: Run on Illumina NovaSeq X platform with 2×150bp paired-end reads, targeting 30-50x coverage

- Variant Calling: Process with DeepVariant for SNP and indel identification, using default parameters [23]

- Analysis: Map reads to reference genome using BWA-MEM, identify structural variants with Manta, and annotate with SNPEff

Protocol 2: RNA-Seq for Transcriptomic Profiling of Engineered Strains

- RNA Extraction: Harvest cells during mid-log phase, stabilize immediately in RNA-protect reagent, extract using column-based methods

- Quality Control: Verify RNA Integrity Number (RIN) >8.0 using Bioanalyzer

- Library Preparation: Deplete rRNA using specific probes, prepare stranded RNA-seq libraries with unique dual indexes

- Sequencing: Sequence on Illumina platform to depth of 20-30 million reads per sample

- Analysis: Align to reference genome with STAR, quantify gene expression with featureCounts, perform differential expression with DESeq2

Proteomics Analysis

Protocol 3: Mass Spectrometry-Based Proteomics for Pathway Flux Analysis

- Protein Extraction: Lyse cells in 8M urea buffer with protease inhibitors, sonicate on ice (3×10s pulses)

- Digestion: Reduce with 5mM DTT (30min, 37°C), alkylate with 15mM iodoacetamide (30min, dark), digest with trypsin (1:50 ratio, overnight, 37°C)

- Desalting: Desalt peptides using C18 stage tips, dry in vacuum concentrator

- LC-MS/MS Analysis: Reconstitute in 0.1% formic acid, separate on nano-LC system (75μm×25cm C18 column, 90min gradient), analyze on timsTOF Pro mass spectrometer in DIA mode

- Data Processing: Process using Spectronaut with default settings, quantify against organism-specific database

Protocol 4: Benchtop Protein Sequencing for Rapid Validation

- Sample Preparation: Digest proteins into peptides using Platinum Pro kit reagents following manufacturer's protocol [27]

- Chip Loading: Apply peptides to specialized sequencing chips containing millions of wells

- Sequencing: Run on Quantum-Si's Platinum Pro single-molecule protein sequencer with integrated analysis [27]

- Data Interpretation: Use proprietary software to identify amino acid sequences and post-translational modifications

Multi-Omics Visualization and Workflow Integration

Effective visualization is critical for interpreting multi-omics data within pathway design contexts. The following diagram illustrates the integration of multi-omics data into the DBTL cycle for combinatorial pathway optimization:

The integration of diverse omics data types requires specialized visualization approaches. The following diagram illustrates how different omics layers can be simultaneously visualized on metabolic pathway maps to inform design decisions:

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table: Key Research Reagent Solutions for Multi-Omics Pathway Design

| Tool/Platform | Type | Primary Function | Application in Pathway Design |

|---|---|---|---|

| Illumina NovaSeq X | Sequencing Platform | High-throughput DNA/RNA sequencing | Whole genome sequencing of engineered strains, transcriptome profiling |

| SomaScan Platform | Proteomics Tool | Affinity-based protein quantification | Measuring pathway enzyme abundance in response to genetic modifications |

| Quantum-Si Platinum Pro | Protein Sequencer | Benchtop single-molecule protein sequencing | Validating enzyme sequences in engineered pathways [27] |

| Olink Explore HT | Proteomics Platform | Multiplex protein quantification | High-throughput verification of proteomic changes in engineered strains |

| Pathway Tools | Software Suite | Metabolic pathway analysis and visualization | Multi-omics data visualization on metabolic maps [26] |

| SynOmics | Computational Framework | Graph-based multi-omics integration | Modeling cross-omics dependencies in engineered pathways [25] |

| Ultima UG 100 | Sequencing System | High-throughput, cost-efficient sequencing | Large-scale validation of engineered pathway libraries |

The integration of genomics, transcriptomics, and proteomics data has fundamentally transformed pathway design within DBTL cycles, enabling more predictive and efficient engineering of biological systems. By leveraging computational frameworks like SynOmics for data integration [25], advanced visualization tools for interpretation [26], and high-throughput technologies for data generation [27], researchers can now navigate the complexity of biological systems with unprecedented precision. As these multi-omics approaches continue to mature alongside developments in AI and machine learning [23] [24], they promise to accelerate the design of optimized biological pathways for therapeutic development, sustainable manufacturing, and agricultural innovation.

Combinatorial optimization represents a paradigm shift in both cancer therapy and microbial metabolic engineering. The core principle involves the systematic testing of multiple factors simultaneously—be they drugs, genetic parts, or environmental conditions—to discover synergistic interactions that a sequential approach would likely miss. This methodology is formally structured within the Design-Build-Test-Learn (DBTL) cycle, a framework that accelerates the development of complex biological systems by iteratively refining hypotheses based on experimental data [8]. In oncology, this has evolved from the early use of single cytotoxic agents to sophisticated multi-drug regimens and conjugated combinations that target specific cancer pathways, dramatically improving patient survival [28] [29]. Similarly, in microbial engineering, combinatorial optimization of pathway genes, rather than sequential tuning, is crucial for breaking through production bottlenecks and achieving economically viable titers of valuable metabolites, from biofuels to pharmaceuticals [30] [31]. The following sections detail the application notes and experimental protocols that underpin this powerful approach.

Application Notes: Anti-Cancer Drug Cocktails

The development of combination cancer therapy began with the recognition that single-agent treatments often yielded only transient benefits, followed by disease recurrence due to drug resistance. The shift to combination regimens was a pivotal advancement in clinical oncology [28].

Historical Evolution and Key Principles

The following table summarizes the evolution of cancer chemotherapy, highlighting the key advancements and their impacts.

Table 1: Evolution of Cancer Chemotherapy Approaches

| Era | Therapeutic Approach | Key Examples | Impact and Limitations |

|---|---|---|---|

| 1940s-1950s | Single-agent cytotoxic therapy | Nitrogen mustards, Aminopterin | Demonstrated principle of chemical tumor control; transient responses, universal resistance, severe toxicity [28]. |

| 1960s-Present | Multi-drug combination cocktails | Combination regimens for childhood Acute Lymphoblastic Leukemia (ALL) | Overcame resistance, increased cure rates; severe, dose-limiting toxicities remained a major challenge [28]. |

| 2000s-Present | Targeted therapy & combination | Imatinib for CML, combinations of targeted drugs | Selective targeting of cancer-specific molecules; significantly reduced toxicity, high remission rates (e.g., CML survival from ~20% to >90%) [28]. |

| Present-Future | Conjugated drug combinations & novel modalities | Antibody-Drug Conjugates (ADCs), Bispecific T-cell engagers | Selective delivery of potent cytotoxins to tumor cells (ADCs); redirecting immune cells to tumors (bispecifics); improved therapeutic window [28] [32]. |

Recent Clinical Applications and FDA Approvals (2024-2025)

Recent FDA approvals exemplify the trend towards targeted, biomarker-driven combination strategies and improved drug delivery methods.

Table 2: Select Recent FDA Approvals in Oncology (2024-2025) Illustrating Combination and Delivery Strategies

| Drug (Brand Name) | Approval Date | Indication | Key Combinatorial or Delivery Insight |

|---|---|---|---|

| Dordaviprone (Modeyso) | Q3 2025 | H3 K27M-mutated diffuse midline glioma | First-in-class therapy with dual mechanism: inhibits D2/3 dopamine receptor and overactivates mitochondrial ClpP enzyme [32]. |

| Zongertinib (Hernexeos) | Q3 2025 | HER2-mutated non-small cell lung cancer (NSCLEX) | Oral tyrosine kinase inhibitor; effective against broader HER2 mutations than existing therapy; demonstrates a "very favorable safety profile" [32]. |

| Imlunestrant (Inluriyo) | Q3 2025 | ESR1-mutated, ER+/HER2- advanced breast cancer | A "pure" estrogen receptor (ER) blocker (SERD); effective alone and in combination with the CDK4/6 inhibitor abemaciclib [32]. |

| Linvoseltamab-gcpt (Lynozyfic) | Q3 2025 | Relapsed/refractory multiple myeloma | Bispecific T-cell engager; combinatorially targets BCMA on cancer cells and CD3 on T cells to engage immune system for tumor cell killing [32]. |

| Pembrolizumab (Keytruda) Subcutaneous | Q3 2025 | Multiple solid tumors | New delivery method (subcutaneous injection) for an existing drug, improving patient accessibility and convenience compared to intravenous infusion [32]. |

| Avutometinib + Defactinib (Co-Pack) | H1 2025 | KRAS-mutated recurrent ovarian cancer | Novel drug combination targeting KRAS-driven cancers, representing a new treatment option for a rare cancer [33]. |

Application Notes: Microbial Metabolite Production

Microbial cell factories are engineered to produce valuable secondary metabolites, which include many antibiotics, anticancer agents, and agrochemicals. The optimization of these pathways is a central challenge in metabolic engineering.

The Role of Secondary Metabolites and Optimization Challenge

Microbial secondary metabolites are non-essential molecules produced from intermediates of primary metabolism. They are a rich source of bioactivity. It is estimated that 45% of all bioactive microbial metabolites are produced by Actinomycetales, with the genus Streptomyces alone producing approximately 7,600 compounds [34]. Optimizing the production of these compounds requires balancing the expression of multiple pathway genes, as the abundance of one enzyme often becomes the limiting factor for the entire pathway's flux [30].

Quantitative Analysis of Combinatorial Pathway Optimization

The table below summarizes key results from studies that implemented combinatorial optimization using Design of Experiments (DoE) in microbial systems.

Table 3: Performance Gains from Combinatorial Pathway Optimization in Microbial Cell Factories

| Target Product | Host Organism | Number of Factors Optimized | Optimization Strategy | Resulting Improvement | Source |

|---|---|---|---|---|---|

| p-Coumaric Acid (pCA) | Saccharomyces cerevisiae | 6 genetic + 3 environmental | Two rounds of fractional factorial DoE | 168-fold variation in pCA titer; identified significant interaction between culture temperature and ARO4 gene expression [31]. | |

| Dopamine | Escherichia coli | 2 genes (HpaBC, Ddc) | Knowledge-driven DBTL cycle with high-throughput RBS engineering | Final titer of 69.03 ± 1.2 mg/L (34.34 ± 0.59 mg/g biomass), a 2.6 to 6.6-fold improvement over the state-of-the-art [8]. | |

| Curcuminoid Pathway Analysis | In silico kinetic model | 7 enzymes | Simulated full factorial (128 strains) vs. fractional factorial designs | Resolution IV designs provided the best trade-off, identifying optimal strains with fewer constructions while capturing interactions [30]. |

Experimental Protocols

Protocol 1: Combinatorial Optimization of a Microbial Pathway using Fractional Factorial Design

This protocol outlines the use of a Resolution IV fractional factorial design to optimize a multi-gene pathway in S. cerevisiae for p-coumaric acid production [31].

1. Design Phase

- Define Factors and Levels: Select the pathway genes to be optimized. For initial screening, choose two expression levels per gene (e.g., low and high, represented by weak and strong promoters).

- Select DoE Design: For 6 factors, a full factorial design would require 2^6 = 64 strains. A Resolution IV fractional factorial design can be generated using statistical software (e.g., the

FrF2package in R), requiring only 16-32 strains. This design confounds two-factor interactions with each other but allows clear identification of all main effects [30] [31]. - Strain Design Table: Create a table specifying the genetic construct for each strain to be built.

2. Build Phase

- Strain Construction: Use automated genetic engineering platforms (e.g., Golden Gate assembly followed by in vivo recombination in a Cas9-equipped host strain) to construct the library of yeast strains as per the design table [31].

- Culture Stock Preparation: Pick single colonies into 96-well plates containing solid growth medium with appropriate selection agents. Incubate at 30°C for 3 days and store correct strains at -80°C in 10% glycerol.

3. Test Phase

- Inoculation and Cultivation:

- Grow single colonies in 10 mL of rich medium (e.g., YPDA) in 50 mL tubes for 24 hours.

- Wash cultures and inoculate into defined minimal media with 20 g/L glucose in deep-well plates, to a starting OD specified by the experimental design.

- Vary other process factors as per the DoE (e.g., nitrogen source: ammonium sulfate vs. urea; pH buffered or not).

- Incubate with shaking at temperatures specified by the design (e.g., 20°C, 30°C) for a fixed period [31].

- Analytics: Measure the final optical density (OD) and the concentration of the target metabolite (e.g., p-coumaric acid) in the supernatant via High-Performance Liquid Chromatography (HPLC).

4. Learn Phase

- Statistical Analysis: Fit the production data to a linear model using ordinary least squares regression. The model will have the form:

y = β₀ + Σ(ME_i * F_i) + Σ(2FI_i:j * F_i * F_j)whereyis the product titer,β₀is the intercept,ME_iis the main effect of factor i, and2FI_i:jis the two-factor interaction between i and j [30]. - Analysis of Variance (ANOVA): Perform ANOVA to determine which main effects and interactions significantly influence production.

- Decision Making: Identify the most important factors and their optimal levels. Use these insights to design a subsequent DBTL cycle with a narrower focus or more levels for the critical factors.

Protocol 2: Knowledge-Driven DBTL Cycle for Dopamine Production in E. coli

This protocol leverages upstream in vitro experiments to inform the initial design of the in vivo DBTL cycle, accelerating strain optimization [8].

1. Design Phase

- In Vitro Pathway Analysis:

- Prepare crude cell lysates from an E. coli production host.

- Clone the pathway genes (e.g., hpaBC and ddc for dopamine) into individual plasmids under an inducible promoter (e.g., pJNTN system).

- In a cell-free reaction buffer containing substrates (L-tyrosine, cofactors FeCl₂, vitamin B6), test different relative expression levels by varying the plasmid ratios.

- Analyze L-DOPA and dopamine production to determine the optimal enzyme ratio for the pathway [8].

- In Vivo RBS Library Design: Based on the optimal ratio, design a library of bicistronic constructs for the two genes. Use RBS engineering to fine-tune the translation initiation rate (TIR) of the second gene (ddc) relative to the first (hpaBC). Design a series of RBS sequences with varying Shine-Dalgarno sequences to modulate strength without altering the coding sequence [8].

2. Build Phase

- Library Construction: Assemble the bicistronic construct with the variable RBS for ddc into a backbone plasmid. Use Golden Gate assembly or similar high-throughput methods.

- Strain Transformation: Transform the plasmid library into a genomically engineered E. coli host strain optimized for L-tyrosine overproduction (e.g., FUS4.T2 with TyrR depletion and feedback-resistant tyrA) [8].

3. Test Phase

- High-Throughput Screening:

- Grow transformants in 96-well plates containing minimal medium with appropriate inducers (e.g., 1 mM IPTG).

- After cultivation, measure biomass (OD) and dopamine production. Dopamine can be quantified via HPLC or, for initial screening, correlated with the formation of dark melanin-like pigments in alkaline conditions.

- Select the top-performing strains for validation in shake-flask cultures [8].

- Analytical Validation: Confirm dopamine titers in shake-flask supernatances using HPLC with standard calibration curves.

4. Learn Phase

- Correlation Analysis: Analyze the correlation between the designed RBS strength (e.g., predicted Gibbs free energy), the relative expression level of Ddc, and the final dopamine titer.

- Model Refinement: Update the model of the pathway to incorporate the learned relationship between RBS sequence, enzyme activity, and flux.

- Cycle Iteration: If the target titer is not met, initiate a new DBTL cycle, which could involve fine-tuning the first gene's expression, introducing additional genomic modifications, or optimizing process parameters like temperature and media composition.

Visualizing Workflows and Pathways

The DBTL Cycle for Combinatorial Optimization

Diagram Title: The Iterative DBTL Cycle

Dopamine Biosynthesis Pathway from L-Tyrosine

Diagram Title: Dopamine Biosynthesis Pathway

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagent Solutions for Combinatorial Optimization

| Reagent / Material | Function / Application | Example Use Case |

|---|---|---|

| Plasmid Systems (e.g., pET, pJNTN) | Storage and expression of heterologous genes; library construction. | Hosting genes hpaBC and ddc for dopamine production in E. coli [8]. |

| Promoter & RBS Library | Fine-tuning gene expression levels in a pathway. | Combinatorial optimization of 6 genes in S. cerevisiae using different promoters [31]; RBS engineering in E. coli [8]. |

| Cas9-integrated Host Strain | Enables precise genomic integration of pathway gene clusters. | S. cerevisiae host for efficient, standardized genomic integration of designed pathway constructs [31]. |

| DoE Software (e.g., R FrF2, JMP) | Generates efficient fractional factorial design matrices and analyzes results. | Creating a Resolution IV design for 7 factors to reduce the number of strains from 128 to a more manageable subset [30]. |

| Cell-Free Protein Synthesis (CFPS) System | In vitro testing of enzyme expression and pathway flux without cellular constraints. | Determining the optimal ratio of HpaBC to Ddc enzyme activity before in vivo strain building [8]. |

| HPLC / GC-MS Systems | Accurate quantification of target metabolite titers and analysis of metabolic profiles. | Measuring p-coumaric acid or dopamine concentrations in culture supernatants [31] [8]. |

Implementing DBTL: From AI-Driven Design to High-Throughput Building and Testing

The Design-Build-Test-Learn (DBTL) cycle is a foundational framework for modern combinatorial pathway optimization in drug development. Machine learning (ML) serves as the core "Learn" component, transforming experimental data into predictive models that guide subsequent "Design" phases, thereby creating an iterative, data-driven feedback loop. This accelerates the discovery of synergistic drug combinations and optimized therapeutic agents. Among the plethora of ML techniques, Gradient Boosting, Random Forests, and Deep Learning have emerged as particularly powerful for predictive modeling tasks. These methods excel at analyzing complex, high-dimensional biological data—including multi-omics profiles, chemical structures, and protein-protein interaction networks—to predict bioactivity, synergy, and other critical parameters. Their integration into DBTL cycles enables researchers to move beyond empirical trial-and-error, instead using computational predictions to prioritize the most promising experiments, significantly reducing development time and costs [35] [36].

The following table summarizes the core characteristics and applications of these key ML methods in the context of DBTL-driven research.

Table 1: Key Machine Learning Methods in Predictive Modeling for Drug Discovery

| Method | Core Principle | Key Advantages | Typical Applications in DBTL |

|---|---|---|---|

| Gradient Boosting | Sequentially builds an ensemble of weak prediction models (typically trees), where each new model corrects the errors of the previous one. | High predictive accuracy, robust handling of mixed data types, often wins data science competitions. | Building baseline predictive models for activity or toxicity; feature importance analysis to guide design [37] [38]. |

| Random Forests | An ensemble "bagging" method that constructs a multitude of decision trees at training time and outputs the mode (classification) or mean (prediction) of the individual trees. | Reduces overfitting, handles high-dimensional data well, provides intrinsic feature importance measures. | Initial screening of compound libraries; QSAR modeling; identifying critical genomic features [37] [39]. |

| Deep Learning (e.g., DeepSynergy) | Uses neural networks with many layers ("deep") to learn hierarchical representations and complex, non-linear patterns directly from raw or structured data. | State-of-the-art performance on large datasets; ability to automatically learn relevant features from complex inputs like graphs and multi-omics data. | Predicting novel synergistic drug combinations [37] [40]; integrating multi-omics data for patient-specific predictions [41]. |

Application Notes: ML for Drug Synergy Prediction

Predicting synergistic drug combinations is a central challenge in oncology and complex disease therapy, perfectly suited for ML-powered DBTL cycles. The following application notes detail the implementation and performance of specific deep learning models designed for this task.

Table 2: Comparison of Deep Learning Models for Drug Synergy Prediction

| Model Name | Input Data & Key Innovations | Reported Performance | Context in DBTL Cycle |

|---|---|---|---|

| DeepSynergy | Inputs: Chemical drug descriptors and genomic information (gene expression) of cancer cell lines.Innovation: One of the first deep learning models applied to large-scale drug synergy prediction; uses conical layers to model drug-cell line interactions [37] [38]. | - Mean Squared Error: Significantly outperformed other methods (7.2% improvement over second best).- Pearson Correlation: 0.73 for novel combinations within explored space.- Classification AUC: 0.90 for classifying synergistic combinations [37]. | Learn/Design: The model is trained on high-throughput screening data ("Test" phase) and used to predict and prioritize novel, untested drug-cell line combinations for the next "Design" and "Build" cycle. |

| AuDNNsynergy | Inputs: Multi-omics data (gene expression, copy number variation, mutation) from TCGA and chemical structure data.Innovation: Uses separate autoencoders for each omics data type to create compressed, informative representations of cell lines before feeding them into a deep neural network [41]. | - Outperformed four state-of-the-art approaches, including DeepSynergy, Gradient Boosting Machines, Random Forests, and Elastic Nets [41]. | Learn/Design: Enhances the "Learn" phase by integrating diverse data modalities, leading to more biologically informed predictions for designing new experiments. |

| MultiSyn | Inputs: Multi-omics data, protein-protein interaction (PPI) networks, and drug molecules decomposed into pharmacophore-containing fragments.Innovation: A semi-supervised graph neural network integrates PPI networks with multi-omics data for cell line representation. Uses a heterogeneous graph transformer to learn multi-view drug representations, improving interpretability [40]. | - Outperformed several classical and state-of-the-art baselines (e.g., DeepSynergy, DeepDDS).- Provides visualization of key substructures critical for synergy, enhancing mechanistic understanding [40]. | Learn/Design: Represents an advanced "Learn" phase that incorporates biological network context and pharmacophore information, yielding more accurate and interpretable predictions for the subsequent "Design" of optimized drug combinations and candidates. |

Experimental Protocols

This section provides detailed, step-by-step methodologies for implementing the machine learning workflows discussed, enabling researchers to integrate these protocols into their own DBTL cycles.

Protocol: Implementing a DeepSynergy-like Model for Synergy Prediction

This protocol outlines the procedure for developing a deep learning model to predict anti-cancer drug synergy, based on the architecture and methodology of DeepSynergy [37] [38].

I. Data Acquisition and Preprocessing

- Obtain Synergy Data: Acquire a large-scale drug combination screening dataset. Example: The O'Neil et al. dataset (23,062 samples, 38 drugs, 39 cell lines) used in the original DeepSynergy study [38].

- Collect Drug Features: For each drug, compute or retrieve molecular descriptors (e.g., molecular weight, logP) and fingerprint vectors (e.g., ECFP4) from chemical databases like PubChem or using toolkits like RDKit.

- Collect Cell Line Features: For each cancer cell line, download normalized gene expression profiles (e.g., RNA-Seq or microarray data) from repositories such as the Cancer Cell Line Encyclopedia (CCLE).

- Data Integration and Normalization: Merge the drug and cell line features with the synergy scores. Apply a robust normalization strategy (e.g., Z-score normalization) to all input features to account for data heterogeneity and improve model convergence.

II. Model Architecture and Training

- Input Layer: Create two separate input branches: one for the drug pair (concatenated features of both drugs) and one for the cell line genomic features.

- Hidden Layers:

- Utilize fully connected (dense) layers with non-linear activation functions (e.g., ReLU).

- Implement a specific architectural feature like "conical" layers, where the number of neurons decreases in subsequent layers, forcing the network to learn a compressed, meaningful representation of the input.

- Merge the drug and cell line pathways in the network to model their interaction.

- Output Layer: Use a single neuron with a linear activation function for regression, predicting the continuous synergy score.

- Training Procedure:

- Partitioning: Split the data into training, validation, and test sets. Use a cell-line-wise or drug-wise split to rigorously assess the model's ability to generalize to novel contexts.

- Loss Function: Use Mean Squared Error (MSE) as the loss function.

- Optimization: Train the model using a stochastic gradient descent optimizer (e.g., Adam) with early stopping based on the validation set performance to prevent overfitting.

III. Model Validation and Interpretation

- Performance Evaluation: Calculate the Mean Squared Error (MSE) and Pearson correlation coefficient between the measured and predicted synergy scores on the held-out test set.

- Benchmarking: Compare the model's performance against established baseline methods, such as Gradient Boosting Machines and Random Forests, to demonstrate its superiority.

- Analysis: Perform feature importance analysis to identify which genomic features or chemical descriptors the model deems most critical for its predictions, thereby generating testable biological hypotheses.

Protocol: Building a Multi-Feature Predictor with Gradient Boosting/Random Forests

This protocol describes the use of ensemble tree-based methods for building robust predictive models, which can serve as strong baselines or be deployed where deep learning is not feasible.

I. Feature Engineering and Data Preparation

- Assemble Feature Matrix: Create a comprehensive feature matrix where each row represents a sample (e.g., a drug-drug-cell line triplet) and each column represents a feature.

- Incorporate Diverse Data Types: Include features such as:

- Drug Structural Features: Molecular fingerprints, physicochemical properties.

- Cell Line Characterization: Multi-omics data (gene expression, mutations, copy number variations).

- Network-Based Features: Features derived from biological networks (e.g., centrality measures from PPI networks).

- Handle Missing Data: Impute missing values using appropriate methods (e.g., mean/median imputation, k-nearest neighbors imputation) or use algorithms that can handle missing values internally.

- Feature Preprocessing: Encode categorical variables. While tree-based models are less sensitive to feature scaling, normalization can sometimes aid convergence for boosting algorithms.

II. Model Training and Hyperparameter Tuning

- Algorithm Selection:

- For Gradient Boosting, use implementations like XGBoost, LightGBM, or CatBoost, which are optimized for speed and performance.

- For Random Forests, use the

RandomForestRegressororRandomForestClassifierfrom scikit-learn.

- Hyperparameter Optimization:

- Key for Gradient Boosting:

learning_rate,n_estimators,max_depth,subsample. - Key for Random Forests:

n_estimators,max_features,max_depth,bootstrap. - Use techniques like Bayesian Optimization or RandomizedSearchCV to efficiently search the hyperparameter space.

- Key for Gradient Boosting:

- Training with Cross-Validation: Perform k-fold cross-validation (e.g., 5-fold CV) during the tuning process to obtain robust estimates of model performance and avoid overfitting.

III. Model Evaluation and Interpretation

- Performance Metrics: Evaluate the model on a held-out test set using task-appropriate metrics (e.g., MSE, R² for regression; AUC, Accuracy for classification).

- Feature Importance Analysis: Extract and plot feature importance scores (e.g., Gini importance or permutation importance) to identify the most influential drivers of the model's predictions. This is critical for generating biological insights within the DBTL cycle.

- Validation: Where possible, validate top model predictions through wet-lab experiments, thereby closing the DBTL loop.

Integration of Predictive Modeling into DBTL Cycles

The true power of machine learning is realized when it is seamlessly embedded within the iterative DBTL framework, creating a self-improving research engine.

Design: In this initial phase, predictive models are used to in silico screen vast virtual libraries of drug combinations or compound structures. Models like DeepSynergy and MultiSyn propose candidate combinations with high predicted synergy, while generative models can design novel molecular structures with desired properties, directly informing the experimental plan [35] [39].

Build: The top-predicted candidates from the "Design" phase are synthesized or procured, and biological systems (e.g., cell lines engineered with specific targets) are prepared for testing.

Test: The built candidates are evaluated in high-throughput in vitro or in vivo experiments. This involves robust assays to measure critical outcomes such as cell viability, synergy scores (e.g., using the Bliss independence model), and pharmacokinetic properties [38].

Learn: This is where machine learning is applied. Data from the "Test" phase is combined with existing datasets to retrain and refine the predictive models. Feature importance analysis from tree-based models or attention mechanisms in graph networks can reveal underlying biological mechanisms—such as critical pathways or essential pharmacophores—that explain efficacy or synergy [40]. These new insights directly fuel the next "Design" phase, creating a closed, accelerating loop.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Data Resources and Computational Tools for ML-Driven DBTL

| Resource Category | Specific Examples | Function and Application |

|---|---|---|

| Public Drug Combination Datasets | O'Neil et al. dataset; NCI ALMANAC | Provides large-scale, experimentally derived synergy scores for training and benchmarking predictive models like DeepSynergy [40] [38]. |

| Genomic Data Repositories | Cancer Cell Line Encyclopedia (CCLE); The Cancer Genome Atlas (TCGA) | Sources for gene expression, mutation, and copy number variation data used to create features representing biological context (cell lines) in models [40] [41]. |

| Chemical and Drug Databases | DrugBank; PubChem | Provides chemical structures, SMILES strings, and molecular descriptors necessary for featurizing drugs in a model [40] [39]. |

| Biological Network Databases | STRING Database | Provides protein-protein interaction (PPI) networks that can be integrated using graph neural networks to add functional context, as in MultiSyn [40]. |

| Machine Learning Frameworks | Scikit-learn (for GB/RF); PyTorch; TensorFlow (for DL) | Core programming libraries used to implement, train, and validate the machine learning models discussed [37] [39]. |

| Specialized Computational Tools | DeepSynergy (Web Tool); RDKit (Cheminformatics) | Pre-built platforms or specialized toolkits for specific tasks within the predictive modeling workflow [37]. |