Closed-Loop DBTL Platforms: How AI Agents Are Revolutionizing Drug Discovery

This article explores the transformative integration of AI agents into closed-loop Design-Build-Test-Learn (DBTL) platforms for drug discovery.

Closed-Loop DBTL Platforms: How AI Agents Are Revolutionizing Drug Discovery

Abstract

This article explores the transformative integration of AI agents into closed-loop Design-Build-Test-Learn (DBTL) platforms for drug discovery. Tailored for researchers and development professionals, it provides a comprehensive analysis of the foundational principles, methodological applications, and optimization strategies of these autonomous systems. By examining real-world implementations, performance metrics, and comparative insights from leading biotechs, the content offers a validated perspective on how agentic AI is compressing development timelines from years to months, enhancing precision, and reshaping the future of therapeutic development.

The New Discovery Engine: Understanding Closed-Loop DBTL and Agentic AI

The Design-Build-Test-Learn (DBTL) cycle has long been the cornerstone framework for biological engineering and drug discovery. This iterative process involves designing biological constructs, building them in the lab, testing their performance, and learning from the results to inform the next design cycle. However, traditional DBTL approaches have been persistently hampered by a critical bottleneck: they are slow, resource-intensive, and heavily reliant on deep human expertise at every stage [1]. The sequential nature of DBTL creates significant delays, with the "Build" and "Test" phases being particularly time-consuming, often requiring weeks or months for a single iteration.

A paradigm shift is now underway, moving from human-guided DBTL to fully autonomous LDBT (Learn-Design-Build-Test) workflows. This transformation is powered by artificial intelligence (AI) and laboratory automation, repositioning "Learning" to the beginning of the cycle [2]. Instead of starting with design based on limited human intuition, LDBT begins with machine learning models that have already learned from vast biological datasets. These AI-driven systems can then design optimized experiments, which are automatically built and tested by robotic platforms. This reordering creates a continuous, closed-loop system that dramatically accelerates discovery timelines while reducing costs and human labor requirements.

The LDBT Framework: Core Components and Workflow

The LDBT framework represents a fundamental reorganization of the scientific method for applied biology. By placing learning first, the system leverages accumulated knowledge before committing to specific experimental directions. This approach is enabled by several key technological advancements: the availability of massive biological datasets, sophisticated machine learning algorithms capable of extracting meaningful patterns from these datasets, and integrated robotic systems that can execute laboratory procedures with minimal human intervention [1] [2].

Table: Comparison of DBTL vs. LDBT Paradigms

| Feature | Traditional DBTL | Autonomous LDBT |

|---|---|---|

| Starting Point | Human hypothesis | AI-prioritized designs |

| Learning Phase | After testing results | Before design phase |

| Human Involvement | Required at all stages | Minimal oversight |

| Iteration Speed | Weeks to months | Days to weeks |

| Data Utilization | Limited to previous cycle | All available biological data |

| Scalability | Limited by human capacity | Limited by compute resources |

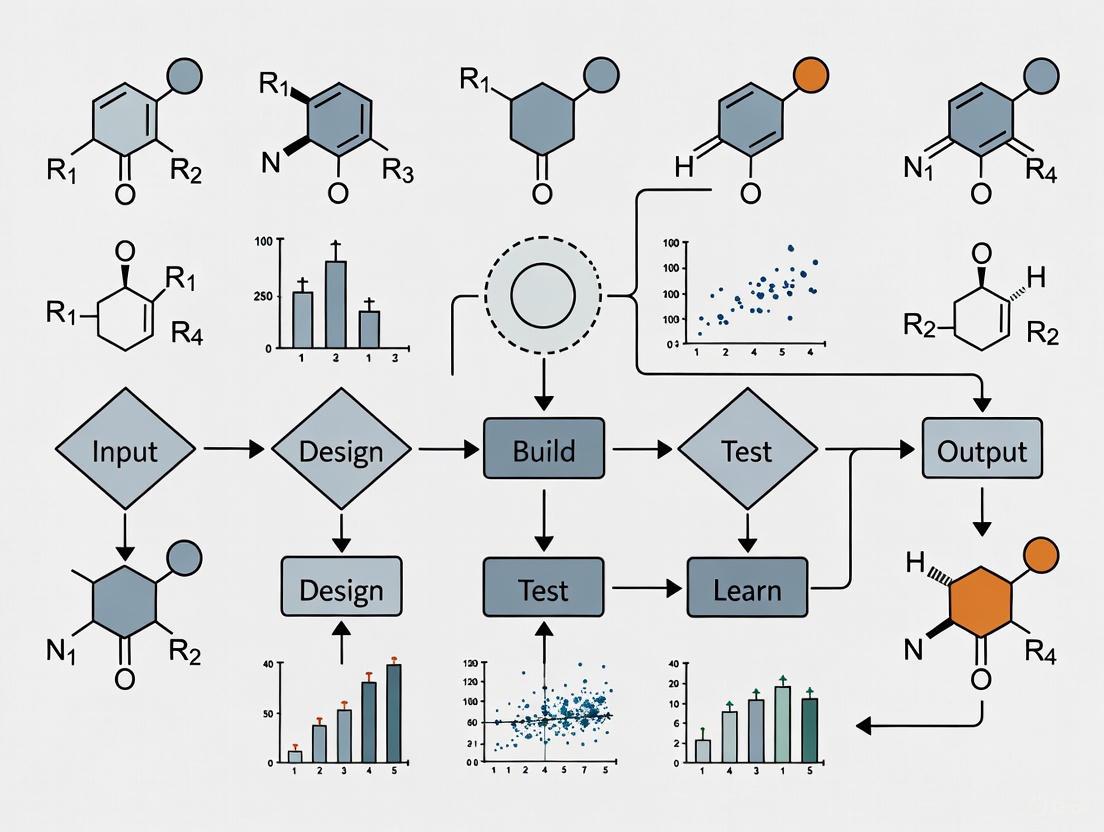

Workflow Visualization

The following diagram illustrates the core architecture and information flow of an autonomous LDBT system:

Implementation Protocols for Autonomous Enzyme Engineering

Case Study: AI-Driven Enzyme Optimization

A landmark 2025 study by Zhao et al. demonstrated a fully integrated LDBT platform for enzyme engineering [1]. The system was applied to two distinct enzymes with different engineering goals: Arabidopsis thaliana halide methyltransferase (AtHMT) and Yersinia mollaretii phytase (YmPhytase). The platform required only the protein sequence and a defined fitness metric, then operated autonomously through multiple iterations.

Table: Experimental Results from Autonomous Enzyme Engineering

| Enzyme | Engineering Goal | Iterations | Variants Screened | Key Improvement | Timeline |

|---|---|---|---|---|---|

| AtHMT | Ethyltransferase activity | 4 cycles | <500 | ~16-fold increase | 4 weeks |

| AtHMT | Substrate preference | 4 cycles | <500 | ~90-fold shift | 4 weeks |

| YmPhytase | Activity at neutral pH | 4 cycles | <500 | ~26-fold higher specific activity | 4 weeks |

Step-by-Step Experimental Protocol

Phase 1: Knowledge-Based Initial Design

Input Specification: Define the target protein sequence and quantitative fitness metric (e.g., enzymatic activity, thermostability, expression level).

Zero-Shot Library Design:

Library Prioritization: Rank variants by composite score balancing novelty, predicted fitness, and structural diversity.

Phase 2: Automated Build and Test

DNA Construction:

Protein Production:

High-Throughput Assaying:

- Implement coupled colorimetric or fluorescent assays compatible with microtiter plates or droplet systems.

- Measure relevant enzyme kinetics (kcat, KM) or stability parameters.

Phase 3: Continuous Learning and Optimization

Data Processing: Automate data collection, normalization, and quality control.

Model Retraining:

- Train supervised "low-N" regression models on experimental results [1].

- Update protein language models with new fitness landscape information.

Next-Generation Design:

- Apply active learning algorithms to select informative variants for subsequent rounds.

- Combine beneficial mutations into higher-order combinations.

- Return to Phase 1 for subsequent iterations until fitness target achieved.

Workflow Automation Architecture

The experimental protocol is executed through a tightly integrated system of AI agents and robotic automation:

Essential Research Reagents and Computational Tools

Successful implementation of LDBT workflows requires both specialized laboratory reagents and sophisticated computational tools. The table below details key components of the "scientist's toolkit" for autonomous enzyme engineering:

Table: Research Reagent Solutions for LDBT Implementation

| Category | Specific Tool/Reagent | Function | Implementation Notes |

|---|---|---|---|

| Computational Models | ESM-2 (Evolutionary Scale Modeling) | Protein language model for zero-shot mutation prediction | Pre-trained on millions of natural sequences; captures evolutionary constraints [1] |

| EVmutation | Epistasis model for predicting mutation interactions | Accounts for non-additive effects of combinations [1] | |

| ProteinMPNN | Structure-based sequence design | Input protein backbone, outputs optimized sequences [2] | |

| Automation Systems | iBioFAB (Illinois Biofoundry) | Fully automated genetic construction | Enables continuous processing without manual steps [1] |

| Cell-free expression systems | Rapid protein synthesis without living cells | Achieves >1 g/L protein in <4 hours; enables toxic protein production [2] | |

| Droplet microfluidics | Ultra-high-throughput screening | Screen >100,000 picoliter-scale reactions in parallel [2] | |

| Specialized Reagents | High-fidelity mutagenesis kits | Error-free DNA construction | ~95% accuracy eliminates sequencing verification [1] |

| Coupled enzyme assays | Quantitative activity measurement | Colorimetric/fluorescent readouts compatible with automation [2] |

Applications in Drug Discovery and Development

The LDBT framework demonstrates particular promise in pharmaceutical applications, where it accelerates multiple stages of the drug development pipeline. AI-driven platforms can analyze diverse biological data—including genomics, proteomics, and clinical records—to identify novel drug targets more effectively than traditional methods [3]. For example, machine learning models trained on datasets of 10,000–15,000 entries have been successfully employed for target proteins such as the main protease of SARS-CoV-2 (Mpro) in antiviral drug development and hERG in cardiotoxicity assessment [3].

In lead discovery and optimization, AI-powered virtual screening rapidly evaluates vast chemical libraries, significantly reducing reliance on resource-intensive high-throughput screening [3] [4]. Quantitative structure-activity relationship (QSAR) models and molecular docking simulations predict biological activity of novel compounds with high accuracy, guiding efficient synthesis priorities [4]. These approaches are particularly valuable for optimizing critical drug properties including solubility, stability, and bioavailability, with datasets of approximately 1,000–5,000 data points used for water solubility predictions [3].

The integration of LDBT approaches in pharmaceutical development addresses key industry challenges, including the typically extensive timelines (often exceeding 15 years) and high costs (averaging over $2.5 billion) associated with bringing a new drug to market [4]. By enabling more predictive and efficient discovery cycles, LDBT frameworks have the potential to significantly accelerate the delivery of novel therapeutics.

Artificial intelligence is undergoing a fundamental transformation in its role within scientific discovery, particularly in biomedical research and drug development. The field is rapidly evolving beyond the use of AI as specialized computational tools toward the emergence of Agentic AI systems that function as autonomous research partners [5] [6]. This transition represents a pivotal stage in the broader "AI for Science" paradigm, where AI systems progress from providing partial assistance to exercising full scientific agency [5]. Within the context of closed-loop Design-Build-Test-Learn (DBTL) platforms, this shift enables increasingly autonomous cycles of hypothesis generation, experimental design, execution, analysis, and iterative refinement—behaviors once regarded as uniquely human domains of scientific expertise [5].

Agentic AI systems in biomedical research combine large language models (LLMs), generative models, and machine learning tools with structured memory for continual learning [6]. Rather than removing humans from the discovery process, these systems augment human creativity and expertise with AI's ability to analyze massive datasets, navigate complex hypothesis spaces, and execute repetitive tasks at scale [6]. This collaboration is particularly transformative for drug discovery, where AI agents can plan discovery workflows, perform self-assessment to identify knowledge gaps, and integrate diverse biological principles and theories into their reasoning processes [6].

The Evolution from Traditional DBTL to LDBT and Agentic Workflows

The Traditional DBTL Cycle in Synthetic Biology and Drug Discovery

The established paradigm for biological engineering and drug discovery has followed the Design-Build-Test-Learn (DBTL) framework [7]. In this iterative cycle:

- Design: Researchers define objectives for desired biological function and design biological parts or systems using domain knowledge and computational modeling.

- Build: DNA constructs are synthesized, assembled into vectors, and introduced into characterization systems (in vivo chassis or in vitro cell-free systems).

- Test: Experimental measurement of engineered biological constructs' performance against design objectives.

- Learn: Analysis of collected data to inform the next design round, with cycles repeating until desired function is achieved [7].

This framework has structured approaches to protein engineering, pathway optimization, and therapeutic development, but faces limitations in speed and predictive power due to its reliance on empirical iteration rather than first-principles prediction.

The Emergence of Learning-Design-Build-Test (LDBT) and Agentic Science

Recent advances in machine learning and autonomous AI systems are driving a fundamental reorganization of this workflow. The proposed LDBT paradigm positions "Learning" at the beginning of the cycle, leveraging pre-trained models and foundational biological knowledge to generate more informed initial designs [7]. This approach leverages the growing success of zero-shot predictions from protein language models and other AI systems that can propose functional biological designs without additional training or experimental data [7].

The integration of Agentic AI transforms this further into a continuous, closed-loop system where AI agents manage the entire discovery process. These agents use LLMs and generative models to feature structured memory for continual learning and employ machine learning tools to incorporate scientific knowledge, biological principles, and theories [6]. This enables a transition toward a "Design-Build-Work" model that relies more heavily on first principles, similar to established engineering disciplines [7].

Table 1: Evolution from DBTL to Agentic Workflows

| Framework | Sequence | Key Characteristics | Role of AI |

|---|---|---|---|

| Traditional DBTL | Design → Build → Test → Learn | Empirical iteration, Human-driven design | Specialized computational tools |

| LDBT | Learn → Design → Build → Test | Zero-shot predictions, Pre-trained models | Machine learning-enhanced design |

| Agentic Science | Continuous autonomous cycling | Full scientific agency, Closed-loop operation | Autonomous AI research partners |

Diagram 1: Evolution from DBTL to Agentic Science

Quantitative Performance of AI-Driven Discovery Platforms

The implementation of AI-driven approaches, particularly in drug discovery, has demonstrated substantial improvements in efficiency and acceleration of early-stage research. Multiple AI-derived small-molecule drug candidates have reached Phase I trials in a fraction of the typical ~5 years needed for traditional discovery and preclinical work [8]. By the end of 2024, over 75 AI-derived molecules had reached clinical stages, representing exponential growth from the first examples appearing around 2018-2020 [8].

Table 2: Performance Metrics of Leading AI-Driven Drug Discovery Platforms

| Company/Platform | Key AI Approach | Reported Efficiency Gains | Clinical Stage Candidates | Notable Achievements |

|---|---|---|---|---|

| Exscientia | Generative AI for small-molecule design, "Centaur Chemist" approach | Design cycles ~70% faster, 10× fewer synthesized compounds; CDK7 inhibitor achieved candidate with only 136 compounds [8] | 8 clinical compounds designed by 2023 [8] | First AI-designed drug (DSP-1181) to enter Phase I trials (2020) [8] |

| Insilico Medicine | Generative AI for target discovery and molecule design | Idiopathic pulmonary fibrosis drug: target discovery to Phase I in 18 months [8] | Multiple candidates in Phase I trials [8] | Demonstrated end-to-end AI acceleration from target identification to clinical candidate [8] |

| Recursion | Phenotypic screening with AI-driven image analysis | Massive scale cellular phenotyping with machine learning classification [8] | Multiple candidates in clinical development [8] | Integrated platform combining biology and AI; merged with Exscientia in 2024 [8] |

| BenevolentAI | Knowledge-graph-driven target discovery | AI-identified novel targets and repurposing opportunities [8] | Several candidates in clinical trials [8] | Knowledge mining from scientific literature and experimental data [8] |

| Schrödinger | Physics-based simulations with machine learning | Combined physical principles with statistical learning for molecular design [8] | Platform used for multiple partnered programs [8] | Integrated computational platform for molecular design [8] |

The efficiency gains demonstrated by these platforms are particularly notable in lead optimization. Exscientia reports in silico design cycles approximately 70% faster and requiring 10× fewer synthesized compounds than industry norms [8]. In one specific example, their CDK7 inhibitor program achieved a clinical candidate after synthesizing only 136 compounds, whereas traditional programs often require thousands [8]. This represents a significant reduction in resource requirements and acceleration of early-stage discovery.

Core Capabilities and Technologies of Agentic AI Systems

Agentic AI systems in scientific discovery integrate multiple advanced capabilities that enable autonomous operation. These systems are characterized by five core capabilities that distinguish them from traditional computational tools:

Structured Memory for Continual Learning: Agentic AI systems maintain organized knowledge repositories that accumulate and integrate information across multiple experimental cycles, enabling progressive improvement and adaptation based on accumulated evidence [6].

Hypothesis Generation and Experimental Design: Using large language models and generative algorithms, these systems can formulate novel research questions and design appropriate experimental approaches to test them, moving beyond pattern recognition to genuine scientific inquiry [5] [6].

Workflow Planning and Execution: Agentic AI can plan multi-step discovery workflows, coordinate experimental processes, and manage resource allocation while adapting to intermediate results and unexpected findings [6].

Self-Assessment and Knowledge Gap Identification: These systems can critically evaluate their own understanding, identify limitations in their knowledge, and proactively design experiments to address those gaps [6].

Integration of Multi-Modal Data and Tools: Agentic AI seamlessly combines diverse data types (genomic, structural, phenotypic) and computational tools (simulation, analysis, prediction) within a unified reasoning framework [5] [6].

Experimental Protocols for AI-Enhanced Drug Discovery

Protocol: Cell-Free Protein Expression for High-Throughput Testing

Purpose: Rapid expression and testing of AI-designed protein variants using cell-free systems to accelerate the Build-Test phases of DBTL cycles [7].

Materials and Reagents:

- Cell-free transcription-translation system (crude lysate or purified components)

- DNA templates encoding target protein variants

- Reaction substrates for functional assays

- Microfluidic device or liquid handling robot

- Detection reagents (fluorogenic or colorimetric substrates)

Procedure:

- DNA Template Preparation: Synthesize DNA sequences encoding AI-designed protein variants without cloning steps [7].

- Reaction Assembly: Combine DNA templates with cell-free expression system components in microtiter plates or microfluidic droplets [7].

- Protein Expression: Incubate reactions at appropriate temperature (typically 30-37°C) for 2-4 hours to express target proteins [7].

- Functional Assay: Directly assay expressed proteins by adding relevant substrates to reactions, measuring output via fluorescence, absorbance, or other detection methods [7].

- Data Collection: Quantify protein function and expression levels using appropriate instrumentation [7].

- Data Integration: Feed results back to AI system for analysis and next-round design [7].

Applications: This protocol has been successfully applied in ultra-high-throughput protein stability mapping (776,000 variants) [7], enzyme engineering through iterative site saturation mutagenesis [7], and antimicrobial peptide screening (500 optimal variants selected from 500,000 computational designs) [7].

Protocol: Closed-Loop AI-Driven Small Molecule Optimization

Purpose: Iterative design and testing of small molecule therapeutics using AI-guided generative chemistry and automated synthesis [8].

Materials and Reagents:

- AI-driven molecular design platform (e.g., Exscientia's DesignStudio)

- Automated synthesis robotics (e.g., Exscientia's AutomationStudio)

- High-throughput screening assays (binding, functional, ADME)

- Target protein or cellular assays

- Compound management system

Procedure:

- Initial Design: AI generates initial compound designs based on target product profile (potency, selectivity, ADME properties) [8].

- Automated Synthesis: Robotics system synthesizes prioritized compounds (typically 100-200 compounds per cycle) [8].

- High-Throughput Testing: Compounds screened against primary target, counterscreens for selectivity, and early ADME assessment [8].

- Data Analysis: AI analyzes structure-activity relationships and identifies key molecular determinants of desired properties [8].

- Iterative Design: AI proposes next-generation compounds incorporating learning from previous cycle [8].

- Candidate Selection: Process repeats until compound meets all criteria for clinical candidate selection [8].

Applications: This approach enabled Exscientia to advance a CDK7 inhibitor to clinical candidate stage with only 136 synthesized compounds, compared to thousands typically required in traditional medicinal chemistry [8].

Diagram 2: Closed-Loop AI-Driven Small Molecule Optimization

Research Reagent Solutions for AI-Enhanced Discovery

Table 3: Essential Research Reagents and Platforms for AI-Driven Discovery

| Reagent/Platform | Function | Application in AI-Enhanced Workflows |

|---|---|---|

| Cell-Free Expression Systems | Protein synthesis without living cells | Enables rapid testing of AI-designed protein variants (>1 g/L in <4 hours); scalable from pL to kL [7] |

| DropAI Microfluidics | Picoliter-scale reaction containers | Allows screening of >100,000 reactions for massive data generation to train AI models [7] |

| Protein Language Models (ESM, ProGen) | Protein sequence and function prediction | Zero-shot prediction of beneficial mutations and protein functions; trained on evolutionary relationships [7] |

| Structure-Based Design Tools (ProteinMPNN) | Protein sequence design from structure | Designs sequences for specific backbones; combined with AlphaFold for 10× increase in design success [7] |

| Stability Prediction Tools (Prethermut, Stability Oracle) | Predicts effects of mutations on protein stability | Identifies stabilizing mutations and eliminates destabilizing variants before synthesis [7] |

| Automated Synthesis Robotics | Compound synthesis and testing | Closes the design-make-test-learn loop with minimal human intervention; enables rapid iteration [8] |

| Phenotypic Screening Platforms | High-content cellular imaging | Generates rich datasets for AI analysis of compound effects in biologically relevant systems [8] |

Implementation Challenges and Future Directions

Despite significant progress, Agentic AI in scientific discovery faces several substantial challenges. A critical question remains whether AI is "truly delivering better success, or just faster failures" [8]. While AI-designed compounds are reaching clinical trials more rapidly, none have yet received regulatory approval, with most programs remaining in early-stage trials [8]. This highlights the need for continued validation of AI-generated candidates in later-stage clinical development.

Additional challenges include:

- Data Quality and Bias: Training data limitations can propagate biases and constrain the chemical or biological space explored by AI systems [8].

- Explainability and Transparency: The "black box" nature of some complex AI models creates challenges for regulatory approval and scientific trust [8].

- Integration with Biological Complexity: Capturing the full complexity of biological systems, including cellular context and organism-level effects, remains difficult [7] [6].

- Regulatory Frameworks: Evolving guidelines from FDA and EMA on AI in drug development require careful navigation [8].

Future directions for Agentic AI in discovery include greater integration of multi-scale biological data, improved physical principles in generative models, and more sophisticated reasoning capabilities for hypothesis generation. As these systems mature, they are poised to transform areas ranging from virtual cell simulation and programmable control of phenotypes to the design of cellular circuits and development of novel therapies [6]. The ongoing merger of AI capabilities with experimental automation, exemplified by the integration of Exscientia's generative chemistry with Recursion's phenomics data, suggests continued acceleration toward more autonomous, efficient, and effective discovery platforms [8].

The paradigm of Design-Build-Test-Learn (DBTL) has long been the cornerstone of iterative engineering in biological sciences, particularly in protein engineering and drug development. The integration of artificial intelligence (AI) is now transforming this cycle into a closed-loop, autonomous research platform [2]. This evolution is powered by core AI agent architectures—ReAct, Reflection, and Multi-Agent Swarm Systems—that automate reasoning, enhance decision-making, and parallelize experimental workflows. By framing these architectures within the DBTL cycle, this article provides researchers and drug development professionals with application notes and protocols to harness AI for accelerated discovery.

A significant shift is emerging from the traditional DBTL cycle to an "LDBT" (Learn-Design-Build-Test) paradigm, where machine learning precedes design [2]. This approach leverages pre-trained models on vast biological datasets to make zero-shot predictions, potentially reducing the need for multiple iterative cycles and moving closer to a "Design-Build-Work" model. AI agent architectures are the computational engines that operationalize this shift, enabling the integration of prior knowledge and automated reasoning directly into the experimental design process.

Core AI Agent Architectures

ReAct (Reasoning + Acting)

The ReAct architecture integrates logical reasoning with actionable steps, allowing an AI agent to interact with its environment strategically.

- Principle of Operation: The agent operates in a loop: it reasons to deduce the next step, acts to execute that step (e.g., using a tool or querying an API), and observes the result, which informs the next cycle of reasoning [9]. This intertwining of thought and action is crucial for tackling complex problems requiring multi-step planning.

- Role in DBTL Platforms: Within a DBTL framework, a ReAct agent can autonomously manage the "Test" and "Learn" phases. For example, upon receiving experimental data ("Observe"), it can reason about the results, act by updating a machine learning model, and then use the refined model to design new variants for the next cycle.

Reflection (Self-Critique and Refinement)

The Reflection architecture equips an agent with a self-critique mechanism, enabling it to evaluate and improve its own outputs or plans before finalizing them.

- Principle of Operation: After generating an initial output (e.g., an experimental design or a piece of code), the agent switches to a "critical" mode. It analyzes its own work against a set of criteria (e.g., feasibility, safety, efficiency) and produces a reflective critique. This critique is then used to revise and refine the original output [10].

- Role in DBTL Platforms: Reflection is paramount for ensuring the quality and reliability of autonomous operations. A reflecting agent can critically assess its proposed designs for protein variants in the "Design" phase, checking for potential stability issues or expression problems, thereby reducing the cost of failed "Build" and "Test" steps.

Multi-Agent Swarm Systems

Multi-agent swarm systems involve multiple specialized AI agents collaborating to solve a problem that is beyond the capability of a single agent [10]. These systems are inspired by collective problem-solving observed in nature and human organizations.

- Principle of Operation: Multiple agents, each with a defined role, expertise, and tools, work together. Their collaboration is orchestrated through specific communication patterns, such as hierarchical, sequential, or concurrent workflows [10] [9].

- Role in DBTL Platforms: A swarm system is ideal for managing the entire, complex DBTL cycle. Different agents can be assigned to specialized sub-tasks—such as experimental design, robotic control, data analysis, and hypothesis generation—working in concert to accelerate the research pace.

Comparative Analysis of Architectures

The table below summarizes the key characteristics, strengths, and ideal use cases for each architecture within a research platform.

Table 1: Comparative Analysis of Core AI Agent Architectures for DBTL Platforms

| Architecture | Core Principle | Key Strengths | Common Use Cases in DBTL | Communication Pattern |

|---|---|---|---|---|

| ReAct | Interleaves chain-of-thought reasoning with actionable steps [9]. | High transparency; handles dynamic environments. | Automated data analysis; guiding single-instrument workflows. | Sequential, model-driven loop. |

| Reflection | Generates self-critique to refine initial outputs. | Improved output quality and reliability; reduces errors. | Validating experimental designs; critiquing code for robotic control. | Sequential, iterative refinement. |

| Multi-Agent Swarm | Specialized agents collaborate under an orchestrated pattern [10] [9]. | Divides complex tasks; enables parallel work; scalable. | Managing end-to-end DBTL cycles; complex problem-solving. | Hierarchical, Sequential, Concurrent, Mesh [10]. |

Multi-Agent Swarm System Patterns for DBTL

Multi-agent systems can be instantiated in several patterns, each suited to different experimental workflows.

Hierarchical Architecture

In this pattern, agents are organized in a tree-like structure. A high-level "orchestrator" agent breaks down a top-level goal (e.g., "improve enzyme activity") and delegates sub-tasks to specialized "worker" agents [10] [9].

- DBTL Application: An orchestrator manages the entire DBTL cycle. It could delegate the "Design" phase to a specialized design agent, the "Build" phase to an agent controlling liquid handlers, and the "Test" phase to an analysis agent, aggregating their results for the "Learn" phase.

The following diagram illustrates the hierarchical flow of tasks from a central orchestrator to specialized agents, which aligns with phases of a DBTL cycle.

Sequential Coordination

This architecture processes tasks in a linear order, where the output of one agent becomes the input for the next [10]. It is analogous to a traditional assembly line.

- DBTL Application: This pattern naturally maps to the classical DBTL cycle. A design agent passes variant sequences to a build agent, who passes physical constructs to a test agent, who finally passes assay data to a learn agent to plan the next cycle.

The DOT script below defines a sequential workflow where output from one specialized agent becomes the input for the next.

Agents as Tools Pattern

This is a specific and practical implementation of a hierarchical system. Specialized AI agents are wrapped as callable "tools" for a central orchestrator agent [9].

- Principle of Operation: The user interacts only with the orchestrator. The orchestrator's large language model (LLM) reads the docstrings of the available agent-tools to decide which specialist to invoke for a given query. The specialist agent executes the task and returns the result to the orchestrator.

- DBTL Application: A researcher can ask the orchestrator, "Design and run an experiment to find a more stable phytase variant." The orchestrator would then call a

protein_design_agent, alab_automation_agentto handle the build and test phases, and adata_analysis_agentto interpret results, seamlessly executing a full DBTL cycle.

Application Notes: Autonomous Enzyme Engineering

A landmark study in Nature Communications (2025) demonstrates the powerful integration of a multi-agent swarm system within a closed-loop DBTL platform for autonomous enzyme engineering [11].

Experimental Objectives and Workflow

The platform's goal was to engineer two enzymes: Arabidopsis thaliana halide methyltransferase (AtHMT) for improved ethyltransferase activity, and Yersinia mollaretii phytase (YmPhytase) for enhanced activity at neutral pH. The workflow integrated AI and robotics within a biofoundry environment, the Illinois Biological Foundry for Advanced Biomanufacturing (iBioFAB) [11].

Table 2: Key Research Reagent Solutions for Autonomous Enzyme Engineering

| Reagent / Solution | Function in the Experimental Workflow |

|---|---|

| Protein LLM (ESM-2) | An unsupervised model used in the "Learn/Design" phase to predict beneficial amino acid substitutions based on evolutionary sequences [11]. |

| Epistasis Model (EVmutation) | An unsupervised model analyzing local homologs to predict the fitness of protein variants, complementing the protein LLM for initial library design [11]. |

| HiFi Assembly Mix | Enzymatic mix for high-fidelity DNA assembly, creating mutant libraries with ~95% accuracy and eliminating the need for intermediate sequencing [11]. |

| Cell-Free Expression System | A rapid, flexible protein synthesis platform enabling high-throughput expression of protein variants without live cells [2] [11]. |

| Automated Assay Reagents | Specific substrates and buffers (e.g., for methyltransferase or phosphatase activity) configured for high-throughput, robotic handling and quantification in microplates [11]. |

Integrated AI and Robotic Protocol

Module 1: Learn & Design

- Step 1.1: The "Learn" phase begins by training a low-N machine learning model on existing variant fitness data [11]. In the first cycle, this is skipped in favor of zero-shot predictions.

- Step 1.2: The "Design" phase uses a combination of a protein LLM (ESM-2) and an epistasis model (EVmutation) to generate a diverse and high-quality initial library of protein variants [11]. For subsequent cycles, the ML model from Step 1.1 is used to propose new variants based on the latest experimental data.

Module 2: Build

- Step 2.1: The designed variant sequences are used to automatically generate instructions for a DNA synthesizer or to design primers for a high-fidelity (HiFi) assembly-based mutagenesis PCR [11].

- Step 2.2: A robotic liquid handler prepares the mutagenesis PCR reactions.

- Step 2.3: The platform executes DpnI digestion, DNA assembly, and high-efficiency transformation into a microbial host in a 96-well format [11].

- Step 2.4: A colony picker robot selects successful transformants and inoculates them into deep-well plates for protein expression.

Module 3: Test

- Step 3.1: After a defined incubation period, a central robotic arm transfers the culture plates to a centrifuge, and then another liquid handler prepares crude cell lysates or purifies the expressed protein.

- Step 3.2: The platform dispenses the lysates and assay reagents into microplates. The enzyme activity assay (e.g., measuring methyltransferase or phosphatase activity) is performed and quantified by a plate reader [11].

- Step 3.3: The raw assay data is automatically processed and stored in a central database.

Module 4: Learn & Consolidate

- Step 4.1: The processed data is used to train or retrain the machine learning model (e.g., a Bayesian optimizer or neural network), updating the understanding of the sequence-function landscape [11].

- Step 4.2: The cycle repeats from Module 1, using the newly trained model to design an optimized library for the next round.

The DOT script below represents the integrated workflow of AI and robotic modules, showing both sequential flow and parallel processes.

Key Experimental Outcomes

The autonomous platform delivered exceptional results within a condensed timeframe, demonstrating the efficiency of integrating AI agents with laboratory automation.

Table 3: Quantitative Outcomes of Autonomous Enzyme Engineering Campaign

| Engineered Enzyme | Engineering Goal | Fold Improvement | Campaign Duration & Scale |

|---|---|---|---|

| AtHMT | Improve substrate preference (Ethyl Iodide) | 90-fold | 4 weeks |

| AtHMT | Improve ethyltransferase activity | 16-fold | (Fewer than 500 variants constructed & characterized per enzyme) [11] |

| YmPhytase | Improve activity at neutral pH | 26-fold | 4 weeks |

The integration of ReAct, Reflection, and Multi-Agent Swarm architectures into DBTL platforms marks a transformative leap toward autonomous research. These AI agent frameworks provide the cognitive backbone for automating complex reasoning, ensuring robust output, and orchestrating collaborative, parallel experimentation. As demonstrated by the autonomous engineering of enzymes, the synergy between AI and robotics within a closed-loop system can drastically accelerate the pace of discovery, reducing a process that traditionally takes months to a matter of weeks. For researchers in drug development and synthetic biology, adopting these architectures is no longer a speculative future but a practical pathway to achieving unprecedented scale, efficiency, and innovation in their experimental workflows.

The emergence of megascale datasets and sophisticated artificial intelligence (AI) models is fundamentally reshaping the synthetic biology and drug development landscape. The traditional Design-Build-Test-Learn (DBTL) cycle, while systematic, often requires multiple, time-intensive iterations to engineer functional biological systems. A significant paradigm shift is now underway, repositioning the "Learn" phase to the forefront of this cycle. This new LDBT (Learn-Design-Build-Test) framework leverages vast, pre-existing datasets and powerful machine learning models to make accurate, zero-shot predictions, thereby streamlining the path to functional designs [7]. In this context, zero-shot learning (ZSL) refers to the capability of AI models to recognize, classify, or generate predictions for categories or tasks they have never explicitly encountered during training, breaking the traditional dependency on extensive, task-specific labeled datasets [12]. This approach is particularly transformative for closed-loop DBTL platforms governed by AI agents, where the initial learning phase can drastically reduce the number of empirical cycles required, accelerating the development of novel therapeutics, enzymes, and biosynthetic pathways.

The Core Components: Megascale Data and Zero-Shot AI

Megascale Datasets: Characteristics and Curation

Megascale datasets are characterized by their unprecedented volume, diversity, and depth, providing the foundational substrate for training robust foundational models in biology. These datasets span genomics, proteomics, transcriptomics, and phenotypic data from millions of biological entities. For zero-shot learning to be effective, the datasets must encompass broad evolutionary and functional information, allowing models to infer deep patterns and relationships.

Table 1: Exemplary Megascale Datasets for Biological Zero-Shot Learning

| Dataset Name | Scale and Scope | Data Modalities | Primary Application in ZSL |

|---|---|---|---|

| LAD (Large-scale Attribute Dataset) [13] | 78,017 images; 230 classes; 359 attributes | Visual images, semantic attributes | Attribute-based classification and generalization to unseen object categories. |

| Protein Sequence Databases (e.g., UniRef) [7] | Millions of protein sequences from across phylogeny | Amino acid sequences, evolutionary relationships | Training protein language models (e.g., ESM, ProGen) for zero-shot function prediction and design. |

| Protein Structure Databases (e.g., PDB) [7] | Hundreds of thousands of experimentally determined structures | 3D atomic coordinates, secondary structure | Training structure-based models (e.g., AlphaFold, RoseTTAFold, ProteinMPNN) for zero-shot structure prediction and sequence design. |

Zero-Shot Learning Mechanisms in Biology

Zero-shot learning operates by leveraging semantic information and relationships between known and unknown classes to make predictions. In a biological context, this translates to several powerful mechanisms [12]:

- Semantic Embeddings and Attribute-Based Classification: Biological entities (e.g., proteins, cell types) are described by a set of human-interpretable attributes or features (e.g., structural motifs, functional domains, solubility, stability). Models are trained to map sequences or structures into a shared semantic space defined by these attributes, enabling them to infer the properties of unseen entities based on their proximity in this space.

- Generative Models: Techniques such as Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs) can generate synthetic examples of unseen classes based on the learned distribution of seen classes. This is particularly useful for creating novel protein variants or chemical compounds that satisfy desired property constraints.

- Transfer Learning: Pre-trained models on massive, general datasets (e.g., all known protein sequences) can be applied with minimal fine-tuning to specialized tasks (e.g., predicting the stability of a specific enzyme family), effectively transferring knowledge from a source domain to a target domain.

Application Notes: Protocols for a Closed-Loop LDBT Platform

Integrating megascale data and zero-shot learning into a closed-loop platform requires a structured experimental and computational workflow. The following protocols and application notes detail this process.

Protocol 1: In silico Protein Engineering via Zero-Shot Prediction

Objective: To design a protein (e.g., an antibody or enzyme) with enhanced properties (e.g., stability, activity) using zero-shot models without initial experimental testing.

Learn (L): Model Selection and Context Priming

- Input: A megascale dataset of protein sequences and/or structures.

- Action: Select and employ a pre-trained protein language model (e.g., ESM-3, ProGen) or a structure-based design tool (e.g., ProteinMPNN, MutCompute). The model has already "learned" the fundamental principles of protein folding and function from its training data [7].

- Output: A foundational model capable of zero-shot inference.

Design (D): Zero-Shot Sequence Generation

- Input: A set of functional constraints and objectives for the target protein (e.g., "stabilize the active site," "maintain binding affinity to antigen X," "increase solubility").

- Action: Use the model to generate novel protein sequences that are predicted to meet the objectives. This can involve sampling from the model's distribution or using it to score and rank a library of candidate sequences. Tools like Stability Oracle or DeepSol can be integrated for specific property predictions [7].

- Output: A computationally designed library of protein variants.

Build (B): Rapid Synthesis with Cell-Free Systems

- Input: The computationally designed DNA sequences.

- Action: Synthesize DNA templates without intermediate cloning steps and express proteins using a cell-free gene expression (CFE) platform. This system leverages cellular machinery in vitro, enabling rapid (hours) and high-throughput protein production, even for potentially toxic proteins [7].

- Output: A physical library of synthesized protein variants.

Test (T): High-Throughput Functional Characterization

- Input: The synthesized protein library.

- Action: Assay protein function using high-throughput methods coupled with CFE, such as fluorescence-based activity assays, cDNA display for stability mapping, or affinity selection. Liquid handling robots and microfluidics can scale testing to hundreds of thousands of variants [7].

- Output: Quantitative functional data for each variant.

Closed-Loop Feedback: The experimental results from the Test phase are fed back into the Learn phase. This new, high-quality data can be used to fine-tune the foundational model, creating a more accurate and specialized AI agent for the next iteration of the cycle, thus closing the loop [7].

Diagram 1: The closed-loop LDBT cycle for protein engineering.

Protocol 2: AI-Driven Antibody-Drug Conjugate (ADC) Target Discovery

Objective: To prioritize novel, tumor-selective antigen targets for ADC development using AI and multi-omics megascale data in a zero-shot manner.

Learn (L): Multi-Omics Data Integration

- Input: Curated megascale datasets including genomic, transcriptomic, proteomic, and clinical data from both tumor and normal tissues.

- Action: Train or utilize a pre-trained AI model (e.g., graph neural networks, deep learning classifiers) to learn the complex patterns distinguishing tumor-specific, internalizing membrane proteins from other proteins [14].

- Output: A predictive model for ideal ADC target properties.

Design (D): Zero-Shot Target Prioritization

- Input: The complete human proteome or a specific set of uncharacterized membrane proteins.

- Action: Use the trained model to score and rank all input proteins based on their predicted "ideality" as an ADC target (e.g., high tumor expression, low normal tissue expression, high predicted internalization). This constitutes a zero-shot prediction for proteins not previously known to be ADC targets [14].

- Output: A shortlist of candidate antigen targets with associated predictive scores.

Build (B): Molecular Tool Generation

- Input: The shortlisted target genes.

- Action: Clone and express the target antigens. Generate candidate antibodies or binding scaffolds against these targets.

- Output: Recombinant antigens and antibody candidates.

Test (T): In vitro and In vivo Validation

- Input: The generated molecular tools.

- Action: Validate target expression via IHC, test antibody binding affinity and specificity, and critically, assess antigen-antibody complex internalization in relevant cell lines. Proceed to in vivo efficacy and safety studies for lead candidates [14].

- Output: Experimental confirmation of target suitability.

Closed-Loop Feedback: Validation results refine the AI model, improving its predictive power for subsequent target discovery campaigns and informing the AI agent's future search strategies.

Table 2: AI Models for Zero-Shot Target Identification in ADC Development [14]

| AI Methodology | Data Sources Utilized | ADC-Specific Challenge Addressed | ZSL Application |

|---|---|---|---|

| Graph Neural Networks (GNNs) | Genomics, transcriptomics, proteomics, molecular structures | Overcoming target heterogeneity and ensuring functional internalization | Predicting internalization efficiency and tumor-selectivity for uncharacterized proteins. |

| Natural Language Processing (NLP) | Scientific literature, patents, clinical trial databases | Aggregating fragmented evidence for novel targets | Inferring functional and expression patterns for less-studied targets from textual data. |

| Deep Learning (CNNs, Autoencoders) | Digital pathology images, multi-omics data | Assessing antigen density and spatial distribution from imaging | Quantifying expression patterns from tissue images for targets not in training data. |

Diagram 2: AI-driven ADC target discovery workflow.

The Scientist's Toolkit: Essential Research Reagents and Platforms

The practical implementation of the LDBT paradigm relies on a suite of specialized reagents, software, and platforms.

Table 3: Key Research Reagent Solutions for Megascale Zero-Shot Learning

| Item / Solution | Function / Application | Example / Specification |

|---|---|---|

| Cell-Free Expression System | Rapid, high-throughput protein synthesis without living cells. Enables Build phase for toxic or complex proteins. | E. coli or HeLa lysate-based systems; protein yields >1 g/L in <4 hours [7]. |

| Pre-trained Protein Language Model | Zero-shot prediction of protein function, stability, and mutation effects. Core of the Learn phase. | ESM-3, ProGen; trained on millions of sequences from UniRef [7]. |

| Structure Prediction & Design Tool | Zero-shot protein structure prediction and sequence design for a given backbone. Core of the Design phase. | AlphaFold2, ProteinMPNN; enables design without experimental structures [7]. |

| Droplet Microfluidics System | Ultra-high-throughput screening of biological assays. Critical for the Test phase at megascale. | Platforms like DropAI for screening >100,000 picoliter-scale reactions [7]. |

| Large-Scale Attribute Dataset | Benchmarking and training ZSL models for visual and semantic tasks in biology. | LAD dataset: 78K images, 230 classes, 359 attributes [13]. |

| AI-Optimized Distributed Training Framework | Training foundational models on megascale datasets across thousands of GPUs. | MegaScale system: achieves >55% Model FLOPs Utilization on 12,288 GPUs [15]. |

The fusion of megascale datasets and zero-shot learning within a reimagined LDBT cycle represents a cornerstone for the next generation of closed-loop, AI-agent-driven research platforms. This approach moves the field from a reliance on iterative, empirical experimentation toward a more predictive, first-principles-based engineering discipline. By establishing a robust data foundation and leveraging the generalized intelligence of models trained on this data, researchers and drug developers can dramatically accelerate the design of novel biological parts, therapeutic candidates, and optimized biosynthetic pathways, ultimately shrinking the timeline from concept to functional validation.

AI Agents in Action: Real-World Applications and Workflow Integration

The early stage of drug discovery, hit identification, aims to find chemical compounds that measurably modulate a biological target and are suitable for optimization into lead compounds [16]. This phase has been transformed by artificial intelligence (AI) and its integration into closed-loop Design-Build-Test-Learn (DBTL) platforms. These platforms use AI agents to autonomously design molecules, simulate their properties, and prioritize experiments, creating a continuous, data-driven cycle that dramatically accelerates research [7] [17] [18].

This article details practical protocols for two core methodologies—virtual screening and de novo molecular design—framed within an agentic AI-driven DBTL workflow. We provide structured data, ready-to-use experimental procedures, and key reagent solutions to enable implementation of these accelerated approaches.

Integrated DBTL Workflow for Hit Identification

The following diagram illustrates the overarching closed-loop DBTL workflow, showing the integration of agentic AI, virtual screening, and de novo design in an automated cycle for hit identification.

Diagram Title: Closed-Loop DBTL Workflow

Virtual Screening Protocols

Virtual screening uses computational methods to prioritize compounds from ultra-large libraries for experimental testing [16]. Integrated into a DBTL cycle, AI agents can autonomously manage this process, from initial docking to selecting compounds for synthesis and testing.

The HIDDEN GEM Protocol for Ultra-Large Library Screening

HIDDEN GEM is a novel methodology that integrates molecular docking, generative modeling, and similarity searching to efficiently identify hits from libraries containing billions of molecules [19].

Workflow and Quantitative Performance

The diagram below details the HIDDEN GEM cycle, which integrates initial docking, generative AI, and similarity searching to efficiently identify high-scoring compounds.

Diagram Title: HIDDEN GEM Screening Cycle

Table 1: HIDDEN GEM Performance Metrics for 16 Protein Targets [19]

| Metric | Result |

|---|---|

| Library Size Screened | 37 billion compounds (Enamine REAL Space) |

| Computational Resource | Single 44 CPU-core machine, one Nvidia GTX 1080 Ti GPU |

| Total Time | ~2 days per target |

| Enrichment Factor | Up to 1000-fold over random screening |

| Compounds Actually Docked | < 600,000 |

Step-by-Step Protocol

Initialization

- Input: Prepared protein structure (PDB format), defined binding site.

- Library: Use a small, diverse initial library like the Enamine Hit Locator Library (HLL, ~460,000 compounds) [19].

- Action: Dock all HLL compounds using software like Smina or AutoDock Vina. Retain the best score per compound.

Generation

- Fine-tuning: Use the top 1% of scoring compounds from Initialization to fine-tune a pre-trained generative model (e.g., a SMILES-based model trained on ChEMBL).

- Filter Model: Train a binary classification model to discriminate the top 1% from the remaining 99%.

- AI Generation: The fine-tuned model generates novel compounds. The filter model selects those predicted to be top-scoring.

- Output: Generate approximately 10,000 unique, novel compounds. This takes ~4 hours on a single NVIDIA GTX 1080 Ti GPU [19].

- Docking: Dock and score all generated compounds.

Similarity Search

- Query: Select up to 1,000 top-scoring compounds from the previous steps.

- Search: Perform a massive chemical similarity search (e.g., Tanimoto similarity) against the ultra-large screening library (e.g., Enamine REAL Space).

- Output: Select the 100,000 most similar, purchasable compounds from the large library. This step requires ~3,600 CPU-core hours [19].

Final Docking and Hit Nomination

- Dock and score the 100,000 selected compounds.

- The top-ranking compounds are nominated as validated virtual hits for the subsequent "Build" and "Test" phases.

Deep Learning-Based Virtual Screening with HydraScreen

HydraScreen is a deep learning-based scoring function that predicts protein-ligand affinity and pose confidence [20].

Workflow

The process involves generating multiple ligand poses and using a convolutional neural network (CNN) ensemble to analyze protein-ligand interactions and predict binding affinity.

Diagram Title: HydraScreen Affinity Prediction

Step-by-Step Protocol

Input Preparation

- Protein: Remove water and ions, add hydrogens and charges, repair truncated side-chains.

- Ligand: Sanitize structures and generate stereoisomers (store up to 16 stereoisomers per compound) [20].

Pose Generation

- Use docking software (e.g., Smina) to generate an ensemble of docked conformations for each protein-ligand pair.

Deep Learning Scoring

- Process each pose through the HydraScreen CNN ensemble.

- The model outputs an affinity estimate and a pose confidence score for each conformation.

Final Affinity Calculation

- Compute a final, aggregate affinity value using a Boltzmann-like average over the entire conformational ensemble [20].

Table 2: Prospective Validation of HydraScreen for IRAK1 Hit Identification [20]

| Metric | Performance |

|---|---|

| Hit Identification Rate | 23.8% of all active compounds found in top 1% of ranked library |

| Library Screened | 46,743 diversity compound library |

| Key Outcome | Identification of 3 potent (nanomolar) scaffolds, 2 of which were novel for IRAK1 |

De Novo Molecular Design Protocols

De novo design uses AI to generate novel molecular structures from scratch, optimized for specific target binding and drug-like properties [21]. Within a DBTL cycle, generative models are continuously refined with experimental data from the "Test" phase.

Core Strategies for De Novo Design

Table 3: De Novo Molecular Design Strategies [21]

| Strategy | Description | Primary DBTL Phase |

|---|---|---|

| Scaffold Hopping | Generating novel core structures while maintaining similar activity to a known hit. | Hit-to-Lead |

| Scaffold Decoration | Adding functional groups to a core scaffold to enhance interactions with the target. | Hit-to-Lead / Optimization |

| Fragment Growing | Expanding a small, validated fragment by adding atoms or functional groups. | Hit Identification |

| Fragment Linking | Joining two or more fragments that bind to different sub-pockets of the target. | Hit Identification |

| Chemical Space Sampling | Selecting a diverse subset of molecules from a vast virtual chemical space (~10^63 molecules) [21]. | Hit Identification |

Protocol for Generative AI-Driven De Novo Design

This protocol can be executed by an AI agent to automatically design new molecules based on experimental feedback.

Model Selection and Training

- Architecture: Choose a generative model architecture such as a Variational Autoencoder (VAE), Generative Adversarial Network (GAN), or a modern Transformer model.

- Pre-training: Pre-train the model on a large corpus of chemical structures (e.g., ChEMBL, ZINC) to learn fundamental chemical rules and structural patterns [22].

Goal-Directed Generation

- Reward Function: Define a multi-parameter reward function for Reinforcement Learning (RL) that incorporates predicted affinity, drug-likeness (e.g., Lipinski's Rule of Five), synthetic accessibility, and target selectivity [22] [21].

- AI Generation: The AI agent uses the generative model to propose new molecules, which are then scored by the reward function. The agent iteratively improves its proposals based on this feedback.

In Silico Validation

- Filters: Apply computational filters to prioritize generated compounds for synthesis. These include:

- ADMET Prediction: Use tools like DeepSol (solubility) or Stability Oracle (protein stability) to predict key properties [7].

- Pan-Assay Interference Compounds (PAINS) Filtering: Remove compounds with known promiscuous, non-selective motifs [20].

- Docking: Perform molecular docking as a preliminary check for binding mode and affinity.

- Filters: Apply computational filters to prioritize generated compounds for synthesis. These include:

Iterative DBTL Integration

- The top-ranked virtual compounds proceed to the "Build" phase (synthesis).

- Synthesized compounds are then "Tested" in biochemical or cellular assays.

- The resulting data is fed back into the "Learn" phase to retrain and refine the generative model, closing the loop and improving the next round of design [18].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Reagents and Tools for Accelerated Hit Identification

| Item / Resource | Function / Application |

|---|---|

| Ultra-Large Make-on-Demand Libraries (Enamine REAL Space, eMolecules eXplore) | Provide access to billions of synthesizable compounds for virtual screening [19]. |

| Diverse Screening Libraries (Enamine Hit Locator Library - HLL) | Smaller, curated libraries used for initial docking in workflows like HIDDEN GEM [19]. |

| DNA-Encoded Libraries (DELs) | Enable experimental screening of millions to billions of compounds in a single tube via affinity selection and NGS readout [16]. |

| Cloud-Based Robotic Labs (Strateos Cloud Lab) | Provide remote, automated platforms for high-throughput compound synthesis and testing, integrating with DBTL workflows [20]. |

| Cell-Free Expression Systems | Enable rapid, high-throughput synthesis and testing of proteins without the need for live cells, accelerating the "Build" and "Test" phases [7]. |

| Generative AI Platforms (e.g., for VAE, GAN, RL) | Core software for de novo molecular design, integrating with DBTL cycles for continuous learning [22] [21]. |

| Knowledge Graphs (e.g., Ro5's SpectraView) | Data systems that integrate biomedical data from publications, patents, and databases to aid in target evaluation and prioritization [20]. |

The integration of artificial intelligence (AI) into biological design is fundamentally reshaping the discovery and development of therapeutic proteins and antibodies. This paradigm moves beyond traditional, linear Design-Build-Test-Learn (DBTL) cycles to a more dynamic, AI-agent-driven closed-loop feedback system, often termed LDBT (Learn-Design-Build-Test). This framework leverages AI to learn from vast biological datasets, design novel biomolecules in silico, and rapidly validate them through high-throughput experiments, with data from each cycle feeding back to refine the AI models. This application note details the core protocols, quantitative results, and essential reagents driving this transformative approach, providing a practical resource for researchers and drug development professionals.

The Shift from DBTL to Closed-Loop LDBT

The conventional DBTL cycle, while effective, is often sequential and slow. The integration of AI proposes a paradigm shift to LDBT, where "Learning" precedes "Design" [7]. In this model, pre-trained AI models, informed by massive datasets, perform zero-shot predictions to generate initial designs, drastically accelerating the initial design phase and reducing reliance on iterative physical screening [7]. The "Build" and "Test" phases are accelerated using technologies like cell-free expression systems and yeast display, enabling megascale data generation [7]. This creates a closed-loop feedback system where experimental results continuously refine and improve the AI models, creating a self-optimizing discovery engine [7] [17].

The following diagram illustrates the workflow of this integrated, AI-agent-driven closed-loop system.

Quantitative Results in AI-Driven Therapeutic Protein Design

Recent breakthroughs demonstrate the efficacy of AI-driven platforms. The table below summarizes key performance metrics from published studies and industry partnerships as of 2025.

Table 1: Performance Metrics of AI-Designed Therapeutic Proteins and Antibodies

| Therapeutic Molecule / Platform | Target | Key AI Technology | Reported Affinity/Performance | Experimental Validation Method |

|---|---|---|---|---|

| De novo VHHs [23] | Influenza haemagglutinin, C. difficile toxin B (TcdB) | Fine-tuned RFdiffusion, ProteinMPNN | Initial designs: tens-hundreds of nM KD After maturation: single-digit nM KD | Yeast surface display, SPR, Cryo-EM |

| De novo scFvs [23] | TcdB, PHOX2B peptide–MHC | Fine-tuned RFdiffusion, ProteinMPNN | Binding confirmed, atomic-level accuracy of all six CDR loops | Cryo-EM |

| AI-designed Enzymes (IDT & Profluent) [24] | Various (Oncology, Epigenetics) | Profluent Bio's ProGen3 models | Accelerated development of "next-generation enzymes" | Proprietary enzymology validation & manufacturing |

| Small Molecule (Exscientia) [8] | CDK7 (Oncology) | Generative AI Design Platform | Clinical candidate from 136 synthesized compounds (vs. thousands typically) | Phase I/II clinical trials |

Detailed Experimental Protocols for AI-Driven Design

This section outlines a standardized protocol for the de novo design of antibodies, such as single-chain variable fragments (scFvs) and single-domain antibodies (VHHs), using a fine-tuned RFdiffusion network, as validated in recent landmark studies [23].

Protocol:De NovoAntibody Design via RFdiffusion

Principle: This protocol utilizes a RFdiffusion network, fine-tuned on antibody-antigen complex structures, to generate novel antibody variable regions that bind a user-specified epitope on a target antigen with atomic-level precision. The process keeps the framework region of the antibody constant while designing novel complementarity-determining region (CDR) loops and the overall binding orientation [23].

Workflow Overview:

- Learn (AI Pre-training & Conditioning): The RFdiffusion model, pre-trained on protein structures, is fine-tuned on antibody complex structures. For a new design, the target protein structure and a desired framework region are provided as conditioning inputs.

- Design (In Silico Generation): The conditioned model generates thousands of novel antibody structures. ProteinMPNN is then used to design sequences for these backbone structures.

- Build (Construct Synthesis): DNA sequences encoding the top-ranked designs are synthesized and cloned into expression vectors (e.g., for yeast display or bacterial expression).

- Test (Experimental Validation): Designed antibodies are expressed and tested for binding and affinity. High-resolution structural validation (e.g., Cryo-EM) confirms binding pose accuracy.

Materials and Equipment:

- Hardware: High-performance computing cluster with multiple GPUs.

- Software: Fine-tuned RFdiffusion, ProteinMPNN, RoseTTAFold2 (fine-tuned for antibody validation) [23].

- Target Data: High-resolution 3D structure of the target antigen (PDB file or AlphaFold2 prediction). Definition of target epitope residues.

- Biological Reagents: (See Section 5: "The Scientist's Toolkit" for a detailed list).

Step-by-Step Procedure:

Part A: Learn & Design (In Silico Phase)

- Input Preparation: Prepare a PDB file of the target antigen and a list of residue numbers defining the epitope of interest. Select a stable, humanized antibody framework (e.g., h-NbBcII10FGLA for VHHs [23]).

- Condition RFdiffusion: Input the target structure, epitope "hotspot" residues, and the framework structure into the fine-tuned RFdiffusion model. The framework is provided in a global-frame-invariant manner using the template track, allowing the model to design both CDR loops and the rigid-body docking orientation [23].

- Generate Structures: Run RFdiffusion to generate a large library (e.g., 10,000-100,000) of de novo antibody variable region structures bound to the target.

- Design Sequences: For each generated backbone structure, use ProteinMPNN to design a sequence that is likely to fold into that structure. This results in a library of candidate antibody sequences.

- In Silico Filtering: Filter the designed sequences using a fine-tuned RoseTTAFold2 network. This network, provided with the target structure and epitope, re-predicts the structure of the designed antibody-antigen complex. Designs with high predicted confidence (self-consistency with the original design) and low predicted cross-reactivity are selected for experimental testing [23].

Part B: Build & Test (Experimental Phase)

- DNA Synthesis and Cloning: Synthesize DNA sequences encoding the top 100-500 filtered designs. Clone these sequences into an appropriate expression vector (e.g., for yeast surface display or bacterial expression).

- High-Throughput Screening: For scFvs or VHHs, use yeast surface display to screen for binders. Induce expression of the designed antibodies on the yeast surface and label with fluorescently-tagged antigen. Use fluorescence-activated cell sorting (FACS) to isolate yeast populations displaying antigen-binding antibodies [23].

- Affinity Measurement: Express and purify positive hits from E. coli or mammalian systems. Determine binding affinity (KD) using surface plasmon resonance (SPR) or bio-layer interferometry (BLI).

- Affinity Maturation (if needed): For designs with modest affinity, employ an affinity maturation process. This can be done using continuous evolution systems like OrthoRep to generate higher-affinity binders (e.g., moving from nanomolar to single-digit nanomolar KD) while maintaining epitope selectivity [23].

- Structural Validation: To confirm atomic-level accuracy, determine the high-resolution structure of the designed antibody in complex with its antigen using cryo-electron microscopy (cryo-EM) or X-ray crystallography. Compare the experimental structure with the computational model [23] [25].

Integrated Closed-Loop System Architecture

For an AI-driven discovery platform to function as a true closed-loop system, the computational and experimental components must be seamlessly integrated. The following diagram outlines the architecture of such a system, as exemplified by platforms like the Digital Catalysis Platform (DigCat) [17] and industry partnerships [24].

The Scientist's Toolkit: Research Reagent Solutions

Successful execution of these protocols relies on a suite of specialized reagents and computational tools. The following table catalogs essential solutions for AI-driven therapeutic protein design.

Table 2: Essential Research Reagents and Tools for AI-Driven Protein Design

| Item Name | Function/Application | Specific Example / Vendor |

|---|---|---|

| Fine-tuned RFdiffusion | Generative AI model for creating de novo antibody and protein structures bound to a specified target. | Custom fine-tuned version as described in [23]; available from academic sources (e.g., Baker Lab/IPD). |

| ProteinMPNN | AI-based protein sequence design tool that predicts sequences for a given protein backbone structure. | Publicly available; used for designing sequences for RFdiffusion-generated backbones [23] [7]. |

| RoseTTAFold2 (Fine-tuned) | AI structure prediction tool, fine-tuned on antibody complexes, used for in silico validation and filtering of designs. | Custom fine-tuned version for antibody-antigen complex prediction [23]. |

| Yeast Surface Display System | High-throughput platform for screening and isolating antibody binders from large libraries. | Commercial systems available; used for screening ~9,000 AI-designed VHHs/scFvs per target [23]. |

| Cell-Free Protein Synthesis System | Rapid, in vitro expression system for protein production without living cells, accelerating the Build-Test phases. | Various commercial kits (e.g., based on E. coli lysate); enables mg/mL yields in hours for testing [7]. |

| Surface Plasmon Resonance (SPR) | Label-free technique for quantifying binding kinetics (KD, kon, koff) of designed proteins. | Instruments from vendors like Cytiva (Biacore) or Sartorius (Octet); used for affinity measurement of purified binders [23]. |

| Humanized VHH Framework | A stable, optimized single-domain antibody scaffold used as a starting framework for de novo VHH design. | e.g., h-NbBcII10FGLA framework [23]. |

| OrthoRep System | A yeast-based platform for continuous in vivo mutagenesis and evolution, used for affinity maturation of initial designs. | Used to mature initial AI-designed VHHs from nanomolar to single-digit nanomolar affinity [23]. |

The integration of robotic platforms and cell-free systems is revolutionizing high-throughput testing, creating a foundation for fully autonomous research environments. This synergy is pivotal for operationalizing the closed-loop Design-Build-Test-Learn (DBTL) paradigm, where artificial intelligence (AI) agents direct experimental workflows with minimal human intervention [26]. Wet-lab automation has evolved from simple robotic liquid handlers to sophisticated, interconnected systems capable of executing complex experimental protocols. When combined with the speed and flexibility of cell-free protein synthesis (CFPS), these platforms enable an unprecedented scale of experimentation, allowing for the rapid exploration of biological hypotheses. This article details the application of these technologies within AI-driven discovery platforms, providing specific protocols and resource guides to implement these systems in modern research and drug development pipelines.

Robotic Platforms for High-Throughput Testing

Core Components of an Automated Workstation

A high-throughput screening (HTS) robotics laboratory is typically built around an integrated system of components designed to handle microtiter plates (e.g., 96, 384, or 1536-well formats) [27] [28]. These systems minimize hands-on time, enhance data quality, and reduce reagent waste by using smaller amounts of materials [28]. A standard platform includes:

- Liquid Handling Robots: These are the core workhorses for pipetting. Modern systems employ acoustic dispensing and pressure-driven methods for nanoliter-precision transfer, making workflows incredibly fast and less error-prone compared to manual pipetting [27].

- Robotic Arms: These transport microtiter plates between different stations on the platform, creating a seamless, end-to-end automated workflow [28].

- Plate Readers and Imaging Systems: These devices capture experimental outcomes. The trend has moved from simple absorbance readouts to high-content imaging (HCI) that can capture multi-parametric data on cell morphology, signaling, and other phenotypic changes [27].

- Integrated Software: A central software suite schedules and coordinates all instruments via a robotic arm, ensuring robust and reliable operation [11].

Application Note: Automated Clone Screening

Objective: To automate the screening of thousands of microbial or mammalian clones for a target protein or gene of interest, significantly reducing laborious manual methods and creating a unified data repository [29].

Protocol:

- Target Identification: Begin with a defined target—a receptor, protein, or gene in a biological pathway.

- Automated Culture Inoculation: A robotic arm picks and transfers colonies from an agar plate into deep-well plates containing growth medium.

- Liquid Handling for Induction: After a growth period, a liquid handler automatically adds inducters to trigger protein expression.

- Sample Preparation: The system harvests cells and performs lysis to release the target protein for analysis.

- Assay and Analysis: The robotic arm moves the assay plate to a plate reader or imager for automated quantification of the target's activity or expression.

- Data Centralization: All data from multiple processes are automatically pulled into a central software repository for analysis and decision-making [29].

Cell-Free Systems as an Enabling Technology

Cell-free protein synthesis (CFPS) systems have emerged as a powerful tool for high-throughput testing because they bypass the need for live cells, offering unparalleled speed and flexibility [2] [30]. These systems utilize the protein biosynthesis machinery from cell lysates (e.g., E. coli, yeast, mammalian) or purified components to activate in vitro transcription and translation [30].

Key Advantages for High-Throughput Workflows:

- Rapid Protein Production: Achieve high-yield protein synthesis (>1 g/L) in less than 4 hours, without the time-consuming steps of cloning and transformation [2].

- Direct DNA Template Use: Synthesized DNA templates can be added directly to CFPS reactions, drastically accelerating the testing cycle [2].

- Tolerance to Toxicity: CFPS can express proteins that would be toxic to a living cell, expanding the range of testable molecules [30].

- Ultra-Miniaturization: Reactions can be scaled down to picoliter volumes, enabling the testing of millions of conditions in a single campaign [2] [31].

Integrated Experimental Protocols

This section provides a detailed methodology for implementing a closed-loop DBTL cycle for protein engineering, leveraging robotic automation and cell-free systems.

Protocol 1: Automated, Cell-Free Protein Engineering Cycle

Objective: To autonomously engineer a protein for a desired function (e.g., improved enzyme thermostability) through iterative, AI-directed DBTL cycles. This protocol is based on the SAMPLE (Self-driving Autonomous Machines for Protein Landscape Exploration) platform and the Illinois Biological Foundry for Advanced Biomanufacturing (iBioFAB) [31] [11].

The Scientist's Toolkit: Key Research Reagent Solutions

| Component | Function in the Protocol | Specific Example / Note |

|---|---|---|

| BL-7S Plasmids [30] | Overexpress a subset of translation factors in a specialized, high-yield CFPS system. | Found in Addgene (https://www.addgene.org/Cheemeng_Tan/). |

| Amino Acid Mixture [30] | Provides building blocks for in vitro protein synthesis. | A pre-mixed solution of all 20 standard amino acids. |

| Nucleotide Triphosphates (ATP, GTP, UTP, CTP) [30] | Provides energy and substrates for transcription and translation. | Critical for the CFPS reaction supplement. |

| Creatine Phosphate & Kinase [30] | An energy regeneration system to sustain prolonged protein synthesis. | Maintains ATP levels in the cell-free reaction. |

| Liquid Handling Robot | Automates all pipetting steps for nanoliter to microliter volumes. | Enables high-throughput assembly of CFPS reactions. |

| Microfluidic Dispenser | Allows for ultra-low-volume (nanoliter) reaction assembly to conserve precious reagents. | e.g., μCD (microfluidic cap-to-dispense) system [30]. |

| Robotic Arm | Integrates individual instruments (thermocyclers, incubators, plate readers) into a single workflow. | Physically moves plates between stations without human intervention. |

Detailed Methodology:

Module 1: AI-Driven Design and DNA Construction (Build)

- Design: An AI agent (e.g., using a protein language model like ESM-2 or a Bayesian optimization algorithm) analyzes existing data and proposes a library of protein sequence variants to test next [31] [11].

- DNA Assembly: The robotic system executes an automated mutagenesis PCR protocol to construct the designed variants.

- The system prepares PCR reactions in a 96-well plate format using a liquid handler.

- Following PCR, a DpnI digestion step is performed to eliminate the template DNA.

- The assembled DNA is then amplified via polymerase chain reaction (PCR). The success of gene assembly and PCR is verified in real-time using a fluorescent dye like EvaGreen [31].

Module 2: Cell-Free Protein Synthesis (Test)

- Reaction Assembly: A liquid handling robot or a specialized microfluidic printer (e.g., the μCD system) dispenses nanoliter volumes of the CFPS reaction supplement and the amplified DNA expression cassettes into assay plates [30] [31]. This step is performed with high accuracy to create hundreds of unique reactions.

- Protein Expression: The plate is incubated at a controlled temperature (e.g., 30-37°C) for a defined period (typically 3-6 hours) to allow for protein synthesis [31].

Module 3: Automated Functional Assay (Test)