Building to Understand: How Synthetic Biology is Decoding Life's Design Principles

This article explores how synthetic biology, the discipline of designing and constructing biological systems, provides a powerful framework for achieving fundamental biological understanding.

Building to Understand: How Synthetic Biology is Decoding Life's Design Principles

Abstract

This article explores how synthetic biology, the discipline of designing and constructing biological systems, provides a powerful framework for achieving fundamental biological understanding. Targeted at researchers and drug development professionals, it details the paradigm of 'learning by building' to probe core biological questions. The scope covers foundational concepts from genetic circuit design to minimal cell assembly, examines cutting-edge methodologies including AI-driven design and machine learning optimization, addresses key troubleshooting challenges in predictability and scaling, and validates the approach through comparative analysis with traditional discovery methods. The synthesis of these areas highlights how synthetic biology is revolutionizing our ability to not just observe, but actively decipher the rules of life, with profound implications for therapeutic discovery and biomedical innovation.

The 'Build-to-Learn' Paradigm: Deconstructing Biological Complexity from the Ground Up

Synthetic biology represents a fundamental shift in biological science, moving from observational studies to a design-based research paradigm. This approach uses basic biological building blocks to create fundamentally new biological molecules, cells, and organisms not found in nature, thereby advancing fundamental biological understanding by providing new approaches and tools to probe living systems [1]. This field has rapidly evolved from merely "reading" DNA sequences through advanced sequencing technologies to actively "writing" and designing novel biological systems with predetermined functions. The past few years have witnessed transformative technologies to read and write DNA, RNA, and proteins, accelerating progress in synthetic biology toward addressing more complex problems and engineering new host species [1]. This technical guide examines the core technologies driving this revolution, with specific emphasis on their application for deepening fundamental biological insight through constructive approaches.

The field stands poised to offer radical solutions to significant global challenges in food production, climate change, bioremediation, and human health [1]. However, its greater contribution may be theoretical—by building biological systems from first principles, researchers can test hypotheses about the fundamental rules governing living systems in ways that observational biology alone cannot achieve. This whitepaper provides researchers and drug development professionals with a comprehensive technical overview of the current state of genomic reading technologies, biological system writing capabilities, and the computational infrastructure that bridges these domains.

From Reading DNA: Advanced Sequencing and Multi-Omic Analysis

The ability to comprehensively "read" DNA sequences represents the foundational capability upon which synthetic biology is built. Next-Generation Sequencing (NGS) has revolutionized genomics by making large-scale DNA and RNA sequencing faster, cheaper, and more accessible than ever before [2]. Unlike traditional Sanger sequencing, which was time-intensive and costly, NGS enables simultaneous sequencing of millions of DNA fragments, democratizing genomic research and opening the door to high-impact projects like the 1000 Genomes Project and the UK Biobank [2].

Current NGS Technology Platforms

The NGS landscape continues to evolve with significant improvements in speed, accuracy, and affordability. Key platforms include Illumina's NovaSeq X, which has redefined high-throughput sequencing with unmatched speed and data output for large-scale projects, and Oxford Nanopore Technologies, which has expanded the boundaries of read length while enabling real-time, portable sequencing [2]. These platforms have enabled diverse applications ranging from rare genetic disorder diagnosis through rapid whole-genome sequencing to comprehensive cancer genomics that identifies somatic mutations, structural variations, and gene fusions in tumors [2].

Table 1: Next-Generation Sequencing Platforms and Applications

| Platform | Technology | Key Strengths | Primary Applications |

|---|---|---|---|

| Illumina NovaSeq X | Sequencing-by-synthesis | Unmatched throughput, cost-effectiveness | Large-scale population studies, whole-genome sequencing |

| Oxford Nanopore | Nanopore sensing | Long reads, real-time analysis, portability | Structural variant detection, field sequencing |

| PacBio | Single-molecule real-time (SMRT) | HiFi reads, epigenetic detection | De novo genome assembly, full-length transcript sequencing |

Multi-Omic Integration and Analysis

While genomics provides valuable insights into DNA sequences, it represents only one layer of biological complexity. Multi-omics approaches combine genomics with other layers of biological information to provide a comprehensive view of biological systems [2]. This integration includes transcriptomics (RNA expression levels), proteomics (protein abundance and interactions), metabolomics (metabolic pathways and compounds), and epigenomics (epigenetic modifications such as DNA methylation) [2]. The strategic integration of these data layers enables researchers to link genetic information with molecular function and phenotypic outcomes, creating powerful models of biological systems.

In 2025, population-scale genome studies are expanding to an entirely new phase of multiomic analysis enabled by direct interrogation of molecules [3]. Unlike past studies based on molecular proxies, direct analysis of RNA and epigenomes adds to DNA sequencing data to enable a more sophisticated understanding of native biology in extremely large cohorts. This approach is unlocking the potential to drive more routine adoption of precision medicine in mainstream healthcare than would ever have been possible with information gleaned from genomic data alone [3].

To Writing Biological Systems: Computational Design and Engineering

The transition from reading biological information to writing functional biological systems represents the core frontier of synthetic biology. This capability moves beyond traditional genetic engineering to the computational design of biological components with predetermined functions.

Computational Protein Design for DNA Recognition

A landmark advancement in biological system writing is the computational design of sequence-specific DNA-binding proteins (DBPs). While natural DNA-binding domains like CRISPR-Cas systems, TALEs, and zinc fingers have proven powerful, each has limitations including size constraints, delivery challenges, and target site restrictions [4]. Recently, researchers have developed a computational method for designing small DBPs that recognize short specific target sequences through interactions with bases in the major groove, generating binders for five distinct DNA targets with mid-nanomolar to high-nanomolar affinities [4].

The design strategy addresses three fundamental challenges in DNA recognition: (1) achieving precise positioning for amino acid-DNA base interactions, (2) recognizing specific DNA bases through accurate molecular contact prediction, and (3) ensuring precise geometric side-chain placement through preorganization [4]. The pipeline begins with the creation of a diverse library of approximately 26,000 helix-turn-helix (HTH) DNA-binding domain scaffolds generated from metagenome sequence data and AlphaFold2 structure predictions [4].

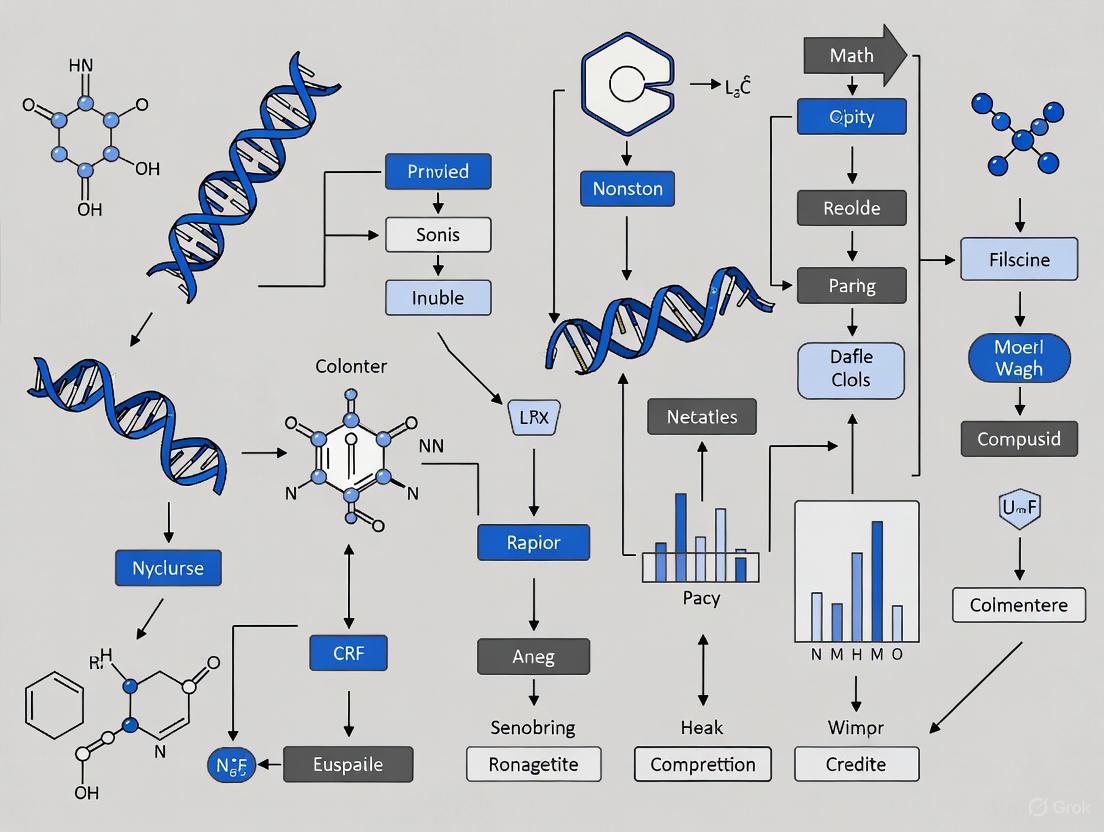

Diagram: Computational Pipeline for DNA-Binding Protein Design

Experimental Validation of Designed DBPs

The designed DBPs were experimentally validated through multiple approaches. Researchers created three sets of designs using variations of the overall design approach: one set using Rosetta-based sequence design and motif grafting, a second set employing LigandMPNN sequence design against both crystal-derived DNA and straight B-DNA, and a third set using LigandMPNN-based design with inpainting for backbone diversification [4]. The designs were screened using yeast display cell sorting, with the best-performing binders subjected to further characterization.

Crystal structures of designed DBP-target site complexes demonstrated close agreement with the design models, validating the computational approach [4]. Functional testing confirmed that the designed DBPs function in both Escherichia coli and mammalian cells to repress and activate transcription of neighboring genes. This methodology provides a route to small and readily deliverable sequence-specific DBPs for gene regulation and editing applications, complementing existing technologies like CRISPR-Cas systems [4].

Table 2: Performance Metrics of Computationally Designed DNA-Binding Proteins

| Design Set | Design Method | Number of Designs | Binding Affinity | Specificity Match | Functional Validation |

|---|---|---|---|---|---|

| Set 1 | Rosetta design + motif grafting | 21,488 designs | Mid-nanomolar | Up to 6 base-pair positions | E. coli and mammalian cells |

| Set 2 | LigandMPNN + B-DNA targets | 12,273 designs | High-nanomolar | Close computational match | Transcriptional regulation |

| Set 3 | LigandMPNN + inpainting | 100,000 designs | Nanomolar range | Model agreement | Crystal structure confirmation |

The Computational Bridge: Data Analysis and Visualization Infrastructure

The integration of reading and writing biological systems depends critically on advanced computational infrastructure that can process massive datasets and facilitate biological insight.

AI and Machine Learning in Genomic Analysis

The massive scale and complexity of genomic datasets demand advanced computational tools for interpretation. Artificial intelligence and machine learning algorithms have emerged as indispensable in genomic data analysis, uncovering patterns and insights that traditional methods might miss [2]. Key applications include variant calling with tools like Google's DeepVariant, which utilizes deep learning to identify genetic variants with greater accuracy than traditional methods; disease risk prediction through polygenic risk scores; and drug discovery by analyzing genomic data to identify new targets and streamline development pipelines [2].

Biological large language models (BioLLMs) represent a particularly promising development. These models are trained on natural DNA, RNA, and protein sequences and can generate new biologically significant sequences that serve as helpful points of departure for designing useful proteins [5]. The integration of AI with multi-omics data has further enhanced its capacity to predict biological outcomes, contributing to advancements in precision medicine [2].

Biological Data Visualization Solutions

Effective visualization of complex biological data is essential for researcher interpretation and insight generation. Biological data visualization transforms complex datasets into visual formats that are easier to interpret and analyze, helping uncover insights faster and more accurately across genomics, proteomics, and related fields [6].

Diagram: Biological Data Visualization Workflow

When creating biological visualizations, researchers should follow established principles for effective colorization. These include identifying the nature of the data (nominal, ordinal, interval, ratio), selecting appropriate color spaces, creating color palettes based on the selected color space, applying the color palette to the dataset, and checking for color context [7]. Additional considerations include evaluating color interactions, being aware of disciplinary color conventions, assessing color deficiencies, considering web content accessibility and print realities, and ensuring the visualization works in black and white [7].

For large-scale omics data analysis, platforms like Cytoscape provide open-source software for visualizing complex networks and integrating these with any type of attribute data [8]. Cytoscape supports use cases in molecular and systems biology, genomics, and proteomics, including loading molecular and genetic interaction datasets, projecting and integrating global datasets and functional annotations, establishing powerful visual mappings, performing advanced analysis and modeling using apps, and visualizing and analyzing human-curated pathway datasets [8].

Essential Research Reagents and Tools

Implementing the described methodologies requires specific research reagents and software tools. The table below details key resources for synthetic biology research.

Table 3: Essential Research Reagent Solutions for Synthetic Biology

| Category | Specific Tools/Platforms | Function | Applications |

|---|---|---|---|

| DNA Sequencing Platforms | Illumina NovaSeq X, Oxford Nanopore, PacBio | High-throughput DNA/RNA reading | Whole genome sequencing, transcriptomics, epigenomics |

| Software for Biological Data Analysis | Partek Flow, OmicsBox, Cytoscape | Bioinformatics analysis of genomic data | Genomic workflows, non-model organism research, network biology |

| Protein Design Software | Rosetta, ProteinMPNN, LigandMPNN, RIFdock | Computational protein design and optimization | De novo protein design, DNA-binding protein engineering |

| Laboratory Information Management | Benchling, Labguru | Electronic lab notebooks, sample tracking | R&D data management, inventory management, protocol standardization |

| Quality Management Systems | Veeva Vault, Scilife | Regulatory compliance, quality management | Clinical trial management, FDA/ISO compliance, audit preparation |

| Multi-omics Integration Platforms | IQVIA, BIOVIA | AI-driven analytics, data integration | Drug discovery, clinical trials, real-world evidence generation |

The convergence of advanced DNA reading technologies and computational biological writing capabilities represents a transformative frontier in synthetic biology. The integration of next-generation sequencing, multi-omics data integration, AI-driven analysis, and computational protein design creates a powerful framework for advancing fundamental biological understanding through design-based research. As these technologies continue to mature, they promise to accelerate breakthroughs across therapeutic development, agricultural innovation, and sustainable manufacturing.

The most significant impact may be theoretical: by building biological systems from first principles, researchers can rigorously test fundamental hypotheses about the operating principles of living systems. This constructive approach complements traditional analytical methods in biology, potentially leading to unified theories of biological organization that have previously eluded the field. For drug development professionals and researchers, these advances provide an expanding toolkit for interrogating biological complexity while developing innovative solutions to pressing human challenges.

Synthetic biology represents a fundamental shift in the life sciences, applying engineering principles to design and construct novel biological systems. This field is driven by the core philosophy that biological systems can be broken down into interchangeable, standardized components that, when reassembled, can generate predictable and useful functions. The ultimate goal is not merely to manipulate biology but to fundamentally understand it through the process of design and construction [9]. This approach allows researchers to test hypotheses about biological organization and function by building systems from the ground up. The three foundational pillars enabling this paradigm are standardized biological parts, genetic circuits, and chassis organisms. Together, they form an integrated framework for programming living cells to perform complex tasks, from producing therapeutic drugs to processing environmental information [10]. This technical guide details the core principles, composition, and interplay of these components, providing a roadmap for their application in research and drug development.

Standardized Biological Parts: The Building Blocks of Programmable Biology

At the most basic level, standardized biological parts are DNA sequences that encode a specific biological function. The concept of standardization is borrowed from other engineering disciplines, where components like resistors in electronics have predictable, well-defined behaviors regardless of their context. In synthetic biology, this allows for the modular assembly of complex systems [9].

Definition and Purpose: A standardized biological part is a functional unit of DNA that governs a defined cellular process. Examples include promoters, ribosome binding sites (RBS), protein-coding sequences, and terminators. The key is that these parts are designed to be modular and interoperable, minimizing unexpected interactions when combined [11]. This design-driven genetic engineering relies on concepts of abstraction and standardization to make biological engineering more predictable and scalable [9].

The Registry of Standard Biological Parts: Initiatives like the BioBricks standard have established frameworks for sharing and assembling these parts [9]. This repository allows researchers worldwide to access and use characterized parts, accelerating the design process and enabling the reproduction of results across different laboratories.

Tuning and Optimization: A critical aspect of part design is the ability to fine-tune expression levels. For instance, using different ribosome-binding sites (RBS) can alter protein copy number, leading to different outcomes from a synthetic system [9]. Computational tools and part libraries have been developed specifically for this tuning, moving beyond the course-grained control that was initially possible [11].

Table 1: Categories of Standardized Biological Parts

| Part Category | Function | Key Characteristics | Example |

|---|---|---|---|

| Promoter | Initiates transcription of a gene | Strength, inducibility, host compatibility | P{Lac}, P{Tet} [11] |

| Ribosome Binding Site (RBS) | Controls translation initiation rate | Sequence strength, affects protein yield | Varies by organism [9] |

| Protein Coding Sequence | Encodes an amino acid sequence for a protein | Codon optimization, function, folding | GFP, TetR, Cas9 [11] |

| Terminator | Signals the end of transcription | Efficiency, prevents read-through | Various Rho-dependent/independent |

| Operator | Transcription factor binding site | Specificity, binding affinity | Operator for LacI, TetR [11] |

Genetic Circuits: Computational Logic in Living Cells

Genetic circuits are networks of integrated biological parts that process information and control cellular behavior in a manner analogous to electronic circuits. They are the functional assemblies that give a synthetic biological system its "program" [10].

Circuit Design and Core Components

The design of genetic circuits involves connecting standardized parts so that the output of one part (e.g., a produced protein) becomes the input for another (e.g., regulating a promoter). The core logic is implemented using transcriptional regulators.

Transcriptional Regulators: These proteins control the flow of RNA polymerase along the DNA. The main classes used in circuit design include:

- DNA-Binding Proteins (Repressors/Activators): Proteins like TetR and LacI homologs bind to specific operator sites to block or recruit RNA polymerase, respectively. They are the workhorses for building logic gates (NOT, NOR) and dynamic circuits like oscillators [11].

- CRISPRi/a: Catalytically dead Cas9 (dCas9) can be targeted to specific DNA sequences by guide RNAs to repress (CRISPRi) or activate (CRISPRa) transcription. This technology offers unparalleled designability due to the ease of programming guide RNA sequences [11].

- Invertases: Site-specific recombinases (e.g., serine integrases) that flip DNA segments between binding sites. They are ideal for building memory circuits because the DNA state change is permanent and does not require sustained energy [11].

Key Circuit Functions: By combining these regulators, researchers can create fundamental computing functions within a cell.

- Logic Gates: Boolean logic operations like AND, OR, and NOT allow cells to make decisions based on multiple inputs [11].

- Dynamic Circuits: These include oscillators that produce periodic pulses of gene expression and bistable switches that can toggle between two stable states, enabling cellular memory and differentiation events [11].

Figure 1: Genetic Circuit Workflow. This diagram illustrates the flow of information within a synthetic genetic circuit, from input signal to functional output, and highlights the critical regulatory feedback loops and host context that influence its behavior.

Experimental Protocol: Constructing a Simple Inducible Switch

The following protocol outlines the steps for building a basic inducible gene switch, a foundational circuit for controlled expression.

Design and In Silico Modeling:

- Objective: Enable controlled expression of a target gene (e.g., a therapeutic protein) using a small molecule inducer.

- Circuit Architecture: A constitutively active promoter drives the expression of a repressor protein (e.g., TetR). This repressor binds to an operator site within a second, inducible promoter, silencing it. The inducer molecule (e.g., anhydrous tetracycline, aTc) binds to the repressor, causing a conformational change that releases it from the DNA, thereby activating the inducible promoter and the downstream target gene [11].

- In Silico Simulation: Use computational modeling software (e.g., MATLAB, SimBiology) to simulate circuit dynamics. Model ordinary differential equations for protein production and degradation to predict the system's response time and expression levels upon induction.

DNA Assembly:

- Part Selection: Select standardized parts from a repository: a constitutive promoter, the tetR coding sequence, a strong terminator, the pTet inducible promoter, the coding sequence for your protein of interest (POI), and a final terminator.

- Assembly Method: Use a standardized assembly method such as Golden Gate or Gibson Assembly to combine these parts into a single plasmid vector in the correct order. Verify the final plasmid sequence by Sanger sequencing.

Transformation and Screening:

- Chassis Transformation: Introduce the assembled plasmid into your chosen chassis organism (e.g., E. coli) via chemical transformation or electroporation.

- Clone Screening: Plate the transformation mixture on selective antibiotic media. Pick isolated colonies and grow them in liquid culture. Isolate plasmid DNA from these cultures and verify the correct circuit assembly via restriction digest or PCR.

Circuit Characterization:

- Culture Conditions: Grow engineered cells in a controlled bioreactor or multi-well plates.

- Induction: Add a range of inducer (aTc) concentrations to parallel cultures during the mid-log growth phase.

- Output Measurement: Measure the output (e.g., fluorescence from a reporter protein like GFP) over time using a plate reader or flow cytometry. Normalize the data to cell density (OD600).

- Data Analysis: Plot the dose-response curve (output vs. inducer concentration) and the time-course dynamics. Key parameters to extract include leakiness (expression without inducer), dynamic range (maximal expression / leakiness), and response time.

Chassis Organisms: The Functional Host Platform

The chassis organism is the living host that houses the genetic circuit and provides the essential machinery for its operation. It is far from a passive vessel; it is an active and integral component of the overall system whose physiology deeply impacts circuit performance [12] [13].

The Chassis as a Design Parameter

The traditional synthetic biology approach has heavily relied on a narrow set of model organisms, such as Escherichia coli and Saccharomyces cerevisiae, due to their well-characterized genetics and ease of manipulation [12] [13]. However, a paradigm shift is underway towards Broad-Host-Range (BHR) Synthetic Biology, which re-conceptualizes the chassis as a tunable design parameter rather than a default choice [12].

- Functional Module: The innate traits of a chassis can be leveraged as the foundation of a design. For example, cyanobacteria are engineered as photosynthetic chassis to fix CO₂ and produce chemicals using sunlight [12] [14]. Similarly, extremophiles (thermophiles, halophiles) serve as robust chassis for industrial processes in harsh environments [12].

- Tuning Module: The same genetic circuit can exhibit different performance metrics—such as output strength, response time, and leakiness—when placed in different host organisms. This "chassis effect" provides a spectrum of performance profiles that synthetic biologists can leverage [12].

Selection of a Microbial Chassis

The choice of chassis is critical and depends on the application's specific requirements. The table below compares key chassis organisms.

Table 2: Comparison of Common and Emerging Microbial Chassis Organisms

| Chassis Organism | Type | Key Features | Ideal Applications | Notable Strains/Projects |

|---|---|---|---|---|

| Escherichia coli | Model Bacterium | Rapid growth, high genetic tractability, extensive toolkit | Protein production, metabolic engineering, basic circuit design | MGF-01 (reduced genome for higher yield) [15] |

| Saccharomyces cerevisiae | Model Yeast | Eukaryotic, GRAS status, secretory pathway | Complex eukaryotic protein production, biosynthetic pathways | Engineered for therapeutic proteins [13] |

| Synechococcus elongatus | Cyanobacterium | Oxygenic photosynthesis, fixes CO₂, "Green E. coli" | Sustainable production of biofuels & chemicals from CO₂ and light [14] | UTEX 2973 (fast-growing), PCC 7002 [14] |

| Mycoplasma mycoides | Minimal Cell | Minimal genome, reduced complexity | Fundamental study of life, simplified chassis for orthogonal functions | JCVI-syn3.0 (minimal genome with 473 genes) [13] |

| Halomonas bluephagenesis | Non-Model Bacterium | High salinity tolerance, low sterilization needs | Industrial biomanufacturing, open fermentation [12] | Engineered for bioplastic production [12] |

Experimental Protocol: Assessing Circuit Performance Across Multiple Chassis

This protocol describes a systematic approach to quantify the "chassis effect" by measuring the performance of an identical genetic circuit in different host organisms.

Strain and Circuit Preparation:

- Select a panel of chassis organisms (e.g., E. coli, Pseudomonas putida, a cyanobacterium).

- Design a standardized, well-characterized genetic circuit (e.g., an inducible GFP expression system) on a BHR plasmid vector (e.g., a SEVA plasmid) [12].

- Adapt the circuit for each chassis by ensuring the origin of replication and selection marker are functional. Perform codon optimization on the reporter gene if necessary.

- Transform the final constructs into each target chassis organism.

Cultivation and Induction:

- For each chassis, establish optimal growth conditions (media, temperature, aeration). For photosynthetic chassis, specify light intensity [14].

- In a controlled cultivation system (e.g., a multi-well plate), grow biological replicates of each engineered strain to mid-exponential phase.

- Induce the circuit using a saturating concentration of the inducer molecule.

Performance Metric Analysis:

- Growth Measurement: Monitor cell density (OD600) throughout the experiment to assess the metabolic burden imposed by the circuit.

- Fluorescence Output: Measure GFP fluorescence at regular intervals post-induction using a plate reader. Calculate the maximum fluorescence and the time to reach 50% of maximum (response time).

- Leakiness: Measure fluorescence from uninduced control cultures.

- Signal Strength/Noise: Use flow cytometry to measure fluorescence in thousands of individual cells. Calculate the population mean (signal strength) and the coefficient of variation (noise).

Data Integration and Analysis:

- For each chassis, plot growth and fluorescence over time.

- Create a comparative table of key performance indicators: leakiness, maximum output, dynamic range, response time, and growth burden.

- Correlate performance variations with known host physiology (e.g., growth rate, resource allocation mechanisms) to build predictive models for future chassis selection [12].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents, tools, and materials essential for research in synthetic biology.

Table 3: Essential Research Reagents and Tools for Synthetic Biology

| Tool/Reagent Category | Specific Example | Function in Research |

|---|---|---|

| DNA Assembly Kits | Gibson Assembly Master Mix, Golden Gate Assembly Kits | Modular, seamless assembly of multiple DNA parts into a vector backbone. |

| Cloning Kits | TA Cloning Kits, Restriction Enzyme & Ligation Kits | Standard molecular biology workflows for inserting DNA fragments into plasmids. |

| Gene Editing Tools | CRISPR-Cas9 kits (e.g., from Synthego), TALENs, ZFNs | Precise, targeted manipulation of genomic DNA in chassis organisms [16]. |

| DNA Synthesis Services | Twist Bioscience, Integrated DNA Technologies (IDT) | Provision of custom, high-quality double-stranded DNA fragments and genes. |

| Specialized Chassis | Scarab Genomics "Clean Genome" E. coli, Synechococcus elongatus UTEX 2973 | Optimized host organisms with reduced genomes or specialized capabilities (e.g., rapid growth) [13] [14]. |

| Reporter Proteins | Green Fluorescent Protein (GFP), Luciferase | Quantitative, real-time measurement of gene expression and circuit output. |

| Inducer Molecules | Anhydrous Tetracycline (aTc), Isopropyl β-d-1-thiogalactopyranoside (IPTG) | Chemical control of inducible promoters to activate or repress synthetic circuits. |

| Bioprocessing Tools | Bench-top Bioreactors, Multi-well Plate Readers | Controlled cultivation of engineered organisms and high-throughput phenotypic screening. |

The true power of synthetic biology emerges from the synergistic integration of standardized parts, logical circuits, and carefully selected chassis organisms. Mastering the design principles of each component and, more importantly, their complex interactions is the key to transitioning from proof-of-concept demonstrations to robust, real-world applications. The future of this field lies in the continued development of more sophisticated, well-characterized parts; the creation of predictive models that account for host-circuit interactions; and the expansion of the chassis repertoire to harness the full diversity of microbial life. As these core components become more refined and their interplay better understood, synthetic biology will solidify its role as a cornerstone for fundamental biological discovery and a powerful engine for biotechnological innovation.

Synthetic biology, a discipline dedicated to engineering living systems, has provided researchers with a powerful methodology for probing cellular logic. By constructing artificial genetic circuits, scientists can test hypotheses about the design principles of natural biological systems through a hands-on, rational design process [17]. This approach of "reverse engineering" life allows for the deconstruction of complex cellular phenomena into manageable, testable modules. The core premise is that by building simplified, well-defined regulatory systems, we can gain a profound understanding of the operational principles governing natural networks, from fundamental gene expression dynamics to sophisticated multi-cellular behaviors [17] [18].

This whitepaper examines how synthetic gene circuits serve as experimental platforms for uncovering the rules of biological regulation and robustness. We explore the architectural components of these circuits, quantitative design frameworks, experimental methodologies for their implementation, and how their failure modes reveal fundamental constraints on biological systems. By framing synthetic biology as a basic research tool, we demonstrate how construction for its own sake provides unique insights into the mechanistic underpinnings of cellular computation and control.

The Core Architecture of Synthetic Gene Circuits

Synthetic gene circuits are typically modular systems composed of biological components that sense, integrate, and respond to signals through programmed logical operations [19]. These systems can be deconstructed across multiple biological scales, from molecular interactions to population-level behaviors [18].

Fundamental Modules and Their Functions

- Sensors: Input modules that detect external or internal cues such as small molecules, light, temperature, or metabolic states [20] [19]. These typically consist of promoter elements that respond to specific transcription factors or environmental conditions.

- Integrators/Processors: Computational modules that perform logical operations on input signals, implementing functions such as AND, OR, NOT, or more complex Boolean logic [21] [19]. These form the "circuit" proper, where information is processed.

- Actuators: Output modules that generate measurable responses or execute cellular functions, such as fluorescent reporters, enzyme production, or activation of endogenous pathways [19].

Key Regulatory Mechanisms and Their Applications

Synthetic circuits exploit control mechanisms operating at different levels of the central dogma, each offering distinct advantages for probing cellular logic [20]:

Table: Regulatory Devices in Synthetic Gene Circuits

| Regulatory Level | Molecular Components | Key Applications | Advantages |

|---|---|---|---|

| DNA Sequence | Site-specific recombinases (Cre, Flp), Serine integrases (Bxb1, PhiC31), CRISPR-Cas systems | Memory devices, State switching, Counting circuits [20] | Stable, inheritable states; Digital-like control |

| Transcriptional | Synthetic transcription factors, Orthogonal RNA polymerases, Programmable DNA-binding domains | Logic gates, Switches, Amplifiers [20] [21] | High programmability; Combinatorial control |

| Post-transcriptional | Riboswitches, Toehold switches, RNA interference, sRNAs | Tunable expression, Noise reduction, Burden mitigation [20] [22] | Rapid response; Energy efficiency |

| Post-translational | Conditional degradation tags, Protein-protein interaction domains, Allosteric regulation | Signal processing, Noise filtering, Dynamic control [20] | Fast kinetics; Metabolic sensing |

Quantitative Frameworks for Predictive Circuit Design

A significant advancement in using synthetic circuits as discovery tools has been the development of quantitative, predictive design frameworks that move beyond trial-and-error approaches.

Standardized Characterization and Modeling

Predictive design requires precise quantification of genetic parts and their interactions. Researchers have established robust measurement systems such as Relative Promoter Units (RPUs) to normalize genetic part activities across experimental batches and conditions [23]. This standardization enables the creation of mathematical models that accurately predict circuit behavior from characterized components.

For example, in plant systems where long life cycles traditionally hampered design cycles, researchers have developed rapid (~10 days) quantitative frameworks using protoplast transfection and RPU normalization to accurately predict the behavior of 21 different two-input genetic circuits (R² = 0.81 between prediction and experimental data) [23]. Similar approaches in microbial systems have enabled the development of algorithms that systematically enumerate possible circuit configurations to identify optimally compressed designs [21].

The Transcriptional Programming (T-Pro) Approach

Recent work has established Transcriptional Programming (T-Pro) as a framework for constructing compressed genetic circuits that implement complex logic with minimal components [21]. This system utilizes synthetic repressors and anti-repressors that coordinately bind to cognate synthetic promoters, reducing the need for circuit inversion operations that increase part count.

Table: Performance Metrics for T-Pro Circuit Compression

| Circuit Type | Canonical Design Parts Count | T-Pro Compressed Parts Count | Reduction Factor | Prediction Error |

|---|---|---|---|---|

| 2-input Boolean | Varies by implementation | Optimized via enumeration | ~4x reduction [21] | <1.4-fold average [21] |

| 3-input Boolean | >20 parts in traditional designs | Algorithmically optimized | ~4x smaller [21] | Quantitative setpoints achievable |

| Memory Circuits | Multiple recombinase units | Compressed T-Pro + recombinase | Specific to application | Precise activity control [21] |

This compression is particularly valuable for minimizing metabolic burden and context-dependence, two major challenges in circuit implementation [21]. The algorithmic enumeration method for T-Pro circuits models circuits as directed acyclic graphs and systematically explores the design space in order of increasing complexity, guaranteeing identification of the most compressed implementation for any given truth table from a search space of >100 trillion possible circuits [21].

Experimental Methodologies for Circuit Implementation and Analysis

Workflow for Quantitative Circuit Characterization

Diagram 1: Experimental workflow for quantitative genetic circuit characterization, showing the iterative design-build-test-learn cycle with key measurement and analysis phases.

The experimental pipeline for circuit characterization begins with standardized part measurement. For example, in plant systems, researchers have adapted the Relative Promoter Unit (RPU) system to normalize promoter activities across experimental batches [23]. Each plasmid construct contains both a normalization module (e.g., GUS driven by a reference promoter) and a circuit module (e.g., LUC driven by a test promoter). The LUC/GUS ratio provides normalized values that are then converted to RPUs by defining the reference promoter's activity as 1 RPU in each batch [23]. This approach significantly reduces batch-to-batch variation, enabling reproducible quantitative characterization.

Protocol for Sensor and Gate Characterization

- Construct Assembly: Clone sensor elements or logic gates into standardized vectors containing both normalization and test modules using Golden Gate or similar assembly methods [23].

- Transformation/Transfection: Introduce constructs into host systems (bacterial, yeast, plant protoplasts, or mammalian cells) ensuring controlled copy number and genomic context where possible.

- Inducer Titration: Expose cells to a range of input concentrations (e.g., 0-1.2 μM auxin for plant hormone sensors, or varying concentrations of small-molecule inducers like IPTG, cellobiose, or D-ribose for bacterial transcription factors) [21] [23].

- High-Throughput Measurement: Quantify output signals using flow cytometry for fluorescence or plate readers for luminescence assays across multiple biological replicates.

- Data Normalization: Convert raw measurements to RPUs or other standardized units using the co-expressed reference standard.

- Transfer Function Analysis: Fit input-output relationships to Hill equations or other appropriate models to extract parameters (dynamic range, Hill coefficient, leakage, etc.) [23].

This methodology enabled researchers to characterize an auxin sensor in plants with 40-fold induction and Hill coefficient of 1.32, providing precise parameterization for predictive models [23].

Circuit Robustness and Evolutionary Longevity

A critical insight from synthetic biology is that circuit failure mechanisms reveal fundamental constraints on biological systems. Circuits impose metabolic burden by diverting cellular resources, creating evolutionary pressure for loss-of-function mutations that reduce this burden and restore growth advantage [22].

Controller Architectures for Enhanced Evolutionary Stability

Diagram 2: Genetic controller architectures for enhancing evolutionary longevity, showing different sensing strategies and actuation mechanisms that impact circuit stability.

Multi-scale modeling that captures host-circuit interactions, mutation, and population dynamics reveals that different controller architectures optimize different stability metrics [22]. Three key metrics quantify evolutionary longevity: P₀ (initial output), τ±10 (time until output deviates by ±10%), and τ50 (time until output halves) [22].

Research shows that:

- Negative autoregulation prolongs short-term performance (τ±10) but provides limited long-term benefit [22].

- Growth-based feedback significantly extends functional half-life (τ50) by linking circuit function to host fitness [22].

- Post-transcriptional control using small RNAs (sRNAs) generally outperforms transcriptional control due to an amplification step that enables strong regulation with reduced controller burden [22].

These findings illustrate how synthetic circuits reveal fundamental trade-offs between performance, robustness, and evolutionary stability in biological systems.

Essential Research Reagent Solutions

Table: Key Research Reagents for Synthetic Gene Circuit Construction

| Reagent Category | Specific Examples | Function and Application |

|---|---|---|

| Synthetic Transcription Factors | E+TAN repressor, EA1TAN anti-repressor [21] | Engineered DNA-binding proteins for orthogonal transcriptional regulation |

| Inducible Systems | IPTG-, D-ribose-, and cellobiose-responsive regulators [21] | Chemical control of circuit components; Input signal generation |

| Synthetic Promoters | Modular Psyn designs with operator insertion sites [23] | Customizable expression levels and regulatory responses |

| Reporter Systems | Fluorescent proteins (GFP, RFP), Luciferase (LUC), GUS [23] | Quantitative measurement of circuit outputs and performance |

| Standardized Vectors | Golden Gate-compatible plasmids, RPU measurement systems [23] | Modular assembly and standardized characterization of parts |

| Host Engineering Tools | CRISPR-Cas9, Recombinases (Cre, Bxb1) [20] [24] | Genome integration, circuit memory, and context control |

Synthetic gene circuits have evolved from simple proof-of-concept demonstrations to sophisticated tools for probing the fundamental principles of biological regulation. The iterative process of designing, constructing, and testing these circuits has revealed intrinsic constraints on biological systems, including metabolic burden, evolutionary instability, and context-dependent part behavior [22] [18]. These "failure modes" are not merely engineering challenges but windows into the fundamental operating principles of life.

Future research will leverage increasingly predictive design frameworks to create circuits that maintain function over evolutionary timescales, operate reliably across different host contexts, and execute more complex computational tasks [21] [22]. The integration of machine learning approaches with high-throughput characterization data will further enhance our ability to predict circuit behavior from part specifications [24]. As these tools mature, synthetic gene circuits will continue to serve as both practical tools for biotechnology and fundamental instruments for discovering the logic of life.

By adopting a "design to understand" approach, researchers can continue to use synthetic gene circuits as experimental testbeds for exploring the constraints and capabilities of biological systems across scales—from molecular interactions to population dynamics [18]. This methodology represents a powerful paradigm for fundamental biological discovery through constructive approaches.

The pursuit of a minimal cell—a synthetic cellular construct designed to embody the core functions of life with the smallest possible set of components—represents a paradigm shift in biological research. This approach, central to synthetic biology, moves beyond traditional dissection of existing life to fundamentally understand biological design by building simplicity from the ground up. By stripping cellular processes to their bare essentials, researchers aim to uncover the first principles of life, free from the evolutionary complexities that obscure core functionalities in natural organisms [25]. The minimal cell serves as both a experimental tool and a theoretical framework, enabling a unique "design-research" cycle where the act of construction tests and refines our understanding of what constitutes life itself.

The synthetic biology philosophy underpinning this pursuit posits that complexity in natural systems arises not merely from the length of biological parts lists, but from how those parts are organized and interact [17]. This perspective suggests that novel biological functions emerge from new combinations of pre-existing modules—a principle that minimal cell research directly tests by reconstituting life-like behaviors from defined components. The minimal cell therefore becomes a simplified test-bed where researchers can intuitively grasp the ranges of behavior generated by fundamental biological circuits and exert unprecedented control over natural processes [17].

Defining the Minimal Cell: Concepts and Approaches

Conceptual Frameworks and Definitions

The term "SynCell" (synthetic cell) encompasses a spectrum of artificial constructs designed to mimic cellular functions, with definitions varying based on research objectives. Two predominant conceptual frameworks guide the field:

Functional Mimicry Framework: This approach defines SynCells as engineered cell-sized systems capable of performing specific life-like functions, such as information processing, motility, growth and division, signaling, or metabolism, without necessarily achieving full self-replication [25]. This modular perspective enables researchers to reconstitute biological features piecemeal, focusing on understanding individual processes.

Life Reboot Framework: This more ambitious definition characterizes SynCells as physicochemical systems that sustain themselves and replicate in an environment capable of open-ended evolution [25]. This framework emphasizes the ability of a fully interoperable SynCell to replicate and evolve, addressing fundamental questions about the origins and evolution of life.

Top-Down vs. Bottom-Up Engineering Strategies

Minimal cell research employs two complementary engineering strategies, each with distinct advantages for fundamental biological inquiry:

Top-Down Genome Minimization: This approach starts with existing organisms and systematically removes genes to identify the minimal set essential for life. The landmark JCVI-syn3.0 project exemplifies this strategy, resulting in a minimized cell based on Mycoplasma mycoides with roughly half as many genes as its natural counterpart [26]. With a genome of approximately 473 genes, this top-down minimized genome provides critical baseline data suggesting that a functional minimal genome synthesized from the bottom-up may require 200-500 genes [25].

Bottom-Up Assembly: This approach constructs cell-like systems by assembling molecular components from non-living building blocks [25]. This strategy allows researchers to explore non-natural components and arrangements not constrained by biological evolution, potentially revealing why natural systems are organized as they are. Bottom-up assembly typically utilizes molecular building blocks such as membranes, genetic material, and proteins to create structural chassis that can host life-like functions.

Table: Comparison of Minimal Cell Engineering Approaches

| Approach | Starting Point | Key Advantages | Limitations | Exemplary System |

|---|---|---|---|---|

| Top-Down | Existing organisms | Leverages evolved functional systems; Identifies essential genes in native context | Retains evolutionary baggage; Limited to natural components | JCVI-syn3.0 (473 genes) [26] |

| Bottom-Up | Molecular components | Freedom from evolutionary constraints; Incorporation of non-natural parts; Precise control | Integration challenges; Limited complexity to date | Enzyme-loaded liposomes for chemotaxis [27] |

Key Achievements in Minimal Cell Research

Established Minimal Cell Platforms

The most advanced minimal cell platform to date is the JCVI-syn3.0 system and its derivatives (including JCVI-syn3A and JCVI-syn3B). Based on the naturally occurring Mycoplasma mycoides, this top-down minimized organism contains approximately half the genes of its parental strain and serves as a platform for exploring the first principles of life, engineering, computational modeling, and more [26]. This minimal cell has demonstrated remarkable robustness despite its reduced genome, enabling diverse research applications from aging studies to metabolic engineering.

Recent research with JCVI-syn3.0 has revealed unexpected biological complexities even in this minimalist system. Studies of its proteome have identified numerous "moonlighting" proteins—proteins that perform multiple functions by changing their location, interactions, shape, or oligomeric state [26]. For instance, highly conserved cytoplasmic proteins such as Enolase, DnaK, and EF-Tu have been found to be modified and present on the cell surface of JCVI-syn3.0, suggesting they serve secondary functions beyond their canonical roles [26]. Proteomic analyses have identified over 100 proteins from the syn3.0 proteome that inhabit the membrane and have multiple functions, potentially increasing the effective functional size of the proteome by 21% or more [26].

Reconstitution of Core Cellular Functions

Bottom-up approaches have successfully reconstructed individual cellular functions using minimal component sets:

Chemical Navigation: Researchers have created the world's simplest artificial cell capable of chemical navigation by encapsulating enzymes within lipid-based vesicles (liposomes) modified with membrane pore proteins [27]. This system demonstrates how microscopic bubbles can be programmed to follow chemical trails like natural cells, revealing the core principles behind chemotaxis without the complex machinery typically involved, such as flagella or intricate signaling pathways [27].

Information Processing: The assembly of transcription-translation (TX-TL) systems, either based on cellular extracts or reconstructed from purified components, has been widely explored and integrated with compartmentalization to achieve SynCells programmed to communicate and interact with living cells [25].

Compartmentalization: Diverse structural chassis have been developed to host minimal cellular functions, including lipid vesicles, emulsion droplets, liquid-liquid phase separated systems, proteinosomes, and hydrogels [25]. Each platform offers distinct advantages for housing specific cellular functions.

Table: Experimentally Demonstrated Minimal Cellular Functions

| Cellular Function | Minimal Component Set | Key Findings | Reference |

|---|---|---|---|

| Chemical Navigation (Chemotaxis) | Lipid vesicle + enzyme (glucose oxidase/urease) + membrane pore protein | Vesicles navigate chemical gradients; Movement direction reverses with increasing pore number | [27] |

| Information Processing | Cell-free TX-TL system + genetic program + compartment | Couples genotype to phenotype; Enables programmed communication | [25] |

| Multi-functionality (Moonlighting) | JCVI-syn3.0 proteome | >100 proteins have multiple functions; Essential cytoplasmic enzymes traffic to membrane | [26] |

| Growth in Defined Medium | JCVI-syn3B + synthetic peptides | Requires polymerized peptides beyond singular amino acids | [26] |

Critical Scientific Challenges and Research Frontiers

The Integration Challenge

A primary obstacle in minimal cell research is the integration of functional modules into a cohesive, self-sustaining system. While numerous life-like modules have been engineered individually, combining them presents significant scientific hurdles:

Functional Interoperability: The complexity of combining and integrating components in an interoperable and functional way scales exponentially with module numbers [25]. A defining characteristic of a living SynCell would be the presence of a functional cell cycle, where processes such as DNA replication, segregation, cell growth, and division are seamlessly coordinated and tightly integrated.

Compatibility Across Systems: Incompatibilities between diverse chemical/synthetic sub-systems developed by groups with different expertise hamper the capacity to integrate such modules into a single system [25]. This includes biochemical incompatibilities (e.g., differing ionic conditions), kinetic mismatches (e.g., differing reaction rates), and spatial constraints.

Essential Modules for a Self-Sustaining Minimal Cell

Research continues to address several core cellular functions that remain challenging to reconstitute in minimal systems:

De Novo Biomolecule Synthesis: Self-replication of all essential components, including ribosome biogenesis, lipid synthesis, and genomic DNA replication, is required to keep SynCells self-sustaining and replicable [25]. The current state-of-the-art is still far from achieving doubling of cellular components, representing one of the biggest challenges in the SynCell effort.

Controlled Cell Division: While certain elements of division have been realized (e.g., contractile ring formation or final abscission), a controlled synthetic divisome has not yet been realized, calling for extensive biophysical characterizations [25].

Energy Metabolism: Energy supply, anabolism, and catabolism are pivotal functions that keep living systems out of thermodynamic equilibrium. While metabolic networks providing energy and building blocks have been reconstituted in vitro and integrated with genetic modules, improvements in metabolic flux, efficiencies, and coupling with complementing pathways are needed [25].

Experimental Protocols and Methodologies

Protocol: Creating Chemotactic Minimal Cells

The following detailed methodology enables the creation of minimal cells capable of chemical navigation, based on published research [27]:

Materials and Reagents

- Lipid Components: 1-palmitoyl-2-oleoyl-sn-glycero-3-phosphocholine (POPC) and 1,2-dipalmitoyl-sn-glycero-3-phosphoethanolamine (DPPE) in chloroform

- Enzyme Solutions: Glucose oxidase (from Aspergillus niger) or urease (from Canavalia ensiformis) in phosphate buffer

- Membrane Pore Protein: Alpha-hemolysin (αHL) or similar pore-forming protein

- Microfluidic Device: Fabricated using standard soft lithography techniques

- Glucose or Urea Gradients: Prepared in chemotaxis buffer

Procedure

Vesicle Formation:

- Prepare lipid films by depositing POPC/DPPE (9:1 molar ratio) chloroform solutions in glass vials and evaporating solvent under nitrogen stream.

- Hydrate lipid films with enzyme solution (1-2 mg/mL glucose oxidase or urease) in phosphate buffer (pH 7.4) to a final lipid concentration of 1 mM.

- Subject the suspension to five freeze-thaw cycles (liquid nitrogen/water bath at 40°C) to form multilamellar vesicles.

- Extrude the suspension through polycarbonate membranes (100 nm pore size) using a mini-extruder to form large unilamellar vesicles.

Pore Protein Incorporation:

- Incubate vesicles with αHL (0.1 μg/mL final concentration) for 1 hour at room temperature.

- Remove unincorporated αHL by size exclusion chromatography.

Chemotaxis Assay:

- Load vesicle suspension into microfluidic device containing established chemical gradient.

- Image vesicle movement using phase-contrast microscopy at 10-second intervals for 30 minutes.

- Track and analyze trajectories using particle tracking software (e.g., ImageJ with TrackMate plugin).

Controls:

- Include vesicles lacking enzymes or pore proteins as negative controls.

- Verify gradient stability using fluorescent dextran markers.

Data Analysis

- Calculate directionality index (DI) as the net displacement divided by the total path length.

- Compare mean square displacement of experimental vs. control vesicles.

- Analyze pore number dependence by titrating αHL concentration and correlating with directional persistence.

Diagram Title: Chemotactic Minimal Cell Creation Workflow

Protocol: Defined Medium Formulation for Minimal Cells

Developing synthetic defined media is essential for controlling minimal cell growth conditions and understanding nutritional requirements:

Materials and Reagents

- Amino Acid Mixture: All 20 proteinogenic amino acids

- Vitamin Mix: Water-soluble vitamins (B1, B2, B3, B5, B6, B7, B9, B12)

- Nucleobases: Adenine, guanine, cytosine, thymine, uracil

- Synthetic Peptides: Custom-synthesized di- and tri-peptides

- Salt Solution: MgSO₄, K₂HPO₄, NaCl, FeSO₄

Procedure

Base Medium Preparation:

- Combine amino acids (each at 0.5-2.0 mM final concentration) in ultrapure water.

- Add vitamin mix (0.1-1.0 mg/L each vitamin) and nucleobases (0.1-0.5 mM each).

- Add salt solution and adjust pH to 7.2-7.4.

Peptide Supplementation:

- Prepare synthetic peptide stock solutions (10-100 mM in water).

- Add peptides to base medium at 0.1-1.0 mM final concentration.

Growth Assessment:

- Inoculate JCVI-syn3B cultures at initial OD600 of 0.05.

- Monitor growth by OD600 measurements every 2 hours for 24-48 hours.

- Compare growth rates in media with and without peptide supplementation.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table: Key Research Reagents for Minimal Cell Research

| Reagent/Solution | Function/Purpose | Example Application | Technical Notes |

|---|---|---|---|

| JCVI-syn3.0/syn3A/syn3B Strains | Minimal cell platform for top-down studies | Studying central dogma, metabolism, aging | Requires specialized media; Grows slower than natural bacteria [26] |

| PURE (Protein Synthesis Using Recombinant Elements) System | Reconstituted cell-free transcription-translation | Bottom-up gene expression; Circuit prototyping | Enables controlled studies of information processing [25] |

| Lipid Vesicles (Liposomes) | Minimal membrane compartment | Housing reactions; Studying transport & signaling | Composition tunable (e.g., POPC/DPPE); Size controlled by extrusion [27] |

| Defined Synthetic Media | Controlled nutritional environment | Identifying essential nutrients; Growth studies | JCVI-syn3B requires polymerized peptides beyond amino acids [26] |

| Membrane Pore Proteins (e.g., α-hemolysin) | Enabling molecular exchange across synthetic membranes | Chemotaxis systems; Metabolic support | Controlled incorporation critical for function [27] |

| Microfluidic Devices | Creating chemical gradients; Single-cell analysis | Chemotaxis assays; Long-term culturing | Enables precise environmental control [27] |

Quantitative Frameworks for Minimal Cell Analysis

Analytical Approaches for Spatial Organization

Recent advances in spatial analysis provide quantitative frameworks for characterizing minimal cell organization and interactions:

The "colocatome" framework catalogs significant, normalized colocalizations between pairs of cell subpopulations, enabling comparisons across biological samples [28]. This approach uses the colocation quotient (CLQ) spatial metric to identify cell subpopulation pairs in close proximity (positive colocalization) versus those that are distant (negative colocalization), combined with spatial randomization to assess significance compared to null distributions [28].

Quantitative Characterization of Gene Expression

Synthetic biological approaches have contributed significantly to quantitative descriptions of gene expression, transforming qualitative notions of transcriptional regulation into quantifiable parameters:

Combinatorial Promoter Libraries: These libraries allow unbiased measurement of transcriptional activity across possible promoter architectures, revealing rules that describe promoter responsiveness to transcription factors [17]. Studies in E. coli have shown that repressors effectively repress expression from core, proximal, and distal promoter regions, with strength greatest in core regions, while activators work primarily in distal sites [17].

Transfer Function Mapping: Synthetic constructs have been used to map the transfer function that relates input concentration of transcription factors and inducers to output concentration of reporter genes, enabling quantitative prediction of circuit behavior [17].

Diagram Title: Quantitative Gene Expression Framework

Future Directions and Concluding Perspectives

The pursuit of a minimal cell continues to evolve, with several emerging frontiers promising to advance both fundamental understanding and practical applications:

Global Collaboration: The recent SynCell Global Summit brought together scientists from SynCell communities worldwide to establish consensus on future research directions, highlighting the need for international collaboration to overcome integration challenges [25].

Non-Natural Biology: The option to explore non-natural components in SynCell design presents opportunities to expand functional capabilities beyond those found in nature, using building blocks such as polymersomes or nanoparticles [25].

Theoretical Frameworks: Developing predictive models for minimal cell behavior represents a critical frontier, as current lack of theoretical frameworks that predict behaviors and robustness of reconstituted systems hampers design efforts [25].

The minimal cell pursuit exemplifies the synthetic biology paradigm of understanding through building, providing a powerful approach to fundamental biological questions. As research progresses, the integration of functional modules into cohesive, self-sustaining systems will continue to test and refine our understanding of life's essential principles, with potential applications spanning medicine, biotechnology, and beyond.

Synthetic biology is founded on a core premise: to understand biology, one must be able to design and construct it. This approach has transformed our fundamental biological understanding, moving from passive observation to active creation and testing. The evolution of foundational tools for DNA synthesis, sequencing, and genome editing has been instrumental in this shift, enabling researchers to dissect and reassemble the molecular machinery of life with increasing precision. These technologies form an interdependent toolkit: DNA synthesis writes genetic information, sequencing reads it, and genome editing rewrites it [29]. Together, they create a powerful engineering cycle for biological systems. This technical guide examines the current state of these core technologies, detailing their methodologies, applications, and integration, framed within the context of using synthetic design to uncover fundamental biological principles.

DNA Synthesis: From Oligos to Whole Genomes

DNA synthesis technologies provide the foundational ability to write genetic code from scratch, offering researchers the freedom to move beyond naturally occurring sequences and test hypotheses through constructive biology.

Core Synthesis Methodologies

The field encompasses both established chemical methods and emerging enzymatic approaches, each with distinct advantages and limitations.

Table 1: Comparison of DNA Synthesis Methodologies

| Method | Core Principle | Read Length | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Phosphoramidite Chemistry | Step-wise chemical synthesis on a solid support (silica gel) [29] | ~200 nucleotides [29] | Low cost; widely established | Length limitation; use of hazardous chemicals |

| Template-Independent Enzymatic Synthesis (TiEOS) | Enzymatic addition of nucleotides using terminal deoxynucleotidyl transferase (TdT) [29] | Developing technology | Avoids harsh chemicals; potential for longer reads | Lower efficiency; still under development |

| Microarray-Derived Synthesis | Light-directed or electrochemical parallel synthesis on a chip [29] | Varies | High-throughput; massive parallelism | Lower single-sequence fidelity; complex workflow |

Experimental Protocol: Gene Assembly via Phosphoramidite Synthesis and Gibson Assembly

The following protocol is typical for producing a synthetic gene of kilobase scale.

- Oligonucleotide Synthesis: Design and synthesize overlapping oligonucleotides (∼200 nt) covering the entire target gene sequence using column-based phosphoramidite chemistry [29].

- Gene Assembly: Pool the oligonucleotides and perform an assembly reaction, such as Gibson Assembly, which uses a one-pot isothermal reaction with a 5' exonuclease, a DNA polymerase, and a DNA ligase to seamlessly join overlapping DNA fragments [29].

- Cloning: Insert the assembled product into a plasmid vector via in vitro ligation and transform into competent E. coli cells.

- Sequence Verification: Isolve plasmid DNA from multiple bacterial colonies and verify the complete sequence of the synthetic gene using Sanger sequencing or next-generation sequencing (NGS).

- Functional Validation: The synthetic gene can then be used in downstream applications, such as expression in a host organism to test the function of a designed genetic circuit or the activity of an engineered enzyme [17].

Figure 1: Gene Synthesis and Validation Workflow

DNA Sequencing: Reading the Genome with Speed and Context

Sequencing technology has evolved from decoding linear sequences to mapping the spatial and functional organization of the genome within the cell, providing critical readouts for synthetic biology designs.

Advanced Sequencing Technologies and Applications

Modern sequencing platforms can be broadly categorized by read length and application, with recent breakthroughs focusing on speed, accuracy, and multiomic integration.

Table 2: Key Sequencing Platforms and Their Research Applications

| Platform/Technology | Read Type | Typical Read Length | Key Research Applications |

|---|---|---|---|

| Roche Sequencing by Expansion (SBX) [30] | Long-read | Not Specified | Bulk RNA sequencing, methylation mapping, rapid clinical sequencing (e.g., <4 hours for a human genome) |

| PacBio HiFi [31] | Long-read | 15,000-20,000 bases | De novo genome assembly, variant detection, full-length transcript sequencing |

| Illumina NGS [3] | Short-read | 100-300 bases | Whole-genome sequencing, population studies, targeted sequencing |

| Expansion In Situ Genome Sequencing [32] | Spatial | N/A | Linking nuclear structure to gene repression; sequencing DNA within intact, expanded cells |

Experimental Protocol: ExpansionIn SituGenome Sequencing

This novel protocol from the Broad Institute sequences DNA within intact cells while preserving spatial context.

- Cell/Tissue Fixation and Permeabilization: Fix cells (e.g., patient-derived skin cells) to preserve native 3D nuclear architecture and permeabilize membranes to allow reagent entry [32].

- In Situ Sequencing Library Prep: Perform library preparation for sequencing inside the fixed cells, incorporating barcodes that retain spatial information [32].

- Polymer Gel Expansion: Embed the sample in a swellable polymer gel and expand it physically in water. This expansion increases the physical distance between molecules, allowing standard microscopes to achieve nanoscale resolution [32].

- Imaging and Sequencing: Place the expanded sample on a sequencer (e.g., an Illumina flow cell). Sequence the genome directly within the expanded cells and simultaneously perform high-resolution imaging to map the location of DNA sequences relative to nuclear proteins [32].

- Data Integration and Analysis: Integrate the sequenced DNA data with the imaging data to link structural abnormalities (e.g., nuclear invaginations in progeria) to local gene repression and other functional genomic changes [32].

Figure 2: Expansion In Situ Sequencing Workflow

Genome Editing: Programming the Code of Life

Genome editing, particularly CRISPR-based systems, has provided an unparalleled tool for precise, programmable modification of genomes, enabling both functional interrogation of genetic elements and the development of novel therapeutics.

The Genome Editing Toolbox

The core editing platforms have expanded beyond initial CRISPR-Cas9 systems to include more precise editors and sophisticated control mechanisms.

Table 3: Evolution of Key Genome-Editing Technologies

| Technology | Mechanism of Action | Key Applications | Clinical Stage (as of 2025) |

|---|---|---|---|

| CRISPR-Cas9 Nucleases [33] [34] | Creates double-strand breaks in DNA | Gene knockouts, gene therapy (e.g., Casgevy for sickle cell disease) [35] | Approved therapy; multiple Phase I-III trials [35] |

| Base Editing [33] | Chemically converts one base pair to another without double-strand breaks | Correcting point mutations responsible for genetic diseases | Early-phase clinical trials |

| Prime Editing [33] | "Search-and-replace" editing directly using a reverse transcriptase template | Precise gene insertion, deletion, and all 12 possible base-to-base conversions | Preclinical research |

| Anti-CRISPR Proteins (LFN-Acr/PA) [34] | Inhibits Cas9 activity after editing is complete | Reducing off-target effects; increasing safety of CRISPR therapies | Preclinical development |

Experimental Protocol:In VivoCRISPR Therapy with Anti-CRISPR Control

This protocol outlines a cutting-edge therapeutic genome-editing approach that includes a safety switch to deactivate the editor.

- Lipid Nanoparticle (LNP) Formulation: Encapsulate CRISPR-Cas9 mRNA and single-guide RNA (sgRNA) into lipid nanoparticles (LNPs), which have a natural affinity for the liver and protect the editing components [35].

- Systemic Administration: Administer the LNP formulation to the patient via intravenous (IV) infusion, allowing systemic delivery and uptake by target cells in the liver [35].

- Genome Editing: The CRISPR-Cas9 system enters the nucleus and creates a double-strand break at the target genomic locus, enabling gene disruption or repair via homology-directed repair.

- Anti-CRISPR Delivery: After a predetermined time window sufficient for on-target editing, administer the LFN-Acr/PA system. This system uses a component derived from anthrax toxin to deliver anti-CRISPR proteins into cells rapidly and efficiently, shutting down residual Cas9 activity to minimize off-target effects [34].

- Efficacy and Safety Monitoring: Use blood tests to measure reduction of the target protein (a biomarker for editing efficacy) and employ NGS-based methods to monitor for potential off-target edits in the patient's genome [35].

Figure 3: In Vivo CRISPR Therapy with Safety Switch

Integrated Workflows and the Scientist's Toolkit

The convergence of synthesis, sequencing, and editing, powered by artificial intelligence and bioinformatics, is creating unified workflows for biological discovery and engineering.

The Role of AI and Multiomics

Artificial intelligence is revolutionizing how researchers design experiments and interpret complex biological data. Machine learning models are being used to optimize the activity of genome editors like Cas9, predict their off-target effects, and even discover novel editing enzymes from microbial genomes [33]. Furthermore, the integration of multiomic datasets—genomic, epigenomic, and transcriptomic—from the same sample provides a systems-level view that is essential for understanding the functional outcomes of synthetic biological designs [3]. Bioinformatics tools are critical for off-target prediction and target gene selection, tasks that require accurate genome sequence information [31].

Research Reagent Solutions

Table 4: Essential Research Reagents and Their Functions in Synthetic Biology

| Reagent / Material | Function in Research |

|---|---|

| Lipid Nanoparticles (LNPs) [35] | Delivery vehicle for in vivo transport of CRISPR components; naturally targets liver cells. |

| Anti-CRISPR Proteins (Acrs) [34] | Acts as a safety switch to deactivate Cas9 after editing, reducing off-target effects. |

| Terminal Deoxynucleotidyl Transferase (TdT) [29] | Key enzyme for template-independent enzymatic DNA synthesis (TiEOS). |

| Hi-C Reagents [31] | Used in chromosome conformation capture to guide accurate genome assembly. |

| PacBio HiFi Reads [31] | Long-read sequencing technology for high-fidelity de novo genome assembly. |

| Unique Molecular Identifiers (UMIs) [30] | Molecular barcodes used in NGS to improve accuracy by tagging individual molecules. |

| TET-assisted pyridine borane sequencing (TAPS) [30] | High-fidelity methylation mapping method for epigenomic research. |

The Synthetic Biologist's Toolkit: AI, Genome Engineering, and Novel Applications in Biomedicine

Precision genome engineering represents a cornerstone of modern synthetic biology, providing the foundational tools to conduct "design research" for fundamental biological understanding. By moving from observation to deliberate construction and perturbation of genetic systems, researchers can reverse-engineer the logic of life. The advent of Clustered Regularly Interspaced Short Palindromic Repeats (CRISPR) and associated Cas proteins has revolutionized this field, offering unprecedented control over genetic information. This technological paradigm shift enables scientists to systematically probe gene function, model diseases, and engineer novel cellular behaviors with high precision.

Framed within the broader thesis of synthetic biology, CRISPR-Cas systems are more than just gene-editing tools; they are programmable platforms for testing hypotheses about biological design principles. The ability to make targeted perturbations—whether altering DNA sequences, modifying epigenetic states, or controlling gene expression—allows for a dissection of causality in complex biological networks that was previously impossible. This guide details the core mechanisms, methodologies, and applications of CRISPR-Cas and its next-generation derivatives, providing a technical roadmap for leveraging these systems to advance fundamental biological understanding through designed interventions.

The Core CRISPR-Cas9 Mechanism

The CRISPR-Cas9 system functions as a programmable DNA-targeting complex. Its mechanism is derived from a natural immune system in microbes, which they use to find and eliminate unwanted invaders like viruses by incorporating snippets of the invader's DNA into their own genome for future recognition [36]. For biotechnological application, this system is reconstituted as a two-component complex.

- Guide RNA (gRNA): A synthetic RNA molecule that combines the functions of the natural tracer RNA (tracrRNA) and CRISPR RNA (crRNA). It is engineered with a ~20 nucleotide targeting sequence at its 5' end that defines the specific genomic locus for editing via Watson-Crick base pairing [37] [38].

- Cas9 Nuclease: An enzyme that acts as "molecular scissors." Upon binding to the gRNA, it undergoes a conformational change, enabling it to scan DNA for a Protospacer Adjacent Motif (PAM) sequence (e.g., 5'-NGG-3' for Streptococcus pyogenes Cas9). When it finds a PAM site, the gRNA's targeting sequence anneals to the complementary DNA strand, and the Cas9 enzyme introduces a precise double-strand break (DSB) in the DNA [38].

The cell then attempts to repair this DSB primarily through two endogenous pathways, which synthetic biologists harness to achieve different editing outcomes:

- Non-Homologous End Joining (NHEJ): An error-prone repair pathway that often results in small insertions or deletions (indels) at the cut site. This is useful for targeted gene disruption, as these indels can knockout gene function by shifting the reading frame or creating premature stop codons [37] [38].

- Homology-Directed Repair (HDR): A precise repair pathway that uses a homologous DNA template to repair the break. By co-delivering a designed donor DNA template, researchers can leverage HDR to introduce specific point mutations, correct mutations, or insert new genetic sequences [37].

The following diagram illustrates the core mechanism and key outcomes of CRISPR-Cas9 genome editing.

Experimental Protocol: A Step-by-Step Workflow

This section provides a generalized, yet detailed, protocol for a typical CRISPR-Cas9 genome editing experiment in mammalian cells, from design to validation. The workflow integrates both computational and bench-based steps, embodying the synthetic biology "design-build-test-learn" cycle [39].

Identifying the Target and Designing gRNAs

- Target Selection: Identify the precise genomic locus to be edited. For gene knockout, target early exons; for precise editing, ensure the mutation site is close to the PAM.

- gRNA Design: Use bioinformatic tools to design gRNAs with high on-target efficiency and low off-target potential. Key criteria include:

- A PAM sequence (e.g., NGG) immediately downstream of the target site in the genomic DNA.

- A 20-nucleotide guide sequence that is unique within the genome.

- A GC content between 40-60%.