Benchmarking Predictive Models in Biological Design: From AI Foundations to Clinical Impact

This article provides a comprehensive roadmap for researchers and drug development professionals on establishing robust benchmarking practices for predictive models in biological system design.

Benchmarking Predictive Models in Biological Design: From AI Foundations to Clinical Impact

Abstract

This article provides a comprehensive roadmap for researchers and drug development professionals on establishing robust benchmarking practices for predictive models in biological system design. It explores the foundational need for standardized evaluation in overcoming reproducibility challenges in bioinformatics and AI-driven drug discovery. The content details current methodological approaches, from community-driven platforms to genetic circuit design software, and addresses critical troubleshooting aspects like data bias and model interpretability. Finally, it synthesizes strategies for rigorous validation and comparative analysis, underscoring how credible benchmarking ecosystems are essential for de-risking drug discovery, accelerating therapeutic development, and building trustworthy AI for clinical translation.

The Critical Need for Benchmarking in Predictive Biology

In the field of biological system design, a significant and often overlooked obstacle is stifling progress: the reproducibility bottleneck. Predictive models, from AI-driven virtual cells to diagnostic tools, are frequently developed using bespoke, non-standardized evaluation methods. This forces researchers to spend weeks building custom evaluation pipelines for tasks that should require only hours, diverting valuable time from actual discovery to debugging and implementation variations [1]. This article objectively compares emerging solutions designed to overcome this bottleneck, providing researchers and drug development professionals with a clear guide to the current benchmarking landscape.

# The High Cost of Inconsistent Evaluation

The lack of trustworthy, reproducible benchmarks creates multiple systemic problems that slow the pace of scientific discovery.

- Wasted Research Effort: Without unified evaluation methods, the same model can yield different performance scores across laboratories due not to scientific factors, but to implementation variations [1]. This forces researchers to dedicate extensive time to rebuilding evaluation pipelines instead of improving their models.

- Unreliable Comparisons and Cherry-Picking: The field often relies on bespoke benchmarks created for individual publications, which can lead to cherry-picked results that look good in isolation but are difficult to reproduce or cross-check across studies [1]. This lack of true comparability erodes trust in models and hampers collective progress.

- Hidden Model Discrepancy: Predictive models can perform well on training data but fail to generalize to new experimental protocols or scenarios. This model discrepancy is a major challenge for uncertainty quantification, as a model's performance is often tightly linked to the specific design of the experiments used to train it [2].

- Fragile and Non-Reproducible Results: Machine learning models, especially those initialized through stochastic processes, can suffer from reproducibility issues. Changes in random seeds can alter predictive performance and feature importance, making it difficult to obtain stable, interpretable results [3].

# Benchmarking Solutions: A Comparative Guide

To address these challenges, several initiatives and frameworks have been developed. The following table summarizes and compares the core approaches of several key benchmarking ecosystems in biological and AI research.

Table 1: Comparison of Benchmarking Ecosystems for Predictive Models

| Benchmarking Solution | Primary Focus | Core Methodology | Key Advantages | Featured Experimental Metrics |

|---|---|---|---|---|

| CZI Virtual Cell Benchmarking Suite [1] | AI-driven virtual cell models & single-cell transcriptomics | Community-driven, living benchmark suite with no-code web interface and Python tools. | Standardized toolkit for biological relevance; multiple metrics per task; dynamic and open for contributions. | Cell clustering accuracy, cross-species integration performance, perturbation expression prediction accuracy. |

| NewtonBench [4] | Scientific law discovery in physics by LLM agents | Interactive exploration of simulated complex systems using "metaphysical shifts" in canonical laws. | Resolves trade-off between scientific relevance, scalability, and memorization-resistance; tests genuine reasoning. | Symbolic accuracy of discovered laws, performance degradation with system complexity/noise. |

| PLOS Continuous Benchmarking Ecosystem [5] | General bioinformatics methods | Formalized benchmark definitions via configuration files and workflow systems (e.g., CWL). | Promotes FAIR principles (Findable, Accessible, Interoperable, Reusable); high extensibility and provenance tracking. | Varies by task; focuses on workflow reproducibility and component reusability. |

| Google AI Empirical Software System [6] | Multi-disciplinary scorable tasks (genomics, public health, etc.) | LLM-powered generation and tree-search-based optimization of code for empirical software. | Automates hypothesis testing and code optimization; reduces exploration time from months to hours. | Overall score on OpenProblems benchmark (14% improvement over ComBat [6]), average WIS for COVID-19 forecasting. |

# Experimental Evidence: Quantifying the Bottleneck and Solution Efficacy

Evidence of Inconsistency in Predictive Modeling

A systematic review of predictive models for lung cancer based on exhaled volatile organic compounds (VOCs) provides concrete evidence of the reproducibility bottleneck. The review, which analyzed 11 articles and 46 different models, found substantial heterogeneity in model performance and constituents [7].

Table 2: Inconsistencies in Exhaled VOC-Based Predictive Models for Lung Cancer [7]

| Aspect of Variation | Findings | Impact on Reproducibility |

|---|---|---|

| Model Constituents | 84 different VOCs were incorporated into models across studies. Only 3 compounds were consistently identified as key predictors. | Lack of consensus on fundamental biomarkers prevents model standardization and validation. |

| Reported Performance | Wide variation in sensitivity, specificity, and AUC indicators across studies. | Makes it impossible to determine the true clinical value or compare model efficacy reliably. |

| Methodology | Heterogeneity in breath collection, analysis, and modeling methods. | Results are highly specific to the experimental protocol, limiting generalizability. |

Efficacy of Advanced Benchmarking and Validation Protocols

NewtonBench's "Metaphysical Shift" Protocol:

- Objective: To rigorously evaluate the scientific law discovery capability of LLMs in a way that prevents memorization and requires first-principles reasoning [4].

- Methodology:

- Task Formalization: Define the discovery task around an

Equation(as a mathematical expression tree) and aModel(an experimental system of multiple equations) [4]. - Metaphysical Shift: Systematically alter the mathematical structure of canonical physical laws (e.g., modifying operators or exponents) to create novel, conceptually grounded problems [4].

- Interactive Evaluation: Agents must actively design experiments by specifying input parameters to a simulated environment, moving beyond static function fitting to true model discovery [4].

- Task Formalization: Define the discovery task around an

- Key Result: Evaluations of 11 state-of-the-art LLMs revealed a "clear but fragile" capability for discovery. Performance degraded sharply with increased system complexity and observational noise, highlighting a core challenge for automated science [4].

Novel Validation for Subject-Specific Insights:

- Objective: To stabilize machine learning models for reproducible and explainable results, countering variability from stochastic initialization [3].

- Methodology:

- Repeated Trials: For each subject in a dataset, run the ML model (e.g., Random Forest) up to 400 times with different random seeds [3].

- Feature Aggregation: Aggregate feature importance rankings across all trials for each subject.

- Stable Feature Sets: Identify the top subject-specific and group-specific features based on consistent importance across trials [3].

- Key Result: This method significantly reduced variability in feature rankings and improved the consistency of model performance metrics, leading to more robust and interpretable predictions [3].

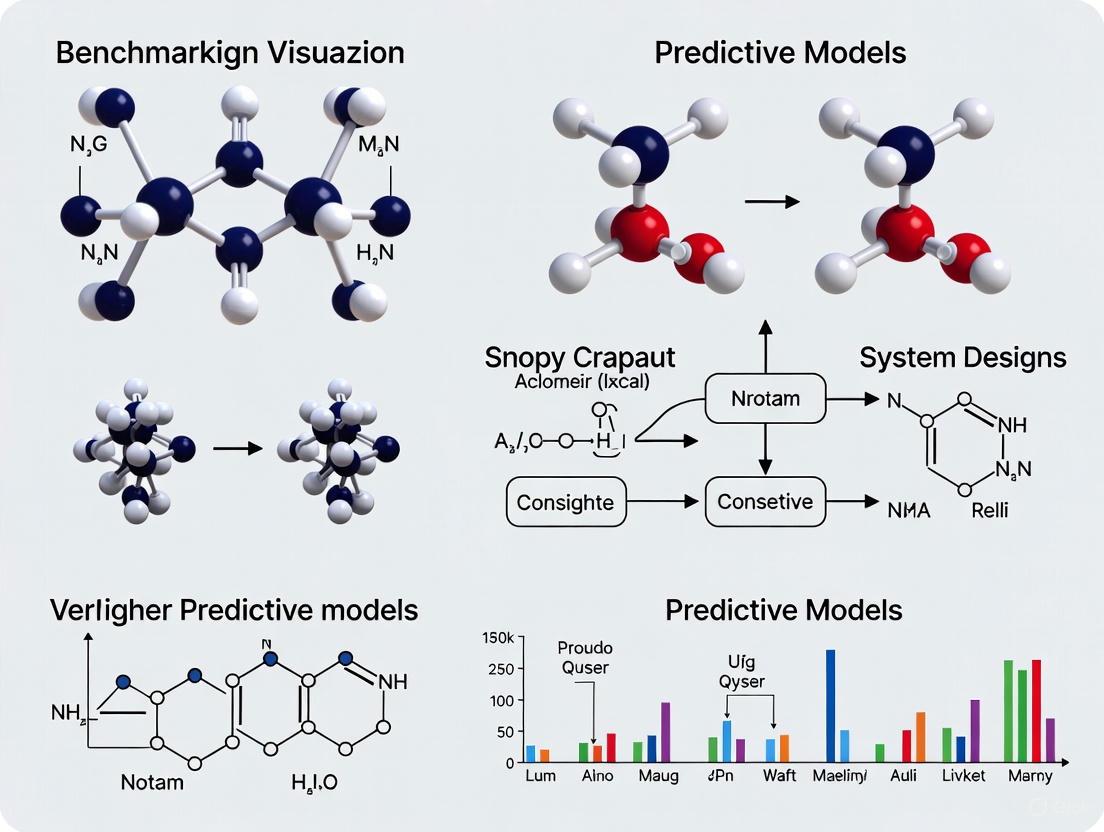

# Visualizing the Benchmarking Workflow

The following diagram illustrates the logical workflow of a continuous benchmarking ecosystem, showing how standardized components come together to ensure reproducible and comparable results.

For researchers aiming to implement robust benchmarking for their predictive models, the following tools and resources are essential.

Table 3: Key Reagents and Resources for Reproducible Model Benchmarking

| Resource / Reagent | Function in Benchmarking | Application Example |

|---|---|---|

CZI cz-benchmarks Python Package [1] |

Enables embedded evaluation of models during training/inference; integrates with tools like TensorBoard. | Standardized performance assessment of a new single-cell transcriptomics model on community-defined tasks. |

| Common Workflow Language (CWL) [5] | Provides a standard for defining computational workflows, ensuring portability and reproducibility across environments. | Creating a shareable, executable definition of a full model training and evaluation pipeline. |

| PROBAST (Prediction Model Risk of Bias Assessment Tool) [7] | A structured tool to assess the risk of bias and applicability of diagnostic and prognostic prediction models. | Critically appraising the methodological quality of a new VOC-based lung cancer prediction model during systematic review. |

| Stochastic Model Validation Scripts [3] | Custom code to run repeated model trials with varying random seeds, aggregating results for stable feature importance. | Ensuring that the key biomarkers identified by a diagnostic ML model are robust and not an artifact of random initialization. |

| Benchmark Definition File [5] | A configuration file (e.g., YAML) that formally specifies all benchmark components, software versions, and parameters. | Snapshotting the exact conditions of a benchmarking study for future reproduction or extension by other labs. |

# The Path Forward

Overcoming the reproducibility bottleneck requires a cultural and technical shift towards community-driven, standardized evaluation. The benchmarking solutions profiled demonstrate a clear path forward: adopting living, evolving benchmarks that are transparent, trusted, and representative of real scientific needs [1]. By integrating these practices and tools, the field of biological design can transform model evaluation from a recurring obstacle into a catalyst for accelerated, reliable discovery.

In the rapidly evolving field of biological artificial intelligence (AI), robust benchmarking has emerged as the critical framework for distinguishing genuine capability from hyperbolic claims. As foundation models transition from predicting protein structures to generating novel biological designs, the community faces a pressing need for standardized evaluation protocols that can reliably measure progress toward functionally meaningful endpoints. Benchmarks provide the essential yardstick for comparing model performance, identifying limitations, and guiding future development efforts across diverse biological domains, from DNA and protein engineering to single-cell analysis and system-level prediction.

The current landscape is characterized by a tension between rapid technical innovation and the fundamental biological complexity these models aim to capture. As noted in critical assessments of the field, many demonstrated capabilities of current biological AI models can be matched or surpassed by simpler statistical approaches, raising questions about what truly novel scientific capabilities these systems enable [8]. This underscores the necessity for benchmarking suites that move beyond automating existing analyses to instead define entirely new capabilities that would represent genuine scientific advancement. The protein structure prediction field, with its decades-long commitment to the Critical Assessment of Protein Structure Prediction (CASP) challenge, serves as a powerful exemplar of how community-wide benchmarking can drive progress on a fundamental biological problem [8].

This guide examines the current state of biological AI benchmarking through a systematic analysis of emerging benchmark suites, evaluation metrics, and experimental protocols. By synthesizing methodologies from leading research initiatives, we provide researchers with a standardized framework for objective model comparison and performance validation across key domains of biological design.

Current Benchmark Suites for Biological AI

The development of comprehensive benchmark suites has accelerated dramatically in recent years, with several emerging standards specifically designed to evaluate model performance on biologically meaningful tasks. These suites span multiple biological scales—from DNA-level sequence analysis to cellular-level predictions—and incorporate diverse task types including classification, regression, and generation.

Table 1: Major Biological AI Benchmark Suites

| Benchmark Suite | Biological Scale | Primary Tasks | Sequence Length | Key Metrics |

|---|---|---|---|---|

| DNALONGBENCH [9] | DNA | Enhancer-target prediction, 3D genome organization, eQTL analysis, regulatory activity, transcription initiation | Up to 1 million bp | AUROC, AUPR, Pearson correlation, Stratum-adjusted correlation |

| BioLLM [10] | Single-cell | Gene expression analysis, cell type identification, perturbation response | Variable | Zero-shot performance, Fine-tuning efficiency, Task accuracy |

| ProteinBench [11] | Protein | Folding, variant effect prediction, generative design | Full protein sequences | Accuracy, Structural quality metrics, Design validity |

| BEND & LRB [9] | DNA & Expression | Regulatory element identification, Gene expression prediction | Thousands to ~200k bp | AUROC, AUPR, Pearson correlation |

DNALONGBENCH represents one of the most comprehensive efforts to date, specifically designed to evaluate model performance on long-range DNA dependencies that are crucial for understanding genome structure and function [9]. This suite addresses a critical gap in biological AI evaluation by focusing on interactions that may span millions of base pairs, which until recently remained largely inaccessible to computational modeling. The benchmark's deliberate inclusion of both classification and regression tasks across one-dimensional (sequence-based) and two-dimensional (contact map) outputs reflects the multifaceted nature of genomic regulation.

For single-cell analysis, BioLLM provides a unified framework that enables standardized comparison of diverse foundation models through consistent APIs and evaluation protocols [10]. This approach addresses the significant challenge of heterogeneous architectures and coding standards that have hampered objective model comparison in the field. The framework supports both zero-shot evaluation—testing inherent model capabilities without task-specific training—and fine-tuning performance, providing insights into how readily models can adapt to specialized analytical tasks.

Evaluation Metrics and Performance Gaps

Rigorous benchmarking requires multiple complementary metrics that collectively capture different dimensions of model performance. The field has largely moved beyond single-metric evaluations toward comprehensive assessment frameworks that balance statistical performance with practical utility.

Table 2: Core Evaluation Metrics Across Biological AI Domains

| Metric Category | Specific Metrics | Interpretation | Ideal Value |

|---|---|---|---|

| Classification Performance | AUROC, AUPR, Accuracy | Measures ability to distinguish between classes | 1.0 |

| Regression Performance | Pearson correlation, Mean squared error, Stratum-adjusted correlation | Measures agreement with continuous experimental measurements | 1.0 (for correlation), 0 (for error) |

| Model Calibration | Expected calibration error, Reliability diagrams | Measures alignment between predicted probabilities and actual outcomes | 0 |

| Operational Performance | Inference speed, Memory usage, Scaling behavior | Measures practical deployment considerations | Task-dependent |

Recent benchmarking studies have revealed consistent performance patterns across biological domains. In DNA-based prediction tasks, specialized expert models consistently outperform more general foundation models across most evaluation metrics. For example, in the DNALONGBENCH evaluation, task-specific expert models achieved superior performance on all five tasks compared to foundation models like HyenaDNA and Caduceus, with particularly pronounced advantages in regression tasks such as contact map prediction and transcription initiation signal prediction [9]. The performance gap was most dramatic in transcription initiation prediction, where the expert model Puffin achieved an average score of 0.733, significantly surpassing CNN-based approaches (0.042) and foundation models (0.108-0.132) [9].

Similar patterns emerge in single-cell analysis, where evaluations through BioLLM have revealed distinct performance trade-offs across leading architectures. In these assessments, scGPT demonstrated robust performance across multiple tasks, while Geneformer and scFoundation showed strong capabilities in gene-level tasks, attributed to their effective pretraining strategies [10]. scBERT consistently lagged behind other models, likely due to its smaller parameter count and more limited training data [10].

Experimental Protocols for Model Evaluation

Standardized experimental protocols are essential for ensuring fair and reproducible model comparisons. Based on analysis of leading benchmarking studies, we outline a consensus methodology for evaluating biological AI systems.

Data Partitioning and Preprocessing

All benchmarking studies emphasize rigorous data partitioning to prevent data leakage and ensure realistic performance estimation. For genomic tasks, this typically involves chromosome-level splits, where certain chromosomes are entirely withheld during training and used exclusively for validation and testing [9]. This approach prevents inflation of performance metrics due to local sequence similarity and provides a more realistic assessment of generalization capability. For single-cell data, careful stratification by experimental batch, donor, and tissue source is critical to ensure models are evaluated on truly novel biological contexts rather than technical variations of seen data [10].

Model Training and Fine-tuning

For foundation model evaluation, two primary approaches have emerged: zero-shot/few-shot evaluation and task-specific fine-tuning. Zero-shot evaluation assesses the inherent capabilities of pretrained models without additional task-specific training, providing insights into the general biological knowledge captured during pretraining [10]. Fine-tuning evaluations then measure how readily these models can adapt to specific tasks with limited additional training data. In both scenarios, consistent hyperparameter optimization protocols and computational budgets are essential for fair comparisons across models.

Statistical Significance Testing

Given the often-subtle performance differences between state-of-the-art models, rigorous statistical testing is essential. Current best practices recommend repeated evaluations with different random seeds, followed by appropriate statistical tests (e.g., paired t-tests or Wilcoxon signed-rank tests) to establish performance differences with confidence intervals [9]. For multi-task benchmarks, correction for multiple hypothesis testing is necessary to prevent inflation of false positive rates.

Research Reagent Solutions for Biological Benchmarking

The experimental ecosystem for biological AI benchmarking relies on both computational tools and carefully curated biological data resources. The table below outlines essential research reagents and their functions in benchmark development and validation.

Table 3: Essential Research Reagents for Biological AI Benchmarking

| Reagent Category | Specific Examples | Function in Benchmarking | Access Source |

|---|---|---|---|

| Foundation Models | scGPT, Geneformer, HyenaDNA, Caduceus, ESM, ProtT5 | Provide baseline performance and transfer learning capabilities | GitHub, Hugging Face, Model repositories |

| Expert Models | Enformer, Akita, ABC model, Puffin | Establish state-of-the-art performance bounds for specific tasks | GitHub, Original publications |

| Data Resources | ENCODE, GTEx, Single-cell Atlases, PDB | Provide standardized training and evaluation datasets | Public data portals, Controlled access repositories |

| Evaluation Frameworks | BioLLM, ProteinBench | Standardize metrics, data processing, and comparison protocols | GitHub, Open-source distributions |

Specialized expert models serve as critical baseline comparators in biological benchmarking. For example, Enformer establishes the expected performance ceiling for gene expression prediction tasks, while Akita provides reference performance for 3D genome organization prediction [9]. These expert models are typically highly specialized for their specific tasks and often incorporate domain-specific architectural innovations that make them difficult to surpass by general-purpose foundation models.

Data resources with comprehensive metadata annotation are equally crucial, as the biological context of evaluation data significantly influences model performance interpretation. For genomic benchmarks, resources from ENCODE and related consortia provide uniformly processed functional genomics data across multiple cell types and experimental conditions [9]. For single-cell benchmarking, datasets with well-annotated cell types, experimental conditions, and perturbation responses enable more nuanced evaluation of model capabilities [10].

Visualization of Model Architectures and Performance

The current landscape of biological AI benchmarking reveals a field in transition, moving from isolated model evaluations toward comprehensive, community-driven assessment frameworks. The emergence of standardized benchmark suites like DNALONGBENCH and evaluation frameworks like BioLLM represents significant progress in enabling objective model comparison [9] [10]. However, important challenges remain, including the consistent performance advantage of specialized expert models over general foundation models and the need for benchmarks that test truly novel capabilities beyond what current approaches can achieve.

Looking forward, the field must develop benchmarks that strike at the heart of what "solving" fundamental biological problems would mean, moving beyond incremental improvements on established tasks [8]. This will likely require closer integration between computational and experimental approaches, with benchmarks designed around closed-loop cycles of prediction and experimental validation. Additionally, as noted in critical assessments, the field may need to reconsider its level of abstraction—the most impactful advances may come from models that operate at the level of whole organisms or tissues rather than isolated cellular or molecular systems [8].

For researchers engaged in biological AI development, adherence to emerging benchmarking standards is increasingly essential for meaningful scientific contribution. By adopting the protocols, metrics, and evaluation frameworks outlined in this guide, the community can accelerate progress toward AI systems that genuinely advance our understanding and engineering of biological systems.

Computational models have become a critical framework for interrogation and discovery in biological phenomena, enabling hypothesis generation, testing, and greater mechanistic understanding of complex systems [12]. The field of computational systems biology has emerged as a essential biomolecular technique that complements experimental biology by providing a rigorous mathematical foundation for understanding biological system designs and their modes of operation [13]. As predictive models grow in sophistication and application scope—from molecular and cellular levels to tissue, organ, and population levels—ensuring their reliability through rigorous benchmarking has become a fundamental requirement for research credibility and translational applications [14] [12]. This guide examines the complete stakeholder ecosystem involved in developing, validating, and implementing these predictive models for biological system design, with particular emphasis on comparative performance evaluation across different methodological approaches.

The Stakeholder Ecosystem in Predictive Model Development

The development and application of predictive models in biological research involves a diverse network of stakeholders with complementary expertise and responsibilities. This ecosystem ranges from those creating novel computational methods to those applying established models to answer specific biological questions.

Stakeholder Roles and Responsibilities

The modeling and analysis of biological systems requires close collaboration between professionals with different specializations [13] [14]. The National Institutes of Health's Modeling and Analysis of Biological Systems (MABS) study section explicitly recognizes this diversity in its review processes, which encompass applications concerned with the integration of computational modeling and analytical experimentation to understand complex biological systems across full biological scales [14].

Table 1: Key Stakeholder Roles in Predictive Model Development

| Stakeholder Category | Primary Responsibilities | Expertise Domain |

|---|---|---|

| Method Developers | Create novel modeling frameworks and algorithms; develop mathematical foundations; implement computational tools | Mathematics, Computer Science, Engineering, Computational Biology |

| Experimental Biologists | Provide biological context; design regulated connectivity diagrams; supply semi-quantitative information on stimuli and responses [13] | Molecular Biology, Cell Biology, Physiology, Specific Domain Knowledge |

| Translational Modelers | Convert biological information into mathematical constructs; execute forward and inverse modeling; collaborate on interpretation [13] | Systems Biology, Mathematical Modeling, Data Integration |

| End-User Analysts | Apply validated models to specific research questions; generate biological insights; test hypotheses | Domain-Specific Biological Research, Drug Development, Clinical Applications |

| Funding & Policy Agencies | Establish review criteria; allocate resources; set standards for validation and benchmarking | Research Administration, Scientific Review, Policy Development |

Collaborative Workflows in Model Development

Effective biological systems modeling requires iterative collaboration between experimental biologists and mathematical modelers [13]. This process typically begins with the biologist designing a regulated connectivity diagram of processes comprising a biological system, accompanied by semi-quantitative information on stimuli and measured or expected responses. The modeler then converts this information through methods of forward and inverse modeling into a mathematical construct that can be used for simulations and hypothesis testing. Both parties collaboratively interpret the results and devise improved concept maps in an iterative refinement cycle [13].

Diagram Title: Predictive Modeling Stakeholder Collaboration Workflow

Benchmarking Predictive Models: Experimental Protocols and Performance Metrics

Rigorous benchmarking is essential for verifying model transportability across different data sources, also known as external validation [15]. Predictive model performance may deteriorate when applied to data sources not used for training, making external validation a critical step in successful model deployment.

External Validation Methodology

A recent benchmarking study published in 2025 provides a robust framework for estimating external model performance using only external summary statistics when patient-level external data sources are inaccessible [15]. This approach is particularly valuable in biological and clinical contexts where data privacy and accessibility present significant challenges.

Table 2: External Validation Performance Metrics for Predictive Models

| Performance Metric | Description | Benchmark Error (95th Percentile) | Interpretation in Biological Context |

|---|---|---|---|

| AUROC (Discrimination) | Area Under Receiver Operating Characteristic curve; measures model's ability to distinguish between classes | 0.03 [15] | Excellent discrimination ≤0.03 error from actual external performance |

| Calibration-in-the-large | Measures how well predicted probabilities match observed frequencies | 0.08 [15] | Good calibration accuracy for biological prediction tasks |

| Brier Score (Overall Accuracy) | Measures overall model accuracy; mean squared difference between predicted probabilities and actual outcomes | 0.0002 [15] | Minimal error in probabilistic predictions |

| Scaled Brier Score | Brier score scaled for easier interpretation | 0.07 [15] | Consistent performance across different scaling approaches |

Comprehensive Benchmarking Protocol

The external validation benchmarking protocol involves several methodical steps to ensure robust performance estimation [15]:

Dataset Configuration: Utilize multiple large heterogeneous data sources where each source sequentially plays the role of internal training data, with the remaining sources serving as external validation sets.

Target Cohort Definition: Define specific patient or biological system cohorts for evaluation. In the referenced 2025 study, the target cohort included patients with pharmaceutically-treated depression, with models predicting risk of developing various conditions including diarrhea, fracture, gastrointestinal hemorrhage, insomnia, or seizure [15].

Model Training and Validation: For each internal data source, train multiple model types predicting specific biological outcomes, then extract population-level statistics from external cohorts to estimate model performance without accessing unit-level external data.

Weighting Algorithm Application: Apply specialized weighting algorithms to internal cohort units to reproduce external statistics, enabling performance estimation without direct data access.

Performance Comparison: Compare estimated performance metrics against actual external performance calculated by testing models directly in external cohorts.

This methodology demonstrates particular value for biological and clinical researchers working with sensitive or inaccessible data, as it enables reliable transportability assessment while maintaining data privacy and security [15].

Comparative Performance Analysis Across Modeling Approaches

Different modeling approaches exhibit distinct performance characteristics across various biological contexts and data environments. Understanding these differences is crucial for stakeholders when selecting appropriate methodologies for specific research questions.

Impact of Model Design Choices on Biological Insight

Model design choices significantly impact the biological insights derived from computational simulations [12]. Key considerations include:

System Representation Choices: Decisions regarding geometry (rectangular vs. hexagonal) and dimensionality (2D, 3D, or 3D center slices) balance accurate biological representation with computational cost, with different choices driving quantitative changes in emergent behavior [12].

Cell-to-Cell Variability: Incorporation of cell-to-cell heterogeneity significantly impacts cell-level and temporal trends observed in simulations, affecting the interpretation of single-cell emergent dynamics [12].

Environmental Dynamics: Choices regarding nutrient dynamics and other environmental factors directly influence emergent outcomes and system behavior predictions [12].

These design decisions create fundamental trade-offs between realism, precision, and generality that must be deliberately balanced based on specific research contexts and questions [12].

Algorithm Selection for Biological Prediction Tasks

Different predictive algorithms offer varying advantages for biological prediction tasks, with selection dependent on data characteristics and research objectives [16].

Table 3: Comparative Performance of Predictive Modeling Algorithms

| Algorithm | Best Suited For | Advantages | Limitations in Biological Context |

|---|---|---|---|

| Random Forest | Classification tasks with large volumes of data; feature importance estimation [16] | Resistant to overfitting; handles thousands of input variables; maintains accuracy with missing data [16] | Limited interpretability of complex biological mechanisms |

| Generalized Linear Model (GLM) | Scenarios requiring model interpretability; categorical predictors [16] | Clear understanding of predictor influences; relatively straightforward interpretation; resistant to overfitting [16] | Requires large datasets; susceptible to outliers in biological measurements |

| Gradient Boosted Model (GBM) | Complex prediction tasks with hierarchical data structures [16] | High predictive accuracy; handles complex nonlinear relationships in biological systems | Computationally intensive; requires careful parameter tuning |

| Biochemical Systems Theory (BST) | Dynamic analysis of biochemical and gene regulatory systems [13] | Approximates unknown processes with power-law functions; flexible framework for biological systems [13] | Parameter estimation challenges; steep learning curve for experimental biologists |

Essential Research Reagents and Computational Tools

Successful benchmarking of predictive models in biological research requires both wet-lab reagents and computational resources that enable robust model development and validation.

Table 4: Essential Research Reagent Solutions for Predictive Model Benchmarking

| Research Reagent/Tool | Function/Purpose | Application Context |

|---|---|---|

| OHDSI Standardized Data | Provides harmonized observational health data with standardized structure, content, and semantics [15] | Enables consistent feature definitions across external validation datasets |

| BST-Box Software | Supports biochemical systems theory modeling activities including concept map modeling and simulation [13] | Facilitates collaboration between biologists and modelers through specialized modeling environment |

| ARCADE ABM Framework | Agent-based modeling testbed for simulating cancer cell growth in tumor microenvironments [12] | Enables investigation of modeling choices on emergent behavior in spatial-temporal contexts |

| External Summary Statistics | Limited descriptive statistics from external data sources enabling transportability assessment [15] | Allows model performance estimation without accessing unit-level external data |

| Weighting Algorithm | Assigns weights to internal cohort units to reproduce external statistics [15] | Enables accurate external performance estimation while maintaining data privacy |

The stakeholder ecosystem for predictive model development in biological research encompasses a diverse range of expertise from method developers to end-user analysts, with successful outcomes dependent on effective collaboration across these domains. Benchmarking remains a critical component for ensuring model reliability and transportability, with recent methodological advances enabling robust external validation even when direct data access is limited. As computational approaches continue to evolve toward more sophisticated multi-scale integration and agent-based modeling, maintaining rigorous benchmarking standards and clear communication across stakeholder groups will be essential for advancing biological understanding and therapeutic development.

In modern drug development, where bringing a single new drug to market can cost over $2 billion, the failure of a predictive model carries an exceptionally high price [17] [18]. Benchmarking—the process of systematically comparing a model's performance against historical data or alternative approaches—has emerged as an essential discipline for quantifying and mitigating this risk [17] [18]. Effective benchmarking enables researchers to assess a drug candidate's probability of technical success, allocate scarce resources strategically, and make data-driven decisions about which programs to advance, pivot, or terminate [17].

This guide provides an objective comparison of contemporary predictive modeling approaches in drug discovery, with a specific focus on their benchmarking methodologies and performance metrics. By examining experimental protocols and quantitative results across different model classes, we aim to establish a framework for evaluating model utility within biological system design research.

Comparative Analysis of Predictive Modeling Approaches

Performance Metrics Across Model Types

Different model architectures excel at distinct aspects of the drug discovery pipeline. The table below summarizes key performance indicators for several recently published approaches.

Table 1: Performance comparison of predictive models in drug discovery applications

| Model/Platform | Primary Application | Key Performance Metrics | Reported Results | Reference |

|---|---|---|---|---|

| SANDSTORM (Sequence and Structure Neural Network) | Functional RNA molecule prediction (Toehold switches, CRISPR guides, UTRs) | • Mean Squared Error (MSE)• R² (Coefficient of Determination)• Spearman Correlation• Area Under Curve (AUC) | • Matched prior model performance with 67% fewer parameters• AUC = 0.97 for structure-dependent classification | [19] |

| CANDO Platform (Computational Analysis of Novel Drug Opportunities) | Multi-scale therapeutic discovery & drug repurposing | • Ranking accuracy of known drugs for indications• Correlation with chemical similarity | • Ranked 7.4%-12.1% of known drugs in top 10 candidates• Performance correlated with chemical similarity (Spearman > 0.5) | [18] |

| Regression ML for DDI (Support Vector Regressor) | Predicting pharmacokinetic drug-drug interactions | • Percentage of predictions within 2-fold of observed values• Cross-validation performance | • 78% of predictions within 2-fold of actual exposure changes | [20] |

| MD-Feature NN Model (Molecular Dynamics Neural Network) | Predicting biological activity of photoswitchable peptides | • Prediction accuracy for cytotoxic activity (IC₅₀)• Generalization to novel peptide analogs | • Reliable prediction of activity differences between photoisomers• Generalized to peptides with different activity types | [21] |

Domain-Specific Evaluation Metrics

Traditional machine learning metrics often prove misleading in drug discovery contexts characterized by imbalanced datasets and rare but critical events [22]. The field has consequently developed specialized evaluation approaches:

Table 2: Domain-specific metrics for biopharma applications

| Metric | Application Context | Advantage Over Generic Metrics |

|---|---|---|

| Precision-at-K | Ranking top drug candidates or biomarkers | Prioritizes highest-scoring predictions rather than averaging performance across all data [22] |

| Rare Event Sensitivity | Detecting low-frequency events (e.g., toxicity signals, rare mutations) | Focuses on critical findings that generic accuracy metrics might obscure [22] |

| Pathway Impact Metrics | Evaluating biological relevance of predictions | Assesses mechanistic insights and pathway alignment rather than just statistical correctness [22] |

| Temporal Validation | Assessing model performance on data from different time periods | Tests predictive validity against future observations rather than just historical data [18] |

Experimental Protocols and Benchmarking Methodologies

Data Sourcing and Ground Truth Establishment

Robust benchmarking begins with carefully curated data sources and clearly defined validation protocols:

- Data Completeness and Recency: Traditional benchmarking solutions updated infrequently fail to capture newly generated drug development data. Dynamic approaches incorporate new data in near real-time for more accurate benchmarks [17].

- Ground Truth Mappings: Most drug discovery benchmarking protocols begin with established drug-indication associations from databases like:

- Comparative Toxicogenomics Database (CTD)

- Therapeutic Targets Database (TTD)

- DrugBank [18]

- Data Splitting Strategies: Common approaches include:

- K-fold cross-validation: Very commonly employed across studies

- Temporal splits: Splitting based on approval dates to test predictive validity

- Leave-one-out protocols: Particularly useful for smaller datasets [18]

Benchmarking Workflow for Predictive Models

The following diagram illustrates a generalized benchmarking workflow adapted from current best practices in computational drug discovery:

Diagram 1: Benchmarking workflow for predictive models

Case Study: SANDSTORM RNA Prediction Architecture

The SANDSTORM (Sequence and Structure Neural Network) architecture demonstrates how incorporating both sequence and structural information improves predictive performance for RNA-based therapeutics [19]:

Experimental Protocol:

- Input Representation:

- Sequence: One-hot encoded nucleotide sequences

- Structure: Novel structural array encoding base pairing interactions

- Architecture: Dual-input convolutional neural network with independent convolutional channels for sequence and structure

- Training: Model trained on diverse RNA classes (toehold switches, CRISPR guides, UTRs, RBSs)

- Validation: Performance compared to sequence-only models using multiple randomized train-test splits

Key Findings:

- The dual-input model significantly outperformed sequence-only models in classifying structure-dependent RNA functions (AUC = 0.97 vs. 0.72)

- Integrated gradients revealed the model correctly identified structurally important regions

- The architecture achieved comparable performance to specialized models with 67% fewer parameters [19]

Essential Research Reagents and Computational Tools

Successful implementation of predictive models requires both computational resources and experimental validation tools. The table below details key solutions mentioned in the evaluated studies.

Table 3: Research reagent solutions for predictive model development and validation

| Resource/Tool | Type | Primary Function | Application Context |

|---|---|---|---|

| Scikit-learn Python Library | Software Library | Machine learning model implementation and evaluation | General-purpose ML pipeline development [20] |

| Molecular Dynamics (MD) Simulation | Computational Method | Sampling 3D conformations and dynamics of molecules | Generating dynamic features for flexible compounds [21] |

| SimCYP Simulator | PBPK Modeling Platform | Predicting pharmacokinetic parameters and drug interactions | Generating CYP activity profiles and DDI predictions [20] |

| SciVal | Benchmarking Platform | Research performance analysis and benchmarking | Comparing institutional and researcher output [23] [24] |

| InCites Benchmarking & Analytics | Research Evaluation Tool | Analyzing institutional productivity and collaboration | Benchmarking against global research baselines [23] [24] |

| Washington Drug Interaction Database | Clinical Data Resource | Source of clinical drug interaction studies | Ground truth for DDI prediction models [20] |

The high cost of failure in drug development necessitates rigorous benchmarking practices for predictive models. Our analysis reveals that the most effective approaches share several key characteristics: they utilize dynamic, current data; employ domain-specific evaluation metrics; and validate performance against biologically relevant endpoints. As computational methods continue to evolve, standardized benchmarking protocols will become increasingly vital for translating model predictions into successful therapeutic outcomes. Researchers should prioritize models that demonstrate not only statistical superiority but also biological interpretability and robustness across diverse validation scenarios.

Implementing Benchmarks: Community Tools and Practical Workflows

Benchmarking suites have become foundational tools for validating predictive models in biological system design. This guide objectively compares four community-driven platforms—CZI's Benchmarking Suite, DNALONGBENCH, OpenVT, and collaborative AI platforms—focusing on their performance, technical specifications, and applicability for different research needs.

The table below summarizes the core characteristics and quantitative performance data for the featured community-driven platforms.

Table 1: Platform Overview and Comparative Performance

| Platform Name | Primary Focus | Key Performance Metrics | Reported Performance Highlights | Input Specifications |

|---|---|---|---|---|

| CZI Benchmarking Suite [1] [25] | Virtual cell model development | Multiple metrics across tasks (e.g., clustering accuracy, integration quality) | Enables model evaluation in "3 hours vs. 3 weeks" with custom pipelines [1] | Biological tasks from single-cell analysis [1] |

| DNALONGBENCH [26] | Long-range DNA dependency prediction | AUROC, Pearson Correlation Coefficient (PCC), Stratum-Adjusted Correlation Coefficient (SCC) | Expert models consistently outperform foundation models (HyenaDNA, Caduceus) and lightweight CNNs across all 5 tasks [26] | Sequences up to 1 million base pairs [26] |

| OpenVT [27] | Multicellular virtual tissue simulation | Explanatory power for tissue mechanisms, virtual experiment precision | Aims to accelerate understanding of tissue development, homeostasis, and disease [27] | Agent-based models, cell-type description libraries [27] |

| Collaborative AI Platforms [28] [29] | AI-powered drug discovery | Clinical candidate synthesis count, discovery timeline compression | AI-designed drugs reaching Phase I trials in ~2 years (vs. typical ~5 years); specific programs achieved candidates with only 136 compounds synthesized [29] | Multi-modal data (genomic, chemical, phenotypic) [28] |

Detailed Experimental Protocols and Workflows

A rigorous experimental protocol is essential for obtaining trustworthy, reproducible benchmark results. The following workflow is synthesized from the examined platforms.

Community-Driven Benchmark Development Workflow

The diagram below outlines the key stages for establishing a community-driven benchmark.

Protocol Steps and Platform-Specific Implementations

Task and Metric Definition: The first step involves the community defining biologically meaningful tasks and corresponding quantitative metrics.

- CZI Suite: Initial release includes six tasks for single-cell analysis, such as cell clustering and perturbation expression prediction, each paired with multiple metrics for a thorough performance view [1].

- DNALONGBENCH: Established criteria of biological significance, long-range dependency, and task diversity to select five tasks, including enhancer-target gene interaction (AUROC) and contact map prediction (SCC & PCC) [26].

Data Curation and Standardization: This critical phase involves gathering, harmonizing, and formatting datasets for model training and evaluation.

- CZI & NVIDIA Collaboration: Focuses on scaling biological data processing to billions of data points, leveraging GPU-accelerated tools to create large-scale, robust datasets for model development [30].

- CZI Platform: Provides linked, processed datasets for training and evaluation, such as the Human Protein Atlas (HPA) Source Dataset and the CZ CELLxGENE Discover Census, which offers API access to over 33 million human cells [31].

Tool Development and Model Execution: This involves creating user-friendly tools for running benchmarks and executing model evaluations.

- CZI Suite: Offers a three-part toolkit: 1)

cz-benchmarks, an open-source Python package; 2) a VCP CLI for programmatic interaction; and 3) a no-code web interface for biologists [1] [25]. - DNALONGBENCH: Implements three model types for baseline comparisons: a lightweight Convolutional Neural Network (CNN), existing expert models specific to each task, and fine-tuned DNA foundation models like HyenaDNA and Caduceus [26].

- CZI Suite: Offers a three-part toolkit: 1)

Result Integration and Community Feedback: The final stage involves sharing results publicly and incorporating community feedback to refine the benchmarks.

- CZI Platform: Features an interactive web interface where users can explore and compare benchmarking results, filtering by task, dataset, or metric [1] [25]. It is designed as a "living, evolving product" where researchers can propose new tasks and contribute data [1].

- OpenVT: Aims to establish an active open-source community for sharing and applying biomedical data and models, using standards to enable sharing across platforms like CompuCell3D and PhysiCell [27].

The Scientist's Toolkit: Research Reagent Solutions

This section details key computational "reagents"—datasets, software tools, and models—that are essential for conducting experiments in this field.

Table 2: Essential Research Reagents for AI Biology Benchmarking

| Reagent Name | Type | Primary Function | Relevance to Benchmarking |

|---|---|---|---|

| CZ CELLxGENE Discover Census [31] | Dataset | Provides standardized single-cell RNA sequencing data from over 33 million human cells. | Serves as a massive, curated training and evaluation dataset for transcriptomic model development. |

| Human Protein Atlas (HPA) [31] | Dataset | Contains images capturing subcellular localization of thousands of proteins. | Used as a source and evaluation dataset for imaging models like SubCell that analyze protein distribution. |

| cz-benchmarks [1] [30] | Software Package | An open-source Python package containing standardized benchmark tasks and metrics. | Embedded directly in training code to evaluate model performance on community-defined tasks. |

| RDKit [32] | Software Library | An open-source toolkit for cheminformatics (e.g., molecule search, fingerprint computation). | Used in drug development pipelines for chemical database management and virtual screening preparation. |

| scGPT [31] | AI Model | A foundation model designed to analyze large-scale single-cell multi-omics data. | A benchmarkable model on the CZI platform for tasks like perturbation prediction and data integration. |

| Exscientia's Centaur Chemist [29] | AI Platform | Integrates generative AI with human expertise for small-molecule drug design. | Represents a commercial platform whose reported efficiency (e.g., 70% faster design cycles) serves as a performance benchmark for the industry. |

| Federated Learning [28] | Computational Method | A privacy-preserving technology that allows AI models to learn from decentralized data without sharing it. | Enables secure multi-institutional collaboration on benchmark development and model training. |

Performance Analysis and Key Findings

Synthetic analysis of the platforms reveals critical insights into the current state of biological AI benchmarking.

Comparative Strengths and Adopted Strategies

CZI's Integrated Ecosystem: The suite's primary strength is its multi-faceted approach, uniting a Python package for developers, a CLI for programmatic access, and a no-code GUI for biologists [1] [25]. This democratizes access and fosters a tighter collaboration between computational and experimental scientists, which is crucial for ensuring biological relevance [31].

DNALONGBENCH's Rigorous Baselines: A key finding from DNALONGBENCH is that while DNA foundation models show promise, they still lag behind task-specific expert models in capturing long-range genomic dependencies [26]. This highlights the importance of including specialized baselines in benchmarks to avoid the "illusion of progress" and provides a clear challenge for the community to address.

Addressing the Overfitting Challenge: CZI's approach is explicitly designed to combat overfitting to static benchmarks. By creating a "living, evolving" resource where new tasks and data can be contributed, the platform encourages the development of models that generalize to new datasets and research questions rather than just optimizing for a fixed set of metrics [1].

The Collaborative Model for Drug Discovery: Platforms like those from Exscientia and Recursion demonstrate a strategic shift towards collaboration over competition. By providing smaller biotechs with access to sophisticated AI tools and data via secure, federated environments, the industry aims to accelerate innovation collectively [28] [29]. The merger of Exscientia and Recursion itself represents a consolidation of complementary strengths to create a more powerful "AI drug discovery superpower" [29].

Formalizing Benchmarks with Workflow Systems (e.g., Common Workflow Language)

Benchmarking is a critical, yet complex, component of computational method development in biological research. A benchmark is defined as a conceptual framework to evaluate the performance of computational methods for a given task, requiring a well-defined objective and a precise definition of correctness or ground-truth in advance [5]. In fields like bioinformatics, the proliferation of tools for analyzing high-throughput sequencing (HTS) data—including RNA-Seq, ChIP-Seq, and germline variant calling—has made neutral and reproducible performance comparisons increasingly vital [33]. However, the process is fraught with challenges, including software dependency conflicts, difficulties in executing diverse tools uniformly, and a lack of standardized metrics that span different application domains [5] [34].

The inherent generality of the workflow paradigm makes it a powerful abstraction for designing complex applications executed on large-scale distributed infrastructures. Unfortunately, this same generality becomes an obstacle when evaluating workflow implementations or Workflow Management Systems (WMSs), as no consistent and universally agreed-upon key performance indicators exist in the literature [34]. Different scientific domains often prioritize different aspects of the execution process; for instance, compute-intensive workflows with billions of fine-grained tasks require minimal control-plane overhead, while data-intensive workflows with fewer, larger steps benefit more from overlapping computation and communication [34]. This lack of community consensus on benchmarking suites represents a significant barrier for domain experts attempting to compare WMSs based on their specific needs.

Common Workflow Language (CWL) as a Formalization Solution

The Common Workflow Language (CWL) is an open standard designed to describe and implement portable, scalable, and reproducible data analysis workflows [35] [33]. It provides a vendor-neutral specification for defining the syntax and input/output semantics of command-line tools and workflows, creating a common layer that reduces the incidental complexity of connecting heterogeneous programs together [35]. By treating command line tools as a flexible, non-interactive unit of code sharing and reuse, CWL establishes a precise data and execution model that can be implemented across various computing platforms, from single workstations to clusters, grids, and cloud environments [35].

CWL addresses core reproducibility challenges through several key features. Its platform-independent design enables workflow execution on any infrastructure—local machines, clusters, or cloud-based systems—without modification [33]. The language's interoperability allows integration of diverse tools and software, facilitating the development of complex, multi-tool pipelines. When combined with containerization technologies like Docker, CWL resolves software dependency and compatibility issues by packaging tools with their entire environment, ensuring consistent behavior across different systems [33]. Furthermore, CWL workflows are defined using human-readable and machine-processable formats like YAML and JSON, which enhances transparency and allows for version control and formal validation [35] [33].

Formally, a CWL document describes a computational process through several key components. The specification defines a process as a basic unit of computation that accepts input data, performs computation, and produces output data, with examples including CommandLineTools, Workflows, and ExpressionTools [35]. An input object describes the inputs to a process invocation, while an output object describes the resulting output, with both having defined schemas that specify valid formats, required fields, and data types [35]. This formalization enables precise description of tool interfaces, data requirements, and execution dependencies, which is fundamental for creating standardized benchmarks.

CWL in Action: Implementation for Biological Workflows

Case Study: High-Throughput Sequencing Analysis

The practical implementation of CWL for reproducible bioinformatics analysis is demonstrated in automated pipelines for processing HTS data from RNA-Seq, ChIP-Seq, and germline variant calling experiments [33]. These CWL-implemented workflows have achieved high accuracy in reproducing previously published research findings on Chronic Lymphocytic Leukemia (CLL) and in analyzing whole-genome sequencing data from four Genome in a Bottle Consortium (GIAB) samples [33].

The RNA-Seq analysis workflow exemplifies a complete CWL pipeline (Supplementary Figure S1) [33]. The workflow begins with raw FASTQ files as input and proceeds through multiple processing stages: initial quality control using FastQC, read trimming with Trim Galore, optional custom processing using FASTA/Q Trimmer, reference genome mapping with HISAT2, and file format conversion and sorting using Samtools [33]. The pipeline then branches into two independent workflows for differential expression analysis, demonstrating how complex analytical pathways can be structured within the CWL framework.

The technical architecture of these implementations shows how CWL addresses common computational biology challenges. Each tool within the workflow is executed using Docker containers, which automates software installation and ensures cross-platform compatibility [33]. The CWL descriptions themselves define all input parameters, output expectations, and execution requirements in a standardized format that can be validated, shared, and re-executed consistently across different computing environments [33]. This approach effectively overcomes issues of software incompatibility and laborious configuration that typically plague bioinformatics analyses.

Benchmarking Ecosystem and Community Initiatives

Community-driven initiatives are crucial for establishing formal benchmarking practices using workflow systems. The Workflow Benchmarking Group (WfBG) represents one such effort that seeks to define a shared vocabulary of performance metrics, design and maintain benchmarking suites of real-world workflows, and develop a reproducible methodology to collect and report benchmark results [34]. These efforts aim to foster a positive continuous improvement process similar to what has been achieved in high-performance computing through standardized benchmarks like HPLinpack [34].

The WfBG specifically aims to define several benchmarking suites to evaluate different metrics of interest, acknowledging that no single performance metric serves all workflow domains [34]. The group collaboratively maintains a catalog of state-of-the-art implementations of real-world workflows for various workflow languages and frameworks, while explicitly avoiding the determination of a universally "best" tool, instead focusing on helping users compare WMSs for their specific needs [34].

Specialized benchmarking frameworks like CatBench demonstrate the application of these principles to specific domains, such as evaluating Machine Learning Interatomic Potentials (MLIPs) for adsorption energy predictions in heterogeneous catalysis [36]. CatBench implements a systematic evaluation process that incorporates real-world application principles, including full relaxation requirements, multi-category anomaly detection mechanisms, and flexible assessment capabilities for different MLIP architectures on user-specified systems [36]. The framework employs a four-step anomaly detection mechanism: multiple independent relaxations to detect reproducibility failures, structural integrity checks to identify non-physical relaxations, bond-length change rate algorithms to detect adsorbate migration, and energy deviation thresholds to flag energy anomalies [36].

Comparative Analysis: CWL Versus Alternative Approaches

Workflow System Landscape

While CWL offers significant advantages for formalizing benchmarks, the broader ecosystem contains numerous workflow languages and systems. Over 350 workflow languages, platforms, or systems have been identified, with CWL representing one standard that has gained significant traction, particularly in bioinformatics and life sciences [5]. Other workflow systems often include domain-specific optimizations or different execution models that may be better suited to particular computational patterns or resource environments.

The choice between workflow systems often involves trade-offs between expressiveness, performance, portability, and community support. Systems like Nextflow and Snakemake offer powerful domain-specific languages, while more general-purpose systems like Apache Airflow provide scheduling and monitoring capabilities. The key differentiator for CWL is its position as an open standard rather than a specific implementation, which promotes interoperability and reduces vendor lock-in [35] [33].

Performance Metrics and Evaluation Framework

A standardized approach to benchmarking requires clearly defined performance metrics that enable fair comparison across different workflow systems and computational methods. The table below summarizes key metric categories relevant for evaluating bioinformatics workflows:

Table: Essential Performance Metrics for Workflow Benchmarking

| Metric Category | Specific Metrics | Interpretation and Importance |

|---|---|---|

| Runtime Performance | Wall-clock time, CPU hours, Memory usage, Peak memory | Measures computational efficiency and resource requirements; crucial for cost estimation and infrastructure planning |

| Scalability | Strong scaling efficiency, Weak scaling efficiency, Data throughput | Evaluates performance maintenance with increasing resources or problem sizes; indicates suitability for large-scale studies |

| Accuracy & Quality | Variant calling accuracy, Differential expression precision, Adsorption energy MAE [36] | Quantifies analytical correctness against ground truth; determines scientific validity of results |

| Robustness | Task failure rate, Exception handling, Checkpointing effectiveness | Measures reliability and fault tolerance; important for production environments and long-running analyses |

| Reproducibility | Result consistency across platforms, Environment reproducibility, Parameter transparency | Ensures findings can be independently verified; fundamental requirement for scientific validity |

These metrics can be formalized within benchmarking frameworks to enable consistent evaluation. For instance, in the CatBench framework for MLIP evaluation, metrics like Normal Rate (percentage of successful relaxations), Mean Absolute Error (MAE), and computational cost (seconds per relaxation step) provide a multidimensional assessment of model performance [36]. The framework's use of Average Distance within Threshold (ADwT) and Average Maximum Distance within Threshold (AMDwT) offers structure-based accuracy measures that complement energy-based metrics [36].

Experimental Protocols for Benchmark Implementation

Standardized Benchmarking Methodology

Implementing rigorous benchmarks with workflow systems requires a structured experimental approach. The following protocol outlines key steps for creating formal benchmarks using CWL:

Benchmark Definition: Create a formal specification of all benchmark components in a single configuration file that defines the scope, topology, software environments, parameters, and snapshot policies for a release [5]. This definition should explicitly state the ground truth and evaluation metrics.

Workflow Implementation: Develop CWL descriptions for all tools and workflows included in the benchmark, ensuring complete encapsulation of dependencies through containerization [33]. The implementation should include both the methods being evaluated and any pre-/post-processing steps required for metric calculation.

Execution Environment Provisioning: Establish consistent computing environments across all benchmark runs, using container technologies to ensure identical software stacks [5] [33]. Document all hardware specifications, including CPU architecture, memory capacity, and storage subsystems.

Provenance Tracking: Execute workflows with comprehensive provenance capture, recording all input parameters, software versions, intermediate results, and output data [5]. The CWL standard facilitates this tracking through its formal execution model.

Metric Calculation and Reporting: Implement automated collection of performance metrics during workflow execution, followed by systematic aggregation and reporting in standardized formats [34]. Results should be suitable for both interactive exploration and programmatic access.

Case Study: MLIP Benchmarking Protocol

The CatBench framework provides a specific example of a comprehensive benchmarking protocol for machine learning interatomic potentials [36]. Their methodology includes:

Dataset Curation: Standardized adsorption energy datasets are created from Catalysis-Hub or user-defined data, automatically converting adslab, slab, and gas-phase reference structures into benchmark-ready formats [36].

Multi-Category Anomaly Detection: Relaxation results are classified into normal, adsorbate migration, and anomaly categories, with further subdivision of anomalies into reproducibility failures, non-physical relaxations, and energy anomalies [36]. This fine-grained classification distinguishes genuine model failures from physically reasonable structural changes.

Comprehensive Model Evaluation: Multiple MLIP architectures (including CHGNet, MACE, SevenNet, GemNet-OC, Equiformer, eSEN, and UMA) are assessed across diverse adsorption scenarios, covering 37 metal types and 2,035 bimetallic alloy surfaces [36].

Performance Trade-off Analysis: Pareto analysis reveals the balance between accuracy and computational efficiency, enabling informed model selection based on application requirements [36]. For instance, evaluations show UMA-s delivers 0.200 eV normal MAE at 0.099 seconds/step, while GRACE offers faster execution (0.010 seconds/step) with slightly reduced accuracy [36].

Essential Research Reagents and Computational Tools

Formalizing benchmarks requires both computational infrastructure and specialized software tools. The following table details key components of the benchmarking toolkit:

Table: Essential Research Reagents and Computational Tools for Workflow Benchmarking

| Tool Category | Specific Tools/Platforms | Function and Application |

|---|---|---|

| Workflow Systems | Common Workflow Language (CWL), Nextflow, Snakemake | Define, execute, and manage computational pipelines; CWL provides platform independence and standardization [35] [33] |

| Containerization | Docker, Singularity, Podman | Package tools and dependencies into portable, reproducible environments; essential for consistent execution [33] |

| Benchmarking Frameworks | CatBench [36], WfCommons [34], WfBench [34] | Provide specialized infrastructure for designing, executing, and analyzing benchmark studies |

| Performance Analysis | custom metrics collectors, WfFormat [34], Workflow Trace Archive [34] | Collect, standardize, and analyze performance metrics across multiple workflow executions |

| Data Resources | GIAB references [33], Catalysis-Hub [36], public repositories (SRA, ENA) | Provide ground-truth datasets and standardized inputs for benchmark validation |

| Specialized Analytical Tools | HISAT2 [33], Samtools [33], CHGNet [36], MACE [36] | Domain-specific tools that form the analytical core of scientific workflows; targets of benchmark evaluations |

Visualization of Benchmarking Workflows

The following diagrams illustrate key workflow architectures and benchmarking processes discussed in this guide.

Conceptual Framework for Benchmarking Systems

CWL Implementation for HTS Data Analysis

The formalization of benchmarks with workflow systems like CWL represents a critical advancement for ensuring reproducibility, fairness, and utility in computational biology method evaluation. By providing standardized mechanisms for defining workflow components, execution environments, and performance metrics, these approaches address fundamental challenges in comparative method assessment. The integration of containerization technologies with platform-independent workflow definitions creates an ecosystem where benchmarks can be executed consistently across diverse computational environments, enabling meaningful comparisons and facilitating community verification.

Looking forward, the evolution of benchmarking ecosystems will likely focus on increasing automation, enhancing metric standardization, and expanding community engagement. Initiatives like the Workflow Benchmarking Group and frameworks like CatBench demonstrate the growing recognition that systematic, reproducible evaluation is essential for scientific progress in computational fields. As these practices mature, they will enable more continuous benchmarking processes, where method performance can be tracked over time and across technological evolution. For researchers in biological system design and drug development, adopting these formalized benchmarking approaches will provide more reliable guidance for tool selection and method development, ultimately accelerating scientific discovery.

The pharmaceutical industry is undergoing a transformative shift, moving from traditional, labor-intensive drug discovery processes toward data-driven, predictive approaches powered by artificial intelligence (AI) and machine learning (ML). This evolution is characterized by a "predict first" mindset, where researchers use computational power to conceptualize, create, and evaluate promising molecules more effectively than ever before [37]. AI's influence on pharmaceutical research has never been more pronounced, with performance on demanding biological benchmarks continuing to improve rapidly [38]. The integration of AI spans the entire drug development pipeline, from initial target identification and lead compound optimization to critical toxicity prediction and clinical trial design [39]. This guide provides a comprehensive comparison of AI/ML applications across biological research, focusing specifically on their performance in predictive modeling for biological system design, with supporting experimental data and standardized benchmarking methodologies.

Performance Benchmarking of AI Models in Biological Research

Established AI Benchmarks for Biological Applications

AI benchmarks serve as standardized tests to measure and compare the performance of AI models on specific tasks. These benchmarks typically include test datasets, evaluation methods, and leaderboards for ranking model performance [40]. For biological applications, several benchmarks have emerged as standards for evaluating model capabilities:

Table 1: Key AI Benchmarks for Biological and Chemical Applications

| Benchmark Name | Primary Focus | Evaluation Metrics | Key Features |

|---|---|---|---|

| SWE-bench [38] [40] | Software engineering for biological applications | Accuracy in resolving real-world GitHub issues | 2,200+ issues from 12 Python repositories; tests code patch generation |

| MMLU-Pro [40] | Multitask language understanding | Accuracy on challenging Q&A across domains | 12,000 questions; 10 choice options to reduce guessing |

| BIG-Bench [40] | Reasoning and extrapolation | Performance across diverse tasks | 204 tasks contributed by 450 authors; covers linguistics, biology, physics |

| DO Challenge [41] | Virtual screening for drug discovery | Overlap score with top molecular structures | 1 million molecular conformations; measures identification of promising drug candidates |

| HELM Safety [38] | AI safety and factuality | Comprehensive safety metrics | Standardized framework for assessing model safety and reliability |

Performance Comparison of AI Models in Toxicity Prediction

Toxicity prediction represents a critical application of ML in pharmaceutical research, where accurate models can significantly reduce late-stage drug failures. Recent research has demonstrated the effectiveness of optimized ensemble models compared to individual algorithms:

Table 2: Performance Comparison of ML Models in Drug Toxicity Prediction [42]

| Model Type | Model Name | Scenario 1 Accuracy (%) | Scenario 2 Accuracy (%) | Scenario 3 Accuracy (%) |

|---|---|---|---|---|

| Individual Models | Kstar | 85 | 81 | 85 |

| Random Forest | 82 | 80 | 83 | |

| Decision Tree | 79 | 78 | 80 | |

| AIPs-DeepEnC-GA (DL) | 72 | 70 | 72 | |

| Ensemble Model | OEKRF (Optimized Ensemble) | 77 | 89 | 93 |

Scenario 1: Original features; Scenario 2: Feature selection + resampling with percentage split; Scenario 3: Feature selection + resampling with 10-fold cross-validation [42]

The optimized ensemble model (OEKRF) combining eager random forest and sluggish Kstar techniques demonstrated remarkable performance improvements, exceeding the top-performing individual model by 8% and the deep learning model by 21% in the most rigorous testing scenario [42]. This highlights the value of hybrid approaches in biological prediction tasks.

Experimental Protocols for AI Model Evaluation

Protocol for Toxicity Prediction Modeling

The superior performance of the OEKRF model in toxicity prediction was achieved through a rigorous experimental methodology [42]:

Data Preprocessing Pipeline:

- Dataset: The toxicity dataset contained annotated samples with features related to molecular structures and biological activity.

- Feature Selection: Principal Component Analysis (PCA) was employed for dimensionality reduction, creating linear combinations of original features while preserving crucial information.

- Resampling Techniques: Both oversampling and undersampling methods were applied to address class imbalance issues in the dataset.

- Validation Methods: Three scenarios were implemented: (1) original features with basic validation, (2) feature selection with resampling using percentage split (80% training, 10% testing, 10% validation), and (3) feature selection with resampling using 10-fold cross-validation.

Model Training and Evaluation:

- Seven machine learning algorithms (Gaussian Process, Linear Regression, SMO, Kstar, Bagging, Decision Tree, Random Forest) were compared against the optimized ensemble.

- The ensemble model was created through systematic combination of Random Forest and Kstar algorithms.

- Performance was evaluated using accuracy metrics and composite scores (W-saw and L-saw) that combined multiple performance parameters to strengthen model validation.

- The 10-fold cross-validation approach was particularly effective for addressing overfitting and improving model generalization.

Toxicity Prediction Workflow: The experimental protocol for toxicity prediction modeling involves sequential stages from data preparation to performance validation.

Protocol for Virtual Screening Benchmark (DO Challenge)

The DO Challenge benchmark provides a standardized framework for evaluating AI systems in virtual screening scenarios [41]:

Task Setup:

- Dataset: 1 million unique molecular conformations with custom-generated DO Score labels indicating drug candidate potential.

- Objective: Identify the top 1,000 molecular structures with the highest DO Score using limited resources.

- Constraints: Agents can request only 10% of the true DO Score values (100,000 structures) and are allowed only 3 submission attempts.

- Evaluation Metric: Percentage overlap between submitted structures and actual top 1,000 (Equation: Score = (Submission ∩ Top1000) / 1000 * 100%).

Successful Strategy Components:

- Strategic Structure Selection: Implementation of active learning, clustering, or similarity-based filtering approaches.

- Spatial-Relational Neural Networks: Utilization of Graph Neural Networks (GNNs), attention-based architectures, or 3D CNNs to capture spatial relationships in molecular conformations.

- Position Non-Invariance: Employment of features sensitive to translation and rotation of molecular structures.

- Strategic Submitting: Intelligent combination of true labels and model predictions, leveraging multiple submission opportunities iteratively.