Benchmarking Machine Learning in DBTL Cycles: A Framework for Accelerating Drug Discovery

This article provides a comprehensive framework for benchmarking machine learning (ML) methods within Design-Build-Test-Learn (DBTL) cycles, tailored for researchers and professionals in drug development.

Benchmarking Machine Learning in DBTL Cycles: A Framework for Accelerating Drug Discovery

Abstract

This article provides a comprehensive framework for benchmarking machine learning (ML) methods within Design-Build-Test-Learn (DBTL) cycles, tailored for researchers and professionals in drug development. It explores the foundational shift towards data-driven bioengineering, details the practical application of ML models and validation techniques, addresses common pitfalls in benchmarking, and presents rigorous methods for comparative model analysis. By synthesizing current trends and methodologies, this guide aims to equip scientists with the knowledge to reliably benchmark ML approaches, thereby enhancing the efficiency and predictive power of DBTL cycles in biomedical research.

From DBTL to LDBT: The Foundational Shift of Machine Learning in Bioengineering

Understanding the Classic DBTL Cycle in Synthetic Biology

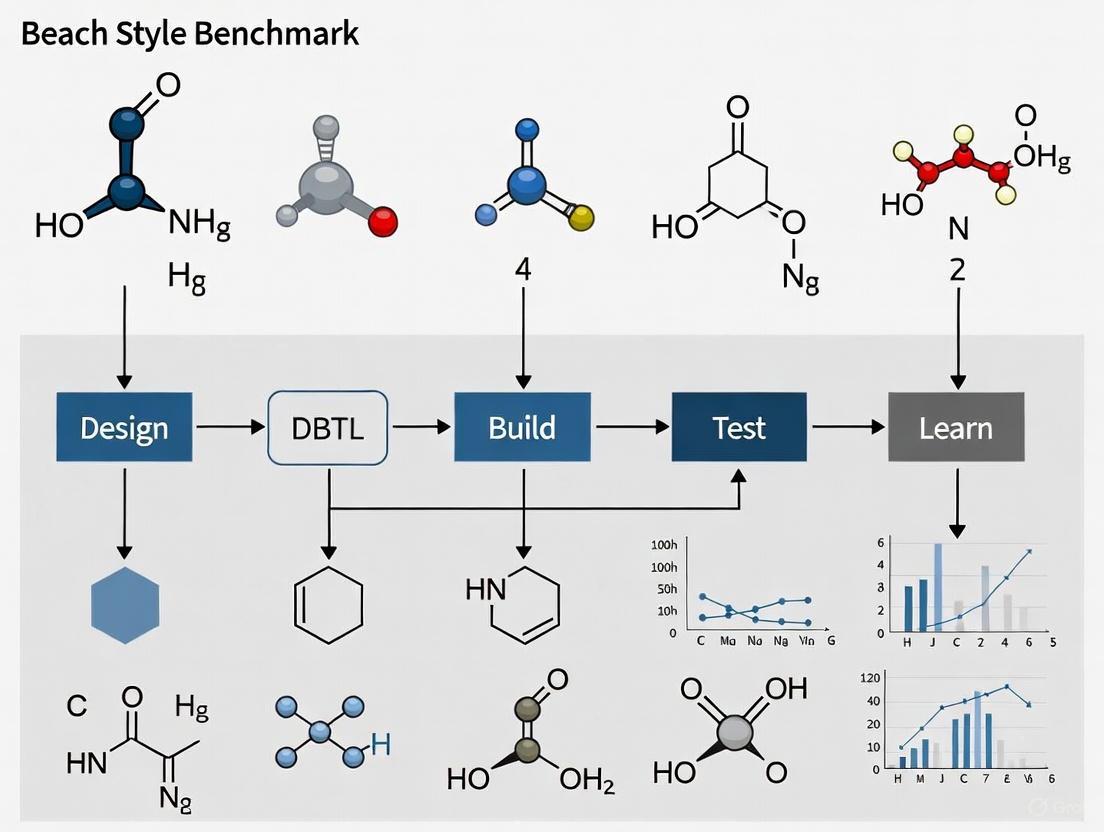

The Design-Build-Test-Learn (DBTL) cycle is a systematic, iterative framework central to synthetic biology, enabling the engineering of biological systems with desired functionalities [1] [2]. This guide compares the performance of the classic DBTL framework against emerging machine learning (ML)-augmented paradigms, providing experimental data and protocols for benchmarking ML methods in DBTL research.

The Classic DBTL Framework: A Foundational Benchmark

The DBTL cycle provides a structured approach for biological engineering, mirroring principles from established engineering disciplines [3]. Its four stages form a continuous loop for system optimization.

- Design: Researchers define objectives and create a conceptual plan using computational modeling, domain knowledge, and existing biological parts to specify genetic sequences or system alterations [1] [3].

- Build: The digital design is physically implemented through DNA synthesis, assembly into vectors (e.g., plasmids), and introduction into a host chassis (e.g., bacteria, yeast, or cell-free systems) [1] [2].

- Test: The constructed biological systems are experimentally characterized to measure performance against the desired objectives, often using high-throughput sequencing and functional assays [1] [4].

- Learn: Data from the test phase are analyzed to understand system behavior, identify bottlenecks, and inform revisions for the next design iteration [1] [5].

The diagram below illustrates the logical flow and iterative nature of the classic DBTL cycle.

Performance Comparison: Classic vs. ML-Augmented DBTL

The table below summarizes key performance indicators, comparing the classic DBTL cycle against modern ML-enhanced approaches. This data serves as a benchmark for evaluating ML method efficacy.

| Performance Metric | Classic DBTL Cycle | ML-Augmented DBTL Cycle |

|---|---|---|

| Primary Workflow | Reactive testing and iterative refinement [1] | Proactive, data-driven prediction [1] [5] |

| Cycle Iteration Speed | Time-consuming, often requires multiple turns [3] [5] | Dramatically accelerated via predictive design [1] [3] |

| Data Handling & Learning | Challenging to learn from big data; relies on trial-and-error [4] | Leverages large datasets for pattern recognition and predictive modeling [1] [4] |

| Predictive Power | Limited by first-principles biophysical models [1] | High; captures non-linear, high-dimensional interactions [1] [5] |

| Typical Experimental Throughput | Manual or semi-automated, lower throughput [2] [6] | Highly automated (e.g., biofoundries), enabling megascale testing [3] [7] |

| Encountered Bottlenecks | "Learning" phase is a major bottleneck [4] | "Build" and "Test" phases can become bottlenecks without automation [7] |

| Automation Dependency | Automation improves throughput but is not always integral [6] | Tight integration with automation is crucial for data generation [3] [7] |

A significant paradigm shift emerging from ML integration is the reordering of the cycle to LDBT (Learn-Design-Build-Test), where machine learning models pre-trained on vast biological datasets are used for zero-shot prediction, potentially reducing the need for multiple iterative cycles [3]. The comparative workflow illustrates this shift.

Experimental Protocols for Benchmarking ML in DBTL

To objectively compare DBTL approaches, researchers can implement the following key experiments focusing on protein engineering, a common application in synthetic biology.

Experiment 1: Benchmarking Zero-Shot Prediction for Protein Engineering

This protocol tests the ability of pre-trained ML models to design functional proteins without prior specific experimental data.

- Objective: To compare the initial success rate of ML zero-shot designs against designs generated by traditional, knowledge-based methods.

- Methodology:

- Design: Use a pre-trained protein language model (e.g., ESM, ProGen) or a structure-based tool (e.g., ProteinMPNN) to generate sequences for a target protein with desired properties (e.g., thermostability, catalytic activity). In parallel, design a control set using traditional homology modeling or site-directed mutagenesis based on literature [3].

- Build: Synthesize DNA sequences for all designed variants. Use a cell-free protein expression system for rapid, high-throughput synthesis without cloning [3].

- Test: Express and purify protein variants. Assay for the target function (e.g., enzyme activity via colorimetric assay) and stability (e.g., thermal shift assay). The cell-free system allows for direct screening of thousands of variants in plate reader format [3].

- Learn: Calculate the fraction of functional variants in the ML-designed pool versus the traditional design pool. Use the results to benchmark the predictive power of the ML model.

- Supporting Data: In one study, combining ProteinMPNN with AlphaFold for structure assessment led to a nearly 10-fold increase in design success rates for TEV protease variants compared to previous methods [3].

Experiment 2: Resolving DBTL Involution with ML-Guided Fermentation Optimization

This protocol addresses the "involution" problem where traditional DBTL cycles lead to diminished returns in complex strain engineering.

- Objective: To test if ML can incorporate multiscale bioprocess data to predict and optimize fermentation titers, breaking the involution cycle [5].

- Methodology:

- Design: Train an ML model (e.g., a Random Forest or Gradient Boosting regressor) on a historical dataset linking strain genotypes, fermentation conditions (e.g., pH, temperature, feed rate), and final product titer [5].

- Build: Engineer a new microbial strain based on insights from the model or use a set of existing production strains.

- Test: Conduct fermentations in bioreactors under conditions predicted by the ML model to be high-performing. Monitor and record the final product titer.

- Learn: Compare the achieved titer against model predictions and against titers from strains developed through classical DBTL without ML guidance. Use the new data to retrain and improve the ML model.

- Supporting Data: Integrative ML models that combine genomic and fluxomic data have been shown to accurately predict complex phenotypes like cellular growth in E. coli, outperforming traditional genome-scale metabolic models (GEMs) alone [5].

The Scientist's Toolkit: Essential Research Reagent Solutions

The table below details key reagents and platforms essential for implementing both classic and ML-augmented DBTL cycles.

| Tool / Reagent | Function in DBTL Cycle |

|---|---|

| Cell-Free Gene Expression Systems | Accelerates the Build and Test phases by enabling rapid protein synthesis without cloning; ideal for high-throughput data generation for ML training [3]. |

| Automated Biofoundries | Integrates laboratory robotics to automate Build and Test processes, dramatically increasing throughput and reproducibility for gathering large-scale data [7]. |

| Protein Language Models (e.g., ESM, ProGen) | Core to the Learn and Design phases; pre-trained on evolutionary data to predict protein function and generate novel, functional sequences via zero-shot inference [3]. |

| Structure-Based Design Tools (e.g., ProteinMPNN) | Used in the Design phase to generate amino acid sequences that will fold into a desired protein backbone structure [3]. |

| High-Throughput DNA Synthesizers | Enables the physical Build phase of large genetic variant libraries designed computationally, providing the link between digital models and physical DNA [1]. |

| CRISPR-Cas9 Genome Editing | A key Build technology for making precise, targeted modifications to an organism's genome to implement designed genetic changes [1]. |

The traditional Design-Build-Test-Learn (DBTL) cycle has long been the cornerstone framework for scientific experimentation in fields like synthetic biology and metabolic engineering. In this paradigm, researchers design biological systems, build DNA constructs, test their performance, and finally learn from the results to inform the next design iteration [3]. However, this process often requires multiple costly and time-consuming cycles to achieve desired functions, with the Build-Test phases creating significant bottlenecks [3].

A fundamental paradigm shift is now emerging: the LDBT cycle, where Machine Learning (ML) precedes the Design phase [3]. This reordering leverages the predictive power of machine learning models trained on vast biological datasets to generate more optimal initial designs, potentially reducing the number of experimental iterations needed. The LDBT approach aims to transform biological engineering into a more predictive discipline, moving closer to a "Design-Build-Work" model similar to established engineering fields [3].

Comparative Analysis: LDBT vs. Traditional DBTL Performance

The performance difference between LDBT and traditional DBTL approaches can be quantified across several key metrics, from experimental efficiency to success rates in protein and pathway engineering.

Table 1: Overall Performance Comparison of DBTL vs. LDBT Approaches

| Performance Metric | Traditional DBTL | LDBT Approach | Experimental Basis |

|---|---|---|---|

| Cycle Efficiency | Multiple iterative cycles required [3] | Potential for single-cycle success [3] | Computational analysis of cycle efficiency [3] |

| Design Success Rate | Limited by empirical iteration [3] | ~10x increase in protein design success [3] | ProteinMPNN + AlphaFold combination [3] |

| Data Utilization | Learns only from current experiment data | Leverages evolutionary and structural data [3] | Protein language models (ESM, ProGen) [3] |

| Throughput Capability | Limited by in vivo building/testing [3] | Ultra-high-throughput via cell-free testing [3] | Cell-free systems screening >100,000 variants [3] |

| Optimal Configuration Finding | Sequential optimization may miss global optimum [8] | Identifies globally optimal configurations [8] | Kinetic modeling of metabolic pathways [8] |

Table 2: Machine Learning Model Performance in Simulated DBTL Cycles

| Machine Learning Model | Performance in Low-Data Regime | Robustness to Training Bias | Robustness to Experimental Noise | Study Findings |

|---|---|---|---|---|

| Gradient Boosting | Top performer [8] | High [8] | High [8] | Effective for combinatorial pathway optimization [8] |

| Random Forest | Top performer [8] | High [8] | High [8] | Effective for combinatorial pathway optimization [8] |

| Automated Recommendation Tool | Variable performance [8] | Moderate [8] | Moderate [8] | Success in some applications (dodecanol, tryptophan) [8] |

| Bayesian Optimization | Can "get lost" in high-dimensional spaces [9] | Requires careful space definition [9] | Dependent on surrogate model [9] | Enhanced by multimodal data integration (CRESt system) [9] |

Experimental Protocols and Methodologies

Core LDBT Protocol for Protein Engineering

Objective: To engineer stabilized protein variants with enhanced catalytic activity using an LDBT approach.

Learning Phase:

- Utilize protein language models (ESM, ProGen) trained on millions of protein sequences for zero-shot prediction of beneficial mutations [3].

- Employ structure-based tools (MutCompute, ProteinMPNN) to identify mutations probable given the local chemical environment [3].

- Generate initial variant libraries computationally, prioritizing sequences with predicted stabilizing mutations and improved function [3].

Design Phase:

- Select top-ranking variants from in silico predictions for experimental testing [3].

- Design DNA sequences codon-optimized for the expression system.

Build Phase:

- Synthesize DNA templates without intermediate cloning steps [3].

- Express protein variants using cell-free transcription-translation systems, enabling rapid production (>1 g/L in <4 hours) of potentially toxic proteins without cellular constraints [3].

Test Phase:

- Measure protein stability via cDNA display, enabling ΔG calculations for hundreds of thousands of variants [3].

- Assess catalytic activity using fluorescent or colorimetric assays in microtiter plates or droplet microfluidics [3].

- Validate predictions and generate data for subsequent model refinement.

Integrated ML-Experimental Workflow for Pathway Optimization

Objective: To optimize a metabolic pathway for maximal product flux using simulated DBTL cycles and machine learning.

Workflow:

- Kinetic Model Development: Represent the metabolic pathway using ordinary differential equations (ODEs) embedded in a physiologically relevant cell model (e.g., E. coli core kinetic model) [8].

- In Silico Library Generation: Create in silico libraries by varying multiple enzyme concentrations (Vmax parameters) simultaneously to mimic combinatorial DNA library construction [8].

- Data Generation: Simulate product flux for thousands of pathway variants to create a comprehensive training dataset, accounting for metabolic burden and bioprocess conditions [8].

- Machine Learning Training: Train gradient boosting and random forest models on the simulated data to predict product flux from enzyme expression levels [8].

- Recommendation Algorithm: Implement an algorithm that uses ML model predictions to recommend new strain designs for the next DBTL cycle, balancing exploration and exploitation [8].

- Validation: Test the optimized pathway designs experimentally, feeding results back to improve model accuracy.

Multimodal Active Learning for Materials Discovery

Objective: To discover novel catalyst materials using the CRESt (Copilot for Real-world Experimental Scientists) platform that integrates diverse data sources.

Workflow:

- Knowledge Integration: The system searches scientific literature for descriptions of elements or precursor molecules relevant to the target material [9].

- Representation Learning: Creates embeddings for each recipe based on the existing knowledge base before experimentation [9].

- Search Space Reduction: Performs principal component analysis (PCA) in the knowledge embedding space to identify a reduced search space capturing most performance variability [9].

- Bayesian Optimization: Uses Bayesian optimization in the reduced space to design new experiments [9].

- Robotic Experimentation: Employs liquid-handling robots, carbothermal shock synthesis systems, and automated electrochemical workstations for high-throughput testing [9].

- Multimodal Feedback: Incorporates newly acquired experimental data, literature knowledge, and human feedback into large language models to augment the knowledge base and refine the search space [9].

Visualizing the Paradigm Shift: Workflows and Signaling Pathways

The Traditional DBTL Cycle vs. The LDBT Paradigm

Machine Learning-Driven Experimental Optimization

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Platforms for LDBT Implementation

| Tool/Reagent | Function | Application in LDBT |

|---|---|---|

| Cell-Free Expression Systems | Protein biosynthesis machinery from cell lysates or purified components for in vitro transcription/translation [3]. | Rapid building and testing of protein variants without cloning; enables high-throughput screening [3]. |

| cDNA Display Platforms | Technology for linking proteins to their encoding cDNA for stability screening [3]. | Ultra-high-throughput protein stability mapping (e.g., 776,000 variants) [3]. |

| Droplet Microfluidics | Picoliter-scale reaction compartments for massively parallel screening [3]. | Screening >100,000 cell-free reactions with multi-channel fluorescent imaging [3]. |

| Protein Language Models (ESM, ProGen) | Deep learning models trained on evolutionary relationships in protein sequences [3]. | Zero-shot prediction of beneficial mutations and protein function in Learning phase [3]. |

| Structure-Based Design Tools (ProteinMPNN, MutCompute) | Deep neural networks trained on protein structures for sequence design [3]. | Designing protein variants that fold into desired structures with improved properties [3]. |

| Ax Adaptive Experimentation Platform | Open-source platform using Bayesian optimization for experiment guidance [10]. | Efficient parameter optimization in complex experimental spaces with multiple constraints [10]. |

| CRESt Platform | Integrated system combining multimodal AI with robotic experimentation [9]. | Materials discovery through literature mining, robotic synthesis, and automated characterization [9]. |

| Kinetic Modeling Frameworks (SKiMpy) | Symbolic kinetic modeling in Python for metabolic pathways [8]. | Simulating DBTL cycles and benchmarking ML methods for metabolic engineering [8]. |

The iterative process of Design-Build-Test-Learn (DBTL) cycles is a cornerstone of modern bioengineering, enabling the systematic development and optimization of biological systems. However, the "Build" and "Test" phases often create significant bottlenecks, being both time-consuming and resource-intensive. The integration of advanced machine learning (ML) is transforming this paradigm by shifting predictive capabilities earlier in the cycle. This guide focuses on two key ML concepts—zero-shot prediction and protein language models (PLMs)—which are critical for bioengineers aiming to accelerate research in areas like drug discovery and protein engineering. By enabling accurate forecasts of biological behavior without the need for experimental data on every new variant, these methods are paving the way for more efficient and intelligent bioengineering workflows. This article provides an objective comparison of leading tools in this space, detailing their performance, experimental protocols, and integration into next-generation DBTL frameworks.

Understanding Zero-Shot Prediction in Biology

In the context of bioengineering, zero-shot prediction refers to the ability of a machine learning model to accurately predict the properties or functions of a biological sequence (e.g., a protein, DNA sequence, or drug compound) without having been explicitly trained on labeled data for that specific task or entity. This is achieved by leveraging foundational knowledge learned from vast, general datasets during pre-training.

The significance for DBTL cycles is profound. A model capable of zero-shot prediction can inform the "Design" phase with reliable forecasts for novel compounds or protein variants, potentially reducing the number of iterative cycles required to reach an optimal solution. This approach directly addresses the challenge of predicting responses for novel compounds with unknown properties, a scenario where conventional supervised learning methods fail [11].

Comparative Analysis of Zero-Shot Prediction Tools

The table below summarizes key zero-shot prediction tools and their documented performance.

Table 1: Comparison of Zero-Shot Prediction Tools for Bioengineering

| Tool Name | Primary Application | Key Features | Reported Performance |

|---|---|---|---|

| ProMEP [12] [13] | Protein Mutational Effect Prediction | Multimodal (sequence & structure); MSA-free; ~160M protein training set | Spearman's correlation: 0.523 (ProteinGym benchmark); Guided engineering of TnpB (5-site mutant efficiency: 74.04% vs WT 24.66%) |

| MSDA (Zero-shot DRP) [11] | Drug Response Prediction | Multi-source domain adaptation; Predicts response for novel compounds | General performance improvement of 5-10% in preclinical screening (GDSCv2, CellMiner datasets) |

| ProGen [14] | Protein Sequence Generation | Language model trained on 280M sequences; Controllable generation via tags | Generated functional lysozymes with catalytic efficiency similar to natural ones (sequence identity as low as 31.4%) |

The Rise of Protein Language Models (PLMs)

Protein Language Models are a class of large language models adapted to the "language of life." Just as LLMs like ChatGPT learn the statistical relationships between words in human language, PLMs are trained on millions of protein sequences to learn the underlying "grammar" and "syntax" of proteins. This self-supervised pre-training allows them to build rich, internal representations of proteins that encapsulate information about evolution, structure, and function.

PLMs are revolutionizing the "Learn" phase of DBTL cycles. They can automatically extract features from massive amounts of unlabeled protein data, moving beyond traditional methods that rely on hand-designed feature extractors [15]. These models are then fine-tuned for specific downstream tasks such as predicting protein function, fitness, or structure, thereby providing a powerful, generalizable starting point for various bioengineering challenges.

Comparative Analysis of Protein Language Models

The field of PLMs is rapidly evolving, with models differing in architecture, training data, and specialization.

Table 2: Comparison of Protein Language Models (PLMs)

| Model Name | Modality | Key Architecture/Features | Primary Applications & Performance |

|---|---|---|---|

| ProteinGPT [16] | Multimodal (Sequence & Structure) | Integrates ESM-2 sequence encoder & inverse folding structure encoder; LLM backbone | Protein property Q&A; Outperforms baseline models and general-purpose LLMs on protein-specific queries. |

| ESM (Evolutionary Scale Modeling) [17] [15] | Sequence | Transformer-based; Pre-trained on UniRef databases | Protein function prediction; Widely used as a state-of-the-art sequence encoder. |

| ProMEP's Base Model [12] | Multimodal (Sequence & Structure) | Equivariant structure embedding; trained on ~160M AlphaFold structures | State-of-the-art (SOTA) performance on function annotation and protein-protein interaction tasks. |

| ProGen [14] | Sequence | Language model based on Transformer architecture; control tags | Generation of functional protein sequences across diverse families. |

Benchmarking Methodologies for DBTL Cycles

Evaluating the real-world performance of ML-guided bioengineering requires robust, standardized benchmarking. Due to the cost and time associated with physical DBTL cycles, mechanistic kinetic model-based frameworks have emerged as a valuable tool for simulation and comparison [8].

These frameworks use ordinary differential equations (ODEs) to model cellular metabolism, representing a synthetic pathway embedded within a physiologically relevant cell model. Researchers can simulate combinatorial pathway optimization by in silico perturbations of enzyme concentrations (e.g., changing Vmax parameters) and then use the simulated product flux data to benchmark different machine learning models and DBTL cycle strategies [8].

Experimental Protocol for In Silico Benchmarking

The following workflow, adapted from research in this area, outlines a general protocol for benchmarking ML models in iterative metabolic engineering [8]:

- Pathway and Model Definition: A kinetic model of a core metabolic host (e.g., E. coli) is established, integrating a synthetic pathway for a product of interest.

- Combinatorial Library Simulation: A large in silico library of strain designs is created by varying the expression levels of multiple pathway enzymes simultaneously. Each design is simulated to obtain the product flux (the fitness metric).

- Initial DBTL Cycle Sampling: An initial subset of designs is selected from the full library to simulate the first experimental "Build-Test" cycle.

- Machine Learning Model Training: The data from the initial subset is used to train various ML models (e.g., gradient boosting, random forest) to predict product flux from enzyme expression levels.

- Recommendation and Iteration: The trained model predicts the fitness of all remaining designs in the library. A recommendation algorithm selects the next set of promising strains for the subsequent simulated DBTL cycle.

- Performance Evaluation: The process is repeated over multiple cycles. The performance of different ML models is compared based on how quickly they identify the highest-producing strain designs across iterations.

Studies using this framework have found that gradient boosting and random forest models tend to outperform other methods in low-data regimes and are robust to training set biases and experimental noise [8].

The Evolving DBTL Workflow: From DBTL to LDBT

The power of zero-shot prediction and advanced PLMs is catalyzing a fundamental shift in the synthetic biology paradigm. The traditional DBTL cycle is being reordered into a new LDBT (Learn-Design-Build-Test) cycle [3].

In this new paradigm, the "Learn" phase comes first. Researchers leverage pre-trained foundational models (PLMs, zero-shot predictors) that already contain vast biological knowledge. This knowledge directly informs the "Design" of parts and systems. The subsequent "Build" and "Test" phases then serve to validate the in silico predictions, often in a single, efficient cycle. This approach brings bioengineering closer to a "Design-Build-Work" model used in other engineering disciplines [3].

The following diagram illustrates the logical relationship and flow between the traditional DBTL cycle and the emerging LDBT paradigm.

The Scientist's Toolkit: Essential Research Reagents & Platforms

Success in ML-guided bioengineering relies on a combination of computational tools and experimental platforms that enable high-throughput validation.

Table 3: Key Research Reagent Solutions and Experimental Platforms

| Item / Solution | Function in ML-Guided Bioengineering |

|---|---|

| Cell-Free Expression Systems [3] | Rapid, high-throughput protein synthesis and testing without cloning; enables megascale data generation for model training and validation. |

| AlphaFold2/3 & RoseTTAFold [18] | Provides high-accuracy protein structure predictions; used as inputs for structure-based models and for functional analysis. |

| Liquid Handling Robots & Microfluidics [3] | Automates the "Build" and "Test" phases; allows for screening of thousands to hundreds of thousands of reactions (e.g., picoliter-scale droplet assays). |

| Pre-trained Model Weights (e.g., for ESM, ProGen) | Foundational models that can be fine-tuned on specific datasets or used for zero-shot prediction, saving computational resources and time. |

| Biofoundries (e.g., ExFAB) [3] | Integrated facilities that combine automation, computation, and biology to execute DBTL/LDBT cycles at a large scale. |

Zero-shot prediction models and sophisticated Protein Language Models are no longer speculative technologies but are actively reshaping bioengineering research. As benchmarked against traditional DBTL cycles, their integration offers a clear path to drastically reduced development times and more intelligent exploration of biological design spaces. The emergence of the LDBT cycle underscores a move toward a more predictive, first-principles approach to biological design. For researchers and drug development professionals, proficiency in these tools—understanding their strengths, limitations, and appropriate application contexts—is becoming indispensable for maintaining a competitive edge. The future will likely see these models become more accurate, multimodal, and seamlessly integrated with automated experimental platforms, further closing the loop between digital design and physical biological systems.

The Critical Role of High-Quality, Megascale Data for Foundational Models

In the realm of scientific machine learning (ML), particularly for biomedical and synthetic biology applications, the quality and scale of training data fundamentally determine the predictive power and utility of resulting models. The established paradigm of Design-Build-Test-Learn (DBTL) cycles for biological engineering relies on iterative experimentation to accumulate knowledge and refine biological designs [8]. Within this framework, megascale experimental datasets—those encompassing hundreds of thousands to millions of precise measurements—provide the essential substrate for training foundational models that can accurately predict complex biological phenomena like protein folding stability and metabolic pathway behavior.

The emergence of high-throughput experimental techniques has enabled a dramatic shift from small-scale, bespoke measurements to industrial-scale data generation. This shift is critical because the complex sequence-structure-function relationships in biology inhabit high-dimensional spaces that can only be effectively navigated with vast amounts of high-fidelity data [19] [3]. This article examines the generation, quality requirements, and application of such data through the lens of DBTL cycle research, providing a comparative analysis of experimental platforms and their outputs.

Megascale Data Generation: Experimental Platforms and Protocols

cDNA Display Proteolysis for Protein Folding Stability

Overview and Principle: cDNA display proteolysis is a recently developed high-throughput method for quantifying protein thermodynamic folding stability (ΔG) at an unprecedented scale [19]. The technique leverages the principle that proteases cleave unfolded proteins more rapidly than folded ones, allowing folding stability to be inferred from protease susceptibility measurements.

Table 1: Key Characteristics of cDNA Display Proteolysis

| Characteristic | Specification |

|---|---|

| Throughput | Up to 900,000 protein domains per one-week experiment |

| Total Measurements | 1.8 million (776,000 high-quality curated ΔG values) |

| Cost | ~$2,000 per library (excluding DNA synthesis/sequencing) |

| Key Innovation | Cell-free molecular biology combined with next-generation sequencing |

| Proteases Used | Trypsin and chymotrypsin (orthogonal specificity) |

| Dynamic Range | Reproducibility R = 0.97 for trypsin, 0.99 for chymotrypsin |

Experimental Workflow: The method involves creating a DNA library encoding test proteins, which are then transcribed and translated using cell-free cDNA display, resulting in proteins covalently attached to their cDNA. These protein-cDNA complexes are incubated with varying protease concentrations, followed by pull-down of intact (protease-resistant) proteins and quantification via deep sequencing [19].

Figure 1: cDNA Display Proteolysis Workflow for Megascale Protein Stability Data

Data Quality and Validation: The resulting sequencing counts are processed through a Bayesian model incorporating single-turnover protease kinetics to infer K50 values (protease concentration at half-maximal cleavage rate) and ultimately thermodynamic ΔG values [19]. The method demonstrates high consistency with traditional purified protein measurements (Pearson correlations >0.75 across 1,188 variants of 10 proteins), establishing its reliability for quantitative biophysical measurements [19].

Kinetic Model-Based Framework for Metabolic Pathway Optimization

Overview and Principle: For metabolic pathway engineering, a mechanistic kinetic model-based framework provides a simulated environment for generating megascale data and benchmarking ML approaches [8]. This approach uses ordinary differential equations (ODEs) to describe changes in intracellular metabolite concentrations over time, with reaction fluxes modeled using kinetic mechanisms derived from mass action principles.

Simulation Framework: The framework integrates synthetic pathways into established core kinetic models of organisms like Escherichia coli, embedding the pathway within a physiologically relevant cell and bioprocess model [8]. This allows in silico perturbation of enzyme concentrations and properties to simulate their effects on metabolic flux and product formation.

Figure 2: Kinetic Modeling Framework for Metabolic Pathway Simulation

Applications for ML Benchmarking: This simulated framework enables systematic testing of ML methods over multiple DBTL cycles without the cost and time constraints of physical experiments [8]. Researchers have used this approach to demonstrate that gradient boosting and random forest models outperform other methods in low-data regimes and remain robust against training set biases and experimental noise [8].

Comparative Analysis of Megascale Data Generation Platforms

Table 2: Platform Comparison for Megascale Biological Data Generation

| Platform | Primary Application | Scale | Key Advantages | Validation Metrics |

|---|---|---|---|---|

| cDNA Display Proteolysis [19] | Protein folding stability measurement | 776,000 high-quality ΔG measurements | Fast (1 week), accurate, uniquely scalable | R=0.94 between trypsin/chymotrypsin; >0.75 correlation with traditional methods |

| Kinetic Modeling Framework [8] | Metabolic pathway optimization | Virtually unlimited in silico designs | Enables DBTL strategy comparison; models complex physiology | Captures non-intuitive pathway behaviors; embedded in bioprocess context |

| Cell-Free Expression Systems [3] | Protein and pathway prototyping | >100,000 reactions via microfluidics | Rapid (>1g/L protein in <4h); scalable pL-kL; customizable | Successful AMP design (6/500 candidates); 20-fold pathway improvement |

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Research Reagent Solutions for Megascale Experimentation

| Reagent/Platform | Function | Application Context |

|---|---|---|

| Cell-Free cDNA Display [19] | In vitro protein synthesis with cDNA linkage | Enables protein stability mapping via display technology |

| Orthogonal Proteases (Trypsin/Chymotrypsin) [19] | Cleave at different amino acid residues | Provides complementary stability measurements; controls for protease specificity |

| DropAI Microfluidics [3] | Encapsulates reactions in picoliter droplets | Enables ultra-high-throughput screening (>100,000 reactions) |

| DNA Library Synthesis [19] | Generates diverse variant libraries | Creates input material for megascale screening experiments |

| Next-Generation Sequencing [19] | Quantifies protein survival post-proteolysis | Provides digital readout for millions of protein variants |

| Mechanistic Kinetic Models [8] | Simulates metabolic pathway dynamics | Generates training data for ML; predicts pathway behavior |

Data Quality Framework for ML-Ready Biological Data

The utility of megascale datasets for training foundational models depends critically on adherence to data quality dimensions. The "Six Dimensions" model of data quality provides a framework for evaluation [20]:

- Completeness – Comprehensive coverage of sequence space (e.g., all single amino acid variants) [19]

- Accuracy – High correlation with gold-standard measurements (R>0.75 with traditional methods) [19]

- Validity – Conformance to expected biophysical parameters and model constraints [20]

- Consistency – Reproducibility between experimental replicates (R=0.97-0.99) and orthogonal methods [19]

- Timeliness – Rapid generation compatible with iterative DBTL cycles (900,000 measurements/week) [19]

- Uniqueness – Avoidance of duplicate measurements that bias training datasets [20]

Databricks Lakehouse Platform provides technological infrastructure for implementing this quality framework through features like Lakehouse Monitoring for quality metrics, Delta Live Tables for data pipeline reliability, and Unity Catalog for lineage tracking [20].

Transforming DBTL Cycles Through Megascale Data and ML

Enhanced Learning Through Expanded Experimental Scale

The massive scale of modern biological datasets fundamentally transforms the Learning phase of DBTL cycles. Where traditional approaches might examine dozens or hundreds of variants, megascale experiments generate sufficient data to train complex ML models that capture subtle, non-intuitive relationships in biological systems [8]. For example, the protein stability dataset of 776,000 measurements enables quantification of environmental factors affecting amino acid fitness, identification of thermodynamic couplings between protein sites, and analysis of evolutionary amino acid usage patterns [19].

The Emergence of LDBT: Learning-Driven Design

The accumulation of megascale datasets, combined with advanced ML models, is prompting a paradigm shift from Design-Build-Test-Learn (DBTL) to Learn-Design-Build-Test (LDBT) cycles [3]. In this new framework, Learning precedes Design through:

- Protein language models (ESM, ProGen) trained on evolutionary relationships [3]

- Structure-based design tools (ProteinMPNN, MutCompute) enabling zero-shot prediction [3]

- Hybrid physico-ML approaches combining statistical power with physical principles [3]

This reordering allows researchers to leverage prior knowledge embedded in pre-trained models, potentially reducing the number of experimental cycles required to achieve functional designs [3].

Figure 3: LDBT Cycle with Learning-First Approach

The generation and utilization of high-quality, megascale datasets represent a critical enabling capability for developing foundational models in biology. As experimental technologies continue to advance in throughput and accuracy, and as ML methodologies become increasingly sophisticated at extracting insights from complex biological data, the synergy between large-scale experimentation and computational modeling will drive accelerated progress in synthetic biology, metabolic engineering, and drug development. The integration of these approaches within structured DBTL (or LDBT) frameworks provides a systematic methodology for navigating the vast design spaces of biological systems, ultimately reducing the time and cost required to develop novel biological solutions to pressing human challenges.

Methodologies and Real-World Applications of ML in DBTL Workflows

In the rapidly evolving field of biological data science, selecting the optimal machine learning (ML) approach is pivotal for accelerating research and development cycles, particularly within the Design-Build-Test-Learn (DBTL) framework. The paradigm is even shifting towards LDBT, where learning precedes design, underscoring the critical role of predictive modeling [3] [21]. This guide provides a comprehensive comparison between ensemble and single-model ML approaches, offering researchers, scientists, and drug development professionals an evidence-based foundation for model selection. We synthesize recent findings and benchmark studies to delineate the performance, applicability, and practical implementation of these strategies in biological prediction tasks.

The table below summarizes the core comparative insights between ensemble and single-model approaches, drawing on recent benchmark studies across various biological domains.

Table 1: High-Level Comparison of Ensemble and Single-Model Approaches

| Aspect | Ensemble Models | Single-Model Approaches |

|---|---|---|

| Average Predictive Accuracy | Generally higher, with documented accuracy up to 95.4% in classification tasks [22]. | Variable; can be high but often lower than ensembles in head-to-head comparisons [23]. |

| Prediction Error | Lower, as error is reduced by leveraging the "Diversity Prediction Theorem" [23]. | Typically higher for a given model, as it lacks error-cancellation from diverse predictions [23]. |

| Robustness & Stability | High; produces more stable predictive features and is resilient to overfitting [22] [24]. | Can be susceptible to overfitting and less stable across different prediction scenarios [22]. |

| Data Integration Prowess | Excels at integrating multi-modal, multi-omics data (e.g., genomics, transcriptomics) [24]. | Often struggles with heterogeneous data types; simpler models may require extensive pre-processing [25]. |

| Theoretical Foundation | Supported by the "Diversity Prediction Theorem" and the "No Free Lunch Theorem" [23]. | Limited by the "No Free Lunch Theorem", which states no single model is best for all problems [23]. |

| Computational Cost | Higher during training and prediction due to multiple model runs [25]. | Lower, making them suitable for resource-constrained settings or specific, well-defined tasks [25]. |

| Interpretability | Can be complex to interpret ("black box"), though methods like feature importance exist [23]. | Often simpler to interpret, especially linear models or decision trees [25]. |

| Ideal Use Case | Integrating diverse data types for robust clinical outcome prediction or complex trait analysis [24] [23]. | Efficiently adapting to specific datasets with limited computational resources or for simpler tasks [25]. |

Performance and Benchmarking Data

Quantitative benchmarks from recent studies provide compelling evidence for the performance advantages of ensemble methods.

Accuracy and Error Metrics in Direct Comparisons

In a landmark study on genomic prediction for crop breeding, an ensemble-average model was benchmarked against six individual genomic prediction models for traits like "days to anthesis" and "tiller number." The ensemble approach consistently increased prediction accuracies and reduced prediction errors compared to the best individual models [23]. The performance gain is quantitatively explained by the Diversity Prediction Theorem, where the ensemble's squared error equals the average squared error of the individual models minus the diversity of their predictions [23].

Another study on biomedical signal classification achieved a state-of-the-art classification accuracy of 95.4% by employing an ensemble framework that integrated Random Forest, Support Vector Machines (SVM), and Convolutional Neural Networks (CNNs). This hybrid model outperformed its individual components by effectively mitigating overfitting and leveraging the strengths of each algorithm [22].

Multi-Omics Data Integration Performance

Ensemble methods have proven particularly powerful for integrating multi-class, multi-omics data for clinical outcome prediction. A 2025 benchmarking study showed that ensemble models like PB-MVBoost and AdaBoost with soft vote were the best-performing models for integrating complementary omics modalities, achieving an Area Under the Receiver Operating Curve (AUC) of up to 0.85 in predicting outcomes for hepatocellular carcinoma, breast cancer, and irritable bowel disease. The study concluded that these ensembles produced more stable predictive features than models using individual data modalities or simple data concatenation [24].

Table 2: Benchmark Performance in Multi-Omics Clinical Prediction

| Study Focus | Best-Performing Ensemble Model(s) | Key Performance Metric | Comparison Baseline |

|---|---|---|---|

| Multi-omics clinical outcome prediction [24] | PB-MVBoost, AdaBoost (Soft Vote) | AUC up to 0.85 | Simple concatenation of modalities and other individual models. |

| Biomedical signal classification from spectrograms [22] | Ensemble of RF, SVM, and CNN | Classification Accuracy: 95.4% | Individual Random Forest, SVM, and CNN classifiers. |

| Genomic prediction for crop breeding [23] | Naïve Ensemble-Average | Higher prediction accuracy, lower prediction error | Six individual genomic prediction models (e.g., Bayesian models, RR-BLUP). |

Theoretical Foundations: Why Ensembles Work

The superiority of ensemble approaches is not merely empirical; it is grounded in robust theoretical principles.

The No Free Lunch Theorem: This theorem posits that no single ML model can be universally superior across all possible problems. When averaged over all scenarios, the performance of all models is equivalent [23]. This fundamentally explains why relying on a single "best" model is a flawed strategy for the diverse and unpredictable challenges in biological research.

The Diversity Prediction Theorem: This theorem provides the mathematical backbone for ensembles. It states that the error of an ensemble is equal to the average error of its individual models minus the diversity of their predictions [23]. By combining models that make different types of errors, the ensemble's collective prediction cancels out individual mistakes, leading to a lower overall error. This is the core mechanism behind the success of ensembles.

Experimental Protocols and Workflows

Implementing a successful ML strategy requires a structured workflow, from data preparation to model validation.

Protocol for Ensemble Construction in Biomedical Signal Classification

The 95.4%-accurate ensemble model for classifying percussion and palpation signals followed a rigorous protocol [22]:

- Signal Preprocessing and Feature Extraction: Raw biological signals were first converted into time-frequency representations (spectrograms) using the Short-Time Fourier Transform (STFT). This step captures crucial temporal and spectral properties for analysis.

- Model Selection and Training: Three distinct classifiers were trained independently:

- Convolutional Neural Network (CNN): To extract spatial and hierarchical features from the spectrogram images.

- Support Vector Machine (SVM): To handle high-dimensional data and find optimal separating hyperplanes.

- Random Forest (RF): An ensemble method itself, used to reduce overfitting through its committee of decision trees.

- Ensemble Integration: Predictions from the CNN, SVM, and Random Forest were combined into a final meta-prediction. This leverages the complementary strengths of each model, where one model's weakness is compensated by another's strength.

Protocol for Ensemble Genomic Prediction

The study demonstrating enhanced genomic prediction in teosinte plants detailed the following methodology [23]:

- Data Preparation: A population of Recombinant Inbred Lines (RILs) was genotyped with single nucleotide polymorphisms (SNPs) and phenotyped for complex traits. Quality control and imputation were performed to handle missing marker calls.

- Individual Model Training: Six different genomic prediction models were trained separately. These models represented a diverse set of statistical approaches to capture both additive and non-additive genetic effects.

- Naïve Ensemble-Averaging: A straightforward ensemble was constructed by averaging the predicted phenotypes from all six individual models. This equal-weighting approach is simple yet powerful, as dictated by the Diversity Prediction Theorem.

- Validation: The ensemble's prediction accuracy was compared against each individual model using cross-validation techniques.

The following diagram illustrates the core logical relationship and workflow of building an ensemble model based on the Diversity Prediction Theorem.

The Scientist's Toolkit: Key Research Reagents and Solutions

The following table details essential computational tools and materials referenced in the featured studies.

Table 3: Key Research Reagent Solutions for ML in Biology

| Item / Solution Name | Function / Application | Relevant Context |

|---|---|---|

| Cell-Free Transcription-Translation (TX-TL) Systems [3] [21] | Rapid, high-throughput testing of genetic designs without using live cells. Accelerates the "Build-Test" phase of DBTL cycles. | Synthetic biology, metabolic engineering, protein engineering. |

| Short-Time Fourier Transform (STFT) [22] | Converts raw, time-series biomedical signals (e.g., from percussion) into spectrograms for machine learning analysis. | Biomedical signal processing and classification. |

| scFMs (Single-Cell Foundation Models) [25] [26] | Large-scale pretrained models (e.g., Geneformer, scGPT) for analyzing single-cell omics data. Can be adapted to various downstream tasks. | Single-cell genomics, cancer research, drug sensitivity prediction. |

| UTR Designer [27] | A computational tool for designing ribosome binding site (RBS) sequences to fine-tune gene expression in synthetic biology. | Rational strain engineering, metabolic pathway optimization. |

| Voting Ensemble / Meta Learner [24] | A class of ensemble methods that combine predictions from multiple base models via voting or a meta-classifier. | Multi-omics data integration, clinical outcome prediction. |

| Random Forest (RF) [22] | An ensemble learning method that uses many decision trees and is robust against overfitting. | General-purpose classification and regression, biomedical signal processing. |

| Liquid Biopsy & ctDNA Analysis [28] | A non-invasive method to obtain tumor-derived genetic material from blood or CSF for diagnostic and monitoring purposes. | Neuro-oncology, cancer diagnostics, minimal residual disease (MRD) detection. |

Integrated Workflow: The LDBT Cycle with ML Selection

The choice of ML model is deeply embedded in the modern synthetic biology workflow. The emerging LDBT (Learn-Design-Build-Test) cycle places learning first, where machine learning models pre-trained on vast biological datasets inform the initial design [3] [21]. Ensemble models are particularly suited for the "Learn" phase when integrating diverse, multi-modal data. The "Test" phase is increasingly accelerated by high-throughput platforms like cell-free systems, which generate the large-scale, high-quality data required to train and validate both single and ensemble models effectively [3]. This creates a powerful, closed-loop system where experimental testing continuously improves the predictive ML models, which in turn guide more effective designs.

The diagram below illustrates this integrated, iterative cycle, highlighting the role of ML and rapid testing.

The evidence strongly indicates that ensemble machine learning approaches generally offer superior performance, robustness, and data integration capabilities for complex biological prediction tasks within DBTL research cycles. Their foundation in the Diversity Prediction Theorem makes them a powerful strategy for navigating the "No Free Lunch" reality of data science.

However, single-model approaches remain relevant for specific, well-defined tasks, particularly under computational resource constraints [25]. The future of ML in biology is not a strict choice between one or the other but will involve intelligent model selection ecosystems. As seen in single-cell foundation models, the trend is towards leveraging large, pre-trained models as powerful feature extractors, upon which simpler, task-specific models or ensembles can be efficiently built [25] [26]. This hybrid strategy, combined with the accelerating power of high-throughput experimental testing, promises to further compress the DBTL cycle and drive the next wave of discovery in biomedicine and biotechnology.

Accelerating the Build and Test Phases with Cell-Free Expression Systems

In synthetic biology and metabolic engineering, the Design-Build-Test-Learn (DBTL) cycle is a foundational framework for engineering biological systems. This iterative process involves designing genetic constructs, building them in a host organism, testing their performance, and learning from the data to inform the next design cycle [3] [8]. However, the traditional DBTL cycle faces significant bottlenecks, particularly in the Build and Test phases where molecular cloning, cell-based expression, and functional characterization can require weeks of experimental work. These temporal constraints severely limit the iteration speed necessary for rapid biological engineering and the generation of large datasets required for training robust machine learning models [3] [27].

Cell-free expression systems (CFES) have emerged as a transformative technology for accelerating the Build and Test phases. Also known as cell-free protein synthesis (CFPS), this technology utilizes the transcriptional and translational machinery extracted from cells to produce proteins in vitro without the constraints of living organisms [29] [30]. By decoupling protein production from cell viability, CFES enables rapid protein synthesis in hours rather than days, direct access to the reaction environment, and high-throughput implementation [31] [29]. This review provides a comprehensive comparison of how cell-free platforms are revolutionizing DBTL cycles by dramatically accelerating build and test phases, with specific focus on benchmarking machine learning applications in biomedical research and drug development.

Technical Comparison: Cell-Free vs. Cell-Based Expression Systems

Key Performance Metrics and Experimental Outcomes

Cell-free expression systems offer distinct advantages and some limitations compared to traditional cell-based methods. The table below summarizes key performance metrics based on current experimental data.

Table 1: Performance comparison between cell-free and cell-based expression systems

| Parameter | Cell-Free Expression Systems | Traditional Cell-Based Systems |

|---|---|---|

| Expression Timeline | 4-24 hours [29] [30] | 1-7 days (including cell growth) [29] |

| Throughput Capability | High (pL to kL scale); >100,000 reactions in parallel [3] | Limited by cell culture requirements |

| Toxic Protein Production | Suitable [32] [29] | Often problematic |

| Non-Canonical Amino Acid Incorporation | Straightforward [30] | Complex, limited by cell viability |

| Open System Accessibility | Direct manipulation possible [30] | Restricted by cell membranes |

| Automation Compatibility | High (compatible with microfluidics) [3] | Moderate |

| Typical Protein Yield | >1 g/L in <4 hours demonstrated [3] | Variable, depends on optimization |

Applications in Pharmaceutical Development

The unique advantages of CFES have enabled specific applications in drug discovery and development:

- Antibody Discovery: Accelerated workflows for synthesizing and screening antigen-binding fragments (sdFab) against targets like SARS-CoV-2 spike protein within days instead of weeks [30].

- Membrane Protein Production: Successful synthesis of functionally active G protein-coupled receptors (GPCRs) – targets for 34% of FDA-approved drugs – which are often challenging to express in cellular systems [30].

- Vaccine Development: Rapid prototyping of virus-like particles (VLPs) and glycoconjugate vaccines with improved stability characteristics [30].

- Antibody-Drug Conjugates: Site-specific conjugation of cytotoxic payloads to antibodies via non-canonical amino acids for targeted cancer therapies [30].

Experimental Protocols for DBTL Integration

Core Methodology: Cell-Free System Preparation and Operation

The fundamental workflow for implementing CFES in DBTL cycles involves several key stages, with variations depending on the specific application requirements.

Table 2: Key research reagent solutions for cell-free expression systems

| Reagent Component | Function | Examples & Notes |

|---|---|---|

| Cell Extract | Provides transcriptional/translational machinery | E. coli S30 extract, wheat germ extract, HEK293 lysate [29] [30] |

| Energy Source | Fuels phosphorylation and polymerization | Phosphoenolpyruvate, creatine phosphate, or glycolytic intermediates [30] |

| Template DNA | Encodes gene of interest | Linear expression templates (LETs) or plasmids; LETs bypass cloning [30] |

| Amino Acid Mixture | Building blocks for translation | All 20 canonical amino acids; may include non-canonical variants [30] |

| Cofactors | Enzyme activators | Mg²⁺, K⁺, NH₄⁺ ions [29] |

| Detection Components | Enable functional testing | Fluorescent dyes, split-protein systems for complementation assays [30] |

Protocol 1: Basic E. coli-Based CFPS Setup

- Extract Preparation: Grow E. coli cells to mid-log phase in rich medium (e.g., 2xYT) to maximize ribosome content. Harvest cells by centrifugation and disrupt via homogenization or sonication. Clarify lysate by centrifugation and perform runoff reaction to reduce endogenous mRNA [29].

- Reaction Assembly: Combine cell extract with energy solution (e.g., 3mM ATP, GTP, CTP, UTP), amino acid mixture (1mM each), cofactors (10-15mM Mg²⁺, 100-150mM K⁺), DNA template (10-50nM), and energy-regenerating system (e.g., phosphoenolpyruvate) [29] [30].

- Incubation and Monitoring: Conduct reaction at 30-37°C for 2-24 hours. Monitor protein synthesis in real-time if using fluorescent reporters [30].

- Analysis: Assess protein yield via SDS-PAGE, western blot, or activity assays. For high-throughput applications, use fluorescence-based methods [30].

Protocol 2: High-Throughput Screening with Microfluidics

- Miniaturization: Utilize droplet microfluidics to create picoliter-scale reaction chambers [3].

- Parallelization: Implement platforms like DropAI to screen >100,000 conditions simultaneously [3].

- Detection: Integrate multi-channel fluorescent imaging for real-time monitoring [3].

- Data Collection: Couple with automated image analysis and machine learning for pattern recognition [3].

Case Study: Dopamine Production Optimization

A knowledge-driven DBTL cycle successfully optimized dopamine production in E. coli by integrating cell-free and cell-based approaches:

- In Vitro Prototyping: Tested different relative enzyme expression levels for HpaBC and Ddc in cell lysate systems to identify optimal ratios without the constraints of cellular metabolism [27].

- In Vivo Translation: Translated optimal expression ratios to engineered E. coli strains through ribosomal binding site (RBS) engineering [27].

- Results: Achieved dopamine production of 69.03 ± 1.2 mg/L (34.34 ± 0.59 mg/g biomass), a 2.6 to 6.6-fold improvement over previous in vivo production systems [27].

Workflow Visualization: Integrating CFES into Machine Learning-Enhanced DBTL Cycles

The integration of cell-free systems with machine learning creates a powerful framework for biological engineering. The following workflow diagrams illustrate this synergistic relationship.

Figure 1: The accelerated LDBT cycle powered by cell-free expression systems and machine learning. This paradigm reshapes the traditional DBTL cycle by placing Learning first, enabled by pre-trained models that can make zero-shot predictions, while CFES dramatically accelerates the Build and Test phases.

Figure 2: Machine learning and CFPS integration for therapeutic protein development. ML models generate designs which are rapidly tested in CFPS platforms, creating large datasets that further refine the models in an iterative improvement cycle.

Quantitative Benchmarking Data

Performance Metrics in Published Studies

The effectiveness of CFES in accelerating DBTL cycles is demonstrated through concrete experimental data from recent studies.

Table 3: Quantitative benchmarking of cell-free expression in research applications

| Application | Experimental Scale | Time Savings | Key Outcomes | Reference |

|---|---|---|---|---|

| Antimicrobial Peptide Engineering | 500 variants validated from 500,000 surveyed computationally | Weeks to days | 6 promising AMP designs identified | [3] |

| Antibody Discovery | High-throughput sdFab screening | Single 3-day experiment | Effective antibody sequences against SARS-CoV-2 | [30] |

| Enzyme Engineering | 776,000 protein variants | Ultra-high-throughput mapping | ΔG calculations for stability optimization | [3] |

| Metabolic Pathway Optimization | 20-fold improvement in product titer | Accelerated prototyping | 3-HB production increased in Clostridium | [3] |

| Deep Screening of scFVs | Library diversity 4×10⁶ | Traditional methods require larger libraries | 5200-fold increased binding affinity | [30] |

Impact on Machine Learning Model Training

The massive datasets generated through CFES-enabled high-throughput testing directly enhance machine learning model performance:

- Data Volume: CFPS enables experimental testing of >100,000 protein variants, providing the mega-scale datasets required for training robust ML models [3].

- Model Accuracy: When deep-learning sequence generation was paired with CFPS validation, researchers successfully engineered enzymes with improved catalytic activity and stability [3].

- Zero-Shot Prediction Improvement: CFPS-generated datasets have been extensively utilized to benchmark and improve zero-shot predictors for protein folding and function [3].

Cell-free expression systems represent a transformative technology for accelerating the Build and Test phases of DBTL cycles in biomedical research. By enabling rapid, high-throughput protein synthesis and characterization, CFES directly addresses the critical bottleneck in iterative biological engineering. The integration of these systems with machine learning approaches creates a powerful synergy – CFPS generates the large-scale experimental data required for training accurate models, while ML provides intelligent design predictions that can be rapidly validated in cell-free platforms. This virtuous cycle is reshaping the landscape of synthetic biology, drug discovery, and enzyme engineering, moving the field closer to a predictive engineering discipline where designed biological systems work as intended on the first or second iteration. As both CFES and ML technologies continue to advance, their integration promises to further accelerate the pace of biological innovation and therapeutic development.

The paradigm of Design-Build-Test-Learn (DBTL) has long been the cornerstone of biological engineering and drug discovery. This iterative cycle involves designing biological constructs, building them, testing their performance, and learning from the data to inform the next design iteration [3]. However, the integration of machine learning (ML) is fundamentally reshaping this workflow, accelerating its pace, and enhancing its predictive power. The application of ML now spans the entire drug development pipeline, from the initial design of novel drug molecules to the optimization of late-stage clinical trials. This guide provides a comparative analysis of the performance of various applied ML methods, frameworks, and platforms, benchmarking them within the modern DBTL cycle context to offer an objective resource for researchers and drug development professionals.

A significant shift is the move from a traditional DBTL cycle to an "LDBT" (Learn-Design-Build-Test) cycle, where machine learning and prior knowledge precede the design phase [3]. This is further powered by technologies like cell-free expression systems, which accelerate the Build and Test phases by enabling rapid, high-throughput synthesis and testing of proteins without the constraints of living cells [3]. The diagram below illustrates this evolved, data-driven cycle.

ML in Molecular Design and Optimization

Key Generative Models and Performance Benchmark

In molecular design, generative artificial intelligence (GenAI) models are used to create novel, synthesizable chemical structures with desired properties. Different model architectures offer distinct advantages and are suited for specific tasks.

Table 1: Comparative Performance of Key Generative AI Models in Molecular Design

| Model Architecture | Key Principle | Strengths | Common Applications | Example Performance Notes |

|---|---|---|---|---|

| Variational Autoencoder (VAE) [33] [34] | Encodes input into a latent distribution; decodes to generate new data. | Smooth, continuous latent space for interpolation; disentangled representations allow property editing. | Inverse molecular design; exploring chemical space. | Integrated with Bayesian optimization for efficient candidate identification [34]. |

| Generative Adversarial Network (GAN) [33] [34] | A generator and discriminator network are trained adversarially. | Can produce highly realistic, novel molecules. | Image synthesis; molecular generation. | Can suffer from mode collapse (limited diversity of outputs) [34]. |

| Transformer [34] | Uses self-attention mechanisms to process sequential data. | Captures long-range dependencies in data; highly adaptable. | Generating molecules represented as text (e.g., SMILES); predicting properties. | Excels in tasks like goal-directed generation and molecular optimization [34]. |

| Flow-based Models [33] | Learns a series of invertible transformations to map data to a latent distribution. | Explicitly models the probability density function, enabling exact likelihood calculation. | Molecular generation and efficient computation of properties. | -- |

| Diffusion Models [34] | Progressively adds noise to data and learns to reverse the process. | High-quality generation; stability in training. | High-quality molecular generation; refining structures against target properties. | GaUDI framework achieved 100% validity in generated structures for organic electronics [34]. |

Optimization Strategies for Enhanced Molecular Design

To steer these generative models toward molecules with optimal drug-like properties, several optimization strategies are employed:

- Reinforcement Learning (RL): An agent is trained to make sequential decisions (e.g., adding atoms) to build a molecule, receiving rewards based on achieving target properties like drug-likeness, binding affinity, and synthetic accessibility [34]. For example, GraphAF combines flow-based models with RL fine-tuning for targeted optimization [34].

- Property-Guided Generation: This integrates property prediction directly into the generation process. The Guided Diffusion for Inverse Molecular Design (GaUDI) framework combines a property prediction network with a diffusion model to optimize for single or multiple objectives simultaneously [34].

- Bayesian Optimization (BO): Particularly useful when evaluating candidates is computationally expensive (e.g., docking simulations), BO builds a probabilistic model to guide the search for optimal molecules in a latent or chemical space [34].

Experimental Benchmarking: The Lo-Hi Framework

Evaluating ML models for molecular property prediction requires realistic benchmarks. The Lo-Hi benchmark provides a practical framework based on real-world drug discovery stages [35]:

- Hit Identification (Hi): Focuses on identifying active compounds from large, diverse chemical libraries.

- Lead Optimization (Lo): Focuses on optimizing a smaller set of candidate compounds for properties like potency and selectivity.

This benchmark addresses limitations of earlier benchmarks (like MoleculeNet) which were found to be "unrealistic and overoptimistic" [35]. It employs a novel molecular splitting algorithm (Balanced Vertex Minimum k-Cut) to create more challenging and realistic test scenarios, providing a more reliable measure of model performance in practical settings [35].

Case Study: AI-Driven Platform Performance

Several AI-driven platforms have demonstrated the real-world impact of these technologies by advancing novel drug candidates into clinical trials.

Table 2: Performance Metrics of Leading AI-Driven Drug Discovery Platforms (2025 Landscape)

| Company / Platform | Core AI Approach | Key Achievements & Clinical Candidates | Reported Efficiency Gains |

|---|---|---|---|

| Exscientia [36] | Generative AI for small-molecule design; "Centaur Chemist" integrating human expertise. | Multiple clinical candidates, including the first AI-designed drug (DSP-1181 for OCD) to enter Phase I. | Design cycles ~70% faster, requiring 10x fewer synthesized compounds than industry norms. A CDK7 inhibitor candidate was identified after synthesizing only 136 compounds. |

| Insilico Medicine [36] | Generative AI for target discovery and molecule design. | AI-designed drug for Idiopathic Pulmonary Fibrosis (IPF) progressed from target to Phase I in 18 months. | Demonstrated rapid transition from target identification to clinical candidate. |

| BenevolentAI [37] [36] | Knowledge-graph-driven target discovery and validation. | Identified and validated novel targets for IPF and chronic kidney disease (in collaboration with AstraZeneca). | AI used to analyze scientific literature and biomedical data to hypothesize novel disease targets. |

| Schrödinger [36] | Physics-based simulations combined with ML. | Multiple partnered and internal programs in clinical stages. | Platform aims to predict binding affinity and molecular properties with high accuracy. |

ML in Clinical Trial Planning and Optimization

The application of ML extends significantly into clinical development, aiming to de-risk trials and improve their efficiency and success rates.

AI Applications Across the Clinical Trial Workflow

Machine learning algorithms analyze large, complex datasets—including electronic health records, past trial data, and real-world evidence—to optimize key aspects of trial planning and execution [37] [38]. The following diagram maps the primary AI use cases onto the clinical trial lifecycle.

Quantitative Impact of AI on Clinical Trial Efficiencies

The integration of AI and ML tools in clinical trial management is yielding measurable improvements in performance and timelines.

Table 3: Reported Impact of AI/ML Solutions on Clinical Trial Operations

| Application Area | AI Function | Reported Outcome / Performance Gain |

|---|---|---|

| Study Timelines [39] | Integrated data insights for faster decision-making. | Removes 50% of study timeline "whitespace". |

| Site Selection [38] | Predictive analytics to identify high-performing sites. | Improved identification of top-enrolling sites by 30-50%; accelerated enrollment by 10-15%. |

| Contracting & Negotiations [39] | Automated issue detection and mitigation. | Negotiations completed almost a month faster. |

| Patient Enrollment [39] | Early detection of enrollment risk at clinical sites. | Enables proactive creation of action plans before issues are manually apparent. |

A major challenge in traditional trials is patient recruitment, where an estimated 40% of sites fail to enroll a single patient and nearly 90% of trials experience significant delays due to recruitment issues [38]. AI tools directly address this by analyzing site-level data to predict enrollment potential and flag at-risk sites early [38] [39]. Furthermore, AI can enhance trial diversity by identifying investigators and clinics in underserved areas that have access to more diverse patient pools [38].

The effective application of ML in drug development relies on a foundation of high-quality data, software tools, and experimental systems.

Table 4: Essential Research Reagents and Resources for ML-Driven Drug Development

| Item / Resource | Type | Primary Function in ML-DBTL Cycles |

|---|---|---|

| ZINC Database [33] | Chemical Database | A source of ~2 billion purchasable compounds for virtual screening and training generative models on "drug-like" chemical space. |

| ChEMBL Database [33] | Bioactivity Database | A curated resource of ~1.5M bioactive molecules with experimental measurements, used for training predictive models. |

| Cell-Free Expression System [3] | Experimental Platform | Accelerates the "Build" and "Test" phases by enabling rapid, high-throughput protein synthesis and testing without cloning into living cells. |

| ProteinMPNN [3] | Software Tool | A deep learning-based tool for designing protein sequences that fold into a desired backbone structure, improving design success rates. |

| AlphaFold2 [33] | Software Tool | Provides highly accurate protein 3D structure predictions, which are crucial for structure-based drug design and generating training data. |

| Lo-Hi Benchmark [35] | Evaluation Framework | Provides a practical benchmark for evaluating ML models on tasks relevant to real-world hit identification and lead optimization. |

| Cloud-Based AI Platforms (e.g., AWS) [36] | Computational Infrastructure | Provides scalable computing power and managed services for training and deploying large, complex AI models. |

| RBS Library (e.g., UTR Designer) [27] | Genetic Tool | Enables fine-tuning of gene expression levels in synthetic biological pathways, a key aspect of the "Design" and "Build" phases in strain engineering. |

Experimental Protocols for Key Applications

Protocol: Coupling Cell-Free Protein Synthesis with ML for Pathway Optimization

This methodology, as exemplified by the iPROBE (in vitro prototyping and rapid optimization of biosynthetic enzymes) approach, integrates high-throughput experimental data generation with machine learning to optimize metabolic pathways [3].

- Design: Use ML models to generate a diverse set of pathway designs (e.g., varying enzyme homologs or expression levels via RBS sequences) [3] [27].

- Build: Assemble DNA templates encoding the pathway variants. This can be done rapidly using automated cloning techniques.

- Test (Cell-Free): Express the pathway variants in a cell-free protein synthesis (CFPS) system derived from crude cell lysates. The system is supplemented with necessary precursors and energy sources. Measure the output of the desired metabolite using colorimetric or fluorescent assays in microtiter plates or droplet microfluidics [3]. This allows for testing thousands of variants in parallel.

- Learn: Train a neural network or other supervised learning model on the generated dataset, where the inputs are the pathway designs (e.g., RBS sequences, enzyme combinations) and the outputs are the measured metabolite titers. The trained model can then predict the performance of untested designs [3].

- Iterate: Use the model's predictions to select a new, optimized batch of designs for the next round of testing, closing the DBTL loop.

Protocol: AI-Driven Site Selection and Enrollment Forecasting

This protocol outlines how AI is used to optimize operational planning in clinical trials [38] [39].

- Data Aggregation: Compile a large, historical dataset from past clinical trials. This dataset should include operational data (e.g., country and site performance, contract negotiation timelines), protocol details, and patient enrollment records.

- Feature Engineering: Process the data to create meaningful features for the model, such as site activation timelines, past enrollment rates by therapeutic area, and protocol complexity scores.

- Model Training: Train a machine learning model (e.g., a regression model for forecasting timelines, a classification model for identifying at-risk sites) on the historical data. The model learns the complex relationships between protocol characteristics, site attributes, and enrollment outcomes.

- Prediction and Scenario Analysis: For a new trial protocol, use the trained model to:

- Predict site activation dates and forecast patient enrollment rates for different potential sites [39].

- Identify sites with a high risk of under-enrollment before the trial begins [38].

- Run "what-if" scenarios to model the impact of adding or removing sites, or changing inclusion criteria, on the overall enrollment timeline [38].

- Actionable Outputs: The model's outputs guide strategic decisions, allowing study planners to select the optimal mix of high-performing sites, proactively deploy enrollment support to at-risk sites, and create a more accurate and efficient global trial footprint [38].

Implementing Automated, Closed-Loop DBTL Cycles in Biofoundries

The Design-Build-Test-Learn (DBTL) cycle has long been the cornerstone of synthetic biology, providing a systematic framework for engineering biological systems. This iterative process involves designing genetic constructs, building them in biological systems, testing their functionality, and learning from the results to inform the next design iteration. However, traditional DBTL approaches have been hampered by significant bottlenecks, particularly in the Build and Test phases, which are often slow, resource-intensive, and reliant on deep human expertise [40]. These limitations have constrained the pace of innovation in fields ranging from metabolic engineering to therapeutic development.

A transformative shift is now underway with the emergence of fully automated, closed-loop DBTL cycles integrated within biofoundries. These integrated facilities combine robotic automation, computational analytics, and advanced data science to streamline and accelerate biological engineering [41]. Most notably, a paradigm reordering of the cycle itself is being proposed—from DBTL to LDBT (Learn-Design-Build-Test)—where machine learning models pre-trained on vast biological datasets precede and inform the design phase [42] [21]. This evolution, powered by artificial intelligence and automation, is transitioning synthetic biology from a bespoke craft to a scalable, predictable engineering discipline, potentially realizing the ambition of a "Design-Build-Work" model akin to more established engineering fields [42].

Comparative Analysis of DBTL Implementation Frameworks