Automating Biological Circuit Design: How Simulation and AI Are Revolutionizing Synthetic Biology

The automated design of biological circuits using simulation represents a paradigm shift in synthetic biology, moving from labor-intensive trial-and-error to a predictable engineering discipline.

Automating Biological Circuit Design: How Simulation and AI Are Revolutionizing Synthetic Biology

Abstract

The automated design of biological circuits using simulation represents a paradigm shift in synthetic biology, moving from labor-intensive trial-and-error to a predictable engineering discipline. This article explores the foundational principles, current methodologies, and future directions of this rapidly advancing field. We examine how computational tools, from algorithmic enumeration to machine learning and black-box optimization, are enabling the predictive design of complex genetic systems. For researchers and drug development professionals, we provide a comprehensive overview of how these technologies are being applied to overcome critical challenges in circuit complexity, context-dependence, and metabolic burden, thereby accelerating the development of sophisticated biological computers, living therapeutics, and engineered biosystems.

The Foundations of Automated Biological Design: From Manual Tweaks to Predictive Simulation

A central challenge in synthetic biology, often termed the "synthetic biology problem," is the fundamental discrepancy between our ability to design genetic circuits qualitatively and our inability to predict their quantitative performance accurately [1]. While researchers can intuitively assemble genetic parts to create circuits with desired logical functions—such as switches, oscillators, or logic gates—the quantitative expression levels, dynamics, and metabolic impact of these circuits in living cells remain notoriously difficult to forecast [1] [2]. This problem arises because biological components lack strict modularity and composability; when genetic parts are combined, their individual behaviors change due to context effects, resource competition, and unforeseen interactions with the host cell [1] [2].

The synthetic biology problem presents a significant bottleneck for the automated design of biological circuits, as it limits the transition from conceptual designs to reliably functioning constructed systems. This challenge becomes increasingly pronounced as circuit complexity grows, with larger designs imposing greater metabolic burden on chassis cells and exhibiting more unpredictable behaviors [1]. Overcoming this problem requires new methodologies that integrate computational design with experimental validation to bridge the gap between qualitative intention and quantitative outcome.

The T-Pro Platform: A Solution Framework

The Transcriptional Programming (T-Pro) platform represents a comprehensive approach to addressing the synthetic biology problem through integrated wetware and software components [1]. This framework enables the predictive design of compressed genetic circuits for higher-state decision-making, achieving approximately 4-times smaller genetic footprints compared to canonical inverter-based genetic circuits while maintaining quantitative prediction errors below 1.4-fold on average across numerous test cases [1].

Core Principles of Circuit Compression

Traditional genetic circuit design often relies on inversion to achieve NOT/NOR Boolean operations, requiring multiple genetic parts to implement basic logical functions. In contrast, T-Pro utilizes synthetic transcription factors (repressors and anti-repressors) and cognate synthetic promoters to implement logical operations directly, significantly reducing part count [1]. This process of designing smaller genetic circuits is termed "compression" [1]. By minimizing the genetic footprint of designed circuits, T-Pro reduces metabolic burden and context effects, thereby improving the alignment between qualitative design and quantitative performance.

Expansion to 3-Input Boolean Logic

Recent advancements in T-Pro wetware have expanded its capacity from 2-input to 3-input Boolean logic, increasing the design space from 16 to 256 distinct truth tables [1]. This expansion required the development of an additional set of orthogonal synthetic transcription factors responsive to cellobiose, complementing existing IPTG and D-ribose responsive systems [1]. The engineering workflow involved creating anti-repressor variants through site saturation mutagenesis and error-prone PCR, followed by screening via fluorescence-activated cell sorting (FACS) to identify functional anti-repressors with desired characteristics [1].

Quantitative Performance Assessment

The T-Pro platform demonstrates remarkable quantitative predictability across diverse applications. The table below summarizes key performance metrics achieved through this approach.

Table 1: Quantitative Performance Metrics of the T-Pro Platform

| Application | Performance Metric | Result | Significance |

|---|---|---|---|

| Genetic Circuit Design | Average Size Reduction | ~4x smaller | Reduced metabolic burden on host cells |

| Quantitative Prediction | Average Error | <1.4-fold | High prediction accuracy across >50 test cases |

| Boolean Logic Scale | Input Capacity | 3-input (8-state) | Supports 256 distinct truth tables |

| Metabolic Engineering | Flux Control | Precise setpoints | Predictable control through toxic biosynthetic pathways |

| Genetic Memory | Recombinase Activity | Target-specific | Predictive design of synthetic memory circuits |

These performance metrics highlight the potential of integrated wetware-software solutions in addressing the synthetic biology problem, particularly in achieving predictable quantitative behaviors from qualitative designs.

Experimental Protocols

Protocol: Engineering Anti-Repressors for T-Pro Wetware

Objective: Engineer anti-repressor transcription factors responsive to cellobiose for expanding T-Pro to 3-input Boolean logic.

Materials:

- CelR transcriptional regulator scaffold

- Site-directed mutagenesis kit

- Error-prone PCR reagents

- Fluorescence-activated cell sorting (FACS) system

- Synthetic promoter library

- Fluorescent reporter genes

Procedure:

- Generate Super-Repressor Variant:

- Perform site saturation mutagenesis at amino acid position 75 of the E+TAN repressor scaffold

- Screen variants for ligand insensitivity while maintaining DNA binding function

- Identify L75H mutant (ESTAN) with desired super-repressor phenotype [1]

Error-Prone PCR Library Generation:

- Use ESTAN super-repressor as template for error-prone PCR

- Aim for low mutational rate to maintain structural integrity

- Generate library of approximately 10^8 variants [1]

FACS Screening:

- Transform variant library into host cells with fluorescent reporter system

- Sort population using FACS to identify anti-repressor phenotypes

- Isolate unique anti-repressors (EA1TAN, EA2TAN, EA3TAN) [1]

Alternate DNA Recognition Engineering:

- Equip validated anti-CelRs with additional ADR functions (EAYQR, EANAR, EAHQN, EAKSL)

- Verify retention of anti-repressor phenotype across all ADR combinations [1]

Orthogonality Validation:

- Test cross-reactivity between cellobiose-responsive components and existing IPTG/D-ribose systems

- Confirm orthogonality through promoter-TF interaction assays [1]

Protocol: Algorithmic Enumeration for Circuit Compression

Objective: Identify the most compressed circuit implementation for a given truth table from a combinatorial space of >100 trillion putative circuits.

Materials:

- T-Pro component library (synthetic TFs, promoters)

- Algorithmic enumeration software

- High-performance computing resources

Procedure:

- Circuit Modeling:

- Model candidate circuits as directed acyclic graphs

- Represent synthetic transcription factors and promoters as nodes with specific interaction properties [1]

Systematic Enumeration:

- Enumerate circuits in sequential order of increasing complexity

- Prioritize circuits with minimal genetic components (promoters, genes, RBS, TFs) [1]

Optimization Implementation:

- Apply algorithmic optimization to guarantee identification of most compressed circuit

- Evaluate each candidate circuit against target truth table

- Select minimal implementation that satisfies all logical requirements [1]

Validation:

- Compare algorithmically determined circuits with intuitive designs

- Verify functional equivalence between compressed and canonical implementations

- Assess quantitative performance predictions for selected designs [1]

Protocol: Integrating Qualitative and Quantitative Data for Parameter Identification

Objective: Combine qualitative phenotypes and quantitative time-course data for robust parameter estimation in biological models.

Materials:

- Quantitative experimental data (time courses, dose-response curves)

- Qualitative phenotypic observations (viability, oscillatory behavior, relative expression)

- Constrained optimization software

- Model of the biological system

Procedure:

- Formulate Objective Function:

- For quantitative data: Compute sum-of-squares difference between model predictions and experimental data

- For qualitative data: Convert observations into inequality constraints on model outputs [3]

Construct Combined Objective Function:

- Implement static penalty function: ftot(x) = fquant(x) + f_qual(x)

- fquant(x) = Σj [yj,model(x) - yj,data]^2

- fqual(x) = Σi Ci · max(0, gi(x)) where g_i(x) < 0 represents qualitative constraints [3]

Parameter Optimization:

- Initialize parameter values within biologically plausible ranges

- Apply optimization algorithm (differential evolution or scatter search)

- Minimize combined objective function f_tot(x) [3]

Uncertainty Quantification:

- Apply profile likelihood approach to assess parameter identifiability

- Compare confidence intervals from qualitative, quantitative, and combined data

- Validate improved parameter precision with combined data approach [3]

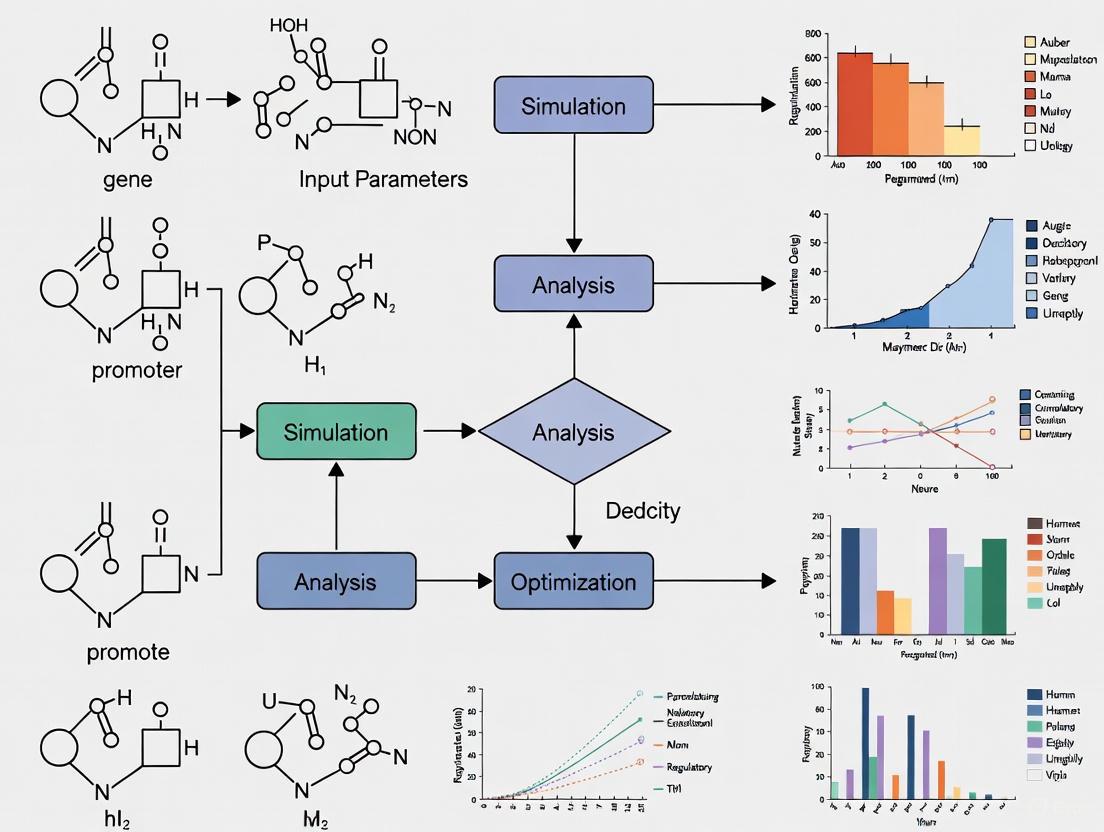

Visualization of Workflows

T-Pro Circuit Design and Implementation Workflow

T-Pro Circuit Design Workflow: This diagram illustrates the comprehensive process from truth table specification to experimental validation, highlighting the integration of algorithmic design with experimental implementation.

Qualitative and Quantitative Data Integration

Data Integration for Parameter Identification: This workflow demonstrates how qualitative and quantitative data are combined to improve parameter estimation in biological models, leading to more reliable predictive designs.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Reagents for Synthetic Biology Circuit Design

| Reagent / Tool | Type | Function | Application Example |

|---|---|---|---|

| Synthetic Transcription Factors | Wetware | Implement logical operations via repression/anti-repression | T-Pro circuit components for Boolean logic [1] |

| Synthetic Promoters | Wetware | Provide regulatory targets for synthetic TFs | T-Pro synthetic promoters with tandem operator designs [1] |

| Orthogonal Inducer Systems | Chemical Inducers | Provide orthogonal input signals | IPTG, D-ribose, cellobiose responsive systems [1] |

| Algorithmic Enumeration Software | Software | Identify minimal circuit implementations | T-Pro circuit compression optimization [1] |

| Constrained Optimization Framework | Computational Method | Combine qualitative and quantitative data | Parameter identification with mixed data types [3] |

| FACS Screening | Experimental Platform | High-throughput variant selection | Anti-repressor engineering and characterization [1] |

| Error-Prone PCR | Molecular Biology Technique | Generate diverse variant libraries | Creating anti-repressor diversity for screening [1] |

| Static Penalty Functions | Mathematical Formulation | Convert constraints into optimization objectives | Handling qualitative data in parameter estimation [3] |

The synthetic biology problem—the disconnect between qualitative design and quantitative performance—represents a fundamental challenge in engineering biological systems. The T-Pro platform demonstrates that integrated wetware-software solutions can successfully address this problem through circuit compression, algorithmic design, and quantitative prediction [1]. By combining specialized biological parts with computational tools that explicitly account for context effects and performance setpoints, researchers can achieve unprecedented accuracy in genetic circuit implementation.

Furthermore, methodologies that integrate qualitative and quantitative data for parameter identification provide a robust framework for model refinement and validation [3]. This approach leverages the full spectrum of experimental observations, from precise measurements to categorical phenotypes, to constrain model parameters and improve predictive capability.

As synthetic biology continues to advance toward more complex and sophisticated systems, addressing the synthetic biology problem will remain essential for realizing the full potential of automated biological circuit design. The tools, protocols, and frameworks presented here provide a foundation for developing more predictable and reliable biological engineering workflows.

The automated design of biological circuits requires a comprehensive toolkit of well-characterized, orthogonal regulatory devices that function predictably within host cells. These devices operate across the central dogma of molecular biology, enabling precise control at the transcriptional, translational, and post-translational levels. The integration of these multi-level control mechanisms is fundamental to constructing sophisticated genetic circuits that can process information and execute complex cellular functions with minimal metabolic burden. Advanced computational approaches, including machine learning pipelines like SONAR, now enable researchers to predict protein abundance from sequence features alone with up to 63% accuracy, dramatically accelerating the design-build-test cycle for synthetic genetic circuits [4]. This application note details the key regulatory devices and experimental protocols for their implementation, specifically framed within the context of automated design and simulation of biological circuits.

Transcriptional Control Devices

Enhancer-Derived RNAs and Transcriptional Activation

Enhancers are crucial transcriptional control elements that act over distances to positively regulate gene expression. Recent genomic studies have revealed that active enhancers are broadly transcribed, producing enhancer-derived RNAs (eRNAs). The expression levels of these eRNAs positively correlate with the expression of nearby protein-coding genes, suggesting a potential functional role in enhancer activity [5]. These eRNAs are typically non-polyadenylated, lower in abundance compared to coding transcripts, and exhibit cell-type specificity, making them valuable as markers of active enhancer elements and potential tools for fine-tuning transcriptional circuits.

Key Experimental Evidence:

- Historical Context: Early evidence of enhancer transcription came from studies of the β-globin Locus Control Region (LCR), where transcriptional initiation sites were identified within DNAse-I hypersensitivity sites, distinct from the globin gene promoters [5].

- Genomic Era Findings: Genome-wide studies using techniques like GRO-seq have demonstrated that eRNA transcription is induced by stimuli and widely distributed, serving as a reliable marker for active enhancers. Their transcription correlates well with enhancer activity, though some studies indicate that pharmacological inhibition of eRNA transcription does not necessarily inhibit enhancer-promoter looping, as measured by 3C assays [5].

Synthetic Transcription Factors and Promoters for Circuit Compression

Transcriptional Programming (T-Pro) utilizes engineered repressor and anti-repressor transcription factors (TFs) paired with cognate synthetic promoters to achieve complex logic operations with minimal genetic parts. This approach enables circuit compression, reducing the number of required components and the associated metabolic burden on the host cell.

Key Wetware Components:

- Orthogonal TF/Promoter Sets: Complete sets of synthetic repressors and anti-repressors responsive to orthogonal signals (e.g., IPTG, D-ribose, cellobiose) form the basis of 3-input Boolean logic circuits [1].

- Anti-Repressor Engineering: Anti-repressors are engineered from repressor scaffolds through a multi-step process: (1) generating a super-repressor variant that retains DNA binding but is ligand-insensitive via site-saturation mutagenesis, and (2) performing error-prone PCR on the super-repressor to create variants that activate transcription in the presence of the ligand [1].

Table 1: Orthogonal Inducer Systems for Transcriptional Control

| Inducer Signal | Transcription Factor Scaffold | Regulatory Phenotype | Application in Circuit Design |

|---|---|---|---|

| IPTG | LacI-derived repressor/anti-repressor | Repression or activation of cognate promoter | 2-input and 3-input Boolean logic |

| D-ribose | RhaS-derived repressor/anti-repressor | Repression or activation of cognate promoter | 2-input and 3-input Boolean logic |

| Cellobiose | CelR-derived repressor/anti-repressor | Repression or activation of cognate promoter | 3-input Boolean logic expansion |

Figure 1: A compressed transcriptional circuit implementing 3-input logic using orthogonal synthetic transcription factors. Each TF responds to a specific inducer and regulates a single synthetic promoter containing multiple binding sites.

Protocol: Implementing a 3-Input T-Pro Boolean Logic Circuit

Objective: Construct and validate a compressed genetic circuit implementing a specific 3-input Boolean logic operation using T-Pro components.

Materials:

- Plasmids: Expression vectors encoding the required synthetic repressors and anti-repressors (e.g., IPTG-, D-ribose-, and cellobiose-responsive TFs).

- Reporter Plasmid: Vector containing the synthetic promoter with cognate operator sites driving a fluorescent protein (e.g., GFP).

- Host Cells: Appropriate microbial or mammalian chassis (e.g., E. coli, HEK293 cells).

- Inducers: Stock solutions of IPTG, D-ribose, and cellobiose in suitable buffers.

Procedure:

- Circuit Design:

- Utilize algorithmic enumeration software to identify the most compressed circuit design for your target truth table [1].

- Select the required synthetic TFs and promoter architecture based on the software output.

Strain Construction:

- Co-transform the host cells with the reporter plasmid and the required TF expression plasmids.

- Include appropriate selection markers and maintain selective pressure.

Induction Assay:

- Inoculate cultures and grow to mid-log phase.

- Divide culture into 8 separate aliquots.

- Add inducers according to all possible combinations of the 3-input signals (000, 001, 010, 011, 100, 101, 110, 111).

- Incubate for an additional 6-8 hours to allow gene expression.

Output Measurement:

- Measure fluorescence intensity using flow cytometry or a plate reader.

- Normalize data to cell density.

- Compare the output pattern to the expected truth table.

Validation:

- Confirm circuit performance matches predictions across multiple biological replicates.

- If discrepancies exist, refine model parameters and consider context effects from genetic positioning.

Translational Control Devices

RNA-Binding Proteins and Regulation Mechanisms

RNA-binding proteins (RBPs) serve as versatile post-transcriptional regulators in synthetic circuits. They can be engineered to respond to various cues and provide precise control over translation. Common RBPs used in synthetic biology include L7Ae, which binds kink-turn (K-turn) RNA motifs in the 5'UTR to inhibit translation, and MS2, which can be fused to translational activators or repressors [6].

Key Engineering Strategies:

- Protease-Responsive RBPs: Inserting protease cleavage sites into RBP sequences links their activity to upstream proteases, creating post-translational control over translation. For example, engineering L7Ae to harbor a tobacco etch virus protease (TEVp) cleavage site (TCS) creates a repressor whose activity can be derepressed by protease presence [6].

- Insertion Site Selection: Based on structural data, insertion sites should be in loop regions away from the RNA-binding domain to minimize impact on RBP function while allowing protease access.

Table 2: Translation Regulatory Devices and Their Characteristics

| Regulatory Device | Mechanism of Action | Dynamic Range | Orthogonality |

|---|---|---|---|

| L7Ae (wild-type) | Binds K-turn motif in 5'UTR, repressing translation | High (strong repression) | High in mammalian cells |

| L7Ae-CS3 (TEVp-responsive) | Derepressed upon TEVp cleavage at inserted TCS | 77-fold derepression [6] | Orthogonal to host proteases |

| MS2-cNOT7 | Fusion protein that can activate or repress translation | Configurable based on fusion partner | High in mammalian cells |

| miRNA target sites | Endogenous miRNA-mediated repression | Dependent on miRNA expression | Cell-type specific |

Sequence Features Governing Translation Efficiency

Machine learning analysis of sequence features has revealed critical determinants of protein abundance at the translational level. The SONAR pipeline demonstrates that features within the coding sequence (CDS) contribute more significantly to predicting protein abundance than features in the 5' or 3'UTRs, challenging conventional emphasis on UTR-centric regulation [4].

Key Sequence Features:

- Codon Usage: Adaptation between coding sequences and the tRNA pool creates a conserved translation efficiency profile where the first 30-50 codons are translated with lower efficiency, acting as a "ramp" to prevent ribosomal traffic jams [7].

- Regulatory Motifs: GC content, TOP-like CT-rich motifs in the 5'UTR, and AU-rich elements in the 3'UTR significantly impact translation efficiency and mRNA stability [4].

- RNA Modifications: Predicted m6A and m7G modification sites in the CDS and UTRs contribute to translation regulation.

Protocol: Engineering a Protease-Responsive Translation Repressor

Objective: Create and characterize a translation repressor whose activity is controlled by protease cleavage.

Materials:

- RBP Template: Plasmid encoding wild-type RBP (e.g., L7Ae).

- Protease Source: Plasmid encoding the cognate protease (e.g., TEVp).

- Reporter Plasmid: Vector containing RBP binding sites (e.g., 2x K-turn) in the 5'UTR of a fluorescent reporter gene.

- Site-Directed Mutagenesis Kit: For inserting protease cleavage sites into the RBP.

Procedure:

- Cleavage Site Insertion:

- Analyze RBP crystal structure to identify surface-accessible loops away from the functional domains.

- Design primers to insert the protease cleavage site (e.g., TEVp cleavage site ENLYFQ\G) into selected loops.

- Perform site-directed mutagenesis to generate RBP-CS variants.

Initial Screening:

- Co-transfect cells with: (1) RBP-CS variant plasmid, (2) reporter plasmid, and (3) with or without protease plasmid.

- Measure fluorescence after 24-48 hours.

- Select variants that show strong repression in the absence of protease and significant derepression in its presence.

Characterization:

- Generate dose-response curves by titrating protease expression.

- Measure temporal dynamics of derepression over 24-96 hours.

- Test specificity using orthogonal proteases with different cleavage sites.

Circuit Integration:

- Implement the validated RBP-CS in a larger circuit context.

- Verify orthogonality and lack of crosstalk with other circuit components.

Figure 2: Protease-controlled translation regulation. In the absence of protease, the RBP binds its target mRNA and represses translation. Protease cleavage inactivates the RBP, derepressing translation of the output protein.

Post-Translational Control Devices

Engineered Proteases and Signaling Cascades

Proteases provide powerful post-translational control devices for synthetic circuits due to their high specificity, modularity, and ability to implement signal amplification. Viral proteases like TEVp, TVMVp, TUMVp, and SuMMVp offer orthogonality to host cellular processes and can be engineered to create multi-layer regulatory networks [6].

Key Applications:

- Protease Cascades: Connecting multiple proteases in series creates signaling cascades that can amplify signals or integrate multiple inputs.

- Protein Sensors: Fusing proteases to specific binding domains (e.g., single-chain antibodies) creates sensors that detect target proteins and transduce signals through proteolytic activity.

- Orthogonal Protease Systems: Multiple viral proteases with distinct cleavage specificities can be used in the same circuit without crosstalk.

Analysis of Post-Translational Modifications

Post-translational modifications (PTMs) including phosphorylation, acetylation, and ubiquitination play pivotal roles in regulating cellular signaling and protein function. Tools like PTMNavigator enable researchers to overlay experimental PTM data with pathway diagrams, providing insights into how PTMs modulate cellular pathways [8].

PTM Analysis Capabilities:

- Pathway Enrichment Analysis: PTM-signature enrichment analysis (PTM-SEA) identifies pathways enriched in regulated PTMs, overcoming limitations of traditional gene-centric approaches [8].

- Kinase-Substrate Mapping: Identifies potential upstream kinases based on phosphorylation patterns.

- Visualization: Projects PTM perturbation data onto canonical pathways from KEGG and WikiPathways, enabling intuitive interpretation of signaling network alterations.

Protocol: Implementing a Protease-Based Protein Sensor

Objective: Construct a circuit that detects a specific target protein and produces a measurable output via protease-mediated activation.

Materials:

- Intrabody-Protease Fusion: Plasmid encoding a protease (e.g., TEVp) fused to a single-chain antibody (scFv) specific for your target protein.

- Intrabody-RBP Fusion: Plasmid encoding the protease-responsive RBP (e.g., L7Ae-CS3) fused to a complementary scFv that binds a different epitope on the same target protein.

- Reporter Plasmid: Vector containing RBP binding sites driving a fluorescent reporter.

- Target Protein: Plasmid expressing the protein to be detected.

Procedure:

- Component Validation:

- Verify that scFv-L7Ae-CS3 fusions retain repression capability using the reporter plasmid.

- Confirm that TEVp-scFv fusions retain protease activity using a fluorescence-based protease assay.

Sensor Assembly:

- Co-transfect cells with: (1) scFv-L7Ae-CS3, (2) TEVp-scFv, (3) reporter plasmid, and (4) with or without target protein expression plasmid.

- Use a regulated promoter (e.g., tetracycline-inducible) for TEVp-scFv expression to minimize leakiness.

Specificity Testing:

- Measure reporter output in the presence and absence of the target protein.

- Test against related proteins to verify specificity.

- Optimize expression levels of sensor components to maximize signal-to-noise ratio.

Characterization:

- Determine the detection limit and dynamic range of the sensor.

- Measure response time from target expression to output detection.

- Validate in relevant cell types or conditions.

Figure 3: PTM-regulated signaling pathway. A kinase cascade relays signals through sequential phosphorylation events, ultimately leading to transcription factor activation and target gene expression. PTMs (phosphorylation, shown as "P") control the activity state of each signaling component.

Integrated Circuit Design and Analysis Tools

Software and Visualization Platforms

BioTapestry is an open-source computational tool specifically designed for genetic regulatory network (GRN) modeling and visualization. It provides genome-oriented representations with emphasis on cis-regulatory elements, offering multiple hierarchical views of network states across different cell types, spatial domains, and time points [9].

Key Features for Automated Design:

- Hierarchical Representation: Three-level hierarchy (View from the Genome, View from All Nuclei, View from the Nucleus) enables tracking of GRN states across different conditions and time points.

- Cis-Regulatory Focus: Explicit representation of transcription factor binding sites and cis-regulatory modules.

- Off-DNA Interactions: Compact symbols for complex processes like signal transduction while maintaining regulatory connectivity.

PTMNavigator provides a PTM-centric interface for pathway-level data analysis, integrating multiple enrichment algorithms and visualization tools specifically for post-translational modification data [8].

Machine Learning for Predictive Design

The SONAR pipeline uses machine learning to predict protein abundance from sequence features, revealing the relative contribution of different regulatory elements and their cell-type specificity [4].

Key Insights for Circuit Design:

- Feature Importance: Coding sequence features contribute more to protein abundance prediction than UTR features on average.

- Cell-Type Specificity: The importance of specific sequence features varies by cell type, necessitating context-aware design.

- Predictive Capacity: Using sequence features alone, SONAR can predict up to 63% of protein abundance variance, independent of promoter or enhancer information.

Research Reagent Solutions

Table 3: Essential Research Reagents for Circuit Construction and Analysis

| Reagent Category | Specific Examples | Function/Application | Key Characteristics |

|---|---|---|---|

| Synthetic Transcription Factors | IPTG-/D-ribose-/cellobiose-responsive repressors and anti-repressors [1] | Implement transcriptional logic operations | Orthogonal, high dynamic range, ligand-responsive |

| Engineered RBPs | L7Ae-CS3, MS2-cNOT7 with protease cleavage sites [6] | Post-transcriptional regulation | Protease-controllable, specific RNA binding |

| Orthogonal Proteases | TEVp, TVMVp, TUMVp, SuMMVp [6] | Post-translational signal processing | Specific cleavage sequences, minimal host interactions |

| Analysis Tools | PTMNavigator [8], BioTapestry [9] | Circuit modeling and data visualization | Pathway integration, hierarchical representation |

| Machine Learning Pipelines | SONAR [4] | Predictive protein expression design | Sequence feature-based prediction, cell-type specific models |

The integration of transcriptional, translational, and post-translational control devices provides a comprehensive toolkit for constructing sophisticated genetic circuits with predictable behaviors. By leveraging engineered transcription factors, RNA-binding proteins, proteases, and computational design tools, researchers can implement complex logic operations with minimal genetic footprint. The continued development of automated design platforms that incorporate machine learning and multi-level regulatory principles will further advance our ability to program cellular functions for therapeutic and biotechnological applications.

Application Notes

The automated design of sophisticated genetic circuits is fundamentally challenged by the intrinsic complexity and context-dependence of biological systems. Computational modeling and simulation have emerged as indispensable technologies to overcome these hurdles, enabling the transition from qualitative, intuitive design to predictive, quantitative engineering of cellular behavior [10] [1]. This paradigm shift is critical for applications ranging from living therapeutics to sustainable bioproduction, where reliability and predictability are paramount.

A primary challenge in circuit design is limited modularity: biological parts often behave differently when removed from their original context or assembled into new systems [11] [1]. This context-dependence arises from myriad factors, including uncharacterized interactions with the host chassis, resource competition, and emergent properties of interconnected components. Furthermore, as circuit complexity increases, so does the metabolic burden on the host cell, which can distort circuit function and limit operational capacity [1]. Simulation-driven design addresses these issues by creating in silico environments where parts and circuits can be tested virtually before physical assembly, allowing designers to identify and mitigate failure modes early in the development process.

Quantitative Characterization and Workflow Standardization

Establishing robust, standardized metrics is a crucial first step in building reliable predictive models. A study on recombinase-based digitizer circuits demonstrated the power of moving beyond simple fold-change measurements to more informative metrics like Signal-to-Noise Ratio (SNR) and Area Under the Receiver Operating Characteristic Curve (AUC) [11]. This quantitative framework revealed performance differences across three digitizer topologies that would otherwise be overlooked (Table 1) and enabled the development of a mixed phenotypic/mechanistic model capable of predicting how these circuits amplify a cell-to-cell communication signal [11].

Table 1: Performance Metrics for Recombinase-Based Digitizer Circuits [11]

| Circuit Topology | Fold Change (FC) | Signal-to-Noise Ratio (SNR) | Key Characteristic |

|---|---|---|---|

| No-shRNA | 8.5x | ~0 dB | Significant leaky expression in OFF-state |

| Feedforward-shRNA | 15x | Data Not Specified | Effectively controls leaky expression |

| Constant-shRNA | 4.5x | Data Not Specified | Over-repression leads to low activation |

This workflow exemplifies the modern Design-Build-Test-Learn (DBTL) cycle, where computational tools are integrated at every stage [12]. The cycle begins with in silico design and simulation, proceeds to physical construction, involves rigorous experimental testing, and concludes by using the new data to refine models and inform the next design iteration. Automation technologies, including robotic liquid handling and microfluidics, are accelerating this cycle, enabling high-throughput characterization essential for generating the large datasets required to parameterize complex models [12].

Predictive Design of Compressed Genetic Circuits

A landmark application of simulation is the predictive design of compressed genetic circuits. So-called "wetware" (engineered biological components) and "software" (computational design tools) were co-developed to create genetic circuits that perform higher-state decision-making with a minimal genetic footprint [1]. This "T-Pro" (Transcriptional Programming) platform utilizes synthetic transcription factors and promoters to implement complex Boolean logic.

The computational challenge was immense; scaling from 2-input to 3-input Boolean logic expanded the combinatorial design space to over 100 trillion putative circuits [1]. An algorithmic enumeration method was developed to navigate this space, systematically identifying the most compressed (smallest) circuit design for any of the 256 possible 3-input Boolean operations. This software, combined with quantitative models that account for genetic context, enabled the predictive design of multi-state circuits that were, on average, four times smaller than canonical designs, with quantitative predictions achieving an average error below 1.4-fold across more than 50 test cases (Table 2) [1].

Table 2: Performance of Predictive Models for Compressed Genetic Circuits [1]

| Application | Key Achievement | Quantitative Prediction Accuracy |

|---|---|---|

| Multi-state Biocomputing Circuits | 4x size reduction vs. canonical circuits | Average error < 1.4-fold for >50 test cases |

| Recombinase Genetic Memory | Predictive design of specific memory activity | Successfully demonstrated |

| Metabolic Pathway Control | Predictive control of flux through a toxic pathway | Successfully demonstrated |

The diagram below illustrates the core concept of circuit compression, contrasting the traditional approach with the T-Pro methodology.

Circuit Compression Concept

Protocols

Protocol 1: Quantitative Characterization of a Genetic Digitizer Circuit

This protocol details the process for quantitatively characterizing a recombinase-based digitizer circuit using flow cytometry, establishing a dataset for model parameterization and validation [11].

Research Reagent Solutions

Table 3: Essential Reagents for Digitizer Characterization

| Reagent / Material | Function / Description |

|---|---|

| HEK293FT Cell Line | Mammalian cell chassis for circuit expression and testing. |

| Digitizer Plasmid Constructs | Plasmids encoding the no-shRNA, feedforward-shRNA, or constant-shRNA circuit designs. |

| Doxycycline (Dox) | Small-molecule input signal that induces recombinase (Flp) expression via the Tet-ON system. |

| Flow Cytometer | Instrument for measuring the distribution of fluorescence (output) across thousands of individual cells. |

Step-by-Step Procedure

Cell Culture and Transfection: Culture HEK293FT cells under standard conditions (DMEM + 10% FBS, 37°C, 5% CO₂). Transfect the cells with the digitizer plasmid construct(s) using a preferred method (e.g., polyethyleneimine (PEI), lipofection). Include a constitutive fluorescent protein (e.g., CFP) marker plasmid to identify successfully transfected cells [11].

Input Titration and Induction: Immediately after transfection, divide the cells into multiple culture wells. Titrate a stock solution of doxycycline into the media across a range of concentrations (e.g., 0 nM to 225 nM). Include an uninduced (0 nM Dox) control well to measure basal OFF-state activity [11].

Time-Series Sampling: Incubate the cells and collect samples at multiple time points post-induction (e.g., 24, 48, 72, and 96 hours). This time-series data is critical for capturing dynamic circuit behaviors, such as the gradual accumulation of leaky recombination [11].

Flow Cytometry Data Acquisition: For each sample, analyze at least 10,000 single-cell events on a flow cytometer. Record fluorescence intensities for the constitutive marker (CFP) and the circuit output (GFP).

Data Pre-processing and Gating: Analyze the flow cytometry data using software such as FlowJo or Python. Gate the population to focus on single, live cells. Further, gate on the top 30% of cells expressing the constitutive CFP marker to standardize comparisons across populations and minimize noise from transfection variability [11].

Metric Calculation: For each experimental condition (Dox concentration, time point), calculate the key performance metrics:

- Fold Change (FC):

FC = (Geometric Mean of GFP in ON-state) / (Geometric Mean of GFP in OFF-state). - Signal-to-Noise Ratio (SNR): Calculate in decibels (dB) as

SNR = 10 * log10( (Mean_ON - Mean_OFF)² / (σ²_ON + σ²_OFF) ), where σ is the standard deviation. - Area Under the Curve (AUC): Generate a Receiver Operating Characteristic (ROC) curve by plotting the true positive rate against the false positive rate for classifying ON/OFF states across the population. Calculate the AUC to quantify the distinguishability of the two states [11].

- Fold Change (FC):

The following workflow diagram summarizes this characterization pipeline.

Digitizer Characterization Workflow

Protocol 2: Predictive Design of a Compressed Genetic Circuit using T-Pro

This protocol outlines the computational and experimental workflow for designing a 3-input Boolean logic circuit with predictable quantitative performance, using the T-Pro software and wetware suite [1].

Research Reagent Solutions

Table 4: Essential Reagents for T-Pro Circuit Design

| Reagent / Material | Function / Description |

|---|---|

| Orthogonal Synthetic TF/SP Libraries | Engineered transcription factors (repressors/anti-repressors) and their cognate synthetic promoters, responsive to IPTG, D-ribose, and cellobiose. |

| Algorithmic Enumeration Software | Custom software that identifies the minimal (compressed) circuit design for a target truth table from a vast combinatorial space. |

| Quantitative Context-Aware Model | A mathematical model that predicts circuit output levels by accounting for the specific genetic context of parts. |

Step-by-Step Procedure

Part 1: In Silico Circuit Design and Enumeration

Define Truth Table: Specify the desired 3-input (8-state) Boolean logic operation as a truth table, defining the output (ON/OFF) for every combination of the three inputs (e.g., IPTG, D-ribose, cellobiose) [1].

Algorithmic Circuit Enumeration: Input the target truth table into the T-Pro algorithmic enumeration software. The software models the circuit as a directed acyclic graph and systematically searches the combinatorial space, iterating through designs of increasing complexity until it identifies the most compressed (smallest) circuit that implements the target logic [1].

Design Selection and Validation: The software returns one or more valid, compressed circuit designs. Select the final design based on criteria such as the number of parts or compatibility with downstream assembly methods.

Part 2: Quantitative Performance Prediction and Assembly

Model-Based Performance Prediction: Use the selected circuit design and the quantitative context-aware model to predict the output expression level (e.g., fluorescence intensity) for each of the eight input states. The model incorporates parameters that account for the specific genetic context of the promoters, coding sequences, and other regulatory elements used in the design [1].

Genetic Construct Assembly: Physically build the final DNA construct encoding the designed circuit using standard molecular biology techniques such as Gibson Assembly or Golden Gate cloning.

Part 3: Experimental Validation and Model Refinement

Circuit Characterization: Transform/transfect the assembled construct into the chosen chassis organism (e.g., E. coli). Measure the circuit's output in response to all eight input combinations using flow cytometry or plate reader assays.

Model Validation and Refinement: Compare the experimentally measured output levels with the model's predictions. If the discrepancy is outside an acceptable error margin (e.g., the >1.4-fold average error achieved in the original study), use the new experimental data to refine the model's parameters, enhancing its predictive power for future designs [1]. This step closes the DBTL loop, turning a single design into a learning cycle.

Circuit compression is an engineering paradigm focused on reducing the number of components in a genetic circuit while preserving its logical function. In synthetic biology, as circuit complexity increases, the metabolic burden on host cells intensifies, often leading to system failure and limited design capacity. This resource burden arises because biological parts are not strictly composable; their function is influenced by genetic context and cellular resource limitations [13]. Circuit compression addresses this by developing minimized genetic architectures that require fewer transcriptional units, promoters, and coding sequences, thereby enhancing circuit performance, predictability, and host viability [13] [14]. This document provides application notes and protocols for implementing compression in the automated design of biological circuits, framed within simulation-based research.

Quantitative Performance of Compressed Genetic Circuits

Recent advances have demonstrated the significant benefits of circuit compression. The tables below summarize key performance metrics from foundational studies.

Table 1: Performance Metrics of 3-Input T-Pro Compression Circuits

| Performance Metric | Value | Context / Comparison |

|---|---|---|

| Average Size Reduction | ~4x smaller | Compared to canonical inverter-type genetic circuits [13] [15] |

| Quantitative Prediction Error | < 1.4-fold (average) | Across >50 test cases [13] [15] |

| Boolean Logic Capacity | 256 distinct truth tables | 3-input Boolean logical operations (eight-state) [13] |

Table 2: Compression-Driven Performance Gains in Automated Design

| Design Strategy | Functions Improved | Maximum Performance Gain | Average Performance Gain |

|---|---|---|---|

| Structural Variants (same gate count) | 22 of 33 functions | 3.8-fold | 29% [14] |

| Structural Variants (+1 excess gate) | 30 of 33 functions | 7.9-fold | 111% [14] |

| Novel Robustness Score | 22 of 33 functions | 26-fold | Not specified [14] |

Experimental Protocols for Circuit Compression

Protocol: Algorithmic Enumeration for 3-Input T-Pro Circuit Design

This protocol describes the qualitative design of maximally compressed genetic circuits using an algorithmic enumeration method, enabling higher-state decision-making with a minimal genetic footprint [13].

Principle: Scaling from 2-input to 3-input Boolean logic expands the design space to 256 distinct truth tables, making intuitive design impossible. An algorithmic approach systematically explores the combinatorial space to guarantee the identification of the smallest circuit for a given operation [13].

Materials:

- Software for algorithmic enumeration (e.g., custom Python-based optimizer [13])

- Library of orthogonal synthetic transcription factors (repressors/anti-repressors)

- Library of cognate synthetic promoters

Procedure:

- Define the Truth Table: Specify the desired 3-input (8-state) Boolean logic function as a truth table with inputs A, B, C and output Z.

- Model as a Directed Acyclic Graph (DAG): Represent the circuit topology as a DAG, where nodes represent genetic components (promoters, genes) and edges represent regulatory interactions.

- Systematic Enumeration: Execute the enumeration algorithm to generate circuits in sequential order of increasing complexity (i.e., part count).

- Validation and Selection: The algorithm identifies all functional circuit structures for the given truth table. The solution with the fewest genetic parts is selected as the compressed design.

- Technology Mapping: Map the selected compressed topology onto specific biological parts from the available wetware library (e.g., CelR, LacI, RhaR-responsive TFs [13]).

Protocol: Implementing a Csr Network-Based BUFFER Gate

This protocol details the construction of a compressed post-transcriptional BUFFER Gate (cBUFFER) by rewiring the native E. coli Carbon Storage Regulatory (Csr) network [16].

Principle: The global RNA-binding protein CsrA represses translation by binding to GGA motifs in the 5' UTR of target mRNAs, occluding the Ribosome Binding Site (RBS). The sRNA CsrB sequesters CsrA, de-repressing translation. This native interaction is co-opted to build a BUFFER Gate where inducing CsrB expression activates a synthetic output [16].

Materials:

- Plasmid Backbone: ColE1 origin of replication plasmid.

- Promoters: Weak constitutive promoter (e.g., PCon) for the output gene; inducible promoter (e.g., PLlacO) for csrB.

- Engineered 5' UTR: The glgC 5' UTR sequence (positions -61 to -1 relative to the native start codon) containing CsrA binding motifs.

- Reporter Gene: gfpmut3 or another fluorescent protein gene.

- csrB Gene: Wild-type csrB sRNA sequence.

- Host Strains: Wild-type E. coli and csrA::kan mutant for control experiments.

Procedure:

- Construct Assembly:

- Clone the engineered glgC 5' UTR upstream of the reporter gene (e.g., gfpmut3) on the plasmid, under the control of the weak constitutive promoter.

- Clone the csrB gene under the control of the inducible PLlacO promoter on the same plasmid.

- Transformation and Culturing:

- Transform the constructed plasmid into both wild-type and csrA::kan E. coli strains.

- Grow cultures in appropriate medium to mid-exponential phase.

- Induction and Measurement:

- Induce the expression of csrB by adding IPTG over a concentration range (e.g., 10 - 1000 µM).

- Monitor fluorescence accumulation over time (e.g., every 20 minutes for 60-120 minutes) using a plate reader or flow cytometer.

- Measure the optical density to correlate fluorescence with cell growth.

- Validation and Tuning:

- Positive Control: The wild-type strain with the intact glgC UTR should show increased fluorescence upon IPTG induction, typically achieving up to 8-fold activation in the initial design.

- Negative Controls:

- The wild-type strain with a mutated glgC UTR (CsrA binding sites disrupted) should show no activation upon induction.

- The csrA::kan strain should show minimal activation regardless of induction, confirming CsrA-dependent regulation.

- Tunability: The system's output can be tuned by adjusting the IPTG concentration or by rationally engineering the RBS and CsrB sequences [16].

Signaling Pathways and Workflow Visualization

T-Pro 3-Input Circuit Compression Mechanism

The diagram below illustrates the core mechanism of Transcriptional Programming (T-Pro), which utilizes synthetic anti-repressors to achieve circuit compression, avoiding the need for larger inverter-based architectures [13].

Csr Network Post-Transcriptional Regulation

This diagram outlines the experimental workflow and logical relationships for building a compressed BUFFER gate within the native Csr post-transcriptional regulatory network [16].

The Scientist's Toolkit: Research Reagent Solutions

The following table catalogues essential materials and their functions for implementing the described circuit compression protocols.

Table 3: Key Research Reagents for Genetic Circuit Compression

| Item Name | Function / Application | Key Features / Examples |

|---|---|---|

| Orthogonal Synthetic TFs | Core wetware for T-Pro circuit implementation. Enables input-specific regulation without cross-talk. | Repressor/Anti-repressor sets responsive to IPTG (LacI), D-ribose (RhaR), and cellobiose (CelR) [13]. |

| Synthetic Promoters (SPs) | Cognate DNA binding sites for synthetic TFs. The combination of TFs and SPs defines the circuit's logic. | Tandem operator designs that can be regulated by multiple TFs simultaneously, enabling compressed logic [13]. |

| Engineered 5' UTRs | Post-transcriptional regulation scaffold. Provides a platform for implementing repression and BUFFER gates. | The glgC 5' UTR (-61 to -1) with CsrA GGA-binding motifs for CsrA-based repression [16]. |

| Algorithmic Enumeration Software | Qualitative design software for finding the smallest circuit topology for a given Boolean function. | Software that models circuits as Directed Acyclic Graphs (DAGs) and systematically enumerates designs by increasing complexity [13]. |

| Robustness Scoring Function | Quantitative metric for automated circuit selection in GDA workflows. Accounts for model inaccuracy and cell-to-cell variability. | A modified Wasserstein metric that scores circuits based on the separation and overlap of their ON/OFF output distributions [14]. |

Simulation in Action: Methodologies and Real-World Applications for Predictive Circuit Design

The forward engineering of biological systems presents a grand challenge, requiring sophisticated computational approaches to manage complexity. Bio-design automation (BDA) has emerged as a critical discipline, applying computational techniques from electronic design automation to biological engineering workflows [17]. These workflows encompass five main areas: specification, design, building, testing, and learning [17].

A fundamental challenge in synthetic biology is that biological circuit components lack strict composability, creating a discrepancy between qualitative design and quantitative performance prediction known as the "synthetic biology problem" [1]. As circuit complexity increases, limitations in biological part modularity and the metabolic burden imposed on chassis cells severely constrain design capacity [1].

Algorithmic enumeration addresses these challenges by systematically exploring the combinatorial design space to identify minimal genetic implementations. This approach is exemplified by the T-Pro (Transcriptional Programming) framework, which leverages synthetic transcription factors and promoters to achieve circuit compression—designing genetic circuits with fewer parts for higher-state decision-making [1]. This review details the software architecture, experimental protocols, and applications of algorithmic enumeration methods for guaranteeing minimal circuit designs in synthetic biology.

Software Architecture and Implementation

Algorithmic Enumeration Method

The T-Pro framework employs a generalizable algorithmic enumeration method for designing 3-input Boolean logic circuits. This approach models genetic circuits as directed acyclic graphs and systematically enumerates circuits in sequential order of increasing complexity [1].

- Search Space Complexity: The combinatorial space for 3-input T-Pro circuits is on the order of 10^14 putative circuits, from which 256 non-synonymous operations with prescribed truth tables must be selected [1].

- Compression Optimization: The algorithm guarantees identification of the most compressed circuit implementation for a given truth table by sequentially exploring designs with increasing part counts [1].

- Wetware Integration: The software coordinates with expanded T-Pro wetware, including synthetic transcription factors responsive to orthogonal signals (IPTG, D-ribose, and cellobiose) with engineered alternate DNA recognition functions [1].

Quantitative Performance Prediction

A critical innovation in modern algorithmic enumeration tools is their ability to provide quantitative performance predictions with high accuracy:

Table 1: Performance Metrics of Algorithmic Enumeration Software

| Metric | Performance Value | Validation Method |

|---|---|---|

| Prediction Error | <1.4-fold average error | >50 test cases |

| Circuit Size Reduction | ~4x smaller than canonical inverter-type circuits | Component count comparison |

| Boolean Logic Capacity | 256 distinct 3-input truth tables | Functional validation |

| Multi-state Decision Making | 8-state (000 to 111) | Truth table verification |

The software incorporates workflows that account for genetic context effects when quantifying expression levels, enabling predictive design of genetic circuits with precise performance setpoints [1]. This represents a significant advancement beyond qualitative design-by-eye approaches that require labor-intensive experimental optimization.

Experimental Protocols

Wetware Engineering for Circuit Components

Objective: Engineer orthogonal sets of synthetic transcription factors (repressors and anti-repressors) for 3-input Boolean logic circuits.

Materials:

- CelR regulatory core domain (RCD) scaffold

- Site saturation mutagenesis reagents

- Error-prone PCR (EP-PCR) materials

- Fluorescence-activated cell sorting (FACS) capability

- Synthetic promoter libraries with tandem operator designs

Methodology:

- Repressor Selection: Verify synthetic transcription factors against synthetic promoter sets using tandem operator designs. Select optimal repressors based on dynamic range and ON-state expression level in presence of ligand [1].

- Super-repressor Generation: Perform site saturation mutagenesis at strategic amino acid positions (e.g., position 75 for CelR scaffold) to create ligand-insensitive variants that retain DNA binding function [1].

- Anti-repressor Engineering: Conduct error-prone PCR on super-repressor templates at low mutation rates to generate variant libraries (~10^8 variants) [1].

- Functional Screening: Use FACS to identify anti-repressor variants with desired phenotypes. Validate anti-repressor function across multiple alternate DNA recognition domains [1].

Computational Enumeration Workflow

Objective: Identify minimal genetic circuit implementations for target Boolean functions.

Figure 1: Algorithmic enumeration workflow for identifying minimal genetic circuit designs. The process systematically explores circuits of increasing complexity until identifying the most compressed implementation satisfying the target truth table.

Implementation Details:

- Truth Table Specification: Precisely define the desired 3-input Boolean logic function across all 8 possible input states (000 to 111) [1].

- Systematic Enumeration: Generate candidate circuits using a directed acyclic graph model, exploring implementations with increasing component counts [1].

- Functional Validation: Verify each candidate circuit against the target truth table, proceeding until identifying the minimal implementation [1].

- Context Integration: Incorporate genetic context parameters to enable quantitative performance prediction for the compressed design [1].

Circuit Validation and Performance Characterization

Objective: Experimentally validate computationally designed circuits and measure performance metrics.

Materials:

- Chassis cells (appropriate microbial hosts)

- Induction ligands (IPTG, D-ribose, cellobiose)

- Reporter genes (fluorescent proteins)

- Flow cytometry or microplate readers

Protocol:

- Construct Assembly: Implement the computationally designed circuit using standardized assembly methods.

- Transfer Function Analysis: Measure input-output relationships across a range of inducer concentrations.

- Burden Assessment: Quantify growth rates and metabolic burden compared to control strains.

- Truth Table Verification: Confirm circuit functionality matches all states of the target Boolean logic table.

Applications and Case Studies

Circuit Compression for Biocomputing

The T-Pro framework with algorithmic enumeration has demonstrated significant advantages for biological computing applications:

Table 2: Research Reagent Solutions for Genetic Circuit Design

| Reagent Category | Specific Examples | Function in Circuit Design |

|---|---|---|

| Synthetic Transcription Factors | E+TAN repressor, EA1TAN anti-repressor | Perform core logical operations through DNA binding regulation |

| Synthetic Promoters | Tandem operator designs | Provide regulated expression platforms responsive to synthetic TFs |

| Orthogonal Inducer Systems | IPTG, D-ribose, cellobiose | Enable independent control of multiple circuit inputs |

| Regulatory Core Domains | CelR RCD with ADR variations | Create orthogonal protein-DNA interactions for circuit scaling |

| Reporter Systems | Fluorescent proteins (GFP, RFP) | Quantify circuit performance and output states |

- 3-Input Boolean Logic: The expansion from 2-input to 3-input Boolean logic enables 256 distinct truth tables compared to the previous 16 operations [1].

- Reduced Metabolic Burden: Compressed circuits are approximately 4-times smaller than canonical inverter-type genetic circuits, significantly reducing resource competition on host cells [1].

- Predictive Design: Quantitative predictions demonstrate less than 1.4-fold error across multiple test cases, enabling reliable design without iterative optimization [1].

Metabolic Pathway Control

Algorithmic enumeration software has been successfully applied to metabolic engineering challenges:

- Flux Control: Precisely control flux through toxic biosynthetic pathways by implementing optimal regulatory programs [1].

- Operon Design: Design compressed genetic circuits that coordinate expression of multiple pathway enzymes with minimal genetic footprint [1].

- Setpoint Targeting: Achieve precise expression levels for metabolic enzymes using quantitative performance prediction workflows [1].

Synthetic Memory Systems

The methodology enables predictive design of recombinase-based genetic memory:

- State Switching: Implement stable genetic memory elements with target recombination efficiencies [1].

- Minimal Design: Utilize circuit compression to create memory systems with reduced part counts compared to traditional architectures [1].

The Scientist's Toolkit

Algorithmic Enumeration Tools:

- T-Pro Circuit Designer: Specialized software for enumerating and optimizing transcriptional programming circuits [1].

- Cello: Compiles Verilog code specifying logic circuits to DNA sequences with prediction of design correctness [17].

- Eugene: Rule-based specification language that incorporates abstraction layers and automatically generates combinatorial biological devices [17].

Supporting Frameworks:

- GenoCAD: Gene design and simulation tool with repository of biological parts and support for various grammars/libraries [17].

- Proto/Biocompiler: Generates optimized genetic regulatory network designs from specifications written in a high-level programming language [17].

DNA Assembly and Construction:

- Standardized Part Libraries: Curated collections of promoters, coding sequences, and terminators with characterized performance metrics.

- Automated Assembly Workflows: Robotic platforms for high-throughput construction of genetic circuits.

Characterization Platforms:

- Flow Cytometry: Single-cell resolution measurement of circuit performance in population contexts.

- Microplate Readers: Bulk measurement of circuit transfer functions across induction ranges.

Figure 2: Architecture of a compressed 3-input genetic circuit using synthetic transcription factors. Multiple inputs regulate synthetic TFs that integrate at a single promoter implementing complex logic with minimal components.

Leveraging Machine Learning for Sequence-to-Function and Composition-to-Function Predictions

The automated design of biological circuits represents a frontier in synthetic biology, enabling the programming of cellular behaviors for therapeutic and biotechnological applications. A central challenge in this endeavor is the predictive mapping of biological sequences—whether DNA, RNA, or protein—to their resulting functions. Machine learning (ML), and particularly deep learning (DL), has emerged as a transformative technology for creating these sequence-to-function and composition-to-function models. By leveraging large-scale biological data, these models allow researchers to bypass traditionally labor-intensive and expensive experimental characterization, accelerating the design-build-test cycle for genetic circuits, enzymes, and therapeutic proteins. This Application Note details key ML methodologies and provides standardized protocols for their implementation, specifically framed within the context of simulation research for automated biological circuit design.

Key Machine Learning Approaches

Computational protein function prediction methods can be broadly categorized based on the input information they utilize. The following table summarizes the main classes of methods, their input features, and example applications.

Table 1: Categories of Machine Learning Methods for Function Prediction

| Method Category | Primary Input Features | Example Algorithms & Tools | Key Applications in Circuit Design |

|---|---|---|---|

| Sequence-Based | Protein/DNA primary sequence, amino acid k-mers, physicochemical properties | FUTUSA [18] [19], ProLanGO [19], DeepGOPlus [19] | Predicting novel enzyme activity (e.g., oxidoreductase, acetyltransferase) from sequence alone [18] |

| Structure-Based | 3D protein structure, spatial & biochemical features from PDB or AlphaFold | DeepFRI [19], Struct2GO [19], GAT-GO [19] | Predicting protein-protein interactions (PPIs) with high biological accuracy [20] |

| Interaction-Based | Protein-Protein Interaction (PPI) network data, functional associations | Graph2GO [19], deepNF [19], NetGO3 [19] | Mapping functional modules and conserved interaction patterns within synthetic pathways [20] |

| Integrative | Combined sequence, structure, interaction, and/or textual data | TransFun [19], MultiPredGO [19] | Holistic functional annotation for poorly characterized proteins in novel circuits [19] |

Sequence-to-Function Prediction

Sequence-to-function models directly map a linear sequence of nucleotides or amino acids to a specific functional output, a capability essential for predicting the behavior of novel genetic parts and enzymes in a circuit.

Methodologies and Algorithms

- Convolutional Neural Networks (CNNs): Models like FUTUSA (Function Teller Using Sequence Alone) use CNN-based feature extraction from protein sequences. They employ sequence segmentation to train on regional sequence patterns and their relationships, which has been shown to improve predictive performance by 49% compared to processing full-length sequences [18] [19]. This is particularly powerful for identifying local motifs and functional domains.

- Recurrent Neural Networks (RNNs): Methods such as ProLanGO treat function prediction as a language translation problem, where the protein sequence (ProLan) is "translated" into a function language (GOLan) of Gene Ontology terms. An encoder-decoder RNN architecture captures the sequential dependencies between amino acids [19].

- Transformers and Attention Mechanisms: These architectures are increasingly used due to their ability to capture long-range dependencies in sequences. They offer enhanced interpretability by using attention mechanisms to identify key residues critical for function [19].

Application Protocol: Predicting Enzyme Function with FUTUSA

Objective: To predict the molecular function of a protein (e.g., enzyme commission class) using only its amino acid sequence.

Experimental Workflow:

Diagram 1: FUTUSA Prediction Workflow

Step-by-Step Procedure:

Input Preparation:

- Obtain the FASTA format sequence of the target protein.

- Pre-process the sequence by segmenting it into overlapping regional windows to enhance pattern recognition [18].

Feature Extraction:

- The segmented sequences are fed into a one-dimensional CNN to generate numerical embeddings for each amino acid, moving beyond simple one-hot encoding to capture richer physicochemical information [19].

- A second CNN layer then extracts spatial features and local sequence patterns from these embeddings.

Classification:

- The extracted features are passed through one or more fully connected (dense) layers to create hidden feature representations that integrate information from across the sequence.

- The final classification layer (e.g., a softmax layer) uses these hidden features to predict the probability of each functional class (e.g., monooxygenase activity) [18] [19].

Validation:

- In silico validation: Perform cross-validation on hold-out test sets with known functions.

- Experimental validation: For high-confidence predictions, validate the function using targeted biochemical assays (e.g., enzyme activity assays).

Application in Circuit Design: This protocol can predict the catalytic function of an enzyme encoded by a novel sequence, allowing researchers to incorporate it into a metabolic pathway within a genetic circuit. Furthermore, once trained on a specific function, the model can predict the functional consequence of point mutations, such as assessing the impact of a mutation in phenylalanine hydroxylase responsible for phenylketonuria (PKU) [18].

Composition-to-Function Prediction

Composition-to-function models predict the emergent behavior of a system composed of multiple interacting parts, such as the logical output of a genetic circuit built from promoters, coding sequences, and transcription factors.

Methodologies and Algorithms

- Structure-Based PPI Prediction: These methods use 3D structural information, either from experimental data (Protein Data Bank) or predictions (AlphaFold), to predict whether and how two proteins interact. They offer greater biological accuracy than sequence-only methods by explicitly modeling spatial and biochemical complementarity [20] [21].

- Graph Neural Networks (GNNs): GNNs are ideal for modeling relational data, such as PPI networks or genetic circuits. They operate on graph structures where nodes represent proteins or genetic parts, and edges represent interactions. GNNs can predict novel interactions and functional properties of entire networks [20] [19].

- Algorithmic Enumeration for Circuit Compression: For genetic circuit design, methods like Transcriptional Programming (T-Pro) use algorithmic enumeration to navigate a vast combinatorial space of genetic parts. The algorithm, modeled as a directed acyclic graph, systematically identifies the smallest possible circuit (compressed circuit) that implements a desired Boolean logic function, thereby minimizing the metabolic burden on the host chassis [1].

Application Protocol: Designing a Compressed Genetic Logic Circuit with T-Pro

Objective: To design a 3-input Boolean logic genetic circuit (e.g., for higher-state decision-making) with a minimal number of genetic parts.

Experimental Workflow:

Diagram 2: T-Pro Circuit Design Workflow

Step-by-Step Procedure:

Problem Definition:

- Define the desired 3-input (8-state) Boolean logic operation as a truth table (e.g., INPUTS: A, B, C; OUTPUT: Y) [1].

In Silico Design via Algorithmic Enumeration:

- Use the T-Pro enumeration algorithm to search the combinatorial space of possible circuits. The algorithm explores circuits in order of increasing complexity, guaranteeing the identification of the most compressed (smallest) design that satisfies the target truth table [1].

- The output is a qualitative circuit design specifying the required synthetic promoters, repressors, and anti-repressors.

Wetware Assembly:

- Cloning: Assemble the designed circuit using standardized biological parts (e.g., from the iGEM registry). The T-Pro framework relies on orthogonal sets of synthetic transcription factors (TFs) responsive to different inducers (e.g., IPTG, D-ribose, and cellobiose) [1].

- Chassis Transformation: Introduce the constructed plasmid into the microbial chassis (e.g., E. coli).

Testing and Validation:

- Characterization: Measure the circuit's output (e.g., fluorescence) in response to all combinations of input signals.

- Performance Analysis: Compare the quantitative performance (e.g., transfer function, dynamic range) to the model's predictions. The T-Pro workflow has been shown to achieve quantitative predictions with an average error below 1.4-fold [1].

Application in Circuit Design: This protocol enables the automated design of complex genetic circuits that are 4-times smaller on average than canonical designs, significantly reducing the metabolic burden on the host cell and improving circuit stability and predictability [1]. This is directly applicable to building sophisticated sensors, processors, and actuators in synthetic biology.

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for ML-Guided Biological Design

| Reagent / Resource | Type | Function in Experimentation | Example Use-Case |

|---|---|---|---|

| Synthetic Transcription Factors (TFs) [1] | Wetware (Protein) | Engineered repressors and anti-repressors that bind synthetic promoters to implement logical operations in genetic circuits. | Core component for building T-Pro compression circuits responsive to inducers like IPTG, ribose, and cellobiose. |

| T-Pro Synthetic Promoters [1] | Wetware (DNA) | Engineered DNA sequences containing tandem operator sites for binding synthetic TFs, facilitating transcriptional programming. | Provides the regulatory logic for genetic circuits, working in concert with synthetic TFs. |

| AlphaFold Database [20] | Software/Database | Provides highly accurate predicted 3D protein structures for millions of proteins, updated regularly. | Source of structural data for structure-based PPI prediction when experimental structures are unavailable. |

| DIP / IntAct / STRING [20] | Database | Curated databases of experimentally verified and predicted Protein-Protein Interactions (PPIs). | Used as ground-truth data for training and validating interaction-based ML models like GNNs. |

| Negatome Database [20] | Database | A manually curated collection of protein pairs that are known not to interact. | Provides critical negative examples for training ML models to avoid false-positive PPI predictions. |

| FUTUSA [18] [19] | Software (Deep Learning) | A CNN-based deep learning program that predicts protein function from sequence information alone. | First-step tool for functional annotation of newly identified or poorly characterized proteins in a circuit. |

The automated design of biological circuits presents a fundamental challenge: how to optimize system performance when the relationship between circuit components and their functional output is complex, poorly understood, or computationally expensive to model directly. Black-box optimization methods have emerged as powerful tools for this task, as they do not require detailed mechanistic knowledge of the underlying system but instead treat the system as a "black box" where inputs are mapped to outputs through iterative experimentation. In the context of biological circuit design, these algorithms efficiently navigate high-dimensional parameter spaces—such as concentrations of inducers, gene expression rates, and regulatory strengths—to find combinations that yield desired circuit behaviors.

Two particularly influential classes of algorithms for this purpose are Bayesian optimization (BO) and evolutionary algorithms (EAs). Bayesian optimization constructs a probabilistic model of the objective function and uses it to direct the search toward promising regions, making it exceptionally sample-efficient for expensive experiments [22]. Evolutionary algorithms, inspired by natural selection, maintain a population of candidate solutions that undergo selection, mutation, and recombination to progressively improve fitness over generations [23] [24]. These methods are transforming biological research by enabling the optimization of molecular designs (e.g., antibodies, peptides), gene circuit tuning, culture protocol optimization, and patient-specific dose adjustment, even in the face of substantial biological noise and variability across individuals [22].

Bayesian Optimization for Biological Circuit Design

Core Principles and Biological Applicability

Bayesian optimization is a sequential global optimization strategy designed to find the extremum of a black-box function with minimal evaluations, a critical feature when each evaluation represents a costly or time-consuming biological experiment [25]. Its effectiveness in biological contexts stems from several inherent advantages: it does not require the objective function to be differentiable, it handles noisy outcomes common in biological data, and it efficiently manages the exploration-exploitation trade-off inherent in experimental design [25].

The power of BO derives from three interconnected components:

- A probabilistic surrogate model, typically a Gaussian Process (GP), which estimates the function and its uncertainty at unexplored points based on observed data.

- An acquisition function which uses the surrogate model's predictions to quantify the utility of evaluating each point in the parameter space, balancing exploration of uncertain regions with exploitation of known promising areas.

- A Bayesian update mechanism that refines the surrogate model as new data becomes available [25].

This framework is particularly suited to biological applications because it can incorporate prior knowledge (a "prior") and update beliefs with new experimental evidence (the "posterior"), making it ideal for lab-in-the-loop research where each data point is expensive to acquire [25].

Detailed Experimental Protocol for Bayesian Optimization

Protocol Title: Bayesian Optimization for Gene Circuit Tuning in Metabolic Engineering

Objective: To optimize the expression levels of multiple genes in a synthetic metabolic pathway (e.g., for limonene or astaxanthin production) to maximize product yield.

Materials and Reagents:

- E. coli Marionette strain with genomically integrated orthogonal inducible transcription factors [25].

- Plasmids containing the target metabolic pathway genes.

- Chemical inducers (e.g., naringenin) for transcriptional control.

- Spectrophotometer or HPLC for product quantification.

Software Requirements:

- Bayesian optimization software (e.g., BioKernel, a no-code BO framework) [25].

- Tools for heteroscedastic noise modeling to account for non-constant measurement uncertainty.

Procedure:

- Define the Optimization Problem:

- Inputs (Parameters to Vary): Identify the

ncontrol parameters (e.g., concentrations ofndifferent inducers regulating pathway genes). - Output (Objective Function): Define the quantitative measurement to maximize (e.g., limonene production measured in mg/L).

- Constraints: Specify any technical constraints (e.g., inducer concentration ranges, total experiment budget).

- Inputs (Parameters to Vary): Identify the

Initial Experimental Design: