Automated DBTL Pipelines: Accelerating Microbial Production of Fine Chemicals and Therapeutics

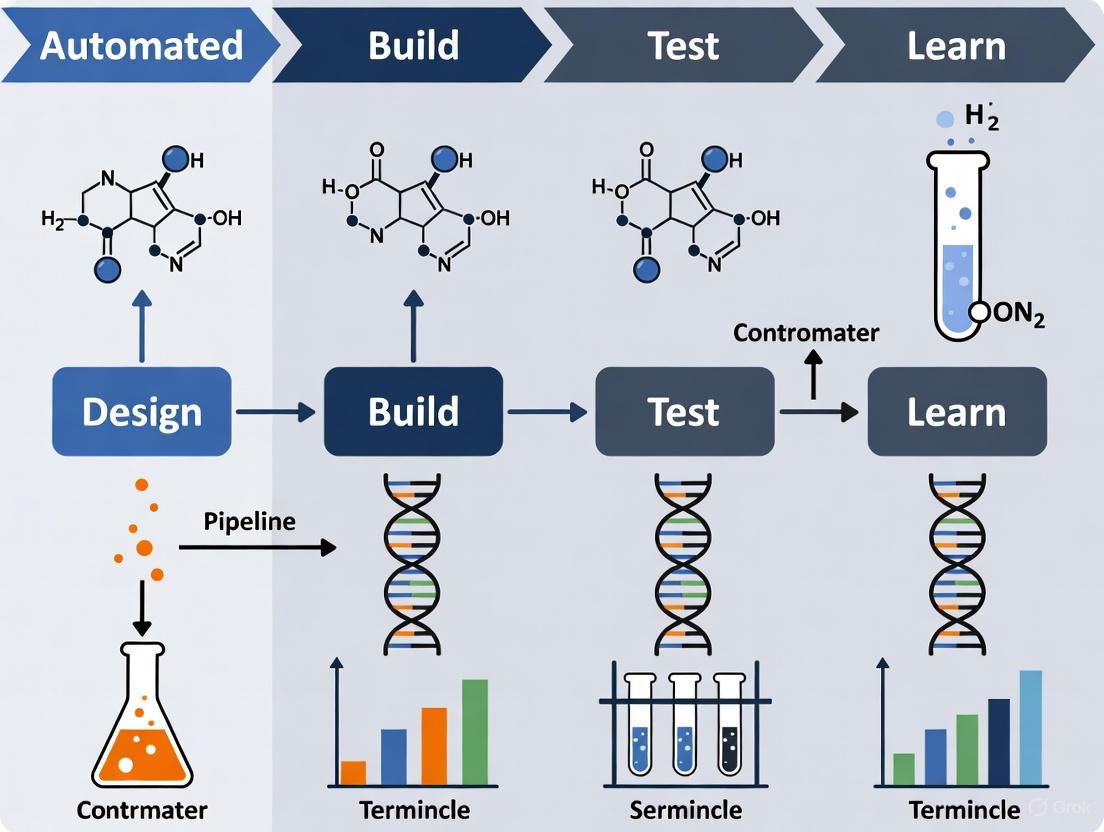

This article explores the transformative role of automated Design-Build-Test-Learn (DBTL) pipelines in synthetic biology for the microbial production of fine chemicals and pharmaceutical precursors.

Automated DBTL Pipelines: Accelerating Microbial Production of Fine Chemicals and Therapeutics

Abstract

This article explores the transformative role of automated Design-Build-Test-Learn (DBTL) pipelines in synthetic biology for the microbial production of fine chemicals and pharmaceutical precursors. Tailored for researchers, scientists, and drug development professionals, it provides a comprehensive examination of the foundational principles, methodological implementations, and AI-driven optimization of these integrated systems. Through specific case studies on compounds like pinocembrin, dopamine, and colicins, we detail how automation and machine learning are overcoming traditional bottlenecks in strain engineering and pathway optimization. The content further validates these approaches with performance benchmarks and discusses emerging paradigms, offering a strategic resource for deploying these accelerated engineering cycles in both academic and industrial biomanufacturing.

The DBTL Framework: Foundations for Automated Microbial Engineering

Defining the Design-Build-Test-Learn (DBTL) Cycle in Synthetic Biology

The Design-Build-Test-Learn (DBTL) cycle represents a systematic framework central to modern synthetic biology, enabling the iterative engineering of biological systems for enhanced production of valuable compounds. This article details the implementation of an automated DBTL pipeline for microbial production of fine chemicals, using the optimization of (2S)-pinocembrin in Escherichia coli as a primary case study. Through two iterative DBTL cycles, we achieved a 500-fold improvement in production titers, demonstrating the power of this approach for rapid strain development. The protocols and data presented provide researchers with a blueprint for implementing automated DBTL workflows in their own metabolic engineering projects.

In synthetic biology, the Design-Build-Test-Learn (DBTL) cycle provides a structured engineering framework for developing biological systems with desired functions [1]. This iterative process begins with in silico Design of biological parts, proceeds to physical Building of genetic constructs, advances to experimental Testing of system performance, and concludes with Learning from generated data to inform the next design cycle [2]. The DBTL framework has become fundamental to synthetic biology because it addresses a core challenge: despite rational design, introducing foreign DNA into cellular systems often produces unpredictable outcomes, necessitating multiple testing iterations [1].

Automation has dramatically accelerated DBTL cycling in recent years, with biofoundries implementing robotic platforms and computational tools to streamline each phase [3]. This automation enables researchers to explore vast design spaces efficiently, significantly reducing the time and resources required for strain optimization [4]. The DBTL approach is particularly valuable for microbial production of fine chemicals, where it has successfully optimized pathways for compounds ranging from flavonoids to alkaloids [4] [5].

DBTL Phase Protocols and Applications

Design Phase: Computational Planning

Objective: Design genetic constructs and pathways in silico to meet predefined engineering objectives.

Protocol:

- Pathway Selection: Utilize computational tools like RetroPath to identify potential biosynthetic pathways for your target compound [4].

- Enzyme Selection: Employ enzyme selection platforms such as Selenzyme to identify optimal enzymes for each pathway step based on catalytic efficiency, substrate specificity, and host compatibility [4].

- Genetic Design: Translate protein sequences to coding sequences with optimized codon usage for the host organism. Design regulatory elements including promoters, ribosome binding sites (RBS), and terminators [4].

- Assembly Design: Generate detailed DNA assembly protocols specifying cloning methods (Gibson assembly, Golden Gate, etc.), fragment ordering, and necessary overhang sequences [3].

- Library Design: For multivariate optimization, create combinatorial libraries covering different parameters (promoter strengths, gene orders, copy numbers). Apply Design of Experiments (DoE) methodologies to reduce library size while maintaining representativeness [4].

Application Note: In the pinocembrin case study, researchers designed an initial library of 2,592 possible configurations varying four parameters: vector copy number, promoter strength for each gene, and gene order. Using statistical DoE, this was reduced to 16 representative constructs, achieving a 162:1 compression ratio [4].

Build Phase: Physical Construction

Objective: Physically assemble designed genetic constructs and introduce them into host organisms.

Protocol:

- DNA Synthesis: Order DNA fragments from commercial providers (Twist Bioscience, IDT, GenScript) or amplify from existing stocks [4] [3].

- Automated Assembly: Implement robotic liquid handling systems (Tecan, Beckman Coulter, Hamilton) for high-throughput DNA assembly. For the pinocembrin pathway, ligase cycling reaction (LCR) was used for assembly [4].

- Transformation: Introduce assembled constructs into appropriate host cells (E. coli DH5α for plasmid propagation, production strains for functional testing) [4].

- Quality Control: Verify constructs through automated plasmid purification, restriction digest analysis, and sequencing (Sanger or NGS) [4] [3].

Application Note: Integration with laboratory information management systems (LIMS) like TeselaGen enables sample tracking and protocol management throughout the Build phase. Automated platforms can manage inventory, track freezer stocks, and execute complex pipetting workflows with minimal human intervention [3].

Test Phase: Functional Characterization

Objective: Experimentally measure performance of engineered biological systems.

Protocol:

- Cultivation: Grow engineered strains in appropriate media formats (96-deepwell plates for high-throughput screening) with standardized growth conditions [4].

- Induction: Implement automated induction protocols for gene expression control [4].

- Metabolite Analysis: Extract metabolites from cultures and quantify target compounds and intermediates using analytical methods such as:

- Data Processing: Implement automated data extraction and processing pipelines using custom scripts (R, Python) for rapid analysis [4].

Application Note: In the pinocembrin study, automated 96-deepwell plate growth and induction protocols enabled rapid screening of construct libraries. Quantitative UPLC-MS/MS analysis provided precise measurements of pinocembrin and key intermediates like cinnamic acid, revealing production tiers ranging from 0.002 to 0.14 mg/L in the initial library [4].

Learn Phase: Data Analysis and Insight Generation

Objective: Analyze experimental data to extract insights and guide subsequent design cycles.

Protocol:

- Statistical Analysis: Apply statistical methods to identify significant factors influencing system performance. For the initial pinocembrin library, this included ANOVA to determine effects of copy number, promoter strengths, and gene order [4].

- Machine Learning: Implement ML algorithms to build predictive models linking genotype to phenotype. These models can identify non-intuitive relationships and optimize multi-parameter systems [2] [3].

- Hypothesis Generation: Formulate new design hypotheses based on statistical and ML analysis to improve system performance in subsequent DBTL cycles.

Application Note: Analysis of the initial pinocembrin library revealed that vector copy number had the strongest significant effect on production (P value = 2.00 × 10⁻⁸), followed by chalcone isomerase (CHI) promoter strength (P value = 1.07 × 10⁻⁷). Interestingly, high levels of the intermediate cinnamic acid suggested phenylalanine ammonia-lyase (PAL) activity was not rate-limiting despite its promoter strength showing some effect [4].

Case Study: Pinocembrin Production inE. coli

The power of the automated DBTL pipeline was demonstrated through the optimization of (2S)-pinocembrin production in E. coli [4]. The biosynthetic pathway comprised four enzymes: phenylalanine ammonia-lyase (PAL), 4-coumarate:CoA ligase (4CL), chalcone synthase (CHS), and chalcone isomerase (CHI) converting L-phenylalanine to pinocembrin [4].

Table 1: Pinocembrin Production Through DBTL Iterations

| DBTL Cycle | Key Design Changes | Production Titer (mg/L) | Fold Improvement |

|---|---|---|---|

| Initial constructs | 16 representative designs from full combinatorial library | 0.002 - 0.14 | Baseline |

| Cycle 2 | High-copy backbone; optimized CHI promoter and position | Up to 88 | 500-fold |

Table 2: Statistical Analysis of Design Factors in Initial Library

| Design Factor | Effect on Pinocembrin Production | P-value |

|---|---|---|

| Vector copy number | Strongest positive effect | 2.00 × 10⁻⁸ |

| CHI promoter strength | Strong positive effect | 1.07 × 10⁻⁷ |

| CHS promoter strength | Moderate effect | 1.01 × 10⁻⁴ |

| 4CL promoter strength | Moderate effect | 1.01 × 10⁻⁴ |

| PAL promoter strength | Weak effect | 3.06 × 10⁻⁴ |

| Gene order | Not significant | > 0.05 |

Pathway Engineering and Workflow

Diagram 1: Pinocembrin biosynthetic pathway. The pathway converts L-phenylalanine to pinocembrin through five enzymatic steps. In the E. coli case study, the C4H step was bypassed by using cinnamic acid as a precursor or through endogenous activity [4].

Diagram 2: Automated DBTL workflow for pinocembrin production. The integrated pipeline features specialized computational tools for Design, robotic automation for Build, high-throughput analytics for Test, and statistical modeling for Learn phases [4] [3].

Advanced DBTL Methodologies

Knowledge-Driven DBTL and Cell-Free Prototyping

Recent advances have introduced knowledge-driven DBTL cycles that incorporate upstream in vitro investigation to guide rational strain engineering [6]. This approach was successfully applied to dopamine production in E. coli, where cell-free lysate systems were used to test different enzyme expression levels before implementing changes in vivo [6]. This strategy enabled the development of a dopamine production strain achieving 69.03 ± 1.2 mg/L, a 2.6 to 6.6-fold improvement over previous reports [6].

Cell-free expression systems represent another powerful methodology for accelerating DBTL cycles. These systems enable rapid protein synthesis without cloning steps, typically producing >1 g/L of protein in under 4 hours [2]. When combined with microfluidics, cell-free systems can screen over 100,000 reactions in picoliter-scale droplets, generating massive datasets for machine learning model training [2].

Machine Learning and the LDBT Paradigm

The integration of machine learning is transforming traditional DBTL approaches. Protein language models (ESM, ProGen) and structure-based design tools (ProteinMPNN) now enable zero-shot predictions of protein function and stability [2]. These advances have prompted proposals for a paradigm shift from DBTL to LDBT (Learn-Design-Build-Test), where machine learning precedes design based on large biological datasets [2].

Table 3: Machine Learning Tools for Biological Design

| Tool Name | Type | Primary Application | Key Feature |

|---|---|---|---|

| ESM [2] | Protein language model | Function prediction | Trained on evolutionary relationships |

| ProGen [2] | Protein language model | Sequence generation | Designs diverse functional sequences |

| MutCompute [2] | Structure-based deep learning | Residue optimization | Predicts stabilizing mutations |

| ProteinMPNN [2] | Structure-based deep learning | Sequence design | Designs sequences for target structures |

| Prethermut [2] | Stability prediction | Thermostability optimization | Predicts effects of mutations on stability |

| DeepSol [2] | Solubility prediction | Solubility optimization | Predicts protein solubility from sequence |

Essential Research Reagents and Solutions

Table 4: Key Research Reagent Solutions for DBTL Implementation

| Reagent/Resource | Function/Application | Example Suppliers/Tools |

|---|---|---|

| DNA Synthesis Providers | Supply custom DNA fragments and libraries | Twist Bioscience, IDT, GenScript [3] |

| Automated Liquid Handlers | Enable high-throughput pipetting and assembly | Tecan, Beckman Coulter, Hamilton [3] |

| Cell-Free Expression Systems | Rapid protein synthesis without cloning | PURExpress, homemade extracts [2] |

| Analytical Instruments | Metabolite quantification and characterization | UPLC-MS/MS, HPLC, plate readers [4] [3] |

| Software Platforms | DBTL cycle management and data analysis | TeselaGen, CLC Genomics, Geneious [3] |

| Design Tools | In silico pathway and part design | RetroPath, Selenzyme, PartsGenie [4] |

The Design-Build-Test-Learn cycle provides a powerful framework for systematic engineering of biological systems, with automated implementations dramatically accelerating strain development for fine chemical production. The pinocembrin case study demonstrates how iterative DBTL cycling coupled with statistical analysis can achieve remarkable improvements in production titers. Emerging methodologies including knowledge-driven DBTL, cell-free prototyping, and machine learning integration are further enhancing the efficiency and predictive power of this approach. As these technologies mature, DBTL pipelines will continue to transform synthetic biology from an empirical art to a predictive engineering discipline.

The Critical Need for Automation in Strain Development for Fine Chemicals

The microbial production of fine chemicals presents a promising biosustainable manufacturing solution but is often hindered by the immense resource investments and lengthy development times required for strain engineering [4]. The Design-Build-Test-Learn (DBTL) cycle, a core engineering paradigm, has been adopted to structure this development process. However, its traditional, manual implementation remains a major bottleneck. The integration of laboratory automation and robotics is therefore not merely an incremental improvement but a critical enabler that transforms the DBTL cycle from a slow, sequential process into a rapid, high-throughput, and iterative workflow [4] [7]. This automated pipeline is essential for the efficient discovery and optimization of microbial strains, making the production of high-value fine chemicals economically viable [4] [6].

This application note details the components of an automated DBTL pipeline, provides quantitative evidence of its impact, and outlines specific protocols for its implementation, framed within the context of a broader thesis on advancing microbial production research.

The Automated DBTL Pipeline: Components and Workflow

An automated DBTL pipeline for strain development integrates computational design, robotic construction, high-throughput analytics, and data analysis into a continuous, iterative cycle [4]. The workflow and logical relationships between these stages are illustrated below.

Stage 1: Design

The Design phase employs a suite of bioinformatics tools for in silico pathway prototyping. For any target compound, tools like RetroPath [4] and Selenzyme [4] automate the selection of potential biosynthetic routes and candidate enzymes. The PartsGenie software [4] then designs reusable DNA parts, optimizing elements like ribosome-binding sites (RBS) and codon usage. A critical step is using Design of Experiments (DoE) to reduce the vast combinatorial design space of pathway variants (e.g., promoters, gene order) into a smaller, statistically representative library for testing, achieving compression ratios as high as 162:1 [4].

Stage 2: Build

The Build stage translates digital designs into physical DNA constructs. This stage leverages commercial DNA synthesis followed by automated, robot-assisted assembly using methods like ligase cycling reaction (LCR) [4]. Automated protocols are also established for host transformation and subsequent quality control, including high-throughput plasmid purification, restriction digest, and sequence verification [4] [7]. A key advancement is the development of integrated robotic protocols for organisms like Saccharomyces cerevisiae, which can increase throughput to ~400 transformations per day, a 10-fold improvement over manual methods [7].

Stage 3: Test

In the Test phase, constructed strains are cultivated in automated systems, typically using 96-deepwell plates [4]. Following growth, the process of metabolite extraction is automated. Quantitative analysis of the target chemical and key intermediates is performed using fast, sensitive methods such as ultra-performance liquid chromatography coupled to tandem mass spectrometry (UPLC-MS/MS) [4]. The development of rapid LC-MS methods, which can reduce analyte detection runtime from 50 minutes to 19 minutes, is crucial for screening large libraries efficiently [7].

Stage 4: Learn

The Learn phase involves analyzing the high-throughput data to extract meaningful insights. This is achieved through the application of statistical methods and machine learning (ML) to identify the relationships between genetic design factors (e.g., promoter strength, copy number) and observed production titers [4] [8]. These insights directly inform the design of the next, improved library of strains, thus closing the DBTL loop [4] [6].

Quantitative Impact of Automation

The implementation of an automated DBTL cycle has demonstrated dramatic improvements in the speed and success of strain engineering projects. The following table summarizes key performance metrics from recent applications.

Table 1: Performance Metrics of Automated DBTL Pipelines in Strain Engineering

| Target Compound / Process | Host Organism | Key Automated Step | Quantitative Improvement | Throughput / Efficiency Gain |

|---|---|---|---|---|

| (2S)-Pinocembrin [4] | Escherichia coli | Pathway assembly & screening | 500-fold increase in production (over 2 cycles); final titer of 88 mg/L | Library compression of 162:1 via DoE [4] |

| Dopamine [6] | Escherichia coli | High-throughput RBS engineering | Final titer of 69.03 ± 1.2 mg/L; 2.6 to 6.6-fold improvement over previous state-of-the-art | Knowledge-driven DBTL cycle using upstream in vitro testing [6] |

| Verazine [7] | Saccharomyces cerevisiae | Robotic yeast transformation | Identified genes giving a 2 to 5-fold increase in production | Throughput of ~400 transformations/day (10x manual) [7] |

| General Strain Construction [7] | Saccharomyces cerevisiae | Integrated robotic workflow | Successful transformation rate compatible with downstream automation | Pipeline capacity of 2,000 transformations/week [7] |

Detailed Experimental Protocols

Protocol: Automated Build Phase for Yeast Strain Construction

This protocol outlines the automated high-throughput transformation of S. cerevisiae using a Hamilton Microlab VANTAGE system, as described by Robinson et al. [7].

I. Research Reagent Solutions

Table 2: Essential Reagents for Automated Yeast Transformation

| Reagent / Material | Function / Explanation |

|---|---|

| Competent S. cerevisiae cells | Engineered production host (e.g., verazine-producing strain PW-4). |

| pESC-URA plasmid library | Expression vector with auxotrophic marker for selection. |

| Lithium Acetate (LiOAc) | Component of transformation mix; alters cell wall to facilitate DNA uptake. |

| Single-Stranded Carrier DNA (ssDNA) | Blocks nucleases and improves plasmid DNA uptake efficiency. |

| Polyethylene Glycol (PEG) | Promotes cell membrane fusion and DNA entry during heat shock. |

| YPAD Agar Plates | Growth medium for outgrowth and selection of successful transformants. |

II. Workflow Diagram

III. Step-by-Step Procedure

- Workflow Initialization: Load the deck of the Hamilton VANTAGE platform according to the predefined labware layout. The user interface (programmed in Hamilton VENUS) allows for customization of parameters like DNA volume and incubation times.

- Transformation Mix Assembly: The robotic arm pipettes the plasmid DNA library, competent yeast cells, and transformation mix reagents (LiOAc, PEG, ssDNA) into a 96-well plate. Note: Pipetting parameters for viscous reagents like PEG are pre-optimized for accuracy.

- Heat Shock: The robotic arm transfers the 96-well plate to an off-deck thermal cycler (e.g., Inheco ODTC) for a heat shock incubation at 42°C. The plate is automatically sealed and peeled using integrated off-deck devices during this step.

- Cell Washing: Following heat shock, the plate is centrifuged, and the robot aspirates the supernatant. The cell pellets are then resuspended in water.

- Plating: The transformed cell suspension is automatically transferred onto solid YPAD agar plates for outgrowth.

- Downstream Processing: The resulting colonies can be picked using an automated colony picker (e.g., QPix 460) for subsequent high-throughput culturing and screening.

Protocol: Automated Test Phase for Metabolite Screening

This protocol details the high-throughput screening of microbial cultures for fine chemical production, compatible with 96-deepwell plate formats [4] [7].

I. Research Reagent Solutions

Table 3: Essential Reagents for Metabolite Screening

| Reagent / Material | Function / Explanation |

|---|---|

| Production Media | Chemically defined or rich media optimized for the production host and target pathway. |

| Inducer (e.g., IPTG, Galactose) | Triggers the expression of heterologous biosynthetic pathway genes. |

| Zymolyase / Lysozyme | Enzyme for efficient cell lysis, particularly for yeast/fungal cells. |

| Organic Solvents (e.g., Methanol, Acetonitrile) | Used for metabolite extraction and protein precipitation. |

| LC-MS Grade Solvents & Standards | High-purity solvents for UPLC-MS/MS; authentic chemical standards for quantification. |

II. Workflow Diagram

III. Step-by-Step Procedure

- Cultivation: Inoculate production media in 96-deepwell plates from the library of engineered strains. Use a liquid handling robot for consistency. Incubate the plates with shaking in a controlled environment. Induce pathway expression at the optimal growth phase using an automated dispenser.

- Metabolite Extraction: At a defined time post-induction, harvest the cells by centrifugation. Implement an automated extraction protocol, which may involve:

- Cell Lysis: For robust cell walls (e.g., yeast), add a buffer containing Zymolyase and incubate to digest the wall [7].

- Solvent Extraction: Add a suitable organic solvent (e.g., methanol or acetonitrile) to the lysate or cell pellet to extract the intracellular and extracellular metabolites. This also precipitates proteins.

- Sample Analysis: Centrifuge the extraction plate to pellet cell debris and precipitated protein. Transfer the clarified supernatant to a new plate for analysis.

- UPLC-MS/MS Quantification: Use an autosampler to inject samples onto the UPLC-MS/MS system. A rapid, optimized method (e.g., 19-minute runtime [7]) is critical for high throughput. The mass spectrometer should be operated in Multiple Reaction Monitoring (MRM) mode for high sensitivity and specificity when quantifying target compounds against a standard curve.

Automation is the cornerstone of a modern, efficient biofoundry. The integration of robotics and data science at every stage of the DBTL cycle—from design to learning—dramatically accelerates the development of microbial cell factories for fine chemicals. The quantitative data and detailed protocols provided herein serve as a blueprint for research institutions and industrial laboratories to implement these critical technologies, thereby overcoming traditional bottlenecks and unlocking new possibilities in sustainable biomanufacturing.

The implementation of automated Design-Build-Test-Learn (DBTL) pipelines represents a paradigm shift in microbial metabolic engineering for fine chemicals production. This application note details the core components of an integrated automated DBTL platform, from specialized software tools to robotic hardware systems. We present quantitative performance data from case studies on flavonoid and dopamine production in Escherichia coli, along with detailed protocols for pathway assembly and screening. The documented pipeline achieves up to 500-fold improvement in production titers through iterative cycling, demonstrating the transformative potential of automation in biopharmaceutical research and development.

The Design-Build-Test-Learn (DBTL) framework has emerged as a cornerstone of modern synthetic biology and metabolic engineering. Automated DBTL pipelines integrate computational design, robotic construction, high-throughput analytical testing, and machine learning-driven analysis into an iterative, closed-loop system [4]. These platforms are particularly valuable for optimizing microbial production of fine chemicals, where traditional approaches require substantial time and resource investments.

Biofoundries—specialized facilities housing integrated automation systems—enable the rapid prototyping of microbial strains through sophisticated robotic workflows [7]. The automation of each DBTL phase significantly accelerates strain development cycles, with demonstrated capacity of up to 2,000 transformations per week in yeast systems—a 10-fold improvement over manual methods [7]. For pharmaceutical applications, these systems enhance product quality through reduced human intervention and precise process control while ensuring compliance with regulatory requirements [9] [10].

Core Components of an Automated DBTL Pipeline

Design Phase: In Silico Pathway Design and Library Construction

The Design phase initiates the DBTL cycle through computational selection of biosynthetic pathways and enzymatic components. Automated pathway design utilizes software tools including RetroPath for pathway selection and Selenzyme for enzyme selection [4]. DNA parts are designed with simultaneous optimization of ribosome-binding sites (RBS) and coding sequences using tools such as PartsGenie [4].

Combinatorial libraries are constructed in silico by varying multiple parameters: plasmid copy number (e.g., ColE1, p15a, pSC101 origins), promoter strength (e.g., Ptrc, PlacUV5), intergenic regions, and gene order permutations [4]. Statistical methods like Design of Experiments (DoE) enable efficient exploration of design spaces, achieving compression ratios of 162:1 (reducing 2,592 combinations to 16 representative constructs) while maintaining library diversity [4].

Table 1: Software Tools for Automated DBTL Design Phase

| Tool Name | Function | Application Example | Reference |

|---|---|---|---|

| RetroPath | Pathway selection | Flavonoid biosynthesis pathway design | [4] |

| Selenzyme | Enzyme selection | Identification of optimal enzymes for target reactions | [4] |

| PartsGenie | DNA part design | RBS optimization and coding sequence refinement | [4] |

| UTR Designer | RBS engineering | Fine-tuning translation initiation rates | [6] |

| TeselaGen Platform | DNA assembly protocol generation | Managing complex combinatorial libraries | [3] |

Build Phase: Automated DNA Assembly and Strain Construction

The Build phase translates digital designs into physical biological constructs through automated laboratory workflows. Robotic platforms such as the Hamilton Microlab VANTAGE execute modular protocols for DNA assembly, transformation, and quality control [7]. Integration with external hardware (plate sealers, thermal cyclers, colony pickers) enables end-to-end automation of molecular biology workflows [7].

DNA assembly employs standardized methods such as ligase cycling reaction (LCR) or Gibson assembly, with automated worklist generation ensuring reagent precision [4]. Liquid handling robots from manufacturers including Tecan, Beckman Coulter, and Hamilton Robotics provide high-precision pipetting for PCR setup, DNA normalization, and plasmid preparation [3]. Quality control is implemented through automated plasmid purification, restriction digest analysis, and sequence verification [4].

Table 2: Robotic Systems for DBTL Build Phase

| System Type | Example Models | Primary Function | Throughput Capacity | |

|---|---|---|---|---|

| Automated Liquid Handlers | Hamilton VANTAGE, Tecan Freedom EVO, Beckman Coulter Biomek | Precise reagent dispensing, PCR setup, DNA normalization | 400-2,000 transformations/week | [7] [3] |

| Integrated Robotics | Hamilton iSWAP | Plate movement between instruments | Hands-free operation of multi-step protocols | [7] |

| External Hardware | Inheco ODTC thermocycler, 4titude plate sealer | Specific process steps (heat shock, sealing) | Parallel processing of 96-well plates | [7] |

| Colony Pickers | QPix 460 | Automated selection of transformed colonies | High-throughput strain library generation | [7] |

Test Phase: High-Throughput Screening and Analytics

The Test phase employs automated cultivation and analytical systems to rapidly characterize library performance. Robotic platforms execute 96-deepwell plate growth and induction protocols with precise environmental control [4]. Sample processing includes automated metabolite extraction followed by quantitative analysis using ultra-performance liquid chromatography coupled to tandem mass spectrometry (UPLC-MS/MS) [4] [7].

High-throughput screening systems incorporate plate readers (e.g., PerkinElmer EnVision, BioTek Synergy HTX) for rapid phenotypic assessment [3]. For secondary metabolite detection, automated sample preparation enables LC-MS runtime reduction from 50 to 19 minutes while maintaining data quality—critical for screening large libraries [7]. Data extraction and processing are automated through custom scripts (e.g., R-based pipelines) for streamlined conversion of raw data into analyzable formats [4].

Learn Phase: Data Integration and Machine Learning

The Learn phase applies statistical analysis and machine learning to extract design principles from experimental data. Statistical methods identify significant factors influencing production titers, such as plasmid copy number and promoter strength effects [4]. Machine learning algorithms build predictive models connecting genetic designs to phenotypic outcomes, enabling genotype-to-phenotype predictions for subsequent DBTL cycles [3].

Platforms like TeselaGen's Discover Module employ predictive models to forecast biological phenotypes using quantitative data and advanced embeddings representing DNA, proteins, and chemical compounds [3]. The integration of all experimental data into centralized repositories with standardized application programming interfaces (APIs) facilitates data mining and pattern recognition across multiple DBTL cycles [3].

Application Notes: Microbial Production of Fine Chemicals

Case Study 1: Flavonoid Production in E. coli

Background: Flavonoids represent a structurally diverse class of natural products with pharmaceutical applications. Pinocembrin serves as a key biosynthetic precursor, produced from L-phenylalanine via a four-enzyme pathway [4].

Experimental Protocol:

- Pathway Design: A combinatorial library was designed incorporating four expression levels (vector backbone selection), promoter strength variations (strong Ptrc vs. weak PlacUV5), intergenic region regulation (strong, weak, or no promoter), and 24 gene order permutations [4].

- Library Reduction: Design of Experiments based on orthogonal arrays combined with Latin square arrangement reduced 2,592 combinations to 16 representative constructs [4].

- Strain Construction: Automated LCR assembly was performed on robotic platforms, followed by transformation into E. coli DH5α. Constructs were verified through automated plasmid purification, restriction digest, and sequencing [4].

- Screening: Cultures were grown in 96-deepwell plates with automated induction and metabolite extraction. Pinocembrin and intermediate (cinnamic acid) quantification used UPLC-MS/MS with high mass resolution [4].

- Data Analysis: Statistical analysis identified significant factors affecting production titers using custom R scripts [4].

Results and Learning: Initial library screening revealed pinocembrin titers ranging from 0.002 to 0.14 mg/L [4]. Statistical analysis identified vector copy number as the strongest positive factor (P = 2.00×10⁻⁸), followed by chalcone isomerase (CHI) promoter strength (P = 1.07×10⁻⁷) [4]. A second DBTL cycle incorporating these insights achieved 88 mg/L pinocembrin—a 500-fold improvement over initial constructs [4].

Figure 1: Flavonoid Biosynthesis Pathway for Pinocembrin Production. Enzymes: PAL (phenylalanine ammonia-lyase), C4H (cinnamate 4-hydroxylase), 4CL (4-coumarate:CoA ligase), CHS (chalcone synthase), CHI (chalcone isomerase).

Case Study 2: Dopamine Production in E. coli

Background: Dopamine has applications in emergency medicine, cancer treatment, and materials science. A knowledge-driven DBTL approach incorporated upstream in vitro testing to inform strain design [6].

Experimental Protocol:

- In Vitro Testing: Crude cell lysate systems expressed pathway enzymes to assess relative expression levels and identify bottlenecks before in vivo implementation [6].

- Host Engineering: E. coli FUS4.T2 was engineered for enhanced L-tyrosine production through deletion of the transcriptional dual regulator TyrR and mutation of feedback inhibition in chorismate mutase/prephenate dehydrogenase (tyrA) [6].

- RBS Engineering: High-throughput RBS engineering fine-tuned expression of HpaBC (4-hydroxyphenylacetate 3-monooxygenase) and Ddc (L-DOPA decarboxylase) using simplified Shine-Dalgarno sequence modulation [6].

- Bioreactor Cultivation: Strains were cultured in minimal medium with 20 g/L glucose, 10% 2xTY, MOPS buffer, and appropriate supplements in 96-deepwell plates [6].

- Analytical Methods: Dopamine quantification employed LC-MS with optimized rapid methods to reduce analysis time while maintaining sensitivity [6].

Results and Learning: The optimized dopamine production strain achieved titers of 69.03 ± 1.2 mg/L (34.34 ± 0.59 mg/g biomass)—a 2.6 to 6.6-fold improvement over previous in vivo production systems [6]. The study demonstrated the impact of GC content in the Shine-Dalgarno sequence on RBS strength and translation efficiency [6].

Figure 2: Dopamine Biosynthesis Pathway from L-Tyrosine. Enzymes: HpaBC (4-hydroxyphenylacetate 3-monooxygenase), Ddc (L-DOPA decarboxylase).

Essential Research Reagent Solutions

Table 3: Key Research Reagents for Automated DBTL Pipelines

| Reagent Category | Specific Examples | Function in DBTL Pipeline | Application Notes | |

|---|---|---|---|---|

| DNA Assembly Systems | Ligase Cycling Reaction (LCR), Gibson Assembly | Construction of genetic pathways | Automated worklist generation enables robotic execution | [4] |

| Vector Systems | pET system, pJNTN, pESC-URA | Gene expression in microbial hosts | Varying copy numbers (ColE1, p15a, pSC101) modulate expression | [4] [6] |

| Induction Systems | IPTG-inducible promoters, GAL1 promoter | Controlled gene expression | Concentration optimization critical for metabolic burden management | [6] [7] |

| Selection Markers | Ampicillin, kanamycin resistance genes | Strain selection and maintenance | Antibiotic concentrations: ampicillin 100 μg/mL, kanamycin 50 μg/mL | [6] |

| Analytical Standards | Pinocembrin, dopamine, L-DOPA | Metabolite quantification | Essential for UPLC-MS/MS method development and validation | [4] [6] |

| Cell Lysis Reagents | Zymolyase, organic solvents | Metabolite extraction from cells | Automated processing enables high-throughput sample preparation | [7] |

Workflow Integration and Automation Protocols

Integrated DBTL Pipeline Operation

Figure 3: Integrated Automated DBTL Workflow. The cycle connects computational design with robotic execution and machine learning, with an automation layer ensuring seamless transitions between phases.

Protocol: Automated Yeast Transformation for Pathway Screening

This protocol adapts the lithium acetate/ssDNA/PEG method for 96-well format using Hamilton VANTAGE platform [7]:

Materials:

- Competent S. cerevisiae cells (prepared in-house)

- Plasmid DNA library (concentration normalized to 50-100 ng/μL)

- Lithium acetate (1.0 M)

- Single-stranded carrier DNA (2.0 mg/mL)

- PEG solution (40% w/v polyethylene glycol 3350)

- Selective agar plates

- 96-well microplates (capable of withstanding heat shock)

Automated Workflow:

- Transformation Setup: Program Hamilton VENUS software with customized parameters (DNA volume, reagent ratios, incubation times).

- Cell Resuspension: Distribute competent cells to 96-well plate using liquid handler (50 μL/well).

- Reagent Addition: Add plasmid DNA (1-5 μL), lithium acetate (25 μL), ssDNA (5 μL), and PEG solution (150 μL) with optimized pipetting parameters for viscous solutions.

- Heat Shock: Transfer plate to Inheco ODTC thermal cycler for 42°C incubation (20-40 minutes, user-defined).

- Washing: Centrifuge plate, remove supernatant, resuspend in recovery medium using robotic arm.

- Plating: Transfer aliquots to selective agar plates using automated plate replicator.

- Colony Picking: Incubate 2-3 days at 30°C, then pick colonies using QPix 460 system.

Critical Parameters:

- Pipetting accuracy: Adjust aspiration/dispense speeds for viscous PEG solution

- Heat shock uniformity: Ensure consistent temperature across 96-well plate

- Transformation efficiency: Optimize cell density and DNA concentration

- Quality control: Include positive (known plasmid) and negative (no DNA) controls

Automated DBTL pipelines represent a transformative technological platform for accelerating microbial strain engineering for fine chemicals production. The integration of specialized software tools, robotic hardware systems, and machine learning algorithms creates an iterative optimization cycle capable of achieving order-of-magnitude improvements in production titers. As demonstrated in the flavonoid and dopamine case studies, these systems enable rapid identification of metabolic bottlenecks and design principles that would be impractical to discover through manual approaches.

Future developments in automated bioprocessing will likely focus on enhanced integration across platforms, improved machine learning models leveraging larger datasets, and expansion to non-traditional microbial hosts. For the pharmaceutical industry, these advancements promise to accelerate development timelines while improving product quality and process consistency through reduced human intervention and precise control of critical process parameters.

The microbial production of fine chemicals presents a promising biosustainable manufacturing solution. Its advancement at an industrial level, however, has been hindered by the large resource investments required for strain development. The automated Design-Build-Test-Learn (DBTL) pipeline represents a transformative approach, integrating computational design and laboratory automation to rapidly prototype and optimize biochemical pathways in microbial chassis. This pipeline is designed to be compound-agnostic and enables rapid iterative cycling with automation at every stage, dramatically accelerating the development of efficient microbial cell factories [4].

The core of this pipeline involves:

- Design: In silico selection of pathways and enzymes, and automated design of genetic parts.

- Build: Automated DNA assembly and construction of pathway libraries.

- Test: High-throughput cultivation and analytical screening.

- Learn: Data analysis to identify optimal genetic configurations for the next cycle [4].

This article examines the application of this framework across three key chassis organisms: Escherichia coli, Saccharomyces cerevisiae, and Pseudomonas putida, detailing their unique metabolic capabilities and providing practical protocols for their engineering.

Organism Profiles and Recent Production Milestones

The selection of an appropriate chassis organism is fundamental to the success of any bioproduction process. E. coli, S. cerevisiae, and P. putida have emerged as predominant hosts due to their distinct metabolic advantages, well-characterized genetics, and suitability for industrial fermentation.

E. coli is a genetically tractable workhorse whose industrial competitiveness increasingly depends on expanding its molecular repertoire through first-in-class pathways and achieving best-in-class titer, rate, and yield (TRY). Recent milestones include the first demonstration of producing aromatic homopolyester and poly(ester amide)s directly from glucose [11].

S. cerevisiae offers the advantages of being Generally Regarded As Safe (GRAS), robust in industrial fermentations, and capable of performing complex eukaryotic post-translational modifications. Its industrial use is widespread, with global production of S. cerevisiae yeast estimated to be in the hundreds of millions of kilograms annually [12].

P. putida is valued for its remarkable metabolic versatility and exceptional tolerance to chemical and physical stresses, making it particularly suitable for the production of toxic compounds or for processes using heterogeneous feedstocks. The market for P. putida-based technologies is experiencing steady growth, indicative of its increasing industrial adoption [13].

Table 1: Key Characteristics of Chassis Organisms in Bioproduction

| Characteristic | Escherichia coli | Saccharomyces cerevisiae | Pseudomonas putida |

|---|---|---|---|

| Genetic Tools | Extensive, well-developed | Extensive, well-developed | Developing, but advanced |

| Growth Rate | Very High | High | Moderate |

| Stress Tolerance | Moderate | High | Very High |

| Preferred Substrate | Simple sugars (e.g., Glucose) | Simple sugars (e.g., Glucose, Sucrose) | Diverse, including aromatics and glycerol |

| Typical Bioreactor Cell Density | High (OD~50-100) | High (OD~50-100) | Very High (e.g., 50 million cells/mL) [13] |

| Key Advantage | Rapid prototyping, high yields | GRAS status, eukaryotic protein processing | Solvent tolerance, flexible metabolism |

| Example Product | (2S)-Pinocembrin [4] | Fatty Alcohols [14] | 7-Methylxanthine [15] |

Table 2: Recent Production Achievements with Chassis Organisms

| Organism | Target Product | Titer/ Yield | Key Engineering Strategy | Reference |

|---|---|---|---|---|

| E. coli | (2S)-Pinocembrin | 88 mg L⁻¹ | Application of an automated DBTL cycle for pathway optimization. | [4] |

| S. cerevisiae | Fatty Alcohols | Increase up to 56% | Downregulation of TOR1 and deletion of HDA1 to enhance cellular robustness and extend chronological lifespan. | [14] [16] |

| P. putida | 7-Methylxanthine | 9.2 ± 0.42 g L⁻¹, 100% yield | Deletion of glpR, integration of ndmABD, overexpression of fdhA, and identification of efficient caffeine transporter. | [15] |

Detailed Application Notes and Protocols

Protocol 1: Automated DBTL for Pathway Prototyping in E. coli

This protocol outlines the application of a fully integrated, automated DBTL pipeline for optimizing a flavonoid pathway in E. coli, as demonstrated for (2S)-pinocembrin production [4].

Design Phase

- Pathway Selection: Use retrobiosynthesis tools like RetroPath to identify potential pathways from a target compound (e.g., (2S)-pinocembrin) to host metabolites [4].

- Enzyme Selection: Employ enzyme selection tools like Selenzyme to choose candidate enzymes (e.g., PAL, 4CL, CHS, CHI for pinocembrin) based on sequence and function [4].

- Genetic Design: Use software (e.g., PartsGenie) to design genetic parts with optimized ribosome-binding sites (RBS) and codon-optimized coding sequences.

- Library Design: Create a combinatorial library of pathway designs by varying:

- Vector Backbone: Origin of replication (e.g., ColE1-high, p15a-medium, pSC101-low) and promoter (e.g., Ptrc-strong, PlacUV5-weak).

- Gene Order: Systematically permute the order of genes in the operon.

- Intergenic Regions: Include strong, weak, or no additional promoter between genes.

- Library Compression: Apply Design of Experiments (DoE) methodologies, such as orthogonal arrays combined with a Latin square, to reduce the combinatorial library (e.g., from 2592 to 16 constructs) to a tractable size for testing [4].

Build Phase

- DNA Synthesis: Commission commercial synthesis of the designed gene fragments.

- Automated Assembly: Use a liquid handling robot to assemble the pathway constructs via ligase cycling reaction (LCR) or other DNA assembly methods, following automated worklists generated by design software.

- Transformation: Transform assembled plasmids into an appropriate E. coli production chassis (e.g., DH5α or BL21).

- Quality Control (QC): Perform high-throughput, automated plasmid purification, analytical restriction digest, and sequence verification to ensure construction fidelity [4].

Test Phase

- Cultivation: Conduct growth and production in 96-deepwell plates using automated liquid handling. Induce expression at the appropriate growth phase.

- Metabolite Extraction: Use automated quenching and extraction protocols for intracellular metabolites.

- Analytical Screening: Employ fast UPLC-MS/MS for quantitative, high-throughput screening of the target product and key intermediates [4].

Learn Phase

- Data Analysis: Apply statistical analysis (e.g., ANOVA) to production data to identify the main factors (e.g., vector copy number, promoter strength for specific genes) significantly influencing product titer.

- Redesign: Use the statistical model to inform the design of a second, refined library targeting the most influential factors, initiating the next DBTL cycle [4].

Diagram 1: Automated DBTL pipeline for microbial strain engineering.

Protocol 2: Engineering Cellular Robustness in S. cerevisiae for Enhanced Production

This protocol details a strategy to enhance the production of fatty alcohols in S. cerevisiae by engineering cellular robustness rather than directly manipulating the product pathway, a method that can serve as a general strategy for building more effective microbial cell factories [14] [16].

Genetic Modifications for Robustness

- Downregulate TOR1 Expression: The Target of Rapamycin (TOR) kinase is a central regulator of cell growth and metabolism. Moderate downregulation (e.g., using a weaker promoter or CRISPRi) can extend chronological lifespan and enhance stress resistance without severely compromising growth.

- Delete Histone Deacetylase HDA1: Deletion of HDA1 alters global gene expression patterns, leading to improved stress response and metabolic balance. This can be achieved via standard homologous recombination or CRISPR-Cas9.

Verification of Robustness Phenotypes

- Chronological Lifespan (CLS) Assay:

- Inoculate yeast strains in synthetic complete medium and incubate at 30°C with shaking.

- Monitor the optical density (OD600) to determine the exponential growth phase.

- At stationary phase (day 2), serially dilute culture samples and spot them onto solid YPD plates to determine the number of colony-forming units (CFUs) per mL. This day is considered day 0 for the CLS assay.

- Continue incubating the main culture and periodically sample to determine CFUs over time (e.g., every 2-3 days). A strain with an extended CLS will maintain viability for a longer period.

- Stress Resistance Assay: Exponentially growing cells to various stressors (e.g., oxidative stress with H₂O₂, osmotic stress with NaCl) and monitor cell viability or growth inhibition compared to the wild-type strain.

Production Evaluation

- Cultivation for Production: Grow engineered and control strains in production medium with the appropriate carbon source (e.g., glucose). Induce expression of the fatty alcohol pathway genes if under an inducible promoter.

- Metabolite Extraction and Analysis: Extract fatty alcohols from the culture using an organic solvent (e.g., ethyl acetate). Quantify production using GC-MS or GC-FID. The engineered robust strain is expected to show significantly higher titers (e.g., up to 56% increase) and possibly higher productivity in prolonged fermentations [14] [16].

Protocol 3: Systematic Engineering of P. putida for High-Yield Bioconversion

This protocol describes the systematic engineering of P. putida EM42 for the selective and high-yield conversion of caffeine to 7-methylxanthine (7-MX) in minimal salt media with glycerol, culminating in high-titer production in a bioreactor [15].

Step 1: Enable Glycerol Utilization

- Delete the transcriptional repressor glpR: This deregulates the glp operon, enabling efficient glycerol catabolism. Use allelic exchange or CRISPR-based genome editing for clean deletion.

Step 2: Establish the Heterologous Production Pathway

- Genomically integrate the ndmABD cassette: This cassette encodes the heterologous N-demethylase complex from another bacterium, which catalyzes the specific demethylation of caffeine to 7-MX.

- Select a strong, constitutive native promoter (e.g., P_{gap}}) to drive the expression of ndmABD.

- Implement a redox-balancing strategy: Overexpress a native formate dehydrogenase (e.g., fdhA) to regenerate NADH required for the N-demethylase reaction, improving cofactor balance and reaction efficiency.

Step 3: Engineer Substrate Uptake

- Identify and overexpress a native caffeine transporter: The discovery and overexpression of the native transporter PP_RS18750 was crucial for efficient caffeine uptake from the media, preventing substrate limitation [15].

Step 4: Bioreactor Process Optimization

- Operate in fed-batch mode to achieve high cell density. Use a minimal salts medium with glycerol as the primary carbon and energy source.

- Maintain dissolved oxygen at a sufficient level (e.g., >30% air saturation) through controlled agitation and aeration.

- Employ a controlled feeding strategy for caffeine: Add caffeine feed once a high cell density is achieved to drive the bioconversion. The feeding rate should be optimized to maintain a sub-inhibitory concentration while maximizing conversion rate.

- Monitor the process: Track cell density (OD600), glycerol, and caffeine consumption, and 7-MX accumulation over time. Under optimized conditions, this process has achieved titers of 9.2 ± 0.42 g L⁻¹ of 7-MX with 100% yield in a 3-L bioreactor [15].

Diagram 2: Engineered 7-MX biosynthesis pathway in P. putida.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Microbial Metabolic Engineering

| Reagent/Material | Function/Application | Example Use Case |

|---|---|---|

| RetroPath [4] | Retrobiosynthetic tool for automated pathway design from a target molecule. | Design phase: Identifying potential pathways for (2S)-pinocembrin synthesis in E. coli. |

| Selenzyme [4] | Automated enzyme selection platform for suggested pathway reactions. | Design phase: Selecting candidate genes (PAL, 4CL, CHS, CHI) for the pinocembrin pathway. |

| PartsGenie & PlasmidGenie [4] | Software for designing genetic parts (RBS, coding sequences) and generating robotic assembly worklists. | Design/Build phase: Creating combinatorial libraries and automating DNA assembly protocols. |

| Ligase Cycling Reaction (LCR) [4] | A DNA assembly method suitable for automated, high-throughput construction of genetic pathways. | Build phase: Assembling multiple pathway variants in a 96-well plate format on a robotic platform. |

| UPLC-MS/MS [4] | High-resolution, quantitative analytical chemistry platform for metabolomics and pathway screening. | Test phase: Rapidly measuring titers of pinocembrin and key intermediates from many culture samples. |

| CRISPRi/sRNA Libraries [11] | Tools for targeted gene knockdown at genome scale to identify gene targets that enhance production. | Learn/Redesign phase: Systems-level identification of knockdown targets to optimize flux in E. coli. |

| Dynamic Biosensors [11] | Genetic circuits that link product concentration to a measurable output (e.g., fluorescence). | Test phase: High-throughput screening of mutant libraries for desired production phenotypes. |

Fine chemicals, such as flavonoids and alkaloids, represent a category of high-value, physiologically active compounds with critical applications in pharmaceuticals, cosmetics, and nutritional supplements [17]. Their conventional extraction from native plants faces significant challenges, including low abundance in natural sources, complex purification processes, and fluctuating supply chains [17] [18]. Bio-based production through microbial fermentation and enzymatic synthesis has emerged as a sustainable alternative, offering cost-effective and environmentally friendly manufacturing solutions [17].

The Design-Build-Test-Learn (DBTL) pipeline represents a transformative, automated approach for optimizing the microbial production of these fine chemicals [4] [19]. This framework enables rapid prototyping and iterative refinement of biosynthetic pathways, dramatically accelerating the development of efficient microbial cell factories. By integrating computational design with laboratory automation, the DBTL pipeline systematically addresses the bottlenecks that have traditionally hindered the industrial-scale development of bio-based fine chemical production [4].

Target Fine Chemicals: Structures, Functions, and Production Systems

Flavonoids

Flavonoids constitute a large family of plant secondary metabolites characterized by a C6-C3-C6 skeleton structure, comprising two aromatic rings linked by a three-carbon bridge [20]. These compounds exhibit diverse biological activities, including potent antioxidant, anti-inflammatory, antibacterial, and anticancer properties [20]. The global flavonoid market continues to expand, projected to reach USD 3.4 billion by 2031, driven by increasing applications in pharmaceutical and health food industries [20].

Pinocembrin, a simple flavonoid, serves as a key precursor to more complex flavonoids and has been successfully produced in engineered E. coli using automated DBTL approaches [4]. Similarly, chrysoeriol, a 3′-O-methoxy flavone derived from luteolin, demonstrates valuable pharmacological effects including neuroprotective, antidiabetic, and anticancer activities [20]. Recent advances in plant synthetic biology have enabled the production of chrysoeriol in engineered Nicotiana benthamiana by reconstructing a simplified four-step biosynthetic pathway [20].

Alkaloids

Alkaloids are nitrogen-containing compounds found in various plant species with vast potential for medicinal and pharmacological applications [21]. Their global interest as natural therapeutic agents continues to grow, particularly due to their lower toxicity profiles compared to synthetic compounds [21]. Notable plant-derived alkaloids that have become indispensable in modern pharmacotherapy include the anticancer agents vincristine and vinblastine from Madagascar periwinkle (Catharanthus roseus), and the analgesic morphine from opium poppy (Papaver somniferum) [18].

Research indicates that alkaloid potency and concentration are significantly influenced by environmental factors such as soil composition and climate, adding complexity to their standardized production [21]. Emerging evidence also suggests promising synergistic effects when alkaloids are combined with other phytochemicals, opening new avenues for multi-compound therapeutic formulations [21].

Pharmaceutical Precursors

Beyond complete compounds, bio-based production systems have been successfully applied to pharmaceutical precursors, addressing supply limitations of complex natural products. Seminal examples include artemisinic acid, a precursor to the antimalarial drug artemisinin, produced in both yeast (25 g/L) and tobacco (120 mg/kg) through synthetic biology approaches [20]. Similarly, amorpha-4,11-diene, a precursor to artemisinin, has been synthesized in engineered microorganisms [17].

The table below summarizes key fine chemicals, their functions, and production platforms:

Table 1: Fine Chemicals Overview: Functions and Production Platforms

| Chemical Category | Example Compounds | Therapeutic Functions | Production Platforms |

|---|---|---|---|

| Flavonoids | Pinocembrin, Chrysoeriol, Apigenin | Antioxidant, anti-inflammatory, anticancer | E. coli, S. cerevisiae, N. benthamiana |

| Alkaloids | Morphine, Vincristine, Quinine | Analgesic, anticancer, antimalarial | Microbial fermentation, plant extraction |

| Isoprenoids | Artemisinic acid, Amorpha-4,11-diene | Antimalarial, precursors | E. coli, S. cerevisiae, plant platforms |

| GABA | γ-aminobutyric acid | Neurotransmitter, antihypertensive | L. brevis, C. glutamicum, E. coli |

The Automated DBTL Pipeline: Methodology and Implementation

The automated DBTL pipeline represents an integrated, compound-agnostic platform for rapid optimization of biosynthetic pathways [4]. Its modular architecture enables efficient cycling through design, construction, testing, and data analysis phases with minimal manual intervention:

DBTL Stage Protocols

Design Stage Protocol

Objective: In silico selection and design of biosynthetic pathways and genetic constructs.

Procedure:

- Pathway Selection: Use RetroPath software to identify potential biosynthetic routes to the target compound [4].

- Enzyme Selection: Employ Selenzyme platform to select optimal enzymes for each pathway step based on catalytic efficiency, substrate specificity, and compatibility with the host organism [4].

- Parts Design: Utilize PartsGenie software to design genetic parts with optimized ribosome-binding sites and codon-optimized coding sequences [4].

- Library Design: Apply Design of Experiments (DoE) methodologies to reduce combinatorial libraries to tractable numbers. For example, reduce 2592 possible configurations to 16 representative constructs using orthogonal arrays combined with Latin square design [4].

Deliverable: Statistically representative library of pathway designs ready for construction.

Build Stage Protocol

Objective: Automated construction of designed genetic pathways.

Procedure:

- DNA Synthesis: Commission commercial synthesis of designed genetic parts [4].

- Part Preparation: Perform PCR amplification and clean-up of genetic parts (currently off-deck but amenable to automation) [4].

- Automated Assembly: Set up ligase cycling reaction (LCR) assembly on robotics platforms using automated worklists generated by PlasmidGenie software [4].

- Transformation: Introduce assembled constructs into suitable production host (e.g., E. coli DH5α) [4].

- Quality Control: Conduct high-throughput plasmid purification, restriction digest analysis by capillary electrophoresis, and sequence verification [4].

Deliverable: Sequence-verified constructs transformed into production host.

Test Stage Protocol

Objective: High-throughput screening of constructed strains for product formation.

Procedure:

- Cultivation: Grow strains in 96-deepwell plates using automated growth and induction protocols [4].

- Metabolite Extraction: Perform automated extraction of target compounds and intermediates from cultures [4].

- Analysis: Conduct quantitative analysis using fast ultra-performance liquid chromatography coupled to tandem mass spectrometry (UPLC-MS/MS) with high mass resolution [4].

- Data Processing: Extract and process data using custom-developed R scripts for quantification of target products and pathway intermediates [4].

Deliverable: Quantitative production data for all library constructs.

Learn Stage Protocol

Objective: Extract design principles from experimental data to inform next cycle.

Procedure:

- Statistical Analysis: Identify relationships between design factors (e.g., promoter strength, gene order, copy number) and production titers using statistical methods [4].

- Machine Learning: Apply machine learning algorithms to identify non-obvious interactions and optimize predictive models [4].

- Pathway Redesign: Use statistical insights to redesign pathway for improved performance in next DBTL cycle [4].

Deliverable: Redesigned pathway library with improved production characteristics.

Case Study: Flavonoid Production in E. coli

Pathway Engineering for Pinocembrin Production

The application of the automated DBTL pipeline to (2S)-pinocembrin production in E. coli demonstrates the power of this approach [4]. The reconstructed pathway comprises four enzymes converting L-phenylalanine to (2S)-pinocembrin: phenylalanine ammonia-lyase (PAL), 4-coumarate:CoA ligase (4CL), chalcone synthase (CHS), and chalcone isomerase (CHI) [4].

DBTL Iterations and Productivity Enhancement

The implementation of two iterative DBTL cycles for pinocembrin production demonstrated remarkable improvement in titers:

Table 2: DBTL Cycle Progression for Pinocembrin Production in E. coli

| DBTL Cycle | Key Design Factors | Production Titer (mg/L) | Fold Improvement |

|---|---|---|---|

| Initial Library | Broad exploration: 4 expression levels, promoter strength variations, 24 gene orders | 0.002 - 0.14 | Baseline |

| Statistical Analysis | Identified vector copy number and CHI promoter strength as most significant factors | N/A | N/A |

| Redesigned Library | High copy number (ColE1), CHI at pathway start, optimized 4CL and CHS expression | Up to 88 | 500-fold |

The statistical analysis from the first DBTL cycle revealed that vector copy number had the strongest significant effect on pinocembrin production (P value = 2.00 × 10^(-8)), followed by a positive effect of the CHI promoter strength (P value = 1.07 × 10^(-7)) [4]. Weaker effects were observed for CHS, 4CL, and PAL promoter strengths, respectively [4]. Interestingly, gene order did not show significant effects in this pathway [4].

Essential Research Reagents and Solutions

Successful implementation of DBTL pipelines requires carefully selected research reagents and molecular tools:

Table 3: Essential Research Reagent Solutions for DBTL Pipeline Implementation

| Reagent Category | Specific Examples | Function in DBTL Pipeline |

|---|---|---|

| Host Strains | E. coli DH5α, C. glutamicum, S. cerevisiae, N. benthamiana | Production chassis with complementary metabolic capabilities |

| Vector Systems | p15a (medium copy), pSC101 (low copy), ColE1 (high copy) origins | Tunable expression control through copy number variation |

| Promoter Systems | Ptrc (strong), PlacUV5 (weak) | Transcriptional regulation of pathway genes |

| Enzyme Tools | Ligase Cycling Reaction (LCR) reagents | Automated, efficient DNA assembly |

| Analytical Instruments | UPLC-MS/MS systems | High-throughput, quantitative metabolite analysis |

| Software Platforms | RetroPath, Selenzyme, PartsGenie, PlasmidGenie | In silico design, enzyme selection, and automated workflow generation |

Concluding Remarks

The integration of automated DBTL pipelines for microbial production of fine chemicals represents a paradigm shift in biomanufacturing, dramatically accelerating the development timeline for bio-based production processes [4]. The case studies presented demonstrate that iterative DBTL cycling can achieve remarkable improvements in production titers—up to 500-fold enhancement through just two cycles [4].

This approach effectively addresses the longstanding challenges in natural product supply chains by enabling sustainable, bio-based production of complex molecules that are difficult to synthesize chemically or extract in sufficient quantities from natural sources [17] [18]. As synthetic biology tools continue to advance and automation becomes more accessible, the DBTL framework is poised to become the standard methodology for developing microbial cell factories for diverse fine chemicals, from flavonoids and alkaloids to pharmaceutical precursors [4] [20].

The modular nature of the pipeline ensures its adaptability across different host organisms and target compound classes, promising broad impact on the sustainable production of high-value chemicals for pharmaceutical, nutraceutical, and cosmetic applications [4]. Future developments will likely focus on increasing automation throughput, enhancing computational prediction accuracy, and expanding the repertoire of amenable host systems to further accelerate the bio-based manufacturing revolution.

Building and Implementing Automated DBTL Pipelines: From Concepts to Bench

The microbial production of fine chemicals represents a sustainable alternative to traditional chemical synthesis. Central to this approach is the Design-Build-Test-Learn (DBTL) cycle, an engineering framework for the systematic development and optimization of microbial strains [4]. This application note focuses on the automated "Design" phase, specifically on the use of in silico tools for biochemical pathway design and enzyme selection. The integration of computational tools like RetroPath for pathway discovery and Selenzyme for enzyme selection into automated biofoundries has enabled the rapid prototyping of biosynthetic pathways, significantly reducing the time and resources required for strain development [22] [4]. The application of this pipeline has been successfully demonstrated for the production of compounds such as the flavonoid (2S)-pinocembrin and dopamine, leading to productivity improvements of several hundred-fold [4] [23].

Core Tool Functions

- RetroPath: This is a pathway discovery tool that uses reaction rules (encoded as reaction SMARTS) to establish possible biosynthetic pathways from a set of starting substrates to a target compound. It operates within a retrosynthesis workflow, identifying potential metabolic routes that may not exist in nature [22] [4].

- Selenzyme: This is a free online enzyme selection tool that takes a target reaction (e.g., from RetroPath output) as input and mines databases to shortlist candidate enzyme sequences. It ranks candidates based on multiple criteria, including reaction similarity, phylogenetic distance from the host chassis, and predicted protein properties [22].

Tool Comparison and Integration

The table below summarizes the key characteristics of these core design tools.

Table 1: Comparison of In Silico Tools for Pathway Design and Enzyme Selection

| Feature | RetroPath | Selenzyme |

|---|---|---|

| Primary Function | Retrosynthesis-based pathway discovery [22] [4] | Enzyme candidate selection and ranking [22] |

| Typical Input | Target compound molecule | A target reaction (e.g., in .rxn or SMIRKS format) [22] |

| Core Methodology | Uses reaction rules (e.g., reaction SMARTS) [22] | Screens reaction databases using Tanimoto similarity and collects annotated sequences [22] |

| Key Output | Potential metabolic pathways to the target compound [4] | A ranked list of enzyme candidate sequences with associated data [22] |

| Integration in DBTL | Upstream pathway design [4] | Downstream enzyme selection for a designed pathway [22] [4] |

These tools are designed to work in sequence. A typical workflow begins with RetroPath generating potential pathways, after which each reaction step in a selected pathway is submitted to Selenzyme to identify the most suitable enzyme sequences for the biological assembly [4].

Workflow Diagram

The following diagram illustrates the logical workflow and data flow between the key computational tools in the Design phase, and their integration with the subsequent Build phase.

Diagram 1: In Silico Design Workflow for DBTL Pipeline.

Case Study: Application to Flavonoid Production

The automated DBTL pipeline was applied to engineer an E. coli strain for the production of (2S)-pinocembrin, achieving a 500-fold increase in titer after two DBTL cycles, reaching competitive levels of up to 88 mg L⁻¹ [4].

Pathway Design and Enzyme Selection

The biosynthetic pathway for pinocembrin consists of four reaction steps, starting from the precursor L-phenylalanine. The enzymes catalyzing these steps are:

- Phenylalanine ammonia-lyase (PAL)

- 4-coumarate:CoA ligase (4CL)

- Chalcone synthase (CHS)

- Chalcone isomerase (CHI) [4]

For the initial design cycle, enzymes were selected from Arabidopsis thaliana (PAL, CHS, CHI) and Streptomyces coelicolor (4CL) using the Selenzyme tool [4]. This case study exemplifies the enzyme selection process within the broader DBTL context.

Quantitative Results from Iterative DBTL Cycling

The table below summarizes the quantitative outcomes from the two iterative DBTL cycles, highlighting the key factors that influenced production titers.

Table 2: Quantitative Results from Pinocembrin DBTL Cycles

| DBTL Cycle | Key Design Changes | Production Titer Range | Key Learning & Statistically Significant Factors |

|---|---|---|---|

| Cycle 1 | Combinatorial library of 16 constructs from 2592 designs using DoE. Varied vector copy number, promoter strength, and gene order. | 0.002 – 0.14 mg L⁻¹ | • Vector copy number had the strongest positive effect (P = 2.00 × 10⁻⁸).• CHI promoter strength had a significant positive effect (P = 1.07 × 10⁻⁷).• High accumulation of the intermediate cinnamic acid indicated PAL activity was not limiting [4]. |

| Cycle 2 | Designs focused on the high-copy-number vector. CHI position was fixed at the start of the operon. PAL was fixed at the end. | Up to 88 mg L⁻¹ | The re-design based on Cycle 1 learnings successfully alleviated bottlenecks, leading to a 500-fold improvement in production [4]. |

Experimental Protocols

Protocol: Using Selenzyme for Enzyme Candidate Selection

This protocol describes the steps for selecting enzyme sequences for a given biochemical reaction using the Selenzyme web server.

Prepare Reaction Query:

- Define the target reaction for which you need an enzyme.

- The reaction can be provided in several formats: an .rxn file, a SMILES string, a SMIRKS/SMARTS reaction rule, or an external database ID or EC number [22].

Submit Query to Web Server:

- Access the Selenzyme web server at http://selenzyme.synbiochem.co.uk.

- Submit the reaction query. The tool will screen it against its reaction database (powered by biochem4j) to find similar known chemical transformations [22].

Review and Rank Candidates:

- The server returns an interactive table of candidate enzyme sequences.

- Candidates are pre-ranked, but the table can be sorted based on user-defined summary scores. These scores are a weighted average of properties like:

- Reaction similarity.

- Phylogenetic distance between the source organism and your intended host (e.g., E. coli).

- UniProt protein evidence level.

- Predicted solubility and transmembrane regions (computed via the EMBOSS suite) [22].

Inspect Sequence Details (Optional):

- For selected candidates, a multiple sequence alignment (MSA) can be generated using T-Coffee and visualized with MSAViewer to highlight conserved regions and the predicted catalytic site [22].

Export Results:

- Download the final shortlist of candidate sequences as a .csv file for integration into the next stage of the DBTL pipeline [22].

Protocol: Initial Library Design and Statistical Compression

This protocol outlines the process of designing a manageable initial library for testing, following enzyme selection.

Define Combinatorial Space:

- Identify the genetic factors to vary. For the pinocembrin pathway, this included:

- Vector backbone (origin of replication controlling copy number).

- Promoter strength (e.g., strong Ptrc vs. weak PlacUV5) for each gene.

- Gene order within the operon [4].

- Identify the genetic factors to vary. For the pinocembrin pathway, this included:

Apply Design of Experiments (DoE):

- Use statistical methods (e.g., orthogonal arrays combined with a Latin square) to reduce the total number of constructs to a representative subset.

- In the pinocembrin case, a library of 2592 possible configurations was reduced to 16 representative constructs, a compression ratio of 162:1 [4].

Generate Assembly Instructions:

- Use downstream software (e.g., PlasmidGenie) to automatically generate assembly recipes and robotics worklists for the chosen library constructs, enabling automated pathway assembly via methods like ligase cycling reaction (LCR) [4].

The Scientist's Toolkit: Research Reagent Solutions

The table below lists key reagents, tools, and software used in the featured pinocembrin production case study.

Table 3: Essential Research Reagents and Tools for DBTL Implementation

| Item Name | Function / Description | Example Use in Case Study |

|---|---|---|

| Selenzyme | Online tool for selecting and ranking enzyme sequences for a given reaction. | Selected candidate genes for PAL, 4CL, CHS, and CHI [22] [4]. |

| RetroPath | Retrosynthesis tool for designing novel biochemical pathways to a target molecule. | Identified the four-step pathway from L-phenylalanine to (2S)-pinocembrin [4]. |

| PartsGenie | Software for designing reusable DNA parts with optimized RBS and coding sequences. | Designed DNA parts for pathway assembly following enzyme selection [4]. |

| Ligase Cycling Reaction (LCR) | A DNA assembly method for constructing pathways from multiple parts. | Used for the automated robotic assembly of pathway variants [4]. |

| E. coli DH5α | A standard cloning strain for plasmid propagation and maintenance. | Used as the host for pathway assembly and initial screening [4]. |

| UPLC-MS/MS | Ultra-Performance Liquid Chromatography coupled with tandem Mass Spectrometry. | Enabled high-throughput, quantitative screening of pinocembrin and intermediates from cultures [4]. |

Within the automated Design-Build-Test-Learn (DBTL) cycle for microbial production of fine chemicals, the Build phase is critical for translating digital designs into physical biological entities. This stage encompasses the high-throughput construction of genetic designs and the generation of engineered microbial strains, forming the foundation for all subsequent testing and learning. Automated biofoundries have dramatically accelerated this process, enabling the rapid prototyping of biosynthetic pathways that was previously a major bottleneck in metabolic engineering [5] [4]. This technical note details the implementation of an automated Build pipeline, specifically for high-throughput strain construction in Saccharomyces cerevisiae, providing application notes, protocols, and resources for researchers developing microbial production platforms for pharmaceuticals and fine chemicals.

Workflow Integration and Automation Architecture

The automated strain construction pipeline was implemented on a Hamilton Microlab VANTAGE liquid handling system, chosen for its modular deck layout and capacity for hardware integration [7]. The workflow was programmed using Hamilton VENUS software (v2.2.13.4) and strategically divided into three discrete, modular steps: (1) Transformation set up and heat shock, (2) Washing, and (3) Plating (Figure 1) [7]. This modular approach enables robust execution and troubleshooting while allowing researchers to customize parameters for specific experimental needs.

A critical innovation in this pipeline is the seamless integration of external off-deck hardware devices through the Hamilton iSWAP robotic arm, which enables complete hands-free operation after initial manual deck setup [7]. The system coordinates with several specialized instruments:

- Inheco ODTC 96-well thermocycler for precise temperature control during heat shock

- 4titude_a4S plate sealer for secure plate sealing during incubation

- HSLBrooksAutomationXPeel plate peeler for efficient seal removal [7]

This integration is facilitated through instrument-specific software drivers and communication protocols within the Hamilton device libraries, creating a cohesive automated platform that significantly reduces manual labor while improving reproducibility.

User Interface and Parameter Customization

To enhance usability and flexibility, a custom user interface was developed with dialog boxes for each workflow step, enabling researchers to adjust key experimental parameters on-demand [7]. Customizable parameters include:

- DNA volume and concentration

- Lithium acetate/single-stranded DNA/PEG ratios

- Heat shock incubation times and temperatures

- Washing and plating conditions

The interface incorporates programmed checkpoints to detect common errors, such as incomplete cell resuspension, and initiates corrective loops to ensure robust performance across diverse experimental conditions [7]. This focus on user experience makes automated strain construction accessible to researchers without specialized robotics expertise.

Application Notes: High-Throughput Yeast Transformation

Protocol Optimization and Validation