Automated Biofoundry Workflows: Accelerating Biomedical Engineering from Discovery to Biomanufacturing

This article explores the transformative role of automated biofoundries in biomedical engineering, providing a comprehensive guide for researchers and drug development professionals.

Automated Biofoundry Workflows: Accelerating Biomedical Engineering from Discovery to Biomanufacturing

Abstract

This article explores the transformative role of automated biofoundries in biomedical engineering, providing a comprehensive guide for researchers and drug development professionals. It covers the foundational principles of the Design-Build-Test-Learn (DBTL) cycle and the emerging Global Biofoundry Alliance standardizing the field. The piece details cutting-edge methodologies, including the integration of protein language models for zero-shot enzyme design and semi-automated workflows for engineering therapeutic proteins. It addresses critical troubleshooting aspects, such as adapting manual protocols for automation and achieving interoperability. Finally, it examines real-world validation through case studies in enzyme engineering and biomanufacturing, demonstrating significant reductions in development timelines and enhanced production of biomedical targets, thereby charting a course toward more predictive and autonomous biomedical discovery.

The Biofoundry Framework: Core Principles and the DBTL Cycle Powering Modern Biomedicine

Automated biofoundries represent a paradigm shift in biological engineering, transforming traditional artisanal research processes into streamlined, industrialized workflows. These integrated facilities leverage robotic automation, computational analytics, and high-throughput instrumentation to accelerate synthetic biology research and applications through iterative Design-Build-Test-Learn (DBTL) cycles [1]. The global biofoundry ecosystem has expanded significantly since the establishment of the Global Biofoundry Alliance (GBA) in 2019, which now includes over 30 academic and research institutions worldwide [1] [2]. This growth reflects the increasing recognition of biofoundries as essential infrastructure for advancing biomedical engineering, sustainable biomanufacturing, and therapeutic development.

The transformative potential of biofoundries lies in their ability to address the fundamental challenges of biological complexity and experimental reproducibility. Where traditional biological research might require years of development for a single product – exemplified by the 150 person-years needed to develop artemisinin precursor production – biofoundries can compress these timelines dramatically through parallelization and automation [3]. By integrating advanced computational design with robotic implementation and analysis, biofoundries enable systematic exploration of biological design spaces that would be intractable through manual approaches.

Core Architectural Framework

The DBTL Engineering Cycle

The operational foundation of every biofoundry is the Design-Build-Test-Learn cycle, an iterative engineering framework that transforms biological designs into optimized systems [1] [4]. This closed-loop process enables continuous refinement of biological constructs, pathways, or organisms through successive iterations of computational design, physical construction, experimental validation, and data-driven learning.

Table 1: The Four Phases of the DBTL Cycle in Biofoundries

| Phase | Key Activities | Technologies & Tools | Outputs |

|---|---|---|---|

| Design | Genetic circuit design, Pathway optimization, DNA sequence design | CAD tools, Cello, Retrobiosynthesis algorithms, Protein MPNN, PLMs | Digital DNA sequences, Genetic constructs, Oligo libraries |

| Build | DNA synthesis, DNA assembly, Strain engineering, Genome editing | Liquid handlers, PCR systems, Colony pickers, Automated transformation | Physical DNA constructs, Engineered strains, Variant libraries |

| Test | High-throughput screening, Functional characterization, Analytics | Plate readers, Flow cytometers, Mass spectrometers, Fragment analyzers | Quantitative data, Production yields, Functional measurements |

| Learn | Data analysis, Pattern recognition, Model training, Prediction | Machine learning, Statistical analysis, Bayesian optimization, ART tool | Refined designs, Predictive models, New hypotheses |

The DBTL cycle's power emerges from its iterative nature – each cycle generates data that informs subsequent designs, creating a continuous improvement loop. Recent advances have enabled fully automated DBTL cycles with minimal human intervention, dramatically accelerating the engineering timeline [1]. The integration of artificial intelligence and machine learning at each phase has further enhanced the precision of predictions and reduced the number of cycles needed to achieve desired outcomes [5] [3].

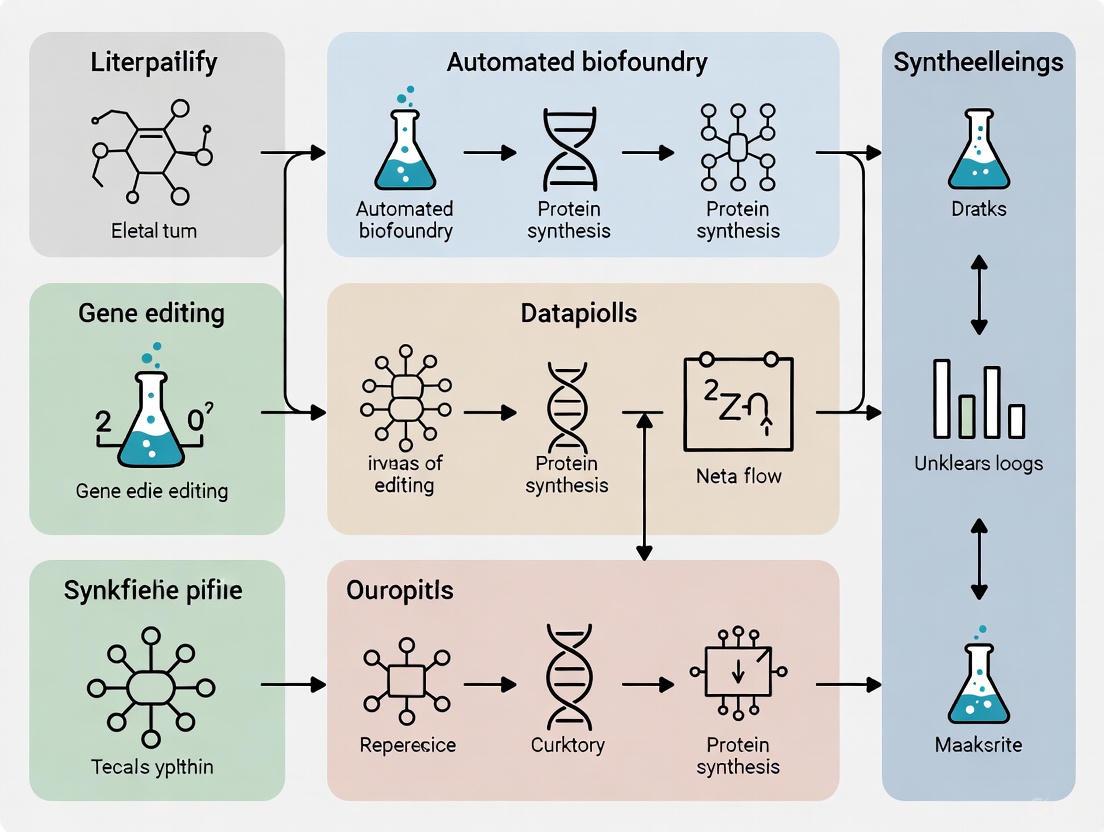

Diagram 1: DBTL Cycle in Biofoundries

To address challenges in standardization and interoperability, a four-level abstraction hierarchy has been developed to organize biofoundry activities into modular, interoperable components [4]. This framework enables more flexible and automated experimental workflows while improving communication between researchers and systems.

Level 0: Project - Represents the overall research objectives and requirements from external users, such as developing a novel microbial strain for therapeutic protein production or optimizing a biosynthetic pathway.

Level 1: Service/Capability - Defines the specific functions that the biofoundry provides to achieve project goals. These services are categorized into tiers based on their complexity and scope within the DBTL cycle:

- Tier 1: Equipment access (e.g., liquid handler training)

- Tier 2: Single DBTL stage service (e.g., protein sequence design)

- Tier 3: Multiple DBTL stage integration (e.g., protein library construction and verification)

- Tier 4: Full DBTL cycle support (e.g., complete strain engineering for bioproduction) [4]

Level 2: Workflow - Comprises the sequence of tasks needed to deliver a specific service. Each workflow is assigned to a single stage of the DBTL cycle to ensure modularity. Examples include "DNA Oligomer Assembly" (Build stage) or "High-Throughput Screening" (Test stage). The standardization of 58 distinct biofoundry workflows enables reconfiguration and reuse across different projects [4].

Level 3: Unit Operations - Represents the fundamental hardware or software elements that perform individual experimental or computational tasks. These include 42 hardware unit operations (e.g., "Liquid Transfer" using liquid handling robots) and 37 software unit operations (e.g., "Protein Structure Generation" using RFdiffusion) [4].

Diagram 2: Biofoundry Abstraction Hierarchy

Application Notes: Success Stories and Implementation Cases

DARPA's Biofoundry Pressure Test

One of the most compelling demonstrations of biofoundry capabilities was a timed pressure test administered by the U.S. Defense Advanced Research Projects Agency (DARPA), which challenged a biofoundry to research, design, and develop microbial strains for producing 10 target molecules within 90 days [1]. The challenge was particularly demanding as the biofoundry had no prior knowledge of the target molecules or the starting date.

The target molecules represented diverse chemical classes and applications:

- 1-Hexadecanol: A simple chemical used as fastener lubricant

- Tetrahydrofuran: An industrial solvent with no known biological synthesis pathway

- Carvone: A monoterpene with applications as mosquito repellent and pesticide

- Epicolactone: A multicyclic tropolone with antimicrobial activity

- Barbamide: A potent molluscicide for antifouling applications

- Vincristine, Rebeccamycin, Enediyene C-1027: Anticancer agents

- Pyrrolnitrin: An antifungal agent

- Pacidamycin D: An antibacterial agent against pseudomonads

Despite the complexity and novelty of these targets, the biofoundry successfully constructed 1.2 Mb DNA, built 215 strains spanning five microbial species, established two cell-free systems, and performed 690 custom assays within the stipulated timeframe [1]. The team succeeded in producing the target molecule or a closely related analog for six of the ten targets and made significant progress on the others. This achievement highlighted the versatility and robustness of biofoundry approaches when addressing diverse biological engineering challenges.

AI-Driven Protein Engineering Platform

Recent advances have integrated protein language models (PLMs) with automated biofoundry operations to create a closed-loop system for protein evolution. The Protein Language Model-enabled Automatic Evolution (PLMeAE) platform demonstrates how machine learning can accelerate the DBTL cycle for enzyme optimization [6].

In a case study optimizing Methanocaldococcus jannaschii p-cyanophenylalanine tRNA synthetase (pCNF-RS), the PLMeAE platform implemented two complementary modules:

- Module I: For proteins without previously identified mutation sites, using PLMs to predict high-fitness single mutants through zero-shot learning

- Module II: For proteins with known mutation sites, employing PLMs to sample informative multi-mutant variants for experimental characterization

Through four rounds of automated DBTL cycles completed in just 10 days, the platform identified enzyme variants with activity improved by up to 2.4-fold compared to the wild type [6]. This performance surpassed traditional directed evolution approaches, demonstrating how the integration of foundational AI models with biofoundry automation can dramatically accelerate protein engineering timelines.

Isoprene Synthase Engineering for Gas Fermentation

Another successful implementation demonstrated the optimization of isoprene synthase (IspS) for methane-to-isoprene conversion using semi-automated biofoundry workflows [7]. The project achieved a 4.5-fold improvement in catalytic efficiency along with enhanced thermostability through sequence coevolution-guided engineering.

The engineered enzyme showed improved functionality in Methylococcus capsulatus Bath, validating its application in gas fermentation systems. This advancement reached Technology Readiness Level (TRL) 4, demonstrating proof-of-concept in a relevant environment [7]. The project highlights how biofoundries can bridge fundamental enzyme engineering with industrial bioprocess development, creating a pipeline from initial design to scalable production.

Table 2: Performance Metrics from Biofoundry Implementation Cases

| Project | Engineering Target | Performance Improvement | Timeframe | Key Technologies |

|---|---|---|---|---|

| DARPA Challenge | 10 diverse small molecules | 6/10 targets produced | 90 days | High-throughput DNA construction, Multi-species engineering, Custom assays |

| PLMeAE Platform | tRNA synthetase enzyme | 2.4-fold activity increase | 10 days (4 cycles) | Protein language models, Automated variant construction, ML-based fitness prediction |

| Isoprene Synthase | Catalytic efficiency & thermostability | 4.5-fold improvement | Not specified | Sequence coevolution analysis, Semi-automated workflows, Gas fermentation validation |

Experimental Protocols

Protocol: Automated DBTL for Cell-Free Protein Synthesis Optimization

This protocol describes a fully automated DBTL pipeline for optimizing cell-free protein synthesis (CFPS) systems, adapted from recent research that achieved 2- to 9-fold yield improvements for antimicrobial colicins in just four cycles [5].

Design Phase

- Objective: Generate experimental designs for optimizing CFPS component compositions

- Materials: ChatGPT-4 or similar LLM for code generation, Active Learning (AL) framework with Cluster Margin sampling strategy

- Procedure:

- Use natural language prompts to instruct ChatGPT-4 to generate Python scripts for experimental design and microplate layout generation

- Implement Active Learning with Cluster Margin approach to select experimental conditions that balance uncertainty and diversity

- Generate design of experiments (DoE) covering component variations in cell-free systems (extract concentration, energy sources, nucleotide ratios)

- Format output for compatibility with liquid handling systems

Note: The described approach successfully used ChatGPT-4 generated code without manual revisions, dramatically reducing coding time [5]

Build Phase

- Objective: Automatically prepare CFPS reactions according to designed experiments

- Materials: Opentrons or similar liquid handling system, 96-well microplates, CFPS components (cell extract, energy sources, amino acids, nucleotides, DNA template)

- Procedure:

- Program liquid handler using generated protocols from Design phase

- Set up temperature-controlled areas for reagent storage (4°C) and reaction incubation (30-37°C)

- Perform automated liquid transfers to assemble CFPS reactions in 96-well format

- Include appropriate controls (negative controls without DNA template, positive controls with known templates)

- Seal plates and transfer to incubation system

Test Phase

- Objective: Quantify protein synthesis yields and functional activity

- Materials: Plate reader with fluorescence/absorbance capabilities, activity assay reagents, standard curves for quantification

- Procedure:

- Measure protein yield using fluorescence (GFP-fusion) or absorbance methods

- Perform functional assays specific to target protein (e.g., antimicrobial activity assays for colicins)

- Normalize measurements using standard curves

- Export data in standardized format for analysis

Learn Phase

- Objective: Analyze data and generate improved designs for next DBTL cycle

- Materials: Machine learning platform (Python with scikit-learn), previously generated data

- Procedure:

- Train machine learning models to correlate CFPS composition with protein yield

- Apply Active Learning strategy to identify most informative next experiments

- Generate new experimental designs based on model predictions

- Iterate through additional DBTL cycles until performance targets are met

Protocol: Automated Protein Engineering Using PLMeAE Platform

This protocol outlines the implementation of the Protein Language Model-enabled Automatic Evolution platform for directed protein evolution [6].

Initial Variant Design (Module I - No Prior Mutation Sites)

- Objective: Identify promising single-point mutations for proteins without known mutation sites

- Materials: ESM-2 or similar protein language model, target protein sequence

- Procedure:

- Input wild-type protein sequence into PLM

- Systematically mask each amino acid position and calculate likelihood scores for all possible substitutions

- Rank variants by predicted fitness (likelihood score)

- Select top 96 variants for experimental characterization

- Output DNA sequences for synthesized variants

Multi-Site Variant Design (Module II - Known Mutation Sites)

- Objective: Design multi-mutant variants when target sites are identified

- Materials: Pre-trained PLM, identified mutation sites from previous cycles or structural analysis

- Procedure:

- Encode protein sequence using PLM to create sequence representations

- For given mutation sites, generate combinatorial variants

- Use PLM to predict fitness of multi-mutant variants

- Select diverse set of variants covering predicted fitness landscape

- Output sequences for automated DNA synthesis

Build Phase: Automated Variant Construction

- Objective: High-throughput construction of designed protein variants

- Materials: Automated biofoundry with liquid handlers, thermocyclers, DNA assembly reagents, expression vectors

- Procedure:

- Automate DNA assembly using standardized protocols (Golden Gate, Gibson Assembly)

- Transform constructs into expression host (E. coli or other chassis)

- Pick colonies and culture in 96-deep well plates

- Isopropyl β-d-1-thiogalactopyranoside (IPTG) induction of protein expression

- Harvest cells for functional testing

Test Phase: High-Throughput Functional Characterization

- Objective: Quantify fitness metrics for all variants

- Materials: Plate readers, activity assay reagents, cell lysis systems

- Procedure:

- Lyse cells using automated protocols

- Perform enzyme activity assays in high-throughput format

- Measure protein expression levels (e.g., via fluorescence, Western blot)

- Collect stability data (thermal shift assays)

- Compile dataset linking variants to functional metrics

Learn Phase: Model Retraining and Optimization

- Objective: Improve fitness predictions using experimental data

- Materials: Collected variant fitness data, ML platform (multi-layer perceptron)

- Procedure:

- Encode tested variants using PLM

- Train supervised ML model to predict fitness from sequence representations

- Validate model performance using cross-validation

- Apply Bayesian optimization or similar algorithms to explore sequence space

- Select next round of variants balancing exploration and exploitation

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Automated Biofoundries

| Category | Specific Examples | Function in Workflow | Implementation Notes |

|---|---|---|---|

| DNA Assembly Systems | j5 DNA assembly design, Golden Gate Assembly, Gibson Assembly | Modular construction of genetic circuits and pathways | j5 outputs compatible with Opentrons via AssemblyTron [1] |

| Liquid Handling Platforms | Opentrons, Tecan Fluent, Labcyte Echo, Agilent Bravo | Automated reagent transfer and reaction assembly | Acoustic liquid handlers enable nanoliter-scale transfers [2] |

| Protein Language Models | ESM-2, Protein MPNN | Zero-shot prediction of functional protein variants | ESM-2 used for variant fitness prediction without experimental data [6] |

| Machine Learning Tools | Automated Recommendation Tool (ART), scikit-learn, Active Learning | Data analysis and predictive modeling for DBTL cycles | ART provides Bayesian optimization for strain engineering [3] |

| Cell-Free Systems | E. coli extract, HeLa extract, PURExpress | Rapid prototyping of protein production without living cells | CFPS enables high-throughput protein production optimization [5] |

| High-Throughput Screening | Plate readers, flow cytometers, fragment analyzers | Functional characterization of libraries | Multiplexed assays enable parallel testing of thousands of variants [8] |

| Automated Colony Processing | QPix systems, Singer Instruments PIXL | Picking, arraying, and replicating microbial colonies | Enables processing of thousands of colonies per hour [2] |

Automated biofoundries represent a transformative infrastructure for biological engineering, integrating computational design, robotic automation, and artificial intelligence to accelerate the design and optimization of biological systems. Through the structured implementation of Design-Build-Test-Learn cycles and standardized abstraction hierarchies, these facilities enable unprecedented throughput and reproducibility in synthetic biology research.

The continued advancement of biofoundries depends on several key factors: the development of interoperable standards and workflows, the integration of more sophisticated AI and machine learning capabilities, and the expansion of global collaboration through initiatives like the Global Biofoundry Alliance. As these facilities become more accessible and their methodologies more refined, they hold tremendous potential to accelerate breakthroughs in therapeutic development, sustainable biomanufacturing, and fundamental biological research.

The protocols and applications detailed in this article provide a roadmap for researchers seeking to leverage biofoundry capabilities for their own biomedical engineering projects. By adopting these automated, high-throughput approaches, the scientific community can overcome traditional limitations in biological design and usher in a new era of predictable, scalable biological engineering.

The Design-Build-Test-Learn (DBTL) cycle represents a foundational framework in modern biomedical engineering and synthetic biology, enabling systematic bioengineering of biological systems. This iterative process facilitates the development of optimized microbial strains for biomedical applications, such as drug discovery and therapeutic compound production. By integrating automation, machine learning, and high-throughput technologies within biofoundries, the DBTL cycle significantly accelerates research and development timelines while improving reproducibility and success rates. This article deconstructs the DBTL framework through practical applications in metabolic engineering, detailing experimental protocols, key reagents, and workflow visualizations to provide researchers with actionable methodologies for implementation in automated biofoundry environments.

The DBTL cycle is a crucial framework in synthetic biology for the development and optimization of biological systems, forming the core operational principle of modern biofoundries [9]. These specialized facilities integrate automation, synthetic biology, and advanced computational tools to accelerate the engineering of biological systems, transforming raw biological materials into finished products through a structured, iterative process [9]. The cycle consists of four interconnected phases: (1) Design, where computational tools are used to plan genetic circuits or metabolic pathways; (2) Build, where biological components are constructed through automated synthesis and assembly; (3) Test, where engineered systems are evaluated via high-throughput screening; and (4) Learn, where data is analyzed to refine subsequent designs [9]. This integrated approach significantly reduces the time and cost associated with biotechnological research, enhancing reproducibility, scalability, and standardization while making complex biological engineering projects more feasible and efficient [9].

In the context of biomedical engineering, DBTL cycles have demonstrated remarkable efficacy in optimizing the production of valuable compounds. For instance, researchers have successfully applied knowledge-driven DBTL cycles to develop an optimized dopamine production strain in Escherichia coli, achieving concentrations of 69.03 ± 1.2 mg/L—a 2.6 to 6.6-fold improvement over previous state-of-the-art production methods [10]. Similarly, semi-automated biofoundry workflows have enabled 4.5-fold improvements in catalytic efficiency for engineered isoprene synthase, demonstrating the framework's potential for enzyme engineering and pathway optimization [7]. The power of the DBTL approach lies in its iterative nature, where each cycle incorporates learning from previous iterations to progressively refine strain performance and pathway efficiency.

Phase 1: Design – Computational Planning and Pathway Design

The Design phase initiates the DBTL cycle, focusing on computational planning and pathway design using bioinformatics tools and mathematical modeling. This stage involves selecting appropriate genetic components, designing DNA constructs, and predicting system behavior before physical implementation. In metabolic engineering projects, the Design phase typically begins with identifying target pathways and selecting suitable enzyme variants, codon optimization, and designing regulatory elements such as promoters and ribosome binding sites (RBS) [10]. For combinatorial pathway optimization, simultaneous optimization of multiple pathway genes is essential, though this often leads to combinatorial explosions of the design space that must be addressed through strategic sampling [11].

Advanced computational approaches are increasingly employed to enhance the Design phase. Mechanistic kinetic models provide a valuable framework for representing metabolic pathways embedded in physiologically relevant cell models [11]. These models describe changes in intracellular metabolite concentrations over time using ordinary differential equations, with each reaction flux described by kinetic mechanisms derived from mass action principles. This approach allows for in silico manipulation of pathway elements, such as modifying enzyme concentrations or catalytic properties, to predict their effects on system performance [11]. Additionally, machine learning tools are being integrated into the Design phase to predict biological system behavior without requiring full mechanistic understanding [12]. The Automated Recommendation Tool (ART), for instance, leverages machine learning and probabilistic modeling to guide synthetic biology design in a systematic fashion, providing a set of recommended strains to be built in the next engineering cycle alongside probabilistic predictions of their production levels [12].

Figure 1: The iterative DBTL cycle for bioengineering. Each phase feeds into the next, creating a continuous improvement loop for strain development and pathway optimization.

Phase 2: Build – Genetic Construction and Automation

The Build phase translates computational designs into physical biological entities through genetic construction and assembly. This stage has been revolutionized by automation and standardized protocols, enabling high-throughput implementation of genetic designs. In biofoundries, the Build phase leverages robotic liquid-handling systems, automated DNA assembly, and molecular cloning techniques to construct plasmid libraries and engineer microbial strains with minimal human intervention [13]. A key aspect of this phase is the implementation of designed genetic modifications, such as RBS engineering to fine-tune relative gene expression in synthetic pathways [10].

Advanced biofoundries employ distributed workflow automation using directed acyclic graphs (DAGs) and orchestrators to manage complex construction processes [13]. This approach represents workflows with directed graphs and uses orchestrators for their execution, enabling highly flexible and standardized automation. The build process typically involves several key steps: (1) DNA synthesis or amplification of genetic parts, (2) assembly of genetic constructs using standardized methods (e.g., Golden Gate assembly, Gibson assembly), (3) transformation into microbial chassis, and (4) verification of constructed strains through colony PCR and sequencing [10]. For metabolic engineering applications, this often includes constructing plasmid libraries with varying expression levels for pathway enzymes. For example, in dopamine production strain development, researchers utilized the pJNTN plasmid system for crude cell lysate system testing and plasmid library construction, enabling high-throughput RBS engineering to optimize enzyme expression levels [10].

Phase 3: Test – High-Throughput Screening and Analytics

The Test phase involves comprehensive characterization and performance evaluation of constructed biological systems through high-throughput screening and analytical techniques. This critical stage provides the experimental data necessary to assess design efficacy and identify bottlenecks. In metabolic engineering applications, testing typically involves cultivation experiments, product quantification, and multi-omics analyses to evaluate strain performance and pathway functionality [10]. Advanced biofoundries automate this phase using integrated robotic systems that can conduct thousands of experiments simultaneously, drastically increasing data generation speed while enhancing reproducibility [9].

For dopamine production optimization, researchers employed a structured testing protocol including: (1) cultivation in minimal medium with appropriate carbon sources and inducers, (2) sampling at regular intervals to monitor biomass growth and metabolite concentrations, (3) HPLC analysis for dopamine quantification, and (4) calculation of production titers and yields [10]. The testing phase also often incorporates cell-free protein synthesis systems to bypass whole-cell constraints and rapidly assess enzyme expression levels and pathway functionality before full cellular implementation [10]. This approach allows for faster iteration and reduces the resource intensity of testing. The data generated during this phase typically includes targeted measurements of the desired product, biomass growth parameters, and potentially broader omics data (proteomics, metabolomics) to provide insights into system-wide responses to genetic modifications [12].

Detailed Protocol: Dopamine Quantification in Engineered E. coli

Purpose: To quantify dopamine production in engineered E. coli strains [10]

Materials:

- Engineered E. coli strains harboring dopamine pathway

- Minimal medium: 20 g/L glucose, 10% 2xTY medium, 2.0 g/L NaH₂PO₄·2H₂O, 5.2 g/L K₂HPO₄, 4.56 g/L (NH₄)₂SO₄, 15 g/L MOPS, 50 µM vitamin B₆, 5 mM phenylalanine, 0.2 mM FeCl₂, 0.4% (v/v) trace element stock solution [10]

- Antibiotics: ampicillin (100 µg/mL), kanamycin (50 µg/mL)

- Inducer: IPTG (1 mM)

- Phosphate buffer (50 mM, pH 7.0)

- HPLC system with electrochemical or UV detection

Procedure:

- Inoculate engineered E. coli strains in minimal medium with appropriate antibiotics and incubate at 37°C with shaking at 220 rpm.

- At OD₆₀₀ ≈ 0.6, induce dopamine pathway expression with 1 mM IPTG.

- Continue incubation for 24-48 hours, sampling at regular intervals (e.g., 0, 6, 12, 24, 48 hours).

- Centrifuge 1 mL culture samples at 13,000 × g for 5 minutes to separate biomass and supernatant.

- Analyze supernatant using HPLC with C18 column and mobile phase consisting of 50 mM phosphate buffer (pH 3.0) with 5-10% methanol.

- Detect dopamine at 280 nm or using electrochemical detection.

- Quantify dopamine concentration by comparison with standard curve (0-100 mg/L).

- Normalize production to biomass (OD₆₀₀) for yield calculations.

Notes: For intracellular dopamine quantification, resuspend cell pellets in 500 µL phosphate buffer and disrupt cells by sonication before centrifugation and HPLC analysis.

Phase 4: Learn – Data Analysis and Machine Learning

The Learn phase represents the knowledge extraction and hypothesis generation component of the DBTL cycle, where experimental data is analyzed to gain insights and inform subsequent design iterations. This critical phase transforms raw experimental results into actionable knowledge, enabling continuous improvement of biological designs. Traditional approaches to this phase have included statistical analysis and mechanistic modeling, but increasingly, machine learning algorithms are being employed to identify complex patterns and relationships within multidimensional data sets [11] [12]. The learning process typically involves correlating genetic designs (e.g., promoter combinations, RBS sequences) or molecular profiling data (e.g., proteomics, metabolomics) with performance metrics (e.g., product titer, yield) to build predictive models [12].

Research has demonstrated that gradient boosting and random forest models outperform other machine learning methods in the low-data regime typical of early DBTL cycles, showing robustness to training set biases and experimental noise [11]. These algorithms can effectively handle the complex, nonlinear relationships often encountered in biological systems. The Automated Recommendation Tool (ART) exemplifies the application of machine learning in the Learn phase, combining scikit-learn libraries with a Bayesian ensemble approach to provide predictions and uncertainty quantification specifically tailored to synthetic biology applications [12]. ART generates probabilistic predictions of strain performance and recommends specific designs for the next DBTL cycle based on optimization objectives. When applying these computational tools, if the number of strains to be built is limited, evidence suggests that starting with a larger initial DBTL cycle is more favorable than distributing the same number of strains equally across multiple cycles [11].

Figure 2: Engineered dopamine biosynthesis pathway in E. coli. Heterologous enzymes HpaBC and Ddc convert endogenous L-tyrosine to dopamine via L-DOPA intermediate.

Integrated DBTL Case Study: Optimizing Dopamine Production

The application of a knowledge-driven DBTL cycle to optimize dopamine production in E. coli provides an illustrative case study of the framework's power in metabolic engineering [10]. This project demonstrated how iterative DBTL cycles, incorporating upstream in vitro investigation, can significantly accelerate strain development while providing mechanistic insights. The approach achieved a 2.6 to 6.6-fold improvement over state-of-the-art dopamine production methods, reaching titers of 69.03 ± 1.2 mg/L (34.34 ± 0.59 mg/g biomass) [10].

The project began with in vitro testing using crude cell lysate systems to assess enzyme expression levels and pathway functionality before moving to full cellular implementation. This preliminary investigation informed the initial in vivo strain design, focusing on RBS engineering to optimize the expression of two key enzymes: 4-hydroxyphenylacetate 3-monooxygenase (HpaBC) and L-DOPA decarboxylase (Ddc) [10]. The Build phase involved constructing plasmid libraries with varying RBS sequences controlling the expression of these enzymes, followed by transformation into an engineered E. coli host with enhanced L-tyrosine production. The Test phase employed high-throughput cultivation and HPLC analysis to quantify dopamine production across different RBS combinations. In the Learn phase, researchers analyzed the correlation between RBS sequence features (particularly GC content in the Shine-Dalgarno sequence) and enzyme performance, determining that fine-tuning the translational initiation rates through RBS optimization was critical for maximizing pathway flux [10]. This learning informed subsequent DBTL cycles, progressively increasing dopamine production through iterative optimization.

Table 1: Key Research Reagent Solutions for DBTL-based Metabolic Engineering

| Reagent/Category | Specific Examples | Function in DBTL Workflow |

|---|---|---|

| Plasmid Systems | pET system, pJNTN system [10] | Storage and expression of heterologous genes in microbial hosts |

| Enzymes | HpaBC (4-hydroxyphenylacetate 3-monooxygenase), Ddc (L-DOPA decarboxylase) [10] | Catalyze specific reactions in engineered metabolic pathways |

| E. coli Strains | DH5α (cloning), FUS4.T2 (production) [10] | Serve as microbial chassis for genetic construction and production |

| Media Components | Minimal medium with MOPS buffer, trace elements, vitamin B₆ [10] | Support controlled microbial growth and product formation |

| Inducers | Isopropyl β-D-1-thiogalactopyranoside (IPTG) [10] | Regulate expression of pathway genes in inducible systems |

| Analytical Tools | HPLC with electrochemical or UV detection [10] | Quantify target compound production and pathway intermediates |

Table 2: Quantitative Performance Metrics in DBTL Cycle Implementation

| Performance Metric | Reported Value/Outcome | Application Context |

|---|---|---|

| Dopamine Production | 69.03 ± 1.2 mg/L (34.34 ± 0.59 mg/g biomass) [10] | Knowledge-driven DBTL cycle optimization in E. coli |

| Improvement Factor | 2.6 to 6.6-fold increase over previous methods [10] | Dopamine production strain development |

| Catalytic Efficiency | 4.5-fold improvement [7] | Isoprene synthase engineering in semi-automated biofoundry |

| Tryptophan Production | 106% increase from base strain [12] | ART-guided DBTL cycle implementation |

| Machine Learning Advantage | Gradient boosting and random forest outperform in low-data regime [11] | Simulated DBTL cycles for combinatorial pathway optimization |

Biofoundry Automation and Workflow Implementation

The implementation of DBTL cycles in automated biofoundry environments represents a transformative advancement in engineering biology, addressing limitations of manual approaches through standardized, high-throughput workflows [13]. Biofoundries specialize in integrating software-based design with automated construction and testing pipelines, organized around the DBTL paradigm to enable rapid prototyping of genetic devices [13]. A significant challenge in this context is workflow automation, which requires translating high-level experimental procedures into precise, machine-readable instructions that can be executed by robotic systems with minimal human intervention [13].

Advanced biofoundries address this challenge through three-tier hierarchical models for workflow implementation: (1) human-readable workflow descriptions, (2) procedures for data and machine interaction using directed acyclic graphs (DAGs) and orchestrators, and (3) automated implementation using biofoundry resources [13]. This approach employs DAGs for workflow representation and orchestrators like Airflow for execution, enabling complex, multi-step experiments to be conducted with high reproducibility and scalability [13]. The integration of physical and data standards is crucial for this automation, including ANSI standards for microplates and data standards like SBOL (Synthetic Biology Open Language) for genetic designs [13]. The resulting automated workflows can execute thousands of experiments simultaneously, generating standardized, high-quality data that feed directly into the Learn phase of the DBTL cycle. This infrastructure enables the exploration of vast biological design spaces that would be intractable using manual methods, dramatically accelerating the development timeline for engineered biological systems.

Table 3: Implementation Tools for Automated DBTL Workflows

| Tool Category | Specific Technologies | Role in DBTL Automation |

|---|---|---|

| Workflow Representation | Directed Acyclic Graphs (DAGs) [13] | Model experimental workflows as connected computational and laboratory tasks |

| Workflow Orchestration | Airflow [13] | Execute workflows, assign tasks to resources, and monitor progress |

| Data Management | Vendor-neutral archives, Neo4j graph database [13] | Store and link operational data, experimental results, and design information |

| Platform-Agnostic Programming | LabOP, PyLabRobot [13] | Enable protocol development transferable across different automated platforms |

| Genetic Design Standards | Synthetic Biology Open Language (SBOL) [13] | Standardize representation of genetic designs for reproducibility and sharing |

| Machine Learning Framework | Automated Recommendation Tool (ART) [12] | Bridge Learn and Design phases through predictive modeling and strain recommendation |

The field of synthetic biology stands at a pivotal juncture, where its potential to revolutionize biomedical engineering, drug development, and biomanufacturing is increasingly constrained by challenges of scalability, reproducibility, and interoperability across research facilities. Biofoundries—integrated facilities that combine automation, robotic systems, and computational analytics—aim to accelerate biological engineering through iterative Design-Build-Test-Learn (DBTL) cycles [1]. However, the lack of standardized methodologies and terminology has historically limited their efficiency and collaborative potential.

In response, the Global Biofoundry Alliance (GBA) was established as an international consortium to coordinate efforts and address common challenges [14]. Concurrently, recent research has proposed a conceptual framework of abstraction hierarchies to standardize biofoundry operations [15]. This application note examines how these parallel developments are fostering global standardization, thereby enhancing the reliability and throughput of automated workflows for biomedical research and therapeutic development.

The Global Biofoundry Alliance: A Framework for International Collaboration

Origin and Objectives

The GBA was formally launched in May 2019 in Kobe, Japan, following a preliminary meeting of 15 non-commercial biofoundries from four continents in London in June 2018 [14] [16]. This voluntary alliance operates under a non-binding Memorandum of Understanding, relying on goodwill and cooperation among its signatories, which include research institutions and funding agencies that operate non-commercial biofoundries [14].

The GBA's primary objectives are to:

- Develop, promote, and support non-commercial biofoundries worldwide.

- Intensify collaboration and communication among member facilities.

- Collectively develop responses to technological and operational challenges.

- Enhance the visibility and sustainability of biofoundries.

- Explore grand challenge projects with global societal impact [14].

Growth and Membership

The alliance has experienced significant growth since its inception. From the initial 15 founding members, the GBA has expanded to include over 40 member biofoundries globally as of 2025 [16]. The table below summarizes a selection of notable member biofoundries and their locations, illustrating the global distribution of this infrastructure.

Table 1: Selected Member Biofoundries of the Global Biofoundry Alliance

| Biofoundry Name | Location |

|---|---|

| London DNA Foundry | United Kingdom |

| iBioFoundry | USA (University of Illinois Urbana-Champaign) |

| DOE Agile BioFoundry | USA |

| VTT Biofoundry | Finland |

| Kobe Biofoundry | Japan |

| K-Biofoundry | South Korea |

| Australian Genome Foundry | Australia |

| Paris Biofoundry | France |

| A*STAR SPARROW Biofoundry | Singapore |

| Shenzhen Biofoundry | China |

This network enables cost-effective access to specialized equipment and expertise for product prototyping and commercial process validation, which are crucial for securing investment in biotechnological innovations [14].

The Four-Level Hierarchy

A recent landmark publication proposes an abstraction hierarchy that organizes biofoundry activities into four distinct but interoperable levels [15]. This framework is designed to streamline the DBTL cycle by creating modular, flexible, and automated experimental workflows.

Table 2: The Four-Level Abstraction Hierarchy for Biofoundry Operations

| Level | Name | Description | Example |

|---|---|---|---|

| Level 0 | Project | Series of tasks to fulfill requirements of external users | Engineering a microbial strain for therapeutic protein production |

| Level 1 | Service/Capability | Functions that the biofoundry provides to clients | AI-driven protein engineering or modular long-DNA assembly |

| Level 2 | Workflow | DBTL-based sequence of tasks needed to deliver a service | DNA Oligomer Assembly or Liquid Media Cell Culture |

| Level 3 | Unit Operation | Individual experimental or computational tasks | Liquid Transfer, Thermocycling, or Protein Structure Generation |

This hierarchical structure allows researchers and engineers to work at appropriate levels of complexity without needing to understand every detail of lower-level operations [15].

Workflow and Unit Operation Classification

The abstraction framework further catalogs specific processes within biofoundries. Researchers have identified 58 distinct biofoundry workflows, each assigned to a specific stage of the DBTL cycle [15]. These are supported by 42 hardware unit operations (e.g., Liquid Transfer, Nucleic Acid Extraction) and 37 software unit operations (e.g., Protein Structure Generation) [15].

The hierarchical relationship between these levels creates a standardized vocabulary and structure for describing complex biofoundry operations, as illustrated below.

Integrated Applications in Biomedical Engineering

Case Study: Semi-Automated Enzyme Engineering

The synergy between GBA collaboration and standardized abstraction hierarchies finds practical application in biomedical engineering. Recent research demonstrates scalable enzyme engineering workflows for isoprene synthase (IspS), a rate-limiting enzyme in isoprene biosynthesis with potential industrial and biomedical applications [17].

This study integrated computational mutation design based on sequence coevolution analysis with laboratory automation, conducting three rounds of site-directed mutagenesis and screening. Researchers synthesized approximately 100 genetic mutants per round, with workflows scalable to thousands without extensive optimization [17]. This approach identified IspS variants with a 4.5-fold improvement in catalytic efficiency and enhanced thermostability, subsequently improving methane-to-isoprene bioconversion in Methylococcus capsulatus Bath to achieve a titer of 319.6 mg/L [17].

Experimental Protocol: Sequence Coevolution-Guided Enzyme Engineering

Objective: Engineer enhanced IspS enzymes through iterative DBTL cycles using biofoundry automation and computational design.

Methodology:

Design Phase:

- Perform sequence coevolution analysis to identify potential beneficial mutations.

- Computationally design mutation sets targeting improved catalytic efficiency and stability.

- Format designs for automated DNA synthesis.

Build Phase:

- Utilize liquid-handling robots for high-throughput site-directed mutagenesis.

- Employ automated colony picking systems to isolate mutant constructs.

- Prepare mutant libraries in microtiter plates for screening.

Test Phase:

- Express mutant enzymes in suitable microbial hosts using automated culture systems.

- Implement high-throughput assays to measure isoprene production.

- Screen for thermostability using automated microplate thermoshift assays.

Learn Phase:

- Analyze mutant performance data to identify beneficial mutations.

- Feed results into subsequent DBTL cycles for further optimization.

- Use machine learning approaches to refine predictive models for mutation effects.

This workflow exemplifies the abstraction hierarchy, where the project (Level 0) is enzyme engineering, the service (Level 1) is protein optimization, the workflows (Level 2) include mutagenesis and screening, and the unit operations (Level 3) include specific automated steps like liquid handling and plate reading [15] [17].

Essential Research Reagents and Materials

Successful implementation of standardized biofoundry workflows requires specific reagents and instrumentation. The following table details key components essential for executing automated enzyme engineering protocols.

Table 3: Research Reagent Solutions for Biofoundry Workflows

| Reagent/Material | Function in Workflow |

|---|---|

| Liquid-handling robots | Automated transfer of liquids in microplate formats |

| Automated colony pickers | High-throughput selection of transformed clones |

| Microtiter plates (96/384/1536-well) | Standardized format for parallel experiments |

| Thermal cyclers | Automated DNA amplification and enzymatic reactions |

| DNA assembly reagents | Modular construction of genetic circuits |

| Cell lysis reagents | Preparation of biological samples for analysis |

| Enzyme substrates | Activity assays for engineered enzymes |

| Automated bioreactors | Controlled microbial cultivation for characterization |

Implications for Biomedical Research and Drug Development

The standardization efforts driven by the GBA and abstraction hierarchies have profound implications for biomedical engineering and pharmaceutical development. By establishing shared terminologies and operational standards, these initiatives directly address reproducibility challenges that have historically plagued biological research [15].

For drug development professionals, these advances translate to accelerated therapeutic discovery pipelines. The ability to rapidly engineer enzymatic pathways or microbial hosts for antibiotic production (as demonstrated in the DARPA pressure test that successfully produced therapeutic molecules like barbamide and pyrrolnitrin) showcases the potential of standardized biofoundry operations [1]. Furthermore, the integration of artificial intelligence and machine learning with standardized data outputs from biofoundry workflows enhances predictive modeling and reduces the number of DBTL cycles required to achieve desired biological functions [15] [1].

Visualizing the Integrated System

The relationship between the GBA, abstraction hierarchies, and final applications in biomedical engineering can be visualized as an integrated system where standardization enables collaboration and innovation.

The synergistic relationship between the Global Biofoundry Alliance and standardized abstraction hierarchies represents a transformative development in synthetic biology and biomedical engineering. The GBA provides the organizational framework for international collaboration, while abstraction hierarchies offer the conceptual infrastructure for standardizing complex operations. Together, they enable more reproducible, scalable, and efficient biofoundry workflows that accelerate the engineering of biological systems for therapeutic applications, biomanufacturing, and fundamental research. As these standards continue to evolve and be adopted, they promise to significantly shorten development timelines and enhance the reliability of biological engineering outcomes for drug development professionals and biomedical researchers.

The transition from artisanal, one-off experiments to automated, scalable research pipelines represents a paradigm shift in biomedical engineering. This evolution centers on achieving research reproducibility—the ability to independently verify scientific findings using the same materials and methods. Within automated biofoundry workflows, reproducibility extends beyond merely repeating an experiment to encompass the verification of results through biological feature values and computational provenance [18]. The "reproducibility crisis," in which a significant majority of researchers have failed to reproduce others' experiments (and even their own), underscores the critical need for this shift [18]. Modern approaches now differentiate between repeatability (same team, same environment), reproducibility (different team, different environment, same setup), and replicability (different team, different environment, different setup) [18].

Biofoundries operationalize this paradigm through integrated systems that automate the design-build-test-learn cycle, transforming biomedical research from a craft into an engineering discipline. The scalability of these systems enables researchers to systematically address complex biological questions that were previously intractable through manual approaches, while simultaneously generating the structured data necessary for true reproducibility assessment [18] [19].

Reproducibility Assessment Framework

The Reproducibility Scale

Moving beyond binary assessments of reproducibility requires a graduated framework that evaluates the degree of reproducibility achieved. This fine-grained approach enables researchers to determine not just whether results match, but how closely they align across key biological interpretations [18].

Table 1: Reproducibility Scale for Workflow Execution Results

| Reproducibility Level | Description | Validation Approach |

|---|---|---|

| Identical Results | Output files are exactly the same at the byte level | Checksum comparison of output files |

| Equivalent Biological Interpretation | Biological feature values match within acceptable thresholds | Comparison of extracted biological features (e.g., mapping rates, variant frequencies) |

| Consistent Trends | Overall conclusions align despite numerical differences | Qualitative comparison of results, trends, and statistical significance |

| Divergent Results | Fundamental interpretations differ | Identification of discrepancies in key findings and conclusions |

Quantitative Validation Methods

Automated validation of reproducibility employs biological feature values—quantifiable metrics representing the biological interpretation of results. For example, in RNA sequencing workflows, the mapping rate (percentage of reads mapped to a reference genome) serves as a key biological feature value for validation [18]. The validation process involves two critical steps:

- Biological Feature Extraction: Automated tools extract relevant numerical features from output files and logs (e.g., using SAMtools to extract mapping statistics from SAM files) [18].

- Threshold-Based Comparison: Extracted features are compared against expected values using predefined tolerance thresholds [18].

Table 2: Common Biological Feature Values for Reproducibility Assessment

| Research Domain | Biological Feature Values | Extraction Method | Typical Threshold |

|---|---|---|---|

| Genomics/RNA-seq | Mapping rate, read count, variant frequency | SAMtools, custom scripts | 1-5% variation |

| Medical Imaging | Signal-to-noise ratio, contrast measurements | Image analysis algorithms | 3-5% variation |

| Clinical Studies | Effect sizes, hazard ratios, confidence intervals | Statistical analysis | Determined by power |

| Drug Screening | IC50 values, efficacy metrics | Dose-response curve fitting | 2-fold variation |

Emerging Technologies Enabling Automated Reproducibility

Workflow Management Systems

Specialized workflow languages and execution systems provide the foundation for reproducible research by capturing computational methods in machine-readable formats. Common Workflow Language (CWL), Workflow Description Language (WDL), Nextflow, and Snakemake have formed large user communities and enable execution across different computing environments through virtualization technologies [18]. These systems abstract software and computational requirements, facilitating data analysis re-execution by different teams in different environments—a core requirement for reproducibility [18].

Provenance Capture and Metadata Standards

Workflow provenance—structured archives packaging workflow-related metadata in machine-readable formats—enables the verification of execution results. Frameworks such as Research Object Crate (RO-Crate) and CWLProv generate comprehensive provenance information that packages workflow descriptions, execution parameters, input and output data, tests, and documentation [18]. When distributed through platforms like WorkflowHub, Dockstore, and nf-core, this provenance allows researchers to verify new execution results against original findings [18].

LLM-Based Autonomous Agents for Reproducibility

Large Language Models are emerging as powerful tools for automating reproducibility assessments. Recent exploratory studies demonstrate that LLM-based autonomous agents can partially reproduce published research findings when provided with study abstracts, methods sections, and data dictionary descriptions [20]. In one study focusing on Alzheimer's disease research using National Alzheimer's Coordinating Center (NACC) data, LLM agents successfully reproduced approximately 53.2% of findings across five studies [20]. These agents operated by writing and executing code to dynamically reproduce study findings, though implementation flaws and missing methodological details limited complete reproducibility in some cases [20].

Experimental Protocols for Reproducibility Assessment

Protocol 1: Biological Feature Value Extraction

Purpose: To systematically extract quantitative biological feature values from workflow outputs for reproducibility assessment.

Materials:

- Workflow output files (BAM, VCF, CSV, etc.)

- Extraction scripts (Python/R)

- Data visualization tools (Tableau, matplotlib, ggplot2)

Procedure:

- Identify key biological interpretations from the original study

- Select appropriate feature values that represent these interpretations

- Implement extraction algorithms using standardized tools (e.g., SAMtools for BAM files)

- Validate extraction methods on control datasets

- Apply extraction to both original and reproduced output files

- Record feature values in structured format (JSON/CSV) for comparison

Validation: Compare extracted values against known benchmarks for accuracy.

Protocol 2: Threshold-Based Reproducibility Validation

Purpose: To determine reproducibility success using predefined tolerance thresholds for biological feature values.

Materials:

- Extracted feature values from original and reproduced results

- Statistical analysis software (R, Python, GraphPad Prism)

- Threshold criteria based on biological significance

Procedure:

- Calculate percentage differences or absolute differences for each feature value

- Apply predefined tolerance thresholds (established during workflow design)

- For multiple features, apply statistical tests (e.g., t-tests, correlation analysis)

- Classify reproducibility level based on the proportion of features within thresholds

- Generate reproducibility report with quantitative metrics

Analysis: Determine whether results meet criteria for "Equivalent Biological Interpretation" per the reproducibility scale.

Visualization Framework for Reproducibility Assessment

Reproducibility Validation Workflow

Reproducibility Scale Decision Framework

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Research Reagent Solutions for Reproducible Biofoundry Workflows

| Reagent Solution | Function | Implementation Example |

|---|---|---|

| Workflow Language Specifications | Describe computational methods in portable, executable formats | CWL, WDL, Nextflow scripts |

| Containerization Platforms | Package software dependencies for consistent execution | Docker, Singularity containers |

| Provenance Capture Tools | Generate structured metadata about workflow executions | RO-Crate, CWLProv |

| Biological Feature Extractors | Quantify key biological interpretations from raw outputs | SAMtools, custom Python/R scripts |

| Reproducibility Validation Frameworks | Automate comparison of results against thresholds | Tonkaz, custom validation pipelines |

| Workflow Sharing Platforms | Distribute reproducible workflows and provenance | WorkflowHub, Dockstore, nf-core |

| LLM-Based Validation Agents | Automate reproducibility assessment through AI | GPT-4o agents for code generation and execution [20] |

The transition from artisanal to automated biomedical research represents both a technological and cultural shift toward reproducibility by design. By implementing the frameworks, protocols, and tools outlined in these application notes, researchers can systematically enhance the reproducibility and scalability of their work. The integration of graduated reproducibility assessment, biological feature validation, and emerging technologies like LLM agents creates a foundation for more rigorous, transparent, and efficient biomedical discovery within automated biofoundry environments. As these practices mature, they promise to accelerate the translation of basic research into clinical applications through more reliable and verifiable scientific outcomes.

The global synthetic biology market is experiencing exponential growth, fueled by its convergence with the sustainable bioeconomy. The bioeconomy, an economic system that utilizes renewable biological resources to produce food, materials, and energy, is valued at over €2.4 trillion in the EU alone and provides work for approximately 17.2 million people [21]. Synthetic biology, which involves redesigning organisms by engineering their genetic material, is a key enabling technology for this bioeconomy [22].

Table 1: Synthetic Biology Market Size and Growth Projections

| Source | 2024 Market Size | 2025 Market Size | 2032/2034 Forecast Size | Projected CAGR |

|---|---|---|---|---|

| Fortune Business Insights [22] | USD 14.30 billion | USD 17.09 billion | USD 63.77 billion by 2032 | 20.7% |

| Precedence Research [23] | USD 20.01 billion | USD 24.58 billion | USD 192.95 billion by 2034 | 28.63% |

| Nova One Advisor [24] | USD 16.35 billion | - | USD 80.70 billion by 2034 | 17.31% |

This growth is propelled by several key drivers:

- Technological Advancements: Innovations in DNA sequencing, gene editing (e.g., CRISPR-Cas9), and software for designing biological systems are fundamental market drivers [22]. The integration of AI and machine learning is accelerating the design and optimization of biological systems [22].

- Demand for Sustainable Solutions: There is a significant shift toward sustainable production, with synthetic biology enabling eco-friendly drug production, biodegradable medical solutions, and the replacement of fossil-based materials with renewable alternatives [22] [21] [25].

- Healthcare Applications: The market is dominated by healthcare applications, including the development of novel therapeutics, vaccines, and advanced diagnostics [23] [24]. The rise in genetic disorders increases the demand for genetically engineered medicines [22].

- Strategic and Financial Investment: High levels of investment from both public and private sectors are fueling innovation and commercialization. For instance, the U.S. National Science Foundation awarded $75 million to create five biofoundries in 2024 [24].

Table 2: Regional Market Dynamics

| Region | Market Share (2024) | Key Growth Factors |

|---|---|---|

| North America [22] [23] | 39.6% - 52.09% | Advanced research infrastructure, strong presence of key players (e.g., Illumina, Thermo Fisher), supportive FDA policies, and significant investment in R&D and personalized medicine. |

| Europe [24] | Notable Growth | Adoption of sustainable manufacturing methods, government subsidies, and R&D investments, with strong contributions from the UK and Germany. |

| Asia Pacific [22] [23] | Fastest-Growing Region | Government support for domestic biotech, rising investments, increasing collaborations, and a growing need to address healthcare demands from a large and aging population. |

Application Note: An Automated Biofoundry Workflow for Enzyme Engineering

The following application note details a real-world experiment demonstrating how semi-automated biofoundry workflows can address key market and bioeconomy demands by engineering a critical enzyme for sustainable biomanufacturing.

This note describes a scalable, semi-automated workflow for engineering isoprene synthase (IspS), a rate-limiting enzyme in isoprene biosynthesis [7] [17]. Isoprene is a valuable chemical traditionally derived from petroleum. By integrating computational design with laboratory automation, we achieved a 4.5-fold improvement in the catalytic efficiency of IspS and enhanced its thermostability [17]. The engineered enzyme was successfully introduced into Methylococcus capsulatus Bath, enabling the conversion of methane—a potent greenhouse gas—into isoprene, achieving a titer of 319.6 mg/l in gas fermentation [7] [17]. This approach establishes a robust framework for rapid enzyme optimization, aligning with the synthetic biology market's drive towards sustainable chemical production and the bioeconomy's goal of using renewable and even waste resources.

The synthetic biology market demands higher-throughput and more reliable methods for biological design to accelerate R&D cycles [22]. Biofoundries, which integrate automation, analytics, and informatics, are emerging as transformative platforms to meet this demand. This application note outlines a protocol for sequence coevolution-guided enzyme engineering executed within a semi-automated biofoundry environment. The primary objectives were:

- To develop a scalable workflow for enzyme engineering suitable for biofoundry applications.

- To enhance the catalytic efficiency and thermostability of IspS.

- To validate the performance of the engineered enzyme in a relevant industrial biomanufacturing context—specifically, the conversion of methane to isoprene [7] [17].

This work directly contributes to the sustainable bioeconomy by creating a pathway to produce value-added chemicals from greenhouse gas, reducing dependence on fossil fuels [21] [26].

Experimental Protocol

The following protocol was adapted from the research conducted by Lee et al. [7] [17].

Stage 1: Computational Mutation Design

Procedure:

- Sequence Coevolution Analysis: Perform a multiple sequence alignment of homologous IspS protein sequences from diverse organisms using bioinformatics software (e.g., Clustal Omega, MAFFT).

- Identify Covarying Sites: Use a statistical analysis package (e.g., GREMLIN, EVcouplings) to identify pairs of amino acid residues that have coevolved throughout evolution. These pairs are indicative of functionally or structurally important interactions.

- Design Mutagenesis Libraries: Based on the coevolution analysis, select target residues for mutagenesis. Design oligonucleotide primers for site-directed mutagenesis to create focused mutant libraries. The workflow was scaled to synthesize approximately 100 genetic mutants per round [17].

Stage 2: Automated Build & Transform

Materials:

- Oligonucleotide primers for site-directed mutagenesis.

- DNA polymerase (e.g., Phusion U Hot Start DNA Polymerase).

- Template plasmid containing the wild-type IspS gene.

- E. coli competent cells for transformation.

- Liquid handling robot and thermal cycler.

Procedure:

- Gene Fragments Synthesis: Use the designed primers in a PCR reaction to generate mutant gene fragments. This step can be automated using a liquid handling robot for setting up parallel PCR reactions.

- DNA Assembly: Digest the PCR products and the destination vector with appropriate restriction enzymes, then perform a ligation reaction to assemble the mutant IspS gene into the expression vector.

- Transformation: Transform the assembled plasmids into competent E. coli cells using a high-throughput electroporation system.

- Culture and Plasmid Extraction: Plate transformed cells on selective agar and incubate. Pick individual colonies into deep-well plates containing liquid culture medium. After incubation, use an automated plasmid purification system to extract and normalize the mutant plasmid libraries.

Stage 3: High-Throughput Screening

Materials:

- Deep-well plates containing growth medium.

- Inducer for gene expression (e.g., IPTG).

- Substrate for IspS (dimethylallyl diphosphate, DMADP).

- Microplate reader or HPLC system.

Procedure:

- Expression of Mutant Library: Transfer the cultures to expression plates and induce protein expression with IPTG.

- Cell Lysis: Lyse the cells chemically or enzymatically to release the expressed IspS variants.

- Activity Assay: In a new assay plate, mix the cell lysates with the substrate DMADP. Isoprene production can be detected indirectly via a colorimetric coupled assay or, more precisely, by using a high-throughput GC-MS or HPLC system.

- Data Collection: Measure the initial velocity of the reaction for each variant to determine catalytic efficiency (kcat/Km). Perform a thermostability assay by incubating lysates at elevated temperatures for a set time before measuring residual activity.

Stage 4: Data Analysis and Iteration

Procedure:

- Data Integration: Automatically transfer screening data (activity, stability) to a central database linked to the mutant sequence information.

- Variant Selection: Rank variants based on combined improvements in catalytic efficiency and thermostability.

- Loop Closure: Use the data from the best-performing variants to inform the next round of computational design, creating subsequent-generation libraries that combine beneficial mutations. The described study completed three rounds of this design-build-test-learn cycle [17].

Stage 5: Bioprocess Validation

Procedure:

- Strain Engineering: Clone the top-performing engineered IspS gene into an appropriate expression vector and introduce it into the industrial host Methylococcus capsulatus Bath.

- Gas Fermentation: Evaluate the performance of the engineered strain in a controlled bioreactor system fed with methane as the sole carbon source.

- Product Quantification: Monitor cell growth and periodically sample the fermentation broth and off-gas to quantify isoprene production, confirming the industrial relevance of the engineered enzyme [7] [17].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Reagents and Materials for Automated Enzyme Engineering

| Item | Function/Description | Key Players/Examples |

|---|---|---|

| Oligonucleotide Pools & Synthetic DNA [23] [24] | Cost-effective source for constructing mutant libraries in protein and metabolic engineering; enables high-throughput screening. | Twist Bioscience [27], GenScript [27] |

| CRISPR/Cas9 Systems [22] [28] | Advanced gene-editing tool for precise genome manipulation; revolutionizes the engineering of host organisms. | CRISPR Therapeutics [27], Merck KGaA [27] |

| DNA Synthesis & Sequencing Tools [22] | Fundamental for reading (sequencing) and writing (synthesizing) genetic material, the core of all synthetic biology workflows. | Illumina [22], Thermo Fisher [22] [27] |

| Specialized Enzymes | High-fidelity DNA polymerases for accurate PCR, and restriction enzymes for DNA assembly in the Build phase. | New England Biolabs [24] |

| Biofoundry Automation Software | Enables experimental design, workflow automation, and data integration; critical for managing the design-build-test-learn cycle. | Synthace [27] |

| Cell-Free Systems [25] | Cell-free bioprocessing (e.g., for hyaluronic acid production) bypasses biological bottlenecks of living cells, enabling safer and more scalable production. | Enzymit [25] |

The integration of semi-automated biofoundry workflows represents a paradigm shift in biomedical engineering and industrial biomanufacturing. The demonstrated protocol for IspS engineering highlights a scalable, iterative approach that directly addresses key market needs: accelerating R&D cycles, improving the efficiency of biological systems, and enabling the sustainable production of chemicals from renewable or waste resources like methane [7] [17]. As these platforms become more integrated with AI-guided, closed-loop systems, they will further de-risk the scaling process and solidify synthetic biology's role as a cornerstone of the modern bioeconomy [7]. This synergy between advanced biofoundries and sustainable goals is essential for meeting the demands of the rapidly growing synthetic biology market and for building a more resilient, low-carbon economy [21] [26].

From Code to Cure: Implementing Automated Workflows for Enzyme and Therapeutic Protein Engineering

Application Notes

Biological and Engineering Context

Isoprene synthase (IspS) is a critical rate-limiting enzyme in the metabolic pathway for isoprene biosynthesis. Engineering this enzyme presents a significant challenge for sustainable biomanufacturing, as its catalytic efficiency and stability directly impact the viability of microbial platforms for converting renewable feedstocks into valuable chemicals. The integration of semi-automated biofoundry workflows with sequence coevolution analysis has established a robust framework for accelerating the engineering of such enzymes, moving beyond traditional, labor-intensive methods [17] [7].

This approach is firmly situated within the Design-Build-Test-Learn (DBTL) engineering cycle, a paradigm central to modern synthetic biology and biofoundry operations [13] [1]. Biofoundries are specialized facilities that integrate software-based design with automated construction and testing pipelines to streamline biological engineering. The trend toward automation is driven by the need for higher throughput, greater reliability, and improved replicability in biological research and development [13]. The case of IspS engineering exemplifies how these principles can be applied to a real-world protein engineering problem, demonstrating a scalable path from computational design to improved industrial performance.

The implementation of sequence coevolution-guided mutagenesis and semi-automated screening led to the rapid identification of superior IspS variants. The table below summarizes the key quantitative outcomes from the study.

Table 1: Key Experimental Results from IspS Engineering

| Parameter | Result | Context/Significance |

|---|---|---|

| Catalytic Efficiency | Up to 4.5-fold improvement | Compared to the wild-type IspS enzyme [17] [7]. |

| Thermostability | Simultaneously enhanced | Specific metrics not provided; noted as an important improvement alongside activity [17]. |

| Isoprene Titer | 319.6 mg/l | Achieved in Methylococcus capsulatus Bath using methane as a feedstock [17] [7]. |

| Technology Readiness Level (TRL) | Level 4 | Validated proof-of-concept in a relevant laboratory environment [7]. |

| Throughput Capability | ~100 mutants synthesized and screened per round; scalable to thousands [17]. | Demonstrates the high-throughput potential of the workflow. |

Experimental Protocols

The engineering of IspS was conducted through an integrated semi-automated workflow. The following diagram illustrates the logical flow and interactions between the key stages of this process.

Detailed Methodologies

Protocol 1: Computational Mutation Design via Sequence Coevolution

Objective: To identify residue pairs for mutagenesis that are predicted to be important for IspS function and stability.

Principle: Sequence coevolution analysis detects pairs of amino acid positions within a protein (or across interacting proteins) that have mutated in a correlated manner throughout evolution. This correlation often indicates a functional or structural constraint, such as a residue-residue contact that is crucial for stabilizing the protein's three-dimensional structure or its active site [29] [30] [31].

Procedure:

- Sequence Alignment Compilation: Collect a large and diverse multiple sequence alignment (MSA) of homologous isoprene synthase sequences from public databases.

- Coevolutionary Analysis: Process the MSA using a statistical model (e.g., a pseudolikelihood maximization method within a tool like EVcouplings) to compute evolutionary coupling (EC) scores for all possible pairs of amino acid positions [29] [30].

- Identification of Inter-protein ECs: The analysis generates both intra-protein couplings (within IspS) and inter-protein couplings (between IspS and its potential interaction partners, if relevant). Focus on high-ranking inter-protein ECs, as these are most likely to represent direct physical contacts at the protein-protein interface [30].

- Mutation Design: Select the top-ranked coevolving residue pairs. Design site-directed mutagenesis libraries that target these positions, exploring combinations of amino acids observed in the evolutionary record or predicted to enhance interactions.

Protocol 2: Semi-Automated Build and Test Workflow

Objective: To construct and screen a library of IspS genetic mutants in a high-throughput, reproducible manner.

Principle: Biofoundries automate laboratory tasks using liquid-handling robots and other automated platforms, which are coordinated by workflow management software. This translates a high-level experimental design into low-level, machine-readable instructions executed in a specific sequence [13] [15].

Procedure:

- Workflow Orchestration: Define the build-test workflow as a Directed Acyclic Graph (DAG)

, where each node is a discrete unit operation (e.g., "PCR Setup," "Transformation") and the edges define the sequence. An orchestrator software (e.g., Apache Airflow) executes the graph, instructing the biofoundry resources and collecting all operational and experimental data [13].

Semi-Automated Build and Test Workflow

- Automated Build Phase:

- Genetic Mutant Synthesis: Use a liquid-handling robot to set up approximately 100 site-directed mutagenesis PCR reactions per engineering round as defined by the computational design [17].

- Strain Construction: Automate the transformation of the constructed IspS variants into the production host, Methylococcus capsulatus Bath.

- Automated Test Phase:

- Cultivation: Inoculate and grow mutant strains in deep-well microplates.

- High-Throughput Screening: Employ an automated assay, likely based on a colorimetric or fluorometric readout linked to enzyme activity, to screen the library. The workflow is designed to be easily scaled up to screen thousands of mutants [17].

- Data Integration: All screening data is automatically captured, curated, and stored by the supporting IT infrastructure, ready for the Learn phase [13].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key materials and resources essential for implementing this enzyme engineering workflow.

Table 2: Essential Research Reagents and Resources

| Item | Function/Description | Specific Example/Note |

|---|---|---|

| Sequence Coevolution Tool (e.g., EVcouplings) | Software for identifying evolutionarily coupled residues from multiple sequence alignments. | Critical for the Design phase; predicts stabilizing residue contacts [29] [30]. |

| Liquid-Handing Robot | Automated platform for precise liquid transfers in microplates. | Enables high-throughput PCR setup and assay screening in the Build and Test phases [13]. |

| Workflow Orchestrator (e.g., Apache Airflow) | Software that coordinates the execution of automated tasks in the correct sequence. | Manages the DAG representation of the experimental protocol [13]. |